Smart Home Appliances, Operating Method Of Thereof, And Voice Recognition System Using The Smart Home Appliances

LEE; Juwan ; et al.

U.S. patent application number 16/289558 was filed with the patent office on 2019-08-29 for smart home appliances, operating method of thereof, and voice recognition system using the smart home appliances. The applicant listed for this patent is LG ELECTRONICS INC.. Invention is credited to Lagyoung Kim, Juwan LEE, Daegeun Seo, Taedong Shin.

| Application Number | 20190267004 16/289558 |

| Document ID | / |

| Family ID | 53371410 |

| Filed Date | 2019-08-29 |

View All Diagrams

| United States Patent Application | 20190267004 |

| Kind Code | A1 |

| LEE; Juwan ; et al. | August 29, 2019 |

SMART HOME APPLIANCES, OPERATING METHOD OF THEREOF, AND VOICE RECOGNITION SYSTEM USING THE SMART HOME APPLIANCES

Abstract

Provided is a smart home appliance. The smart home appliance includes: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage or face; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit.

| Inventors: | LEE; Juwan; (Seoul, KR) ; Kim; Lagyoung; (Seoul, KR) ; Shin; Taedong; (Seoul, KR) ; Seo; Daegeun; (Seoul, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 53371410 | ||||||||||

| Appl. No.: | 16/289558 | ||||||||||

| Filed: | February 28, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15103528 | Jun 10, 2016 | 10269344 | ||

| PCT/KR2014/010536 | Nov 4, 2014 | |||

| 16289558 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/00302 20130101; G10L 13/033 20130101; G10L 2015/088 20130101; H04L 12/2803 20130101; G06F 3/167 20130101; G10L 15/26 20130101; G10L 21/0208 20130101; G10L 15/22 20130101; G06F 3/012 20130101; G10L 15/00 20130101; G10L 2015/227 20130101; G10L 25/63 20130101; G06K 9/00228 20130101; G10L 2015/223 20130101; G10L 13/027 20130101; G10L 15/08 20130101; G10L 25/84 20130101; G10L 2015/226 20130101; G10L 15/24 20130101; G10L 15/25 20130101; H04L 12/282 20130101; G10L 17/24 20130101 |

| International Class: | G10L 15/22 20060101 G10L015/22; G10L 15/08 20060101 G10L015/08; G10L 25/63 20060101 G10L025/63; G06K 9/00 20060101 G06K009/00; H04L 12/28 20060101 H04L012/28; G10L 21/0208 20060101 G10L021/0208; G10L 13/033 20060101 G10L013/033; G10L 17/24 20060101 G10L017/24; G10L 25/84 20060101 G10L025/84; G10L 15/25 20060101 G10L015/25; G06F 3/16 20060101 G06F003/16; G06F 3/01 20060101 G06F003/01 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Dec 11, 2013 | KR | 10-2013-0153713 |

Claims

1-30. (canceled)

31. A smart home appliance comprising: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information, and storing a keyword information that a user may input to start a voice recognition service; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit, and a region recognition unit determining a user's region on the basis of information on the voice collected through the voice input unit; and an output unit outputting region customized information on the basis of information on a region determined by the region recognition unit and information on the setting function. wherein the control unit comprises a face detection unit recognizing that a user is in a staring state for voice input when image information on a user's visage is collected for more than a setting time through the capturing unit, and wherein the control unit determines that a voice recognition service standby state is entered when it is recognized that there is keyword information in a voice through the voice input unit and a user is in the staring state through the face detection unit.

32. The smart home appliance according to claim 31, further comprising: a filter unit removing a noise sound from the voice inputted through the voice input unit; and a memory unit mapping voice information related to an operation of the smart home appliance and voice information unrelated to an operation of the smart home appliance in the voice inputted through the voice input unit and storing the mapped information.

33. The smart home appliance according to claim 31, wherein the setting function comprises a plurality of functions divided according to regions; and the region customized information including one function matching information on the region among the plurality of functions is outputted through the output unit.

34. The smart home appliance according to claim 31, wherein the output unit outputs the region customized information by using a dialect in the region determined by the region recognition unit.

35. The smart home appliance according to claim 31, wherein the output unit outputs a keyword for security setting and the voice input unit sets a reply word corresponding to the keyword.

36. A smart home appliance comprising: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information, and storing a keyword information that a user may input to start a voice recognition service; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit, and an emotion recognition unit and an output unit, wherein the voice recognition unit recognizes a text corresponding to first voice information in the voice collected through the voice input unit, and the emotion recognition unit extracts a user's emotion on the basis of second voice information in the voice collected through the voice input unit; and the output unit outputs user customized information on information on a user's emotion determined by the emotion recognition unit and information on the setting function, and wherein the control unit comprises a face detection unit recognizing that a user is in a staring state for voice input when image information on a user's visage is collected for more than a setting time through the capturing unit, and wherein the control unit determines that a voice recognition service standby state is entered when it is recognized that there is keyword information in a voice through the voice input unit and a user is in the staring state through the face detection unit.

37. The smart home appliance according to claim 36, wherein the first voice information comprises a language element in the collected voice; and the second voice information comprises a non-language element related to a user's emotion.

38. The smart home appliance according to claim 36, wherein the emotion recognition unit comprises a database where information on user's voice characteristics and information on an emotion state are mapped; and the information on the user's voice characteristics comprises information on a speech spectrum having characteristics for each user's emotion.

39. The smart home appliance according to claim 36, wherein the setting function comprises a plurality of functions to be recommended or selected; and the user customized information including one function matching the information on the user's emotion among the plurality of functions is outputted through the output unit.

40. A smart home appliance comprising: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information, and storing a keyword information that a user may input to start a voice recognition service; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit, and a position information recognition unit recognizing position information; and an output unit outputting the information on the setting function on the basis of position information recognized by the position information recognition unit, and wherein the control unit comprises a face detection unit recognizing that a user is in a staring state for voice input when image information on a user's visage is collected for more than a setting time through the capturing unit, and wherein the control unit determines that a voice recognition service standby state is entered when it is recognized that there is keyword information in a voice through the voice input unit and a user is in the staring state through the face detection unit.

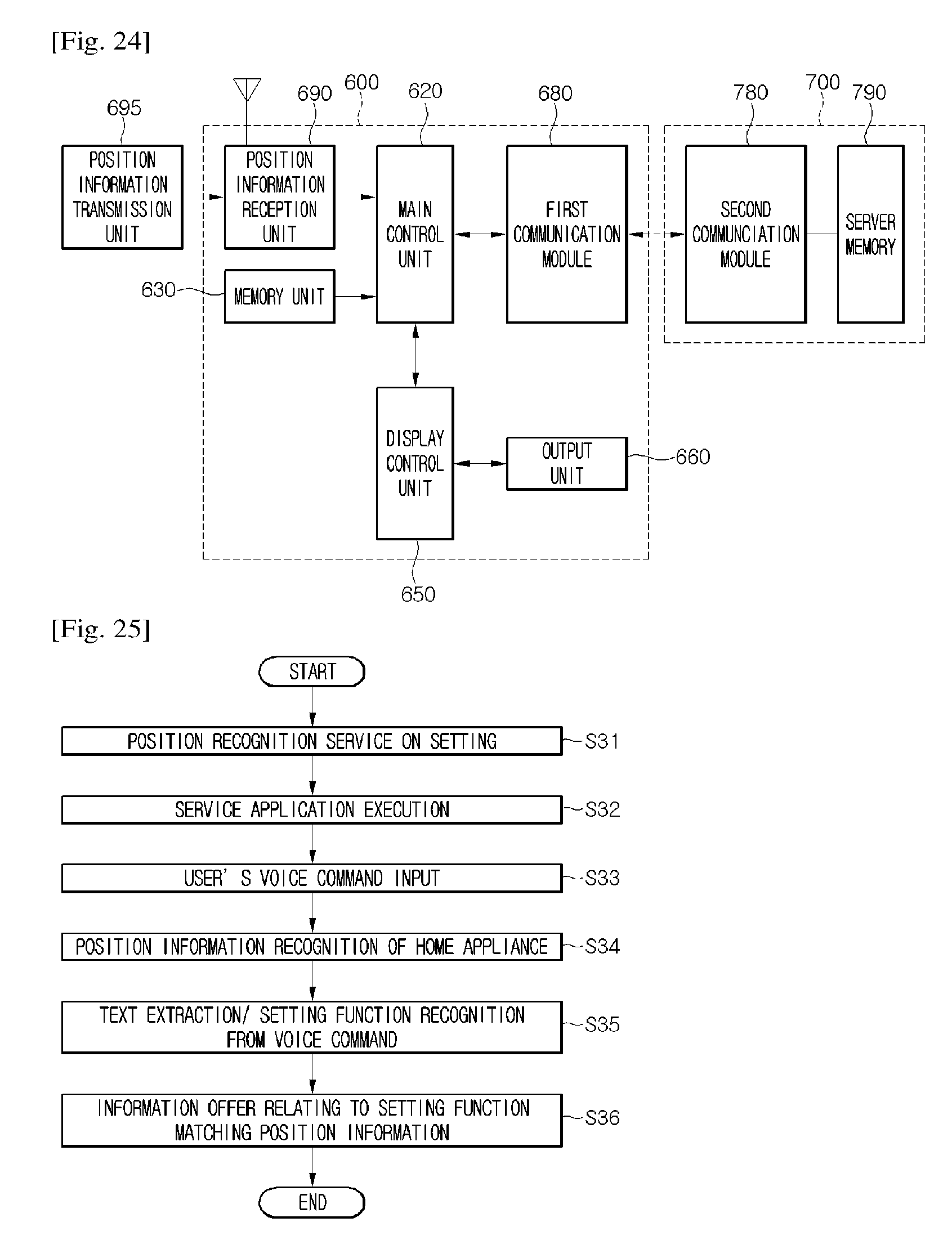

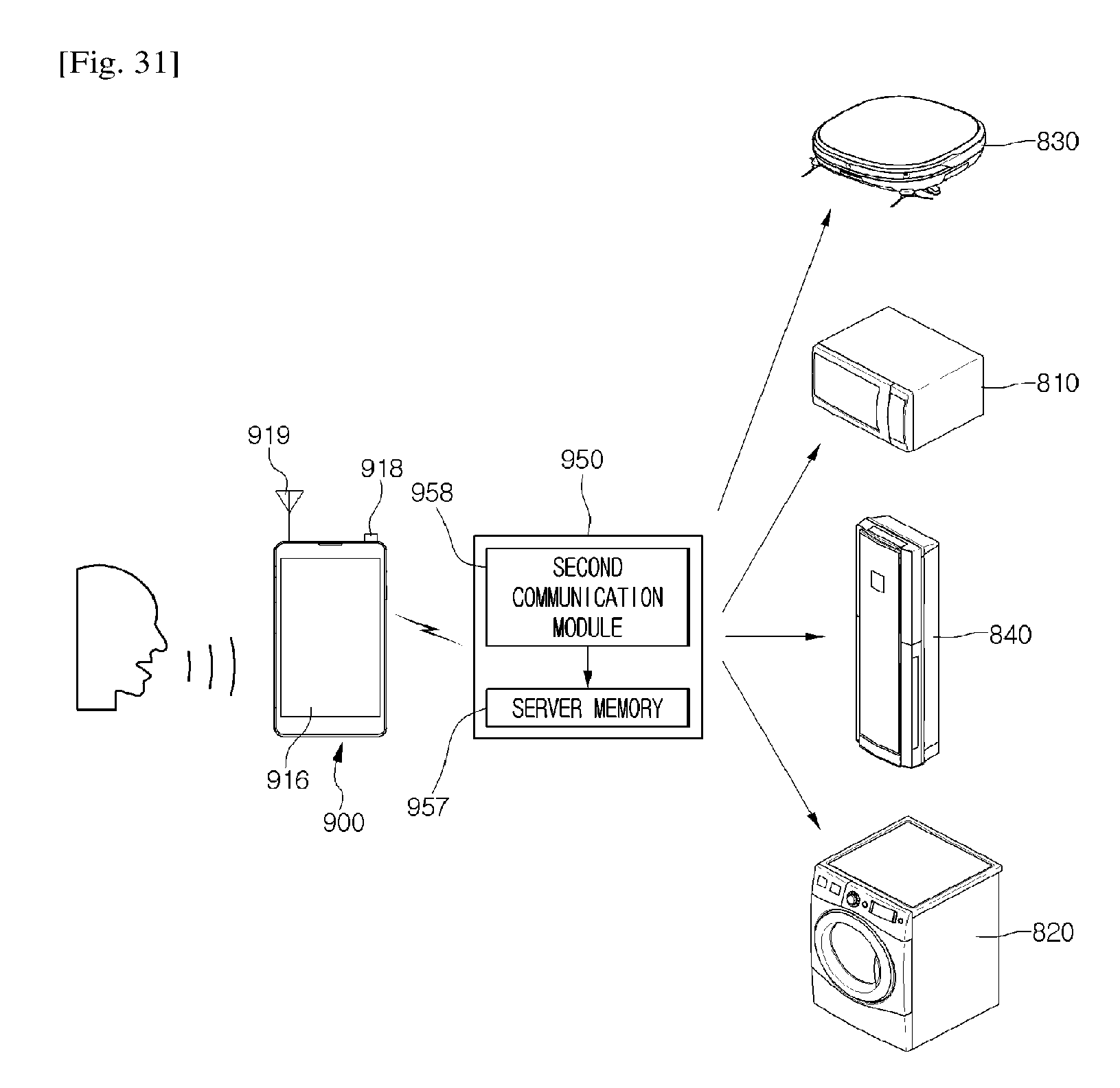

41. The smart home appliance according to claim 40, wherein the position information recognition unit comprises: a GPS reception unit receiving a position coordinate from a position information transmission unit; and a first communication module communicably connected to a second communication module equipped in a server.

42. The smart home appliance according to claim 40, wherein the output unit comprises a voice output unit outputting the information on the setting function as a voice, by using the position recognized by the position information recognition unit or a dialect used in a region.

43. The smart home appliance according to claim 40, wherein the output unit outputs information optimized for a region recognized by the position information recognition unit among a plurality of information on the setting function.

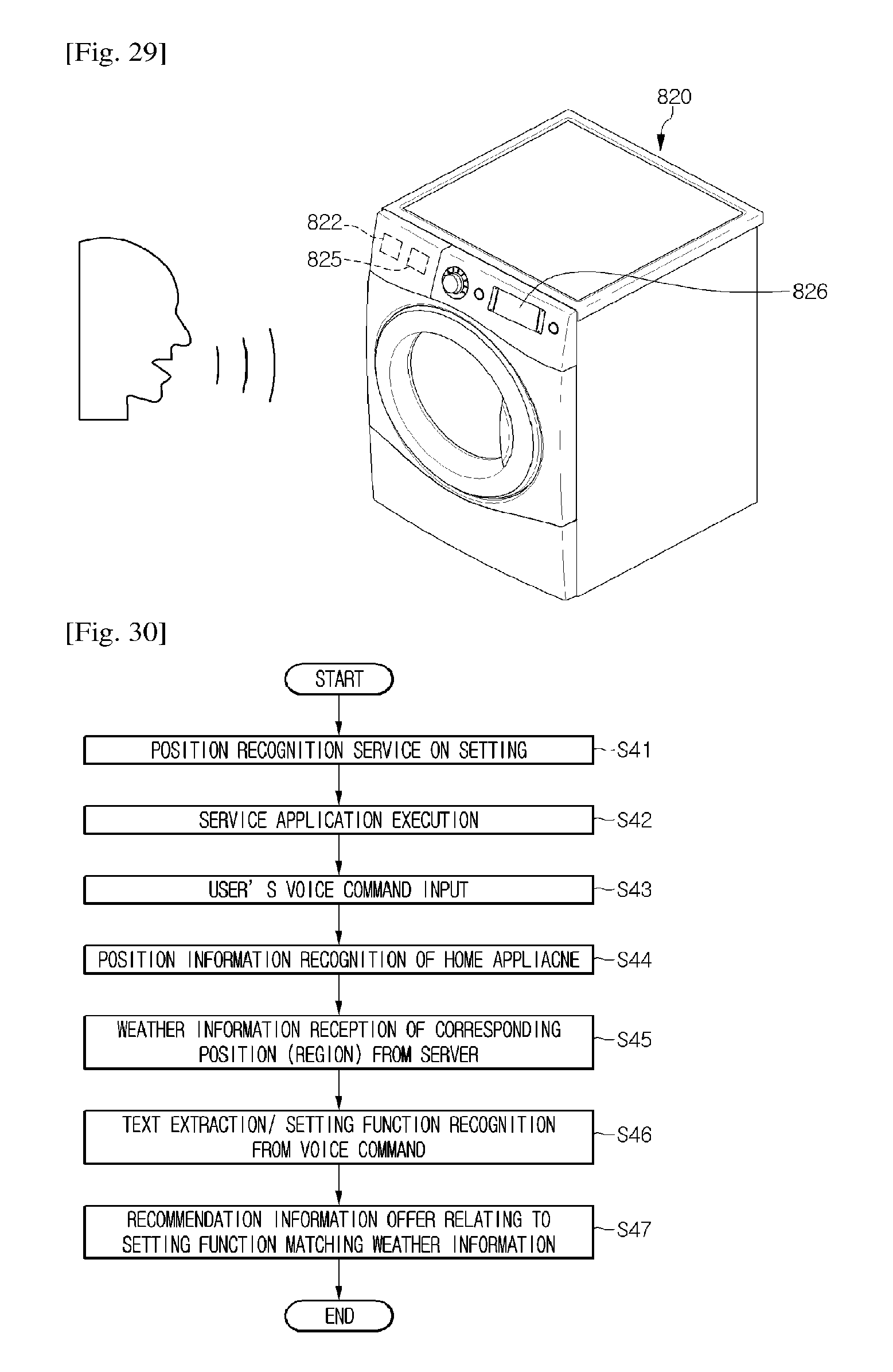

44. The smart home appliance according to claim 40, wherein the position information recognized by the position information recognition unit comprises weather information.

45. An operating method of a smart home appliance comprising: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information, and storing a keyword information that a user may input to start a voice recognition service; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit, and wherein the control unit comprises a face detection unit recognizing that a user is in a staring state for voice input when image information on a user's visage is collected for more than a setting time through the capturing unit, and wherein the control unit determines that a voice recognition service standby state is entered when it is recognized that there is keyword information in a voice through the voice input unit and a user is in the staring state through the face detection unit, and the method comprising: collecting a voice through a voice input unit; recognizing whether keyword information is included in the collected voice; collecting image information on a user's visage through a capturing unit equipped in the smart home appliance; and entering a standby state of a voice recognition service on the basis of the image information on the user's visage.

46. The method according to claim 45, further comprising performing a security setting, wherein the performing of the security setting comprises: outputting a predetermined key word; and inputting a reply word in response to the outputted key word.

47. The method according to claim 45, further comprising: extracting a user's emotion state on the basis of information on the collected voice; and recommending an operation mode on the basis of information on the user's emotion state.

48. The method according to claim 45, further comprising: recognizing an installation position of the smart home appliance through a position information recognition unit; and driving the smart home appliance on the basis of information on the installation position.

49. The method according to claim 48, wherein the recognizing of the installation position of the smart home appliance comprises receiving GPS coordinate information from a GPS satellite or a communication base station.

50. The method according to claim 48, wherein the recognizing of the installation position of the smart home appliance comprises checking a communication address as a first communication module equipped in the smart home appliance is connected to a second communication module equipped in a server.

Description

TECHNICAL FIELD

[0001] The present disclosure relates to smart home appliances, an operating method thereof, and a voice recognition system using the smart home appliances.

BACKGROUND ART

[0002] Home appliances, as electronic products equipped in homes, include refrigerators, air conditioners, cookers, and vacuum cleaner. Conventionally, in order to operate such home appliances, a method of approaching and directly manipulating them or remotely controlling them through a remote controller is used.

[0003] However, with the recent developments of communication technology, a technique for inputting a command for operating home appliances by using a voice and allowing the home appliances to recognize the inputted voice content and operate is introduced.

[0004] FIG. 1 is a view illustrating a configuration of a conventional home appliance and its operating method.

[0005] A conventional home appliance includes a voice recognition unit 2, a control unit 3, a memory 4, and a driving unit 5.

[0006] When a user makes a voice meaning a specific command, the home appliance 1 collects the spoken voice and interprets the collected voice by using the voice recognition unit 2.

[0007] As an interpretation result of the collected voice, a text corresponding to the voice may be extracted. The control unit 3 compares extracted first text information and second text information stored in the memory 4 to determine whether the text is matched.

[0008] When the first and second text information matches, the control unit 3 may recognize a predetermined function of the home appliance 1 corresponding to the second text information.

[0009] Then, the control unit 3 may operate the driving unit 5 on the basis of the recognized function.

[0010] However, when such a conventional home appliance is in use, a noise source generated from the surrounding may be wrongly recognized as a voice. Additionally, even when a user simply talks with other people near a home appliance without an intention of speaking a command for voice recognition, this may be also wrongly recognized. That is, the home appliance malfunctions.

DISCLOSURE OF INVENTION

Technical Problem

[0011] Embodiments provide a smart home appliance with improved voice recognition rate, an operation method thereof, and a voice recognition system using the smart home appliance.

Solution to Problem

[0012] In one embodiment, a smart home appliance includes: a voice input unit collecting a voice; a voice recognition unit recognizing a text corresponding to the voice collected through the voice input unit; a capturing unit collecting an image for detecting a user's visage or face; a memory unit mapping the text recognized by the voice recognition unit and a setting function and storing the mapped information; and a control unit determining whether to perform a voice recognition service on the basis of at least one information of image information collected by the capturing unit and voice information collected by the voice input unit.

[0013] The control unit may include a face detection unit recognizing that a user is in a staring state for voice input when image information on a user's visage or face is collected for more than a setting time through the capturing unit.

[0014] The control unit may determine that a voice recognition service standby state is entered when it is recognized that there is keyword information in a voice through the voice input unit and a user in the staring state through the face detection unit.

[0015] The smart home appliance may further include: a filter unit removing a noise sound from the voice inputted through the voice input unit; and a memory unit mapping voice information related to an operation of the smart home appliance and voice information unrelated to an operation of the smart home appliance in advance in the voice inputted through the voice input unit and storing the mapped information.

[0016] The smart home appliance may further include: a region recognition unit determining a user's region on the basis of information on the voice collected through the voice input unit; and an output unit outputting region customized information on the basis of information on a region determined by the region recognition unit and information on the setting function.

[0017] The setting function may include a plurality of functions divided according to regions; and the region customized information including one function matching information on the region among the plurality of functions is outputted through the output unit.

[0018] The output unit may output the region customized information by using a dialect in the region determined by the region recognition unit.

[0019] The output unit may output a key word for security setting and the voice input unit may set a reply word corresponding to the key word.

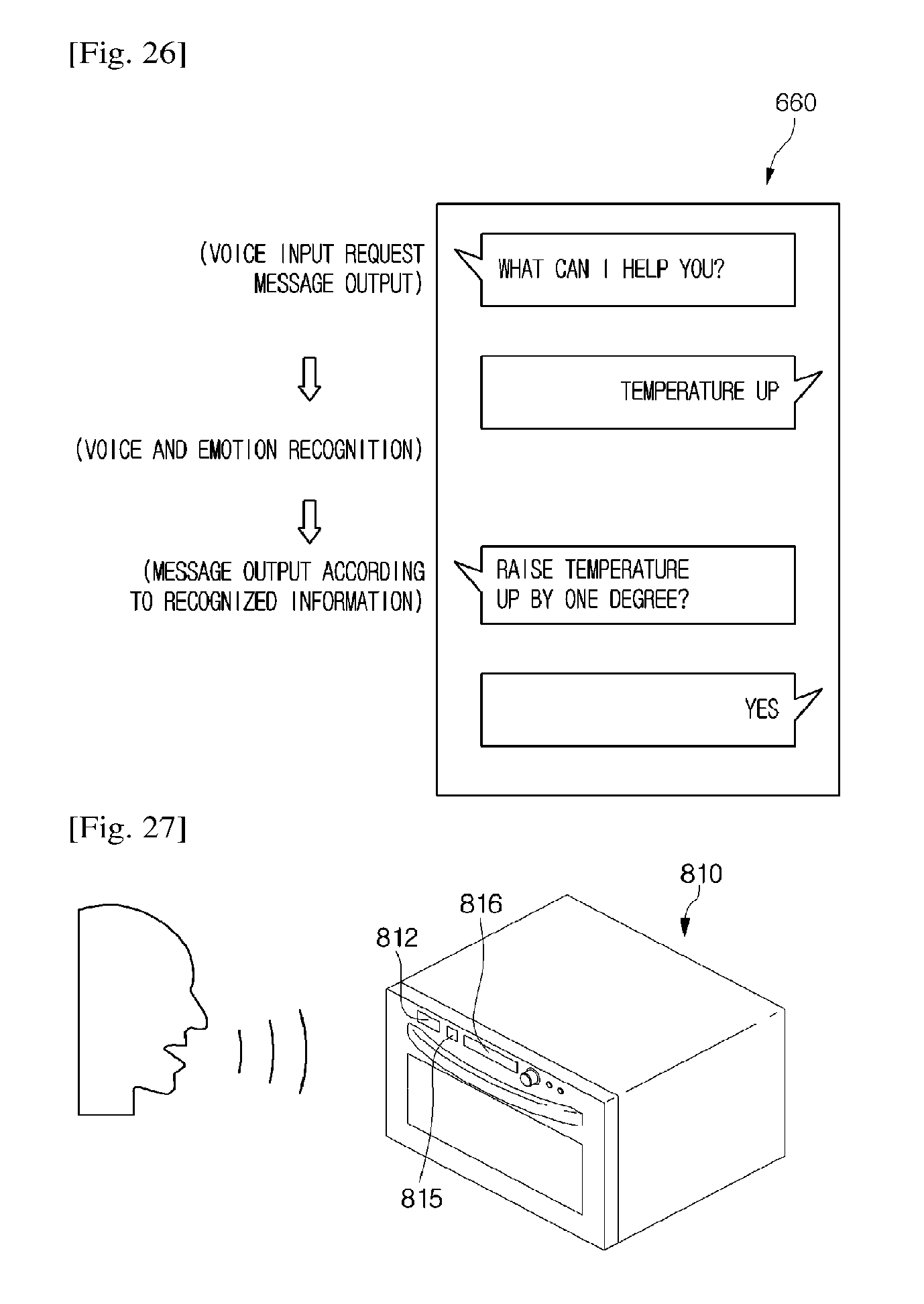

[0020] The smart home appliance may further include an emotion recognition unit and an output unit, wherein the voice recognition unit may recognize a text corresponding to first voice information in the voice collected through the voice input unit; the emotion recognition unit may extract a user's emotion on the basis of second voice information in the voice collected through the voice input unit; and the output unit may output user customized information on information on a user's emotion determined by the emotion recognition unit and information on the setting function.

[0021] The first voice information may include a language element in the collected voice; and the second voice information may include a non-language element related to a user's emotion.

[0022] The emotion recognition unit may include a database where information on user's voice characteristics and information on an emotion state are mapped; and the information on the user's voice characteristics may include information on a speech spectrum having characteristics for each user's emotion.

[0023] The setting function may include a plurality of functions to be recommended or selected; and the user customized information including one function matching the information on the user's emotion among the plurality of functions is outputted through the output unit.

[0024] The smart home appliance may further include: a position information recognition unit recognizing position information; and an output unit outputting the information on the setting function on the basis of position information recognized by the position information recognition unit.

[0025] The position information recognition unit may include: a GPS reception unit receiving a position coordinate from an external position information transmission unit; and a first communication module communicably connected to a second communication module equipped in an external server.

[0026] The output unit may include a voice output unit outputting the information on the setting function as a voice by the position recognized by the position information recognition unit or a dialect used in a region.

[0027] The output unit may output information optimized for a region recognized by the position information recognition unit among a plurality of information on the setting function.

[0028] The position information recognized by the position information recognition unit may include weather information.

[0029] In another embodiment, an operating method of a smart home appliance includes: collecting a voice through a voice input unit; recognizing whether keyword information is included in the collected voice; collecting image information on a user's visage or face through a capturing unit equipped in the smart home appliance; and entering a standby state of a voice recognition service on the basis of the image information on the user's visage or face.

[0030] When the image information on the user's visage or face is collected for more than a setting time, it may be recognized that a user is in a staring state for voice input; and when it is recognized that there is keyword information in the voice and the user is in the staring state for voice input, a standby state of the voice recognition service may be entered.

[0031] The method may further include: determining a user's region on the basis of information on the collected voice; and driving the smart home appliance on the basis of information on the setting function and information on the determined region.

[0032] The method may further include outputting region customized information related to the driving of the smart home appliance on the basis of the information on the determined region.

[0033] The outputting of the region customized information may include outputting a voice or a screen by using a dialect used in the user's region.

[0034] The method may further include performing a security setting, wherein the performing of the security setting may include: outputting a set key word; and inputting a reply word in response to the suggested key word.

[0035] The method may further include: extracting a user's emotion state on the basis of information on the collected voice; and recommending an operation mode on the basis of information on the user's emotion state.

[0036] The method may further include: recognizing an installation position of the smart home appliance through a position information recognition unit; and driving the smart home appliance on the basis of information on the installation position.

[0037] The recognizing of the installation position of the smart home appliance may include receiving GPS coordinate information from a GPS satellite or a communication base station.

[0038] The recognizing of the installation position of the smart home appliance may include checking a communication address as a first communication module equipped in the smart home appliance is connected to a second communication module equipped in a server.

[0039] In further another embodiment, a voice recognition system includes: a mobile device including a voice input unit receiving a voice; a smart home appliance operating and controlled based on a voice collected through the voice input unit; and a communication module equipped in each of the mobile device and the smart home appliance, wherein the mobile device includes a movement detection unit determining whether to enter a standby state of a voice recognition service in the smart home appliance by detecting a movement of the mobile device.

[0040] The movement detection unit may include an acceleration sensor or a gyro sensor detecting a change in an inclined angle of the mobile device, wherein the voice input unit may be disposed at a lower part of the mobile device; and when a user puts the voice input unit close the mouth in order for a voice input as gripping the mobile device, an angle value detected by the acceleration sensor or the gyro sensor may be reduced.

[0041] The movement detection unit may include an illumination sensor detecting an intensity of an external light collected by the mobile device; and when a user puts the voice input unit close the mouth in order for a voice input as gripping the mobile device, an intensity value of a light detected by the illumination sensor may be increased.

[0042] The details of one or more embodiments are set forth in the accompanying drawings and the description below. Other features will be apparent from the description and drawings, and from the claims.

Advantageous Effects of Invention

[0043] According to the present invention, since a user controls an operation of a smart home appliance through a voice, usability may be improved.

[0044] Additionally, a user's face or an action for manipulating a mobile device is recognized and through this, whether a user has an intention for speaking a voice command is determined, so that voice misrecognition may be prevented.

[0045] Furthermore, when there are a plurality of voice recognition available smart home appliances, a command subject may be recognized by extracting the feature (specific word) of a command voice that a user makes and only a specific electronic product among a plurality of electronic products responds according to the recognized command subject. Therefore, miscommunication may be prevented during an operation of an electronic product.

[0046] Additionally, whether a user speaks in standard language or direct is recognized and according to the content or dialect type of the recognized voice, customized information is provided, so that user's convenience may be improved.

[0047] Moreover, since a setting on whether to use a voice recognition function of a home appliance and a security setting are possible, an arbitrary user may be prevented from using a corresponding function. Therefore, the reliability of a product operation may be increased.

[0048] Especially, happy, angry, sad, and trembling emotions may be classified from a user's voice by using mapped information of voice characteristics and emotional states in voice and on the basis of the classified emotion, an operation of a home appliance may be performed or recommended.

[0049] Additionally, since mapping information for distinguishing voice information related to an operation of an air conditioner from voice information related to noise in a user's voice is stored in the air conditioner, the misrecognition of a user's voice may be reduced.

[0050] According to the present invention, since a user controls an operation of a smart home appliance through a voice, usability may be improved. Thus, industrial applicability is remarkable.

BRIEF DESCRIPTION OF DRAWINGS

[0051] FIG. 1 is a view illustrating a configuration of a conventional home appliance and its operating method.

[0052] FIG. 2 is a view illustrating a configuration of an air conditioner as one example of a smart appliance according to a first embodiment of the present invention.

[0053] FIGS. 3 and 4 are block diagrams illustrating a configuration of the air conditioner.

[0054] FIG. 5 is a flowchart illustrating a control method of a smart home appliance according to a first embodiment of the present invention.

[0055] FIG. 6 is a schematic view illustrating a configuration of a voice recognition system according to a second embodiment of the present invention.

[0056] FIG. 7 is a block diagram illustrating a configuration of a voice recognition system according to a second embodiment of the present invention.

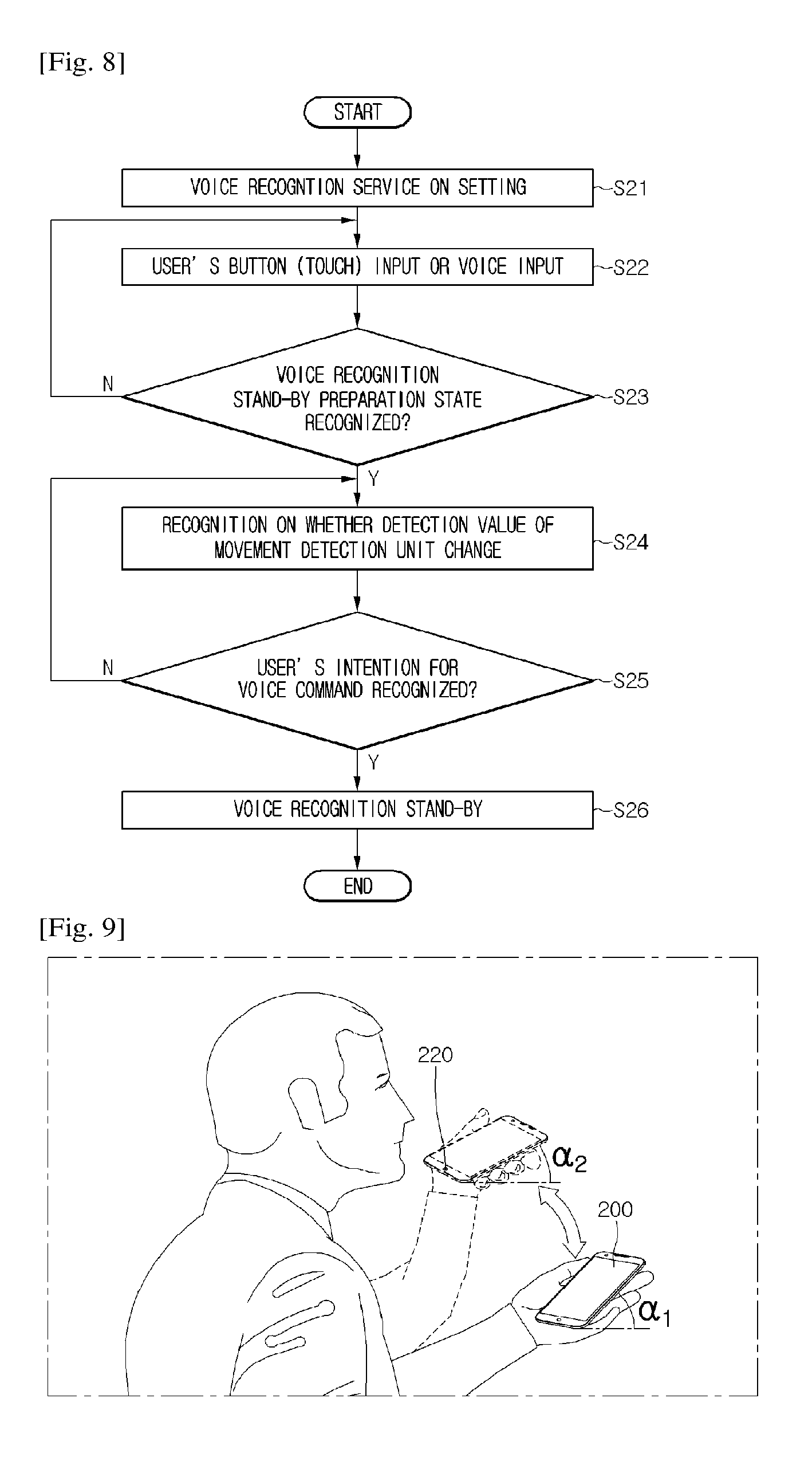

[0057] FIG. 8 is a flowchart illustrating a control method of a smart home appliance according to a first embodiment of the present invention.

[0058] FIG. 9 is a view when a user performs an action for starting a voice recognition by using a mobile device according to a second embodiment of the present invention.

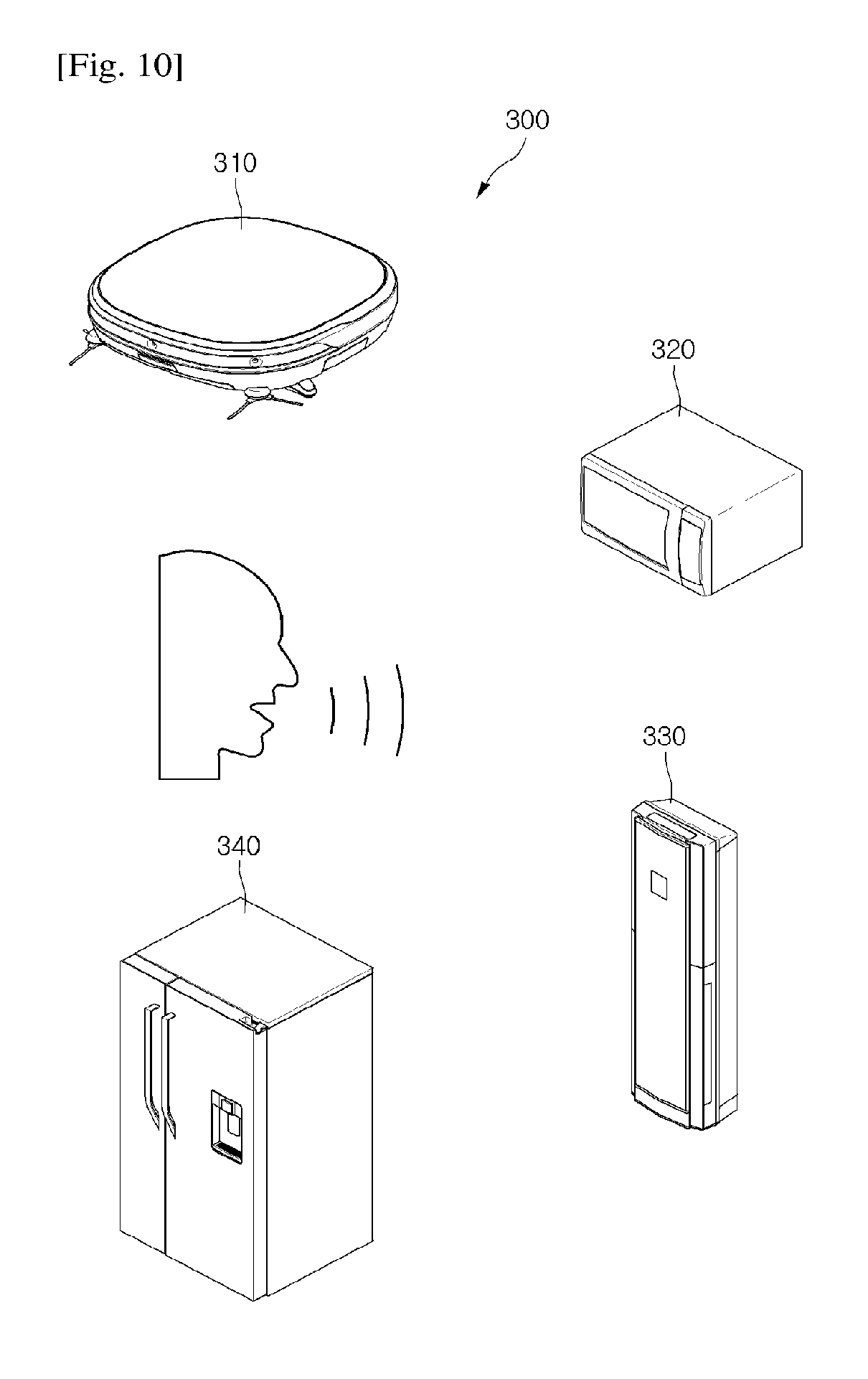

[0059] FIG. 10 is a view illustrating a configuration of a plurality of smart home appliances according to a third embodiment of the present invention.

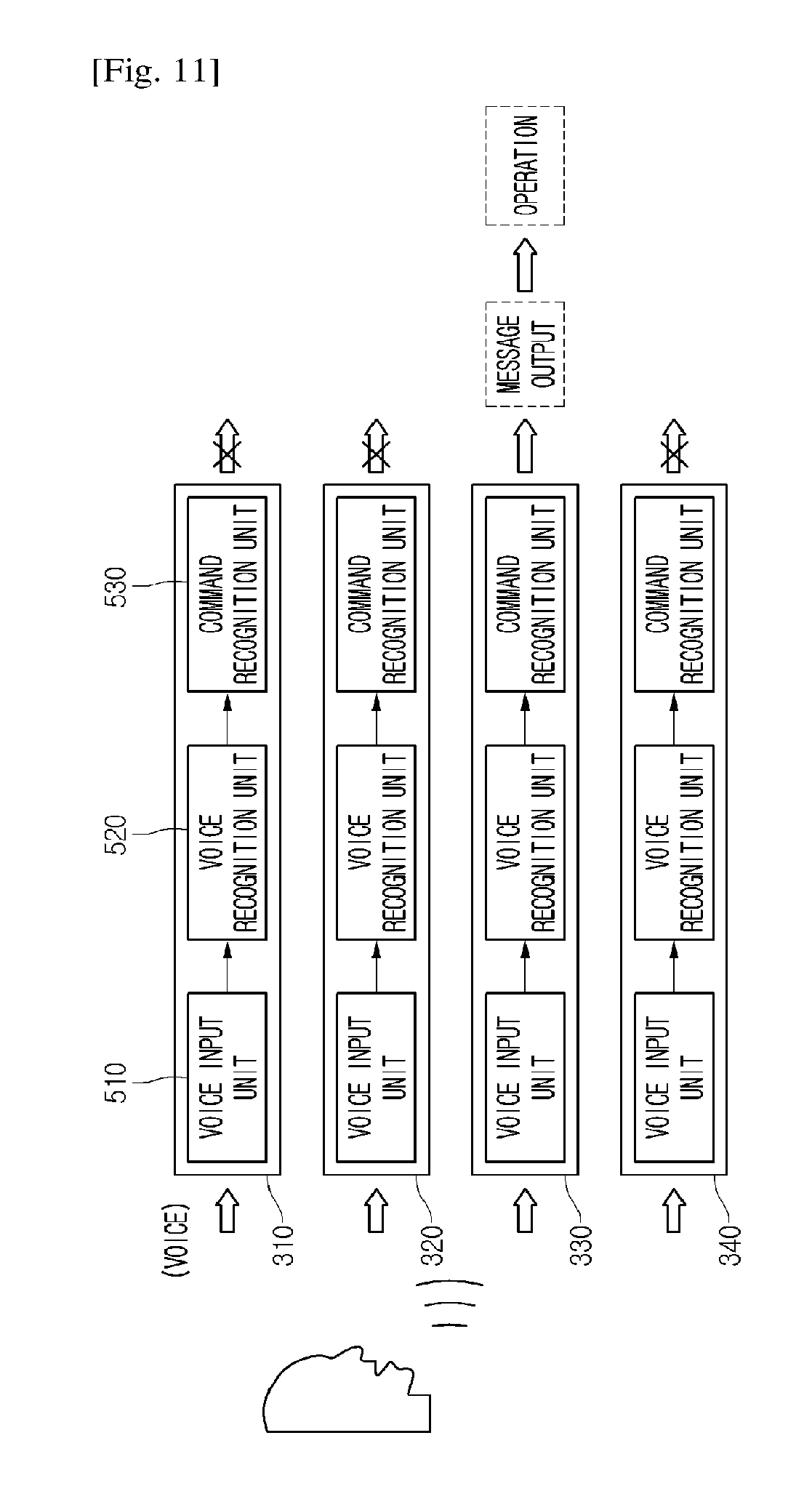

[0060] FIG. 11 is a view when a user makes a voice on a plurality of smart home appliances according to a third embodiment of the present invention.

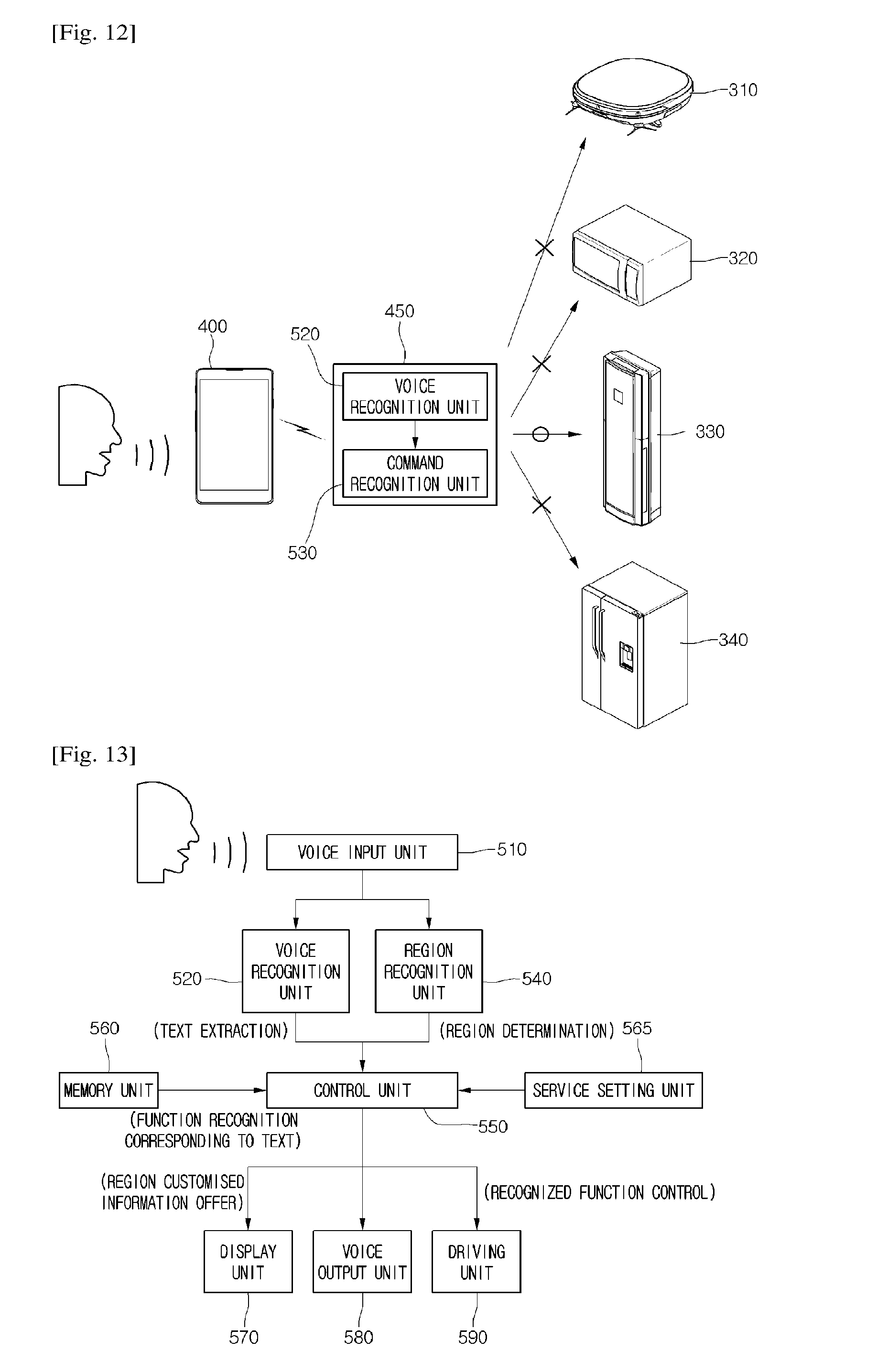

[0061] FIG. 12 is a view when a plurality of smart home appliances operate by using a mobile device according to a fourth embodiment of the present invention.

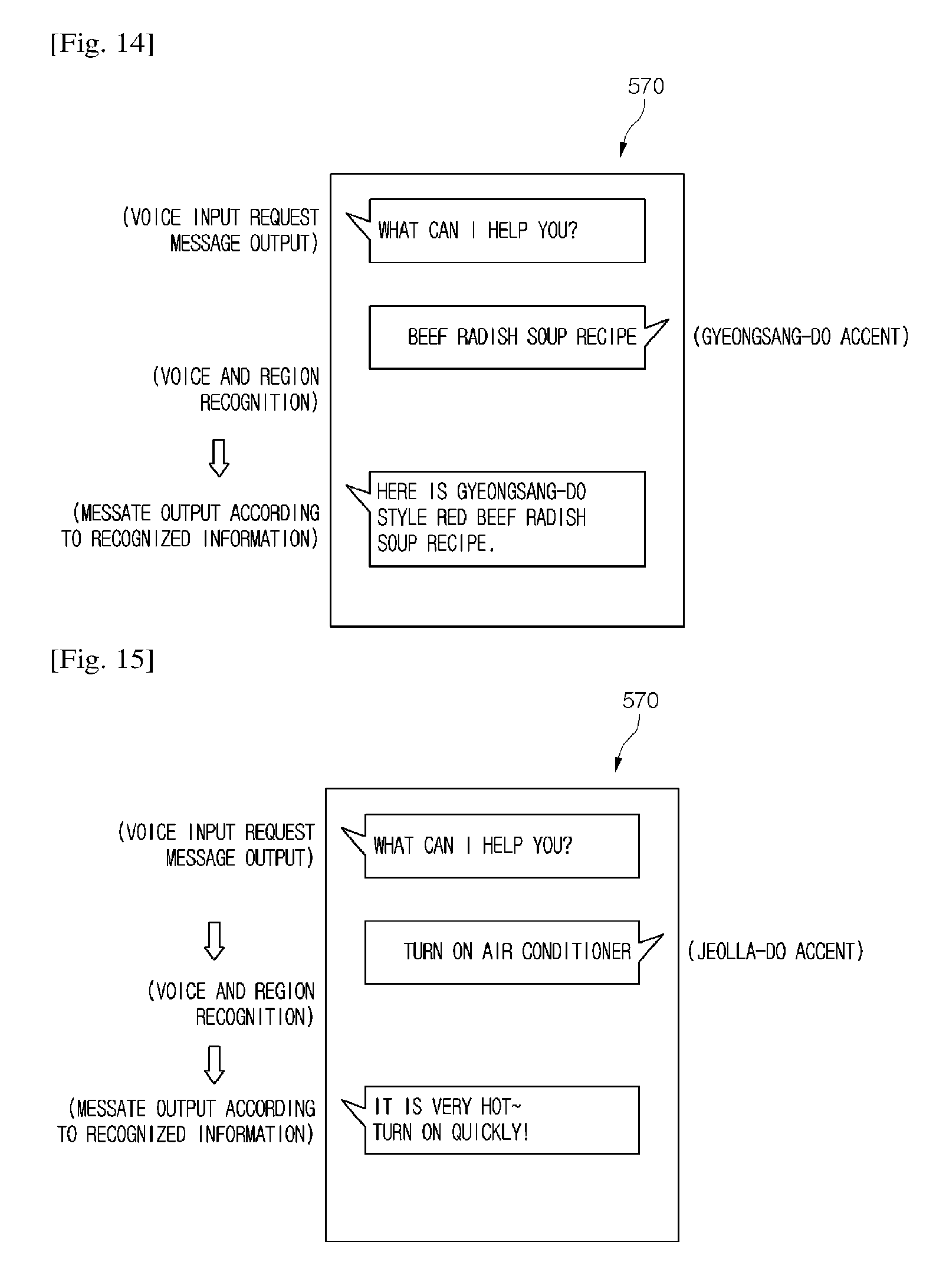

[0062] FIG. 13 is a view illustrating a configuration of a smart home appliance or a mobile device and an operating method thereof according to an embodiment of the present invention.

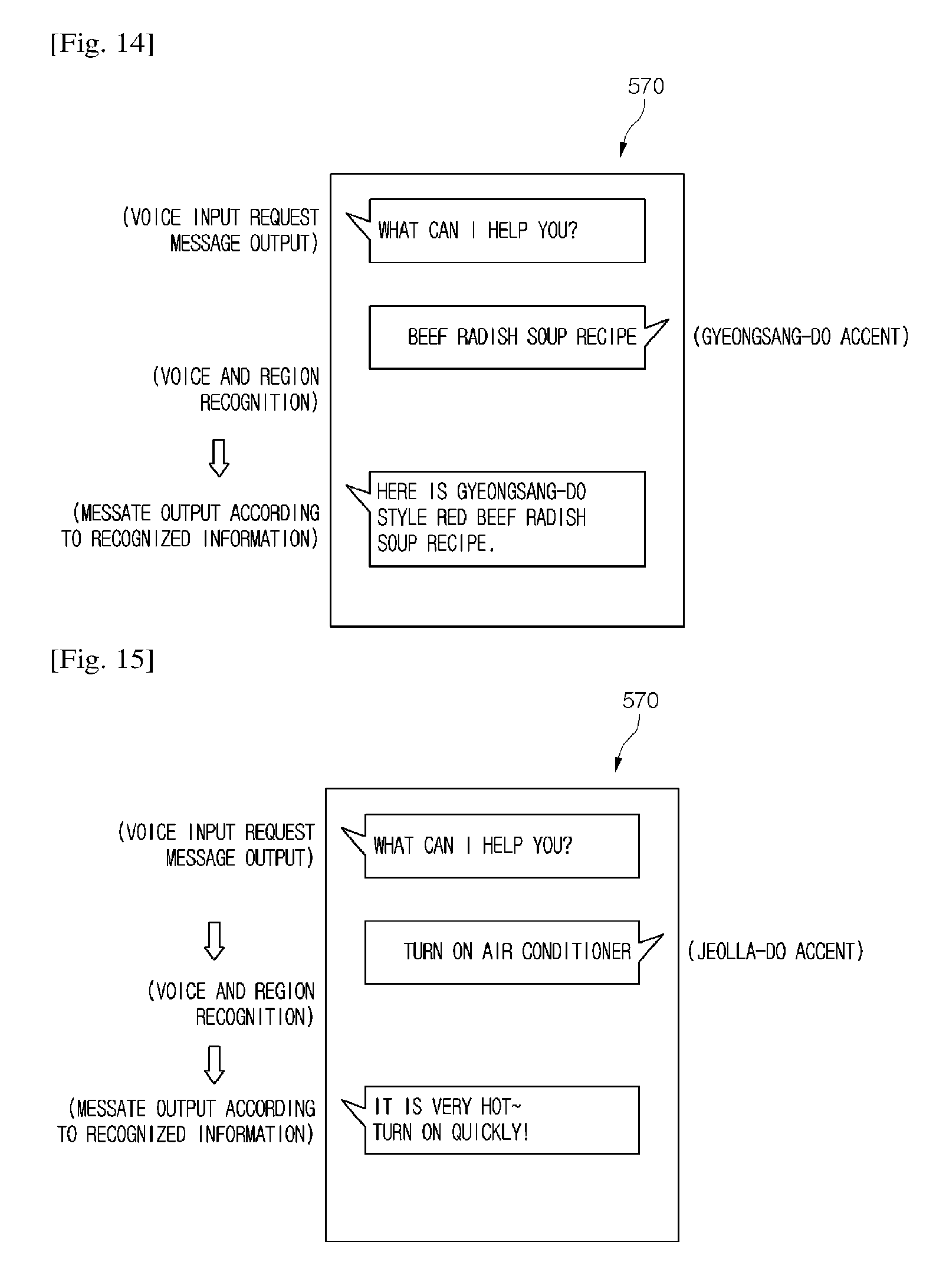

[0063] FIG. 14 is a view illustrating a message output of a display unit according to an embodiment of the present invention.

[0064] FIG. 15 is a view illustrating a message output of a display unit according to another embodiment of the present invention.

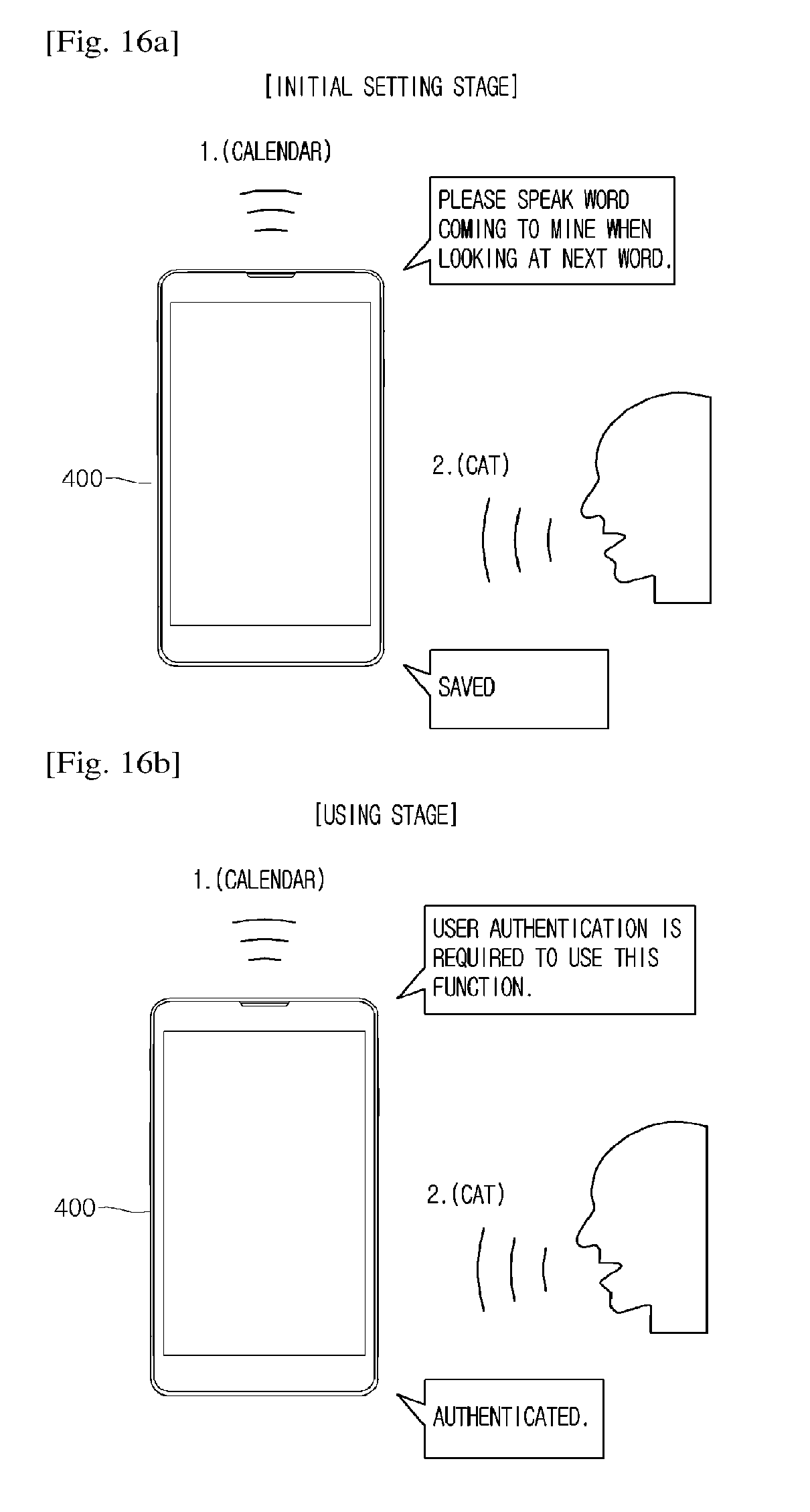

[0065] FIGS. 16A and 16B are views illustrating a security setting for voice recognition function performance according to a fifth embodiment of the present invention.

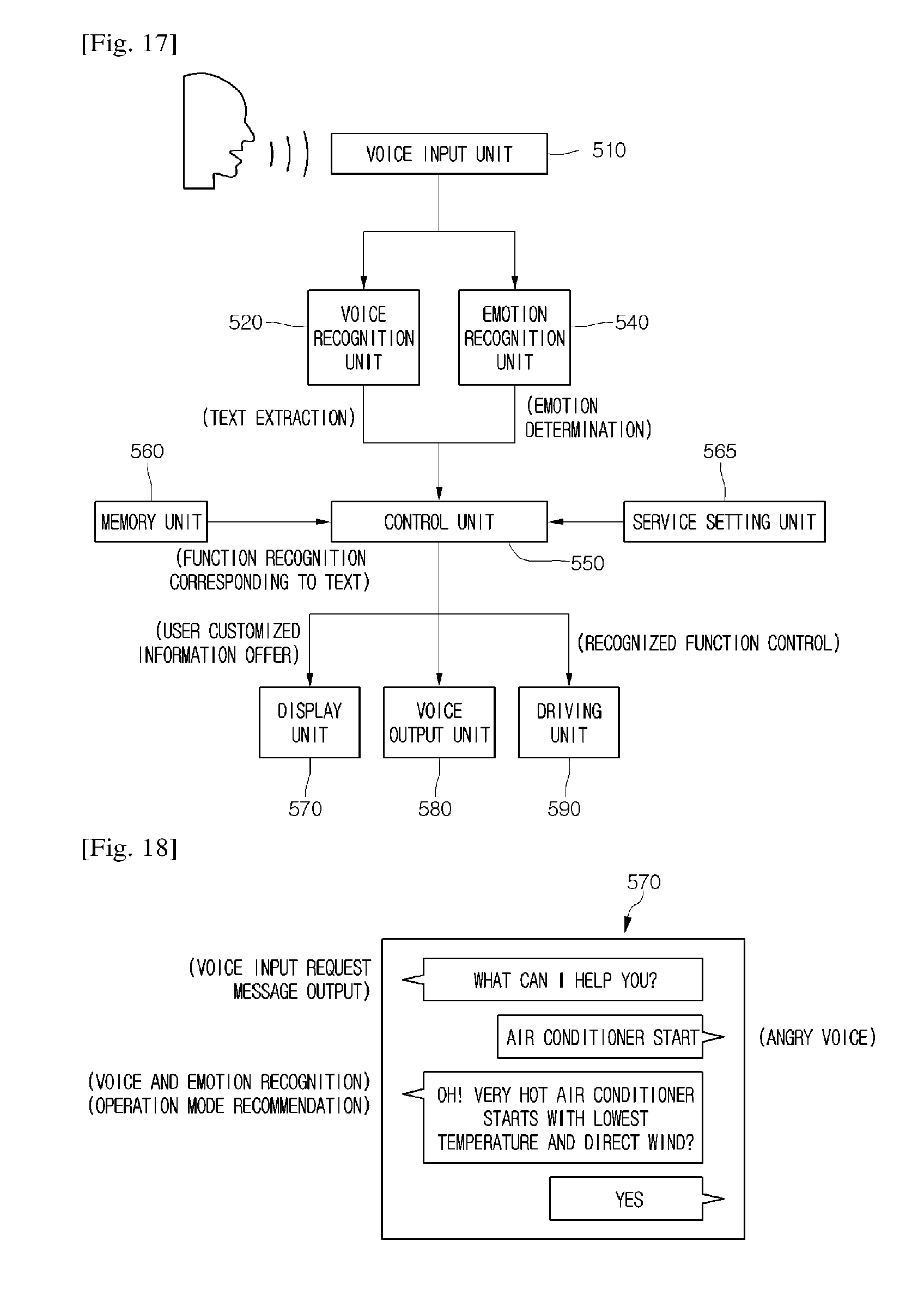

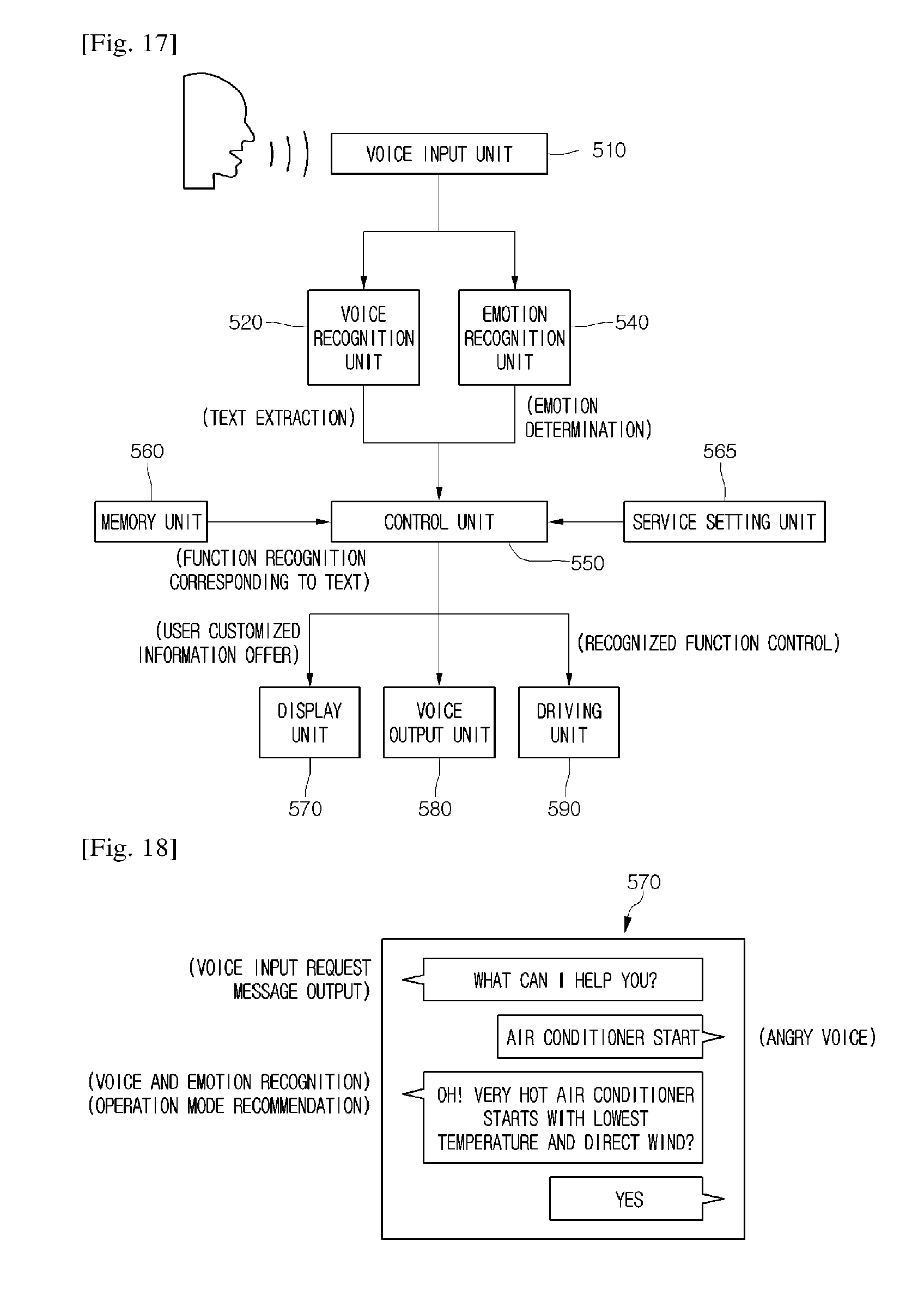

[0066] FIG. 17 is a view illustrating a configuration of a voice recognition system and its operation method according to a sixth embodiment of the present invention.

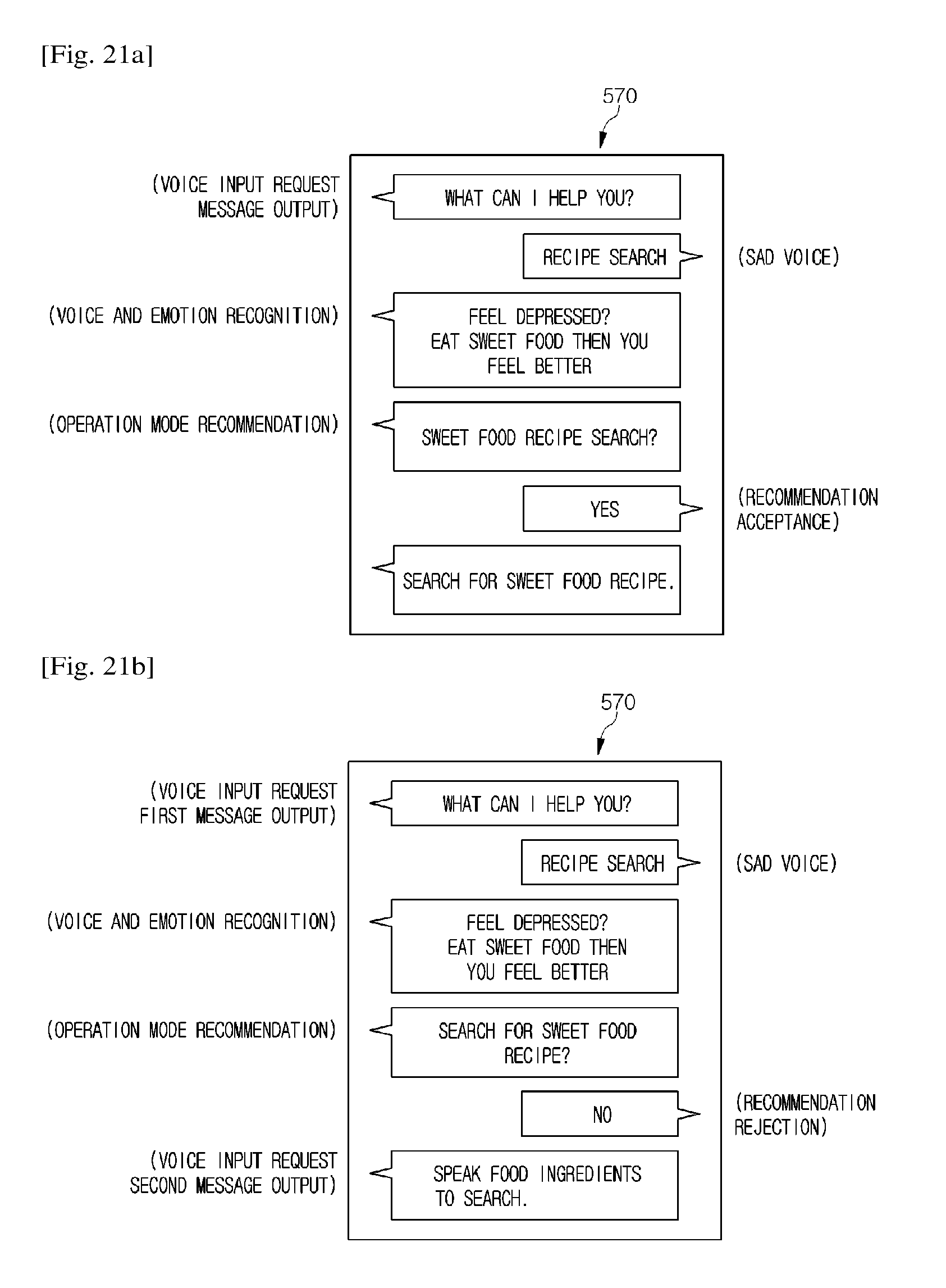

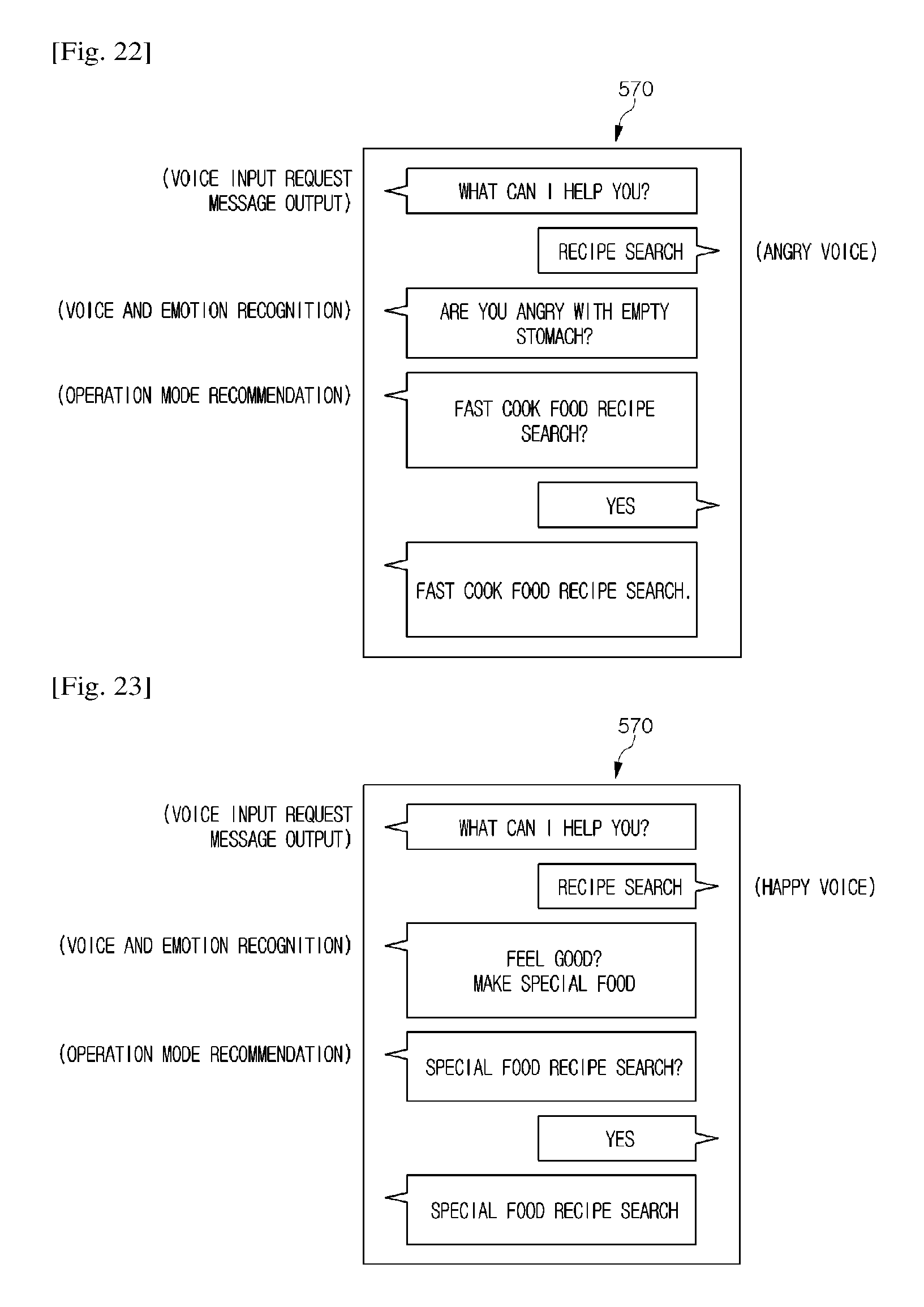

[0067] FIGS. 18 to 20 are views illustrating a message output of a display unit according to a sixth embodiment of the present invention.

[0068] FIGS. 21A to 23 are views illustrating a message output of a display unit according to another embodiment of the present invention.

[0069] FIG. 24 is a block diagram illustrating a configuration of an air conditioner as one example of a smart home appliance according to a seventh embodiment of the present invention.

[0070] FIG. 25 is a flowchart illustrating a control method of a smart home appliance according to a seventh embodiment of the present invention.

[0071] FIG. 26 is a view illustrating a display unit of a smart home appliance.

[0072] FIG. 27 is a view illustrating a configuration of a cooker as another example of a smart home appliance according to a seventh embodiment of the present invention.

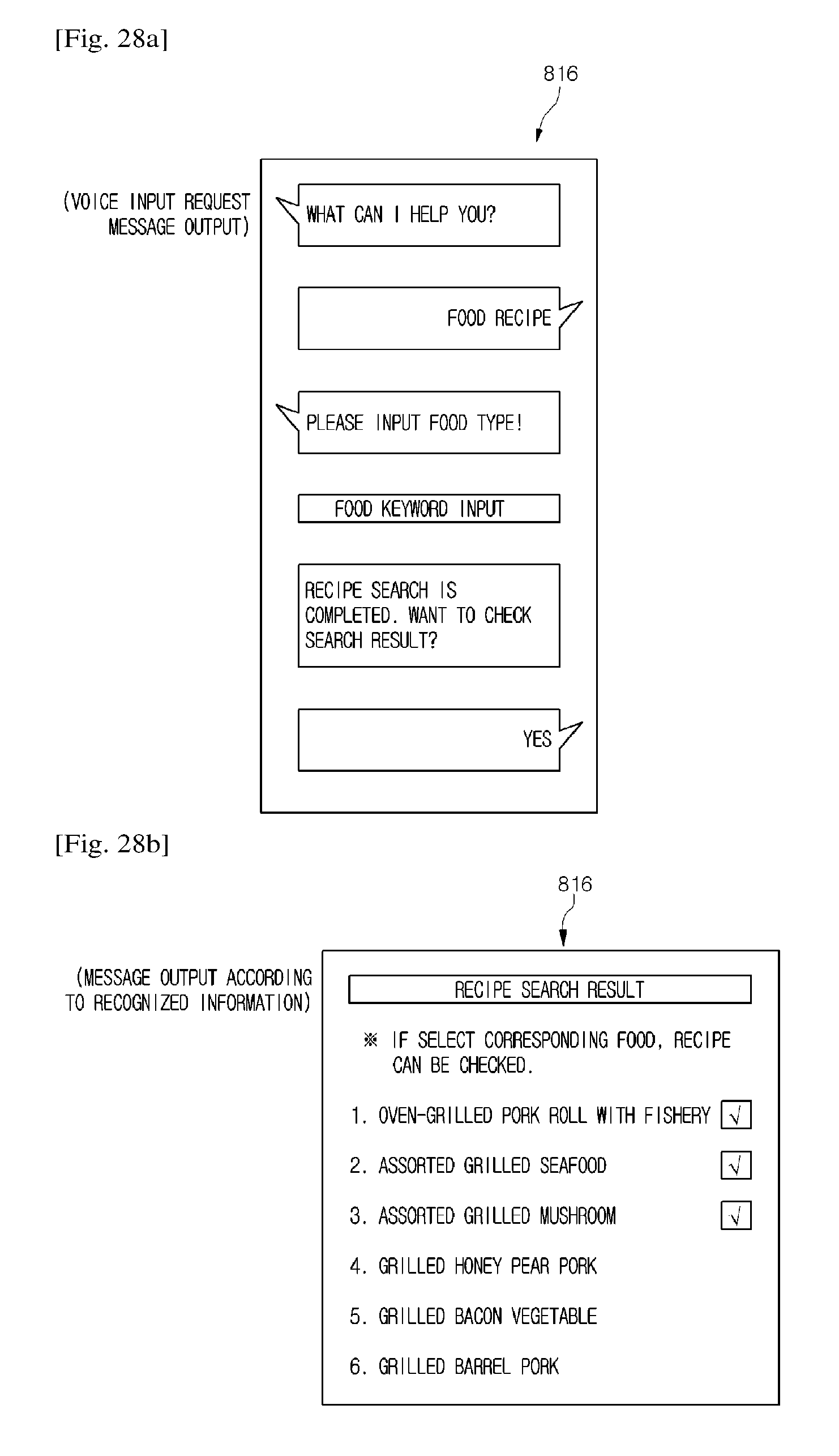

[0073] FIGS. 28A and 28B are views illustrating a display unit of the cooker.

[0074] FIG. 29 is a view illustrating a configuration of a washing machine as another example of a smart home appliance according to an eight embodiment of the present invention.

[0075] FIG. 30 is a flowchart illustrating a control method of a smart home appliance according to an eighth embodiment of the present invention.

[0076] FIG. 31 is a block diagram illustrating a configuration of a voice recognition system according to a ninth embodiment of the present invention.

BEST MODE FOR CARRYING OUT THE INVENTION

[0077] Hereinafter, specific embodiments of the present invention are described with reference to the accompanying drawings. However, the idea of the present invention is not limited to suggested embodiments and those skilled in the art may suggest other embodiments within the scope of the same idea.

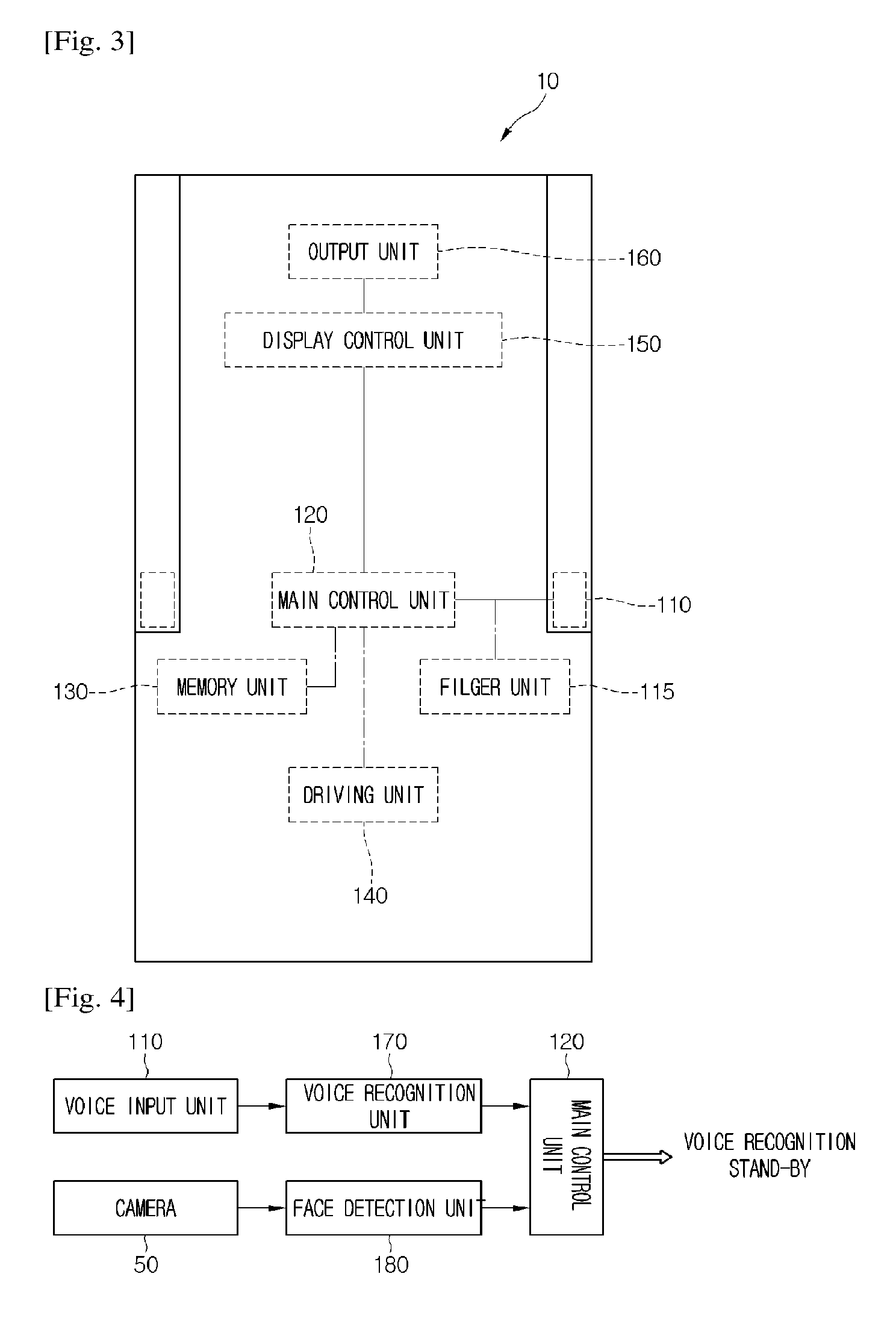

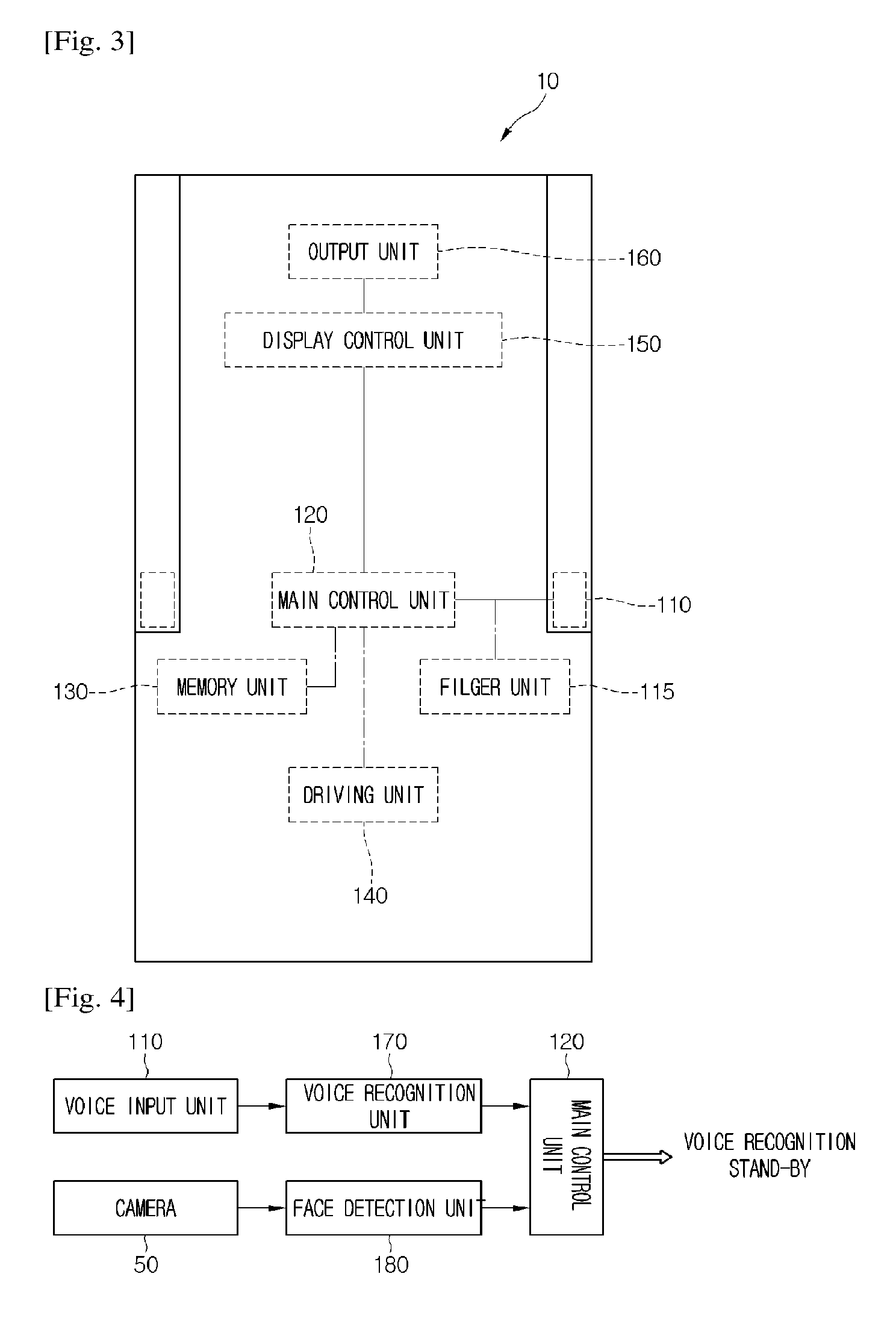

[0078] FIG. 2 is a view illustrating a configuration of an air conditioner as one example of a smart appliance according to a first embodiment of the present invention. FIGS. 3 and 4 are block diagrams illustrating a configuration of the air conditioner.

[0079] Hereinafter, although an air conditioner is described as one example of a smart appliance, it should be clear in advance that the ideas related to a voice recognition or communication (information offer) procedure except for the unique setting functions of an air conditioner may be applied to other smart home appliances, for example, cleaners, cookers, washing machines, or refrigerators.

[0080] Referring to FIGS. 2 to 4, an air conditioner 10 according to the first embodiment of the present invention includes a suction part 22, discharge parts 25 and 42, and a case 20 forming an external appearance. The air conditioner 10 shown in FIG. 2 may be an indoor unit installed in an indoor space to discharge air.

[0081] The suction part 22 may be formed at the rear of the case 20. Then, the discharge parts 25 and 42 include a main discharge part 25 through which the air suctioned through the suction part 22 is discharged to the front or side of the case 20 and a lower discharge part 42 discharging the air downwardly.

[0082] The main discharge parts 25 may be formed at the both sides of the case 20 and its opening/closing degree may be adjusted by a discharge vane 26. The discharge vane 26 may be rotatably provided at one side of the main discharge part 25. The opening/closing degree of the lower discharge part 42 may be adjusted by a lower discharge vane 44.

[0083] A vertically movable upper discharge device 30 may be provided at an upper part of the case 20. When the air conditioner 10 is turned on, the upper discharge device 30 may move to protrude from an upper end of the case 20 toward an upper direction and when the air conditioner 10 is turned off, the upper discharge device 30 may move downwardly and may be received inside the case 20.

[0084] An upper discharge part 32 discharging air is defined at the front of the upper discharge device 30 and an upper discharge vane 34 adjusting a flow direction of the discharged air is equipped inside the upper discharge device 30. The upper discharge vane 34 may be provided rotatably.

[0085] A voice input unit 110 receiving a user's voice is equipped on at least one side of the case 20. For example, the voice input unit 110 may be equipped at the left side or right side of the case 20. The voice input unit 110 may be referred to as a "voice collection unit" in that it is possible to collect voice. The voice input unit 110 may include a microphone. The voice input unit 110 may be disposed at the rear of the main discharge unit 25 so as not to be affected by the air discharged from the main discharge part 25.

[0086] The air conditioner 10 may further include a body detection unit 36 detecting a body in an indoor space or a body's movement. For example, the body detection unit 36 may include at least one of an infrared sensor and a camera. The body detection unit 36 may be disposed at the front part of the upper discharge device 30.

[0087] The air conditioner 10 may further include a capsule injection device 60 through which a capsule with aroma is injected. The capsule input device 60 may be installed at the front of the air conditioner 10 to be withdrawable. When a capsule is inputted to the capsule input device 60, a capsule release device (not shown) disposed inside the air conditioner 10 pops the capsule and a predetermine aroma fragrance is diffused. Then, the diffused aroma fragrance may be discharged to the outside of the air conditioner 10 together with the air discharged from the discharge parts 25 and 42. According to types of the capsule, aroma fragrance may be provided variously, for example, lavender, rosemary or peppermint.

[0088] The air conditioner 10 may include a filter unit 115 for removing noise sound from a voice inputted through the voice input unit 110. The voice may be filtered into a voice frequency easy for voice information through the filter unit 115.

[0089] The air conditioner 10 includes control units 120 and 150 recognizing information for an operation of the air conditioner 10 from the voice information passing through the filter unit 115. The control units 120 and 150 include a main control unit 120 controlling an operation of the driving unit 140 in order for an operation of the air conditioner 10 and an output unit 160 communicably connected to the main control unit 120 and controlling the output unit 160 to display operation information of the air conditioner 10 to the outside.

[0090] The driving unit 140 may include a compressor or a blow fan. Then, the output unit 160 includes a display unit displaying operation information of the air conditioner 10 as an image and a voice output unit outputting the operation information as a voice. The voice output unit may include a speaker. The voice output unit may be disposed at one side of the case 20 and may be provided separated from the voice input unit 110.

[0091] Then, the air conditioner 10 may include a memory unit 130 mapping and storing voice information related to an operation of the air conditioner 10 and voice information irrelevant to an operation of the air conditioner 10 in advance among voices inputted through the voice input unit 110. First voice information, second voice information, and third voice information are stored in the memory unit 130. In more detail, frequency information defining the first to third voice information may be stored in the memory unit 130.

[0092] The first voice information may be understood as voice information related to an operation of the air conditioner 10, that is, keyword information. When it is recognized that the first voice information is inputted, an operation of the air conditioner 10 corresponding to the inputted first voice information may be performed or stop. The memory unit 130 may include text information corresponding to the first voice information and information on a setting function corresponding to the text information.

[0093] For example, when the first voice information corresponds to ON of the air conditioner 10, as the first voice information is recognized, an operation of the air conditioner 10 starts. On the other hand, when the first voice information corresponds to OFF of the air conditioner 10, as the first voice information is recognized, an operation of the air conditioner 10 stops.

[0094] As another example, when the first voice information corresponds to one operation mode of the air conditioner 10, that is, air conditioning, heating, ventilation or dehumidification, as the first voice information is recognized, a corresponding operation mode may be performed. As a result, the first voice information and an operation method (on/off and an operation mode) of the air conditioner 10 corresponding to the first voice information may be mapped in advance in the memory unit 130.

[0095] The second voice information may include frequency information similar to the first voice information related to an operation of the air conditioner 10 but is substantially understood as voice information related to an operation of the air conditioner 10. Herein, the frequency information similar to the first voice information may be understood as frequency information showing a frequency difference between the first voice information and the inside of a setting range.

[0096] When it is recognized that the second voice information is inputted, corresponding voice information may be filtered as noise information. That is, the main control unit 120 may recognize the second voice information but may not perform an operation of the air conditioner 10.

[0097] The third voice information may be understood as voice information irrelevant to an operation of the air conditioner 10. The third voice information may be understood as frequency information showing a frequency difference between the first voice information and the outside of a setting range. The second voice information and the third voice information may be referred to as "unrelated information" in that they are voice information unrelated to an operation of the air conditioner 10.

[0098] In such a way, since voice information related to an operation of a home appliance and voice information unrelated to an operation of a home appliance are mapped and stored in advance in a smart home appliance, voice recognition may be performed effectively.

[0099] The air conditioner 10 includes a camera 50 as a capturing unit capturing a user's face. For example, the camera 50 may be installed at the front part of the air conditioner 10.

[0100] The air conditioner 10 may further include a face detection unit 180 recognizing that a user looks at the air conditioner 10 in order for voice recognition on the basis of an image captured through the camera 50. The face detection unit 180 may be installed inside the camera 50 or installed separately in the case 20, in order for one function of the camera 50. When the camera 50 captures a user's face for a predetermined time, the face detection unit 180 recognizes that a user stares at the air conditioner 10 in order for voice input (that is, a staring state).

[0101] The air conditioner 10 further include a voice recognition unit 170 extracting text information from a voice collected through the voice input unit 110 and recognizing a setting function of the air conditioner 10 on the basis of the extracted text information.

[0102] The information recognized by the voice recognition unit 170 or the face detection unit 180 may be delivered to the main control unit 120. The main control unit 120 may realize a user's intention of using a voice recognition service and may then enter a standby state on the basis of the information recognized by the voice recognition unit 170 and the face detection unit 180.

[0103] Although the voice recognition unit 170, the face detection unit 180, and the main control unit 120 are separately configured as shown in FIG. 4, the voice recognition unit 170 and the face detection unit 180 may be installed as one component of the main control unit 120. That is, the voice recognition unit 170 may be understood as a function component of the main control unit 120 to perform a voice recognition function and also the face detection unit 170 may be understood as a function component of the main control unit 120 to perform a face detection function.

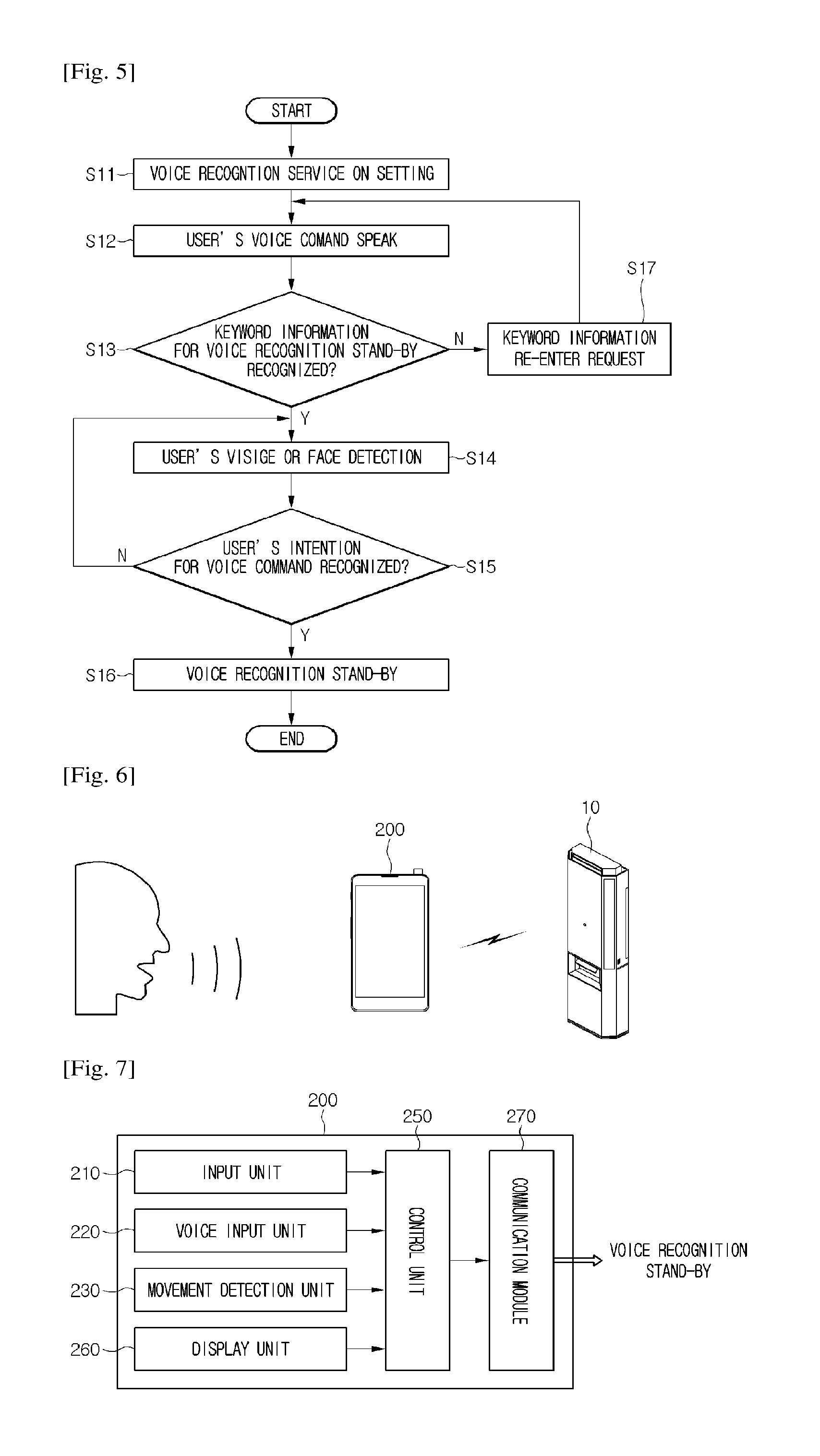

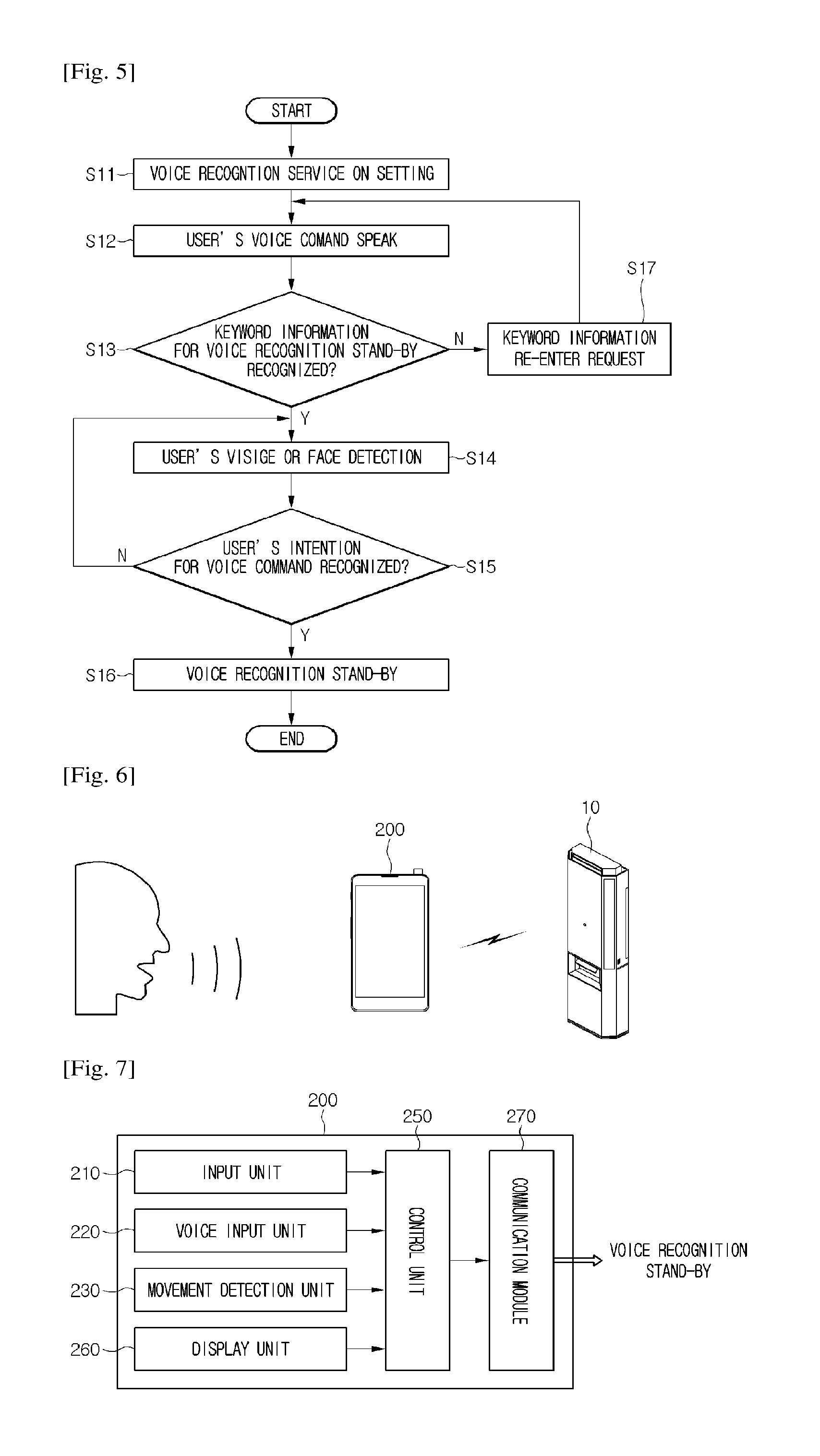

[0104] FIG. 5 is a flowchart illustrating a control method of a smart home appliance according to a first embodiment of the present invention.

[0105] Referring to FIG. 5, in controlling a smart home appliance according to the first embodiment of the present invention, a voice recognition service may be set to be turned on. The voice recognition service is understood as a service controlling an operation of the air conditioner 10 by inputting a voice command. For example, in order to turn on the voice recognition service, a predetermined input unit (not shown) may be manipulated. Of course, when a user does not want to use a voice recognition service, it may be set to be turned off in operation S 11.

[0106] Then, a user speaks a predetermined voice command in operation S 12. The spoken voice is collected through the voice input unit 110. It is determined whether keyword information for activating voice recognition is included in the collected voice.

[0107] The keyword information, as first voice information stored in the memory unit 130, is understood as information that a user may input to start a voice recognition service. That is, even when the voice recognition service is set to be turned on, the keyword information may be inputted in order to represent a user's intention of using a voice recognition service after the current time.

[0108] For example, the keyword information may include pre-mapped information such as "turn on air conditioner" or "voice recognition start". In such a manner, at the beginning of using a voice recognition service, by inputting the keyword information, a time for preparation to minimize surrounding noise or conversation is provided to a user.

[0109] When it is recognized that the keyword information is included in a voice command inputted through the voice input unit 110, it is recognized whether a user truly has an intention for voice command.

[0110] On the other hand, when it is recognized that the keyword information is not included in the voice command, a request message for re-inputting keyword information may be outputted from the output unit 160 of the air conditioner 10.

[0111] Whether a user truly has an intention for voice command may be determined based on whether a user's visage or face is detected for more than a setting time through the camera 50. For example, the setting time may be 2 sec to 3 sec. When a user stares at the camera 50 at the front of the air conditioner 10, the camera 50 captures the user's visage or face and transmits it to the face detection unit 180.

[0112] Then, the face detection unit 180 may recognize whether a user stares at the camera 50 for a setting time. When the face detection unit 180 recognizes the user's staring state, the main control unit 120 determines that the user has an intention on voice command and enters a voice recognition standby state in operations S14 and S15.

[0113] As entering the voice recognition standby state, a filtering process is performed on all voice information collected through the input unit 110 of the air conditioner 10 and then, whether there is a voice command is recognized. The termination of the voice recognition standby state may be performed when a user inputs keyword information for voice recognition termination as voice or manipulates an additional input unit in operation S16.

[0114] In such a way, since whether to activate a voice recognition service is determined by simply recognizing keyword information according to a voice input or detecting a user's visage or face, voice misrecognition may be prevented.

[0115] Although it is described with reference to FIG. 5 that the user face detection operation is performed after the voice keyword information recognition operation, unlike this, after the user face detection operation, the voice keyword information recognition operation may be performed.

[0116] As another example, although it is described with reference to FIG. 5 that when two conditions on the voice keyword information recognition and the user face detection are satisfied, the voice recognition standby is entered, when any one condition on the voice keyword information recognition and the user face detection is satisfied, the voice recognition standby may be entered.

[0117] Hereinafter, a second embodiment of the present invention is described. In this embodiment, since there is a difference from the first embodiment in that a voice recognition service is performed through a mobile device, the difference is mainly described and for the same parts as in the first embodiment, the description and reference numbers of the first embodiment are incorporated.

[0118] FIG. 6 is a schematic view illustrating a configuration of a voice recognition system according to a second embodiment of the present invention.

[0119] Referring to FIG. 6, the voice recognition system includes a mobile device 200 receiving a user's voice input and an air conditioner 10 operating and controlled based on a voice inputted to the mobile device 200. The air conditioner 10 is just one example of a smart home appliance. Thus, the idea of this embodiment is applicable to other smart home appliances. The mobile device 200 may include a smartphone, a remote controller, and a tap book.

[0120] The mobile device 200 may include a voice input available voice input unit 220, a manipulation available input unit 210, a display unit equipped at the front part to display information on an operation state of the mobile device 200 or information provided from the mobile device 200, and a movement detection unit 230 detecting a movement of the mobile device 200.

[0121] The voice input unit 220 may include a mike. The voice input unit 220 may be disposed at a lower part of the mobile device 200. The input unit 210 may include a user press manipulation available button or a user touch manipulation available touch panel.

[0122] Then, the movement detection unit 230 may include an acceleration sensor or a gyro sensor. The acceleration sensor or the gyro sensor may detect information on an inclined angle of the mobile device 200, for example, an inclined angle with respect to the ground.

[0123] For example, when a user stands up the mobile device 200 in order to stare at the display unit 260 of the mobile device 200 and a users lays down the mobile terminal 200 in order to input a voice command through the voice input unit 220, the acceleration sensor or the gyro sensor may detect a different angle value according to the standing degree of the mobile device 200.

[0124] As another example, the movement detection unit 230 may include an illumination sensor. The illumination sensor may detect the intensity of external light collected according the standing degree of the mobile device 200. For example, when a user stands up the mobile device 200 in order to stare at the display unit 260 of the mobile device 200 and a users lays down the mobile terminal 200 in order to input a voice command through the voice input unit 220, the acceleration sensor or the gyro sensor may detect a different angle value according to the standing degree of the mobile device 200.

[0125] The mobile device 200 further includes a control unit 250 receiving information detected by the input unit 210, the voice input unit 230, the display unit 260, and the movement detection unit 230 and recognizing a user's voice command input intention, and a communication module 270 communicating with the air conditioner 10.

[0126] For example, a communication module of the air conditioner 10 and the communication module 270 of the mobile device 100 may directly communicate with each other. That is, direct communication is possible without going through a wireless access point by using Wi-Fi-Direct technique, an Ad-Hoc mode (or network), or Bluetooth.

[0127] In more detail, WiFi-Direct may mean a technique for communicating at high speed by using communication standards such as 802.11a, b, g, and n regardless of the installation of an access point. This technique is understood as a communication technique for connecting the air conditioner 10 and the mobile device 200 by using Wi-Fi wirelessly without internet network.

[0128] The Ad-Hoc mode (or Ad-Hoc network) is a communication network including only mobile hosts without a fixed wired network. Since there are no limitations in the movement of a host and it does not require a wired network and a base station, fast network configuration is possible and its cost is inexpensive. That is, wireless communication is possible without the access point. Accordingly, in the Ad-Hoc mode, without the access point, wireless communication is possible between the air conditioner 10 and the mobile device 200.

[0129] In the Bluetooth communication, as a short-range wireless communication method, wireless communication is possible within a specific range through a pairing process between a communication module (a first Bluetooth module) of the air conditioner 10 and the communication module 270 (a second Bluetooth module) of the mobile device.

[0130] As another example, the air conditioner 10 and the mobile device 200 may communicate with each other through an access point and a server (not shown) or a wired network. When a user's voice command intention is recognized, the control unit 250 may transmit to the air conditioner 10 information that the voice recognition service standby state is entered through the communication module 270.

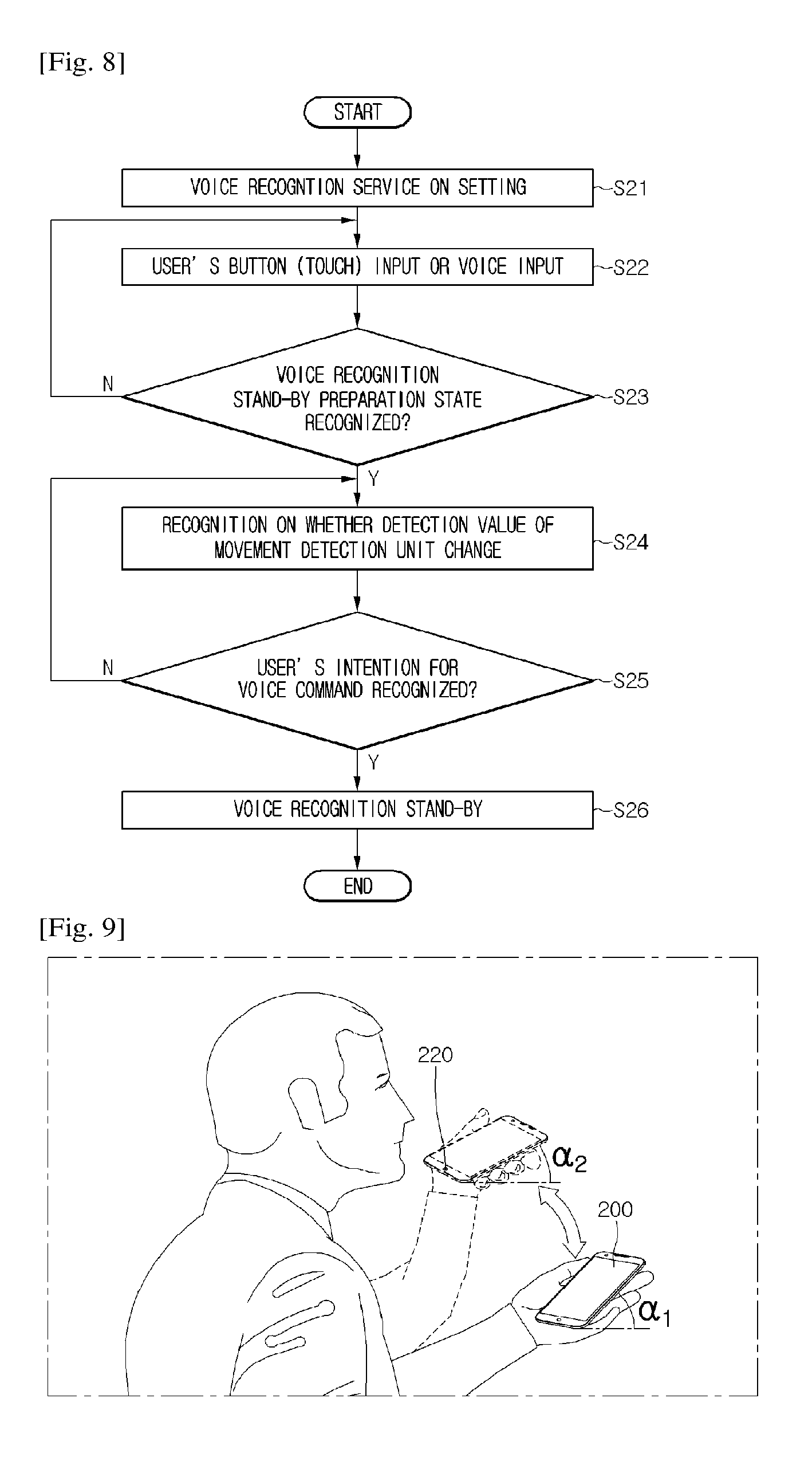

[0131] FIG. 8 is a flowchart illustrating a control method of a smart home appliance according to a second embodiment of the present invention. FIG. 9 is a view when a user performs an action for starting a voice recognition by using a mobile device according to a second embodiment of the present invention.

[0132] Referring to FIG. 8, in controlling a smart home appliance according to the second embodiment of the present invention, a voice recognition service may be set to be turned on in operation S21.

[0133] Then, a user may input or speak a command for a voice recognition standby preparation state through a manipulation of the input unit 210 or the voice input unit 220. When an input of the input unit 210 is recognized or it is recognized that keyword information for voice recognition standby is included in a voice collected through the voice input unit 220, it is determined that "voice recognition standby preparation state" is entered.

[0134] As described in the first embodiment, the keyword information, as first voice information stored in the memory unit 130, is understood as information that a user may input to start a voice recognition service in operations S22 and S23.

[0135] When it is recognized that "the voice recognition standby preparation state" is entered, whether a user truly has an intention on voice command is recognized. On the other hand, when it is not recognized that "the voice recognition standby preparation state" is entered, operation S22 and the following operations may be performed again.

[0136] Whether a user truly has an intention on voice command may be determined based on whether a detection value is changed in the movement detection unit 230. For example, when the movement detection unit 230 includes an acceleration sensor or a gyro sensor, whether a value detected by the acceleration sensor or the gyro sensor is changed is recognized. The change may depend on whether an inclined value (or range) at which the mobile device 200 stands changes into an inclined value (or range) at which the mobile device 200 lies.

[0137] As shown in FIG. 9, while a user stares at the display unit 260 as gripping the mobile device 200, the mobile device 200 may stand up somewhat. At this point, an angle at which the mobile device 200 makes with the ground may be .alpha.1.

[0138] On the other hand, when a user inputs a predetermined voice command through the voice input unit 220 disposed at a lower part of the mobile device 200 as gripping the mobile device 200, the mobile device 200 may lie somewhat. At this point, an angle at which the mobile device 200 makes with the ground may be .alpha.2. Then, .alpha.1>.alpha.2. Values for .alpha.1 and .alpha.2 may be predetermined within a predetermined setting range.

[0139] When a value detected by the acceleration sensor or the gyro sensor changes from a setting range of al into a setting range of .alpha.2, it is recognized that a users puts the voice input unit 220 close to a user's mouth. In this case, it is recognized that the user has an intention on voice command.

[0140] As another example, when the movement detection unit 230 includes an illumination sensor, it is recognized whether a value detected by the illumination sensor is changed. The change may depend on whether the intensity of light (first intensity) collected when the mobile device 200 stands changes into the intensity of light (second intensity) collected when the mobile device 200 lies.

[0141] Herein, the second intensity may be formed greater than the first intensity. That is, the intensity of light collected from the outside when the mobile device 200 lines may be greater than the intensity of light collected from the outside when the mobile device 200 stands. Herein, values for the first intensity and the second intensity may be predetermined within a predetermined setting range.

[0142] When it is detected that a value detected by the illumination sensor changes from a setting range of the first intensity into a setting range of the second intensity, it is determines that a user puts the voice input unit 220 closer to the user's mouth. In this case, it is recognized that the user has an intention on voice command in operations S24 and S25.

[0143] Through such a method, when it is recognized that the user has an intention on voice command, a voice recognition standby state may be entered. As entering the voice recognition standby state, a filtering process is performed on all voice information collected through the voice input unit 220 of the air conditioner 10 and then, whether there is a voice command is recognized.

[0144] The termination of the voice recognition standby state may be performed when a user inputs keyword information for voice recognition termination as voice or manipulates an additional input unit. In such a way, since whether keyword information according to a simple voice input is recognized and whether to activate a voice recognition service is determined by detecting a movement of a mobile device, voice misrecognition may be prevented in operation S26.

[0145] Although it is described with reference to FIG. 8 that the movement detection operation of the mobile device is performed after the user's button (touch) input or voice input operation, the user's button (touch) input or voice input operation may be performed after the movement detection operation of the mobile device.

[0146] As another example, although it is descried with reference to FIG. 8 that when two conditions on the user's button (touch) input or voice input operation and the movement detection operation of the mobile device are satisfied, a voice recognition standby is entered, when any one condition on the user's button (touch) input or voice input operation and the movement detection operation of the mobile device is satisfied, the voice recognition standby state may be entered.

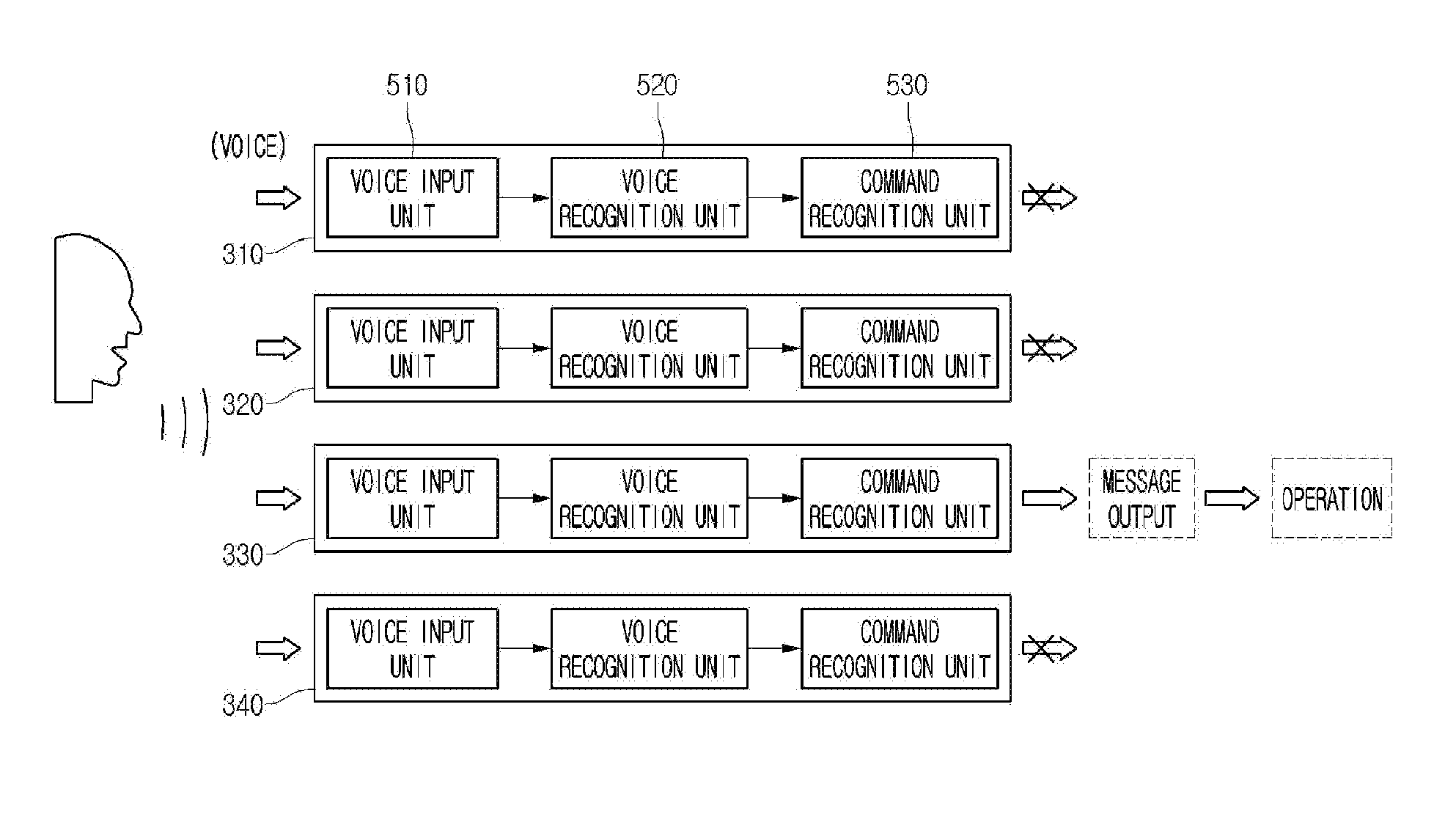

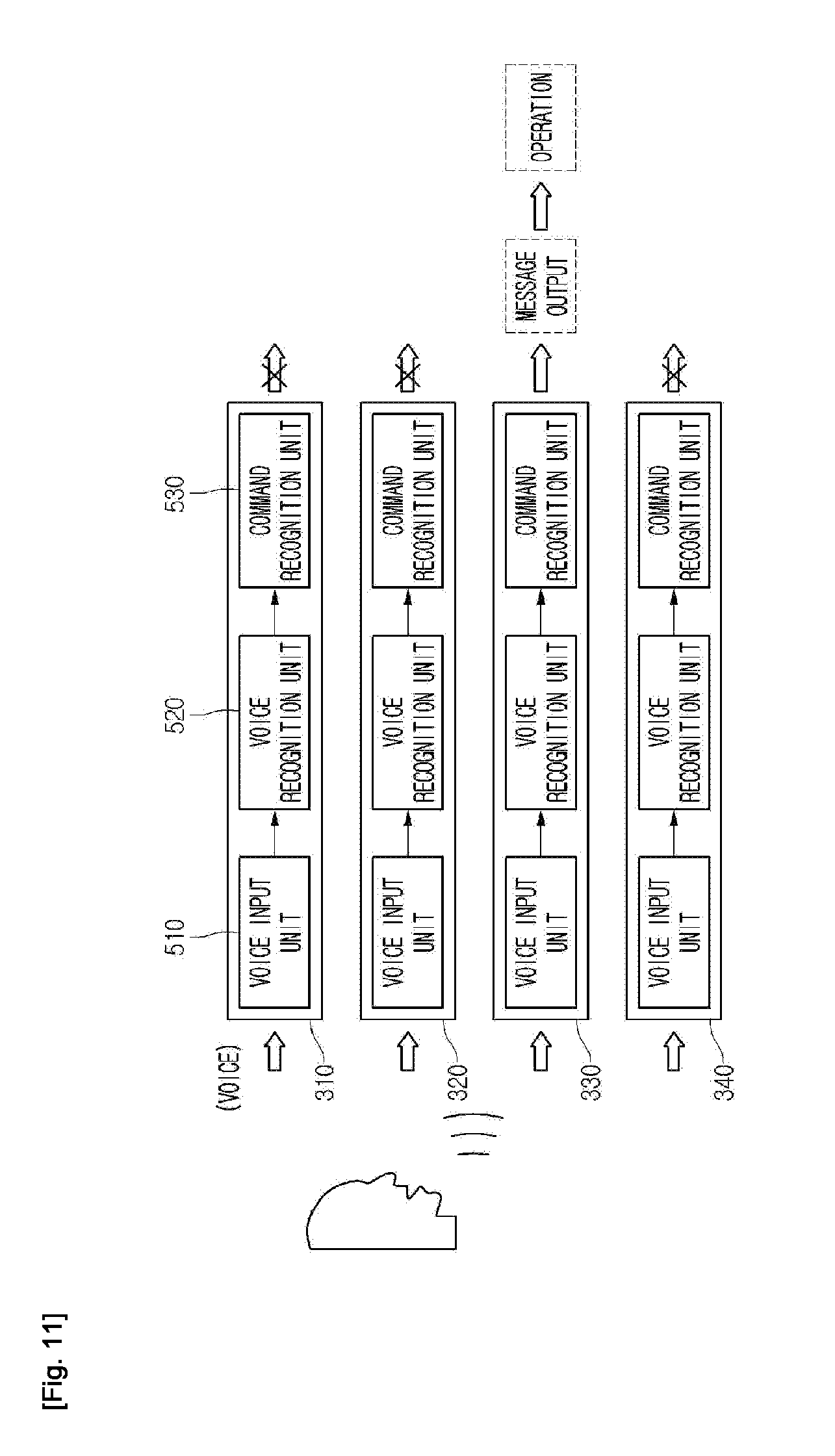

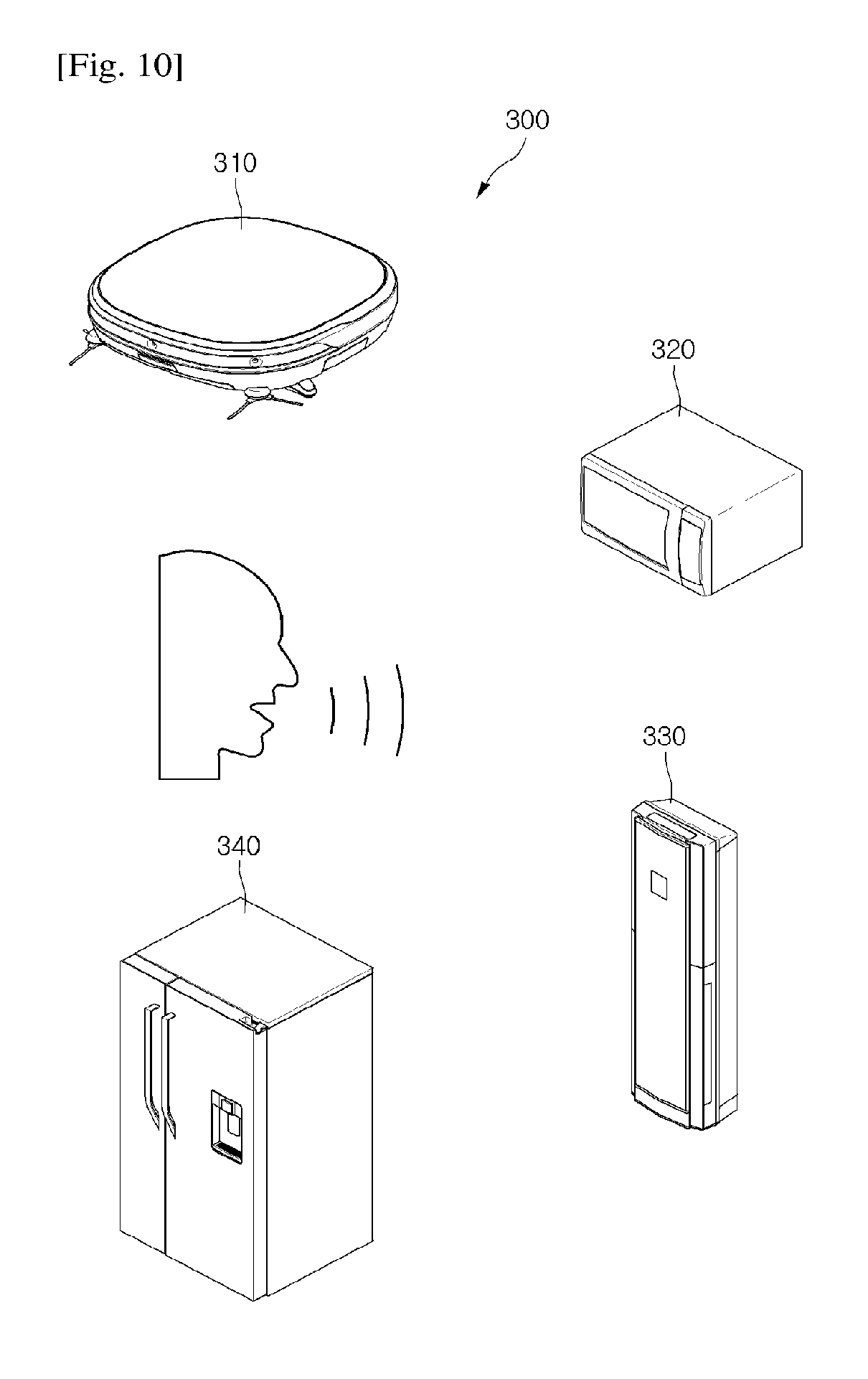

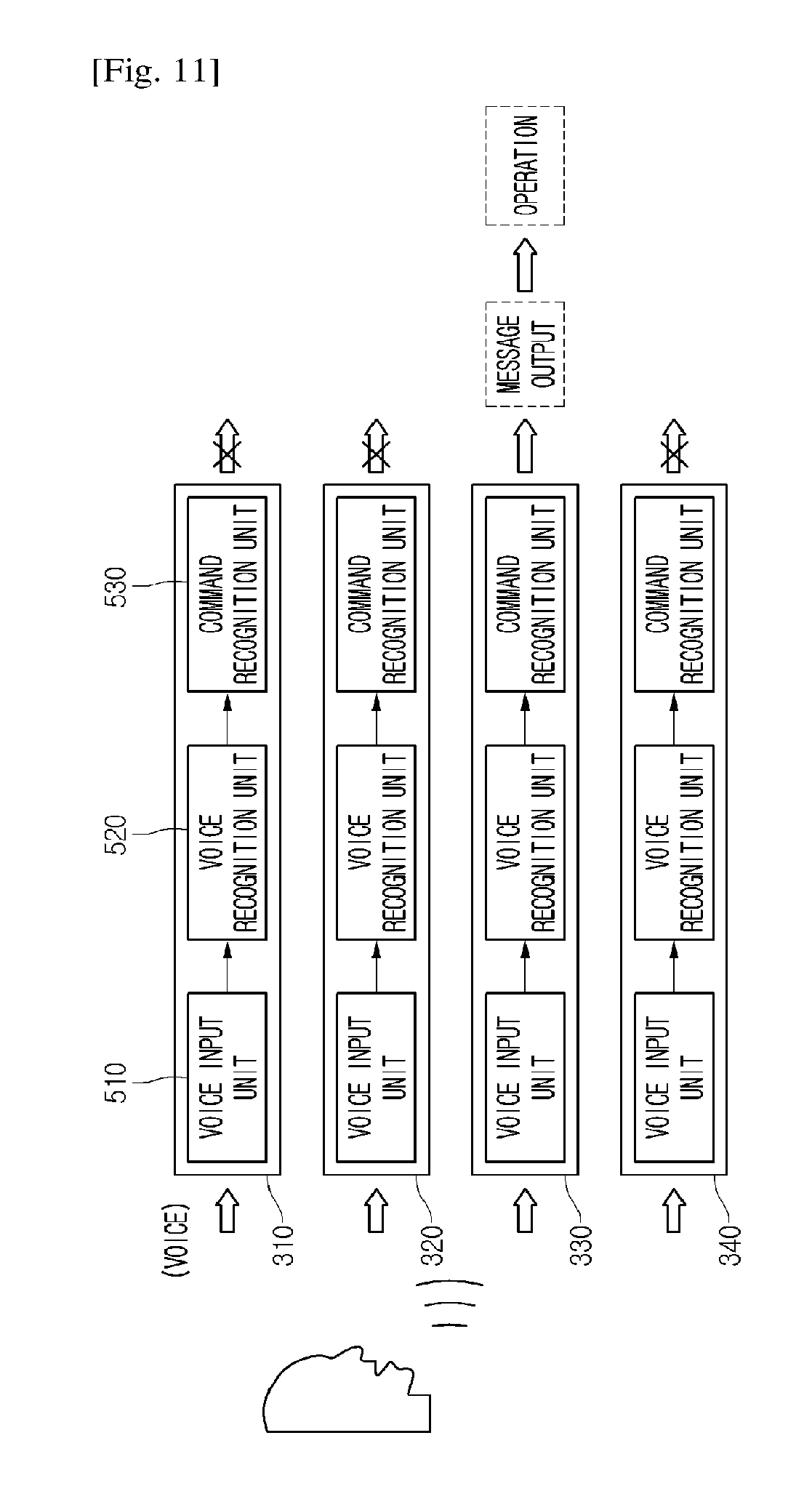

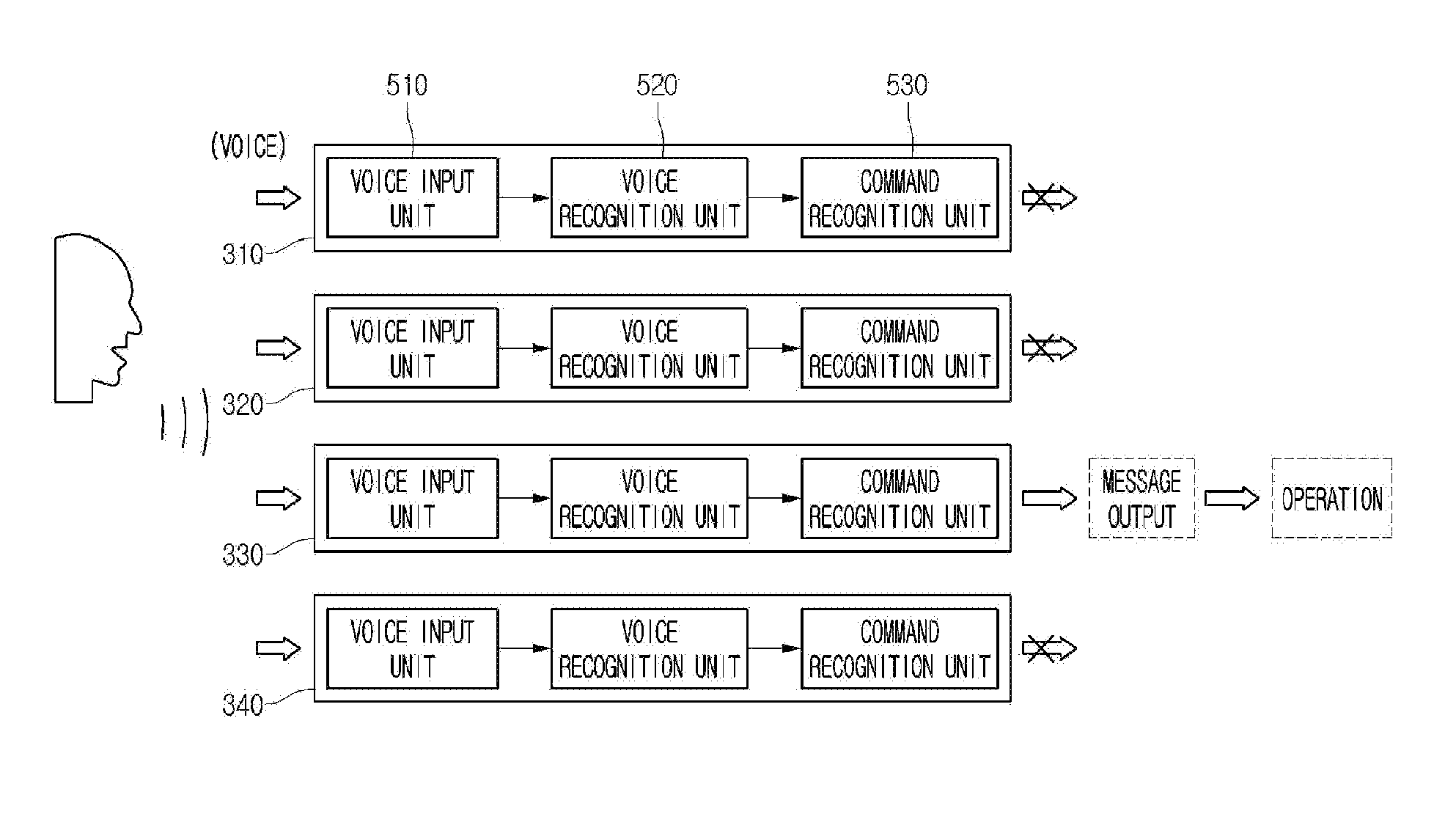

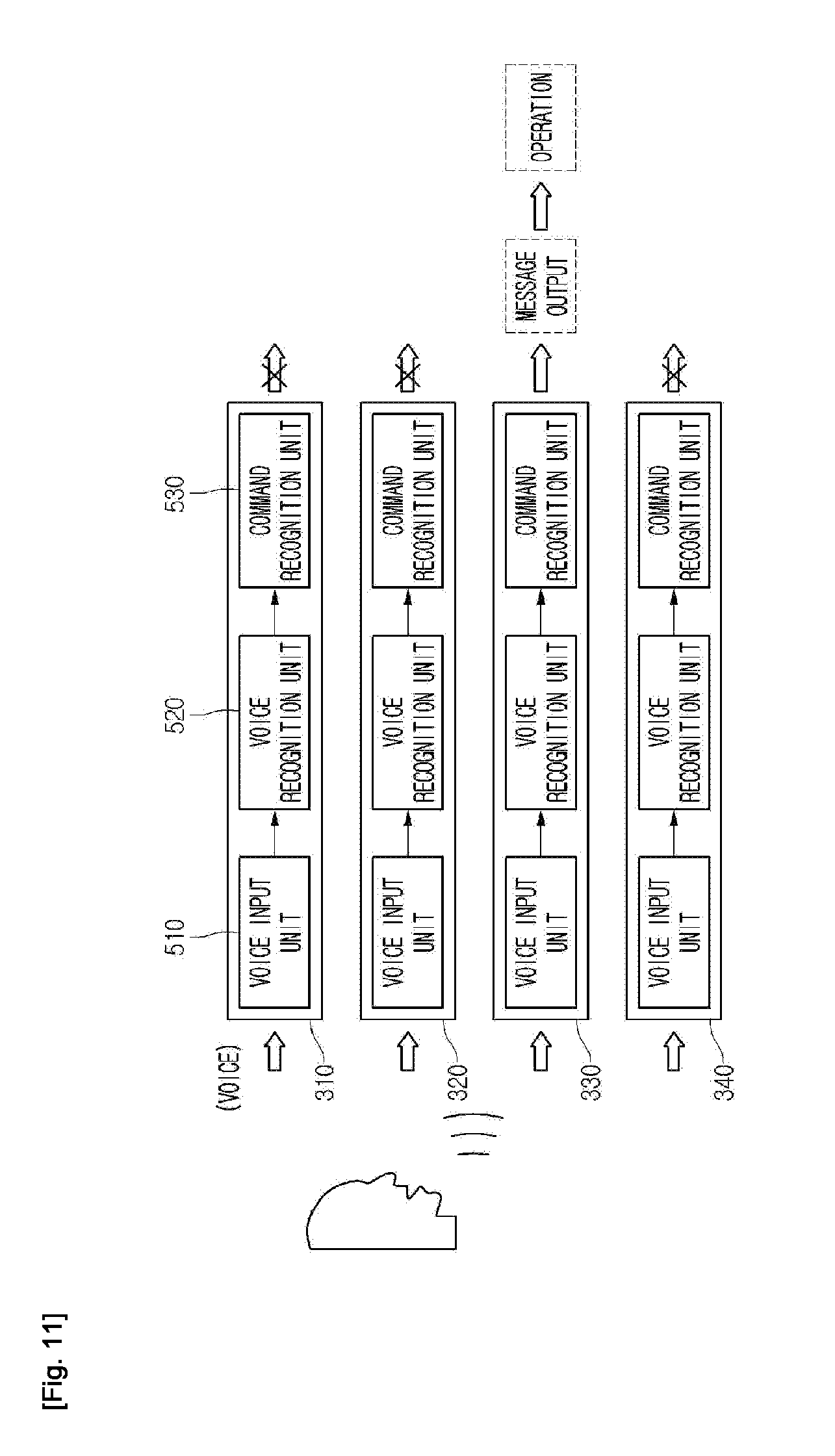

[0147] FIG. 10 is a view illustrating a configuration of a plurality of smart home appliances according to a third embodiment of the present invention. FIG. 11 is a view when a user makes a voice on a plurality of smart home appliances according to a third embodiment of the present invention.

[0148] Referring to FIG. 10, a voice recognition system 10 according to the third embodiment of the present invention includes a plurality of voice recognition available smart home appliances 310, 320, 330, and 340. For example, the plurality of smart home appliances 310, 320, 330, and 340 may include a cleaner 310, a cooker 320, an air conditioner 330, and a refrigerator 340.

[0149] The plurality of smart home appliances 310, 320, 330, and 340 may be in a standby state for receiving a voice. The standby state may be entered when a user sets a voice recognition mode in each smart home appliance. Then, the setting of the voice recognition mode may be accomplished by an input of a predetermined input unit or an input of a set voice.

[0150] The plurality of smart home appliances 310, 320, 330, and 340 may be disposed together in a predetermined space. In this case, even when a user speaks a predetermined voice command toward a specific one among the plurality of smart home appliances 310, 320, 330, and 340, another home appliance may react to the voice command. Accordingly, this embodiment is characterized in that when a user makes a predetermined voice, a target home appliance to be commanded is estimated or determined appropriately.

[0151] In more detail, referring to FIG. 11, each of the smart home appliances 310, 320, 330, and 340 includes a voice input unit 510, a voice recognition unit 520, and a command recognition unit 530.

[0152] The voice input unit 510 may collects voices that a user makes. For example, the voice input unit 510 may include a microphone. The voice recognition unit 520 extracts a text from the collected voice. The command recognition unit 530 determines whether there is a text where a specific word related to an operation of each home appliance is used by using the extracted text. The command recognition unit 530 may include a memory storing information related to the specific word.

[0153] If a voice in which the specific word is used is included in the collected voice, the command recognition unit 530 may recognize that a corresponding home appliance is a home appliance that is a user's command target. The voice recognition unit 520 and the command recognition unit 530 are functionally distinguished and described but may be equipped inside one controller.

[0154] A home appliance recognized as a command target may output a message that whether a user's command target is the home appliance itself. For example, when a home appliance recognized as a command target is the air conditioner 330, a voice or text message "turn on air conditioner?" may be outputted. At this, the outputted voice or text message is referred to as "recognition message".

[0155] According thereto, when an operation of the air conditioner 330 is desired, a user may input a recognition or confirmation message that an air conditioner is a target, for example, a concise message "air conditioner operation" or "OK". At this, the inputted voice message is referred to as "confirmation message".

[0156] On the other hand, if a voice in which the specific word is used is not included in the collected voice, the command recognition unit 530 may recognize that corresponding home appliances, that is, the cleaner 310, the cooker 320, and the refrigerator 340, are excluded from a user's command target. Then, even when a user's voice is inputted for a setting time after the recognition, the home appliances excluded from the command target are not recognized as the user's command target and do not react to the user's voice.

[0157] It is shown in FIG. 11 that when it is recognized that a voice corresponding to the air conditioner 330 among a plurality of home appliances is inputted, a recognition message is outputted and an operation in response to an inputted command is performed.

[0158] Moreover, when a home appliance recognized as the command target is more than one, as mentioned above, each home appliance may output a confirmation message that whether a user's command target is each home appliance itself. Then, a user may specify a home appliance that is a command target by inputting a voice for the type of a home appliance to be commanded among a plurality of home appliances.

[0159] For example, when there are the voice recognition available cleaner 310, cooker 320, air conditioner 330, and refrigerator 340 together in home, as a user makes a voice "air conditioning start", the air conditioner 330 recognizes that a specific word "air conditioning" is used and also recognizes that the home appliance itself is a command target. Of course, information on the text "air conditioning" may be stored in the memory of the air conditioner 330 in advance.

[0160] On the other hand, since the word "air conditioning" is not a specific word of a corresponding home appliance with respect to the cleaner 310, the cooker 320, and the air conditioner 330, it is recognized that the home appliances 310, 320, and 330 are excluded from the command target.

[0161] As another example, when a user makes the voice "temperature up", the cooker 320, the air conditioner 330, and the refrigerator 340 may recognize that a specific word "temperature" is used. That is, the plurality of home appliances 320, 330, and 340 may recognize that they are the command targets.

[0162] At this point, the plurality of home appliances 320, 330, and 340 may output a message that whether the user's command targets are the home appliances 320, 330, and 340 themselves. Then, as a user inputs a voice for a specific home appliance, for example, "air conditioner", it is specified that a command target is the air conditioner 330. When a command target is specified in the above manner, an operation of a home appliance may be controlled through an interactive communication between a user and a corresponding home appliance.

[0163] In such a manner, when there are a plurality of voice recognition available smart home appliances, a command subject may be recognized by extracting the feature (specific word) of a voice that a user makes and only a specific electronic product among a plurality of electronic products responds according to the recognized command subject. Therefore, miscommunication may be prevented during an operation of an electronic product.

[0164] FIG. 12 is a view when a plurality of smart home appliances operate by using a mobile device according to a fourth embodiment of the present invention.

[0165] Referring to FIG. 12, a voice recognition system 10 according to the fourth embodiment of the present invention includes a mobile device 400 receiving a user's voice input, a plurality of home appliances 310, 320, 330, and 340 operating and controlled based on a voice inputted to the mobile device 400, and a server 450 communicably connecting the mobile device 400 and the plurality of home appliances 310, 320, 330, and 340.

[0166] The mobile device 400 is equipped with the voice input unit 510 described with reference to FIG. 11 and the server 450 includes the voice recognition unit 520 and the command recognition unit 530.

[0167] The mobile device 400 may include an application connected to the server 400. Once the application is executed, a voice input mode for user's voice input may be activated in the mobile device 400.

[0168] When a user's voice is inputted through the voice input unit 510 of the mobile device 400, the inputted voice is delivered to the server 450 and the server 450 determines which home appliance is the target of a voice command as the voice recognition unit 520 and the command recognition unit 530 operate.

[0169] When a specific home appliance is recognized as the command target on the basis of a determination result, the server 450 notifies the specific home appliance of the recognized result. The home appliance notified of the result responses to a user's command. For example, when the air conditioner 330 is recognized as a command target and notified of a result, it may output a recognition message such as "turn on air conditioner?". According thereto, a user may input a confirmation message such as "OK" or "air conditioner operation". In relation to this, the contents described with reference to FIG. 11 are used.

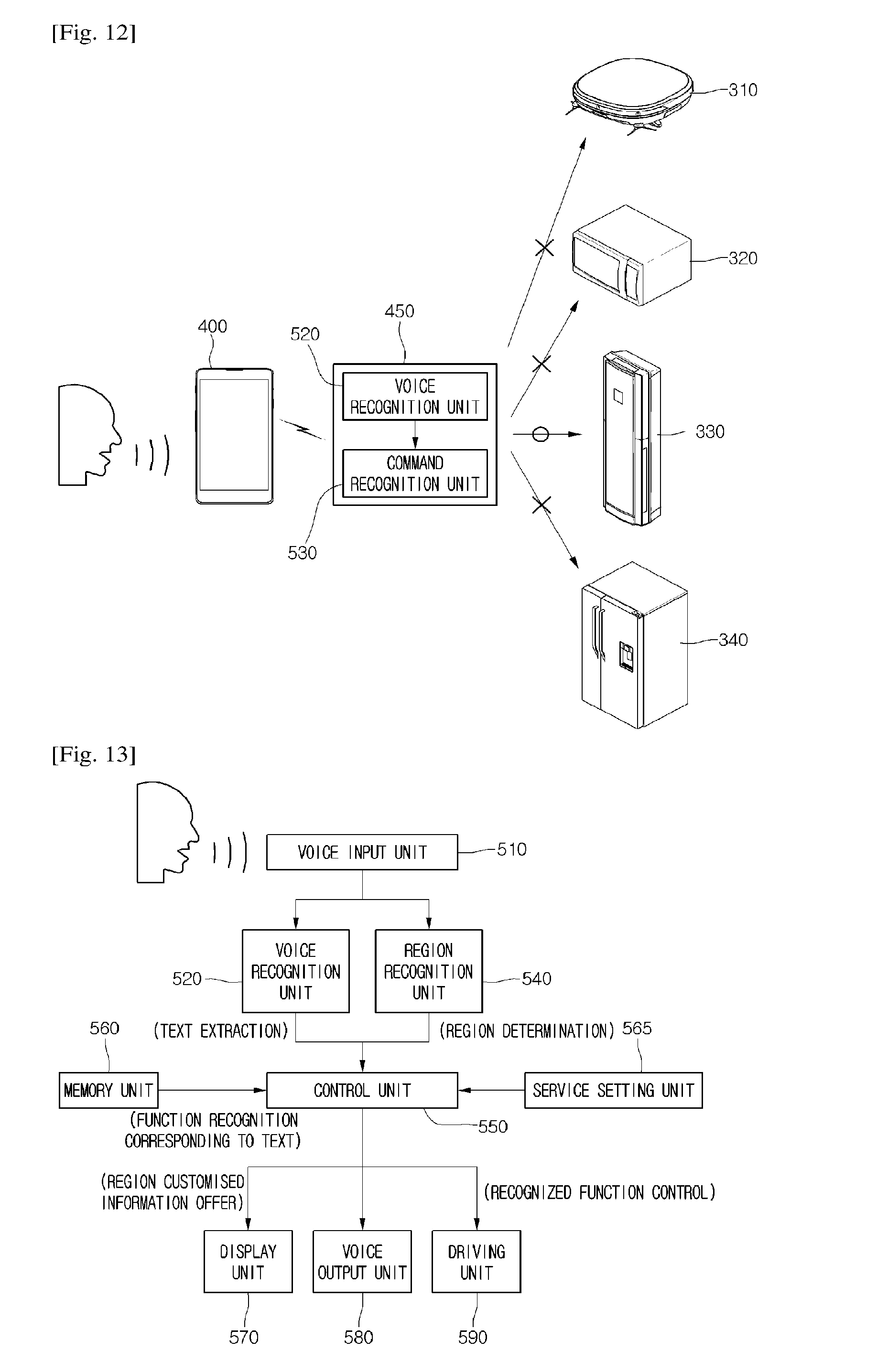

[0170] FIG. 13 is a view illustrating a configuration of a smart home appliance or a mobile device and an operating method thereof according to an embodiment of the present invention. Configurations shown in FIG. 13 may be equipped in smart home appliances or mobile devices. Hereinafter, smart home appliances will be described for an example.

[0171] Referring to FIG. 13, a smart home appliance according to an embodiment of the present invention includes a voice input unit 510 receiving a user's voice input and a voice recognition unit 520 extracting a text from a voice collected through the voice input unit 510. The voice recognition unit 520 may include a memory unit where the frequency of a voice and a text are mapped.

[0172] The smart home appliance may further include a region recognition unit 540 extracting the intonation of a voice inputted from the voice input unit 510 to determine the local color of the voice, that is, which region dialect is used. The region recognition unit 540 may include a database for dialects used in a plurality of regions. The database may store information on the intonation recognized when speaking in dialect, that is, unique frequency changes.

[0173] The text extracted through the voice recognition unit 520 and the information on a region determined through the region recognition unit 540 may be delivered to the control unit 550.

[0174] The smart home appliance may further include a memory unit 560 mapping the text extracted by the voice recognition unit 520 and a function corresponding to the text and storing them.

[0175] The control unit 550 may recognize a function corresponding to the text extracted by the voice recognition unit 520 on the basis of the information stored in the memory unit 560. Then, the control unit 550 may control a driving unit 590 equipped in the home appliance in order to perform the recognized function.

[0176] For example, the driving unit 560 may include a suction motor of a cleaner, a motor or a heater of a cooker, a compressor motor of an air conditioner, or a compressor motor of a refrigerator.

[0177] The home appliance further includes a display unit 570 outputting or providing region customized information to a screen or a voice output unit 580 outputting a voice on the basis of a setting function corresponding to the text extracted by the voice recognition unit 520 and the region information determined by the region recognition unit 540. The combined display unit 570 and voice output unit 580 may be referred to as "output unit"

[0178] That is, the setting function may include a plurality of functions divided according to regions and one function matching the region information determined by the region recognition unit 540 among the plurality of functions may be outputted. In summary, combined information of the recognized function and the determined local color may be inputted to the display unit 570 or the voice output unit 580 (region customized information providing service).

[0179] Moreover, the smart home appliance further includes a selection available mode setting unit 565 to perform a mode for the region customized information providing service. A user may use the region customized information providing service when the mode setting unit 565 is in "ON" state. Of course, a user may not use the region customized information providing service when the mode setting unit 565 is in "OFF" state.

[0180] Hereinafter, by referring to the drawings, contents of region customized information outputted to the display unit 570 are described.

[0181] FIG. 14 is a view illustrating a message output of a display unit according to an embodiment of the present invention.

[0182] Referring to FIG. 14, the display unit 570 may be equipped in the cooler 320, the refrigerator 340, or the mobile device 400. Hereinafter, the display unit 570 equipped at the refrigerator 340 is described for an example.

[0183] The cooler 320 or the refrigerator 340 may provide information on a recipe for a predetermined cooking to a user. In other words, the cooker 320 or the refrigerator 340 may include a memory unit storing recipe information on at least one cooking.

[0184] When a region customized information providing service is used to obtain recipe information, a user may provide an input when the mode setting unit 565 of the refrigerator 340 is ON state.

[0185] When the region customized information providing service starts, for example, a guide message such as "what can I help you", that is, a voice input request message, may be displayed on a screen of the display unit 570. Of course, the voice input request message may be outputted as a voice through the voice output unit 580.

[0186] As shown in FIG. 14, a user may input a specific recipe for the voice input request message, for example, as shown in FIG. 14, a voice "beef radish soup recipe". The refrigerator 340 receives and recognizes a user's voice command and extracts a text corresponding to the recognized voice command. Accordingly, the display unit 570 may display a screen "beef radish soup recipe".

[0187] Then, the refrigerator 340 extracts the intonation from a voice inputted by a user and recognizes a frequency change corresponding to the extracted intonation, so that it may recognize a dialect for a specific region.

[0188] For example, when a user inputs a voice "beef radish soup recipe" in Gyeongsang-do accent, the refrigerator 340 recognizes the Gyeongsang-do dialect and prepares to provide a recipe optimized for Gyeongsang-do region. That is, there are a plurality of beef radish recipes according to regions, one recipe matching the recognized region, Gyeongsang-do, may be recommended.

[0189] As a result, the refrigerator 340 may recognize that a user in Gycongsang-do region wants to receive "beef radish soup recipe" and may then, read information on a Gyeongsang-do style radish recipe to provide it to a user. For example, the display unit 570 may display a message "here is Gyeongsang-do style red beef radish soup recipe". In addition, a voice message may be outputted through the voice output unit 580.

[0190] Another example is described.

[0191] When the smart home appliance is an air conditioner for conditioning an indoor space and recognizes that a user` region is a cold region such as Gangwon-do, as a user inputs a voice command "temperature down", under the assumption that a user in cold region likes cold weather, the smart home appliance may operate to set a relatively low temperature as a setting temperature. Then, information on contents related to adjusting a setting temperature to a relatively low temperature, for example, 20.degree. C., may be outputted to the output units 570 and 580.

[0192] According to such a configuration, without a user's input for specific information, the dialect that a user speaks is recognized and region customized information is provided on the basis of the recognized dialect information. Therefore, usability may be improved.

[0193] FIG. 15 is a view illustrating a message output of a display unit according to another embodiment of the present invention.

[0194] Referring to FIG. 15, the display unit 570 according to another embodiment of the present invention may be equipped in the air conditioner 330 or the mobile device 400. When a region customized information providing service is used to input a command for an operation of the air conditioner 330, a user may provide an input when the mode setting unit 545 of the air conditioner 330 is in ON state.

[0195] When the region customized information providing service starts, for example, a guide message such as "what can I help you", that is, a voice input request message, may be displayed on a screen of the display unit 570. Of course, the voice input request message may be outputted as a voice through the voice output unit 580.

[0196] With respect to the voice input request message, a user may input a command on an operation of the air conditioner 300, for example, as shown in FIG. 15, a voice "turn on air conditioner (in dialect)". The air conditioner 330 receives and recognizes a user's voice command and extracts a text corresponding to the recognized voice command. Accordingly, the display unit 570 may display a screen "turn on air conditioner (in dialect)".

[0197] Then, the air conditioner 330 extracts the intonation from a voice inputted by a user and recognizes a frequency change corresponding to the extracted intonation, so that it may recognize a dialect for a specific region. For example, when a user inputs a voice "turn on air conditioner" in Jeolla-do accent, the air conditioner 330 may recognize the Jcolla-do dialect and may then generate a response message for a user as the Jcolla-do dialect.

[0198] That is, the air conditioner 330 recognizes that a user in Jeolla-do region wants "air conditioner operation" and reads dialect information on a message that an air conditioner operation is performed from the memory unit 560 to provide it to a user. For example, the display unit 570 may output a message using the Jeolla-do dialect, for example, "it is very hot and turn on quickly (in the Jeolla-do dialect)". In addition, a voice message may be outputted through the voice output unit 580.

[0199] By such a configuration, the dialect that a user speaks is recognized and information to be provided to a user is provided as a dialect on the basis of the recognized dialect information, so that the user may feel the intimacy.

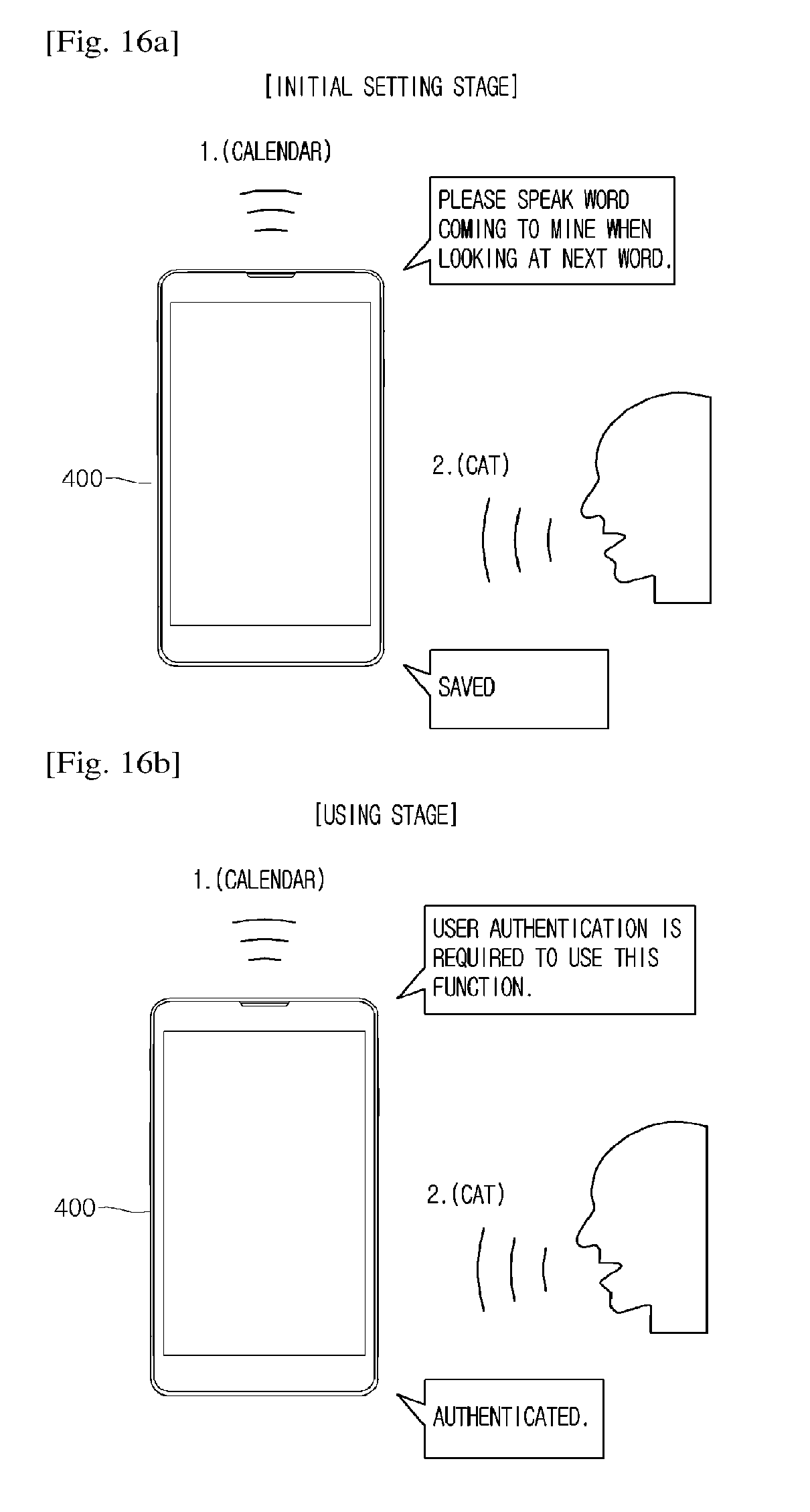

[0200] FIGS. 16A and 16B are views illustrating a security setting for voice recognition function performance according to a fifth embodiment of the present invention.

[0201] Referring to FIGS. 16A and 16B, a user's security setting is possible in the voice recognition system according to the fifth embodiment of the present invention. The security setting may be completed by a smart home appliance directly or by using a mobile device. Hereinafter, for example, security setting and authentication procedures by using a mobile device are described.

[0202] When a user wants to use the region customized information providing service, an input may be provided when the mode setting unit 565 in the mobile device 400 is in ON state. As an input is provided when the mode setting unit 565 is in ON state, an operation for setting an initial security may be performed.

[0203] The mobile device 400 may output a message for a predetermined key word. For example, as shown in the drawing, a key word "calendar" may be outputted as a text through a screen of the mobile device 400 or may be outputted as voice through a speaker.

[0204] Then, together with a message for the outputted key word, a first guide message may be outputted. The first guide message includes contents for an input of a reply word to the key word. For example, the first guide message may include content "please speak word coming to mind when looking at the next word". Then, the first guide message may be outputted as a text through a screen of the mobile device 400 or may be outputted as a voice through a speaker.

[0205] For the first guide message, a user may input a word to be set as a password as a voice. For example, as shown in the drawing, a relay word "cat" may be inputted. When the mobile device 400 recognizes the reply word, a second guide message notifying the reply word is stored may be outputted through a screen or a voice.

[0206] In such way, when the region customized information providing service is used after the completion of the security setting, as shown in FIG. 16B, a procedure for performing an authentication by inputting a reply word to the key word may be performed.

[0207] In more detail, when an input is provided as the mode setting unit 565 in the mobile device 400 is in ON state, the mobile device 400 outputs a message for the key word, for example, "calendar", and outputs a third guide message notifying the need for authentication, for example, "user authentication is required for this function". The message for keyword and the third guide message may be outputted through a screen of the mobile device 400 or a voice.

[0208] For the key word, a user may input a predetermined set reply word, for example, a voice of "cat". As recognizing the matching of the key word and the reply word, the mobile device 400 may output a fourth guide message notifying that authentication is successful, for example, a text of voice message "authenticated".