Light Field Imaging Device and Method for Depth Acquisition and Three-Dimensional Imaging

Saari; Jonathan Ikola ; et al.

U.S. patent application number 16/308173 was filed with the patent office on 2019-08-22 for light field imaging device and method for depth acquisition and three-dimensional imaging. The applicant listed for this patent is AIRY3D INC.. Invention is credited to Ji-Ho Cho, Jonathan Ikola Saari.

| Application Number | 20190257987 16/308173 |

| Document ID | / |

| Family ID | 60578320 |

| Filed Date | 2019-08-22 |

View All Diagrams

| United States Patent Application | 20190257987 |

| Kind Code | A1 |

| Saari; Jonathan Ikola ; et al. | August 22, 2019 |

Light Field Imaging Device and Method for Depth Acquisition and Three-Dimensional Imaging

Abstract

A light field imaging device and method are provided. The device can include a diffraction grating assembly receiving a wavefront from a scene and including one or more diffraction gratings, each having a grating period along a grating axis and diffracting the wavefront to generate a diffracted wavefront. The device can also include a pixel array disposed under the diffraction grating assembly and detecting the diffracted wavefront in a near-field diffraction regime to provide light field image data about the scene. The pixel array has a pixel pitch along the grating axis that is smaller than the grating period. The device can further include a color filter array disposed over the pixel array to spatio-chromatically sample the diffracted wavefront prior to detection by the pixel array. The device and method can be implemented in backside-illuminated sensor architectures. Diffraction grating assemblies for use in the device and method are also disclosed.

| Inventors: | Saari; Jonathan Ikola; (Montreal, CA) ; Cho; Ji-Ho; (Montreal, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 60578320 | ||||||||||

| Appl. No.: | 16/308173 | ||||||||||

| Filed: | June 6, 2017 | ||||||||||

| PCT Filed: | June 6, 2017 | ||||||||||

| PCT NO: | PCT/CA2017/050686 | ||||||||||

| 371 Date: | December 7, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62346884 | Jun 7, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/232 20180501; G02B 27/0075 20130101; G02B 27/4205 20130101; H01L 27/14607 20130101; H04N 13/207 20180501; G03B 11/00 20130101; G02B 3/0006 20130101; H01L 27/14645 20130101; G02B 5/1871 20130101; H04N 5/22541 20180801; G02B 27/46 20130101; H01L 27/14625 20130101; G02B 5/201 20130101; H01L 27/1464 20130101; G02B 5/1814 20130101; H04N 13/257 20180501; G02B 5/1842 20130101; G03B 33/00 20130101; H01L 27/14627 20130101; G03B 35/08 20130101; H04N 9/04551 20180801; H01L 27/14621 20130101 |

| International Class: | G02B 5/18 20060101 G02B005/18; G02B 5/20 20060101 G02B005/20; G02B 3/00 20060101 G02B003/00; G02B 27/42 20060101 G02B027/42; G02B 27/46 20060101 G02B027/46; G03B 11/00 20060101 G03B011/00; H01L 27/146 20060101 H01L027/146; H04N 13/257 20060101 H04N013/257; H04N 13/207 20060101 H04N013/207; H04N 9/04 20060101 H04N009/04; H04N 5/225 20060101 H04N005/225 |

Claims

1. A light field imaging device for capturing light field image data about a scene, the light field imaging device comprising: a diffraction grating assembly configured to receive an optical wavefront originating from the scene, the diffraction grating assembly comprising a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the diffraction grating diffracting the optical wavefront to generate a diffracted wavefront; and a pixel array comprising a plurality of light-sensitive pixels disposed under the diffraction grating assembly and detecting the diffracted wavefront as the light field image data, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

2. The light field imaging device of claim 1, further comprising a color filter array disposed over the pixel array and comprising a plurality of color filters arranged in a mosaic color pattern, the color filter array spatially and spectrally filtering the diffracted wavefront according to the mosaic color pattern prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels.

3. The light field imaging device of claim 2, wherein each color filter is optically coupled to a corresponding one of the light-sensitive pixels.

4. The light field imaging device of claim 2, wherein each color filter is optically coupled to at least two corresponding ones of the plurality of light-sensitive pixels.

5. The light field imaging device of claim 2, wherein each color filter is one of a red pass filter, a green pass filter and a blue pass filter.

6. The light field imaging device of claim 2, wherein the mosaic color pattern is a Bayer pattern.

7. The light field imaging device of claim 1, wherein the diffraction grating is configured to diffract the optical wavefront in a waveband ranging from 400 nanometers to 1550 nanometers.

8. The light field imaging device of claim 1, wherein the grating period ranges from 1 micrometer to 20 micrometers.

9. The light field imaging device of claim 1, wherein the diffraction grating includes between two and ten repetitions of the grating period.

10. The light field imaging device of claim 1, wherein the diffraction grating is a phase grating.

11. The light field imaging device of claim 10, wherein the diffraction grating is a binary phase grating and the refractive index modulation pattern comprises a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

12. The light field imaging device of claim 11, wherein the diffraction grating has a duty cycle of about 50%.

13. The light field imaging device of claim 12, wherein each light-sensitive pixel is positioned under and in alignment with either a corresponding one of the ridges or a corresponding one of the grooves.

14. The light field imaging device of claim 12, wherein each light-sensitive pixel is positioned under and in alignment with a transition between a corresponding one of the ridges and a corresponding adjacent one of the grooves.

15. The light field imaging device of claim 11, wherein the diffraction grating has a duty cycle different from 50%.

16. The light field imaging device of claim 11, wherein the series of ridges has a step height with respect to the series of grooves, the step height ranging from 0.2 micrometer to 1 micrometer.

17. The light field imaging device of claim 1, wherein a separation distance between the refractive index modulation pattern of the diffraction grating and a light-receiving surface of the pixel array ranges from 0.5 micrometer to 20 micrometers.

18. The light field imaging device of claim 1, wherein a separation distance between the refractive index modulation pattern of the diffraction grating and a light-receiving surface of the pixel array is less than about ten times a center wavelength of the optical wavefront.

19. The light field imaging device of claim 1, wherein a ratio of the grating period to the pixel pitch along the grating axis is substantially equal to two.

20. The light field imaging device of claim 1, wherein the plurality of light-sensitive pixels is arranged in a rectangular pixel grid defined by two orthogonal pixel axes, and wherein the grating axis is either parallel to one of the two orthogonal pixel axes or oblique to both the two orthogonal pixel axes.

21. The light field imaging device of claim 1, wherein the pixel pitch ranges from 1 micrometer to 10 micrometers.

22. The light field imaging device of claim 1, further comprising dispersive optics disposed in a light path of the optical wavefront between the scene and the diffraction grating assembly, the dispersive optics being configured to receive and spectrally disperse the optical wavefront.

23. The light field imaging device of claim 1, further comprising a microlens array disposed over the pixel array and comprising a plurality of microlenses, each microlens being optically coupled to a corresponding one of the light-sensitive pixels.

24. The light field imaging device of claim 1, further comprising pixel array circuitry disposed either under the pixel array, in a backside illumination configuration, or between the diffraction grating assembly and the pixel array, in a frontside illumination configuration.

25. The light field imaging device of claim 1, wherein the diffraction grating is one of a plurality of diffraction gratings of the diffraction grating assembly, the plurality of diffraction gratings being arranged in a two-dimensional grating array disposed over the pixel array.

26. The light field imaging device of claim 25, wherein the plurality of diffraction gratings comprises multiple sets of diffraction gratings, the grating axes of the diffraction gratings of different ones of the sets having different orientations.

27. The light field imaging device of claim 26, wherein the multiple sets of diffraction gratings comprise a first set of diffraction gratings and a second set of diffraction gratings, the grating axes of the diffraction gratings of the first set extending substantially perpendicularly to the grating axes of the diffraction gratings of the second set.

28. The light field imaging device of claim 24, wherein each diffraction grating comprises a grating substrate including a top surface having the refractive index modulation pattern formed thereon, the grating substrate comprising a spectral filter material or region configured to spectrally filter the diffracted wavefront prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels, the plurality of diffraction gratings thus forming a color filter array.

29. The light field imaging device of claim 28, wherein the grating substrate of each diffraction grating acts as one of a red pass filter, a green pass filter and a blue pass filter.

30. The light field imaging device of claim 28, wherein the color filter array is arranged in a Bayer pattern.

31. A backside-illuminated light field imaging device for capturing light field image data about a scene, the backside-illuminated light field imaging device comprising: a substrate having a front surface and a back surface; a diffraction grating assembly disposed over the back surface of the substrate and configured to receive an optical wavefront originating from the scene, the diffraction grating assembly comprising a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the diffraction grating diffracting the optical wavefront to generate a diffracted wavefront; a pixel array formed in the substrate and comprising a plurality of light-sensitive pixels configured to receive through the back surface and detect as the light field image data the diffracted wavefront, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period; and pixel array circuitry disposed under the front surface and coupled to the pixel array.

32. The backside-illuminated light field imaging device of claim 31, further comprising a color filter array disposed over the back surface and comprising a plurality of color filters arranged in a mosaic color pattern, the color filter array spatially and spectrally filtering the diffracted wavefront according to the mosaic color pattern prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels.

33. The backside-illuminated light field imaging device of claim 32, wherein the mosaic color pattern is a Bayer pattern.

34. The backside-illuminated light field imaging device of claim 31, wherein the diffraction grating is a binary phase grating and the refractive index modulation pattern comprises a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

35. The backside-illuminated light field imaging device of claim 34, wherein the diffraction grating has a duty cycle of about 50% and each light-sensitive pixel is positioned under and in alignment with either a corresponding one of the ridges or a corresponding one of the grooves.

36. The backside-illuminated light field imaging device of claim 34, wherein the diffraction grating has a duty cycle of about 50% and each light-sensitive pixel is positioned under and in alignment with a transition between a corresponding one of the ridges and a corresponding adjacent one of the grooves.

37. The backside-illuminated light field imaging device of claim 31, wherein a separation distance between the refractive index modulation pattern of the diffraction grating and a light-receiving surface of the pixel array ranges from 0.5 micrometer to 5 micrometers.

38. The backside-illuminated light field imaging device of claim 31, wherein a ratio of the grating period to the pixel pitch along the grating axis is substantially equal to two.

39. The backside-illuminated light field imaging device of claim 31, wherein the plurality of light-sensitive pixels is arranged in a rectangular pixel grid defined by two orthogonal pixel axes, and wherein the grating axis is either parallel to one of the two orthogonal pixel axes or oblique to both the two orthogonal pixel axes.

40. The backside-illuminated light field imaging device of claim 31, wherein the pixel pitch ranges from 1 micrometer to 5 micrometers.

41. The backside-illuminated light field imaging device of claim 31, further comprising dispersive optics disposed in a light path of the optical wavefront between the scene and the diffraction grating assembly, the dispersive optics being configured to receive and spectrally disperse the optical wavefront.

42. The backside-illuminated light field imaging device of claim 31, further comprising a microlens array disposed over the pixel array and comprising a plurality of microlenses, each microlens being optically coupled to a corresponding one of the light-sensitive pixels.

43. The backside-illuminated light field imaging device of claim 31, wherein the diffraction grating is one of a plurality of diffraction gratings of the diffraction grating assembly, the plurality of diffraction gratings being arranged in a two-dimensional grating array disposed over the pixel array.

44. The backside-illuminated light field imaging device of claim 43, wherein the plurality of diffraction gratings comprises multiple sets of diffraction gratings, the grating axes of the diffraction gratings of different ones of the sets having different orientations.

45. The backside-illuminated light field imaging device of claim 44, wherein the multiple sets of diffraction gratings comprise a first set of diffraction gratings and a second set of diffraction gratings, the grating axes of the diffraction gratings of the first set extending substantially perpendicularly to the grating axes of the diffraction gratings of the second set.

46. The backside-illuminated light field imaging device of claim 31, further comprising: a color filter array disposed over the back surface and comprising a plurality of color filters, each of which optically coupled to a corresponding one of the plurality of light-sensitive pixels, the color filter array spatially and spectrally filtering the diffracted wavefront prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels; and a microlens array disposed over the color filter array and comprising a plurality of microlenses, each microlens being optically coupled to a corresponding one of the plurality of the color filters, wherein the diffraction grating further comprises a grating substrate including a top surface having the refractive index modulation pattern formed thereon and a bottom surface disposed over the microlens array.

47. A light field imaging device comprising: a diffraction grating assembly comprising a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis; and a pixel array comprising a plurality of light-sensitive pixels disposed under the diffraction grating, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

48. The light field imaging device of claim 47, further comprising a color filter array disposed over the pixel array and comprising a plurality of color filters arranged in a mosaic color pattern, the color filter array spatially and spectrally filtering the diffracted wavefront according to the mosaic color pattern prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels.

49. The light field imaging device of claim 47, wherein the grating period ranges from 1 micrometer to 20 micrometers.

50. The light field imaging device of claim 47, wherein the diffraction grating is a binary phase grating and the refractive index modulation pattern comprises a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

51. The light field imaging device of claim 50, wherein the diffraction grating has a duty cycle of about 50% and each light-sensitive pixel is positioned under and in alignment with either a corresponding one of the ridges or a corresponding one of the grooves.

52. The light field imaging device of claim 50, wherein the diffraction grating has a duty cycle of about 50% and each light-sensitive pixel is positioned under and in alignment with a transition between a corresponding one of the ridges and a corresponding adjacent one of the grooves.

53. The light field imaging device of claim 47, wherein a ratio of the grating period to the pixel pitch along the grating axis is substantially equal to two.

54. The light field imaging device of claim 47, wherein the plurality of light-sensitive pixels is arranged in a rectangular pixel grid defined by two orthogonal pixel axes, and wherein the grating axis is either parallel to one of the two orthogonal pixel axes or oblique to both the two orthogonal pixel axes.

55. The light field imaging device of claim 47, further comprising dispersive optics disposed in a light path of the optical wavefront between the scene and the diffraction grating assembly, the dispersive optics being configured to receive and spectrally disperse the optical wavefront.

56. The light field imaging device of claim 47, wherein the diffraction grating is one of a plurality of diffraction gratings of the diffraction grating assembly, the plurality of diffraction gratings being arranged in a two-dimensional grating array disposed over the pixel array.

57. The light field imaging device of claim 56, wherein the plurality of diffraction gratings comprises multiple sets of diffraction gratings, the grating axes of the diffraction gratings of different ones of the sets having different orientations.

58. The light field imaging device of claim 57, wherein the multiple sets of diffraction gratings comprise a first set of diffraction gratings and a second set of diffraction gratings, the grating axes of the diffraction gratings of the first set extending substantially perpendicularly to the grating axes of the diffraction gratings of the second set.

59. A diffraction grating assembly for use with an image sensor comprising a pixel array having a plurality of light-sensitive pixels to capture light field image data about a scene, the diffraction grating assembly comprising a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the grating period being larger than a pixel pitch of the pixel array along the grating axis, the diffraction grating being configured to receive and diffract an optical wavefront originating from the scene to generate a diffracted wavefront for detection by the light-sensitive pixels as the light field image data, the diffraction grating assembly being configured to be disposed over the pixel array.

60. The diffraction grating assembly of claim 59, wherein the diffraction grating assembly is configured to be disposed over a color filter array of the image sensor, the color filter array being disposed over pixel array and configured to spatially and spectrally filter the diffracted wavefront prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels.

61. The diffraction grating assembly of claim 59, wherein the diffraction grating is configured to diffract the optical wavefront in a waveband ranging from 400 nanometers to 1550 nanometers.

62. The diffraction grating assembly of claim 59, wherein the grating period ranges from 1 micrometer to 20 micrometers.

63. The diffraction grating assembly of claim 59, wherein the diffraction grating is a binary phase grating and the refractive index modulation pattern comprises a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

64. The diffraction grating assembly of claim 59, wherein a ratio of the grating period to the pixel pitch along the grating axis is substantially equal to two.

65. The diffraction grating assembly of claim 59, wherein the diffraction grating is one of a plurality of diffraction gratings of the diffraction grating assembly, the plurality of diffraction gratings being arranged in a two-dimensional grating array disposed over the pixel array.

66. The diffraction grating assembly of claim 65, wherein the plurality of diffraction gratings comprises multiple sets of diffraction gratings, the grating axes of the diffraction gratings of different ones of the sets having different orientations.

67. The diffraction grating assembly of claim 66, wherein the multiple sets of diffraction gratings comprise a first set of diffraction gratings and a second set of diffraction gratings, the grating axes of the diffraction gratings of the first set extending substantially perpendicularly to the grating axes of the diffraction gratings of the second set.

68. The diffraction grating assembly of claim 59, wherein each diffraction gratings includes between two and ten repetitions of the grating period.

69. A method of capturing light field image data about a scene, the method comprising: diffracting an optical wavefront originating from the scene with a diffraction grating having a grating period along a grating axis to generate a diffracted wavefront; and detecting the diffracted wavefront as the light field image data with a pixel array comprising a plurality of light-sensitive pixels disposed under the diffraction grating, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

70. The method of claim 69, further comprising spatio-spectrally filtering the diffracted wavefront with a color filter array prior to detecting the diffracted wavefront with the plurality of light-sensitive pixels.

71. The method of claim 69, wherein diffracting the optical wavefront originating from the scene comprises diffracting the optical wavefront in a waveband ranging from 400 nanometers to 1550 nanometers.

72. The method of claim 69, further comprising selecting the grating period in a range between 1 micrometer to 20 micrometers.

73. The method of claim 69, further comprising providing the diffraction grating as a binary phase grating comprising a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

74. The method of claim 73, further comprising providing the diffraction grating with a duty cycle of about 50% and positioning each light-sensitive pixel under and in alignment with either a corresponding one of the ridges or a corresponding one of the grooves, or under and in alignment with a transition between a corresponding one of the ridges and a corresponding adjacent one of the grooves.

75. The method of claim 73, further comprising setting a step height of the ridges relative to the grooves to control an optical path difference between adjacent ones of the ridges and grooves.

76. The method of claim 69, further comprising setting a separation distance between the refractive index modulation pattern of the diffraction grating and a light-receiving surface of the pixel array to less than about ten times a center wavelength of the optical wavefront.

77. The method of claim 69, further comprising setting a ratio of the grating period to the pixel pitch along the grating axis to be substantially equal to two.

78. The method of claim 69, further comprising providing the plurality of light-sensitive pixels in a rectangular pixel grid defined by two orthogonal pixel axes, and orienting the grating axis either parallel to one of the two orthogonal pixel axes or oblique to both the two orthogonal pixel axes.

79. The method of claim 69, further comprising spectrally dispersing the optical wavefront prior to diffracting the optical wavefront.

80. A method of providing three-dimensional imaging capabilities to an image sensor viewing a scene and comprising a pixel array having a plurality of light-sensitive pixels, the method comprising: disposing a diffraction grating assembly in front of the image sensor, the diffraction grating assembly comprising a diffraction grating having a grating axis and a grating period along the grating axis, the grating period being larger than a pixel pitch of the pixel array along the grating axis; receiving and diffracting an optical wavefront originating from the scene with the diffraction grating to generate a diffracted wavefront; and detecting the diffracted wavefront with the light-sensitive pixels.

81. The method of claim 80, further comprising spatio-spectrally filtering the diffracted wavefront with a color filter array prior to detecting the diffracted wavefront by the plurality of light-sensitive pixels.

82. The method of claim 80, further comprising selecting the grating period in a range between 1 micrometer to 20 micrometers.

83. The method of claim 80, further comprising providing the diffraction grating as a binary phase grating comprising a series of ridges periodically spaced-apart at the grating period, interleaved with a series of grooves periodically spaced-apart at the grating period.

84. The method of claim 83, further comprising providing the diffraction grating with a duty cycle of about 50% and positioning the diffraction grating assembly over the pixel array such that either each ridge and each groove extends over and in alignment with a corresponding one of the light-sensitive pixels, or each transition between the interleaved ridges and grooves extends over and in alignment with a corresponding one of the light-sensitive pixels.

85. The method of claim 83, further comprising setting a step height of the ridges relative to the grooves to control an optical path difference between adjacent ones of the ridges and grooves.

86. The method of claim 80, wherein disposing the diffraction grating assembly in front of the image sensor comprises positioning the diffraction grating assembly at a separation distance from the pixel array selected such that an optical path length of the diffracted wavefront prior to being detected with the light-sensitive pixels is less than about ten times a center wavelength of the optical wavefront.

87. The method of claim 80, further comprising setting the grating period equal to substantially twice the pixel pitch along the grating axis.

88. The method of claim 80, wherein disposing the diffraction grating assembly in front of the image sensor comprises orienting the grating axis either parallel to one of two orthogonal pixel axes of the pixel array or oblique to both the two orthogonal pixel axes.

89. The method of claim 80, further comprising spectrally dispersing the optical wavefront prior to diffracting the optical wavefront.

Description

TECHNICAL FIELD

[0001] The general technical field relates to imaging systems and methods and, more particularly, to a light field imaging device and method for depth acquisition and three-dimensional (3D) imaging.

BACKGROUND

[0002] Traditional imaging hardware involves the projection of complex three-dimensional (3D) scenes onto simplified two-dimensional (2D) planes, forgoing dimensionality inherent in the incident light. This loss of information is a direct result of the nature of square-law detectors, such as charge-coupled devices (CCD) or complementary metal-oxide-semiconductor (CMOS) sensor arrays, which can only directly measure the time-averaged intensity I of the incident light, not its phase, .phi., or wave vector, k, or angular frequency, w:

I.about.<E(t)>; where E(t)=E.sub.0 cos({right arrow over (k)}{right arrow over (r)}-.omega.t+.phi.). (1)

Working within this constraint, plenoptic cameras are forced to recover depth information through either the comparative analysis of multiple simultaneously acquired images, complicated machine learning and/or reconstruction techniques, or the use of active illuminators and sensors. Plenoptic cameras generally describe a scene through the "plenoptic function" which parameterizes a light field impingent on an observer or point by:

P=P(x,y,.lamda.,t,V.sub.x,V.sub.y,V.sub.z,p), (2)

[0003] where the x and y coordinates define a certain image plane at time t, for wavelength A, and polarization angle p, as witnessed by an observer at location (V.sub.x, V.sub.y, V.sub.z). While they may be single- or multi-sensor based systems, current plenoptic cameras can rely, at minimum, solely on the intensity of light detected by any given pixel of a sensor array. More practically, existing solutions, such as stereovision or microlensing, sacrifice overall image quality and sensor footprint by employing multiple sensors or sensor segmentation to accommodate the various fields of view required to discern depth.

[0004] Random binary occlusion masks and coded apertures are other existing approaches that provide single-sensor solutions with minimal impact on packaging or overall footprint. However, despite advances in compressed sensing and non-linear reconstruction techniques, these solutions remain hindered by the massive image dictionaries and computational expense involved.

[0005] Time-of-flight and structured-light based techniques actively illuminate a scene with pulsed, patterned, or modulated continuous-wave infrared light, and determine depth via the full return-trip travel time or subtle changes in the illuminated light pattern. While these techniques do not suffer from image segmentation, they generally require additional active infrared emitters and detectors which both increase power consumption as well as overall device footprint. Similarly, these techniques tend to be sensitive to interfering signals, specular reflections, and ambient infrared light, thus limiting their viability outdoors.

[0006] Challenges therefore remain in the field of light field imaging.

SUMMARY

[0007] The present description generally relates to light field imaging techniques for depth mapping and other 3D imaging applications.

[0008] In accordance with an aspect, there is provided a light field imaging device for capturing light field image data about a scene, the light field imaging device including: [0009] a diffraction grating assembly configured to receive an optical wavefront originating from the scene, the diffraction grating assembly including a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the diffraction grating diffracting the optical wavefront to generate a diffracted wavefront; and [0010] a pixel array including a plurality of light-sensitive pixels disposed under the diffraction grating assembly and detecting the diffracted wavefront as the light field image data, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

[0011] In some implementations, the diffracted wavefront has an intensity profile along the grating axis, and the pixel array is separated from the diffraction grating by a separation distance at which the intensity profile of the diffracted wavefront has a spatial period that substantially matches the grating period.

[0012] In accordance with another aspect, there is provided a backside-illuminated light field imaging device for capturing light field image data about a scene, the backside-illuminated light field imaging device including: [0013] a substrate having a front surface and a back surface; [0014] a diffraction grating assembly disposed over the back surface of the substrate and configured to receive an optical wavefront originating from the scene, the diffraction grating assembly including a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the diffraction grating diffracting the optical wavefront to generate a diffracted wavefront; [0015] a pixel array formed in the substrate and including a plurality of light-sensitive pixels configured to receive through the back surface and detect as the light field image data the diffracted wavefront, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period; and [0016] pixel array circuitry disposed under the front surface and coupled to the pixel array.

[0017] In some implementations, the diffracted wavefront has an intensity profile along the grating axis, and the pixel array is separated from the diffraction grating by a separation distance at which the intensity profile of the diffracted wavefront has a spatial period that substantially matches the grating period.

[0018] In accordance with another aspect, there is provided a light field imaging device including: [0019] a diffraction grating assembly including a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis; and [0020] a pixel array including a plurality of light-sensitive pixels disposed under the diffraction grating, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

[0021] In accordance with another aspect, there is provided a diffraction grating assembly for use with an image sensor including a pixel array having a plurality of light-sensitive pixels to capture light field image data about a scene, the diffraction grating assembly including a diffraction grating having a grating axis and a refractive index modulation pattern having a grating period along the grating axis, the grating period being larger than a pixel pitch of the pixel array along the grating axis, the diffraction grating being configured to receive and diffract an optical wavefront originating from the scene to generate a diffracted wavefront for detection by the light-sensitive pixels as the light field image data, the diffraction grating assembly being configured to be disposed over the pixel array. In some implementations, the diffraction grating assembly is configured to be separated from the pixel array by a separation distance at which the diffracted wavefront has an intensity profile along the grating axis with a spatial period that substantially matches the grating period.

[0022] In accordance with another aspect, there is provided a method of capturing light field image data about a scene, the method including: [0023] diffracting an optical wavefront originating from the scene with a diffraction grating having a grating period along a grating axis to generate a diffracted wavefront; and [0024] detecting the diffracted wavefront as the light field image data with a pixel array including a plurality of light-sensitive pixels disposed under the diffraction grating, the pixel array having a pixel pitch along the grating axis that is smaller than the grating period.

[0025] In some implementations, the diffracted wavefront has an intensity profile along the grating axis, and the pixel array is separated from the diffraction grating by a separation distance at which the intensity profile of the diffracted wavefront has a spatial period that substantially matches the grating period.

[0026] In accordance with another aspect, there is provided a method of providing three-dimensional imaging capabilities to an image sensor viewing a scene and including a pixel array having a plurality of light-sensitive pixels, the method including: [0027] disposing a diffraction grating assembly in front of the image sensor, the diffraction grating assembly including a diffraction grating having a grating axis and a grating period along the grating axis, the grating period being larger than a pixel pitch of the pixel array along the grating axis; [0028] receiving and diffracting an optical wavefront originating from the scene with the diffraction grating to generate a diffracted wavefront; and [0029] detecting the diffracted wavefront with the light-sensitive pixels.

[0030] In some implementations, disposing the diffraction grating assembly in front of the image sensor includes positioning the diffraction grating assembly at a separation distance from the pixel array at which the diffracted wavefront has an intensity profile along the grating axis with a spatial period that substantially matches the grating period.

[0031] In some implementations, the light field imaging device can include an array of light-sensitive elements; an array of color filters overlying and aligned with the array of photosensitive elements such that each color filter covers at least one of the light-sensitive elements, the color filters being spatially arranged according to a mosaic color pattern; and a diffraction grating structure extending over the array of color filters.

[0032] In some implementations, the light field imaging device can include a diffraction grating structure exposed to an optical wavefront incident from a scene, the diffraction grating structure diffracting the optical wavefront to produce a diffracted wavefront; an array of color filters spatially arranged according to a mosaic color pattern, the array of color filters extending under the diffraction grating structure and spatio-chromatically filtering the diffracted wavefront according to the mosaic color pattern to produce a filtered wavefront including a plurality of spatially distributed wavefront components; and an array of light-sensitive elements detecting the filtered wavefront as light field image data, the array of light-sensitive elements underlying and being aligned with the array of color filters such that each light-sensitive element detects at least a corresponding one of the spatially distributed wavefront components.

[0033] In some implementations, the method can include diffracting an optical wavefront incident from a scene to produce a diffracted wavefront; filtering the diffracted wavefront through an array of color filters spatially arranged according to a mosaic color pattern, thereby obtaining a filtered wavefront including a plurality of spatially distributed wavefront components; and detecting the filtered wavefront as light field image data with an array of light-sensitive elements underlying and aligned with the array of color filters such that each light-sensitive element detects at least part of a corresponding one of the spatially distributed wavefront components.

[0034] In some implementations, the method can include diffracting an optical wavefront incident from a scene to produce a diffracted wavefront; spectrally and spatially filtering the diffracted wavefront to produce a filtered wavefront including a plurality of spatially distributed and spectrally filtered wavefront components; and detecting as light field image data the plurality of spatially distributed and spectrally filtered wavefront components at a plurality of arrayed light-sensitive elements.

[0035] Other features and advantages of the present description will become more apparent upon reading of the following non-restrictive description of specific embodiments thereof, given by way of example only with reference to the appended drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

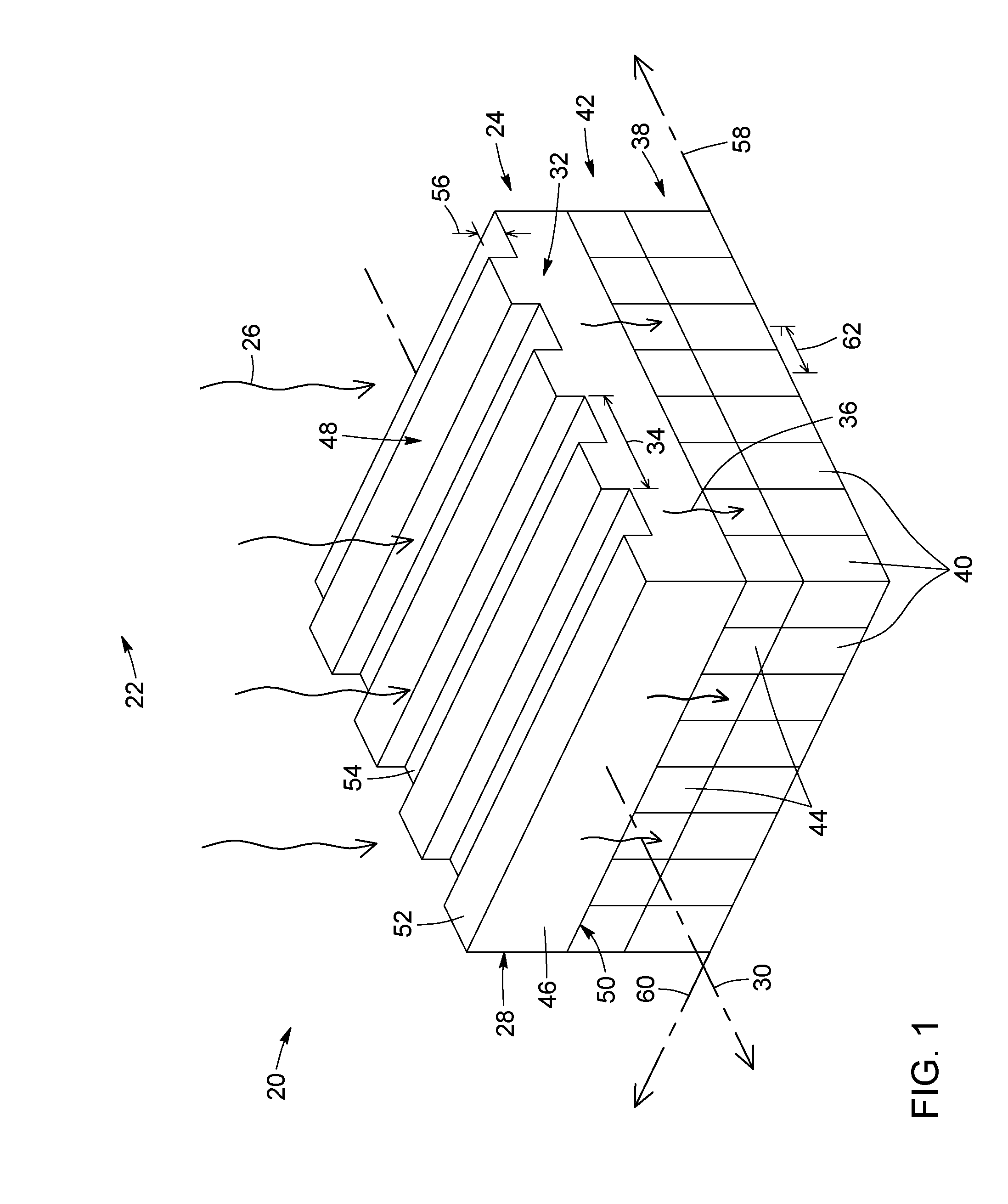

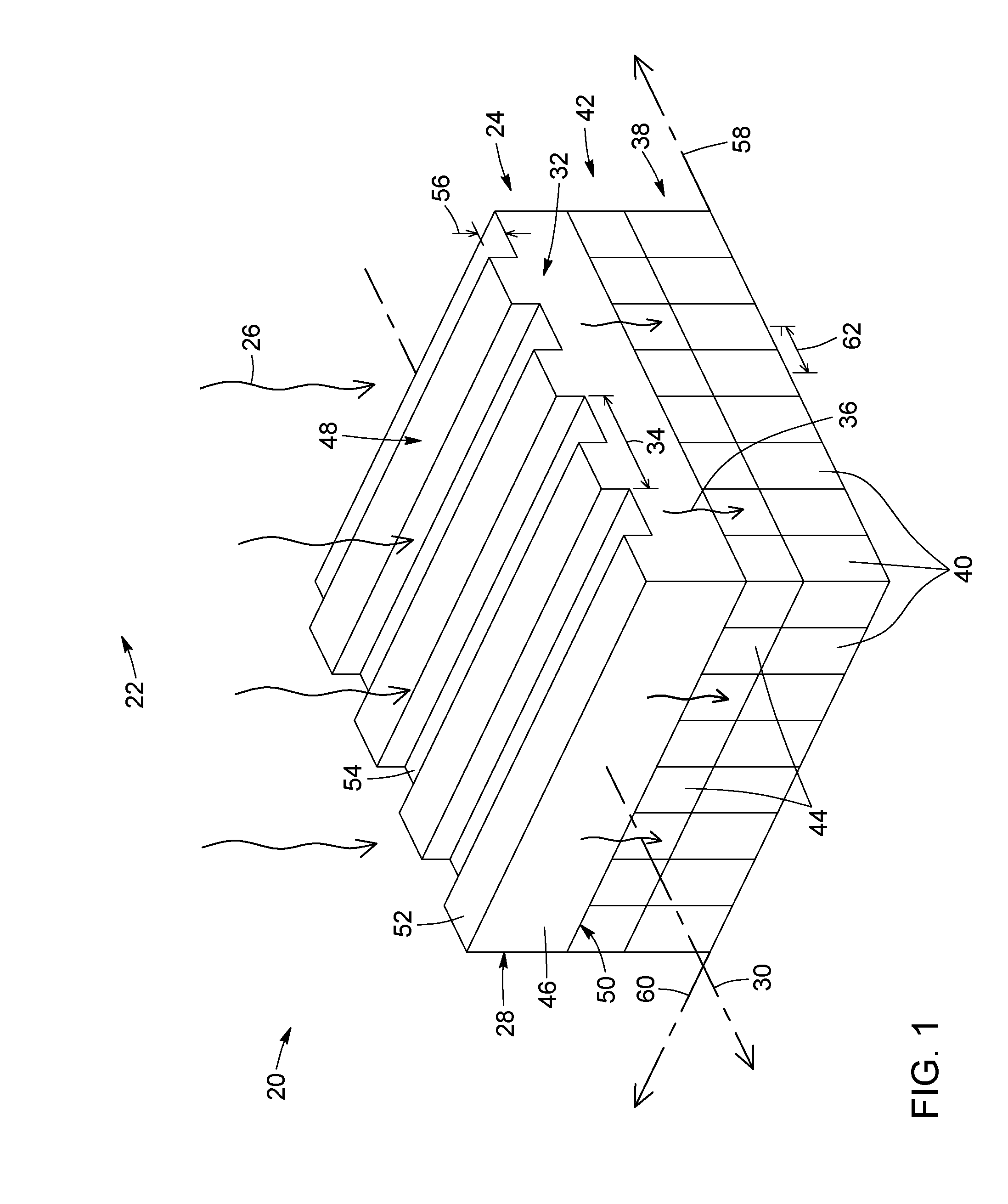

[0036] FIG. 1 is a schematic perspective view of a light field imaging device, in accordance with a possible embodiment.

[0037] FIG. 2 is a schematic partially exploded perspective view of the light field imaging device of FIG. 1.

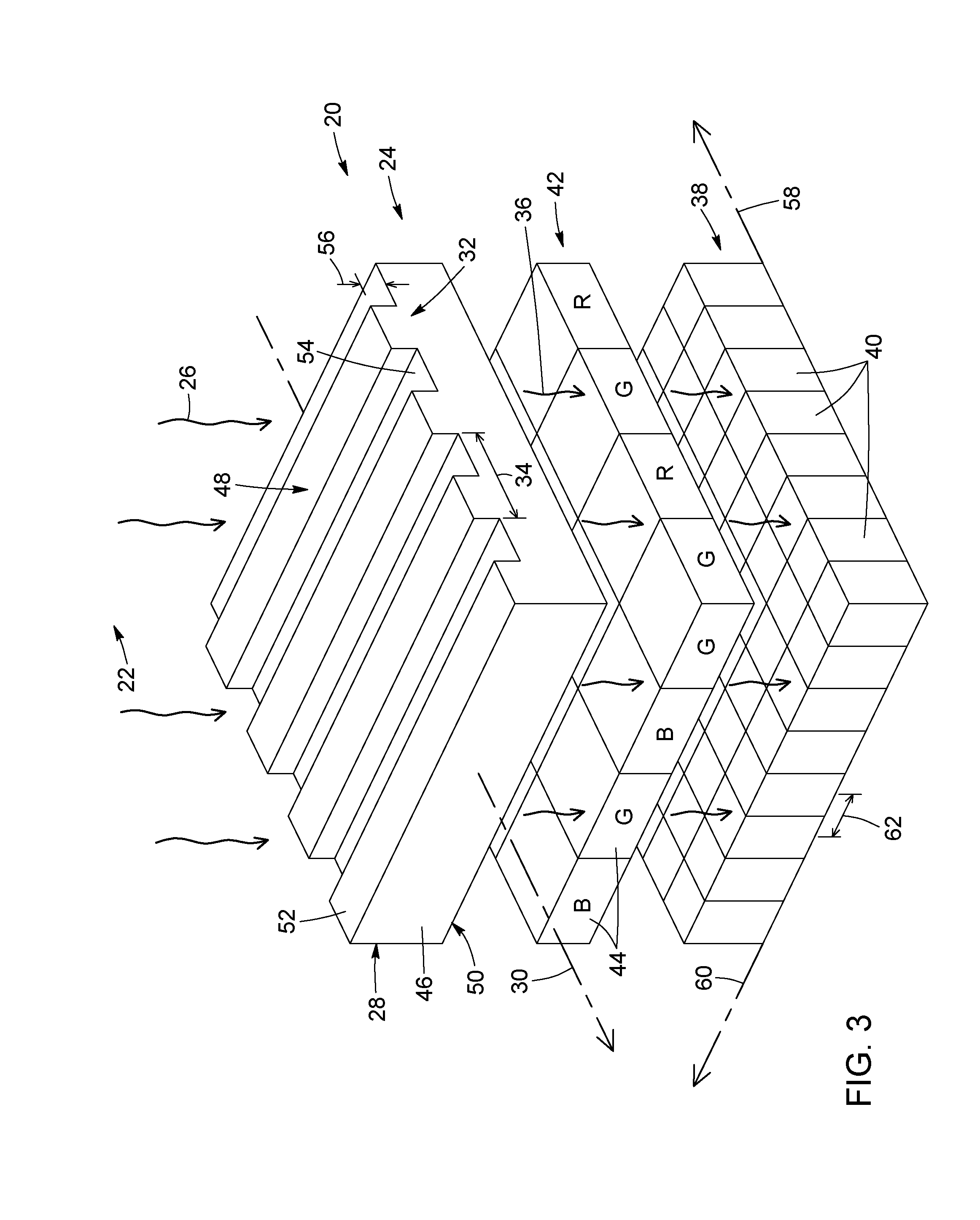

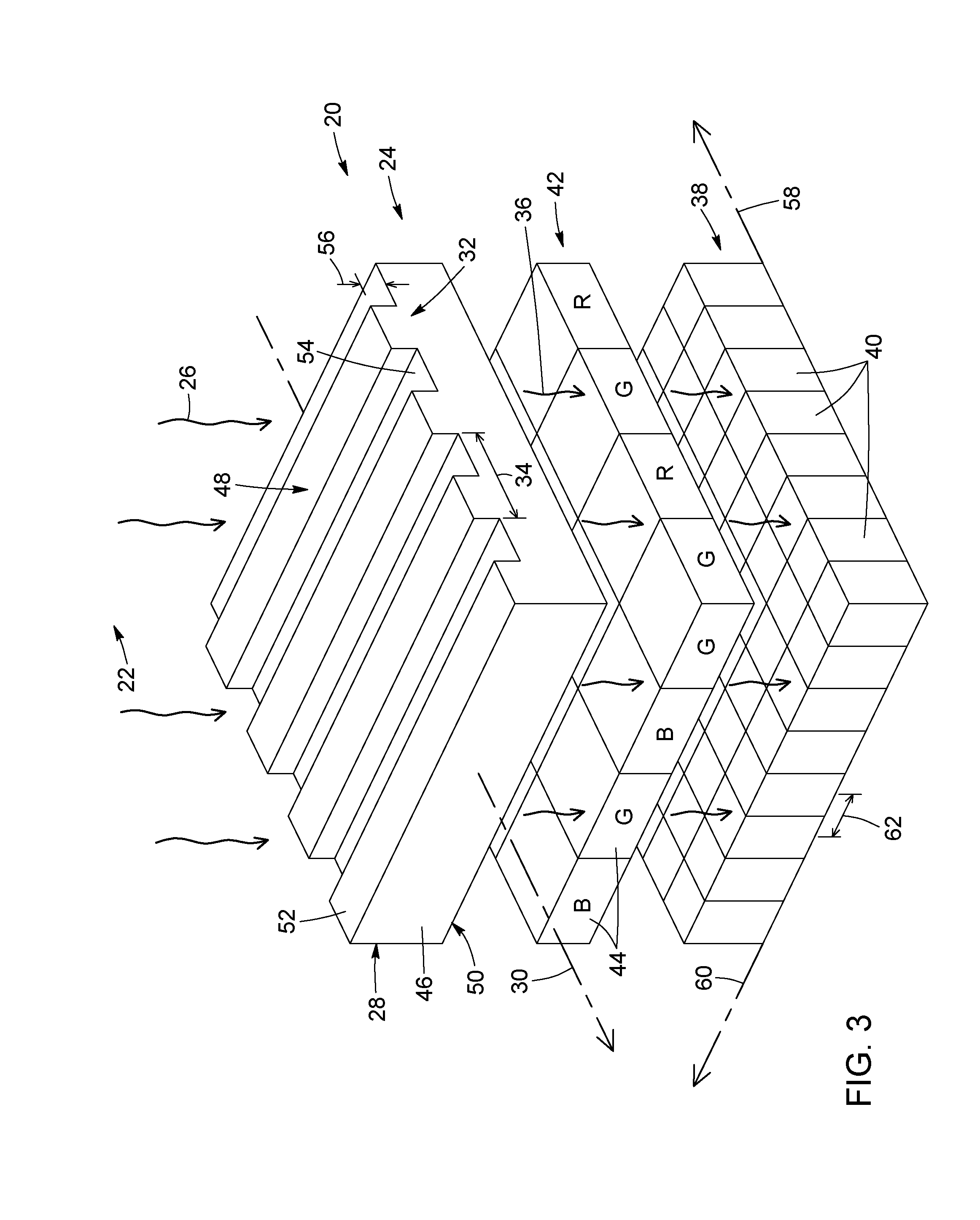

[0038] FIG. 3 is a schematic partially exploded perspective view of a light field imaging device, in accordance with another possible embodiment, where each color filter overlies a 2.times.2 block of light-sensitive pixels.

[0039] FIG. 4 is a schematic perspective view of a light field imaging device, in accordance with another possible embodiment, where the light field imaging device is configured for monochrome imaging applications.

[0040] FIG. 5 is a schematic partially exploded perspective view of the light field imaging device of FIG. 4.

[0041] FIG. 6 is a schematic partially exploded perspective view of the light field imaging device, in accordance with another possible embodiment, where the light field imaging device includes a microlens array on top of the color filter array.

[0042] FIG. 7 is a schematic partially exploded side view of a light field imaging device, in accordance with another possible embodiment, where the propagation of a wavefront of light through the device is schematically depicted. The light field imaging device of FIG. 7 is suitable for monochrome imaging applications.

[0043] FIGS. 8A to 8C are schematic partially exploded side views of three other possible embodiments of a light field imaging device, where the propagation of a wavefront of light through the device is schematically depicted. In FIG. 8A, each light-sensitive pixel is vertically aligned with a transition between one ridge and one groove. In FIG. 8B, the ratio of the grating period to the pixel pitch along the grating axis is equal to four. In FIG. 8C, the duty cycle of the diffraction grating is different from 50%.

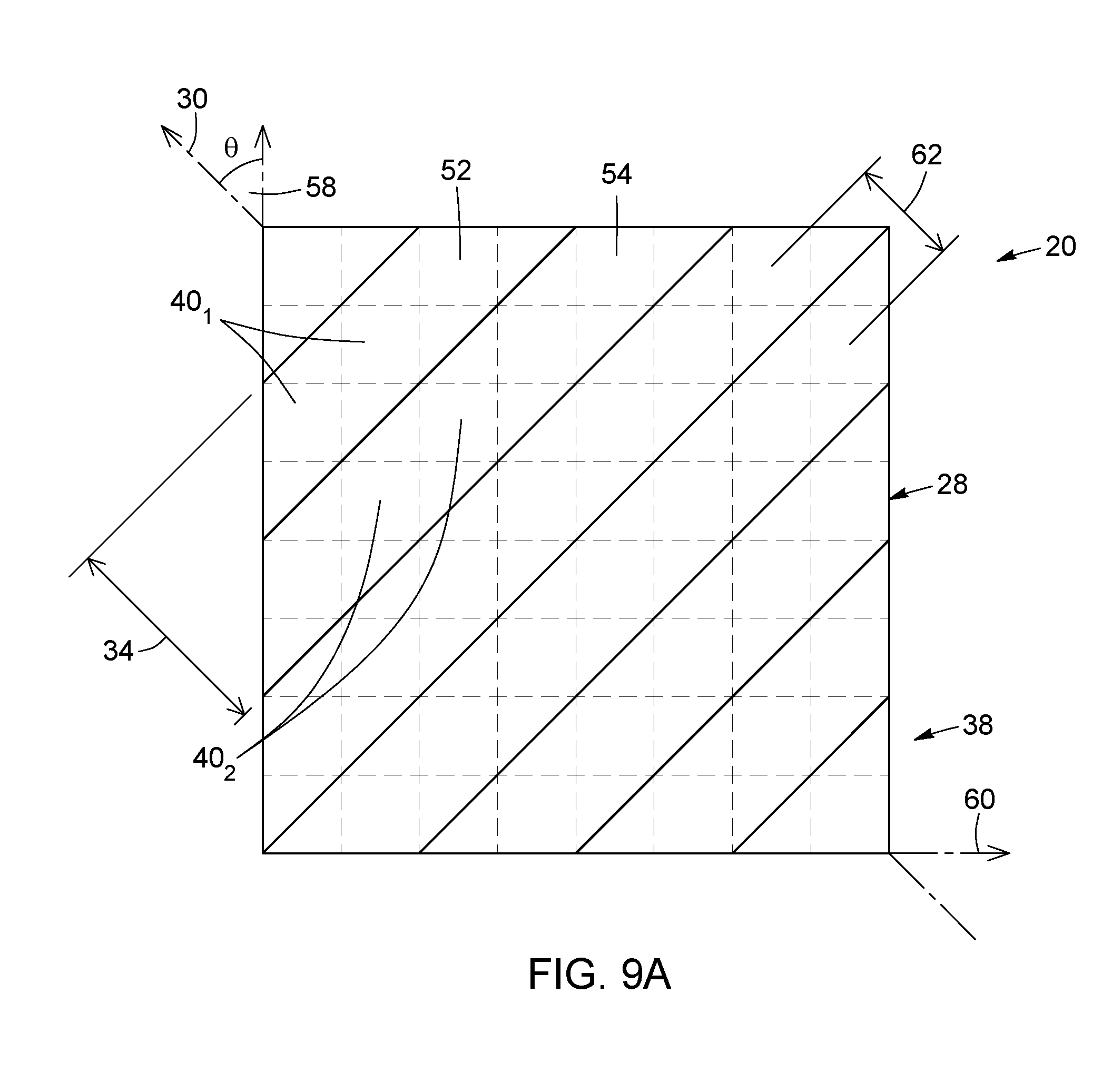

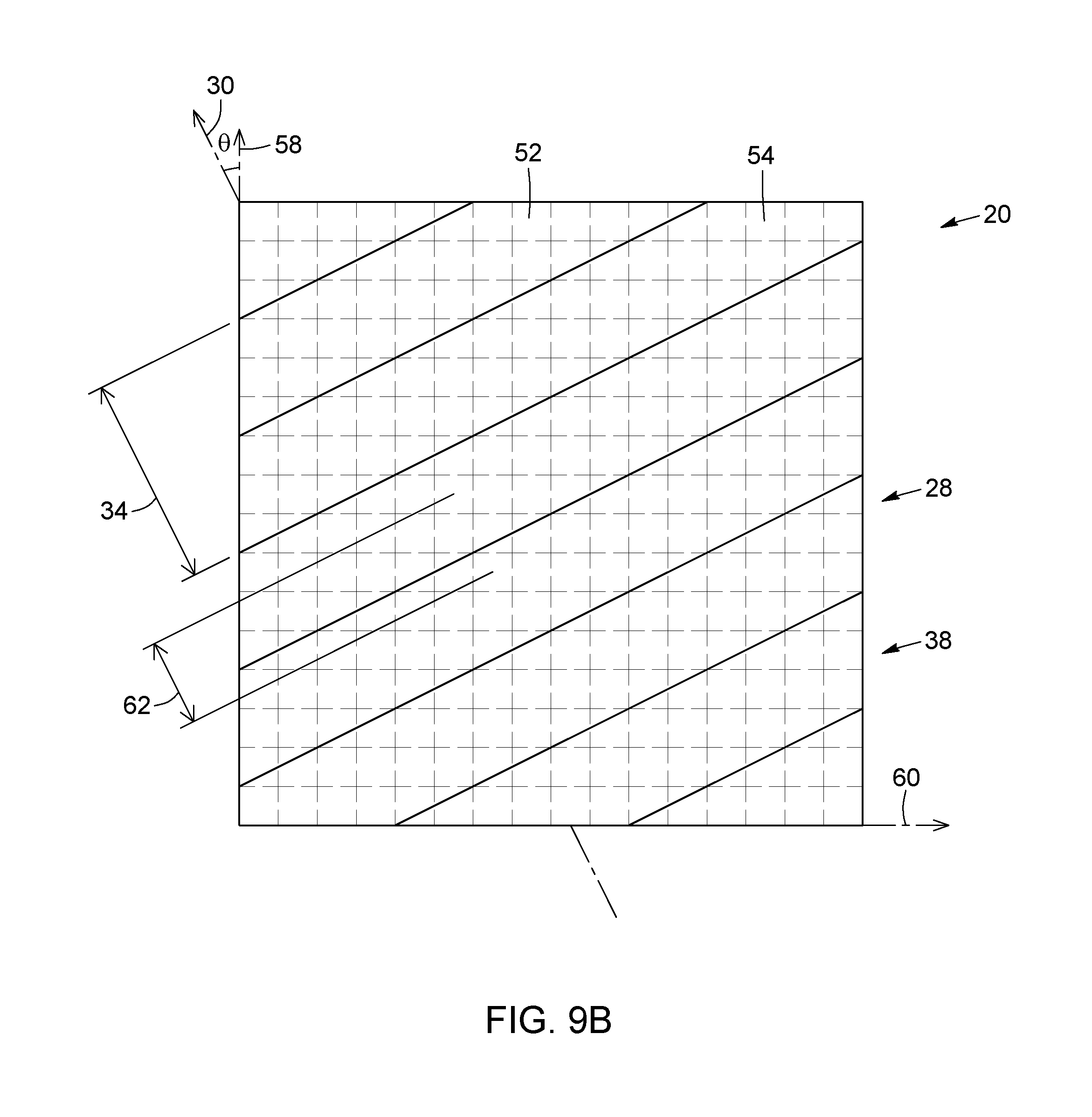

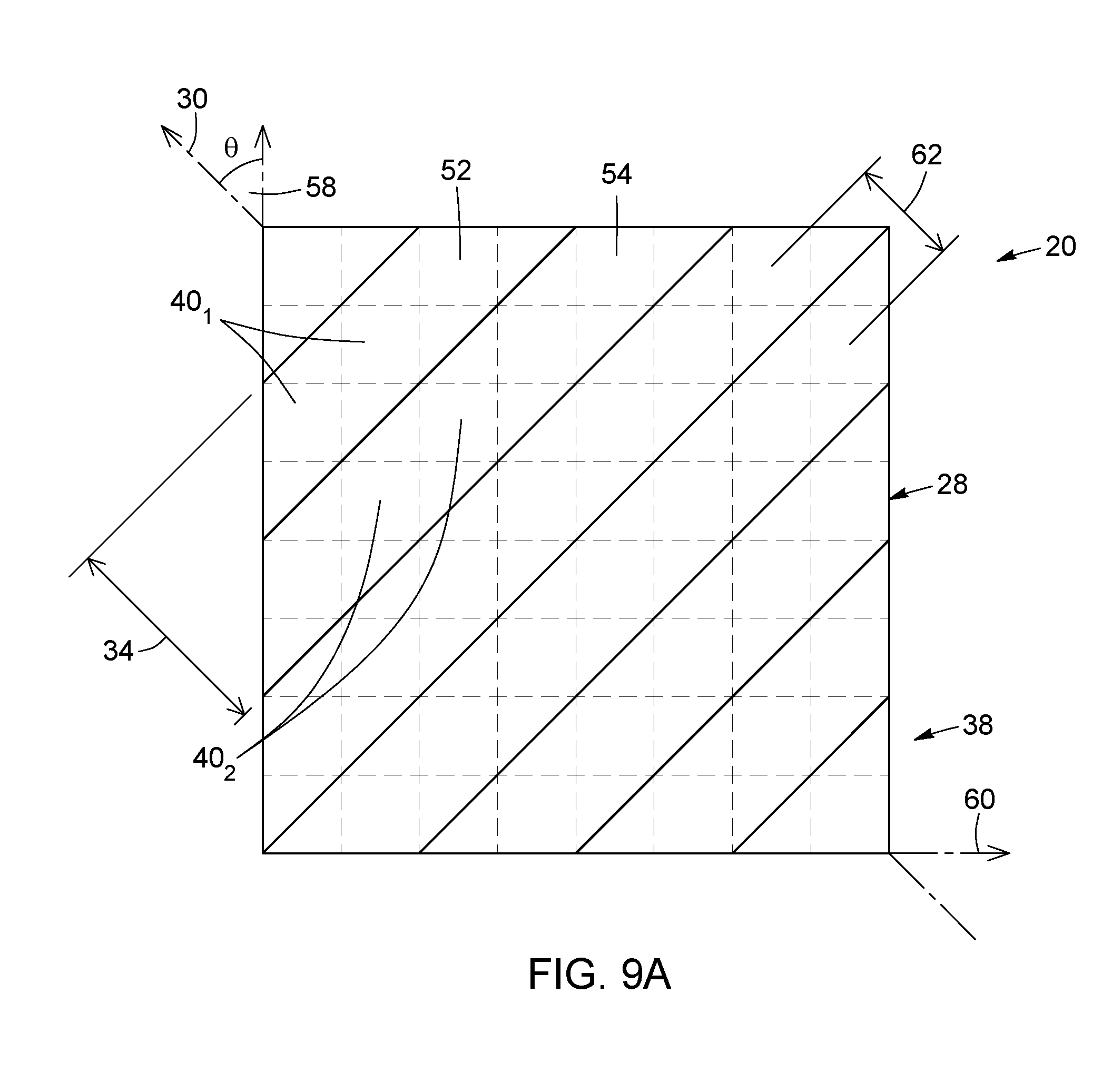

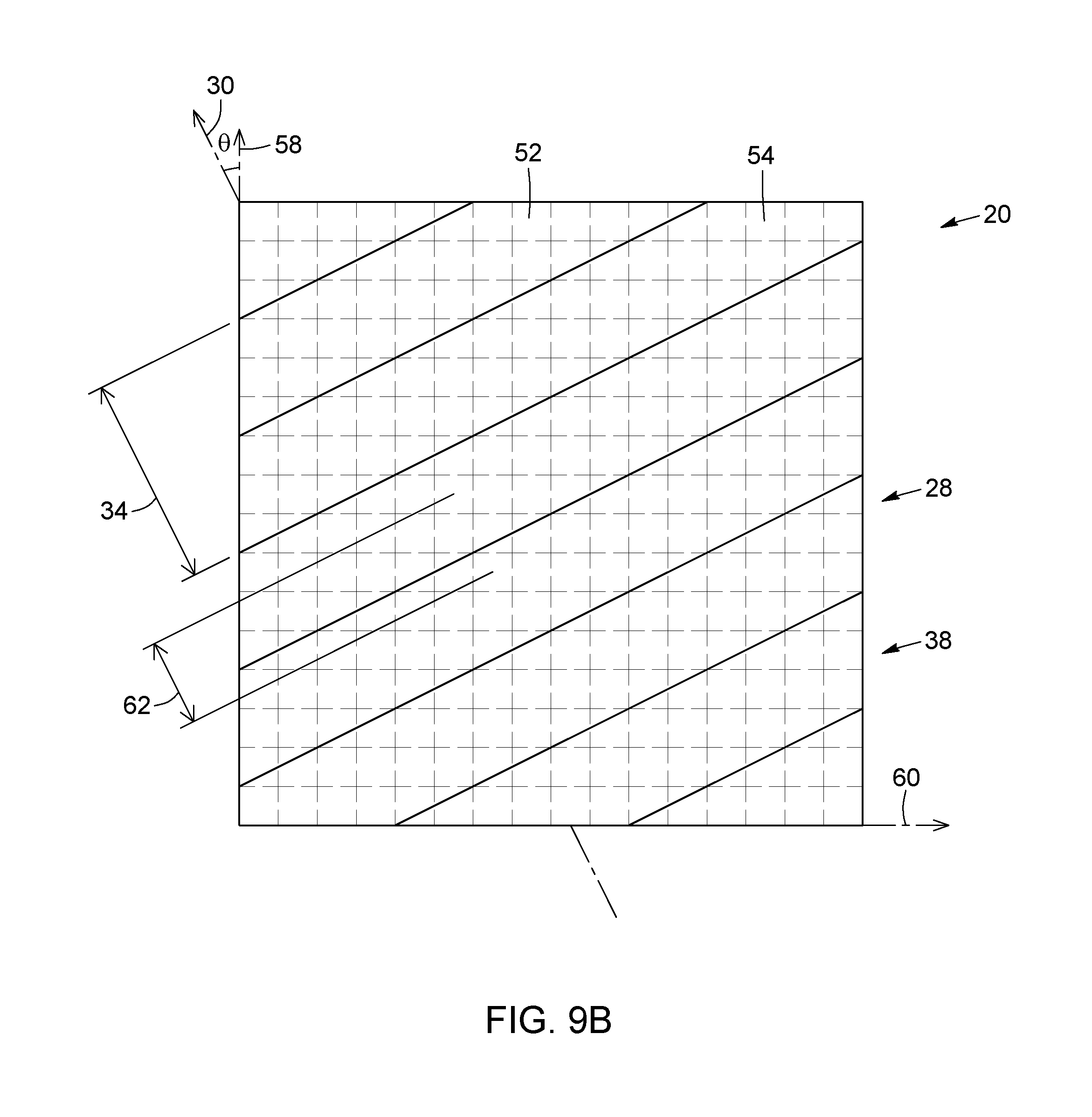

[0044] FIGS. 9A and 9B are schematic partially transparent top views of two other possible embodiments of a light field imaging device, where the grating axis of the diffraction grating is oblique to either of the two orthogonal pixel axes.

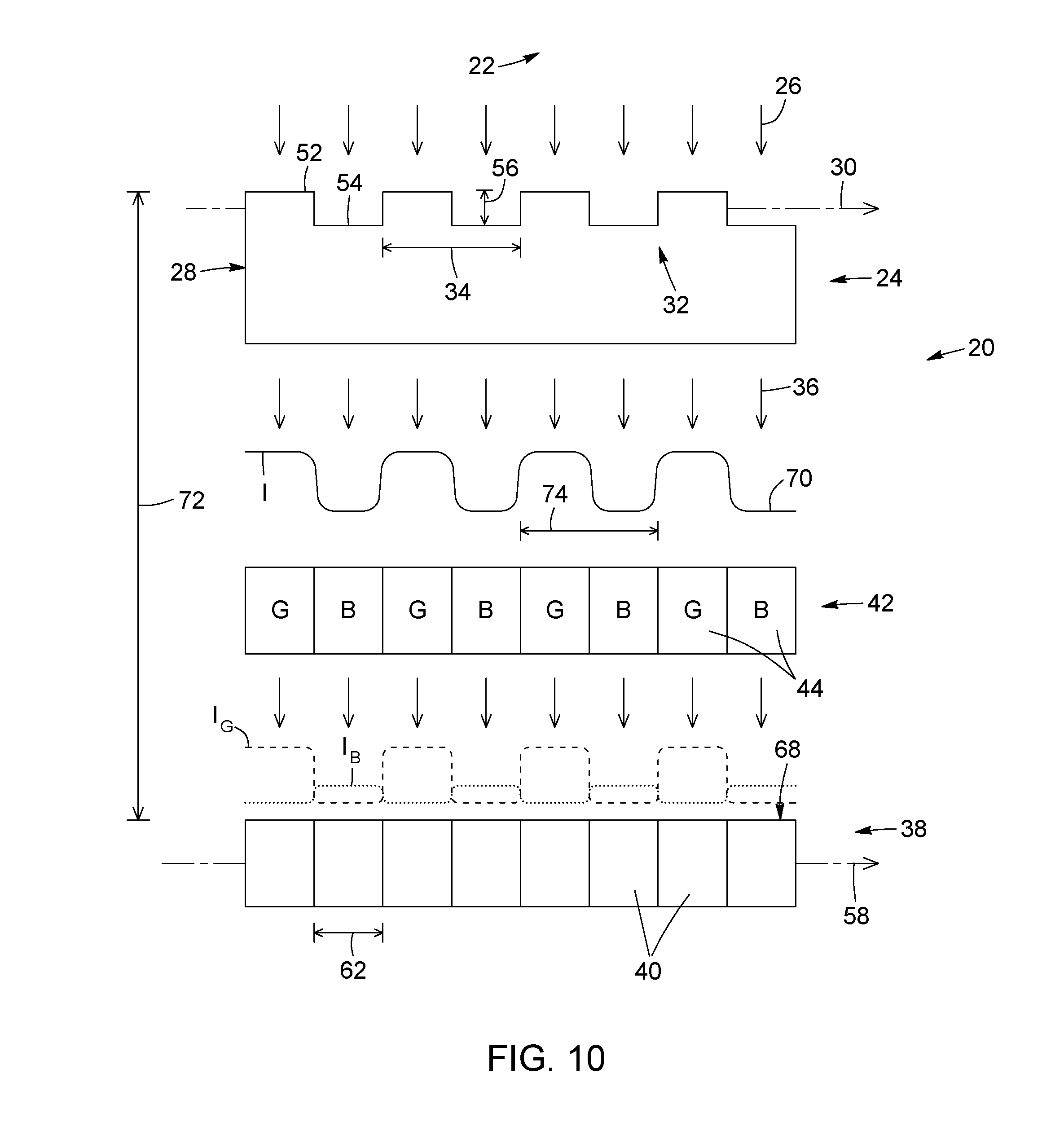

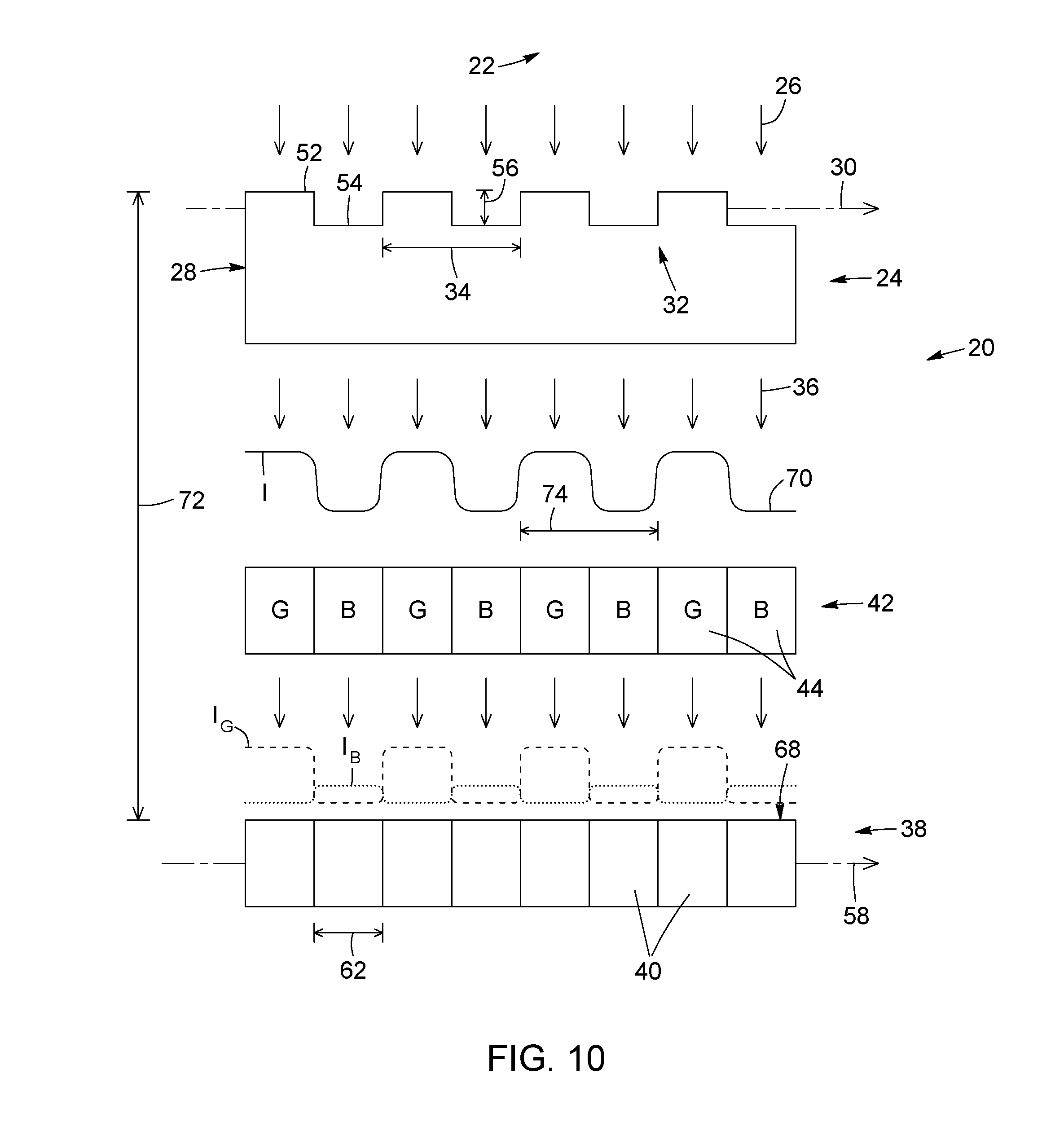

[0045] FIG. 10 is a schematic partially exploded side view of a light field imaging device, in accordance with another possible embodiment, where the propagation of a wavefront of light through the device is schematically depicted. The light field imaging device of FIG. 10 is suitable for color imaging applications.

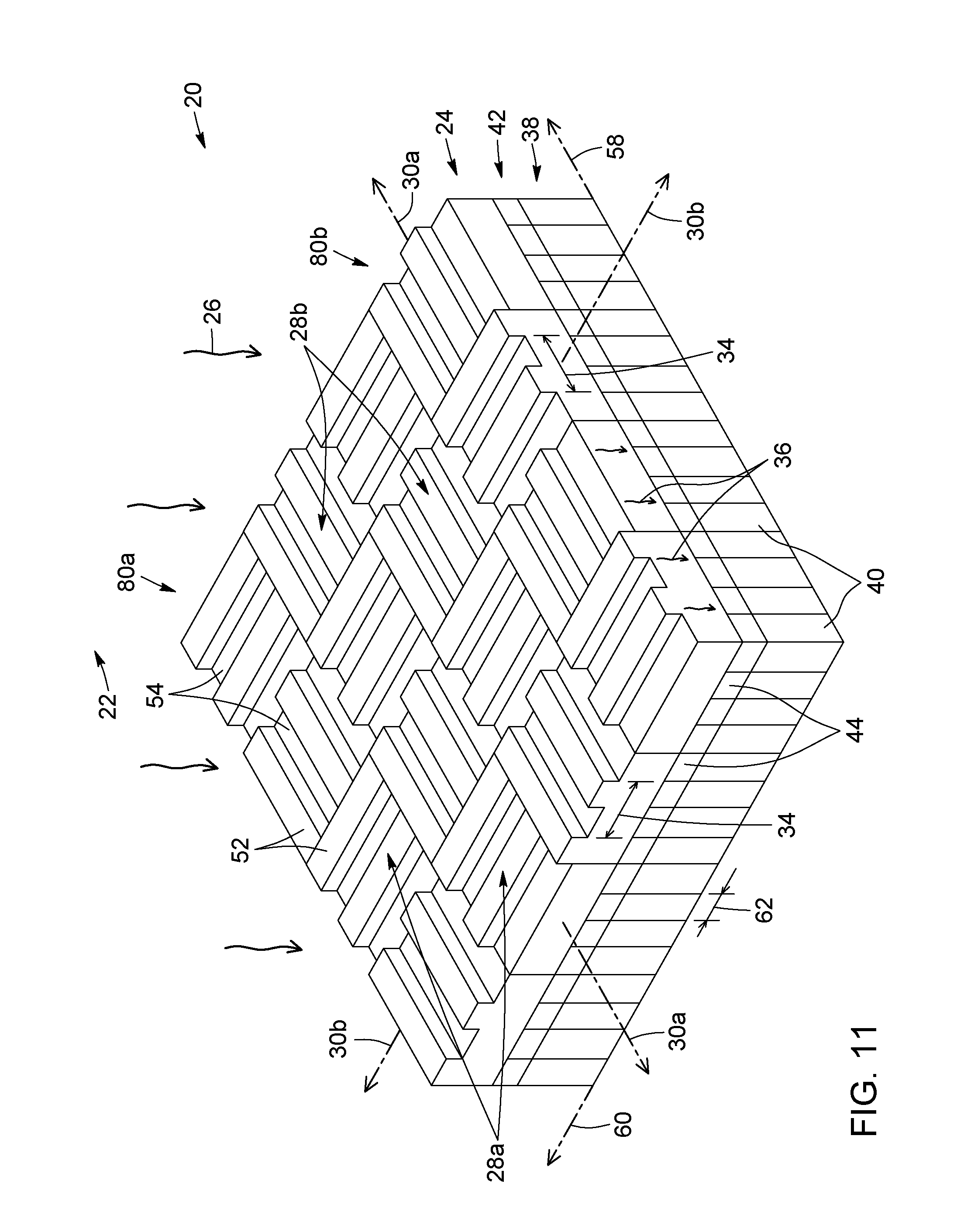

[0046] FIG. 11 is a schematic perspective view of a light field imaging device, in accordance with another possible embodiment, where the diffracting grating assembly includes two sets of orthogonally oriented diffracting gratings arranged to alternate in both rows and columns to define a checkerboard pattern.

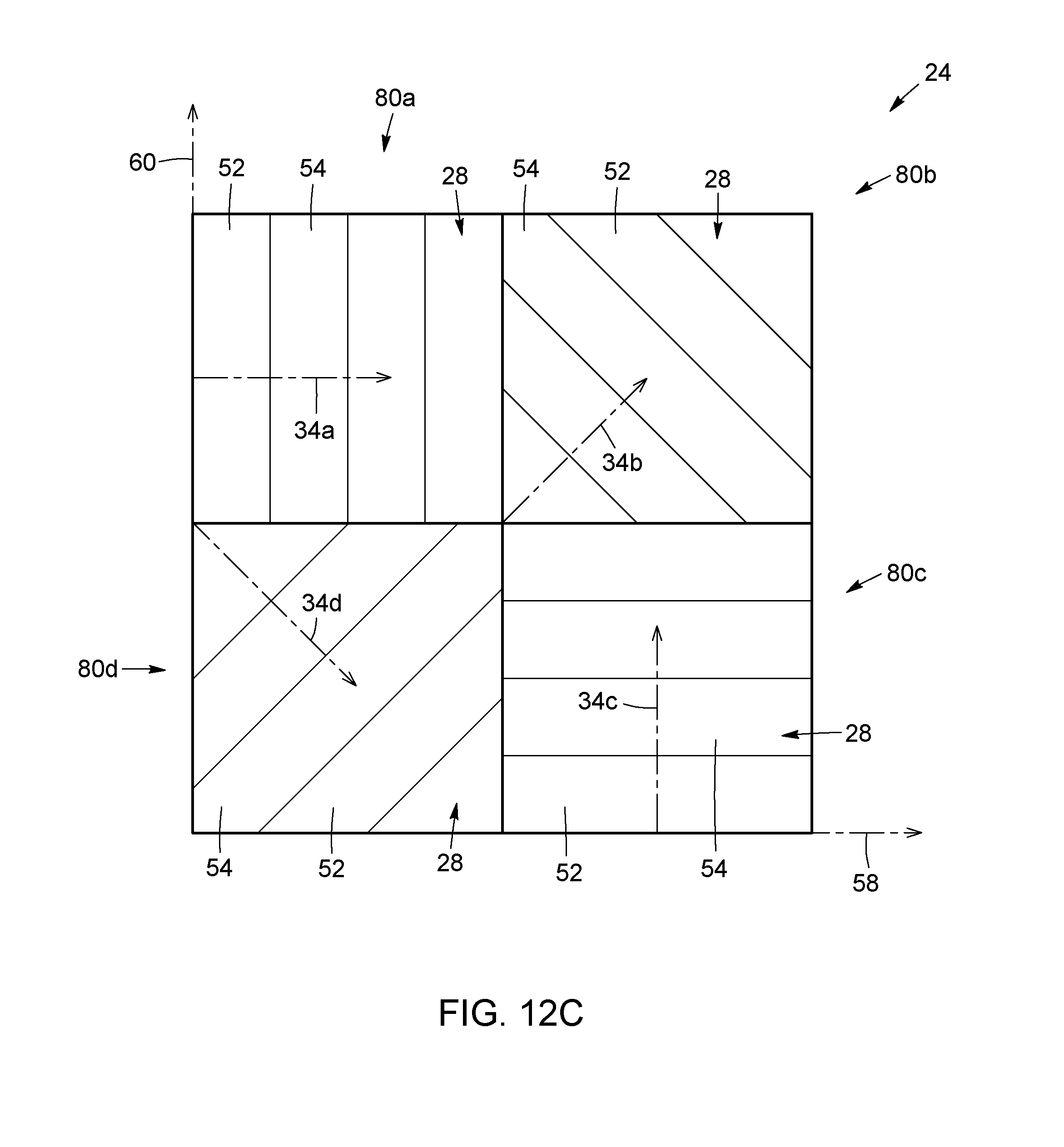

[0047] FIGS. 12A to 12C illustrate alternative embodiments of diffraction grating assemblies including a plurality of diffraction gratings arranged in a two-dimensional array.

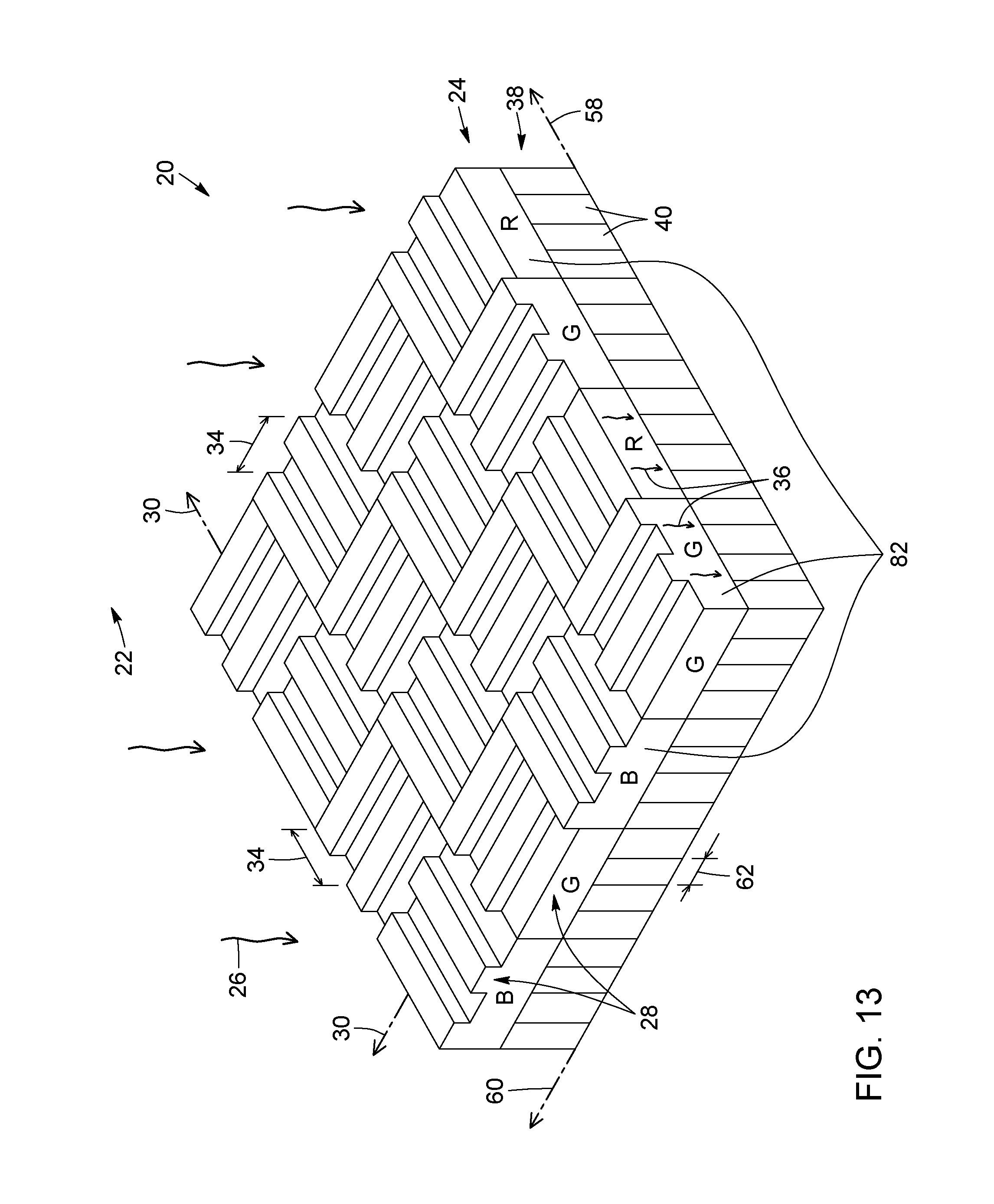

[0048] FIG. 13 is a schematic perspective view of a light field imaging device, in accordance with another possible embodiment, where the diffracting grating assembly includes a plurality of diffraction gratings forming an array of color filters, each of which embodied by a respective one of the diffraction gratings.

[0049] FIG. 14 is a schematic side view of a light field imaging device, in accordance with another possible embodiment, where the light field imaging device includes dispersive optics disposed in front of the diffraction grating assembly to spatio-spectrally spread the optical wavefront originating from the scene prior to it reaching the diffraction grating assembly.

[0050] FIG. 15 is a schematic side view of a light field imaging device in a frontside illumination configuration, in accordance with another possible embodiment.

[0051] FIG. 16 is a schematic side view of a light field imaging device in a backside illumination configuration, in accordance with another possible embodiment.

[0052] FIG. 17 is a schematic perspective view of a diffraction grating assembly for use in an image sensor including a pixel array having a plurality of light-sensitive pixels to capture light field image data about a scene, in accordance with a possible embodiment.

[0053] FIG. 18 is a flow diagram of a method of capturing light field image data about a scene, in accordance with a possible embodiment.

[0054] FIG. 19 is a flow diagram of a method of providing 3D imaging capabilities to an image sensor viewing a scene and including an array of light-sensitive pixels, in accordance with a possible embodiment.

DETAILED DESCRIPTION

[0055] In the present description, similar features in the drawings have been given similar reference numerals, and, to not unduly encumber the figures, some elements may not be indicated on some figures if they were already identified in a preceding figure. It should also be understood that the elements of the drawings are not necessarily depicted to scale, since emphasis is placed upon clearly illustrating the elements and structures of the present embodiments.

[0056] In the present description, and unless stated otherwise, the terms "connected", "coupled" and variants and derivatives thereof refer to any connection or coupling, either direct or indirect, between two or more elements. The connection or coupling between the elements may be mechanical, optical, electrical, operational or a combination thereof. It will also be appreciated that positional descriptors and other like terms indicating the position or orientation of one element with respect to another element are used herein for ease and clarity of description and should, unless otherwise indicated, be taken in the context of the figures and should not be considered limiting. It will be understood that such spatially relative terms are intended to encompass different orientations in use or operation of the present embodiments, in addition to the orientations exemplified in the figures. More particularly, it is to be noted that in the present description, the terms "over" and "under" in specifying the relative spatial relationship of two elements denote that the two elements can be either in direct contact with each other or separated from each other by one or more intervening elements.

[0057] In the present description, the terms "a", "an" and "one" are defined to mean "at least one", that is, these terms do not exclude a plural number of items, unless specifically stated otherwise.

[0058] The present description generally relates to light field imaging techniques for acquiring light field information or image data about an optical wavefront emanating from a scene. In accordance with various aspects, the present description relates to a light field imaging device for capturing light field image data about a scene, for example a backside-illuminated light field imaging device; a diffraction grating assembly for use with an image sensor to obtain light field image data about a scene; a method of capturing light field image data about a scene; and a method of providing three-dimensional (3D) imaging capabilities to an image sensor array viewing a scene.

[0059] In some implementations, the present techniques enable the specific manipulation and comparison of the chromatic dependence of diffraction by means of one or many diffractive optical elements paired with an appropriate chromatic encoding mechanism, as well as its use in 3D imaging. In some implementations, the light field imaging devices and methods disclosed herein are sensitive to not only the intensity and angle of incidence of an optical wavefront originating from an observable scene, but also the wavelength, through a specific spatio-spectral subsampling of a generated interference pattern allowing for direct measurement of the chromatic dependence of diffraction. Light field information or image data, can include information about not only the intensity of the optical wavefront emanating from an observable scene, but also other light field parameters including, without limitation, the angle of incidence, the phase, the wavelength and the polarization of the optical wavefront. Therefore, light field imaging devices, for example depth cameras, can acquire more information than traditional cameras, which typically record only light intensity. The image data captured by light field imaging devices can be used or processed in a variety of ways to provide multiple functions including, but not limited to, 3D depth map extraction, 3D surface reconstruction, image refocusing, and the like. Depending on the application, the light field image data of an observable scene can be acquired as one or more still images or as a video stream.

[0060] The present techniques can be used in imaging applications that require or can benefit from enhanced depth sensing and other 3D imaging capabilities, for example to allow a user to change the focus, the point of view and/or the depth of field of a captured image of a scene. The present techniques can be applied to or implemented in various types of 3D imaging systems and methods including, without limitation, light field imaging applications using plenoptic descriptions, ranging applications through the comparative analysis of the chromatic dependence of diffraction, and single-sensor single-image depth acquisition applications. Non-exhaustive advantages and benefits of certain implementations of the present techniques can include: compatibility with passive sensing modalities that employ less power to perform their functions; compatibility with single-sensor architectures having reduced footprint; enablement of depth mapping functions while preserving 2D performance; simple and low-cost integration into existing image sensor hardware and manufacturing processes; compatibility with conventional CMOS and CCD image sensors; and elimination of the need for multiple components, such as dual cameras or cameras equipped with active lighting systems for depth detection.

[0061] In the present description, the terms "light" and "optical" are used to refer to radiation in any appropriate region of the electromagnetic spectrum. More particularly, the terms "light" and "optical" are not limited to visible light, but can also include invisible regions of the electromagnetic spectrum including, without limitation, the terahertz (THz), infrared (IR) and ultraviolet (UV) spectral bands. In some implementations, the terms "light" and "optical" can encompass electromagnetic radiation having a wavelength ranging from about 175 nanometers (nm) in the deep ultraviolet to about 300 micrometers (.mu.m) in the terahertz range, for example from about 400 nm at the blue end of the visible spectrum to about 1550 nm at telecommunication wavelengths, or between about 400 nm and about 650 nm to match the spectral range of typical red-green-blue (RGB) color filters. Those skilled in the art will understand, however, that these wavelength ranges are provided for illustrative purposes only and that the present techniques may operate beyond this range.

[0062] In the present description, the terms "color" and "chromatic", and variants and derivatives thereof, are used not only in their usual context of human perception of visible electromagnetic radiation (e.g., red, green and blue), but also, and more broadly, to describe spectral characteristics (e.g., diffraction, transmission, reflection, dispersion, absorption) over any appropriate region of the electromagnetic spectrum. In this context, and unless otherwise specified, the terms "color" and "chromatic" and their derivatives can be used interchangeably with the term "spectral" and its derivatives.

Light Field Imaging Device Implementations

[0063] Referring to FIGS. 1 and 2, there is provided a schematic representation of an exemplary embodiment of a light field imaging device 20 for capturing light field or depth image data about an observable scene 22. In the present description, the term "light field imaging device" broadly refers to any image capture device capable of acquiring an image representing a light field or wavefront emanating from a scene and containing information about not only light intensity at the image plane, but also other light field parameters such as, for example, the direction from which light rays enter the device and the spectrum of the light field. It is to be noted that in the present description, the term "light field imaging device" can be used interchangeably with terms such as "light field camera", "light field imager", "light field image capture device", "depth image capture device", "3D image capture device", and the like.

[0064] In the illustrated embodiment, the light field imaging device 20 includes a diffraction grating assembly or structure 24 configured to receive an optical wavefront 26 originating from the scene 22. The diffraction grating assembly 24 can include at least one diffraction grating 28, each of which having a grating axis 30 and a refractive index modulation pattern 32 having a grating period 34 along the grating axis 30. In FIGS. 1 and 2, the diffraction grating assembly 24 includes a single diffraction grating 28, but as described below, in other embodiments the diffraction grating assembly can include more than one diffraction grating. The diffraction grating 28 is configured to diffract the incoming optical wavefront 26, thereby generating a diffracted wavefront 36. The diffraction grating 28 in FIGS. 1 and 2 is used in transmission since the incident optical wavefront 26 and the diffracted wavefront 36 lie on opposite sides of the diffraction grating 28.

[0065] Referring still to FIGS. 1 and 2, the light field imaging device 20 also includes a pixel array 38 comprising a plurality of light-sensitive pixels 40 disposed under the diffraction grating assembly 24 and configured to detect the diffracted wavefront 36 as the light field image data about the scene 22. In color implementations, the light field imaging device 20 can also include a color filter array 42 disposed over the pixel array 38. The color filter array 42 includes a plurality of color filters 44 arranged in a mosaic color pattern, each of which filters incident light by wavelength to capture color information at a respective location in the color filter array 42. The color filter array 42 is configured to spatially and spectrally filter the diffracted wavefront 36 according to the mosaic color pattern prior to detection of the diffracted wavefront 36 by the plurality of light-sensitive pixels 40. Therefore, as mentioned above, by providing a color filter array to perform a direct spatio-chromatic subsampling of the diffracted wavefront generated by the diffraction grating assembly prior to its detection by the pixel array, the light field imaging device can be sensitive to not only the angle and intensity of an incident wavefront of light, but also its spectral content.

[0066] It is to be noted that a color filter array need not be provided in some applications, for example for monochrome imaging. It is also to be noted that the wavefront detected by the light-sensitive pixels will be generally referred to as a "diffracted wavefront" in both monochrome and color implementations, although in the latter case, the terms "filtered wavefront" or "filtered diffracted wavefront" may, in some instances, be used to denote the fact that the diffracted wavefront generated by the diffraction grating assembly is both spatially and spectrally filtered by the color filter array prior to detection by the underlying pixel array. It is also to be noted that in some implementations where a color filter array is not provided, it may be envisioned that the diffraction grating could act as a color filter. For example, the diffraction grating could include a grating substrate with a top surface having the refractive index modulation pattern formed thereon, the grating substrate including a spectral filter material or region configured to spectrally filter the diffracted wavefront according to wavelength prior to detection of the diffracted wavefront by the plurality of light-sensitive pixels. For example, the spectral filter material or region could act as one of a red pass filter, a green pass filter and a blue pass filter.

[0067] Depending on the application or use, embodiments of the light field imaging device can be implemented using various image sensor architectures and pixel array configurations. For example, in some implementations, the light field imaging device can be implemented simply by adding or coupling a diffraction grating assembly on top of an already existing image sensor including a pixel array and, in color-based applications, a color filter array. For example, the existing image sensor can be a conventional 2D CMOS or CCD imager. However, in other implementations, the light field imaging device can be implemented and integrally packaged as a separate, dedicated and/or custom-designed device incorporating therein all or most of its components (e.g., diffraction grating assembly, pixel array, color filter array).

[0068] More detail regarding the structure, configuration and operation of the components introduced in the preceding paragraphs as well as other possible components of the light field imaging device will be described below.

[0069] In the embodiment illustrated in FIGS. 1 and 2, the diffraction grating 28 includes a grating substrate 46 extending over the color filter array 42. The grating substrate 46 has a top surface 48, on which is formed the periodic refractive index modulation pattern 32, and a bottom surface 50. The grating substrate 46 is made of a material that is transparent, or sufficiently transparent, in the spectral operating range to permit the diffracted wavefront 36 to be transmitted therethrough. Non-limiting examples of such material include silicon oxide (SiOx), polymers, colloidal particles, SU-8 photoresist, glasses. For example, in some implementations the diffraction grating 28 can be configured to diffract the optical wavefront 26 in a waveband ranging from about 400 nm to about 1550 nm.

[0070] As known in the art, diffraction occurs when a wavefront, whether electromagnetic or otherwise, encounters a physical object or a refractive-index perturbation. The wavefront tends to bend around the edges of the object. Should a wavefront encounter multiple objects, whether periodic or otherwise, the corresponding wavelets may interfere some distance away from the initial encounter as demonstrated by Young's double slit experiment. This interference creates a distinct pattern, referred to as a "diffraction pattern" or "interference pattern", as a function of distance from the original encounter, which is sensitive to the incidence angle and the spectral content of the wavefront, and the general size, shape, and relative spatial relationships of the encountered objects. This interference can be described through the evolving relative front of each corresponding wavelet, as described by the Huygens-Fresnel principle.

[0071] In the present description, the term "diffraction grating", or simply "grating", generally refers to a periodic structure having periodically modulated optical properties (e.g., a refractive index modulation pattern) that spatially modulates the amplitude and/or the phase of an optical wavefront incident upon it. A diffraction grating can include a periodic arrangement of diffracting elements (e.g., alternating ridges and grooves) whose spatial period--the grating period--is nearly equal to or slightly longer than the wavelength of light. An optical wavefront containing a range of wavelengths incident on a diffraction grating will, upon diffraction, have its amplitude and/or phase modified, and, as a result, a space- and time-dependent diffracted wavefront is produced. In general, a diffracting grating is spectrally dispersive so that each wavelength of an input optical wavefront will be outputted along a different direction. However, diffraction gratings exhibiting a substantially achromatic response over an operating spectral range exist and can be used in some implementations. For example, in some implementations, the diffraction grating can be achromatic in the spectral range of interest and be designed for the center wavelength of the spectral range of interest. More particularly, in the case of a Bayer patterned color filter array, the diffraction grating can be optimized for the green channel, that is, around a center wavelength of about 532 nm. It is to be noted that when the diffraction grating is achromatic, it is the mosaic color patter of the color filter array that provides the chromatic sub-sampling of the diffraction pattern of the diffracted wavefront.

[0072] Depending on whether the diffracting elements forming the diffraction grating are transmitting or reflective, the diffraction grating will be referred to as a "transmission grating" or a "reflection grating". In the embodiments disclosed in the present description, the diffracting gratings are transmission gratings, although the use of reflection gratings is not excluded a priori. Diffraction gratings can also be classified as "amplitude gratings" or "phase gratings", depending on the nature of diffracting elements. In amplitude gratings, the perturbations to the initial wavefront caused by the grating are the result of a direct amplitude modulation, while in phase gratings, these perturbations are the result of a specific modulation of the relative group-velocity of light caused by a periodic variation of the refractive index of the grating material. In the embodiments disclosed in the present description, the diffracting gratings are phase gratings, although amplitude gratings can be used in other embodiments.

[0073] In the illustrated embodiment of FIGS. 1 and 2, the diffraction grating 28 is a phase grating, more specifically a binary phase grating for which the refractive index modulation pattern 32 includes a series of ridges 52 periodically spaced-apart at the grating period 34, interleaved with a series of grooves 54 also periodically spaced-apart at the grating period 34. The spatial profile of the refractive index modulation pattern 32 thus exhibits a two-level step function, or square-wave function, for which the grating period 34 corresponds to the sum of the width, along the grating axis 30, of one ridge 52 and one adjacent groove 54. In some implementations, the grating period 34 can range from about 1 .mu.m to about 20 .mu.m, although other values are possible in other implementations. In the illustrated embodiment of FIGS. 1 and 2, the grooves 54 are empty (i.e., they are filled with air), but they could alternatively be filled with a material having a refractive index different from that of the ridge material. Also, depending on the application, the diffraction grating 28 can have a duty cycle substantially equal to or different from 50%, the duty cycle being defined as the ratio of the ridge width to the grating period 34. Another parameter of the diffraction grating 28 is the step height 56, that is, the difference in level between the ridges 52 and the grooves 54. For example, in some implementations the step height 56 can range from about 0.2 .mu.m to about 1 .mu.m. It is to be noted that in some implementations, the step height 56 can be selected so that the diffraction grating 28 causes a predetermined optical path difference between adjacent ridges 52 and grooves 54. For example, the step height 56 can be controlled to provide, at a given wavelength and angle of incidence of the optical wavefront (e.g. its center wavelength), a half-wave optical path difference between the ridges and the grooves. Of course, other optical path difference values can be used in other implementations.

[0074] It is to be noted that while the diffraction grating 28 in the embodiment of FIGS. 1 and 2 is a linear, or one-dimensional, binary phase grating consisting of alternating sets of parallel ridges 52 and grooves 54 forming a square-wave refractive index modulation pattern 32, other embodiments can employ different types of diffraction gratings. For example, other implementations can use diffraction gratings where at least one among the grating period, the duty cycle and the step height is variable; diffraction gratings with non-straight features perpendicular to the grating axis; diffraction gratings having more elaborate refractive index profiles; 2D diffraction gratings; and the like. It will be understood that the properties of the diffracted wavefront can be tailored by proper selection of the grating parameters. More detail regarding the operation of the diffraction grating and its positioning relative and optical coupling to the other components of the light field imaging device will be described further below.

[0075] Referring still to FIGS. 1 and 2, as mentioned above, the pixel array 38 includes a plurality of light-sensitive pixels 40 disposed under the color filter array 42, which is itself disposed under the diffraction grating assembly 24. The term "pixel array" refers generally to a sensor array made up of a plurality of photosensors, referred to herein as "light-sensitive pixels" or simply "pixels", which are configured to detect electromagnetic radiation incident thereonto from an observable scene and to generate an image of the scene, typically by converting the detected radiation into electrical data. In the present techniques, the electromagnetic radiation that is detected by the light-sensitive pixels 40 as light field image data corresponds to an optical wavefront 26 incident from the scene 22, which has been diffracted and, optionally, spatio-chromatically filtered, prior to reaching the pixel array 38. The pixel array 38 can be embodied by a CMOS or a CCD image sensor, but other types of photodetector arrays (e.g., charge injection devices or photodiode arrays) could alternatively be used. As mentioned above, the pixel array 38 can be configured to detect electromagnetic radiation in any appropriate region of the spectrum. Depending on the application, the pixel array 38 may be configured according to either a rolling or global shutter readout design. The pixel array 38 may further be part of a stacked, backside, or frontside illumination sensor architecture, as described in greater detail below. The pixel array 38 may be of any standard or non-standard optical format, for example, but not limited to, 4/3'', 1'', 2/3'', 1/1.8'', 1/2'', 1.27'', 1/3'', 1/3.2'', 1/3.6'', 35 mm, and the like. The pixel array 38 may also include either a contrast or a phase-detection autofocus mechanism and their respective pixel architectures. It is to be noted that in the present description, the term "pixel array" can be used interchangeably with terms such as "photodetector array", "photosensor array", "imager array", and the like.

[0076] Each light-sensitive pixel 40 of the pixel array 38 can convert the spatial part of the diffracted wavefront 36 incident upon it into accumulated charge, the amount of which is proportional to the amount of light collected and registered by the pixel 40. Each light-sensitive pixel 40 can include a light-sensitive surface and associated pixel circuitry for processing signals at the pixel level and communicating with other electronics, such as a readout unit. Those skilled in the art will understand that various other components can be integrated into the pixel circuitry of each pixel. In general, the light-sensitive pixels 40 can be individually addressed and read out.

[0077] Referring still to FIGS. 1 and 2, the light-sensitive pixels 40 can be arranged into a rectangular grid of rows and columns defined by two orthogonal pixel axes 58, 60, the number of rows and columns defining the resolution of the pixel array 38. For example, in some implementations, the pixel array 38 can have a resolution of at least 16 pixels, although a wide range of other resolution values, including up to 40 megapixels or more, can be used in other embodiments. It is to be noted that while the light-sensitive pixels 40 are organized into a 2D array in the embodiment of FIGS. 1 and 2, they may alternatively be configured as a linear array in other embodiments. It is also to be noted that while the light-sensitive pixels 40 are square in the embodiment of FIGS. 1 and 2, corresponding to a pixel aspect ratio of 1:1, other pixel aspect ratio values can be used in other embodiments.

[0078] The pixel array 38 can also be characterized by a pixel pitch 62. In the present description, the term "pixel pitch" generally refers to the spacing between the individual pixels 40 and is typically defined as the center-to-center distance between adjacent pixels 40. Depending on the physical arrangement of the pixel array 38, the pixel pitch 62 along the two orthogonal pixel axes 58, 60 may or may not be the same. It is to be noted that a pixel pitch can also be defined along an arbitrary axis, for example along a diagonal axis oriented at 45.degree. with respect to the two orthogonal pixel axes 58, 60. It is also to be noted that, in the present techniques, a relevant pixel pitch 62 is the one along the grating axis 30 of the overlying diffraction grating 28, as depicted in FIGS. 1 and 2. As described in greater detail below, the grating period 34 of the diffraction grating 28 is selected to be larger than the pixel pitch 62 of the pixel array 38 along the grating axis 30. For example, in some implementations the pixel pitch 62 along the grating axis 30 can range from 1 .mu.m or less to 10 .mu.m, although different pixel pitch values can be used in other implementations.

[0079] In the present description, the term "pixel data" refers to the image information captured by each individual pixel and can include intensity data indicative of the total amount of optical energy absorbed by each individual pixel over an integration period. Combining the pixel data from all the pixels 40 yields light field image data about the scene 22. In the present techniques, because the optical wavefront 26 incident from the scene 22 is diffracted and, possibly, spatially and spectrally filtered prior to detection, the light field image data can provide information about not only the intensity of the incident wavefront 26, but also other light field parameters such as its angle of incidence, phase and spectral content. More particularly, it will be understood that the present techniques can allow recovery or extraction of depth or other light field information from the intensity-based diffraction pattern captured by the pixel array 38, as described further below.

[0080] Referring still to FIGS. 1 and 2, the color filter array 42 is spatially registered with the pixel array 38, such that each color filter 44 is optically coupled to a corresponding one of the light-sensitive pixels 40. That is, each color filter 44 covers a single light-sensitive pixel 40, such that there is a one-to-one relationship, or mapping, between the color filters 44 and the light-sensitive pixels 40. However, in other implementations, each color filter can be optically coupled to at least two corresponding ones of the plurality of light-sensitive pixels. For example, turning briefly to FIG. 3, there is shown another embodiment of a light field imaging device 20 in which each color filter 44 of the color filter array 42 overlies a group or subset of light-sensitive pixels 40, namely a 2.times.2 block of light-sensitive pixels 40. In both the embodiment of FIGS. 1 and 2 and the embodiment of FIG. 3, the color filter array 42 and the pixel array 38 together enable the direct spatio-chromatic sampling of the diffracted wavefront produced by the overlying diffraction grating assembly 24, as detailed and explained below.

[0081] As mentioned above regarding the terms "color" and "chromatic", terms such as "color filter" and "color filtering" are to be understood as being equivalent to "spectral filter" and "spectral filtering" in any appropriate spectral range of the electromagnetic spectrum, and not only within the visible range. Depending on the application, the color filters can achieve spectral filtering through absorption of unwanted spectral components, for example using dye-based color filters, although other filtering principles may be used without departing from the scope of the present techniques.

[0082] Returning to FIGS. 1 and 2, the color filters 44 are physically organized according to a mosaic color pattern or configuration. In some implementations, each color filter 44 is one of a red pass filter, a green pass filter and a blue pass filter. For example, in the illustrated embodiment, the mosaic color pattern of the color filter array 42 is a Bayer pattern, in which the color filters arranged in a checkerboard pattern with rows of alternating red (R) and green (G) filters are interleaved with rows of alternating green (G) and blue (B) filters. As known in the art, a Bayer pattern contains twice as many green filters as red or blue filters, such that the green component of the mosaic color pattern is more densely sampled than red and blue components. In alternative implementations, the mosaic color pattern can be embodied by more elaborate Bayer-type patterns, for example Bayer-type patterns with an n-pixel unit cell, where n is an integer greater than 4. Of course, the present techniques are not limited to Bayer-type patterns, but can be applied to any appropriate mosaic color pattern including, but not limited to, RGB, RGB-IR, RGB-W, CYGM, CYYM, RGBE, RGBW #1, RGBW #2, RGBW #3, and monochrome. It is to be noted that in some implementations, the color filter array 42 may be extended beyond the standard visible Bayer pattern to include hyperspectral imaging and filtering techniques or interferometric filtering techniques. In such embodiments, the design of the diffraction grating 28 (e.g., the grating period 34) can be adjusted to accommodate the increased spectral sampling range.

[0083] Referring now to FIGS. 4 and 5, there is shown another embodiment of a light field imaging device 20, which is suitable for monochrome imaging applications. This embodiment shares many features with the embodiment described above and illustrated in FIGS. 1 and 2, insofar as it generally includes a diffraction grating assembly 24 including at least one diffraction grating 28 and disposed over a pixel array 38 including a plurality of light-sensitive pixels 40. These components can generally be similar in terms of structure and operation to like components of the embodiment of FIGS. 1 and 2. The light field imaging device 20 of FIGS. 4 and 5 differs from that of FIGS. 1 and 2 mainly in that it does not include a color filter array disposed between the diffraction grating assembly 24 and the pixel array 38. As a result, the light-sensitive pixels 40 directly detect the diffracted wavefront 36 transmitted by the diffraction grating 28.

[0084] Referring to FIG. 6, there is shown another embodiment of a light field imaging device 20, which shares similar features with the embodiment of FIGS. 4 and 5, but differs in that it further includes a microlens array 64 disposed over the pixel array 38 and including a plurality of microlenses 66. Each microlens 66 is optically coupled to a corresponding one of the light-sensitive pixels 40 and is configured to focus the spatial part of the diffracted wavefront 36 incident upon it onto its corresponding light-sensitive pixel 40. It is to be noted that in embodiments where an array of color filters is provided, such as in FIGS. 1 and 2, the microlens array would be disposed over the color filter array such that each microlens would be optically coupled to a corresponding one of the color filters. In some variants, the light imaging device may also include an anti-reflection coating (not shown) provided over the pixel array 38.

[0085] Referring now to FIG. 7, there is shown a schematic partially exploded side view of an embodiment of a light field imaging device 20 suitable for monochrome imaging applications. The light field imaging device 20 shares similarities with the one shown in FIGS. 4 and 5, in that it includes a diffraction grating 28 disposed on top of a pixel array 38 of light-sensitive pixels 40. The diffraction grating 28 is a binary phase transmission grating having a duty cycle of 50% and a periodic refractive index modulation pattern 32 consisting of alternating sets of ridges 52 and grooves 54. FIG. 7 also depicts schematically the propagation of light through the device 20. In operation, the light field imaging device 20 has a field of view encompassing an observable scene 22. The diffraction grating 28 receives an optical wavefront 26 (solid line) incident from the scene 22 on its input side, and diffracts the optical wavefront 26 to generate, on its output side, a diffracted wavefront 36 (solid line) that propagates toward the pixel array 38 for detection thereby. For simplicity, the incoming optical wavefront 26 in FIG. 7 corresponds to the wavefront of a plane wave impinging on the diffraction grating 28 at normal incidence. However, the present techniques can be implemented for an optical wavefront of arbitrary shape incident on the diffraction grating 28 at an arbitrary angle within the field of view of the light field imaging device.

[0086] Referring still to FIG. 7, the diffracted wavefront 36 can be characterized by a diffraction pattern whose form is a function of the geometry of the diffraction grating 28, the wavelength and angle of incidence of the optical wavefront 26, and the position of the observation plane, which corresponds to the light-receiving surface 68 of the pixel array 38. In the observation plane, the diffraction pattern of the diffracted wavefront 36 can be characterized by a spatially varying intensity profile 70 along the grating axis 30 in the light-receiving surface 68 of the pixel array 38. It is to be noted that in FIG. 7, the grating axis 30 is parallel to the pixel axis 58.