Augmented Reality Navigation Systems For Use With Robotic Surgical Systems And Methods Of Their Use

Johnson; Norbert ; et al.

U.S. patent application number 15/899038 was filed with the patent office on 2019-08-22 for augmented reality navigation systems for use with robotic surgical systems and methods of their use. The applicant listed for this patent is GLOBUS MEDICAL, INC.. Invention is credited to Bessam Al Jewad, Neil Crawford, Jeffrey Forsyth, Weston Healy, Norbert Johnson, Ken Jones, Sanjay Joshi, Christine Russ.

| Application Number | 20190254753 15/899038 |

| Document ID | / |

| Family ID | 65685116 |

| Filed Date | 2019-08-22 |

View All Diagrams

| United States Patent Application | 20190254753 |

| Kind Code | A1 |

| Johnson; Norbert ; et al. | August 22, 2019 |

AUGMENTED REALITY NAVIGATION SYSTEMS FOR USE WITH ROBOTIC SURGICAL SYSTEMS AND METHODS OF THEIR USE

Abstract

The present disclosure is directed to augmented reality navigation systems and methods of their use that, inter alia, address the need for systems and methods of robotic surgical system navigation with reduced distraction to surgeons. Augmented reality navigation systems disclosed herein enable a surgeon to maintain focus on a surgical site and/or surgical tool being used in a surgical procedure while obtaining a wide range of navigational information relevant to the procedure. Navigational information can appear in the augmented reality navigation system as being presented on virtual displays that sit in a natural field of view of a surgeon during a procedure. Navigational information can also appear to be overlaid over a patient's anatomy. Augmented reality navigation systems comprise a head mounted display comprising an at least partially transparent display screen, at least one detector connected to the head mounted display for identifying real-world features, and a computer subsystem.

| Inventors: | Johnson; Norbert; (North Andover, MA) ; Forsyth; Jeffrey; (Cranston, RI) ; Crawford; Neil; (Chandler, AZ) ; Joshi; Sanjay; (Andover, MA) ; Al Jewad; Bessam; (Madbury, NH) ; Healy; Weston; (Cambridge, MA) ; Russ; Christine; (Stoneham, MA) ; Jones; Ken; (Wellesley, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 65685116 | ||||||||||

| Appl. No.: | 15/899038 | ||||||||||

| Filed: | February 19, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 19/006 20130101; A61B 2017/00207 20130101; A61B 2017/00203 20130101; A61B 2034/258 20160201; G02B 27/017 20130101; A61B 2034/254 20160201; G16H 50/50 20180101; A61B 2034/256 20160201; A61B 2090/3616 20160201; A61B 2090/374 20160201; G06F 3/011 20130101; A61B 34/30 20160201; A61B 2017/00216 20130101; A61B 2034/2057 20160201; A61B 2090/371 20160201; G16H 40/63 20180101; A61B 34/20 20160201; A61B 2034/107 20160201; A61B 2034/2048 20160201; A61B 2034/2074 20160201; A61B 2090/502 20160201; A61B 2090/365 20160201; A61B 2090/376 20160201; G16H 20/40 20180101; A61B 2090/372 20160201; A61B 2034/2068 20160201; A61B 2090/378 20160201 |

| International Class: | A61B 34/20 20060101 A61B034/20; G06T 19/00 20060101 G06T019/00; G06F 3/01 20060101 G06F003/01; G02B 27/01 20060101 G02B027/01 |

Claims

1. An augmented reality navigation system for use with a robotic surgical system, the system comprising: a head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics which appear to a user to be superimposed on at least a portion of a natural field of view of the user; at least one detector for identifying real-world features, the at least one detector connected to the head mounted display; a surgical tool having markers and configured to be detected by at the at least one detector, wherein a representation of at least a portion of the surgical tool and/or a trajectory of the surgical tool is presented in the head mounted display, wherein a detector input signal from the at least one detector corresponds to a field of view of the at least one detector and the field of view comprises at least a portion of anatomy of a patient during a surgical procedure, wherein the detector input signal includes a relative location and/or orientation for each of one or more of the real-world features, wherein the surgical tool is inserted into or connected to the robotic surgical system.

2. The augmented reality navigation system of claim 1, wherein a camera system for detecting real-world features is electrically coupled to the head mounted display.

3. The augmented reality navigation system of claim 1, wherein the head mounted display provides a representation of the surgical tool and a trajectory of the surgical tool overlaid on the anatomy of the patient.

4. The augmented reality navigation system of claim 1, further includes a motion sensor connected to the head mounted display for outputting a motion signal based on measured motion of the head mounted display.

5. The augmented reality navigation system of claim 1, wherein the robotic surgical system includes an arm, an end effector coupled to a first end of the arm and a base coupled to the second end of the arm, wherein the arm moves the end effector that is configured to receive the surgical tool to a trajectory selected by a user.

6. The augmented reality navigation system of claim 5, wherein the robotic surgical system and the augmented reality navigation system includes a haptic feedback system to control the robotic arm within a selected trajectory.

7. The augmented reality navigation system of claim 6, wherein the surgical tool includes a communicating with the haptic feedback system that constrains the surgical tool to the user selected trajectory.

8. The augmented reality navigation system of claim 1, wherein the at least one detector comprises a detector with at least a minimum field of view of 40 degrees.

9. The augmented reality navigation system of claim 1, wherein the display screen has a resolution of at least 1280.times.720 pixels.

10. The augmented reality navigation system of claim 1, comprising a pointer tool for making surgical planning selections, wherein the pointer tool is configured to be detected by the at least one detector.

11. The augmented reality navigation system of claim 1, wherein the robotic surgical system registers anatomy of a patient with the augmented reality navigation system, and an anatomical model of the patient based on medical image data.

12. The augmented reality navigation system of claim 1, wherein the at least one detector comprises a video camera and transmits a video signal to the head mounted display to display augmentation graphics which appear to the user to be superimposed on at least a portion ao natural field of view of the user.

13. The augmented reality navigation system of claim 1, wherein the surgical procedure comprises at least one of a spinal surgical procedure, an orthopedic surgical procedure, an orthopedic trauma surgical procedure, and a neurosurgical procedure.

14. An augmented reality navigation system for use with a robotic surgical system, the system comprising: a head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics which appear to a user to be superimposed on at least a portion of a natural field of view of the user; at least one detector for identifying real-world features, the at least one detector connected to the head mounted display; and a computer subsystem configured to generate and/or access a representation of at least a portion of a surgical tool and/or a trajectory of the surgical tool during a surgical procedure, modify at least a portion of the representation based on a relative position and/or orientation of one or more real-world features in a detector input signal received from the at least one detector, and display, on the display screen, surgical tool augmentation graphics based on the modified representation, wherein the surgical tool is inserted into or connected to the robotic surgical system.

15. The augmented reality navigation system of claim 14, wherein the computer subsystem is configured to render a surgical tool augmentation graphic for each of a plurality of surgical tool trajectories, and display, on the display screen, the plurality of surgical tool augmentation graphics such that the surgical tool augmentation graphics appear overlaid on the anatomy of the patient and each of the trajectory augmentation graphics indicate a physical trajectory that could be followed during the surgical procedure.

16. The augmented reality navigation system of claim 14, wherein the computer subsystem is configured to modify an anatomical model of a patient based on one or more relative location(s) and/or orientation(s) determined from the detected input signal, thereby forming an updated anatomical model, and the computer subsystem is configured to display, on the display screen, anatomical model augmentation graphics corresponding to the updated anatomical model such that the updated anatomical model appears overlaid on the anatomy of the patient.

17. The augmented reality navigation system of claim 14, comprising: a motion sensor connected to the head mounted display for outputting a motion signal based on measured motion of the head mounted display, wherein the computer subsystem is configured to update the surgical tool augmentation graphics based on motion detected by the motion sensor.

18. The augmented reality navigation system of claim 14, wherein the computer subsystem is configured to determine a selected trajectory based at least in part on a user input trajectory selection signal that selects the selected trajectory from a set of one or more planned trajectories, and automatically move a robotic arm and/or end effector of the robotic surgical system to be aligned with the selected trajectory.

19. The augmented reality navigation system of claim 18, wherein the computer subsystem is configured to automatically move the robotic arm and/or end effector of the robotic surgical system along the trajectory.

20. The augmented reality navigation system of claim 18, wherein the computer subsystem is configured to define a haptic feedback system that comprises the trajectory and constrains motion of a robotic arm and/or end effector such that motion of at least a portion of a surgical tool attached to the robotic arm and/or end effector is constrained to within the haptic feedback system.

Description

FIELD

[0001] The present invention relates generally to augmented reality systems for use with robotic surgical systems and methods of their use.

BACKGROUND

[0002] Robotic surgical systems are used in many surgical procedures in order to assist surgeons in precisely and accurately performing the procedures. Frequently, these procedures require precise placement of one or more implants and can be performed using minimally invasive techniques. Robotic surgical systems follow pre-planned or intra-operatively planned trajectories that assist the surgeon in placing implants while maintaining their intended alignment. Navigation markers placed throughout the surgical environment are used to register the environment (e.g., patient anatomy) with the robotic surgical system in order to properly orient the robot to the pre-planned or intra-operatively planned trajectories. Additionally, medical image data can be registered to the robotic surgical system to provide a model of the patient's anatomy for use in navigation.

[0003] Surgeons plan and monitor trajectories as well as monitor status of a robotic surgical system and a patient's anatomy during a procedure using a fixed display, for example, attached to or next to the robotic surgical system. Such a fixed display is the primary mechanism for navigating and monitoring a robotic surgical system during a procedure. This is especially true for minimally invasive procedures where a patient's anatomy obstructs direct view of the surgical site. However, fixed displays require a surgeon to divert his or her vision away from the surgical site and/or surgical tools that he or she is manipulating in order to obtain navigational information displayed on the screen. Moreover, the display screen can physically obstruct a surgeon's view of a portion of the surgical environment.

SUMMARY

[0004] There is a need for systems and methods for viewing navigational information from a robotic surgical system that reduce a surgeon's need to divert his or her vision while not obstructing view of the surgical environment. The present disclosure is directed to augmented reality navigation systems and methods of their use that, inter alia, address the need for systems and methods of robotic surgical system navigation with reduced distraction to surgeons. Augmented reality navigation systems disclosed herein enable a surgeon to maintain focus on a surgical site and/or surgical tool being used in a surgical procedure while obtaining a wide range of navigational information relevant to the procedure. Navigational information includes, but is not limited to, a model of a patient's anatomy derived from medical image data, a trajectory or position of a surgical tool or robotic surgical system, or a position and orientation of a surgical implant. Navigational information can be sent to an augmented reality navigation system as navigation input data from a robotic surgical system. Navigational information can appear in the augmented reality navigation system as being presented on virtual displays that sit in a natural field of view of a surgeon during a procedure. Navigational information can also appear to be overlaid over a patient's anatomy. Navigational information can include information otherwise not visible in a surgeon's natural field of view, for example trajectories and or portions of a surgical tool obscured by a patient's anatomy.

[0005] Augmented reality navigation systems comprise a head mounted display comprising an at least partially transparent display screen, at least one detector connected to the head mounted display for identifying real-world features, and a computer subsystem. The display screen displays augmentation graphics, for example navigation augmentation graphics that provide navigational information to a surgeon. The navigation augmentation graphics can appear as a separate display in the field of view of a surgeon or overlaid over a patient's anatomy. The at least one detector identifies real-world features, wherein the real-world features can be, for example fiducials and/or patient anatomy recognized via image recognition methods. In this way, the at least one detector mounted to the head mounted display acts as the detector in a typical navigation system used during surgery (e.g., can be used to register a patient's anatomy and a robotic surgical system) without requiring an additional piece of equipment in the surgical environment. The computer subsystem can be configured to perform a variety of navigational tasks useful to a surgeon during a procedure including, for example, trajectory planning and execution. A motion sensor can optionally be included to detect motion of the head of a surgeon wearing the augmented reality navigation system providing additional functionality and/or performance (e.g., a selection input means or drift correction).

[0006] In certain embodiments, an augmented reality navigation system eliminates the need for an auxiliary navigation subsystem such as those commonly used with current robotic surgical systems. The at least one detector in the augmented reality navigation system detects real-world features (e.g., fiducials) in sufficiently quantity and resolution as to properly register a patient to a robotic surgical system and, optionally, one or more models of patient anatomy derived from medical image data. Therefore, the augmented reality navigation system acts as a standalone system without the need for additional equipment. Although, in certain embodiments, an auxiliary detector is used in conjunction with the augmented reality navigation system. An auxiliary detector may provide a larger registered field, improved resolution of registration, and/or redundancy.

[0007] In one aspect, the invention is directed to an augmented reality navigation system for use with a robotic surgical system, the system comprising: a head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) (e.g., navigation augmentation graphics) which appear to a user to be superimposed on at least a portion of a natural field of view of the user; at least one detector for identifying real-world features, the at least one detector connected to the head mounted display [e.g., wherein the at least one detector comprises at least one of an optical camera (e.g., a video camera), an EMF detector, a LiDAR detector, an acoustic detector, and an RF detector] [e.g., wherein the real-world features comprises fiducials and/or identified patient anatomy (e.g., wherein the real-world features are fiducials connected to at least one of a patient, a surgical tool, and the robotic surgical system (e.g., a robotic arm, a part of a robotic arm, and/or an end-effector of a robotic arm))]; a processor of a computing device; and a non-transitory computer readable medium having instructions stored thereon, wherein the instructions, when executed by the processor, cause the processor to: receive, by the processor, a detector input signal from the at least one detector, wherein the detector input signal corresponds to a field of view of the at least one detector and the field of view comprises at least a portion of anatomy of a patient during a surgical procedure, determine, by the processor, a relative location and/or orientation for each of one or more the real-world features in the detector input signal, generate and/or access, by the processor, a representation of at least a portion of a surgical tool and/or a trajectory of the surgical tool, wherein the surgical tool is inserted into or connected to the robotic surgical system (e.g., wherein the portion of the surgical tool is hidden from the natural field of view of the user, e.g., within a patient), modify (e.g., least one of rotate, scale, and translate), by the processor, at least a portion of the representation based on the relative location and/or orientation of the one or more real-world features, thereby forming an updated representation, render, by the processor, surgical tool augmentation graphics based on the updated representation, and display, by the processor, the surgical tool augmentation graphics on the display screen (e.g., display, via the at least partially transparent display screen of the head mounted display, the surgical tool augmentation graphics superimposed on at least a portion of the natural field of view of the user).

[0008] In some embodiments, the instructions cause the processor to: render, by the processor, a surgical tool augmentation graphic for each of a plurality of surgical tool trajectories (e.g., planned surgical tool trajectories); and display, by the processor, on the display screen, the plurality of surgical tool augmentation graphics such that the surgical tool augmentation graphics appear overlaid on the anatomy of the patient and each of the trajectory augmentation graphics indicate a physical trajectory that could be followed during the surgical procedure.

[0009] In some embodiments, the instructions cause the processor to: determine, by the processor, a relative location and/or orientation for each of at least one real-world feature from the detected input signal; modify, by the processor, (e.g., by at least one of rotation, scaling, and translation) an anatomical model of a patient (e.g., a 3D model) based on the relative locations and/or orientations determined from the detected input signal, thereby forming an updated anatomical model (e.g., that is registered to the anatomy of the patient); render, by the processor, anatomical model augmentation graphics based at least in part on the updated anatomical model; and display, by the processor, on the display screen, the anatomical model augmentation graphics such that the updated anatomical model appears overlaid on the anatomy of the patient.

[0010] In some embodiments, the augmented reality navigation system comprises a motion sensor (e.g., an inertial motion unit (IMU)) connected to the head mounted display for outputting a motion signal based on measured motion of the head mounted display and wherein the instructions cause the processor to: update, by the processor, the relative position and orientation of the determined real-world features in the detector input signal based on motion detected by the motion sensor; and update, by the processor, the surgical tool augmentation graphics based on the updated relative position and orientation.

[0011] In some embodiments, the instructions cause the processor to: receive, by the processor, a user input trajectory selection signal that selects a trajectory from a set of one or more planned trajectories (e.g., one or more preoperatively or intraoperatively planned trajectories) (e.g., wherein the user input trajectory selection signal corresponds to a gesture or sound made by the user or a position and/or orientation of a robotic arm and/or end effector of the robotic surgical system); determine, by the processor, a selected trajectory based at least in part on the user input trajectory selection signal; and automatically move, by the processor, a robotic arm and/or end effector of the robotic surgical system to be aligned with the selected trajectory.

[0012] In some embodiments, the instructions cause the processor to: automatically move, by the processor, the robotic arm and/or end effector of the robotic surgical system along the selected trajectory (e.g., towards the anatomy of the patient).

[0013] In some embodiments, the instructions cause the processor to: define and/or update, by the processor, a haptic object that comprises the selected trajectory; and constrain, by the processor, motion of a robotic arm and/or end effector such that motion of at least a portion of the surgical tool inserted into or attached to the robotic arm and/or end effector is constrained to within the haptic object.

[0014] In some embodiments, the at least one detector comprises a detector with at least a minimum field of view of 40 degrees (e.g., as measured on a diagonal). In some embodiments, the display screen has a resolution of at least 1280.times.720 pixels.

[0015] In some embodiments, the augmented reality navigation system comprises a pointer tool for making surgical planning selections (e.g., of a trajectory and/or position(s) and/or orientation(s) that define a trajectory), wherein the pointer tool is configured to be detected by the at least one detector.

[0016] In some embodiments, the instructions cause the processor to register anatomy of a patient with the robotic surgical system, the augmented reality navigation system, and, optionally, an anatomical model of the patient based on medical image data (e.g., X-ray data, CT data, MRI data, fluoroscopy data).

[0017] In some embodiments, the at least one detector comprises a video camera and the instructions cause the processor to: generate, by the processor, a video signal based on the detector input signal; and output, by the processor, the video signal for display on at least one of (i) a monitor and (ii) a second head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) which appear to a user to be superimposed on at least a portion of a natural field of view of the user.

[0018] In some embodiments, the system comprises one or more fiducial markers connected to the head mounted display. In some embodiments, the instructions cause the processor to: receive, by the processor, a relative location and orientation of the one or more fiducial markers connected to the head mounted display, wherein the one or more fiducial markers are detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector); and modify (e.g., at least one of rotate, scale, and translate) at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of a trajectory of a surgical tool, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more fiducial markers detected by the secondary detector.

[0019] In some embodiments, the instructions cause the processor to: receive, by the processor, a relative location and orientation of one or more real-world features detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector); modify (e.g., at least one of rotate, scale, and translate), by the processor, at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of a trajectory of a surgical tool, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more real-world features detected by the secondary detector; render and/or update, by the processor, updated augmentation graphics based at least in part on the modified at least one of (i), (ii), (iii), and (iv); an display, by the processor, on the display screen, the updated augmentation graphics.

[0020] In some embodiments, the surgical procedure comprises at least one of a spinal surgical procedure, an orthopedic surgical procedure, an orthopedic trauma surgical procedure, and a neurosurgical procedure. In some embodiments, the surgical procedure comprises a minimally invasive surgical procedure.

[0021] In one aspect, the invention is directed to an augmented reality navigation system for use with a robotic surgical system, the system comprising: a head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) (e.g., navigation augmentation graphics) which appear to a user to be superimposed on at least a portion of a natural field of view of the user; at least one detector for identifying real-world features, the at least one detector connected to the head mounted display [e.g., wherein the at least one detector comprises at least one of an optical camera (e.g., a video camera), an EMF detector, a LiDAR detector, an acoustic detector, and an RF detector] [e.g., wherein the real-world features comprises fiducials and/or identified patient anatomy (e.g., wherein the real-world features are fiducials connected to at least one of a patient, a surgical tool, and the robotic surgical system (e.g., a robotic arm, a part of a robotic arm, and/or an end-effector of a robotic arm))]; and a computer subsystem configured to generate and/or access a representation of at least a portion of a surgical tool and/or a trajectory of the surgical tool during a surgical procedure, modify at least a portion of the representation based on a relative position and/or orientation of one or more real-world features in a detector input signal received from the at least one detector, and display, on the display screen, surgical tool augmentation graphics based on the modified representation, wherein the surgical tool is inserted into or connected to the robotic surgical system (e.g., wherein the portion of the surgical tool is hidden from the natural field of view of the user, e.g., within a patient).

[0022] In some embodiments, the computer subsystem is configured to render a surgical tool augmentation graphic for each of a plurality of surgical tool trajectories (e.g., planned surgical tool trajectories), and display, on the display screen, the plurality of surgical tool augmentation graphics such that the surgical tool augmentation graphics appear overlaid on the anatomy of the patient and each of the trajectory augmentation graphics indicate a physical trajectory that could be followed during the surgical procedure.

[0023] In some embodiments, the computer subsystem is configured to modify (e.g., by at least one of rotation, scaling, and translation) an anatomical model of a patient (e.g., a 3D model) based on one or more relative location(s) and/or orientation(s) determined from the detected input signal, thereby forming an updated anatomical model (e.g., that is registered to the anatomy of the patient), and the computer subsystem is configured to display, on the display screen, anatomical model augmentation graphics corresponding to the updated anatomical model such that the updated anatomical model appears overlaid on the anatomy of the patient.

[0024] In some embodiments, the augmented reality navigation system comprises a motion sensor (e.g., an inertial motion unit (IMU)) connected to the head mounted display for outputting a motion signal based on measured motion of the head mounted display, wherein the computer subsystem is configured to update the surgical tool augmentation graphics based on motion detected by the motion sensor.

[0025] In some embodiments, the computer subsystem is configured to determine a selected trajectory based at least in part on a user input trajectory selection signal that selects the selected trajectory from a set of one or more planned trajectories (e.g., one or more preoperatively or intraoperatively planned trajectories) (e.g., wherein the user input trajectory selection signal corresponds to a gesture or sound made by the user or a position and/or orientation of a robotic arm and/or end effector of the robotic surgical system), and automatically move a robotic arm and/or end effector of the robotic surgical system to be aligned with the selected trajectory.

[0026] In some embodiments, the computer subsystem is configured to automatically move the robotic arm and/or end effector of the robotic surgical system along the trajectory (e.g., towards the anatomy of the patient).

[0027] In some embodiments, the computer subsystem is configured to define a haptic object that comprises the trajectory and constrain motion of a robotic arm and/or end effector such that motion of at least a portion of a surgical tool attached to the robotic arm and/or end effector is constrained to within the haptic object.

[0028] In some embodiments, the at least one detector comprises a detector with at least a minimum field of view of 40 degrees (e.g., as measured on a diagonal). In some embodiments, the display screen has a resolution of at least 1280.times.720 pixels. In some embodiments, the augmented reality navigation system comprises a pointer tool for making surgical planning selections, wherein the pointer tool is configured to be detected by the at least one detector.

[0029] In some embodiments, the computer subsystem is configured to register anatomy of a patient with the robotic surgical system, the augmented reality navigation system, and, optionally, an anatomical model of the patient based on medical image data (e.g., X-ray data, CT data, MRI data, fluoroscopy data).

[0030] In some embodiments, the computer subsystem is configured to generate a video signal based on the detector input signal and output the video signal for display on at least one of (i) a monitor and (ii) a second head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) which appear to a user to be superimposed on at least a portion of a natural field of view of the user.

[0031] In some embodiments, the system comprises one or more fiducial markers connected to the head mounted display. In some embodiments, the computer subsystem is configured to receive a relative location and orientation of the one or more fiducial markers connected to the head mounted display detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector) and modify (e.g., at least one of rotate, scale, and translate) at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of a trajectory of a surgical tool, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more fiducial markers detected by the secondary detector.

[0032] In some embodiments, the computer subsystem is configured to receive a relative location and orientation of one or more real-world features detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector) and modify (e.g., at least one of rotate, scale, and translate) at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of the trajectory, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more real-world features detected by the secondary detector, and the computer subsystem is configured to display, on the display screen, updated augmentation graphics based at least in part on the modified at least one of (i), (ii), (iii), and (iv).

[0033] In some embodiments, the surgical procedure comprises at least one of a spinal surgical procedure, an orthopedic surgical procedure, an orthopedic trauma surgical procedure, and a neurosurgical procedure. In some embodiments, the surgical procedure comprises a minimally invasive surgical procedure.

[0034] In one aspect, the invention is directed to a method of using an augmented reality navigation system with a robotic surgical system, the method comprising: providing and/or accessing the augmented reality navigation system, wherein the augmented reality navigation system comprises: a head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) (e.g., navigation augmentation graphics) which appear to a user to be superimposed on at least a portion of a natural field of view of the user; optionally, a motion sensor (e.g., an inertial motion unit (IMU)) connected to the head mounted display for outputting a motion signal based on measured motion of the head mounted display; and at least one detector for identifying real-world features, the at least one detector connected to the head mounted display [e.g., wherein the at least one detector comprises at least one of an optical camera (e.g., a video camera), an EMF detector, a LiDAR detector, an acoustic detector, and an RF detector] [e.g., wherein the real-world features comprises fiducials and/or identified patient anatomy (e.g., wherein the real-world features are fiducials connected to at least one of a patient, a surgical tool, and the robotic surgical system (e.g., a robotic arm, a part of a robotic arm, and/or an end-effector of a robotic arm))]; receiving (e.g., by a processor of a computer subsystem) a detector input signal from the at least one detector, wherein the detector input signal corresponds to a field of view of the at least one detector and the field of view comprises at least a portion of anatomy of a patient during a surgical procedure, determining (e.g., by a processor of a computer subsystem) a relative location and/or orientation for each of one or more the real-world features in the detector input signal, generating and/or accessing (e.g., by a processor of a computer subsystem) a representation of at least a portion of a surgical tool and/or a trajectory of the surgical tool, wherein the surgical tool is inserted into or connected to the robotic surgical system (e.g., wherein the portion of the surgical tool is hidden from the natural field of view of the user, e.g., within a patient), modifying (e.g., least one of rotating, scaling, and translating) (e.g., by a processor of a computer subsystem) at least a portion of the representation based on the relative location and orientation of the one or more real-world features, thereby forming an updated representation, rendering (e.g., by a processor of a computer subsystem) surgical tool augmentation graphics based on the updated representation, and displaying (e.g., by a processor of a computer subsystem) the surgical tool augmentation graphics on the display screen (e.g., displaying, via the at least partially transparent display screen of the head mounted display, the surgical tool augmentation graphics superimposed on at least a portion of the natural field of view of the user).

[0035] In some embodiments, the method comprises: rendering (e.g., by a processor of a computer subsystem) a surgical tool augmentation graphic for each of a plurality of surgical tool trajectories (e.g., planned surgical tool trajectories); and displaying (e.g., by a processor of a computer subsystem) on the display screen, the plurality of surgical tool augmentation graphics such that the surgical tool augmentation graphics appear overlaid on the anatomy of the patient and each of the trajectory augmentation graphics indicate a physical trajectory that could be followed during the surgical procedure.

[0036] In some embodiments, the method comprises: determining (e.g., by a processor of a computer subsystem) a relative location and/or orientation for each of at least one real-world feature from the detected input signal; modifying (e.g., by at least one of rotating, scaling, and translating) (e.g., by a processor of a computer subsystem) an anatomical model of a patient (e.g., a 3D model) based on the relative locations and/or orientations determined from the detected input signal, thereby forming an updated anatomical model (e.g., that is registered to the anatomy of the patient); rendering (e.g., by a processor of a computer subsystem) anatomical model augmentation graphics based at least in part on the updated anatomical model; and displaying, on the display screen, (e.g., by a processor of a computer subsystem) the anatomical model augmentation graphics such that the updated anatomical model appears overlaid on the anatomy of the patient.

[0037] In some embodiments, the method comprises: updating (e.g., by a processor of a computer subsystem) the relative position and orientation of the determined real-world features in the detector input signal based on motion detected by the motion sensor; and updating (e.g., by a processor of a computer subsystem) the surgical tool augmentation graphics based on the updated relative position and orientation.

[0038] In some embodiments, the method comprises: receiving (e.g., by a processor of a computer subsystem) a user input trajectory selection signal that selects a trajectory from a set of one or more planned trajectories (e.g., one or more preoperatively or intraoperatively planned trajectories) (e.g., wherein the user input trajectory selection signal corresponds to a gesture or sound made by the user or a position and/or orientation of a robotic arm and/or end effector of the robotic surgical system); determining (e.g., by a processor of a computer subsystem) a selected trajectory based at least in part on the user input trajectory selection signal; and automatically (e.g., by a processor of a computer subsystem) moving a robotic arm and/or end effector of the robotic surgical system to be aligned with the selected trajectory.

[0039] In some embodiments, the method comprises: automatically (e.g., by a processor of a computer subsystem) moving the robotic arm and/or end effector of the robotic surgical system along the selected trajectory (e.g., towards the anatomy of the patient). In some embodiments, the method comprises: defining and/or updating (e.g., by a processor of a computer subsystem) a haptic object that comprises the selected trajectory; and constraining motion of a robotic arm and/or end effector such that motion of at least a portion of the surgical tool inserted into or attached to the robotic arm and/or end effector is constrained to within the haptic object.

[0040] In some embodiments, the at least one detector comprises a detector with at least a minimum field of view of 40 degrees (e.g., as measured on a diagonal). In some embodiments, the display screen has a resolution of at least 1280.times.720 pixels.

[0041] In some embodiments, the method comprises: registering anatomy of a patient with the robotic surgical system, the augmented reality navigation system, and, optionally, an anatomical model of the patient based on medical image data (e.g., X-ray data, CT data, MRI data, fluoroscopy data).

[0042] In some embodiments, the at least one detector comprises a video camera and the method comprises: generating (e.g., by a processor of a computer subsystem) a video signal based on the detector input signal; and outputting (e.g., by a processor of a computer subsystem) the video signal for display on at least one of (i) a monitor and (ii) a second head mounted display comprising an at least partially transparent display screen configured to display augmentation graphics (e.g., semi-opaque images) which appear to a user to be superimposed on at least a portion of a natural field of view of the user.

[0043] In some embodiments, the system comprises one or more fiducial markers connected to the head mounted display and the method comprises: receiving (e.g., by a processor of a computer subsystem) a relative location and orientation of the one or more fiducial markers connected to the head mounted display, wherein the one or more fiducial markers are detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector); and modifying (e.g., at least one of rotating, scaling, and translating) (e.g., by a processor of a computer subsystem) at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of a trajectory of a surgical tool, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more fiducial markers detected by the secondary detector.

[0044] In some embodiments, the method comprises: receiving (e.g., by a processor of a computer subsystem) a relative location and orientation of one or more real-world features detected by a secondary detector (e.g., not physically connected to the head mounted display) (e.g., an EMF detector, an RF detector, an acoustic detector, a LiDAR detector, an optical detector); modifying (e.g., at least one of rotating, scaling, and translating) (e.g., by a processor of a computer subsystem) at least one of (i) an anatomical model, (ii) a representation of a surgical implant, (iii) a representation of a trajectory of a surgical tool, and (iv) a representation of at least a portion of a surgical tool hidden from a natural field of view based on the one or more real-world features detected by the secondary detector; rendering and/or updating (e.g., by a processor of a computer subsystem) updated augmentation graphics based at least in part on the modified at least one of (i), (ii), (iii), and (iv); and displaying (e.g., by a processor of a computer subsystem) on the display screen, the updated augmentation graphics.

[0045] In some embodiments, the surgical procedure comprises at least one of a spinal surgical procedure, an orthopedic surgical procedure, an orthopedic trauma surgical procedure, and a neurosurgical procedure. In some embodiments, the surgical procedure comprises a minimally invasive surgical procedure.

Definitions

[0046] In order for the present disclosure to be more readily understood, certain terms used herein are defined below. Additional definitions for the following terms and other terms may be set forth throughout the specification.

[0047] In this application, the use of "or" means "and/or" unless stated otherwise. As used in this application, the term "comprise" and variations of the term, such as "comprising" and "comprises," are not intended to exclude other additives, components, integers or steps. As used in this application, the terms "about" and "approximately" are used as equivalents. Any numerals used in this application with or without about/approximately are meant to cover any normal fluctuations appreciated by one of ordinary skill in the relevant art. In certain embodiments, the term "approximately" or "about" refers to a range of values that fall within 25%, 20%, 19%, 18%, 17%, 16%, 15%, 14%, 13%, 12%, 11%, 10%, 9%, 8%, 7%, 6%, 5%, 4%, 3%, 2%, 1%, or less in either direction (greater than or less than) of the stated reference value unless otherwise stated or otherwise evident from the context (except where such number would exceed 100% of a possible value).

[0048] The following description generally makes use of a Cartesian coordinate system in describing positions, orientations, and directions of travel of various elements of and relating to the systems and methods described herein. However, it should be understood that specific coordinates (e.g., "x, y, z") and related conventions based on them (e.g., a "positive x-direction", an "x, y, or z-axis", an "xy, xz, or yz-plane", and the like) are presented for convenience and clarity, and that, as understood by one of skill in the art, other coordinate systems could be used (e.g., cylindrical, spherical) and are considered to be within the scope of the claims.

[0049] Navigational information: As used herein, the term "navigational information" means information useful in navigating during a surgical procedure. In certain embodiments, navigating includes navigating one or more surgical tools and/or implants (or other surgical apparatus). The surgical tool(s) may be attached to a robotic surgical system. Navigational information includes, but is not limited to, one or more of surgical trajectories, positions and/or orientations of (i) surgical tools and/or apparatus (e.g., implants) and/or surgical equipment (e.g., surgical tables), patient anatomy and/or models thereof, medical image data, and positions and/or orientations of a robotic surgical system. As used herein, where an augmented reality navigation system is described as displaying navigational information to a surgeon, it is understood that other information not immediately relevant to navigation, but relevant generally to a surgical procedure may also be displayed (e.g., in a similar fashion). For example, patient health information regarding a patient's vitals or condition (e.g., patient history) or status information related to a surgical procedure (e.g., progress, instructions, or other information) may be displayed (e.g., on a virtual display presented on a display screen of an augmented reality navigation system). When appropriate, navigational information can optionally appear overlaid over a patient's anatomy.

[0050] Augmentation graphic: As used herein, the term "augmentation graphic" refers to a graphic that is rendered by a processor and displayed on a display screen of an augmented reality navigation system such that the graphic appears superimposed on the natural field of view of a surgeon as viewed through the display screen. In certain embodiments, an augmentation graphic may also be rendered for display on a remote monitor to allow third party observers to observe what a surgeon is seeing (e.g., one that is mounted on a wall of an operating room). An augmentation graphic may be a standalone graphic that appears in the natural field of view (e.g., as a virtual display floating in the field of view of a surgeon). An augmentation graphic may appear overlaid over one or more objects (e.g., patient anatomy) in the natural field of view, such that the augmentation graphic coincides with a physical object (e.g., portion of a patient anatomy) that is represented by the augmentation graphic (e.g., wherein the physical object or a portion thereof is not otherwise seen by a surgeon). In certain embodiments, an augmentation graphic may appear as a 3D object that sits adjacent to the physical object that it represents (e.g., with a common orientation but offset by a spatial translation). In some embodiments, augmentation graphics comprise several graphics for display in a chronological order such that they appear as a video on a display screen that augments a surgeon's natural field of view. For example, a surgeon can view a portion of a procedure as it will occur overlaid over a patient's physical anatomy.

[0051] Medical image data: As used herein, medical image data refers to image data that represents at least a portion of a patient. Medical image data may be generated using any suitable technique including, but not limited to, X-ray techniques, radiography techniques (fluoroscopy techniques (e.g., generated using an O-arm or C-arm)), tomographic techniques (e.g., X-ray computed tomography, positron emission tomography (PET), or magnetic resonance imaging (MRI)), ultrasound techniques, or elastography techniques.

[0052] Pointer tool: As used herein, the term "pointer tool" refers to a tool that is used to indicate a desired position and/or orientation. A pointer tool may be configured to be inserted into a robotic surgical system, for example, an end effector thereof or a tool guide attached thereto. A pointer tool may be a specially configured instrument solely used for pointing. A surgical tool may be used as a pointer tool. For example, a drill bit, a drill guide, a tool guide, an awl, or similar surgical tool may be used as a pointer tool. A pointer tool may have one or more fiducials attached to the tool (e.g., sufficient to determine a position and orientation of the pointer tool (e.g., by triangulation)).

[0053] Real-world feature: As used herein, the term "real-world feature" refers to a physical object or portion thereof that can be detected by a detector such that spatial information about the object can be determined. Spatial information comprises a position and/or orientation. A real-world feature may be identified patient anatomy (e.g., identified by reference to an anatomy database (e.g., a database of images of patient anatomy)) that is detected using one or more image-recognition techniques. A real-world feature may be any suitable fiducial. A fiducial may be attached to, for example, surgical equipment (e.g., an operating table), a patient, a surgical tool, an implant, a robotic surgical system, or an augmented reality navigation system (e.g., on the head mounted display). A fiducial may comprise a plurality of markers to assist in orienting the fiducial in the environment during navigation (e.g., tracking). For example, in certain embodiments, a fiducial comprises a plurality of spatially separated markers (e.g., 3 markers or 4 markers) attached to a rigid holding apparatus that is attachable to an object, wherein each of the markers is configured to be detected by a detector disposed on the head mounted display (e.g., emit or alter an electromagnetic field for an EMF detector or have a certain reflectivity for an optical detector). Real-world features are used to determine position and orientation of objects in a surgical environment. An example of a technique used to make such a determination using the systems disclosed herein is triangulation, however, it will be apparent to those of ordinary skill in the art of patient registration that any of a number of techniques may be used, alone or in combination, such as surface matching or other similar correlation techniques.

[0054] Trajectory: As used herein, the term "trajectory" refers to a path desired and/or intended to be followed. A trajectory may be modeled and graphics representing the trajectory may be displayed on a display screen of an augmented reality navigation system. A trajectory may a linear trajectory (e.g., wherein all points along the trajectory fall onto a line) or a non-linear trajectory. A trajectory sent by a processor (e.g., to a robotic surgical system) or stored on a computer readable medium (e.g., for manipulation by a processor) may be represented by any data sufficient to define the trajectory. Non-limiting examples of data used to define a trajectory include a single coordinate in space and an orientation (e.g., vector), a plurality of coordinates, and a functional relationship (e.g., involving an x, y, and z variable). In certain embodiments, a path may be followed using a robotic surgical system (e.g., automatically) or manually by a surgeon.

[0055] Robotic surgical system: As used herein, the term "robotic surgical system" refers to a system comprising a robotic arm configured to assist in a surgical procedure. A robotic arm may assist in a surgical procedure by holding and/or manipulating (e.g., guiding and/or moving) one or more surgical tool(s). In certain embodiments, a robotic surgical system comprises an active, non-backdriveable robotic arm. In certain embodiments, a robotic surgical system comprises a passive, backdriveable robotic arm. In certain embodiments, a robotic surgical system is configured to be manipulated directly by a surgeon (e.g., by grasping and maneuvering). In certain embodiments, a robot is configured to be manipulated remotely by a surgeon (e.g., similarly to a master/slave system). In certain embodiments, a robotic arm of a robotic surgical system is configured to assist in a surgical procedure by preventing a surgeon from actively maneuvering a surgical tool attached to the robotic arm outside of a defined haptic object (i.e., haptic volume). In certain embodiments, a robotic arm of a robotic surgical system is configured to automatically move, upon input from a surgeon, onto and/or along a trajectory, such as a trajectory pre-operatively or intra-operatively planned using an augmented reality navigation system in communication with the robotic surgical system. In certain embodiments, a robotic surgical system and an augmented reality navigation system have a common computer subsystem.

BRIEF DESCRIPTION OF THE DRAWING

[0056] Drawings are presented herein for illustration purposes, not for limitation. The foregoing and other objects, aspects, features, and advantages of the invention will become more apparent and may be better understood by referring to the following description taken in conjunction with the accompanying drawings, in which:

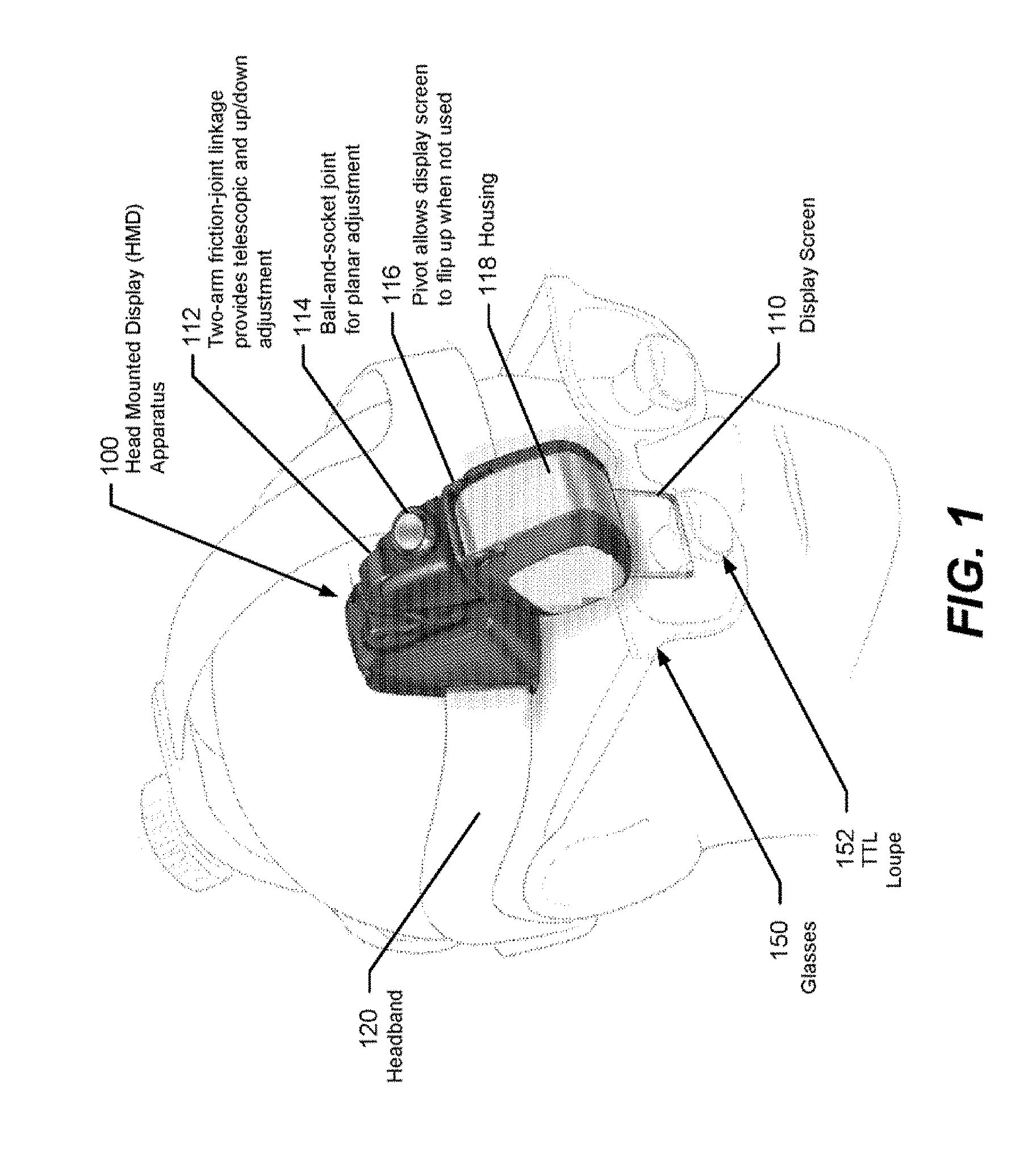

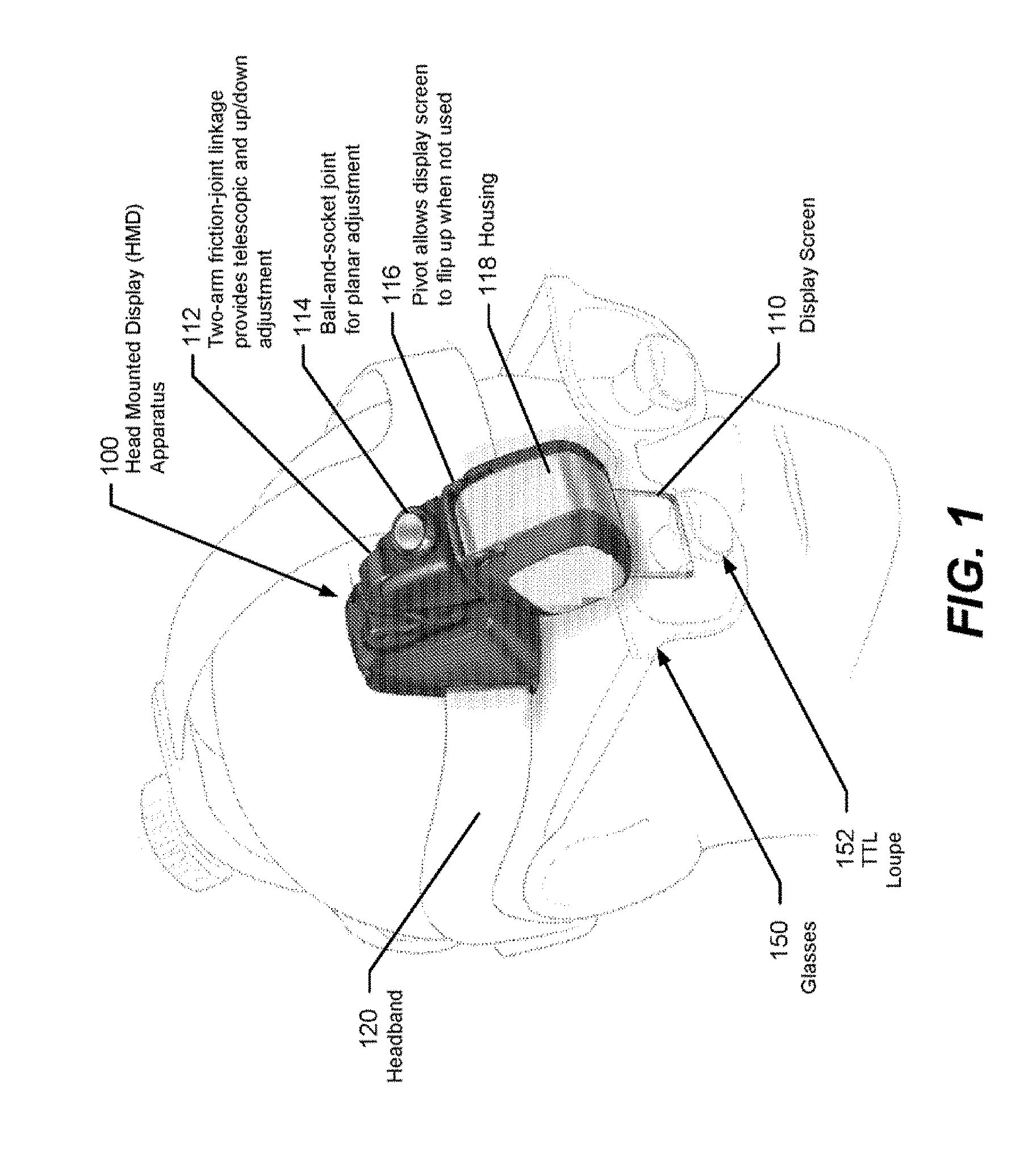

[0057] FIG. 1 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

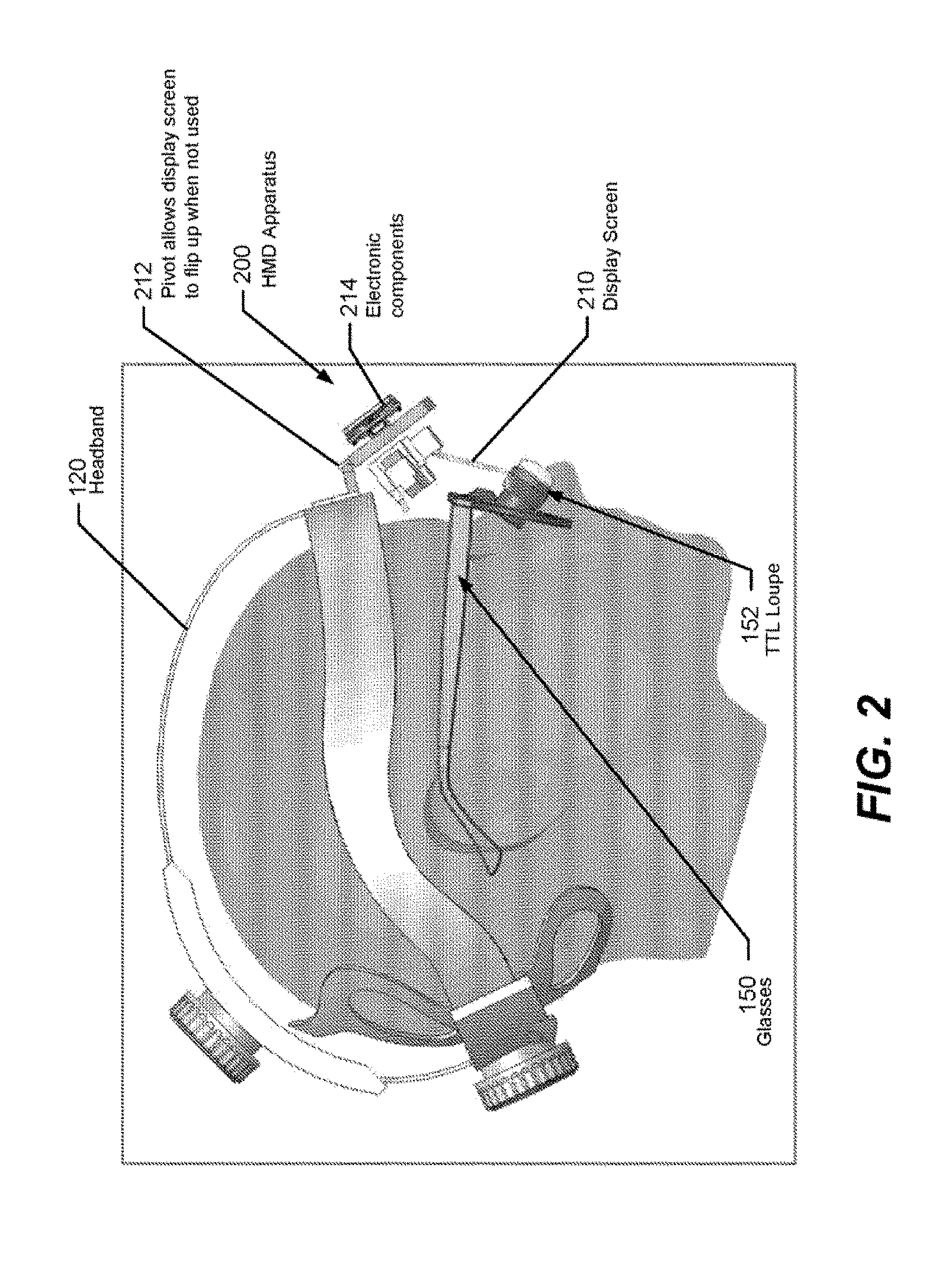

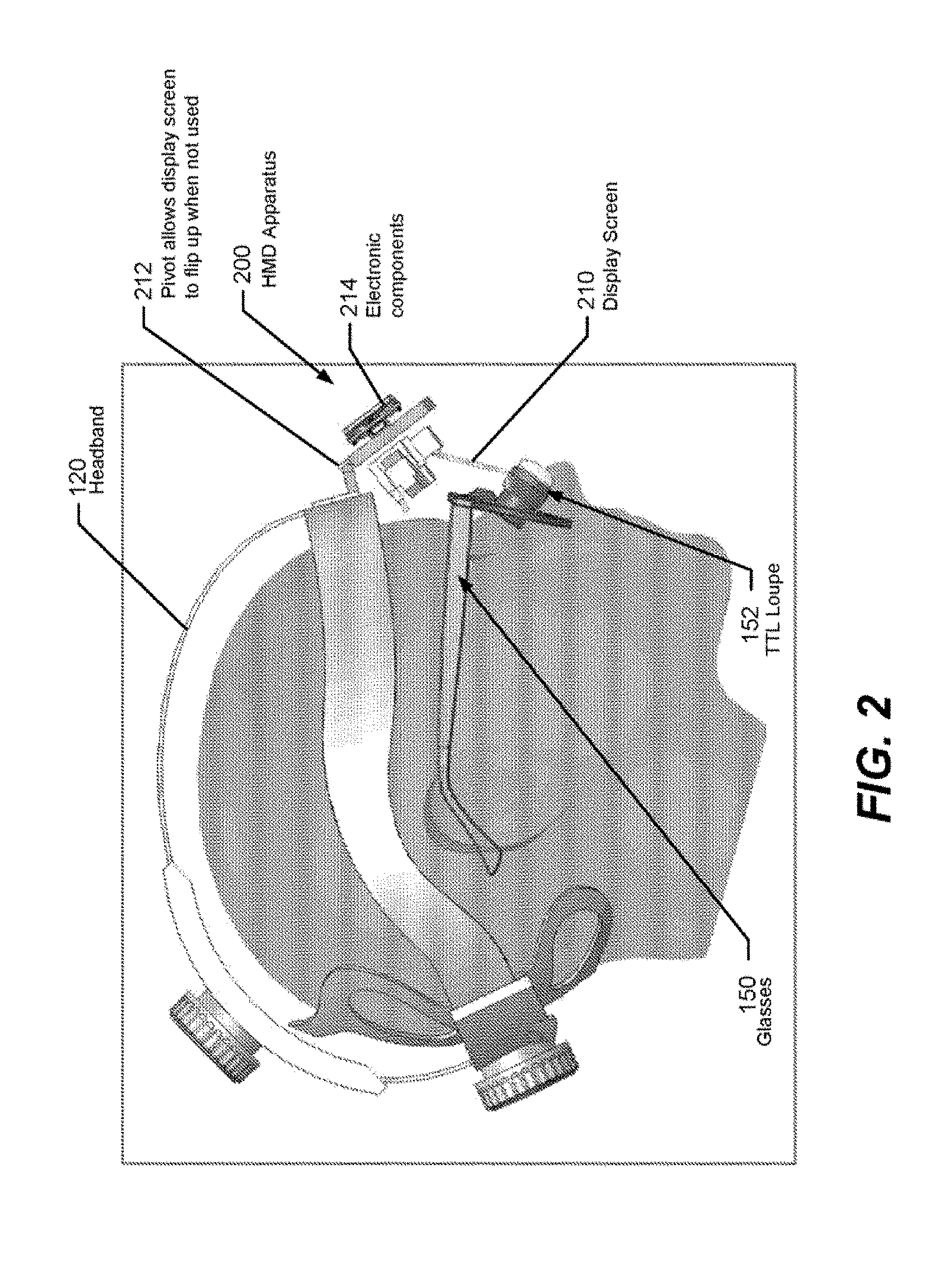

[0058] FIG. 2 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

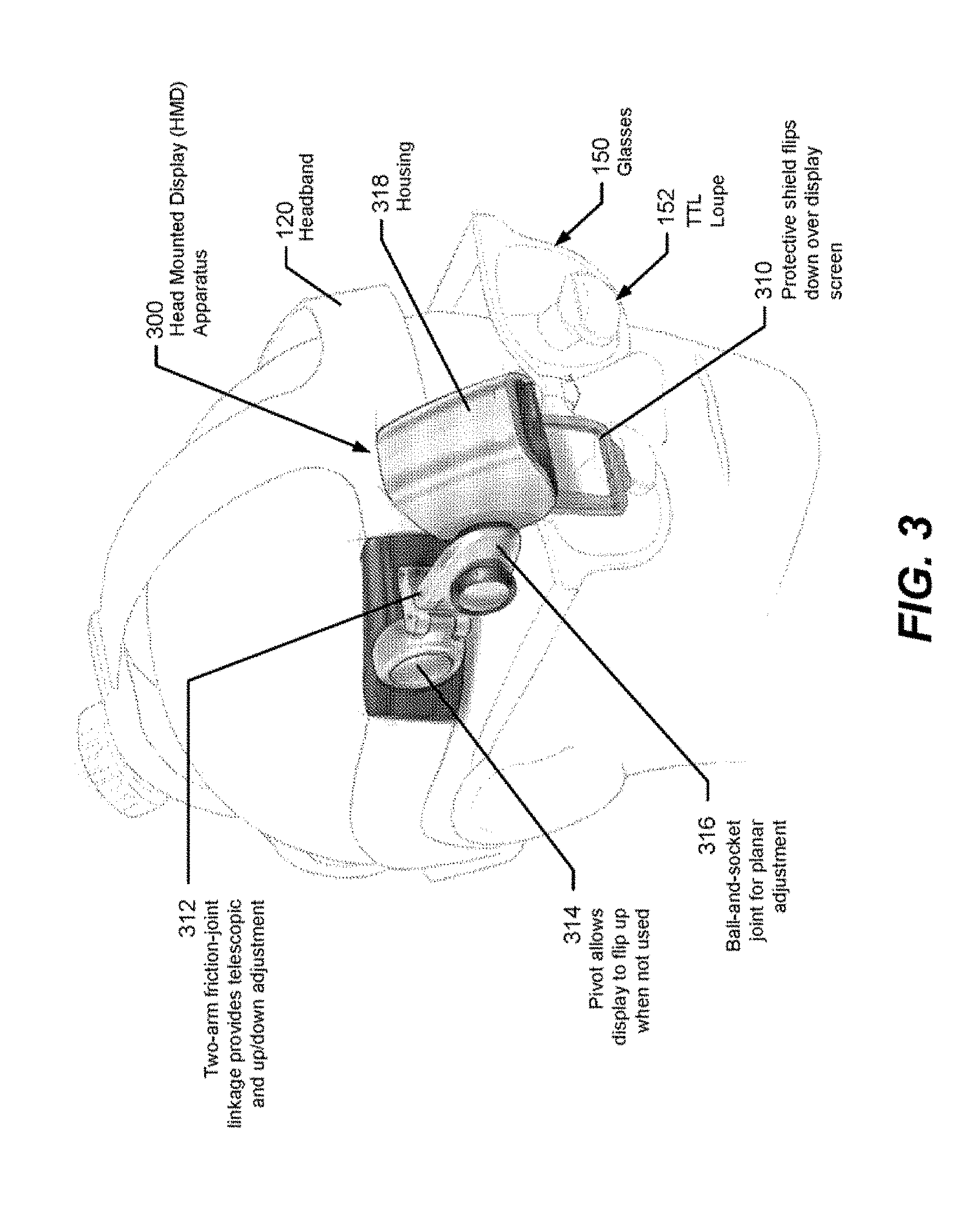

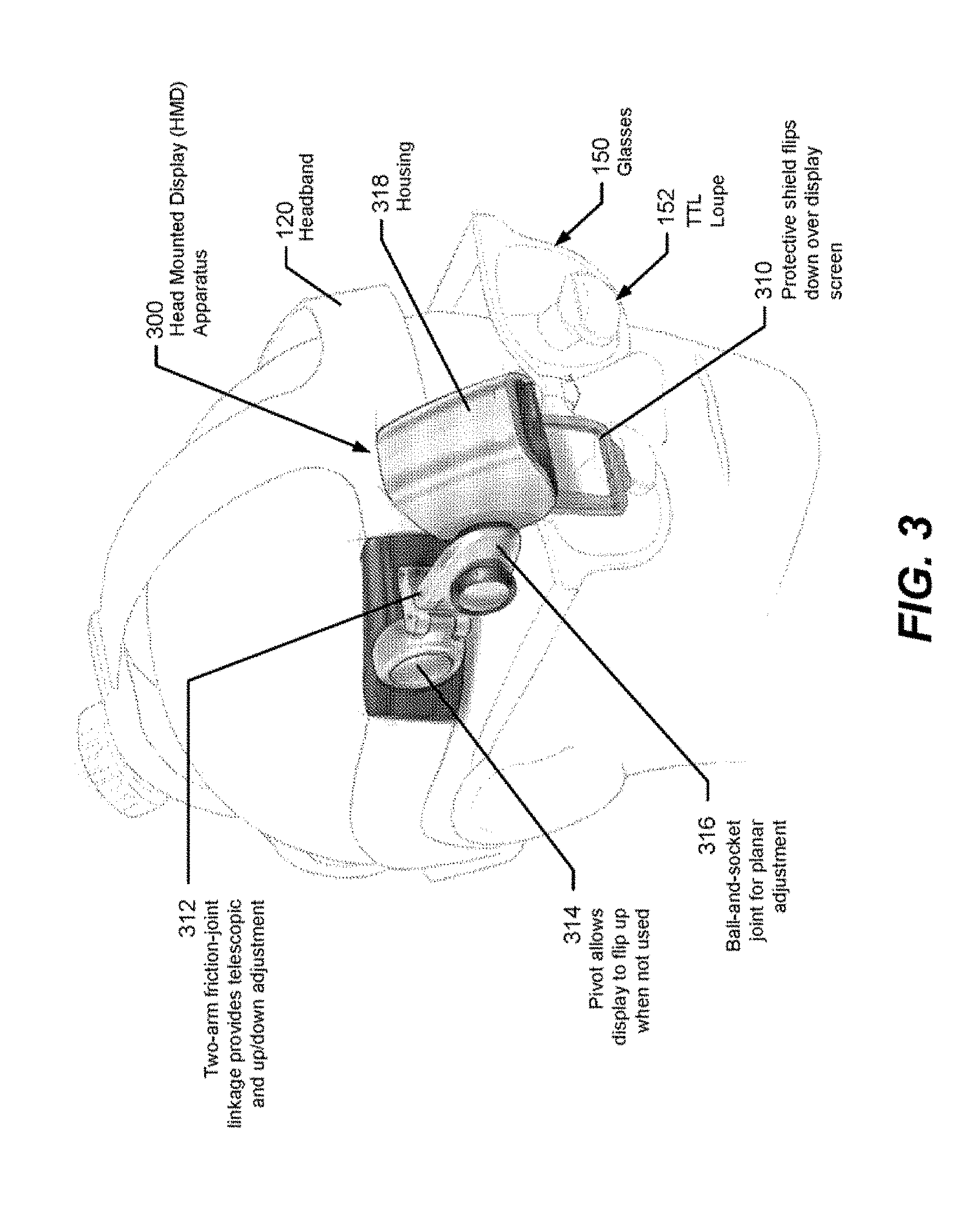

[0059] FIG. 3 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

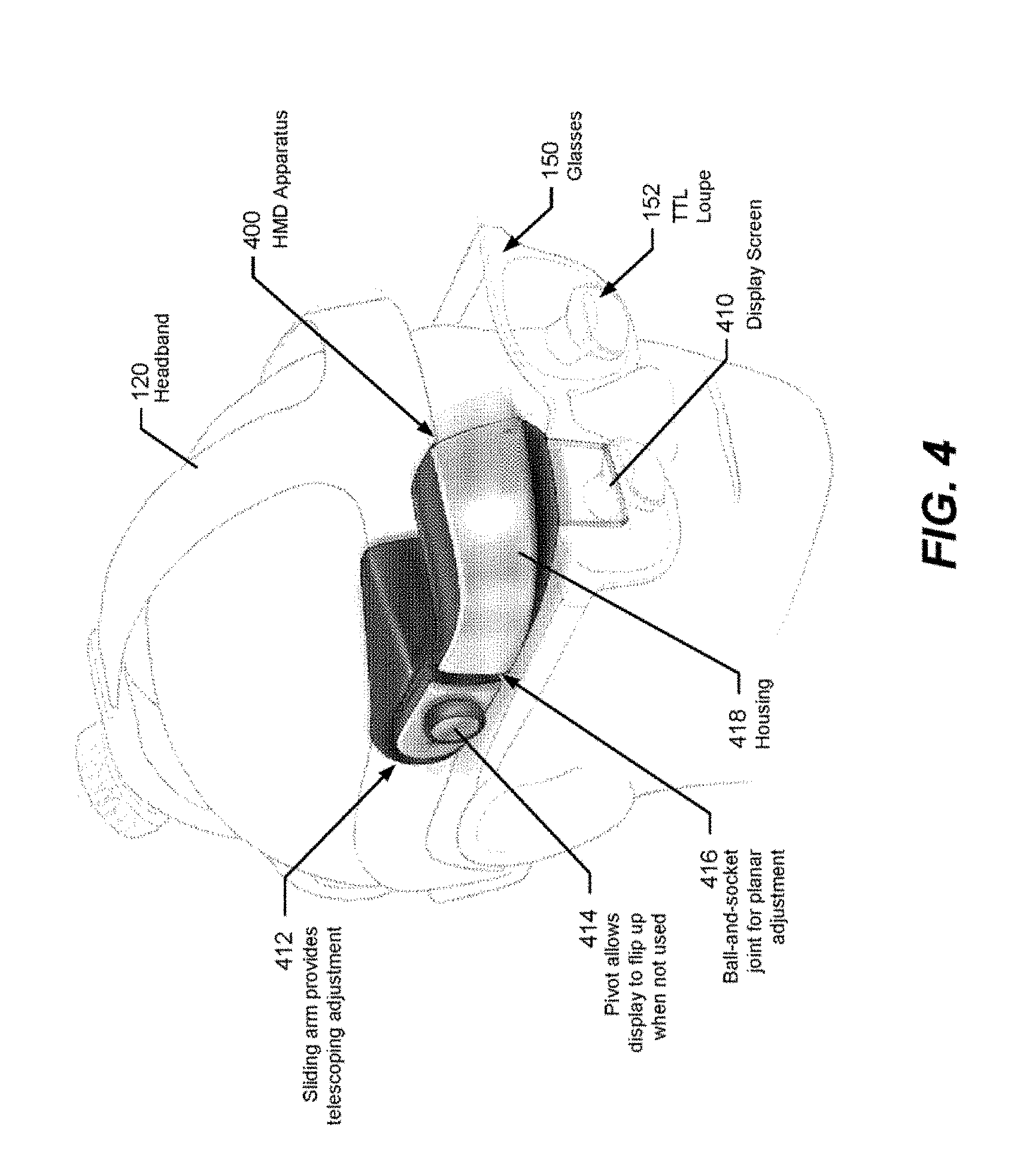

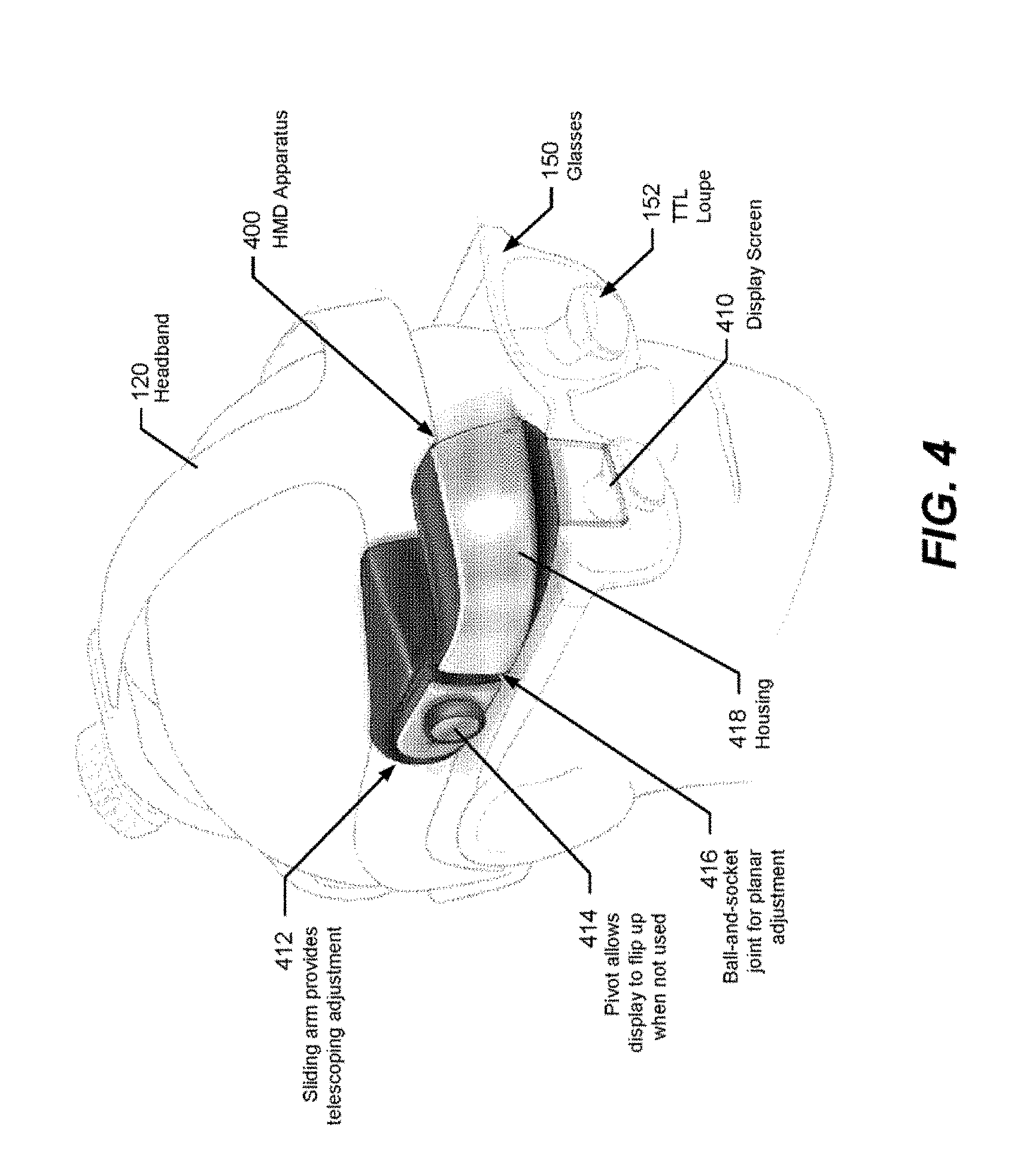

[0060] FIG. 4 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

[0061] FIG. 5 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

[0062] FIG. 6 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

[0063] FIG. 7 illustrates a head mounted display apparatus that can be worn on a user's head and operates in accordance with some embodiments of the invention;

[0064] FIG. 8 illustrates operations and methods that may be performed by an augmented reality navigation system that includes a head mounted display to display virtual display panels through a display screen of the head mounted display, according to some illustrative embodiments of the invention;

[0065] FIG. 9 is a block diagram of electronic components of a computer subsystem coupled to head mounted display in an augmented reality navigation system, according to illustrative embodiments of the invention;

[0066] FIG. 10 is a block diagram of components of an augmented reality navigation system that tracks the location of equipment (surgical tools, a surgeon's head mounted display, and parts of a patient's anatomy, and generates a three dimensional (3D) model from patient data that is displayed on the head mounted display to be rendered super-imposed at a visually aligned location on the patient's body in accordance with illustrative embodiments of the invention;

[0067] FIG. 11 is another block diagram of the electronic components and modules of an augmented reality surgical system, in accordance with illustrative embodiments of the invention;

[0068] FIG. 12 is a block diagram of an exemplary method for using an augmented reality navigation system to display a graphical representation of a surgical tool and/or its trajectory, according to illustrative embodiments of the invention;

[0069] FIG. 13 is a block diagram of an exemplary method for using an augmented reality navigation system with fixed crosshairs, according to illustrative embodiments of the invention;

[0070] FIG. 14 is a block diagram of an exemplary method for using an augmented reality navigation system with a pointer tool, according to illustrative embodiments of the invention;

[0071] FIG. 15 illustrates navigation information that may be displayed on an augmented reality navigation system during a surgical procedure, according to illustrative embodiments of the invention;

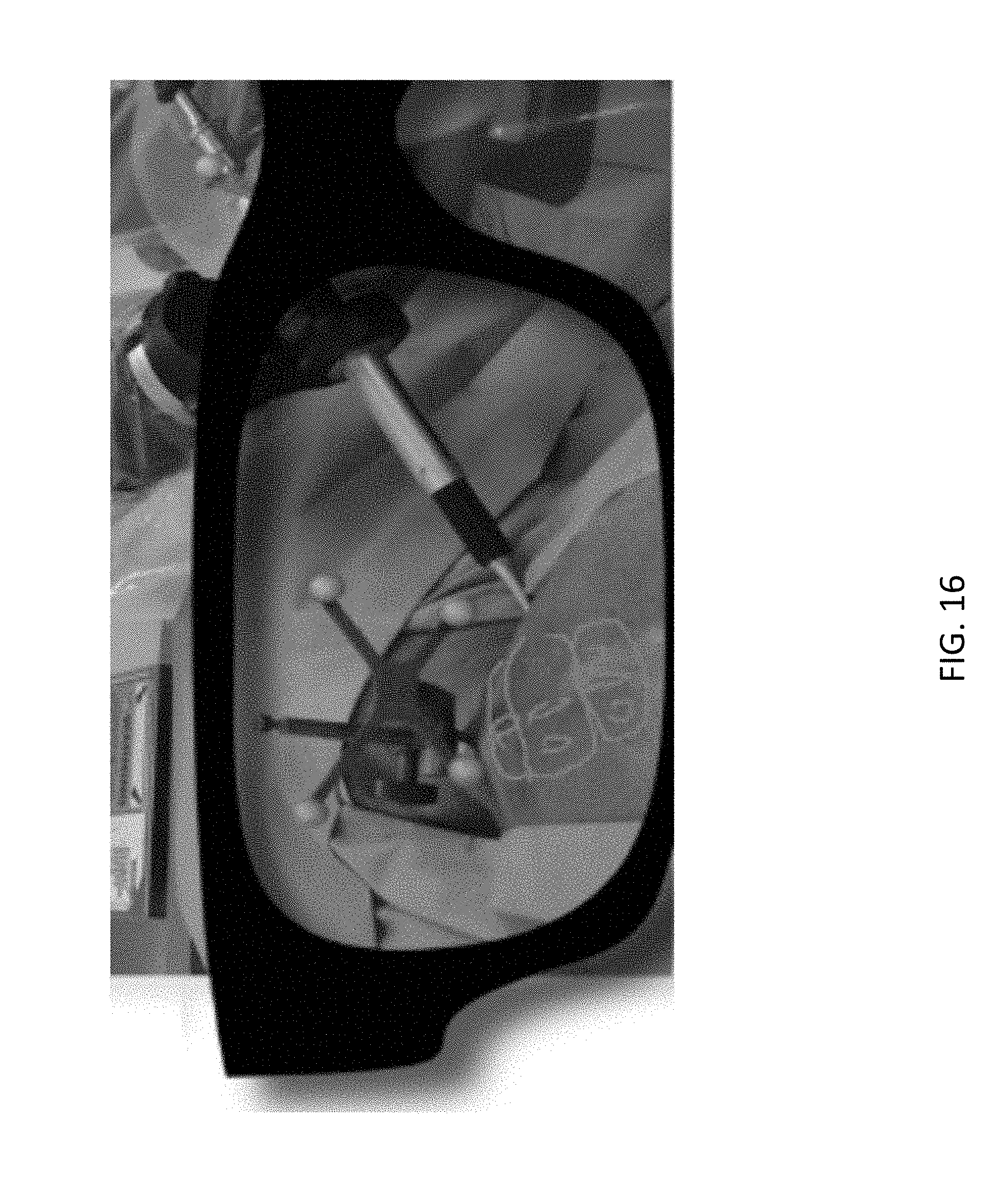

[0072] FIG. 16 schematically illustrates portions of a model of a patient anatomy and an implant being inserted along a pre-planned or intra-operatively planned trajectory using a surgical tool connected to a robotic surgical system that are otherwise not visible to the wearer, as displayed on an augmented reality navigation system, according to illustrative embodiments of the invention;

[0073] FIG. 17 is a block diagram of an example network environment for use in the methods and systems described herein, according to illustrative embodiments of the invention; and

[0074] FIG. 18 is a block diagram of an example computing device and an example mobile computing device, for use in illustrative embodiments of the invention.

DETAILED DESCRIPTION

[0075] It is contemplated that systems, devices, methods, and processes of the claimed invention encompass variations and adaptations developed using information from the embodiments described herein. Adaptation and/or modification of the systems, devices, methods, and processes described herein may be performed by those of ordinary skill in the relevant art.

[0076] Throughout the description, where articles, devices, and systems are described as having, including, or comprising specific components, or where processes and methods are described as having, including, or comprising specific steps, it is contemplated that, additionally, there are articles, devices, and systems of the present invention that consist essentially of, or consist of, the recited components, and that there are processes and methods according to the present invention that consist essentially of, or consist of, the recited processing steps.

[0077] It should be understood that the order of steps or order for performing certain action is immaterial so long as the invention remains operable. Moreover, two or more steps or actions may be conducted simultaneously.

[0078] The mention herein of any publication, for example, in the Background section, is not an admission that the publication serves as prior art with respect to any of the claims presented herein. The Background section is presented for purposes of clarity and is not meant as a description of prior art with respect to any claim. Headers are provided for the convenience of the reader and are not intended to be limiting with respect to the claimed subject matter.

Augmented Reality Navigation Systems and Components Thereof

[0079] The augmented reality navigation systems disclosed herein comprise a head mounted display comprising an at least partially transparent display screen, at least one detector connected to (e.g., disposed on) the head mounted display for identifying real-world features, and a computer subsystem. The computer subsystem can be configured to perform a variety of navigational tasks useful to a surgeon during a procedure including, for example, trajectory planning and execution. A motion sensor can optionally be included to detect motion of the head of a surgeon wearing the augmented reality navigation system providing additional functionality and/or performance (e.g., a selection input means or drift correction). Augmented reality navigation systems for use in certain surgical procedures that are in accordance with certain embodiments of the present disclosure are described in U.S. Patent Publication No. 2016-0225192 A1, published on Aug. 4, 2016, the disclosure of which is hereby incorporated by reference herein in its entirety.

[0080] Embodiments of the present disclosure are directed to an augmented reality surgical system that includes a head mounted display (HMD) apparatus that can be worn by a surgeon, physician, or other personnel during a medical procedure. Throughout the present disclosure, where an augmented reality navigation system is described as being worn by or used by a wearer, user, or surgeon, it is understood that any person assisting with, leading, or observing a surgical procedure can equivalently interact with an augmented reality navigation system in the same manner as is being described. The user is not limited to any one particular individual with a specific relationship to a surgical procedure. The HMD can be configured to provide localized, real-time situational awareness to the wearer. The HMD includes a display screen that can be positioned within the natural line-of-sight and/or periphery field of view (FOV) of the wearer to provide visual information that can be organized and displayed as a single virtual display or as a collection of virtual displays that a wearer can navigate between to view using head movement, hand gestures, voice commands, eye control, and/or other operations disclosed herein. In certain embodiments, a display screen can be manipulated to be in or out of a natural field of view of a surgeon (e.g., by flipping the screen from an "in use" to "out of use" position").

[0081] In certain embodiments, a surgeon or other person can wear an HMD to see a graphical representation of what is within the patient's body but covered from view by skin, muscle, organs, skeletal structure, etc. In certain embodiments, using an HMD can enable a surgeon to minimize the size of an incision by observing where the incision needs to be made to reveal a targeted portion of the body. Similarly, an HMD can be used when replacing a bone with prosthesis to enable a surgeon to observe a real-world feature that aids with orienting and moving surgical tool(s) (e.g., attached to a robotic surgical system) and/or a prosthesis during the procedure. An HMD may operate to improve the efficiency, productivity, throughput, and/or accuracy, and/or safety of the wearer's performance of a medical procedure. Moreover, an HMD can reduce mental fatigue by reducing or eliminating a need for the wearer to reference remote display devices having substantial angular offsets during a medical procedure.

[0082] In certain embodiments, an augmented reality navigation system has an initialization time of under 10 minutes (e.g., under 5 minutes) thereby minimizing delay and interference of surgical procedures. In certain embodiments, an augmented reality navigation system can be comfortably word for a minimum of one hour and up to four hours or more. In certain embodiments, an augmented reality navigation system is compatible with surgeon's loupes. In certain embodiments, an augmented reality navigation system is compatible with personal prescription glasses. In certain embodiments, an augmented reality navigation system provides a minimum field of view of 40 degrees (e.g., 50 degrees, 60 degrees, 70 degrees, 90 degrees, or more) as measured on a diagonal. In certain embodiments, an augmented reality navigation system comprises a head mounted display and computer subsystem coupled together to provide less than 50 millisecond latency (e.g., less than 20 millisecond latency) from the time that a display screen moves until updated navigation information is received by the display screen. In certain embodiments, a display screen can have a frame rate of 30 frames per second or more (e.g., 60 frames per second or 120 frames per second).

[0083] The augmented reality navigation systems disclosed herein comprise a head mounted display comprising an at least partially transparent display screen. The head mounted display comprising an at least partially transparent display screen can have a form factor similar to eyeglasses. For example, it can be binocular or monocular (i.e., the display screen can be used to augment vision in one or both eyes). In certain embodiments, the head mounted display is a binocular head mounted display. A binocular arrangement may be made of one contiguous display screen at least partially covering both eyes when worn or it may be two separate display screens (i.e., one screen for each eye). The head mounted display may be held on a wearer's head using armatures (as in a typical pair of eyeglasses) or some other mounting means (e.g., a strap, band, or fixture sized and shaped to be worn on a human head). The at least partially transparent display screen configured to display augmentation graphics can be any suitable commercially available display screen, for example, those used in heads up displays for piloting or athletics. The display screen is at least partially transparent such that a wearer can see at least a portion of their natural field of view through the display screen while also seeing any augmentation graphics displayed on the screen. In certain embodiments, the display screen is a high resolution display screen (e.g., has a resolution of at least 1280.times.720).

[0084] The augmented reality navigation subsystems disclosed herein comprise at least one detector. A detector is one suitable for use in determining spatial information about real-world features in the field of view of the detector(s). A detector can be an optical camera (e.g., a video camera), an EMF detector, a LiDAR detector, an acoustic detector, an RF detector, or similar energy sensor. The detector receives information from the environment in the field of view of the detector and produces a detector input signal that is sent to the computing subsystem. In certain embodiments, a detector is disposed (e.g., attached) to a head mounted display). In certain embodiments, a detector is connected (e.g., electrically) but spatially remote from the head mounted display. In certain embodiments, fiducials of an appropriate type are selected and used during a surgical procedure based on the type of detector connected to the head mounted display. For example, fiducials that output an EMF signal are detected by an EMF detector mounted to the head mounted display. One or more fiducials may be disposed on a head mounted display for tracking and/or orienting the head mounted display in the surgical environment (e.g., as detected by an auxiliary detector or a second augmented reality navigation system). In certain embodiments, the detector is an optical camera and image recognition is performed by the computer subsystem on the detector input signal in order to determine one or more real-world features. Detectors of different types can be used in combination in a single augmented reality navigation system. Two detectors of the same type may be disposed on a head mounted display and spatially separated thereon (e.g., in order to triangulate real-world features). In certain embodiments, a detector is removable such that it can be replaced by a detector of a different type. In certain embodiments, a detector coupled with a computer subsystem enables patient registration accurate to within 2 mm (e.g., within 1.5 mm or within 1 mm) RMS for each position degree of freedom. In certain embodiments, one or more detector(s) (e.g., disposed on a head mounted display) are configured to provide accurate registration throughout a period of time (e.g., a surgical procedure) over a certain spatial volume in a surgical environment (e.g., a volume larger than a spherical volume of 2 foot radius, 4 foot radius, 6 foot radius, or larger). In certain embodiments, such a volume may further be defined by a cone whose apex is located at the head mounted display.

[0085] In certain embodiments, an augmented reality navigation system comprises a motion sensor. The motion sensor can track motion of a wearer's head over time. Such tracking can be used to, for example, smooth, or even remove, jitter of augmentation graphics generated by natural small movements of a wearer's head. In certain embodiments, a motion sensor also provides a means for determining motion of a wearer's head and provides a new orientation and/or position of the head mounted display to the computer subsystem in order to update augmentation graphics displayed on a display screen of the head mounted display. In certain embodiments, a motion sensor is configured to provide a less than 2 cm (e.g., less than 1 cm) drift in the position of augmentation graphics from their baseline position (i.e., as determined by the registration of the augmented reality navigation system to a patient's anatomy), as measured in an open loop. In certain embodiments, the motion sensor coupled with a detector in an augmented reality navigation system provides optically calibrated (i.e., closed loop) sub-millimeter drift per hour. In certain embodiments, a motion sensor is used to record user input used for making selections (e.g., a head nod or shake).

[0086] In certain embodiments, an augmented reality navigation system comprises a microphone. The microphone may be disposed on the head mounted display. The microphone may be used to receive surgeon commands (i.e., verbal commands) for use in controlling the augmented reality navigation system. For example, verbal commands may serve as user input for making a selection, modify or update settings or graphics of the display screen, or other similar tasks. For example, verbal commands may be used to rearrange virtual display panels displayed on a display screen of a head mounted display or to change the navigational information displayed on a virtual display. Similarly, graphics overlaid may be modified by verbal commands (e.g., to change brightness, color, or data source for the overlaid graphics).

[0087] In some embodiments, in addition to or in place of verbal commands or commands input by motion of a motion sensor, gestures made by a surgeon may be used to signal selections or control an augmented reality navigation system. For example, in certain embodiments, a surgeon can make swipe, click, resize, or refresh type gestures (e.g., similar to those used with smartphones or other touch control devices) to control the augmented reality navigation system. Such gestures may be made in a manner that is detectable by a detector of the augmented reality navigation system. For example, gestures may be detected using image-recognition type procedures (e.g., when a detector is an optical camera). For example, a surgeon may wear or hold a fiducial detectable by the augmented reality navigation system when making a gesture such that the gesture is detected based on motion of the fiducial. In certain embodiments, alternatively or additionally, an auxiliary mechanical input (e.g., foot pedal) may be used as an input (e.g., selection) device.

[0088] The augmented reality navigation systems disclosed herein comprise a computer subsystem. The computer subsystem, inter alia, processes (e.g., renders) augmentation graphics for display on a display screen of an augmented reality navigation system. The computer subsystem can be remote. For example, a computer subsystem can be connected to a head mounted display through a cable with a quick disconnect. Such a cable can also provide power to components of a head mounted display (e.g., a display screen). In certain embodiments, a computer subsystem is disposed partially or entirely on a head mounted display (e.g., that operates using battery power). In certain embodiments, a computer subsystem is configured to receive navigation input data that comprises navigational information from a robotic surgical system. For example, a trajectory stored in the robotic surgical system and/or coordinates stored in the robotic surgical system (e.g., recorded using the robotic surgical system). In certain embodiments, a computer subsystem can control a robotic surgical system using output (such as trajectory data output) from an augmented reality navigation system.

[0089] In certain embodiments, a computer subsystem is configured to assist in pre-operative and/or intra-operative planning (e.g., trajectory planning). In certain embodiments, the computer subsystem is configured to perform trajectory planning using a pointer tool and/or trajectory selection guidance augmentation graphic (e.g., crosshair) displayed on a display screen. In certain embodiments, a computer subsystem comprises a model module that, inter alia, stores, accesses, and/or updates a model of patient anatomy (e.g., derived from medical image data). In certain embodiments, a computer subsystem comprises a coordinate module that, inter alia, creates, stores, accesses, and/or updates a reference coordinate system used for navigation. Such a reference coordinate system may be defined during a registration method (e.g., at the start of a surgical procedure). A coordinate module may be used to perform reregistration that may happen continuously or periodically during a surgical procedure, wherein the registration may be performed using the augmented reality navigation system.

[0090] In certain embodiments, an augmented reality navigation system operates cooperatively with an auxiliary navigation subsystem. For example, an auxiliary detector in a surgical environment may be used to provide additional detection of real-world features in a surgical environment (in addition to those detected by a detector disposed on a head mounted display). In this way, in certain embodiments, an augmented reality navigation system is couple with an existing navigation system used in conjunction with a robot surgical system in order to navigate during a surgical procedure. For example, a registration performed by an augmented reality navigation system can be compared to a registration performed by an auxiliary detector to determine accuracy of the registration being used by the augmented reality navigation system during navigation of a surgical procedure. A computer subsystem can perform a reregistration to minimize error between a registration according to an augmented reality navigation system and an auxiliary detector. When an auxiliary detector is used, registration precision and tracking during a procedure may be improved, for example, due to each detector detecting real-world features that are otherwise obfuscated from the other detector(s). In certain embodiments, an auxiliary detector is a detector disposed on a head mounted display of a second augmented reality navigation system. An auxiliary detector, when present, may be used to detect one or more fiducials disposed on a head mounted display (e.g., to assist in registration, jitter correction and/or drift correction).

[0091] In certain embodiments, two or more augmented reality navigation systems are used cooperatively and/or conjunctively (e.g., simultaneously). For example, two surgeons may each wear a head mounted display during a surgical procedure. In certain embodiments, navigational information from one head mounted display (e.g., detector input data from a detector mounted to the head mounted display) may be provided to the other head mounted display, for example, in order to share a common registration or provide a video input feed for display on a display screen of one head mounted display from a detector of another head mounted display. In certain embodiments, a video input feed is provided to an external monitor (e.g., on a nearby wall or in a remote location, such as a class room) for view by other persons (e.g., in the same surgical environment), either in addition to or in alternative to being provided to a second augmented reality navigation system. In certain embodiments, fiducials disposed on each head mounted display assist in co-registering two augmented reality navigation systems used in a single surgical environment. Two or more augmented reality navigation systems may share a common computer subsystem (e.g., each be connected by its own cable to a common computer subsystem).

Exemplary Augmented Reality Navigation Systems

[0092] FIG. 1 illustrates an augmented reality navigation system 100 (also referred to as "HMD 100") configured according to some embodiments of the present disclosure. Referring to FIG. 1, the HMD 100 includes a semitransparent display screen 110 connected to a display module that processes and displays augmentation graphics (e.g., video and other images) on the display screen 110 (e.g., a LCD display, a reflective screen on which the display module projects images, etc.) for viewing by a user. The display module may be within a housing 118 of the HMD 100 or may be contained within a communicatively connected computer subsystem.