System And Method For Pose Estimation Of An Imaging Device And For Determining The Location Of A Medical Device With Respect To

BARAK; RON ; et al.

U.S. patent application number 16/270414 was filed with the patent office on 2019-08-08 for system and method for pose estimation of an imaging device and for determining the location of a medical device with respect to . The applicant listed for this patent is COVIDIEN LP. Invention is credited to GUY ALEXANDRONI, RON BARAK, ARIEL BIRENBAUM, OREN P. WEINGARTEN.

| Application Number | 20190239838 16/270414 |

| Document ID | / |

| Family ID | 67475240 |

| Filed Date | 2019-08-08 |

| United States Patent Application | 20190239838 |

| Kind Code | A1 |

| BARAK; RON ; et al. | August 8, 2019 |

SYSTEM AND METHOD FOR POSE ESTIMATION OF AN IMAGING DEVICE AND FOR DETERMINING THE LOCATION OF A MEDICAL DEVICE WITH RESPECT TO A TARGET

Abstract

A system and method for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two-dimensional fluoroscopic images acquired via a fluoroscopic imaging device.

| Inventors: | BARAK; RON; (TEL AVIV, IL) ; BIRENBAUM; ARIEL; (HERZLIYA, IL) ; ALEXANDRONI; GUY; (RAMAT HASHARON, IL) ; WEINGARTEN; OREN P.; (HERZLIYA, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 67475240 | ||||||||||

| Appl. No.: | 16/270414 | ||||||||||

| Filed: | February 7, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62628017 | Feb 8, 2018 | |||

| 62641777 | Mar 12, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 90/36 20160201; G06T 2207/30021 20130101; A61B 2034/2055 20160201; G06T 7/269 20170101; A61B 1/042 20130101; A61B 90/39 20160201; G06T 2207/10068 20130101; A61B 5/064 20130101; A61B 1/00158 20130101; G06T 2207/10016 20130101; A61B 2090/3983 20160201; G06T 7/251 20170101; G06T 2207/30244 20130101; A61B 2090/364 20160201; G06T 7/73 20170101; A61B 6/487 20130101; G06T 2207/30208 20130101; G06T 7/75 20170101; G06T 2207/10088 20130101; G06T 7/248 20170101; A61B 5/055 20130101; A61B 6/032 20130101; A61B 34/20 20160201; G06T 7/77 20170101; A61B 1/043 20130101; G06T 2207/10081 20130101; G06T 2207/10121 20130101; A61B 1/0005 20130101; A61B 2034/2051 20160201 |

| International Class: | A61B 6/00 20060101 A61B006/00; A61B 5/055 20060101 A61B005/055; A61B 34/20 20060101 A61B034/20; A61B 1/04 20060101 A61B001/04; A61B 1/00 20060101 A61B001/00; G06T 7/73 20060101 G06T007/73; A61B 90/00 20060101 A61B090/00 |

Claims

1. A system for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two-dimensional fluoroscopic images acquired via a fluoroscopic imaging device, comprising: a structure of markers, wherein a sequence of images of the target area and of the structure of markers is acquired via the fluoroscopic imaging device; and a computing device configured to: estimate a pose of the fluoroscopic imaging device for a plurality of images of the sequence of images based on detection of a possible and most probable projection of the structure of markers as a whole on each image of the plurality of images; and construct fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

2. The system of claim 1, wherein the computing device is further configured to: facilitate an approach of a medical device to the target area, wherein a medical device is positioned in the target area prior to acquiring the sequence of images; and determine an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

3. The system of claim 2, further comprising a locating system indicating a location of the medical device within the patient, wherein the computing device comprises a display and is configured to: display the target area and the location of the medical device with respect to the target; facilitate navigation of the medical device to the target area via the locating system and the display; and correct the display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

4. The system of claim 3, wherein the computing device is further configured to: display a 3D rendering of the target area on the display; and register the locating system to the 3D rendering, wherein correcting the display of the location of the medical device with respect to the target comprises updating the registration between the locating system and the 3D rendering.

5. The system of claim 3, wherein the locating system is an electromagnetic locating system.

6. The system of claim 3, wherein the target area comprises at least a portion of lungs and the medical device is navigable to the target area through airways of a luminal network.

7. The system of claim 1, wherein the structure of markers is at least one of a periodic pattern or a two-dimensional pattern.

8. The system of claim 1, wherein the target area comprises at least a portion of lungs and the target is a soft-tissue target.

9. A method for constructing fluoroscopic-based three dimensional volumetric data of a target area within a patient from a sequence of two-dimensional (2D) fluoroscopic images of a target area and of a structure of markers acquired via a fluoroscopic imaging device, wherein the structure of markers is positioned between the patient and the fluoroscopic imaging device, the method comprising using at least one hardware processor for: estimating a pose of the fluoroscopic imaging device for at least a plurality of images of the sequence of 2D fluoroscopic images based on detection of a possible and most probable projection of the structure of markers as a whole on each image of the plurality of images; and constructing fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

10. The method of claim 9, wherein a medical device is positioned in the target area prior to acquiring the sequence of images, and wherein the method further comprises using the at least one hardware processor for determining an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

11. The method of claim 10, further comprising using the at least one hardware processor for: facilitating navigation of the medical device to the target area via a locating system indicating a location of the medical device and via a display; and correcting a display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

12. The method of claim 11, further comprising using the at least one hardware processor for: displaying a 3D rendering of the target area on the display; and registering the locating system to the 3D rendering, wherein the correcting of the location of the medical device with respect to the target comprises updating the registration of the locating system to the 3D rendering.

13. The method of claim 12, further comprising using the at least one hardware processor for generating the 3D rendering of the target area based on previously acquired CT volumetric data of the target area.

14. The method of claim 10, wherein the target area comprises at least a portion of lungs and wherein the medical device is navigable to the target area through airways of a luminal network.

15. The method of claim 11, wherein the structure of markers is at least one of a periodic pattern or a two-dimensional pattern.

16. The method of claim 11, wherein the target area comprises at least a portion of lungs and the target is a soft-tissue target.

17. A system for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two-dimensional fluoroscopic images acquired via a fluoroscopic imaging device, comprising: a computing device configured to: estimate a pose of the fluoroscopic imaging device for a plurality of images of a sequence of images based on detection of a possible and most probable projection of a structure of markers as a whole on each image of the plurality of images; and construct fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

18. The system of claim 17, wherein the computing device is further configured to: facilitate an approach of a medical device to the target area, wherein a medical device is positioned in the target area prior to acquisition of the sequence of images; and determine an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

19. The system of claim 18, further comprising a locating system indicating a location of the medical device within the patient, wherein the computing device comprises a display and is configured to: display the target area and the location of the medical device with respect to the target; facilitate navigation of the medical device to the target area via the locating system and the display; and correct the display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

20. The system of claim 19, wherein the computing device is further configured to: display a 3D rendering of the target area on the display; and register the locating system to the 3D rendering, wherein correcting the display of the location of the medical device with respect to the target comprises updating the registration between the locating system and the 3D rendering.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of the filing date of provisional U.S. Patent Application No. 62/628,017, filed Feb. 8, 2018, provisional U.S. Patent Application No. 62/641,777, filed Mar. 12, 2018, and U.S. patent application Ser. No. 16/022,222, filed Jun. 28, 2018, the entire contents of each of which are incorporated herein by reference.

BACKGROUND

[0002] The disclosure relates to the field of imaging, and particularly to the estimation of a pose of an imaging device and to three-dimensional imaging of body organs.

[0003] Pose estimation of an imaging device, such as a camera or a fluoroscopic device, may be required or used for variety of applications, including registration between different imaging modalities or the generation of augmented reality. One of the known uses of a pose estimation of an imaging device is the construction of a three-dimensional volume from a set of two-dimensional images captured by the imaging device while in different poses. Such three-dimensional construction is commonly used in the medical field and has a significant impact.

[0004] There are several commonly applied medical methods, such as endoscopic procedures or minimally invasive procedures, for treating various maladies affecting organs including the liver, brain, heart, lung, gall bladder, kidney and bones. Often, one or more imaging modalities, such as magnetic resonance imaging, ultrasound imaging, computed tomography (CT), fluoroscopy as well as others are employed by clinicians to identify and navigate to areas of interest within a patient and ultimately targets for treatment. In some procedures, pre-operative scans may be utilized for target identification and intraoperative guidance. However, real-time imaging may be often required in order to obtain a more accurate and current image of the target area. Furthermore, real-time image data displaying the current location of a medical device with respect to the target and its surrounding may be required in order to navigate the medical device to the target in a more safe and accurate manner (e.g., with unnecessary or no damage caused to other tissues and organs).

SUMMARY

[0005] According to one aspect of the disclosure, a system for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two-dimensional fluoroscopic images acquired via a fluoroscopic imaging device is provided. The system includes a structure of markers and a computing device. A sequence of images of the target area and of the structure of markers is acquired via the fluoroscopic imaging device. The computing device is configured to estimate a pose of the fluoroscopic imaging device for a plurality of images of the sequence of images based on detection of a possible and most probable projection of the structure of markers as a whole on each image of the plurality of images, and construct fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

[0006] In an aspect, the computing device is further configured to facilitate an approach of a medical device to the target area, wherein a medical device is positioned in the target area prior to acquiring the sequence of images, and determine an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

[0007] In an aspect, the system further comprises a locating system indicating a location of the medical device within the patient. Additionally, the computing device may be further configured to display the target area and the location of the medical device with respect to the target, facilitate navigation of the medical device to the target area via the locating system and the display, and correct the display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

[0008] In an aspect, the computing device is further configured to display a 3D rendering of the target area on the display, and register the locating system to the 3D rendering, wherein correcting the display of the location of the medical device with respect to the target comprises updating the registration between the locating system and the 3D rendering.

[0009] In an aspect, the locating system is an electromagnetic locating system.

[0010] In an aspect, the target area comprises at least a portion of lungs and the medical device is navigable to the target area through airways of a luminal network.

[0011] In an aspect, the structure of markers is at least one of a periodic pattern or a two-dimensional pattern. The target area may include at least a portion of lungs and the target may be a soft tissue target.

[0012] In yet another aspect of the disclosure, a method for constructing fluoroscopic-based three dimensional volumetric data of a target area within a patient from a sequence of two-dimensional (2D) fluoroscopic images of a target area and of a structure of markers acquired via a fluoroscopic imaging device is provided. The structure of markers is positioned between the patient and the fluoroscopic imaging device. The method includes using at least one hardware processor for estimating a pose of the fluoroscopic imaging device for at least a plurality of images of the sequence of 2D fluoroscopic images based on detection of a possible and most probable projection of the structure of markers as a whole on each image of the plurality of images, and constructing fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

[0013] In an aspect, a medical device is positioned in the target area prior to acquiring the sequence of images, and wherein the method further comprises using the at least one hardware processor for determining an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

[0014] In an aspect, the method further includes facilitating navigation of the medical device to the target area via a locating system indicating a location of the medical device and via a display, and correcting a display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

[0015] In an aspect, the method further includes displaying a 3D rendering of the target area on the display, and registering the locating system to the 3D rendering, where the correcting of the location of the medical device with respect to the target comprises updating the registration of the locating system to the 3D rendering.

[0016] In an aspect, the method further includes using the at least one hardware processor for generating the 3D rendering of the target area based on previously acquired CT volumetric data of the target area.

[0017] In an aspect, the target area includes at least a portion of lungs and the medical device is navigable to the target area through airways of a luminal network.

[0018] In an aspect, the structure of markers is at least one of a periodic pattern or a two-dimensional pattern. The target area may include at least a portion of lungs and the target may be a soft-tissue target.

[0019] In yet another aspect of the disclosure, a system for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two-dimensional fluoroscopic images acquired via a fluoroscopic imaging device is provided. The system includes a computing device configured to estimate a pose of the fluoroscopic imaging device for a plurality of images of a sequence of images based on detection of a possible and most probable projection of a structure of markers as a whole on each image of the plurality of images, and construct fluoroscopic-based three-dimensional volumetric data of the target area based on the estimated poses of the fluoroscopic imaging device.

[0020] In an aspect, the computing device is further configured to facilitate an approach of a medical device to the target area, wherein a medical device is positioned in the target area prior to acquisition of the sequence of images, and determine an offset between the medical device and the target based on the fluoroscopic-based three-dimensional volumetric data.

[0021] In an aspect, the computing device is further configured to display the target area and the location of the medical device with respect to the target, facilitate navigation of the medical device to the target area via the locating system and the display, and correct the display of the location of the medical device with respect to the target based on the determined offset between the medical device and the target.

[0022] In an aspect, the computing device is further configured to display a 3D rendering of the target area on the display, and register the locating system to the 3D rendering, wherein correcting the display of the location of the medical device with respect to the target comprises updating the registration between the locating system and the 3D rendering.

BRIEF DESCRIPTION OF THE DRAWINGS

[0023] Various exemplary embodiments are illustrated in the accompanying figures with the intent that these examples not be restrictive. It will be appreciated that for simplicity and clarity of the illustration, elements shown in the figures referenced below are not necessarily drawn to scale. Also, where considered appropriate, reference numerals may be repeated among the figures to indicate like, corresponding or analogous elements. The figures are listed below.

[0024] FIG. 1 is a flow chart of a method for estimating the pose of an imaging device by utilizing a structure of markers in accordance with one aspect of the disclosure;

[0025] FIG. 2A is a schematic diagram of a system configured for use with the method of FIG. 1 in accordance with one aspect of the disclosure;

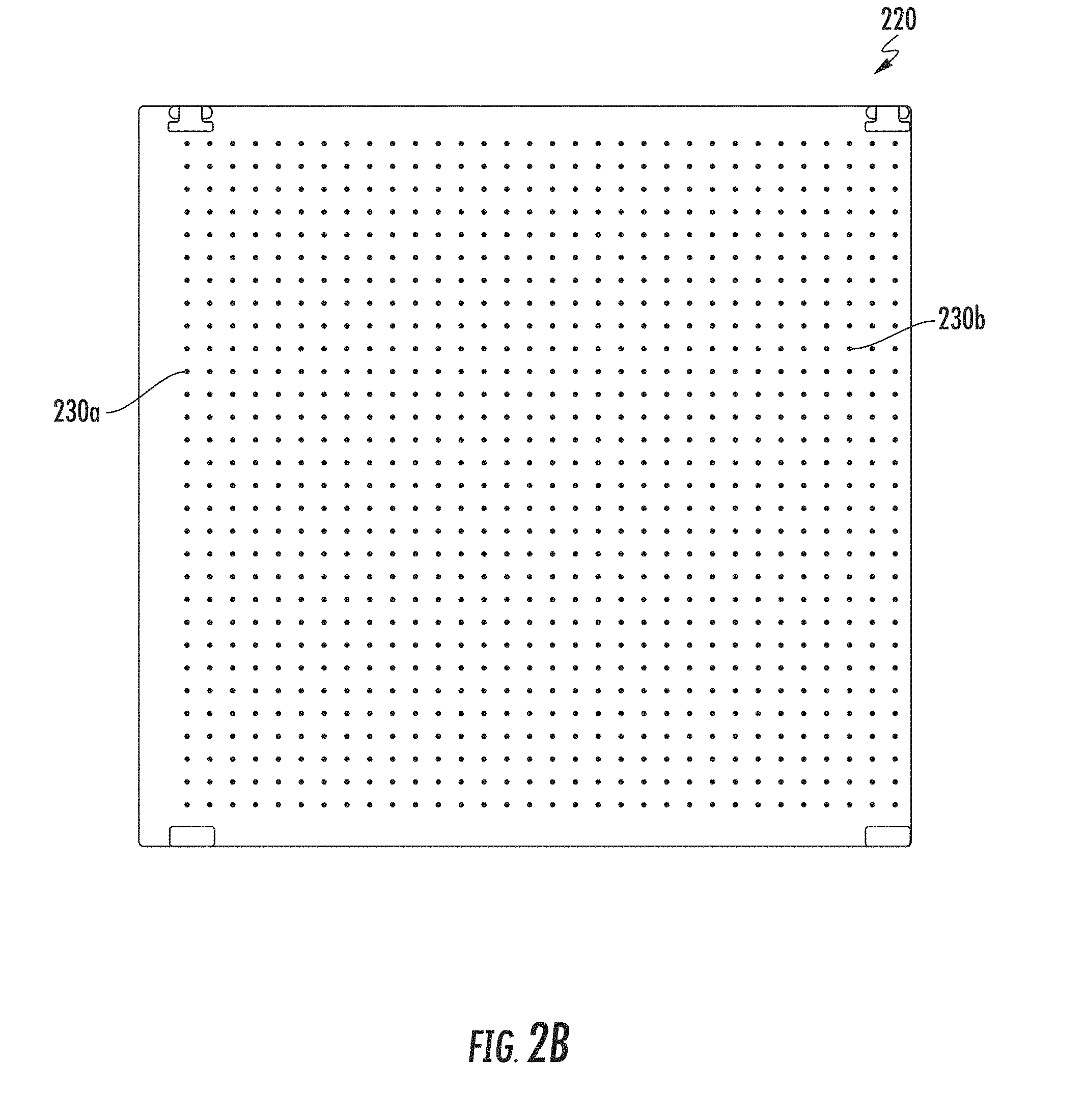

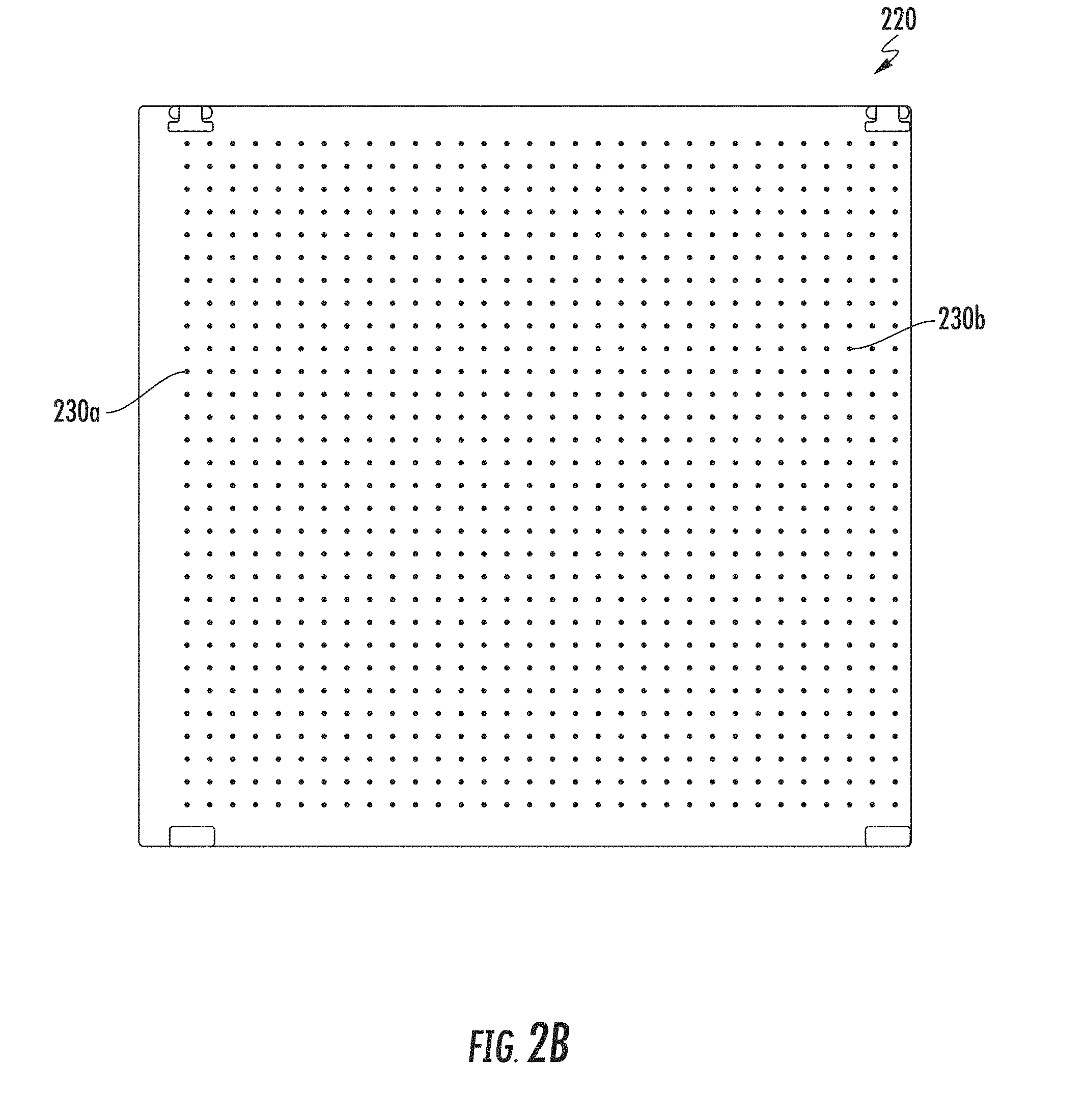

[0026] FIG. 2B is a schematic illustration of a two-dimensional grid structure of sphere markers in accordance with one aspect of the disclosure;

[0027] FIG. 3 shows an exemplary image captured by a fluoroscopic device of an artificial chest volume of a Multipurpose Chest Phantom N1 "LUNGMAN", by Kyoto Kagaku, placed over the grid structure of radio-opaque markers of FIG. 2B;

[0028] FIG. 4 is a probability map generated for the image of FIG. 3 in accordance with one aspect of the disclosure;

[0029] FIGS. 5A-5C show different exemplary candidates for the projection of the 2D grid structure of sphere markers of FIG. 2B on the image of FIG. 3 overlaid on the probability map of FIG. 4;

[0030] FIG. 6A shows a selected candidate for the projection of the 2D grid structure of sphere markers of FIG. 2B on the image of FIG. 3, overlaid on the probability map of FIG. 4 in accordance with one aspect of the disclosure;

[0031] FIG. 6B shows an improved candidate for the projection of the 2D grid structure of sphere markers of FIG. 2B on the image of FIG. 3, overlaid on the probability map of FIG. 4 in accordance with one aspect of the disclosure;

[0032] FIG. 6C shows a further improved candidate for the projection of the 2D grid structure of sphere markers of FIG. 2B on image 300 of FIG. 3, overlaid on the probability map of FIG. 4 in accordance with one aspect of the disclosure;

[0033] FIG. 7 is a flow chart of an exemplary method for constructing fluoroscopic three-dimensional volumetric data in accordance with one aspect of the disclosure; and

[0034] FIG. 8 is a view of one illustrative embodiment of an exemplary system for constructing fluoroscopic-based three-dimensional volumetric data in accordance with the disclosure.

DETAILED DESCRIPTION

[0035] Prior art methods and systems for pose estimation may be inappropriate for real time use, inaccurate or non-robust. Therefore, there is a need for a method and system, which provide a relatively fast, accurate and robust pose estimation, particularly in the field of medical imaging.

[0036] In order to navigate medical devices to a remote target for example, for biopsy or treatment, both the medical device and the target should be visible in some sort of a three-dimensional guidance system. When the target is a small soft-tissue object, such as a tumor or a lesion, an X-ray volumetric reconstruction is needed in order to be able to identify it. Several solutions exist that provide three-dimensional volume reconstruction such as CT and Cone-beam CT which are extensively used in the medical world. These machines algorithmically combine multiple X-ray projections from known, calibrated X-ray source positions into three dimensional volume in which, inter alia, soft-tissues are visible. For example, a CT machine can be used with iterative scans during procedure to provide guidance through the body until the tools reach the target. This is a tedious procedure as it requires several full CT scans, a dedicated CT room and blind navigation between scans. In addition, each scan requires the staff to leave the room due to high-levels of ionizing radiation and exposes the patient to such radiation. Another option is a Cone-beam CT machine which is available in some operation rooms and is somewhat easier to operate, but is expensive and like the CT only provides blind navigation between scans, requires multiple iterations for navigation and requires the staff to leave the room. In addition, a CT-based imaging system is extremely costly, and in many cases not available in the same location as the location where a procedure is carried out.

[0037] A fluoroscopic imaging device is commonly located in the operating room during navigation procedures. The standard fluoroscopic imaging device may be used by a clinician, for example, to visualize and confirm the placement of a medical device after it has been navigated to a desired location. However, although standard fluoroscopic images display highly dense objects such as metal tools and bones as well as large soft-tissue objects such as the heart, the fluoroscopic images have difficulty resolving small soft-tissue objects of interest such as lesions. Furthermore, the fluoroscope image is only a two-dimensional projection, while in order to accurately and safely navigate within the body, a volumetric or three-dimensional imaging is required.

[0038] An endoscopic approach has proven useful in navigating to areas of interest within a patient, and particularly so for areas within luminal networks of the body such as the lungs. To enable the endoscopic, and more particularly the bronchoscopic, approach in the lungs, endobronchial navigation systems have been developed that use previously acquired MRI data or CT image data to generate a three dimensional rendering or volume of the particular body part such as the lungs.

[0039] The resulting volume generated from the MRI scan or CT scan is then utilized to create a navigation plan to facilitate the advancement of a navigation catheter (or other suitable medical device) through a bronchoscope and a branch of the bronchus of a patient to an area of interest. A locating system, such as an electromagnetic tracking system, may be utilized in conjunction with the CT data to facilitate guidance of the navigation catheter through the branch of the bronchus to the area of interest. In certain instances, the navigation catheter may be positioned within one of the airways of the branched luminal networks adjacent to, or within, the area of interest to provide access for one or more medical instruments.

[0040] As another example, minimally invasive procedures, such as laparoscopy procedures, including robotic-assisted surgery, may employ intraoperative fluoroscopy in order to increase visualization, e.g., for guidance and lesion locating, or in order to prevents injury and complications.

[0041] Therefore, a fast, accurate and robust three-dimensional reconstruction of images is required, which is generated based on a standard fluoroscopic imaging performed during medical procedures.

[0042] FIG. 1 illustrates a flow chart of a method for estimating the pose of an imaging device by utilizing a structure of markers in accordance with an aspect of the disclosure. In a step 100, a probability map may be generated for an image captured by an imaging device. The image includes a projection of a structure of markers. The probability map may indicate the probability of each pixel of the image to belong to the projection of a marker of the structure of markers. In some embodiments, the structure of markers may be of a two-dimensional pattern. In some embodiments, the structure of markers may be of a periodic pattern, such as a grid. The image may include a projection of at least a portion of the structure of markers.

[0043] Reference is now made to FIGS. 2B and 3. FIG. 2B is a schematic illustration of a two-dimensional (2D) grid structure of sphere markers 220 in accordance with the disclosure. FIG. 3 is an exemplary image 300 captured by a fluoroscopic device of an artificial chest volume of a Multipurpose Chest Phantom N1 "LUNGMAN", by Kyoto Kagaku, placed over the 2D grid structure of sphere markers 220 of FIG. 2B. 2D grid structure of sphere markers 220 includes a plurality of sphere shaped markers, such as sphere markers 230a and 230b, arranged in a two-dimensional grid pattern. Image 300 includes a projection of a portion of 2D grid structure of sphere markers 220 and a projection of a catheter 320. The projection of 2D grid structure of sphere markers 220 on image 300 includes projections of the sphere markers, such as sphere marker projections 310a, 310b and 310c.

[0044] The probability map may be generated, for example, by feeding the image into a simple marker (blob) detector, such as a Harris corner detector, which outputs a new image of smooth densities, corresponding to the probability of each pixel to belong to a marker. FIG. 4 illustrates a probability map 400 generated for image 300 of FIG. 3. Probability map 400 includes pixels or densities, such as densities 410a, 410b and 410c, which correspond accordingly to markers 310a, 310b and 310c. In some embodiments, the probability map may be downscaled (e.g., reduced in size) in order to make the required computations more simple and efficient. It should be noted that probability map 400, as shown in FIGS. 5A-6B is downscaled by four and probability map 400 as shown in FIG. 6C is downscaled by two.

[0045] In a step 110, different candidates may be generated for the projection of the structure of markers on the image. The different candidates may be generated by virtually positioning the imaging device in a range of different possible poses. By "possible poses" of the imaging device, it is meant three-dimensional positions and orientations of the imaging device. In some embodiments, such a range may be limited according to the geometrical structure and/or degrees of freedom of the imaging device. For each such possible pose, a virtual projection of at least a portion of the structure of markers is generated, as if the imaging device actually captured an image of the structure of markers while positioned at that pose.

[0046] In a step 120, the candidate having the highest probability of being the projection of the structure of markers on the image may be identified based on the image probability map. Each candidate, e.g., a virtual projection of the structure of markers, may be overlaid or associated to the probability map. A probability score may be then determined or associated with each marker projection of the candidate. In some embodiments, the probability score may be positive or negative, e.g., there may be a cost in case virtual markers projections falls within pixels of low probability. The probability scores of all of the markers projections of a candidate may be then summed and a total probability score may be determined for each candidate. For example, if the structure of markers is a two-dimensional grid, then the projection will have a grid form. Each point of the projection grid would lie on at least one pixel of the probability map. A 2D grid candidate will receive the highest probability score if its points lie on the highest density pixels, that is, if its points lie on projections of the centeres of the markers on the image. The candidate having the highest probability score may be determined as the candidate which has the highest probability of being the projection of the structure of markers on the image. The pose of the imaging device for the image may be then estimated based on the virtual pose of the imaging device used to generate the identified candidate.

[0047] FIGS. 5A-5C illustrate different exemplary candidates 500a-c for the projection of 2D grid structure of sphere markers 220 of FIG. 2B on image 300 of FIG. 3 overlaid on probability map 400 of FIG. 4. Candidates 500a, 500b and 500c are indicated as a grid of plus signs ("+"), while each such sign indicates the center of a projection of a marker. Candidates 500a, 500b and 500c are virtual projections of 2D grid structure of sphere markers 220, as if the fluoroscope used to capture image 300 is located at three different poses associated correspondingly with these projections. In this example, candidate 500a was generated as if the fluoroscope is located at: position [0, -50, 0], angle: -20 degrees. Candidate 500b was generated as if the fluoroscope is located at: position [0, -10, 0], angle: -20 degrees. Candidate 500c was generated as if the fluoroscope is located at: position [7.5, -40, 11.25], angle: -25 degrees. The above-mentioned coordinates are with respect to 2D grid structure of sphere markers 220. Densities 410a of probability map 400 are indicated in FIGS. 5A-5C. Plus signs 510a, 510b and 510c are the centers of the markers projections of candidates 500a, 500b and 500c correspondingly, which are the ones closest to densities 410a. One can see that plus sign 510c is the sign which best fits densities 410a and therefore would receive the highest probability score among signs 510a, 510b and 510c of candidates 500a, 500b and 500c correspondingly. One can further see that accordingly, candidate 500c would receive the highest probability score since its markers projections best fit probability map 400. Thus, among these three exemplary candidates, 500a, 500b and 500c, candidate 500c would be identified as the candidate with the highest probability of being the projection of 2D grid structure of sphere markers 220 on image 300.

[0048] Further steps may be performed in order to refine the above described pose estimation. In an optional step 130, a locally deformed version of the candidate may be generated in order to maximize its probability of being the projection of the structure of markers on the image. The locally deformed version may be generated based on the image probability map. A local search algorithm may be utilized to deform the candidate so that it would maximize its score. For example, in case the structure of markers is a 2D grid, each 2D grid point may be treated individually. Each point may be moved towards the neighbouring local maxima on the probability map using gradient ascent method.

[0049] In an optional step 140, an improved candidate for the projection of the structure of markers on the image may be detected based on the locally deformed version of the candidate. The improved candidate is determined such that it fits (exactly or approximately) the locally deformed version of the candidate. Such improved candidate may be determined by identifying a transformation that will fit a new candidate to the local deformed version, e.g., by using homography estimation methods. The virtual pose of the imaging device associated with the improved candidate may be then determined as the estimated pose of the imaging device for the image.

[0050] In some embodiments, the generation of a locally deformed version of the candidate and the determination of an improved candidate may be iteratively repeated. These steps may be iteratively repeated until the process converges to a specific virtual projection of the structure of markers on the image, which may be determined as the improved candidate. Thus, since the structure of markers converges as a whole, false local maxima is avoided. In an aspect, as an alternative to using a list of candidates and finding an optimal candidate for estimating the camera pose, the camera pose may be estimated by solving a homography that transforms a 2D fiducial structure in 3D space into image coordinates that matches the fiducial probability map generated from the imaging device output.

[0051] FIG. 6A shows a selected candidate 600a, for projection of 2D grid structure of sphere markers 220 of FIG. 2B on image 300 of FIG. 3, overlaid on probability map 400 of FIG. 4. FIG. 6B shows an improved candidate 600B, for the projection of 2D grid structure of sphere markers 220 of FIG. 2B on image 300 of FIG. 3, overlaid on probability map 400 of FIG. 4. FIG. 6C shows a further improved candidate 600c, for the projection of 2D grid structure of sphere markers 220 of FIG. 2B on image 300 of FIG. 3, overlaid on probability map 400 of FIG. 4. As described above, the identified or selected candidate is candidate 500c, which is now indicated 600a. Candidate 600b is the improved candidate which was generated based on a locally deformed version of candidate 600a according to the method disclosed above. Candidate 600c is a further improved candidate with respect to candidate 600b, generated by iteratively repeating the process of locally deforming the resulting candidate and determining an approximation to maximize the candidate probability. FIG. 6C illustrates the results of refined candidates based on a higher resolution probability map. In an aspect, this is done after completing a refinement step using the down-sampled version of the probability map. Plus signs 610a, 610b and 610c are the centers of the markers projections of candidates 600a, 600b and 600c correspondingly, which are the ones closest to densities 410a of probability map 400. One can see how the candidates for the projection of 2D grid structure of sphere markers 220 on image 300 converge to the candidate of the highest probability according to probability map 400.

[0052] In some embodiments, the imaging device may be configured to capture a sequence of images. A sequence of images may be captured, automatically or manually, by continuously sweeping the imaging device at a certain angle. When pose estimation of a sequence of images is required, the estimation process may become more efficient by reducing the range or area of possible virtual poses for the imaging device. A plurality of non-sequential images of the sequence of images may be then determined. For example, the first image in the sequence, the last image, and one or more images in-between. The one or more images in-between may be determined such that the sequence is divided into equal image portions. At a first stage, the pose of the imaging device may be estimated only for the determined non-sequential images. At a second stage, the area or range of possible different poses for virtually positioning the imaging device may be reduced. The reduction may be performed based on the estimated poses of the imaging device for the determined non-sequential images. The pose of the imaging device for the rest of the images may be then estimated according to the reduced area or range. For example, the pose of the imaging device for the first and tenth images of the sequence are determined at the first stage. The pose of the imaging device for the second to ninth images must be along a feasible and continuous path between its pose for the first image and its pose for the tenth image, and so on.

[0053] In some embodiments, geometrical parameters of the imaging device may be pre-known, or pre-determined, such as the field of view of the source, height range, rotation angle range and the like, including the device degrees of freedom (e.g., independent motions allowed). In some embodiments, such geometrical parameters of the imaging device may be determined in real-time while estimating the pose of the imaging device for the captured images. Such information may be also used to reduce the area or range of possible poses. In some embodiments, a user practicing the disclosed disclosure may be instructed to limit the motion of the imaging device to certain degrees of freedom or to certain ranges of motion for the sequence of images. Such limitations may be also considered when determining the imaging device possible poses and thus may be used to make the imaging device pose estimation faster.

[0054] In some embodiments, an image pre-processing methods may be first applied to the one or more images in order to correct distortions and/or enhance the visualization of the projection of the structure of markers on the image. For example, in case the imaging device is a fluoroscope, correction of "pincushion" distortion, which slightly warps the image, may be performed. This distortion may be automatically addressed by modelling the warp with a polynomial surface and applying compatible warp which will cancel out the pincushion effect. In case a grid of metal spheres is used, the image may be inversed in order to enhance the projections of the markers. In addition, the image may be blurred using Gaussian filter with sigma value equal, for example, to one half of the spheres diameter, in order to facilitate the search and evaluation of candidates as disclosed above.

[0055] In some embodiments, one or more models of the imaging device may be calibrated to generate calibration data, such as a data file, which may be used to automatically calibrate the specific imaging device. The calibration data may include data referring to the geometric calibration and/or distortion calibration, as disclosed above. In some embodiments, the geometric calibration may be based on data provided by the imaging device manufacturer. In some embodiments, a manual distortion calibration may be performed once for a specific imaging device. In an aspect, the imaging device distortion correction can be calibrated as a preprocessing step during every procedure as the pincushion distortion may change as a result of imaging device maintenance or even as a result of a change in time.

[0056] FIG. 2A illustrates a schematic diagram of a system 200 configured for use with the method of FIG. 1 in accordance with one aspect of the disclosure. System 200 may include a workstation 80, an imaging device 215 and a structure of markers structure 218. In some embodiments, workstation 80 may be coupled with imaging device 215, directly or indirectly, e.g., by wireless communication. Workstation 80 may include a memory 202, a processor 204, a display 206 and an input device 210. Processor or hardware processor 204 may include one or more hardware processors. Workstation 80 may optionally include an output module 212 and a network interface 208. Memory 202 may store an application 81 and image data 214. Application 81 may include instructions executable by processor 204, inter alia, for executing the method of FIG. 1 and a user interface 216. Workstation 80 may be a stationary computing device, such as a personal computer, or a portable computing device such as a tablet computer. Workstation 80 may embed a plurality of computer devices.

[0057] Memory 202 may include any non-transitory computer-readable storage media for storing data and/or software including instructions that are executable by processor 204 and which control the operation of workstation 80 and in some embodiments, may also control the operation of imaging device 215. In an embodiment, memory 202 may include one or more solid-state storage devices such as flash memory chips. Alternatively, or in addition to the one or more solid-state storage devices, memory 202 may include one or more mass storage devices connected to the processor 204 through a mass storage controller (not shown) and a communications bus (not shown). Although the description of computer-readable media contained herein refers to a solid-state storage, it should be appreciated by those skilled in the art that computer-readable storage media can be any available media that can be accessed by the processor 204. That is, computer readable storage media may include non-transitory, volatile and non-volatile, removable and non-removable media implemented in any method or technology for storage of information such as computer-readable instructions, data structures, program modules or other data. For example, computer-readable storage media may include RAM, ROM, EPROM, EEPROM, flash memory or other solid-state memory technology, CD-ROM, DVD, Blu-Ray or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium which may be used to store the desired information and which may be accessed by workstation 80.

[0058] Application 81 may, when executed by processor 204, cause display 206 to present user interface 216. Network interface 208 may be configured to connect to a network such as a local area network (LAN) consisting of a wired network and/or a wireless network, a wide area network (WAN), a wireless mobile network, a Bluetooth network, and/or the internet. Network interface 208 may be used to connect between workstation 80 and imaging device 215. Network interface 208 may be also used to receive image data 214. Input device 210 may be any device by means of which a user may interact with workstation 80, such as, for example, a mouse, keyboard, foot pedal, touch screen, and/or voice interface. Output module 212 may include any connectivity port or bus, such as, for example, parallel ports, serial ports, universal serial busses (USB), or any other similar connectivity port known to those skilled in the art.

[0059] Imaging device 215 may be any imaging device, which captures 2D images, such as a standard fluoroscopic imaging device or a camera. In some embodiments, markers structure 218, may be a structure of markers having a two-dimensional pattern, such as a grid having two dimensions of width and length (e.g., 2D grid), as shown in FIG. 2B. Using a 2D pattern, as opposed to a 3D pattern, may facilitate the pose estimation process. Furthermore, when for example, a patient is required to lie on markers structure 218 in order to estimate the pose of a medical imaging device while scanning the patient, a 2D pattern would be more convenient for the patient. The markers should be formed such that they will be visible in the imaging modality used. For example, if the imaging device is a fluoroscopic device, then the markers should be made of a material which is at least partially radio-opaque. In some embodiments, the shape of the markers may be symmetric and such that the projection of the markers on the image would be the same at any pose the imaging device may be placed. Such configuration may simplify and enhance the pose estimation process and/or make it more efficient. For example, when the imaging device is rotated around the markers structure, markers having a rotation symmetry may be preferred, such as spheres. The size of the markers structure and/or the number of markers in the structure may be determined according to the specific use of the disclosed systems and methods. For example, if the pose estimation is used to construct a 3D volume of an area of interest within a patient, then the markers structure may be of a size similar or larger than the size of the area of interest. In some embodiments, the pattern of markers structure 218 may be two-dimensional and/or periodic, such as a 2D grid. Using a periodic and/or of a two-dimensional pattern structure of markers may further enhance and facilitate the pose estimation process and make it more efficient.

[0060] Referring now to FIG. 2B, 2D grid structure of sphere markers 220 has a 2D periodic pattern of a grid and includes symmetric markers in the shape of a sphere. Such a configuration simplifies and enhances the pose estimation process, as described in FIG. 1, specifically when generating the virtual candidates for the markers structure projection and when determining the optimal one. The structure of markers, as a fiducial, should be positioned in a stationary manner during the capturing of the one or more images. In an exemplary 2D grid structure of sphere markers such as described above, used in medical imaging of the lungs area, the sphere markers diameter may be 2.+-.0.2 mm and the distance between the spheres may be about 15.+-.0.15 mm isotropic.

[0061] Referring now back to FIG. 2A, imaging device 215 may capture one or more images (i.e., a sequence of images) such that at least a projection of a portion of markers structure 218 is shown in each image. The image or sequence of images captured by imaging device 215 may be then stored in memory 202 as image data 214. The image data may be then processed by processor 204 and according to the method of FIG. 1, to determine the pose of imaging device 215. The pose estimation data may be then output via output module 212, display 206 and/or network interface 208. Markers structure 218 may be positioned with respect to an area of interest, such as under an area of interest within the body of a patient going through a fluoroscopic scan. Markers structure 218 and the patient will then be positioned such that the one or more images captured by imaging device 215 would capture the area of interest and a portion of markers structure 218. If required, once the pose estimation process is complete, the projection of markers structure 218 on the images may be removed by using well known methods. One such method is described in commonly-owned U.S. patent application Ser. No. 16/259,612, entitled: "IMAGE RECONSTRUCTION SYSTEM AND METHOD", filed on Jan. 28, 2019, by Alexandroni et al., the entire content of which is hereby incorporated by reference.

[0062] FIG. 7 is a flow chart of an exemplary method for constructing fluoroscopic three-dimensional volumetric data in accordance with the disclosure. A method for constructing fluoroscopic-based three-dimensional volumetric data of a target area within a patient from two dimensional fluoroscopic images, is hereby disclosed. In step 700, a sequence of images of the target area and of a structure of markers is acquired via a fluoroscopic imaging device. The structure of markers may be the two-dimensional structure of markers described with respect to FIGS. 1, 2A and 2B. The structure of markers may be positioned between the patient and the fluoroscopic imaging device. In some embodiments, the target area may include, for example, at least a portion of the lungs, and as exemplified with respect to the system of FIG. 8. In some embodiments, the target is a soft-tissue target, such as within a lung, kidney, liver and the like.

[0063] In a step 710, a pose of the fluoroscopic imaging device for at least a plurality of images of the sequence of images may be estimated. The pose estimation may be performed based on detection of a possible and most probable projection of the structure of markers as a whole on each image of the plurality of images, and as described with respect to FIG. 1.

[0064] In some embodiments, other methods for estimating the pose of the fluoroscopic device may be used. There are various known methods for determining the poses of imaging devices, such as an external angle measuring device or based on image analysis. Some of such devices and methods are particularly described in commonly-owned U.S. Patent Publication No. 2017/0035379, filed on Aug. 1, 2016, by Weingarten et al, the entire content of which is hereby incorporated by reference.

[0065] In a step 720, a fluoroscopic-based three-dimensional volumetric data of the target area may be constructed based on the estimated poses of the fluoroscopic imaging device. Exemplary systems and methods for constructing such fluoroscopic-based three-dimensional volumetric data are disclosed in the above commonly-owned U.S. Patent Publication No. 2017/0035379, which is incorporated by reference.

[0066] In an optional step 730, a medical device may be positioned in the target area prior to the acquiring of the sequence of images. Thus, the sequence of images and consequently the fluoroscopic-based three-dimensional volumetric data may also include a projection of the medical device in addition to the target. The offset (i.e., .DELTA.x, .DELTA.y and .DELTA.z) between the medical device and the target may be then determined based on the fluoroscopic-based three-dimensional volumetric data. The target may be visible or better exhibited in the generated three-dimensional volumetric data. Therefore, the target may be detected, automatically, or manually by the user, in the three-dimensional volumetric data. The medical device may be detected, automatically or manually by a user, in the sequence of images, as captured, or in the generated three-dimensional volumetric data. The automatic detection of the target and/or the medical device may be performed based on systems and methods as known in the art and such as described, for example, in commonly-owned U.S. Patent Application No. 62/627,911, titled: "SYSTEM AND METHOD FOR CATHETER DETECTION IN FLUOROSCOPIC IMAGES AND UPDATING DISPLAYED POSITION OF CATHETER", filed on Feb. 8, 2018, by Birenbaum et al. The manual detection may be performed by displaying to the user the three-dimensional volumetric data and/or captured images and requesting his input. Once the target and the medical device are detected in the three-dimensional volumetric data and/or the captures images, their location in the fluoroscopic coordinate system of reference may be obtained and the offset between them may be determined.

[0067] The offset between the target and the medical device may be utilized for various medical purposes, including facilitating approach of the medical device to the target area and treatment. The navigation of a medical device to the target area may be facilitated via a locating system and a display. The locating system locates or tracks the motion of the medical device through the patient's body. The display may display the medical device location to the user with respect to the surroundings of the medical device within the patient's body and the target. The locating system may be, for example, an electromagnetic or optic locating system, or any other such system as known in the art. When, for example, the target area includes a portion of the lungs, the medical device may be navigated to the target area through the airways luminal network and as described with respect to FIG. 8.

[0068] In an optional step 740, a display of the location of the medical device with respect to the target may be corrected based on the determined offset between the medical device and the target. In some embodiments, a 3D rendering of the target area may be displayed on the display. The 3D rendering of the target area may be generated based on CT volumetric data of the target area which was acquired previously, e.g., prior to the current procedure or operation (e.g., preoperative CT). In some embodiments, the locating system may be registered to the 3D rendering of the target, such as described, for example, with respect to FIG. 8 below. The correction of the offset between the medical device and the target may be then performed by updating the registration of the locating system to the 3D rendering. Generally, to perform such updating, a transformation between coordinate system of reference of the fluoroscopic images and the coordinate system of reference of the locating system should be known. The geometrical positioning of the structure of markers with respect to the locating system may determine such a transformation. In some embodiments, and as shown in the embodiment of FIG. 8, the structure of markers and the locating system are positioned such that the same coordinate system of reference would apply to both, or such that the one would be only a translated version of the other.

[0069] In some embodiments, the updating of the registration of the locating system to the 3D rendering (e.g., CT-base) may be performed in a local manner and/or in a gradual manner. For example, the registration may be updated only in the surroundings of the target, e.g., only within a certain distance from the target. This is since the update may be less accurate when not performed around the target. In some embodiments, the updating may be performed in a gradual manner, e.g., by applying weights according to distance from the target. In addition to accuracy considerations, such gradual updating may be more convenient or easier for the user to look at, process and make the necessary changes during procedure, than abrupt change in the medical device location on the display.

[0070] In some embodiments, the patient may be instructed to stop breathing (or caused to stop breathing) during the capture of the images in order to prevent movements of the target area due to breathing. In other embodiments, methods for compensating breathing movements during the capture of the images may be performed. For example, the estimated poses of the fluoroscopic device may be corrected according to the movements of a fiducial marker placed in the target area. Such a fiducial may be a medical device, e.g., a catheter, placed in the target area. The movement of the catheter, for example, may be determined based on the locating system. In some embodiments, a breathing pattern of the patient may be determined according to the movements of a fiducial marker, such as a catheter, located in the target area. The movements may be determined via a locating system. Based on that pattern, only images of inhale or exhale may be considered when determining the pose of the imaging device.

[0071] In embodiments, as described above, for each captured frame, the imaging device three-dimensional position and orientation are estimated based on a set of static markers positioned on the patient bed. This process requires knowledge about the markers 3D positions in the volume, as well as the compatible 2D coordinates of the projections in the image plane. Adding one or more markers from different planes in the volume of interest may lead to more robust and accurate pose estimation. One possible marker that can be utilized in such a process is the catheter tip (or other medical device tip positioned through the catheter). The tip is visible throughout the video captured by fluoroscopic imaging and the compatible 3D positions may be provided by a navigation or tracking system (e.g., an electromagnetic navigation tracking system) as the tool is navigated to the target (e.g., through the electromagnetic field). Therefore, the only remaining task is to deduce the exact 2D coordinates from the video frames. As described above, one embodiment of the tip detection step may include fully automated detection and tracking of the tip throughout the video. Another embodiment may implement semi-supervised tracking in which the user manually marks the tip in one or more frames and the detection process computes the tip coordinates for the rest of the frames.

[0072] In embodiments, the semi-supervised tracking process may be implemented in accordance with solving each frame at a time by template matching between current frame and previous ones, using optical flow to estimate the tip movement along the video, and/or model-based trackers. Model-based trackers train a detector to estimate the probability of each pixel to belong to the catheter tip, which is followed by a step of combining the detections to a single most probable list of coordinates along the video. One possible embodiment of the model-based trackers involves dynamic programming. Such an optimization approach enables finding a seam (connected list of coordinates along the video frames 3D space--first two dimensions belongs to the image plane and the third axis is time) with maximal probability. Another possible way to achieve a seam of two-dimensional coordinates is training a detector to estimate the tip coordinate in each frame while incorporating a regularization to the loss function of proximity between detections in adjacent frames.

[0073] FIG. 8 illustrates an exemplary system 800 for constructing fluoroscopic-based three-dimensional volumetric data in accordance with the disclosure. System 800 may be configured to construct fluoroscopic-based three-dimensional volumetric data of a target area including at least a portion of the lungs of a patient from 2D fluoroscopic images. System 800 may be further configured to facilitate approach of a medical device to the target area by using Electromagnetic Navigation Bronchoscopy (ENB) and for determining the location of a medical device with respect to the target.

[0074] System 800 may be configured for reviewing CT image data to identify one or more targets, planning a pathway to an identified target (planning phase), navigating an extended working channel (EWC) 812 of a catheter assembly to a target (navigation phase) via a user interface, and confirming placement of EWC 812 relative to the target. One such EMN system is the ELECTROMAGNETIC NAVIGATION BRONCHOSCOPY.RTM. system currently sold by Medtronic PLC. The target may be tissue of interest identified by review of the CT image data during the planning phase. Following navigation, a medical device, such as a biopsy tool or other tool, may be inserted into EWC 812 to obtain a tissue sample from the tissue located at, or proximate to, the target.

[0075] FIG. 8 illustrates EWC 812 which is part of a catheter guide assembly 840. In practice, EWC 812 is inserted into a bronchoscope 830 for access to a luminal network of the patient "P." Specifically, EWC 812 of catheter guide assembly 840 may be inserted into a working channel of bronchoscope 830 for navigation through a patient's luminal network. A locatable guide (LG) 832, including a sensor 844 is inserted into EWC 812 and locked into position such that sensor 844 extends a desired distance beyond the distal tip of EWC 812. The position and orientation of sensor 844 relative to the reference coordinate system, and thus the distal portion of EWC 812, within an electromagnetic field can be derived. Catheter guide assemblies 840 are currently marketed and sold by Medtronic PLC under the brand names SUPERDIMENSION.RTM. Procedure Kits, or EDGE.TM. Procedure Kits, and are contemplated as useable with the disclosure. For a more detailed description of catheter guide assemblies 840, reference is made to commonly-owned U.S. Patent Publication No. 2014/0046315, filed on Mar. 15, 2013, by Ladtkow et al, U.S. Pat. Nos. 7,233,820, and 9,044,254, the entire contents of each of which are hereby incorporated by reference.

[0076] System 800 generally includes an operating table 820 configured to support a patient "P," a bronchoscope 830 configured for insertion through the patient's "P's" mouth into the patient's "P's" airways; monitoring equipment 835 coupled to bronchoscope 830 (e.g., a video display, for displaying the video images received from the video imaging system of bronchoscope 830); a locating system 850 including a locating module 852, a plurality of reference sensors 854 and a transmitter mat coupled to a structure of markers 856; and a computing device 825 including software and/or hardware used to facilitate identification of a target, pathway planning to the target, navigation of a medical device to the target, and confirmation of placement of EWC 812, or a suitable device therethrough, relative to the target. Computing device 825 may be similar to workstation 80 of FIG. 2A and may be configured, inter alia, to execute the method of FIG. 1.

[0077] A fluoroscopic imaging device 810 capable of acquiring fluoroscopic or x-ray images or video of the patient "P" is also included in this particular aspect of system 800. The images, sequence of images, or video captured by fluoroscopic imaging device 810 may be stored within fluoroscopic imaging device 810 or transmitted to computing device 825 for storage, processing, and display, as described with respect to FIG. 2A. Additionally, fluoroscopic imaging device 810 may move relative to the patient "P" so that images may be acquired from different angles or perspectives relative to patient "P" to create a sequence of fluoroscopic images, such as a fluoroscopic video. The pose of fluoroscopic imaging device 810 relative to patient "P" and for the images may be estimated via the structure of markers and according to the method of FIG. 1. The structure of markers is positioned under patient "P," between patient "P" and operating table 820 and between patient "P" and a radiation source of fluoroscopic imaging device 810. Structure of markers is coupled to the transmitter mat (both indicated 856) and positioned under patient "P" on operating table 820. Structure of markers and transmitter mat 856 are positioned under the target area within the patient in a stationary manner. Structure of markers and transmitter mat 856 may be two separate elements which may be coupled in a fixed manner or alternatively may be manufactured as one unit. Fluoroscopic imaging device 810 may include a single imaging device or more than one imaging device. In embodiments including multiple imaging devices, each imaging device may be a different type of imaging device or the same type. Further details regarding the imaging device 810 are described in U.S. Pat. No. 8,565,858, which is incorporated by reference in its entirety herein.

[0078] Computing device 185 may be any suitable computing device including a processor and storage medium, wherein the processor is capable of executing instructions stored on the storage medium. Computing device 185 may further include a database configured to store patient data, CT data sets including CT images, fluoroscopic data sets including fluoroscopic images and video, navigation plans, and any other such data. Although not explicitly illustrated, computing device 185 may include inputs, or may otherwise be configured to receive, CT data sets, fluoroscopic images/video and other data described herein. Additionally, computing device 185 includes a display configured to display graphical user interfaces. Computing device 185 may be connected to one or more networks through which one or more databases may be accessed.

[0079] With respect to the planning phase, computing device 185 utilizes previously acquired CT image data for generating and viewing a three dimensional model of the patient's "P's" airways, enables the identification of a target on the three dimensional model (automatically, semi-automatically, or manually), and allows for determining a pathway through the patient's "P's" airways to tissue located at and around the target. More specifically, CT images acquired from previous CT scans are processed and assembled into a three-dimensional CT volume, which is then utilized to generate a three-dimensional model of the patient's "P's" airways. The three-dimensional model may be displayed on a display associated with computing device 185, or in any other suitable fashion. Using computing device 185, various views of the three-dimensional model or enhanced two-dimensional images generated from the three-dimensional model are presented. The enhanced two-dimensional images may possess some three-dimensional capabilities because they are generated from three-dimensional data. The three-dimensional model may be manipulated to facilitate identification of target on the three-dimensional model or two-dimensional images, and selection of a suitable pathway through the patient's "P's" airways to access tissue located at the target can be made. Once selected, the pathway plan, three dimensional model, and images derived therefrom, can be saved and exported to a navigation system for use during the navigation phase(s). One such planning software is the ILOGIC.RTM. planning suite currently sold by Medtronic PLC.

[0080] With respect to the navigation phase, a six degrees-of-freedom electromagnetic locating or tracking system 850, e.g., similar to those disclosed in U.S. Pat. Nos. 8,467,589, 6,188,355, and published PCT Application Nos. WO 00/10456 and WO 01/67035, the entire contents of each of which are incorporated herein by reference, or other suitable positioning measuring system, is utilized for performing registration of the images and the pathway for navigation, although other configurations are also contemplated. Tracking system 850 includes a locating or tracking module 852, a plurality of reference sensors 854, and a transmitter mat 856. Tracking system 850 is configured for use with a locatable guide 832 and particularly sensor 844. As described above, locatable guide 832 and sensor 844 are configured for insertion through an EWC 182 into a patient's "P's" airways (either with or without bronchoscope 830) and are selectively lockable relative to one another via a locking mechanism.

[0081] Transmitter mat 856 is positioned beneath patient "P." Transmitter mat 856 generates an electromagnetic field around at least a portion of the patient "P" within which the position of a plurality of reference sensors 854 and the sensor 844 can be determined with use of a tracking module 852. One or more of reference sensors 854 are attached to the chest of the patient "P." The six degrees of freedom coordinates of reference sensors 854 are sent to computing device 825 (which includes the appropriate software) where they are used to calculate a patient coordinate frame of reference. Registration, is generally performed to coordinate locations of the three-dimensional model and two-dimensional images from the planning phase with the patient's "P's" airways as observed through the bronchoscope 830, and allow for the navigation phase to be undertaken with precise knowledge of the location of the sensor 844, even in portions of the airway where the bronchoscope 830 cannot reach. Further details of such a registration technique and their implementation in luminal navigation can be found in U.S. Patent Application Pub. No. 2011/0085720, the entire content of which is incorporated herein by reference, although other suitable techniques are also contemplated.

[0082] Registration of the patient's "P's" location on the transmitter mat 856 is performed by moving LG 832 through the airways of the patient's "P." More specifically, data pertaining to locations of sensor 844, while locatable guide 832 is moving through the airways, is recorded using transmitter mat 856, reference sensors 854, and tracking module 852. A shape resulting from this location data is compared to an interior geometry of passages of the three dimensional model generated in the planning phase, and a location correlation between the shape and the three dimensional model based on the comparison is determined, e.g., utilizing the software on computing device 825. In addition, the software identifies non-tissue space (e.g., air filled cavities) in the three-dimensional model. The software aligns, or registers, an image representing a location of sensor 844 with the three-dimensional model and two-dimensional images generated from the three-dimension model, which are based on the recorded location data and an assumption that locatable guide 832 remains located in non-tissue space in the patient's "P's" airways. Alternatively, a manual registration technique may be employed by navigating the bronchoscope 830 with the sensor 844 to pre-specified locations in the lungs of the patient "P", and manually correlating the images from the bronchoscope to the model data of the three dimensional model.

[0083] Following registration of the patient "P" to the image data and pathway plan, a user interface is displayed in the navigation software which sets for the pathway that the clinician is to follow to reach the target. One such navigation software is the ILOGIC.RTM. navigation suite currently sold by Medtronic PLC.

[0084] Once EWC 812 has been successfully navigated proximate the target as depicted on the user interface, the locatable guide 832 may be unlocked from EWC 812 and removed, leaving EWC 812 in place as a guide channel for guiding medical devices including without limitation, optical systems, ultrasound probes, marker placement tools, biopsy tools, ablation tools (i.e., microwave ablation devices), laser probes, cryogenic probes, sensor probes, and aspirating needles to the target.

[0085] The disclosed exemplary system 800 may be employed by the method of FIG. 7 to construct fluoroscopic-based three-dimensional volumetric data of a target located in the lungs area and to correct the location of a medical device navigated to the target area with respect to the target.

[0086] System 800 or similar version of it in conjunction with the method of FIG. 7 may be used in various procedures, other than ENB procedures with the required modifications, and such as laparoscopy or robotic-assisted surgery.

[0087] Systems and methods in accordance with the disclosure may be usable for facilitating the navigation of a medical device to a target and/or its area using real-time two-dimensional fluoroscopic images of the target area. The navigation is facilitated by using local three-dimensional volumetric data, in which small soft-tissue objects are visible, constructed from a sequence of fluoroscopic images captured by a standard fluoroscopic imaging device available in most procedure rooms. The fluoroscopic-based constructed local three-dimensional volumetric data may be used to correct a location of a medical device with respect to a target or may be locally registered with previously acquired volumetric data (e.g., CT data). In general, the location of the medical device may be determined by a tracking system, for example, an electromagnetic tracking system. The tracking system may be registered with the previously acquired volumetric data. A local registration of the real-time three-dimensional fluoroscopic data to the previously acquired volumetric data may be then performed via the tracking system. Such real-time data, may be used, for example, for guidance, navigation planning, improved navigation accuracy, navigation confirmation, and treatment confirmation.

[0088] In some embodiments, the methods disclosed may further include a step for generating a 3D rendering of the target area based on a pre-operative CT scan. A display of the target area may then include a display of the 3D rendering. In another step, the tracking system may be registered with the 3D rendering. As described above, a correction of the location of the medical device with respect to the target, based on the determined offset, may then include the local updating of the registration between the tracking system and the 3D rendering in the target area. In some embodiments, the methods disclosed may further include a step for registering the fluoroscopic 3D reconstruction to the tracking system. In another step, and based on the above, a local registration between the fluoroscopic 3D reconstruction and the 3D rendering may be performed in the target area.

[0089] From the foregoing and with reference to the various figure drawings, those skilled in the art will appreciate that certain modifications can also be made to the disclosure without departing from the scope of the same. For example, although the systems and methods are described as usable with an EMN system for navigation through a luminal network such as the lungs, the systems and methods described herein may be utilized with systems that utilize other navigation and treatment devices such as percutaneous devices. Additionally, although the above-described system and method is described as used within a patient's luminal network, it is appreciated that the above-described systems and methods may be utilized in other target regions such as the liver. Further, the above-described systems and methods are also usable for transthoracic needle aspiration procedures.

[0090] Detailed embodiments of the disclosure are disclosed herein. However, the disclosed embodiments are merely examples of the disclosure, which may be embodied in various forms and aspects. Therefore, specific structural and functional details disclosed herein are not to be interpreted as limiting, but merely as a basis for the claims and as a representative basis for teaching one skilled in the art to variously employ the disclosure in virtually any appropriately detailed structure.

[0091] As can be appreciated a medical instrument such as a biopsy tool or an energy device, such as a microwave ablation catheter, that is positionable through one or more branched luminal networks of a patient to treat tissue may prove useful in the surgical arena and the disclosure is directed to systems and methods that are usable with such instruments and tools. Access to luminal networks may be percutaneous or through natural orifice using navigation techniques. Additionally, navigation through a luminal network may be accomplished using image-guidance. These image-guidance systems may be separate or integrated with the energy device or a separate access tool and may include MRI, CT, fluoroscopy, ultrasound, electrical impedance tomography, optical, and/or device tracking systems. Methodologies for locating the access tool include EM, IR, echolocation, optical, and others. Tracking systems may be integrated to an imaging device, where tracking is done in virtual space or fused with preoperative or live images. In some cases the treatment target may be directly accessed from within the lumen, such as for the treatment of the endobronchial wall for COPD, Asthma, lung cancer, etc. In other cases, the energy device and/or an additional access tool may be required to pierce the lumen and extend into other tissues to reach the target, such as for the treatment of disease within the parenchyma. Final localization and confirmation of energy device or tool placement may be performed with imaging and/or navigational guidance using a standard fluoroscopic imaging device incorporated with methods and systems described above.

[0092] While several embodiments of the disclosure have been shown in the drawings, it is not intended that the disclosure be limited thereto, as it is intended that the disclosure be as broad in scope as the art will allow and that the specification be read likewise. Therefore, the above description should not be construed as limiting, but merely as exemplifications of particular embodiments. Those skilled in the art will envision other modifications within the scope and spirit of the claims appended hereto.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

D00010

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.