Imaging Systems And Methods For Immersive Surveillance

Chuang; Daniel B. ; et al.

U.S. patent application number 16/380568 was filed with the patent office on 2019-08-01 for imaging systems and methods for immersive surveillance. The applicant listed for this patent is Massachusetts Institute of Technology. Invention is credited to Mark E. Beattie, Jonathan P. Blanchard, Mark Bury, Lawrence M. Candell, Daniel B. Chuang, Cindy Y. Fang, Gary M. Long, JR., Svetlana V. Panasyuk, Bobby Ren, William D. Ross, Lauren L. White.

| Application Number | 20190238800 16/380568 |

| Document ID | / |

| Family ID | 46380414 |

| Filed Date | 2019-08-01 |

View All Diagrams

| United States Patent Application | 20190238800 |

| Kind Code | A1 |

| Chuang; Daniel B. ; et al. | August 1, 2019 |

IMAGING SYSTEMS AND METHODS FOR IMMERSIVE SURVEILLANCE

Abstract

Security guards at large facilities, such as airports, monitor multiple screens that display images from individual surveillance cameras dispersed throughout the facility. If a guard zooms with a particular camera, he may lose image resolution, along with perspective on the surrounding area. Embodiments of the inventive Imaging System for Immersive Surveillance (ISIS) solve these problems by combining multiple cameras in one device. When properly mounted, example ISIS systems offer up to 360-degree, 240-megapixel views on a single screen. (Other fields of view and resolutions are also possible.) Image-stitching software merges multiple video feeds into one scene. The system also allows operators to tag and follow targets, and can monitor restricted areas and sound an alert when intruders breach them.

| Inventors: | Chuang; Daniel B.; (Cambridge, MA) ; Candell; Lawrence M.; (Arlington, MA) ; Ross; William D.; (Westford, MA) ; Beattie; Mark E.; (Westford, MA) ; Fang; Cindy Y.; (Arlington, MA) ; Ren; Bobby; (Cambridge, MA) ; Blanchard; Jonathan P.; (Sterling, MA) ; Long, JR.; Gary M.; (Lowell, MA) ; White; Lauren L.; (Burlington, MA) ; Panasyuk; Svetlana V.; (Lexington, MA) ; Bury; Mark; (Acton, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 46380414 | ||||||||||

| Appl. No.: | 16/380568 | ||||||||||

| Filed: | April 10, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14639244 | Mar 5, 2015 | 10306186 | ||

| 16380568 | ||||

| 13327416 | Dec 15, 2011 | 9007432 | ||

| 14639244 | ||||

| 61423938 | Dec 16, 2010 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G08B 13/19682 20130101; H04N 7/181 20130101; H04N 5/247 20130101; G08B 13/19608 20130101; H04N 5/3415 20130101; H04N 5/23238 20130101; G08B 13/1968 20130101; G08B 13/19628 20130101; G08B 13/19619 20130101 |

| International Class: | H04N 7/18 20060101 H04N007/18; H04N 5/341 20060101 H04N005/341; H04N 5/247 20060101 H04N005/247; H04N 5/232 20060101 H04N005/232; G08B 13/196 20060101 G08B013/196 |

Goverment Interests

GOVERNMENT SUPPORT

[0002] This invention was made with government support under Air Force Contract FA8721-05-C-0002. The government has certain rights in the invention.

Claims

1. A method of determining a model representing views of a scene from cameras in an array of cameras, each camera in the array of cameras having a field of view that overlaps with the field of view of another camera in the array of cameras, the method comprising: (A) for each pair of overlapping fields of view: (A1) selecting image features in a region common to the overlapping fields of view; (A2) matching points corresponding to a subset of the image features in one field of view in the pair of overlapping fields of view to points corresponding the subset of the image features in the other field of view in the pair of overlapping fields of view to form a set of matched points; (B) merging at least a subset of each set of the matched points to form a set of merged points; and (C) estimating parameters associated with each field of view based on the set of merged points to form the model representing the views of the scene.

2. The method of claim 1 wherein (A) comprises identifying candidate image features using a random sample and consensus procedure.

3. The method of claim 1 wherein (A2) comprises calculating a homography that maps points from one field of view in the pair of overlapping fields of view to the other field of view in the pair of overlapping fields of view.

4. The method of claim 1 wherein (A2) comprises: projecting points corresponding to the image features from one field of view in the pair of overlapping fields of view onto the other field of view in the pair of overlapping fields of view; and for each projected point, determining whether the projected point lies within a predefined distance of a corresponding point in the other field of view.

5. The method of claim 4 further comprising: selecting the subset of each set of matched points from among those projected points that lie within the predefined distance of the corresponding points.

6. The method of claim 1 wherein (A) further comprises: (A3) repeating steps (A1) and (A2) for different image features in the region common to the overlapping fields of view.

7. The method of claim 1 further comprising: (D) calculating a re-projection error associated with model representing the views of the scene; and (E) weighting the parameters to reduce the re-projection error.

8. A method of compensating for imbalances in color and white levels in color images of respective portions of a wide-area scene, each color image comprising red, green, and blue color channels acquired by a respective camera in a camera array disposed to image the wide-area scene, the method comprising: (A) normalizing values representing the red, green, and blue color channels to a reference value representing a response of the cameras in the camera array to white light; (B) equalizing the values representing the red, green, and blue color channels to red, green, and blue equalization values, respectively; (C) identifying high- and low-percentile values among each of the red, green, and blue color channels; and (D) scaling each of the red, green, and blue color channels based on the high- and low-percentile values to provide compensated values representing the red, green, and blue color channels.

9. The method of claim 8 wherein (B) further comprises: (B1) calculating red, green, and blue median values for the red, green, and blue color channels, respectively; (B2) calculating red, green, and blue equalization coefficients by dividing the red, green, and blue median values, respectively, by the mean of the red, green, and blue median values; and (B3) scaling the values representing the red, green, and blue color channels by the red, green, and blue equalization coefficients, respectively.

10. The method of claim 8, wherein (A) further comprises: (A1) disposing the camera array inside a calibration hemisphere, the calibration hemisphere comprising: a diffusive hemisphere, a reflective hemisphere disposed outside the diffusive hemisphere, and a plurality of light emtting diodes (LEDs), disposed between the diffusive hemisphere and the reflective hemisphere, to emit light that is reflected by the reflective hemisphere and transmitted by the diffusive hemisphere; (A2) capturing a first image of the plurality of LEDs using the camera array when the plurality of LEDs is off; (A3) capturing a second image of the plurality of LEDs using the camera array when the plurality of LEDs is on; and (A4) generating the reference value based at least in part on the first image captured in (A2) and the second image captured in (A3).

Description

CROSS-REFERENCE TO RELATED PATENT APPLICATIONS

[0001] This application is divisional application of U.S. application Ser. No. 14/639,244, filed on Mar. 5, 2015, and entitled "Imaging Systems and Methods for Immersive Surveillance," which is a divisional application of U.S. application Ser. No. 13/327,416, filed on Dec. 15, 2011, entitled "Imaging Systems and Methods for Immersive Surveillance," which in claims benefit, under 35 U.S.C. .sctn. 119(e), of U.S. Provisional Application No. 61/423,938, filed on Dec. 16, 2010, and entitled "Imaging System for Immersive Surveillance." Each of these applications is hereby incorporated herein by reference in its entirety.

BACKGROUND

[0003] In order to provide video surveillance of a wide area at high resolution, a conventional surveillance system employs many cameras, each emplaced at a different location throughout the scene being surveilled. Each camera has its own pan, tilt and zoom mechanism, and is connected to its own video feed at a video operator control console.

[0004] This approach has several problems. First, the spatial resolution of available cameras is not fine enough to cover a wide area at high resolution. Thus, the user must choose whether to zoom in or out using a particular camera. Zooming out provides wide area coverage, but makes the resolution insufficient to identify faces or other important features. Zooming in provides high resolution images, but creates significant gaps in video surveillance coverage of the scene. Furthermore, tying each camera to an individual video view creates many disparate views of the scene, which can be confusing and overwhelming for a single end user or require multiple users to monitor.

[0005] In addition, the spatial resolution of images acquired by conventional surveillance systems varies with the distance to the target and the particular optical arrangement of the cameras. Thus, not all imagery collected by conventional surveillance systems is suitable for target tracking, pattern (face) recognition, etc.

SUMMARY

[0006] Embodiments of the present invention include a system for monitoring a wide-area scene and corresponding method of monitoring a wide-area system. An illustrative system includes an array of first cameras and an array of second cameras. Each first camera has a first field of view, and each second camera has a second field of view that is different than the first field of view. In another example, the first field of view may be a first angular field of view, and the second field of view may be a second angular field of view that is smaller than the first angular field of view. The array of first cameras and the array of second cameras acquire first imagery and second imagery, respectively, which is used to form an image of the wide-area scene.

[0007] An alternative embodiment include a surveillance system comprising an array of first cameras, an array of second cameras, a processor, a server, and an interface. Each first camera has a first angular field of view and is configured to provide respective first real-time imagery of a corresponding portion of the wide-area scene. Similarly, each second camera has a second angular field of view different (e.g., smaller) than the first angular field of view and is configured to provide respective second real-time imagery of a corresponding portion of the wide-area scene. The processor is operably coupled to the array of first cameras and the array of second cameras and is configured to decompose the first real-time imagery and the second real-time imagery into image tiles and to compress the image tiles at each of a plurality of resolutions. The server, which is operably coupled to the processor, is configured to serve one or more image tiles at one of the plurality of resolutions in response to a request for an image of a particular portion of the wide-area scene. An interface communicatively coupled to the server (e.g., via a communications network) is configured to render a real-time image of the wide-area scene represented by the one or more image tiles.

[0008] Still another embodiment includes a (computer) method of compressing, transmitting, and, optionally, rendering image data. A processor decomposes images into image tiles and compresses the image tiles at each of a plurality of resolutions. The processor, or a server operably coupled to the processor, serves one or more image tiles at one of the plurality of resolutions in response to a request for an image of a particular portion of the wide-area scene. Optionally, an interface communicatively coupled to the server (e.g., via a communications network) renders a real-time image of the wide-area scene represented by the one or more image tiles.

[0009] Yet another embodiment includes a (computer) method of determining a model representing views of a scene from cameras in an array of cameras, where each camera in the array of cameras has a field of view that overlaps with the field of view of another camera in the array of cameras. For each pair of overlapping fields of view, a processor selects image features in a region common to the overlapping fields of view and matches points corresponding to a subset of the image features in one field of view in the pair of overlapping fields of view to points corresponding the subset of the image features in the other field of view in the pair of overlapping fields to form a set of matched points. Next, the processor merges at least a subset of each set of the matched points to form a set of merged points. The processor then estimates parameters associated with each field of view based on the set of merged points to form the model representing the views of the scene.

[0010] Still another embodiment includes a (computer) method of compensating for imbalances in color and white levels in color images of respective portions of a wide-area scene, where each color image comprises red, green, and blue color channels acquired by a respective camera in a camera array disposed to image the wide-area scene. A processor normalizes values representing the red, green, and blue color channels to a reference value representing a response of the cameras in the camera array to white light. The processor equalizes the values representing the red, green, and blue color channels to red, green, and blue equalization values, respectively, then identifies high- and low-percentile values among each of the red, green, and blue color channels. The processor scales each of the red, green, and blue color channels based on the high- and low-percentile values to provide compensated values representing the red, green, and blue color channels.

[0011] A yet further embodiment comprises a calibration apparatus suitable for performing white and color balancing of a sensor array or camera head. An illustrative calibration apparatus includes a hemispherical shell of diffusive material with a first surface that defines a cavity to receive the sensor array or camera head. The illustrative calibration apparatus also includes a reflective material disposed about a second surface of hemispherical shell of diffusive material. One or more light sources disposed between the hemispherical shell of diffusive material and the reflective material are configured to emit light that diffuses through the hemispherical shell of diffusive material towards the cavity.

[0012] A further embodiment includes an interface for a surveillance system that monitors a scene. The interface may include a full-scene view configured to render a real-time panoramic image of the entire scene monitored by the surveillance system and a zoom view configured to render a close-up of a region of the panoramic view. In at least one example, the full-scene view and/or the zoom view may display a pre-warped image. An illustrative interface may optionally be configured to enable a user to select a region of the scene in the full-scene view for display in the zoom view. An illustrative interface may also be configured to enable a user to set a zone in the panoramic image to be monitored for activity and, optionally, to alert the user upon detection of activity in the zone. The illustrative interface may further populate an activity database with an indication of detected activity in the zone; the illustrative interface may also include an activity view configured to display the indication of detected activity to the user in a manner that indicates a time and a location of the detected activity and/or to display images of detected activity in the full-scene view and/or the zoom view. An exemplary interface may be further configured to track a target throughout the scene and to display an indication of the target's location in at least one of the full-scene view and the zoom view, and, further optionally, to enable a user to select the target.

[0013] It should be appreciated that all combinations of the foregoing concepts and additional concepts discussed in greater detail below (provided such concepts are not mutually inconsistent) are contemplated as being part of the inventive subject matter disclosed herein. In particular, all combinations of claimed subject matter appearing at the end of this disclosure are contemplated as being part of the inventive subject matter disclosed herein. It should also be appreciated that terminology explicitly employed herein that also may appear in any disclosure incorporated by reference should be accorded a meaning most consistent with the particular concepts disclosed herein.

BRIEF DESCRIPTION OF THE DRAWINGS

[0014] The foregoing will be apparent from the following more particular description of example embodiments of the invention, as illustrated in the accompanying drawings in which like reference characters refer to the same parts throughout the different views. The drawings are not necessarily to scale, emphasis instead being placed upon illustrating embodiments of the present invention.

[0015] FIG. 1 is a diagram that illustrates an imaging system for immersive surveillance (ISIS) and major ISIS components according to an embodiment of the present invention.

[0016] FIG. 2A is an exploded view of an ISIS camera head according to an embodiment of the present invention.

[0017] FIG. 2B is a plot that shows coverage provided by the cameras in the ISIS camera head of FIG. 2A.

[0018] FIG. 2C is a plot of resolution versus target system for the ISIS camera head of FIG. 2A and two single-focal-length surveillance systems.

[0019] FIGS. 3A and 3B illustrate example modular architectures suitable for use in ISIS systems according to embodiments of the present invention.

[0020] FIG. 4 illustrates client-server operation for an example ISIS system according to an embodiment of the present invention.

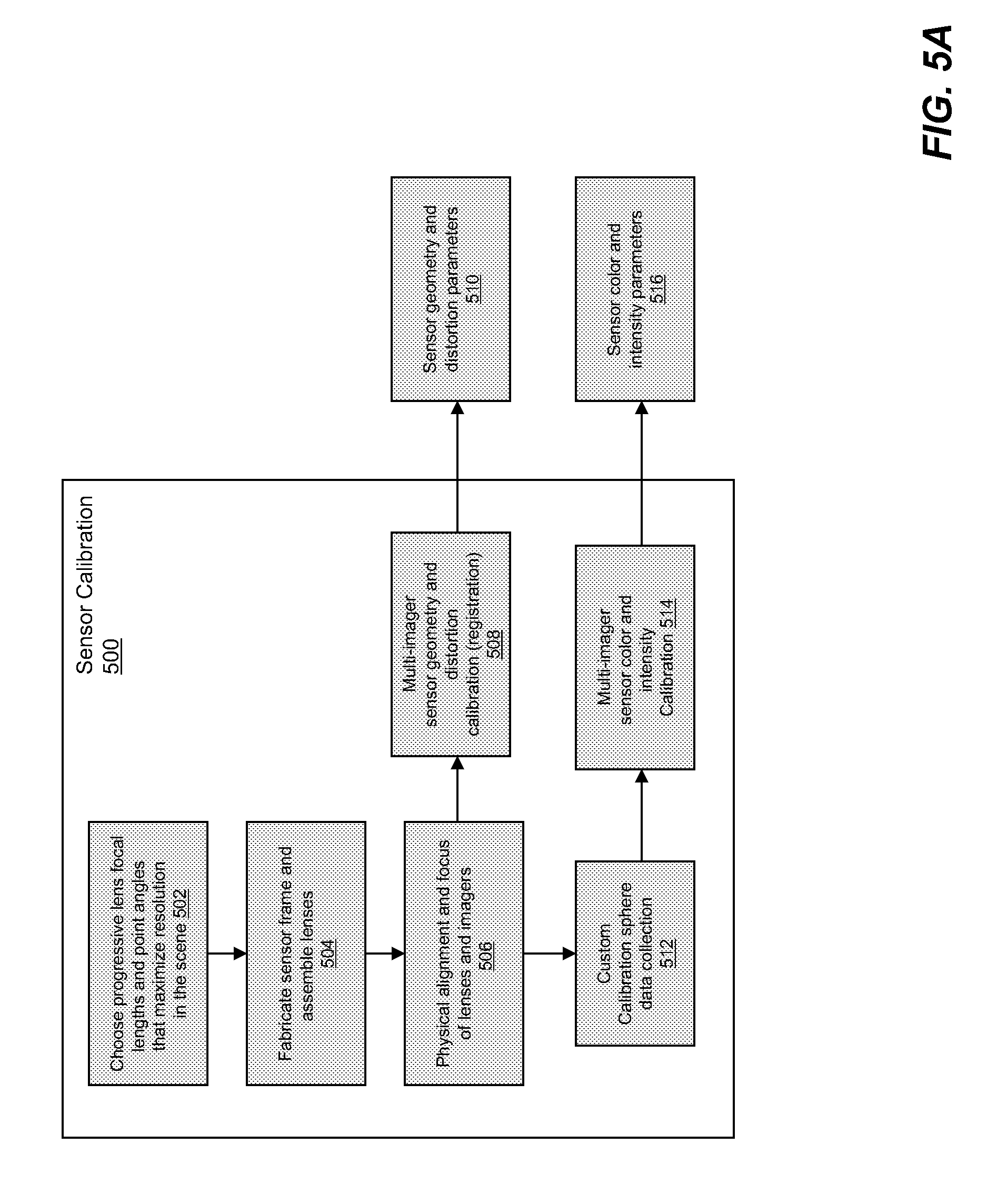

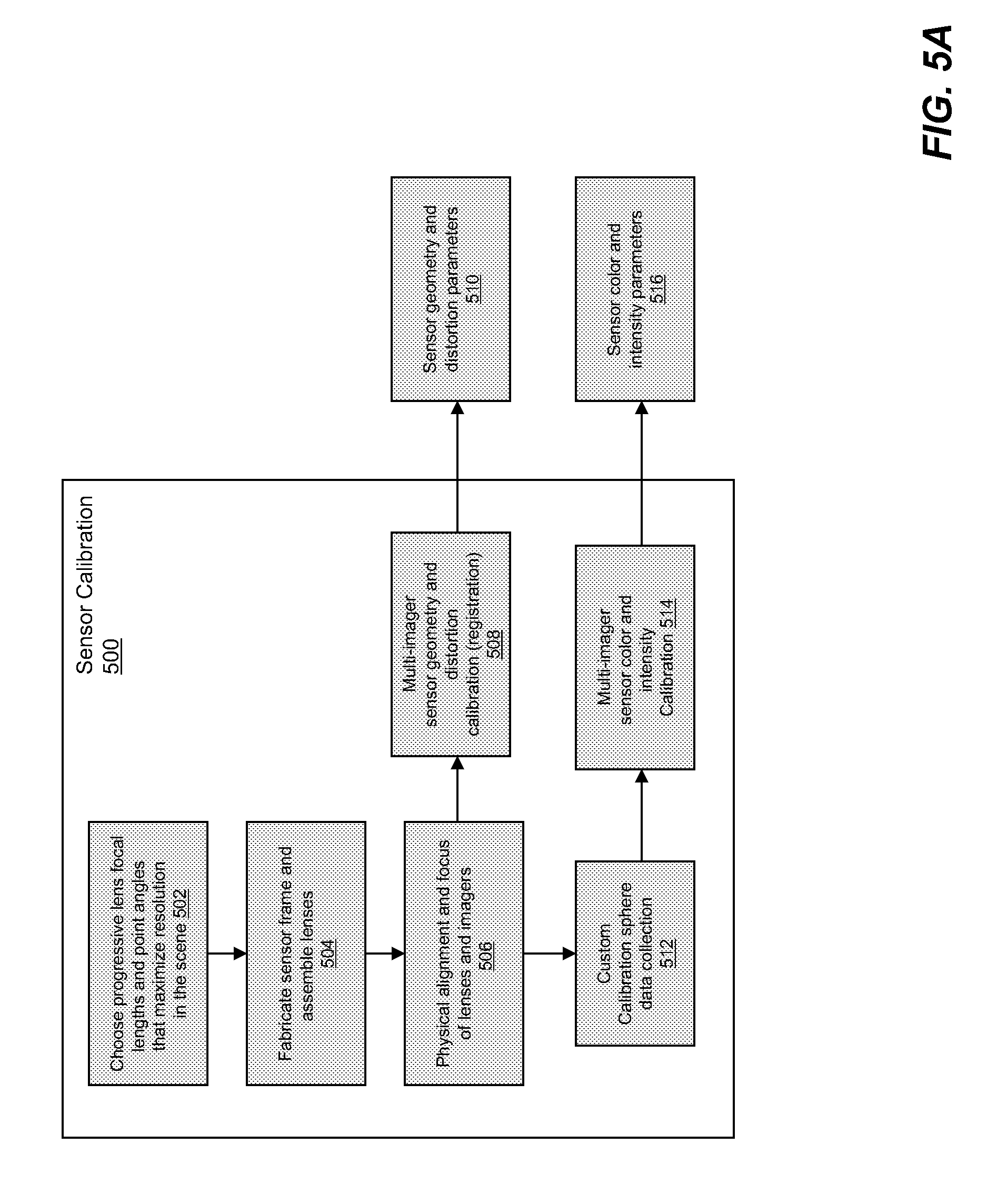

[0021] FIG. 5A illustrates an illustrative sensor calibration process according to an embodiment of the present invention.

[0022] FIG. 5B illustrates ISIS processing architecture and data flow according to an embodiment of the present invention.

[0023] FIGS. 6A and 6B illustrate ISIS registration processes with (FIG. 6A) and without (FIG. 6B) the use of a wide-angle reference image according to embodiments of the present invention.

[0024] FIG. 6C illustrates images acquired with an illustrative ISIS system before (left) and after (right) relative registration (FIG. 6B) according to one embodiment of the present invention.

[0025] FIG. 6D illustrates images acquired with an illustrative ISIS system before (left) and after (right) seam blending according to one embodiment of the present invention.

[0026] FIG. 7A shows how non-uniformity correction can be used to reduce image roll-off according to an embodiment of the present invention.

[0027] FIG. 7B illustrates a novel diffuse-reflectance hemisphere used for color calibration of an ISIS system according to an embodiment of the present invention.

[0028] FIG. 7C includes low-resolution images of part of a wide-area scene before (left) and after (right) white balancing and color equalization.

[0029] FIG. 7D includes low-resolution images of a wide-area scene at night, in the rain, from a working ISIS system with visible-wavelength cameras before (left) and after (right) white balancing and color equalization.

[0030] FIG. 8 shows how demosiacing can be used to improve image quality according to an embodiment of the present invention.

[0031] FIGS. 9A and 9B illustrate exemplary ISIS compression processes according to an embodiment of the present invention.

[0032] FIG. 10 illustrates adaptive statistical background modeling suitable for use with ISIS image data according to an embodiment of the present invention.

[0033] FIGS. 11-13 illustrate aspects of a user interface for an ISIS image viewer suitable for displaying and analyzing real-time and recorded data acquired by an ISIS system according to an embodiment of the present invention.

[0034] FIG. 14 shows rendered video views of 90-degree and 3-degree scenes acquired with an example ISIS system according to an embodiment of the present invention.

[0035] FIG. 15 shows a fisheye view that was acquired and corrected with an example ISIS system according to an embodiment of the present invention.

[0036] FIGS. 16A-16Q illustrates aspects of a user interface according to embodiments of the present invention.

[0037] FIGS. 17A-17C illustrates aspects of an ISIS cooling system according to embodiments of the present invention.

DETAILED DESCRIPTION

[0038] A description of example embodiments of the invention follows. The teachings of all patents, published applications and references cited herein are incorporated by reference in their entirety.

[0039] An Imaging System for Immersive Surveillance (ISIS) is a video surveillance system that provides wide-area, persistent surveillance from a single vantage point. The system provides 360-degree video surveillance coverage in the scene by being mounted to a ceiling, tower or wall. Example applications of the system include supporting security personnel in maintaining situational awareness in an area, aiding operators in real-time decision-making, and providing a comprehensive and high-resolution record of all activity in an area of interest.

[0040] The system has other applications as well, including but not limited to the provision of operational support for facilities operators, and the acquisition of high resolution video coverage for media applications. For example, an ISIS could be used for military base protection, port security, border security, airport security, and/or casino security. An ISIS could be used at train and subway stations, indoor and outdoor stadiums, shopping malls, entertainment venues, theme parks, convention centers, and even refugee centers.

[0041] Exemplary ISIS systems can also be used for sports, entertainment, and news broadcasting. For instance, a portable ISIS system can be used instead of or as a supplement to a conventional camera crew to provide coverage of sporting events, parades, and breaking news. An ISIS system mounted in baseball or football stadium or hung from the ceiling of a basketball arena can provide real-time, 360-degree coverage of the entire field or court. ISIS can provide such coverage to multiple viewers simultaneously. The "virtual camera" features of ISIS mimic the pan/tilt/zoom (PTZ) functionality of conventional video cameras and allow television producers--and even individual viewers--to zoom in, zoom out, or follow particular sections of the shot. Exemplary ISIS systems give fans instant replay on demand, enable referees to review close calls, and allow investigators to track the movements of suspects through crowded areas.

[0042] ISIS unifies two or more arrays of cameras (each of which includes a lens and a detector array), custom image processing, custom hardware compression boards, a smart compression architecture and parallel processing to provide wide-area (e.g., 180.degree., 270.degree., or 360.degree.) scene surveillance at a spatial resolution sufficient to identify a human face out to a radius of 10, 25, 50, 75, 100, or even 200 meters in all directions simultaneously. In some embodiments, the spatial resolution is about 0.5 cm, 1.0 cm, 1.2 cm, 1.5 cm, 2 cm, 13 cm, 20 cm, or 50 cm. As understood by those of skill in the art, 1.2 cm resolution is sufficient to recognize a person's face, 0.5 cm resolution is enough to read a license plate, 13 cm resolution is sufficient to identify a car, 20 cm resolution allows motion detection for people, and 50 cm resolution allows motion detection for cars.

[0043] To provide this kind of wide-area coverage, most surveillance systems use many cameras with individual pan/tilt/zoom mechanisms emplaced at various points throughout the scene. This approach means that each camera provides either a wide field of view at low resolution, which makes it difficult or impossible to identify individuals, or a narrow field of view at high resolution, which means that situational awareness outside the field of view is lost. The ISIS sensor provides both wide area coverage and high resolution in a single sensor.

[0044] Furthermore, existing camera systems tie each individual camera to a single discrete view at a control center, where each operator monitors one or more camera views. As the ability of an operator to adequately monitor separately presented views from multiple cameras decreases as the number of views increases, increasing the number of camera views leads to increases in the number of operators required to man the control center. In contrast, ISIS combines imagery from many cameras into a single, continuous image that appears to the end user as if it were a from a single lens-imager combination. As a result, ISIS enables a single user to monitor an entire wide-area scene--the number of users does not scale with the number of cameras (or the camera resolution). In some embodiments, an ISIS interface may extract and present sub-regions of the single, continuous image in separate windows so that the number of displays in an ISIS system scales with the number of events of interest in a scene. In addition, adding cameras or improving spatial resolution does not necessarily increase the complexity of an illustrative ISIS use interface.

[0045] An exemplary ISIS system may include a very large number (e.g., 100 million, 120 million, 240 million, or 480 million) of individual sensing elements (pixels) to provide fine spatial resolution throughout the wide-area scene. The use of inexpensive imagers, lenses, and custom electronic boards that can be manufactured a low cost in quantity, combined with the use of a single installation point means that the cost per pixel is very low compared to other surveillance solutions. Further, the unification of an illustrative ISIS systems into a complete, end-to-end, transportable platform means that the solution is cost effective, quickly deployable and bypasses the need for a system integration effort in which similar components are purchased separately.

[0046] An exemplary ISIS system may also provide a memory, or data storage, solution that is capable of handling these very high data rates (e.g., 240 million pixels at eight frames per second). ISIS also provides a tiled, multi-resolution compression scheme, accelerated through hardware-based compression in combination with an image client-server architecture, that allows for efficient bandwidth usage and fast playback speeds.

[0047] Finally, embodiments of ISIS provide a combination of image processing, viewer software design, and video analytics that enable the user to interact with the very large data in an efficient way. An illustrative ISIS viewer gives one or more users the ability to maintain wide-area situational awareness with a contextual view while simultaneously viewing different parts of the scene at high resolution through the means of the virtual pan/tilt/zoom view of the scene. Automated detection and tracking of moving objects in the scene can cue users to activity of interest instead of requiring an active search of the scene for activity.

[0048] Overview of ISIS

[0049] FIG. 1 is a diagram of an ISIS system 100 showing major ISIS components: a high-resolution camera head 200 (also called a sensor assembly 200); a video data compression server array 104 and storage array 114; a video analytics engine 106; and a multi-user video data interface 108. The camera head 200 can be mounted at a position that provides mostly unobstructed views of the scene, such as on a ceiling, tower or wall. In one example, the camera head 200 includes two or more arrays of cameras selected to image a wide-area scene from a height of approximately 30 feet, although different mount heights are possible. Image data, such as real-time video data, from the camera head 200 is transmitted to an array of computers or "servers" 104 over a bundle of fiber optic cables 110. Fiber optic cables 110 provide high bandwidth transmissions over distances as short as several feet or as long as several kilometers. In one example, a bundle of 32 fibers 110 provides a 100 Gbps link from the camera head 200 to an array of server computers 104 and an associated hard drive array 114.

[0050] The server computers 104 compress the video data using hierarchical image compression (described below) and write the compressed to an array of disks 114. The servers 104 also serving image and video data to video data viewer interfaces 108 resident on respective client computers. The client computers include the data browsing interfaces 108 that are connected to the data servers 104 by a transmission control protocol/internet protocol (TCP/IP) connection 116. This connection may be Ethernet (copper), fiber, or wireless in nature. Multiple clients may connect to the servers 104 simultaneously, providing multiple users with simultaneous access to both current and past image data.

[0051] While the system 100 described below uses a wired, gigabit ethernet link 116, the link 116 between the server cluster 104 and client PC/interface 108 may be any packet-switching based network, including wireless and wired links. A wireless link may make a physical connection between the client PCs and server cluster 104 unnecessary, for example. Other links, for example, free space optical links, can also be used as understood by those of skill in the art.

[0052] The video data browsing interface also includes or is operably coupled to a video processing (analytics) engine 106 responsible for providing automated activity detection and image registration, and can incorporate external video analytics software as well. This video processing engine 106 may also run on the server computers 104.

[0053] Camera Head

[0054] The camera head 200 includes two or more arrays of cameras arranged to provide imagery of substantially all of a wide-area scene. Each camera in the camera head 200 includes a lens that images a respective portion of the wide-area scene onto a detector, such as a charge-coupled device (CCD), complementary metal-oxide-semiconductor (CMOS) array, bolometer array, or any other suitable detector (or combination of detectors). The detector may detect visible, infrared, or ultraviolet radiation. In the example below, the camera head includes a total of forty-eight cameras arranged in two different arrays, or tiers. Each camera includes a lens, a five-megapixel detector array, and associated electronics. Depending on both the application and the geometry of the scene, different numbers of imagers and a different number of pixels per imager may be used.

[0055] The lens and detector array of each camera are selected to provide a particular field of view of the wide-area scene. Each camera's field of view may be characterized as an angular field of view, which is the range of angles detected by the camera, or as a linear (or areal) field of view, which the length (or area) of the portion of the scene detected by the camera. The angular field of view depends on the lens and the detector array and is independent of the scene, whereas the linear field of view depends on the angular field of view and the distance from the camera to the scene. Depending on the embodiment, an ISIS camera head may includes cameras whose fields of view ranges from about 1-2 degrees to as high as 360 degrees. Each camera's field of view overlaps with the field of view of at least one other camera in the array to provide spatially continuous monitoring of the wide-are scene. In some examples, each camera's field of view overlaps with the fields of view of the neighboring cameras in the arrays of cameras.

[0056] The angular field of view of each camera in the camera head is chosen (e.g., by selecting a lens of a particular focal length) to provide relatively uniform spatial resolution throughout substantially all of the wide-area scene. As described in more detail below, each cameras in one array of cameras may have a relatively narrow angular field of view (e.g., about 10.degree. to about 30.degree.) and may be positioned to image portion of the scene that is relatively far away from the camera head (e.g., a portion of the horizon). Each camera in another array of cameras may have a relative wide angular field of view (e.g., about 30.degree. to about 60.degree.) and may be positioned to image a portion of the scene that is relatively close to the camera head (e.g., a portion of the scene below the camera head). In general, the variation in angular field of view as a function of camera position yields progressively varying angular resolution across the scene; in turn, this yields images having a spatial resolution that is optimally designed for the geometry of the surveilled scene.

[0057] FIG. 2A shows an example camera head 200 that contains three arrays (tiers) 202a, 202b, and 202c (collectively, arrays 202), each with its own group of lenses 204a, 204b, and 204c (collectively, lenses 204), imager boards 206, and camera merger boards 208. Each lens 204/imager 206 pair forms a respective camera. In some embodiments, the frame to which the lenses 204 and electronics 206, 208 are mounted is made of Duraform GF, a glass-filled nylon material, formed by a technique called selective laser sintering (SLS), a type of three-dimensional printing. The use of SLS as a manufacturing technique allowed more complex structures to be fabricated than through other methods. For example, a five-axis CNC (computer numerical controls) machine cannot be controlled as precisely as an SLS machine. One example of such a structure is the set of imager board insets 206 on the inside of each array 202, which may be difficult to machine using more traditional methods. The use of a rapid prototyping methodology like SLS also can reduce production times, for example, from several months to one week. The imagers 206 and merger boards 208 are mounted in a protective case 210, which also encloses fans 212 used to cool the electronics in the camera head 200.

[0058] The top two arrays 202a, 202b are capped by an aluminum plate that holds two ventilation fans 212 which blow the air out of the casing. Also resident on the plate are two 150 W power supplies and an RJ-45 power connector for the boards. Removing the plate breaks the power connection to reduce the risks of accidental electrocution and human contact with the internal electronics.

[0059] Another aluminum plate, attached to the support rods, provides structural support and separates the second array 202b from the third array 202c. This additional plate holds two additional fans which move the air upwards from the third tier up into the top two tiers. The third array 202c holds eighteen lens-imager pairs (cameras). Sixteen of the lenses 204c are 9.6-mm lenses, which provide coverage of the scene between approximately 10 and 50 meters from the sensor. In one embodiment, the camera head 200 includes another array of two cameras with 1.27 mm lenses mounted towards the bottom of the camera head 200. Alternatively, or in addition, one imager 206 is paired with a 2.6-mm lens, which provides coverage of the scene from 0 to 10 meters from the sensor, and another imager 206 is paired with a 1.1 mm lens (i.e., a fisheye lens) to provide full 360-degree coverage of the scene, providing reference imagery for image registration.

[0060] A 13.5-inch diameter case 210 covers the top two arrays 202a, 202b. This case 210 also contains transparent windows 214 that provide limited resistance to moisture and outdoor weather conditions. The case 210 contains two vents to allow the hot air being blown out of the camera to escape. The fiber bundles and power cables attached to the camera merger boards 208 inside the camera head 200 are threaded through the center of the aluminum plates and then out through the commercially available liquid-tight non-metallic conduits that are installed in the top of the case 210. Five support rods that attach to an external mount are inserted through holes in the top of the case 210, through the first aluminum plate, and to the second plate separating the second and third arrays 202a, 202b. The two pieces of glass-filled vinyl frame (the top two tiers are a single unit and the bottom tier is separated) are attached by screws to the aluminum plate along their outer rim.

[0061] Camera Geometry and Field of View

[0062] FIG. 2B illustrates coverage provided by lenses of the camera head 200 shown in FIG. 2A. The top two arrays 202a, 202b of the frame each hold fifteen 25-mm focal length lenses 204a, 204b, fifteen imager boards 206, and five camera merger boards 208. In some examples, each lens 204 is paired with a single imager 206. Other arrangements of arrays and camera focal lengths are possible and may be selected based on the height of the sensor and the three-dimensional geometry of the scene being monitored. The geometry of the frame is designed such that the lenses 204 cover a continuous circular region approximately 50 m and farther from the lens 204 when the camera is placed at a height of 7.5 meters (the region beyond the circle of sixteen sensors in FIG. 2B). The fields of view of the sensors in each of the top two arrays 202a, 202b are offset (e.g., by the width of one-half of an imager) so that the field of view of one imager 206 is adjacent to the two imagers 206 immediately above or below it. Each camera's field of view may overlap with the fields of view of the neighboring cameras. Overlap helps to ensure that there are no gaps between imagers, and helps to provide enough common imagery between adjacent imagers to provide imagery for relative registration (described below).

[0063] The geometry of the imagers 206 and lenses 204 may be chosen so that (1) the area of interest is covered with no gaps, and (2) the resolution on the objects of interest over the field of view is within a desired range no matter what the range from the object of interest to the camera head 200. Assuming that a high level of resolution is desired everywhere in the scene, then the optimal use of the number of pixels in the sensor array 200 is to make the resolution stay within a particular range, or as constant as possible within the scene. In other words, the cameras (imagers 206) and their respective lenses 204 may be chosen to vary progressively in order to provide a substantially constant resolution across the entire scene.

[0064] In some embodiments, the ISIS system 100 achieves this goal through a tiered lens approach. Different tiers (arrays) of cameras have lenses 204 with different focal lengths, so that a given tier (e.g., an upper array 202a) includes lenses 204 having a given focal length (e.g., lenses 204a have relatively long focal lengths) and a corresponding angular field of view. In one such embodiment, the upper arrays 202a of the ISIS system 100 contains lenses 204a having longer focal lengths (and narrower fields of view), and the lower arrays 202b, 202c contain lenses 204b, 204c having shorter focal lengths (and wider fields of view). In this way, the upper arrays 202a give a higher angular resolution to maintain constant spatial resolution on more distant objects, and the lower arrays 202b, 202c have a coarser angular resolution, but because of the closer proximity to the targets in question, maintain a relatively constant spatial resolution on the target with in the field of view of interest--in one such embodiment, 100 meters or less from the sensor. Other arrangements are possible, for example, an alternative ISIS system having shorter focal-length lenses in the upper tiers and longer focal-length lenses in the lower tiers, or an alternative ISIS system having tiers that contain both shorter and longer focal-length lenses.

[0065] As described above, the ISIS sensor 200 of FIG. 2A has three arrays 202 of lenses 204. The top two arrays 202a, 202b of cameras contain lenses 204a, 204b with focal lengths of 25 mm. The cameras' fields of view are staggered such that each camera's field of view overlaps with the fields of view of azimuthally adjacent cameras in the other tier of cameras. This arrangement allows the lenses 204 and imagers 206 to be packed in a tighter configuration and allows the sensor 200 to be significantly smaller. The bottom array 202c contains shorter focal length lenses 204c because the imagers 206 on the bottom array 202c are looking directly down. The upper arrays 202a and 202b provide coverage over the horizon, which appears as an outer ring, or annular portion, of the wide-area scene viewed from the perspective of a fisheye lens, and the lower array 202c provides coverage over a central portion (e.g., a circular region or inner annular region) of the wide-area scene viewed from the perspective of a fisheye lens.

[0066] The tiered design allows a graduated focal length along the sensor in a vertical direction--much like a bifocal/trifocal design on a pair of human glasses, or a graduated lens. The cameras need not be arrange in tiers--they can be arranged in arrays of different geometries, including interweaved geometries--and that the number and arrangement of tiers depends on the application. In addition, the number of lenses and number of different focal lengths can be varied depending on the desired spatial resolution and the desired uniformity of spatial resolution across the scene.

[0067] FIG. 2C is a plot of the spatial resolution of targets as a function of distance for surveillance systems with single focal lengths and for the ISIS sensor 200 (FIG. 2A) mounted at an example height of 7.5 m above the ground plane. Targets are assumed to be on the ground plane for the purposes of this graph. The spatial resolution of a camera with a single-focal length lens varies linearly with distance to the target at a slope depending on the focal length as shown by lines 292 and 294. In contrast, the ISIS sensor 200, which includes cameras with multiple focal lengths, provides a substantially constant resolution (e.g., between about 5 mm and about 15 mm) over the entire field of view as shown by line 296. The line 296 is jagged because each array 202 of the sensor 200 has lenses 204 of different focal lengths and because the lenses 204 in each array 202 have different distances to their respective targets. Note that the optical design of the sensor head 200 is optimized to see out to a distance of 100 meters, although the diagram shows a coverage area of 200 meters.

[0068] A sensor head with more tiers and/or lenses of a greater number of different focal lengths enables the sensor to obtain imagery whose spatial resolution is more uniform as a function of target distance. A greater number of different focal lengths also yields a spatial resolution versus target distance that would be represented by a line on the plot in FIG. 2C with many small "jags," each of which may be centered about a mean spatial resolution. The number of lenses, number of different lens focal lengths, lens focal length values, and target distance(s) determine an ISIS system's exact spatial resolution and the uniformity of the spatial resolution across the entire scene.

[0069] Camera Head Electronics

[0070] Besides the lenses 204, frame and cover, the sensor head 200 shown in FIG. 2A contains three major categories of electronic components: forty-eight imager printed circuit boards (PCBs) 206, sixteen camera merger PCBs 208, and two power boards.

[0071] Each of the imager boards 206 can be paired with a single lens 204, as described above, and carries a single detector array (e.g., a Micron Technology MT9P001 5-megapixel CMOS 12-bit digital RGB Bayer pattern image sensor array). In one example, the imager board 206 provides 15 frames of image data per second (fps) at full resolution while consuming less then 400 mW of power. It incorporates sophisticated camera functions such as windowing, column and row skip mode, and snapshot mode, and is programmable through a two-wire serial interface. The board 206 also contains a programmable read-only memory (PROM) for imager identification purposes and a ribbon cable connector for bidirectional data/control signals and power.

[0072] Each camera merger PCB 208 interfaces to three of the imager PCBs 206 via ribbon cables. A field programmable gate array (FPGA) (e.g., a Xilinx.RTM. Virtex 5 FPGA) on the camera merger PCB 208 is used to clock the imagers 206 at 96 MHz and capture data acquired by the imagers 206. One frame at a time, the data from all three imagers 206 is transferred from the FPGA to a 1-Gigabyte double data rate (DDR2) synchronous dynamic random-access memory (SDRAM) module at 400 MHz. The camera merger PCB 208 has two DDR SDRAM modules in order to perform a ping-pong data transfer scheme, i.e., data is written to one memory while being read back from the other. The data being read from memory is transferred, via a 3.125 Gigabit fiber-optic interface, to a data server containing a custom PCI JPEG2000 compression board. The camera merger PCB 208 also contains power conditioning circuitry for local components as well as for the imager board 206. Other FPGAs and memory configurations may be used as well. Two power boards (not shown) are located in the top array 202a of the sensor head 200. Each power board contains a 150 Watt DC-DC converter that accepts 24 volts and outputs 3.3 volts. In other embodiments, the power boards may be located elsewhere in the ISIS system.

[0073] Modular Camera Head Construction

[0074] The electronics in the camera head are designed in a modular fashion so that the camera head itself can be broken into several pieces to accommodate the geometry of the mounting point. For example, the camera head 206 can be broken down into four pieces, each of which is mounted to a face of a building to provide 360-degree coverage. The electronics in the camera are designed so that sub-cameras may be broken out without the need to modify the back end collection electronics or viewer software. In one example, the cameras are divided into groups of three, but other groupings or arrangements of cameras may be selected as desired.

[0075] FIGS. 3A and 3B illustrate alternative architectures 300 and 350, respectively, for image data acquired by the ISIS system 100 and camera head 200 described above. The ISIS system 100 includes four compression boards 302 per computer server 104, for a total of eight compression boards 302 distributed over two computer servers 104. Each computer server 104 receives a compressed data stream from each of the compression cards 302 over the internal data bus. The data is written to a multiple terabyte array of hard drives 114 that is also resident in each computer server 104. The array of hard drives 114 are configured as a redundant array of independent drives (RAID) to maximize both throughput and data redundancy for robustness to drive failure. The compression boards 302 export the data to a computer server over a data bus and the data is stored on a hard drive array.

[0076] Each camera merger board 208 can transmit data over a single fiber pair 354 and thus this makes a natural module and working unit. In a single-camera module embodiment 300 of the sensor 200, shown in FIG. 3A, all sixteen camera merger boards 208 reside in a single housing, and the fiber pairs 354 are grouped into a single fiber bundle 304. However, each camera merger board 208 or group of camera merger boards 208 can be broken into its own unit, as shown in the architecture 350 of FIG. 3B, so that if the camera head 200 were to be broken into multiple pieces, the system 100 would not need to be redesigned--rather each merger board 208 and associated imagers 206 could reside in its own housing, be mounted separately, and each fiber pair 354 could be run separately to an associated compression card 302 or server 104, as shown in FIG. 3B. Furthermore, each compression card 302 could reside in a different server 104, and the servers 104 need not be co-located, as long as they reside on the same network. Thus, the system 100 is modular at the camera head level, the compression card level, and the server level, and these items need not be co-located.

[0077] Video and Image Data Compression

[0078] The video data being transmitted from the camera merger boards are compressed using JPEG2000 compression engines, or compression boards. (Other image and video compression techniques may be used as well). Each compression engine receives video data from two camera merger boards over 3.125 Gigabit fiber. The compression engine decomposes each frame of the video data into tiles, which may be 128.times.128, 256.times.256 pixels, 512.times.512 pixels, or any other suitable size, including asymmetric (e.g., rectangular) sizes, which are stored at a plurality of resolutions. In one embodiment, an ISIS system encodes the data from each image tile at four different resolutions levels, each one half of the width and height of the preceding resolution. The data is encoded progressively such that multiple quality levels of imagery with varied regions of interest (ROIs) can be requested at a later time.

[0079] FIG. 4 illustrates an example operation 400 of an image client-server software architecture that serves image data efficiently, such as the architecture 300 of FIG. 3. A viewer interface 108 on a client computer sends a directory request 401 to the server cluster 104 for a directory of all collected imagery data sets (402). The server cluster 104 retrieves the data set start and end times (404) from metadata files stored in the hard drive archive 114, then forwards the requested directory 403 to the viewer/client 108.

[0080] Next, the viewer/client 108 requests imagery for a particular angular field of view (azimuth, elevation and angular width) sufficient to render an image of a particular size (406). Using image registration information (described below), the viewer/client 108 is able to back-project (408) the field of view in angle space to regions on specific imagers. This allows the viewer/client 108 to determine which cameras in the camera arrays are collecting video from a desired angular coverage area (410); the "tiles" or sub-regions within each imager to render the view (412); and the quality level or resolution of imagery to render the view of the scene at the desired size (414).

[0081] The viewer/client 108 then sends a request 415 for compressed data with the appropriate message headers over TCP/IP to the cluster of servers 104 for imagery for the tiles of imagery from the desired imagers at the desired resolution/quality level. The server 104 retrieves the requested data from the hard drive array (416), packages the data with the appropriate headers, and sends the packaged data 417 back to the viewer/client 108 that requested the data over the TCP/IP network connection. By sending only those image tiles at the resolution level necessary to render a particular angular view, the server 104 reduces the data bandwidth over the TCP/IP link. The viewer/client 108 decompresses and color corrects (418) the compressed image tile data, then renders the decompressed image tiles in a composite view (420).

[0082] Illustrative ISIS architectures may include video-based compression (which takes advantage of temporal correlation between frames and uses key frames to reduce data size), still image compression (where each image is stored as a separate image with no correlation between frames), or both video-based and image compression. One advantage of using still image compression is that the user, upon "seeking" in the browser to a particular moment in time, may pull up the image very quickly without waiting for the video to "buffer" and being able to play back. Instant seek allows the user to more efficiently browse the data for a particular object, person, or event.

[0083] Video and Image Processing

[0084] FIGS. 5A and 5B illustrate exemplary video and image processing chains, respectively, according to embodiments of the present invention. FIG. 5A shows the calibration process of the camera head 200 itself, and FIG. 5B shows how the imagery collected from the imagers makes its way to the end user.

[0085] FIG. 5A shows a calibration process 500 suitable for aligning the cameras in the camera head (sensor assembly). Once the progressive focal lengths and point angles of each lens have been chosen to optimize the resolution throughout the scene (502), the sensor frame is fabricated to hold the lenses in the computed geometry (504). The individual cameras (lens/imager pairs) are then physically aligned so that they overlap slightly with no gaps in coverage, and each camera is focused onto a corresponding (e.g., unique, but overlapping) portion of the wide-area scene (also 506). At this time, video data from the imager is collected in a typical scene. The collected imagery is used to estimate physical parameters 510 of the system, such as focal length, pixel size, point angle and lens distortion (508). This estimation process (also called registration) is described below. These parameters 510 may be stored in a memory for later processing during image processing. Also, the imagery is collected from the sensor inside a custom calibration sphere as well (512) for multi-imager sensor color and intensity calibration (514) as described below. This imagery is used to compute color and intensity correction parameters (516), also to be used during the image processing chain.

[0086] FIG. 5B shows an exemplary image processing architecture 520 suitable for use during ISIS system operation. Light from the wide-area scene being covered by the sensor is collected by the cameras (lenses and imagers) in a synchronized fashion. The camera sensor 102 formats and transmits the image data over the fiber bundle 304 to a server computer array 104, which analyzes the data and sends back commands to the camera head 200, also via fiber 304, to control the integration time (exposure) of each of the cameras (imager/lens pairs) to maximize dynamic range in the camera head 200.

[0087] The server computer array 104 then tiles the imagery and compresses each tile in a hierarchical, multi-resolution fashion. In some implementations of the system 500, JPEG2000 was used, but any suitable hierarchical data compression techniques may be used. The compressed tiles are written to a large storage array 114 (FIG. 1).

[0088] One or more client computers 528 can connect to the server computer array 104 via TCP/IP (or similar communication protocol) over an Ethernet (copper) or wireless communication link. A user may use the client's graphical user interface (GUI) 108 to specify a desired field of view (or desired fields of view) of the scene in terms of azimuth, elevation, desired angular field of view, and time (current or past). The client 528 uses the sensor geometry and distortion parameters 510 (FIG. 5A) computed during calibration 500 to determine which image tiles, cameras, and resolution can be used to render the requested view(s). The client 528 requests those tiles and camera data from the server computer array 104. The client 528 and server computer array 104 together form the client-server architecture described later.

[0089] Once the server 104 has received the tile requests, it retrieves the desired image tiles at the requested resolution levels from the storage array 114 and sends them to the client computer 528 over the TCP/IP network. The client computer 528 receives and decompresses the tiles. It then corrects the decompressed tiles for color and intensity using the color and intensity parameters 516 (FIG. 5A) computed during the calibration stage 500. The client 528 then demosaics the color-corrected images into RGB imagery, which is stretched according the specified controls on the GUI 108. The client 528 processes the stretched imagery for seam blending, a process by which the overlapping regions between the imagers are combined in a way so as to reduce the visibility of the seam to the end user. The client 528 renders the seam-blended image tiles on a display (not shown), for example, as projected onto a virtual camera space for the fields of view specified by the user and displayed on the GUI 108. This process may be repeated each time an image is output to the screen, e.g., as part of the display refresh or when the user selects a new point of view or portion of the scene as described below.

[0090] The client 528 may also process the rendered imagery using image processing plug-ins, including, but not limited to (motion) detection, object tracking and other video analytics. The client 528 may store indications of motion detection in a motion detection database 530 that can be accessed by one or more other clients 528 on the network. The client 528 may also re-load stored indications of motion detection at a later time. The results of object tracking can be fed back into the client-server architecture 520 to cue (e.g., continuously) the virtual camera view to be centered on one or more objects of interest. Other video analytics, such as behavior analysis and incident detection, can be subsequently applied to some or all of the processed data. Annotation tools also store operator-annotated events and specific incidents in the scene.

[0091] Image Registration and Conditioning

[0092] In some examples, the ISIS system viewer 108 renders an interactive virtual camera view of the scene and displaying it to a viewer (user). This virtual camera view represents an arbitrary angular field of view into the scene that is independent of the field of view of any single camera. The user has the ability to request a particular angular field of view and have the resultant imagery appear as if it came from a single camera (image-lens combination), even if the viewer 108 uses imagery from multiple imagers to create that imagery. Thus, the relative position, point angle, pixel size, radial distortion, and focal lengths of each camera (imager/lens combination) should be determined to a sufficient degree of accuracy, and the imagery from each camera should be warped, resized, and placed in the virtual camera at the correct position so that the transition from one camera to another appears to be seamless. The process of determining the relative locations, relative pointing angles, appropriate image scale factors, and distortion correction for the cameras in the cameras arrays to provide a seamless image is called image registration.

[0093] An ISIS system may use any suitable registration technique, including registration of imagery from individual cameras to a panoramic (e.g., fisheye) reference image of substantially of the scene and registration of imagery from individual cameras to imagery from other cameras in the camera arrays. In theory, registration only needs to be done once per camera. In practice, however, cameras are re-calibrated periodically to compensate for mechanical drift, thermally induced expansion and contraction, and other environmental perturbations. For instance, outdoor ISIS systems may be re-calibrated once every two months, depending on the weather; indoor ISIS systems may be re-calibrated less frequently, and ISIS systems on moving platforms (e.g., unmanned aerial vehicles, ships, cars, and trucks) may be re-registered as indicated by visual inspection or as indicated when an error metric computed from image data reaches or exceeds a predefined threshold.

[0094] Image Registration to a Wide Angle ("Fisheye") Reference

[0095] As described above, the camera head 200 of FIG. 2A is comprised of forty-eight lens-imager combinations, or forty-eight cameras (forty-eight is an arbitrary number, other numbers can be used). Most of these cameras have a narrow field of view (e.g., <30 degrees); these are designated "projective" cameras because they can be modeled fairly accurately as a flat projection of a three-dimensional world onto a plane perpendicular to the camera's optical axis. The camera head 200 may also include another camera (not shown) with a fisheye lens to provide a reference image that encompasses substantially all of the wide-area scene.

[0096] ISIS employs different models for the projective cameras and the fisheye camera to compensate for the unique distortion characteristics of the conventional (e.g., spherical) lenses in the projective cameras and the fisheye lens in the fisheye camera. These models are applied to the imagery to remove distortion. For projective cameras, the ISIS system 100 calculates distortion parameters and combines these parameters with the projection matrix, which is computed from an initial gross azimuth/elevation point angle estimation of the camera. For the fisheye lens/imager combination, the fisheye distortion parameters were computed using a publically available calibration software toolbox. These distortion parameters were used to more accurately map camera pixels to three-dimensional world coordinates.

[0097] Because the fisheye camera provides imagery that covers 360 degrees, the field of view of each projective camera covers a subset of the fisheye camera's field of view. As a result, the fisheye camera provides a single, seamless common reference frame for all of the projective cameras. The ISIS system 100 overlays imagery taken by a given projective camera on top of imagery captured by the fisheye lens camera, then matches the projective camera imagery to the underlying fisheye reference image in a process called image registration.

[0098] FIG. 6A illustrates an example image registration 600 performed by the ISIS system 100 using imagery 602 acquired by the fisheye camera and imagery 604 acquired by at least one other camera in the sensor head 200. To project image data onto an image plane perpendicular to the optical axis of the virtual camera view being generated, the image is warped using a "transform" or a linear algebra operation on the image data. Because of the 360-degree nature of the fisheye camera imagery 602, the fisheye camera imagery 602 is best represented with a three-dimensional spherical model. The registration process 600 performed for each projective camera by the ISIS system 100 is as follows.

[0099] First, one or more processors (e.g., on the client side) in the ISIS system 100 projects projective camera imagery 604 according to current projection matrix (initialized to default value based on rough estimate of point angle) (620), and renders a non-linear, distortion-corrected projection of the reprojected camera imagery onto a spherical, three-dimensional model in (x, y, z) space (622). Next, the processor renders a non-linear, distortion-corrected projection of the fisheye camera imagery 602 onto the spherical, three-dimensional model in (x, y, z) space (606). The processor then renders a "camera view" planar projection from the spherically projected imagery of both fisheye and projective imagery 602, 604 in a local angular area of overlap onto the tangent plane (virtual camera) in (x,y) space (608, 624).

[0100] The processor then finds robust image features in both images (610, 626) using the Scale Invariant Feature Transform (SIFT), Speeded Up Robust Feature (SURF), corner detection, edge filtering, hand selection or any other suitable technique. (For more on SIFT, see U.S. Pat. No. 6,711,293 to Lowe, which is hereby incorporated herein by reference in its entirety.) Suitable image features are relatively robust to slight differences in color and scale and relatively well-localized features (e.g., bright points, not blobs). The processor filters outliers from among the image features using random sample consensus (RANSAC; developed by Fischler and Bolles in 1981) or any other suitable technique (612). It then computes a homography between the filtered image features in the fisheye camera and projective camera views and uses the homography to determine a registration matrix (614). As understood by those of skill in the art, a homography is a circle-preserving transformation composed of an even number of inversions that can be used to map features from one image (projection) onto the same features in another image (projection). This registration matrix updates the projection matrix used above. The processor iterates through the registration process 600 until the homography converges, or until it reaches specified maximum number of iterations (e.g., M).

[0101] Relative Image Registration

[0102] FIG. 6B illustrates a relative registration process 650 in which overlapping images from the cameras on an ISIS camera head are registered to each other rather than to a reference image. A processor locates robust image features in a region of overlap, or image seam, common to an overlapping pair of images (or field of view). The processor uses the locations of the image features to solve for intrinsic and extrinsic camera parameters, such as camera position, camera (angular or linear) field of view, camera pointing angle, camera offset, etc., by minimizing an error associated with re-projecting the image features onto the image seams using the camera parameters. Precise estimates of point angle, focal length and pixel size are especially useful for registering cameras to each other without introducing perceptually significant discontinuities in the composite image. The relative registration process 650 yields a set of equations, or model, of the cameras' locations and attitudes relative to each other and to the scene. This model can be used to register imagery from the cameras to form continuous image of all or substantially all of the wide-area scene. For instance, the registration process can be used to transform imagery that is aligned using initial estimates of camera parameters (shown at left in FIG. 6C) into registered imagery (shown at right in FIG. 6C).

[0103] FIG. 6B shows that the relative image registration process 650 begins with obtaining raw video or image data from cameras in the array (652) and generating or loading a set of nominal sensor parameters (654). A processor, such as a graphics processing unit (GPU) on the server side or the client side, uses the nominal sensor parameters to find regions of overlap, or seams, in the video and image data. The processor identifies and extracts points representing image features (658) that are robust to slight differences in color and scale (e.g., bright point sources) in each seam using SIFT, corner detection, edge filtering, user input, or any other suitable selection technique.

[0104] Once the processor has extracted the image feature points, it filters the extracted image feature points to reject outliers using the following RANSAC procedure. The processor selects a random set of at least four non-colinear image features in a seam common to images from a pair of cameras, then calculates a planar homography, which defines a transform from one camera perspective to another camera perspective, based on the selected non-colinear features. Using at least four points, each which is characterized by an x coordinate and a y coordinate, yields eight constraints--enough to solve for a 3.times.3 matrix to transform one plane to another.

[0105] The processor tests the homography on all of the filtered image features as follows. First, the processer determines an inlier consensus set by projecting points representing the image features using the homography from one camera view (image) to the other camera view (image) sharing a common seam (660). The processor selects those points from one camera view that lie within a pre-defined Euclidean distance to the matching points in the other camera view and rejects outlying pairs of points (662). If desired, the processor may select another set of at least four image features, compute a new homography, and test the new homography until it reaches a maximum number of iterations or until it determines that the probability of choosing an outlier is less than a predefined probability. The processor retains the largest inlier consensus set on each seam for parameter optimization.

[0106] Next, the processor subsamples the inlier consensus set to produce an even distribution of points across the image seams (664). In general, the processor finds a large number of image features that contribute to the inlier consensus set, but highly spatially correlated features add very few additional constraints for the calibration software. Therefore, the processor prunes the matched points spatially so that there is an even sampling of points in a set of tiles distributed across every seam in the scene. Point reduction helps reduce the optimizer's sensitivity to specific seams, e.g., seams that have excess features. Point pruning also increases the speed of the calibration since fewer points are being re-projected at each iteration of the optimization. If a set of points in the world have a known three-dimensional location, a user can select the corresponding points in the raw imagery during a one-time initialization step and the calibration software will produce a solution with metrically accurate absolute orientation.

[0107] The processor merges the pruned points from each seam in a procedure called multi-dataset calibration (666). Feature locations are scene dependent with more features being found in regions of the scene with higher entropy. Rotating the sensor (camera head) and acquiring additional images causes locations in the scene with many feature to other overlap regions (image seams). The inclusion of more data samples avoids solutions that settle into local minima. In addition, using more samples averages out uncertainties in the location of each image feature.

[0108] Relative image registration 650 concludes with an optimization routine (668) to calculate the sensor model parameters. The optimizer uses the pruned point pairs from the overlap regions (image seams) to minimize a cost function based on the re-projection error. The processor iteratively minimizes a global cost equal to the sum of the re-projection errors using a boundary-constrained non-linear objective function that accounts for the camera parameters. Each camera parameter can be scaled with respect to other camera parameters to weight certain camera parameters more or less in the objective function at each iteration.

[0109] Because the some of the functions defining the sensor model are non-differentiable, a completely analytic Jacobian of the cost function cannot be derived. (As understood by those of skill in the art, the Jacobian of the cost function is the matrix of all first-order partial derivatives of the cost function with respect to another vector.) The derivatives of non-differentiable functions, like lens distortion, are differentiated using a three-point numerical approximation. The combined analytic and numerical Jacobian of the cost function is used to direct the gradient descent iterations of the optimization routine to optimal parameter values more rapidly than a pure numerical approach. The Jacobian of the cost function is used in the gradient descent update portion of the software to predict the change in parameters at the next iteration that will yield a lower overall cost. Parameter estimation proceeds until a maximum number of iterations is reached, the error objective reaches a specified lower limit, or a particular parameter moves outside a defined interval.

[0110] The parameter optimization technique may be used to calibrate sub-groups of imagers to achieve more optimal results. For example, the thirty longer focal length cameras (i.e., those with 25 mm focal length lenses) in FIG. 2A may be registered to each other by solving for the point angle, pixel size and focal length of each camera, and the sixteen medium focal length imagers (i.e., those with 9.6 mm focal length lenses) in FIG. 2A may be registered to each other using the same process. These two arrays of cameras may then be registered to each other using the global iterative process. Finally, any wide-angle, downward-pointing lenses (1.3 mm) in the array have more inherent distortion and that distortion may be modeled as well through the global optimization process and are registered to the medium focal length lenses.

[0111] Seam Blending

[0112] Demosaicing yields an image like the one shown at left in FIG. 6D. Once demosaicing is finished, one or more processors (e.g., GPUs) performs a seam blending operation to blend the registered images together to produce an image such as the one shown at right in FIG. 6D. In some embodiments, seam blending includes two part--first, by introducing an opacity mask for each pixel in each image and then by blending the opacity masks together during the rendering process.

[0113] An opacity mask for an underlying image is a secondary image with the dimensions (number of pixels) as the underlying image. Each pixel in the opacity mask has an opacity value between 0 and 1. The user may choose a number N which, in some embodiments, is slightly larger than the expected number of overlapping pixels in the registered images. Consider a border of width N on the edge of an image tile from a given camera. At the inside of the border, N pixels away from the outside edge of the image tile, the opacity mask has an opacity value of 1, and along the edge of the image, the opacity mask has a value of 0. In between the inside and outside edges of the border region, the opacity mask value transitions smoothly from 1 to 0. The transition may be linear in nature, or may fall off in another fashion, e.g., exponentially. Each border of a given image tile may have a different width.

[0114] Once processor has created the opacity masks for each of the requested image tiles, it projects the opacity masks onto the virtual camera space and resamples them accordingly. Each pixel on the projected image may have an opacity mask associated with between 1 and M image tiles, depending on the number of overlapping images (camera fields of view). All of the opacity mask values associated with a single pixel are all normalized such that they have a total value of one. For example if a single pixel in an image appears in an image seem common to three image tiles, the corresponding opacity mask values t1, t2, and t3 are each multiplied by a factor F, where F=1/(t1+t2+t3). If there is only one opacity value t1 associated with a given pixel, it is also normalized by F=1/t1, which is the same as setting that value to one.

[0115] Once the processor has created the opacity masks, it applies the masks to the imagery--that is, it multiplies each pixel value from each image tile by its associated opacity mask value. In this way, edges of each image tile are blended into each other to create imagery that appears smoother, e.g., as shown at right in FIG. 6D.

[0116] Distortion Modeling

[0117] Exemplary ISIS systems may use models of the radial distortion of certain cameras (lenses) to correct the imagery to maximize the accuracy of the projection. For some of the shorter focal length lenses in the system, the distortion in the lens may be severe enough to warrant estimating distortion parameters and applying the estimated distortion parameters to the imagery to undistort the imagery. Camera distortion may be estimated using the global parameter estimation described above or with any suitable camera calibration toolbox.

[0118] Demosaicing Using Edge Sensing

[0119] Many imagers, including those used by this project, incorporate micro-lens color arrays to create a single image with pixels that alternately represent the blue, red and green light in the image at a given point. The image read off of such an imager is said to be mosaiced. The imagery can be converted to three individual full-resolution images, each representing a separate color component (blue, red, green). This process is called demosaicing and is shown in FIG. 8. One problem that can occur is that the process of filling in the missing pixels for each color to create a whole image can cause visually distracting artifacts, particularly along strong edges in the images (sometimes called "zippering"). To sharpen edges of the color-interpolated image to better represent the actual transitions in the imagery, a gradient-following approach is used. The interpolated pixels follow the direction of the strongest edge as determined by examining its neighboring pixels. Demosaicing is a common problem in image conditioning. For descriptions of demosaicing methods suitable for use with ISIS imagery, see K. Kirakawa, T. W. Parks, "Chromatic Adaptation and White-Balance Problem," IEEE ICIP, 2005, or Edward Chang, Shiufun Cheung and Davis Pan, "Color filter array recovery using a threshold-based variable number of gradients", Proc. SPIE, Vol. 3650, 36 (1999), each of which is incorporated herein by reference in its entirety.

[0120] White and Color Balancing Using a Reference Image

[0121] In addition to registration, which determines the geometry of the reconstructed imager, the imagery can be "conditioned" or adjusted so that the user can view imagery that has excellent color balance, contrast and sharpness. White balancing is used to remove unrealistic color casts in the image, so that white images "look" white. Over the course of the day, the color temperature of the light may change, requiring occasional adjustments to the image. A white balancing tool was implemented, allowing the user to select a region that "should" be white in the image, the scaling factors to bring that imagery to true white are computed, and all of the imagery from the imager/lens combinations are adjusted to match that new ratio.