System And Method For Estimating Cardiorespiratory Fitness

Kovach; F. Grant ; et al.

U.S. patent application number 16/259416 was filed with the patent office on 2019-08-01 for system and method for estimating cardiorespiratory fitness. The applicant listed for this patent is Under Armour, Inc.. Invention is credited to Jeffrey Allen, F. Grant Kovach, Michael Mazzoleni.

| Application Number | 20190232108 16/259416 |

| Document ID | / |

| Family ID | 67392717 |

| Filed Date | 2019-08-01 |

| United States Patent Application | 20190232108 |

| Kind Code | A1 |

| Kovach; F. Grant ; et al. | August 1, 2019 |

SYSTEM AND METHOD FOR ESTIMATING CARDIORESPIRATORY FITNESS

Abstract

A fitness tracking system for generating movement variables corresponding to movement of a user includes a monitoring device, a personal electronic device, and a remote processing server. The monitoring device is configured to be worn or carried by the user and includes a movement sensor configured to collect movement data. The personal electronic device is operably connected to the monitoring device. At least one of the personal electronic device and the monitoring device is configured to calculate feature data by applying a set of rules to the movement data, to calculate raw speed data corresponding to a speed of the user from the subset of the movement data, and to calculate raw distance data corresponding to a distance moved by the user from the subset of the movement data. The remote processing server includes a machine learning model for processing at least the feature data.

| Inventors: | Kovach; F. Grant; (Baltimore, MD) ; Mazzoleni; Michael; (Baltimore, MD) ; Allen; Jeffrey; (Baltimore, MD) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 67392717 | ||||||||||

| Appl. No.: | 16/259416 | ||||||||||

| Filed: | January 28, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62624214 | Jan 31, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A63B 2071/0663 20130101; A63B 24/0062 20130101; A63B 2220/12 20130101; G16H 10/60 20180101; G16H 50/70 20180101; G16H 80/00 20180101; G16H 20/30 20180101; A63B 24/0003 20130101; A63B 2220/22 20130101; G16H 40/63 20180101; G16H 40/67 20180101; G01C 22/006 20130101; G06K 9/00348 20130101 |

| International Class: | A63B 24/00 20060101 A63B024/00; G16H 20/30 20060101 G16H020/30; G06K 9/00 20060101 G06K009/00 |

Claims

1. A fitness tracking system for generating at least one movement variable corresponding to movement of a user, the fitness tracking system comprising: a monitoring device configured to be worn or carried by the user and comprising a movement sensor configured to collect movement data corresponding to movement of the user; a personal electronic device operably connected to the monitoring device and including a controller and a transceiver, the controller configured to receive at least a subset of the movement data from the monitoring device, wherein at least one of the monitoring device and the controller is configured to calculate feature data by applying a set of rules to the subset of the movement data, to calculate raw speed data corresponding to a speed of the user from the subset of the movement data, and to calculate raw distance data corresponding to a distance moved by the user from the subset of the movement data; and a remote processing server comprising a machine learning model, the remote processing server configured to receive the feature data, the raw speed data, and the raw distance data from the transceiver of the personal electronic device, and the remote processing server further configured to apply the machine learning model to the feature data, the raw speed data, and the raw distance data to determine movement variable data corresponding to the at least one movement variable, wherein the at least one movement variable comprises at least one of an estimated speed of the user, an estimated distance moved by the user, and an estimated stride length of the user, and wherein the transceiver of the personal electronic device is configured to receive the at least one movement variable determined by the machine learning model of the remote processing server.

2. The fitness tracking system of claim 1, wherein: the machine learning model is a neural network regression model, at least one of the monitoring device and the controller of the personal electronic device is further configured to calculate at least one of raw stride length data corresponding to a stride length of the user from the subset of the movement data, raw cadence data corresponding to a cadence of the user from the subset of the movement data, and raw ground contact time data corresponding to a ground contact time of the user from the subset of the movement data, the movement data includes acceleration data corresponding to an acceleration of the user, the feature data comprises at least one of a mean acceleration, a median acceleration, a root mean square acceleration, a maximum acceleration, a minimum acceleration, the remote processing server is further configured to apply the machine learning model to at least one of the raw stride length data, the raw cadence data, and the raw ground contact time data to determine the movement variable data, and the at least one movement variable further comprises at least one of an estimated cadence of the user and an estimated ground contact time of the user.

3. The fitness tracking system of claim 1, wherein the personal electronic device further comprises: a display unit operably connected to the controller, the display unit configured to display a visual representation of the movement variable data.

4. The fitness tracking system of claim 3, wherein the transceiver of the personal electronic device is configured to wirelessly transmit the feature data to the remote processing server, to wirelessly receive the movement variable data from the remote processing server, and to wirelessly receive the movement data from the monitoring device.

5. The fitness tracking system of claim 1, wherein: the monitoring device is permanently embedded in a sole of a shoe worn by the user, and the personal electronic device is worn or carried by the user during the collection of the movement data.

6. The fitness tracking system of claim 1, wherein: the estimated speed of the user is a more accurate representation of an actual speed of the user than the raw speed data, and the estimated distance moved by the user is a more accurate representation of an actual distance moved by the user than the raw distance data.

7. A method of operating a fitness tracking system by determining at least one movement variable corresponding to movement of a user comprising: collecting movement data corresponding to movement of a user with a monitoring device worn or carried by the user; calculating feature data by applying a set of rules to at least a subset of the movement data with at least one of the monitoring device and a personal electronic device operably connected to the monitoring device; calculating raw speed data corresponding to a speed of the user from the subset of the movement data with at least one of the monitoring device and the personal electronic device; calculating raw distance data corresponding to a distance moved by the user from the subset of the movement data with at least one of the monitoring device and the personal electronic device; transmitting the feature data, the raw speed data, and the raw distance to a remote processing server with the personal electronic device; applying at least the feature data, the raw speed data, and the raw distance data to a machine learning model to determine movement variable data corresponding to the at least one movement variable, the at least one movement variable comprising at least one of an estimated speed of the user, an estimated distance moved by the user, and an estimated stride length of the user, the machine learning model stored on the remote processing server; transmitting the movement variable data to the personal electronic device with the remote processing server; and displaying a visual representation of the movement variable data on a display of the personal electronic device, wherein the estimated speed of the user is a more accurate representation of an actual speed of the user than the raw speed data, and wherein the estimated distance moved by the user is a more accurate representation of an actual distance moved by the user than the raw distance data.

8. The method of claim 7, wherein: the machine learning model comprises a neural network regression model, the movement data includes acceleration data corresponding to an acceleration of the user, the feature data comprises at least one of a mean acceleration, a median acceleration, a root mean square acceleration, a maximum acceleration, and a minimum acceleration, and the method further comprises: calculating, with at least one of the monitoring device and the controller of the personal electronic device, at least one of raw stride length data corresponding to a stride length of the user from the subset of the movement data, raw cadence data corresponding to a cadence of the user from the subset of the movement data, and raw ground contact time data corresponding to a ground contact time of the user from the subset of the movement data; and applying at least one of the raw stride length data, the raw cadence data, and the raw ground contact time data to the machine learning model to determine the movement variable data, the at least one movement variable further comprising at least one of an estimated cadence of the user and an estimated ground contact time of the user.

9. The method of claim 7, further comprising: processing the movement data with at least one of the monitoring device and the personal electronic device to detect a movement event, wherein the subset of the movement data corresponds to the detected movement event.

10. The method of claim 9, wherein the detected movement event corresponds to a stride of the user comprising a stance phase event, a takeoff event, a flight phase event, and a landing event, and the method further comprises: identifying, with at least one of the monitoring device and the personal electronic device, at least one of stance phase data of the subset of the movement data that corresponds to the stance phase event, takeoff data of the subset of the movement data that corresponds to the takeoff event, flight phase data of the subset of the movement data that corresponds to the flight phase event, and landing data of the subset of the movement data that corresponds to the landing event; and calculating the feature data based on and corresponding to at least one of the stance phase data, the takeoff data, the flight phase data, and the landing data.

11. The method of claim 7, further comprising: identifying demographic data corresponding to the user, the demographic data comprising at least one of gender data, height data, weight data, body mass index data, and age data; and applying at least the demographic data to the machine learning model to determine the movement variable data.

12. The method of claim 7, wherein the set of rules comprises at least one of a complexity calculation, a skewness calculation, a kurtosis calculation, a percentage of acceleration data samples above or below a mean acceleration, and an autocorrelation calculation.

13. The method of claim 7, wherein: the movement data includes acceleration data corresponding to an acceleration of the user, and the set of rules comprises at least one of a lower quartile acceleration calculation, an upper quartile acceleration calculation, an interquartile acceleration range calculation, a percentage of acceleration data samples above or below a mean acceleration, a percentage of acceleration data samples above the mean acceleration and below the upper quartile acceleration calculation, and a percentage of acceleration data samples below the mean acceleration and above the lower quartile acceleration calculation.

14. The method of claim 7, wherein: the machine learning model comprises a heel-strike non-linear regression model, a midfoot strike non-linear regression model, and a forefoot strike non-linear regression model, the movement data includes acceleration data corresponding to an acceleration of the user, and the method further comprises: calculating, with at least one of the monitoring device and the controller of the personal electronic device, at least one of raw stride length data corresponding to a stride length of the user from the subset of the movement data, raw cadence data corresponding to a cadence of the user from the subset of the movement data, and raw ground contact time data corresponding to a ground contact time of the user from the subset of the movement data; determining if the subset of the acceleration data corresponds to a heel-strike stride of the user, a midfoot-strike stride of the user, or a forefoot-strike stride of the user; applying at least the feature data, the raw speed data, the raw distance data, the raw stride length data, the raw cadence data, and the raw ground contact time data to only the heel-strike non-linear regression model to determine the movement variable data, if the subset of the acceleration data corresponds to the heel-strike stride; applying at least the feature data, the raw speed data, the raw distance data, the raw stride length data, the raw cadence data, and the raw ground contact time data to only the midfoot-strike non-linear regression model to determine the movement variable data, if the subset of the acceleration data corresponds to the midfoot-strike stride; and applying at least the feature data, the raw speed data, the raw distance data, the raw stride length data, the raw cadence data, and the raw ground contact time data to only the forefoot-strike non-linear regression model to determine the movement variable data, if the subset of the acceleration data corresponds to the forefoot-strike stride.

15. A method for calculating at least one of a speed of a user and a distance traversed by a user with a fitness tracking system, comprising: collecting movement data corresponding to movement of the user with a monitoring device worn or carried by the user; calculating feature data by applying a set of rules to at least a subset of the movement data collected by the monitoring device with at least one of the monitoring device and a personal electronic device, the personal electronic device comprising a controller, a display unit, and a wireless transceiver, and the personal electronic device worn or carried by the user; wirelessly transmitting the feature data from the personal electronic device to a remote processing server with the wireless transceiver, wherein the remote processing server comprises a central processing unit (CPU) and a machine learning model; calculating, by the CPU and the machine learning model, movement variable data based on the feature data and corresponding to the at least one movement variable, the at least one movement variable including at least one of the speed of the user and the distance traversed by the user; transmitting the movement variable data from the server to the personal electronic device; and displaying a visual representation of the movement variable data on the display unit of the personal electronic device.

16. The method of claim 15, wherein: the machine learning model comprises a neural network regression model, the movement data includes acceleration data corresponding to an acceleration of the user, the feature data comprises at least one of a mean acceleration, a median acceleration, a root mean square acceleration, a maximum acceleration, and a minimum acceleration, and the method further comprises calculating, with at least one of the monitoring device and the personal electronic device, at least one of raw stride length data corresponding to a stride length of the user from the subset of the movement data, raw cadence data corresponding to a cadence of the user from the subset of the movement data, and raw ground contact time data corresponding to a ground contact time of the user from the subset of the movement data, applying at least one of the raw stride length data, the raw cadence data, and the raw ground contact time data to the machine learning model to determine the movement variable data, the at least one movement variable further comprising at least one of an estimated cadence of the user and an estimated ground contact time of the user.

17. The method of claim 15, wherein: the movement data includes acceleration data corresponding to an acceleration of the user, and the set of rules comprises at least one of a complexity calculation, a skewness calculation, a kurtosis calculation, a percentage of acceleration data samples above or below a mean acceleration, and an autocorrelation calculation.

18. The method of claim 15, wherein: the movement data includes acceleration data corresponding to an acceleration of the user, and the set of rules comprises at least one of a lower quartile acceleration calculation, an upper quartile acceleration calculation, an interquartile acceleration range calculation, a percentage of acceleration data samples above or below a mean acceleration calculation, a percentage of acceleration data samples above the mean acceleration and below the upper quartile acceleration calculation, and a percentage of acceleration data samples below the mean acceleration and above the lower quartile acceleration calculation.

19. The method of claim 15, further comprising: calculating combined feature data, at one or more of monitoring device and the personal electronic device, based on a combination of a subset of the calculated feature data; wirelessly transmitting the combined feature data from the personal electronic device to the remote processing server; and calculating, by the CPU and the machine learning model, the movement variable data based in part on the combined feature data.

20. The method of claim 19, wherein the combined feature data is calculated using at least principal component analysis.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims priority to U.S. Provisional Patent Application Ser. No. 62/624,214, filed Jan. 31, 2018, the content of which is incorporated herein by reference in its entirety.

COPYRIGHT

[0002] A portion of the disclosure of this patent document contains material that is subject to copyright protection. The copyright owner has no objection to the facsimile reproduction by anyone of the patent document or the patent disclosure, as it appears in the Patent and Trademark Office patent files or records, but otherwise reserves all copyright rights whatsoever.

FIELD

[0003] The methods and systems disclosed in this document relate to the field of fitness tracking systems for monitoring user activity and, in particular, to determining movement variables associated with a user of a fitness tracking system.

BACKGROUND

[0004] Active individuals, such as walkers, runners, and other athletes commonly use fitness tracking systems to track exercise metrics such as average speed and distance traversed during an exercise session. One type of fitness tracking system includes an accelerometer that collects data used to determine the exercise metrics. In order to improve the user experience of fitness tracking systems, it is desirable to increase the accuracy of the fitness tracking system and associated metrics. Accordingly, improvements in fitness tracking systems are desirable.

SUMMARY

[0005] In accordance with one exemplary embodiment of the disclosure, a fitness tracking system for generating at least one movement variable corresponding to movement of a user includes a monitoring device, a personal electronic device, and a remote processing server. The monitoring device is configured to be worn or carried by the user and comprises a movement sensor configured to collect movement data corresponding to movement of the user. The personal electronic device is operably connected to the monitoring device and includes a controller and a transceiver. The controller is configured to receive at least a subset of the movement data from the monitoring device. At least one of the monitoring device and the controller is configured to calculate feature data by applying a set of rules to the subset of the movement data, to calculate raw speed data corresponding to a speed of the user from the subset of the movement data, and to calculate raw distance data corresponding to a distance moved by the user from the subset of the movement data. The remote processing server includes a machine learning model and the remote processing server is configured to receive the feature data, the raw speed data, and the raw distance data from the transceiver of the personal electronic device. The remote processing server is further configured to apply the machine learning model to the feature data, the raw speed data, and the raw distance data to determine movement variable data corresponding to the at least one movement variable. The at least one movement variable comprises at least one of an estimated speed of the user, an estimated distance moved by the user, and an estimated stride length of the user. The transceiver of the personal electronic device is configured to receive the at least one movement variable determined by the machine learning model of the remote processing server.

[0006] Pursuant to another exemplary embodiment of the disclosure, a method of operating a fitness tracking system by determining at least one movement variable corresponding to movement of a user includes collecting movement data corresponding to movement of a user with a monitoring device worn or carried by the user, calculating feature data by applying a set of rules to at least a subset of the movement data with at least one of the monitoring device and a personal electronic device operably connected to the monitoring device, and calculating raw speed data corresponding to a speed of the user from the subset of the movement data with at least one of the monitoring device and the personal electronic device. The method further includes calculating raw distance data corresponding to a distance moved by the user from the subset of the movement data with at least one of the monitoring device and the personal electronic device, transmitting the feature data, the raw speed data, and the raw distance to a remote processing server with the personal electronic device, and applying at least the feature data, the raw speed data, and the raw distance data to a machine learning model to determine movement variable data corresponding to the at least one movement variable. The at least one movement variable includes at least one of an estimated speed of the user, an estimated distance moved by the user, and an estimated stride length of the user, and the machine learning model is stored on the remote processing server. The method further includes transmitting the movement variable data to the personal electronic device with the remote processing server, and displaying a visual representation of the movement variable data on a display of the personal electronic device. The estimated speed of the user is a more accurate representation of an actual speed of the user than the raw speed data, and the estimated distance moved by the user is a more accurate representation of an actual distance moved by the user than the raw distance data.

[0007] In accordance with yet another exemplary embodiment, a further method for calculating at least one of a speed of a user and a distance traversed by a user with a fitness tracking system includes collecting movement data corresponding to movement of the user with a monitoring device worn or carried by the user, and calculating feature data by applying a set of rules to at least a subset of the movement data collected by the monitoring device with at least one of the monitoring device and a personal electronic device. The personal electronic device includes a controller, a display unit, and a wireless transceiver, and the personal electronic device is worn or carried by the user. The method further includes wirelessly transmitting the feature data from the personal electronic device to a remote processing server with the wireless transceiver. The remote processing server includes a central processing unit (CPU) and a machine learning model. The method further includes calculating, by the CPU and the machine learning model, movement variable data based on the feature data and corresponding to the at least one movement variable. The at least one movement variable includes at least one of the speed of the user and the distance traversed by the user. The method further includes transmitting the movement variable data from the server to the personal electronic device, and displaying a visual representation of the movement variable data on the display unit of the personal electronic device.

[0008] These and other aspects shall become apparent when considered in light of the disclosure provided herein.

BRIEF DESCRIPTION OF THE FIGS

[0009] The foregoing aspects and other features of a fitness tracking system are explained in the following description, taken in connection with the accompanying drawings.

[0010] FIG. 1 is a diagram illustrating a fitness tracking system, as disclosed herein;

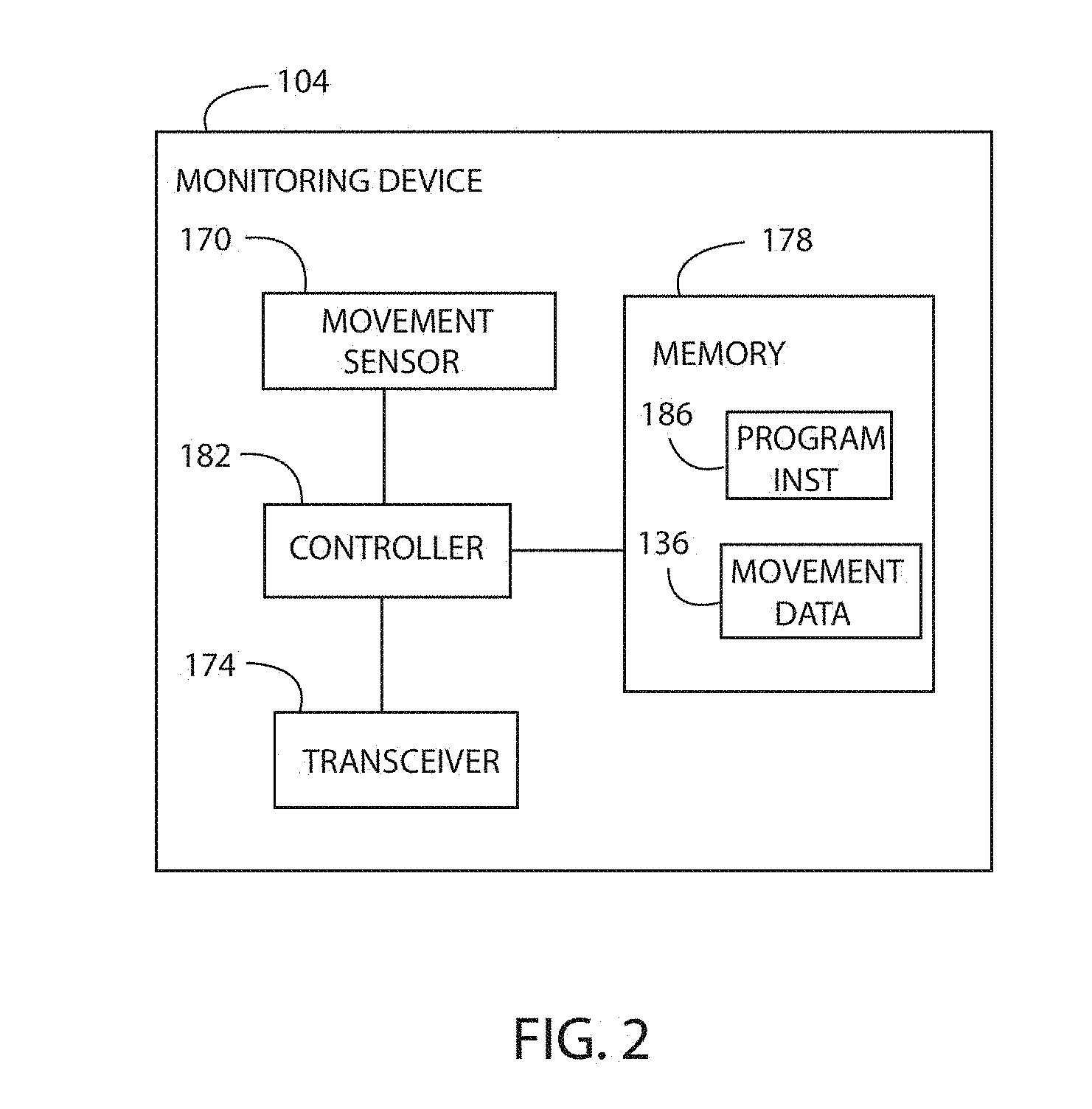

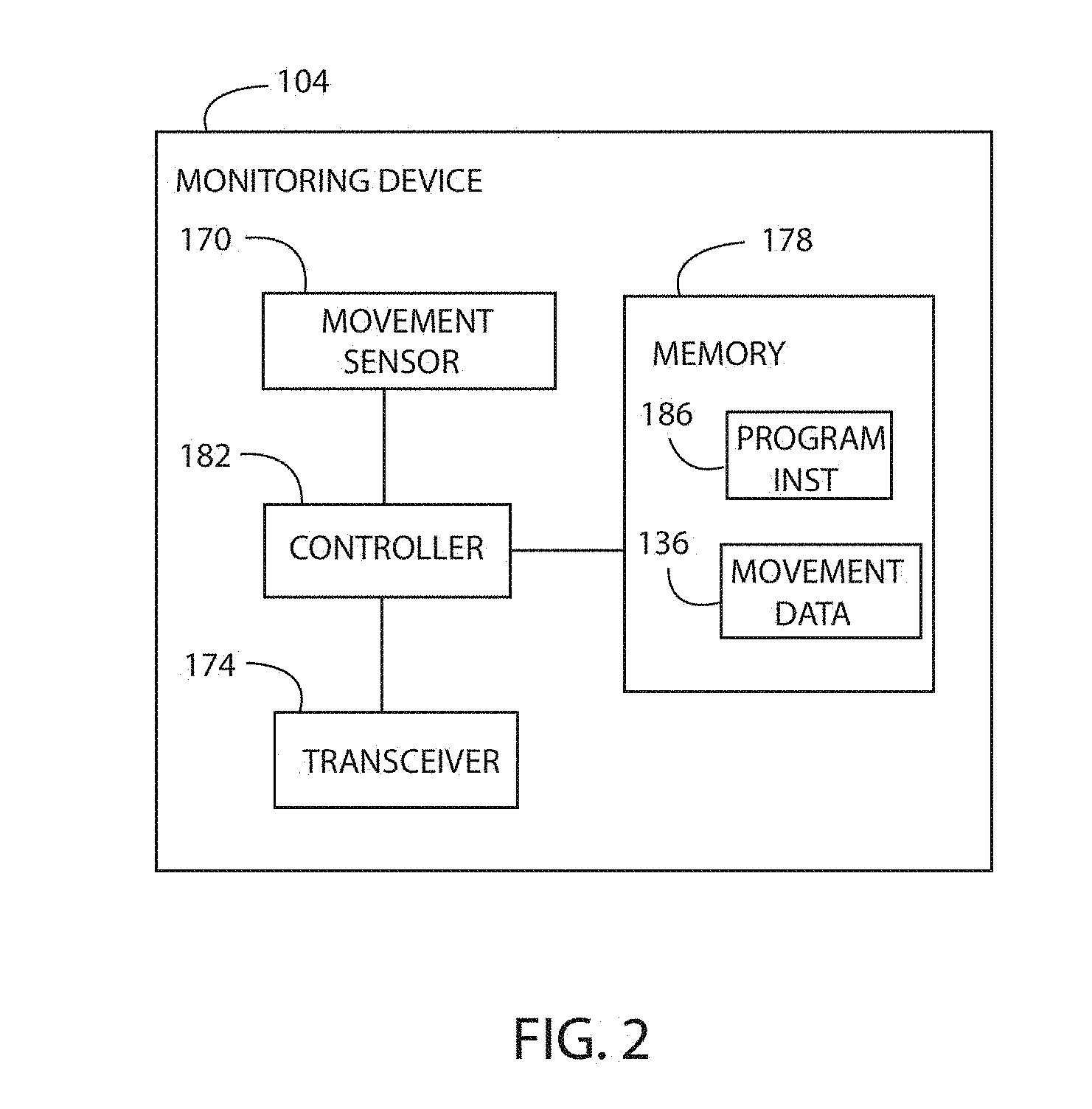

[0011] FIG. 2 is a diagram illustrating a monitoring device of the fitness tracking system of FIG. 1;

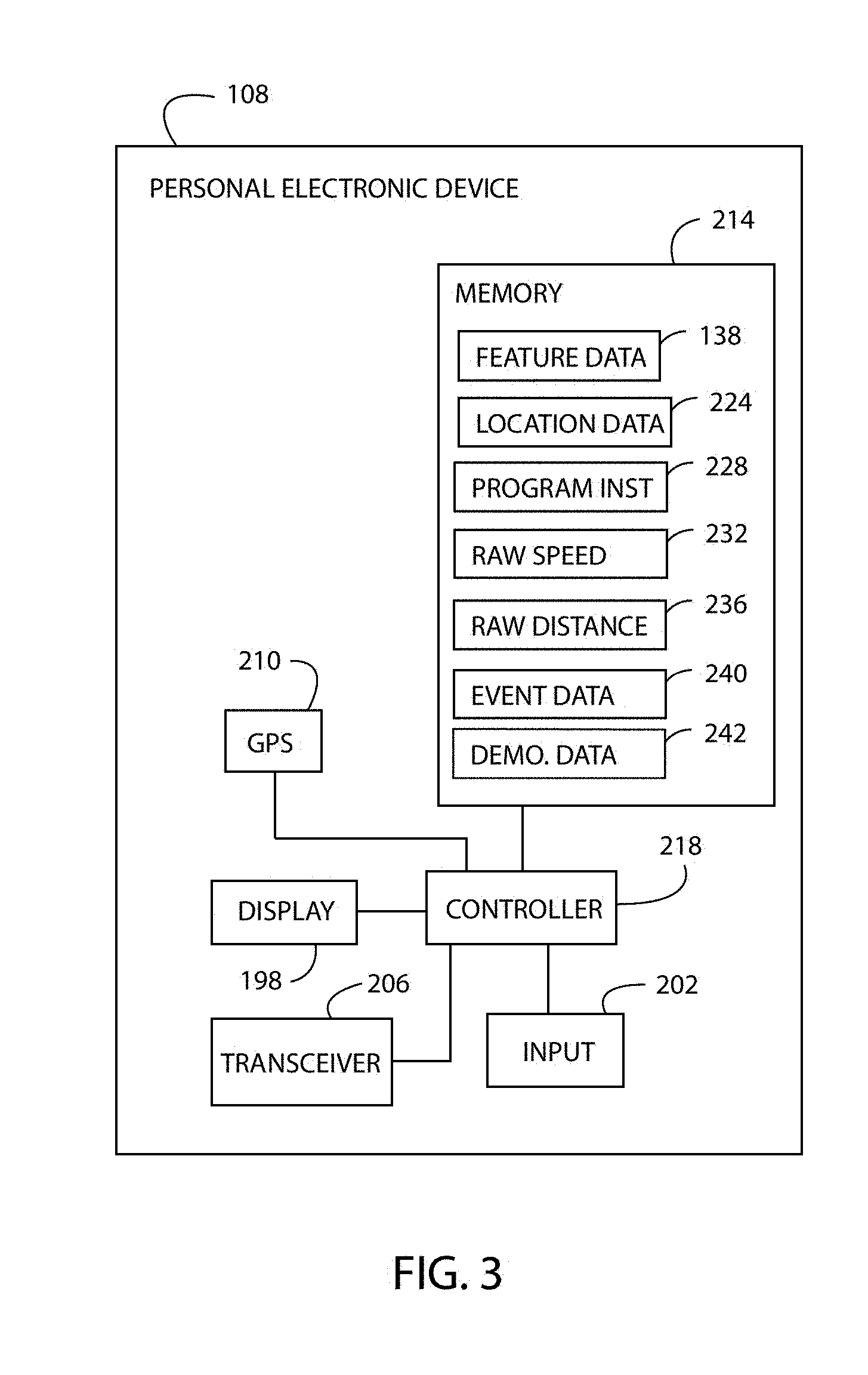

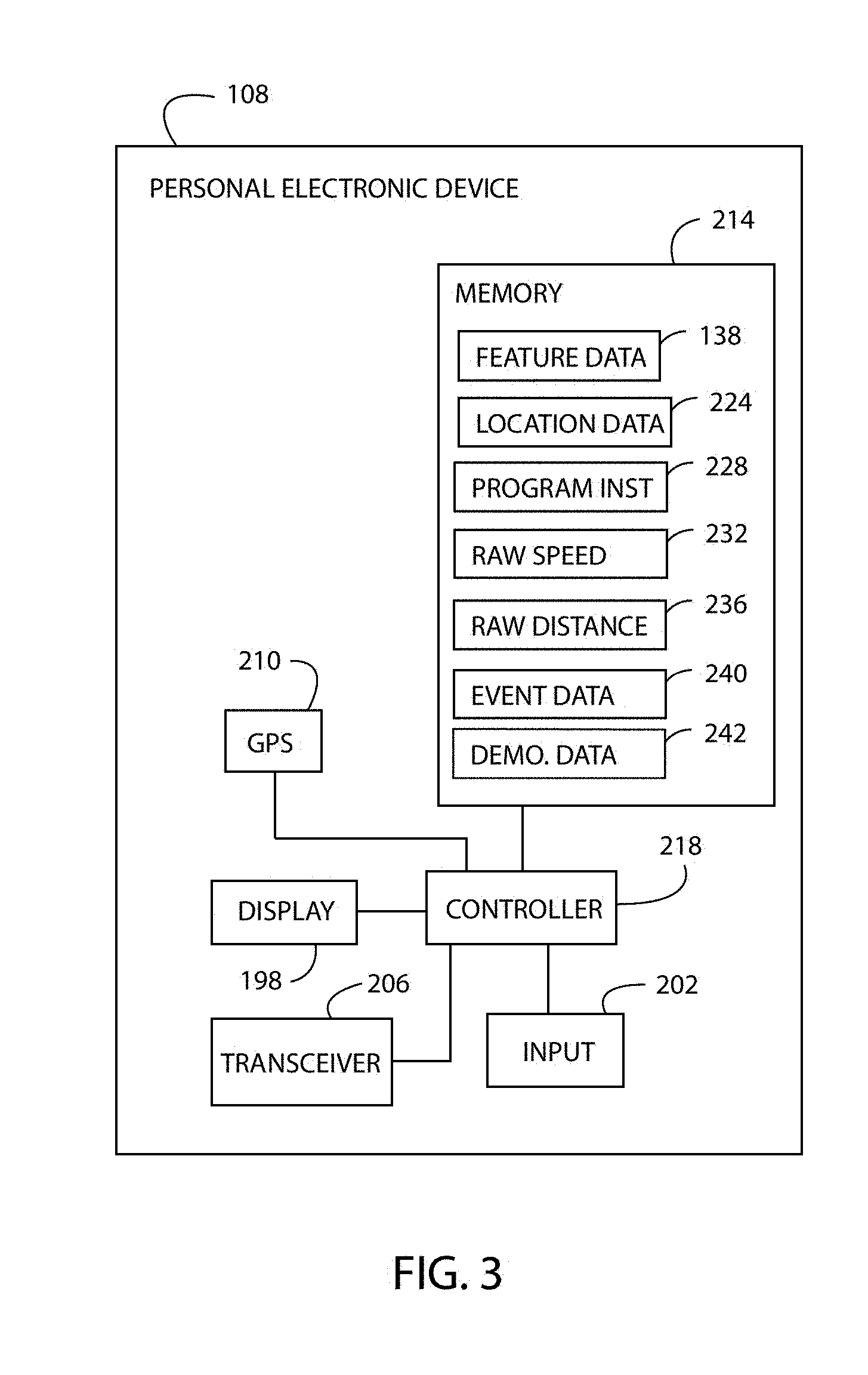

[0012] FIG. 3 is a diagram illustrating a personal electronic device of the fitness tracking system of FIG. 1;

[0013] FIG. 4 is a diagram illustrating a machine learning model of the fitness tracking system of FIG. 1;

[0014] FIG. 5 is a flowchart illustrating a method of operating the fitness tracking system of FIG. 1;

[0015] FIG. 6 is a plot of movement event data, foot position, acceleration data, feature data shown as a mean acceleration, raw speed data, and estimated speed data versus time;

[0016] FIG. 7 is a plot of an estimated speed of the user as determined by the fitness tracking system versus an actual speed of the user;

[0017] FIG. 8 is a diagram illustrating another embodiment of a personal electronic device as disclosed herein; and

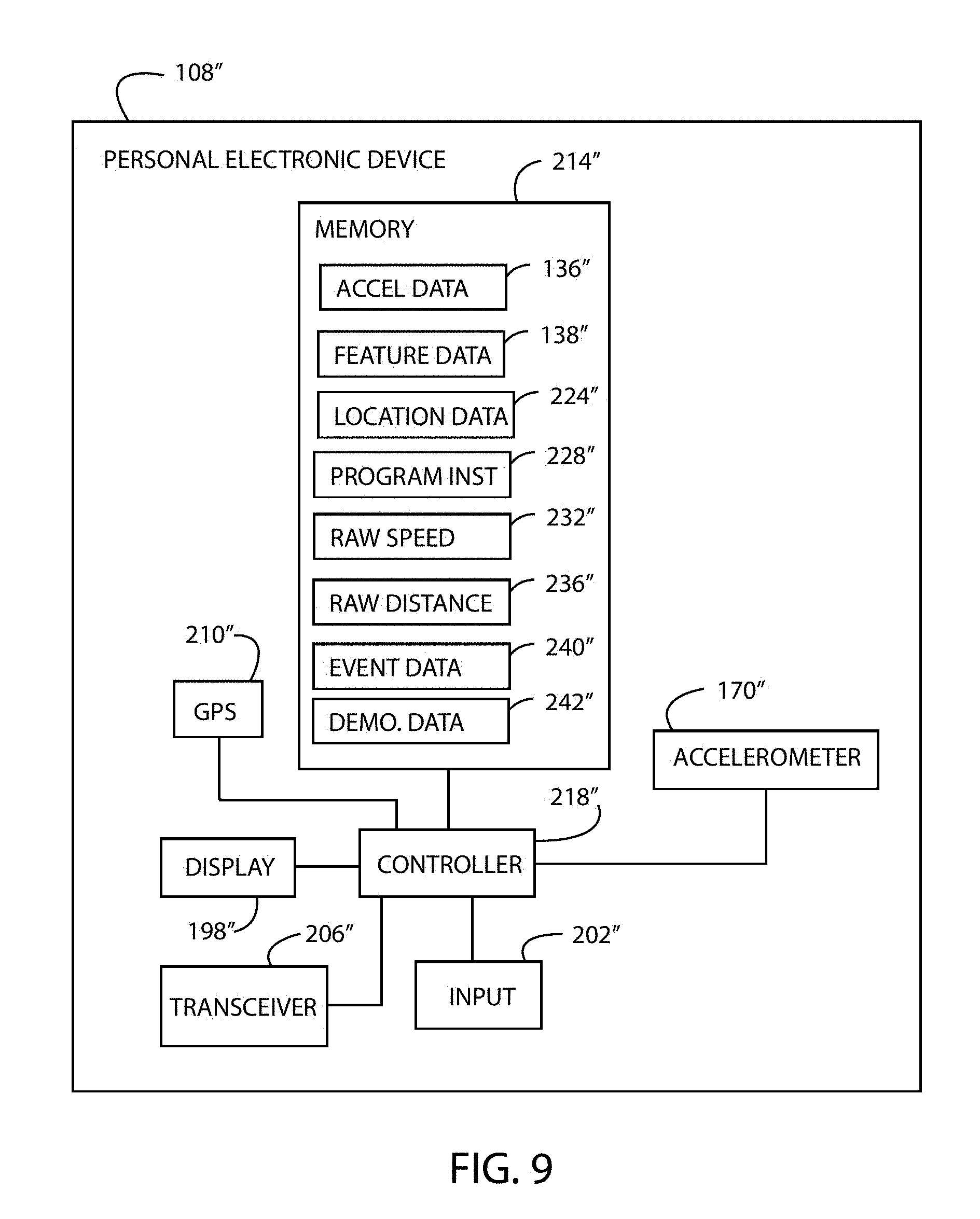

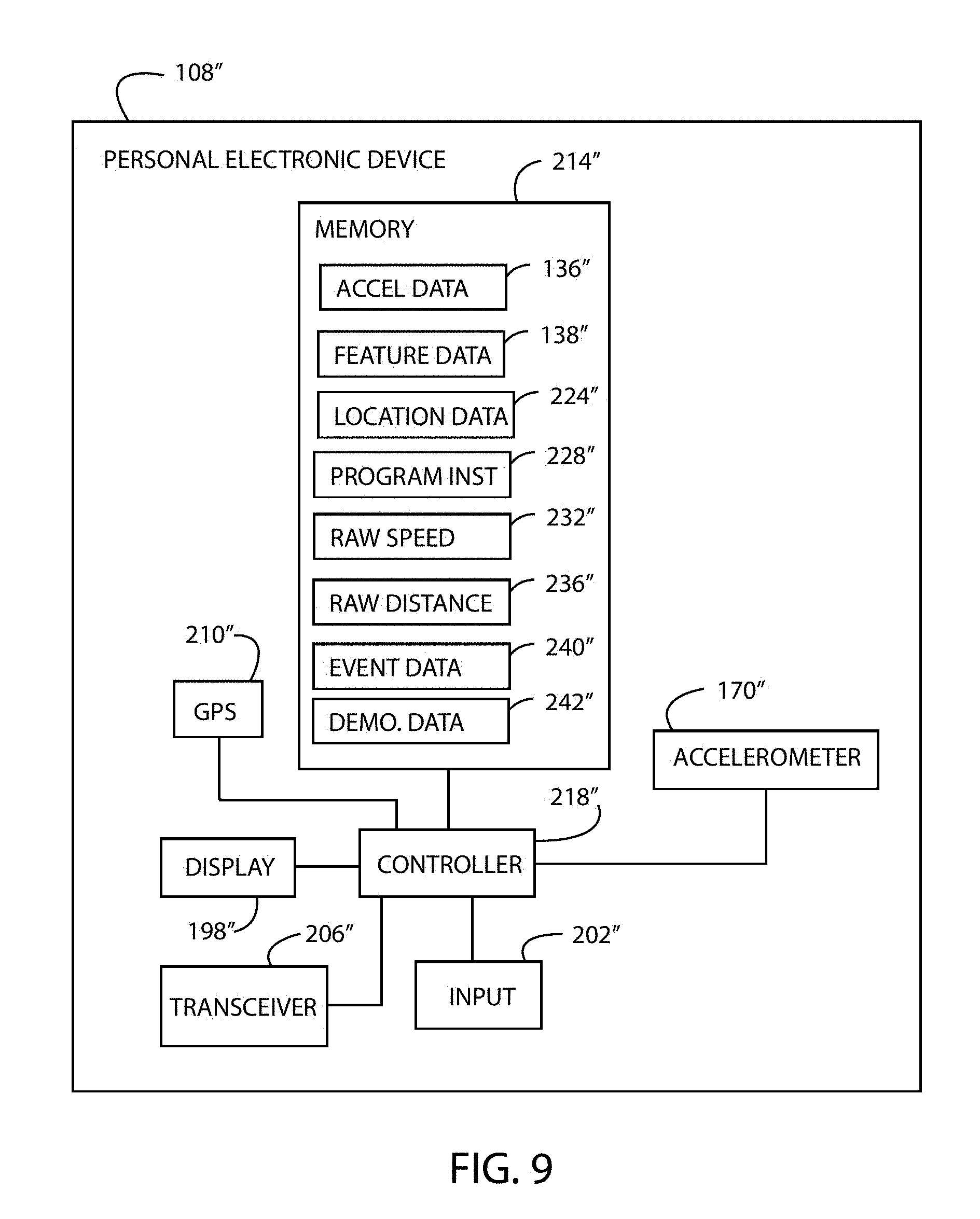

[0018] FIG. 9 is a diagram illustrating yet another embodiment of a personal electronic device as disclosed herein.

[0019] All Figures .COPYRGT. Under Armour, Inc. 2018. All rights reserved.

DETAILED DESCRIPTION

[0020] Disclosed embodiments include systems, apparatus, methods, and storage medium associated for generating at least one movement variable corresponding to a movement of a user, and, in particular, for generating at least one movement variable using a machine learning model.

[0021] For the purpose of promoting an understanding of the principles of the disclosure, reference will now be made to the embodiments illustrated in the drawings and described in the following written specification. It is understood that no limitation to the scope of the disclosure is thereby intended. It is further understood that this disclosure includes any alterations and modifications to the illustrated embodiments and includes further applications of the principles of the disclosure as would normally occur to one skilled in the art to which this disclosure pertains.

[0022] In the following detailed description, reference is made to the accompanying drawings which form a part hereof wherein like numerals designate like parts throughout, and in which is shown, by way of illustration, embodiments that may be practiced. It is to be understood that other embodiments may be utilized, and structural or logical changes may be made without departing from the scope of the present disclosure. Therefore, the following detailed description is not to be taken in a limiting sense, and the scope of embodiments is defined by the appended claims and their equivalents.

[0023] Aspects of the disclosure are disclosed in the accompanying description. Alternate embodiments of the present disclosure and their equivalents may be devised without parting from the spirit or scope of the present disclosure. It should be noted that any discussion herein regarding "one embodiment," "an embodiment," "an exemplary embodiment," and the like indicate that the embodiment described may include a particular feature, structure, or characteristic, and that such particular feature, structure, or characteristic may not necessarily be included in every embodiment. In addition, references to the foregoing do not necessarily comprise a reference to the same embodiment. Finally, irrespective of whether it is explicitly described, one of ordinary skill in the art would readily appreciate that each of the particular features, structures, or characteristics of the given embodiments may be utilized in connection or combination with those of any other embodiment discussed herein.

[0024] Various operations may be described as multiple discrete actions or operations in turn, in a manner that is most helpful in understanding the claimed subject matter. However, the order of description should not be construed as to imply that these operations are necessarily order dependent. In particular, operations described may be performed in a different order than the described embodiments. Various additional operations may be performed and/or described operations may be omitted in additional embodiments.

[0025] For the purposes of the present disclosure, the phrase "A and/or B" means (A), (B), or (A and B). For the purposes of the present disclosure, the phrase "A, B, and/or C" means (A), (B), (C), (A and B), (A and C), (B and C), or (A, B and C).

[0026] The terms "comprising," "including," "having," and the like, as used with respect to embodiments of the present disclosure, are synonymous.

[0027] As shown in FIG. 1, a fitness tracking system 100 includes a monitoring device 104, a personal electronic device 108, and a remote processing server 112. The fitness tracking system 100 transmits and receives data over the Internet 124 using a cellular network 128, for example. The fitness tracking system 100 may also be configured for use with a global positioning system ("GPS") 132. As disclosed herein, the fitness tracking system 100 collects movement data 136 (FIG. 2) with the monitoring device 104 while the user exercises. At least one of the personal electronic device 108 and the monitoring device 104 calculates feature data 138 (FIG. 3) based on the movement data 136. The feature data 138 is remotely processed by a machine learning model 140 stored on the remote processing server 112 to determine movement variable data 144 based on movements of the user. Exemplary movement variables of the movement variable data 144 include estimated speed, distance, and stride length of the user. The machine learning model 140 increases the accuracy of the determined movement variables, as compared to known approaches for determining the movement variables. Each component of the fitness tracking system 100 and method for operating the fitness tracking system 100 are described herein.

[0028] The monitoring device 104 is configured to be worn or carried by a user of the fitness tracking system 100. In one embodiment, the monitoring device 104 is permanently embedded in the sole of a shoe 150 worn by the user, such that the monitoring device 104 cannot be removed from the shoe 150 without destroying the shoe 150. The monitoring device 104 may also be configured for placement in the shoe 150, may be attached to the shoe 150, may be carried in a pocket 154 of the user's clothing, may be attached to a hat 156 worn by the user, and/or may be attached to any portion of the user or the user's clothing or accessories (e.g., wrist band, eyeglasses, necklace, visor, etc.). Moreover, in some embodiments, a left monitoring device 104 is located and/or affixed to the user's left shoe 150 and a right monitoring device 104 is located and/or affixed to the user's right shoe 150; both monitoring devices 104 being configured substantially identically.

[0029] In other embodiments, the monitoring device 104 includes a strap 158 to mount the monitoring device 104 onto the user. In this embodiment, the monitoring device 104 may be strapped to the user's wrist, arm, ankle, or chest, for example. In at least one embodiment, the strap 158 and the monitoring device 104 are provided as a watch or a watch-like electronic device. In a further embodiment, the monitoring device 104 is included in a heartrate monitoring device (not shown) that is worn around the wrist, chest, or other body location that is typically used to measure heartrate. Thus, the monitoring device 104 is configured for mounting (permanently or removably) on any element of the user or the user's clothing, footwear, or other article of apparel using any of various mounting means such as adhesives, stitching, pockets, or any of various other mounting means. The monitoring device 104 is located proximate to the user during activities and exercise sessions such as hiking, running, jogging, walking, and the like; whereas the personal electronic device 108 may be left behind or remote to the user during an exercise session. In a further embodiment, which is discussed in greater detail at FIG. 8, the components of the monitoring device 104 are included as part of the personal electronic device 108.

[0030] As shown in FIG. 2, the monitoring device 104, which is also referred to herein as a measuring device, a health parameter monitoring device, a distance monitoring device, a speed monitoring device, and/or an activity monitoring device, includes a movement sensor 170, a transceiver 174, and a memory 178 each of which is operably connected to a controller 182. The movement sensor 170 is configured to collect movement data 136, which corresponds to movement of the user during an exercise session. In one embodiment, the movement sensor 170 is an accelerometer sensor (such as a MEMS accelerometer) and the movement data 136 is (or includes) acceleration data, which corresponds to acceleration of the user during the exercise session. In this embodiment, the movement sensor 170 collects acceleration data that corresponds to bipedal movement of the user. The movement data 136 is stored by the controller 182 in the memory 178. The movement sensor 170 is provided as any type of sensor configured to generate the movement data 136, such as a single-axis or a multi-axis microelectromechanical (MEMS) accelerometer, a gyroscope, and/or a magnetometer.

[0031] The transceiver 174 of the monitoring device 104, which is also referred to as a wireless transmitter and/or receiver, is configured to transmit and to receive data from the personal electronic device 108. In one embodiment, the transceiver 174 is configured for operation according to the Bluetooth.RTM. wireless data transmission standard. In other embodiments, the transceiver 174 comprises any desired transceiver configured to wirelessly transmit and receive data using a protocol including, but not limited to, Near Field Communication ("NFC"), IEEE 802.11, Global System for Mobiles ("GSM"), and Code Division Multiple Access ("CDMA").

[0032] The memory 178 of the monitoring device 104 is an electronic data storage unit, which is also referred to herein as a non-transient computer readable medium. The memory 178 is configured to store the program instruction data 186 and the movement data 136 generated by the movement sensor 170, as well as any other electronic data associated with the fitness tracking system 100, such as user profile information, for example. The program instruction data 186 includes computer executable instructions for operating the monitoring device 104.

[0033] The controller 182 of the monitoring device 104 is configured to execute the program instruction data 186 for controlling the movement sensor 170, the transceiver 174, and the memory 178. The controller 182 is a provided as a microprocessor, a processor, or any other type of electronic control chip.

[0034] As shown in FIG. 3, the exemplary personal electronic device 108 is configured as a smartphone. In other embodiments, the personal electronic device 108 is provided as a smartwatch, an electronic wristband, or the like. In one embodiment, the personal electronic device 108 is configured to be worn or carried by the user during collection of the movement data 136 by the monitoring device 104. In another embodiment, the personal electronic device 108 is not carried or worn by the user during collection of the movement data 136, and the personal electronic device 108 receives the movement data 136 from the monitoring device 104 after the user completes an exercise session. In a further embodiment, data may be transmitted from the monitoring device 104 to the personal electronic device 108 both during and after completion of an exercise session.

[0035] The personal electronic device 108 includes display unit 198, an input unit 202, a transceiver 206, a GPS receiver 210, and a memory 214 each of which is operably connected to a processor or a controller 218. The display unit 198 is configured to display a visual representation of the movement variable data 144 (i.e. the estimated speed, distance, stride length, and cadence of the user as determined by the machine learning model 140). The display unit 198 may comprise a liquid crystal display (LCD) panel configured to display static and dynamic text, images, and other visually comprehensible data. For example, the display unit 198 is configurable to display one or more interactive interfaces or display screens to the user including a display of at least an estimated distance traversed by the user, a display of an estimated speed of the user, and a display of an estimated stride length of the user. The display unit 198, in another embodiment, is any display unit as desired by those of ordinary skill in the art.

[0036] The input unit 202 of the personal electronic device 108 is configured to receive data input via manipulation by a user. The input unit 202 may be configured as a touchscreen applied to the display unit 198 that is configured to enable a user to input data via the touch of a finger and/or a stylus. In another embodiment, the input unit 202 comprises any device configured to receive user inputs, as may be utilized by those of ordinary skill in the art, including e.g., one or more buttons, switches, keys, and/or the like.

[0037] With continued reference to FIG. 3, the transceiver 206 of the personal electronic device 108 is configured to wirelessly communicate with the transceiver 174 of the monitoring device 104 and the remote processing server 112. The transceiver 206 wirelessly communicates with the remote processing server 112 either directly or indirectly via the cellular network 128 (FIG. 1), a wireless local area network ("Wi-Fi"), a personal area network, and/or any other wireless network over the Internet 124. Accordingly, the transceiver 206 is compatible with any desired wireless communication standard or protocol including, but not limited to, Near Field Communication ("NFC"), IEEE 802.11, Bluetooth.RTM., Global System for Mobiles ("GSM"), and Code Division Multiple Access ("CDMA"). To this end, the transceiver 206 is configured to wirelessly transmit and receive data from the remote processing server 112, and to wirelessly transmit and receive data from the monitoring device 104.

[0038] The GPS receiver 210 of the personal electronic device 108 is configured to receive GPS signals from the GPS 132 (FIG. 1). The GPS receiver 210 is further configured to generate location data 224 that is representative of a current location on the Earth of the personal electronic device 108 based on the received GPS signals. The location data 224, in one embodiment, includes latitude and longitude information. The controller 218 is configured to store the location data 224 generated by the GPS receiver 210 in the memory 214.

[0039] As shown in FIG. 3, the memory 214 of the personal electronic device 108 is an electronic data storage unit, which is also referred to herein as a non-transient computer readable medium. The memory 214 is configured to store electronic data associated with operating the personal electronic device 108 and the monitoring device 104 including program instruction data 228, raw speed data 232, raw distance data 236, movement event data 240, demographic data 242, and the feature data 138. The program instruction data 228 includes computer executable instructions for determining the feature data 138, the raw speed data 232, the raw distance data 236, the event data 240, and the demographic data 242. The raw speed data 232 corresponds to a speed of the user that is calculated by the personal electric device 108 based on at least a subset of the movement data 136 collected by monitoring unit 104 during an exercise session. The raw distance data 236 corresponds to a distance traversed by the user that is calculated by the personal electronic device 108 based on at least a subset of the movement data 136 collected by the monitoring unit 104 during an exercise session. The event data 240 corresponds to movement events of the user during the exercise session, such as a takeoff event 302 (FIG. 6), a landing event 306 (FIG. 6), a stance phase event 310 (FIG. 6), and a flight phase event 314 (FIG. 6).

[0040] The demographic data 242 is based on demographic information of the user and may include user gender, user height, user weight, user body mass index ("BMI"), and user age, among other data. Any other user demographic and/or psychographic data may be included in the demographic data 242.

[0041] The feature data 138 stored in the memory 214 of the personal electronic device 108 corresponds to data calculated by the personal electronic device 108 and/or the monitoring device 104 by applying a set of rules to at least a subset of the movement data 136. In one embodiment, the rules of the set of rules are categorized as mathematical operations, event-specific operations, and processed signals. Exemplary feature data 138 based on the set of rules include mean acceleration, root mean square acceleration, median acceleration, lower quartile acceleration, upper quartile acceleration, an interquartile acceleration range, maximum acceleration, minimum acceleration, a predetermined range of acceleration, standard deviation, variance, complexity, skewness, kurtosis, autocorrelation, a percentage of acceleration data samples above or below the mean acceleration, a percentage of acceleration data samples above the mean acceleration and below the upper quartile acceleration, and a percentage of acceleration data samples below the mean acceleration and above the lower quartile acceleration. Additional feature data 138 based on the set of rules include all statistical moments including, but not limited to, mean (moment ordinal 1), variance (moment ordinal 2), skewness (moment ordinal 3), kurtosis (moment ordinal 4), hyperskewness (moment ordinal 5, and hyperflatness (moment ordinal 6). The feature data 138 include any (or none) of the infinite number of statistical moments. The above rules are exemplary, and the controller 218 and/or the controller 182 are configured to perform at least one rule of the set of rules in calculating the feature data 138 from the movement data 136.

[0042] The controller 218 of the personal electronic device 108 is operatively connected to the monitoring device 104 and is configured to execute the program instruction data 228 in order to control the display unit 198, the input unit 202, the transceiver 206, the GPS receiver 210, the memory 214, and the monitoring device 104. The controller 218 is provided as a microprocessor, a processor, or any other type of electronic control chip. The controller 218 is further configured to process at least a subset of the movement data 136 and to calculate the feature data 138 by applying at least one rule of the set of rules to the subset of the movement data 136, for example. Moreover, the controller 218 is configured to calculate the raw speed data 232 and the raw distance data 236 from the subset of the movement data 136 by integrating the subset of the movement data 136, for example. Further, the controller 218 is configured to receive the movement variable data 144 determined by the machine learning model 140 of the remote processing server 112 and to store the movement variable data 144 in the memory 214. In another embodiment, the controller 182 of the monitoring device 104 is configured to execute the program instructions 186 to process at least a subset of the movement data 136 and to calculate the feature data 138 by applying at least one rule of the set of rules to the subset of the movement data 136, for example. Moreover, in this embodiment, the controller 182 is configured to calculate the raw speed data 232 and the raw distance data 236 from the subset of the movement data 136 by integrating the subset of the movement data 136, for example. In another embodiment, the monitoring device 104 is configured to calculate the raw speed data and the raw distance data. Furthermore, in some embodiments, at least one of the personal electronic device 108 and the monitoring device 104 is configured to calculate raw stride length data corresponding to the stride length of the user from the subset of the movement data 136, raw cadence data corresponding to the cadence of the user from the subset of the movement data 136, and raw ground contact time data corresponding to the ground contact time of the user from the subset of the movement data 136. The raw stride length data, the raw cadence data, and the raw ground contact time data are stored in at least one of the memory 178 and the memory 214. The "raw" data may be determined or calculated by one or more of the personal electronic device 108 and the monitoring device 104.

[0043] With reference to FIG. 1, the remote processing server 112 is remotely located from the monitoring device 104 and the personal electronic device 108. That is, the server 112 is located in a first physical location and the personal electric device 108 and the monitoring device 104 are located in a second physical location that is different from the first physical location. In a further embodiment, which is discussed in greater detail at FIG. 8, the components of the server 112 are included as part of the personal electronic device 108.

[0044] The server 112 is configured to calculate, generate, and/or determine the movement variable data 144 based on at least the feature data 138 calculated, generated, and/or determined by at least one of the personal electronic device 108 and the monitoring device 104. Accordingly, the server 112 is configured to receive the feature data 138, the raw speed data 232, and the raw distance 236 from the personal electric device 108 via the Internet 124. To this end, the server 112 includes a transceiver 252 and a memory 256 storing program instructions 260, the machine learning model 140, and the movement variable data 144. Each of the transceiver 252 and the memory 256 is operably connected to a central processing unit ("CPU") 264.

[0045] The movement variable data 144 generated by the machine learning model 140 of the server 112 includes at least one of an estimated speed of the user, an estimated distance traversed by the user, an estimated stride length of the user, an estimated cadence of the user, and an estimated ground contact time of the user's foot/shoe. The estimated speed of the user is an estimated ground speed of the user determined at least periodically during an exercise session monitored by the monitoring device 104. The estimated distance traversed by the user is an estimated distance moved/traversed by the user during the exercise session. The estimated distance is determined accurately when the user utilizes a treadmill, or the like, during the exercise session and is representative of a corresponding distance moved. That is, the user is not required to move a corresponding distance on the ground in order for the machine learning model 140 to determine the estimated distance. The estimated stride length is an estimated heel-to-heel distance of the user determined at least periodically during the exercise session. The estimated cadence of the user is an estimation of the number of steps taken per unit time (e.g. steps per minute) during the exercise session. The estimated ground contact time of the user is an estimation of the time the user's foot is in contact with the ground during each stride of the exercise session.

[0046] An advantage of using the machine learning model 140 is that, for example, the estimated speed/distance of the user is a more accurate representation of an actual speed 234 (FIG. 6) and an actual distance of the user than is the raw speed/distance (raw speed data 232 and raw distance data 236) of the user as determined by the personal electronic device 108 or the monitoring device 104. Each "estimated" value of the movement variable data 144 is typically more accurate than the corresponding value (if any) as determined by the personal electronic device 108 or the monitoring device 104.

[0047] The transceiver 252 of the remote processing server 112 is configured to wirelessly communicate with the personal electronic device 108 either directly or indirectly via the cellular network 128, a wireless local area network ("Wi-Fi"), a personal area network, and/or any other wireless network. Accordingly, the transceiver 252 is compatible with any desired wireless communication standard or protocol including, but not limited to, Near Field Communication ("NFC"), IEEE 802.11, Bluetooth.RTM., Global System for Mobiles ("GSM"), and Code Division Multiple Access ("CDMA").

[0048] The CPU 264 of the remote processing server 112 is configured to execute the program instruction data 260 stored in the memory 256 for generating and/or determining movement variable data 144 by applying at least one of the feature data 138, the raw speed data 232, the raw distance data 236, the demographic data 242, the raw stride length data, the raw cadence data, and the raw ground contact time data to the machine learning model 140 to generate the movement variable data 144. The CPU 264 is provided as a microprocessor, a processor, or any other type of electronic control chip. Typically, the CPU 264 is more powerful than the controller 218 of the personal electronic device 108, thereby enabling the remote processing server 112 to generate the movement variable data 144 more quickly than the personal electronic device 108.

[0049] The machine learning model 140 stored in the memory 256 of the remote processing server 112 in one embodiment, and includes a non-linear neural network regression model to determine the movement variable data 144. In other embodiments, the machine learning model 140 uses other regression models such as multiple linear regression. A single feature datum 138 (such as mean acceleration only) or multiple feature data 138 (such as mean acceleration and root mean square acceleration) may be used as inputs to the machine learning model 140. Moreover, the machine learning model 140 may be implemented in a piecewise manner, and dimensionality reduction, such as principal component analysis ("PCA"), may be used in some embodiments to reduce the complexity of the machine learning model 140.

[0050] The machine learning model 140 is trained using training data (i.e. existing feature data and movement variable data) to output data corresponding to the movement variable data 144 (i.e. the estimated speed, distance, stride length, and cadence of the user). As used herein, the term "machine learning model" refers to a system or a set of program instructions configured to implement an algorithm or mathematical model that predicts and provides a desired output based on a given input. In one example, the input is training "feature data" and the desired output is the data corresponding to the movement variable data 144. The machine learning model 140 is typically not explicitly programmed or designed to follow particular rules in order to provide the desired output for a given input. Instead, the machine learning model 140 is provided with the corpus of training data from which the machine learning model 140 identifies or "learns" patterns and statistical relationships or structures in the training data, which are generalized to make predictions with respect to new data inputs (i.e. feature data 138, raw speed data 232, and raw distance data 236 corresponding to "user-generated" movement data 136). In the case of supervised machine learning, the training data is labeled as inputs and outputs, and the machine learning model 140 is trained to predict outputs for new data based on the patterns and other relationships or structures identified in the training data. The training data, however, may also be unlabeled as is the case with unsupervised machine learning. Both training scenarios are suitable for training the machine learning model 140 included in the server 112.

[0051] FIG. 4 illustrates an exemplary simplified block diagram of the logical components of the machine learning model 140 as well as the data that is input to the model 140 and the data that is output from the model 140. The machine learning model 140 includes an encoder 270 operatively connected to a trained model 274, which is operatively connected to a decoder 278. The encoder 270 is configured to reformat the input data (i.e. at least the feature data 138, the raw speed data 232, the raw distance data 236, and the demographic data 242) into a format that is suitable for processing by the trained model 274.

[0052] The trained model 274 processes the encoded input data and generates output data. The trained model 274, in at least one embodiment, includes sub-models such as a sub-model 276a, a sub-model 276b, and a sub-model 276c. The trained model 274 includes any number of the sub-models 276a, 276b, 276c. Each of the sub-models 276a, 276b, 276c is configured to receive and process a particular type of input data. For example, in one embodiment, the sub-model 276a is trained to receive feature data 138 that is generated from a walking user, the sub-model 276b is trained to receive feature data 138 that is generated from a running user, and the sub-model 276c is trained to receive feature data 138 that is generated by a fast running user. In an exemplary embodiment, a walking user moves at a speed less than about 4.0 mile per hour ("mph"), a running user moves a speed from 4.0 to 7.0 mph, and a fast running user moves at a speed greater than 7.0 mph. An exemplary output according to the walking/running/fast running model is shown in FIG. 7 and is discussed in detail herein. In another example, the sub-model 276a is trained to receive feature data 138 that is generated from a forefoot-strike mover and is referred to as a forefoot-strike non-linear regression model, the sub-model 276b is trained to receive feature data 138 that is generated from a midfoot-strike mover and is referred to as a midfoot-strike non-linear regression model, and the sub-model 276c is trained to receive feature data 138 that is generated from a heel-strike mover and is referred to as a heel-strike non-linear regression model. The CPU 264 and/or the controller 218 of the personal electronic device 108 is configured to process the movement data 136 and/or the feature data 138 to determine the appropriate sub-model 276a, 276b, 276c (if any) to which the feature data 138 and/or the other input data should be applied. In another embodiment, the trained model 274 does not include the sub-models 276a, 276b, 276c, and the trained model 274 generates the output data for all input data.

[0053] The decoder 278 receives the output data from the trained model 274 and reformats the output data into the movement variable data 144 and confidence factor data 282. The confidence factor data 282 is indicative of an accuracy of the determined movement variable data 144. In one embodiment, the confidence factor data 282 is a value from zero to one, but in other embodiments, the confidence factor data 282 includes values within any desired range. Typically, a low confidence factor is associated with less accurately determined movement variable data 144 and a high confidence factor is associated with more accurately determined movement variable 144. The confidence factor data 282 may or may not be stored in the memory 256 of the remote processing server 112.

[0054] In operation, the fitness tracking system 100 is operated according to a method 500 illustrated by the flowchart of FIG. 5. The fitness tracking system 100 executes the method 500 to determine at least one movement variable (i.e. the movement variable data 144) corresponding to movement of a user wearing or carrying the monitoring device 104 during an exercise session. In block 504, the user engages in an exercise session and wears or carries the monitoring device 104. Specifically, the user runs, walks, or hikes while wearing or carrying the monitoring device 104. Typically, the monitoring device 104 is affixed to the user's shoe 150. The user may also wear or carry the personal electronic device 108; however, the user is not required to wear or carry the personal electronic device 108 during the exercise session.

[0055] At block 508, during the exercise session, the monitoring device 104 collects movement data 136 corresponding to, in this exemplary embodiment, the acceleration of the user. Specifically, since the monitoring device 104 is typically affixed to the user's shoe 150, the movement data 136 corresponds to the acceleration of the user's shoe/foot 150 during the exercise session. FIG. 6 includes a plot of exemplary movement data 136 collected by the monitoring device 104 and data corresponding to a distance of the user's shoe 150 from the ground.

[0056] Either during the exercise session or at the conclusion of the exercise session, the movement data 136 collected by the monitoring device 104 is wirelessly transmitted to the personal electronic device 108 via the transceivers 174, 206. The personal electronic device 108 stores the movement data 136 in the memory 214.

[0057] Next, in block 512, the personal electronic device 108 determines whether the movement data 136 should be processed with movement event detection. Whether or not movement event detection is performed may be specified by the user of the personal electronic device 108 using the input unit 202, or by the program instruction data 238. Movement event detection (or simply "event detection") generates the movement event data 240 (FIG. 3) and associates the movement event data 240 with the feature data 138 and/or the movement data 136. Movement event detection is a processing step that is not required to determine the movement variable data 144, but that tends to increase the accuracy of the movement variable data 144. The movement variable data 144 is accurately determinable without event detection. In other embodiments, the monitoring device 104 determines whether the movement data 136 should be processed with movement event detection.

[0058] In block 516, if event detection is to be performed, the personal electronic device 108 detects movement events in the collected movement data 136 and stores the movement event data 240 to the memory 214. Detecting movement events, in one embodiment, includes identifying subsets of the movement data 136 that correspond to the takeoff event 302, the landing event 306, the stance phase event 310, and the flight phase event 314 by processing the movement data 136 with the controller 218 of the personal electronic device 108. In other embodiments, the controller 182 of the monitoring device 104 detects the movement events in the collected movement data 136.

[0059] The takeoff event 302, the landing event 306, the stance phase event 310, and the flight phase event 314 are identified in FIG. 6. The takeoff event 302 is indicative of when the user's shoe 150 has left the ground at the beginning of a stride. The landing event 306 is indicative of when the user's shoe 150 has struck the ground at the end of the stride. The flight phase event 314 is located between the takeoff event 302 and the landing event 306. During the flight phase event 314, the user's shoe is spaced apart from the ground and is moving through the air. The stance phase event 310 is located between the landing event 306 and the next takeoff event 302. During the stance phase event 310, the user's shoe 150 is positioned on the ground.

[0060] The detected movement events of block 516 are stored as the event data 240 in the memory 214 of the personal electronic device 108 or the memory 104 of the monitoring device 104. In one embodiment, the event data 240 is categorized by event type and includes or identifies subsets of the movement data 136 and/or feature data 138 corresponding to the takeoff event 302, the landing event 306, the stance phase event 310, and the flight phase event 314. As a result, for example, using the event data 240 a subset of the feature data 138 corresponding to only the flight phase event 314 can be isolated and applied to the machine learning model 140 without applying feature data 138 corresponding to the takeoff event 302, the landing event 306, and the stance phase event 310. The event data 240 for the events identified above may be referred to herein as stance phase data including a subset of the movement data 136 that corresponds to the stance phase event 310, takeoff data including a subset of the movement data 136 that corresponds to the takeoff event 302, flight phase data including a subset of the movement data 136 that corresponds to the flight phase event 314, and landing data including a subset of the movement data 136 that corresponds to the landing event 306.

[0061] Continuing to block 520, the personal electronic device 108 determines if the movement data 136 should be processed to calculate at least one of the raw speed data 232 and the raw distance data 236. Whether or not the raw data 232, 236 is calculated may be specified by the user of the personal electronic device 108 using the input unit 202, or by the program instruction data 238. The calculation of the raw data 232, 236 is a processing step that is not required to determine the movement variable data 144, but that tends to increase the accuracy of the movement variable data 144. The movement variable data 144 is accurately determinable without applying the raw data 232, 236 to the machine learning model 140.

[0062] In block 524, if the raw data calculation is to be performed, the personal electronic device 108 calculates at least one of the raw speed data 232 and the raw distance data 236 by processing the movement data 136. Specifically, to determine the raw speed data 232, the controller 218 integrates at least a subset of the movement data 136. To determine the raw distance data 236, the controller 218 integrates the raw speed data 232. The raw speed data 232 and the raw distance data 236 are stored in the memory 214 of the personal electronic device 108. Exemplary raw speed data 232 is plotted in FIG. 6. The plotted raw speed data 232 is based on the plotted movement data 136, which is acceleration data. In other embodiments, the controller 182 of the monitoring device 104 calculates the raw data 232, 236 from the movement data 136.

[0063] Next, in block 528, the controller 218 of the personal electronic device 108 calculates the feature data 138 by applying at least one rule of the set of rules to at least a subset of the movement data 136. The controller 218 stores the feature data 138 in the memory 214 of the personal electronic device 108. The controller 218 performs any desired mathematical operation on the movement data 136 to generate feature data 138 corresponding to a mean acceleration, a median acceleration, a root mean square acceleration, a maximum acceleration, and/or a minimum acceleration. FIG. 6 includes an exemplary plot of a mean acceleration (i.e. feature data 138) based on the plotted movement data 136, which is acceleration data. In one embodiment, the mean acceleration in FIG. 6 is a moving mean taken over a predetermined time period. Moreover, the controller 218 may perform mathematical operations on the movement data 136 to determine feature data 138 including a complexity calculation, a skewness calculation, a kurtosis calculation, a percentage of acceleration samples above or below the mean acceleration, and an autocorrelation calculation. Still further, the controller 218 may perform mathematical operations on the movement data 136 to determine feature data 138 including a lower quartile calculation, an upper quartile calculation, an interquartile range calculation, a percentage of acceleration samples above or below the mean acceleration, a percentage of acceleration data samples above the mean acceleration and below the upper quartile acceleration calculation, and a percentage of acceleration data samples below the mean acceleration and above the lower quartile acceleration calculation. The controller 218 may apply any rule or set of rules and/or perform any mathematical operating or statistical operation on the movement data 136 in calculating the feature data 138. In another embodiment, the controller 182 of the monitoring device 104 determines and/or calculates the feature data 138.

[0064] According to an exemplary embodiment, the controller 218 is configured to calculate the feature data 138 based on and corresponding to the movement data 136 of the movement events detected at block 516 of the method 500. For example, the controller 218 may generate a subset of the feature data 138 based on only the movement data 136 of the flight phase event 314 using the event data 240. Additionally or alternatively, the controller 218 may generate another subset of the feature data 138 based on only the movement data 136 of the takeoff event 302 using the event data 240. The controller 218 is configured to generate the feature data 138 based on the movement data 136 of any detected movement event using the event data 240.

[0065] In one embodiment, the controller 218 is further configured to calculate combined features stored as combined feature data. The combined feature data is stored in the memory 214 and is applied to the machine learning model 140 in the same way that the feature data 138 is applied to the machine learning model 140. The combined feature data is included in the feature data 138 in the figures. The combined feature data is based on a combination of the feature data 138. For example, combined feature data is formed by combining a mean acceleration and a root mean square acceleration using principal component analysis techniques. Any other data combination technique or process may be used to generate the combined feature data from the feature data 138 using the controller 218.

[0066] Moving to block 532, the personal electronic device 108 determines if the user or the program instruction data 228 requires classification of the feature data 138. Classification of the feature data 138 enables the feature data 138 to be organized into subsets that are each associated with a particular type of user. For example, in one embodiment, the controller 218 classifies the feature data 138 based on the speed of the user during various segments of the exercise session each having a predetermined time period.

[0067] In block 536, if classification is to be performed, the controller 218 or the controller 182 applies classification rules to the feature data 138 to classify the feature data 138. The classification rules enable the controller 218 or the controller 182 to categorize the feature data 138 into the desired classification structure. An exemplary classification rule states that the mean acceleration feature data 138 is classified as walking data when a maximum acceleration is less than 70 meters per second squared (70 m/s.sup.2), running data when the maximum acceleration is 70 m/s.sup.2 to 100 m/s.sup.2, and fast running data when the maximum acceleration is greater than 100 m/s.sup.2. Another classification rule classifies the feature data 138 according to the manner in which the user's feet strike the ground when running or walking. For example, the controller 218 determines if the feature data 138 originated from movement data 136 corresponding to a heel-strike mover, a midfoot-strike mover, or a forefoot-strike mover using an appropriate metric.

[0068] Next, in block 540 data is transmitted from the personal electronic device 108 to the remote processing server 112. The transmission of data is typically performed wirelessly using the cellular network 128, a local area network, and/or the Internet 124. The personal electronic device 108 may transmit any data used to generate the movement variable data 144 to the server 112 including the feature data 138 (including combined feature data, classified feature data 138, and/or feature data 138 based on the event data 240), the raw speed data 232, the raw distance data 236, and the demographic data 242. In some embodiments, at least one of the raw speed data 232, the raw distance data 236, and the demographic data 242 are not generated and are not transmitted to the server 112. In a specific embodiment, only the feature data 138 (without combined feature data, classified feature data 138, and feature data 138 based on the event data 240) is transmitted to the remote processing server 112. That is, in this embodiment, the movement data 136 is not transmitted to the remote processing server 112.

[0069] As shown in block 544, the remote processing server 112 generates the movement variable data 144 using the machine learning model 140. The movement variable data 144 includes at least the estimated speed of the user based on the movement data 136, the estimated distance the user has moved/traversed based on the movement data 136, the estimated stride length of the user based on the movement data 136, the estimated cadence of the user based on the movement data 136, and the estimated ground contact time of the user based on the movement data 136, as determined by the machine learning model 140. Depending on the embodiment, at least one of the feature data 138, the raw speed data 232, the raw distance data 236, the demographic data 242, the raw stride length data, the raw cadence data, and the raw ground contact time data are applied to (i.e. processed through) the machine learning model 140 to cause the machine learning model 140 to generate the movement variable data 144, which is stored in the memory 256. Depending on the desired processing demands of the remote processing server 112, only certain data are applied to the machine learning model 140. For example, an approach that generates movement variable data 144 with a suitable level of accuracy applies only the feature data 138 to the machine learning model 140, without event detection (i.e. block 516), without the "raw" data, such as the raw speed data 232 and the raw distance data 236 (i.e. block 524), and without classifying the feature data 138 (i.e. block 536). Such an approach generates the movement variable data 144 based on only the feature data 138 without prior integration of the movement data 136 to determine the raw speed data 232 and the raw distance data 236. That is, the machine learning model 140 determines the estimated speed of the user and the estimated distance moved by the user without the movement data 136 (which in this example includes acceleration data) being integrated by either the personal electronic device 108 or the remote processing server 112.

[0070] Another approach for determining the movement variable data 144 is to apply the feature data 138, the raw speed data 232, and the raw distance data 236 only to the machine learning model 140. Such an approach generates the movement variable data 144 including at least the estimated speed of the user and the estimated distance traveled by the user. Since the machine learning model 140 is trained based on feature data 138 from many users, the output of the machine learning model 140 is more accurate than the raw movement variables determined directly from the movement data 136. Specifically, the estimated speed of the user as determined by the machine learning model 140 is a more accurate representation of the actual speed 234 (FIG. 6) of the user than the raw speed data 232, and the estimated distance traveled by the user as determined by the machine learning model 140 is a more accurate representation of the actual distance traveled by the user than the raw distance data 236. Exemplary, estimated speed data 144 as determined by the machine learning model 140 and raw speed data 232 are plotted for comparison in FIG. 6. As shown in FIG. 6, both the raw speed data 232 and the estimated speed data 144 closely approximate the actual speed 234 of the user, but the estimated speed data 144 is a closer approximation because the estimated speed data 144 deviates less from the actual speed 234.

[0071] Yet another approach for determining the movement variable data 144 is to apply classified feature data 138 and certain "raw" data to the machine learning model 140. For example, the feature data 138 is classified according to the walking/running/fast-running model, as set forth above. At least one of the classified feature data 138, the raw speed data 232, the raw distance data 236, the raw stride length data, the raw cadence data, and the raw ground contact time data is then applied to only the appropriate sub-model 276a, 276b, 276c of the trained model 274. In a specific example, the feature data 138 is classified as "walking" and the feature data 138 is applied to only the walking sub-model 276a to generate the movement variable data 144. Since the sub-model 276a is specially trained with data from "walking" users, the movement variable data 144 is a particularly accurate representation of the actual movements of the user. To illustrate this point, FIG. 7 plots the estimated speed of the user (i.e. movement variable data 144) versus the actual speed of the user for each of the three speed classifications. In one embodiment, the overall mean absolute percentage error ("MAPE") for a model including the walking, running, and fast running sub-models 276a, 276b, 276c is about 3.77.+-.3.01%. In this embodiment, the MAPE for the walking sub-model 276a is only about 2.76.+-.2.44%, the MAPE for the running sub-model 276b is only about 4.22.+-.3.18%, and the MAPE for the fast running sub-model 276c is only about 3.40.+-.2.60%.

[0072] In a similar approach, the feature data 138 is classified according to the heel/midfoot/forefoot strike model, as set forth above. At least one of the classified feature data 138, the raw speed data 232, the raw distance data 236, the raw stride length data, the raw cadence data, and the raw ground contact time data is then applied to only the appropriate sub-model 276a, 276b, 276c of the trained model 274. In a specific example, the feature data 138 is classified as "forefoot-strike" and the feature data 138 (and any "raw" data, if desired) is applied to only the forefoot sub-model 276c to generate the movement variable data 144. Since the sub-model 276c is specially trained with data from "forefoot-strike" users, the movement variable data 144 is a particularly accurate representation of the actual movements of the user. A corresponding approach is taken for feature data 138 classified as "midfoot-strike" and "heel-strike."

[0073] Next, in block 548 of FIG. 5, the remote processing server 112 transmits the movement variable data 144 to the personal electronic device 108. The transmission of data 144 is typically performed wirelessly using the cellular network 128, a local area network, and/or the Internet 124.

[0074] In block 552, the personal electronic device 108 displays a visual representation of the movement variable data 144 on the display unit 198. For example, at the conclusion of the exercise session, at least one of the estimated speed, estimated distance, estimated stride length, estimated cadence, and estimated ground contact time are displayed on the display unit 198 for review by the user. The movement variable data 144 may also be stored on the server 112 for access by the user and others via the Internet 124.

[0075] The fitness tracking system 100 includes specific improvements to computer functionality by improving the manner in which the system 100 generates the movement variable data 144. Known fitness tools locally generate user movement data using an on-board processor. In contrast, in at least one embodiment, the fitness tracking system 100 generates the movement variable data 144 at the server 112, which is remote from the monitoring device 104 and the personal electronic device 108. The remote processing configuration reduces the processing demands of the controller 218 of the personal electronic device 108, thereby reducing the electrical power consumed by the personal electronic device 108. Moreover, the powerful remote processing server 112 generates the movement variable data 144 more quickly than the controller 218 to improve the user experience. Furthermore, storing the machine learning model 140 on the server 112 reduces the capacity requirements of the memory 214 of the personal electronic device 108.