Method Of Showing Availability Zones On A Map For Customer-owned And Cloud Provider-owned Datacenters

McLarty; Melina ; et al.

U.S. patent application number 15/879923 was filed with the patent office on 2019-07-25 for method of showing availability zones on a map for customer-owned and cloud provider-owned datacenters. The applicant listed for this patent is Nutanix, Inc.. Invention is credited to Van Co, Melina McLarty, John Torres.

| Application Number | 20190230462 15/879923 |

| Document ID | / |

| Family ID | 67299032 |

| Filed Date | 2019-07-25 |

| United States Patent Application | 20190230462 |

| Kind Code | A1 |

| McLarty; Melina ; et al. | July 25, 2019 |

METHOD OF SHOWING AVAILABILITY ZONES ON A MAP FOR CUSTOMER-OWNED AND CLOUD PROVIDER-OWNED DATACENTERS

Abstract

A method of discovering geographic locations of one or more data centers of a data center cluster. The method includes receiving a request for geolocation data of a first data center of a data center cluster; identifying a host machine of a second data center of the data center cluster based on the request. The method includes sending a discovery request to the host machine of the second data center. The discovery request configured to cause operations comprising generating, by the host machine of the second data center, discovery data associated with a host machine of a first data center. The method includes generating display data based on the discovery data. The method includes sending the display data to the user device. The display data configured to cause the user device to display the geolocation data of the first data center in a window of the application.

| Inventors: | McLarty; Melina; (Aptos, CA) ; Torres; John; (San Jose, CA) ; Co; Van; (San Jose, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 67299032 | ||||||||||

| Appl. No.: | 15/879923 | ||||||||||

| Filed: | January 25, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04W 4/02 20130101; H04W 64/003 20130101; H04L 41/12 20130101; H04L 41/5058 20130101; H04L 67/1097 20130101; H04L 67/12 20130101; H04L 41/22 20130101; H04L 67/18 20130101; H04L 41/5096 20130101; H04L 67/10 20130101 |

| International Class: | H04W 4/02 20060101 H04W004/02; H04L 29/08 20060101 H04L029/08; H04W 64/00 20060101 H04W064/00; H04L 12/24 20060101 H04L012/24 |

Claims

1. A computer-implemented method comprising: receiving, by an infrastructure management server and from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster; identifying, by the infrastructure management server, a host machine of a second data center of the data center cluster based on the request; sending, by the infrastructure management server, a discovery request to the host machine of the second data center; generating, by the infrastructure management server, display data based on discovery data associated with a second host machine of the first data center of the data center cluster; and sending, by the infrastructure management server, the display data to the user device.

2. (canceled)

3. The method of claim 1, wherein identifying the host machine of the second data center of the data center cluster comprising: retrieving, by the infrastructure management server, a list of data center clusters from a database, each data center cluster having a plurality of host machines associated with a customer and a second plurality of host machines associated with a cloud provider; comparing, by the infrastructure management server, the request for the geolocation data to the list of data center clusters to determine a match; and extracting, by the infrastructure management server in response to determining the match, a network identifier of the host machine of the second data center; and establishing, by the infrastructure management server based on the network identifier, a communication channel between the infrastructure management server and the host machine of the second data center.

4. The method of claim 1, wherein the discovery request causes operations comprising: identifying, by the host machine of the second data center, the second host machine of the first data center.

5. The method of claim 1, wherein the discovery request causes operations comprising: measuring, by the host machine of the second data center, a round-trip time of a message routing between the host machine of the second data center and the second host machine of the first data center; and generating, by the host machine of the second data center and based on the round-trip time of the message, the discovery data associated with the second host machine of the first data center of the data center cluster.

6. The method of claim 1, wherein identifying, by the infrastructure management server, the host machine of the second data center comprising: extracting, by the infrastructure management server from the request for the geolocation data, a network address associated with the host machine of the second data center; and sending, by the infrastructure management server based on the network address, the discovery request to the host machine of the second data center.

7. (canceled)

8. The method of claim 1, wherein the discovery request causes operations comprising: sending, by the host machine of the second data center, a second discovery request to a third host machine of the second data center; wherein the second discovery request causes operations comprising generating, by the third host machine, second discovery data associated with the second host machine of the first data center.

9. The method of claim 8, further comprising: generating, by the infrastructure management server, the display data based on the discovery data and the second discovery data.

10. The method of claim 1, wherein the display data causes operations comprising: displaying, by the user device in a window of the application and on a geographical map, the geolocation data of the first data center and a geolocation of the second data center.

11. (canceled)

12. An apparatus comprising a processor having programmed instructions to: receive, from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster; identify a host machine of a second data center of the data center cluster based on the request; send a discovery request to the host machine of the second data center; generate display data based on discovery data associated with a second host machine of the first data center of the data center cluster; and send the display data to the user device.

13. (canceled)

14. The apparatus of claim 12, wherein the processor further has programmed instructions to: retrieve a list of data center clusters from a database, each data center cluster having a plurality of host machines associated with a customer and a second plurality of host machines associated with a cloud provider; compare the request for the geolocation data to the list of data center clusters to determine a match; and extract, in response to determining the match, a network identifier of the host machine of the second data center; and establish, based on the network identifier, a communication channel between the apparatus and the host machine of the second data center.

15. The apparatus of claim 12, wherein the discovery request causes the host machine of the second data center to identify the second host machine of the first data center.

16. The apparatus system of claim 12, wherein the discovery request causes the host machine of the second data center to: measure a round-trip time of a message routing between the host machine of the second data center and the second host machine of the first data center; and generate, based on the round-trip time of the message, discovery data associated with the second host machine of the first data center of the data center cluster.

17. The apparatus of claim 12, wherein the processor further has programmed instructions to: extract, from the request for the geolocation data, a network address associated with the host machine of the second data center; and send, based on the network address, the discovery request to the host machine of the second data center.

18. (canceled)

19. The apparatus of claim 12, wherein the discovery request causes the host machine of the second data center to: send a second discovery request to a third host machine of the second data center; wherein the second discovery request causes generating, by the third host machine, second discovery data associated with the second host machine of the first data center.

20. The apparatus of claim 19, wherein the processor further has programmed instructions to: generate the display data based on the discovery data and the second discovery data.

21. The apparatus of claim 12, wherein the display data causes the user device to: display, in a window of the application and on a geographical map, the geolocation data of the first data center and a geolocation of the second data center.

22. (canceled)

23. A non-transitory computer readable storage medium to store a computer program configured to execute a method comprising: receiving, from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster; identifying, a host machine of a second data center of the data center cluster based on the request; sending, a discovery request to the host machine of the second data center; generating, display data based on discovery data associated with a second host machine of the first data center of the data center cluster; and sending the display data to the user device.

24. The method of claim 1, wherein the discovery request causes operations comprising generating, by the host machine of the second data center, the discovery data associated with the second host machine of the first data center of the data center cluster.

25. The method of claim 1, wherein the display data causes the user device to display the geolocation data of the first data center in a window of the application.

26. The apparatus of claim 12, wherein the discovery request causes operations comprising generating, by the host machine of the second data center, the discovery data associated with the second host machine of the first data center of the data center cluster.

27. The apparatus of claim 12, wherein the display data causes the user device to display the geolocation data of the first data center in a window of the application.

28. The non-transitory computer readable storage medium of claim 23, wherein the discovery request causes operations comprising generating, by the host machine of the second data center, the discovery data associated with the second host machine of the first data center of the data center cluster.

29. The non-transitory computer readable storage medium of claim 23, wherein the display data causes the user device to display the geolocation data of the first data center in a window of the application.

30. The non-transitory computer readable storage medium of claim 23, wherein identifying the host machine of the second data center of the data center cluster comprising: retrieving a list of data center clusters from a database, each data center cluster having a plurality of host machines associated with a customer and a second plurality of host machines associated with a cloud provider; comparing the request for the geolocation data to the list of data center clusters to determine a match; and extracting, in response to determining the match, a network identifier of the host machine of the second data center; and establishing, based on the network identifier, a communication channel between the computer program and the host machine of the second data center.

31. The non-transitory computer readable storage medium of claim 23, wherein the discovery request causes operations comprising: identifying, by the host machine of the second data center, the second host machine of the first data center.

32. The non-transitory computer readable storage medium of claim 23, wherein the discovery request causes operations comprising: measuring, by the host machine of the second data center, a round-trip time of a message routing between the host machine of the second data center and the second host machine of the first data center; and generating, by the host machine of the second data center and based on the round-trip time of the message, the discovery data associated with the second host machine of the first data center of the data center cluster.

33. The non-transitory computer readable storage medium of claim 23, wherein identifying the host machine of the second data center comprising: extracting, from the request for the geolocation data, a network address associated with the host machine of the second data center; and sending, based on the network address, the discovery request to the host machine of the second data center.

34. The non-transitory computer readable storage medium of claim 23, wherein the discovery request causes operations comprising: sending, by the host machine of the second data center, a second discovery request to a third host machine of the second data center; wherein the second discovery request causes comprising generating, by the third host machine, second discovery data associated with the second host machine of the first data center.

35. The non-transitory computer readable storage medium of claim 35, further comprising: generating the display data based on the discovery data and the second discovery data.

36. The non-transitory computer readable storage medium of claim 23, wherein the display data causes operations comprising: displaying, by the user device in a window of the application and on a geographical map, the geolocation data of the first data center and a geolocation of the second data center.

37. A non-transitory computer readable storage medium to store a computer program configured to execute a method comprising: receiving, from an application executing on a user device, a request for geolocation data of a first data center and a second data center; determining the geolocation data of the first data center and the second data center; generating display data based on the geolocation data; and sending the display data to the user device, the display data causes the user device to display the geolocation data of the first data center and the second data center in a single-view of a window of the application.

38. The non-transitory computer readable storage medium of claim 37, the method further comprising: identifying a host machine of a third data center based on the request; and sending a discovery request to the host machine of the third data center, wherein the discovery request causes the host machine of the third data center to generate the geolocation data of the first data center and the second data center.

39. The non-transitory computer readable storage medium of claim 37, the method further comprising sending a discovery request to a host machine of a third data center, wherein the discovery request causes operations comprising: sending, by the host machine of the third data center, a message to each of a first host machine of the first data center and a second host machine of the second data center, respectively; measuring, by the host machine of the third data center, a plurality of round-trip times, wherein a round-trip time of the plurality of round-trip times is measured, by the host machine of the third data center, for each message; and generating, by the host machine of the third data center and based on the plurality of round-trip times, the geolocation data associated with the first data center and the second data center.

40. The non-transitory computer readable storage medium of claim 37, the method further comprising sending a first discovery request to a first host machine of a third data center and a second discovery request to a second host machine of the third data center, wherein the first discovery request and the second discovery request cause the first host machine and the second host machine of the third data center, respectively, to generate the geolocation data of the first data center and the second data center, respectively.

Description

BACKGROUND

[0001] A virtual machine ("VM") may refer to a specific software-based implementation of a machine in a virtualization environment, in which the hardware resources of a real computer (e.g., CPU, memory, etc.) are virtualized or transformed into the underlying support for the fully functional virtual machine that can run its own operating system and applications on the underlying physical resources just like a real computer.

SUMMARY

[0002] Aspects of the present disclosure relate generally to a cloud infrastructure and virtual environment management system, and more particularly to systems and methods for automatically discovering the geographic locations of the customer-owned data centers and the cloud provider-owned data centers of a data center cluster.

[0003] One implementation disclosed herein is a method of discovering the geographic locations of one or more data centers of a data center cluster. The method includes receiving, by an infrastructure management server and from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster. In some implementations, the method includes identifying, by the infrastructure management server, a host machine of a second data center of the data center cluster based on the request. In some implementations, the method includes sending, by the infrastructure management server, a discovery request to the host machine of the second data center. The discovery request causing operations comprising generating, by the host machine of the second data center, discovery data associated with a host machine of a first data center of the data center cluster. In some implementations, the method includes generating, by the infrastructure management server, display data based on the discovery data. In some implementations, the method includes sending, by the infrastructure management server, the display data to the user device, the display data causing the user device to display the geolocation data of the first data center in a window of the application.

[0004] In some implementations, the first data center is owned by one of a customer and a cloud provider and wherein the second data center is owned by the other of the customer and the cloud provider.

[0005] In some implementations, identifying the host machine of the second data center of the data center cluster includes retrieving, by the infrastructure management server, a list of data center clusters from a database. In some implementations, each data center cluster having a plurality of host machines associated with a customer and a plurality of host machines associated with a cloud provider.

[0006] In some implementations, the method includes comparing, by the infrastructure management server, the request for the geolocation data to the list of data center clusters to determine a match.

[0007] In some implementations, the method includes extracting, by the infrastructure management server in response to determining the match, a network identifier of the host machine of the second data center.

[0008] In some implementations, the method includes establishing, by the infrastructure management server based on the network identifier, a communication channel between the infrastructure management server and the host machine of the second data center.

[0009] In some implementations, the discovery request further causing operations comprising identifying, by the host machine of the second data center, the host machine of the first data center.

[0010] In some implementations, the discovery request further causing operations comprising measuring, by the host machine of the second data center, a round-trip time of a message routing between the host machine of the second data center and the host machine of the first data center;

[0011] In some implementations, the discovery request further causing operations comprising generating, by the host machine of the second data center and based on the round-trip time of the message, discovery data associated with the host machine of the first data center of the data center cluster.

[0012] In some implementations, identifying, by the infrastructure management server, the host machine of the second data center comprising extracting, by the infrastructure management server from the request for the geolocation data, a network address associated with the host machine of the second data center.

[0013] In some implementations, identifying, by the infrastructure management server, the host machine of the second data center comprising sending, by the infrastructure management server based on the network address, the discovery request to the host machine of the second data center.

[0014] In some implementations, generating, by the infrastructure management server, the display data based on the discovery data comprising sending, by the infrastructure management server, a second discovery request to the second host machine of the second data center, the second discovery request causing operations comprising generating second discovery data associated with the host machine of the first data center of the data center cluster; and generating, by the infrastructure management server, the display data based on the discovery data and the second discovery data.

[0015] In some implementations, the discovery request causing operations further comprising sending, by the host machine of the second data center, a second discovery request to a second host machine of the second data cluster; wherein the second discovery request causing operations comprising generating, by the second host, second discovery data associated with the host machine of the first data center.

[0016] In some implementations, the method includes generating, by the infrastructure management server, display data based on the discovery data and the second discovery data.

[0017] In some implementations, the display data further causing operations comprising displaying, by the user device in the window of the application and on a geographical map, the geolocation data of the first data center and a geolocation of the second data center.

[0018] In another aspect, the present disclosure is directed to a system for discovering the geographic locations of one or more data centers of a data center cluster. The system comprising an infrastructure management server configured to receive, from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster. In some implementations, the system is configured to identify a host machine of a second data center of the data center cluster based on the request.

[0019] In some implementations, the system is configured to send a discovery request to the host machine of the second data center, the discovery request causes the host machine of the second data center to generate discovery data associated with a host machine of a first data center of the data center cluster.

[0020] In some implementations, the system is configured to generate display data based on the discovery data. In some implementations, the system is configured to send the display data to the user device, the display data causing the user device to display the geolocation data of the first data center in a window of the application.

[0021] In some implementations, the first data center owned by one of a customer and a cloud provider and wherein the second data center owned by the other of the customer and the cloud provider.

[0022] In some implementations, the infrastructure management server further configured to retrieve a list of data center clusters from a database, each data center cluster having a plurality of host machines associated with a customer and a plurality of host machines associated with a cloud provider. In some implementations, the infrastructure management server further configured to compare the request for the geolocation data to the list of data center clusters to determine a match. In some implementations, the infrastructure management server further configured to extract, in response to determining the match, a network identifier of the host machine of the second data center. In some implementations, the infrastructure management server further configured to establish, based on the network identifier, a communication channel between the infrastructure management server and the host machine of the second data center.

[0023] In some implementations, the discovery request further causes the host machine of the second data center to identify the host machine of the first data center.

[0024] In some implementations, the discovery request further causes the host machine of the second data center to measure a round-trip time of a message routing between the host machine of the second data center and the host machine of the first data center. In some implementations, the discovery request further causes the host machine of the second data center to generate, based on the round-trip time of the message, discovery data associated with the host machine of the first data center of the data center cluster.

[0025] In some implementations, the infrastructure management server further configured to extract, from the request for the geolocation data, a network address associated with the host machine of the second data center and send, based on the network address, the discovery request to the host machine of the second data center.

[0026] In some implementations, the infrastructure management server further configured to send a second discovery request to the second host machine of the second data center, the second discovery request causing operations comprising generating second discovery data associated with the host machine of the first data center of the data center cluster and generate the display data based on the discovery data and the second discovery data.

[0027] In some implementations, the discovery request further causes the host machine of the second data center to send a second discovery request to a second host machine of the second data cluster; wherein the second discovery request causing operations comprising generating, by the second host, second discovery data associated with the host machine of the first data center.

[0028] In some implementations, the infrastructure management server further configured to generate display data based on the discovery data and the second discovery data.

[0029] In some implementations, the display data further causing the user device to display, in the window of the application and on a geographical map, the geolocation data of the first data center and a geolocation of the second data center.

[0030] In another aspect, the present disclosure is directed to a non-transitory computer readable storage medium to store a computer program configured to execute a method for discovering the geographic locations of one or more data centers of a data center cluster. The method includes receiving, by an infrastructure management server and from an application executing on a user device, a request for geolocation data of a first data center of a data center cluster. In some implementations, the method includes identifying, by the infrastructure management server, a host machine of a second data center of the data center cluster based on the request. In some implementations, the method includes sending, by the infrastructure management server, a discovery request to the host machine of the second data center. The discovery request causing operations comprising generating, by the host machine of the second data center, discovery data associated with a host machine of a first data center of the data center cluster. In some implementations, the method includes generating, by the infrastructure management server, display data based on the discovery data. In some implementations, the method includes sending, by the infrastructure management server, the display data to the user device, the display data causing the user device to display the geolocation data of the first data center in a window of the application.

[0031] In another aspect, the present disclosure is directed to a method of discovering the status of entities associated with one or more data centers of a data center cluster. The method including receiving, by an infrastructure management server and from an application executing on a user device, a request for entity data associated with one or more data centers of a data center cluster; identifying, by the infrastructure management server, a host machine of a data center of the one or more data centers based on the request; sending, by the infrastructure management server, a discovery request to the host machine of the data center, the discovery request causing operations comprising generating, by the host machine of the data center, entity data associated with the host machine of the data center and a second host machine of a second data center; generating, by the infrastructure management server, display data based on the entity data; and sending, by the infrastructure management server, the display data to the user device, the display data causing the user device to display the entity data in a window of the application.

[0032] In another aspect, the present disclosure is directed to a system for discovering the status of entities associated with one or more data centers of a data center cluster, the system comprising an infrastructure management server configured to receive, from an application executing on a user device, a request for entity data associated with one or more data centers of a data center cluster; identify a host machine of a data center of the one or more data centers based on the request; send a discovery request to the host machine of the data center, the discovery request causing operations comprising generating, by the host machine of the data center, entity data associated with the host machine of the data center and a second host machine of a second data center; generate display data based on the entity data; and send the display data to the user device, the display data causing the user device to display the entity data in a window of the application

[0033] In another aspect, the present disclosure is directed to a non-transitory computer readable storage medium to store a computer program configured to execute a method for discovering the status of entities associated with one or more data centers of a data center cluster. The method including receiving, by an infrastructure management server and from an application executing on a user device, a request for entity data associated with one or more data centers of a data center cluster; identifying, by the infrastructure management server, a host machine of a data center of the one or more data centers based on the request; sending, by the infrastructure management server, a discovery request to the host machine of the data center, the discovery request causing operations comprising generating, by the host machine of the data center, entity data associated with the host machine of the data center and a second host machine of a second data center; generating, by the infrastructure management server, display data based on the entity data; and sending, by the infrastructure management server, the display data to the user device, the display data causing the user device to display the entity data in a window of the application.

BRIEF DESCRIPTION OF THE DRAWINGS

[0034] Illustrative embodiments will hereafter be described with reference to the accompanying drawings.

[0035] FIG. 1A is a block diagram illustrating a clustered virtualization environment according to an illustrative implementation.

[0036] FIG. 1B is a block diagram illustrating the data flow within an example clustered virtualization environment according to an illustrative implementation.

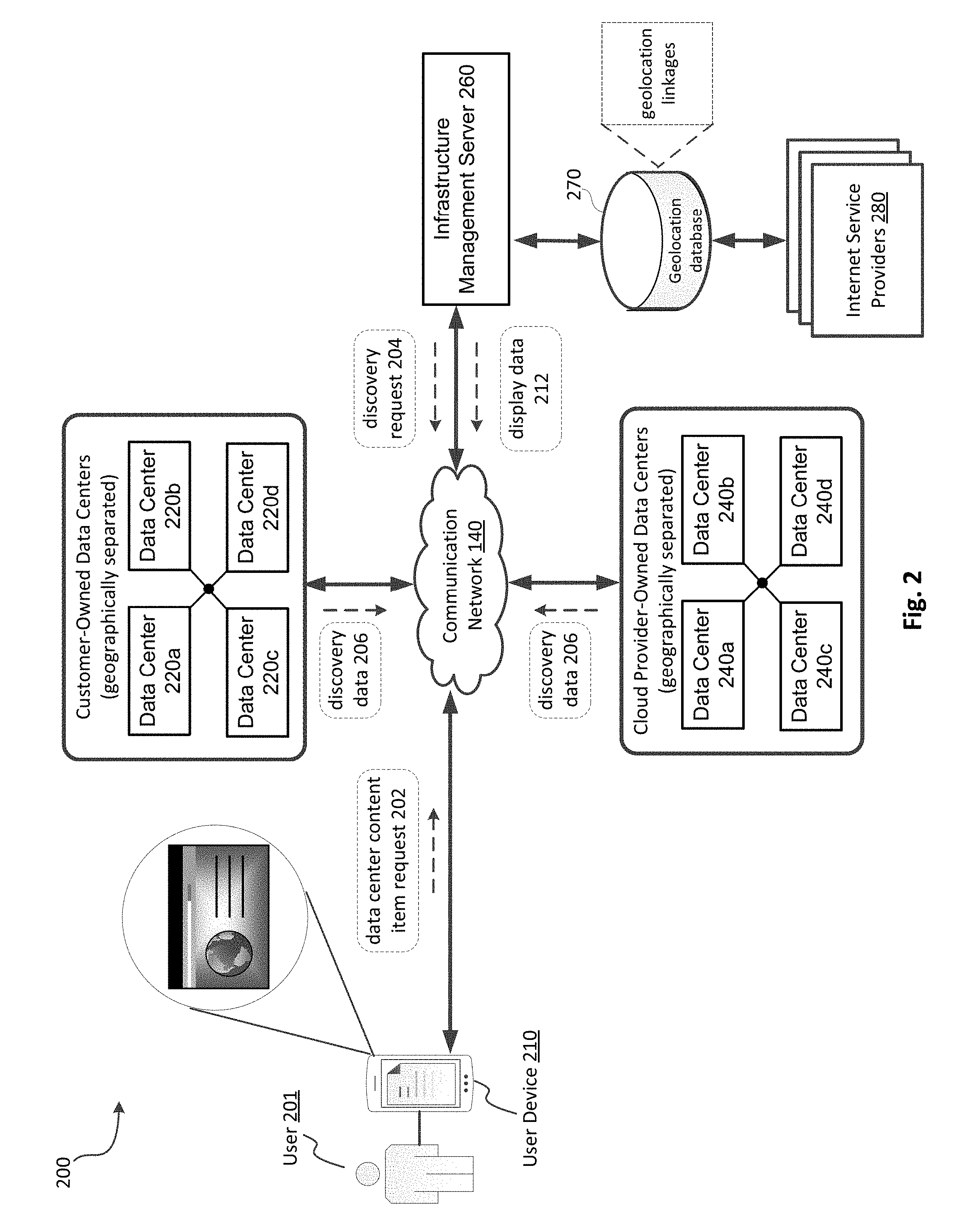

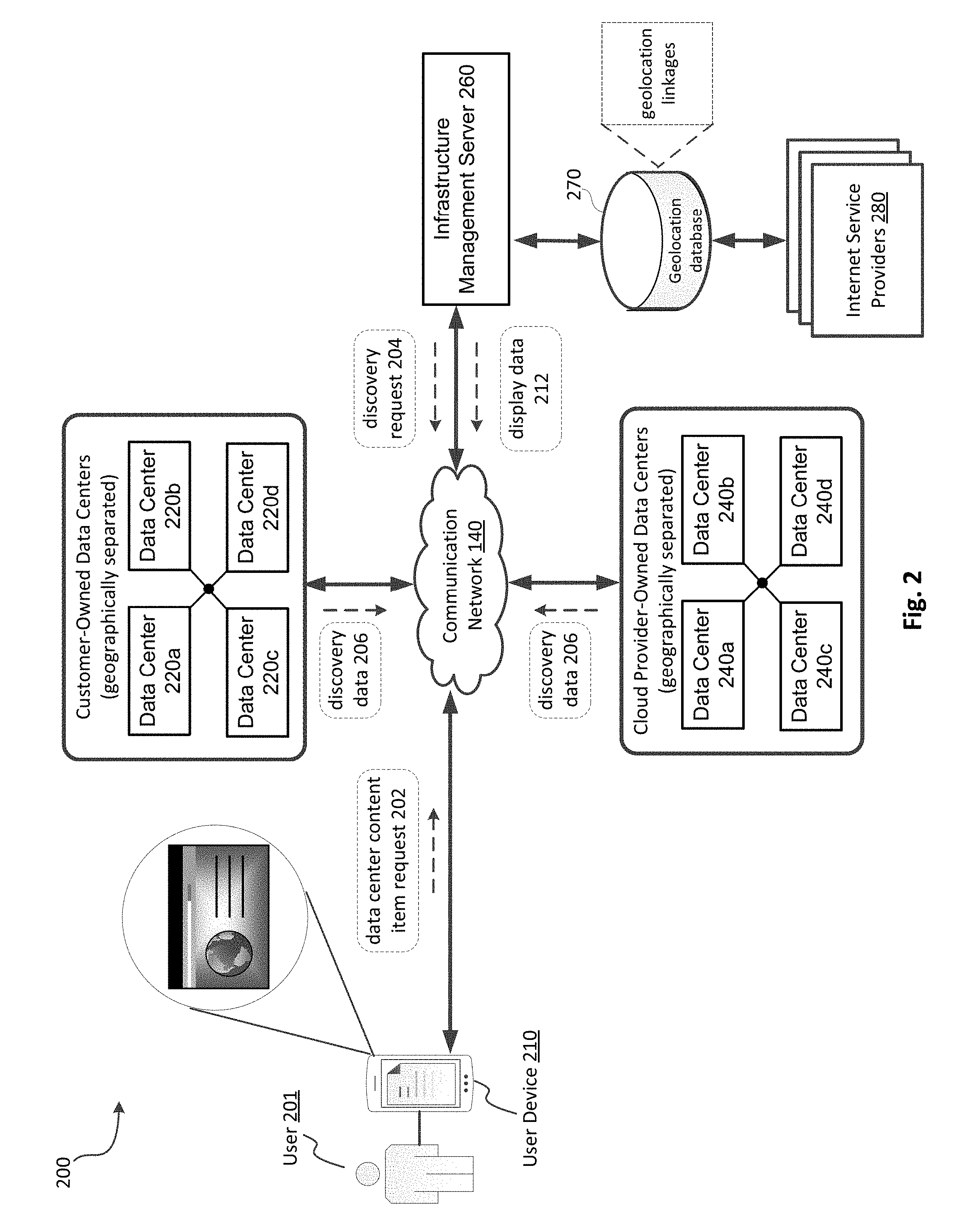

[0037] FIG. 2 is a block diagram depicting an example environment for automatically discovering the location of customer-owned data centers and cloud provider-owned data centers of a data center cluster and the status of their respective cloud entities, according to an illustrative implementation.

[0038] FIG. 3A is a block diagram depicting an example user device of the environment in FIG. 1, according to an illustrative implementation.

[0039] FIG. 3B is a block diagram depicting an example infrastructure management server of the environment in FIG. 1, according to an illustrative implementation.

[0040] FIG. 4A is a diagram depicting an example browser for displaying discovery data associated with a data center cluster, according to an illustrative implementation.

[0041] FIG. 4B is a diagram depicting an example browser for displaying the geographic locations of customer-owned data centers and cloud provider-owned data centers of a data center cluster, according to an illustrative implementation.

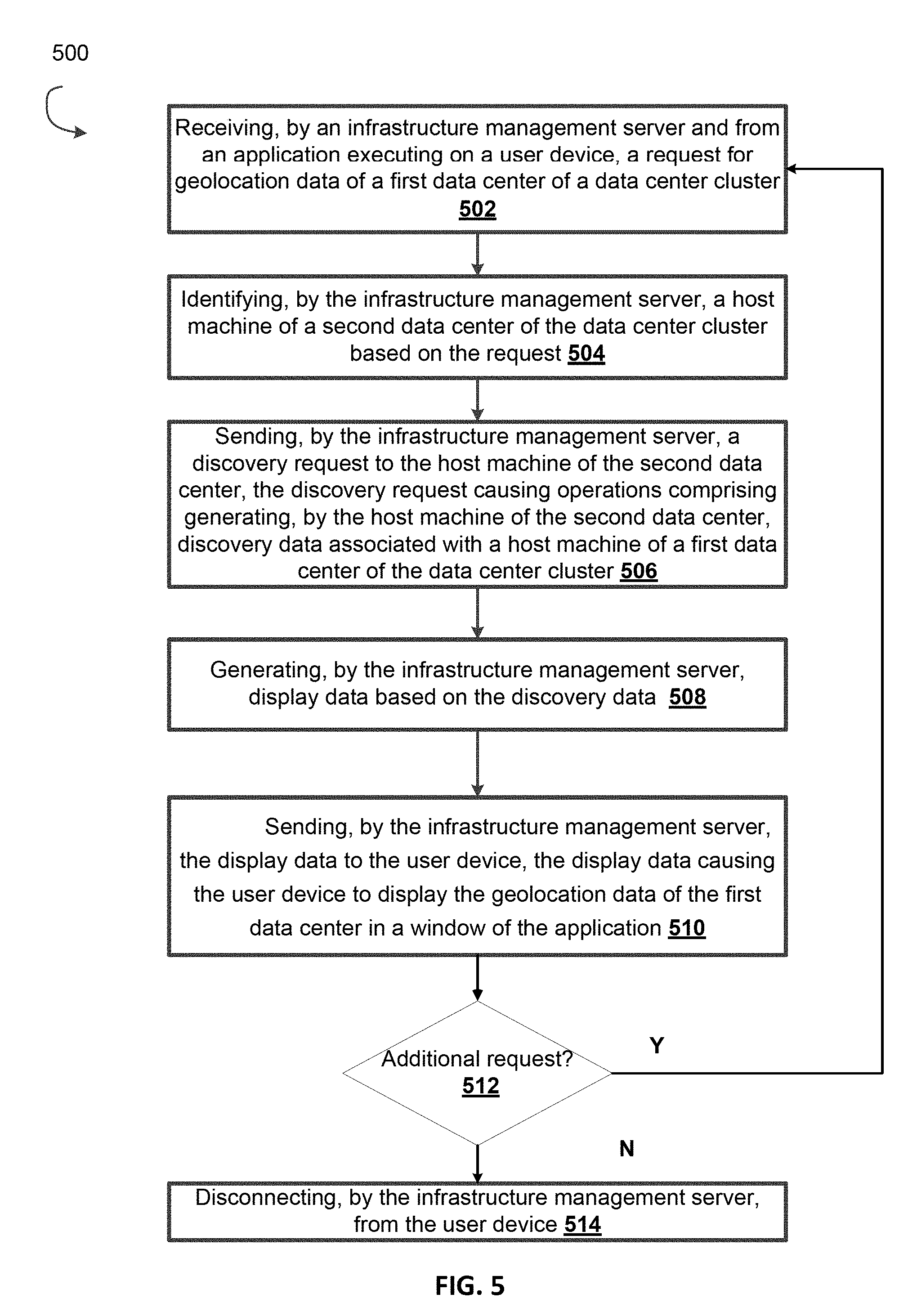

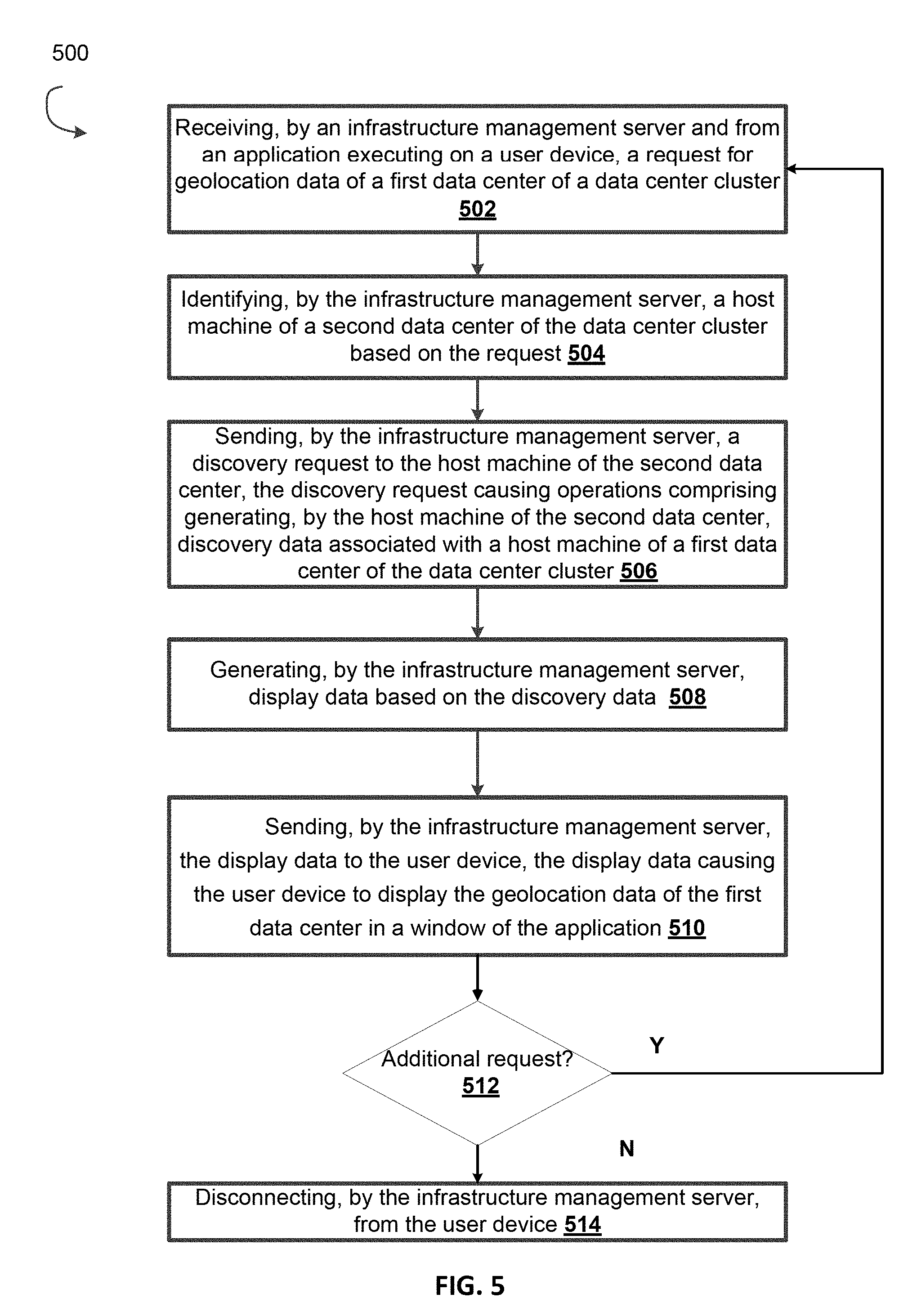

[0042] FIG. 5 is a flow diagram depicting a method for automatically discovering the geographic locations of customer-owned data centers and cloud provider-owned data centers of a data center cluster and the status of their respective entities, according to an illustrative implementation.

[0043] FIG. 6 is a flow diagram depicting a method for automatically discovering the status of the entities associated with data centers spanning across multiple geographic locations and causing display of the entity status in a single-view, according to an illustrative implementation.

[0044] Like reference numbers and designations in the various drawings indicate like elements.

DETAILED DESCRIPTION

[0045] Virtual machines allow multiple independent instances to coexist simultaneously on the same physical server. This allows vastly increased utilization of the physical server's resources, requiring fewer physical systems at a data center to operate the same number of business workloads, resulting in lower system maintenance costs, less power consumption and reduced cooling demands for the enterprise. Despite the scaling advantages of virtual machines, businesses with multiple locations or many employees, may need to build more than one data center in order to provide sufficient computing resources/services to all employees of the business. Moreover, unexpected demands for computing resources by the employees, such as during the holidays, may overburden the business's computing network requiring the business to contract with third-party cloud providers to lease their data centers during these peak times. However, the cloud providers tend to build their data centers far apart from one another and at locations that are undisclosed to the business leasing the data center, which presents numerous technical problems for a system administrator who builds the business's network. For example, employees who send network request to the data center cluster to request computing resources/services may be randomly connected to a data center that is distant to the employee's location. As such, the employee may experience significant latency issues in receiving their data or viewing applications running in a virtual machine.

[0046] Accordingly, the present disclosure is directed to systems and methods for automatically discovering the geographic locations of the customer-owned data centers and the cloud provider-owned data centers of a data center cluster and displaying their locations on one or more maps. A system administrator informed of the location for each data center may create efficient networking rules that redirect an employee's networking request for computing resources/services of a data center cluster to only local data centers; thereby, dramatically reducing network latency and network congestion.

[0047] Furthermore, a data center cluster with many data centers makes it difficult for the system administrator to monitor the status of the virtual machines executing at each data center For example, a system administrator must connect and disconnect to each data center one at a time to query the virtual machine status for that data centers. This prevents the system administrator from viewing the activity of all virtual machines in real-time, and having to send multiple connection and disconnection requests to the data center cluster.

[0048] Accordingly, the present disclosure also describes systems and methods for automatically discovering the status of the entities associated with data centers spanning across multiple geographic locations and causing display of the entity status in a single-view. Therefore, the system administrator may only need to send one request to simultaneously access the real-time status for all virtual machines, which alleviates network bottlenecks and network congestion.

[0049] In general, the system and method discussed herein allows a system administrator of a cluster of data centers (referred to herein as a data center cluster) to send a request for geolocation data from a user device to an infrastructure management server, which automatically determines and delivers the precise (or approximate) geographic location of each data center created by the cloud provider (and in some implementations, each data center created by the customer) contained within the data center cluster. That is, the infrastructure management server identifies each customer-owned data center of the data center cluster, establishes a connection with one or more host machines of each of the customer-owned data centers, sends instructions to the connected, customer-owned host machines causing them to (a) measure the round-trip time for a message that they send/receive from a single host machine residing at the cloud provider-owned data center, and (b) send the measured round-trip time(s) to the infrastructure management server. The infrastructure management server calculates the geolocation for the cloud provider-owned data center based on the round-trip time and generates display data that, when delivered to the user device, instructs (and causes) the user device to assemble/render the requested geolocation data on one or more geographic maps showing the geolocation of each data center in the data center cluster. In some implementations, the infrastructure management server may also identify and discover the status of each virtual machine executing (or previously executed) on the host machines of data centers spanning across multiple geographic locations. The infrastructure management server may then include the status data in the generation of the display data to instruct (and cause) the user device to assemble/render the multi-regional status data into a single-view.

[0050] Virtualization Technology and Environment

[0051] Virtualization works by inserting a thin layer of software directly on the computer hardware or on a host operating system. This layer of software contains a virtual machine monitor or "hypervisor" that allocates hardware resources dynamically and transparently. Multiple operating systems run concurrently on a single physical computer and share hardware resources with each other. By encapsulating an entire machine, including CPU, memory, operating system, and network devices, a virtual machine is completely compatible with most standard operating systems, applications, and device drivers. Most modern implementations allow several operating systems and applications to safely run at the same time on a single computer, with each having access to the resources it needs when it needs them.

[0052] Virtualization allows one to run multiple virtual machines on a single physical machine, with each virtual machine sharing the resources of that one physical computer across multiple environments. Different virtual machines can run different operating systems and multiple applications on the same physical computer.

[0053] One reason for the broad adoption of virtualization in modern business and computing environments is because of the resource utilization advantages provided by virtual machines. Without virtualization, if a physical machine is limited to a single dedicated operating system, then during periods of inactivity by the dedicated operating system the physical machine is not utilized to perform useful work. This is wasteful and inefficient if there are users on other physical machines which are currently waiting for computing resources. To address this problem, virtualization allows multiple VMs to share the underlying physical resources so that during periods of inactivity by one VM, other VMs can take advantage of the resource availability to process workloads. This can produce great efficiencies for the utilization of physical devices, and can result in reduced redundancies and better resource cost management.

[0054] Furthermore, there are now products that can aggregate multiple physical machines, running virtualization environments to not only utilize the processing power of the physical devices to aggregate the storage of the individual physical devices to create a logical storage pool wherein the data may be distributed across the physical devices but appears to the virtual machines to be part of the system that the virtual machine is hosted on. Such systems operate under the covers by using metadata, which may be distributed and replicated any number of times across the system, to locate the indicated data. These systems are commonly referred to as clustered systems, wherein the resources of the group are pooled to provide logically combined, but physically separate systems.

[0055] Further details of aspects, objects, and advantages of the invention are described below in the detailed description, drawings, and claims. Both the foregoing general description and the following detailed description are exemplary and explanatory, and are not intended to be limiting as to the scope of the invention. Particular embodiments may include all, some, or none of the components, elements, features, functions, operations, or steps of the embodiments disclosed above. The subject matter which can be claimed comprises not only the combinations of features as set out in the attached claims but also any other combination of features in the claims, wherein each feature mentioned in the claims can be combined with any other feature or combination of other features in the claims. Furthermore, any of the embodiments and features described or depicted herein can be claimed in a separate claim and/or in any combination with any embodiment or feature described or depicted herein or with any of the features of the attached claims.

[0056] FIG. 1A is a block diagram illustrating a clustered virtualization environment 100 according to an illustrative implementation. The architecture of FIG. 1A can be implemented for a distributed platform that contains multiple host machines 101 a-c (also collectively referred to herein as "host machines 101") that manage multiple tiers of storage. The multiple tiers of storage may include storage that is accessible through communication network 140, such as, by way of example and not limitation, cloud storage 126 (e.g., which may be accessible through the Internet), network-attached storage (NAS) 128 (e.g., which may be accessible through a LAN), or a storage area network (SAN). Unlike the prior art, the present embodiment also permits local storage 122a-c that is incorporated into or directly attached to the respective host machine 101 and/or appliance to be managed as part of storage pool 160. Examples of such local storage include Solid State Drives 125 (henceforth "SSDs"), Hard Disk Drives 127 (henceforth "HDDs" or "spindle drives"), optical disk drives, external drives (e.g., a storage device connected to a host machine via a native drive interface or a serial attached SCSI interface), or any other direct-attached storage. These storage devices, both direct-attached and network-accessible, collectively form storage pool 160. Virtual disks (or "vDisks") may be structured from the physical storage devices in storage pool 160, as described in more detail below. As used herein, the term vDisk refers to the storage abstraction that is exposed by a Controller/Service VM (CVM) 110 to be used by a user VM 105. In particular embodiments, the vDisk may be exposed via iSCSI ("internet small computer system interface") or NFS ("network file system") and is mounted as a virtual disk on the user VM. In particular embodiments, vDisks may be organized into one or more volume groups (VGs).

[0057] Each host machine 101 may run virtualization software, such as VMWARE ESX(I), MICROSOFT HYPER-V, or REDHAT KVM. The virtualization software includes hypervisor 130a-c to create, manage, and destroy user VMs 105, as well as managing the interactions between the underlying hardware and user VMs 105. User VMs 105 may run one or more applications that may operate as "clients" with respect to other elements within virtualization environment 100. Though not depicted in FIG. 1A, a hypervisor may connect to communication network 140. In particular embodiments, a host machine 101 may be a physical hardware computing device; in particular embodiments, a host machine 101 may be a virtual machine.

[0058] CVMs 110a-c are used to manage storage and input/output ("I/O") activities according to particular embodiments. These special VMs act as the storage controller in the currently described architecture. Multiple such storage controllers may coordinate within a cluster to form a unified storage controller system. CVMs 110 may run as virtual machines on the various host machines 101, and work together to form a distributed system 110 that manages all the storage resources, including local storage 122, NAS 128, and cloud storage 126. The CVMs may connect to communication network 140 directly, or via a hypervisor. Since the CVMs run independent of hypervisors 130a-c, this means that the current approach can be used and implemented within any virtual machine architecture, since the CVMs of particular embodiments can be used in conjunction with any hypervisor from any virtualization vendor.

[0059] A host machine may be designated as a leader node within a cluster of host machines. For example, host machine 101b, as indicated by the asterisks, may be a leader node. A leader node may have a software component designated to perform operations of the leader. For example, CVM 110b on host machine 101b may be designated to perform such operations. A leader may be responsible for monitoring or handling requests from other host machines or software components on other host machines throughout the virtualized environment. If a leader fails, a new leader may be designated. In particular embodiments, a management module (e.g., in the form of an agent) may be running on the leader node.

[0060] Each CVM 110a-c exports one or more block devices or NFS server targets that appear as disks to user VMs 105a-c. These disks are virtual, since they are implemented by the software running inside CVMs 110a-c. Thus, to user VMs 105a-c, CVMs 110a-c appear to be exporting a clustered storage appliance that contains some disks. All user data (including the operating system) in the user VMs 105a-c and reside on these virtual disks.

[0061] Significant performance advantages can be gained by allowing the virtualization system to access and utilize local storage 122 as disclosed herein. This is because I/O performance is typically much faster when performing access to local storage 122 as compared to performing access to NAS 128 across a communication network 140. This faster performance for locally attached storage 122 can be increased even further by using certain types of optimized local storage devices, such as SSDs. Further details regarding methods and mechanisms for implementing the virtualization environment illustrated in FIG. 1A are described in U.S. Pat. No. 8,601,473, which is hereby incorporated by reference in its entirety.

[0062] FIG. 1B illustrates data flow within an example clustered virtualization environment 100 according to an illustrative implementation. As described above, one or more user VMs and a CVM may run on each host machine 101 along with a hypervisor. As a user VM performs I/O operations (e.g., a read operation or a write operation), the I/O commands of the user VM may be sent to the hypervisor that shares the same server as the user VM. For example, the hypervisor may present to the virtual machines an emulated storage controller, receive an I/O command and facilitate the performance of the I/O command (e.g., via interfacing with storage that is the object of the command, or passing the command to a service that will perform the I/O command). An emulated storage controller may facilitate I/O operations between a user VM and a vDisk. A vDisk may present to a user VM as one or more discrete storage drives, but each vDisk may correspond to any part of one or more drives within storage pool 160. Additionally or alternatively, CVM 110a-c may present an emulated storage controller either to the hypervisor or to user VMs to facilitate I/O operations. CVM 110a-c may be connected to storage within storage pool 160. CVM 110a may have the ability to perform I/O operations using local storage 122a within the same host machine 101a, by connecting via communication network 140 to cloud storage 126 or NAS 128, or by connecting via communication network 140 to local storage 122b-c within another host machine 101b-c (e.g., via connecting to another CVM 110b or 110c). In some implementations, any suitable computing device (e.g., infrastructure management server 260) may be used to implement a host machine 101.

[0063] Management System for Cloud Infrastructure and Virtual Environments

[0064] FIG. 2 is a block diagram depicting an example environment 200 for managing cloud infrastructure and virtual environments, according to an illustrative implementation. The environment 200 includes a user device 210 for a user 201 to send a request (e.g., data center content item request 202) to an infrastructure management server 260. The environment includes a cluster (referred to herein as a "data center cluster") of customer-owned data centers 220a-220d (also collectively referred to herein as "data centers 220") and cloud provider-owned data centers (also collectively referred to herein as "data centers 240") that may each be physically (e.g., geographically) or communicatively (e.g., firewalled) separated from one another. In some implementations, the cluster may include only customer-owned data centers or only cloud-provider-owned data centers. The environment includes an infrastructure management server 260 for automatically discovering the geographical locations of customer-owned (or customer-associated) and cloud provider-owned (or cloud provider-associated) data centers and the status of their executed virtual machines, and delivering display data (e.g., display data 212) to a user (e.g., user 201) of a computing device (e.g., user device 210). The user 201 may be an administrator of the data cluster who is responsible for the upkeep, configuration, and/or reliable operation of computer systems (e.g., host machines 101a-101c in FIG. 1) of one or more data centers of the data cluster. In some implementations, the user 201 may be associated (e.g., member, employee, independent contractor) with the customer-owned data centers, the cloud provider-owned data centers of the data center cluster, or both. In some implementations, the user 201 may be an individual or a business entity (e.g., a company, a corporation, a partnership, a Trust, an Association, or the like). The environment 200 includes a communication network 140 that connects user device 210 to one or more customer-owned and/or cloud provider-owned data centers and one or more infrastructure management servers 260.

[0065] The environment 200 may include a customer (not shown), such as an individual or business entity, that owns data centers 220. The environment 200 may include a cloud provider (not shown), such as an individual or business entity, that owns datacenters 240 and provides an administrator (e.g., user 201) with the authority (e.g., via a lease, rental agreement, oral agreement) to manage, control, and use the resources of the datacenters 240.

[0066] The environment 200 includes a geolocation database 270 for storing geolocation linkages provided from one or more internet service providers 280. The geolocation linkages associates (e.g., maps or links) "identifiable information" (as discussed herein) of any computing device or entity (e.g., data centers 220, 240) in environment 200 with its geolocation data. For example, the geolocation database 270 may associate and store the device identifier of data center 220b with the geolocation of data center 220b as "ID25<=> San Diego, Calif.". As discussed herein, in some implementations, the environment 200 may include fewer, additional, and/or different components. For example, the environment may omit the geolocation database 270 and/or internet service providers 280.

[0067] A data center cluster is a collection of data centers (e.g., data centers 220, 240) interconnected by a common network connection (e.g., communication network 140). A data center is a collection of racks, and each rack is a collection of computing systems, such as a server (e.g., host machines 101a-c in FIG. 1B). A server may contain a predetermined number of virtual nodes that function as the data storage layer within each server. Each data center provides a user and/or customer of the data center with storage and/or virtual machine resources.

[0068] The environment 200 may include many thousands of user devices 210, customer-owned data centers 220, cloud provider-owned data centers 240, customers (not shown), cloud providers (not shown), and infrastructure management servers 260; each interconnected via communication network 140. Each data center 220, 240 may share some or all of its stored "identifiable information" (e.g., geolocation data, entity status data, device identifiers, session identifiers, and display data, etc.) with any other data center 220, 240. In some implementations, environment 200 may include sub-clusters of a data center (data centers 220 and/or data centers 240) where the data centers within a sub-cluster are interconnected to one another via communication network 140 but communicatively unavailable (e.g., disconnected, isolated, fire-walled) to data centers of another sub-cluster. As such, each data center within a sub-cluster may share some or all of its stored data with other data centers connected to that subset. Although shown outside of data centers 220 and data centers 240, infrastructure management server 260 may be housed within any one of data centers 220, data centers 240, a customer location (e.g., a headquarters, a remote/satellite office), and a cloud provider location. In some implementations, a cloud provider may own multiple infrastructure management servers 260 and scatter (e.g., geographically/physically separated) them throughout a particular geographic region, such as a town, a city, a state, and a country.

[0069] The user device 210 is an electronic device that is under control/management of a user (e.g., user 101) and is capable of sending/receiving requests (e.g., data center content item requests 202) and resources/data (e.g., user data, user device identifiers, session identifiers, display data, content items, and) over communication network 140. Example user device 210 include personal computers (e.g., desktop or laptop), mobile communication devices (e.g., smartphones or tablets), video game console, servers, and other devices that can send and receive data over communication network 140.

[0070] The user device 210 may execute, operate, or otherwise provide an application (e.g., application 370 in FIG. 3A), such as any type and/or form of an internet/web browser, a web-based client, client-server application, a thin-client computing client, a graphic user interface (GUI), an email reader/client, and a File Transfer Protocol (FTP) client, an HTTP client, an Oscar client, a Telnet client, a voice over internet protocol client (VoIP), an infrastructure/virtual environment monitoring tool, an ActiveX control or a Java applet, or any other type and/or form of executable instructions capable of executing on user device 210, to facilitate the sending and receiving (and processing) of data between user device 210 and infrastructure management servers 260, via communication network 140. The user device 210 renders the data within/via the software application or may include (or execute) other content rendering applications (e.g., .pdf viewer, .doc viewer, txt viewer, .xls viewer, .ppt viewer, HyperText Markup (HTML) viewer, .jpg/.bmp/.png viewer, video viewer, map viewer, etc.) to display the received data on a display screen. In some implementations, user device 210 may receive display output data from a server (e.g., infrastructure management server 260) executing any thin-client or remote-display protocol, such as the Independent Computing Architecture (ICA) protocol, the Remote Desktop Protocol (RDP) manufactured; the X11 protocol; the Virtual Network Computing (VNC) protocol, the SPICE protocol, the Net2Display protocol, the PC-over-IP protocol, the TCX protocol, the THINC protocol, the Virtual-D protocols, any thin-client or remote-display protocol manufactured by VMware, Inc. or Nutanix, Inc., or any other thin-client or remote-display protocol.

[0071] The user device 210 may be configured to send a connection request to infrastructure management server 260 to establish a communication channel between the user device 210 and the infrastructure management server 260. In some implementations, the infrastructure management server 260 establishes an encrypted/secure communication channel between the user device 210 and the infrastructure management server 260. The connection request may include any "identifiable information" for infrastructure management server 260 to authenticate and grant the requested connection. For example, user device 210 may send a username/password combination that is encoded in its connection request to infrastructure management server 260. The user device 210 may be configured to receive from infrastructure management server 260, via the authenticated and established connection, display data (e.g., display data 212) that includes the geolocation and entity status data (e.g., virtual machine status, virtual node status, etc.) associated with one or more data centers 220 and/or data centers 240. The user device 210 may display (e.g., assemble/render) the data on a display within in a window (e.g., browser window, application window) of a software application (e.g., application in FIG. 370) executing on user device 210.

[0072] The user device 210 connects to the computing systems (e.g., host machines 101) of data centers 220, 240 and the infrastructure management server 260 via communication network 140. The communication network 140 is any suitable Local Area Network (LAN) or Wide Area Network (WAN), or Internet through a variety of communication protocols, such as TCP/IP, IPX, SPX, NetBIOS, Ethernet, ARCNET, SONET, SDH, Fiber Distributed Data Interface (FDDI), RS232, cellular (e.g., Frequency Division Multiple Access (FDMA), Time Division Multiple Access (TDMA), Code Division Multiple Access (CDMA) (particularly, Evolution-Data Optimized (EVDO)), Universal Mobile Telecommunications Systems (UMTS) (particularly, Time Division Synchronous CDMA (TD-SCDMA or TDS) Wideband Code Division Multiple Access (WCDMA), Long Term Evolution (LTE), evolved Multimedia Broadcast Multicast Services (eMBMS), High-Speed Downlink Packet Access (HSDPA), and the like), Universal Terrestrial Radio Access (UTRA), Global System for Mobile Communications (GSM), Code Division Multiple Access 1.times. Radio Transmission Technology (1.times.), General Packet Radio Service (GPRS), Personal Communications Service (PCS)), Bluetooth, Wi-Fi, any suitable wired network, combination thereof, and/or the like. The communication network 140 is structured to permit the exchange of data, values, instructions, messages, commands, and the like between the user device 210, the computing systems (e.g., host machines 101) of data centers 220, 240 and the infrastructure management server 260. Although not illustrated, in many implementations, communication network 140 may comprise one or more intermediary devices, including gateways, routers, firewalls, switches, network accelerators, Wi-Fi access points or hotspots, or other devices.

[0073] The user device 210 may be configured to send a request to infrastructure management server 260 requesting the delivery of the precise (or approximate) geographic location of one or more customer-owned data centers 220 and one or more cloud provider-owned data centers of a data center cluster. The request may be in the form of a data center content item request 202. The data center content item request 202 may include any information (referred herein as "identifiable information") that allows infrastructure management server 260 to identify/discover the one or more data centers--and their respective host machines 101--of the data center cluster. For example, the user device 210 may include the MAC address for each host machine 101 of data center 220a, data center 220b, and data center 220c in the data center content item request 202 that it sends to infrastructure management server 260. Identifiable information may include any type and form of identification, including without limitation, a name of a user 201 (e.g., a name of the system administrator of a data cluster), a customer name (e.g., Company `A`) and/or identifier, a cloud provider name (e.g., Company `B`) and/or identifier, a data center name and/or identifier, a data center cluster name and/or identifier, a data center sub-cluster name and/or identifier, a virtual machine name and/or identifier, a virtual node name and/or identifier, a process name and/or identifier, a rack name and/or identifier, a server name and/or identifier, a Media Access Control (MAC) address, a network address, an Internet Protocol (IP) address (e.g., in the IPv4 form, such as 210.43.92.4, or in the IPv6 form, such as "2001:0db8:85a3:0000:0000:8a2e:0370:7334"), a device identifier, a session identifier, any data or user data collected by a collection agent (e.g., collection agent 315 in FIG. 3), and any other information associated with one or more data center clusters, data center sub-clusters, data centers 220, data centers 240, users 201, user devices 210, and/or a host machines 101. In some implementations, the user device 210 may include the "identifiable information" in the data center content item request 202 in a delimited string list format (e.g., device ID-SanDiego, Calif.). Example delimiters include commas (,), semicolon (;), quotes (",`), braces ({ }), pipes (|), slashes (A), a space ( ) a hyphen (-), an underscore (_), a hash sign (#), a percentage sign (%), a linkage sign (<=>), any single character (a-z), or any alphanumeric character string (e.g., b8).

[0074] In some implementations, the user device 210 may be configured to send a request to infrastructure management server 260 requesting the delivery of the status (herein referred to as "entity status") of one or more entities (e.g., virtual machines, virtual entities, virtual nodes, virtual cluster, hypervisor, etc.) executing on one or more host machines 101 of the customer-owned data centers 220 and one or more host machines 101 of the cloud provider-owned data centers of a data center cluster. The request may be in the form of a data center content item request 202 and may include a description and/or an identifier of the requested entity status. Example entity status may include, e.g., name; version; identifier; description; network address (e.g., assigned IP address, subnet address); guest operating system properties; CPU name, identifier, and/or usage; memory name, identifier, and/or usage; disk I/O name, identifier, and/or usage (e.g., used, capacity); memory usage, network I/O interface (NIC) name, identifier, and/or usage; NIC flaps, NIC Link Down status, IPMI IP status, launch status (e.g., running/enabled, shutoff/disabled, suspended); number of entities (e.g., running/enabled, shutoff/disabled, suspended); Input/output operations per second (IOPS), I/O bandwidth, latency, data resiliency. The entity status may also provide the status and health of the physical hardware (e.g., any component of host machine 101) on which the entities execute.

[0075] In some implementations, the user device 210 may be configured to receive a delivery request from a computing device (e.g., infrastructure management server 260, a host machine 101 from data center 220, a host machine from data center 240, or another user device 210) to deliver its stored "identifiable information" to another computing device (e.g., infrastructure management server 260, a host machine 101, data center 220, data center 240, or another user device 210). For example, user device 210 may receive a request from infrastructure management server 260 to deliver identifiable information associated with data centers 220a and data centers 240 to infrastructure management server 260. In response to the delivery request from infrastructure management server 260, a collection agent (e.g., collection agent 315 in FIG. 3) on user device 210 searches all software applications (e.g., application 370) executing on user device 210 and searches all hardware components (e.g., devices connected to network interface 305, devices connected to input/output circuit 306, cache areas of processor 303, memory 304, and internal/external hard drive storage) housed within or under the control of user device 210 for all "identifiable information" associated with the entity (e.g., data centers 220a and data centers 240) identified in the delivery request. The user device 210 then sends the requested identifiable information to infrastructure management server 260. In some implementations, the user device 210 may be configured to establish a connection with one or more host machines 101 associated with each data center 220, 240 to retrieve identifiable information. In some implementations, the user device 210 may be unable (e.g., geolocation information is unavailable) to retrieve or discover geolocation information directly from any of the data centers 220, 240 in the data center cluster or from its communication with any of their respective host machines 101. In some implementations, a delivery request from one infrastructure management server 260 for identifiable information associated with the data centers of the data center cluster prompts the user device 210 to send the requested identifiable information to all infrastructure management servers 260 managed/controlled by the cloud provider (not shown).

[0076] In some implementations, the user device 210 ("requestee") may be configured to receive a delivery request from a computing device ("requestor") to deliver the "identifiable information" of the computing device ("requestor") to another computing device ("beneficiary"). The delivery request may include any "identifiable information" stored by the requestor computing device, requestee computing device, and beneficiary computing device. For example, user device 210 may receive a request from a host machine 101 of data center 220a to deliver the geolocation of host machine 101--included in the request--to infrastructure management server 260. In response, user device 210 sends the geolocation of host machine 101 to infrastructure management server 260, which infrastructure management server 260 may store in its local storage (e.g., discovery data database 350 in FIG. 3B). In some implementations, user device 210 includes the received "identifiable information" (e.g., geolocation of host machine 101) from the requestor computing device in the data center content item request 202 that user device 210 sends to infrastructure management server 260.

[0077] In some implementations, user device 210 compresses (e.g., .bz2, .F, .gz, .lz, .lzma, .lzo, .rz, .sfark, .sz, .xz, .z, .Z) all or any portion of the identifiable information prior to transmitting it to the requested destination (e.g., infrastructure management server 260). In some implementations, user device 210 packages all or any portion of the identifiable information into an archive file (e.g., .7z, .apk, .b1, .ba, .cab, .cfs, .ear, .jar, .zip). In some implementations, user device 210 transmits all or any portion of the identifiable information to infrastructure management server 260 without the need to receive a request, such as upon establishing a connection with infrastructure management server 260. In some implementations, user device 210 periodically transmits all or any portion of the identifiable information to infrastructure management server 260, such as every minute, hourly, daily, weekly, monthly, or any other such time period.

[0078] FIG. 3A is a block diagram depicting example details of the user device 210 of the environment 200 in FIG. 2, according to an illustrative implementation. The user device 210 is shown to include various circuits and logic for implementing the activities described herein. More particularly, the user device 210 includes one or more of a processing circuit 302, a network interface 305, an input/output circuit 306, a device identification circuit 307 (shown in FIG. 3A as device ID circuit 307), session identification circuit 308 (shown in FIG. 3A as session ID circuit 308), and application 370. While various circuits, interfaces, and logic with particular functionality are shown, it should be understood that the user device 210 includes any number of circuits, interfaces, and logic for facilitating the functions described herein. For example, the activities of multiple circuits are combined as a single circuit and implemented on a same processing circuit (e.g., the processing circuit 302), as additional circuits with additional functionality are included.

[0079] The user device 210 also includes a bus (not shown), such as an address bus, a data bus, or other communication mechanism for communicating information, which interconnects subsystems and devices, such as processing circuit 302, network interface 305, input/output circuit 306, device identification circuit 307 (shown in FIG. 3A as device ID circuit 307), session identification circuit 308 (shown in FIG. 3A as session ID circuit 308), and application 370. As such, some or all of the circuits of user device 210 are coupled to network interface 305 for communicating with one or more of the infrastructure management servers 260, host machines 101 of data centers 220, host machines 101 of data centers 240, or other user devices 210.

[0080] The processing circuit 302 includes a processor 303 and memory 304. The processor 303 is implemented as a general-purpose processor, a microprocessor, an application-specific integrated circuit (ASIC), one or more field-programmable gate arrays (FPGA), a digital signal processor (DSP), a group of processing components that are distributed over various geographic locations or housed in a single location or device, or other suitable electronic processing components. In many implementations, processor 303 may be a multi-core processor or an array of processors. The memory 304 stores data and/or computer instructions/code for facilitating the various processes described herein. The memory may include tangible, non-volatile memory random-access memory (NVRAM), random access memory (RAM), read only memory (ROM), EEPROM, EPROM, Flash Memory, hard disk storage, a floppy disk, optical disk (e.g., compact disk-read only memory (CD-ROM), digital video disk (DVD)), a magnetic disk, a memory chip, or any other suitable memory from which processor 303 can read instructions. The memory 304 may include database components, object code components, script components, or any other type of information structure for supporting the various activities and information structures described herein. The memory 304 stores programming logic (e.g., instructions/code) that, when executed by the processor 303, controls the operations of the user device 210. The instructions may include code from any suitable computer programming language such as, but not limited to, C, C++, C#, Java, JavaScript, VBScript, Perl, HTML, XML, Python, TCL, Basic, AWK, and assembly.

[0081] The network interface 305 is configured for and structured to establish a communication session via the communication network 140 with the infrastructure management server 260. Accordingly, the network interface 305 is an interface such as, but not limited to, the network interface 345, as described herein.

[0082] The input/output circuit 306 is configured to receive user input from and provide information to the user 101. In this regard, the input/output circuit 306 is structured to exchange data, communications, instructions, etc. with an input/output component of the user device 210. Accordingly, input/output circuit 306 may be any electronic device that conveys data to a user 101 by generating sensory information (e.g., a visualization on a display, one or more sounds, tactile feedback, etc.) and/or converts received sensory information from a user 101 into electronic signals (e.g., a keyboard, a mouse, a pointing device, a touch screen display, a microphone, etc.). The one or more user interfaces may be internal to the housing of user device 210, such as a built-in display, touch screen, microphone, etc., or external to the housing of user device 210, such as a monitor connected to user device 210, a speaker connected to user device 210, etc., according to various implementations. In some implementations, the input/output circuit 306 includes communication circuitry for facilitating the exchange of data, values, messages, commands, instructions, and the like between the input/output device and the components of the user device 210. In some implementations, the input/output circuit 306 includes machine-readable media for facilitating the exchange of information between the input/output device 306 and the components of the user device 210. In still another implementation, the input/output circuit 306 includes any combination of hardware components (e.g., a touchscreen), communication circuitry, and machine-readable media.

[0083] The device identification circuit 307 is configured to generate and/or manage a device identifier associated with user device 210. A device identifier may include any type and form of identification, including without limitation a Media Access Control (MAC) address, text and/or numerical data string, a username, a cryptographic public key, cookies, device serial numbers, user profile data, network addresses, an Internet Protocol (IP) address, or any other such identifier that may be used to distinguish the user device 210 from other user devices 210. In some implementations, a device identifier may be associated with one or more other device identifiers (e.g., a device identifier `A` for a mobile device that is associated with a device identifier `B` for a home computer). In many implementations, to preserve privacy, the device identifier may be cryptographically generated (via any hash function algorithm including, e.g., HMAC, SHA-1, SHA-2, SHA-3, MD2, MD4, and MD5), encrypted (e.g., via triple Data Encryption Standard (DES), RSA, blowfish, two-fish, or the Advanced Encryption Standard (AES)), or otherwise obfuscated by any circuit (e.g., processing circuit 302, device identification circuit 307, etc.) of user device 210.

[0084] The session identification circuit 308 is configured to generate and/or manage a session identifier associated with communication sessions between user device 210 and any other node/entity (e.g., infrastructure management server 260, a host machine 101, and other user devices 210, etc.) on communication network 140. A session identifier may be similar to a device identifier, but generated more frequently, such as every minute, hourly, daily, upon launching/executing application 370, or any other such time period or triggering event. A session identifier may be generated by the session ID circuit 308 or received from any other node/entity (e.g., host machine 101 from data center 220a or host machine 101 from data center 240a) on communication network 140. A session identifier may be used in place of device identifiers to increase anonymity, or may be used in connection with device identifiers to distinguish interactions of one session from those of another session. In many implementations, to preserve privacy, the session identifier may be cryptographically generated (via any hash function algorithm including, e.g., HMAC, SHA-1, SHA-2, SHA-3, MD2, MD4, and MD5), encrypted (e.g., via triple Data Encryption Standard (DES), RSA, blowfish, two-fish, or the Advanced Encryption Standard (AES)), or otherwise obfuscated by any circuit (e.g., processing circuit 302, session identification circuit 308, etc.) of user device 210.

[0085] The application 370 is communicably coupled to the infrastructure management server via the communication network 140. The application 370 is structured to assemble/render display data 212 that is receives from infrastructure management server on a display screen of user device 210, or an external display screen under the control of user device 210, such to show the geolocation of each data center in the data center cluster on a geographic map. In other implementations, the geolocation data is displayed as a list of Global Positioning Satellite (GPS) coordinates and/or a list of streets, cities, states, and or countries.