Computer System And Method For Monitoring Key Performance Indicators (KPIs) Online Using Time Series Pattern Model

Ma; Jian ; et al.

U.S. patent application number 16/307620 was filed with the patent office on 2019-07-25 for computer system and method for monitoring key performance indicators (kpis) online using time series pattern model. The applicant listed for this patent is Aspen Technology, Inc.. Invention is credited to Willie K. C. Chan, Andrew L. Lui, Jian Ma, Ashok Rao, Hong Zhao.

| Application Number | 20190227504 16/307620 |

| Document ID | / |

| Family ID | 59381708 |

| Filed Date | 2019-07-25 |

View All Diagrams

| United States Patent Application | 20190227504 |

| Kind Code | A1 |

| Ma; Jian ; et al. | July 25, 2019 |

Computer System And Method For Monitoring Key Performance Indicators (KPIs) Online Using Time Series Pattern Model

Abstract

Embodiments are directed to computer methods and systems that build and deploy a pattern model to detect an operating event in an online plant process. To build the pattern model, the methods and systems define a signature of the operating event, such that the defined signature contains a time series pattern for a KPI associated with the operating event. The methods and systems deploy the pattern model to automatically monitor, during online execution of the plant process, trends in movement of the KPI as a time series. The methods and systems determine, in real-time, a distance score between a range of the monitored time series and the time series pattern contained in the defined signature. The methods and systems automatically detect the operating event in the online industrial process based on the determined distance score, and alter parameters of the process (e.g., valves, actuators, etc.) to prevent the operating event.

| Inventors: | Ma; Jian; (Lexington, MA) ; Zhao; Hong; (Sugar Land, TX) ; Rao; Ashok; (Sugar Land, TX) ; Lui; Andrew L.; (Newton, MA) ; Chan; Willie K. C.; (Framingham, MA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 59381708 | ||||||||||

| Appl. No.: | 16/307620 | ||||||||||

| Filed: | July 7, 2017 | ||||||||||

| PCT Filed: | July 7, 2017 | ||||||||||

| PCT NO: | PCT/US2017/041003 | ||||||||||

| 371 Date: | December 6, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62359575 | Jul 7, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G05B 23/0227 20130101; G05B 13/042 20130101; G05B 23/024 20130101 |

| International Class: | G05B 13/04 20060101 G05B013/04 |

Claims

1. A computer-implemented method of detecting an operating event in an industrial process, the method comprising: defining a signature of an operating event in an industrial process, the defined signature containing a time series pattern for a KPI associated with the operating event; monitoring, during online execution of the industrial process, trends in movement of the KPI, wherein the trends in movement are monitored as a time series of the KPI; determining, in real-time, a distance score between (i) a range of the monitored time series of the KPI and (ii) the time series pattern for the KPI contained in the defined signature; detecting the operating event associated with the defined signature in the executed industrial process based on the determined distance score; and in response to detecting the operating event, adjusting parameters of the industrial process to prevent the detected operating event.

2. The method of claim 1, wherein defining the signature further comprises: loading historical plant data via data server from plant historian database; identifying time series patterns of the KPI in the loaded historical plant data, the identified time series patterns being associated with the operating event, wherein identifying the time series patterns in the loaded historical plant data by at least one of: automatic pattern search and identification techniques, application of operation logs, and review by a domain expert; selecting a time series range for the signature; and configuring the signature to contain an entire identified time series pattern that corresponds to the selected time series range.

3. The method of claim 2, wherein the automatic pattern search and identification techniques is a supervised pattern discovery technique comprising: defining one or more pattern shapes representing pattern characteristics of an abnormal operating condition, the defined pattern shapes being stored in a shape library; selecting a pattern shape from the shape library for the operating event, the selecting indicating inclusion or exclusion of the selected pattern shape when identifying time series patterns; determining a distance profile between (i) the selected pattern shape and (ii) a time series of the KPI from the loaded historical plant data, wherein generating a search profile based on the determined distance profile; and using the generated search profile, determining one or more pattern clusters that contain the identified time series patterns associated with the operating event.

4. The method of claim 1, wherein calculating the distance score in real-time for the KPI further comprises: performing Z-normalization on the monitored time series range and the time series pattern; applying a proprietary amplitude filter to the Z-normalized monitored time series range; calculating an Euclidean distance with dynamic time warping (DTW) between the Z-normalized monitored time series range and the Z-normalized time series pattern; calculating a zero-line Euclidean distance between a vector of zeros and the Z-normalized time series pattern; and determining the distance score based on the calculated Euclidean distance with dynamic time warping and the calculated zero-line Euclidean distance, the distance score indicating the probability that the monitored time series range matches the time series pattern.

5. The method of claim 4, further comprising: applying filters to eliminate certain variations between the monitored time series range and the time series pattern when determining the distance score.

6. The method of claim 4, wherein the distance score is a value between 0 and 1, and wherein 1 indicates a highest probability of occurrence of the operating event and 0 indicates a lowest probability of occurrence of the operating event.

7. The method of claim 1, further comprising: performing the defining, monitoring, calculating, and detecting multiple signatures of the KPI in parallel; combining the distance score calculated for each of the multiple defined signatures of the KPI into a combined distance score for the KPI; and detecting the operating event based on the combined distance score for the KPI.

8. The method of claim 7, wherein the time series patterns contained in the multiple defined signatures vary according to at least one of: amplitude, offset, shape, and time.

9. The method of claim 7, further comprising configuring a signature library for storing the multiple defined signatures.

10. The method of claim 1, further comprising: performing the defining, monitoring, calculating, and detecting for multiple KPIs in parallel; defining a weight coefficient corresponding to each of the multiple KPIs; weighting the distance score calculated for a respective KPI based on the corresponding weight coefficient; combining the weighted distance score for each of the multiple KPIs into a total distance score; and detecting the operating event based on the total distance score.

11. A computer system for detecting an operating event in an industrial process, the system comprising: a processor; and a memory with computer code instructions stored thereon, the memory operatively coupled to the processor such that, when executed by the processor, the computer code instructions cause the computer system to implement: a modeler engine configured to define a signature of an operating event in an industrial process, the defined signature containing a time series pattern for a KPI associated with the operating event; an analysis engine configured to: monitor, during online execution of the industrial process, trends in movement of the KPI, wherein the trends in movement are monitored as a time series of the KPI; determine, in real-time, a distance score between (i) a range of the monitored time series of the KPI and (ii) the time series pattern for the KPI contained in the defined signature; and detect the operating event associated with the defined signature in the executed industrial process based on the determined distance score; and a process control system configured to, in response to receiving information related to the detected operating event, adjust parameters of the industrial process to prevent the detected operating event.

12. The system of claim 11, wherein the modeler engine is further configured to: load historical plant data from data server; identify time series patterns of the KPI in the loaded historical plant data, the identified time series patterns being associated with the operating event, wherein identifying the time series patterns in the loaded historical plant data by at least one of: automatic pattern search and identification techniques, application of operation logs, and review by a domain expert; select a time series range for the signature; and configure the signature to contain an entire identified time series pattern that corresponds to the selected time series range.

13. The method of claim 12, wherein the automatic pattern search and identification techniques is a supervised pattern discovery technique comprising: defining one or more pattern shapes representing pattern characteristics of an abnormal operating condition, the defined pattern shapes being stored in a shape library; selecting a pattern shape from the shape library for the operating event, the selecting indicating inclusion or exclusion of the selected pattern shape when identifying time series patterns; determining a distance profile between (i) the selected pattern shape and (ii) a time series of the KPI from the loaded historical plant data, wherein generating a search profile based on the determined distance profile; and using the generated search profile, determining one or more pattern clusters that contain the identified time series patterns associated with the operating event.

14. The system of claim 11, wherein the analysis engine is configured to calculate the distance score in real-time for the KPI by: performing Z-normalization on the monitored time series range and the time series pattern; calculating an Euclidean distance with DTW between the Z-normalized monitored time series range and the Z-normalized time series pattern; calculating a zero-line Euclidean distance between a vector of zeros and the Z-normalized time series pattern; and determining the distance score based on the calculated Euclidean distance and the calculated zero-line Euclidean distance, the distance score indicating the probability that the monitored time series range matches the time series pattern.

15. The system of claim 14, wherein the analysis engine is further configured to: apply filters to eliminate certain variations between the monitored time series range and the time series pattern when determining the distance score.

16. The system of claim 14, wherein the distance score is a value between 0 and 1, and wherein 1 indicates a highest probability of occurrence of the operating event and 0 indicates a lowest probability of occurrence of the operating event.

17. The system of claim 16, wherein the analysis engine is further configured to: apply filters to eliminate certain variations between the monitored time series and the time series patterns when determining the distance score.

18. The system of claim 16, wherein the distance score is a value between zero to one, and wherein one indicates a highest probability of occurrence of the defined signature and zero indicates a lowest probability of occurrence of the defined signature.

19. The system of claim 11, wherein: the modeler engine is further configured to: perform the defining for multiple signatures of the operating event in parallel; and the analysis engine is further configured to: perform the defining, monitoring, calculating, and detecting for multiple signatures of the KPI in parallel; combine the distance score calculated for each of the multiple defined signatures of the KPI into a combined distance score for the KPI; and detect the operating event based on the combined distance score for the KPI.

20. The system of claim 19, wherein the time series patterns contained in the multiple defined signatures vary according to at least one of: amplitude, offset, shape, and time.

21. The system of claim 19, wherein the modeler engine is further configured to create a signature library for storing the multiple defined signatures.

22. The system of claim 11, wherein: the modeler engine is further configured to: perform the defining for multiple KPIs in parallel; and the analysis engine is further configured to: perform the monitoring, calculating, and detecting for multiple KPIs in parallel; define a weight coefficient corresponding to each of the multiple KPIs; weight the distance score calculated for a respective KPI based on the corresponding weight coefficient; combine the weighted distance score for each of the multiple KPIs into a total distance score; and detect the operating event based on the total distance score.

23. A computer program product comprising: a non-transitory computer-readable storage medium having code instructions stored thereon, the storage medium operatively coupled to a processor, such that, when executed by the processor for detecting an operating event in an industrial process, the computer code instructions cause the processor to: define a signature of an operating event in an industrial process, the defined signature containing a time series pattern for a KPI associated with the operating event; monitor, during online execution of the industrial process, trends in movement of the KPI, wherein the trends in movement are monitored as a time series of the KPI; determine, in real-time, a distance score between (i) a range of the monitored time series of the KPI and (ii) the time series pattern for the KPI contained in the defined signature; detect the operating event associated with the defined signature in the executed industrial process based on the determined distance score; and in response to detecting the operating event, adjust parameters of the industrial process to prevent the detected operating event.

Description

RELATED APPLICATION

[0001] This application claims the benefit of U.S. Provisional Application No. 62/359,575, filed on Jul. 7, 2016, which is herein incorporated by reference in its entirety.

BACKGROUND

[0002] In the process industry, sustaining and maintaining process performance has become an important component in advanced process control and asset optimization of an industrial or chemical plant. The sustaining and maintaining of process performance may provide an extended period of efficient and safe operation and reduced maintenance costs at the plant. To sustain and maintain performance of a plant process, a set of Key Performance Indicators (KPIs) may be monitored to detect issues and inefficiencies in the plant process. In particular, monitoring trends in the movement (e.g., as a time series) of the KPIs provide insight into the plant process operation and indicate potential incoming undesirable events (e.g., faults) affecting performance of the plant process. However, traditional plant models are not suitable for such a task, e.g., monitoring time series of KPIs to detect and diagnose these undesirable operating events. The unsuitability of these traditional plant models is due, in part, to the nature of these undesirable events often occurring randomly when the plant process operates in an extreme condition that is out of coverage of the traditional plant model and having a varied root-cause at each random occurrence.

[0003] First, a traditional first-principle model is too complicated and expensive to develop for the detailed dynamic predictions required from monitoring KPI time series in an online plant process. Further, a first-principle model is typically calibrated with normal operational conditions, whereas the undesirable (extreme) events are often caused by extreme operational conditions not included in this model calibrated only by normal operational conditions. Second, building a traditional empirical model requires repeatable process event data to train and validate the model (e.g., correlation and regression models). However, undesirable event data is rare and the time series readings (e.g., amplitude, shape, and such) of a KPI from the same plant process producing the same product also often vary over time. Thus, repeatable process event data for a time series of a KPI is unavailable to sufficiently train and validate the empirical model. Third, a statistical model (univariate or multivariate) is only capable of detecting anomalous versus normal condition (e.g., indicate an anomaly occurred), but has limited capabilities in KPI time series monitoring and fault identification (e.g., reporting what occurred). Further, the results presented from a statistical model require expertise knowledge in statistics to understand and explain, and, thus, is often not intuitive to plant operators and other plant personnel.

[0004] Moreover, traditional multivariate statistical approaches for time series may use techniques, such as Principal Component Analysis (PCA) and Partial Least Squares Regression (PLSR), nonlinear neural network, and the like, in implementing monitoring and detecting undesirable operating events (faults). These traditional multivariate statistical approaches require periodic recalibrating and retraining to prevent the model from classifying a new normal operating state as an outlier (and issue false alerts). Further, Fuzzy-reasoning approaches, which have also been employed for fault detection, require a complicated event signature reasoning system for KPI trend processing. The complicated event signature reasoning requires disassembling time series patterns into primitives, rebuilding the primitives, applying the similarity matrix, and such.

[0005] Recently, machine learning in time series and shape data mining approaches (techniques) are advancing rapidly (e.g., Thanawin Rakthanmanon et. al. "Searching and Mining Trillions of Time Series Subsequences under Dynamic Time Warping," the 18.sup.th ACM SIGKDD Conference on Knowledge discovery and Data Mining, Aug. 12-16, 2012). Such typical analysis techniques and their applications include Novelty detection, Motif discovery, Clustering, Classification, Indexing and Visualizing Massive Datasets, and the like. These new techniques have been applied to image recognition, medical data analysis, symbolic aggregate approximation, visual comparison in DNA species, and such. In the process industry, however, there are no known suitable techniques applied and successful applications reported for time series and shape data mining. There are several difficulties in applying new techniques, as mentioned above, in process industry. First, general time series data analysis algorithms alone are not suitable to process applications without problem-specific system and methods, such as data collection and preparation, pattern model development and management, and the like. Second, for plant operator and engineers, the model and results need to be consistent with their domain knowledge, in many cases, the system should be able to accept their domain knowledge into the modeling process, e.g., allow a user to be involved in the modeling and monitoring process. Third, the system and methods must allow users to develop one or more models and to run them in an online environment in daily (e.g., 24/7) non-stop operations. All the arts references mentioned above are unable to meet these requirements for the process industry.

SUMMARY OF THE INVENTION

[0006] Embodiments of the present invention are directed to a modeling approach suitable for detecting and predicting an operating event in an online plant process. These embodiments do not require the building of a complicated first-principle, empirical, or statistical model, or the costly preparation of data sets for training/validating these models. Rather, these embodiments build event pattern models that include a set of event signatures of a time series for a KPI associated with the operating event. Each event signature contains a whole time series pattern for the KPI that indicates the operating event in the plant process. The embodiment may configure the event signatures for the KPI that contain time series patterns of varying amplitude, offset, spread, shape, time range, and such.

[0007] These embodiments enable process operators, engineers, and system to define an event signature of an event pattern model in several ways, e.g., (i) use a known time series event-pattern from past events; (ii) use a signature pattern taken from a sister operation unit or plant; (iii) import a time series pattern from other resources; and (iv) select a pattern from an event signature library. In the case where no signature pattern is available or available signature patterns do not meet the user's criteria, the user may search for events patterns in the plant historian by using either: (i) an unsupervised (unguided) pattern discovery, (ii) supervised (guided) pattern discovery, and (iii) combined iterative pattern discovery. The embodiments further enable the user to construct a library of the built event pattern models.

[0008] As described above, the search and location of event patterns for event pattern models can be performed according to users' specifications on pattern characteristics of a time series or in an automated way by using a pattern discovery approach. In an unsupervised (e.g., "data-only") pattern discovery approach, the embodiments apply a pattern discovery algorithm to a given-length (finite) of time series for a KPI (e.g., KPI measurements) and locate clusters that have similarities at a given length in a time widow of the time series. In a supervised (user-guided) pattern discovery approach, the embodiments further combine user selected primitive shapes with the pattern discovery algorithm to speed up the pattern search process, reduce the number of non-event patterns, and result in the most meaningful pattern models for online deployment. The supervised (user-guided) pattern discovery algorithm enables a user to apply a default primitive shape library (e.g., bell-shape peak, rising, sinking, and the like), or dynamically (on the fly) draw a shape on an ad hoc drawing pane, or such. The supervised (user-guided) pattern discovery approach can influence what pattern characteristics are desired to be included or excluded in pattern clusters during the pattern discovery process. The supervised pattern discovery approach reduces time taken to build desired pattern clusters by trimming off/skipping unwanted pattern clusters before building pattern clusters.

[0009] In an online environment, these embodiments further monitor trends in movement (time series) of a KPI that are associated with an operating event during execution of the online plant process using the library of built event pattern models. The embodiments deploy one or more pattern models from the library, in iterations, to the online execution of the plant process. In deploying an iteration of a pattern model, these embodiments apply the corresponding set of event signatures to the trends in KPI movement (time series) in the online plant process. For each applied event signature, the embodiments compare the KPI time series pattern contained in the event signature, as a whole, to a range of the online KPI time series to determine similarity. The embodiments account for variations in amplitude, offset, shape, and time in determining the similarity between the online KPI time series range and time series pattern model. The embodiments calculate a distance (similarity) score based on the comparison, which quantifies the likelihood that the online KPI time series indicates the occurrence of the operating event in the online plant process. To do so, some embodiments provide a new distance criterion called Aspen Tech Distance (ATD) that measures similarities of a given length of a time series against a pattern library model. The ATD has similar mathematical properties to the conventional Euclidian Distance (ED), but it has several advantages over ED for process industrial applications.

[0010] The pattern models of these embodiments are formulated such that they can be used in monitoring the varied, daily operations of a subject plant. The pattern models may also be used in monitoring a related (sister) plant with similar operations under a similar or different scale. Further, the pattern models are formulated to be adaptable to changing operation conditions by simply replacing the event signatures as the process operation of the subject plant is changed, such as changed to produce a different product. The pattern models are also formulated such that they apply time series patterns as a whole, rather than applying disassembled time series primitives using a similarity matrix. The pattern models are further formulated such that they are independent of the data sampling rates used to measure the KPI time series pattern of an event signature and the KPI time series of an online plant process. The pattern models also enable the embodiments to present results that are intuitive to plant operators and other plant personnel, such as graphics and the distance score in an understandable full range (e.g., values between 0-1).

[0011] Example embodiments of the present invention are directed to computer-implemented methods, computer systems, and computer program products that detect an operating event in an industrial process. The operating event may be an undesirable or abnormal operating event occurring in the industrial process. The computer systems comprise a processor and a memory with computer code instructions stored thereon. The memory is operatively coupled to the processor such that, when executed by the processor, the computer code instructions cause the computer system to implement a modeler engine and an analysis engine. The computer program products comprises a non-transitory computer-readable storage medium having code instructions stored thereon. The storage medium is operatively coupled to a processor, such that, when executed by the processor, the computer code instructions cause the processor to detect an operating event in an industrial process.

[0012] In example embodiments, the computer methods, systems, and program products comprise at least a data server, a historian database, a web user interface (UI) and an application server. The embodiments reside in an application server, which may load plant historical data via the data server from a historian database, the historical data may include data collected from sensors by the Instrumentation or calculated KPIs. The embodiments may also interact with users through the web UI, e.g., accept users' specifications about a pattern signature or selection of an existing pattern model, etc. Then the embodiments may execute tasks such as pattern discovery, pattern model building, and online KPI time series monitoring etc. in the application server and send the results to Web UI for display.

[0013] The computer methods, systems (via the modeler engine), and program products define a signature of an operating event in an industrial process. The computer methods, systems, and program products define the signature to contain a time series pattern for a KPI associated with the operating event. In some embodiments, the computer methods, systems, and program products define the signature by loading historical plant data from computer memory (e.g., plant historian database via a data server). The computer methods, systems, and program products identify time series patterns of the KPI in the loaded historical plant data that are associated with the operating event. The computer methods, systems, and program products identify the time series patterns in the loaded historical plant data by at least one of: automatic pattern search and identification methods, application of operation logs, and review by a domain expert. The computer methods, systems, and program products then select a time series range for the signature and configure the signature to contain an identified time series pattern that corresponds to the time series range.

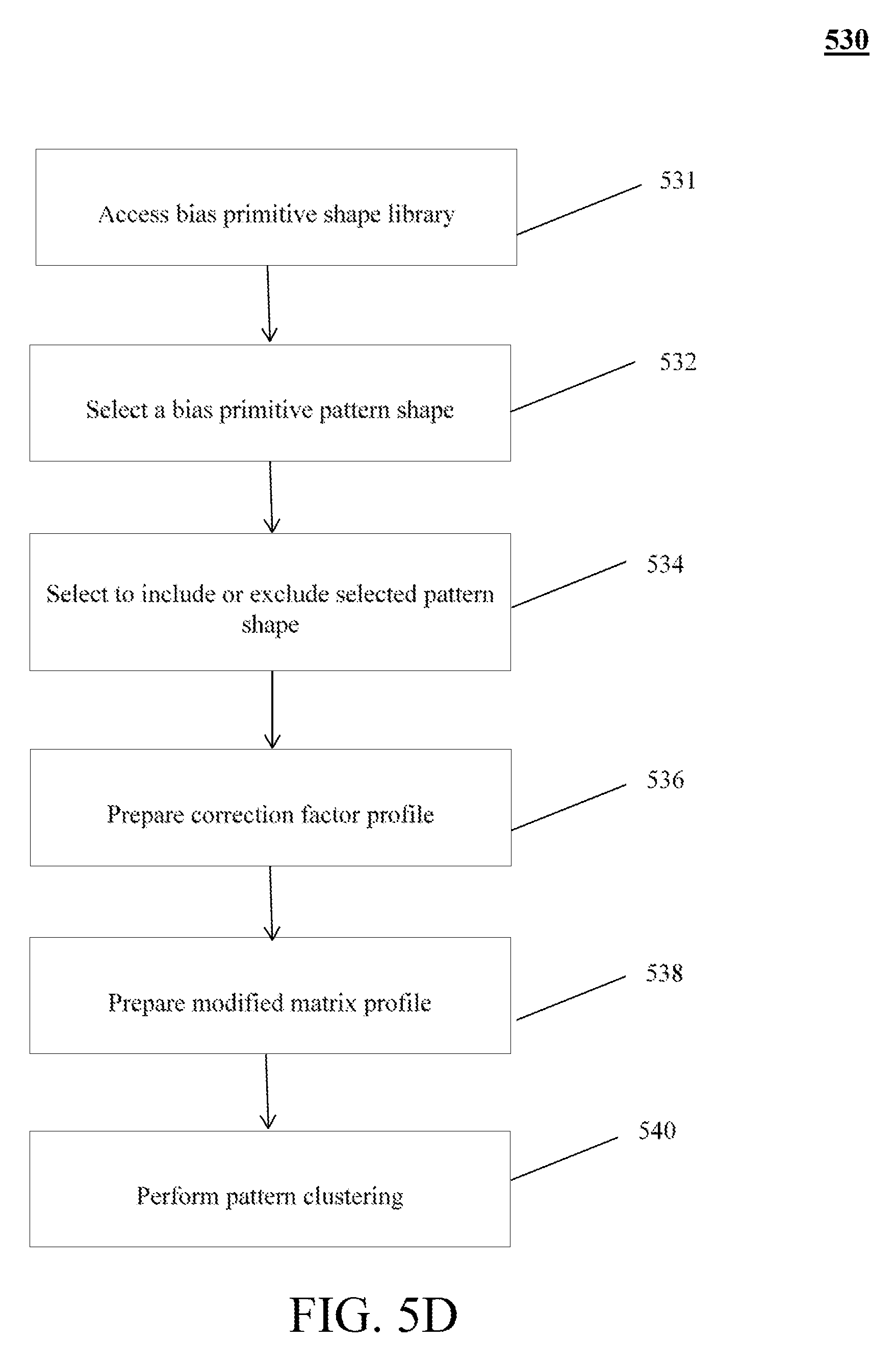

[0014] In some embodiments, the automatic pattern search and identification methods include a supervised pattern discovery method. In these embodiments, the computer methods, systems, and program products execute the supervised pattern discovery method. The supervised pattern discovery method defines one or more pattern shapes representing pattern characteristics of an abnormal operating condition. The defined pattern shapes being stored in a shape library. The supervised pattern discovery method selects a pattern shape from the shape library for the operating event. As part of the selecting, the supervised pattern discovery method indicates inclusion or exclusion of the selected pattern shape when identifying time series patterns. The supervised pattern discovery method determines a distance profile between (i) the selected pattern shape and (ii) a time series of the KPI from the loaded historical plant data, and generates a search profile based on the determined distance profile. The supervised pattern discovery method, using the generated search profile, determines one or more pattern clusters that contain the identified time series patterns associated with the operating event.

[0015] The computer methods, systems (via the analysis engine), and program products then monitor, during online execution of the industrial process, trends in movement of the KPI as a time series of the KPI. Based on the monitoring, the computer methods, systems, and program products determine, in real-time, a distance score between (i) a range of the monitored time series of the KPI and (ii) the time series pattern for the KPI contained in the defined signature.

[0016] In some embodiments, the computer methods, systems, and program products calculate the distance score in real-time for the KPI by performing Z-normalization on both the monitored time series range and the time series pattern. The computer methods, systems, and program products then calculate an ATD distance between the Z-normalized monitored time series range and the Z-normalized time series pattern by allowing some degree of dynamic time warping (DTW). The computer methods, systems, and program products also calculate a zero-line Euclidean distance between a vector of zeros (i.e., zero-line) and the Z-normalized time series pattern. The computer methods, systems, and program products determine the distance score based on the calculated Euclidean distance with DTW and the calculated zero-line ATD distance. The determined distance score indicates the probability that the monitored time series range matches the time series pattern.

[0017] In some embodiments, the computer methods, systems, and program products also apply filters to eliminate certain variations between the monitored time series range and the time series pattern when determining the distance score. In example embodiments, the determined distance score is re-scaled to a value between 0 and 1, such that 1 indicates a highest probability of occurrence of the operating event and 0 indicates a lowest probability of occurrence of the operating event.

[0018] The computer methods, systems (via analysis engine), and program products detect the operating event associated with the defined signature in the executed industrial process based on the calculated distance score. The computer methods, systems, and program products, in response to detecting the operating event, may send event-alerts to operations that advise the operators to adjust parameters of the industrial process to prevent an undesirable operating event. In some embodiments, the computer methods, systems, and program products transmit the information related to the detected operating event to a process control system, which may adjust the parameters of the industrial process automatically to prevent such undesirable operating event in an automated manner (e.g., free of human intervention).

[0019] In some embodiments, the computer methods, systems, and program products perform the defining, monitoring, calculating, and detecting operation-events for multiple signatures for a KPI in parallel. In some of these embodiments, the time series patterns contained in the multiple defined signatures vary according to at least one of: amplitude, offset, shape, and time. The computer methods, systems, and program products then combine the distance score calculated for each of the multiple defined signatures of the KPI into a combined distance score. In these embodiments, the computer methods, systems, and program products detect the operating event based on the combined distance score for the KPI. In some of these embodiments, the computer methods, systems, and program products configure a signature library for storing the multiple defined signatures for future online monitoring.

[0020] In some embodiments, the computer methods, systems, and program products further perform the defining, monitoring, calculating, and detecting operations for multiple KPIs in parallel. The computer methods, systems, and program products define a set of weight coefficients corresponding to each of the multiple KPIs and weight the distance score calculated for a respective KPI based on the corresponding weight coefficient. The computer methods, systems, and program products combine the weighted distance score for each of the multiple KPIs into a total distance score. In these embodiments, the computer methods, systems, and program products detect the operating event based on the total distance score. In the foregoing ways, embodiments provide computer-based automated improvements to process monitoring technology and sustained process performance.

BRIEF DESCRIPTION OF THE DRAWINGS

[0021] The foregoing will be apparent from the following more particular description of example embodiments of the invention, as illustrated in the accompanying drawings in which like reference characters refer to the same parts throughout the different views. The drawings are not necessarily to scale, emphasis instead being placed upon illustrating embodiments of the present invention.

[0022] FIG. 1 is a flow diagram depicting an example method of building a pattern model in embodiments of the present invention.

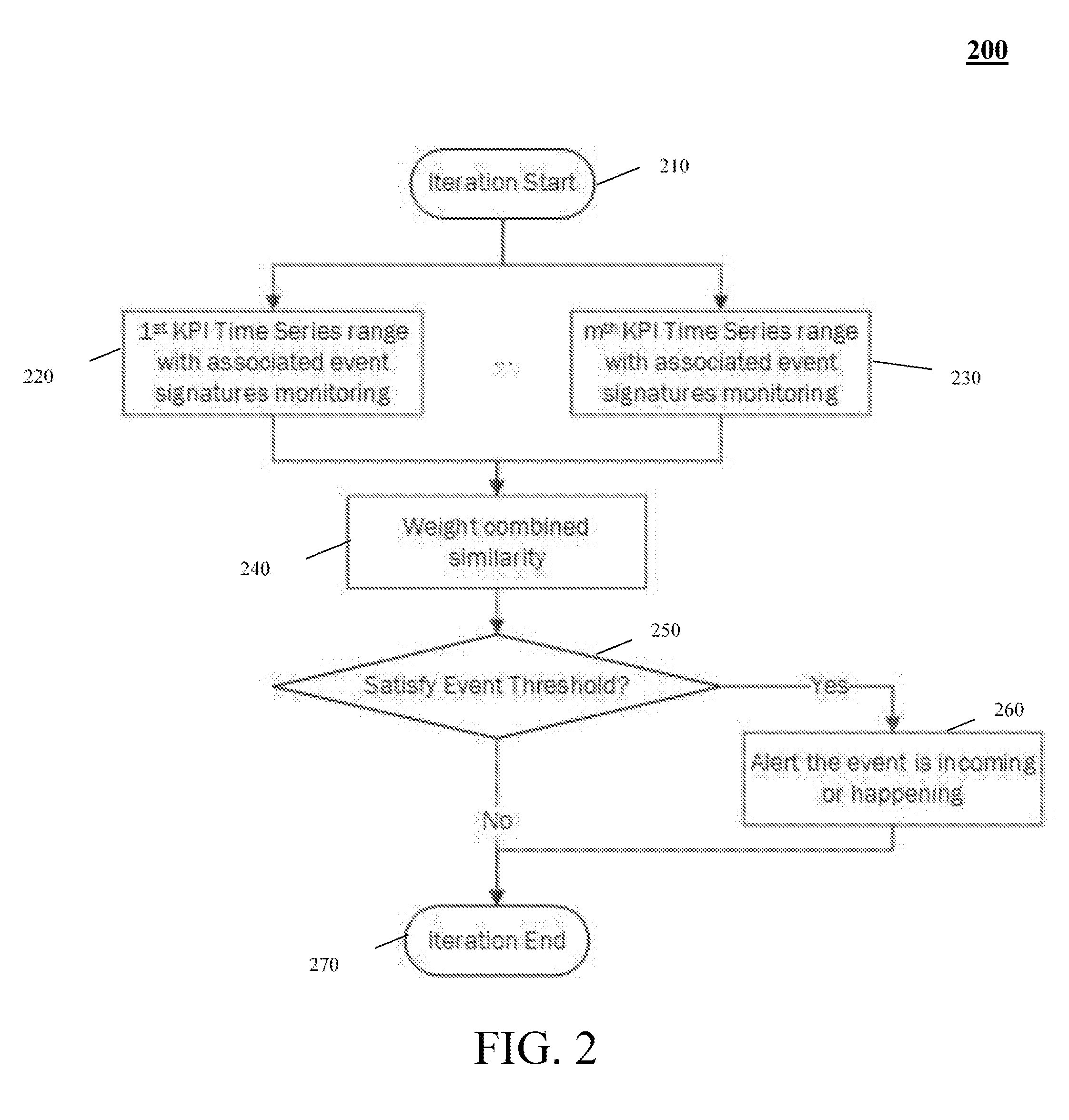

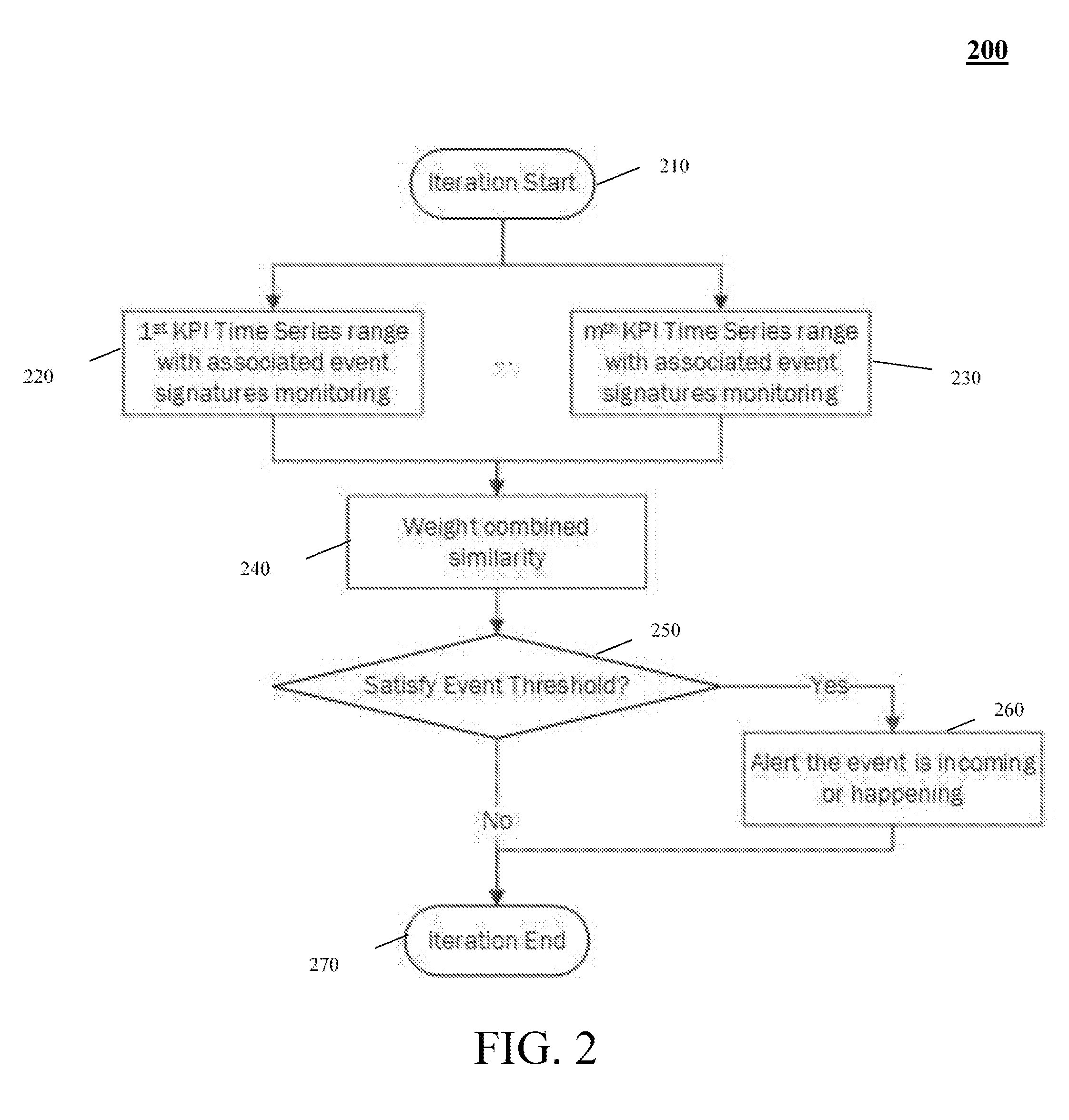

[0023] FIG. 2 is a flow diagram depicting an example method of online monitoring and iteration of time series patterns in embodiments of the present invention.

[0024] FIG. 3 is a flow diagram depicting an example method of deploying pattern models of FIG. 1 in the online monitoring of FIG. 2 in embodiments of the present invention.

[0025] FIG. 4 is a block diagram depicting of an example computer system for deploying a pattern model using the methods of FIGS. 1-3 in embodiments of the present invention.

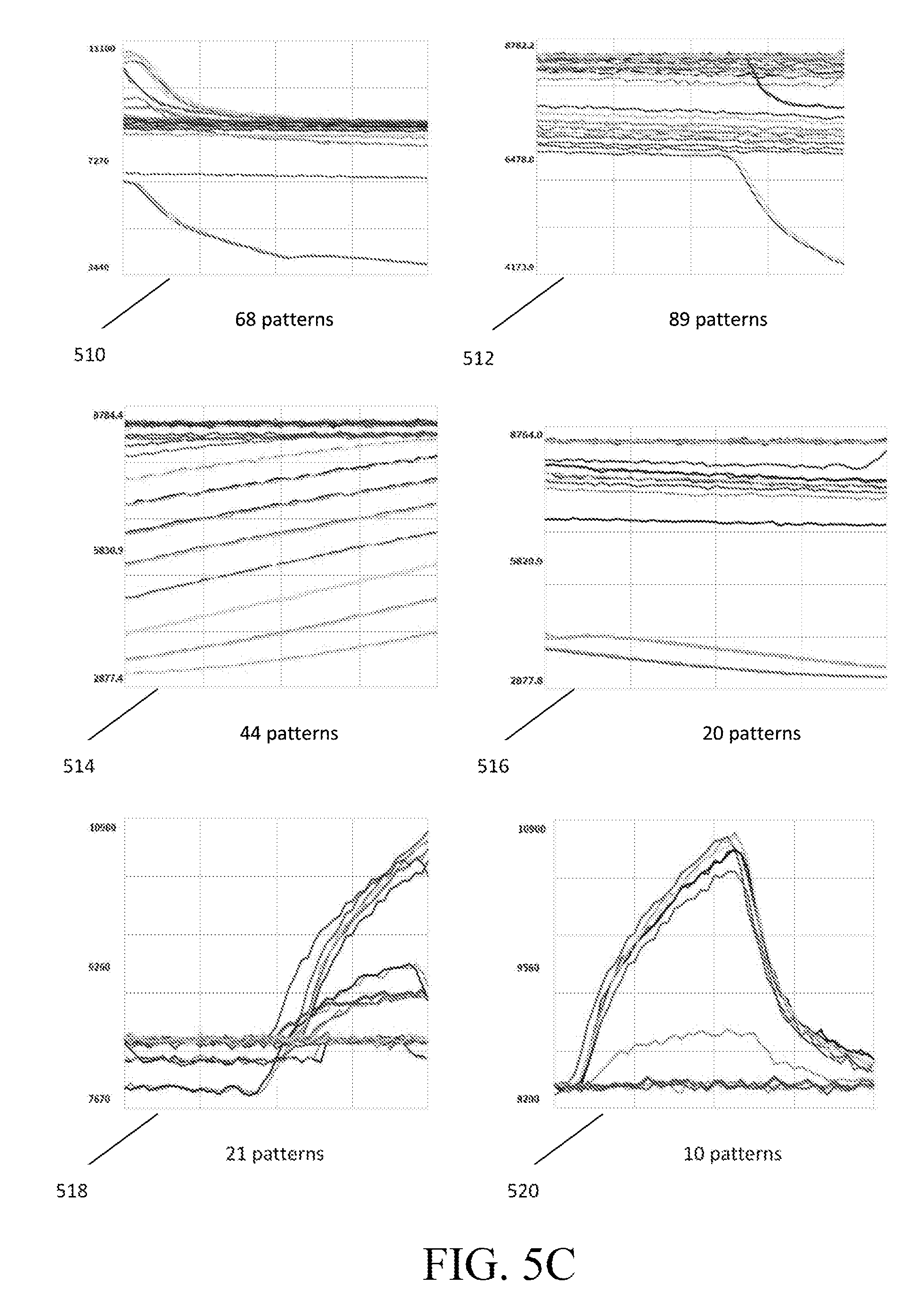

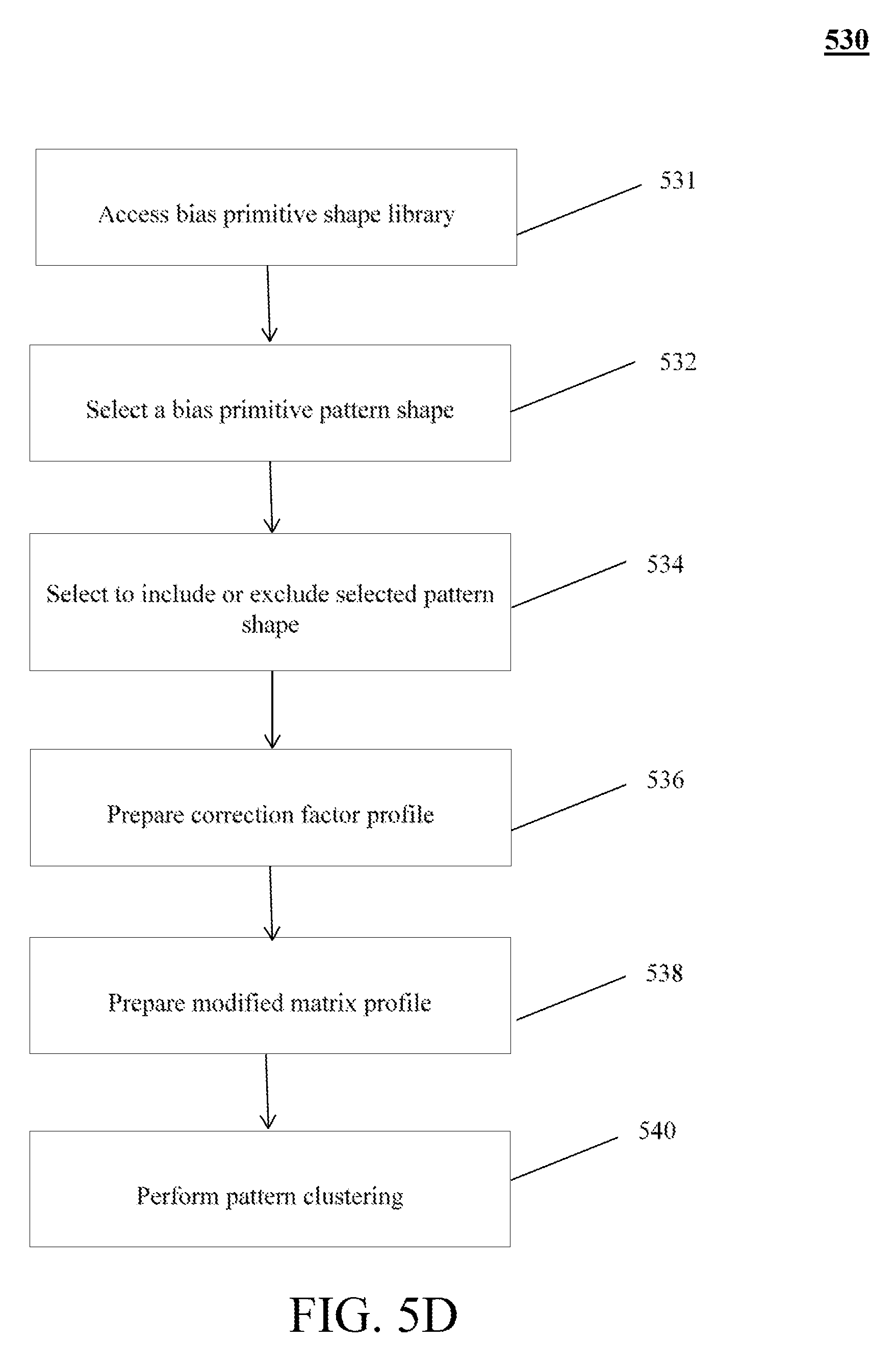

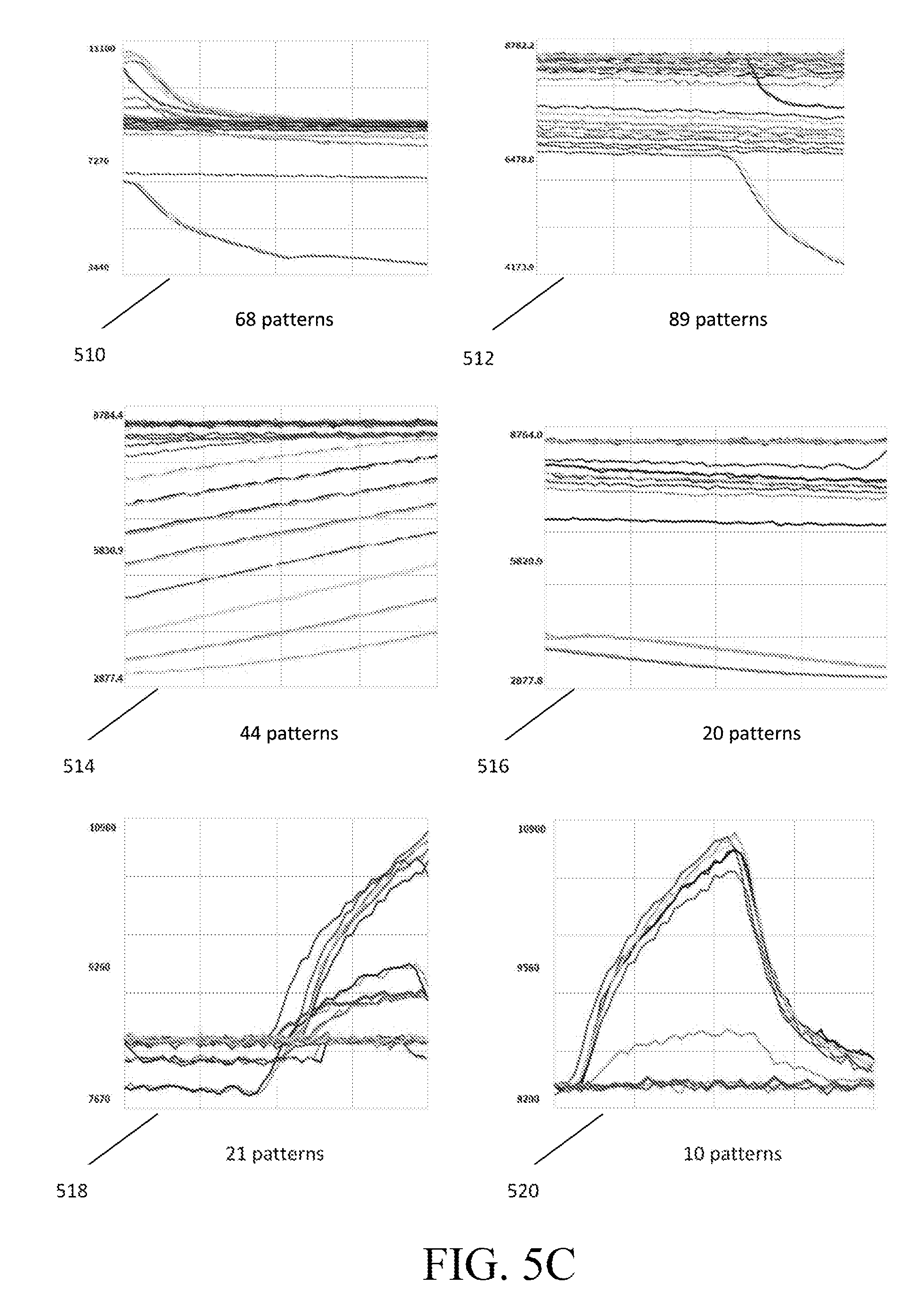

[0026] FIGS. 5A-5E are diagrams depicting an example guided pattern discovery technique in embodiments of the present invention.

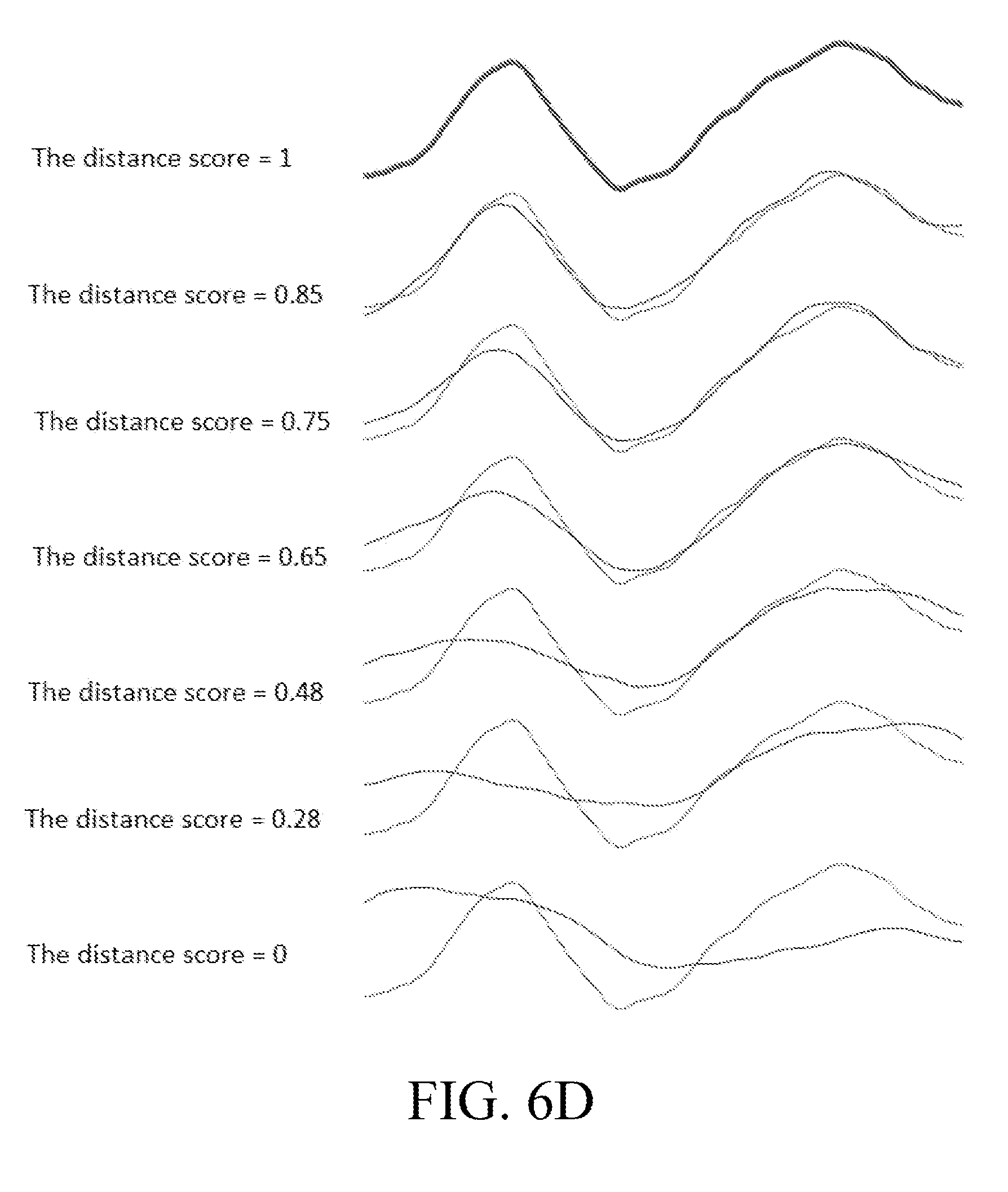

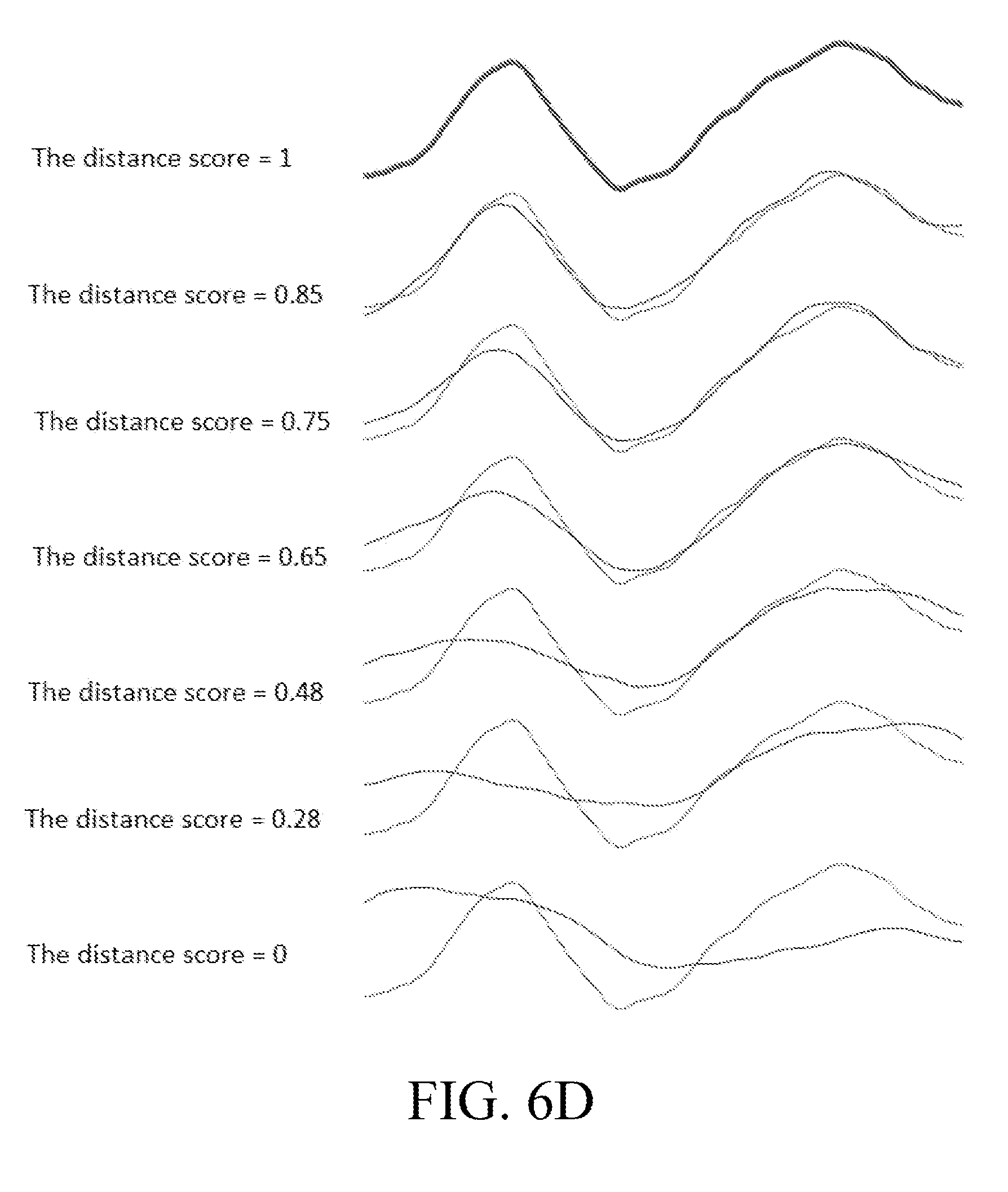

[0027] FIGS. 6A-6D are graphs depicting example distance computations used in embodiments of the present invention.

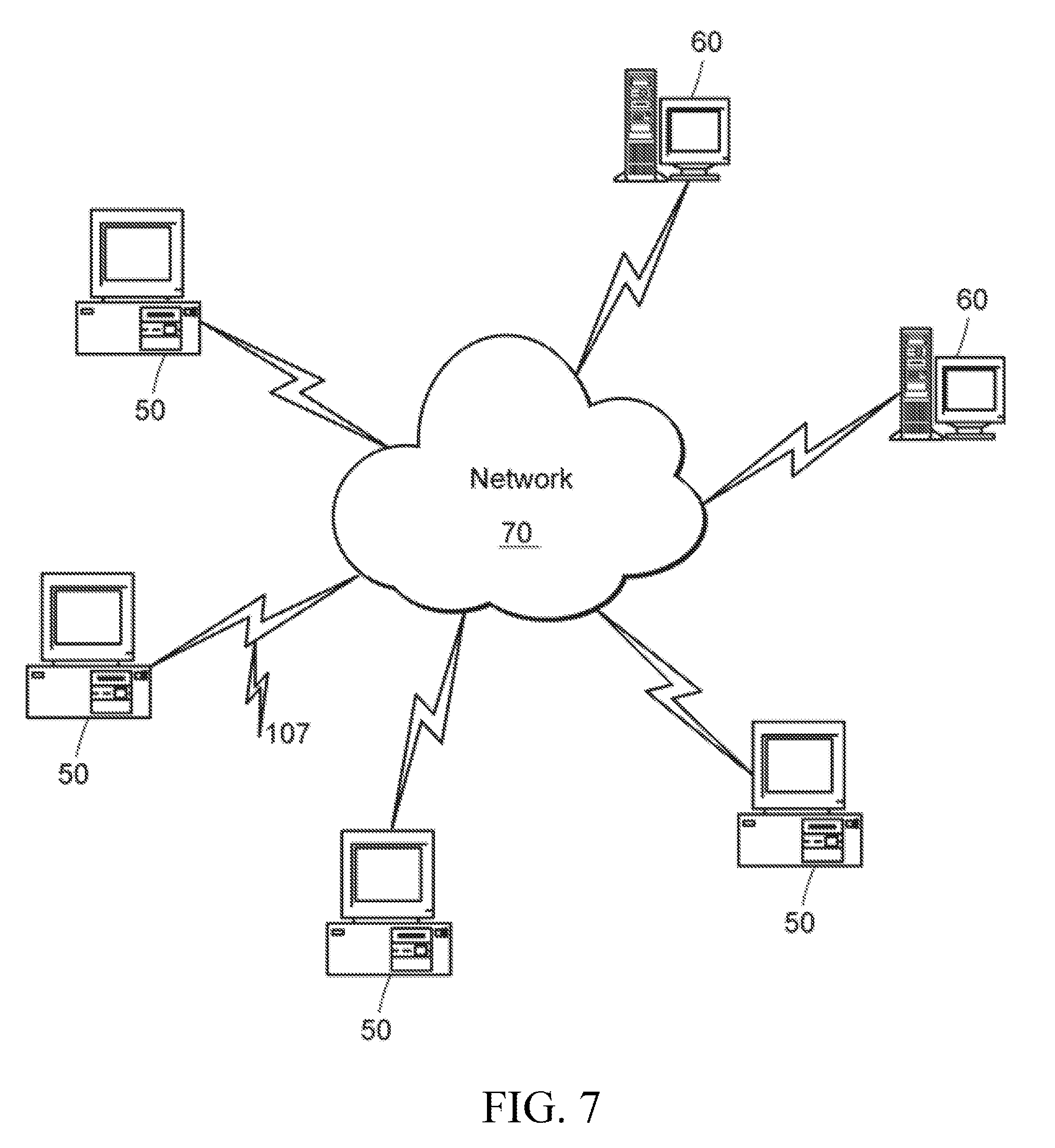

[0028] FIG. 7 is a schematic view of an example computer network in which embodiments of the present invention may be implemented.

[0029] FIG. 8 is a block diagram of an example computer node in the computer network of FIG. 7.

DETAILED DESCRIPTION OF THE INVENTION

[0030] A description of example embodiments of the invention follows.

Overview

[0031] Embodiments of the present invention provide a modeling approach (tools) for sustaining and maintaining performance of a plant process. Random abnormal (or otherwise undesirable) operating events, based on various root-causes, occur in an online plant process, which adversely affect the performance of the online plant process. These embodiments build and deploy a pattern model used to monitor trends in the movement (time series) of KPIs to detect a certain undesirable operating event in an online plant process. The deployed pattern models enable plant personnel (e.g., plant operators, maintenance personnel, and such) and plant operating systems to quickly locate, identify, monitor, and detect/predict the undesirable operating events (or any other plant event without limitation) and then take actions to prevent such undesirable events happing.

[0032] Previous modeling approaches are not suitable for monitoring and detecting trends in the movement (time series) of KPIs associated with an undesirable operating event in an online plant process. First, a traditional first-principle model is too complicated and expensive to develop and calibrate for the detailed dynamic predictions required to monitor readings of KPI time series in an online plant process. Further, a first-principle model is typically calibrated with normal operational conditions, whereas the undesirable events are often caused by extreme operational conditions not included in a model calibrated only by normal operational conditions.

[0033] Second, a traditional empirical model requires repeatable process event data to train and validate the model. However, the time series readings (e.g., amplitude, shape, and such) of a KPI from the same subject plant, producing the same product, may vary over time. The variance may be due to the subject plant operating, for producing the same product, at varying scales during different times based on regional/global market demand and supply. For example, Plant A may produce Ethylene at 100 ton/hour in January, and produce Ethylene at 150 ton/hour in February. Further, other (sister) plants producing similar products often operate at a completely different process scale from the subject plant all the time. For example, Plant B (subject plant) may produce Ethylene at 30 ton/hour, while plant C (sister plant) produces Ethylene at 100 ton/hour. As, the time series for KPIs may differ significantly between time periods and plants, repeatable process event data for a time series of a KPI is likely unavailable to sufficiently train and validate an empirical model.

[0034] Third, a statistical model (univariate or multivariate) has limited capabilities in KPI monitoring and event (fault) detection. In statistical models, preparing good training data sets and validation data sets is time consuming, but crucial for its success. Statistics models require frequent recalibration, as the operation conditions outside the statistic norms might be treated as outliers and trigger false alarms. For example, without recalibration, a new normal operating condition of a plant process may be incorrectly classified as an outlier, resulting in false event (fault) detection in the plant process. Further, statistical models built from a subject plant (plant A) are not likely very helpful to a sister plant (plant B), unless products/operation conditions at the two plants are nearly identical. Moreover, statistical models are complicated and costly to build and deploy in an online process, and only function well within the training/validation conditions of the plant for which they are built. In addition, the results presented from a statistical model require expertise knowledge in statistics to understand and explain, and, thus, is often not intuitive to plant operators and other plant personnel.

[0035] Recently, machine learning in time series and shape data mining techniques are advancing rapidly (see, e.g., Thanawin Rakthanmanon et. al. "Searching and Mining Trillions of Time Series Subsequences under Dynamic Time Warping," the 18.sup.th ACM SIGKDD Conference on Knowledge discovery and Data Mining, Aug. 12-16, 2012; Eamonn Keogh, "Machine Learning in Time Series Databases (and Everything Is a Time Series!)," Tutorial in AAAI 2011, 7th Aug. 2011.), herein incorporated by reference in its entirety, which describes typical new analysis techniques that include Dynamic Time Warping (DTW), Euclidean Distance (ED) in Novelty detection, Motif discovery, Clustering, Classification, Indexing and Visualizing Massive Datasets, and such. These new analysis techniques and their applications have been applied to images recognition, medical data analysis, symbolic aggregate approximation, and visual comparison in DNA species, and such. In process industry, however, there are neither known suitable techniques applied and nor successful applications reported for time series and shape data mining. There are several difficulties in applying new techniques, as mentioned above, in the process industry. First, general time series data analysis algorithms alone are not suitable to process applications without problem-specific systems and methods, such as data collection and preparation, pattern model development and management, and the like. Second, these analysis algorithms do not provide models and results that are consistent with domain knowledge of plant operator and engineers, and do not enable for plant operator and engineers to apply their domain knowledge into the modeling process. Third, these analysis algorithms do not enable users to develop one or more models and to run them in an online environment in daily (e.g., 24/7) non-stop operations, as required in the process industry. All the art references mentioned above are unable to meet these requirements.

[0036] In contrast to these traditional models and time series analysis approaches, the pattern model of embodiments of the present invention is less complicated to build, does not require the costly preparation of training and validation data, and is specifically suited to monitor trends in movement of KPIs. Embodiments can build the pattern model by defining a set of event signatures for each KPI of an operating event, where each event signature set contains time series patterns of the KPI associated with the operating event. The KPI time series patterns of a KPI may be identified from historical plant data by a domain expert, by comparison to operator logs, or by a pattern search and discovery technique. The embodiment may configure each event signature to contain time series patterns of the KPI having certain amplitude, offset, shape, time range, and such. During execution of the online process, the embodiments match the time series patterns of the KPI (contained in the event signatures) to the online trends in movement (time series) of the KPI to generate a distance (similarity) score. The distance score presents to plant personnel or plant operating system, and the likelihood that the trends in movement of the KPI indicate a potential operating event.

[0037] The pattern models are formulated such that they can be used in monitoring the varied, daily operation of a subject plant, and in monitoring a related (sister) plant with similar design operates under similar or different scale. For example, when the process operation of the plant is changed, such as changed to produce different products, the pattern models may be adapted to the current operation conditions by simply replacing the event signatures. The distance scores are calculated such that they are independent of the data sampling rates used to measure the KPI time series patterns of the event signatures and the KPI time series of an online plant process. The pattern models also enable the embodiments to present results that are intuitive to plant operators and other plant personnel, such as the distance (similarity) score in an understandable full range (e.g., values between 0-1).

[0038] In general, pattern recognition is a specific technique that has been applied in voice, image, and time-series recognitions in web, medical, smart phones, and the like. In contrast to example embodiments of the present invention, the process industry has been focused on multivariate statistical approaches for time series, such as Principal Component Analysis (PCA) and Partial Least Squares Regression (PLSR) model, nonlinear neural network, and the like, in implementing monitoring and fault diagnosis technology. See, e.g., "A hybrid process monitoring and fault diagnosis approach for chemical plants" by Guo et al.; "Fuzzy-logic based trend classification for fault diagnosis of chemical processes" by Dash et al.; "Fault diagnosis in chemical processes using Fisher discriminant analysis, discriminant partial least squares, and principal component analysis" by Chiang et al.; and "Monitoring chemical processes for early fault detection using multivariate data analysis methods" by Westad. For example, Fuzzy-reasoning approaches with time series pattern were attempted for fault diagnosis of chemical processes. See, e.g., "Fuzzy-logic based trend classification for fault diagnosis of chemical processes" by Dash et al.

[0039] In Fuzzy-reasoning approaches, a typical event signature reasoning system has three major components: trends' representation, trend identification technique, and technique for mapping trends to operational conditions. Further, the reasoning system extracts trend primitives using interval-halving, and defines a primitive similarity matrix to quantify the similarity between the trend primitives. The reasoning system then describes a trend pattern as a series of trend primitives, i.e. a string of characters. To prototyped events for given KPIs, the reasoning system builds a library to include the trend primitives and similarity matrix. The reasoning system computes similarity between a trend representing an unknown pattern and a string describing a prototype pattern to recognize, if the unknown pattern is one of the prototyped patterns. The reasoning system uses an IF-THEN rule library to relate trends to process state.

[0040] In contrast, embodiments of the present invention do not require the complicated event signature system of the reasoning system, which require three components for trend representation, identification, and mapping. Rather, the embodiments include less complicated defining and matching of event signatures that contain whole time series patterns of a KPI associated with an operational condition. The embodiments may determine similarities between a KPI time series and a whole KPI time series pattern without disassembling the KPI time series pattern into primitives, rebuilding the primitives, applying the similarity matrix, and such. Further, unlike in the reasoning system built, the embodiments may apply the same whole KPI time series patterns to detect an operation condition over variations in the same plant or in other similar plants.

Method of Building Pattern Model

[0041] FIG. 1 depicts an example computer-based method 100 of building a pattern model in embodiments of the present invention. The method 100 includes various workflows (steps) to build the pattern model. The example method 100 enables process operators, engineers, and systems to define an event signature of a time series (to build an event pattern model) in several ways. Some of these ways include: (i) use a known time series event-pattern from past events; (ii) use a signature pattern taken from a sister operation unit or plant; (iii) import a time series pattern from other resources; and (iv) select a pattern from an event signature library. In the case where no signature pattern is available or available signature patterns do not meet the user's criteria, the user may search events patterns in the plant historian by using either: (i) an unsupervised (unguided) pattern discovery; (ii) supervised (guided) pattern discovery; or (iii) combined iterative pattern discovery. The method 100 further enabled the user to build a library of the located event patterns (signature patterns).

[0042] The method 100 begins at step 110, by enabling a human or system user to select one or more key performance indicator (KPI) of an industrial or chemical process at a subject plant (e.g. a refinery or Ethylene plant). Method 100 (step 110) enables the user to select one or more KPI from the available process variables for the plant process. In some embodiments, step 110 may provide a user interface for the user to select the one or more KPI from the available process variables for the plant process. The user selects one or more KPI as an indicator of an undesirable (or abnormal) operating event related to the plant process, such as column flooding, heat exchanger fouling, compressor failure, pump shut-down, and the like, that is of interest to the user. For example, the user may select a flooding risk factor variable (e.g., column pressure difference between top and bottom of a column) as the KPI of a distillation column flooding event of interest related to the plant process. The movement (time series) of the one or more KPI may indicate that the operating event of interest is currently occurring in the plant process or is predicted to occur at a future time in the plant process.

[0043] The method 100, at step 120, then automatically loads historical data for the plant process from a plant historian database for the selected one or more KPI (KPI(s) of choice). The loaded historical data includes data specific to the selected KPI. The historical data may include one process variable measurement of the one or more KPI or may be an index of one or more process variable measurements of the KPI calculated using a process formula or model. The method 100, steps 130-157, identifies primary time series patterns for the one or more KPI (KPI time series patterns) of the operating event of interest, or a similar operating event, in the historical data. The method 100 may perform one or more of steps 130-157 to identify such KPI time series patterns. The method 100 may enable process operators, engineers, and systems to search KPI time series patterns in the plant historian and build the pattern model from the KPI time series patterns located by the search (i.e., define event signature patterns on time series in the plant historian data). The method 100 (steps 130-157) can perform the search and location of KPI time series patterns according to users' specifications on pattern characteristics or in an automated way, such as by using a pattern discovery approach. The KPI time series patterns identified by steps 130-157 may be of varying duration (length or range), amplitude, offset, shape, time, and such.

[0044] At step 130, the method 100 enables a domain expert to analyze the historical data and identify (predefine) KPI time series patterns related to the operating event of interest. For example, step 130 may provide a user interface that enables the user (e.g., domain expert) to review the historical data as a time series (e.g., in a graph or other such format) and identify KPI time series patterns related to the operating event of interest in the historical data. The historical data may include a known time series event-pattern from past occurrences of the operating event of interest. At step 140, the method 100 automates identification of KPI time series patterns based on operator logs from the subject plant, another (sister) plant or operation unit, or another plant resource having similar structure, feed stocks, processing capacity, and the like. The operating logs include undesirable event records and associations with the selected KPI over a certain time period, which was recorded by a plant operator during the operating event of interest (or similar or related operating event). In some embodiments, the user selects a signature pattern from the operating logs of subject plant, sister plant or operation unit, or other plant resource. In other embodiments, Step 140 compares the historical data according to operator logs to automatically identify time series patterns for the KPI during the operating event of interest.

[0045] At step 150, method 100 automatically performs a pattern search and identification technique on the historical data of the KPI. In some example embodiments, the method 100 (step 150) may automatically perform a pattern search and identification technique on KPI data from the plant process during online execution at the subject plant. The pattern search and identification technique of step 150 is an unsupervised pattern discovery technique (e.g., Motif pattern search and discovery technique). This technique applies a pattern discovery algorithm to a KPI time series (e.g., KPI measurements taken from the plant historian) of a given-length (finite) time window, and searches/locates pattern clusters that have similarities to the operating event of interest in the time window. That is, the pattern discovery algorithm traverses through the time window and builds pattern clusters that contain repeatable KPI time series patterns ("event-like" patterns). The identified clusters may include a cluster of patterns that are close in characteristics to the operating event of interest.

[0046] At step 155, method 100 automatically performs a supervised (guided) pattern discovery. The supervised pattern discovery technique enables the user to select primitive shapes, as part of the pattern discovery algorithm of step 150, to speed up the pattern cluster search process, reduce the number of non-event pattern clusters located by the algorithm, and result in the most meaningful pattern models for online deployment. In the supervised pattern discovery, as the pattern discovery algorithm traverses through the time window to build the pattern clusters, the technique identifies candidates from the repeated KPI time series patterns. The supervised technique applies primitive shapes selected by the user from a default primitive shape library (e.g., bell-shape peak, rising, sinking, and the like), or dynamically drawn (on the fly) by the user on an ad hoc drawing pane, to the identified KPI time series pattern candidates to identify patterns with most characteristics of the operating event of interest. The supervised technique builds and returns pattern clusters containing those patterns identified as most characteristic of the operating event of interest. The application of the primitive shapes to the pattern candidates reduces the time taken to build desired pattern clusters by trimming off/skipping unwanted pattern clusters before building pattern clusters.

[0047] At steps 150 and 155, the method 100 may further interact with a user (e.g., plant operator or other plant personnel) to confirm that the identified KPI time series patterns and/or pattern clusters relate to the operating event of interest. For example, steps 150 and 155 may provide a user interface that presents each identified KPI time series pattern or pattern cluster to the user and enables the user to confirm the pattern/cluster as a KPI time series related to the operating event of interest. By presenting the identified time series patterns/clusters to plant personnel, steps 150 and 155 further incorporates plant knowledge and expertise into the performance of the automatic search and identification technique. At step 157, the method 100 may also select KPI time series patterns from a signature library.

[0048] The method 100, at step 160, automatically defines and confirms a signature (i.e., as a tag or calculated tag) of the operating event of interest based on the KPI time series patterns/pattern clusters identified in steps 130-157. At step 160, the method 100 may define the event signature to comprise one or more time series range (time window) or vary in amplitude, spread, offset, shape, dynamic time warping (DTW) and such. For each time series range, offset, shape, and such, step 160 includes one or more corresponding KPI time series patterns (e.g., contained in pattern cluster) identified (found) in steps 130-157. Step 160 includes the entire identified KPI time series pattern in the event signature, rather than disassembling the KPI time series pattern into primitive components. In other embodiments, a user interface may be provided for a user to interactively define the event signature, which may include options for configuring time series ranges, amplitude, offset, shape, and such for the event signature and selecting one or more corresponding KPI time series pattern (e.g., contained in pattern cluster).

[0049] The method 100, at step 170, builds a pattern model for the operating event of interest and adds the defined event signature in the pattern model. The pattern model may be used to monitor for the event signature during online execution of the plant process at the subject plant. The method 100 may also transfer the pattern model to a similar plant, where the pattern may also be used to monitor for the event signature during online execution of the plant process. The method 100, at step 175, adds more event signatures that include the KPI time series patterns identified in steps 130-157, which are also saved in the pattern model. In repeating these steps, method 100 builds the pattern models with different event signatures that account for various aspects of a KPI time series range associated with the operating event of interest. For example, the event signatures may include KPI time series patterns that account for varying amplitude, offset, shape, time, and such in a KPI time series range associated with the operating event of interest. The variation of amplitude, offset, shape, time, and the like may be an indication of undesirable or abnormal conditions associated with the operating event of interest. Further, method 100 may include in the pattern model event signatures containing KPI time series patterns that are precursors for predicting a future occurrence of the operating event of interest (e.g., a KPI for a C2 splitter indicates future occurrence of Ethylene leak). The method 100 may include in the same pattern model event signatures containing KPI time series patterns that are detectors of an ongoing occurrence of the operating event of interest.

[0050] The method 100, may then repeat steps 130-175 to define event signatures based on another selected KPI of the operating event of interest, which may be included in the same or different pattern model. The method 100 may further repeat to define event signatures for additional undesirable operating events, which may be included in other pattern models. In addition, when the process operation scheme of the plant changes (revamps), such as producing different product, steps 110-170 may be repeated to build a new pattern model to replace a built model from the previous process operation scheme. The method 100 ends at step 180.

[0051] In some embodiments, each of the defined event signatures may be collected, saved, classified, and documented in an event signature library (in step 170). In the event signature library, the event signatures may be organized according to an operating event of interest, KPI associated with the operating event of interest, and such. The method 100 (step 170) may provide a user interface to enable a user to add, delete, and edit (tune) the event signatures in the plant signature library. For example, fine tuning KPI time series patterns of an event signature could accommodate minor structure change or customized operation conditions, such as switching to specially designed catalyst for a reactor. The user interface may further enable the user to create and update pattern models using event signatures from the event signature library. In these embodiments, the event signature library and pattern models may be stored at the plant historian database or other location in a server computer. The user interface may further enable the user to simulate and test the stored pattern models on in an offline or online plant process.

Method of Online Monitoring of Pattern Model

[0052] FIG. 2 depicts an example computer-based method 200 of online monitoring and iteration of time series patterns in embodiments of the present invention. Prior to the start of method 200, a plant operations system executes a plant process online at a subject plant (e.g., a refinery or Ethylene plant). The plant operations system also loads a stored pattern model of an undesirable (abnormal) operating event of interest related to the online plant process. In some embodiments, the plant operation system may load multiple event-pattern models together online for improved reliability of the system to monitor and predict one or more operating events of interest. The stored model includes a first set of event signatures for a first KPI of the operating event of interest. Each event signature of the first set contains a time series pattern of the first KPI associated with the operating event of interest. The stored model also includes a second set of event signatures for a second KPI of the operating event of interest. Each event signature of the second set contains a time series pattern of the second KPI associated with the operating event of interest. The stored model also includes up to an m.sup.th set of event signatures for an m.sup.th KPI of the operating event of interest. Each event signature of the m.sup.th set contains time series pattern of the m.sup.th KPI associated with the operating event of interest. In some example embodiments, the pattern model was built according to the method 100 of FIG. 1.

[0053] The method 200, at step 210, starts an iteration of applying the pattern model (resulting from FIG. 1) to a specified time range (e.g., a short history time duration or portion up to current time) of the online plant process. The iteration may be repeated at a certain time interval scheduled by user. In some embodiments, the specified range may be predefined by a user via a user interface, and in other embodiments, the specified range may be automatically determined based on features of the plant process. In the iteration, the method 200, at step 220, executes a first computer process that monitors trends in movement of the first KPI over the specified range of the online plant process (e.g., the KPI value movements over the last two hours). Step 220 monitors the trends in movement of the first KPI (as a time series) of the specified range, referred to as the "first monitored KPI time series." In particular, the first computer process (at step 220) selects associated event signatures from the first set of event signatures (for the first KPI) that contain time series patterns corresponding to the first monitored KPI time series. For each selected event signature, the first computer process compares the time series patterns for the KPI contained in the selected event signature to the first monitored KPI time series. Based on the comparisons, the first computer process determines a level of similarity between the selected event signatures and the first monitored KPI time series, which is output as a distance score.

[0054] The method 200, at step 230, executes an m.sup.th computer process simultaneously (in parallel) with the first computer process. The m.sup.th computer process monitors trends in movement of the m.sup.th KPI (as a time series) over the specific range of the online plant process, referred to as the "m.sup.th monitored KPI time series." In particular, the m.sup.th computer process (at step 230) selects associated event signatures from the m.sup.th set of event signatures (for the m.sup.th KPI) that contain time series patterns corresponding to the m.sup.th monitored KPI time series. For each selected event signature, the m.sup.th computer process compares the time series patterns for the KPI contained in the selected event signature to the m.sup.th monitored KPI time series. Based on the comparisons, the m.sup.th computer process determines a level of similarity between the selected event signatures and the m.sup.th monitored KPI time series, which is output as a distance score. The method 200 may similarly execute any number of other computer processes (as indicated by the markings between the 1.sup.st process 220 and m.sup.th process 230) to determine a level of similarity between the corresponding monitored KPI time series and associated selected event signatures.

[0055] At step 240, the method 200 receives the distance score for the first KPI from the first computer monitoring process (step 220). At step 240, the method 200 also receives the distance score from each up to the m.sup.th computer monitoring process (steps 230. In other embodiments, step 240 may receive a distance score for only one KPI (from a single computer monitoring process). In yet other embodiments, step 240 may receive a distance score for any number of multiple KPIs (e.g., from corresponding monitoring processes in series).

[0056] Step 240 assigns a weight coefficient to the distance score for each monitored KPI. For example, step 240 may assign a first weight coefficient to the distance score for the first monitored KPI, up to a m.sup.th weight coefficient to the distance score for the m.sup.th monitored KPI. The assigned weight coefficients indicate the significance or impact of the corresponding KPI in detecting the operating event of interest. For example, a column pressure difference between a distillation column top and bottom KPI may be more significant in determining a column distillation flooding event than a column pressure KPI. In example embodiments, by default, each distance score is assigned the same weight coefficient of 1, indicating similar significance of the corresponding KPIs in detecting the operating event of interest. In some embodiments, a user (e.g., plant operator) may configure the weight coefficient for each KPI via a user interface. In this way, process domain knowledge can be applied by plant personnel through assigning different coefficients to increase/decrease the influence of a KPI on the overall combined distance score.

[0057] Step 240 next performs the real-time operation of calculating the weighted distance score of each monitored KPI into a combined distance score. The method 200, at step 250, then performs the real-time operation of comparing the combined distance score to an event similarity threshold for the operating event of interest. In some embodiments, the method 200 provides a user interface for a user (e.g., plant operator) to define the event similarity threshold for the operating event of interest. If the combined distance score satisfies the event similarity threshold, the method 200, at step 260, detects the current occurrence of the operating event of interest or predicts a future occurrence of the operating event of interest. Step 260 then alters the online process system or operational personal to stop or prevent the occurrence of the operating event of interest based the combined distance score and related information collected by method 200. For example, the method 200 (step 260) may alert and advise operators to adjust parameters (variables) of the online plant process or plant equipment associated with the online plant process to avoid a shut-down, or prevent from, the occurrence of the operating event of interest (adapt process/plant changes in operation conditions).

[0058] Step 260 may also only alert (alarm) a user (e.g., plant operation) of the occurrence or predicted (incoming) occurrence of the operating event of interest. The combined distance (similarity) score, event signatures, and pattern model can be further applied to event root-cause analysis based on correlations with available process variable measurements. Detailed example embodiments of root-cause analysis from event KPIs are described in Applicant's U.S. patent application Ser. No. 15/141,701, herein incorporated by reference.

[0059] The method 200 completes at step 270 with the termination of the iteration of applying the pattern model to the online plant process. Method 200 may then repeat steps 210-270 to perform a next iteration of applying the pattern model to the online plant process, as scheduled at a certain time interval (e.g. every 10 minutes).

Method of Deploying Pattern Model

[0060] FIG. 3 depicts an example computer-based method 300 of deploying pattern models in embodiments of the present invention. The method 300 is a detailed example embodiment of the step 220 or 230 of method 200 (FIG. 2). The method 300 starts at step 305. The method 300, at step 310, receives time series of a KPI from a specified range of choice for an online plant process, referred to as the "monitored KPI time series." If method 300 is executing an embodiment of step 220, the KPI is the "first KPI," and the monitored KPI time series is the "first monitored KPI time series." If method 300 is executing an embodiment of step 230, the KPI is the "m.sup.th KPI," and the monitored KPI time series is the "m.sup.th monitored KPI time series," and so forth.

[0061] At step 310, the method 300 also receives a set of event signatures in a pattern model for the KPI. If method 300 is executing an embodiment of step 220, the set of event signatures is the "first set of event signatures," and if method 300 is executing an embodiment of step 230, the set of event signatures is the "m.sup.th set of event signatures," and so forth. At step 310, the method 300 selects event signatures from the set of event signatures, which each contains a time series pattern for the KPI corresponding in range to the monitored KPI time series. In particular, in the embodiment of FIG. 3, the method 300 selects a first event signature and an n.sup.th event signature from the set of event signatures. The first event signature includes a time series pattern for the KPI that vary in at least one of amplitude, offset, shape, or time from the time series pattern for the KPI in the n.sup.th event signature. The time series pattern for the KPI from the first event signature is referred to as the "first KPI time series pattern" and the time series pattern for the KPI from the n.sup.th event signature is referred to as "the n.sup.th KPI time series pattern." By simultaneously applying both the first and the n.sup.th event signatures to the monitored KPI time series, the method 300 can monitor, in real-time, different manifestations of the operating event of interest in the online plant process.

[0062] In the following descriptions, a data transform called Z-normalization is repeatedly applied, Z-normalization, also known as "Normalization to Zero Mean and Unit of Energy," was mentioned by Goldin & Kanellak, "On Similarity Queries for Time Series Data: Constraint Specification and Implementation" (1995), herein incorporated by reference in its entirety. The procedure ensures, that all elements of the input vector are transformed into an output vector with mean approximately 0, while the standard deviation is in a range close to 1. The formula (Equation 1) used in the transform is shown below, where i .di-elect cons. N:

x'i=(xi-.mu.)/.sigma. (Equation 1)

[0063] First, the time series mean is subtracted from original values, and second, the difference is divided by the standard deviation value. According to most of the recent work concerned with time series structural pattern mining, z-normalization is an essential preprocessing step which allows a mining algorithm to focus on the structural similarities/dissimilarities rather than on the amplitude-driven ones.

[0064] The method 300 continues to step 320, where the method 300 performs Z-normalization on the first and the n.sup.th event signatures and the monitored KPI time series. Z-normalization converts: (i) the first KPI time series pattern of the first event signature, the n.sup.th KPI time series pattern of the n.sup.th event signature, and the monitored KPI time series to a common scale. For example, the Z-normalization removes impacts of amplitude, offset, shape, and such from the first KPI time series pattern and the n.sup.th KPI time series pattern, and the monitored KPI time series. After the conversion of the KPI time series patterns and monitored KPI time series, method 300, at step 325, checks if the monitored KPI time series range satisfies a proprietary filter (e.g., amplitude spread) placed on the first event signature to remove false positives. If so, the method, step 325, transmits the event signature to a separate computer process, along with the monitored KPI time series.

[0065] In particular, after conversion, step 325 transmits the first event signature to a first computer process 330, along with the monitored KPI time series. The first computer process (step 330) calculates an Euclidean distance with dynamic time warping (DTW) between the first KPI time series pattern and the monitored KPI time series. To enable the first computer process to calculate the distance in real-time, the typical upper bound used for the DTW is 10%, as a larger upper bound will slow calculation of the distance significantly. The use of Euclidean distance with DTW allows for the method 300 to accommodate small time duration variation in the calculation of the distance.

[0066] To calculate the Euclidean distance with DTW, step 330 inputs the first KPI time series pattern and monitored KPI time series into a Euclidian Distance (ED) with DTW function, for example, as shown in Equation 2. In other embodiments, the standard Euclidean distance function may be used.

ED(A,B)= {square root over (.SIGMA..sub.i=1.sup.n(a.sub.i-b.sub.i).sup.2)} (Equation 2)

[0067] In Equation 2, A is the first KPI time series pattern, after being converted by Z-normalization; and a.sub.i is the ith object of the converted first KPI time series pattern B is the monitored KPI time series, after being converted by Z-normalization; and b.sub.i is the ith object of the converted monitored KPI time series, and n is the length of A and B. In practice, A and B may be not well aligned in time and a correction may be made by DTW algorithm. An example process to calculate a distance using an Euclidian distance function with DTW is disclosed in "Exact indexing of dynamic time warping," by E. Keogh, et al, Knowledge and Information Systems (2004), which is herein incorporated by reference.

[0068] Some embodiments provide a new distance criterion, which may be referred to as Aspen Tech Distance (ATD), which measures similarities of a given length of a time series against a pattern library model. The ATD has similar mathematical properties to the conventional Euclidian Distance, but it has several advantages over Euclidian Distance for process industrial applications. As part of the ATD, Equation 2 calculates the Euclidian Distance with DTW (ED(A, B)) between the first KPI time series pattern and the monitored KPI time series. In other embodiments, a standard Euclidean Distance may be used. By calculating the Euclidean distance with DTW, the method 300 directly determines the distance between the first KPI time series pattern (as a whole) and the monitored KPI time series, rather than disassembling the first KPI time series pattern into primitives, applying similarity matrix, and such.

[0069] The calculated distance (ED(A, B)) becomes the smaller the closer the similarity is between the first KPI time series pattern and the monitored KPI time series. For example, if first the KPI time series pattern perfectly matched the monitored KPI time series (i.e., closest possible similarity), Equation 2 would calculate a distance of 0. Note that in Equation 2, the data sampling rates used in originally measuring the KPI time series pattern and the monitored KPI time series affects the value of the calculated distance. For example, if the same first KPI time series pattern is measured at a higher data sampling rate and a lower data sampling rate respectively, Equation 2 would output a larger distance between the first KPI time series pattern and the monitored KPI time series for the higher data sampling rate than the lower data sampling rate.

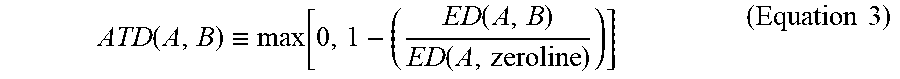

[0070] The method 300, at step 340, inputs the calculated distance (ED(A,B)) to an ATD distance scoring function. Based on the calculated distance, the ATD distance scoring function measures the similarity between the first KPI time series pattern and the monitored KPI time series to compute a first distance score. The ATD distance score function is defined as follows in Equation 3:

ATD ( A , B ) .ident. max [ 0 , 1 - ( ED ( A , B ) ED ( A , zeroline ) ) ] ( Equation 3 ) ##EQU00001##

[0071] In Equation 3, ED(A,B) is the Euclidian distance calculated from Equation 2 in step 330. A is the first KPI time series pattern, after being converted by Z-normalization. B is the monitored KPI time series, after being converted by Z-normalization. The zero-line is a constant vector of zeros of the same length as the monitored KPI time series, and ED (A,zero-line) is the Euclidian distance calculated between A and zero-line. There are two advantages by using ATD distance score instead of ED to measure similarity: (i) the distance scores are rescaled from zero (smallest similarity) to one (highest similarity), which matches most engineering measurement scales, is easy to understand for process operation personals; (ii) the ATD distance score is not sensitive to pattern sampling rate (e.g., when the sampling time interval changed, the ATD scores are still valid for comparisons of two time series patterns with different sampling rate).

[0072] Step 340, using Equation 3, calculates an distance score (ATD(A,B)) that has a value in the range 0 to 1. That is, if step 340 applies ATD(A,a) across each object i in a large dataset, the distribution of the distance score ATD(A,a) spans the full range [0, 1], rather than being confined to a smaller range, such as [0.95 to 0.97]. The more similar the monitored KPI time series (B) is to the first KPI time series pattern (A), the closer the distance score ATD(A,B) is to 1. Whereas the less similar the monitored KPI time series (B) is to the first KPI time series pattern (A), the closer the distance score ATD(A,B) is to 0. Further, Equation 3 calculates ATD(A,B) to be essentially independent of (immune to) the data sampling rate used to measure A and B. That is, for the same first KPI time series pattern (A) and monitored KPI time series (B), Equation 3 calculates approximately the same distance score, regardless of whether A and B were measured using a higher or lower data sampling rate. As such, the impact of the data sampling rate in the calculated distance (ED(A,B)) by Equation 2 is essentially eliminated when converting the calculated distance into the distance score (ATD(A,B)).