Fast Encoding Loss Metric

Thiagarajan; Arvind ; et al.

U.S. patent application number 16/248508 was filed with the patent office on 2019-07-18 for fast encoding loss metric. The applicant listed for this patent is MatrixView, Inc.. Invention is credited to Ravilla Jaisimha, Arvind Thiagarajan.

| Application Number | 20190222864 16/248508 |

| Document ID | / |

| Family ID | 61620802 |

| Filed Date | 2019-07-18 |

| United States Patent Application | 20190222864 |

| Kind Code | A1 |

| Thiagarajan; Arvind ; et al. | July 18, 2019 |

FAST ENCODING LOSS METRIC

Abstract

Provided is a process including: obtaining source and destination video data, the destination video data being a transformed version of the source video data; for a plurality of blocks in the given source frame, determining a respective source aggregate value that is based on a measure of central tendency of pixel values; for a plurality of blocks in the given destination frame, determining a respective destination aggregate value that is based on a measure of central tendency of pixel values; determining a plurality of differences between source and destination aggregate values; determining a frame aggregate value that is based on a measure of central tendency of the determined differences; and determining a measure of distortion of the destination video data based on the frame aggregate value.

| Inventors: | Thiagarajan; Arvind; (Sunnyvale, CA) ; Jaisimha; Ravilla; (818 Kifer Road, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 61620802 | ||||||||||

| Appl. No.: | 16/248508 | ||||||||||

| Filed: | January 15, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15824341 | Nov 28, 2017 | 10182244 | ||

| 16248508 | ||||

| 15447755 | Mar 2, 2017 | 10154288 | ||

| 15824341 | ||||

| 62474350 | Mar 21, 2017 | |||

| 62513681 | Jun 1, 2017 | |||

| 62487777 | Apr 20, 2017 | |||

| 62302436 | Mar 2, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 19/61 20141101; H04N 19/625 20141101; G10L 19/0212 20130101; H04N 19/91 20141101; H04N 19/126 20141101; H04N 19/176 20141101 |

| International Class: | H04N 19/61 20060101 H04N019/61; H04N 19/126 20060101 H04N019/126; H04N 19/91 20060101 H04N019/91; H04N 19/625 20060101 H04N019/625; H04N 19/176 20060101 H04N019/176 |

Claims

1. A tangible, non-transitory, machine-readable medium storing instructions that when executed by one or more processors effectuate operations comprising: obtaining, with one or more processors, source video data, the source video data having a plurality of frames, the frames each having a plurality of pixel values; obtaining, with one or more processors, destination video data, the destination video data being a transformed version of the source video data and having transformed versions of the plurality of frames; for a given frame, accessing, with one or more processors, a given source frame among the plurality of frames in the source video data and a given destination frame among the plurality of frames in the destination video data, the given destination frame being a transformed version of the given source frame; segmenting, with one or more processors, the given source frame and the given destination frame into a plurality of blocks, each block corresponding to a region of pixels in the respective frame; for a plurality of blocks in the given source frame, determining, with one or more processors, a respective source aggregate value that is based on a measure of central tendency of pixel values in the respective block of the given source frame; for a plurality of blocks in the given destination frame, determining, with one or more processors, a respective destination aggregate value that is based on a measure of central tendency of pixel values in the respective block of the given destination frame; determining, with one or more processors, a plurality of differences between source aggregate values and corresponding destination aggregate values of the given frame; determining, with one or more processors, a frame aggregate value that is based on a measure of central tendency of the determined differences between source aggregate values and corresponding destination aggregate values of the given frame; determining, with one or more processors, a measure of distortion of the destination video data relative to the source video data based on the frame aggregate value and a plurality of other frame aggregate values of a plurality of other frames; and storing, with one or more processors, the measure of distortion in memory in association with the destination video data.

2. The medium of claim 1, wherein: blocks in the source frame have coterminous perimeter pixel positions with corresponding blocks in the destination frame; the plurality of blocks are each rectangular regions of pixels; sizes of the blocks are between 4 by 4 pixels in horizontal and vertical directions, respectively, of the given frame and 128 by 128 pixels in the horizontal and vertical directions, respectively, of the given frame; the frame aggregate value is formed with steps for determining a frame aggregate value; the destination video data is formed with steps for compressing video data; the operations comprise steps for adjusting compression parameters in response to a video quality measurement; the frame aggregate value is based on a mean square error of the plurality of differences between source aggregate values and corresponding destination aggregate values of the given frame; and each frame has more than or equal to 3840 pixel positions along more than or equal to 2160 lines.

3. The medium of claim 1, wherein: the source aggregate value is based on a mean of pixel values in the respective block in the source frame; and the destination aggregate value is based on a mean of pixel values in the respective block in the destination frame.

4. The medium of claim 1, wherein: at least one of the measures of aggregate value is a mean, median, or mode.

5. The medium of claim 1, wherein: each of the measures of aggregate value is the same type of measure and is one of a mean, median, or mode.

6. The method of claim 1, wherein: the frame aggregate value is based on each and every block in the given source frame and the given destination frame.

7. The medium of claim 1, wherein: the measure of distortion is based on a sampling, wherein the sampling is of: pixels in blocks and does not include all pixels of the sampled blocks, blocks in the plurality of frames and does not include all blocks in the plurality of frames, frames and does not include all of the plurality of frames, or a combination thereof.

8. The medium of claim 1, wherein: each of the plurality of blocks in the given source frame and in the given destination frame is the same size and does not overlap other blocks in the same frame.

9. The medium of claim 1, wherein: for the given frame, a plurality of different frame aggregate values are determined for a plurality of different types of pixel values corresponding to different color or luminance constituents of an image depicted in the given frame; and the measure of distortion is based on the plurality of different frame aggregate values.

10. The medium of claim 9, wherein: different weights are associated with the different types of pixel values; and the measure of distortion is based on a weighted combination of the plurality of different frame aggregate values in which different frame aggregate values are multiplied by their respective corresponding one of the different weights.

11. The medium of claim 10, wherein: a weight associated with pixel values indicating luminance is greater than a weight associated with pixel values indicating a constituent color.

12. The medium of claim 1, wherein: the measure of distortion is a mean of a plurality of frame aggregate values.

13. The medium of claim 1, wherein: the measure of distortion is a moving mean of a plurality of frame aggregate values of a threshold number of sequential frames in the video data.

14. The medium of claim 1, the operations comprising: concurrently determining a plurality of differences between source aggregate values and corresponding destination aggregate values.

15. The medium of claim 1, wherein: the pixel values are residual values indicative of differences between an intra-frame prediction of blocks or inter-frame prediction of blocks and displayed pixel values.

16. The medium of claim 1, the operations comprising: determining an aggregate amount of data discarded when rounding quotients formed with an element-by-element division of a transform matrix by a quantization matrix; and storing the aggregate amount of data discarded in association with the destination video data.

17. The medium of claim 1, the operations comprising: forming the destination video data by encoding in a compressed bitstream the source video data with lossy compression and then decoding the compressed bitstream.

18. The medium of claim 17, the operations comprising: adjusting the quantization matrix used in encoding the compressed bitstream or encoding a new compressed bitstream representation of the source video data in response to the measure of distortion.

19. The medium of claim 17, the operations comprising: adjusting values of a transform matrix formed when encoding the compressed bitstream or encoding a new compressed bitstream representation of the source video data in response to the measure of distortion.

20. The medium of claim 1, the operations comprising: receiving a request for video content from a client computing device; accessing a library of video content; sending the compressed video bitstream to the client computing device; and one or more of: determining that the client computing device is associated with a subscription; or sending an advertisement to the client computing device for display.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This patent filing is a continuation of U.S. patent application Ser. No. 15/824,341, titled FAST ENCODING LOSS METRIC, filed 28 Nov. 2017, which claims the benefit of U.S. Provisional Patent App. 62/474,350, titled FAST ENCODING LOSS METRIC, filed 21 Mar. 2017, U.S. Provisional Patent App. 62/513,681, titled MODIFYING COEFFICIENTS OF A TRANSFORM MATRIX, filed 1 Jun. 2017, U.S. Provisional Patent App. 62/487,777, titled ON THE FLY REDUCTION OF QUALITY BY SKIPPING LEAST SIGNIFICANT AC COEFFICIENTS OF A DISCRETE COSINE TRANSFORM MATRIX, filed 20 Apr. 2017. The patent filing U.S. patent application Ser. No. 15/824,341, titled FAST ENCODING LOSS METRIC, filed 28 Nov. 2017 is also a continuation-in-part of U.S. patent application Ser. No. 15/447,755, titled APPARATUS AND METHOD TO IMPROVE IMAGE OR VIDEO QUALITY OR ENCODING PERFORMANCE BY ENHANCING DISCRETE COSINE TRANSFORM COEFFICIENTS, filed 2 Mar. 2017, which claims the benefit of U.S. Provisional Patent App. 62/302,436, titled APPARATUS AND METHOD TO IMPROVE IMAGE OR VIDEO QUALITY OR ENCODING PERFORMANCE BY ENHANCING DISCRETE COSINE TRANSFORM COEFFICIENTS, filed 2 Mar. 2016, the entire content of each of which are hereby incorporated by reference.

BACKGROUND

1. Field

[0002] The present disclosure relates generally to image compression and, more specifically, to fast encoding loss metrics for video encoding.

2. Description of the Related Art

[0003] Data compression underlies much of modern information technology infrastructure. Compression is often used before storing data, to reduce the amount of media consumed and lower storage costs. Compression is also often used before transmitting the data over networks to reduce the bandwidth consumed. Certain types of data are particularly amenable to compression, including images (e.g., still images or video) and audio.

[0004] Prior to compression, data is often obtained through sensors, data entry, or the like, in a format that is relatively voluminous. Often the data contains redundancies and less-perceivable information that can be leveraged to reduce the amount of data needed to represent the original data. In some cases, end users are not particularly sensitive to portions of the data, and these portions can be discarded to reduce the amount of data used to represent the original data. Compression can, thus, be lossless or, when data is discarded, "lossy," in the sense that some of the information is lost in the compression process.

[0005] A common technique for lossy data compression is based on the discrete cosine transform (DCT). Data is generally represented as the sum of cosine functions at various frequencies, with the amplitude of the function at the respective frequencies being modulated to produce a result that approximates the original data. Another example is asymmetric discrete sine transform (ADST). At higher compression rates, however, a blocky artifact appears that is undesirable.

[0006] Often traditionally, artifacts and other losses in image compression are measured with a metric referred to as peak signal-to-noise ratio (PSNR). In some cases, this metric may be relatively computationally expensive, often requiring a relatively slow calculation on every pixel in an image (which is not to suggest that this attribute or any other is disclaimed in all embodiments). This problem is expected to be aggravated as higher-resolution, higher-frame rate video is deployed more widely, particularly on less powerful computing devices. As a result, it can be difficult to calculate these metrics on the fly and dynamically adjust compression, encoding, or decoding responsive to such metrics.

SUMMARY

[0007] The following is a non-exhaustive listing of some aspects of the present techniques. These and other aspects are described in the following disclosure.

[0008] Some aspects include a process, including: obtaining, with one or more processors, source video data, the source video data having a plurality of frames, the frames each having a plurality of pixel values; obtaining, with one or more processors, destination video data, the destination video data being a transformed version of the source video data and having transformed versions of the plurality of frames; for a given frame, accessing, with one or more processors, a given source frame among the plurality of frames in the source video data and a given destination frame among the plurality of frames in the destination video data, the given destination frame being a transformed version of the given source frame; segmenting, with one or more processors, the given source frame and the given destination frame into a plurality of blocks, each block corresponding to a region of pixels in the respective frame; for a plurality of blocks in the given source frame, determining, with one or more processors, a respective source aggregate value that is based on a measure of central tendency of pixel values in the respective block of the given source frame; for a plurality of blocks in the given destination frame, determining, with one or more processors, a respective destination aggregate value that is based on a measure of central tendency of pixel values in the respective block of the given destination frame; determining, with one or more processors, a plurality of differences between source aggregate values and corresponding destination aggregate values of the given frame; determining, with one or more processors, a frame aggregate value that is based on a measure of central tendency of the determined differences between source aggregate values and corresponding destination aggregate values of the given frame; determining, with one or more processors, a measure of distortion of the destination video data relative to the source video data based on the frame aggregate value and a plurality of other frame aggregate values of a plurality of other frames; and storing, with one or more processors, the measure of distortion in memory in association with the destination video data.

[0009] Some aspects include a tangible, non-transitory, machine-readable medium storing instructions that when executed by a data processing apparatus cause the data processing apparatus to perform operations including the above-mentioned process.

[0010] Some aspects include a system, including: one or more processors; and memory storing instructions that when executed by the processors cause the processors to effectuate operations of the above-mentioned process.

BRIEF DESCRIPTION OF THE DRAWINGS

[0011] The above-mentioned aspects and other aspects of the present techniques will be better understood when the present application is read in view of the following figures in which like numbers indicate similar or identical elements:

[0012] FIG. 1 shows an example of a video distribution system in accordance with some embodiments;

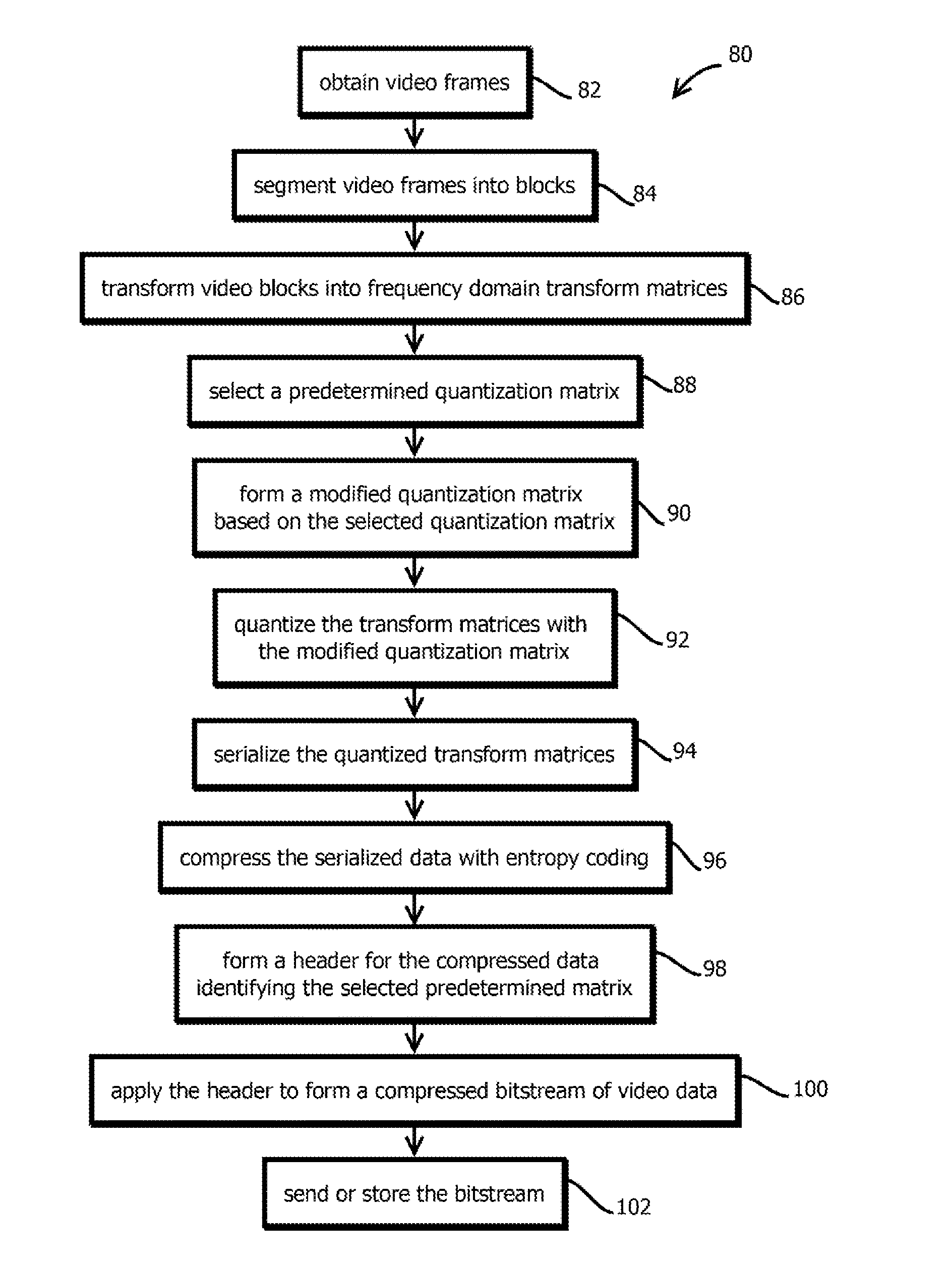

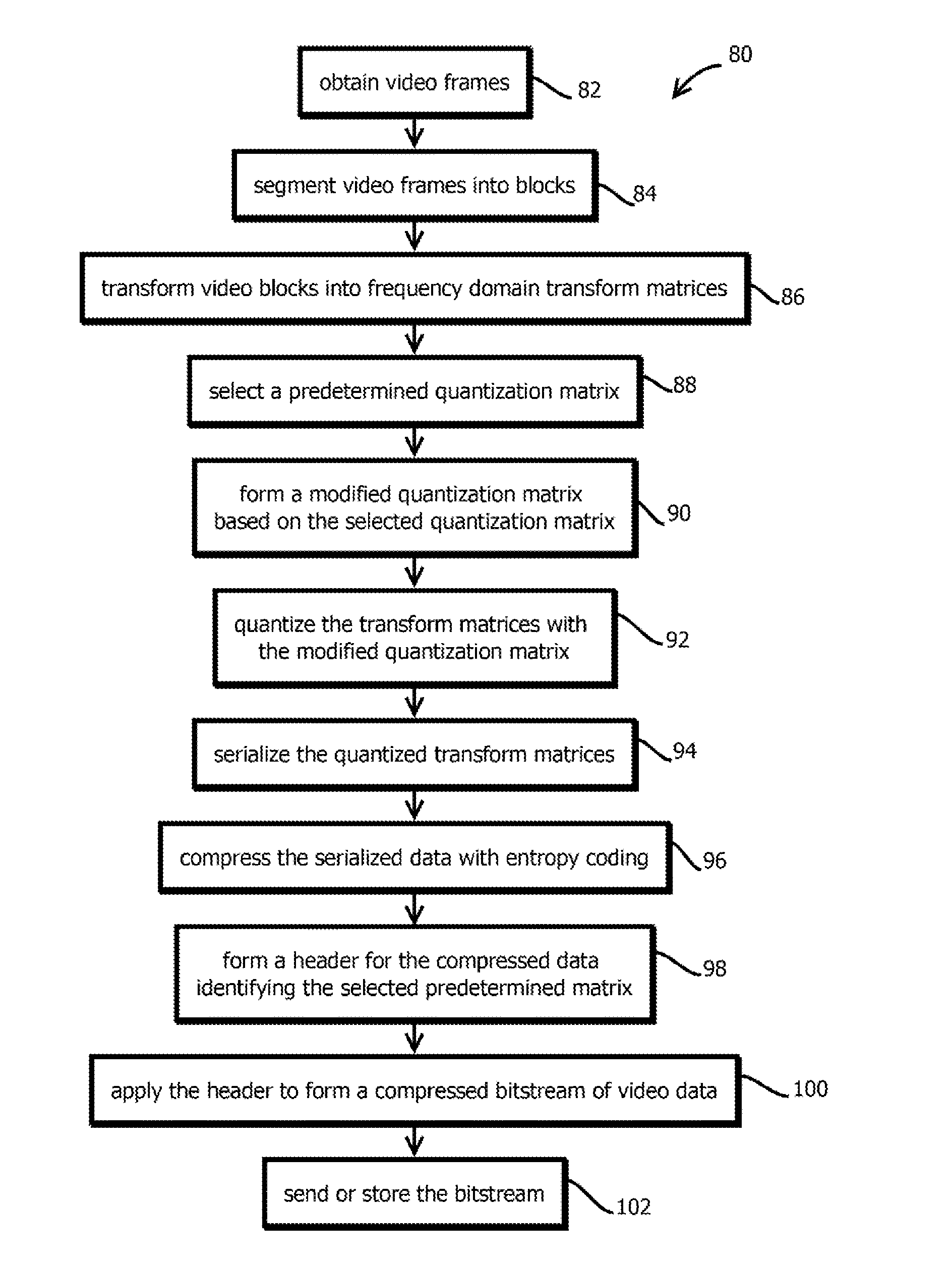

[0013] FIG. 2 shows an example of a video compression process in accordance with some embodiments;

[0014] FIG. 3 shows an example of a distortion measurement process that may serve as feedback for the above processes in accordance with some embodiments; and

[0015] FIG. 4 shows an example of a computer system by which the above processes and systems may be implemented.

[0016] While the present techniques are susceptible to various modifications and alternative forms, specific embodiments thereof are shown by way of example in the drawings and will herein be described in detail. The drawings may not be to scale. It should be understood, however, that the drawings and detailed description thereto are not intended to limit the present techniques to the particular form disclosed, but to the contrary, the intention is to cover all modifications, equivalents, and alternatives falling within the spirit and scope of the present techniques as defined by the appended claims.

DETAILED DESCRIPTION OF CERTAIN EMBODIMENTS

[0017] To mitigate the problems described herein, the inventors had to both invent solutions and, in some cases just as importantly, recognize problems overlooked (or not yet foreseen) by others in the field of data compression. Indeed, the inventors wish to emphasize the difficulty of recognizing those problems that are nascent and will become much more apparent in the future should trends in industry continue as the inventors expect. Further, because multiple problems are addressed, it should be understood that some embodiments are problem-specific, and not all embodiments address every problem with traditional systems described herein or provide every benefit described herein. That said, improvements that solve various permutations of these problems are described below.

[0018] Many image compression techniques used with video compression exhibit a "blocky" artifact, often at higher levels of compression. Often, smooth transitions between pixels in original images exhibit sudden changes at the edges of blocks in decompressed video. These and similar artifacts often serve as a constraint on the amount of compression that can be applied to a video file, causing excessive storage and network bandwidth use relative to what would be desirable with greater compression. Further, these artifacts often are distracting to users and can make it difficult to enjoy or extract information from compressed video and other content.

[0019] Some embodiments may mitigate these problems with a technique for measuring an amount of information loss in compression described below. Some embodiments may calculate a block-based measure of compression error, for instance, based on the below-described blocks of pixels. Some embodiments may calculate the measurement on eight-by-eight blocks of pixels (or other sizes noted below). An example of the technique is described in greater detail below in a series of steps and pseudocode. In some cases, the metric may be calculated and streaming video may be adjusted responsive to the metric. For example, some embodiments may adjust the various parameters described above for changing the compression parameters of a video stream or some embodiments may select between different resolutions, color bit depths, or frame rates in response to the calculated metric. In some cases, adjustments may be made on live streams or previously recorded and encoded streams.

[0020] Some embodiments may implement the following pseudocode process:

[0021] 1. For each 8.times.8 block of pixels, a block average may be calculated as

avg_block = 1 64 x = 0 7 y = 0 7 pixel xy ##EQU00001##

[0022] 2. Repeat step 1 for the whole frame, and embodiments will get m*n blocks of block average and it is represented as

BAvg_Frame=avg_block.sub.min

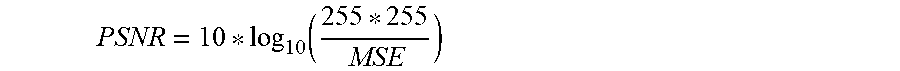

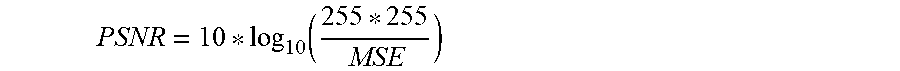

[0023] 3. An example of a loss metric, referred to as a block peak signal to noise ratio (BPSNR) for the source and destination frame may be calculated as

[0024] Block Mean Squared Error:

MSE = 1 xy x = 0 x - 1 y = 0 y - 1 ( Source_BAvg _Frame x , y - Destination_BAvg _Frame x , y ) 2 ##EQU00002##

[0025] Block PSNR:

PSNR = 10 * log 10 ( 255 * 255 MSE ) ##EQU00003##

[0026] It should be noted that: [0027] Source_BAvg_Frame.sub.x,y is the source frame of block average [0028] Destination_BAvg_Frame.sub.x,y is the destination frame of block average

[0029] For example, consider a 1080P frame, which has pixel width 1920 and height 1080. By doing the 8.times.8 block average, embodiments are expected to get Block average frame size of 240 by 135. (As: m=240 (1920/8) and n=135 (1080/8))

[0030] These metrics may be used to adjust various compression parameters, including both parameters provided by compression standards described below and other parameters relevant to an independently useful invention that may be used with our without the fast encoding loss metric. This other invention is described in another patent filing sharing portions of a disclosure with this filing, but the inventors reserve the right to claim combinations of features of these techniques. In particular, the inventors of the present application have observed that certain subsets of the information in images, for example, in a frame of video, are more important for avoiding these types of artifacts than other subsets of that information, relative to the balance that is typically struck in conventional video compression. In particular, when video images (e.g., frames) are transformed into the frequency domain from the spatial domain, certain lower-frequency components appear to contribute disproportionately to the blocky artifact when the video is decompressed and displayed.

[0031] Traditional video compression algorithms are not well-suited to exploit this insight. Typically, there is a fixed (and predetermined) set of parameter settings, called quantization matrices, that specify how much low-frequency and high-frequency information is retained during lossy compression. Thus, acting the above insight is not merely a matter of tuning existing algorithms, as the set of available balances are in a sense often "baked-in" to these algorithms.

[0032] Further complicating this issue is that it is desirable, and often commercially essential of a compression algorithm, for the existing installed base of video decoders to be able to decompress a bitstream of compressed video data. Typically, the installed base of decoders rely on the same predetermined discrete set of parameters used in compression, that is the same discrete set of quantization matrices. As a result, engineers are often dissuaded from deviating from this predetermined discrete set of quantization matrices, because if they used a custom quantization matrix, decoders generally will not have available that custom quantization matrix, as it is outside of the predetermined set, to decode video, limiting the audience and imposing burdens on those that wish to decode the video.

[0033] Some embodiments mitigate this problem by modifying some of the predetermined, discrete set of quantization matrices during compression, to decrease the amount of higher-frequency information that is retained, and then instructing decoders to use a different quantization matrix from that used to compress data to decode video bitstreams, while remaining standard compliant. As a result, for a given file size or bit rate, the above-described artifacts are expected to be reduced or eliminated, or for a given amount of artifacts being introduced, the file size are bit rate may be reduced, while remaining compliant with existing standards and decoders built to comply with those standards. That said, embodiments are not limited to systems that afford all of these benefits, as various tradeoffs are envisions, and independently useful techniques are described herein, none of which is to suggest that any other description is limiting.

[0034] Alternatively, or additionally, some embodiments modify certain transform matrix (e.g. DCT or ADST transform matrices) values to improve the effectiveness of compression, either dynamically as noted above, or by selecting fixed values once (or some embodiments may not modify transform matrix values, as some embodiments may only implement a subset of the techniques described herein, which is not to suggest that any other description is limiting). Some embodiments modify higher-frequency amplitudes to enhance compression rates at a given level of perceived quality, or improve perceived quality at a given compression rate. In many forms of DCT or ADST, a matrix is produced, with the first row and column value being a non-oscillating (DC) value, and the adjacent values being the primary oscillating (AC) components (e.g., at progressively higher frequencies further from the DC component along rows or columns of the matrix). These oscillating components are selectively modified, in some embodiments, by reducing their granularity or setting their values to zero to enhance entropy coding in subsequent steps. (Though one down-side of this approach is that data is discarded without regard to the amplitude of a value for a given frequency, so some patterns and textures in video data may be adversely affected, which is not to suggest that this or any other approach is disclaimed.) This approach is expected to be consistent with many existing, standard-compliant decoders, thereby avoiding the need for users to reconfigure their video players or install new software, while providing files and streaming video with relatively high-quality images at relatively low-bit rates.

[0035] Observed results significantly reduce the "blocky" artifact, without significantly impairing the effectiveness of compression. As a result, it is expected that a given bit-rate for transmission can deliver higher fidelity data, or a given level of fidelity can be delivered at a lower bit rate. For example, the present techniques may be used for improving video broadcasting (e.g., a broadcaster that desires to compress video before distributing via satellite, e.g., from 50 megabits per second (Mbps) to 7 Mbps, may use the technique to compress further at the same quality, or offer better quality at the same bit rate), improving online video streaming or video upload from mobile devices to the same ends. Some embodiments may support a service by which mobile devices are used for fast, on-the-fly video editing, e.g., a hosted service by which video files in the cloud can be edited with a mobile device to quickly compose a video about what the user is experience, e.g., at a basketball game.

[0036] In some embodiments, the techniques may be implemented in software (e.g., in a video or audio codec) or hardware (e.g., encoding accelerator circuits, such as those implemented with a field programmable gate array or an application specific integrated circuit (or subset of a larger system-on-a-chip ASIC)). The process may begin with obtaining data to be compressed (e.g., a file, such as a segment of a stream, including a sequence of video frames). Examples include a raw image file or a feed from a microphone (e.g., in mono or stereo). In some embodiments, setting these values to zero, or suppressing some values with modified quantization matrices, may increase the length of consecutive zeros after serialization of the matrix, thereby enhancing the compression techniques described herein, e.g., run-length coding or dictionary compression.

[0037] In some embodiments, different parameters described above may be selected based on whether a frame is an I-frame, a B-frame, or a P-frame (or, more generally, a reference frame or a frame described by reference to that reference frame). Some embodiments may selectively apply parameters above that produce higher quality compressed images on I-frames relative to the parameters applied to B-frames or P-frames. For instance, some embodiments may apply a higher-quality low-quality compression encoding, a higher threshold frequency for DCT matrix value injection, or a different threshold for injecting sub-block sizes for I-frames.

[0038] In some embodiments, the above techniques may be implemented in a computing environment 10 shown in FIG. 1. In some embodiments, the computing environment 10 includes a video distribution system 12 having a video compression system 14 in accordance with some embodiments of the present techniques. In some embodiments, the computing environment 10 is a distributed computing environment in which a plurality of computing devices communicate with one another via the Internet 16 and various other networks, such as cellular networks, wireless local area networks, and the like. In some embodiments, the video distribution system 12 is configured to distribute, for example, stream or download, video to mobile computing devices 18, desktop computing devices 20, and set-top box computing devices 22, or various other types of user computing devices, including wearable computing devices, in-dash automotive computing devices, seat-back video players on planes (or trains or busses), in-store kiosks, and the like.

[0039] Three user computing devices 18 through 22 are shown, but embodiments are consistent with substantially more, for example, more than 100, more than 10,000, or more than 1 million different user computing devices, in some cases, with several hundred or several thousand concurrent video viewing sessions, or more. In some embodiments, the computing devices 18 through 22 may be relatively bandwidth sensitive or memory constrained. To mitigate these challenges, in some cases, the video distribution system 12 may compress video, in some cases to a plurality of different rates of compression with a plurality of different levels of quality suitable for different bandwidth constraints. Some embodiments may select among these different versions to achieve a target bit rat, a target latency, a target bandwidth utilization, or based on feedback from the user device indicative of dropped frames. Alternatively, or additionally, in some embodiments, the video compression system 14 may be executed within one of the mobile computing devices 18, for example, to facilitate video compression before upload, for instance, on video captured with a camera of the mobile computing device 18, to be uploaded to the video distribution system 12.

[0040] The video may be any of a variety of different types of video, including user generated content, virtual reality formatted video, television shows (including 4k or 8K, high-dynamic range), movies, video of a video game rendered on a cloud-based graphical processing unit, and the like. The present techniques are described with reference to video, but some are applicable to a variety of other types of media, including audio.

[0041] In some embodiments, the video distribution system 12 includes a server 24 that may serve videos or receive uploaded videos, a controller 26 that may coordinate the operation of the other components of the video distribution system 14, an advertisement repository 28, and a user profile repository 30. In some embodiments, the controller 26 may be operative to direct the server 24 to stream compressed video content to one or more of the user computing devices 18 through 22, in some cases, dynamically selecting among different copies of different segments of a video file that has been compressed with different quality/compression-rate tradeoffs. The selection may be based on upon bit rate, bandwidth usage, packet loss, or the like, for example, targeting a target bit rate median value over a trailing or future duration of the video, in some cases, switching as needed at discrete intervals, for example, every two seconds, in response to a measured value exceeding a maximum or minimum delta from the target.

[0042] In some embodiments, the controller may be operative to recommend videos based upon user profiles in profile repository 30, and in some cases select advertisements based upon records in the advertisement repository 28. In some cases, the advertisements may be streamed before, during, or after a user-requested streamed video. Or in some cases, the video compression system 14 may have use cases in other environments, for example, in subscription supported video distribution systems that do not serve advertisements, and client-side computing devices, for example, in mobile computing device 18 to compress video before upload, in desktop computing device 20 to compress video feeds before wireless transmission to a wireless virtual reality headset, or the like. In some cases, the video compression system 14 may be executed within an Internet of things (IoT) appliance, such as a baby monitor or security camera, to compress video streaming before upload to a cloud-based video distribution system 12.

[0043] In some embodiments, each of the user computing devices 18 through 22 may include an operating system, and a video player, for example embedded within a web browser or native application. In some embodiments, the video player may include a video decoder, such as a video decoder compliant with various standards, like H.264, H.265, VP8, VP9, AOMedia Video 1 (AV1), Daala, or Thor. In some embodiments, an installation base of these video decoders may impose constraints upon the types of video compression that are commercially viable, as users are often unwilling to install new decoders until those decoders obtain wide acceptance. Some embodiments may modify existing standard-compliant compression algorithms in ways that afford even more efficient compression, while remaining standard compliant in the resulting output file, such that the existing installed base of various standard-compliant decoders may still decode and play the resulting files. (That said, embodiments are also consistent with non-standard-compliant bespoke compression techniques, which is not to imply that any other description is limiting.) Further, such compression may be achieved while offering greater quality in some traditional compression techniques, or while offering greater compression rates at a given level of quality.

[0044] In some embodiments, the video compression system 14 may include an input video file repository 32, a video encoder 34, and an output video file repository 36. In some embodiments, the video encoder may compress video files from the input video file repository 32 and store the compressed video files in the output video file repository 36. In some embodiments, a given single input video file may be stored in multiple copies, each copy having a different rate of compression in the output video file repository 36, and in some cases the controller 26 may select among these different copies dynamically during playback of a video file, for example, to target a set point bit rate (e.g., specified in user profiles). In some cases, the different segments of different copies may be associated with metadata indicating the identifier of the corresponding input video file, a position of the segment in a sequence of segments, and an identifier of the rate of compression or level of quality. In some cases, metadata in headers of video files may indicate parameters by which the videos encoded, which may be reference during decoding to select appropriate settings and stored values in the decoder, for example QP (quantization parameter) values that serve as identifiers for, or seed values for generating, quantization matrices. In some cases, the stored output video files may be segmented as well in time, for example, stored in two seconds or five second segments to facilitate switching, or in some cases, a single input video file may be stored as a single, and segmented, output video file, which is not to suggest that other descriptions are limiting. References to video files includes streaming video, for example, in cases in which the entire video is not resident on a single instance of storage media concurrently. References to video files also includes use cases in which an entire copy of a video is resident concurrently on a storage media, for example, stored in a directory of a filesystem or as a binary blob in a database on a solid-state drive or hard disk drive.

[0045] In some embodiments, the video encoder is an H.264, H.265, VP8, VP9, AOMedia Video 1 (AV1), Daala, or Thor video encoder having been modified in the manner indicated below to selectively adjust generally higher-frequency components of a transformation matrix in a way that causes those values to tend to be zero with a higher probability than traditional standard-compliant video encoding techniques. As a result, the modified transformation matrices are expected to produce relatively long strings of zeros relatively frequently, which are expected to facilitate more efficient compression, for example with entropy coding. And in some embodiments, the resulting file may remain complaint with corresponding decoders for H.264, H.265, VP8, VP9, AOMedia Video 1 (AV1), Daala, or Thor video. Further, these techniques are expected to be extensible to future generations of video encoders.

[0046] In some embodiments, the video encoder 34 includes an image block segmenter 38, a spatial-to-frequency domain transformer 40, a quantizer 42, a quantization matrix repository 44, a matrix editor 46, a serializer 48, a quality sensor 52, and an encoder 50. In some embodiments, the threshold selector 46 may be operative to select subsets of quantized transformation matrices and set values in the subsets to zero while leaving other, unselected subsets unmodified, or modified in a different way, for example, without setting values to zero, but quantizing the values more coarsely than other values in the matrix (e.g., quantizing values to the nearest even value, while other, lower-frequency values are quantized to the nearest integer).

[0047] In some embodiments, the image block segmenter 38 is configured to segment a frame of video (or a layer of a frame) into blocks. In some embodiments, different layers of a frame may be processed through the illustrated pipeline concurrently, for example, a chrominance layer or luminance layer. In some embodiments, the image block segmenter 38 may first segment a video frame into tiles of uniform and consistent size corresponding to one or more rows in one or more columns of the frame, and then each of those tiles may be segmented into one or more blocks, for example, blocks that are 4.times.4 pixels, 8.times.8 pixels, 16.times.16 pixels, 32.times.32 pixels, or 64.times.64 pixels, e.g., based on an amount of entropy in the segmented region, a compression quality setting, and an amount of movement between sequential frames. In some cases, block-sizes may be dynamically adjusted with the technique described in U.S. Provisional Patent Application 62/487,785, titled VIDEO ENCODING WITH ADAPTIVE RATE DISTORTION CONTROL BY SKIPPING BLOCKS OF A LOWER QUALITY VIDEO INTO A HIGHER QUALITY VIDEO, filed 20 Apr. 2017, the contents of which are incorporated by reference.

[0048] In some embodiments, different tiles and different portions of different tiles may be segmented into different sized blocks, for example based upon an amount of uniformity of image values (e.g. various attributes of pixels, like luminance, chrominance, red, blue, green, or the like) across the tile, with more uniformity corresponding to larger blocks. In some cases, thresholds for selecting the boxes may depend upon a compression rate or quality setting applied to the frame and the video, and in some cases, the compression rate or quality setting may vary between frames, for example, based on whether the frame is an I-frame, a P-frame, or a B-frame, with higher-quality, lower-compression rates being applied to I-frames. In some cases, higher-quality, lower-compression settings may be applied also based on amount of movement between consecutive frames, with more movement corresponding to lower-quality, higher-compression rate settings. The settings may affect each of the operations in the illustrated pipeline up to (and in some cases including) encoding and serialization, in some cases.

[0049] Next, some embodiments may input each of the blocks into the spatial-to-frequency domain transformer, 40. In some embodiments, the transformer is a discrete cosine transformer configured to produce a transformation matrix. In some embodiments, the transformer is an asymmetric discrete sine transformer also configured to produce an asymmetric discrete sine transform matrix. In some cases, the transform matrix may include a plurality of rows and a plurality of columns, for example, in a square matrix, and different values in the matrix may correspond to different frequency components of spatial variation in image values in the input block, for example, with a value in the first row and first column position corresponding to a DC value, a value in a first row and a second column corresponding to a first frequency of variation in a horizontal direction, and a value in a second column and the first row corresponding to a second frequency that is higher than the first frequency of variation in a horizontal direction, or vice versa, and so on, monotonically increases frequency across rows and columns of the transform matrix.

[0050] In some embodiments, blocks may be processed a block-modeler that approximates the block with a prediction, such as by approximating a block with a set of uniform values (e.g., an average of the values in the block) that are uniform over the block, or by approximating the block with a linear gradient of values, for instance, that linearly vary from left to right or top to bottom, or a combination thereof, according to horizontal and vertical coefficients. Some embodiments may then determine a residual value by calculating differences in corresponding pixel positions between these predicted values and the values in the block. Some embodiments may then perform subsequent operations based on these residual values and encode the prediction in a video bitstream such that the video may be decoded by re-creating the prediction and then summing the residual value for a given pixel position with the predicted value. In some cases, the predictions may be intra-frame predictions, such as predictions based upon adjacent blocks. In some cases, the predictions may be inter-frame predictions, such as predictions based upon subsequent or previous frames, for instance predictions based upon movement of items depicted in frames, like predictions based on segments of a video frame in a different position in a previous frame that are expected to move and a position of a given block being predicted, for instance, as a camera pans from left to right or an item moves through a frame.

[0051] In some embodiments, the transform matrix for each block may be input into the quantizer 42, which may quantize the transform matrix to produce a quantized transform matrix. In some cases, quantization may selectively suppress certain frequencies that are less likely to be perceived by a human viewer in the transform matrix or reduce an amount of resolution with which the frequencies are represented. In some embodiments, quantizing may be based upon a quantization matrix selected from the quantization matrices repository 44 and modified as described below. In some embodiments, a finite, discrete set of video encoding quality settings may each be associated with a different quantization matrix in the repository 44, and some embodiments may select a matrix based upon this setting. In some cases, a value for the setting may be stored in association with the block, the tile, or the frame, or the video file, for example in a header. In some embodiments, a similar quantization matrix repository like that corresponding element 44 may be stored in a decoder of the user computing devices 18 through 22, and those user computing devices may select the corresponding matrix when decoding video based upon the setting in the header. In some embodiments, the quantization matrices are specified by (e.g., calculated based on) a QP value stored in the header that ranges from 0 to 51, with 0 corresponding to lower compression rates and higher quality, and 51 corresponding to higher compression rates and lower quality (of human perceived images in compressed video, e.g., as determined by the metrics described below with reference to quality sensor 52 ).

[0052] In some embodiments, the quantizer 42 accesses (e.g., retrieves from memory or calculates) a matrix that is the same size as the transform matrix and performs an element-by-element division of the transform matrix by the quantization matrix, for example, dividing the value in the first row and first column by the corresponding value in the first row and first column, and so on throughout the matrices. In some embodiments, division may produce a set of quotients in place of each of the values of the transform matrix, and some embodiments may truncate less significant digits of the quotients, for example, less significant than a threshold, or rounding off to the nearest integer, for example, rounding up, rounding down, or rounding to the nearest integer. As a result, particularly large values in the quantization matrix at a given frequency position may tend to produce relatively small quotients, which may tend to be rounded to zero. Thus, in some cases, the quantization matrix may be tuned with relatively large values corresponding to positions that correspond to frequencies that are less perceptible to the human eye, which may cause the corresponding values in the quantized transform matrix to tend toward zero (discarding their information), unless the corresponding component in the transform matrix is particularly large and sufficient to overcome the division by the quantization matrix and produce a value that rounds to a nonzero integer.

[0053] In some embodiments, the matrix editor 46 may modify existing predetermined quantization matrices among a discrete set specified by a compression standard, in some cases dynamically responsive to feedback from a video file currently being compressed, such as from a stats file or from a single pass compression. In some embodiments, the matrix editor 46, in cooperation with the video encoder 34, may perform the operations of a process described below with reference to FIG. 2 to obtain and use these modified quantization matrices.

[0054] In some embodiments, the matrix editor 46 may select a base quantization matrix from which a modified quantization matrix is determined (or upon which it is based if previously formed). A base quantization matrix may be selected from among a predetermined discrete set of quantization matrices, such as those provided by various compression standards, like the compression standards mentioned herein. For example, the quantization matrix may be selected from among 52 quantization matrices provided for by the H.264 standard and specified by a quantization parameter, such as a QP value, that ranges between 0 and 51 and is expressed in a header of a compressed video bitstream. In another example, the quantization matrix may be selected from among those provided for in the H.265, VP8, VP9, AOMedia Video 1 (AV1), Daala, or Thor standards and is specified by a quantization parameter included other types of headers in the bitstream specified by the respective standard. In some cases, the base quantization matrix may be selected with traditional techniques for selecting a quantization matrix, for example, responsive to a configuration of the video encoder specifying a quantization parameter, responsive to a single pass target bit rate quantization parameter selection routine, responsive to a double pass quantization parameter selection routine that targets a bit rate or file size, or the like.

[0055] Some embodiments may use a modified form of the base quantization matrix to quantize each transform matrix from each block of at least some video data. In some cases, this may include dynamically forming the modified quantization matrix during video compression, or some embodiments may form this matrix by accessing a previously stored version of the modified matrix. In some cases, modification may include creating a new copy of the quantization matrix or overwriting values of an existing copy.

[0056] The modified quantization matrix (that is one modified relative to a predetermined discrete set of quantization matrices specified by a video compression standard in use by the video encoder 34 and identified in a bitstream produced by the video encoder 34) may be formed with a variety of different techniques. Some embodiments may merge two predetermined quantization matrices specified by a video encoding standard, for instance, merging element-by-element some values from a lower-image-quality (and higher-compression amount) quantization matrix into corresponding positions in a higher-image-quality (and lower-compression amount) quantization matrix, such as the base matrix. Thus, values in a given position in one matrix may replace values in that position in the other matrix, for instance, changing a value in a rightmost column, lowermost row in one matrix to be equal to a value in the same position in another matrix, and so on through a variety of different positions.

[0057] To this end, some embodiments may select a lower-image-quality quantization matrix (relative to the base quantization matrix) from which values are to be merged into the higher-quality base quantization matrix. In some embodiments, the selection may be of (and responsive to determining) a quantization matrix at a fixed rank-order distance along a ranking according to image quality of a discrete set of predetermined quantization matrices provided for by a compression standard, for example, selecting a next lower-image-quality quantization matrix, or skipping to a lower-image-quality quantization matrix that is two, five, or 10 quantization matrices in a lower-image-quality direction along the ranking.

[0058] In some embodiments, the amount of positions in this ranking that are "jumped" to select the lower-image-quality quantization matrix is dynamically adjusted, for example, in response to a score output by the quality sensor 52, in response to an amount of movement between frames, in response to a size of a block, in response to the ranking of the base quantization matrix, or a combination thereof, for example, a weighted combination that produces a score that is rounded to a next highest or lowest predetermined quantization matrix among a discrete set specified by a video compression standard, with some embodiments implementing logic to select a lowest-image-quality quantization matrix when the jump exceeds the predetermined range of discrete set of quantization matrices, for example, jumping beyond the 52nd one in the ranking in some standards.

[0059] Which values are merged into the base quantization matrix may be determined with a variety of different techniques. Some embodiments may merge values that correspond to spatial domain frequencies that exceed a threshold frequency, for example, merging values into the base quantization matrix from the selected lower-image-quality quantization matrix that are in positions of greater than a threshold row or greater than a threshold column, thereby preserving lower-frequency components of the base quantization matrix, while inserting higher-frequency components of the selected lower-image-quality quantization matrix. Or some embodiments may merge values based on scan position in a scan pattern used by the below-describe serializer 48, for example, merging in to the base quantization matrix values in positions corresponding to greater than a threshold scan position in the lower-image-quality quantization matrix that is selected.

[0060] Generally, the values inserted into the base quantization matrix are expected to be larger than the values that are replaced in the base quantization matrix. This is expected to increase the likelihood that information in the transform matrix at corresponding positions will be discarded, thereby increasing the likelihood of higher-frequency components being discarded during compression and increasing the amount of compression. To quantize the transform matrix, some embodiments may divide values in the transform matrix by values in corresponding positions in the modified quantization matrix (e.g., dividing the value in the first row and first column in one matrix by the value in the first row and first column and the other matrix, and so on with element-by-element divisions), and then round the resulting value to the nearest integer or rounding down. As a result, relatively large values in the quantization matrix may tend to cause values in the quantized version of the transform matrix to be set to zero, which is expected to enhance the effect of entropy coding by the encoder 50 described below.

[0061] Some embodiments may form the modified quantization matrix based on multiple lower-image-quality quantization matrices specified in a predetermined discrete set of a given standard. For instance, the base quantization matrix may be modified by inserting some values from a next-lower-image quality quantization matrix in the discrete set of a standard and other values from a, even lower quality matrix, for example, next in the ranking of quantization matrices in that set. In some cases, the insertion operations are configured such that the further along a ranking in a discrete set a selection is made to insert values, the higher the frequency of the position in the base quantization matrix the inserted values. In some embodiments, an insertion mapping matrix may specify "jumped" amounts that indicate how many positions to jump in an image-quality ranking of the discrete set to obtain an inserted value. An example is shown below, and as indicated, a first row, rightmost column may jump for positions relative to the base quantization matrix to obtain the inserted value, while the first row second-to-last column may jump three positions, and the first column first row position may retain the value of the base quantization matrix.

[0062] Example jump matrix specifying composition of a modified matrix from lower-image-quality quantization matrices a specified number of positions away in a quality ranking from a base quantization matrix: [0063] [0, 1, 1, 3, 4] [0064] [1, 1, 2, 3, 4] [0065] [3, 3, 4, 4, 5] [0066] [4, 4, 4, 5, 8]

[0067] In some cases, a similar matrix may specify an alphabet of symbols for rounding operations, e.g., indicating whether quotients are to be rounded to the nearest integer, nearest multiple of 2, nearest multiple of 4, 8, 16, or the like. After rounding quotients after element-by-element division of the transform matrix by the quantization matrix, these values may be accessed to determine where to round. In some cases, a DC component may be rounded to the nearest integer, while other components may be rounded to a nearest even number or a nearest (e.g., nearest smaller) multiple of 4 or 8, with larger frequencies having higher values (and thus, smaller alphabets).

[0068] In some embodiments, all of the values in the base quantization matrix may be modified to some degree, though the DC component may be modified by a smaller amount, for example, by less than 5%, less than 10%, or less than 20% of its original value in the discrete set of quantization matrices provided for by a standard. In some embodiments, values in the base quantization matrix may be modified according to a formula rather than according to other quantization matrices in a discrete set specified by a standard. For example, some embodiments may apply a function to each value or a subset of values in the base quantization matrix that includes as parameters the row and column position (or corresponding frequencies) of those values in the base quantization matrix. The function may also include as a parameter the value of that corresponding position in the base quantization matrix. In some embodiments, the function may output a modified value for the corresponding position in the modified quantization matrix. In some cases, the function may tend to (e.g., for more than 80 or 90% of values) increase values as frequencies increase, for example, tending to increase values as column position increases and tending to increase values as row position increases, thereby increasing the likelihood that higher-frequency components will be discarded during compression, unless those higher-frequency-components are particularly strongly exhibited in the original image or residual values. In some cases, the function may be a product of a scaling coefficient, a row position, a column position, and the value in the corresponding position in the base quantization matrix. Or in some cases, the function may scale nonlinearly, for example, applying a multiple to each value at greater than a threshold row or column position. Or in some cases, scan position in a scanning pattern used by the serializer 48 may serve the role of the row and column position in these techniques. In some cases, the multiple may be a square of the scan position multiplied by a scaling coefficient.

[0069] In some embodiments, the modified quantization matrix may be determined (for example selected among a plurality of previously calculated quantization matrices or dynamically formed) in response to various signals. In some embodiments, the modified quantization matrix may be changed between blocks within a tile of a frame (e.g. a row tile or a column tile, each containing a plurality of blocks, which in some cases which may be concurrently processed during encoding or decoding, such that two or more tiles are at least partially processed at that same time). In some cases, different modified quantization matrices (that are modified relative to a predetermined discrete set of quantization matrices provided for by a compression standard otherwise being applied) may be applied to different blocks within a segment of a frame (e.g., as specified in a bitstream to identify subsets of a frame (like a list of blocks) subject to similar parameters or the same parameters). In some cases, different modified quantization matrices may be applied to different blocks in different frames, for example, to the same block in the same position in different consecutive frames. In some cases, the selection of the modified quantization matrix may be made based on whether a frame is an I-frame, a B-frame, a P frame, or other type of frame that distinguishes between reference frames and frames that are described with reference to those reference frames.

[0070] In some embodiments, the amount of the above described jumps in rankings according to quality of discrete sets of quantization matrices specified by standards, or the above-describe scaling coefficients, may be determined based upon a feedback score, for instance, from the below-describe quality sensor 52. In some cases, these values by which quantization matrices are determined may be modulated to decrease an amount of difference between a target bit rate and a predicted or actual bit rate of compressed video produced by the encoder 50. In some cases, predictions may be produced by accessing a stats file determined during an initial pass through video to be compressed, such that forward-looking predictions may be made based on parameters, such as amounts of movement in video, amounts of entropy in images, and the like. In some cases, these values may be modulated to decrease an amount of difference between a predicted or currently exhibited file size of compressed video. In some cases, these values may be modulated to decrease an amount of difference between a predicted or currently exhibited target measure of difference between original images prior to compression in frames and decompressed versions of those images, for instance with peak signal to noise ratio or block peak signal-to-noise ratio scores like those described below. In some embodiments, values by which the modified quantization matrix is formed may be modulated in response to a score that is a combination of one or more of these types of feedback, such as a weighted sum of these different types of differences. Or in some cases, the score may be a nonlinear combination of these different types of distances between targets and measured values, for existence, measures of difference between images pre-and-post compression may dominate the score when those differences exceed some amount, for instance, based on a square of these differences or other higher-order contribution.

[0071] Some embodiments may further modify the quantized transform matrix to increase the amount of zero values in the quantized transform matrix in areas that are less perceptible to the human eye while having a relatively large effect on the rate of compression. Thus, some embodiments may set certain values to zero that the quantization matrix (which may be specified by a value and a header of a block, tile, layer, frame, or file), would not otherwise cause to be zero. In some cases, the highest-frequency values or higher-frequency values of the quantized transform matrix may be set to zero with the techniques described in U.S. Provisional Patent App. 62/513,681, titled MODIFYING COEFFICIENTS OF A TRANSFORM MATRIX, filed 1 Jun. 2017, which is incorporated by reference. This is expected to further enhance compression resulting from subsequent entropy coding operations, or some embodiments may omit this operation, which is not to suggest that any other operation or feature may not also be omitted.

[0072] In some embodiments, parameters may be dynamically adjusted, for example, within a frame, between frames, or between blocks or tiles responsive to feedback from a quality sensor 52. In some embodiments, the quality sensor 52 may be configured to compare the input video file to an output compressed video file (which includes a streaming portion thereof), in some cases decoding and encoded video files and performing a pixel-by pixel comparison, or a block-by-block comparison, and calculating an aggregate measure of difference, for example, a root mean square difference, mean absolute error, a signal-to-noise ratio, such as peak signal to noise ratio (PSNR) value, or a block-based signal to noise ratio, such as a BPSNR value as described in U.S. patent application 62/474,350, titled FAST ENCODING LOSS METRIC, filed 21 Mar. 2017, the contents of which are hereby incorporated by reference. For instance, some embodiments may increase the threshold frequency (moving the values set to zero to the right and down) in response to the BPSNR increases, e.g., dynamically while streaming or while encoding video, for instance between frames or during frames. In some embodiments, the quality sensor 52 may execute various algorithms to measure psychophysical attributes of the output compressed video file, for example, a mean observer score (MOS), and those specified in ITU-R Rec. BT. 500-11 (ITUR, 1998) and ITU-T Rec. P.910 (ITU-T, 1999), like Double Stimulus Continuous Quality Scale (DSCQS), Single Stimulus Continuous Quality Evaluation (SSCQE), Absolute Category Rating (ACR), and Pair Comparison (PC). In some cases, video files may be compressed, measured, and re-compressed based on feedback, e.g., by interfacing a video terminal to the quality sensor 52 and providing a user interface by which human subjects enter values upon which the feedback is based, or some embodiments may simulate the input of human subjects, e.g., by training a deep coevolution neural network on a training set of pervious scores supplied by humans and the corresponding stimulus with a stochastic gradient descent or various other deep learning techniques.

[0073] In some embodiments, the parameters may be adjusted to target an output attribute of the compressed video file, such as a set point bit rate, for example, over a trailing duration of time, like an average bit rate over a trailing 20 seconds or 30 seconds. In some embodiments, the parameters described above may be adjusted along with a plurality of other attributes of the video encoding algorithm in concert to target such values. In some embodiments, the parameters described above may be adjusted based upon a weighted combination of the output of the quality sensor 52 indicative of quality of the compressed video and a target bit rate. For example, some embodiments may calculate a weighted sum of these values, and adjust the parameters described above in response to determining that the difference between the weighted sum and a target value exceeds a maximum or minimum. In some embodiments, proportional, proportional integrative, or proportional integrative derivative feedback control may be exercised over the threshold applied by the matrix editor 46 responsive to this weighted sum.

[0074] In some embodiments, the quantizer 42 outputs a quantized transform matrix where more of the values are zero relative to traditional techniques, and in some cases, some of the values have been reduced in their resolution, for example, transforming the values from a first alphabet having a first number of symbols to a second alphabet having a second number of symbols that is smaller than the first number of symbols, for example, using only even values or only odd values. As a result, the distribution of occurrences of particular symbols in the modified quantized transform matrix may be tuned to enhance the effectiveness of entropy encoding, where relatively frequent symbols are represented with smaller numbers of bits than less frequent symbols.

[0075] The quantized transform matrix may be input to the serializer 48, which may apply one of various scan patterns to convert the modified quantized transform matrix into a sequence of values, for instance, placing the values of the modified quantized transform matrix into an ordered list according to the scan pattern, e.g., loading the values to a first-in-first-out buffer that feeds the encoder 50.

[0076] For serialization, some embodiments may select a scan pattern that tends to increase the number of consecutive zeros in the resulting sequence of values to enhance the efficiency of entropy encoding by the encoder 50. In some embodiments, the scan pattern has a "Z" shape starting with a DC component, for example, in an upper-left corner of the quantized transform matrix and then moving diagonally back and forth across the quantized transform matrix, for example, from the second column-first row, to the first column-second row, and then to the first column-third row, then to the second column-second row, and then to the third column-first row, and so on, moving in diagonal lines back and forth, rastering diagonally across the quantized transform matrix from the DC value to in one corner to a value and an imposing corner.

[0077] In another example, the scan pattern may swing back and forth in a non-linear path through some back-and-fourth movements. For example, some diagonal swings back and or forth may only transit a portion of that diagonal, thereby imparting a curved-shaped to subsequent swings back or forth that remain adjacent to a previous diagonal scan back or forth across the quantized transform matrix. In some cases, these partial diagonal scans back or forth may be biased, for example, above or below the diagonal between the position of the DC component in the quantized transform matrix and the opposing corner. In some cases, a bias may be selected based upon a type of spatial-to-frequency domain transform performed, for example based upon whether a DCT transform is applied or an ADST is applied.

[0078] Next, some embodiments may compress the sequence of values produced by scanning according to the scan pattern with the encoder 50. In some embodiments, the encoder 50 is an entropy coder. In some embodiments, the encoder 50 is configured to apply Huffman coding, arithmetic coding, context adaptive binary arithmetic coding, range coding, or the like (which is not to suggest that this item of lists describes mutually exclusive designations or that any other list herein does, as some list items may be species of other list items). Some embodiments may determine the frequency with which various sequences these occur within the sequence of values and construct a Huffman tree according to the frequencies, or access a Huffman tree in memory formed based upon expected frequencies to convert relatively long, but frequent sequences in the sequence of values output by the serializer 48 into relatively short sequences of binary values, while converting relatively infrequent sequences of values output by the serializer 48 into longer sequences of binary values. In some embodiments, the decoder in the user computing devices 18 to 22 may access another copy of the Huffman tree to reverse the operation, traversing the Huffman tree based upon each value in the binary sequence output by the encoder 50 until reaching a leaf node, which may be mapped in the Huffman tree to a corresponding sequence of values output by the serializer 48. When decoding, the sequence of values may be de-serialized by reversing the scan pattern, de-quantized by performing a value-by-value multiplication with the quantization matrix designated in a header of the video file, and reversing the transform back to the spatial domain to reconstruct images in frames.

[0079] In some embodiments, the bitstream output by the encoder 50 may be stored in the output video file repository 36, in some cases combining different bitstreams corresponding to different layers of a frame and combining different frames together into a file format, and in some cases appending header information indicating how to decode the file.

[0080] The operation of the matrix editor 46 is described above as interfacing with the quantizer 42, but similar techniques may be applied elsewhere within the pipeline of video encoding implemented by the video encoder 34. For example, image blocks may be modified before being applied to the spatial-to-frequency domain transformer 40. Some embodiments may apply a low-pass or band-pass filter to variation in image values in the spatial domain, for example, horizontally, or vertically or a combination thereof, across the image block. For example, some embodiments may apply a convolution that sets each image value (e.g., a pixel value at a layer of a frame) to the mean of that image value, the image value to the left, and the image value to the right along a row; or the mean may be based on those image values left right, above, and below, or based on each adjacent pixel image value (or those within a threshold number of positions in the spatial domain) to implement an example of a low-pass filter applied before performing the spatial-to-frequency domain transform, thereby suppressing higher-frequency components.

[0081] In another example, the transform matrix may be modified by the matrix editor before being quantized by the quantizer 42, for example, setting values to zero in the manner described above or setting values to even or odd integer multiples of corresponding values and corresponding positions of the quantize station matrix to reduce the granularity of certain values. (Code may perform a division by-zero-check before dividing these values by the corresponding value in the quantization matrix and leave zero-values as zero to avoid division by zero errors.)

[0082] In another example, the matrix editor may operate upon the output of the serializer 48, for example, accessing the scan pattern to determine which values in a sequence of values are to be modified.

[0083] FIG. 2 shows an example of a process 80 that may be implemented by the above-describe video encoder, but is not limited to embodiments having those features, which is not to suggest that any other description is limiting. In some embodiments, the operations by which the process 80 (and the other functionality described herein) is effectuated may be encoded as computer readable instructions stored in a tangible, non-transitory, machine-readable medium, such that when those instructions are executed by one or more processors, the operations described herein are effectuated. In some cases, some or all of the steps of the process 80 may be hardcoded into a hardware accelerator, such as a video encoder hardware accelerator application specific integrated circuit or a functional block of a processor or system-on-a-chip. In some embodiments, some of the operations of the process 80 may be omitted, replicated, executed in a different order, executed concurrently, or otherwise varied, which is not to suggest that any other description is limiting.

[0084] In some embodiments, the process 80 begins with obtaining video frames, as indicated by block 82, and then segmenting video frames into blocks, as indicated by block 84. In some embodiments, the process 84, further includes transforming video blocks into frequency domain transform matrices, as indicated by block 86. In some cases, this may further include performing the above-described operations by which predictions of blocks are determined, residuals are calculated, and those residuals may be subject to the transformation of block 86, rather than the original pixel values (though it should be noted that residuals are a type of pixel value).

[0085] Next, some embodiments may select a predetermined quantization matrix, as indicated by block 88, and form a modified quantization matrix based on the selected quantization matrix, as indicated by block 90. Some embodiments may then quantize the transform matrices with the modified quantization matrix, as indicated by block 92.

[0086] Some embodiments may then serialized the quantized transform matrices, as indicated by block 94, and compress the serialized data with entropy coding, as indicated by block 96. Some embodiments may then form a header for the compressed data identifying the selected predetermined matrix, as indicated catered by block 98. In some cases, headers may be compressed or uncompressed portions of a video bitstream. In some cases, the header may be associated with, for example, serve as a prefix in a bitstream of, a compressed portion of data containing data to which the header applies. In some cases, the header may include various parameters by which a decoder may reverse some or all of the above-described operations to re-create frames (which includes re-creating approximations thereof when using lossy compression algorithms) to decode and display compressed video. In some cases, the headers include a parameter that identifies a quantization matrix purported to (according to the standard and header values) have been used during compression. And in some cases, decoders on a client computing device may playback video by accessing the identified quantization matrix to reverse the above-described quantization operations (to the extent possible, as some information may be discarded during the quantization process). In some cases, the quantization parameter may identify one of the quantization matrices in a discrete set specified by a given standard, which may also be identified in the bitstream. For instance, a quantization parameter may be a value from 0 to 51 that identifies one of a discrete set of 52 quantization matrices that vary in tradeoffs between image-quality and compression amounts.

[0087] As a result of the above described modifications to the quantization matrix that is identified in the header, which may be the base quantization matrix, the decoder may be instructed by the header to use a different quantization matrix to decode a given block than was used to encode that block. For instance, a decoder may use the base quantization matrix to decode a given block, while the given block was encoded with a modified version of that base quantization matrix, e.g., where some higher-frequency component values are different (such as larger) from that used by the decoder. As a result, in some cases, the block of data may be compressed by a greater amount than is provided for by the base quantization matrix due to the insertion of values described above, while the lower-frequency components of information may be retained, and the standard-compliant decoder may have information sufficient to process and decode and play the compressed data.

[0088] Some embodiments may apply the header to form a compressed bitstream of video data, as indicated by block 100, and then send or store the bitstream, as indicated by block 102. In some cases, sending the bitstream may include streaming the bitstream to one or more of the above described computing devices 18, 20, or 22. Streaming may include determining whether user credentials associated with those devices are associated with valid subscriptions, or may include selecting one or more advertisements to be sent to the user computing devices 18, 20, or 22 for presentation to users in association with the streamed video, for instance, before or after the streamed video.

[0089] In some cases, different versions (at different compression rates) of a file may be processes concurrently, temporal segments of a video file may be processed concurrently, frames may be processed concurrently, different tiles may be processed concurrently, different blocks may be processed concurrently, or different layers within a frame may be processed concurrently, or combination thereof.