Data Processing Method, System, and Apparatus

ZHANG; Jiajin ; et al.

U.S. patent application number 16/369102 was filed with the patent office on 2019-07-18 for data processing method, system, and apparatus. The applicant listed for this patent is HUAWEI TECHNOLOGIES CO., LTD.. Invention is credited to Pak-Ching LEE, Matt M.T. YIU, Jiajin ZHANG.

| Application Number | 20190220356 16/369102 |

| Document ID | / |

| Family ID | 61763162 |

| Filed Date | 2019-07-18 |

View All Diagrams

| United States Patent Application | 20190220356 |

| Kind Code | A1 |

| ZHANG; Jiajin ; et al. | July 18, 2019 |

Data Processing Method, System, and Apparatus

Abstract

A data processing method is disclosed, and the method includes: encoding a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, where the data chunk includes a data object, and the data object includes a key, a value, and metadata; and generating a data chunk index and a data object index, where the data chunk index is used to retrieve the data chunk and the error-correcting data chunk corresponding to the data chunk, the data object index is used to retrieve the data object in the data chunk, and each data object index is used to retrieve a unique data object.

| Inventors: | ZHANG; Jiajin; (Shenzhen, CN) ; YIU; Matt M.T.; (Hong Kong, CN) ; LEE; Pak-Ching; (Hong Kong, CN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 61763162 | ||||||||||

| Appl. No.: | 16/369102 | ||||||||||

| Filed: | March 29, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/CN2017/103678 | Sep 27, 2017 | |||

| 16369102 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 12/0246 20130101; G06F 3/0619 20130101; G06F 12/02 20130101; G06F 2212/7207 20130101; G06F 3/0644 20130101; G06F 11/1076 20130101; G06F 3/0673 20130101 |

| International Class: | G06F 11/10 20060101 G06F011/10; G06F 3/06 20060101 G06F003/06 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Sep 30, 2016 | CN | 201610875562.X |

Claims

1. A data management method, comprising: encoding a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, wherein the data chunk comprises a data object, and the data object comprises a key, a value, and metadata; and generating a data chunk index and a data object index, wherein the data chunk index is used to retrieve the data chunk and the error-correcting data chunk corresponding to the data chunk, the data object index is used to retrieve the data object in the data chunk, and each data object index is used to retrieve a unique data object.

2. The data management method according to claim 1, wherein before the encoding a data chunk of the predetermined size, to generate the error-correcting data chunk corresponding to the data chunk, the method further comprises: storing the data object in the data chunk of the predetermined size, wherein the data chunk of the predetermined size is located in a first storage device; and correspondingly, after the step of the encoding the data chunk of the predetermined size, to generate the error-correcting data chunk corresponding to the data chunk, the method further comprises: storing the error-correcting data in a second storage device, wherein the first storage device and the second storage device are located at different locations in a distributed storage system.

3. The data management method according to claim 2, wherein the data chunk index comprises a stripe list ID, a stripe ID, and location information; the stripe list ID is used to uniquely determine one of a plurality of storage device groups in the distributed storage system, and the storage device group comprises a plurality of first devices and a plurality of second devices; the stripe ID is used to determine a sequence number of an operation of storing the data chunk and the error-correcting data chunk corresponding to the data chunk in the storage device group indicated by the stripe list ID; and the location information is used to determine the first storage device that is in the storage device group determined based on the stripe list ID and in which the data chunk is located, and the second storage device that is in the storage device group determined based on the stripe list ID and in which the error-correcting data chunk is located.

4. The data management method according to claim 3, wherein the generating the data chunk index and the data object index comprises: generating the data chunk index and the data object index in the first storage device; and generating the data chunk index and the data object index in the second storage device.

5. The data management method according to claim 2, wherein before the storing the data object in the data chunk of the predetermined size, the method further comprises: selecting one first storage device and one or more second storage devices; and separately sending the data object to the first storage device and the second storage device.

6. The data management method according to claim 5, wherein the first storage device stores the data object in the data chunk; and the method further comprises: when a size of the data object in the data chunk approximates or equals a storage limit of the data chunk, stops writing a new data object into the data chunk, and sending, to the second storage device, key values of data objects stored in the data chunk; and wherein the second storage device receives the key values, sent by the first storage device, of the data objects in the data chunk, the second storage device reconstructs the data chunk in the second storage device based on the key values of the data objects, and the second storage device encodes the reconstructed data chunk.

7. The data management method according to claim 5, wherein the method further comprises: when the data chunk comprises a plurality of data objects: determining whether a size of a to-be-stored data object is less than a threshold; and storing and encoding the to-be-stored data object whose size is less than the threshold.

8. A data management method, comprising: selecting one storage site and a plurality of backup sites for a to-be-stored data object, wherein the storage site and the plurality of backup sites have a same stripe list ID; sending the to-be-stored data object to the storage site and the plurality of backup sites, wherein the data object comprises metadata, a key, and a value; in the storage site, storing the to-be-stored data object in a data chunk that has a constant size and that is not encapsulated, adding data index information of the to-be-stored data object to a data index, wherein the data index comprises data index information of data objects in the data chunk, and generating a data chunk index; in the backup site, storing the to-be-stored data object in a temporary buffer; after the to-be-stored data object is stored in the data chunk, if a total data size of the data objects stored in the data chunk approximates a storage limit of the data chunk, encapsulating the data chunk, sending a key list of the data objects stored in the encapsulated data chunk to the backup site, and generating a same stripe ID for the storage site and the backup site; and after receiving the key list, retrieving, by the backup site from the temporary buffer based on a key in the key list, a data object corresponding to the key, reconstructing, based on the data object, a data chunk corresponding to the key list, encoding the reconstructed data chunk, to obtain a backup data chunk, and updating the data chunk index and the data object index that is stored in the backup site and that corresponds to the encapsulated data chunk.

9. The data management method according to claim 8, further comprising: searching for, based on a key of a first target data object, the first target data object, a first target data chunk in which the first target data object is located, and a first target storage site in which the first target data chunk is located; sending an updated value of the first target data object to the first target storage site; updating a value of the first target data object in the first target storage site based on the updated value, and sending a difference value between the updated value of the first target data object and an original value of the first target data object to first target backup sites that have a stripe ID the same as that of the first target storage site; and if the first target data chunk is not encapsulated, finding, based on the key, the first target data object stored in a buffer of the first target backup site, and adding the difference value and the original value of the first target data object, to obtain the updated value of the first target data object; or if the first target data chunk is already encapsulated, updating, based on the difference value, first target backup data chunks that are in the plurality of first target backup sites and that correspond to the first target data chunk.

10. The data management method according to claim 8, further comprising: searching for, based on the key of the first target data object, the first target data object, the first target data chunk in which the first target data object is located, and the first target storage site in which the first target data chunk is located; sending, to the first target storage site, a delete request for deleting the first target data object; and if the first target data chunk is not encapsulated, deleting the first target data object in the first target storage site, and sending a delete instruction to the first target backup site, to delete the first target data object stored in the buffer of the first target backup site; or if the first target data chunk is already encapsulated, setting the value of the first target data object in the first target storage site to a special value, and sending a difference value between the special value and the original value of the first target data object to the plurality of first target backup sites, wherein the first target backup data chunks in the plurality of first target backup sites are updated based on the difference value, and the first target backup data chunks correspond to the first target data chunk.

11. The data management method according to claim 8, further comprising: selecting one second target storage site and a plurality of second target backup sites for a second target data object, wherein the second target storage site and the plurality of second target backup sites have a same stripe list ID; sending the second target data object to the second target storage site; and when the second target storage site is a faulty storage site, sending the second target data object to a coordinator manager, wherein the coordinator manager obtains a stripe list ID corresponding to the second target data object, the coordinator manager determines a normal storage site that has a stripe list ID the same as the stripe list ID as a first temporary storage site, and the coordinator manager instructs to send the second target data object to the first temporary storage site for storage; storing the second target data object in the first temporary storage site; and after a fault of the second target storage site is cleared, migrating the second target data object stored in the first temporary storage site to the second target storage site whose fault is cleared.

12. The data management method according to claim 8, further comprising: sending, to the second target storage site, a data obtaining request for requesting the second target data object; and when the second target storage site is the faulty storage site, sending the data obtaining request to the coordinator manager, wherein the coordinator manager obtains, according to the data obtaining request, the stripe list ID corresponding to the second target data object, the coordinator manager determines a normal storage site that has a stripe list ID the same as the stripe list ID as a second temporary storage site, the coordinator manager instructs to send the data obtaining request to the second temporary storage site, and the second temporary storage site returns the corresponding second target data object according to the data obtaining request.

13. The data management method according to claim 12, wherein the second temporary storage site returns the corresponding second target data object according to the data obtaining request comprises: if a second data chunk in which the second target data object is located is not encapsulated, sending, by the second temporary storage site, a data request to the second target backup site corresponding to the second target storage site; obtaining, by the second target backup site, the corresponding second target data object from a buffer of the second target backup site according to the data request, and returning the second target data object to the second temporary storage site; and returning, by the second temporary storage site, the requested second target data object; or if the second target data object requested by the data request is newly added or modified after the second target storage site becomes faulty, obtaining the corresponding second target data object from the second temporary storage site, and returning the corresponding second target data object; or otherwise, obtaining, by the second temporary storage site based on a stripe ID corresponding to the second target data object, a second backup data chunk that is from a second target backup site having a stripe ID the same as the stripe ID corresponding to the second target data object and that corresponds to the second target data object, restoring, based on the second backup data chunk, a second target data chunk comprising the second target data object, and obtaining the second target data object from the second target data chunk, and returning the second target data object.

14. A data management apparatus, comprising: a non-transitory memory storage comprising instructions; and one or more hardware processors in communication with the non-transitory memory storage, wherein the one or more hardware processors execute the instructions to: encode a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, wherein the data chunk comprises a data object, and the data object comprises a key, a value, and metadata; and generate a data chunk index and a data object index, wherein the data chunk index is used to retrieve the data chunk and the error-correcting data chunk corresponding to the data chunk, the data object index is used to retrieve the data object in the data chunk, and each data object index is used to retrieve a unique data object.

15. The data management apparatus according to claim 14, wherein the one or more hardware processors execute the instructions to: store the data object in the data chunk of the predetermined size, wherein the data chunk of the predetermined size is located in a first storage device; and store the error-correcting data in a second storage device, wherein the first storage device and the second storage device are located at different locations in a distributed storage system.

16. The data management apparatus according to claim 15, wherein the data chunk index comprises a stripe list ID, a stripe ID, and location information; the stripe list ID is used to uniquely determine one of a plurality of storage device groups in the distributed storage system, and the storage device group comprises a plurality of first devices and a plurality of second devices; the stripe ID is used to determine a sequence number of an operation of storing the data chunk and the error-correcting data chunk corresponding to the data chunk in the storage device group indicated by the stripe list ID; and the location information is used to determine a first storage device that is in the storage device group determined based on the stripe list ID and in which the data chunk is located, and a second storage device that is in the storage device group determined based on the stripe list ID and in which the error-correcting data chunk is located.

17. The data management apparatus according to claim 16, wherein the one or more hardware processors execute the instructions to: generate the data chunk index and the data object index in the first storage device; and generate the data chunk index and the data object index in the second storage device.

18. The data management apparatus according to claim 15, wherein the one or more hardware processors execute the instructions to: select one first storage device and one or more second storage devices; and separately send the data object to the first storage device and the second storage device.

19. The data management apparatus according to claim 18, wherein the one or more hardware processors execute the instructions to: store the data object in the data chunk; when a size of the data object in the data chunk approximates or equals a storage limit of the data chunk, stop writing a new data object into the data chunk, and send key values of data objects stored in the data chunk; receive the key values of the data objects in the data chunk; reconstruct the data chunk in the second storage device based on the key values of the data objects; and encode the reconstructed data chunk.

20. A data management system, comprising: a client, configured to: select one storage site and a plurality of backup sites for a to-be-stored data object, wherein the storage site and the plurality of backup sites have a same stripe list ID, and send the to-be-stored data object to the storage site and the plurality of backup sites, wherein the data object comprises metadata, a key, and a value; the storage site, configured to: store the to-be-stored data object in a data chunk that has a constant size and that is not encapsulated, add data index information of the to-be-stored data object to a data index, wherein the data index comprises data index information of data objects in the data chunk, and generate a data chunk index; and the backup site, configured to store the to-be-stored data object in a temporary buffer, wherein after the to-be-stored data object is stored in the data chunk, if a total data size of the data objects stored in the data chunk approximates a storage limit of the data chunk, the storage site is configured to: encapsulate the data chunk, send a key list of the data objects stored in the encapsulated data chunk to the backup site, and generate a same stripe ID for the storage site and the backup site; and the backup site is further configured to: receive the key list sent by the storage site, retrieve, from the temporary buffer based on a key in the key list, a data object corresponding to the key, reconstruct, based on the data object, a data chunk corresponding to the key list, encode the reconstructed data chunk, to obtain a backup data chunk, and update the data chunk index and the data object index that is stored in the backup site and that corresponds to the encapsulated data chunk.

21. The data management system according to claim 20, wherein the client is further configured to: search for, based on a key of a first target data object, the first target data object, a first target data chunk in which the first target data object is located, and a first target storage site in which the first target data chunk is located, and send an updated value of the first target data object to the first target storage site; the first target storage site is configured to: update a value of the first target data object, and send a difference value between the updated value of the first target data object and an original value of the first target data object to first target backup sites that have a stripe ID the same as that of the first target storage site; and if the first target data chunk is not encapsulated, the plurality of first target backup sites are configured to: find, based on the key, the first target data object stored in buffers, and add the difference value and the original value of the first target data object, to obtain the updated value of the first target data object; or if the first target data chunk is already encapsulated, the plurality of first target backup sites are configured to update, based on the difference value, first target backup data chunks corresponding to the first target data chunk.

22. The data management system according to claim 20, wherein the client is further configured to: search for, based on the key of the first target data object, the first target data object, the first target data chunk in which the first target data object is located, and the first target storage site in which the first target data chunk is located, and send, to the first target storage site, a delete request for deleting the first target data object; and if the first target data chunk is not encapsulated, the first target storage site is configured to: delete the first target data object, and send a delete instruction to the first target backup site; and the first target backup site is configured to delete, according to the delete instruction, the first target data object stored in the buffer; or if the first target data chunk is already encapsulated, the first target storage site is configured to: set the value of the first target data object to a special value, and send a difference value between the special value and the original value of the first target data object to the plurality of first target backup sites; and the plurality of first target backup sites are configured to update the first target backup data chunk based on the difference value.

23. The data management system according to claim 20, wherein the client is further configured to: select one second target storage site and a plurality of second target backup sites for a second target data object, wherein the second target storage site and the plurality of second target backup sites have a same stripe list ID; send the second target data object to the second target storage site; and when the second target storage site is a faulty storage site, send the second target data object to a coordinator manager; wherein the coordinator manager is configured to: obtain a stripe list ID corresponding to the second target data object, determine a normal storage site that has a stripe list ID the same as the stripe list ID as a first temporary storage site, and instruct the client to send the second target data object to the first temporary storage site for storage; and the first temporary storage site is configured to: store the second target data object, and after a fault of the second target storage site is cleared, migrate the second target data object to the second target storage site whose fault is cleared.

24. The data management system according to claim 20, wherein the client is further configured to: send, to the second target storage site, a data obtaining request for requesting the second target data object, and when the second target storage site is the faulty storage site, send the data obtaining request to the coordinator manager; the coordinator manager is configured to: obtain the stripe list ID corresponding to the second target data object, determine a normal storage site that has a stripe list ID the same as the stripe list ID as a second temporary storage site, and instruct the client to send the data obtaining request to the second temporary storage site; and the second temporary storage site is configured to return the corresponding second target data object according to the data obtaining request.

25. A data management apparatus, comprising: a non-transitory memory storage comprising instructions; and one or more hardware processors in communication with the non-transitory memory storage, wherein the one or more hardware processors execute the instructions to: select one storage site and a plurality of backup sites for a to-be-stored data object, wherein the storage site and the plurality of backup sites have a same stripe list ID, and send the to-be-stored data object, wherein the data object comprises metadata, a key, and a value; store the to-be-stored data object in a data chunk that has a constant size in the storage site and that is not encapsulated, add data index information of the to-be-stored data object to a data index, wherein the data index comprises data index information of data objects in the data chunk, and generate a data chunk index; store the to-be-stored data object in a temporary buffer of the backup site; after the to-be-stored data object is stored in the data chunk, if a total data size of the data objects stored in the data chunk approximates a storage limit of the data chunk, encapsulate the data chunk, send a key list of the data objects stored in the encapsulated data chunk, and generate a same stripe ID for the storage site and the backup site; and retrieve, from a temporary buffer of the storage site based on a key in the key list, a data object corresponding to the key, reconstruct, based on the data object, a data chunk corresponding to the key list, encode the reconstructed data chunk, to obtain a backup data chunk, and update the data chunk index and the data object index that is stored in the backup site and that corresponds to the encapsulated data chunk.

26. The data management apparatus according to claim 25, wherein the one or more hardware processors execute the instructions to: search for, based on a key of a first target data object, the first target data object, a first target data chunk in which the first target data object is located, and a first target storage site in which the first target data chunk is located, and send an updated value of the first target data object; update a value of the first target data object in the first target storage site based on the updated value, and send a difference value between the updated value of the first target data object and an original value of the first target data object; and update first target backup data that is in first target backup sites having a stripe ID the same as that of the first target storage site and that corresponds to the first target data object; and if the first target data chunk is not encapsulated, find, based on the key, the first target data object stored in a buffer of the first target backup site, use the first target data object as the first target backup data, and add the difference value and an original value of the first target backup data, to obtain the updated value of the first target data object; or if the first target data chunk is already encapsulated, update, based on the difference value, first target backup data chunks in the plurality of first target backup sites, wherein the first target backup data chunks correspond to the first target data chunk.

27. The data management apparatus according to claim 25, wherein the one or more hardware processors execute the instructions to: search for, based on the key of the first target data object, the first target data object, the first target data chunk in which the first target data object is located, and the first target storage site in which the first target data chunk is located, and send a delete request for deleting the first target data object; and if the first target data chunk is not encapsulated, delete the first target data object in the first target storage site, and send a delete instruction; and delete, according to the delete instruction, the first target data object stored in the buffer of the first target backup site; or if the first target data chunk is already encapsulated, set the value of the first target data object in the first target storage site to a special value, and send a difference value between the special value and the original value of the first target data object; and update the first target backup data chunks in the plurality of first target backup sites based on the difference value, wherein the first target backup data chunks correspond to the first target data chunk.

28. The data management apparatus according to claim 25, wherein the one or more hardware processors execute the instructions to: select one second target storage site and a plurality of second target backup sites for a second target data object, wherein the second target storage site and the plurality of second target backup sites have a same stripe list ID; send the second target data object to the second target storage site; and when the second target storage site is a faulty storage site, send the second target data object to a coordinator manager; wherein the coordinator manager is configured to: obtain a stripe list ID corresponding to the second target data object, determine a normal storage site that has a stripe list ID the same as the stripe list ID as a first temporary storage site, and send the second target data object; and the one or more hardware processors execute the instructions to: store the second target data object in the first temporary storage site; and after a fault of the second target storage site is cleared, migrate the second target data object stored in the first temporary storage site to the second target storage site whose fault is cleared.

29. The data management apparatus according to claim 25, wherein the one or more hardware processors execute the instructions to: send a data obtaining request for requesting the second target data object; when determining that the second target storage site that stores the second target data object is the faulty storage site, send the data obtaining request to the coordinator manager; and wherein the coordinator manager is configured to: obtain, according to the data obtaining request, the stripe list ID corresponding to the second target data object, and determine a normal storage site that has a stripe list ID the same as the stripe list ID as a second temporary storage site; and the one or more hardware processors execute the instructions to: obtain the second target data object from the second temporary storage site according to the data obtaining request, and return the second target data object.

30. The data management apparatus according to claim 25, wherein the one or more hardware processors execute the instructions to: if a second data chunk in which the second target data object is located is not encapsulated, send a data request; obtain, according to the data request, the corresponding second target data object from buffers of the plurality of second target backup sites corresponding to the second target storage site, and return the second target data object; and return the requested second target data object; or if the second target data object requested by the data request is newly added or modified after the second target storage site becomes faulty, obtain the corresponding second target data object from the second temporary storage site, and return the corresponding second target data object; or otherwise, obtain, based on a stripe ID corresponding to the second target data object, a second backup data chunk that is from a second target backup site having a stripe ID the same as the stripe ID corresponding to the second target data object and that corresponds to the second target data object, restore, based on the second backup data chunk, a second target data chunk comprising the second target data object, obtain the second target data object from the second target data chunk, and return the second target data object.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of International Application No. PCT/CN2017/103678, filed on Sep. 27, 2017, which claims priority to Chinese Patent Application No. 201610875562.X, filed on Sep. 30, 2016. The disclosures of the aforementioned applications are hereby incorporated by reference in their entireties.

TECHNICAL FIELD

[0002] The present invention relates to the computing field, and more specifically, to a data processing method, system, and apparatus.

BACKGROUND

[0003] As a price of a memory drops, a distributed memory storage system is widely applied to a distributed computing system, to store hot data. A most commonly used data storage manner is key-value (key-Value, KV) pair storage. Currently, mainstream commercial products include Memcached, Redis, RAMCloud, and the like, and are commercially applied to data storage systems of Twitter, Facebook, and Amazon.

[0004] A mainstream fault tolerance method of the distributed memory storage system is mainly a full backup solution. A manner of the full backup solution is replicating an entire piece of data to different devices. When some devices become faulty, backup data on other devices that are not faulty may be used to restore data on the faulty devices. This implementation solution is simple and reliable. However, data redundancy is relatively high, and at least two backups are required. In addition, to ensure data consistency, data modification efficiency is not high.

[0005] Another fault tolerance solution is an erasure coding (Erasure Coding, EC) fault tolerance solution. An erasure coding technology is used to encode data, to obtain an erasure code (Parity). A length of the erasure code is usually less than a length of original data. The original data and the erasure code are distributed on a plurality of different devices. When some devices become faulty, a part of the original data and a part of the erasure code may be used to restore complete data. In this way, an overall data redundancy rate is less than 2, and an objective of saving memory is achieved.

[0006] Currently, mainstream technologies using erasure coding include LH*RS, Atlas, Cocytus, and the like. In these technologies, erasure coding is performed on a value of a key-value (KV) pair, and the full backup solution is still used for other data of the key-value pair. Specifically, a KV data structure of first target data (object) usually includes three parts: a key, a value, and metadata. The key is a unique identifier of the first target data, and may be used to uniquely determine the corresponding first target data. The value is actual content of the first target data. The metadata stores some attribute information of the first target data. The attribute information is, for example, a size of the key, a size of the value, or a timestamp of creating/modifying the first target data. When a current mainstream erasure coding technology is used to back up the first target data, the full backup solution is used for a metadata part and a key part of the first target data, and the EC solution is used for a value part. For example, three data objects need to be stored and backed up, and are represented by using M1, M2, M3, Data 1, Data 2, and Data 3, where M represents metadata and a key of a data object, and Data is a value of the data object. In this case, EC encoding is performed on the Data 1, the Data 2, and the Data 3, to obtain error-correcting codes Parity 1 and Parity 2. The five pieces of data, that is, the Data 1, the Data, the Data 3, the Parity 1, and the Parity 2, are distributed on five devices, and three duplicates of each of M1, M2, and M3 are created and are distributed on the five devices.

[0007] This solution may also be referred to as a partial encoding storage solution. In a scenario in which a large data object is stored, to be specific, in a scenario in which a data length of metadata and a key is far less than a data length of a value, the partial encoding storage solution has relatively high storage efficiency. However, this solution has low efficiency in processing a small data object, because a data length of metadata and a key of the small data object is slightly different from a data length of a value of the small data object, or even the data length of the metadata and the key is greater than the data length of the value. According to statistics released by Facebook, most of data objects stored in memory storage are small data objects, and even more than 40% of the data objects have less than 11 bits. This indicates that most data is small data. EC encoding cannot be used to advantage in such a partial encoding storage solution. As a result, data storage redundancy is relatively high, and storage costs are increased.

SUMMARY

[0008] This application provides a data processing method, system, and apparatus, to reduce data redundancy during storage of a data object, and reduce storage costs.

[0009] According to a first aspect, this application provides a data processing method, where the data processing method includes: encoding a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, where the data chunk includes a data object, and the data object includes a key, a value, and metadata; and

[0010] generating a data chunk index and a data object index, where the data chunk index is used to retrieve the data chunk and the error-correcting data chunk corresponding to the data chunk, the data object index is used to retrieve the data object in the data chunk, and each data object index is used to retrieve a unique data object.

[0011] In the method, a plurality of data objects are gathered in one data chunk and are encoded in a centralized manner. In this way, data encoding and backup can be effectively used to advantage. In other words, data redundancy is relatively low. This avoids disadvantages of low efficiency and high system redundancy that are caused by independent encoding and backing up of each data object.

[0012] In a first implementation of the first aspect of the present invention, before the encoding a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, in the method, the plurality of data objects first need to be stored in the data chunk of the predetermined size, where the data chunk of the predetermined size is located in a first storage device, the data chunk of the predetermined size may be specifically a storage address that is unoccupied in the first storage device, and the predetermined size is an optimum data size, for example, 4 KB, based on which encoding and backup can be used to advantage and a reading speed and storage reliability of a data object stored in the data chunk can be ensured. After the encoding a data chunk of a predetermined size, to generate an error-correcting data chunk corresponding to the data chunk, the method further includes: storing the error-correcting data in a second storage device, where the first storage device and the second storage device are located at different locations in a distributed storage system.

[0013] In the method, the data chunk and the corresponding error-correcting data chunk are stored at different storage locations in the distributed storage system, for example, the first storage device and the second storage device. This can ensure that when some parts of the first storage device become faulty, data in the faulty storage device can be restored based on data in the second storage device and other parts of the first storage device that operate normally. Therefore, the distributed storage system can have a redundancy capability that meets a basic requirement, namely, reliability. The first storage device may be understood as a conventional data server configured to store the data chunk, and the second storage device may be understood as a parity server that stores the error-correcting data chunk of the data chunk. When a maximum of M servers (M data servers, M parity servers, or M servers including data servers and parity servers) in K data servers and M parity servers become faulty, remaining K servers may restore data in the faulty servers by using an encoding algorithm. The encoding algorithm may be EC encoding, XOR encoding, or another encoding algorithm that can implement data encoding and backup.

[0014] With reference to another implementation of the first implementation of the first aspect of the present invention, the data chunk index includes a stripe list ID, a stripe ID, and location information; the stripe list ID is used to uniquely determine one of a plurality of storage device groups in the distributed storage system, and the storage device group includes a plurality of first devices and a plurality of second devices; the stripe ID is used to determine a sequence number of an operation of storing the data chunk and the error-correcting data chunk corresponding to the data chunk in the storage device group indicated by the stripe list ID; and the location information is used to determine a first storage device that is in the storage device group determined based on the stripe list ID and in which the data chunk is located, and a second storage device that is in the storage device group determined based on the stripe list ID and in which the error-correcting data chunk is located.

[0015] To restore a storage device after the storage device becomes faulty, in the present invention, a manner of a three-level index, that is, a stripe list ID, a stripe ID, and location information, is used to retrieve a data chunk. When a client needs to access a data chunk, the client may reconstruct a correct data chunk based on the index in combination with a key of a data object index, and all requests in a faulty period may be processed by another storage device determined based on the stripe ID. After the faulty storage device is restored, data is migrated to the restored storage device in a unified manner. This effectively ensures reliability of a distributed storage device using the data management method. The data chunk index and the data object index are stored in both the first storage device and the second storage device. The data chunk index and the data object index are generated in the first storage device, and the data chunk index and the data object index are generated in the second storage device. Therefore, redundancy backup is not required, and when the faulty device is restored, an index can be reconstructed locally.

[0016] With reference to another implementation of any implementation of the first aspect of the present invention, before the plurality of data objects are stored in the data chunk of the predetermined size, the method further includes: selecting one first storage device and one or more second storage devices; and separately sending the plurality of data objects to the first storage device and the second storage device.

[0017] A data storage method in the present invention allows the client to specify the first storage device and the second storage device before sending a service request, for example, a data storage service request. The first storage device and the second storage device may be pre-selected. In other words, the second storage device is a dedicated backup site of the first storage device, namely, a parity server. In this way, an addressing operation in a data storage and backup process can be simplified. For ease of searching for the first storage device and the second storage device, the first storage device and the second storage device are included in a same stripe, so that the first storage device and the second storage device have a same stripe identifier, namely, a stripe ID. In addition, when the second storage device is selected, load statuses of a plurality of candidate storage devices in the distributed storage system may be further referenced, and one or more candidate storage devices with minimum load are used as the one or more second storage devices. In this way, workload of sites can be balanced in the entire distributed storage system, device utilization is improved, and overall data processing efficiency is improved.

[0018] With reference to another implementation of a third implementation of the first aspect of the present invention, the first storage device stores the data object in the data chunk; when a size of the data object in the data chunk approximates or equals a storage limit of the data chunk, stops writing a new data object into the data chunk; and sends, to the second storage device, key values of all data objects stored in the data chunk; and

[0019] the second storage device receives the key values, sent by the first storage device, of all the data objects in the data chunk, reconstructs the data chunk in the second storage device based on the key values of the data objects, and encodes the reconstructed data chunk.

[0020] The method ensures that content in each data chunk is not greater than the preset storage limit, to ensure that a size of a data unit is smallest by using the data management method. Therefore, encoding and backup can be fully used to advantage while a system throughput is ensured.

[0021] With reference to a fifth implementation of any implementation of the first aspect of the present invention, when the data chunk includes the plurality of data objects, the method further includes: determining whether a size of a to-be-stored data object is less than a threshold, and store and encode the to-be-stored data object whose size is less than the threshold.

[0022] To effectively use the data management method in the present invention to advantage, and to avoid an operation such as data segmentation performed on a relatively large data object when the relatively large data object is stored in the data chunk, a threshold filtering manner is used, and a small data object is stored in the data chunk, to ensure that the method can effectively overcome disadvantages of low efficiency and high data redundancy that are caused when the small data object is stored and backed up. In addition, in a continuous time period, sizes of data objects usually have a particular similarity. In the method, the threshold can further be dynamically adjusted, so that the method can be more widely applied, and can be more suitable for data characteristics of the Internet.

[0023] With reference to another implementation of any implementation of the first aspect of the present invention, the method further includes: the storing, by the first storage device, the data object in the data chunk includes: when the size of the data object is greater than a size of storage space in the data chunk, or when the size of the data object is greater than a size of remaining storage space in the data chunk, segmenting the data object, storing, in the data chunk, a segmented data object whose size after the segmentation is less than the size of the storage space in the data chunk or less than the size of the remaining storage space in the data chunk, and storing data chunk segmentation information in metadata of the segmented data object, to recombine the segmented data object.

[0024] In a process of storing the data object, if the data object is excessively large or the size of the data object is greater than the size of the storage space in the data chunk, segmentation of the data chunk may be allowed for storage in the present invention. In this way, applicability of the method can be improved, and complexity of the method can be reduced.

[0025] According to a second aspect, a data management method is provided, where the method includes: selecting one storage site and a plurality of backup sites for a to-be-stored data object, where the storage site and the plurality of backup sites have a same stripe list ID; and sending the to-be-stored data object to the storage site and the plurality of backup sites, where the data object includes metadata, a key, and a value;

[0026] in the storage site, storing the to-be-stored data object in a data chunk that has a constant size and that is not encapsulated, adding data index information of the to-be-stored data object to a data index, where the data index includes data index information of all data objects in the data chunk, and generating a data chunk index; in the backup site, storing the to-be-stored data object in a temporary buffer; after the to-be-stored data object is stored in the data chunk, if a total data size of all the data objects stored in the data chunk approximates a storage limit of the data chunk, encapsulating the data chunk, sending a key list of all the data objects stored in the encapsulated data chunk to the backup site, and generating a same stripe ID for the storage site and the backup site; and

[0027] after receiving the key list, retrieving, by the backup site from the temporary buffer based on a key in the key list, a data object corresponding to the key, reconstructing, based on the data object, a data chunk corresponding to the key list, encoding the reconstructed data chunk, to obtain a backup data chunk, and updating the data chunk index and the data object index that is stored in the backup site and that corresponds to the encapsulated data chunk.

[0028] In the method, a plurality of data objects are gathered in one data chunk and are encoded in a centralized manner. In this way, data encoding and backup can be effectively used to advantage. In other words, data redundancy is relatively low. This avoids disadvantages of low efficiency and high system redundancy that are caused by independent encoding and backing up of each data object. It should be noted that in the method, the storage site and the backup site are usually disposed at different locations in a distributed storage system, to provide a required redundancy capability. In other words, when a storage site becomes faulty, content in the storage site can be restored by using a backup site. In addition, in the method, a data storage method may be combined with the method provided in the first aspect of the present invention, for example, the methods that are provided in the first aspect of the present invention and that are for selecting a storage device, screening a data object, and segmenting a data object. For example, the method may include the solutions in the first aspect of the present invention. (1) When the data chunk includes the plurality of data objects, the method further includes: determining whether a size of a to-be-stored data object is less than a threshold, and store and encode the to-be-stored data object whose size is less than the threshold; (2) dynamically adjusting the threshold at a predetermined time interval based on an average size of the to-be-stored data object; (3) when the distributed storage system includes a plurality of candidate storage devices, using one or more candidate storage devices with minimum load as the one or more second storage devices based on load statuses of the plurality of candidate storage devices; and (4) when a size of the data object is greater than a size of storage space in the data chunk, or when the size of the data object is greater than a size of remaining storage space in the data chunk, segmenting the data object, storing, in the data chunk, a segmented data object whose size after the segmentation is less than the size of the storage space in the data chunk or less than the size of the remaining storage space in the data chunk, and storing data chunk segmentation information in metadata of the segmented data object, to recombine the segmented data object.

[0029] With reference to the second aspect, in an implementation of the second aspect, the method further includes: searching for, based on a key of a first target data object, the first target data object, a first target data chunk in which the first target data object is located, and a first target storage site in which the first target data chunk is located; sending an updated value of the first target data object to the first target storage site; updating a value of the first target data object in the first target storage site based on the updated value, and sending a difference value between the updated value of the first target data object and an original value of the first target data object to all first target backup sites that have a stripe ID the same as that of the first target storage site; and if the first target data chunk is not encapsulated, finding, based on the key, the first target data object stored in a buffer of the first target backup site, and adding the difference value and the original value of the first target data object, to obtain the updated value of the first target data object; or if the first target data chunk is already encapsulated, updating, based on the difference value, first target backup data chunks that are in the plurality of first target backup sites and that correspond to the first target data chunk.

[0030] In the method, a data updating method is provided, so that data synchronization can be implemented for a target data object and a corresponding target backup data chunk in a most economical way by using the foregoing method.

[0031] With reference to the foregoing implementation of the second aspect, in another implementation of the second aspect, the method further includes: searching for, based on the key of the first target data object, the first target data object, the first target data chunk in which the first target data object is located, and the first target storage site in which the first target data chunk is located; sending, to the first target storage site, a delete request for deleting the first target data object; and if the first target data chunk is not encapsulated, deleting the first target data object in the first target storage site, and sending a delete instruction to the first target backup site, to delete the first target data object stored in the buffer of the first target backup site; or

[0032] if the first target data chunk is already encapsulated, setting the value of the first target data object in the first target storage site to a special value, and sending a difference value between the special value and the original value of the first target data object to the plurality of first target backup sites, so that the first target backup data chunks in the plurality of first target backup sites are updated based on the difference value, where the first target backup data chunks correspond to the first target data chunk.

[0033] In the method, a data deletion method that can reduce system load is provided. To be specific, a target data object stored in the data chunk is not deleted immediately; instead, a value of the target data object is set to a special value, for example, 0, and the target data object may be deleted when the system is idle.

[0034] With reference to any one of the second aspect or the foregoing implementations based on the second aspect, in another implementation of the second aspect, the method further includes: selecting one second target storage site and a plurality of second target backup sites for a second target data object, where the second target storage site and the plurality of second target backup sites have a same stripe list ID; sending the second target data object to the second target storage site; when the second target storage site is a faulty storage site, sending the second target data object to a coordinator manager, so that the coordinator manager obtains a stripe list ID corresponding to the second target data object, determines a normal storage site that has a stripe list ID the same as the stripe list ID as a first temporary storage site, and instructs to send the second target data object to the first temporary storage site for storage; storing the second target data object in the first temporary storage site; and after a fault of the second target storage site is cleared, migrating the second target data object stored in the first temporary storage site to the second target storage site whose fault is cleared.

[0035] The method ensures that, in the data management method, when a specified second target storage site becomes faulty, the coordinator manager specifies a first temporary storage site to take the place of the faulty second target storage site, and when the faulty second target storage site is restored, migrates, to the second target storage site, the second target data object that is stored in the first temporary storage site and that points to the second target storage site; and the second target storage site stores the second target data object according to a normal storage method.

[0036] With reference to any one of the second aspect or the foregoing implementations based on the second aspect, in another implementation of the second aspect, the method further includes: sending, to the second target storage site, a data obtaining request for requesting the second target data object; and when the second target storage site is the faulty storage site, sending the data obtaining request to the coordinator manager, so that the coordinator manager obtains, according to the data obtaining request, the stripe list ID corresponding to the second target data object, determines a normal storage site that has a stripe list ID the same as the stripe list ID as a second temporary storage site, and instructs to send the data obtaining request to the second temporary storage site, and the second temporary storage site returns the corresponding second target data object according to the data obtaining request.

[0037] In the method, it can be ensured that, in this data management method, even if a second target storage site becomes faulty, a client can still be allowed to access a data object stored in the faulty second target storage site. A specific method is described as above, and the second temporary storage site takes the place of the second target storage site, to implement access to the faulty site, thereby improving system reliability.

[0038] With reference to any one of the second aspect or the foregoing implementations based on the second aspect, in another implementation of the second aspect, the returning, by the second temporary storage site, the corresponding second target data object according to the data obtaining request includes:

[0039] if a second data chunk in which the second target data object is located is not encapsulated, sending, by the second temporary storage site, a data request to the second target backup site corresponding to the second target storage site; obtaining, by the second target backup site, the corresponding second target data object from a buffer of the second target backup site according to the data request, and returning the second target data object to the second temporary storage site; and returning, by the second temporary storage site, the requested second target data object; and

[0040] if the second target data object requested by the data request is newly added or modified after the second target storage site becomes faulty, obtaining the corresponding second target data object from the second temporary storage site, and returning the corresponding second target data object; or

[0041] otherwise, obtaining, by the second temporary storage site based on a stripe ID corresponding to the second target data object, a second backup data chunk that is from a second target backup site having a stripe ID the same as the stripe ID corresponding to the second target data object and that corresponds to the second target data object, restoring, based on the second backup data chunk, a second target data chunk including the second target data object, obtaining the second target data object from the second target data chunk, and returning the second target data object.

[0042] In the method, when a data storage site, for example, the second target storage site, becomes faulty, a fault occurrence time point and duration are uncertain. Therefore, the method provides methods for accessing data in the faulty site in a plurality of different cases, to improve system flexibility and applicability.

[0043] With reference to any one of the second aspect or the foregoing implementations based on the second aspect, in another implementation of the second aspect, the method further includes: sending, to a third target storage site, a data modification request for modifying a third target data object; and when the third target storage site is a faulty storage site, sending the data modification request to the coordinator manager, so that the coordinator manager obtains a stripe list ID corresponding to the third target data object, determines a normal storage site that has a stripe list ID the same as the stripe list ID as a third temporary storage site, and instructs to send the data modification request to the third temporary storage site, and the third temporary storage site modifies the third target data object according to the data modification request.

[0044] In the method, when the third target storage site becomes faulty, the third temporary storage site may process modification performed on the third target data object, keep data in a third target backup site consistent with the modified third target data object, and after the third target storage site is restored, re-send the modification request to the third target storage site, so that the data in the third target backup site is consistent with third target data in the third target storage site.

[0045] With reference to any one of the second aspect or the foregoing implementations based on the second aspect, in another implementation of the second aspect, the modifying, by the third temporary storage site, the third target data object according to the data modification request includes: storing the data modification request in the third temporary storage site, so that the third temporary storage site obtains, based on a stripe ID corresponding to the third target data object, a third backup data chunk that is from a third target backup site having a stripe ID the same as the stripe ID corresponding to the third target data object and that corresponds to the third target data object, restores, based on the third backup data chunk, a third target data chunk including the third target data object, and sends a difference value between an updated value carried in the data modification request and an original value of the third target data object to the third target backup site, and the third target backup site updates the third backup data chunk based on the difference value; and

[0046] after a fault of the third target storage site is cleared, migrating the data modification request stored in the third temporary storage site to the third target storage site, so that the third target storage site modifies the third target data object in the third target storage site according to the data modification request.

[0047] According to a fourth aspect, a data management apparatus is provided, where the data management apparatus includes a unit or a module configured to perform the method in the first aspect.

[0048] According to a fifth aspect, a data management system is provided, where the data management system includes various functional bodies, for example, a client, a storage site, a backup site, and a coordinator manager, and is configured to perform the method in the second aspect.

[0049] According to a sixth aspect, a data management apparatus is provided, where the data management apparatus includes a unit or a module configured to perform the method in the second aspect.

[0050] A seventh aspect of the present invention provides a data structure, including:

[0051] a plurality of data chunks of a constant size, stored in a first storage device first storage device, where each data chunk includes a plurality of data objects, and each data object includes a key, a value, and metadata;

[0052] a plurality of error-correcting data chunks, stored in a second storage device, where the error-correcting data chunks are obtained by encoding the plurality of data chunks of the constant size, and the first storage device and the second storage device are located at different locations in a distributed storage system;

[0053] a data chunk index, where the data chunk index is used to retrieve the data chunks and the error-correcting data chunks corresponding to the data chunks; and

[0054] a data object index, where the data object index is used to retrieve the data objects in the data chunks, and each data object index is used to retrieve a unique data object.

[0055] In the data structure provided in the present invention, the plurality of data objects are gathered in one data chunk, a corresponding error-correcting data chunk is constructed for the data chunk, the data chunk and the error-correcting data chunk are respectively distributed at different storage locations, namely, the first storage device and the second storage device, in the distributed storage system, and a data chunk index is associated with the data chunk and the error-correcting data chunk. When the first storage device in which the data chunk is located becomes faulty, content in the original data chunk can be restored based on the data structure, and encoding and backup can be fully used to advantage, thereby implementing relatively low data redundancy. This avoids disadvantages of low efficiency and high system redundancy that are caused by independent encoding and backing up of each data object.

BRIEF DESCRIPTION OF DRAWINGS

[0056] To describe the technical solutions in the embodiments of the present invention more clearly, the following briefly describes the accompanying drawings required for describing the embodiments or the prior art. Apparently, the accompanying drawings in the following description show merely some embodiments of the present invention, and a person of ordinary skill in the art may still derive other drawings from these accompanying drawings without creative efforts.

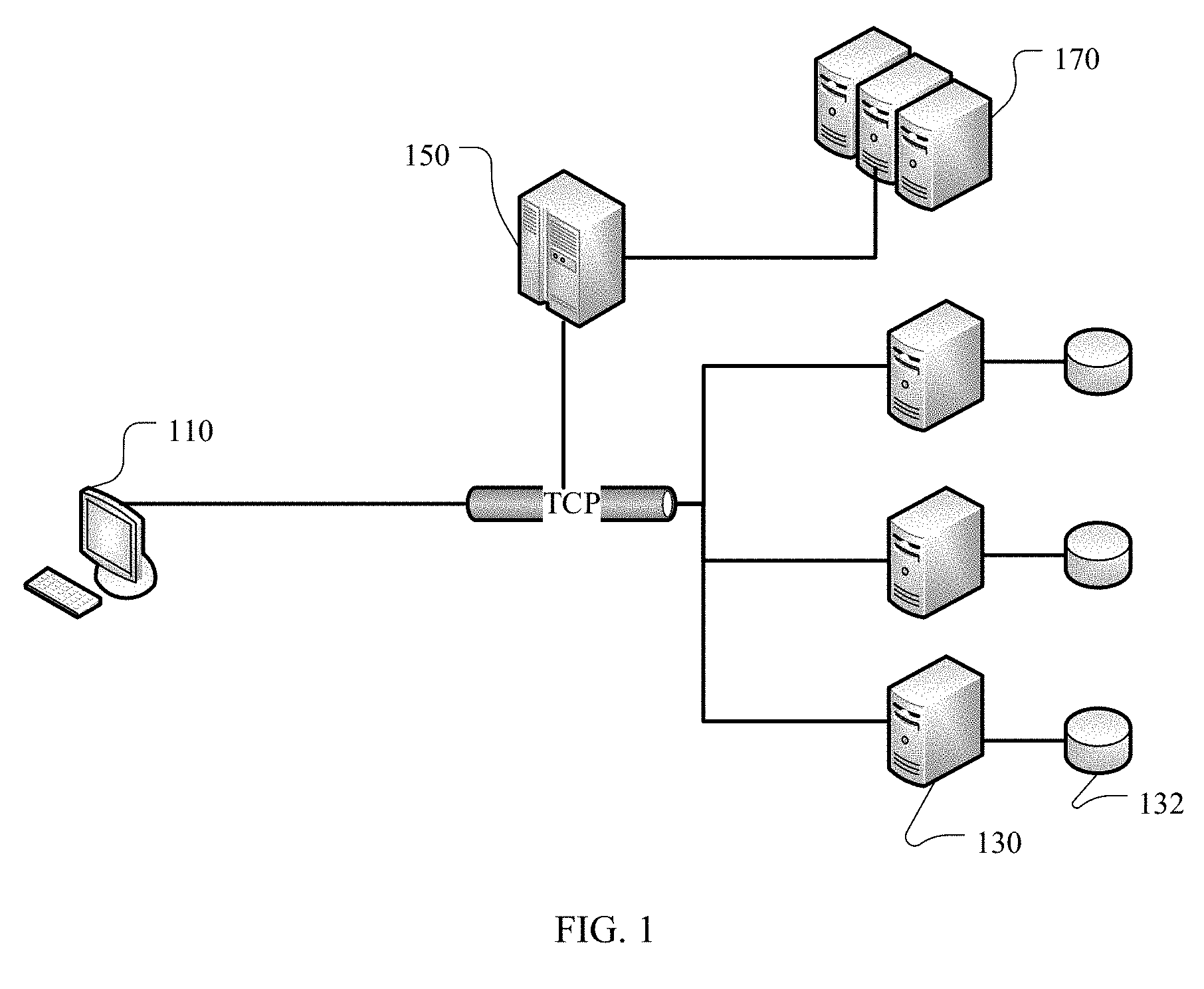

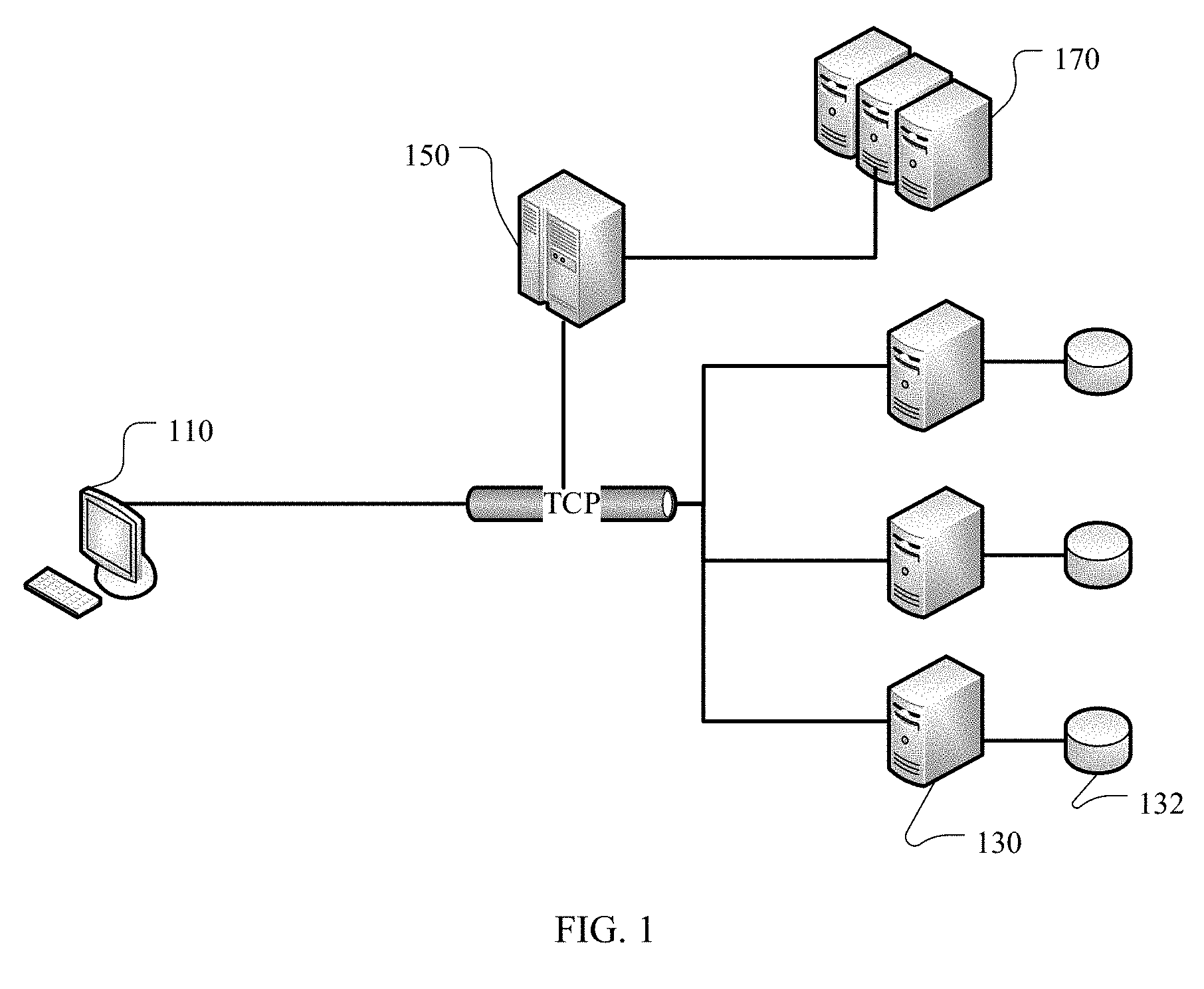

[0057] FIG. 1 is a schematic architectural diagram of a distributed storage system;

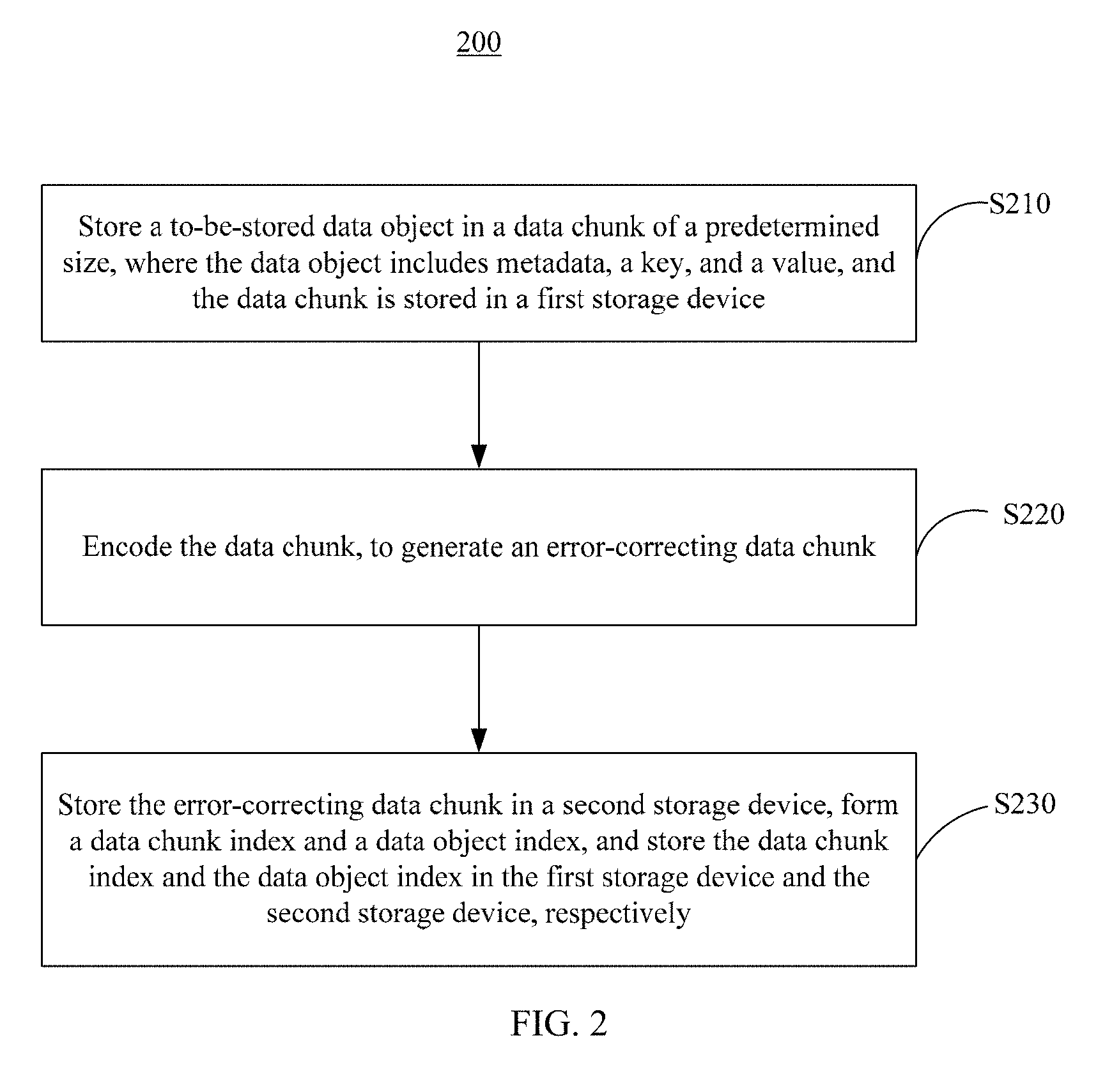

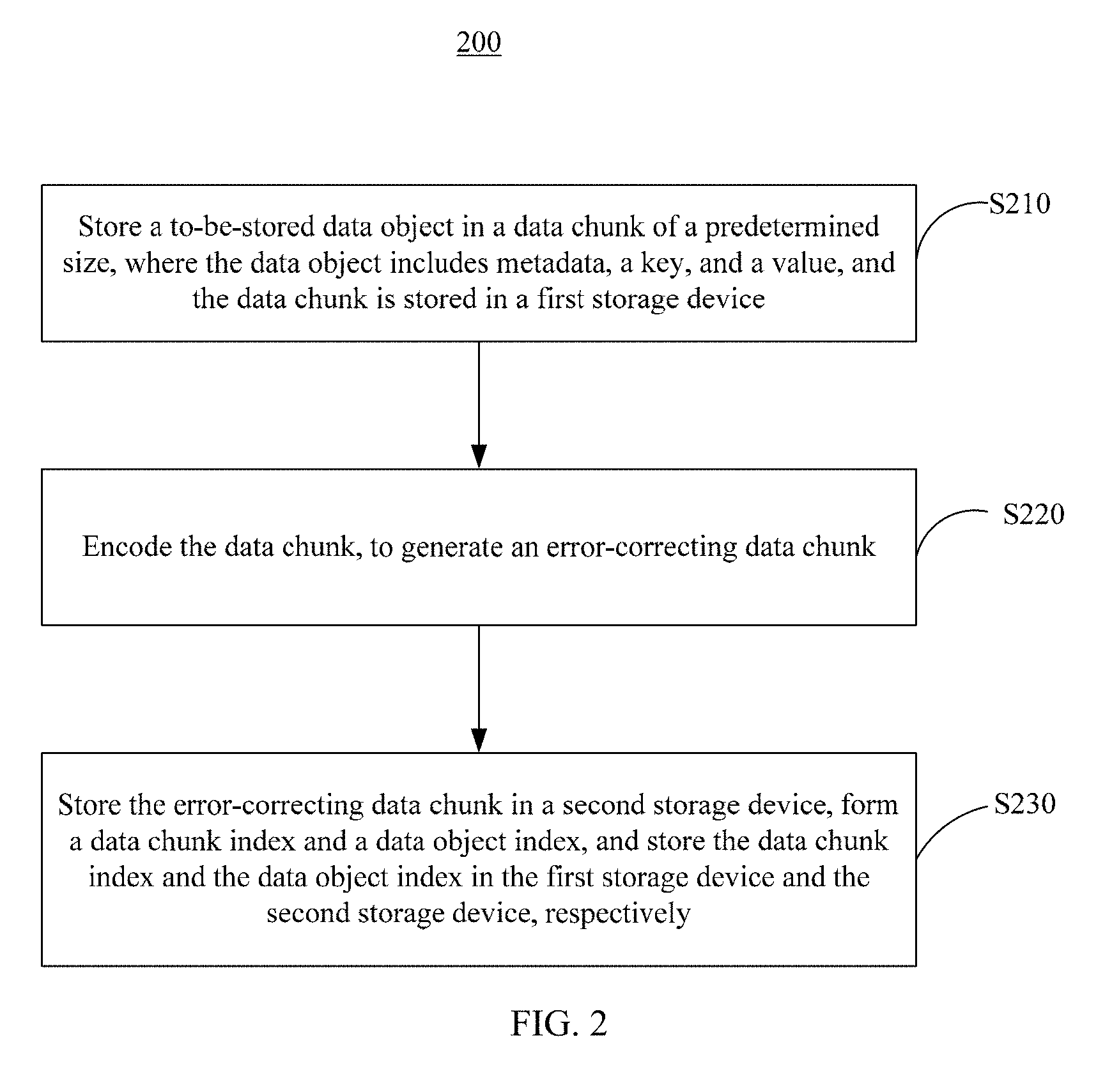

[0058] FIG. 2 is a schematic flowchart of a data management method according to an embodiment of the present invention;

[0059] FIG. 3 is an architectural diagram of data generated in a data management method according to an embodiment of the present invention;

[0060] FIG. 4 is a schematic flowchart of a data management method according to another embodiment of the present invention;

[0061] FIG. 5 is a schematic flowchart of a data management method according to another embodiment of the present invention;

[0062] FIG. 6 is a schematic flowchart of a data management method according to another embodiment of the present invention;

[0063] FIG. 7A to FIG. 7H are schematic flowcharts of a data management method according to another embodiment of the present invention;

[0064] FIG. 8 is a schematic block diagram of a data management apparatus according to an embodiment of the present invention;

[0065] FIG. 9 is a schematic block diagram of a data management apparatus according to another embodiment of the present invention; and

[0066] FIG. 10 is a schematic block diagram of a data management apparatus according to another embodiment of the present invention.

DESCRIPTION OF EMBODIMENTS

[0067] The following clearly describes the technical solutions in the embodiments of the present invention with reference to the accompanying drawings in the embodiments of the present invention. Apparently, the described embodiments are some but not all of the embodiments of the present invention. All other embodiments obtained by a person of ordinary skill in the art based on the embodiments of the present invention without creative efforts shall fall within the protection scope of the present invention.

[0068] The following describes composition of a distributed storage system. FIG. 1 is a schematic architectural diagram of a distributed storage system 100. Referring to FIG. 1, the distributed storage system 100 includes the following key entities: a client 110, a storage site 130, a coordinator manager 150, and a backup site 170. These key entities are connected by using a network. For example, normal operation of information transmission channels between the key entities is maintained by using a long-term Transmission Control Protocol (Transmission Control Protocol, TCP).

[0069] The client 110 is an access point of an upper-layer service provided by the distributed storage system 100, and is connected to each key entity of the storage system 100 by using a communications network. The client 110 serves as an initiator of a service request and may initiate a service request to the storage site 130. The service request may include requesting data, writing data, modifying data, updating data, deleting data, and the like. The client 110 may be a device such as a handheld device, a consumer electronic device, a general-purpose computer, a special-purpose computer, a distributed computer, or a server. It may be understood that, to implement a basic function of the client 110, the client 110 should have a processor, a memory, an I/O port, and a data bus connecting these components.

[0070] The storage site 130 is configured to store a data object (data object). The storage site 130 may be allocated by a network server in a unified manner. To be specific, the storage site 130 is homed on one or more servers that are connected by using a network, and is managed by the server in a unified manner. Therefore, the storage site 130 may also be referred to as a data server (Data server). The storage site 130 may be further connected to a disk 132 that is configured to store some seldom-used data.

[0071] The coordinator manager 150 is configured to manage the whole distributed storage system 100, for example, use a heartbeat mechanism to detect whether the storage site 130 and/or the backup site 170 become/becomes faulty, and be responsible for restoring, when some sites become faulty, data objects stored in the faulty sites.

[0072] The backup site 170 is configured to back up the data object stored in the storage site 130, and is also referred to as a backup server or a parity server (Parity server). It should be noted that the backup site 170 and the storage site 130 are relative concepts and may interchange roles depending on different requirements. The backup sites 170 may be distributed at different geographical locations or network topologies. Specifically, the backup site 170 in form may be any device that can instantly store data, such as a network site (site) or a memory of a distributed server.

[0073] It should be noted that all the key entities included in the distributed storage system 100 have a data processing capability, and therefore all the key entities have such components as a CPU, a buffer (memory), an I/O interface, and a data bus. In addition, a quantity of each type of the key entities may be at least one, and quantities of the key entities may be the same or different. For example, in some system environments, a quantity of the backup sites 170 needs to be greater than a quantity of the storage sites 130; and in some environments, a quantity of the backup sites 170 may be equal to a quantity of the storage sites, or even less than a quantity of the storage sites 130.

[0074] The following describes in detail a data management method 200 that is proposed in the present invention based on the foregoing distributed storage system 100. The data management method 200 is mainly performed by a storage site 130 and a backup site 170, to describe how to store a data object having a relatively small data length.

[0075] As shown in FIG. 2, the data management method 200 in the present invention includes the following steps.

[0076] S210. Store a to-be-stored data object in a data chunk of a predetermined size (size/volume/capacity), where the data object includes a key (key), a value (value), and metadata (metadata), and the data chunk is stored in a first storage device.

[0077] In an application scenario in which current data object sizes are relatively small, in the present invention, a predetermined size (size/volume/capacity) is preset for a data chunk, to store these small data objects. The data chunk (Data Chunk) of the predetermined size may be visually understood as a data container. The data container has a predetermined volume, namely, a constant size, for example, 4 KB. The to-be-stored data object has a KV (Key-value) structure, that is, a key-value structure. The data structure includes three parts: a key (Key), a value (Value), and metadata (Metadata). The key is a unique identifier of the data object, and the key may be used to uniquely determine the corresponding data object, so that when the stored data object is to be read, modified, updated, or deleted, a first target data object is located by using the key. The value is actual content of the data object. The metadata stores attribute information of the data object, such as a size of the key, a size of the value, a timestamp of creating/modifying the data object, and like information. A plurality of small data objects are stored in the data chunk of the predetermined size. When a size of the data objects stored in the data chunk approximates or equals a size limit of the data chunk, the data chunk is encapsulated. In other words, new data is no longer written into the data chunk.

[0078] In most cases, a size of the to-be-stored data object is less than a size of the data chunk. Correspondingly, the data chunk in which the data object is stored is a data chunk that has a predetermined size and that includes a plurality of data objects. In some cases, the size of the to-be-stored data object is greater than the size of the data chunk, or the size of the to-be-stored data object is greater than a size of remaining available storage space in the data chunk. The to-be-stored data object may be segmented to obtain small-size data objects. For example, a data object A is segmented into a plurality of small-size data objects a1, a2, . . . , and aN whose sizes approximate the data size, where A=a1+a2+ . . . +aN, and N is a natural number. Sizes of the data objects a1, a2, . . . , and aN may be the same. In other words, the data objects have a unified size. Alternatively, the sizes of the data objects may be different. All or some data objects have different sizes. A size of at least one data object aM (1.ltoreq.M.ltoreq.N) of the small data objects a1, a2, . . . , and aN that are obtained through segmentation is less than the size of the data chunk or less than the size of the remaining available storage space in the data chunk. The small-size data object aM obtained through segmentation is stored in the data chunk, and segmentation information is stored in metadata (metadata) of the small data object aM. Remaining data objects in the small-size data objects a1, a2, . . . , and aN except aM are stored in a new data chunk of a constant size, and the new data chunk of the constant size is preferably located in a different storage site 130. When the data object A is read, the data objects a1, a2, . . . , and aN stored in the different storage site 130 are found based on a key of the data object A, and the data objects a1, a2, . . . , and aN are recombined based on segmentation information in metadata, to obtain the original data object. There may be a plurality of data chunks of a constant size, and the plurality of data chunks are preferably located in a plurality of different storage sites 130. In addition, the data chunks may be alternatively located in different storage partitions of a same storage site 130, and each data chunk has a predetermined size. Preferably, the plurality of data chunks have a same constant size.

[0079] The data chunk of the predetermined size is a virtual data container. The virtual data container may be considered as storage space in a storage device. For ease of understanding, the storage device may be referred to as the first storage device. The first storage device may specifically correspond to a storage site 130 in the distributed storage system 100. An implementation process of storing the to-be-stored data object in the distributed storage system 100 may be as follows: A client 110 initiates a data storage request, where the data storage request carries specific location information of the storage site 130, and sends, to the storage site 130, that is, the first storage device, based on the location information and for storage, the to-be-stored data object carried in the data storage request; the storage site 130 stores the to-be-stored data object in a data chunk that is not encapsulated; and if the data chunk has no remaining storage space after the to-be-stored data object is received, or the remaining storage space is less than a predetermined threshold, the storage site 130 encapsulates the data chunk, and a new data object is no longer accepted by the encapsulated data chunk. In this case, the storage site 130 may add, to a storage success message returned to the client 110, a message indicating that the data chunk is already encapsulated, and when initiating a new storage request, the client 110 may select a different storage site 130 for performing a storage operation.

[0080] S220. Encode the data chunk, to generate an error-correcting data chunk.

[0081] When a total size of data objects stored in the data chunk approximates the size limit of the data chunk, a data object is no longer stored in the data chunk. In other words, the data chunk is encapsulated. Specifically, a write operation attribute may be disabled for the data object. Then, data redundancy encoding, such as XOR encoding or EC encoding, is performed on the data chunk. EC encoding is used as an example to describe how to perform redundancy encoding. An EC encoding process may be understood as: Information including K objects is changed to information including K+M objects, and when any M or fewer objects of the K+M objects are damaged, the M damaged objects can be restored by using remaining K objects. A relatively frequently-used EC encoding algorithm is Reed-Solomon Code (Reed-solomon codes, RS-code). According to the RS-code, the following formula is used to calculate error-correcting data (parity) in a finite field based on original data, namely, the data chunk.

[ P 1 P 2 P M ] = [ 1 1 1 1 a 1 1 a 1 K - 1 1 a M - 1 1 a M - 1 K - 1 ] * [ D 1 D 2 D K ] ##EQU00001##

[0082] D.sub.1 to D.sub.K represent data chunks, P.sub.1 to P.sub.M represent error-correcting data chunks, and a matrix is referred to as a Vandermonde matrix. In the present invention, the error-correcting data chunk may be generated by using the RS-Code. The error-correcting data chunk and the data chunk may be used to restore damaged data chunks within a maximum acceptable damage range, in other words, a quantity of the damaged data chunks is not greater than M.