Session Description Protocol Mechanisms For Signaling Radio Access Network Capabilities In Multimedia Telephony Sessions

Oyman; Ozgur ; et al.

U.S. patent application number 16/353783 was filed with the patent office on 2019-07-11 for session description protocol mechanisms for signaling radio access network capabilities in multimedia telephony sessions. The applicant listed for this patent is Intel Corporation. Invention is credited to Usharani Ayyalasomayajula, Thomas Luetzenkirchen, Ozgur Oyman, Jerome Parron, Fabrice Plante, Ganesh Vijayan.

| Application Number | 20190215729 16/353783 |

| Document ID | / |

| Family ID | 67141210 |

| Filed Date | 2019-07-11 |

View All Diagrams

| United States Patent Application | 20190215729 |

| Kind Code | A1 |

| Oyman; Ozgur ; et al. | July 11, 2019 |

SESSION DESCRIPTION PROTOCOL MECHANISMS FOR SIGNALING RADIO ACCESS NETWORK CAPABILITIES IN MULTIMEDIA TELEPHONY SESSIONS

Abstract

Systems, apparatuses, methods, and computer-readable media are provided for negotiating Radio Access Network (RAN)-level capabilities toward improving end-to-end quality of Internet Protocol Multimedia Subsystem (IMS) communication sessions, such as Voice over Long-Term Evolution (VoLTE) calls. Disclosed embodiments include Session Description Protocol-based mechanisms to signal the RAN-level capabilities. The RAN-level capabilities may include, for example, delay budget information signaling, Transmission Time Interval bundling, RAN frame aggregation, RAN-assisted codec adaptation or access network bitrate recommendation, and/or other like capabilities. Other embodiments may be described and/or claimed.

| Inventors: | Oyman; Ozgur; (Palo Alto, CA) ; Luetzenkirchen; Thomas; (Taufkirchen BY, DE) ; Plante; Fabrice; (Hillsboro, OR) ; Ayyalasomayajula; Usharani; (Bangalore, IN) ; Vijayan; Ganesh; (Bangalore, IN) ; Parron; Jerome; (Fuerth BY, DE) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 67141210 | ||||||||||

| Appl. No.: | 16/353783 | ||||||||||

| Filed: | March 14, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62643541 | Mar 15, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 65/80 20130101; H04L 65/1016 20130101; H04W 76/28 20180201; H04W 28/22 20130101; H04W 28/20 20130101; H04W 76/27 20180201; H04W 8/24 20130101; H04L 5/0082 20130101; H04W 80/10 20130101; H04L 65/1006 20130101; H04L 65/608 20130101; H04W 80/02 20130101 |

| International Class: | H04W 28/20 20060101 H04W028/20; H04W 8/24 20060101 H04W008/24; H04W 80/02 20060101 H04W080/02; H04W 80/10 20060101 H04W080/10; H04W 76/27 20060101 H04W076/27; H04W 76/28 20060101 H04W076/28; H04L 5/00 20060101 H04L005/00 |

Claims

1. A System-on-Chip (SoC) to be implemented in a first user equipment (UE), the SoC comprising: baseband circuitry to generate a Session Description Protocol (SDP) message to indicate one or more radio capabilities of the first UE; and interface circuitry coupled with the baseband circuitry, the interface circuitry to provide the SDP message to radio-frequency (RF) circuitry for transmission to a second UE via a Radio Access Network (RAN) node.

2. The SoC of claim 1, wherein the SDP message is a first SDP message, the one or more radio capabilities of the first UE are first radio capabilities, and wherein the baseband circuitry is further to: identify one or more second radio capabilities of the second UE within a second SDP message obtained from the second UE; and determine one or more desired radio level adaptations based on the one or more second radio capabilities.

3. The SoC of claim 2, wherein the baseband circuitry is further to: determine the one or more desired radio level adaptations based on the one or more second radio capabilities and based on detected radio conditions.

4. The SoC of claim 3, wherein the detected radio conditions are one or both of conditions of a radio link between the first UE and the RAN node and conditions of a radio link between the second UE and another RAN node, and wherein: the baseband circuitry is further to generate a Radio Resource Control (RRC) message to indicate a desired radio level adaptation; and the interface circuitry is further to provide the RRC message to the RF circuitry for transmission to the RAN node.

5. The SoC of claim 4, wherein the desired radio level adaptation is to turn off connected mode discontinuous reception (cDRX) when the one or more second radio capabilities indicate support of Delay Budget Information (DBI) signaling and Transmission Time Interval (TTI) bundling, the detected radio conditions are better than threshold radio conditions, and an end-to-end (e2e) packet loss rate is below a threshold e2e packet loss rate.

6. The SoC of claim 4, wherein the RRC message is to include a UE Assistance Information (UEAssistanceInformation) information element (IE), and the UEAssistanceInformation IE is to indicate the desired radio level adaptation.

7. The SoC of claim 2, wherein: the baseband circuitry is to further generate a first recommended bit rate Media Access Control (MAC) Control Element (CE), the first recommended bit rate MAC CE to query for a recommended bit rate or indicate a desired radio level adaptation; and the interface circuitry is further to provide the first recommended bit rate MAC CE to the to the RF circuitry for transmission to the RAN node, and obtain a second recommended bit rate MAC CE from the RF circuitry, the second recommended bit rate MAC CE to include Access Network Bitrate Recommendation (ANBR) information.

8. The SoC of claim 7, wherein the baseband circuitry is further to: adapt a bit rate based on the ANBR information; generate a Codec Mode Request (CMR) or an Application-defined Real-Time Transport Control Protocol packet (APP) for voice rate adaptation based on the ANBR information; or generate a Temporary Maximum Media Bit-rate Request (TMMBR) or a Temporary Maximum Media Bit-rate Notification (TMMBN) message for video rate adaptation based on the ANBR information.

9. The SoC of claim 2, wherein the first SDP message is an SDP offer message and the second SDP message is an SDP answer message, or the second SDP message is an SDP offer message and the first SDP message is an SDP answer message, and wherein the baseband circuitry is to: generate the first SDP message to include a first radio capabilities attribute to indicate the one or more first radio capabilities; and identify the one or more second radio capabilities within a second radio capabilities attribute in the second SDP message.

10. The SoC of claim 1, wherein the one or more radio capabilities include one or more of a delay budget reporting capability, a transmission time interval (TTI) bundling capability, a RAN frame aggregation capability, and a RAN-assisted codec adaptation capability or an ANBR signaling capability.

11. One or more non-transitory computer-readable media (NTCRM) comprising instructions, wherein execution of the instructions is to cause a first user equipment (UE) to operate a Multimedia Telephony Service for Internet Protocol Multimedia Subsystem (MTSI) client to: generate a Session Description Protocol (SDP) message to indicate one or more Radio Access Network (RAN)-level radio capabilities of the first UE; and control transmission of the SDP message to a second UE via a radio link between the first UE and a RAN node.

12. The one or more NTCRM of claim 11, wherein the SDP message is a first SDP message, the one or more radio capabilities of the first UE are first radio capabilities, and wherein execution of the instructions is to cause the first UE to operate the MTSI client to: identify one or more second radio capabilities of the second UE within a second SDP message obtained from the second UE; and determine one or more desired radio level adaptations based on the one or more second radio capabilities and based on detected radio conditions, wherein the detected radio conditions are one or both of conditions of the radio link between the first UE and the RAN node and conditions of a radio link between the second UE and another RAN node.

13. The one or more NTCRM of claim 12, wherein execution of the instructions is to cause the first UE to operate the MTSI client to: generate a Radio Resource Control (RRC) message to indicate a desired radio level adaptation, wherein the desired radio level adaptation is to turn off connected mode discontinuous reception (cDRX) when the one or more second radio capabilities indicate support of Delay Budget Information (DBI) signaling and Transmission Time Interval (TTI) bundling, the detected radio conditions are better than threshold radio conditions, or an end-to-end (e2e) packet loss rate is below a threshold e2e packet loss rate; and control transmission of the RRC message to the RAN node.

14. The one or more NTCRM of claim 13, wherein the RRC message is to include a UE Assistance Information (UEAssistanceInformation) information element (IE), and the UEAssistanceInformation IE is to indicate the desired radio level adaptation.

15. The one or more NTCRM of claim 12, wherein execution of the instructions is to cause the first UE to operate the MTSI client to: generate a recommended bit rate Media Access Control (MAC) Control Element (CE) to include a recommended bit rate query, the recommended bit rate query to query for a recommended bit rate or indicate a desired radio level adaptation; control transmission of the recommended bit rate MAC CE including the recommended bit rate query to the RAN node; and receive, from the RAN node, a recommended bit rate MAC CE that includes a recommended bit rate message, the recommended bit rate message to indicate an Access Network Bitrate Recommendation (ANBR), and the ANBR is based on the recommended bit rate query.

16. The one or more NTCRM of claim 15, wherein execution of the instructions is to cause the first UE to operate the MTSI client to: adapt a bit rate based on the ANBR; generate a Codec Mode Request (CMR) or an Application-defined Real-Time Transport Control Protocol packet (APP) for voice rate adaptation based on the ANBR; or generate a Temporary Maximum Media Bit-rate Request (TMMBR) or a Temporary Maximum Media Bit-rate Notification (TMMBN) message for video rate adaptation based on the ANBR.

17. The one or more NTCRM of claim 12, wherein the first SDP message is an SDP offer message and the second SDP message is an SDP answer message, or the second SDP message is an SDP offer message and the first SDP message is an SDP answer message, and wherein execution of the instructions is to cause the first UE to operate the MTSI client to: generate the first SDP message to include a first radio capabilities attribute to indicate the one or more first radio capabilities; and identify the one or more second radio capabilities within a second radio capabilities attribute in the second SDP message.

18. An MTSI client in terminal comprising: identification means for identifying radio capabilities of a remote device based on a session description protocol (SDP) offer message obtained from the remote device; message generation means for generating an SDP answer message to include a radio capabilities attribute, the radio capabilities attribute to indicate one or more radio capabilities of the apparatus; means for receiving the SDP offer message from the remote device, wherein the SDP offer message is to include another radio capabilities attribute of the remote device; and means for transmitting the SDP answer message to the remote device.

19. The MTSI client in terminal of claim 18, wherein the radio capabilities attribute of the apparatus is to indicate whether the apparatus supports one or more of a delay budget reporting, transmission time interval (TTI) bundling, Radio Access Network (RAN) frame aggregation, and RAN-assisted codec adaptation or an access network bitrate recommendation (ANBR) signaling capability; and the other radio capabilities attribute of the remote device is to indicate whether the remote device supports one or more of a delay budget reporting, TTI bundling, RAN frame aggregation, and RAN-assisted codec adaptation or an ANBR signaling capability.

20. The MTSI client in terminal of claim 18, further comprising: means for detecting radio conditions local to the MTSI client in terminal, wherein the detected radio conditions are one or both of conditions of the radio link between the MTSI client in terminal and the RAN node and conditions of a radio link between the remote device and another RAN node, and wherein the message generation means is for generating a message to indicate one or more desired radio level adaptations based on the radio capabilities of the remote device and based on the detected radio conditions.

21. The MTSI client in terminal of claim 20, wherein the message is a Radio Resource Control (RRC) message, the means for transmitting is for transmitting the RRC message to a local RAN node; and the means for receiving is for receiving another message from the RAN node based on the RRC message, and the MTSI client in terminal further comprises: means for adjusting a bit rate based on the message received from the RAN node.

22. The MTSI client in terminal of claim 21, wherein: when the detected radio conditions include a relatively long packet delay and jitter, the other message is a recommended bit rate Media Access Control (MAC) Control Element (CE); and when the detected radio conditions include a relatively large number of packet losses, the other message is a delay budget information acknowledgement indicating that additional budget has been granted by the RAN node.

23. The MTSI client in terminal of claim 21, wherein the message is a recommended bit rate query MAC CE for querying the recommended bitrate or indicating a desired bit rate, and wherein the means for receiving is for receiving a recommended bit rate message MAC CE, the recommended bit rate message MAC CE including an ANBR based on the recommended bit rate query MAC CE.

24. The MTSI client in terminal of claim 23, further comprising: means for adapting a bit rate based on the ANBR.

25. The MTSI client in terminal of claim 23, wherein the message generation means is for: generating a Codec Mode Request (CMR) or an Application-defined Real-Time Transport Control Protocol packet (APP) for voice rate adaptation based on the ANBR; or generating a Temporary Maximum Media Bit-rate Request (TMMBR) or a Temporary Maximum Media Bit-rate Notification (TMMBN) message for video rate adaptation based on the ANBR.

Description

RELATED APPLICATIONS

[0001] The present application claims priority under 35 U.S.C. .sctn. 119 to U.S. Provisional App. No. 62/643,541 filed Mar. 15, 2018, the contents of which is hereby incorporated by reference in its entirety.

FIELD

[0002] Various embodiments of the present application generally relate to the field of wireless communications, and in particular, to Multimedia Telephony Service for IMS technologies.

BACKGROUND

[0003] MTSI supports conversational speech, video, and text transported over RTP to deliver a user experience equivalent to or better than that of circuit switched conversational services using the same amount of network resources. MTSI defines media handling (e.g., signaling, transport, jitter buffer management, packet-loss handling, and adaptation), as well as interactivity (e.g., adding or dropping media during a call). The focus is to ensure a reliable and interoperable service with a predictable media quality, while allowing for flexibility in the service offerings. MTSI uses SIP, SDP, and SDP capabilities negotiation protocols for media negotiation and configuration. No signaling mechanisms are currently defined to provide e2e coordination between UEs with respect to radio capabilities.

BRIEF DESCRIPTION OF THE FIGURES

[0004] FIG. 1 depicts an architecture of a system of a network in accordance with some embodiments.

[0005] FIG. 2 illustrates an example Multimedia Telephony Service for IMS architecture according to various embodiments.

[0006] FIG. 3 depicts an example use case for RAN delay budget reporting.

[0007] FIG. 4 depicts an example procedure for RAN delay budget reporting in MTSI according to some embodiments.

[0008] FIG. 5 shows an example bitmask, which may be used for SDP-based negotiation of RAN capabilities, in accordance with various embodiments.

[0009] FIG. 6 depicts an example of infrastructure equipment in accordance with various embodiments.

[0010] FIG. 7 depicts example components of a computer platform in accordance with various embodiments.

[0011] FIG. 8 depicts a block diagram illustrating components, according to some example embodiments, able to read instructions from a machine-readable or computer-readable medium (e.g., a non-transitory machine-readable storage medium) and perform any one or more of the methodologies discussed herein.

[0012] FIG. 9 depicts example components of baseband circuitry and radio frequency circuitry in accordance with various embodiments.

[0013] FIG. 10 is an illustration of various protocol functions that may be used for various protocol stacks in accordance with various embodiments.

[0014] FIG. 11 depicts example bit rate recommendation MAC CEs according to various embodiments.

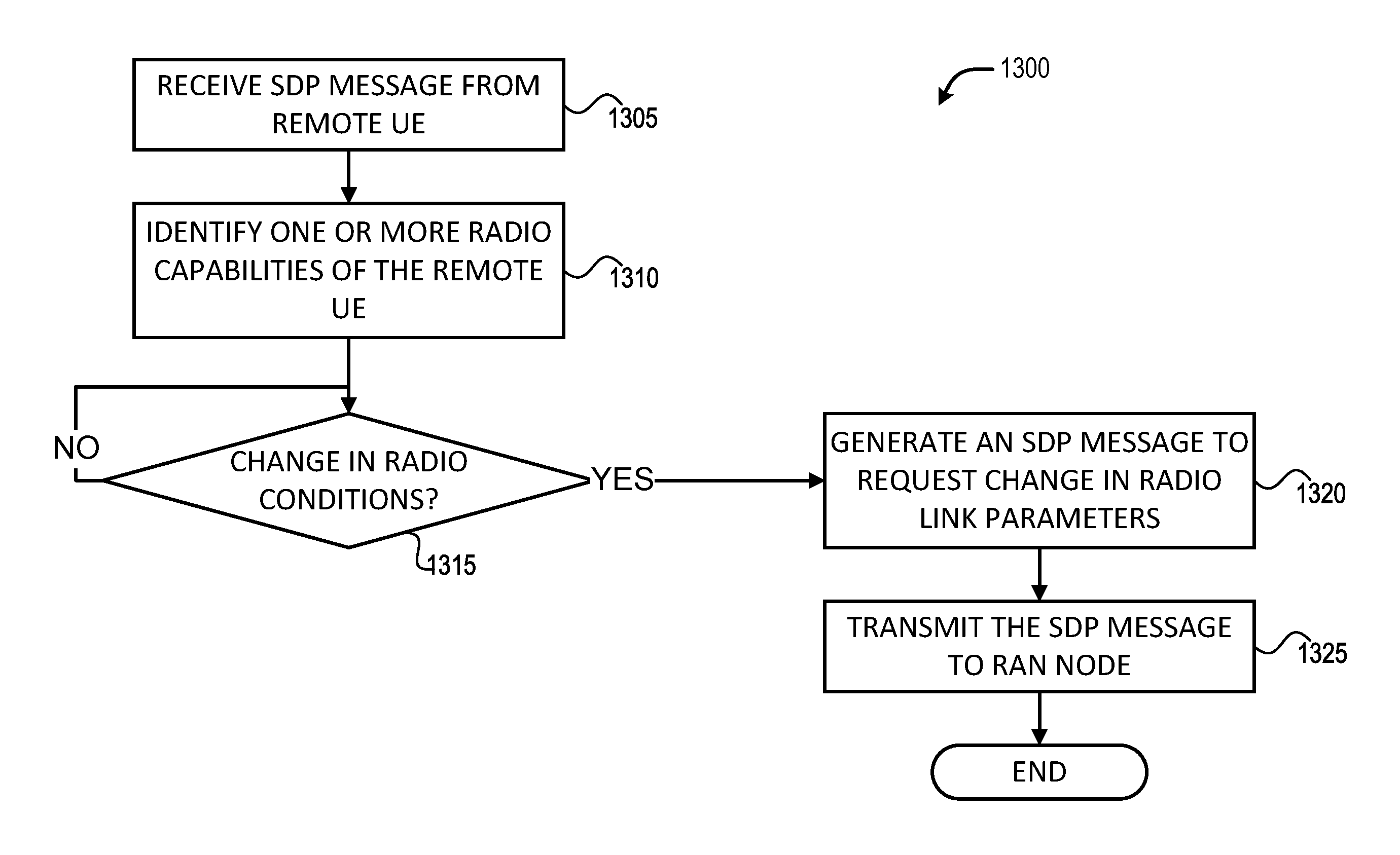

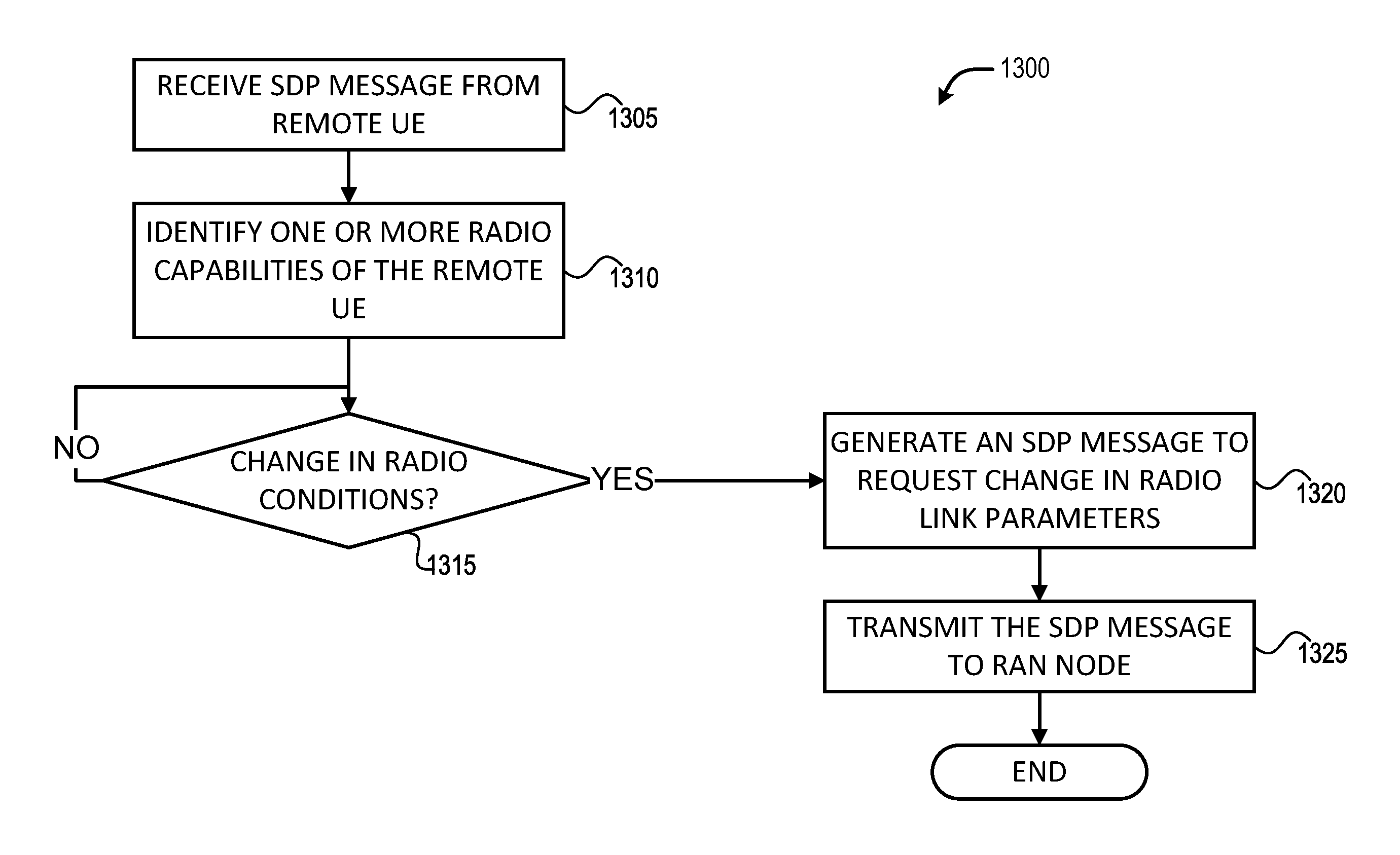

[0015] FIGS. 12-13 depict example processes for practicing the various embodiments discussed herein.

DETAILED DESCRIPTION

[0016] Embodiments herein are related to SDP-based mechanisms to signal specific RAN-level capabilities toward improving e2e quality of VoLTE/ViLTE calls. Signaled RAN-level capabilities may include, for example, Delay Budget Reporting, TTI bundling, RAN frame aggregation, RAN-assisted codec adaptation, etc. According to various embodiments, a first UE is operable to perform multimedia telephony with a second UE, wherein the second UE is remote from the first UE. The first UE is configured to receive an SDP offer message from the second UE with an attribute describing radio capabilities of the second UE, and send an SDP answer message to the second UE with an attribute describing the radio capabilities of the first UE. In some embodiments, the attribute describing radio capabilities includes descriptors, configurations, and/or parameters pertaining to specific RF circuitry or modem (or baseband circuitry) capabilities. In some embodiments, the specific RF circuitry or modem (or baseband circuitry) capabilities include one or more of a delay budget reporting capability, a TTI bundling capability, a RAN frame aggregation and RAN-assisted codec adaptation capability, and/or other like capabilities. Other embodiments may be described and/or claimed.

[0017] Referring now to FIG. 1, in which an example architecture of a system 100 of a network according to various embodiments, is illustrated. The following description is provided for an example system 100 that operates in conjunction with the LTE system standards and 5G or NR system standards as provided by 3GPP technical specifications. However, the example embodiments are not limited in this regard and the described embodiments may apply to other networks that benefit from the principles described herein, such as future 3GPP systems (e.g., Sixth Generation (6G)) systems, IEEE 802.16 protocols (e.g., WMAN, WiMAX, etc.), or the like.

[0018] As shown by FIG. 1, the system 100 includes UE 101a and UE 101b (collectively referred to as "UEs 101" or "UE 101"). In this example, UEs 101 are illustrated as smartphones (e.g., handheld touchscreen mobile computing devices connectable to one or more cellular networks), but may also comprise any mobile or non-mobile computing device, such as consumer electronics devices, cellular phones, smartphones, feature phones, tablet computers, wearable computer devices, personal digital assistants, pagers, wireless handsets, desktop computers, laptop computers, in-vehicle infotainment, in-car entertainment devices, an Instrument Cluster, head-up display (HUD) devices, onboard diagnostic devices, dashtop mobile equipment, mobile data terminals, Electronic Engine Management System, electronic/engine control units, electronic/engine control modules, embedded systems, microcontrollers, control modules, engine management systems, networked or "smart" appliances, MTC devices, M2M, IoT devices, and/or the like. As discussed in more detail infra, the UEs 101 (and the RAN nodes 111) incorporate the SDP-based signaling embodiments discussed herein. In these embodiments, the UEs 101 are capable of, inter alia, signaling specific RAN-level capabilities using SDP toward improving e2e quality of VoLTE calls. The RAN-level capabilities may include, for example, delay budget reporting, TTI bundling, RAN frame aggregation, RAN-assisted codec adaptation, and/or the like. These and other embodiments are discussed in more detail infra.

[0019] In some embodiments, any of the UEs 101 may be IoT UEs, which may comprise a network access layer designed for low-power IoT applications utilizing short-lived UE connections. An IoT UE can utilize technologies such as M2M or MTC for exchanging data with an MTC server or device via a PLMN, ProSe or D2D communication, sensor networks, or IoT networks. The M2M or MTC exchange of data may be a machine-initiated exchange of data. An IoT network describes interconnecting IoT UEs, which may include uniquely identifiable embedded computing devices (within the Internet infrastructure), with short-lived connections. The IoT UEs may execute background applications (e.g., keep-alive messages, status updates, etc.) to facilitate the connections of the IoT network.

[0020] The UEs 101 may be configured to connect, for example, communicatively couple, with an or RAN 110. In embodiments, the RAN 110 may be an NG RAN or a 5G RAN, an E-UTRAN, or a legacy RAN, such as a UTRAN or GERAN. The term "NG RAN" or the like refers to a RAN 110 that operates in an NR or 5G system 100, and the term "E-UTRAN" or the like refers to a RAN 110 that operates in an LTE or 4G system 100. The UEs 101 utilize connections (or channels) 103 and 104, respectively, each of which comprises a physical communications interface or layer.

[0021] In this example, the connections 103 and 104 are illustrated as an air interface to enable communicative coupling, and can be consistent with cellular communications protocols, such as a GSM protocol, a CDMA network protocol, a PTT protocol, a POC protocol, a UMTS protocol, a 3GPP LTE protocol, a 5G protocol, a NR protocol, and/or any of the other communications protocols discussed herein. In embodiments, the UEs 101 may directly exchange communication data via a ProSe interface 105. The ProSe interface 105 may alternatively be referred to as a SL interface 105 and may comprise one or more logical channels, including but not limited to a PSCCH, a PSSCH, a PSDCH, and a PSBCH.

[0022] The UE 101b is shown to be configured to access an AP 106 (also referred to as "WLAN node 106," "WLAN 106," "WLAN Termination 106," "WT 106" or the like) via connection 107. The connection 107 can comprise a local wireless connection, such as a connection consistent with any IEEE 802.11 protocol, wherein the AP 106 would comprise a WiFi.RTM. router. In this example, the AP 106 is shown to be connected to the Internet without connecting to the core network of the wireless system (described in further detail below). In various embodiments, the UE 101b, RAN 110, and AP 106 may be configured to utilize LWA operation and/or LWIP operation. The LWA operation may involve the UE 101b in RRC_CONNECTED being configured by a RAN node 111a-b to utilize radio resources of LTE and WLAN. LWIP operation may involve the UE 101b using WLAN radio resources (e.g., connection 107) via IPsec protocol tunneling to authenticate and encrypt packets (e.g., IP packets) sent over the connection 107. IPsec tunneling may include encapsulating the entirety of original IP packets and adding a new packet header, thereby protecting the original header of the IP packets.

[0023] The RAN 110 can include one or more AN nodes or RAN nodes 111a and 111b (collectively referred to as "RAN nodes 111" or "RAN node 111") that enable the connections 103 and 104. These access nodes can be referred to as BS, gNBs, RAN nodes, eNBs, NodeBs, RSUs, TRxPs or TRPs, and so forth, and can comprise ground stations (e.g., terrestrial access points) or satellite stations providing coverage within a geographic area (e.g., a cell). The term "NG RAN node" or the like refers to a RAN node 111 that operates in an NR or 5G system 100 (for example, a gNB), and the term "E-UTRAN node" or the like refers to a RAN node 111 that operates in an LTE or 4G system 100 (e.g., an eNB). According to various embodiments, the RAN nodes 111 may be implemented as one or more of a dedicated physical device such as a macrocell base station, and/or a low power base station for providing femtocells, picocells or other like cells having smaller coverage areas, smaller user capacity, or higher bandwidth compared to macrocells.

[0024] In some embodiments, all or parts of the RAN nodes 111 may be implemented as one or more software entities running on server computers as part of a virtual network, which may be referred to as a CRAN and/or a vBBUP. In these embodiments, the CRAN or vBBUP may implement a RAN function split, such as a PDCP split wherein RRC and PDCP layers are operated by the CRAN/vBBUP and other L2 protocol entities are operated by individual RAN nodes 111; a MAC/PHY split wherein RRC, PDCP, RLC, and MAC layers are operated by the CRAN/vBBUP and the PHY layer is operated by individual RAN nodes 111; or a "lower PHY" split wherein RRC, PDCP, RLC, MAC layers and upper portions of the PHY layer are operated by the CRAN/vBBUP and lower portions of the PHY layer are operated by individual RAN nodes 111. This virtualized framework allows the freed-up processor cores of the RAN nodes 111 to perform other virtualized applications. In some implementations, an individual RAN node 111 may represent individual gNB-DUs that are connected to a gNB-CU via individual F1 interfaces (not shown by FIG. 1). In these implementations, the gNB-DUs may include one or more remote radio heads or RFEMs (see, e.g., FIG. 6), and the gNB-CU may be operated by a server that is located in the RAN 110 (not shown) or by a server pool in a similar manner as the CRAN/vBBUP. Additionally or alternatively, one or more of the RAN nodes 111 may be next generation eNBs (ng-eNBs), which are RAN nodes that provide E-UTRA user plane and control plane protocol terminations toward the UEs 101, and are connected to a 5GC 120 via an NG interface.

[0025] In V2X scenarios one or more of the RAN nodes 111 may be or act as RSUs. The term "Road Side Unit" or "RSU" refers to any transportation infrastructure entity used for V2X communications. An RSU may be implemented in or by a suitable RAN node or a stationary (or relatively stationary) UE. In one example, an RSU is a computing device coupled with RF circuitry located on a roadside that provides connectivity support to passing vehicle UEs 101 (vUEs 101). The RSU may also include internal data storage circuitry to store intersection map geometry, traffic statistics, media, as well as applications/software to sense and control ongoing vehicular and pedestrian traffic. The RSU may operate on the 5.9 GHz Direct Short Range Communications (DSRC) band to provide very low latency communications required for high speed events, such as crash avoidance, traffic warnings, and the like. Additionally or alternatively, the RSU may operate on the cellular V2X band to provide the aforementioned low latency communications, as well as other cellular communications services. Additionally or alternatively, the RSU may operate as a Wi-Fi hotspot (2.4 GHz band) and/or provide connectivity to one or more cellular networks to provide uplink and downlink communications. The computing device(s) and some or all of the radiofrequency circuitry of the RSU may be packaged in a weatherproof enclosure suitable for outdoor installation, and may include a network interface controller to provide a wired connection (e.g., Ethernet) to a traffic signal controller and/or a backhaul network.

[0026] Any of the RAN nodes 111 can terminate the air interface protocol and can be the first point of contact for the UEs 101. In some embodiments, any of the RAN nodes 111 can fulfill various logical functions for the RAN 110 including, but not limited to, RNC functions such as radio bearer management, uplink and downlink dynamic radio resource management and data packet scheduling, and mobility management. In embodiments, the UEs 101 can be configured to communicate using OFDM communication signals with each other or with any of the RAN nodes 111 over a multicarrier communication channel in accordance with various communication techniques, such as, but not limited to, an OFDMA communication technique (e.g., for downlink communications) or a SC-FDMA communication technique (e.g., for uplink and ProSe or SL communications), although the scope of the embodiments is not limited in this respect. The OFDM signals can comprise a plurality of orthogonal subcarriers.

[0027] In embodiments, the UEs 101 implement or operate a client for MTSI supporting conversational speech (including DTMF), video, and text transported over RTP with the scope to deliver a user experience equivalent to or better than that of Circuit Switched (CS) conversational services using the same amount of network resources. MTSI defines media handling (e.g., signaling, transport, jitter buffer management, packet-loss handling, adaptation, etc.), as well as interactivity (e.g., adding or dropping media during a call). In these embodiments, the UEs 101 may connect to the IMS (e.g., AS 130) using 3GPP access (e.g., via RAN 110 and CN 120) or using non-3GPP access (e.g., via WLAN 106, Bluetooth, DECT/NG DECT).

[0028] According to various embodiments, UEs 101 may communicate with one another using VoLTE mechanisms. VoLTE is a standard for high-speed wireless communication, which is based on IMS networks where specific profiles for control and media planes of voice service over an LTE network may be defined. In various embodiments, SIP is used to convey information during a call setup procedure. SIP is an application-layer control protocol for creating, modifying, and terminating sessions (e.g., Internet multimedia conferences, Internet telephone calls, and multimedia distribution using an offer/answer model) that works independently of underlying transport protocols and without dependency on the type of session that is being established. SIP works in concert with various protocols for carrying various forms of real-time multimedia session data such as voice, video, and/or text messages. SIP works in concert with these protocols by enabling Internet endpoints (referred to as "user agents") to discover one another and to agree on a characterization of a session they would like to share. For locating prospective session participants, and for other functions, SIP enables the creation of an infrastructure of network hosts (referred to as "proxy servers") to which user agents can send registrations, invitations to sessions, and other requests.

[0029] SIP messages used to create sessions may carry session descriptions that allow participants to agree on a set of compatible media types to be used during the communication session. The session descriptions may be formatted according to SDP, wherein media type and parameter negotiation and media setup is performed with SDP that is carried as payload in SIP messages. SIP employs many aspects of the HTTP request/response model, including reuse of header fields, encoding rules, and status codes of HTTP. Furthermore, a suitable transport layer protocol may be used to convey data before session establishment (e.g., audio and/or video as early media) or during an established session. The transport layer protocol may include, for example, UDP, TCP, RSTP, SCTP, RTP, SRTP, and/or the like for the transmission of media streams (e.g., voice, video). Moreover, the SIP messages may be encrypted using TLS, SRTP, and/or the like. In some embodiments, another encapsulation protocol, such as RTSP, may be used to convey SDP messages. RTSP is an application-level protocol for controlling the delivery of data with real-time properties. RTSP provides an extensible framework to enable controlled, on-demand delivery of real-time data, such as audio and video. An RTSP client and server negotiate an appropriate set of parameters for media delivery, partially using SDP syntax to describe those parameters.

[0030] SDP is used to set up a call and create a session, such as a real-time text, voice, or video call. The purpose of SDP is to convey information about media streams in multimedia sessions to allow the recipients of a session description to participate in the session. SDP provides a means to communicate the existence of a session, and a means to convey sufficient information to enable joining and participating in the session. Media streams can be many-to-many, and sessions need not be continually active. An SDP session description includes the following: session name and purpose; time(s) the session is active; the media comprising the session; and information needed to receive those media (e.g., addresses, ports, formats, etc.). The session description may also include information about the bandwidth to be used by the session, and contact information for the person responsible for the session.

[0031] During the creation of the session, two endpoints (e.g., UE 101a and UE 101b) that are supposed to later on exchange media packets, send each other SDP offer messages and answer messages so that the two endpoints exchange respective capability information. For example, a sender (e.g., UE 101a) may want to understand what kind of decoders the receiver (e.g., UE 101b) can support, what kind of codecs the receiver can support, and so forth. The sender and the receiver need to agree on the parameters to be used during the session, such as the codecs, protocols, payload formats, and other like parameters related to the delivery of content. And on top of it our proposal here is to. According to various embodiments, various radio capabilities of the UEs 101 may also be indicated during the SDP offer/answer exchanges.

[0032] The offer/answer exchange of session descriptions assumes the existence of a higher layer protocol (e.g., SIP), which is capable of exchanging SDP for the purposes of session establishment between agents. SDP protocol operation begins when one agent (e.g., UE 101a) sends an initial offer to another agent (e.g., UE 101b). An agent is the protocol implementation involved in the offer/answer exchange, and there are at least two agents involved in an offer/answer exchange. An offer is an SDP message sent by an offerer, and an offerer is an agent that generates a session description in order to create or modify a session. An offer is an initial offer if it is outside of any context that may have already been established through the higher layer protocol. It is assumed that the higher layer protocol provides maintenance of some kind of context which allows the various SDP exchanges to be associated together.

[0033] The agent receiving the offer may generate an answer, or the agent may reject the offer. An answer is an SDP message sent by an answerer in response to an offer, and an answerer is an agent which receives a session description from another agent describing aspects of desired media communication, and then responds to that with its own session description. The means for rejecting an offer are dependent on the higher layer protocol. The offer/answer exchange is atomic in that if the answer is rejected, the session reverts to the state prior to the offer, which may be absence of a session. At any time, either agent may generate a new offer that updates the session. However, the agents may not generate a new offer if it has received an offer to which it has not yet answered or rejected. Furthermore, an agent may not generate a new offer if the agent has generated a prior offer for which it has not yet received an answer or a rejection. If an agent receives an offer after having sent one, but before receiving an answer to it, this is considered a glare condition. The term "glare" was originally used in circuit switched telecommunications networks to describe the condition where two switches both attempt to seize the same available circuit on the same trunk at the same time. For purposes of the present disclosure, "glare" may mean that both agents have attempted to send an updated offer at the same time.

[0034] For example, an originating UE 101a may generate and send an SIP INVITE request to be delivered to a terminating UE 101b. The INVITE request message may include an SDP offer, at least one media description, and one or more radio capabilities of the UE 101a. The SDP offer may reflect the capabilities and user preferences of the UE 101a for the session. In this example, after the INVITE message is conveyed to the terminating UE 101b, a response message including response code 180 may be conveyed to the originating UE 101b. The response code 180 may indicate that the destination user agent (e.g., terminating UE 101b) received the INVITE, and is alerting the user of the terminating UE 101b of the call/session. While the call/session is in a ringing state, early media may be conveyed between the two UEs 101 using a suitable mechanism, such encoding media data in RTP packets and conveying those RTP packets according to RTP. Response messages may be sent by a user agent server indicating a result of a received request. Several classes of responses are recognized, determined by the numerical range of result codes. For example, the 200 response code may indicate a successful completion of the request and/or may indicate that a call/session has been established in response to the INVITE message. The SIP and/or SDP messages may include or indicate other information than that described previously such as, for example, user location which is a determination of the end system to be used for communication, and user availability: determination of the willingness of the called party to engage in communications.

[0035] An SDP session description itself is entirely textual, and includes a number of lines of text in the form of <type>=<value>. In general, <value> is either a number of fields delimited by a single space character or a free format string, and is case-significant unless a specific field defines otherwise. An SDP session description comprises a session level section followed by zero or more media level sections. The session level part starts with a "v=" line and continues to the first media level section. Each media-level section starts with an "m=" line and continues to the next media-level section or end of the whole session description. Generally, session level values are the default for all media unless overridden by an equivalent media-level value. Example SDP session description parameters are shown by table 1.

TABLE-US-00001 TABLE 1 SDP Session Descriptions Session level description v=(protocol version) Specifies the version of Session Description Protocol o=<username><sess-id> Details about the originator and identifi- <sess-version><nettype> cation of the session. <addrtype><unicast-address> .cndot. <username> - user login. .cndot. <sess-id> - numeric string used as unique identifier for the session .cndot. <sess-version> - numeric string used as version number for this session description .cndot. <nettype> - Text string, specifying the network type, e.g., IN for internet .cndot. <addrtype> - Text string specifying the type of the address of originator E.g.IP4 or IP6 .cndot. <unicast-address> - The address of the from where the session is machine originating, which can be both FQDN or IP address. s=<session name> Only one session name per session descrip- tion can be specified. It must not be empty; therefore if no name is assigned to the session, a single empty space should be used as session name i=< Session information> Only one session-level "i" field can be specified in the Session description. The "i" filed can be used in session or media description. It is primarily intended for labeling media streams when used in media description section. It can be a human readable description u=<URI> The URI (Uniform Resource Identifier) specified in the "u" filed, is a pointer to additional information about the session e=<email address> Email address of person responsible for conference or session p=<phone number> Specifies contact information for the person responsible for the conference or session c=<connection information>; Connection information can be included in c=<nettype> <addrtype> Session description or in media description. <connection-address> A session description MUST contain either at least one "c=" field in each media description or a single "c=" field at the session level. .cndot. <nettype> A text string describing the network type, e.g., IN for internet. .cndot. <addrype> A text string describing the type of the address used in connection- address; E.g., IP4 or IP6. .cndot. <connection-address> A Multicast IP address is specified including TTL, e.g., 224.2.36.42/127 b=<bwtype>:<bandwidth> Bandwidth field can be used both in the session description, specifying the total bandwidth of the whole session and can also be used in media description, per media session. .cndot. <bwtype> Bandwidth type can be CT; conference total upper limit of band- width to be used, or AS; application specific, therefore it will be the application's concept of maximum bandwidth. .cndot. <bandwidth> is interpreted as kilobits per second by default. z=<adjustment time> <offset> To schedule a repeated session that <adjustment time> <offset> specifies a change from daylight saving time to standard time or vice versa, it is necessary to specify difference from the originating time k=<method>:<encryption key> If channel is secure and trusted, SDP can be used to convey encryption keys. A key can be specified for the whole session or for each media description. a=<attribute>:<value> Zero or more session attribute lines. may Attributes be defined at "session-level" or at "media-level" or both. Session level attributes are used to advertise additional information that applies to conference as a whole. Media level attributes are specific to the media, i.e. advertising information about the media stream Time description t=<start-time>:<value> Specifies the start and stop times for a session. If a session is active at irregular intervals, multiple time entries can be used r=<repeat interval> Zero or more repeat times; If a session is <active duration> to be repeated at fixed intervals, the "r" <offsets from start-time> field is used. By default all values should be specified in seconds, but to make description more compact, time can also be given in different units, such as days, hours or minutes; e.g., r=6d 2h 14m Media description m=<media> <port>/ Media name and transport address. This <number of ports> field is used in the media description <proto> <fmt> section to advertise properties of the media stream, such as the port it will be using for transmitting, the protocol used for streaming and the format or codec. .cndot. <media> Used to specify media type, generally this can be audio, video, text etc. .cndot. <port> The port to which the media stream will be sent. Multiple ports can also be specified if more than 1 port is being used. .cndot. <proto> The transport protocol used for streaming, e.g., RTP (real time protocol). .cndot. <fmt> The format of the media being sent, e.g., in which codec is the media encoded; e.g., PCMU, GSM etc. i=<media title> media title or information field c=<connection information)> connection information - optional if included at session level b=<bwtype>:<bandwidth> bandwidth information k=<method>:<encryption key> encryption key a=<attribute>:<value> zero or more media attribute lines

[0036] Recent voice and video enhancements for LTE include several VoLTE/ViLTE enhancement features including RAN-assisted codec adaptation, VoLTE/ViLTE signaling optimization and VoLTE/ViLTE quality/coverage enhancement. In addition to RAN-assisted codec adaptation, another important feature is VoLTE quality/coverage enhancement functionality. As part of this functionality, a delay budget reporting framework has been specified so that the VoLTE coverage can be effectively enhanced by relaxing the air interface delay budget. This involves the UE 101 using RRC signaling to report DBI. Based on the reported DBI, when the UE 101 is in "good" coverage, the RAN node 111 (e.g., an eNB or gNB) can configure longer DRX for power saving purpose. When a remote UE 101 is in "bad" coverage, the RAN node 111 (e.g., an eNB or gNB) can reduce DRX cycle in order to reduce e2e delay and jitter, and/or configure the remote UE 101 in the "bad" coverage to increase the retransmission times in order to reduce the packet loss.

[0037] While RAN-layer delay budget reporting allows UEs 101 to locally adjust their own air interface delay, such a mechanism does not provide coordination between the UEs 101 to manage delay and jitter on an e2e basis. In particular, considering the autonomous operation of the MTSI sender and MTSI receiver (as discussed in more detail with respect to FIG. 4), an MTSI receiver's decision to turn cDRX off may be dependent on the remote MTSI sender's ability to leverage the available delay budget to further improve the reliability of its transmissions. As such, it would be desirable for the UEs 101 to exchange information about specific RAN capabilities that would be relevant in influencing the remote UE's 101 adaptation and impact the e2e quality of the VoLTE call. Such RAN capabilities may include RAN delay budget reporting, TTI bundling, HARQ support and RAN-assisted codec adaptation or ANBR, and/or other like RAN capabilities.

[0038] Embodiments herein provide mechanisms to enable SDP-based methods to signal specific RAN-level capabilities toward improving e2e quality of VoLTE calls. Signaled RAN-level capabilities may include Delay Budget Reporting, TTI bundling, RAN frame aggregation, RAN-assisted codec adaptation or ANBR, and the like. In various embodiments, a new SDP attribute RANCapabilities' is defined, which includes dedicated parameters that allow UEs 101 to indicate support for specific RAN capabilities. The RANCapabilities may include individual parameters that correspond to support of each of RAN delay budget reporting, TTI bundling, RAN frame aggregation, RAN-assisted codec adaptation or ANBR, and/or the like. These aspects are discussed in more detail with respect to FIG. 5.

[0039] With respect to ANBR, it has been observed that the ANBR procedures, in the absence of e2e coordination, may suffer from unfavorable consequences such as relatively high PLR and poor quality. Enabling such e2e coordination may be useful for a UE 101 to learn the ANBR capabilities of its remote UE 101 and may accordingly determine the most suitable media adaptation on its end, for example, in response to ANBR information received from its local RAN node 111 (e.g., an eNB and/or gNB). Moreover, as also observed in 3GPP TR 26.919, such signaling can allow the PCF/PCRF when setting GBR<MBR bearers. Furthermore, from a conformance point of view, the LTE_VoLTE_ViLTE_enh test case included in 3GPP TS 36.523-1 assumes that if a UE 101 is capable of receiving ANBR information then it is also capable of using this ANBR information as an adaptation trigger. Such a conformance point is currently not supported by the existing media handling requirements on ANBR in 3GPP TS 26.114, where ANBR is an optional/recommended feature, for example, the UE 101 is free to ignore ANBR information as an adaptation trigger. The present disclosure introduces SDP-based indications of ANBR-capabilities. In embodiments, if a UE 101 signals the ANBR SDP capability, then the UE 101 is capable of receiving ANBR information from its access network and its MTSI client is capable of ANBR as defined in clause 10.7 of 3GPP TS 26.144 v16.0.0 (2018 December).

[0040] RAN-assisted codec adaptation or ANBR functionality, as defined in 3GPP TS 26.114, 3GPP TS 36.321, and 3GPP TS 38.321 provides a means for the RAN node 111 (e.g., an eNB or gNB) to send a codec adaptation indication with recommended bit rate to assist the UE 101 to select or adapt to a codec rate for voice or video. The RAN-assisted codec adaptation mechanism supports the uplink/downlink bit rate increase or decrease. For a bearer associated with configuration of MBR greater than GBR, the recommended uplink/downlink bit rate is within boundaries set by the MBR and GBR of the concerned bearer. For uplink or downlink bit rate adaptation, the RAN node 111 may send a recommended bit rate to the UE 101 to inform the UE 101 on the currently recommended transport bit rate on the local uplink or downlink, which the UE 101 may use in combination with other information to adapt the bit rate. For example, the UE 101a may send a bit rate request to the peer UE 101b via application layer messages as specified in 3GPP TS 26.114, which the peer UE 101b may use in combination with other information to adapt the codec bit rate. The recommended bit rate is in kbps at the physical layer at the time when the decision is made. The recommended bit rate for UL and DL is conveyed as a MAC CE from the RAN node 111 to the UE 101. Based on the recommended bit rate from the RAN node 111, a UE 101 may initiate an e2e bit rate adaptation with its peer (e.g., another UE 101 or MGW). The UE 101 may also send a query message to its local RAN node 111 to check if a bit rate recommended by its peer can be provided by the RAN node 111. The UE 101 is not expected to go beyond the recommended bit rate from the RAN node 111.

[0041] According to various embodiments, a session description may include a media-level SDP attribute to indicate support for ANBR. For example, the media-level SDP attribute may be "anbr." Use of ANBR with dynamic bitrate adaptation is described in 3GPP TS 26.144 v16.0.0 (2018 December) clause 10.7.3, and related adaptation of sent and received media is described in 3GPP TS 26.144 v16.0.0 (2018 December) clauses 10.7.3.2 and 10.7.3.3, respectively. At the radio signaling level, ANBR signaling capability, also known as RAN-assisted codec adaptation as specified in 3GPP TS 36.321 for LTE access and 3GPP TS 38.321 for NR access. The embodiments discussed herein allow for little or no e2e coordination between the UEs 101 on their ANBR-triggered adaptation capabilities.

[0042] If an MTSI client in terminal signals the ANBR attribute in the SDP, then the MTSI client in terminal supports ANBR as described in 3GPP TS 26.144 v16.0.0 (2018 December) clause 10.7, including the use of ANBR with dynamic bitrate adaptation as described in 3GPP TS 26.144 v16.0.0 (2018 December) clause 10.7.3. An MTSI client in terminal is an MTSI client that is implemented in a terminal or UE 101, and an MTSI client is a function in a terminal (or UE 101) or in a network entity (e.g., a MRFP) that supports MTSI. An MTSI client capable of supporting multiple streams may be referred to as an "MSMTSI client." If the MTSI client in terminal signals the ANBR attribute in the SDP, then its UE 101 is capable of RAN-assisted codec adaptation specified in 3GPP TS 36.321 for LTE access and/or 3GPP TS 38.321 for NR access. For LTE access, inclusion of "anbr" in the SDP indicates whether the UE 101 is able to query and receive ANBR information (for both downlink and uplink ANBR) from its eNB. Likewise, for NR access inclusion of this attribute indicates whether the UE 101 is able to query and receive ANBR information. In various embodiments, the "a=anbr" attribute may only be used on media level.

[0043] Informing the remote MTSI client via the SDP about the ANBR support using the "anbr" attribute helps the remote MTSI client to determine the most suitable media adaptation on its end, this is especially valid if the remote MTSI client itself is also capable of performing ANBR-triggered adaptation, e.g., in response to ANBR information received from its local RAN node 111 (e.g., an eNB or gNB). As an example, for ANBR-triggered rate up-switching, an MTSI client may adapt its sent bitrate and also perform CMR/RTCP-APP/TMMBR/TMMBN signaling more responsively based on the ANBR information it receives from its local RAN node 111, if it knows that the remote MTSI client also supports ANBR. In the absence of the knowledge of the ANBR capabilities of the remote UE 101, an MTSI client may not have dynamic knowledge on the feasibility of the new bitrate based on ANBR over the access network of the remote UE 101, and this may not be desirable especially in a rate up-switching scenario. Furthermore, signaling of ANBR capabilities in the SDP via "a=anbr" can also be useful for the PCF/PCRF when setting GBR<MBR bearers. An example use of the "a=anbr" attribute relative to a media line is as follows: a=anbr. The IANA registration information for the "a=anbr" SDP attribute is provided in table 2.

TABLE-US-00002 TABLE 2 Contact name, email address, and telephone number: 3GPP Specifications Manager 3gppContact@etsi.org +33 (0)492944200 Attribute Name (as it will appear in SDP) anbr Long-form Attribute Name in English: 3GPP access network bitrate recommendation (ANBR) support attribute Type of Attribute Media level Is Attribute Value subject to the Charset Attribute? This Attribute is not dependent on charset. Purpose of the attribute: This attribute is used to indicate the UE's ability to use ANBR as an adaptation trigger and also its ability to receive ANBR information from the access network. Appropriate Attribute Values for this Attribute: No values. See clause 6.2.X for detailed usage.

[0044] Referring back to FIG. 1, the RAN nodes 111 are configured to communicate with one another via interface 112. In embodiments where the system 100 is an LTE system (e.g., when CN 120 is an EPC 120), the interface 112 may be an X2 interface 112. The X2 interface may be defined between two or more RAN nodes 111 (e.g., two or more eNBs and the like) that connect to EPC 120, and/or between two eNBs connecting to EPC 120. In some implementations, the X2 interface may include an X2-U and an X2-C. The X2-U may provide flow control mechanisms for user data packets transferred over the X2 interface, and may be used to communicate information about the delivery of user data between eNBs. For example, the X2-U may provide specific sequence number information for user data transferred from a master eNB (MeNB) to an secondary eNB (SeNB); information about successful in sequence delivery of PDCP PDUs to a UE 101 from an SeNB for user data; information of PDCP PDUs that were not delivered to a UE 101; information about a current minimum desired buffer size at the SeNB for transmitting to the UE user data; and the like. The X2-C may provide intra-LTE access mobility functionality, including context transfers from source to target eNBs, user plane transport control, etc.; load management functionality; as well as inter-cell interference coordination functionality.

[0045] In embodiments where the system 100 is a 5G or NR system (e.g., when CN 120 is an 5GC 120), the interface 112 may be an Xn interface 112. The Xn interface is defined between two or more RAN nodes 111 (e.g., two or more gNBs and the like) that connect to 5GC 120, between a RAN node 111 (e.g., a gNB) connecting to 5GC 120 and an eNB, and/or between two eNBs connecting to 5GC 120. In some implementations, the Xn interface may include an Xn-U interface and an Xn-C interface. The Xn-U may provide non-guaranteed delivery of user plane PDUs and support/provide data forwarding and flow control functionality. The Xn-C may provide management and error handling functionality, functionality to manage the Xn-C interface; mobility support for UE 101 in a CM-CONNECTED mode including functionality to manage the UE mobility for connected mode between one or more RAN nodes 111. The mobility support may include context transfer from an old (source) serving RAN node 111 to new (target) serving RAN node 111; and control of user plane tunnels between old (source) serving RAN node 111 to new (target) serving RAN node 111. A protocol stack of the Xn-U may include a transport network layer built on IP transport layer, and a GTP--U layer on top of a UDP and/or IP layer(s) to carry user plane PDUs. The Xn-C protocol stack may include an application layer signaling protocol (referred to as XnAP) and a transport network layer that is built on SCTP. The SCTP may be on top of an IP layer, and may provide the guaranteed delivery of application layer messages. In the transport IP layer, point-to-point transmission is used to deliver the signaling PDUs. In other implementations, the Xn-U protocol stack and/or the Xn-C protocol stack may be same or similar to the user plane and/or control plane protocol stack(s) shown and described herein.

[0046] The RAN 110 is shown to be communicatively coupled to a core network--in this embodiment, CN 120. The CN 120 may comprise one or more network elements 122, which are configured to offer various data and telecommunications services to customers/subscribers (e.g., users of UEs 101) who are connected to the CN 120 via the RAN 110. The CN 120 includes one or more servers 122, which may implement various core network elements or AFs such as those discussed herein. The components of the CN 120 may be implemented in one physical node or separate physical nodes including components to read and execute instructions from a machine-readable or computer-readable medium (e.g., a non-transitory machine-readable storage medium). In some embodiments, NFV may be utilized to virtualize any or all network node functions via executable instructions stored in one or more computer-readable storage mediums (described in further detail below). A logical instantiation of the CN 120 may be referred to as a network slice, and a logical instantiation of a portion of the CN 120 may be referred to as a network sub-slice. NFV architectures and infrastructures may be used to virtualize one or more network functions, alternatively performed by proprietary hardware, onto physical resources comprising a combination of industry-standard server hardware, storage hardware, or switches. In other words, NFV systems can be used to execute virtual or reconfigurable implementations of one or more EPC components/functions.

[0047] In some embodiments, the CN 120 may be an EPC (referred to as "EPC 120"). In these embodiments, the one or more network elements 122 may include or operate one or more an MMEs, SGSNs, S-GWs, P-GWs, HSSs, PCRFs, and/or other like LTE core network elements. Additionally, the RAN 110 (referred to as "E-UTRAN 110" or the like) may be connected with the EPC 120 via an S1 interface 113. In embodiments, the S1 interface 113 may be split into two parts, an S1-U interface 114, which carries traffic data between the RAN nodes 111 and the S-GW, and the S1-MME interface 115, which is a signaling interface between the RAN nodes 111 and MMEs. Additionally, the P-GW within the EPC 120 may route data packets between the EPC 120 and external networks such as a network including a PDN 130 via an IP interface 125. The PDN 130 may be an operator external public, a private PDN (e.g., enterprise network, etc.), or an intra-operator PDN (e.g., for provision of IMS and/or IP-CAN services).

[0048] In some embodiments, the CN 120 may be a 5GC (referred to as "5GC 120"). In these embodiments, the network elements 122 may implement, inter alia, an AUSF, AMF, SMF, NEF, PCF, NRF, UDM, AF, UPF, SMSF, N3IWF, NSSF and/or other like NR NFs. Additionally, the RAN 110 (referred to as "5G-RAN 110," "NG-RAN 110," or the like) may be connected with the 5GC 120 via an NG interface 113. In these embodiments, the NG interface 113 may be split into two parts, an NG-U interface 114, which carries traffic data between the RAN nodes 111 and a UPF, and the NG-C interface 115, which is a signaling interface between the RAN nodes 111 and AMFs. Additionally, the UPF within the 5GC 120 may perform packet routing, filtering, inspection, forwarding, etc., between the 5GC 120 and external networks such as a DN 130 via an IP interface 125. The DN 130 may represent one or more data networks, including one or more LADNs, and may be an operator external public, a private PDN (e.g., enterprise network, etc.), or an intra-operator PDN, for example, for provision of IMS and/or IP-CAN services.

[0049] The CN 120 is shown to be communicatively coupled to PDN/DN 130 via an IP communications interface 125. The PDN/DN 130 may include one or more application servers, for example, the AS 250a and 250b depicted by FIG. 2. The application server(s) comprise one or more physical and/or virtualized systems for providing functionality (or services) to one or more clients (e.g., UEs 101) over a network. The server(s) within PDN/DN 130 and/or the server(s) 122 may include various computer devices with rack computing architecture component(s), tower computing architecture component(s), blade computing architecture component(s), and/or the like. The server(s) may represent a cluster of servers, a server farm, a cloud computing service, or other grouping or pool of servers, which may be located in one or more datacenters. The server(s) may also be connected to, or otherwise associated with one or more data storage devices (not shown). Moreover, the server(s) may include an operating system (OS) that provides executable program instructions for the general administration and operation of the individual server computer devices, and may include a computer-readable medium storing instructions that, when executed by a processor of the servers, may allow the servers to perform their intended functions. Suitable implementations for the OS and general functionality of servers are known or commercially available, and are readily implemented by persons having ordinary skill in the art. Generally, the server(s) offer applications or services that use IP/network resources. As examples, the server(s) may provide traffic management services, cloud analytics, content streaming services, immersive gaming experiences, social networking and/or microblogging services, and/or other like services. In addition, the various services provided by the server(s) 130 may include initiating and controlling software and/or firmware updates for applications or individual components implemented by the UEs 101. The server(s) can also be configured to support one or more communication services (e.g., VoIP sessions, PTT sessions, group communication sessions, social networking services, etc.) for the UEs 101 via the CN 120.

[0050] FIG. 2 illustrates an example Multimedia Telephony Service for IMS (MTSI) architecture 200 according to various embodiments. MTSI (also referred to as "Multimedia Telephony") is an IMS telephony service that builds on IMS capabilities to establish multimedia communications between terminals (e.g., UEs 101a, 101b) within and in-between operator networks (operator networks 202a, 202b). The terminals connect to the IMS using either a fixed access network or a 3GPP access network.

[0051] The MTSI architecture 200 includes two operator networks, including an operator A network 202a and an operator B network 202b. In this example, operator A network 202a serves UE 101a and operator B network 202b serves UE 101b. The UEs 101a, 101b may include MTSI clients and/or MSMTSI clients. An MTSI client in terminal is an MTSI client that is implemented in a terminal or UE 101. The term "MTSI client in terminal" is used in the present disclosure when entities such as MRFP, MRFC or media gateways are excluded. An MSMTSI client is a multi-stream capable MTSI client supporting multiple streams. An MTSI client may support multiple streams, even of the same media type, without being an MSMTSI client. Such an MTSI client may, for example, add a second video to an ongoing video telephony session.

[0052] Each of the operator networks 202a and 202b include RAN 210 (including RAN 210a in the operator A network 202a and RAN 210b in the operator B network 202b) and a PS domain 220 (including PS domain 220a in the operator A network 202a and PS domain 220b in the operator B network 202b), which may be the same or similar to the RAN 110 and CN 120 of FIG. 1, respectively. Each of the operator networks include various CSCF mechanisms to route control-plane signaling between the UEs 101a and 101b involved in a call, including a P-CSCF 230 (including P-CSCF 230a in the operator A network 202a and P-CSCF 230b in the operator B network 202b) and a S-CSCF 240 (including S-CSCF 240a in the operator A domain and S-CSCF 240b in the operator B domain). Operator B network 202b includes an I-CSCF 245b, however, in other embodiments the operator A network 202a may also include an I-CSCF. In some embodiments, the operator networks 202 may include other elements that are not shown by FIG. 2, such as an MFRP, MRFC, MGW, and/or the like.

[0053] The P-CSCF 230 is the first contact point for the UE 101a, 101b within the IMS. An address of the P-CSCF 230 is discovered by UEs using the mechanism described in the clause "Procedures related to Local CSCF Discovery". The P-CSCF 230 behaves like a proxy (also referred to as "SIP proxy servers" or the like) in that the P-CSCF 230 accepts requests and services the requests internally or forwards them to an appropriate entity. In particular, the P-CSCF 230 forwards SIP register requests received from the UE 101 to an entry point determined using the home domain name, as provided by the UE 101, forwards SIP messages received from the UE 101 to an SIP server (e.g., the S-CSCF 240) whose name the P-CSCF 230 has received as a result of the registration procedure, and forwards the SIP request or response to the UE 101. The P-CSCF 230 may behave as a UA wherein the P-CSCF 230 may terminate and independently generate SIP transactions in abnormal conditions. The P-CSCF 230 also performs SIP message compression/decompression.

[0054] The S-CSCF 240 handles session states in the network. For registration, the S-CSCF 240 may behave as a registrar (also referred to as an "SIP registration server" or the like) in that the S-CSCF 240 accepts registration requests and makes its information available through a location server (e.g., HSS 224). The S-CSCF 240 also notifies subscribers about registration changes including the GRUU sets assigned to registered instances. During registration process, the S-CSCF 240 provides policy information, if available, for a Public User Identity from the HSS 224 to the P-CSCF 230 and/or UE 101. For example, the policy information includes MPS IMS Subscription status and policy applicable to enterprise network subscribers. For session-related and session-unrelated flows, the S-CSCF 240 provides session control for the registered endpoint's (e.g., UEs 101) sessions, and rejects IMS communication to/from Public User Identity(s) that are barred for IMS communications after completion of registration. The S-CSCF 240 may behave as a proxy server in that the S-CSCF 240 accepts requests and services them internally or forwards them on, possibly after translation. The S-CSCF 240 may behave as a UA in that the S-CSCF 240 may terminate and independently generate SIP transactions. Based on the determined served user, the S-CSCF 240 handles interactions with the services platforms for the support of services. The S-CSCF 240 provides endpoints with service event related information (e.g., notification of tones/announcement together with location of additional media resources, billing notification).

[0055] The I-CSCF 245b is the contact point within an operator's network (e.g., the operator B network 202b) for all IMS connections destined to a subscriber of that network operator (e.g., the UE 101b), or a roaming subscriber currently located within that network operator's service area. The I-CSCF 245b also generates CDRs for charging and resource utilization.

[0056] Each operator network in the IMS architecture 200 also include an AS 250 (including AS 250a in the operator A network 202a and AS 250b in the operator B network 202b). The AS 250 may influence and impact the SIP session on behalf of the services supported by the operator's network. An AS 250 may host and execute services. The AS 250 may resides either in the user's home network or in a third party location. The third party could be a network or simply a stand-alone AS. In the control plane, AS 250 provides supplementary services such as call hold/resume, call forwarding, multi-party calls, and/or the like. The AS 250 may be an SIP AS, OSA AS, or CAMEL IM-SSF. The OSA AS does not directly interact with the IMS network entities but through the OSA SCS-s. The SIP Application Server supports IMS reference points (e.g., ISC, Sh, Ut (not shown by FIG. 2)), in support of an application, is considered as part of the IM CN subsystem. Examples of such ASs are SCC AS and TAS. The AS (SIP AS, OSA SCS, and/or IM-SSF) can communicate with the HSS 224 over the Sh and Si interfaces (not shown by FIG. 2). An S-CSCF to AS interface is used to provide services residing in an AS, and an I-CSCF to AS interface is used to forward SIP requests destined to a Public Service Identity hosted by the AS directly to that AS.

[0057] The HSS/SLF 224b is a master database wherein the HSS portion of the HSS/SLF 224 (hereinafter referred to as "HSS 224") includes (or stores) subscription-related information to support the network entities actually handling calls/sessions, and the SLF portion of the HSS/SLF 224 (hereinafter referred to as "SLF 224") includes (or stores) information used to locate the subscription-related information. Although FIG. 2 only depicts HSS/SLF 224b located in the operator B network 202b, in other embodiments, the operator A network 202a may also include an HSS/SLF 224. In some embodiments, the SLF is not required such as in a single HSS environment (e.g., a server farm architecture) or when an AS 250 are configured/managed to use pre-defined HSS 224. A home network may contain one or several HSSs 224 depending on the number of mobile subscribers, the capacity of the equipment, and on the organization of the network. As an example, the HSS 224 provides support to the call control servers in order to complete the routing/roaming procedures by solving authentication, authorization, naming/addressing resolution, location dependencies, etc.

[0058] The HSS 224 is responsible for holding (or storing) the following user related information: user identification, numbering, and addressing information; user security information including network access control information for authentication and authorization; user location information at inter-system level wherein the HSS 224 supports the user registration and stores inter-system location information, etc.; and user profile information. The HSS 224 also generates user security information for mutual authentication, communication integrity check and ciphering. Based on this information, the HSS 224 also supports the call control and session management entities of the different domains and subsystems of the operator network. The HSS 224 may integrate heterogeneous information, and enable enhanced features in the core network to be offered to the application & services domain, at the same time hiding the heterogeneity. Furthermore, the HSS 224 includes IP multimedia functionality, which provides support to control functions of the IM subsystem such as the CSCF 230, 240, 245. The IP multimedia functionality enables subscriber usage of the IM CN subsystem services and is independent of the access network used to access the IM CN subsystem.

[0059] The SLF 224 is queried by the I-CSCF 245b via a Dx interface (not shown by FIG. 2) during the registration and session setup to get the name of the HSS 224 containing the required subscriber specific data. Furthermore the SLF 224 is also queried by the S-CSCF 240 via the Dx interface (not shown by FIG. 2) during registration. The SLF 224 is queried by the AS 250 via the Dh interface (not shown by FIG. 2) in conjunction with the Sh interface (not shown by FIG. 2) operation to get the name of the HSS 224 containing the required subscriber specific data. The SLF 224 is queried by an 3GPP AAA server (not shown by FIG. 2) via the Dw interface (not shown by FIG. 2) to get the name of the HSS 224 containing the required subscriber specific data.

[0060] As mentioned previously, the UEs 101 may use SIP, SDP, and SDPCapNeg for media negotiation and configuration. General SIP signaling (see e.g., 3GPP TS 24.229) is used to convey SDP offer and answer messages. The SIP messages include SDP offer and answer messages in the message body portion of the SIP messages. In some implementations, the MTSI client in terminal may use the OMA-DM solutions for enhancing SDP negotiation and resource reservation process.

[0061] The session setup for RTP transported media may determine, for each media, IP address(es), RTP profile, UDP port number(s); codec(s); RTP Payload Type number(s), RTP Payload Format(s), the maximum bandwidth that is allowed in the session, and/or the like. The session setup may also determine ECN usage and any additional session parameters. The session setup for UDP transported media without RTP may determine IP address(es), UDP port number(s) and additional session parameters.

[0062] An MTSI client (e.g., a UE 101) offers at least one RTP profile for each RTP media stream. Multiple RTP profiles may be offered using SDPCapNeg. For voice and real-time text, the first SDP offer may include at least the AVP profile. For video, the first SDP offer for a media type may include at least the AVPF profile. Subsequent SDP offers may include only other RTP profiles if it is known from a preceding offer that this RTP profile is supported by the answerer. The MTSI client may be capable of receiving an SDP offer containing both AVP and AVPF offers in order to support interworking. The configuration of ECN for media transported with RTP for speech and for video.

[0063] SDPCapNeg is used to negotiate RTP profiles for all media types where AVPF is supported. MTSI clients supporting SDPCapNeg may support the complete SDPCapNeg framework. SDPCapNeg attributes that are directly applicable for the RTP profile negotiation include, inter alia, tcap, pcfg and acfg attributes. For voice and real-time text, SDPCapNeg may be used when offering AVPF the first time for a new media type in the session since the support for AVPF in the answering client is not known at this stage. For video, an MTSI client may either offer AVPF and AVP together using SDPCapNeg, or the MTSI client may offer only AVPF without using SDPCapNeg. When offering AVP and AVPF using SDPCapNeg, the MTSI client may offer AVP on the media (m=) line and may offer AVPF using SDPCapNeg mechanisms. The SDPCapNeg mechanisms are used as follows: The support for AVPF is indicated in an attribute (a=) line using the transport capability attribute `tcap`. AVPF may be preferred over AVP. At least one configuration using AVPF may be listed using the attribute for potential configurations `pcfg`.

[0064] An invited MTSI client should accept using AVPF whenever supported. If AVPF is to be used in the session then the MTSI client selects one configuration out of the potential configurations defined in the SDP offer for using AVPF; indicates in the media (m=) line of the SDP answer that the profile to use is AVPF; and indicates the selected configuration for using AVPF in the attribute for actual configurations `acfg`. If AVP is to be used then the MTSI may not indicate any SDPCapNeg attributes for using AVPF in the SDP answer.

[0065] The SDP may include bandwidth information for each media stream and also for the session in total. The bandwidth information for each media stream and for the session is defined by the application specific bandwidth modifier as defined in RFC 4566. An MTSI client in terminal may include the `a=bw-info` attribute in the SDP offer. When accepting a media type where the `a=bw-info` attribute is included the MTSI client in terminal may include the `a=bw-info` attribute in the SDP answer if it supports the attribute. When the `a=bw-info` attribute is supported, the following bandwidth properties may be included for each RTP payload type in the SDP: Maximum Supported Bandwidth for sending direction; Maximum Desired Bandwidth for sending direction; Minimum Desired Bandwidth for sending direction; Minimum Supported Bandwidth for sending direction; Maximum Supported Bandwidth for receiving direction (with some exceptions); Maximum Desired Bandwidth for receiving direction; Minimum Desired Bandwidth for receiving direction; and Minimum Supported Bandwidth for receiving direction.

[0066] When an MTSI client in terminal receives an SDP offer or answer it may determine the maximum sending rate for the selected codec by selecting the smallest of the following: the bandwidth value, if the b=AS parameter was included in the received SDP offer or answer; the Maximum Supported Bandwidth for the receiving direction, if included in the received SDP; the preconfigured data rate for the selected codec, if the MTSI client has been preconfigured by the operator to use a particular data rate for the selected codec; the maximum data rate for the selected codec as determined by examining the codec information (e.g., codec, mode, profile, level) and any other media information (e.g., ptime and maxptime) included in the received SDP offer or answer. The maximum data rate is determined assuming no extra bandwidth is allowed for redundancy. The maximum sending rate may be further updated by the MTSI client in terminal based on receiving an indication of the granted QoS.