Methods And Apparatus For Exploiting Interfaces Smart Environment Device Application Program Interfaces

Kortz; Samuel W. ; et al.

U.S. patent application number 16/293358 was filed with the patent office on 2019-07-04 for methods and apparatus for exploiting interfaces smart environment device application program interfaces. This patent application is currently assigned to Google LLC. The applicant listed for this patent is Google LLC. Invention is credited to Gregory J. Hu, Samuel W. Kortz, Mark McBride, Amanda Surya.

| Application Number | 20190208390 16/293358 |

| Document ID | / |

| Family ID | 54869564 |

| Filed Date | 2019-07-04 |

View All Diagrams

| United States Patent Application | 20190208390 |

| Kind Code | A1 |

| Kortz; Samuel W. ; et al. | July 4, 2019 |

Methods And Apparatus For Exploiting Interfaces Smart Environment Device Application Program Interfaces

Abstract

A tangible, non-transitory, machine-readable medium, comprising instructions to obtain an estimated time of arrival for an occupant of a household; calculate a transition time to reach a desired temperature of the occupant from a current ambient temperature within the household; if, the estimated time of arrival is less than or equal to the transition time, activate a transition to the desired temperature; otherwise if the estimated time of arrival is greater than the transition time, do not activate the transition.

| Inventors: | Kortz; Samuel W.; (Palo Alto, CA) ; Hu; Gregory J.; (Los Altos, CA) ; Surya; Amanda; (Santa Clara, CA) ; McBride; Mark; (Santa Clara, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Google LLC Mountain View CA |

||||||||||

| Family ID: | 54869564 | ||||||||||

| Appl. No.: | 16/293358 | ||||||||||

| Filed: | March 5, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14577635 | Dec 19, 2014 | |||

| 16293358 | ||||

| 62016052 | Jun 23, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/546 20130101; H04L 67/12 20130101; H04L 63/08 20130101; F24F 11/30 20180101; H04L 12/2818 20130101; H04L 12/2829 20130101; H04W 4/60 20180201; G05D 23/1904 20130101; H04W 4/80 20180201; H04L 12/282 20130101; G05B 17/02 20130101; H05B 47/105 20200101; G05B 15/02 20130101; H04L 12/2816 20130101; H04L 12/2823 20130101; H04L 12/2803 20130101; H04L 67/30 20130101; H04W 4/021 20130101; G08B 17/10 20130101; H04L 29/06047 20130101; G05D 23/1917 20130101; G06F 9/541 20130101; H04L 67/22 20130101; F24F 11/62 20180101; H04L 2012/2841 20130101; G06F 9/54 20130101; H04L 67/42 20130101; G05B 13/04 20130101; H04L 2012/285 20130101; H04L 67/1097 20130101; H04M 1/72533 20130101; G05B 2219/2642 20130101; H05B 47/19 20200101 |

| International Class: | H04W 4/80 20060101 H04W004/80; G05B 15/02 20060101 G05B015/02; H04L 12/28 20060101 H04L012/28; G05D 23/19 20060101 G05D023/19; G05B 13/04 20060101 G05B013/04; H04L 29/08 20060101 H04L029/08; H05B 37/02 20060101 H05B037/02; F24F 11/30 20060101 F24F011/30; G05B 17/02 20060101 G05B017/02; H04W 4/021 20060101 H04W004/021; F24F 11/62 20060101 F24F011/62; H04L 29/06 20060101 H04L029/06; H04M 1/725 20060101 H04M001/725; H04W 4/60 20060101 H04W004/60; G08B 17/10 20060101 G08B017/10; G06F 9/54 20060101 G06F009/54 |

Claims

1. (canceled)

2. A method for controlling a smart-home lighting device, the method comprising: receiving, by an application, an audible cue from a user that corresponds to a command to control the smart-home lighting device; converting the audible cue into a device request message to command the smart-home lighting device; forwarding the device request message to an application programming interface (API) server that is effective to cause the API server to: update a data model based on the device request message; and send the command to the smart-home lighting device via an update signal communicated to the smart-home lighting device that causes the smart-home lighting device to perform an action.

3. The method of claim 2, comprising: receiving, by the application, one or more device status parameters of the smart-home lighting device via an API of the API server.

4. The method of claim 2, wherein the data model comprises information related to one or more smart-home devices including the smart-home lighting device, one or more smart-device environment structures comprising the smart-devices, or both.

5. The method of claim 2, wherein the audible cue sets a value of a parameter of the smart-home lighting device.

6. The method of claim 5, wherein the parameter of the smart home lighting device is one of: a light setting, a dim state of one or more lights, activating lighting, deactivating lighting, or a lighting color.

7. The method of claim 2, wherein the audible cue sets an operational mode of the smart-home lighting device.

8. The method of claim 7, wherein the operation mode is an occupancy mode, and wherein the occupancy mode comprises a home mode and an away mode.

9. The method of claim 2, comprising receiving, by the application, an indication of crossing a geo-fence boundary that corresponds to another command to control the smart-home lighting device; converting the indication of crossing a geo-fence boundary into another device request message to command the smart-home lighting device; forwarding the other device request message to the application programming interface (API) server that is effective to cause the API server to: update the data model based on the other device request message; and send the other command to the smart-home lighting device via another update signal communicated to the smart-home lighting device that causes the smart-home lighting device to perform another action.

10. A system comprising: an application programming interface (API) server; and an electronic device configured to: receive an audible cue from a user that corresponds to a command to control a smart-home lighting device; convert the audible cue into a device request message to command the smart-home lighting device; and forward the device request message to the API server that is effective to cause the API server to: update a data model based on the device request message; and send the command to the smart-home lighting device via an update signal communicated to the smart-home lighting device that causes the smart-home lighting device to perform an action.

11. The system of claim 10, the electronic device configured to: receive one or more device status parameters of the smart-home lighting device via an API of the API server.

12. The system of claim 10, wherein the data model comprises information related to one or more smart-home devices including the smart-home lighting device, one or more smart-device environment structures comprising the smart-devices, or both.

13. The system of claim 10, wherein the audible cue sets a value of a parameter of the smart-home lighting device.

14. The system of claim 10, wherein the audible cue sets an operational mode of the smart-home lighting device.

15. The system of claim 14, wherein the operation mode is an occupancy mode, and wherein the occupancy mode comprises a home mode and an away mode.

16. One or more non-transitory, computer-readable media comprising instructions to: receive an audible cue from a user that corresponds to a command to control a smart-home lighting device; convert the audible cue into a device request message to command the smart-home lighting device; and forward the device request message to an application programming interface (API) server that is effective to cause the API server to: update a data model based on the device request message; and send the command to the smart-home lighting device via an update signal communicated to the smart-home lighting device that causes the smart-home lighting device to perform an action.

17. The one or more non-transitory, computer-readable media of claim 16, comprising instructions to receive one or more device status parameters of the smart-home device via an API of the API server.

18. The one or more non-transitory, computer-readable media of claim 16, wherein the data model comprises information related to one or more smart-home devices including the smart-home lighting device, one or more smart-device environment structures comprising the smart-devices, or both.

19. The one or more non-transitory, computer-readable media of claim 16, wherein the audible cue sets a value of a parameter of the smart-home lighting device.

20. The one or more non-transitory, computer-readable media of claim 19, wherein the parameter of the smart home lighting device is one of: a light setting, a dim state of one or more lights, activating lighting, deactivating lighting, or a lighting color.

21. The one or more non-transitory, computer-readable media of claim 16, wherein the audible cue sets an operational mode of the smart-home lighting device, wherein the operation mode is an occupancy mode, and wherein the occupancy mode comprises a home mode and an away mode.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a Continuation Application of, and claims priority to, U.S. application Ser. No. 14/577,635, entitled "Methods And Apparatus For Exploiting Interfaces Smart Environment Device Application Program Interfaces", filed Dec. 19, 2014 which in turn claims priority to U.S. Provisional Patent Application No. 62/016,052, entitled "Methods and Apparatus for Exploiting Application Programming Interfaces to Smart Home Environment Electronic Components", filed Jun. 23, 2014, which is herein incorporated by reference.

BACKGROUND

[0002] This disclosure relates to controlling access to electronic devices via application programming interface (API) restrictions.

[0003] This section is intended to introduce the reader to various aspects of art that may be related to various aspects of the present disclosure, which are described and/or claimed below. This discussion is believed to be helpful in providing the reader with background information to facilitate a better understanding of the various aspects of the present disclosure. Accordingly, it should be understood that these statements are to be read in this light, and not as admissions of prior art.

[0004] People interact with a number of different electronic devices on a daily basis. In a home setting, for example, a person may interact with smart thermostats, lighting systems, alarm systems, entertainment systems, and a variety of other electronic devices. To interact with some of these electronic devices, a person may communicate a command using an application program running on another electronic device. For instance, a person may control the temperature setting on a smart thermostat using an application program running on a smartphone. The application program may communicate with a secure online service that interacts with that thermostat.

[0005] To preserve the user experience associated with an electronic device, the manufacturer of the electronic device may develop the application programs to control the electronic device. Opening access to the electronic devices to third party developers, however, may potentially improve the experience of some people with the devices--but only if third party application programs do not cause the electronic devices to behave in an undesirable manner. Accordingly, while it may be desirable to open access to the electronic devices to third party developers, it may also be desirable to place restrictions on that access so as to reduce the risk that the third party access may negatively impact the operation of the electronic devices and thus the user experience associated with those devices.

SUMMARY

[0006] A summary of certain embodiments disclosed herein is set forth below. It should be understood that these aspects are presented merely to provide the reader with a brief summary of these certain embodiments and that these aspects are not intended to limit the scope of this disclosure. Indeed, this disclosure may encompass a variety of aspects that may not be set forth below.

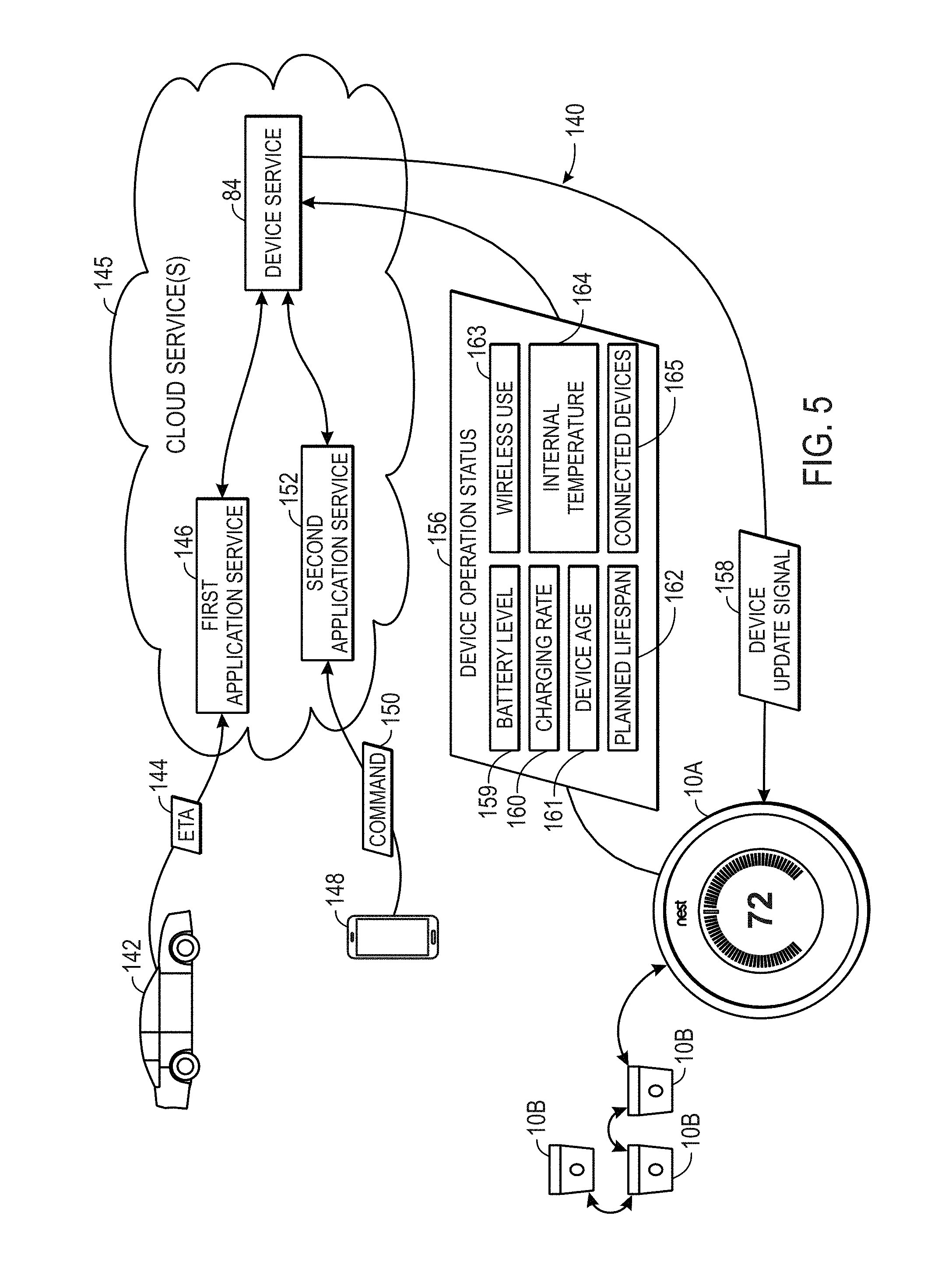

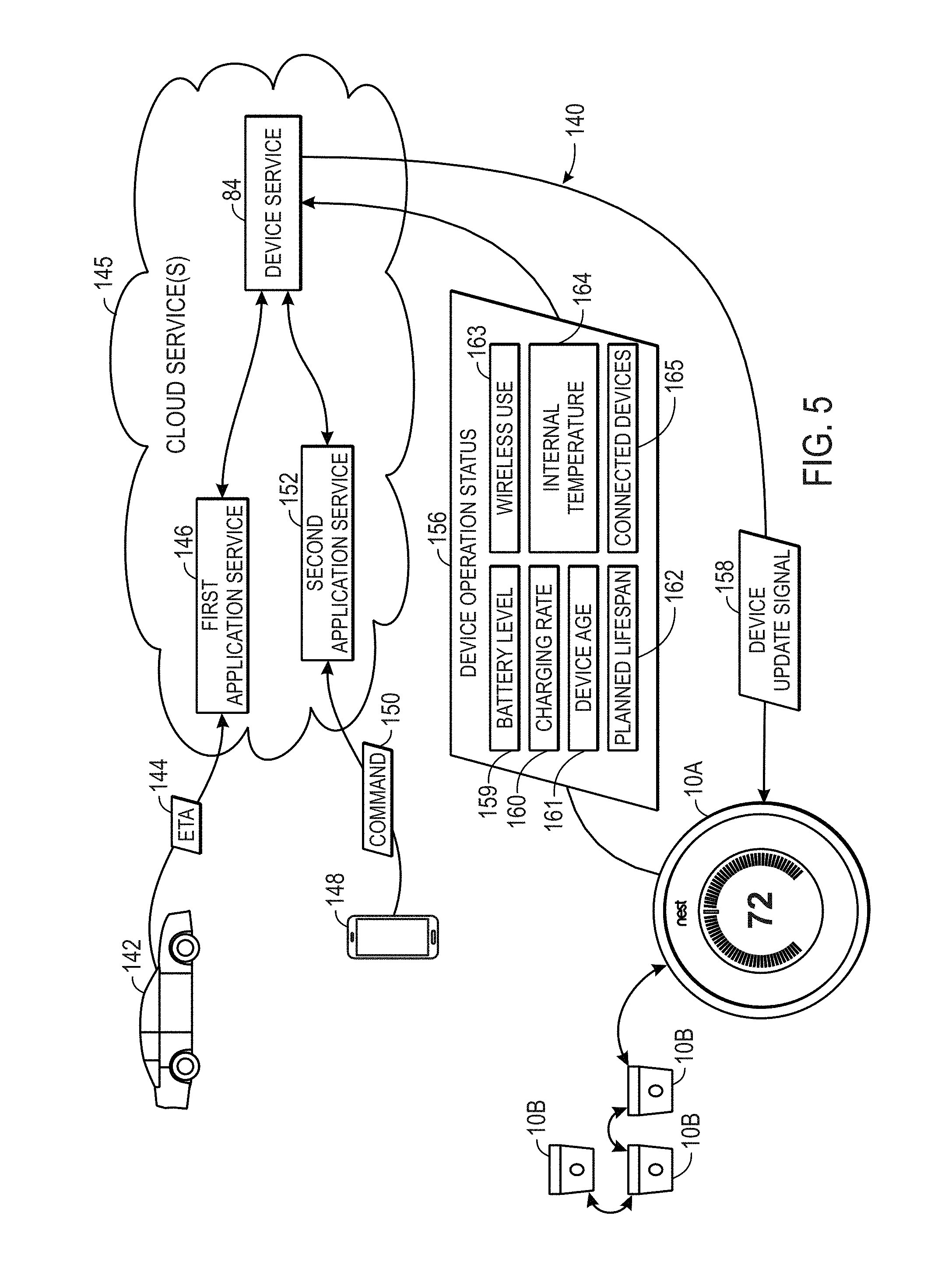

[0007] According to embodiments of this disclosure, applications may access different installations of smart home devices (e.g., via an application programming interface (API)). Namely, the third party applications may communicate not directly with a smart home device, but rather through a device service. The device service may provide a corresponding update signal to the target smart home device based on one or more factors such as operation status parameters of the device.

[0008] Various refinements of the features noted above may exist in relation to various aspects of the present disclosure. Further features may also be incorporated in these various aspects as well. These refinements and additional features may exist individually or in any combination. For instance, various features discussed below in relation to one or more of the illustrated embodiments may be incorporated into any of the above-described aspects of the present disclosure alone or in any combination. The brief summary presented above is intended only to familiarize the reader with certain aspects and contexts of embodiments of the present disclosure without limitation to the claimed subject matter.

BRIEF DESCRIPTION OF THE DRAWINGS

[0009] Various aspects of this disclosure may be better understood upon reading the following detailed description and upon reference to the drawings in which:

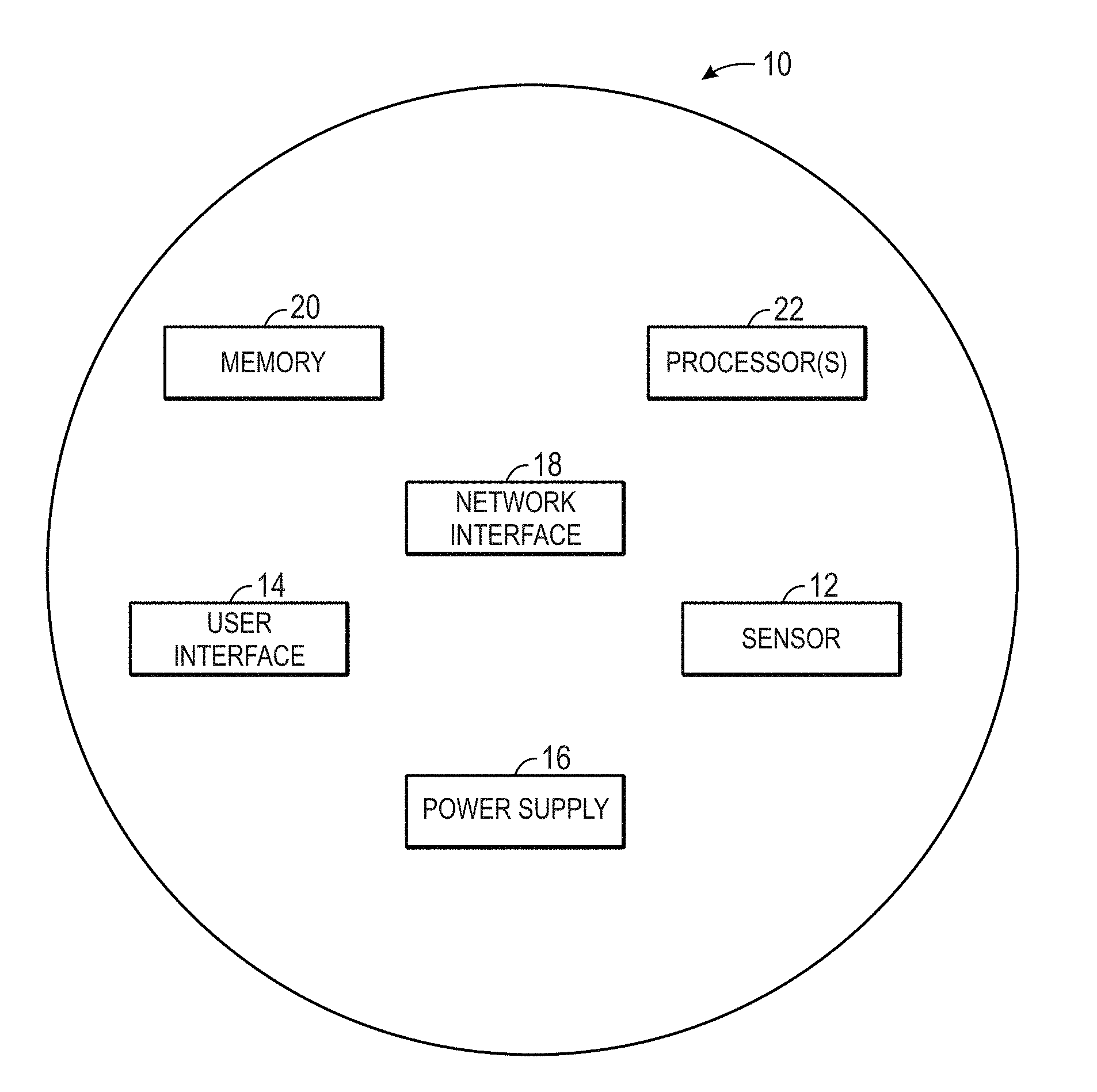

[0010] FIG. 1 is a block diagram of a smart home device, in accordance with an embodiment;

[0011] FIG. 2 is a block diagram of a connected smart home environment that includes a number of smart home devices, in accordance with an embodiment;

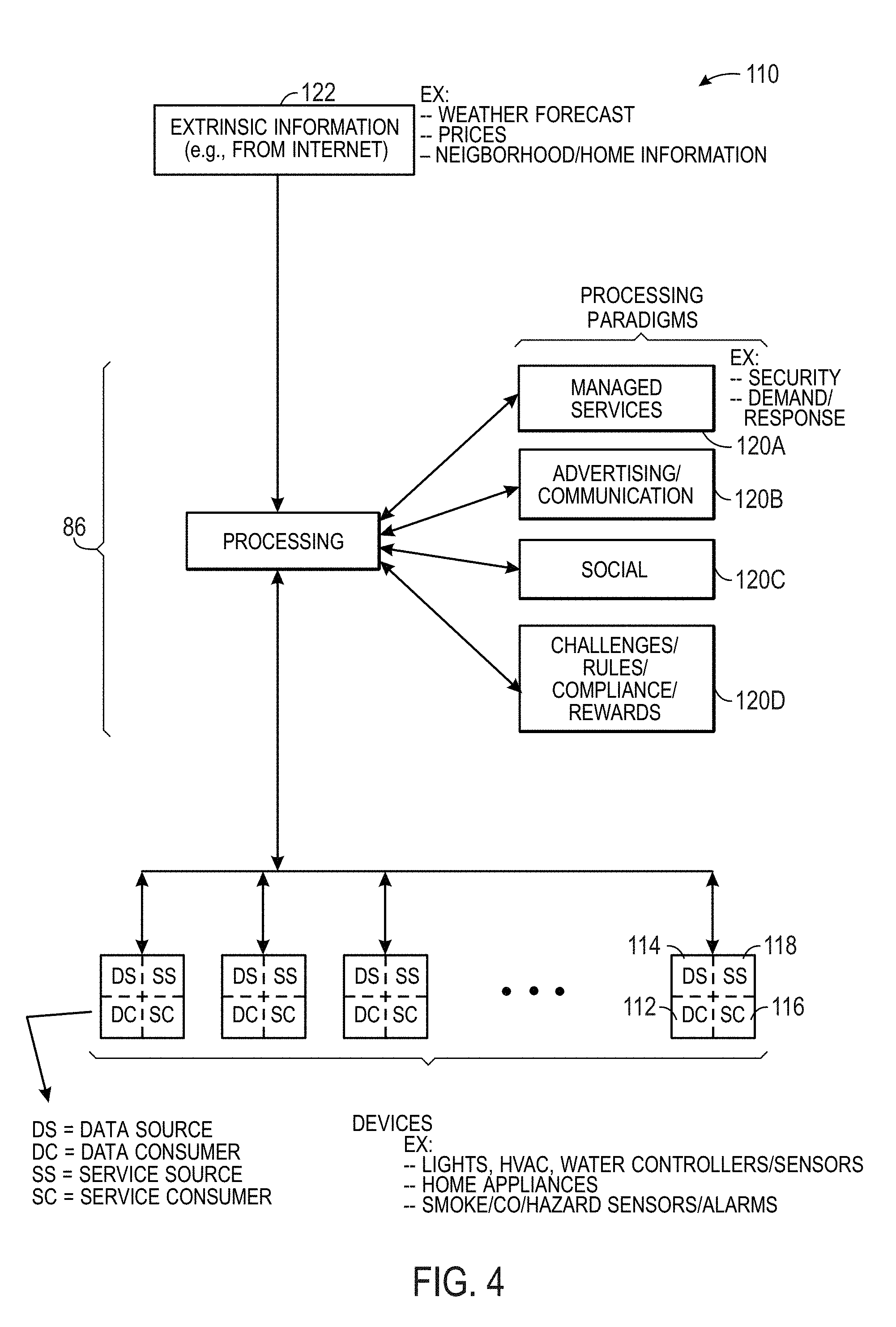

[0012] FIG. 3 is a block diagram illustrating a manner of controlling and/or accessing the smart home environment using services over the internet, in accordance with an embodiment;

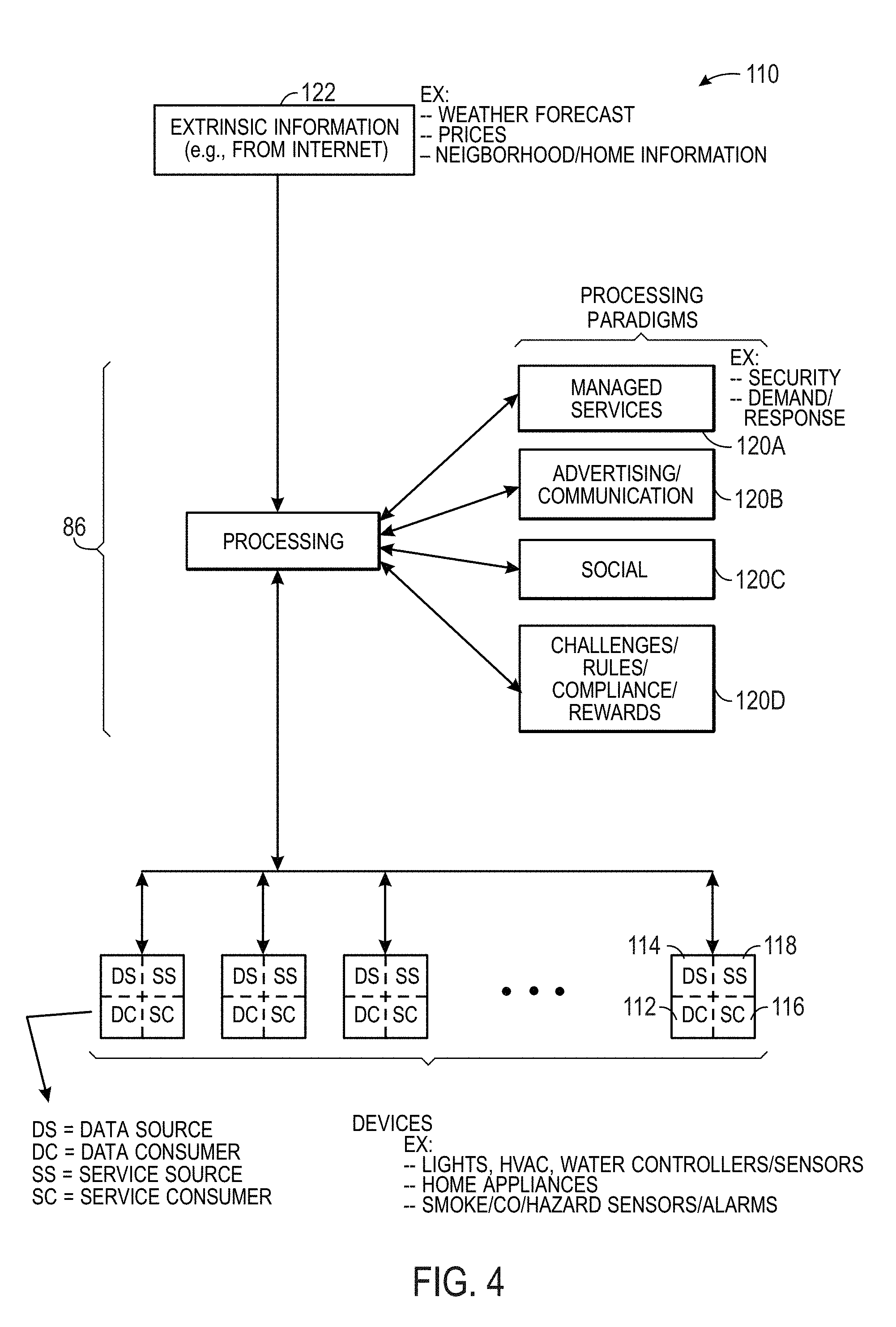

[0013] FIG. 4 is a block diagram of processing paradigms that may be used to control devices of the smart home environment, in accordance with an embodiment;

[0014] FIG. 5 is a block diagram of a system that provides access to smart home devices, in accordance with an embodiment;

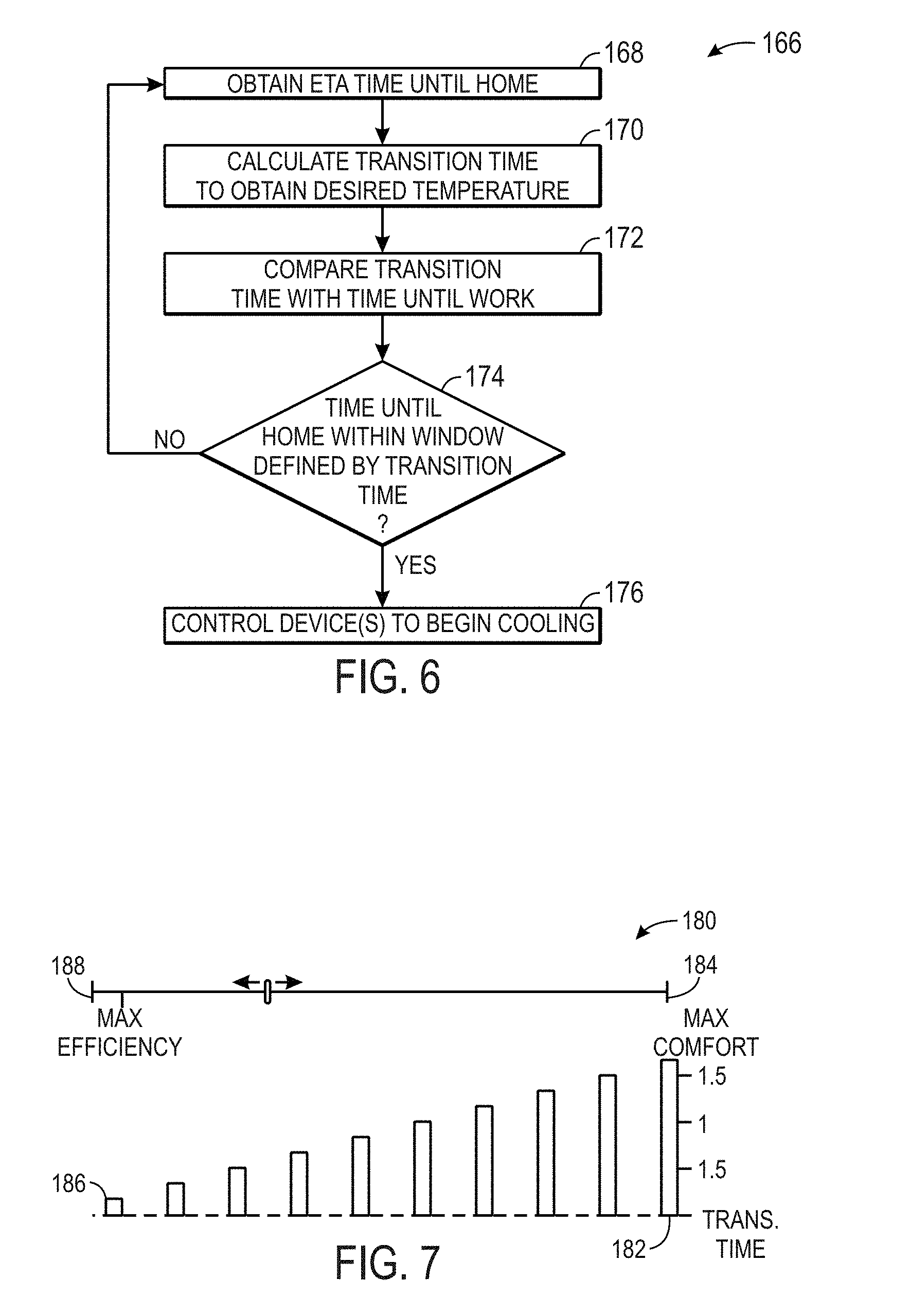

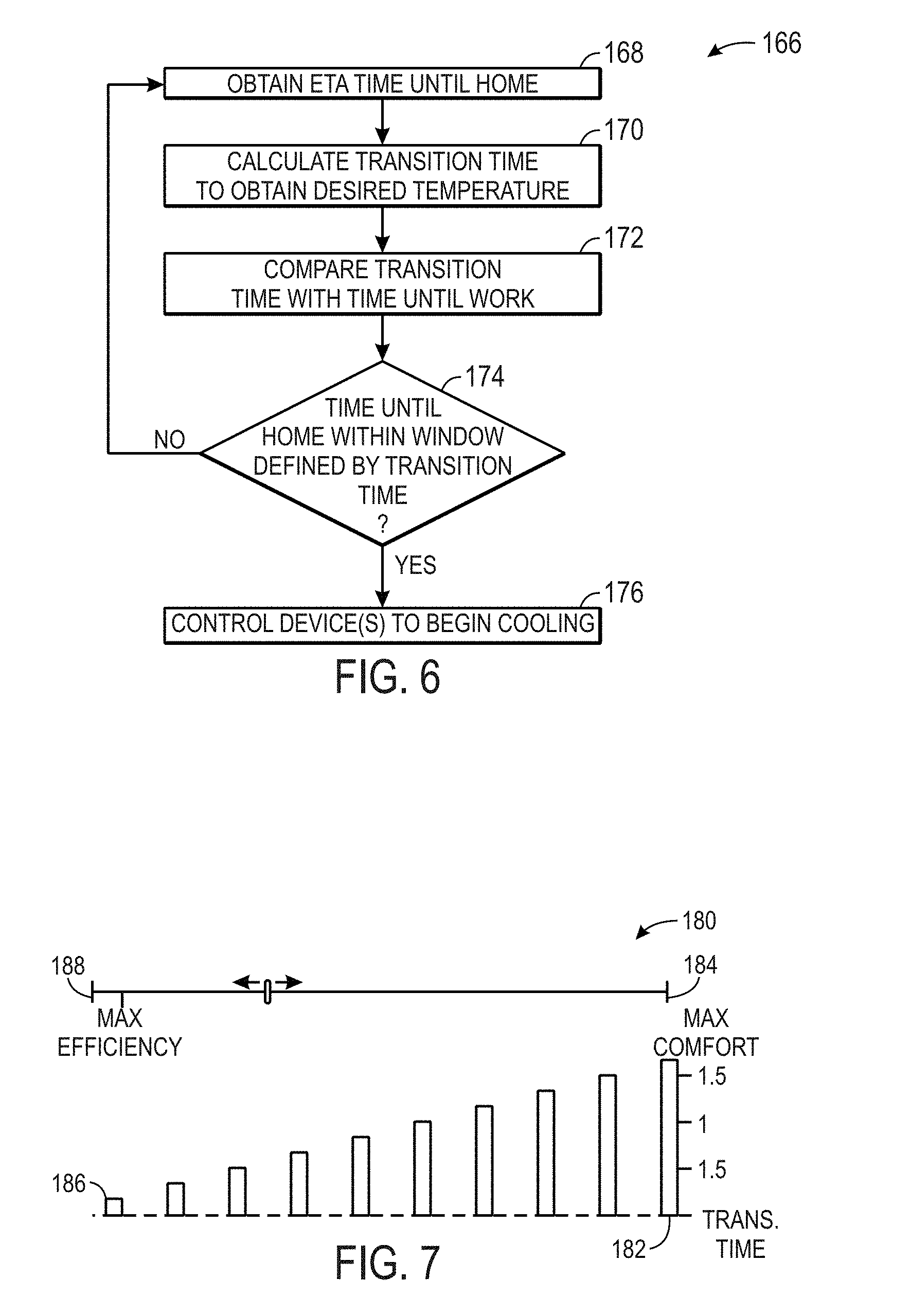

[0015] FIG. 6 is a flow diagram illustrating a method for transitioning temperatures based upon an estimated time of arrival, in accordance with an embodiment;

[0016] FIG. 7 is block diagram illustrating window creation for the method of FIG. 6, in accordance with an embodiment;

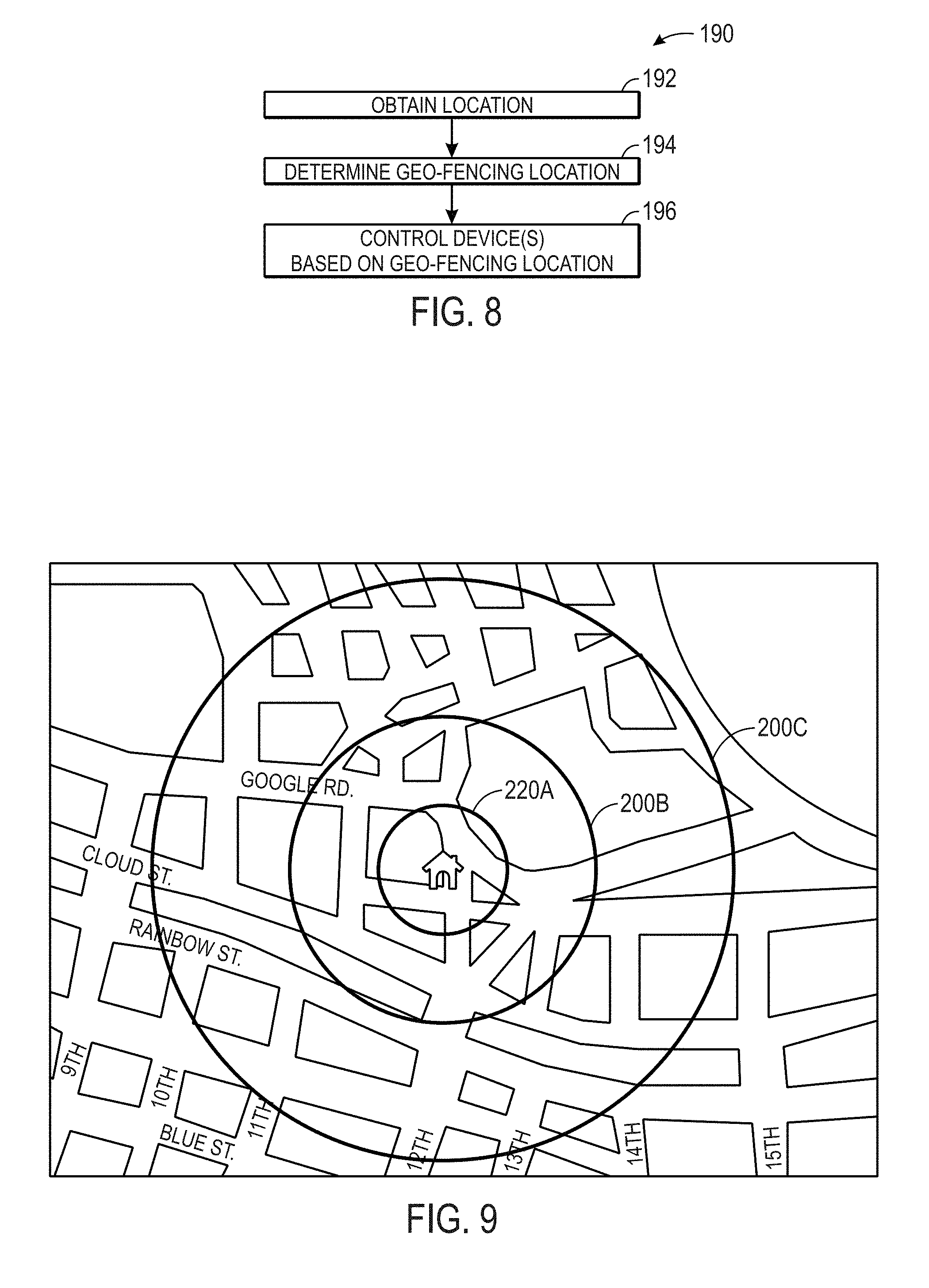

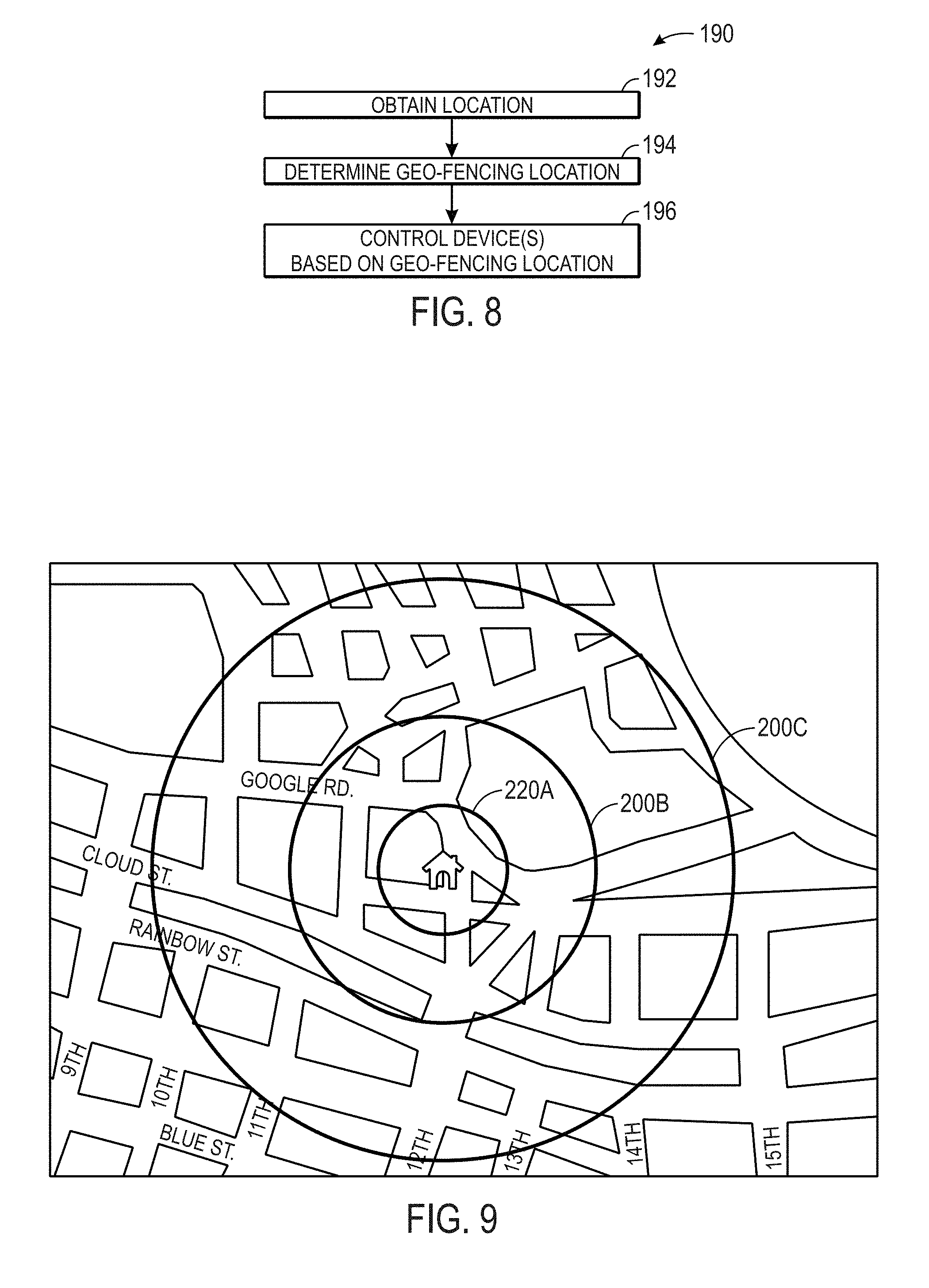

[0017] FIG. 8 is a flow diagram illustrating a method for controlling devices using geo-fencing, in accordance with an embodiment;

[0018] FIG. 9 is a block diagram illustrating a set of geo-fence boundaries, in accordance with an embodiment;

[0019] FIG. 10 is a block diagram illustrating a geo-fencing application on a handheld electronic device, in accordance with an embodiment;

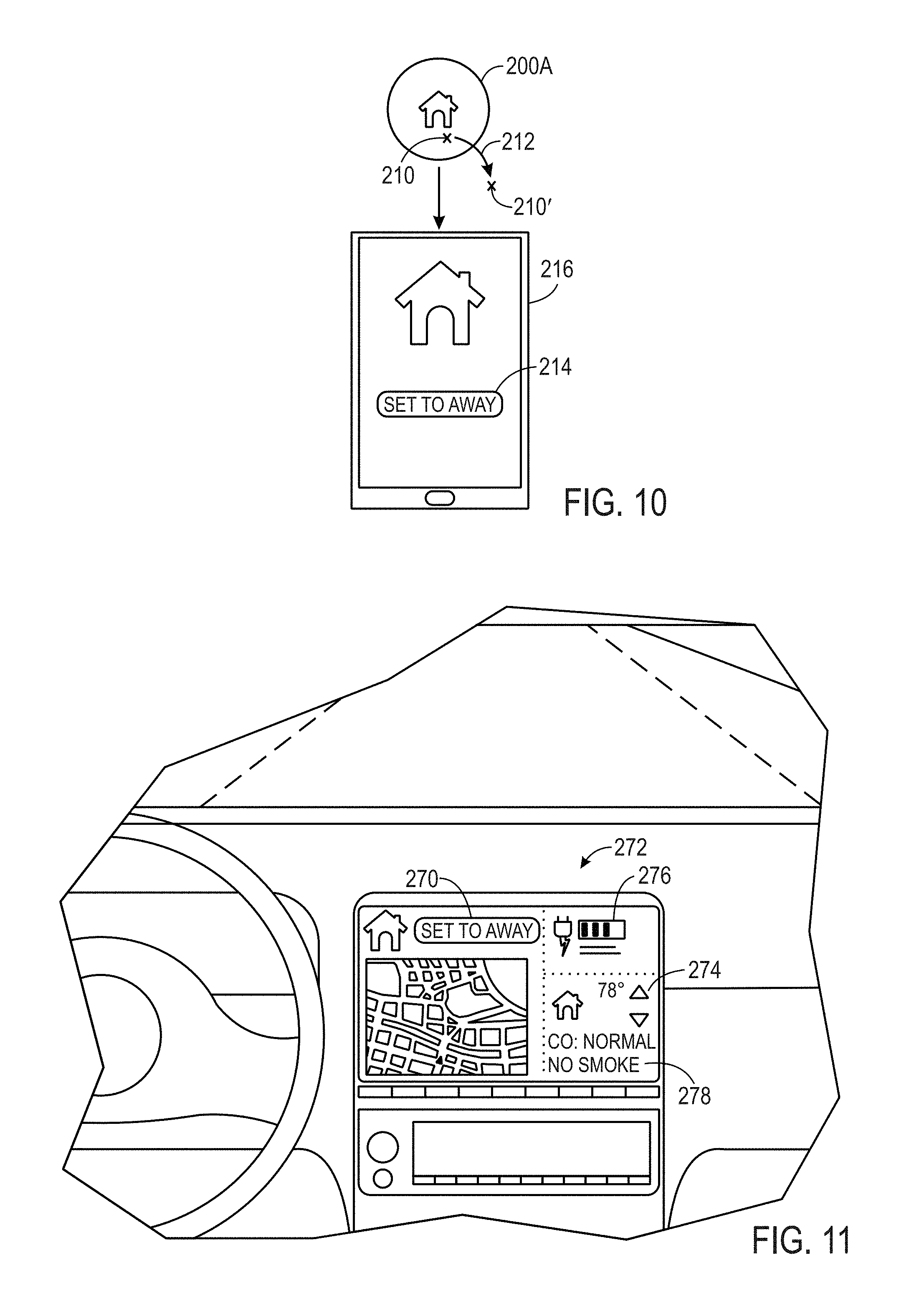

[0020] FIG. 11 is a block diagram illustrating an application running from an in-dash interface, in accordance with an embodiment;

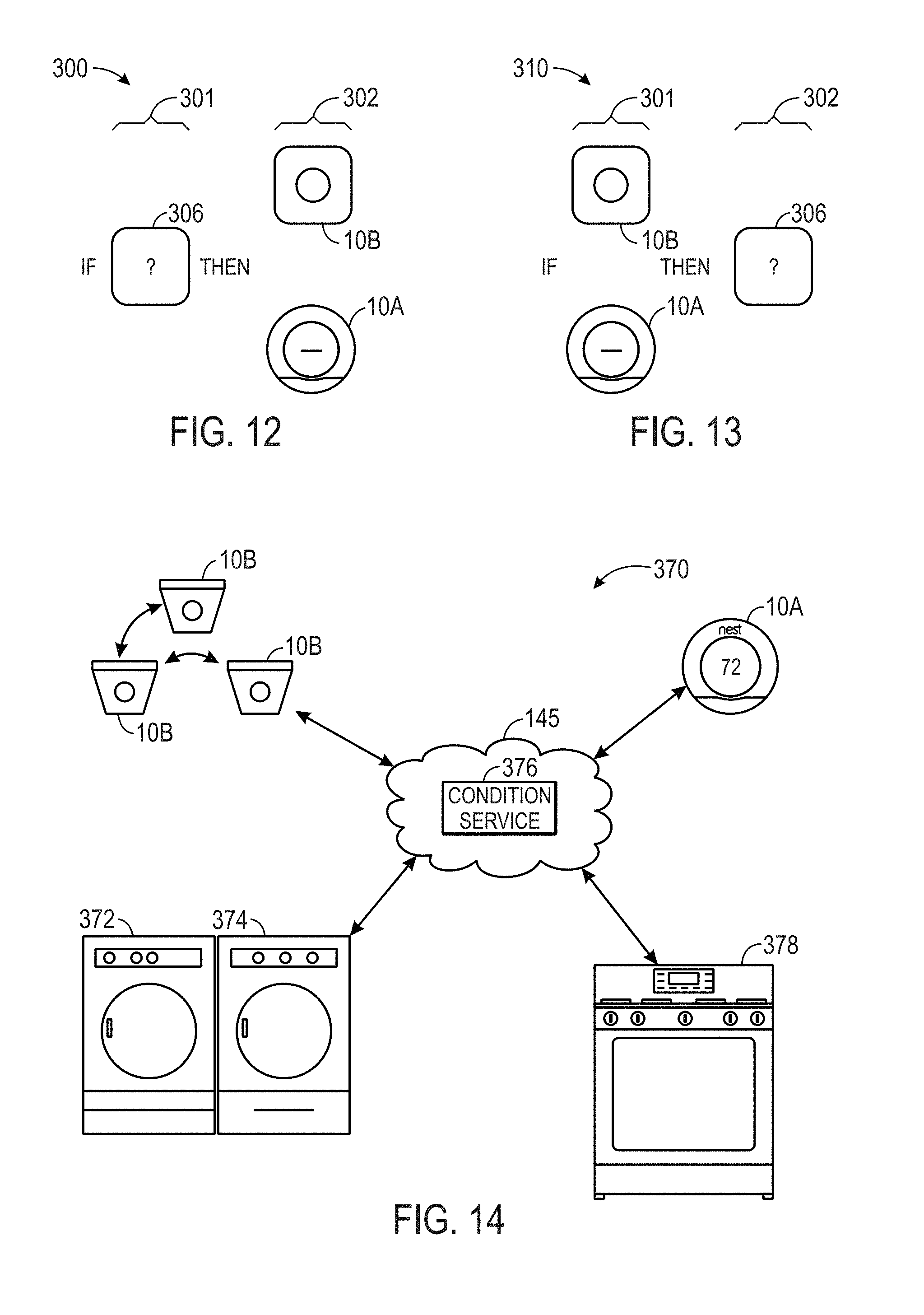

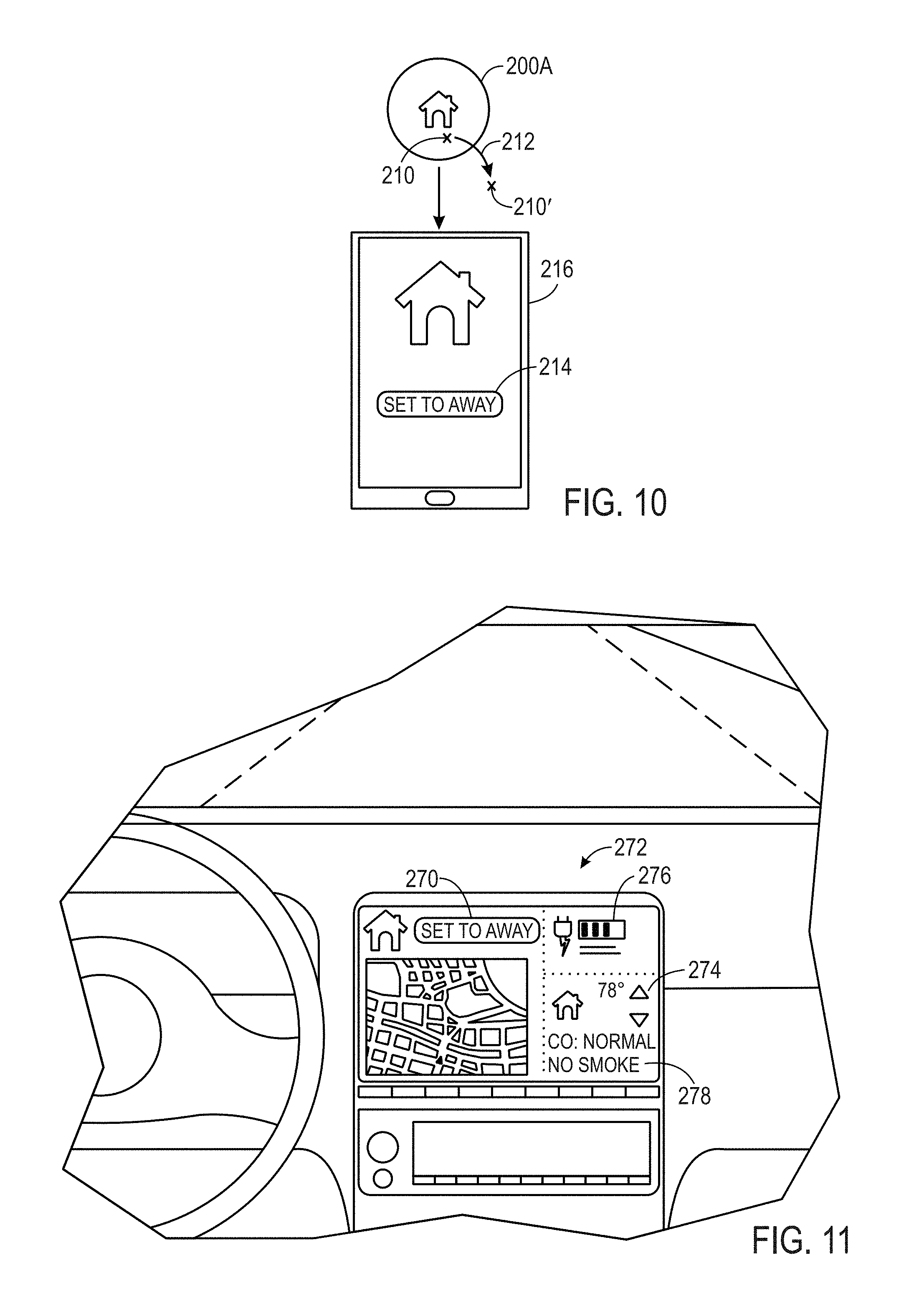

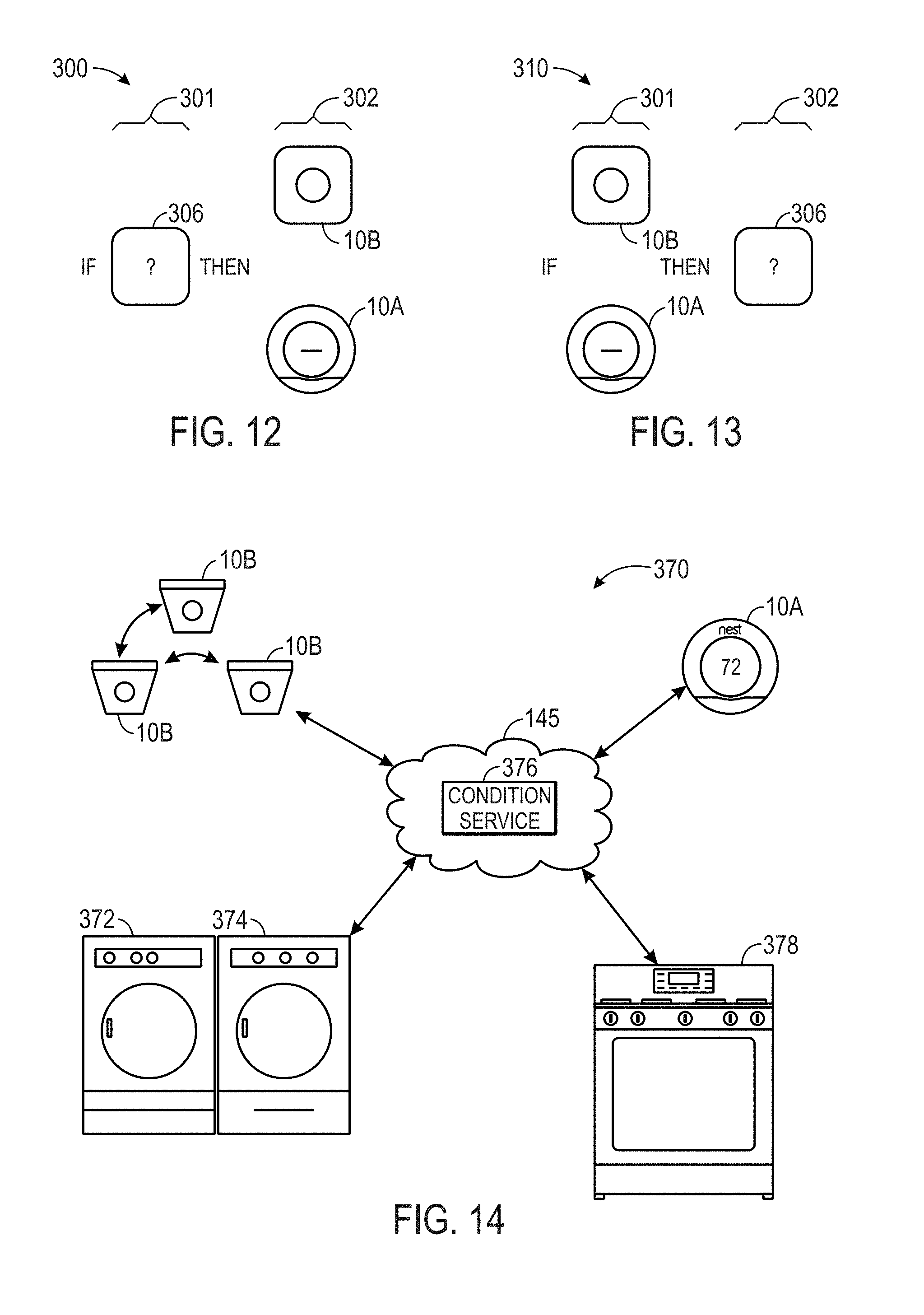

[0021] FIG. 12 is a schematic illustration of a conditional rule where a thermostat, a smoke/carbon monoxide detector, or both are outputs, in accordance with an embodiment;

[0022] FIG. 13 is a schematic illustration of a conditional rule where data from a thermostat, a smoke/carbon monoxide detector, or both are conditions, in accordance with an embodiment;

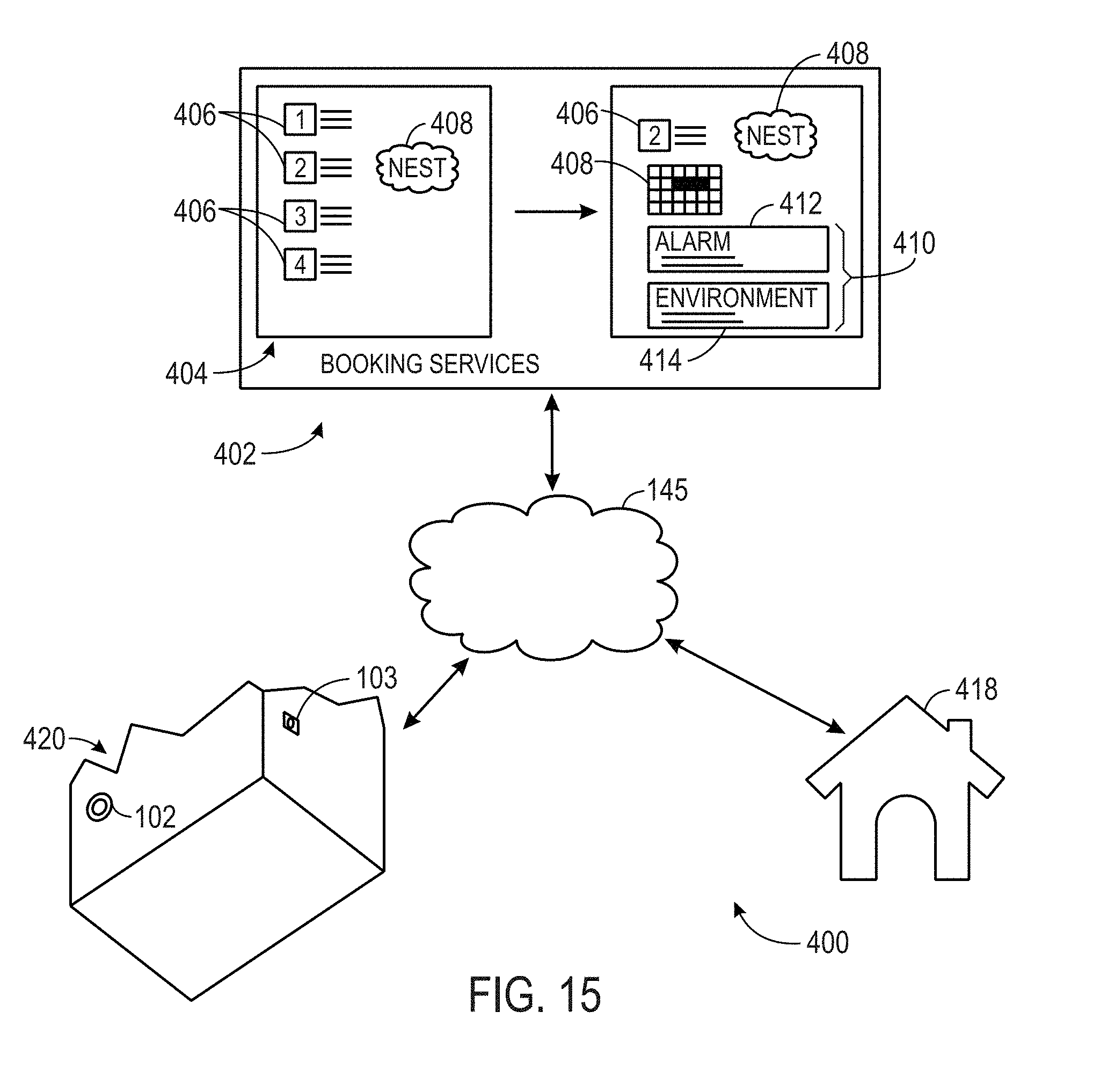

[0023] FIG. 14 is a block diagram of a system that integrates household appliances with a thermostat, smoke/carbon monoxide detector, or both, in accordance with an embodiment;

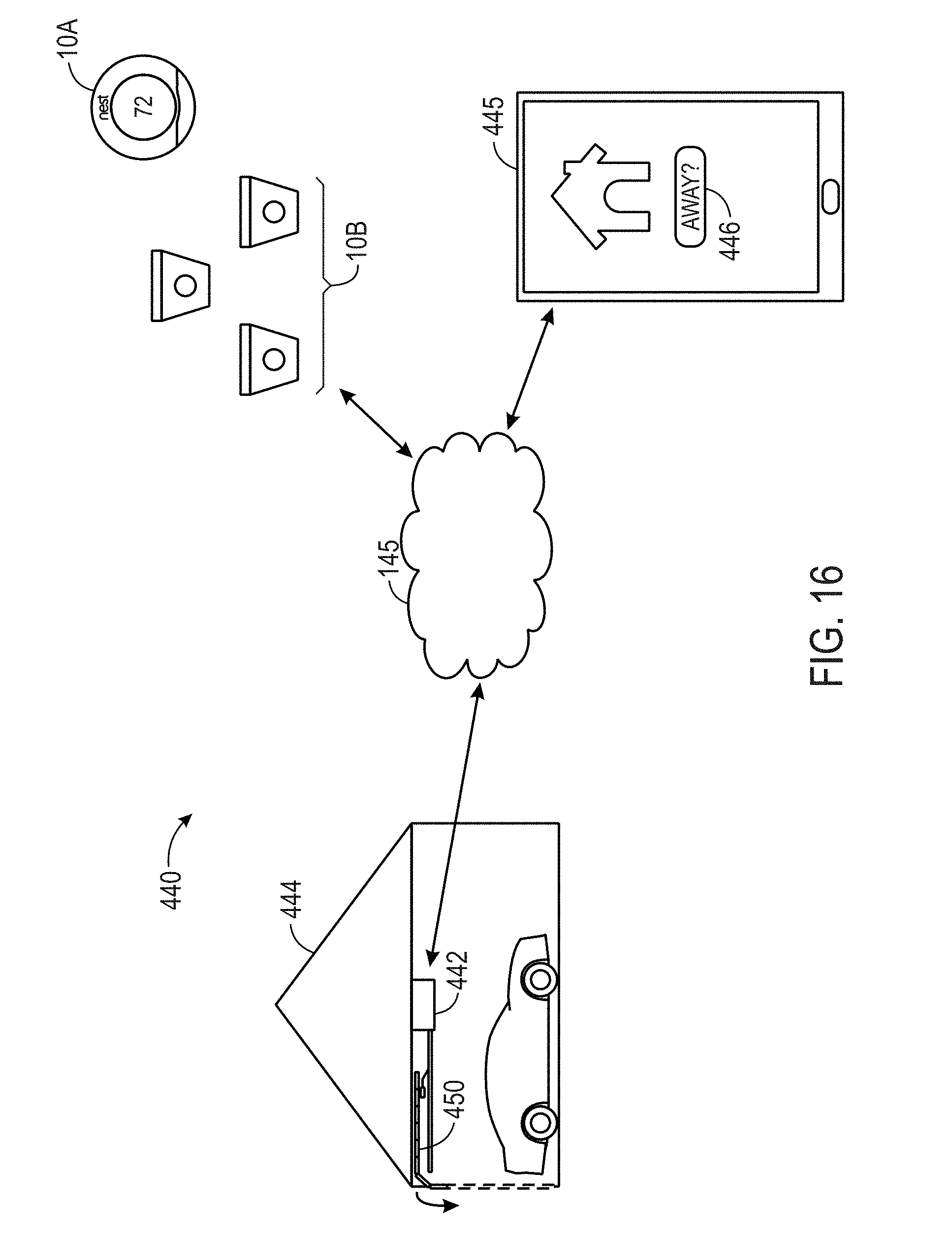

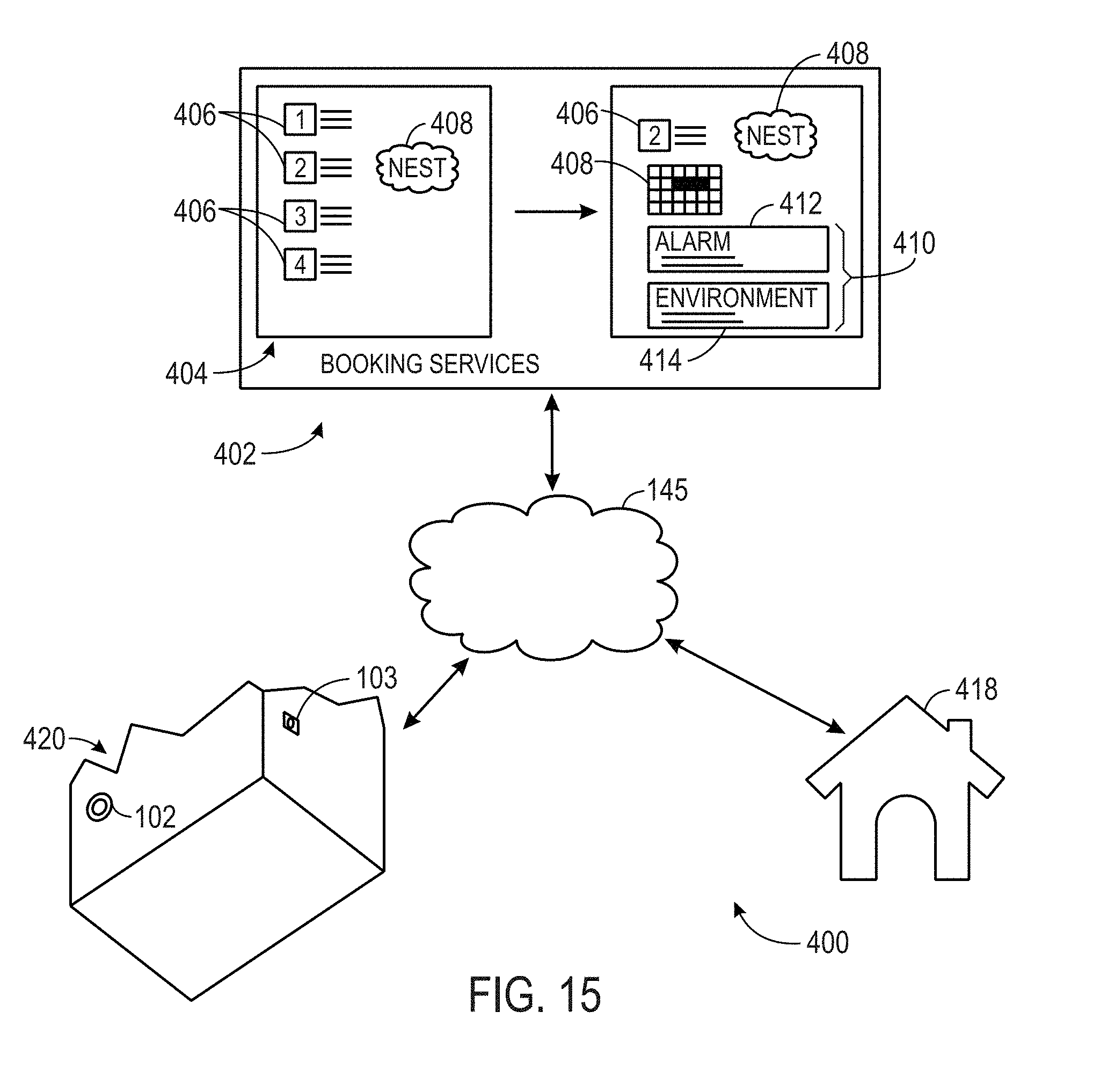

[0024] FIG. 15 is a block diagram of a system that integrates a booking service with a thermostat, smoke/carbon monoxide detector, an alarm system, or combination thereof, in accordance with an embodiment; and

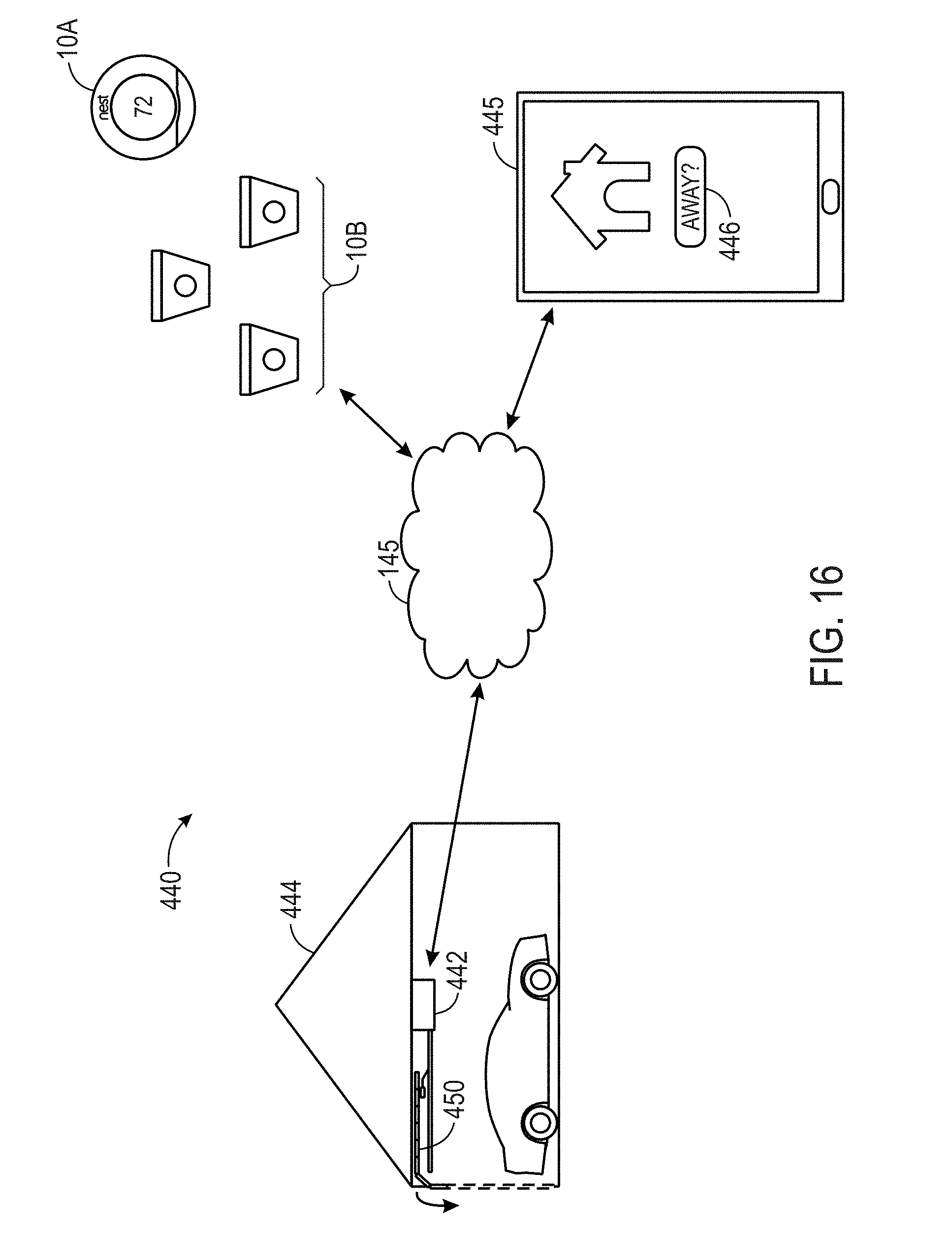

[0025] FIG. 16 is a block diagram of a system that integrates a garage door opener with a thermostat, smoke/carbon monoxide detector, or both, in accordance with an embodiment.

DETAILED DESCRIPTION OF SPECIFIC EMBODIMENTS

[0026] One or more specific embodiments will be described below. In an effort to provide a concise description of these embodiments, not all features of an actual implementation are described in the specification. It should be appreciated that in the development of any such actual implementation, as in any engineering or design project, numerous implementation-specific decisions must be made to achieve the developers' specific goals, such as compliance with system-related and business-related constraints, which may vary from one implementation to another. Moreover, it should be appreciated that such a development effort might be complex and time consuming, but would nevertheless be a routine undertaking of design, fabrication, and manufacture for those of ordinary skill having the benefit of this disclosure.

[0027] Embodiments of the present disclosure relate to an electronic device, such as a thermostat or a hazard detector (e.g., smoke detector), that may be disposed in a building (e.g., home or office) such that the electronic device may detect the presence of a human being in the building and distinguish between the presence of the human being and a pet. Generally, the electronic device may employ a sensor, such as a passive infrared (PIR) sensor, to detect the presence of a human being. However, each PIR sensor may be inherently sensitive to different levels of noise. By accounting for the different sensitivity levels of each PIR sensor, the electronic device may improve its detection of human being and better distinguish between the presence of human beings and pets.

[0028] Keeping this in mind, the electronic device may include a low-power processor that may store the sensor measurements acquired by the PIR sensor during a time period when the electronic device does not expect a human the building or portion of the building being monitored by electronic device is not expected to have a human being present. In one embodiment, after storing the sensor measurements over some period of time, the low-power processor may send the stored sensor measurements to a high-power processor of the electronic device. The high-power processor may then calculate a threshold or adjust the previous threshold for determining a presence of a human based on the stored sensor measurements that correspond to the time period when a human being is likely not present in the building. The high-power processor may then send the newly calculated or the adjusted threshold to the low-power processor. The low-power processor may then use the newly calculated or the adjusted threshold to detect the presence of a human. Since the new threshold is calculated based on the respective sensor measurements for the respective PIR sensor of a respective electronic device, the new threshold may compensate for the inherent sensitivity characteristics of the respective PIR sensor. As a result, the electronic device may detect the presence of a human being more effectively and efficiently.

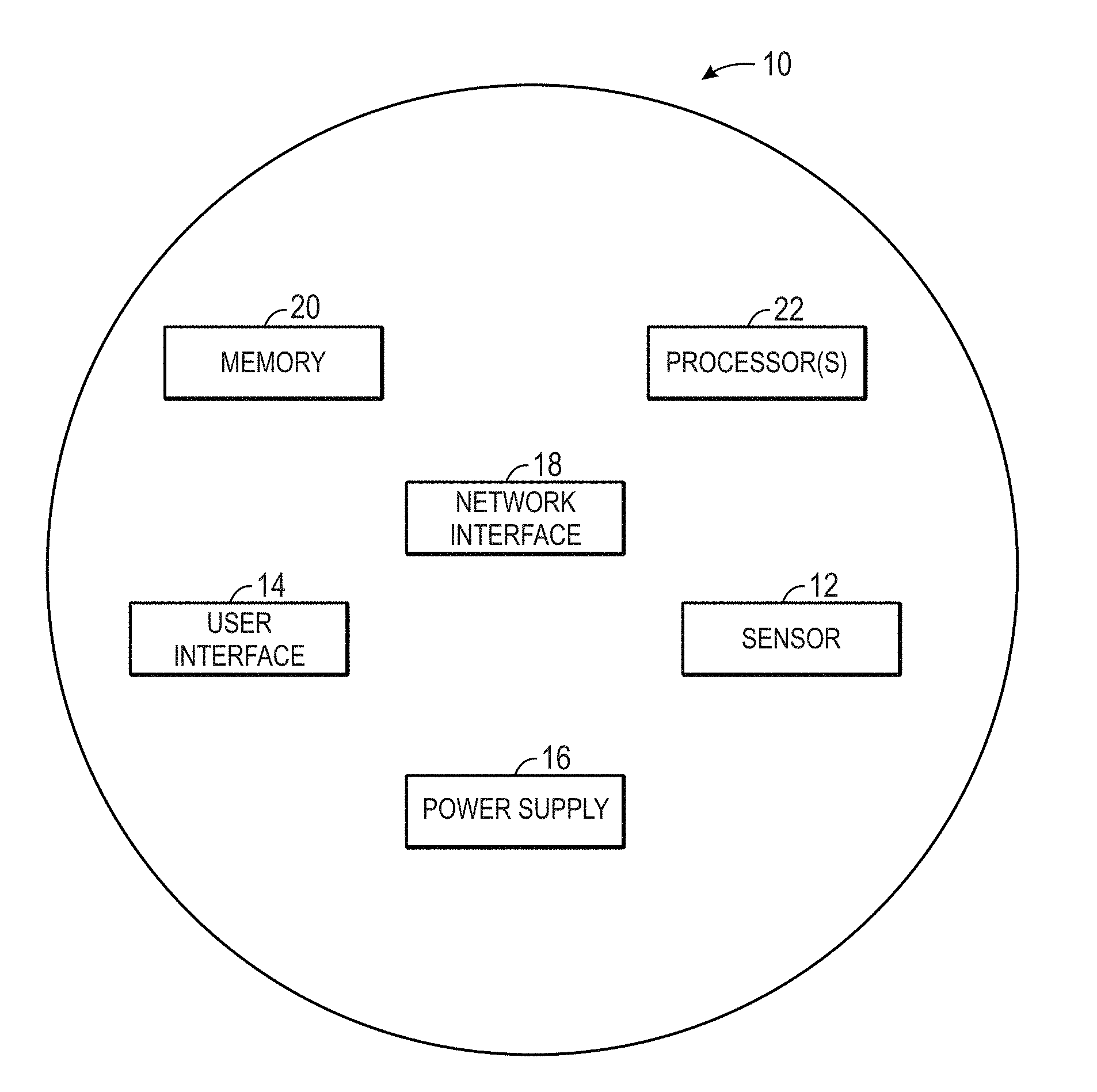

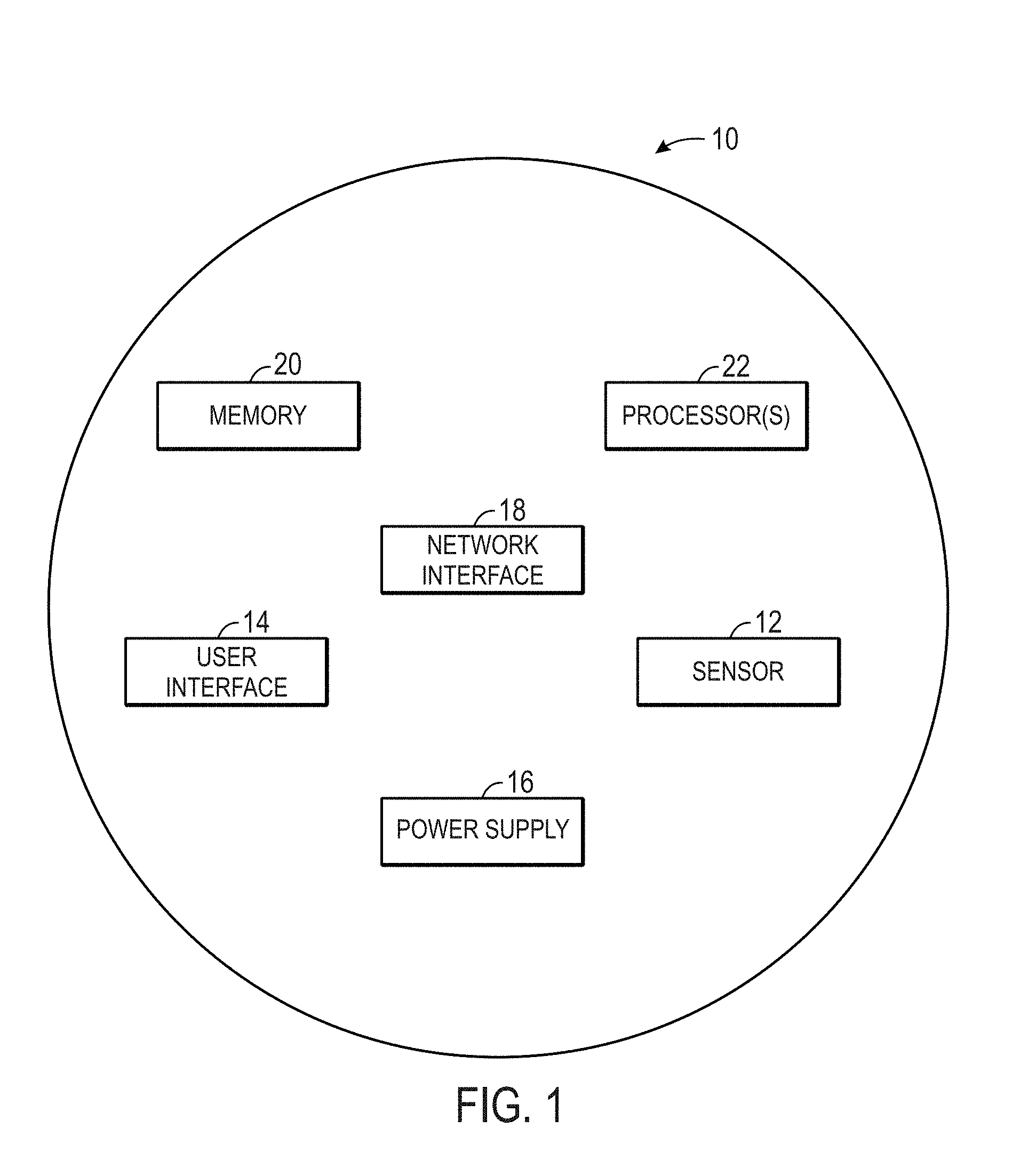

[0029] Smart Device in Smart Home Environment

[0030] By way of introduction, FIG. 1 illustrates an example of a general device 10 that may that may be disposed within a building environment. In one embodiment, the device 10 may include one or more sensors 12, a user-interface component 14, a power supply 16 (e.g., including a power connection and/or battery), a network interface 18, a high-power processor 20, a low-power processor 22, a passive infrared (PIR) sensor 24, a light source 26, and the like.

[0031] The sensors 12, in certain embodiments, may detect various properties such as acceleration, temperature, humidity, water, supplied power, proximity, external motion, device motion, sound signals, ultrasound signals, light signals, fire, smoke, carbon monoxide, global-positioning-satellite (GPS) signals, radio-frequency (RF), other electromagnetic signals or fields, or the like. As such, the sensors 12 may include temperature sensor(s), humidity sensor(s), hazard-related sensor(s) or other environmental sensor(s), accelerometer(s), microphone(s), optical sensors up to and including camera(s) (e.g., charged coupled-device or video cameras), active or passive radiation sensors, GPS receiver(s) or radiofrequency identification detector(s). While FIG. 1 illustrates an embodiment with a single sensor, many embodiments may include multiple sensors. In some instances, the device 10 may include one or more primary sensors and one or more secondary sensors. Here, the primary sensor(s) may sense data central to the core operation of the device (e.g., sensing a temperature in a thermostat or sensing smoke in a smoke detector), while the secondary sensor(s) may sense other types of data (e.g., motion, light or sound), which can be used for energy-efficiency objectives or smart-operation obj ectives.

[0032] One or more user-interface components 14 in the device 10 may receive input from the user and/or present information to the user. The received input may be used to determine a setting. In certain embodiments, the user-interface components may include a mechanical or virtual component that responds to the user's motion. For example, the user can mechanically move a sliding component (e.g., along a vertical or horizontal track) or rotate a rotatable ring (e.g., along a circular track), or the user's motion along a touchpad may be detected. Such motions may correspond to a setting adjustment, which can be determined based on an absolute position of a user-interface component 14 or based on a displacement of a user-interface components 14 (e.g., adjusting a set point temperature by 1 degree F. for every 10.degree. rotation of a rotatable-ring component). Physically and virtually movable user-interface components can allow a user to set a setting along a portion of an apparent continuum. Thus, the user may not be confined to choose between two discrete options (e.g., as would be the case if up and down buttons were used) but can quickly and intuitively define a setting along a range of possible setting values. For example, a magnitude of a movement of a user-interface component may be associated with a magnitude of a setting adjustment, such that a user may dramatically alter a setting with a large movement or finely tune a setting with a small movement.

[0033] The user-interface components 14 may also include one or more buttons (e.g., up and down buttons), a keypad, a number pad, a switch, a microphone, and/or a camera (e.g., to detect gestures). In one embodiment, the user-interface component 14 may include a click-and-rotate annular ring component that may enable the user to interact with the component by rotating the ring (e.g., to adjust a setting) and/or by clicking the ring inwards (e.g., to select an adjusted setting or to select an option). In another embodiment, the user-interface component 14 may include a camera that may detect gestures (e.g., to indicate that a power or alarm state of a device is to be changed). In some instances, the device 10 may have one primary input component, which may be used to set a plurality of types of settings. The user-interface components 14 may also be configured to present information to a user via, e.g., a visual display (e.g., a thin-film-transistor display or organic light-emitting-diode display) and/or an audio speaker.

[0034] The power-supply component 16 may include a power connection and/or a local battery. For example, the power connection may connect the device 10 to a power source such as a line voltage source. In some instances, an AC power source can be used to repeatedly charge a (e.g., rechargeable) local battery, such that the battery may be used later to supply power to the device 10 when the AC power source is not available.

[0035] The network interface 18 may include a component that enables the device 10 to communicate between devices. As such, the network interface 18 may enable the device 10 to communicate with other devices 10 via a wired or wireless network. The network interface 18 may include a wireless card or some other transceiver connection to facilitate this communication.

[0036] The high-power processor 20 and the low-power processor 22 may support one or more of a variety of different device functionalities. As such, the high-power processor 20 and the low-power processor 22 may each include one or more processors configured and programmed to carry out and/or cause to be carried out one or more of the functionalities described herein. In one embodiment, the high-power processor 20 and the low-power processor 22 may include general-purpose processors carrying out computer code stored in local memory (e.g., flash memory, hard drive, random access memory), special-purpose processors or application-specific integrated circuits, combinations thereof, and/or using other types of hardware/firmware/software processing platforms. In certain embodiments, the high-power processor 20 may execute computationally intensive operations such as operating the user-interface component 14 and the like. The low-power processor 22, on the other hand, may manage less complex processes such as detecting a hazard or temperature from the sensor 12. In one embodiment, the low-power processor may wake or initialize the high-power processor for computationally intensive processes.

[0037] By way of example, the high-power processor 20 and the low-power processor 22 may detect when a location (e.g., a house or room) is occupied (i.e., includes a presence of a human), up to and including whether it is occupied by a specific person or is occupied by a specific number of people (e.g., relative to one or more thresholds). In one embodiment, this detection can occur, e.g., by analyzing microphone signals, detecting user movements (e.g., in front of a device), detecting openings and closings of doors or garage doors, detecting wireless signals, detecting an internet protocol (IP) address of a received signal, detecting operation of one or more devices within a time window, or the like. Moreover, the high-power processor 20 and the low-power processor 22 may include image recognition technology to identify particular occupants or objects.

[0038] In certain embodiments, the high-power processor 20 and the low-power processor 22 may detect the presence of a human using the PIR sensor 24. The PIR sensor 24 may be a passive infrared sensor that may measures infrared (IR) light radiating from objects in its field of view. As such, the PIR sensor 24 may detect the Infrared radiation emitted from an object.

[0039] In some instances, the high-power processor 20 may predict desirable settings and/or implement those settings. For example, based on the presence detection, the high-power processor 20 may adjust device settings to, e.g., conserve power when nobody is home or in a particular room or to accord with user preferences (e.g., general at-home preferences or user-specific preferences). As another example, based on the detection of a particular person, animal or object (e.g., a child, pet or lost object), the high-power processor 20 may initiate an audio or visual indicator of where the person, animal or object is or may initiate an alarm or security feature if an unrecognized person is detected under certain conditions (e.g., at night or when lights are off).

[0040] In some instances, devices may interact with each other such that events detected by a first device influences actions of a second device. For example, a first device can detect that a user has entered into a garage (e.g., by detecting motion in the garage, detecting a change in light in the garage or detecting opening of the garage door). The first device can transmit this information to a second device via the network interface 18, such that the second device can, e.g., adjust a home temperature setting, a light setting, a music setting, and/or a security-alarm setting. As another example, a first device can detect a user approaching a front door (e.g., by detecting motion or sudden light pattern changes). The first device may, e.g., cause a general audio or visual signal to be presented (e.g., such as sounding of a doorbell) or cause a location-specific audio or visual signal to be presented (e.g., to announce the visitor's presence within a room that a user is occupying).

[0041] In addition to detecting various types of events, the device 10 may include a light source 26 that may illuminate when a living being, such as a human, is detected as approaching. The light source 26 may include any type of light source such as one or more light-emitting diodes or the like. The light source 26 may be communicatively coupled to the high-power processor 20 and the low-power processor 22, which may provide a signal to cause the light source 26 to illuminate.

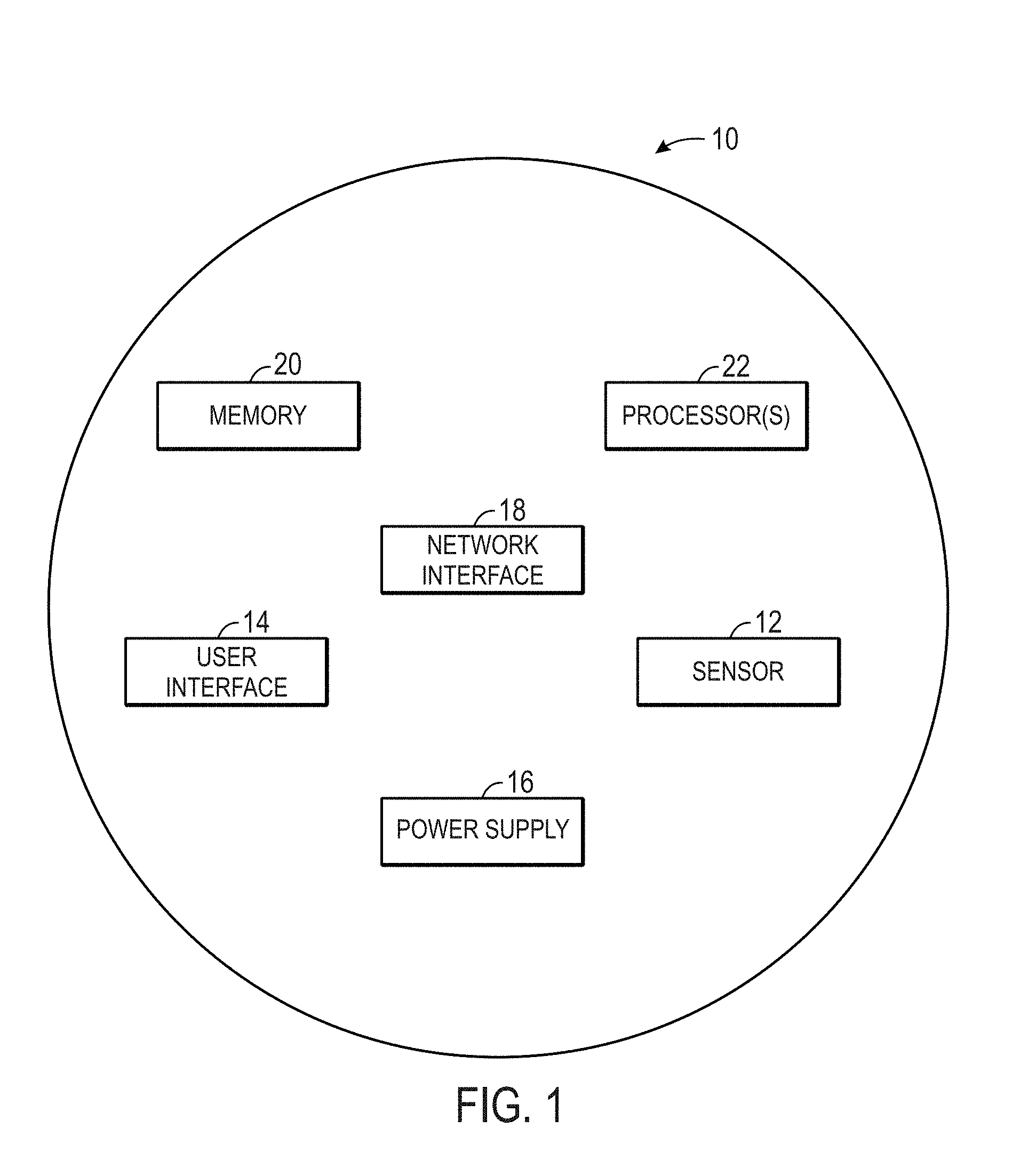

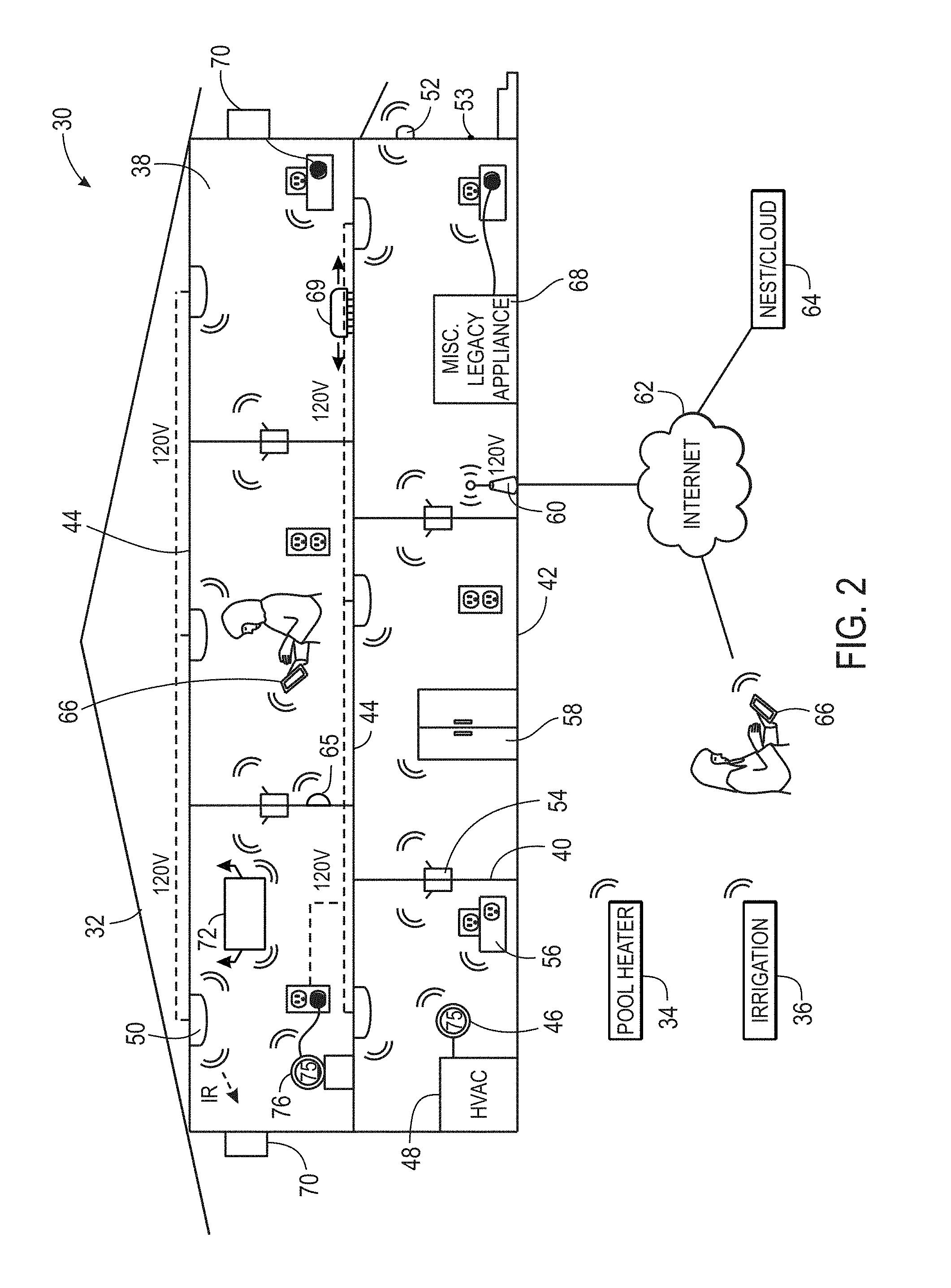

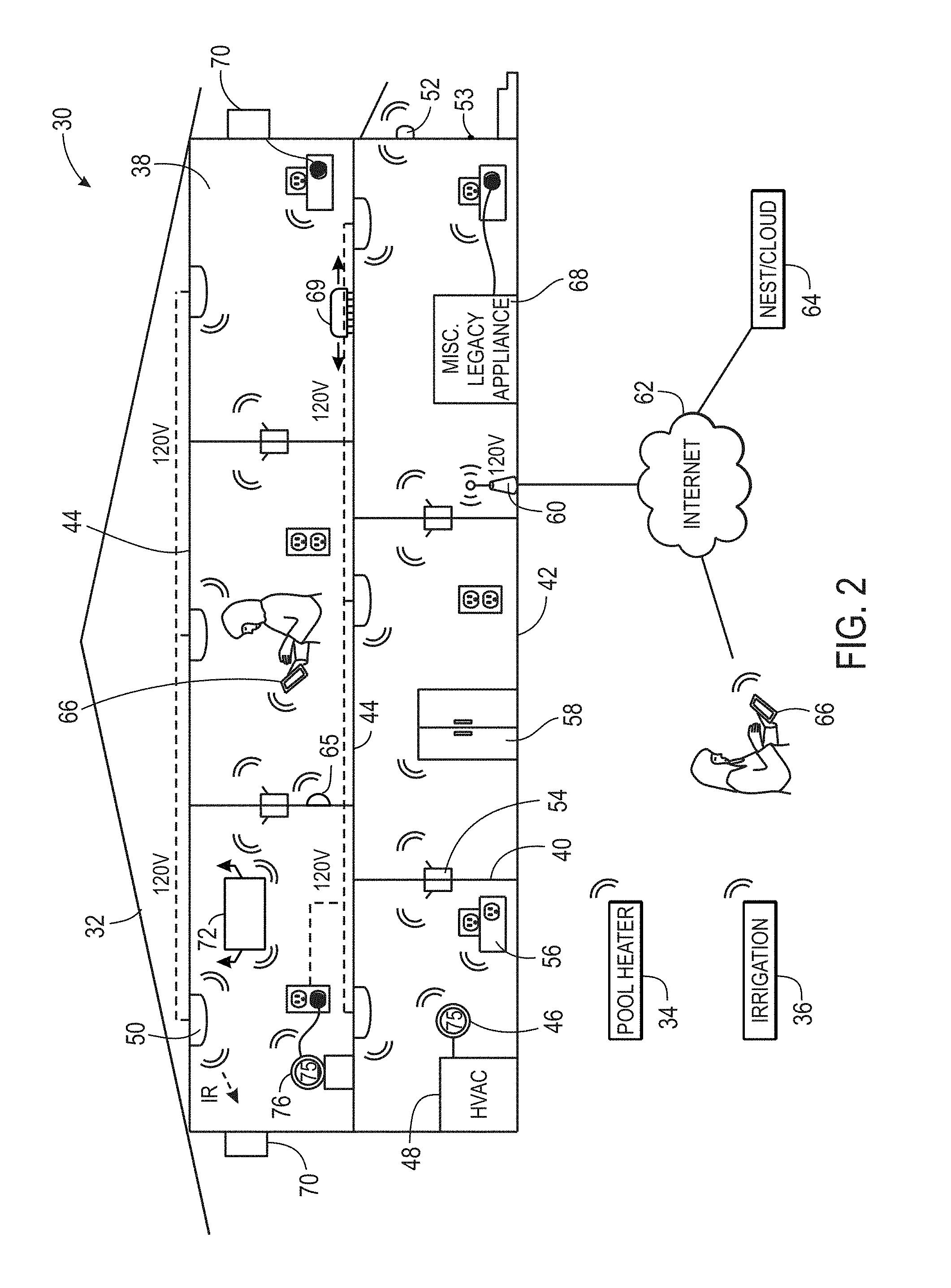

[0042] Keeping the foregoing in mind, FIG. 2 illustrates an example of a smart-home environment 30 within which one or more of the devices 10 of FIG. 1, methods, systems, services, and/or computer program products described further herein can be applicable. The depicted smart-home environment 30 includes a structure 32, which can include, e.g., a house, office building, garage, or mobile home. It will be appreciated that devices can also be integrated into a smart-home environment 30 that does not include an entire structure 32, such as an apartment, condominium, or office space. Further, the smart home environment can control and/or be coupled to devices outside of the actual structure 32. Indeed, several devices in the smart home environment need not physically be within the structure 32 at all. For example, a device controlling a pool heater or irrigation system can be located outside of the structure 32.

[0043] The depicted structure 32 includes a plurality of rooms 38, separated at least partly from each other via walls 40. The walls 40 can include interior walls or exterior walls. Each room can further include a floor 42 and a ceiling 44. Devices can be mounted on, integrated with and/or supported by a wall 40, floor 42 or ceiling 44.

[0044] In some embodiments, the smart-home environment 30 of FIG. 2 includes a plurality of devices 10, including intelligent, multi-sensing, network-connected devices, that can integrate seamlessly with each other and/or with a central server or a cloud-computing system to provide any of a variety of useful smart-home objectives. The smart-home environment 30 may include one or more intelligent, multi-sensing, network-connected thermostats 46 (hereinafter referred to as "smart thermostats 46"), one or more intelligent, network-connected, multi-sensing hazard detection units 50 (hereinafter referred to as "smart hazard detectors 50"), and one or more intelligent, multi-sensing, network-connected entryway interface devices 52 (hereinafter referred to as "smart doorbells 52"). According to embodiments, the smart thermostat 46 may include a Nest.RTM. Learning Thermostat--1st Generation T100577 or Nest.RTM. Learning Thermostat--2nd Generation T200577 by Nest Labs, Inc., among others. The smart thermostat 46 detects ambient climate characteristics (e.g., temperature and/or humidity) and controls a HVAC system 48 accordingly.

[0045] The smart hazard detector 50 may detect the presence of a hazardous substance or a substance indicative of a hazardous substance (e.g., smoke, fire, or carbon monoxide). The smart hazard detector 50 may include a Nest.RTM. Protect that may include sensors 12 such as smoke sensors, carbon monoxide sensors, and the like. As such, the hazard detector 50 may determine when smoke, fire, or carbon monoxide may be present within the building.

[0046] The smart doorbell 52 may detect a person's approach to or departure from a location (e.g., an outer door), control doorbell functionality, announce a person's approach or departure via audio or visual means, or control settings on a security system (e.g., to activate or deactivate the security system when occupants go and come). The smart doorbell 52 may interact with other devices 10 based on whether someone has approached or entered the smart-home environment 30.

[0047] In some embodiments, the smart-home environment 30 further includes one or more intelligent, multi-sensing, network-connected wall switches 54 (hereinafter referred to as "smart wall switches 54"), along with one or more intelligent, multi-sensing, network-connected wall plug interfaces 56 (hereinafter referred to as "smart wall plugs 56"). The smart wall switches 54 may detect ambient lighting conditions, detect room-occupancy states, and control a power and/or dim state of one or more lights. In some instances, smart wall switches 54 may also control a power state or speed of a fan, such as a ceiling fan. The smart wall plugs 56 may detect occupancy of a room or enclosure and control supply of power to one or more wall plugs (e.g., such that power is not supplied to the plug if nobody is at home).

[0048] Still further, in some embodiments, the device 10 within the smart-home environment 30 may further includes a plurality of intelligent, multi-sensing, network-connected appliances 58 (hereinafter referred to as "smart appliances 58"), such as refrigerators, stoves and/or ovens, televisions, washers, dryers, lights, stereos, intercom systems, garage-door openers, floor fans, ceiling fans, wall air conditioners, pool heaters, irrigation systems, security systems, and so forth. According to embodiments, the network-connected appliances 58 are made compatible with the smart-home environment by cooperating with the respective manufacturers of the appliances. For example, the appliances can be space heaters, window AC units, motorized duct vents, etc. When plugged in, an appliance can announce itself to the smart-home network, such as by indicating what type of appliance it is, and it can automatically integrate with the controls of the smart-home. Such communication by the appliance to the smart home can be facilitated by any wired or wireless communication protocols known by those having ordinary skill in the art. The smart home also can include a variety of non-communicating legacy appliances 68, such as old conventional washer/dryers, refrigerators, and the like which can be controlled, albeit coarsely (ON/OFF), by virtue of the smart wall plugs 56. The smart-home environment 30 can further include a variety of partially communicating legacy appliances 70, such as infrared ("IR") controlled wall air conditioners or other IR-controlled devices, which can be controlled by IR signals provided by the smart hazard detectors 50 or the smart wall switches 54.

[0049] According to embodiments, the smart thermostats 46, the smart hazard detectors 50, the smart doorbells 52, the smart wall switches 54, the smart wall plugs 56, and other devices of the smart-home environment 30 are modular and can be incorporated into older and new houses. For example, the devices 10 are designed around a modular platform consisting of two basic components: a head unit and a back plate, which is also referred to as a docking station. Multiple configurations of the docking station are provided so as to be compatible with any home, such as older and newer homes. However, all of the docking stations include a standard head-connection arrangement, such that any head unit can be removably attached to any docking station. Thus, in some embodiments, the docking stations are interfaces that serve as physical connections to the structure and the voltage wiring of the homes, and the interchangeable head units contain all of the sensors 12, processors 28, user interfaces 14, the power supply 16, the network interface 18, and other functional components of the devices described above.

[0050] Many different commercial and functional possibilities for provisioning, maintenance, and upgrade are possible. For example, after years of using any particular head unit, a user will be able to buy a new version of the head unit and simply plug it into the old docking station. There are also many different versions for the head units, such as low-cost versions with few features, and then a progression of increasingly-capable versions, up to and including extremely fancy head units with a large number of features. Thus, it should be appreciated that the various versions of the head units can all be interchangeable, with any of them working when placed into any docking station. This can advantageously encourage sharing and re-deployment of old head units--for example, when an important high-capability head unit, such as a hazard detector, is replaced by a new version of the head unit, then the old head unit can be re-deployed to a back room or basement, etc. According to embodiments, when first plugged into a docking station, the head unit can ask the user (by 2D LCD display, 2D/3D holographic projection, voice interaction, etc.) a few simple questions such as, "Where am I" and the user can indicate "living room", "kitchen" and so forth.

[0051] The smart-home environment 30 may also include communication with devices outside of the physical home but within a proximate geographical range of the home. For example, the smart-home environment 30 may include a pool heater monitor 34 that communicates a current pool temperature to other devices within the smart-home environment 30 or receives commands for controlling the pool temperature. Similarly, the smart-home environment 30 may include an irrigation monitor 36 that communicates information regarding irrigation systems within the smart-home environment 30 and/or receives control information for controlling such irrigation systems. According to embodiments, an algorithm is provided for considering the geographic location of the smart-home environment 30, such as based on the zip code or geographic coordinates of the home. The geographic information is then used to obtain data helpful for determining optimal times for watering, such data may include sun location information, temperature, dewpoint, soil type of the land on which the home is located, etc.

[0052] By virtue of network connectivity, one or more of the smart-home devices of FIG. 2 can further allow a user to interact with the device even if the user is not proximate to the device. For example, a user can communicate with a device using a computer (e.g., a desktop computer, laptop computer, or tablet) or other portable electronic device (e.g., a smartphone) 66. A web page or app can be configured to receive communications from the user and control the device based on the communications and/or to present information about the device's operation to the user. For example, the user can view a current setpoint temperature for a device and adjust it using a computer. The user can be in the structure during this remote communication or outside the structure.

[0053] As discussed, users can control the smart thermostat and other smart devices in the smart-home environment 30 using a network-connected computer or portable electronic device 66. In some examples, some or all of the occupants (e.g., individuals who live in the home) can register their device 66 with the smart-home environment 30. Such registration can be made at a central server to authenticate the occupant and/or the device as being associated with the home and to give permission to the occupant to use the device to control the smart devices in the home. An occupant can use their registered device 66 to remotely control the smart devices of the home, such as when the occupant is at work or on vacation. The occupant may also use their registered device to control the smart devices when the occupant is actually located inside the home, such as when the occupant is sitting on a couch inside the home. It should be appreciated that instead of or in addition to registering devices 66, the smart-home environment 30 makes inferences about which individuals live in the home and are therefore occupants and which devices 66 are associated with those individuals. As such, the smart-home environment "learns" who is an occupant and permits the devices 66 associated with those individuals to control the smart devices of the home.

[0054] In some instances, guests desire to control the smart devices. For example, the smart-home environment may receive communication from an unregistered mobile device of an individual inside of the home, where said individual is not recognized as an occupant of the home. Further, for example, a smart-home environment may receive communication from a mobile device of an individual who is known to be or who is registered as a guest.

[0055] According to embodiments, a guest-layer of controls can be provided to guests of the smart-home environment 30. The guest-layer of controls gives guests access to basic controls (e.g., a judicially selected subset of features of the smart devices), such as temperature adjustments, but it locks out other functionalities. The guest layer of controls can be thought of as a "safe sandbox" in which guests have limited controls, but they do not have access to more advanced controls that could fundamentally alter, undermine, damage, or otherwise impair the occupant-desired operation of the smart devices. For example, the guest layer of controls will not permit the guest to adjust the heat-pump lockout temperature.

[0056] A use case example of this is when a guest is in a smart home, the guest could walk up to the thermostat and turn the dial manually, but the guest may not want to walk around the house "hunting" the thermostat, especially at night while the home is dark and others are sleeping. Further, the guest may not want to go through the hassle of downloading the necessary application to their device for remotely controlling the thermostat. In fact, the guest may not have the home owner's login credentials, etc., and therefore cannot remotely control the thermostat via such an application. Accordingly, according to embodiments of the invention, the guest can open a mobile browser on their mobile device, type a keyword, such as "NEST" into the URL field and tap "Go" or "Search", etc. In response, the device presents the guest with a user interface which allows the guest to move the target temperature between a limited range, such as 65 and 80 degrees Fahrenheit. As discussed, the user interface provides a guest layer of controls that are limited to basic functions. The guest cannot change the target humidity, modes, or view energy history.

[0057] According to embodiments, to enable guests to access the user interface that provides the guest layer of controls, a local webserver is provided that is accessible in the local area network (LAN). It does not require a password, because physical presence inside the home is established reliably enough by the guest's presence on the LAN. In some embodiments, during installation of the smart device, such as the smart thermostat, the home owner is asked if they want to enable a Local Web App (LWA) on the smart device. Business owners will likely say no; home owners will likely say yes. When the LWA option is selected, the smart device broadcasts to the LAN that the above referenced keyword, such as "NEST", is now a host alias for its local web server. Thus, no matter whose home a guest goes to, that same keyword (e.g., "NEST") is always the URL you use to access the LWA, provided the smart device is purchased from the same manufacturer. Further, according to embodiments, if there is more than one smart device on the LAN, the second and subsequent smart devices do not offer to set up another LWA. Instead, they register themselves as target candidates with the master LWA. And in this case the LWA user would be asked which smart device they want to change the temperature on before getting the simplified user interface for the particular smart device they choose.

[0058] According to embodiments, a guest layer of controls may also be provided to users by means other than a device 66. For example, the smart device, such as the smart thermostat, may be equipped with walkup-identification technology (e.g., face recognition, RFID, ultrasonic sensors) that "fingerprints" or creates a "signature" for the occupants of the home. The walkup-identification technology can be the same as or similar to the fingerprinting and signature creating techniques described in other sections of this application. In operation, when a person who does not live in the home or is otherwise not registered with the smart home or whose fingerprint or signature is not recognized by the smart home "walks up" to a smart device, the smart device provides the guest with the guest layer of controls, rather than full controls.

[0059] As described below, the smart thermostat 46 and other smart devices "learn" by observing occupant behavior. For example, the smart thermostat learns occupants' preferred temperature set-points for mornings and evenings, and it learns when the occupants are asleep or awake, as well as when the occupants are typically away or at home, for example. According to embodiments, when a guest controls the smart devices, such as the smart thermostat, the smart devices do not "learn" from the guest. This prevents the guest's adjustments and controls from affecting the learned preferences of the occupants.

[0060] According to some embodiments, a smart television remote control is provided. The smart remote control recognizes occupants by thumbprint, visual identification, RFID, etc., and it recognizes a user as a guest or as someone belonging to a particular class having limited control and access (e.g., child). Upon recognizing the user as a guest or someone belonging to a limited class, the smart remote control only permits that user to view a subset of channels and to make limited adjustments to the settings of the television and other devices. For example, a guest cannot adjust the digital video recorder (DVR) settings, and a child is limited to viewing child-appropriate programming.

[0061] According to some embodiments, similar controls are provided for other instruments, utilities, and devices in the house. For example, sinks, bathtubs, and showers can be controlled by smart spigots that recognize users as guests or as children and therefore prevent water from exceeding a designated temperature that is considered safe.

[0062] In some embodiments, in addition to containing processing and sensing capabilities, each of the devices 34, 36, 46, 50, 52, 54, 56, and 58 (collectively referred to as "the smart devices") is capable of data communications and information sharing with any other of the smart devices, as well as to any central server or cloud-computing system or any other device that is network-connected anywhere in the world. The required data communications can be carried out using any of a variety of custom or standard wireless protocols (Wi-Fi, ZigBee, 6LoWPAN, etc.) and/or any of a variety of custom or standard wired protocols (CAT6 Ethernet, HomePlug, etc.).

[0063] According to embodiments, all or some of the smart devices can serve as wireless or wired repeaters. For example, a first one of the smart devices can communicate with a second one of the smart device via a wireless router 60. The smart devices can further communicate with each other via a connection to a network, such as the Internet 62. Through the Internet 62, the smart devices can communicate with a central server or a cloud-computing system 64. The central server or cloud-computing system 64 can be associated with a manufacturer, support entity, or service provider associated with the device. For one embodiment, a user may be able to contact customer support using a device itself rather than needing to use other communication means such as a telephone or Internet-connected computer. Further, software updates can be automatically sent from the central server or cloud-computing system 64 to devices (e.g., when available, when purchased, or at routine intervals).

[0064] According to embodiments, the smart devices combine to create a mesh network of spokesman and low-power nodes in the smart-home environment 30, where some of the smart devices are "spokesman" nodes and others are "low-powered" nodes. Some of the smart devices in the smart-home environment 30 are battery powered, while others have a regular and reliable power source, such as by connecting to wiring (e.g., to 120V line voltage wires) behind the walls 40 of the smart-home environment. The smart devices that have a regular and reliable power source are referred to as "spokesman" nodes. These nodes are equipped with the capability of using any wireless protocol or manner to facilitate bidirectional communication with any of a variety of other devices in the smart-home environment 30 as well as with the central server or cloud-computing system 64. On the other hand, the devices that are battery powered are referred to as "low-power" nodes. These nodes tend to be smaller than spokesman nodes and can only communicate using wireless protocols that requires very little power, such as Zigbee, 6LoWPAN, etc. Further, some, but not all, low-power nodes are incapable of bidirectional communication. These low-power nodes send messages, but they are unable to "listen". Thus, other devices in the smart-home environment 30, such as the spokesman nodes, cannot send information to these low-power nodes.

[0065] As described, the smart devices serve as low-power and spokesman nodes to create a mesh network in the smart-home environment 30. Individual low-power nodes in the smart-home environment regularly send out messages regarding what they are sensing, and the other low-powered nodes in the smart-home environment--in addition to sending out their own messages--repeat the messages, thereby causing the messages to travel from node to node (i.e., device to device) throughout the smart-home environment 30. The spokesman nodes in the smart-home environment 30 are able to "drop down" to low-powered communication protocols to receive these messages, translate the messages to other communication protocols, and send the translated messages to other spokesman nodes and/or the central server or cloud-computing system 64. Thus, the low-powered nodes using low-power communication protocols are able send messages across the entire smart-home environment 30 as well as over the Internet 62 to the central server or cloud-computing system 64. According to embodiments, the mesh network enables the central server or cloud-computing system 64 to regularly receive data from all of the smart devices in the home, make inferences based on the data, and send commands back to one of the smart devices to accomplish some of the smart-home objectives described herein.

[0066] As described, the spokesman nodes and some of the low-powered nodes are capable of "listening". Accordingly, users, other devices, and the central server or cloud-computing system 64 can communicate controls to the low-powered nodes. For example, a user can use the portable electronic device (e.g., a smartphone) 66 to send commands over the Internet 62 to the central server or cloud-computing system 64, which then relays the commands to the spokesman nodes in the smart-home environment 30. The spokesman nodes drop down to a low-power protocol to communicate the commands to the low-power nodes throughout the smart-home environment, as well as to other spokesman nodes that did not receive the commands directly from the central server or cloud-computing system 64.

[0067] An example of a low-power node is a smart night light 65. In addition to housing a light source, the smart night light 65 houses an occupancy sensor, such as an ultrasonic or passive IR sensor, and an ambient light sensor, such as a photoresistor or a single-pixel sensor that measures light in the room. In some embodiments, the smart night light 65 is configured to activate the light source when its ambient light sensor detects that the room is dark and when its occupancy sensor detects that someone is in the room. In other embodiments, the smart night light 65 is simply configured to activate the light source when its ambient light sensor detects that the room is dark. Further, according to embodiments, the smart night light 65 includes a low-power wireless communication chip (e.g., ZigBee chip) that regularly sends out messages regarding the occupancy of the room and the amount of light in the room, including instantaneous messages coincident with the occupancy sensor detecting the presence of a person in the room. As mentioned above, these messages may be sent wirelessly, using the mesh network, from node to node (i.e., smart device to smart device) within the smart-home environment 30 as well as over the Internet 62 to the central server or cloud-computing system 64.

[0068] Other examples of low-powered nodes include battery-operated versions of the smart hazard detectors 50. These smart hazard detectors 50 are often located in an area without access to constant and reliable power and, as discussed in detail below, may include any number and type of sensors, such as smoke/fire/heat sensors, carbon monoxide/dioxide sensors, occupancy/motion sensors, ambient light sensors, temperature sensors, humidity sensors, and the like. Furthermore, smart hazard detectors 50 can send messages that correspond to each of the respective sensors to the other devices and the central server or cloud-computing system 64, such as by using the mesh network as described above.

[0069] Examples of spokesman nodes include smart thermostats 46, smart doorbells 52, smart wall switches 54, and smart wall plugs 56. These devices 46, 52, 54, and 56 are often located near and connected to a reliable power source, and therefore can include more power-consuming components, such as one or more communication chips capable of bidirectional communication in any variety of protocols.

[0070] In some embodiments, these low-powered and spokesman nodes (e.g., devices 46, 50, 52, 54, 56, 58, and 65) can function as "tripwires" for an alarm system in the smart-home environment. For example, in the event a perpetrator circumvents detection by alarm sensors located at windows, doors, and other entry points of the smart-home environment 30, the alarm could be triggered upon receiving an occupancy, motion, heat, sound, etc. message from one or more of the low-powered and spokesman nodes in the mesh network. For example, upon receiving a message from a smart night light 65 indicating the presence of a person, the central server or cloud-computing system 64 or some other device could trigger an alarm, provided the alarm is armed at the time of detection. Thus, the alarm system could be enhanced by various low-powered and spokesman nodes located throughout the smart-home environment 30. In this example, a user could enhance the security of the smart-home environment 30 by buying and installing extra smart nightlights 65. However, in a scenario where the perpetrator uses a radio transceiver to jam the wireless network, the devices 10 may be incapable of communicating with each other. Therefore, as discussed in detail below, the present techniques provide network communication jamming attack detection and notification solutions to such a problem.

[0071] In some embodiments, the mesh network can be used to automatically turn on and off lights as a person transitions from room to room. For example, the low-powered and spokesman nodes detect the person's movement through the smart-home environment and communicate corresponding messages through the mesh network. Using the messages that indicate which rooms are occupied, the central server or cloud-computing system 64 or some other device activates and deactivates the smart wall switches 54 to automatically provide light as the person moves from room to room in the smart-home environment 30. Further, users may provide pre-configuration information that indicates which smart wall plugs 56 provide power to lamps and other light sources, such as the smart night light 65. Alternatively, this mapping of light sources to wall plugs 56 can be done automatically (e.g., the smart wall plugs 56 detect when a light source is plugged into it, and it sends a corresponding message to the central server or cloud-computing system 64). Using this mapping information in combination with messages that indicate which rooms are occupied, the central server or cloud-computing system 64 or some other device activates and deactivates the smart wall plugs 56 that provide power to lamps and other light sources so as to track the person's movement and provide light as the person moves from room to room.

[0072] In some embodiments, the mesh network of low-powered and spokesman nodes can be used to provide exit lighting in the event of an emergency. In some instances, to facilitate this, users provide pre-configuration information that indicates exit routes in the smart-home environment 30. For example, for each room in the house, the user provides a map of the best exit route. It should be appreciated that instead of a user providing this information, the central server or cloud-computing system 64 or some other device could automatically determine the routes using uploaded maps, diagrams, architectural drawings of the smart-home house, as well as using a map generated based on positional information obtained from the nodes of the mesh network (e.g., positional information from the devices is used to construct a map of the house). In operation, when an alarm is activated (e.g., when one or more of the smart hazard detector 50 detects smoke and activates an alarm), the central server or cloud-computing system 64 or some other device uses occupancy information obtained from the low-powered and spokesman nodes to determine which rooms are occupied and then turns on lights (e.g., nightlights 65, wall switches 54, wall plugs 56 that power lamps, etc.) along the exit routes from the occupied rooms so as to provide emergency exit lighting.

[0073] Further included and illustrated in the smart-home environment 30 of FIG. 2 are service robots 69 each configured to carry out, in an autonomous manner, any of a variety of household tasks. For some embodiments, the service robots 69 can be respectively configured to perform floor sweeping, floor washing, etc. in a manner similar to that of known commercially available devices such as the ROOMBA.TM. and SCOOBA.TM. products sold by iRobot, Inc. of Bedford, Mass. Tasks such as floor sweeping and floor washing can be considered as "away" or "while-away" tasks for purposes of the instant description, as it is generally more desirable for these tasks to be performed when the occupants are not present. For other embodiments, one or more of the service robots 69 are configured to perform tasks such as playing music for an occupant, serving as a localized thermostat for an occupant, serving as a localized air monitor/purifier for an occupant, serving as a localized baby monitor, serving as a localized hazard detector for an occupant, and so forth, it being generally more desirable for such tasks to be carried out in the immediate presence of the human occupant. For purposes of the instant description, such tasks can be considered as "human-facing" or "human-centric" tasks.

[0074] When serving as a localized thermostat for an occupant, a particular one of the service robots 69 can be considered to be facilitating what can be called a "personal comfort-area network" for the occupant, with the objective being to keep the occupant's immediate space at a comfortable temperature wherever that occupant may be located in the home. This can be contrasted with conventional wall-mounted room thermostats, which have the more attenuated objective of keeping a statically-defined structural space at a comfortable temperature. According to one embodiment, the localized-thermostat service robot 69 is configured to move itself into the immediate presence (e.g., within five feet) of a particular occupant who has settled into a particular location in the home (e.g. in the dining room to eat their breakfast and read the news). The localized-thermostat service robot 69 includes a temperature sensor, a processor, and wireless communication components configured such that control communications with the HVAC system, either directly or through a wall-mounted wirelessly communicating thermostat coupled to the HVAC system, are maintained and such that the temperature in the immediate vicinity of the occupant is maintained at their desired level. If the occupant then moves and settles into another location (e.g. to the living room couch to watch television), the localized-thermostat service robot 69 proceeds to move and park itself next to the couch and keep that particular immediate space at a comfortable temperature.

[0075] Technologies by which the localized-thermostat service robot 69 (and/or the larger smart-home system of FIG. 2) can identify and locate the occupant whose personal-area space is to be kept at a comfortable temperature can include, but are not limited to, RFID sensing (e.g., person having an RFID bracelet, RFID necklace, or RFID key fob), synthetic vision techniques (e.g., video cameras and face recognition processors), audio techniques (e.g., voice, sound pattern, vibration pattern recognition), ultrasound sensing/imaging techniques, and infrared or near-field communication (NFC) techniques (e.g., person wearing an infrared or NFC-capable smartphone), along with rules-based inference engines or artificial intelligence techniques that draw useful conclusions from the sensed information (e.g., if there is only a single occupant present in the home, then that is the person whose immediate space should be kept at a comfortable temperature, and the selection of the desired comfortable temperature should correspond to that occupant's particular stored profile).

[0076] When serving as a localized air monitor/purifier for an occupant, a particular service robot 69 can be considered to be facilitating what can be called a "personal health-area network" for the occupant, with the objective being to keep the air quality in the occupant's immediate space at healthy levels. Alternatively or in conjunction therewith, other health-related functions can be provided, such as monitoring the temperature or heart rate of the occupant (e.g., using finely remote sensors, near-field communication with on-person monitors, etc.). When serving as a localized hazard detector for an occupant, a particular service robot 69 can be considered to be facilitating what can be called a "personal safety-area network" for the occupant, with the objective being to ensure there is no excessive carbon monoxide, smoke, fire, etc., in the immediate space of the occupant. Methods analogous to those described above for personal comfort-area networks in terms of occupant identifying and tracking are likewise applicable for personal health-area network and personal safety-area network embodiments.

[0077] According to some embodiments, the above-referenced facilitation of personal comfort-area networks, personal health-area networks, personal safety-area networks, and/or other such human-facing functionalities of the service robots 69, are further enhanced by logical integration with other smart sensors in the home according to rules-based inferencing techniques or artificial intelligence techniques for achieving better performance of those human-facing functionalities and/or for achieving those goals in energy-conserving or other resource-conserving ways. Thus, for one embodiment relating to personal health-area networks, the air monitor/purifier service robot 69 can be configured to detect whether a household pet is moving toward the currently settled location of the occupant (e.g., using on-board sensors and/or by data communications with other smart-home sensors along with rules-based inferencing/artificial intelligence techniques), and if so, the air purifying rate is immediately increased in preparation for the arrival of more airborne pet dander. For another embodiment relating to personal safety-area networks, the hazard detector service robot 69 can be advised by other smart-home sensors that the temperature and humidity levels are rising in the kitchen, which is nearby to the occupant's current dining room location, and responsive to this advisory the hazard detector service robot 69 will temporarily raise a hazard detection threshold, such as a smoke detection threshold, under an inference that any small increases in ambient smoke levels will most likely be due to cooking activity and not due to a genuinely hazardous condition.

[0078] The above-described "human-facing" and "away" functionalities can be provided, without limitation, by multiple distinct service robots 69 having respective dedicated ones of such functionalities, by a single service robot 69 having an integration of two or more different ones of such functionalities, and/or any combinations thereof (including the ability for a single service robot 69 to have both "away" and "human facing" functionalities) without departing from the scope of the present teachings. Electrical power can be provided by virtue of rechargeable batteries or other rechargeable methods, such as an out-of-the-way docking station to which the service robots 69 will automatically dock and recharge its batteries (if needed) during periods of inactivity. Preferably, each service robot 69 includes wireless communication components that facilitate data communications with one or more of the other wirelessly communicating smart-home sensors of FIG. 2 and/or with one or more other service robots 69 (e.g., using Wi-Fi, Zigbee, Z-Wave, 6LoWPAN, etc.), and one or more of the smart-home devices 10 can be in communication with a remote server over the Internet. Alternatively or in conjunction therewith, each service robot 69 can be configured to communicate directly with a remote server by virtue of cellular telephone communications, satellite communications, 3G/4G network data communications, or other direct communication method.

[0079] Provided according to some embodiments are systems and methods relating to the integration of the service robot(s) 69 with home security sensors and related functionalities of the smart home system. The embodiments are particularly applicable and advantageous when applied for those service robots 69 that perform "away" functionalities or that otherwise are desirable to be active when the home is unoccupied (hereinafter "away-service robots"). Included in the embodiments are methods and systems for ensuring that home security systems, intrusion detection systems, and/or occupancy-sensitive environmental control systems (for example, occupancy-sensitive automated setback thermostats that enter into a lower-energy-using condition when the home is unoccupied) are not erroneously triggered by the away-service robots.

[0080] Provided according to one embodiment is a home automation and security system (e.g., as shown in FIG. 2) that is remotely monitored by a monitoring service by virtue of automated systems (e.g., cloud-based servers or other central servers, hereinafter "central server") that are in data communications with one or more network-connected elements of the home automation and security system. The away-service robots are configured to be in operative data communication with the central server, and are configured such that they remain in a non-away-service state (e.g., a dormant state at their docking station) unless permission is granted from the central server (e.g., by virtue of an "away-service-OK" message from the central server) to commence their away-service activities. An away-state determination made by the system, which can be arrived at (i) exclusively by local on-premises smart device(s) based on occupancy sensor data, (ii) exclusively by the central server based on received occupancy sensor data and/or based on received proximity-related information such as GPS coordinates from user smartphones or automobiles, or (iii) any combination of (i) and (ii) can then trigger the granting of away-service permission to the away-service robots by the central server. During the course of the away-service robot activity, during which the away-service robots may continuously detect and send their in-home location coordinates to the central server, the central server can readily filter signals from the occupancy sensing devices to distinguish between the away-service robot activity versus any unexpected intrusion activity, thereby avoiding a false intrusion alarm condition while also ensuring that the home is secure. Alternatively or in conjunction therewith, the central server may provide filtering data (such as an expected occupancy-sensing profile triggered by the away-service robots) to the occupancy sensing nodes or associated processing nodes of the smart home, such that the filtering is performed at the local level. Although somewhat less secure, it would also be within the scope of the present teachings for the central server to temporarily disable the occupancy sensing equipment for the duration of the away-service robot activity.

[0081] According to another embodiment, functionality similar to that of the central server in the above example can be performed by an on-site computing device such as a dedicated server computer, a "master" home automation console or panel, or as an adjunct function of one or more of the smart-home devices of FIG. 2. In such an embodiment, there would be no dependency on a remote service provider to provide the "away-service-OK" permission to the away-service robots and the false-alarm-avoidance filtering service or filter information for the sensed intrusion detection signals.

[0082] According to other embodiments, there are provided methods and systems for implementing away-service robot functionality while avoiding false home security alarms and false occupancy-sensitive environmental controls without the requirement of a single overall event orchestrator. For purposes of the simplicity in the present disclosure, the home security systems and/or occupancy-sensitive environmental controls that would be triggered by the motion, noise, vibrations, or other disturbances of the away-service robot activity are referenced simply as "activity sensing systems," and when so triggered will yield a "disturbance-detected" outcome representative of the false trigger (for example, an alarm message to a security service, or an "arrival" determination for an automated setback thermostat that causes the home to be heated or cooled to a more comfortable "occupied" setpoint temperature). According to one embodiment, the away-service robots are configured to emit a standard ultrasonic sound throughout the course of their away-service activity, the activity sensing systems are configured to detect that standard ultrasonic sound, and the activity sensing systems are further configured such that no disturbance-detected outcome will occur for as long as that standard ultrasonic sound is detected. For other embodiments, the away-service robots are configured to emit a standard notification signal throughout the course of their away-service activity, the activity sensing systems are configured to detect that standard notification signal, and the activity sensing systems are further configured such that no disturbance-detected outcome will occur for as long as that standard notification signal is detected, wherein the standard notification signal comprises one or more of: an optical notifying signal; an audible notifying signal; an infrared notifying signal; an infrasonic notifying signal; a wirelessly transmitted data notification signal (e.g., an IP broadcast, multicast, or unicast notification signal, or a notification message sent in an TCP/IP two-way communication session).

[0083] According to some embodiments, the notification signals sent by the away-service robots to the activity sensing systems are authenticated and encrypted such that the notifications cannot be learned and replicated by a potential burglar. Any of a variety of known encryption/authentication schemes can be used to ensure such data security including, but not limited to, methods involving third party data security services or certificate authorities. For some embodiments, a permission request-response model can be used, wherein any particular away-service robot requests permission from each activity sensing system in the home when it is ready to perform its away-service tasks, and does not initiate such activity until receiving a "yes" or "permission granted" message from each activity sensing system (or from a single activity sensing system serving as a "spokesman" for all of the activity sensing systems). One advantage of the described embodiments that do not require a central event orchestrator is that there can (optionally) be more of an arms-length relationship between the supplier(s) of the home security/environmental control equipment, on the one hand, and the supplier(s) of the away-service robot(s), on the other hand, as it is only required that there is the described standard one-way notification protocol or the described standard two-way request/permission protocol to be agreed upon by the respective suppliers.

[0084] According to still other embodiments, the activity sensing systems are configured to detect sounds, vibrations, RF emissions, or other detectable environmental signals or "signatures" that are intrinsically associated with the away-service activity of each away-service robot, and are further configured such that no disturbance-detected outcome will occur for as long as that particular detectable signal or environmental "signature" is detected. By way of example, a particular kind of vacuum-cleaning away-service robot may emit a specific sound or RF signature. For one embodiment, the away-service environmental signatures for each of a plurality of known away-service robots are stored in the memory of the activity sensing systems based on empirically collected data, the environmental signatures being supplied with the activity sensing systems and periodically updated by a remote update server. For another embodiment, the activity sensing systems can be placed into a "training mode" for the particular home in which they are installed, wherein they "listen" and "learn" the particular environmental signatures of the away-service robots for that home during that training session, and thereafter will suppress disturbance-detected outcomes for intervals in which those environmental signatures are heard.

[0085] For still another embodiment, which is particularly useful when the activity sensing system is associated with occupancy-sensitive environmental control equipment rather than a home security system, the activity sensing system is configured to automatically learn the environmental signatures for the away-service robots by virtue of automatically performing correlations over time between detected environmental signatures and detected occupancy activity. By way of example, for one embodiment an intelligent automated nonoccupancy-triggered setback thermostat such as the Nest Learning Thermostat can be configured to constantly monitor for audible and RF activity as well as to perform infrared-based occupancy detection. In particular view of the fact that the environmental signature of the away-service robot will remain relatively constant from event to event, and in view of the fact that the away-service events will likely either (a) themselves be triggered by some sort of nonoccupancy condition as measured by the away-service robots themselves, or (b) occur at regular times of day, there will be patterns in the collected data by which the events themselves will become apparent and for which the environmental signatures can be readily learned. Generally speaking, for this automatic-learning embodiment in which the environmental signatures of the away-service robots are automatically learned without requiring user interaction, it is more preferable that a certain number of false triggers be tolerable over the course of the learning process. Accordingly, this automatic-learning embodiment is more preferable for application in occupancy-sensitive environmental control equipment (such as an automated setback thermostat) rather than home security systems for the reason that a few false occupancy determinations may cause a few instances of unnecessary heating or cooling, but will not otherwise have any serious consequences, whereas false home security alarms may have more serious consequences.

[0086] According to embodiments, technologies including the sensors of the smart devices located in the mesh network of the smart-home environment in combination with rules-based inference engines or artificial intelligence provided at the central server or cloud-computing system 64 are used to provide a personal "smart alarm clock" for individual occupants of the home. For example, user-occupants can communicate with the central server or cloud-computing system 64 via their mobile devices 66 to access an interface for the smart alarm clock. There, occupants can turn on their "smart alarm clock" and input a wake time for the next day and/or for additional days. In some embodiments, the occupant may have the option of setting a specific wake time for each day of the week, as well as the option of setting some or all of the inputted wake times to "repeat". Artificial intelligence will be used to consider the occupant's response to these alarms when they go off and make inferences about the user's preferred sleep patterns over time.

[0087] According to embodiments, the smart device in the smart-home environment 30 that happens to be closest to the occupant when the occupant falls asleep will be the device that transmits messages regarding when the occupant stopped moving, from which the central server or cloud-computing system 64 will make inferences about where and when the occupant prefers to sleep. This closest smart device will as be the device that sounds the alarm to wake the occupant. In this manner, the "smart alarm clock" will follow the occupant throughout the house, by tracking the individual occupants based on their "unique signature", which is determined based on data obtained from sensors located in the smart devices. For example, the sensors include ultrasonic sensors, passive IR sensors, and the like. The unique signature is based on a combination of walking gate, patterns of movement, voice, height, size, etc. It should be appreciated that facial recognition may also be used.