Covert Identification Tags Viewable By Robots and Robotic Devices

Smith; Fraser M.

U.S. patent application number 15/859618 was filed with the patent office on 2019-07-04 for covert identification tags viewable by robots and robotic devices. The applicant listed for this patent is Sarcos Corp.. Invention is credited to Fraser M. Smith.

| Application Number | 20190202057 15/859618 |

| Document ID | / |

| Family ID | 65036577 |

| Filed Date | 2019-07-04 |

| United States Patent Application | 20190202057 |

| Kind Code | A1 |

| Smith; Fraser M. | July 4, 2019 |

Covert Identification Tags Viewable By Robots and Robotic Devices

Abstract

A system and method comprises marking or identifying an object to be perceptible to robot, while being invisible or substantially invisible to humans. Such marking can facilitate interaction and navigation by the robot, and can create a machine or robot navigable environment for the robot. The machine readable indicia can comprise symbols that can be perceived and interpreted by the robot. The robot can utilize a camera with an image sensor to see the indicia. In addition, the indicia can be invisible or substantially invisible to the unaided human eye so that such indicia does not create an unpleasant environment for humans, and remains aesthetically pleasing to humans. For example, the indicia can reflect UV light, while the image sensor of the robot can be capable of detecting such UV light. Thus, the indicia can be perceived by the robot, while not interfering with the aesthetics of the environment.

| Inventors: | Smith; Fraser M.; (Salt Lake City, UT) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 65036577 | ||||||||||

| Appl. No.: | 15/859618 | ||||||||||

| Filed: | December 31, 2017 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | Y10S 901/49 20130101; G06K 19/06037 20130101; G06K 7/10861 20130101; Y10S 901/47 20130101; Y10S 901/09 20130101; B25J 9/1669 20130101; B25J 11/008 20130101; B25J 9/1674 20130101; B25J 9/0003 20130101; G06K 19/0614 20130101; G06K 7/10722 20130101; B25J 9/1697 20130101; Y10S 901/01 20130101; G05D 1/0234 20130101; G06K 19/06131 20130101; G06F 16/90335 20190101; B25J 19/02 20130101; G06K 7/12 20130101 |

| International Class: | B25J 9/16 20060101 B25J009/16; G06K 7/10 20060101 G06K007/10; G06F 17/30 20060101 G06F017/30; B25J 9/00 20060101 B25J009/00; B25J 11/00 20060101 B25J011/00 |

Claims

1. A method for creating a machine navigable environment for a robot, the method comprising: identifying a desired space in which the robot will operate; selecting one or more objects that are or will be located in the space; marking the one or more objects with indicia being machine-readable and human-imperceptible; and introducing the robot into the space, the robot being capable of movement within the space, and the robot having a sensor capable of perceiving the indicia.

2. The method of claim 1, wherein the indicia comprises interaction information that assists the robot with interaction with the object.

3. The method of claim 1, wherein the indicia facilitates access to interaction information stored in a computer database.

4. The method of claim 1, wherein marking the one or more objects with indicia further comprises marking the one or more objects with indicia that is UV reflective and visible in the UV spectrum, and the robot has a camera with an image sensor operable in the UV spectrum and a UV light source.

5. The method of claim 1, wherein marking the one or more objects further comprises applying the indicia on an external, outfacing surface of the object facing into the space.

6. The method of claim 1, wherein marking the one or more objects further comprises applying the indicia over a finished surface of the object.

7. The method of claim 1, wherein marking the one or more objects further comprises applying a removable applique with the indicia to an outermost finished surface of the object.

8. The method of claim 1, wherein marking the one or more objects further comprises disposing the indicia to be under an outermost finished surface of the object.

9. The method of claim 1, wherein the indicia is disposed in a laminate with an outer layer disposed over the indicia, and the outer layer is opaque to visible light.

10. The method of claim 1, wherein marking the one or more objects further comprises marking the one or more objects with indicia transparent to visible light.

11. The method of claim 1, wherein selecting the one or more objects further comprises selecting a transparent object, and wherein marking the one or more objects further comprises applying the indicia on the transparent object without interfering with the transparency of the object.

12. The method of claim 1, wherein the indicia identifies the object.

13. The method of claim 1, wherein the indicia identifies a location of an interface of the object.

14. The method of claim 1, wherein the indicia identifies a location of an interface of the object and a direction of operation of the interface.

15. The method of claim 1, wherein marking the one or more objects further comprises marking an object inside a perimeter of the object.

16. The method of claim 1, wherein marking the one or more objects further comprises marking an object adjacent an interface of the object.

17. The method of claim 1, wherein marking the one or more objects further comprises marking an object substantially circumscribing a perimeter of the object.

18. The method of claim 1, wherein marking the one or more objects with indicia further comprises marking a single object of the one or more objects with multiple different indicia on different parts of the single object to identify the different parts of the single object.

19. The method of claim 1, wherein selecting the one or more objects further comprises selecting an object representing a physical boundary or barrier to movement or action of the robot; and wherein marking the one or more objects further comprises marking the object to indicate the physical boundary or barrier.

20. The method of claim 1, wherein selecting the one or more objects further comprises selecting an object representing a physical boundary or barrier subject to damage of the object by the robot, and wherein marking the one or more objects further comprises marking the object to indicate the physical boundary or barrier.

21. The method of claim 1, wherein selecting the one or more objects further comprises selecting an object representing a physical boundary or barrier subject to damage of the robot, and wherein marking the one or more objects further comprises marking the object to indicate the physical boundary or barrier.

22. The method of claim 1, wherein selecting the one or more objects further comprises selecting an object representing a physical boundary or barrier subject to frequent movement, and wherein marking the one or more objects further comprises marking the object to indicate the physical boundary or barrier.

23. The method of claim 1, further comprising: identifying a desired or probable task to be given to the robot; and identifying one or more objects corresponding to the desired or probable task.

24. The method of claim 1, further comprising: giving an instruction to the robot involving at least one of the one or more objects.

25. The method of claim 1, wherein marking the one or more objects with indicia further comprises marking the one or more objects with indicia that is IR reflective and visible in the IR spectrum, and the robot has a camera with an image sensor operable in the IR spectrum and an IR light source.

26. The method of claim 1, wherein marking the one or more objects with indicia further comprises marking the one or more objects with indicia that is RF reflective, and the robot has a sensor operable in the RF spectrum.

27. The method of claim 1, wherein marking the one or more objects with indicia further comprises marking the one or more objects with indicia that emits ultrasonic wavelengths, and the robot has sensor operable in the ultrasonic spectrum.

28. An object configured for use with a robot with a sensor in a space defining a machine navigable environment, the object comprising: a surface; indicia disposed on the surface, the indicia being machine-readable by the sensor of the robot, and the indicia being human-imperceptible; and the indicia identifying the object and comprising information pertaining to interaction of the robot with the object.

29. The object of claim 28, wherein the indicia comprises interaction information indicating, at least in part, how the robot is to interact with the object.

30. The object of claim 28, wherein the interaction information is stored on a computer database to be later conveyed to the robot.

31. The object of claim 28, wherein the indicia is visible in the UV spectrum; and wherein the robot has a camera with an image sensor operable in the UV spectrum and a UV light source.

32. The object of claim 28, wherein the indicia is disposed over a finished surface of the object.

33. The object of claim 28, wherein the indicia comprises an applique removably disposed on an outermost finished surface of the object.

34. The object of claim 28, wherein the indicia is disposed under an outermost finished surface of the object.

35. The object of claim 28, wherein the indicia comprises a laminate with an outer layer disposed over the indicia; and wherein the outer layer is opaque to visible light.

36. The object of claim 28, wherein the indicia is transparent to visible light.

37. The object of claim 28, further comprising a transparent portion; and wherein the indicia is disposed on the transparent portion without interfering with the transparency of the portion.

38. The object of claim 28, wherein the indicia identifies a location of an interface of the object and a direction of operation of the interface.

39. The object of claim 28, wherein the indicia is disposed inside a perimeter of the object.

40. The object of claim 28, wherein the indicia is disposed adjacent an interface of the object.

41. The object of claim 28, wherein the indicia substantially circumscribes a perimeter of the object.

42. The object of claim 28, wherein the indicia further comprises multiple different indicia on different parts of a single object identifying the different parts of the single object.

43. The object of claim 28, wherein the object represents a physical boundary or barrier to movement or action of the robot; and wherein the indicia indicates the physical boundary or barrier.

44. The object of claim 28, wherein the object represents a physical boundary or barrier subject to damage of the object by the robot; and wherein the indicia indicates the physical boundary or barrier.

45. The object of claim 28, wherein the object represents a physical boundary or barrier subject to damage of the robot; and wherein the indicia indicates the physical boundary or barrier.

46. The object of claim 28, wherein the object represents a physical boundary or barrier subject to frequent movement; and wherein the indicia indicates the physical boundary or barrier.

47. The object of claim 28, wherein the indicia is visible in the IR spectrum, and the robot has a camera with an image sensor operable in the IR spectrum and an IR light source.

48. The object of claim 28, wherein the indicia is RF reflective, and the robot has a sensor operable in the RF spectrum.

49. The object of claim 28, wherein the indicia emits ultrasonic wavelengths, and the robot has sensor operable in the ultrasonic spectrum.

50. A machine readable marker, comprising: human-imperceptible marking indicia, wherein the marking indicia is configured to be applied to an object, and comprises information pertaining to robot interaction with the object.

51. The machine readable marker of claim 50, wherein the information comprises interaction information that assists the robot with interaction with the object.

52. The machine readable marker of claim 50, wherein the information comprises a link facilitating access interaction information stored in a computer database, the interaction information assisting the robot with interaction with the object.

53. A robotic system comprising: human-imperceptible indicia associated with an object within an environment in which the robot operates, the human-imperceptible indicia comprising linking information; a robot having at least one sensor operable to sense the human-imperceptible indicia and the linking information; and interaction information stored in a database accessible by the robot, the interaction information being associated with the linking information and pertaining to a predetermined intended interaction of the robot with the object, wherein the linking information is operable to facilitate access to the database and the interaction information therein by the robot, the interaction information being operable to instruct the robot to interact with the object in accordance with the predetermined intended interaction.

54. A robotic system comprising: human-imperceptible indicia associated with an object within an environment in which a robot operates, the human-imperceptible indicia comprising interaction information directly thereon pertaining to a predetermined intended interaction of the robot with the object; and the robot having at least one sensor operable to sense the human-imperceptible indicia and the interaction information, the interaction information being operable to instruct the robot to interact with the object in accordance with the predetermined intended interaction.

Description

BACKGROUND

[0001] Having a robot traverse a space and interact with objects can be a difficult task. The robot must be able to distinguish obstacles which could damage the robot, or which can be damaged by the robot. In addition, the robot must be able to identify objects with which it must interact in order to carry out instructions. Furthermore, some objects can present dangers or difficulties to interaction, such as the difference between a freezer and a fridge door, or which knob on a stove corresponds to which burner, etc.

BRIEF DESCRIPTION OF THE DRAWINGS

[0002] Features and advantages of the invention will be apparent from the detailed description which follows, taken in conjunction with the accompanying drawings, which together illustrate, by way of example, features of the invention; and, wherein:

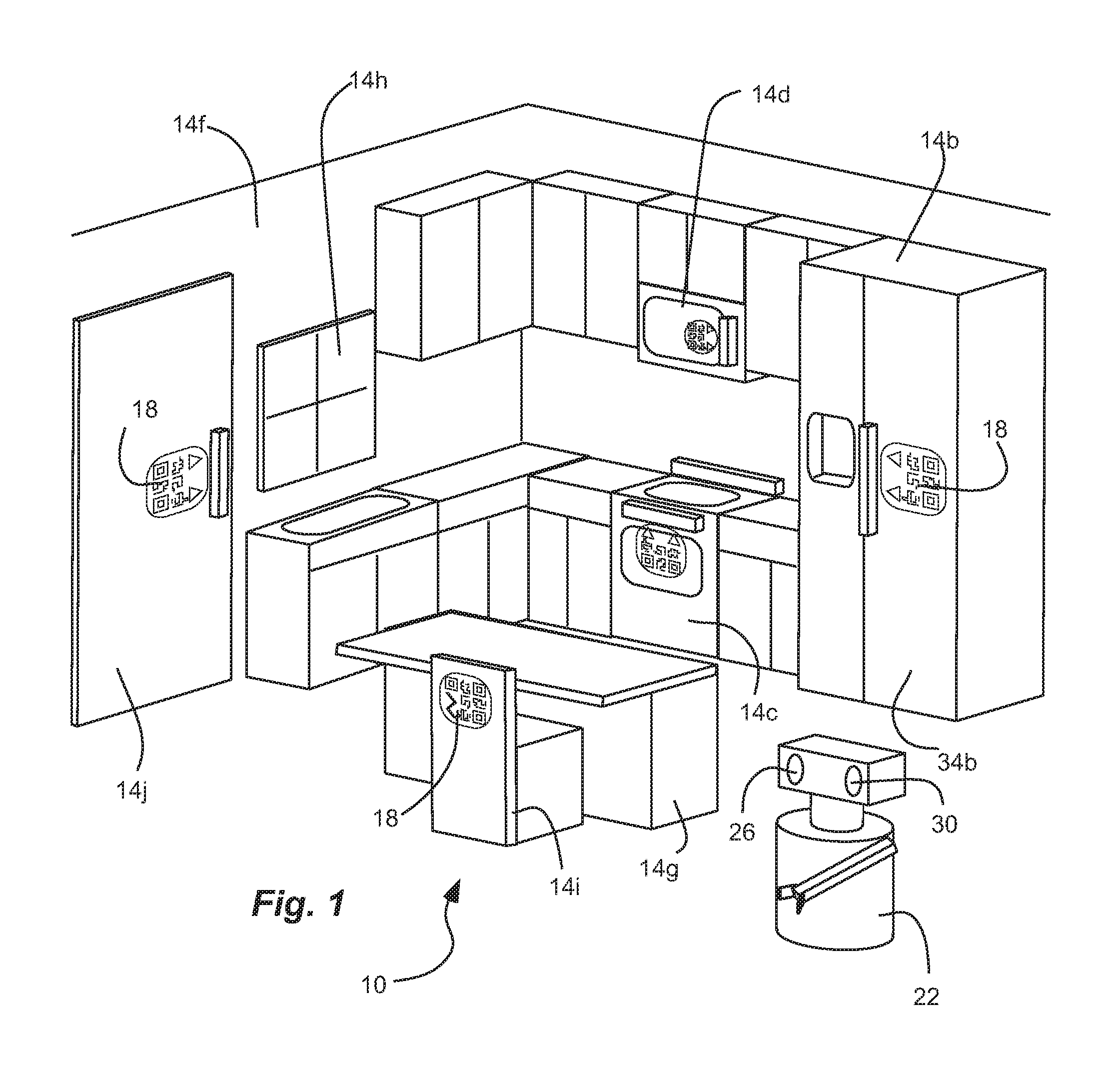

[0003] FIG. 1 is a schematic perspective view of a space with objects therein marked with machine-readable, but human-imperceptible, indicia, defining a machine-navigable environment through which a robot can navigate and interact, in accordance with an example.

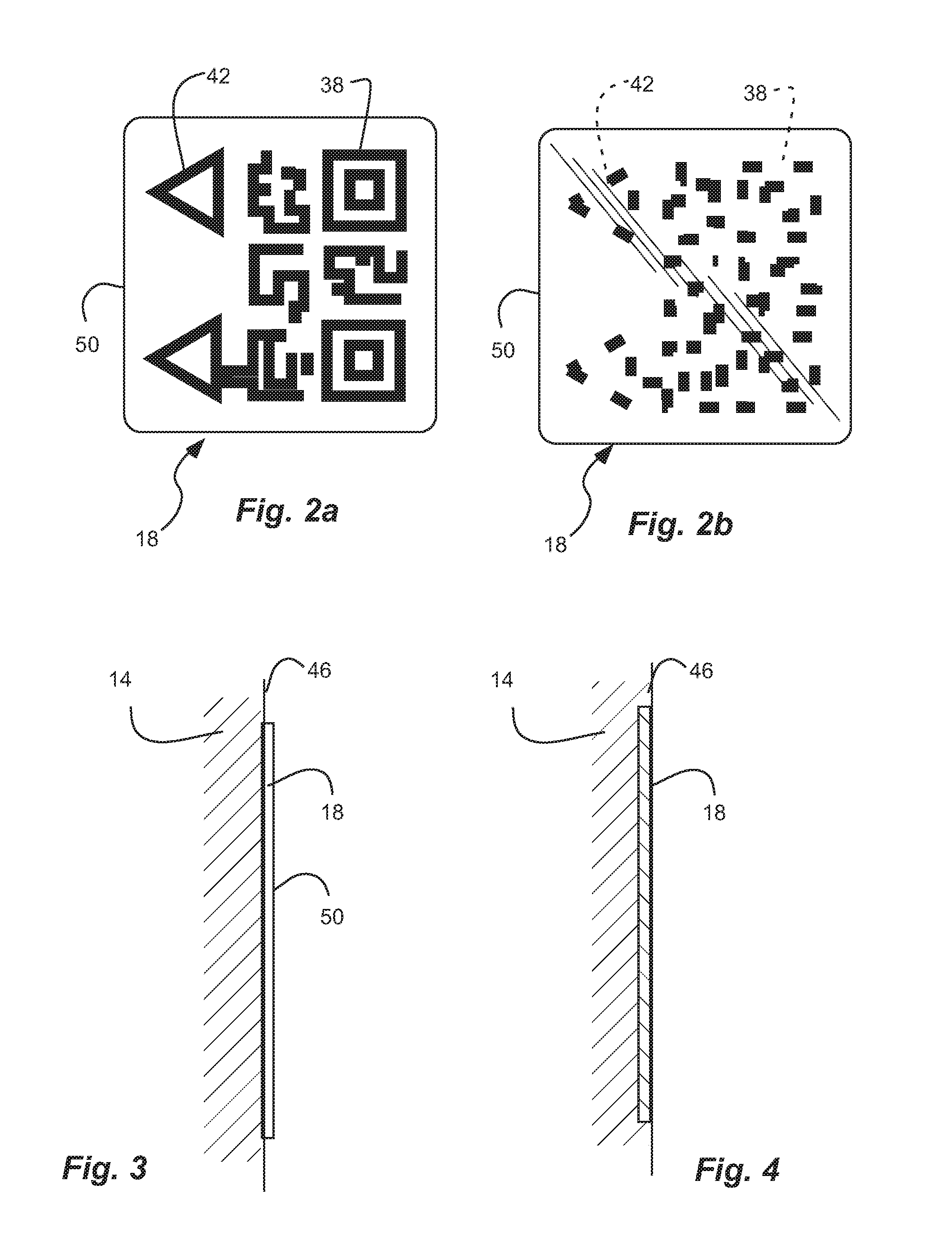

[0004] FIG. 2a is a schematic front view of a machine-readable, but human-imperceptible, label or indicia for marking an object of FIG. 1 in accordance with an example, and shown visible for the sake of clarity.

[0005] FIG. 2b is a schematic front view of the label or indicia for marking an object of FIG. 1 in accordance with an example, and illustrated in such a way to indicate invisibility.

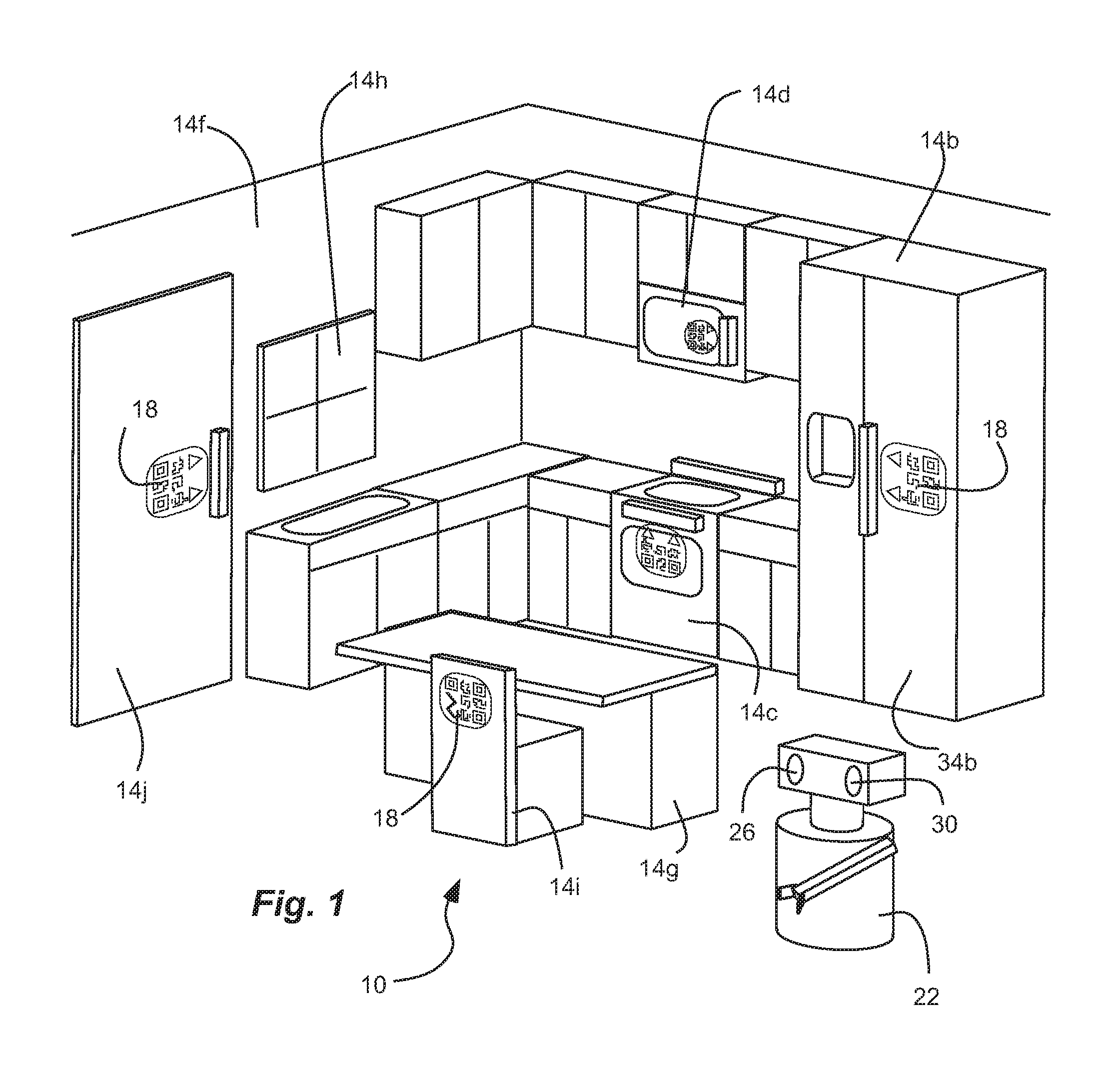

[0006] FIG. 3 is a schematic side view of the label or indicia for marking an object of FIG. 1 in accordance with an example.

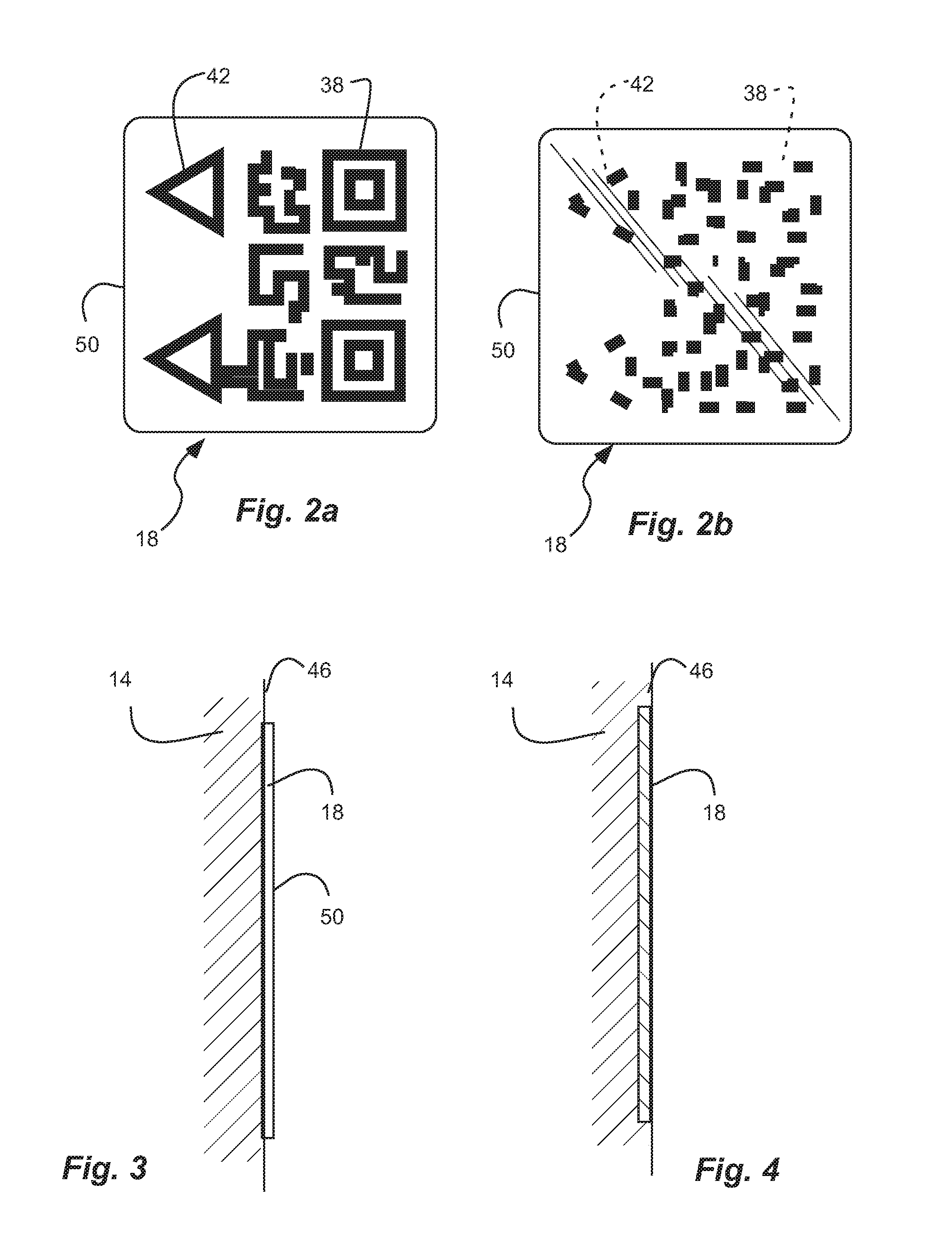

[0007] FIG. 4 is a schematic cross-sectional side view of the label or indicia for marking an object of FIG. 1 in accordance with an example.

[0008] FIG. 5 is a schematic front view of an object of FIG. 1 in accordance with an example.

[0009] FIG. 6 is a schematic front view of an object of FIG. 1 in accordance with an example.

[0010] FIG. 7 is a schematic front view of an object of FIG. 1 in accordance with an example.

[0011] FIG. 8 is a schematic view of look-up table or library of indicia.

[0012] Reference will now be made to the exemplary embodiments illustrated, and specific language will be used herein to describe the same. It will nevertheless be understood that no limitation of the scope of the invention is thereby intended.

SUMMARY OF THE INVENTION

[0013] An initial overview of technology embodiments is provided below and then specific technology embodiments are described in further detail later. This initial summary is intended to aid readers in understanding the technology more quickly but is not intended to identify key features or essential features of the technology nor is it intended to limit the scope of the claimed subject matter.

[0014] Disclosed herein is a system and method for marking or identifying an object to be perceptible to a robot, or being machine-readable, while being invisible or substantially invisible to humans. Such marking can facilitate identification, interaction and navigation by the robot, and can create a machine or robot navigable environment for the robot. By way of example, such an object can be an appliance, such as a refrigerator, with which the robot approaches and interacts in order to complete a task, such as to retrieve an item (e.g., a beverage). Thus, the appliance or refrigerator can have a marking, label, tag or indicia that is machine-readable, and that can identify the appliance as a refrigerator, and thus the object with which the machine or robot will interact to retrieve the item. As used herein, the term "indicia" refers to one or more than one "indicium." In addition, the indicia can also identify the parts of the refrigerator, such as the handle, again with which the robot will interact. Multiple different markings, labels, tags or indicia can be used to distinguish the multiple different parts of the refrigerator, such as the refrigerator handle, the freezer handle, the ice maker dispenser, the water dispenser, different shelves or bins, etc. Furthermore, the indicia can also indicate a direction, magnitude or other parameters that may be useful to the robot in terms of how the robot should interact with the object. For example, the indicia can indicate which direction the door opens, how much force should be exerted to open the door, and the degree of rotation to be carried out.

[0015] The machine readable indicia can comprise symbols that can be perceived and interpreted by the robot, but that are not visible to the unaided human eye. In one example, the robot can utilize a camera with an image sensor to see the indicia. In addition, the indicia can be invisible or substantially invisible to the unaided human eye so that such indicia does not create an unpleasant environment for humans, and remains aesthetically pleasing to humans. For example, the indicia can reflect UV light, while the image sensor of the robot can be capable of detecting such UV light. Thus, the indicia can be perceived by the robot, while not interfering with the aesthetics of the environment.

DETAILED DESCRIPTION

[0016] As used herein, the term "substantially" refers to the complete or nearly complete extent or degree of an action, characteristic, property, state, structure, item, or result. For example, an object that is "substantially" enclosed would mean that the object is either completely enclosed or nearly completely enclosed. The exact allowable degree of deviation from absolute completeness may in some cases depend on the specific context. However, generally speaking the nearness of completion will be so as to have the same overall result as if absolute and total completion were obtained. The use of "substantially" is equally applicable when used in a negative connotation to refer to the complete or near complete lack of an action, characteristic, property, state, structure, item, or result.

[0017] As used herein, "adjacent" refers to the proximity of two structures or elements. Particularly, elements that are identified as being "adjacent" may be either abutting or connected. Such elements may also be near or close to each other without necessarily contacting each other. The exact degree of proximity may in some cases depend on the specific context.

[0018] As used herein, "visible" refers to visible to the unaided human eye and visible with light in the visible spectrum, with a wavelength of approximately 400 to 750 nanometers.

[0019] As used herein, "indicia" refers to both indicia and indicium, unless specified otherwise.

[0020] FIG. 1 depicts a schematic perspective view of a space 10 with exemplary objects 14 marked with machine-readable, but human-imperceptible, indicia 18, therein, defining a machine-navigable environment through which a robot 22 can navigate and interact, in accordance with an example. The robot 22 can be mobile and self-propelled, such as with wheels and a motor, tele-operated, a humanoid robot, etc. as understood by those of skill in the art. The robot 22 can have one or more arms with grippers or fingers, or the like, for grasping or engaging objects. In addition, the robot 22 can have a camera 26 with an image sensor to view or sense objects and the environment. In one aspect, the camera 26 or image sensor can be operable in the UV spectrum, and can be capable or viewing or sensing UV light. In addition, the robot 22 can have a UV light source 30 capable of emitting UV light to illuminate and reflect off of the objects and environment. In one aspect, the UV light source 30 can emit, and the UV camera 26 or image sensor thereof can detect, near UV light (NUV) with a wavelength between 380 to 200 nanometers.

[0021] The objects 14 can vary depending upon the environment or space. In one aspect, the environment or space 10 can be a home or kitchen therein. Thus, the objects can comprise built-in appliances, such as a refrigerator 14b, a range 14c or stove and cooktop, a microwave oven 14d, a dishwasher, a trash compactor, etc. In another aspect, the objects can comprise countertop appliances, such as a blender, a rice-cooker, a vegetable steamer, a crackpot, a pressure cooker, etc. In addition, the objects can have an interface, such as a handle, knob, button, actuator, etc. In addition, the objects can be or can represent a physical boundary or barrier to movement or action of the robot, such as walls 14f, counters or islands 14g, etc. In addition, the objects can be or can represent a physical boundary or barrier subject to damage of the object by the robot, such as an outdoor window 14h, an oven window, a microwave window, a mirror, a glass door of a cabinet, etc. In addition, the object can be or can represent a physical boundary or barrier hazardous to the robot, such as stairs, etc. Furthermore, the object can be or can represent a physical boundary or barrier subject to frequent movement, such as a chair 14i, a door 14j, etc. The objects can have exterior, outfacing surfaces, represented by 34b of the refrigerator 14b, that face outwardly and into the space 10.

[0022] The indicia 18 can be disposed on and carried by the object 14, such as on the exterior, outfacing surface, etc. In one aspect, the indicia 18 can comprise information pertaining to the object, to the interaction of the robot with the object, the environment, etc. For example, the indicia can 18 can identify the object, a location of an interface of the object, indicate the physical boundary or barrier thereof, or can comprise certain interaction information directly thereon, or that comprises database-related or linking information that facilitates access to such interaction information, such as by providing a link, opening or causing to be opened, or other access initiating indicia to a computer database comprising the information, wherein the robot (or an operator) can interact with the computer database to obtain the information designed to assist the robot in how to interact with the object, its environment, etc. This is explained in further detail below.

[0023] The phrase "interaction information," as used herein, can mean information conveyed to the robot facilitated by reading of the marking indicia that can be needed by or useful to the robot to interact with an object in one or more ways, wherein the interaction information facilitates efficient, accurate and useful interaction with the object by the robot. More specifically, the interaction information can comprise information that pertains to a predetermined intended interaction of the robot with an object, and any other type of information readily apparent to those skilled in the art upon reading the present disclosure that could be useful in carrying out predetermined and intended robot interaction with the object in one or more ways. Interaction information can comprise, but is not limited to, information pertaining to the identification of the object (e.g., what the object is, the make or model of the object, etc.), information about the properties of the object (e.g., information about the material makeup, size, shape, configuration, orientation, weight, component parts, surface properties, material properties, etc. of the object), computer readable instructions regarding specific ways the robot can manipulate the object, or a component part or element of the object (e.g., task-based instructions or algorithms relating to the object and how it can be interacted with (e.g., if this task, then these instructions), information pertaining to how the robot can interact with the object (e.g., direction, magnitude, time duration, etc.), relative information as it pertains to the object (e.g., information about the object relative to its environment (e.g., distance from a wall, location where it is to be moved to, etc.) or one or more other objects, information about its location (e.g., coordinates based on GPS or other navigational information), etc.), information about tasks that utilize multiple marking indicia (e.g., sequence instructions that can include identification of the sequence, where to go to find the next sequential marking indicia, etc.). The nature, amount and detail of the interaction information can vary and can depend upon a variety of things, such as what the robot already knows, how the robot is operated, such as whether the robot is intended to function autonomously, semi-autonomously, or under complete or partial control via a computer program or human control, etc. The interaction information described and identified herein is not intended to be limiting in any way. Indeed, those skilled in the art will recognize other interaction information that can be made available to a robot or robotic device or a system in which a robot or robotic device is operated (e.g., such as to an operator, or to a system operating in or with a virtual environment) via the indicia. It is contemplated that such interaction information can be any that may be needed or desired for a robot, robotic device, etc. to interact with any given object, and as such, not all possible scenarios can be identified and discussed herein. In one aspect, the indicia can represent machine language that is recognizable and understood by the robot or computer processor thereof. In another aspect, the indicia can represent codes or numbers that can be compared to the look-up table or library. Thus, the look-up table or library can comprise codes or numbers associated with interaction information. In one aspect, the look-up table or library can be internal to the robot. In another aspect, the look-up table or library can be external to the robot, and with which the robot can communicate during operation (e.g., a computer network or cloud-based system accessible by the robot).

[0024] In another example, the environment or space can be an office. Thus, the objects can comprise walls, cubicles, desks, copiers, etc. In another example, the environment or space can be an industrial or manufacturing warehouse, plant, shipyard, etc. Moreover, the objects can comprise products, machinery, etc. Essentially, it is contemplated herein that the labels or indicia can be utilized in any conceivable environment in which a robot could operate, and that they can be utilized on any objects or items or structural elements within such environments, and that they can comprise any type of information of or pertaining to the environment, the objects, the interaction of these with one another, etc.

[0025] FIG. 2a depicts a schematic front view of a marker in the form of a label comprising indicia 18 configured to be applied to an object in accordance with an example. As such, the marker can comprise a physical medium or carrier operable to support or carry thereon the marking indicia. For sake of clarity, the label or indicia 18 is shown as being visible, although such indicia is configured to be invisible to the unaided human eye (human-imperceptible) and is substantially transparent to visible light. FIG. 2b depicts a schematic front view of the label and indicia 18 supported thereon, but illustrated in such a way to indicate invisibility to the unaided human eye, and substantial transparency to visible light. The indicia 18 is machine-readable. Thus, the indicia 18 can include symbols or patterns 38 or both that can be perceived by the camera 26 or image sensor (or other sensor or detector) thereof, and recognized by the robot 22. In one aspect, the indicia 18 can be or can comprise a bar code that is linear or one-dimensional with a series of parallel lines with a variable thickness or spacing to provide a unique code, or a two-dimensional MaxiCode or QR code with a pattern of rectangular or square dots to provide a unique code, etc. In another aspect, the indicia can include symbols, letters, and even arrows 42 or the like. In another aspect, the indicia 18 can be passive, as opposed to an active identifier, such as a radio frequency identification (RFID) device or tag.

[0026] In addition, the indicia 18 can be human-imperceptible or invisible or substantially invisible to the unaided human eye, as indicated in FIG. 2b. In one aspect, the indicia 18 can be invisible in the visible spectrum, and thus does not substantially reflect visible light. In one aspect, the indicia 18 can be transparent or translucent, so that visible light substantially passes therethrough. In one aspect, the indicia can be ultraviolet and can reflect ultraviolet light, either from the surrounding light sources, such as the sun, or as illuminated from the UV light source 30 of the robot 22. Thus, the indicia 18 can be visible in the UV spectrum. Therefore, the indicia 18 can be applied to an object and appear to the human eye as translucent or clear, or transparent to visible light, and thus can be substantially imperceptible to the unaided human eye to maintain the aesthetic appearance of the object. But the indicia 18 can be visible, such as in the UV spectrum, to the camera 16 of the robot 22.

[0027] The indicia can be visible outside of the visual spectrum. In one aspect, the indicia can fluoresce or be visible with infrared or IR (near, mid and/or far). For example, the object can be marked with indicia that is IR reflective and visible in the IR spectrum; and the robot can have a camera with an image sensor operable in the IR spectrum and an IR light source. In another aspect, the indicia can be radio frequencies or sound in wavelengths outside of the human audible ranges. For example, the object can be marked with indicia that is RF reflective; and the robot can have a sensor operable in the RF spectrum. As another example, the robot can touch the object with an ultrasonic emitter and receive a characteristic return signal, or emit a sound that causes an internal mechanism to resonate at a given frequency providing identification. Thus, the object can be marked with indicia that emits ultrasonic wavelengths, and the robot can have a sensor operable in the ultrasonic spectrum.

[0028] Referring again to FIG. 1, the object 14, such as a window of the range 14c, or a window of the microwave 14d, or the outside window 14h, can have a transparent portion. The indicia 18 can be disposed on the transparent portion, and can be transparent, as described above, so that the indicia does not interfere with the operation of the object or transparent portion thereof. Thus, the indicia 18 applied to the window 14h or transparent portion of the range 14c or microwave 14d does not impede viewing through the window or transparent portion.

[0029] FIG. 3 depicts a schematic cross-sectional side view of the label or indicia 18 for marking an object 14. In one aspect, the indicia 18 can be disposed over a finished surface 46 of the object 14. In one aspect, the indicia 18 can be or can comprise a removable label or a removable applique 50 removably disposed on or applied to an outermost finished surface 46 of the object 14. For example the indicia 18 can be printed on the label or the applique 50. The applique 50 can adhere to the finished surface 46 of the object, such as with a releasable or permanent adhesive. Thus, the indicia 18 can be applied to the objects 14 in an existing space, and after manufacture and transportation of the objects to the space. The indicia 18 or labels 50 can be applied to an existing space 10 and the objects therein.

[0030] FIG. 4 depicts a schematic cross-sectional side view of the label or indicia 18 for marking an object 14. In one aspect, the indicia 18 can be disposed under an outermost finished surface 46 of the object 14. For example, the indicia 18 can be disposed directly on the object, such as by printing or painting, and then the outermost finished surface 46, such as a clear coat or the like, can be applied over the indicia 18. Thus, the indicia 18 can be or can comprise a laminate with an outer layer 46 disposed over the indicia 18. In one aspect, the outer layer 46 can be transparent or translucent to visible light, such as a clear coat. In another aspect, the outer layer 46 can be opaque to visible light. In another aspect, the outer layer 46 can be transparent or translucent to UV light. Thus, the indicia 18 can be visible to the robot 22 or camera 26 or image sensor thereof, even when covered by the finished outer layer 46 of the object. Thus, the aesthetic appearance of the object can be maintained. In addition, the indicia 18 can be applied to the objects during manufacture and at the place of manufacture.

[0031] In another example, the indicia can be applied directly to the object without the use of a carrier medium (e.g., such as a label). In one example, the indicia can be applied by printing onto the object using a suitable ink. Many different types of inks are available, such as those that fluoresce at specific wavelengths.

[0032] In another aspect, the indicia can be applied or painted on during production of the object. In one aspect, the entire object can be painted with fluorescent paint or covering, and the indicia can be formed by a part that is unpainted or uncovered. In another aspect, the indicia or object can comprise paint that becomes capable fluorescence when another chemical is applied to the paint.

[0033] In another aspect, fluorescent markers can be used. For example, the fluorescent markers can include fluorescently doped silicas and sol-gels, hydrophilic polymers (hydrogels), hydrophobic organic polymers, semiconducting polymer dots, quantum dots, carbon dots, other carbonaceous nanomaterials, upconversion NPs, noble metal NPs (mainly gold and silver), various other nanomaterials, and dendrimers.

[0034] In another aspect, the indicia can be part of an attached piece. For example, the indicia can be on a handle or trim that is mechanically fastened or chemically adhered to the object. As another example, the indicia can be molded into a polymer of the handle, trim or other part.

[0035] FIG. 5 depicts a schematic front view of an object, namely a refrigerator 14b, in accordance with an example. The object 14b can have an interface, such as one or more handles 54 or one or more buttons, knobs or actuators 58. In addition, the object 14b can have multiple labels or indicia 18. In one aspect, the indicia 18 can identify the object 14b as a refrigerator. In another aspect, other indicia 62 and 64 can identify a location of an interface, or the handle 54 or the actuator 58, respectively. Thus, the object 14b can have multiple different indicia 18, 62 and 64 on different parts of a single object, identifying the different parts 54 and 58 of the single object and information on how to interact with them. As described above, the indicia 18 can include an arrow 42 (FIGS. 2a and 2b) to indicate location of the interface. The indicia 62 and 64 can be disposed or located adjacent to the interface, or handle 54 and actuator 58, of the object so that the arrow 42 is proximal the interface. In addition, the indicia can include other information, or can facilitate access to other information (interaction information), such as a direction of operation (e.g. outwardly, inwardly, downward, to the right or to the left) of the interface or handle, or magnitude or limit of force required to operate the interface. For example, the indicia 62 in FIG. 5 can indicate, or facilitate access to information that indicates, that the object 14b is the refrigerator, identify the location of the handle 54, that a force of 10-20 lbs. is required to open the door, that the force applied should not exceed 30 lbs., that the door opens to the right or the maximum rotation of the door is not to exceed 120 degrees, or both. Such information can reduce the calculation time and computational power required by the robot to complete a task, and can reduce the need to learn certain aspects useful or required for interacting with the object(s).

[0036] The indicia 18, 62 and 64, can be located or disposed inside a perimeter 68 or lateral perimeter of the object 14c. In another aspect, the indicia 72 can be disposed at the perimeter 68 or lateral perimeter of the object 14c to help identify the boundaries of the object. The indicia 72 can substantially circumscribe the perimeter of the object.

[0037] FIG. 6 depicts a schematic front view of an object, namely a doorway 78, with an interface, namely the opening 80 therethrough, in accordance with an example. The indicia 82 can be disposed on a wall 14f to identify the wall or the doorway 78, and to indicate the interface or opening 80 thereof and other pertinent information. The indicia 82 can be located adjacent to or proximal to the opening 80 at a perimeter thereof.

[0038] Similarly, FIG. 7 depicts a schematic front view of an object, namely a door 86, with an interface, namely a doorknob 88, in accordance with an example. The indicia 90 can be disposed on the door 86 to identify the door, and to indicate the interface or the doorknob 88 thereof and other pertinent information. To do so, the indicia 90 can be located adjacent to or proximal to the interface or the doorknob 90, respectively. Again, the indicia 90 can also indicate, or can facilitate access to information that can indicate, the direction of operation of the object or door (e.g. inwardly, outwardly, to the left, to the right), the direction of operation of the interface or doorknob (e.g. clockwise or counterclockwise), the force required to open the door or the doorknob, etc.

[0039] FIG. 8 depicts a schematic view of a look-up table 100 or library of information corresponding to the indicia 18, with the look-up table 100 or library of information stored in a computer database, accessible by the robot via one or more ways as is known in the art. As indicated above, the indicia 18 can identify or facilitate identification of the object, a location of an interface of the object, indicate or facilitate indication of the physical boundary or barrier thereof, and can comprise or facilitate access to information pertaining to robot interaction (or interaction information or instructions) with the object.

[0040] As indicated above, in one aspect, the indicia 18 can comprise multiple indicia 18 on a single object, In another aspect, the indicia 18 can comprise a series of indicia on a series of objects or a single object. Thus, the indicia 18 can comprise a series identifier to indicate its position in the series. The series of indicia can correspond to a series of objects, or aspects of one or more objects, with which the robot must interact in series or in sequence. For example, a first indicia can be associated with the refrigerator door, and can comprise indicia indicating that it is the first in a series of indicia, while a second indicia can be associated with a drawer in the refrigerator, and can comprise indicia indicating that it is the second in the series of indicia, while a third indicia can be associated with a container in the drawer, and can comprise indicia indicating that it is the third in the series of indicia. Thus, if the robot opens the refrigerator door, and looks through the drawer to the container, and thus sees the third indicia, the robot will know that there has been a skip in the sequence and know that it must search for the second indicia. Such a sequence in the indicia can help the robot perform the correct tasks, and can also provide safety to the objects and the robot.

[0041] In another aspect, the indicia can provide further instructions. For example, the indicia can represent instructions to instruct the robot to turn on a sensor that will be needed to interact with the object.

[0042] A method for creating a machine navigable environment for a robot 22, as described above, comprises identifying a desired space 10 in which the robot 22 will operate (e.g. a home or kitchen); selecting one or more objects 14 (e.g. a refrigerator 14d) that are or will be located in the space 10; marking the one or more objects 14 with indicia 18 being machine-readable and human-imperceptible; and introducing the robot 22 into the space 10. The robot 22 is capable of movement within the space 10, and the robot has a sensor 26 capable of perceiving the indicia 18 and processing the information contained therein that assist the robot in performing a task. Indeed, one or more desired or probable tasks to be given to the robot 22 can be identified, such as to obtain a beverage from the refrigerator 14b, etc. In addition, one or more objects 14b corresponding to the desired or probable task can be identified. Furthermore, instructions can be given to the robot 22 involving at least one of the one or more objects 14b.

[0043] Reference was made to the examples illustrated in the drawings and specific language was used herein to describe the same. It will nevertheless be understood that no limitation of the scope of the technology is thereby intended. Alterations and further modifications of the features illustrated herein and additional applications of the examples as illustrated herein are to be considered within the scope of the description.

[0044] Although the disclosure may not expressly disclose that some embodiments or features described herein may be combined with other embodiments or features described herein, this disclosure should be read to describe any such combinations that would be practicable by one of ordinary skill in the art. The user of "or" in this disclosure should be understood to mean non-exclusive or, i.e., "and/or," unless otherwise indicated herein.

[0045] Furthermore, the described features, structures, or characteristics may be combined in any suitable manner in one or more examples. In the preceding description, numerous specific details were provided, such as examples of various configurations to provide a thorough understanding of examples of the described technology. It will be recognized, however, that the technology may be practiced without one or more of the specific details, or with other methods, components, devices, etc. In other instances, well-known structures or operations are not shown or described in detail to avoid obscuring aspects of the technology.

[0046] Although the subject matter has been described in language specific to structural features and/or operations, it is to be understood that the subject matter defined in the appended claims is not necessarily limited to the specific features and operations described above. Rather, the specific features and acts described above are disclosed as example forms of implementing the claims. Numerous modifications and alternative arrangements may be devised without departing from the spirit and scope of the described technology.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.