Methods And System For Managing And Displaying Virtual Content In A Mixed Reality System

TATE-GANS; June ; et al.

U.S. patent application number 16/224719 was filed with the patent office on 2019-06-27 for methods and system for managing and displaying virtual content in a mixed reality system. This patent application is currently assigned to MAGIC LEAP, INC.. The applicant listed for this patent is MAGIC LEAP, INC.. Invention is credited to David William HOVER, Mark Ashley RUSHTON, June TATE-GANS, Eric Norman YISKIS.

| Application Number | 20190197785 16/224719 |

| Document ID | / |

| Family ID | 66950554 |

| Filed Date | 2019-06-27 |

View All Diagrams

| United States Patent Application | 20190197785 |

| Kind Code | A1 |

| TATE-GANS; June ; et al. | June 27, 2019 |

METHODS AND SYSTEM FOR MANAGING AND DISPLAYING VIRTUAL CONTENT IN A MIXED REALITY SYSTEM

Abstract

Disclosed is an approach for managing and displaying virtual content in a mixed reality environment on a one-on-one basis independently by each application, each virtual content is rendered by its respective application into a bounded volume referred herein as a "Prism." Each Prism may have characteristics and properties that allow a universe application to manage and display the Prism in the mixed reality environment such that the universe application may manage the placement and display of the virtual content in the mixed reality environment by managing the Prism itself.

| Inventors: | TATE-GANS; June; (Tamarac, FL) ; YISKIS; Eric Norman; (Boca Raton, FL) ; RUSHTON; Mark Ashley; (Fort Lauderdale, FL) ; HOVER; David William; (Tamarac, FL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | MAGIC LEAP, INC. Plantation FL |

||||||||||

| Family ID: | 66950554 | ||||||||||

| Appl. No.: | 16/224719 | ||||||||||

| Filed: | December 18, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62610101 | Dec 22, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 19/006 20130101; G06T 2210/12 20130101 |

| International Class: | G06T 19/00 20060101 G06T019/00 |

Claims

1. A method for displaying virtual content in a 3D spatial environment, the method comprising: receiving, from an application, a request to display a virtual content in a 3D spatial environment; creating a prism, wherein the prism is a volume of space configured to bound the virtual content inside a boundary of the prism; receiving the virtual content from the application; rendering the virtual content inside the boundaries of the prism; and associating the prism to an object in the 3D spatial environment.

2. The method of claim 1, wherein boundaries of the prism are not displayed.

3. The method of claim 1, wherein the 3D spatial environment is a physical real world environment of a user.

4. The method of claim 1, wherein the prism is created automatically having a set of functionalities.

5. The method of claim 4, wherein the set of functionalities comprises an association between the prism to the object in the 3D spatial environment.

6. The method of claim 4, wherein the set of functionalities comprises one or more of a minimum size allowed for the prism, maximum size allowed for the prism, and an aspect ratio for resizing the prism.

7. The method of claim 1, wherein the application renders a first virtual content into a first prism and a second virtual content into a second prism, the first prism and the second prism being different prisms.

8. The method of claim 7, wherein the prism does not overlap with other prisms in the 3D spatial environment.

9. The method of claim 1, wherein the prism is placed in context to an object in the 3D spatial environment.

10. The method of claim 9, wherein the object is a user of an augmented reality device.

11. The method of claim 1, wherein the prism comprises: one or more universal features; one or more application-specific features; and wherein the one or more universal features and the one or more application-specific features are selected from a list of pre-approved options.

12. The method of claim 11, wherein the one or more universal features ensure different applications interact in a consistent manner with one another.

13. A display system for displaying virtual content in a 3D spatial environment, the display system comprising: an augmented reality head-mounted display system; and one or more modules for processing data, wherein the one or more modules are stored in one or more memory, the one or more modules configured to perform: receiving, from an application, a request to display a virtual content in a 3D spatial environment, creating a prism, wherein the prism is a volume of space configured to bound the virtual content inside a boundary of the prism, receiving the virtual content from the application, rendering the virtual content inside the boundaries of the prism, and associating the prism to an object in the 3D spatial environment.

14. The display system of claim 13, wherein boundaries of the prism are not displayed.

15. The display system of claim 13, wherein the 3D spatial environment is a physical real world environment of a user.

16. The display system of claim 13, wherein the prism is created automatically having a set of functionalities.

17. The display system of claim 16, wherein the set of functionalities comprises an association between the prism to the object in the 3D spatial environment.

18. The display system of claim 16, wherein the set of functionalities comprises one or more of a minimum size allowed for the prism, maximum size allowed for the prism, and an aspect ratio for resizing the prism.

19. The display system of claim 13, wherein the application renders a first virtual content into a first prism and a second virtual content into a second prism, the first prism and the second prism being different prisms.

20. The display system of claim 19, wherein the prism does not overlap with other prisms in the 3D spatial environment.

21. The display system of claim 13, wherein the prism is placed in context to an object in the 3D spatial environment.

22. The display system of claim 21, wherein the object is a user of an augmented reality device.

23. The display system of claim 13, wherein the prism comprises: one or more universal features; one or more application-specific features; and wherein the one or more universal features and the one or more application-specific features are selected from a list of pre-approved options.

24. The display system of claim 23, wherein the one or more universal features ensure different applications interact in a consistent manner with one another.

25. A method for starting a mixed reality system, the method comprising: determining a current location of a user; retrieving one or more prisms previously deployed at the current location; restoring the one or more prisms at the current location of the user; and displaying the one or more prisms restored at the current location of the user.

26. A method for managing application states of virtual content in a mixed reality system, the method comprising: segmenting a 3D volume into a volumetric grid; determining a first location of a user within the volumetric grid; determining a second location of an application within the 3D volume; calculating a distance between the user and the application within the 3D volume; and modifying a state of the application based at least in part on the distance calculated between the user and the application.

27. A method for opening and placing an application in an augmented reality environment, the method comprising: receiving a first user input from a user indicating a request for new content; launching an application to generate the content; creating a mini display volume of a 3D display volume managing unit, wherein a page preview is displayed in the mini display volume, wherein the 3D display volume managing unit is created simultaneously with the launching of the application; receiving a second user input indicating a movement of the mini display volume; receiving a third user input indicating a placement of the mini display volume at a location in the augmented reality environment; and expanding the 3D display volume managing unit in place of the mini display volume at the location, the 3D display volume managing unit displaying the content fully loaded within the 3D display volume managing unit.

28. A method for managing virtual content, the method comprising: receiving content from a content generating application; displaying the content in a 3D spatial environment by a universe application; and constantly managing the display of the content in the 3D spatial environment by the universe application.

29. A method comprising: accessing a scene graph for a scene, wherein the scene graph comprises one or more transform trees, each tree comprising a plurality of nodes; adding a tag to one or more nodes from a plurality of nodes within the one or more transform trees, wherein the one or more nodes tagged form a transform group, wherein the one or more nodes tagged comprise a first tagged node and a second tagged node; and moving the first tagged node, wherein moving the first tagged node causes the second tagged node to move.

30. A method of displaying virtual content in a 3D shared space, the method comprising: generating, by a first application, virtual content in the 3D shared space; and displaying, by a second application, the virtual content generated by the first application, the first application and the second application being different applications.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] The present application claims benefit under 35 U.S.C. .sctn. 119 to U.S. Provisional Patent Application Ser. No. 62/610,101 filed on Dec. 22, 2017, entitled "METHODS AND SYSTEM FOR MANAGING AND DISPLAYING VIRTUAL CONTENT IN A MIXED REALITY SYSTEM," which is hereby incorporated by reference into the present application in its entirety.

[0002] The present disclosure is related to co-owned U.S. patent application Ser. No. 14/205,126, filed on Mar. 11, 2014, entitled "SYSTEM AND METHOD FOR AUGMENTED AND VIRTUAL REALITY", which is hereby incorporated by reference in its entirety.

FIELD OF INVENTION

[0003] The present disclosure generally relates to systems and methods configured to facilitate interactive virtual or augmented reality environments for one or more users.

BACKGROUND

[0004] Modern computing and display technologies have facilitated the development of systems for so-called "virtual reality" (VR), "augmented reality" (AR) experiences, and/or "mixed reality" experiences (hereinafter collectively referred to as "mixed reality" and/or "MR"), where digitally reproduced images or portions thereof are presented to a user in a manner where they seem to be, or may be perceived as, real. A VR scenario typically involves presentation of digital or virtual image information without transparency to other actual real-world visual input, whereas an AR or MR scenario typically involves presentation of digital or virtual image information as an augmentation to visualization of the real world around the user such that the digital or virtual image (e.g., virtual content) may appear to be a part of the real world. However, MR may integrate the virtual content in a contextually meaningful way, whereas AR may not.

[0005] Therefore, there is a need for an approach to manage and display virtual content in a mixed reality environment.

SUMMARY

[0006] In accordance with some embodiments, instead of managing and displaying virtual content in a mixed reality environment on a one-at-a-time basis independently by each application, each virtual content is rendered by its respective application into a bounded volume, which hereinafter may be referred to as a "Prism." Each Prism may have characteristics and properties that allow an application, sometimes called a universe application, to manage and display the Prism in the mixed reality environment such that the universe application may manage the placement and display of the virtual content in the mixed reality environment by managing the Prism itself.

[0007] One embodiment is directed to a method for displaying virtual content in a 3D spatial environment, the method comprising 1) receiving, from an application, a request to display a virtual content in a 3D spatial environment, 2) creating a Prism for managing the display of the virtual content, wherein the Prism is a cubic and/or rectangular volume of space configured to bound the virtual content inside a boundary of the Prism, 3) receiving the virtual content from the application, 4) rendering the virtual content inside the boundary of the Prism, associating the Prism to an object in the 3D spatial environment based at least in part on a user input, and 6) anchoring the Prism into the 3D spatial environment.

[0008] In one or more embodiments, borders of the boundary of the Prism are not displayed. The 3D spatial environment may be a physical real world environment of a user. The Prism may be created automatically having a set of functionalities. The set of functionalities may comprise a minimum/maximum size allowed for the Prism, and/or an aspect ratio for resizing the Prism. The set of functionalities may comprise an association between the Prism to the object in the 3D spatial environment. The application may render additional virtual content into additional Prisms, wherein each virtual content may be rendered into a separate Prism.

[0009] In one or more embodiments, the Prism does not overlap with other Prisms in the 3D spatial environment. The Prism may comprise one or more universal features to ensure different applications interact appropriately with one another, and/or one or more application-specific features, wherein the one or more universal features and the one or more application-specific features are selected from a list of pre-approved options.

[0010] Another embodiment is directed to a display system for displaying virtual content in a 3D spatial environment, the display system may include an augmented reality head-mounted display system, and one or more modules for processing data, wherein the one or more modules are stored in one or more memory, the one or more modules configured to perform 1) receiving, from an application, a request to display a virtual content in a 3D spatial environment, 2) creating a prism, wherein the prism is a volume of space configured to bound the virtual content inside a boundary of the prism, 3) receiving the virtual content from the application, 4) rendering the virtual content inside the boundaries of the prism, and 5) associating the prism to an object in the 3D spatial environment.

[0011] In one or more embodiments, borders of the boundary of the Prism are not displayed. The 3D spatial environment may be a physical real world environment of a user. The Prism may be created automatically having a set of functionalities. The set of functionalities may comprise a minimum/maximum size allowed for the Prism, and/or an aspect ratio for resizing the Prism. The set of functionalities may comprise an association between the Prism to the object in the 3D spatial environment. The application may render additional virtual content into additional Prisms, wherein each virtual content may be rendered into a separate Prism.

[0012] In one or more embodiments, the Prism does not overlap with other Prisms in the 3D spatial environment. The Prism may comprise one or more universal features to ensure different applications interact appropriately with one another, and/or one or more application-specific features, wherein the one or more universal features and the one or more application-specific features are selected from a list of pre-approved options.

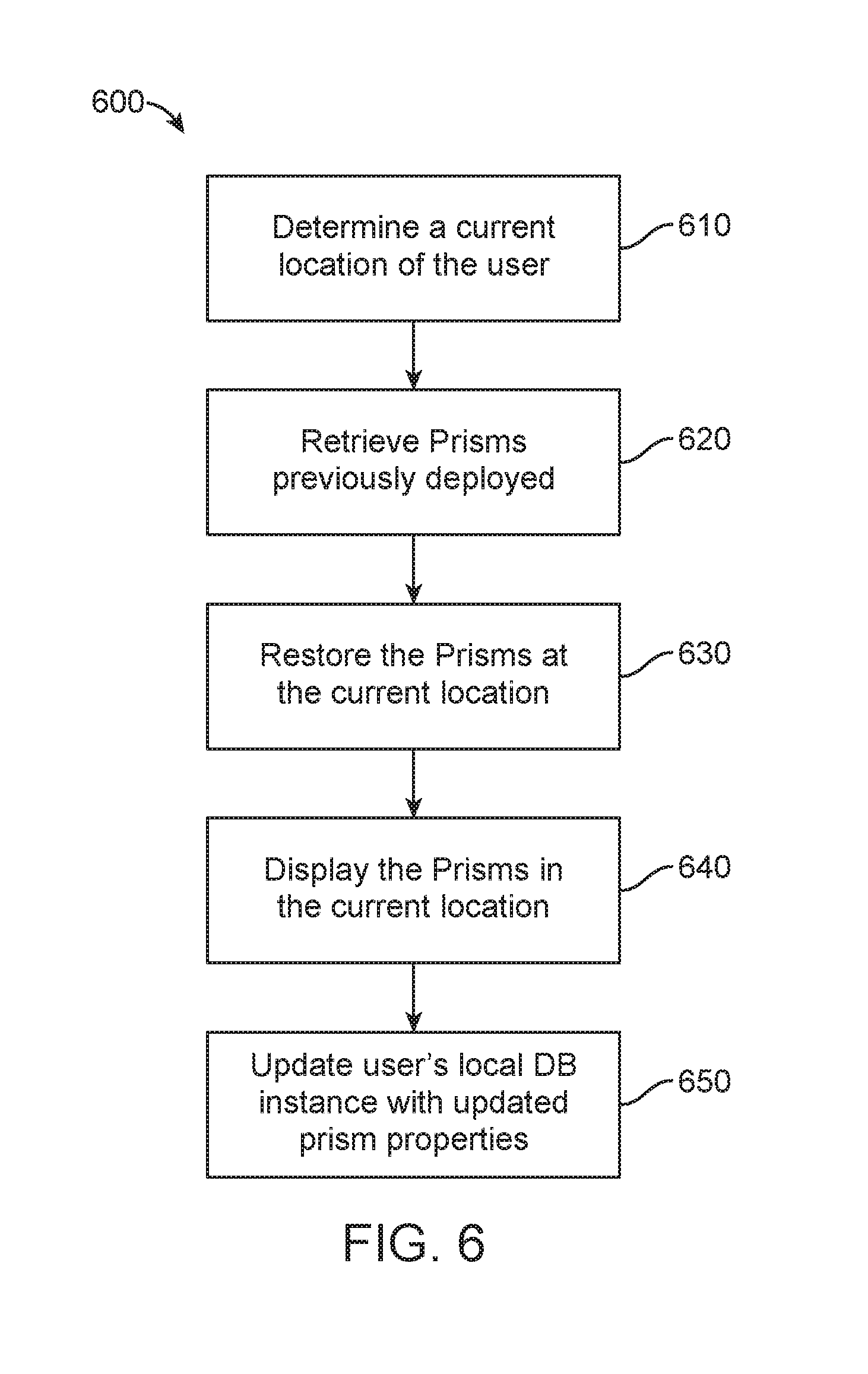

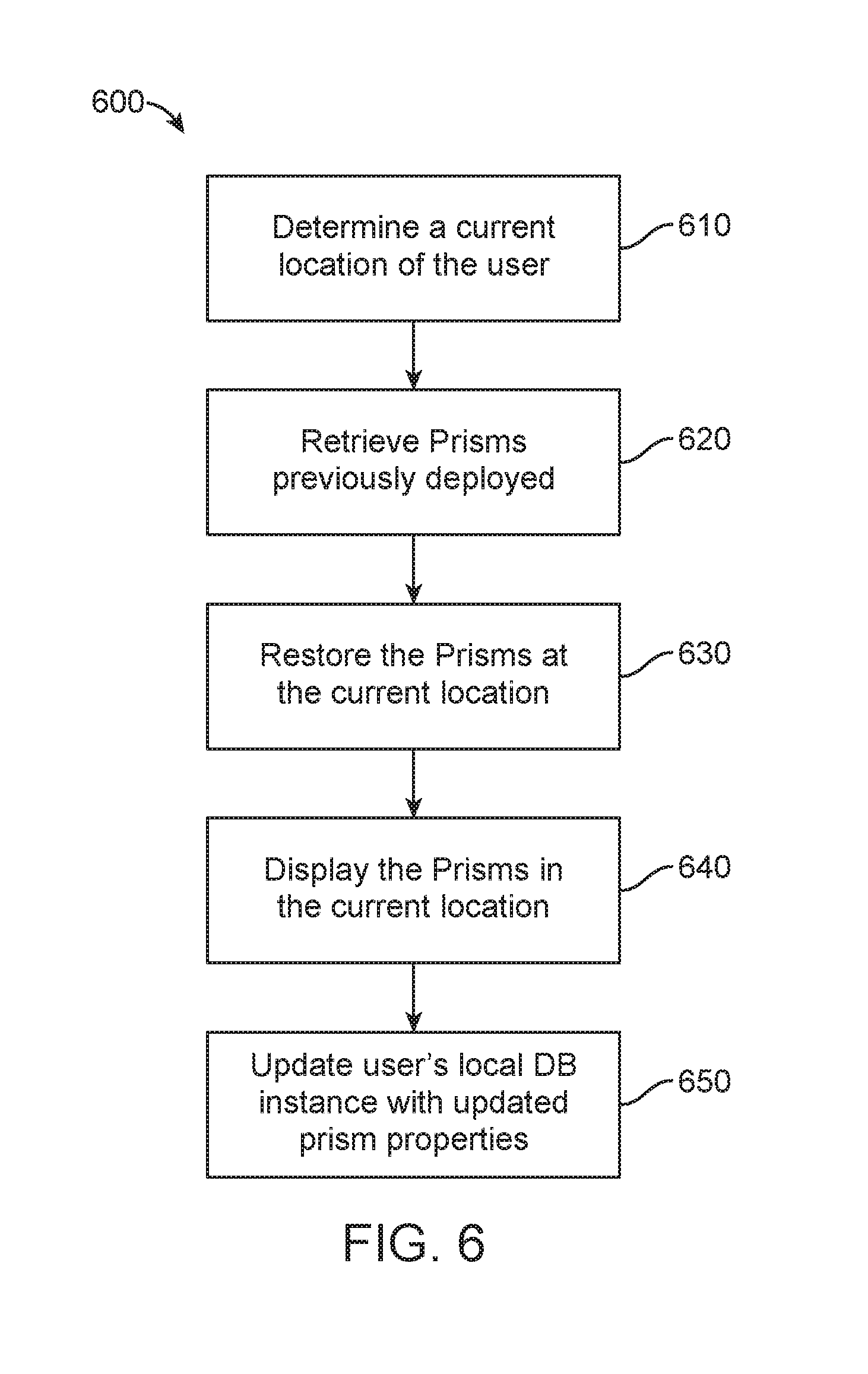

[0013] Another embodiment is directed to a method for starting a mixed reality system, the method may include determining a current location of a user, retrieving one or more Prisms previously deployed at the current location, restoring the one or more Prisms at the current location of the user, and displaying the one or more Prisms restored at the current location of the user.

[0014] In one or more embodiments, a Prism is a cubic and/or rectangular volume of space that virtual content from an application is displayed into, wherein the may render into more than one Prism. In other words, in some embodiments, a single application may correspond to more than one Prism. In some embodiments, a single application corresponds to a single prism. A Prism represents a sub-tree of a multi-application scene graph for the current location of the user. Retrieving the one or more Prisms previously deployed at the current location of the user may comprise retrieving instance data for the one or more Prisms, from an external database for example, and reconstructing a local Prism database with the instance data for the one or more Prisms, wherein the instance data for each Prism include a data structure of Prism properties defining the Prism, the Prism properties comprising at least one of a location, an orientation, an extent width, an extent height, an extent depth, an anchor type, and/or an anchor position, wherein the instance data for each Prism include key value pairs of application specific properties comprising state information of virtual content previously rendered into the Prism by an application. In some embodiments, data is stored locally and an external database is not needed.

[0015] In one or more embodiments, restoring the one or more Prisms comprises launching respective applications corresponding to the one or more Prisms previously deployed at the current location, creating one or more new Prisms corresponding to the one or more Prisms previously deployed, and rendering respective virtual content into the one or more new Prisms.

[0016] In one or more embodiments, further comprising updating the local Prism database of the user with updated Prism instance data as the user interacts with the one or more Prisms, and synchronizing the local Prism database with the external database.

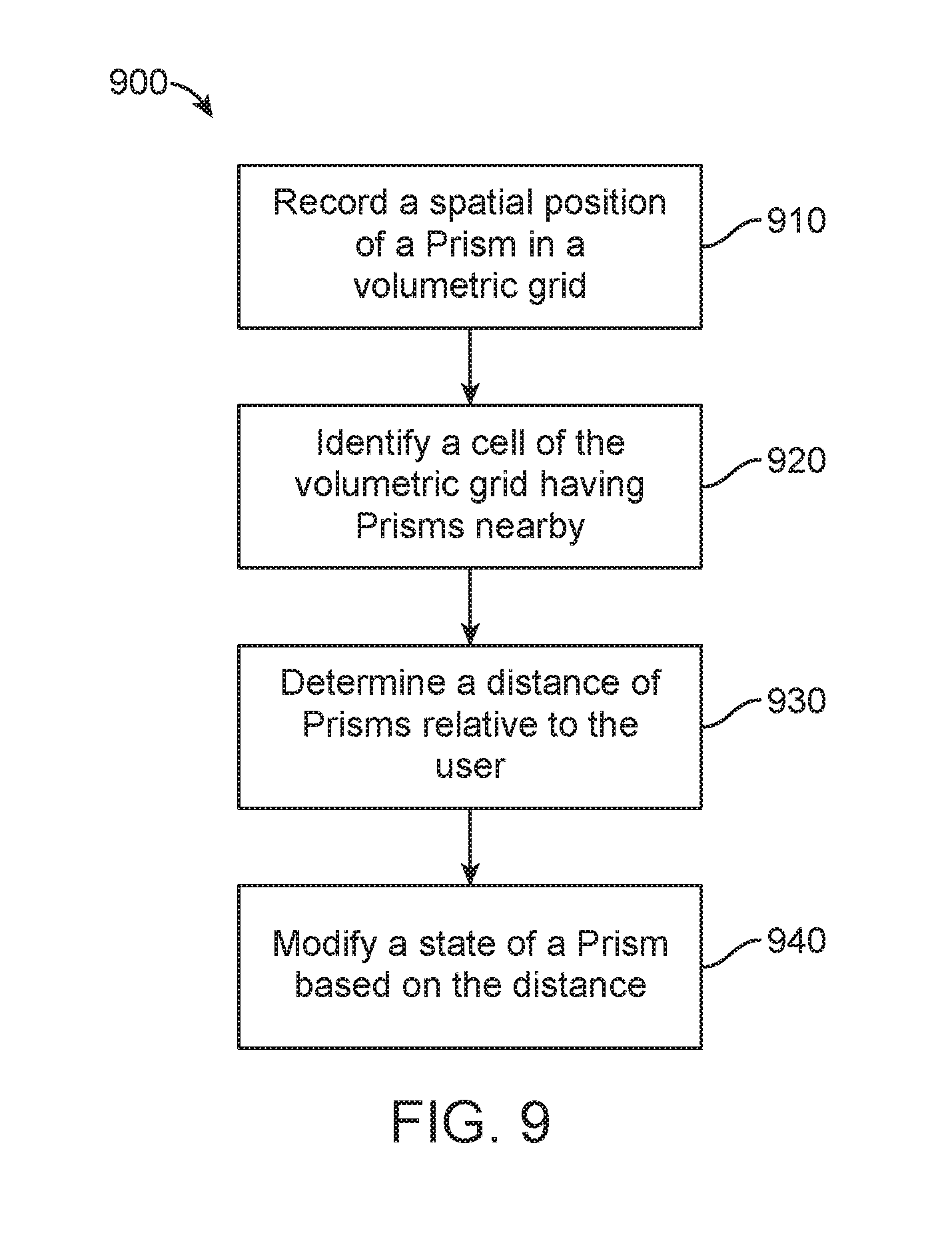

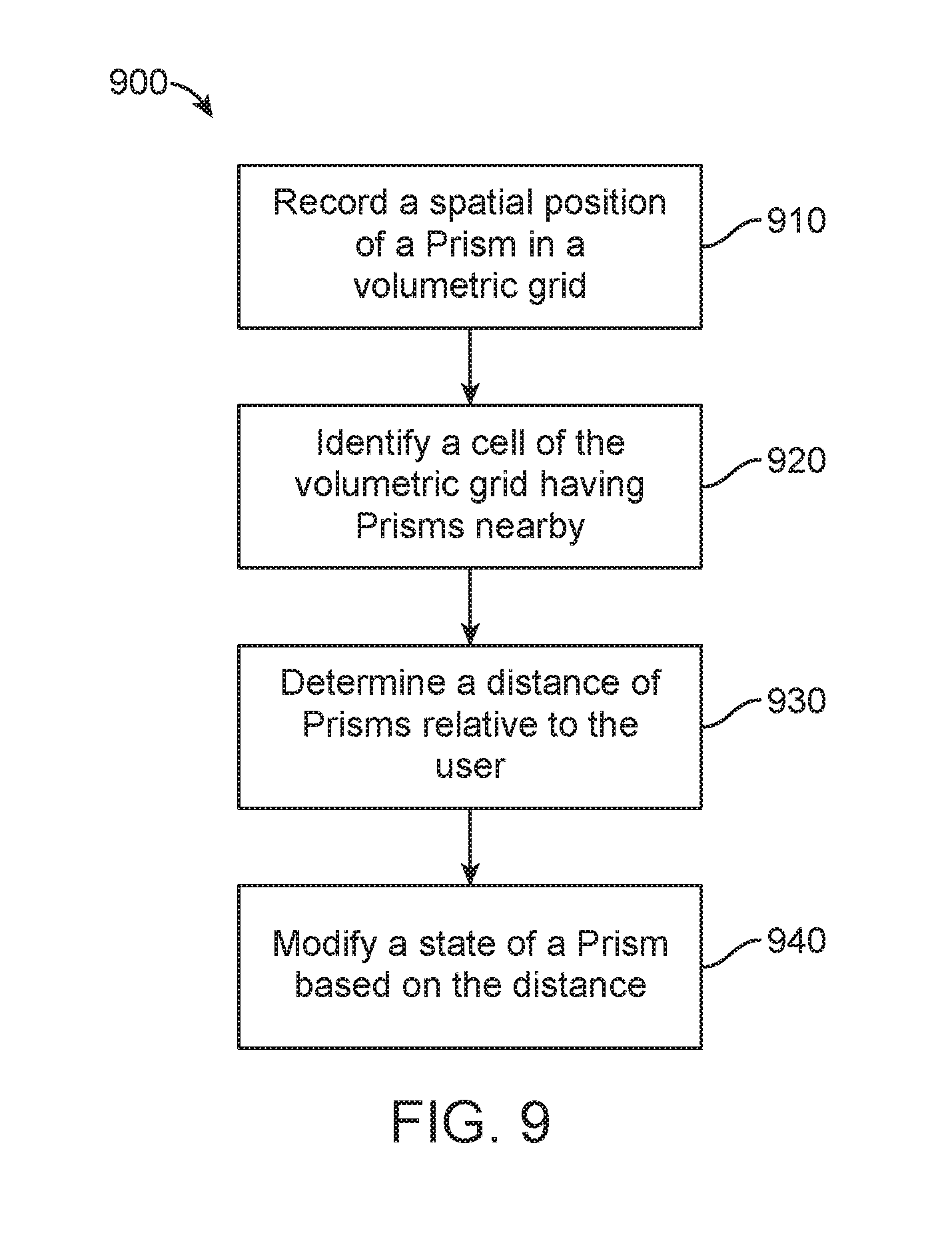

[0017] Some embodiments are directed to a method for managing application states of virtual content in a mixed reality system, the method may include segmenting a 3D volume into a volumetric grid. The method may also include determining a first location of a user within the volumetric grid. The method may further include determining a second location of an application within the 3D volume. The method may also include calculating a distance between the user and the application within the 3D volume. The method may additionally include modifying a state of the application based at least in part on the distance calculated between the user and the application.

[0018] Another embodiment is directed to a method for managing application states of virtual content in a mixed reality system, the method comprising recording a spatial position of one or more applications in a volumetric grid, the one or more applications providing content displayed inside one or more respective Prisms, the volumetric grid corresponding to a coarse representation of a physical environment in an x, y, and z axis. Identifying a cell of the volumetric grid comprising applications located within the cell, wherein a width of the cell is equal to or larger than a radius of an active zone. Determining a distance of known positions of each application within the cell and neighboring cells when a user using a mixed reality device moves within the cell. And modifying a state of an application based at least in part on a distance between the mixed reality device and each application within the cell and neighboring cells.

[0019] In one or more embodiments, the radius of the active zone defines a circular/spherical area around the user using the mixed reality device, wherein the user may be at the center of the circle/sphere. In some embodiments, modifying the state of the application may be based, at least in part, on whether the application is occluded by another application. In some embodiments, modifying the state of the application is based, at least in part, on a head pose of the user. A head pose of the user is a measurement of a location and/or orientation of a user's head. The head pose can be used to render a scene to match a user's dynamically changing head location and orientation to provide an increased sense of immersion in a virtual/augmented/mixed space. In some embodiments, the head pose may be determined, at least in part, by an inertial measurement unit mounted on the head mounted display system, or the user's head, although other suitable methods may also be used. The distance of known positions of each application within the cell may be determined only for the cell the user using the mixed reality device is in and the neighboring cells.

[0020] In one or more embodiments, there may be a buffer zone around an exterior of the active zone, wherein the buffer zone prevents intermittent or rapid changes to the state of the applications.

[0021] Another embodiment is directed to a method for launching an application from a launcher menu for displaying virtual content in a mixed reality system, the method comprising receiving a request for starting an application. Creating, by a universe application, a Prism for displaying and managing virtual content, wherein the Prism is a volumetric display space having boundaries for the application to render the virtual content into. Starting, by the Prism, the application through a lifecycle service. Determining, by the Prism, a unique identifier (UID) of the application through the package manager service. Registering, by the application, a listener with the universe application. Determining, by the universe application, the UID of the application. Associating, by the universe application, the Prism to the listener. Assigning, by the universe application, the Prism to the application using the listener of the application. And placing the Prism in a sub-set of a 3D displayable space of the mixed reality system.

[0022] Some embodiments are directed to a method for opening and placing an application in an augmented reality environment, the method may include receiving a first user input from a user indicating an interest in content. The method may also include launching an application to generate the content. The method may further include creating a mini display volume of a 3D display volume managing unit, wherein a page preview is displayed in the mini display volume, wherein the mini display volume managing unit is created simultaneously with the launching of the application. The method may further include receiving a second user input indicating a movement of the mini display volume. The method may also include receiving a third user input indicating a placement of the mini display volume at a location in the augmented reality environment, and expanding the 3D display volume managing unit in place of the mini display volume at the location, the 3D display volume managing unit displaying the content fully loaded within the 3D display volume managing unit.

[0023] In one or more embodiments, the first user input may be a cursor movement over a link on a web page, wherein the second user input is a selection of the link, and movement of the mini display volume. The mini display volume may be an initial default size of the 3D display volume managing unit. The content may be loaded into the mini display volume while the user is moving and placing the mini display volume. The location may be fixed to an object in the augmented reality environment, wherein the object is the user.

[0024] Some embodiments may be directed to a method for managing virtual content, the method may include receiving content from a content generating application. The method may also include displaying the content in a 3D spatial environment by a universe application. The method may further include constantly managing the display of the content in the 3D spatial environment by the universe application.

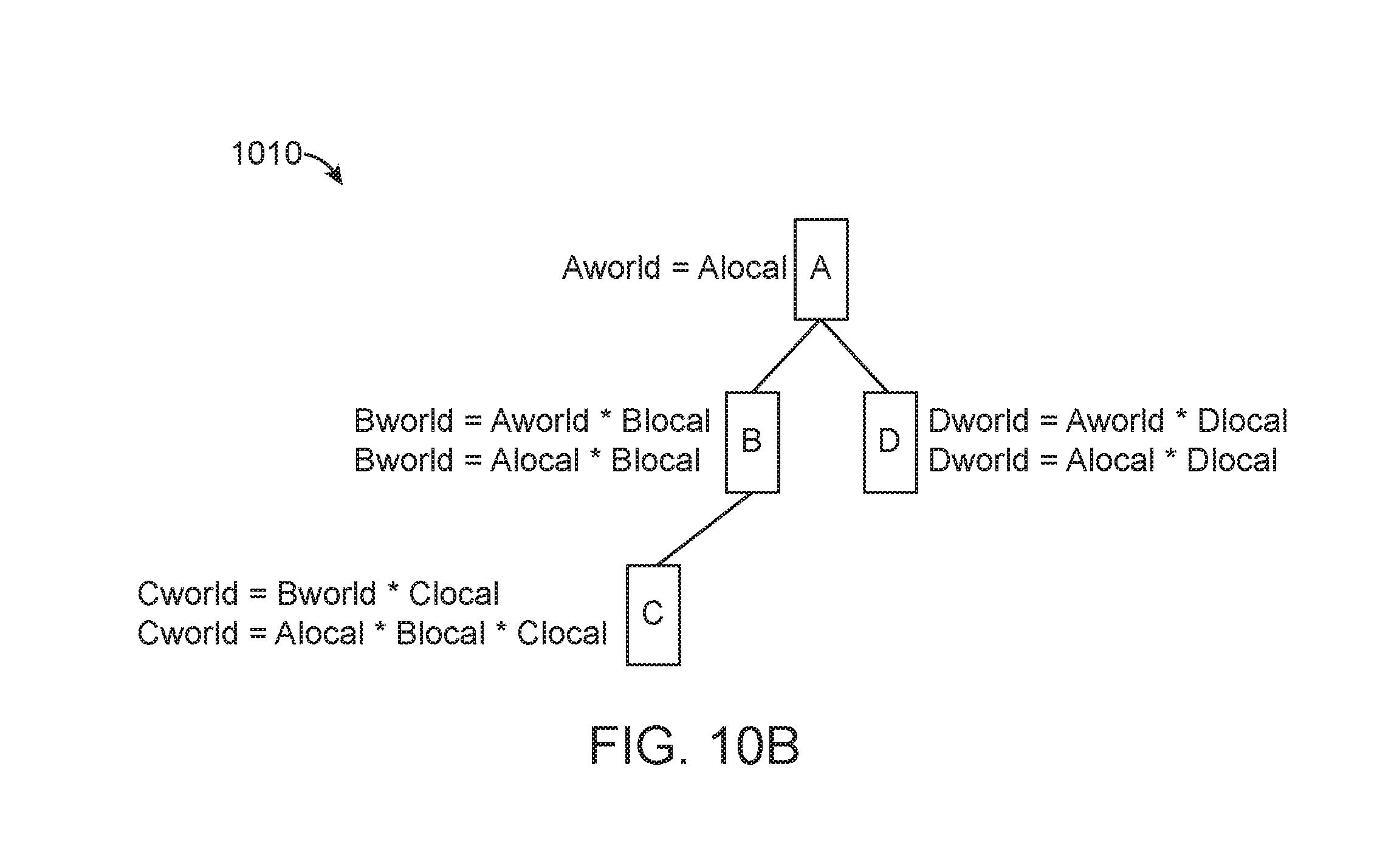

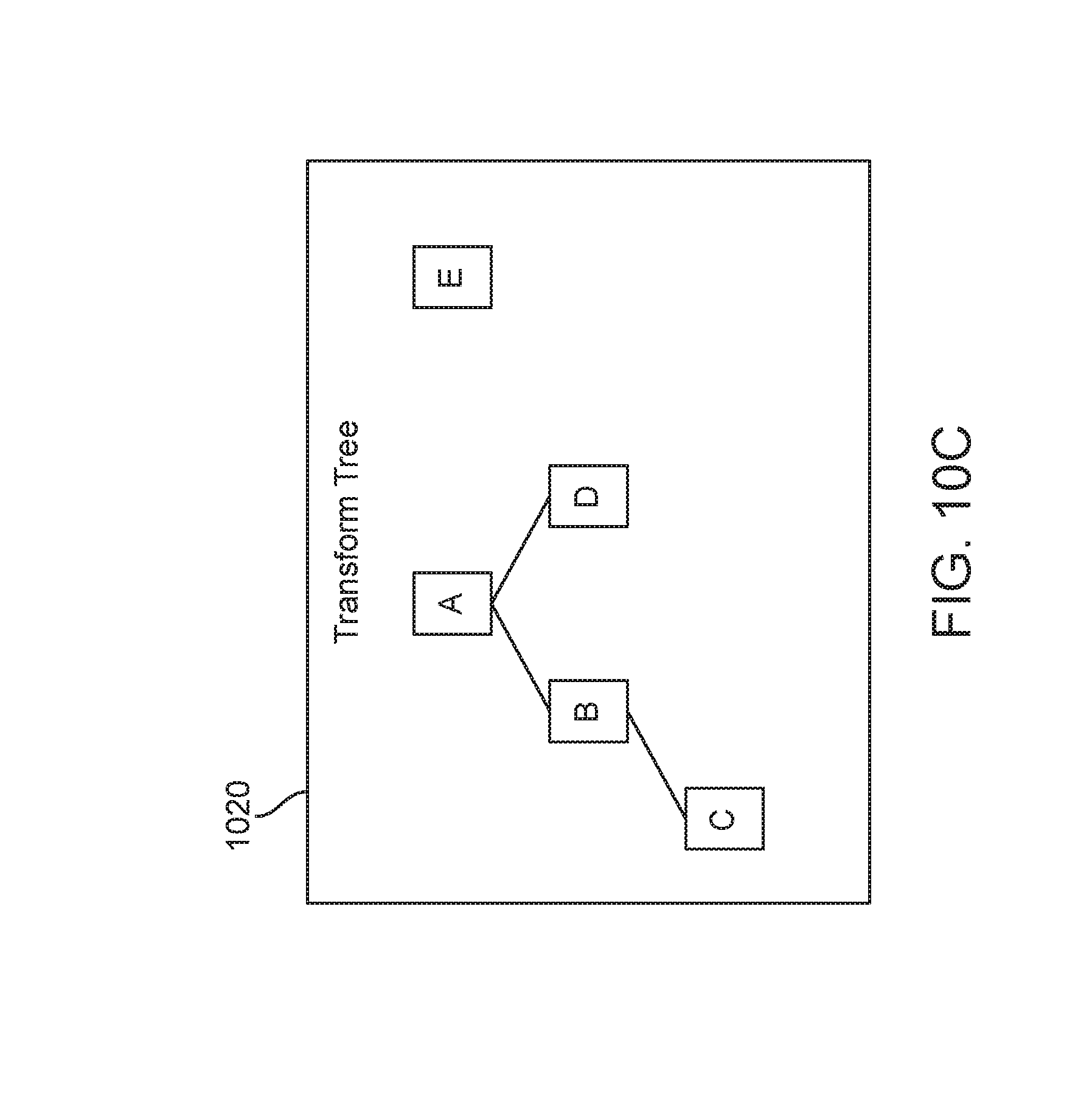

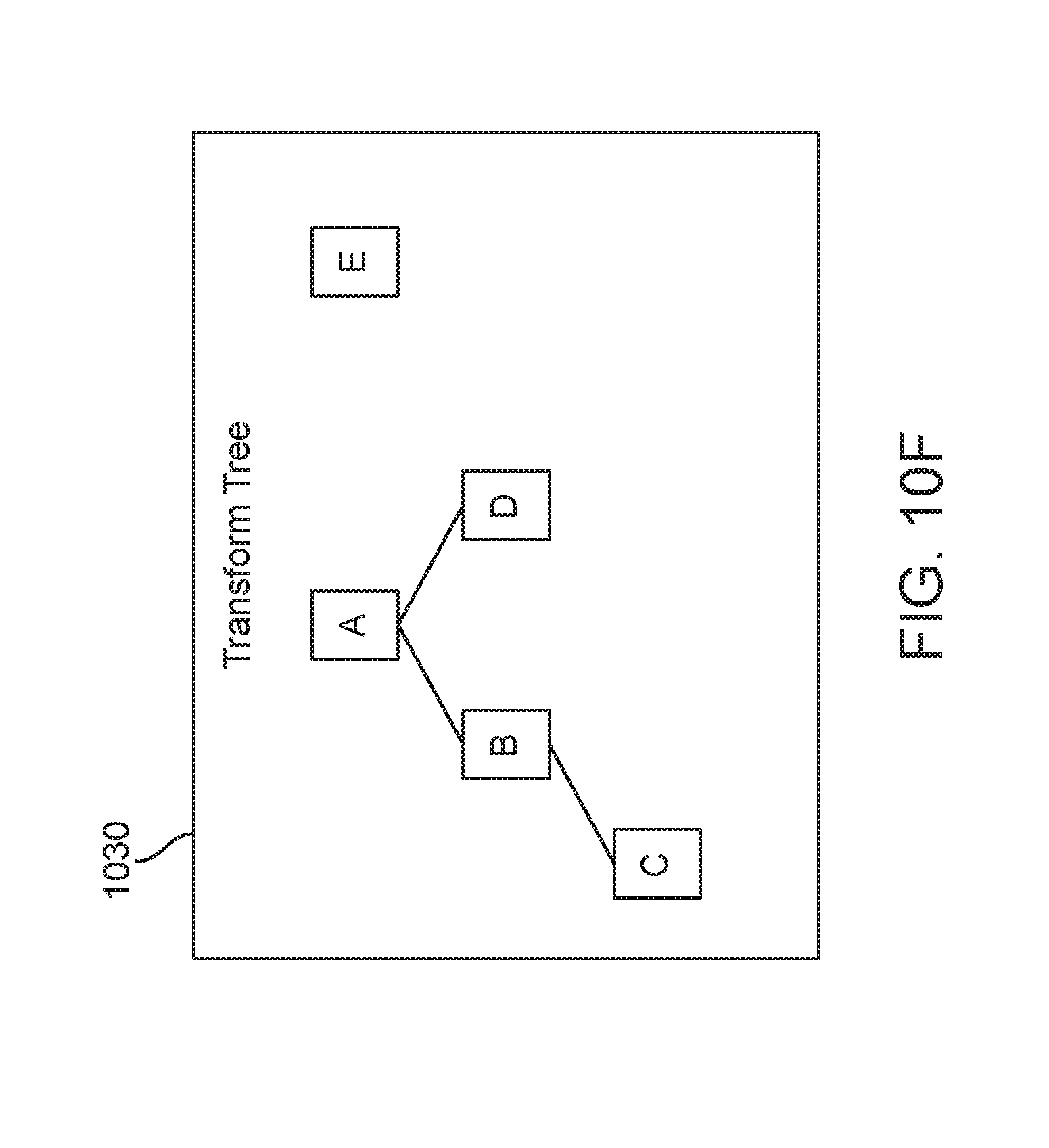

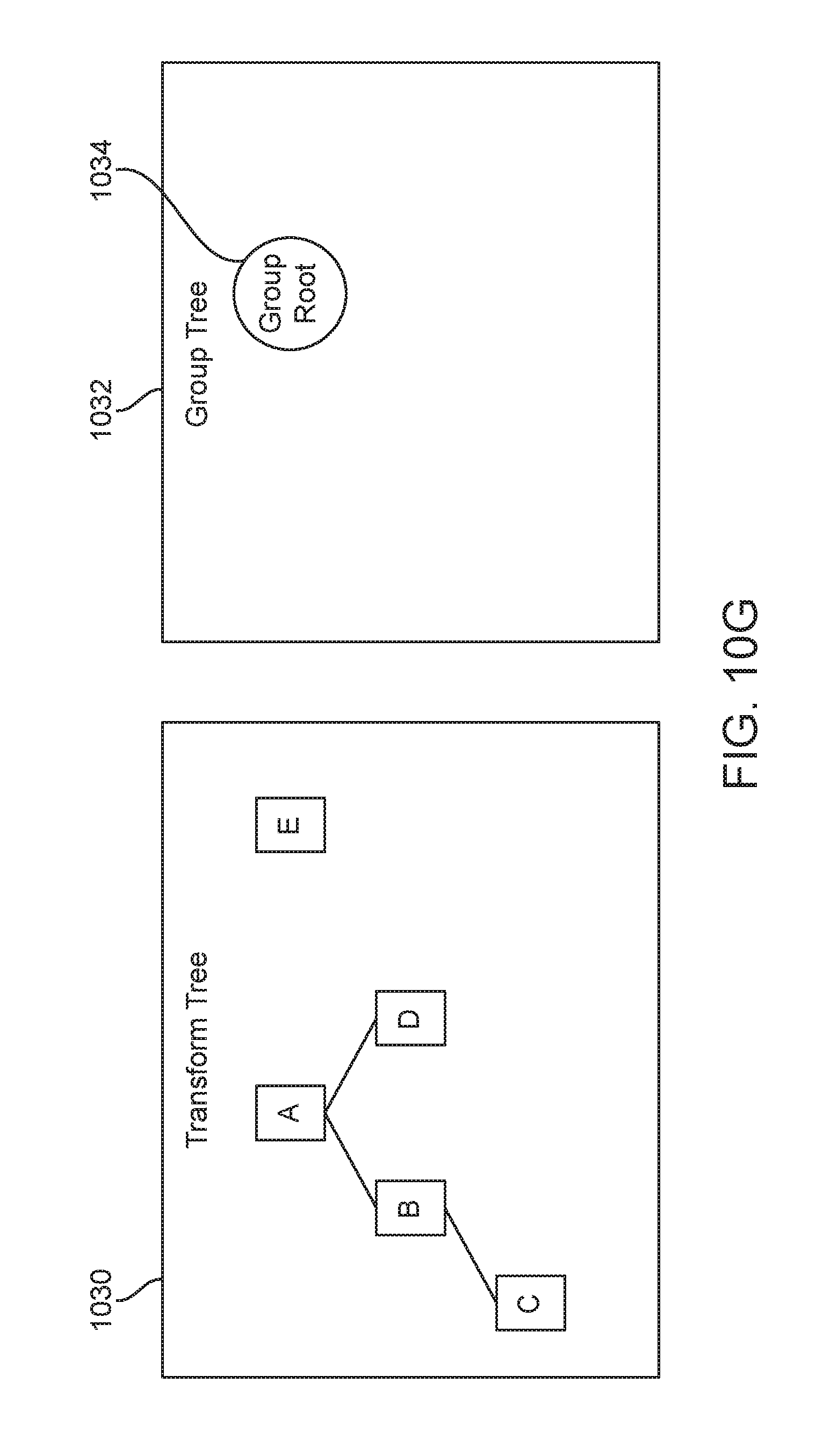

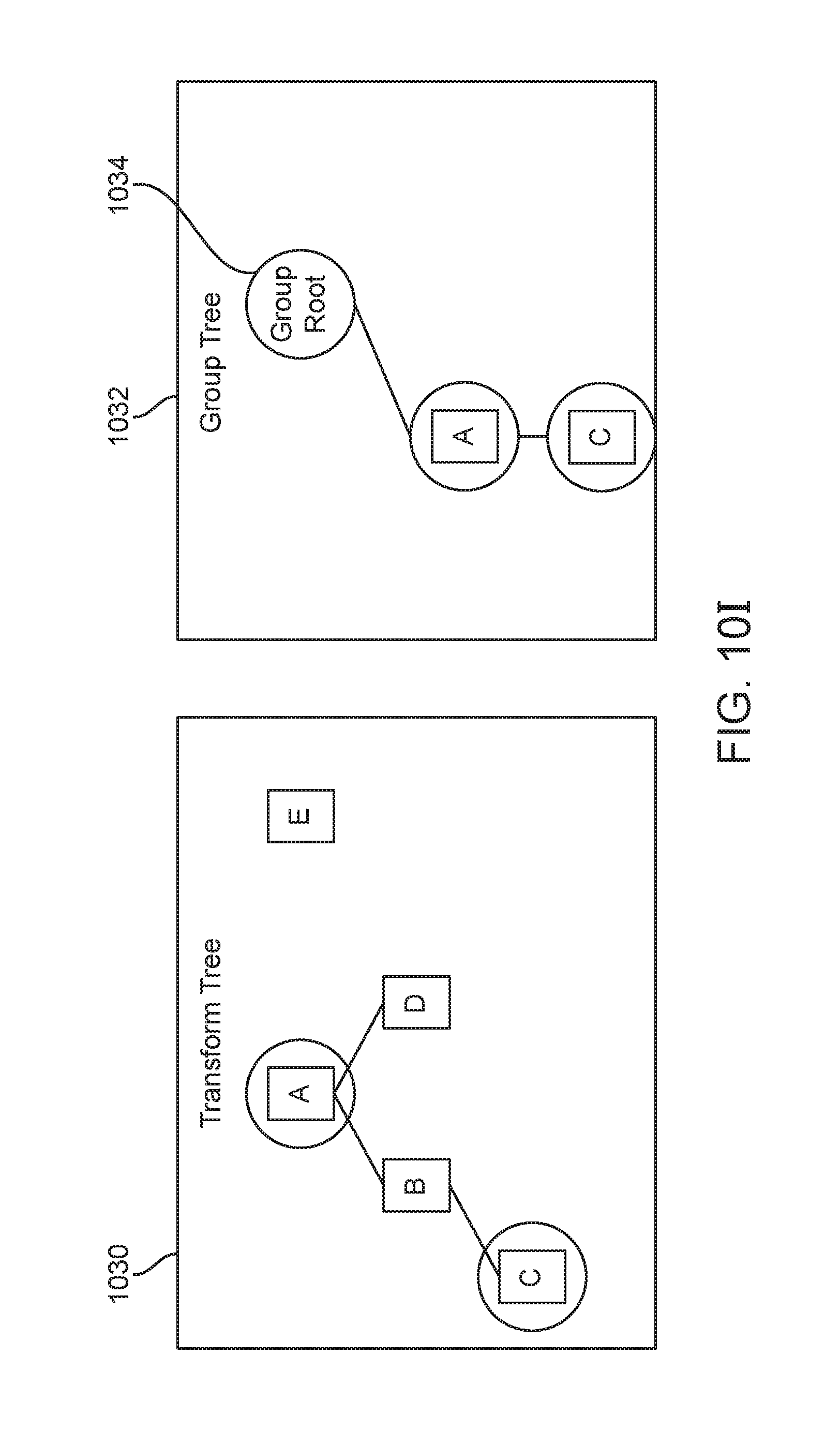

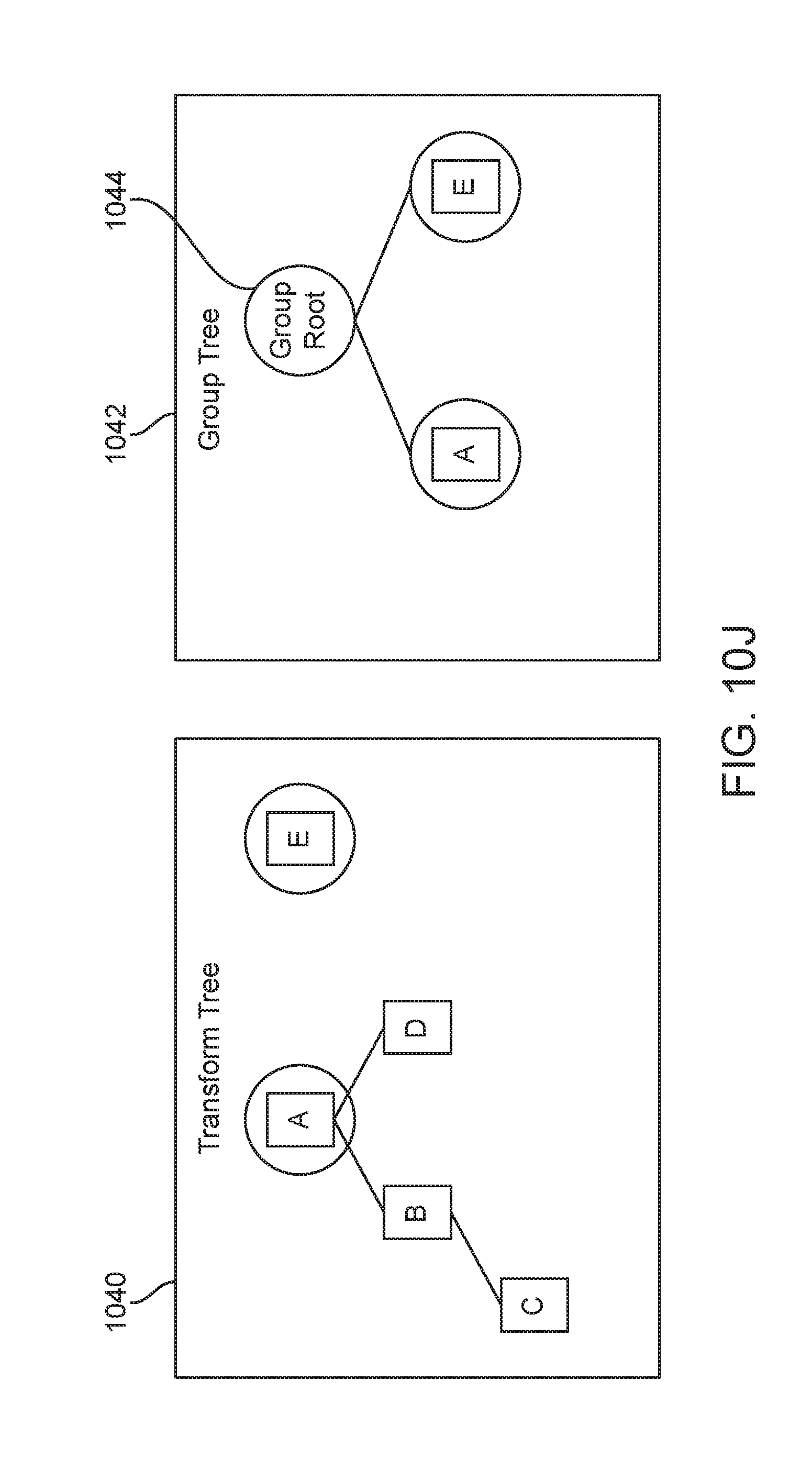

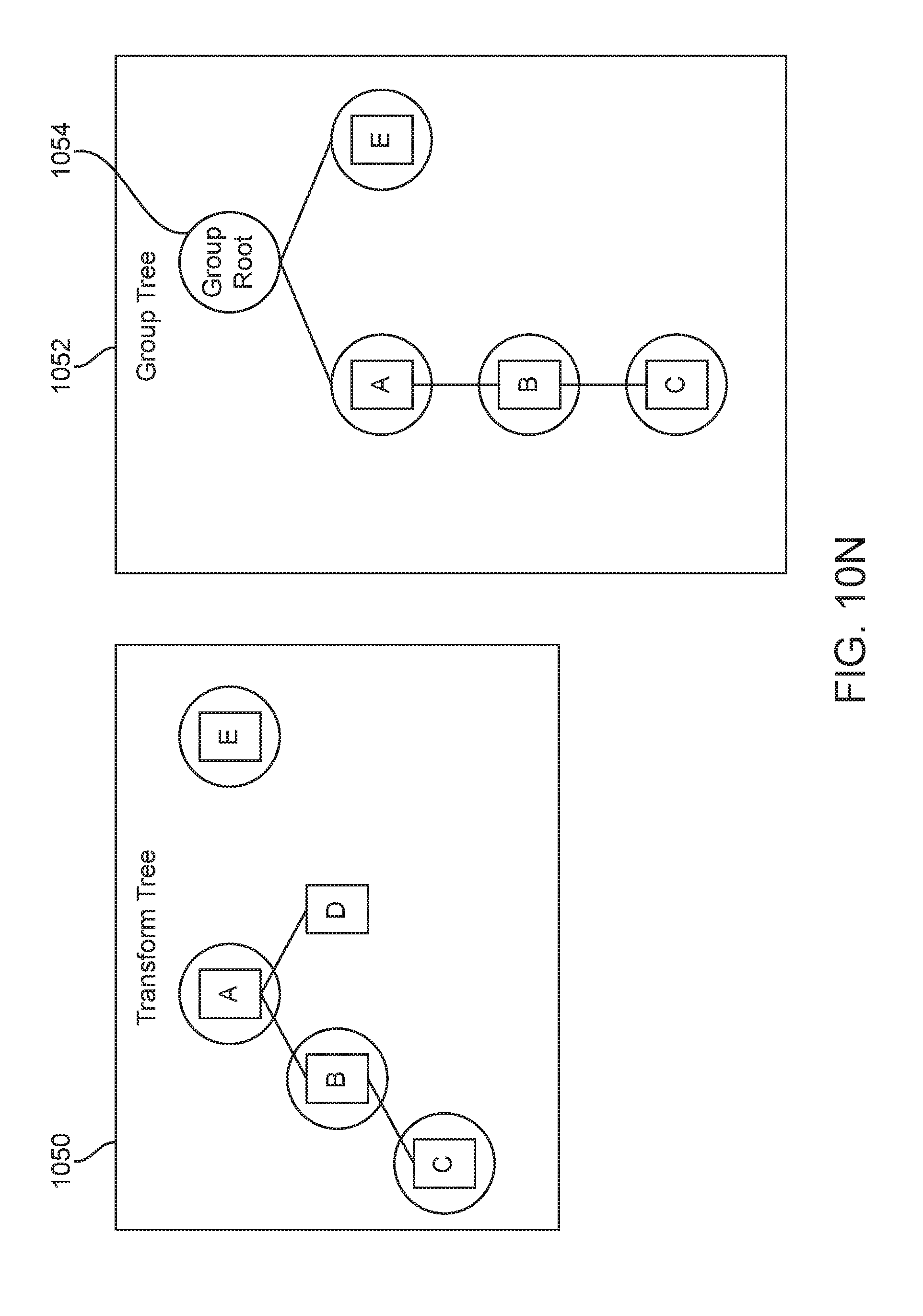

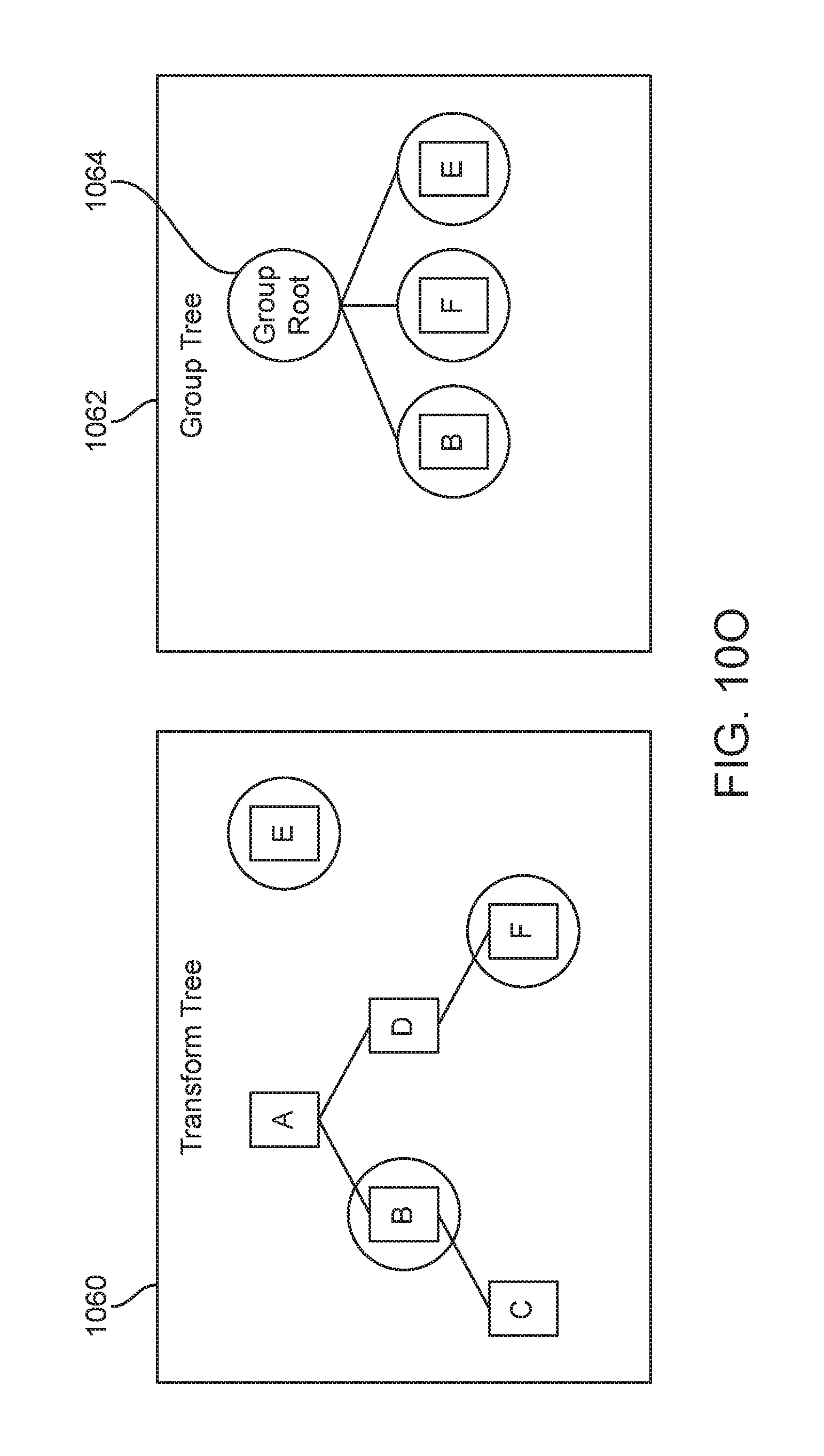

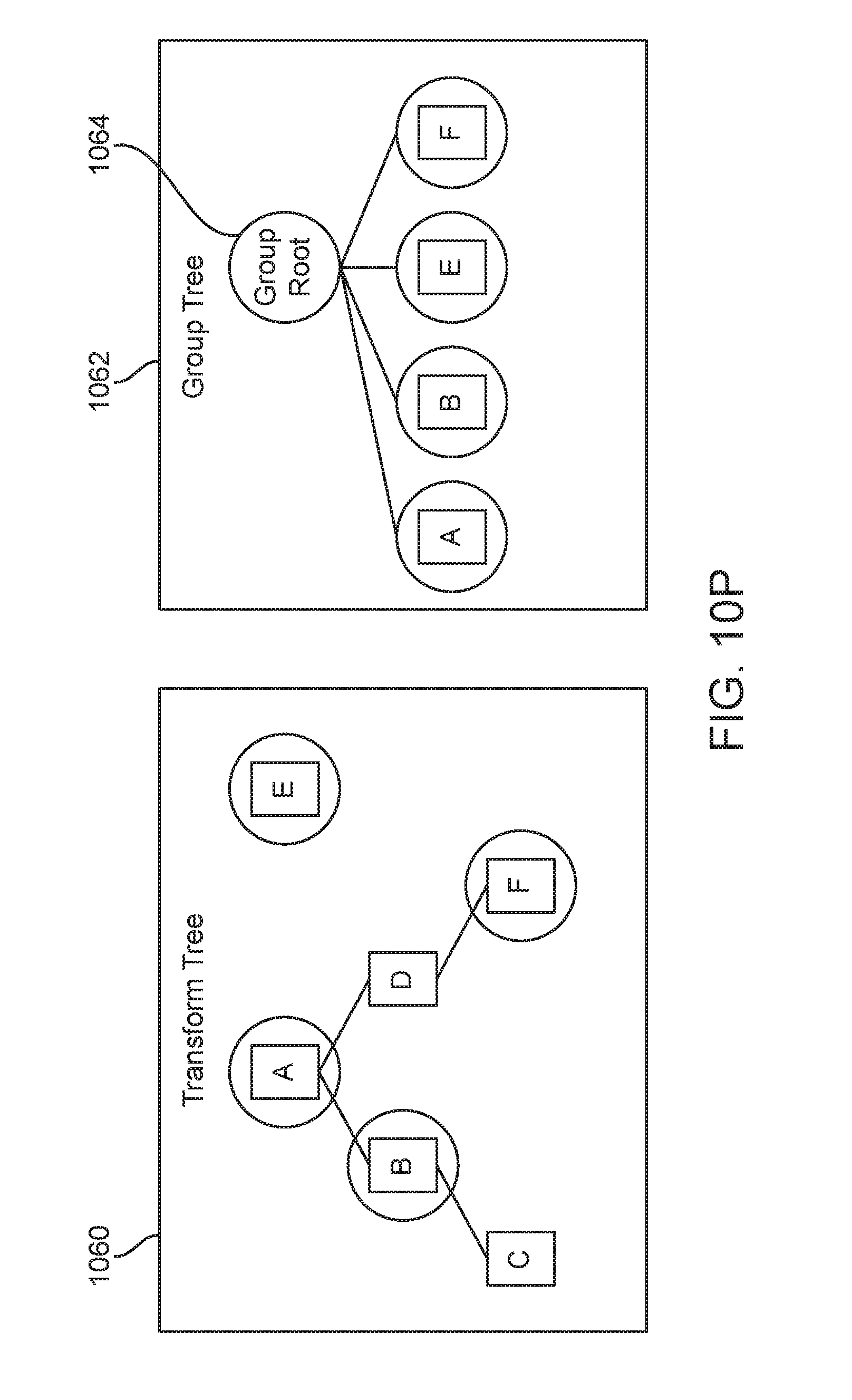

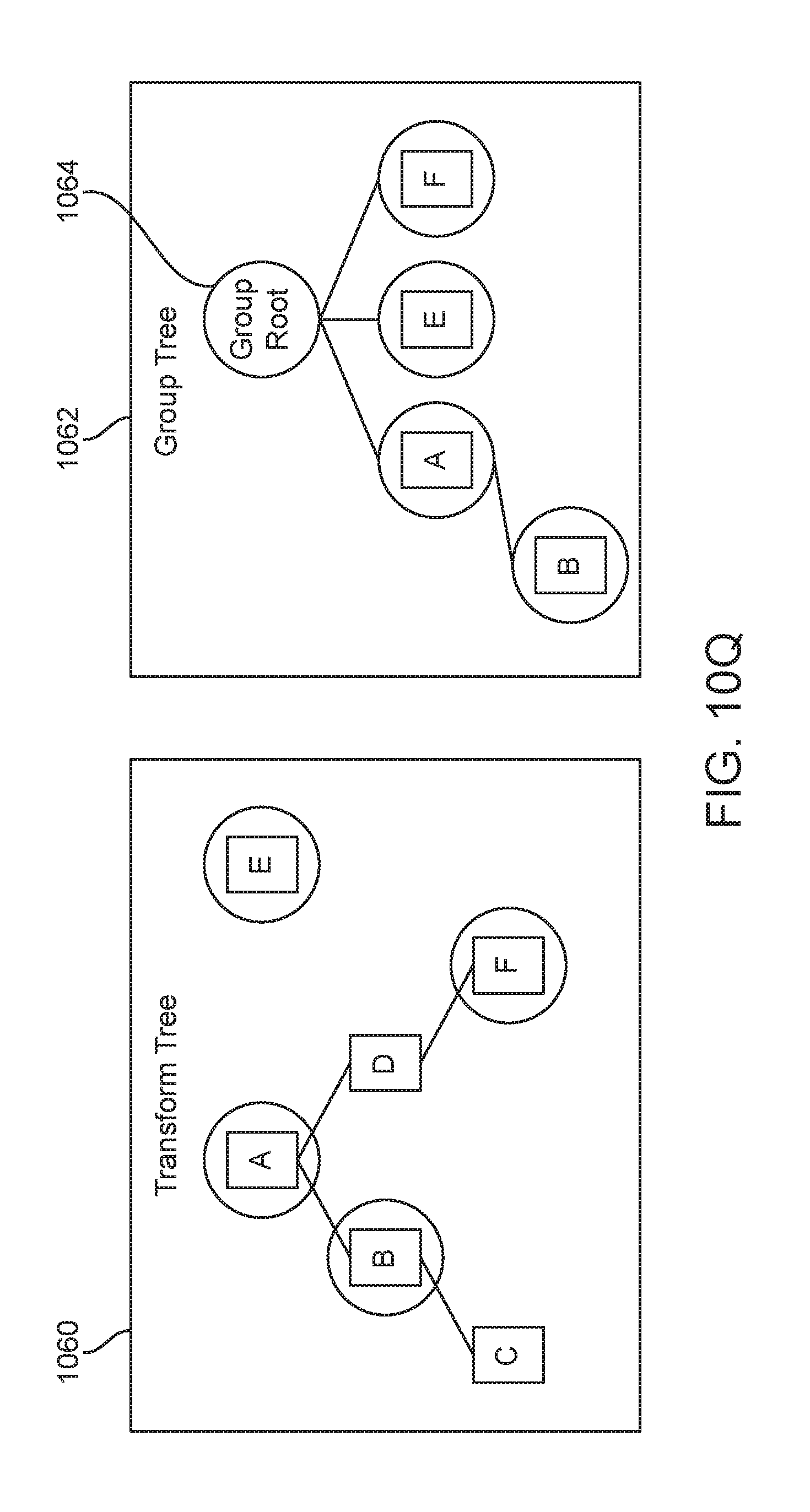

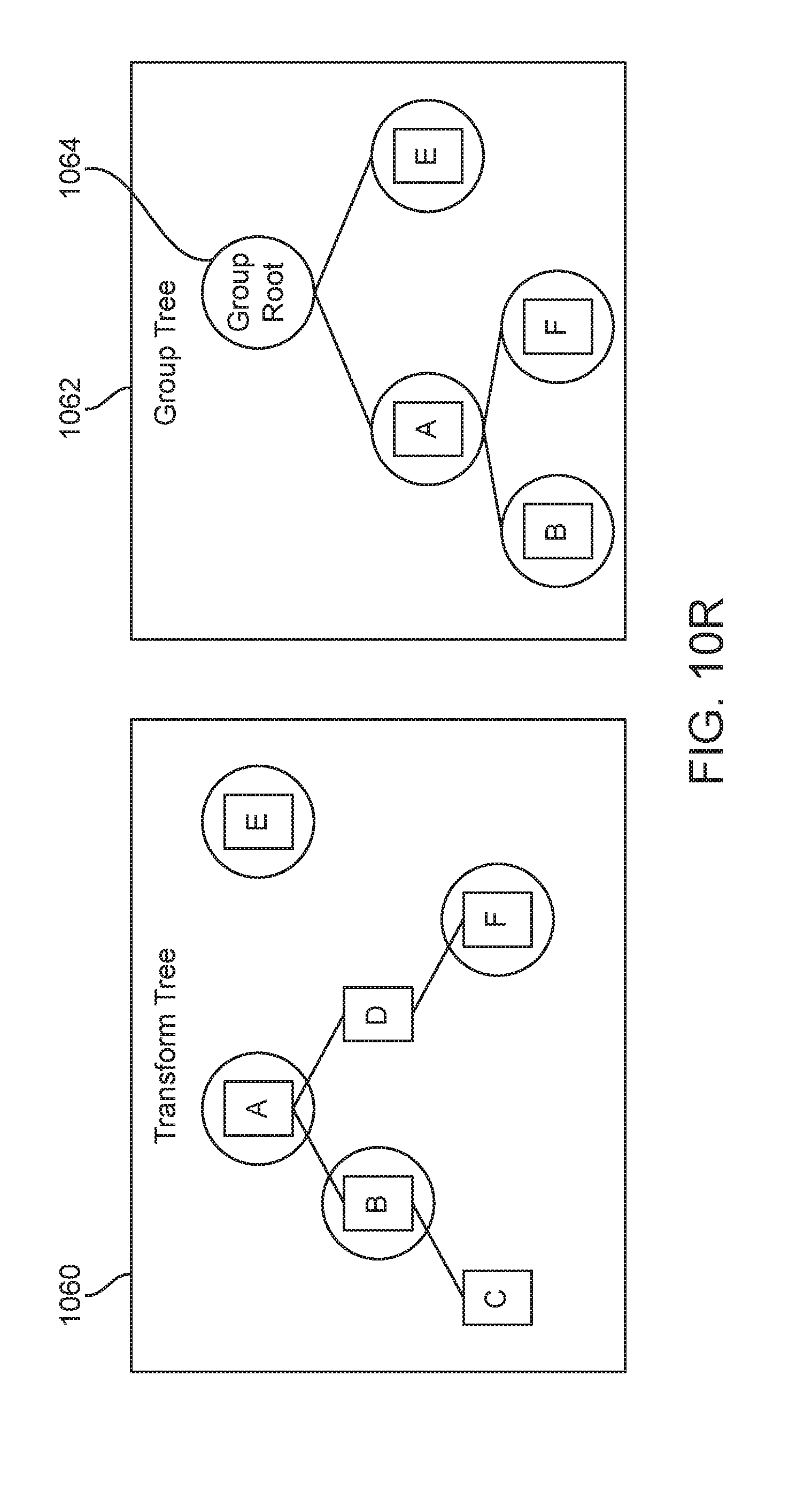

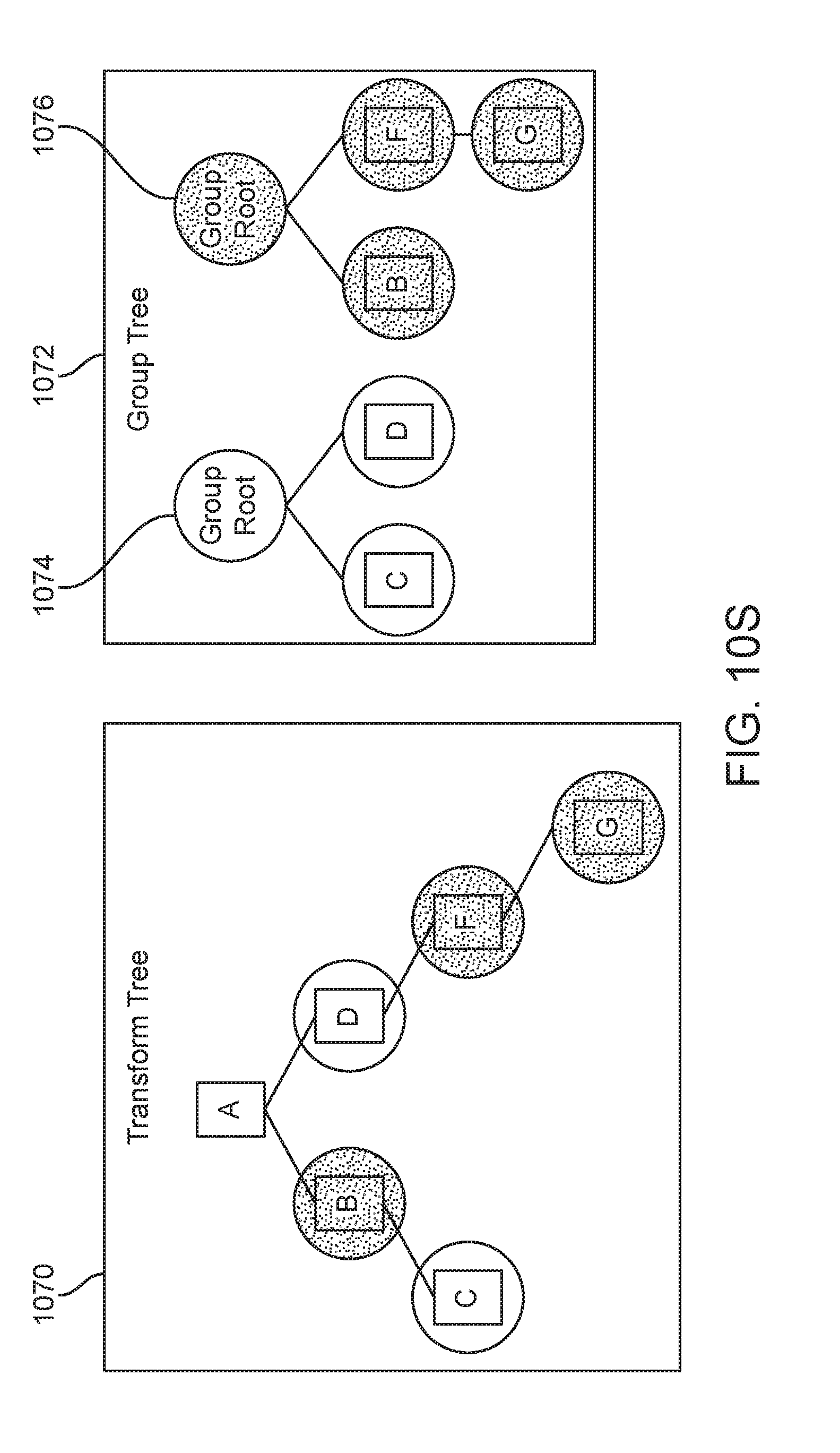

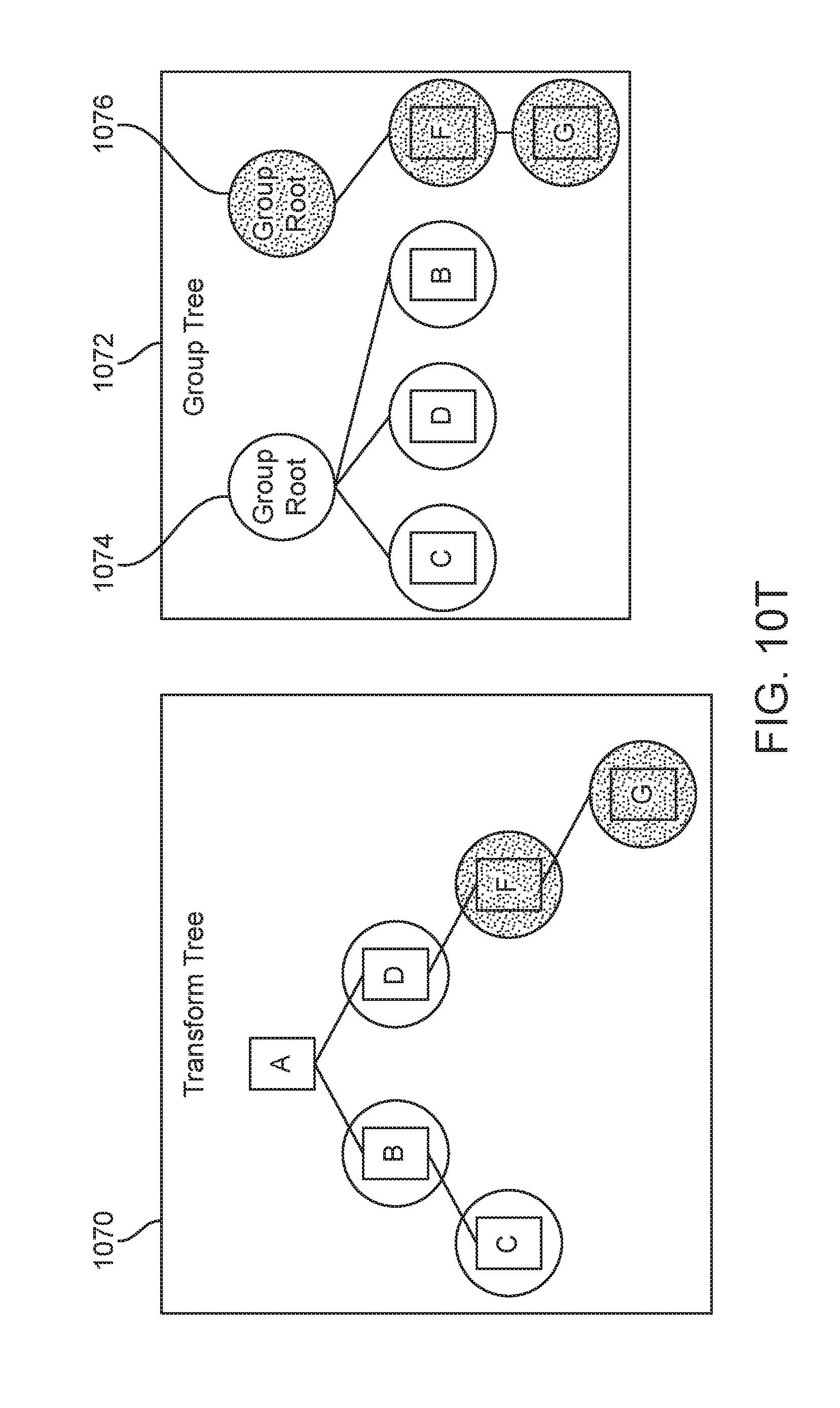

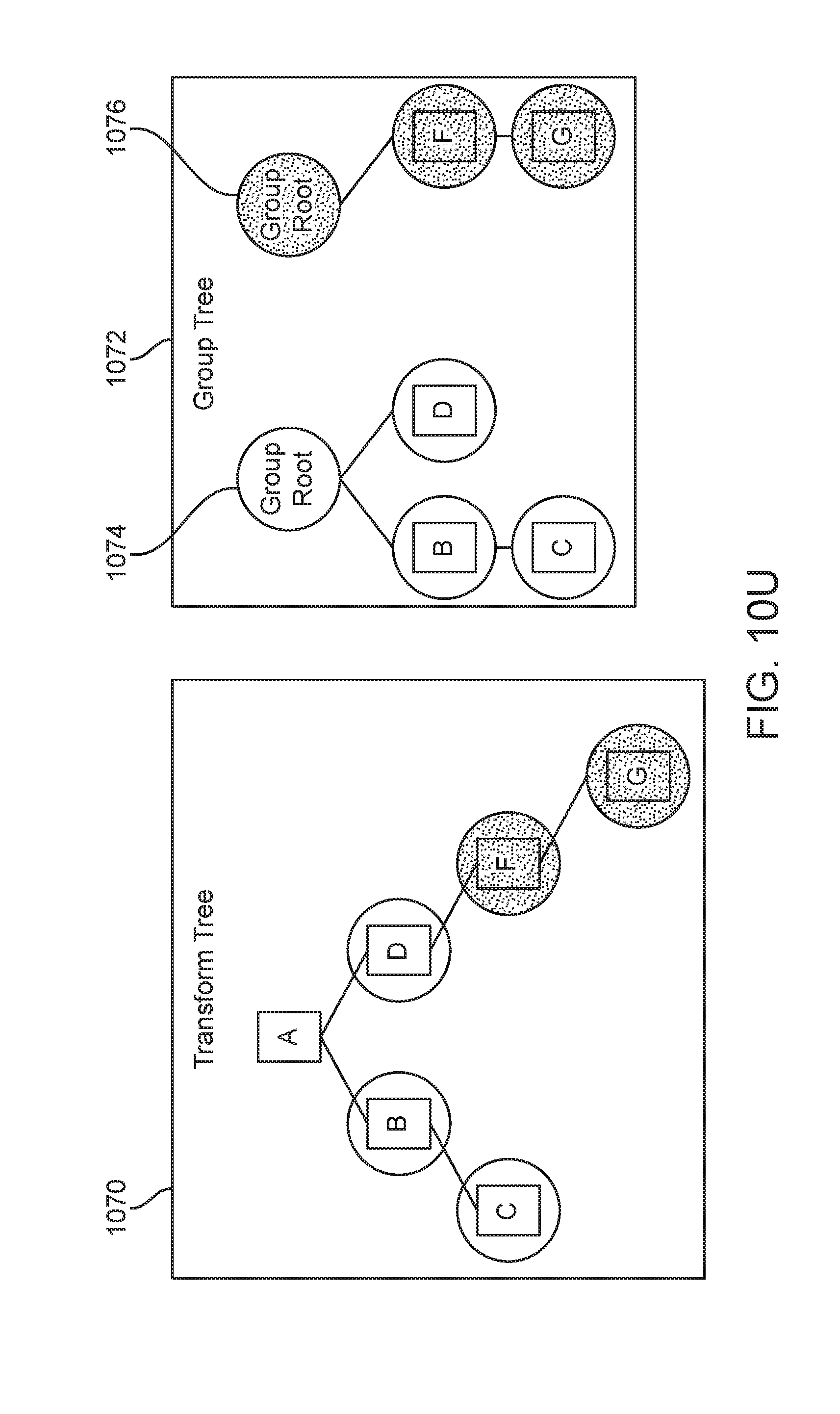

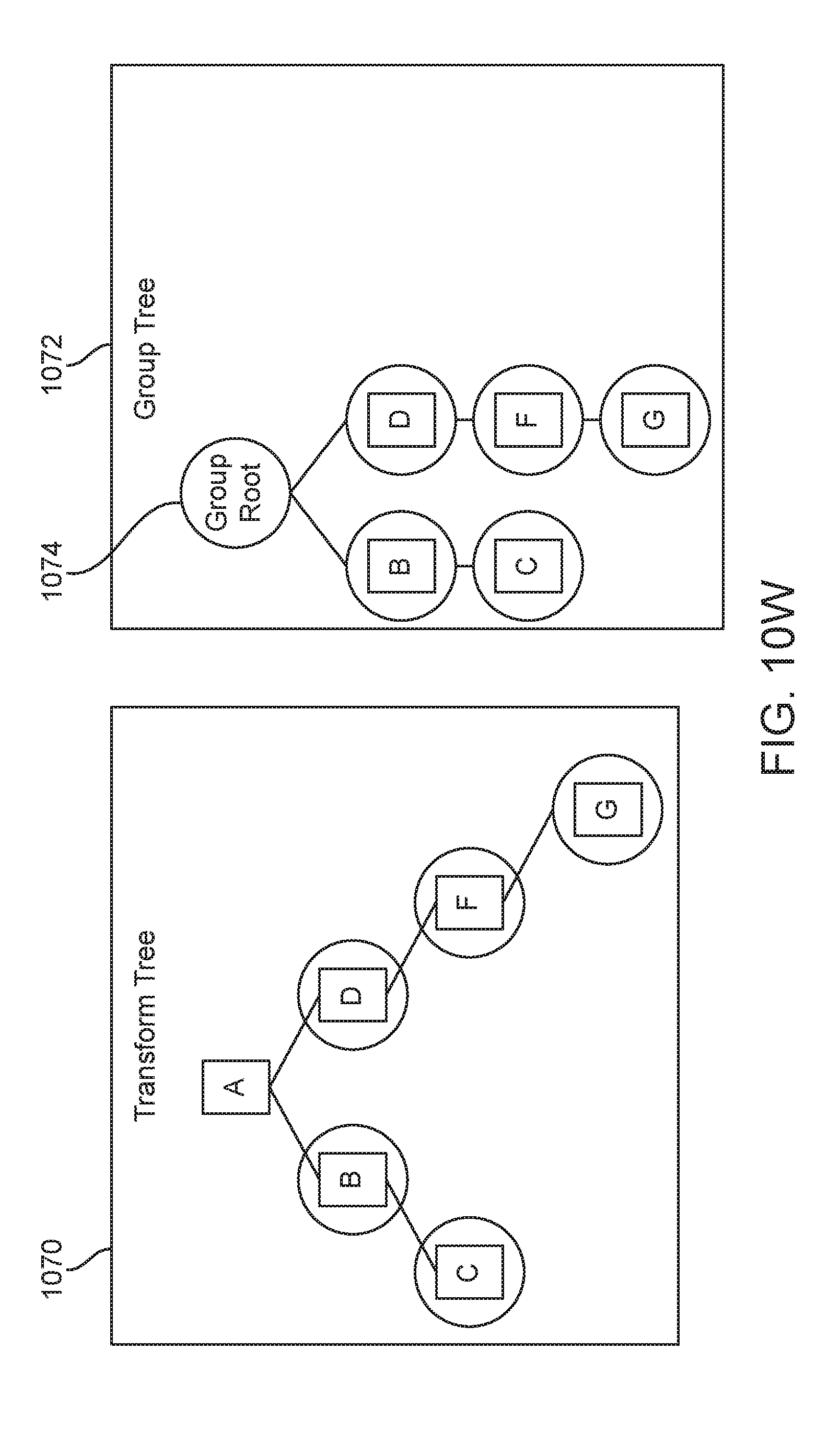

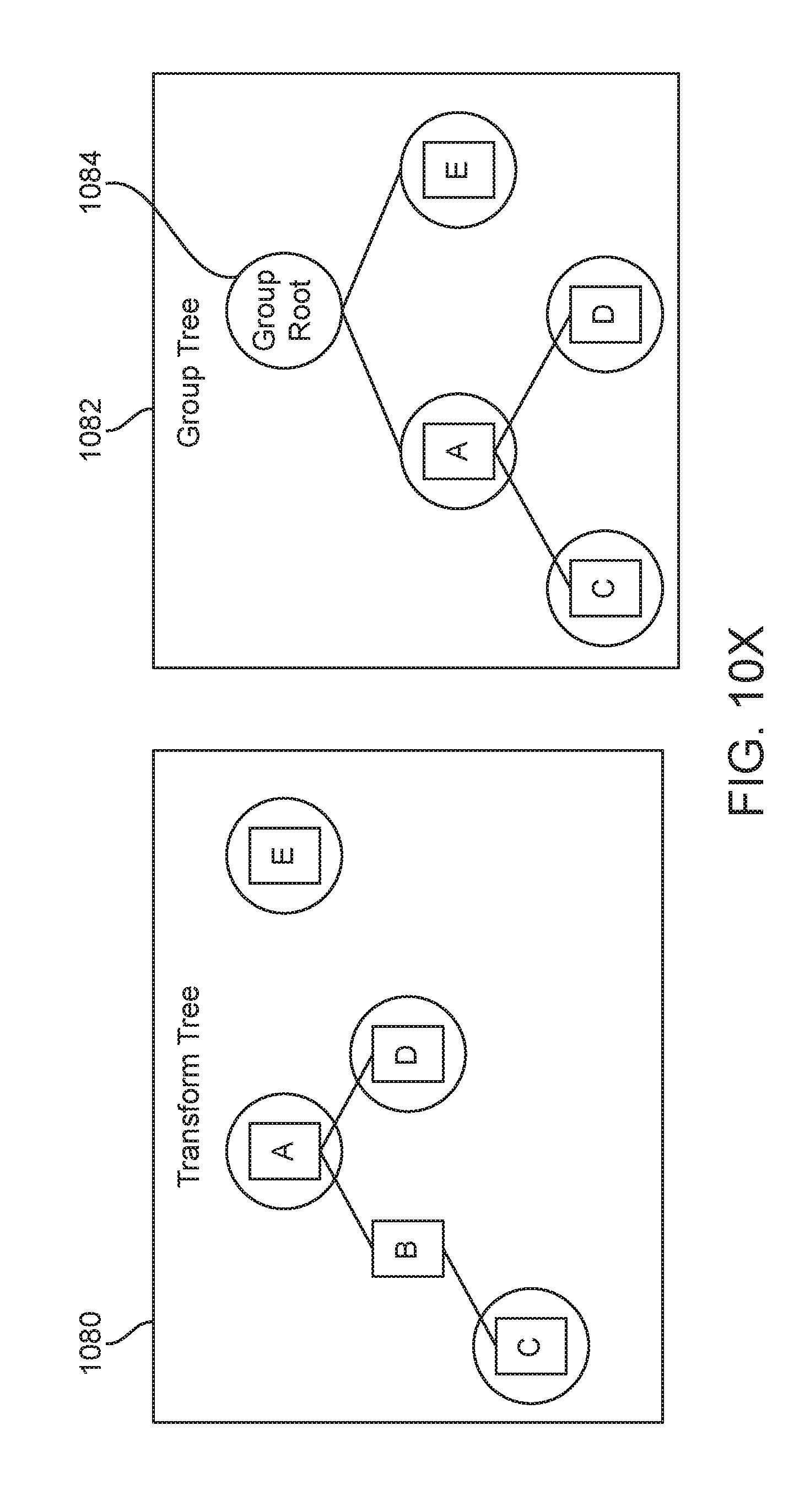

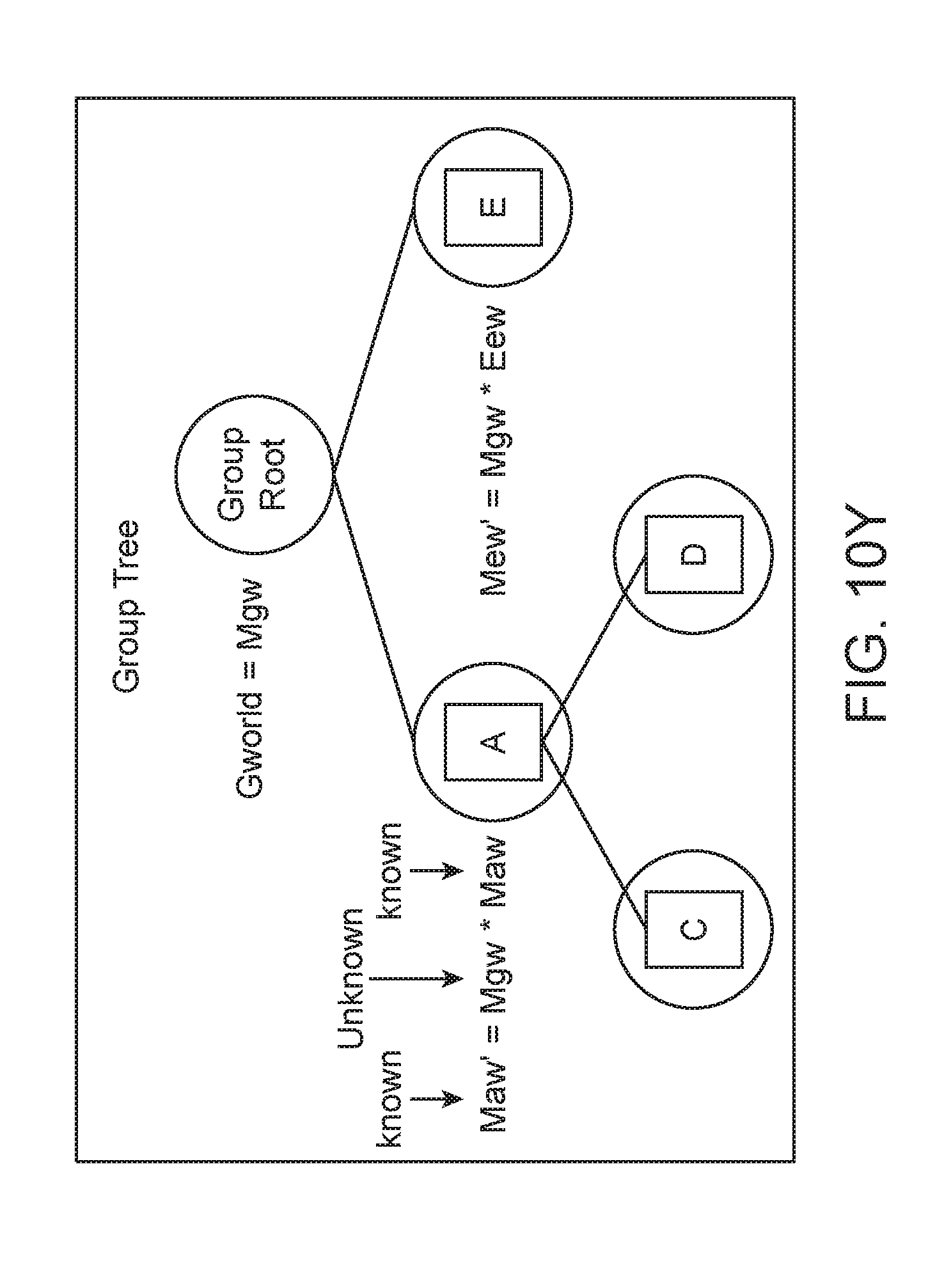

[0025] Some embodiments may be directed to a method that includes accessing a scene graph for a scene, wherein the scene graph comprises one or more transform trees, each tree comprising a plurality of nodes. The method may also include adding a tag to one or more nodes from a plurality of nodes within the one or more transform trees, wherein the one or more nodes tagged form a transform group, wherein the one or more nodes tagged comprise a first tagged node and a second tagged node. The method may further include moving the first tagged node, wherein moving the first tagged node causes the second tagged node to move.

[0026] Another embodiment is directed to a method of displaying virtual content in a 3D shared space, the method may include generating, by a first application, virtual content in the 3D shared space, and displaying, by a second application, the virtual content generated by the first application, the first application and the second application being different applications.

[0027] Some embodiments may be directed to a method for assigning to a Prism universal features and application selected features from a list of pre-approved options for configurations of display customizations by an application. Another embodiment is directed to a method for displaying virtual content into one or more Prisms, wherein the one or more Prisms do not overlap with one another. Another embodiment is directed to a method for changing a state of a Prism based at least in part on a relative position and location of the Prism to a user. Another embodiment is directed to a method for managing content creation in an application and managing content display in a separate application. Another embodiment is directed to a method for opening an application that will provide content into a Prism while simultaneously placing the Prism in a mixed reality environment.

[0028] Some embodiments may be directed to a method for assigning location, orientation, and extent data to a Prism for displaying virtual content within the Prism, the virtual content is 3D virtual content.

[0029] In one or more embodiment, the location is a coordinate of an anchor position of the Prism in a mixed reality environment. The extent data defines a size of the Prism.

[0030] Some embodiments may be directed to a method for pinning a launcher application to a real world object within a mixed reality environment.

[0031] In one or more embodiments, the pinned launcher application launches content of the application within a Prism in a same location as the pinned launcher application.

[0032] Some embodiments may be directed to a method for assigning a behavior type to each Prism, the behavior type comprising at least one of a world lock, a billboard, an edge billboard, a follow headlock, a follow based on external sensor, or a fade (described in more detail below). Some embodiments are directed to a method for identifying a most used content specific to a placed location of a launcher application. Some embodiments are directed to a method for displaying favorite applications, by a placed launcher application, the favorite applications based at least in part on context relative to a location of the placed launcher.

[0033] Additional and other objects, features, and advantages of the disclosure are described in the detail description, figures and claims.

BRIEF DESCRIPTION OF THE DRAWINGS

[0034] The drawings illustrate the design and utility of preferred embodiments of the present disclosure, in which similar elements are referred to by common reference numerals. In order to better appreciate how the above-recited and other advantages and objects of the present disclosure are obtained, a more particular description of the present disclosure briefly described above will be rendered by reference to specific embodiments thereof, which are illustrated in the accompanying drawings. Understanding that these drawings depict only typical embodiments of the disclosure and are not therefore to be considered limiting of its scope, the disclosure will be described and explained with additional specificity and detail through the use of the accompanying drawings.

[0035] The drawings use like reference numerals to identify like elements. A letter after a reference numeral, such as "120a," indicates that the text refers specifically to the element having that particular reference numeral. A reference numeral in the text without a following letter, such as "120," refers to any or all of the elements in the drawings bearing that reference numeral (e.g. "120" in the text refers to reference numerals "120a" and/or "120b" in the drawings).

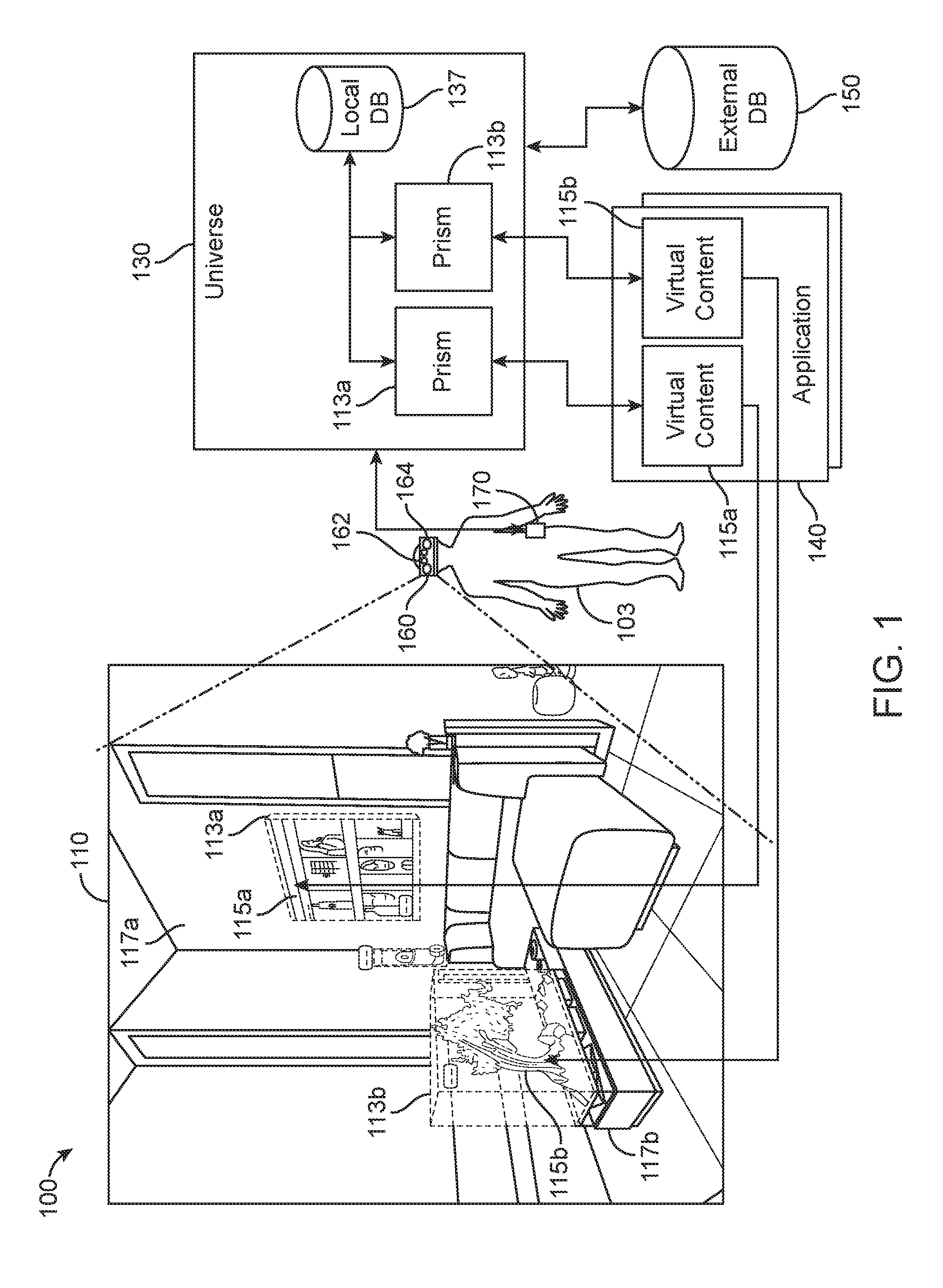

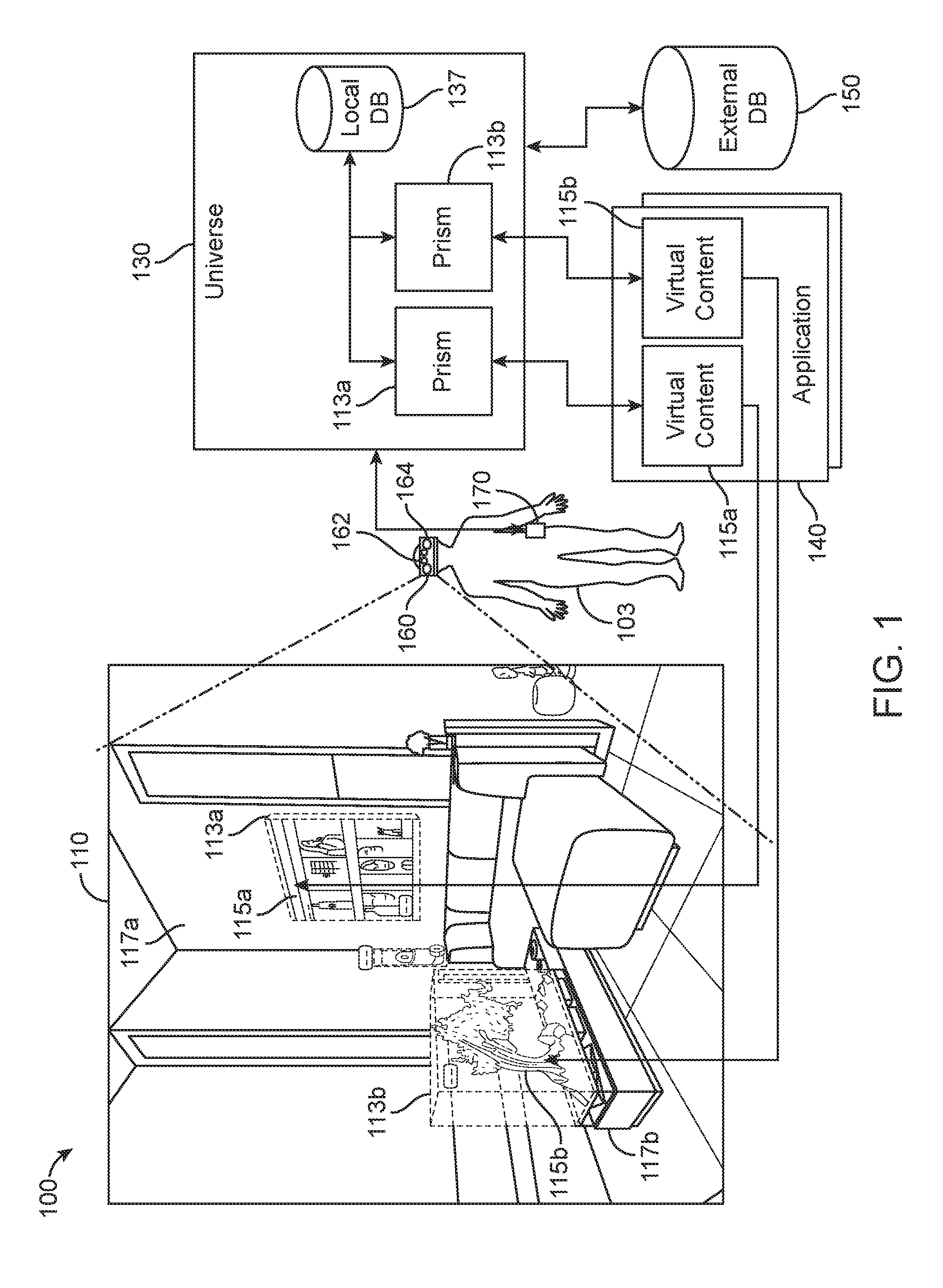

[0036] FIG. 1 shows an example user physical environment and system architecture for managing and displaying virtual content in a mixed reality system, according to some embodiments.

[0037] FIG. 2 shows a system architecture for managing and displaying virtual content in a mixed reality system, according to some embodiments.

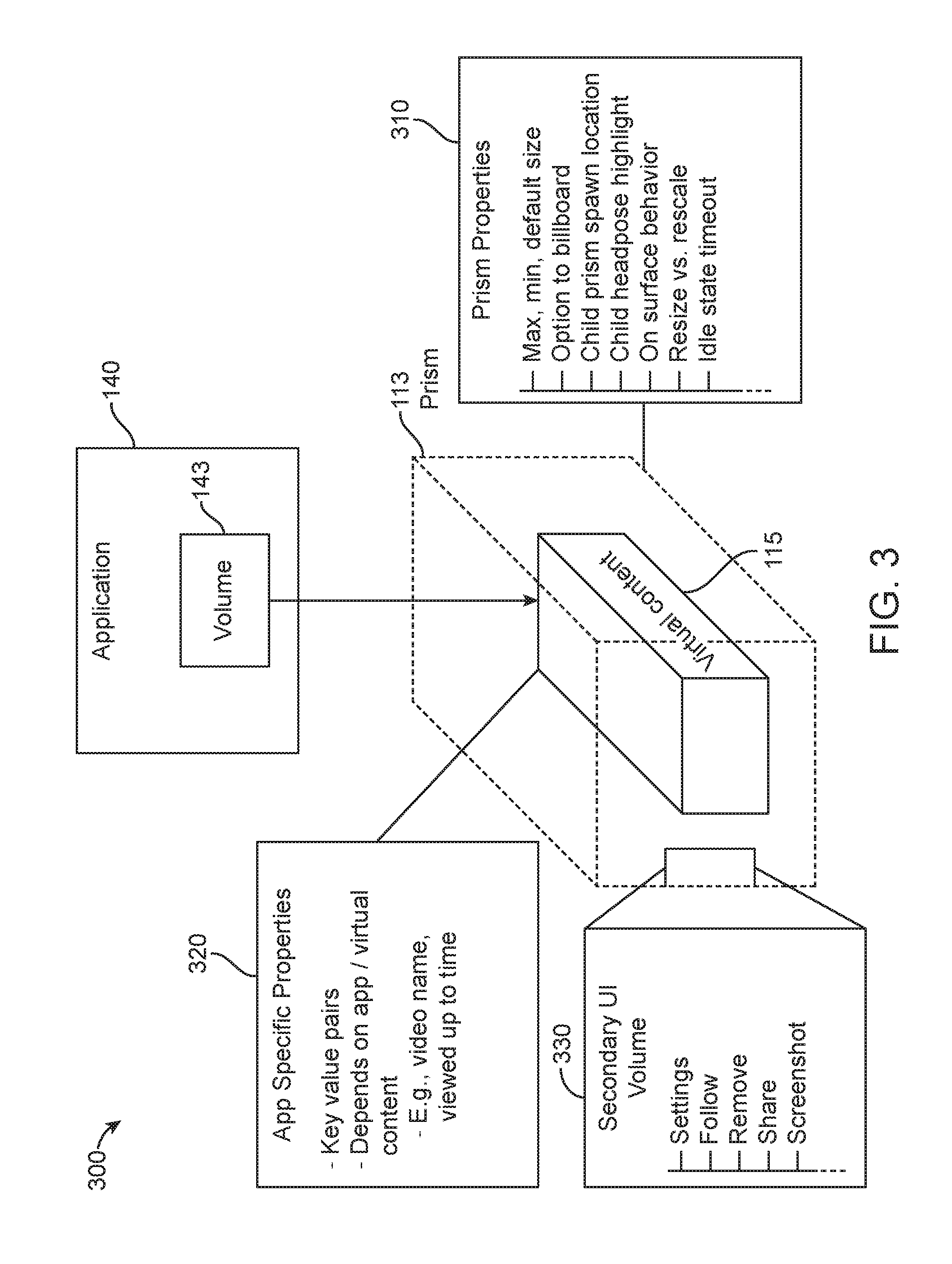

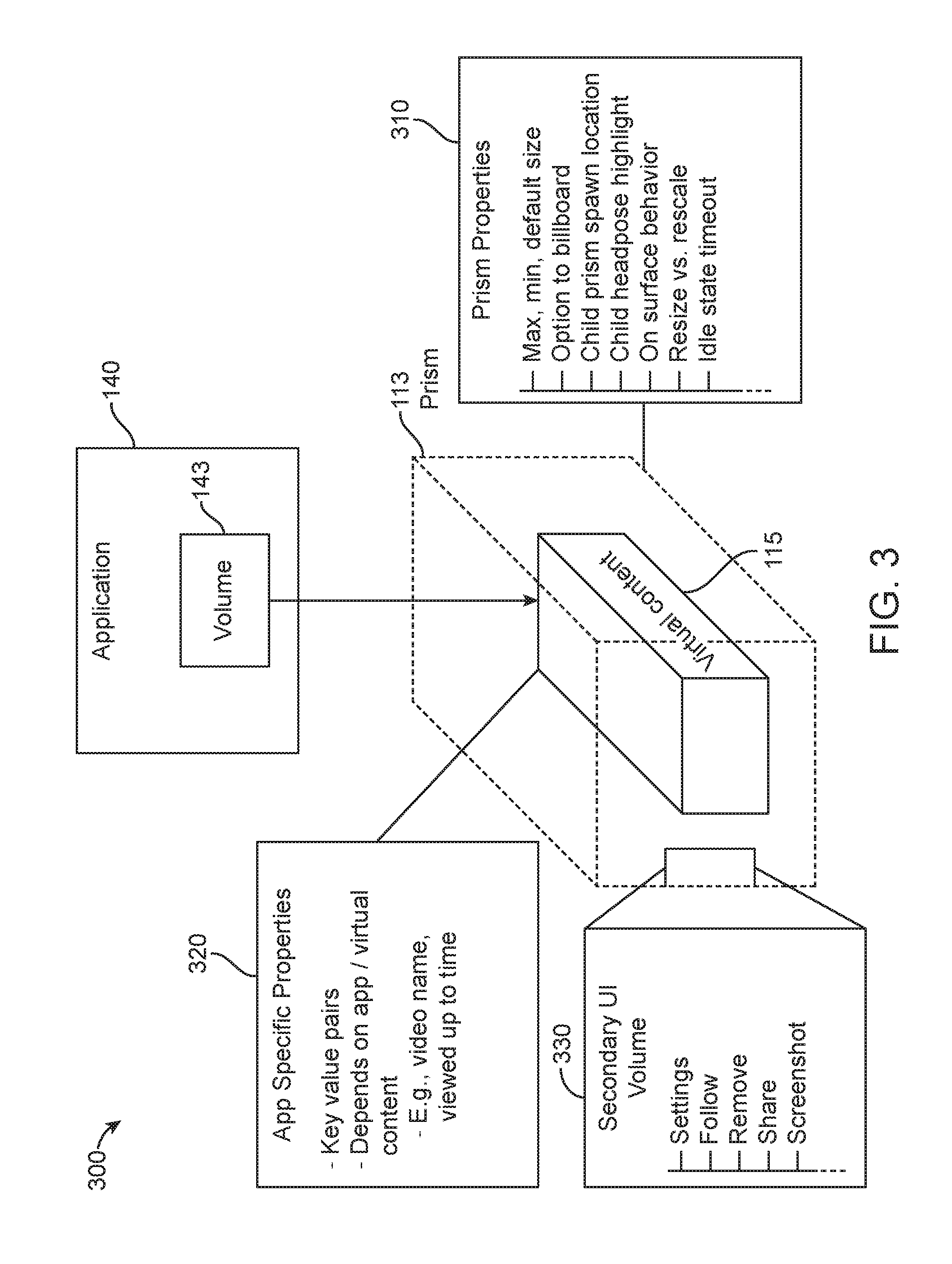

[0038] FIG. 3 shows a Prism/bounding volume, according to some embodiments.

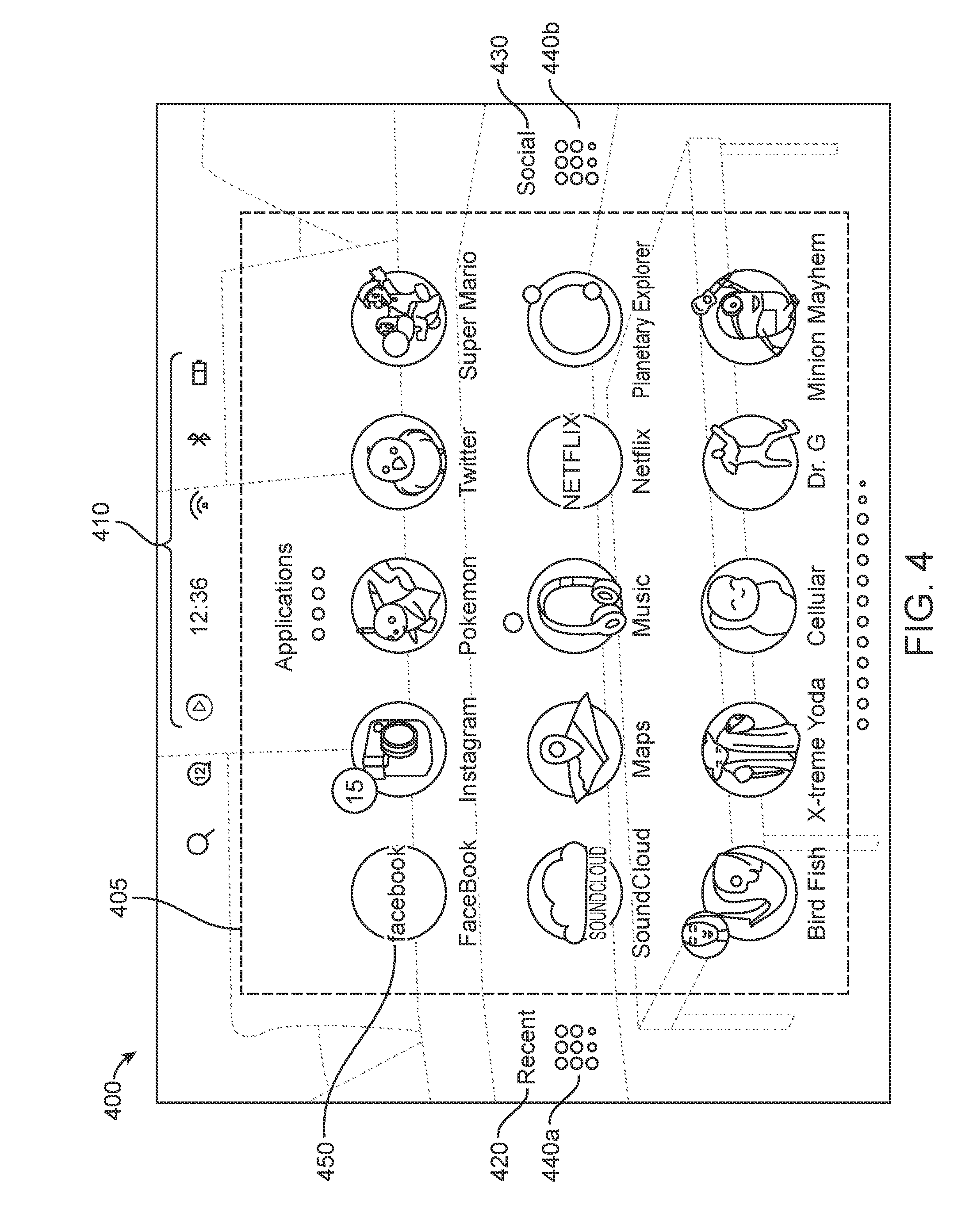

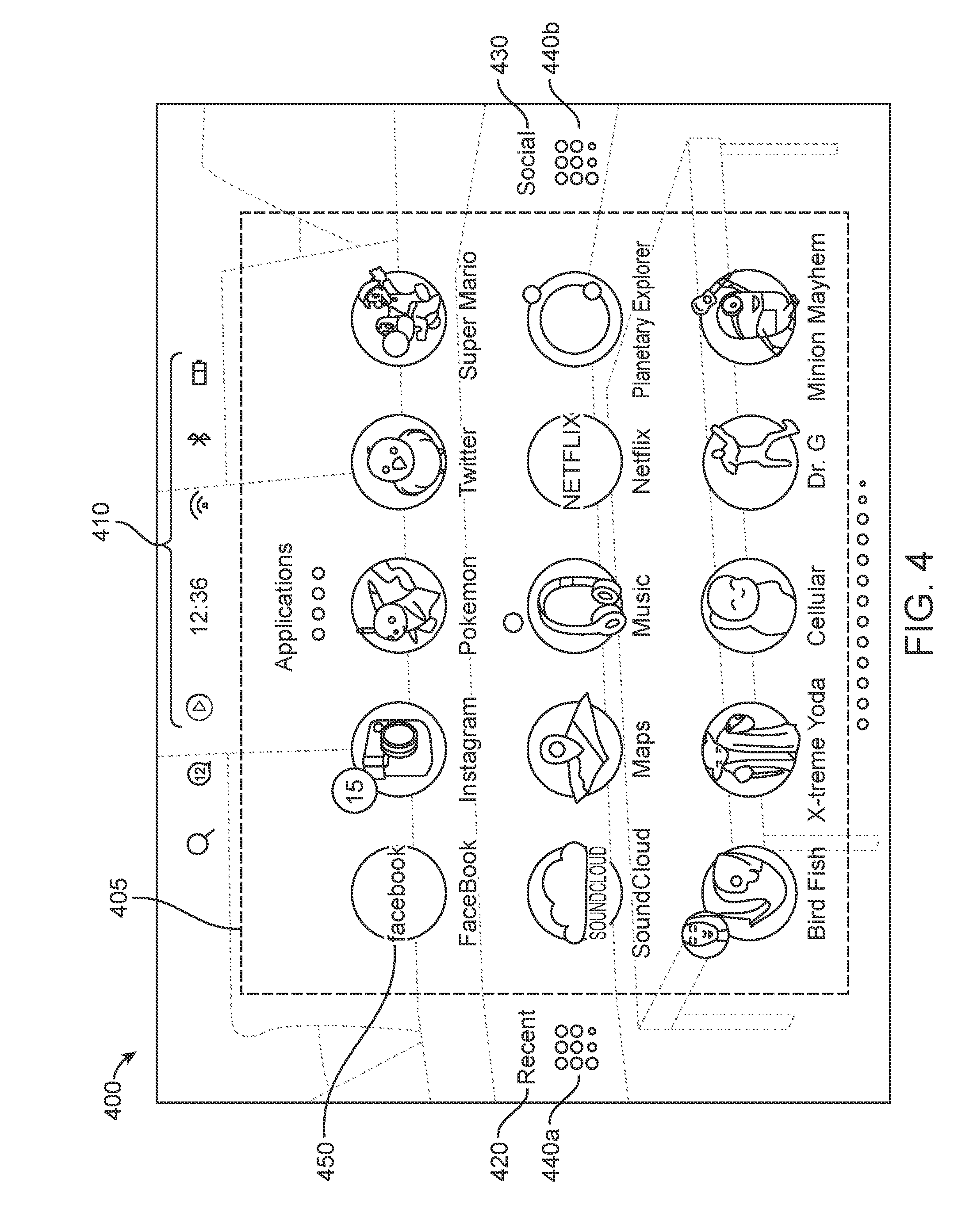

[0039] FIG. 4 shows a launcher menu for launching applications to display virtual content, according to some embodiments.

[0040] FIG. 5A-5B shows a panel carousel change, according to some embodiments.

[0041] FIG. 6 shows a flowchart for an approach for starting a mixed reality system, according to some embodiments.

[0042] FIG. 7 shows a flowchart for an approach for displaying a virtual content in a mixed reality environment, according to some embodiments.

[0043] FIG. 8 is a diagram for managing application states, according to some embodiments.

[0044] FIG. 9 shows a flowchart for managing an application state relative to a user location, according to some embodiments.

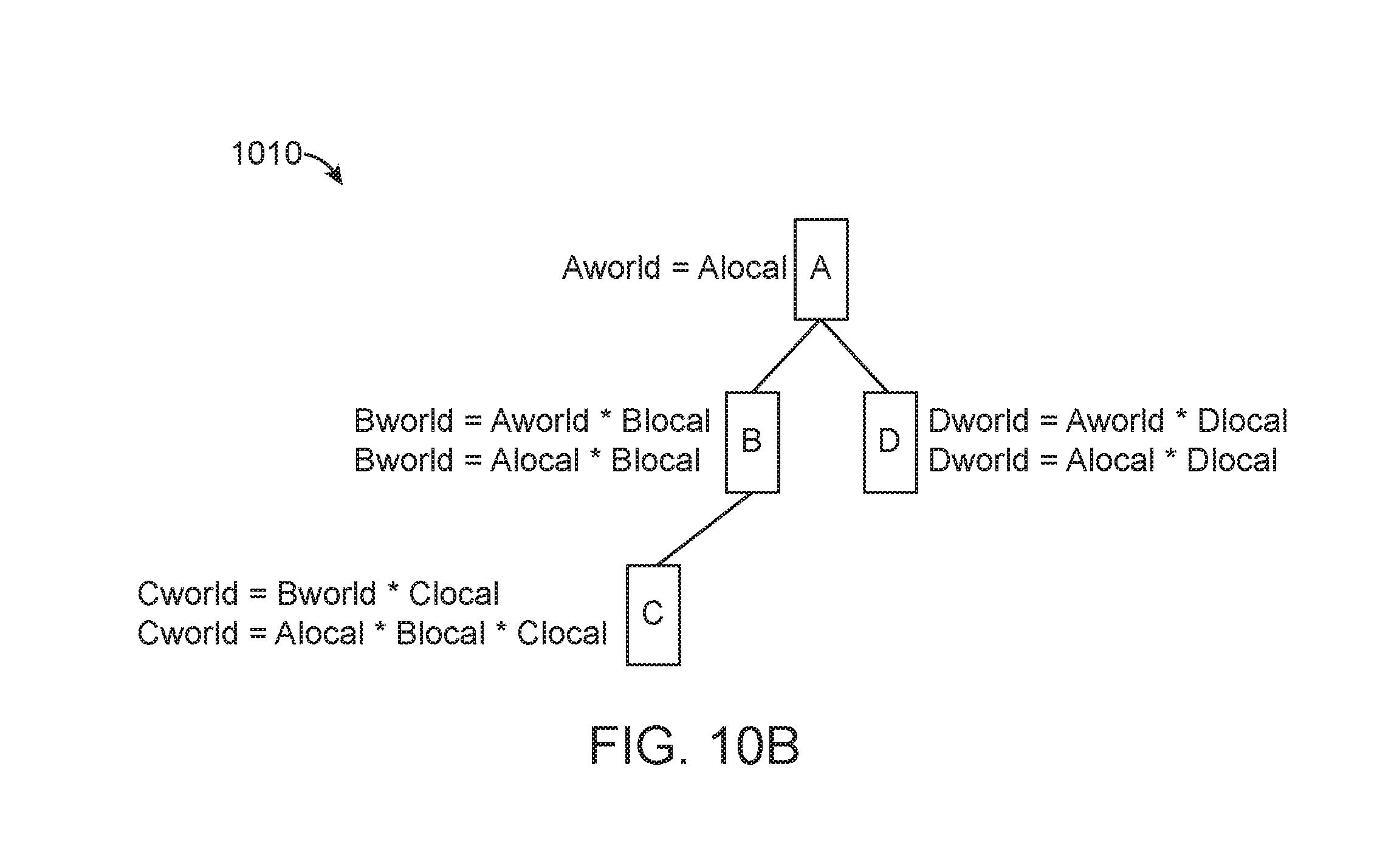

[0045] FIG. 10A illustrates a tree node of a scene graph, according to some embodiments.

[0046] FIGS. 10B-10AA illustrate various transform trees and group trees, according to some embodiments.

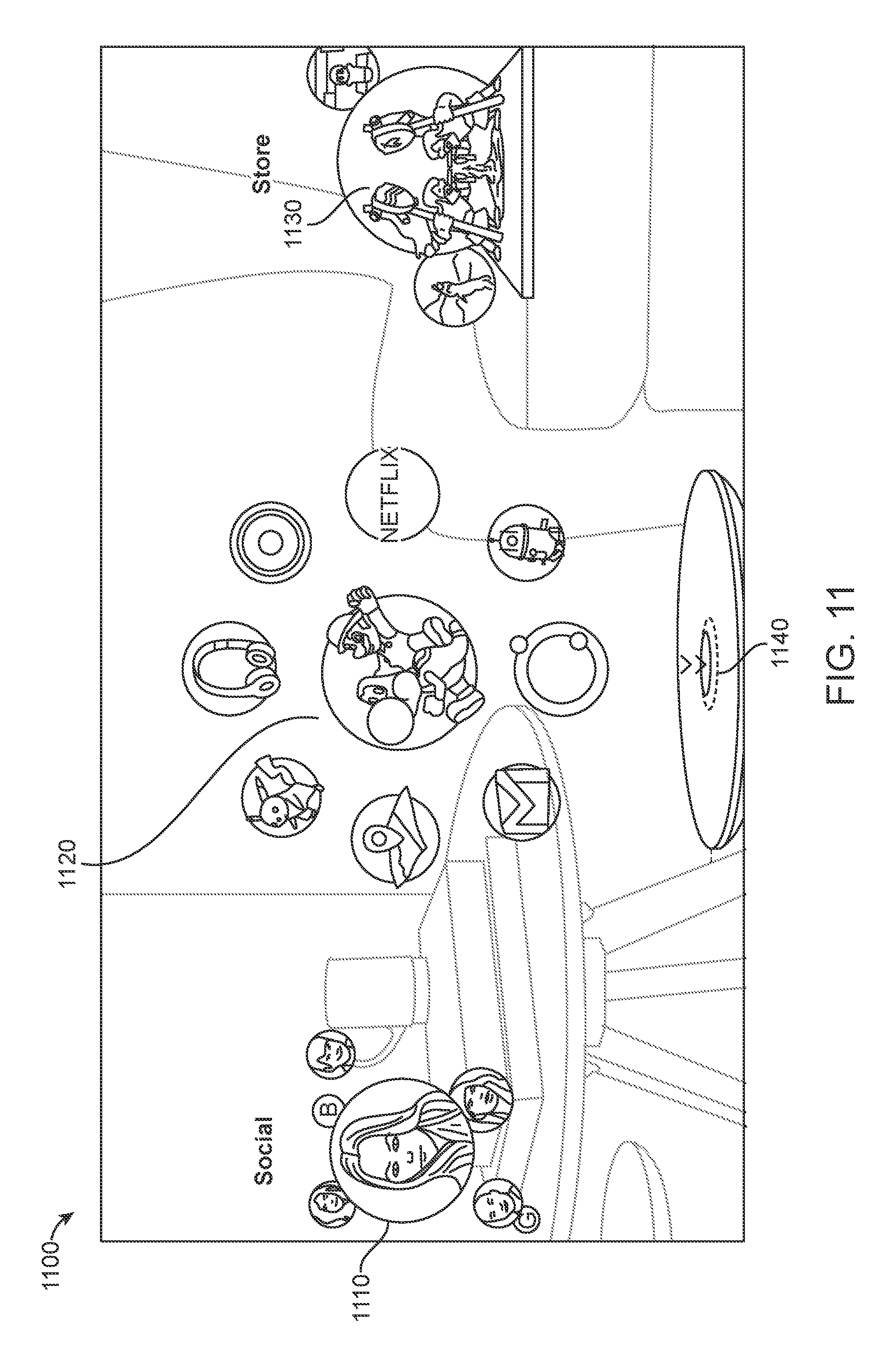

[0047] FIG. 11 shows a view of a placed launcher, according to some embodiments.

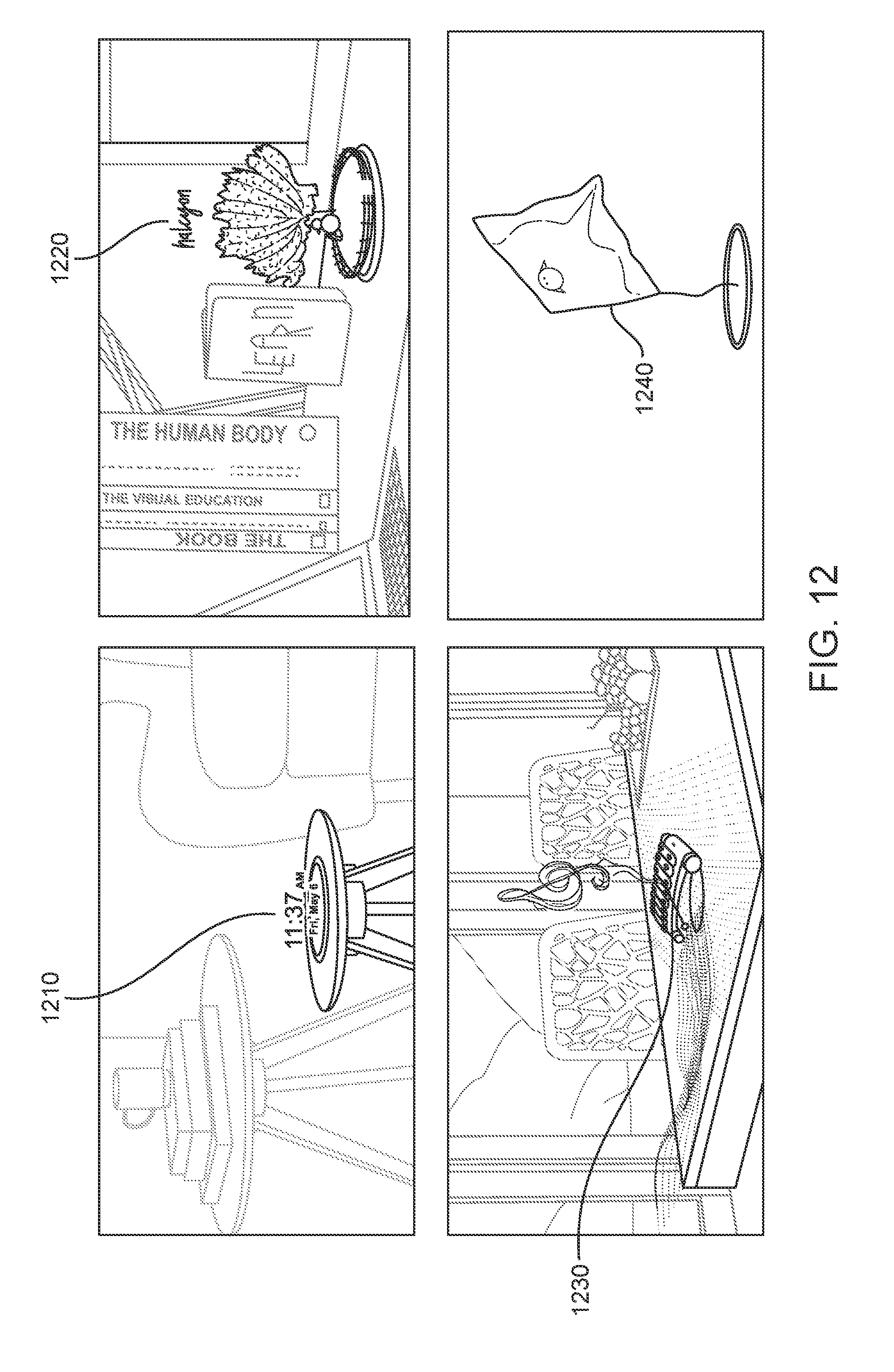

[0048] FIG. 12 shows types of content that may be displayed while a launcher is in an idle/sleeping state, according to some embodiments.

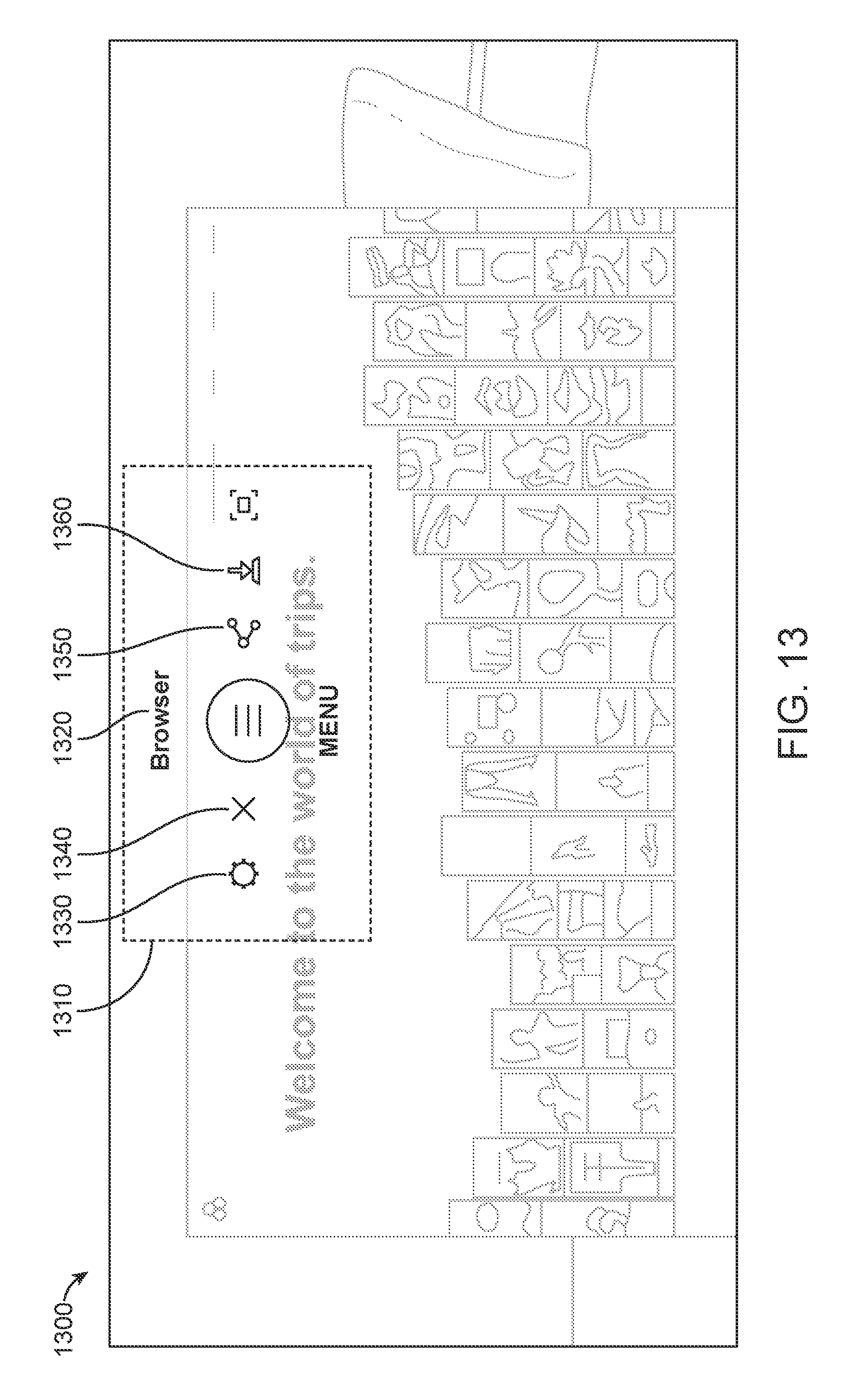

[0049] FIG. 13 shows a Secondary UI volume, according to some embodiments.

[0050] FIG. 14 shows an example of body dynamics, according to some embodiments.

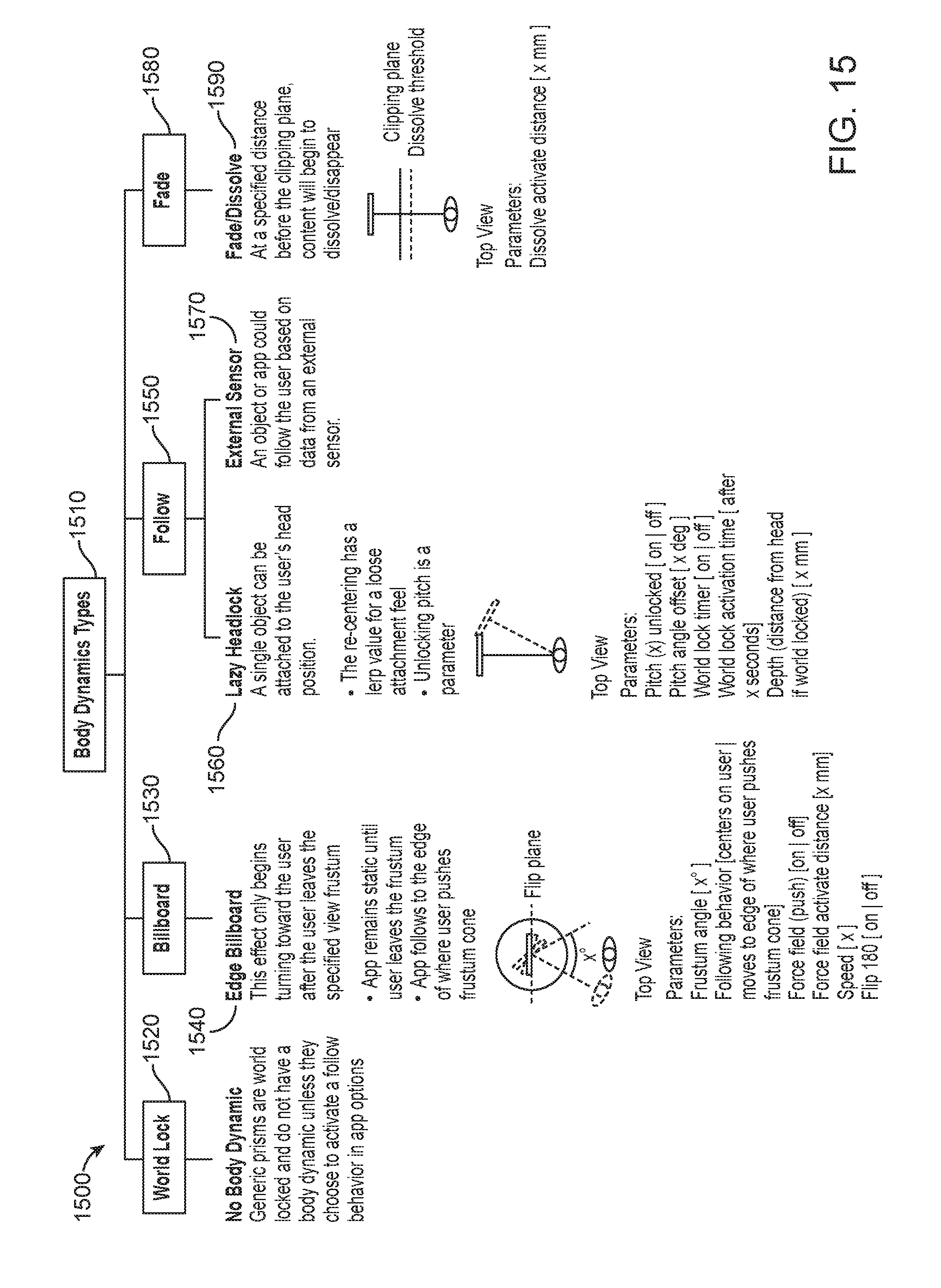

[0051] FIG. 15 shows different types of body dynamics, according to some embodiments.

[0052] FIG. 16 shows a flowchart for simultaneously launching and placing an application in a mixed reality environment, according to some embodiments.

[0053] FIG. 17 is a block diagram of an illustrative computing system 1400 suitable for implementing one or more of the embodiments of the present disclosure.

DETAILED DESCRIPTION

[0054] The present disclosure is directed to managing and displaying virtual content in a mixed reality system. Instead of allowing multiple applications to manage and display virtual content in a mixed reality system independently of one another, embodiments of the present disclosure disclose a universe application that manages (e.g. how and where) virtual content to be displayed and managed in the mixed reality system using 3D windows called Prisms.

[0055] This disclosure provides a description of an illustrative mixed reality system with which some embodiments of the disclosure may be practiced, followed by a description of one or more embodiments of processes and mechanisms to manage and display virtual content in the mixed reality system.

[0056] The description that follows pertains to an illustrative mixed reality system with which the disclosure may be practiced. However, it is to be understood that the disclosure also lends itself to applications in other types of AR and virtual reality (VR) systems, and therefore the disclosure is not to be limited to only the illustrative system disclosed herein.

[0057] FIG. 1, shows an example user physical environment and system architecture for managing and displaying virtual content in a mixed reality system. The representative environment 100 includes a user's landscape 110 as viewed by a user 103 through a head-mounted system 160. The user's landscape 110 is a 3D view of the world where user-placed content may be composited on top of the real world. The representative environment 100 further includes accessing a universe application 130 via a processor 170 operatively coupled to a network (not shown). Although the processor 170 is shown as an isolated component separate from the head-mounted system 160, in an alternate embodiment, the processor 170 may be integrated with one or more components of the head-mounted system 160, and/or may be integrated into other system components within the representative environment 100 such as, for example, a network to access a computing network (not shown) and external storage device(s) 150. In some embodiments, the processor 170 may not be connected to a network. The processor 170 may be configured with software (e.g., a universe application 130) for receiving and processing information such as video, audio, and/or other data (e.g. depth camera data) received from the head-mounted system 160, a local storage device 137, application(s) 140, a computing network, and/or external storage device(s) 150.

[0058] The universe application 130 may be a 3D windows manager that is analogous to a 2D windows manager running on, for example, a desktop computer for managing 2D windows displayed on the display screen of the desktop computer. However, the universe application 130 (hereinafter may be referred to as "the Universe") manages the creation, placement and display of virtual content 115 in a 3D spatial environment, as well as interactions between a plurality of virtual content 115 displayed in a user's landscape 110. Virtual content 115 from applications 140 are presented to users 103 inside of one or more 3D window display management units such as bounded volumes and/or 3D windows, hereinafter may be referred to as Prisms 113.

[0059] A bounded volume/3D window/Prism 113 may be a rectangular, cubic, cylindrical, or any other shape volume of space that may be positioned and oriented in space. A Prism 113 may be a volumetric display space having boundaries for content (e.g., virtual content) to be rendered/displayed into, wherein the boundaries are not displayed. In some embodiments, the boundaries may be displayed. The Prism 113 may present a standard base level of interaction and control over an application's content and its placement. The Prism 113 may represent a sub-tree of a multi-application scene graph, which may be embedded inside of the Universe, or may be external to but accessed by the Universe. A scene graph is a general data structure commonly used by vector-based graphics, editing applications and modern gaming software, which arranges the logical and often (but not necessarily) spatial representation of a graphical scene. A scenegraph may be considered a data-structure that defines how content is positioned and transformed relative to each other within its structure. Application(s) 140 are given instances of Prisms 113 to place content within. Applications may render 2D/3D content within a Prism 113 using relative placement algorithms and arbitrary transforms, but the Universe may still ultimately be in charge of gross interaction patterns such as content extraction. Multiple applications may render to the Universe via the Prisms 113, with process boundaries separating the Prisms 113. There may be n number of bounded volumes/Prisms 113 per application process, but this is explicitly an n:1 relationship such that only one process for each application may be running for each bounded volume/Prism 113, but there may be a number of m processes running, each with their own bounded volume/Prism 113.

[0060] The Universe operates using a Prism/distributed scene graph approach for 2D and/or 3D content. A portion of the Universe's scene graph is reserved for each application to render to. Each interaction with an application, for example the launcher menu, the landscape, or body-centric application zones (all described in more detail below) may be done through a multi-application scene graph. Each application is allocated 1 to n rectangular Prisms that represent a sub-tree of the scene graph. Prisms are not allocated by the client side applications, but instead are created through the interaction of the user inside of the Universe, for example when the user opens a new application in the landscape by clicking a button on a controller. In some embodiments, an application can request a Prism from the Universe, but the request may be denied. In some embodiments, if an application requests and is allowed a new Prism, the application may only transform the new Prism relative to one of its other Prisms.

[0061] The Universe encloses virtual content 115 from application(s) 140 in objects called Prisms 113. Each application process or instance may render its virtual content into its own individual Prism 113 or set of Prisms. The Universe manages a world space, sometimes called a landscape, where Prisms 113 are displayed. In some embodiments, the Universe provides the ability to attach applications to walls and surfaces, place Prisms at an arbitrary location in space, register them with the mixed reality system's world database, and/or control sharing of content between multiple users of the mixed reality system.

[0062] In some embodiments, the purpose of the Prisms 113 is to provide behaviors and control over the rendering and display of the content. Much like a 2D display, where a window may be used to define location, menu structures, and display of 2D content within a 2D window, with 3D virtual display, the Prism allows the mixed reality system (e.g., the Universe) to wrap control relating to, for example, content locations, 3D window behavior, and/or menu structures around the display of 3D content. For example, controls may include at least placing the virtual content in a particular location in the user's landscape 110, removing the virtual content from the landscape 110, copying the virtual content and/or placing the copy in a different location, etc. In some embodiments, Prisms may be created and destroyed by the user and only the user. This may be done explicitly to help control abuse of the interfaces provided and to help the user maintain control of the user's content. Additionally, in some embodiments, application(s) 140 do not know where their volumes are placed in the landscape--only that they exist. In some embodiments, applications may request one or more Prisms, and the request may or may not be granted. After the new Prism is created, the user may change the position, and/or the application may automatically position the new Prism relative to a currently existing Prism associated with the application. In some embodiments, each application 140 making use of the Universe service to render 3D content (e.g. composited 3D content) into the Universe process may be required to first register a listener with the Universe. This listener may be used to inform the application 140 of creation and destruction of rendering Prisms, based upon user movement and user interaction with those Prisms. A listener is an interface object that receives messages from an inter-process communication system. For example, in the Android operating system, a listener is an object that receives messages through an Android Binder interface. However, any IPC system may be used such that a Binder is not always used.

[0063] In some embodiments, Prisms may be created from the following interactions: (1) The user has extracted content from an extractable node (disclosed further below); (2) The user has started an application from the launcher; (3) The user has downloaded a nearby passable world map tile that includes a placed instance of an application that the user has permission to see; (4) The user has downloaded a nearby passable world map tile that includes an object that the passable world object recognizer infrastructure has detected, that a given application must render content for; and/or (5) The user has triggered a dispatch from another application that must be handled in a different application. In some embodiments, a passable world model allows a user to effectively pass over a piece of the user's world (e.g., ambient surroundings, interactions, etc.) to another user.

[0064] Extractable Content is content inside a Prism (including but not limited to an icon, 3D icon, word in a text display, and/or image) that can be pulled out of the Prism using an input device and placed in the landscape. For example, a Prism might display a web page showing a running shoe for sale. To extract the running shoe, the shoe can be selected and "pulled" with an input device. A new Prism would be created with a 3D model representing the shoe, and that Prism would move out of the original Prism and towards the user. Like any other Prism, the user may use an input device to move, grow, shrink or rotate the new Prism containing the shoe in the 3D space of the landscape. An Extractable Node is a node in the Prism's scene graph that has been tagged as something that can be extracted. In the Universe, to extract content means to select an extractable node, and use an input device to pull the content out of the Prism. The input to initiate this pull could be aiming a 6dof pointing device at extractable content and pulling the trigger on the input device.

[0065] Each user's respective individual mixed reality system (e.g., mixed reality devices) captures information as the user passes through or inhabits an environment, which the mixed reality system processes to produce a passable world model. More details regarding a passable world are described in U.S. patent application Ser. No. 14/205,126, filed on Mar. 11, 2014, entitled "SYSTEM AND METHOD FOR AUGMENTED AND VIRTUAL REALITY", which has been previously incorporated by reference. The individual mixed reality system may communicate or pass the passable world model to a common or shared collection of data, referred to as the cloud. The individual mixed reality system may communicate or pass the passable world model to other users, either directly or via the cloud. The passable world model provides the ability to efficiently communicate or pass information that essentially encompasses at least a field of view of a user. In one embodiment, the system uses the pose and orientation information, as well as collected 3D points described above in order to create the passable world. In some embodiments, the passable world model allows the user the ability to integrate content (e.g., virtual and/or physical content) with the real world. A passable world system may include one or more mixed reality systems or mixed reality user devices that are able to connect to a cloud network, a passable world model, a set of object recognizers, and a database (e.g., external database 150). The passable world model may be configured to receive information from the mixed reality user devices and also transmit data to them through the network. For example, based on the input from a user, a piece of the passable world may be passed on from one user to another user. The passable world model may be thought of as a collection of images, points and other information (e.g. real world information) based on which the mixed reality system is able to construct, update and build the virtual world on the cloud, and effectively pass pieces of the virtual world to various users. For example, a set of real world points collected from a mixed reality user device may be collected in the passable world model. Various object recognizers may crawl through the passable world model to recognize objects, tag images, etc., and attach semantic information to the objects. The passable world model may use the database to build its knowledge of the world, attach semantic information, and store data associated with the passable world.

[0066] In the case of a Prism that is visible to the user but whose controlling application is not currently installed, the Universe may render a temporary placeholder for that application that, when interacted with, redirects the user to the application store page for that application.

[0067] In some embodiments, Prisms may be destroyed in similar interactions: (1) The user has walked far enough from a passable world map tile that the placed instance of an application has been unloaded (i.e. removed) from volatile memory; (2) The user has destroyed a placed instance of an application; and/or (3) An application has requested that a Prism be closed.

[0068] In some embodiments, if no Prisms for an application are visible and/or loaded, then the process associated with those Prisms may be paused or ended. Once a placed Prism for that application is visible again, the process may be restarted. Prisms may also be hidden, but, in some embodiments, this may only happen at the behest of the Universe and the user. In some embodiments, multiple Prisms may be placed at the same exact location. In such embodiments, the Universe may only show one instance of a placed Prism in one place at a time, and manage the rendering by hiding the visibility of a Prism (and its associated content) until a user interaction is detected, such as the user "swipes" to the next visible element (e.g., Prism) in that location.

[0069] In some embodiments, each Prism 113 may be exposed to the application 140 via a volume listener interface with methods for accessing properties of the Prism 113 and registering content in a scene graph sub-tree for shared resources such as meshes, textures, animations, and so on.

[0070] In some embodiments, since the application 140 does not know where a given Prism 113 is placed in 3D space, the volume listener interface may provide accessor methods to a set of hints that help to define where the given Prism is present in the Universe, for example hand centric, stuck in the landscape, Body Centric, etc. These properties additionally specify expected behavior of the Prisms, and may be controlled in a limited fashion either by the user, the application 140, or the Universe. A given Prism can be positioned relative to another Prism that an application owns. Applications can specify that Prisms should snap together (two sides of their bounding volumes touch) while Prisms from that application are being placed. Additionally, Prisms may provide an API for key-value data storage. Some of these key-value pairs are only writable by privileged applications.

[0071] In some embodiments, application(s) 140 are client software applications that provide content that is to be displayed to the user 103 in the user's landscape 110. For example, an application 140 may be a video streaming application, wherein video data may be streamed to the user to be displayed on a 2D planar surface. As another example, an application 140 may be a Halcyon application that provides 3D imaging of physical objects that may denote a period of time in the past that was idyllically happy and peaceful for the user. Application 140 provides the content that a user may want to include in the user's landscape 110. The Universe via the Prisms 113 manages the placement and management of the content that is generated by application 140.

[0072] When a non-immersive application is executed/launched in the user's landscape 110, its content (e.g., virtual content) is rendered inside of a Prism 113. A non-immersive application may be an application that is able to run and/or display content simultaneously with one or more other applications in a shared 3D environment. Although the virtual content may be contained within the Prism, a user may still interact with the virtual content, such as, for example, hovering over an object, clicking on it, etc. The Prism 113 may also bound application 140's displayed content so different applications 140 do not interfere with each other or other objects in the user's landscape 110. Prisms 113 may also provide a useful abstraction for suspending, pausing, and/or minimizing virtual content from application(s) 140 that are out of view or too far away from the user.

[0073] The Prisms 113 may be anchored/attached/pinned to various objects within a user's landscape 110, including snapping or anchoring to another Prism. For example, Prism 113a, which displays virtual content 115 (e.g., a video 115a from a video streaming application), may be anchored to a vertical wall 117a. As another example, Prism 113b, which displays a 3D tree 115b from a Halcyon application, is shown in FIG. 1 to be anchored to a table 117b. Furthermore, a Prism 113 may be anchored relative to a user 103 (e.g., body-centric), wherein the Prism 113 which displays virtual content 115 may be anchored to a user's body, such that as the user's body moves, the Prism 113 moves relative to the movement of the user's body. A body-centric content may be application content such as planes, meshes, etc. that follow the user and remain positionally consistent with the user. For example, a small dialog box that follows the user around but exists relative to the user's spine rather than the landscape 110. Additionally, a Prism 113 may also be anchored to a virtual object such as a virtual display monitor displayed within the user's landscape 110. The Prism 113 may be anchored in different ways, which is disclosed below.

[0074] The Universe may include a local database 137 to store properties and characteristics of the Prisms 113 for the user. The stored Prism information may include Prisms activated by the user within the user's landscape 110. Local database 137 may be operatively coupled to an external database 150 that may reside in the cloud or in an external storage facility. External database 150 may be a persisted database that maintains information about the mixed reality environment of the user and of other users.

[0075] For example, as a user launches a new application to display virtual content in the user's physical environment, the local database 137 may store information corresponding to a Prism that is created and placed at a particular location by the Universe, wherein an application 140 may render content into the Prism 113 to be displayed in the user's landscape 110. The information corresponding to the Prism 113, virtual content 115, and application 140 stored in the local database 137 may be synchronized to the external database 150 for persistent storage.

[0076] In some embodiments, the persisted storage may be important because when the mixed reality system is turned off, data stored in the local database 137 may be erased, deleted, or non-persisted. Thus, when a user turns on the mixed reality system, the Universe may synchronize with the external database 150 to retrieve an instance of the local database 137 corresponding to the user 103 and the user's landscape 110 prior to the mixed reality system being turned off. The local database 137 may be an instance of the external database 150, wherein the instance of the local database 137 includes information pertinent to the user 103 and the user's current environment. The external database 150 may additionally store instances of local databases of other users, multiple users, the same user over time, and/or other environments. The external database 150 may contain information that is used to manage and share virtual content between multiple users of the mixed reality system, whereas the local database 137 stores and maintains information corresponding to the user 103.

[0077] The Universe may create a Prism 113 for application 140 each time application(s) 140 needs to render virtual content 115 onto a user's landscape 110. In some embodiments, the Prism 113 created by the Universe allows application 140 to focus on rendering virtual content for display while the Universe focuses on creating and managing the placement and display of the Prism 113 having the virtual content 115 displayed within the boundaries of the Prism by the application 140.

[0078] Each virtual content 115 rendered by an application 140, displayed in the user's landscape 110, may be displayed within a single Prism 113. For example, if an application 140 needs to render two virtual contents (e.g., 115a and 115b) to be displayed within a user's landscape 110, then application 140 may render the two virtual contents 115a and 115b. Since virtual contents 115 include only the rendered virtual contents, the Universe may create Prisms 113a and 113b to correspond with each of the virtual content 115a and 115b, respectively. The Prism 113 may include 3D windows management properties and characteristics of the virtual content 115 to allow the Universe to manage the virtual content 115 inside the Prism 113 and the placement and display of the Prism 113 in the user's landscape 110.

[0079] The Universe may be the first application a user 103 sees when the user 103 turns on the mixed reality device. The Universe may be responsible for at least (1) rendering the user's world landscape; (2) 2D window management of planar applications and 3D windows (e.g., Prisms) management; (3) displaying and executing the application launcher menu; (4) allowing the user to place virtual content into the user's landscape 110; and/or (5) managing the different states of the display of the Prisms 113 within the user's landscape 110.

[0080] The head-mounted system 160 may be a mixed reality head-mounted system that includes a display system (e.g., a user interface) positioned in front of the eyes of the user 103, a speaker coupled to the head-mounted system and positioned adjacent the ear canal of the user, a user-sensing system, an environment sensing system, and a processor (all not shown). The head-mounted system 160 presents to the user 103 the display system (e.g., user interface) for interacting with and experiencing a digital world. Such interaction may involve the user and the digital world, one or more other users interfacing the representative environment 100, and objects within the digital and physical world.

[0081] The user interface may include viewing, selecting, positioning and managing virtual content via user input through the user interface. The user interface may be at least one or a combination of a haptics interface devices, a keyboard, a mouse, a joystick, a motion capture controller, an optical tracking device, an audio input device, a smartphone, a tablet, or the head-mounted system 160. A haptics interface device is a device that allows a human to interact with a computer through bodily sensations and movements. Haptics refers to a type of human-computer interaction technology that encompasses tactile feedback or other bodily sensations to perform actions or processes on a computing device.

[0082] An example of a haptics controller may be a totem (not shown). In some embodiments, a totem is a hand-held controller that tracks its position and orientation relative to the headset 160. In this example, the totem may be a six degree-of-freedom (six DOF) controller where a user may move a Prism around in altitude and azimuth (on a spherical shell) by moving the totem up or down. In some embodiments, to move the object closer or farther away, the user may use the joystick on the totem to "push" or "pull" the Prism, or may simply move the totem forward or backward. This may have the effect of changing the radius of the shell. In some embodiments, two buttons on the totem may cause the Prism to grow or shrink. In some embodiments, rotating the totem itself may rotate the Prism. Other totem manipulations and configurations may be used, and should not be limited to the embodiments described above.

[0083] The user-sensing system may include one or more sensors 162 operable to detect certain features, characteristics, or information related to the user 103 wearing the head-mounted system 160. For example, in some embodiments, the sensors 162 may include a camera or optical detection/scanning circuitry capable of detecting real-time optical characteristics/measurements of the user 103 such as, for example, one or more of the following: pupil constriction/dilation, angular measurement/positioning of each pupil, sphericity, eye shape (as eye shape changes over time) and other anatomic data. This data may provide, or be used to calculate information (e.g., the user's visual focal point) that may be used by the head-mounted system 160 to enhance the user's viewing experience.

[0084] The environment-sensing system may include one or more sensors 164 for obtaining data from the user's landscape 110. Objects or information detected by the sensors 164 may be provided as input to the head-mounted system 160. In some embodiments, this input may represent user interaction with the virtual world. For example, a user (e.g., the user 103) viewing a virtual keyboard on a desk (e.g., the table 188) may gesture with their fingers as if the user were typing on the virtual keyboard. The motion of the fingers moving may be captured by the sensors 164 and provided to the head-mounted system 160 as input, wherein the input may be used to change the virtual world or create new virtual objects.

[0085] The sensors 164 may include, for example, a generally outward-facing camera or a scanner for capturing and interpreting scene information, for example, through continuously and/or intermittently projected infrared structured light. The environment-sensing system may be used for mapping one or more elements of the user's landscape 110 around the user 103 by detecting and registering one or more elements from the local environment, including static objects, dynamic objects, people, gestures and various lighting, atmospheric and acoustic conditions, etc. Thus, in some embodiments, the environment-sensing system may include image-based 3D reconstruction software embedded in a local computing system (e.g., the processor 170) and operable to digitally reconstruct one or more objects or information detected by the sensors 164.

[0086] In some embodiments, the environment-sensing system provides one or more of the following: motion capture data (including gesture recognition), depth sensing, facial recognition, object recognition, unique object feature recognition, voice/audio recognition and processing, acoustic source localization, noise reduction, infrared or similar laser projection, as well as monochrome and/or color CMOS sensors (or other similar sensors), field-of-view sensors, and a variety of other optical-enhancing sensors. It should be appreciated that the environment-sensing system may include other components other than those discussed above.

[0087] As mentioned above, the processor 170 may, in some embodiments, be integrated with other components of the head-mounted system 160, integrated with other components of the system of the representative environment 100, or may be an isolated device (wearable or separate from the user 103) as shown in FIG. 1. The processor 170 may be connected to various components of the head-mounted system 160 through a physical, wired connection, or through a wireless connection such as, for example, mobile network connections (including cellular telephone and data networks), Wi-Fi, Bluetooth, or any other wireless connection protocol. The processor 170 may include a memory module, integrated and/or additional graphics processing unit, wireless and/or wired internet connectivity, and codec and/or firmware capable of transforming data from a source (e.g., a computing network, and the user-sensing system and the environment-sensing system from the head-mounted system 160) into image and audio data, wherein the images/video and audio may be presented to the user 103 via the user interface (not shown).

[0088] The processor 170 handles data processing for the various components of the head-mounted system 160 as well as data exchange between the head-mounted system 160 and the software applications such as the Universe, the external database 150, etc. For example, the processor 170 may be used to buffer and process data streaming between the user 103 and the computing network, including the software applications, thereby enabling a smooth, continuous and high fidelity user experience. The processor 170 may be configured to execute a set of program code instructions. The processor 170 may include a memory to hold the set of program code instructions, in which the set of program code instructions comprises program code to display virtual content within a subset of available 3D displayable space by displaying the virtual content within a volumetric display space, wherein boundaries of the volumetric display space are not displayed. In some embodiments, the processor may be two or more processors operatively coupled.

[0089] In some embodiments, the mixed reality system may be configured to assign to a Prism universal features and application selected/application-specific features from a list of pre-approved options for configurations of display customizations by an application. For example, universal features ensure different applications interact well together. Some example of universal features may include max/min size, no overlapping Prisms (excluding temporary overlap from collision behavior), no displaying content outside the boundaries of the Prism, applications need permission from user if the application wants to access sensors or sensitive information. Application selected/application-specific features enable optimized application experiences. Application-selected/application-specific features may include max/min size (within limits from the system), default size (within limits from the system), type of body dynamic (e.g., none/world lock, billboard, edge billboard, follow/lazy headlock, follow based on external sensor, fade--discussed below), child Prism spawn location, child headpose highlight, child Prism relational behavior, on surface behavior, independent transformation control, resize vs. scale, idle state timeout, collision behavior, permission/password to access application, etc. In another embodiment, the mixed reality system may be configured to display virtual content into one or more Prisms, wherein the one or more Prisms do not overlap with one another, in some embodiments. In some embodiments, one or more Prisms may overlap in order to provide specific interactions. In some embodiments, one or more Prisms may overlap, but only with other Prisms from the same application. In another embodiment, the mixed reality system may be configured to change a state of a Prism based at least in part on a relative position and location of the Prism to a user. In another embodiment, the mixed reality system may be configured to manage content creation in an application and manage content display in a separate application. In another embodiment, the mixed reality system may be configured to open an application that will provide content into a Prism while simultaneously placing the Prism in a mixed reality environment.

[0090] In some embodiments, the mixed reality system may be configured to assign location, orientation, and extent data to a Prism for displaying virtual content within the Prism, where the virtual content is 3D virtual content. In some embodiments, the mixed reality system may be configured to pin a launcher application to a real world object within a mixed reality environment. In some embodiments, the mixed reality system may be configured to assign a behavior type to each Prism, the behavior type comprising at least one of a world lock, a billboard, an edge billboard, a follow headlock, a follow based on external sensor, or a fade (described below in more detail). In some embodiments, the mixed reality system may be configured to identify a most used content or an application that is specific to a placed location of a launcher application, and consequently re-order to the applications from most to least frequently used, for example. In another embodiment, the mixed reality system may be configured to display favorite applications at a placed launcher application, the favorite applications based at least in part on context relative to a location of the placed launcher.

[0091] FIG. 2 shows a system architecture for managing and displaying virtual content in a mixed reality system, according to some embodiments. System 200 includes a Universe 130, application 140, icon grid application 260, status bar app 270, social panel app 280, and store panel app 290. These applications may represent the base level of applications on system 200, however, in some embodiments, more or fewer applications may be part of system 200.

[0092] As discussed in FIG. 1 above, the Universe may be thought of as a 3D windows (e.g., Prisms) manager, analogous to a 2D windows manager that manages 2D windows in conventional computer desktop systems and such. FIG. 2 may provide further details of the Universe from FIG. 1. Here, the universe application 130 may also include universe server 205, loader volumes 210, secondary UI volumes 220, universe client 225, launcher application 230, and universe server 205. Universe server 205 may be a processing thread of the Universe in a multi-threaded processing environment for multi-parallel processing.

[0093] Loader volumes 210 are placeholder volumes that are displayed to a user while the Universe is creating a Prism for displaying virtual content in the user's landscape 110. For example, when a user selects an application to display in the user's landscape 110 at a particular location, for example, on a vertical wall of the user's landscape 110, while the Universe is setting up the Prism and starting the application for rendering the virtual content into the Prism, the Universe may display a loader volume 210 with a default icon as a placeholder volume to indicate to the user that the Universe is setting up the Prism for display. Once the application finishes rendering the virtual content into the Prism for display in the user's landscape, the loader volume 210 is replaced with the actual Prism containing the rendered virtual content.

[0094] In some embodiments, while the Universe is starting up an application for displaying virtual content, the user 103 may move the loader volume 210 to a desired different location. In some embodiments, the user may move the loader volume 210 to a location that is different than the location of the loader volume/Prism that was initially selected. Once the universe is done creating the Prism and the application has rendered the virtual content into the Prism, the Universe may replace the loader volume 210, wherever the user may have placed the loader volume 210, with the Prism displaying the virtual content.

[0095] Secondary UI volume 220 is another Prism that may be created when a Prism 113 (e.g., its "parent Prism") is created. The Secondary UI volume 220 provides a universal interface of Prisms for users. For example, the Secondary UI volume 220 may be considered as window dressing because the Secondary UI volume 220 provides a mechanism to manage a Prism (e.g., close/remove, share, follow, take a screenshot of the Prism's content, etc.). When a Prism is created, a Secondary UI volume 220 may be created for the Prism if the Prism is NOT part of the Launcher (Launcher applications may not have Secondary UI volumes). The Secondary UI volume 220 provides the space/volume to display graphical user interface icons such as close/remove, share, follow, screenshot, etc. for the user to interact with and manage the Prism. The Secondary UI volume 220 is associated to the parent Prism and may be grouped with the parent Prism. The Secondary UI volume 220 lifetime ends when the parent Prism lifetime it is associated with ends.

[0096] In some embodiments, the Secondary UI volume 220 may have at least three states: (1) Display nothing when the parent Prism is out of focus; (2) Display the component's "visible name" when the parent Prism is in focus; and (3) Display a "carousel" of application menu option icons when a specific user interaction is detected, for example, a home button of a handheld controller (e.g., a Totem, or other suitable user interaction controllers) has been held for a certain number of seconds, wherein the carousel displays a collection of icons, one of which may be a large "X" icon for closing the Prism. In some embodiments, the Secondary UI volume 220 receives input via its parent Prism. In other words, the parent Prism may determine if the Secondary UI volume 220 is displaying its carousel, and if so the parent Prism redirects user input to the Secondary UI. The carousel of the Secondary UI volume 220 is disclosed below.

[0097] In some embodiments, the launcher may be the default "home" menu for the mixed reality system. The launcher may bring together multiple panels of content alongside a system status bar. Each panel may represent a different content type. Applications may be pulled from the launcher and pinned into the landscape for quick recall. The launcher itself may be placed into the landscape for customization per location and/or for quick access.

[0098] Launcher 230 provides the user with the ability to launch new applications into the user's landscape 110. The launcher 230 may be an application composed of a series of body-centric Prisms called panels. The panels may be vertically and horizontally scrollable and a user may switch between panels with a swiping motion, for example. In some embodiments, one panel may be visible at a time (e.g., a central panel), with its two neighboring panels visible as placeholder panels at its side. When the user swipes to the next panel, the placeholder panels may expand to show the full panel. Panels may include an Icon Grid application 260, a Social panel 280, and a Store panel 290. In some embodiments, when the user swipes to the next panel, the panels themselves are not moved or changed, but instead, contents (e.g., icons) within the different panels may be animated in and out of the central panel (e.g., active panel). Furthermore, applications may be pulled from the launcher 230 and pinned into the user's landscape 110 for customization per location, discussed further below.

[0099] In some embodiments, an application 140 may communicate with the Universe via a centralized rendering service client 250 on each application 140. The centralized rendering service client 250 may be in communication with a universe server 205 within the Universe 130. The centralized rendering service client 250 may be a client service of a centralized rendering system that allows application(s) 140 and other applications that generate content for display in the user's landscape to communicate with the Universe via the universe server 205.

[0100] The universe server 205may comprise a service of the centralized rendering system that allows the Universe to communicate with applications that provide the Universe content to be displayed in the user's landscape. In some embodiments, the communication may comprise more than rendering data, for example, input data, requesting a security privilege, requesting to show or hide the virtual keyboard, etc.

[0101] In some embodiments, the centralized rendering system may be a system of hardware and software resources dedicated to receiving graphical data from multiple applications to be displayed on a single display (e.g., in a user's landscape in the mixed reality system). The centralized rendering system combines graphical data from multiple applications 140 into a "centralized" data structure, such as a scene graph, which may be used to render, to a display, a scene reflecting the graphical data from the multiple applications in a realistic and efficient manner. In order to achieve the centralized rendering system, in some embodiments, an application may make changes to a local representation of the Prism called the Client Prism (e.g. Client Prism 215 from FIG. 2). These changes may then be sent to the Universe Server 205 and stored in a Server Prism. The centralized rendering system may then render the updated data in the Server Prisim. The centralized rendering system may hereinafter be referred to as the "Cali" or Kali" system. The Universe may be thought of as an enhanced version of the Cali Server, for example, because the Universe can manage the Prisms in the real world.

[0102] In some embodiments, each application 140 that creates virtual content 115 for the Universe communicates with the centralized rendering system and the Universe via the centralized rendering service client 250 (hereinafter may be referred to as a "Cali client") installed on each of the respective application(s) 140. More information may be disclosed in a related Application Ser. No. 62/479,134 entitled "CENTRALIZED RENDERING", filed on Mar. 30, 2017, and which is hereby incorporated by reference in its entirety. The centralized rendering system improves the user's experience by ensuring that virtual content from multiple different applications are properly analyzed and processed, if necessary, to ensure the virtual content are displayed in a realistic manner to the user. In some embodiments, the Universe is an instance of a Cali Server with additional functionality, such as managing Prisms. In some embodiments, a Client Prism is an instance of a Cali Client Volume and a Server Prism is an instance of a Cali Server Volume, with additional functionality, such as the ability to bring up an App Options display, to display a Loader Volume while the Prism is loading its content, to collide with other Prisms, and to be part of a Transform Tree.

[0103] Client Prism 215a and Client Prism 215b comprise virtual content that is generated by the application 140 and sent by the Cali Client 250a to the Universe Server 205 to be displayed in the user's landscape. In some embodiments, as the application 140 makes changes to the virtual content 115a and 115b, the changes to the virtual content are communicated from the Client Prism 215 to the Universe Server 205, and that information is stored inside the Universe in the corresponding Server Prism data structures 113. In some embodiments, the application 140 does not know where in the user's landscape a virtual content 115a is displayed. The Universe may manage display location of the virtual content 115a via the corresponding Server Prism 113a that is associated to the Client Prism 215a (e.g., the virtual content 115a after it has been processed by the centralized rendering system). The application 140 may request a new Prism by accessing Universe Server 205. In some embodiments, the universe server 205 may be a software module in the Universe that communicates with centralized rendering service client(s) 250 from applications that provide virtual content for display in the user's landscape 110. For example, when a user wants to launch an application and display virtual content from the application in the user's landscape, the application may provide the virtual content to the Universe via the centralized rendering service client from the application to the universe centralized rendering service on the Universe to be displayed in a Prism that may be anchored in the user's landscape.

[0104] In some embodiments, the icon grid application 260 may comprise a recent application section (not shown) and/or a general application section (not shown). The general application section comprises an icon representing each application installed on the mixed reality system. The general application section may be initially populated with a call to a Package Manager (not shown) to determine a list of installed packages. An icon is added for each application in each package. When the Package Manager notifies the Universe of package installation and uninstallation, the icon grid application 260 adjusts its icons accordingly. The Package Manager Service manages the installation of applications and maintains information about those applications such as their names, icon graphics, security permissions, executable files and data files.

[0105] The recent icon section may be initially reconstructed from a log on disk, and then updated by calls from other services. The package name may be logged to disk when a Lifecycle Service notifies the launcher of an application start event, and when the Package Manager notifies the launcher of a package uninstallation event. A user may interact with the icon grid application 260 by choosing icons to launch, or extracting icons to place into the landscape.

[0106] The Lifecycle Service may be a centralized service that manages the process of starting, stopping, putting to sleep, and waking up applications. The Lifecycle Service also knows when applications terminate unexpectedly (crash). When any of these events happen, the service's listeners are notified, and the Universe is one of the listeners. The Universe accesses this service to start, stop, sleep and wake applications. In some embodiments, the Lifecycle Services provide application programming interfaces (APIs) for controlling the lifecycle of application processes running in the mixed reality system. The Lifecycle Services may spawn new processes to run application binaries with a set of permissions, and call APIs on a predefined interface implemented by the applications to control their lifecycle. The Lifecycle Service also provides a listener interface through which other modules may keep track of applications being started/stopped/paused/resumed. The Lifecycle Services may be a separate program from the launcher or the Universe. In some embodiments, the Lifecycle Services may be a middleware.