Probability Of Hire Scoring For Job Candidate Searches

Jersin; John Robert ; et al.

U.S. patent application number 15/942383 was filed with the patent office on 2019-06-27 for probability of hire scoring for job candidate searches. The applicant listed for this patent is Microsoft Technology Licensing, LLC. Invention is credited to Alexis Blevins Baird, John Robert Jersin, Benjamin John McCann, Divyakumar Menghani, Huanyu Zhao.

| Application Number | 20190197486 15/942383 |

| Document ID | / |

| Family ID | 66948980 |

| Filed Date | 2019-06-27 |

View All Diagrams

| United States Patent Application | 20190197486 |

| Kind Code | A1 |

| Jersin; John Robert ; et al. | June 27, 2019 |

PROBABILITY OF HIRE SCORING FOR JOB CANDIDATE SEARCHES

Abstract

Techniques for calculating probability of hire scores in a streaming environment are described. In an embodiment, a system accesses a candidate stream definition comprising a hiring organization and a role. Additionally, the system accesses, based on the candidate stream definition, one or more stream-related information sources, and extracts attributes from the stream-related information sources. Moreover, the system identifies similar organizations based on the attributes, the role, and features of the hiring organization. Then, the system accesses historical data regarding hiring patterns at the similar organizations for the role. The system calculates, based on the historical data and interactions by the hiring organization with member profiles of an online system (e.g., hosting a social networking service), a probability of hire for the role. Next, the system presents the probability of hire. In some embodiments, the system identifies the similar organizations by identifying related roles based on the attributes and the role.

| Inventors: | Jersin; John Robert; (San Francisco, CA) ; Baird; Alexis Blevins; (San Francisco, CA) ; Menghani; Divyakumar; (Sunnyvale, CA) ; McCann; Benjamin John; (Mountain View, CA) ; Zhao; Huanyu; (Fremont, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66948980 | ||||||||||

| Appl. No.: | 15/942383 | ||||||||||

| Filed: | March 30, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62609910 | Dec 22, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 16/90324 20190101; G06F 16/1834 20190101; H04L 51/14 20130101; H04L 67/306 20130101; G06F 16/9032 20190101; H04L 51/02 20130101; G06N 20/00 20190101; H04L 51/20 20130101; G06F 16/285 20190101; G06F 16/635 20190101; G06F 16/9535 20190101; G06F 16/24578 20190101; H04L 67/18 20130101; G06N 7/005 20130101; G06Q 10/063112 20130101; G06F 16/735 20190101; G06Q 50/01 20130101; H04L 51/32 20130101; G06Q 10/1053 20130101 |

| International Class: | G06Q 10/10 20060101 G06Q010/10; G06N 7/00 20060101 G06N007/00; G06Q 50/00 20060101 G06Q050/00 |

Claims

1. A computer system, comprising: one or more processors; and a non-transitory computer readable storage medium storing instructions that when executed by the one or more processors cause the computer system to perform operations comprising: accessing a candidate stream definition comprising a hiring organization and a role; accessing, based on the candidate stream definition, one or more stream-related information sources; extracting one or more attributes from the one or more stream-related information sources; identifying one or more similar organizations based on the one or more attributes, the role, and features of the hiring organization; accessing historical data regarding hiring patterns at the one or more similar organizations for the role; calculating, based on the historical data and interactions by the hiring organization with one or more member profiles of an online system, a probability of hire for the role; and causing presentation, on a display device, of the probability of hire.

2. The computer system of claim 1, wherein: the candidate stream definition further comprises a location; identifying the one or more similar organizations comprises: identifying related roles based on the one or more attributes and the role; and identifying, based on the one or more attributes, the role, the location, the related roles, and features of the hiring organization, the one or more similar organizations; accessing the historical data comprises accessing historical data regarding hiring patterns at the one or more similar organizations for the role and the related roles; and the historical data includes, for each successful hire for the role and the related roles at the one or more similar organizations: a number of member profiles reviewed by the one or more similar organizations; a number of messages sent by the one or more similar organizations to members of the online system; a number of messages accepted or responded to by members of the online system; and a number of members of the online system hired via the online system, the number of members of the online system hired being based on updates to a current employer attribute of member profiles subsequent to a message sent by the one or more similar organizations to members of the online system.

3. The computer system of claim 2, wherein the calculating is based on comparing the historical data with a number of the one or more member profiles reviewed and a number of messages sent to members of the online system by the hiring organization.

4. The computer system of claim 1, the operations further comprising: causing presentation, on the display device, of a prompt to display insights regarding actions that the hiring organization can take to increase the probability of hire.

5. The computer system of claim 4, the operations further comprising: responsive to receiving a response to the prompt, causing presentation, on the display device, of the actions.

6. The computer system of claim 5, wherein the actions include one or more of: a number of member profiles of members of the online system to be reviewed; and a number of messages to be sent by the hiring organization to members of the online system.

7. The computer system of claim 1, wherein the probability of hire is a percentage chance of filling the role in a certain time frame.

8. The computer system of claim 1, wherein the certain time frame is 30 days.

9. The computer system of claim 1, wherein: the online system hosts a social networking service; and the one or more member profiles are member profiles in the social networking service corresponding to candidates for the role.

10. The computer system of claim 1, wherein: the features of the hiring organization include one or more of: an industry, organization size, and geographic locations where the hiring organization has employees; and identifying the one or more similar organizations comprises comparing the features of the hiring organization to features of the one or more similar organizations.

11. The computer system of claim 1, wherein: identifying the one or more similar organizations comprises using a factorization machine to conduct collaborative filtering, and wherein the one or more similar organizations are deemed similar to the hiring organization based on a flow of members of the online system between the hiring organization and one or more similar organizations.

12. The computer system of claim 11, wherein the flow of members of the online system between the hiring organization and the one or more similar organizations includes: a number of employees of the hiring organization that have worked for one or more similar organizations; and a number of employees of the one or more similar organizations that have worked for the hiring organization.

13. The computer system of claim 1, wherein identifying the one or more similar organizations is based at least in part on a number of employees at the hiring organization and the one or more similar organizations who have the role.

14. The computer system of claim 1, wherein identifying the one or more similar organizations is based on relationships between the hiring organization and the one or more similar organizations, the relationships including one or more of: co-viewing relationships indicating overlapping sets of members of the online system who have viewed a page of the hiring organization and a page of the one or more similar organizations; and co-application relationships indicating overlapping sets of members of the online system who have applied to work for the hiring organization and applied to work for the one or more similar organizations.

15. A computer-implemented method, comprising: accessing a candidate stream definition comprising a hiring organization and a role; accessing, based on the candidate stream definition, one or more stream-related information sources; extracting one or more attributes from the one or more stream-related information sources; identifying one or more similar organizations based on the one or more attributes, the role, and features of the hiring organization; accessing historical data regarding hiring patterns at the one or more similar organizations for the role; calculating, based on the historical data and interactions by the hiring organization with one or more member profiles of an online system, a probability of hire for the role; and causing presentation, on a display device, of the probability of

16. The computer-implemented method of claim 15, further comprising: causing presentation, on the display device, of a prompt to display insights regarding actions that the hiring organization can take to increase the probability of hire.

17. The computer-implemented method of claim 16, further comprising: responsive to receiving a response to the prompt.sub.; causing presentation, on the display device, of the actions, wherein the actions include one or more of: a number of member profiles of members of the online system to be reviewed; and a number of messages to be sent by the hiring organization to members of the online system.

18. A non-transitory machine-readable storage medium comprising instructions that, when executed by one or more processors of a machine, cause the machine to perform operations comprising: accessing a candidate stream definition comprising a hiring organization and a role; accessing, based on the candidate stream definition, one or more stream-related information sources; extracting one or more attributes from the one or more stream-related information sources; identifying one or more similar organizations based on the one or more attributes, the role, and features of the hiring organization; accessing historical data regarding hiring patterns at the one or more similar organizations for the role; calculating, based on the historical data and interactions by the hiring organization with one or more member profiles of an online system, a probability of hire for the role; and causing presentation, on a display device, of the probability of hire.

19. The non-transitory machine-readable storage medium of claim 18, wherein: the candidate stream definition further comprises a location; identifying the one or more similar organizations comprises: identifying related roles based on the one or more attributes and the role; and identifying the one or more similar organizations based on the one or more attributes, the role, the location.sub.; the related roles, and features of the hiring organization; accessing the historical data comprises accessing historical data regarding hiring patterns at the one or more similar organizations for the role and the related roles; and the historical data includes, for each successful hire for the role and the related roles at the one or more similar organizations: a number of member profiles reviewed by the one or more similar organizations; a number of messages sent by the one or more similar organizations to members of the online system; a number of messages accepted or responded to by members of the online system; and a number of members of the online system hired via the online system, the number of members of the online system hired being based on updates to a current employer attribute of member profiles subsequent to a message sent by the one or more similar organizations to members of the online system.

20. The non-transitory machine-readable storage medium of claim 19, wherein the calculating is based on comparing the historical data with a number of the one or more profiles viewed and a number of messages sent to members of the online system by the hiring organization.

Description

PRIORITY CLAIM

[0001] This application claims the benefit of priority to U.S. Provisional Patent Application No. 62/609,910 entitled "Creating and Modifying Job Candidate Search Streams", [reference number 902004-US-PSP (3080.G98PRV)] filed on Dec. 22, 2017, which is incorporated herein by reference in its entirety.

TECHNICAL FIELD

[0002] The present disclosure generally relates to computer technology for solving technical challenges in determining query attributes (e.g., locations, skills, positions, job titles, industries, years of experience and other query terms) for search queries. More specifically, the present disclosure relates to creating a stream of candidates based on attributes such as locations and titles, which may be suggested to a user, and refining the stream based on user feedback. The disclosure also relates to generating communications such as messages and alerts related to the stream.

BACKGROUND

[0003] The rise of the Internet has occasioned two disparate phenomena: the increase in the presence of social networks, with their corresponding member profiles visible to large numbers of people, and the increase in use of social networks for job searches, by applicants, employers, social referrals, and recruiters. Employers and recruiters attempting to connect candidates and employers, or refer them to a suitable position (e.g., job title), often perform searches on social networks to identify candidates who have relevant qualifications that make them good candidates for whatever job opening the employers or recruiters are attempting to fill. The employers or recruiters then can contact these candidates to see if they are interested in applying for the job opening.

[0004] Traditional querying of social networks for candidates involves the employer or recruiter entering one or more search terms to manually create a query. A key challenge in a search for candidates (e.g., talent search) is to translate the criteria of a hiring position into a search query that leads to desired candidates. To fulfill this goal, the searcher typically needs to understand which skills are typically required for the position (e.g., job title), what are the alternatives, which companies are likely to have such candidates, which schools the candidates are most likely to graduate from, etc. Moreover, this knowledge varies over time. Furthermore, some attributes, such as the culture of a company, are not easily entered into a search box as query terms. As a result, it is not surprising that even for experienced recruiters, many search trials are often required in order to obtain an appropriate query that meets the recruiters' search intent. Additionally, small business owners do not have the time to navigate through and review large numbers of candidates.

BRIEF DESCRIPTION OF THE DRAWINGS

[0005] Some embodiments of the technology are illustrated, by way of example and not limitation, in the figures of the accompanying drawings.

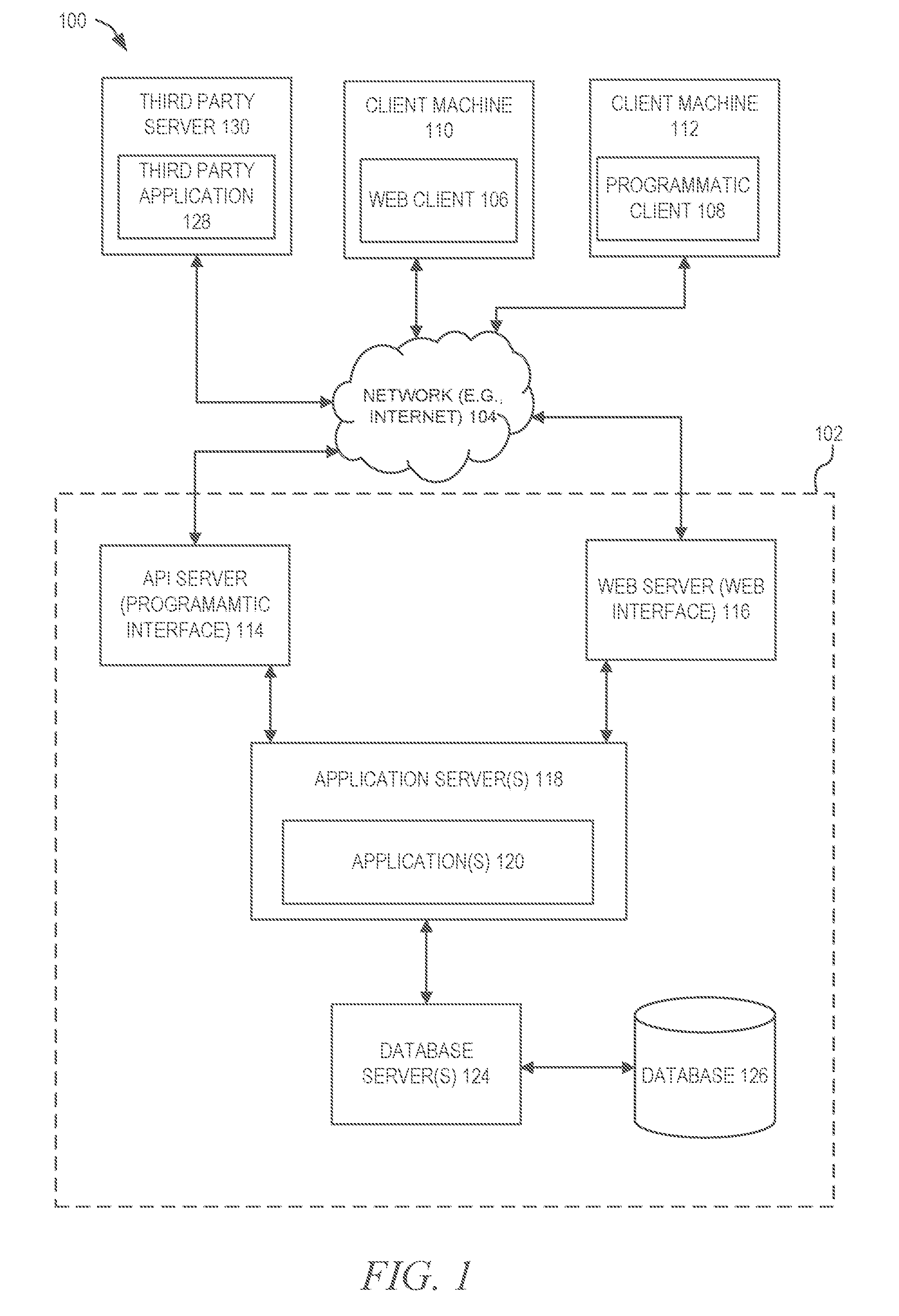

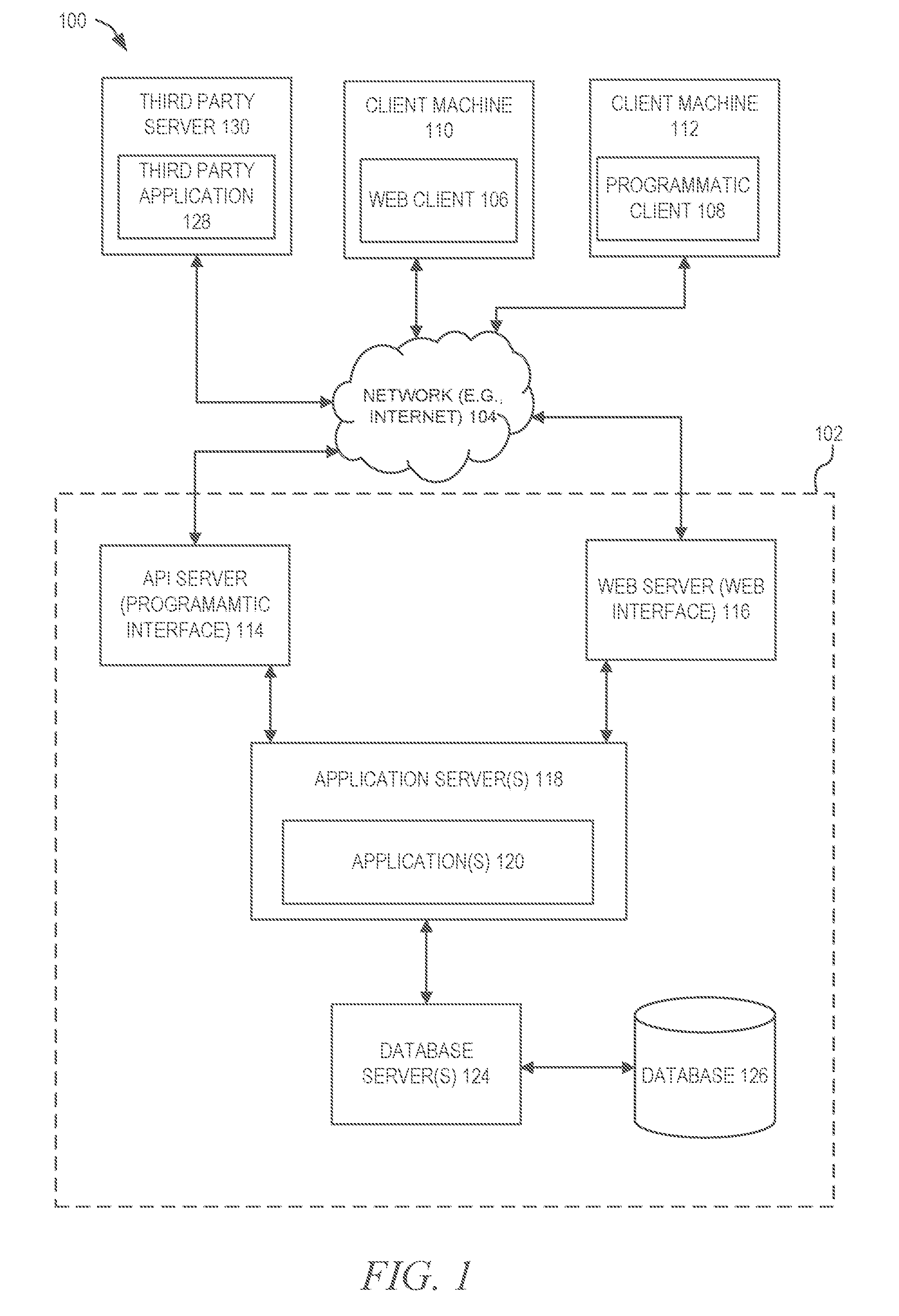

[0006] FIG. 1 is a block diagram illustrating a client-server system, in accordance with an example embodiment.

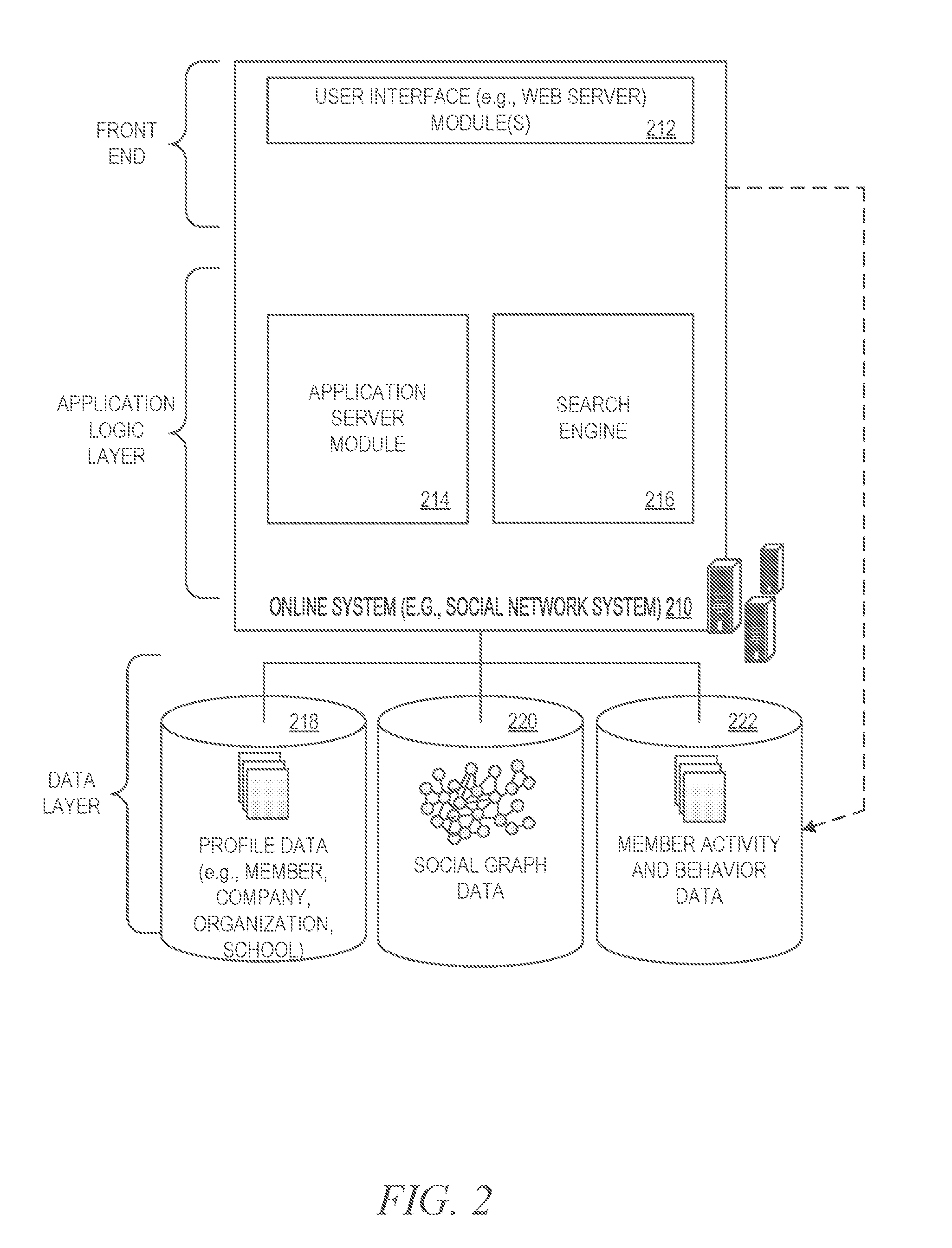

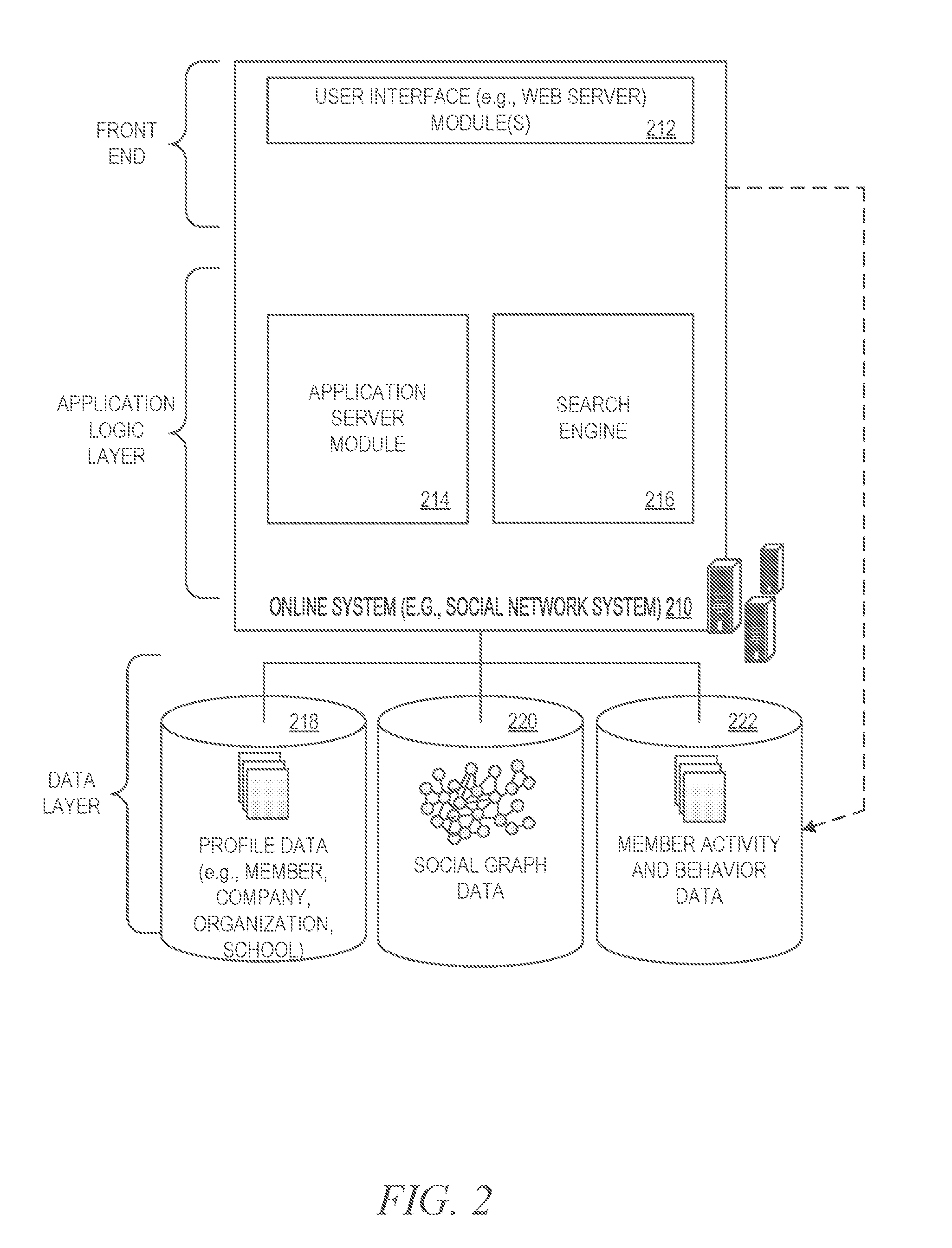

[0007] FIG. 2 is a block diagram showing the functional components of a social networking service, including a data processing block referred to herein as a search engine, for use in generating and providing search results for a search query, consistent with some embodiments of the present disclosure.

[0008] FIG. 3 is a block diagram illustrating an application server block of FIG. 2 in more detail, in accordance with an example embodiment.

[0009] FIG. 4 is a block diagram illustrating an intelligent matches system, in accordance with an embodiment.

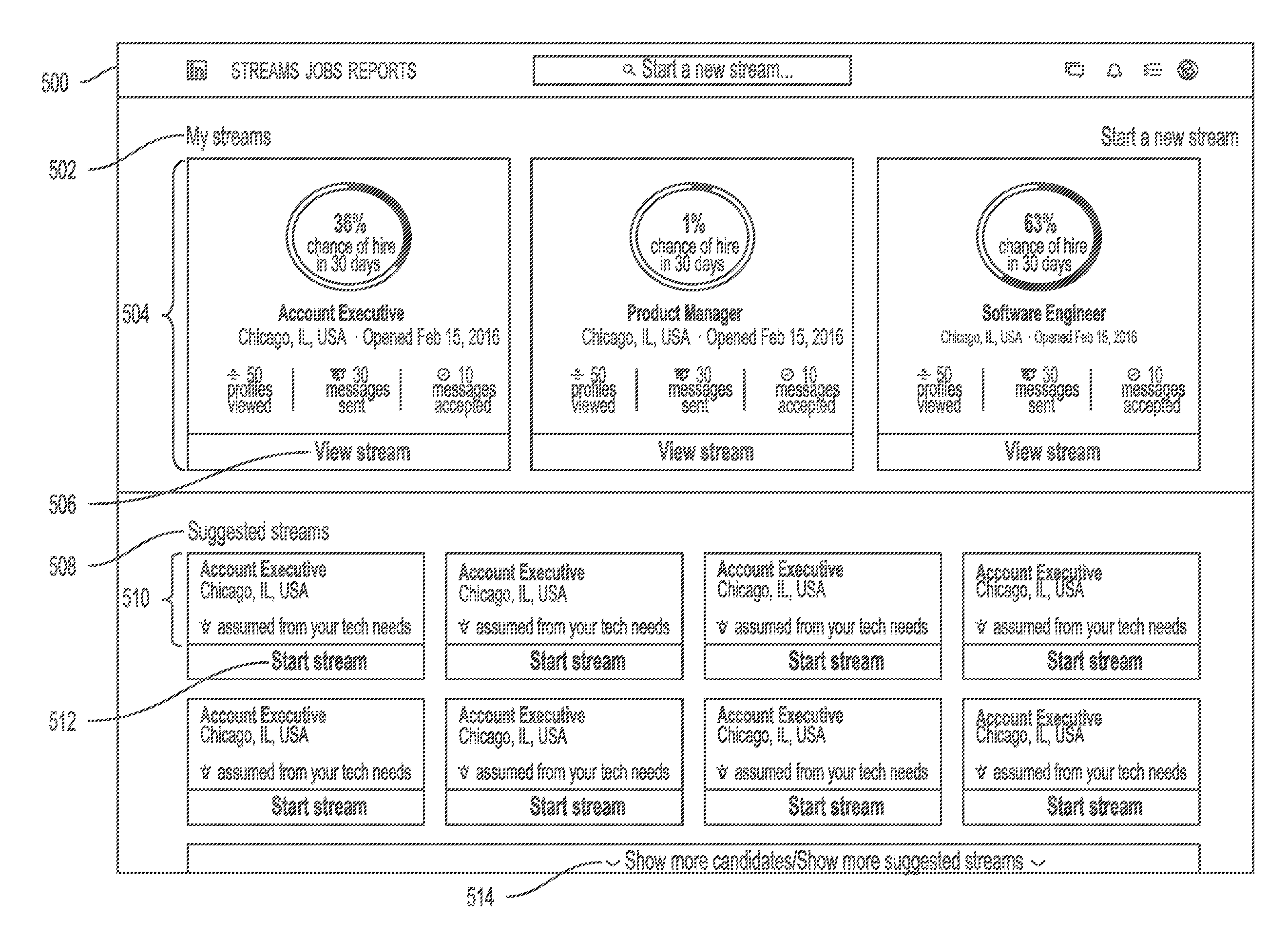

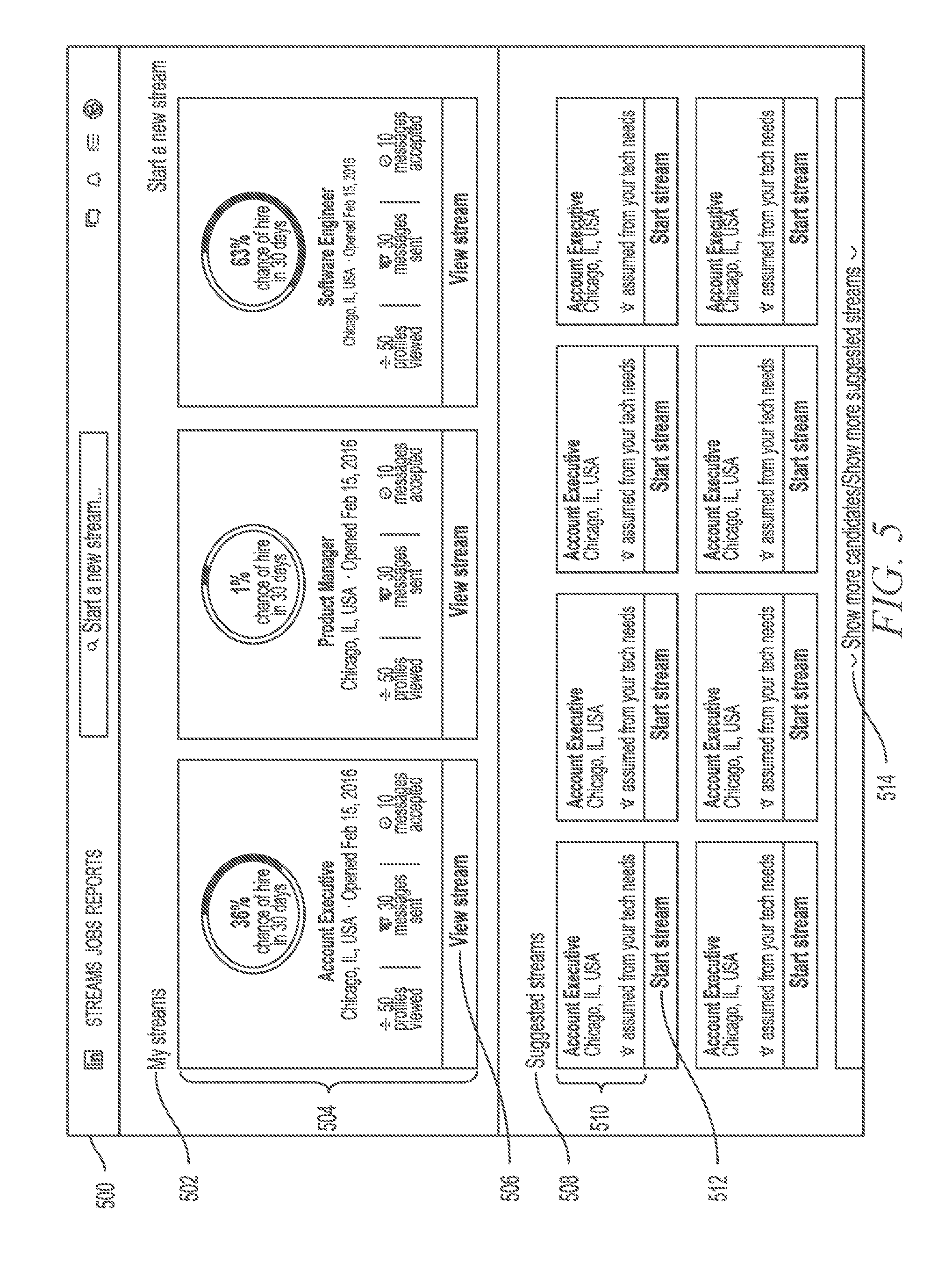

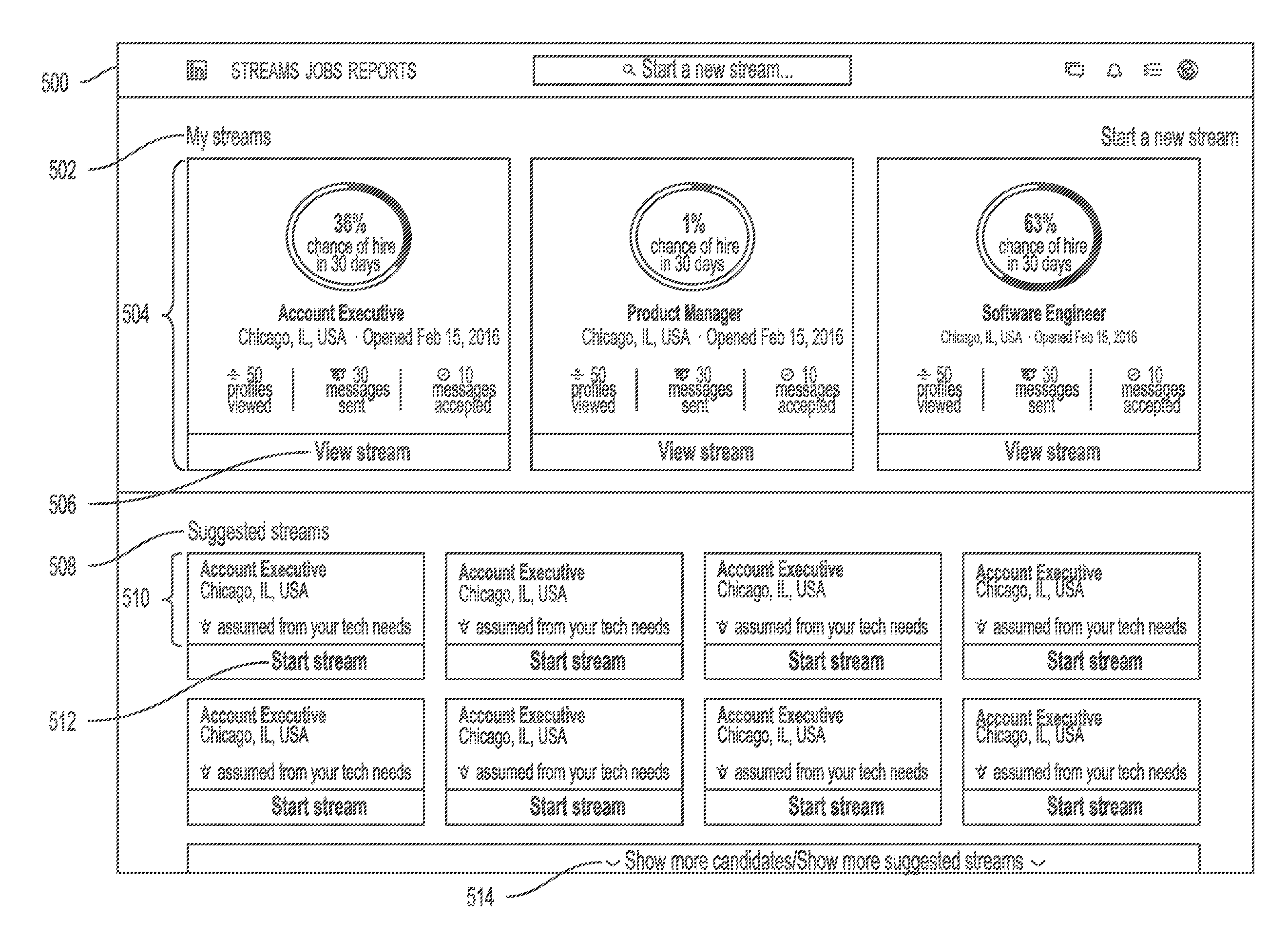

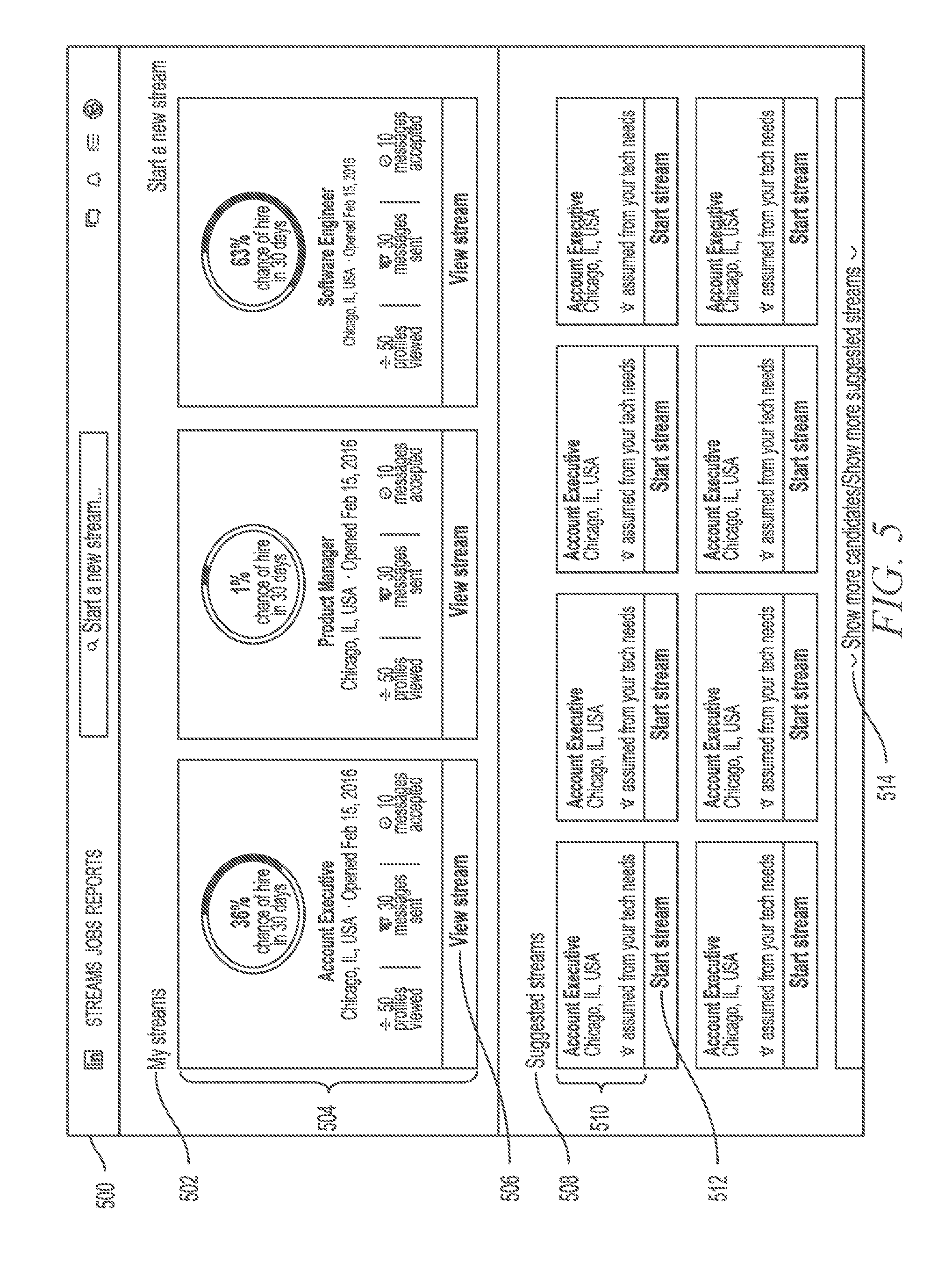

[0010] FIG. 5 is a screen capture illustrating a first screen of a user interface for editing and displaying suggested candidate streams, in accordance with an example embodiment.

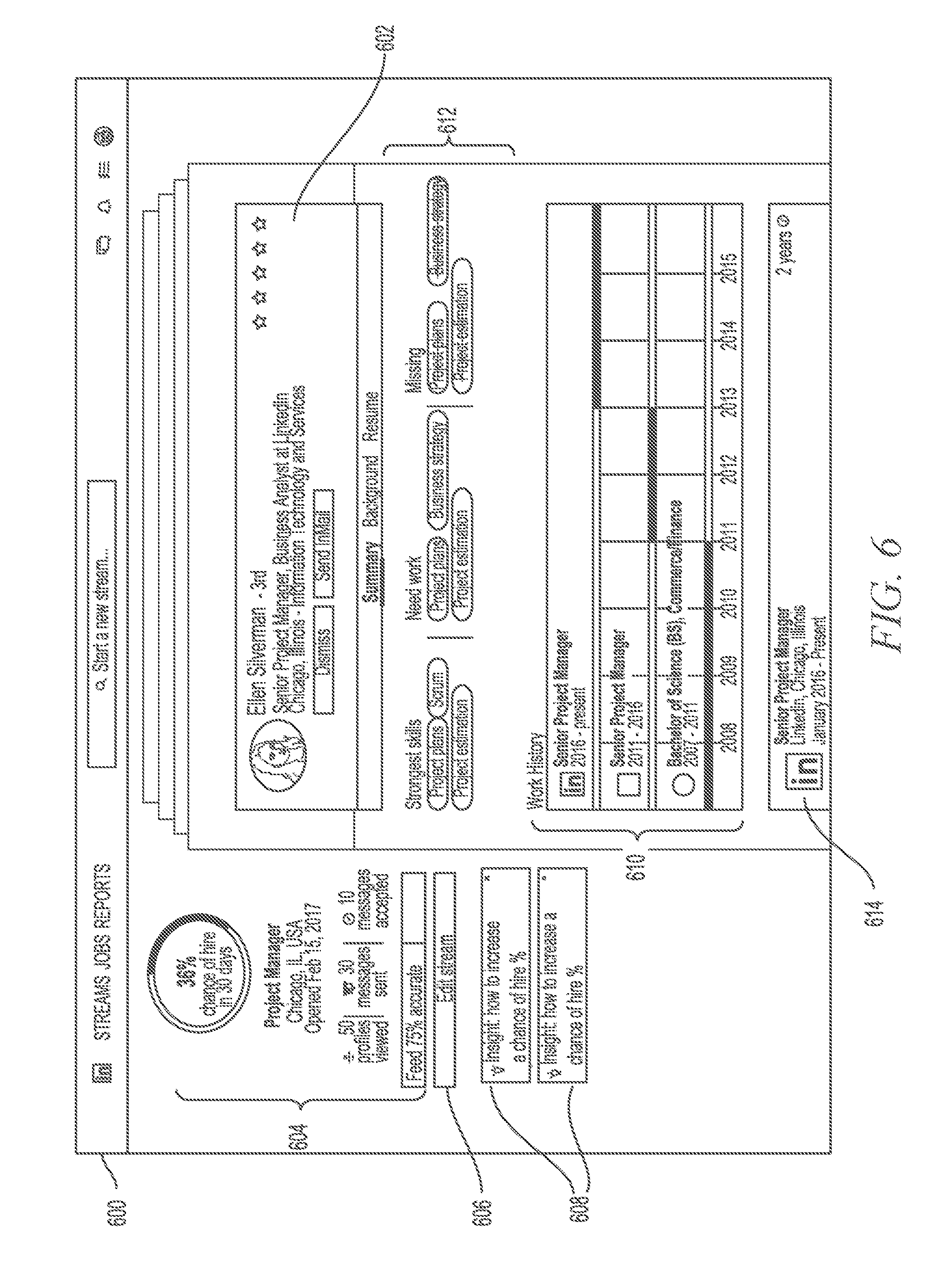

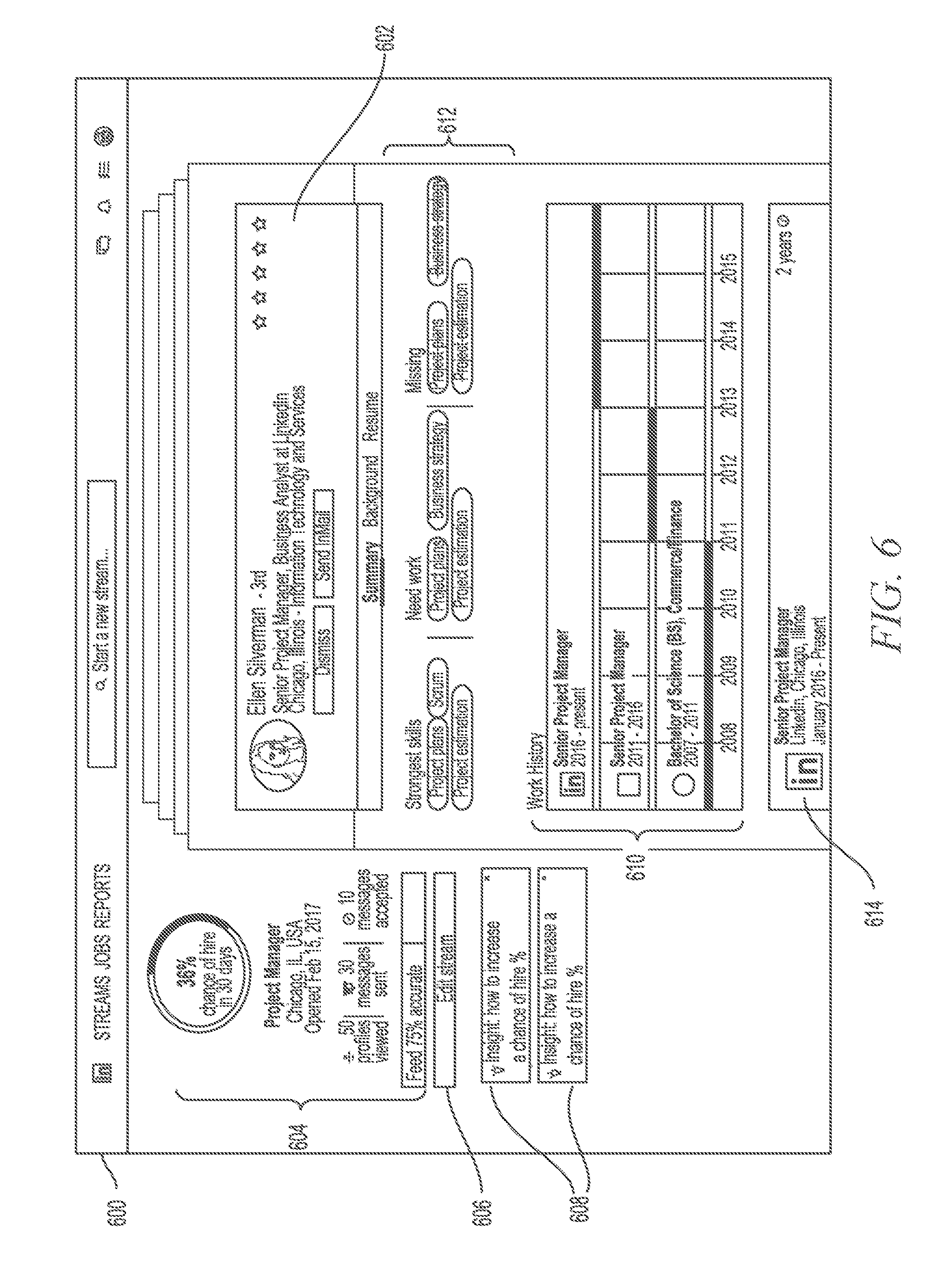

[0011] FIG. 6 is a screen capture illustrating a second screen of a user interface for editing and displaying suggested candidate streams, in accordance with an example embodiment.

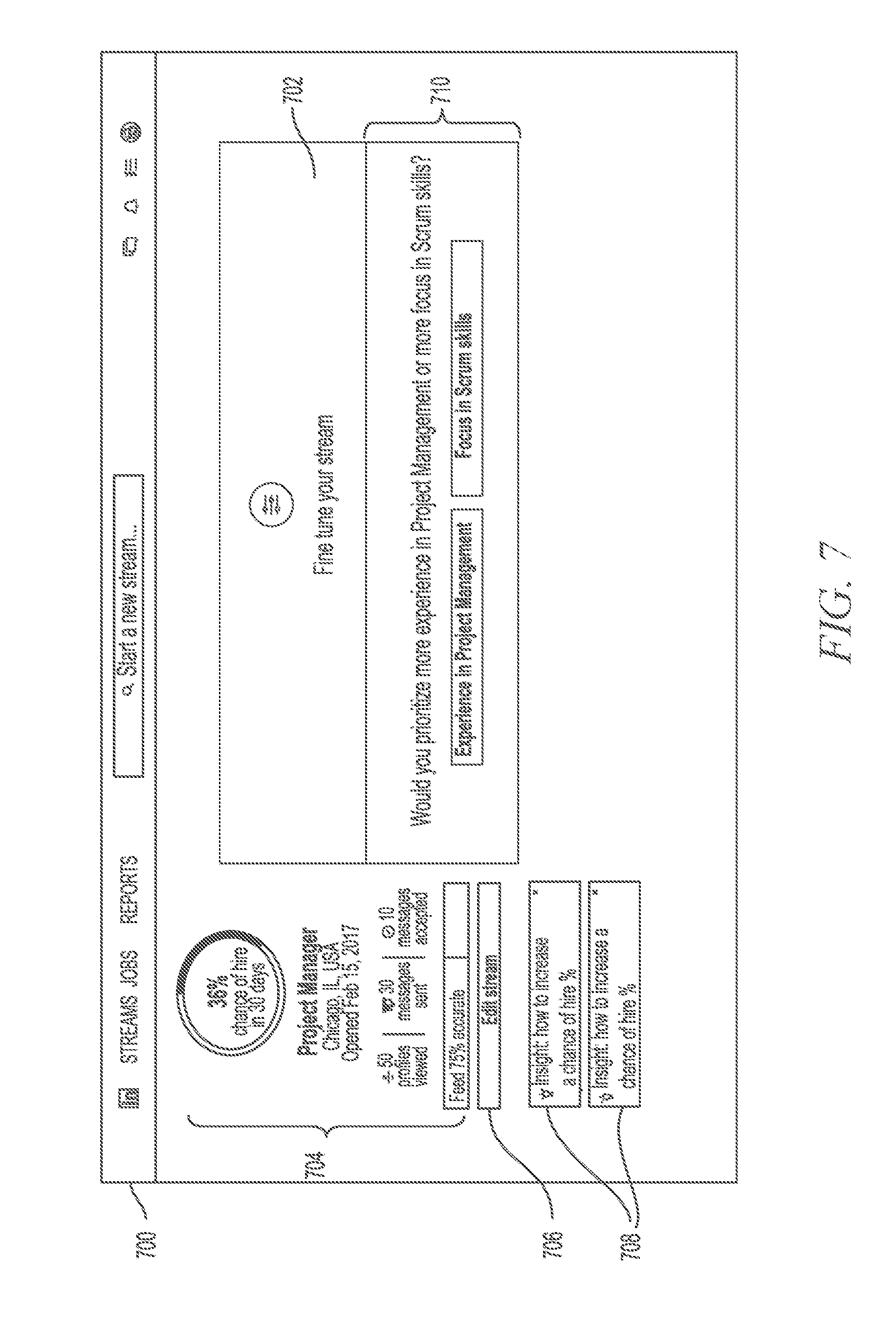

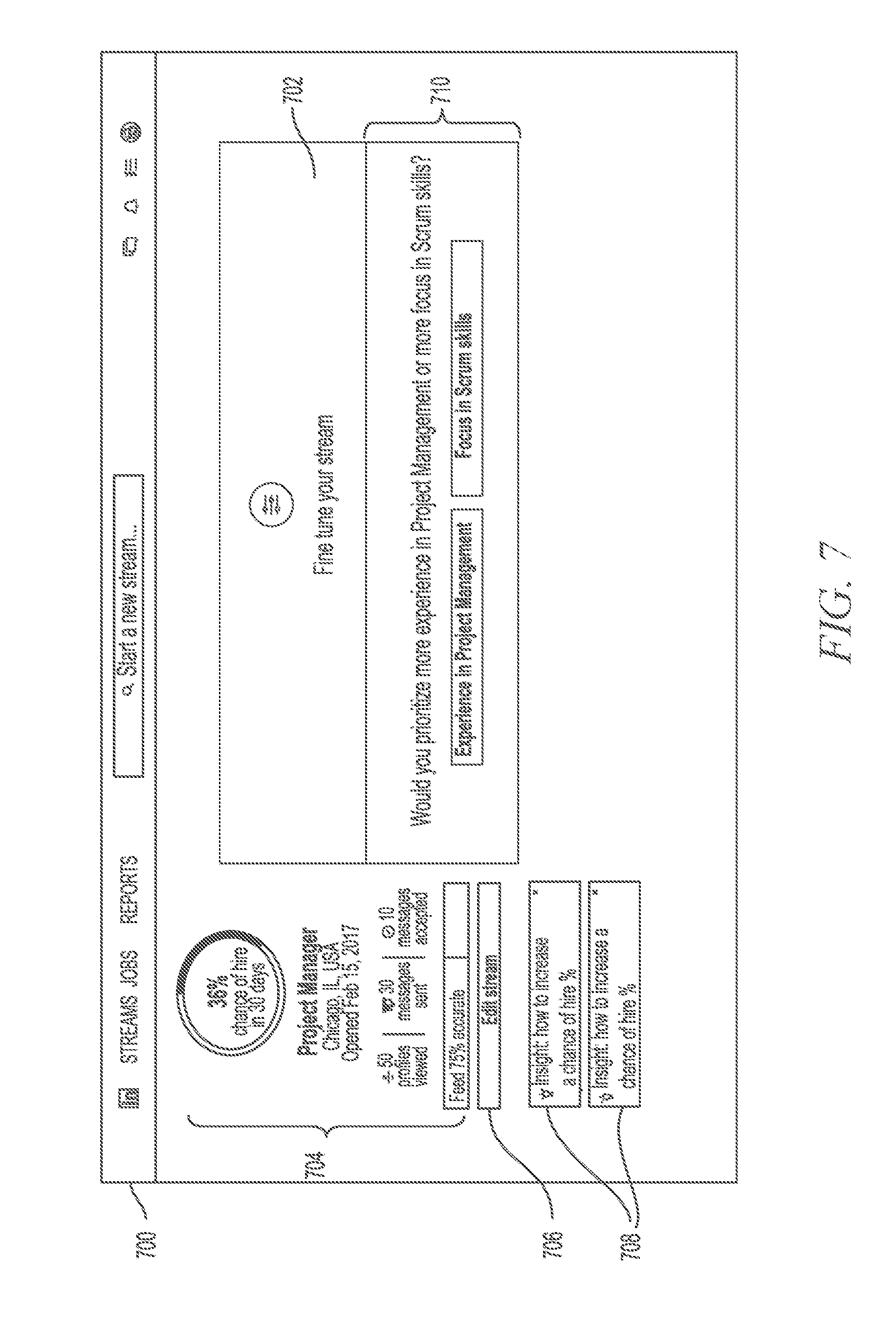

[0012] FIG. 7 is a screen capture illustrating a third screen of a user interface for editing and displaying suggested candidate streams, in accordance with an example embodiment.

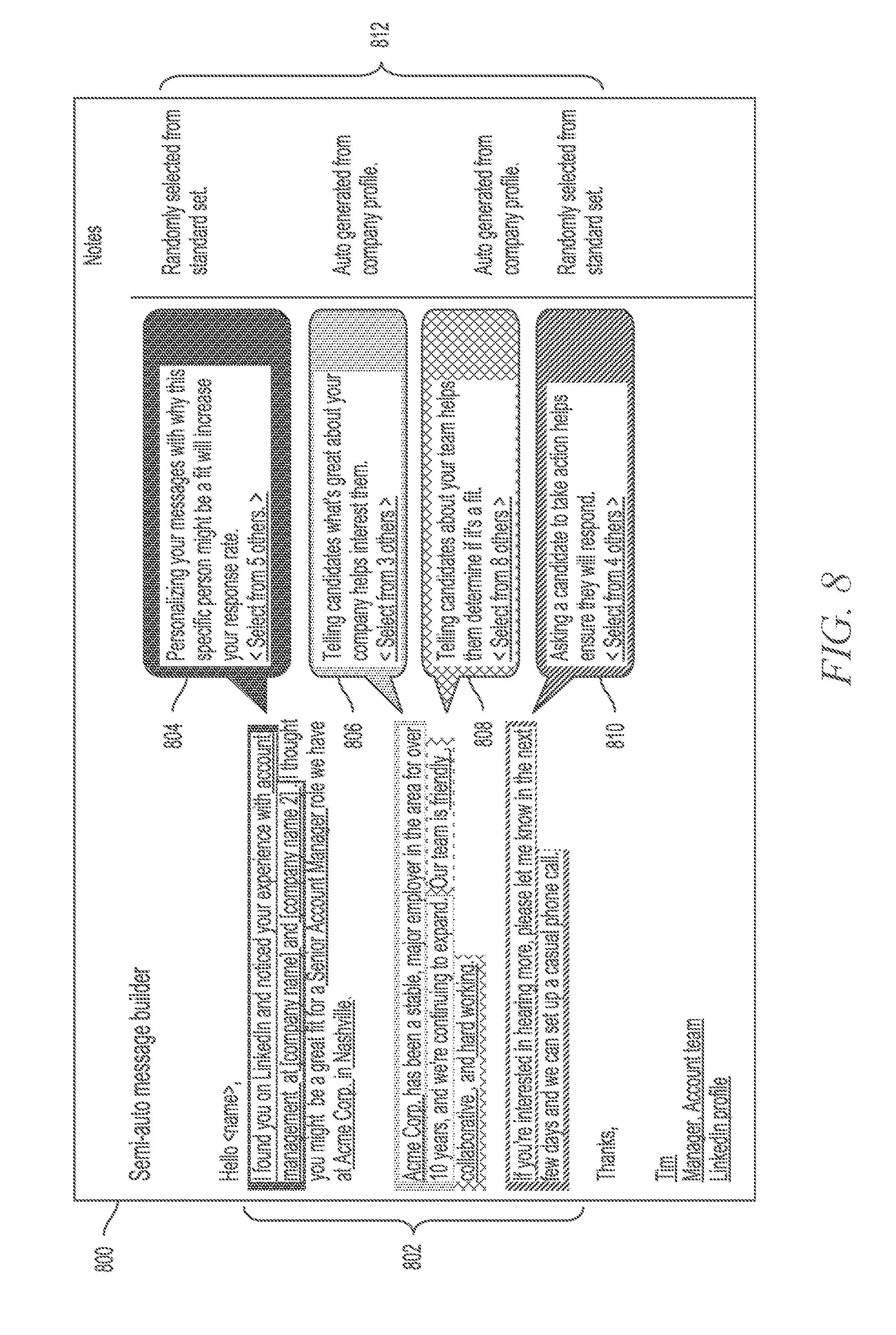

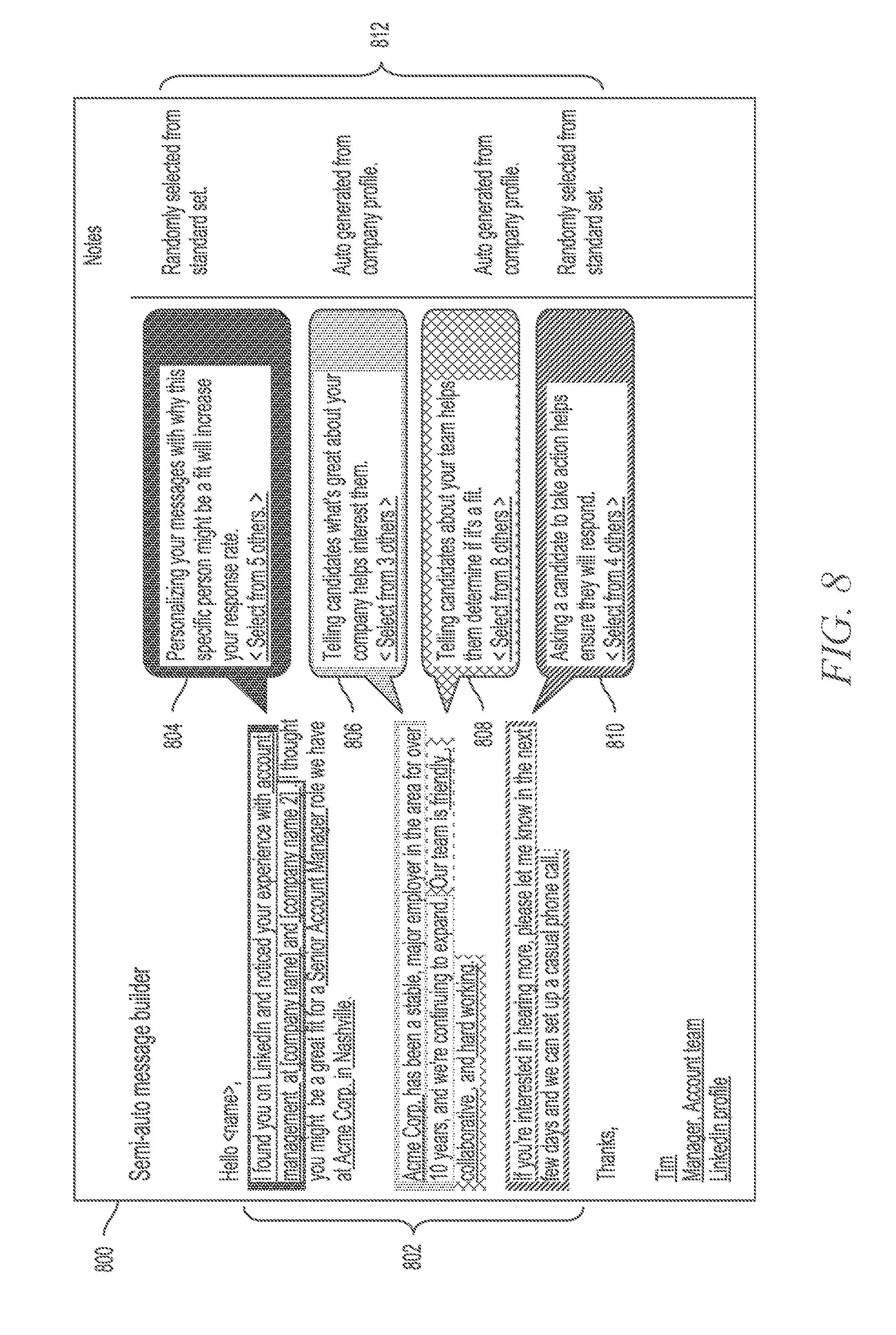

[0013] FIG. 8 is a screen capture illustrating a user interface for a semi-automatic message builder, in accordance with an example embodiment.

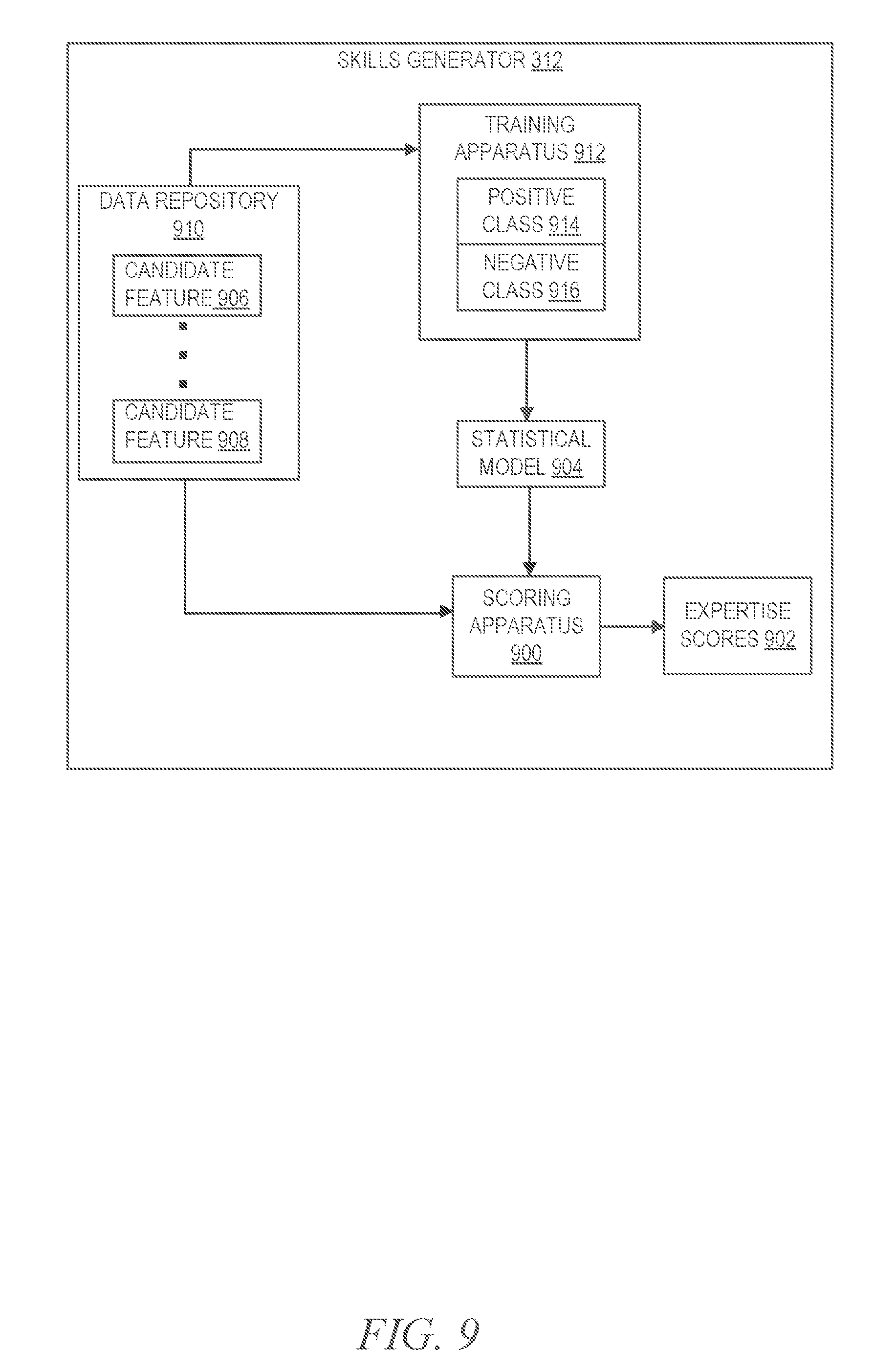

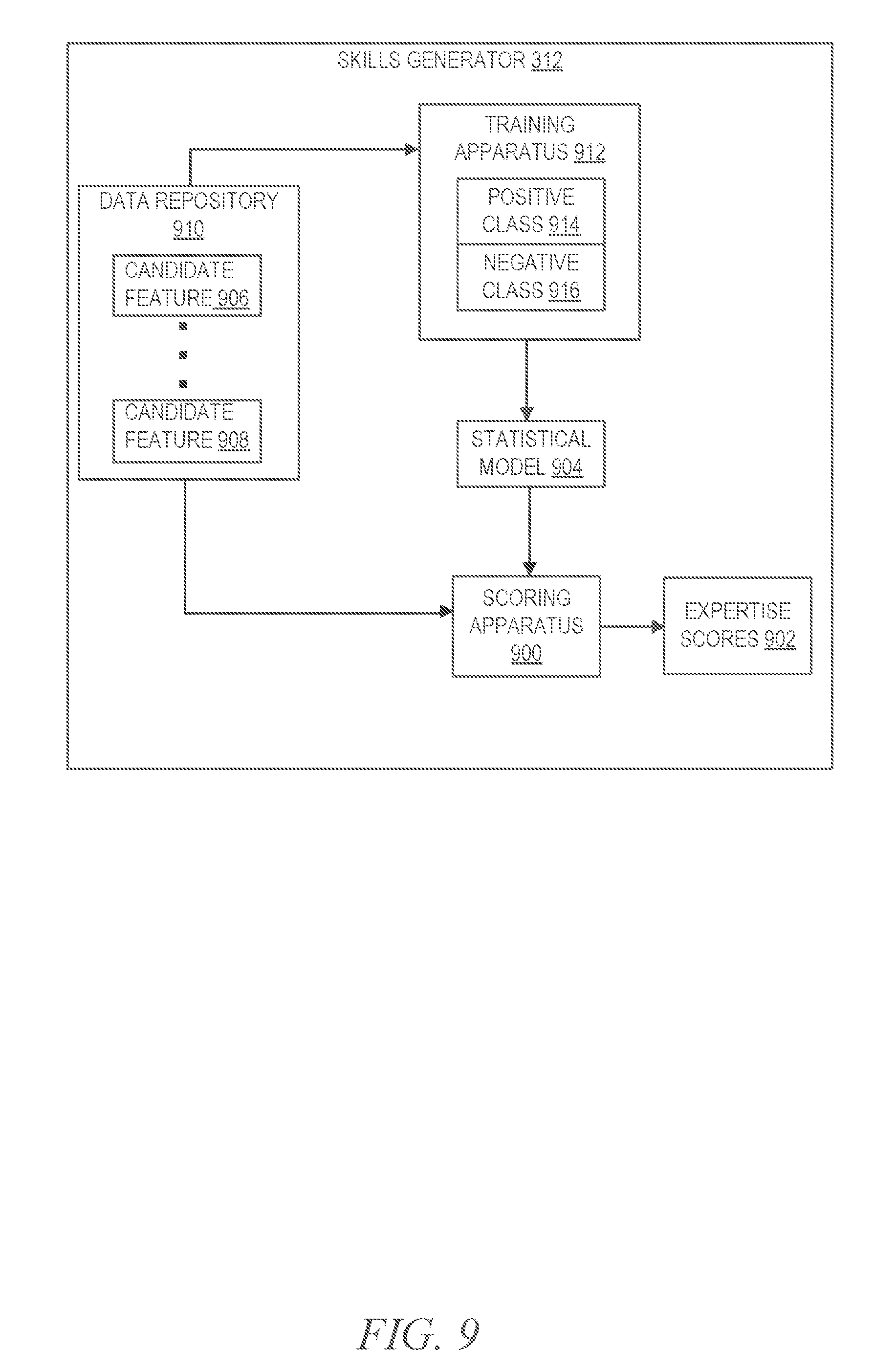

[0014] FIG. 9 is a block diagram illustrating a skills generator in more detail, in accordance with an example embodiment.

[0015] FIG. 10 is a diagram illustrating an offline process to estimate expertise scores, in accordance with another example embodiment.

[0016] FIG. 11 is a flow diagram illustrating a method for performing a suggested candidate-based search, in accordance with an example embodiment.

[0017] FIG. 12 is a flow diagram illustrating a method for soliciting and using feedback to create and modify a candidate stream, in accordance with an example embodiment.

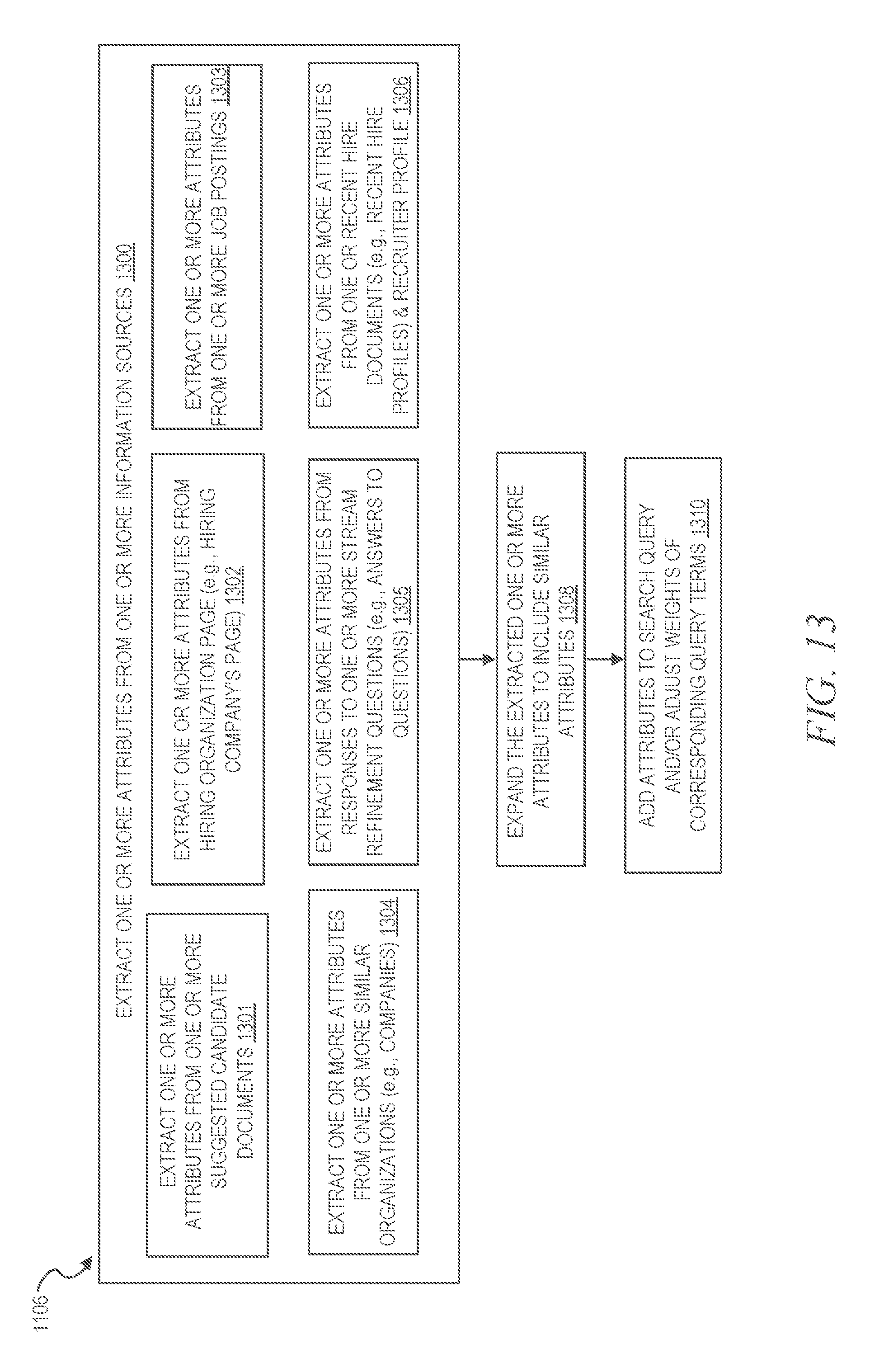

[0018] FIG. 13 is a flow diagram illustrating generating a search query based on one or more extracted attributes, in accordance with an example embodiment.

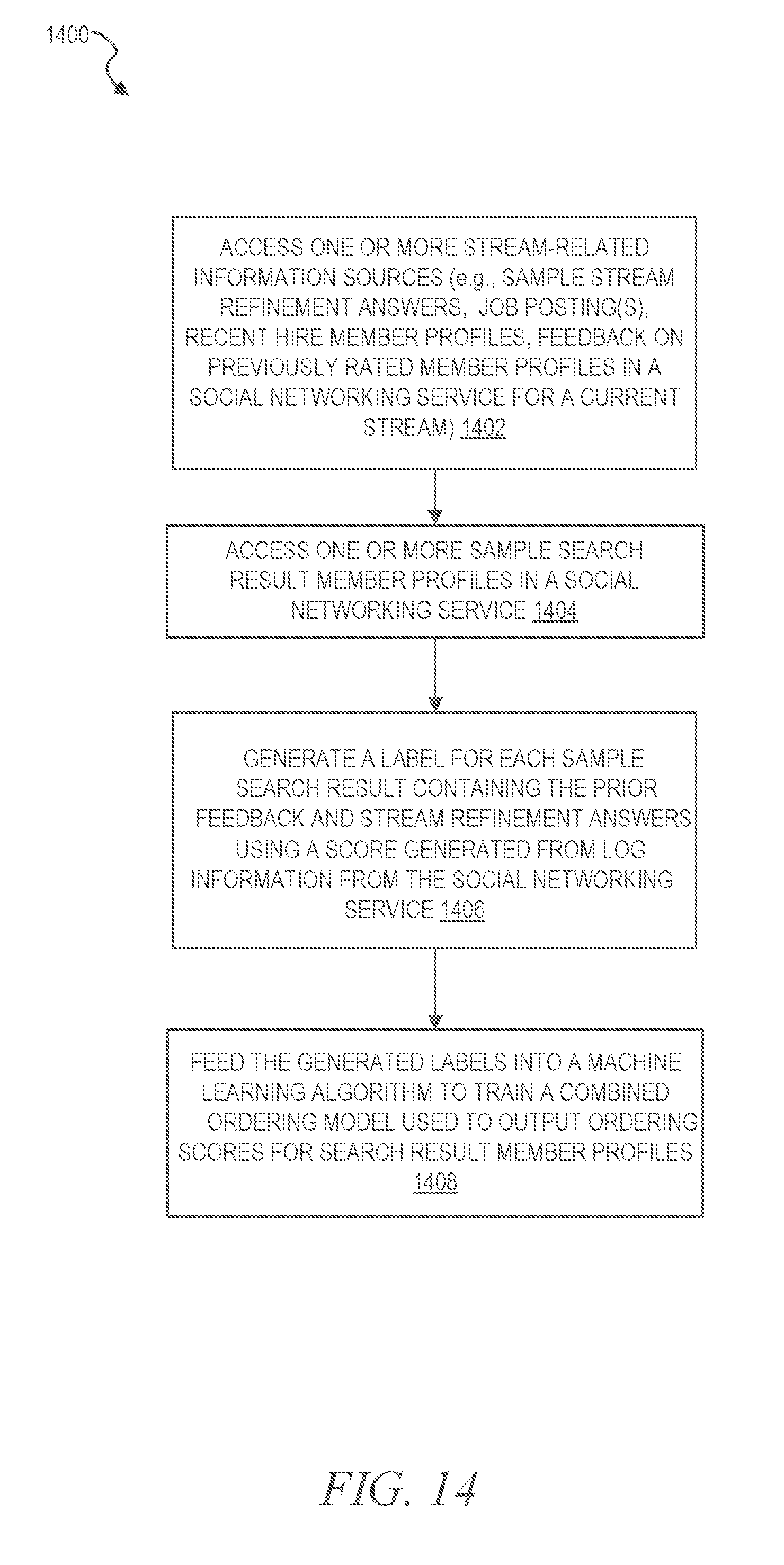

[0019] FIG. 14 is a flow diagram illustrating a method for generating labels for sample suggested candidate member profiles in accordance with an example embodiment.

[0020] FIG. 15 is a flow diagram illustrating a method of generating suggested streams in accordance with an example embodiment.

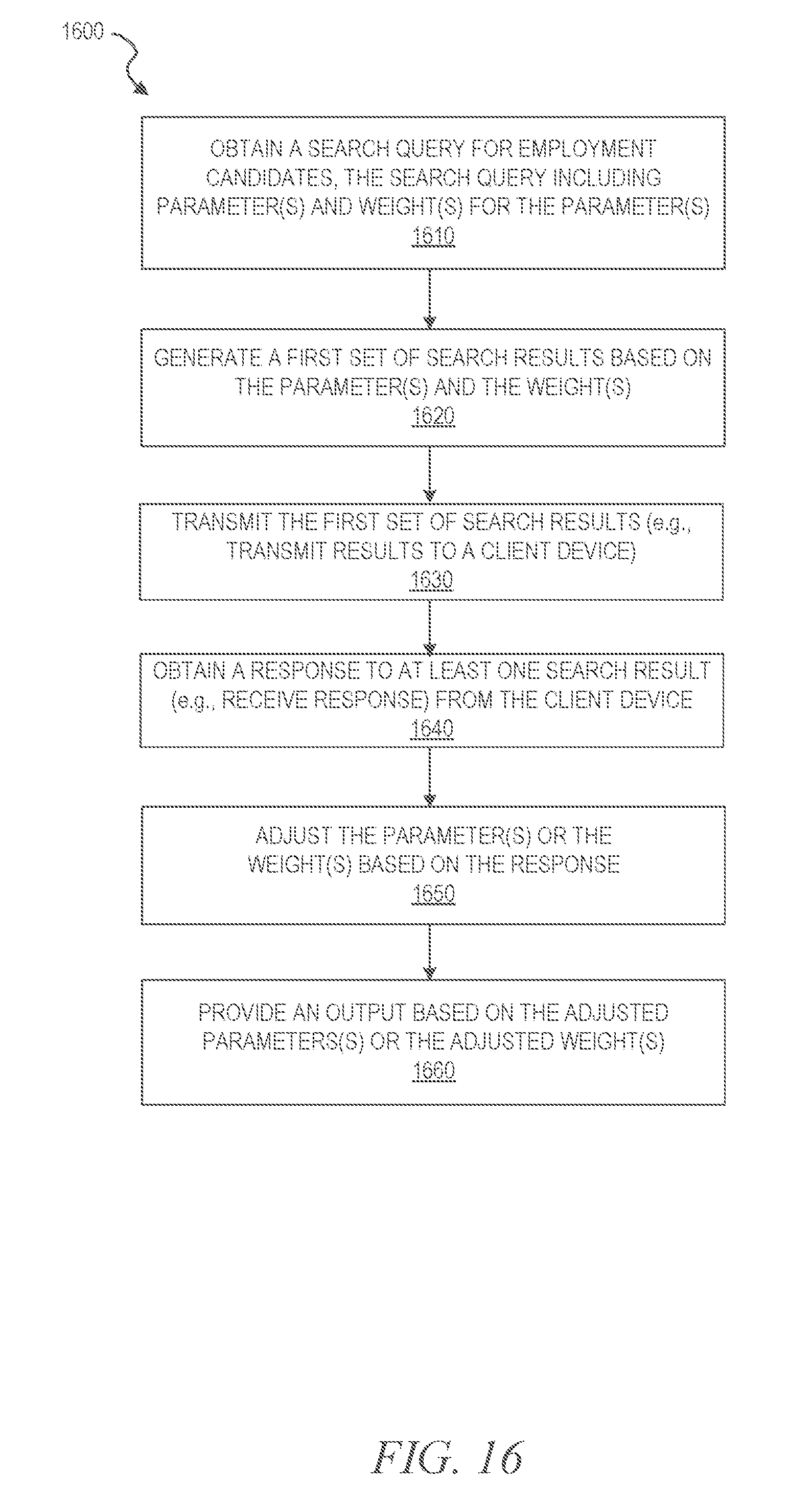

[0021] FIG. 16 is a flow chart illustrating a method for query term weighting, in accordance with an example embodiment.

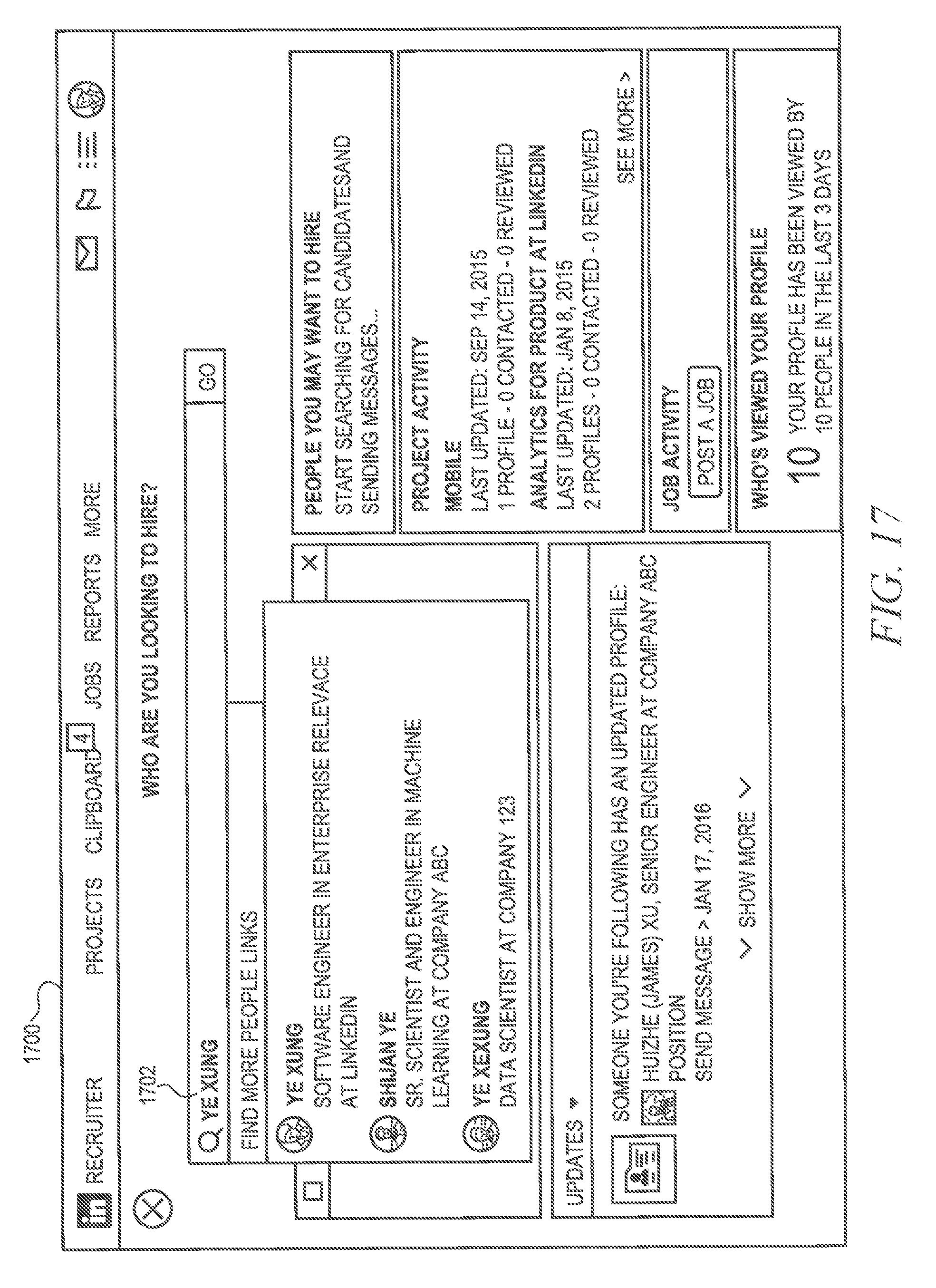

[0022] FIG. 17 is a screen capture illustrating a first screen of a user interface for performing a suggested candidate-based search, in accordance with an example embodiment.

[0023] FIG. 18 is a screen capture illustrating a second screen of the user interface for performing a stream refinement question-based search, in accordance with an example embodiment.

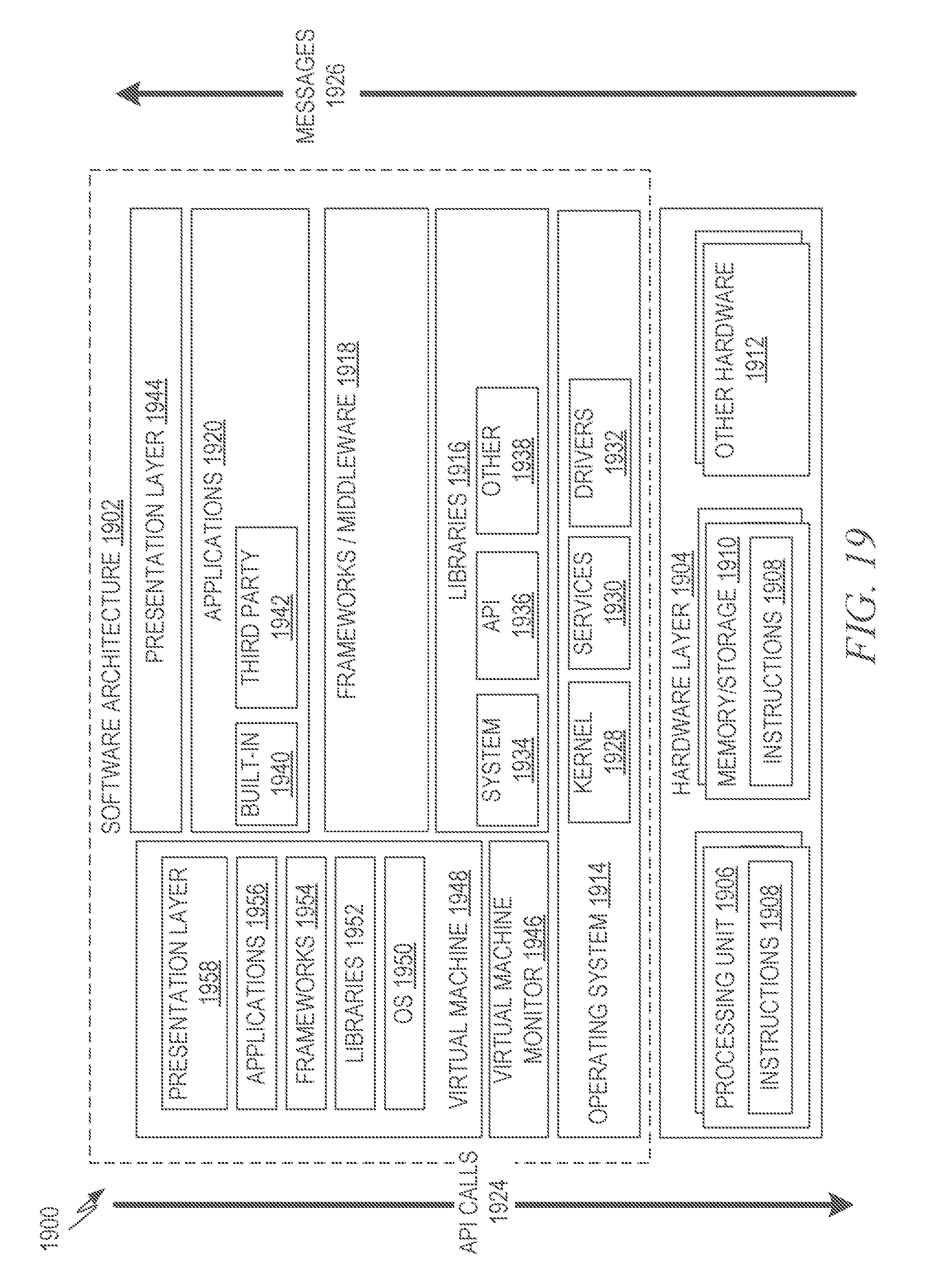

[0024] FIG. 19 is a block diagram illustrating a representative software architecture, which may be used in conjunction with various hardware architectures herein described.

[0025] FIG. 20 is a block diagram illustrating components of a machine, according to some example embodiments, able to read instructions from a machine-readable medium (e.g., a machine-readable storage medium) and perform any one or more of the methodologies discussed herein.

DETAILED DESCRIPTION

[0026] The present disclosure describes, among other things, methods, systems, and computer program products that individually provide various functionality. In the following description, for purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of the various aspects of different embodiments of the present disclosure. It will be evident, however, to one skilled in the art, that the present disclosure may be practiced without all of the specific details.

[0027] Techniques for recommending candidate search streams (i.e., suggested streams) to recruiters are disclosed herein. In an example, job titles are suggested by presenting the titles to a user of a recruiting tool (e.g., a recruiter or hiring manager). Based on a variety of features, a system can suggest a position or job title that a recruiter may want to till. For example, a hiring manager at a small-but-growing Internet company may want to hire a head of human resources (HR.). Such suggested job titles may be used to create candidate search streams comprising recommended candidates for the job titles. Some embodiments create streams of suggested candidates based on predicted positions for which a recruiter may be hiring.

[0028] In some embodiments, a role or stream may consist of three attributes: title, location, and company. To suggest a stream for the user to create, certain embodiments suggest values for these attributes. No suggestion needs to be generated for organization (e.g., company) since some embodiments may simply use the company that the hiring manager is employed by. The logic for suggesting a position (e.g., a job title or role) is described herein. The location suggestion is more straight forward than a job title or position. Some embodiments suggest a role, stream, or position for a hiring search rather than just a job title.

[0029] To suggest a position for a hiring search (e.g., a job candidate search), certain embodiments first find similar organizations and companies. Certain embodiments can accomplish this this in two ways. The first is to use a factorization machine to conduct collaborative filtering. In this method, companies are deemed similar if there is a flow of human capital between the companies or related companies. The other way is to consider directly where a company's employees have worked in the past. Once some similar companies have been identified, an embodiment can consider common titles held by employees at those companies and draw suggestions (e.g., suggested job titles) from this set of titles. A goal of both methods is to suggest the most common titles, but also maintain diversity amongst the positions suggested (e.g., avoid suggesting only titles in the sales department by also suggest positions in marketing, accounting IT, and other departments). In order to do this, an embodiment may model each of the titles as a particle in space and run a physics simulation in which there is an attractive force driven by how often a title occurs and a repulsive force driven by how similar a title is to other titles.

[0030] Example techniques for using such a physics simulation and a factorization machine are described in U.S. patent application Ser. No. 15/827,350, entitled "MACHINE LEARNING TO PREDICT NUMERICAL OUTCOMES IN A MATRIX-DEFINED PROBLEM SPACE", filed on Nov. 30, 2017, which is incorporated herein by reference in its entirety. The referenced document describes techniques for using a physics simulation to suggest answers for areas of expertise stream refinement questions. The referenced document also describes Factorization machines (FIVIs) and their extension, field-aware factorization machines (FFMs), which have a broad range of applications for machine learning tasks including regression, classification, collaborative filtering, search ranking, and recommendation. The referenced document further describes a scalable implementation of the FFM learning model that runs on a standard SparkiHadoop cluster. The referenced document also describes a prediction algorithm that runs in linear time for models with higher order interactions of rank three or greater, and further describes the basic components of the FFM, including feature engineering and negative sampling, sparse matrix transposition, a training data and model co-partitioning strategy, and a computational graph-inspired training algorithm. In the referenced document, one distributed training algorithm and system optimizations enable some aspects to train FFM models at high speed and scale on commodity hardware using off-the-shelf big data processing frameworks such as Fladoop and Spark.

[0031] The above-referenced document additionally describes Factorization machines (FMs) that model interactions between features (e.g., attributes) using factorized parameters. Field-aware factorization machines (FFMs) are a subset of factorization machines that are used, for example, in recommender systems, including job candidate recommendation systems. FMs and FFMs may be used in machine learning tasks, such as regression, classification, collaborative filtering, search ranking, and recommendation. Some implementations of the technology described herein leverage FFMs to provide a prediction algorithm that runs in linear time. The training algorithm and system optimizations described herein enable training of the FFM models at high speed and at a large scale. Thus, Mils based on large datasets and large parameter spaces may be trained. In some embodiments, FMs may be a model class that combines the advantages of Support Vector Machines (SVMs) with factorization models. Like SVMs, FMs are a general predictor working with any real valued feature vector. In contrast to SVMs, FMs model all interactions between features using factorized parameters. Thus, they are able to estimate interactions even in problems with huge sparsity (like recommender systems) where SVMs fail. FMs are class of models which generalize Tensor Factorization models and polynomial kernel regression models. FMs uniquely combine the generality and expressive power of prior approaches (e.g., SVMs) with the ability to learn from sparse datasets using factorization (e.g., SVD++). FFMs extend FMs to also model effects of different interaction types, defined by fields.

[0032] For a large professional networking or employee-finding service, a production-level machine learning system may have a number of operational goals, such as scale. Large-scale distributed systems are needed to adequately process data of millions of professional network members. The referenced document describes how the FFM model extends the FM model with information about groups (called fields) of features. In some embodiments, a latent space with more than two dimensions (e.g., three, four, or five dimensions) may be used. To generate this mapping, latent vectors learned by a factorization machine trained on examples of <Current Title, Skills> tuples are extracted from a data store. In some examples, the data store stores member profiles from a professional networking service (see, e.g., the profile database 218 of FIG. 2). According to some examples described in the referenced document, a server extracts training examples from the data store, where each training example includes a <current title, skills> tuple. The server trains a factorization machine, and latent vectors learned by the factorization machine may be viewed as a mapping of titles to the latent space and a mapping of skills to the latent space. In other words, for each title, there is a vector that numerically represents the title. For each skill, there is a vector that numerically represents the skill.

[0033] By using titles that similar companies have hired people for, embodiments can produce a list of suggested titles. Then, the physics simulation discussed above and described in the above-referenced document can be applied to produce the most important titles (e.g., the most needed titles) while also ensuring that the few options of titles presented are different. In this way, embodiments avoid the issue of presenting closely-related or synonymous titles such as, for example "Software Engineer" and "Software Developer" at the same time. That is, embodiments improve the efficiency of suggesting titles to a user by filtering out titles that are semantically identical or duplicative.

[0034] In addition to what is described in the referenced document, certain embodiments include deciding to ask a stream refinement question to improve a candidate stream and incorporating the answer into the relevance for which candidate is suggested next in the stream.

[0035] Embodiments can suggest positions based on a hiring organization's size, location, and existing titles--including titles that differ from each other, but have overlapping areas of expertise. Such embodiments can predict feature values using a factorization machine that is configured to find a related entity for a given entity. For instance, a factorization machine may be used to find similar companies for a given hiring organization. Embodiments suggest positions that may be different from each other. For instance, some embodiments suggest locations where an organization should hire based on where the organization has offices and where similar organizations have offices, apart from specific job titles. Also, for example, embodiments suggest broader positions beyond just job titles related to one area of expertise. According to such examples, in addition to suggesting software-related job titles such as software engineer, software developer and related or synonymous titles, a system can additionally suggest non-software positions based on accessing title data from similar companies and different, non-software groups and divisions at the hiring company.

[0036] Some embodiments create streams of suggested candidates (i.e., suggested streams) based on predicted positions for which a recruiter may be hiring. In certain embodiments, positions or job titles may be predicted based on criteria, such as, for example, past hiring activity, organization (e.g., company) size, a currently logged-in user's job title (i.e., the title of a current user of a recruiting tool), competitors' hiring activity, industry hiring activity, recent candidate searches, recent member profiles viewed, recent member profiles prospected, and job searches by candidates who have engaged with the user's company page (e.g., the organization page or company page of the currently logged-in user).

[0037] To suggest a location, an embodiment first generates a list of all the places a company has employees (generally a few offices, but may include knowledge such as whether this company has remote workers). Then, the embodiment can use a simple rule based logic. The embodiment can utilize the language of the selected title to determine which office locations are in countries with that language. Then, the embodiment can display a small list of top suggestions to the user. This list can be sorted according to number of people in the corresponding department in each office (e.g., if this job is for an Accountant and most accounting staff are in Los Angeles then suggest Los Angeles first). If the department is small or this is the first hire in the department, an embodiment can use office size most likely place to hire a new employee would be the headquarters).

[0038] According to an embodiment, a system learns attributes of recent hires as compared to the rest of a population of candidates. In certain examples, the attributes can include a combination of title and location (e.g., a candidate's job title and geographic location). The geographic location can be, for example, a metropolitan area, such as a city, a county, a town, a province, or any other municipality. Also, for example, the geographic location may be a postal code, a larger area such as a country, or may specify that the candidate can be a remote worker.

[0039] Techniques for returning candidates to recruiters based on recruiter feedback are disclosed herein. In an example, candidate feedback is received by presenting a stream of candidates to a user of a recruiting tool (e.g., a recruiter or hiring manager). Based on recruiter feedback, a system can revise the stream to include additional candidates and to filter out other candidates. For instance, based on explicit feedback such as, for example, a user rating (e.g., acceptance, deferral, or rejection), a user-specified job title, and a user-specified location, an intelligent matching system can return potential candidates to a user of the system (e.g., a recruiter or hiring manager). Also, for example, based on implicit user feedback, such as similar company matching, user dwell time on a candidate, candidate member profile sections viewed, and a number of revisits to a saved candidate profile, the intelligent matching system can revise a stream of suggested candidates that are returned to the user.

[0040] An example system recommends potential candidates to users, such as, for example, users of a recruiting tool. The system stores the employment history (titles and positions held with dates and descriptions), location, educational history, industry, and skills of members of a social networking service. The system uses explicit (specified job title, location, positively-rated candidates, etc.) and implicit user input (features of positively-rated candidates, similar company/organization matching, data about past hiring activity, etc.) to return potential candidates to a user (e.g., a hiring manager or a recruiter).

[0041] Techniques for query inference based on explicit (specified job title, location, etc.) and implicit user feedback (features of positively rated candidates, similar company matching, etc.) are also disclosed herein. In an example, a system returns potential, suggested candidates as a candidate stream based on executing an inferred query generated by a query builder. In some embodiments, a candidate stream is presented to a user (e.g., a recruiter) as a series of potential candidates which the user may then rate as a good fit or not a good fit (e.g., explicit feedback). Based on this explicit feedback, the user may then be shown different candidates or asked for further input on the stream.

[0042] Example embodiments provide systems and methods for using feedback to create and modify a search query, where the search query is a candidate query in an intelligent matching context. According to these embodiments, intelligent matching allows a user, such as, for example, a recruiter or hiring manager, to create a stream from a minimal set of attributes. As used herein, in certain embodiments, the terms `intelligent matches` and `intelligent matching` refers to systems and methods that offer intelligent candidate suggestions to users such as, for example, recruiters, hiring managers, and small business owners. For example, a recommendation system oilers such intelligent candidate suggestions while accounting for users' personal preferences and immediate interactions with the recommendation system. Intelligent matching enables such users to review and select relevant candidates from a candidate stream without having to navigate or review a large list of irrelevant candidates. For instance, intelligent matching can provide a user with intelligent suggestions automatically selected from a candidate stream or flow of candidates for a position to be filled without requiring the user to manually move through a list of thousands of candidates. In the intelligent matching context, such a candidate stream can be created based on minimal initial contributions or inputs from users such as small business owners and hiring managers. For example, embodiments may use a recruiter tool to build a candidate stream with the only required input into the tool being a job title and location.

[0043] Instead of requiring large amounts of explicit user input, intelligent matching techniques infer query criteria with attributes and information derived from the user's company or organization, job titles, positions, job descriptions, other companies or organizations in similar industries, data about recent hires, and the like. Among many attributes or factors that can contribute to the criteria for including members of a social networking service in a stream of candidates, embodiments use a standardized job title and location to start a stream, which may be suggested to the user, as well as the user's current company. In certain embodiments, the social networking service is an online professional network. As a user is fed a stream of candidates, the user can assess the candidates' fit for the role. This interaction information can be fed back into a relevance engine that includes logic for determining which candidates end up in a stream. In this way, intelligent matching techniques continue to improve the stream.

[0044] In an example embodiment, a system is provided whereby candidate features for a query are evaluated (e.g., weighed and re-weighed) based on a mixture of explicit and implicit feedback. The feedback can include user feedback for candidates in a given stream. The user can be, for example, a recruiter or hiring manager reviewing candidates in a stream of candidates presented to the user. Explicit feedback can include acceptance, deferral, or rejection of a presented candidate by a user (e.g., recruiter feedback). Explicit feedback can also include a user's interest or disinterest in member profile attributes (i.e., urns) and may be used to identify ranking and recall limitations of previously displayed member profiles and to devise reformulation schemes for candidate query facets and query term weighting (QTW). For instance, starting with a set of suggested candidates for a candidate search (i.e., suggested candidates for a given title, location) specified by the stream, an embodiment represents a candidate profile as a bag of urns. In this example, an urn is an identifier for a standardized value of an entity type associated with a member profile, where an entity type represents an attribute or feature of the member's profile (e.g., skills, education, experience, current and past organizations). For example, member profiles can be represented by urns, where the urns can include urns for skills (e.g., C++ programming experience) and other urns for company or organization names (e.g., names of current and former employers). Implicit feedback can include measured metrics such as dwell time, profile sections viewed, and number of revisits to a saved candidate profile.

[0045] In certain embodiments, a candidate stream collects two types of explicit feedback. The first type of explicit feedback includes signals from a user such as acceptance, deferral, or rejection of a candidate shown or presented to the user (e.g., a recruiter). The second type of explicit feedback includes member urns that are similar or dissimilar to urns of a desired hire. If the explicit feedback includes many negative responses, an embodiment can prompt the user to ask the user what about the profiles returned is disliked. An embodiment may also allow the user to highlight or select sections of the profile that were particularly liked or disliked in order to provide targeted explicit feedback at any time.

[0046] In some embodiments, implicit feedback can be measured in part by using log data from a recruiting tool to measure an amount of items in a member profile a user has reviewed or seen (e.g., a number of profile sections viewed). In an example, such log data from a recruiting product can be used to determine if a user is interested in a particular skill set, seniority or tenure in a position, seniority or tenure at an organization, and other implicit feedback that can be determined from log data. In another example, log data from an intelligent matching product may be used to determine if a user of the intelligent matching product is interested in a particular skill set, seniority or tenure in a position, seniority or tenure at an organization, and other implicit feedback that can be determined from intelligent matching log data.

[0047] Embodiments incorporate the above-noted types of explicit and implicit feedback signals into a single weighted scheme of signals. In additional or alternative embodiments, the explicit member urns feedback may be used to identify ranking and recall limitations of previously displayed member profiles and to devise reformulation schemes for query facets and query term weighting (QTW). The approach does not require displaying a query for editing by the user. Instead, the user's query can be tuned automatically behind the scenes. For example, query facets and QTW may be used to automatically reformulate and tune an inferred query based on a mixture of explicit and implicit feedback as the user is looking for candidates, and reviews, selects, defers, or rejects candidates in a candidate stream.

[0048] The above-noted types of explicit and implicit feedback can be used to promote and demote candidates by re-weighting candidate features (e.g., inferred query criteria). That is, values assigned to weights corresponding to candidate features (e.g., desired hire features) can be re-calculated or re-weighted. Some feedback can conflict with other feedback. For instance, seniority feedback can indicate that a user wants a candidate with long seniority at a start-up company, but relatively shorter seniority at a larger organization (e.g., a Fortune 500 company).

[0049] In an example, a system returns potential, suggested candidates as a candidate stream based on executing an inferred query generated by a query builder. In some embodiments, a candidate stream is presented to a user (e.g., a recruiter) as a series of potential candidates which the user may then rate as a good fit or not a good fit (e.g., explicit feedback). Based on this explicit feedback, the user may then be shown different candidates or asked for further input on the stream.

[0050] A user can use a recruiting tool to move or navigate through a candidate stream. For example, as a stream engine returns each candidate to the user, the user can provide feedback to rate the candidate in one of a discrete number of ways. Non-limiting examples of the feedback include acceptance (e.g., Yes--interested in candidate, deferral (e.g., maybe later) and rejection (e.g., No--not interested).

[0051] Techniques for soliciting and using feedback to modify a candidate stream are disclosed. In some embodiments, a user (e.g., a hiring manager or recruiter) can be presented with a series of potential, suggested candidates which the user provides feedback on (e.g., by rating candidates as a good fit or not a good fit). Based on this feedback, an embodiment shows the user different candidates or prompts the user for further input on the stream.

[0052] Some embodiments present a user (e.g., a hiring manager or recruiter) with a series of potential candidates (e.g., a candidate stream) in order to solicit feedback. In certain embodiments, the feedback includes user ratings of the candidates. For example, the user may rate candidates in the stream as a good fit or not a good fit for a position the user is seeking to fill. Also for, example, the user may rate candidates in the stream as a good fit or not a good fit for an organization (e.g., a company) the user is recruiting on behalf of. Based on such feedback, the user may be shown different candidates or additional feedback may be solicited. For instance, when the user repeatedly assigns negative ratings to candidates in the stream (e.g., by rejecting them or rating them as not a good fit), the user may be asked for further input on the stream in order to improve the stream. In an example, the user may be asked which attribute of the last rated candidate was displeasing (e.g., location, title, company, seniority, industry, and the like).

[0053] Certain embodiments return candidates to users such as recruiters or hiring managers based on solicited feedback. In an example, user feedback is solicited and received by presenting a stream of candidates to a user of a recruiting tool (e.g., an intelligent matching tool or product). Based on received feedback, a system can revise the stream to include additional candidates and to filter out other candidates. For instance, based on explicit feedback such as, for example, a user rating (e.g., acceptance, deferral, or rejection), a user-specified job title, and a user-specified location, an intelligent matching system can return potential candidates to a user of the system (e.g., a recruiter or hiring manager). Also, for example, based on implicit user feedback, such as user dwell time on a candidate, candidate member profile sections viewed, and a number of revisits to a saved candidate profile, the intelligent matching system can revise a stream of suggested candidates that are returned to the user.

[0054] Certain embodiments include a semi-automated message builder or generator. For instance, once a user chooses to reach out to a candidate, the semi-automated message builder may semi-automatically generate a message that is optimized for a high response rate and personalized based on the recipient's member profile information, the user's profile information, and the position being hired for. For example, techniques for automatically generating a message on behalf of a recruiter or job applicant are disclosed. In one example, a message generated for a recruiter can include information about the recruiter, job posting, and organization (e.g., company) that is relevant to the job candidate. In another example, a message generated for the job applicant may include information about the job applicant (e.g., job experience, skills, connections, education, industry, certifications, and other candidate-based features) that is relevant for a specific job posting.

[0055] Some embodiments include a message alert system that can send alerts to notify a user (e.g., a hiring manager) of events that may spur the user to take actions in the recruiting tool. For example, the alerts may include notifications and emails such as `There are X new candidates for you to review.` In additional or alternative embodiments, alerts and notifications that are sent at other possible stages of recruiting trigger user reengagement with the recruiting tool. For instance, alerts may be sent when no candidate streams exist, when relevant candidates have not yet been messaged by the user (e.g., no LinkedIn InMail messages sent to qualified, suggested candidates), and when interested candidates have not been responded to (e.g., when no reply has been sent to accepting recipients of LinkedIn InMail messages).

[0056] Embodiments pertain to recruiting, but the techniques could be applied in a variety of settings--not just in employment candidate search and intelligent matches contexts. The technology is described herein in the professional networking, employment candidate search, and intelligent matches contexts. However, the technology described herein may be useful in other contexts also. For example, the technology described herein may be useful in any other search context. In some embodiments, the technology described herein may be applied to a search for a mate in a dating service or a search for a new friend in a friend-finding service.

[0057] Intelligent matching allows a user, such as, for example, a recruiter or hiring manager, to create a candidate stream from a minimal set of attributes. Intelligent matching recommends candidates to a user of a recruiting tool (e.g., a recruiter or hiring manager), while accounting for the user's personal preferences and user interactions with the tool. In some embodiments, suggested titles can be used to build a candidate stream for a recruiter based on predicted positions (e.g., titles) for which a recruiter may be hiring. For example, the suggested stream may include candidates that are suggested to the user based on the predicted positions.

[0058] Example techniques for predicting values for features (e.g., locations, titles and other features) are described in U.S. patent application Ser. Nos. 15/827,289 and 15/827,350 entitled "PREDICTING FEATURE VALUES IN A MATRIX" and "MACHINE LEARNING TO PREDICT NUMERICAL OUTCOMES IN A MATRIX-DEFINED PROBLEM SPACE", respectively, both filed on Nov. 30, 2017, which are incorporated herein by reference in their entireties. The referenced documents describe techniques for predicting numerical outcomes in a matrix-defined problem space where the numerical outcomes may include any outcomes that may be expressed numerically, such as Boolean outcomes, integer outcomes or any other outcomes that are capable of being expressed with number(s). The referenced documents also describe a control server that stores (or is coupled with a data repository that stores) a matrix with multiple dimensions, where one of the dimensions represents features, such as employers, job titles, universities attended, and the like, and another one of the dimensions represents entities, such as individuals or employees. The control server separates the matrix into multiple submatrices along a first dimension (e.g. features). The referenced documents further describe techniques for title recommendation and company recommendation. For title recommendation, the referenced document describes techniques that train a model over each member's titles (rather than companies) in his/her work history (e.g., members of a social networking service). Title recommendation seeks to find a cause-effect relationship between job history and future jobs, whereas title similarity is non-temporal in nature.

[0059] In an example embodiment, a system is provided whereby a stream of candidates is created from a minimal set of attributes, such as, for example, a combination of title and geographic location. As used herein, the terms `stream of candidates` and `candidate stream` generally refer to sets of candidates that can be presented or displayed to a user. The user can be a user of an intelligent matches recruiting tool or a user of a program that accesses an application programming interface (API). For example, the user can be a recruiter or a hiring manager that interacts with a recruiting tool to view and review a stream of candidates being considered for a position or job. Member profiles for a set of candidate profiles can be represented as document vectors, and urns for job titles (e.g., positions), skills previous companies, educational institutions, seniority, years of experience and industries to hire from can be determined. In additional or alternative embodiments, derived latent features based on member profiles and hiring companies can be used to predict a position (e.g., a job title) for which a recruiter or hiring manager may be hiring.

[0060] Embodiments can personalize results in a stream by modifying query term weights. Techniques for QTW to weigh query-based features are described in U.S. patent application Ser. No. 15/845,477 filed on Dec. 18, 2017, entitled "QUERY TERM WEIGHTING", which is hereby incorporated by reference in its entirety. The referenced document describes weighting parameters in a query that includes multiple parameters to address the problem in the computer arts of identifying relevant records in a data repository for responding to a search query from a user of a client device. The referenced document further describes a system wherein a server receives, from a client device, a search query for employment candidates, where the search query includes a set of parameters and each parameter in the set of parameters has a weight. The referenced document also describes that the server generates, from a data repository storing records associated with professionals, a first set of search results based on the set of parameters and the weights of the parameters in the set. The server transmits, to the client device, the first set of search results. The server receives, from the client device, a response to search result(s) from the first set of search results. The search result(s) associated with a set of factors. The factor(s) in the set may correspond to any parameters, for example, skills, work experience, education, years of experience, seniority, pedigree, and industry. The response indicates a level of interest in the search result(s). For example, the response may indicate that the user of the client device is interested in the search result(s j, is not interested in the search result(s), or has ignored the search result(s). The server adjusts the parameters in the set of parameters or adjusts the weights of the parameters based on the response to the search result(s). The server provides an output based on the adjusted parameters or the adjusted weights.

[0061] In accordance with an embodiment, a weight may be associated with each query term which may be either an urn or free text. For example, a search may be conducted with query term weights {Java: 0.9, HTML: 0.4, CSS: 0:3} to indicate that the skills Java, HTML, and Cascading Style Sheets (CSS) are relevant to the position, but that java is relatively more important than HTML and CSS. Query term weights may be initialized to include query terms known before the sourcing process begins. For instance, the system may include a query term weight corresponding to the hiring manager's industry and the skills mentioned in the job posting. Query term weights may be adjusted in response to the answers given to stream refinement questions.

[0062] In some embodiments, there are four types of stream refinement questions that may be asked, and attributes may be extracted from answers to these questions. The first type of stream refinement question that may be asked is regarding areas of expertise (e.g., what skill sets are most important?). An area of expertise is typically higher level than individual skills. For example, skills might be CSS, HTML, and JavaScript, whereas the corresponding area of expertise might be web development. A second type of refinement question that may be asked is regarding a user's ideal candidate (e.g., do you know someone that would be a good fit for this job so that we can find similar people?). A third type of refinement question that may be asked is regarding organizations (e.g., what types of companies would you like to hire from?). A fourth type of refinement question that may be asked is regarding keywords (e.g., are there any keywords that are relevant to this job?). The system may extract attributes from answers to one or more of these types of stream refinement questions.

[0063] According to certain embodiments, the system may provide suggested answers for each stream refinement question. For instance, for an ideal candidate question, the system may present to the user three connections that may be a good fit for the job or allow the user to type an answer. Example processes for suggesting answers for questions are described in U.S. patent application Ser. No. 15/827,350, entitled "MACHINE LEARNING TO PREDICT NUMERICAL OUTCOMES IN A MATRIX-DEFINED PROBLEM SPACE", filed on Nov. 30, 2017, which is incorporated herein by reference in its entirety. The referenced document describes techniques for using a physics simulation to suggest answers for areas of expertise stream refinement questions. The referenced document also describes an example where five iterations are performed before the physics simulation of the forces is stopped. In the refenced document, after the simulation of the forces is completed, the sampled points are remapped to areas of expertise. The referenced document describes presenting the three areas of expertise closest to a title after the simulation to a user that can then specify which, if any, of the areas of expertise are applicable to the title. In addition to what is described in the referenced document, certain embodiments include deciding to ask a stream refinement question to improve a candidate stream and incorporating the answer into the relevance for which candidate is suggested next in the stream.

[0064] In an example embodiment, the system may ask the user which of three areas of expertise is most relevant to the position and adjust the query term weights based upon the answer. Positive feedback about a candidate may increase the weights of the query terms appearing on that profile while negative feedback may decrease the weights of the query terms appearing on that profile. In some embodiments, the user may select attributes of a profile that are particularly of interest to them. When attributes are selected the query term weights of those attributes may be adjusted while the query term weights related to the other attributes of the profile may not be adjusted. A flowchart for an example method for QTW is provided in FIG. 16, which is described below.

[0065] Embodiments exploit correlations between certain attributes of member profiles and other attributes. One such correlation is the correlation between a member's title and the member's skills. For example, within member profile data, there exists a strong correlation between title and skills (e.g., a title+skills correlation). Such a title+skills correlation can be used as a model for ordering candidates. Example techniques for ordering candidates are described in U.S. patent application Ser. No. 15/827,337, entitled "RANKING JOB CANDIDATE SEARCH RESULTS", filed on Nov. 30, 2017, which is incorporated herein by reference in its entirety. The referenced document describes techniques for predicting numerical outcomes in a matrix-defined problem space, where the numerical outcomes may include any outcomes that may be expressed numerically, such as Boolean outcomes, integer outcomes or any other outcomes that are capable of being expressed with number(s). The referenced document also describes techniques for solving the problem of ordering job candidates when multiple candidates are available for an opening at a business. In one example, a server receives, from a client device, a request for job candidates for an employment position, the request comprising search criteria. The server generates, based on the request, a set of job candidates for the employment position. The server provides, to the client device, a prompt for ordering the set of job candidates. The server receives, from the client device, a response to the prompt. The server orders the set of job candidates based on the received response. The server provides, for display at the client device, an output based on the ordered set of job candidates. Some advantages and improvements include the ability to order job candidates based on feedback from the client device and the provision of prompts for this feedback.

[0066] Query facets may be generated as part of the inferred search query. As a first step towards generating a bootstrap query for intelligent matches, an embodiment includes similar titles. Additional facet values increase the recall set at the cost of increased computation. In an embodiment, additional facets may be added or removed to target a specific recall set size. For example, if a given query which includes only titles is returning 26,000 candidates, but only 6,400 candidates can be scored in time to return a response to the user without incurring high latency, then additional facets may be added to lower the size of the recall set. Also, for example, if a query is returning only 150 candidates for a given title, then additional similar titles may be included since the current query is below the 6,400 candidate limit. Techniques for expanding queries to return additional results (e.g., suggested candidates) by adding additional facets are further described in U.S. patent application Ser. No. 15/922,732, entitled "QUERY EXPANSION", filed on Mar. 15, 2018, which is incorporated herein by reference in its entirety.

[0067] In an example embodiment, a system is provided whereby, given attributes from a set of input recent hires, a search query is built capturing the key information in the candidates' profiles. The query is then used to retrieve and/or order results. In this manner, a user (e.g., a searcher) may list one or several examples of good candidates for a given position. For instance, hiring managers or recruiters can utilize profiles of existing members of the team to which the position pertains. In this new paradigm, instead of specifying a complex query capturing the position requirements, the searcher can simply pick out a small set of recent hires for the position. The system then builds a query automatically extracted from the input candidates and searches for result candidates based on this built query.

[0068] In some example embodiments, the query terms of an automatically constructed query can also be presented to the searcher, which helps explain why a certain result shows up in a search ordering, making the system more transparent to the searcher. Further, the searcher can then interact with the system and have control over the results by modifying the initial query. Some example embodiments may additionally show the query term weights to the searcher and the searcher interact with and control the weights. Other example embodiments may not show the query term weights to the searcher, but may show only those query terms with the highest weights.

[0069] Some embodiments present a candidate stream to a recruiter that includes candidates selected based on qualifications, positions held, interests, cultural fit with the recruiter's organization, seniority, skills, and other criteria. In some embodiments, the recruiter is shown a variety of candidates early in a calibration process in order to solicit the recruiter's feedback about which types of candidates are a good or bad fit for a position the recruiter is seeking to fill. In examples, this may be accomplished by finding clusters, such as, but not limited to, skills clusters, in a vector space. For instance, the feedback may include the recruiter's stream interactions (e.g., candidate views, selections and other interactions with candidate profiles presented as part of the stream). In other examples, QTW is used to assign weights to terms of a candidate search query, and skills clusters are not needed.

[0070] In accordance with certain embodiments, a candidate stream includes suggested candidates based on comparing member profile attributes to features of a job opening. The comparison may compare attributes and features such as, but not limited to, geography, industry, job role, job description text, member profile text, hiring organization size (e.g., company size), required skills, desired skills, education (e.g., schools, degrees, and certifications), experience (e.g., previous and current employers), group memberships, a member's interactions with the hiring organization's website (e.g., company page interactions), past job searches by a member, past searches by the hiring organization, and interactions with feed items.

[0071] According to some embodiments, a recruiting tool outputs a candidate stream that includes candidate suggestions and a model strength. In some such embodiments, the model strength communicates to a user of the tool the model calibration. For example, the tool may prompt the user to review a certain number of additional candidates (e.g., five more candidates) in order to improve the recommendations in the stream. An example model used by the tool seeks to identify ideal candidates, by taking as input member IDs of recent hires and/or member IDs of previously suggested candidates, accessing member profiles for those member IDs, and then extracting member attributes from the profiles. Example member attributes include skills, education, job titles held, seniority, and other attributes. After extracting the attributes, the model generates a candidate search query based on the attributes, and then performs a search using the generated query.

[0072] In accordance with certain embodiments, a candidate stream primarily includes candidates that are not among the example, input member IDs provided. That is, a recruiting tool may seek to create a suggested candidate stream that primarily excludes member IDs of recent hires and member IDs of suggested candidates that were previously reviewed by a user (e.g., a recruiter). However, in some cases, it may be desirable to include certain re-surface candidates in the stream. For example, it may be desirable to include previously suggested candidates that a recruiter deferred or skipped rather than accepting or rejecting outright. In this example, recruiter feedback received at the tool for a candidate can include acceptance, deferral, or rejection of a presented candidate by a user (e.g., recruiter feedback), and highly-ranked candidates that were deferred or skipped may be included as re-surface candidates in a subsequent candidate stream. Embodiments may use candidate scores, such as, for example, expertise scores and/or skill reputation scores, in combination with a machine learning model to determine how often, or in what circumstances, to include such re-surface candidates in a suggested candidate stream.

[0073] In some example embodiments, machine-learning programs (MLP), also referred to as machine-learning algorithms, models, or tools, are utilized to perform operations associated with searches, such as job candidate searches. Machine learning is a field of study that gives computers the ability to learn without being explicitly programmed. Machine learning explores the study and construction of algorithms, also referred to herein as tools and machine learning models, that may learn from existing data and make predictions about new data. Such machine-learning tools operate by building a model from example training data in order to make data-driven predictions or decisions expressed as outputs or assessments. Although example embodiments are presented with respect to a few machine-learning tools, the principles presented herein may be applied to other machine-learning tools.

[0074] In some example embodiments, different machine-learning tools may be used. For example, Logistic Regression (LR), Naive-Bayes, Random Forest (RF), neural networks (NN), matrix factorization, and Support Vector Machines (SVM) tools may be used for classifying or scoring candidate skills and job candidates.

[0075] Some embodiments seek to predict, job changes by predicting how likely each candidate in a stream is to be moving to a new job in the near future. Some such embodiment predicts a percentage probability that a given candidate will change jobs within the next K months (e.g., 3 months, 6 months, or a year). Some of these embodiments predict job changes based on candidate attributes such as, but not limited to, time at current position, periodicity of job changes at previous positions, career length (taking into consideration that early-career people tend to change jobs more often), platform interactions (e.g., member interactions indicating a job search is underway), month of year (taking into consideration that job changes in some industries and fields are more often during certain seasons, quarters, and months of the year), upcoming current position anniversaries (e.g., stock vesting schedules, retirement plan match schedules, bonus pay out schedules), company-specific seasonal patterns (e.g., a company's annual bonus pay out may be a known date on which many people leave), and industry- or title-specific patterns (e.g., a time of year when most people in a job title change jobs).

[0076] Certain embodiments also seek to predict geographic moves of candidates so that those candidates can be considered for inclusion in a stream. For example, these embodiments may predict how likely each candidate is to geographically move from their current location to a location of a job opening. In this example, the candidate that is likely to move should match the organization's candidate queries for that job even if that candidate's current location does not match the job location. Additional or alternative embodiments also seek to predict how likely each candidate is to move to multiple, specific areas (e.g., more than one geographic region, metropolitan area, or city). For instance, these embodiments may predict that there is a 20% chance that a given candidate will move to the San Francisco Bay Area in the next K months, and a 5% chance the candidate will move to the Greater Los Angeles Area in the next K months. Some of these embodiments predict geographic moves based on the candidate attributes described above with reference to predicting job changes. Additional or alternative embodiments also predict geographic moves based on candidate attributes such as, but not limited to, the candidate's past locations (e.g., geographic locations where the candidate has lived, worked, or studied) and the candidate's frequency or periodicity of previous moves,

[0077] Certain embodiments also seek to promote active job seekers higher in the stream. Users on a professional social network may be asked to identify themselves as candidates open to new opportunities. An open candidate may also specify that they prefer to work in a certain industry, geographic region, or field.

[0078] Some embodiments determine a job description strength and company description strength by extracting features of the job and company from the job and company descriptions. The description strength and company description strength are used to predict higher response rates and positive acceptance rates to a job posting. The strength values are based on comparing a bag of words to words in the descriptions, length of the descriptions, and comparing the descriptions to other job and company descriptions in the same industry or field. The feature extraction can be based on performing job description parsing to parse key features out of a job description. Such key features may include title, location, and skills.

[0079] FIG. 1 is a block diagram illustrating a client-server system 100, in accordance with an example embodiment. A networked system 102 provides server-side functionality via a network 104 (e.g., the :Internet or a wide area network (WAN)) to one or more clients. FIG. 1 illustrates, for example, a web client 106 (e.g., a browser) and a programmatic client 108 executing on respective client machines 110 and 112.

[0080] An API server 114 and a web server 116 are coupled to, and provide programmatic and web interfaces respectively to, one or more application servers 118. The application server(s) 118 host one or more applications 120. The application server(s) 118 are, in turn, shown to be coupled to one or more database servers 124 that facilitate access to one or more databases 126. While the application(s) 120 are shown in FIG. 1 to form part of the networked system 102, it will be appreciated that, in alternative embodiments, the application(s) 120 may form part of a service that is separate and distinct from the networked system 102.

[0081] Further, while the client-server system 100 shown in FIG. 1 employs a client-server architecture, the present disclosure is, of course, not limited to such an architecture, and could equally well find application in a distributed, or peer-to-peer, architecture system, for example. The various applications 120 could also be implemented as standalone software programs, which do not necessarily have networking capabilities.

[0082] The web client 106 accesses the various applications 120 via the web interface supported by the web server 116. Similarly, the programmatic client 108 accesses the various services and functions provided by the application(s) 120 via the programmatic interface provided by the API server 114.

[0083] FIG. 1 also illustrates a third-party application 128, executing on a third-party server 130, as having programmatic access to the networked system 102 via the programmatic interface provided by the API server 114. For example, the third-party application 128 may, utilizing information retrieved from the networked system 102, support one or more features or functions on a website hosted by a third-party. The third-party website may, for example, provide one or more functions that are supported by the relevant applications 120 of the networked system 102.

[0084] In some embodiments, any website referred to herein may comprise online content that may be rendered on a variety of devices including, but not limited to, a desktop personal computer (PC), a laptop, and a mobile device (e.g., a tablet computer, smartphone, etc.). In this respect, any of these devices may be employed by a user to use the features of the present disclosure. In some embodiments, a user can use a mobile app on a mobile device (any of the client machines 110, 112 and the third-party server 130 may be a mobile device) to access and browse online content, such as any of the online content disclosed herein. A mobile server (e.g., API server 114) may communicate with the mobile app and the application server(s) 118 in order to make the features of the present disclosure available on the mobile device. In some embodiments, the networked system 102 may comprise functional components of a social networking service.

[0085] FIG. 2 is a block diagram illustrating components of an online system 210 (e.g., a social network system hosting a social networking service), according to some example embodiments. The online system 210 is an example of the networked system 102 of FIG. 1. In certain embodiments, the online system 210 may be implemented as a social network system. As illustrated in FIG. 2, the functional components of a social network system may include a data processing block referred to herein as a search engine 216, for use in generating and providing search results for a search query, consistent with some embodiments of the present disclosure, in some embodiments, the search engine 216 may reside on the application server(s) 118 in FIG. 1. However, it is contemplated that other configurations are also within the scope of the present disclosure.

[0086] As shown in FIG. 2, a front end may comprise a user interface module or block (e.g., a web server 116) 212, which receives requests from various client computing devices, and communicates appropriate responses to the requesting client devices. For example, the user interface module(s) 212 may receive requests in the form of Hypertext Transfer Protocol (HTTP) requests or other web-based API requests. In addition, a member interaction detection functionality is provided by the online system 210 to detect various interactions that members have with different applications 120, services, and content presented. As shown in FIG. 2, upon detecting a particular interaction, such as a member interaction, the online system 210 logs the interaction, including the type of interaction and any metadata relating to the interaction, in a member activity and behavior database 222.

[0087] An application logic layer may include the search engine 216 and one or more various application server module(s) 214 which, in conjunction with the user interface module(s) 212, generate various user interfaces (e.g., web pages) with data retrieved from various data sources in a data layer. In some embodiments, individual application server module(s) 214 are used to implement the functionality associated with various applications 120 and/or services provided by the social networking service. Member interaction detection happens inside the user interface module(s) , the application server module(s) 214, and the search engine 216, each of which can fire tracking events in the online system 210. That is, upon detecting a particular member interaction, any of the user interface module(s) 212, the application server module(s) 214, and the search engine 216, can log the interaction, including the type of interaction and any metadata relating to the interaction, in a member activity and behavior database 222.

[0088] As shown in FIG. 2, the data layer may include several databases, such as a profile database 218 for storing profile data, including both member profile data and profile data for various organizations (e.g., companies, research institutes, government organizations, schools, etc.). Consistent with some embodiments, when a person initially registers to become a member of the social networking service, the person will be prompted to provide some personal information, such as his or her name, age (e.g., birthdate), gender, interests, contact information, home town, address, spouse's and/or family members' names, educational background (e.g., schools, majors, matriculation and/or graduation dates, etc.), employment history, skills, professional organizations, and so on. This information is stored, for example, in the profile database 218. Similarly, when a representative of an organization initially registers the organization with the social networking service, the representative may be prompted to provide certain information about the organization. This information may be stored, for example, in the profile database 218, or another database (not shown). In some embodiments, the profile data may be processed (e.g., in the background or offline) to generate various derived profile data. For example, if a member has provided information about various job titles that the member has held with the same organization or different organizations and for how long, this information can be used to infer or derive a member profile attribute indicating the member's overall seniority level, or seniority level within a particular organization. In some embodiments, importing or otherwise accessing data from one or more externally hosted data sources may enrich profile data for both members and organizations. For instance, with organizations in particular, financial data may be imported from one or more external data sources and made part of an organization's profile. This importation of organization data and enrichment of the data will be described in more detail later in this document.

[0089] Once registered, a member may invite other members, or be invited by other members, to connect via the social networking service. A `connection` may constitute a bilateral agreement by the members, such that both members acknowledge the establishment of the connection. Similarly, in some embodiments, a member may elect to `follow` another member. In contrast to establishing a connection, `following` another member typically is a unilateral operation and, at least in sonic embodiments, does not require acknowledgement or approval by the member that is being followed. When one member follows another, the member who is following may receive status updates (e.g., in an activity or content stream) or other messages published by the member being followed, or relating to various activities undertaken by the member who is being followed. Similarly, when a member follows an organization, the member becomes eligible to receive messages or status updates published on behalf of the organization. For instance, messages or status updates published on behalf of an organization that a member is following will appear in the member's personalized data feed, commonly referred to as an activity stream or content stream. In any case, the various associations and relationships that the members establish with other members, or with other entities and Objects, are stored and maintained within a social graph in a social graph database 220.