System, Device And Method For Dental Intraoral Scanning

PESACH; Benny

U.S. patent application number 16/199954 was filed with the patent office on 2019-06-27 for system, device and method for dental intraoral scanning. This patent application is currently assigned to Dentlytec G.P.L. LTD.. The applicant listed for this patent is Dentlytec G.P.L. LTD.. Invention is credited to Benny PESACH.

| Application Number | 20190192262 16/199954 |

| Document ID | / |

| Family ID | 56405339 |

| Filed Date | 2019-06-27 |

View All Diagrams

| United States Patent Application | 20190192262 |

| Kind Code | A1 |

| PESACH; Benny | June 27, 2019 |

SYSTEM, DEVICE AND METHOD FOR DENTAL INTRAORAL SCANNING

Abstract

There is provided according to some embodiments, a system, device and method for a three dimensional (3D) intraoral scanning of at least a portion of a tooth. An intraoral scanner may include a shadow casting object extending between a light emitter and the portion of the tooth. An imaging module may image the portion of the tooth and/or the projected shadow of the shadow casting object. A method to construct a 3D model may include illuminating at least a portion of the tooth with a light emitter; casting a shadow on the portion of the tooth by an object located between the emitter and the tooth; imaging the portion of the tooth, including at least a part of the shadow; and determining a location of a point on the tooth and related to the shadow, using the image.

| Inventors: | PESACH; Benny; (Rosh Haayin, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Dentlytec G.P.L. LTD. Tel-Aviv IL |

||||||||||

| Family ID: | 56405339 | ||||||||||

| Appl. No.: | 16/199954 | ||||||||||

| Filed: | November 26, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15115196 | Jul 28, 2016 | 10136970 | ||

| PCT/IL2016/050058 | Jan 18, 2016 | |||

| 16199954 | ||||

| 62104835 | Jan 18, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 7/20 20130101; A61C 19/04 20130101; G06T 2200/08 20130101; G06T 7/74 20170101; A61C 9/006 20130101; A61B 1/06 20130101; G06T 1/0007 20130101; G06T 2207/10028 20130101; A61B 1/24 20130101; H04N 5/2256 20130101; A61C 3/02 20130101; G06T 7/13 20170101; G06T 15/60 20130101; G06T 2210/41 20130101; H04N 2005/2255 20130101; A61C 9/008 20130101; G06T 17/00 20130101; G06T 2207/30036 20130101 |

| International Class: | A61C 9/00 20060101 A61C009/00; G06T 7/13 20060101 G06T007/13; G06T 1/00 20060101 G06T001/00; G06T 7/73 20060101 G06T007/73; G06T 15/60 20060101 G06T015/60; G06T 17/00 20060101 G06T017/00; G06T 7/20 20060101 G06T007/20; A61C 3/02 20060101 A61C003/02; A61B 1/24 20060101 A61B001/24; A61C 19/04 20060101 A61C019/04; H04N 5/225 20060101 H04N005/225; A61B 1/06 20060101 A61B001/06 |

Claims

1.-31. (canceled)

32. A three dimensional (3D) intraoral scanner system for imaging at least a portion of a tooth in an oral cavity comprising: an intraoral portion sized and shaped for insertion into the oral cavity, said intraoral portion including: a light emitter for emitting a light; a shadow casting object; at least a portion of said shadow casting object in a field of illumination of said light source and extending away from a housing of said intraoral portion; an imager positioned to image at least an edge of a shadow cast by said shadow casting object in said light reflected off a portion of a tooth located at a distance of between 3 mm and 5 cm from said imager.

33. The intraoral scanner system of claim 32 further comprising: a processor configured to process an image of said light received by said imager to provide location information.

34. The system of claim 33, wherein said processor is configured to process said image to determine a location of a portion at an edge of said shadow.

35. The intraoral scanner system of claim 33 wherein said processer is configured to process said image to determine a shape of said tooth.

36. The system of claim 33, wherein said processor is further configured to: establish a position of imager with respect to a reference feature in the oral cavity.

37. The system of claim 36, wherein said processor is configured for said establishing said position of said imager from said image.

38. The system of claim 32, wherein said imager is located at a known orientation to said light emitter.

39. The system of claim 33, wherein said processor is further configured to: establish a position of said shadow casting object; and establish a relative location of said imager with respect to said light emitter and a portion of said shadow casting object.

40. The system of any of claim 32, wherein a portion of said shadow casting object casting said edge of said shadow has a known fixed orientation to said light emitter.

41. The system of claim 33, wherein said processor is further configured to: establish a relative location with respect to said light emitter of a portion of said shadow casting object casting said edge of said shadow.

42. The system of claim 32, wherein said at least a portion of said shadow casting object, said light emitter and said imager are collocated such that they do not fall within 0.5 mm of a single plane.

43. The system of claim 33, wherein said at least a portion of said shadow casting object is within a field of view of said imager and wherein said processor is further configured to determine a position of said shadow casting object from said image.

44. The system of claim 32, wherein said light emitter comprises a plurality of light sources, not all illuminated at a same time.

45. The system of claim 33, wherein said processor is configured to process a plurality of images to designate at least one reference feature in said plurality of images and calculate said location of at least one point related to said shadow with respect to said reference feature.

46. The system of claim 33, wherein said processor is configured to designate a features which appear in at least 2 of a plurality of images and calculate the movement of said imager relative of said feature.

47. The system of claim 46, wherein said processor is further configured to combine location information determined at different imager locations to a single 3D model.

48. A system for imaging a tooth in an oral cavity comprising: a light emitter sized and shaped to fit in an oral cavity; an imager sized and shaped to fit in said oral cavity simultaneously with the light emitter and directed to collect light from the light emitter reflected off the tooth at a point of an edge of a shadow on said tooth; a processor configured to receive an image from said imager and process said image to determine a location of said point and a shape of said tooth.

49. The system of claim 48, wherein said processor is further configured to: establish a position of said imager with respect to a reference feature in the oral cavity from said image.

50. The system of claim 49, wherein said shadow has at least two edges cast by opposite exterior sides of the shadow casting object.

51. The system of claim 48, wherein said processor is further configured to: establishing a position of said object producing the shadow from said image.

52. The system of claim 33, wherein said light emitter comprises a plurality of light emitter elements and wherein said processor is configured to track said shadow casting object and to select one or more of said light emitter elements based on the location of the shadow casting object to cast a shadow on the tooth.

53. The system of claim 48, wherein said imager comprises two separate and spaced apart imager elements aimed at said point.

54. The system of claim 53, wherein said processor is configured to determine a location of said point based on a difference between images acquired by different imager elements.

Description

RELATED APPLICATION/S

[0001] This application is a continuation of U.S. patent application Ser. No. 15/115,196 filed on Jul. 28, 2016 (U.S. Pat. No. 10,136,970), which is a National Phase of PCT Patent Application No. PCT/IL2016/050058 having International filing date of Jan. 18, 2016, which claims the benefit of priority under 35 USC .sctn. 119(e) of U.S. Provisional Patent Application No. 62/104,835 filed on Jan. 18, 2015. The entirety of each of the disclosures are incorporated herein by reference.

FIELD AND BACKGROUND OF THE INVENTION

[0002] The present invention, in some embodiments thereof, relates to a system and method for intra-oral scanning and, more particularly, but not exclusively, to a system and method of determining the geometry of a tooth.

[0003] U.S. Published Application 2015/0348320 to the present applicant and others relates to, "A method for measuring regions of a tooth in a mouth including: measuring at least one surface point on a surface of the tooth with respect to an element mechanically coupled to said surface point; determining a location of at least one visible reference mechanically coupled to said surface point with respect to said element; estimating a location of said surface point with respect to said visible reference. A device used for such measuring may include a main body comprising a final optical element of an imager which defines an optical field of view directed in a first direction; and a measurement element coupled to said main body extending generally in said first direction; where a tip of said measurement element is sized and shaped to be inserted between a tooth and adjacent gingiva; where said optical field of view is sized to image at least part of a tooth."

SUMMARY OF THE INVENTION

[0004] According to an aspect of some embodiments of the invention, there is provided a method of imaging a tooth comprising: illuminating at least a portion of the tooth with a light emitter; casting a shadow on the portion of the tooth by an object between the emitter and the tooth; imaging the portion of the tooth, including at least a part of the shadow; and determining a location of a point on the tooth and related to the shadow, using the image including the shadow.

[0005] According to some embodiments of the invention, the method comprises constructing a shape of the portion based on the determining.

[0006] According to some embodiments of the invention, constructing comprises repeating the illuminating, casting, imaging and determining for a plurality of locations.

[0007] According to some embodiments of the invention, the method further includes: producing an image of light collected from the portion of the tooth including at least two points related to the shadow; processing the image to determine a 3D location of the at least two points.

[0008] According to some embodiments of the invention, the determining comprises determining relative to a model of the tooth.

[0009] According to some embodiments of the invention, the determining comprises determining relative to a model of a plurality of teeth.

[0010] According to some embodiments of the invention, the method comprises acquiring a plurality of images of the portion and wherein the determining comprises determining a movement of the imager from a plurality of images.

[0011] According to some embodiments of the invention, the method further comprises: storing a plurality of the images; designating at least one reference feature in the plurality of images and calculating the location of at least two points related to the shadow with respect to the reference at least one feature.

[0012] According to some embodiments of the invention, the reference is at least one of a tooth or gums.

[0013] According to some embodiments of the invention, the determining comprises determining a relative position of an imager used for imaging and the tooth and the object.

[0014] According to some embodiments of the invention, the object is fixed relative to the imager.

[0015] According to some embodiments of the invention, the object is rigid. According to some embodiments of the invention, the method comprises identifying a deformation of the object.

[0016] According to some embodiments of the invention, the method comprises identifying a shape of a portion of the shadow in the image and identifying a point of intersection defined by the shadow and the tooth based on the shape.

[0017] According to some embodiments of the invention, the determining comprises determining the location of a line comprising a plurality of points in a same cast shadow.

[0018] According to some embodiments of the invention, imaging comprises imaging a plurality of teeth simultaneously with the part of the shadow.

[0019] According to some embodiments of the invention, illuminating comprises selecting an illumination point.

[0020] According to some embodiments of the invention, illuminating comprises selecting a plurality of illumination points, applied in sequence.

[0021] According to some embodiments of the invention, the imaging includes imaging from a viewpoint and wherein determining includes: sighting the point from a viewpoint; and establishing a relative location of the object with respect to the viewpoint and wherein the determining is based on the relative location.

[0022] According to some embodiments of the invention, the determining includes: sighting the point from each of two viewpoints; and establishing a relative location of two viewpoints and wherein the determining is based on the relative location.

[0023] According to some embodiments of the invention, the method further comprises: inserting an imager into an oral cavity containing the tooth and wherein the determining further includes sighting the tooth with the imager.

[0024] According to some embodiments of the invention, the illuminating includes: inserting a light emitter into an oral cavity containing the tooth, and wherein the illuminating is by the light emitter.

[0025] According to some embodiments of the invention, the method further comprises: establishing a relative position of the shadow casting object with respect to the light emitter.

[0026] According to some embodiments of the invention, the location is with respect to the imager.

[0027] According to some embodiments of the invention, the object, an imager used for imaging and a light emitter used for illuminating are fixedly coupled and inserted as a unit into an oral cavity containing the tooth, with the object extending away from a housing of the unit.

[0028] According to some embodiments of the invention, the location is with respect to a shadow casting object.

[0029] According to some embodiments of the invention, the method comprises storing at most a lower representation of the image after the determining.

[0030] According to an aspect of some embodiments of the invention, there is provided a three dimensional (3D) intraoral scanner system for imaging at least a portion of a tooth in an oral cavity comprising: an intraoral portion sized and shaped for insertion into the oral cavity, the intraoral portion including: a light emitter for emitting a light; a shadow casting object; at least a portion of the shadow casting object in a field of illumination of the light source and extending away from a housing of the intraoral portion; an imager positioned to image at least an edge of a shadow cast by the shadow casting object in the light reflected off a portion of a tooth located at a distance of between 3 mm and 5 cm from the imager.

[0031] According to some embodiments of the invention, the intraoral scanner system further comprises: a processor configured to process an image of the light received by the imager to provide location information.

[0032] According to some embodiments of the invention, the processor is configured to process the image to determine a location of a portion at an edge of the shadow.

[0033] According to some embodiments of the invention, the processer is configured to process the image to determine a shape of the tooth.

[0034] According to some embodiments of the invention, the processor is further configured to: establish a position of imager with respect to a reference feature in the oral cavity.

[0035] According to some embodiments of the invention, the processor is configured to track a position of the portion in the cavity.

[0036] According to some embodiments of the invention, the position is provided using the imager.

[0037] According to some embodiments of the invention, the processor is configured for the establishing the position of the imager from the image.

[0038] According to some embodiments of the invention, the processor is further configured to: establish a position of the object producing the shadow.

[0039] According to some embodiments of the invention, the imager is located at a known orientation to the light emitter.

[0040] According to some embodiments of the invention, the processor is further configured to: establish a position of the shadow casting object; and establish a relative location of the imager with respect to the light emitter and a portion of the shadow casting object.

[0041] According to some embodiments of the invention, a portion of the shadow casting object casting the edge of the shadow has a known orientation to the light emitter.

[0042] According to some embodiments of the invention, the processor is further configured to: establish a relative location with respect to the light emitter of a portion of the shadow casting object casting the edge of the shadow.

[0043] According to some embodiments of the invention, the system further comprises an elongated handle having a length between 8 to 30 cm, a width less than 15 cm.

[0044] According to some embodiments of the invention, the at least a portion of the shadow casting object, the light emitter and the imager are collocated such that they do not fall within 0.5 mm of a single plane.

[0045] According to some embodiments of the invention, the system further includes an orientation sensor sensing an orientation of the at least a portion of the shadow casting object with respect to the light emitter.

[0046] According to some embodiments of the invention, the orientation sensor includes the imager and wherein the at least a portion of the shadow casting object is within a field of view of the imager.

[0047] According to some embodiments of the invention, the emitter radiates light from surface fitting within a sphere of radius 5 mm.

[0048] According to some embodiments of the invention, the light emitter comprises a plurality of light sources, not all illuminated at a same time.

[0049] According to some embodiments of the invention, the processor is configured for computing a location of at least two spaced apart portions of the shadow with respect to the imager.

[0050] According to some embodiments of the invention, the processor is configured to process a plurality of images to designate at least one reference feature in the plurality of images and calculate the location of the at least two portions with respect to the reference feature.

[0051] According to some embodiments of the invention, the processor is configured to designate at least 3 features which appear in at least 2 of a plurality of images and calculate the movement of the imager relative of the features.

[0052] According to some embodiments of the invention, the processor is further configured to combine location information determined at different imager locations to a single 3D model.

[0053] According to some embodiments of the invention, the processor is further configured to combine locations of the at least 3 features identified at different imager locations to the single 3D model that includes the location information determined at each imager location.

[0054] According to some embodiments of the invention, the processor is configured to compute a location of at least two points related to the edge of the shadow with respect to an intraoral reference feature.

[0055] According to an aspect of some embodiments of the invention, there is provided a system for imaging a tooth in an oral cavity comprising: a light emitter sized and shaped to fit in an oral cavity; an imager sized and shaped to fit in the oral cavity simultaneously with the light emitter and directed to collect light from the light emitter reflected off the tooth at a point of an edge of a shadow on the tooth; a processor configured to receive an image from the imager and process the image to determine a location of the point and a shape of the tooth.

[0056] According to some embodiments of the invention, the processor is further configured to: establish a position of imager with respect to a reference feature in the oral cavity.

[0057] According to some embodiments of the invention, the processor is configured for the establishing the position of the imager from the image.

[0058] According to some embodiments of the invention, the processor is further configured to: establishing a position of the object producing the shadow.

[0059] According to some embodiments of the invention, the system comprises: a dental probe sized and shaped so the shadow can be cast by the dental probe.

[0060] According to some embodiments of the invention, the light emitter comprises a plurality of light emitter elements and wherein the processor is configured to track the dental probe and to select one or more of the light emitter elements to use for casting a shadow, based on the location of the probe to cast a shadow on the tooth.

[0061] According to some embodiments of the invention, the processor is configured to determine a tooth location relative to the dental probe.

[0062] According to some embodiments of the invention, the processor is configured to locate an edge of a shadow in an image from the imager and avoid storing a full resolution image thereafter.

[0063] According to some embodiments of the invention, the imager comprises two separate and spaced apart imager elements aimed at the point.

[0064] According to some embodiments of the invention, a first imager element has a wider FOV (field of view) than a second imager of the imager elements, suitable for imaging an object outside of the tooth, while the second imager element images the point.

[0065] According to some embodiments of the invention, the processor is configured to determine a location of the point based on a difference between images acquired by different imager elements.

[0066] Unless otherwise defined, all technical and/or scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which the invention pertains. Although methods and materials similar or equivalent to those described herein can be used in the practice or testing of embodiments of the invention, exemplary methods and/or materials are described below. In case of conflict, the patent specification, including definitions, will control. In addition, the materials, methods, and examples are illustrative only and are not intended to be necessarily limiting.

[0067] As will be appreciated by one skilled in the art, some embodiments of the present invention may be embodied as a system, method or computer program product. Accordingly, some embodiments of the present invention may take the form of an entirely hardware embodiment, an entirely software embodiment (including firmware, resident software, micro-code, etc.) or an embodiment combining software and hardware aspects that may all generally be referred to herein as a "circuit," "module" or "system." Furthermore, some embodiments of the present invention may take the form of a computer program product embodied in one or more computer readable medium(s) having computer readable program code embodied thereon. Implementation of the method and/or system of some embodiments of the invention can involve performing and/or completing selected tasks manually, automatically, or a combination thereof. Moreover, according to actual instrumentation and equipment of some embodiments of the method and/or system of the invention, several selected tasks could be implemented by hardware, by software or by firmware and/or by a combination thereof, e.g., using an operating system.

[0068] For example, hardware for performing selected tasks according to some embodiments of the invention could be implemented as a chip or a circuit. As software, selected tasks according to some embodiments of the invention could be implemented as a plurality of software instructions being executed by a computer using any suitable operating system. In an exemplary embodiment of the invention, one or more tasks according to some exemplary embodiments of method and/or system as described herein are performed by a data processor, such as a computing platform for executing a plurality of instructions. Optionally, the data processor includes a volatile memory for storing instructions and/or data and/or a non-volatile storage, for example, a magnetic hard-disk and/or removable media, for storing instructions and/or data. Optionally, a network connection is provided as well. A display and/or a user input device such as a keyboard or mouse are optionally provided as well.

[0069] Any combination of one or more computer readable medium(s) may be utilized for some embodiments of the invention. The computer readable medium may be a computer readable signal medium or a computer readable storage medium. A computer readable storage medium may be, for example, but not limited to, an electronic, magnetic, optical, electromagnetic, infrared, or semiconductor system, apparatus, or device, or any suitable combination of the foregoing. More specific examples (a non-exhaustive list) of the computer readable storage medium would include the following: an electrical connection having one or more wires, a portable computer diskette, a hard disk, a random access memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or Flash memory), an optical fiber, a portable compact disc read-only memory (CD-ROM), an optical storage device, a magnetic storage device, or any suitable combination of the foregoing. In the context of this document, a computer readable storage medium may be any tangible medium that can contain, or store a program for use by or in connection with an instruction execution system, apparatus, or device.

[0070] A computer readable signal medium may include a propagated data signal with computer readable program code embodied therein, for example, in baseband or as part of a carrier wave. Such a propagated signal may take any of a variety of forms, including, but not limited to, electro-magnetic, optical, or any suitable combination thereof. A computer readable signal medium may be any computer readable medium that is not a computer readable storage medium and that can communicate, propagate, or transport a program for use by or in connection with an instruction execution system, apparatus, or device.

[0071] Program code embodied on a computer readable medium and/or data used thereby may be transmitted using any appropriate medium, including but not limited to wireless, wireline, optical fiber cable, RF, etc., or any suitable combination of the foregoing.

[0072] Computer program code for carrying out operations for some embodiments of the present invention may be written in any combination of one or more programming languages, including an object oriented programming language such as Java, Smalltalk, C++ or the like and conventional procedural programming languages, such as the "C" programming language or similar programming languages. The program code may execute entirely on the user's computer, partly on the user's computer, as a stand-alone software package, partly on the user's computer and partly on a remote computer or entirely on the remote computer or server. In the latter scenario, the remote computer may be connected to the user's computer through any type of network, including a local area network (LAN) or a wide area network (WAN), or the connection may be made to an external computer (for example, through the Internet using an Internet Service Provider).

[0073] Some embodiments of the present invention may be described below with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems) and computer program products according to embodiments of the invention. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer program instructions. These computer program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, create means for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

[0074] These computer program instructions may also be stored in a computer readable medium that can direct a computer, other programmable data processing apparatus, or other devices to function in a particular manner, such that the instructions stored in the computer readable medium produce an article of manufacture including instructions which implement the function/act specified in the flowchart and/or block diagram block or blocks.

[0075] The computer program instructions may also be loaded onto a computer, other programmable data processing apparatus, or other devices to cause a series of operational steps to be performed on the computer, other programmable apparatus or other devices to produce a computer implemented process such that the instructions which execute on the computer or other programmable apparatus provide processes for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

[0076] Some of the methods described herein are generally designed only for use by a computer, and may not be feasible or practical for performing purely manually, by a human expert. A human expert who wanted to manually perform similar tasks might be expected to use completely different methods, e.g., making use of expert knowledge and/or the pattern recognition capabilities of the human brain, which would be vastly more efficient than manually going through the steps of the methods described herein.

BRIEF DESCRIPTION OF THE SEVERAL VIEWS OF THE DRAWING(S)

[0077] Some embodiments of the invention are herein described, by way of example only, with reference to the accompanying drawings. With specific reference now to the drawings in detail, it is stressed that the particulars shown are by way of example and for purposes of illustrative discussion of embodiments of the invention. In this regard, the description taken with the drawings makes apparent to those skilled in the art how embodiments of the invention may be practiced.

[0078] In the drawings:

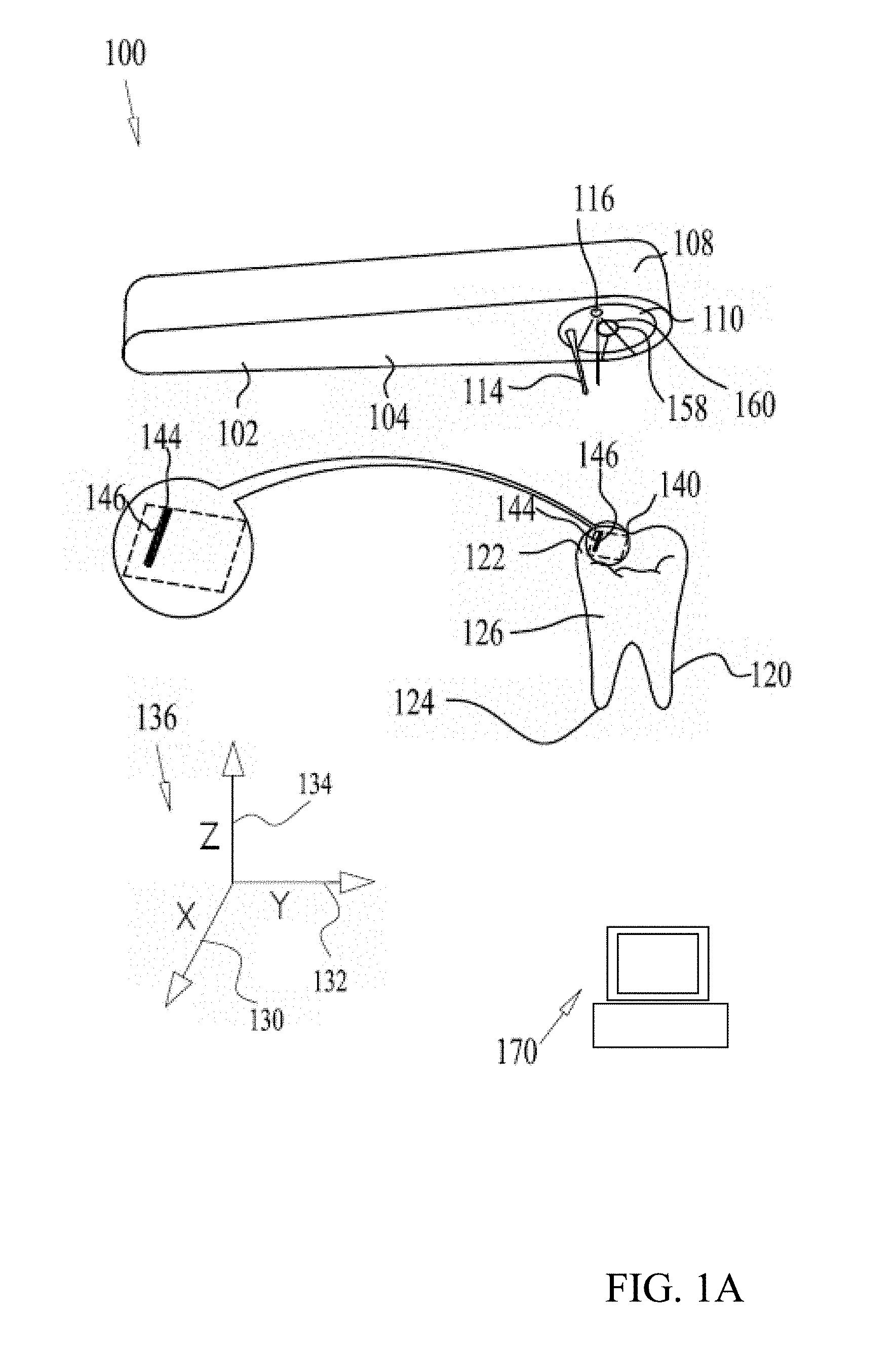

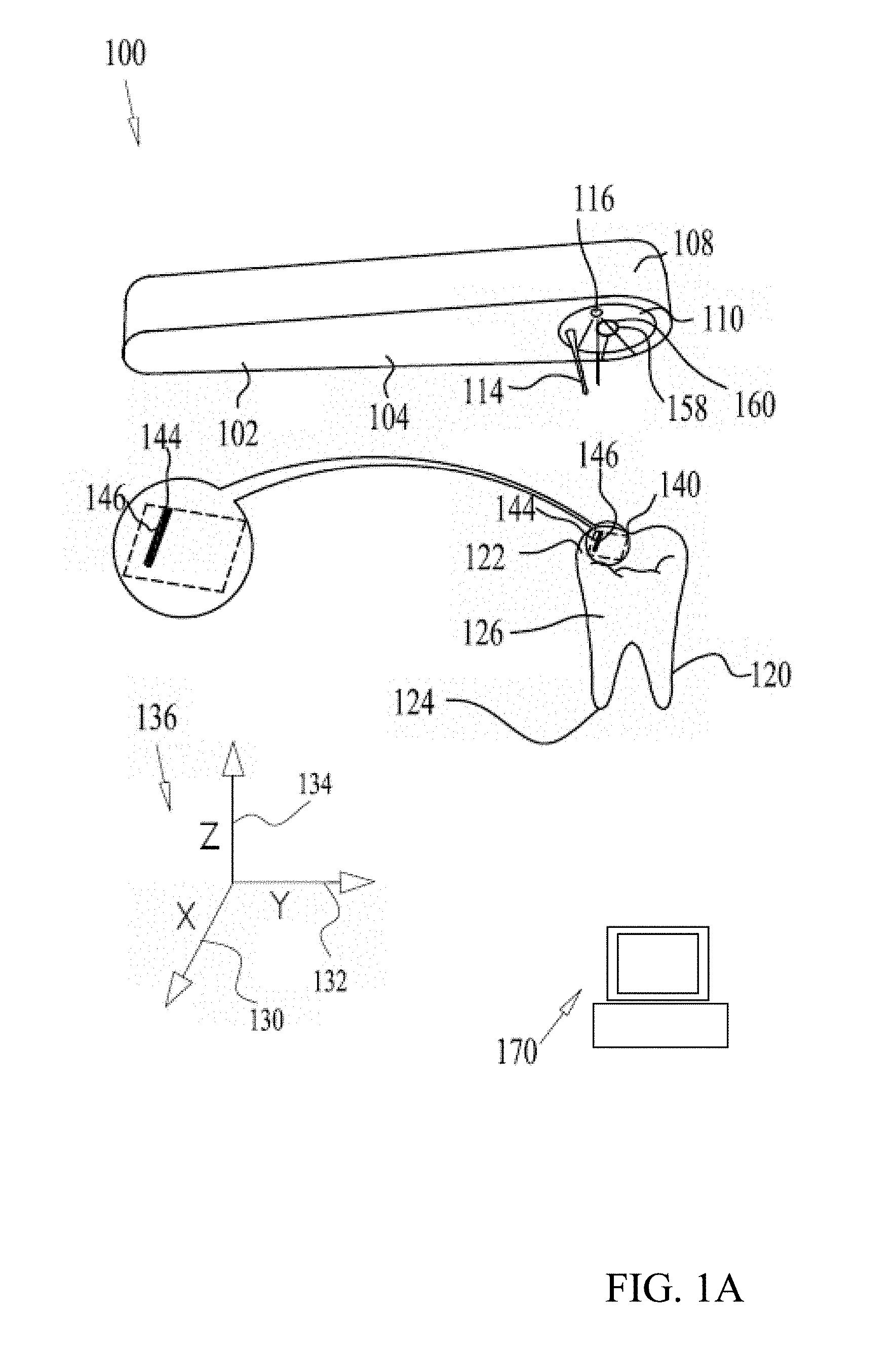

[0079] FIGS. 1A and 1B are simplified schematic illustrations of a 3D intraoral scanner system according to some embodiments of the present invention;

[0080] FIG. 1C is a simplified illustration of a 3D intraoral scanner system according to some embodiments of the present invention;

[0081] FIG. 1D is a simplified schematic illustration of the configurations of the shadow parameter of FIG. 1C;

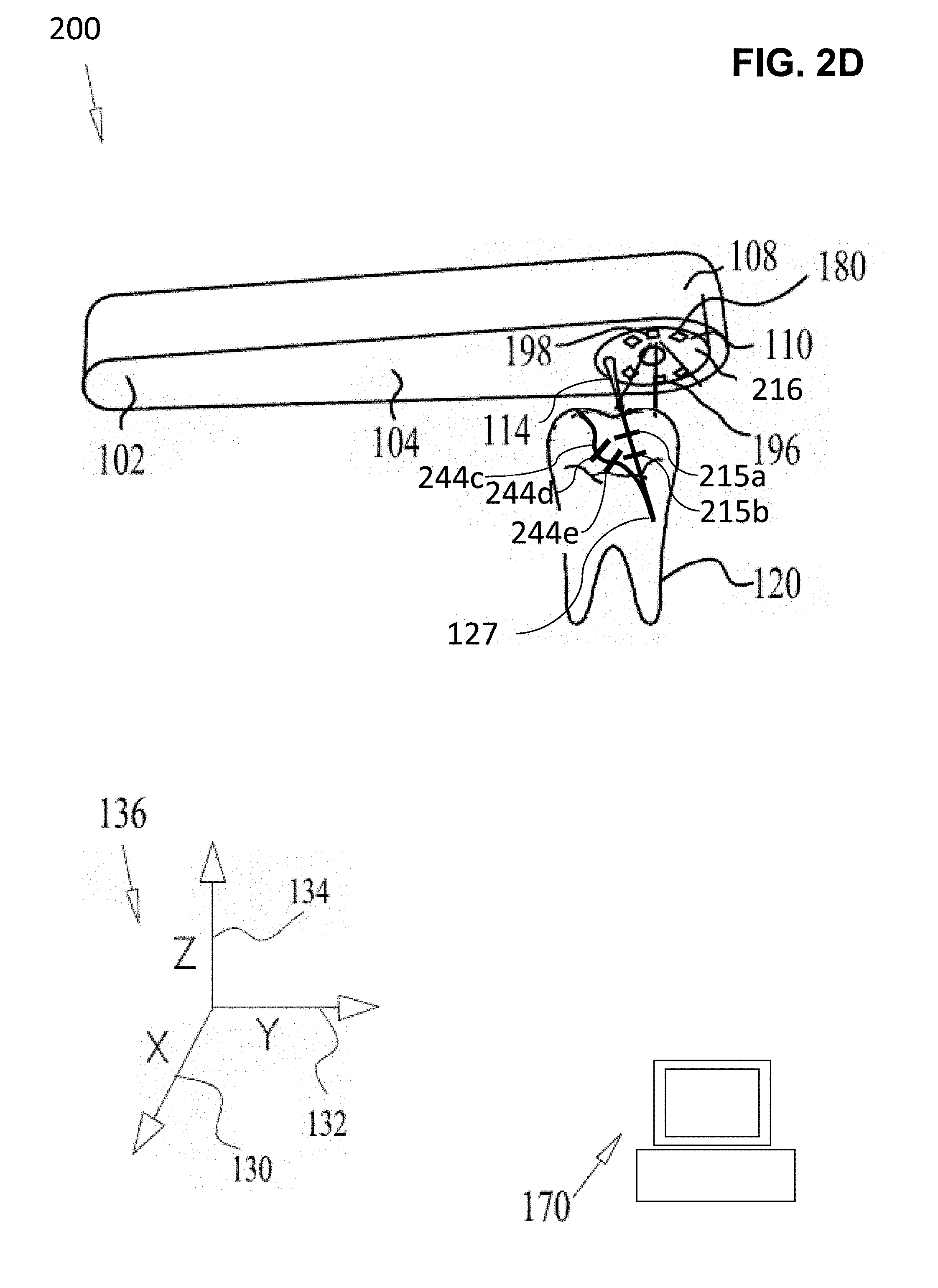

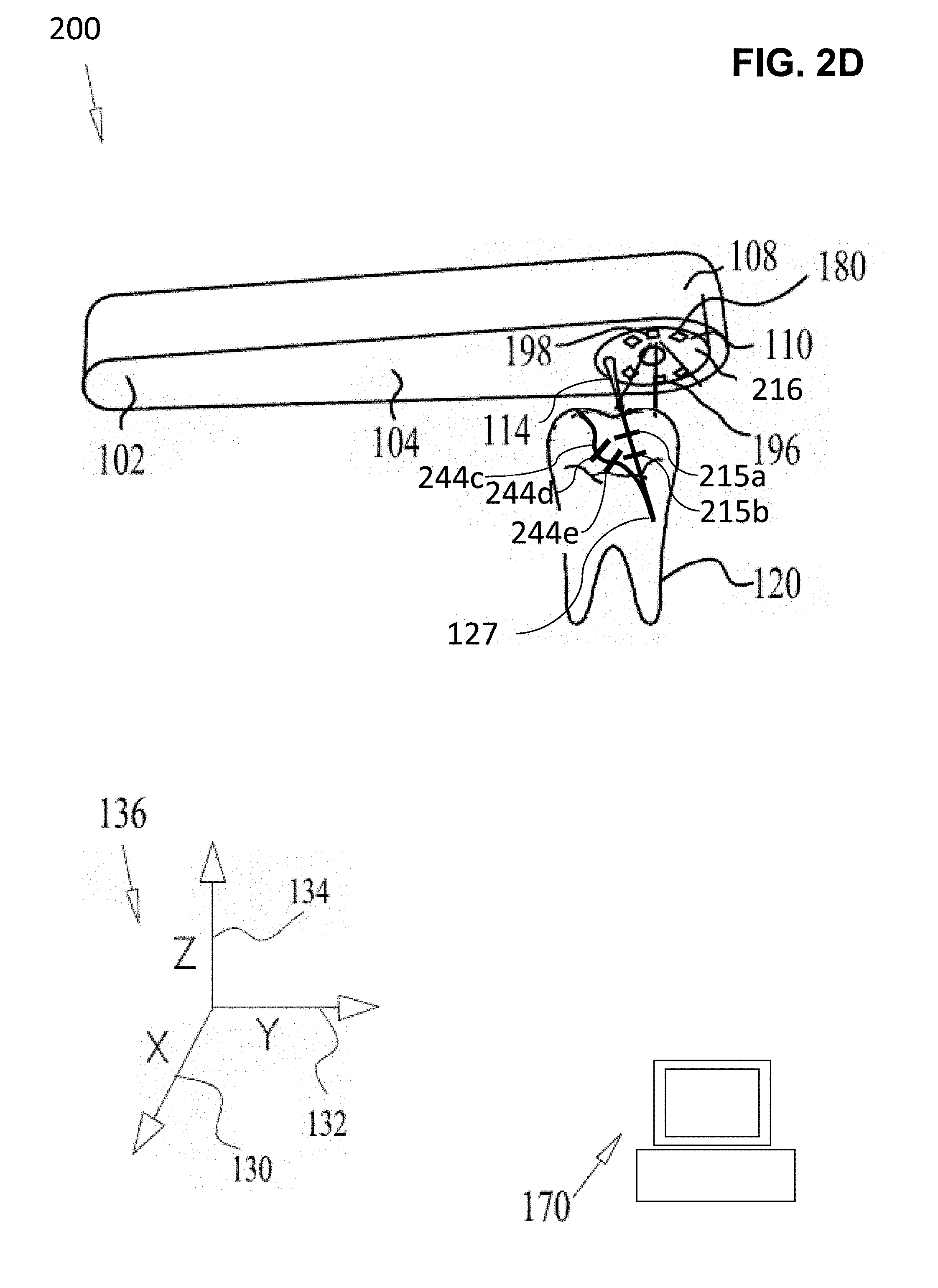

[0082] FIGS. 2A, 2B, 2C and 2D are simplified schematic illustrations of a 3D dental intraoral scanner system with multiple light emitters, according to some embodiments of the present invention;

[0083] FIG. 3A illustrates a high level flow chart of an embodiment of a method intra oral scanning using shadows in accordance with an embodiment of the present invention;

[0084] FIG. 3B is a flow chart illustration of further details a method of 3D data from an intraoral scan in accordance with an embodiment of the present invention;

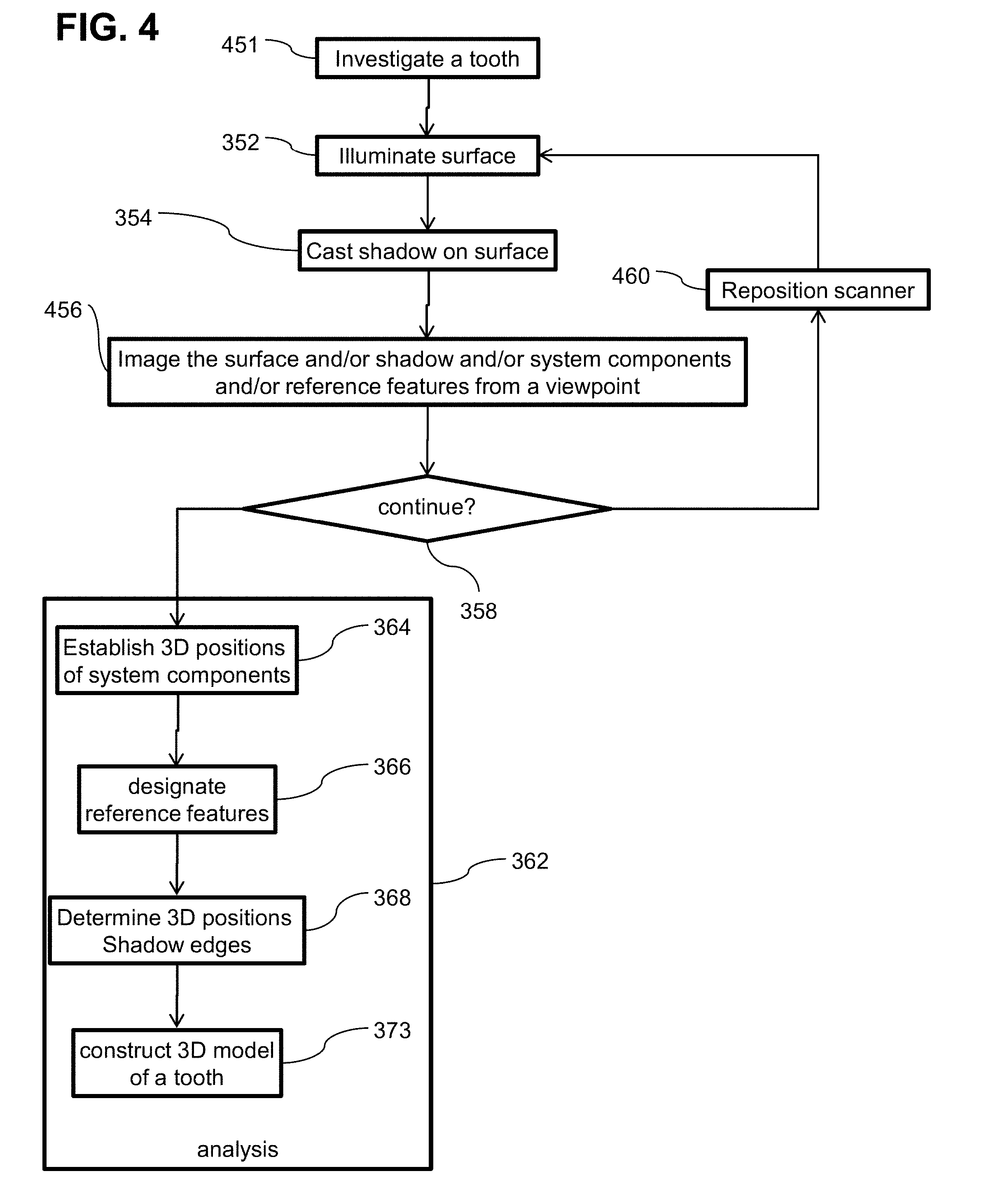

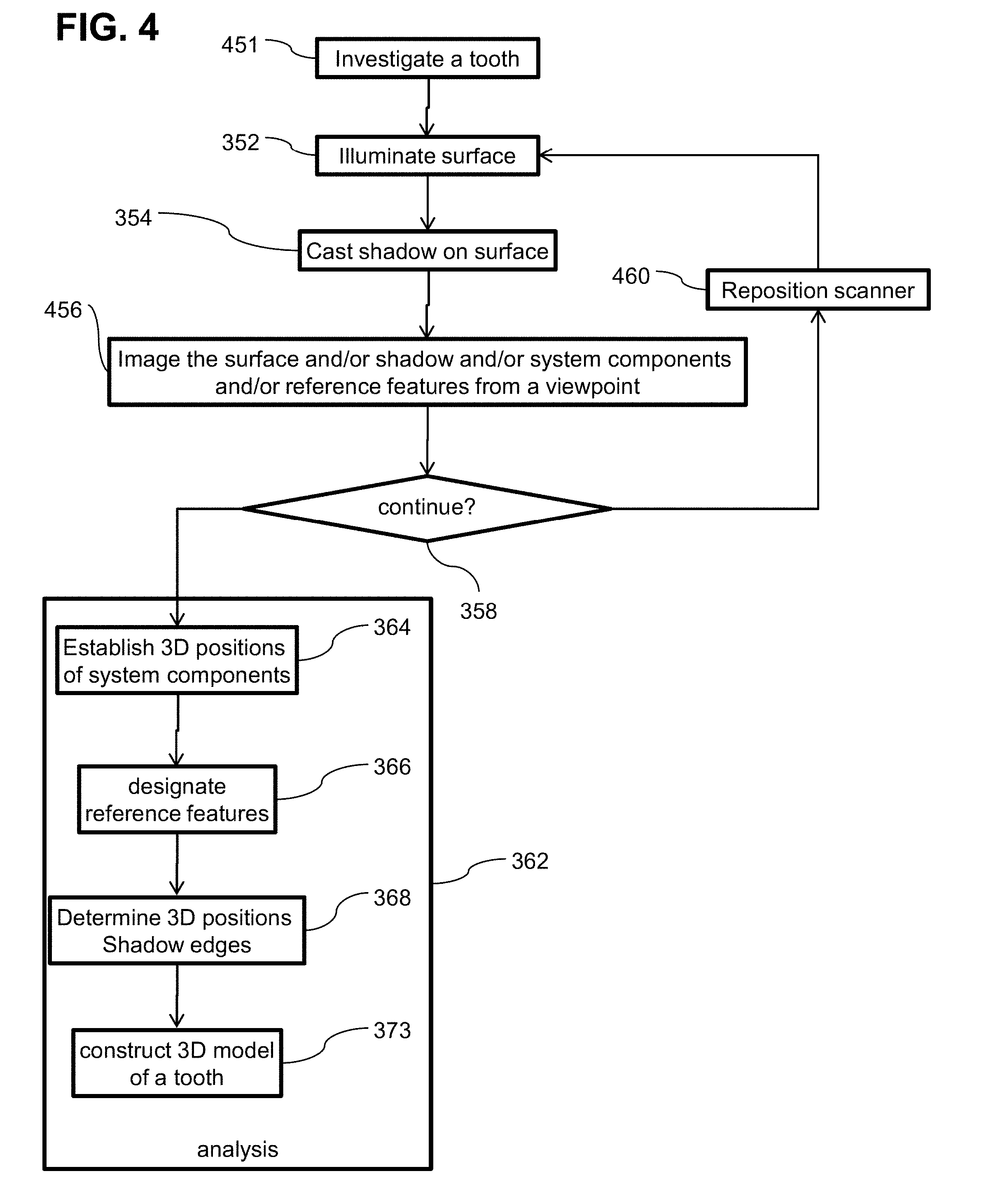

[0085] FIG. 4 is a flow chart illustration of a method of modeling a tooth while a investigating a tooth with a scanner including a dental probe in accordance with an embodiment of the present invention;

[0086] FIG. 5 is a flow chart illustration of a method of modeling a tooth while investigating a tooth with a scanner including a dental probe and multiple light emitters in accordance with an embodiment of the present invention;

[0087] FIG. 6 is a flow chart illustration of a semi-passive method of modeling a tooth while a investigating a tooth in accordance with an embodiment of the present invention;

[0088] FIG. 7 is a flow chart illustration of a method of modeling a tooth when navigation data is available on the oral cavity in accordance with an embodiment of the present invention;

[0089] FIG. 8 is a flow chart illustration of a method of modeling a tooth using multiple overlapping image sensors in accordance with an embodiment of the present invention;

[0090] FIG. 9 is a schematic illustration of a scanner having multiple shadow casting objects in accordance with an embodiment of the present invention;

[0091] FIG. 10 is a schematic illustration of a scanning system having multiple imagers in accordance with an embodiment of the present invention;

[0092] FIG. 11 is a schematic illustration of a scanner having multiple imagers in accordance with an embodiment of the present invention;

[0093] FIG. 12 is a block diagram of an intraoral scanner in accordance with an embodiment of the present invention;

[0094] FIG. 13 is a block diagram of a portion of an intraoral scanner system in accordance with an embodiment of the present invention and

[0095] FIG. 14 is a block diagram of a portion of an intraoral scanner system in accordance with an embodiment of the present invention.

DESCRIPTION OF SPECIFIC EMBODIMENTS OF THE INVENTION

[0096] The present invention, in some embodiments thereof, relates to a system and method for intra-oral scanning and, more particularly, but not exclusively, to a system and method of constructing a 3D model of a tooth or teeth.

Overview

[0097] An aspect of some embodiments of the current invention relates to a method of producing and/or imaging shadows to determine the three dimensional geometry of a tooth surface. Optionally, a shadow may be cast onto the tooth by an object located between the tooth and a light emitter illuminating the surface. In some embodiments, an image of the surface and/or the shadow and/or a portion thereof is captured by a light collector and/or analyzed. A 3D location may be determined of a point related to the shadow (for example a point on the surface at an edge of the shadow and/or one point inside the shadow and one point outside the shadow, delineating the edge of the shadow between the points). Optionally, multiple images may be combined to construct a 3D model of the surface, the tooth, gums, an arch, a portion of the oral cavity and/or the entire oral cavity. Optionally multiple points along a shadow edge will be determined. Multiple points may give information of the size and/or direction of a shadow.

[0098] In some embodiments, use of shadows may facilitate measuring and/or determining three dimensional geometry of an intra-oral object (for example a tooth, a bone, and/or an arch). Optionally the imaging is done while simultaneously measuring sub-gingival structures. Optionally measurements are made without the use of penetrating radiation (such as x-ray methods for example tradition x-rays, computerized axial tomography CAT scans, cone beam imaging). Optionally measurements may be made without the use of a laser and/or structured light (for example a projected optical pattern) and/or a computer controlled scanning light emitters. Use of a shadow to discern 3D features has a potential disadvantage that shadow edges may tend to get fuzzy and/or diffused and/or spread as the distance increases between the shadow producing object and the surface upon which the shadow falls. Nevertheless, in some embodiments, a device producing shadows may be cheaper and/or smaller than a device producing a projected grid etc.

[0099] In some embodiments, the location of a point on a shadow may be calculated, for example as the intersection of two lines. For example, the two lines may include two lines of sight from two spaced apart view points to the point on the shadow. For the sake of this disclosure a view point may be the location of a light collector, for example the centroid of the optical aperture thereof, for example a camera and/or another imager. For the sake of this disclosure a line of sight may includes the line from the view point to the point being measured (e.g. the edge of the shadow on the surface and/or the centroid of an area, for example a blurred edge). For example, the coordinates of the point being measured may be found using in stereo vision methods for example including binocular range finding where the location is at the intersection of the two lines of sight from two spaced apart view points to the point being measured on the surface. Alternatively or additionally, the location of a point on a shadow on the surface may be identified as the intersection of a line of sight and a line of illumination. For the sake of this disclosure, a line of illumination may include a line from an illumination point and/or a centroid of a light emitter to the location being measured, for example the centroid thereof. For example, a line of illumination to a point on the edge of a shadow includes a line passing from the illumination point, past the edge of the shadow producing object to the edge of the shadow on the surface. Alternatively or additionally, for example, when a shadow is cast by an object with a straight edge, all the points of the shadow edge may fall in a plane defined by the illumination point and the line of the edge of the shadow casting object. In some embodiments, the 3D location of a point on the tooth may be determined by computing the point of intersection between the plane of the shadow edge and the line of sight from the imager to the point. The direction of the line of sight to the point is optionally calculated from the location of the point in an image. The plane of the shadow is optionally determined by the location of the light emitter aperture and the edge of the shadow casting object.

[0100] In some embodiments, the illuminated portion of a shadow producing object may have a short dimension of between 0.1 to 0.3 mm and/or between 0.3 to 0.7 mm and/or between 0.7 to 3 mm and/or between 3 to 10 mm and/or between 10 to 30 mm. Optionally the illuminated portion of a shadow producing object may have a long dimension of between 0.1 to 0.3 mm and/or between 0.3 to 0.7 mm and/or between 0.7 to 3 mm and/or between 3 to 10 mm and/or between 10 to 30 mm and/or between 30 to 50 mm or more. For example the shadow casting object may be elongate with a long illuminated dimension between 3 to 5 times the short illuminated dimension and/or between 5 to 10 times and/or between 10 to 50 times or more.

[0101] In some embodiments, the area of a shadow on an image may be less than 1/100 the illuminated area and/or between 1/100 to 1/20 of the illuminated area and/or between 1/20 to 1/10 of the illuminated area and/or between 1/10 to 1/4 of the illuminated area and/or between 1/4 to 1/2 of the illuminated area and/or between 1/1 to equal to the illuminated area and/or between equal to twice the illuminated area and/or between twice to four times the illuminated area and/or between four times to ten times the illuminated area and/or between ten times to one hundred times the illuminated area.

[0102] In some embodiments, an image may include less than 100 shadow edges and/or less than 10 shadow edges and/or less than 5 shadow edges and/or less than 3 shadow edges. In some embodiments, an image may include less than 100 parallel shadow edges and/or less than 10 parallel shadow edges and/or less than 5 parallel shadow edges and/or less than 3 parallel shadow edges. In some embodiments, the total length of shadow edges in an image may be less than 100 times the length of the long edge of the image and/or between 10 to 100 times the length of the long edge of the images and/or between 1 to 10 times the length of the long side of the images and/or between 1/4 to 1 times the length of the long side of the image and/or less than 1/4 the length of the long edge.

[0103] In some embodiments, a shadow will be in the central portion of an image. For example the shadow may fall entirely in the central (from side to side and/or from top to bottom) 1/2 of the image and or the central 1/4 of the image. Alternatively or additionally, the shadow may fall entirely in the peripheral (from side to side and/or from top to bottom) 1/2 of the image and or the peripheral 1/4 of the image. In some embodiments, a shadow may have one outer edge inside an image and/or between 1 to 4 outer edges in the image. In some embodiments, a shadow in an image may include a projection of an outer edge of the shadow producing object and/or of two opposing edges of the shadow may be projections of two opposing outer edges of the shadow producing object.

[0104] In some embodiments, the distance of the shadow producing objects to the surface being imaged may be less than 1 mm and/or between 1 mm to 3 mm and/or between 3 mm to 10 mm. In some embodiments, the distance of the shadow producing objects to the surface being imaged may be less than 1 mm and/or between 1 mm to 3 mm and/or between 3 mm to 10 mm. In some embodiments, the distance of the light emitter to the surface being imaged may be less than 1 mm and/or between 1 mm to 3 mm and/or between 3 mm to 10 mm.

[0105] In some embodiments, a feature in a shadow may be used to mark a point on a surface (for example the surface may lack identifiable features e.g. a smooth surface). For example, the location of the marked point may be imaged from two view points and its location found by triangulation (for example stereoscopy or using at least 2 images made at spaced apart viewpoints, the images are optionally made by a single camera and/or using multiple cameras and/or a camera with multiple apertures). Alternatively or additionally, a shape of a shadow may be used to determine a shape of a surface. For example, a bending of a shadow may indicate that a surface is bent and/or lengthening of a shadow may indicate that a surface is angled away from the light emitter and/or an axis of the shadow producing object. Optionally, a cast shadow may be used for discovering object's concavities, which are sometimes difficult to measure based on other cues such as occluding boundaries.

[0106] Optionally some or all of the light collector, light emitter and/or shadow producing object have a fixed relative location. For example, they may be fixed in an intraoral scanning device. Alternatively or additionally, the field of view FOV of the light collector may include at least a portion of the shadow casting object such that the location of the shadow casting object and/or deformation of the shadow casting object may be identified by image analysis. The location in 3D space of a point related to the shadow may be adjusted using image of said shadow casting object. Optionally the location of the light collector may be identified with respect to features in an oral cavity by image analysis.

[0107] In some embodiments, an imaging method may include establishing a spatial relationship between components of the system. For example, a spatial relationship may be tracked in real time. For example, relative positions may be tracked using an optical tracking system, for example one or more cameras sighting a system component and/or the oral cavity. Alternatively or addition, a location of a component may be tracked using a beacon (for example a radio frequency RF beacon) and/or a local positioning system. Alternatively or additionally, some aspects of a spatial relationship between components of the system may be fixed.

[0108] In some embodiments, light emitter may include any object that illuminates the tooth. For example, the light emitter may include a light source such as a light emitting diode and/or a laser. Alternatively or additionally, the light emitter may include a light shaping element such as a lens and/or an optical fiber.

[0109] In some embodiments, an object may be actively introduced between a light emitter and a tooth to produce the shadow. Alternatively or additionally, a shadow may be produced by the geometry of the tooth itself and/or by a passive object. For example, the shadow casting object may include a dental tool and/or a specially designed probe with optional fiducial marking.

[0110] In some embodiments, measurements of the shadow edge 3D profile may have a tolerance of, for example, between 10 um to 50 um and/or between 50 um to 100 um and/or from 100 um to 200 um.

[0111] In some embodiments, relative position of system components may be established to a linear tolerance, of for example, between 10 um to 50 um and/or between 50 um to 100 um and/or from 100 um to 200 um. Optionally an imaging sensor may have a resolution of about 1 Mpixels. For example, the resolution may vary over a range, for example, of 0.4-15 Mpixels or even lower or higher resolutions. Optionally a light collector may have a FOV of about 60 deg. For example the FOV may vary over a range of for example 10 to 30 degrees and/or 30 to 60 degrees and/or 60 to 90 degrees or greater or smaller.

[0112] In some embodiments, imaging a shadow may result in measurements and/or images that will be used to establish a spatial relation during post processing. For example, images produced by a light collector may be analyzed to establish a spatial relationship. Optionally the light collector may have a field of view FOV that includes a shadow producing object and/or a light emitter. Optionally an imaged produced from the light collector may be analyzed to establish a position of the shadow producing object and/or a navigational reference feature with respect to the light collector. Alternately or additionally a fiducial marker may be used and/or a position sensor. Data from the marker and/or the position sensor may be processed to establish the location of one or more components of the system.

[0113] In some embodiments measuring an oral cavity and/or intra-oral object may include establishing a spatial location of components of the system and/or of determining a spatial location of an image feature with respect to a reference feature, for example an intra-oral object and/or feature. Except where clearly used otherwise, the term navigation is used in this disclosure to mean establishing the location of a system component with respect to a reference (for example a reference feature in the oral cavity). For example, a location may be tracked in real time. Optionally, the location may be tracked based using an optical tracking system, for example one or more cameras viewing a system component and/or the oral cavity. Alternatively or additionally, a location of a system component and/or an imaged object and/or a reference feature may be tracked using a marker and/or a beacon (for example a radio frequency RF beacon) and/or a local positioning system signal.

[0114] In some embodiments, a previously identified reference feature (for example an object identified in a previous intra-oral scan and/or in a CT scan) may be used to as references to establish the location of a system component and/or determine the location of an imaged object. Alternatively or addition, reference features may be recognized during processing of a collection of data. In some embodiments artificial reference markers may be used, for example marking a location on a tooth with a dye and/or powder and/or a sticker and/or placing a fiduciary marking in the oral cavity. In some embodiments, natural reference features may be used, for example a distinguishable feature of a tooth and/or a pallet and/or an arch. In some embodiments, a fixed dental structure may be used as a reference, for example a crown and/or a bridge. Alternatively or additionally, inertial navigation may be employed to track the location of a system component and/or an object in the oral cavity. For example, a gyro and/or an accelerometer may be attached to a component of the system and/or an object in the oral cavity.

[0115] In some embodiments, images may be made simultaneously or nearly simultaneously. For example, an imagers may capture a pair of images in less than one second (sec) and/or between 1 to 1/30 sec and/or between 1/30 to 1/200 sec and/or between 1/200 to 1/700 sec and/or between 1/700 to 1/1000 sec. Optionally the capture rate may be even higher for lower resolution and/or smaller ROIs. For example, separate images may be captured with a light emitter activated in a different position resulting in a different position of a shadow. Alternatively or additionally multiple light collectors may be illuminate the imaged structure from different locations. The different light emitters may have different frequencies and/or be activated in quick succession to produce shadows at multiple locations substantially simultaneously. For the sake of this disclosure substantially simultaneously may mean within a time period wherein the oral cavity normally moves less than a measurement tolerance. For images made substantially simultaneously, the spatial relationship between locations may be determined from their relative spatial positions.

[0116] An aspect of some embodiments of the current invention relates to intra-oral scanning device. Optionally, the device includes one or more light collectors and/or one or more light emitters and/or one or more shadow producing elements (for example a dental tool). Optionally the scanning device may include a housing (for example components such as the light emitter, image and/or shadow caster may be mounted to the housing and/or may be contained in the housing and/or may be integral to the housing). Optionally the relative orientation of the components in the housing is known and/or fixed. Optionally one or more of the system components is included in a portion of the housing shaped and sized to fit into an oral cavity. Optionally the housing is shaped, sized to be handled by a single human hand from outside the oral cavity. For example, a user may use a handle outside the oral cavity to manipulate the scanner inside the cavity.

[0117] In some embodiments, a spatial relationship may be established based on a fixed spatial relationship between some elements of the system. For example, a light emitter and a light collector may be fixed in a tool with a fixed geometric structure. Alternatively or additionally, a shadow producing object such as a probe may be fixed in the tool. Alternatively, a light collector and a shadow producing object may be fixed into a tool. Alternatively, a light emitter and a shadow producing object may be fixed into a tool. Alternatively or additionally one or more light emitters and/or light collectors may be independent of the tool. Alternatively or additionally there may be multiple tools including one or more of a light emitter, a light collector and/or a shadow producing object.

[0118] In some embodiments, a light collector may include an image sensor and/or a light shaping element (for example a lens and/or optical fiber) directing light to an image sensor. An example of an image sensor is for instance a CMOS sensor [for example an ON-Semi Python1300 CMOS sensor available from ON Semiconductor 5005 East McDowell Road Phoenix, Ariz. 85008 USA, with 1.3 Mpixels (1024.times.1280) resolution, global shutter to avoid image distortions because of movements, that captures up to 545 frames per second FPS on full frame and 1000s FPS on smaller ROIs. Optionally a sensor may have between 10 to 10.sup.3 pixels and/or from 10.sup.3 to 10.sup.5 pixels and/or 10.sup.5 to 5.times.10 .sup.5 pixels and/or 5.times.10 .sup.5 to 5.times.10 .sup.6 pixels and/or 5.times.10 .sup.6 to 5.times.10 .sup.7 pixels and/or 5.times.10 .sup.7 to 10.sup.9 pixels.

[0119] In some embodiments, a light emitter may include a light emitting element and/or a light shaping element (for example an optical fiber and/or a lens) directing light to a region of interest ROI (for example an intra-oral object including a tooth and/or a reference feature and/or a shadow producing object for example a dental tool). For example, a white LED may be used. In some embodiments, white illumination facilitates obtaining a color image of the ROI. For example the light emitters may be point emitters of light for example having a light emitting surface of between 3 to 1 mm.sup.2 and/or between 1 to 1/10 mm.sup.2 and/or between 1/10 to 1/100 mm.sup.2 and/or between 1/100 to 1/10000 mm.sup.2. For example, a larger light emitter may include an LED and/or a smaller light emitter may include a laser diode. For example, where the light emitter is large, the distance between the shadow producing object and a surface being imaged may be decreased and/or where the light emitter is large, the distance between the light emitter a surface being imaged may be increased.

[0120] In some embodiments, a housing may have a length ranging between 10-25 cm. In some embodiments, a housing may have a maximum width ranging between 1-3 cm. In some embodiments, a system may have a weight ranging between 20 g-1 Kg.

[0121] In some embodiments, the shadow producing element may include a dental tool, for example, a periodontal probe. For example, the tool may protrude to a distance between 5-25 mm from the housing. For example, the tool may have a maximum width ranging between 1-3 mm. For example, the tool may include a fiducial marking. For example, fiducial markings may include a protrusion, a small ball, a hole, a colored area and/or an indentation. Optionally, markings may be arranged to indicate an orientation, for example an angle and/or a length. In some embodiments, the distance between a light emitter and a light collector may range between 1 to 10 mm and/or between 10 to 50 mm and/or greater than 50 mm. In some embodiments, a distance between a light emitter and a probe tip may range between 5-50 mm or more than 50 mm. In some embodiments, the distance between a light collector and a probe tip may range between 5-50 mm or more than 50 mm. In some embodiments, a probe may have a sharp tip.

[0122] In some embodiments, a navigation sensor may be provided. For example, a tool may include a light collector for imaging a tooth and/or a shadow producing object and/or a navigation sensor, which is used to establish the location of the light collector. For example, the navigation sensor may include an imaging light collector whose orientation to a light emitter, light collector and/or shadow casting object is fixed. The navigation sensor is optionally configured to detect its location with respect to a reference feature and/or the navigation sensor optionally has a wide field of view for capturing an orientation to a reference feature. Alternatively or additionally a single light collector used for locating a point on a shadow and/or navigating, for example the light collector may have a wide FOV to capture the reference features along with the shadow. Optionally the FOV may be between 5 to 25 degrees and/or between 25 to 45 degrees and/or between 45 to 90 deg and or greater than 90 degrees. Reference features in the FOV of the light collector may optionally be used for navigation.

[0123] An aspect of some embodiments of the current invention relates to intra-oral scanning and/or navigation during use of a dental tool (for example a probe used to measure a sub-gingival feature or a dental drill). For example, images may be recorded of a dental probe, a revealed surface of a tooth and/or gums, a reference feature and/or a shadow on the surface. The images may be processed in real time and/or in post processing to establish the location of the probe in relation to the reference feature and/or the geometry of the surface and/or the orientation of geometric features of the surface with respect to the reference feature. Optionally image recording may be accomplished with minimal or no interruption of the use of the probe.

[0124] In some embodiments, while a user measures teeth or gums of a subject using a dental probe, images may be collected showing the probe position and/or a shadow of the probe on a tooth and/or shadows of shadows cast by tooth structures. The images may be later processed to produce a model of geometry of the tooth and/or determine locations of measurements made with the probe. For example, a portion of the shadow casting object may be distanced from the light source between 0.1 to 1 mm and/or between 1 mm to 5 mm and/or between 5 mm to 30 mm and/or between 30 mm to 50 mm and/or greater than 50 mm. For example, the shadow casting object may move independently of the probe.

[0125] In some embodiments, the device may measure deformations of the probe. For example, deformation of the probe may be used to determine when and/or where the probe tip contacts a sub-gingival object. Alternatively or additionally, a calculation of shadow location may be corrected for deformations of the shadow casting object.

[0126] In some embodiments, a light emitter may be configured to reduce interference with activity of the user. For example, while a user is measuring teeth and/or sub-gingival structures under visible light (for example wide bandwidth white light), light emitters and/or collectors may be illuminating and/or imaging structures using non-visible wavelengths (e.g. UV and/or IR). Alternatively or additionally, while a user is measuring teeth and/or sub-gingival structures under visible light (for example wide bandwidth white light), light emitters and/or collectors may be illuminating and/or imaging structures using visible light in a narrow bandwidth.

[0127] An aspect of some embodiments of the current invention relates to a method and system to track shadow casting object in real time and direct a device for imaging a shadow of the object on a tooth. In some embodiments, a processor may be configured to track the shadow casting object. The processor may optionally, direct a select a light emitter for activation and/or direct a light emitter in order to cast a shadow of the object on the tooth. Alternatively or additionally, a system for mapping an oral cavity may track movement of a shadow casting object and direct a light collector towards the location of the shadow. Alternatively or additionally, the processor may track a position of a tooth and a light source and position the shadow casting object to cast a shadow on the tooth. In some embodiments the location of features and/or shadows are located in a model and/or in the coordinate system of a model. Optionally features of the model may be constructed using interpolation and/or extrapolation. Optionally measured features will be related to the model.

[0128] In some embodiments, an intra-oral scanning system may include multiple light sources and/or one or more shadow casting object. A dental system may include a processor that tracks the orientation of the light sources and/or shadow casting objects and selectively activates a light source in order to cast a shadow on a particular tooth and/or a particular region of a tooth.

[0129] In some embodiments, the processor identifies the location of a scanned tooth in an image captured by an imager. For instance, the tooth may be identified relative to the gums using color segmentation. The processor optionally uses the tooth location relative to the probe to illuminate the probe with at least one light source to cast a shadow on a ROI on the tooth. For example, while the probe scans the tooth, different light sources are selected to illuminate the ROI and cast the shadow at a desired location. Factors considered in selecting the light source may include, for instance the contrast between the shaded and illuminated regions, and/or measurement error at different regions (for example a shadow may be projected where it has the largest baseline related to the imager).

[0130] In some embodiments, the image of the cast shadow and/or may be analyze a cast shadow to decide which light source to activate.

[0131] Before explaining at least one embodiment of the invention in detail, it is to be understood that the invention is not necessarily limited in its application to the details of construction and the arrangement of the components and/or methods set forth in the following description and/or illustrated in the drawings and/or the Examples. The invention is capable of other embodiments or of being practiced or carried out in various ways.

DETAILED EMBODIMENTS

Intraoral Scanner With a Single Light Source

[0132] Referring now to the figures, FIGS. 1A and 1B are each a simplified schematic illustration of a 3D dental intraoral scanner (IOS) system 100 according to some embodiments of the present disclosure. As seen in FIGS. 1A-D, the exemplary IOS system 100 comprises an IOS 102. IOS 102 includes a hand piece 104 and a head portion 108. In accordance with some embodiments, from an oral-facing surface 110 of the head portion 108 extends a shadow casting object 114 (shadow casting object is an object located between a light emitter and the tooth, such that a portion of said shadow casting object casts a shadow on at least a portion of the tooth. In some cases said shadow casting object may have also a dental functionality, for example a dental probe, or ultrasonic scaler, or a dental drill or dental turbine or any other dental tool.) In the schematic embodiment shown at FIGS. 1A-D, shadow casting object 114 is a dental probe. The oral-facing surface 110 optionally includes a light emitter, light source 116, and an optical aperture 158 of a light collector, camera 160.

[0133] In some embodiments light source 116 may include any suitable light source, such as a light-emitting diode (LED), a laser, such as edge emitter or VCSEL etc. Light source 116 optionally includes beam shaping optics, such as a lens, for example to use the light source light efficiently. The light source spectrum may be for instance blue, such as 450 nm LEDs or lasers, which may in some embodiments provide better contrast on the tooth or any other spectra, such as white or green or IR light etc. In some embodiments, optical fibers with a remote light source may be used.

[0134] In some embodiments, probe 114 and/or light source 116 may be utilized for 3D measurements of a portion of a tooth 120, and/or for measurement of the full tooth and/or for measurement of a plurality of teeth and/or for measurement of a partial or full dental arch and/or at least a portion of the dental arch.

[0135] In some embodiments, during scanning, light source 116 may be illuminated onto a chosen surface, for example to illuminate surface of a tooth or gums. The illumination is optionally used for imaging the surface. The chosen surface may comprise any surface on the tooth, such as a top surface 122, a bottom surface 124 and/or side surfaces 126 thereof

[0136] In some embodiments, the illumination also falls on probe 114. Optionally, probe 114 casts a shadow on a chosen surface 140 of a tooth 120 or gums. The shadow may include a projection of the probe geometry on the chosen surface. The tooth image is optionally captured by a light collector, for example camera 160 through an optical aperture 158. Optionally, a portion of probe 114 is seen in the camera image as well as a portion of its shadow 144 (for example as illustrated in FIG. 1A) on a tooth surface 140.

[0137] In some embodiments probe 114 has a linear edge. Optionally, the projection of the linear edge of the probe shadow 144 may be geometrically considered as included in a plane 142 (for example as illustrated in FIG. 1C) defined by the respective probe edge and light source 116. Each point in the shadow edge image falls somewhere in plane 142. The line of sight from optical aperture 158 to a point on the shadow defines a ray such as rays X, Y and Z that extends from optical aperture 158 to plane 142. The point of intersection of the ray and the plane is also the edge of the shadow on the surface. In some embodiments, the 3D location of the intersection of a ray and a plane is calculated. The intersection may optionally provide a 3D profile of a tooth portion. In some embodiments, to get 3D information, optical aperture 158 is located outside of the plane of light emitter 116 and probe 114.

[0138] In some embodiments, features and/or the shape of a surface is understood from the shape of a shadow on the surface. For example, the intersection between plane 142 and a flat surface would be a line. Curves bends or deformations of the line of the shadow indicate a curvature or deformation and/or angling of the surface.

[0139] In some embodiments shadow 144 forms a 3D line on surface 140. The line is optionally imaged by camera 160. In some embodiments, the orientation of a ray (for example rays X, Y, Z) between the camera and the shadow are derived from the image made by the camera. The 3D orientation of the ray may be found from the image, for example using the camera intrinsic and extrinsic parameters, such as the angle of the camera and the camera optics. Alternatively or additionally, the 3D orientation of the ray may be found, for example by relating the position of the point of the shadow in the image to the position of one or more reference features in the image. The 3D relationship between light source 116 and probe 114 are optionally used to calculate the orientation of plane 142. For example, based on the orientation of plane 142 and the orientation of a ray to a point on shadow 144 (for example rays X, Y and Z) the location of a point on shadow 144 is determined in any coordinate system, for example the system defined by an X axis 130, a Y axis 132 and a Z axis 134 relative to the IOS 102. A non-limiting example of a configuration of the shadow parameters, such as the angle and length are shown in FIG. 1D, which is self explanatory.

[0140] In some embodiments, the 3D coordinates of shadow 144 obtained in an image are stored in a coordinate system related to the position of the IOS at the time that the image was made, this coordinate system may be referred to as an IOS coordinate system. Optionally, the IOS coordinates system of different images may differ, for example due to movement of camera between images. In some embodiments, the IOS coordinates of shadow 144 are transformed into a tooth global coordinates system. For example, the transformation may be performed by finding the 3D relation between the IOS camera 160 and the tooth 120. The 3D relationship between the camera and the tooth may be found using, for example, Structure from Motion (SfM) methods or any other suitable methods. Optionally, probe 114 is scanned around tooth 120, for example to gather data with which to construct a 3D model of tooth 120. The 3D model may be stored in coordinates of a tooth global coordinate system.

[0141] FIG. 1A and 1B illustrate exemplary tooth surfaces and shadows in accordance with some embodiments of the current invention. For example, surface 140 (defined by dashed lines) on tooth 120 may be substantially flat in an X-Y plane. For example, the shadow of a linear probe 114 projected on a flat surface 140 may be a substantially straight line 146, as seen in the insert. In FIG. 1B, an exemplary surface 150 on tooth 120 may include a curved surface 152. For example, the shadow 156 of linear probe 114 projected on the curved surface 150 may appear to curve from some viewpoints.

[0142] In some embodiments, camera 160 may be placed close to the optical aperture 158, which is defined as the camera lens exit pupil. Alternatively or additionally camera 160 may be located at a larger distance from aperture 158. Optionally relay optics and/or a mirror may be used to convey light from aperture 158 to camera 160. In some embodiments, this may facilitate reducing the size of the intraoral portion of the IOS 102.

[0143] In some embodiments, camera 160 may capture images at a high frame rate. For example, a frame rate may range between 500-2000 frames per second (FPS). In some embodiments, an image may be limited to only the shadow and/or additional feature of interest. Limiting images size may facilitate increasing the frame rate of image capture, reducing scan time and/or higher precision. For example, the images may be limited by using only a portion or few portions of the camera image sensor (for example between 0 to 10% and/or between 10 to 30% and/or between 30 to 70% and/or between 70 to 100%). For example, the image may be limited by using Region of Interest (ROI) reading, which is supported by many CMOSs. In some embodiments, ROI reading facilitates higher frame rate. Limited data from an image may take less than 5% and/or between 5 to 10% of the memory space of the original image and/or between 10 to 30% and/or between 30 to 60% and/or between 60 to 90% and/or between 90 to 99% of the memory space of the original image.

[0144] In some embodiments, during scanning shadow 144 may be projected on any surface of tooth 120. Optionally, camera 160 images the shadow. For example, shadow 144 may be moved continuously across one or more surfaces of tooth 120. At any surface, the image of the projected shadow is optionally captured by camera 160. The captured images are optionally processed by a processing system 170. Processing system 170 optionally comprises image analysis functionality and/or data processing functionality. Processed data is optionally used for constructing a 3D model of the tooth surfaces.

[0145] A non-limiting example, the processing algorithm is illustrated in FIG. 3B. In some embodiments, the exemplary process may be performed to obtain a model of a portion of a tooth, a full tooth, few teeth, and/or a full arch.

[0146] In some embodiments processing system 170 may include a controller. Optionally, the controller may control parameters related to the light source 116. For example, controller 170 may control the angle of the illuminated light relative to oral-facing surface 110 and/or relative to a surface on tooth 120 and/or controller 170 may control a duration of illumination.

[0147] In some embodiments, probe 114 may have some flexibility and/or be deformed from a perfect straight edge. Optionally, markers (for example fiducials) may be marked or attached to the probe. Optionally, by analyzing their location in the image, the location of various portions of the deformed probe 114 may be estimated. Exemplary algorithms for such calculations are described in U.S. Patent publication 2015/0348320.

[0148] In some embodiments, contact between a tip of probe 114 and a tooth may be identified based on deformation of probe 114. Method for identifying this contact are described for instance as described in U.S. Patent publication 2015/0348320. In some embodiments, the location 127 where probe 114 tip touches tooth 120 may be included in a 3D model of tooth 120. For example, contact of the tip and the tooth are optionally used to map parts of the tooth that are obscured by blood, fluids or the gums.

[0149] In some embodiments, the geometry of the IOS is fixed and/or known. For example, the known geometry may include the 3D relationship between the locations of light source 116, probe 114 and/or aperture 158. In some embodiments, intrinsic parameters of the camera 160, such as equivalent focal length EFL, distortion etc. may be obtained by calibrating the IOS 102 prior to performing a scan. Alternatively or additionally, the calibration information may be obtained during the tooth scan, using suitable methods, for example a bundle adjustment method.

[0150] In some embodiments, the probe includes fiducials or markers. Optionally the markers are in the FOV of the camera 160. For example, the distance between a pair of markers may be a known. For example, data about the markers may be used for calibrating a 3D model. Alternatively or additionally, fiducials seen by the camera 160 may be used for identifying probe movements relative to optical aperture 158 for example due to probe distortion. Optionally calculations may be adjusted to compensate for the distortion. Compensation may be added to calculation of the shadow location and/or calculation of the probe tip location.

Probe With Fiducial Markers

[0151] FIGS. 2A and 2B illustrate, an IOS 200 with multiple light emitters in accordance with an embodiment of the current invention. A light source 216 optionally comprises a plurality of light emitters, for example LEDs 180 and/or lasers. Alternatively or additionally, optical fibers with a remote light source/s may be used. In the exemplary embodiments of FIGS. 2A and 2B six LEDs 180 are circumferentially arranged on the oral-facing surface 110. It is appreciated that any suitable number of light emitters may be provided and/or may be arranged in any suitable arrangement at any suitable location within the IOS system 200.

[0152] In some embodiments, a shadow 244a of probe 114 is cast on a surface of tooth 120 to be imaged. The surface is optionally illuminated by one of LED's 180, selected to cast shadow 244a onto the chosen surface. In some embodiments, controller 170, by aid of the camera 160, may receive a measurement of the spatial orientation (e.g. angle) of the chosen surface relative to the probe 116. The angle may be defined for example by the 3D coordinate system 136. The controller 170 is optionally configured to select the appropriate LED 180, suitably placed relative to the probe 116 to illuminate the surface. Accordingly, the shadow 144 will be cast upon the surface.

[0153] In a non-limiting example, a surface of tooth 120 comprises a proximal surface 190 and a distal surface 192. The plurality of LEDs 180 comprises a distally positioned LED 196 and a proximally positioned LED 198. For example as illustrated in FIG. 2A, a chosen surface for imaging may include a proximal surface 190. To illuminate and cast a shadow on proximal surface 190 controller 170 optionally selects to illuminate distal LED 196. A shadow 244a is thus projected on the chosen, proximal surface 190.

[0154] FIG. 2B, illustrates an exemplary configuration wherein the chosen surface for imaging is distal surface 192. In some embodiments controller 170 may select to illuminate the proximal LED 198. A shadow 244b is thus projected on the chosen, distal surface 192.

[0155] In some embodiments, a user may scan the probe around an object to be scanned. As the user scans the object, the processing unit computes the geometrical relation between the probe 114 and the tooth 120 for each image during the scan and may activate the proper light source 116 accordingly. Thus, the LEDs 180 may be manipulated to illuminate in accordance with the chosen surface of the tooth 120. Optionally, multiple light emitters may be illuminated sequentially. For example, while scanner 200 is scanning around a tooth, different LED's (e.g. LED''s 198, 196) of light source 216 may be illuminated. For example, the LED's may be switched in a fast sequence, such that the position of the scanner almost does not change between illumination events. Optionally, the resulting images may be related one to another and/or made into a mosaic to relate (e.g. determine relative position of) features on different surfaces and/or to relate features revealed by different shadow 244a, 244b positions.

[0156] FIGS. 2C and 2D illustrate an intra-oral scanner being used to scan tooth 120 while a user probes the tooth 120, for example, under a gum line in accordance with an embodiment of the current invention. Optionally the user scans probe 114 around the tooth close to and/or under the gums, as seen at FIG. 2C. Optionally, a shadow 244c is cast over the wall and/or crown of tooth 120. Processing unit 170 optionally identifies the location of scanned tooth 120 relative to probe 114 and/or the location of the side of tooth 120 relative to probe 114 and/or illuminates tooth 120 from opposite probe 114, to cast a shadow 244c on the scanned surface.

[0157] In some embodiments, the user may scan the probe 114 around tooth 120, such that the tip of probe 114 gets below the gums line, for example to scan a subgingival finish line. In some embodiments the probe 114 includes additional features, as seen for example in FIG. 2D. For example, shadow 244c, shown schematically casted over the wall and tooth crown in FIG. 2D, includes additional shadows 244d and 244e cast by fiducial markers 215a and 215b respectively. Optionally markers 215a and 215b are perpendicular to probe 114 direction and parallel to the scan around the tooth direction, the additional depth point obtained from shadow 244d and 244e are out of the plane created by probe 114 and light source and/or may provide 3D information on points on the tooth that are out of aid plane. In some embodiment, different fiducial markers may have different directions. Some of the markers may be positioned to cast shadows when illuminated by one LED 198 while other may be positioned to cast a shadow with another LED 196. Additionally or alternatively, the form of a shadow of the fiducial marker processor 170 may determine the relative direction of the light source with relation to the illuminated surface and/or the direction of the illustrated surface. The 3D information from the shadows of the fiduciary markers may optionally be used, by processing unit 170 to estimate probe and/or IOS movements between the different images.

[0158] In some embodiments, more than one LED 180 may be illuminated simultaneously to provide more than one shadow on the surface of the tooth 120.

[0159] In some embodiments, more than one LED 180 may be illuminated sequentially synchronized with the camera 160 to provide more than one shadow on the surface of the tooth 120 in separate images. In some embodiments, the sequential illumination may be fast enough to be substantially simultaneous (for the sequential images may be captured in a time small enough that no significant movement occurred in the meantime).

[0160] In some embodiments, the light source 116 may be placed on another object away from the IOS 102.