System And Method For Ranking Using Construction Site Images

Sasson; Michael ; et al.

U.S. patent application number 16/277046 was filed with the patent office on 2019-06-13 for system and method for ranking using construction site images. This patent application is currently assigned to CONSTRU LTD. The applicant listed for this patent is Shalom Bellaish, Moshe Nachman, Michael Sasson, Ron Zass. Invention is credited to Shalom Bellaish, Moshe Nachman, Michael Sasson, Ron Zass.

| Application Number | 20190180140 16/277046 |

| Document ID | / |

| Family ID | 66696261 |

| Filed Date | 2019-06-13 |

View All Diagrams

| United States Patent Application | 20190180140 |

| Kind Code | A1 |

| Sasson; Michael ; et al. | June 13, 2019 |

SYSTEM AND METHOD FOR RANKING USING CONSTRUCTION SITE IMAGES

Abstract

Systems and methods for ranking entities using construction site images are provided. For example, image data captured from a construction site using at least one image sensor may be obtained. The image data may be analyzed to detect at least one element depicted in the image data and associated with an entity. The image data may be further analyzed to determine at least one property indicative of quality and associated with the at least one element. The at least one property may be used to generate a ranking of the entity. In some examples, the at least one property may be based on a discrepancy between a construction plan and the construction site, between a project schedule and the construction site, between a financial record and the construction site, between a progress record and the construction site, and so forth.

| Inventors: | Sasson; Michael; (Petah Tikva, IL) ; Zass; Ron; (Kiryat Tivon, IL) ; Bellaish; Shalom; (Tel Mond, IL) ; Nachman; Moshe; (Tel-Aviv, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | CONSTRU LTD Kiryat Tivon IL |

||||||||||

| Family ID: | 66696261 | ||||||||||

| Appl. No.: | 16/277046 | ||||||||||

| Filed: | February 15, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62631757 | Feb 17, 2018 | |||

| 62666152 | May 3, 2018 | |||

| 62791841 | Jan 13, 2019 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06Q 40/08 20130101; G06N 3/08 20130101; G06K 9/00671 20130101; G06T 7/0006 20130101; G06T 2207/30132 20130101; G06F 16/2379 20190101; G06K 9/00637 20130101; G06N 3/02 20130101; G06K 9/6256 20130101; G06T 7/0004 20130101; G06K 9/00664 20130101; G06T 7/001 20130101; G06T 2207/30242 20130101; G06F 16/583 20190101; G06T 7/70 20170101; G06Q 10/06311 20130101; G06T 2207/20092 20130101; G06K 9/6215 20130101; G06K 9/623 20130101; G06T 2200/24 20130101; G06Q 50/08 20130101; G06K 9/00624 20130101; G06N 20/00 20190101; G06Q 40/025 20130101; G06Q 40/12 20131203; G06K 9/78 20130101 |

| International Class: | G06K 9/62 20060101 G06K009/62; G06T 7/00 20060101 G06T007/00; G06K 9/00 20060101 G06K009/00; G06N 20/00 20060101 G06N020/00; G06Q 50/08 20060101 G06Q050/08 |

Claims

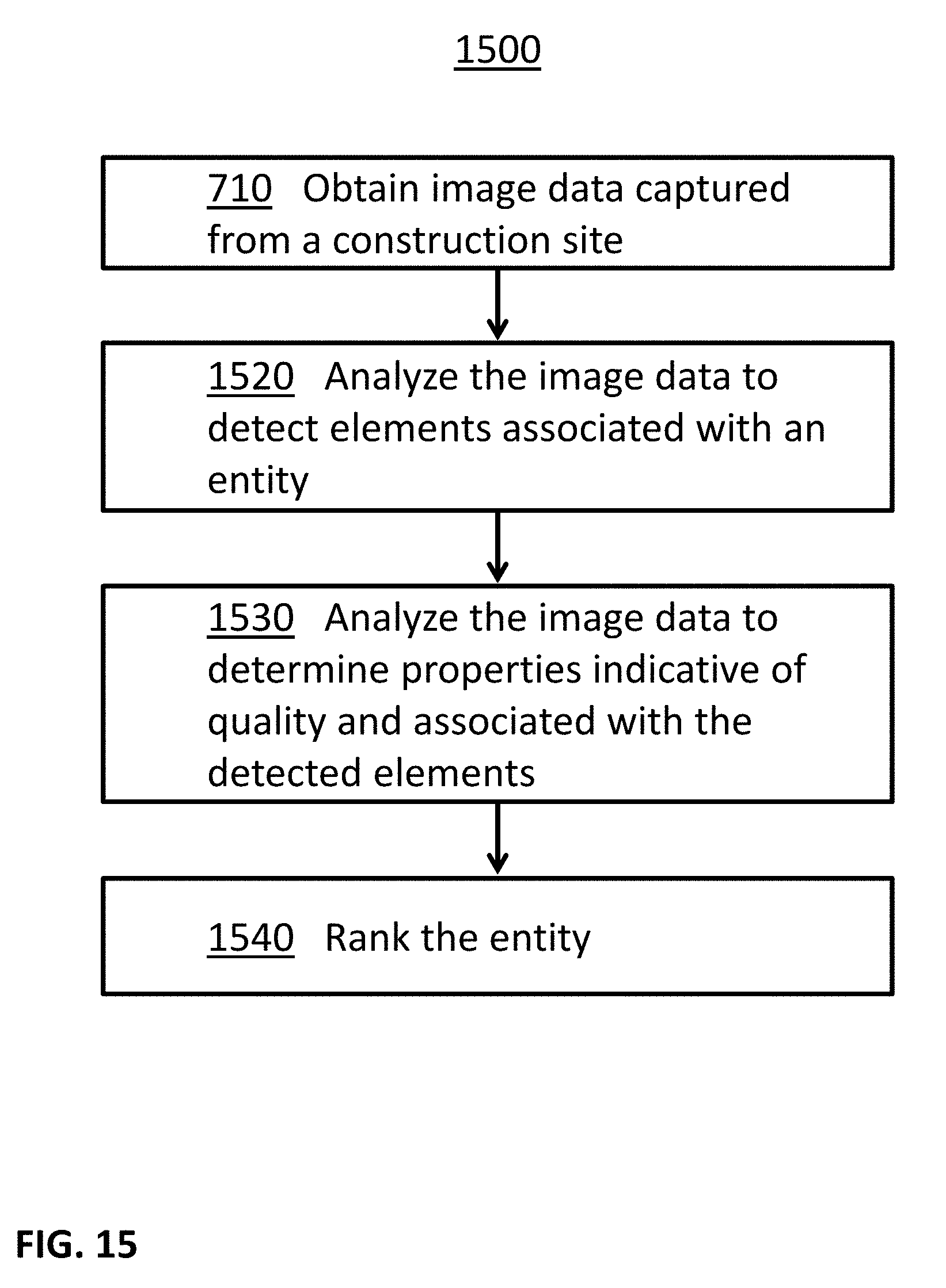

1. A method for ranking using construction site images, the method comprising: obtaining image data captured from a construction site using at least one image sensor; analyzing the image data to detect at least one element depicted in the image data and associated with an entity; analyzing the image data to determine at least one property indicative of quality and associated with the at least one element; and using the at least one property to generate a ranking of the entity.

2. The method of claim 1, wherein the at least one element is selected of a plurality of alternative elements based on the entity.

3. The method of claim 1, wherein the at least one element includes at least one of an element built by the entity, and an element installed by the entity.

4. The method of claim 1, wherein the at least one element includes at least one of an element built by a second entity and affected by a task performed by the entity, and an element installed by a second entity and affected by a task performed by the entity.

5. The method of claim 1, wherein the at least one element is an element supplied by the entity.

6. The method of claim 1, wherein the at least one element is an element manufactured by the entity.

7. The method of claim 1, wherein the image data comprises at least a first image corresponding to a first point in time and a second image corresponding to a second point in time, the elapsed time between the first point in time and the second point in time is at least one day, and the determined at least one property indicative of quality is based on a comparison of the first image and the second image.

8. The method of claim 1, wherein the image data comprises one or more indoor images of the construction site, the at least one element comprises at least one wall built by the entity, and further comprising: analyzing the image data to determine a quantity of plaster applied to the at least one wall; and using the determined quantity of plaster applied to the at least one wall to generate the ranking of the entity.

9. The method of claim 1, wherein the at least one element comprises a room built by the entity, and further comprising: analyzing the image data to determine one or more dimensions of the room; and using the determined one or more dimensions of the room to generate the ranking of the entity.

10. The method of claim 1, further comprising: analyzing the image data to identify signs of water leaks associated with the at least one element; and using the identified signs of water leaks to generate the ranking of the entity.

11. The method of claim 1, wherein the at least one property is based on at least one discrepancy between a construction plan associated with the construction site and the construction site.

12. The method of claim 1, wherein the at least one property is based on at least one discrepancy between a project schedule associated with the construction site and the construction site.

13. The method of claim 1, wherein the at least one property is based on at least one discrepancy between a financial record associated with the construction site and the construction site.

14. The method of claim 1, wherein the at least one property is based on at least one discrepancy between a progress record associated with the construction site and the construction site.

15. The method of claim 1, wherein the generated ranking is further based on information based on at least one image captured from at least one additional construction site.

16. The method of claim 1, wherein the at least one element is further associated with a first technique, the generated ranking is associated with the entity and the first technique, and further comprising: analyzing the image data to detect an additional group of at least one element depicted in the image data and associated with the entity and a second technique; analyzing the image data to determine an additional group of at least one property indicative of quality and associated with the additional group of at least one element; and using the additional group of at least one property to generate a second ranking of the entity related to the second technique.

17. The method of claim 1, wherein the at least one element is associated with a first group of one or more additional elements, the generated ranking is associated with the entity and the first group, and further comprising: analyzing the image data to detect an additional group of at least one element depicted in the image data and associated with the entity and a second group of one or more additional elements; analyzing the image data to determine an additional group of at least one property indicative of quality and associated with the additional group of at least one element; and using the additional group of at least one property to generate a second ranking of the entity related to the second group of one or more additional elements.

18. The method of claim 1, wherein the at least one element is further associated with a second entity, the generated ranking is associated with the entity and the second entity, and further comprising: analyzing the image data to detect an additional group of at least one element depicted in the image data and associated with the entity and a third entity; analyzing the image data to determine an additional group of at least one property indicative of quality and associated with the additional group of at least one element; and using the additional group of at least one property to generate a second ranking of the entity related to the third entity.

19. A system for ranking using construction site images, the system comprising: at least one image sensor configured to capture image data from a construction site; and at least one processor configured to: analyze the image data to detect at least one element depicted in the image data and associated with an entity; analyze the image data to determine at least one property indicative of quality and associated with the at least one element; and use the at least one property to generate a ranking of the entity.

20. A non-transitory computer readable medium storing data and computer implementable instructions for carrying out a method for ranking using construction site images, the method comprising: obtaining image data captured from a construction site using at least one image sensor; analyzing the image data to detect at least one element depicted in the image data and associated with an entity; analyzing the image data to determine at least one property indicative of quality and associated with the at least one element; and using the at least one property to generate a ranking of the entity.

Description

CROSS REFERENCES TO RELATED APPLICATIONS

[0001] This application claims the benefit of priority of U.S. Provisional Patent Application No. 62/631,757, filed on Feb. 17, 2018, and U.S. Provisional Patent Application No. 62/666,152, filed on May 3, 2018, and U.S. Provisional Patent Application No. 62/791,841, filed on Jan. 13, 2019.

[0002] The entire contents of all of the above-identified applications are herein incorporated by reference.

BACKGROUND

Technological Field

[0003] The disclosed embodiments generally relate to systems and methods for processing images. More particularly, the disclosed embodiments relate to systems and methods for processing images of construction site images.

Background Information

[0004] Image sensors are now part of numerous devices, from security systems to mobile phones, and the availability of images and videos produced by those devices is increasing.

[0005] The construction industry deals with building of new structures, additions and modifications to existing structures, maintenance of existing structures, repair of existing structures, improvements of existing structures, and so forth. While construction is widespread, the construction process still needs improvements. Manual monitoring, analysis, inspection, and management of the construction process prove to be difficult, expensive, and inefficient. As a result, many construction projects suffer from cost and schedule overruns, and in many times the quality of the constructed structures is lacking.

SUMMARY

[0006] In some embodiments, systems comprising at least one processor are provided. In some examples, the systems may further comprise at least one of an image sensor, a display device, a communication device, a memory unit, and so forth.

[0007] In some embodiments, systems and methods for determining the quality of concrete from construction site images are provided.

[0008] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. The image data may be analyzed to identify a region of the image data depicting at least part of an object, wherein the object is of an object type and made, at least partly, of concrete. The image data may be further analyzed to determine a quality indication associated with the concrete. The object type of the object may be used to select a threshold. The quality indication may be compared with the selected threshold. An indication to a user may be provided to a user based on a result of the comparison of the quality indication with the selected threshold.

[0009] In some embodiments, systems and methods for providing information based on construction site images are provided.

[0010] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. Further, at least one electronic record associated with the construction site may be obtained. The image data may be analyzed to identify at least one discrepancy between the at least one electronic record and the construction site. Further, information based on the identified at least one discrepancy may be provided to a user.

[0011] In some embodiments, systems and methods for updating records based on construction site images are provided.

[0012] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. The image data may be analyzed to detect at least one object in the construction site. Further, at least one electronic record associated with the construction site may be updated based on the detected at least one object. In some examples, the at least one electronic record may comprise a searchable database, and updating the at least one electronic record may comprise indexing the at least one object in the searchable database. For example, the searchable database may be searched for a record related to the at least one object. In response to a determination that the searchable database includes a record related to the at least one object, the record related to the at least one object may be updated. In response to a determination that the searchable database do not include a record related to the at least one object, a record related to the at least one object may be added to the searchable database.

[0013] In some embodiments, systems and methods for generating financial assessments based on construction site images are provided.

[0014] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. Further, at least one electronic record associated with the construction site may be obtained. The image data and the at least one electronic record may be analyzed to generate at least one financial assessment related to the construction site. For example, the image data may be analyzed to identify at least one discrepancy between the at least one electronic record and the construction site, and the identified at least one discrepancy may be used in the generation of the at least one financial assessment.

[0015] In some embodiments, systems and methods for hybrid processing of construction site images are provided.

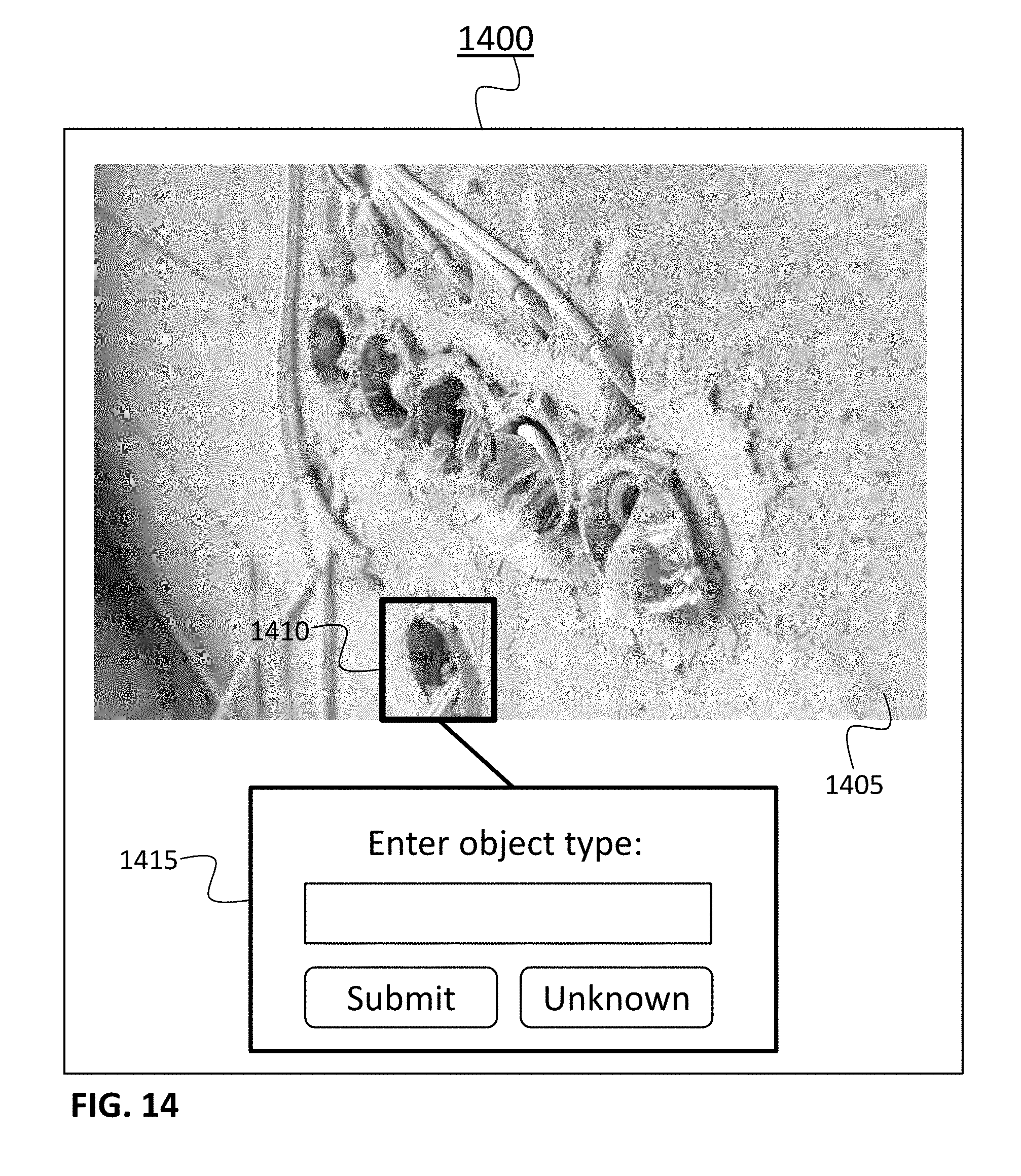

[0016] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. The image data may be analyzed to attempt to recognize at least one object depicted in the image data. In response to a failure to successfully recognize the at least one object, at least part of the image data may be presented to a user, and a feedback related to the at least one object may be received from the user. For example, the attempt to recognize the at least one object may be based on a construction plan associated with the construction site, and the failure to successfully recognize the at least one object may be identified based on a mismatch between the suggested object type from the attempt to recognize the at least one object and one or more types of one or more objects selected from the construction plan based on the location of the at least one object in the image data.

[0017] In some embodiments, systems and methods for ranking entities using construction site images are provided.

[0018] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. The image data may be analyzed to detect at least one element depicted in the image data and associated with an entity. The image data may be further analyzed to determine at least one property indicative of quality and associated with the at least one element. The at least one property may be used to generate a ranking of the entity. For example, the at least one element may include an element built by the entity, installed by the entity, affected by a task performed by the entity, supplied by the entity, manufactured by the entity, and so forth. In some examples, the at least one property may be based on a discrepancy between a construction plan associated with the construction site and the construction site, between a project schedule associated with the construction site and the construction site, between a financial record associated with the construction site and the construction site, between a progress record associated with the construction site and the construction site, and so forth.

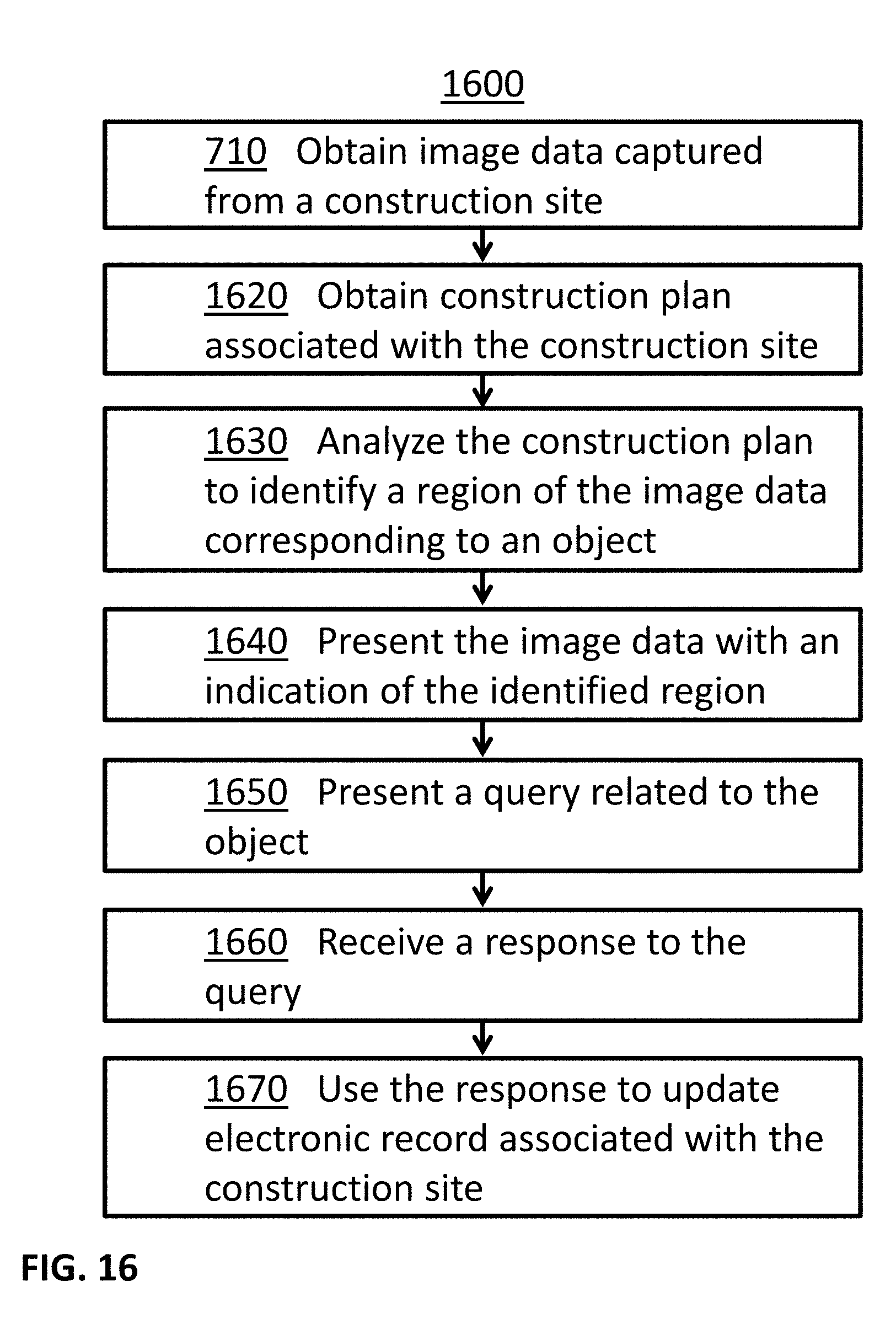

[0019] In some embodiments, systems and methods for annotation of construction site images are provided.

[0020] In some embodiments, image data captured from a construction site using at least one image sensor may be obtained. Further, at least one construction plan associated with the construction site and including information related to an object may be obtained. The at least one construction plan may be analyzed to identify a first region of the image data corresponding to the object. The at least one display device may be used to present at least part of the image data to a user with an indication of the identified first region of the image data corresponding to the object. Further, the at least one display device may be used to present to the user a query related to the object. A response to the query may be received from the user. The response may be used to update information associated with the object in at least one electronic record associated with the construction site.

[0021] Consistent with other disclosed embodiments, non-transitory computer-readable storage media may store data and/or computer implementable instructions for carrying out any of the methods described herein.

[0022] The foregoing general description and the following detailed description are exemplary and explanatory only and are not restrictive of the claims.

BRIEF DESCRIPTION OF THE DRAWINGS

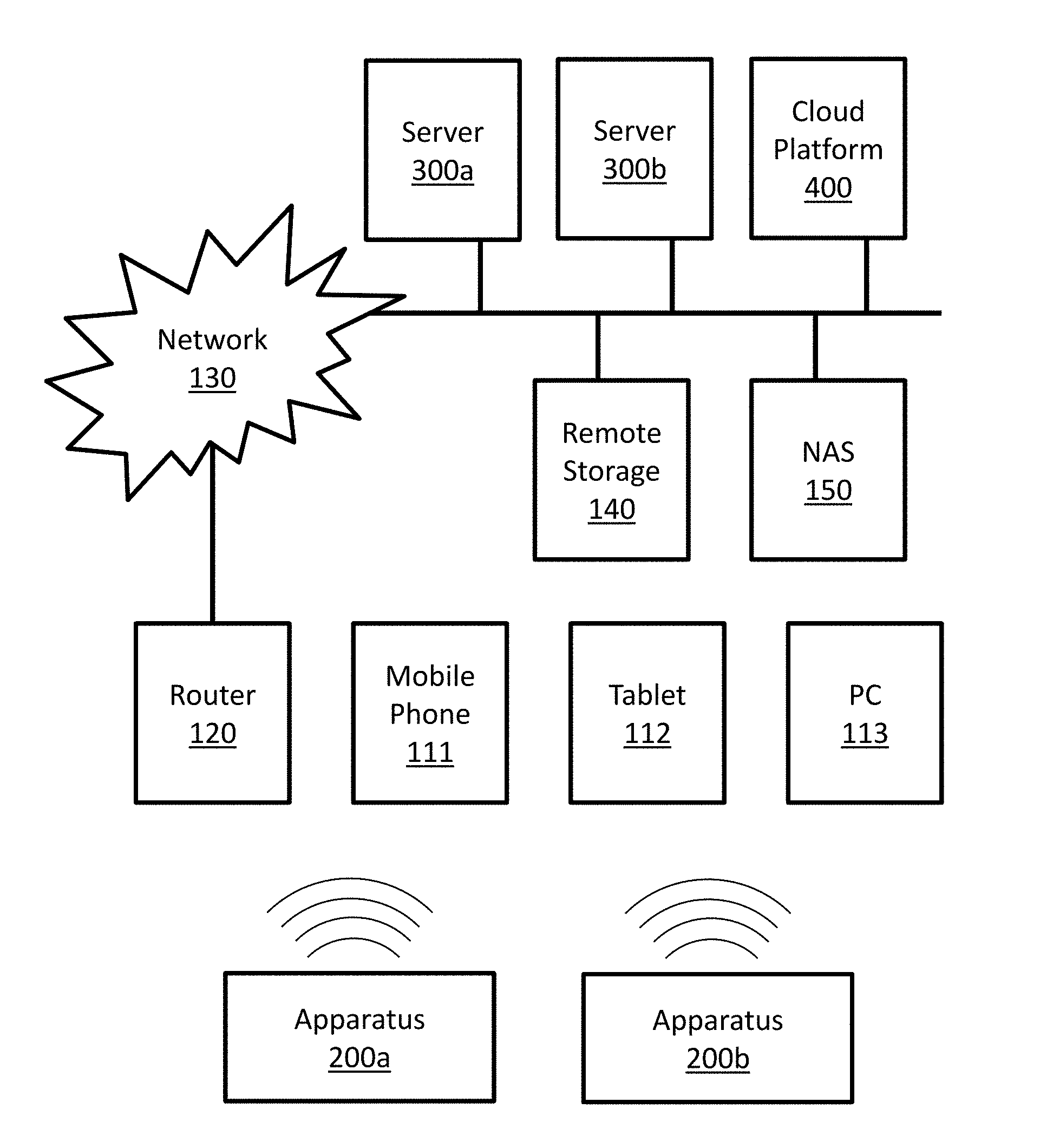

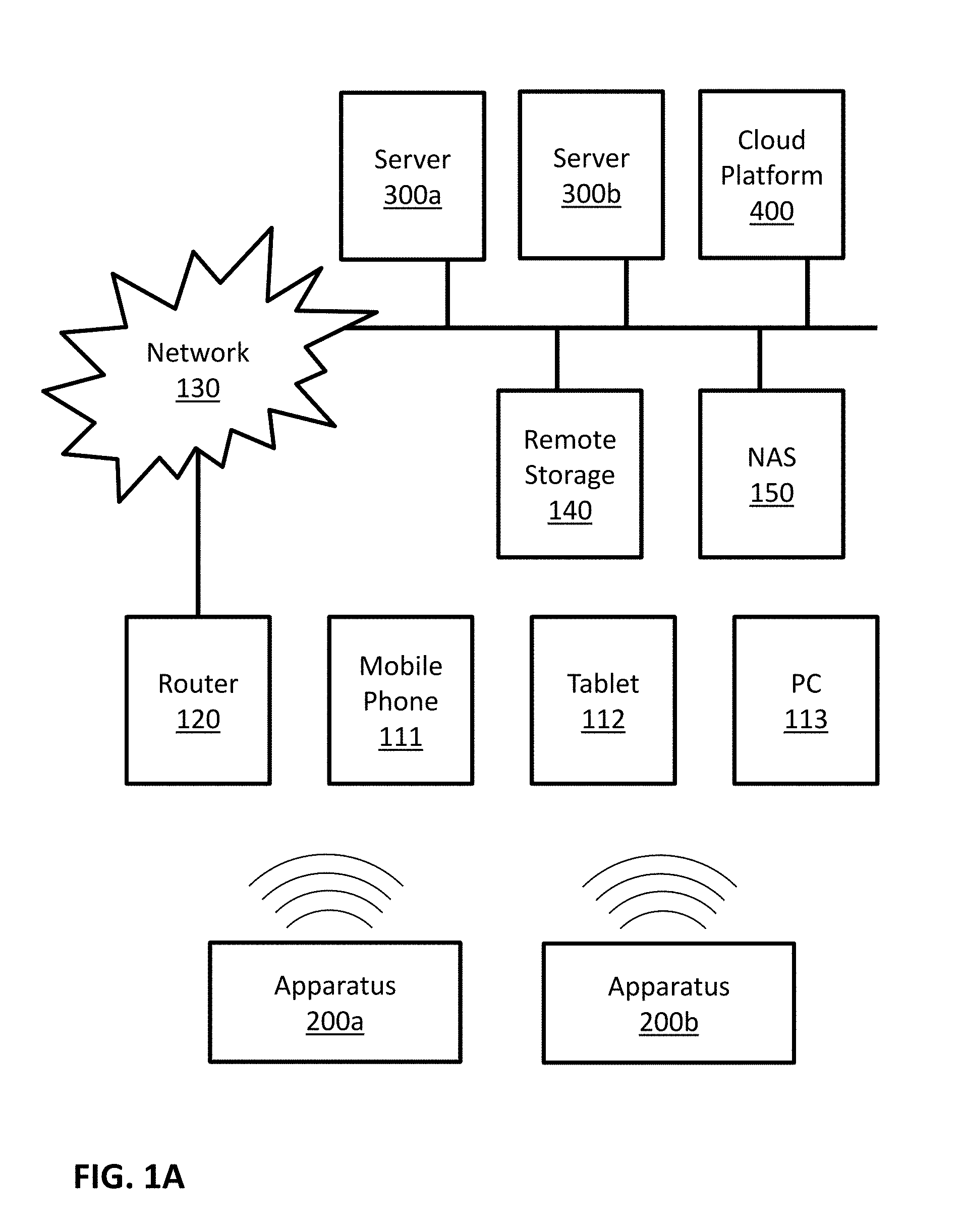

[0023] FIGS. 1A and 1B are block diagrams illustrating some possible implementations of a communicating system.

[0024] FIGS. 2A and 2B are block diagrams illustrating some possible implementations of an apparatus.

[0025] FIG. 3 is a block diagram illustrating a possible implementation of a server.

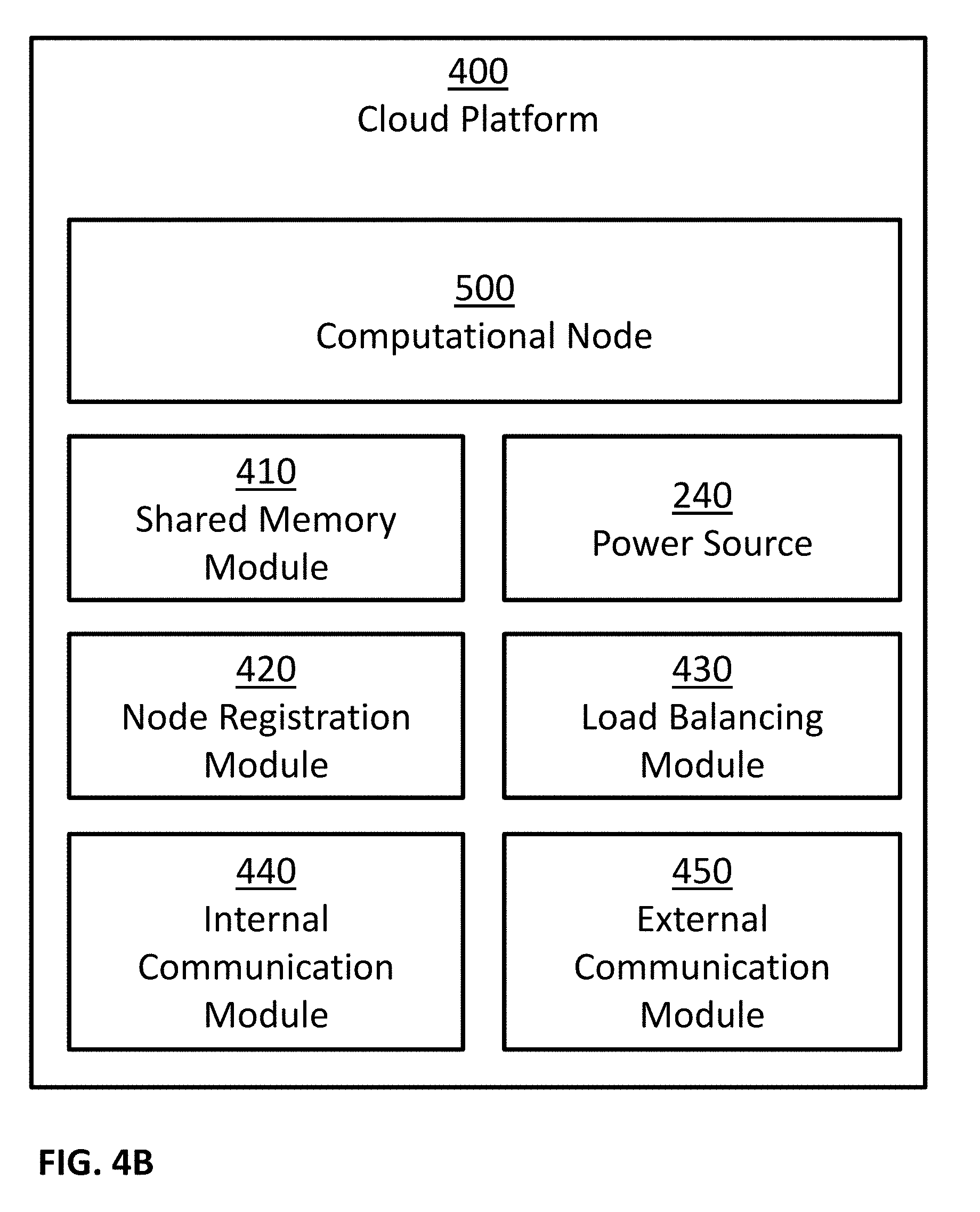

[0026] FIG. 4A and 4B are block diagrams illustrating some possible implementations of a cloud platform.

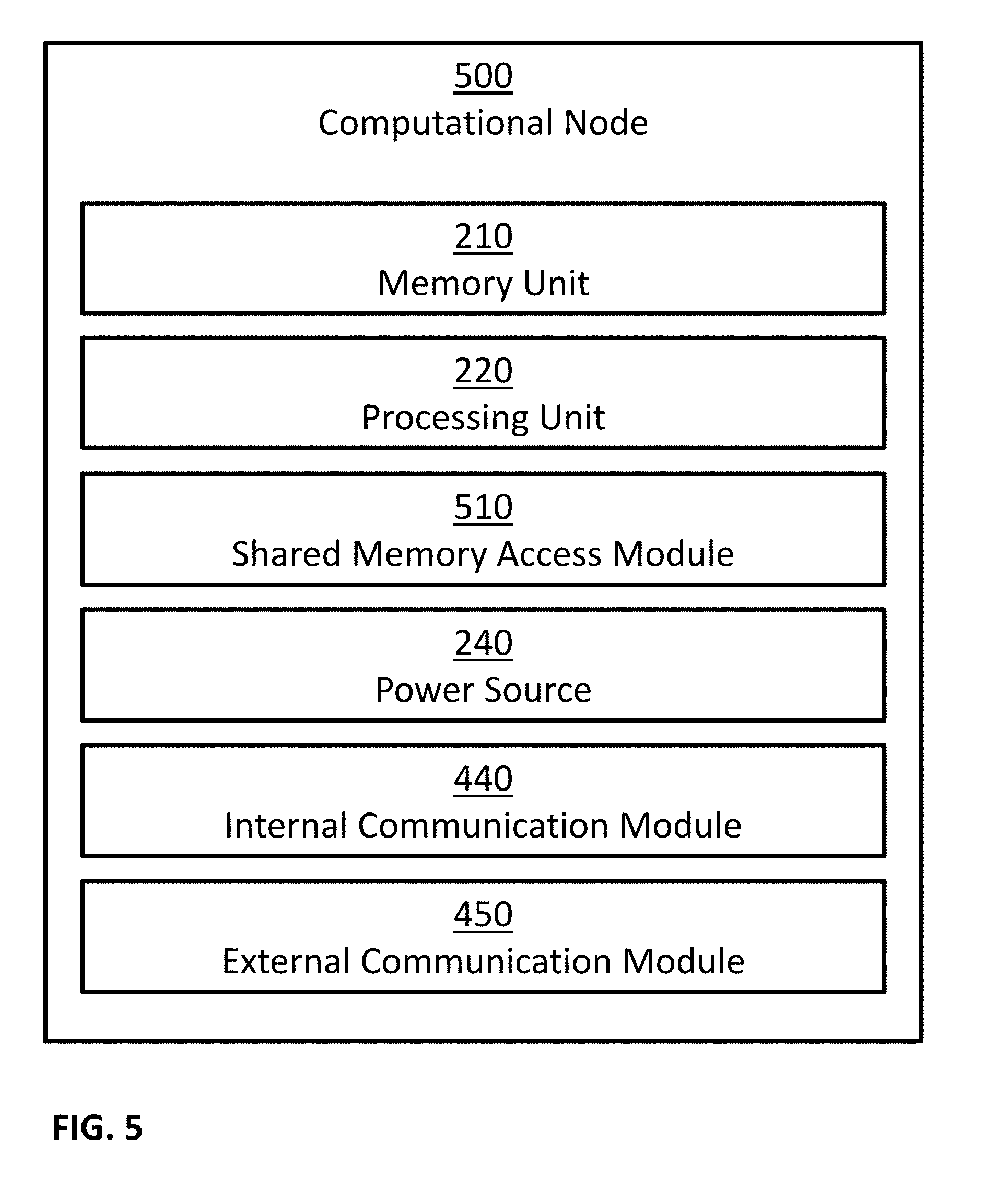

[0027] FIG. 5 is a block diagram illustrating a possible implementation of a computational node.

[0028] FIG. 6 illustrates an exemplary embodiment of a memory storing a plurality of modules.

[0029] FIG. 7 illustrates an example of a method for processing images of concrete.

[0030] FIG. 8 is a schematic illustration of an example image captured by an apparatus consistent with an embodiment of the present disclosure.

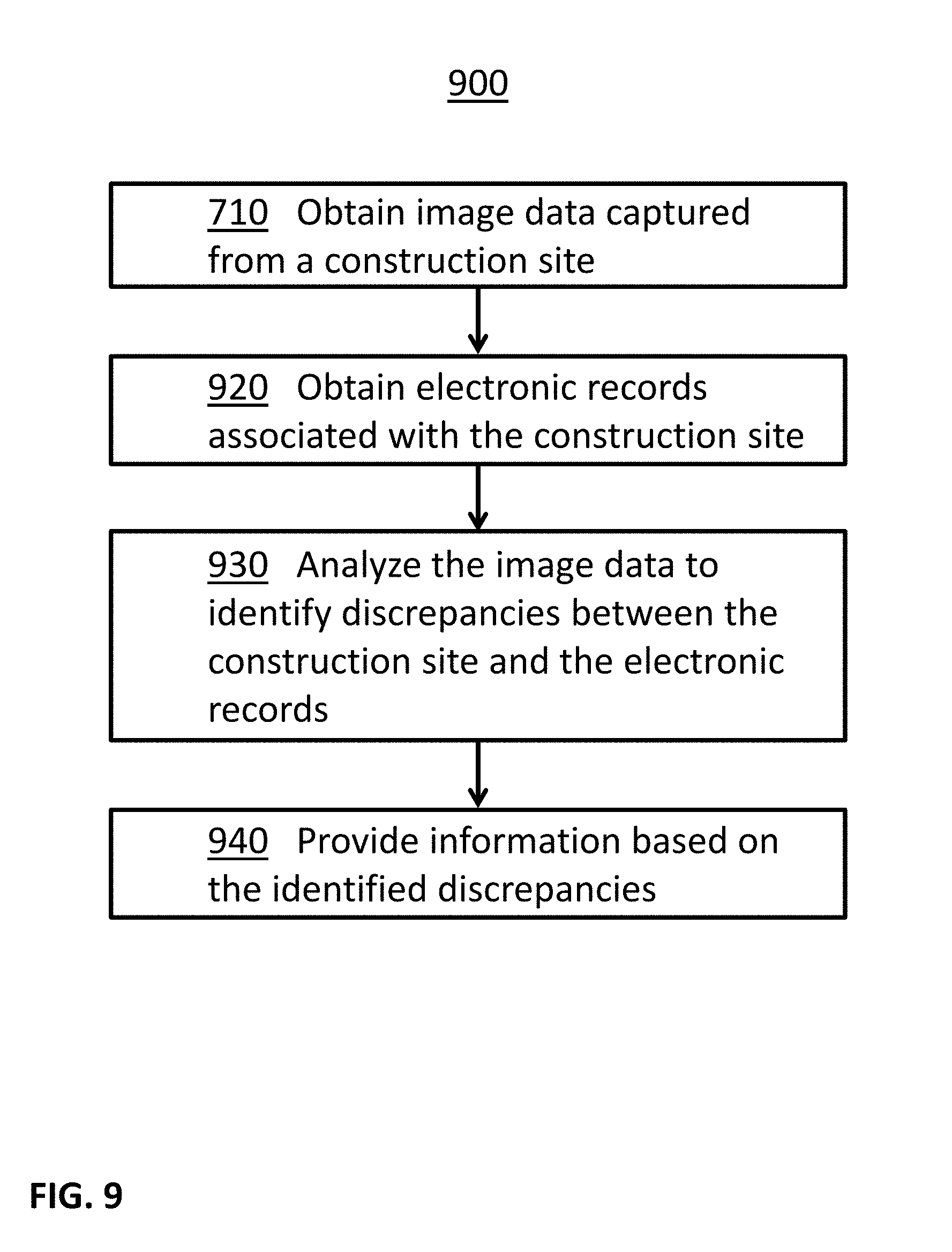

[0031] FIG. 9 illustrates an example of a method for providing information based on construction site images.

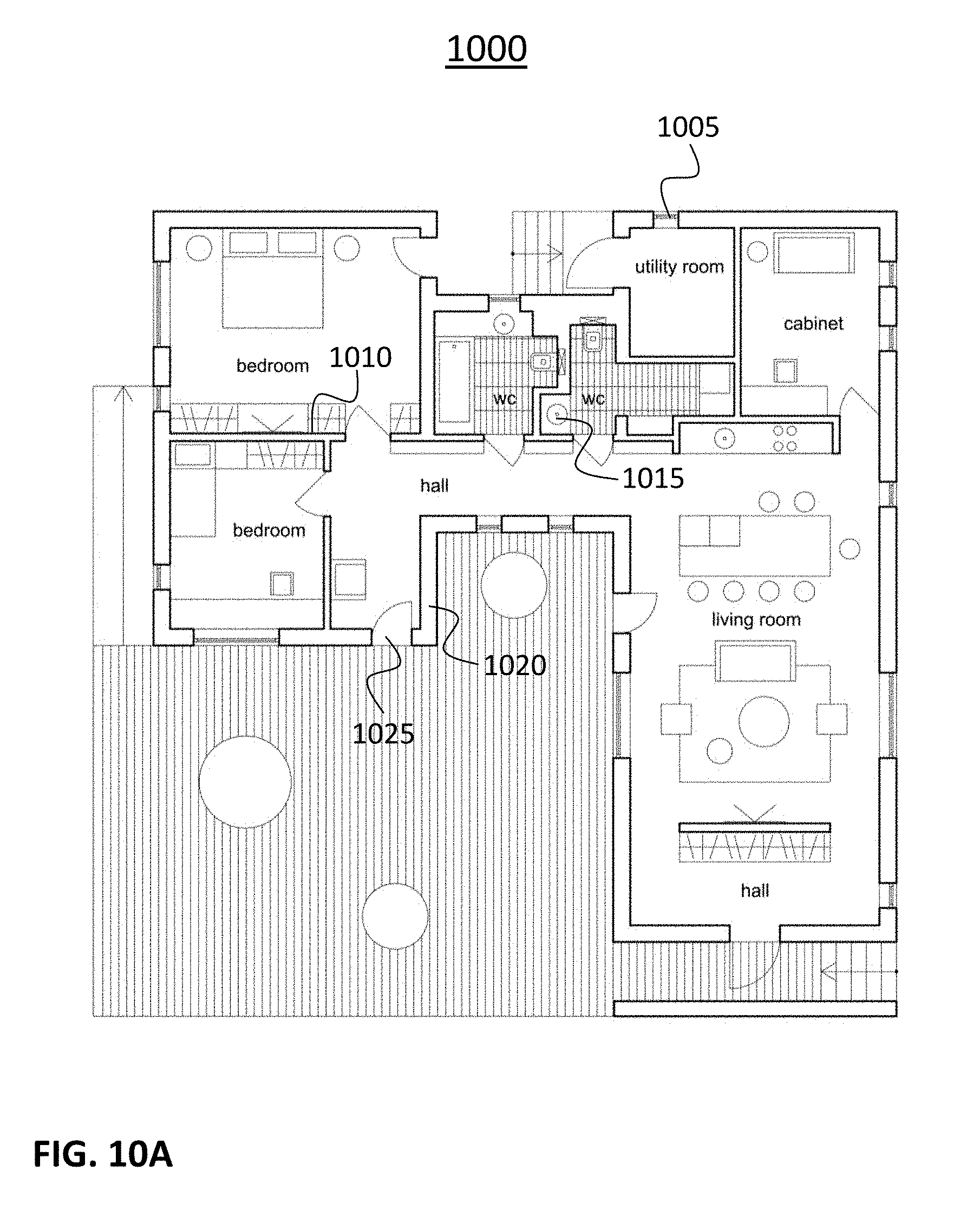

[0032] FIG. 10A is a schematic illustration of an example construction plan consistent with an embodiment of the present disclosure.

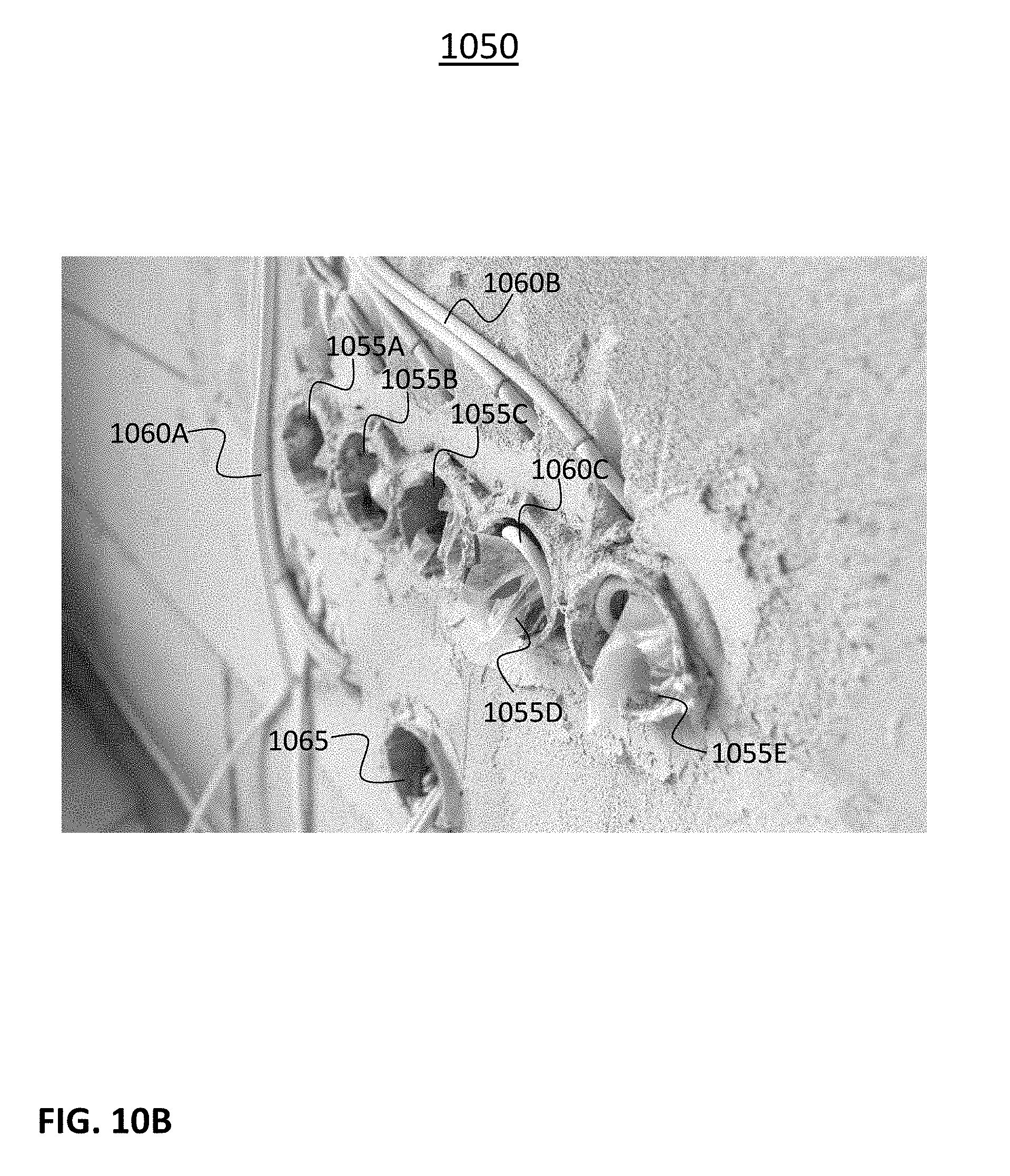

[0033] FIG. 10B is a schematic illustration of an example image captured by an apparatus consistent with an embodiment of the present disclosure.

[0034] FIG. 11 illustrates an example of a method for updating records based on construction site images.

[0035] FIG. 12 illustrates an example of a method for generating financial assessments based on construction site images.

[0036] FIG. 13 illustrates an example of a method for hybrid processing of construction site images.

[0037] FIG. 14 is a schematic illustration of a user interface consistent with an embodiment of the present disclosure.

[0038] FIG. 15 illustrates an example of a method for ranking using construction site images.

[0039] FIG. 16 illustrates an example of a method for annotation of construction site images.

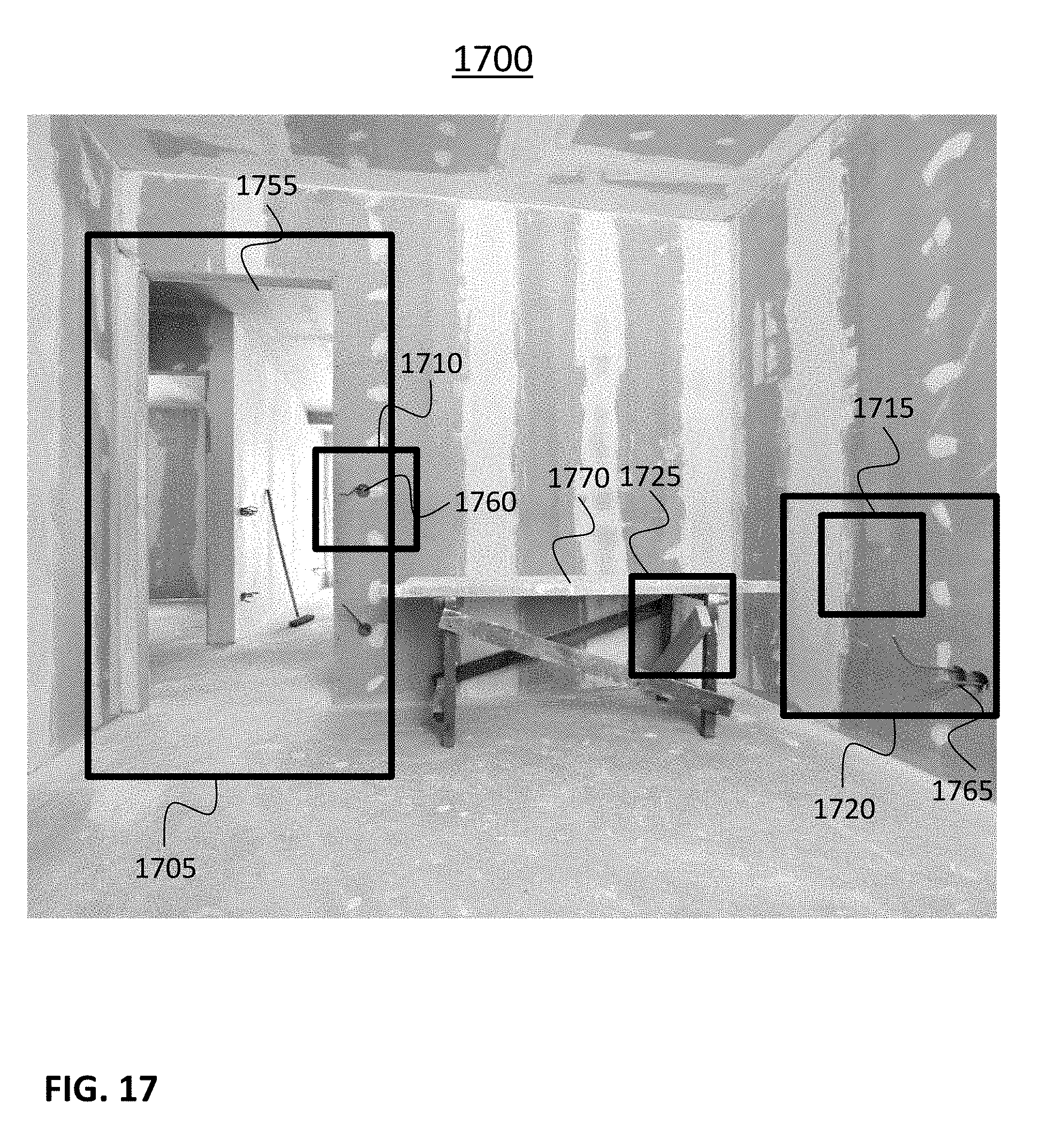

[0040] FIG. 17 is a schematic illustration of an example image captured by an apparatus consistent with an embodiment of the present disclosure.

DESCRIPTION

[0041] Unless specifically stated otherwise, as apparent from the following discussions, it is appreciated that throughout the specification discussions utilizing terms such as "processing", "calculating", "computing", "determining", "generating", "setting", "configuring", "selecting", "defining", "applying", "obtaining", "monitoring", "providing", "identifying", "segmenting", "classifying", "analyzing", "associating", "extracting", "storing", "receiving", "transmitting", or the like, include action and/or processes of a computer that manipulate and/or transform data into other data, said data represented as physical quantities, for example such as electronic quantities, and/or said data representing the physical objects. The terms "computer", "processor", "controller", "processing unit", "computing unit", and " processing module" should be expansively construed to cover any kind of electronic device, component or unit with data processing capabilities, including, by way of non-limiting example, a personal computer, a wearable computer, a tablet, a smartphone, a server, a computing system, a cloud computing platform, a communication device, a processor, such as, a digital signal processor (DSP), an image signal processor (ISR), a microcontroller, a field programmable gate array (FPGA), an application specific integrated circuit (ASIC), a central processing unit (CPA), a graphics processing unit (GPU), a visual processing unit (VPU), and so on), possibly with embedded memory, a single core processor, a multi core processor, a core within a processor, any other electronic computing device, or any combination of the above.

[0042] The operations in accordance with the teachings herein may be performed by a computer specially constructed or programmed to perform the described functions.

[0043] As used herein, the phrase "for example," "such as", "for instance" and variants thereof describe non-limiting embodiments of the presently disclosed subject matter. Reference in the specification to "one case", "some cases", "other cases" or variants thereof means that a particular feature, structure or characteristic described in connection with the embodiment(s) may be included in at least one embodiment of the presently disclosed subject matter. Thus the appearance of the phrase "one case", "some cases", "other cases" or variants thereof does not necessarily refer to the same embodiment(s). As used herein, the term "and/or" includes any and all combinations of one or more of the associated listed items.

[0044] It is appreciated that certain features of the presently disclosed subject matter, which are, for clarity, described in the context of separate embodiments, may also be provided in combination in a single embodiment. Conversely, various features of the presently disclosed subject matter, which are, for brevity, described in the context of a single embodiment, may also be provided separately or in any suitable sub-combination.

[0045] The term "image sensor" is recognized by those skilled in the art and refers to any device configured to capture images, a sequence of images, videos, and so forth. This includes sensors that convert optical input into images, where optical input can be visible light (like in a camera), radio waves, microwaves, terahertz waves, ultraviolet light, infrared light, x-rays, gamma rays, and/or any other light spectrum. This also includes both 2D and 3D sensors. Examples of image sensor technologies may include: CCD, CMOS, NMOS, and so forth. 3D sensors may be implemented using different technologies, including: stereo camera, active stereo camera, time of flight camera, structured light camera, radar, range image camera, and so forth.

[0046] The term "compressive strength test" is recognized by those skilled in the art and refers to a test that mechanically measure the maximal amount of compressive load a material, such as a body or a cube of concrete, can bear before fracturing.

[0047] The term "water permeability test" is recognized by those skilled in the art and refers to a test of a body or a cube of concrete that measures the depth of penetration of water maintained at predetermined pressures for a predetermined time intervals.

[0048] The term "rapid chloride ion penetration test" is recognized by those skilled in the art and refers to a test that measures the ability of concrete to resist chloride ion penetration.

[0049] The term "water absorption test" is recognized by those skilled in the art and refers to a test of concrete specimens that, after drying the specimens, emerges the specimens in water at predetermined temperature and/or pressure for predetermined time intervals, and measures the weight of water absorbed by the specimens.

[0050] The term "initial surface absorption test" is recognized by those skilled in the art and refers to a test that measures the flow of water per concrete surface area when subjected to a constant water head.

[0051] In embodiments of the presently disclosed subject matter, one or more stages illustrated in the figures may be executed in a different order and/or one or more groups of stages may be executed simultaneously and vice versa. The figures illustrate a general schematic of the system architecture in accordance embodiments of the presently disclosed subject matter. Each module in the figures can be made up of any combination of software, hardware and/or firmware that performs the functions as defined and explained herein. The modules in the figures may be centralized in one location or dispersed over more than one location.

[0052] It should be noted that some examples of the presently disclosed subject matter are not limited in application to the details of construction and the arrangement of the components set forth in the following description or illustrated in the drawings. The invention can be capable of other embodiments or of being practiced or carried out in various ways. Also, it is to be understood that the phraseology and terminology employed herein is for the purpose of description and should not be regarded as limiting.

[0053] In this document, an element of a drawing that is not described within the scope of the drawing and is labeled with a numeral that has been described in a previous drawing may have the same use and description as in the previous drawings.

[0054] The drawings in this document may not be to any scale. Different figures may use different scales and different scales can be used even within the same drawing, for example different scales for different views of the same object or different scales for the two adjacent objects.

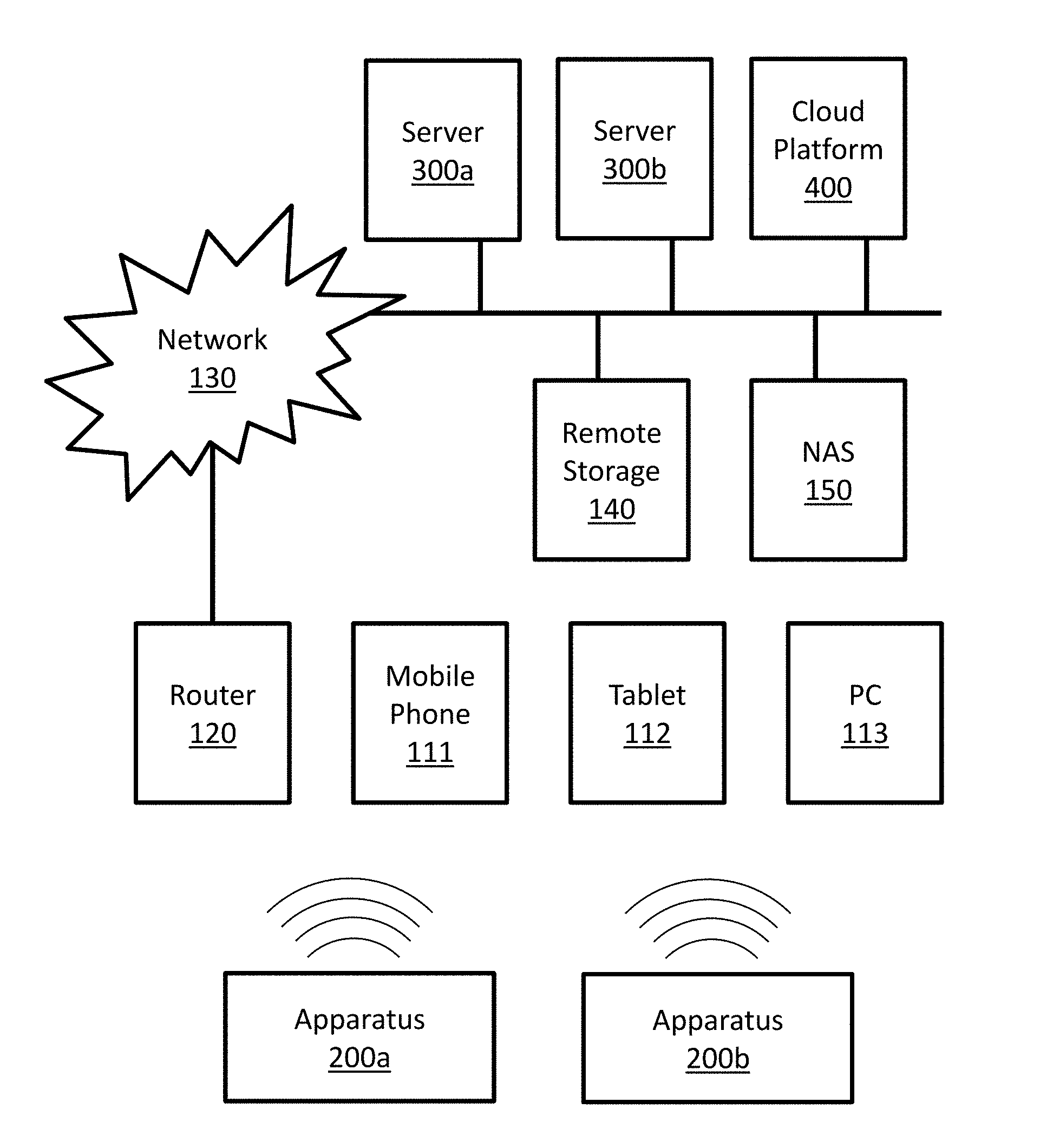

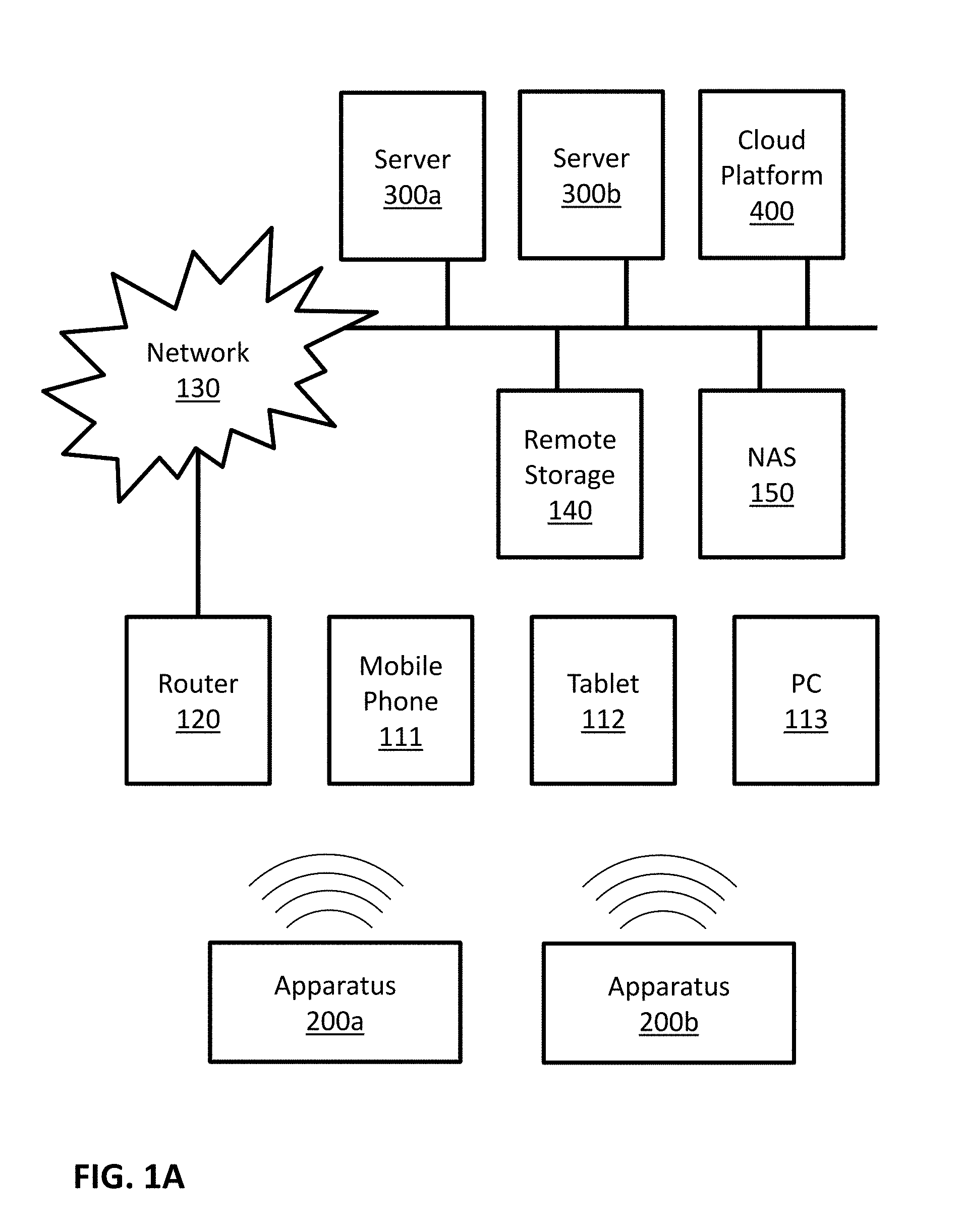

[0055] FIG. 1A is a block diagram illustrating a possible implementation of a communicating system. In this example, apparatuses 200a and 200b may communicate with server 300a, with server 300b, with cloud platform 400, with each other, and so forth. Possible implementations of apparatuses 200a and 200b may include apparatus 200 as described in FIGS. 2A and 2B. Possible implementations of servers 300a and 300b may include server 300 as described in FIG. 3. Some possible implementations of cloud platform 400 are described in FIGS. 4A, 4B and 5. In this example apparatuses 200a and 200b may communicate directly with mobile phone 111, tablet 112, and personal computer (PC) 113. Apparatuses 200a and 200b may communicate with local router 120 directly, and/or through at least one of mobile phone 111, tablet 112, and personal computer (PC) 113. In this example, local router 120 may be connected with a communication network 130. Examples of communication network 130 may include the Internet, phone networks, cellular networks, satellite communication networks, private communication networks, virtual private networks (VPN), and so forth. Apparatuses 200a and 200b may connect to communication network 130 through local router 120 and/or directly. Apparatuses 200a and 200b may communicate with other devices, such as servers 300a, server 300b, cloud platform 400, remote storage 140 and network attached storage (NAS) 150, through communication network 130 and/or directly.

[0056] FIG. 1B is a block diagram illustrating a possible implementation of a communicating system. In this example, apparatuses 200a, 200b and 200c may communicate with cloud platform 400 and/or with each other through communication network 130. Possible implementations of apparatuses 200a, 200b and 200c may include apparatus 200 as described in FIGS. 2A and 2B. Some possible implementations of cloud platform 400 are described in FIGS. 4A, 4B and 5.

[0057] FIGS. 1A and 1B illustrate some possible implementations of a communication system. In some embodiments, other communication systems that enable communication between apparatus 200 and server 300 may be used. In some embodiments, other communication systems that enable communication between apparatus 200 and cloud platform 400 may be used. In some embodiments, other communication systems that enable communication among a plurality of apparatuses 200 may be used.

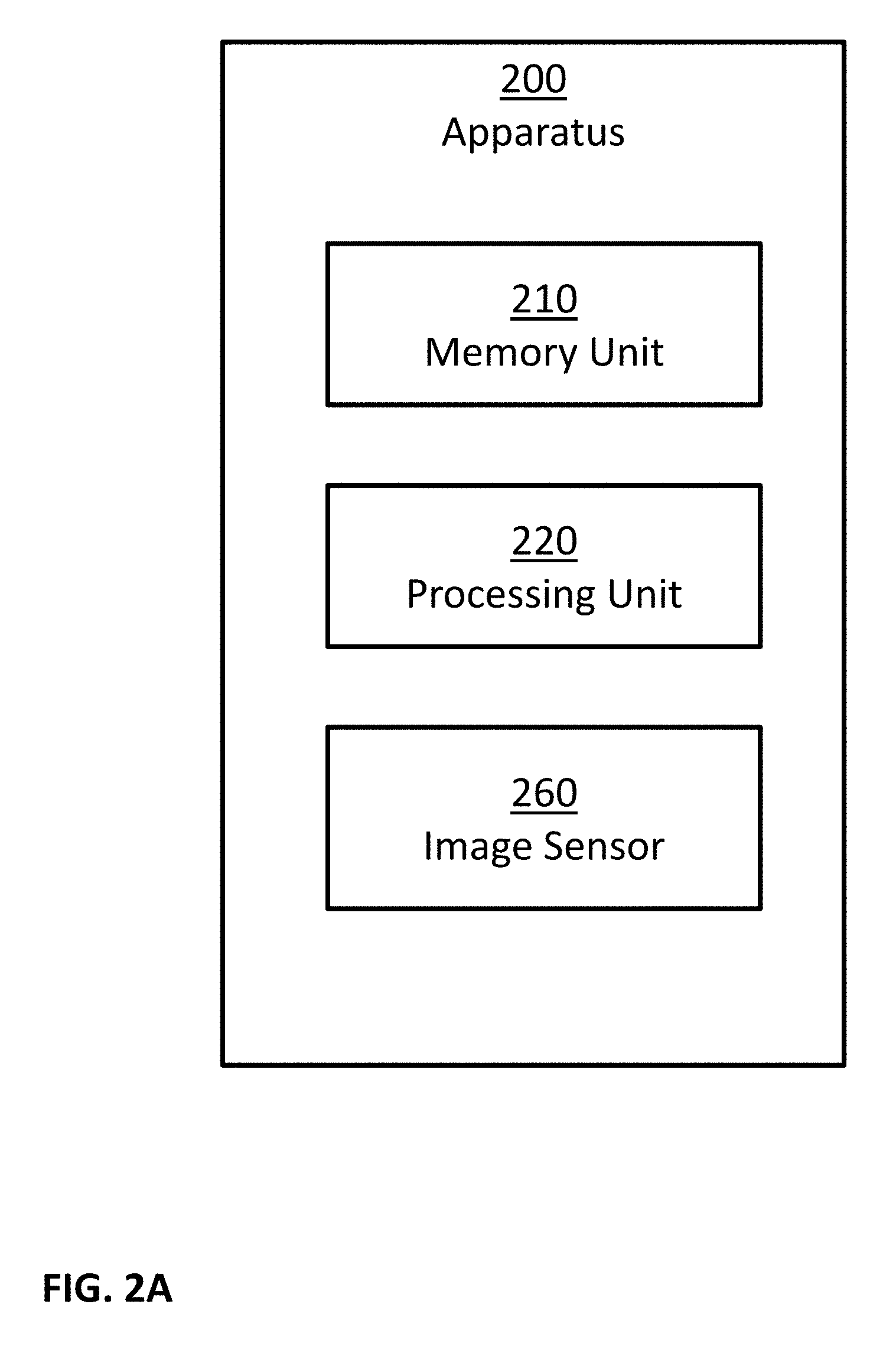

[0058] FIG. 2A is a block diagram illustrating a possible implementation of apparatus 200. In this example, apparatus 200 may comprise: one or more memory units 210, one or more processing units 220, and one or more image sensors 260. In some implementations, apparatus 200 may comprise additional components, while some components listed above may be excluded.

[0059] FIG. 2B is a block diagram illustrating a possible implementation of apparatus 200. In this example, apparatus 200 may comprise: one or more memory units 210, one or more processing units 220, one or more communication modules 230, one or more power sources 240, one or more audio sensors 250, one or more image sensors 260, one or more light sources 265, one or more motion sensors 270, and one or more positioning sensors 275. In some implementations, apparatus 200 may comprise additional components, while some components listed above may be excluded. For example, in some implementations apparatus 200 may also comprise at least one of the following: one or more barometers; one or more user input devices; one or more output devices; and so forth. In another example, in some implementations at least one of the following may be excluded from apparatus 200: memory units 210, communication modules 230, power sources 240, audio sensors 250, image sensors 260, light sources 265, motion sensors 270, and positioning sensors 275.

[0060] In some embodiments, one or more power sources 240 may be configured to: power apparatus 200; power server 300; power cloud platform 400; and/or power computational node 500. Possible implementation examples of power sources 240 may include: one or more electric batteries; one or more capacitors; one or more connections to external power sources; one or more power convertors; any combination of the above; and so forth.

[0061] In some embodiments, the one or more processing units 220 may be configured to execute software programs. For example, processing units 220 may be configured to execute software programs stored on the memory units 210. In some cases, the executed software programs may store information in memory units 210. In some cases, the executed software programs may retrieve information from the memory units 210. Possible implementation examples of the processing units 220 may include: one or more single core processors, one or more multicore processors; one or more controllers; one or more application processors; one or more system on a chip processors; one or more central processing units; one or more graphical processing units; one or more neural processing units; any combination of the above; and so forth.

[0062] In some embodiments, the one or more communication modules 230 may be configured to receive and transmit information. For example, control signals may be transmitted and/or received through communication modules 230. In another example, information received though communication modules 230 may be stored in memory units 210. In an additional example, information retrieved from memory units 210 may be transmitted using communication modules 230. In another example, input data may be transmitted and/or received using communication modules 230. Examples of such input data may include: input data inputted by a user using user input devices; information captured using one or more sensors; and so forth. Examples of such sensors may include: audio sensors 250; image sensors 260; motion sensors 270; positioning sensors 275; chemical sensors; temperature sensors; barometers; and so forth.

[0063] In some embodiments, the one or more audio sensors 250 may be configured to capture audio by converting sounds to digital information. Some examples of audio sensors 250 may include: microphones, unidirectional microphones, bidirectional microphones, cardioid microphones, omnidirectional microphones, onboard microphones, wired microphones, wireless microphones, any combination of the above, and so forth. In some examples, the captured audio may be stored in memory units 210. In some additional examples, the captured audio may be transmitted using communication modules 230, for example to other computerized devices, such as server 300, cloud platform 400, computational node 500, and so forth. In some examples, processing units 220 may control the above processes. For example, processing units 220 may control at least one of: capturing of the audio; storing the captured audio; transmitting of the captured audio; and so forth. In some cases, the captured audio may be processed by processing units 220. For example, the captured audio may be compressed by processing units 220; possibly followed: by storing the compressed captured audio in memory units 210; by transmitted the compressed captured audio using communication modules 230; and so forth. In another example, the captured audio may be processed using speech recognition algorithms. In another example, the captured audio may be processed using speaker recognition algorithms.

[0064] In some embodiments, the one or more image sensors 260 may be configured to capture visual information by converting light to: images; sequence of images; videos; 3D images; sequence of 3D images; 3D videos; and so forth. In some examples, the captured visual information may be stored in memory units 210. In some additional examples, the captured visual information may be transmitted using communication modules 230, for example to other computerized devices, such as server 300, cloud platform 400, computational node 500, and so forth. In some examples, processing units 220 may control the above processes. For example, processing units 220 may control at least one of: capturing of the visual information; storing the captured visual information; transmitting of the captured visual information; and so forth. In some cases, the captured visual information may be processed by processing units 220. For example, the captured visual information may be compressed by processing units 220; possibly followed: [0065] by storing the compressed captured visual information in memory units 210; [0066] by transmitted the compressed captured visual information using communication modules 230; and so forth. In another example, the captured visual information may be processed in order to: detect objects, detect events, detect action, detect face, detect people, recognize person, and so forth.

[0067] In some embodiments, the one or more light sources 265 may be configured to emit light, for example in order to enable better image capturing by image sensors 260. In some examples, the emission of light may be coordinated with the capturing operation of image sensors 260. In some examples, the emission of light may be continuous. In some examples, the emission of light may be performed at selected times. The emitted light may be visible light, infrared light, x-rays, gamma rays, and/or in any other light spectrum. In some examples, image sensors 260 may capture light emitted by light sources 265, for example in order to capture 3D images and/or 3D videos using active stereo method.

[0068] In some embodiments, the one or more motion sensors 270 may be configured to perform at least one of the following: detect motion of objects in the environment of apparatus 200; measure the velocity of objects in the environment of apparatus 200; measure the acceleration of objects in the environment of apparatus 200; detect motion of apparatus 200; measure the velocity of apparatus 200; measure the acceleration of apparatus 200; and so forth. In some implementations, the one or more motion sensors 270 may comprise one or more accelerometers configured to detect changes in proper acceleration and/or to measure proper acceleration of apparatus 200. In some implementations, the one or more motion sensors 270 may comprise one or more gyroscopes configured to detect changes in the orientation of apparatus 200 and/or to measure information related to the orientation of apparatus 200. In some implementations, motion sensors 270 may be implemented using image sensors 260, for example by analyzing images captured by image sensors 260 to perform at least one of the following tasks: track objects in the environment of apparatus 200; detect moving objects in the environment of apparatus 200; measure the velocity of objects in the environment of apparatus 200; measure the acceleration of objects in the environment of apparatus 200; measure the velocity of apparatus 200, for example by calculating the egomotion of image sensors 260; measure the acceleration of apparatus 200, for example by calculating the egomotion of image sensors 260; and so forth. In some implementations, motion sensors 270 may be implemented using image sensors 260 and light sources 265, for example by implementing a LIDAR using image sensors 260 and light sources 265. In some implementations, motion sensors 270 may be implemented using one or more RADARs. In some examples, information captured using motion sensors 270: may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0069] In some embodiments, the one or more positioning sensors 275 may be configured to obtain positioning information of apparatus 200, to detect changes in the position of apparatus 200, and/or to measure the position of apparatus 200. In some examples, positioning sensors 275 may be implemented using one of the following technologies: Global Positioning System (GPS), GLObal NAvigation Satellite System (GLONASS), Galileo global navigation system, BeiDou navigation system, other Global Navigation Satellite Systems (GNSS), Indian Regional Navigation Satellite System (IRNSS), Local Positioning Systems (LPS), Real-Time Location Systems (RTLS), Indoor Positioning System (IPS), Wi-Fi based positioning systems, cellular triangulation, and so forth. In some examples, information captured using positioning sensors 275 may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0070] In some embodiments, the one or more chemical sensors may be configured to perform at least one of the following: measure chemical properties in the environment of apparatus 200; measure changes in the chemical properties in the environment of apparatus 200; detect the present of chemicals in the environment of apparatus 200; measure the concentration of chemicals in the environment of apparatus 200. Examples of such chemical properties may include: pH level, toxicity, temperature, and so forth. Examples of such chemicals may include: electrolytes, particular enzymes, particular hormones, particular proteins, smoke, carbon dioxide, carbon monoxide, oxygen, ozone, hydrogen, hydrogen sulfide, and so forth. In some examples, information captured using chemical sensors may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0071] In some embodiments, the one or more temperature sensors may be configured to detect changes in the temperature of the environment of apparatus 200 and/or to measure the temperature of the environment of apparatus 200. In some examples, information captured using temperature sensors may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0072] In some embodiments, the one or more barometers may be configured to detect changes in the atmospheric pressure in the environment of apparatus 200 and/or to measure the atmospheric pressure in the environment of apparatus 200. In some examples, information captured using the barometers may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0073] In some embodiments, the one or more user input devices may be configured to allow one or more users to input information. In some examples, user input devices may comprise at least one of the following: a keyboard, a mouse, a touch pad, a touch screen, a joystick, a microphone, an image sensor, and so forth. In some examples, the user input may be in the form of at least one of: text, sounds, speech, hand gestures, body gestures, tactile information, and so forth. In some examples, the user input may be stored in memory units 210, may be processed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

[0074] In some embodiments, the one or more user output devices may be configured to provide output information to one or more users. In some examples, such output information may comprise of at least one of: notifications, feedbacks, reports, and so forth. In some examples, user output devices may comprise at least one of: one or more audio output devices; one or more textual output devices; one or more visual output devices; one or more tactile output devices; and so forth. In some examples, the one or more audio output devices may be configured to output audio to a user, for example through: a headset, a set of speakers, and so forth. In some examples, the one or more visual output devices may be configured to output visual information to a user, for example through: a display screen, an augmented reality display system, a printer, a LED indicator, and so forth. In some examples, the one or more tactile output devices may be configured to output tactile feedbacks to a user, for example through vibrations, through motions, by applying forces, and so forth. In some examples, the output may be provided: in real time, offline, automatically, upon request, and so forth. In some examples, the output information may be read from memory units 210, may be provided by a software executed by processing units 220, may be transmitted and/or received using communication modules 230, and so forth.

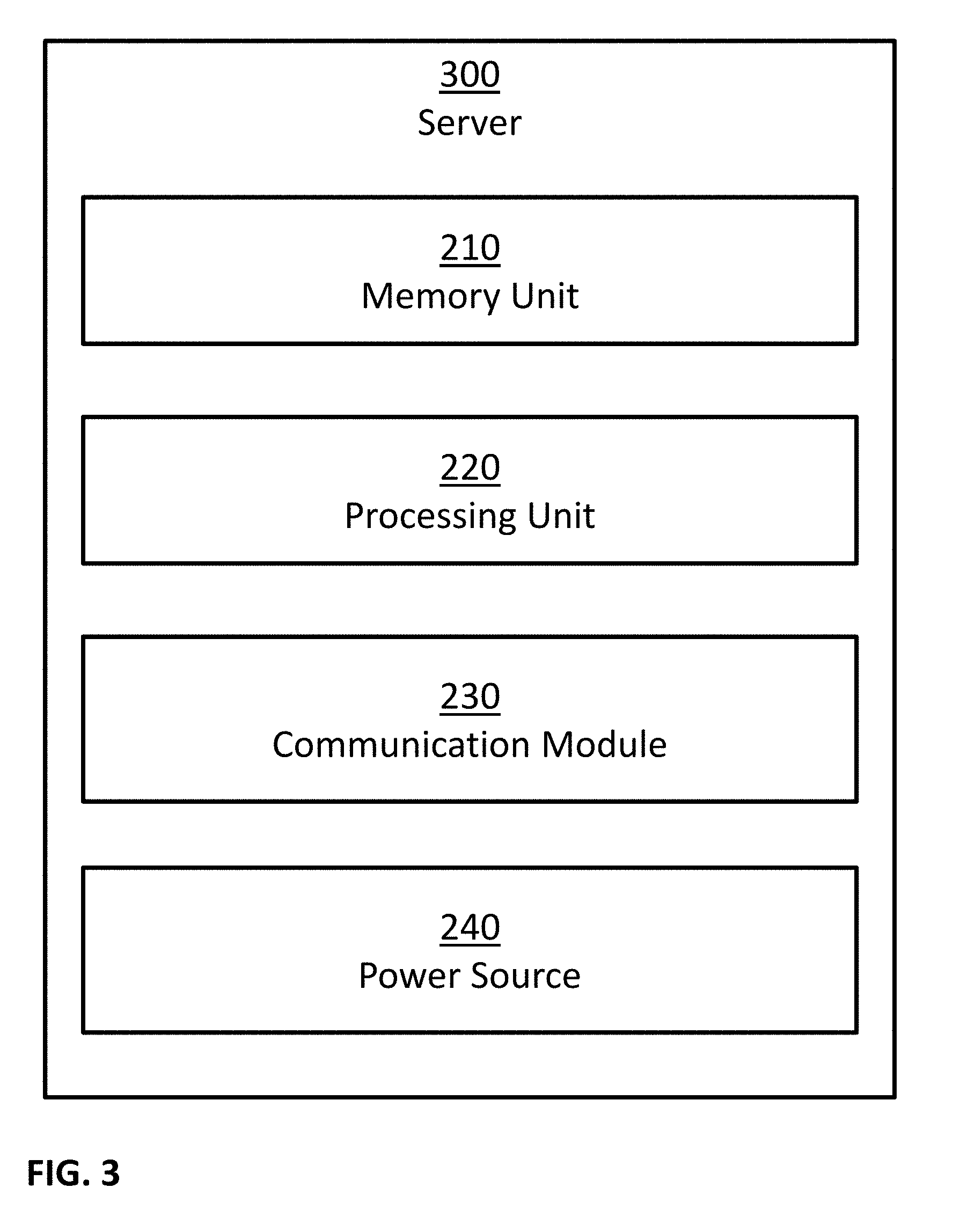

[0075] FIG. 3 is a block diagram illustrating a possible implementation of server 300. In this example, server 300 may comprise: one or more memory units 210, one or more processing units 220, one or more communication modules 230, and one or more power sources 240. In some implementations, server 300 may comprise additional components, while some components listed above may be excluded. For example, in some implementations server 300 may also comprise at least one of the following: one or more user input devices; one or more output devices; and so forth. In another example, in some implementations at least one of the following may be excluded from server 300: memory units 210, communication modules 230, and power sources 240.

[0076] FIG. 4A is a block diagram illustrating a possible implementation of cloud platform 400. In this example, cloud platform 400 may comprise computational node 500a, computational node 500b, computational node 500c and computational node 500d. In some examples, a possible implementation of computational nodes 500a, 500b, 500c and 500d may comprise server 300 as described in FIG. 3. In some examples, a possible implementation of computational nodes 500a, 500b, 500c and 500d may comprise computational node 500 as described in FIG. 5.

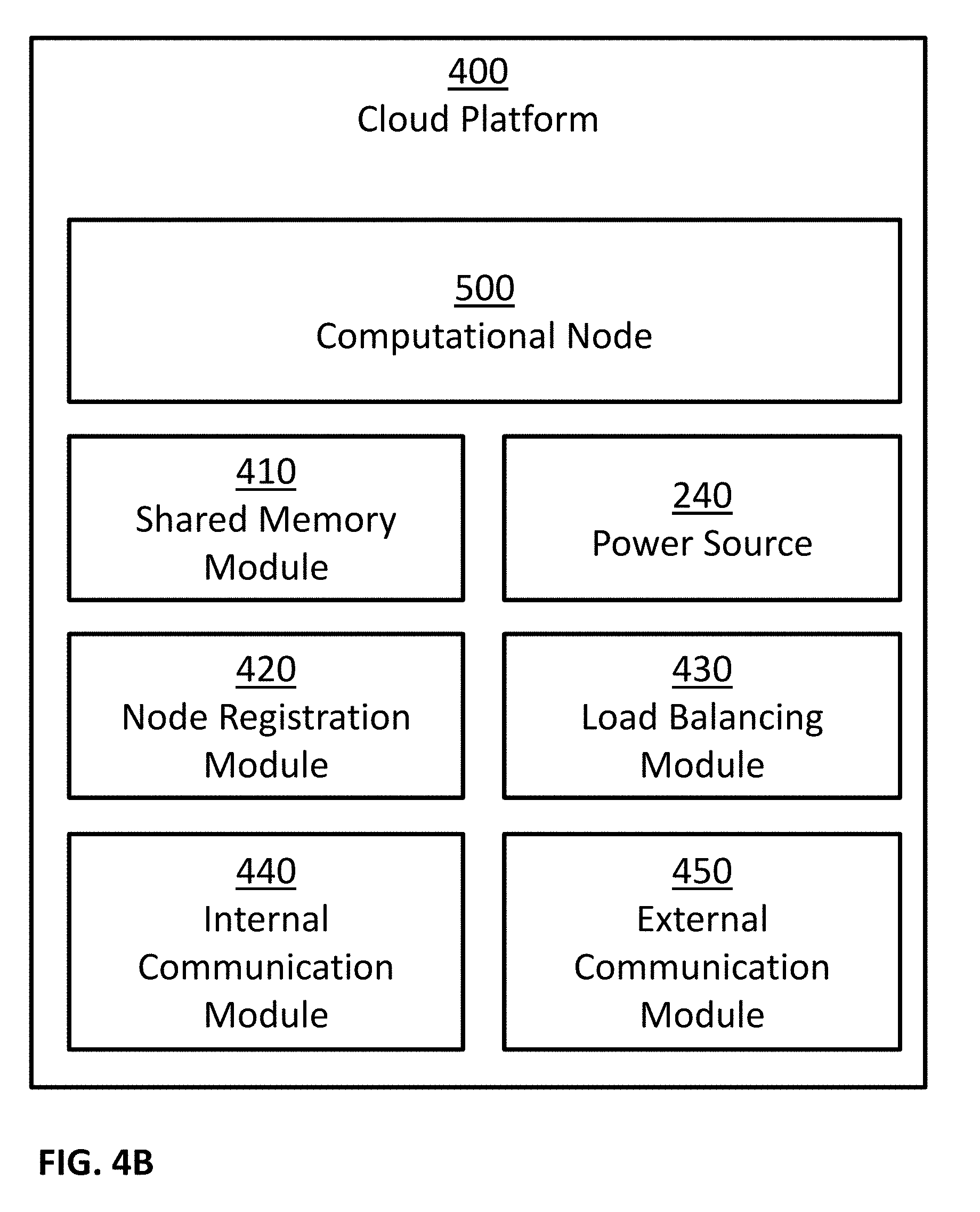

[0077] FIG. 4B is a block diagram illustrating a possible implementation of cloud platform 400. In this example, cloud platform 400 may comprise: one or more computational nodes 500, one or more shared memory modules 410, one or more power sources 240, one or more node registration modules 420, one or more load balancing modules 430, one or more internal communication modules 440, and one or more external communication modules 450. In some implementations, cloud platform 400 may comprise additional components, while some components listed above may be excluded. For example, in some implementations cloud platform 400 may also comprise at least one of the following: one or more user input devices; one or more output devices; and so forth. In another example, in some implementations at least one of the following may be excluded from cloud platform 400: shared memory modules 410, power sources 240, node registration modules 420, load balancing modules 430, internal communication modules 440, and external communication modules 450.

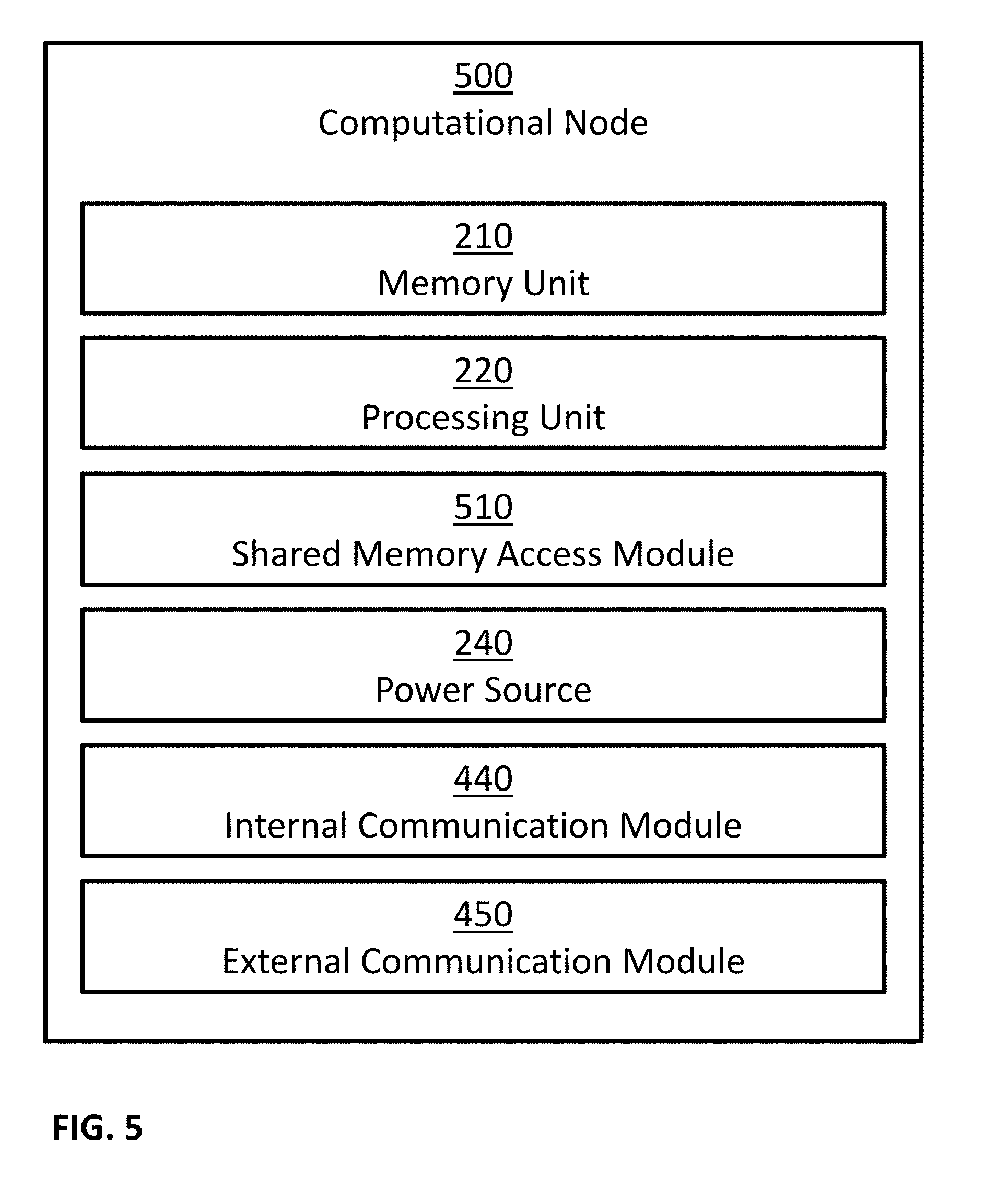

[0078] FIG. 5 is a block diagram illustrating a possible implementation of computational node 500. In this example, computational node 500 may comprise: one or more memory units 210, one or more processing units 220, one or more shared memory access modules 510, one or more power sources 240, one or more internal communication modules 440, and one or more external communication modules 450. In some implementations, computational node 500 may comprise additional components, while some components listed above may be excluded. For example, in some implementations computational node 500 may also comprise at least one of the following: one or more user input devices; one or more output devices; and so forth. In another example, in some implementations at least one of the following may be excluded from computational node 500: memory units 210, shared memory access modules 510, power sources 240, internal communication modules 440, and external communication modules 450.

[0079] In some embodiments, internal communication modules 440 and external communication modules 450 may be implemented as a combined communication module, such as communication modules 230. In some embodiments, one possible implementation of cloud platform 400 may comprise server 300. In some embodiments, one possible implementation of computational node 500 may comprise server 300. In some embodiments, one possible implementation of shared memory access modules 510 may comprise using internal communication modules 440 to send information to shared memory modules 410 and/or receive information from shared memory modules 410. In some embodiments, node registration modules 420 and load balancing modules 430 may be implemented as a combined module.

[0080] In some embodiments, the one or more shared memory modules 410 may be accessed by more than one computational node. Therefore, shared memory modules 410 may allow information sharing among two or more computational nodes 500. In some embodiments, the one or more shared memory access modules 510 may be configured to enable access of computational nodes 500 and/or the one or more processing units 220 of computational nodes 500 to shared memory modules 410. In some examples, computational nodes 500 and/or the one or more processing units 220 of computational nodes 500, may access shared memory modules 410, for example using shared memory access modules 510, in order to perform at least one of: executing software programs stored on shared memory modules 410, store information in shared memory modules 410, retrieve information from the shared memory modules 410.

[0081] In some embodiments, the one or more node registration modules 420 may be configured to track the availability of the computational nodes 500. In some examples, node registration modules 420 may be implemented as: a software program, such as a software program executed by one or more of the computational nodes 500; a hardware solution; a combined software and hardware solution; and so forth. In some implementations, node registration modules 420 may communicate with computational nodes 500, for example using internal communication modules 440. In some examples, computational nodes 500 may notify node registration modules 420 of their status, for example by sending messages: at computational node 500 startup; at computational node 500 shutdown; at constant intervals; at selected times; in response to queries received from node registration modules 420; and so forth. In some examples, node registration modules 420 may query about computational nodes 500 status, for example by sending messages: at node registration module 420 startup; at constant intervals; at selected times; and so forth.

[0082] In some embodiments, the one or more load balancing modules 430 may be configured to divide the work load among computational nodes 500. In some examples, load balancing modules 430 may be implemented as: a software program, such as a software program executed by one or more of the computational nodes 500; a hardware solution; a combined software and hardware solution; and so forth. In some implementations, load balancing modules 430 may interact with node registration modules 420 in order to obtain information regarding the availability of the computational nodes 500. In some implementations, load balancing modules 430 may communicate with computational nodes 500, for example using internal communication modules 440. In some examples, computational nodes 500 may notify load balancing modules 430 of their status, for example by sending messages: at computational node 500 startup; at computational node 500 shutdown; at constant intervals; at selected times; in response to queries received from load balancing modules 430; and so forth. In some examples, load balancing modules 430 may query about computational nodes 500 status, for example by sending messages: at load balancing module 430 startup; at constant intervals; at selected times; and so forth.

[0083] In some embodiments, the one or more internal communication modules 440 may be configured to receive information from one or more components of cloud platform 400, and/or to transmit information to one or more components of cloud platform 400. For example, control signals and/or synchronization signals may be sent and/or received through internal communication modules 440. In another example, input information for computer programs, output information of computer programs, and/or intermediate information of computer programs, may be sent and/or received through internal communication modules 440. In another example, information received though internal communication modules 440 may be stored in memory units 210, in shared memory units 410, and so forth. In an additional example, information retrieved from memory units 210 and/or shared memory units 410 may be transmitted using internal communication modules 440. In another example, input data may be transmitted and/or received using internal communication modules 440. Examples of such input data may include input data inputted by a user using user input devices.

[0084] In some embodiments, the one or more external communication modules 450 may be configured to receive and/or to transmit information. For example, control signals may be sent and/or received through external communication modules 450. In another example, information received though external communication modules 450 may be stored in memory units 210, in shared memory units 410, and so forth. In an additional example, information retrieved from memory units 210 and/or shared memory units 410 may be transmitted using external communication modules 450. In another example, input data may be transmitted and/or received using external communication modules 450. Examples of such input data may include: input data inputted by a user using user input devices; information captured from the environment of apparatus 200 using one or more sensors; and so forth. Examples of such sensors may include: audio sensors 250; image sensors 260; motion sensors 270; positioning sensors 275; chemical sensors; temperature sensors; barometers; and so forth.

[0085] FIG. 6 illustrates an exemplary embodiment of memory 600 storing a plurality of modules. In some examples, memory 600 may be separate from and/or integrated with memory units 210, separate from and/or integrated with memory units 410, and so forth. In some examples, memory 600 may be included in a single device, for example in apparatus 200, in server 300, in cloud platform 400, in computational node 500, and so forth. In some examples, memory 600 may be distributed across several devices. Memory 600 may store more or fewer modules than those shown in FIG. 6. In this example, memory 600 may comprise: objects database 605, construction plans 610, as-built models 615, project schedules 620, financial records 625, progress records 630, safety records 635, and construction errors 640.

[0086] In some embodiments, objects database 605 may comprise information related to objects associated with one or more construction sites. For example, the objects may include objects planned to be used in a construction site, objects ordered for a construction site, objects arrived at a construction site and awaiting to be used and/or installed, objects used in a construction site, objects installed in a construction site, and so forth. In some examples, the information related to an object in database 605 may include properties of the object, type, brand, configuration, dimensions, weight, price, supplier, manufacturer, identifier of related construction site, location (for example, within the construction site), time of planned arrival, time of actual arrival, time of usage, time of installation, actions need to be taken that involves the object, actions performed using and/or on the object, people associated with the actions (such as persons that need to perform an action, persons that performed an action, persons that monitor the action, persons that approve the action, etc.), tools associated with the actions (such as tools required to perform an action, tools used to perform the action, etc.), quality, quality of installation, other objects used in conjunction with the object, and so forth. In some examples, elements in objects database 605 may be indexed and/or searchable, for example using a database, using an indexing data structure, and so forth.

[0087] In some embodiments, construction plans 610 may comprise documents, drawings, models, representations, specifications, measurements, bill of materials, architectural plans, architectural drawings, floor plans, 2D architectural plans, 3D architectural plans, construction drawings, feasibility plans, demolition plans, permit plans, mechanical plans, electrical plans, space plans, elevations, sections, renderings, computer-aided design data, Building Information Modeling (BIM) models, and so forth, indicating design intention for one or more construction sites and/or one or more portions of one or more construction sites. Construction plans 610 may be digitally stored in memory 600, as described above.

[0088] In some embodiments, as-built models 615 may comprise documents, drawings, models, representations, specifications, measurements, list of materials, architectural drawings, floor plans, 2D drawings, 3D drawings, elevations, sections, renderings, computer-aided design data, Building Information Modeling (BIM) models, and so forth, representing one or more buildings or spaces as they were actually constructed. As-built models 615 may be digitally stored in memory 600, as described above.

[0089] In some embodiments, project schedules 620 may comprise details of planned tasks, milestones, activities, deliverables, expected task start time, expected task duration, expected task completion date, resource allocation to tasks, linkages of dependencies between tasks, and so forth, related to one or more construction sites. Project schedules 620 may be digitally stored in memory 600, as described above.

[0090] In some embodiments, financial records 625 may comprise information, records and documents related to financial transactions, invoices, payment receipts, bank records, work orders, supply orders, delivery receipts, rental information, salaries information, financial forecasts, financing details, loans, insurance policies, and so forth, associated with one or more construction sites. Financial records 625 may be digitally stored in memory 600, as described above.

[0091] In some embodiments, progress records 630 may comprise information, records and documents related to tasks performed in one or more construction sites, such as actual task start time, actual task duration, actual task completion date, items used, item affected, resources used, results, and so forth. Progress records 630 may be digitally stored in memory 600, as described above.

[0092] In some embodiments, safety records 635 may include information, records and documents related to safety issues (such as hazards, accidents, near accidents, safety related events, etc.) associated with one or more construction sites. Safety records 635 may be digitally stored in memory 600, as described above.

[0093] In some embodiments, construction errors 640 may include information, records and documents related to construction errors (such as execution errors, divergence from construction plans, improper alignment of items, improper placement or items, improper installation of items, concrete of low quality, missing item, excess item, and so forth) associated with one or more construction sites. Construction errors 640 may be digitally stored in memory 600, as described above.

[0094] In some embodiments, a method, such as methods 700, 900, 1100, 1200, 1300, 1500 and 1600, may comprise of one or more steps. In some examples, these methods, as well as all individual steps therein, may be performed by various aspects of apparatus 200, server 300, cloud platform 400, computational node 500, and so forth. For example, a system comprising of at least one processor, such as processing units 220, may perform any of these methods as well as all individual steps therein, for example by processing units 220 executing software instructions stored within memory units 210 and/or within shared memory modules 410. In some examples, these methods, as well as all individual steps therein, may be performed by a dedicated hardware. In some examples, computer readable medium, such as a non-transitory computer readable medium, may store data and/or computer implementable instructions for carrying out any of these methods as well as all individual steps therein. Some examples of possible execution manners of a method may include continuous execution (for example, returning to the beginning of the method once the method normal execution ends), periodically execution, executing the method at selected times, execution upon the detection of a trigger (some examples of such trigger may include a trigger from a user, a trigger from another process, a trigger from an external device, etc.), and so forth.

[0095] FIG. 7 illustrates an example of a method 700 for determining the quality of concrete from construction site images. In this example, method 700 may comprise: obtaining image data captured from a construction site (Step 710); analyzing the image data to identify a region depicting an object of an object type and made of concrete (Step 720); analyzing the image data to determine a quality indication associated with concrete (Step 730); selecting a threshold (Step 740); and comparing the quality indication with the selected threshold (Step 750). Based, at least in part, on the result of the comparison, process 700 may provide an indication to a user (Step 760). In some implementations, method 700 may comprise one or more additional steps, while some of the steps listed above may be modified or excluded. For example, Step 720 and/or Step 740 and/or Step 750 and/or Step 760 may be excluded from method 700. In some implementations, one or more steps illustrated in FIG. 7 may be executed in a different order and/or one or more groups of steps may be executed simultaneously and vice versa. For example, Step 720 may be executed after and/or simultaneously with Step 710, Step 730 may be executed after and/or simultaneously with Step 710, Step 730 may be executed before, after and/or simultaneously with Step 720, Step 740 may be executed at any stage before Step 750, and so forth.

[0096] In some embodiments, obtaining image data captured from a construction site (Step 710) may comprise obtaining image data captured from a construction site using at least one image sensor, such as image sensors 260. In some examples, obtaining the images may comprise capturing the image data from the construction site. Some examples of image data may include: one or more images; one or more portions of one or more images; sequence of images; one or more video clips; one or more portions of one or more video clips; one or more video streams; one or more portions of one or more video streams; one or more 3D images; one or more portions of one or more 3D images; sequence of 3D images; one or more 3D video clips; one or more portions of one or more 3D video clips; one or more 3D video streams; one or more portions of one or more 3D video streams; one or more 360 images; one or more portions of one or more 360 images; sequence of 360 images; one or more 360 video clips; one or more portions of one or more 360 video clips; one or more 360 video streams; one or more portions of one or more 360 video streams; information based, at least in part, on any of the above; any combination of the above; and so forth.

[0097] In some examples, Step 710 may comprise obtaining image data captured from a construction site (and/or capturing the image data from the construction site) using at least one wearable image sensor, such as wearable version of apparatus 200 and/or wearable version of image sensor 260. For example, the wearable image sensors may be configured to be worn by construction workers and/or other persons in the construction site. For example, the wearable image sensor may be physically connected and/or integral to a garment, physically connected and/or integral to a belt, physically connected and/or integral to a wrist strap, physically connected and/or integral to a necklace, physically connected and/or integral to a helmet, and so forth.

[0098] In some examples, Step 710 may comprise obtaining image data captured from a construction site (and/or capturing the image data from the construction site) using at least one stationary image sensor, such as stationary version of apparatus 200 and/or stationary version of image sensor 260. For example, the stationary image sensors may be configured to be mounted to ceilings, to walls, to doorways, to floors, and so forth. For example, a stationary image sensor may be configured to be mounted to a ceiling, for example substantially at the center of the ceiling (for example, less than two meters from the center of the ceiling, less than one meter from the center of the ceiling, less than half a meter from the center of the ceiling, and so forth), adjunct to an electrical box in the ceiling, at a position in the ceiling corresponding to a planned connection of a light fixture to the ceiling, and so forth. In another example, two or more stationary image sensors may be mounted to a ceiling in a way that ensures that the fields of view of the two cameras include all walls of the room.

[0099] In some examples, Step 710 may comprise obtaining image data captured from a construction site (and/or capturing the image data from the construction site) using at least one mobile image sensor, such as mobile version of apparatus 200 and/or mobile version of image sensor 260. For example, mobile image sensors may be operated by construction workers and/or other persons in the construction site to capture image data of the construction site. In another example, mobile image sensors may be part of a robot configured to move through the construction site and capture image data of the construction site. In yet another example, mobile image sensors may be part of a drone configured to fly through the construction site and capture image data of the construction site.

[0100] In some examples, Step 710 may comprise, in addition or alternatively to obtaining image data and/or other input data, obtaining motion information captured using one or more motion sensors, for example using motion sensors 270. Examples of such motion information may include: indications related to motion of objects; measurements related to the velocity of objects; measurements related to the acceleration of objects; indications related to motion of motion sensor 270; measurements related to the velocity of motion sensor 270; measurements related to the acceleration of motion sensor 270; information based, at least in part, on any of the above; any combination of the above; and so forth.

[0101] In some examples, Step 710 may comprise, in addition or alternatively to obtaining image data and/or other input data, obtaining position information captured using one or more positioning sensors, for example using positioning sensors 275. Examples of such position information may include: indications related to the position of positioning sensors 275; indications related to changes in the position of positioning sensors 275; measurements related to the position of positioning sensors 275; indications related to the orientation of positioning sensors 275; indications related to changes in the orientation of positioning sensors 275; measurements related to the orientation of positioning sensors 275; measurements related to changes in the orientation of positioning sensors 275; information based, at least in part, on any of the above; any combination of the above; and so forth.

[0102] In some embodiments, Step 710 may comprise receiving input data using one or more communication devices, such as communication modules 230, internal communication modules 440, external communication modules 450, and so forth. Examples of such input data may include: input data captured using one or more sensors; image data captured using image sensors, for example using image sensors 260; motion information captured using motion sensors, for example using motion sensors 270; position information captured using positioning sensors, for example using positioning sensors 275; and so forth.

[0103] In some embodiments, Step 710 may comprise reading input data from memory units, such as memory units 210, shared memory modules 410, and so forth. Examples of such input data may include: input data captured using one or more sensors; image data captured using image sensors, for example using image sensors 260; motion information captured using motion sensors, for example using motion sensors 270; position information captured using positioning sensors, for example using positioning sensors 275; and so forth.

[0104] In some embodiments, analyzing image data, for example by Step 720, Step 730, Step 930, Step 1120, Step 1320, Step 1520, Step 1530, etc., may comprise analyzing the image data to obtain a preprocessed image data, and subsequently analyzing the image data and/or the preprocessed image data to obtain the desired outcome. One of ordinary skill in the art will recognize that the followings are examples, and that the image data may be preprocessed using other kinds of preprocessing methods. In some examples, the image data may be preprocessed by transforming the image data using a transformation function to obtain a transformed image data, and the preprocessed image data may comprise the transformed image data. For example, the transformed image data may comprise one or more convolutions of the image data. For example, the transformation function may comprise one or more image filters, such as low-pass filters, high-pass filters, band-pass filters, all-pass filters, and so forth. In some examples, the transformation function may comprise a nonlinear function. In some examples, the image data may be preprocessed by smoothing the image data, for example using Gaussian convolution, using a median filter, and so forth. In some examples, the image data may be preprocessed to obtain a different representation of the image data. For example, the preprocessed image data may comprise: a representation of at least part of the image data in a frequency domain; a Discrete Fourier Transform of at least part of the image data; a Discrete Wavelet Transform of at least part of the image data; a time/frequency representation of at least part of the image data; a representation of at least part of the image data in a lower dimension; a lossy representation of at least part of the image data; a lossless representation of at least part of the image data; a time ordered series of any of the above; any combination of the above; and so forth. In some examples, the image data may be preprocessed to extract edges, and the preprocessed image data may comprise information based on and/or related to the extracted edges. In some examples, the image data may be preprocessed to extract image features from the image data. Some examples of such image features may comprise information based on and/or related to: edges; corners; blobs; ridges; Scale Invariant Feature Transform (SIFT) features; temporal features; and so forth.

[0105] In some embodiments, analyzing image data, for example by Step 720, Step 730, Step 930, Step 1120, Step 1320, Step 1520, Step 1530, etc., may comprise analyzing the image data and/or the preprocessed image data using one or more rules, functions, procedures, artificial neural networks, object detection algorithms, face detection algorithms, visual event detection algorithms, action detection algorithms, motion detection algorithms, background subtraction algorithms, inference models, and so forth. Some examples of such inference models may include: an inference model preprogrammed manually; a classification model; a regression model; a result of training algorithms, such as machine learning algorithms and/or deep learning algorithms, on training examples, where the training examples may include examples of data instances, and in some cases, a data instance may be labeled with a corresponding desired label and/or result; and so forth.

[0106] In some embodiments, analyzing the image data to identify a region depicting an object of an object type and made of concrete (Step 720) may comprise analyzing image data (such as image data captured from a construction site using at least one image sensor and obtained by Step 710) and/or preprocessed image data to identify a region of the image data depicting at least part of an object, wherein the object is of an object type and made, at least partly, of concrete. In one example, multiple regions may be identified, depicting multiple such objects of a single object type and made, at least partly, of concrete. In another example, multiple regions may be identified, depicting multiple such objects of a plurality of object types and made, at least partly, of concrete. In some examples, an identified region of the image data may comprise rectangular region of the image data containing a depiction of at least part of the object, map of pixels of the image data containing a depiction of at least part of the object, a single pixel of the image data within a depiction of at least part of the object, a continuous segment of the image data including a depiction of at least part of the object, a non-continuous segment of the image data including a depiction of at least part of the object, and so forth.

[0107] In some examples, the image data may be preprocessed to identify colors and/or textures within the image data, and a rule for detecting concrete based, at least in part, on the identified colors and/or texture may be used. For example, local histograms of colors and/or textures may be assembled, and concrete may be detected when the assembled histograms meet predefined criterions. In some examples, the image data may be processed with an inference model to detect regions of concrete. For example, the inference model may be a result of a machine learning and/or deep learning algorithm trained on training examples. A training example may comprise example images together with markings of regions depicting concrete in the images. The machine learning and/or deep learning algorithms may be trained using the training examples to identify images depicting concrete, to identify the regions within the images that depict concrete, and so forth.

[0108] In some examples, the image data may be processed using object detection algorithms to identify objects made of concrete, for example to identify objects made of concrete of a selected object type. Some examples of such object detection algorithms may include: appearance based object detection algorithms, gradient based object detection algorithms, gray scale object detection algorithms, color based object detection algorithms, histogram based object detection algorithms, feature based object detection algorithms, machine learning based object detection algorithms, artificial neural networks based object detection algorithms, 2D object detection algorithms, 3D object detection algorithms, still image based object detection algorithms, video based object detection algorithms, and so forth.

[0109] In some examples, Step 720 may further comprise analyzing the image data to determine at least one property related to the detected concrete, such as a size of the surface made of concrete, a color of the concrete surface, a position of the concrete surface (for example based, at least in part, on the position information and/or motion information obtained by Step 710), a type of the concrete surface, and so forth. For example, a histogram of the pixel colors and/or gray scale values of the identified regions of concrete may be generated. In another example, the size in pixels of the identified regions of concrete may be calculated. In yet another example, the image data may be analyzed to identify a type of the concrete surface, such as an object type (for example, a wall, a ceiling, a floor, a stair, and so forth). For example, the image data and/or the identified region of the image data may be analyzed using an inference model configured to determine the type of surface (such as an object type). The inference model may be a result of a machine learning and/or deep learning algorithm trained on training examples. A training example may comprise example images and/or image regions together with a label describing the type of concrete surface (such as an object type). The inference model may be applied to new images and/or image regions to determine the type of the surface (such as an object type).

[0110] In some examples, Step 720 may comprise analyzing a construction plan 610 associated with the construction site to determine the object type of the object. For example, the construction plan may be analyzed to identify an object type specified for an object in the construction plan, for example based on a position of the object in the construction site.

[0111] In some examples, Step 720 may comprise analyzing an as-build model 615 associated with the construction site to determine the object type of the object. For example, the as-build model may be analyzed to identify an object type specified for an object in the as-build model, for example based on a position of the object in the construction site.

[0112] In some examples, Step 720 may comprise analyzing a project schedule 620 associated with the construction site to determine the object type of the object. For example, the project schedule may be analyzed to identify objects of what object types should be in the construction site (or in parts of the construction site) at a certain time (for example, the capturing time of the image data) according to the project schedule.

[0113] In some examples, Step 720 may comprise analyzing financial records 625 associated with the construction site to determine the object type of the object. For example, the financial records may be analyzed to identify objects of what object types should be in the construction site (or in parts of the construction site) at a certain time (for example, the capturing time of the image data) according to the delivery receipts, invoices, purchase orders, and so forth.

[0114] In some examples, Step 720 may comprise analyzing progress records 630 associated with the construction site to determine the object type of the object. For example, the progress records may be analyzed to identify objects of what object types should be in the construction site (or in parts of the construction site) at a certain time (for example, the capturing time of the image data) according to the progress records.

[0115] In some examples, the image data may be analyzed to determine the object type of the object of Step 720. For example, the image data may be analyzed using a machine learning model trained using training examples to determine object type of an object from one or more images depicting the object (and/or any other input described above). In another example, the image data may be analyzed by an artificial neural network configured to determine object type of an object from one or more images depicting the object (and/or any other input described above).