Mobile Robot And Control Method For Controlling The Same

NOH; Dongki ; et al.

U.S. patent application number 16/327452 was filed with the patent office on 2019-06-13 for mobile robot and control method for controlling the same. This patent application is currently assigned to LG ELECTRONICS INC.. The applicant listed for this patent is LG ELECTRONICS INC.. Invention is credited to Seungmin BAEK, Junghwan KIM, Juhyeon LEE, Dongki NOH, Wonkeun YANG.

| Application Number | 20190179333 16/327452 |

| Document ID | / |

| Family ID | 61245133 |

| Filed Date | 2019-06-13 |

View All Diagrams

| United States Patent Application | 20190179333 |

| Kind Code | A1 |

| NOH; Dongki ; et al. | June 13, 2019 |

MOBILE ROBOT AND CONTROL METHOD FOR CONTROLLING THE SAME

Abstract

A mobile robot includes a travel drive unit configured to move a main body, an image acquisition unit configured to acquire an image of surroundings of the main body, a storage configured to store the image acquired by the image acquisition unit, a sensor unit having one or more sensors configured to sense an object during the movement of the body, and a controller configured to perform control to extract a part of the image acquired by the image acquisition unit in correspondence to a direction in which the object sensed by the sensor unit is present.

| Inventors: | NOH; Dongki; (Seoul, KR) ; LEE; Juhyeon; (Seoul, KR) ; KIM; Junghwan; (Seoul, KR) ; BAEK; Seungmin; (Seoul, KR) ; YANG; Wonkeun; (Seoul, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | LG ELECTRONICS INC. Seoul KR |

||||||||||

| Family ID: | 61245133 | ||||||||||

| Appl. No.: | 16/327452 | ||||||||||

| Filed: | August 24, 2017 | ||||||||||

| PCT Filed: | August 24, 2017 | ||||||||||

| PCT NO: | PCT/KR2017/009257 | ||||||||||

| 371 Date: | February 22, 2019 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G05D 1/0221 20130101; B25J 11/00 20130101; B25J 19/023 20130101; B25J 5/007 20130101; G06K 9/4628 20130101; G06K 9/4671 20130101; B25J 9/16 20130101; G05D 1/0255 20130101; G06N 20/00 20190101; B25J 19/02 20130101; B25J 9/00 20130101; B25J 9/1666 20130101; B25J 11/0085 20130101; B25J 9/1697 20130101; G05D 1/0234 20130101; G05D 1/0246 20130101; A47L 9/28 20130101; G05D 2201/0203 20130101; G06K 9/3233 20130101; G06K 9/00664 20130101; G01S 15/931 20130101; G05D 1/0272 20130101; G06K 9/627 20130101 |

| International Class: | G05D 1/02 20060101 G05D001/02; G01S 15/93 20060101 G01S015/93 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Aug 25, 2016 | KR | 10-2016-0108384 |

| Aug 25, 2016 | KR | 10-2016-0108385 |

| Aug 25, 2016 | KR | 10-2016-0108386 |

Claims

1. A mobile robot comprising: a travel drive unit configured to move a main body; an image acquisition unit configured to acquire an image of surroundings of the main body; a storage configured to store the image acquired by the image acquisition unit; a sensor unit having one or more sensors configured to sense an object during the movement of the main body; and a controller configured to perform control to extract a part of the image acquired by the image acquisition unit in correspondence to a direction in which the object sensed by the sensor unit is present.

2. The mobile robot of claim 1, wherein the controller comprises: an object recognition module configured to recognize an object in the extracted part of the image based on data pre-learned through machine learning; and a travel control module configured to control driving of the travel drive unit based on an attribute of the recognized object.

3. The mobile robot of claim 2, wherein the controller further comprises an image processing module configured to extract a part of the image acquired by the image acquisition unit in correspondence to the direction in which the object sensed by the sensor unit is present.

4. The mobile robot of claim 2, further comprising a communication unit configured to transmit the extracted part of the image to a predetermined server, and receive machine learning-related data from the predetermined server.

5. The mobile robot of claim 4, wherein the object recognition module is updated based on the machine learning-related data received from the predetermined server.

6. The mobile robot of claim 1, further comprising an image processing unit configured to extract a part of the image acquired by the image acquisition unit in correspondence to the direction in which the object sensed by the sensor is present.

7. The mobile robot of claim 1, wherein the controller is further configured to: when the object is sensed from a forward-right direction of the main body, extract a right lower area of the selected image acquired at the specific point in time; when the object is sensed from a forward-left direction of the main body, extract a left lower area of the selected image acquired at the specific point in time; and when the object is sensed from a forward direction of the main body, extract a central lower area of the selected image acquired at the specific point in time.

8. The mobile robot of claim 1, wherein the sensor unit comprises: a first sensor disposed on a front surface of the main body; and a second sensor and a third sensor spaced apart in left and right directions from the first sensor.

9. The mobile robot of claim 8, wherein the controller is further configured to extract an extraction target area from the image acquired by the image acquisition unit by shifting the extraction target area in proportion to a difference between a distance from the sensed object to the second sensor and a distance from the sensed object and the third sensor.

10. The mobile robot of claim 1, wherein a plurality of continuous images acquired by the image acquisition unit is stored in the storage, and wherein the controller is further configured to extract, based on a moving direction and a moving speed of the main body, a part of an image acquired at a specific point in time earlier than an object sensing time of the sensor unit from among the plurality of continuous images.

11. The mobile robot of claim 10, wherein the controller is further configured to extract a part of an image acquired at a point in time earlier than the object sensing time of the sensor as the moving speed is slower.

12. The mobile robot of claim 1, wherein the controller is further configured to store position information of the sensed object and position information of the mobile robot in the storage, and perform control to register an area having a predetermined size around a position of the sensed object as an object area in a map.

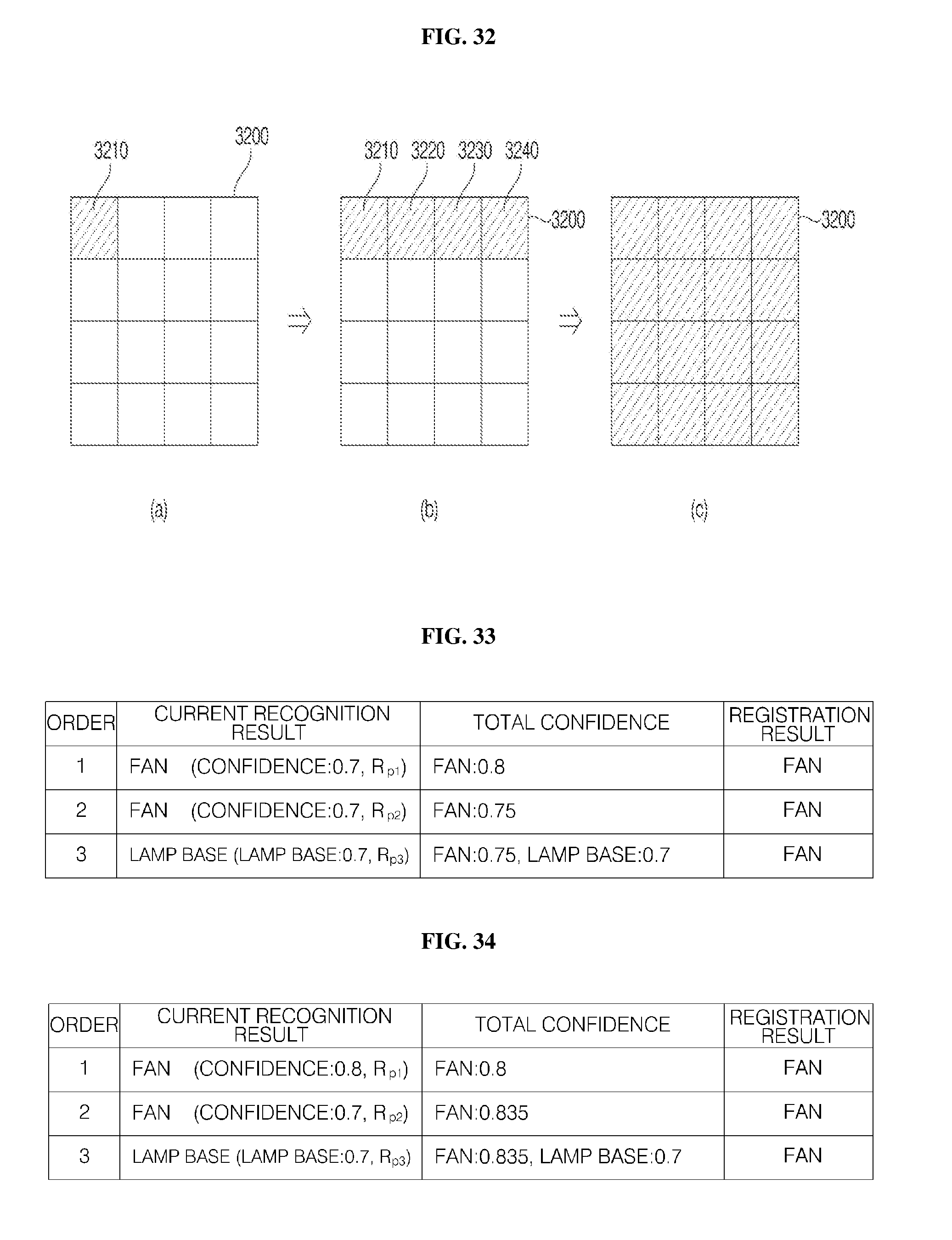

13. The mobile robot of claim 12, wherein the controller is further configured to recognize an attribute of an object sequentially with respect to images acquired in a predetermined object area by the image acquisition unit, and determine a final attribute of the object based on a plurality of recognition results from the sequential recognition.

14. A method for controlling a mobile robot comprising: sensing, by a sensor unit, object during movement; extracting a part of an image acquired by an image acquisition unit in correspondence to a direction in which the object sensed by the sensor unit is present; recognizing an object in the extracted part of the image; and controlling driving of a travel drive unit based on an attribute of the recognized object.

15. The method of claim 14, wherein the recognizing of the object comprises recognizing an attribute of the object in the extracted part of the image based on data that is pre-learned through machine learning.

16. The method of claim 14, wherein the extracting of the part of the image acquired by the image acquisition unit comprises: when the object is sensed from a forward-right direction of the main body, extracting a right lower area of the selected image acquired at the specific point in time; when the object is sensed from a forward-left direction of the main body, extracting a left lower area of the selected image acquired at the specific point in time; and when the object is sensed from a forward direction of the main body, extracting a central lower area of the selected image acquired at the specific point in time.

17. The method of claim 14, further comprising storing the extracted part of the image.

18. The method of claim 14, wherein the controlling of the driving the travel drive unit comprises performing control to perform an avoidance operation when the sensed object is not an object that the mobile robot is able to climb.

19. The method of claim 14, wherein the extracting of the part of the image comprises extracting, based on a moving direction and a moving speed of the main body, a partial area of an image acquired at a specific point in time earlier than an object sensing time of the sensor unit from among the plurality of continuous images.

20. The method of claim 14, further comprising: when the sensor unit senses an object, storing position information of the sensed object and position information of the mobile robot in the storage and registering an area having a predetermined size around a position of the sensed object as an object area in a map; recognizing an attribute of the object sequentially with respect to images acquired by the image acquisition unit during movement in the object area; and determining a final attribute of the object based on a plurality of recognition results from the sequential recognition.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a U.S. National Phase entry under 35 U.S.C. .sctn. 371 from PCT International Application No. PCT/KR2017/009257, filed Aug. 24, 2017, which claims the benefit of priority of Korean Patent Applications Nos. 10-2016-0108384, 10-2016-0108385, and 10-2016-0108386, all filed Aug. 25, 2016, the contents of which are incorporated herein by reference in their entireties.

TECHNICAL FIELD

[0002] The present invention relates to a mobile robot and a method for controlling the same, and, more particularly, to a mobile robot capable of performing object recognition and avoidance and a method for controlling the mobile robot.

BACKGROUND ART

[0003] Robots have been developed for industry and has taken charge of a portion of factory automation. As the robots have recently been used in more diverse fields, medical robots, aerospace robots, etc. have been developed, and domestic robots for ordinary households are also being made. Among those robots, a robot capable of traveling on its own is referred to as a mobile robot.

[0004] A typical example of mobile robots used in households is a robot cleaner, which cleans a predetermined area by suctioning ambient dust or foreign substances while traveling in the predetermined area on its own.

[0005] A mobile robot is capable of moving on its own and thus has freedom to move, and the mobile robot is provided with a plurality of sensors to avoid an object during traveling so that the mobile robot may travel while detouring around the object.

[0006] In general, an infrared sensor or an ultrasonic sensor is used to sense an object by the mobile robot. The infrared sensor determines presence of an object and a distance to the object based on an amount of light reflected by the object or a time taken to receive the reflected light, whereas the ultrasonic sensor emits an ultrasound wave having a predetermined period and, in response to ultrasonic waves reflected by an object, determines a distance to the object based on a difference between the time to emit the ultrasonic waves and the time for the ultrasonic waves to return to the object.

[0007] Meanwhile, object recognition and avoidance significantly affects not just driving performance but also cleaning performance of the mobile robot, and thus, it is required to secure reliability of an object recognizing capability.

[0008] A related art (Korean Patent Registration No. 10-0669892) discloses a technology that embodies highly reliable object recognition by combining an infrared sensor and an ultrasonic sensor.

[0009] However, the related art (Korean Patent Registration No. 10-0669892) has a problem in its incapability of determining an attribute of an object.

[0010] FIG. 1 is a diagram for explanation of a method in which a conventional mobile robot senses and avoids an object.

[0011] Referring to FIG. 1, a robot cleaner performs cleaning by suctioning dust and foreign substance while moving (S11).

[0012] The robot cleaner recognizes presence of an object in response to sensing, by an ultrasonic sensor, an ultrasonic signal reflected by an object (S12), and determines whether the recognized object has a height that the robot cleaner is able to climb (S13).

[0013] If it is determined that the recognized object has a height that the robot cleaner is able to climb, the robot cleaner may move in a straight forward direction (S14), and, otherwise, rotate by an angle of 90 degrees (S15).

[0014] For example, if the object is a low door threshold, the robot cleaner recognizes the door threshold, and, if a recognition result indicates that the robot cleaner is able to pass the door threshold, the robot cleaner moves over the door threshold.

[0015] However, if an object determined to have a height that the mobile robot is able to climb is an electric wire, the robot cleaner may be confined thus restricted by the electric wire when moving over the electric wire.

[0016] In addition, as a fan base has a height similar to or lower than a height of a door threshold, the robot cleaner may determine that the fan base is an object that the robot cleaner is able to climb. In this case, when attempting to climb the fan base, the robot cleaner may be restricted with wheels spinning.

[0017] In addition, in the case where, not an entire human body, but a part of human hair is sensed, the robot cleaner may determine that the human hair has a height that the robot cleaner is able to climb, and travel forward, but, in this case, the robot cleaner may suction the human hair which possibly cause an accident.

[0018] Thus, a method for identifying an attribute of a front object and change a moving pattern according to the attribute is required.

[0019] Meanwhile, interests for machine learning such as artificial intelligence and deep learning have grown significantly in recent years.

[0020] Machine learning is one of artificial intelligence fields, and refers to training computer with data and instruct the computer to perform a certain task, such as prediction and classification, based on the data.

[0021] The representative definition of machine learning is proposed by Professor Tom M. Mitchell, as follows: "if performance at a specific task in T, as measured by P, improves with experience E, it can be said that the task T is learned from experience E." That is, improving performance with respect to a certain task T with consistent experience E is considered machine learning.

[0022] Conventional machine learning focuses on statistics-based classification, regression, and clustering models. In particular, regarding map learning of the classification and regression models, a human defines properties of training data and a learning model of distinguishing new data based on the properties.

[0023] Meanwhile, unlike the conventional machine learning, deep learning technologies have been being developed recently. Deep learning is an artificial intelligence technology that enables a computer to learn on its own like a human on the basis of Artificial Neural Networks (ANN). That is, deep learning means that a computer discovers and determines properties on its own.

[0024] One of factors having accelerated development of deep learning may be, for example, deep learning framework that is provided as an open source. For example, deep learning frameworks include Theano developed by University of Montreal, Canada, Torch developed by New York University, U.S.A., Caffe developed by University of California, Berkeley, TensorFlow developed by Google, etc.

[0025] With disclosure of deep learning frameworks, a learning process, a learning method, and extracting and selecting data used for learning have become more important in addition to a deep learning algorithm for the purposes of effective learning and recognition.

[0026] In addition, more and more efforts are being made to use artificial intelligence and machine learning in a diversity of products and services.

DISCLOSURE

Technical Problem

[0027] One object of the present invention is to provide a mobile robot and a method for controlling the mobile robot, the mobile robot which is capable of acquiring image data for improving accuracy in object attribute recognition.

[0028] Another object of the present invention is to provide a mobile robot and a method for controlling the mobile robot, the mobile robot which is capable of determining an attribute of an object and adjusting a traveling pattern according to the attribute of the object to thereby recognize and avoid the object with high reliability.

[0029] Yet another object of the present invention is to provide a mobile robot, which is capable of moving forward, moving backward, stopping, detouring, and the like according to a recognition result on an object to enhance stability of the mobile robot and user convenience and improve operational efficiency and cleaning efficiency, and a method for controlling the mobile robot.

[0030] Yet another object of the present invention is to provide a mobile robot capable of accurately recognizing an attribute of an object based on machine learning, and a method for controlling the mobile robot.

[0031] Yet another object of the present invention is to provide a mobile robot capable of performing machine learning efficiently and extracting data for object attribute recognition, and a method for controlling the mobile robot.

Technical Solution

[0032] In order to achieve the above or other objects, a mobile robot in one general aspect of the present invention includes: a travel drive unit configured to move a main body; an image acquisition unit configured to acquire an image of surroundings of the main body; a storage configured to store the image acquired by the image acquisition unit; a sensor unit having one or more sensors configured to sense an object during the movement of the main body; and a controller configured to perform control to extract a part of the image acquired by the image acquisition unit in correspondence to a direction in which the object sensed by the sensor unit is present, and accordingly, it is possible to extract data that is efficient for machine learning and object attribute recognition.

[0033] In addition, in order to achieve the above or other objects, a mobile robot in one general aspect of the present invention includes an object recognition module configured to recognize, in an extracted image, an object pre-learned through machine learning and a travel control module configured to control driving of a travel drive unit based on an attribute of the recognized object, and accordingly, it is possible to improve stability, user convenience, operation efficiency, and cleaning efficiency.

[0034] In addition, in order to achieve the above or other objects, a method for controlling a mobile robot in one general aspect of the present invention may include: sensing, by a sensor unit, object during movement; extracting a part of an image acquired by an image acquisition unit in correspondence to a direction in which the object sensed by the sensor unit is present; recognizing an object in the extracted part of the image; and controlling driving of a travel drive unit based on an attribute of the recognized object.

Advantageous Effects

[0035] According to at least one of the embodiments of the present invention, it is possible to acquire image data that helps increase accuracy of recognizing an attribute of an object.

[0036] According to at least one of the embodiments of the present invention, since a mobile robot is able to to determine an attribute of an object and adjust a traveling pattern according to the attribute of the object, it is possible to perform highly reliable object recognition and avoidance operation.

[0037] In addition, according to at least one of the embodiments of the present invention, it is possible to provide a mobile robot which is capable of moving forward, moving backward, stopping, detouring, and the like according to a recognition result on an object to enhance user convenience and improve operational efficiency and cleaning efficiency, and a method for controlling the mobile robot.

[0038] In addition, according to at least one of the embodiments of the present invention, it is possible to provide a mobile robot and a method for controlling the mobile robot, the mobile robot which is capable of accurately recognizing an attribute of an object based on learning machine.

[0039] In addition, according to at least one of the embodiments of the present invention, it is possible for a mobile robot to perform machine learning efficiently and extract data for recognition.

[0040] Meanwhile, other effects may be explicitly or implicitly disclosed in the following detailed description according to embodiments of the present invention.

DESCRIPTION OF DRAWINGS

[0041] FIG. 1 is a diagram for explanation of a method for sensing and avoiding object by a conventional robot cleaner.

[0042] FIG. 2 is a perspective view of a mobile robot according to an embodiment of the present invention and a base station for charging the mobile robot.

[0043] FIG. 3 is a view of the top section of the mobile robot in FIG. 2.

[0044] FIG. 4 is a view of the front section of the mobile robot in FIG. 2.

[0045] FIG. 5 is a view of the bottom section of the mobile robot in FIG. 2.

[0046] FIGS. 6 and 7 are block diagram of a control relationship between major components of a mobile robot according to an embodiment of the present invention.

[0047] FIG. 8 is an exemplary schematic internal block diagram of a server according to an embodiment of the present invention.

[0048] FIGS. 9 to 12 are diagrams for explanation of deep learning.

[0049] FIG. 13 is a flowchart of a method for controlling a mobile robot according to an embodiment of the present invention.

[0050] FIG. 14 is a flowchart of a method for controlling a mobile robot according to an embodiment of the present invention.

[0051] FIGS. 15 to 21 are diagrams for explanation of a method for controlling a mobile robot according to an embodiment of the present invention.

[0052] FIG. 22 is a diagram for explanation of an operation method of a mobile robot and a server according to an embodiment of the present invention.

[0053] FIG. 23 is a flowchart of an operation method of a mobile robot and a server according to an embodiment of the present invention.

[0054] FIGS. 24 to 26 are diagrams for explanation of a method for controlling a moving robot according to an embodiment of the present invention.

[0055] FIG. 27 is a flowchart of a method for controlling a mobile robot according to an embodiment of the present invention.

[0056] FIG. 28 is a diagram for explanation of a method for controlling a mobile robot according to an embodiment of the present invention.

[0057] FIG. 29 is a flowchart of a method for controlling a mobile robot according to an embodiment of the present invention.

[0058] FIGS. 30 to 34 are diagrams for explanation of a method for controlling a mobile robot according to an embodiment of the present invention

BEST MODE

[0059] Hereinafter, embodiments of the present invention will be described in detail with reference to the accompanying drawings. While the invention will be described in conjunction with exemplary embodiments, it will be understood that the present description is not intended to limit the invention to the exemplary embodiments.

[0060] In the drawings, in order to clearly and briefly describe the invention, parts which are not related to the description will be omitted, and, in the following description of the embodiments, the same or similar elements are denoted by the same reference numerals even though they are depicted in different drawings.

[0061] In the following description, with respect to constituent elements used in the following description, the suffixes "module" and "unit" are used or combined with each other only in consideration of ease in the preparation of the specification, and do not have or serve as different meanings. Accordingly, the suffixes "module" and "unit" may be interchanged with each other.

[0062] A mobile robot 100 according to an embodiment of the present invention refers to a robot capable of moving on its own using wheels and the like, and may be a domestic robot, a robot cleaner, etc. A robot cleaner having a cleaning function is herein after described as an example of the mobile robot, but the present invention is not limited thereto.

[0063] FIG. 2 is a perspective view of a mobile robot and a charging station for charging the mobile robot according to an embodiment of the present invention.

[0064] FIG. 3 is a view of the top section of the mobile robot illustrated in FIG. 2, FIG. 4 is a view of the front section of the mobile robot illustrated in FIG. 2, and FIG. 5 is a view of the bottom section of the mobile robot illustrated in FIG. 2.

[0065] FIGS. 6 and 7 are block diagram of a control relationship between major components of a mobile robot according to an embodiment of the present invention.

[0066] Referring to FIGS. 2 to 7, a mobile robot 100, 100a, or 100b includes a main body 110, and an image acquisition unit 120, 120a, and 120b for acquiring an area around the main body 110.

[0067] Hereinafter, each part of the main body 110 is defined as below: a portion facing a ceiling of a cleaning area is defined as the top section (see FIG. 3), a portion facing a floor of the cleaning area is defined as the bottom section (see FIG. 5), and a portion facing a direction of travel in portions constituting a circumference of the main body 110 between the top section and the bottom section is defined as the front section (see FIG. 4).

[0068] The mobile robot 100, 100a, or 100b includes a travel drive unit 160 for moving the main body 110. The travel drive unit 160 includes at least one drive wheel 136 for moving the main body 110. The travel drive unit 160 includes a driving motor (not shown) connected to the drive wheel 136 to rotate the drive wheel. Drive wheels 136 may be provided on the left side and the right side of the main body 110, respectively, and such drive wheels 136 are hereinafter referred to as a left wheel 136(L) and a right wheel 136(R), respectively.

[0069] The left wheel 136(L) and the right wheel 136(R) may be driven by one driving motor, but, if necessary, a left wheel drive motor to drive the left wheel 136(L) and a right wheel drive motor to drive the right wheel 136(R) may be provided. The travel direction of the main body 110 may be changed to the left or to the right by making the left wheel 136(L) and the right wheel 136(R) have different rates of rotation.

[0070] A suction port 110h to suction air may be formed on the bottom surface of the body 110, and the body 110 may be provided with a suction device (not shown) to provide suction force to cause air to be suctioned through the suction port 110h, and a dust container (not shown) to collect dust suctioned together with air through the suction port 110h.

[0071] The body 110 may include a case 111 defining a space to accommodate various components constituting the mobile robot 100, 110a, 110b. An opening allowing insertion and retrieval of the dust container therethrough may be formed on the case 111, and a dust container cover 112 to open and close the opening may be provided rotatably to the case 111.

[0072] There may be provided a roll-type main brush having bristles exposed through the suction port 110h and an auxiliary brush 135 positioned in the front of the bottom surface of the body 110 and having bristles forming a plurality of radially extending blades. Dust is removed from the floor in a cleaning area by rotation of the brushes 134 and 135, and such dust separated from the floor in this way is suctioned through the suction port 110h and collected in the dust container.

[0073] A battery 138 serves to supply power necessary not only for the drive motor but also for overall operations of the mobile robot 100, 100a, or 100b. When the battery 138 of the robot cleaner 100 is running out, the mobile robot 100, 100a, or 100b may perform return travel to the charging base 200 to charge the battery, and during the return travel, the robot cleaner 100 may autonomously detect the position of the charging base 200.

[0074] The charging base 200 may include a signal transmitting unit (not shown) to transmit a predetermined return signal. The return signal may include, but is not limited to, a ultrasonic signal or an infrared signal.

[0075] The mobile robot 100, 100a, or 100b may include a signal sensing unit (not shown) to receive the return signal. The charging base 200 may transmit an infrared signal through the signal transmitting unit, and the signal sensing unit may include an infrared sensor to sense the infrared signal. The mobile robot 100, 100a, or 100b moves to the position of the charging base 200 according to the infrared signal transmitted from the charging base 200 and docks with the charging base 200. By docking, charging of the robot cleaner 100 is performed between a charging terminal 133 of the mobile robot 100, 100a, or 100b and a charging terminal 210 of the charging base 200.

[0076] In some implementations, the mobile robot 100, 100a, or 100b may perform return travel to the base station 200 based on an image or in a laser-pattern extraction method.

[0077] The mobile robot 100, 100a, or 100b may return to the base station by recognizing a predetermined pattern formed at the base station 200 using an optical signal emitted from the main body 110 and extracting the predetermined pattern.

[0078] For example, the mobile robot 100, 100a, or 100b according to an embodiment of the present invention may include an optical pattern sensor (not shown).

[0079] The optical pattern sensor may be provided in the main body 110, emit an optical pattern to an active area where the mobile robot 100, 100a, or 100b moves, and acquire an input image by photographing an area into which the optical pattern is emitted. For example, the optical pattern may be light having a predetermined pattern, such as a cross pattern.

[0080] The optical pattern sensor may include a pattern emission unit to emit the optical pattern, and a pattern image acquisition unit to photograph an area into which the optical pattern is emitted.

[0081] The pattern emission unit may include a light source, and an Optical Pattern Projection Element (OPPE). The optical pattern is generated as light incident from the light source passes through the OPPE. The light source may be a Laser Diode (LD), a Light Emitting Diode (LED), etc.

[0082] The pattern emission unit may emit light in a direction forward of the main body, and the pattern image acquisition unit acquires an input image by photographing an area where an optical pattern is emitted. The pattern image acquisition unit may include a camera, and the camera may be a structured light camera.

[0083] Meanwhile, the base station 200 may include two or more locator beacons spaced a predetermined distance apart from each other.

[0084] A locator beacon forms a mark distinguishable from the surroundings when an optical pattern is incident on a surface of the locator beacon. This mark may be caused by transformation of the optical pattern due to a morphological characteristic of the locator beacon, or may be caused by difference in light reflectivity (or absorptivity) due to a material characteristic of the locator beacon.

[0085] The locator beacon may include an edge that forms the mark. An optical pattern incident on the surface of the locator beacon is bent on the edge with an angle, and the bending is found as a cusp in an input image as the mark.

[0086] The mobile robot 100, 100a, or 100b may automatically search for the base station when a remaining battery capacity is insufficient, or may search for the base station even when a charging command is received from a user.

[0087] When the mobile robot 100, 100a, or 100b searches for the base station, a pattern extraction unit extracts cusps from an input image and a controller 140 acquire location information of the extracted cusps. The location information may include locations in a three-dimensional space, which takes into consideration of distances from the mobile robot 100, 100a, or 100b to the cusps.

[0088] The controller 140 calculates an actual distance between the cusps based on the acquired location information of the cusps and compare the actual distance with a preset reference threshold, and, if a distance between the actual distance and the reference threshold falls into a predetermined range, it may be determined that the charging station 200 is found.

[0089] Alternatively, the mobile robot 100, 100a, or 100b may return to the base station 200 by acquiring images of the surroundings with a camera of the image acquisition unit 120 and extracting and identifying a shape corresponding to the charging station 200 from the acquired image.

[0090] In addition, the mobile robot 100, 100a, or 100b may return to the base station by acquiring images of surroundings with the camera of the image acquisition unit 120 and identifying a predetermined optical signal emitted from the base station 200.

[0091] The image acquisition unit 120, which is configured to photograph the cleaning area, may include a digital camera. The digital camera may include at least one optical lens, an image sensor (e.g., a CMOS image sensor) including a plurality of photodiodes (e.g., pixels) on which an image is created by light transmitted through the optical lens, and a digital signal processor (DSP) to construct an image based on signals output from the photodiodes. The DSP may produce not only a still image, but also a video consisting of frames constituting still images.

[0092] Preferably, the image acquisition unit 120 includes a front camera 120a provided to acquire an image of a front field of view from the main body 110, and an upper camera 120b provided on the top section of the main body 110 to acquire an image of a ceiling in a travel area, but the position and capture range of the image acquisition unit 120 are not necessarily limited thereto.

[0093] In this embodiment, a camera may be installed at a portion (ex. the front, the rear, and the bottom) of the mobile robot and continuously acquire captured images in a cleaning operation. Such a camera may be installed in plural at each portion for photography efficiency. An image captured by the camera may be used to recognize a type of a substance existing in a corresponding space, such as dust, a human hair, and floor, to determine whether cleaning has been performed, or to confirm a point in time to clean.

[0094] The front camera 120a may capture an object or a condition of a cleaning area ahead in a direction of travel of the mobile robot 100, 100a, or 100b.

[0095] According to an embodiment of the present invention, the image acquisition unit 120 may acquire a plurality of images by continuously photographing the surroundings of the main body 110, and the plurality of acquired images may be stored in a storage 150.

[0096] The mobile robot 100, 100a, or 100b may increase accuracy in object recognition by using a plurality of images or may increase accuracy of recognition of an object by selecting one or more images from a plurality of images to use effective data.

[0097] In addition, the mobile robot 100, 100a, or 100b may include a sensor unit 170 that includes sensors serving to sense a variety of data related to operation and states of the mobile robot.

[0098] For example, the sensor unit 170 may include an object detection sensor to sense an object present ahead. In addition, the sensor unit 170 may further include a cliff detection sensor 132 to sense presence of a cliff on the floor of a travel region, and a lower camera sensor 139 to acquire an image of the floor.

[0099] Referring to FIGS. 2 and 4, the object detection sensor 131 may include a plurality of sensors installed at a predetermined interval on an outer circumferential surface of the mobile robot 100.

[0100] For example, the sensor unit 170 may include a first sensor disposed on the front surface of the main body 110, and second and third sensors spaced apart from the first sensor in the left and right direction.

[0101] The object detection sensor 131 may include an infrared sensor, an ultrasonic sensor, an RF sensor, a magnetic sensor, a position sensitive device (PSD) sensor, etc.

[0102] Meanwhile, the positions and types of sensors included in the object detection sensor 131 may differ depending on a model of a mobile robot, and the object detection sensor 131 may further include various sensors.

[0103] The object detection sensor 131 is a sensor for sensing a distance to a wall or an object in an indoor space, and is, but not limited thereto, described as an ultrasonic sensor.

[0104] The object detection sensor 131 senses an object, especially an object, present in a direction of travel (movement) of the mobile robot and transmits object information to the controller 140. That is, the object detection sensor 131 may sense a protrusion, home stuff, furniture, a wall surface, a wall corner, etc. that are present in a path through which the robot cleaner moves, and deliver related information to a control unit.

[0105] In this case, the controller 140 may sense a position of the object based on at least two signals received through the ultrasonic sensor, and control movement of the mobile robot 100 according to the sensed position of the object.

[0106] In some implementations, the object detection sensor 131 provided on an outer side surface of the case 110 may include a transmitter and a receiver.

[0107] For example, the ultrasonic sensor may be provided such that at least one transmitter and at least two receivers cross one another. Accordingly, it is possible to transmit a signal in various directions and receive a signal, reflected by an object, from various directions.

[0108] In some implementations, a signal received from the object detection sensor 131 may go through signal processing, such as amplification and filtering, and thereafter a distance and a direction to an object may be calculated.

[0109] Meanwhile, the sensor unit 170 may further include an operation detection sensor that serves to sense operation of the mobile robot 100, 100a, or 100b upon driving of the main body 110, and output operation information. As the operation detection sensor, a gyro sensor, a wheel sensor, an acceleration sensor, etc. may be used.

[0110] When the mobile robot 100, 100a, or 100b moves according to an operation mode, the gyro sensor senses a direction of rotation and detects an angle of rotation. The mobile robot 100, 100a, or 100b detects a velocity of the mobile robot 100, 100a, or 100b, and outputs a voltage value proportional to the velocity. The controller 140 may calculate the direction of rotation and the angle of rotation using the voltage value that is output from the gyro sensor.

[0111] The wheel sensor is connected to the left wheel 136(L) and the right wheel 136(R) to sense the revolution per minute (RPM) of the wheels. In this example, the wheel sensor may be a rotary encoder. The rotary encoder senses and outputs the RPM of the left wheel 136(L) and the right wheel 136(R).

[0112] The controller 140 may calculate the speed of rotation of the left and right wheels using the RPM. In addition, the controller 140 may calculating an angle of rotation using a difference in the RPM between the left wheel 136(L) and the right wheel 136(R).

[0113] The acceleration sensor senses a change in speed of the mobile robot 100, 100a, or 100b, e.g., a change in speed according to a start, a stop, a direction change, collision with an object, etc. The acceleration sensor may be attached to a position adjacent to a main wheel or a secondary wheel to detect the slipping or idling of the wheel.

[0114] In addition, the acceleration sensor may be embedded in the controller 140 and detect a change in speed of the mobile robot 100, 100a, or 100b. That is, the acceleration sensor detects impulse according to a change in speed and outputs a voltage value corresponding thereto. Thus, the acceleration sensor may perform the function of an electronic bumper.

[0115] The controller 140 may calculate a change in the positions of the mobile robot 100, 100a, or 100b based on operation information output from the operation detection sensor. Such a position is a relative position corresponding to an absolute position that is based on image information. By recognizing such a relative position, the mobile robot may enhance position recognition performance using image information and object information.

[0116] Meanwhile, the mobile robot 100, 100a, or 100b may include a power supply (not shown) having a rechargeable battery 138 to provide power to the robot cleaner.

[0117] The power supply may supply driving power and operation power to each element of the mobile robot 100, 100a, or 100b, and may be charged by receiving a charging current from the charging station 200 when a remaining power capacity is insufficient.

[0118] The mobile robot 100, 100a, or 100b may further include a battery sensing unit to sense the state of charge of the battery 138 and transmit a sensing result to the controller 140. The battery 138 is connected to the battery sensing unit such that a remaining battery capacity state and the state of charge of the battery is transmitted to the controller 140. The remaining battery capacity may be disposed on a screen of an output unit (not shown).

[0119] In addition, the mobile robot 100, 100a, or 100b includes an operator 137 to input an on/off command or any other various commands. Through the operator 137, various control commands necessary for overall operations of the mobile robot 100 may be received. In addition, the mobile robot 100, 100a, or 100b may include an output unit (not shown) to display reservation information, a battery state, an operation mode, an operation state, an error state, etc.

[0120] Referring to FIGS. 6 and 7, the mobile robot 100a, or 100b includes the controller 140 for processing and determining a variety of information, such as recognizing the current position, and the storage 150 for storing a variety of data. In addition, the mobile robot 100, 100a, or 100b may further include a communication unit 190 for transmitting and receiving data with an external terminal

[0121] The external terminal may be provided with an application for controlling the mobile robot 100a and 100b, displays a map of a travel area to be cleaned by executing the application, and designate a specific area on a map to be cleaned. Examples of the external terminal may include a remote control, a PDA, a laptop, a smartphone, a tablet, and the like in which an application for configuring a map is installed.

[0122] By communicating with the mobile robot 100a and 100b, the external terminal may display the current position of the mobile robot, in addition to the map, and display information on a plurality of areas. In addition, the external terminal may update the current position in accordance with traveling of the mobile robot and displays the updated current position.

[0123] The controller 140 controls the image acquisition unit 120, the operator 137, and the travel drive unit 160 of the mobile robot 100a and 100b so as to control overall operations of the mobile robot 100.

[0124] The storage 150 serves to record various kinds of information necessary for control of the robot cleaner 100 and may include a volatile or non-volatile recording medium. The recording medium serves to store data which is readable by a micro processor and may include a hard disk drive (HDD), a solid state drive (SSD), a silicon disk drive (SDD), a ROM, a RAM, a CD-ROM, a magnetic tape, a floppy disk, an optical data storage, etc.

[0125] Meanwhile, a map of the travel area may be stored in the storage 150. The map may be input by an external terminal or a server capable of exchanging information with the mobile robot 100a and 100b through wired or wireless communication, or may be constructed by the mobile robot 100a and 100b through self-learning.

[0126] On the map, positions of rooms within the cleaning area may be marked. In addition, the current position of the mobile robot 100a and 100b may be marked on the map, and the current position of the mobile robot 100a and 100b on the map may be updated during travel of the mobile robot 100a and 100b. The external terminal stores a map identical to a map stored in the storage 150.

[0127] The storage 150 may store cleaning history information. The cleaning history information may be generated each time cleaning is performed.

[0128] The map of a travel area stored in the storage 150 may be a navigation map used for travel during cleaning, a simultaneous localization and mapping (SLAM) map used for position recognition, a learning map used for learning cleaning by storing corresponding information upon collision with an object or the like, a global localization map used for global localization recognition, an object recognition map where information on a recognized object is recorded, etc.

[0129] Meanwhile, maps may be stored and managed in the storage 150 by purposes or may be classified exactly by purposes. For example, a plurality of information items may be stored in one map so as to be used for at least two purposes. For an instance, information on a recognized object may be recorded on a learning map so that the learning map may replace an object recognition map, and a global localization position is recognized using the SLAM map used for position recognition so that the SLAM map may replace or be used together with the global localization map.

[0130] The controller 140 may include a travel control module 141, a position recognition module 142, a map generation module 143, and an object recognition module 144.

[0131] Referring to FIGS. 3 to 7, the travel control module 141 serves to control travelling of the mobile robot 100, 100a, or 100b, and controls driving of the travel drive unit 160 according to a travel setting. In addition, the travel control module 141 may determine a travel path of the mobile robot 100, 100a, or 100b based on operation of the travel drive unit 160. For example, the travel control module 141 may determine the current or previous moving speed or distance travelled of the mobile robot 100 based on an RPM of the drive wheel 136, and may also determine the current or previous direction shifting process based on directions of rotation of each drive wheel 136(L) or 136(R). Based on travel information of the mobile robot 100, 100a, or 100b determined in this manner, the position of the mobile robot 100, 100a, or 100b on the map may be updated.

[0132] The map generation module 143 may generate a map of a travel area. The map generation module 143 may write a map by processing an image acquired by the image acquisition unit 120. That is, a cleaning map corresponding to a cleaning area may be written.

[0133] In addition, the map generation module 143 may enable global localization in association with a map by processing an image acquired by the image acquisition unit 120 at each position.

[0134] The position recognition module 142 may estimate and recognize the current position. The position recognition module 142 identifies the position in association with the map generation module 143 using image information of the image acquisition unit 120, thereby enabled to estimate and recognize the current position even when the position of the mobile robot 100, 100a, or 100b suddenly changes.

[0135] The mobile robot 100, 100a, or 100b may recognize the position using the position recognition module 142 during continuous travel, and the position recognition module 142 may learn a map and estimate the current position by using the map generation module 143 and the object recognition module 144 without the position recognition module 142.

[0136] While the mobile robot 100, 100a, or 100b travels, the image acquisition unit 120 acquires images of the surroundings of the mobile robot 100. Hereinafter, an image acquired by the image acquisition unit 120 is defined as an "acquisition image".

[0137] An acquisition image includes various features located at a ceiling, such as lighting devices, edges, corners, blobs, ridges, etc.

[0138] The map generation module 143 detects features from each acquisition image. In computer vision, there are a variety of well-known techniques for detecting features from an image. A variety of feature detectors suitable for such feature detection are well known. For example, there are Canny, Sobel, Harris&Stephens/Plessey, SUSAN, Shi&Tomasi, Level curve curvature, FAST, Laplacian of Gaussian, Difference of Gaussians, Determinant of Hessian, MSER, PCBR, and Grey-level blobs detectors.

[0139] The map generation module 143 calculates a descriptor based on each feature. For feature detection, the map generation module 143 may convert a feature into a descriptor using Scale Invariant Feature Transform (SIFT).

[0140] Meanwhile, the descriptor is defined as a group of individual features present in a specific space and may be expressed as an n-dimensional vector. For example, various features at a ceiling, such as edges, corners, blobs, ridges, etc. may be calculated into respective descriptors and stored in the storage 150.

[0141] Based on descriptor information acquired from an image of each position, at least one descriptor is classified by a predetermined subordinate classification rule into a plurality of groups, and descriptors included in the same group may be converted by a predetermined subordinate representation rule into subordinate representative descriptors. That is, a standardization process may be performed by designating a representative value for descriptors obtained from an individual image.

[0142] The SIFT enables detecting a feature invariant to a scale, rotation, change in brightness of a subject, and thus, it is possible to detect an invariant (i.e., rotation-invariant) feature of an area even when images of the area is captured by changing a position of the mobile robot 100. However, aspects of the present invention are not limited thereto, and Various other techniques (e.g., Histogram of Oriented Gradient (HOG), Haar feature, Fems, Local Binary Pattern (LBP), and Modified Census Transform (MCT)) can be applied.

[0143] The map generation module 143 may classify at least one descriptor of each acquisition image into a plurality groups based on descriptor information, obtained from an acquisition image of each position, by a predetermined subordinate classification rule, and may convert descriptors included in the same group into subordinate representative descriptors by a predetermined subordinate representation rule.

[0144] In another example, all descriptors obtained from acquisition images of a predetermined area, such as a room, may be classified into a plurality of groups by a predetermined subordinate classification rule, and descriptors included in the same group may be converted into subordinate representative descriptors by the predetermined subordinate representation rule.

[0145] Through the above procedure, the map generation module 143 may obtain a feature distribution for each position. The feature distribution of each position may be represented by a histogram or an n-dimensional vector. In another example, without using the predetermined subordinate classification rule and the predetermined subordinate representation rule, the map generation module 143 may estimate an unknown current position based on a descriptor calculated from each feature.

[0146] In addition, when the current position of the mobile robot 100, 100a, or 100b has become unknown due to position hopping, the map generation module may estimate the current position of the mobile robot 100 based on data such as a pre-stored descriptor and a subordinate representative descriptor.

[0147] The mobile robot 100, 100a, or 100b acquires an acquisition unit image at the unknown current position using the image acquisition unit 120. Various features located at a ceiling, such as lighting devices, edges, corners, blobs, and ridges, are found in the acquisition image.

[0148] The position recognition module 142 detects features from an acquisition image. Descriptions about various methods for detecting features from an image in computer vision and various feature detectors suitable for feature detection are the same as described above.

[0149] The position recognition module 142 calculates a recognition descriptor based on each recognition feature through a recognition descriptor calculating process. In this case, the recognition feature and the recognition descriptor are used to describe a process performed by the object recognition module 144, and to be distinguished from the terms describing the process performed by the map generation module 143. However, these are merely terms defined to describe characteristics of a world outside the mobile robot 100, 100a, or 100b.

[0150] For such feature detection, the position recognition module 142 may convert a recognition feature into a recognition descriptor using the SIFT technique. The recognition descriptor may be represented by an n-dimensional vector.

[0151] The SIFT is an image recognition technique by which easily distinguishable features, such as corners, are selected in an acquisition image and n-dimensional vectors are obtained, which are values of dimensions indicative of a degree of radicalness of change in each direction with respect to a distribution feature (a direction of brightness change and a degree of radicalness of the change) of gradient of pixels included in a predetermined area around each of the features.

[0152] Based on information on at least one recognition descriptor obtained from an acquisition image acquired at an unknown current position, the position recognition module 142 performs conversion by a predetermined subordinate conversion rule into information (a subordinate recognition feature distribution), which is comparable with position information (e.g., a subordinate feature distribution for each position) subject to comparison.

[0153] By the predetermined subordinate comparison rule, a feature distribution for each position may be compared with a corresponding recognition feature distribution to calculate a similarity therebetween. A similarity (probability) for the position corresponding to each position, and a position having the highest probability may be determined to be the current position.

[0154] As such, the controller 140 may generate a map of a travel area consisting of a plurality of areas, or recognize the current position of the main body 110 based on a pre-stored map.

[0155] When the map is generated, the controller 140 transmits the map to an external terminal through the communication unit 190. In addition, as described above, when a map is received from the external terminal, the controller 140 may store the map in the storage.

[0156] In addition, when the map is updated during traveling, the controller 140 may transmit update information to the external terminal so that the map stored in the external terminal becomes identical to the map stored in the mobile robot 100. As the identical map is stored in the external terminal and the mobile robot 100, the mobile robot 100 may clean a designated area in response to a cleaning command from a mobile terminal, and the current position of the mobile robot may be displayed in the external terminal.

[0157] In this case, the map divides a cleaning area into a plurality of areas, includes a connection channel connecting the plurality of areas, and includes information on an object in an area.

[0158] When a cleaning command is received, the controller 140 determines whether a position on the map and the current position of the mobile robot match each other. The cleaning command may be received from a remote controller, an operator, or the external terminal.

[0159] When the current position does not match with a position marked on the map, or when it is not possible to confirm the current position, the controller 140 recognizes the current position, recovers the current position of the mobile robot 100 and then controls the travel drive unit 160 to move to a designated area based on the current position.

[0160] When the current position does not match with a position marked on the map or when it is not possible to confirm the current position, the position recognition module 142 analyzes an acquisition image received from the image acquisition unit 120 and estimate the current position based on the map. In addition, the object recognition module 144 or the map generation module 143 may recognize the current position as described above.

[0161] After the current position of the mobile robot 100, 100a, or 100b is recovered by recognizing the position, the travel control module 141 may calculate a travel path from the current position to a designated area and controls the travel drive unit 160 to move to the designated area.

[0162] When a cleaning pattern information is received from a serer, the travel control module 141 may divide the entire travel area into a plurality of areas based on the received cleaning pattern information and set one or more areas as a designated area.

[0163] In addition, the travel control module 141 may calculate a travel path based on the received cleaning pattern information, and perform cleaning while traveling along the travel path.

[0164] When cleaning of a set designated area is completed, the controller 140 may store a cleaning record in the storage 150.

[0165] In addition, through the communication unit 190, the controller 140 may transmit an operation state or a cleaning state of the mobile robot 100 at a predetermined cycle.

[0166] Accordingly, based on the received data, the external terminal displays the position of the mobile robot together with a map on a screen of an application in execution, and outputs information on a cleaning state.

[0167] The mobile robot 100, 100a, or 100b moves in one direction until detecting an object or a wall, and, when the object recognition module 144 recognizes an object, the mobile robot 100, 100a, or 100b may determine a travel pattern, such as forward travel and rotation, depending on an attribute of the recognized object.

[0168] For example, if the attribute of the recognized object implies an object that the mobile robot is able to climb, the mobile robot 100, 100a, or 100b may keep traveling straight forward. Alternatively, if the attribute of the recognized object implies an object that the mobile robot is not able to climb, the mobile robot 100, 100a, or 100b may travel in a zigzag pattern in a manner in which the mobile robot 100, 100a, or 100b rotates, moves a predetermined distance, and then moves a distance in a direction opposite to the initial direction of travel until detecting another object.

[0169] The mobile robot 100, 100a, or 100b may perform object recognition and avoidance based on machine learning.

[0170] The controller 140 may include the object recognition module 144 for recognizing an object, which is pre-learned through machine learning, in an input image, and the travel control module 141 for controlling driving of the travel drive unit 160 based on an attribute of the recognized object.

[0171] The mobile robot 100, 100a, or 100b may include the object recognition module 144 that has learned an attribute of an object through machine learning.

[0172] The machine learning refers to a technique which enables a computer to learn without a logic instructed by a user so as to solves a problem on its own.

[0173] Deep learning is an artificial intelligence technology that trains a computer to learn human thinking based on Artificial Neural Networks (ANN) for constructing artificial intelligence so that the computer is capable of learning on its own without a user's instruction.

[0174] The ANN may be implemented in a software form or a hardware form, such as a chip and the like.

[0175] The object recognition module 144 may include an ANN in a software form or a hardware form, in which attributes of objects is learned.

[0176] For example, the object recognition module 144 may include a Depp Neural Network (DNN) trained through deep learning, such as Convolutional Neural Network (CNN), Recurrent Neural Network (RNN), Deep Belief Network (DBN), etc.

[0177] Deep learning will be described in more detail with reference to FIGS. 9 to 12.

[0178] The object recognition module 144 may determine an attribute of an object in input image data based on weights between nodes included in the DNN.

[0179] Meanwhile, when the sensor unit 170 senses an object while the mobile robot 100, 100a, or 100b moves, the controller 140 may perform control to extract a part from an image acquired by the image acquisition unit 120 in correspondence to a direction in which the object sensed by the sensor unit 170 is present.

[0180] The image acquisition unit 120, especially the front camera 120a, may acquire an image within a predetermined range of angles in a moving direction of the mobile robot 100, 100a, or 100b.

[0181] The controller 140 may distinguish an attribute of the object present in the moving direction, not by using the whole image acquired by the image acquisition unit, especially the front camera 120a, but by using only a part of the image.

[0182] Referring to FIG. 6, the controller 140 may further include an image processing module 145 for extracting a part of an image acquired by the image acquisition unit 120 in correspondence to a direction in which an object sensed by the sensor unit 170 is present.

[0183] Alternatively, referring to FIG. 7, the mobile robot 100b may further include an additional image processing unit 125 for extracting a part of an image acquired by the image acquisition unit 120 in correspondence to a direction in which an object sensed by the sensor unit 170 is present.

[0184] The mobile robot 100a in FIG. 6 and the mobile robot 100b in FIG. 7 are identical to each other, except for the image processing module 145 and the image processing unit 125.

[0185] Alternatively, in some implementations, the image acquisition unit 120 may extract a part of the image on its own, unlike the examples of FIGS. 6 and 7.

[0186] The object recognition module 144 having trained through machine learning has a higher recognition rate if a learned object occupies a larger portion of input image data.

[0187] Accordingly, the present invention extracts a different area of an image acquired by the image acquisition unit 120 depending on a direction in which an object sensed by the sensor unit 170 such as an ultrasonic sensor, and use the extracted part of the image as data for recognition, thereby enhance a recognition rate.

[0188] The object recognition module 144 may recognize an object in the extracted part of the image, based on data that is pre-learned through machine learning.

[0189] In addition, the travel control module 141 may control driving of the travel drive unit 160 based on an attribute of the recognized object.

[0190] Meanwhile, when the object is sensed from a forward-right direction of the front surface of the main body, the controller 140 may perform control to extract a right lower area of the image acquired by the image acquisition unit; when the object is sensed from a forward-left direction of the main body, the controller 140 may perform control to extract a left lower area of the image acquired by the image acquisition unit; and when the object is sensed from a forward direction of the main body, the controller 140 may perform control to extract a central lower area of the image acquired by the image acquisition unit.

[0191] In addition, the controller 140 may perform control to shift an extraction target area of an image acquired by the image acquisition unit to correspond to a direction in which the sensed object is present, and then extract the extraction target area.

[0192] A feature of cropping a part from an image acquired by the image acquisition unit 120 and extracting the cropped part will be described in more detail with reference to FIGS. 15 to 21.

[0193] In addition, when the sensor unit 170 senses an object while the mobile robot 100, 100a, or 100b moves, the controller 140 may perform control to select, based on a moving direction and a moving speed of the main body 110, an image acquired at a specific point in time earlier than an object sensing time by the sensor unit 170 from among a plurality of continuous images acquired by the image acquisition unit 120.

[0194] In the case where the image acquisition unit 120 acquires an image using an object sensing time of the sensor unit 170 as a trigger signal, an object may not be included in the acquired image or may be captured in a very small size because the mobile robot keeps moving.

[0195] Thus, in one embodiment of the present invention, it is possible to select, based on a moving direction and a moving speed of the main body 110, an image acquired at a specific point in time earlier than an object sensing time of the sensor unit 170 from among a plurality of continuous images acquired by the image acquisition unit 120 and use the selected as data for object recognition.

[0196] Meanwhile, the object recognition module 144 may recognize an attribute of an object included in the selected image acquired at the predetermined point in time, based on data that is pre-learned through machine learning.

[0197] Meanwhile, input data for distinguishing an attribute of an object, and data for training the DNN may be stored in the storage 150.

[0198] An original image acquired by the image acquisition unit 120, and extracted images of a predetermined area may be stored in the storage 150.

[0199] In addition, in some implementations, weights and biases of the DNN may be stored in the storage 150.

[0200] Alternatively, in some implementations, weights and biases of the DNN may be stored in an embedded memory of the object recognition module 144.

[0201] Meanwhile, whenever a part of an image acquired by the image acquisition unit 120 is extracted, the object recognition module 144 may perform a learning process using the extracted part of the image as training data, or, after a predetermined number of images is extracted, the object recognition module 144 may perform a learning process.

[0202] That is, whenever an object is recognized, the object recognition module 144 may add a recognition result to update a DNN architecture, such as a weight, or, when a predetermined number of training data is secured, the object recognition module 144 may perform a learning process using the secured data sets as training data so as to update an DNN architecture.

[0203] Alternatively, the mobile robot 100, 100a, or 100b transmits the extracted part of the image to a predetermined server through the communication unit 190, and receive machine learning-related data from the predetermined server.

[0204] In this case, the mobile robot 100, 100a, or 100b may update the object recognition module 141 based on the machine learning-related data received from the predetermined server.

[0205] FIG. 8 is an exemplary schematic internal block diagram of a server according to an embodiment of the present invention.

[0206] Referring to FIG. 8, a server 70 may include a communication unit 820, a storage 830, a learning module 840, and a processor 810.

[0207] The processor 810 may control overall operations of the server 70.

[0208] Meanwhile, the server 70 may be a server operated by a manufacturer of a home appliance such as the mobile robot 100, 100a, or 100b, a server operated by a service provider, or a kind of Cloud server.

[0209] The communication unit 820 may receive a diversity of data, such as state information, operation information, controlled information, etc., from a mobile terminal, a home appliance such as the mobile robot 100, 100a, or 100b, etc., a gate way, or the like.

[0210] In addition, the communication unit 820 may transmit data responsive to the received diversity of data to a mobile terminal, a home appliance such as the mobile robot 100, 100a, 100b, etc., a gate way or the like.

[0211] To this end, the communication unit 820 may include one or more communication modules such as an Internet module, a communication module, etc.

[0212] The storage 830 may store received information and data necessary to generate result information responsive to the received information.

[0213] In addition, the storage 830 may store data used for learning machine, result data, etc.

[0214] The learning module 840 may serve as a learning machine of a home appliance such as the mobile robot 100, 100a, or 100b, etc.

[0215] The learning module 840 may include an artificial network, for example, a Deep Neural Network (DNN) such as a Convolutional Neural Network (CNN), a Recurrent Neural Network (RNN), a Deep Belief Network (DBN), etc., and may train the DNN.

[0216] As a learning scheme for the learning module 840, both unsupervised learning and supervised learning may be used.

[0217] Meanwhile, according to a setting, the controller 810 may perform control to update an artificial network architecture of a home appliance such as the mobile robot 100, 100a, or 100b, into a learned artificial neural network architecture.

[0218] FIGS. 9 to 12 are diagrams for explanation of deep learning.

[0219] Deep learning is a kind of machine learning, and goes deep inside data in multiple stages.

[0220] The deep learning may represent a set of machine learning algorithms that extract more critical data from a plurality of data sets on each layer than on the previous layer.

[0221] Deep learning architectures may include an ANN and may be, for example, composed of a DNN such as a Convolutional Neural Network (CNN_, a Recurrent Neural Network (RNN), a Deep Belief Network (DBN), etc.

[0222] Referring to FIG. 9, the ANN may include an input layer, a hidden layer, and an output layer. Each layer includes a plurality of nodes, and each layer is connected to a subsequent layer. Nodes between adjacent layers may be connected to each other with having weights.

[0223] Referring to FIG. 10, a computer (machine) constructs a feature map by discovering a predetermined pattern from input data 1010. The computer (machine) may recognize an object by extracting a low level feature 1020 through an intermediate level feature 1030 to an upper level feature 1040, and output a corresponding result 1050.

[0224] The ANN may abstract a higher level feature on a further subsequent layer.

[0225] Referring to FIGS. 9 and 10, each node may operate based on an activation model, and an output value corresponding to an input value may be determined according to the activation model.

[0226] An output value of a random node, for example, the low level feature 1020, may be input to a subsequent layer connected to the corresponding node, for example, a node of the intermediate level feature 1030. The node of the subsequent layer, for example, the node of the intermediate level feature 1030, may receive values output from a plurality of nodes of the lower level feature 1020.

[0227] At this time, a value input to each node may be an output value of a previous layer with a weight applied. A weight may refer to strength of connection between nodes.

[0228] In addition, a deep learning process may be considered a process for determining an appropriate weight.

[0229] Meanwhile, an output value of a random node, for example, the intermediate level feature 1030, may be input to a node of a subsequent layer connected to the corresponding node, for example, a node of the higher level feature 1040. The node of the subsequent layer, for example, the node of the higher level feature 1040, may receive values output from a plurality of nodes of the intermediate level feature 1030.

[0230] The ANN may extract feature information corresponding to each level using a trained layer corresponding to each level. The ANN may perform abstraction sequentially such that a predetermined object may be recognized using feature information of the highest layer.

[0231] For example, face recognition with deep learning is as follows: a computer may classify bright pixels and dark pixels by degrees of brightness from an input image, distinguish simple forms such as boundaries and edges, and then distinguish more complex forms and objects. Lastly, the computer may recognize a form that defines a human face.

[0232] A deep learning architecture according to the present invention may employ a variety of well-known architectures. For example, the deep learning architecture may be a CNN, RNN, DBN, etc.

[0233] The RNN is widely used in processing natural languages and efficient in processing time-series data, which changes over time, and thus, the RNN may construct an artificial neural network architecture by piling up layers each time.

[0234] The DBN is a deep learning architecture that is constructed by piling up multiple layers of Restricted Boltzman Machine (RBM) which is a deep learning technique. If a predetermined number of layers is constructed by repeating RBM learning, a DBN having the predetermined number of layers may be constructed.

[0235] The CNN is an architecture widely used especially in object recognition and the CNN will be described with reference to FIGS. 11 and 12.

[0236] The CNN is a model that imitates human brain functions based on an assumption that a human extracts basic features of an object, performs complex calculation on the extracted basic features, and recognizes the object based on a result of the calculation.

[0237] FIG. 11 is a diagram of a CNN architecture.

[0238] A CNN may also include an input layer, a hidden layer, and an output layer.

[0239] A predetermined image is input to the input layer.

[0240] Referring to FIG. 11, the hidden layer may be composed of a plurality of layers and include a convolutional layer and a sub-sampling layer.

[0241] In the CNN, a pooling or non-linear activation function is used to add non-linear features and various filters so as to basically extract features of an image through convolution computation.

[0242] Convolution is mainly used in filter computation in image processing fields and used to implement a filter for extracting a feature from an image.

[0243] For example, as shown in FIG. 12, if convolution computation is repeatedly performed with respect to the entire image by moving a 3.times.3 window, an appropriate result may be obtained according to a weight of the window.

[0244] Referring to (a) of FIG. 12, if convolution computation is performed with respect to a predetermined area 1210 using a 3.times.3 window, a result 1201 is outcome.

[0245] Referring to (b) of FIG. 12, if computation is performed again with respect to an area 1220 by moving the 3.times.3 window to the right, a predetermined result 1202 is outcome.

[0246] That is, as shown in (c) of FIG. 12, if computation is performed with respect to an entire image by moving a predetermined window, a final result may be obtained.

[0247] A convolution layer may be used to perform convolution filtering for filtering information extracted from a previous layer with a filter of a predetermined size (for example, a 3.times.3 window exemplified in FIG. 12).

[0248] The convolutional layer performs convolutional computation on input image data 1100 and 1102 with a convolutional filter, and generates feature maps 1101 and 1103 representing features of an input image 1100.

[0249] As a result of convolution filtering, filtered images in the same number of filters included in the convolution layer may be generated. The convolution layer may be composed of nodes included in the filtered images.

[0250] In addition, a sub-sampling layer paired with a convolution layer may include feature maps in the same number as feature maps of the paired convolutional layer.

[0251] The sub-sampling layer reduces dimension of the feature maps 1101 and 1103 through sampling or pooling.

[0252] The output layer recognizes the input image 1100 by combining various features represented in a feature map 1104.

[0253] An object recognition module of a mobile robot according to the present invention may employ the aforementioned various deep learning architectures. For example, a CNN widely used in recognizing an object in an image may be employed, but aspects of the present invention are not limited thereto.

[0254] Meanwhile, training of an ANN may be performed by adjusting a weight of a connection line between nodes so that a desired output is output in response to a given input. In addition, the ANN may constantly update the weight due to the training. In addition, back propagation or the like may be used to train the ANN.

[0255] FIGS. 13 and 14 are flowcharts illustrating a method for controlling a mobile robot according to an embodiment of the present invention.

[0256] Referring to FIGS. 2 to 7 and 13, the mobile robot 100, 100a, or 100b may perform cleaning while moving according to a command or a setting (S1310).

[0257] The sensor unit 170 includes an obstacle detection sensor 131 and may sense a forward object.