Method For Automatically Mapping Light Elements In An Assembly Of Light Structures

Green; Alexander ; et al.

U.S. patent application number 16/267341 was filed with the patent office on 2019-06-06 for method for automatically mapping light elements in an assembly of light structures. The applicant listed for this patent is Symmetric Labs, Inc.. Invention is credited to Kyle Fleming, Alexander Green.

| Application Number | 20190174603 16/267341 |

| Document ID | / |

| Family ID | 59680302 |

| Filed Date | 2019-06-06 |

| United States Patent Application | 20190174603 |

| Kind Code | A1 |

| Green; Alexander ; et al. | June 6, 2019 |

METHOD FOR AUTOMATICALLY MAPPING LIGHT ELEMENTS IN AN ASSEMBLY OF LIGHT STRUCTURES

Abstract

One variation of a method for automatically mapping light elements in light structures arranged in an assembly includes: defining a sequence of test frames--each specifying activation of a unique subset of light elements in the assembly--executable by the set of light structures; serving the sequence of test frames to the assembly for execution; receiving photographic test images of the assembly, each photographic test image recorded during execution of one test frame by the assembly; for each photographic test image, identifying a location of a particular light element based on a local change in light level represented in the photographic test image, the particular light element activated by the set of light structures according to a test frame during recordation of the photographic test image; and aggregating locations of light elements identified in photographic test images into a virtual map representing positions of light elements within the assembly.

| Inventors: | Green; Alexander; (San Francisco, CA) ; Fleming; Kyle; (San Francisco, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 59680302 | ||||||||||

| Appl. No.: | 16/267341 | ||||||||||

| Filed: | February 4, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15909688 | Mar 1, 2018 | 10237957 | ||

| 16267341 | ||||

| 15445911 | Feb 28, 2017 | 9942970 | ||

| 15909688 | ||||

| 62424523 | Nov 20, 2016 | |||

| 62301581 | Feb 29, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H05B 45/22 20200101; G06T 2207/20224 20130101; H05B 47/125 20200101; H04N 7/18 20130101; G06T 2207/10016 20130101; G06T 7/74 20170101; H05B 47/155 20200101 |

| International Class: | H05B 37/02 20060101 H05B037/02; H05B 33/08 20060101 H05B033/08; G06T 7/73 20060101 G06T007/73; H04N 7/18 20060101 H04N007/18 |

Claims

1. A method for automatically mapping light elements in a set of light structures arranged in an assembly, the method comprising: serving a sequence of test frames to the assembly for execution by the set of light structures, each test frame in the sequence of frames executable by the set of light structures and specifying activation of a subset of light elements within the set of light structures; receiving a set of optical test images of the assembly, each optical test image in the set of optical test images recorded by an imaging system during execution of one test frame, in the sequence of test frames, by the set of light structures; for each optical test image in the set of optical test images, detecting a location of a particular light element within a field of view of the imaging system based on a local change in light level represented in the optical test image, the particular light element activated within the set of light structures according to a test frame in the sequence of test frames executed by the assembly during recordation of the optical test image; and aggregating locations of light elements detected in the set of optical test images into a virtual map representing positions of light elements within the assembly.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation application of U.S. application Ser. No. 15/909,688, filed on 1 Mar. 2018, which is a continuation application of U.S. application Ser. No. 15/445,911, filed on 28 Feb. 2017, which claims priority to U.S. Provisional Application No. 62/301,581, filed on 29 Feb. 2016, and to U.S. Provisional Application No. 62/424,523, filed on 20 Nov. 2016, all of which are incorporated in their entireties by this reference.

TECHNICAL FIELD

[0002] This invention relates generally to the field of lighting systems and more specifically to a new and useful method for automatically mapping light elements in an assembly of light structures in the field of lighting systems.

BRIEF DESCRIPTION OF THE FIGURES

[0003] FIG. 1 is a flowchart representation of a method; and

[0004] FIG. 2 is a schematic representation of a system;

[0005] FIGS. 3A, 3B, 3C, and 3D are schematic representations of one variation of the system;

[0006] FIG. 4 is a flowchart representation of one variation of the method;

[0007] FIG. 5 is a flowchart representation of one variation of the method;

[0008] FIG. 6 is a flowchart representation of one variation of the method; and

[0009] FIG. 7 is a schematic representation of one variation of the method.

DESCRIPTION OF THE EMBODIMENTS

[0010] The following description of embodiments of the invention is not intended to limit the invention to these embodiments but rather to enable a person skilled in the art to make and use this invention. Variations, configurations, implementations, example implementations, and examples described herein are optional and are not exclusive to the variations, configurations, implementations, example implementations, and examples they describe. The invention described herein can include any and all permutations of these variations, configurations, implementations, example implementations, and examples.

1. Method

[0011] As shown in FIG. 1, a method S100 for automatically mapping light elements in an assembly of light structures includes: retrieving addresses from light structures in the assembly in Block S110; and, based on addresses retrieved from light structures in the assembly in Block S120, generating a sequence of test frames including a reset frame specifying activation of multiple light elements in light structures across the assembly, a set of synchronization frames succeeding the reset frame and specifying activation of light elements in light structures across the assembly, a set of pairs of blank frames interposed between adjacent synchronization frames and specifying deactivation of light elements in light structures across the assembly, and a set of test frames interposed between adjacent blank frame pairs, each test frame in the set of test frames specifying activation of a unique subset of light elements in light structures within the assembly. The method S100 also includes, during a test period: transmitting the sequence of test frames to light structures in the assembly in Block S130; and at an imaging system, capturing a sequence of images of the assembly in Block S140. Furthermore, the method S100 includes: aligning the sequence of test frames to the sequence of images based on the reset frames, the set of synchronization frames, and values of pixels in images in the sequence of images in Block S150; for a particular test frame in the set of test frames, determining a position of a light element, addressed in the particular test frame, within a particular image, in the sequence of images, corresponding to the particular test frame based on values of pixels in the particular image in Block S160; and aggregating positions of light elements determined in images in the sequence of images into a virtual 3D map of positions and addresses of light elements within the assembly in Block S170.

[0012] As shown in FIG. 4, one variation of the method S100 includes: reading an address from each light structure in the set of light structures in Block S110; defining a sequence of test frames executable by the set of light structures in Block S120, each test frame in the sequence of frames specifying activation of a unique subset of light elements across the set of light structures; serving the sequence of test frames to the assembly for execution by the set of light structures in Block S130; receiving a set of photographic test images of the assembly in Block S140, each photographic test image in the set of photographic test images recorded by an imaging system during execution of one test frame, in the sequence of test frames, by the set of light structures; for each photographic test image in the set of photographic test images, identifying a location of a particular light element within a field of view of the imaging system based on a local change in light level represented in the photographic test image in Block S160, the particular light element activated by the set of light structures according to a test frame in the sequence of test frames during recordation of the photographic test image; and aggregating locations of light elements identified in the set of photographic test images into a virtual map representing positions of light elements within the assembly in Block S170.

2. Applications

[0013] Generally, the method S100 can be executed by a computer system in conjunction with an assembly of one or more light structures including one or more light elements in order to automatically determine a position of each light element in the assembly in real space and to construct a 3D virtual map of the assembly. In particular, a set of light structures can be assembled into a unique assembly, such as on a theater or performing arts stage and connected to the computer system; the computer system can then generate a sequence of test frames in which each test frames specified activation of a unique subset light elements (e.g., one unique light element) in the assembly, sequentially serve the sequence of test frames to the assembly for execution while a camera in a fixed position records photographic test images of the assembly, identifies positions of light elements in the field of view of the camera based on differences in lighted areas between photographic test images or between photographic test images and a baseline image, and compiles these positions of light elements in the field of view of the camera into a virtual 3D representation of the assembly, including relative positions and subaddresses of each light element.

[0014] In the foregoing example, during setup the computer system can read addresses from each light structure in the assembly in Block S110, retrieve a virtual model of each light structure based on its address in Block S170, and align a virtual model of a light structure to light elements--in the same light structure--identified in photographic test images recorded by the camera to a location in the visual model in the virtual map in Block S160. Alternatively, the computer system can serve two sequences of test frames to the assembly, including a first sequence while the camera is arranged in a first location, and a second sequence while the camera is arranged in a second location offset from the first location; the computer system can then compile detected positions of light elements in two separate sets of photographic test images and a known distance between the first and second locations into a 3D virtual map of the assembly.

[0015] With a virtual map of the assembly thus constructed, the computer system can project a new or preexisting dynamic virtual light pattern onto the virtual 3D map of the assembly and generate a sequence of replay frames that, when executed by the assembly, recreate the dynamic virtual light pattern in real space. For example, a lighting crew can assemble a set of dozens or hundreds of one-meter-cubic light structures, as shown in FIG. 2, on a stage according to a custom assembly geometry without regard to the orientation of these light structures and then connect these light structures to a router without regard to order of the light structures. Once the assembly is complete and connected to the computer system via the router, the computer system can execute Blocks of the method S100 to automatically construct a virtual map of the assembly. In this example, a lighting engineer can then select a predefined virtual light pattern, customize an existing virtual light pattern, code a new virtual light pattern, or string together multiple virtual light patterns into a composite virtual light pattern through a user interface executing on the computer system. Once a virtual light pattern is selected, the computer system can scale, translate, and rotate the pattern in three dimensions in order to locate the virtual light pattern on the virtual map and generate a sequence of replay frames, wherein each replay frame assigns a color value to each light element in the assembly according to a 3D color pattern defined by the light pattern at one instance within a duration of the predefined dynamic light pattern.

[0016] The computer system can therefore execute Blocks of the method S100 to automatically generate a virtual map of a custom assembly of light structures, to automatically project a virtual dynamic 3D light pattern onto the virtual map, and to automatically define a sequence of replay frames that--when executed on the assembly--recreate the virtual dynamic 3D light pattern in real space without necessitating (substantial) consideration of light structure addresses or orientations during construction of the assembly or manual configuration of the virtual map within the user interface.

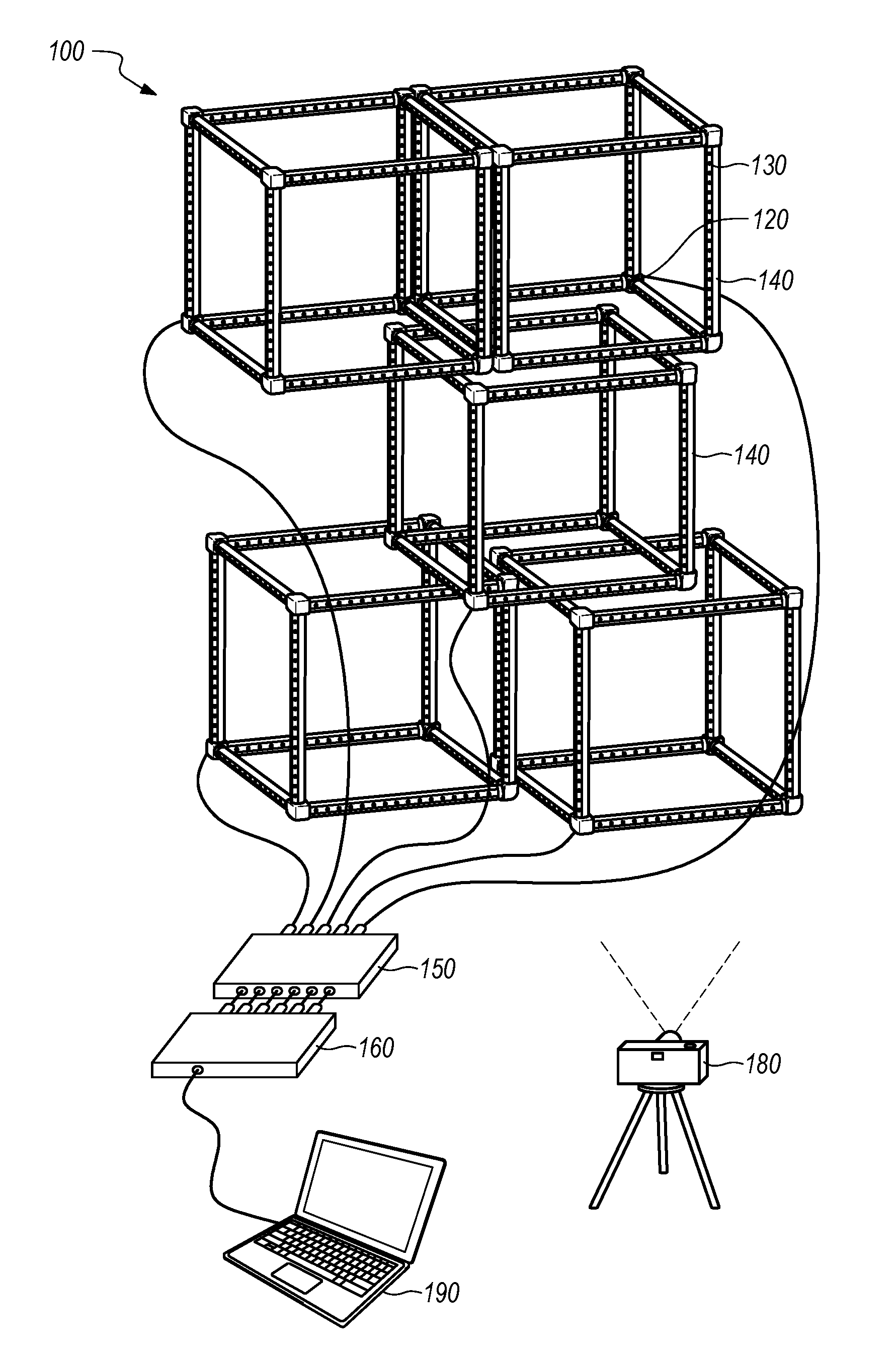

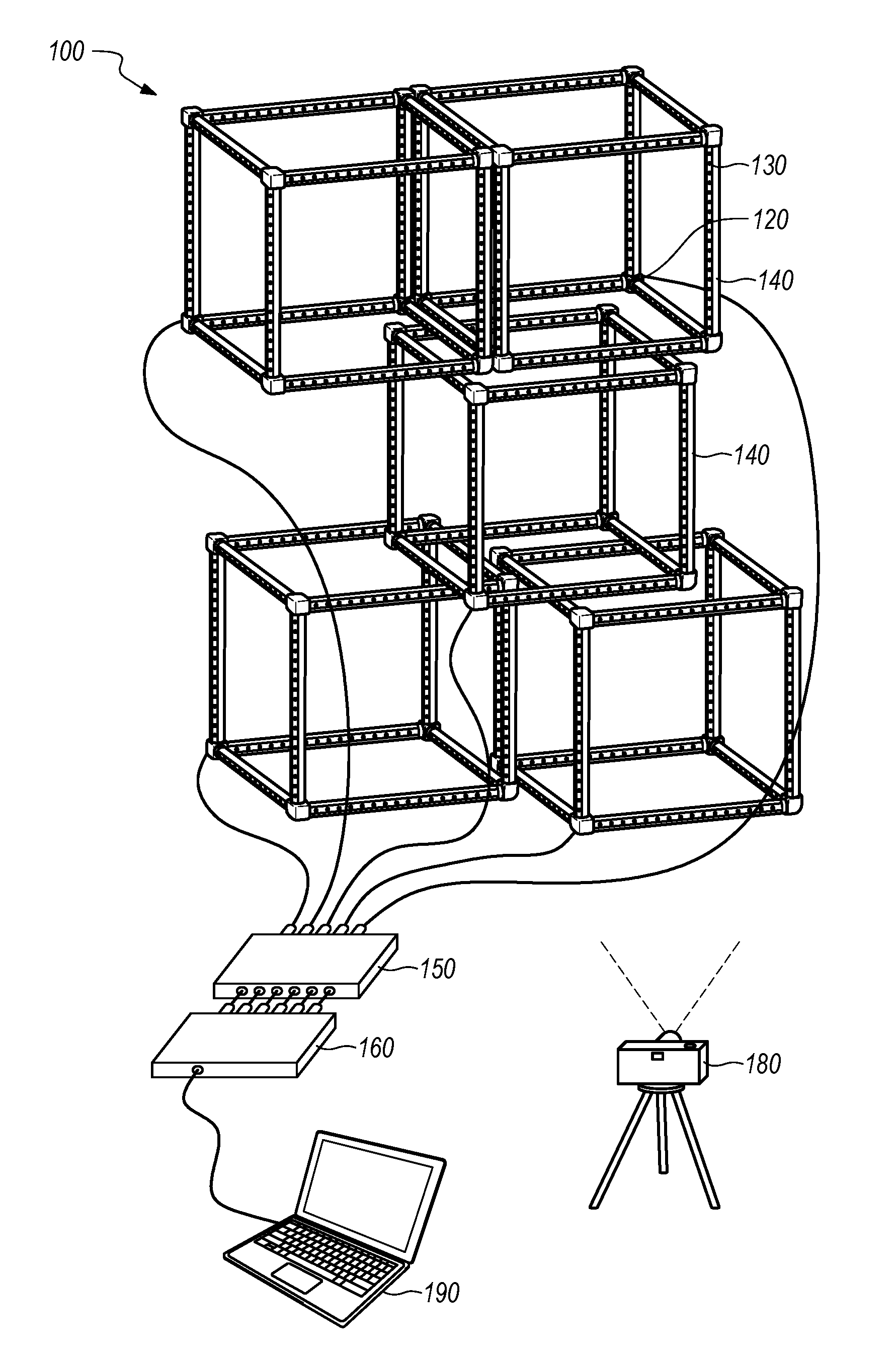

[0017] As shown in FIG. 2, the method S100 can be implemented by a system 100 including one or more light structures 110 forming an assembly of light structures, a computer system 190 (e.g., a remote controller), a power supply 150, a router 160, and/or an imaging system 180 (such as a camera), etc.

3. Light Structure

[0018] In one implementation, a light structure 110 defines an open cubic structure (a "light cube") including: twelve linear beams 112 that define the twelve edges of the light cube; eight corner elements 120 that each receive three perpendicular linear beams 112 to define the eight corners of the light cube; and a single chain of light elements 130 (e.g., 180 LEDs) arranged along each linear beam in the light cube, as shown in FIGS. 2, 3B, and 3C.

3.1 Light Element

[0019] In this implementation, each light element 130 can include one or more light emitters, as shown in FIGS. 3B and 3C. For example, each light element 130 can include a discrete red emitter, a discrete green emitter, and a discrete blue emitter packaged in a single light element 130 housing. In this example, each red, green, and blue emitter can be independently powered (e.g., from 0% power to 100% in 256 steps) in order to achieve a wide range of color outputs from the light element 130 (e.g., .about.16.8M colors). Furthermore, each light element 130 can be independently addressable and can be assigned a color value independent of other light elements in the chain. As in the foregoing example, during a first frame (e.g., a frame 0.2-second in duration), a light element 130 in the chain can be assigned a color value [0, 0, 0], or "OFF." In this example, during a second, subsequent frame, the light element 130 can be assigned a color value [255, 255, 255], or "white" at full (gamma-corrected) brightness. Furthermore, in this example, during a third frame, the light element 130 can be assigned a color value [0, 255, 255], or "cyan" at full brightness. During a fourth frame, the light element 130 can be assigned a color value [0, 127, 127], or "cyan" at 50% (gamma-corrected) brightness.

3.2 Local Controller

[0020] In this implementation, each light element 130 can include a local controller that: receives a string (or array) of color values for light elements in the chain from a preceding light element 130 (or, for the first light element 130 in the chain, from the control module 140 described below); strips its assigned color value from the string (or array) of color values; passes the remaining portion of the string (or array) of color values to a subsequent light element 130; and implements its assigned color value, such as immediately or in response to a trigger (e.g., a clock output), for each frame during operation of the light structure 110. For example, the local controller can set a duty cycle for each color emitter in its corresponding light element 130, such as from 0% to 100% duty in 256 increments, based on color values received from the control module 140 in order to achieve a color output from the light element 130 as specified by the control module 140 for the current frame. For a subsequent frame, the control module 140 can pass a new array of color values to the chain of light elements, and each light element 130 can repeat the foregoing methods and techniques to output colored light according to a color value specified by the control module 140 for the subsequent frame. In a similar implementation, the light structure 110 includes multiple discrete chains of light elements, wherein each chain and/or each light element 130 in each chain is independently addressable with a color value. Each chain and/or each light element 130 in each chain can thus implement the foregoing methods and techniques to output colored light according to a color value specified by the control module 140.

[0021] In one implementation, the computer system 190 described below generates one array of color values per light structure 110 per replay frame, wherein each array includes one color value per light element 130 in its corresponding light structure 110. In this implementation, each color value represents both an hue and a brightness of light output by the corresponding light element 130 during execution of the replay frame. For example, for a light structure 110 that includes a chain of 180 light elements connected in series and for which each light element 130 contains three color elements (e.g., a red (R), a green (G), and a blue (B) color elements), the computer system 190 can output one array in the form of [R.sub.0, G.sub.0, B.sub.0, R.sub.1, G.sub.1, B.sub.1, R.sub.2, G.sub.2, B.sub.2, R.sub.3, G.sub.3, B.sub.3, . . . R.sub.178, G.sub.178, B.sub.178, R.sub.179, G.sub.179, B.sub.179] to the light structure 110 per replay frame. In this implementation, when a local controller of a first light element 130 in the chain receives a new array from the computer system 190, the local controller can: strip the first three values from the array; set a brightness of the red component of the light element 130 according to the first stripped value; set a brightness of the green component of the light element 130 according to the second stripped value; set a brightness of the blue component of the light element 130 according to the third stripped value; and pass the remainder of the array to a next light element 130 in the chain within the light structure 110. Upon receipt of the revised array, a local controller of a second light element 130 in the chain can implement similar methods and techniques to update RGB components in its light element 130, to again revise the array, and to pass the revised array to a next light element 130 in the chain; etc. until the array reaches and is implemented by the final light element 130 in the chain.

[0022] Alternatively, the first local controller can read a color value--from the array--at array positions predefined for the first light element 130, implement this color value, and pass the complete array to the next light element 130 in the chain.

[0023] Yet alternatively, the computer system 190 described below can generate a set of three arrays of color values (e.g., red, green, and blue arrays) per light structure 110 per replay frame, wherein each set of arrays includes one color value in one color channel (e.g., red, green, or blue) per light element 130 in its corresponding light structure 110. In this implementation, each color value represents a brightness of one component of a corresponding light element 130 for the replay frame. Upon receipt of a set of arrays from the computer system 190, local controllers at light elements in a light structure 110 can implement methods and techniques described above to read color values from this set of arrays and to update components of light elements in the light structure 110 according to these color values.

[0024] The computer system 190 and chains of light elements and local controllers across an assembly of light structures can repeat this process for a sequence of replay frames--such as at a preset frame rate (or "refresh rate," such as 20 Hz, 200 Hz, or 400 Hz)--during playback of a lighting pattern, as described below.

3.3 Control Module

[0025] The light structure 110 also includes a control module 140 connected to the chain of light elements and configured to interface with the computer system 190 as shown in FIGS. 3B and 3C. In one implementation, the control module 140 is preloaded with a unique ID. In one example, the unique ID defines a MAC address (or IP address) that includes: a pseudorandomly assigned or serialized UUID segment; and additional data, such as a light element 130 configuration (e.g., a number of light elements, an offset distance between light elements), a power limit of the light structure 110 (e.g., 25 W, 100 W), a light structure 110 configuration (e.g., point light source, cubic light structure 110, tetrahedral light structure 110), and/or a make or model of the light structure 110; etc. The computer system 190 or router 160 can thus read these data from the control module 140 directly. Alternatively, the control module 140 can be loaded with a pseudorandomly assigned or serialized MAC address, and any of the foregoing data can be stored in a remote database and linked to the MAC address of the light structure 110 via a domain name system (DNS).

3.4 Buffer

[0026] In one implementation, a control module 140 within a light structure 110 includes a buffer in local memory and writes multiple arrays received from the computer system 190--via the router 160--to this buffer. During operation, the control module 140 can push an oldest array in the buffer to the first light element 130 in the chain at a regular interval, such as at a preset frame rate (or "refresh rate", such as 20 Hz, 200 Hz, or 400 Hz) and then delete this last array from the buffer once distributed to the chain of light elements. Furthermore, once the buffer is filled, the control module 140 can delete an oldest array from the buffer upon receipt of a new array from the computer system 190 in order to maintain synchronicity between light structures in one assembly of light structures to within a maximum time spanned by the buffer.

[0027] In this implementation, the control module 140 can implement a buffer of size based on (e.g., directly proportional to) the frame rate implemented by the system. For example: for a frame rate of 20 fps (e.g., a refresh rate of 20 Hz), the control module 140 can implement a buffer configured to store five arrays; for a frame rate of 100 fps (e.g., a refresh rate of 100 Hz), the control module 140 can implement a buffer configured to store ten arrays; and, for a frame rate of 400 fps (e.g., a refresh rate of 400 Hz), the control module 140 can implement a buffer configured to store fifty arrays.

3.5 Communication Port

[0028] The light structure 110 also includes a communication port and receptacle (e.g., an Ethernet port) that receives power and control signals from the computer system 190, such as in the form of an array of color values for each light element 130 in the light structure 110. The control module 140 can also transmit its assigned MAC address (or IP address or other locally-stored ID), confirmation of receipt of an array (or array) of color values, confirmation of implementation of an array of color values across light elements in the light structure 110, and/or any other data to the computer system 190 (e.g., via the router 160) over the communication port.

3.6 Light Structure Assembly

[0029] Multiple light structures can be constructed into an assembly of light structures. For example, multiple light structures can be mounted (e.g., clamped) to a truss and/or stacked and mounted to other light structures.

[0030] The method S100 is described herein as interfacing with and automatically generating a virtual map of one or more light cubes. However, the method S100 can interface with and generate a virtual map of any other one-, two-, or 3D light structures or combinations of light structures of various types, such as a point light source, a linear light structure (shown in FIG. 7), a cubic light structure 110, or a tetrahedral light structure 110, etc. Each light structure 110 can also be foldable, such as to pack flat and nest with other like light structures, as shown in FIGS. 3A and 3D.

4. Computer System

[0031] Blocks of the method S100 are described herein as executed by a computer system 190, such as a computer system 190 integrated into or external to the assembly of light structures. For example, the computer system 190 can include a laptop computer (shown in FIG. 2), a desktop computer, a smartphone, a tablet, a remote server, or any other one or combination of local, remote, and/or distributed computing devices. Blocks of the method S100 are described herein as executed by a remote control external but local to the assembly of light structures. However, Blocks of the method S100 can be executed by any other one or more local or remote computer systems.

[0032] Generally, the computer system 190 can aggregate arrays of colors values addressed to specific light structures in the assembly into a frame that can then be loaded into and executed by the assembly. For example, the computer system 190 can generate frames of a lighting sequence for the light structure 110 assembly by mapping one or more standard lighting sequence modules onto a 3D representation of the light structure 110 assembly, as described below. The mobile computing device can then feed these frames--including arrays of color values addressed to one or more light structures within the assembly--to the assembly in order to realize the lighting sequence, such as during a show, act, gig, exhibit, or other performance.

[0033] However, because a group of light structures may be assembled into a custom assembly and because groups of light structures may not be identically ordered or oriented when assembled, dismantled, and reassembled (e.g., between venues), the computer system 190 can execute Blocks of the method S100, as described below, to: automatically generate a virtual 3D representation (hereinafter a "virtual map") of an assembly of one or more light structures; and to automatically map a predefined lighting sequence template, lighting sequence module, or complete lighting sequence to the virtual 3D representation of the light structure 110 assembly. In particular, the computer system 190 can therefore execute Blocks of the method S100 prior to implementation of a lighting sequence at the light structure 110 assembly in order to automatically determine positions and orientations of a group of light structures--and therefore the positions and orientations of each light element 130 in each light structure 110--in a light structure 110 assembly. The computer system 190 can therefore execute Blocks of the method S100 retroactively such that a group of light structures can be assembled without tedious attention to order or orientation, such that a human operator need not manually reprogram or modify a predefined light sequence for a current assembly of light structures, and such that an operator need not manually map a predefined light sequence to each light element 130 in each light structure 110 in the current assembly.

5. Router

[0034] As shown in FIG. 2, the system can also include a router 160 interposed between the mobile computing device and the assembly of light structures. The router 160 can be connected to each light structure 110 via wired connections, such as via one category-5 cable extending from the communication port on a light structure 110 to a corresponding port in the router 160 for each light structure 110 in the assembly. Alternatively, all or a subset of light structures in the assembly can be connected in serial (e.g., "daisy-chained") and connected to a single port in the router 160 via a wired connection. The router 160 can also assign an IP address (or other interface identification and location addressing label) to each light structure 110 in the assembly. For example, when an assembly of light structures is completed and first powered on, the router 160 can read a MAC address (or other identifier) from each light structure 110 in the assembly, assign a static IP address to each received MAC address, populate a DNS with MAC address and IP address pairs, and pass a list of these IP addresses (or the complete DNS value pairs) to the computer system 190.

[0035] During operation, such as during an automatic mapping cycle (described below) or later during execution of a lighting sequence, the router 160 receives frames--including an array of color values and light structure 110 identifiers (e.g., IP address) for each light structure 110 in the assembly--from the computer system 190, such as over a single wired or wireless connection. Upon receipt of a frame from the computer system 190, the router 160 distributes each array of color values in the frame to its corresponding light structure 110 via a port in the router 160 connected (e.g., wired) to and assigned an IP address associated with the corresponding light structure 110; light structures in the assembly then distribute color value assignments in their received color value arrays to corresponding light elements, and light elements across the assembly implement their respective color values, such as immediately or in response to a next clock value, flag, or interrupt transmitted from the router 160. For example, the computer system 190, router 160, light structures, and light elements can cooperate to implement a frame rate of 5 Hz (i.e., the computer system 190, router 160, light structures, and light elements can cooperate to execute a new frame once every 0.2 second).

[0036] In one implementation, the router 160 receives one packet of arrays (or a "replay frame") from the computer system 190 per update period, such as at a rate of 20, 200, or 400 frames per second over 2 MHz serial connection to the computer system 190. The router 160 can then funnel each array to its corresponding light structure 110. For example, at startup (e.g., when the router 160 is first powered on following connection to an assembly of light structures) the router 160 can read a MAC address (or other identifier) from each connected light structure 110 and then generate a lookup table linking one MAC address to one port of the router 160 for the current light structure 110-router 160 configuration based on the port address from which each MAC address was received. When generating a new replay frame, the computer system 190 can assign a MAC address (or IP address or other identifier) to each array in the replay frame based on results of the automatic mapping cycle described below. Upon receipt of a new replay frame from the computer system 190, the router 160 can access the lookup table and funnel each array in the replay frame through a port associated with the MAC address of its corresponding light structure 110, such as via user datagram protocol (UDP) packets.

6. Power Supply

[0037] In one implementation, the system also includes an external power supply 150, such as a power over Ethernet (PoE) switch interposed between each light structure 110 (or each chain of light structures) and a corresponding port in the router 160, as shown in FIG. 2. In this implementation, the power supply 150 can add a DC power signal to an AC data signal received from the router 160 and pass this combined power-data signal to a corresponding light structure 110.

[0038] Alternatively, the system can include one or more discrete power supplies separately wired to light structures in the assembly. A light structure 110 can additionally or alternatively include an integrated power supply, such as a rechargeable battery. In this implementation, the router 160 (or the computer system 190 directly) can wirelessly broadcast color value arrays to light structures in the assembly, such as over short-range wireless communication protocol.

7. Light Structure Identification

[0039] Block S110 recites retrieving addresses from light structures in the assembly. Generally, in Block S110, the computer system collects IP addresses, MAC addresses, and/or other configuration data from each light structure connected to the router, as shown in FIG. 1.

[0040] In one implementation, the computer system collects IP addresses from the router in Block S110. In this implementation, the computer system can pass each IP address received from the router into a DNS to retrieve configuration data for each light structure in the assembly, such as including a total number of light elements in each light structure, a shape and size (e.g., one-meter cube) of each light structure, and a maximum power output of each light structure (e.g., 25 Watts, 100 Watts). For example, the computer system can pass IP addresses received from light structures in the assembly to a remote computer system over the Internet; the remote computer system can then query a name mapping system for a virtual model of each light structure and then return digital files containing these light structures to the computer system via the Internet. The computer system can then extract a number, configuration, and/or subaddresses of light elements in each light structure from these virtual models in Block S110 and implement these data in Block S120 to generate a sequence of test frames for the assembly. As described below, the computer system can also implement virtual models of the light structures to construct the virtual map of the assembly in Block S170 described below.

[0041] Alternatively, a light structure can store its configuration data in local memory and can transmit these data upon request. For example, upon receipt of an IP address from a light structure at the router, the computer system can query the light structure at this IP address for its configuration and then store data subsequently returned by the light structure (e.g., a number, configuration, and/or subaddresses of light elements in the light structure) with this IP address in Block S110

[0042] In another implementation, the computer system can receive unique identifiers--encoded with light structure configuration data--from each light structure in the assembly. For example, once light structures are connected to the router and the computer system is connected to the router, the computer system can query each port of the router for a unique ID. The computer system can then extract configuration data--such as number, configuration, and subaddresses of light elements--of each light structure from its unique identifier and associate these configuration data with the corresponding port in the router. However, the computer system can implement any other method or technique to collect an identifier and configuration data for each light structure in the assembly.

8. Test Frame Sequence Generation

[0043] Block S120 of the method S100 recites, based on addresses retrieved from light structures in the assembly, generating a sequence of test frames. Generally, in Block S120, the computer system (or any other remote computer system) generates a sequence of test frames that can be executed by the assembly of light structures to selectively activate and deactivate light elements across the assembly. An imaging system 190 (e.g., a camera distinct from or integrated into the computer system) can capture images of the assembly in Block S140 while the assembly executes the sequence of test frames, and the computer system (or other remote computer system) can manipulate and compile these images into a virtual 3D map of real positions of each addressed light element within each light structure within the assembly in Block S170.

[0044] In one implementation, the computer system generates a sequence of test frames including: a reset frame specifying activation of multiple (e.g., all) light elements in light structures (e.g., all light structures) in the assembly; a set of synchronization frames succeeding the reset frame and specifying activation of light elements (e.g., all light elements) in multiple (e.g., all) light structures in the assembly; a set of pairs of blank frames interposed between adjacent synchronization frames and specifying deactivation of light elements (e.g., all light elements) in light structures (e.g., all light structures) in the assembly; and a set of test frames interposed between adjacent blank frame pairs, each test frame in the set of test frames specifying activation of a unique subset of light elements (e.g., a single light element) in light structures (e.g., a single light structure) within the assembly. For example, the computer system generates a sequence of test frames that, when executed by the assembly of light structures: turns all light elements in all light structures in the assembly off; turns all light elements in all light structures in the assembly on in the reset frame to indicate the beginning of the test sequence; turns all light elements in all light structures in the assembly off; and then, for each light element in the assembly, turns the light element on while keeping all other light elements off in the test frame, turns all light elements off in a first blank frame, turns all light elements on in a synchronization frame to provide a reference synchronization marker, and then turns all light elements off in a second blank frame.

[0045] In the foregoing implementation, the reset frame defines a start of the test sequence that can later be optically identified in the sequence of images--captured by the imaging system during execution of the test sequence--such as by counting a number of bright (e.g., white) pixels in each image and comparing adjacent images in the sequence of images. In particular, the computer system can insert, at the beginning of the test sequence, a reset frame that defines activation of a pattern of light elements (e.g., all light elements) in the assembly and that, when executed by light structures in the assembly, can be identified in an image of the assembly to synchronize the beginning of the test sequence to the sequence of images in Block S150. The computer system (or other remote computer system) can also compare an image of the assembly executing the reset frame to an image of the assembly executing a blank frame to generate an image mask that, when applied to an image in the sequence of images, rejects a region in the image that does not correspond to the assembly in Block S162, as described below. In one example in which each light structure in the assembly includes 180 RGB light elements, the computer system: defines a color value array including a sequence of 180 bright white (e.g., [255, 255, 255]) color values; and compiles a set of such color value arrays--including one such color value array per light structure in the assembly--to generate the reset frame.

[0046] The computer system can set an extended duration for the reset frame. For example, the imaging system can be configured to capture images at a frame rate of 120 Hz, and each light structure can be configured to load and implement a new frame at a rate of 30 Hz. During an automatic mapping cycle (and during other operating periods), the computer system can write a new frame to the light structure assembly at a rate of 30 Hz in Block S130 such that the imaging system can capture at least two images of the assembly executing each test frame in the sequence of test frames in Block S140. In this example, the computer system can specify an extended delay between execution of the reset frame and execution of a subsequent blank frame, such as a delay of two seconds, in the test frame sequence. Alternatively, the computer system can insert 60 identical reset frames into the test frame sequence to achieve a similar reset period at the assembly during execution of the test frame sequence.

[0047] In this implementation, the computer system appends the reset frame(s) with a light element test module for each light element in each light structure in the assembly. In particular, for each light element in the assembly, the computer system can insert a light element test module that specifies: a blank frame in which all light elements in the assembly are deactivated; a test frame in which a single corresponding light element is activated; a blank frame; and a synchronization frame in which all light elements in the assembly are activated (i.e., like the reset frame). In one example, the computer system sums a total number of light elements in each light structure in the assembly based on configuration data retrieved for each light structure, as described above, to determine a total number of light element test modules for the test frame sequence. In this example, the computer system then assigns an address (e.g., an IP address) of a particular light structure and an address of a particular light element in the particular light structure to each light element test module. For each light element test module, the computer system then: generates a unique color value array--for the assigned light structure--specifying activation of the assigned light element and specifying deactivation of all other light elements in the assigned light structure; generates color value arrays specifying deactivation of all light elements for all other light structures in the assembly; and compiles the unique color value array for the assigned light structure and color values arrays of all light structures in the assembly into a test frame for the light element test module. Like the reset frame, the computer system can generate a generic synchronization frame by compiling color value arrays specifying activation of (e.g., a [255, 255, 255] color value for) all light elements in all light structures in the assembly and can populate each light element test module with a copy of this generic synchronization frame. Similarly, the computer system can generate a generic blank frame by compiling color value arrays specifying deactivation of (e.g., a [0, 0, 0] color value for) all light elements in all light structures in the assembly and can then populate each light element test module with a copy of this generic synchronization frame.

[0048] As described above, the computer system can define durations of each blank frame, test frame, and synchronization frame in the test frame sequence, such as by defined delays between blank frames, test frames, and synchronization frames in the test frame sequence. For example, the computer system can define 0.5-second delays when switching from a blank frame to a test frame, from a test frame to a blank frame, from a blank frame to a synchronization frame, and from a synchronization frame to a blank frame within a light element test module in order to ensure sufficient time for the imaging system to capture at least two images of each frame executed by light structures in the assembly. Alternatively, the computer system can insert duplicate blank frames, test frames, and/or synchronization frames in the test frame sequence in order to achieve similar frame durations.

[0049] In one variation, the computer system also generates an image mask sequence and prepends the reset frame with the image mask sequence. In one example, the computer system generates an image mask sequence by assembling a number of blank frame and synchronization frame pairs sufficient to span a target mask generation duration, such as 45 blank frame and synchronization frame pairs switched at a frame rate of 30 Hz over a target mask generation duration of 30 seconds, and then inserts the image mask sequence ahead of the reset frame in the test frame sequence. The imaging system can later capture images of the assembly executing the image mask sequence, and the computer system (or other remote computer system) can generate an image mask for images captured after execution of the reset frame based on the images captured during execution of the image mask sequence in Block S162, as described below.

[0050] Furthermore, the computer system can insert a second reset frame into the test frame sequence following a last blank frame or synchronization frame in order to indicate the end of the test frame sequence to the computer system (or other remote computer system) and/or to a human operator. The computer system can also generate an event timeline including event markers corresponding to the reset frame(s), blank frames, and synchronization frames and light element subaddress markers corresponding to light elements specified as active in test frames within the test frame sequence.

[0051] However, the computer system can implement any other method or technique to specify a color value for a light element in the assembly, to aggregate color values for light elements in a light structure into a color value array or other container; to compile color value containers for one or more light structures into a frame, and to assemble multiple frames into a test frame sequence that can then be executed by the assembly of light structures during an automatic mapping cycle.

8. Test Frame Sequence Execution

[0052] Block S130 of the method S100 recites, during a test period, transmitting the sequence of test frames to light structures in the assembly; and Block S140 of the method S100 recites, during the test period, at an imaging system, capturing a sequence of images of the assembly. Generally, during an automatic mapping cycle, the computer system feeds frames in the test frame sequence to the assembly of light structures in Block S130, light structures in the assembly selectively activate and deactivate their light elements based on their assigned color value containers in each subsequent frame received from the computer system (e.g., via the router), and an imaging system defining a field of view including the assembly captures a sequence of images in Block S140, as shown in FIG. 1.

[0053] In one example, a human operator places the imaging system (e.g., a standalone camera or DSLR, a smartphone) in a first location in front of the assembly, orients the imaging system to face the assembly such that the full assembly is within the field of view of the imaging system, sets the imaging system to capture a first video (i.e., a first sequence of images at a substantially uniform frame rate) according to Block S140, and triggers the computer system to drip-feed frames in the test frame sequence to the assembly according to Block S130. Upon completion of the test frame sequence, the human operator places the imaging system in a second location offset from the first location (e.g., by ten to fifteen feet), again orients the imaging system to face the assembly such that the full assembly is within the field of view of the imaging system, sets the imaging system to capture a second video (i.e., a second sequence of images at a substantially uniform frame rate), and triggers the computer system to again drip-feed frames in the same test frame sequence to the assembly. However, the imaging system and the computer system can be manually or automatically triggered in any other way.

[0054] The imaging system can then upload the first and second videos to the computer system or to another computer system, such as over a wired or wireless communication protocol.

9. Image Mask

[0055] As shown in FIG. 1, one variation of the method S100 includes Block S162, which recites generating an image mask. Generally, in Block S162, the computer system processes a subset of images captured by the imaging system during execution of the test frame sequence (e.g., specifically during the image mask sequence) to identify a region in the field of view of the imaging system that corresponds to light elements in light structures in the assembly and to define this region in an image mask. The computer system can then apply the image mask to other images in the sequence of images in Block S160 to discard regions in these images that do not correspond to light elements in the assembly.

[0056] In the implementation above in which the computer system inserts an image mask sequence in the test frame sequence ahead of the first reset frame, the computer system can: identify a set of discard images at the beginning of the image sequence (e.g., a first 360 images spanning a first three seconds of the image sequence); identify a reset image captured during execution of the (first) reset frame by the assembly in the sequence of images, as described below; and select a set of image masks between the set of discard images and the reset image. The computer system can then record an accumulated difference between adjacent images in the set of image masks to generate the image mask. For example, the computer system can: convert each image to grayscale (e.g., 256-bit grayscale images in which a pixel value of "0" corresponds to black and in which a pixel value of "255" corresponds to white); and then, for each pair of adjacent images in the set of image masks, subtract the first image in the pair from the second image in the pair to construct a first difference image and subtract the second image in the pair from the first image in the pair to construct a second difference image. The computer system can then sum all first and second difference images from the set of image masks, normalize this sum, and convert this normalized sum to black and white to generate the image mask.

[0057] In this implementation, for a pair of adjacent images captured by the imaging system while light elements in the assembly are transitioning from deactivated to activated according to a new mask frame, the first difference image will contain image data (e.g., grayscale values greater than "0") representative of a region in the field of view of the imaging system that changes in light value when the assembly transitions from a blank frame to a mask frame. Therefore, the first difference image will contain image data representative of a region in the field of view of the imaging system corresponding to light elements in the assembly (and to surfaces on light structures within the assembly and to background surfaces near light elements in the assembly). However, for this pair of adjacent images captured while light elements in the assembly are transitioning from deactivated to activated according to a new mask frame, pixels in the second difference image may generally saturate to "0" such that summing the first difference image and the second difference image yields approximately the first difference image representing a region in the field of view of the imaging system corresponding to light elements in the assembly.

[0058] Similarly, for a pair of adjacent images captured by the imaging system while light elements in the assembly are transitioning from activated to deactivated according to a new blank frame, the second difference image will contain image data representative of a region in the field of view of the imaging system corresponding to light elements in the assembly. However, for this pair of adjacent images captured while light elements in the assembly are transitioning from activated to deactivated according to a new blank frame, pixels in the first difference image may generally saturate to "0" such that summing the first difference image and the second difference image yields approximately the second difference image representing a region in the field of view of the imaging system corresponding to light elements in the assembly.

[0059] The computer system can thus sum the first and second difference images from all pairs of adjacent images captured by the imaging system during execution of the image mask sequence by the assembly to generate an accumulator image containing image data corresponding to all regions within the field of view of the imaging system that exhibited light changes during the image mask sequence. The computer system can: normalize the accumulator image by dividing grayscale values in the accumulator image by the number of difference images compiled into the accumulator image; apply a static or dynamic grayscale threshold (e.g., 127 in a grayscale range of 0 for black to 255 for white) to convert pixels in the normalized accumulator image of grayscale value less than or equal to the grayscale threshold to a rejection value (e.g., "-255") and to convert pixels in the normalized accumulator image of grayscale values greater less than the grayscale threshold to a pass value (e.g., "0"). The computer system can thus define the image mask from rejection pixels and pass pixels in the normalized accumulator image in Block S162.

[0060] The computer system (or other remote computer system) can then convert remaining images in the sequence of images (e.g., images between the first and second reset images) to grayscale and add (or subtract) the image mask to each remaining image in order to remove regions in these images not corresponding to light elements in the assembly, surfaces on light structures within the assembly, or background surfaces near light elements in the assembly from these images. The computer system can process these masked or "filtered" images in Blocks S150 and S160, as described below.

10. Event Alignment

[0061] Block S150 of the method S100 recites aligning the sequence of test frames to the sequence of images based on the reset frames, the set of synchronization frames, and values of pixels in images in the sequence of images. Generally, in Block S150, the computer system synchronizes the sequence of images to the test frame sequence to enable subsequent correlation of a detected light element in an image in the image sequence to light element subaddress specified as active in a frame executed by the assembly when the image was recorded, as shown in FIG. 1.

[0062] In one implementation, the computer system converts images in the sequence of images to grayscale, applies the image mask to the grayscale images as described above, and then counts a number of pixels containing a grayscale value greater than a threshold grayscale value (e.g., "light pixels" containing a grayscale value greater than 200 in a grayscale range of 0 for black to 255 for white) in each image. The computer system then flags each image that contains more than a threshold number of light pixels as a synchronization (or reset) image captured at a time that a synchronization (or reset) frame is executed by the assembly. For example, the computer system can calculate the threshold number of light pixels as a fraction of (e.g., 70% of) pixels not masked by the image mask. The system also identifies an extended sequence of consecutive flagged images recorded near the beginning of the automatic mapping cycle and stores images in this sequence of consecutive flagged images as reset images. (The computer system can similarly identify a second extended sequence of consecutive flagged images recorded near the end of the automatic mapping cycle and can store images in this second sequence of consecutive flagged images as reset images.)

[0063] The computer system can then count the number of discrete clusters of synchronization images between the reset images and compare this number to the number of discrete synchronization frames (or discrete clusters of synchronization frames) in the test frame sequence to confirm that all synchronization events were identified in the image sequence. If fewer than all synchronization events were identified in the image sequence, the computer system can reduce a grayscale threshold implemented in Block S162 to generate the image mask, reduce a grayscale threshold implemented in Block S150 to identify light pixels, and/or reduce a threshold number of light pixels corresponding to a synchronization in order to detect more synchronization events in the image sequence; and vice versa.

[0064] Once the number of discrete clusters of synchronization images in the image sequence is confirmed, the computer system can detect a test image (i.e., an image recorded while a test frame is executed by the assembly) between each adjacent cluster of synchronization frames and tag each test image with a subaddress of a light element activated during the corresponding test frame. For example, for each adjacent pair of synchronization image clusters in the image sequence, the computer system can: select a subset of masked grayscale images substantially centered between the synchronization image clusters; count a number of pixels containing a grayscale value greater than a threshold grayscale value ("light pixels") in each image in the subset of images; flag a particular image in the subset of images that contains the greatest number of light pixels as a test image; and then tag the test image with the subaddress of the light element recorded in a light element subaddress marker in a corresponding position in the event timeline described above. The computer system can thus identify a single test image for each test frame in the test frame sequence and tag or otherwise associate each test image with the subaddress of the (single) light element in the assembly activated when the test image was captured in Block S150.

11. Light Element Detection

[0065] Block S160 of the method S100 recites, for a particular test frame in the set of test frames, determining a position of a light element, addressed in the particular test frame, within a particular image, in the sequence of images, corresponding to the particular test frame based on values of pixels in the particular image. Generally, in Block S160, the computer system determines a position of an active light element within each test image identified in the image sequence, as shown in FIG. 1.

[0066] In one implementation, for each test image, the computer system: scans the masked grayscale test image for pixels containing grayscale values greater than a threshold grayscale value ("light pixels"); counts numbers of light pixels in contiguous blobs of light pixels in the test image; calculates the centroid of light pixels in a particular blob of light pixels containing the greatest number of light pixels in the test image; and stores the centroid of the particular blob as the position of the light element in the test image. For example, for each test image, the computer system can store the (X, Y) pixel position of a pixel in the test image nearest the centroid of the blob--relative to the top-left corner of the test image--in cells in a light element position table assigned to the light element subaddress corresponding to the test image. In this example, if no light pixel blob is detected in a test image, the computer system can process an adjacent test for a light pixel blob or can leave empty cells in the table assigned to the light element subaddress corresponding to the test image. In this implementation, the computer system can also reject blobs of light pixels in a test image corresponding to light reflected from a surface near an active light element. For example, for each test image in the image sequence, the computer system can: scan to detect light pixels in the masked grayscale test image; count numbers of light pixels in contiguous blobs of light pixels in the test image; identify the two blobs of light pixels containing the greatest numbers of light pixels in the test image; select a particular blob of light pixels--from the two blobs of light pixels--at a highest vertical position in the test image in order to reject light reflected from a floor surface; calculate the centroid of light pixels in the particular blob of light pixels; and store the centroid of the particular blob as the position of the light element in the test image.

[0067] The computer system: can then generate a two-dimensional (2D) composite image representing positions of light elements in the field of view of the imaging system during execution of the test frame sequence from centroid positions (e.g., (X, Y) values) stored in the light element position table; and can tag each point in the composite image with the subaddress of its corresponding light element. For the assembly constructed of light structures including light elements arranged along linear beams and tested in series during the image test sequence, the computer system can calculate lines of best fit (e.g., one in the X-direction and another in the Y-direction) for subsets of (X, Y) points representing subsets of light elements in each light structure in the assembly based on light element subaddresses of light structures in the assembly and known number of light elements along linear beams in the light structures. The computer system can then discard or flag outliers in the light element position table before transforming the table--or an image constructed from the table--into a virtual 3D map of the light structure in Block S170.

[0068] The computer system can repeat the foregoing Blocks of the method S100 for the second sequence of images recorded by the imaging system during execution of the same or similar test frame sequence by the assembly in order to generate a second composite image representing positions of light elements in the field of view of the imaging system when arranged at a different location relative to the assembly. However, the computer system can implement any other method or technique to identify positions corresponding to light elements in test images and can store these positions in any other way.

12. Virtual Map

[0069] Block S170 of the method S100 recites aggregating positions of light elements and corresponding subaddresses identified in images in the sequence of images into a virtual 3D map of positions and subaddresses of light elements within the assembly. Generally, in Block S170, the computer system merges two or more composite images--representing positions of light elements in fields of view of the imaging system placed at various locations relative to the assembly during execution of multiple (like or similar) test frame sequences--into a virtual 3D map of the light elements in light structures in the assembly, as shown in FIG. 1.

[0070] In one implementation, the computer system performs three-dimensional (3D) reconstruction methods to generate a 3D point cloud from the composite images generated in Block S160. In particular, the computer system can identify (X, Y) points in the first composite image that correspond to the same (X, Y) points in the second composite image based on like light element subaddress assignments, define these points as reference points, and then construct a virtual 3D representation of the assembly from the composite images based on these shared reference points in both composite images. In this implementation, the computer system can also retrieve gyroscope data, accelerometer data, compass data, and/or other motion data recorded by sensors within the imaging system when moved from a first position after recording the first image sequence to a second position in preparation to record the second image sequence, and the computer system can implement dead reckoning or other suitable methods and techniques to determine the offset distance and relative orientation between the first and second positions. Alternatively, the computer system can determine the offset distance and relative orientation between the first and second positions based on GPS or other suitable data collected by the imaging system during or between recordation of the image sequences. The computer system can orient the first and second composite images and can calculate a fundamental matrix for the automatic mapping cycle based on the offset distance and relative orientation between the first and second positions.

[0071] The computer system can then pass each point in the composite images into the fundamental matrix to generate a 3D point cloud and can confirm viability of the fundamental matrix and alignment of the 2D composite images in 3D space based on proximity of reference point pairs from the composite images into the 3D point cloud. The computer system can select a group of points in the 3D point cloud--corresponding to a linear beam in light structure in the assembly--and shift points in this group of points into alignment with real known positions of light elements in the linear beam of a light structure. For example, for the assembly that includes cubic light structures, the computer system can select groups of points in the point cloud, each group of points corresponding to one cubic light structure, and then transform the 3D point cloud to achieve nearest approximations of cubes across all groups of points in the 3D point cloud. In this example, the computer system can also retrieve real offset distances between light elements in light structures in the assembly and can scale the 3D point cloud based on these real offset distances between light elements. Furthermore, the computer system can map a virtual model of a light structure, as described above, to groups of points in the 3D point cloud to identify and discard points not intersecting or falling within a threshold distance of a light element position within the virtual model of the light structure. Similarly, the computer system can identify points missing from the point cloud and their corresponding light element subaddresses based on nearby light element subaddresses and light element positions defined in a virtual model of light structures inserted into the 3D point cloud, and the computer system can complete the 3D point cloud by inserting these points and their corresponding light element subaddresses into the 3D point cloud. The computer system can also shift positions of points in the 3D point cloud to better align with light element positions defined in the virtual model of the light structure applied to the 3D point cloud in order to improve alignment of light element represented in the 3D point cloud.

[0072] However, the computer system (or other remote computer system) can implement any other method or technique in Block S170 to generate a 3D point cloud or other virtual 3D map of positions and subaddresses of light elements within the assembly.

13. Tethered Imaging System

[0073] In one variation shown in FIG. 4, the method S100 includes: receiving addresses from light structures in the assembly in Block S110; generating a baseline frame specifying deactivation of light elements in light structures in the assembly in Block S120; based on addresses retrieved from light structures in the assembly, generating a sequence of test frames succeeding the baseline frame in Block S120, each test frame in the sequence of test frames specifying activation of a unique subset of light elements in light structures within the assembly; sequentially serving the baseline frame followed by the sequence of test frames to the assembly for execution in Block S130; and receiving a baseline image recorded by an imaging system during execution of the baseline frame at the assembly in Block S140. In this variation, the method S100 also includes, for a first frame in the sequence of test frames: receiving a first photographic test image recorded by the imaging system during execution of the first test frame by the assembly in Block S140, the first test frame specifying activation of a first light element in a first light structure in the assembly; and identifying a first location of the first light element within a field of view of the imaging system based on a difference between the first photographic test image and the baseline image in Block S160. Similarly, the method S100 includes, for a second frame in the sequence of test frames: receiving a second photographic test image recorded by the imaging system during execution of the second test frame by the assembly in Block S140, the second test frame specifying activation of a second light element in the first light structure; and identifying a second location of the second light element within the field of view of the imaging system based on a difference between the second photographic test image and the baseline image in Block S160. The method S100 further includes: retrieving a first virtual model of the first light structure based on an address of the first light structure, the virtual model defining a geometry of and locations of light elements within the first light structure in Block S170; locating the first virtual model of the first light structure within a 3D virtual map of the assembly by projecting the first virtual model of the first light structure onto the first location of the first light element and onto the second location of the second light element in Block S160; and populating the 3D virtual map with points representing locations associated with unique addresses of light elements within the assembly in Block S170.

[0074] In particular, in this variation, the method S100 can include: based on addresses retrieved from light structures in the assembly, deactivating light elements across the assembly; in response to deactivation of light elements across the assembly, capturing a first reference image of the assembly; activating light elements across the assembly; in response to activation of light elements across the assembly, capturing a second reference image of the assembly; and generating an image mask based on a difference between the first reference image and the second reference image. In this variation, the method S100 also includes: for each subset of light elements in light structures within the assembly, activating the subset of light elements and, in response to activation of the subset of light elements, capturing a test image of the assembly; for each test image, identifying a position of an activated light element within a region of the test image outside of the image mask; and aggregating positions of light elements identified in test images into a virtual 3D map of positions and subaddresses of light elements within the assembly.

[0075] Generally, in this variation, the computer system implements methods and techniques similar to those described above but implements two-way wired or wireless communication with light structures in the assembly and with the imaging system to receive confirmation that a test frame transmitted to the assembly was executed by the assembly, to trigger the imaging system to capture an image of the assembly while executing a test frame, and to confirm that the imaging system captured an image of the assembly before loading a new test frame into the assembly. In particular, in this variation, the computer system can: define test frames in a sequence of test frames, each test frame specifying activation of single light element in the assembly, as described above; feed a single test frame to the assembly; trigger the imaging system to capture an image of the assembly once confirmation of execution of the test frame is received from the assembly; feed a subsequent test frame to the assembly only after confirmation of recordation of an image from the imaging system; and repeat this process for the remaining test frames in the test frame sequence. Therefore, in this variation, the computer system can immediately associate each image recorded by the imaging system with a subaddress of a light element specified as active in the corresponding frame. The computer system can similarly load blank frames and mask frames into the assembly, trigger the imaging system to capture corresponding blank images and image masks, and implement methods and techniques described above to define an image mask based on these blank images and image masks. The computer system can then implement methods and techniques described above to apply the image mask to test images, identify light pixels corresponding to active elements in the test images, and to generate a 3D point cloud or other virtual 3D map of positions and subaddresses of light elements within the assembly.

[0076] In this variation, the computer system can also merge positive confirmation of execution of test frames and their corresponding test images to detect nonresponsive light elements and/or nonresponsive light structures in the assembly and to issue notifications or other prompts to test, correct, or replace nonresponsive light elements or light structures.

[0077] Furthermore, in this variation, the computer system and the imaging system can be physically coextensive, such as integrated into a smartphone, tablet, or other mobile computing device in communication with the router over a wired or wireless connection.

13.1 Test Frames

[0078] In this variation, the computer system can implement methods and techniques described above to generate a sequence of test frames defining activation of unique subsets (e.g., singular) of light elements within the assembly in Block S120. However, in this variation, the computer system can generate the set of test frames that excludes blank and reset frames. In particular, because the computer system is in communication with the imaging system via a wired or wireless link, the computer system can: serve a first test frame--in the sequence of test frames--to the assembly via the router; receive confirmation from light structures in the assembly that the first test frame has been implemented; trigger the imaging system to record a first photographic test image of the assembly; and receive confirmation that the imaging system recorded the first photographic test image before serving a second test frame--in the sequence of test frames--to the assembly.

[0079] In one implementation (and in implementations described above): the computer system defines a sequence of test frames; each test frame includes a set of numerical arrays; each numerical array is associated with an address (e.g., a MAC address, an IP address) of one light structure in the assembly and includes a set of values; and each value in a numerical array defines a color and intensity (or a "color value") of one light element at one light element in the corresponding light structure. In the implementation described above in which each light element in each light structure includes a tri-color LED, each numerical array can include one string of three values per light element in the corresponding light structure. In this implementation, each string of values corresponding to one light element can include a first value corresponding to brightness of a red subpixel, a second value corresponding to brightness of a green subpixel, and a third value corresponding to brightness of a blue subpixel in the light element. Upon receipt of such a numerical array, a light structure can pass the numerical array to a first light element; the first light element can strip the first three values from the numerical array, update intensities of its three subpixels according to these first three values, and pass the remainder of the numerical array to the second light element in the light structure; the second light element can strip the first three remaining values from the numerical array, update intensities of its three subpixels according to these three values, and pass the remainder of the numerical array to the third light element in the light structure; etc. to the last light element in the light structure.

[0080] Alternatively, each test frame can include one numerical array per subpixel color, such as one "red" numerical array, one "green" numerical array, and one "blue" numerical array. Upon receipt of such a set of numerical arrays, a light structure can pass the red, green, and blue numerical arrays to a first light element; the first light element can strip the first values from each of the numerical arrays, update intensities of its three subpixels according to these first values, and pass remainders of the numerical arrays to the second light element in the light structure; the second light element can strip the first remaining value from each of the numerical arrays, update intensities of its three subpixels according to these values, and pass remainders of the numerical arrays to the third light element in the light structure; etc. to the last light element in the light structure.

[0081] In Block S120, the computer system generates a sequence of test frames, wherein each test frame specifies activation of a unique subset (e.g., one) of light elements in the assembly. In one implementation, the computer system first identifies individually-addressable light elements in all light structures in the assembly. For example, the computer system can extract a number (and subaddresses) of light elements in a light structure in the assembly directly from a MAC address, IP address, or data packet received from the light structure via the router. Alternatively, the computer system can pass a MAC address, IP address, or other identifier received from the light structure to a name mapping system (e.g., a remote database), which can return a number (and subaddresses) of light elements in the light structure. Similarly, the computer system can pass a MAC address, IP address, or other identifier received from the light structure to a name mapping system; the name mapping system can return a 3D virtual model of the light structure--including relative positions and subaddresses of light elements in the light structure--to the computer system; and the computer system can extract relevant information from the virtual model to identify individually-addressable light elements in the light structure. However, the computer system can implement any other method or technique to characterize a light structure in the assembly, and the computer system can repeat this process for each other light structure in the assembly.

[0082] The computer system can then generate a number of unique test frames equivalent to a number of individually-addressable light elements in the assembly. In this implementation, each test frame can specify maximum brightness (in all subpixels) for one particular light element and specify minimum brightness (e.g., an "off" or deactivated state) for all other light elements in the assembly.