Speech Analysis For Cross-language Mental State Identification

Mishra; Taniya ; et al.

U.S. patent application number 16/206135 was filed with the patent office on 2019-06-06 for speech analysis for cross-language mental state identification. This patent application is currently assigned to Affectiva, Inc.. The applicant listed for this patent is Affectiva, Inc.. Invention is credited to Islam Faisal, Taniya Mishra, Mohamed Ezzeldin Abdelmonem Ahmed Mohamed.

| Application Number | 20190172458 16/206135 |

| Document ID | / |

| Family ID | 66658115 |

| Filed Date | 2019-06-06 |

View All Diagrams

| United States Patent Application | 20190172458 |

| Kind Code | A1 |

| Mishra; Taniya ; et al. | June 6, 2019 |

SPEECH ANALYSIS FOR CROSS-LANGUAGE MENTAL STATE IDENTIFICATION

Abstract

Techniques are described for speech analysis for cross-language mental state identification. A first group of utterances in a first language is collected, on a computing device, with an associated first set of mental states. The first group of utterances and the associated first set of mental states are stored on an electronic storage device. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. A second group of utterances from a second language is processed, on the machine learning system that was trained, wherein the processing determines a second set of mental states corresponding to the second group of utterances. The second set of mental states is output. A series of heuristics is output, based on the correspondence between the first group of utterances and the associated first set of mental states.

| Inventors: | Mishra; Taniya; (New York, NY) ; Faisal; Islam; (Kafr El-Zayat, EG) ; Mohamed; Mohamed Ezzeldin Abdelmonem Ahmed; (Cairo, EG) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Affectiva, Inc. Boston MA |

||||||||||

| Family ID: | 66658115 | ||||||||||

| Appl. No.: | 16/206135 | ||||||||||

| Filed: | November 30, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62593449 | Dec 1, 2017 | |||

| 62593440 | Dec 1, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G10L 15/005 20130101; G10L 2015/223 20130101; G10L 13/027 20130101; G06T 13/40 20130101; G10L 15/16 20130101; G06K 9/6262 20130101; G10L 15/22 20130101; G06K 9/4628 20130101; G06K 9/00302 20130101; G10L 15/063 20130101; G06K 9/6256 20130101; G10L 25/78 20130101; G06K 9/6271 20130101; G10L 25/63 20130101 |

| International Class: | G10L 15/22 20060101 G10L015/22; G10L 15/00 20060101 G10L015/00; G10L 15/16 20060101 G10L015/16; G10L 25/63 20060101 G10L025/63; G10L 15/06 20060101 G10L015/06 |

Claims

1. A computer-implemented method for speech analysis comprising: collecting, on a computing device, a first group of utterances in a first language with an associated first set of mental states; storing, on an electronic storage device, the first group of utterances and the associated first set of mental states; training a machine learning system using the first group of utterances and the associated first set of mental states that were stored; processing, on the machine learning system that was trained, a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances; and outputting the second set of mental states.

2. The method of claim 1 further comprising learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, to facilitate determining an associated third set of mental states from a third group of utterances.

3. The method of claim 1 further comprising outputting a series of heuristics, based on correspondence between the first group of utterances and the associated first set of mental states.

4. The method of claim 1 wherein the machine learning system includes a deep learning system.

5. The method of claim 4 wherein the machine learning system performs convolving.

6. The method of claim 1 wherein the machine learning system includes a convolutional neural network.

7. The method of claim 1 wherein the first language and the second language are substantially similar.

8. The method of claim 7 wherein the first language and the second language are identical.

9. The method of claim 1 wherein the first language and the second language are different.

10. The method of claim 1 further comprising refining the training of the machine learning system based on one or more additional groups of utterances in the first language or the second language.

11. The method of claim 1 wherein the training comprises segmenting silence from speech in the second group of utterances.

12. The method of claim 1 wherein the training comprises extracting low-level acoustic descriptors from short, overlapping speech segments from the second group of utterances.

13. The method of claim 12 further comprising applying statistical functions to resolve low-level acoustic descriptors over longer speech segments.

14. The method of claim 12 further comprising extracting contextual information from neighboring speech segments.

15. The method of claim 12 further comprising feeding extracted features to a classifier for determining mental states.

16. The method of claim 1 wherein the training comprises estimating mental state metrics over successive, overlapped speech segments.

17. The method of claim 16 further comprising fusing the mental state metrics that were estimated to produce a smoothed mental state metric.

18. The method of claim 16 wherein the successive, overlapped speech segments are windowed around 1200 ms.

19. The method of claim 1 wherein the first group of utterances includes non-speech vocalizations.

20-21. (canceled)

22. The method of claim 1 wherein the outputting is used for developing cross-cultural conversational agents.

23. The method of claim 22 wherein the cross-cultural conversational agents are used in vehicular control.

24. The method of claim 1 further comprising training an application for use with a third language distinct from the first language and the second language.

25. The method of claim 1 further comprising developing cross-linguistic models based on the outputting.

26. The method of claim 25 further comprising training the cross-linguistic models based on one or more human reactions to an application using the cross-linguistic models.

27. (canceled)

28. A computer program product embodied in a non-transitory computer readable medium for speech analysis, the computer program product comprising code which causes one or more processors to perform operations of: collecting, on a computing device, a first group of utterances in a first language with an associated first set of mental states; storing, on an electronic storage device, the first group of utterances and the associated first set of mental states; training a machine learning system using the first group of utterances and the associated first set of mental states that were stored; processing, on the machine learning system that was trained, a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances; and outputting the second set of mental states.

29. A computer system for speech analysis comprising: a memory which stores instructions; one or more processors attached to the memory wherein the one or more processors, when executing the instructions which are stored, are configured to: collect, on a computing device, a first group of utterances in a first language with an associated first set of mental states; store, on an electronic storage device, the first group of utterances and the associated first set of mental states; train a machine learning system using the first group of utterances and the associated first set of mental states that were stored; process, on the machine learning system that was trained, a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances; and output the second set of mental states.

Description

RELATED APPLICATIONS

[0001] This application claims the benefit of U.S. provisional patent applications "Avatar Image Animation Using Translation Vectors" Ser. No. 62/593,440, filed Dec. 1, 2017, and "Speech Analysis for Cross-Language Mental State Identification" Ser. No. 62/593,449, filed Dec. 1, 2017.

[0002] The foregoing application is hereby incorporated by reference in its entirety.

FIELD OF INVENTION

[0003] This application relates generally to speech analysis and more speech analysis for cross-language mental state identification.

BACKGROUND

[0004] People around the world use a variety of electronic devices to pass the time and to engage with and share many types of online content. The content includes news, sports, politics, cute puppy videos, children being silly videos, adults being dumb videos, and much, much more. The content is delivered to the electronic devices via websites, apps, streaming, podcasts, and other channels. When a person finds content or a channel that they particularly like or find especially loathsome, she or he may care to share it with friends and followers. As a result, social sharing has provided popular and convenient channels for dissemination of shared content. As the friends and followers view the shared content, they react to it. The reactions include facial expressions and changes in facial expressions which result from movements of facial muscles. The reactions also include audible reactions which can include speaking, shouts, groans, crying, muttering, and other sounds produced by the viewers of the shared content. The reactions of the viewers, whether facial or audible, involve moods, emotions, and mental states. The moods, emotions, and mental states can range from happy to sad, and can include expressions of anger, fear, disgust, surprise, ennui, and many others.

[0005] People around the world use a variety of languages for communication. Some languages are very similar to each other, such as dialects, and some languages are very different from each other. Communication among people around the world is critical, and understanding languages is likewise critical to facilitating that communication. Languages are also intimately connected to the variety of electronic devices that people around the world employ. The ability to use an electronic device in one's own language is a key element of device operability.

SUMMARY

[0006] Speech analysis is used for cross-language mental state identification. Utterances in a first language, with an associated set of mental states, are collected on a computing device. The computing device can include a smartphone, personal digital assistant, tablet, laptop computer, and so on. The utterances and associated mental states are stored on an electronic storage device, where the electronic storage device can be coupled to the computing device used for the collecting, or can be remotely located such as a server, cloud server, etc. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. The training can include supervised training. The machine learning system can include a deep learning system, and can include performing convolution. The machine learning system can include a deep learning system, where the deep learning system can be based on a convolutional neural network. Processing is performed on the machine learning system that was trained, to process a second group of utterances from a second language. The processing is used to determine a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, can be used to facilitate determining an associated third set of mental states from a third group of utterances. A series of heuristics is output, based on the correspondence between the first group of utterances and the associated first set of mental states.

[0007] A computer-implemented method for speech analysis is disclosed comprising: collecting, on a computing device, a first group of utterances in a first language with an associated first set of mental states; storing, on an electronic storage device, the first group of utterances and the associated first set of mental states; training a machine learning system using the first group of utterances and the associated first set of mental states that were stored; processing, on the machine learning system that was trained, a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances; and outputting the second set of mental states.

[0008] Other embodiments disclose a method of using a speech analysis system comprising: obtaining a first group of utterances in a first language with an associated first set of mental states; training a machine learning system using the first group of utterances and associated first set of mental states; obtaining a second group of utterances from a second language; determining an associated second set of mental states corresponding to the second language, wherein the determining is based on the machine learning system that was trained with the first group of utterances and the associated first set of mental states; and outputting the associated second set of mental states.

[0009] Various features, aspects, and advantages of numerous embodiments will become more apparent from the following description.

BRIEF DESCRIPTION OF THE DRAWINGS

[0010] The following detailed description of certain embodiments may be understood by reference to the following figures wherein:

[0011] FIG. 1 is a flow diagram for speech analysis with cross-language mental state identification.

[0012] FIG. 2 is a flow diagram for emotion classification.

[0013] FIG. 3 shows an example of smoothed emotion estimation.

[0014] FIG. 4 illustrates an example of a confusion matrix.

[0015] FIG. 5 is a diagram showing audio and image collection including multiple mobile devices.

[0016] FIG. 6 illustrates feature extraction for multiple faces.

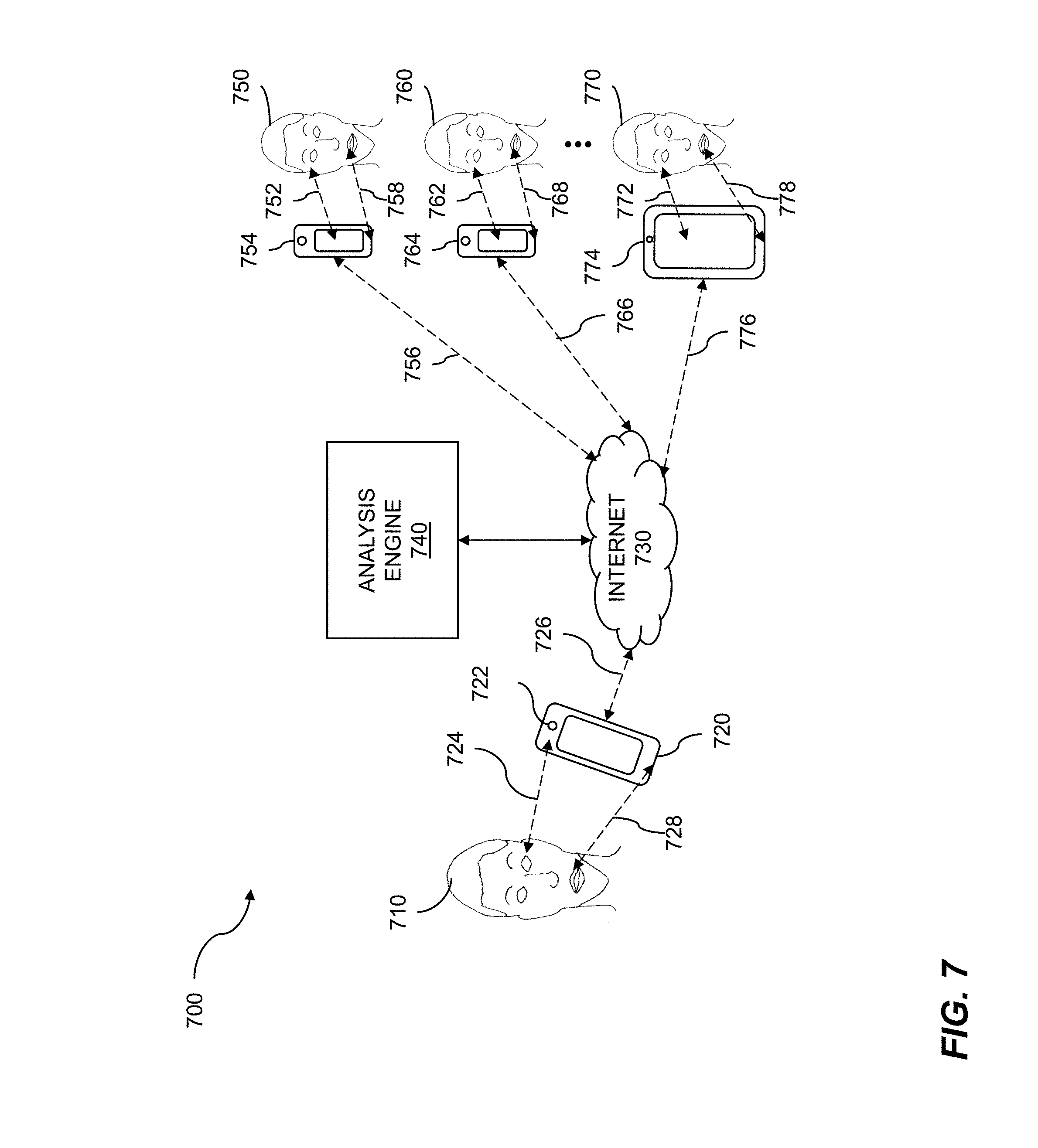

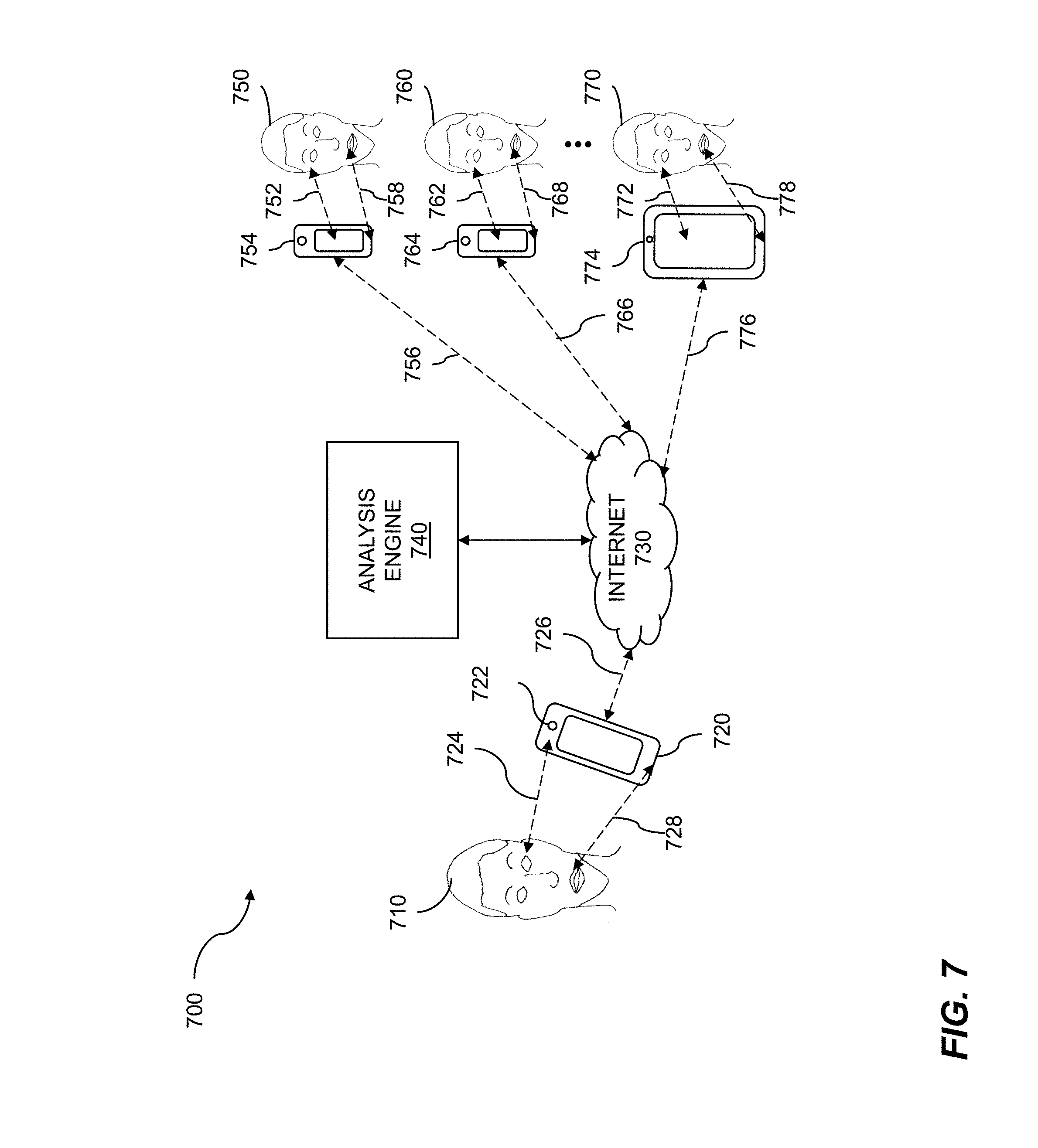

[0017] FIG. 7 shows live streaming of social video and social audio.

[0018] FIG. 8 shows example facial data collection including landmarks.

[0019] FIG. 9 shows example facial data collection including regions.

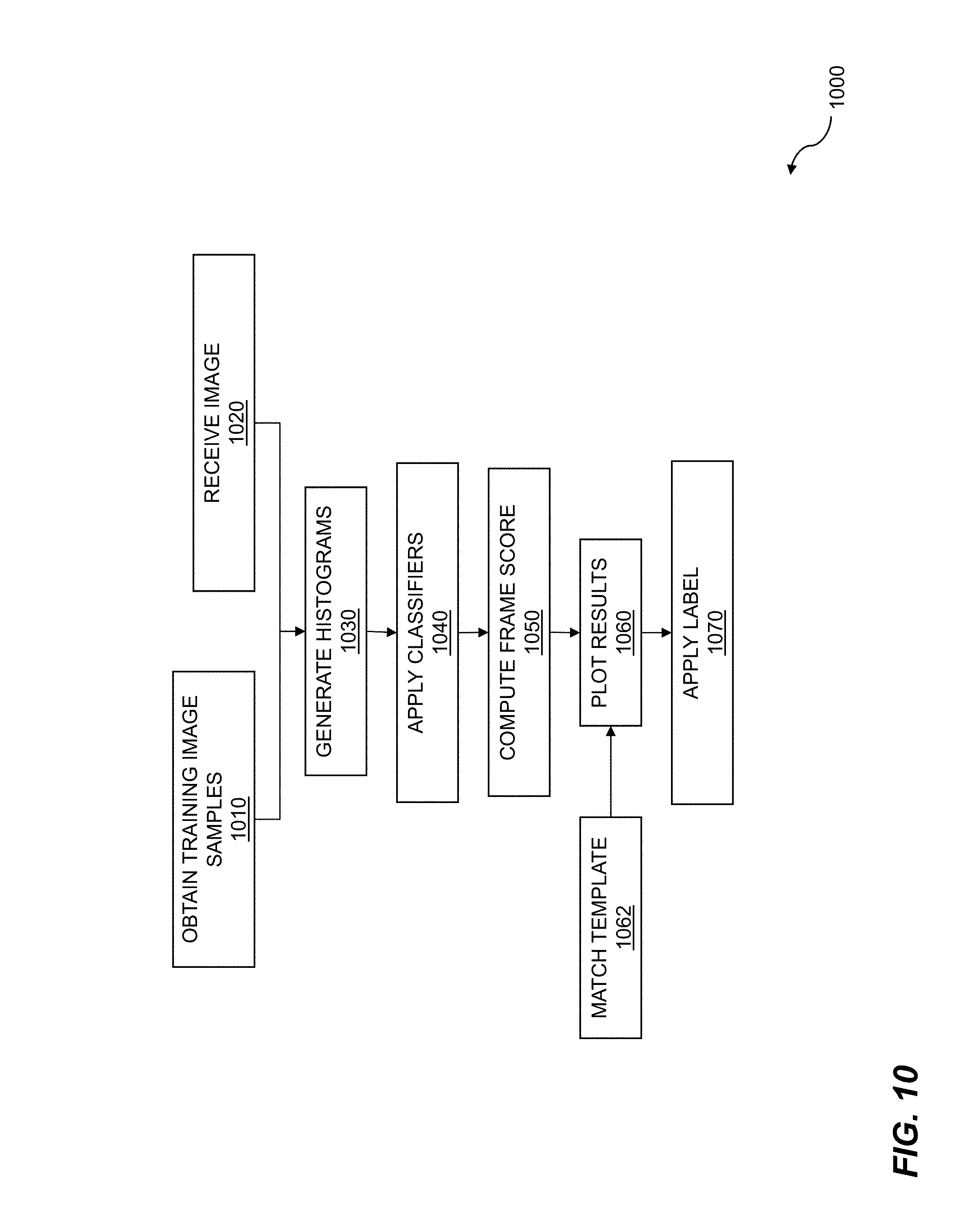

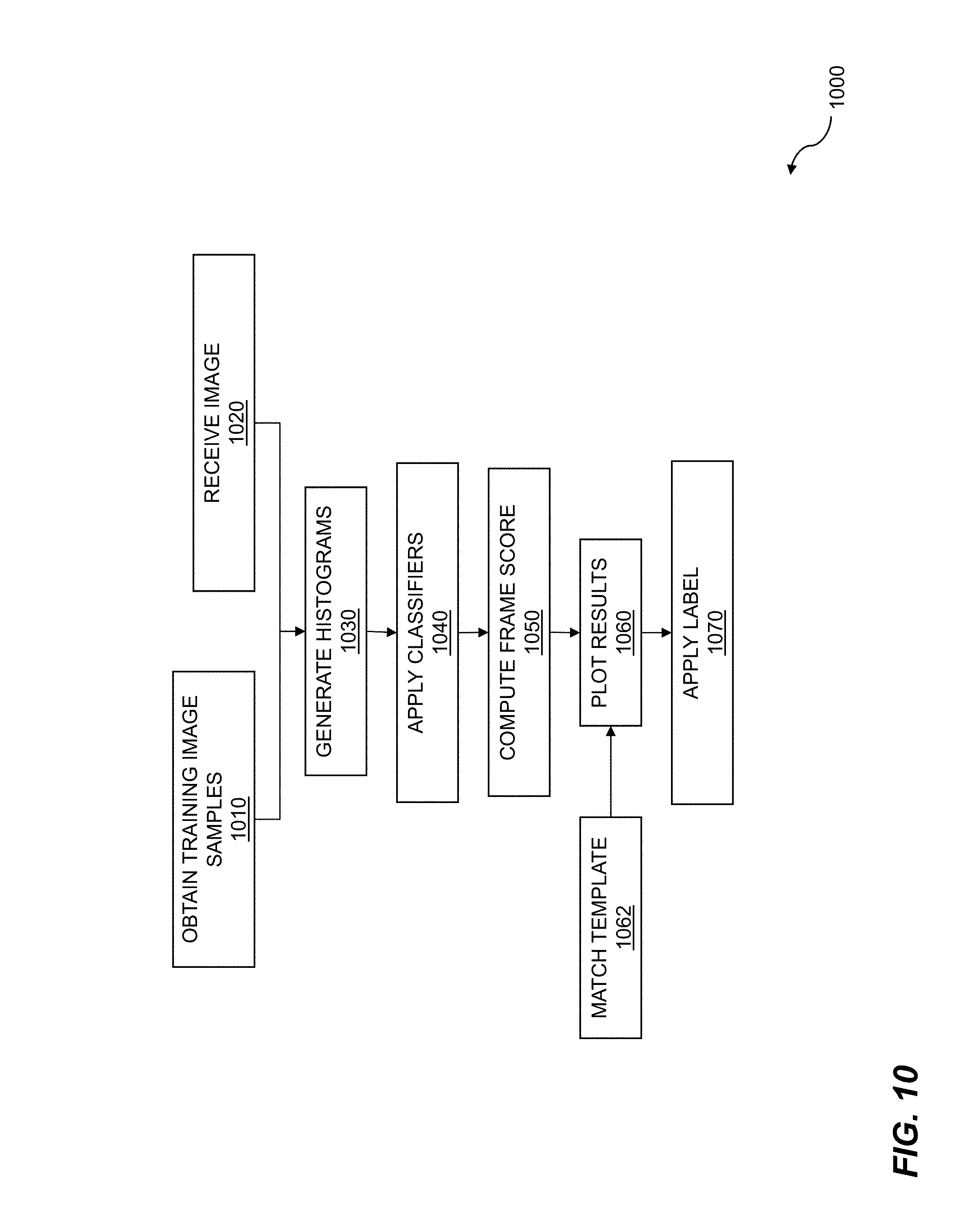

[0020] FIG. 10 is a flow diagram for detecting facial expressions.

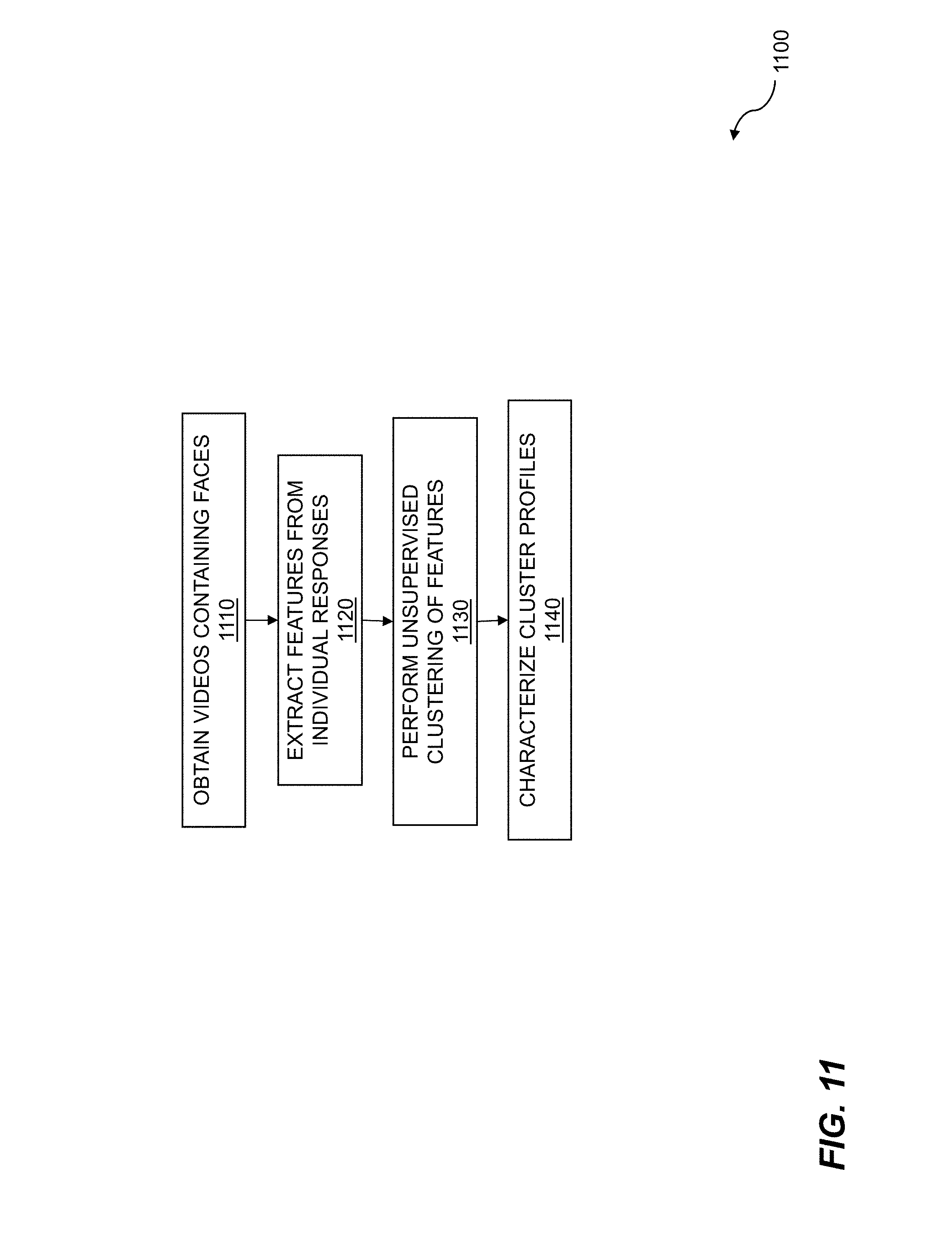

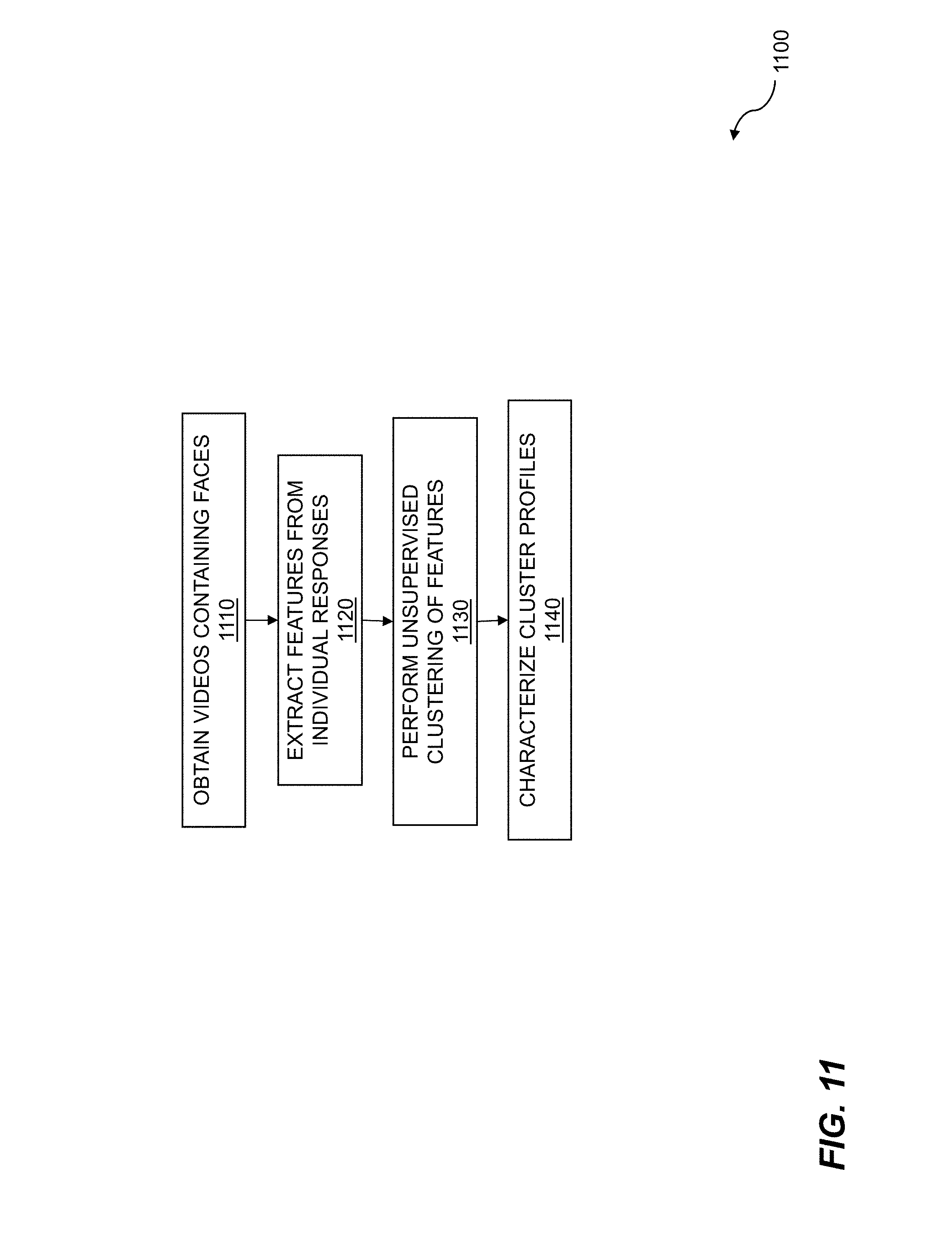

[0021] FIG. 11 is a flow diagram for the large-scale clustering of facial events.

[0022] FIG. 12 illustrates a system diagram for deep learning for emotion analysis.

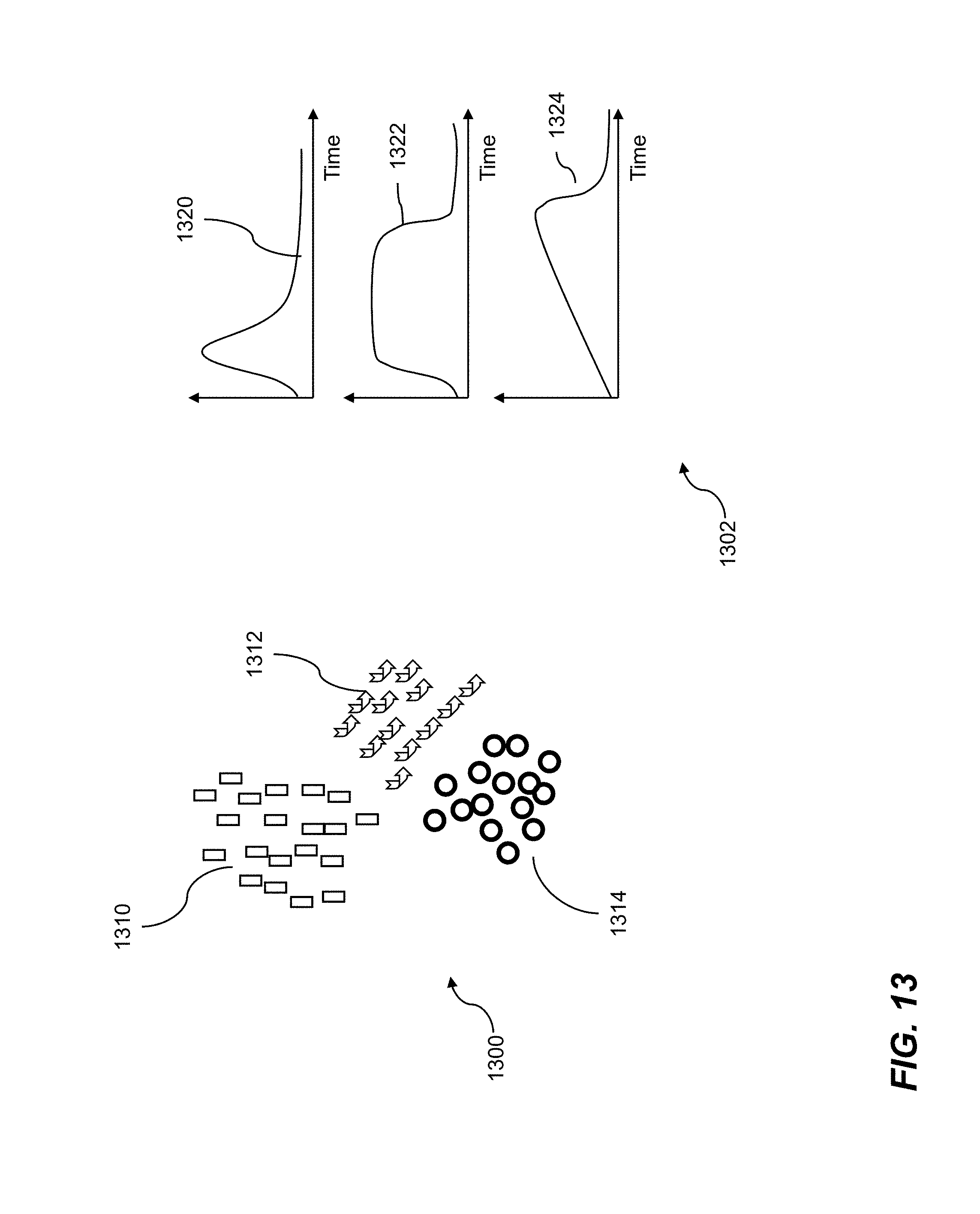

[0023] FIG. 13 shows unsupervised clustering of features and characterizations of cluster profiles.

[0024] FIG. 14A shows example tags embedded in a webpage.

[0025] FIG. 14B shows invoking tags to collect images.

[0026] FIG. 15 is a diagram of a system for speech analysis supporting cross-language mental state identification.

DETAILED DESCRIPTION

[0027] Individuals experience a range of emotions as they interact daily with a variety of electronic devices such as smartphones, personal digital assistants, tablets, laptops, and so on. The individuals use these devices to view and interact with websites, streaming media, social media, and many other channels. The individuals also use these devices to share the variety of content presented on those channels. The channels for sharing can include social media sharing, and the sharing channels can induce emotions, moods, and mental states in the individuals. The channels can inform, amuse, entertain, annoy, anger, bore, etc., those who view the channels. When the channels provide content such as a news story in different languages, the reactions of the individuals to the content may be similar or the same, or may differ, sometimes drastically. The differences in the mental states of the individuals to the content can be based on gender, age, and other demographic information; cultural norms; etc. As a result, the mood of a given individual can be directly influenced not only by the content, but can also be impacted by the language in which content is delivered. The individual may want to find and view content that makes her or him happy, while skipping content they find to be boring, and avoiding content that angers or annoys them. The content that the individual views could be used to cheer up the individual, stir him or her to action, etc.

[0028] Speech analysis can be performed for cross-language state identification. Utterances and associated mental states can be collected from one or more individuals using a microphone or other audio capture technique coupled to a computing device such as a smartphone, personal digital assistant (PDA), tablet, laptop computer, and so on. The collected utterances and associated mental states can be stored locally or remotely on an electronic storage device such as flash media, a solid-state disk (SSD) media, or other media suitable for electronic storage. The utterances and associated mental states can be used to train a machine learning system such as a deep learning system. Once trained, the machine learning system can process other groups of utterances and associated sets of mental states collected from other individuals. The other individuals may speak the same language or a different language. The machine learning system can be trained in one language and applied to another language without having to train the machine learning system anew.

[0029] In disclosed techniques, speech analysis is used for cross-language mental state identification. A first group of utterances in a first language with an associated first set of mental states is collected on a computing device. The first group of utterances and the associated first set of mental states are stored on an electronic storage device. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. A second group of utterances from a second language is processed on the machine learning system, where the processing determines a second set of mental states corresponding to the second group of utterances. The second set of mental states is output.

[0030] In other disclosed techniques, a speech analysis system is used. A first group of utterances in a first language with an associated first set of mental states is obtained. A machine learning system is trained using the first group of utterances and associated first set of mental states. A second group of utterances from a second language is obtained. An associated second set of mental states corresponding to the second language is determined, where the determining is based on the machine learning system that was trained with the first group of utterances and the associated first set of mental states. The associated second set of mental states is output.

[0031] Training for cross-language speech analysis can include training data across language groups and across different cultures that use those language groups. Differences in language formality and idiomatic expressions across such groups and cultures can be considered. For example, the French spoken in France and the French spoken in the Canadian province of Quebec have developed distinctly and are somewhat different, though generally recognizable. In some instances, language becomes a soft proxy for the culture. Other differences among language groups are more notable. For example, Romance languages and Germanic languages can include not only the obvious difference in words, but also in sentence structure, formality, colloquialism, and so on. Other differences that are emerging in languages show how language is used with other humans versus how it is used in speech directed toward a computer-generated electronic device, such as an artificial intelligence personal voice assistant such as Siri.RTM., Cortana.RTM., Google Now.TM., and Echo.COPYRGT.. In embodiments, the outputting of the second mental state is used for human-directed speech. In embodiments, the outputting of the second mental state is used for computer-directed speech or speech recognition.

[0032] Training for cross-language speech analysis can include non-speech vocalizations, also known as non-lexical vocalizations. Non-speech vocalizations such as a cough, a grunt, crying, or a tongue click, to name just a few, may mean different things in different languages. In embodiments, cross-language speech analysis can include the first group of utterances including non-speech vocalizations. In embodiments, cross-language speech analysis can include the second group of utterances including non-speech vocalizations. Further groups of utterances can likewise include non-speech vocalizations. In embodiments, the non-speech vocalizations can include grunts, yelps, squeals, snoring, sighs, laughter, filled pauses, unfilled pauses, tongue clicks, or yawns.

[0033] The outputting the second set of mental states can be useful in various scenarios. The outputting can be used to display the mental state on an electronic device. The outputting can be used to develop cross-linguistic models. The outputting can be used to train an application running on an electronic device. The outputting can be used to develop a conversational agent. The conversational agent can be deployed across languages, cultures, regions, countries, and so on. For example, a conversational agent might be deployed in a rental car pool that is used with customers speaking different languages. Of course, to be useful, the rental car application should be able to provide computer-based speech and speech recognition in the customer's preferred language. An even further useful goal is to be able to understand mental states across languages and cultures using cross-language speech analysis. Thus, in embodiments, the outputting is used for developing cross-cultural conversational agents. And in further embodiments, the cross-cultural conversational agents are used in vehicular control.

[0034] FIG. 1 is a flow diagram for speech analysis with cross-language mental state identification. Various disclosed techniques include speech analysis for cross-language mental state identification. The flow 100 includes collecting, on a computing device, a first group of utterances in a first language with an associated first set of mental states 110. The first group of utterances and the associated first set of mental states can include voice data. The utterances and the mental states can be captured using a microphone, a transducer, or other audio capture device. The collecting of the utterances and the mental states can be accomplished using a microphone, etc., coupled to a portable electronic device such as a smartphone, a personal digital assistant, a tablet, a laptop computer, and so on. In embodiments, the flow 100 includes outputting a series of heuristics 112, based on the correspondence between the first group of utterances and the associated first set of mental states. The heuristics can be used by a machine learning system. The series of heuristics can be used to identify one or more mental states based on the utterances. In embodiments, the heuristics can be used to identify mental states of another person based on the utterances of the other person.

[0035] The flow 100 includes storing 120, on an electronic storage device, the first group of utterances and the associated first set of mental states. The storing of the utterances and the mental states can include storing the utterances and the mental states on the computing device that collected the utterances and the mental states; on another computing device such as a PDA, tablet, smartphone, or laptop; on a local server; on a remote server; on a cloud server; and so on. The storage component can include a flash memory, a solid-state disk, or other media suitable for storing the emotional intensity metrics and other data. The flow 100 includes training a machine learning system 130 using the first group of utterances and the associated first set of mental states that were stored. Various techniques can be used to realize the machine learning system. In some techniques, the machine learning system performs convolving. In embodiments, the machine learning system includes a deep learning system. The machine learning system based on a deep learning system can include a convolutional neural network. Other machine learning systems can include a decision tree, an artificial neural network, a convolutional neural network, a support vector machine, a Bayesian network, a genetic algorithm, and so on. The machine learning can be based on a known set of utterances and associated mental states, on control data, and so on. The training can be based on fully and partially annotated data. The machine learning system can be located on a local server, a remote server, a cloud server, and so on. The flow 100 includes refining the training 132 of the machine learning system based on one or more additional groups of utterances in the first language or the second language. The additional groups of utterances can be collected from the same person as the first group of utterances, from a plurality of people, and so on.

[0036] The flow 100 includes processing 140, on the machine learning system that was trained, a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances. The processing, on the machine learning system, can be performed on the computer device for collecting; on a portable electronic device such as a smartphone, PDA, table, or laptop; on a local server; on a remote server; on a cloud server; and so on. The processing can include preprocessing the raw collected utterances and associated mental states to generate data which is better suited to the processing. In embodiments, the first language and the second language are substantially similar. Substantial similarity here can refer to various dialects and accents of languages such as English spoken in Britain versus America; French spoken in France versus the province of Quebec, Canada; and so on. In embodiments, the first language and the second language can be identical, while in other embodiments, the first language and the second language are different. In the case that the languages are different, speech patterns and mental states can differ in reaction to a media presentation, an event, and so on. In embodiments, the flow 100 includes segmenting silence from speech 142 in the second group of utterances. The segmenting silence from speech can reduce computational overhead. The segmenting silence from speech can segment out data that may not contribute to the identification of one or more mental states. The machine learning system can be updated (e.g. can learn) based on learning from the processing of the first group of utterances and associated first set of mental states, and the second group of utterances and associated second set of mental states. The flow 100 further includes learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, to facilitate determining an associated third set of mental states from a third group of utterances. In embodiments, the determining includes extracting low-level acoustic descriptors (LLD) 144 from short, overlapping speech segments from the second group of utterances. Low-level acoustic descriptors can include prosodic and spectral features. The prosodic low-level descriptors can include pitch, formants, energy, jitter, shimmer, etc. The spectral low-level descriptors can include spectra flux, centroid, entropy, roll-off, and so on.

[0037] The flow 100 includes applying statistical functions 146 to resolve low-level acoustic descriptors over longer speech segments. The applying statistical functions can include curve fitting techniques, smoothing techniques, etc. The applying statistical functions can include signal processing techniques for speech enhancement, improving signal-to-noise ratios, and so on. In embodiments, the extracting includes extracting contextual information 148 from neighboring speech segments. The neighboring segments can be overlapping segments of the voice data including utterances and associated mental states. The contextual information can include data and estimations about the speaker such as gender, age, native language, etc., and fusion rules, window size, and so on. In embodiments, the successive, overlapped speech segments are windowed around 1200 ms. The window sizes can be varied to improve accuracy, to adjust computational complexity, and so on. The flow 100 includes feeding extracted features to a classifier 150 for determining mental states. A plurality of classifiers can be used to determine one or more mental states. In embodiments, the mental states can include one or more of sadness, stress, happiness, anger, frustration, confusion, disappointment, hesitation, cognitive overload, focusing, engagement, attention, boredom, exploration, confidence, trust, delight, disgust, skepticism, doubt, satisfaction, excitement, laughter, calmness, curiosity, humor, sadness, poignancy, or mirth. The determining can include estimating mental state metrics 152 over successive, overlapped speech segments. The metrics can include one or more of mental state onset, duration, decay, intensity, and so on. The flow 100 includes fusing the mental state metrics 154 that were estimated to produce a smoothed mental state metric. The fused mental state metric can be used to improve accuracy of the mental states that are determined.

[0038] The flow 100 includes training an application 160. Many applications can be trained using cross-language speech analysis, including any program or app that will be deployed across more than one language, culture, or people group. General purpose training can occur using several of the more common languages, which can then provide a foundation for more specific fine tuning of the training for use in a local language or application. Thus, some embodiments comprise training an application for use with a third language which is distinct from the first language and the second language. The flow 100 includes developing cross-linguistic models 162. The cross-linguistic models can be based on the outputting of the second mental state and can be included in a program, agent, or application. Thus, embodiments include developing cross-linguistic models based on the outputting. The models can be refined based on further analysis of how the models perform in applications that include human interaction. Thus, further embodiments comprise training the cross-linguistic models based on one or more human reactions to an application using the cross-linguistic models.

[0039] Various steps in the flow 100 may be changed in order, repeated, omitted, or the like without departing from the disclosed concepts. Various embodiments of the flow 100 can be included in a computer program product embodied in a non-transitory computer readable medium that includes code executable by one or more processors.

[0040] FIG. 2 is a flow diagram for emotion classification. Emotion classification can be used for speech analysis for cross-language mental state identification. A computing device collects a first group of utterances in a first language with an associated first set of mental states. An electronic storage device stores the first group of utterances and the associated first set of mental states. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. The machine learning system that was trained processes a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, further facilitates determining an associated third set of mental states from a third group of utterances. A series of heuristics is output based on the correspondence between the first group of utterances and the associated first set of mental states.

[0041] The flow 200 includes collecting voice data 210. The collecting voice data can be performed on a computing device such as a personal electronic device, a laptop computer, and so on. As discussed previously, the voice data can include a first group of utterances in a first language with an associated first set of mental states. The voice data can include other audio data such as ambient noise, vocalizations, etc. The flow 200 includes segmenting silence from speech 212. Pauses, breaths, periods of inactivity, etc. can be segmented from periods of speech included in the voice data. The silence can be segmented from the speech to improve processing of the speech data. The flow 200 includes extracting low-level acoustic descriptors 220 (LLD) from short, overlapping speech segments. The low-level acoustic descriptors can include prosodic features and spectral features. The prosodic low-level descriptors can include pitch, formants, energy, jitter, shimmer, etc. The spectral low-level descriptors can include spectra flux, centroid, entropy, roll-off, and so on. The flow 200 includes applying statistical functions 230 to the extracted low-level acoustic descriptors. The applying of statistical functions can include applying the functions to longer segments of the voice data. The applying statistical functions can include curve fitting techniques, smoothing techniques, etc. The flow 200 includes extracting contextual information 240 from neighboring segments. The neighboring segments can be overlapping segments of the voice data. The contextual information can include data and estimations about the speaker such as gender, age, native language, etc., and fusion rules, window size, and so on.

[0042] The flow 200 includes feeding extracted features to a classifier 250. The classifier can be used to classify mental states, emotional states, moods, and so on. The flow 200 includes classifying emotion 260. More than one emotion can be classified. In embodiments, the emotions that can be identified can include one or more of sadness, stress, happiness, anger, frustration, confusion, disappointment, hesitation, cognitive overload, focusing, engagement, attention, boredom, exploration, confidence, trust, delight, disgust, skepticism, doubt, satisfaction, excitement, laughter, calmness, curiosity, humor, depression, envy, sympathy, embarrassment, poignancy, mirth, etc. Various steps in the flow 200 may be changed in order, repeated, omitted, or the like without departing from the disclosed concepts. Various embodiments of the flow 200 can be included in a computer program product embodied in a non-transitory computer readable medium that includes code executable by one or more processors.

[0043] FIG. 3 shows an example of smoothed emotion estimation. Smoothed emotion estimation can be used for speech analysis for cross-language mental state identification. A first group of utterances in a first language with an associated first set of mental states is collected on a computing device. The first group of utterances and the associated first set of mental states are stored on an electronic storage device. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. A second group of utterances from a second language is processed on the machine learning system that was trained, wherein the processing determines a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, facilitates determining an associated third set of mental states from a third group of utterances. A series of heuristics is output, based on the correspondence between the first group of utterances and the associated first set of mental states.

[0044] An example of smoothed emotion estimation is shown 300. An audio clip 310 includes a sample of speech collected from a person over time. The audio clip 310 can be partitioned into segments such as segment 1320, segment 2 322, and segment 3 324. While three audio segments are shown, other numbers of audio segments can be used. The audio segments can represent partitions or samples of the audio clip at various times such as time t(i) 322, time t(i-1) 320, time t(i+1) 324, etc. Emotions at the times of the various audio segments can be estimated. An estimation can be formulated for time segment t(i-1) 330, an estimation can be formulated for time segment t(i) 332, an estimation can be formulated for time segment t(i+1) 334, and so on. The estimations can include predictions of mental states of a person at different times t(i-1), t(i), t(i+1), etc. The mental states can include happy, sad, angry, confused, attentive, distracted, and so on. The smoothed emotion estimation can include fusion of predictions 340. The mental state predictions can be fused to form combined mental states, multiple mental states, etc. The smoothed emotion estimation can include smoothing emotion estimation at a given time t(i) 350. In the example 300, (i) can be 1, and therefore the smoothed emotion estimation could be at time t(1). The smoothed emotion estimation at time t(i) can include combined mental states such as happy-distracted, sad-angry, and so on.

[0045] FIG. 4 illustrates an example of a confusion matrix. A confusion matrix 400 can be used for speech analysis for cross-language mental state identification. A computing device collects a first group of utterances in a first language with an associated first set of mental states. An electronic storage device stores the first group of utterances and the associated first set of mental states. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. The machine learning system that was trained processes a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, further facilitates determining an associated third set of mental states from a third group of utterances. A series of heuristics is output based on the correspondence between the first group of utterances and the associated first set of mental states.

[0046] A confusion matrix 400 can be a visual representation of the performance of a given algorithm to make correct predictions. The algorithm, can be developed as part of a supervised learning technique for machine learning. The matrix shows predicted classes and actual classes. The values entered into the columns 410 can represent the numbers of instances for the predicted classes, while the values entered into the rows 412 can represent the numbers of instances for the actual classes. The diagonal shows the numbers of instances where the actual classes and the predicted classes coincide. When the predicted classes and the actual classes differ, then the algorithm has "confused" the classification. A scale 420 can be an accuracy scale. Higher values on the accuracy scale can indicate that the algorithm has accurately predicted the actual class for a particular datum. Lower values on the accuracy scale indicate that the algorithm has confused the classification of a particular datum and has inaccurately predicted its class.

[0047] FIG. 5 is a diagram showing audio and image collection including multiple mobile devices. The collected images and speech can be analyzed for cross-language mental state identification. In the diagram 500, the multiple mobile devices can be used singly or together to collect video data and audio on a user 510. While one person is shown, the video data and the audio data can be collected on multiple people. A user 510 can be observed and recorded as she or he is performing a task, experiencing an event, viewing a media presentation, and so on. The user 510 can be shown one or more media presentations, political presentations, social media, or another form of displayed media. The one or more media presentations can be shown to a plurality of people. The media presentations can be displayed on an electronic display 512 or another display. The data collected on the user 510 or on a plurality of users can be in the form of one or more videos, video frames, still images, etc. The plurality of videos can be of people who are experiencing different situations. Some example situations can include the user or plurality of users being exposed to TV programs, movies, video clips, social media, and other such media. The situations could also include exposure to media such as advertisements, political messages, news programs, and so on. As noted before, video data and audio data can be collected on one or more users in substantially identical or different situations and viewing either a single media presentation or a plurality of presentations. The data collected on the user 510 can be analyzed and viewed for a variety of purposes including expression analysis, mental state analysis, and so on. The electronic display 512 can be on a laptop computer 520 as shown, a tablet computer 550, a cell phone 540, a television, a mobile monitor, or any other type of electronic device. In one embodiment, expression data is collected on a mobile device such as a cell phone 540, a tablet computer 550, a laptop computer 520, or a watch 570. Thus, the multiple sources can include at least one mobile device, such as a phone 540 or a tablet 550, or a wearable device such as a watch 570 or glasses 560. A mobile device can include a forward-facing camera and/or a rear-facing camera that can be used to collect expression data. Sources of expression data can include a webcam 522, a phone camera 542, a tablet camera 552, a wearable camera 562, and a mobile camera 530. A wearable camera can comprise various camera devices, such as a watch camera 572. In another embodiment, voice data is collected on a microphone 580, audio transducer, etc., and a mobile device such as a laptop computer 520, and a tablet 550. The microphone 580 can be a web-enabled microphone, a wireless microphone, etc. There can be clear audio paths from the person to the microphone or other audio pickup apparatus. In the example shown, there can be a clear audio path 526 from the laptop 520 to the person 510, an audio path 582 from the microphone 580 to the person 510, an audio path 556 from the tablet 550 to the person 510, and so on.

[0048] As the user 510 is monitored, the user 510 might move due to the nature of the task, boredom, discomfort, distractions, or for another reason. As the user moves, the camera with a view of the user's face can be changed. Thus, as an example, if the user 510 is looking in a first direction, the line of sight 524 from the webcam 522 is able to observe the user's face, but if the user is looking in a second direction, the line of sight 534 from the mobile camera 530 is able to observe the user's face. Furthermore, in other embodiments, if the user is looking in a third direction, the line of sight 544 from the phone camera 542 is able to observe the user's face, and if the user is looking in a fourth direction, the line of sight 554 from the tablet camera 552 is able to observe the user's face. If the user is looking in a fifth direction, the line of sight 564 from the wearable camera 562, which can be a device such as the glasses 560 shown and can be worn by another user or an observer, is able to observe the user's face. If the user is looking in a sixth direction, the line of sight 574 from the wearable watch-type device 570, with a camera 572 included on the device, is able to observe the user's face. In other embodiments, the wearable device is another device, such as an earpiece with a camera, a helmet or hat with a camera, a clip-on camera attached to clothing, or any other type of wearable device with a camera or other sensor for collecting expression data. The user 510 can also use a wearable device including a camera for gathering contextual information and/or collecting expression data on other users. Because the user 510 can move her or his head, the facial data can be collected intermittently when she or he is looking in a direction of a camera. In some cases, multiple people can be included in the view from one or more cameras, and some embodiments include filtering out faces of one or more other people to determine whether the user 510 is looking toward a camera. All or some of the expression data can be continuously or sporadically available from the various devices and other devices.

[0049] The captured video data can include facial expressions, and can be analyzed on a computing device such as the video capture device or on another separate device. The captured audio data can include mental states and can also be analyzed on a computing device such as the audio capture device or another separate device. The analysis can take place on one of the mobile devices discussed above, on a local server, on a remote server, on a cloud-based server, and so on. In embodiments, some of the analysis takes place on the mobile device, while other analysis takes place on a server device. The analysis of the video data can include the use of a classifier. The video data and the audio data can be captured using one of the mobile devices discussed above and then sent to a server or another computing device for analysis. However, the captured video data including expressions, and audio data including mental states, can also be analyzed on the device which performed the capturing. The analysis can be performed on a mobile device where the videos were obtained with the mobile device and wherein the mobile device includes one or more of a laptop computer, a tablet, a PDA, a smartphone, a wearable device, and so on. In another embodiment, the analyzing comprises using a classifier on a server or another computing device other than the capturing device.

[0050] FIG. 6 illustrates feature extraction for multiple faces. The feature extraction for multiple faces 600 can be performed for faces that can be detected in multiple images. The feature extraction can include speech analysis for cross-language mental state identification. A computing device collects a first group of utterances in a first language with an associated first set of mental states. An electronic storage device stores the first group of utterances and the associated first set of mental states. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. The machine learning system that was trained processes a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, further facilitates determining an associated third set of mental states from a third group of utterances. A series of heuristics is output based on the correspondence between the first group of utterances and the associated first set of mental states.

[0051] In embodiments, the features of multiple faces are extracted for evaluating mental states. Features of a face or a plurality of faces can be extracted from collected video data. Feature extraction for multiple faces can be based on analyzing, using one or more processors, the mental state data for providing analysis of the mental state data to the individual. The feature extraction can be performed by analysis using one or more processors, using one or more video collection devices, and by using a server. The analysis device can be used to perform face detection for a second face, as well as for facial tracking of the first face. One or more videos can be captured, where the videos contain one or more faces. The video or videos that contain the one or more faces can be partitioned into a plurality of frames, and the frames can be analyzed for the detection of the one or more faces. The analysis of the one or more video frames can be based on one or more classifiers. A classifier can be an algorithm, heuristic, function, or piece of code that can be used to identify into which of a set of categories a new or particular observation, sample, datum, etc. should be placed. The decision to place an observation into a category can be based on training the algorithm or piece of code by analyzing a known set of data, known as a training set. The training set can include data for which category memberships of the data can be known. The training set can be used as part of a supervised training technique. If a training set is not available, then a clustering technique can be used to group observations into categories. The latter approach, or "unsupervised learning", can be based on a measure (i.e. distance) of one or more inherent similarities among the data that is being categorized. When the new observation is received, then the classifier can be used to categorize the new observation. Classifiers can be used for many analysis applications, including analysis of one or more faces. The use of classifiers can be the basis of analyzing the one or more faces for gender, ethnicity, and age; for detection of one or more faces in one or more videos; for detection of facial features; for detection of facial landmarks; and so on. The observations can be analyzed based on one or more of a set of quantifiable properties. The properties can be described as features and explanatory variables and can include various data types that can include numerical (integer-valued, real-valued), ordinal, categorical, and so on. Some classifiers can be based on a comparison between an observation and prior observations, and can also be based on functions such as a similarity function, a distance function, and so on.

[0052] Classification can be based on various types of algorithms, heuristics, codes, procedures, statistics, and so on. Many techniques exist for performing classification. This classification of one or more observations into one or more groups can be based on distributions of the data values, probabilities, and so on. Classifiers can be binary, multiclass, linear, etc. Algorithms for classification can be implemented using a variety of techniques, including neural networks, kernel estimation, support vector machines, use of quadratic surfaces, and so on. Classification can be used in many application areas such as computer vision, speech and handwriting recognition, and the like. Classification can be used for biometric identification of one or more people in one or more frames of one or more videos.

[0053] Returning to FIG. 6, the detection of the first face, the second face, and multiple faces can include identifying facial landmarks, generating a bounding box, and prediction of a bounding box and landmarks for a next frame, where the next frame can be one of a plurality of frames of a video containing faces. A first video frame 600 includes a frame boundary 610, a first face 612, and a second face 614. The video frame 600 also includes a bounding box 620. Facial landmarks can be generated for the first face 612. Face detection can be performed to initialize a second set of locations for a second set of facial landmarks for a second face within the video. Facial landmarks in the video frame 600 can include the facial landmarks 622, 624, and 626. The facial landmarks can include corners of a mouth, corners of eyes, eyebrow corners, the tip of the nose, nostrils, chin, the tips of ears, and so on. The performing of face detection on the second face can include performing facial landmark detection with the first frame from the video for the second face, and can include estimating a second rough bounding box for the second face based on the facial landmark detection. The estimating of a second rough bounding box can include the bounding box 620. Bounding boxes can also be estimated for one or more other faces within the boundary 610. The bounding box can be refined, as can one or more facial landmarks. The refining of the second set of locations for the second set of facial landmarks can be based on localized information around the second set of facial landmarks. The bounding box 620 and the facial landmarks 622, 624, and 626 can be used to estimate future locations for the second set of locations for the second set of facial landmarks in a future video frame from the first video frame.

[0054] A second video frame 602 is also shown. The second video frame 602 includes a frame boundary 630, a first face 632, and a second face 634. The second video frame 602 also includes a bounding box 640 and the facial landmarks, or points, 642, 644, and 646. In other embodiments, multiple facial landmarks are generated and used for facial tracking of the two or more faces of a video frame, such as the second shown video frame 602. Facial points from the first face can be distinguished from other facial points. In embodiments, the other facial points include facial points of one or more other faces. The facial points can correspond to the facial points of the second face. The distinguishing of the facial points of the first face and the facial points of the second face can be used to distinguish between the first face and the second face, to track either or both of the first face and the second face, and so on. Other facial points can correspond to the second face. As mentioned above, multiple facial points can be determined within a frame. One or more of the other facial points that are determined can correspond to a third face. The location of the bounding box 640 can be estimated, where the estimating can be based on the location of the generated bounding box 620 shown in the first video frame 600. The three facial points shown, facial points, or landmarks, 642, 644, and 646, might lie completely within the bounding box 640 or might lie partially outside the bounding box 640. For instance, the second face 634 might have moved between the first video frame 600 and the second video frame 602. Based on the accuracy of the estimating of the bounding box 640, a new estimation can be determined for a third, future frame from the video, and so on. The evaluation can be performed, all or in part, on semiconductor-based logic.

[0055] FIG. 7 shows live streaming of social video and social audio. The live streaming of social video and social audio can be performed for speech analysis for cross-language mental state identification. The streaming of social video and social audio can include people as they interact with the Internet. A video of a person or people can be transmitted via live streaming. Similarly, audio of a person or people can be transmitted via live streaming. The streaming and analysis can be facilitated by a video capture device, a local server, a remote server, a semiconductor-based logic, and so on. The streaming can be live streaming and can include mental state analysis, mental state event signature analysis, etc. Live stream video and live stream audio are examples of one-to-many social media, where video and/or audio can be sent over the Internet from one person to a plurality of people using a social media app and/or platform. Live streaming is one of numerous popular techniques used by people who want to disseminate ideas, send information, provide entertainment, share experiences, and so on. Some of the live streams can be scheduled, such as webcasts, podcasts, online classes, sporting events, news, computer gaming, or video conferences, while others can be impromptu streams that are broadcast as needed or when desirable. Examples of impromptu live stream videos can range from individuals simply wanting to share experiences with their social media followers, to live coverage of breaking news, emergencies, or natural disasters. The latter coverage is known as mobile journalism, or "mo jo", and is becoming increasingly common. With this type of coverage, news reporters can use networked, portable electronic devices to provide mobile journalism content to a plurality of social media followers. Such reporters can be quickly and inexpensively deployed as the need or desire arises.

[0056] Several live streaming social media apps and platforms can be used for transmitting video. One such video social media app is Meerkat.TM. that can link with a user's Twitter.TM. account. Meerkat.TM. enables a user to stream video using a handheld, networked electronic device coupled to video capabilities. Viewers of the live stream can comment on the stream using tweets that can be seen and responded to by the broadcaster. Another popular app is Periscope.TM. that can transmit a live recording from one user to that user's Periscope.TM. account and other followers. The Periscope.TM. app can be executed on a mobile device. The user's Periscope.TM. followers can receive an alert whenever that user begins a video transmission. Another live-stream video platform is Twitch.TM. which can be used for video streaming of video games and broadcasts of various competitions and events. Audio streaming applications are also popular. Some of the many audio streaming, editing, and disk jockey (DJ) oriented applications include Mixlr.TM., DJ Player.TM., LadioCast.TM., and a variety of MPEG3 (MP3) applications for creating, editing, broadcasting, and streaming MP3 files.

[0057] The example 700 shows a user 710 broadcasting a video live stream and an audio live stream to one or more people as shown by the person 750, the person 760, and the person 770. A portable, network-enabled, electronic device 720 can be coupled to a forward-facing camera 722. The portable electronic device 720 can be a smartphone, a PDA, a tablet, a laptop computer, and so on. The camera 722 coupled to the device 720 can have a line-of-sight view 724 to the user 710 and can capture video of the user 710. The portable electronic device 720 can be coupled to a built-in or other microphone and can have a clear audio path 728 to the user 710. The captured video and audio can be sent to an analysis or recommendation engine 740 using a network link 726 to the Internet 730. The network link can be a wireless link, a wired link, and so on. The recommendation engine 740 can recommend to the user 710 an app and/or platform that can be supported by the server and can be used to provide a video live stream and an audio live stream to one or more followers of the user 710. In the example 700, the user 710 has three followers: the person 750, the person 760, and the person 770. Each follower has a line-of-sight view to a video screen on a portable, networked electronic device, and has a clear audio path to audio transducers in the portable, networked electronic device. In other embodiments, one or more followers follow the user 710 using any other networked electronic device, including a computer. In the example 700, the person 750 has a line-of-sight view 752 to the video screen of a device 754 and a clear audio path 758 to the transducers of the device 754; the person 760 has a line-of-sight view 762 to the video screen of a device 764 and a clear audio path 768 to the transducers of the device 764; and the person 770 has a line-of-sight view 772 to the video screen of a device 774 and a clear audio path 778 to the transducers of the device 774. The portable electronic devices 754, 764, and 774 can each be a smartphone, a PDA, a tablet, and so on. Each portable device can receive the video stream and the audio stream being broadcast by the user 710 through the Internet 730 using the app and/or platform that can be recommended by the recommendation engine 740. The device 754 can receive a video stream and an audio stream using the network link 756; the device 764 can receive a video stream and an audio stream using the network link 766; the device 774 can receive a video stream and an audio stream using the network link 776, and so on. The network link can be a wireless link, a wired link, a hybrid link, etc. Depending on the app and/or platform that can be recommended by the recommendation engine 740, one or more followers, such as the followers 750, 760, 770, and so on, can reply to, comment on, remark, and otherwise provide feedback to the user 710 using their devices 754, 764, and 774, respectively.

[0058] The human face and the human voice provide a powerful communications medium through their ability to exhibit a myriad of expressions that can be captured and analyzed for a variety of purposes. In some cases, media producers are acutely interested in evaluating the effectiveness of message delivery by video media. Such video media includes advertisements, political messages, educational materials, television programs, movies, government service announcements, etc. Automated facial analysis can be performed on one or more video frames containing a face in order to detect facial action. Based on the facial action detected, a variety of parameters can be determined, including affect valence, spontaneous reactions, facial action units, and so on. The parameters that are determined can be used to infer or predict emotional and mental states. For example, determined valence can be used to describe the emotional reaction of a viewer to a video media presentation or another type of presentation. Positive valence provides evidence that a viewer is experiencing a favorable emotional response to the video media presentation, while negative valence provides evidence that a viewer is experiencing an unfavorable emotional response to the video media presentation. Other facial data analysis can include the determination of discrete emotional states of the viewer or viewers.

[0059] Facial data can be collected from a plurality of people using any of a variety of cameras. A camera can include a webcam, a video camera, a still camera, a thermal imager, a CCD device, a phone camera, a three-dimensional camera, a depth camera, a light field camera, multiple webcams used to show different views of a person, or any other type of image capture apparatus that can allow captured data to be used in an electronic system. In some embodiments, the person is permitted to "opt-in" to the facial data collection. For example, the person can agree to the capture of facial data using a personal device such as a mobile device or another electronic device by selecting an opt-in choice. Opting-in can then turn on the person's webcam-enabled device and can begin the capture of the person's facial data via a video feed from the webcam or other camera. The video data that is collected can include one or more persons experiencing an event. The one or more persons can be sharing a personal electronic device or can each be using one or more devices for video capture. The videos that are collected can be collected using a web-based framework. The web-based framework can be used to display the video media presentation or event as well as to collect videos from multiple viewers who are online. That is, the collection of videos can be crowdsourced from those viewers who elected to opt-in to the video data collection.

[0060] The videos captured from the various viewers who chose to opt-in can be substantially different in terms of video quality, frame rate, etc. As a result, the facial video data can be scaled, rotated, and otherwise adjusted to improve consistency. Human factors further influence the capture of the facial video data. The facial data that is captured might or might not be relevant to the video media presentation being displayed. For example, the viewer might not be paying attention, might be fidgeting, might be distracted by an object or event near the viewer, or might be otherwise inattentive to the video media presentation. The behavior exhibited by the viewer can prove challenging to analyze due to viewer actions including eating, speaking to another person or persons, speaking on the phone, etc. The videos collected from the viewers might also include other artifacts that pose challenges during the analysis of the video data. The artifacts can include items such as eyeglasses (because of reflections), eye patches, jewelry, and clothing that occludes or obscures the viewer's face. Similarly, a viewer's hair or hair covering can present artifacts by obscuring the viewer's eyes and/or face.

[0061] The captured facial data can be analyzed using the facial action coding system (FACS). The FACS seeks to define groups or taxonomies of facial movements of the human face. The FACS encodes movements of individual muscles of the face, where the muscle movements often include slight, instantaneous changes in facial appearance. The FACS encoding is commonly performed by trained observers but can also be performed on automated, computer-based systems. Analysis of the FACS encoding can be used to determine emotions of the persons whose facial data is captured in the videos. The FACS is used to encode a wide range of facial expressions that are anatomically possible for the human face. The FACS encodings include action units (AUs) and related temporal segments that are based on the captured facial expression. The AUs are open to higher order interpretation and decision-making. These AUs can be used to recognize emotions experienced by the observed person. Emotion-related facial actions can be identified using the emotional facial action coding system (EMFACS) and the facial action coding system affect interpretation dictionary (FACSAID). For a given emotion, specific action units can be related to the emotion. For example, the emotion of anger can be related to AUs 4, 5, 7, and 23, while happiness can be related to AUs 6 and 12. Other mappings of emotions to AUs have also been previously associated. The coding of the AUs can include an intensity scoring that ranges from A (trace) to E (maximum). The AUs can be used for analyzing images to identify patterns indicative of a particular mental and/or emotional state. The AUs range in number from 0 (neutral face) to 98 (fast up-down look). The AUs include so-called main codes (inner brow raiser, lid tightener, etc.), head movement codes (head turn left, head up, etc.), eye movement codes (eyes turned left, eyes up, etc.), visibility codes (eyes not visible, entire face not visible, etc.), and gross behavior codes (sniff, swallow, etc.). Emotion scoring can be included where intensity, as well as specific emotions, moods, or mental states, are evaluated.

[0062] The coding of faces identified in videos captured of people observing an event can be automated. The automated systems can detect facial AUs or discrete emotional states. The emotional states can include amusement, fear, anger, disgust, surprise, and sadness. The automated systems can be based on a probability estimate from one or more classifiers, where the probabilities can correlate with an intensity of an AU or an expression. The classifiers can be used to identify into which of a set of categories a given observation can be placed. In some cases, the classifiers can be used to determine a probability that a given AU or expression is present in a given frame of a video. The classifiers can be used as part of a supervised machine learning technique, where the machine learning technique can be trained using "known good" data. Once trained, the machine learning technique can proceed to classify new data that is captured.

[0063] The supervised machine learning models can be based on support vector machines (SVMs). An SVM can have an associated learning model that is used for data analysis and pattern analysis. For example, an SVM can be used to classify data that can be obtained from collected videos of people experiencing a media presentation. An SVM can be trained using "known good" data that is labeled as belonging to one of two categories (e.g. smile and no-smile). The SVM can build a model that assigns new data into one of the two categories. The SVM can construct one or more hyperplanes that can be used for classification. The hyperplane that has the largest distance from the nearest training point can be determined to have the best separation. The largest separation can improve the classification technique by increasing the probability that a given data point can be properly classified.

[0064] In another example, a histogram of oriented gradients (HoG) can be computed. The HoG can include feature descriptors and can be computed for one or more facial regions of interest. The regions of interest of the face can be located using facial landmark points, where the facial landmark points can include outer edges of nostrils, outer edges of the mouth, outer edges of eyes, etc. A HoG for a given region of interest can count occurrences of gradient orientation within a given section of a frame from a video, for example. The gradients can be intensity gradients and can be used to describe an appearance and a shape of a local object. The HoG descriptors can be determined by dividing an image into small, connected regions, also called cells. A histogram of gradient directions or edge orientations can be computed for pixels in the cell. Histograms can be contrast-normalized based on intensity across a portion of the image or the entire image, thus reducing any influence from differences in illumination or shadowing changes between and among video frames. The HoG can be computed on the image or on an adjusted version of the image, where the adjustment of the image can include scaling, rotation, etc. The image can be adjusted by flipping the image around a vertical line through the middle of a face in the image. The symmetry plane of the image can be determined from the tracker points and landmarks of the image.

[0065] In embodiments, an automated facial analysis system identifies five facial actions or action combinations in order to detect spontaneous facial expressions for media research purposes. Based on the facial expressions that are detected, a determination can be made with regard to the effectiveness of a given video media presentation, for example. The system can detect the presence of the AUs or the combination of AUs in videos collected from a plurality of people. The facial analysis technique can be trained using a web-based framework to crowdsource videos of people as they watch online video content. The video can be streamed at a fixed frame rate to a server. Human labelers can code for the presence or absence of facial actions including a symmetric smile, unilateral smile, asymmetric smile, and so on. The trained system can then be used to automatically code the facial data collected from a plurality of viewers experiencing video presentations (e.g. television programs).

[0066] Spontaneous asymmetric smiles can be detected in order to understand viewer experiences. Related literature indicates that as many asymmetric smiles occur on the right hemi face as do on the left hemi face, for spontaneous expressions. Detection can be treated as a binary classification problem, where images that contain a right asymmetric expression are used as positive (target class) samples and all other images as negative (non-target class) samples. Classifiers, including classifiers such as support vector machines and random forests, perform the classification. Random forests can include ensemble-learning methods that use multiple learning algorithms to obtain better predictive performance. Frame-by-frame detection can be performed to recognize the presence of an asymmetric expression in each frame of a video. Facial points can be detected, including the top of the mouth and the two outer eye corners. The face can be extracted, cropped, and warped into a pixel image of specific dimension (e.g. 96.times.96 pixels). In embodiments, the inter-ocular distance and vertical scale in the pixel image are fixed. Feature extraction can be performed using computer vision software such as OpenCV.TM.. Feature extraction can be based on the use of HoGs. HoGs can include feature descriptors and can be used to count occurrences of gradient orientation in localized portions or regions of the image. Other techniques can be used for counting occurrences of gradient orientation, including edge orientation histograms, scale-invariant feature transformation descriptors, etc. The AU recognition tasks can also be performed using Local Binary Patterns (LBP) and Local Gabor Binary Patterns (LGBP). The HoG descriptor represents the face as a distribution of intensity gradients and edge directions and is robust in its ability to translate and scale. Differing patterns, including groupings of cells of various sizes and arranged in variously sized cell blocks, can be used. For example, 4.times.4 cell blocks of 8.times.8 pixel cells with an overlap of half of the block can be used. Histograms of channels can be used, including nine channels or bins evenly spread over 0-180 degrees. In this example, the HoG descriptor on a 96.times.96 image is 25 blocks.times.16 cells.times.9 bins=3600, the latter quantity representing the dimension. AU occurrences can be rendered. The videos can be grouped into demographic datasets based on nationality and/or other demographic parameters for further detailed analysis. This grouping and other analyses can be facilitated via semiconductor-based logic.

[0067] FIG. 8 shows example facial data collection including landmarks. The collecting of facial data including landmarks 800 can be performed for speech analysis for cross-language mental state identification. A computing device collects a first group of utterances in a first language with an associated first set of mental states. An electronic storage device stores the first group of utterances and the associated first set of mental states. A machine learning system is trained using the first group of utterances and the associated first set of mental states that were stored. The machine learning system that was trained processes a second group of utterances from a second language, wherein the processing determines a second set of mental states corresponding to the second group of utterances. Learning, from the first group of utterances and associated first set of mental states and the second group of utterances and associated second set of mental states, further facilitates determining an associated third set of mental states from a third group of utterances. A series of heuristics is output based on the correspondence between the first group of utterances and the associated first set of mental states.

[0068] A face 810 can be observed using a camera 830 in order to collect facial data that includes facial landmarks. The facial data can be collected from a plurality of people using one or more of a variety of cameras. As previously discussed, the camera or cameras can include a webcam, where a webcam can include a video camera, a still camera, a thermal imager, a CCD device, a smartphone camera, a three-dimensional camera, a depth camera, a light field camera, multiple webcams used to show different views of a person, or any other type of image capture apparatus that can allow captured data to be used in an electronic system. The quality and usefulness of the facial data that is captured can depend on the position of the camera 830 relative to the face 810, the number of cameras used, the illumination of the face, etc. In some cases, if the face 810 is poorly lit or over-exposed (e.g. in an area of bright light), the processing of the facial data to identify facial landmarks might be rendered more difficult. In another example, the camera 830 being positioned to the side of the person might prevent capture of the full face. Other artifacts can degrade the capture of facial data. For example, the person's hair, prosthetic devices (e.g. glasses, an eye patch, and eye coverings), jewelry, and clothing can partially or completely occlude or obscure the person's face. Data relating to various facial landmarks can include a variety of facial features. The facial features can comprise an eyebrow 820, an outer eye edge 822, a nose 824, a corner of a mouth 826, and so on. Multiple facial landmarks can be identified from the facial data that is captured. The facial landmarks that are identified can be analyzed to identify facial action units. The action units that can be identified can include AU02 outer brow raiser, AU14 dimpler, AU17 chin raiser, and so on. Multiple action units can be identified. The action units can be used alone and/or in combination to infer one or more mental states and emotions. A similar process can be applied to gesture analysis (e.g. hand gestures) with all of the analysis being accomplished or augmented by a mobile device, a server, semiconductor-based logic, and so on.