Electronic Device Operating In Associated State With External Audio Device Based On Biometric Information And Method Therefor

BAE; Minho ; et al.

U.S. patent application number 16/201473 was filed with the patent office on 2019-05-30 for electronic device operating in associated state with external audio device based on biometric information and method therefor. The applicant listed for this patent is Samsung Electronics Co., Ltd.. Invention is credited to Minho BAE, Hochul HWANG, Moonsoo KIM.

| Application Number | 20190166428 16/201473 |

| Document ID | / |

| Family ID | 66633740 |

| Filed Date | 2019-05-30 |

View All Diagrams

| United States Patent Application | 20190166428 |

| Kind Code | A1 |

| BAE; Minho ; et al. | May 30, 2019 |

ELECTRONIC DEVICE OPERATING IN ASSOCIATED STATE WITH EXTERNAL AUDIO DEVICE BASED ON BIOMETRIC INFORMATION AND METHOD THEREFOR

Abstract

An audio output device is provided. The audio output device determines a similarity between a first external subject and a second external subject based on first biometric information about the first external object associated with the audio output device and second biometric information about the second external object associated with an external audio output device, and controls the audio output device and the external audio output device to operation in coordination or independently based on the similarity.

| Inventors: | BAE; Minho; (Suwon-si, KR) ; KIM; Moonsoo; (Suwon-si, KR) ; HWANG; Hochul; (Suwon-si, KR) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66633740 | ||||||||||

| Appl. No.: | 16/201473 | ||||||||||

| Filed: | November 27, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | A61B 5/14532 20130101; H04R 2430/01 20130101; G06F 21/32 20130101; G06F 21/44 20130101; H04R 2420/01 20130101; H04R 5/04 20130101; H04M 1/7253 20130101; H04M 1/72558 20130101; A61B 5/024 20130101; A61B 5/021 20130101; A61B 5/6817 20130101; H04R 2420/07 20130101; H04M 1/6016 20130101; H04M 1/6066 20130101; H04M 1/72569 20130101; A61B 5/6898 20130101; H04R 1/1041 20130101; A61B 5/0488 20130101 |

| International Class: | H04R 5/04 20060101 H04R005/04; H04R 1/10 20060101 H04R001/10; A61B 5/00 20060101 A61B005/00; G06F 21/32 20060101 G06F021/32; H04M 1/725 20060101 H04M001/725 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Nov 28, 2017 | KR | 10-2017-0160311 |

Claims

1. An audio output device comprising: at least one communication circuit configured to communicate with an external audio output device; at least one sensor; and at least one processor configured to: obtain first biometric information about a first external object associated with the audio output device by using the at least one sensor, obtain second biometric information about a second external object associated with the external audio output device from the external audio output device by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, operate in coordination with the external audio output device when the similarity satisfies a first specified condition, and operate independently of the external audio output device when the similarity satisfies a second specified condition.

2. The audio output device of claim 1, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, output first audio data and transmit at least a portion of the first audio data to the external audio output device by using the at least one communication circuit.

3. The audio output device of claim 1, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, control the audio output device and the external audio output device so as to have same audio output characteristics, and when the similarity satisfies the second specified condition, control the audio output device and the external audio output device so as to have different audio output characteristics, and wherein the audio output characteristics comprise at least one of a volume or a volume for each frequency band.

4. The audio output device of claim 1, wherein the at least one communication circuit communicates with the external audio output device and an external electronic device, and wherein, when the similarity satisfies the second specified condition, the at least one processor is further configured to transmit information, which indicates that objects associated with the audio output device and the external audio output device are different, to the external electronic device by using the at least one communication circuit.

5. The audio output device of claim 1, wherein the first biometric information and the second biometric information include at least one of a heart rate, a heartbeat waveform, a heartbeat timing, or a heartbeat frequency, and wherein the at least one processor is further configured to determine a similarity between the first external object and the second external object based on a similarity between the first biometric information and the second biometric information.

6. An electronic device comprising: at least one communication circuit configured to communicate with a first external audio output device; a display; and at least one processor configured to: receive first biometric information about a first external object associated with the first external audio output device and second biometric information about a second external object associated with a second external audio output device communicating with the first external audio output device from the first external audio output device, by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, when the similarity satisfies a first specified condition, set the first external audio output device to operate in coordination with the second external audio output device, and when the similarity satisfies a second specified condition, set the first external audio output device to operate independently of the second external audio output device.

7. The electronic device of claim 6, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, transmit first audio data to at least one of the first external audio output device or the second external audio output device by using the at least one communication circuit; and when the similarity satisfies the second specified condition, transmit the first audio data to the first external audio output device and second audio data to the second external audio output device, by using the at least one communication circuit.

8. The electronic device of claim 7, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, display a user interface to control the first audio data in at least a portion of the display; and when the similarity satisfies the second specified condition, display a user interface to independently control the first audio data and the second audio data in the portion of the display.

9. The electronic device of claim 6, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, set the first external audio output device and the second external audio output device to have same audio output characteristics, and when the similarity satisfies the second specified condition, set the first external audio output device and the second external audio output device to have different audio output characteristics, and wherein the audio output characteristics comprise at least one of a volume or a volume for each frequency band.

10. The electronic device of claim 9, wherein, when a user input for controlling the audio output characteristics is received, the at least one processor is further configured to: when the similarity satisfies the first specified condition, display a user interface to control the audio output characteristics of the first external audio output device and the second external audio output device in at least a portion of the display; and when the similarity satisfies the second specified condition, display a user interface to independently control the audio output characteristics of the first external audio output device and the second external audio output device in the portion of the display.

11. The electronic device of claim 6, wherein the at least one processor is further configured to: when the similarity satisfies the second specified condition, display indicating information, which indicates that the first external audio output device and the second external audio output device are associated with different objects, in at least a portion on the display.

12. The electronic device of claim 11, wherein the at least one processor is further configured to display at least one of an icon, a character, an image, or a pop-up message on the display as at least a portion of the indicating information.

13. The electronic device of claim 6, wherein the first biometric information and the second biometric information include at least one of a heart rate, a heartbeat waveform, a heartbeat timing, or a heartbeat frequency, and wherein the at least one processor is further configured to determine a similarity between the first external object and the second external object based on a similarity between the first biometric information and the second biometric information.

14. An electronic device comprising: at least one communication circuit configured to communicate with a first external audio output device and a second external audio output device; a display; and at least one processor configured to: obtain first biometric information about a first external object associated with the first external audio output device and second biometric information about a second external object associated with the second external audio output device, by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, when the similarity satisfies a first specified condition, set the first external audio output device and the second external audio output device to operate in coordination with each other, and when the similarity satisfies a second specified condition, set the first external audio output device and the second external audio output device to operate independently of each other.

15. The electronic device of claim 14, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, transmit first audio data to at least one of the first external audio output device or the second external audio output device by using the at least one communication circuit; and when the similarity satisfies the second specified condition, transmit the first audio data to the first external audio output device and second audio data to the second external audio output device, by using the at least one communication circuit.

16. The electronic device of claim 15, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, display a user interface to control the first audio data in at least a portion of the display; and when the similarity satisfies the second specified condition, display a user interface to independently control the first audio data and the second audio data in the portion of the display.

17. The electronic device of claim 14, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, set the first external audio output device and the second external audio output device to have same audio output characteristics, and when the similarity satisfies the second specified condition, set the first external audio output device and the second external audio output device to have different audio output characteristics, and wherein the audio output characteristics include at least one of a volume or a volume for each frequency band.

18. The electronic device of claim 17, wherein, when a user input controlling the audio output characteristic control is received, the at least one processor is further configured to: when the similarity satisfies the first specified condition, display a user interface to control the audio output characteristics of the first external audio output device and the second external audio output device in at least a portion of the display; and when the similarity satisfies the second specified condition, display a user interface to independently control the audio output characteristics of the first external audio output device and the second external audio output device in the portion of the display.

19. The electronic device of claim 14, wherein the at least one processor is further configured to: when the similarity satisfies the first specified condition, display indicating information, which indicates that the first external audio output device and the second external audio output device are associated with different objects, in at least a portion of the display.

20. The electronic device of claim 19, wherein the at least one processor is further configured to: display at least one of an icon, a character, an image, or a pop-up message on the display as at least a portion of the indicating information.

Description

CROSS-REFERENCE TO RELATED APPLICATION(S)

[0001] This application is based on and claims priority under 35 U.S.C. .sctn. 119(a) of a Korean patent application number 10-2017-0160311, filed on Nov. 28, 2017, in the Korean Intellectual Property Office, the disclosure of which is incorporated by reference herein its entirety.

BACKGROUND

1. Field

[0002] The disclosure relates to an audio output device and a control method therefor.

2. Description of Related Art

[0003] As electronic communication industries develop, an electronic device with high mobility and accessibility is being developed. For example, a portable electronic device such as a wearable device is being widely used. Also, as the portable electronic device is supplied, methods for monitoring a user state by using the portable electronic device are being developed. For example, a user state may be monitored through a wireless audio output device (e.g., a wireless earphone(s) or a wireless headset) which may be mounted on (or in) user's ear. For example, advanced user experience may be provided by controlling an audio output based on user's state. Accordingly, a method which may control a wireless audio output device based on information about user's state is required.

[0004] The above information is presented as background information only to assist with an understanding of the disclosure. No determination has been made, and no assertion is made, as to whether any of the above might be applicable as prior art with regard to the disclosure.

SUMMARY

[0005] An audio output device may include a pair of output devices. For example, the pair of output devices may be worn by different users. Also, the pair of output devices may be interconnected wirelessly. In this case, the different users may want to listen to different music. However, the pair of output devices provides the same music as one device, thereby causing degradation of the user experience.

[0006] Aspects of the disclosure are to address at least the above-mentioned problems and/or disadvantages and to provide at least the advantages described below. Accordingly, an aspect of the disclosure is to provide a user information-based wireless audio output device which may coincide with a user situation and a control method therefor.

[0007] Additional aspects will be set forth in part in the description which follows and, in part, will be apparent from the description, or may be learned by practice of the presented embodiments.

[0008] In accordance with an aspect of the disclosure, an audio output device is provided. The audio output device includes at least one communication circuit configured to communicate with an external audio output device, at least one sensor, and at least one processor configured to obtain first biometric information about a first external object associated with the audio output device by using the at least one sensor, obtain second biometric information about a second external object associated with the external audio output device from the external audio output device by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, operate in coordination with the external audio output device when the similarity satisfies a first specified condition, and operate independently of the external audio output device when the similarity satisfies a second specified condition.

[0009] In accordance with another aspect of the disclosure, an electronic device is provided. The electronic device includes at least one communication circuit that communicates with a first external audio output device, a display, and at least one processor configured to receive first biometric information about a first external object associated with the first external audio output device and second biometric information about a second external object associated with a second external audio output device communicating with the first external audio output device from the first external audio output device, by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, set the first external audio output device such that the first external audio output device operates in coordination with the second external audio output device, when the similarity satisfies a first specified condition, and set the first external audio output device to operate independently of the second external audio output device, when the similarity satisfies a second specified condition.

[0010] In accordance with yet another aspect of the disclosure, an electronic device is provided. The electronic device includes at least one communication circuit that communicates with a first external audio output device and a second external audio output device, a display, and at least one processor configured to obtain first biometric information about a first external object associated with the first external audio output device and second biometric information about a second external object associated with the second external audio output device, by using the at least one communication circuit, determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information, set the first external audio output device and the second external audio output device such that the first external audio output device and the second external audio output device operate in coordination with each other, when the similarity satisfies a first specified condition, and to set the first external audio output device and the second external audio output device to operate independently of each other, when the similarity satisfies a second specified condition.

[0011] According to embodiments of the disclosure, user's convenience may be improved by controlling a pair of audio output devices independently based on obtained information.

[0012] Also, according to various embodiments, an independent control of the pair of audio output devices may be implemented through individual user interfaces for a plurality of users.

[0013] Besides, a variety of effects directly or indirectly understood through this disclosure may be provided.

[0014] Other aspects, advantages, and salient features of the disclosure will become apparent to those skilled in the art from the following detailed description, which, taken in conjunction with the annexed drawings, discloses various embodiments of the disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0015] The above and other aspects, features, and advantages of certain embodiments of the disclosure will be more apparent from the following description taken in conjunction with the accompanying drawings, in which:

[0016] FIG. 1 illustrates an operating environment of an output device according to an embodiment of the disclosure;

[0017] FIG. 2 is a block diagram illustrating a configuration of an audio output device and an external electronic device according to an embodiment of the disclosure;

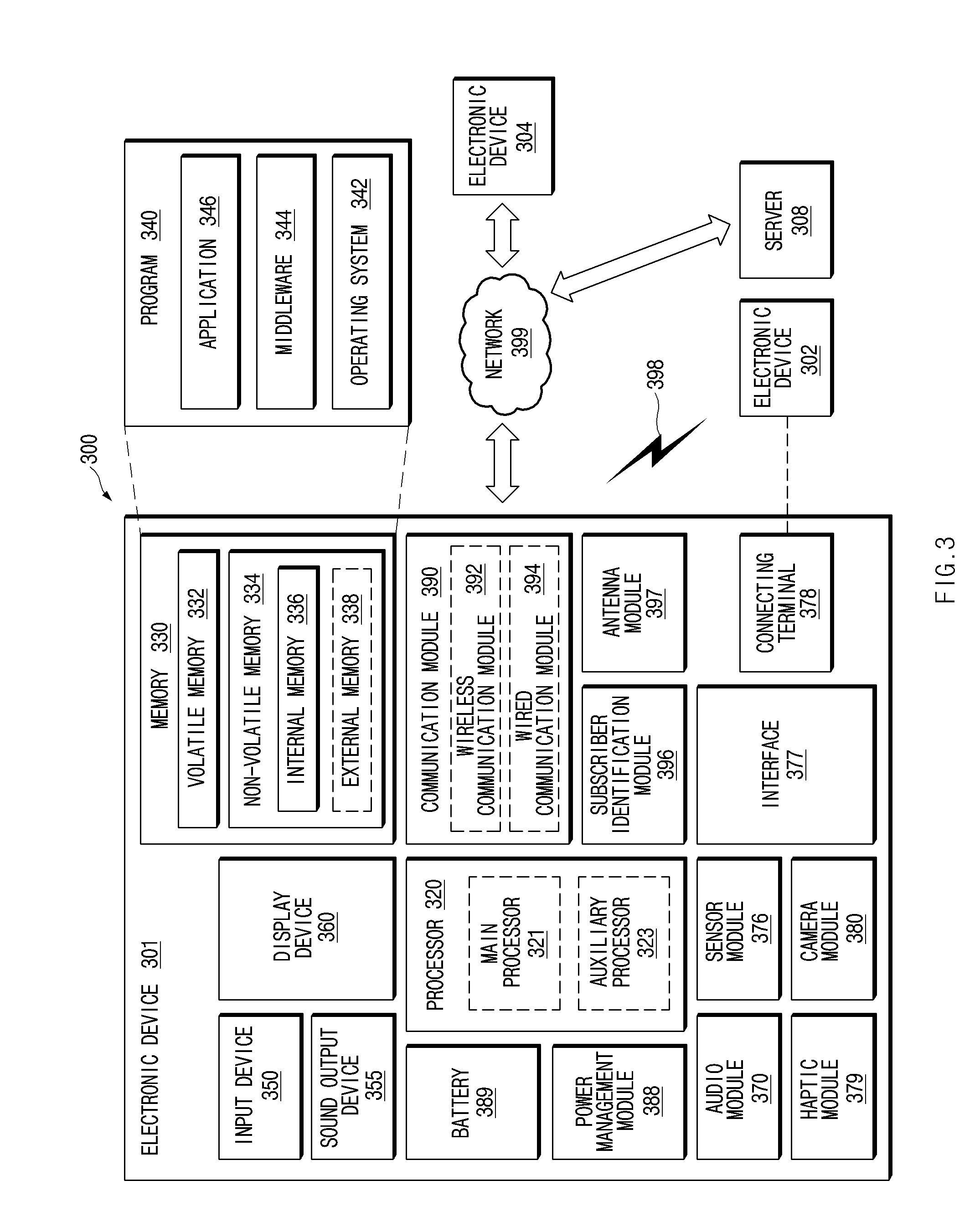

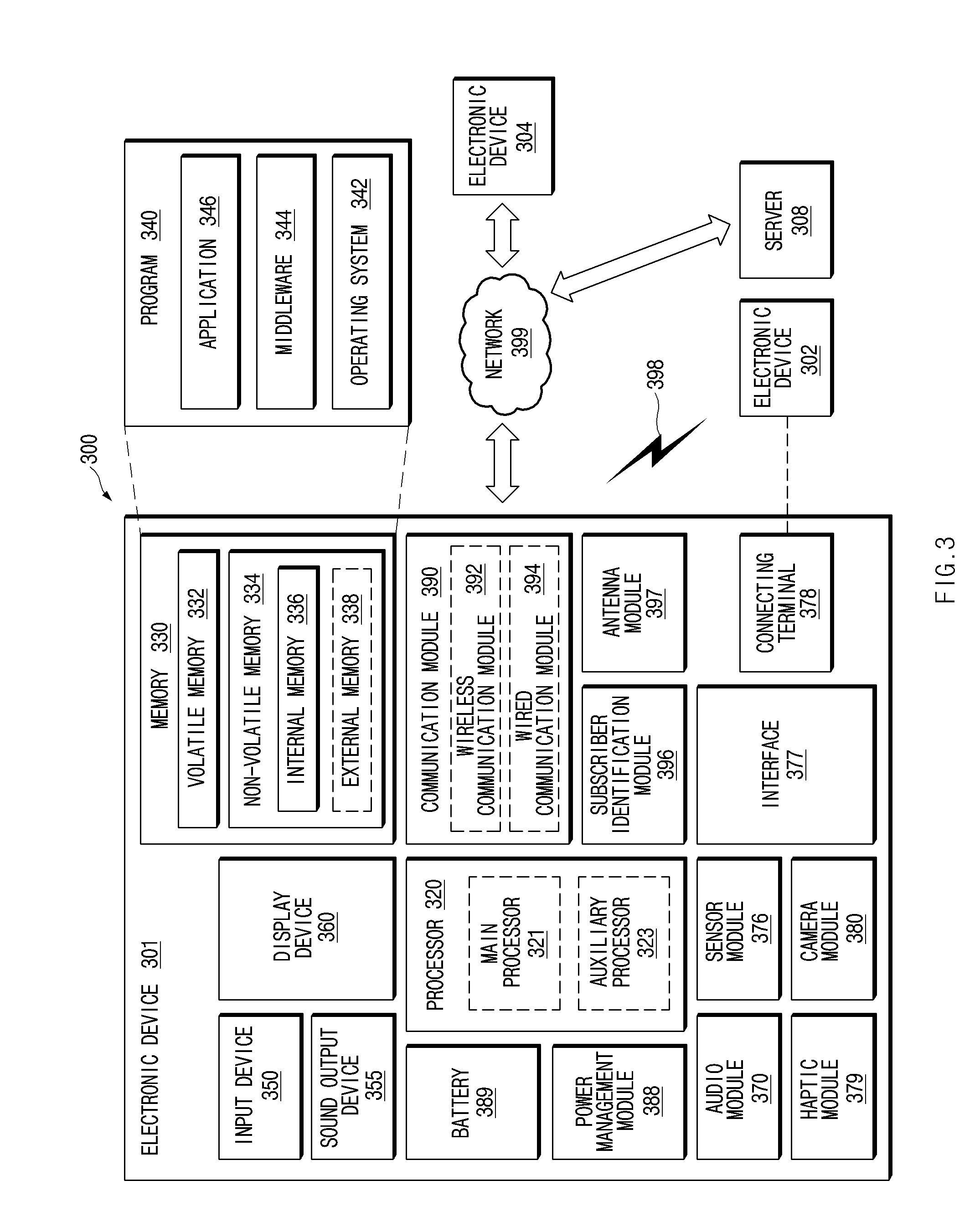

[0018] FIG. 3 illustrates an electronic device in a network environment, according to various embodiments of the disclosure;

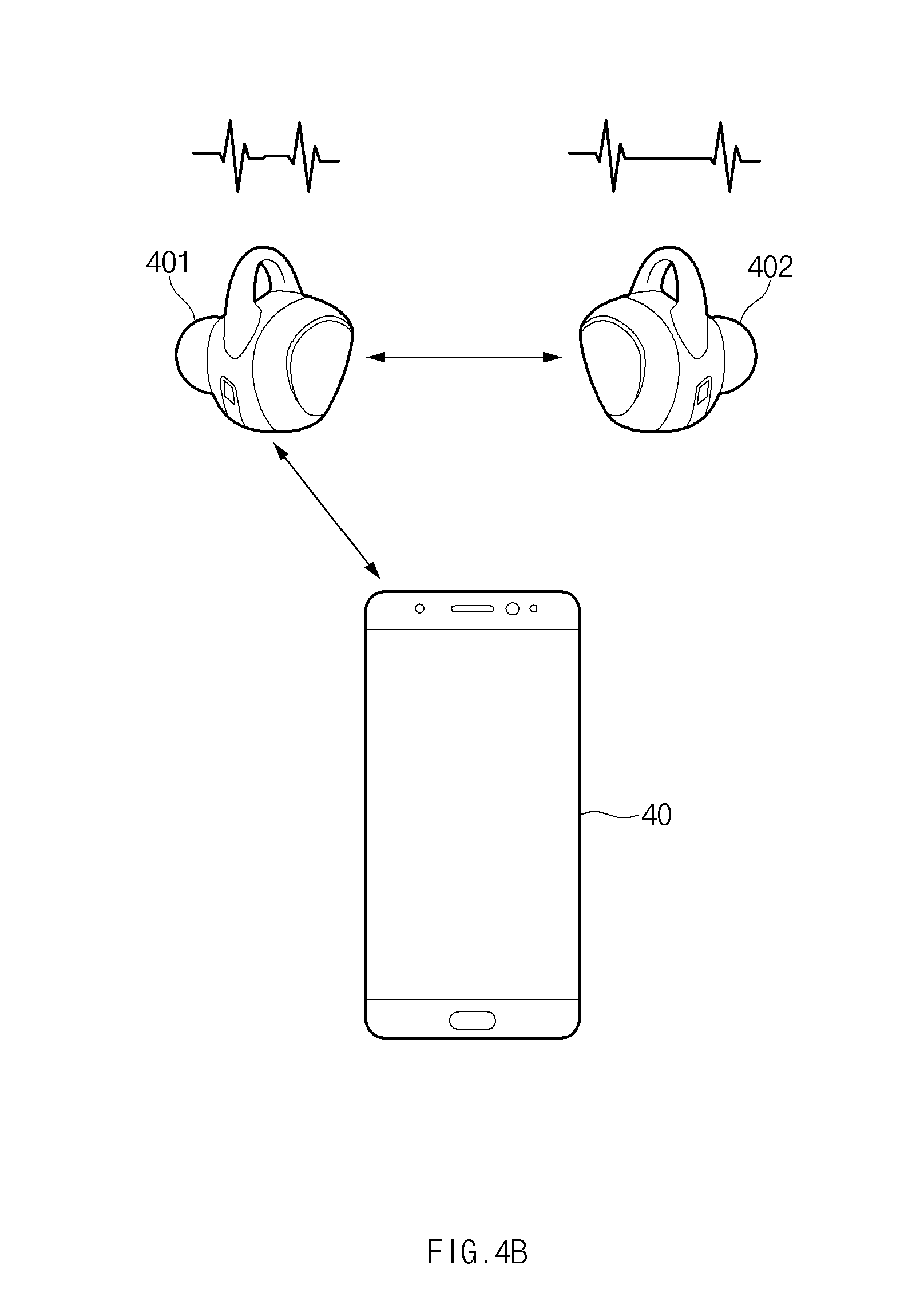

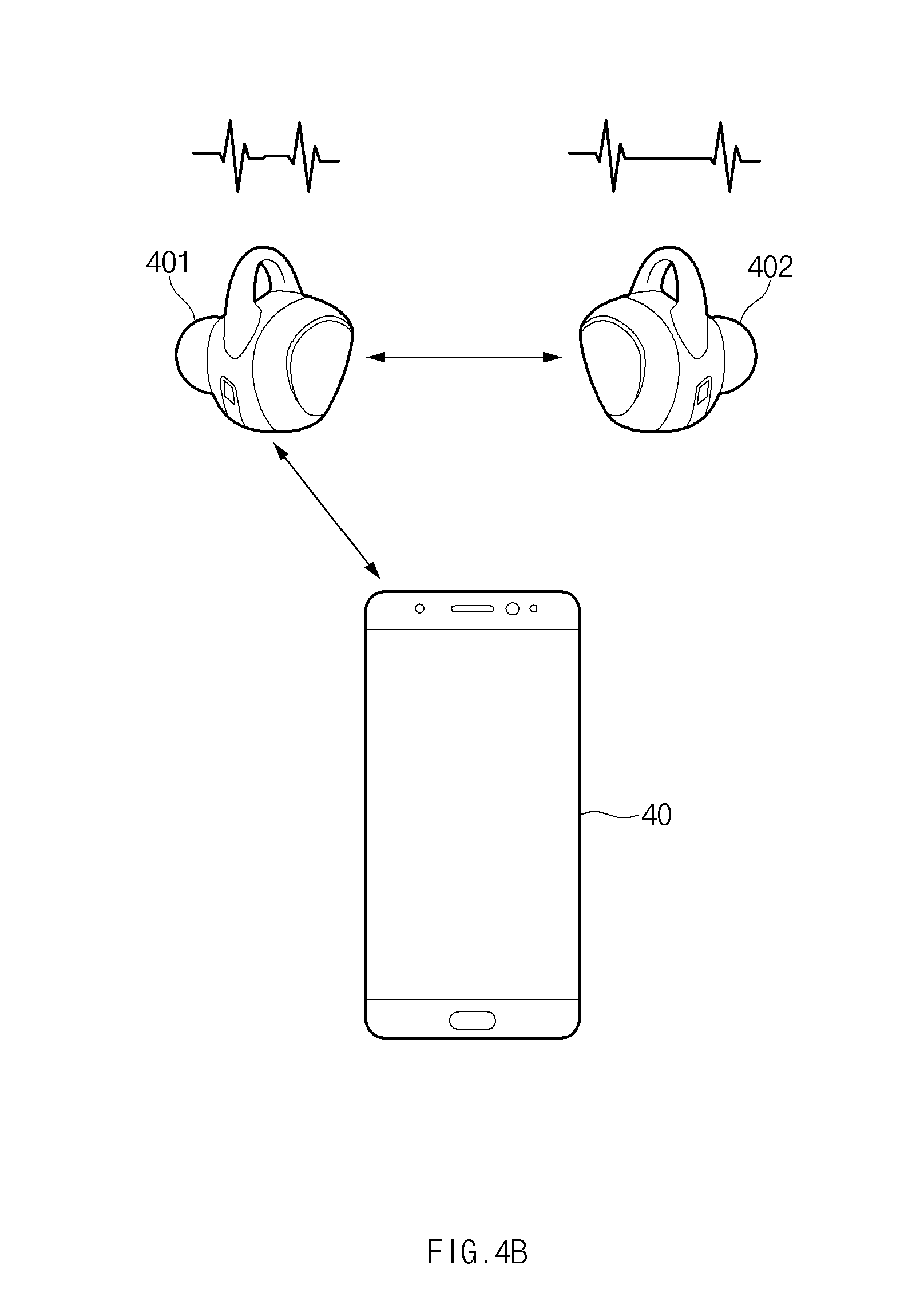

[0019] FIGS. 4A and 4B are views for describing how to determine whether an audio output device is worn by a plurality of users, according to an embodiment of the disclosure;

[0020] FIG. 5 is a view illustrating a method in which an electronic device displays an operation state of an audio output device, according to an embodiment of the disclosure;

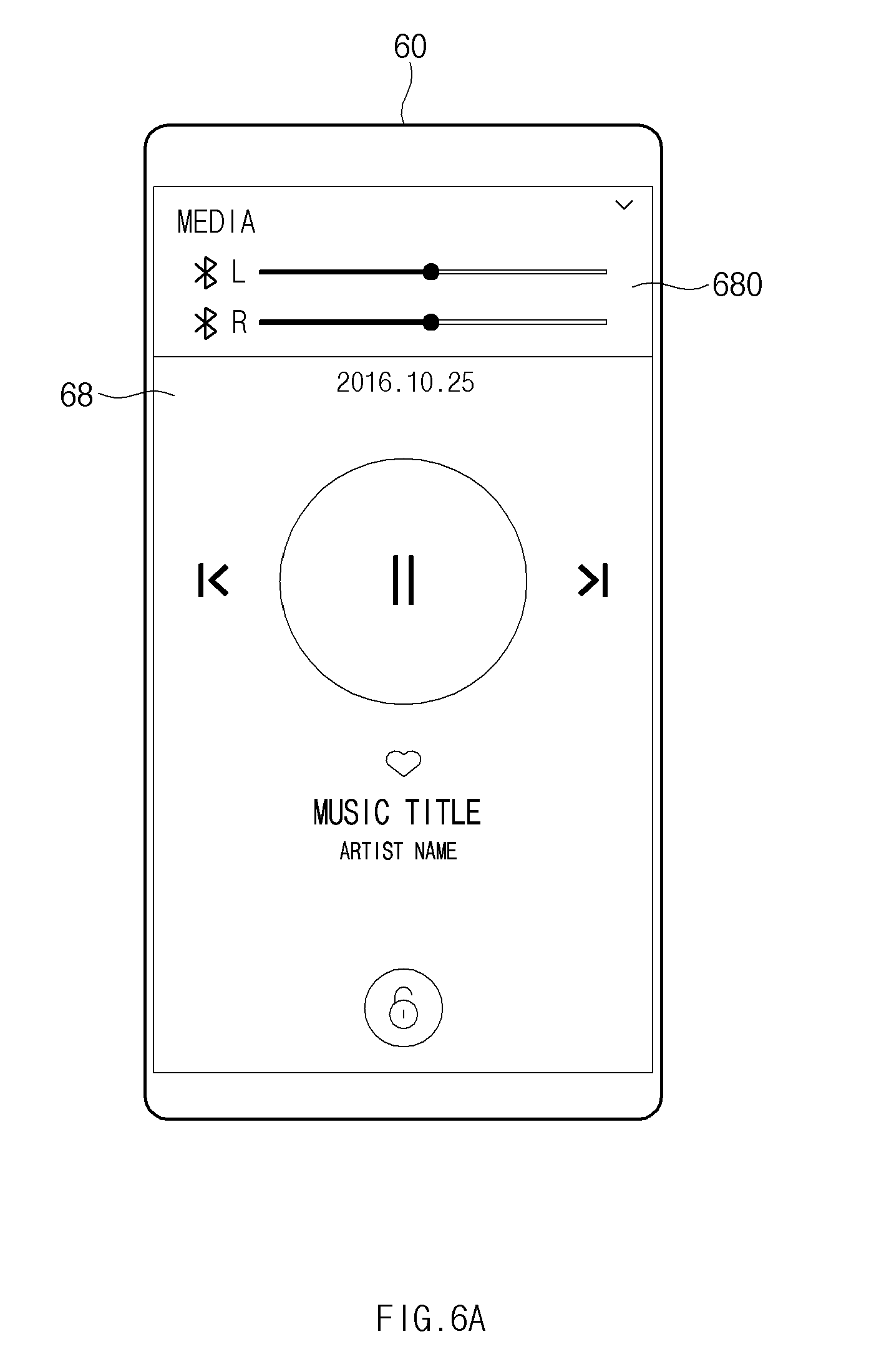

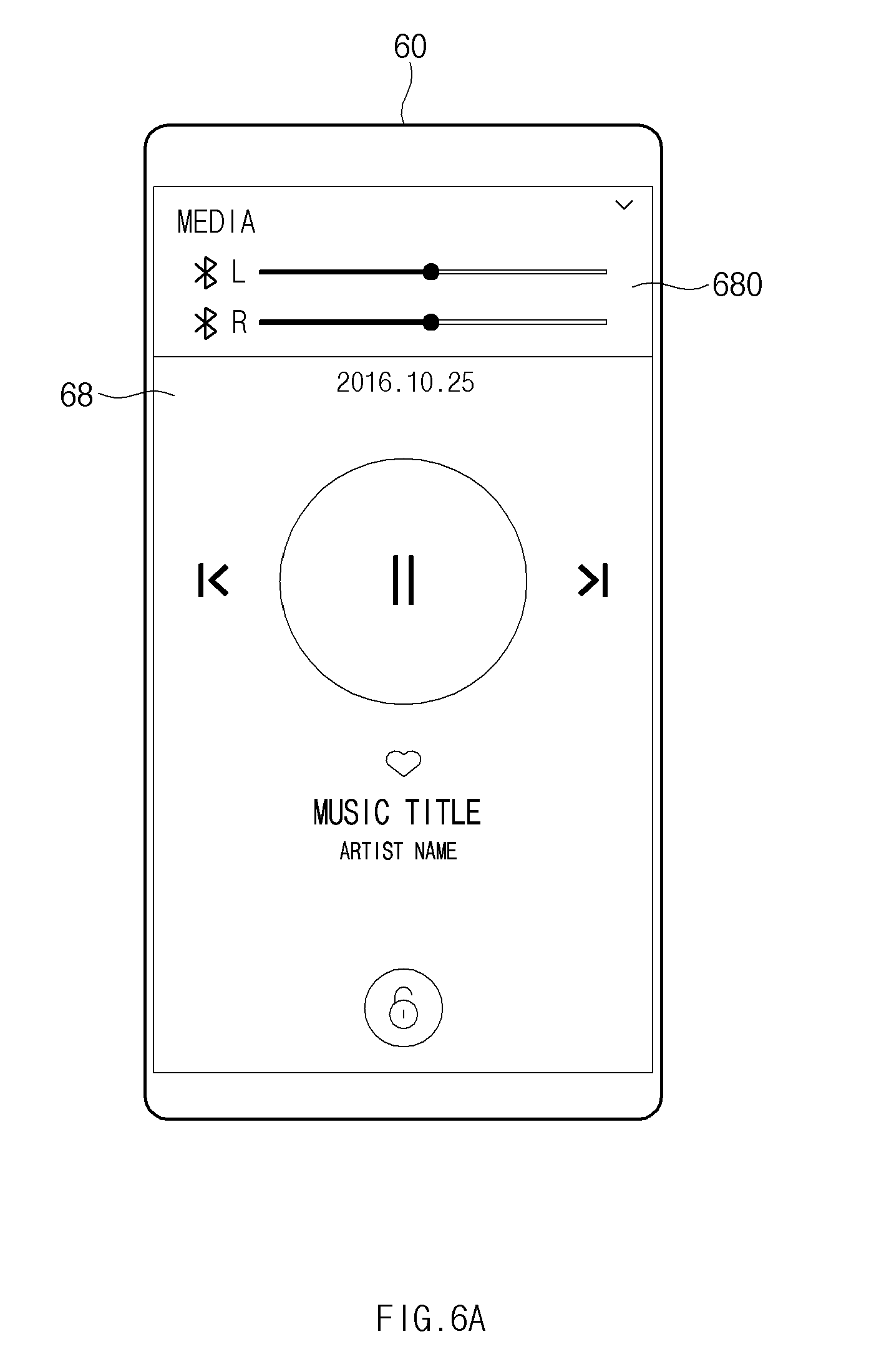

[0021] FIG. 6A illustrates a user interface for an independent volume control of an audio output device, according to an embodiment of the disclosure;

[0022] FIG. 6B illustrates an independent volume control of an audio output device, according to an embodiment of the disclosure;

[0023] FIG. 7 illustrates a user interface for independent music recommendation of an audio output device, according to an embodiment of the disclosure;

[0024] FIG. 8 illustrates a user interface for an independent audio output characteristic control of an audio output device, according to an embodiment of the disclosure;

[0025] FIGS. 9A and 9B illustrate user interfaces for an independent volume control of an audio output device, according to various embodiments of the disclosure;

[0026] FIG. 10 illustrates a master device setting method, according to an embodiment of the disclosure;

[0027] FIG. 11 is a flowchart illustrating a method in which an electronic device controls an external audio output device, according to various embodiments of the disclosure;

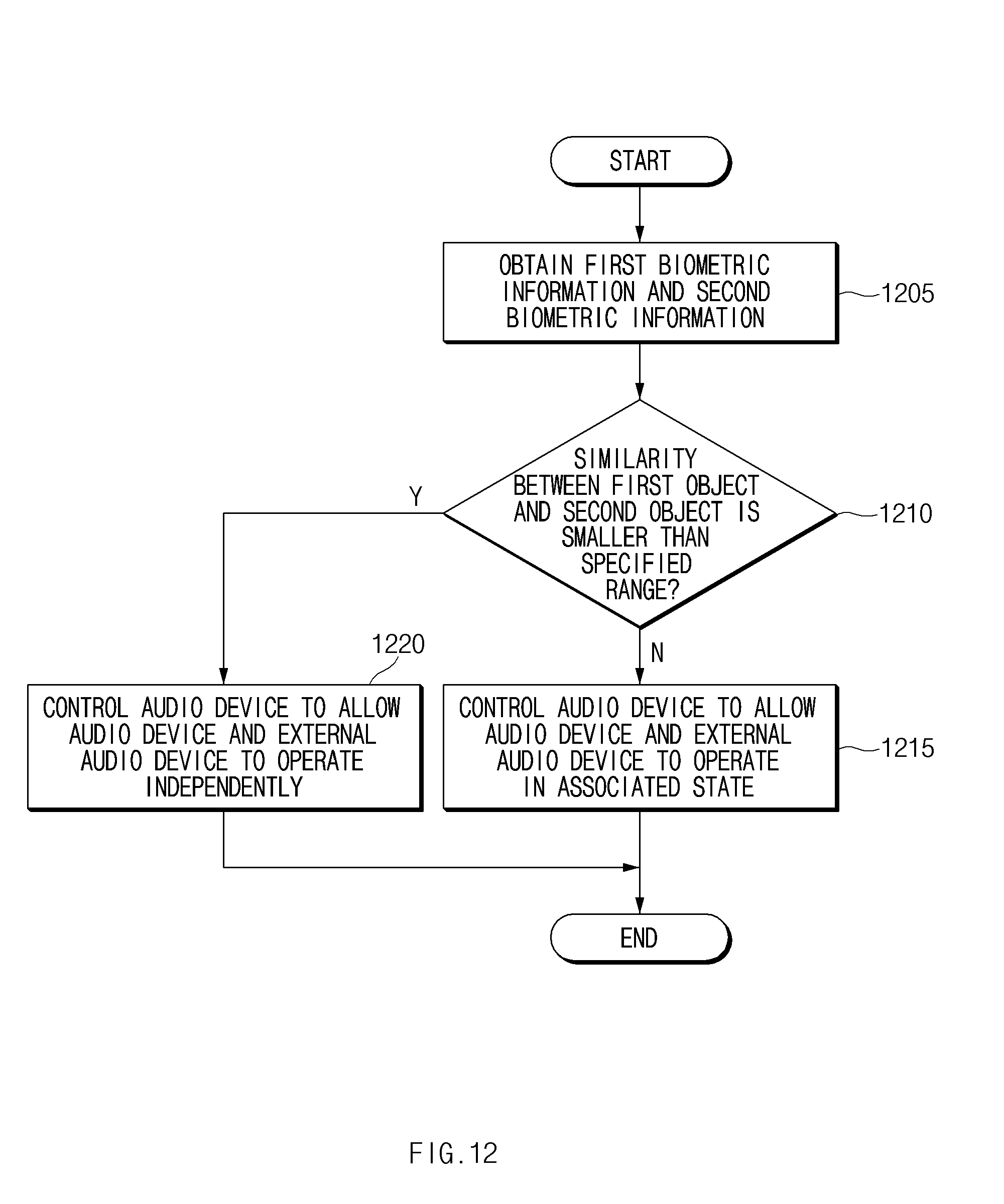

[0028] FIG. 12 is a flowchart illustrating an audio device control method, according to various embodiments of the disclosure; and

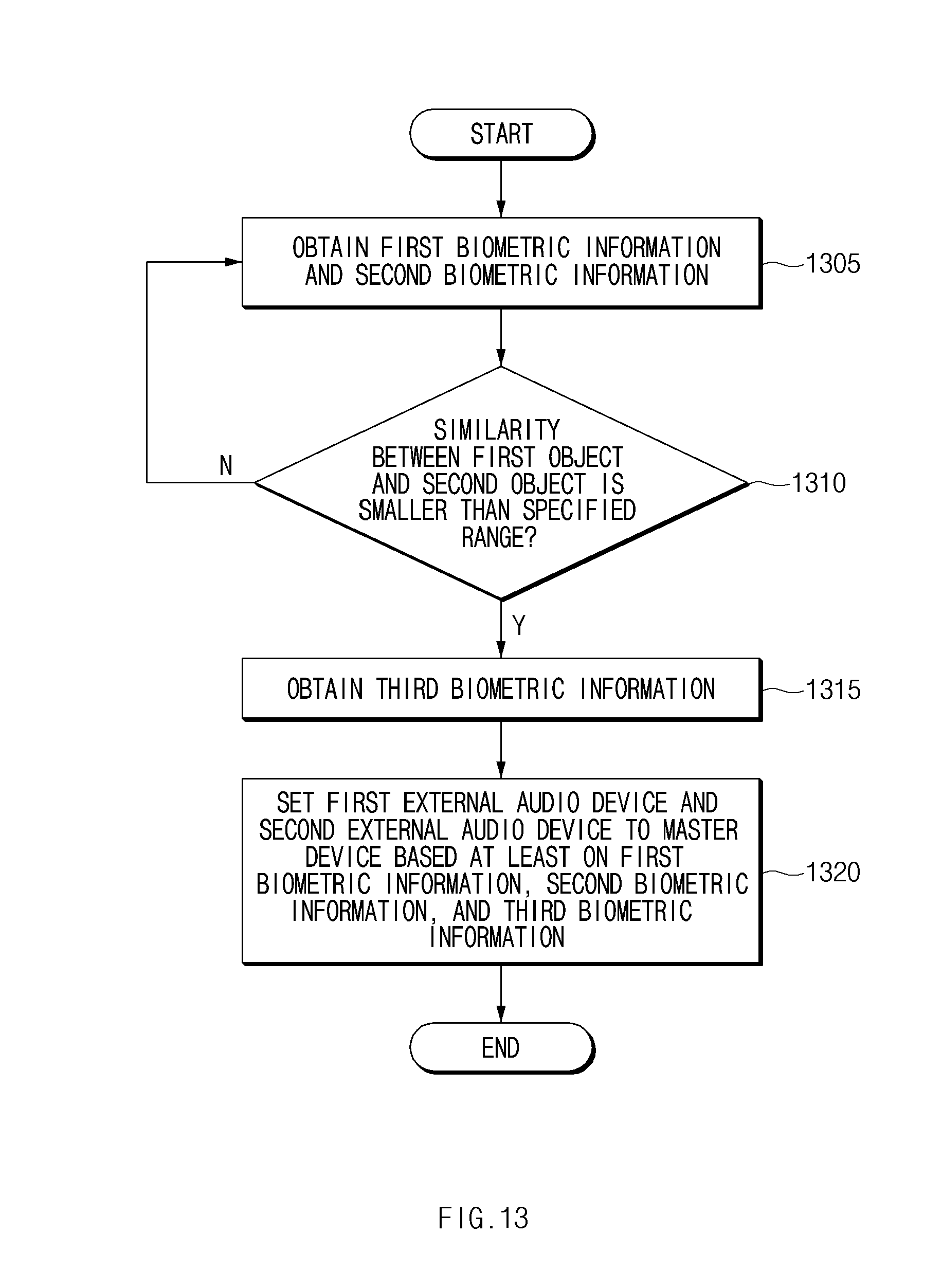

[0029] FIG. 13 is a flowchart illustrating a method in which an electronic device sets a master device, according to various embodiments of the disclosure.

[0030] Throughout the drawings, like reference numerals will be understood to refer to like parts, components, and structures.

DETAILED DESCRIPTION

[0031] The following description with reference to accompanying drawings. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the various embodiments described herein can be made without departing from the scope and spirit of the disclosure. In addition, descriptions of well-known functions and constructions may be omitted for clarity and conciseness.

[0032] The terms and words used in the following description and claims are not limited to the bibliographical meanings, but, are merely used by the inventor to enable a clear and consistent understanding of the disclosure. Accordingly, it should be apparent to those skilled in the art that the following description of various embodiments of the disclosure is provided for illustration purpose only and not for the purpose of limiting the disclosure as defined by the appended claims and their equivalents.

[0033] It is to be understood that the singular forms "a," "an," and "the" include plural referents unless the context clearly dictates otherwise. Thus, for example, reference to "a component surface" includes reference to one or more of such surfaces.

[0034] The terms of a singular form may include plural forms unless otherwise specified. In the disclosure, the expressions "A or B", "at least one of A or/and B", or the like may include any and all combinations of one or more of the associated listed items. The terms, such as "first", "second", and the like may be used to refer to various components regardless of the order and/or the priority and to distinguish the relevant components from other components, but do not limit the components. It will be understood that when a component (e.g., a first component) is referred to as being "(operatively or communicatively) coupled with/to" or "connected to" another component (e.g., a second component), it may be directly coupled with/to or connected to the other component or an intervening component (e.g., a third component) may be present.

[0035] According to the situation, the expression "configured to" used in the disclosure may be interchangeably used as, for example, the expression "suitable for", "having the capacity to", "designed to", "adapted to", "made to", or "capable of" in terms of hardware or software. In a situation, the expression "a device configured to" may mean that the device is "capable of" operating together with another device or other parts. For example, a "processor configured to (or set to) perform A, B, and C" may mean a dedicated processor (e.g., an embedded processor) for performing a corresponding operation or a generic-purpose processor (e.g., a central processing unit (CPU) or an application processor) which performs corresponding operations by executing one or more software programs which are stored in a memory device.

[0036] An electronic device according to various embodiments of the disclosure may include at least one of, for example, smartphones, tablet personal computers (PCs), mobile phones, video telephones, electronic book readers, desktop PCs, laptop PCs, netbook computers, workstations, servers, personal digital assistants (PDAs), portable multimedia players (PMPs), motion picture experts group (MPEG-1 or MPEG-2) audio layer 3 (MP3) players, medical devices, cameras, or wearable devices. The wearable device may include at least one of an accessory type (e.g., watches, rings, bracelets, anklets, necklaces, glasses, contact lens, or head-mounted-devices (HMDs), a fabric or garment-integrated type (e.g., an electronic apparel), a body-attached type (e.g., a skin pad or tattoos), or a bio-implantable type (e.g., an implantable circuit). According to various embodiments, the electronic device may include at least one of, for example, televisions (TVs), digital versatile disc (DVD) players, audios, refrigerators, air conditioners, cleaners, ovens, microwave ovens, washing machines, air cleaners, set-top boxes, home automation control panels, security control panels, media box (e.g., Samsung HomeSync.TM., Apple TV.TM., or Google TV.TM.), game consoles (e.g., Xbox.TM. or PlayStation.TM.), electronic dictionaries, electronic keys, camcorders, electronic picture frames, and the like.

[0037] According to another embodiment, an electronic device may include at least one of various medical devices (e.g., various portable medical measurement devices (e.g., a blood glucose monitoring device, a heartbeat measuring device, a blood pressure measuring device, a body temperature measuring device, and the like), a magnetic resonance angiography (MRA), a magnetic resonance imaging (MRI), a computed tomography (CT), scanners, and ultrasonic devices), navigation devices, global navigation satellite system (GNSS), event data recorders (EDRs), flight data recorders (FDRs), vehicle infotainment devices, electronic equipment for vessels (e.g., navigation systems for vessels and gyrocompasses), avionics, security devices, head units for vehicles, industrial or home robots, drones, automated teller machines (ATMs), points of sales (POSs) of stores, or internet of things (e.g., light bulbs, various sensors, sprinkler devices, fire alarms, thermostats, street lamps, toasters, exercise equipment, hot water tanks, heaters, boilers, and the like). According to an embodiment, the electronic device may include at least one of parts of furniture, buildings/structures, or a vehicle, electronic boards, electronic signature receiving devices, projectors, or various measuring instruments (e.g., water meters, electricity meters, gas meters, or wave meters, and the like). According to various embodiments, the electronic device may be a flexible one or a combination of two or more of the above-described devices. An electronic device according to an embodiment of the disclosure may not be limited to the above-described electronic devices. In the disclosure, the term "user" may refer to a person who uses an electronic device or may refer to a device (e.g., an artificial intelligence electronic device) that uses the electronic device.

[0038] FIG. 1 illustrates an operating environment of an output device according to an embodiment of the disclosure.

[0039] Referring to FIG. 1, an audio output device 100 may include a first output device 101 and a second output device 102. For example, the first output device 101 may be mounted on one ear of a user, and the second output device 102 may be mounted on the other ear of the user. In an embodiment, the first output device 101 may communicate with an external electronic device 10 and the second output device 102. The audio output device 100 may operate in association with the external electronic device 10, and may operate standalone.

[0040] According to an embodiment, the external electronic device 10 may be one of various mobile devices such as a smartphone, a tablet personal computer (PC), a smart watch, or the like. The external electronic device 10, for example, may output a voice received from any other electronic device during a phone call, a sound source stored in the external electronic device 10 or a sound source being streamed in real time through a communication network, a sound generated upon playing at least one content, or the like. In an embodiment, the voice or the sound source may be transmitted to the audio output device 100, and may be output by the audio output device 100. The audio output device 100 may include the first output device 101 and the second output device 102. The first output device 101 and the second output device 102 are illustrated in FIG. 1 as being kernel-type earphones, but a type of the first output device 101 and the second output device 102 is not limited thereto. For example, the first output device 101 and the second output device 102 may be open-type earphones. Below, a configuration of the first output device 101 will be described with reference to FIG. 1.

[0041] According to an embodiment, the first output device 101 may include a wingtip 111, an ear tip 121, a speaker 131, a heart rate (HR) sensor 141, and a terminal 151. The wingtip 111 may be coupled on the periphery of the first output device 101 so as to be replaceable. For example, the wingtip 111 may have elasticity, and may help the first output device 101 to be closely mounted on user's ear. The wingtip 111 may form the exterior of the first output device 101. The ear tip 121 may be coupled at one end of the first output device 101. The ear tip 121 may be, for example, a cylindrical shape. The ear tip 121 may have elasticity, and may help the first output device 101 to be closely mounted on user's ear. The speaker 131 may be positioned within a housing of the first output device 101. The sound output by the speaker 131 may be transferred to user's eardrum through a hollow of a tip. The HR sensor 141 may be positioned within the housing of the first output device 101. When the first output device 101 is mounted on user's ear, the HR sensor 141 may measure a heartbeat of the user by using at least one light emitting unit which may output a light in a specified wavelength range. For example, the at least one light emitting unit may include at least one of an infrared (IR) light emitting unit, a red light emitting unit, a green light emitting unit, a blue light emitting unit, or a white light emitting unit. The first output device 101 may determine whether the first output device 101 is worn by the user, based on the data measured by the HR sensor 141. The terminal 151 may be electrically connected with a charging device, and a battery (not illustrated) of the first output device 101 may be charged through the terminal 151.

[0042] The configuration of the first output device 101 illustrated in FIG. 1 is exemplary, and at least a part of the first output device 101 having the configuration illustrated in FIG. 1 may be omitted or replaced. As described above, according to an embodiment, the first output device 101 may be an open earphone. For example, the first output device 101 may not include the wingtip 111. For another example, the first output device 101 may include a speaker, at least a portion of which is exposed, on one surface of the first output device 101 instead of the ear tip 121.

[0043] According to an embodiment, the first output device 101 may be wirelessly connected with the external electronic device 10. The first output device 101 may be connected with the external electronic device 10 by wireless communication (e.g., Bluetooth or Bluetooth low energy (BLE)). The first output device 101 may be connected with the external electronic device 10 through a hands-free profile (HFP) or an advanced audio distribution profile (A2DP). In the case where the first output device 101 is connected with the external electronic device 10 through the HFP, the external electronic device 10 may be set to an HFP audio gateway (AG), and the first output device 101 may be set to an HFP hands-free unit (HF). In the case where the first output device 101 is connected with the external electronic device 10 through the A2DP, the external electronic device 10 may be set to an A2DP source (SRC), and the first output device 101 may be set to an A2DP sink (SNK).

[0044] According to an embodiment, the first output device 101 may be wirelessly connected with the second output device 102. The first output device 101 may be connected with the second output device 102 by wireless communication (e.g., Bluetooth or BLE). For example, the first output device 101 may be connected with the second output device 102 through the HFP or A2DP. In this case, the first output device 101 may operate as a master, and the second output device 102 may operate as a slave. An example is illustrated in FIG. 1 as the first output device 101 operates as a master and the second output device 102 operates as a slave, but the disclosure is not limited thereto. For example, the second output device 102 may operate as a master, and the first output device 101 may operate as a slave. According to an embodiment, each of the first output device 101 and the second output device 102 may operate as a master. For example, the first output device 101 and the second output device 102 may operate independently of each other. The first output device 101 and the second output device 102 may operate independently of the external electronic device 10.

[0045] According to an embodiment, the first output device 101 may be wirelessly connected with the second output device 102. When connected with the external electronic device 10, the first output device 101 may receive audio data associated with a voice or a sound source. The first output device 101 may receive the audio data in a streaming manner and may output the received audio data through the speaker 131. The first output device 101 may transmit the received audio data to the second output device 102. The first output device 101 may output a sound source stored in the first output device 101 or the second output device 102. In this case, the first output device 101 may not be connected with the external electronic device 10. According to an embodiment, the second output device 102 may include a wingtip 112, an ear tip 122, a microphone hole 162, and a touch pad 172. The wingtip 112 and the ear tip 122 of the second output device 102 may be identical to the wingtip 111 and the ear tip 121 of the first output device 101. Although not illustrated in FIG. 1, the second output device 102 may include a speaker, an HR sensor, and a terminal which are identical to those of the first output device 101. Also, although not illustrated in FIG. 1, the first output device 101 may include a microphone hole and a touch pad which are identical to those of the second output device 102.

[0046] According to an embodiment, the microphone hole 162 may be formed at a housing of the second output device 102. A microphone may be positioned below the microphone hole 162, and a sound may be transferred to the microphone through the microphone hole 162 from the outside.

[0047] According to an embodiment, the touch pad 172 may be formed at a location where the touch pad 172 is exposed when the second output device 102 is inserted into user's ear. The touch pad 172 may sense a contact of user's body. When a touch input is sensed by the touch pad 172, for example, the second output device 102 may perform a function, which corresponds to the touch input, such as play, stop, fast-forward, rewind, volume control, call connection, call end, or the like.

[0048] According to various embodiments, the external electronic device 10 may obtain data which are sensed by sensors included in the first output device 101 and the second output device 102. For example, the external electronic device 10 may obtain data sensed by the first output device 101 and data sensed by the second output device 102 through the first output device 101. For another example, the external electronic device 10 may obtain data from each of the first output device 101 and the second output device 102.

[0049] According to an embodiment, the external electronic device 10 may identify objects associated with the first output device 101 and the second output device 102, based on a result of comparing the obtained data. For example, the external electronic device 10 may determine whether a first object (e.g., a user which utilizes the first output device 101) and a second object (e.g., a user which utilizes the second output device 102) are different from each other. For example, the external electronic device 10 may determine whether the audio output device 100 is used by a plurality of users. For example, the external electronic device 10 may determine whether the audio output device 100 is used by a plurality of users, by comparing data sensed by the first output device 101 and data sensed by the second output device 102.

[0050] According to an embodiment, the external electronic device 10 may control the audio output device 100 based on the data sensed by the first output device 101 and the second output device 102. For example, the external electronic device 10 may control the first output device 101 and the second output device 102 in an associated state or in an independent state. For example, when it is determined that the first object associated with the first output device 101 and the second object associated with the second output device 102 are different from each other, the external electronic device 10 may control the first output device 101 and the second output device 102 so as to operate independently of each other. For another example, when it is determined that the audio output device 100 is used by a plurality of users, the external electronic device 10 may allow the first output device 101 and the second output device 102 to operate independently of each other.

[0051] According to an embodiment, the external electronic device 10 may control the audio output device 100 through communication with the audio output device 100. For example, the external electronic device 10 may control the first output device 101 and the second output device 102 by transmitting a signal to the first output device 101 or the second output device 102. In this case, the external electronic device 10 may transmit a signal to a master device of the audio output device 100. For another example, the external electronic device 10 may control the first output device 101 and the second output device 102 by transmitting a signal to the first output device 101 and the second output device 102. In this case, each of the first output device 101 and the second output device 102 may operate as a master device.

[0052] According to an embodiment, the external electronic device 10 may set a state of the audio output device 100 through communication with the audio output device 100. For example, the external electronic device 10 may control states of the first output device 101 and the second output device 102 by transmitting a signal to the first output device 101 or the second output device 102. In this case, the external electronic device 10 may transmit a signal to a master device of the audio output device 100. For another example, the external electronic device 10 may control states of the first output device 101 and the second output device 102 by transmitting a signal to the first output device 101 and the second output device 102. In this case, each of the first output device 101 and the second output device 102 may operate as a master device.

[0053] According to an embodiment, depending on an operation state of the audio output device 100, the external electronic device 10 may provide information about operation states of the first output device 101 and the second output device 102 or a user interface for controlling the first output device 101 and the second output device 102. For example, when it is determined that the first object associated with the first output device 101 and the second object associated with the second output device 102 are different from each other or when it is determined that the audio output device 100 is used by a plurality of users, the external electronic device 10 may provide, on a display, user interfaces which may control the first output device 101 and the second output device 102 individually. According to an embodiment, the first output device 101 and the second output device 102 may be associated with different applications. For example, the external electronic device 10 may display, on the display, a user interface corresponding to an application associated with the first output device 101 and a user interface corresponding to an application associated with the second output device 102.

[0054] According to an embodiment, based at least on information sensed by the first output device 101 and the second output device 102, the external electronic device 10 may set the first output device 101 or the second output device 102 to a master device and may set the other device to a slave device. Also, the external electronic device 10 may provide a notification to the master device. For example, the notification may be associated with a call or a message to the external electronic device 10. For another example, the notification may be associated with an operation state of the audio output device 100.

[0055] According to various embodiments, at least a part of the above-described operations of the external electronic device 10 may be performed by the first output device 101. For example, the first output device 101 may obtain data which are sensed by sensors included in the first output device 101 and the second output device 102. For example, the first output device 101 may identify objects associated with the first output device 101 and the second output device 102, by comparing data sensed by the first output device 101 and data sensed by the second output device 102. For example, the first output device 101 may determine whether a first object (e.g., a user which utilizes the first output device 101) and a second object (e.g., a user which utilizes the second output device 102) are different from each other. For example, the first output device 101 may determine whether the audio output device 100 is used by a plurality of users. For example, the first output device 101 may determine whether the audio output device 100 is used by a plurality of users, by comparing data sensed by the first output device 101 and data sensed by the second output device 102. According to an embodiment, the first output device 101 may control the audio output device 100 based on the data sensed by the first output device 101 and the second output device 102. For example, the first output device 101 may control the first output device 101 and the second output device 102 so as to operate in an associated state or in an independent state. Also, the above-described operations may be performed by the second output device 102. Below, exemplary embodiments will be more fully described with reference to FIGS. 2 to 13.

[0056] FIG. 2 is a block diagram illustrating a configuration of an audio output device and an external electronic device according to an embodiment of the disclosure.

[0057] Referring to FIG. 2, an audio output device 200 may include a first output device 201 and a second output device 202. The first output device 201 may include a speaker 211, a microphone 221, a battery 231, a memory 241, a sensing module 251, a communication circuit 261, and a processor 271. For example, the first output device 201 may be the same device as the first output device 101 illustrated in FIG. 1. The audio output device 200 may communicate with an external electronic device 20. The external electronic device 20 is illustrated in FIG. 2 as communicating with the first output device 201, but the disclosure is not limited thereto. For example, the external electronic device 20 may communicate with the second output device 202 or may communicate with the first output device 201 and the second output device 202.

[0058] According to an embodiment, the second output device 202 may include a speaker 212, a microphone 222, a battery 232, a memory 242, a sensing module 252, a communication circuit 262, and a processor 272. For example, the second output device 202 may be the same device as the second output device 102 illustrated in FIG. 1. Configurations and functions of the speaker 212, the microphone 222, the memory 242, the sensing module 252, the communication circuit 262, and the processor 272 of the second output device 202 may be identical to configurations and functions of the speaker 211, the microphone 221, the battery 231, the memory 241, the sensing module 251, the communication circuit 261, and the processor 271 of the first output device 201.

[0059] Below, for convenience of description, the audio output device 200 will be described with respect to the first output device 201, but embodiments to be described below may also be performed by the second output device 202.

[0060] According to an embodiment, the speaker 211 may output a sound. The speaker 211 may convert audio data to a sound. In the case where the first output device 201 is inserted into user's ear, the sound output by the speaker 211 may be transferred to user's eardrum.

[0061] According to an embodiment, the microphone 221 may sense a sound generated on the outside. For example, the microphone 221 may sense a voice of the user. For another example, the microphone 221 may sense a sound generated around the first output device 201. The sound sensed by the microphone 221 with regard to an ambient environment may be output by the speaker 211.

[0062] According to an embodiment, the battery 231 may supply a power to any other components of the first output device 201. The battery 231 may be electrically connected with a power management integrated circuit (PMIC) (not illustrated). In the case where the first output device 201 is connected with a charging device, the battery 231 may be charged wired or wirelessly through the PMIC. The level of the battery 231 may be verified by the PMIC.

[0063] According to an embodiment, the memory 241 may store data associated with the first output device 201 and/or the second output device 202. For example, the memory 241 may store a sound source which may be played by the first output device 201 and/or the second output device 202. For another example, the memory 241 may store data sensed by the first output device 201 and/or the second output device 202. For another example, the memory 241 may store data associated with a task which is performed by the first output device 201 and/or the second output device 202.

[0064] According to an embodiment, the sensing module 251 may include at least one sensor. The sensing module 251 may sense, for example, a heartbeat, acceleration, an angular velocity, an infrared light, proximity, and/or an electromyogram (EMG). The sensing module 251 may include, for example, an HR sensor 251a, an acceleration sensor 251b, a gyro sensor 251c, an IR sensor 251d, and a proximity sensor 251e. Although not illustrated in FIG. 2, the sensing module 251 may further include various kinds of sensors such as an EMG sensor.

[0065] According to an embodiment, the communication circuit 261 may wirelessly communicate with at least one of the external electronic devices 20 or the second output device 202 coupled with the first output device 201. For example, the communication circuit 261 may search for a connectable device around the first output device 201 and may attempt a connection with the found device. The communication circuit 261 may transmit data to the connected device and may receive data from the connected device. The communication circuit 261 may update mutual states with the connected device and may transmit a command to the connected device. The communication circuit 261 may communicate with the external electronic device 20 or the second output device 202 in various manners such as Bluetooth, BLE, Wi-Fi Direct, and/or pronounced ant plus (ANT+).

[0066] According to an embodiment, the processor 271 may be electrically connected with the speaker 211, the microphone 221, the battery 231, the memory 241, the sensing module 251, and the communication circuit 261. The processor 271 may control the speaker 211, the microphone 221, the battery 231, the memory 241, the sensing module 251, and the communication circuit 261. The processor 271 may control the second output device 202 and/or the external electronic device 20, which is connected through the communication circuit 261.

[0067] According to an embodiment, the processor 271 may obtain data (e.g., a biometric signal) sensed by the sensing module 251 and/or the sensing module 252. For example, the processor 271 may obtain data sensed by the sensing module 252 by using the communication circuit 261. For another example, the processor 271 may obtain information about a state of the sensing module 251 and/or the sensing module 252. For example, the information about the sensing module 251 or 252 may include information about whether each of at least one sensor included in the sensing module 251 or 252 is available.

[0068] According to an embodiment, the processor 271 may determine whether the audio output device 200 is used by the same user or by a plurality of users, based on data sensed by the sensing module 251 and/or the sensing module 252. For example, the processor 271 may determine whether the audio output device 200 is used by a plurality of users, based on a result of comparing data sensed by the sensing module 251 and data sensed by the sensing module 252 or based on a similarity between the two data. For another example, when it is determined that the audio output device 200 is used by a plurality of users, the processor 271 may transmit information indicating that the audio output device 200 is used by a plurality of users, to the external electronic device 20.

[0069] According to an embodiment, the processor 271 may control the first output device 201 or the second output device 202 based on data sensed by the sensing module 251 and/or the sensing module 252. According to an embodiment, the processor 271 may assign a task to the first output device 201 or the second output device 202 based on data sensed by the sensing module 251 and/or the sensing module 252. Components of the sensing module 252 of the second output device 202 are essentially similar to those of the sensing module 251 of the first output device 201. Thus, the HR sensor 252a, acceleration sensor 252b, gyro sensor 252c, IR sensor 252d, and proximity sensor 252e are substantially similar to the same named components 251x of the sensing module 251. Descriptions thereof will not be repeated for brevity.

[0070] According to an embodiment, the processor 271 may obtain information about a wearing state of the audio output device 200, based on data sensed by the sensing module 251 and/or the sensing module 252. In the case where that the first output device 201 and the second output device 202 are used by different users is determined based on the data sensed by the sensing module 251 and/or the sensing module 252, the processor 271 may perform at least one or more additional functions.

[0071] For example, in the case where recession from a contacted object is sensed by the proximity sensor 251e, the processor 271 may determine that the first output device 201 is separated from user's ear. For another example, in the case where acceleration (or acceleration of gravity) of a specified magnitude is sensed by the acceleration sensor 251b, the processor 271 may determine that the first output device 201 is separated from user's ear. For another example, in the case where a contact with an external object is sensed by the proximity sensor 251e, the processor 271 may determine that the first output device 201 is mounted on user's ear. For another example, in the case where a heartbeat is sensed by the HR sensor 251a, the processor 271 may determine that the first output device 201 is mounted on user's ear. For another example, in the case where acceleration or angular velocity of a specified magnitude or more is sensed by the acceleration sensor 251b or the gyro sensor 251c, in the case where proximity of an external object is sensed by the proximity sensor 251e, and in the case where a heartbeat is sensed by the HR sensor 251a, the processor 271 may determine that the first output device 201 is mounted on user's ear.

[0072] According to an embodiment, the processor 271 may obtain, from the second output device 202, information about a wearing state of the second output device 202 by using the communication circuit 261. For example, the processor 271 may receive data sensed by the sensing module 252 of the second output device 202 from the second output device 202, and may determine a wearing state of the second output device 202 based on the received information. For another example, in the case where the second output device 202 determines a wearing state of the second output device 202 based on data sensed by the sensing module 252 of the second output device 202, the processor 271 may receive a determination result from the second output device 202.

[0073] According to an embodiment, in the case where a task is assigned to the first output device 201, the processor 271 may perform the assigned task. The processor 271 may store data associated with the performed task to the memory 241. The processor 271 may transmit the data associated with the performed task to the second output device 202 by using the communication circuit 261.

[0074] According to an embodiment, the processor 271 may verify heartbeat information by using the HR sensor 251a of the first output device 201 and an HR sensor 252a of the second output device 202. According to another embodiment, in the case where the first output device 201 and the second output device 202 are mounted on user's ears, the processor 271 may verify heartbeat information. According to another embodiment, the processor 271 may determine whether the audio output device 200 is used by a plurality of users, based at least on first heartbeat information verified from the HR sensor 251a and second heartbeat information verified from the HR sensor 252a. For example, in the case where the similarity between the first heartbeat information and the second heartbeat information is smaller than a specified value or a difference between the first heartbeat information and the second heartbeat information is a specified threshold value or more, the processor 271 may determine that the audio output device 200 is used by a plurality of users. For example, heartbeat information may include at least one of a heart rate, a heartbeat timing, a heartbeat interval, a heartbeat waveform, or a heartbeat frequency.

[0075] According to an embodiment, the processor 271 may determine whether the audio output device 200 is used by a plurality of users, based on angular velocities sensed by the acceleration sensor 251b and an acceleration sensor 252b. For example, in the case where a difference between a first acceleration sensed by the acceleration sensor 251b and a second acceleration sensed by the acceleration sensor 252b is a specified value or more, the processor 271 may determine that the audio output device 200 is used by a plurality of users.

[0076] According to an embodiment, the processor 271 may determine whether the audio output device 200 is used by a plurality of users, based on a direction of the first output device 201 sensed by the gyro sensor 251c and a direction of the second output device 202 sensed by a gyro sensor 252c. For example, in the case where a direction (e.g., an output direction of the speaker 211) in which the first output device 201 faces and a direction (e.g., an output direction of the speaker 212) in which the second output device 202 faces face each other, the processor 271 may determine that the audio output device 200 is used by a plurality of users. For another example, in the case where a direction in which the first output device 201 faces and a direction in which the second output device 202 faces are out of a specified range, the processor 271 may determine that the audio output device 200 is used by a plurality of users.

[0077] According to an embodiment, the processor 271 may determine whether the audio output device 200 is used by a plurality of users, based on a distance between the first output device 201 and the second output device 202. For example, the processor 271 may determine a distance between the first output device 201 and the second output device 202 by transmitting a specified signal to the second output device 202 by using the communication circuit 261 and receiving a response from the second output device 202. The processor 271 may determine a distance based on a time (e.g., a round trip time) between the transmission of the specified signal and the response. For another example, in the case where the time from the transmission to the response or the distance is a specified value or more, the processor 271 may determine that the first output device 201 and the second output device 202 are used by different users.

[0078] According to an embodiment, when it is determined that the first output device 201 and the second output device 202 are used by different users, the processor 271 may assign a task being performed by the first output device 201 to the second output device 202. For example, the processor 271 may transmit data associated with the task being performed by the first output device 201 to the second output device 202 by using the communication circuit 261. For another example, while the first output device 201 and the second output device 202 operate in a stereo mode, when it is determined that the first output device 201 and the second output device 202 are used by different users, the processor 271 may set the first output device 201 and the second output device 202 such that the first output device 201 and the second output device 202 operate in a mono mode.

[0079] According to an embodiment, the processor 271 may assign a task to the first output device 201 or the second output device 202 based on a user input. For example, the processor 271 may output a call voice, a notification, or the like through the first output device 201 or the second output device 202 in the mono mode. For another example, the processor 271 may control the first output device 201 and/or the second output device 202 based on a task or a user input such that only one of the first output device 201 or the second output device 202 is used. For another example, the processor 271 may activate or deactivate the microphone 221 of the first output device 201 and/or the microphone 222 of the second output device 202.

[0080] According to an embodiment, various embodiments which are above described as being performed by the processor 271 of the first output device 201 may be performed by the processor 272 of the second output device 202. For example, the processor 271 of the first output device 201 may control the processor 272 of the second output device 202 such that the various embodiments described above are performed by the processor 272 of the second output device 202. Below, various embodiments will be described with respect to the external electronic device 20. At least some of the embodiments described above as being performed by the processor 271 may be performed by the external electronic device 20.

[0081] According to an embodiment, the external electronic device 20 may include a memory 24, a communication circuit 26, a display 28, and a processor 27. The processor 27 may be electrically connected with the memory 24, the communication circuit 26, and the display 28. According to an embodiment, the processor 27 may control the first output device 201 and/or the second output device 202, which is connected through the communication circuit 26. For example, the external electronic device 20 may be the same device as the external electronic device 10 illustrated in FIG. 1.

[0082] According to an embodiment, the memory 24 may store data associated with the first output device 201 and/or the second output device 202. For example, the memory 24 may store a sound source which may be played by the first output device 201 and/or the second output device 202. For another example, the memory 24 may store data sensed by the first output device 201 and/or the second output device 202. For another example, the memory 24 may store data associated with a task which is performed by the first output device 201 and/or the second output device 202.

[0083] According to an embodiment, the communication circuit 26 may wirelessly communicate with at least one of the first output device 201 or the second output device 202. For example, the communication circuit 26 may search for a connectable device around the external electronic device 20 and may attempt a connection with the found device. The communication circuit 26 may transmit data to the connected device and may receive data from the connected device. The communication circuit 26 may update mutual states with the connected device and may transmit a command to the connected device. The communication circuit 26 may communicate with the first output device 201 or the second output device 202 in various manners such as Bluetooth, BLE, Wi-Fi Direct, and/or ANT+.

[0084] According to an embodiment, the processor 27 may obtain data (e.g., a biometric signal) sensed by the sensing module 251 and/or the sensing module 252. According to an embodiment, by using the communication circuit 26, the processor 27 may obtain data sensed by the sensing module 251 from the first output device 201 and may obtain data sensed by the sensing module 252 from the first output device 201 or the second output device 202. For another example, the processor 27 may obtain information about a state of the sensing module 251 and/or the sensing module 252. For example, the information about the sensing module 251 or 252 may include information about whether each of at least one sensor included in the sensing module 251 or 252 is available.

[0085] According to an embodiment, the processor 27 may determine whether the audio output device 200 is used by the same user or by a plurality of users, based on data sensed by the sensing module 251 and/or the sensing module 252. For example, the processor 27 may determine whether the audio output device 200 is used by a plurality of users, based on a result of comparing data sensed by the sensing module 251 and data sensed by the sensing module 252 or based on a similarity between the two data.

[0086] According to an embodiment, the processor 27 may control the first output device 201 or the second output device 202 based on data sensed by the sensing module 251 and/or the sensing module 252. According to an embodiment, the processor 27 may assign a task to the first output device 201 or the second output device 202 based on data sensed by the sensing module 251 and/or the sensing module 252.

[0087] According to an embodiment, the processor 27 may obtain information about a wearing state of the audio output device 200, based on data sensed by the sensing module 251 and/or the sensing module 252. In the case where that the first output device 201 and the second output device 202 are used by different users is determined based on the data sensed by the sensing module 251 and/or the sensing module 252, the processor 27 may perform at least one or more additional functions. According to another embodiment, the processor 27 may receive information indicating that the first output device 201 and the second output device 202 are used by different users, from the first output device 201 or the second output device 202.

[0088] For example, in the case where recession from a contacted object is sensed by the proximity sensor 251e, the processor 27 may determine that the first output device 201 is separated from user's ear. For another example, in the case where acceleration (or acceleration of gravity) of a specified magnitude is sensed by the acceleration sensor 251b, the processor 27 may determine that the first output device 201 is separated from user's ear. For another example, in the case where a contact with an external object is sensed by the proximity sensor 251e, the processor 27 may determine that the first output device 201 is mounted on user's ear. For another example, in the case where a heartbeat is sensed by the HR sensor 251a, the processor 27 may determine that the first output device 201 is mounted on user's ear. For another example, in the case where acceleration or angular velocity of a specified magnitude or more is sensed by the acceleration sensor 251b or the gyro sensor 251c, in the case where proximity of an external object is sensed by the proximity sensor 251e, and in the case where a heartbeat is sensed by the HR sensor 251a, the processor 27 may determine that the first output device 201 is mounted on user's ear. For another example, the processor 27 may receive, from the first output device 201, information about a wearing state of the first output device 201 determined by the first output device 201.

[0089] According to an embodiment, the processor 27 may obtain information about a wearing state of the audio output device 200 from the first output device 201 and/or the second output device 202 by using the communication circuit 26. For example, the processor 27 may receive data sensed by the sensing module 252 of the second output device 202 from the first output device 201 or the second output device 202, and may determine a wearing state of the second output device 202 based on the received information. For another example, the processor 27 may receive information about a wearing state determined by the second output device 202 from the first output device 201 or the second output device 202.

[0090] According to an embodiment, the processor 27 may verify heartbeat information by using the HR sensor 251a of the first output device 201 and the HR sensor 252a of the second output device 202. According to another embodiment, in the case where the first output device 201 and the second output device 202 are mounted on user's ears, the processor 27 may verify heartbeat information. According to another embodiment, the processor 27 may determine whether the audio output device 200 is used by a plurality of users, based on first heartbeat information verified from the HR sensor 251a and second heartbeat information verified from the HR sensor 252a. For example, in the case where the similarity between the first heartbeat information and the second heartbeat information is smaller than a specified value or a difference between the first heartbeat information and the second heartbeat information is a specified threshold value or more, the processor 27 may determine that the audio output device 200 is used by a plurality of users. For example, heartbeat information may include at least one of a heart rate, a heartbeat timing, a heartbeat interval, a heartbeat waveform, or a heartbeat frequency.

[0091] According to an embodiment, when the audio output device 200 is determined as being used by a plurality of users or when information indicating that the audio output device 200 is used by a plurality of users is received from the audio output device 200 (e.g., the first output device 201 or the second output device 202), the processor 27 may display an image indicating the use of the audio output device 200 by a plurality of users on the display 28. For example, the processor 27 may display a pop-up message indicating that the audio output device 200 is used by a plurality of users, on the display 28. For another example, the processor 27 may display an icon indicating that the audio output device 200 is used by a plurality of users, in at least partial area on the display 28.

[0092] According to an embodiment, in the case where the audio output device 200 is used by a plurality of users (e.g., in the case where the use of the audio output device 200 by a plurality of users is determined or the use of the audio output device 200 by a plurality of users is received), an input for controlling an output characteristic of the audio output device 200 may be received. For example, the output characteristic of the audio output device 200 may include at least one of an output intensity (e.g., a volume), an output intensity for each frequency band, or an output filter characteristic. For example, the input associated with the control of the output characteristic may include a touch input on the display 28 or an input to a button on the external electronic device 20. For another example, the input for controlling the output characteristic of the audio output device 200 may be received by the external electronic device 20 through the first output device 201 or the second output device 202.

[0093] According to an embodiment, in the case where the audio output device 200 is used by a plurality of users and the input for controlling the output characteristic of the audio output device 200 is received, the processor 27 may display a user interface for controlling an output characteristic of each of the first output device 201 and the second output device 202 on the display 28. For example, the input for a volume control of the audio output device 200 may be received. In this case, when the audio output device 200 is used by a plurality of users and an input for a volume control is received, the processor 27 may display a user interface (e.g., a scroll bar) for a volume control of the first output device 201 and a user interface for a volume control of the second output device 202 in at least a portion of the display 28. For another example, an input for controlling an output frequency characteristic of the audio output device 200 may be received. In this case, when the audio output device 200 is used by a plurality of users and an input for controlling the output frequency characteristic is received, the processor 27 may display a user interface (e.g., an equalizer) for controlling an output frequency characteristic of the first output device 201 and a user interface for controlling an output frequency characteristic of the second output device 202 in at least a portion of the display 28.

[0094] According to an embodiment, in the case where the audio output device 200 is used by a plurality of users and an input for playing a sound source is received, the processor 27 may display a user interface for playing different sound sources at the first output device 201 and the second output device 202 in at least a portion of the display 28. For example, the processor 27 may display a sound source selection user interface for the first output device 201 and a sound source selection user interface for the second output device 202 in at least a portion of the display 28. For another example, the processor 27 may display a user interface corresponding to a sound source associated with the first output device 201 and a user interface corresponding to a sound source associated with the second output device 202 in at least a portion of the display 28. For another example, the processor 27 may display a user interface associated with the second output device 202 as an image in which the user interface associated with the second output device 202 overlaps at least a portion of a user interface associated with the first output device 201.

[0095] According to an embodiment, in the case where the audio output device 200 is used by a plurality of users, the processor 27 may assign a task being performed by the first output device 201 to the second output device 202. For example, the processor 27 may control the first output device 201 to allow the first output device 201 to transmit data associated with a task being performed by the first output device 201 to the second output device 202. For another example, while the first output device 201 and the second output device 202 operate in a stereo mode, when it is determined that the first output device 201 and the second output device 202 are used by different users, the processor 27 may set the first output device 201 and the second output device 202 such that the first output device 201 and the second output device 202 operate in a mono mode.

[0096] According to an embodiment, the processor 27 may assign a task to the first output device 201 or the second output device 202 based on a user input. For example, the processor 27 may output a call voice or a notification through the first output device 201 or the second output device 202 in the mono mode. For another example, the processor 27 may control the first output device 201 and/or the second output device 202 based on a task or a user input such that only one of the first output device 201 or the second output device 202 is used. For another example, the processor 27 may activate or deactivate the microphone 221 of the first output device 201 and/or the microphone 222 of the second output device 202.

[0097] According to an embodiment, the first output device 201 may include the at least one communication circuit 261, at least one sensor (e.g., the sensing module 251), and a control circuit (e.g., the processor 271). Also, the communication circuit may be configured to obtain first biometric information about a first external object associated with the first output device 201 by using the at least one sensor, to obtain second biometric information about a second external object associated with the second output device 202 from the second output device 202 by using the at least one communication circuit 261, and to determine a similarity between the first external object and the second external object based at least on the first biometric information and the second biometric information. Also, the control circuit may be configured to operate together with the second output device 202 when the similarity satisfies a first specified condition, and to operate independently of the second output device 202 when the similarity satisfies a second specified condition.

[0098] According to an embodiment, when the similarity satisfies the first specified condition, the control circuit may be configured to output first audio data through the first output device 201 and to transmit at least a portion of the first audio data to the second output device 202 by using the at least one communication circuit 261.

[0099] According to an embodiment, the control circuit may be configured to control the first output device 201 and the second output device 202 so as to have the same audio output characteristic when the similarity satisfies the first specified condition, and to control the first output device 201 and the second output device 202 so as to have different audio output characteristics when the similarity satisfies the second specified condition. For example, the audio output characteristic may include at least one of a volume or a volume for each frequency band.

[0100] According to an embodiment, the at least one communication circuit 261 may communicate with the second output device 202 and an external electronic device 20. Also, when the similarity satisfies the second specified condition, the control circuit may be configured to transmit information, which indicates that objects associated with the first output device 201 and the second output device 202 are different, to the external electronic device 20 by using the at least one communication circuit 261.

[0101] According to an embodiment, the first biometric information and the second biometric information may include at least one of a heart rate, a heartbeat waveform, a heartbeat timing, or a heartbeat frequency, and the control circuit may be configured to determine a similarity between the first external object and the second external object based on a similarity between the first biometric information and the second biometric information.