Methods For Improving Test Efficiency And Accuracy In A Computer Adaptive Test (cat)

Gawlick; Lisa ; et al.

U.S. patent application number 16/254315 was filed with the patent office on 2019-05-23 for methods for improving test efficiency and accuracy in a computer adaptive test (cat). The applicant listed for this patent is ACT, INC.. Invention is credited to Lingyun Gao, Lisa Gawlick, Nancy Petersen, Changhui Zhang.

| Application Number | 20190156692 16/254315 |

| Document ID | / |

| Family ID | 53271749 |

| Filed Date | 2019-05-23 |

| United States Patent Application | 20190156692 |

| Kind Code | A1 |

| Gawlick; Lisa ; et al. | May 23, 2019 |

METHODS FOR IMPROVING TEST EFFICIENCY AND ACCURACY IN A COMPUTER ADAPTIVE TEST (CAT)

Abstract

A method for use of pretest items in a test to calculate interim scores is provided. The method includes, for example, a computer implemented test having a plurality of test items that include, for example, a plurality of operational items and one or more pretest 5 items having one or more pretest item parameters. An interim latent construct estimate is calculated using both operational and pretest items. The error for the latent construct estimation is controlled by weighting the contribution of the one or more pretest items.

| Inventors: | Gawlick; Lisa; (Iowa City, IA) ; Zhang; Changhui; (Coralville, IA) ; Petersen; Nancy; (Solon, IA) ; Gao; Lingyun; (Coralville, IA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 53271749 | ||||||||||

| Appl. No.: | 16/254315 | ||||||||||

| Filed: | January 22, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14562183 | Dec 5, 2014 | |||

| 16254315 | ||||

| 61912774 | Dec 6, 2013 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G09B 7/00 20130101 |

| International Class: | G09B 7/00 20060101 G09B007/00 |

Claims

1. A method for generating interim scores with a computer adaptive testing platform by using examinee responses to pretest items to improve scoring accuracy, the method comprising: obtaining a computer adaptive test from a test script administration module, the computer adaptive test comprising a plurality of operational items and one or more pretest items corresponding to one or more pretest items parameters, wherein the pretest item parameters include statistical pretest item response data acquired from other examinees; calculating, with a latent construct estimator, an examinee interim score based on the one or more pretest item parameters and examinee responses to both operational items and pretest items; storing the examinee interim score in a datastore; and re-defining pretest item parameters for one or more of the pretest items based on the examinee responses during the administration of the computer adaptive test to control for error.

2. The method of claim 1 further comprising displaying the computer adaptive test on a graphical user interface and obtaining the examinee responses from the graphical user interface.

3. The method of claim 1 further comprising printing the computer adaptive test and obtaining the examinee responses from a scanner.

4. The method of claim 1 further comprising updating the operational item parameters during administration of the computer adaptive test.

5. The method of claim 1 further comprising using the computer adaptive testing module to set the one or more pretest item parameters to an average of a set of calibrated parameters for the plurality of operational items.

6. The method of claim 1 further comprising updating the one or more pretest item parameters based on corresponding pretest item responses from a threshold number of additional examinees.

7. The method of claim 1 wherein the pretest item parameters comprise maximum likelihood estimators based on the examinee responses.

8. A system for generating interim scores during the administration of a computer adaptive test, the system comprising: a computer adaptive testing server, a data store, and a graphical user interface, the computer adaptive testing server comprising a computer adaptive testing component and a test script administrator, the computer adaptive testing component configured to: generate a computer adaptive test with the test script administrator, the computer adaptive test comprising a plurality of operational items and one or more pretest items having one or more pretest items parameters, wherein the pretest item parameters include statistical pretest item response data acquired from other examinees; calculate an examinee interim score based on the one or more pretest item parameters and examinee responses to both operational items and pretest items; and store the examinee interim score in a datastore; and re-define pretest item parameters for one or more of the pretest items based on the examinee responses during the administration of the computer adaptive test to control for error.

9. The system of claim 8, wherein the computer adaptive testing component is further configured to display the computer adaptive test on the graphical user interface and obtain the examinee responses from the graphical user interface.

10. The system of claim 8, wherein the computer adaptive testing component is further configured to print the computer adaptive test on a printer and obtain the examinee responses from a scanner.

11. The system of claim 8, wherein the computer adaptive testing component is further configured to update the operational item parameters during administration of the computer adaptive test.

12. The system of claim 8, wherein the computer adaptive testing component is further configured to set the one or more pretest item parameters to an average of a set of calibrated parameters for the plurality of operational items.

13. The system of claim 12, wherein the computer adaptive testing component is further configured to update the one or more pretest item parameters based on corresponding pretest item responses from a threshold number of additional examinees.

14. The system of claim 8 wherein the pretest item parameters comprise maximum likelihood estimators based on the examinee responses.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 14/562,183, filed Dec. 5, 2014, which further claims priority under 35 U.S.C. .sctn. 119 to provisional application Ser. No. 61/912,774, filed Dec. 6, 2013, the disclosures of which are hereby incorporated in their entirety.

BACKGROUND OF THE DISCLOSURE

I. Field of the Disclosure

[0002] The present disclosure relates to computer adaptive testing. More specifically, but not exclusively, the present disclosure relates to methods for improving test efficiency and accuracy by providing a procedure for extracting information from an examinee's responses to pretest items for use in construct estimation in a Computer Adaptive Test (CAT).

II. Description of the Prior Art

[0003] In a Computer Adaptive Test (CAT), pretest items may be imbedded in the test but not intended necessarily to make a contribution to the estimation of the examinee's latent construct. Typically, pretest items are embedded in a CAT but examinee responses to the pretest items are not used in item selection or scoring; generally only examinee responses to operational items are used in item selection or scoring. Thus, the information contained in the examinee's responses to the pretest items is underutilized or even wasted.

[0004] Therefore, it is a primary object, feature, or advantage of the present disclosure to use valuable information in the examinee's responses to the pretest items, which may be used together with the information in the examinee's responses to the operational items for construct estimation.

[0005] In a CAT, the next item administered to an examinee can be selected based on the examinee's interim ability score which can be estimated using responses to the operational items administered thus far and the interim ability estimate is updated after the administration of each operational item.

[0006] Therefore, it is a primary object, feature, or advantage of the present disclosure to improve efficiency and final score estimation for a CAT by providing a more accurate interim ability score, which means a more informative next item can be selected for administration to the examinee.

[0007] It is another object, feature, or advantage of the present disclosure to use pretest information to provide improved interim ability score estimation, to fine-tune a test, for example, by making a test shorter or more accurate and thereby more effective.

[0008] Still another object, feature, or advantage of the present disclosure provides for using examinee responses to pretest items in interim ability scoring.

[0009] The obstacle of counting on pretest items for construct estimation is that the item parameters are not in place when they are administered. Technically, the pretest item parameters could be estimated on the fly (i.e., in real time during test administration) and updated right after being exposed to a new examinee, but the response sample size is smaller than in the standard practice for calibration. Small sample sizes could lead to large error in the estimated item parameters. The uncertainty of the item parameters of pretest items discourages their use in construct calculations.

[0010] Therefore, another object, feature, or advantage of the present disclosure uses weighted interim score calculations to control the error impact when including pretest items in construct estimation.

[0011] One or more of these and/or other objects, features or advantages of the present disclosure will become apparent from the specification and claims that follow.

SUMMARY OF THE DISCLOSURE

[0012] The present disclosure improves test efficiency and accuracy in a CAT.

[0013] One exemplary method is for use of pretest items in addition to operational items in a test to calculate interim scores. This may be accomplished, for example, by providing a computer implemented test that includes a plurality of operational items and one or more pretest items having one or more item parameters. Interim latent construct estimates are calculated using both operational and pretest items. Error for the interim latent construct estimates is controlled by weighting the contribution of the pretest items.

[0014] According to one aspect, a method for using pretest items to calculate interim scores is provided. The method uses a computer implemented test having a plurality of test items including a plurality of operational items and one or more pretest items having one or more pretest item parameters. In one exemplary operation, latent construct estimates are calculated using both operational and pretest items by estimating one or more pretest item parameters for use with a set of calibrated parameters for the plurality of operational items.

[0015] According to one aspect, a method for using pretest items in the calculation of interim scores is provided. The method includes providing a computer implemented test having a plurality of test items. The test items include a plurality of operational items and one or more pretest items having one or more pretest item parameters. Steps of the method include, additionally, for example, calculating latent construct estimates using both operational and pretest items, controlling error for the latent construct estimates by weighting the contributions of the one or more pretest items, estimating the one or more pretest item parameters for use with a set of calibrated parameters for the plurality of operational items, and updating an interim score for the computer implemented test based on examinee responses to the one or more pretest items.

BRIEF DESCRIPTION OF THE DRAWINGS

[0016] Illustrated embodiments of the present disclosure are described in detail below with reference to the attached drawing figures, which are incorporated by reference herein, and where:

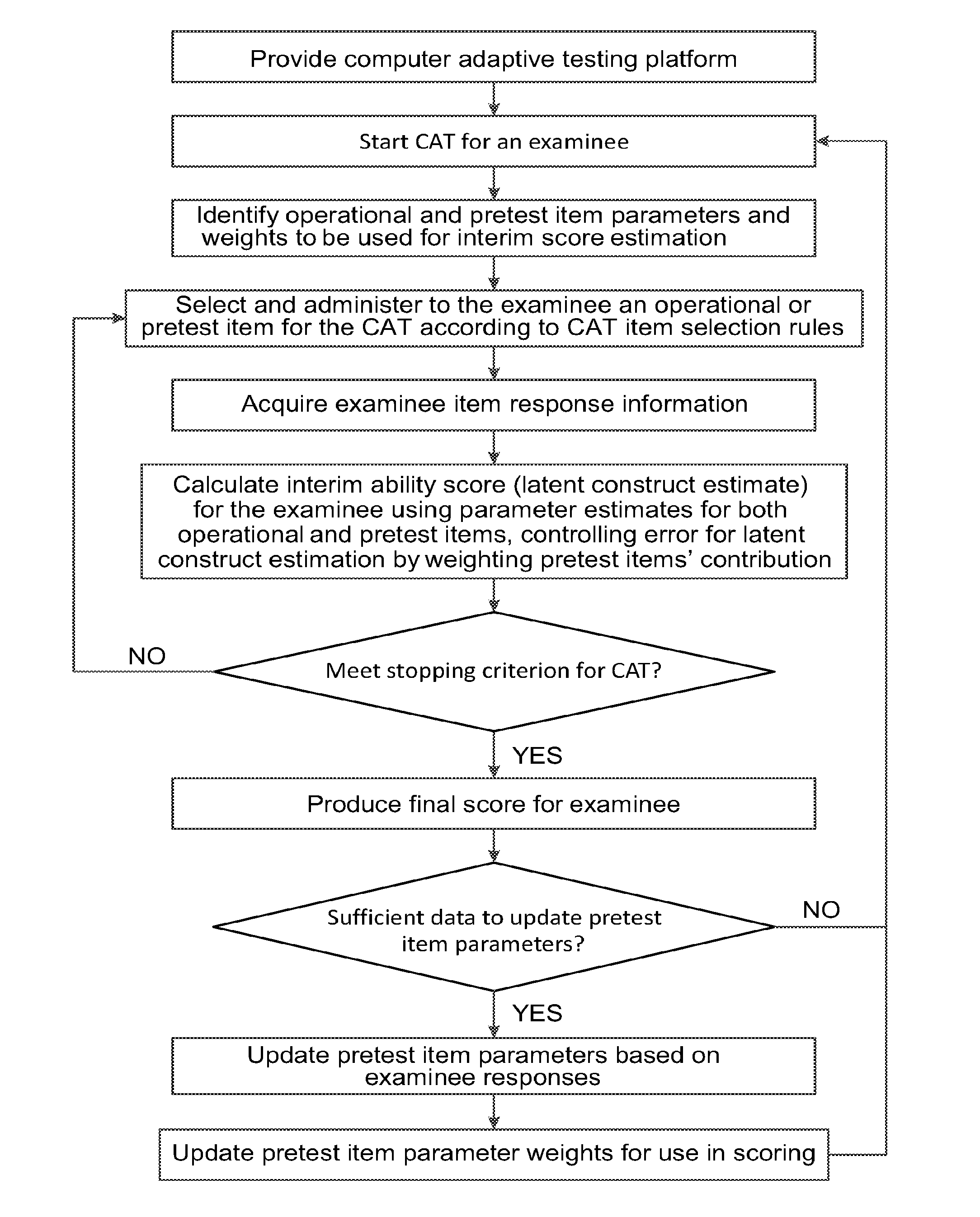

[0017] FIG. 1 is a flowchart of a process for using pretest items for latent construct estimation in computer adaptive testing in accordance with an illustrative embodiment;

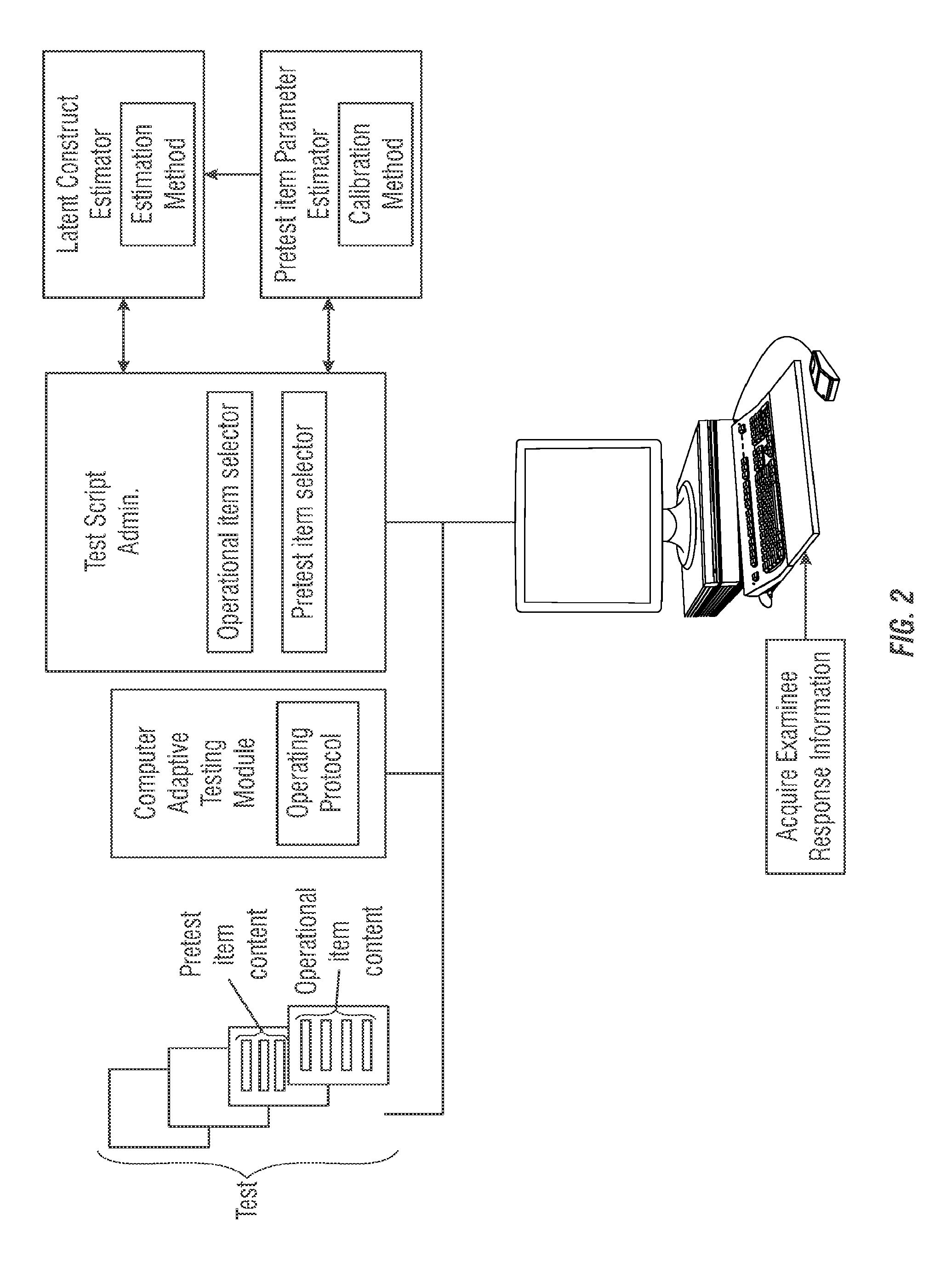

[0018] FIG. 2 is a block diagram providing an overview of a process for using pretest items in latent construct estimations in accordance with an illustrative embodiment.

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

[0019] The present disclosure provides for various computer adaptive testing methods. One exemplary method includes using pretest items in interim latent construct estimation. The accuracy and efficiency of computer adaptive testing is improved by making the test more succinct and/or accurate, and thus more effective. What results is a testing platform using computer adaptive testing that can estimate examinees' ability using examinee's response information relating to a specific set of pretest items.

III. Using Pretest Items to Fine-Tune an Examinee's Interim Score

[0020] According to one aspect, the disclosure may be implemented using a computer adaptive testing platform to create a more succinct and/or accurate and thereby shorter and more effective test using examinees responses to pretest items included in the administration of a test.

[0021] a. Illustrative Embodiments for Using Pretest Items to Fine-Tune an Examinee's Interim Score

[0022] In a computer adaptive test (CAT), pretest items are often embedded but not used to estimate an examinee's latent construct. However, examinees' responses to these items can reveal additional information, which can be used to improve score accuracy and test efficiency. Additionally, including pretest items in interim scoring can have benefits such as improving candidate motivation when the items administered are closer to candidate ability.

[0023] One of the obstacles to the inclusion of pretest items in scoring is that their parameters are not in place when these items are administered. While research studies have demonstrated that pretest item parameters can be estimated on the fly, the challenge of larger error as a result of smaller sample sizes for the pretest items has posed a big concern and no solutions have been found. Consequently, the uncertainty of the pretest item parameters deters people from using them in scoring.

[0024] Understanding the impact of less accurate or indiscriminate item parameters on ability estimation, such as latent construct calculations, and finding a way to control and calibrate such error are examined in the preceding paragraphs and accompanying illustrative works identified in the figures incorporated herein. Increasing the efficiency and effectiveness of computer adaptive testing undoubtedly will result in a significant cost savings, and likely a shorter, more succinct and accurate test thereby increasing the effectiveness of computer administrated testing. Notwithstanding a resultant cost savings, surely the efficiency, effectiveness and accuracy of computer adaptive testing can be significantly improved at least without resulting in an increase in cost. Therefore, a method for using pretest items to fine-tune an examinee's interim score is provided herein. For purposes of illustration, a flowchart diagram is provided in FIG. 1 as one pictorial representation of a method for using pretest items to fine-tune an examinee's interim score or latent construct estimation.

[0025] For acquiring an examinee's response information as shown in FIG. 2, a computer adaptive testing platform is provided as illustrated in FIG. 1. The examining interface piece shown in FIG. 2 may be a computer or computer network. Examples of a computer adaptive testing platform shown in FIG. 1 may be a computer network that includes, for example, a server, workstation, scanner, printer, a datastore, and other connected networks. The computer networks may be configured to provide a communication path for each device of the computer network to communicate with other devices. Additionally, the computer network may be the internet, a public switchable telephone network, a local area network, private wide area network, wireless network, or any of the like. In various embodiments of the disclosure, a computer adaptive testing administration script may be executed on the server and/or workstation. For example, in one embodiment of the disclosure, the server may be configured to execute a computer adaptive testing script, provide outputs for displaying to the workstation, and receive inputs from the workstation, such as an examinee's response information. In various other embodiments, the workstation may be configured to execute a computer adaptive testing application individually or co-operatively with one or more workstations. The scanner may be configured to scan textual content and output the content in a computer readable format. Additionally, the printer may be configured to output the content from the computer adaptive test application to a print media, such as paper. Furthermore, data associated with examining response information of the computer adaptive test application or any of the associated processes illustrated or shown in FIGS. 1-2, and any of the like, may be stored on a datastore and displayed on a workstation. The datastore may additionally be configured to receive and/or forward some or all of the stored data. Moreover, in yet another embodiment, some or all of the computer network may be subsumed within a single device. Although FIG. 2 depicts a computer, it is understood that the disclosure is not limited to operation within a computer or computer network, but rather, the disclosure may be practiced in or on any suitable electronic device or platform. Accordingly, the computer illustrated in FIG. 2 or computer network (not shown) are illustrated and discussed for purposes of explaining the disclosure and are not meant to limit the disclosure in any respect.

[0026] According to one aspect of the disclosure, an operating protocol is provided on the workstation or computer network for operating a computer adaptive testing module or application. A test made up of a group of pretest item content and operational item content is selected for delivery through a computer adaptive testing module controlled by an operating protocol. In addition to operational item selection and pretest item selection, a test script administration application or process could be implemented to select or establish pretest item parameters for the selected pretest items to be administered by the test script application. Using a computer network, workstation or electronic device, a test is administered using the selected operational and pretest items having one or more selectable pretest item parameters. Using a workstation, computer network, or other electronic device, examinee response information is acquired for the selected operational and pretest items administered as part of the test script administration process or application. Upon acquiring examinee response information for subject pretest items or other operational items as part of a test script being administered, an interim score for an examinee such as a latent construct estimate may be calculated. These calculations could be used to inform subsequent selection of one or more operational items, one or more pretest items, and/or one or more pretest item parameters. Operating on the workstation, network or other electronic device is a latent construct estimator using one or more estimation methods for providing an examinee's interim score, such as a latent construct estimate, or selecting one or more pretest items having selected item parameters. Examples of controlling error during latent construct estimation include weighting the contribution of the pretest items on an examinee's interim latent construct estimates. Other methods include adjusting, calibrating or re-defining pretest item parameters for one or more of the selected pretest items during the administration of the test script administration process or application. A calibration script may also be included and made operable on a computer network, a workstation or like electronic device for calibrating, adjusting or re-defining pretest item parameters based on latent construct estimates. Additionally, the resulting interim scores are more diverse when pretest items are included, thus the following items are more diverse. For example, using such a method, the one or more operational items selected in a test may be reduced to make the test shorter, more accurate, more succinct, and more effective based on the use of pretest items in calculation of interim construct estimates. Thus, including pretest items in interim latent score calculations provides a method to refine a test script administration process or application that uses one or more pretest items in combination with one or more operational items for a testing sequence or event using computer adaptive testing.

IV. Other Embodiments and Variations

[0027] The present disclosure is not to be limited to the particular embodiments described 5 herein. In particular, the present disclosure contemplates numerous variations in the type of ways in which embodiments of the disclosure may be applied to computer adaptive testing. The foregoing description has been presented for purposes of illustration and description. It is not intended to be an exhaustive list or limit any of the disclosure to the precise forms disclosed. It is contemplated that other alternatives or exemplary aspects 10 that are considered are included in the disclosure. The description is merely examples of embodiments, processes or methods of the disclosure. For example, the methods for controlling waiting for use of pretest items in latent construct estimation may be varied according to use and test setting, test type, and other like parameters. It is understood that any other modifications, substitutions, and/or additions may be made, which are within the 15 intended spirit and scope of the disclosure. For the foregoing, it can be seen that the disclosure accomplishes at least all of the intended objectives.

* * * * *

D00000

D00001

D00002

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.