Concurrent Scan Of Multiple Pixels In A Lidar System Equipped With A Polygon Mirror

Campbell; Scott R. ; et al.

U.S. patent application number 15/965288 was filed with the patent office on 2019-05-23 for concurrent scan of multiple pixels in a lidar system equipped with a polygon mirror. The applicant listed for this patent is LUMINAR TECHNOLOGIES, INC.. Invention is credited to Scott R. Campbell, Jason M. Eichenholz, Lane A. Martin, Matthew D. Weed.

| Application Number | 20190154802 15/965288 |

| Document ID | / |

| Family ID | 66532279 |

| Filed Date | 2019-05-23 |

View All Diagrams

| United States Patent Application | 20190154802 |

| Kind Code | A1 |

| Campbell; Scott R. ; et al. | May 23, 2019 |

CONCURRENT SCAN OF MULTIPLE PIXELS IN A LIDAR SYSTEM EQUIPPED WITH A POLYGON MIRROR

Abstract

A lidar system includes one or more light sources configured to generate a first and second beams of light, a scanner configured to synchronously scan a field of regard of the lidar system using the two beams, and a receiver configured to detect light of the two beams scattered by one or more remote targets. The scanner includes a rotatable polygon mirror having a block having a first wall, a second wall, and reflective surfaces extending between the first and second walls, the reflective surfaces being angularly offset from one another along a periphery of the block; a polygon mirror axle extending into the block, about which the block rotates; optical elements configured to direct the first and second beams of light respectively to two adjacent reflective surfaces of the rotatable polygon mirror; and a second mirror pivotable along an axis orthogonal to the polygon mirror axle.

| Inventors: | Campbell; Scott R.; (Sanford, FL) ; Eichenholz; Jason M.; (Orlando, FL) ; Weed; Matthew D.; (Winter Park, FL) ; Martin; Lane A.; (Sunnyvale, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 66532279 | ||||||||||

| Appl. No.: | 15/965288 | ||||||||||

| Filed: | April 27, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62590235 | Nov 22, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G02B 5/09 20130101; G01S 17/42 20130101; H01L 27/14694 20130101; G01S 17/89 20130101; G02B 26/105 20130101; G02B 26/123 20130101; G01S 7/4817 20130101; G02B 27/30 20130101; H01L 27/14643 20130101; G01S 17/08 20130101; G02B 26/101 20130101; G01S 7/4813 20130101; G02B 5/1857 20130101; G02B 26/125 20130101; G01S 17/87 20130101; G01S 17/931 20200101; G02B 27/0955 20130101; G02B 7/1821 20130101; G02B 5/22 20130101; H01L 27/14647 20130101; G02B 27/1086 20130101; H01L 25/167 20130101; G02B 5/0841 20130101; G02B 27/0977 20130101 |

| International Class: | G01S 7/481 20060101 G01S007/481; G02B 26/10 20060101 G02B026/10 |

Claims

1. A lidar system comprising: one or more light sources configured to generate a first beam of light and a second beam of light; a scanner configured to synchronously scan a field of regard of the lidar system using the first beam of light and the second beam of light, the scanner including: a rotatable polygon mirror having a block having a first wall, a second wall, and a plurality of reflective surfaces extending between the first and second walls, the reflective surfaces being angularly offset from one another along a periphery of the block, a polygon mirror axle extending into the block through at least one of the first and second walls, about which the block rotates, a plurality of optical elements configured to direct the first and second beams of light respectively to two adjacent reflective surfaces of the rotatable polygon mirror, and a second mirror pivotable along an axis orthogonal to the polygon mirror axle, wherein the first and second beams of light are incident on the second mirror; and a receiver configured to detect the first beam of light and the second beam of light scattered by one or more remote targets.

2. The lidar system of claim 1, wherein each optical element comprises a fiber-optic cable, a fiber-optic collimator, a lens, or a mirror.

3. The lidar system of claim 1, wherein each optical element comprises: a fiber-optic cable to convey light from the light source to the scanner, a collimator or lens at a terminal end of the fiber-optic cable to produce a collimated free-space output beam directed at the polygon mirror.

4. The lidar system of claim 1, wherein: the first beam of light and the second beam of light are scanned by the scanner to define a first field of regard and a second field of regard, respectively, the first field of regard and the second field of regard in combination define the field of regard of the lidar system, and the first field of regard and the second field of regard partially overlap to define an overlap region within the field of regard of the lidar system.

5. The lidar system of claim 4, wherein a width of the overlap region is less than or equal to one-third of a width of the first or second field of regard.

6. The lidar system of claim 4, wherein a width of the overlap region is between 20 and 40 degrees.

7. The lidar system of claim 4, wherein each of the first beam of light and the second beam of light scans the respective field of regard according to a scan pattern that defines horizontal or vertical scan lines, and wherein the scan lines of the first field of regard are offset relative to the scan lines of the second field of regard by approximately one half of a scan line to yield double pixel density within the overlap region.

8. The lidar system of claim 4, wherein the overlap region is oriented in a direction of travel of a vehicle on which the lidar system is deployed.

9. The lidar system of claim 1, wherein each of the first beam of light and the second beam of light are scanned by the scanner to define a respective field of regard approximately 60 degrees wide.

10. The lidar system of claim 1, further comprising: one or more power splitters to split each of the first beam of light and the second beam of light into a pair of beams; four fiber-optic cables to separately convey each of the split beams to the scanner, wherein the scanner mechanically aims each of the split beams to produce angular separation between the split beams of the first beam of light and the split beams of the second beam of light; wherein the receiver includes: a first pair of detectors for the pair of angularly offset beams corresponding to the first beam of light, and a second pair of detectors for the pair of angularly offset beams corresponding to the second beam of light.

11. The lidar system of claim 10, wherein: each detector defines a respective pixel within a scan pattern, and each of the first pair of detectors and the second pair of detectors defines an even pixel and an odd pixel separated by N detector field-of-view (FOV) areas to reduce cross-talk, wherein N is larger than two.

12. The lidar system of claim 10, wherein: each detector defines a respective pixel within a scan pattern, and each of the first pair of detectors and the second pair of detectors define non-integer separation between pixels in a corresponding row or column.

13. The lidar system of claim 10, wherein the splitters include at least one of (i) a diffractive optical element, (ii) a fiber-optic power splitter, or (iii) a free-space power splitter.

14. The lidar system of claim 1, wherein: a first input beam includes light of the first beam of light scattered by one or more remote targets, a second input beam includes light of the second beam of light scattered by one or more remote targets, and the rotatable polygon mirror and the second mirror reflect each of the first input beam and the second input beam to direct the first input beam and the second input beam toward respective detectors of the receiver.

15. The lidar system of claim 14, wherein for each detector of the receiver and a corresponding outbound beam of light associated with a respective light source: an instantaneous field of view (FOV) of the light source is at least partially outside and ahead of a FOV of the detector along a scan direction, at a time when a light pulse associated with the outbound beam is emitted, and the FOV of the detectors is coincident with or overlaps the instantaneous FOV of the light source after a time T during which the light pulse traverses a maximum distance D and returns to the detector, after being scattered by one or more targets.

16. A method in a lidar system for scanning a field of regard, the method comprising: directing, within a single ranging event, a first pulse of light at a first reflective surface of a polygon mirror and a second pulse of light at a second reflective surface of the polygon mirror, wherein the polygon mirror rotates about a polygon mirror axis; directing, with the polygon mirror within the single ranging event, the first pulse of light and the second pulse of light to respective locations on a reflective surface of a second mirror that pivots along an axis orthogonal to the polygon mirror axis; directing, with the second mirror, the first pulse of light and the second pulse of light along a scan direction to illuminate a first instantaneous light-source field of view (FOV) corresponding to a first pixel and a second instantaneous light-source FOV corresponding to a second pixel, respectively; detecting, within a single ranging event, the first beam of light scattered by one or more remote targets using a first detector and the second beam of light scattered by the one or more remote targets using a second detector, to generate values for the first pixel and the second pixel.

17. The method of claim 16, wherein the first pulse of light is associated with a first beam and the second pulse of light is associated with a second beam, the method further comprising: scanning, using the polygon mirror and the second mirror, the first beam and the second beam to define a first field of regard and a second field of regard, respectively, wherein the first field of regard and the second field of regard in combination define the field of regard of the lidar system, and wherein the first field of regard and the second field of regard partially overlap to define an overlap region within the field of regard of the lidar system.

18. The method of claim 17, wherein a width of the overlap region is less than or equal to one-third of a width of the first or second field of regard.

19. The method of claim 17, further comprising scanning the first beam and the second beam across the respective field of regard according to a scan pattern that defines horizontal or vertical scan lines, wherein the scan lines of the first field of regard are offset relative to the scan lines of the second field of regard by approximately one half of a scan line to yield double pixel density within the overlap region.

20. The method of claim 16, further comprising splitting each of the first pulse of light and the second pulse of light into a respective pair of angularly separated pulses corresponding to two different pixels within a same scan line.

Description

REFERENCE TO RELATED APPLICATION

[0001] This application claims priority to provisional U.S. application Ser. No. 62/590,235, filed on Nov. 22, 2017, titled "Low Profile Lidar Scanner with Polygon Mirror," the entire disclosure of which is hereby expressly incorporated by reference herein.

FIELD OF TECHNOLOGY

[0002] This disclosure relates generally to lidar sensor heads and, more specifically, to multi-mirror lidar sensor heads having a compact construction so as to occupy minimal area when deployed on a vehicle.

BACKGROUND

[0003] The background description provided herein is for the purpose of generally presenting the context of the disclosure. Work of the presently named inventors, to the extent it is described in this background section, as well as aspects of the description that may not otherwise qualify as prior art at the time of filing, are neither expressly nor impliedly admitted as prior art against the present disclosure.

[0004] Light detection and ranging (lidar) is a technology that can be used to measure distances to remote targets. Typically, a lidar system includes a light source and an optical receiver. The light source can be, for example, a laser which emits light having a particular operating wavelength. The operating wavelength of a lidar system may lie, for example, in the infrared, visible, or ultraviolet portions of the electromagnetic spectrum. The light source emits light toward a target which then scatters the light. Some of the scattered light is received back at the receiver. The system determines the distance to the target based on one or more characteristics associated with the returned light. For example, the system may determine the distance to the target based on the time of flight of a returned light pulse.

[0005] While the precision and efficacy of lidar scanners have continually improved, the power requirements, heat dissipation, and physical dimensions of existing lidar scanners have posed obstacles to designers of lidar systems. With the increasing prevalence of the use of lidar systems in vehicles, such considerations are of increased concern to designers of lidar systems.

SUMMARY

[0006] A lidar system including a light emitting light source (i.e., a laser), a scanner configured to direct the embedded light to scan a field of regard (FOR) of the lidar system in accordance with a scan pattern, a receiver that detects light scattered by one or more remote targets, and a controller to control one or more mirrors of the scanner, is provided. The scanner includes both a polygon mirror and a planar mirror. The polygon mirror may be in the form of a rotatable block having a first wall, a second wall spaced away from and parallel to the first wall, and a plurality of reflective surfaces extending between the first and second walls, the reflective surfaces being angularly offset from one another along a periphery of the block. The planar mirror rotates about an axis orthogonal to an axis of rotation of the polygon mirror, and is thereby considered a pivotable oscillating planar mirror. At least the scanner and the receiver may be disposed inside a housing of a lidar sensor unit (or "sensor head"), and the lidar system can include one or several lidar sensor units.

[0007] The polygon mirror may also be provided with a motor to power its rotation that is disposed at least partially, but preferably substantially or entirely, within the rotatable block. By arranging the motor for the polygon mirror within the rotatable block of the polygon mirror, the overall three dimensional footprint of the scanner can be further reduced.

[0008] The polygon mirror may be provided with one or more tabs that pass through a stationary photo-interrupter as the polygon mirror rotates. The photo-interrupter provides feedback data indicative of the rotational speed of the polygon mirror, which feedback data can then be processed by a controller associated with the motor of the polygon mirror to regulate, stabilize, or adjust the rotational speed of the polygon mirror as needed.

[0009] The scanner of the lidar sensor unit is provided with a low profile when compared to conventional multi-mirror lidar systems. Certain structural and operational features of the lidar sensor units of the present disclosure may be employed, individually or collectively, to not only minimize the three-dimensional footprint or volume of space occupied by the lidar scanner, but also serve to improve aerodynamic performance (both internally and externally), reduce audible noise, reduce heat, and improve resistance to vibration, acceleration, deceleration, or other environmental factors that might otherwise negatively affect scanner accuracy and performance.

[0010] The orientation of the scanner, and specifically, the orientation of the axis of rotation of the polygon mirror, may be selected so as to align with an orientation of a vehicle in which the lidar sensor unit operates. In some implementations, however, a lidar system operating in a vehicle includes multiple lidar sensor units, with at least some of the lidar sensor units oriented differently from each other.

[0011] The planar mirror of the scanner may be provided with an optimized geometry to enhance durability and service life. For instance, the planar mirror may have a center of gravity closer to its reflective surface than conventional planar mirrors of lidar scanners. This may be effected by constructing a pivotable backing or support surface for the reflective surface of the planar mirror of a honeycomb structure or other ribbed structure, with material arranged such that the center of gravity of the planar mirror is closer to the reflective surface than to an edge of the ribbed or honeycomb structure opposite the reflective surface.

[0012] The speed of oscillation of the planar mirror may be controlled so as to dynamically vary distances between scan lines. In general, a scan line can have a horizontal orientation, vertical orientation, or any other suitable orientation. In at least some of the embodiments discussed herein, each scan line corresponds to a reflection of the emitted light from one of the reflective surfaces of the rotating polygon mirror. The distances between scan lines can vary on a frame-by-frame basis, and can vary in different portions of the field of regard. A drive signal of a motor driving the speed of oscillation of the planar mirror can be shaped as a Gaussian to optimally space scan lines apart. For example, the Y-scan mirror can be driven with a Gaussian-type function so that the mirror has a relatively high scan speed at the ends of its motion and a relatively low scan speed near the middle of its motion. This type of Gaussian scan produces a higher density of scan lines near the middle region of the FOR and a lower density of scan lines at the upper and lower ends of the FOR.

[0013] The width of the planar mirror can determine the horizontal scan range, also referred to below as the horizontal dimension of the field of regard (FOR.sub.H). For a given polygon mirror, FOR.sub.H can be increased by selecting a wider planar mirror. The lidar sensor unit can support modular optical assembly, so that planar mirrors of different widths can be compatible with the same remaining opto-mechanics of the lidar sensor unit. Thus, by providing an oscillating planar mirror of a significantly greater width than the reflective surfaces of the rotating polygon mirror, not only can the oscillating planar mirror achieve desired field of regard along the vertical dimension (FOR.sub.V), but the oscillating planar mirror, in concert with the polygon mirror, can also advantageously increase the FOR.sub.H, all while reducing the overall three dimensional footprint of the lidar sensor unit.

[0014] The planar mirror preferably has a range of motion that exceeds the vertical dimension of the FOR. For instance, if the FOR is 30.degree. vertically by 120.degree. horizontally, the range of motion for the planar mirror (which, for the sake of convenience, is also referred to herein as a Y-scan mirror) can be 60.degree. vertically, to accommodate a 30.degree. vertical component of the FOR in various ranges. This enables a lidar sensor head to scan a greater range of vertical area, such as when a vehicle on which the lidar sensor is mounted approaches an incline.

[0015] As explained in more detail in the following detailed description, the polygon mirror, at any given time during its rotation, includes at least two active, adjacent reflective surfaces. This enables the lidar sensor unit to direct pulses toward different sections of a scan line so as to process at least two distinct return pulses within the time of a single ranging event. The outbound pulses can scatter from the same remote target or different remote targets. Using two beams of light with two facets of the polygon mirror thus increases the FOR.sub.H of the lidar sensor unit without increasing the time it takes to scan one line.

[0016] The adjacent reflective surfaces direct the output beams toward different portions of the planar mirror. Thus, the lidar sensor unit can have two active "eyes" that share both the polygon mirror and the planar mirror, thereby providing both a cost reduction and a size reduction. The beams are incident on the respective surfaces in such a manner that provides a large angular separation between the outbound beams, so as to reduce the probability of cross-talk detection. In one example, two beams can be offset along the x-axis by half a pixel to produce two times the pixel density in the overlap region (e.g., for a pair of adjacent pixels generated using one beam, another pixel centered at the midpoint between the pair of pixels can be generated using the other beam). In another example, two beams can be offset along the y-axis by half a line to produce two times the pixel density in the overlap region (e.g., for a pair of adjacent scan lines generated using one beam, another scan line centered between the pair of adjacent scan lines can be generated using the other beam). The first approach involves offsetting the pixels along the x-axis so that, in the overlap region, the pixels from one beam are interleaved along the x-axis with pixels from the other beam. The second approach involves offsetting the scan lines along the y-axis so that the scan lines are interleaved in the overlap region. These two approaches (interleaving pixels and interleaving scan lines) are independent of each other and can be implemented separately or together.

[0017] By having two adjacent active surfaces, and at least two inactive surfaces of the rotating polygon mirror at any one time, a baffle or shroud can be provided around the inactive surfaces so as to further reduce aerodynamic drag and aid in air circulation of the polygon mirror. The use of such a baffle or shroud is not possible with a 360.degree. scanner, as such a shroud would block active reflective surfaces of the mirror.

[0018] Input and output beams can be incident on the same mirror operating in a lidar scanner, or the same multi-mirror assembly including a mirror to generate scan lines (e.g., a polygon mirror) and another mirror to distribute these scan lines along the other dimension (e.g., a planar mirror). The fields of view (FOVs) of the beams can be arranged to minimize the overall surface area. In another aspect of the present disclosure, the fields of view of two output beams define relatively small circles, whereas the field of view of the input beams defines a relatively large circle. The smaller circles are arranged adjacent to the larger circle, with little or no overlap, and with the imaginary line segment connecting the centers of the smaller circles displaced relative to the diameter of the larger circle. This more compact arrangement facilitates minimization of the overall three dimensional footprint of the scanner.

[0019] The lidar scanner of the present disclosure preferably employs a single lens with off-axis illumination for two detectors, which are placed in the same optical path. The displacement of the transit beam relative to the center of the lens allows the detectors to be placed adjacent one another and off-center, thereby further facilitating a minimized overall profile. The detector diameter is approximately 50-150 microns, and the detector separation distance is approximately 0.5-2 mm.

[0020] The use of off-axis illumination eliminates the need to use an overlap mirror with a center hole, which sometimes is referred to as a "doughnut mirror." In particular, the beams are coupled into the scanner by the side of an overlap mirror that reflects input light to the detector. The output beam(s) and the input beam(s) thus are not entirely coaxial, as discussed in more detail below. The output beam(s) and the input beam(s) are offset relative to each other spatially and angularly. In other implementations, however, a doughnut mirror can be used with the polygon mirror and the planar mirror of this disclosure.

[0021] Methods of manufacture of a suitable polygon mirror are also disclosed herein. To obtain optimal balance of the polygon mirror, and ensure the field of regard is accurately scanned, high-energy laser pulses are used to remove matter at precise locations of the rotating polygon mirror. This can be combined with initial drilling for coarse balancing (so as to achieve both coarse and fine balancing). More particularly, a coarse balancing procedure using a drill or another suitable equipment can be used to form a relatively well-balanced block, and the surfaces can be made reflective (as explained in greater detail below). The block then can be mated to a motor in an assembly to be used in a scanner (rather than using an assembly specifically set up for manufacturing or testing). Once mated to the motor, the block can be rotated, and high-energy laser pulses can remove excess material from the block to achieve a high degree of balancing.

[0022] The polygon mirror is preferably manufactured by surface replication. In embodiments where the polygon mirror includes an even number of facets, pairs of opposite facets may be serviced simultaneously. While a four-sided polygon mirror will be disclosed as the preferred embodiment, the specification will explain that other numbers of sides are possible, with the understanding that the more facets of the polygon mirror, the closer the overall polygon mirror resembles a circle.

[0023] The lidar scanner can be implemented in a manner that directs two angularly separated pulses toward different sections of the scan line and processes the return pulses within the time of a single ranging event, where the two pulses reflect from the same reflective surface of the polygon mirror. Thus, according to some implementations, a single sensor head includes a total of four beams and four detectors: each pair of beams includes two angularly separated beams that reflect from the same surface of the polygon mirror. The lidar system can process return pulses corresponding to a non-integer separation in pixels (for example, an angular separation corresponding to 51/2 or 111/2 pixels). In this manner, the system can superimpose the return values to more accurately determine the values of pixels 1, 2, 3, . . . , N of the scan line. Otherwise, the lidar system receives duplicate readings for many of the pixels. Additionally, separating the two beams by a significant number of pixels (e.g., approximately 9-13 pixels rather than 3-5 pixels) mitigates problems with defocusing of the beam received at the detectors. The separation distance between the detectors (e.g., 0.8-1.2 mm) corresponds to the angular separation of the beams (e.g., 2-3 degrees). Since the two detectors are separated by a certain distance, if the beams become defocused, there will not be a problem with cross-talk where light from one beam spills over to the other detector.

[0024] Alternately, the beams are interleaved/offset by 1/2-pixel so that one beam provides information about pixels 1, 2, 3, etc., and the other beam provides information about pixels 11/2, 21/2, 31/2, etc. Since the pixels can be numbered in any fashion, this can also be expressed as the beams being offset by 1 pixel (e.g., one beam samples the odd pixels and the other beam samples the even pixels), where adjacent pixels may have some amount of overlap.

[0025] In some implementations, diffractive optical elements (DOEs) can be used to produce angularly separated beams. In other implementations, however, the lidar system uses fiber-optic power splitters and mechanical positioning/aiming to produce the angularly separated beams. For example, the output from the light source is split four ways (e.g., with a 4.times.1 power splitter, or with 3 2.times.1 power splitters) into four fiber-optic cables. Then, each of the four fiber-optic cables is terminated by a collimator (essentially, a lens that is rigidly coupled to the end of a fiber) to form a collimated free-space output beam. For each "eye" of the sensor head, two collimators can be positioned and aimed to form two angularly offset output beams (e.g., with a 2-degree angle between the beams). These two beams are directed so that together they reflect off of one face at a time of the rotating polygon mirror.

[0026] Further, the splitters can also be fiber-optic power splitters or free-space power splitters. The fiber-optic power splitters can be considered to be part of the light source or part of the optical elements.

[0027] The low-profile lidar scanner head can be provided as a box-like protrusion on each corner of the roof of a vehicle, preferably at 45.degree. relative to each of the edges. In a particularly preferred embodiment, the lidar scanner head may be partially embedded in the vehicle roof or other vehicle body part so only a window of the unit protrudes prominently from the roof (or hood, side mirror, rear-view mirror, windshield, bumper, grill, or other body part surface in which the lidar scanner head is disposed).

BRIEF DESCRIPTION OF THE DRAWINGS

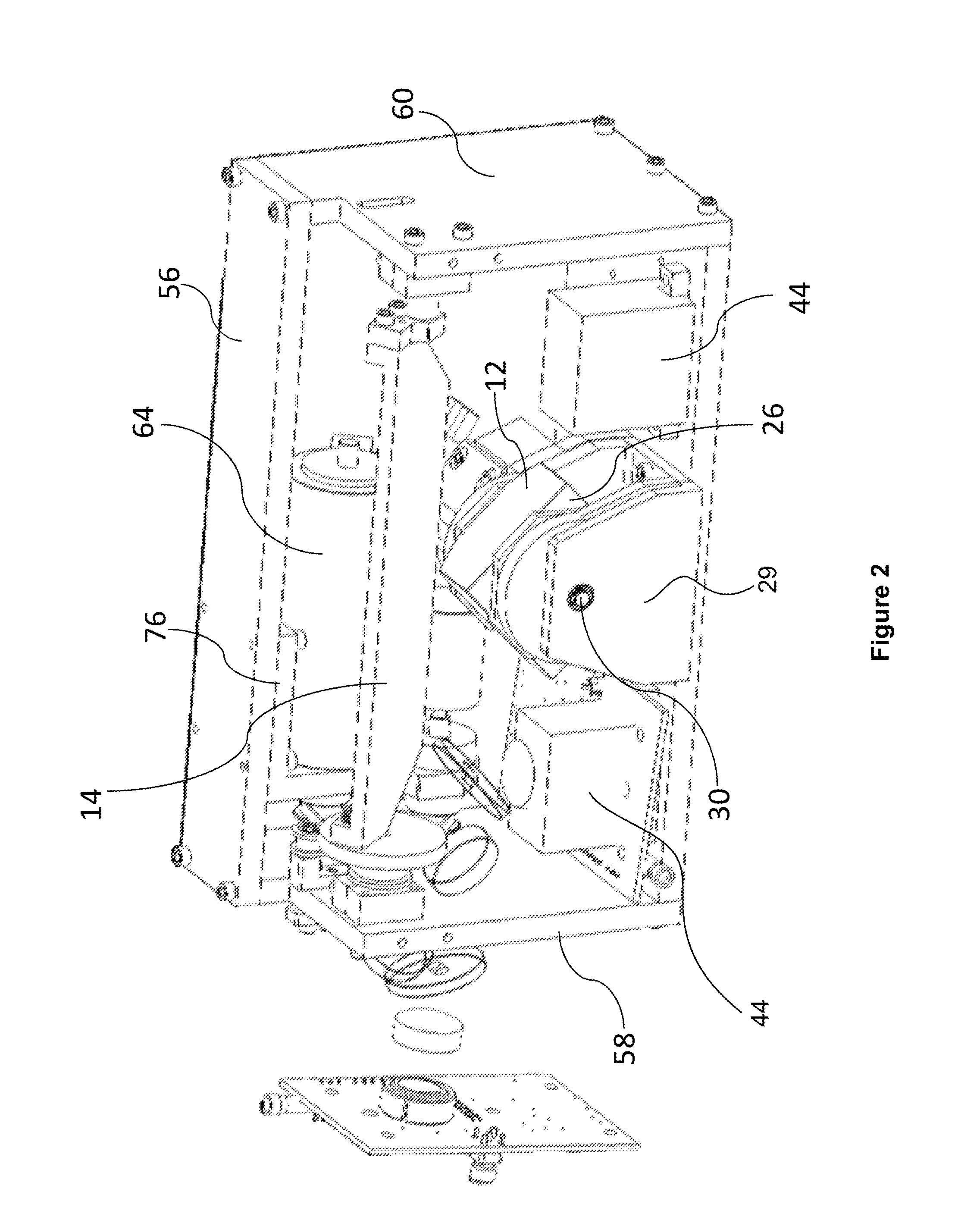

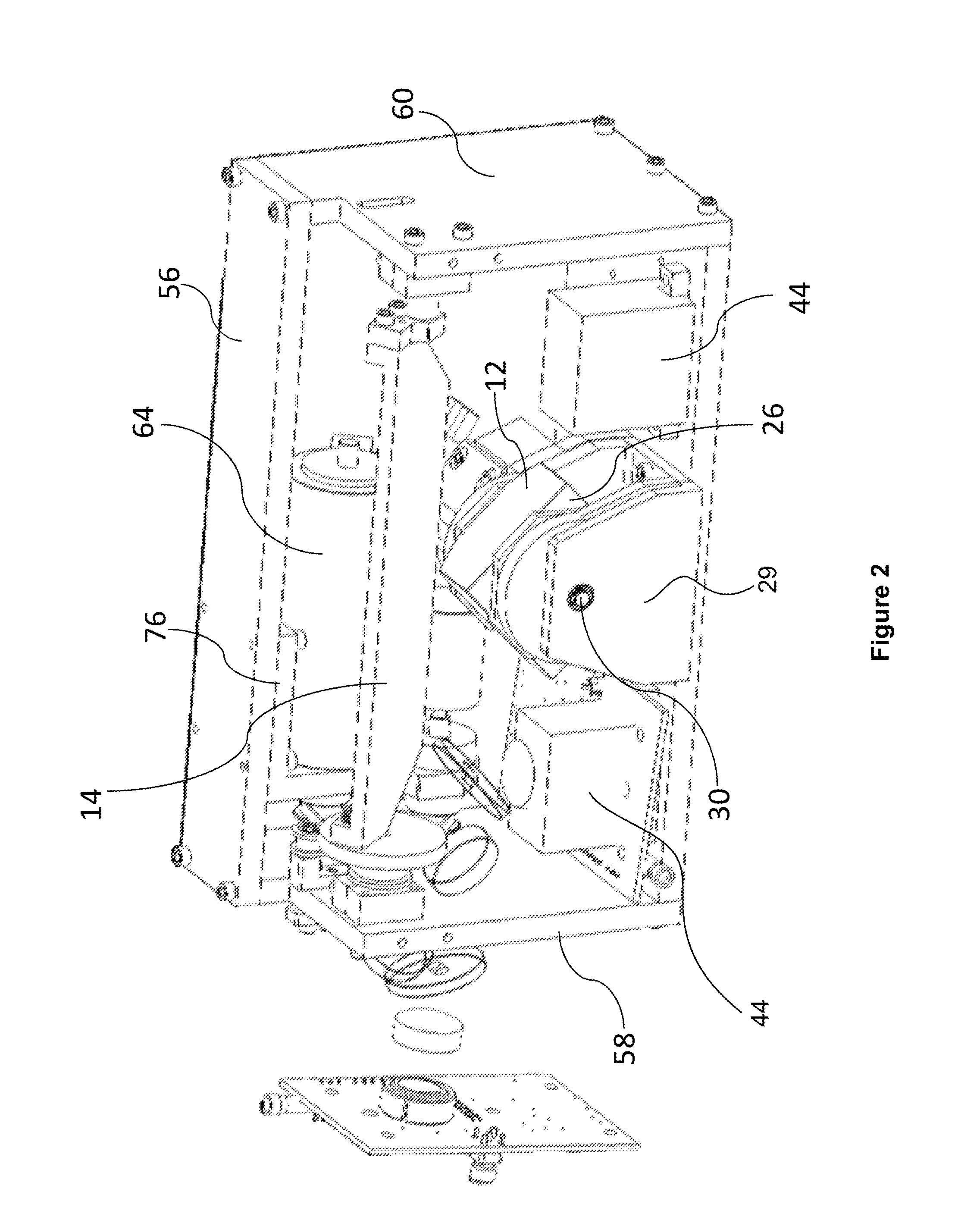

[0028] FIG. 1 is a front perspective view of a lidar sensor unit of the present disclosure;

[0029] FIG. 2 is a top, front perspective view of the lidar sensor unit of FIG. 1;

[0030] FIG. 3 is a front perspective view of the lidar sensor unit of FIG. 1, with the housing removed for clarity;

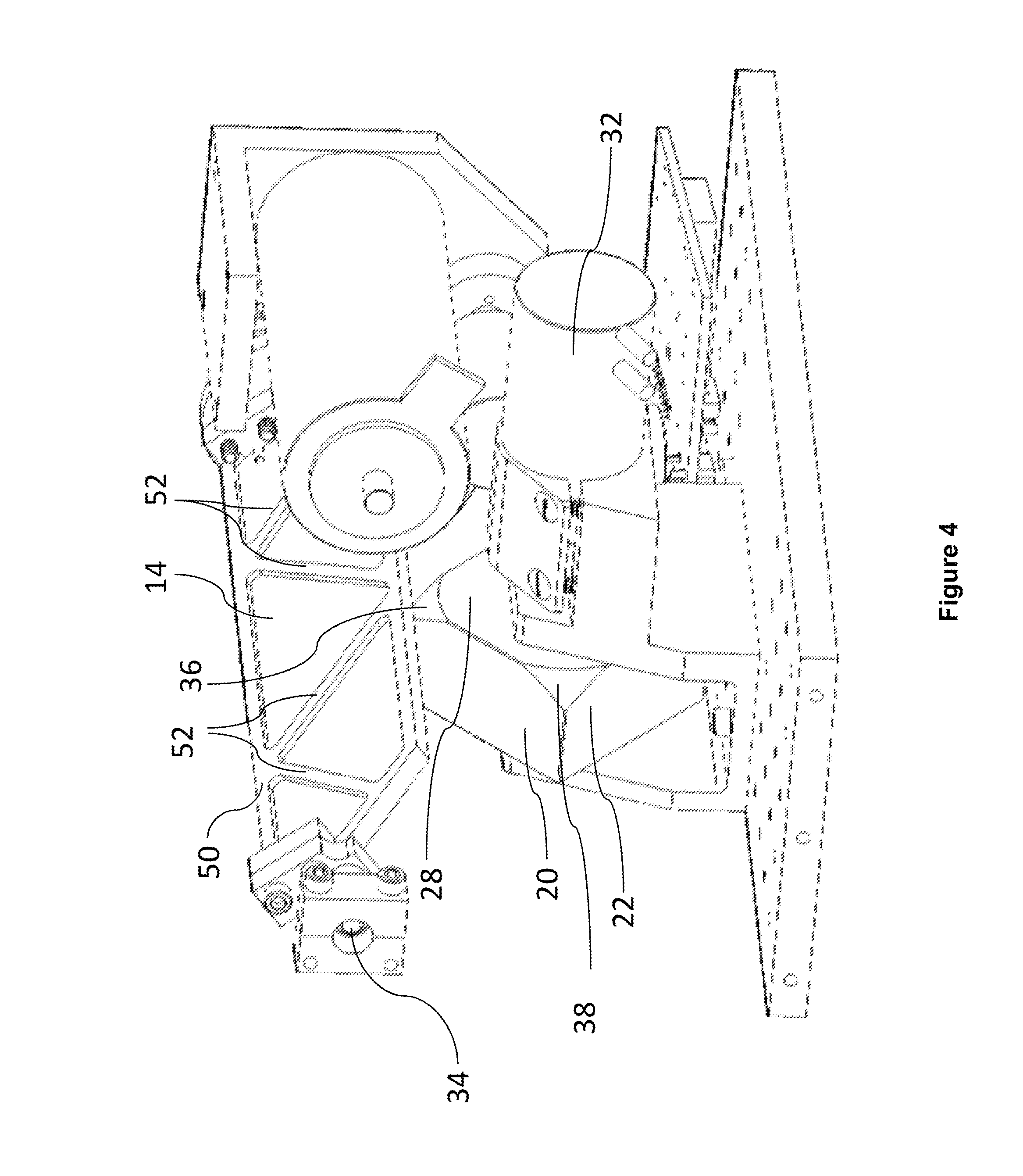

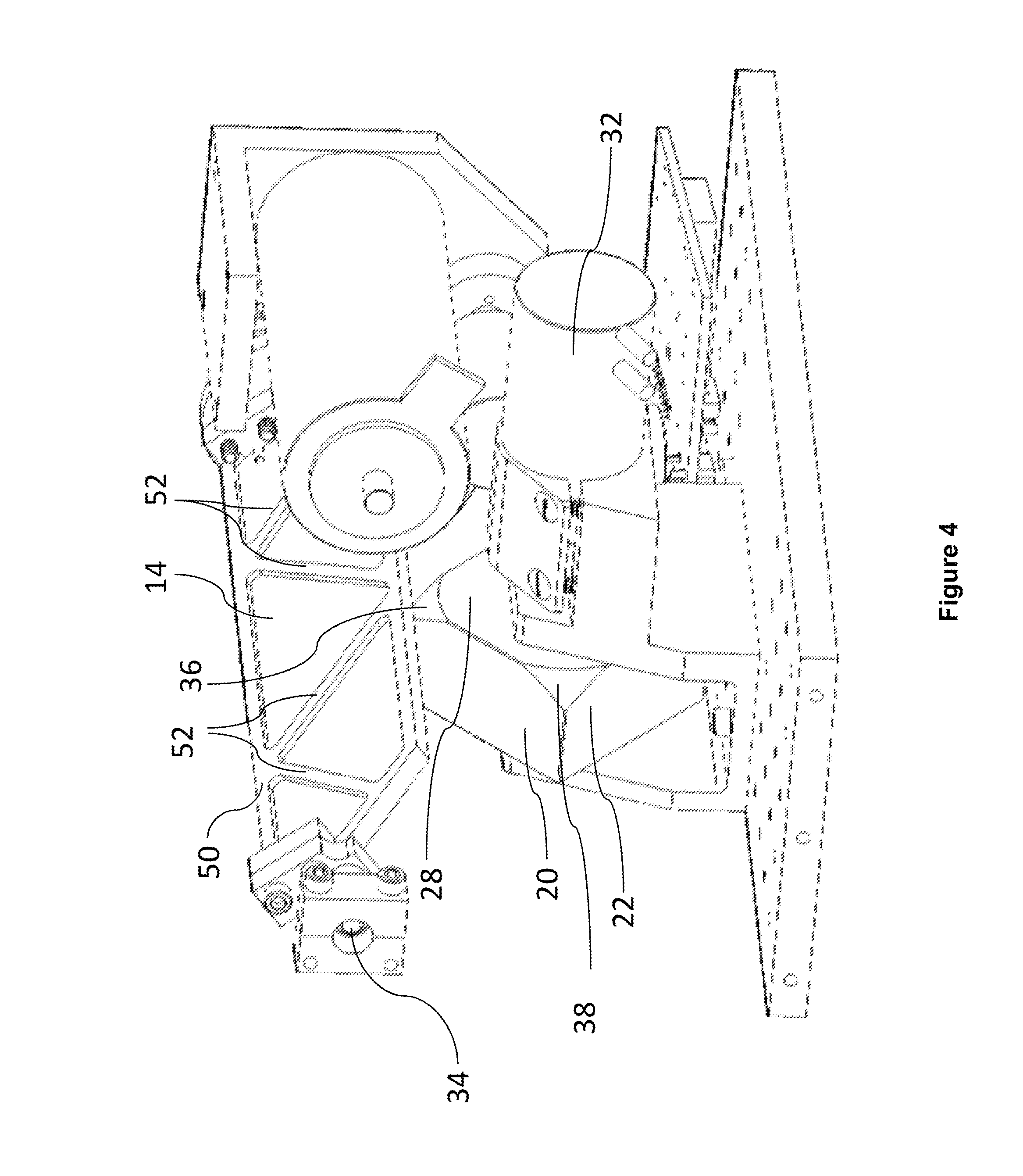

[0031] FIG. 4 is a right, rear perspective view of the lidar sensor unit of FIG. 1;

[0032] FIG. 5 is a right, front perspective view of the lidar sensor unit of FIG. 1;

[0033] FIG. 6 is a right, front perspective view of a polygon mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0034] FIG. 7 is a rear perspective view of the polygon mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0035] FIG. 8 is a left rear perspective view of the polygon mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0036] FIG. 9 is a rear perspective view of the polygon mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0037] FIG. 10 is a top perspective view of a polygon mirror of the lidar sensor unit of FIG. 1;

[0038] FIG. 11 is a rear, top perspective view of the polygon mirror of the lidar sensor unit of FIG. 1;

[0039] FIG. 12 is a right, rear perspective view of the polygon mirror of the lidar sensor unit of FIG. 1;

[0040] FIG. 13 is a rear elevation view of the polygon mirror of the lidar sensor unit of FIG. 1;

[0041] FIG. 14 is a front elevation view of the polygon mirror of the lidar sensor unit of FIG. 1;

[0042] FIG. 15 is a front perspective view of the planar mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0043] FIG. 16 is a front, right perspective view of the planar mirror and motor assembly of the lidar sensor unit of FIG. 1;

[0044] FIG. 17 is a left, front perspective view of just the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

[0045] FIG. 18 is a left elevation view of just the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

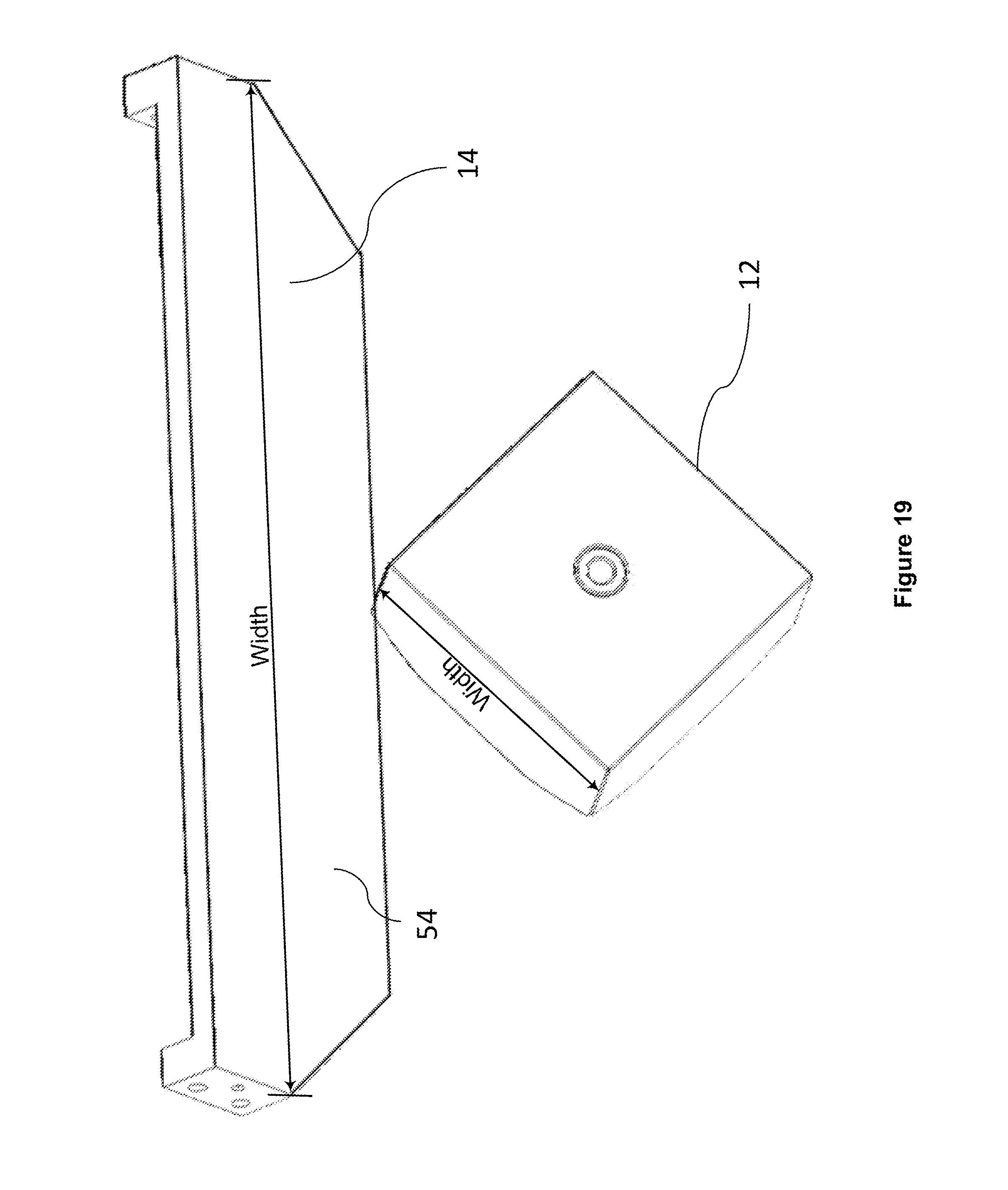

[0046] FIG. 19 is a front perspective view of just the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

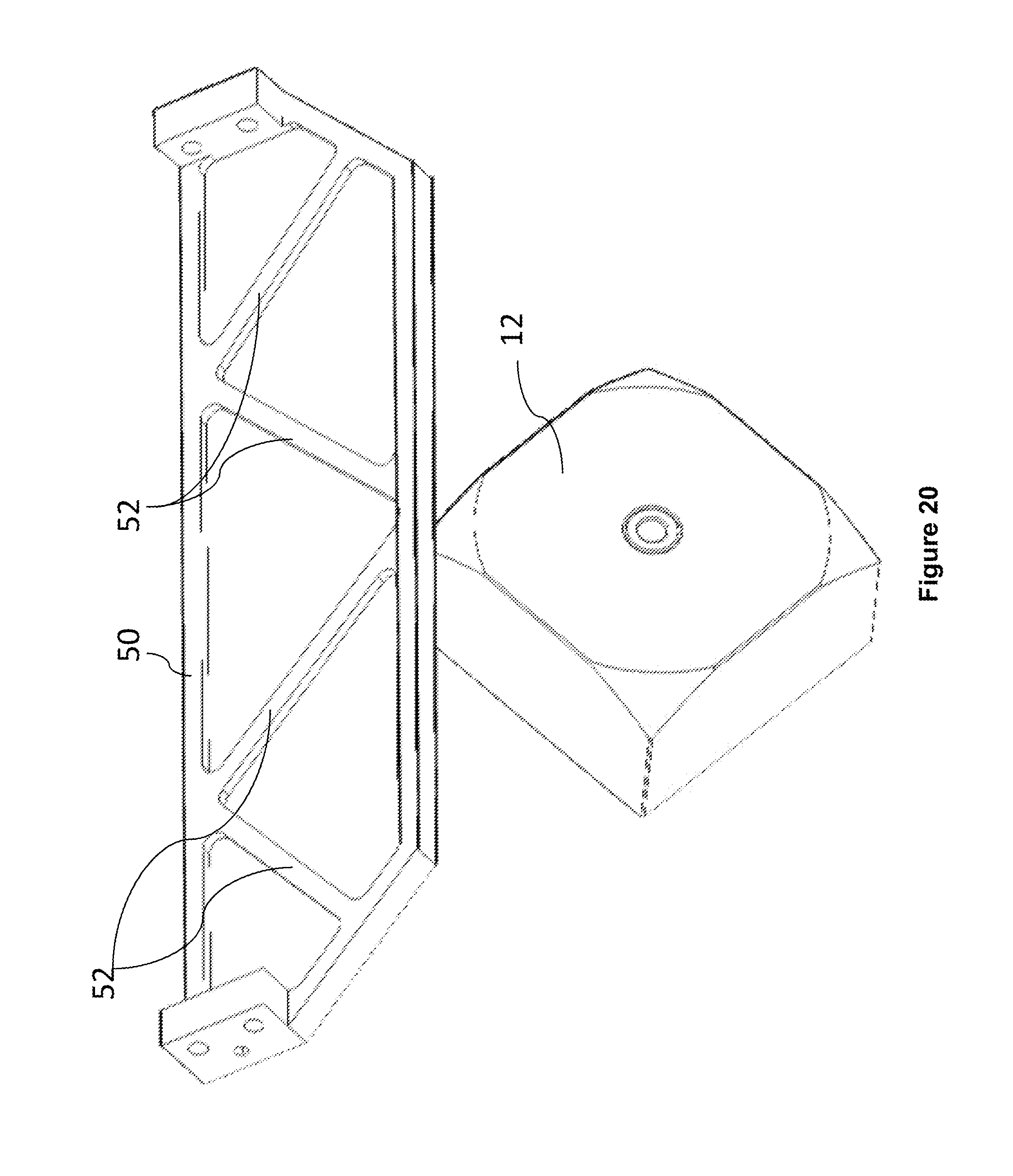

[0047] FIG. 20 is a rear perspective view of just the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

[0048] FIG. 21 is a perspective view of the optical base of the lidar sensor unit of FIG. 1, enclosing a lens and a receiver;

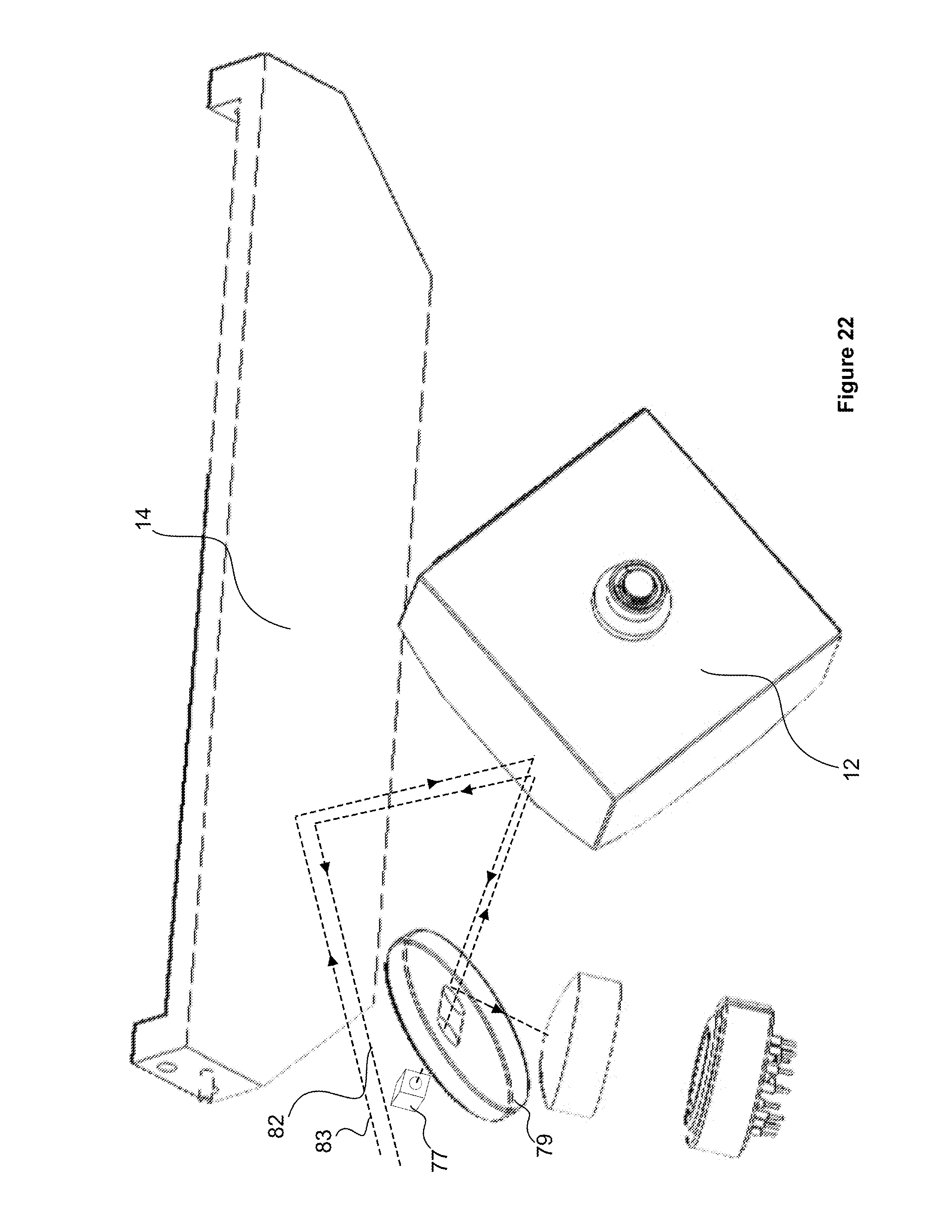

[0049] FIG. 22 is a perspective view of several components of the lidar sensor unit of FIG. 1 in an example implementation that includes an overlap "doughnut mirror," along with a schematic representation of example paths of beams;

[0050] FIG. 23 is a perspective view of several components of the lidar sensor unit of FIG. 1 in an example implementation free of an overlap doughnut mirror;

[0051] FIG. 24 is a perspective view of a path of an input beam relative to the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

[0052] FIG. 25 is a perspective view of paths of an input beam and output beams relative to the polygon mirror and the planar mirror of the lidar sensor unit of FIG. 1;

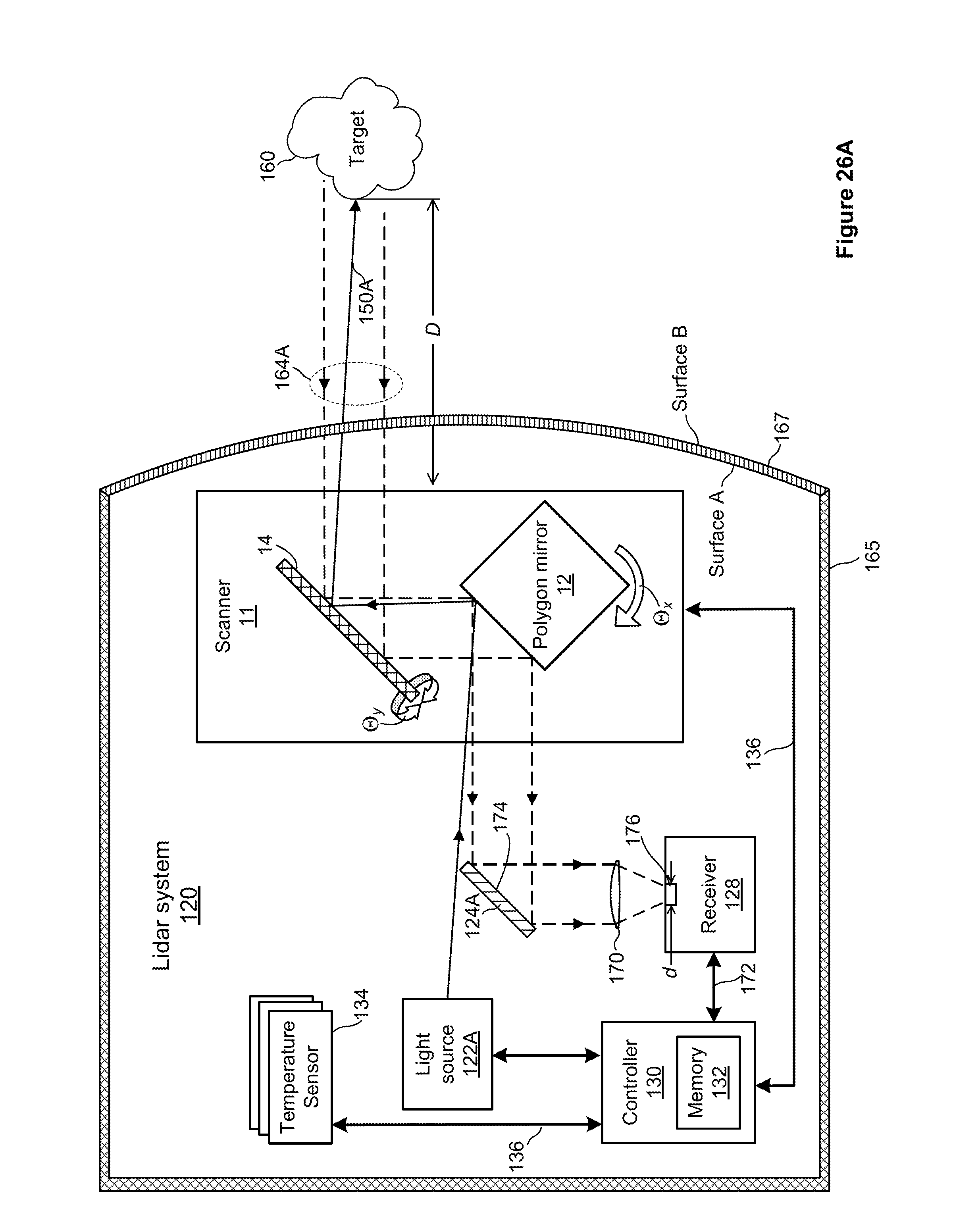

[0053] FIG. 26A is a block diagram of an example lidar system in which the lidar sensor unit of FIG. 1 can operate in a single-eye configuration;

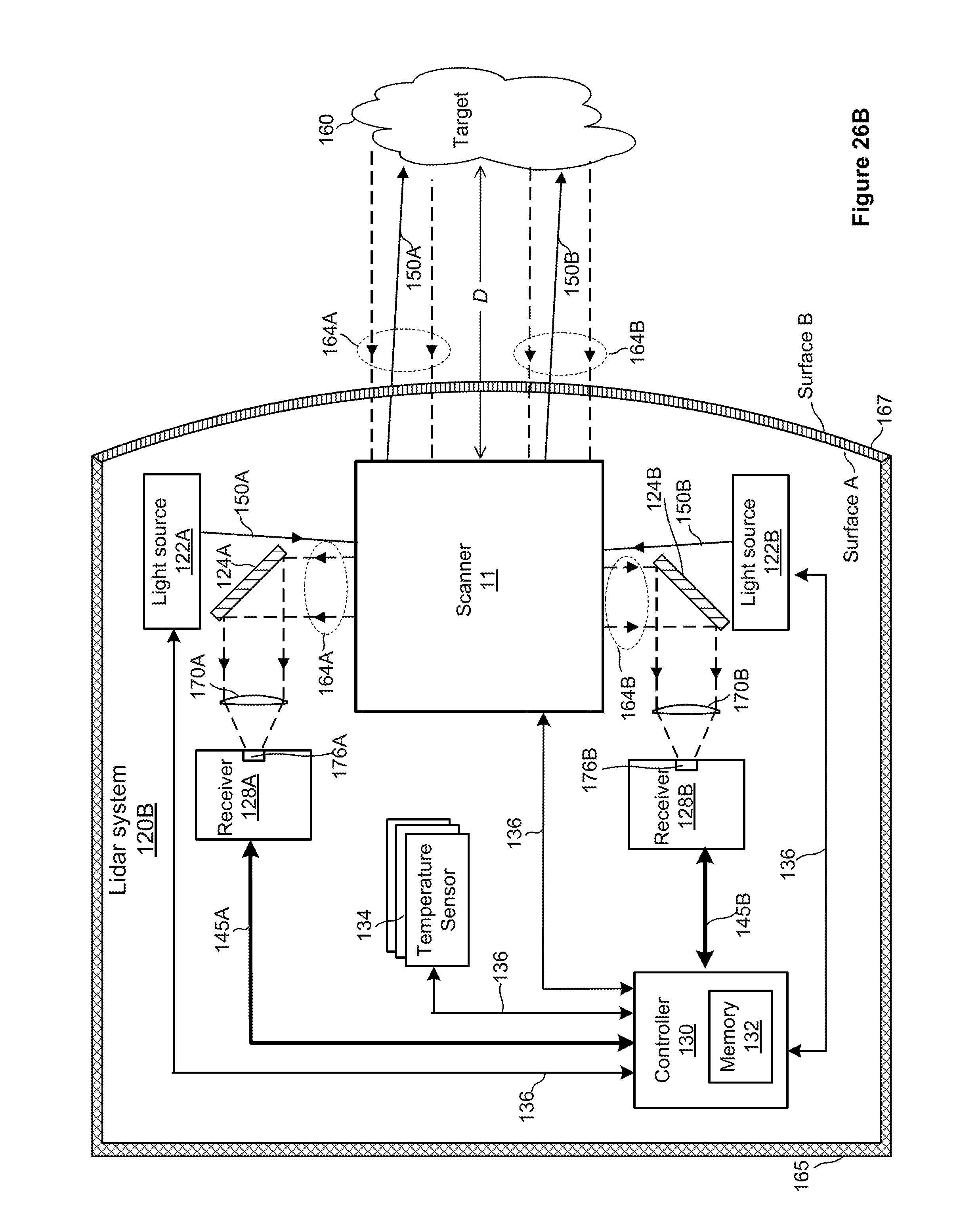

[0054] FIG. 26B is a block diagram of an example lidar system in which the lidar sensor unit of FIG. 1 can operate in a two-eye configuration;

[0055] FIG. 27 illustrates an example InGaAs avalanche photodiode which can operate in the lidar system of FIG. 26A or FIG. 26B;

[0056] FIG. 28 illustrates an example photodiode coupled to a pulse-detection circuit, which can operate in the lidar system of FIG. 26A or 26B;

[0057] FIG. 29 is a perspective view of a housing of a lidar sensor unit, such as the lidar sensor of FIG. 1, protruding from a surface of a vehicle;

[0058] FIG. 30 is perspective view of several components of the lidar system of FIG. 26A or 26B, disposed on a vehicle so that the axis of rotation of the polygon mirror aligns with an orientation of the vehicle;

[0059] FIG. 31 is a perspective view of a roof of a vehicle, on which four sensor head unit are arranged at respective corners;

[0060] FIG. 32 illustrates an example vehicle in which one implementation of the lidar system of FIG. 26a or 26B can operate;

[0061] FIG. 33 illustrates an example vehicle in which another implementation of the lidar system of FIG. 26a or 26B can operate;

[0062] FIG. 34 is a flow diagram of an example method for manufacturing a highly balanced rotatable polygon mirror that can be used in the lidar sensor unit of FIG. 1;

[0063] FIG. 35 schematically illustrates fields of view (FOVs) of a light source and a detector that can operate in the lidar sensor unit of FIG. 1;

[0064] FIG. 36 schematically illustrates the operational vertical field of regard FOR.sub.V of the lidar sensor unit of FIG. 1 relative to the available FOR.sub.V-AVAIL of the lidar sensor unit, within which the operational FOR.sub.V can be adjusted;

[0065] FIG. 37 schematically illustrates non-equal distribution of scan lines within a vertical field of regard FOR.sub.V of the lidar sensor unit of FIG. 1, in a certain operational mode of the lidar sensor unit;

[0066] FIG. 38 is a flow diagram of an example method for repositioning the vertical field of regard FOR.sub.V within the available FOR.sub.V-AVAIL by adjusting the oscillation of the planar mirror of the lidar sensor unit of FIG. 1;

[0067] FIGS. 39A and 39B schematically illustrate adjusting the vertical field of regard FOR.sub.V based on detected changes in the grade of the road, which can be implemented in the lidar sensor unit of FIG. 1;

[0068] FIG. 40 is a diagram of an example detector array with two detectors configured to detect return pulses associated with different respective output beams, which can be implemented in the lidar system of FIG. 26A or 26B;

[0069] FIG. 41 illustrates an example forward scan of a pair of spaced-apart pixels based on the detector array of FIG. 40;

[0070] FIG. 42 illustrates an example interleave of scan lines in an overlap region, which the lidar system of FIG. 26A or 26B can generate;

[0071] FIG. 43 illustrates an example scan using output beams with non-integer pixel separation, which the lidar system of FIG. 26A or 26B can generate; and

[0072] FIG. 44 is a flow diagram of an example method for generating pixel values using output beams with non-integer pixel separation.

DETAILED DESCRIPTION

[0073] A lidar sensor unit and various techniques for operating the lidar sensor unit are discussed below, in particular: (i) an example assembly of a lidar sensor unit, and particularly a scanner of the lidar sensor unit, is discussed with reference to FIGS. 1-21; (ii) propagation of light through the lidar sensor unit in example scenarios is considered in connection with FIGS. 22-25; (iii) example operation of the lidar sensor unit as part of a lidar system is considered with respect to the block diagrams of FIGS. 26A-28; (iv) example placement of a lidar sensor unit on a body of a vehicle is discussed with reference to FIGS. 29-33; (v) an example method of manufacturing a polygon mirror for use in the lidar sensor unit is discussed with reference to FIG. 34; (vi) example modifications to the scan pattern of the lidar sensor unit are discussed with reference to FIGS. 35-39B; and (vii) example generating of pixels is considered in connection with FIGS. 40-44.

I. Lidar Sensor Unit Equipped with a Scanner having a Planar and Polygon Mirrors

[0074] Referring to FIGS. 1-5, a lidar sensor unit 10 of the present disclosure includes a scanner 11 with a rotatable polygon mirror 12 and a pivotable planar mirror 14 that cooperates with the rotatable polygon mirror 12 to perform a scan of a field of regard (FOR) of the lidar sensor unit 10. The pivotable planar mirror 14 may be referred to herein as a Y-scan mirror, but it is understood that depending on the orientation of the rotatable polygon mirror 12 and the pivotable planar mirror 14, the scanning range achieved by the pivotable mirror 14 may be in any of the X- Y- or Z-planes. The rotatable polygon mirror 12 includes a block 16 having a plurality of (preferably at least four) finished reflective surfaces 18, 20, 22, 24. It is possible, however, to a use a triangle-shaped rotatable polygon mirror with three reflective surfaces. In another implementation, not every surface of the rotatable polygon mirror oriented toward the planar mirror 14 is reflective (e.g., the rotatable polygon mirror can be a flat substrate with reflective surfaces on the front and back sides). More generally, the rotatable polygon mirror 12 may have any suitable number of reflective surfaces, such as for example 2, 3, 4, 5, 6, 7, or 8 reflective surfaces. The polygon mirror 12 may be made from any suitable material, such as for example, glass, plastic (e.g., polycarbonate), metal (e.g., aluminum or beryllium), metal foam, carbon fiber, ceramic, or any suitable combination thereof.

[0075] The rotatable polygon mirror 12 further includes a first wall 26 and a second wall 28. Each of the plurality of reflective surfaces 18, 20, 22, 24 extends between the first and second walls 26, 28. The reflective surfaces 18-24 are angularly offset from one another along a periphery of the block 16.

[0076] Generally speaking, as the polygon mirror 12 rotates, the scanner 11 produces one scan line for each reflective surface of the polygon mirror 12, and the planar mirror 14 pivots to distribute the scan lines across the FOR. Thus, if the scan lines are directed horizontally, the polygon mirror 12 is responsible primarily for the horizontal dimension of the field of regard (FOR.sub.H), and the planar mirror 14 accordingly is responsible for vertical dimension of the field of regard (FOR.sub.V).

[0077] Adjacent reflective surfaces 18-24 of the block are preferably joined to one another along a drag-reducing, non-sharp edge to promote aerodynamic efficiency and reduce audible noise. As an example, the block may include rounded or chamfered edges or corners. As another example, the block may include edges with texturing, grooves, riblets, or a sawtooth pattern.

[0078] As best illustrated in FIGS. 6-9, the rotatable polygon mirror 12 is mounted in a bracket or mount 29 on a polygon mirror axle 30, which polygon mirror axle 30 extends through at least one of the first and second walls 26, 28. A motor 32 drives the polygon mirror axle 30, thereby imparting rotational oscillation to the rotatable polygon mirror 12. The motor 32 may be a synchronous brushless DC motor in driving relationship with the axle 30 and may be external to the block 16. Alternately, the block 16 may accommodate an internal motor, or enable a motor 32 to be at least partially embedded within the block 16, such as where a rotor of the motor 32 is disposed within the block 16, reducing the overall size of the lidar sensor unit 10. The motor 32 may drive rotation of the rotatable polygon mirror 12 in an open-loop or closed-loop fashion. In general, the motor 32 can be any actuator or mechanism suitable for rotating the polygon mirror 12.

[0079] The rotatable polygon mirror 12 may additionally employ an optical beam, the presence or absence of which is detectable by a stationary photo-interrupter, to collect data indicative of the rotational speed of the rotatable polygon mirror 12. One or more tabs may be provided on the axis of rotation of the polygon mirror 12 or an interior surface of the block 16, which tab(s) pass through the stationary photo-interrupter during rotation of the polygon mirror 12. Upon receiving from the photo-interrupter feedback data indicative of the rotational speed of the polygon mirror 12, the feedback data can then be processed by a controller associated with the motor 32 of the polygon mirror 12 to make any necessary adjustments to the rotational speed of the polygon mirror 12, for example. The controller may regulate or stabilize the rotational speed of the polygon mirror 12 so that the rotational speed is substantially constant. For example, the polygon mirror 12 may be rotated at a rotational speed of approximately 150 Hz (150 revolutions per second), and the rotational speed may be stabilized so that it varies by less than or equal to 1% (e.g., 150 Hz .+-.1.5 Hz), 0.1%, 0.05%, 0.01%, or 0.005%.

[0080] The planar mirror 14 is pivotally mounted along a planar support shaft 34 that extends orthogonal to the polygon mirror axle 30. The planar mirror 14 preferably has a body 50 defined by a plurality of rib-like members 52 that form a honeycomb-like structure, supporting a finished planar reflective surface 54 (see FIG. 20). The center of gravity of the planar mirror 14 is closer to the reflective surface 54 than to an edge of the ribbed or honeycomb body 50 opposite the reflective surface 54. The planar mirror 14 may be made from any suitable material, such as for example, metal (e.g., aluminum), ceramic polymer, or carbon fiber.

[0081] The reflective surface 54 of the planar mirror 14 preferably has a width that is greater than a width of each of the reflective surfaces 18-24 of the rotatable polygon mirror 12, measured along a common axis. In the embodiment illustrated in FIGS. 1-25, the width of the planar mirror 14 is measured in the horizontal dimension, i.e., along a scan line (see FIG. 19). The width of each surface of the polygon mirror 12 can be measured along an axis that is parallel to the pivot axis of the planar mirror 14 in a certain orientation of the polygon mirror 12. The width of the planar mirror 14 effectively determines the horizontal range, i.e., FOR.sub.H.

[0082] For the same polygon mirror 12, the FOR.sub.H of the sensor unit 10 can be increased by selecting a wider planar mirror. For example, the planar mirror of width 5.3 inches can provide a FOR.sub.H of about 100 degrees. As a more specific example, the lidar sensor unit 10 can have two eyes, each with an FOR.sub.H of 52 degrees, and a two-degree overlap between the eyes. The planar mirror of width 8.1 inches can provide a FOR.sub.H of about 130 degrees. The possibility of increasing the FOR.sub.H of the lidar sensor unit 10 by selecting a planar mirror of a different width for the same polygon mirror provides for a modular optical design.

[0083] As illustrated in FIG. 14, the first wall 26 of rotatable polygon mirror 12 has a major diameter D1 that extends from the corner of two adjacent finished reflective surfaces 18, 20 to a corner of two opposite finished reflective surfaces 22, 24, and a minor diameter D2 that extends from a center of one of the finished reflective surfaces 18 to a center of an opposite one of the finished reflective surfaces 22. A limiting factor in optimizing the minimal height and width of the lidar sensor unit 10 is the necessary spacing between the finished reflective surfaces 18-24 of the rotatable polygon mirror 12 and the planar mirror 14. By strategically removing portions of material from the block 16, it is found that the dimensional difference between the major diameter D1 and the minor diameter D2 need not serve as a constraint to the dimensioning of the overall lidar sensor unit 10. As illustrated in FIGS. 10-12, a plurality of chamfers 36, 38, 40, 42 are formed in the block 16, each of the chamfers being bounded by a pair of adjacent reflective surfaces 18-24 and the second wall 28. Each of these chamfers 36-42 is preferably cut at an angle of 45.degree. to the adjacent finished reflective surfaces and second wall 28. However, the chamfers may be formed at a different angle to these adjacent surfaces.

[0084] The planar mirror 14 is located on the side of the rotatable polygon mirror 12 closest to the second wall 28. The chamfers 36-42 effectively reduce the major diameter of the rotatable polygon mirror 12 to a maximum dimension D1' (see FIG. 13) that is less than D1, such that a minimum distance between the rotatable polygon mirror 12 and the planar mirror 14 can be maintained while still minimizing the overall height and width dimensions of the lidar sensor unit 10. The reflective surfaces 18-24 of the polygon mirror 12 can be manufactured using surface replication techniques, and coarse as well as fine balancing techniques can be applied to the polygon mirror 12, as discussed below.

[0085] By way of example only, and referring back to FIG. 1, the lidar sensor unit 10 may be provided in a housing that includes a shell roof 56, a first shell side wall 58, a second shell side wall 60, and a shell floor 62. Depending on where the lidar sensor unit 10 is mounted on a vehicle, one or more of the surfaces of the housing could coincide with an external or interior surface of a vehicle, as discussed below.

[0086] The housing of the lidar sensor unit 10 is configured so that rotation of the polygon mirror 12 imparts a flow of air through the housing to provide cooling to components enclosed within the housing. The air flow may be a laminar flow, a turbulent flow, or any suitable combination thereof. Such cooling need not be the exclusive means of cooling of the interior components of the lidar sensor unit 10. For instance, one or more of a fan, cooling fins, or a heat exchanger can be used to moderate the temperature of the components of the lidar sensor unit 10. However, the air flow within the housing and the aerodynamic construction of the components of the polygon mirror 12 of the lidar sensor unit 10 preferably account for a substantial portion of the temperature mitigation of the lidar sensor unit 10, even when any one or more of a fan, cooling fins, or a heat exchanger are additionally provided in the housing to supplement cooling. A substantial portion of the temperature mitigation of the lidar sensor unit 10 may be a majority of the cooling, at least 75% of the cooling, at least 80% of the cooling, at least 85% of the cooling, at least 90% of the cooling, at least 95% of the cooling, at least 98% of the cooling, or at least 99% of the cooling. Alternatively, the air flow within the housing and the aerodynamic construction of the components of the polygon mirror 12 of the lidar sensor unit 10 may be relied upon to supply all of the cooling when at least one of the temperature within the housing of the lidar sensor unit 10 or the ambient temperature is below a certain predefined temperature, and if the at least one of the temperature within the housing of the lidar sensor unit 10 or the ambient temperature exceeds the predefined temperature, the air flow within the housing and the aerodynamic construction of the components of the polygon mirror 12 of the lidar sensor unit 10 may be supplemented by at least one or more of a fan, cooling fins, or a heat exchanger to provide cooling. In some implementations, the polygon mirror 12 may be at least partially surrounded or enclosed by a shroud that may act to aid or direct the air circulation provided by the polygon mirror 12. The shroud may include a dust collector (e.g., a filter) configured to remove dust from circulating air.

[0087] The planar mirror 14 is actuated by a drive system such as that illustrated in FIG. 15. The drive system includes a drive motor 64, which, by way of example, may be a brushless FAULHABER (trademark) drive motor, a plurality of pulleys 66, 68, 70, one of the pulleys 68 axially aligned with an encoder 72, and a drive belt 74 translating rotational motion of one of the pulleys 68 driven directly by the drive motor 64 to the other two pulleys 68, 70. The drive motor 64 may be secured to the shell roof 56 by a shell roof motor mount 76.

[0088] As discussed in more detail below, the lidar sensor unit 10 according to some implementations includes optical elements configured to receive light signals such as intermittent pulses or continuous beams from a laser, and direct the light signals toward the active reflective surface(s) of the rotatable polygon mirror 12. The optical elements can include a fiber-optic cable via which the lidar sensor unit 10 is coupled to the laser, and a collimator or a lens to produce a collimated free-space output beam. Referring to FIG. 1, one or several output collimators 77 in an example implementation direct light pulses of respective output beams toward the rotatable polygon mirror 12 via apertures of the overlap doughnut mirror 79. However, in other implementations considered in more detail with reference to FIGS. 23-25, output collimators of the lidar sensor unit 10 and an aperture-free overlap mirror implement an off-axis illumination technique. The mirror 79, or an aperture-free mirror oriented similar to the mirror 79, also can be referred to as a superposition mirror or beam-combiner mirror.

[0089] If desired, the housing of the lidar sensor unit 10 can enclose a laser or multiple lasers configured to generate output beams with different wavelengths. Further, a diffractive optical element (DOE) beam splitter 46 can be used to split a beam output by the laser (or the beam received from a remote laser via a fiber-optic cable) into at least two beams. The beams may have distinct wavelengths from one another. The beam splitter 46 in general can be any suitable holographic element, a pixelator, diffractive element, etc.

[0090] In any case, the one or several collimators 77 direct pulses of light at the reflective surfaces of the rotatable polygon mirror 12, which in turn reflect the pulses toward the planar reflective surface 54. The rotation of the rotatable polygon mirror 12 and the planar mirror 14 achieve the horizontal and vertical scan effect of the lidar sensor unit 10.

[0091] An optic base 44 (see FIGS. 1 & 2) can enclose a receiver with one or more detectors. Depending on whether the scanner 11 utilizes a single reflective surface of the polygon mirror 12 or two reflective surfaces, the sensor unit 10 can include a single optic base 44 or two optic bases 44. As illustrated in FIG. 21, the optic base 44 can enclose a lens 80 to focus an input beam onto an assembly 81 including an optical filter and a detector, discussed in more detail below.

[0092] The axis of rotation of the polygon mirror 12 may be aligned with an orientation of predominant motion of the vehicle in which the lidar system 10 operates. For instance, a front-facing lidar system 10 may be oriented such that the axis of rotation of the polygon mirror 12 is aligned with a longitudinal axis of the vehicle. Such an orientation may serve to reduce adverse effects of vibration, acceleration, and deceleration. These techniques are illustrated in FIG. 30.

[0093] The planar mirror 14 may be configured so as to pivot over a range of allowable motion larger than a range corresponding to the vertical angular dimension of the field of regard, so as to define a maximum range of allowable motion larger than a range within which the planar mirror 14 pivots during a scan. A controller associated with the planar mirror 14 selects different portions of the maximum range of allowable motion as the range within which the second mirror pivots, in accordance with modifications of the scan pattern. In particular, to modify at least one of a scan pattern or a scan rate, a controller associated with the motor 32 of the polygon mirror 12 can be configured to cause the motor 32 to vary the speed of rotation of the polygon mirror 12, cause the drive motor 64 to vary the vary the oscillation of the planar mirror 14, or both. The controller can be associated with both the polygon mirror 12 and the planar mirror 14. The controller may be configured to modify the scan pattern on a frame-by-frame basis, each frame corresponding to a complete scan of the field of regard of the lidar system 10. In some implementations, the oscillation of the planar mirror 14 may be varied (e.g., to change the vertical angular dimension of the field of regard), and the rotational speed of the polygon mirror 12 may be regulated or stabilized so that the polygon mirror 12 rotates at a substantially constant speed.

[0094] With reference to FIGS. 1-5, the polygon mirror 12 in some implementations can be disposed between a third of the way from a first edge of the y-scan mirror 14 and a third of the way from a second edge of the y-scan mirror 14. In a particular embodiment, the polygon mirror axis bisects a length of the y-scan mirror 14.

[0095] Besides the lidar sensor unit 10, the scanner 11 can operate in any suitable optical system to scan the FOR. The scanner 11 in an embodiment includes the polygon mirror 12 rotatable about a polygon mirror axis to scan the FOR of the optical system along a horizontal dimension, the polygon mirror 12 including a plurality of reflective surfaces 18-24 being angularly offset from one another along a periphery of the block 16; and a y-scan mirror 14 pivotable along a pivot axis orthogonal to the polygon mirror axis to scan the FOR of the optical system along a vertical dimension. The width of the y-scan mirror 14 is larger than the width of each of the reflective surfaces 18-24 of the polygon mirror 12. The polygon mirror 12 reflects light incident on one of the reflective surfaces toward the y-scan mirror 14. The width of the y-scan mirror 14 ultimately determines the scan range along the horizontal dimension.

II. Propagation of Input and Output Light Beams Through the Lidar Sensor Unit

[0096] FIG. 22 schematically depicts an example implementation of the lidar sensor unit 10 that includes the doughnut overlap mirror 79 discussed above. In this implementation, an output beam 82 travels from the output collimator 77 through an aperture of the overlap mirror 79 and impinges on one of the reflective surfaces of the polygon mirror 12. The reflective surface of the polygon mirror 12 reflects the output beam 82 to a location on the planar mirror 14 that depends on the current orientation of the polygon mirror 12, thereby defining the current angle within the FOR.sub.H. The planar mirror 14 then directs the output beam 82 out of the lidar sensor unit 10 at a vertical angle that depends on the current orientation of planar mirror 14, thereby defining the current angle within the FOR.sub.V. In this manner, the scanner 11 can disperse light pulses of the output beam 82 across the FOR of the lidar sensor unit 10. An input beam 83 travels to the planar mirror 14, which directs the input beam 83 to the polygon mirror 12, which in turn directs the input beam 83 to the overlap mirror 79.

[0097] Now referring to FIG. 23, an assembly 86 is generally similar to the assembly of FIG. 22. However, unlike the overlap doughnut mirror 79, an overlap mirror 90A does not include an aperture, and an output collimator 92A directs an output beam by the side of the overlap mirror 90A toward a reflective surface 12-1 of the polygon mirror 12. An output collimator 94A can direct another output beam by the side of the overlap mirror 90A toward the same reflective surface 12-1 of the polygon mirror 12. The output collimators 92A and 94A can be configured to emit pulses having different wavelengths, and two respective detectors can be configured to detect the corresponding return pulses in a shared input beam reflected by the surface 12-1. In this manner, a lidar sensor unit that includes the assembly 86 can generate values for two pixels in a certain scan line within a same ranging event. Alternatively, the output collimators 92A and 94A can launch the output beams with a particular spatial or angular offset, and the two input beams have a corresponding spatial or angular offset, with the wavelength of the pulses emitted b the output collimators 92A and 94A being the same.

[0098] Further, in the example implementation of FIG. 23, the assembly 86 includes output collimators 92B and 94B mechanically aimed at a surface 12-2 of the polygon mirror 12. The output collimators 92B and 94B also direct output beams by the side of the corresponding overlap mirror 90B. Similar to the overlap mirror 90A, the overlap mirror 90B does not include an aperture.

[0099] The input beam which the reflective surface 12-1 directs to the overlap mirror 90A can be regarded as the first eye of the lidar sensor unit, and the input beam which the reflective surface 12-2 directs to the overlap mirror 90B can be regarded as the second eye of the lidar sensor unit. The assembly 86 thus implements off-axis illumination for both eyes of the lidar sensor unit.

[0100] For further clarity, FIGS. 24 and 25 illustrate example paths along which input and output beams travel in the sensor unit 10 and, in particular, the scanner 11. As discussed in more detail below, an input beam typically contains only a relatively small portion of the energy of an output beam. A receiver field of view (FOV) may define a larger angular cone over which the receiver detects light as compared to the light-source FOV, or the angular cone illuminated by the light source. Accordingly, FIGS. 24 and 25 illustrate input and output beams of as cones of different sizes, but neither the sizes of the cones nor the degrees of divergence of these cones are drawn to scale.

[0101] In the scenario of FIG. 24, the input beam 102A first impinges on the reflective surface of the planar mirror 14, which reflects the input beam 102A toward the reflective surface of the polygon mirror 12, which in turn reflects the input beam 102B toward the overlap mirror 90A. The overlap mirror 90A then directs the input beam 102A toward a lens 104A, which focuses the input beam 102A on an active region 106A of a receiver 108A. For a given operational state, the current orientation of the polygon mirror 12 defines the horizontal position of the receiver field of view FOV.sub.A within the FOR of the sensor unit 10, and the current orientation of the planar mirror 14 defines the vertical position of the FOV.sub.A within the FOR. An input beam 102B in meantime impinges on the planar mirror 14 at a different location. The planar mirror 14 directs the input beam 102B to a different surface of the polygon mirror 12, which in turn directs the input beam 102B to an assembly including an overlap mirror, a lens, an active region of a receiver, etc. (not illustrated to avoid clutter) disposed on the opposite side of the polygon mirror 12 from the components 90A, 104A, etc.

[0102] The output beams according to these implementations are scanned synchronously because these beams reflect off the same mirrors 12 and 14. In other words, the output beams are scanned at approximately the same scanning rate across the field of regard, and the input beams maintain approximately the same angular separation. For example, both output beams may scan horizontally across the field of regard at approximately 600 radians/sec, and the two output beams may have a substantially fixed angular separation of approximately 20 degrees. In addition to the two output beams being scanned synchronously with respect to each other, each receiver FOV is also scanned synchronously with its respective light-source FOV.

[0103] As discussed in more detail below, a lidar system can use the input beams 102A and 102B to generate two pixels during the same ranging event, with an integer or non-integer separation between the pixels. Further, in some implementations, each of the input beams 102A and 102B is made up of two beams of light corresponding to two output beams of different wavelengths, .lamda..sub.1 and .lamda..sub.2, and accordingly can be used to produce two pixels (e.g., an odd pixel and an even pixel) rather than a single pixel during a single ranging event. The lidar sensor unit 10 thus can produce the total of four pixels per ranging event. As a more specific example, a DOE or another suitable element can impart to a pulse of light a relatively small angular separation into pulses of wavelengths .lamda..sub.1 and .lamda..sub.2, so that the distance between the light pulses of wavelengths .lamda..sub.1 and .lamda..sub.2 at the maximum range of the lidar system corresponds to the width of multiple pixels. The DOE may split the pulse before directing the resulting output beams to the polygon mirror, or the DOE may be disposed downrange of the mirrors 12 and 14 and split a pulse after propagation through the scanner.

[0104] In another example implementation, the input beam 102A includes two component input beams of the same wavelength, which are substantially overlapped spatially but have a small angular offset (e.g., between approximately 0.1 and 2 degrees) with respect to one another. When the two component input beams pass through the lens 104A, the angular offset results in the two beams being focused on two separate spots, which may be separated by approximately 0.4 to 2 mm. In this manner, the angular offset between the beams results in a spatial separation after passing through the lens.

[0105] FIG. 25 illustrates an example spatial arrangement of the fields of view of the input beam 102A and output beams 110A and 110B. The beams 102A, 110A, and 110B are mechanically aimed so as to minimize the resulting "footprints" on the mirrors 14 and 12. Thus, the beams are adjacent to each other on the reflective surfaces of the mirrors 12 and 14. Further, in accordance with off-axis illumination techniques, the output beams 110A and 11B are directed at a reflective surface of the polygon mirror 12 so as to be not entirely coaxial with the input beam 102A (illustrated in FIG. 25 in an exaggerated manner).

[0106] In contrast to the implementation of FIGS. 23-25, the output beam 82 and the input beam 83 in FIG. 22 are more aligned with each other, and may be substantially coaxial. The output beam 82 and input beam 83 may at least partially overlap or share a common propagation axis, so that the output beam 82 and input beam 83 travel along substantially the same optical path (albeit in opposite directions). As the lidar system scans the output beam 82 across a field of regard, the input beam 83 may follow along with the output beam 82, so that the coaxial relationship between the two beams is maintained.

[0107] Referring again to FIG. 25, the output beams of light 110A and 110B emitted by the light source (such as a light source 122A, discussed below with reference to FIGS. 26A and 26B) is a collimated optical beam with any suitable beam divergence, such as a divergence of approximately 0.1 to 3.0 milliradian (mrad). Divergence of the output beams 110A and 110B may refer to an angular measure of an increase in beam size (e.g., a beam radius or beam diameter) as the output beams 110A and 110B travel away from the lidar system. The output beams 110A and 110B may have a substantially circular cross section with a beam divergence characterized by a single divergence value. For example, the output beams 110A and 110B with a circular cross section and a divergence of 1 mrad may have a beam diameter or spot size of approximately 10 cm at a distance of 100 m from the lidar system. In some implementations, the output beams 110A and 110B may be an astigmatic beam or may have a substantially elliptical cross section and may be characterized by two divergence values. As an example, the output beams 110A and 110B may have a fast axis and a slow axis, where the fast-axis divergence is greater than the slow-axis divergence. As another example, the output output beams 110A and 110B may be an astigmatic beam with a fast-axis divergence of 2 mrad and a slow-axis divergence of 0.5 mrad.

[0108] The output beams 110A and 110B may be unpolarized or randomly polarized, may have no specific or fixed polarization (e.g., the polarization may vary with time), or may have a particular polarization (e.g., the output beams 110A and 110B may be linearly polarized, elliptically polarized, or circularly polarized). As an example, the light source may produce linearly polarized light, and the lidar system may include a quarter-wave plate that converts this linearly polarized light into circularly polarized light. The lidar system may transmit the circularly polarized light as the output beams 110A and 110B, and receive the input beam(s) 102A, which may be substantially or at least partially circularly polarized in the same manner as the output beams 110A and 110B (e.g., if the output beams 110A and 110B are right-hand circularly polarized, then the input beam 102A may also be right-hand circularly polarized). The input beam 102A may pass through the same quarter-wave plate (or a different quarter-wave plate), resulting in the input beam 102A being converted to linearly polarized light which is orthogonally polarized (e.g., polarized at a right angle) with respect to the linearly polarized light produced by light source 110. As another example, the lidar system may employ polarization-diversity detection where two polarization components are detected separately. The output beams 110A and 110B may be linearly polarized, and the lidar system may split the input beam 102A into two polarization components (e.g., s-polarization and p-polarization) which are detected separately by two photodiodes (e.g., a balanced photoreceiver that includes two photodiodes).

[0109] The scanner 11 can scan each of the first beam of light and the second beam of light so as to define a respective field of regard approximately 60 degrees wide. Depending on the implementation, the fields of regard can have a relatively large overlap (e.g., 20 degrees, 30 degrees, 40 degrees), a relatively small overlap (e.g., one degree, two degrees, three degrees, four degrees, five degrees), or no overlap. Dynamic modifications to the fields of regard are discussed in more detail below. The overlap region may be oriented in a direction of travel of a vehicle on which the lidar system 10 is deployed.

[0110] III. Operation of a Lidar System

[0111] Next, FIG. 26A illustrates an example lidar system 120A in which all or some of the components of lidar sensor unit 10 can be implemented according to a single-eye configuration. The lidar system 120A may be referred to as a laser ranging system, a laser radar system, a LIDAR system, a lidar sensor, or a laser detection and ranging (LADAR or ladar) system. The lidar system 120A may include a light source 122A, a mirror 124A (referred to as overlap mirror, superposition mirror, or beam-combiner mirror), a scanner 11, a receiver 128A, and a controller 130 equipped with a memory unit 132. In some implementations, the lidar system 120A also can include one or more sensors 134 such as a temperature sensor, a moisture sensor, etc.

[0112] The scanner 11 may be referred to as a beam scanner, optical scanner, or laser scanner. The scanner 11 may be implemented as discussed above with reference to FIGS. 1-25 and include a polygon mirror 12, a planar mirror 14, and corresponding motors to drive the rotation of the polygon mirror 12 and the oscillation of the planar mirror 14.

[0113] Depending on the implementation, the controller 130 may include one or more processors, an application-specific integrated circuit (ASIC), a field-programmable gate array (FPGA), and/or other suitable circuitry. The non-transitory computer-readable memory 132 of the controller 130 can be configured to store instructions executable by the controller 130 as well as data which the controller 130 can produce based on the signals from the components of the system 120A and/or provide to these components. The memory 132 can include volatile (e.g., RAM) and/or non-volatile (e.g., flash memory, a hard disk) components. The data the controller 130 generates during operation and stores in the memory 132 can include pixel data and other results of analyzing characteristics of the target 160, alarm data (e.g., readings from the sensors 134 that exceed certain predefined thresholds), and the configuration data the controller 130 can retrieve from the memory 132 during operation can include definitions of various scan patterns, for example. Alternatively or additionally to the memory 132, the controller 130 can be configured to access memory disposed remotely relative to the lidar system 120A in the vehicle controller (see below) or even memory disposed remotely relative to the vehicle, such as on a network server. In addition to collecting data from receiver 128A, the controller 130 can provide control signals to and, in some implementations, receive diagnostics data from, the light source 122A, the one or more sensors 134, and the scanner 11 via communication links 136.

[0114] In some implementations, the light source 122A can be an output collimator similar to the output collimator(s) 77 discussed above, e.g., a lens rigidly coupled to an end of a fiber-optic cable, with the other end of the fiber-optic cable coupled to a laser disposed remotely relative to the scanner 11. Examples of such configurations are discussed in more detail below with reference to FIGS. 32 and 33. In other implementations, the light source 122A can be an assembly that includes a laser.

[0115] The light source 122A thus may include, or be optically coupled to, a laser which emits light having a particular operating wavelength in the infrared, visible, or ultraviolet portions of the electromagnetic spectrum. As a more specific example, the light source 122A may include a laser with an operating wavelength between approximately 1.2 .mu.m and 1.7 .mu.m.

[0116] In operation, the light source 122A emits an output beam of light 150A which may be continuous-wave, pulsed, or modulated in any suitable manner for a given application. The output beam of light 150A is directed downrange toward a remote target 160 located a distance D from the lidar system 120A and at least partially contained within a field of regard of the system 120A. Depending on the scenario and/or the implementation of the lidar system 120A, the distance D can be between 1 m and 1 km, for example.

[0117] Once the output beam 150A reaches the downrange target 160, the target 160 may scatter or, in some cases, reflect at least a portion of light from the output beam 150A, and some of the scattered or reflected light may return toward the lidar system 120A. In the example of FIG. 26A, the scattered or reflected light is represented by input beam 164A, which passes through the scanner 11. The input beam 164A passes through the scanner 11 to the mirror 124A. The mirror 124A in turn directs the input beam 164A to the receiver 128A. The input beam 164A may contain only a relatively small fraction of the light from the output beam 150A. For example, the ratio of average power, peak power, or pulse energy of the input beam 164A to average power, peak power, or pulse energy of the output beam 150A may be approximately 10.sup.-1, 10.sup.-2, 10.sup.-3, 10.sup.-4, 10.sup.-5, 10.sup.-6, 10.sup.-7, 10.sup.-8, 10.sup.-9, 10.sup.-10, 10.sup.-11, or 10.sup.-12. As another example, if a pulse of the output beam 150A has a pulse energy of 1 microjoule (.mu.J), then the pulse energy of a corresponding pulse of the input beam 164A may have a pulse energy of approximately 10 nanojoules (nJ), 1 nJ, 100 picojoules (pJ), 10 pJ, 1 pJ, 100 femtojoules (fJ), 10 fJ, 1 fJ, 100 attojoules (aJ), 10 aJ, or 1 aJ.

[0118] The output beam 150A may be referred to as a laser beam, light beam, optical beam, emitted beam, or just beam; and the input beam 164A may be referred to as a return beam, received beam, return light, received light, input light, scattered light, or reflected light. As used herein, scattered light may refer to light that is scattered or reflected by the target 160. The input beam 164A may include light from the output beam 150A that is scattered by the target 160, light from the output beam 150A that is reflected by the target 160, or a combination of scattered and reflected light from target 160A. The input beam 164A also can include "passive" light signals, or light from various other sources and of various wavelengths scattered by the target 160.

[0119] The operating wavelength of a lidar system 120A may lie, for example, in the infrared, visible, or ultraviolet portions of the electromagnetic spectrum. The Sun also produces light in these wavelength ranges, and thus sunlight can act as background noise which can obscure signal light detected by the lidar system 120A. This solar background noise can result in false-positive detections or can otherwise corrupt measurements of the lidar system 120A, especially when the receiver 128A includes SPAD detectors (which can be highly sensitive).

[0120] Generally speaking, the light from the Sun that passes through the Earth's atmosphere and reaches a terrestrial-based lidar system such as the system 120A can establish an optical background noise floor for this system. Thus, in order for a signal from the lidar system 120A to be detectable, the signal must rise above the background noise floor. It is generally possible to increase the signal-to-noise (SNR) ratio of the lidar system 120A by raising the power level of the output beam 150A, but in some situations it may be desirable to keep the power level of the output beam 150A relatively low. For example, increasing transmit power levels of the output beam 150A can result in the lidar system 120A not being eye-safe.

[0121] In some implementations, the lidar system 120A operates at one or more wavelengths between approximately 1400 nm and approximately 1600 nm. For example, the light source 122A may produce light at approximately 1550 nm.

[0122] In some implementations, the lidar system 120A operates at frequencies at which atmospheric absorption is relatively low. For example, the lidar system 120A can operate at wavelengths in the approximate ranges from 980 nm to 1110 nm or from 1165 nm to 1400 nm.

[0123] In other implementations, the lidar system 120A operates at frequencies at which atmospheric absorption is high. For example, the lidar system 120A can operate at wavelengths in the approximate ranges from 930 nm to 980 nm, from 1100 nm to 1165 nm, or from 1400 nm to 1460 nm.

[0124] According to some implementations, the lidar system 120A can include an eye-safe laser, or the lidar system 120A can be classified as an eye-safe laser system or laser product. An eye-safe laser, laser system, or laser product may refer to a system with an emission wavelength, average power, peak power, peak intensity, pulse energy, beam size, beam divergence, exposure time, or scanned output beam such that emitted light from the system presents little or no possibility of causing damage to a person's eyes. For example, the light source 122A or the lidar system 120A may be classified as a Class 1 laser product (as specified by the 60825-1 standard of the International Electrotechnical Commission (IEC)) or a Class I laser product (as specified by Title 21, Section 1040.10 of the United States Code of Federal Regulations (CFR)) that is safe under all conditions of normal use. In some implementations, the lidar system 120A may be classified as an eye-safe laser product (e.g., with a Class 1 or Class I classification) configured to operate at any suitable wavelength between approximately 1400 nm and approximately 2100 nm. In some implementations, the light source 122A may include a laser with an operating wavelength between approximately 1400 nm and approximately 1600 nm, and the lidar system 120A may be operated in an eye-safe manner. In some implementations, the light source 122A or the lidar system 120A may be an eye-safe laser product that includes a scanned laser with an operating wavelength between approximately 1530 nm and approximately 1560 nm. In some implementations, the lidar system 120A may be a Class 1 or Class I laser product that includes a fiber laser or solid-state laser with an operating wavelength between approximately 1400 nm and approximately 1600 nm.

[0125] The receiver 128A may receive or detect photons from the input beam 164A and generate one or more representative signals. For example, the receiver 128A may generate an output electrical signal 145A that is representative of the input beam 164. The receiver 128A may send the electrical signal to the controller 130. The controller 130 can be configured to analyze one or more characteristics of the electrical signal 145A to determine one or more characteristics of the target 160, such as its distance downrange from the lidar system 120A. More particularly, the controller 130 may analyze the time of flight or phase modulation for the beam of light 150A transmitted by the light source 122A. If the lidar system 120A measures a time of flight of T (e.g., T represents a round-trip time of flight for an emitted pulse of light to travel from the lidar system 120A to the target 160 and back to the lidar system 120A), then the distance D from the target 160 to the lidar system 120A may be expressed as D=cT/2, where c is the speed of light (approximately 3.0.times.10.sup.8 m/s).