Square-root Multi-state Constraint Kalman Filter For Vision-aided Inertial Navigation System

Roumeliotis; Stergios I. ; et al.

U.S. patent application number 16/316718 was filed with the patent office on 2019-05-23 for square-root multi-state constraint kalman filter for vision-aided inertial navigation system. The applicant listed for this patent is Regents of the University of Minnesota. Invention is credited to Stergios I. Roumeliotis, Kejian J. Wu.

| Application Number | 20190154449 16/316718 |

| Document ID | / |

| Family ID | 60451162 |

| Filed Date | 2019-05-23 |

View All Diagrams

| United States Patent Application | 20190154449 |

| Kind Code | A1 |

| Roumeliotis; Stergios I. ; et al. | May 23, 2019 |

SQUARE-ROOT MULTI-STATE CONSTRAINT KALMAN FILTER FOR VISION-AIDED INERTIAL NAVIGATION SYSTEM

Abstract

A vision-aided inertial navigation system (VINS) implements a square-root multi- state constraint Kalman filter (SR-MSCKF) for navigation. In one example, a processor of a VINS receives image data and motion data for a plurality of poses of a frame of reference along a trajectory. The processor executes an Extended Kalman Filter (EKF)- based estimator to compute estimates for a position and orientation for each of the plurality of poses of the frame of reference along the trajectory. For features observed from multiple poses along the trajectory, the estimator computes constraints that geometrically relate the multiple poses of the respective feature. Using the motion data and the computed constraints, the estimator computes state estimates for the position and orientation of the frame of reference. Further, the estimator determines uncertainty data for the state estimates and maintains the uncertainty data as a square root factor of a covariance matrix.

| Inventors: | Roumeliotis; Stergios I.; (St Paul, MN) ; Wu; Kejian J.; (Minneapolis, MN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 60451162 | ||||||||||

| Appl. No.: | 16/316718 | ||||||||||

| Filed: | July 21, 2017 | ||||||||||

| PCT Filed: | July 21, 2017 | ||||||||||

| PCT NO: | PCT/US2017/043280 | ||||||||||

| 371 Date: | January 10, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62365803 | Jul 22, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 2207/30244 20130101; G06T 2207/30241 20130101; G01S 5/16 20130101; G01C 21/165 20130101; G06F 17/16 20130101; G06T 7/70 20170101; G06T 7/277 20170101 |

| International Class: | G01C 21/16 20060101 G01C021/16; G06T 7/277 20060101 G06T007/277; G06T 7/70 20060101 G06T007/70; G06F 17/16 20060101 G06F017/16 |

Goverment Interests

GOVERNMENT RIGHTS

[0001] This invention was made with government support under Air Force Office of Scientific Research and Multidisciplinary Research Program of the University Research Initiative grant no. FA9550-10-1-0567. The government has certain rights in the invention.

Claims

1. A method comprising: receiving, from at least one image source, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receiving, from a motion sensor, motion data of the frame of reference in the environment for the period of time; and computing state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein computing the state estimates comprises: for one or more of the features observed from multiple poses along the trajectory, computing one or more constraints that geometrically relate the multiple poses from which the corresponding feature was observed; determining, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determining uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

2. The method of claim 1, wherein determining the uncertainty data for the state estimates comprises determining a Cholesky factor of the covariance matrix.

3. The method of claim 1, wherein executing the EFK-based estimator further comprises: maintaining a state vector of state information for the positions and orientations of the VINS and for positions with the environment for features observed within the environment; and excluding, from the state vector, state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

4. The method of claim 1, further comprising excluding, from the covariance matrix from which the square root factor is determined, the features that were each observed from the multiple poses and for which the one or more constraints were computed.

5. The method of claim 1, wherein executing the EFK-based estimator further comprises: determining, from the image data, feature measurements corresponding to the features observed from the poses along the trajectory; grouping the feature measurements according to the features observed within the image data; and for the one or more of the features observed from multiple poses along the trajectory, computing, based on the corresponding group of feature measurements for the feature, the one or more constraints that geometrically relate the multiple poses from which the feature was observed.

6. The method of claim 1, further comprising outputting, by at least one of a transmitter or a display, a navigation user interface based on the computed state estimates.

7. The method of claim 1, wherein determining the uncertainty data for the state estimates comprises determining a lower-triangular square-root factor of the covariance matrix.

8-12. (canceled)

13. The method of claim 1, further comprising outputting, based on the computed state estimates for the at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory, a map of the environment.

14. The method of claim 1, further comprising outputting, based on the computed state estimates for the at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory, instructions for navigating the environment.

15. A vision-aided inertial navigation system (VINS) comprising: at least one image source configured to generate image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; a motion sensor configured to generate motion data of the frame of reference in the environment for the period of time; and a processor communicatively coupled to the at least one image source and the motion sensor, the processor configured to: receive, from the at least one image source, the image data for the plurality of poses of the frame of reference along the trajectory within the environment over the period of time; receive, from the motion sensor, the motion data of the frame of reference in the environment for the period of time; and compute state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein, to compute the state estimates, the EKF-based estimator is configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the corresponding feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

16. The VINS of claim 15, wherein to determine the uncertainty data for the state estimates, the EFK-based estimator is further configured to determine a Cholesky factor of the covariance matrix.

17. The VINS of claim 15, wherein the EFK-based estimator is further configured to: maintain a state vector of state information for the positions and orientations of the VINS and for positions with the environment for features observed within the environment; and exclude, from the state vector, state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

18. The VINS of claim 15, wherein the EFK-based estimator is further configured to exclude, from the covariance matrix from which the square root factor is determined, the features that were each observed from the multiple poses and for which the one or more constraints were computed.

19. The VINS of claim 15, wherein the EFK-based estimator is further configured to: determine, from the image data, feature measurements corresponding to the features observed from the poses along the trajectory; group the feature measurements according to the features observed within the image data; and for the one or more of the features observed from multiple poses along the trajectory, compute, based on the corresponding group of feature measurements for the feature, the one or more constraints that geometrically relate the multiple poses from which the feature was observed.

20. The VINS of claim 15, further comprising at least one of a transmitter or a display configured to output a navigation user interface based on the computed state estimates.

21. The VINS of claim 15, wherein, to determine the uncertainty data for the state estimates, the EKF-based estimator is configured to determine a lower-triangular square-root factor of the covariance matrix.

22. The VINS of claim 15, wherein the VINS comprises one of a robot, a vehicle, a tablet, a mobile device or a wearable computing device.

23. The VINS of claim 15, wherein the image source includes a camera.

24. The VINS of claim 1153, wherein the motion sensor includes an inertial measurement unit (IMU).

25. A non-transitory, computer-readable medium comprising instructions, that, when executed, cause a processor of a vision-aided inertial navigation system (VINS) to: receive, from at least one image source, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receiving, from a motion sensor, motion data of the frame of reference in the environment for the period of time; and compute state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein, to compute the state estimates, the EKF-based estimator is configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the corresponding feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

Description

TECHNICAL FIELD

[0002] This disclosure relates to navigation and, more particularly, to vision-aided inertial navigation.

BACKGROUND

[0003] In general, a Vision-aided Inertial Navigation System (VINS) fuses data from a camera and an Inertial Measurement Unit (IMU) to track the six-degrees-of-freedom (d.o.f) position and orientation (pose) of a sensing platform through an environment. In this way, the VINS combines complementary sensing capabilities. For example, an IMU can accurately track dynamic motions over short time durations, while visual data can be used to estimate the pose displacement (up to scale) between consecutive views. For several reasons, VINS has gained popularity to address GPS-denied navigation. During the past decade, VINS have been successfully applied to robots, spacecraft, automotive, and personal localization (e.g., by use of smartphones or laptops), demonstrating real-time performance.

SUMMARY

[0004] In general, this disclosure describes techniques for implementing a square-root multi-state constraint Kalman filter (SR-MSCKF) for vision-aided inertial navigation. In one example, at least one image source of a VINS produces image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time. In some examples, the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory. In some examples, the at least one image source observes one or more of the features at multiple ones of the poses of the frame of reference along the trajectory. Further, a motion sensor of the VINS provides motion data of the frame of reference in the environment for the period of time. The VINS further includes a hardware-based processor communicatively coupled to the image source and communicatively coupled to the motion sensor.

[0005] In accordance with the techniques of the disclosure, the processor computes estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory. In one example, the processor executes an Extended Kalman Filter (EKF)-based estimator. In this example, the EKF-based estimator computes, for the one or more of the features observed from multiple poses along the trajectory, one or more constraints that geometrically relate the multiple poses from which the respective feature was observed. The EKF-based estimator further determines, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory. Further, the EKF-based estimator determines uncertainty data for the state estimates and maintains the uncertainty data as a square root factor of a covariance matrix.

[0006] In one example, the techniques of the disclosure describe a VINS comprising: at least one image source to produce image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; a motion sensor configured to provide motion data of the frame of reference in the environment for the period of time; and a hardware-based processor communicatively coupled to the image source and communicatively coupled to the motion sensor, the processor configured to compute estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory, wherein the processor executes an Extended Kalman Filter (EKF)-based estimator configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the EKF-based estimator maintains the uncertainty data to comprise a square root factor of a covariance matrix.

[0007] In another example, the techniques of the disclosure describe a method comprising: receiving, with a processor of a VINS and from at least one image source of the VINS, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receiving, with the processor and from a motion sensor communicatively coupled to the processor, motion data of the frame of reference in the environment for the period of time; computing, with the processor, state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein computing the state estimates comprises: for one or more of the features observed from multiple poses along the trajectory, computing one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determining, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determining uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

[0008] In another example, the techniques of the disclosure describe a non-transitory computer-readable medium comprising instructions that, when executed, cause a processor of a VINS to: receive, from at least one image source of the VINS, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receive, from a motion sensor communicatively coupled to the processor, motion data of the frame of reference in the environment for the period of time; compute, state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein, to compute the state estimates, the processor is further configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

[0009] The details of one or more embodiments of the techniques are set forth in the accompanying drawings and the description below.

BRIEF DESCRIPTION OF DRAWINGS

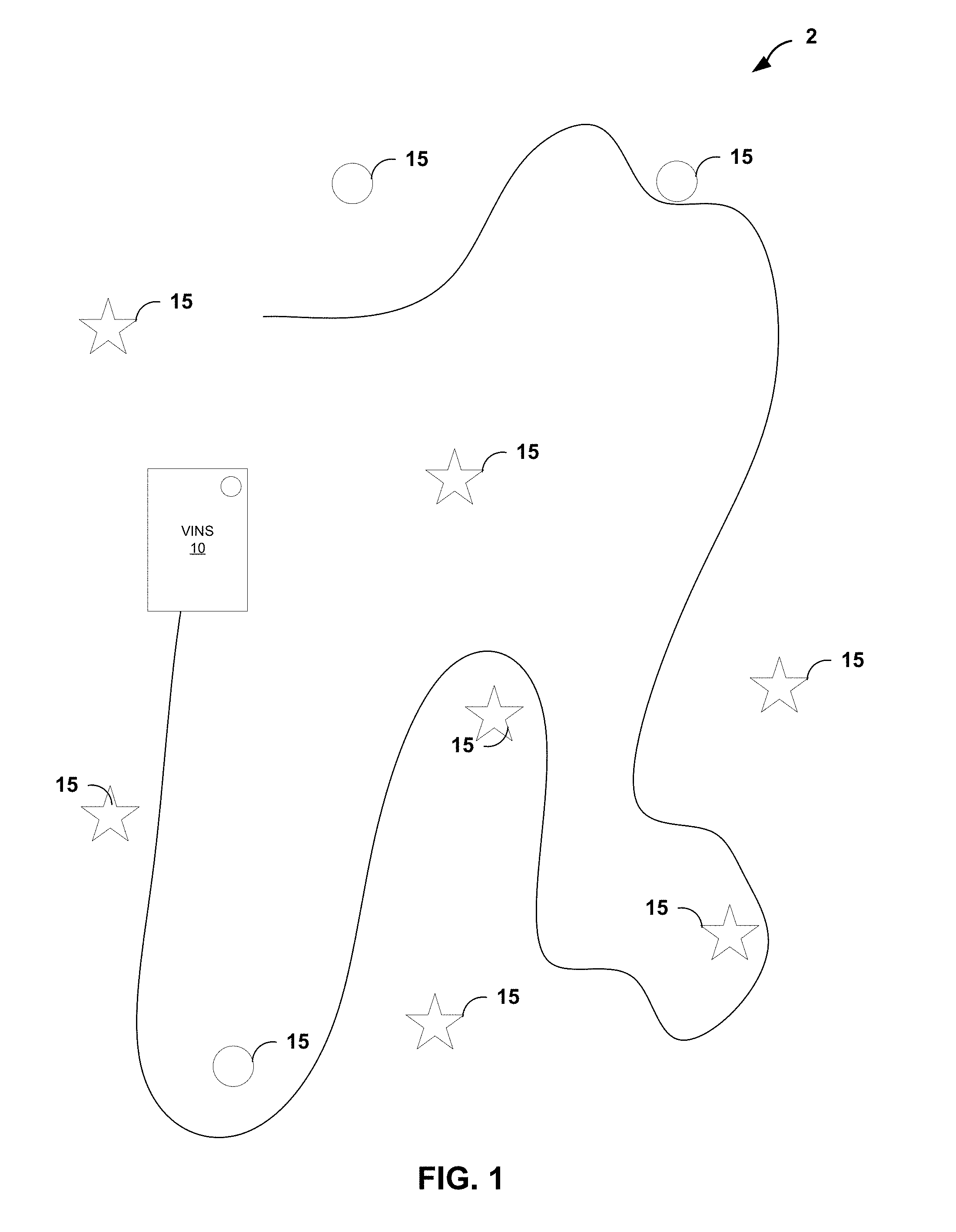

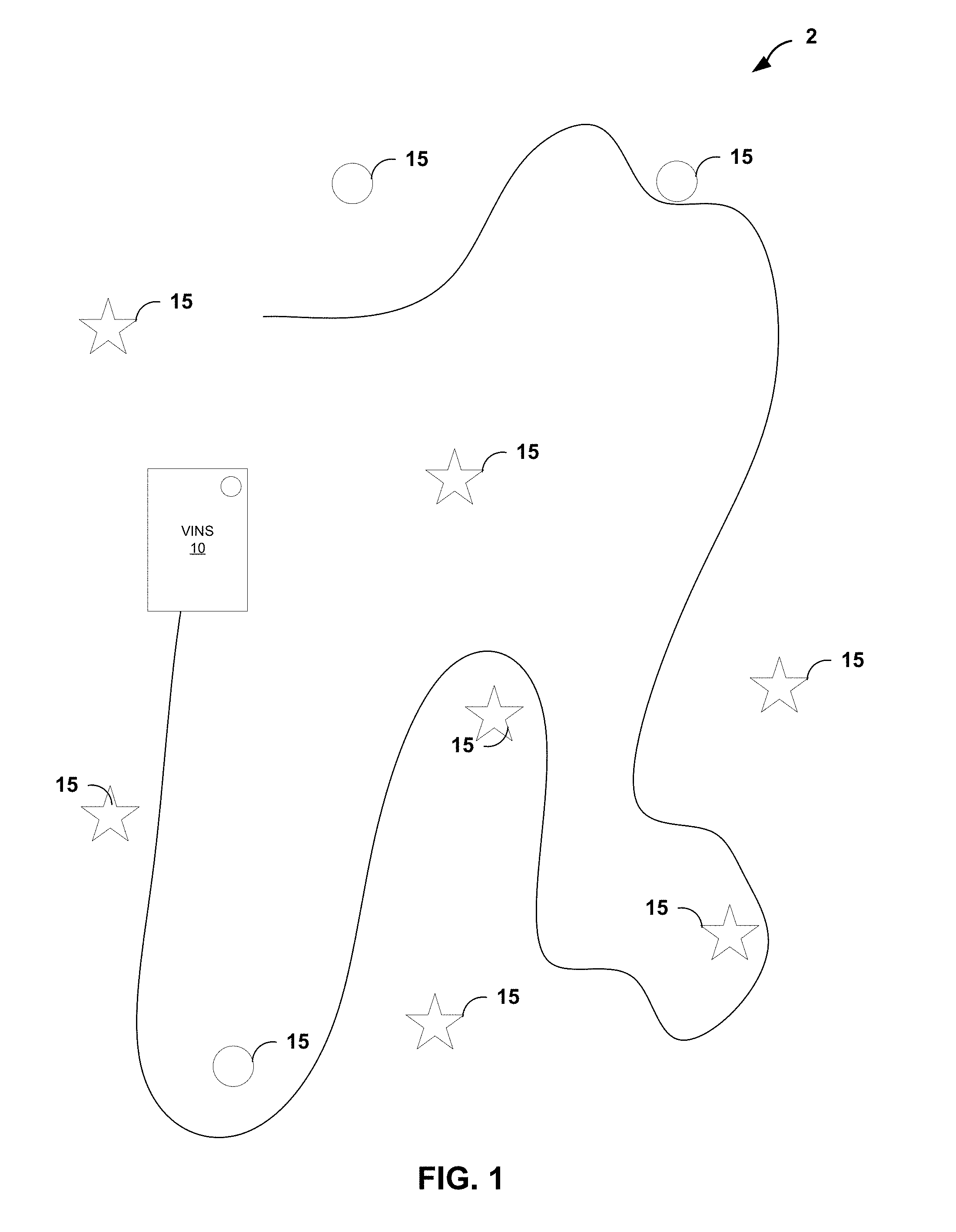

[0010] FIG. 1 is a block diagram illustrating a VINS that navigates an environment having a plurality of features using one or more image sources and inertial measurement unit (IMUs), in accordance with the techniques of the disclosure.

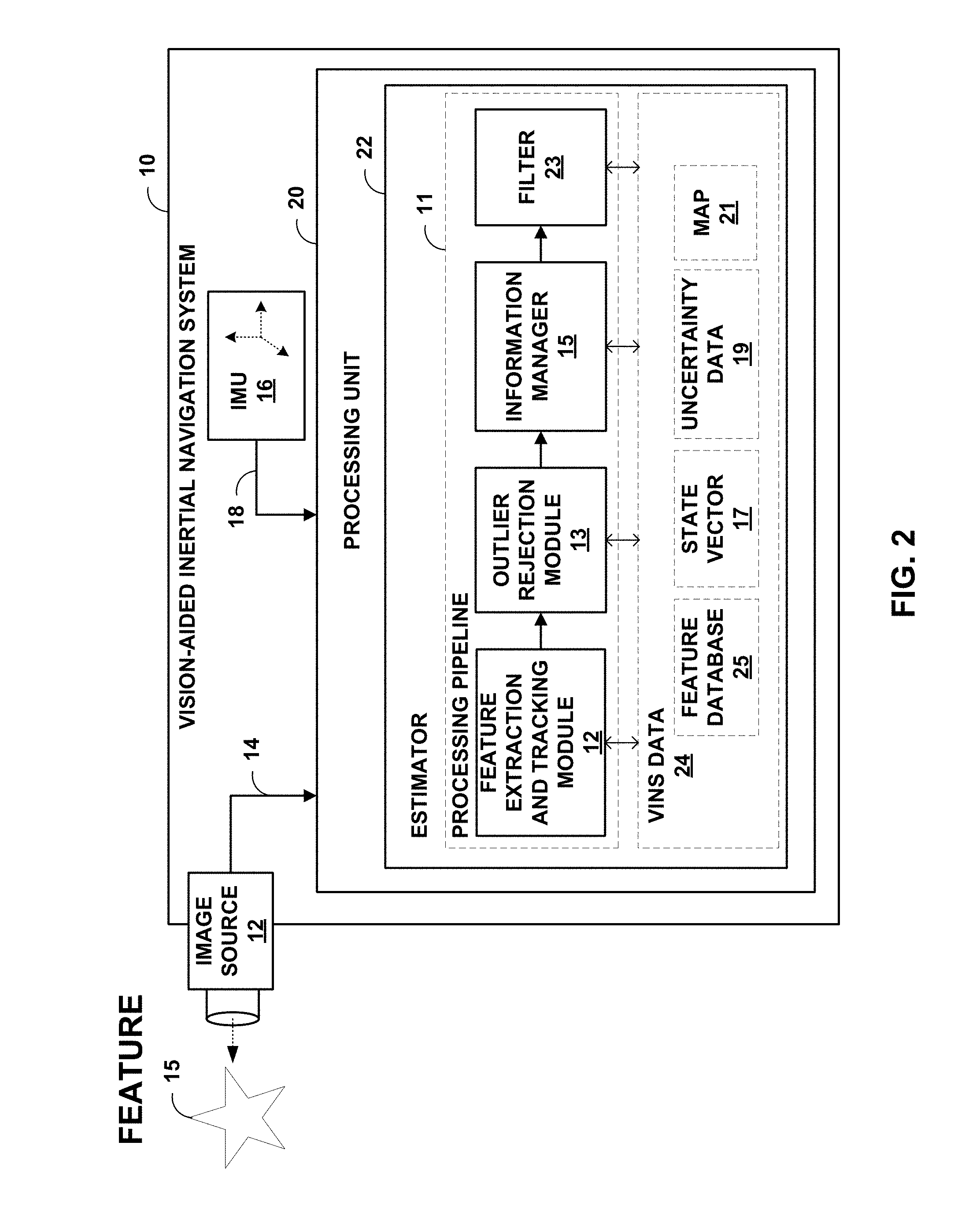

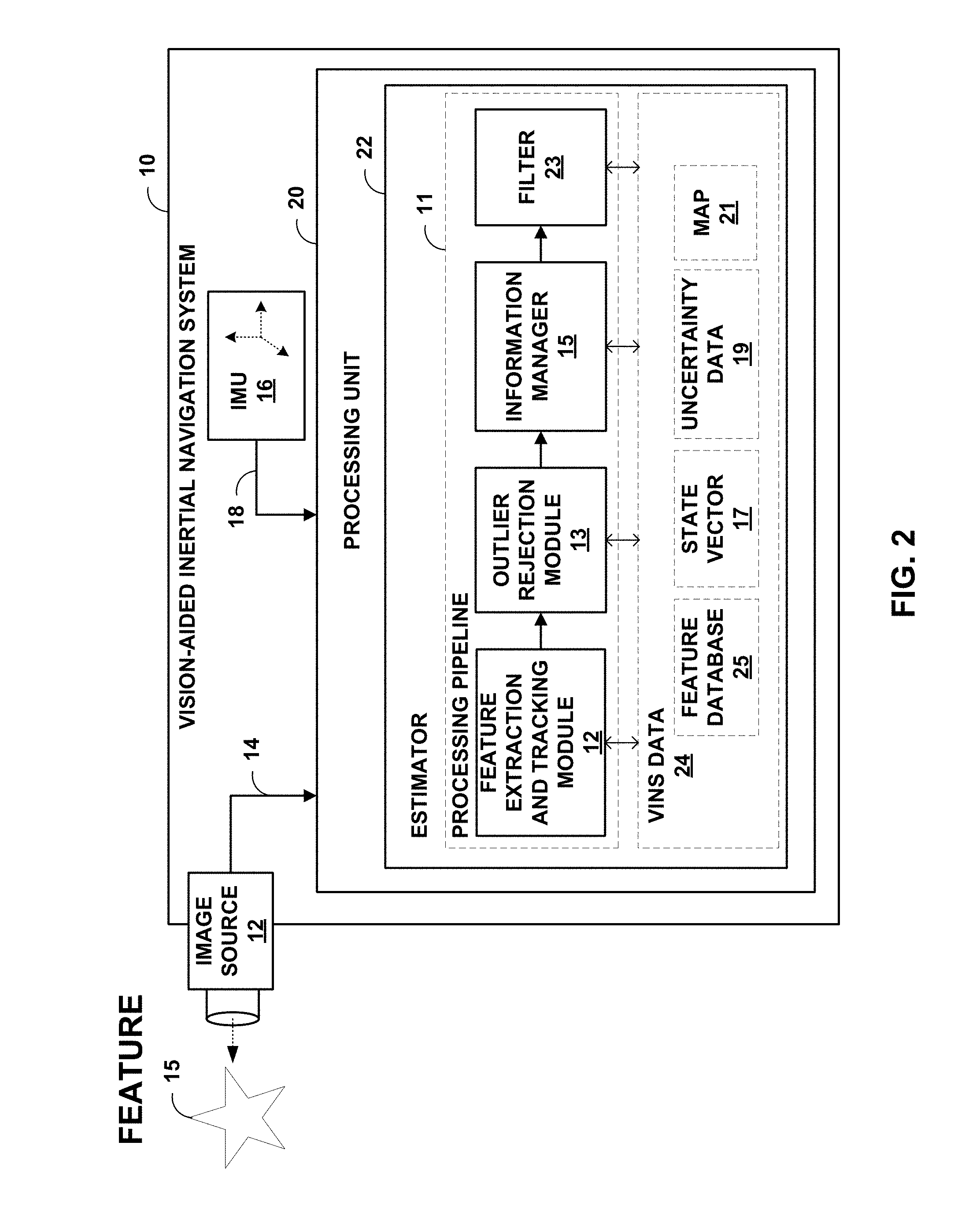

[0011] FIG. 2 is block diagram illustrating an example implementation of the VINS of FIG. 1 in further detail.

[0012] FIG. 3 is a flowchart illustrating an example operation of an estimator, in accordance with the techniques of the disclosure.

[0013] FIG. 4 is an illustration depicting a detailed example of various devices that may be configured to implement some embodiments in accordance with the current disclosure.

[0014] Like reference characters refer to like elements throughout the figures and description.

DETAILED DESCRIPTION

[0015] Techniques are described for implementing an estimator for vision-aided inertial navigation systems (VINS) based on a forward Extended Kalman Filter for localization. In particular, a square root (SQRT) form of a Multi-State Constraint Kalman Filter (MSCKF) is described.

[0016] The techniques described herein may provide increased numerical stability, e.g., can handle double the condition number of the covariance matrix as compared to the Multi-State Constraint Kalman Filter. This may be advantageous for may applications, such as for stereoscopic applications in which the eigenvalues are close to zero. Moreover, in some situations, the SQRT MSCKF estimator described herein may achieve increased performance over the SQRT form of an Inverse Sliding Window Filter estimator for VINS.

[0017] FIG. 1 is a block diagram illustrating a VINS 10 that navigates an environment 2 having a plurality of features 15 using one or more image sources and inertial measurement unit (IMUs). That is, VINS 10 is one example of a device that utilizes a 3D map of environment 2 to determine the position and orientation of VINS 10 as the VINS traverses the environment, where the map may be constructed in real-time by the VINS or previously constructed. Environment 2 may, for example, represent an environment where conventional GPS-signals are unavailable for navigation, such as on the moon or a different planet or even underwater. As additional examples, environment 2 may represent an indoor environment such as the interior of a building, such as a convention center, shopping mall, sporting arena, business office and the like. Features 15, also referred to as landmarks, represent objects visible within environment 2, such as rocks, trees, signs, walls, stairs, chairs, tables, and the like. Features 15 may be moving or stationary objects within environment 2.

[0018] VINS 10 represents any mobile device that implements the techniques described herein. VINS 10 may be, for example, a robot, mobile sensing platform, a mobile phone, a laptop, a tablet computer, a vehicle, a wearable device such as smart glasses and the like. The increasing range of sensing capabilities offered by modern mobile devices, such as cell phones and tables, as well as their increasing computational resources make them ideal for applying VINS. In some implementations, the techniques described herein may be used within environments having GPS or similar signals and may provide supplemental localization and mapping information.

[0019] As one example, VINS 10 may be an autonomous robot although, as discussed above, VINS 10 may take the form of other devices that implement the techniques described herein. While traversing environment 2, the image sources of VINS 10 produce image data at discrete time instances along the trajectory within the three-dimensional (3D) environment, where the image data captures features 15 within the 3D environment at each of the time instances. In addition, IMUs of VINS 10 produces IMU data indicative of a dynamic motion of VINS 10.

[0020] As described in detail herein, VINS 10 includes a hardware-based computing platform that implements an estimator that fuses the image data and the IMU data to perform localization of VINS 10 within environment 10. In general, the estimator process image data 14 and IMU data 18 to estimate the 3D IMU pose and velocity together with the time-varying IMU biases, camera rolling shutter and IMU-camera time synchronization and to produce, based on the captured image data, estimates for poses of VINS 10 along the trajectory and, in some cases, a position and orientation within an overall map of the environment. Utilizing these techniques, VINS 10 may navigate environment 2 and, in some cases, may construct or augment the mapping information for the environment including the positions of features 15. In one example, the map may be constructed using cooperative mapping techniques.

[0021] The estimator of VINS 10 may operate according to different types of estimators. For example, in an example implementation, VINS 10 implements an inverse, sliding-window filter (ISWF). In other examples, VINS 10 implements a sliding-window Iterative Kalman Smoother (IKS).

[0022] In one example, as described herein, the estimator implements a square root (SQRT) form of a Multi-State Constraint Kalman Filter (MSCKF) for localization within environment 10. In one example implementation, as compared to the regular MSCKF, which maintains the covariance matrix, this square-root variant maintains the Cholesky factor of the covariance matrix. This way, the SR-MSCKF algorithm may achieve higher numerical stability than the MSCKF. In linear algebra, the Cholesky factorization is a decomposition of a symmetric positive definite matrix (as is the case of the covariance matrix here) into the product of a lower-triangular matrix (the Cholesky factor) and its transpose. In our context, the use of this factor allows better numerical accuracy of the algorithm as compared to maintaining the covariance matrix itself.

[0023] FIG. 2 is block diagram illustrating an example implementation of VINS 10 of FIG. 1 in further detail. Image source 12 of VINS 10 images an environment in which VINS 10 operates so as to produce image data 14. That is, image source 12 generates image data 14 that captures a number of features visible in the environment surrounding VINS 10. Image source 12 may be, for example, one or more cameras that capture 2D or 3D images, a laser scanner or other optical device that produces a stream of 1D image data, a depth sensor that produces image data indicative of ranges for features within the environment, a stereo vision system or a vision system having multiple cameras to produce 3D information, a Doppler radar and the like. In this way, image data 14 provides exteroceptive information as to the external environment in which VINS 10 operates. Moreover, image source 12 may capture and produce image data 14 at time intervals in accordance one or more clocks associated with the image source. In other words, image source 12 may produce image data 14 at each of a first set of time instances along a trajectory within the three-dimensional (3D) environment, wherein the image data captures features 15 within the 3D environment at each of the first set of time instances.

[0024] IMU 16 produces IMU data 18 indicative of a dynamic motion of VINS 10. IMU 16 may, for example, detect a current acceleration using one or more accelerometers as VINS 10 is translated, and detect the rotational velocity (i.e., the rate of change in rotational attributes like pitch, roll and yaw) using one or more gyroscopes as VINS 10 is rotated. IMU 14 produces IMU data 18 to specify the detected motion. In this way, IMU data 18 provides proprioceptive information as to the VINS 10 own perception of its movement and orientation within the environment. Moreover, IMU 16 may produce IMU data 18 at time intervals in accordance a clock associated with the IMU. In this way, IMU16 produces IMU data 18 for VINS 10 along the trajectory at a second set of time instances, wherein the IMU data indicates a motion of the VINS along the trajectory. In many cases, IMU 16 may produce IMU data 18 at much faster time intervals than the time intervals at which image source 12 produces image data 14. Moreover, in some cases the time instances for image source 12 and IMU 16 may not be precisely aligned such that a time offset exists between the measurements produced, and such time offset may vary over time. In such cases, VINS 10 may compensate and correct for any misalignment.

[0025] In general, estimator 22 fuses image data 14 and IMU data 18 to determine a position and orientation of VINS 10 as well as positions of features 15 as the VINS traverses environment 2. That is, estimator 22 of processing unit 20 process image data 14 and IMU data 18 to compute state estimates for the various degrees of freedom of VINS 10 and, from the state estimates, computes position, orientation, speed, locations of observable features, a map to be used for localization, an odometry, or other higher order derivative information represented by VINS data 24. Processing unit 20 may, for example, comprise a hardware-based computing platform having one or more processors that execute software instructions and/or application-specific hardware for implementing the techniques described herein.

[0026] In the example of FIG. 2, estimator 22 comprises a processing pipeline 11 for measurements from image source 12 and IMU 16. In this example, processing pipeline 11 includes feature extraction and tracking module 12, outlier rejection module 13, information manager 15 and filter 23.

[0027] Feature extraction and tracking module 12 extracts features 15 from image data 14 acquired by image source 12 and stores information describing the features in feature database 25. Feature extraction and tracking module 12 may, for example, perform corner and edge detection to identify features and track features 15 across images using, for example, the Kanade-Lucas-Tomasi (KLT) techniques.

[0028] Outlier rejection module 13 provides robust outlier rejection of measurements from image source 12 and IMU 16. For example, outlier rejection module may apply a Mahalanobis distance tests to the feature measurements to identify and reject outliers. As one example, outlier rejection module 13 may apply a 2-Point Random sample consensus (RANSAC) technique.

[0029] Information manager 15 selects features from feature database 15 and feeds measurements for the selected features to filer 23, which may perform simultaneous localization of the position and orientation for VINS 10 within environment 2 by iteratively optimizing over measurements throughout trajectory, which can be computationally extensive. As described herein, estimator 22 implements filter 23 that iteratively updates predicted state estimates over a bounded-size sliding window of state estimates for poses of VINS 10 and positions of features 15 in real-time as new image data 14 and IMU data 18 are obtained. That is, by implementing the filtering approach, estimator 22 of VINS 10 marginalizes out past state estimates and measurements through the sliding window as VINS 10 traverses environment 2 for simultaneous localization and mapping (SLAM).

[0030] In one example implementation, filter 23 of estimator 22 recursively operates on the streams of image data 14 and IMU data 18 to compute a sliding window of predicted estimates for the state variables maintained within state vector 17 along with uncertainty data 19 representing the respective uncertainties in the form of one or more uncertainty matrices, which may take the form of covariance matrices for an extended Kalman filter (EKF). For example, at any time instant, the EKF state 17 vector comprises the evolving IMU state and a history of up to Nmax past poses of the camera state vector 17 and may take the form of:

x=[x.sub.1x.sub.l.sub.k+n-1 . . . x.sub.l.sub.k]

where x.sub.l denotes the current pose, and x.sub.l.sub.i, for I=k+n-1, . . . , k are the IMU poses in the sliding window, corresponding to the time instants of the last n camera measurements. The current robot pose may be defined as:

x.sub.l=[.sup.lq.sub.G.sup.T .sup.Gv.sub.l.sup.T .sup.Gp.sub.l.sup.T b.sub..alpha..sup.T b.sub.g.sup.T .lamda..sub.d .lamda..sub.T].sup.T

where .sup.lq.sub.G is the quaternion representation of the orientation of {G} in the IMU's frame of reference {I}, .sup.Gv.sub.l and .sup.Gp.sub.l are the velocity and position of {I} in {G} respectively, while b.sub.a and b.sub.g correspond to gyroscope and accelerometer biases.

[0031] Estimator 22 may implement filter 23 such that uncertainty data 19 takes the form of a matrix that contains estimates of the uncertainty of each predicted state estimate in state vector 17 as well as a correlation between uncertainties. When a subsequent measurement is observed from either image data 14 or IMU data 18, filter 23 updates the sliding window of predicted state estimates with state vector 17 and the uncertainty data 19. In general, estimator 22 operates in real-time using the present input measurements of image data 14 and IMU data 18 and the previously calculated state estimates and its uncertainty matrix. In general, when new image data 14 or IMU data 18 is received, filter 23 projects the measurements as the data arrives onto the state estimates within state vector 17 to re-compute the predicted states and to update respective uncertainty data 19 for each state estimate. Any difference between the predicted state estimates as computed by estimator 22 and the actual feature measurements is referred to as a residual.

[0032] In some examples, estimator 22 iteratively processes measurements from image data 14 and IMU data 18 to update estimates only keyframes (key robot/device poses) and key landmarks while also exploiting information (e.g., visual observations and odometry measurements) available to the non-keyframes along the trajectory. In such example implementations, filter 23 projects new measurements onto the keyframes, by generating consistent pose (position and orientation) constraints between keyframes. As used herein, the term keyframes refers to the individual poses of the VINS 10 for which position and orientation of the VINS are to be estimated. In contrast, the term non-keyframes refers to intermediate poses between keyframes and for which, in some examples, complete state estimates of the VINS are not computed. In these example implementations, information from non-keyframes, acquired between keyframes, is not discarded. Instead, this information is projected on to estimates in the state vector associated with the keyframes, in order to generate tight constraints between the keyframes. For example, information from a non-keyframe may be projected onto a preceding keyframe to compute relative position and orientation constraints between the preceding keyframe and the non-keyframe.

[0033] Estimator 22 processes inertial and visual measurements to compute, based on the image data and the IMU data, state estimates for at least a position and orientation of VINS 10 for a plurality of poses of the VINS along the trajectory. That is, estimator 22 process image data 14 and IMU data 18 to update within state vector 17 estimates for the 3D IMU pose and velocity together with the time-varying IMU biases so as to determining the position and orientation of estimator 22 within the environment represented by map 21, where the map may be initially constructed using the cooperative mapping information described herein. Estimator 22 may, in accordance with the techniques described herein, apply estimation techniques that compute state estimates for 3D poses of IMU 16 at each of the first set of time instances associated with capture of the IMU data and 3D poses of image source 12 at each of the second set of time instances associated with capture of the image data along the trajectory.

[0034] In this example implementation, VINS 10 provides two sources of information: motion information (IMU data 18) from an IMU 14, and image data 14 (e.g., feature observations) from image source 12. Estimator 22 may classify the features observations into two main categories: simultaneous localization and mapping (SLAM) features for which estimates are included and updated within a complex system state vector 17 maintained by estimator 22, and multi-state constraint Kalman filter (MSCKF) features for which the estimator has determined to exclude corresponding estimates in the state vector but instead used the features to generate constraints that geometrically constrain the states for the poses of VINS 10 from which the MSCKF feature was observed. That is, rather than maintain state estimates for positions of each observed feature 15 within its internal state vector, the estimator may group the images per feature and elect to exclude state estimates for one or more of those features (i.e., MSCKF features) from its state vector that were observed from multiple poses along the trajectory. For these features excluded from the state vector, referred to as MSCKF features, estimator 22 computes geometric constraints that constrain state estimates for other poses within the sliding window state vector and that are used to compute state updates for those state estimates within the state vector. In this way, MSCKF features relate and constrain estimated poses within the sliding window. They require less computations than SLAM features since their feature states are not directly estimated.

[0035] In one example, as described herein, the estimator implements a square root (SQRT) form of a Multi-State Constraint Kalman Filter (MSCKF) for localization within environment 10. The estimator may, for example, exclude from the state vector state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed. Moreover, the covariance matrix, from which the square root factor is determined, excludes data for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0036] As mentioned above, by maintaining the Cholesky factor, the techniques described herein achieve better numerical accuracy as compared to maintaining the covariance matrix itself. This may provide a significant advantage over other estimators. Further, as a result of maintaining the Cholesky factor, the steps (propagation, update, and marginalization) involved in the estimation computation are modified as described herein.

[0037] The step of propagation refers to operations by the estimator 22 of using image data and IMU data between consecutive states to propagate state vector data along the sliding window filter. The step of updating refers to operations by the estimator 22 of using the image data 14 and the IMU data 18 to update the state vector 17 and the covariance of uncertainty data 19. The step of marginalization refers to operations by the estimator 22 of removing older states from the sliding window filter 23 to maintain constant computational complexity. Additional description of each of these steps is provided in detail below.

[0038] As a result of the estimation, estimator 22 performs localization so as to track the position and orientation of the device (frame of reference) within the environment. VINS 10 may output on a display the determined trajectory as superimposed on a 2D or 3D graph of the environment or regions thereof. As additional examples, VINS 10 may output to the display coordinates representing the position with the environment. Moreover, VINS 10 may control navigation of a device, such as a robot, vehicle or mobile computing device by outputting the position and/or instructions for navigating the environment, e.g., to a destination.

[0039] FIG. 3 is a flowchart illustrating an example operation of estimator 22, in accordance with the techniques of the disclosure. The device may, for example, comprise a vision-aided inertial navigation system, mobile device, laptop, table, robot, vehicle, server, cloud-based processing system or other device having a processor or other operating environment for implementing the techniques described herein. For purposes of explanation, FIG. 3 will be described with respect to VINS 10 and estimator 22 of FIG. 1.

[0040] During operation, estimator 22 receives measurement data observed along the trajectory (100). That is, estimator 22 receives image data 14 produced by an image source 12 of the vision-aided inertial navigation system 10 for keyframes and non-keyframes along a trajectory of the VINS. In addition, estimator 22 receives, from an inertial measurement unit (IMU) 16, IMU data 18 indicative of motion of VINS 10 along the trajectory for the keyframes and the one or more non-keyframes. In this way, VINS 10 receives and records, within VINS data 24, image data 14 and IMU data 18 for keyframes and non-keyframes along the trajectory. Each keyframe and non-keyframe may correspond to a pose (position and orientation) of VINS 10 including landmarks (features) observed within the environment at that pose. In general, the term keyframes refers to the individual poses of the VINS for which position and orientation of the VINS are to be estimated. In contrast, the term non-keyframes refers to intermediate poses between keyframes and for which complete state estimates of the VINS are not computed.

[0041] Based on the sliding window of image data and IMU data, estimator 22 applies an extended Kalman filter to iteratively update a state vector to determine state estimates (linearization points) for each pose of the VINS and each landmark (103). For example, estimator 22 may update a state vector to compute state estimates for the position and the orientation of the VINS and for one or more landmarks observed from the VINS at various poses along the trajectory. In an example implementation, the state vector includes state estimates (quantities being estimated) for at least a position and orientation of the vision-aided inertial navigation system for a plurality of poses of the VINS along the trajectory. Along with the state vector, the estimator maintains a respective covariance for each of the state estimates, where the respective covariance represents the amount of uncertainty in the corresponding state estimate.

[0042] For example, as shown in FIG. 3, for each current time epoch, estimator 22 first determines, from the image data, feature measurements corresponding to the features observed from the poses along the trajectory and groups the feature measurements according to the features observed within the image data (104). For one or more of the features observed that were from multiple poses along the trajectory, estimate 22 computes, based on the respective group of feature measurements for the feature, one or more constraints that geometrically relate the multiple poses from which the respective feature was observed (105). Those features for which geometric constraints are computed may be viewed as MSCKF features that are excluded from the state vector by estimator 22.

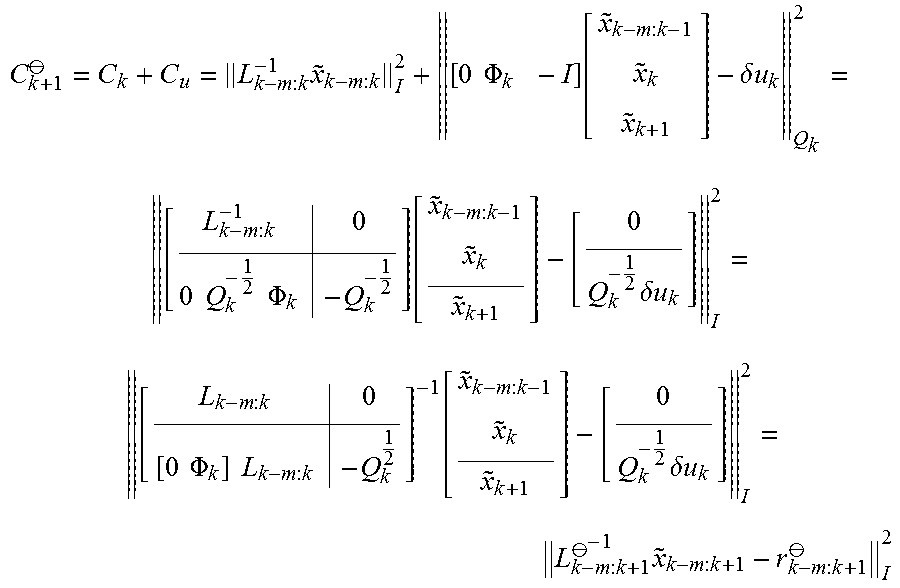

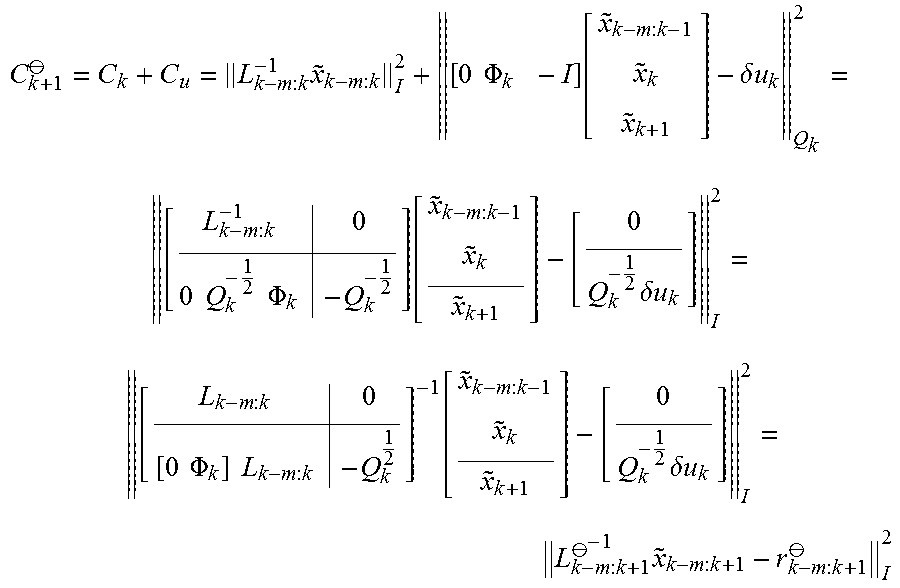

[0043] As one example of the techniques of the disclosure, estimator 22 uses IMU data 18 between consecutive states to propagate state vector 17 along the filter 23. For example, estimator 22 maintains a state vector x comprising poses and other variables of interest over a sliding window of m+2 poses, for a number k of time steps. The estimator 22 maintains the following information in the state vector for the sliding window: the state estimate, {circumflex over (x)}.sub.k-m:k, and the corresponding lower-triangular square-root factor, L.sub.k-m:k of a covariance matrix P.sub.k-m:k such that

P.sub.k-m:k=L.sub.k-m:kL.sub.k-m:k.sup.T

[0044] As described herein, the notation {circumflex over (x)}.sub.k-m:k refers to the state vector comprising all states from time step k-m to k, and similarly for L and P.

[0045] This corresponds to the following cost function:

C.sub.k=.parallel.x.sub.k-m:k-{circumflex over (x)}.sub.k-m:k.parallel..sub.P.sub.k-m:k.sup.2=.parallel.L.sub.k-m:k.sup.- -1{tilde over (x)}.sub.k-m:k.parallel..sub.1.sup.2

where {tilde over (x)}.sub.k-m:k=x.sub.k-m:k-{circumflex over (x)}.sub.k-m:k is the error state at time step k.

[0046] At the next step k+1, a new state, x.sub.k+1 is added into the sliding window of filter 23. In one example, estimator 22 performs state propagation through filter 23 using IMU measurement data between consecutive states x.sub.k and x.sub.k+1. The IMU measurements contribute a linearized cost term of the form:

C u = [ 0 .PHI. k - I ] [ x ~ k - m : k - 1 x ~ k x ~ k + 1 ] - .delta. u k Q k 2 ##EQU00001##

where .PHI..sub.k is the linearized state-transition matrix, .delta.u.sub.k is the residual, and Q.sub.k is the IMU noise covariance matrix of the uncertainty data, respectively. Accordingly, the cost function after propagating the state vector data is:

C k + 1 .crclbar. = C k + C u = L k - m : k - 1 x ~ k - m : k I 2 + [ 0 .PHI. k - I ] [ x ~ k - m : k - 1 x ~ k x ~ k + 1 ] - .delta. u k Q k 2 = [ L k - m : k - 1 0 0 Q k - 1 2 .PHI. k - Q k - 1 2 ] [ x ~ k - m : k - 1 x ~ k x ~ k + 1 ] - [ 0 Q k - 1 2 .delta. u k ] I 2 = [ L k - m : k 0 [ 0 .PHI. k ] L k - m : k - Q k 1 2 ] - 1 [ x ~ k - m : k - 1 x ~ k x ~ k + 1 ] - [ 0 Q k - 1 2 .delta. u k ] I 2 = L k - m : k + 1 .crclbar. - 1 x ~ k - m : k + 1 - r k - m : k + 1 .crclbar. I 2 ##EQU00002##

where Q.sub.k.sup.1/2 is the lower-triangular Cholesky factor of the matrix Q.sub.k, and

L k - m : k + 1 .crclbar. = [ L k - m : k 0 .PHI. k L k - m : k ( k , : ) - Q k 1 2 ] , r k - m : k + 1 .crclbar. = [ 0 Q k - 1 2 .delta. u k ] ##EQU00003##

where L.sub.k-m:k(k,:) denotes the k-th block row of L.sub.k-m:k. Because L.sub.k-m:k and Q.sub.k.sup.1/2 are lower-triangular, L.sub.k-m:k+1.sup..theta. is lower-triangular. Accordingly, the propagated covariance factor of the uncertainty data is given by L.sub.k-m:k+1.sup..theta., which estimator 22 obtains by augmenting the previous prior factor L.sub.k-m:k, as shown above.

[0047] Next, filter 23 of estimator 22 applies an EKF update to update, within the sliding window, each of the state estimates for the VINS and for the features using the IMU data captured throughout the sliding window and the image data obtained at the plurality of poses within the sliding window (106). For example, estimator 22 applies the EKF update to recompute, based on the sliding window of data, the state estimates for the VINS and for the positions of the features with the environment, as represented within the state vector, using (1) the IMU data and the image data associated with features observed at any of the plurality of poses within the sliding window, and (2) the set of computed prior constraints linearly constraining the state estimates for the poses. In some examples, estimator 22 utilizes features associated with all poses within the sliding window. In other examples, estimator 22 may utilizes the budgeting techniques described herein to apply an estimation policy for deciding, which measurements will be processed based on the available computational resources the current EKF update.

[0048] Next, estimator 22 updates, for each of the state estimates, the respective covariance of uncertainty data (108). As described herein, uncertainty data may comprise a square root factor of a covariance matrix. For example, estimator 22 may maintain the uncertainty data in the form of the Cholesky factor of the covariance matrix.

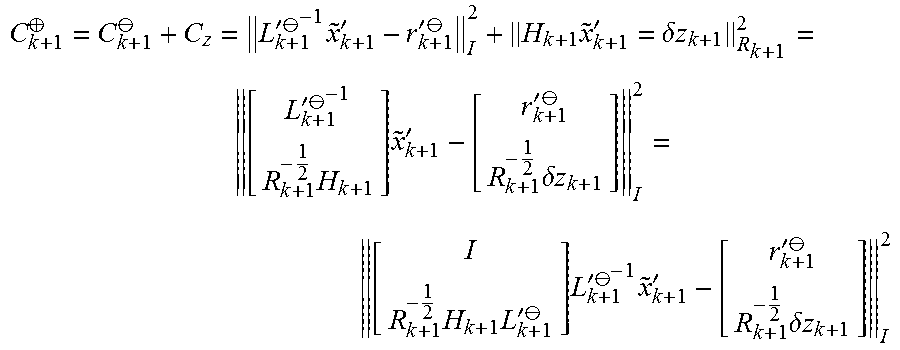

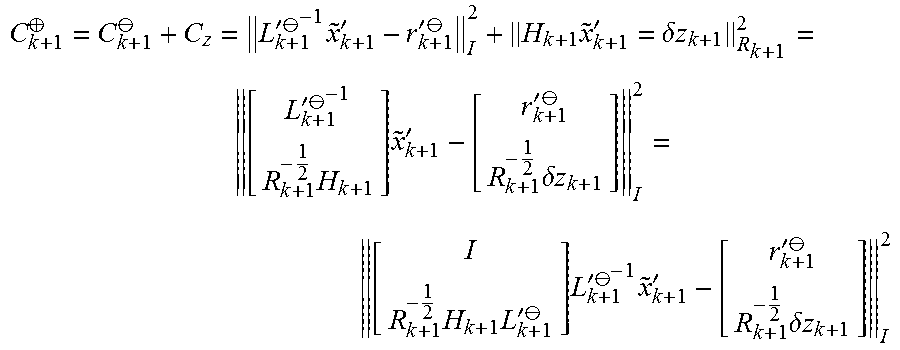

[0049] As one example of the techniques of the disclosure, estimator 22 uses visual observations and image data, such as that obtained with a camera or other image source, to update the state vector and the covariance of uncertainty data. As one example, at time step k+1, the image data contributes a linearized cost term as:

C.sub.z=.parallel.H.sub.k+1{tilde over (x)}.sub.k+1'-.delta.z.sub.k+1.parallel..sub.R.sub.k+1.sup.2

In the foregoing example, to simplify notation, the error state {tilde over (x)}.sub.k-m:k+1 is denoted as {tilde over (x)}.sub.k+1', the square-root factor L.sub.k-m:k+1.sup..theta. is denoted as L'.sub.k+1.sup..theta., and the residual r.sub.k-m:k+1.sup..theta. is denoted as r'.sub.k+1.sup..theta.. Further, in the foregoing example, H.sub.k+1 is the linearized measurement Jacobian, .delta.z.sub.k+1 is the residual, and R.sub.k+1 is the measurement noise covariance matrix, respectively.

[0050] Accordingly, after performing the update, the cost function is:

C k + 1 .sym. = C k + 1 .crclbar. + C z = L k + 1 ' .crclbar. - 1 x ~ k + 1 ' - r k + 1 ' .crclbar. I 2 + H k + 1 x ~ k + 1 ' = .delta. z k + 1 R k + 1 2 = [ L k + 1 ' .crclbar. - 1 R k + 1 - 1 2 H k + 1 ] x ~ k + 1 ' - [ r k + 1 ' .crclbar. R k + 1 - 1 2 .delta. z k + 1 ] I 2 = [ I R k + 1 - 1 2 H k + 1 L k + 1 ' .crclbar. ] L k + 1 ' .crclbar. - 1 x ~ k + 1 ' - [ r k + 1 ' .crclbar. R k + 1 - 1 2 .delta. z k + 1 ] I 2 ##EQU00004##

[0051] Estimator 22 may apply thin QR factorization as follows:

[ I R k + 1 - 1 2 H k + 1 L k + 1 ' .crclbar. ] = QL _ ##EQU00005##

[0052] If L is lower-triangular, then the cost function (ignoring constants) is:

C k + 1 .sym. = Q _ T [ I R k + 1 - 1 2 H k + 1 L k + 1 ' .crclbar. ] L k + 1 ' .crclbar. - 1 x ~ k + 1 ' - Q _ T [ r k + 1 ' .crclbar. R k + 1 - 1 2 .delta. z k + 1 ] I 2 = L _ L k + 1 ' .crclbar. - 1 x ~ k + 1 ' - Q _ T [ r k + 1 ' .crclbar. R k + 1 - 1 2 .delta. z k + 1 ] I 2 = L k + 1 ' .crclbar. - 1 x ~ k + 1 ' - r k + 1 ' .sym. I 2 ##EQU00006## where ##EQU00006.2## L k + 1 ' .sym. = L k + 1 ' .crclbar. L _ - 1 , r k + 1 ' .sym. = Q _ T [ r k + 1 ' .crclbar. R k + 1 - 1 2 .delta. z k + 1 ] ##EQU00006.3##

[0053] With respect to the foregoing equations, because L'.sub.k+1.sup..theta. and L are lower-triangular, L'.sub.k+1.sup..sym. is lower-triangular. Accordingly, the updated covariance factor for the uncertainty data is given as L'.sub.k+1.sup..sym.. Estimator 22 may determine L'k+1.sup..sym. by performing QR factorization (as described above) and solving for L'k+1.sup..sym. using the equations in the preceding paragraph.

[0054] In some examples, estimator 22 obtains the updated residual r'k+1.sup..sym. in place during the QR factorization process, rather than forming Q explicitly. Upon determining the updated factor L'.sub.k+1.sup..sym. and the residual r'.sub.k+1.sup..sym., estimator 22 may update the state estimates of state vector 17 by minimizing the cost function C.sub.k+1.sup..sym. described above, which gives:

{circumflex over (x)}.sub.k+1.sup..sym.={circumflex over (x)}.sub.k+1.sup..theta.+L'.sub.k+1.sup..sym.r'.sub.k+1.sup..sym.

[0055] where {circumflex over (x)}.sub.k+1.sup..theta. and {circumflex over (x)}.sub.k+1.sup..sym. are the state estimates before and after estimator 22 performs the update, respectively.

[0056] In addition, estimator 22 computes updates for the set of prior constraints to be used in the next iteration (110) and marginalizes the oldest keyframe by absorbing the keyframe into the prior of the estimator (112). That this, estimator 22 discards the oldest keyframe from the sliding window and adds a new one to the image processing pipeline.

[0057] As one example of the marginalization process, estimator 22 marginalizes old states within filter 23 to maintain constant computational complexity. In one example, after performing the update process described above for time step k+1, estimator 22 marginalizes the xi component of state vector x.sub.k-m:k+1=[x.sub.1.sup.T x.sub.2.sup.T].sup.T, wherein x.sub.1 includes past poses or features. The techniques of the disclosure contemplate two approaches to marginalizing the old states: 1) an EFK-based derivation, and 2) a cost-function derivation. In accordance with the techniques of the disclosure, each of the two marginalization approaches perform a QR factorization to obtain a marginalized covariance factor L.sub.k+1'=R.sub.2.sup.T. This factor, as well as the state estimate {circumflex over (x)}.sub.2.sup..sym., will serve as the prior information for the subsequent time step.

[0058] In one example of the marginalization process, with respect to the EFK-based derivation, estimator 22 drops corresponding portions of the state estimates and the covariance matrix of the uncertainty data for the marginalized states. For example, assuming that the updated factor L'k+1.sup..sym. has the following structure (since the factor is lower-triangular):

L k + 1 ' .sym. = [ L 11 0 L 21 L 22 ] ##EQU00007##

[0059] Accordingly, the corresponding covariance matrix for the uncertainty data is defined as follows:

P k + 1 ' .sym. = L k + 1 ' .sym. L k + 1 ' .sym. T = [ L 11 L 11 T L 11 L 21 T L 21 L 11 T L 21 L 21 T + L 22 L 22 T ] ##EQU00008##

[0060] Therefore, after removing state x.sub.1 from the state vector, the covariance matrix for the uncertainty data is the (2,2) block element of P'.sub.k+1.sup..sym., as defined by:

P k + 1 ' .sym. ( 2 , 2 ) = L 21 L 21 T + L 22 L 22 T = [ L 21 L 22 ] [ L 21 T L 22 T ] ##EQU00009##

[0061] Estimator 22 may apply the following thin QR factorization:

[ L 21 T L 22 T ] = Q 2 R 2 ##EQU00010##

where R.sub.2 is upper-triangular, which results in:

P'.sub.k+1.sup..sym.(2,2)=R.sub.2.sup.TR.sub.2

Accordingly, after performing marginalization of the old state vector data, the lower-triangular covariance factor is obtained by performing the above QR factorization and is given by:

L.sub.k+1'R.sub.2.sup.T

[0062] As another aspect of the marginalization process, with respect to the cost-function derivation, the updated error state may be defined as:

[ x ~ 1 .sym. x ~ 2 .sym. ] = [ x 1 x 2 ] - [ x ^ 1 .sym. x ^ 2 .sym. ] ##EQU00011##

such that the cost function becomes:

C k + 1 .sym. = L k + 1 ' .sym. - 1 [ x ~ 1 .sym. x ~ 2 .sym. ] I 2 ##EQU00012##

[0063] Accordingly, estimator 22 determines a square orthonormal matrix U, with U.sup.TU=UU.sup.T=I, such that U.sup.TL'k+1.sup..sym..sup.-1 has a block upper-triangular structure. The techniques of the disclosure make use of the fact that the inverse of the updated factor L'.sub.k+1.sup..sym..sup.-1 of matrix U may be represented as:

L k + 1 ' .sym. - 1 = [ L 11 0 L 21 L 22 ] - 1 = [ L 11 - 1 0 - L 22 - 1 L 21 L 11 - 1 L 22 - 1 ] = [ I 0 - L 22 - 1 L 21 I ] [ L 11 - 1 0 0 L 22 - 1 ] ##EQU00013##

[0064] Defining U'=[U.sub.1' U.sub.2'], estimator 22 selects U.sub.1' to span the same column space of the first block column of L'.sub.k+1.sup..sym..sup.-1 and further selects Uz to span the left null space such that:

U 1 ' = [ I - L 22 - 1 L 21 ] , U 2 ' = [ L 21 T L 22 T ] ##EQU00014##

[0065] Accordingly, U.sub.1' and U.sub.2' are orthogonal to one another (e.g., U.sub.1'.sup.T U.sub.2'=0) and the resulting U'.sup.TL'.sub.k+1.sup..sym..sup.-1 is block upper-triangular.

[0066] Next, given U', estimator 22 selects block columns of U to be orthonormal bases of U.sub.1' and U'.sub.2 as follows:

U 1 ' = [ I - L 22 - 1 L 21 ] = Q 1 R 1 Q 1 = [ I - L 22 - 1 L 21 ] R 1 - 1 ##EQU00015## U 2 ' = [ L 21 T L 22 T ] = Q 2 R 2 Q 2 = [ L 21 T L 22 T ] R 2 - 1 ##EQU00015.2##

Following the above, U is given as:

U = [ Q 1 Q 2 ] = [ I L 21 T - L 22 - 1 L 22 T ] [ R 1 - 1 0 0 R 2 - 1 ] ##EQU00016##

As described herein, matrix U is orthonormal because both Q.sub.1 and Q.sub.2 are orthonormal and orthogonal to one another. Further, U.sup.TL'.sub.k+1.sup..sym..sup.-1 is block upper-triangular such that:

U T L k + 1 ' .sym. - 1 = [ R 1 - T 0 0 R 2 - T ] [ I - L 21 T L 22 - T L 21 L 22 ] [ I 0 - L 22 - 1 L 21 I ] [ L 11 - 1 0 0 L 22 - 1 ] = [ R 1 - T 0 0 R 2 - T ] [ I + L 21 T L 22 - T L 22 - 1 L 21 - L 21 T L 22 - T 0 L 22 ] [ L 11 - 1 0 0 L 22 - 1 ] = [ R 1 L 11 - 1 R 1 - T L 21 T L 22 - T L 22 - 1 0 R 2 - T ] ##EQU00017##

[0067] Given the above orthonormal matrix U, the cost function may be written as:

C k + 1 .sym. = L k + 1 ' .sym. - 1 [ x ~ 1 .sym. x ~ 2 .sym. ] I 2 = U T L k + 1 ' .sym. - 1 [ x ~ 1 .sym. x ~ 2 .sym. ] I 2 = [ R 1 L 11 - 1 R 1 - T L 21 T L 22 - T L 22 - 1 0 R 2 - T ] [ x ~ 1 .sym. x ~ 2 .sym. ] I 2 = R 1 L 11 - 1 x ~ 1 .sym. - R 1 - T L 21 T L 22 - t L 22 - 1 x ~ 2 .sym. I 2 + R 2 - T x ~ 2 .sym. I 2 ##EQU00018##

[0068] Estimator 22 may perform marginalization by removing {tilde over (x)}.sub.1.sup..sym. from the foregoing cost function. In other words, estimator 22 may minimize {tilde over (x)}.sub.1.sup..sym. with respect to the cost function as follows:

min x ~ 1 .sym. C k + 1 .sym. = min x ~ 1 .sym. R 1 L 11 - 1 x ~ 1 .sym. - R 1 - T L 21 T L 22 - T L 22 - 1 x ~ 2 .sym. I 2 + R 2 - T x ~ 2 .sym. I 2 = R 2 - T x ~ 2 .sym. I 2 ##EQU00019##

[0069] Because R.sub.1L.sub.11.sup.-1 is invertible, for any {tilde over (x)}.sub.2.sup..sym., there exists an {tilde over (x)}.sub.1.sup..sym. that makes the first cost term in the preceding paragraph zero. Accordingly, the simplified cost function in the preceding paragraph, which involves only {tilde over (x)}.sub.2.sup..sym., contains sufficient information for estimating {tilde over (x)}.sub.2.sup..sym. after marginalization.

[0070] Based on the computed state estimates, estimator 22 may further output a navigation user interface, e.g., a map, e.g., a 2D or 3D map, of the environment overlaid with the position and/or orientation of the frame of reference (114). The map may, for example, construct the user interface to include position and orientation information for the VINS along the trajectory relative to position information for any landmarks observed by the VINS. The user interface may be displayed, stored, used for subsequent navigation and the like.

[0071] FIG. 4 is an illustration depicting a detailed example of various devices that may be configured to implement some embodiments in accordance with the current disclosure. For example, device 500 may be a robot, mobile sensing platform, a mobile phone, a wearable device such as a smartphone or smart watch, a workstation, a computing center, a cluster of servers or other example embodiments of a computing environment, centrally located or distributed, capable of executing the techniques described herein. Any or all of the devices may, for example, implement portions of the techniques described herein for vision-aided inertial navigation systems.

[0072] In this example, a computer 500 includes a hardware-based processor 510 that may be implemented within VINS 10 or any device to execute program instructions or software, causing the computer to perform various methods or tasks, such as performing the techniques described herein. Processor 510 may be a general-purpose processor, a digital signal processor (DSP), a core processor within an Application Specific Integrated Circuit (ASIC) and the like. Processor 510 is coupled via bus 520 to a memory 530, which is used to store information such as program instructions and other data while the computer is in operation. A storage device 540, such as a hard disk drive, nonvolatile memory, or other non-transient storage device stores information such as program instructions, data files of the multidimensional data and the reduced data set, and other information. As another example, computer 500 may provide an operating environment for execution of one or more virtual machines that, in turn, provide an execution environment for software for implementing the techniques described herein.

[0073] The computer also includes various input-output elements 550, including parallel or serial ports, USB, Firewire or IEEE 1394, Ethernet, and other such ports to connect the computer to external device such a printer, video camera, surveillance equipment or the like. Other input-output elements include wireless communication interfaces such as Bluetooth, Wi-Fi, and cellular data networks.

[0074] The following examples illustrate one or more of the techniques described herein:

[0075] Example 1. A VINS comprising: at least one image source to produce image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; a motion sensor configured to provide motion data of the frame of reference in the environment for the period of time; and a hardware-based processor communicatively coupled to the image source and communicatively coupled to the motion sensor, the processor configured to compute estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory, wherein the processor executes an Extended Kalman Filter (EKF)-based estimator configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the EKF-based estimator maintains the uncertainty data to comprise a square root factor of a covariance matrix.

[0076] Example 2. The VINS of example 1, wherein, to determine the uncertainty data for the state estimates, the EKF-based estimator is further configured to determine a Cholesky factor of the covariance matrix as the uncertainty data.

[0077] Example 3. The VINS of example 1, wherein, when executing the EFK-based estimator, the processor: maintains a state vector state information for the positions and orientations of the VINS and for positions with the environment for features observed within the environment; and excludes, from the state vector, state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0078] Example 4. The VINS of example 1, wherein the covariance matrix, from which the square root factor is determined, excludes the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0079] Example 5. The VINS of example 1, wherein, when executing the EFK-based estimator, the processor: determines, from the image data, feature measurements corresponding to the features observed from the poses along the trajectory; groups the feature measurements according to the features observed within the image data; and for the one or more of the features observed from multiple poses along the trajectory, computes based on the respective group of feature measurements for the feature, the one or more constraints that geometrically relate the multiple poses from which the respective feature was observed.

[0080] Example 6. The VINS of example 1, wherein the image source includes a camera, and wherein the motion sensor includes an inertial measurement unit (IMU).

[0081] Example 7. The VINS of example 1, wherein the vision-aided inertial navigation system comprises one of a robot, a vehicle, a tablet, a mobile device or a wearable computing device.

[0082] Example 8. The VINS of example 1 further including at least one of a transmitter or a display for outputting a navigation user interface based on the computed state estimates.

[0083] Example 9. A method comprising: receiving, with a processor of a VINS and from at least one image source of the VINS, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receiving, with the processor and from a motion sensor communicatively coupled to the processor, motion data of the frame of reference in the environment for the period of time; computing, with the processor, state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein computing the state estimates comprises: for one or more of the features observed from multiple poses along the trajectory, computing one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determining, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determining uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

[0084] Example 10. The method of example 9, wherein determining the uncertainty data for the state estimates comprises determining a Cholesky factor of the covariance matrix as the uncertainty data.

[0085] Example 11. The method of example 9, wherein executing the EFK-based estimator further comprises: maintaining a state vector state information for the positions and orientations of the VINS and for positions with the environment for features observed within the environment; and excluding, from the state vector, state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0086] Example 12. The method of example 9, further comprising excluding, from the covariance matrix from which the square root factor is determined, the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0087] Example 13. The method of example 9, wherein executing the EFK-based estimator further comprises: determining, from the image data, feature measurements corresponding to the features observed from the poses along the trajectory; grouping the feature measurements according to the features observed within the image data; and for the one or more of the features observed from multiple poses along the trajectory, computing based on the respective group of feature measurements for the feature, the one or more constraints that geometrically relate the multiple poses from which the respective feature was observed.

[0088] Example 14. The method of example 9, wherein the image source includes a camera, and wherein the motion sensor includes an inertial measurement unit (IMU).

[0089] Example 15. The method of example 9, wherein the VINS comprises one of a robot, a vehicle, a tablet, a mobile device or a wearable computing device.

[0090] Example 16. The method of example 9, further comprising outputting, by at least one of a transmitter or a display, a navigation user interface based on the computed state estimates.

[0091] Example 17. A non-transitory computer-readable medium comprising instructions that, when executed, cause a processor of a VINS to: receive, from at least one image source of the VINS, image data for a plurality of poses of a frame of reference along a trajectory within an environment over a period of time, wherein the image data includes features that were each observed within the environment at poses of the frame of reference along the trajectory, and wherein one or more of the features were each observed at multiple ones of the poses of the frame of reference along the trajectory; receive, from a motion sensor communicatively coupled to the processor, motion data of the frame of reference in the environment for the period of time; compute, state estimates for at least a position and orientation of the frame of reference for each of the plurality of poses of the frame of reference along the trajectory by executing an Extended Kalman Filter (EKF)-based estimator, wherein, to compute the state estimates, the processor is further configured to: for one or more of the features observed from multiple poses along the trajectory, compute one or more constraints that geometrically relate the multiple poses from which the respective feature was observed; determine, in accordance with the motion data and the one or more computed constraints, state estimates for the position and orientation of the frame of reference for each of the plurality of poses along the trajectory; and determine uncertainty data for the state estimates, wherein the uncertainty data comprises a square root factor of a covariance matrix.

[0092] Example 18. The computer-readable medium of example 17, wherein, to determine the uncertainty data for the state estimates, the instructions are further configured to cause the processor to determine a Cholesky factor of the covariance matrix as the uncertainty data.

[0093] Example 19. The computer-readable medium of example 17, wherein, to execute the EFK-based estimator, the instructions are further configured to cause the processor to: maintain a state vector state information for the positions and orientations of the VINS and for positions with the environment for features observed within the environment; and exclude, from the state vector, state information representing estimates for positions within the environment for the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0094] Example 20. The computer-readable medium of example 17, wherein the instructions are further configured to cause the processor to exclude, from the covariance matrix from which the square root factor is determined, the features that were each observed from the multiple poses and for which the one or more constraints were computed.

[0095] The computer itself may be a traditional personal computer, a rack-mount or business computer or server, or any other type of computerized system. The computer in a further example may include fewer than all elements listed above, such as a thin client or mobile device having only some of the shown elements. In another example, the computer is distributed among multiple computer systems, such as a distributed server that has many computers working together to provide various functions.

[0096] The techniques described herein may be implemented in hardware, software, firmware, or any combination thereof. Various features described as modules, units or components may be implemented together in an integrated logic device or separately as discrete but interoperable logic devices or other hardware devices. In some cases, various features of electronic circuitry may be implemented as one or more integrated circuit devices, such as an integrated circuit chip or chipset.

[0097] If implemented in hardware, this disclosure may be directed to an apparatus such a processor or an integrated circuit device, such as an integrated circuit chip or chipset. Alternatively, or additionally, if implemented in software or firmware, the techniques may be realized at least in part by a computer readable data storage medium comprising instructions that, when executed, cause one or more processors to perform one or more of the methods described above. For example, the computer-readable data storage medium or device may store such instructions for execution by a processor. Any combination of one or more computer-readable medium(s) may be utilized.

[0098] A computer-readable storage medium (device) may form part of a computer program product, which may include packaging materials. A computer-readable storage medium (device) may comprise a computer data storage medium such as random access memory (RAM), read-only memory (ROM), non-volatile random access memory (NVRAM), electrically erasable programmable read-only memory (EEPROM), flash memory, magnetic or optical data storage media, and the like. In general, a computer-readable storage medium may be any tangible medium that can contain or store a program for use by or in connection with an instruction execution system, apparatus, or device. Additional examples of computer readable medium include computer-readable storage devices, computer-readable memory, and tangible computer-readable medium. In some examples, an article of manufacture may comprise one or more computer-readable storage media.

[0099] In some examples, the computer-readable storage media may comprise non-transitory media. The term "non-transitory" may indicate that the storage medium is not embodied in a carrier wave or a propagated signal. In certain examples, a non-transitory storage medium may store data that can, over time, change (e.g., in RAM or cache).

[0100] The code or instructions may be software and/or firmware executed by processing circuitry including one or more processors, such as one or more digital signal processors (DSPs), general purpose microprocessors, application-specific integrated circuits (ASICs), field-programmable gate arrays (FPGAs), or other equivalent integrated or discrete logic circuitry. Accordingly, the term "processor," as used herein may refer to any of the foregoing structure or any other processing circuitry suitable for implementation of the techniques described herein. In addition, in some aspects, functionality described in this disclosure may be provided within software modules or hardware modules.

* * * * *

D00000

D00001

D00002

D00003

D00004

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.