Mobile Three-dimensional Measuring Instrument

Zweigle; Oliver ; et al.

U.S. patent application number 16/240843 was filed with the patent office on 2019-05-09 for mobile three-dimensional measuring instrument. The applicant listed for this patent is FARO Technologies, Inc.. Invention is credited to Bernd-Dietmar Becker, Robert E. Bridges, Hamidreza Rajaie, Oliver Zweigle.

| Application Number | 20190137627 16/240843 |

| Document ID | / |

| Family ID | 56937290 |

| Filed Date | 2019-05-09 |

View All Diagrams

| United States Patent Application | 20190137627 |

| Kind Code | A1 |

| Zweigle; Oliver ; et al. | May 9, 2019 |

MOBILE THREE-DIMENSIONAL MEASURING INSTRUMENT

Abstract

A mobile three-dimensional (3D) measuring system includes a 3D measuring device, a multi-legged stand coupled to the 3D measuring device, and a motorized dolly detachably coupled to the multi-legged stand.

| Inventors: | Zweigle; Oliver; (Stuttgart, DE) ; Becker; Bernd-Dietmar; (Ludwigsburg, DE) ; Rajaie; Hamidreza; (Stuttgart, DE) ; Bridges; Robert E.; (Kennett Square, PA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 56937290 | ||||||||||

| Appl. No.: | 16/240843 | ||||||||||

| Filed: | January 7, 2019 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15084751 | Mar 30, 2016 | 10175360 | ||

| 16240843 | ||||

| 62140706 | Mar 31, 2015 | |||

| 62140716 | Mar 31, 2015 | |||

| 62140733 | Mar 31, 2015 | |||

| 62140743 | Mar 31, 2015 | |||

| 62140756 | Mar 31, 2015 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04W 84/12 20130101; G01S 17/86 20200101; G01S 17/48 20130101; G01S 17/42 20130101; H04W 4/70 20180201; G01S 17/66 20130101; G01S 17/36 20130101; G01S 17/89 20130101; G01S 7/4813 20130101; G01S 17/10 20130101; G01B 11/2518 20130101 |

| International Class: | G01S 17/89 20060101 G01S017/89; G01S 17/66 20060101 G01S017/66; G01S 17/02 20060101 G01S017/02; G01S 7/481 20060101 G01S007/481; G01B 11/25 20060101 G01B011/25; H04W 4/70 20060101 H04W004/70; G01S 17/42 20060101 G01S017/42 |

Claims

1. A mobile three-dimensional (3D) measuring system, comprising: a 3D measuring device; a stand having a plurality of legs coupled to the 3D measuring device, wherein the stand includes a plurality of motorized wheels configured to propel and turn the stand under computer system control; and a motorized dolly detachably coupled to the stand; a position sensor configured to obtain a position of the 3D measuring device; a computer system in communication with the 3D measuring system, wherein the computer system is configured to control the motorized dolly based at least in part on data from the position sensor.

2. The system of claim 1, wherein the motorized dolly is configured to move forward or backward and to rotate.

3. The system of claim 1, wherein the motorized dolly is configured to fold into a compact shape.

4. The system of claim 1, wherein the 3D measuring device is a time-of-flight scanner.

5. The system of claim 1, further including a sensor configured for obstacle avoidance.

6. The system of claim 5, wherein the sensor is selected from the group consisting of a two-dimensional (2D) scanner, a 3D scanner that directs light in a horizontal plane, a 2D camera, a 3D depth camera, and an ultrasound sensor.

7. The system of claim 4, wherein the 3D measuring device emits a collimated beam of light.

8. The system of claim 4, wherein the 3D measuring device emits a focused beam of light.

9. The system of claim 8, wherein the 3D measuring device is configured to measure 3D coordinates of a tooling ball.

10. The system of claim 1, wherein the 3D measuring device is a laser tracker or a total station.

11. The system of claim 10, wherein the 3D measuring device is configured to measure 3D coordinates of a retroreflector.

12. The system of claim 11, wherein the 3D measuring device is further configured to measure 3D coordinates of a diffuse surface.

13. The system of claim 1, wherein the 3D measuring device is further configured for wireless communication, wherein the wireless communication is based on at least one of an IEEE 802.11 standard or a cellular communication.

14. The system of claim 1, wherein the stand is a tripod.

15. The system of claim 1, wherein the motorized dolly includes two wheels that turn, each wheel connected to an axle driven by a motor, wherein a rotation of the motor is measured by an angular encoder.

16. The system of claim 15, wherein the motorized dolly includes independent motors attached to each axle.

17. The system of claim 1, wherein the motorized dolly further includes one or more extensions to which a sensor may be attached.

18. A method, comprising: providing a mobile three-dimensional (3D) measuring system including a 3D time-of-flight (TOF) scanner coupled to a first motorized stand and to a plurality of targets, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control; providing a first image sensor in an environment; providing a computer system in communication with the mobile 3D measuring system and the first image sensor; obtaining with the first image sensor a first image of the plurality of targets; determining by the computer system a first position of the mobile 3D measuring system within an environment frame of reference, the first position based at least in part on the first image; providing the first position to the mobile 3D measuring system; and determining by the mobile 3D measuring system its position within the environment frame of reference based at least in part on the provided first position; projecting a beam of scanner light from the 3D TOF scanner onto an object; measuring with the 3D TOF scanner, in response to the projected beam of scanner light, first 3D coordinates of a first point on the object; determining 3D coordinates of the first point in the environment frame of reference based at least in part on the first 3D coordinates of the first point and on the first position of the mobile 3D measuring system in the environment frame of reference; and storing the first 3D coordinates of the first point in the environment frame of reference.

19. A mobile three-dimensional (3D) measuring system, comprising: a 3D measuring device; a stand having a plurality of legs coupled to the 3D measuring device, wherein the stand includes a plurality of motorized wheels configured to propel and turn the stand under computer system control; and a motorized dolly detachably coupled to the stand; an image sensor configured to obtain an image from a plurality of targets; a computer system in communication with the 3D measuring system and the image sensor, wherein the computer system is configured to control the motorized dolly.

20. The system of claim 19, wherein the computer system is configured to control the 3D measuring system upon completion of a first scan by the 3D measuring device at a first position, perform a second scan when the 3D measuring system beings to move to a second position.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/084,751 filed Mar. 30, 2016 which claims the benefit of U.S. Application No. 62/140,706 filed Mar. 31, 2015, of U.S. Application No. 62/140,716, filed Mar. 31, 2015, of U.S. Application No. 62/140,733, filed Mar. 31, 2015, of U.S. Application No. 62/140,743, filed Mar. 31, 2015, and of U.S. Application No. 62/140,756, filed Mar. 31, 2015, the contents all of which are incorporated by reference.

BACKGROUND OF THE INVENTION

[0002] The present disclosure relates to a motorized tripod, which may be used to hold a three-dimensional (3D) measuring instrument. The subject matter disclosed herein relates particularly to a 3D laser scanner time-of-flight (TOF) coordinate measurement device. A 3D laser scanner of this type steers a beam of light to a non-cooperative target such as a diffusely scattering surface of an object. A distance meter in the device measures a distance to the object, and angular encoders measure the angles of rotation of two axles in the device. The measured distance and two angles enable a processor in the device to determine the 3D coordinates of the target.

[0003] A TOF laser scanner (or simply TOF scanner) is a scanner in which the distance to a target point is determined based on the speed of light in air between the scanner and a target point. Laser scanners are typically used for scanning closed or open spaces such as interior areas of buildings, industrial installations and tunnels. They may be used, for example, in industrial applications and accident reconstruction applications. A laser scanner optically scans and measures objects in a volume around the scanner through the acquisition of data points representing object surfaces within the volume. Such data points are obtained by transmitting a beam of light onto the objects and collecting the reflected or scattered light to determine the distance, two-angles (i.e., an azimuth and a zenith angle), and optionally a gray-scale value. This raw scan data is collected, stored and sent to a processor or processors to generate a 3D image representing the scanned area or object.

[0004] Generating an image requires at least three values for each data point. These three values may include the distance and two angles, or may be transformed values, such as the x, y, z coordinates. In an embodiment, an image is also based on a fourth gray-scale value, which is a value related to irradiance of scattered light returning to the scanner.

[0005] Most TOF scanners direct the beam of light within the measurement volume by steering the light with a beam steering mechanism. The beam steering mechanism includes a first motor that steers the beam of light about a first axis by a first angle that is measured by a first angular encoder (or other angle transducer). The beam steering mechanism also includes a second motor that steers the beam of light about a second axis by a second angle that is measured by a second angular encoder (or other angle transducer).

[0006] Many contemporary laser scanners include a camera mounted on the laser scanner for gathering camera digital images of the environment and for presenting the camera digital images to an operator of the laser scanner. By viewing the camera images, the operator of the scanner can determine the field of view of the measured volume and adjust settings on the laser scanner to measure over a larger or smaller region of space. In addition, the camera digital images may be transmitted to a processor to add color to the scanner image. To generate a color scanner image, at least three positional coordinates (such as x, y, z) and three color values (such as red, green, blue "RGB") are collected for each data point.

[0007] A 3D image of a scene may require multiple scans from different registration positions. The overlapping scans are registered in a joint coordinate system, for example, as described in U.S. Published Patent Application No. 2012/0069352 ('352), the contents of which are incorporated herein by reference. Such registration is performed by matching targets in overlapping regions of the multiple scans. The targets may be artificial targets such as spheres or checkerboards or they may be natural features such as corners or edges of walls. Some registration procedures involve relatively time-consuming manual procedures such as identifying by a user each target and matching the targets obtained by the scanner in each of the different registration positions. Some registration procedures also require establishing an external "control network" of registration targets measured by an external device such as a total station. The registration method disclosed in '352 eliminates the need for user matching of registration targets and establishing of a control network.

[0008] A TOF laser scanner is usually mounted on a tripod or instrument stand while measuring 3D coordinates of its surroundings. An operator is required to move the tripod from location to location as measurements are taken. In many cases, post-processing is required to properly register the 3D coordinate data. The operational and post-processing steps can be time consuming.

[0009] Accordingly, while existing 3D scanners are suitable for their intended purposes, there is a need for apparatus and methods providing greater efficiency in 3D measurement according to certain features of embodiments of the present invention.

BRIEF DESCRIPTION OF THE INVENTION

[0010] According to one aspect of the invention, a mobile three-dimensional (3D) measuring system includes a 3D measuring device; a multi-legged stand coupled to the 3D measuring device; and a motorized dolly detachably coupled to the multi-legged stand.

[0011] According to a further aspect of the invention, a method includes: providing a mobile three-dimensional (3D) measuring system including a 3D time-of-flight (TOF) scanner coupled to a first motorized stand and to a plurality of targets, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control; providing a first image sensor in an environment; providing a computer system in communication with the mobile 3D measuring system and the first image sensor; obtaining with the first image sensor a first image of the plurality of targets; determining by the computer system a first position of the mobile 3D measuring system within an environment frame of reference, the first position based at least in part on the first image; providing the first position to the mobile 3D measuring system; and determining by the mobile 3D measuring system its position within the environment frame of reference based at least in part on the provided first position; projecting a beam of scanner light from the 3D TOF scanner onto an object; measuring with the 3D TOF scanner, in response to the projected beam of scanner light, first 3D coordinates of a first point on the object; determining 3D coordinates of the first point in the environment frame of reference based at least in part on the first 3D coordinates of the first point and on the first position of the mobile 3D measuring system in the environment frame of reference; and storing the first 3D coordinates of the first point in the environment frame of reference.

[0012] According to a further aspect of the invention, a method includes: providing a mobile three-dimensional (3D) measuring system including a 3D time-of-flight (TOF) scanner coupled to a first retroreflector, a second retroreflector, a third retroreflector, and a first motorized stand, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control; providing a 3D measuring device in a first device frame of reference, the 3D measuring device configured to steer a device beam of light onto a retroreflector target, to measure a distance, a first angle, and a second angle from the 3D measuring device to the retroreflector target, and to determine 3D coordinates of the retroreflector target based at least on the measured distance, the measured first angle, and the measured second angle; activating the first motorized stand to move the mobile 3D measuring system to a first position; projecting a beam of scanner light from the 3D TOF scanner onto an object; measuring with the 3D TOF scanner, in response to the projected beam of scanner light, first 3D coordinates of a first point on the object; measuring with the 3D measuring device first 3D coordinates of the first retroreflector, first 3D coordinates of the second retroreflector, and first 3D coordinates of the third retroreflector; determining registered 3D coordinates of the first point in the first device frame of reference based at least in part on the measured first 3D coordinates of the first point on the object, the measured first 3D coordinates of the first retroreflector, the measured first 3D coordinates of the second retroreflector, and the measured first 3D coordinates of the third retroreflector; and storing the registered 3D coordinates of the first point.

[0013] According to a further aspect of the invention, a method includes: providing a mobile three-dimensional (3D) measuring system including a 3D scanner coupled to a first retroreflector, a second retroreflector, a third retroreflector, and a first motorized stand, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control, the 3D scanner including a projector and a camera, the projector configured to project a pattern of light, the camera separated from the projector by a baseline distance; providing a 3D measuring device in a first device frame of reference, the 3D measuring device configured to steer a beam of light onto a retroreflector target, to measure a distance, a first angle, and a second angle from the 3D measuring device to the retroreflector target, and to determine 3D coordinates of the retroreflector target based at least on the measured distance, the measured first angle, and the measured second angle; activating the first motorized stand to move the mobile 3D measuring system to a first position; projecting with the projector a first pattern of light onto an object; capturing with the camera a first image of the first pattern of light on the object; determining first measured 3D coordinates of a first point on the object based at least in part on the projected first pattern of light, the captured first image, and the baseline distance; with the 3D measuring device, determining in the first device frame of reference first 3D coordinates of the first retroreflector, first 3D coordinates of the second retroreflector, and first 3D coordinates of the third retroreflector; determining registered 3D coordinates of the first point in the first device frame of reference based at least in part on the first measured 3D coordinates of the first point, the first 3D coordinates of the first retroreflector, the first 3D coordinates of the second retroreflector, and the first 3D coordinates of the third retroreflector; and storing the registered 3D coordinates of the first point.

[0014] According to a further aspect of the invention, a method includes: providing a mobile three-dimensional (3D) measuring system including a 3D scanner coupled to a first six degree-of-freedom (six-DOF) retroreflector target and a first motorized stand, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control, the 3D scanner including a projector and a camera, the projector configured to project a pattern of light, the camera separated from the projector by a baseline distance; providing a six-DOF laser tracker configured to determine six degrees of freedom of a six-DOF retroreflector target; activating the first motorized stand to move the mobile 3D measuring system to a first position; projecting with the projector a first pattern of light onto an object; capturing with the camera a first image of the first pattern of light on the object; determining first measured 3D coordinates of a first point on the object based at least in part on the projected first pattern of light, the captured first image, and the baseline distance; with the six-DOF laser tracker, determining in a first device frame of reference first six-DOF values of the first six-DOF retroreflector target; determining registered 3D coordinates of the first point in the first device frame of reference based at least in part on the first measured 3D coordinates of the first point and the first six-DOF values of the first six-DOF retroreflector target; and storing the registered 3D coordinates of the first point.

[0015] According to a further aspect of the invention, a method includes: providing a mobile three-dimensional (3D) measuring system including a 3D scanner coupled to a first motorized stand and to a plurality of targets, the first motorized stand including a plurality of motorized wheels configured to propel and turn the first motorized stand under computer control, the 3D scanner including a projector and a camera, the projector configured to project a pattern of light, the camera separated from the projector by a scanner baseline distance; providing a computer system in communication with the mobile 3D measuring system; providing a first image sensor in an environment frame of reference; obtaining with the first image sensor a first image of the plurality of targets; determining by the computer system a first position of the mobile 3D measuring system within the environment frame of reference based at least in part on the first image; providing the first position to the mobile 3D measuring system; determining by the mobile 3D measuring system its position within the environment frame of reference based at least in part on the first position; and storing the determined position of the 3D measuring system within the environment frame of reference.

[0016] According to a further aspect of the invention, a method, includes: providing a mobile three-dimensional (3D) measuring system that includes a 3D measuring device and a motorized stand; moving, with the motorized stand, the mobile 3D measuring system to a first position, measuring with the 3D measuring device 3D coordinates of a first point on a diffusely scattering surface, and measuring with the 3D measuring device first 3D coordinates of at least three cooperative targets; moving, with the motorized stand, the mobile 3D measuring system to a second position, measuring with the 3D measuring device 3D coordinates of a second point on the diffusely scattering surface, and measuring with the 3D measuring device second 3D coordinates of the at least three cooperative targets; determining 3D coordinates of the first point and the second point in a common frame of reference based at least in part on the measured 3D coordinates of the first point, the measured 3D coordinates of the second point, the measured first 3D coordinates of the at least three cooperative targets at the first position, and the measured second 3D coordinates of the at least three cooperative targets measured at the second position; and storing the determined 3D coordinates of the first point and the second point in the common frame of reference.

[0017] According to a further aspect of the invention, a method includes: providing a plurality of mobile three-dimensional (3D) measuring systems, each system including a 3D measuring device and a motorized stand, each 3D measuring device configured to project light onto a point and to determine 3D coordinates of the point by measuring a distance, a first angle, and a second angle to the point; providing a user interface configured to enable user control of the plurality of mobile 3D measuring systems; and by an operator, concurrently directing actions of the plurality of mobile 3D measuring systems through the user interface.

[0018] According to a further aspect of the invention, a method includes: providing a mobile 3D measuring system including a 3D measuring device and a motorized stand, the 3D measuring device configured to project light onto a point and to determine 3D coordinates of the point by measuring a distance, a first angle, and a second angle to the point; activating the motorized stand to locate building elements in need of trim; measuring with the mobile 3D measuring system a size and shape of each required trim element; and providing a link between building location and trim dimensions.

[0019] According to a further aspect of the invention, a method includes: providing a mobile 3D measuring system including a 3D measuring device and a motorized stand, the 3D measuring device configured to project light onto a point and to determine 3D coordinates of the point by measuring a distance, a first angle, and a second angle to the point; measuring with the mobile 3D measuring system a size and shape of a floor region; and cutting a floor covering to the measured sizes and shape of the floor region.

[0020] According to a further aspect of the invention, a method includes: providing a mobile 3D measuring system including a 3D measuring device and a motorized stand, the 3D measuring device configured to project light onto a point and to determine 3D coordinates of the point by measuring a distance, a first angle, and a second angle to the point; obtaining electronics design plans; scanning a room with the mobile 3D measuring system to obtain measured 3D information; and generating electronic information giving detailed shape and size of the countertops based at least in part on the electronics design plans and the measured 3D information.

[0021] According to a further aspect of the invention, a method includes: providing a mobile 3D measuring system including a 3D measuring device and a motorized stand, the motorized stand configured to move the 3D measuring device under computer control, the 3D measuring device configured to project light onto a point and to determine 3D coordinates of the point by measuring a distance, a first angle, and a second angle to the point, the 3D measuring device further configured to emit a visible beam of light as a pointer beam; measuring with the 3D measuring device 3D coordinates of objects in an environment; providing electronic plans indicating a desired location of a construction installation line within the environment; and emitting with the 3D measuring device the visible beam of light to illuminate the construction installation line in the environment, the illuminated construction installation line positioned within the environment based at least in part on measured 3D coordinates of objects in the environment and on the electronic plans.

[0022] These and other advantages and features will become more apparent from the following description taken in conjunction with the drawings.

BRIEF DESCRIPTION OF THE DRAWINGS

[0023] The subject matter, which is regarded as the invention, is particularly pointed out and distinctly claimed in the claims at the conclusion of the specification. The foregoing and other features, and advantages of the invention are apparent from the following detailed description taken in conjunction with the accompanying drawings in which:

[0024] FIG. 1 is a perspective view of a laser scanner in accordance with an embodiment of the invention;

[0025] FIG. 2 is a side view of the laser scanner illustrating a method of measurement;

[0026] FIG. 3 is a schematic illustration of the optical, mechanical, and electrical components of the laser scanner;

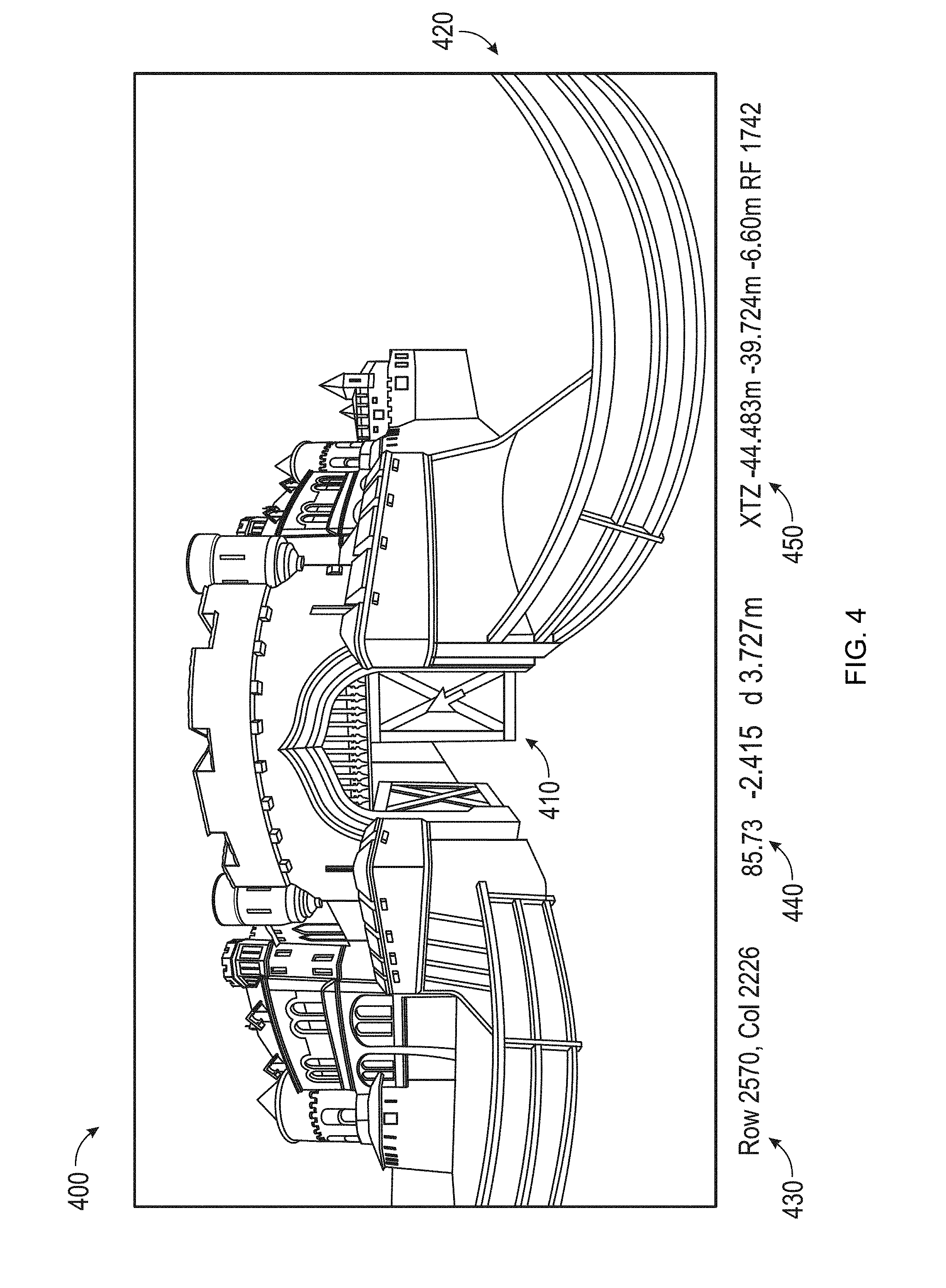

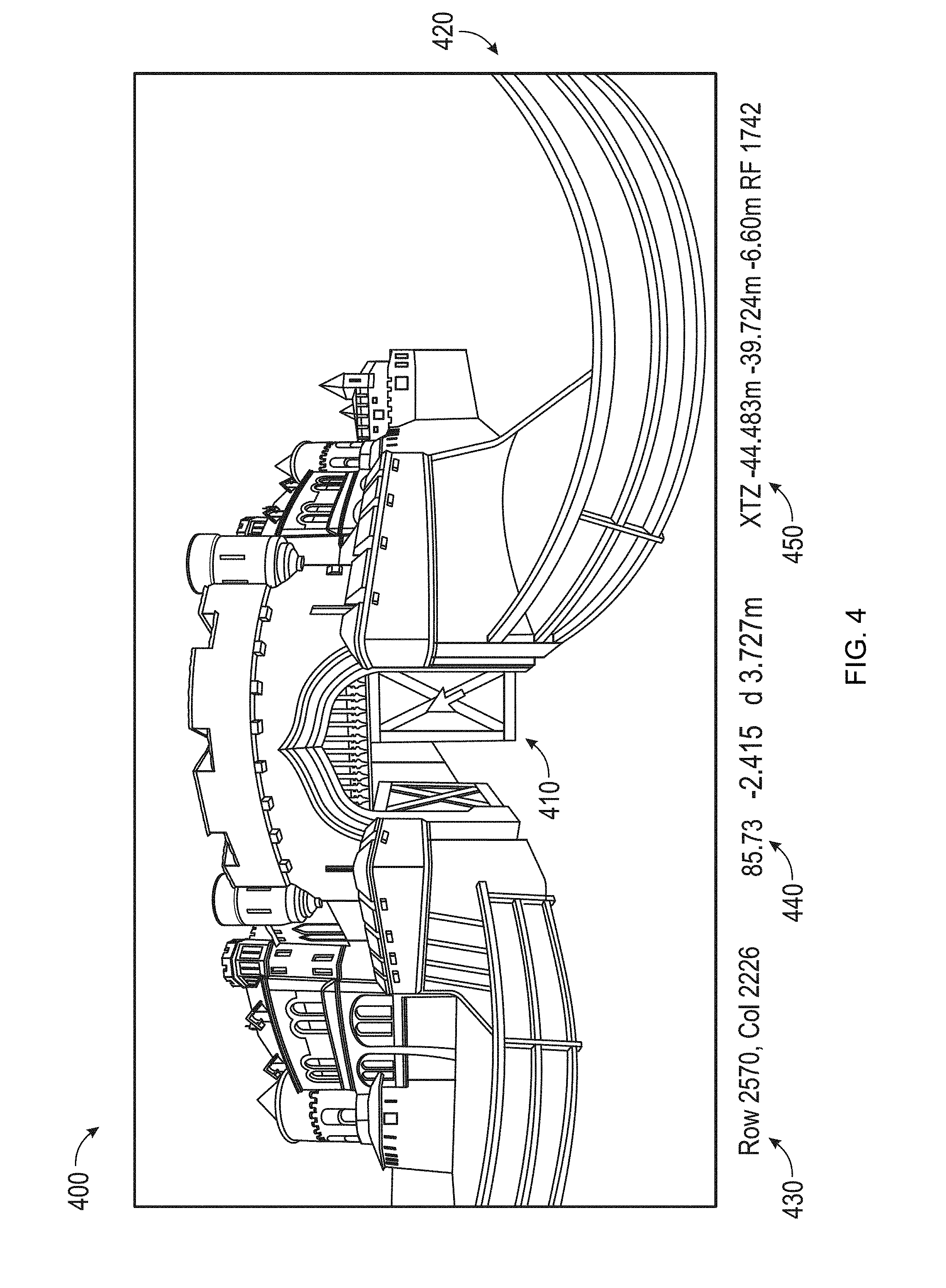

[0027] FIG. 4 depicts a planar view of a 3D scanned image;

[0028] FIG. 5 depicts an embodiment of a panoramic view of a 3D scanned image generated by mapping a planar view onto a sphere;

[0029] FIGS. 6A, 6B and 6C depict embodiments of a 3D view of a 3D scanned image;

[0030] FIG. 7 depicts an embodiment of a 3D view made up of an image of the object of FIG. 6B but viewed from a different perspective and shown only partially;

[0031] FIG. 8A is a perspective view of a mobile 3D measuring system according to an embodiment;

[0032] FIGS. 8B, 8C, and 8D are perspective, top and front views, respectively, of a motorized dolly according to an embodiment;

[0033] FIG. 8E is a perspective view of a motorized dolly folded into a compact shape according to an embodiment;

[0034] FIG. 8F is a schematic representation of electrical circuitry in the motorized dolly according to an embodiment;

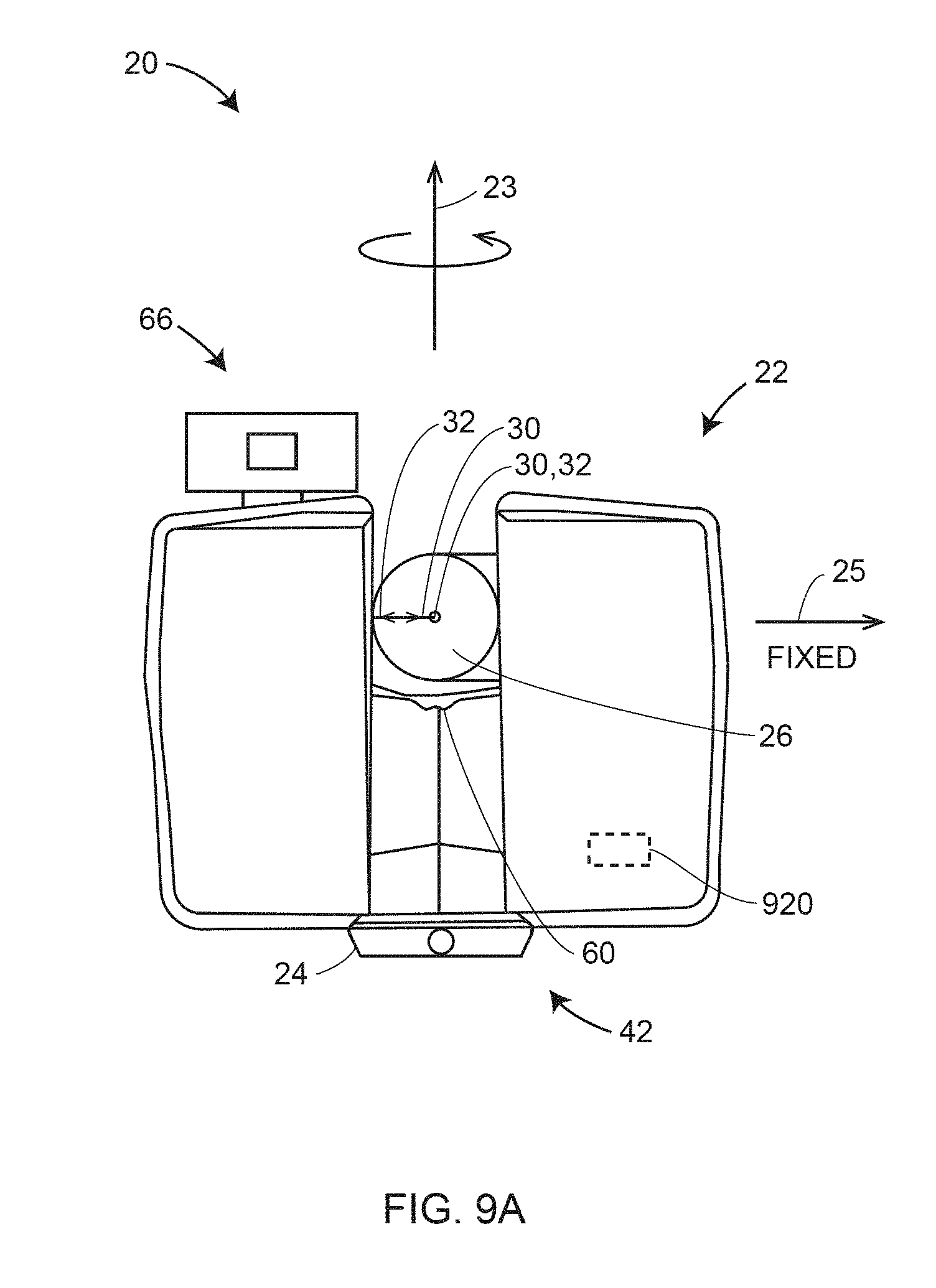

[0035] FIG. 9A is a front view of a 3D scanner configured to project light onto a horizontal plane according to an embodiment;

[0036] FIG. 9B is a block diagram depicting a processor system according to an embodiment;

[0037] FIG. 10 is a schematic representation of a 3D scanner measuring an object from two registration positions according to an embodiment;

[0038] FIG. 11 is a schematic representation of a 3D scanner measuring the object by scanning along a horizontal plane from a plurality of intermediate positions according to an embodiment;

[0039] FIG. 12 shows a 3D scanner capturing portions of the object by scanning along a horizontal plane from a plurality of positions according to an embodiment;

[0040] FIG. 13 shows the 3D scanner capturing portions of the object by scanning along a horizontal plane from a plurality of positions, as seen from a frame of reference of the 3D scanner, according to an embodiment;

[0041] FIGS. 14A, 14B and 14C illustrate a method for finding changes in the position and orientation of the 3D scanner over time according to an embodiment;

[0042] FIG. 15 is a perspective view of a mobile 3D measuring system according to an embodiment;

[0043] FIG. 16 is a perspective view of a mobile 3D measuring system with external camera sensors according to an embodiment;

[0044] FIG. 17 is a perspective view of a mobile 3D measuring system with external laser tracker sensor according to an embodiment;

[0045] FIG. 18 is a perspective view of a mobile 3D measuring system according to an embodiment;

[0046] FIG. 19 is a perspective view of a mobile 3D measuring system used to measure an object according to an embodiment;

[0047] FIG. 20 is a perspective view of a mobile 3D measuring system according to an embodiment;

[0048] FIG. 21 is a perspective view of a first mobile 3D measuring system used to measure an object and a second mobile 3D measuring system used to assist in registration of the first mobile 3D measuring system according to an embodiment; and

[0049] FIG. 22 is a perspective view of a mobile 3D measuring system used to measure an object while external camera sensors assist in registration according to an embodiment.

[0050] The detailed description explains embodiments of the invention, together with advantages and features, by way of example with reference to the drawings.

DETAILED DESCRIPTION OF THE INVENTION

[0051] The present invention relates to a device that includes a 3D scanner and a two-dimensional (2D) scanner working cooperatively to provide automatic registration of 3D scans.

[0052] Referring now to FIGS. 1-3, a laser scanner 20 is shown for optically scanning and measuring the environment surrounding the laser scanner 20. The laser scanner 20 has a measuring head 22 and a base 24. The measuring head 22 is mounted on the base 24 such that the laser scanner 20 may be rotated about a vertical axis 23. In one embodiment, the measuring head 22 includes a gimbal point 27 that is a center of rotation about the vertical axis 23 and a horizontal axis 25. The measuring head 22 has a rotary mirror 26, which may be rotated about the horizontal axis 25. The rotation about the vertical axis may be about the center of the base 24. The terms vertical axis and horizontal axis refer to the scanner in its normal upright position. It is possible to operate a 3D coordinate measurement device on its side or upside down, and so to avoid confusion, the terms azimuth axis and zenith axis may be substituted for the terms vertical axis and horizontal axis, respectively. The term pan axis or standing axis may also be used as an alternative to vertical axis.

[0053] The measuring head 22 is further provided with an electromagnetic radiation emitter, such as light emitter 28, for example, that emits an emitted light beam 30. In one embodiment, the emitted light beam 30 is a coherent light beam such as a laser beam. The laser beam may have a wavelength range of approximately 300 to 1600 nanometers, for example 790 nanometers, 905 nanometers, 1550 nanometers, or less than 400 nanometers. It should be appreciated that other electromagnetic radiation beams having greater or smaller wavelengths may also be used. The emitted light beam 30 is amplitude or intensity modulated, for example, with a sinusoidal waveform or with a rectangular waveform. The emitted light beam 30 is emitted by the light emitter 28 onto the rotary mirror 26, where it is deflected to the environment. A reflected light beam 32 is reflected from the environment by an object 34. The reflected or scattered light is intercepted by the rotary mirror 26 and directed into a light receiver 36. The directions of the emitted light beam 30 and the reflected light beam 32 result from the angular positions of the rotary mirror 26 and the measuring head 22 about the axes 25 and 23, respectively. These angular positions in turn depend on the corresponding rotary drives or motors.

[0054] Coupled to the light emitter 28 and the light receiver 36 is a controller 38. The controller 38 determines, for a multitude of measuring points X, a corresponding number of distances d between the laser scanner 20 and the points X on object 34. The distance to a particular point X is determined based at least in part on the speed of light in air through which electromagnetic radiation propagates from the device to the object point X. In one embodiment the phase shift of modulation in light emitted by the laser scanner 20 and the point X is determined and evaluated to obtain a measured distance d.

[0055] The speed of light in air depends on the properties of the air such as the air temperature, barometric pressure, relative humidity, and concentration of carbon dioxide. Such air properties influence the index of refraction n of the air. The speed of light in air is equal to the speed of light in vacuum c divided by the index of refraction. In other words, c.sub.air=c/n. A laser scanner of the type discussed herein is based on the time-of-flight (TOF) of the light in the air (the round-trip time for the light to travel from the device to the object and back to the device). Examples of TOF scanners include scanners that measure round trip time using the time interval between emitted and returning pulses (pulsed TOF scanners), scanners that modulate light sinusoidally and measure phase shift of the returning light (phase-based scanners), as well as many other types. A method of measuring distance based on the time-of-flight of light depends on the speed of light in air and is therefore easily distinguished from methods of measuring distance based on triangulation. Triangulation-based methods involve projecting light from a light source along a particular direction and then intercepting the light on a camera pixel along a particular direction. By knowing the distance between the camera and the projector and by matching a projected angle with a received angle, the method of triangulation enables the distance to the object to be determined based on one known length and two known angles of a triangle. The method of triangulation, therefore, does not directly depend on the speed of light in air.

[0056] In one mode of operation, the scanning of the volume around the laser scanner 20 takes place by rotating the rotary mirror 26 about axis 25 relatively quickly while rotating the measuring head 22 about axis 23 relatively slowly, thereby moving the assembly in a spiral pattern. In an exemplary embodiment, the rotary mirror rotates at a maximum speed of 5820 revolutions per minute. For such a scan, the gimbal point 27 defines the origin of the local stationary reference system. The base 24 rests in this local stationary reference system.

[0057] In addition to measuring a distance d from the gimbal point 27 to an object point X, the scanner 20 may also collect gray-scale information related to the received optical power (equivalent to the term "brightness.") The gray-scale value may be determined at least in part, for example, by integration of the bandpass-filtered and amplified signal in the light receiver 36 over a measuring period attributed to the object point X.

[0058] The measuring head 22 may include a display device 40 integrated into the laser scanner 20. The display device 40 may include a graphical touch screen 41, as shown in FIG. 1, which allows the operator to set the parameters or initiate the operation of the laser scanner 20. For example, the screen 41 may have a user interface that allows the operator to provide measurement instructions to the device, and the screen may also display measurement results.

[0059] The laser scanner 20 includes a carrying structure 42 that provides a frame for the measuring head 22 and a platform for attaching the components of the laser scanner 20. In one embodiment, the carrying structure 42 is made from a metal such as aluminum. The carrying structure 42 includes a traverse member 44 having a pair of walls 46, 48 on opposing ends. The walls 46, 48 are parallel to each other and extend in a direction opposite the base 24. Shells 50, 52 are coupled to the walls 46, 48 and cover the components of the laser scanner 20. In the exemplary embodiment, the shells 50, 52 are made from a plastic material, such as polycarbonate or polyethylene for example. The shells 50, 52 cooperate with the walls 46, 48 to form a housing for the laser scanner 20.

[0060] On an end of the shells 50, 52 opposite the walls 46, 48 a pair of yokes 54, 56 are arranged to partially cover the respective shells 50, 52. In the exemplary embodiment, the yokes 54, 56 are made from a suitably durable material, such as aluminum for example, that assists in protecting the shells 50, 52 during transport and operation. The yokes 54, 56 each includes a first arm portion 58 that is coupled, such as with a fastener for example, to the traverse 44 adjacent the base 24. The arm portion 58 for each yoke 54, 56 extends from the traverse 44 obliquely to an outer corner of the respective shell 50, 54. From the outer corner of the shell, the yokes 54, 56 extend along the side edge of the shell to an opposite outer corner of the shell. Each yoke 54, 56 further includes a second arm portion that extends obliquely to the walls 46, 48. It should be appreciated that the yokes 54, 56 may be coupled to the traverse 42, the walls 46, 48 and the shells 50, 54 at multiple locations.

[0061] The pair of yokes 54, 56 cooperate to circumscribe a convex space within which the two shells 50, 52 are arranged. In the exemplary embodiment, the yokes 54, 56 cooperate to cover all of the outer edges of the shells 50, 54, while the top and bottom arm portions project over at least a portion of the top and bottom edges of the shells 50, 52. This provides advantages in protecting the shells 50, 52 and the measuring head 22 from damage during transportation and operation. In other embodiments, the yokes 54, 56 may include additional features, such as handles to facilitate the carrying of the laser scanner 20 or attachment points for accessories for example.

[0062] On top of the traverse 44, a prism 60 is provided. The prism extends parallel to the walls 46, 48. In the exemplary embodiment, the prism 60 is integrally formed as part of the carrying structure 42. In other embodiments, the prism 60 is a separate component that is coupled to the traverse 44. When the mirror 26 rotates, during each rotation the mirror 26 directs the emitted light beam 30 onto the traverse 44 and the prism 60. Due to non-linearities in the electronic components, for example in the light receiver 36, the measured distances d may depend on signal strength, which may be measured in optical power entering the scanner or optical power entering optical detectors within the light receiver 36, for example. In an embodiment, a distance correction is stored in the scanner as a function (possibly a nonlinear function) of distance to a measured point and optical power (generally unscaled quantity of light power sometimes referred to as "brightness") returned from the measured point and sent to an optical detector in the light receiver 36. Since the prism 60 is at a known distance from the gimbal point 27, the measured optical power level of light reflected by the prism 60 may be used to correct distance measurements for other measured points, thereby allowing for compensation to correct for the effects of environmental variables such as temperature. In the exemplary embodiment, the resulting correction of distance is performed by the controller 38.

[0063] In an embodiment, the base 24 is coupled to a swivel assembly (not shown) housed within the carrying structure 42. It includes a motor configured to rotate the measuring head 22 about the axis 23.

[0064] An auxiliary image acquisition device 66 may be a device that captures and measures a parameter associated with the scanned volume or the scanned object and provides a signal representing the measured quantities over an image acquisition area. The auxiliary image acquisition device 66 may be, but is not limited to, a pyrometer, a thermal imager, an ionizing radiation detector, or a millimeter-wave detector.

[0065] In an embodiment, a camera (first image acquisition device) 112 is located internally to the scanner and may have the same optical axis as the 3D scanner device. In this embodiment, the first image acquisition device 112 is integrated into the measuring head 22 and arranged to acquire images along the same optical pathway as emitted light beam 30 and reflected light beam 32. In this embodiment, the light from the light emitter 28 reflects off a fixed mirror 116 and travels to dichroic beam-splitter 118 that reflects the light 117 from the light emitter 28 onto the rotary mirror 26. The dichroic beam-splitter 118 allows light to pass through at wavelengths different than the wavelength of light 117. For example, the light emitter 28 may be a near infrared laser light (for example, light at wavelengths of 780 nm or 1150 nm), with the dichroic beam-splitter 118 configured to reflect the infrared laser light while allowing visible light (e.g., wavelengths of 400 to 700 nm) to transmit through. In other embodiments, the determination of whether the light passes through the beam-splitter 118 or is reflected depends on the polarization of the light. The digital camera 112 takes 2D photographic images of the scanned area to capture color data to add to the scanned image. In the case of a built-in color camera having an optical axis coincident with that of the 3D scanning device, the direction of the camera view may be easily obtained by simply adjusting the steering mechanisms of the scanner--for example, by adjusting the azimuth angle about the axis 23 and by steering the mirror 26 about the axis 25.

[0066] FIG. 4 depicts an example of a planar view of a 3D scanned image 400. The planar view depicted in FIG. 4 maps an image based on direct mapping of data collected by the scanner. The scanner collects data in a spherical pattern but with data points collected near the poles more tightly compressed than those collected nearer the horizon. In other words, each point collected near a pole represents a smaller solid angle than does each point collected nearer the horizon. Since data from the scanner may be directly represented in rows and column, data in a planar image is conveniently presented in a rectilinear format, as shown in FIG. 4. With planar mapping described above, straight lines appear to be curved, as for example the straight fence railings 420 that appear curved in the planar view of the 3D image. The planar view may be a 3D unprocessed scanned image displaying just the gray-scale values received from the distance sensor arranged in columns and rows as they were recorded. In addition, the 3D unprocessed scanned image of the planar view may be in full resolution or reduced resolution depending on system characteristics (e.g., display device, storage, processor). The planar view may be a 3D processed scanned image that depicts either gray-scale values (resulting from the light irradiance measured by the distance sensor for each pixel) or color values (resulting from camera images which have been mapped onto the scan). Although the planar view extracted from the 3D scanner is ordinarily a gray-scale or color image, FIG. 4 is shown as a line drawing for clarity in document reproduction. The user interface associated with the display unit, which may be integral to the laser scanner, may provide a point selection mechanism, which in FIG. 4 is the cursor 410. The point selection mechanism may be used to reveal dimensional information about the volume of space being measured by the laser scanner. In FIG. 4, the row and column at the location of the cursor are indicated on the display at 430. The two measured angles and one measured distance (the 3D coordinates in a spherical coordinate system) at the cursor location are indicated on the display at 440. Cartesian XYZ coordinate representations of the cursor location are indicated on the display at 450.

[0067] FIG. 5 depicts an example of a panoramic view of a 3D scanned image 600 generated by mapping a planar view onto a sphere, or in some cases a cylinder. A panoramic view can be a 3D processed scanned image (such as that shown in FIG. 5) in which 3D information (e.g., 3D coordinates) is available. The panoramic view may be in full resolution or reduced resolution depending on system characteristics. It should be pointed out that an image such as FIG. 5 is a 2D image that represents a 3D scene when viewed from a particular perspective. In this sense, the image of FIG. 5 is much like an image that might be captured by a 2D camera or a human eye. Although the panoramic view extracted from the 3D scanner is ordinarily a gray-scale or color image, FIG. 5 is shown as a line drawing for clarity in document reproduction.

[0068] The term panoramic view refers to a display in which angular movement is generally possible about a point in space, but translational movement is not possible (for a single panoramic image). In contrast, the term 3D view as used herein refers to generally refers to a display in which provision is made (through user controls) to enable not only rotation about a fixed point but also translational movement from point to point in space.

[0069] FIGS. 6A, 6B and 6C depict an example of a 3D view of a 3D scanned image. In the 3D view a user can leave the origin of the scan and see the scan points from different viewpoints and angles. The 3D view is an example of a 3D processed scanned image. The 3D view may be in full resolution or reduced resolution depending on system characteristics. In addition, the 3D view allows multiple registered scans to be displayed in one view. FIG. 6A is a 3D view 710 over which a selection mask 730 has been placed by a user. FIG. 6B is a 3D view 740 in which only that part of the 3D view 710 covered by the selection mask 730 has been retained. FIG. 6C shows the same 3D measurement data as in FIG. 6B except as rotated to obtain a different view. FIG. 7 shows a different view of FIG. 6B, the view in this instance being obtained from a translation and rotation of the observer viewpoint, as well as a reduction in observed area. Although the 3D views extracted from the 3D scanner are ordinarily a gray-scale or color image, FIGS. 6A-C and 7 are shown as line drawings for clarity in document reproduction.

[0070] FIG. 8A shows a mobile 3D measuring system 800 that includes a 3D laser scanner 20 mounted on a motorized tripod assembly 810. In an embodiment, the motorized tripod assembly 810 includes a tripod 812 mounted on a motorized dolly 820. Motorized dolly 820 includes clamps 822 to hold tripod legs in contact with the legs 824 of the motorized dolly 820. As shown in the exploded view FIG. 8B, in an embodiment, each of the three dolly legs 824 are attached to a base plate 825 on one end and to a wheel on the other end. In an embodiment, two wheels 862A and 862B are motorized and may be turned independently by motors, while the third wheel 864 is not motorized and is smaller than the other two. In FIG. 8A, the motors that drive the wheels 862A, 862B are not visible beneath the wheel covers 828. Motor assemblies 826A, 826B are used to drive wheels 862A, 862B, respectively, in alternative embodiment of motorized dolly 820B shown in FIG. 8B. The motor assemblies 826A, 826B drive the wheels 862A, 862B using motors 827A, 827B attached to axles 863A and 863B, respectively. The wheels may be plastic or rubber. For transport on uneven surface, rubber wheels may be inflated. Electrical wires attached to the motor assemblies connect them to a control and power unit 840. In an embodiment, the electrical wires are routed from the motor assemblies 826A, 826B to the control and power unit 840 through hollow dolly legs 824.

[0071] Referring now to FIG. 8F, in an embodiment, control and power unit 840 includes a rechargeable battery 890 and an electrical circuit board 880 having a processor 882, a motor controller 884, an IEEE 802.11 (Wi-Fi) transceiver 886, and a cellular transceiver 888. In an embodiment, the cellular transceiver 888 supports 3G and long-term evolution (LTE) formats. In an embodiment, a first power plug 841 enables recharging of the rechargeable battery 890 from an AC power source and a second power plug 842 provides output power from the battery. In an embodiment, the processor 882 is an Intel NUC processor such as might be found in a personal computer. The Intel NUC processor is manufactured by Intel Corporation, with headquarters in Santa Clara, Calif. In an embodiment, the motor controller 884 is a Pololu RoboClaw 2x60A motor controller, manufactured by Pololu Robotics and Electronics with headquarters in Las Vegas, Nev. In an embodiment, the motor controller 884 controls the speed of each wheel 862A, 862B by sending digital signals 892A, 892B to of the motor assemblies 826A, 826B over a USB interface configured to act as a virtual serial (COM) port. In an embodiment, the motor assemblies 826A, 826B include rotary encoders 894A, 894B that measure the rotation of the wheels 862A, 862B and send encoder signals 893A, 893B to the motor controller 884. Rotary encoder signals indicate the angle of rotation of the wheels, from which speed and distance of travel may be calculated by the processor 882. The encoders 894A, 894B can be used as odometers to keep track of the total distance traveled by the mobile 3D measuring system 800. In other embodiments, other types of odometry sensors are used.

[0072] In an embodiment, conventional wheels such as 862A, 862B are driven differentially by the separate motor assemblies 826A, 826B. If the electrical signals provided by the controller are set to keep the rate of rotation of the two wheels the same, the motorized tripod assembly 810 will travel in a straight line. If the electrical signals provide rotation to one wheel but hold the other wheel locked, the motorized tripod assembly 810 will turn about the fixed wheel. Because of differences in the two motors and the differences in frictional profiles of the two wheels, there may be a tendency for the motorized tripod assembly 810 to turn one direction or the other during travel rather than traveling in a straight line. This tendency to turn can be corrected based on feedback provided by rotary encoders 894A, 894B in the motor assemblies 826A and 826B. In an alternative embodiment, conventional wheels are replaced with wheels that may be driven sideways as well as straight ahead. Examples of such wheels include omni wheels and Mecanum wheels.

[0073] In an embodiment, the control and power unit 840 is attached to the dolly legs 822 using a wing nut 852 and bolt 854 on one side, as shown in FIG. 8A, and a hook 856 and eye 858 on the other side, as shown in FIG. 8C. In an embodiment, the control and power unit 840 includes power outlets 872, such as those shown in FIG. 8A, to provide power to accessories such as a 2D laser scanner, a triangulation scanner such as a Kinect, a 2D camera, a depth camera, or an ultrasound sensor. The Kinect device is manufactured by Microsoft Corporation, with headquarters in Redmond, Wash. A fan 877 is provided to ensure cool operation of the unit 840. In an embodiment, the battery 890 is configured to provide power to the laser scanner 20 over the power outlet 878 should the battery of the scanner 20 run low. The power outlet 879 may be further configured to receive power to recharge the rechargeable battery 890.

[0074] In an embodiment, the motorized dolly may be folded into a compact package 895 as shown in FIG. 8E. To obtain the folded shape, the tripod 812 is removed from the motorized dolly 820, and the dolly legs 822 that have the large wheels are folded inward toward the dolly leg 822 that has the small wheel. In an embodiment, a motorized dolly weighs approximately 1.5 kg.

[0075] In the embodiment of FIGS. 8B, 8C, and 8D, extenders 897A, 897B are attached to motor assemblies 826A, 826B. The purpose of the extenders is to provide a location on which to attach accessory devices such as a 2D scanner, a triangulation scanner (for example, a Kinect or similar device), a 2D camera or a camera capable of measuring depth as well as two angles. In an embodiment, the extenders 897A, 897B are connected together to make one long extender region. In an embodiment, a vertical extender is attached to the single long extender. An advantage of attaching accessory devices to extenders rather than tripod legs is that extenders can be consistently aimed in the direction of movement of the system 800. In cases of obstacle avoidance, this is the preferred direction for the sensor. In other embodiments, accessory devices are attached to the 3D measuring device 20. For example, the element 66 in FIG. 1 may be an accessory device.

[0076] In a first mode, a smart device such as a smartphone, tablet, or notebook connects to the Wi-Fi network through a wireless access point. In a second mode, referred to as a client mode, both the unit 840 and a smart device connect to an external network. In a third mode, the unit 840 and a smart device connect to the internet through a cellular channel such as 3G or LTE.

[0077] A smart device such as a smartphone, tablet, or notebook is used to control the mobile 3D measuring system 800 by running an app that may have been downloaded from an app store. The app may include a touchscreen display that provides sensitive joystick control for moving of the 3D measuring system. The app may also control measuring and processing of data. Processing of data may also be handed off to an external computer, for example, to one or more servers located on a network. In an embodiment, the app permits locking or unlocking of the motorized wheels when power is not applied. As stated herein above, one wheel may be locked when a turn is executed. On the other hand, if the 3D measuring system is pushed by hand, it is convenient to unlock the wheels.

[0078] The mobile 3D measuring system 800 includes the motorized dolly 820, which has useful features of simplicity, adaptability, small size, and light weight. Methods described herein below may use the mobile 3D measuring system 800. Other embodiments of the methods described herein below may use other types of motorized 3D measuring systems. Figures that accompany descriptions of the methods given herein below usually include the 3D measuring system 800, but it should be understood that alternative motorized platforms may be used. For example, the motorized dolly 820 may be configured to include four or more wheels. In an embodiment, the dolly 820 may be configured to move in a first direction (e.g. forward), a second direction opposite that of the first direction (e.g. backward) and also rotate about an axis, such as an axis passing centrally through the dolly 820 perpendicular to the surface upon which the dolly 820 rests.

[0079] In a common situation, the system 800 is used to make several measurements at a plurality of locations. The 3D point clouds obtained at the plurality of locations are then registered together. In some embodiments, an operator may use personal judgment in deciding the optimum locations of the mobile 3D measuring system 800 to obtain good registrations. In other embodiments, additional measuring and computing devices may be used to help select optimal measurement locations and ensure good registrations. Four examples of using measuring and computing devices to help select optimal measurement locations and ensure good registrations are described in U.S. patent application Ser. Nos. 14/559,290, 14/559,311, 14/559,335, and 14/559,367, all to Zweigle et al., the contents all of which are incorporated by reference.

[0080] A first example of using measuring and computing devices to help select optimal measurement locations and ensure good registrations is now described with reference to FIGS. 9 to 14. FIGS. 9A and 9B show elements incorporated in an embodiment of a mobile 3D measuring system 800. The embodiment includes a 3D scanner 20 and a processor system 950.

[0081] The processor system 950 includes one or more processing elements, which may include a 3D scanner processor (controller) 38, an external computer 970, and a cloud computer 980. The processors may be microprocessors, field programmable gate arrays (FPGAs), digital signal processors (DSPs), and generally any device capable of performing computing functions. The one or more processors have access to memory for storing information. In an embodiment illustrated in FIG. 9B, the controller 38 represents one or more processors distributed throughout the 3D scanner 20. Also included in the embodiment of FIG. 9B are an external computer 970 and one or more cloud computers 980 for remote computing capability. In an alternative embodiment, only one or two of the processors 38, 970, and 980 is provided in the processor system. Communication among the processors may be through wired links, wireless links, or a combination of wired and wireless links. In an embodiment, scan results are uploaded after each scanning session to the cloud (remote network) for storage and future use. In addition, the processor system 950 cooperates with processors and other electrical components in the control and power unit 840 of the mobile 3D measuring system 800.

[0082] In one mode of operation the 3D scanner 20 measures 2D coordinates in a horizontal plane. In most cases, it does this by steering light within a horizontal plane to illuminate object points in the environment. It collects the reflected (scattered) light from the object points to determine 2D coordinates of the object points in the horizontal plane. In an embodiment, the 3D scanner scans a spot of light and measures the angle of rotation about the axis 23 with an angular encoder while at the same time measuring a corresponding distance value to each illuminated object point in the horizontal plane. The 3D scanner 23 may rotate about the axis 23 at a relatively high speed while performing no rotation about the axis 25. In an embodiment, the laser power emitted from the scanner 20 is set to fall within eye safety limits.

[0083] An optional position/orientation sensor 920 in the 3D scanner 20 may include inclinometers (accelerometers), gyroscopes, magnetometers, and altimeters. Usually devices that include one or more of an inclinometer and gyroscope are referred to as an inertial measurement unit (IMU). In some cases, the term IMU is used in a broader sense to include a variety of additional devices that indicate position and/or orientation--for example, magnetometers that indicate heading based on changes in magnetic field direction relative to the earth's magnetic north and altimeters that indicate altitude (height). An example of a widely used altimeter is a pressure sensor. By combining readings from a combination of position/orientation sensors with a fusion algorithm that may include a Kalman filter, relatively accurate position and orientation measurements can be obtained using relatively low-cost sensor devices. The motorized tripod assembly 810 enables the 3D measuring device 20 to be moved from place to place, typically along a floor that is approximately horizontal.

[0084] In an embodiment, in one mode of operation, the 3D scanner 20 is configured to scan a beam of light over a range of angles in a horizontal plane. At instants in time the 3D scanner 20 returns an angle reading and a corresponding distance reading to provide 2D coordinates of object points in the horizontal plane. In completing one scan over the full range of angles, the 3D scanner 20 returns a collection of paired angle and distance readings. As the mobile 3D measuring system 800 is moved from place to place, it continues to return 2D coordinate values in a horizontal plane. These 2D coordinate values are used to locate the position of the 3D scanner 20 at each stationary registration position, thereby enabling more accurate registration.

[0085] FIG. 10 shows the mobile 3D measuring system 800 moved to a first registration position 1112 in front of an object 1102 that is to be measured. The object 1102 might for example be a wall in a room. The motorized tripod assembly 810 is brought to a stop with the wheels held stationary. The 3D scanner 20 in the mobile 3D measuring system 800 takes a first 3D scan of the object 1102. In an embodiment, the 3D scanner 20 may if desired obtain 3D measurements in all directions except in downward directions blocked by the structure of the 3D measuring device 800. However, in the example of FIG. 10, in which 3D scanner 20 measures a long, mostly flat structure 1102, a smaller effective FOV 1130 may be selected to provide a more face-on view of features on the structure.

[0086] When the first 3D scan is completed, the processor system 950 causes the 3D scanner 20 to change from 3D scanning mode to 2D scanning mode. In an embodiment, it does this by fixing the mirror 26 to direct the outgoing beam 30 on a horizontal plane. The mirror 26 receives reflected light 32 traveling in the reverse direction. In an embodiment, the scanner begins the 2D scan as soon as the 3D scanning stops. In another embodiment, the 2D scan starts when the processor receives a signal such as a signal form the position/orientation sensor 920, a signal from a brake release sensor, or a signal sent in response to a command from an operator. The 3D scanner 20 may start to collect 2D scan data when the mobile 3D measuring system 800 starts to move. In an embodiment, the 2D scan data is sent to the processor system 950 as it is collected.

[0087] In an embodiment, the 2D scan data is collected as the mobile 3D measuring system 800 is moved toward the second registration position 1114. In an embodiment, 2D scan data is collected and processed as the 3D scanner 20 passes through a plurality of 2D measuring positions 1120. At each measuring position 1120, the 3D scanner collects 2D coordinate data over an effective FOV 1140. Using methods described in more detail below, the processor system 950 uses 2D scan data from the plurality of 2D scans at positions 1120 to determine a position and orientation of the 3D scanner 20 at the second registration position 1114 relative to the first registration position 1112, where the first registration position and the second registration position are known in a 3D coordinate system common to both. In an embodiment, the common coordinate system is represented by 2D Cartesian coordinates x, y and by an angle of rotation .theta. relative to the x or y axis. In an embodiment, the x and y axes lie in the horizontal x-y plane of the 3D scanner 20 and may be further based on a direction of a "front" of the 3D scanner 20. An example of such an (x, y, .theta.) coordinate system is the coordinate system 1410 of FIG. 14A.

[0088] On the object 1102, there is a region of overlap 1150 between the first 3D scan (collected at the first registration position 1112) and the second 3D scan (collected at the second registration position 1114). In the overlap region 1150 there are registration targets (which may be natural features of the object 1102) that are seen in both the first 3D scan and the second 3D scan. A problem that often occurs in practice is that, in moving the 3D scanner 20 from the first registration position 1112 to the second registration position 1114, the processor system 950 loses track of the position and orientation of the 3D scanner 20 and hence is unable to correctly associate the registration targets in the overlap regions to enable the registration procedure to be performed reliably. By using the succession of 2D scans, the processor system 950 is able to determine the position and orientation of the 3D scanner 20 at the second registration position 1114 relative to the first registration position 1112. This information enables the processor system 950 to correctly match registration targets in the region of overlap 1150, thereby enabling the registration procedure to be properly completed.

[0089] FIG. 12 shows the 3D scanner 20 collecting 2D scan data at selected positions 1120 over an effective FOV 1140. At different positions 1120, the 3D scanner captures 2D scan data over a portion of the object 1102 marked A, B, C, D, and E. FIG. 12 shows the 3D scanner 20 moving in time relative to a fixed frame of reference of the object 1102.

[0090] FIG. 13 includes the same information as FIG. 12 but shows it from the frame of reference of the 3D scanner 20 while taking 2D scans rather than the frame of reference of the object 1102. This figure makes clear that in the scanner frame of reference, the position of features on the object change over time. Hence it is clear that the distance traveled by the 3D scanner 20 between registration position 1 and registration position 2 can be determined from the 2D scan data sent from the 3D scanner 20 to the processor system 950.

[0091] FIG. 14A shows a coordinate system that may be used in FIGS. 14B and 14C. In an embodiment, the 2D coordinates x and y are selected to lie on the plane in which the 2D scans are taken, ordinarily the horizontal plane. The angle .theta. is selected as a rotation angle in the plane, the rotation angle relative to an axis such as x or y. FIGS. 14B, 14C represent a realistic case in which the 3D scanner 20 is moved not exactly on a straight line, for example, nominally parallel to the object 1102, but also to the side. Furthermore, the 3D scanner 20 may be rotated as it is moved.

[0092] FIG. 14B shows the movement of the object 1102 as seen from the frame of reference of the 3D scanner 20 in traveling from the first registration position to the second registration position. In the scanner frame of reference (that is, as seen from the scanner's point of view), the object 1102 is moving while the 3D scanner 20 is fixed in place. In this frame of reference, the portions of the object 1102 seen by the 3D scanner 20 appear to translate and rotate in time. The 3D scanner 20 provides a succession of such translated and rotated 2D scans to the processor system 950. In the example shown in FIGS. 14A, B, the scanner translates in the +y direction by a distance 1420 shown in FIG. 14B and rotates by an angle 1430, which in this example is +5 degrees. Of course, the scanner could equally well have moved in the +x or -x direction. To determine the movement of the 3D scanner 20 in the x, y, .theta. directions, the processor system 950 uses the data recorded in successive horizontal scans as seen in the frame of reference of the scanner 20, as shown in FIG. 14B. In an embodiment, the processor system 950 performs a best-fit calculation using methods well known in the art to match the two scans or features in the two scans as closely as possible.

[0093] As the 3D scanner 20 takes successive 2D readings and performs best-fit calculations, the processor system 950 keeps track of the translation and rotation of the 3D scanner 20. In this way, the processor system 950 is able to accurately determine the change in the values of x, y, .theta. as the mobile 3D measuring system 800 moves from the first registration position 1112 to the second registration position 1114.

[0094] It is important to understand that the processor system 950 determines the position and orientation of the 3D measuring device 800 based on a comparison of the succession of 2D scans and not on fusion of the 2D scan data with 3D scan data provided by the 3D scanner 20 at the first registration position 1112 or the second registration position 1114.

[0095] Instead, the processor system 950 is configured to determine a first translation value, a second translation value, and a first rotation value that, when applied to a combination of the first 2D scan data and second 2D scan data, results in transformed first 2D data that matches transformed second 2D data as closely as possible according to an objective mathematical criterion. In general, the translation and rotation may be applied to the first scan data, the second scan data, or to a combination of the two. For example, a translation applied to the first data set is equivalent to a negative of the translation applied to the second data set in the sense that both actions produce the same match in the transformed data sets. An example of an "objective mathematical criterion" is that of minimizing the sum of squared residual errors for those portions of the scan data judged to overlap. Another type of objective mathematical criterion may involve a matching of multiple features identified on the object. For example, such features might be the edge transitions 1103, 1104, and 1105 shown in FIG. 11. The mathematical criterion may involve processing of the raw 2D scan data provided by the 3D scanner 20 to the processor system 950, or it may involve a first intermediate level of processing in which features are represented as a collection of line segments using methods that are known in the art, for example, methods based on the Iterative Closest Point (ICP). Such a method based on ICP is described in Censi, A., "An ICP variant using a point-to-line metric," IEEE International Conference on Robotics and Automation (ICRA) 2008.

[0096] In an embodiment, the first translation value is dx, the second translation value is dy, and the first rotation value d.theta.. If first 2D scan data has translational and rotational coordinates (in a reference coordinate system) of (x.sub.1, y.sub.1, .theta..sub.1), then the second 2D scan data collected at a second location has coordinates given by (x.sub.2, y.sub.2, .theta..sub.2)=(x.sub.1+dx, y.sub.1+dy, .theta..sub.1+d.theta.). In an embodiment, the processor system 950 is further configured to determine a third translation value (for example, dz) and a second and third rotation values (for example, pitch and roll). The third translation value, second rotation value, and third rotation value may be determined based at least in part on readings from the position/orientation sensor 920.

[0097] The 3D scanner 20 collects 2D scan data at the first registration position 1112 and more 2D scan data at the second registration position 1114. In some cases, these 2D scans may suffice to determine the position and orientation of the 3D measuring device at the second registration position 1114 relative to the first registration position 1112. In other cases, the two sets of 2D scan data are not sufficient to enable the processor system 950 to accurately determine the first translation value, the second translation value, and the first rotation value. This problem may be avoided by collecting 2D scan data at intermediate scan locations 1120. In an embodiment, the 2D scan data is collected and processed at regular intervals, for example, once per second. In this way, features are easily identified in successive 2D scans 1120. If more than two 2D scans are obtained, the processor system 950 may choose to use the information from all the successive 2D scans in determining the translation and rotation values in moving from the first registration position 1112 to the second registration position 1114. Alternatively, the processor may choose to use only the first and last scans in the final calculation, simply using the intermediate 2D scans to ensure proper correspondence of matching features. In most cases, accuracy of matching is improved by incorporating information from multiple successive 2D scans.

[0098] The mobile 3D measuring system 800 is moved to the second registration position 1114. In an embodiment, the mobile 3D measuring system 800 is brought to a stop and brakes fixed to hold the 3D scanner stationary. In an alternative embodiment, the processor system 950 starts the 3D scan automatically when the moveable platform is brought to a stop, for example, by the position/orientation sensor 920 noting the lack of movement. The 3D scanner 20 in the mobile 3D measuring system 800 takes a 3D scan of the object 1102. This 3D scan is referred to as the second 3D scan to distinguish it from the first 3D scan taken at the first registration position.

[0099] The processor system 950 applies the already calculated first translation value, the second translation value, and the first rotation value to adjust the position and orientation of the second 3D scan relative to the first 3D scan. This adjustment, which may be considered to provide a "first alignment," brings the registration targets (which may be natural features in the overlap region 1150) into close proximity. The processor system 950 performs a fine registration in which it makes fine adjustments to the six degrees of freedom of the second 3D scan relative to the first 3D scan. It makes the fine adjustment based on an objective mathematical criterion, which may be the same as or different than the mathematical criterion applied to the 2D scan data. For example, the objective mathematical criterion may be that of minimizing the sum of squared residual errors for those portions of the scan data judged to overlap. Alternatively, the objective mathematical criterion may be applied to a plurality of features in the overlap region. The mathematical calculations in the registration may be applied to raw 3D scan data or to geometrical representations of the 3D scan data, for example, by a collection of line segments.

[0100] Outside the overlap region 1150, the aligned values of the first 3D scan and the second 3D scan are combined in a registered 3D data set. Inside the overlap region, the 3D scan values included in the registered 3D data set are based on some combination of 3D scanner data from the aligned values of the first 3D scan and the second 3D scan.

[0101] A second example of using measuring and computing devices to help select optimal measurement locations and ensure good registrations is the same as the first example described hereinabove with reference to FIGS. 9-14 except that the measurements in a horizontal plane are made with a 2D laser scanner rather than by the laser scanner 20 according to the method described with reference to FIG. 9A. The 2D laser scanner in this second example provides the same information as the 3D laser scanner 20 measuring over a horizontal plane. In an embodiment, the 2D scanner is placed directly under the 3D scanner 20 and on top of the motorized tripod assembly 810.

[0102] Examples of 2D scanners that might be included in the 2D scanner accessory include 2D scanners from the SICK LMS100 product family and 2D scanners from Hoyuko such as the Hoyuko models URG-04LX-UG01 and UTM-30LX. The 2D scanners from SICK are manufactured by SICK AG, with headquarters in Waldkirch, Germany. The Hoyuko scanners are manufactured by Hokuyo Automatic Company, Ltd, with headquarters in Osaka, Japan. The scanners in the Sick LMS100 family measure angles over a 270 degree range and over distances up to 20 meters. The Hoyuko model URG-04LX-UG01 is a low-cost 2D scanner that measures angles over a 240 degree range and distances up to 4 meters. The Hoyuko model UTM-30LX is a 2D scanner that measures angles over a 270 degree range and to distances up to 30 meters. Many other types of 2D scanners are also available.

[0103] A third example of using measuring and computing devices to help select optimal measurement locations and ensure good registrations is now given with reference to FIGS. 3 and 8A. Mobile 3D measuring device 800 includes a 3D scanner 20, a processor system 950, a motorized dolly 820, and a 2D camera, which may be a camera 112 internal to the 3D scanner (as shown in FIG. 3), a camera 66 mounted on the 3D scanner (as shown in FIG. 1), or a camera mounted on the motorized dolly 820.

[0104] In one mode of operation of the 3D measuring device 800, the camera (112 or 66) captures overlapping 2D camera images as the 3D measuring device is moved between positions at which 3D scans are taken. For the case in which the camera is an internal camera (such as the central color camera 112) or a camera 66 mounted on the measuring head 22, the camera may be optionally steered about the vertical axis 23 to increase the effective FOV of the camera. In an embodiment, the laser power is turned off as the 2D camera images are collected. In an alternative embodiment, the laser power is left on so that the 3D scanner may make 2D scans in a horizontal plane while the 2D camera images are collected.

[0105] The procedure carried out according to the third example is similar to that of the first two except that the change in distance and orientation is based on the information from the plurality of 2D imaging data rather than from the data obtained from the 2D scanner or 3D scanner in a horizontal plane. As in the first and second examples, the change in position and orientation is tracked by the 2D cameras to provide a good starting point for the second registration of the data collected by the 3D scanner in the first and second registration positions. As in the previous cases, 2D and 3D data are not fused to determine the starting position and orientation for the mathematical registration procedure. The distance and orientation change from the first and second position may be based on a mathematical method such as "optical flow" described in "Mathematical Models in Computer Vision: The Handbook" by N. Paragios, Y. Chen, and O. Faugeras (editors), Chapter 15, Springer 2005, pp. 239-258, the contents of which are incorporated by reference herein.