Systems And Methods Of Virtual Billboarding And Collaboration Facilitation In An Augmented Reality Environment

Spivack; Nova ; et al.

U.S. patent application number 16/130541 was filed with the patent office on 2019-04-11 for systems and methods of virtual billboarding and collaboration facilitation in an augmented reality environment. The applicant listed for this patent is Magical Technologies, LLC. Invention is credited to Matthew Hoerl, Nova Spivack.

| Application Number | 20190107991 16/130541 |

| Document ID | / |

| Family ID | 65723564 |

| Filed Date | 2019-04-11 |

View All Diagrams

| United States Patent Application | 20190107991 |

| Kind Code | A1 |

| Spivack; Nova ; et al. | April 11, 2019 |

SYSTEMS AND METHODS OF VIRTUAL BILLBOARDING AND COLLABORATION FACILITATION IN AN AUGMENTED REALITY ENVIRONMENT

Abstract

Systems and methods of virtual billboarding and collaboration facilitation in an augmented reality environment are disclosed. In one aspect, embodiments of the present disclosure include a method, which may be implemented on a system, to facilitate collaboration in an augmented reality environment through a virtual object that is shareable. The method can further include one or more of, identifying a first user and a second user of the augmented reality environment between whom to facilitate the collaboration on the virtual object, implementing a first edit on the virtual object in the augmented reality environment, made by the first user using the edit function, to generate a first edited version of the virtual object, and/or causing to be perceptible, the first edited version of the virtual object, to the second user, via a second user view of the augmented reality environment

| Inventors: | Spivack; Nova; (REDMOND, WA) ; Hoerl; Matthew; (REDMOND, WA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 65723564 | ||||||||||

| Appl. No.: | 16/130541 | ||||||||||

| Filed: | September 13, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62581989 | Nov 6, 2017 | |||

| 62575458 | Oct 22, 2017 | |||

| 62557775 | Sep 13, 2017 | |||

| 62613595 | Jan 4, 2018 | |||

| 62621470 | Jan 24, 2018 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06T 19/006 20130101; G09B 5/00 20130101; G09B 5/02 20130101; A63F 13/87 20140902; G06F 3/011 20130101; G06F 3/147 20130101; G06T 11/60 20130101; H04L 51/10 20130101; G06F 3/013 20130101; G06F 3/1454 20130101; G09G 2340/10 20130101; G06Q 30/0643 20130101; A63F 13/69 20140902; G09B 5/065 20130101; H04L 51/20 20130101; A63F 2300/537 20130101; G09B 5/04 20130101; H04L 51/32 20130101; G09G 5/14 20130101; G09G 2340/12 20130101; G06Q 30/0635 20130101; G06F 3/04815 20130101; A63F 2300/609 20130101; A63F 2300/80 20130101; H04L 51/046 20130101; G06T 19/003 20130101; A63F 13/80 20140902; G09G 2370/20 20130101 |

| International Class: | G06F 3/14 20060101 G06F003/14; G06T 11/60 20060101 G06T011/60; G06T 19/00 20060101 G06T019/00; G09B 5/00 20060101 G09B005/00 |

Claims

1. A method to facilitate collaboration in an augmented reality environment through a virtual object that is shareable, the method, comprising: identifying a first user and a second user of the augmented reality environment between whom to facilitate the collaboration on the virtual object; rendering a first user view of the augmented reality environment based on a first physical location associated with the first user in the real world environment; rendering a second user view of the augmented reality environment based on a second physical location associated with the second user in the real world environment; implementing a first edit on the virtual object in the augmented reality environment, made by the first user using the edit function, to generate a first edited version of the virtual object; wherein, the edit function of the virtual object is accessible by the first user via a first user view of augmented reality environment; causing to be perceptible, the first edited version of the virtual object, to the second user, via a second user view of the augmented reality environment; wherein, the augmented reality environment depicts the virtual object amongst elements physically present in the real world environment.

2. The method of claim 1, further comprising: implementing a second edit on the virtual object in the augmented reality environment, made by the second user using the edit function, to generate a second edited version of the virtual object; wherein, the edit function is accessible by the second user in the second user view of augmented reality environment; causing to be perceptible, the second edited version of the virtual object, to one or more of the first user, via the first user view of the augmented reality environment and a third user, via a third user view of the augmented reality environment.

3. (canceled)

4. (canceled)

5. The method of claim 1, further comprising: adjusting or updating the first user view based on changes to the first physical location, or changes in orientation of the first user in the real world environment; reorienting depiction of the virtual object in the first user view based on changes to the first physical location, or changes in orientation of the first user in the real world environment; adjusting or updating the second view based on changes to the second physical location or changes in orientation of the second user in the real world environment; reorienting depiction of the first edited version of the virtual object in the second user view based on chances to the second physical location, or changes in orientation of the second user in the real world environment.

6. (canceled)

7. The method of claim 1, further comprising: rendering the first user view and the second user view to include at least some shared visible elements of the real world environment; the first user view and the second user view are rendered to include at least some shared perceptible elements of the real world environment responsive to determining that the first user and second user are physically co-located in the real world environment; wherein, the first user and second user are physically co-located if and when at least part of a field of view of the first user and a field of view of the second user at least partially overlaps.

8. (canceled)

9. The method of claim 1, wherein: the virtual object and implementation of the first edit on the virtual object by the first user to generate the first edited version of the virtual object is accessible by the second user through the second user view of the augmented reality environment, if the first user and second user are physically co-located in the real world environment; further wherein, a position or orientation of the first edited version of the virtual object in the second user view is adjusted in response to: completion of the implementation of the first edit on the virtual object, or detection of a share request of the virtual object with the second user, initiated by the first user.

10. The method of claim 1, further comprising: responsive to determining that the first user and the second user are not physically co-located in the real world environment, rendering the first user view to include first real elements of the first physical location; rendering the second user view to include second real elements of the second physical location; wherein the first real elements are distinct from the second real elements; wherein, the first edited version of the virtual object is made perceptible in the second user view in response to: completion of the implementation of the first edit on the virtual object, or detection of a share request of the virtual object with the second user, initiated by the first user.

11. The method of claim 1, wherein: the virtual object includes a collaborative art project constructed in collaboration by the first user and the second user; the virtual object includes, one or more of, a virtual painting, a virtual sculpture, a virtual castle, a virtual snowman.

12. The method of claim 1, wherein: the augmented reality environment includes a collaborative learning environment; wherein, the virtual object facilitates learning by the first user and teaching by the second user or learning by the first user and learning by the second user.

13. (canceled)

14. (canceled)

15. A machine-readable storage medium, having stored thereon instructions, which when executed by a processor, cause the processor to provide an educational experience in a real world environment, via an augmented reality platform, the method, comprising: deploying a virtual object in the augmented reality environment, the virtual object to facilitate interaction between a first user and a second user of the augmented reality platform, to engage in the educational experience in the real world environment; wherein, the virtual object is enabled for interaction with or action on, simultaneously by the first user and the second user; implementing a first manipulation of the virtual object in the augmented reality environment, the first manipulation being made by the first user via a first user view of the augmented reality platform; causing to be perceptible, the virtual object and first changes to the virtual object in the implementing of the first manipulation on the virtual object, to the second user, from a second user view of the augmented reality environment.

16. The method of claim 15, further comprising: causing to be perceptible, the virtual object and the first changes to the virtual object in the implementing of the first manipulation on the virtual object, to a third user, from a third user view of the augmented reality environment; implementing a second manipulation of the virtual object in the augmented reality environment the second manipulation being made by the second user via the second user view of augmented reality platform; causing to be perceptible, the implementing of the second manipulation on the virtual object, by the first user, via the first user view of the augmented reality environment.

17. (canceled)

18. The method of claim 15, further comprising: implementing a second manipulation of the virtual object, the second manipulation being made by the second user via the second user view of the augmented reality environment; wherein, at least a part of the second manipulation made by the second user, is implemented on the virtual object simultaneously in time, with the implementing of the first manipulation of the virtual object, made by the first user; causing to be simultaneously perceptible, to the first user and the second user, second changes to the virtual object in the implementing the second manipulation and the first changes to the virtual object in the implementing of the first manipulation; further causing to be simultaneously perceptible, to the first user, second user and the third user, the second changes to the virtual object in the implementing the second manipulation and the first changes to the virtual object in the implementing of the first manipulation, via the third user view of the augmented reality environment.

19. (canceled)

20. The method of claim 15, further comprising: rendering the first user view and the second user view to include at least some shared visible elements of the real world environment; wherein, the first user view and the second user view are rendered to include at least some shared visible elements of the real world environment responsive to determining that the first user and second user are physically co-located m the real world environment.

21. (canceled)

22. The method of claim 15, further comprising: responsive to determining that the first user and the second user are not physically co-located in the real world environment, rendering the first user view of the augmented reality environment based on a first physical location associated with the first user in the real world environment; wherein, the first user view includes first real elements of the first physical location; rendering the virtual object in the first user view among the first real elements; adjusting a first perspective of the virtual object in the first user view based changes in position or orientation of the first user in the first location.

23. The method of claim 22, further comprising: rendering the second user view of the augmented reality environment based on a second physical location associated with the second user in the real world environment; wherein, the second user view includes second real elements of the second physical location; rendering the virtual object in the second user view among the second real elements; adjusting a second perspective of the virtual object in the second user view based on changes in position or orientation of the second user in the second location; wherein the first real elements are distinct from the second real elements.

24. The method of claim 15, wherein: the virtual object represents, one or more of, a virtual text book, a virtual novel, a virtual pen, a virtual note pad, a virtual blackboard, a blueprint, a virtual painting, a virtual sculpture, a virtual puzzle, a virtual crossword puzzle, a virtual marker, a virtual exam, a virtual exam problem, a virtual home work, a virtual homework problem, a virtual circuit board, a virtual telescope, a virtual instrument, virtual lego, virtual building blocks.

25. (canceled)

26. A system to facilitate interaction with a virtual billboard associated with a physical location in the real world environment, via an augmented reality platform, the system, comprising: a processor; memory coupled to the processor, the memory having stored having stored thereon instructions, which when executed by the processor, cause the processor to: associate the virtual billboard with the physical location in the real world environment, such that the virtual billboard is rendered in an augmented reality environment, at or in a vicinity of the physical location or is rendered in the augmented reality environment to appear to be located at or in the vicinity of the physical location; depict content associated with the virtual billboard, at or in the vicinity of the physical location; depict user replies to the content with the virtual billboard, at or in the vicinity of the physical location.

27. The system of claim 26, wherein the processor: creates the virtual billboard responsive to a request of a creator user; wherein, the physical location with which the virtual billboard is associated is specified in the request of the creator user; the virtual billboard includes one or more of a note, a review, an offer, an ad.

28. The system of claim 26, wherein: the virtual billboard is world-locked, wherein, in world locking the virtual billboard, the virtual billboard is associated with the physical location in the real world environment wherein, the virtual billboard is perceptible to a user, if and when the given user is physically at or in a vicinity of the physical location; the virtual billboard is enabled to be interacted with by the user if and when the user is at or in a vicinity of the physical location.

29. The system of claim 26, wherein: the virtual billboard is user-locked and the physical location with which the virtual billboard is associated, includes a physical space around a user, the physical space around the user being moveable with movement of the user in the real world environment; wherein, in user-locking the virtual billboard, the virtual billboard is rendered in the augmented reality environment to move with or appear to move with the user in the augmented reality environment.

30. The system of claim 29, wherein the processor: detects the movement of the user in the real world environment; identifies changes in location of the physical space around the user due to the movement of the user in the real world environment; render the virtual billboard to move in the augmented reality environment in accordance with the changes in location of the physical space around the user such that the virtual billboard moves with or appears to move with the user in the augmented reality environment; detects interaction with the virtual billboard a user; renders augmented reality features embodied in the virtual billboard in the augmented reality environment; wherein, the augmented reality features include the user replies depicted as a 3D thread associated with the virtual billboard; wherein the augmented reality features further include one or more of, animations, objects or scenes rendered in 360 degrees or 3D.

31. (canceled)

32. (canceled)

33. (canceled)

34. (canceled)

Description

CLAIM OF PRIORITY

[0001] This application claims the benefit of:

[0002] * U.S. Provisional Application No. 62/557,775, filed Sep. 13, 2017 and entitled "Systems and Methods of Augmented Reality Enabled Applications Including Social Activities or Web Activities and Apparatuses of Tools Therefor," (8004.US00), the contents of which are incorporated by reference in their entirety;

[0003] * U.S. Provisional Application No. 62/575,458, filed Oct. 22, 2017 and entitled "Systems, Methods and Apparatuses of Single directional or Multi-directional Lens/Mirrors or Portals between the Physical World and a Digital World of Augmented Reality (AR) or Virtual Reality (VR) Environment/Objects; Systems and Methods of On-demand Curation of Crowdsourced (near) Real time Imaging/Video Feeds with Associated VR/AR Objects; Systems and Methods of Registry, Directory and/or Index for Augmented Reality and/or Virtual Reality Objects," (8005.US00), the contents of which are incorporated by reference in their entirety; and

[0004] * U.S. Provisional Application No. 62/581,989, filed Nov. 6, 2017 and entitled "Systems, Methods and Apparatuses of: Determining or Inferring Device Location using Digital Markers; Virtual Object Behavior Implementation and Simulation Based on Physical Laws or Physical/Electrical/Material/Mechanical/Optical/Chemical Properties; User or User Customizable 2D or 3D Virtual Objects; Analytics of Virtual Object Impressions in Augmented Reality and Applications; Video objects in VR and/or AR and Interactive Multidimensional Virtual Objects with Media or Other Interactive Content," (8006.US00), the contents of which are incorporated by reference in their entirety.

[0005] * U.S. Provisional Application No. 62/613,595, filed Jan. 4, 2018 and entitled "Systems, methods and apparatuses of: Creating or Provisioning Message Objects Having Digital Enhancements Including Virtual Reality or Augmented Reality Features and Facilitating Action, Manipulation, Access and/or Interaction Thereof," (8008.US00), the contents of which are incorporated by reference in their entirety.

[0006] * U.S. Provisional Application No. 62/621,470, filed Jan. 24, 2018 and entitled "Systems, Methods and Apparatuses to Facilitate Gradual and Instantaneous Change or Adjustment in Levels of Perceptibility of Virtual Objects and Reality Object in a Digital Environment," (8009.US00), the contents of which are incorporated by reference in their entirety.

CROSS-REFERENCE TO RELATED APPLICATIONS

[0007] This application is related to U.S. application Ser. No. ______, also filed on Sep. 13, 2018 and entitled "Systems And Methods Of Shareable Virtual Objects and Virtual Objects As Message Objects To Facilitate Communications Sessions In An Augmented Reality Environment," (8004.US01), the contents of which are incorporated by reference in their entirety.

[0008] This application is related to U.S. application Ser. No. ______, also filed on Sep. 13, 2018 and entitled "Systems And Methods Of Rewards Object Spawning And Augmented Reality Commerce Platform Supporting Multiple Seller Entities" (Attorney Docket No. 99005-8004.US03), the contents of which are incorporated by reference in their entirety.

[0009] This application is related to PCT Application no. PCT/______, also filed on Sep. 13, 2018 and entitled "Systems And Methods Of Shareable Virtual Objects and Virtual Objects As Message Objects To Facilitate Communications Sessions In An Augmented Reality Environment" (Attorney Docket No. 99005-8004.WO01), the contents of which are incorporated by reference in their entirety.

[0010] This application is related to PCT Application no. PCT/US2018/44844, filed Aug. 1, 2018 and entitled "Systems, Methods and Apparatuses to Facilitate Trade or Exchange of Virtual Real-Estate Associated with a Physical Space" (Attorney Docket No. 99005-8002.WO01), the contents of which are incorporated by reference in their entirety.

[0011] This application is related to PCT Application no. PCT/US2018/45450, filed Aug. 6, 2018 and entitled "Systems, Methods and Apparatuses for Deployment and Targeting of Context-Aware Virtual Objects and/or Objects and/or Behavior Modeling of Virtual Objects Based on Physical Principles" (Attorney Docket No. 99005-8003.WO01), the contents of which are incorporated by reference in their entirety.

TECHNICAL FIELD

[0012] The disclosed technology relates generally to augmented reality environments and virtual objects that are shareable amongst users.

BACKGROUND

[0013] The advent of the World Wide Web and its proliferation in the 90's transformed the way humans conduct business, live lives, consume/communicate information and interact with or relate to others. A new wave of technology is on the cusp of the horizon to revolutionize our already digitally immersed lives.

BRIEF DESCRIPTION OF THE DRAWINGS

[0014] FIG. 1 illustrates an example block diagram of a host server able to deploy virtual objects for various applications, in accordance with embodiments of the present disclosure.

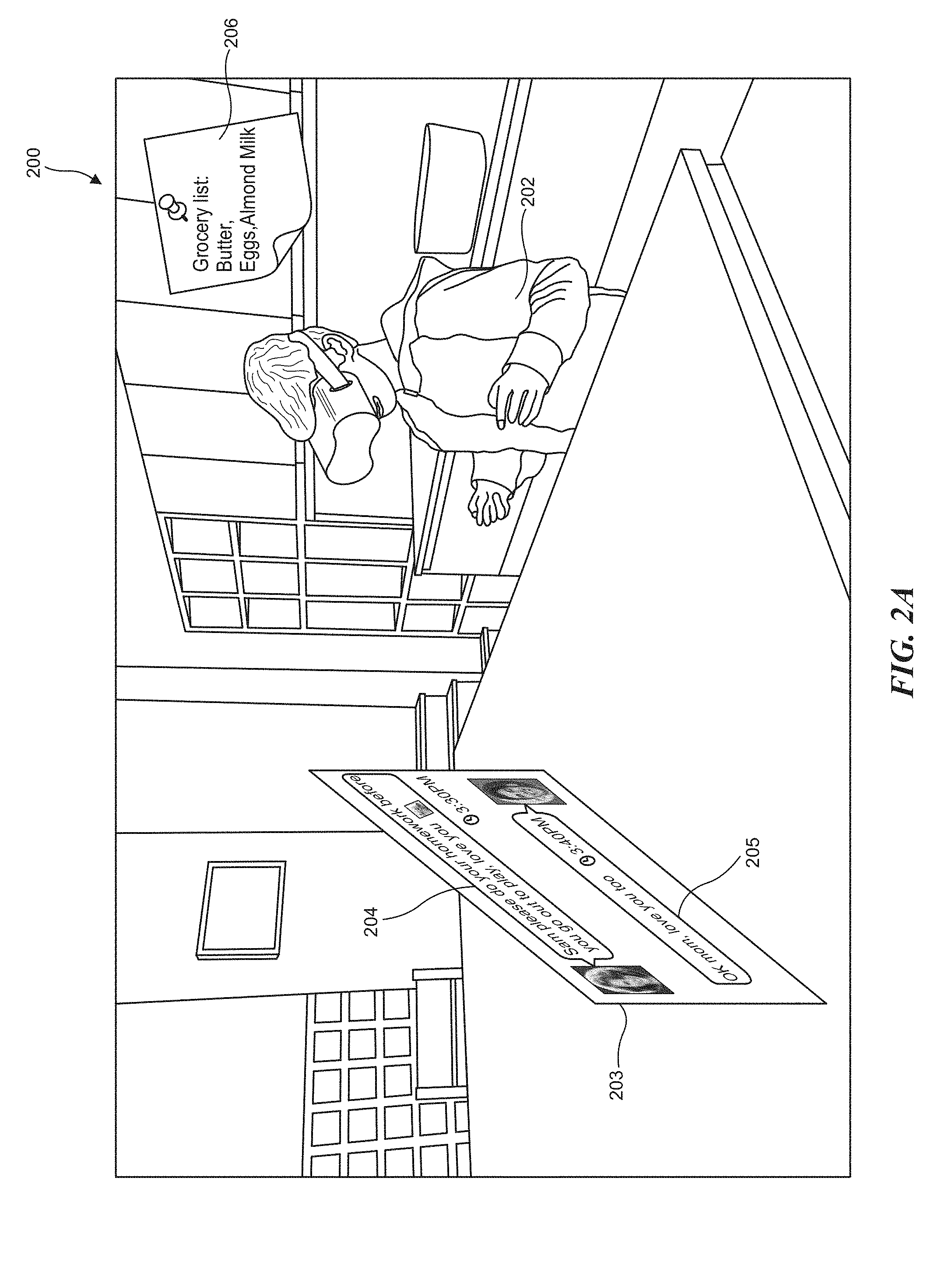

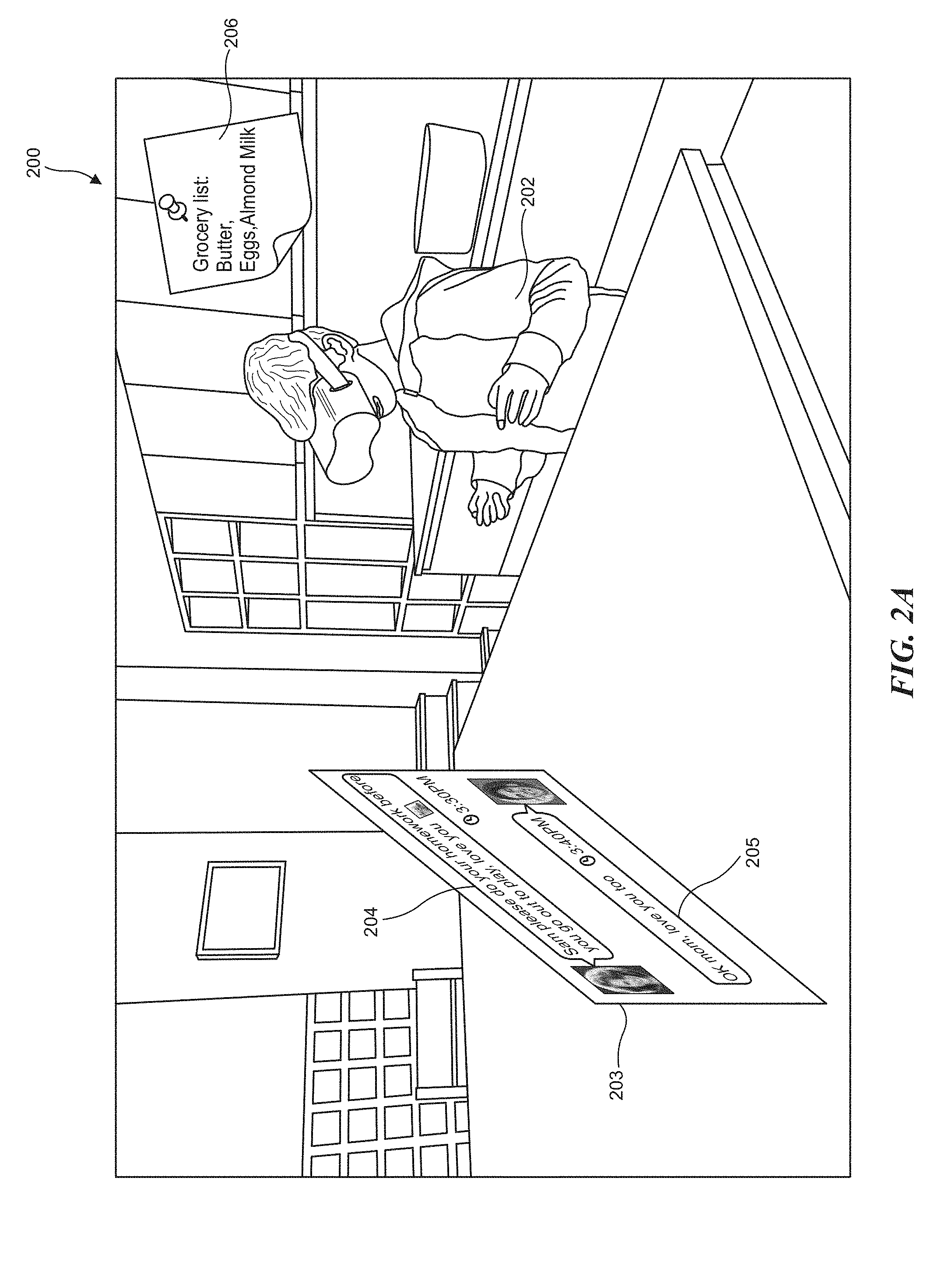

[0015] FIG. 2A depicts an example diagram showing an example of a virtual object to facilitate an augmented reality experience including a communications session and an example of a virtual object which includes a shareable note in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0016] FIG. 2B depicts an example diagram illustrating an example of virtual object posted in an augmented reality environment for a user by another entity, in accordance with embodiments of the present disclosure.

[0017] FIG. 2C depicts an example diagram depicting collaboration facilitated through a virtual object in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0018] FIG. 2D depicts an example diagram of a marketplace administered in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0019] FIG. 2E depicts an example diagram showing an example user experience flow for creating virtual objects, managing a collection of virtual objects, sharing and posting virtual objects or responding to virtual objects, in accordance with embodiments of the present disclosure.

[0020] FIG. 3A depicts an example functional block diagram of a host server that deploys and administers virtual objects for various disclosed applications, in accordance with embodiments of the present disclosure.

[0021] FIG. 3B depicts an example block diagram illustrating the components of the host server that deploys and administers virtual objects for various disclosed applications, in accordance with embodiments of the present disclosure

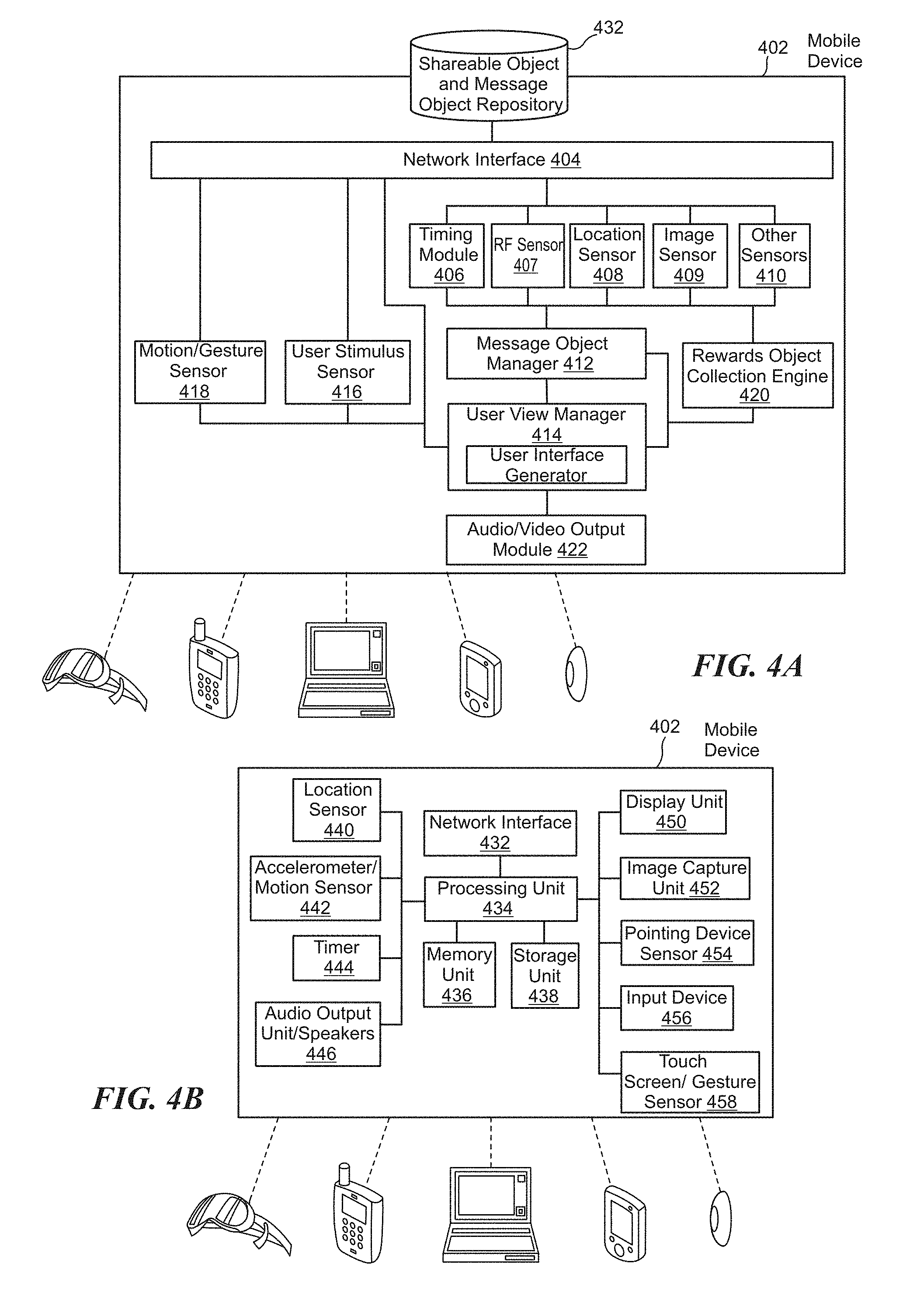

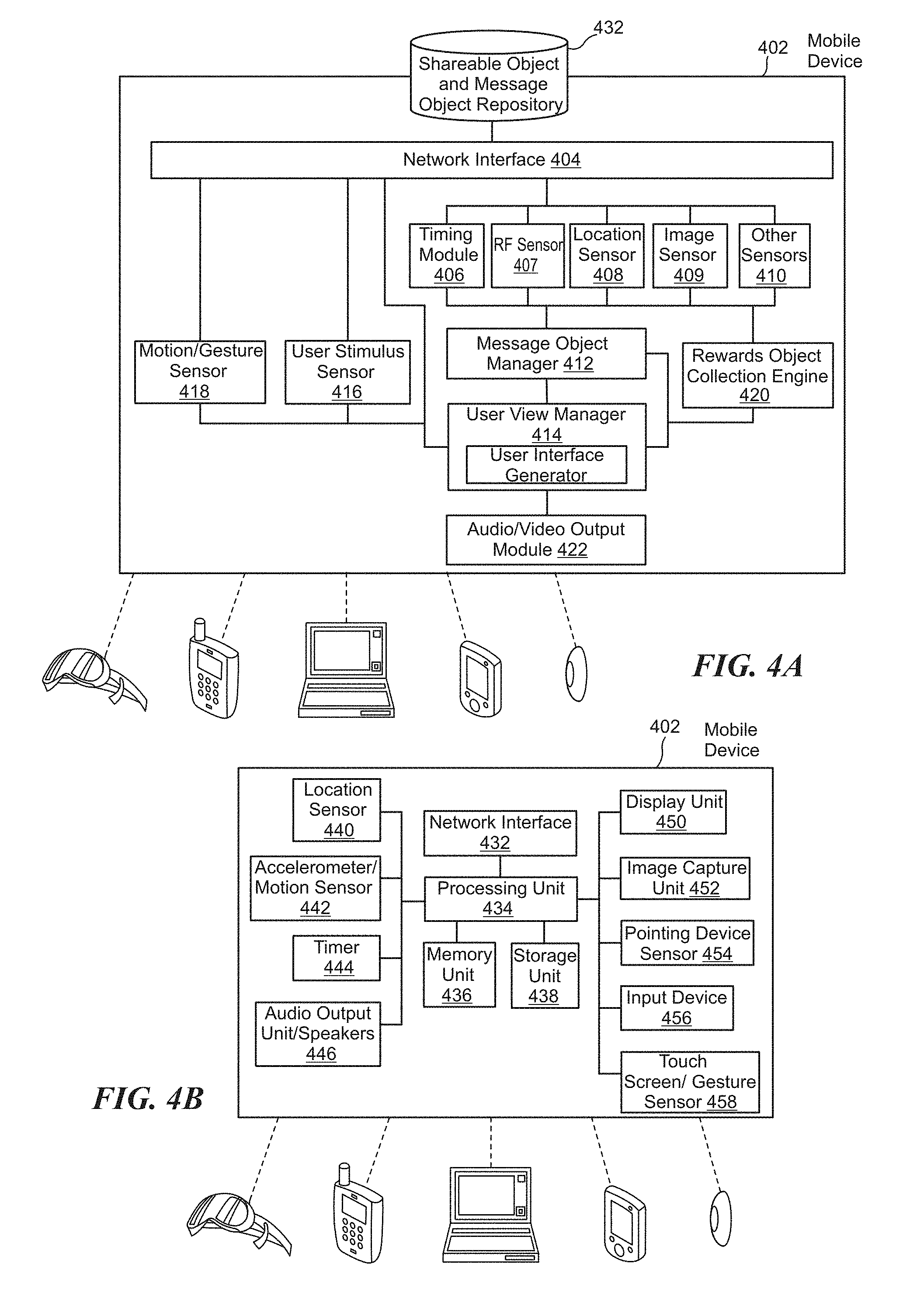

[0022] FIG. 4A depicts an example functional block diagram of a client device such as a mobile device that enables virtual object manipulation and/or virtual object collection for various disclosed applications, in accordance with embodiments of the present disclosure

[0023] FIG. 4B depicts an example block diagram of the client device, which can be a mobile device that enables virtual object manipulation and/or virtual object collection for various disclosed applications, in accordance with embodiments of the present disclosure.

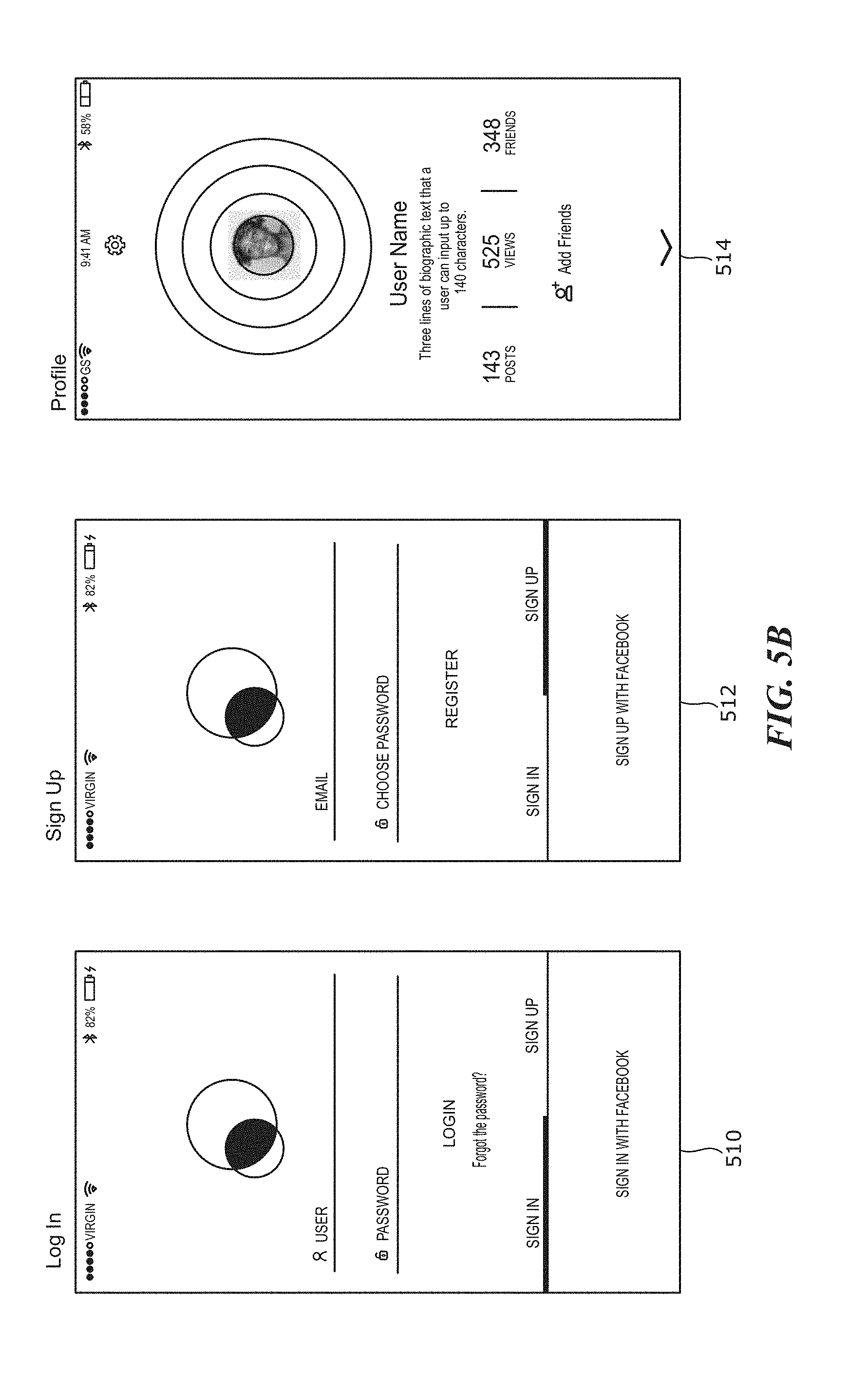

[0024] FIG. 5A graphically depicts diagrammatic examples showing user experience flows in navigating an example user interface for accessing, viewing or interacting with an augmented reality environment, in accordance with embodiments of the present disclosure.

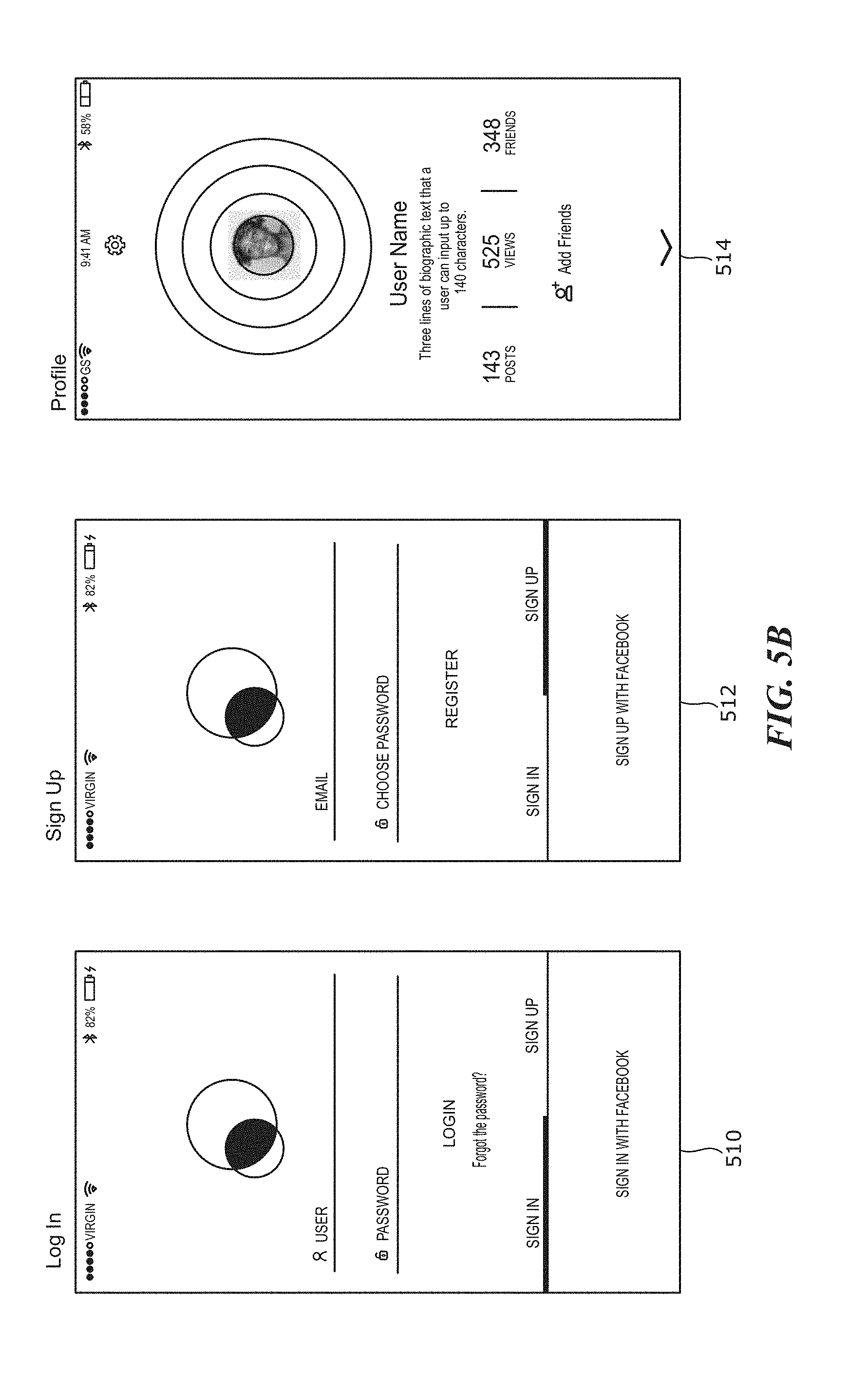

[0025] FIG. 5B graphically depicts example user interfaces for logging in to, signing up for and viewing a user profile in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0026] FIG. 5C graphically depicts example user interfaces for managing friends in an augmented reality environment and an example user interface to manage application settings, in accordance with embodiments of the present disclosure.

[0027] FIG. 5D graphically depicts example user interfaces of an augmented reality environment showing a camera view and a map view, in accordance with embodiments of the present disclosure.

[0028] FIG. 5E graphically depicts example user interfaces for viewing notifications in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0029] FIG. 5F graphically depicts example user interfaces for placing a virtual object at a physical location and example user interfaces for sharing a virtual object with another user via an augmented reality environment, in accordance with embodiments of the present disclosure.

[0030] FIG. 5G graphically depicts example user interfaces for responding to a message or a virtual object with another virtual object via an augmented reality environment, in accordance with embodiments of the present disclosure.

[0031] FIG. 6A graphically depicts example user interfaces for creating, posting and/or sharing a virtual billboard object having text content, in accordance with embodiments of the present disclosure.

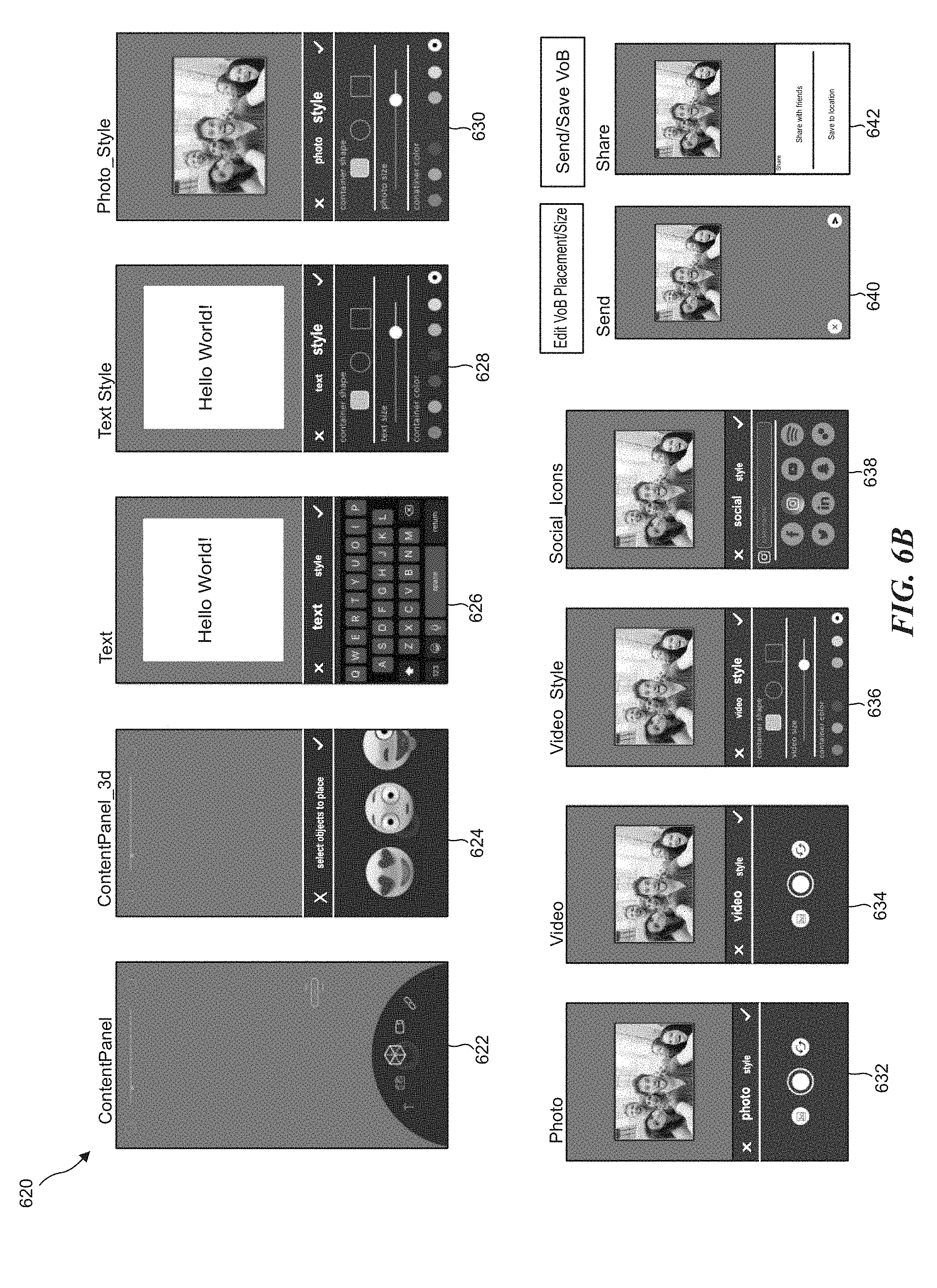

[0032] FIG. 6B graphically depicts additional example user interfaces for creating, posting and/or sharing a virtual object s having multimedia content in accordance with embodiments of the present disclosure.

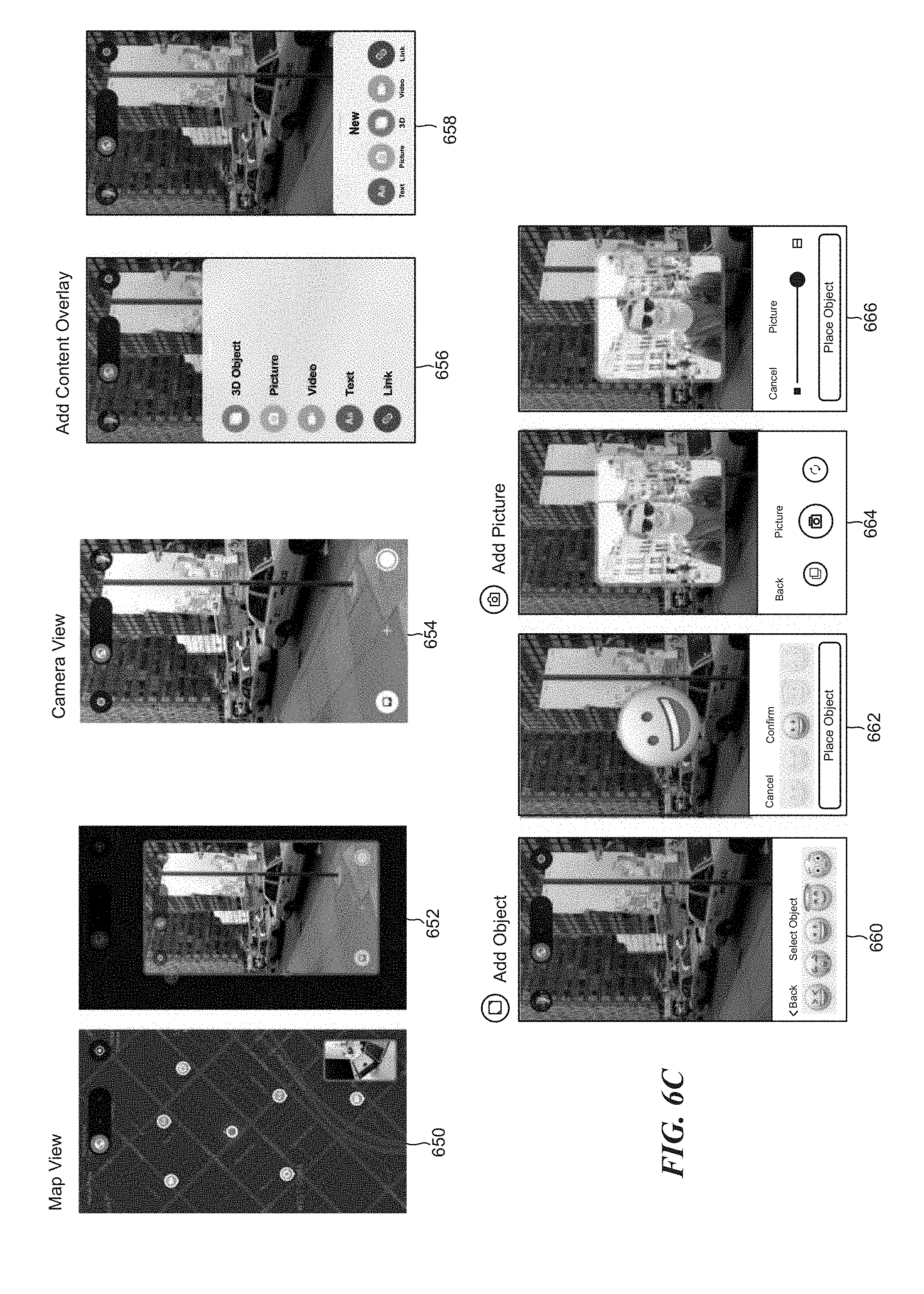

[0033] FIG. 6C graphically depicts example user interfaces for creating a virtual object, posting a virtual object and placing a virtual object at a physical location, in accordance with embodiments of the present disclosure.

[0034] FIG. 7 graphically depicts example user interfaces for creating a virtual billboard, posting a virtual billboard at a physical location, sharing the virtual billboard and views of examples of virtual billboard objects placed at physical locations, in accordance with embodiments of the present disclosure.

[0035] FIG. 8 graphically depicts views of examples of virtual objects associated with a physical location, in accordance with embodiments of the present disclosure.

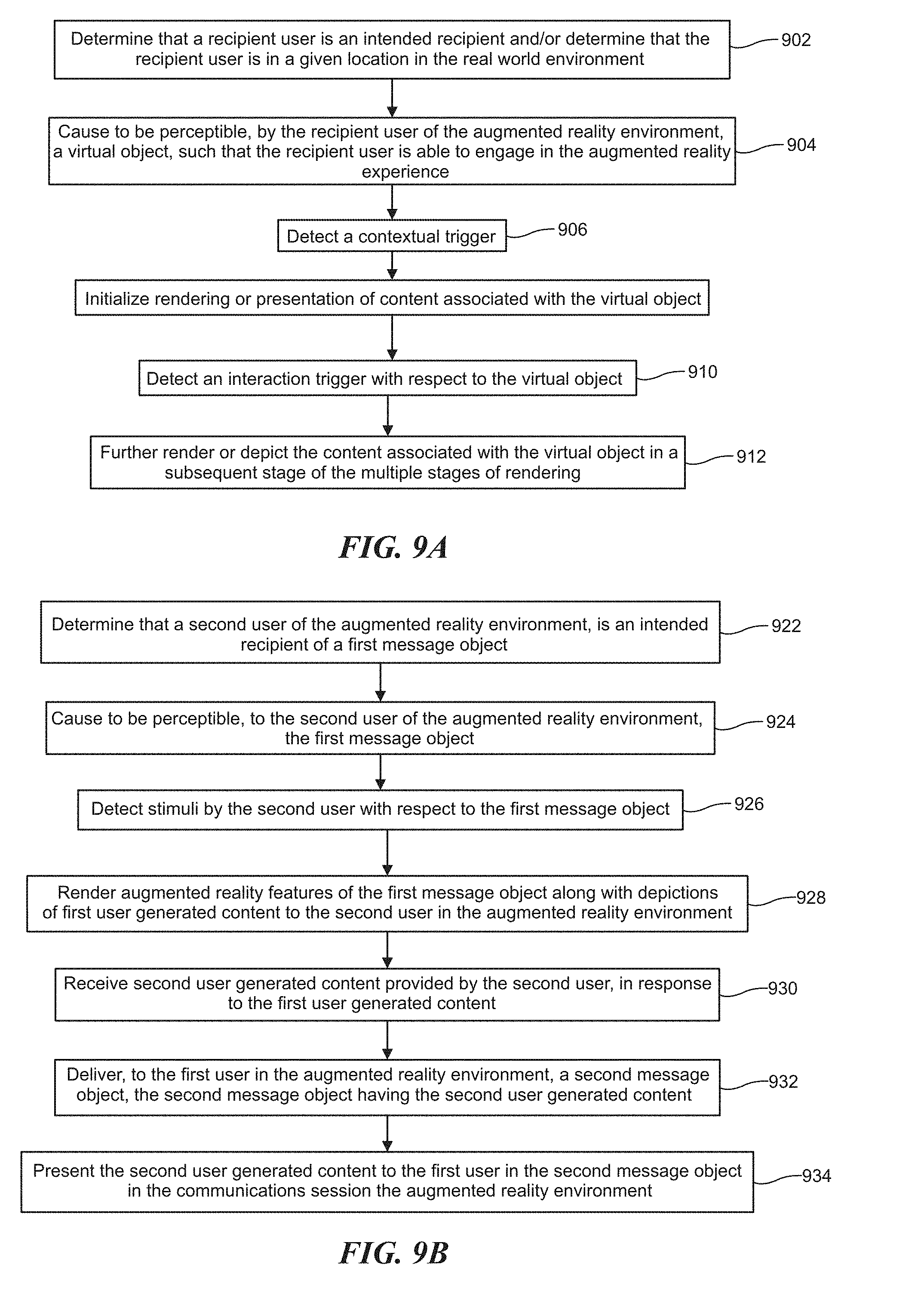

[0036] FIG. 9A depicts a flow chart illustrating an example process to share a virtual object with a recipient user, in accordance with embodiments of the present disclosure.

[0037] FIG. 9B depicts a flow chart illustrating an example process to facilitate a communications session in a real world environment via an augmented reality environment, in accordance with embodiments of the present disclosure.

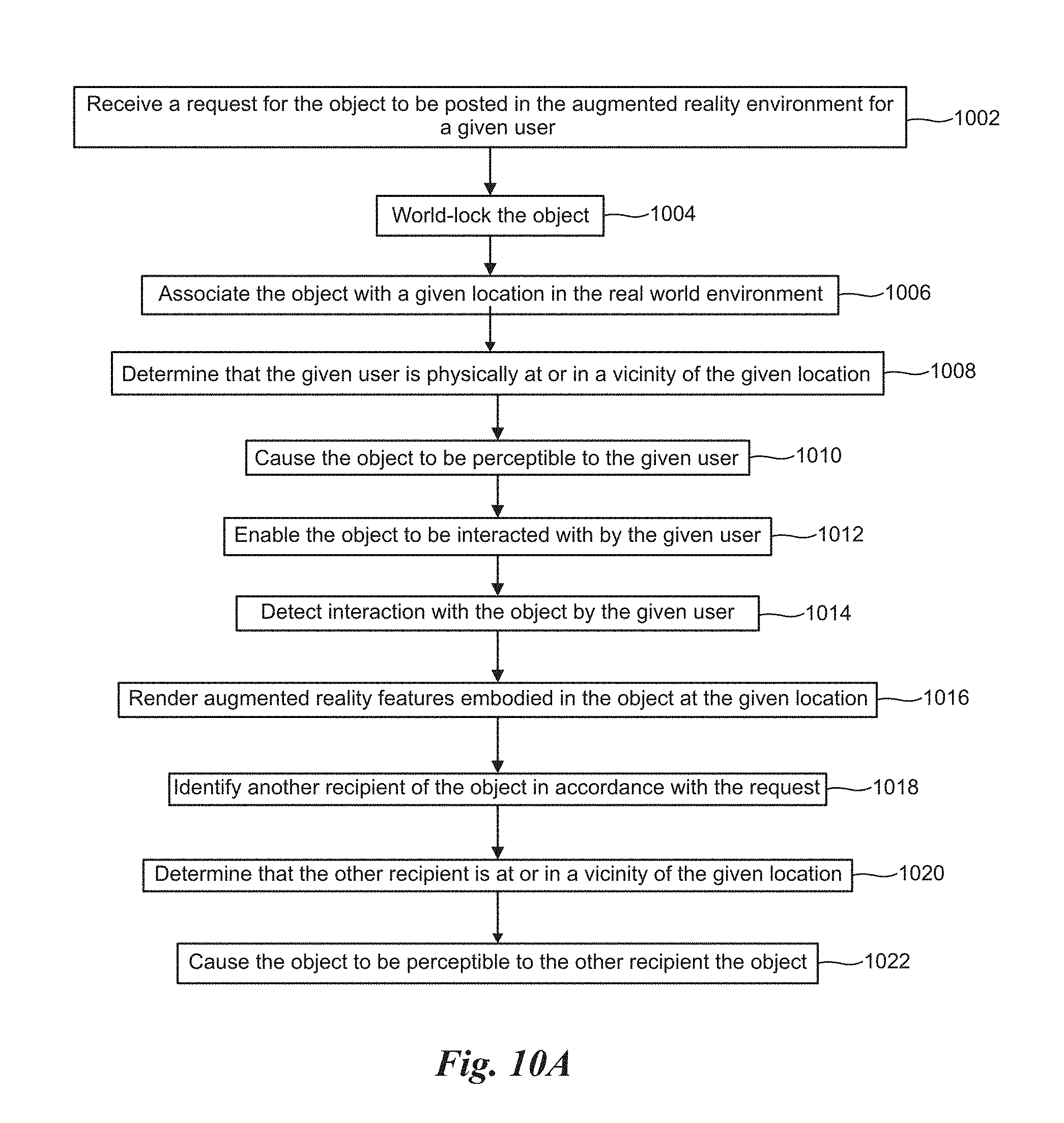

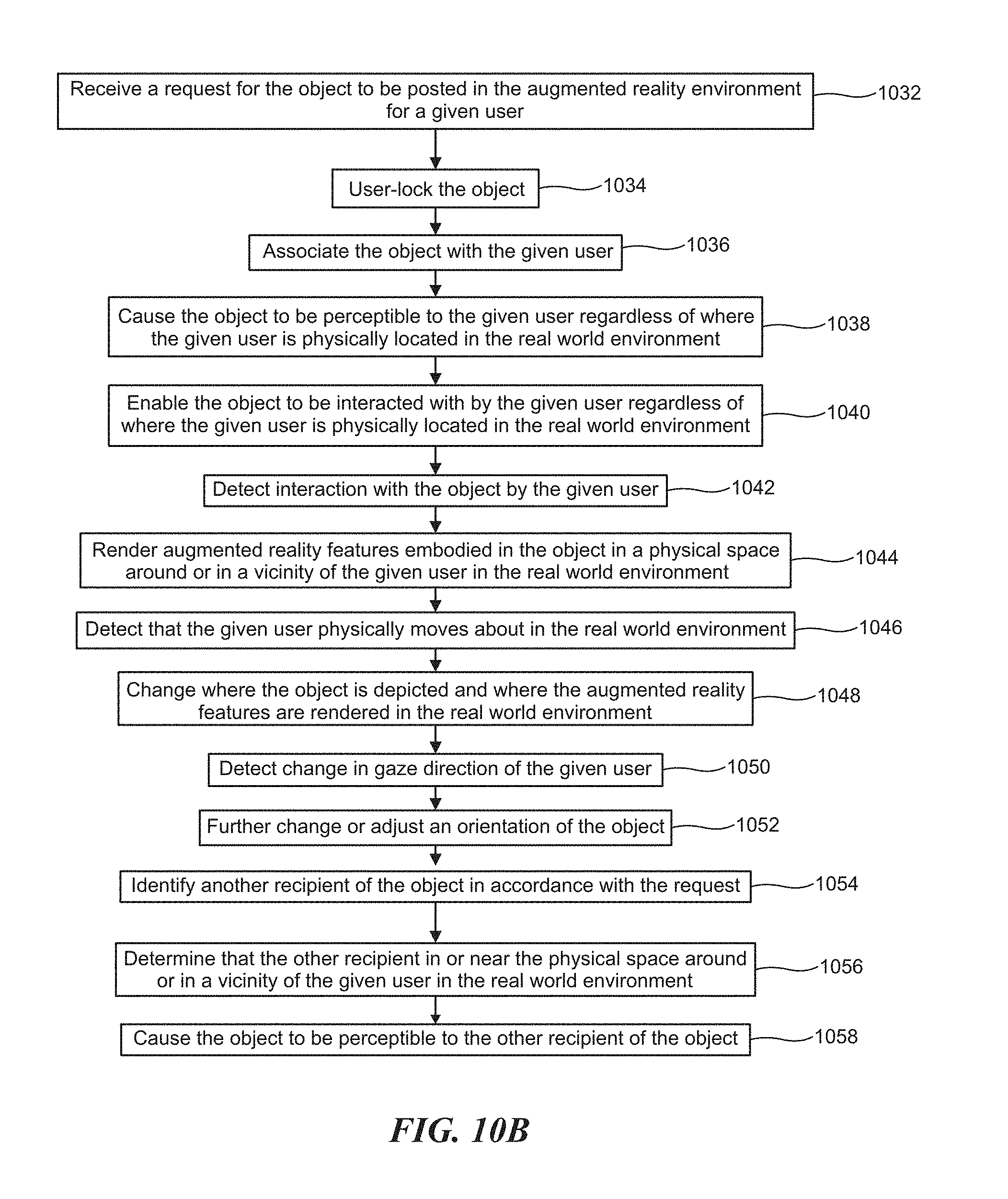

[0038] FIG. 10A-10B depict flow charts illustrating example processes of posting virtual objects that are world locked and user locked, in accordance with embodiments of the present disclosure.

[0039] FIG. 11 depicts a flow chart illustrating an example process to facilitate collaboration in an augmented reality environment through a virtual object, in accordance with embodiments of the present disclosure.

[0040] FIG. 12A depicts a flow chart illustrating an example process to provide an educational experience via an augmented reality environment, in accordance with embodiments of the present disclosure.

[0041] FIG. 12B depicts a flow chart illustrating an example process to facilitate interaction with a virtual billboard associated with a physical location, in accordance with embodiments of the present disclosure.

[0042] FIG. 13A depicts a flow chart illustrating an example process to administer a marketplace having multiple seller entities via an augmented reality environment, in accordance with embodiments of the present disclosure.

[0043] FIG. 13B depicts a flow chart illustrating an example process to spawn a rewards object in an augmented reality environment, in accordance with embodiments of the present disclosure.

[0044] FIG. 14 is a block diagram illustrating an example of a software architecture that may be installed on a machine, in accordance with embodiments of the present disclosure.

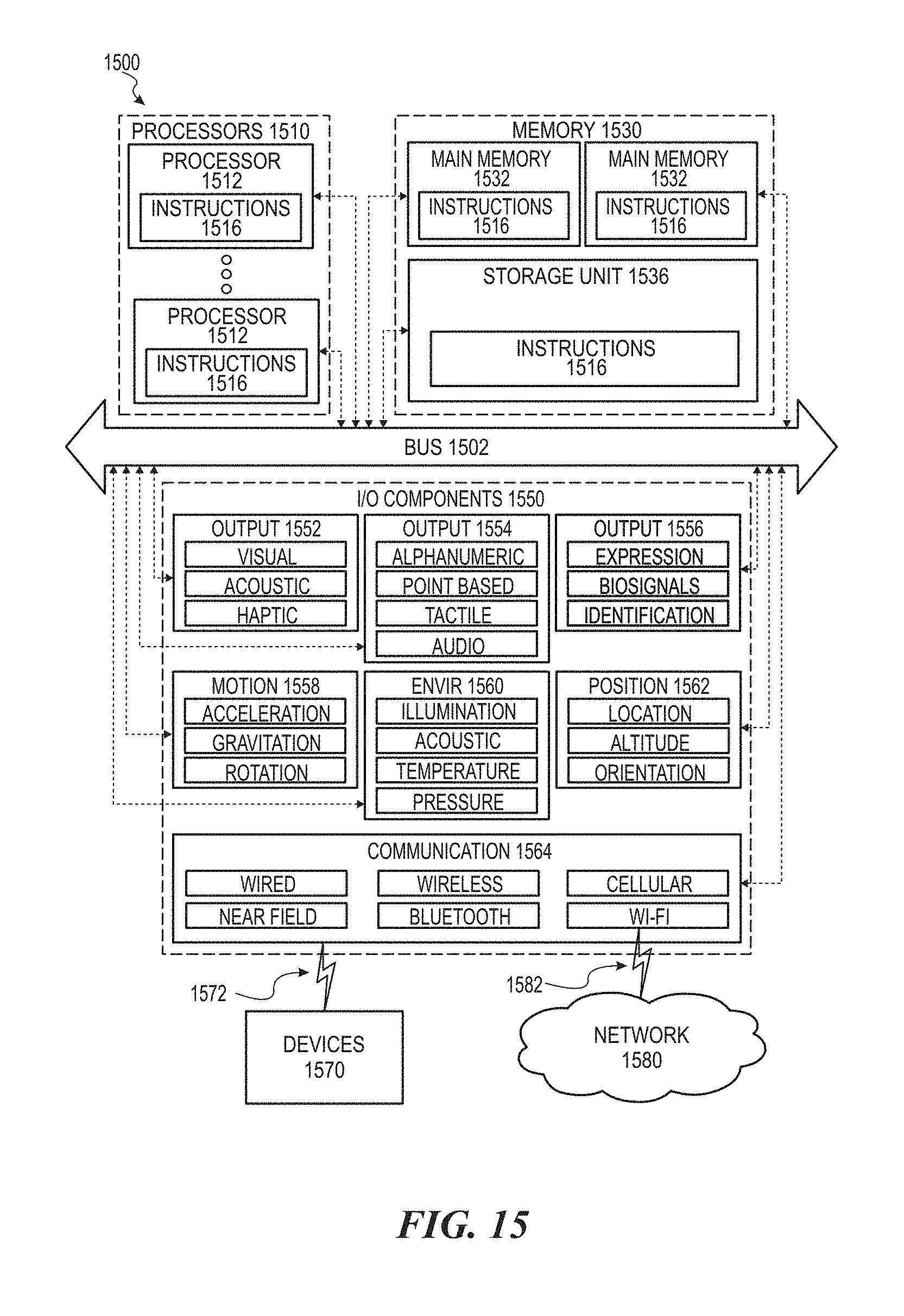

[0045] FIG. 15 is a block diagram illustrating components of a machine, according to some example embodiments, able to read a set of instructions from a machine-readable medium (e.g., a machine-readable storage medium) and perform any one or more of the methodologies discussed herein.

DETAILED DESCRIPTION

[0046] The following description and drawings are illustrative and are not to be construed as limiting. Numerous specific details are described to provide a thorough understanding of the disclosure. However, in certain instances, well-known or conventional details are not described in order to avoid obscuring the description. References to one or an embodiment in the present disclosure can be, but not necessarily are, references to the same embodiment; and, such references mean at least one of the embodiments.

[0047] Reference in this specification to "one embodiment" or "an embodiment" means that a particular feature, structure, or characteristic described in connection with the embodiment is included in at least one embodiment of the disclosure. The appearances of the phrase "in one embodiment" in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Moreover, various features are described which may be exhibited by some embodiments and not by others. Similarly, various requirements are described which may be requirements for some embodiments but not other embodiments.

[0048] The terms used in this specification generally have their ordinary meanings in the art, within the context of the disclosure, and in the specific context where each term is used. Certain terms that are used to describe the disclosure are discussed below, or elsewhere in the specification, to provide additional guidance to the practitioner regarding the description of the disclosure. For convenience, certain terms may be highlighted, for example using italics and/or quotation marks. The use of highlighting has no influence on the scope and meaning of a term; the scope and meaning of a term is the same, in the same context, whether or not it is highlighted. It will be appreciated that the same thing can be said in more than one way.

[0049] Consequently, alternative language and synonyms may be used for any one or more of the terms discussed herein, nor is any special significance to be placed upon whether or not a term is elaborated or discussed herein. Synonyms for certain terms are provided. A recital of one or more synonyms does not exclude the use of other synonyms. The use of examples anywhere in this specification including examples of any terms discussed herein is illustrative only, and is not intended to further limit the scope and meaning of the disclosure or of any exemplified term. Likewise, the disclosure is not limited to various embodiments given in this specification.

[0050] Without intent to further limit the scope of the disclosure, examples of instruments, apparatus, methods and their related results according to the embodiments of the present disclosure are given below. Note that titles or subtitles may be used in the examples for convenience of a reader, which in no way should limit the scope of the disclosure. Unless otherwise defined, all technical and scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this disclosure pertains. In the case of conflict, the present document, including definitions will control.

[0051] Embodiments of the present disclosure include systems, methods and apparatuses of platforms (e.g., as hosted by the host server 100 as depicted in the example of FIG. 1) of shareable virtual objects and virtual objects as message objects to facilitate communications sessions in an augmented reality environment. In general, the object or virtual object is generally digitally rendered or synthesized by a machine (e.g., a machine can be one or more of, client device 102 of FIG. 1, client device 402 of FIG. 4A or server 100 of FIG. 1, server 300 of FIG. 3A) to be presented in the AR environment and have human perceptible properties to be human discernible or detectable.

[0052] Further embodiments include, systems and methods of collaboration facilitation in an augmented reality environment. Embodiments of the present disclosure further include providing an educational experience in a real world environment, via an augmented reality platform. Embodiments of the present disclosure further include systems, methods and apparatuses to facilitate interaction with a virtual billboard associated with a physical location in the real world environment.

[0053] Further embodiments of the present disclosure further include systems, methods and apparatuses of platforms (e.g., as hosted by the host server 100 as depicted in the example of FIG. 1) to spawn a rewards object in an augmented reality platform having value to a user in the real world environment. Yet further embodiments of the present disclosure include an augmented reality commerce platform administer a marketplace which supports multiple seller entities via an augmented reality environment.

[0054] One embodiment includes, sending a virtual object (VOB) as a message.

[0055] For example, the VOB appears in the recipient's inbox, message stream, or device as a 3D object that can be interacted with (open it, talk to it, touch it or play with it, read it share it, reply to it, file it, publish it, edit it, customize it, tag it or annotate it etc). The recipient's inbox can include a 2D or 3D interface (list, plane, 3D space). The VOB can be set to appear at a fixed distance relative to or near the user, and/or at specific times, and/or within or near specified geolocations or named places, and/or in specified contexts (shopping, working, at home).

[0056] In general, VOBs can function like messages that have one or more recipients like email (to, CC, Bcc) and can also be shared with groups or made public, tagged or pinned to the top or a certain location in an interface. VOBs can also carry envelope metadata (e.g. created date, sent date, received date, etc). A thread of related VOB messages is a conversation that uses VOBs as the medium.

[0057] Embodiments of the present disclosure further include systems, methods and apparatuses of: creating, rendering, depicting, provisioning, and/or generating message objects with digital enhancements. The enhanced messages can include virtual and/or augmented reality features. The enhanced messages can further be rendered, accessed, transmitted, manipulated, acted on and/or otherwise interacted with via various networks in digital environments by or amongst users, real or digital entities, other simulated/virtual objects or computing systems including any virtual reality (VR), non-virtual reality, augmented reality (AR) and/or mixed reality (mixed AR, VR and/or reality) environments or platforms. For instance, enhanced messages can be shared, transmitted or sent/received via communication channels including legacy SMS, Internet, mobile network via web services, applications (e.g., mobile apps) or dedicated platforms such as VR/AR or mixed VR/AR platforms or environments.

[0058] Example embodiments of the present disclosure further include, by way of example:

[0059] In one embodiment, a user drafts, writes or composes a message having augmented reality ("AR") content. The AR content can include one or more virtual objects. A virtual object can include a 2D or 3D graphical rendering, which can include one or more of: text, images, audio, video, or computer graphics animation. The virtual object can appear and be accessed (preview, view, shared, edited, modified), acted on and/or interacted with via an imaging device such as a smartphone camera, wearable device such as an augmented reality (AR) or virtual reality (VR) headset, gaming consoles, any wearable technology, AR glasses, wearable smart watch, wearable computer, heads up display, advanced textiles, smart garments, smart shoes, smart helmets, activity trackers, in car display or in car navigation panel or unit, etc.)

[0060] The message can be for instance, supplemented, formatted, optimized or designed with additional text, graphical content, multimedia content, and/or simulated objects or virtual objects. The user can place the enhanced messages (or any of the simulated or virtual objects) in physical locations relative to their device, and/or also relative to other virtual objects or simulated objects in the scene to construct a scene relative to a user view or the user's camera perspective.

[0061] In one example, if a user places a virtual object visually in front of their physical position, the virtual or simulated object can be saved to that physical position or near that physical position or within a range of the physical location. The user can also place and save the object for example, at any angle e.g., 10 degrees to the right of their front position

[0062] If placed at a particular angle, and/or size and/or swivel the virtual object, it can be saved in that particular relative location and orientation. The user can also turn around and place it behind themselves and then turn forward again, before sending the message, then it can then save that virtual object or simulated object as being behind them.

[0063] In one embodiment, the user can further select, identify or specify recipients of for the message. For example, the Recipients can be from existing contacts lists, or can be added as new contacts and/or individuals or named groups or lists of individuals.

[0064] Note that a recipient need not be a single person. For instance, a recipient can be an AR enabled chatroom, group or mailing list An AR enabled chatroom, group or mailing list can be associated with a name and/or address. It may also have policies and permissions governing who is the admin, what other roles exist and what their access, preview, view, edit, delete, enhance, sharing, read/write/invite permissions are.

[0065] In one embodiment, an AR enabled chatroom or group is or includes a shared social space where AR messages (e.g., enhanced messages) that are sent to the chatroom or group can be updated synchronously and/or asynchronously to all the recipients. This enables a real-time or near-real-time or asynchronous AR experience for the participants. In some instances, posting AR content to the chatroom or group can be equivalent to sending an AR message to the members of the group or chatroom.

[0066] According to embodiments of the present disclosure, a user sends a message (e.g., the enhanced message) to recipients and the message can be transmitted to recipients. The recipients can be notified that they have received an AR message or that a system has received a message intended for them. If recipients are members of a group that is the recipient or intended recipient, then a group notification can be sent to individual members of the group. Recipients can be notified with a text message, social invite on Facebook or Twitter or another social network, a message in a chatroom, an email message, or a notification on their phone, or a notification in a particular messaging or other type of mobile, desktop or enterprise/Web app.

[0067] In some embodiments, individual recipients can open the AR message to access, preview or view its content. The relevant application can also automatically open to access the message. For example, by clicking on or otherwise selecting the notification, the AR message can be acted on or interacted with. For example, the AR message can be opened and rendered in the appropriate application or reader for display or further action or interaction.

[0068] Identifiers for an application or plug-in used to display, present or depict it can be conveyed or included in metadata in the notification, or it can be implicit in the type of notification or channel that the notification is sent through and received in. The AR application that detects or receives and renders the message can depict or display the content of the message appropriately. In particular, virtual objects or simulated objects that were placed into relative positions around the sender can be rendered in the same relative positions around the receiver.

[0069] If the sender places a virtual/simulated object, or set of objects, in front of their camera when they composed the message, then those objects can appear in front of the recipient's camera in the same relative positions that the sender intended. If a user puts a virtual object behind themselves, the virtual object can also be behind the receiver when they receive the AR message and the receiver can then turn around and see the virtual object behind them in that case.

[0070] In addition, individual recipients can perform social actions on a received AR message, based on the policies and permissions of the application they use to receive it and/or the metadata on the message itself. They can also reply to an AR message with another AR message. They may reply to an AR message with a new AR message, or a non-AR message. With proper permissions and/or upon meeting certain criteria, users or recipients can modify an AR message to be stored, posted publicly/privately and/or sent in reply or forwarded to another user or group of users.

[0071] In an event when an AR message is configured to allow modifications, certain (or any) recipients can add modifications such as additional virtual objects, to the AR message, and these modifications can be be added to the original Message and the sender and any/all other recipients of the AR message will also get these updates. Revisions to the original message can be stored so users can roll back to or view any of the previous versions.

[0072] In other words, an AR message can be configured to be a collaborative object that can be modified on an ongoing basis by the sender and any/all recipients such that they can collaboratively add to, interact with or act on the content of the message. Modifications to an AR message can be subject to permissions, criterion and/or policies such as moderation approval by the sender or an admin.

[0073] In some embodiments, users can forward an AR message to other recipients Forwarding an AR message sends it to other recipients with forwarding metadata in the header of the message. They can comment on the AR message. A comment can be or include by way of example, text, document, message, emoji, emoticon, a gif, audio or video, that appears on an associated comments thread which can be non AR based or AR based.

[0074] A comment can also be created and rendered as an AR comment object or part of an AR comments digest object that is associated with the AR message. Users can save, tag, flag, delete, archive, rate, like, mark as spam, apply rules or filters, file into a folder, and perform other actions activities on or interact AR messages that are similar to the activities that can be performed on email, text messages or other digital objects rendered in a digital environment.

[0075] AR Billboarding and Messaging

[0076] Embodiments of the present disclosure further include AR billboarding and/or messaging. In one embodiment, when people post in the disclosed VR/AR environment, the system enables them to select a person and/or place and/or time to post it to. If they choose just the person it is a message. If just a place it can be a billboard. A person and place is a sticky note or targeted billboard. If they choose just a time then it appears for everyone they can post it to at a time. Place and time or person and time also have effects. The disclosed system can obey, implement or enforce the rules and displays what is permitted under the constraints. Enabling a person to post to a place that they are not in can be implemented. What plane do they post to, at what angle, what altitude in a building, the system can enable them to choose that with an altitude or floor of building selector, or building map. In some instance, it is free to post to place you are in but not free to places you are not in.

[0077] People could collaboratively tag places and things in places for us. A person chooses a plane at a place and names it "desk in my room" then that surface is logged with geo cords. It goes on the map as a named target. Other users can select it and post an object to that place. So the object appears at that named location relative to that place for anyone who is there. Billboards could be compound objects like that--e.g., as collages made of stickers. The "try it" sticker on top of the "Lose Weight Feel Great" object. Compound objects can be implemented in the system as sticking things on or next to or near other things to build grouped objects and then define as a grouped object so it moves as one--like in a drawing app.

[0078] Algorithmic AR/VR Content Feeds

[0079] Embodiments of the present disclosure further include algorithmic AR/VR Content Feeds. A an example embodiment can include: (1) the twitter near a billboard you designed for the video--or something like it, (2) some interesting things auto-spawning near the user (gems, 50% off coupon, secret VIP event ticket, free gift offer). Some of these could appear and then self-delete after n seconds or minutes, giving you a reason to look at the same place again even though you looked recently.

[0080] The system can award points for actions in the environment, like when you find a special virtual object, or when you do things like click on objects, etc. all of this speaks to the addictiveness and reward of the app. With a process that makes it relevant to each user (e.g., user activity stream for each user). So the story is that users get: messages from friends that are highly relevant by nature, relevant content near them about their interests (from public+layers they follow, in an algo-curated public feed), and relevant rewards and sponsored content (rewards from us and targeted ads/offers from sponsors). The content from friends and the relevant content around them+rewards, keeps them looking and coming back.

[0081] If the sponsored ad content is at least not irrelevant, and ideally also provides some points or other kinds of rewards (social reward, access to exclusive events, big savings, etc.) then users will not only tolerate them but may even enjoy and want them. If for example a sponsor made some great sponsored 3D content and even made it rewarding to engage with it, and it was relevant to Joe, then Joe would enjoy it rather than find it annoying. The difference between something being "content" or "annoying advertising" is relevancy and quality.

[0082] The system can reward users in a number of ways. One is the depicted content itself may be delightful or entertaining or useful. The other is that is the system includes a built in treasure hunt metagame--which spawns rewards that the system provides and that sponsors can pay for, to each user, intelligently. that the process is designed and adapted to keep users playing just like a slot machine.

[0083] In one embodiment, the system's reward system is akin to a real world casino. For example, the system provides a unique experience to the users so Joe doesn't see the same content or rewards every time he logs in. An "ad" in the system should be content+reward. If it is just content it has to be rewarding in itself. Otherwise at least add points rewards to it. The uncertainty and luck of discovery aspect--the potential jackpot aspect--these make it fun and addictive.

[0084] Statistically, the system can use and implement casino math and/or relevant mathematical adaptations for this--specifically, slot machines dynamically adjust the probability of a player winning based on how they are playing, to keep them betting. The system implements this or a version of this. A "bet" is another minute of attention in the environment.

[0085] Like a customized slot machine where jackpots are sponsored targeted ads (that the customer actually wants). But jackpots are both actually--the system can provide points awards to users for actions they take (time spent, interactions, clicks, etc.) and just by luck and based on their karma. So can advertisers--advertisers can insert rewards and the disclosed system runs them in the spawn. There are also other kinds of jackpots beyond just points--for example a coupon has a bar code and gives the user a huge discount at a store but may not dispense any points. Similarly a really great little game or collectors item VOB could also be rewarding to a user that likes that.

[0086] There can be several streams of content that users are exposed to in the disclosed VR/AR environment: (1) objects addressed explicitly to them, (2) objects that are shared with users and groups they follow (but are not explicitly addressed to them), (3) objects that are shared with them by the system, and sponsors of the system. The public layer can include of (2)+(3). All other layers can show for example, either (1) or only (2). The system's content and ads only appear in the public layer. A user can choose not to see the public layer, but they cannot choose to see the public layer without sponsored content (ads). The system ensures that public layer is so good and rewarding that users want to see it all the time.

[0087] One embodiment of the present disclosure includes some coins and gems and power-up objects--the system associates or assigns points with them, but advertisers can also buy them and run them and add some kind of branding to them so that when users redeem them they see some sponsored content, or have to first go through a sponsored interaction.

[0088] The key is lots of quality custom or adapted content to always keep the user engaged: There has to be the optimal ratio. Too much reward is also no longer special. In one embodiment, it is 80/n where n is usually 20% and there is a bell curve of greater and lower reward frequency where the frequency increases a bit while the user has good karma, and then there is another variable for the probability of different sized points rewards as well. For instance, a user who is more engaged can earn better karma and sees more rewards for a while, and there is a dice roll every time a reward spawns where the probability of a higher value reward also can change based on user karma for a while. The more a user uses with the disclosed AR/VR environment and the more they engage, the better they perform. Instead of 80/20 maybe it becomes 70/30 best case, and the user can earn bigger rewards on average during that time. But then it decays unless the user maintain it.

[0089] As for the 80 or 70% of the experience that is non-sponsored content, that can be user generated content (UGC) or content from APIs like Yelp and Twitter. Messaging, billboarding/community, publishing are the UGC part. Then we need a healthy amount of API content that is useful and contextually relevant (geo-relevant to start with).

[0090] In one example, of the 80% to 70%, about half is allocated or earmarked for UGC, and half could be from APIs. In fact even if there was only API content it could be useful in certain contexts. Like as a tourist or when looking for a place to eat--so that's a mechanism to fill the world with a lot of content: twitter, facebook, insta, yelp, wikipedia, google maps--about things near you, the place near you. APIs of an object with the linkedin profile, insta profile, of each person near you can also be utilized.

[0091] Billboarding can be advantageous where there are lots of users. Messaging can be initially useful between friends. The API content can be what populates the world and is the primary excuse for looking through our lens. Adding geo-relevant Twitter, news, media content, and other social media content into the AR view is a good place to start because there is almost always something near you. Note that with the web there is really no page to look at that shows you relevant information from every network to where you are geographically and temporally right now. In a 2D interface you have a list to work with. But in AR, such as the disclosed AR environment, which is 3D, there is so much more room to work with. This touches on the desktop concept, and even personal intelligent assistants--basically the disclosed process includes an intelligent assistant that shows users relevant objects to user's context, through the system's lens.

[0092] A user's context includes, for example the user's identity, past, the user's present activity, location, time of day, who else is there. Usually the system will either have no API content or UGC for a user and place. The disclosed system executes processes which understand that users want to see messages from friends, the best content from people, groups and brands they follow, and the best, most popular, or most relevant stuff from public. Users also want to see rewards that give them value that matters to them--including points where redeemable (cryptocurrency, digital currency, fiat currency, etc.) or useful (buy virtual goods or pay to boost posts and get attention in the AR environment platform or system), and they want to see very entertaining and/or relevant content from sponsors.

[0093] The innovative process includes optimizing this mix to attain and achieve the most engagement and continued engagement from each individual user; the process includes a/b testing across users and populations of users and when it learns that some item is getting great response for some demographic and context it also can increase the frequency of that content for that audience and context. Or it can move it to the "top level" or outside of containers; basically to the disclosed system provides a great, non-cluttered UX; there always has to be enough to keep it engaging and interesting, and the system ensures that it nests or manages the world of information; the disclosed innovative rewards system helps with ensuring there is at least a chance there is a reward all the time; as an example, it's inovative function is to drive engagement with the UX.

[0094] In a further embodiment, the system enables users to attach AR/VR billboards together in the AR/VR environment--to tile them to make larger surfaces. For example attach a billboard to right edge of a billboard. They can then for example make a passageway formed of billboards facing in that you walk through. It could be an experiential thing--an art exhibit, a sequence of messages. Or a room. Or a maze. Or an entire building, etc.

[0095] The system enables these structures to be grouped so that the edges connect and precise angles and they remain that way relative to each other. In further embodiments some other shapes billboards are enabled to be designed customized, or selected--for example, a long rectangle that is as wide as two billboards so you can write a long wide message. A taller one. A triangle shape. A hexagon shape. A sphere. A cube. A rectangular block. These enable lots of billboard structures to be built. Users may be able to generate a non standard shape or design any geometric shape they desire. These become structures users can build. Each surface can have color, texture, and optional content (text or image). This enables AR objects to be built like LEGO. The 2d Wall shape one of many building blocks enabled in the disclosed system/platform.

[0096] One embodiment also includes billboard shapes that look like standard sign shapes (like a stop sign shape, a one way shape, etc) and flags that flap in the wind gently. These could have polls holding them up. Billboard shapes also include doorway, archway, window and portal objects shapes too. People can build cool things to see and explore. This enables second life kinda of activities on your own layer. For the public/main layer the real estate game would be necessary to build things that others see. Also note that in a room made of billboard wall objects if it has a floor and a ceiling and four walls it can have a light source inside it.

[0097] One embodiment further includes--a billboard or set of billboards that are portable in real space and/or digital space, e.g., that a user can take with them. For example a protest--they can carry them with them. Wherever the user is their billboard can be near them or above them. Other users can see that.

[0098] In addition, an object or billboard can be placed in a room so that it always appears in the right part of the room in the right orientation to the room--based on the shape of the room. For example the camera may be able to determine the geometry of the room.

[0099] In one embodiment, the system enables users to map spaces for us--crowd sourced--to map or determine the geometry of the room. If I am in my living room and I want to place an object precisely and have it always be oriented that way then to escape the limits of GPS I could map the room--in that mode the user would enter a special mode in the AR/VR environment and would walk the boundaries of the room as well as perhaps take photos from each wall of the room--the software agent or module of the AR/VR environment would learn the room. From this it could then keep 3D objects oriented relative to the shape of the room. The system can build up a database of mapped rooms. And this could improve if more users helped with more perspectives or to fill in missing pieces or missing rooms. The system can also award points to users for doing this.

[0100] Embodiments of the present disclosure further include physical stickers which can include stickers visible to the human eye, stickers that are highly reflective of IR and/or stickers that are not visible to the human eye. For example, A user could make stickers that appear transparent/almost invisible to the naked eye but that are highly visible to IR from camera phones--for example, using IR range finding device or sensors that can be built into portable devices. In one embodiment, these invisible stickers can be placed on the walls of rooms to enable devices to triangulate more precise locations in the rooms. In one embodiment, billboards can be configured or selected to be stuck to walls and other surfaces. This could also include taking a photo of the place where it appears to help identify it. Billboards can be transparent as well--so users just see the letters in space or on a wall.

[0101] One embodiment includes some special objects like lockable boxes and chests. They can be opened if the user has the key. Or the combination. Or certain required items. The system enables any virtual or digital content to be placed on any surface.

[0102] One problem addressed by the disclosed platform is if people build in the same or overlapping locations in their private layers the system decides what is show to users by default (e.g., in the public layer at that location). In one embodiment, the system can show a summary object that says "there are two giant castles here" unless one of them outbids the other to rent that real estate. If they rent the real estate then they can be the default and the other object is in a small orb or container that indicates there are other objects or content there.

[0103] One embodiment of the AR environment includes, a standardized container shape--an orb--that is recognizable. It appears wherever the system needs to contain and summarize many objects at a place without showing them. The orb can be identifiable in an iconography as an a special thing. It can have a number on it and could also have an icon for the type of things it contains A Twitter orb would have the Twitter logo and a badge for the number of Tweets it contains A MYXR Orb (e.g., an AR/VR orb) would have a MYXR logo and a badge for the number of MYXR or AR/VR objects (billboards, blocks, grouped named structures, it includes.). An example interaction would enable a user to activate an Orb and see an inventory of its contents and then choose the ones to pop out into the environment. They could also pop them all out and then fold them back into the Orb. The size of the Orb could reflect the number of objects it contains as well. In order to not be useful and encourage interaction with it, there could be one Orb per location containing sub orbs per type of collection (Twitter, Videos, Photos, etc).

[0104] Orbs can generally be a bit out of the way in any location--they could either float at about 7 feet altitude, or they could be in the heads up display (HUD) rather than objects in the scene. If they are in the HUD then they would basically be alerts that would appear and say "200 Tweets here"--if you tap on that alert then it puts a timeline of Tweets in front of the user and they can then see them all. Or if it says "100 billboards here" then it gives the user a list of them and they can choose which obese to see in the space".

[0105] One embodiment also includes balloon objects with messages on them. They float to the ceiling or a specific altitude. A giant hot air balloon even. And a dirigible of any size. A small one could cruise around a mall or office. A giant one could cruise over a park or a city. The system can construct some more building block objects to build structures and shapes with and enable them to be glued together to make structures.

[0106] The system deals with multiple objects in the same place from different layers in multiple ways--in the Main or Public view. In one example, the system can summarize them into an Orb, unless there is either <n of them, or they pay to promote. Promoted objects always appear on their own. If multiple or all of them pay they all appear outside the orb but share the location--which could involve them all rotating through the desired location, like a slow orbit. Also note an orb cannot contain only 1 object--so if there is only 1 object left in an orb, the orb goes away and the object becomes first level.

[0107] So in one example, a rule can bet, for any location, to avoid clutter if there are >20 virtual objects there, we put them into <20 Orbs (one Orb named for each layer that is represented and that has >2 virtual objects depicted, presented or posted at the location, and for any layers that only have 1 item at the location they go into an orb for Other Layers). An Orb for a layer has the icon or face for that layer on it.

[0108] For example, if you posted 5 VOBs to a location that has >20 items, an Orb would be formed for you and would contain your virtual objects; it would have your avatar or face on it, and a badge for "5". Other Orbs would group other virtual objects at the location. Except any sponsored/promoted items would appear outside their Orbs as well. You would want them to be listed inside their Orbs too so that if someone looks inside they are there as well, but they are already popped out of the Orb. So the listing of Orb contents would show them, but the state would indicate that they are already visible outside the Orb.

[0109] When objects are placed in a location on a layer, the system will present them in such a manner so as to prevent them from being right on top of each other, or overly overlapping, unless that was intentional (for example building a VOB where you want an emoticon right on the upper right corner of a billboard). When the system presents or depicts the Main or Public views, the system can move VOBs around slowly at the locations they wanted. So They are all orbiting there. There could be multiple billboards (e.g., 3 billboards) from different locations at a certain location--and in the Main or Public views, the system can present them to prevent them from overlapping in space--by orbiting or some other way. Another option would be that there is a marketplace--whoever pays the most points to promote their item gets the location, and then items where paid less are given locations near or around it. This could be a variant on the real-estate game.

[0110] When a user makes an item, there is an optional "Promote this item" field where you can pay some of your points, or buy more points, to promote it. The points you pay to promote it are somehow ticked down over time, and have to be refreshed when they run out, unless the user can assign an ongoing auto-pay budget to keep the points allotment at a certain level.

[0111] At a given location the objects can be arranged from the desired location of objects based on their points budgets. A user could look and see where their object is appearing in the scene and add more points to push it to the center position, or closer to the center, of a desired location. The distance between objects can be configured as the different in points budgets. For example, if the central object paid 100, and the next object paid 50, and then next paid 40, there would be a greater distance between object 1 and 2 than between 2 and 3.

[0112] Examples of Video Objects in Virtual and/or Augmented Reality

[0113] A further embodiment of the present disclosure includes video objects in virtual reality and/or augmented reality. One embodiment includes the ability to render or depict a video on a surface or billboard or virtual screen in a mixed reality environment. Similar to picture billboards. The virtual video screen can behave like a 2D or 3D object in the augmented reality scene. It can be a stationary virtual object at a location and the user can walk around it like a physical object. Or it can turn or orient to the user. Users can interact with it to start, stop, rewind, fast forward, mute sound, adjust volume. It can autoplay when a user is in proximity or it can loop. User may also be able to tune the virtual video screen to a channel or a particular content selection. It may also have a hide or directory or search function or playlist.

[0114] User Generated and User-Customizable 3D or 2D Virtual Objects

[0115] Further embodiments of the present disclosure include user generated and user-customizable 3d or 2d virtual objects. In one embodiment, the system/platform implements 3d or 2d virtual objects that can be user generated and/or user customized. For example, users can choose a virtual object type from a library of types or templates, and then customize it with optional text, formatting, color, fonts, design elements, shapes, borders, frames, illustrations, backgrounds, textures, movement, and other design parameters. They can also choose a template such as a 3D object for a neon sign and customize just the content of the sign in a template, or they can choose a 3D balloon and add customized text to the balloon object. User can also post their own billboards or words, audio or video onto objects.

[0116] Interactive Multidimensional Virtual Objects with Media or Other Interactive Content

[0117] Further embodiments of the present disclosure include interactive multidimensional virtual objects with media or other interactive content. For example, the system can further map video objects or any other interactive, static, dynamic or media content onto any 2D, 3D or multidimensional object. For example, the system supports and implements a media cube such as a video cube, sphere or another shape where each surface or face of the cube (or other shaped object) shows the same or different video/media/interactive/static/dynamic content. The cube or other shaped object face can be of any shape, (round, square, triangular, diamond, etc.). The media cube virtual object can be implemented at a larger scale in pubic arenas (e.g., times square) or concerts or sports games (e.g, jumbotron large screen technology) to for example, show zoom ins or close ups.

[0118] In one embodiment, live video, live streaming video can be depicted or streamed in real time, near real time or replay on the faces of a 2D virtual object (e.g., a billboard) a cube or on a sphere from a camera in a physical location, or from in another app, or from another platform user or any user in some specified or random location in the world. For instance, 360 degree, or panoramic, or other wide angle videos could be depicted in a spherical virtual object (e.g., like a crystal ball). In one embodiment, a user can view at the 360 degree, or panoramic, or other wide angle video from outside the video sphere. The user can further `go into` the sphere and enter the 360 degree, or panoramic, or other wide angle in a virtual reality or augmented reality experience like video player (360 degree, or panoramic, or other wide angle video player).

[0119] Embodiments of the present disclosure include systems, methods and apparatuses of platforms (e.g., as hosted by the host server 100 as depicted in the example of FIG. 1) for deployment and targeting of context-aware virtual objects and/or behavior modeling of virtual objects based on physical laws or principle. Further embodiments relate to how interactive virtual objects that correspond to content or physical objects in the physical world are detected and/or generated, and how users can then interact with those virtual objects, and/or the behavioral characteristics of the virtual objects, and how they can be modeled. Embodiments of the present disclosure further include processes that augmented reality data (such as a label or name or other data) with media content, media content segments (digital, analog, or physical) or physical objects. Yet further embodiments of the present disclosure include a platform (e.g., as hosted by the host server 100 as depicted in the example of FIG. 1) to provide an augmented reality (AR) workspace in a physical space, where a virtual object can be rendered as a user interface element of the AR workspace.

[0120] Embodiments of the present disclosure further include systems, methods and apparatuses of platforms (e.g., as hosted by the host server 100 as depicted in the example of FIG. 1) for managing and facilitating transactions or other activities associated with virtual real-estate (e.g., or digital real-estate). In general, the virtual or digital real-estate is associated with physical locations in the real world. The platform facilitates monetization and trading of a portion or portions of virtual spaces or virtual layers (e.g., virtual real-estate) of an augmented reality (AR) environment (e.g., alternate reality environment, mixed reality (MR) environment) or virtual reality VR environment.

[0121] In an augmented reality environment (AR environment), scenes or images of the physical world is depicted with a virtual world that appears to a human user, as being superimposed or overlaid of the physical world. Augmented reality enabled technology and devices can therefore facilitate and enable various types of activities with respect to and within virtual locations in the virtual world. Due to the inter connectivity and relationships between the physical world and the virtual world in the augmented reality environment, activities in the virtual world can drive traffic to the corresponding locations in the physical world. Similarly, content or virtual objects (VOBs) associated with busier physical locations or placed at certain locations (e.g., eye level versus other levels) will likely have a larger potential audience.

[0122] By virtual of the inter-relationship and connections between virtual spaces and real world locations enabled by or driven by AR, just as there is a value to real-estate in the real world locations, there can be inherent value or values for the corresponding virtual real-estate in the virtual spaces. For example, an entity who is a right holder (e.g., owner, renter, sub-lettor, licensor) or is otherwise associated a region of virtual real-estate can control what virtual objects can be placed into that virtual real-estate.

[0123] The entity that is the rightholder of the virtual real-state can control the content or objects (e.g., virtual objects) that can be placed in it, by whom, for how long, etc. As such, the disclosed technology includes a marketplace (e.g., as run by server 100 of FIG. 1) to facilitate exchange of virtual real-estate (VRE) such that entities can control object or content placement to a virtual space that is associated with a physical space.

[0124] Embodiments of the present disclosure further include systems, methods and apparatuses of seamless integration of augmented, alternate, virtual, and/or mixed realities with physical realities for enhancement of web, mobile and/or other digital experiences. Embodiments of the present disclosure further include systems, methods and apparatuses to facilitate physical and non-physical interaction/action/reactions between alternate realities. Embodiments of the present disclosure also systems, methods and apparatuses of multidimensional mapping of universal locations or location ranges for alternate or augmented digital experiences. Yet further embodiments of the present disclosure include systems, methods and apparatuses to create real world value and demand for virtual spaces via an alternate reality environment.

[0125] The disclosed platform enables and facilitates authoring, discovering, and/or interacting with virtual objects (VOBs). One example embodiment includes a system and a platform that can facilitate human interaction or engagement with virtual objects (hereinafter, `VOB,` or `VOBs`) in a digital realm (e.g., an augmented reality environment (AR), an alternate reality environment (AR), a mixed reality environment (MR) or a virtual reality environment (VR)). The human interactions or engagements with VOBs in or via the disclosed environment can be integrated with and bring utility to everyday lives through integration, enhancement or optimization of our digital activities such as web browsing, digital (online, or mobile shopping) shopping, socializing (e.g., social networking, sharing of digital content, maintaining photos, videos, other multimedia content), digital communications (e.g., messaging, emails, SMS, mobile communication channels, etc.), business activities (e.g., document management, document procession), business processes (e.g., IT, HR, security, etc.), transportation, travel, etc.

[0126] The disclosed innovation provides another dimension to digital activities through integration with the real world environment and real world contexts to enhance utility, usability, relevancy, and/or entertainment or vanity value through optimized contextual, social, spatial, temporal awareness and relevancy. In general, the virtual objects depicted via the disclosed system and platform. can be contextually (e.g., temporally, spatially, socially, user-specific, etc.) relevant and/or contextually aware. Specifically, the virtual objects can have attributes that are associated with or relevant real world places, real world events, humans, real world entities, real world things, real world objects, real world concepts and/or times of the physical world, and thus its deployment as an augmentation of a digital experience provides additional real life utility.

[0127] Note that in some instances, VOBs can be geographically, spatially and/or socially relevant and/or further possess real life utility. In accordance with embodiments of the present disclosure, VOBs can be or appear to be random in appearance or representation with little to no real world relation and have little to marginal utility in the real world. It is possible that the same VOB can appear random or of little use to one human user while being relevant in one or more ways to another user in the AR environment or platform.

[0128] The disclosed platform enables users to interact with VOBs and deployed environments using any device (e.g., devices 102A-N in the example of FIG. 1), including by way of example, computers, PDAs, phones, mobile phones, tablets, head mounted devices, goggles, smart watches, monocles, smart lens, smart watches and other smart apparel (e.g., smart shoes, smart clothing), and any other smart devices.

[0129] In one embodiment, the disclosed platform includes an information and content in a space similar to the World Wide Web for the physical world. The information and content can be represented in 3D and or have 360 or near 360 degree views. The information and content can be linked to one another by way of resource identifiers or locators. The host server (e.g., host server 100 as depicted in the example of FIG. 1) can provide a browser, a hosted server, and a search engine, for this new Web.

[0130] Embodiments of the disclosed platform enables content (e.g., VOBs, third party applications, AR-enabled applications, or other objects) to be created and placed into layers (e.g., components of the virtual world, namespaces, virtual world components, digital namespaces, etc.) that overlay geographic locations by anyone, and focused around a layer that has the highest number of audience (e.g., a public layer). The public layer can in some instances, be the main discovery mechanism and source for advertising venue for monetizing the disclosed platform.

[0131] In one embodiment, the disclosed platform includes a virtual world that exists in another dimension superimposed on the physical world. Users can perceive, observe, access, engage with or otherwise interact with this virtual world via a user interface (e.g., user interface 104A-N as depicted in the example of FIG. 1) of client application (e.g., accessed via using a user device, such as devices 102A-N as illustrated in the example of FIG. 1).

[0132] One embodiment of the present disclosure includes a consumer or client application component (e.g., as deployed on user devices, such as user devices 102A-N as depicted in the example of FIG. 1) which is able to provide geo-contextual awareness to human users of the AR environment and platform. The client application can sense, detect or recognize virtual objects and/or other human users, actors, non-player characters or any other human or computer participants that are within range of their physical location, and can enable the users to observe, view, act, interact, react with respect to the VOBs.