Locomotive Control System And Method

Kumar; Ajith Kuttannair ; et al.

U.S. patent application number 16/195950 was filed with the patent office on 2019-04-11 for locomotive control system and method. The applicant listed for this patent is General Electric Company. Invention is credited to Anwarul Azam, Daniel Malachi Ballesty, Anju Bind, Matthew Lawrence Blair, Sreyashi Dey Chaki, Wing Yeung Chung, Shannon Joseph Clouse, Brad Thomas Costa, Wolfgang Daum, Dale Martin DiDomenico, Jerry Duncan, Sameh Fahmy, Jeffrey James Kisak, Mark Bradshaw Kraeling, Ajith Kuttannair Kumar, Michael Scott Miner, Nidhi Naithani, Nikhil Uday Naphade, Scott Daniel Nelson, Martin Paget, Dattaraj Jagdish Rao, Daniel Rush, Glenn Robert Shaffer, Seneca Snyder.

| Application Number | 20190106135 16/195950 |

| Document ID | / |

| Family ID | 60157719 |

| Filed Date | 2019-04-11 |

View All Diagrams

| United States Patent Application | 20190106135 |

| Kind Code | A1 |

| Kumar; Ajith Kuttannair ; et al. | April 11, 2019 |

LOCOMOTIVE CONTROL SYSTEM AND METHOD

Abstract

A locomotive control system includes a mobile platform that moves under remote and/or autonomous control, a sensor package supported by the mobile platform that obtains information relating to a component of a railroad, and one or more processors that receive the sensor information and analyze the information in combination with other information that is not obtained from the sensor package. The processors also generate an output that displays information relating to one or more of a status, a condition, and/or a state of health of the component of the railroad; initiates an action to change an operational state of the component; identifies a hazard to one or more locomotives traveling within the railroad; and/or collects the information relating to the component. Optionally, the component is not communicatively coupled to an information network and the mobile platform provides the information obtained by the sensor package to the information network.

| Inventors: | Kumar; Ajith Kuttannair; (Erie, PA) ; Daum; Wolfgang; (Erie, PA) ; Paget; Martin; (Irvine, CA) ; Rush; Daniel; (Melbourne, FL) ; Fahmy; Sameh; (Montreal, CA) ; Costa; Brad Thomas; (Melbourne, FL) ; Snyder; Seneca; (Melbourne, FL) ; Duncan; Jerry; (Melbourne, FL) ; Kraeling; Mark Bradshaw; (Melbourne, FL) ; Miner; Michael Scott; (Melbourne, FL) ; Clouse; Shannon Joseph; (Erie, PA) ; Azam; Anwarul; (Lawrence Park, PA) ; Blair; Matthew Lawrence; (Lawrence Park, PA) ; Naithani; Nidhi; (Bangalore, IN) ; Rao; Dattaraj Jagdish; (Bangalore, IN) ; Bind; Anju; (Bangalore, IN) ; Chaki; Sreyashi Dey; (Bangalore, IN) ; Nelson; Scott Daniel; (Melbourne, FL) ; Naphade; Nikhil Uday; (Maharashtra, IN) ; Chung; Wing Yeung; (Erie, PA) ; Ballesty; Daniel Malachi; (Wattsburg, PA) ; Shaffer; Glenn Robert; (Erie, PA) ; Kisak; Jeffrey James; (Erie, PA) ; DiDomenico; Dale Martin; (Melbourne, FL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 60157719 | ||||||||||

| Appl. No.: | 16/195950 | ||||||||||

| Filed: | November 20, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15651630 | Jul 17, 2017 | |||

| 16195950 | ||||

| 14624069 | Feb 17, 2015 | 9873442 | ||

| 15651630 | ||||

| 15044592 | Feb 16, 2016 | |||

| 14624069 | ||||

| 11750716 | May 18, 2007 | |||

| 15044592 | ||||

| 11385354 | Mar 20, 2006 | 9733625 | ||

| 11750716 | ||||

| 14541370 | Nov 14, 2014 | 10110795 | ||

| 11385354 | ||||

| 14217672 | Mar 18, 2014 | |||

| 14541370 | ||||

| 14253294 | Apr 15, 2014 | 9875414 | ||

| 14217672 | ||||

| 14457353 | Aug 12, 2014 | |||

| 14253294 | ||||

| 14479847 | Sep 8, 2014 | |||

| 14457353 | ||||

| 14485398 | Sep 12, 2014 | 10049298 | ||

| 14479847 | ||||

| 13109209 | May 17, 2011 | 8913131 | ||

| 14485398 | ||||

| 11146831 | Jun 6, 2005 | 7965312 | ||

| 13109209 | ||||

| 10361968 | Feb 10, 2003 | |||

| 11146831 | ||||

| 14922787 | Oct 26, 2015 | |||

| 10361968 | ||||

| 14155454 | Jan 15, 2014 | 9671358 | ||

| 14922787 | ||||

| 12573141 | Oct 4, 2009 | 9233696 | ||

| 14155454 | ||||

| PCT/US13/54284 | Aug 9, 2013 | |||

| 14155454 | ||||

| 11385354 | Mar 20, 2006 | 9733625 | ||

| 12573141 | ||||

| 62403963 | Oct 4, 2016 | |||

| 60894006 | Mar 9, 2007 | |||

| 61940813 | Feb 17, 2014 | |||

| 61940660 | Feb 17, 2014 | |||

| 61940610 | Feb 17, 2014 | |||

| 61940696 | Feb 17, 2014 | |||

| 61940813 | Feb 17, 2014 | |||

| 60626573 | Nov 10, 2004 | |||

| 60385645 | Jun 4, 2002 | |||

| 62134518 | Mar 17, 2015 | |||

| 62134518 | Mar 17, 2015 | |||

| 61681843 | Aug 10, 2012 | |||

| 61729188 | Nov 21, 2012 | |||

| 61860469 | Jul 31, 2013 | |||

| 61860496 | Jul 31, 2013 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/332 20130101; B61L 15/009 20130101; B64C 2201/208 20130101; B61L 25/026 20130101; B61L 27/0088 20130101; G05D 1/0094 20130101; B64C 2201/123 20130101; H04N 5/23241 20130101; H04N 5/23206 20130101; B61L 25/023 20130101; B61L 25/021 20130101; B61L 25/025 20130101; B61L 15/0027 20130101; B61L 27/0077 20130101; B61L 15/0081 20130101; B64C 39/024 20130101; B64D 47/08 20130101; G06K 9/00798 20130101; B61L 15/0072 20130101; G06K 9/00771 20130101; G06K 9/00651 20130101; H04N 7/185 20130101; B64C 2201/14 20130101; H04N 5/23203 20130101; B61L 23/04 20130101; B61L 27/0094 20130101; H04L 67/12 20130101; B61L 2205/04 20130101; H04N 7/183 20130101 |

| International Class: | B61L 25/02 20060101 B61L025/02; B61L 15/00 20060101 B61L015/00; B61L 27/00 20060101 B61L027/00; B64C 39/02 20060101 B64C039/02; B64D 47/08 20060101 B64D047/08; G06K 9/00 20060101 G06K009/00; H04N 5/232 20060101 H04N005/232; G05D 1/00 20060101 G05D001/00; H04L 29/08 20060101 H04L029/08; H04N 7/18 20060101 H04N007/18 |

Claims

1. A locomotive control system comprising: a locomotive controller that is configured to control the operation of at least one locomotive; a mobile platform configured to move under remote control or under autonomous control; a sensor package supported by the mobile platform and configured to obtain information relating to a component of a railroad; and one or more processors configured to receive the information obtained by the sensor package and to analyze the information in combination with other information that is not obtained from the sensor package, and to generate an output that at least one of: displays information relating to one or more of a status, a condition, or a state of health of the component of the railroad, initiates an action to change an operational state of the component, identifies a hazard to the at least one locomotive within the railroad, or collects the information relating to the component, wherein the component is not communicatively coupled to an information network, and the mobile platform provides the information that is obtained by the sensor package to the information network, wherein the other information that is not obtained from the sensor package is obtained from the locomotive controller.

2. The locomotive control system of claim 1, wherein the information relating to the component of the railroad is one or more of a condition or a state of health of the component.

3. The locomotive control system of claim 1, further comprising a control unit configured to control a path of travel of the mobile platform.

4. The locomotive control system of claim 3, wherein the control unit is configured to autonomously prevent the mobile platform from moving outside of a determined multi-dimensional movement envelope or from moving into a restricted airspace.

5. The locomotive control system of claim 4, wherein the control unit is further configured to control the mobile platform to move to a safe position or operating mode in response to the mobile platform being located within a defined distance of a beacon or being located outside of the determined multi-dimensional movement envelope.

6. The locomotive control system of claim 4, wherein the control unit is further configured to communicate with an air traffic control system prior to controlling the mobile platform from moving from within the determined multi-dimensional movement envelope to commercial airspace.

7. The locomotive control system of claim 3, wherein the control unit is further configured to respond to a signal from a beacon to avoid controlling the mobile platform into a collision with an object operably coupled with the beacon.

8. The locomotive control system of claim 1, wherein the information obtained from the sensor package is video or image data; and the other information that is not obtained from the sensor package is further obtained from one or more of: a route planning system that is configured to map or control locomotives traveling through at least part of the railroad; a wayside device that is configured to monitor a segment of the railroad; or a satellite that is configured to monitor the segment of the railroad.

9. The locomotive control system of claim 8, wherein the route planning system determines at least one of volume and velocity of the locomotives based at least in part on the information obtained from the sensor package.

10. The locomotive control system of claim 8, wherein the one or more processors are configured to determine that the hazard exists on a route of the railroad and the route planning system responds to the hazard being determined by the one or more processors re-routing the locomotives traveling through the railroad to avoid collision with the hazard that is determined.

11. The locomotive control system of claim 8, wherein the one or more processors are configured to determine that the hazard exists on a route of the railroad and the locomotive controller responds to the hazard by at least one of re-routing the at least one locomotive through the railroad to avoid collision with the hazard that is determined, or by stopping the at least one locomotive to avoid collision with the hazard that is determined.

12. The locomotive control system of claim 1, wherein the one or more processors are configured to direct an output device to display the information relating to the one or more of the status, the condition, or the state of health of the component of the railroad, the information that is displayed including a map and icons indicating a location of the component on the map.

13. The locomotive control system of claim 1, wherein the one or more processors are configured to initiate the action to change the operational state of the component by one or more of: activating a switch to change a pathway defined by rail tracks, activating a signal light to change a traffic flow pattern in the railroad, opening a closed gate, closing an open gate, or dumping bulk materials from one or more locomotives.

14. The locomotive control system of claim 1, wherein the one or more processors are configured to collect the information relating to the component, wherein the mobile platform provides instructions or information to the component.

15. The locomotive control system of claim 14, wherein the mobile platform is further configured to communicate with two or more of a plurality of locomotives traveling through the railroad, and the two or more locomotives communicate with each other through the mobile platform.

16. The locomotive control system of claim 1, wherein the one or more processors are configured to be disposed onboard the at least one locomotive, the one or more processors also configured to identify at least one of a broken component of one or more tracks of the railroad or an obstacle on the one or more tracks based on the information relating to the component.

17. The locomotive control system of claim 1, further comprising: a mission optimizer on board the at least one locomotive and configured to determine a trip plan for a trip of the at least one locomotive, the trip plan comprising at least one of plural speed settings, plural power settings, or plural throttle settings as a function of at least one of time or distance of the at least one locomotive along a route of the trip, based on information of the at least one locomotive and information of the route, wherein the mission optimizer is configured to determine the trip plan before the at least one locomotive commences a trip along the route, and wherein the mission optimizer is further configured to output signals representative of the at least one of plural speed settings, plural power settings, or plural throttle settings for control of an engine system of the at least one locomotive along the route; a sensor on board the at least one locomotive configured to collect operational data of the at least one locomotive, the operational data comprising data of at least one of tractive effort or emissions actually generated by the at least one locomotive as the at least one locomotive travels along the route; and a communication system on board the at least one locomotive configured to communicate the operational data to the mission optimizer; wherein the mission optimizer is configured to change the signals representative of the at least one of plural speed settings, plural power settings, or plural throttle settings that are output from the mission optimizer as the at least one locomotive travels along the route, based in part on the data of the at least one of tractive effort or emissions actually generated by the at least one locomotive.

18. The locomotive control system of claim 17, wherein the mission optimizer is configured to re-determine, at a point along the route, the trip plan based on the information of the at least one locomotive and the information of the route and the operational data, and further comprising: a converter coupled to the mission optimizer and the communication system, the converter configured to convert the signals that are output from the mission optimizer to electrical signals for controlling the engine system of the at least one locomotive; a master controller coupled to the converter and the at least one locomotive for controlling the engine system of the at least one locomotive, the master controller including at least one switch operable by an operator of the at least one locomotive, wherein the at least one of plural speed settings, plural power settings, or plural throttle settings are determined in part based on minimizing fuel consumption, time, considerations, and emissions output.

19. A locomotive control method comprising: controlling movement of a mobile platform having a sensor package supported thereon, the sensor package configured to obtain information relating to a component of a railroad; analyzing the information relating to the component of the railroad and obtained by the sensor package, the information relating to the component analyzed in combination with other information that is not obtained from the sensor package; and generating an output that at least one of: displays information relating to one or more of a status, a condition, or a state of health of the component of the railroad, initiates an action to change an operational state of the component, identifies a hazard to one or more locomotives traveling within the railroad, or collects the information relating to the component, wherein the component is not communicatively coupled to an information network, and the mobile platform provides the information that is obtained by the sensor package to the information network.

20. A locomotive control system comprising: a mobile platform configured to move off-board of a locomotive moving along one or more routes of a railroad, the mobile platform including a sensor package configured to sense information and output data indicative of a state of a component of the railroad; and one or more processors configured to receive the data that is output from the sensor package, the one or more processors configured to identify a hazard to the locomotive based on the data that is output, wherein the one or more processors are configured to generate an autonomous control signal that changes movement of the locomotive responsive to the hazard being identified.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/651,630 filed on 17 Jul. 2017, which claims priority to U.S. Provisional Application No. 62/403,963, filed 4 Oct. 2016, is a continuation-in-part to U.S. patent application Ser. No. 14/624,069, filed 17 Feb. 2015, and is a continuation-in-part to U.S. patent application Ser. No. 15/044,592, filed 16 Feb. 2016.

[0002] This application is also a continuation-in-part of U.S. patent application Ser. No. 11/750,716 filed 18 May 2007, which claims priority to U.S. Provisional Application No. 60/894,006, filed 9 Mar. 2007, and is also a continuation-in part of U.S. application Ser. No. 11/385,354, filed 20 Mar. 2006.

[0003] U.S. patent application Ser. No. 14/624,069 claims priority to U.S. Provisional Application Nos. 61/940,813; 61/940,660; 61/940,610; and 61/940,696, all of which were filed on 17 Feb. 2014.

[0004] U.S. patent application Ser. No. 14/624,069 also is a continuation-in-part of U.S. patent application Ser. No. 14/541,370, filed on 14 Nov. 2014, and which claims priority to U.S. Provisional Application No. 61/940,813, filed on 17 Feb. 2014. U.S. patent application Ser. No. 14/541,370 is a continuation-in-part of U.S. patent application Ser. No. 14/217,672, filed 14 Mar. 2014, U.S. patent application Ser. No. 14/253,294, filed on 15 Apr. 2014, U.S. patent application Ser. No. 14/457,353, filed 12 Aug. 2014, U.S. patent application Ser. No. 14/479,847, filed 8 Sep. 2014, U.S. patent application Ser. No. 14/485,398, filed 12 Sep. 2014, and U.S. patent application Ser. No. 13/109,209, filed 17 May 2011 (now U.S. Pat. No. 8,913,131, issued 16 Dec. 2014).

[0005] U.S. patent application Ser. No. 13/109,209 is a divisional application of U.S. patent application Ser. No. 11/146,831, filed 6 Jun. 2005 (now U.S. Pat. No. 7,965,312, issued 21 Jun. 2011), which claims priority to U.S. Provisional Application No. 60/626,573, filed 10 Nov. 2004.

[0006] U.S. patent application Ser. No. 11/146,831 also is a continuation-in-part of U.S. patent application Ser. No. 10/361,968, filed 10 Feb. 2003, which is now abandoned and which claims priority to U.S. Provisional Application No. 60/385,645, filed 4 Jun. 2002.

[0007] U.S. patent application Ser. No. 15/044,592 claims priority to U.S. Provisional Application No. 62/134,518, filed 17 Mar. 2015, and is a continuation-in-part of U.S. application Ser. No. 14/922,787, filed 26 Oct. 2015, which claims priority to U.S. Provisional Application No. 62/134,518.

[0008] U.S. application Ser. No. 14/922,787 also is a continuation-in-part of U.S. application Ser. No. 14/155,454, filed 15 Jan. 2014, and is a continuation-in-part of U.S. application Ser. No. 12/573,141, filed 4 Oct. 2009.

[0009] U.S. application Ser. No. 14/155,454 is a continuation of International Application No. PCT/US13/54284, filed 9 Aug. 2013, which claims priority to U.S. Provisional Application No. 61/681,843, filed 10 Aug. 2012, to U.S. Provisional Application No. 61/729,188, filed 21 Nov. 2012, to U.S. Provisional Application No. 61/860,469, filed 31 Jul. 2013, and to U.S. Provisional Application No. 61/860,496, filed 31 Jul. 2013.

[0010] U.S. application Ser. No. 12/573,141 is a continuation-in-part of U.S. application Ser. No. 11/385,354, filed 20 Mar. 2006.

[0011] The entire disclosures of all the applications listed above are incorporated herein by reference.

BACKGROUND

Technical Field

[0012] Embodiments of the subject matter disclosed herein relate to obtaining and communicating data using one or more autonomous vehicles as a sensor platform.

Discussion of Art

[0013] Equipment may be sometimes outfitted with various sensors, such as sensor package units for capturing and storing video data of the environment around a vehicle. For example, law enforcement vehicles may be provided with "dashboard cams" to record a view out the front windshield of the vehicle, to capture video data of interactions between a law enforcement officer and (for example) the occupants of another vehicle. As another example, passenger automobiles may be provided with fixed-position rear view sensor packages for capturing a video stream of the region directly behind the automobile, which is displayed on a console display screen to aid the driver in safely backing up.

[0014] In addition to in-vehicle sensor packages, transportation networks (referring to infrastructure for movement of vehicles, e.g., railroad track networks for rail vehicles, or highway and other road networks for automobiles, semi-trailer trucks, and other on-highway vehicles) are sometimes outfitted with wayside sensor packages for capturing video data of the transportation network. For example, a sensor package may be affixed to a mast at the side of a highway, to capture video data of the highway for traffic tracking and reporting purposes.

[0015] For both in-vehicle and wayside sensor package systems, the sensor package systems may be fixed in place, to capture video data only of a designated field of view, e.g., to the front or rear of a vehicle, or a designated segment of road. For vehicles, this may be because the sensor package systems are designated for capturing video data that may be safety critical (rear view) or important from a public-policy standpoint (law enforcement dashboard cams). For wayside sensor package systems, this may be because the designated field of view must be constantly monitored (e.g., view of a tollgate), or to keep data consistent (e.g., roadway monitoring over time).

[0016] It may be desirable to have mobile sensor platforms that differ from those data collection systems that are currently available.

BRIEF DESCRIPTION

[0017] In one embodiment, a system includes a mobile platform configured to move under remote control and/or under autonomous control, a sensor package supported by the mobile platform configured to obtain information relating to a component of a transportation network; one or more processors configured to receive the obtained information from the sensor package and to analyze that data in combination with other information that is not obtained from the sensor package. The one or more processors generate an output that displays information relating to a status, condition and/or state of health of the component of the transportation network, initiate an action to make a change to the operational state of the component at based least in part on the output, identify a hazard to one or more vehicles traveling within the transportation network, and collect the information relating to the component. The component is not communicatively coupled to an information network, and the mobile platform provides the collected information to the information network.

[0018] In one embodiment, a system includes a mobile platform configured to move under remote control or under autonomous control, a sensor package supported by the mobile platform and configured to obtain information relating to a component of a transportation network, and one or more processors configured to receive the information obtained by the sensor package and to analyze the information in combination with other information that is not obtained from the sensor package. The one or more processors also are configured to generate an output that at least one of displays information relating to one or more of a status, a condition, or a state of health of the component of the transportation network; initiates an action to change an operational state of the component; identifies a hazard to one or more vehicles traveling within the transportation network; and/or collects the information relating to the component. The component is not communicatively coupled to an information network and the mobile platform provides the information that is obtained by the sensor package to the information network in one embodiment.

[0019] Optionally, the one or more processors can be configured to generate an output, based on the information obtained by the sensor package analyzed in combination with the other information, that identifies a hazard to one or more vehicles traveling within the transportation network, that initiates an action to change an operational state of the component responsive to the identified hazard, and that initiates a control signal for controlling the one or more vehicles responsive to the identified hazard. For example, responsive to the one or more processors receiving the information obtained by the sensor package and analyzing the information in combination with other information that is not obtained from the sensor package, the one or more processors can identify a damaged route segment, a damaged switch at an intersection between routes, a damaged gate, a damaged signal, a damaged or an immobile vehicle ahead on a route, etc. The one or more processors can then generate and communicate a control signal to control a vehicle headed toward the hazard (e.g., to automatically slow, stop, and/or change a direction of movement of the vehicle heading toward the hazard, or to instruct an operator of that vehicle to slow, stop, or change a direction of movement of the vehicle) based on the identification of the hazard. The one or more processors also can generate another control signal (or use the same control signal) and communicate the control signal to a component of the transportation network, such as a signal, gate, switch, etc. This control signal can help in preventing the vehicle heading toward the hazard from reaching the hazard, such as by changing a color or other state of a signal (e.g., changing from a green light to a red light), by lowering a gate (e.g., to prevent passage of the vehicle on a route toward the hazard), by changing which routes are connected by a switch (e.g., to cause the vehicle to move onto another route that does not include the hazard), etc.

[0020] In one embodiment, a method includes controlling movement of a mobile platform having a sensor package supported thereon. The sensor package is configured to obtain information relating to a component of a transportation network. The method also includes analyzing the information relating to the component of the transportation network and obtained by the sensor package. The information relates to the component analyzed in combination with other information that is not obtained from the sensor package. The method also includes generating an output that at least one of displays information relating to one or more of a status, a condition, or a state of health of the component of the transportation network; initiates an action to change an operational state of the component; identifies a hazard to one or more vehicles traveling within the transportation network; and/or collects the information relating to the component. The component is not communicatively coupled to an information network, and the mobile platform provides the information that is obtained by the sensor package to the information network in one embodiment.

[0021] In one embodiment, a system includes a mobile platform configured to move off-board of a vehicle moving along one or more routes of a transportation network. The mobile platform includes a sensor package configured to sense information and output data indicative of a state of a component of the transportation network. The system also includes one or more processors configured to receive the data that is output from the sensor package. The one or more processors are configured to identify a hazard to the vehicle based on the data that is output. The one or more processors are configured to generate an autonomous control signal that changes movement of the vehicle responsive to the hazard being identified.

[0022] Certain embodiments of the present disclosure provide a locomotive control system that includes a locomotive controller that is configured to control the operation of at least one locomotive, a mobile platform configured to move under remote control or under autonomous control, a sensor package supported by the mobile platform and configured to obtain information relating to a component of a railroad, and one or more processors configured to receive the information obtained by the sensor package and to analyze the information in combination with other information that is not obtained from the sensor package, and to generate an output that at least one of: displays information relating to one or more of a status, a condition, or a state of health of the component of the railroad, initiates an action to change an operational state of the component, identifies a hazard to the at least one locomotive within the railroad, or collects the information relating to the component. The component is not communicatively coupled to an information network, and the mobile platform provides the information that is obtained by the sensor package to the information network. The other information that is not obtained from the sensor package is obtained from the locomotive controller.

[0023] The information relating to the component of the railroad may be one or more of a condition or a state of health of the component.

[0024] In at least one embodiment, a control unit is configured to control a path of travel of the mobile platform. The control unit is configured to autonomously prevent the mobile platform from moving outside of a determined multi-dimensional movement envelope or from moving into a restricted airspace. In at least one embodiment, the control unit may be further configured to control the mobile platform to move to a safe position or operating mode in response to the mobile platform being located within a defined distance of a beacon or being located outside of the determined multi-dimensional movement envelope. The control unit may be further configured to communicate with an air traffic control system prior to controlling the mobile platform from moving from within the determined multi-dimensional movement envelope to commercial airspace. The control unit may be further configured to respond to a signal from a beacon to avoid controlling the mobile platform into a collision with an object operably coupled with the beacon.

[0025] The information obtained from the sensor package may be video or image data. The other information that is not obtained from the sensor package may be further obtained from one or more of: a route planning system that is configured to map or control locomotives traveling through at least part of the railroad, a wayside device that is configured to monitor a segment of the railroad, or a satellite that is configured to monitor the segment of the railroad. In at least one embodiment, the route planning system determines at least one of volume and velocity of the locomotives based at least in part on the information obtained from the sensor package.

[0026] The one or more processors may be configured to determine that the hazard exists on a route of the railroad and the route planning system responds to the hazard being determined by the one or more processors re-routing the locomotives traveling through the railroad to avoid collision with the hazard that is determined.

[0027] The one or more processors may be configured to determine that the hazard exists on a route of the railroad and the locomotive controller responds to the hazard by at least one of re-routing the at least one locomotive through the railroad to avoid collision with the hazard that is determined, or by stopping the at least one locomotive to avoid collision with the hazard that is determined.

[0028] The one or more processors may be configured to direct an output device to display the information relating to the one or more of the status, the condition, or the state of health of the component of the railroad, the information that is displayed including a map and icons indicating a location of the component on the map.

[0029] In at least one embodiment, the one or more processors are configured to initiate the action to change the operational state of the component by one or more of: activating a switch to change a pathway defined by rail tracks, activating a signal light to change a traffic flow pattern in the railroad, opening a closed gate, closing an open gate, or dumping bulk materials from one or more locomotives.

[0030] The one or more processors may be configured to collect the information relating to the component, and the mobile platform may provide instructions or information to the component. In at least one embodiment, the mobile platform is further configured to communicate with two or more of a plurality of locomotives traveling through the railroad, and the two or more locomotives communicate with each other through the mobile platform.

[0031] The one or more processors may be configured to be disposed onboard the at least one locomotive. The one or more processors may also be configured to identify at least one of a broken component of one or more tracks of the railroad or an obstacle on the one or more tracks based on the information relating to the component.

[0032] In at least one embodiment, the locomotive control system includes a mission optimizer on board the at least one locomotive and configured to determine a trip plan for a trip of the at least one locomotive. The trip plan includes at least one of plural speed settings, plural power settings, or plural throttle settings as a function of at least one of time or distance of the at least one locomotive along a route of the trip, based on information of the at least one locomotive and information of the route. The mission optimizer is configured to determine the trip plan before the at least one locomotive commences a trip along the route. The mission optimizer is further configured to output signals representative of the at least one of plural speed settings, plural power settings, or plural throttle settings for control of an engine system of the at least one locomotive along the route. The locomotive control system may also include a sensor on board the at least one locomotive configured to collect operational data of the at least one locomotive. The operational data includes data of at least one of tractive effort or emissions actually generated by the at least one locomotive as the at least one locomotive travels along the route. A communication system may be on board the at least one locomotive configured to communicate the operational data to the mission optimizer. The mission optimizer is configured to change the signals representative of the at least one of plural speed settings, plural power settings, or plural throttle settings that are output from the mission optimizer as the at least one locomotive travels along the route, based in part on the data of the at least one of tractive effort or emissions actually generated by the at least one locomotive.

[0033] The mission optimizer may be configured to re-determine, at a point along the route, the trip plan based on the information of the at least one locomotive and the information of the route and the operational data, and may include a converter coupled to the mission optimizer and the communication system. The converter is configured to convert the signals that are output from the mission optimizer to electrical signals for controlling the engine system of the at least one locomotive. A master controller may be coupled to the converter and the at least one locomotive for controlling the engine system of the at least one locomotive. The master controller includes at least one switch operable by an operator of the at least one locomotive. The at least one of plural speed settings, plural power settings, or plural throttle settings are determined in part based on minimizing fuel consumption, time, considerations, and emissions output.

[0034] Certain embodiments of the present disclosure provide a locomotive control method comprising: controlling movement of a mobile platform having a sensor package supported thereon, the sensor package configured to obtain information relating to a component of a railroad; analyzing the information relating to the component of the railroad and obtained by the sensor package, the information relating to the component analyzed in combination with other information that is not obtained from the sensor package; and generating an output that at least one of: displays information relating to one or more of a status, a condition, or a state of health of the component of the railroad, initiates an action to change an operational state of the component, identifies a hazard to one or more locomotives traveling within the railroad, or collects the information relating to the component, wherein the component is not communicatively coupled to an information network, and the mobile platform provides the information that is obtained by the sensor package to the information network.

[0035] Certain embodiments of the present disclosure provide a locomotive control system comprising: a mobile platform configured to move off-board of a locomotive moving along one or more routes of a railroad, the mobile platform including a sensor package configured to sense information and output data indicative of a state of a component of the railroad; and one or more processors configured to receive the data that is output from the sensor package, the one or more processors configured to identify a hazard to the locomotive based on the data that is output, wherein the one or more processors are configured to generate an autonomous control signal that changes movement of the locomotive responsive to the hazard being identified.

BRIEF DESCRIPTION OF THE DRAWINGS

[0036] The subject matter described herein will be better understood from reading the following description of non-limiting embodiments, with reference to the attached drawings, wherein:

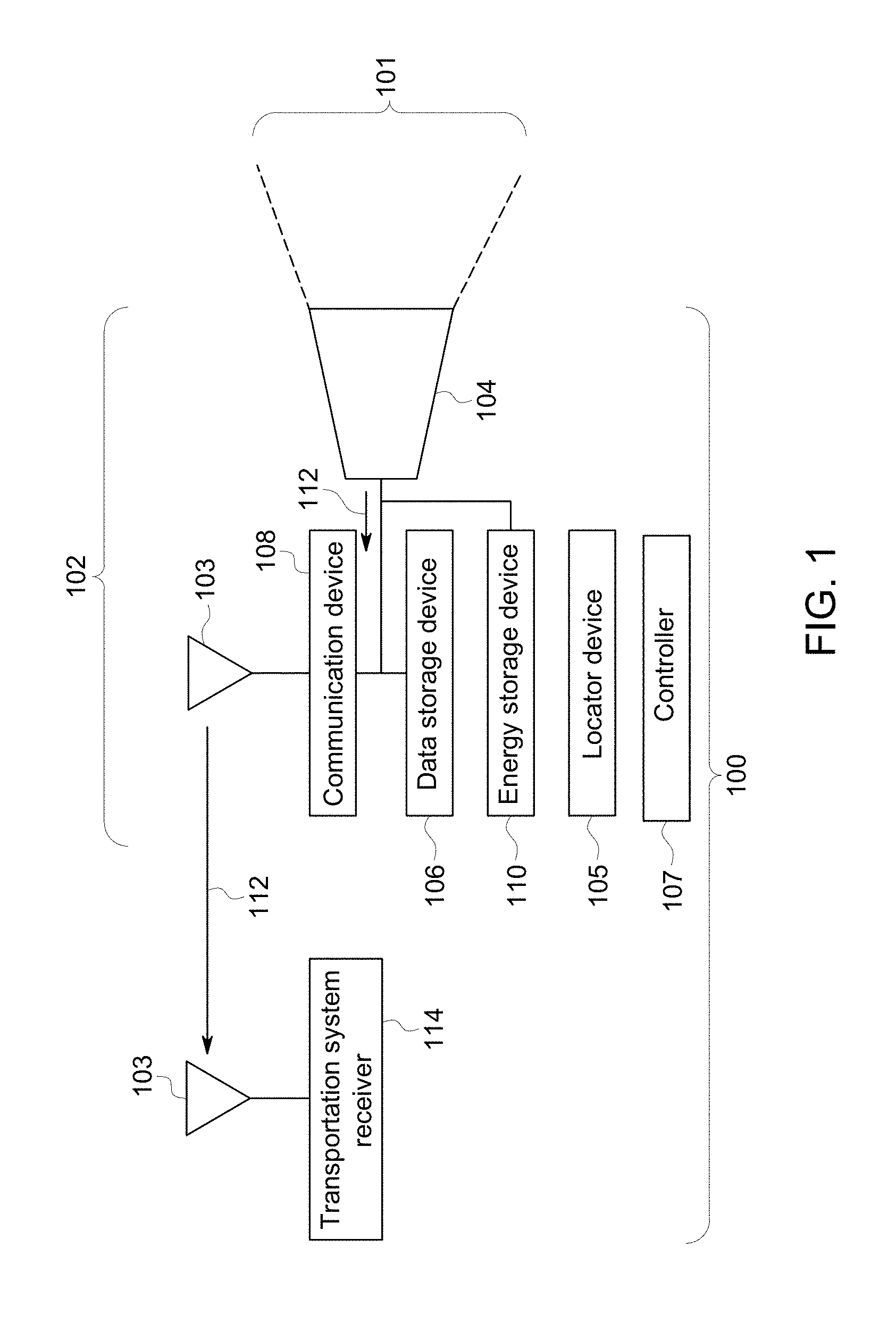

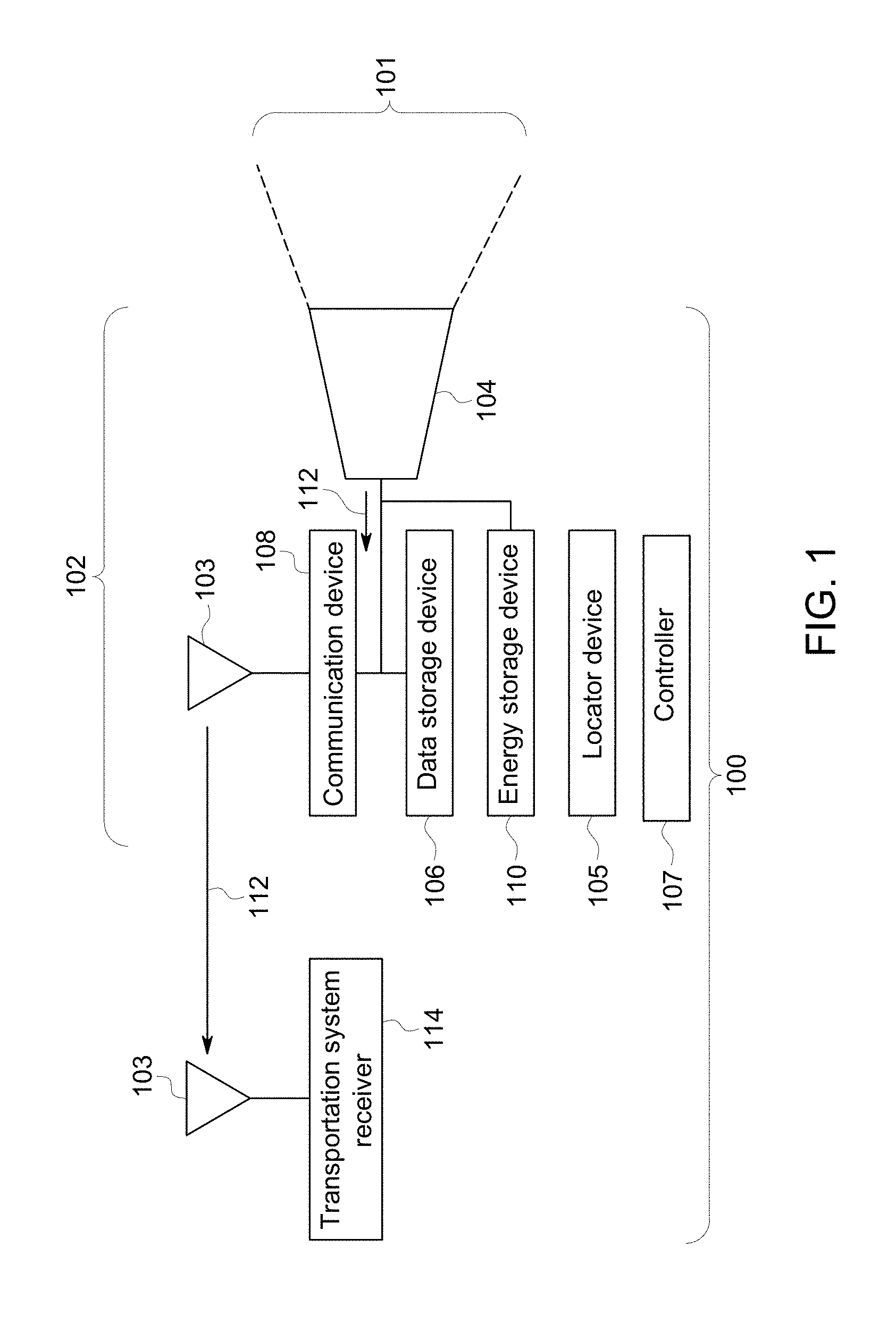

[0037] FIG. 1 illustrates a sensor system for capturing and communicating transportation data related to vehicles or otherwise to a transportation system according to one embodiment;

[0038] FIG. 2 illustrates another embodiment of a sensor system;

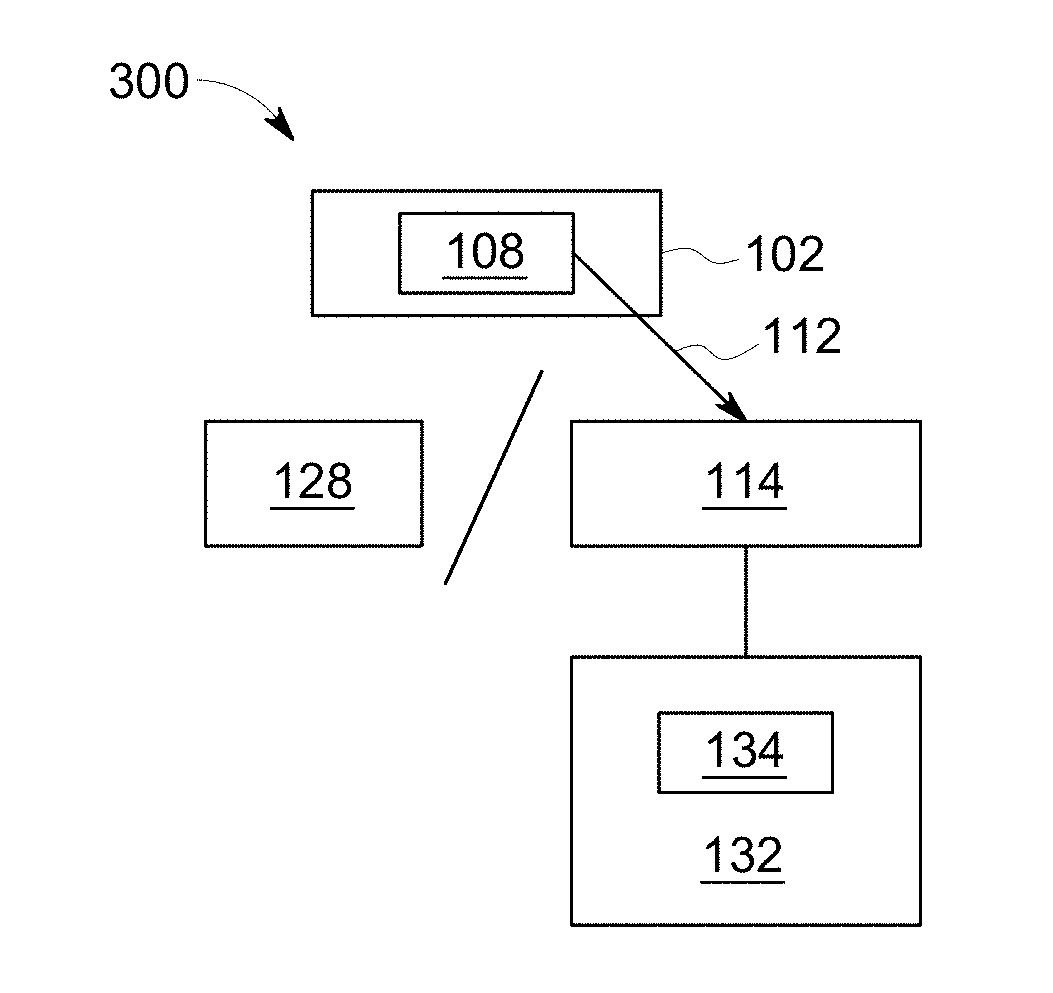

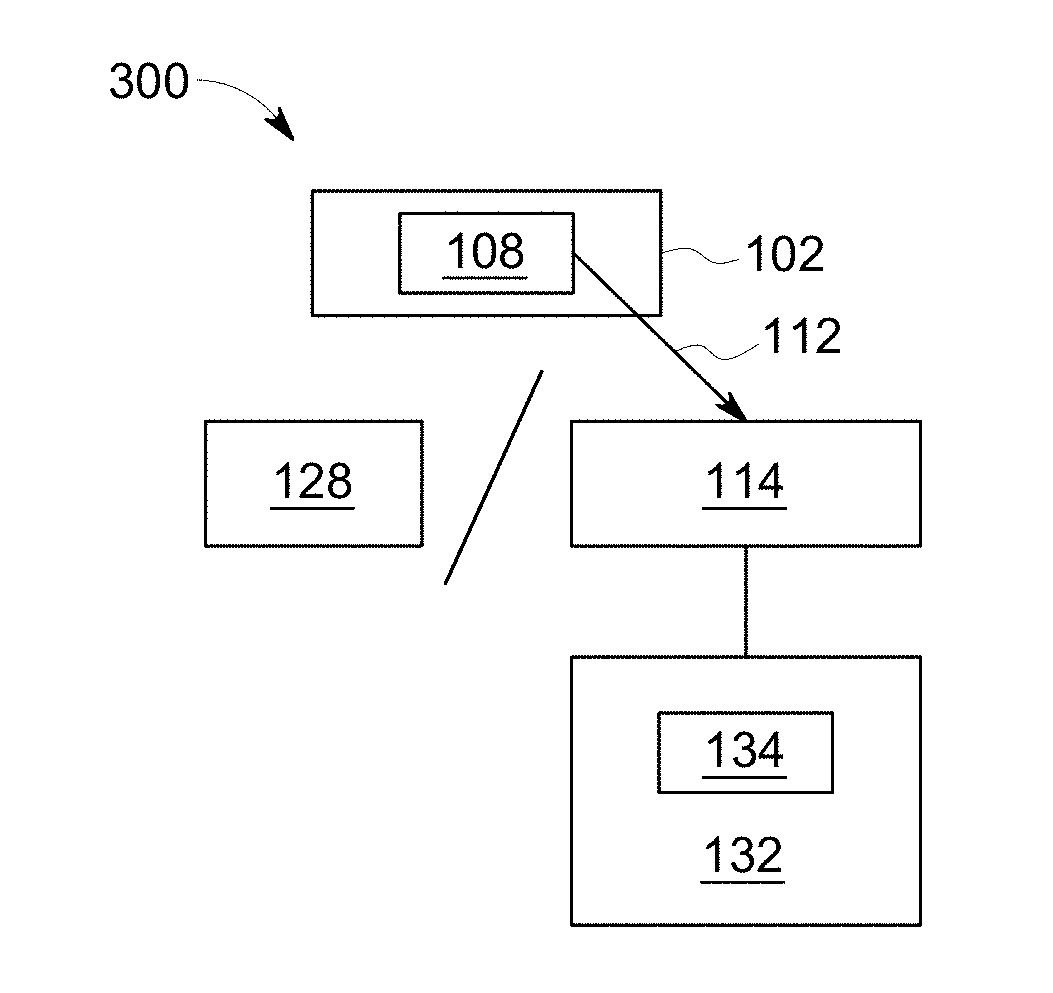

[0039] FIG. 3 illustrates another embodiment of a sensor system;

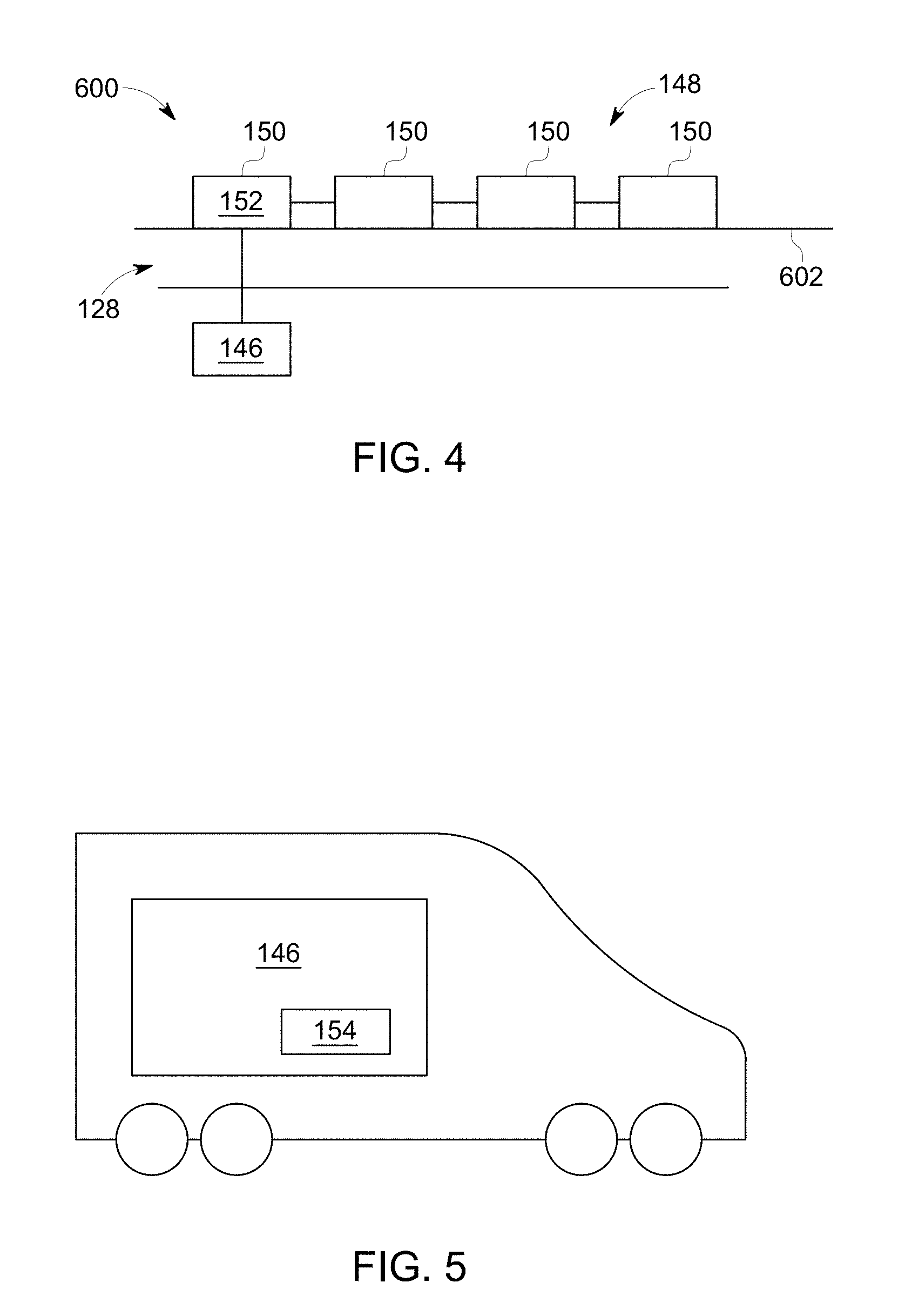

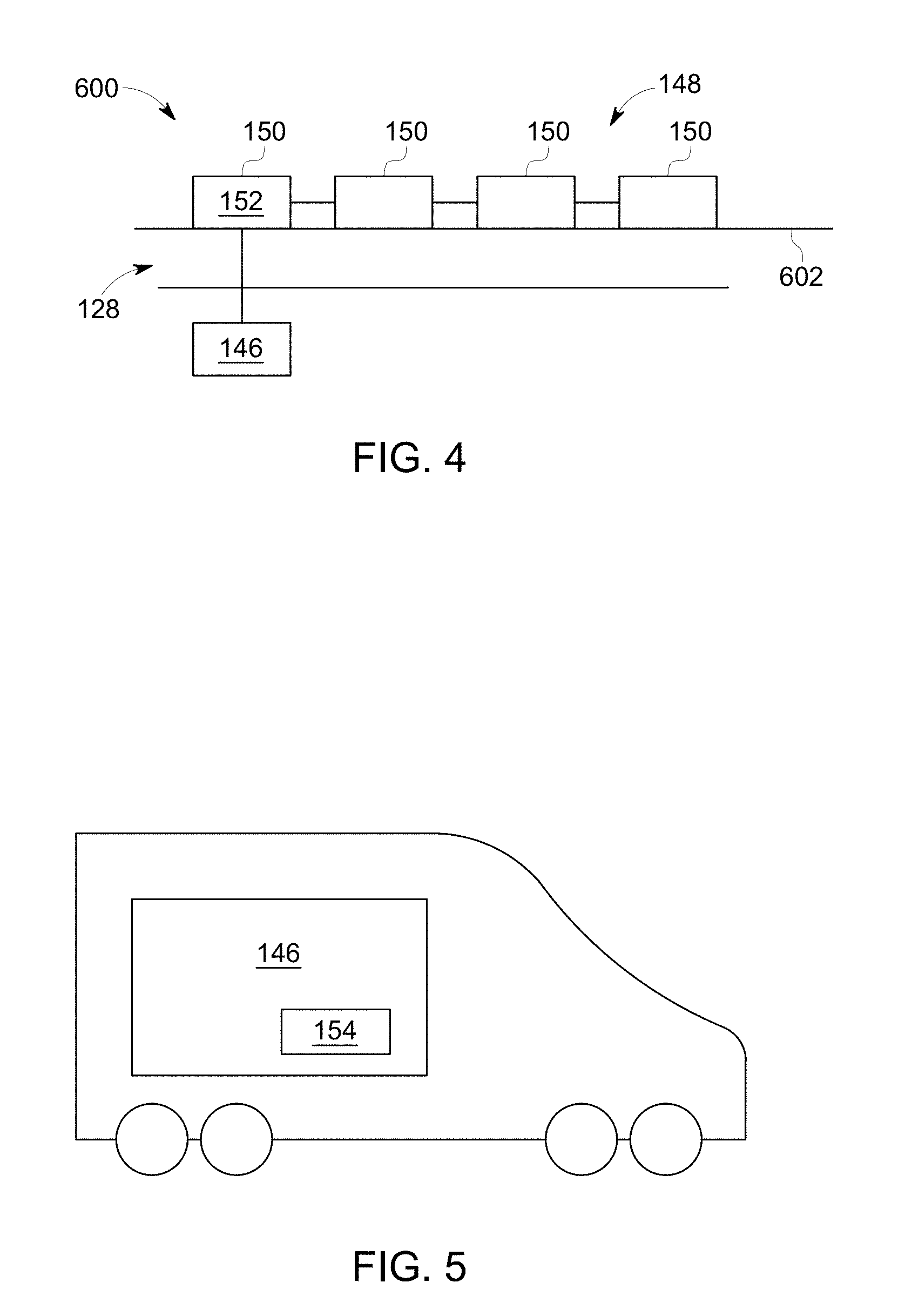

[0040] FIG. 4 illustrates one embodiment of a non-aerial vehicle;

[0041] FIG. 5 illustrates a control system according to one embodiment;

[0042] FIG. 6 illustrates a transportation system receiver located onboard the non-aerial vehicle according to one embodiment;

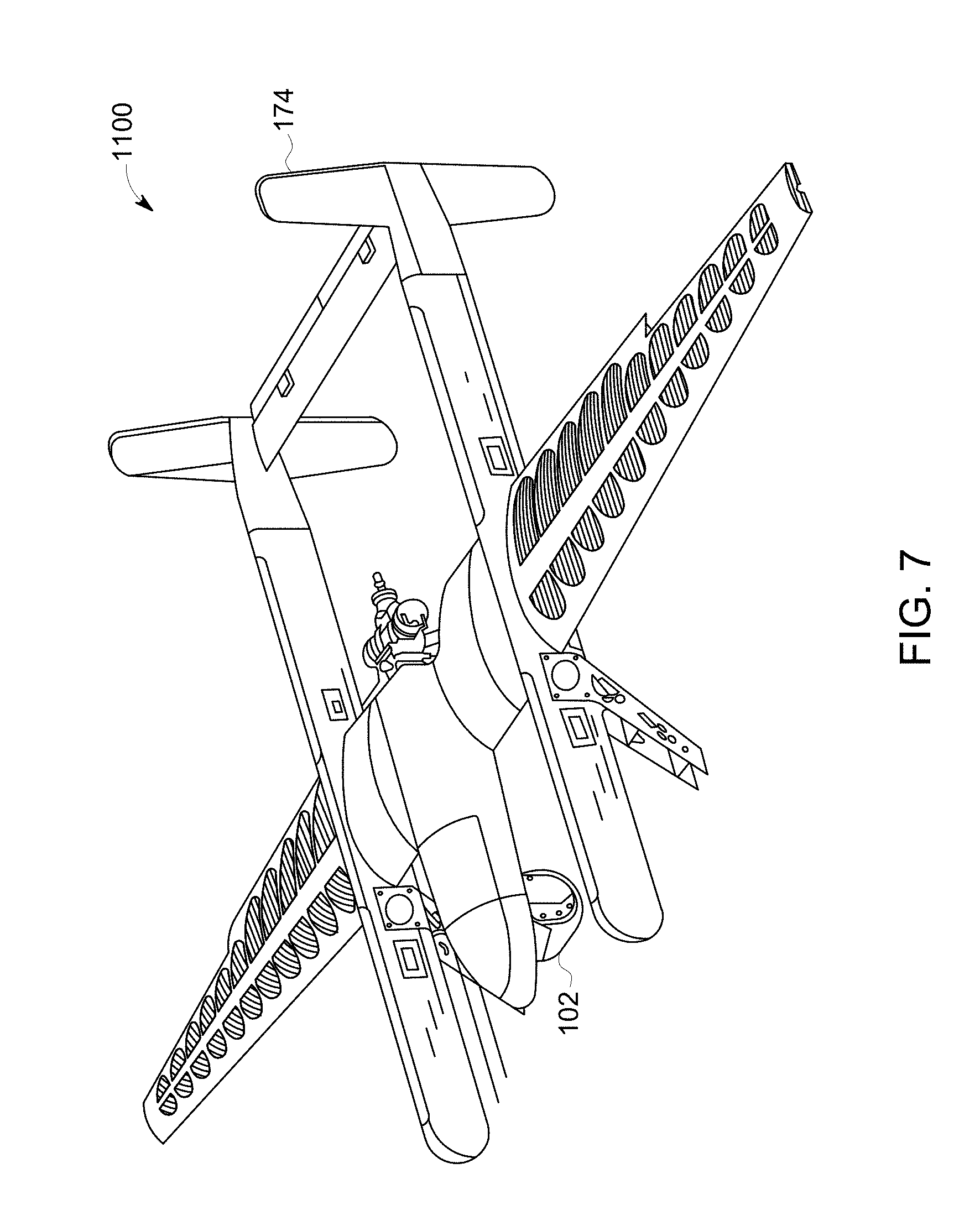

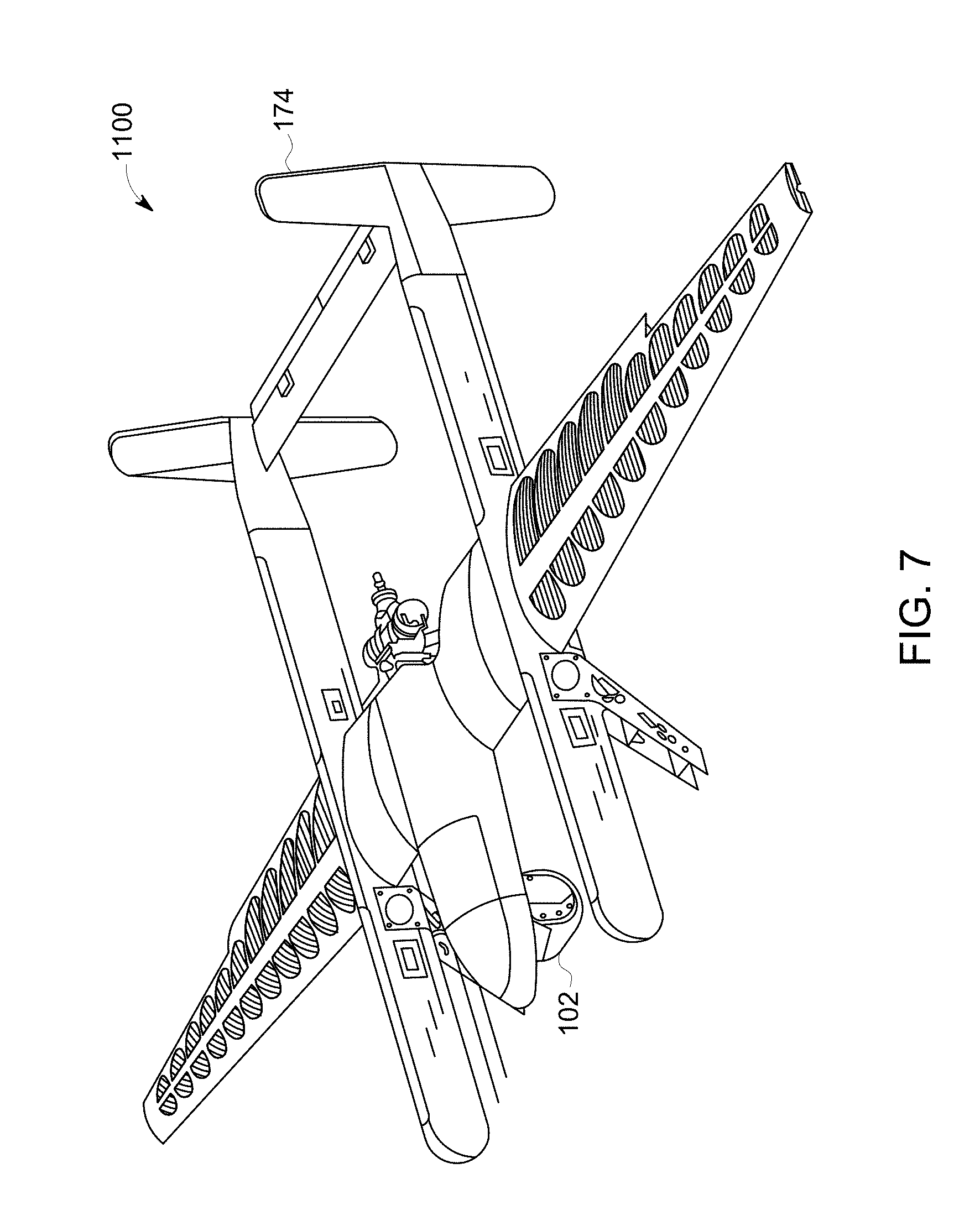

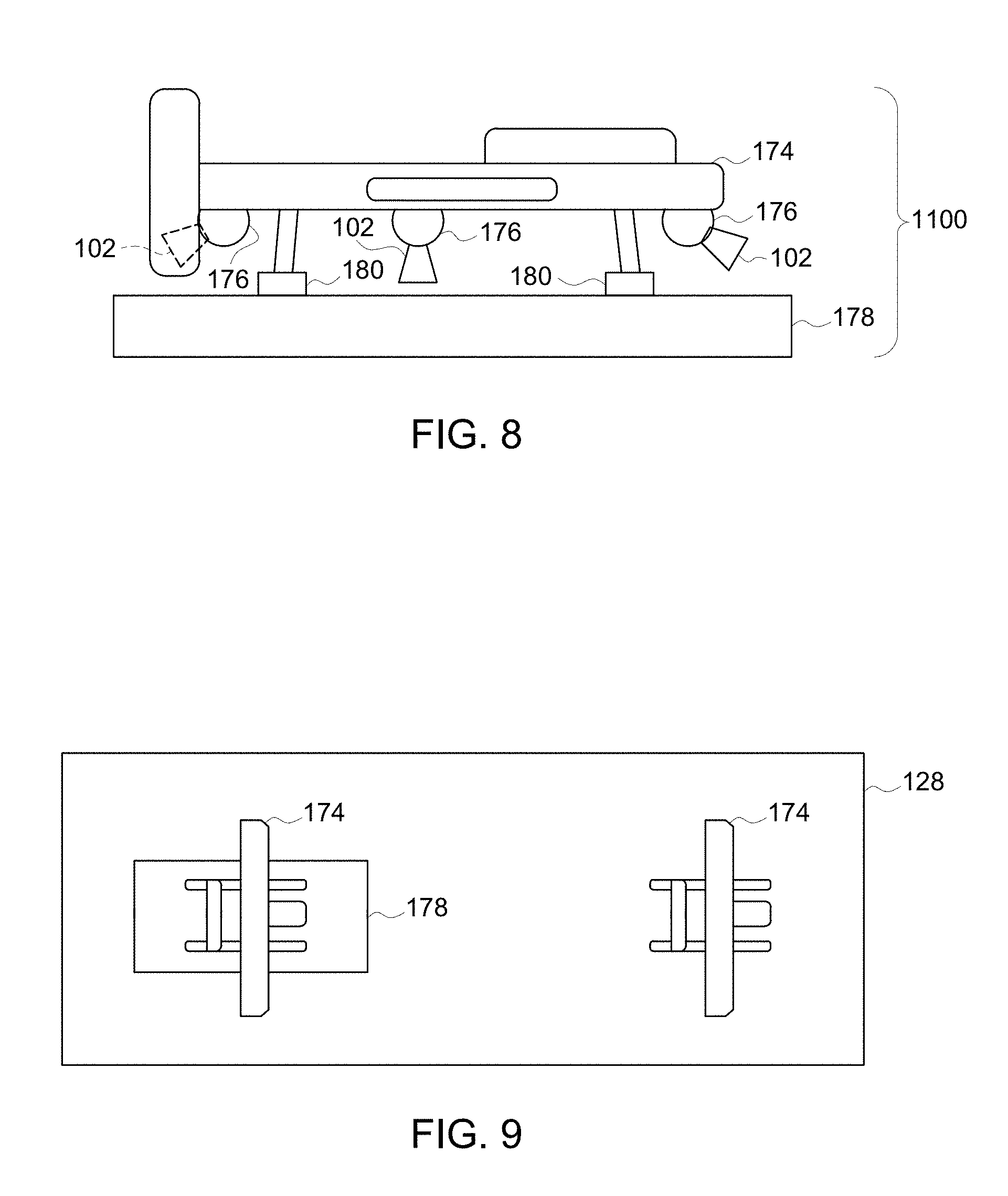

[0043] FIG. 7 illustrates a perspective view of a sensor system;

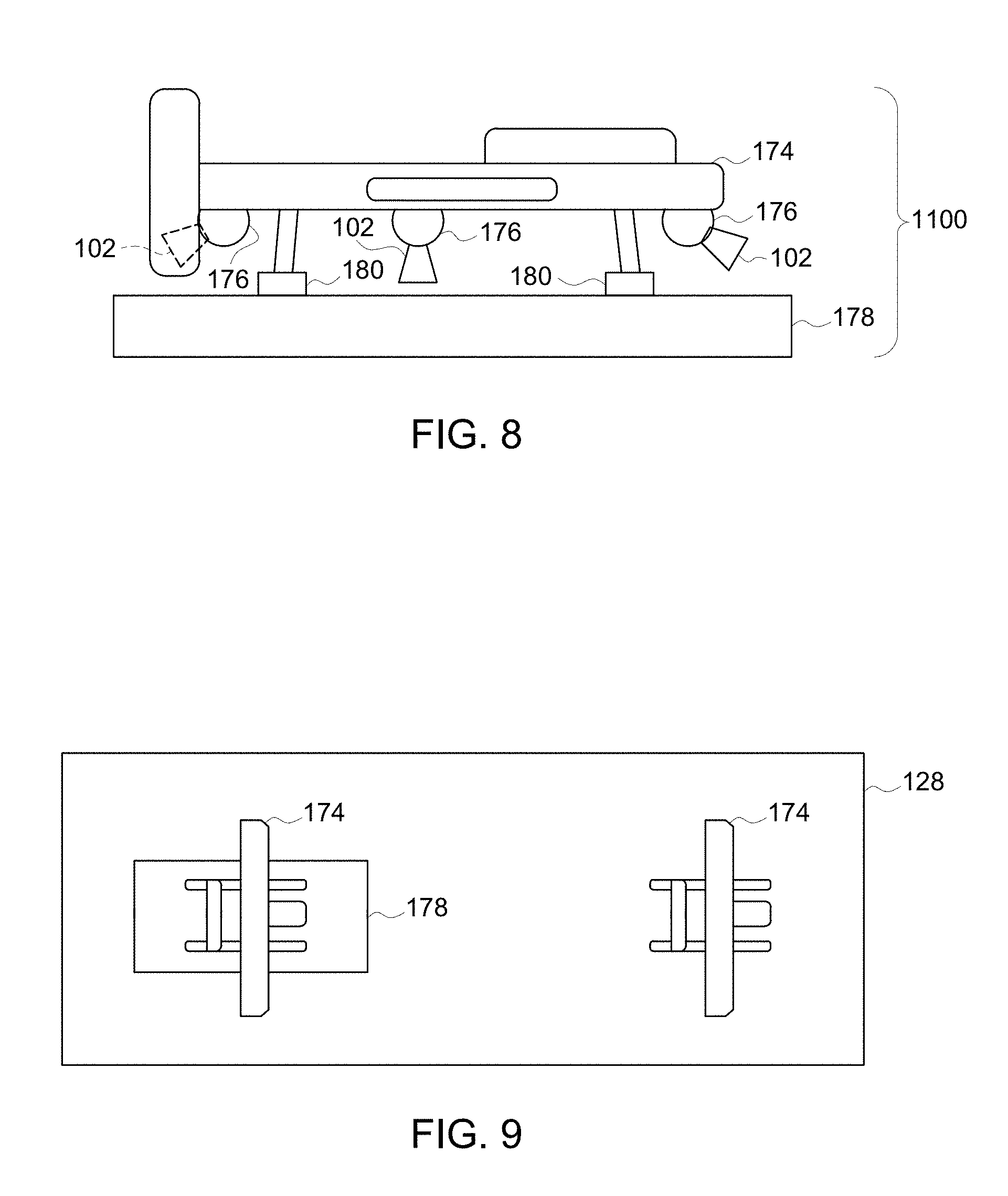

[0044] FIG. 8 illustrates a side view of the sensor system shown in FIG. 7;

[0045] FIG. 9 illustrates a top view of the sensor system shown in FIG. 7;

[0046] FIG. 10 illustrates operation of the sensor system shown in FIGS. 7, 8, and 9 according to one embodiment;

[0047] FIG. 11 illustrates a schematic diagram of a front view of a non-aerial vehicle and a movement envelope of an aerial device according to one embodiment;

[0048] FIG. 12 is a schematic illustration of an image analysis system according to one embodiment;

[0049] FIG. 13 illustrates one example of image data of a segment of a route;

[0050] FIG. 14 illustrates another example of the image data shown in FIG. 13;

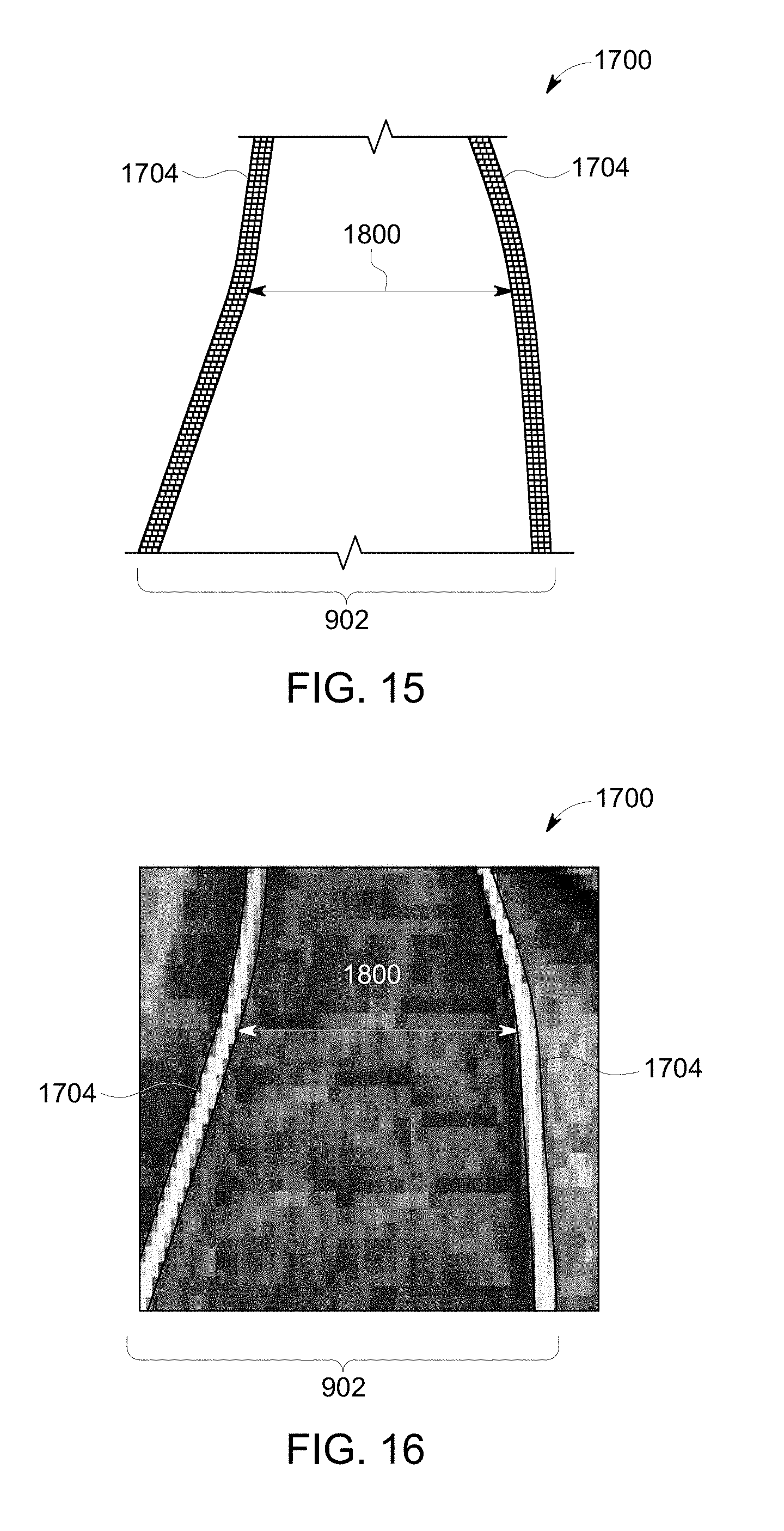

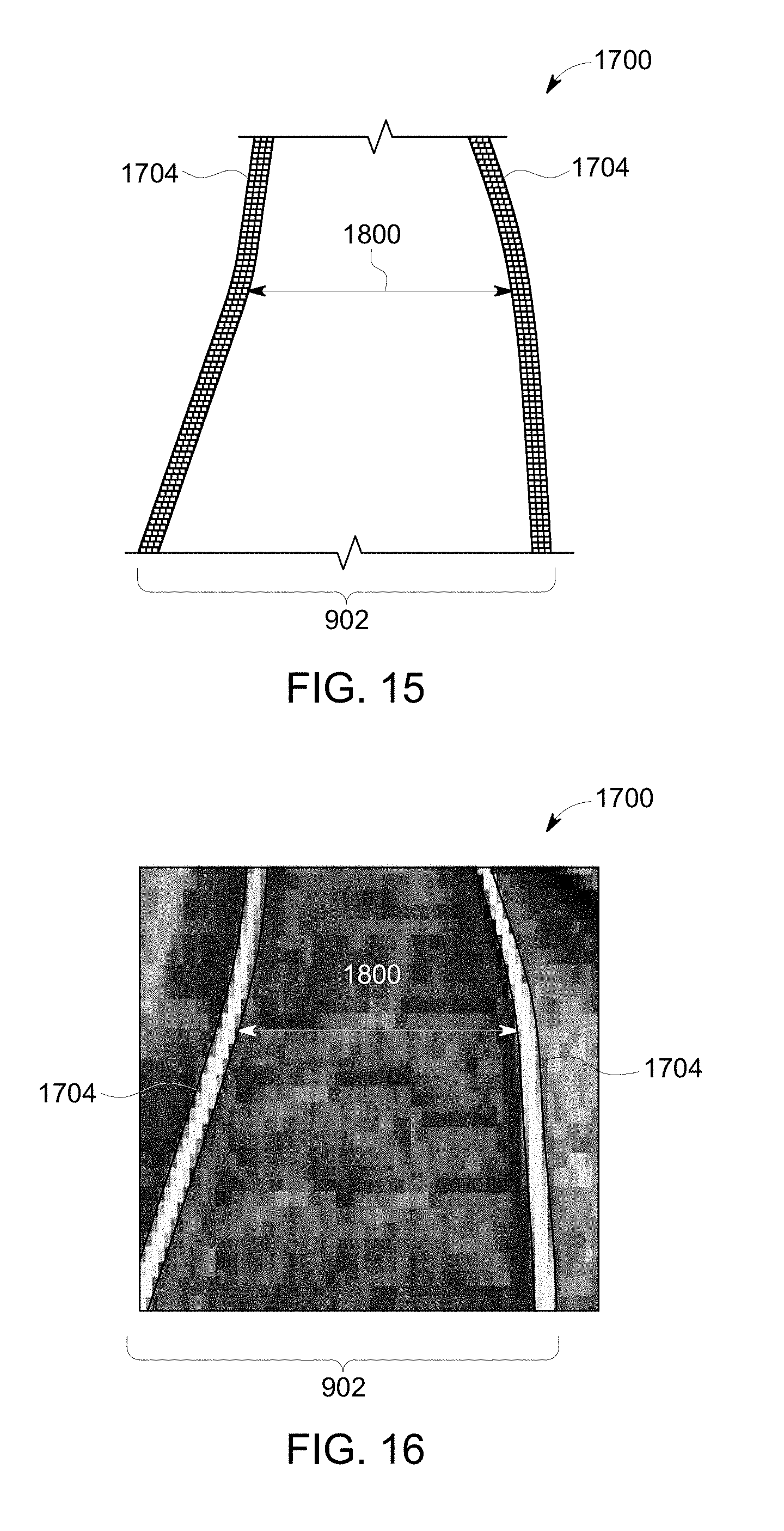

[0051] FIG. 15 illustrates another example of the image data of the route;

[0052] FIG. 16 illustrates another example of the image data of the route;

[0053] FIG. 17 illustrates an example of a benchmark visual profile;

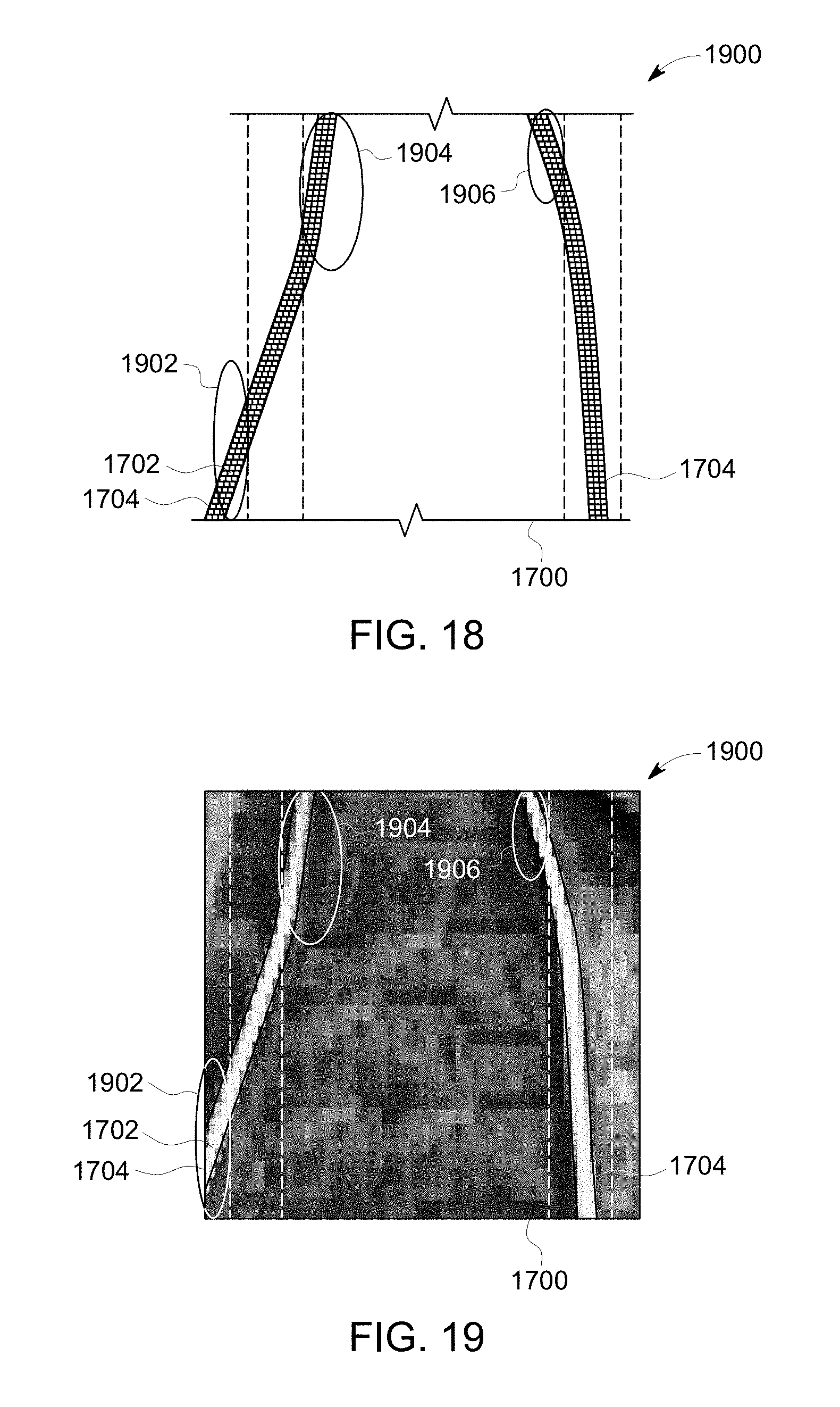

[0054] FIG. 18 illustrates a visual mapping diagram of image data and a benchmark visual profile according to one example;

[0055] FIG. 19 illustrates another view of the visual mapping diagram shown in FIG. 18;

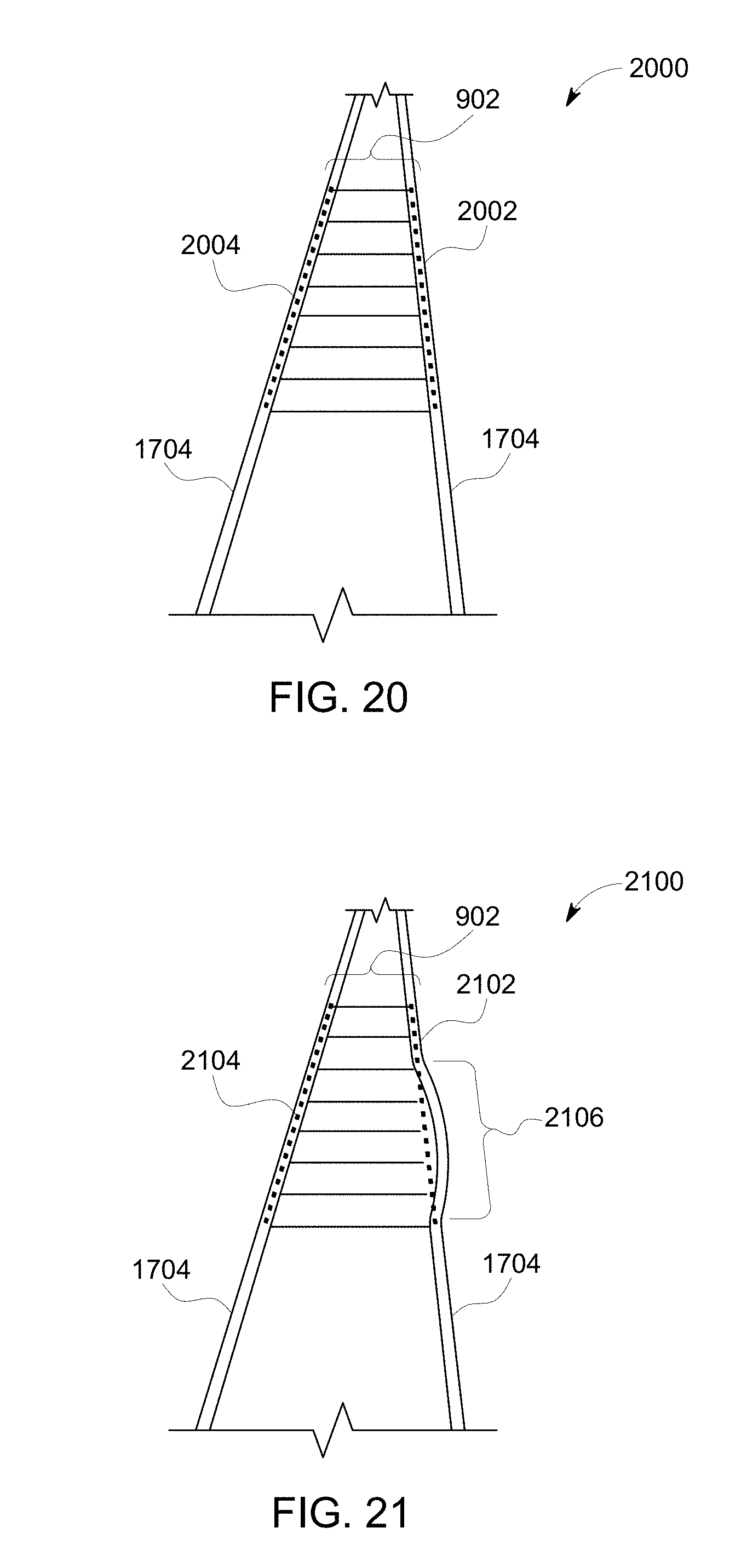

[0056] FIG. 20 illustrates image data generated by one or more camera units disposed onboard the vehicle and/or aerial devices and benchmark visual profiles of the route according to another embodiment;

[0057] FIG. 21 illustrates other image data with benchmark visual profiles of the route according to another embodiment;

[0058] FIG. 22 illustrates a flowchart of one embodiment of a method for identifying route-related hazards;

[0059] FIGS. 23A-E illustrate a block diagram of one embodiment of the communication device shown in FIG. 1;

[0060] FIG. 24 illustrates one embodiment of the mobile platform having multiple propulsion-generating components that operate to propel the mobile platform;

[0061] FIG. 25 illustrates one embodiment of an examination system;

[0062] FIG. 26 illustrates a segment of track occupied by a first train set and a trail vehicle that has an impact sensor;

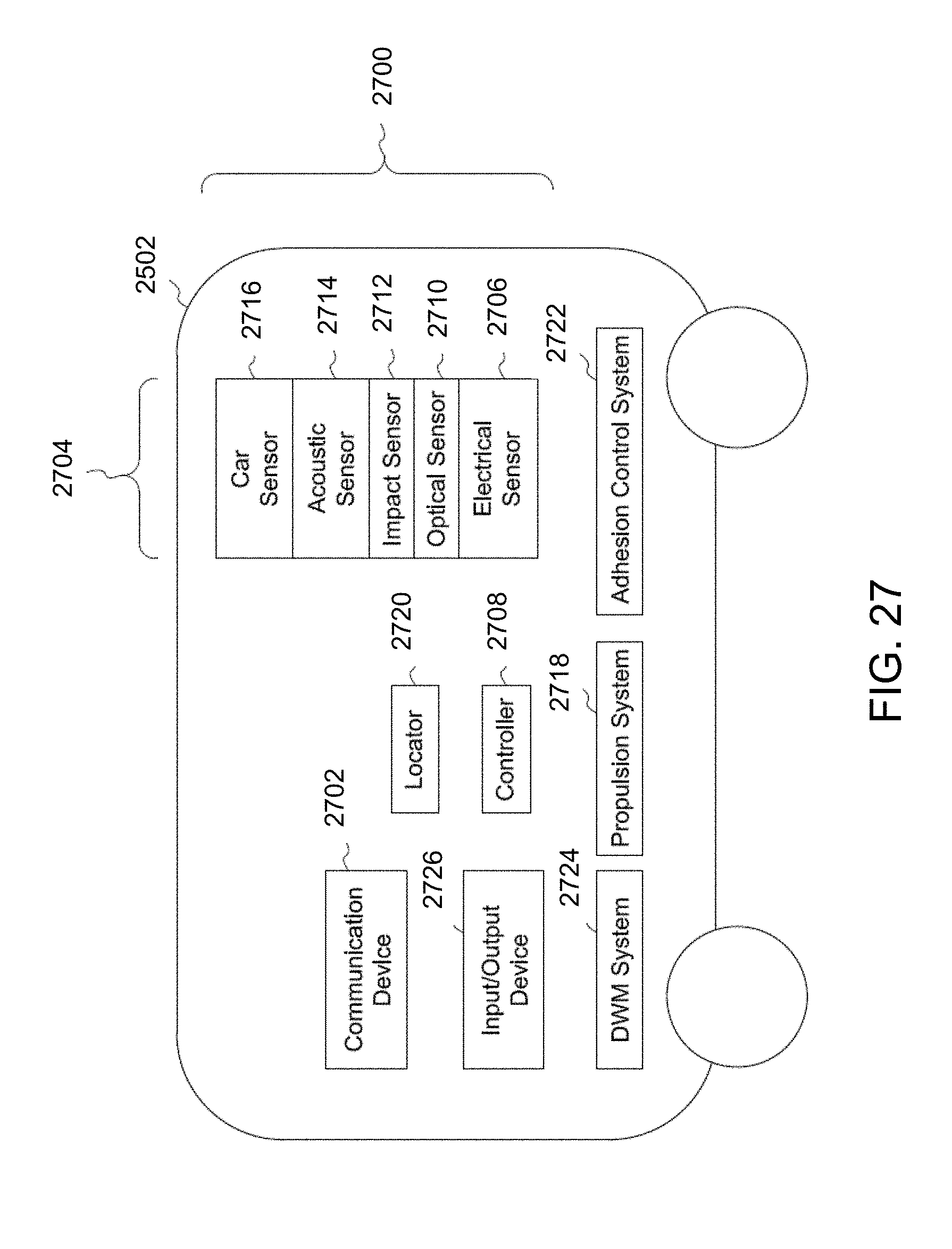

[0063] FIG. 27 includes a schematic illustration of an examination system according to one embodiment;

[0064] FIG. 28 illustrates a flowchart of one embodiment of a method for examining a vehicle and/or route;

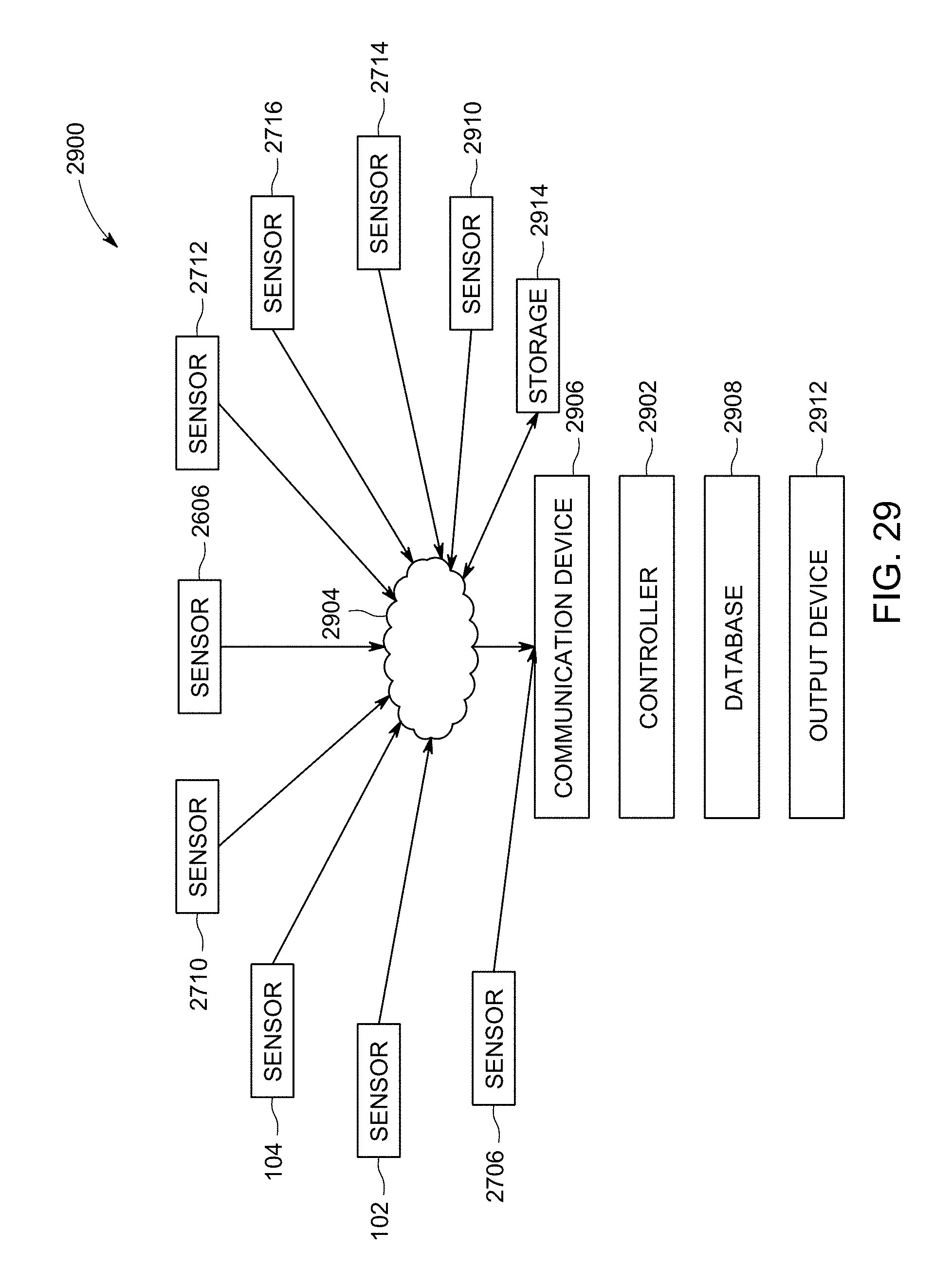

[0065] FIG. 29 illustrates one embodiment of a comprehensive analytic and prognostic system; and

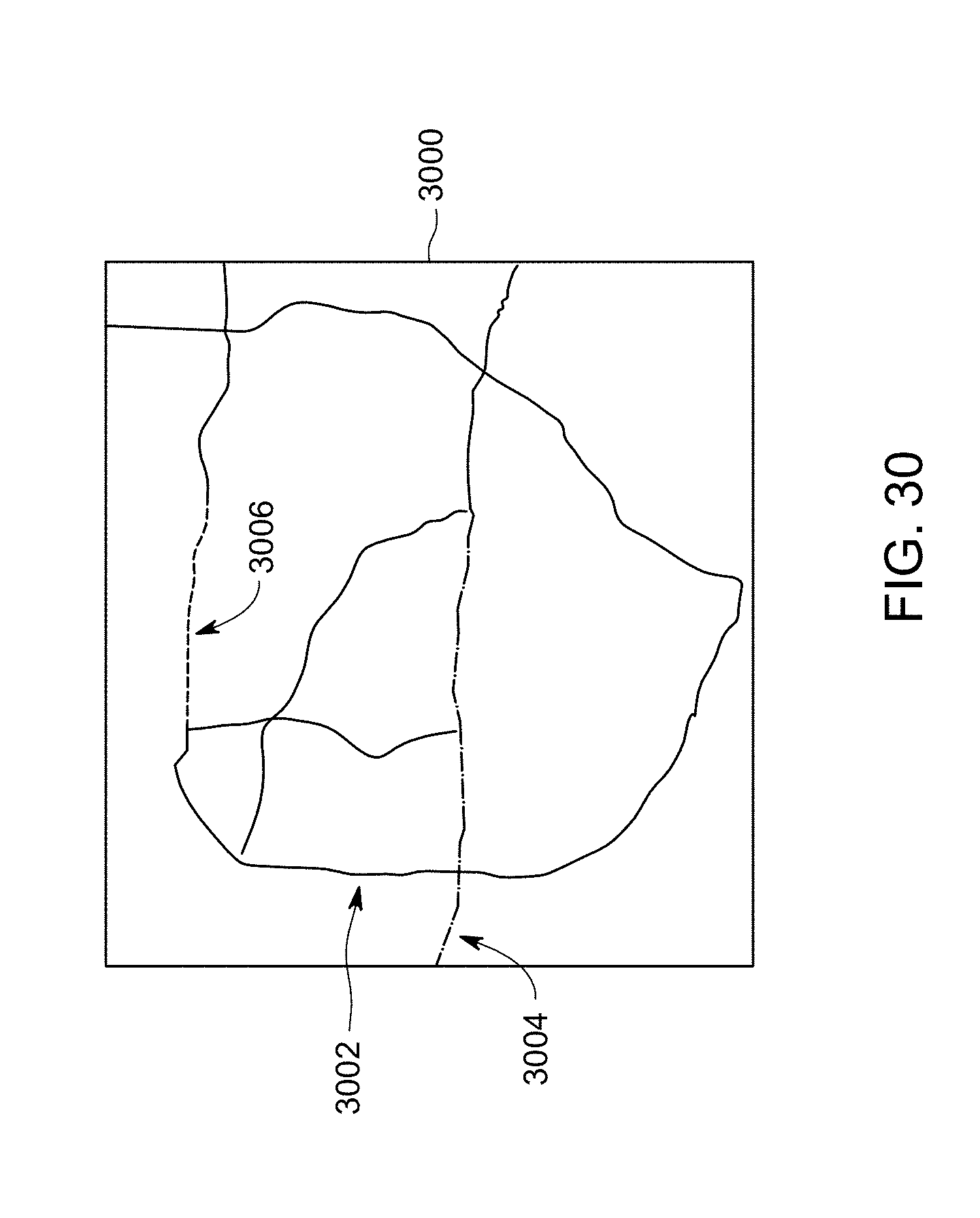

[0066] FIG. 30 illustrates one example of a health score presentation.

DETAILED DESCRIPTION

[0067] Embodiments described herein relate to sensor packages for capturing and communicating data, particularly with regard to a transportation system or network. For example, a sensor package (e.g., a video/IR camera, microphone, accelerometer, radiation detector, LIDAR) may be connected or otherwise disposed onboard a mobile platform (e.g., a driverless or remotely controlled automobile, drone, marine vessel, helicopter, or airplane) to allow the sensor package unit to move. The transportation system or network can include interconnected routes (e.g., tracks, roads, waterways, or other paths), wayside devices, and/or other components, such as bridges, tunnels, gates, etc.

[0068] An aerial unmanned vehicle (also referred to as a drone) may be used as an example of the mobile platform, which, in this example, may have a video camera supported on the drone. The drone can move along a route ahead of a non-aerial transport vehicle and can communicate image data back to the non-aerial vehicle. Suitable examples of non-aerial vehicles include a vehicle that is restricted to propelling itself along non-airborne routes, such as rail vehicles, other off-highway vehicles (e.g., mining vehicles or other ground-based vehicles that are not designed and/or not normally permitted to travel on public roadways), marine vessels, agricultural equipment, automobiles, and the like.

[0069] This image data can include still images (e.g., snapshots), videos (e.g., data that shows movement), or a combination thereof. The image data can provide an operator of the non-aerial vehicle a view of the route well in advance of the arrival of the non-aerial vehicle. For high speed non-aerial vehicles, the stopping distance may be beyond the visibility provided from the vantage of the non-aerial vehicle. The view from the mobile platform, then, may extend or supplement that visible range. In addition, the sensor package itself may be repositionable and may have the ability to pan left, right, up and down, as well as the ability to zoom in and out.

[0070] As used herein, a sensor package that is a video camera is a device for capturing and/or recording visual images. These images may be in the form of still shots, analog video signals, or digital video signals. The signals, particularly the digital video signals, may be subject to compression/decompression algorithms, such as MPEG or HEVC, for example. A suitable sensor package that is a video camera may capture and record in a determined band of wavelengths of light or energy. For example, in one embodiment the sensor package may sense wavelengths in the visible spectrum and in another the sensor package may sense wavelengths in the infrared spectrum. Multiple sensors may be combined in a single sensor package and may be used selectively based on the application. Further, stereoscopic and 3D sensor packages are contemplated for at least some embodiments described herein. These sensor packages may assist in determining distance, velocity, and vectors to predict (and thereby avoid) collision and damage. The term consist, or vehicle consist, refers to two or more vehicles or items of mobile equipment that are mechanically or logically coupled to each other. By logically coupled, the plural items of mobile equipment are controlled so that controls to move one of the items causes a corresponding movement in the other items in consist, such as by wireless command. An Ethernet over multiple unit (eMU) system may include, for example, a communication system for use transmitting data from one vehicle to another in consist (e.g., an Ethernet network over which data is communicated between two or more vehicles).

[0071] FIG. 1 illustrates a sensor package system 100 for capturing and communicating transportation data related to vehicles or otherwise to a transportation system according to one embodiment. The system includes a portable sensor package unit 102 having a sensor package 104, a data storage device 106 and/or a communication device 108, a battery or another energy storage device 110, and a controller 107. The sensor package unit may be portable in that the sensor package unit is small and/or light enough to be carried by a single adult human, a drone, or the like. The sensor package unit can capture and/or generate image data 112 of a field of view 101 of the sensor package unit. For example, the field of view may represent the solid angle through which the sensor package unit is sensitive to light, electromagnetic radiation, or other energy that is used to form images, videos, or the like. The image data can include still images, videos (e.g., moving images or a series of images representative of a moving object), or the like, of one or more objects within the field of view of the sensor package unit. In any of the embodiments of any of the sensor package systems described herein, data other than image data may be captured and communicated, e.g., the portable sensor package unit may have a microphone for capturing audio data, a vibration sensor for capturing vibration data, and so on.

[0072] A suitable portable sensor package unit may be an Internet protocol sensor package unit, such as a sensor package that can send video data via the Internet or another network. In one aspect, the sensor package can be a digital sensor package capable of obtaining relatively high quality image data (e.g., static or still images and/or videos). For example, the sensor package may be Internet protocol (IP) sensor packages that generate packetized image data. The sensor package can be a high definition (HD) sensor package capable of obtaining image data at relatively high resolutions. For example, the sensor package may obtain image data having at least 480 horizontal scan lines, at least 576 horizontal scan lines, at least 720 horizontal scan lines, at least 1080 horizontal scan lines, or an even greater resolution. Alternatively, the sensor package may be another type of sensor package.

[0073] The data storage device may be electrically connected to the sensor package unit and can store the image data. The data storage device may include one or more computer hard disk drives, removable drives, magnetic drives, read only memories, random access memories, flash drives or other solid state storage devices, or the like. Optionally, the data storage device may be disposed remote from the sensor package unit, such as by being separated from the sensor package unit by at least several centimeters, meters, kilometers, as determined at least in part by the application at hand.

[0074] The communication device may be electrically connected to the sensor package unit and can wirelessly communicate (e.g., transmit, broadcast, or the like) the image data to a transportation system receiver 114 located off-board the sensor package unit. Optionally, the image data may be communicated to the receiver via one or more wired connections, over power lines, through other data storage devices, or the like. The communication device and/or receiver can represent hardware circuits or circuitry, such as transceiving circuitry and associated hardware (e.g., antennas) 103, that include and/or are connected with one or more processors (e.g., microprocessors, controllers, or the like). In one embodiment, the antenna includes a vertical dipole antenna operating at gain or focus of 4 dBi (or another value).

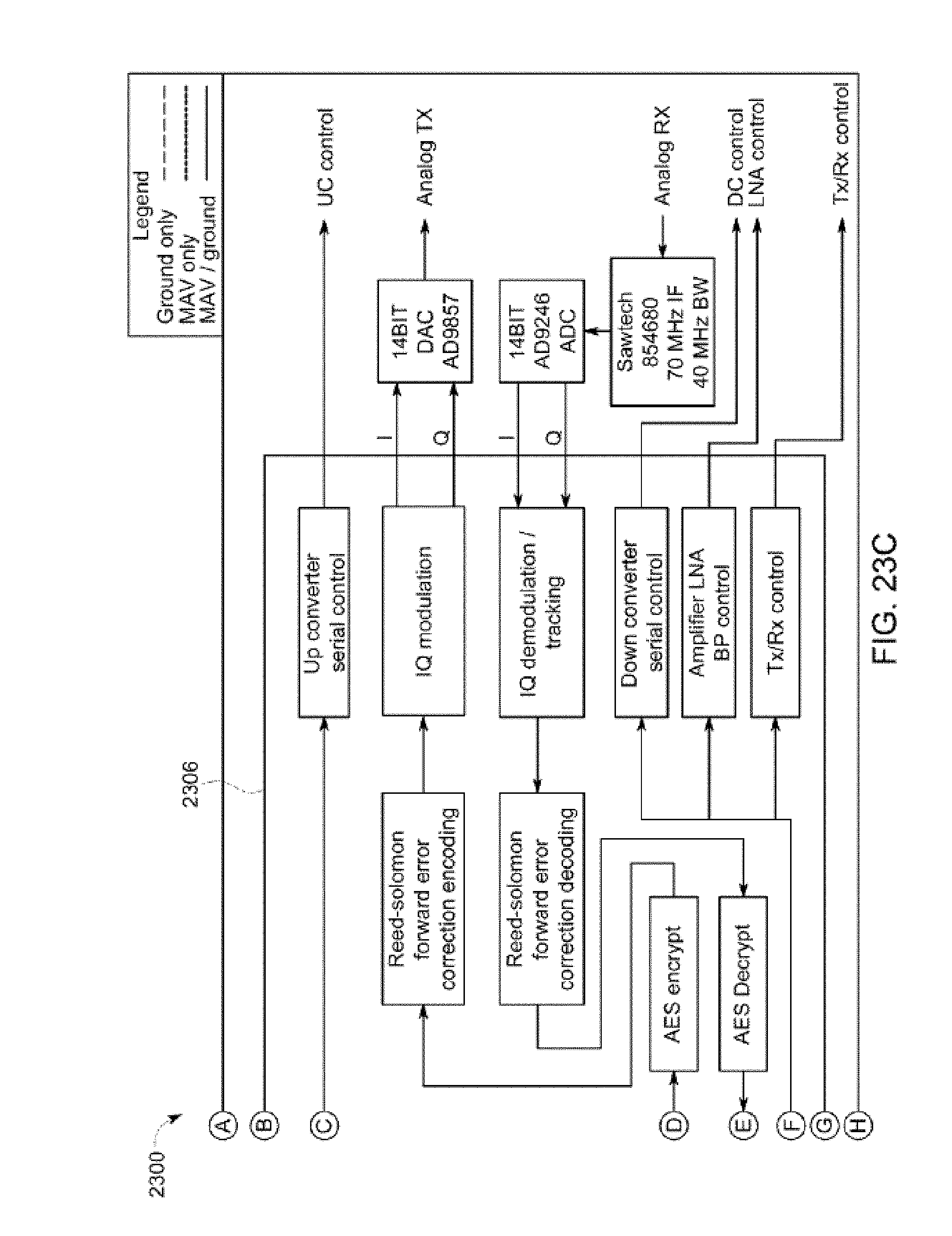

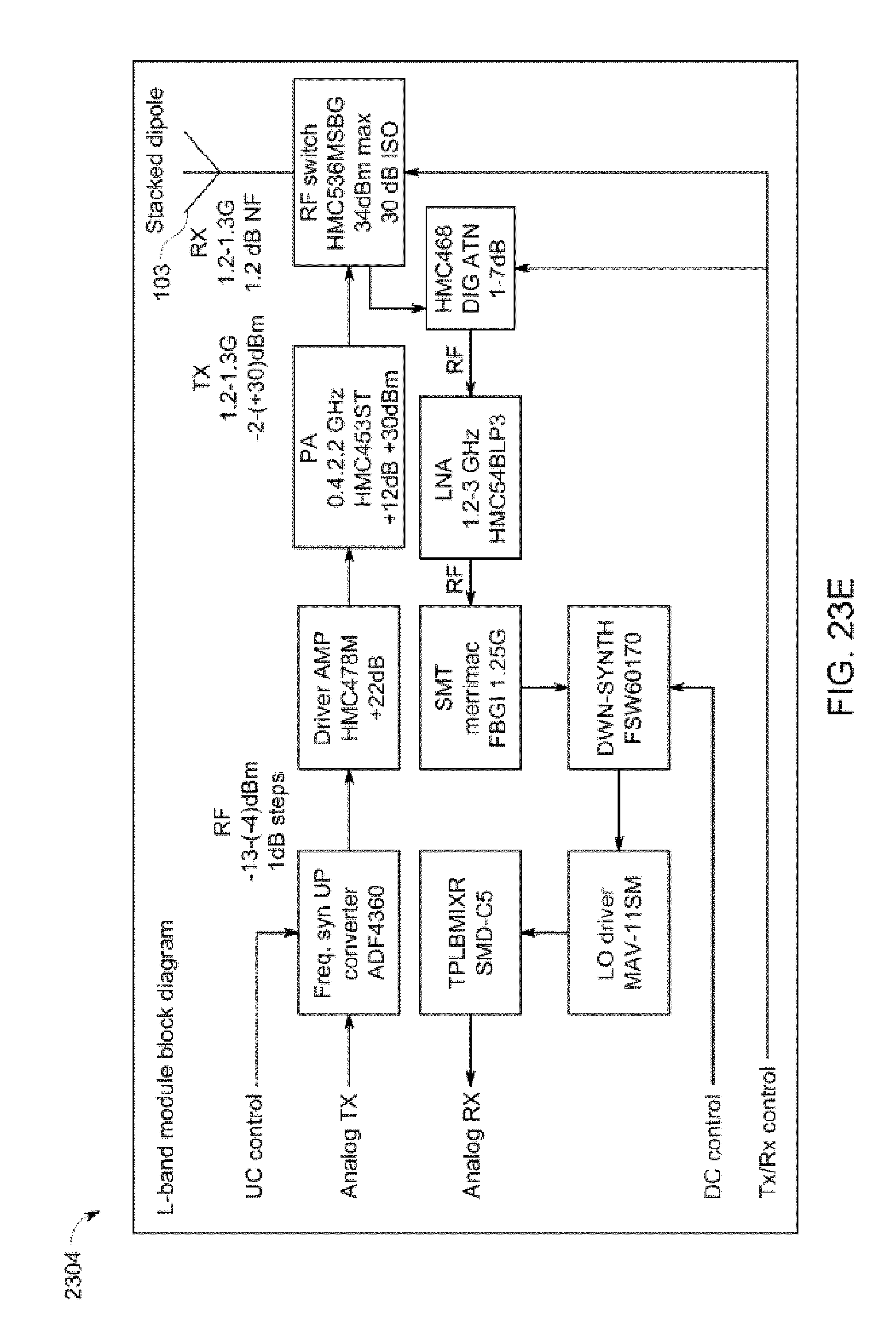

[0075] In one aspect, the communication device includes a low cost, light weight, low power and/or long range L/S band transceiver. FIGS. 23A-E illustrate a block diagram of one embodiment of the communication device shown in FIG. 1. The embodiment of the communication device shown in FIGS. 23A-E is but one example of a communication device that can be used in connection with one or more embodiments described herein. Optionally, the communication device shown in FIGS. 23A-E may be included in the receiver. The block diagram shown in FIGS. 23A-E includes a digital module or section 2300, an S-band module or section 2302, and an L-band module or section 2304. The digital section 2300 can process baseband video and/or serial data for both the communication device and the receiver, or alternatively, for just one of the communication device and receiver. The receiver may process data inversely to the communication device (e.g., with inverse function and/or order), but the processing performed by the communication device and/or receiver may otherwise be the same or similar.

[0076] The processing functions performed by the communication device and/or receiver can include, but are not limited to, serial interface buffering, digital data interleaving, encryption and/or decryption (e.g., using the Advanced Encryption Standard, or AES), forward error correction/restoration, framing and synchronization, digital interface encoding/decoding, video compression, or the like. The digital section 2300 can support a serial I/O interface. One or more communication processors 2306 (e.g., a field programmable gate array or other device) of the digital module 2300 can provide an adaptive transmit power feature of the communication device shown in FIG. 23. This feature can include receiving an indication of a signal level from a receiver (e.g., the antenna 103) and encoding a back channel command to the digital module 2300, using a dedicated control word in the up-linked serial data line, to command the antenna 103 that is transmitting a data signal to adjust the power level at which the data signal is being wirelessly communicated according to the signal level from the receiver (e.g., the received signal strength).

[0077] The digital module 2300 may default to a designated upper limit on transmitted power levels in the event that an uplink signal is not received, which could occur prior to synchronizing the receiver 114 and the communication device. The digital module 2300 may include one or more video inputs (e.g., analog National Television System Committee video inputs) while the S-band and L-band modules 2302, 2304 may include another type of output device, such as a Universal Serial Bus (USB) connection. Plural interface chips (e.g., processors) can be provided to support digitizing and converting video at the front end of the processor 2306. If video compression is used, de-compression may not be used for the modules 2302, 2304. An external computing device 2308, such as a laptop computer, desktop computer, or the like, may be connected with the digital module 2300 to perform video de-compression. The digital module 2300 can interface with one or more of the modules 2302, 2304 using a common (e.g., the same) interface (e.g., a 70 MHz digital I/F) to either an L band or an S band transceiver module.

[0078] The communication device shown in FIG. 23 can communicate video or image data using single frequency radio operation in the L band or S band by communicating the data using the corresponding module 2304, 2302. The digital module 2300 can use a software-based switch to select the operating band to be used (e.g., the L band or the S band). This software-based switch may be included in one or more sets of instructions for the processor 2306, and may be stored in the data storage device 106 or in another location. The communication device may use 100 MHz of continuous band spectrum space supporting four sub channels (e.g., 25 MHz each channel) for the communication of image data.

[0079] The communicating range of the communication device may be relatively large, such as up to 5 km, up to 10 km, or another distance. The communication device can adapt the power at which signals are wirelessly communicated to the receiver 114. The resolution of the video and/or images communicated by the communication device may be on the order of 15 frames per second (or another rate) and/or 320.times.240 resolution (or another resolution). Multiple serial full duplex channels of the communication device can communicate or process the data at a rate up to 19,200 bits per second (bps), up to 57,000 bps, or another rate.

[0080] As described herein, the communication device can be disposed onboard a mobile platform. Due to the relatively small size of one or more of the mobile platforms described herein, the size and/or weight of the communication device may be relatively small and/or light. For example, the outer dimensions of the communication device may be up to 1.5 inches by 1.5 inches by 0.25 inches, or one or more other dimensions. The total weight of the communication device may be up to 20 grams or another weight. In order to conserve power, the communication device may consume electric power at less than 10 watts or another limit. The communication device can use time division multiplexing (TDM) to encode and wirelessly communicate the image data described herein. Alternatively, another encoding technique may be used.

[0081] The energy storage device may be electrically connected to the sensor package unit, the data storage device, and/or the communication device. The energy storage device can represent one or more devices that store and/or generate electric current to power the sensor package unit, the data storage device, and/or the communication device. For example, the energy storage device can include one or more batteries, pantographs (e.g., that receive current from an off-board source via a catenary or overhead line), conductive shoes (e.g., that contact a conductive body, such as an electrified rail, to receive current from an off-board source), generators, alternators, or the like.

[0082] In one embodiment, the sensor package unit comprises the sensor package, the data storage device, and the energy storage device, but not the communication device. In such an embodiment, the sensor package unit may be used for storing captured image data for later retrieval and use. In another embodiment, the sensor package unit comprises the sensor package, the communication device, and the energy storage device, but not the data storage device. In such an embodiment, the portable sensor package unit may be used to communicate the image data to a vehicle or other location for immediate use (e.g., being displayed on a display screen), and/or for storage remote from the portable sensor package unit (this is, for storage not within the portable sensor package unit). In another embodiment, the sensor package unit comprises the sensor package, the communication device, the data storage device, and the energy storage device. In such an embodiment, the portable sensor package unit may have multiple modes of operation, such as a first mode of operation where image data is stored within the portable sensor package unit on the data storage device 106, and a second mode of operation where the image data is transmitted off the portable sensor package unit for remote storage and/or immediate use elsewhere.

[0083] The sensor package may be a digital video sensor package, such as a sensor package having a lens, an electronic sensor for converting light that passes through the lens into electronic signals, and a controller for converting the electronic signals output by the electronic sensor into the image data, which may be formatted according to a standard such as MP4. The data storage device, if present, may be a hard disc drive, flash memory (electronic non-volatile non-transitory computer storage medium), or the like. The communication device, if present, may be a wireless local area network (LAN) transmitter (e.g., Wi-Fi transmitter), a radio frequency (RF) transmitter that transmits in and according to one or more commercial cell frequencies/protocols (e.g., 3G or 4G), and/or an RF transmitter that can wirelessly communicate at frequencies used for vehicle communications (e.g., at a frequency compatible with a wireless receiver of a distributed power system of a rail vehicle; distributed power refers to coordinated traction control, such as throttle and braking, of a train or other rail vehicle consist having plural locomotives or other powered rail vehicle units). A suitable energy storage device may be a battery, such as a rechargeable lithium-ion battery, a rechargeable Ni-Mh battery, an alkaline cell, or other device useful for portable energy storage for use in an electronic device. Another suitable energy storage device, albeit more of an energy provider than storage, include a piezoelectric vibration harvester and a solar panel, where energy is generated and then provided to the sensor package system.

[0084] The sensor package unit can include a locator device 105 that generates data used to determine the location of the sensor package unit. The locator device can represent one or more hardware circuits or circuitry that include and/or are connected with one or more processors (e.g., controllers, microprocessors, or other electronic logic-based devices). In one example, the locator device represents a global positioning system (GPS) receiver that determines a location of the sensor package unit, a beacon or other communication device that broadcasts or transmits a signal that is received by another component (e.g., the transportation system receiver) to determine how far the sensor package unit is from the component that receives the signal (e.g., the receiver), a radio frequency identification (RFID) tag or reader that emits and/or receives electromagnetic radiation to determine how far the sensor package unit is from another RFID reader or tag (e.g., the receiver), or the like. The receiver can receive signals from the locator device to determine the location of the locator device relative to the receiver and/or another location (e.g., relative to a vehicle or vehicle system). Additionally or alternatively, the locator device can receive signals from the receiver (e.g., which may include a transceiver capable of transmitting and/or broadcasting signals) to determine the location of the locator device relative to the receiver and/or another location (e.g., relative to a vehicle or vehicle system).

[0085] The controller 107 represents hardware circuitry that includes and/or is connected with one or more processors (e.g., one or more microprocessors, field programmable gate arrays, and/or integrated circuits) that control operations of the sensor package unit 102. In one embodiment, the controller 107 also controls movement of a mobile platform (e.g., a mobile platform 174 shown in FIGS. 7 through 10) that carries the sensor package unit 102. For example, the controller 107 is disposed onboard the mobile platform and generates control signals to propulsion systems (e.g., rotors, motors, etc.) of the mobile platform to control movement of the mobile platform in one embodiment.

[0086] The image data may be autonomously examined by one or more image data analysis systems or image analysis systems described herein. For example, one or more of the transportation receiver system, a vehicle, and/or the sensor package unit may include an image data analysis system (also referred to as an image analysis system) that examines the image data for one or more purposes described herein.

[0087] FIG. 2 illustrates another embodiment of a sensor package system 300. The system can include a display screen system 132 remotely located from the portable sensor package unit and/or the non-aerial vehicle. Optionally, the display screen system is at least partially disposed onboard the non-aerial vehicle. The display screen system receives the image data from the transportation system receiver as a live feed and display the image data (e.g., converted back into moving images) on a display screen 134 of the display screen system. The live feed can include image data representative of objects contemporaneous with capturing the video data but for communication lags associated with communicating the image data from the portable sensor package unit to the display screen system. Such an embodiment may be used, for example, for communicating image data, captured by the portable sensor package unit disposed onboard a mobile platform moving ahead of the non-aerial vehicle to a remote human operator viewing the display screen. The remote human operator, for example, may be onboard the non-aerial vehicle that is moving behind the mobile platform, an expert in the particular task or tasks, or another person, that can examine the image data and/or provide advice or instructions to the on-scene human operator based on the image data.

[0088] In another embodiment, the system has the display screen disposed onboard the non-aerial vehicle, such as in an operator cab of the non-aerial vehicle. The communication device of the portable sensor package unit can wirelessly transmit the image data to the transportation system receiver which may be located onboard the non-aerial vehicle and operably connected to the display screen, for the image data to be displayed on the display screen. Such an embodiment may be used for one operator of the non-aerial vehicle to view the image data captured by a mobile platform moving ahead of the non-aerial vehicle.

[0089] FIG. 3 illustrates another embodiment of a sensor package system 500. A control system 146 onboard the vehicle may be provided for controlling movement of the non-aerial vehicle. The control system can include or represent the control unit, and can include hardware circuits or circuitry that include and/or are connected with one or more processors (e.g., microprocessors, controllers, or the like). The control system can control operations of the non-aerial vehicle, such as by communicating command signals to a propulsion system of the vehicle (e.g., motors, engines, brakes, or the like) for controlling output of the propulsion system.

[0090] The control system can prevent movement of the non-aerial vehicle responsive to a first data content of the image data and allow movement of the non-aerial vehicle responsive to a different, second data content of the image data. For example, the control system onboard the non-aerial vehicle may engage brakes and/or prevent motors from moving the non-aerial vehicle to prevent movement of the non-aerial vehicle responsive to the first data content of the image data indicating the image data from the portable sensor package unit (e.g., onboard a mobile platform moving ahead of the non-aerial vehicle) indicating that one or more segments of the route ahead of the non-aerial vehicle along a direction of travel are damaged, obstructed by one or more obstacles, occupied by one or more other vehicles, or otherwise unsafe to travel over.

[0091] FIG. 4 illustrates one embodiment of the non-aerial vehicle. The non-aerial vehicle can include one or more vehicle consists 148 having plural interconnected non-aerial vehicle units 150, with at least one of the plural vehicle units being a propulsion-generating, non-aerial vehicle unit 152. The non-aerial vehicle can represent a rail vehicle system, such as a train, with the vehicle units 150, 152 representing locomotives, rail cars, or other types of rail vehicles. For example, the vehicle unit 150 can represent a propulsion-generating vehicle unit, while the vehicle units 152 represent non-propulsion-generating vehicle units, such as rail cars. Alternatively, the non-aerial vehicle can represent another type of vehicle, such as an automobile, marine vessel, mining vehicle, other off-highway vehicle (e.g., a vehicle that is not designed for and/or legally permitted to travel on public roadways), or the like. The consist can represent plural vehicle units mechanically connected to travel together along a land or water route 602, such as a track, road, waterway, or the like. Alternatively, the consist and/or vehicle can include plural vehicle units that communicate with each other to travel together along the route 602, but that are not connected with each other. For example, the vehicle unit may send command signals to the vehicle units to instruct the vehicle units how to move along the route 602 to maintain separation distances between the vehicle units.

[0092] The control system onboard the vehicle can prevent movement of the vehicle consist responsive to the first data content of the image data indicating that one or more segments of the route ahead of the vehicle are damaged or otherwise unsafe for continued travel. For example, responsive to the image data indicating that an upcoming segment of the route is being worked on, is occupied by another vehicle, is impassible due to an obstacle on the route (e.g., an automobile stuck on the track at a crossing), is damaged (e.g., has a broken rail), has a broken switch, or the like, the control system may implement one or more remedial actions. These actions can include, but are not limited to, generating a warning (e.g., visual, audible, or a combination thereof) to an operator of the non-aerial vehicle, automatically slowing or stopping movement of the non-aerial vehicle, communicating a request to an off-board location (e.g., dispatch center, maintenance facility, etc.) for repair, maintenance, and/or inspection of the upcoming segment of the route, change a scheduled trip or route of the vehicle to avoid the upcoming segment, or the like.

[0093] FIG. 5 illustrates the control system according to one embodiment. The control system can be disposed onboard the non-aerial vehicle and also can include an image data analysis system 154. The analysis system can automatically process the image data for identifying data content in the image data. The control system can automatically prevent and allow movement of the vehicle responsive to the first data and the second data, respectively, that is identified by the image data analysis system. The image data analysis system can include one or more image analysis processors that autonomously examine the image data obtained by the sensor package unit for one or more purposes, as described herein.

[0094] FIG. 6 illustrates the transportation system receiver 114 located onboard the non-aerial vehicle 128 according to one embodiment. The transportation system receiver can wirelessly communicate network data onboard and/or off-board the non-aerial vehicle, and/or to automatically switch to a mode for receiving the image data from the portable sensor package unit responsive to the portable sensor package unit being active to communicate the image data. For example, responsive to the portable sensor package unit being active to transmit the image data, the transportation system receiver can automatically switch from a network wireless client mode of operation (transmitting data originating from a device onboard the vehicle, such as the control unit) to the mode for receiving the image data from the portable sensor package unit. The mode for receiving the image data from the portable sensor package unit may comprise a wireless access point mode of operation (receiving data from the portable sensor package unit).

[0095] In another embodiment, the sensor package system further comprises the transportation system receiver located onboard the non-aerial vehicle. The transportation system receiver can wirelessly communicate network data onboard and/or off-board the vehicle, and/or to automatically switch from a network wireless client mode of operation to a wireless access point mode of operation, for receiving the image data from the portable sensor package unit. This network data can include data other than image data. For example, the network data can include information about an upcoming trip of the vehicle (e.g., a schedule, grades of a route, curvature of a route, speed limits, areas under maintenance or repair, etc.), cargo being carried by the vehicle, volume and type of bulk material being transported by the vehicle, or other information. Bulk material refers to solids, such as grain, coal, sand or ore as well as to liquids, such as oil, water, liquefied gases, and the like. Alternatively, the network data can include the image data.

[0096] In another embodiment of one or more of the sensor package systems described herein, the system stores the image data for use locally (e.g., in the vehicle), or it can transmit it to a remote location (e.g., off-vehicle location) based on where the vehicle is located. For example, if the vehicle is in a yard (e.g., a switching yard, maintenance facility, or the like), the image data may be transmitted to a location in the yard. But, prior to the vehicle entering the yard or a designated location in the yard, the image data may be stored onboard the vehicle and not communicated to any location off of the vehicle.

[0097] Thus, in an embodiment, the system further comprises a control unit 800 that, responsive to at least one of a location of the portable sensor package unit or a control input, controls at least one of the portable sensor package unit or the transportation system receiver to a first mode of operation for at least one of storing or displaying the video data on board the vehicle and to a second mode of operation for communicating the video data off board the vehicle for at least one of storage or display of the video data off board the vehicle. For example, the control unit may be can automatically control at least one of the portable sensor package unit or the transportation system receiver from the first mode of operation to the second mode of operation responsive to the location of the portable sensor package unit being indicative of the rail vehicle being in a yard. The control unit 800 can represent hardware circuitry that includes and/or is connected with one or more processors (e.g., microprocessors, field programmable gate arrays, integrated circuits, etc.).

[0098] During operation of the vehicle and/or sensor package unit outside of a designated area (e.g., a geofence extending around a vehicle yard or other location), the image data generated by the sensor package may be locally stored in the data storage device of the sensor package unit, shown on a display of the vehicle, or the like. Responsive to the vehicle and/or sensor package unit entering into the designated area (e.g., as determined by the controller or control unit), the sensor package unit can switch modes to begin wirelessly communicating the image data to the receiver, which may be located in the designated area. Changing where the image data is communicated based on the location of the vehicle and/or sensor package unit can allow for the image data to be accessible to those operators viewing the image data for safety, analysis, or the like. For example, during movement of the vehicle outside of the vehicle yard, the image data can be presented to an onboard operator, and/or the image data may be analyzed by an onboard analysis system of the vehicle to ensure safe operation of the vehicle. Responsive to the vehicle and/or sensor package unit entering into the vehicle yard, the image data can be communicated to a central office or management facility for remote monitoring of the vehicle and/or operations being performed near the vehicle.

[0099] As one example, event data transmission (e.g., the transmitting, broadcasting, or other communication of image data) may be can occur based on various vehicle conditions, geographic locations, and/or situations. The image data may be either pulled (e.g., requested) or pushed (e.g., transmitted and/or broadcast) from the vehicle. For example, image data can be sent from a vehicle to an off-board location by the control unit or controller based on selected operating conditions (e.g., emergency brake application), a geographic location (e.g., in the vicinity of a crossing between two or more routes), selected and/or derived operating areas of concern (e.g., high wheel slip or vehicle speed exceeding area limits), and/or time driven messages (e.g., sent once a day). The off-board location may also request and retrieve the image data from specific vehicles on demand.

[0100] FIGS. 7, 8, and 9 illustrate another embodiment of a sensor package system 1100. FIG. 7 illustrates a perspective view of the sensor package system, FIG. 8 illustrates a side view of the sensor package system, and FIG. 9 illustrates a top view of the sensor package system 1100. The system includes a mobile platform 174 that may be operated by at least one of remote control or autonomous control moving over a ground route of the non-aerial vehicle. The mobile platform may have one or more sensor package docks 176 for receiving one or more portable sensor package units, and may have also a vehicle dock for coupling the mobile platform to the non-aerial vehicle. In the illustrated example, the mobile platform includes three sensor packages, with one sensor package unit facing along a forward direction of travel of the mobile platform, another sensor package unit facing along a downward direction toward the ground or route over which the mobile platform flies, and another sensor package unit facing along a rearward direction of the mobile platform. Alternatively, a different number of sensor package units may be used and/or the sensor package units may be oriented in other directions.

[0101] When the aerial mobile platform is in the air, the portable sensor package units can be positioned for the sensor packages to view the route, the vehicle, or other areas near the vehicle. The mobile platform may be, for example, a scale dirigible, a scale helicopter, or the like (e.g., the mobile platform may be smaller than needed for transporting humans, such as 1/10 scale or smaller). A suitable scale helicopter can include quadcopters and the like.

[0102] The system can include a mobile platform vehicle dock 178 to attach the mobile platform to the vehicle. The mobile platform vehicle dock can receive the mobile platform for at least one of detachable coupling of the mobile platform to the vehicle, charging of a battery of the mobile platform from a power source of the non-aerial vehicle, or the like. For example, the dock can include one or more connectors 180 that mechanically or magnetically coupled with the mobile platform to prevent the mobile platform from moving relative to the dock, that conductively couple an onboard power source (e.g., battery) of the mobile platform with a power source of the vehicle (e.g., generator, alternator, battery, pantograph, or the like) so that the power source of the mobile platform can be charged by the power source of the non-aerial vehicle during movement of the vehicle.

[0103] The mobile platform can move off the vehicle to obtain image data that is communicated from one or more of the sensor packages onboard the mobile platform to one or more receivers 114 onboard the vehicle. The mobile platform can move relative to the vehicle while the vehicle is stationary and/or while the vehicle is moving along a route. The image data may be displayed to an operator on a display device onboard the vehicle and/or may be autonomously examined as described herein. The image data can be examined by the operator and/or an image analysis system, such as to examine the vehicle itself, to examine other vehicles traveling relative to the vehicle (e.g., to avoid collisions between the vehicles), to examine the route being traveled upon (e.g., to perform route inspection), to warn of upcoming obstructions or other problems along the route ahead of the vehicle, and the like. In one aspect, the image data obtained by the mobile platform can be automatically examined by the image analysis system onboard the non-aerial vehicle to detect hazards. Hazards can include one or more of an impending collision with obstacles onboard the route ahead of the non-aerial vehicle; potential derailment due to cracks, breaks or other types of damage to the route; a potential collision with another vehicle on an intercept trajectory; or the like.

[0104] When the mobile platform is coupled into the vehicle dock, one or more sensor packages can be positioned to view the route during movement of the vehicle. In one mode of operation, the system includes three or more mobile platforms, such two or more of the mobile platforms docked onboard the non-aerial vehicle. A control unit onboard the non-aerial vehicle (e.g., the control unit 146 or a control unit that is separate from the control unit 146) can remotely control flight of at least one of the mobile platforms.