Cross Device Bandwidth Utilization Control

Rothman; Aaron Nathaniel ; et al.

U.S. patent application number 15/638295 was filed with the patent office on 2019-04-04 for cross device bandwidth utilization control. This patent application is currently assigned to Google Inc.. The applicant listed for this patent is Google Inc.. Invention is credited to Gaurav Bhaya, Aaron Nathaniel Rothman, Robert Stets.

| Application Number | 20190104199 15/638295 |

| Document ID | / |

| Family ID | 65898178 |

| Filed Date | 2019-04-04 |

| United States Patent Application | 20190104199 |

| Kind Code | A1 |

| Rothman; Aaron Nathaniel ; et al. | April 4, 2019 |

CROSS DEVICE BANDWIDTH UTILIZATION CONTROL

Abstract

A system of multi-modal transmission of packetized data in a voice activated data packet based computer network environment is provided. A natural language processor component can parse an input audio signal to identify a request and a trigger keyword. Based on the input audio signal, a direct action application programming interface can generate a first action data structure, and a content selector component can select a content item based on a count reaches a target number. An interface management component can identify first and second candidate interfaces, and respective resource utilization values. The interface management component can select, based on the resource utilization values, the first candidate interface to present the content item.

| Inventors: | Rothman; Aaron Nathaniel; (Sunnyvale, CA) ; Bhaya; Gaurav; (Sunnyvale, CA) ; Stets; Robert; (Mountain View, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Google Inc. Mountain View CA |

||||||||||

| Family ID: | 65898178 | ||||||||||

| Appl. No.: | 15/638295 | ||||||||||

| Filed: | June 29, 2017 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13441298 | Apr 6, 2012 | 9922334 | ||

| 15638295 | ||||

| 15395703 | Dec 30, 2016 | 10032452 | ||

| 13441298 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 43/0805 20130101; H04L 67/327 20130101; G06F 16/243 20190101; H04L 67/42 20130101; G06Q 30/02 20130101; H04L 67/04 20130101; H04L 67/12 20130101; G06Q 30/0275 20130101 |

| International Class: | H04L 29/08 20060101 H04L029/08; H04L 12/26 20060101 H04L012/26; G06F 17/30 20060101 G06F017/30 |

Claims

1.-20. (canceled)

21. A system to provide content to computing devices in an online computer network environment, comprising a data processing system to: receive an audio-based request from a computing device, the audio-based request comprising a device identifier associated with the computing device and the audio-based request detected at the computing device; receive selection criteria comprising device identifier characteristics and the selection criteria associated with a digital component; identify a plurality of digital components associated with the digital component; determine a count representing a number of the plurality of digital components previously transmitted to the computing device; receive a target number of the plurality of digital components previously transmitted to the computing device; determine a probability the count reaches the target number within a predetermined time interval; select a candidate digital component based on the probability and selection criteria; and transmit the candidate digital component to the computing device.

22. The system of claim 21, comprising the data processing system to: select, based on the selection criteria, an exposure model; and determine, by the exposure model, the probability the count reaches the target number.

23. The system of claim 21, comprising the data processing system to: identify an exposure interval based on the selection criteria; determine a second probability the computing device displays the candidate digital component from the exposure interval; and select the candidate digital component based on the second probability.

24. The system of claim 21, comprising: a natural language processor component to: receive, via an interface of the data processing system, data packets comprising the audio-based request; identify, from the audio-based request, a request and a trigger keyword corresponding to the request; a direct action application programming interface to generate, based on at least one of the request and the trigger keyword, a first action data structure; and a content selector component to select the candidate digital component based on the first action data structure.

25. The system of claim 21, comprising an interface management component to: poll a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; determine a first resource utilization value for the first candidate interface and a second resource utilization value for the second candidate interface, the first resource utilization value and the second resource utilization value based on at least one of a battery status, processor utilization, memory utilization, an interface parameter, and network bandwidth utilization; and select to transmit the candidate digital component to the computing device based on the first resource utilization value and the second resource utilization value.

26. The system of claim 21, comprising an interface management component to: poll a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; transmit the candidate digital component to the second computing device.

27. The system of claim 21, comprising: an interface management component to: poll a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; transmit, responsive to a second probability being above a predetermined threshold, the candidate digital component to the second computing device; the data processing system to: determine a second count of a number of the plurality of digital components previously transmitted to the second computing device; and determine the second probability a combination of the count and the second count reaches the target number with the predetermined time interval.

28. The system of claim 21, comprising an interface management component to: poll a plurality of interfaces of the computing device; select, based on a resource utilization value of plurality of interfaces, a candidate interface; and transmit the candidate digital component to the candidate interface of the computing device.

29. The system of claim 28, wherein the plurality of interfaces includes at least one of a display screen, an audio interface, a vibration interface, an email interface, a push notification interface, a mobile computing device interface, a portable computing device application, a content slot on an online document, a chat application, mobile computing device application, a laptop, a watch, a virtual reality headset, and a speaker.

30. The system of claim 21, comprising an interface management component to: poll a plurality of candidate interfaces associated with the computing device; determine a distance between each of the plurality of candidate interfaces and the computing device; and select the candidate digital component based on the distance between each of the plurality of candidate interfaces and the computing device.

31. A method to provide content to computing devices in an online computer network environment, comprising: receiving, by a data processing system, an audio-based request from a computing device, the audio-based request comprising a device identifier associated with the computing device and the audio-based request detected at the computing device; receiving, by the data processing system, selection criteria comprising device identifier characteristics and the selection criteria associated with a digital component; identifying, by the data processing system, a plurality of digital components associated with the digital component; determining, by the data processing system, a count representing a number of the plurality of digital components previously transmitted to the computing device; receiving, by the data processing system, a target number of the plurality of digital components previously transmitted to the computing device; determining, by the data processing system, a probability the count reaches the target number within a predetermined time interval; selecting, by the data processing system, a candidate digital component based on the probability and selection criteria; and transmitting, by the data processing system, the candidate digital component to the computing device.

32. The method of claim 31, comprising: selecting, based on the selection criteria, an exposure model; and determining, by the exposure model, the probability the count reaches the target number.

33. The method of claim 31, comprising: identifying an exposure interval based on the selection criteria; determining a second probability the computing device displays the candidate digital component from the exposure interval; and selecting the candidate digital component based on the second probability.

34. The method of claim 31, comprising: receiving, by a natural language processor component executed by the data processing system, via an interface of the data processing system, data packets comprising the audio-based request; identifying, by the natural language processor component, from the audio-based request, a request and a trigger keyword corresponding to the request; generating, by a direct action application programming interface of the data processing system, based on at least one of the request and the trigger keyword, a first action data structure; and selecting, by a content selector component, the candidate digital component based on the first action data structure.

35. The method of claim 31, comprising: polling, by an interface management component of the data processing system, a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; determining, by the interface management component, a first resource utilization value for the first candidate interface and a second resource utilization value for the second candidate interface, the first resource utilization value and the second resource utilization value based on at least one of a battery status, processor utilization, memory utilization, an interface parameter, and network bandwidth utilization; and selecting, by the interface management component, to transmit the candidate digital component to the computing device based on the first resource utilization value and the second resource utilization value.

36. The method of claim 31, comprising: polling, by the interface management component of the data processing system, a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; transmitting, by the interface management component, the candidate digital component to the second computing device.

37. The method of claim 31, comprising: polling, by the interface management component of the data processing system, a plurality of interfaces to identify a first candidate interface of the computing device and a second candidate interface of a second computing device; determining, by the data processing system a second count of a number of the plurality of digital components previously transmitted to the second computing device; determining, by the data processing system, a second probability a combination of the count and the second count reaches the target number with the predetermined time interval; transmitting, responsive to the second probability being above a predetermined threshold and by the interface management component, the candidate digital component to the second computing device.

38. The method of claim 31, comprising: polling, by an interface management component, a plurality of interfaces of the computing device; selecting, based on a resource utilization value of plurality of interfaces, a candidate interface; and transmitting the candidate digital component to the candidate interface of the computing device.

39. The method of claim 38, wherein the plurality of interfaces includes at least one of a display screen, an audio interface, a vibration interface, an email interface, a push notification interface, a mobile computing device interface, a portable computing device application, a content slot on an online document, a chat application, mobile computing device application, a laptop, a watch, a virtual reality headset, and a speaker.

40. The method of claim 31, comprising: polling, by an interface management component, a plurality of candidate interfaces associated with the computing device; determining, by the interface management component, a distance between each of the plurality of candidate interfaces and the computing device; and selecting, by the interface management component, the candidate digital component based on the distance between each of the plurality of candidate interfaces and the computing device.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] The present application claims the benefit of priority under 35 U.S.C. .sctn. 120 as a continuation-in-part of U.S. patent application Ser. No. 13/441,298, filed Apr. 6, 2012. The present application also claims the benefit of priority under 35 U.S.C. .sctn. 120 as a continuation-in-part of U.S. patent application Ser. No. 15/395,703, filed Dec. 30, 2016. Each of the foregoing applications is hereby incorporated by reference in their entirety.

BACKGROUND

[0002] Excessive network transmissions, packet-based or otherwise, of network traffic data between computing devices can prevent a computing device from properly processing the network traffic data, completing an operation related to the network traffic data, or timely responding to the network traffic data. The excessive network transmissions of network traffic data can also complicate data routing or degrade the quality of the response if the responding computing device is at or above its processing capacity, which may result in inefficient bandwidth utilization. The control of network transmissions corresponding to content item objects can be complicated by the large number of content item objects that can initiate network transmissions of network traffic data between computing devices.

SUMMARY

[0003] At least one aspect of the disclosure is directed to a system to provide content to computing devices in an online computer network environment. The system includes a data processing system to receive an audio-based request from a computing device. The audio-based request can include a device identifier associated with the computing device. The audio-based request can be detected at the computing device. The data processing system can receive selection criteria that can include device identifier characteristics. The selection criteria can be associated with a digital component. The data processing system can identify a plurality of digital components associated with a digital component. The data processing system can determine a count representing a number of the plurality of digital components previously transmitted to the computing device. The data processing system can receive a target number of the plurality of digital components previously transmitted to the computing device. The data processing system can determine a probability the count reaches the target number within a predetermined time interval. The data processing system can select a candidate digital component based on the probability and selection criteria. The data processing system can transmit the candidate digital component to the computing device.

[0004] At least one aspect of the disclosure is directed to a method to provide content to computing devices in an online computer network environment. The method can include receiving, by a data processing system, an audio-based request from a computing device. The audio-based request can include a device identifier that can be associated with the computing device. The audio-based request can be detected at the computing device. The method can include receiving, by the data processing system, selection criteria that can include device identifier characteristics. The selection criteria can be associated with a digital component. The method can include identifying, by the data processing system, a plurality of digital components associated with the digital component. The method can include determining, by the data processing system, a count representing a number of the plurality of digital components previously transmitted to the computing device. The method can include receiving, by the data processing system, a target number of the plurality of digital components previously transmitted to the computing device. The method can include determining, by the data processing system, a probability the count reaches the target number within a predetermined time interval. The method can include selecting, by the data processing system, a candidate digital component based on the probability and selection criteria. The method can include transmitting, by the data processing system, the candidate digital component to the computing device.

[0005] These and other aspects and implementations are discussed in detail below. The foregoing information and the following detailed description include illustrative examples of various aspects and implementations, and provide an overview or framework for understanding the nature and character of the claimed aspects and implementations. The drawings provide illustration and a further understanding of the various aspects and implementations, and are incorporated in and constitute a part of this specification.

BRIEF DESCRIPTION OF THE DRAWINGS

[0006] The accompanying drawings are not intended to be drawn to scale. Like reference numbers and designations in the various drawings indicate like elements. For purposes of clarity, not every component may be labeled in every drawing. In the drawings:

[0007] FIG. 1A is an example of a block diagram of a computer system in accordance with a described implementation.

[0008] FIG. 1B depicts a system to of multi-modal transmission of packetized data in a voice activated computer network environment;

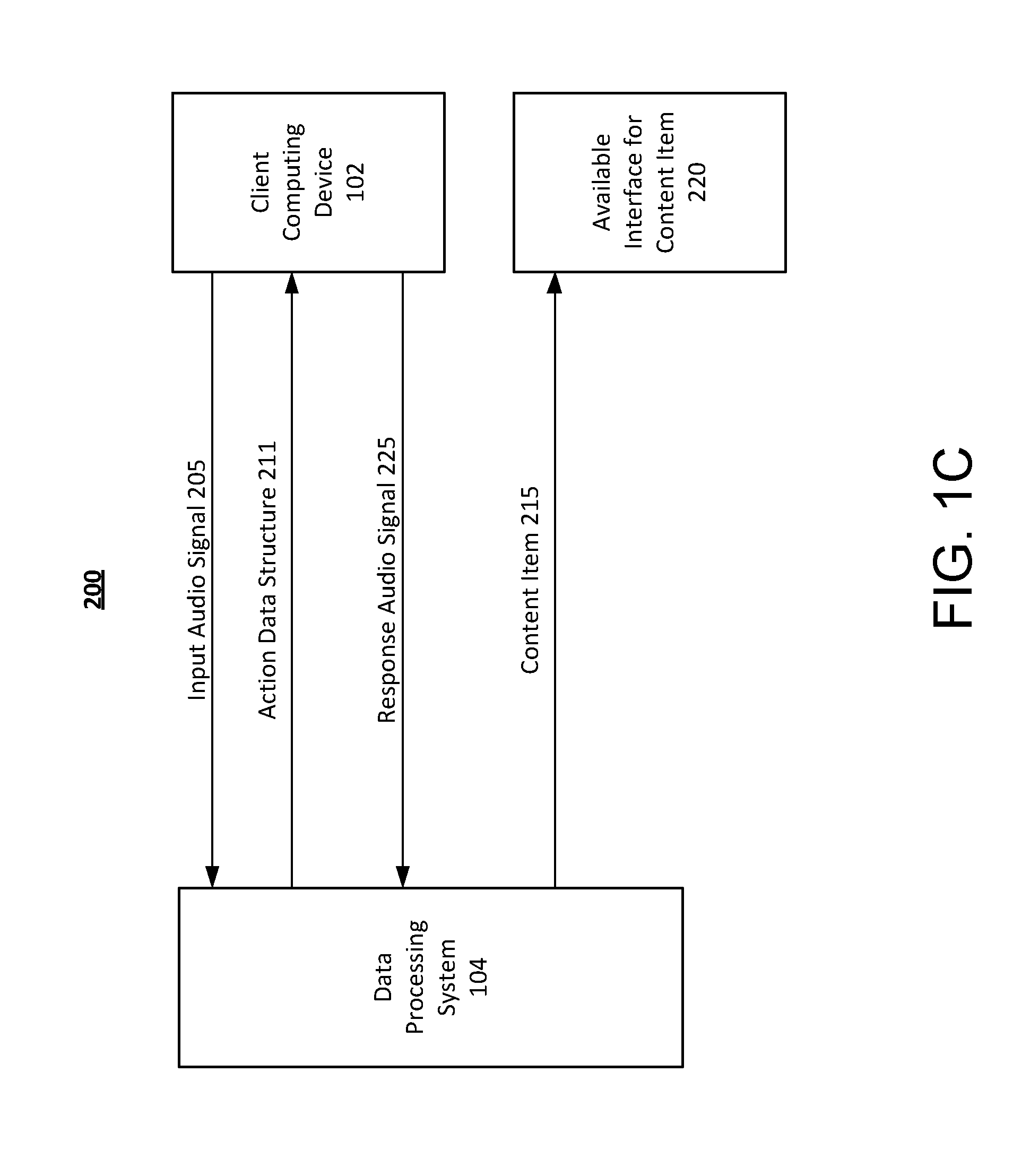

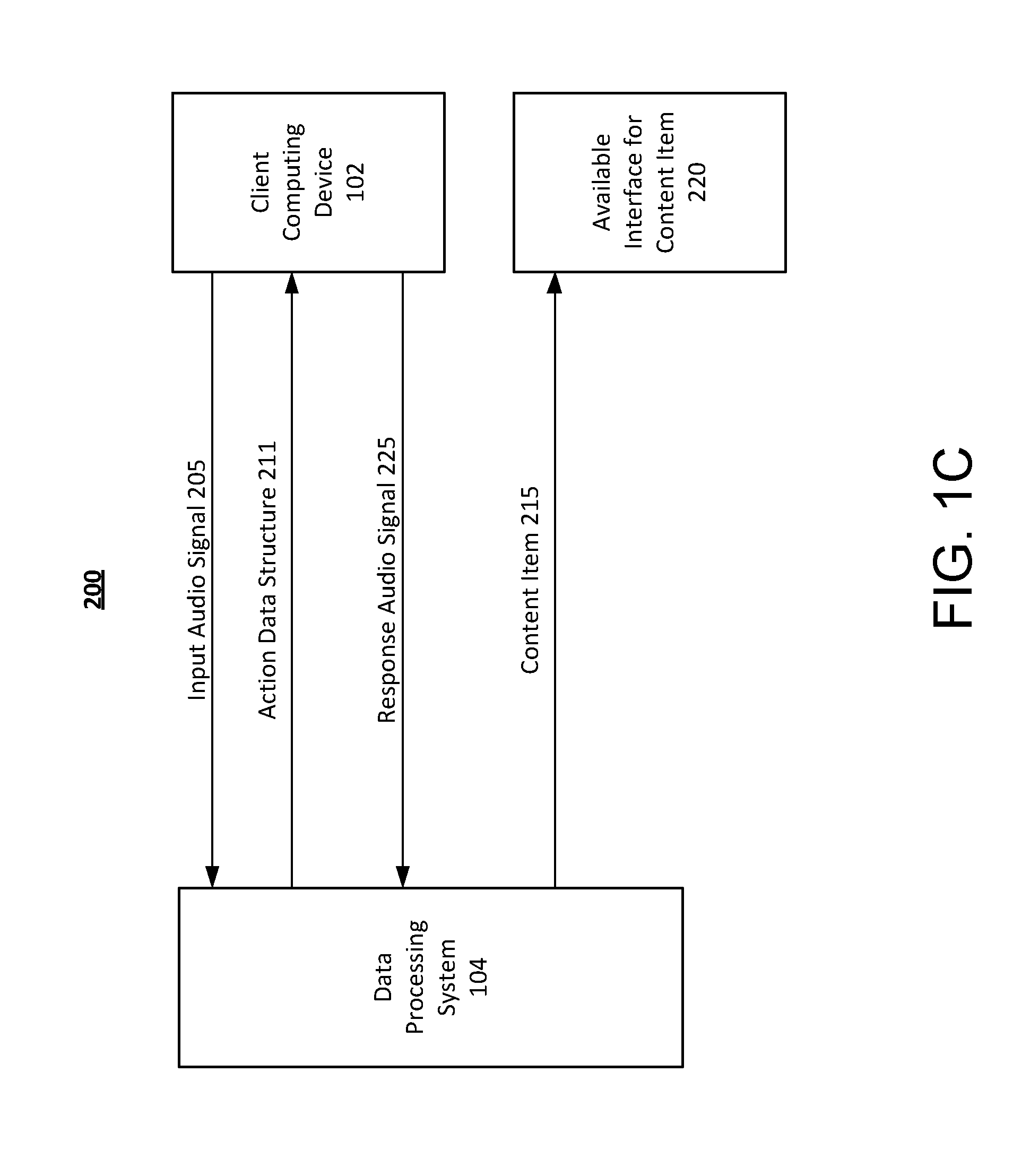

[0009] FIG. 1C depicts a flow diagram for multi-modal transmission of packetized data in a voice activated computer network environment;

[0010] FIG. 2 is an illustration of an example system for serving digital components in accordance with a described implementation.

[0011] FIG. 3 is an illustration of an example interface for a service provider in accordance with a described implementation.

[0012] FIG. 4 is an example of a system for updating the device identifier in accordance with a described implementation.

[0013] FIG. 5 is an example of a flow diagram for serving digital components in accordance with a described implementation.

[0014] FIG. 6 is a block diagram illustrating an example method to provide digital components in an online computer network.

[0015] FIG. 7 is a block diagram illustrating a general architecture for a computer system that may be employed to implement elements of the systems and methods described and illustrated herein.

[0016] Like reference numbers and designations in the various drawings indicate like elements.

DETAILED DESCRIPTION

[0017] Following below are more detailed descriptions of various concepts related to, and implementations of, methods, apparatuses, and systems for multi-modal transmission of packetized data in a voice activated data packet based computer network environment. The various concepts introduced above and discussed in greater detail below may be implemented in any of numerous ways.

[0018] Systems and methods of the present disclosure relate generally to a data processing system that identifies an optimal transmission modality for data packet (or other protocol based) transmission in a voice activated computer network environment. The data processing system can improve the efficiency and effectiveness of data packet transmission over one or more computer networks by, for example, selecting a transmission modality from a plurality of options for data packet routing through a computer network of content items to one or more client computing device, or to different interfaces (e.g., different apps or programs) of a single client computing device. In some implementations, digital components are transmitted to and displayed by computing device a predetermined number of times to achieve an outcome. For example, a digital component (or a group of related digital components) may need to be displayed five times within a given time interval such that a user remembers the subject matter of the digital components. Content items can also be referred to as digital components. In some implementations, a digital component can be a component of a content item. The data processing system can also improve the efficiency and effectiveness of the data packet transmissions over one of more computer networks by, for example, determining not to transmit digital components to a computing device. For example, the data processing system can select to not transmit a digital component to a computing device, and save bandwidth and computational resources, if the data processing system determines the digital component will not be display the predetermined number of times to achieve the outcome. The data processing system can also select digital components such that the digital components are presented the predetermined number of times, such that bandwidth is not wasted by transmitted digital components that have little chance of reaching the predetermined number of exposure times.

[0019] Data packets or other protocol based signals corresponding to the selected operations can be routed through a computer network between multiple computing devices. For example, the data processing system can route a content item to a different interface than an interface from which a request was received. The different interface can be on the same client computing device or a different client computing device from which a request was received. The data processing system can select at least one candidate interface from a plurality of candidate interfaces for content item transmission to a client computing device. The candidate interfaces can be determined based on technical or computing parameters such as processor capability or utilization rate, memory capability or availability, battery status, available power, network bandwidth utilization, interface parameters or other resource utilization values. By selecting an interface to receive and provide the content item for rendering from the client computing device based on candidate interfaces or utilization rates associated with the candidate interfaces, the data processing system can reduce network bandwidth usage, latency, or processing utilization or power consumption of the client computing device that renders the content item. This saves processing power and other computing resources such as memory, reduces electrical power consumption by the data processing system and the reduced data transmissions via the computer network reduces bandwidth requirements and usage of the data processing system.

[0020] The systems and methods described herein can include a data processing system that receives an input audio query, which can also be referred to as an input audio signal. From the input audio query the data processing system can identify a request and a trigger keyword corresponding to the request. Based on the trigger keyword or the request, the data processing system can generate a first action data structure. For example, the first action data structure can include an organic response to the input audio query received from a client computing device, and the data processing system can provide the first action data structure to the same client computing device for rendering as audio output via the same interface from which the request was received.

[0021] The data processing system can also select at least one content item based on the trigger keyword or the request. The data processing system can identify or determine a plurality of candidate interfaces for rendering of the content item(s). The interfaces can include one or more hardware or software interfaces, such as display screens, audio interfaces, speakers, applications or programs available on the client computing device that originated the input audio query, or on different client computing devices. The interfaces can include java script slots for online documents for the insertion of content items, as well as push notification interfaces. The data processing system can determine utilization values for the different candidate interfaces. The utilization values can indicate power, processing, memory, bandwidth, or interface parameter capabilities, for example. Based on the utilization values for the candidate interfaces the data processing system can select a candidate interface as a selected interface for presentation or rendering of the content item. For example, the data processing system can convert or provide the content item for delivery in a modality compatible with the selected interface. The selected interface can be an interface of the same client computing device that originated the input audio signal or a different client computing device. By routing data packets via a computing network based on utilization values associated with a candidate interface, the data processing system selects a destination for the content item in a manner that can use the least amount of processing power, memory, or bandwidth from available options, or that can conserve power of one or more client computing devices.

[0022] The data processing system can provide the content item or the first action data structure by packet or other protocol based data message transmission via a computer network to a client computing device. The output signal can cause an audio driver component of the client computing device to generate an acoustic wave, e.g., an audio output, which can be output from the client computing device. The audio (or other) output can correspond to the first action data structure or to the content item. For example, the first action data structure can be routed as audio output, and the content item can be routed as a text based message. By routing the first action data structure and the content item to different interfaces, the data processing system can conserve resources utilized by each interface, relative to providing both the first action data structure and the content item to the same interface. This results in fewer data processing operations, less memory usage, or less network bandwidth utilization by the selected interfaces (or their corresponding devices) than would be the case without separation and independent routing of the first action data structure and the content item.

[0023] Service Providers can determine goals for exposure to certain digital components or groups of digital components within their content campaigns. Service Providers may also determine a strategy for what times to serve the digital components to meet their goals for exposure. The strategy may be based on previous exposures of the digital components to the users.

[0024] The exposures may be determined by a number of devices, e.g., a set-top box, a television, a web page, etc. used to serve the digital components. The devices may include a database to store the number of exposures and the time of exposure.

[0025] Based on the number of exposures of digital components, service providers can determine or update strategies for their proposed, e.g., yet to be served, digital components. For example, service providers concerned with delivering a message believe that users may need to be exposed to the message a minimum number of times, in a given period of time, before the user is aware of the message. Although a click would count as a recognition of the message, clicks are not a reliable source to estimate the minimum number of exposures, e.g., clicks are infrequent and do not acknowledge every time a user is exposed to the message and does not click on the message.

[0026] In a general overview, the service provider can determine a minimum number of exposures for an interval of time. The service provider may also determine a maximum aggregate bid value for each user. A digital component server determines the probability that a user will meet the minimum number of exposures within the interval of time. Given the probability, the digital component server adjusts bidding within the service provider's maximum aggregate bid value for each user that meets the minimum number of exposures within the interval of time.

[0027] FIG. 1A is a block diagram of a computer system 100 in accordance with a described implementation. System 100 includes client computing device 102, which may communicate with other computing devices via a network 106. For example, client computing device 102 may communicate with one or more content sources ranging from a content provider computing device 108 up to an nth content source 110. Content provider computing devices 108 may provide webpages and/or media content (e.g., audio, video, and other forms of digital content) to client computing devices 102. System 100 may include a data processing system 104, which provides digital component data to other computing devices over network 106.

[0028] Network 106 may be any form of computer network that relays information between client computing device 102, data processing system 104, and content provider computing devices 108. For example, network 106 may include the Internet and/or other types of data networks, such as a local area network (LAN), a wide area network (WAN), a cellular network, satellite network, or other types of data networks. Network 106 may include any number of computing devices (e.g., computer, servers, routers, network switches, etc.) that are configured to receive and/or transmit data within network 106. Network 106 may include any number of hardwired and/or wireless connections. For example, client computing device 102 may communicate wirelessly (e.g., via WiFi, cellular, radio, etc.) with a transceiver that is hardwired (e.g., via a fiber optic cable, a CAT5 cable, etc.) to other computing devices in network 106.

[0029] Client computing device 102 may be any number of different user electronic devices configured to communicate via network 106 (e.g., a laptop computer, a desktop computer, a tablet computer, a smartphone, a digital video recorder, a set-top box for a television, a video game console, etc.). Client computing device 102 is shown to include a processor 112 and a memory 114, e.g., a processing circuit. Memory 114 stores machine instructions that, when executed by processor 112, cause processor 112 to perform one or more of the operations described herein. Processor 112 may include a microprocessor, application-specific integrated circuit (ASIC), field-programmable gate array (FPGA), etc., or combinations thereof. Memory 114 may include, but is not limited to, electronic, optical, magnetic, or any other storage or transmission device capable of providing processor 112 with program instructions. Memory 114 may include a floppy disk, CD-ROM, DVD, magnetic disk, memory chip, ASIC, FPGA, read-only memory (ROM), random-access memory (RAM), electrically-erasable ROM (EEPROM), erasable-programmable ROM (EPROM), flash memory, optical media, or any other suitable memory from which processor 112 can read instructions. The instructions may include code from any suitable computer-programming language such as, but not limited to, C, C++, C#, Java, JavaScript, Perl, Python and Visual Basic.

[0030] Client computing device 102 may include one or more user interface devices. In general, a user interface device refers to any electronic device that conveys data to a user by generating sensory information (e.g., a visualization on a display, one or more sounds, etc.) and/or converts received sensory information from a user into electronic signals (e.g., a keyboard, a mouse, a pointing device, a touch screen display, a microphone, etc.). The one or more user interface devices may be internal to a housing of client computing device 102 (e.g., a built-in display, microphone, etc.) or external to the housing of client computing device 102 (e.g., a monitor connected to client computing device 102, a speaker connected to client computing device 102, etc.), according to various implementations. For example, client computing device 102 may include an electronic display 116, which visually displays webpages using webpage data received from content provider computing devices 108 and/or from data processing system 104.

[0031] Content provider computing devices 108 are electronic devices connected to network 106 and provide media content to client computing device 102. For example, content provider computing devices 108 may be computer servers (e.g., FTP servers, file sharing servers, web servers, etc.) or other devices that include a processing circuit. Media content may include, but is not limited to, webpage data, a movie, a sound file, pictures, and other forms of data. Similarly, data processing system 104 may include a processing circuit including a processor 112 and a memory 114. In some implementations, data processing system 104 may include several computing devices (e.g., a data center, a network of servers, etc.). In such a case, the various devices of data processing system 104 may comprise a processing circuit (e.g., processor 112 represents the collective processors of the devices and memory 114 represents the collective memories of the devices).

[0032] Data processing system 104 may provide digital components to client computing device 102 via network 106. For example, content provider computing device 108 may provide a webpage to client computing device 102, in response to receiving a request for a webpage from client computing device 102. In some implementations, a digital component from data processing system 104 may be provided to client computing device 102 indirectly. For example, content provider computing device 108 may receive digital component data from data processing system 104 and use the digital component as part of the webpage data provided to client computing device 102. In other implementations, a digital component from data processing system 104 may be provided to client computing device 102 directly. For example, content provider computing device 108 may provide webpage data to client computing devices 102 that includes a command to retrieve a digital component from data processing system 104. On receipt of the webpage data, client computing device 102 may retrieve a digital component from data processing system 104 based on the command and display the digital component when the webpage is rendered on display 116.

[0033] According to various implementations, a user of client computing device 102 may search for, access, etc. various documents (e.g., web pages, web sites, articles, images, video, etc.) using a search engine via network 106. The web pages may be displayed as a search result from a search engine query containing search terms or keywords. Search engine queries may allow the user to enter a search term or keyword into the search engine to execute a document search. Search engines may be stored in memory 114 of data processing system 104 and may be accessible with client computing device 102. The result of an executed website search on a search engine may include a display on a search engine document of links to websites. Executed search engine queries may result in the display of digital component data generated and transmitted from data processing system 104. In some cases, search engines contract with service providers to display digital component to users of the search engine in response to certain search engine queries.

[0034] In other implementations, digital component may be displayed in a publication (e.g., a third-party web page) as in a display network. For example, a number of web pages and applications may show relevant digital components. The digital components may be matched to the web pages and other placements, such as mobile computing applications, according to relevant content or themes of the web pages. Specific web pages about specific topics may display the digital component. The digital component may be shown to all the web pages or a select number of web pages.

[0035] In another implementation, service providers may purchase or bid on the search terms such as keyword entries entered by users into a document such as a search engine. When the search term or keyword are entered into the document, then digital component data such as links to a service provider website may be displayed to the user. In some implementations, data processing system 104 may use an auction model that generates a digital component. Service Providers may bid on keywords using the auction model. The auction model may also be adjusted to reflect the maximum amount a service provider is willing to spend so that a user is exposed to a digital component a minimum number of times.

[0036] FIG. 1B depicts an example system 100 to for multi-modal transmission of packetized data in a voice activated data packet (or other protocol) based computer network environment. The system 100 can include at least one data processing system 104. The data processing system 104 can include at least one server having at least one processor. For example, the data processing system 104 can include a plurality of servers located in at least one data center or server farm. The data processing system 104 can determine, from an audio input signal a request and a trigger keyword associated with the request. Based on the request and trigger keyword the data processing system 104 can determine or select at least one action data structure, and can select at least one content item (and initiate other actions as described herein). The data processing system 104 can identify candidate interfaces for rendering of the action data structures or the content items, and can provide the action data structures or the content items for rendering by one or more candidate interfaces on one or more client computing devices based on resource utilization values for or of the candidate interfaces, for example, as part of a voice activated communication or planning system. The action data structures (or the content items) can include one or more audio files that when rendered provide an audio output or acoustic wave. The action data structures or the content items can include other content (e.g., text, video, or image content) in addition to audio content.

[0037] The data processing system 104 can include multiple, logically-grouped servers and facilitate distributed computing techniques. The logical group of servers may be referred to as a data center, server farm or a machine farm. The servers can be geographically dispersed. A data center or machine farm may be administered as a single entity, or the machine farm can include a plurality of machine farms. The servers within each machine farm can be heterogeneous--one or more of the servers or machines can operate according to one or more type of operating system platform. The data processing system 104 can include servers in a data center that are stored in one or more high-density rack systems, along with associated storage systems, located for example, in an enterprise data center. The data processing system 104 with consolidated servers in this way can improve system manageability, data security, the physical security of the system, and system performance by locating servers and high-performance storage systems on localized high-performance networks. Centralization of all or some of the data processing system 104 components, including servers and storage systems, and coupling them with advanced system management tools allows more efficient use of server resources, which saves power and processing requirements and reduces bandwidth usage.

[0038] The data processing system 104 can include at least one natural language processor (NLP) component 110, at least one interface 115, at least one prediction component 120, at least one content selector component 125, at least one audio signal generator component 130, at least one direct action application programming interface (API) 135, at least one interface management component 140, and at least one data repository 145. The NLP component 110, interface 115, prediction component 120, content selector component 125, audio signal generator component 130, direct action API 135, and interface management component 140 can each include at least one processing unit, server, virtual server, circuit, engine, agent, appliance, or other logic device such as programmable logic arrays configured to communicate with the data repository 145 and with other computing devices (e.g., at least one client computing device 102, at least one content provider computing device 108, or at least one service provider computing device 160) via the at least one computer network 106. The network 106 can include computer networks such as the internet, local, wide, metro or other area networks, intranets, satellite networks, other computer networks such as voice or data mobile phone communication networks, and combinations thereof.

[0039] The network 106 can include or constitute a display network, e.g., a subset of information resources available on the internet that are associated with a content placement or search engine results system, or that are eligible to include third party content items as part of a content item placement campaign. The network 106 can be used by the data processing system 104 to access information resources such as web pages, web sites, domain names, or uniform resource locators that can be presented, output, rendered, or displayed by the client computing device 102. For example, via the network 106 a user of the client computing device 102 can access information or data provided by the data processing system 104, the content provider computing device 108 or the service provider computing device 160.

[0040] The network 106 can include, For example, a point-to-point network, a broadcast network, a wide area network, a local area network, a telecommunications network, a data communication network, a computer network, an ATM (Asynchronous Transfer Mode) network, a SONET (Synchronous Optical Network) network, a SDH (Synchronous Digital Hierarchy) network, a wireless network or a wireline network, and combinations thereof. The network 106 can include a wireless link, such as an infrared channel or satellite band. The topology of the network 106 may include a bus, star, or ring network topology. The network 106 can include mobile telephone networks using any protocol or protocols used to communicate among mobile devices, including advanced mobile phone protocol ("AMPS"), time division multiple access ("TDMA"), code-division multiple access ("CDMA"), global system for mobile communication ("GSM"), general packet radio services ("GPRS") or universal mobile telecommunications system ("UMTS"). Different types of data may be transmitted via different protocols, or the same types of data may be transmitted via different protocols.

[0041] The client computing device 102, the content provider computing device 108, and the service provider computing device 160 can each include at least one logic device such as a computing device having a processor to communicate with each other or with the data processing system 104 via the network 106. The client computing device 102, the content provider computing device 108, and the service provider computing device 160 can each include at least one server, processor or memory, or a plurality of computation resources or servers located in at least one data center. The client computing device 102, the content provider computing device 108, and the service provider computing device 160 can each include at least one computing device such as a desktop computer, laptop, tablet, personal digital assistant, smartphone, portable computer, server, thin client computer, virtual server, or other computing device.

[0042] The client computing device 102 can include at least one sensor 151, at least one transducer 152, at least one audio driver 153, and at least one speaker 154. The sensor 151 can include a microphone or audio input sensor. The transducer 152 can convert the audio input into an electronic signal, or vice-versa. The audio driver 153 can include a script or program executed by one or more processors of the client computing device 102 to control the sensor 151, the transducer 152 or the audio driver 153, among other components of the client computing device 102 to process audio input or provide audio output. The speaker 154 can transmit the audio output signal.

[0043] The client computing device 102 can be associated with an end user that enters voice queries as audio input into the client computing device 102 (via the sensor 151) and receives audio output in the form of a computer generated voice that can be provided from the data processing system 104 (or the content provider computing device 108 or the service provider computing device 160) to the client computing device 102, output from the speaker 154. The audio output can correspond to an action data structure received from the direct action API 135, or a content item selected by the content selector component 125. The computer generated voice can include recordings from a real person or computer generated language.

[0044] The content provider computing device 108 (or the data processing system 104 or service provider computing device 160) can provide audio based content items or action data structures for display by the client computing device 102 as an audio output. The action data structure or content item can include an organic response or offer for a good or service, such as a voice based message that states: "Today it will be sunny and 80 degrees at the beach" as an organic response to a voice-input query of "Is today a beach day?". The data processing system 104 (or other system 100 component such as the content provider computing device 108 can also provide a content item as a response, such as a voice or text message based content item offering sunscreen.

[0045] The content provider computing device 108 or the data repository 145 can include memory to store a series of audio action data structures or content items that can be provided in response to a voice based query. The action data structures and content items can include packet based data structures for transmission via the network 106. The content provider computing device 108 can also provide audio or text based content items (or other content items) to the data processing system 104 where they can be stored in the data repository 145. The data processing system 104 can select the audio action data structures or text based content items and provide (or instruct the content provider computing device 108 to provide) them to the same or different client computing devices 102 responsive to a query received from one of those client computing devices 102. The audio based action data structures can be exclusively audio or can be combined with text, image, or video data. The content items can be exclusively text or can be combined with audio, image or video data.

[0046] The service provider computing device 160 can include at least one service provider natural language processor (NLP) component 161 and at least one service provider interface 162. The service provider NLP component 161 (or other components such as a direct action API of the service provider computing device 160) can engage with the client computing device 102 (via the data processing system 104 or bypassing the data processing system 104) to create a back-and-forth real-time voice or audio based conversation (e.g., a session) between the client computing device 102 and the service provider computing device 160. For example, the service provider interface 162 can receive or provide data messages (e.g., action data structures or content items) to the direct action API 135 of the data processing system 104. The direct action API 135 can also generate the action data structures independent from or without input from the service provider computing device 160. The service provider computing device 160 and the content provider computing device 108 can be associated with the same entity. For example, the content provider computing device 108 can create, store, or make available content items for beach relates services, such as sunscreen, beach towels or bathing suits, and the service provider computing device 160 can establish a session with the client computing device 102 to respond to a voice input query about the weather at the beach, directions for a beach, or a recommendation for an area beach, and can provide these content items to the end user of the client computing device 102 via an interface of the same client computing device 102 from which the query was received, a different interface of the same client computing device 102, or an interface of a different client computing device. The data processing system 104, via the direct action API 135, the NLP component 110 or other components can also establish the session with the client computing device, including or bypassing the service provider computing device 160, to for example, to provide an organic response to a query related to the beach.

[0047] The data repository 145 can include one or more local or distributed databases, and can include a database management system. The data repository 145 can include computer data storage or memory and can store one or more parameters 146, one or more policies 147, content data 148, or templates 149 among other data. The parameters 146, policies 147, and templates 149 can include information such as rules about a voice based session between the client computing device 102 and the data processing system 104 (or the service provider computing device 160). The content data 148 can include content items for audio output or associated metadata, as well as input audio messages that can be part of one or more communication sessions with the client computing device 102.

[0048] The system 100 can optimize processing of action data structures and content items in a voice activated data packet (or other protocol) environment. For example, the data processing system 104 can include or be part of a voice activated assistant service, voice command device, intelligent personal assistant, knowledge navigator, event planning, or another assistant program. The data processing system 104 can provide one or more instances of action data structures as audio output for display from the client computing device 102 to accomplish tasks related to an input audio signal. For example, the data processing system can communicate with the service provider computing device 160 or other third party computing devices to generate action data structures with information about a beach, among other things. For example, an end user can enter an input audio signal into the client computing device 102 of: "OK, I would like to go to the beach this weekend" and an action data structure can indicate the weekend weather forecast for area beaches, such as "it will be sunny and 80 degrees at the beach on Saturday, with high tide at 3 pm."

[0049] The action data structures can include a number of organic or non-sponsored responses to the input audio signal. For example, the action data structures can include a beach weather forecast or directions to a beach. The action data structures in this example include organic or non-sponsored content that is directly responsive to the input audio signal. The content items responsive to the input audio signal can include sponsored or non-organic content, such as an offer to buy sunscreen from a convenience store located near the beach. In this example, the organic action data structure (beach forecast) is responsive to the input audio signal (a query related to the beach), and the content item (a reminder or offer for sunscreen) is also responsive to the same input audio signal. The data processing system 104 can evaluate system 100 parameters (e.g., power usage, available displays, formats of displays, memory requirements, bandwidth usage, power capacity or time of input power (e.g., internal battery or external power source such as a power source from a wall output) to provide the action data structure and the content item to different candidate interfaces on the same client computing device 102, or to different candidate interfaces on different client computing devices 102.

[0050] The data processing system 104 can include an application, script or program installed at the client computing device 102, such as an app to communicate input audio signals (e.g., as data packets via a packetized or other protocol based transmission) to at least one interface 115 of the data processing system 104 and to drive components of the client computing device 102 to render output audio signals (e.g., for action data structures) or other output signals (e.g., content items). The data processing system 104 can receive data packets or other signal that includes or identifies an audio input signal. For example, the data processing system 104 can execute or run the NLP component 110 to receive the audio input signal.

[0051] The NLP component 110 can convert the audio input signal into recognized text by comparing the input signal against a stored, representative set of audio waveforms (e.g., in the data repository 145) and choosing the closest matches. The representative waveforms are generated across a large set of users, and can be augmented with speech samples. After the audio signal is converted into recognized text, the NLP component 110 can match the text to words that are associated, for example, via training across users or through manual specification, with actions that the data processing system 104 can serve.

[0052] The audio input signal can be detected by the sensor 151 (e.g., a microphone) of the client computing device. Via the transducer 152, the audio driver 153, or other components the client computing device 102 can provide the audio input signal to the data processing system 104 (e.g., via the network 106) where it can be received (e.g., by the interface 115) and provided to the NLP component 110 or stored in the data repository 145 as content data 148.

[0053] The NLP component 110 can receive or otherwise obtain the input audio signal. From the input audio signal, the NLP component 110 can identify at least one request or at least one trigger keyword corresponding to the request. The request can indicate intent or subject matter of the input audio signal. The trigger keyword can indicate a type of action likely to be taken. For example, the NLP component 110 can parse the input audio signal to identify at least one request to go to the beach for the weekend. The trigger keyword can include at least one word, phrase, root or partial word, or derivative indicating an action to be taken. For example, the trigger keyword "go" or "to go to" from the input audio signal can indicate a need for transport or a trip away from home. In this example, the input audio signal (or the identified request) does not directly express an intent for transport, however the trigger keyword indicates that transport is an ancillary action to at least one other action that is indicated by the request.

[0054] The prediction component 120 (or other mechanism of the data processing system 104) can generate, based on the request or the trigger keyword, at least one action data structure associated with the input audio signal. The action data structure can indicate information related to subject matter of the input audio signal. The action data structure can include one or more than one action, such as organic responses to the input audio signal. For example, the input audio signal "OK, I would like to go to the beach this weekend" can include at least one request indicating an interest for a beach weather forecast, surf report, or water temperature information, and at least one trigger keyword, e.g., "go" indicating travel to the beach, such as a need for items one may want to bring to the beach, or a need for transportation to the beach. The prediction component 120 can generate or identify subject matter for at least one action data structure, an indication of a request for a beach weather forecast, as well as subject matter for a content item, such as an indication of a query for sponsored content related to spending a day at a beach. From the request or the trigger keyword the prediction component 120 (or other system 100 component such as the NLP component 110 or the direct action API 135) predicts, estimates, or otherwise determines subject matter for action data structures or for content items. From this subject matter, the direct action API 135 can generate at least one action data structure and can communicate with at least one content provider computing device 108 to obtain at least one content item 110. The prediction component 120 can access the parameters 146 or policies 147 in the data repository 145 to determine or otherwise estimate requests for action data structures or content items. For example, the parameters 146 or policies 147 could indicate requests for a beach weekend weather forecast action or for content items related to beach visits, such as a content item for sunscreen.

[0055] The content selector component 125 can obtain indications of any of the interest in or request for the action data structure or for the content item. For example, the prediction component 120 can directly or indirectly (e.g., via the data repository 145) provide an indication of the action data structure or content item to the content selector component 125. The content selector component 125 can obtain this information from the data repository 145, where it can be stored as part of the content data 148. The indication of the action data structure can inform the content selector component 125 of a need for area beach information, such as a weather forecast or products or services the end user may need for a trip to the beach.

[0056] From the information received by the content selector component 125, e.g., an indication of a forthcoming trip to the beach, the content selector component 125 can identify at least one content item. The content item can be responsive or related to the subject matter of the input audio query. For example, the content item can include data message identifying as tore near the beach that has sunscreen, or offering a taxi ride to the beach. The content selector component 125 can query the data repository 145 to select or otherwise identify the content item, e.g., from the content data 148. The content selector component 125 can also select the content item from the content provider computing device 108. For example, responsive to a query received from the data processing system 104, the content provider computing device 108 can provide a content item to the data processing system 104 (or component thereof) for eventual output by the client computing device 102 that originated the input audio signal, or for output to the same end user by a different client computing device 102.

[0057] The audio signal generator component 130 can generate or otherwise obtain an output signal that includes the content item (as well as the action data structure) responsive to the input audio signal. For example, the data processing system 104 can execute the audio signal generator component 130 to generate or create an output signal corresponding to the action data structure or to the content item. The interface component 115 of the data processing system 104 can provide or transmit one or more data packets that include the output signal via the computer network 106 to any client computing device 102. The interface 115 can be designed, configured, constructed, or operational to receive and transmit information using, for example, data packets. The interface 115 can receive and transmit information using one or more protocols, such as a network protocol. The interface 115 can include a hardware interface, software interface, wired interface, or wireless interface. The interface 115 can facilitate translating or formatting data from one format to another format. For example, the interface 115 can include an application programming interface that includes definitions for communicating between various components, such as software components of the system 100.

[0058] The data processing system 104 can provide the output signal including the action data structure from the data repository 145 or from the audio signal generator component 130 to the client computing device 102. The data processing system 104 can provide the output signal including the content item from the data repository 145 or from the audio signal generator component 130 to the same or to a different client computing device 102.

[0059] The data processing system 104 can also instruct, via data packet transmissions, the content provider computing device 108 or the service provider computing device 160 to provide the output signal (e.g., corresponding to the action data structure or to the content item) to the client computing device 102. The output signal can be obtained, generated, transformed to or transmitted as one or more data packets (or other communications protocol) from the data processing system 104 (or other computing device) to the client computing device 102.

[0060] The content selector component 125 can select the content item or the action data structure for the as part of a real-time content selection process. For example, the action data structure can be provided to the client computing device 102 for transmission as audio output by an interface of the client computing device 102 in a conversational manner in direct response to the input audio signal. The real-time content selection process to identify the action data structure and provide the content item to the client computing device 102 can occur within one minute or less from the time of the input audio signal and be considered real-time. The data processing system 104 can also identify and provide the content item to at least one interface of the client computing device 102 that originated the input audio signal, or to a different client computing device 102.

[0061] The action data structure (or the content item), For example, obtained or generated by the audio signal generator component 130 transmitted via the interface 115 and the computer network 106 to the client computing device 102, can cause the client computing device 102 to execute the audio driver 153 to drive the speaker 154 to generate an acoustic wave corresponding to the action data structure or to the content item. The acoustic wave can include words of or corresponding to the action data structure or content item.

[0062] The acoustic wave representing the action data structure can be output from the client computing device 102 separately from the content item. For example, the acoustic wave can include the audio output of "Today it will be sunny and 80 degrees at the beach." In this example, the data processing system 104 obtains the input audio signal of, for example, "OK, I would like to go to the beach this weekend." From this information, the NLP component 110 identifies at least one request or at least one trigger keyword, and the prediction component 120 uses the request(s) or trigger keyword(s) to identify a request for an action data structure or for a content item. The content selector component 125 (or other component) can identify, select, or generate a content item for, e.g., sunscreen available near the beach. The direct action API 135 (or other component) can identify, select, or generate an action data structure for, e.g., the weekend beach forecast. The data processing system 104 or component thereof such as the audio signal generator component 130 can provide the action data structure for output by an interface of the client computing device 102. For example, the acoustic wave corresponding to the action data structure can be output from the client computing device 102. The data processing system 104 can provide the content item for output by a different interface of the same client computing device 102 or by an interface of a different client computing device 102.

[0063] The packet based data transmission of the action data structure by data processing system 104 to the client computing device 102 can include a direct or real-time response to the input audio signal of "OK, I would like to go to the beach this weekend" so that the packet based data transmissions via the computer network 106 that are part of a communication session between the data processing system 104 and the client computing device 102 with the flow and feel of a real-time person to person conversation. This packet based data transmission communication session can also include the content provider computing device 108 or the service provider computing device 160.

[0064] The content selector component 125 can select the content item or action data structure based on at least one request or at least one trigger keyword of the input audio signal. For example, the requests of the input audio signal "OK, I would like to go to the beach this weekend" can indicate subject matter of the beach, travel to the beach, or items to facilitate a trip to the beach. The NLP component 110 or the prediction component 120 (or other data processing system 104 components executing as part of the direct action API 135) can identify the trigger keyword "go" "go to" or "to go to" and can determine a transportation request to the beach based at least in part on the trigger keyword. The NLP component 110 (or other system 100 component) can also determine a solicitation for content items related to beach activity, such as for sunscreen or beach umbrellas. Thus, the data processing system 104 can infer actions from the input audio signal that are secondary requests (e.g., a request for sunscreen) that are not the primary request or subject of the input audio signal (information about the beach this weekend).

[0065] The action data structures and content items can correspond to subject matter of the input audio signal. The direct action API 135 can execute programs or scripts, for example, from the NLP component 110, the prediction component 120, or the content selector component 125 to identify action data structures or content items for one or more of these actions. The direct action API 135 can execute a specified action to satisfy the end user's intention, as determined by the data processing system 104. Depending on the action specified in its inputs, the direct action API 135 can execute code or a dialog script that identifies the parameters required to fulfill a user request. Such code can lookup additional information, e.g., in the data repository 145, such as the name of a home automation service, or it can provide audio output for rendering at the client computing device 102 to ask the end user questions such as the intended destination of a requested taxi. The direct action API 135 can determine necessary parameters and can package the information into an action data structure, which can then be sent to another component such as the content selector component 125 or to the service provider computing device 160 to be fulfilled.

[0066] The direct action API 135 of the data processing system 104 can generate, based on the request or the trigger keyword, the action data structures. The action data structures can be generated responsive to the subject matter of the input audio signal. The action data structures can be included in the messages that are transmitted to or received by the service provider computing device 160. Based on the audio input signal parsed by the NLP component 110, the direct action API 135 can determine to which, if any, of a plurality of service provider computing devices 160 the message should be sent. For example, if an input audio signal includes "OK, I would like to go to the beach this weekend," the NLP component 110 can parse the input audio signal to identify requests or trigger keywords such as the trigger keyword word "to go to" as an indication of a need for a taxi. The direct action API 135 can package the request into an action data structure for transmission as a message to a service provider computing device 160 of a taxi service. The message can also be passed to the content selector component 125. The action data structure can include information for completing the request. In this example, the information can include a pick up location (e.g., home) and a destination location (e.g., a beach). The direct action API 135 can retrieve a template 149 from the data repository 145 to determine which fields to include in the action data structure. The direct action API 135 can retrieve content from the data repository 145 to obtain information for the fields of the data structure. The direct action API 135 can populate the fields from the template with that information to generate the data structure. The direct action API 135 can also populate the fields with data from the input audio signal. The templates 149 can be standardized for categories of service providers or can be standardized for specific service providers. For example, ride sharing service providers can use the following standardized template 149 to create the data structure: {client.sub.deviceidentifier; authentication.sub.credentials; pick.sub.uplocation; destination.sub.location; no.sub.passengers; service.sub.level}.

[0067] The content selector component 125 can identify, select, or obtain multiple content items resulting from a multiple content selection processes. The content selection processes can be real-time, e.g., part of the same conversation, communication session, or series of communications sessions between the data processing system 104 and the client computing device 102 that involve common subject matter. The conversation can include asynchronous communications separated from one another by a period of hours or days, for example. The conversation or communication session can last for a time period from receipt of the first input audio signal until an estimated or known conclusion of a final action related to the first input audio signal, or receipt by the data processing system 104 of an indication of a termination or expiration of the conversation. For example, the data processing system 104 can determine that a conversation related to a weekend beach trip begins at the time or receipt of the input audio signal and expires or terminates at the end of the weekend, e.g., Sunday night or Monday morning. The data processing system 104 that provides action data structures or content items for rendering by one or more interfaces of the client computing device 102 or of another client computing device 102 during the active time period of the conversation (e.g., from receipt of the input audio signal until a determined expiration time) can be considered to be operating in real-time. In this example, the content selection processes and rendering of the content items and action data structures occurs in real time.

[0068] The interface management component 140 can poll, determine, identify, or select interfaces for rendering of the action data structures and of the content items related to the input audio signal. For example, the interface management component 140 can identify one or more candidate interfaces of client computing devices 102 associated with an end user that entered the input audio signal (e.g., "What is the weather at the beach today?") into one of the client computing devices 102 via an audio interface. The interfaces can include hardware such as sensor 151 (e.g., a microphone), speaker 154, or a screen size of a computing device, alone or combined with scripts or programs (e.g., the audio driver 153) as well as apps, computer programs, online documents (e.g., webpage) interfaces and combinations thereof.

[0069] The interfaces can include social media accounts, text message applications, or email accounts associated with an end user of the client computing device 102 that originated the input audio signal. Interfaces can include the audio output of a smartphone, or an app based messaging device installed on the smartphone, or on a wearable computing device, among other client computing devices 102. The interfaces can also include display screen parameters (e.g., size, resolution), audio parameters, mobile device parameters, (e.g., processing power, battery life, existence of installed apps or programs, or sensor 151 or speaker 154 capabilities), content slots on online documents for text, image, or video renderings of content items, chat applications, laptops parameters, smartwatch or other wearable device parameters (e.g., indications of their display or processing capabilities), or virtual reality headset parameters.

[0070] The interface management component 140 can poll a plurality of interfaces to identify candidate interfaces. Candidate interfaces include interfaces having the capability to render a response to the input audio signal, (e.g., the action data structure as an audio output, or the content item that can be output in various formats including non-audio formats). The interface management component 140 can determine parameters or other capabilities of interfaces to determine that they are (or are not) candidate interfaces. For example, the interface management component 140 can determine, based on parameters 146 of the content item or of a first client computing device 102 (e.g., a smartwatch wearable device), that the smartwatch includes an available visual interface of sufficient size or resolution to render the content item. The interface management component 140 can also determine that the client computing device 102 that originated the input audio signal has a speaker 154 hardware and installed program e.g., an audio driver or other script to render the action data structure.

[0071] The interface management component 140 can determine utilization values for candidate interfaces. The utilization values can indicate that a candidate interface can (or cannot) render the action data structures or the content items provided in response to input audio signals. The utilization values can include parameters 146 obtained from the data repository 145 or other parameters obtained from the client computing device 102, such as bandwidth or processing utilizations or requirements, processing power, power requirements, battery status, memory utilization or capabilities, or other interface parameters that indicate the available of an interface to render action data structures or content items. The battery status can indicate a type of power source (e.g., internal battery or external power source such as via an output), a charging status (e.g., currently charging or not), or an amount of remaining battery power. The interface management component 140 can select interfaces based on the battery status or charging status.

[0072] The interface management component 140 can order the candidate interfaces in a hierarchy or ranking based on the utilization values. For example, different utilization values (e.g., processing requirements, display screen size, accessibility to the end user) can be given different weights. The interface management component 140 can rank one or more of the utilization values of the candidate interfaces based on their weights to determine an optimal corresponding candidate interface for rendering of the content item (or action data structure). Based on this hierarchy, the interface management component 140 can select the highest ranked interface for rendering of the content item.

[0073] Based on utilization values for candidate interfaces, the interface management component 140 can select at least one candidate interface as a selected interface for the content item. The selected interface for the content item can be the same interface from which the input audio signal was received (e.g., an audio interface of the client computing device 102) or a different interface (e.g., a text message based app of the same client computing device 102, or an email account accessible from the same client computing device 102.

[0074] The interface management component 140 can select an interface for the content item that is an interface of a different client computing device 102 than the device that originated the input audio signal. For example, the data processing system 104 can receive the input audio signal from a first client computing device 102 (e.g., a smartphone), and can select an interface such as a display of a smartwatch (or any other client computing device for rendering of the content item. The multiple client computing devices 102 can all be associated with the same end user. The data processing system 104 can determine that multiple client computing devices 102 are associated with the same end user based on information received with consent from the end user such as user access to a common social media or email account across multiple client computing devices 102.