Secure Broker-Mediated Data Analysis and Prediction

Ceulemans; Hugo ; et al.

U.S. patent application number 15/722742 was filed with the patent office on 2019-04-04 for secure broker-mediated data analysis and prediction. This patent application is currently assigned to IMEC VZW. The applicant listed for this patent is IMEC VZW, Janssen Pharmaceutica NV, Katholieke Universiteit Leuven, KU LEUVEN R&D. Invention is credited to Adam Arany, Hugo Ceulemans, Charlotte Herzeel, Yves Jean Luc Moreau, Jaak Simm, Wilfried Verachtert, Roel Wuyts.

| Application Number | 20190102670 15/722742 |

| Document ID | / |

| Family ID | 63862100 |

| Filed Date | 2019-04-04 |

View All Diagrams

| United States Patent Application | 20190102670 |

| Kind Code | A1 |

| Ceulemans; Hugo ; et al. | April 4, 2019 |

Secure Broker-Mediated Data Analysis and Prediction

Abstract

The present disclosure relates to secure broker-mediated data analysis and prediction. One example embodiment includes a method. The method includes receiving, by a managing computing device, a plurality of datasets from client computing devices. The method also includes computing, by the managing computing device, a shared representation based on a shared function having one or more shared parameters. Further, the method includes transmitting, by the managing computing device, the shared representation and other data to the client computing devices. In addition, the method includes, based on the shared representation and the other data, the client computing devices update partial representations and individual functions with one or more individual parameters. Still further, the method includes determining, by the client computing devices, feedback values to provide to the managing computing device. Additionally, the method includes updating, by the managing computing device, the one or more shared parameters based on the feedback values.

| Inventors: | Ceulemans; Hugo; (Bertem, BE) ; Wuyts; Roel; (Boortmeerbeek, BE) ; Verachtert; Wilfried; (Keerbergen, BE) ; Simm; Jaak; (Leuven, BE) ; Arany; Adam; (Leuven, BE) ; Moreau; Yves Jean Luc; (Heverlee, BE) ; Herzeel; Charlotte; (Leuven, BE) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | IMEC VZW Leuven BE Janssen Pharmaceutica NV Beerse BE Katholieke Universiteit Leuven, KU LEUVEN R&D Leuven BE |

||||||||||

| Family ID: | 63862100 | ||||||||||

| Appl. No.: | 15/722742 | ||||||||||

| Filed: | October 2, 2017 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 21/6218 20130101; G16C 20/30 20190201; G06F 21/6245 20130101; G06N 3/084 20130101; G06N 3/04 20130101; G16H 10/60 20180101; G06N 3/0427 20130101; G16C 20/70 20190201 |

| International Class: | G06N 3/04 20060101 G06N003/04; G06N 3/08 20060101 G06N003/08 |

Claims

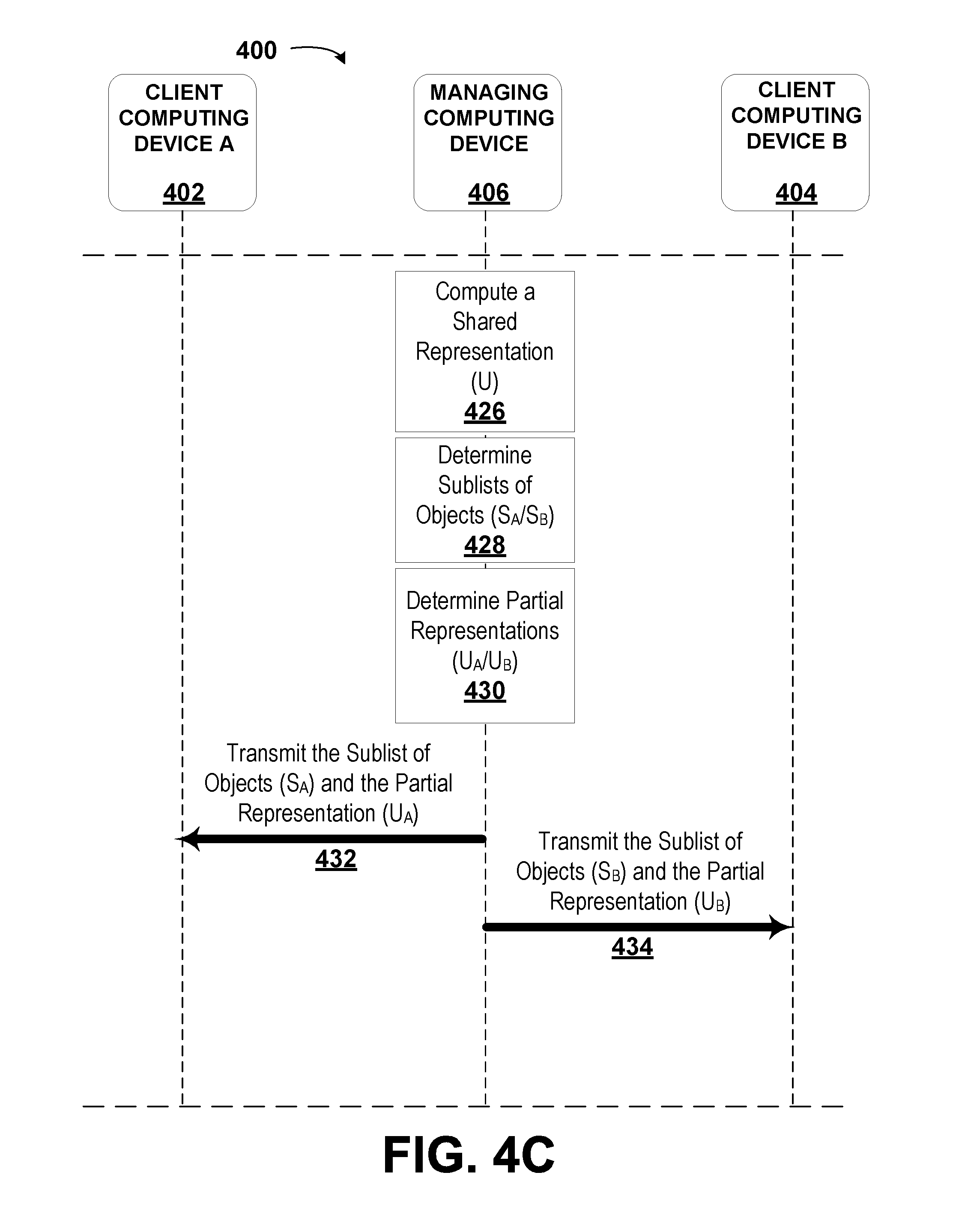

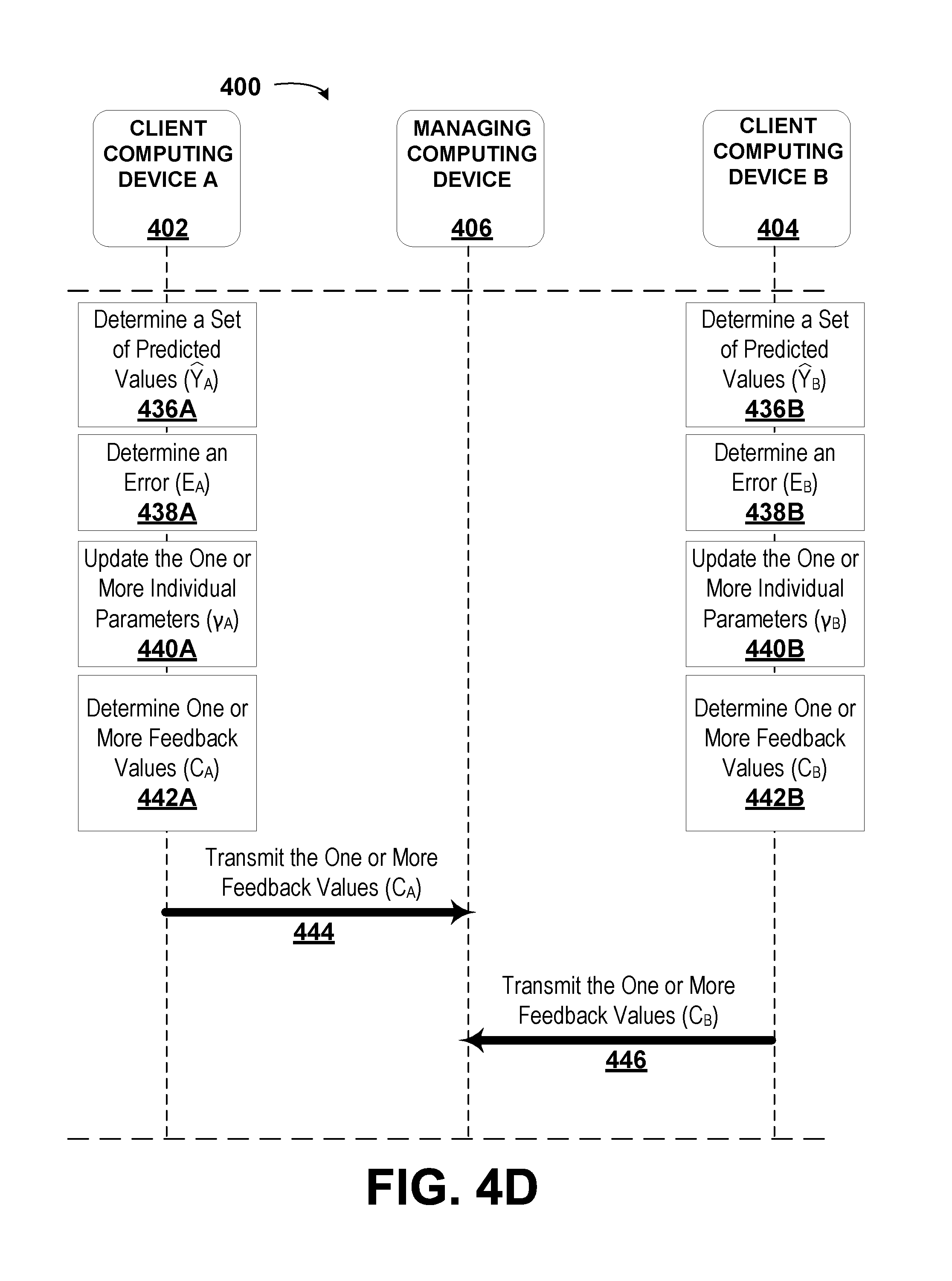

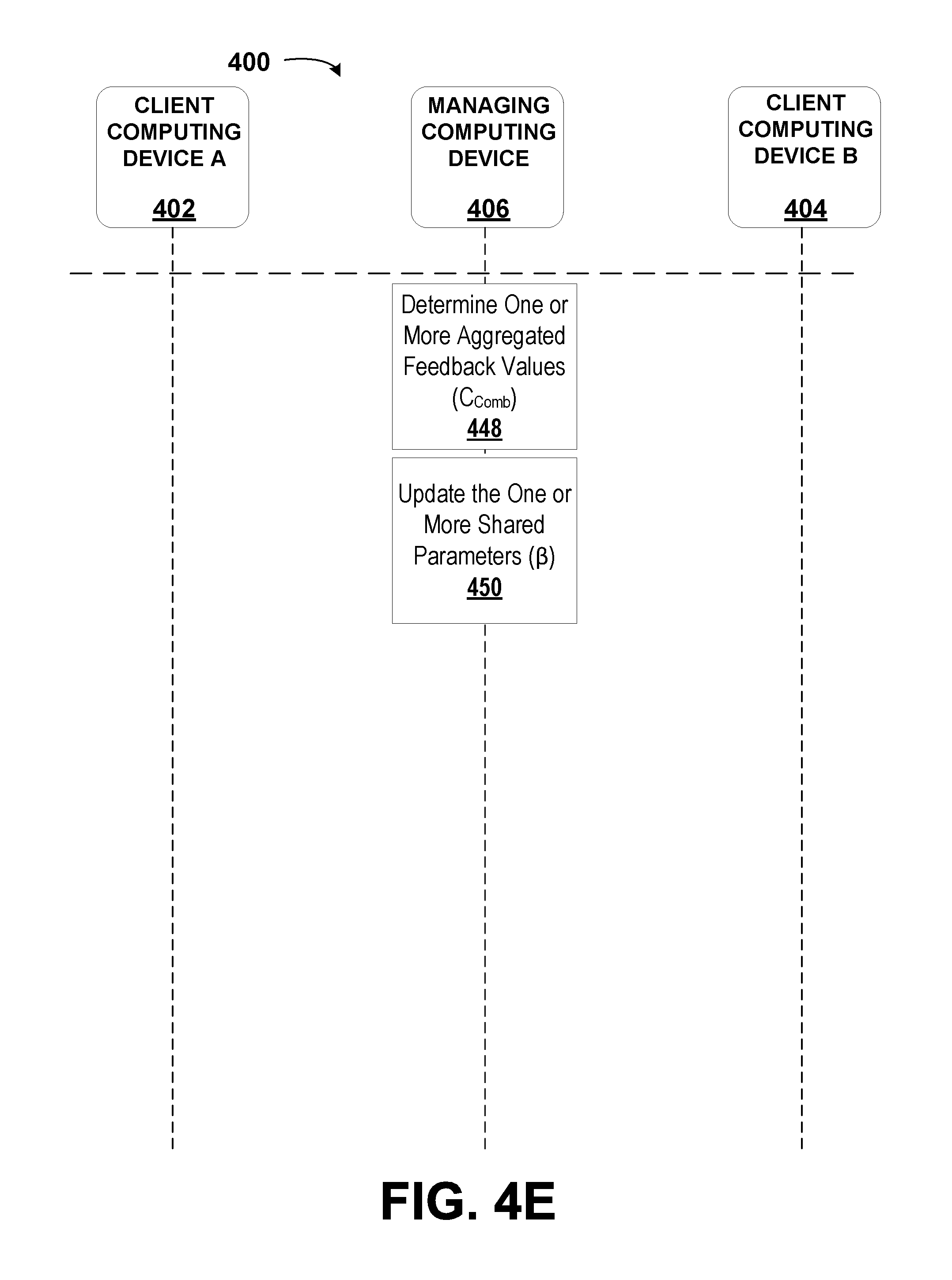

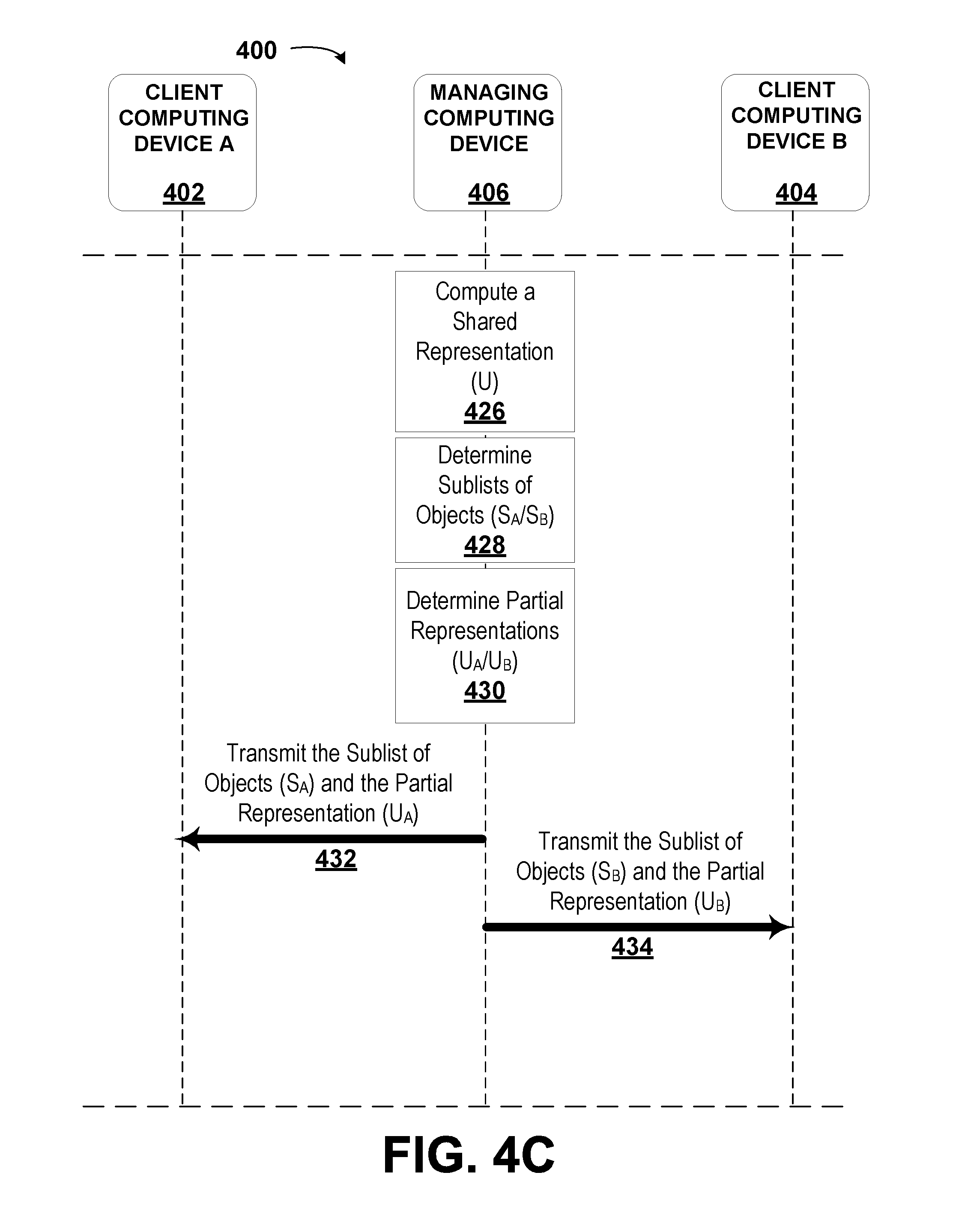

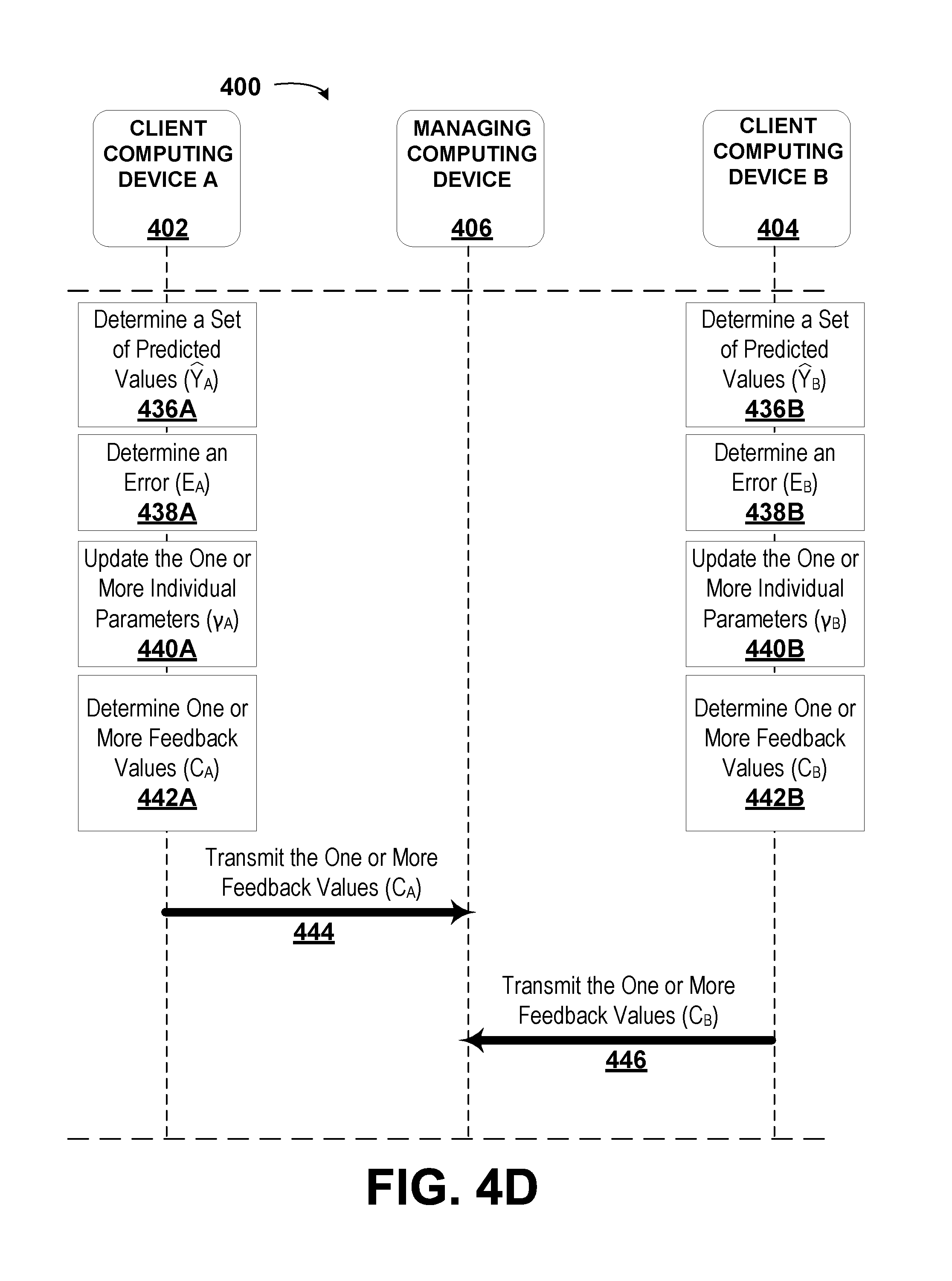

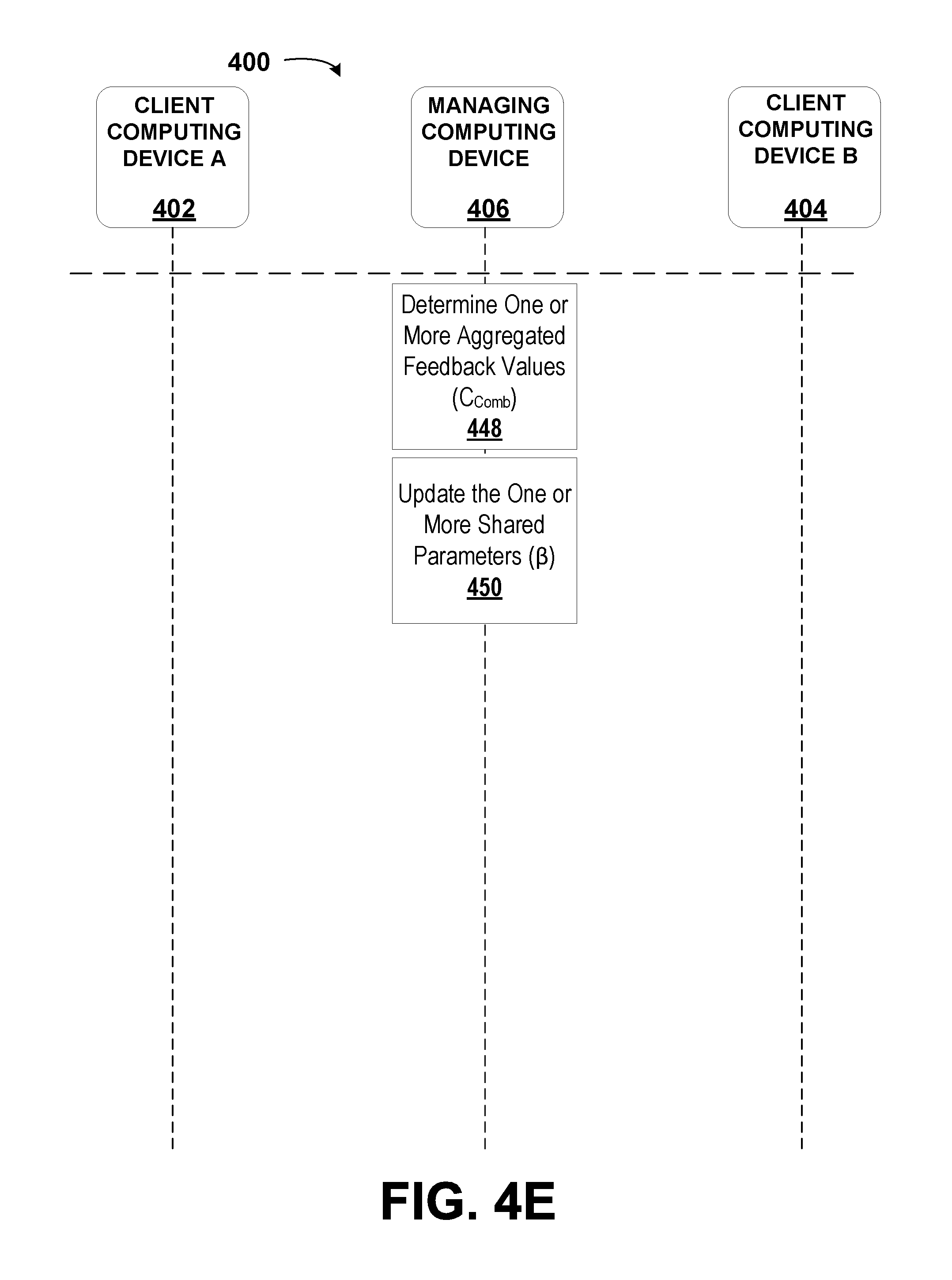

1. A method, comprising: receiving, by a managing computing device, a plurality of datasets, wherein each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices, wherein each dataset corresponds to a set of recorded values, and wherein each dataset comprises objects; determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers comprising a combination of the lists of identifiers of each dataset of the plurality of datasets; determining, by the managing computing device, a list of unique objects from among the plurality of datasets; selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers; determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers; computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters; determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset; determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation; transmitting, by the managing computing device, to each of the client computing devices: the sublist of objects for the respective dataset; and the partial representation for the respective dataset; receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices, wherein the one or more feedback values are determined by the client computing devices by: determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset, wherein the set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset; determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset; updating, by the respective client computing device, the one or more individual parameters for the respective dataset; and determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values; determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values; and updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values.

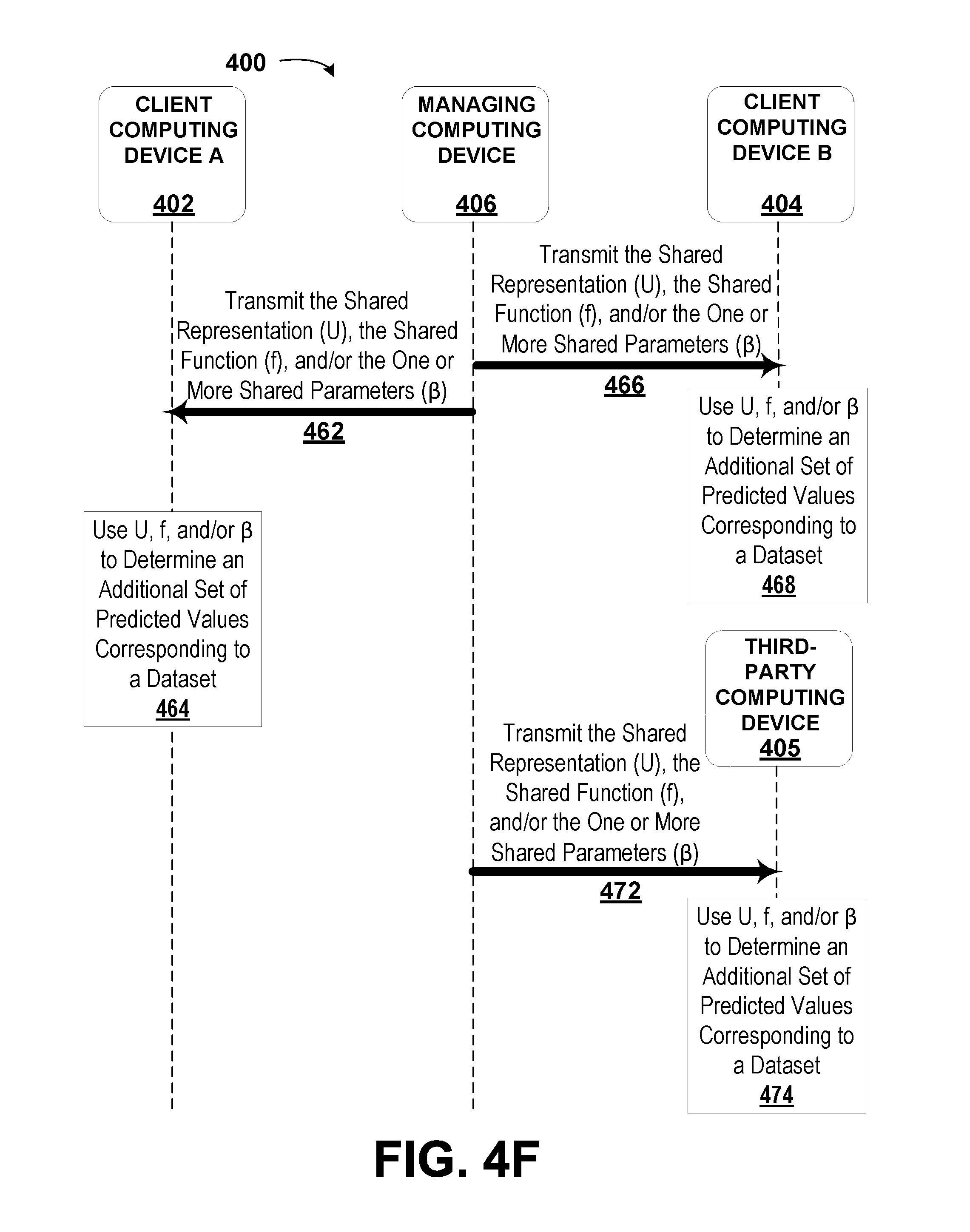

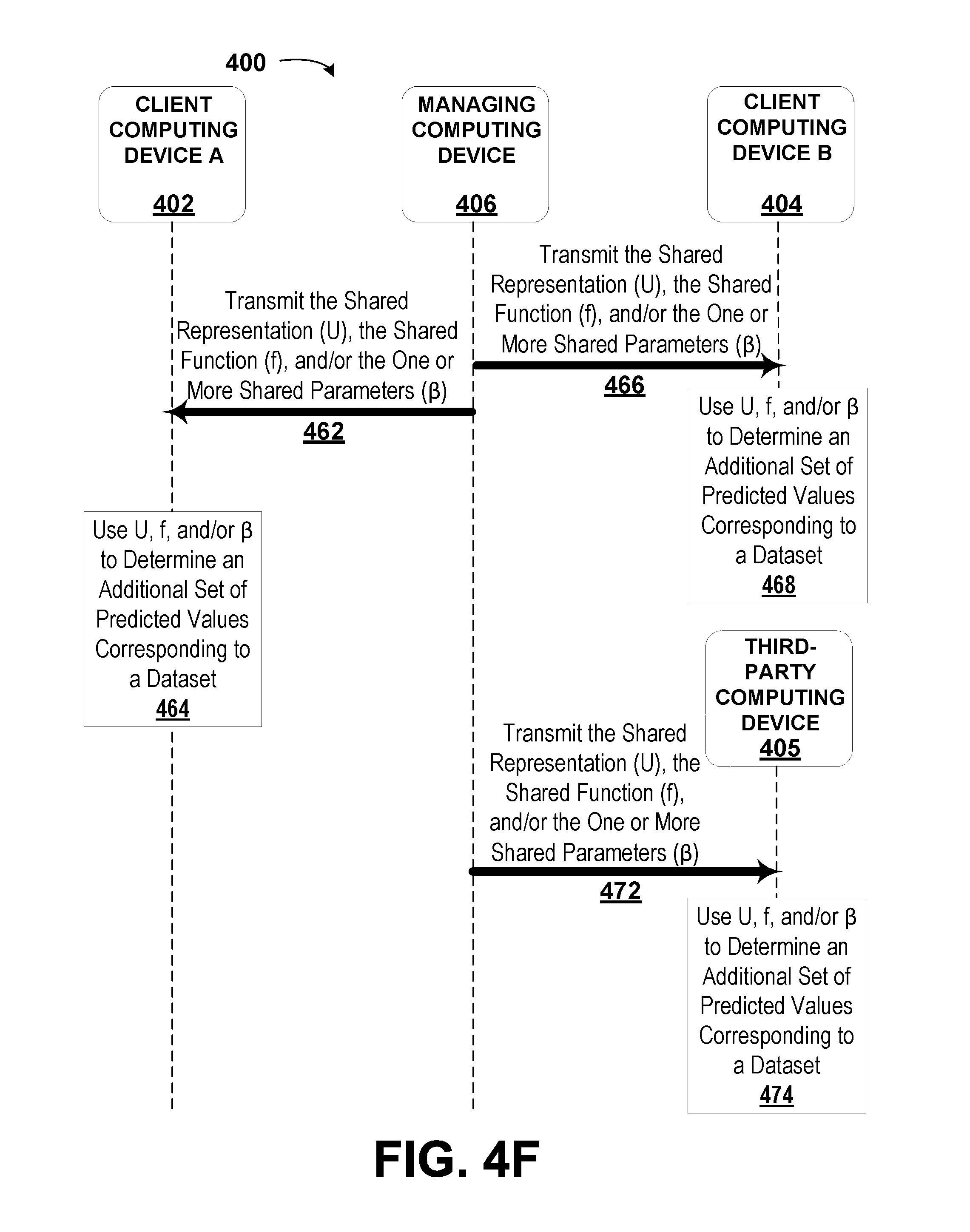

2. The method of claim 1, further comprising transmitting, by the managing computing device, the shared function and the one or more shared parameters to each of the client computing devices.

3. The method of claim 1, wherein determining, by the managing computing device, the list of unique objects from among the plurality of datasets comprises: creating, by the managing computing device, a composite list of objects that is a combination of the objects from each dataset; and removing, by the managing computing device, duplicate objects from the composite list of objects based on an intersection of the lists of identifiers for each of the plurality of datasets.

4. The method of claim 1, wherein determining the error for the respective dataset comprises: identifying, by the respective client computing device, which of the non-empty entries in the set of recorded values corresponding to the respective dataset corresponds to an object in the sublist of objects; determining, by the respective client computing device, a partial error value for each of the identified non-empty entries in the set of recorded values corresponding to the respective dataset by applying the individual loss function between each identified non-empty entry and its corresponding predicted value in the set of predicted values corresponding to the respective dataset; and combining, by the respective client computing device, the partial error values.

5. The method of claim 1, further comprising: calculating, by the managing computing device, a final shared representation of the datasets based on the list of unique objects, the shared function, and the one or more shared parameters; and transmitting, by the managing computing device, the final shared representation of the datasets to each of the client computing devices.

6. The method of claim 5, wherein the final shared representation of the datasets is usable by each of the client computing devices to determine a final set of predicted values corresponding to the respective dataset.

7. The method of claim 6, wherein determining the final set of predicted values corresponding to the respective dataset comprises: receiving, by the respective client computing device, the sublist of objects for the respective dataset; determining, by the respective client computing device, a final partial representation for the respective dataset based on the sublist of objects and the final shared representation; and determining, by the respective client computing device, the final set of predicted values corresponding to the respective dataset based on the final partial representation, the individual function, and the one or more individual parameters corresponding to the respective dataset.

8. The method of claim 1, wherein the one or more feedback values from each of the client computing devices are based on back-propagated errors.

9. The method of claim 1, wherein each of the plurality of datasets comprises an equal number of dimensions.

10. The method of claim 1, wherein each of the plurality of datasets is represented by a tensor, and wherein at least one of the plurality of datasets is represented by a sparse tensor.

11. The method of claim 1, further comprising: selecting, by the managing computing device, an additional subset of identifiers from the composite list of identifiers; determining, by the managing computing device, an additional subset of the list of unique objects corresponding to each identifier in the additional subset of identifiers; computing, by the managing computing device, a revised shared representation of the datasets based on the additional subset of the list of unique objects and the shared function having the one or more shared parameters; determining, by the managing computing device, additional sublists of objects for the respective dataset of each client computing device based on an intersection of the additional subset of identifiers with the list of identifiers for the respective dataset; determining, by the managing computing device, a revised partial respective for the respective dataset of each client computing device based on the additional sublist of objects for the respective dataset and the revised shared representation; transmitting, by the managing computing device, to each of the client computing devices: the additional sublist of objects for the respective dataset; and the revised partial representation for the respective dataset; receiving, by the managing computing device, one or more revised feedback values from at least one of the client computing devices, wherein the one or more revised feedback values are determined by the client computing devices by: determining, by the respective client computing device, a revised set of predicted values corresponding to the respective dataset, wherein the revised set of predicted values is based on the revised partial representation and the individual function with the one or more individual parameters corresponding to the respective dataset; determining, by the respective client computing device, a revised error for the respective dataset based on the individual loss function for the respective dataset, the revised set of predicted values corresponding to the respective dataset, the additional sublist of objects, and the non-empty entries in the set of recorded values corresponding to the respective dataset; updating, by the respective client computing device, the one or more individual parameters for the respective dataset; and determining, by the respective client computing device, the one or more revised feedback values, wherein the one or more revised feedback values are used to determine a change in the revised partial representation that corresponds to an improvement in the set of predicted values; determining, by the managing computing device, based on the additional sublists of objects and the one or more revised feedback values, one or more revised aggregated feedback values; updating, by the managing computing device, the one or more shared parameters based on the one or more revised aggregated feedback values; and determining, by the managing computing device based on the one or more revised aggregated feedback values, that an aggregated error corresponding to the revised errors for all respective datasets has been minimized.

12. The method of claim 1, further comprising initializing, by the managing computing device, the shared function and the one or more shared parameters based on a related shared function used to model a similar relationship.

13. The method of claim 1, wherein determining the one or more feedback values by the client computing devices further comprises initializing, by the respective client computing device, the individual function and the one or more individual parameters corresponding to the respective dataset based on a random number generator or a pseudo-random number generator.

14. The method of claim 1, wherein each of the plurality of datasets comprises at least two dimensions, wherein a first dimension of each of the plurality of datasets comprises a plurality of chemical compounds, wherein a second dimension of each of the plurality of datasets comprises descriptors of the chemical compounds, wherein entries in each of the plurality of datasets correspond to a binary indication of whether a respective chemical compound exhibits a respective descriptor, wherein each of the sets of recorded values corresponding to each of the plurality of datasets comprises at least two dimensions, wherein a first dimension of each of the sets of recorded values comprises the plurality of chemical compounds, wherein a second dimension of each of the sets of recorded values comprises activities of the chemical compounds in a plurality of biological assays, and wherein entries in each of the sets of recorded values correspond to a binary indication of whether a respective chemical compound exhibits a respective activity.

15. The method of claim 14, further comprising: calculating, by the managing computing device, a final shared representation of the datasets based on the list of unique objects, the shared function, and the one or more shared parameters; and transmitting, by the managing computing device, the final shared representation of the datasets to each of the client computing devices, wherein the final shared representation of the datasets is usable by each of the client computing devices to determine a final set of predicted values corresponding to the respective dataset, and wherein the final set of predicted values is used by at least one of the client computing devices to identify one or more effective treatment compounds among the plurality of chemical compounds.

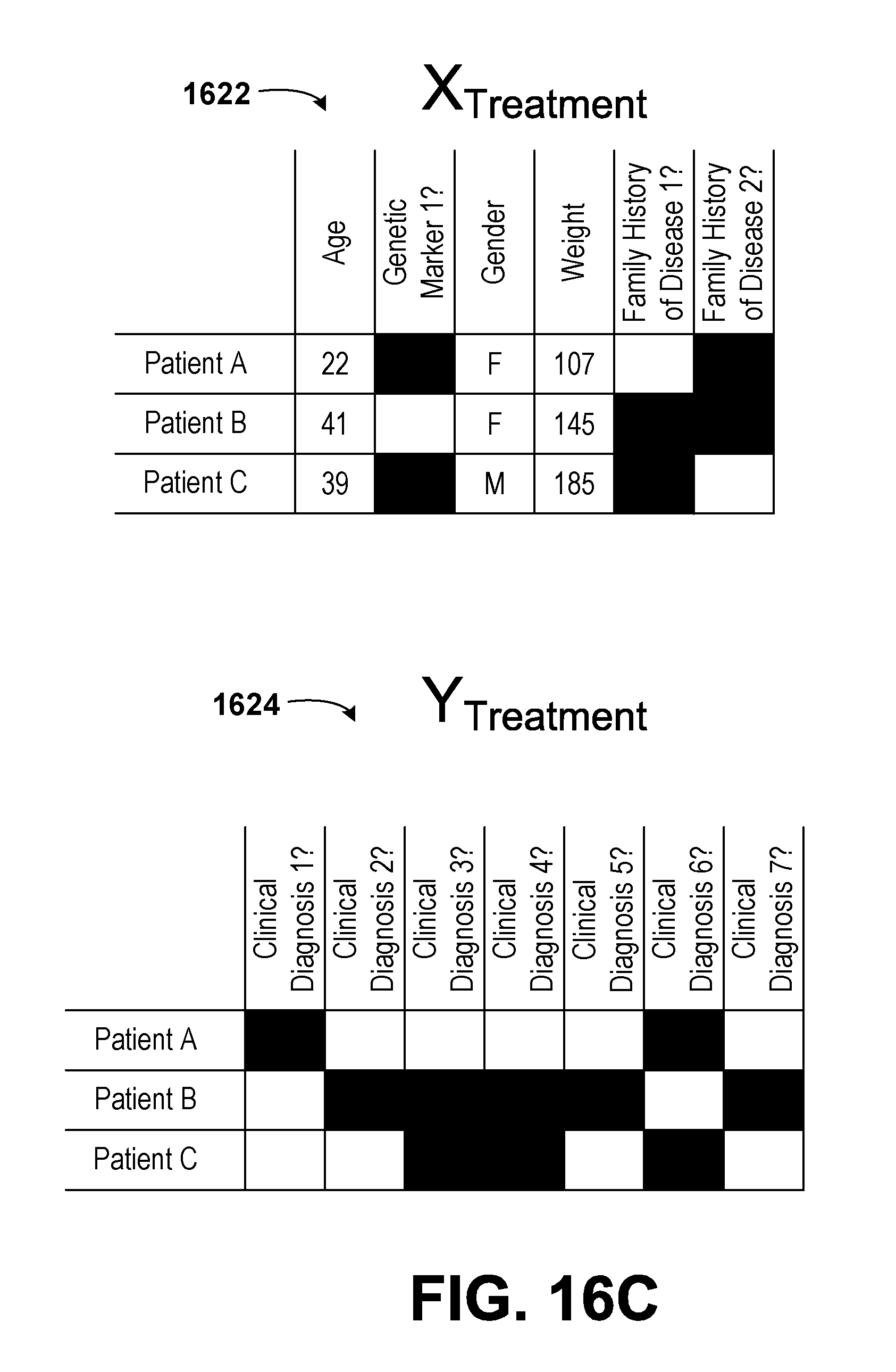

16. The method of claim 1, wherein each of the plurality of datasets comprises at least two dimensions, wherein a first dimension of each of the plurality of datasets comprises a plurality of patients, wherein a second dimension of each of the plurality of datasets comprises descriptors of the patients, wherein entries in each of the plurality of datasets correspond to a binary indication of whether a respective patient exhibits a respective descriptor, wherein each of the sets of recorded values corresponding to each of the plurality of datasets comprises at least two dimensions, wherein a first dimension of each of the sets of recorded values comprises the plurality of patients, wherein a second dimension of each of the sets of recorded values comprises clinical diagnoses of the patients, and wherein entries in each of the sets of recorded values correspond to a binary indication of whether a respective patient exhibits a respective clinical diagnosis.

17. The method of claim 16, further comprising: calculating, by the managing computing device, a final shared representation of the datasets based on the list of unique objects, the shared function, and the one or more shared parameters; and transmitting, by the managing computing device, the final shared representation of the datasets to each of the client computing devices, wherein the final shared representation of the datasets is usable by each of the client computing devices to determine a final set of predicted values corresponding to the respective dataset, and wherein the final set of predicted values is used by at least one of the client computing devices to diagnose at least one of the plurality of patients.

18. The method of claim 1, wherein each of the sets of predicted values corresponding to one of the plurality of datasets corresponds to a predicted value tensor, wherein the predicted value tensor is factored into a first tensor multiplied by a second tensor, and wherein the first tensor corresponds to the respective dataset multiplied by the one or more shared parameters.

19. The method of claim 18, wherein the respective dataset encodes side information about the objects of the dataset.

20. The method of claim 18, wherein the predicted value tensor is factored using a Macau factorization method.

21. A non-transitory, computer-readable medium with instructions stored thereon, wherein the instructions are executable by a processor to perform a method, comprising: receiving a plurality of datasets, wherein each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices, wherein each dataset corresponds to a set of recorded values, and wherein each dataset comprises objects; determining a respective list of identifiers for each dataset and a composite list of identifiers comprising a combination of the lists of identifiers of each dataset of the plurality of datasets; determining a list of unique objects from among the plurality of datasets; selecting a subset of identifiers from the composite list of identifiers; determining a subset of the list of unique objects corresponding to each identifier in the subset of identifiers; computing a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters; determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset; determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation; transmitting to each of the client computing devices: the sublist of objects for the respective dataset; and the partial representation for the respective dataset; receiving one or more feedback values from at least one of the client computing devices, wherein the one or more feedback values are determined by the client computing devices by: determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset, wherein the set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset; determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset; updating, by the respective client computing device, the one or more individual parameters for the respective dataset; and determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values; determining based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values; and updating the one or more shared parameters based on the one or more aggregated feedback values.

22. A memory with a model stored thereon, wherein the model is generated according to a method, comprising: receiving, by a managing computing device, a plurality of datasets, wherein each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices, wherein each dataset corresponds to a set of recorded values, and wherein each dataset comprises objects; determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers comprising a combination of the lists of identifiers of each dataset of the plurality of datasets; determining, by the managing computing device, a list of unique objects from among the plurality of datasets; selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers; determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers; computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters; determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset; determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation; transmitting, by the managing computing device, to each of the client computing devices: the sublist of objects for the respective dataset; and the partial representation for the respective dataset; receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices, wherein the one or more feedback values are determined by the client computing devices by: determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset, wherein the set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset; determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset; updating, by the respective client computing device, the one or more individual parameters for the respective dataset; and determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values; determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values; updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values; and storing, by the managing computing device, the shared representation, the shared function, and the one or more shared parameters on the memory.

Description

BACKGROUND

[0001] Unless otherwise indicated herein, the materials described in this section are not prior art to the claims in this application and are not admitted to be prior art by inclusion in this section.

[0002] Machine learning is a branch of computer science that seeks to automate the building of an analytical model. In machine learning, algorithms are used to create models that "learn" using datasets. Once "taught", the machine-learned models may be used to make predictions about other datasets, including future datasets. Machine learning has proven useful for developing models in a variety of fields. For example, machine learning has been applied to computer vision, statistics, data analytics, bioinformatics, deoxyribose nucleic acid (DNA) sequence identification, marketing, linguistics, economics, advertising, speech recognition, gaming, etc.

[0003] Machine learning involves training the model on a set of data, usually called "training data." Training the model may include two main subclasses: supervised learning and unsupervised learning.

[0004] In supervised learning, training data may include a plurality of datasets for which the outcome is known. For example, training data in the area of image recognition may correspond to images depicting certain objects which have been labeled (e.g., by a human) as containing a specific type of object (e.g., a dog, a pencil, a car, etc.). Such training data may be referred to as "labeled training data."

[0005] In unsupervised learning, the training data may not necessarily correspond to a known value or outcome. As such, the training data may be "unlabeled." Because the outcome for each piece of training data is unknown, the machine learning algorithm may infer a function from the training data. As an example, the function may be weighted based on one or more dimensions within the training data. Further, the function may be used to make predictions about new data to which the model is applied.

[0006] Upon training a model using training data, predictions may be made using the model. The more training data that is used to train a given model, the more the model may be refined and the more accurate the model may become. A common optimization in machine learning includes obtaining the most robust and reliable model while having access to the least amount of training data.

[0007] In some cases, additional sources of training data may provide a better-trained machine-learned model. However, in some scenarios, attaining more training data may not be possible. For example, two corporations may possess respective sets of training data that could be collectively used to train a machine-learned model that is superior to a model trained on either set of training data utilized individually. However, each corporation may desire that their data remains private (e.g., each corporation does not want to reveal the private data to the other corporation).

SUMMARY

[0008] The specification and drawings disclose embodiments that relate to secure broker-mediated data analysis and prediction.

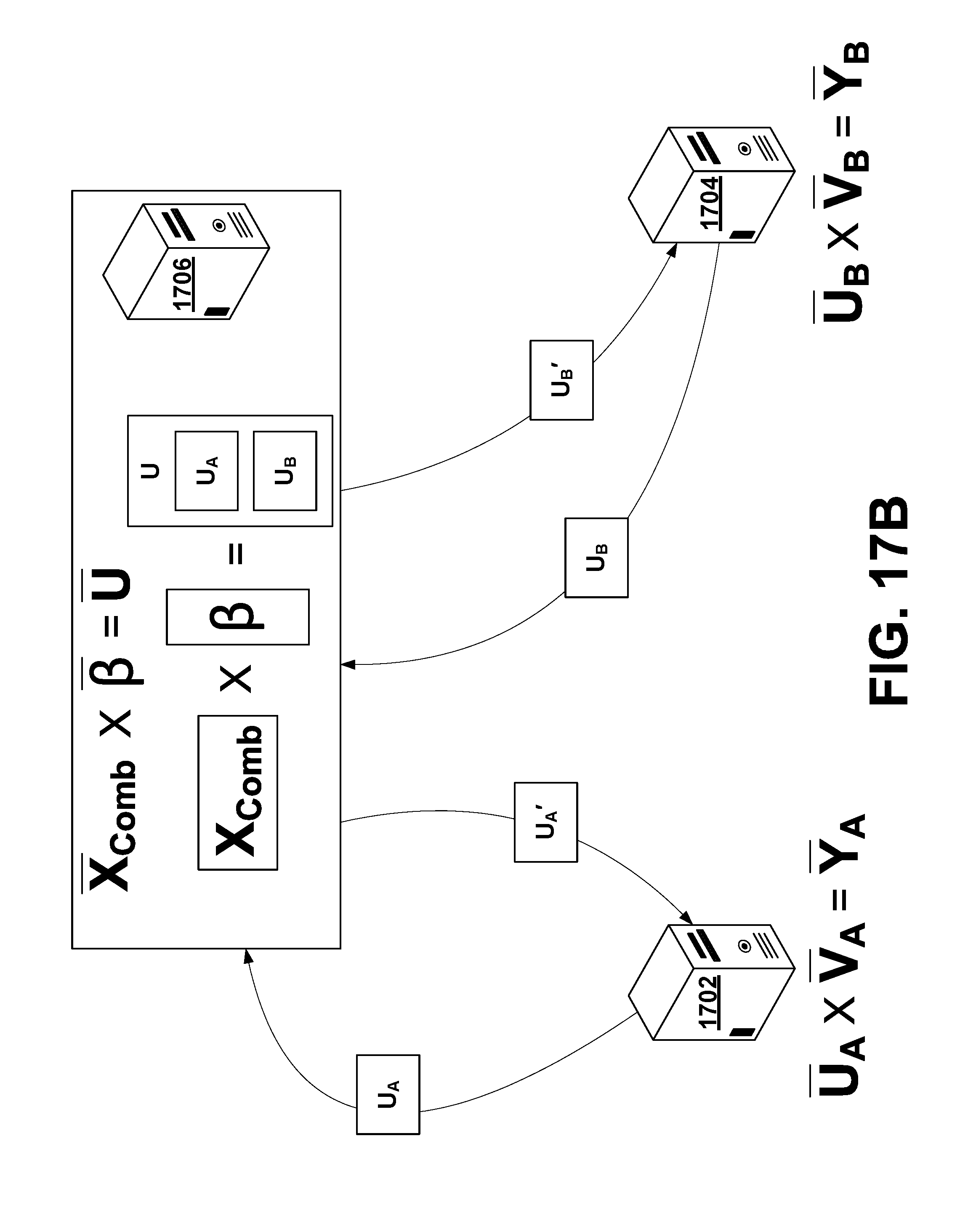

[0009] The disclosure describes a method for performing joint machine learning using multiple datasets from multiple parties without revealing private information between the multiple parties. Such a method may include multiple client computing devices that transmit respective datasets to a managing computing device. The managing computing device may then combine the datasets, perform a portion of a machine learning algorithm on the combined datasets, and then transmit a portion of the results of the machine learning algorithm back to each of the client computing devices. Each client computing device may then perform another portion of the machine learning algorithm and send its portion of the results back to the managing computing device. Based on the results received from each client computing device, the managing computing device may then perform an additional portion of the machine learning algorithm to update a corresponding machine-learned model. In some cases, the method may be carried out multiple times in an optimization-type or recursive-type manner and/or may be carried out on an on-going basis.

[0010] In a first aspect, the disclosure describes a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. The method additionally includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. In addition, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Even further, the one or more feedback values are also determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method also includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values.

[0011] In a second aspect, the disclosure describes a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values, wherein each dataset relates a plurality of chemical compounds to a plurality of descriptors of the chemical compounds. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. Still further, the method includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Additionally, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. Even further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. The set of recorded values corresponding to the respective dataset relates the plurality of chemical compounds to activities of the chemical compounds in a plurality of biological assays. In addition, the one or more feedback values are determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method additionally includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. The shared representation, the shared function, or the one or more shared parameters are usable by at least one of the plurality of client computing devices to identify one or more effective treatment compounds among the plurality of chemical compounds.

[0012] In a third aspect, the disclosure describes a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values, wherein each dataset relates a plurality of patients to a plurality of descriptors of the patients. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. Still further, the method includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Additionally, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. Even further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. The set of recorded values corresponding to the respective dataset relates the plurality of patients to clinical diagnoses of patients. In addition, the one or more feedback values are determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method additionally includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. The shared representation, the shared function, or the one or more shared parameters are usable by at least one of the plurality of client computing devices to diagnose one or more of the plurality of patients.

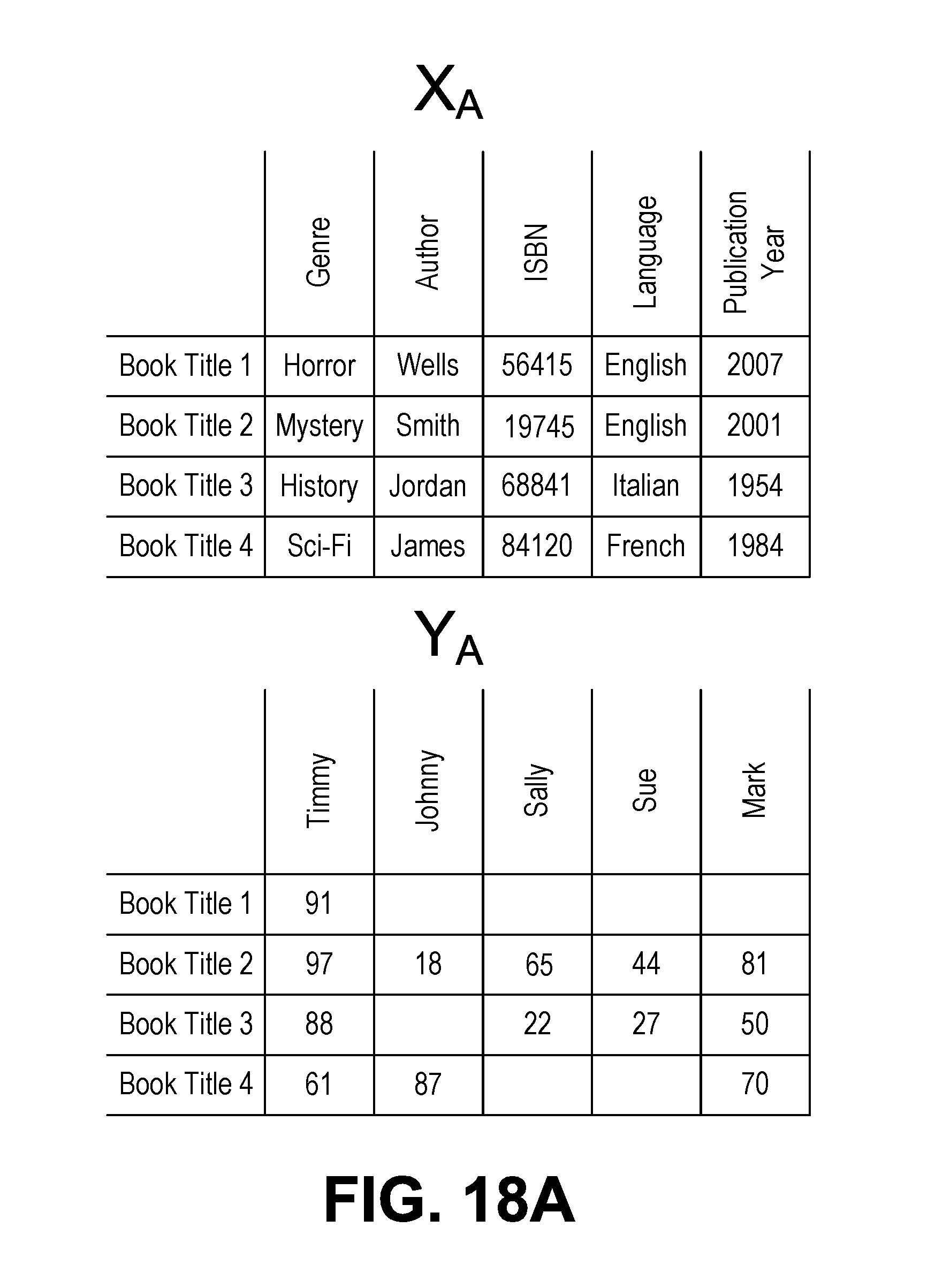

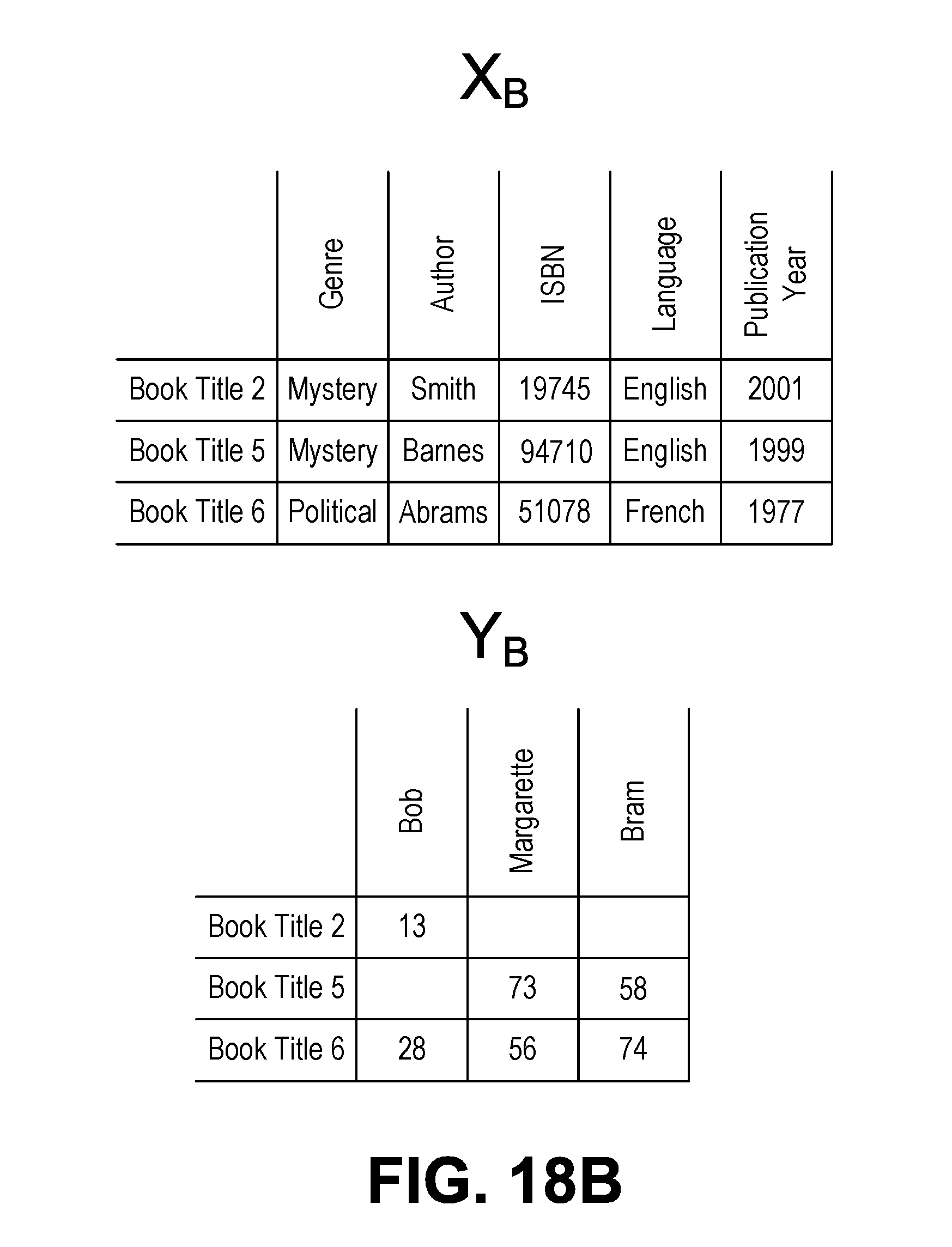

[0013] In a fourth aspect, the disclosure describes a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values, wherein each dataset provides a set of book ratings for a plurality of book titles by a plurality of users. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. Still further, the method includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Additionally, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. Even further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. The set of recorded values corresponding to the respective dataset provides a set of movie ratings for a plurality of movie titles by the plurality of users. In addition, the one or more feedback values are determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method additionally includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. The shared representation, the shared function, or the one or more shared parameters are usable by at least one of the plurality of client computing devices to recommend a movie to one or more of the plurality of users.

[0014] In a fifth aspect, the disclosure describes a method. The method includes transmitting, by a first client computing device to a managing computing device, a first dataset corresponding to the first client computing device. The first dataset is one of a plurality of datasets transmitted to the managing computing device by a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes receiving, by the first client computing device, a first sublist of objects for the first dataset and a first partial representation for the first dataset. The first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers that includes a combination of the lists of identifiers of each dataset of the plurality of datasets. The first sublist of objects for the first dataset and the first partial representation for the first dataset are also determined by determining, by the managing computing device, a list of unique objects from among the plurality of datasets. Further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by selecting, by the managing computing device, the subset of identifiers from the composite list of identifiers. Even further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by computing, by the managing computing device, the shared representation of the plurality of datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by determining, by the managing computing device, the first sublist of objects for the first dataset based on an intersection of the subset of identifiers with the list of identifiers for the first dataset. Yet further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by determining, by the managing computing device, the first partial representation for the first dataset based on the first sublist of objects and the shared representation. Even further, the method includes determining, by the first client computing device, a first set of predicted values corresponding to the first dataset. The first set of predicted values is based on the first partial representation and a first individual function with one or more first individual parameters corresponding to the first dataset. In addition, the method includes determining, by the first client computing device, a first error for the first dataset based on a first individual loss function for the first dataset, the first set of predicted values corresponding to the first dataset, the first sublist of objects, and non-empty entries in the set of recorded values corresponding to the first dataset. Still further, the method includes updating, by the first client computing device, the one or more first individual parameters for the first dataset. Yet further, the method includes determining, by the first client computing device, one or more feedback values. The one or more feedback values are used to determine a change in the first partial representation that corresponds to an improvement in the first set of predicted values. Yet still further, the method includes transmitting, by the first client computing device to the managing computing device, the one or more feedback values. The one or more feedback values are usable by the managing computing device along with sublists of objects from the plurality of client computing devices to determine one or more aggregated feedback values. The one or more aggregated feedback values are usable by the managing computing device to update the one or more shared parameters.

[0015] In a sixth aspect, the disclosure describes a non-transitory, computer-readable medium with instructions stored thereon. The instructions are executable by a processor to perform a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. The method additionally includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. In addition, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Even further, the one or more feedback values are also determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method also includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values.

[0016] In a seventh aspect, the disclosure describes a memory with a model stored thereon. The model is generated according to a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. The method additionally includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. In addition, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Even further, the one or more feedback values are also determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method also includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. Yet even further, the method includes storing, by the managing computing device, the shared representation, the shared function, and the one or more shared parameters on the memory.

[0017] In an eighth aspect, the disclosure describes a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers including a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. In addition, the method includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. The method additionally includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Still even further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even yet further, the method includes transmitting, by the managing computing device, to each of the client computing devices the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. In addition, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Even further, the one or more feedback values are also determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. Still further, the one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values, wherein the one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The method also includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Yet still further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. Yet even further, the method includes using, by a computing device, the shared representation, the shared function, or the one or more shared parameters to determine an additional set of predicted values corresponding to a dataset.

[0018] In a ninth aspect, the disclosure describes a server device. The server device has instructions stored thereon that, when executed by a processor, perform a method. The method includes receiving a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining a respective list of identifiers for each dataset and a composite list of identifiers that includes a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining a list of unique objects from among the plurality of datasets. In addition, the method includes selecting a subset of identifiers from the composite list of identifiers. Still further, the method includes determining a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. The method additionally includes computing a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Yet further, the method includes determining a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Even still further, the method includes transmitting to each of the client computing devices: the sublist of objects for the respective dataset and the partial representation for the respective dataset. Yet still further, the method includes receiving one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. The one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Further, the one or more feedback values are determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. In addition, the one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. Even yet further, the method includes determining based on the sublists of objects and the one or more feedback values from the client computing devices, one or more aggregated feedback values. Still yet further, the method includes updating the one or more shared parameters based on the one or more aggregated feedback values.

[0019] In a tenth aspect, the disclosure describes a server device. The server device has instructions stored thereon that, when executed by a processor, perform a method. The method includes transmitting, to a managing computing device, a first dataset corresponding to the server device. The first dataset is one of a plurality of datasets transmitted to the managing computing device by a plurality of server devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes receiving a first sublist of objects for the first dataset and a first partial representation for the first dataset. The first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by the managing computing device by determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers that includes a combination of the lists of identifiers of each dataset of the plurality of datasets. The first sublist of objects for the first dataset and the first partial representation for the first dataset are also determined by the managing computing device by determining, by the managing computing device, a list of unique objects from among the plurality of datasets. Further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by the managing computing device by selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. In addition, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by the managing computing device by determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Still further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by the managing computing device by computing, by the managing computing device, a shared representation of the plurality of datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. The first sublist of objects for the first dataset and the first partial representation for the first dataset are additionally determined by the managing computing device by determining, by the managing computing device, the first sublist of objects for the first dataset based on an intersection of the subset of identifiers with the list of identifiers for the first dataset. Yet further, the first sublist of objects for the first dataset and the first partial representation for the first dataset are determined by the managing computing device by determining, by the managing computing device, the first partial representation for the first dataset based on the first sublist of objects and the shared representation. Further, the method includes determining a first set of predicted values corresponding to the first dataset. The first set of predicted values is based on the first partial representation and a first individual function with one or more first individual parameters corresponding to the first dataset. Additionally, the method includes determining a first error for the first dataset based on a first individual loss function for the first dataset, the first set of predicted values corresponding to the first dataset, the first sublist of objects, and non-empty entries in the set of recorded values corresponding to the first dataset. Even further, the method includes updating the one or more first individual parameters for the first dataset. The method additionally includes determining one or more feedback values. The one or more feedback values are used to determine a change in the first partial representation that corresponds to an improvement in the first set of predicted values. Yet further, the method includes transmitting, to the managing computing device, the one or more feedback values. The one or more feedback values are usable by the managing computing device along with sublists of objects from the plurality of server devices to determine one or more aggregated feedback values. The one or more aggregated feedback values are usable by the managing computing device to update the one or more shared parameters.

[0020] In an eleventh aspect, the disclosure describes a system. The system includes a server device. The system also includes a plurality of client devices each communicatively coupled to the server device. The server device has instructions stored thereon that, when executed by a processor, perform a first method. The first method includes receiving a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client device of the plurality of client devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The first method also includes determining a respective list of identifiers for each dataset and a composite list of identifiers that includes a combination of the lists of identifiers of each dataset of the plurality of datasets. Additionally, the first method includes determining a list of unique objects from among the plurality of datasets. Further, the first method includes selecting a subset of identifiers from the composite list of identifiers. The first method additionally includes determining a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. The first method further includes computing a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Still further, the first method includes determining a sublist of objects for the respective dataset of each client device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Yet further, the first method includes determining a partial representation for the respective dataset of each client device based on the sublist of objects for the respective dataset and the shared representation. Even further, the first method includes transmitting to each of the client devices: the sublist of objects for the respective dataset and the partial representation for the respective dataset. Each client device has instructions stored thereon that, when executed by a processor, perform a second method. The second method includes determining a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. The second method also includes determining an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Further, the second method includes updating the one or more individual parameters for the respective dataset. The second method additionally includes determining one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. The second method further includes transmitting, to the server device, the one or more feedback values. Yet even further, the first method includes determining based on the sublists of objects and the one or more feedback values from the client devices, one or more aggregated feedback values. Still yet further, the first method includes updating the one or more shared parameters based on the one or more aggregated feedback values.

[0021] In a twelfth aspect, the disclosure describes an optimized model. The model is optimized according to a method. The method includes receiving, by a managing computing device, a plurality of datasets. Each dataset of the plurality of datasets is received from a respective client computing device of a plurality of client computing devices. Each dataset corresponds to a set of recorded values. Each dataset includes objects. The method also includes determining, by the managing computing device, a respective list of identifiers for each dataset and a composite list of identifiers that includes a combination of the lists of identifiers of each dataset of the plurality of datasets. Further, the method includes determining, by the managing computing device, a list of unique objects from among the plurality of datasets. The method additionally includes selecting, by the managing computing device, a subset of identifiers from the composite list of identifiers. The method further includes determining, by the managing computing device, a subset of the list of unique objects corresponding to each identifier in the subset of identifiers. Additionally, the method includes computing, by the managing computing device, a shared representation of the datasets based on the subset of the list of unique objects and a shared function having one or more shared parameters. Even further, the method includes determining, by the managing computing device, a sublist of objects for the respective dataset of each client computing device based on an intersection of the subset of identifiers with the list of identifiers for the respective dataset. Yet further, the method includes determining, by the managing computing device, a partial representation for the respective dataset of each client computing device based on the sublist of objects for the respective dataset and the shared representation. Still further, the method includes transmitting, by the managing computing device, to each of the client computing devices: the sublist of objects for the respective dataset and the partial representation for the respective dataset. Even still further, the method includes receiving, by the managing computing device, one or more feedback values from at least one of the client computing devices. The one or more feedback values are determined by the client computing devices by determining, by the respective client computing device, a set of predicted values corresponding to the respective dataset. The set of predicted values is based on the partial representation and an individual function with one or more individual parameters corresponding to the respective dataset. The one or more feedback values are also determined by the client computing devices by determining, by the respective client computing device, an error for the respective dataset based on an individual loss function for the respective dataset, the set of predicted values corresponding to the respective dataset, the sublist of objects, and non-empty entries in the set of recorded values corresponding to the respective dataset. Further, the one or more feedback values are determined by the client computing devices by updating, by the respective client computing device, the one or more individual parameters for the respective dataset. The one or more feedback values are additionally determined by the client computing devices by determining, by the respective client computing device, the one or more feedback values. The one or more feedback values are used to determine a change in the partial representation that corresponds to an improvement in the set of predicted values. Even yet further, the method includes determining, by the managing computing device, based on the sublists of objects and the one or more feedback values from the client computing devices one or more aggregated feedback values. Still even further, the method includes updating, by the managing computing device, the one or more shared parameters based on the one or more aggregated feedback values. Still yet even further, the method includes computing, by the managing computing device, an updated shared representation of the datasets based on the shared function and the one or more updated shared parameters. The updated shared representation corresponds to the optimized model.

[0022] The foregoing summary is illustrative only and is not intended to be in any way limiting. In addition to the illustrative aspects, embodiments, and features described above, further aspects, embodiments, and features will become apparent by reference to the figures and the following detailed description.

BRIEF DESCRIPTION OF THE FIGURES

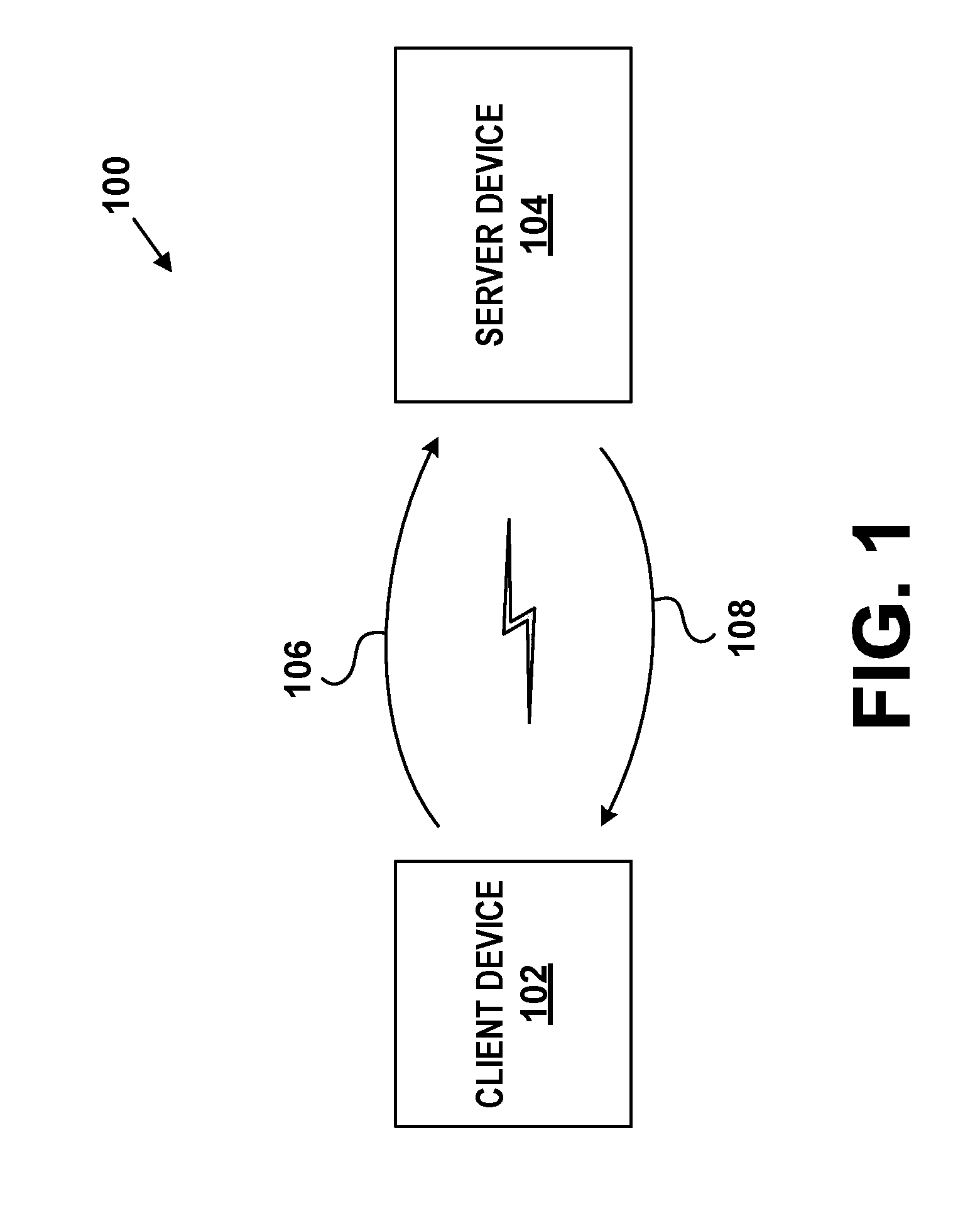

[0023] FIG. 1 is a high-level illustration of a client-server computing system, according to example embodiments.

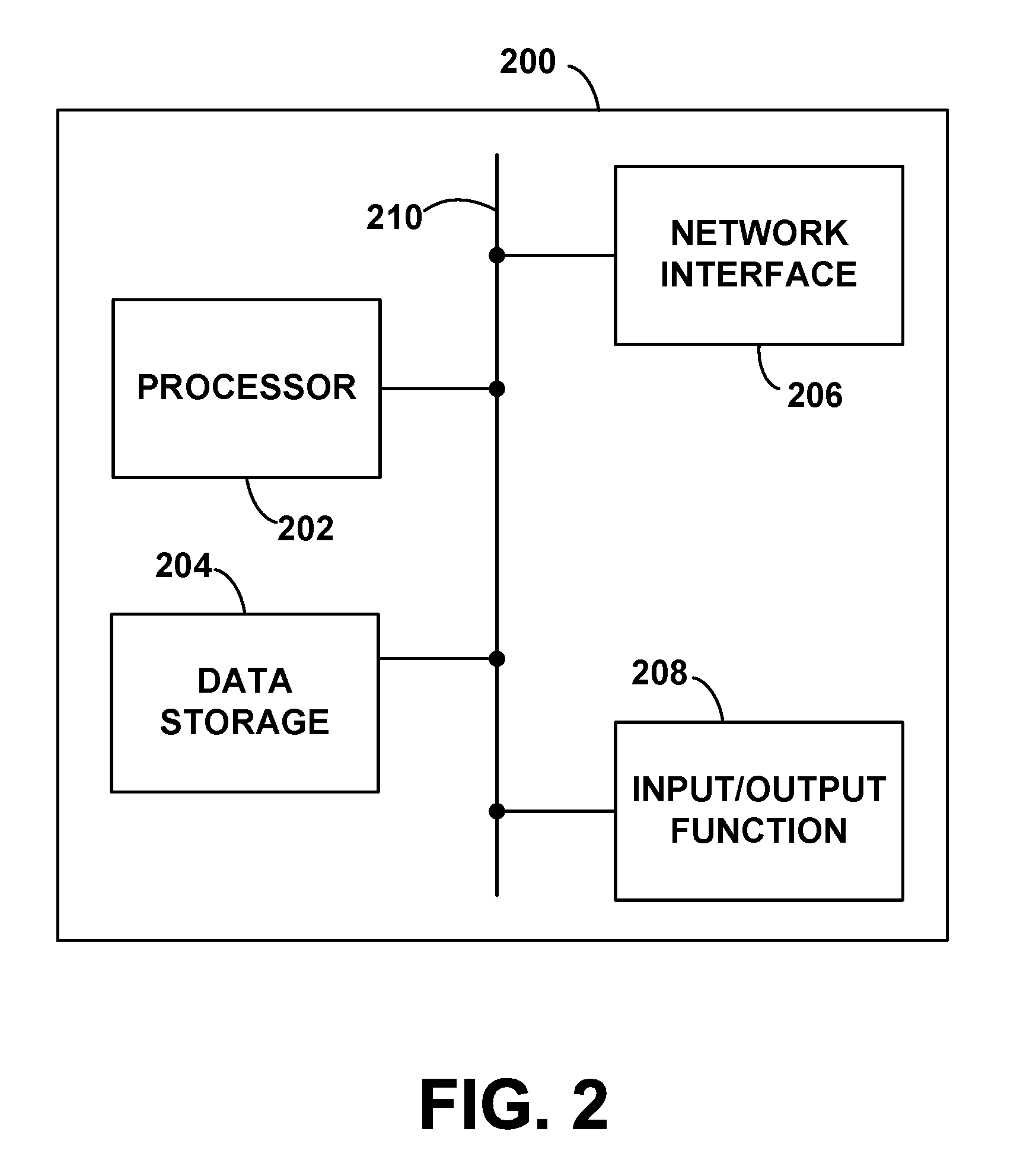

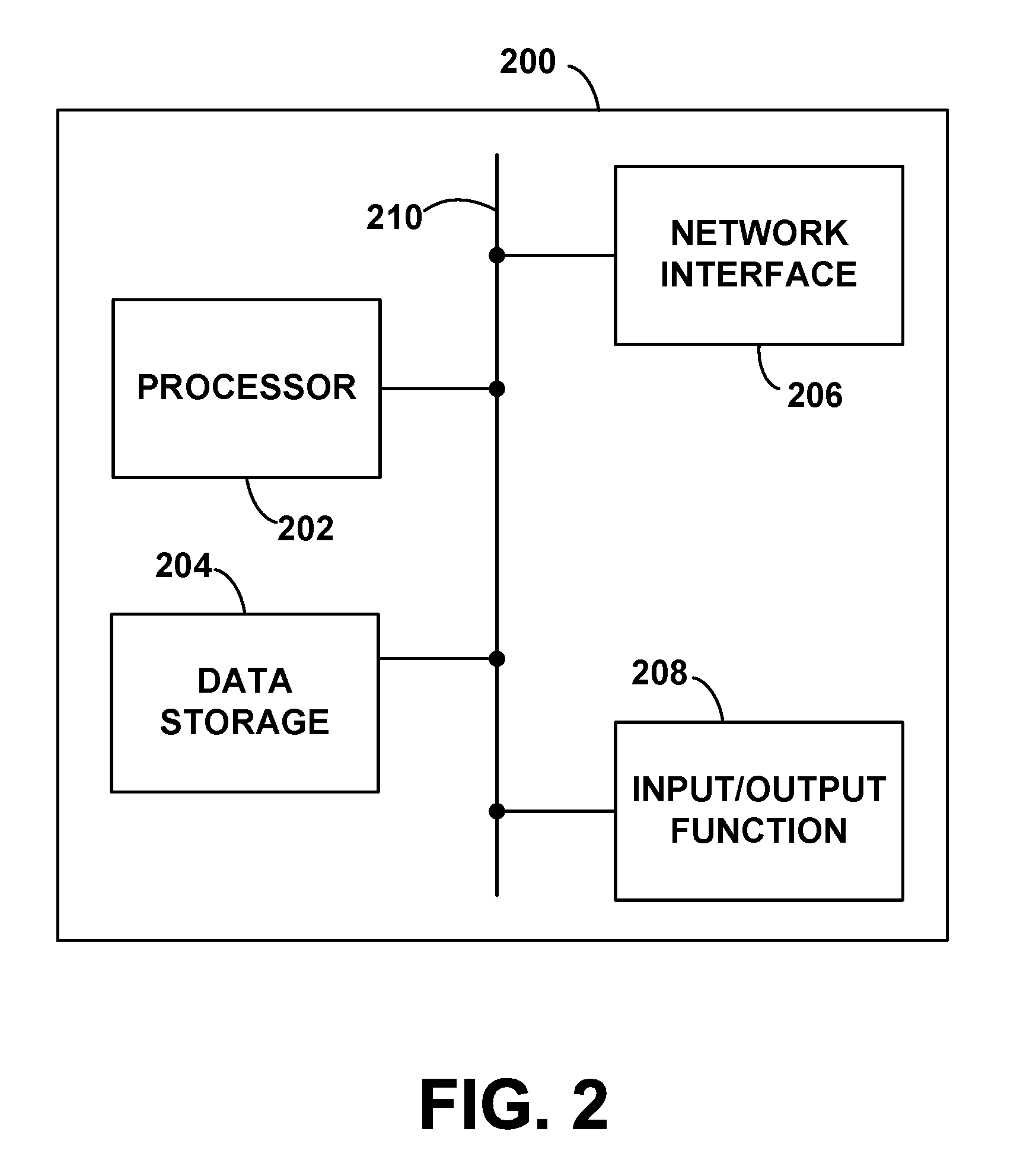

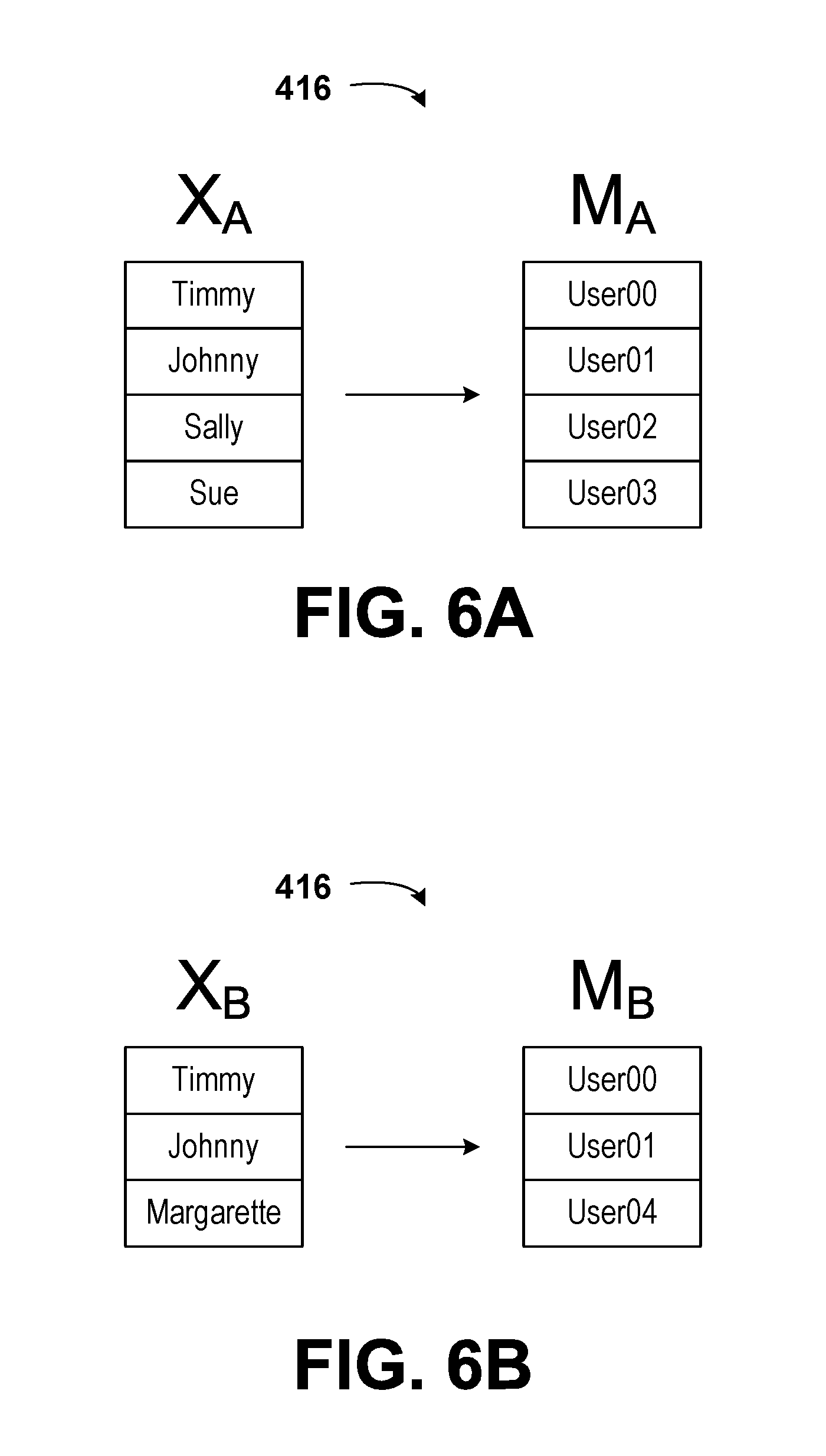

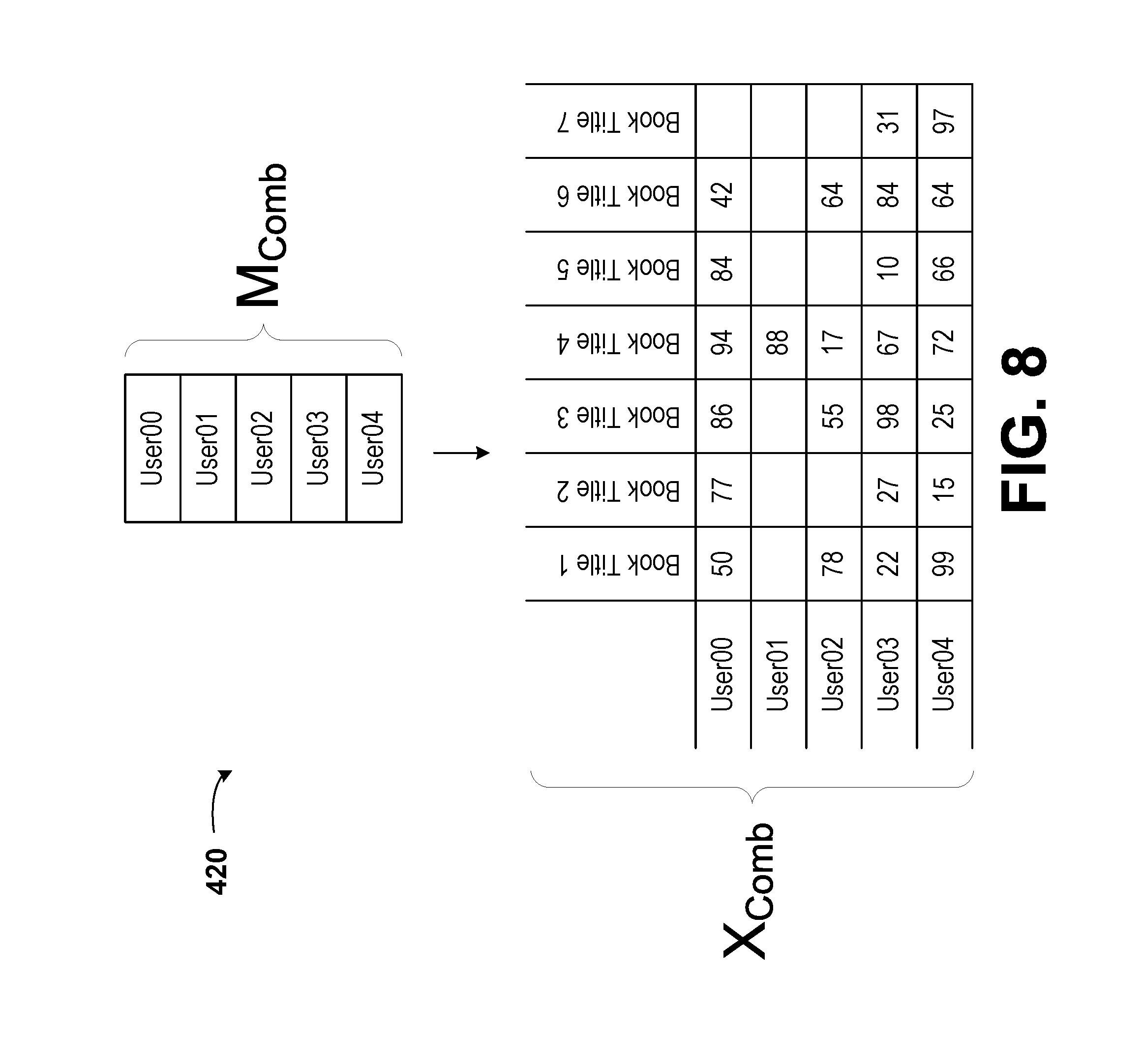

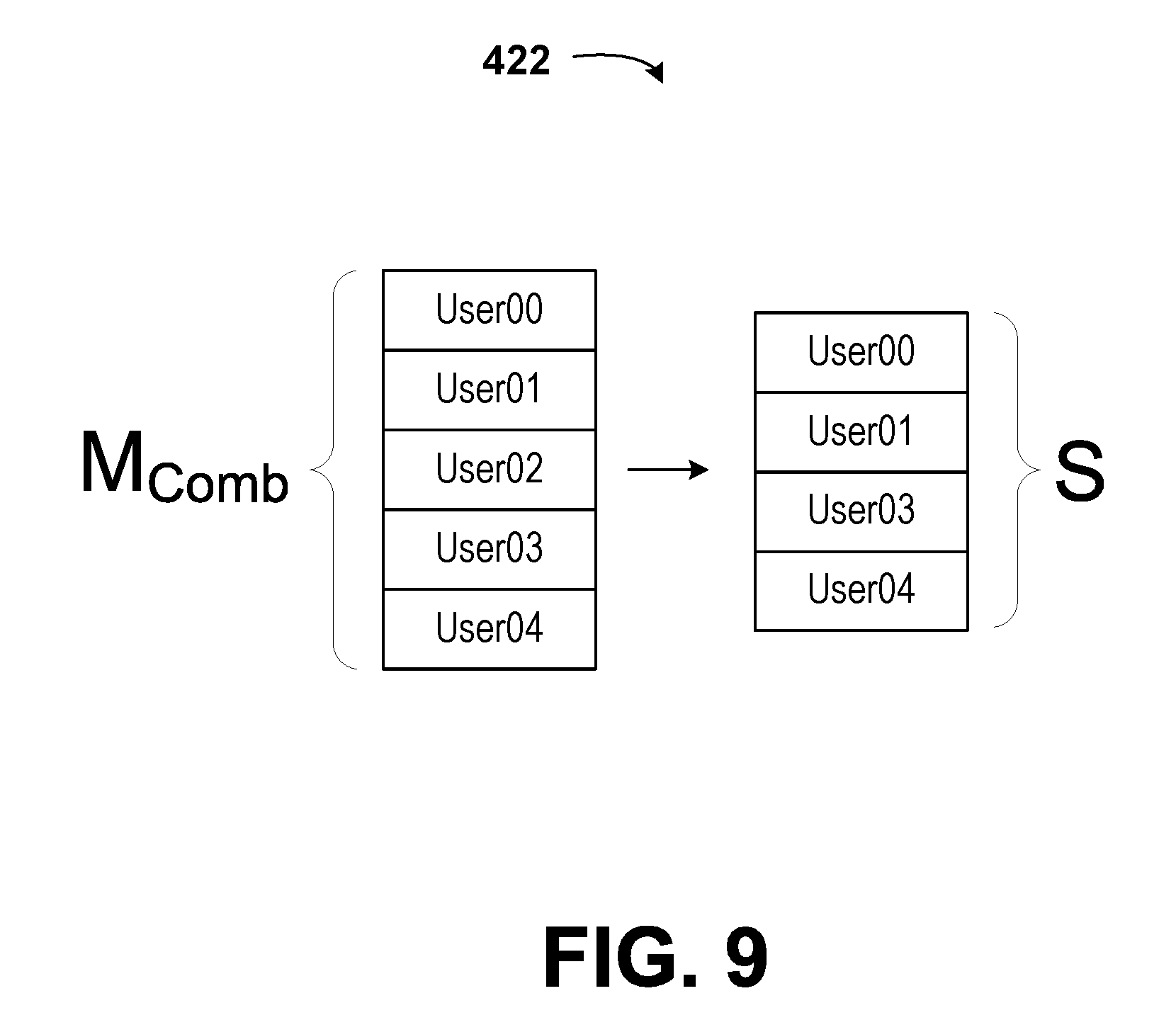

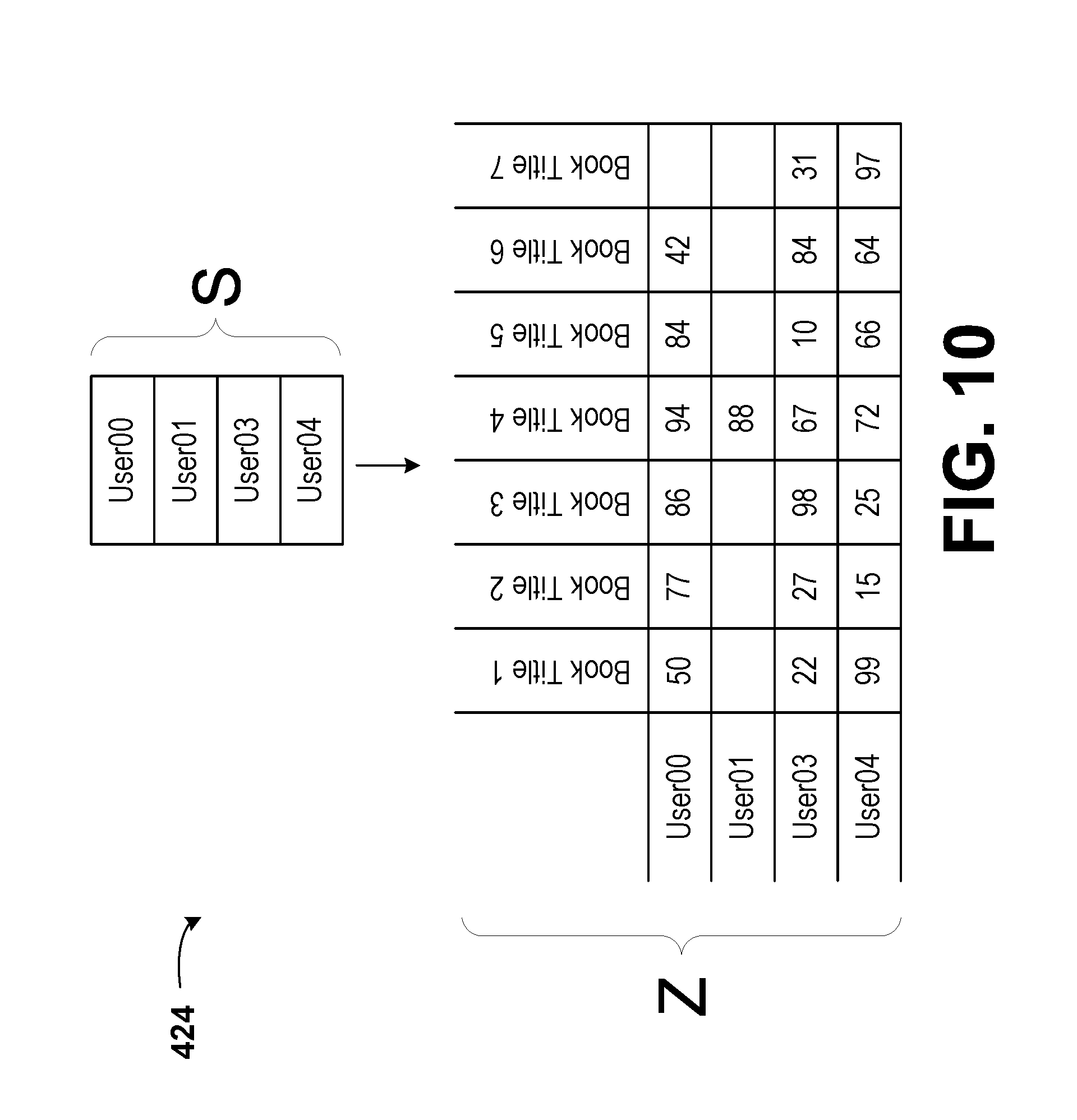

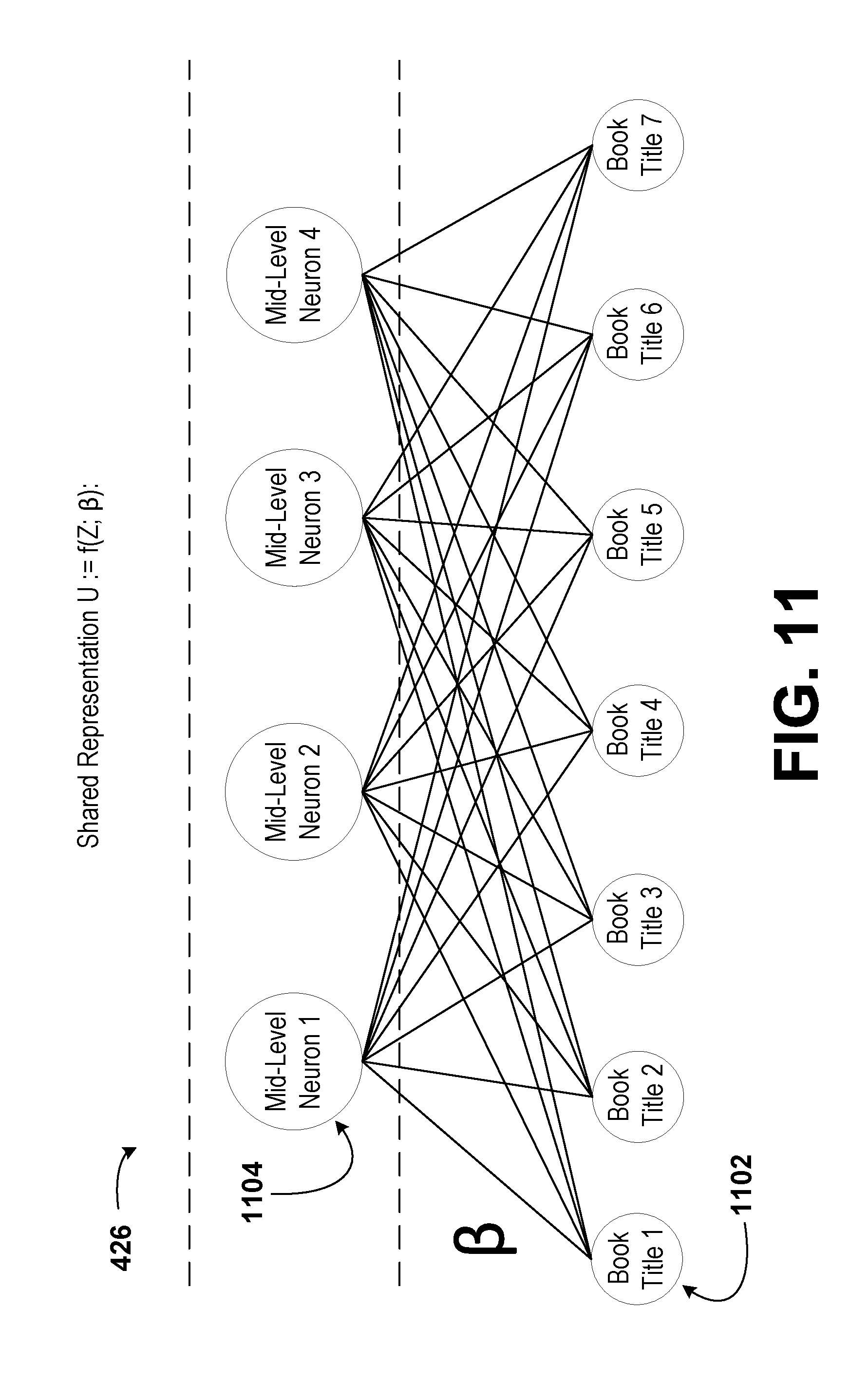

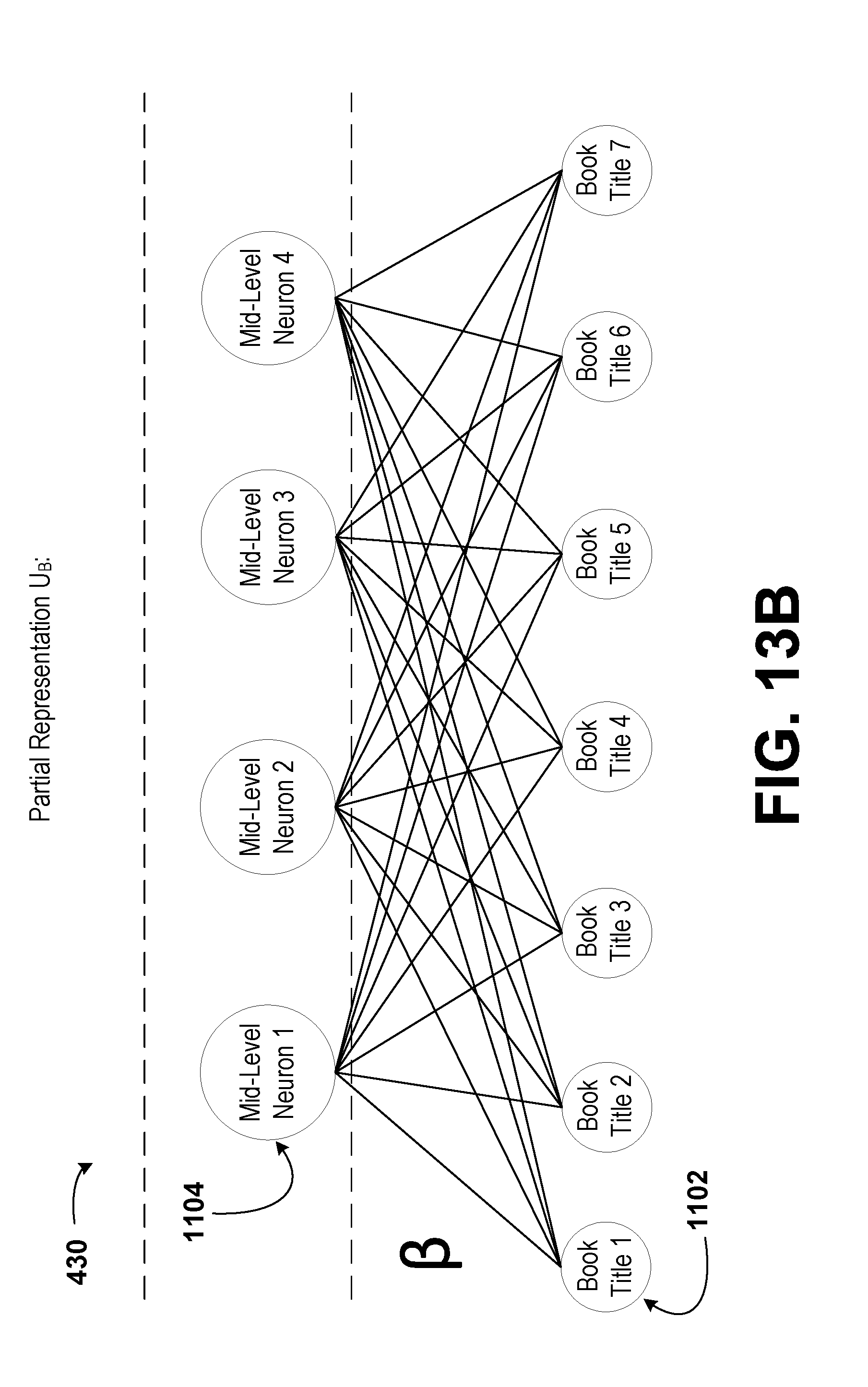

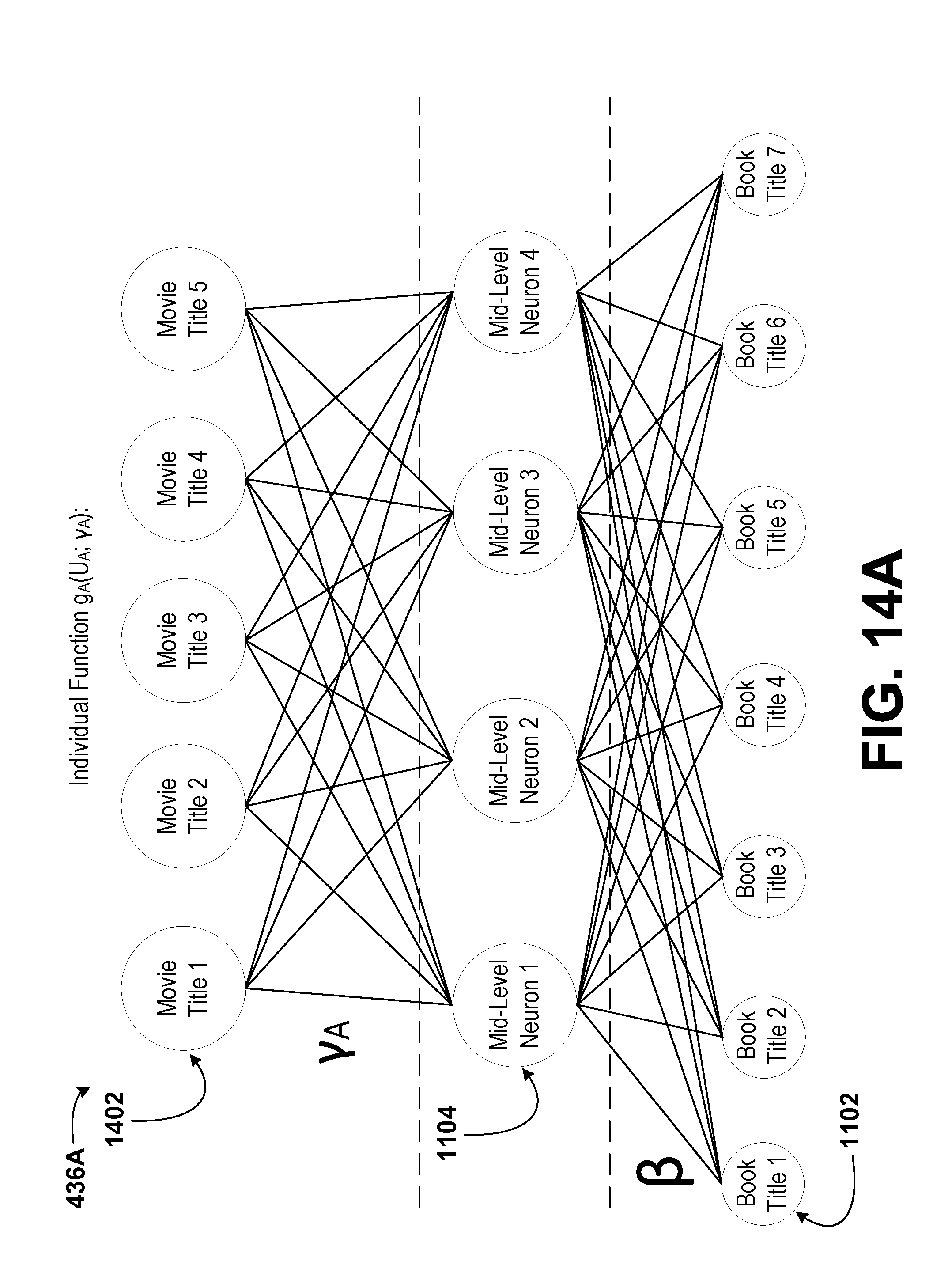

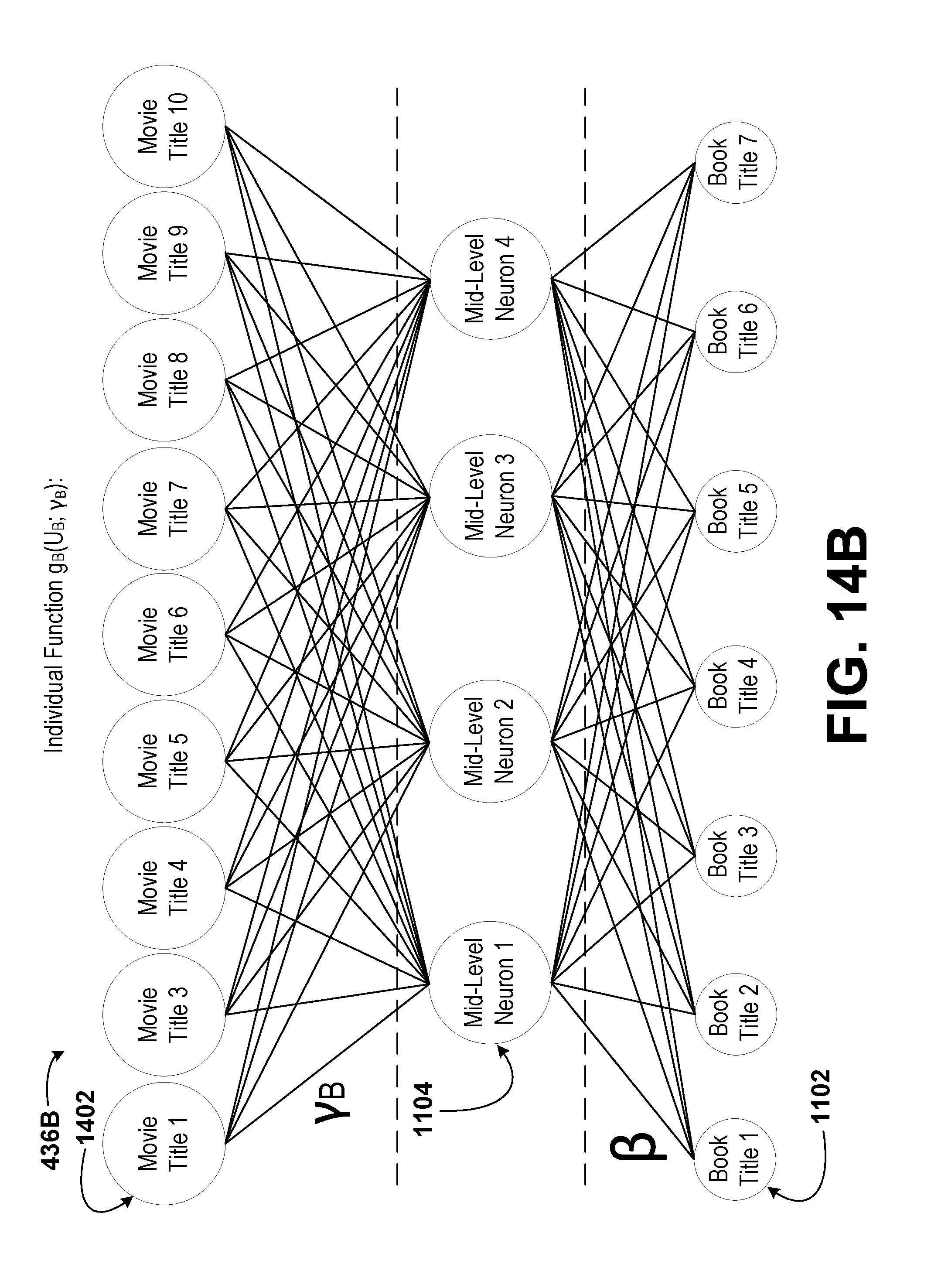

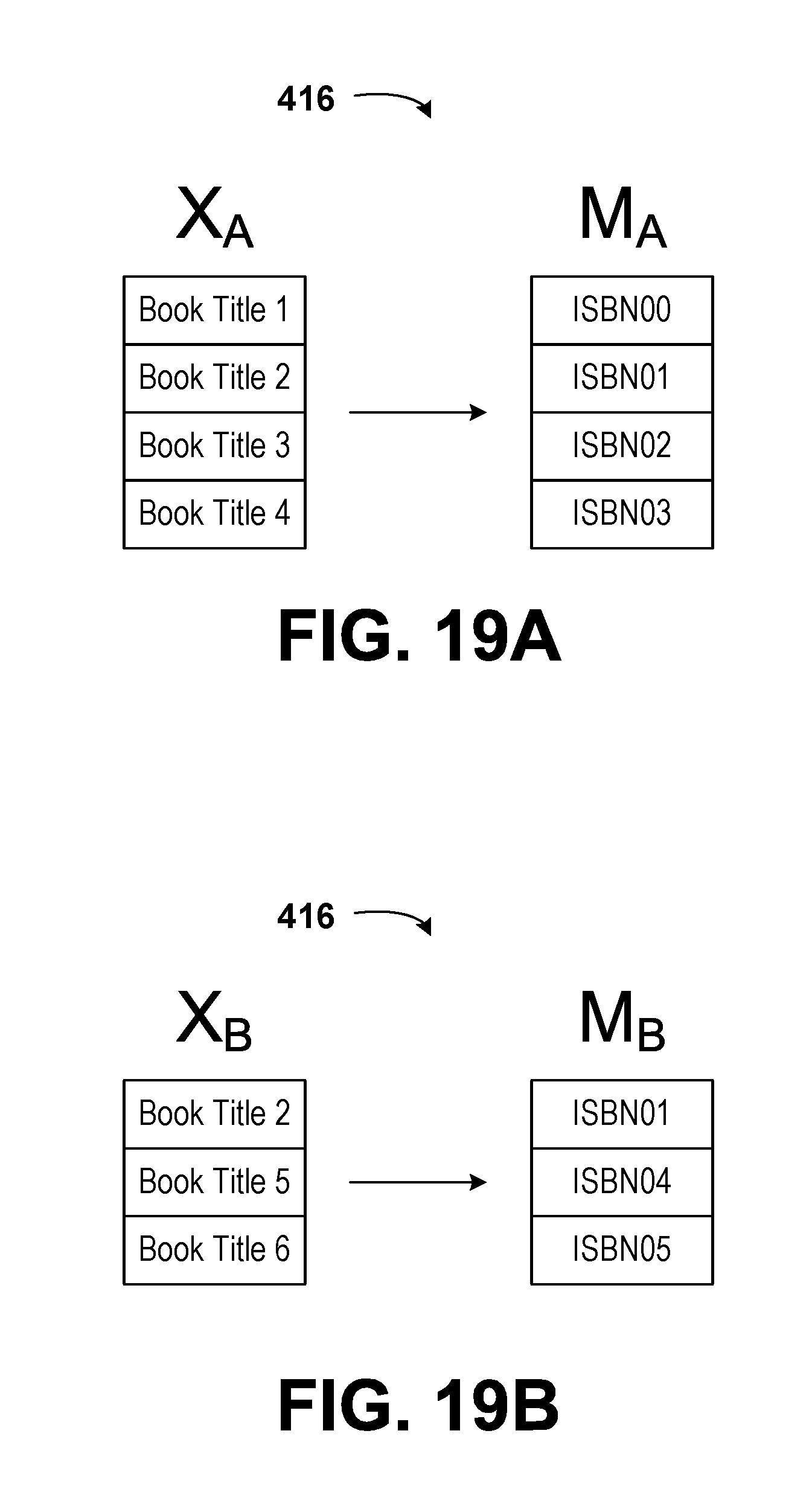

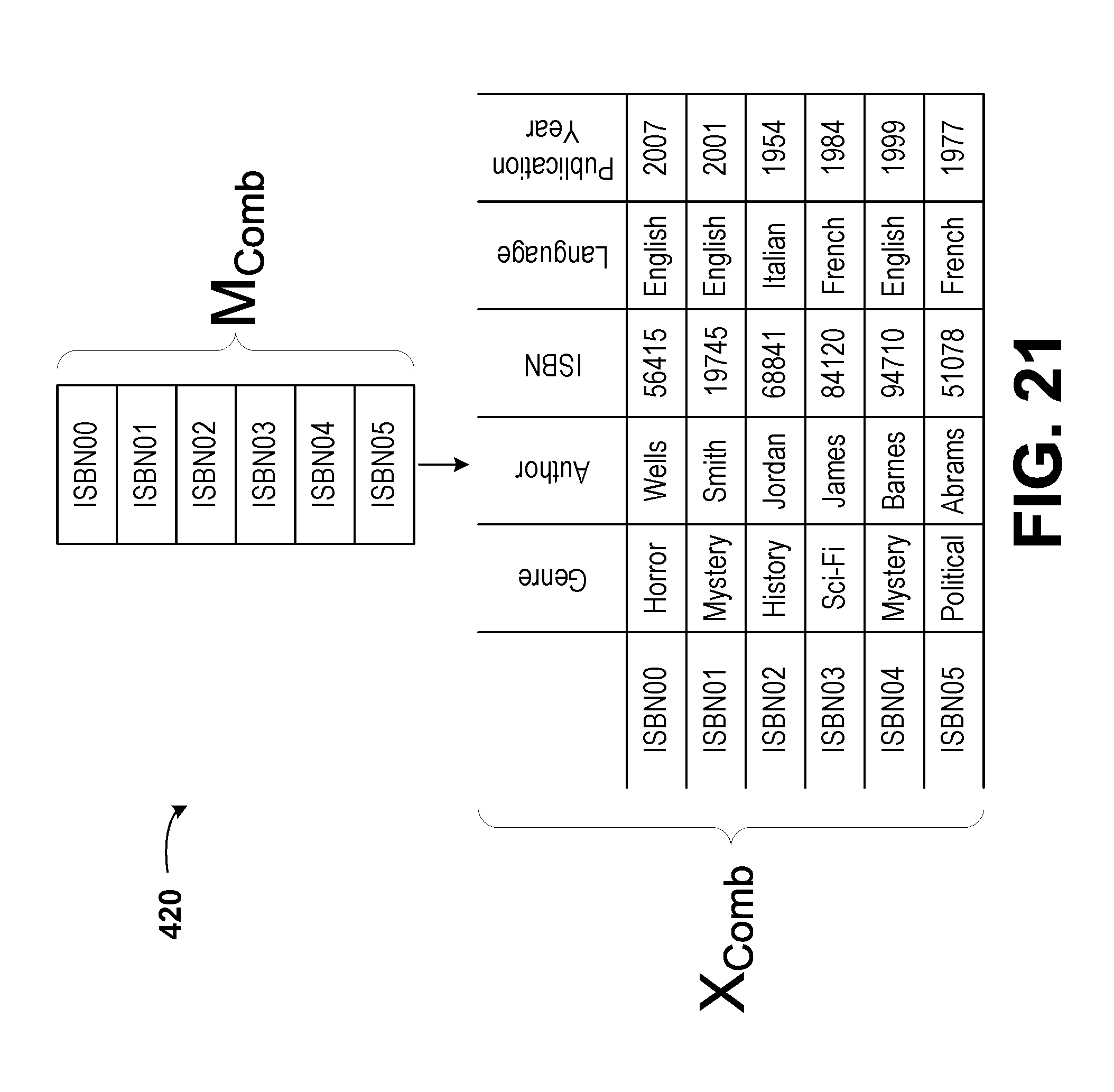

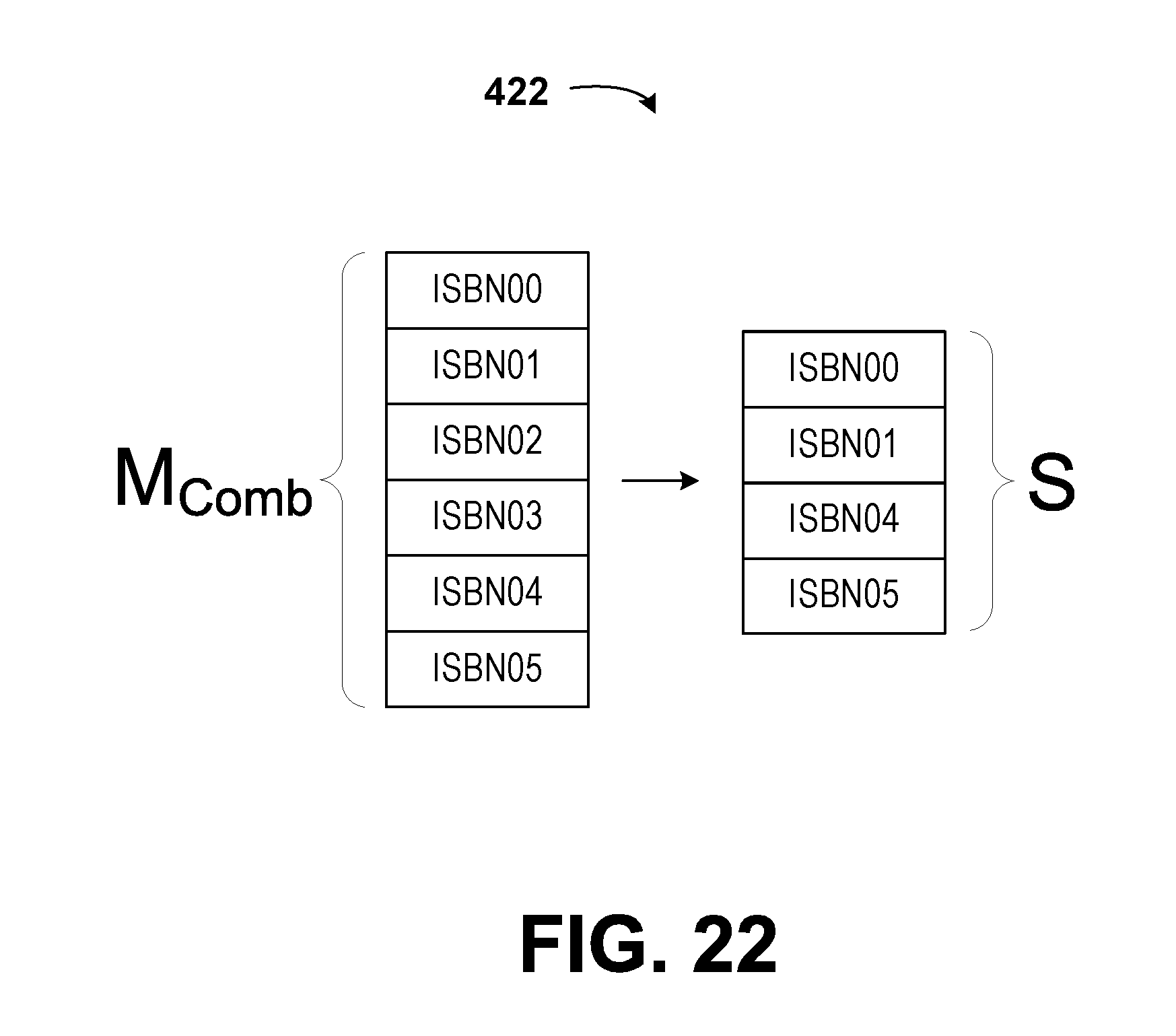

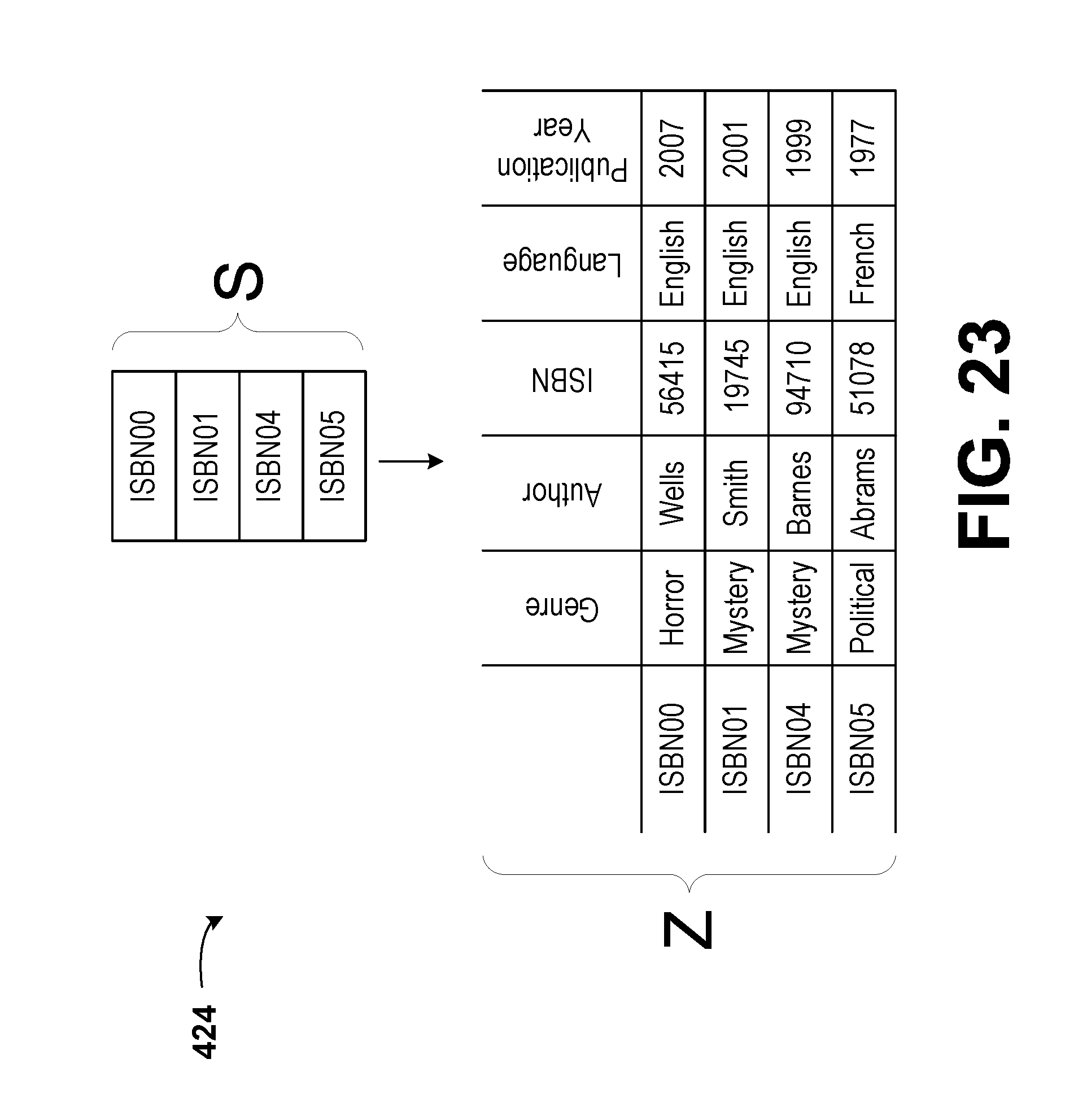

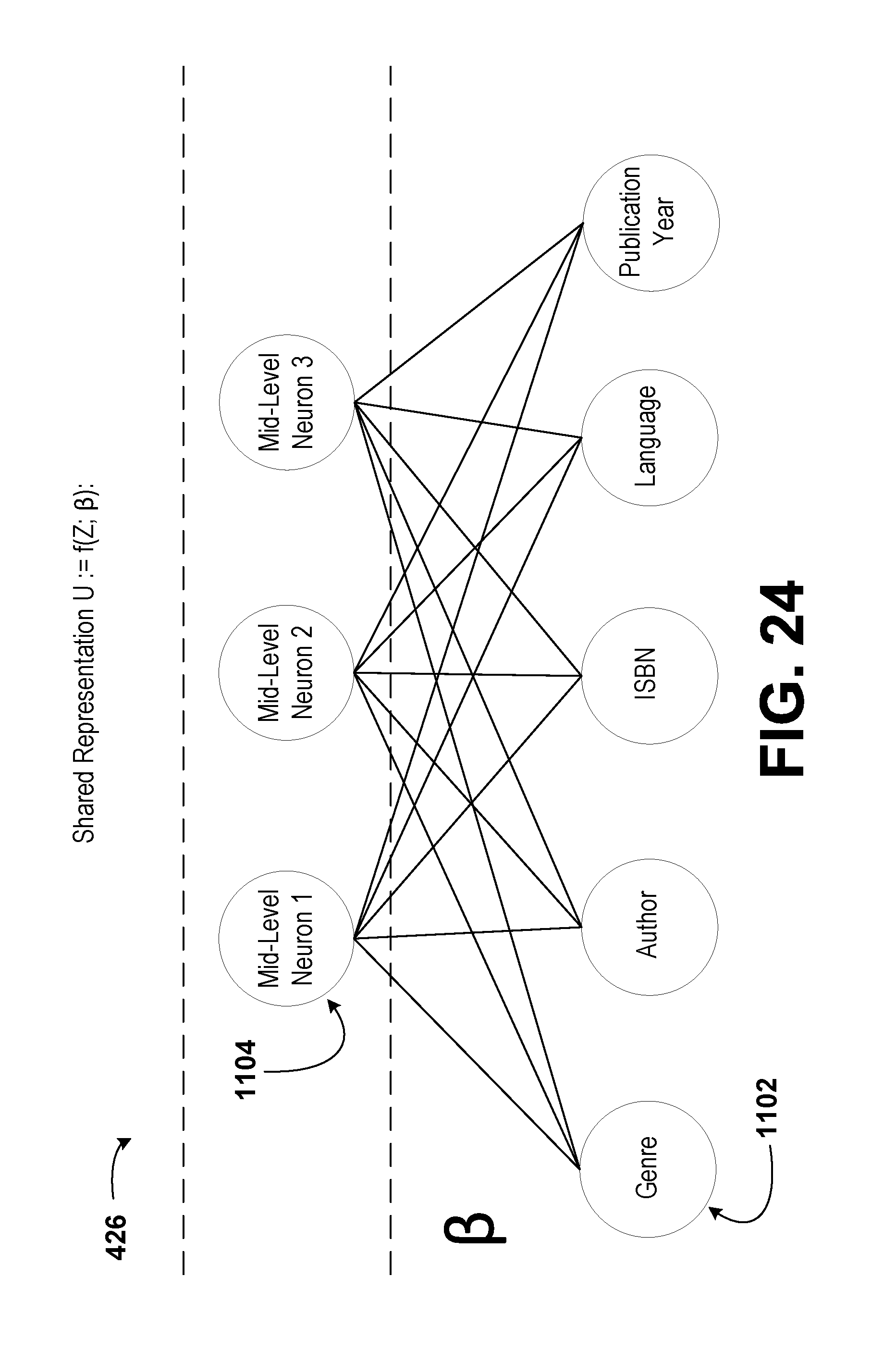

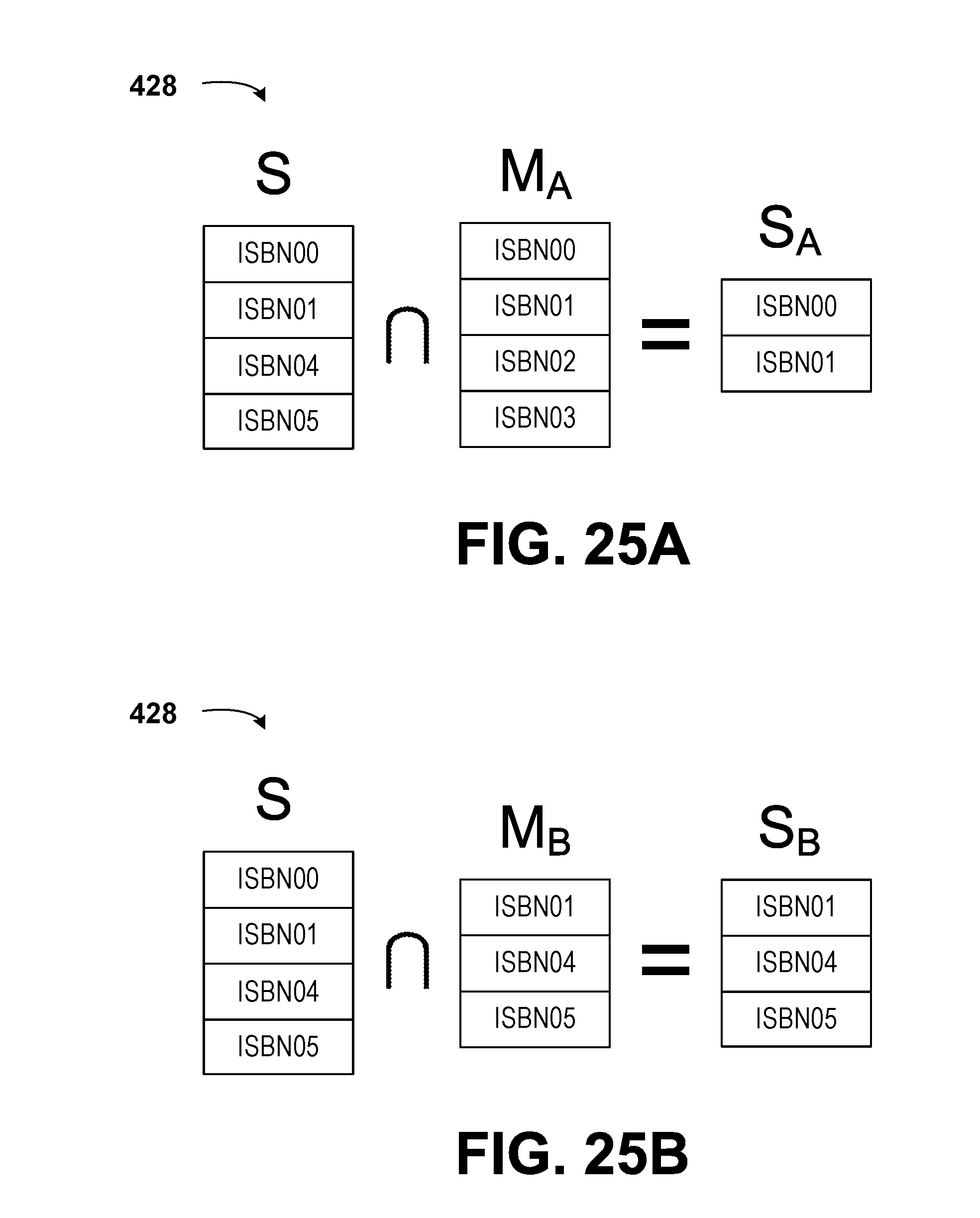

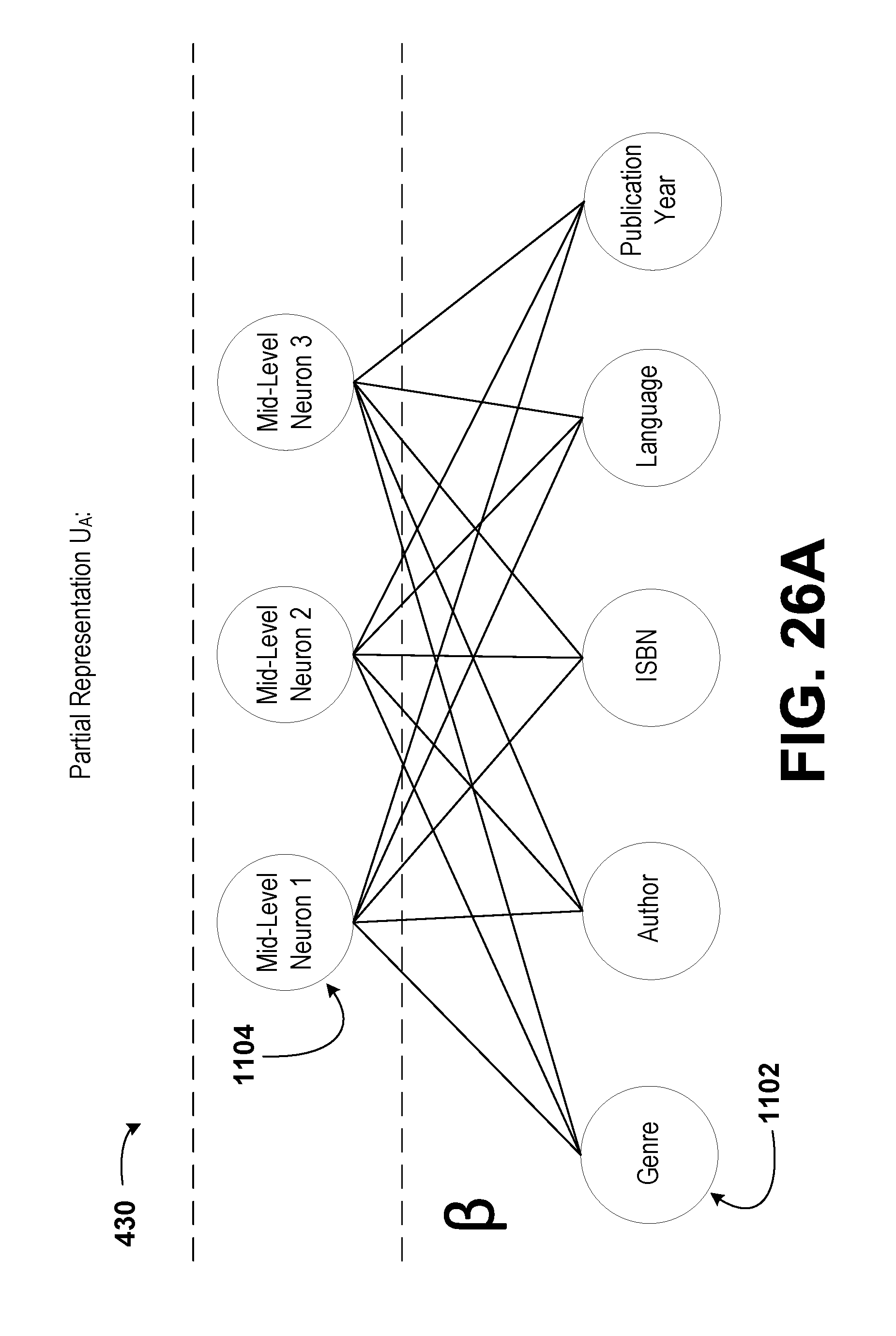

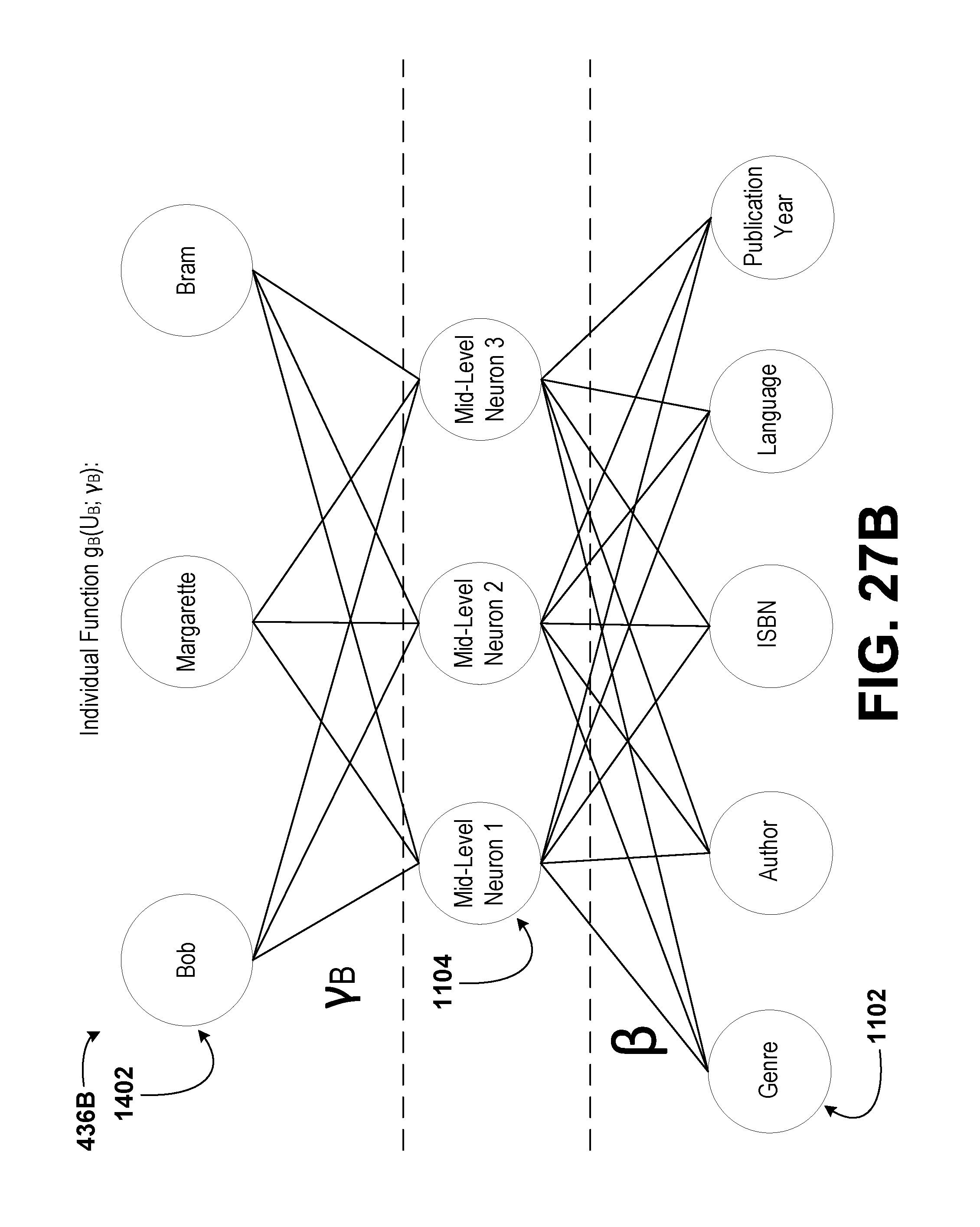

[0024] FIG. 2 is a schematic illustration of a computing device, according to example embodiments.