Web Services For Smart Entity Creation And Maintenance Using Time Series Data

Park; Youngchoon ; et al.

U.S. patent application number 16/142758 was filed with the patent office on 2019-03-28 for web services for smart entity creation and maintenance using time series data. The applicant listed for this patent is Johnson Controls Technology Company. Invention is credited to Vijaya S. Chennupati, Youngchoon Park, Erik S. Paulson, Sudhi R. Sinha, Vaidhyanathan Venkiteswaran.

| Application Number | 20190095518 16/142758 |

| Document ID | / |

| Family ID | 65807587 |

| Filed Date | 2019-03-28 |

| United States Patent Application | 20190095518 |

| Kind Code | A1 |

| Park; Youngchoon ; et al. | March 28, 2019 |

WEB SERVICES FOR SMART ENTITY CREATION AND MAINTENANCE USING TIME SERIES DATA

Abstract

One or more non-transitory computer readable media contain program instructions that, when executed, cause one or more processors to: receive first raw data from a first device, the first raw data including one or more first data points generated by the first device; generate first input timeseries according to the data points; access a database of interconnected smart entities, the smart entities including object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the smart entities; identify a first object entity representing the first device from a first device identifier in the first input timeseries; identify a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity; and store the first input timeseries in the first data entity.

| Inventors: | Park; Youngchoon; (Brookfield, WI) ; Sinha; Sudhi R.; (Milwaukee, WI) ; Venkiteswaran; Vaidhyanathan; (Brookfield, WI) ; Paulson; Erik S.; (Madison, WI) ; Chennupati; Vijaya S.; (Brookfield, WI) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 65807587 | ||||||||||

| Appl. No.: | 16/142758 | ||||||||||

| Filed: | September 26, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62564247 | Sep 27, 2017 | |||

| 62588179 | Nov 17, 2017 | |||

| 62588190 | Nov 17, 2017 | |||

| 62588114 | Nov 17, 2017 | |||

| 62611962 | Dec 29, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 9/547 20130101; H04L 41/12 20130101; G06F 16/9024 20190101; H04L 41/142 20130101; G06F 16/2228 20190101; H04L 41/024 20130101; H04L 67/10 20130101; H04L 67/02 20130101; H04W 4/38 20180201; G06F 16/2358 20190101; G06F 16/288 20190101; H04L 67/32 20130101; G06F 16/2379 20190101; H04L 69/08 20130101 |

| International Class: | G06F 17/30 20060101 G06F017/30; H04L 29/08 20060101 H04L029/08; H04L 12/24 20060101 H04L012/24 |

Claims

1. One or more non-transitory computer readable media containing program instructions that, when executed by one or more processors, cause the one or more processors to perform operations comprising: receiving first raw data from a first device of a plurality of physical devices, the first raw data including one or more first data points generated by the first device; generating first input timeseries according to the one or more data points; accessing a database of interconnected smart entities, the smart entities comprising object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities; identifying a first object entity representing the first device from a first device identifier in the first input timeseries; identifying a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity; and storing the first input timeseries in the first data entity.

2. The one or more non-transitory computer readable media of claim 1, wherein the relational objects semantically define the relationships between the object entities and the data entities.

3. The one or more non-transitory computer readable media of claim 1, wherein one or more of the object entities comprises a static attribute to identify the object entity, a dynamic attribute to store data associated with the object entity that changes over time, and a behavioral attribute that defines an expected response of the object entity in response to an input.

4. The one or more non-transitory computer readable media of claim 3, wherein the first input timeseries corresponds to the dynamic attribute of the first object entity.

5. The one or more non-transitory computer readable media of claim 3, wherein at least one of the first data points in the first input timeseries is stored in the dynamic attribute of the first object entity.

6. The one or more non-transitory computer readable media of claim 1, wherein the input timeseries includes the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

7. The one or more non-transitory computer readable media of claim 6, wherein the instructions further cause the one or more processors to: identify a second object entity representing a second device from a second relational object indicating a relationship between the first object entity and the second object entity; and identify a second data entity from a third relational object indicating a relationship between the second object entity and the second data entity, the second data entity storing second input timeseries corresponding to one or more second data points generated by the second device.

8. The one or more non-transitory computer readable media of claim 7, wherein the instructions further cause the one or more processors to: identify one or more processing workflows that defines one or more processing operations to generate derived timeseries using the first and second input timeseries; execute the one or more processing workflows to generate the derived timeseries; identify a third data entity from a fourth relational object indicating a relationship between the first object entity and the third data entity; and store the derived timeseries in the third data entity.

9. The one or more non-transitory computer readable media of claim 8, wherein the derived timeseries includes one or more virtual data points calculated according to the first and second input timeseries.

10. The one or more non-transitory computer readable media of claim 8, wherein at least one of the first or second devices is a sensor, and the instructions cause the one or more processors to: periodically receive measurements from the sensor; and update at least the derived timeseries in the third data entity each time a new measurement from the sensor is received.

11. A method for managing data relating to a plurality of physical devices connected to one or more electronic communications networks, comprising: receiving, by one or more processors, first raw data from a first device of a plurality of physical devices, the first raw data including one or more first data points generated by the first device; generating, by the one or more processors, first input timeseries according to the one or more data points; accessing, by the one or more processors, a database of interconnected smart entities, the smart entities comprising object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities; identifying, by the one or more processors, a first object entity representing the first device from a first device identifier in the first input timeseries; identifying, by the one or more processors, a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity; and storing, by the one or more processors, the first input timeseries in the first data entity.

12. The method of claim 11, wherein the relational objects semantically define the relationships between the object entities and the data entities.

13. The method of claim 11, wherein one or more of the object entities comprises a static attribute to identify the object entity, a dynamic attribute to store data associated with the object entity that changes over time, and a behavioral attribute that defines an expected response of the object entity in response to an input.

14. The method of claim 13, wherein the first input timeseries corresponds to the dynamic attribute of the first object entity.

15. The method of claim 13, wherein at least one of the first data points in the first input timeseries is stored in the dynamic attribute of the first object entity.

16. The method of claim 11, wherein the input timeseries includes the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

17. The method of claim 16 further comprising: identifying, by the one or more processors, a second object entity representing a second device from a second relational object indicating a relationship between the first object entity and the second object entity; and identifying, by the one or more processors, a second data entity from a third relational object indicating a relationship between the second object entity and the second data entity, the second data entity storing second input timeseries corresponding to one or more second data points generated by the second device.

18. The method of claim 17 further comprising: identifying, by the one or more processors, one or more processing workflows that defines one or more processing operations to generate derived timeseries using the first and second input timeseries; executing, by the one or more processors, the one or more processing workflows to generate the derived timeseries; identifying, by the one or more processors, a third data entity from a fourth relational object indicating a relationship between the first object entity and the third data entity; and storing, by the one or more processors, the derived timeseries in the third data entity.

19. An entity management cloud computing system for managing data relating to a plurality of physical devices connected to one or more electronic communications networks, comprising: one or more processors communicably coupled to a database of interconnected smart entities, the smart entities comprising object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities; and one or more computer-readable storage media communicably coupled to the one or more processors having instructions stored thereon that, when executed by the one or more processors, cause the one or more processors to: receive first raw data from a first device of the plurality of physical devices, the first raw data including one or more first data points generated by the first device; generate first input timeseries according to the one or more data points; identify a first object entity representing the first device from a first device identifier in the first input timeseries; identify a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity; and store the first input timeseries in the first data entity.

20. The system of claim 19, wherein the first input timeseries includes the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application claims the benefit of and priority to U.S. Provisional Patent Application No. 62/564,247 filed Sep. 27, 2017, U.S. Provisional Patent Application No. 62/588,179 filed Nov. 17, 2017, U.S. Provisional Patent Application No. 62/588,190 filed Nov. 17, 2017, U.S. Provisional Patent Application No. 62/588,114 filed Nov. 17, 2017, and U.S. Provisional Patent Application No. 62/611,962 filed Dec. 29, 2017. The entire disclosure of each of these patent applications is incorporated by reference herein.

BACKGROUND

[0002] One or more aspects of example embodiments of the present disclosure generally relate to creation and maintenance of smart entities using timeseries data. One or more aspects of example embodiments of the present disclosure relate to a system and method for defining relationships of timeseries data between smart entities. One or more aspects of example embodiments of the present disclosure relate to a system and method for identifying and processing timeseries data produced by related smart entities.

[0003] The Internet of Things (IoT) is a network of interconnected objects (or Things), hereinafter referred to as IoT devices, that produce data through interaction with the environment and/or are controlled over a network. An IoT platform is used by application developers to produce IoT applications for the IoT devices. Generally, IoT platforms are utilized by developers to register and manage the IoT devices, gather and analyze data produced by the IoT devices, and provide recommendations or results based on the collected data. As the number of IoT devices used in various sectors increases, the amount of data being produced and collected has been increasing exponentially. Accordingly, effective analysis of a plethora of collected data is desired.

SUMMARY

[0004] One implementation of the present disclosure is an entity management cloud computing system for managing data relating to a plurality of devices connected to one or more electronic communications networks. The system includes one or more processors and one or more computer-readable storage media. The one or more processors are communicably coupled to a database of interconnected smart entities, the smart entities including object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities. The one or more computer-readable store media are communicably coupled to the one or more processors and have instructions stored thereon. When executed by the one or more processors, the instructions cause the one or more processors to receive first raw data from a first device of the plurality of physical devices, the first raw data including one or more first data points generated by the first device, generate first input timeseries according to the one or more data points, identify a first object entity representing the first device from a first device identifier in the first input timeseries, identify a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity, and store the first input timeseries in the first data entity.

[0005] In some embodiments, the relational objects may semantically define the relationships between the object entities and the data entities.

[0006] In some embodiments, one or more of the object entities may include a static attribute to identify the object entity, a dynamic attribute to store data associated with the object entity that changes over time, and a behavioral attribute that defines an expected response of the object entity in response to an input.

[0007] In some embodiments, the first input timeseries may correspond to the dynamic attribute of the first object entity.

[0008] In some embodiments, at least one of the first data points in the first input timeseries may be stored in the dynamic attribute of the first object entity.

[0009] In some embodiments, the input timeseries may include the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

[0010] In some embodiments, the instructions may further cause the one or more processors to identify a second object entity representing a second device from a second relational object indicating a relationship between the first object entity and the second object entity, and identify a second data entity from a third relational object indicating a relationship between the second object entity and the second data entity. The second data entity may store second input timeseries corresponding to one or more second data points generated by the second device.

[0011] In some embodiments, the instructions may further cause the one or more processors to identify one or more processing workflows that defines one or more processing operations to generate derived timeseries using the first and second input timeseries, execute the one or more processing workflows to generate the derived timeseries, identify a third data entity from a fourth relational object indicating a relationship between the first object entity and the third data entity, and store the derived timeseries in the third data entity.

[0012] In some embodiments, the derived timeseries may include one or more virtual data points calculated according to the first and second input timeseries.

[0013] In some embodiments, at least one of the first or second devices may be a sensor.

[0014] In some embodiments, the instructions may further cause the one or more processors to periodically receive measurements from the sensor, and update at least the derived timeseries in the third data entity each time a new measurement from the sensor is received.

[0015] In some embodiments, the instructions may further cause the one or more processors to create a shadow entity to store historical values of the first raw data.

[0016] In some embodiments, the instructions may further cause the one or more processors to calculate a virtual data point from the historical values, and create a fourth data entity to store the virtual data point.

[0017] Another implementation of the present disclosure is a method for managing data relating to a plurality of physical devices connected to one or more electronic communications networks. The method includes receiving first raw data from a first device of a plurality of physical devices. The first raw data includes one or more first data points generated by the first device. The method includes generating first input timeseries according to the one or more data points, and accessing a database of interconnected smart entities. The smart entities include object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities. The method includes identifying a first object entity representing the first device from a first device identifier in the first input timeseries, identifying a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity, and storing the first input timeseries in the first data entity.

[0018] In some embodiments, the relational objects may semantically define the relationships between the object entities and the data entities.

[0019] In some embodiments, one or more of the object entities may include a static attribute to identify the object entity, a dynamic attribute to store data associated with the object entity that changes over time, and a behavioral attribute that defines an expected response of the object entity in response to an input.

[0020] In some embodiments, the first input timeseries may correspond to the dynamic attribute of the first object entity.

[0021] In some embodiments, at least one of the first data points in the first input timeseries may be stored in the dynamic attribute of the first object entity.

[0022] In some embodiments, the input timeseries may include the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

[0023] In some embodiments, the method may further include identifying a second object entity representing a second device from a second relational object indicating a relationship between the first object entity and the second object entity, and identifying a second data entity from a third relational object indicating a relationship between the second object entity and the second data entity. The second data entity may store second input timeseries corresponding to one or more second data points generated by the second device.

[0024] In some embodiments, the method may further include identifying one or more processing workflows that defines one or more processing operations to generate derived timeseries using the first and second input timeseries, executing the one or more processing workflows to generate the derived timeseries, identifying a third data entity from a fourth relational object indicating a relationship between the first object entity and the third data entity, and storing the derived timeseries in the third data entity.

[0025] In some embodiments, the derived timeseries may include one or more virtual data points calculated according to the first and second input timeseries.

[0026] In some embodiments, at least one of the first or second devices may be a sensor.

[0027] In some embodiments, the method may further include periodically receiving measurements from the sensor, and updating at least the derived timeseries in the third data entity each time a new measurement from the sensor is received.

[0028] In some embodiments, the method may further include creating a shadow entity to store historical values of the first raw data.

[0029] In some embodiments, the method may further include calculating a virtual data point from the historical values, and creating a fourth data entity to store the virtual data point.

[0030] Another implementation of the present disclosure is one or more non-transitory computer readable media containing program instructions. When executed by one or more processors, the instructions cause the one or more processors to perform operations including receiving first raw data from a first device of a plurality of physical devices. The first raw data includes one or more first data points generated by the first device. The method includes generating first input timeseries according to the one or more data points, and accessing a database of interconnected smart entities. The smart entities include object entities representing each of the plurality of physical devices and data entities representing stored data, the smart entities being interconnected by relational objects indicating relationships between the object entities and the data entities. The method includes identifying a first object entity representing the first device from a first device identifier in the first input timeseries, identifying a first data entity from a first relational object indicating a relationship between the first object entity and the first data entity, and storing the first input timeseries in the first data entity.

[0031] In some embodiments, the relational objects may semantically define the relationships between the object entities and the data entities.

[0032] In some embodiments, one or more of the object entities may include a static attribute to identify the object entity, a dynamic attribute to store data associated with the object entity that changes over time, and a behavioral attribute that defines an expected response of the object entity in response to an input.

[0033] In some embodiments, the first input timeseries may correspond to the dynamic attribute of the first object entity.

[0034] In some embodiments, at least one of the first data points in the first input timeseries may be stored in the dynamic attribute of the first object entity.

[0035] In some embodiments, the input timeseries may include the first device identifier, a timestamp indicating a generation time of the one or more first data points, and a value of the one or more first data points.

[0036] In some embodiments, the instructions may further cause the one or more processors to identify a second object entity representing a second device from a second relational object indicating a relationship between the first object entity and the second object entity, and identify a second data entity from a third relational object indicating a relationship between the second object entity and the second data entity. The second data entity may store second input timeseries corresponding to one or more second data points generated by the second device.

[0037] In some embodiments, the program instructions may further cause the one or more processors to identify one or more processing workflows that defines one or more processing operations to generate derived timeseries using the first and second input timeseries, execute the one or more processing workflows to generate the derived timeseries, identify a third data entity from a fourth relational object indicating a relationship between the first object entity and the third data entity, and store the derived timeseries in the third data entity.

[0038] In some embodiments, the derived timeseries may include one or more virtual data points calculated according to the first and second input timeseries.

[0039] In some embodiments, at least one of the first or second devices may be a sensor.

[0040] In some embodiments, the instructions may cause the one or more processors to periodically receive measurements from the sensor, and update at least the derived timeseries in the third data entity each time a new measurement from the sensor is received.

[0041] In some embodiments, the instructions may further cause the one or more processors to create a shadow entity to store historical values of the first raw data.

[0042] In some embodiments, the instructions may further cause the one or more processors to calculate a virtual data point from the historical values, and create a fourth data entity to store the virtual data point.

BRIEF DESCRIPTION OF THE DRAWINGS

[0043] The above and other aspects and features of the present disclosure will become more apparent to those skilled in the art from the following detailed description of the example embodiments with reference to the accompanying drawings, in which:

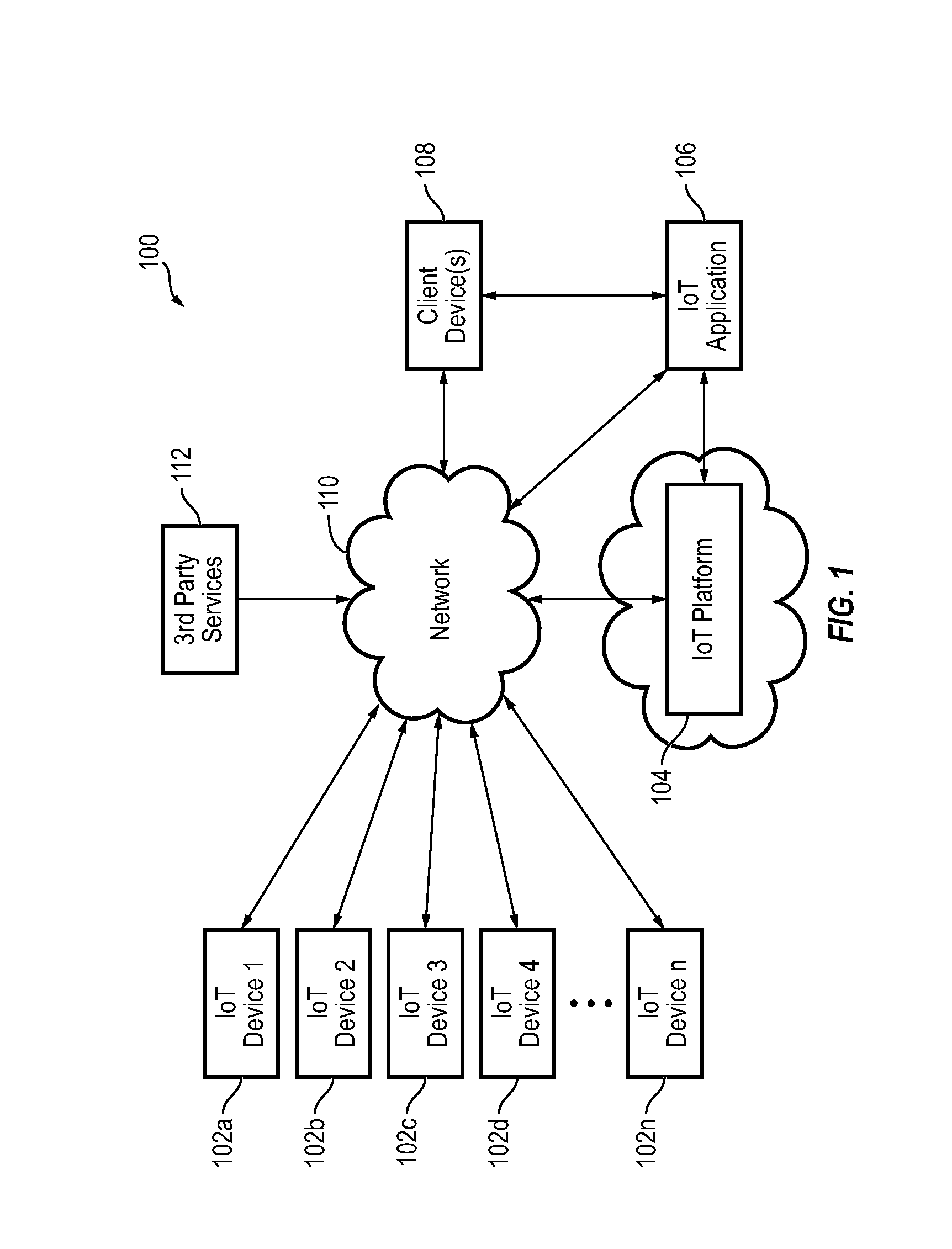

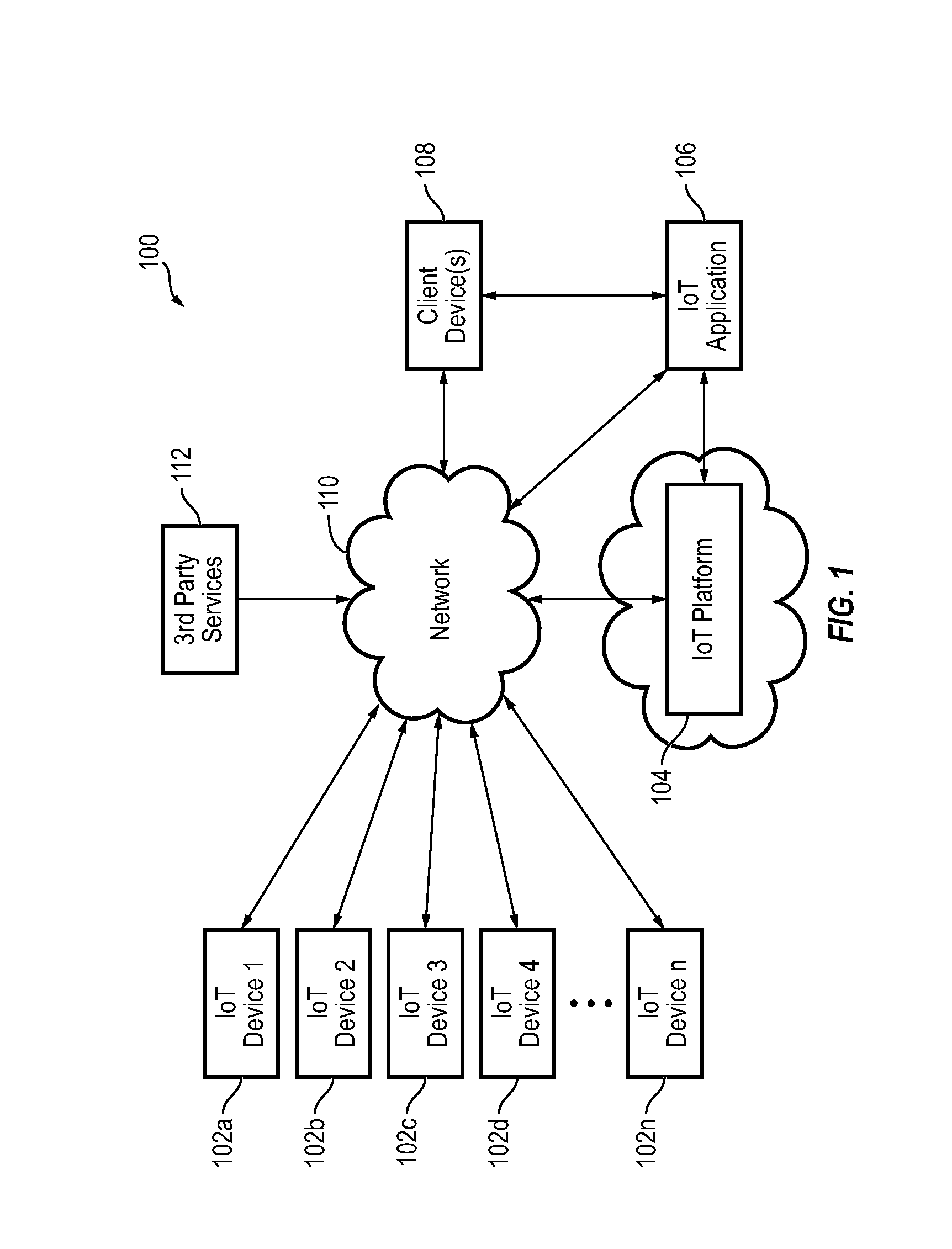

[0044] FIG. 1 is a block diagram of an IoT environment according to some embodiments;

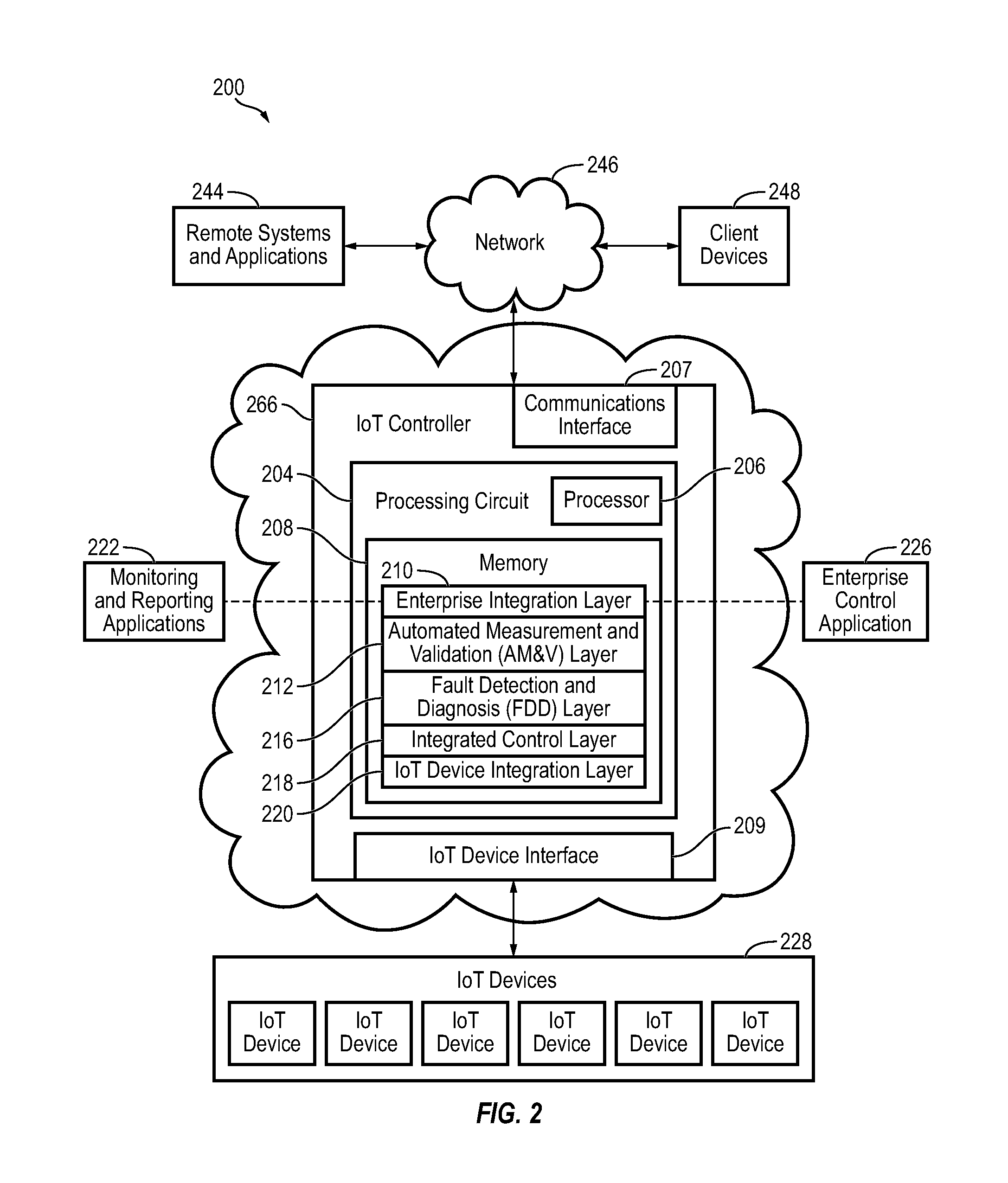

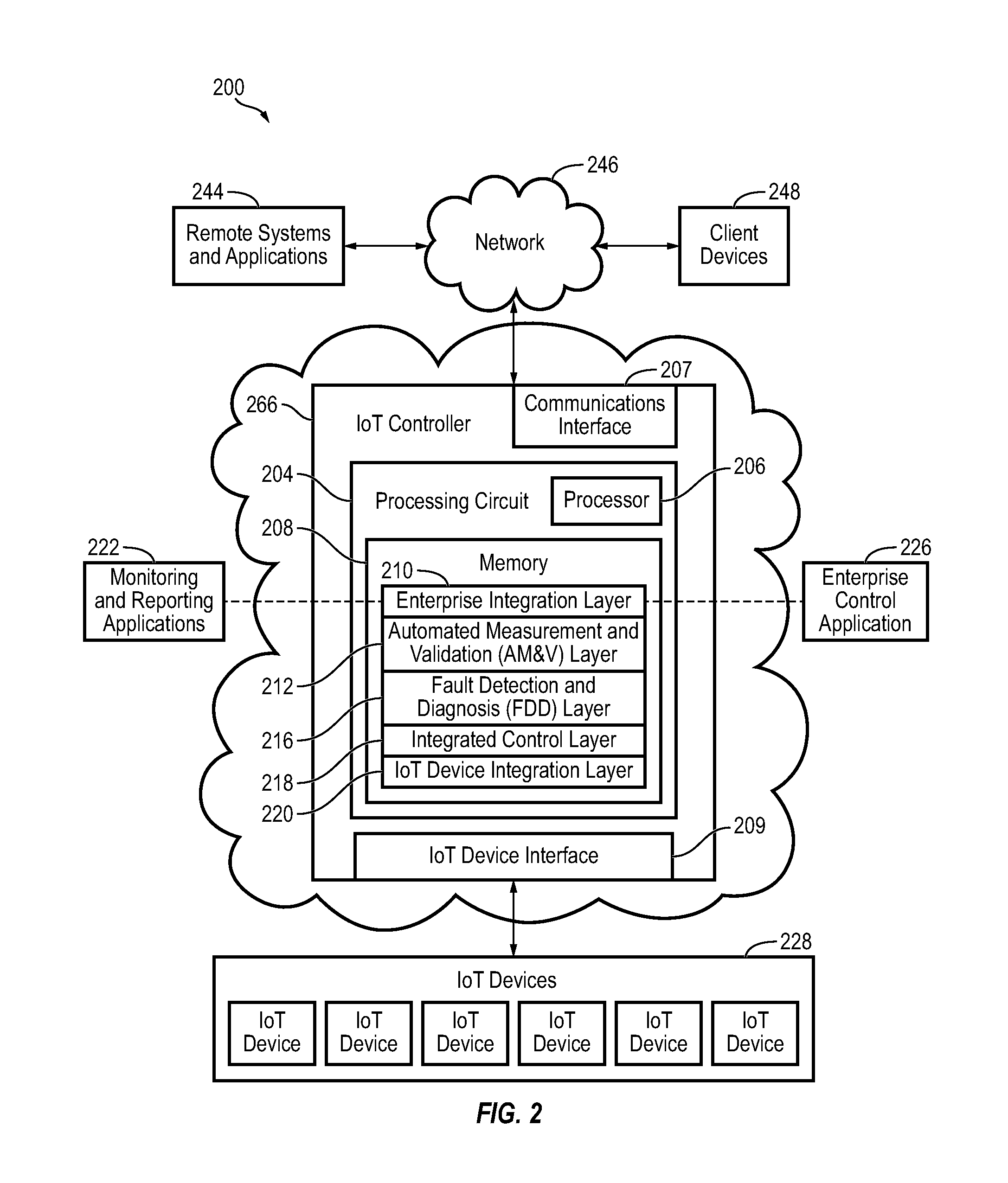

[0045] FIG. 2 is a block diagram of an IoT management system, according to some embodiments;

[0046] FIG. 3 is a block diagram of another IoT management system, according to some embodiments;

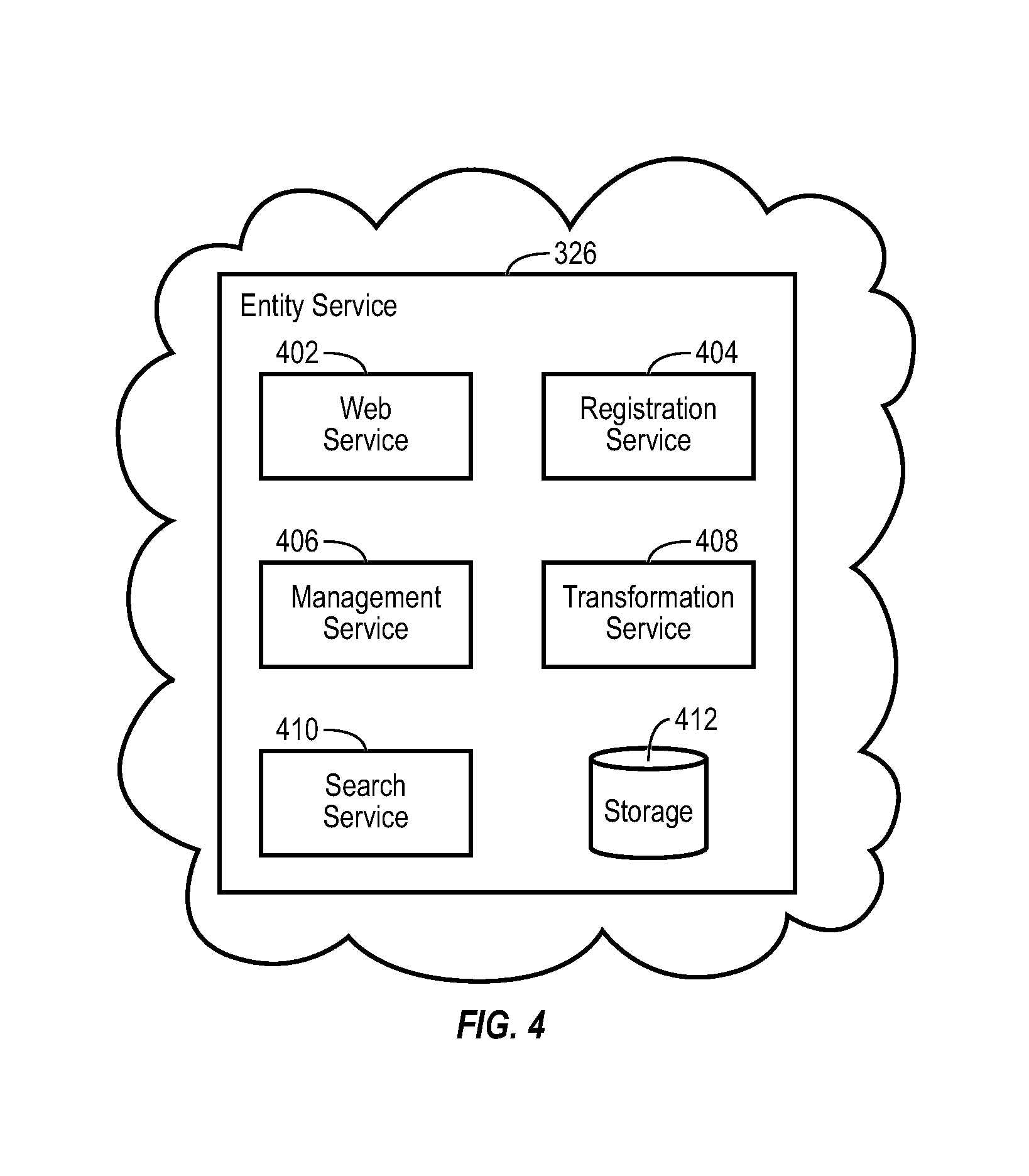

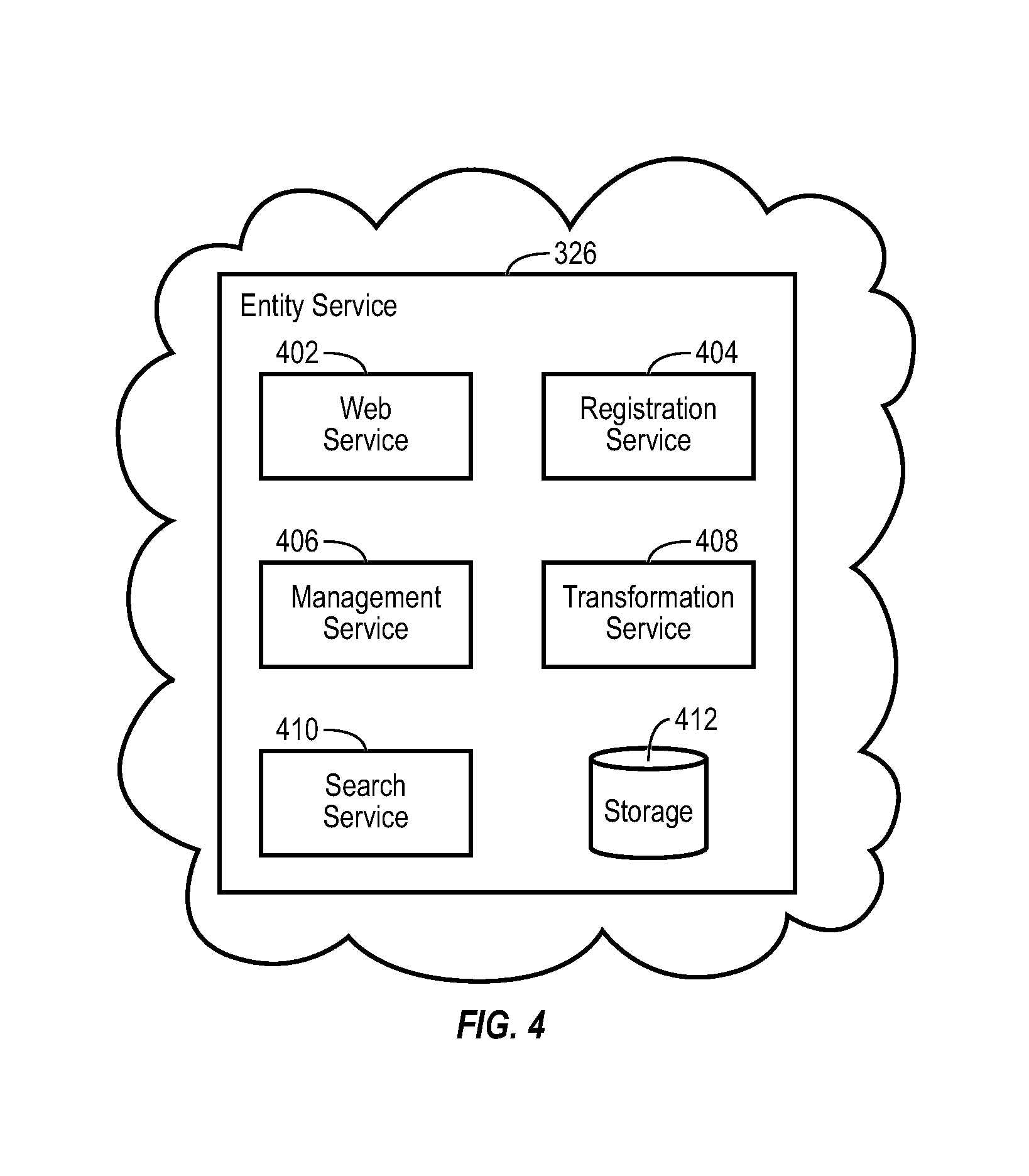

[0047] FIG. 4 is a block diagram illustrating a Cloud entity service of FIG. 3 in greater detail, according to some embodiments;

[0048] FIG. 5 in an example entity graph of entity data, according to some embodiments;

[0049] FIG. 6 is a block diagram illustrating timeseries service of FIG. 3 in greater detail, according to some embodiments;

[0050] FIG. 7 is a flow diagram of a process or method for updating/creating an attribute of a related entity based on data received from a device, according to some embodiments;

[0051] FIG. 8 is an example entity graph of entity data, according to some embodiments;

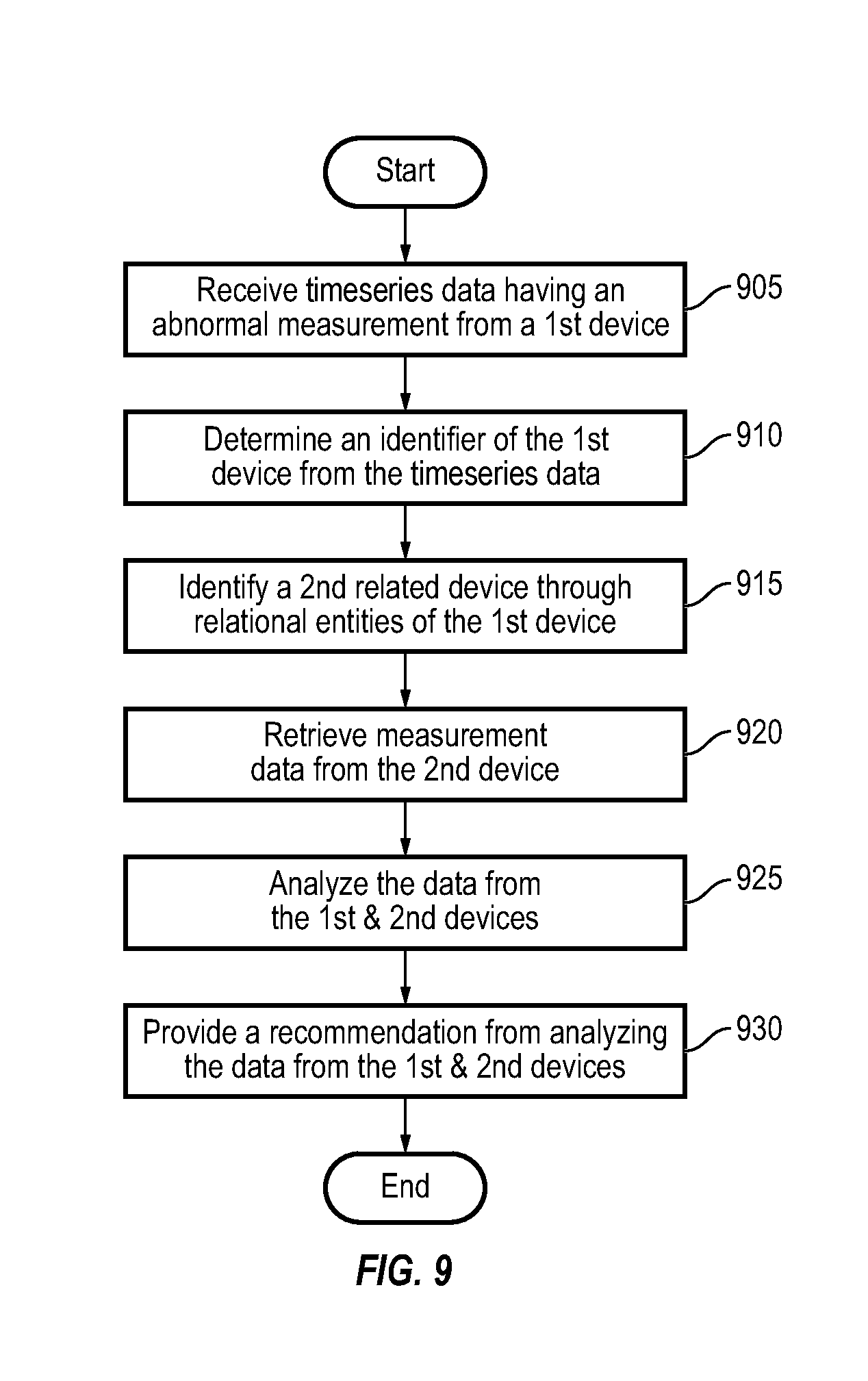

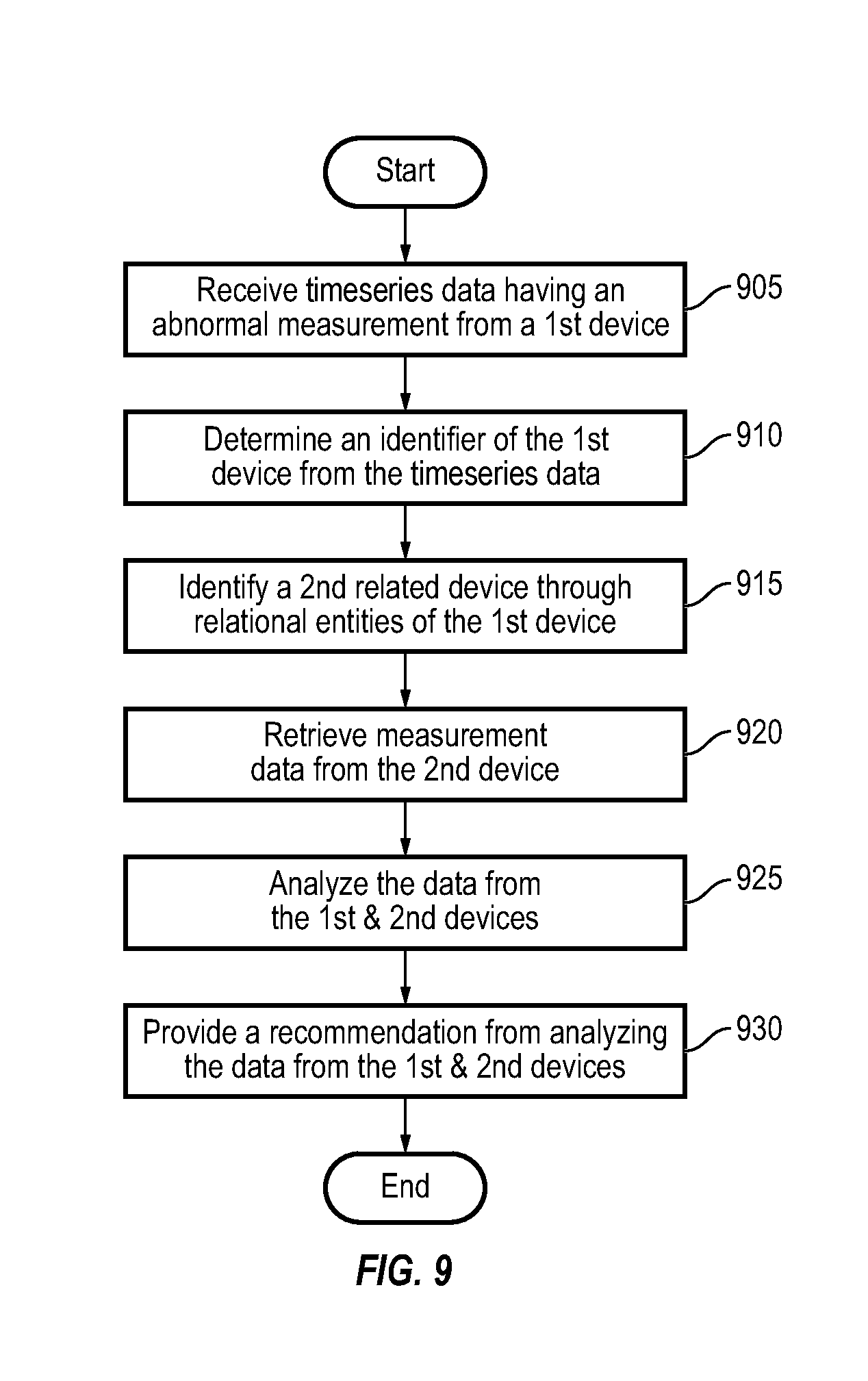

[0052] FIG. 9 is a flow diagram of a process or method for analyzing data from a second related device based on data from a first device, according to some embodiments; and

[0053] FIG. 10 is a flow diagram of a process or method for generating derived timeseries from data generated by a first device and a second device, according to some embodiments.

DETAILED DESCRIPTION

[0054] Hereinafter, example embodiments will be described in more detail with reference to the accompanying drawings.

[0055] FIG. 1 is a block diagram of an IoT environment according to some embodiments. The environment 100 is, in general, a network of connected devices configured to control, monitor, and/or manage equipment, sensors, and other devices in the IoT environment 100. The environment 100 may include, for example, a plurality of IoT devices 102a-102n, a Cloud IoT platform 104, at least one IoT application 106, a client device 108, and any other equipment, applications, and devices that are capable of managing and/or performing various functions, or any combination thereof. Some examples of an IoT environment may include smart homes, smart buildings, smart cities, smart cars, smart medical implants, smart wearables, and the like.

[0056] The Cloud IoT platform 104 can be configured to collect data from a variety of different data sources. For example, the Cloud IoT platform 104 can collect data from the IoT devices 102a-102n, the IoT application(s) 106, and the client device(s) 108. For example, IoT devices 102a-102n may include physical devices, sensors, actuators, electronics, vehicles, home appliances, wearables, smart speaker, mobile phones, mobile devices, medical devices and implants, and/or other Things that have network connectivity to enable the IoT devices 102 to communicate with the Cloud IoT platform 104 and/or be controlled over a network (e.g., a WAN, the Internet, a cellular network, and/or the like) 110. Further, the Cloud IoT platform 104 can be configured to collect data from a variety of external systems or services (e.g., 3rd party services) 112. For example, some of the data collected from external systems or services 112 may include weather data from a weather service, news data from a news service, documents and other document-related data from a document service, media (e.g., video, images, audio, social media, etc.) from a media service, and/or the like. While the devices described herein are generally referred to as IoT devices, it should be understood that, in various embodiments, the devices references in the present disclosure could be any type of devices capable of communicating data over an electronic network.

[0057] In some embodiments, IoT devices 102a-102n include sensors or sensor systems. For example, IoT devices 102a-102n may include acoustic sensors, sound sensors, vibration sensors, automotive or transportation sensors, chemical sensors, electric current sensors, electric voltage sensors, magnetic sensors, radio sensors, environment sensors, weather sensors, moisture sensors, humidity sensors, flow sensors, fluid velocity sensors, ionizing radiation sensors, subatomic particle sensors, navigation instruments, position sensors, angle sensors, displacement sensors, distance sensors, speed sensors, acceleration sensors, optical sensors, light sensors, imaging devices, photon sensors, pressure sensors, force sensors, density sensors, level sensors, thermal sensors, heat sensors, temperature sensors, proximity sensors, presence sensors, and/or any other type of sensors or sensing systems.

[0058] Examples of acoustic, sound, or vibration sensors include geophones, hydrophones, lace sensors, guitar pickups, microphones, and seismometers. Examples of automotive or transportation sensors include air flow meters, air-fuel ratio meters, AFR sensors, blind spot monitors, crankshaft position sensors, defect detectors, engine coolant temperature sensors, Hall effect sensors, knock sensors, map sensors, mass flow sensors, oxygen sensors, parking sensors, radar guns, speedometers, speed sensors, throttle position sensors, tire-pressure monitoring sensors, torque sensors, transmission fluid temperature sensors, turbine speed sensors, variable reluctance sensors, vehicle speed sensors, water sensors, and wheel speed sensors.

[0059] Examples of chemical sensors include breathalyzers, carbon dioxide sensors, carbon monoxide detectors, catalytic bead sensors, chemical field-effect transistors, chemiresistors, electrochemical gas sensors, electronic noses, electrolyte-insulator-semiconductor sensors, fluorescent chloride sensors, holographic sensors, hydrocarbon dew point analyzers, hydrogen sensors, hydrogen sulfide sensors, infrared point sensors, ion-selective electrodes, nondispersive infrared sensors, microwave chemistry sensors, nitrogen oxide sensors, olfactometers, optodes, oxygen sensors, ozone monitors, pellistors, pH glass electrodes, potentiometric sensors, redox electrodes, smoke detectors, and zinc oxide nanorod sensors.

[0060] Examples of electromagnetic sensors include current sensors, Daly detectors, electroscopes, electron multipliers, Faraday cups, galvanometers, Hall effect sensors, Hall probes, magnetic anomaly detectors, magnetometers, magnetoresistances, mems magnetic field sensors, metal detectors, planar hall sensors, radio direction finders, and voltage detectors.

[0061] Examples of environmental sensors include actinometers, air pollution sensors, bedwetting alarms, ceilometers, dew warnings, electrochemical gas sensors, fish counters, frequency domain sensors, gas detectors, hook gauge evaporimeters, humistors, hygrometers, leaf sensors, lysimeters, pyranometers, pyrgeometers, psychrometers, rain gauges, rain sensors, seismometers, SNOTEL sensors, snow gauges, soil moisture sensors, stream gauges, and tide gauges. Examples of flow and fluid velocity sensors include air flow meters, anemometers, flow sensors, gas meter, mass flow sensors, and water meters.

[0062] Examples of radiation and particle sensors include cloud chambers, Geiger counters, Geiger-Muller tubes, ionisation chambers, neutron detections, proportional counters, scintillation counters, semiconductor detectors, and thermoluminescent dosimeters. Wexamples of navigation instruments include air speed indicators, altimeters, attitude indicators, depth gauges, fluxgate compasses, gyroscopes, inertial navigation systems, inertial reference nits, magnetic compasses, MHD sensors, ring laser gyroscopes, turn coordinators, tialinx sensors, variometers, vibrating structure gyroscopes, and yaw rate sensors.

[0063] Examples of position, angle, displacement, distance, speed, and acceleration sensors include auxanometers, capacitive displacement sensors, capacitive sensing devices, flex sensors, free fall sensors, gravimeters, gyroscopic sensors, impact sensors, inclinometers, integrated circuit piezoelectric sensors, laser rangefinders, laser surface velocimeters, LIDAR sensors, linear encoders, linear variable differential transformers (LVDT), liquid capacitive inclinometers odometers, photoelectric sensors, piezoelectric accelerometers, position sensors, position sensitive devices, angular rate sensors, rotary encoders, rotary variable differential transformers, selsyns, shock detectors, shock data loggers, tilt sensors, tachometers, ultrasonic thickness gauges, variable reluctance sensors, and velocity receivers.

[0064] Examples of optical, light, imaging, and photon sensors include charge-coupled devices, CMOS sensors, colorimeters, contact image sensors, electro-optical sensors, flame detectors, infra-red sensors, kinetic inductance detectors, led as light sensors, light-addressable potentiometric sensors, Nichols radiometers, fiber optic sensors, optical position sensors, thermopile laser sensors, photodetectors, photodiodes, photomultiplier tubes, phototransistors, photoelectric sensors, photoionization detectors, photomultipliers, photoresistors, photoswitches, phototubes, scintillometers, Shack-Hartmann sensors, single-photon avalanche diodes, superconducting nanowire single-photon detectors, transition edge sensors, visible light photon counters, and wavefront sensors.

[0065] Examples of pressure sensors include barographs, barometers, boost gauges, bourdon gauges, hot filament ionization gauges, ionization gauges, McLeod gauges, oscillating u-tubes, permanent downhole gauges, piezometers, pirani gauges, pressure sensors, pressure gauges, tactile sensors, and time pressure gauges. Examples of force, density, and level sensors include bhangmeters, hydrometers, force gauge and force sensors, level sensors, load cells, magnetic level gauges, nuclear density gauges, piezocapacitive pressure sensors, piezoelectric sensors, strain gauges, torque sensors, and viscometers.

[0066] Examples of thermal, heat, and temperature sensors include bolometers, bimetallic strips, calorimeters, exhaust gas temperature gauges, flame detections, Gardon gauges, Golay cells, heat flux sensors, infrared thermometers, microbolometers, microwave radiometers, net radiometers, quartz thermometers, resistance thermometers, silicon bandgap temperature sensors, special sensor microwave/imagers, temperature gauges, thermistors, thermocouples, thermometers, and pyrometers. Examples of proximity and presence sensors include alarm sensors, Doppler radars, motion detectors, occupancy sensors, proximity sensors, passive infrared sensors, reed switches, stud finders, triangulation sensors, touch switches, and wired gloves.

[0067] In some embodiments, different sensors send measurements or other data to Cloud IoT platform 104 using a variety of different communications protocols or data formats. Cloud IoT platform 104 can be configured to ingest sensor data received in any protocol or data format and translate the inbound sensor data into a common data format. Cloud IoT platform 104 can create a sensor object smart entity for each sensor that communicates with Cloud IoT platform 104. Each sensor object smart entity may include one or more static attributes that describe the corresponding sensor, one or more dynamic attributes that indicate the most recent values collected by the sensor, and/or one or more relational attributes that relate sensors object smart entities to each other and/or to other types of smart entities (e.g., space entities, system entities, data entities, etc.).

[0068] In some embodiments, Cloud IoT platform 104 stores sensor data using data entities. Each data entity may correspond to a particular sensor and may include a timeseries of data values received from the corresponding sensor. In some embodiments, Cloud IoT platform 104 stores relational objects that define relationships between sensor object entities and the corresponding data entity. For example, each relational object may identify a particular sensor object entity, a particular data entity, and may define a link between such entities.

[0069] In some embodiments, Cloud IoT platform 104 generates data internally. For example, Cloud IoT platform 104 may include a web advertising system, a website traffic monitoring system, a web sales system, and/or other types of platform services that generate data. The data generated by Cloud IoT platform 104 can be collected, stored, and processed along with the data received from other data sources. Cloud IoT platform 104 can collect data directly from external systems or devices or via the network 110. Cloud IoT platform 104 can process and transform collected data to generate timeseries data and entity data.

[0070] Client device(s) 108 can include one or more human-machine interfaces or client interfaces (e.g., graphical user interfaces, reporting interfaces, text-based computer interfaces, client-facing web services, web servers that provide pages to web clients, and/or the like) for controlling, viewing, or otherwise interacting with the IoT environment, IoT devices 102, IoT applications 106, and/or the Cloud IoT platform 104. Client device(s) 108 can be a computer workstation, a client terminal, a remote or local interface, or any other type of user interface device. Client device 108 can be a stationary terminal or a mobile device. For example, client device 108 can be a desktop computer, a computer server with a user interface, a laptop computer, a tablet, a smartphone, a PDA, or any other type of mobile or non-mobile device.

[0071] IoT applications 106 may be applications running on the client device 108 or any other suitable device that provides an interface for presenting data from the IoT devices 102 and/or the Cloud IoT platform 104 to the client device 108. In some embodiments, the IoT applications 106 may provide an interface for providing commands or controls from the client device 108 to the IoT devices 102 and/or the Cloud IoT platform 104.

IoT Management System

[0072] Referring now to FIG. 2, a block diagram of an IoT management system (IoTMS) 200 is shown, according to some embodiments. IoTMS 200 can be implemented in an IoT environment to automatically monitor and control various device functions. IoTMS 200 is shown to include Cloud IoT controller 266 and IoT devices 228. IoT devices 228 are shown to include a plurality of IoT devices. However, the number of IoT devices is not limited to those shown in FIG. 2. Each of the IoT devices 228 may include any suitable device having network connectivity, such as, for example, a mobile phone, laptop, tablet, smart speaker, vehicle, appliance, light fixture, thermostat, wearable, medical implant, equipment, sensor, and/or the like. Further, each of the IoT devices 228 can include any number of devices, controllers, and connections for completing its individual functions and control activities. For example, any of the IoT devices 228 can be a system of devices in itself including controllers, equipment, sensors, and/or the like.

[0073] Cloud IoT controller 266 can include one or more computer systems (e.g., servers, supervisory controllers, subsystem controllers, etc.) that serve as system level controllers, application or data servers, head nodes, or master controllers the IoT devices 228 and/or other controllable systems or devices in an IoT environment. Cloud IoT controller 266 may communicate with multiple downstream IoT devices 228 and/or systems via a communications link (e.g., IoT device interface 209) according to like or disparate protocols (e.g., HTTP(s), TCP-IP, HTML, SOAP, REST, LON, BACnet, OPC-UA, ADX, and/or the like).

[0074] In some embodiments, the IoT devices 228 receive information from Cloud IoT controller 266 (e.g., commands, setpoints, operating boundaries, etc.) and provides information to Cloud IoT controller 266 (e.g., measurements, valve or actuator positions, operating statuses, diagnostics, etc.). For example, the IoT devices 228 may provide Cloud IoT controller 266 with measurements from various sensors, equipment on/off states, equipment operating capacities, and/or any other information that can be used by Cloud IoT controller 266 to monitor or control a variable state or condition within the IoT environment.

[0075] Still referring to FIG. 2, Cloud IoT controller 266 is shown to include a communications interface 207 and an IoT device interface 209. Interface 207 may facilitate communications between Cloud IoT controller 266 and external applications (e.g., monitoring and reporting applications 222, enterprise control applications 226, remote systems and applications 244, applications residing on client devices 248, and the like) for allowing user control, monitoring, and adjustment to Cloud IoT controller 266 and/or IoT devices 228. Interface 207 may also facilitate communications between Cloud IoT controller 266 and client devices 248. IoT device interface 209 may facilitate communications between Cloud IoT controller 266 and IoT devices 228.

[0076] Interfaces 207, 209 can be or include wired or wireless communications interfaces (e.g., jacks, antennas, transmitters, receivers, transceivers, wire terminals, etc.) for conducting data communications with IoT devices 228 or other external systems or devices. In various embodiments, communications via interfaces 207, 209 can be direct (e.g., local wired or wireless communications) or via a communications network 246 (e.g., a WAN, the Internet, a cellular network, etc.). For example, interfaces 207, 209 can include an Ethernet card and port for sending and receiving data via an Ethernet-based communications link or network. In another example, interfaces 207, 209 can include a Wi-Fi transceiver for communicating via a wireless communications network. In another example, one or both of interfaces 207, 209 can include cellular or mobile phone communications transceivers. In some embodiments, communications interface 207 is a power line communications interface and IoT device interface 209 is an Ethernet interface. In other embodiments, both communications interface 207 and IoT device interface 209 are Ethernet interfaces or are the same Ethernet interface.

[0077] Still referring to FIG. 2, in various embodiments, Cloud IoT controller 266 is implemented using a distributed or cloud computing environment with a plurality of processors and memory. That is, Cloud IoT controller 266 can be distributed across multiple servers or computers (e.g., that can exist in distributed locations). For convenience of description, Cloud IoT controller 266 is shown as including at least one processing circuit 204 including a processor 206 and memory 208. Processing circuit 204 can be communicably connected to IoT device interface 209 and/or communications interface 207 such that processing circuit 204 and the various components thereof can send and receive data via interfaces 207, 209. Processor 206 can be implemented as a general purpose processor, an application specific integrated circuit (ASIC), one or more field programmable gate arrays (FPGAs), a group of processing components, or other suitable electronic processing components.

[0078] Memory 208 (e.g., memory, memory unit, storage device, etc.) can include one or more devices (e.g., RAM, ROM, Flash memory, hard disk storage, etc.) for storing data and/or computer code for completing or facilitating the various processes, layers and modules described in the present application. Memory 208 can be or include volatile memory or non-volatile memory. Memory 208 can include database components, object code components, script components, or any other type of information structure for supporting the various activities and information structures described in the present application. According to some embodiments, memory 208 is communicably connected to processor 206 via processing circuit 204 and includes computer code for executing (e.g., by processing circuit 204 and/or processor 206) one or more processes described herein.

[0079] However, the present disclosure is not limited thereto, and in some embodiments, Cloud IoT controller 266 can be implemented within a single computer (e.g., one server, one housing, etc.). Further, while FIG. 2 shows applications 222 and 226 as existing outside of Cloud IoT controller 266, in some embodiments, applications 222 and 226 can be hosted within Cloud IoT controller 266 (e.g., within memory 208).

[0080] Still referring to FIG. 2, memory 208 is shown to include an enterprise integration layer 210, an automated measurement and validation (AM&V) layer 212, a fault detection and diagnostics (FDD) layer 216, an integrated control layer 218, and an IoT device integration later 220. Layers 210-220 can be configured to receive inputs from IoT deices 228 and other data sources, determine optimal control actions for the IoT devices 228 based on the inputs, generate control signals based on the optimal control actions, and provide the generated control signals to IoT devices 228.

[0081] Enterprise integration layer 210 can be configured to serve clients or local applications with information and services to support a variety of enterprise-level applications. For example, enterprise control applications 226 can be configured to provide subsystem-spanning control to a graphical user interface (GUI) or to any number of enterprise-level business applications (e.g., accounting systems, user identification systems, etc.). Enterprise control applications 226 may also or alternatively be configured to provide configuration GUIs for configuring Cloud IoT controller 266. In yet other embodiments, enterprise control applications 226 can work with layers 210-220 to optimize the IoT environment based on inputs received at interface 207 and/or IoT device interface 209.

[0082] IoT device integration layer 220 can be configured to manage communications between Cloud IoT controller 266 and the IoT devices 228. For example, IoT device integration layer 220 may receive sensor data and input signals from the IoT devices 228, and provide output data and control signals to the IoT devices 228. IoT device integration layer 220 may also be configured to manage communications between the IoT devices 228. IoT device integration layer 220 translates communications (e.g., sensor data, input signals, output signals, etc.) across a plurality of multi-vendor/multi-protocol systems.

[0083] Integrated control layer 218 can be configured to use the data input or output of IoT device integration layer 220 to make control decisions. Due to the IoT device integration provided by the IoT device integration layer 220, integrated control layer 218 can integrate control activities of the IoT devices 228 such that the IoT devices 228 behave as a single integrated supersystem. In some embodiments, integrated control layer 218 includes control logic that uses inputs and outputs from a plurality of IoT device subsystems to provide insights that separate IoT device subsystems could not provide alone. For example, integrated control layer 218 can be configured to use an input from a first IoT device subsystem to make a control decision for a second IoT device subsystem. Results of these decisions can be communicated back to IoT device integration layer 220.

[0084] Automated measurement and validation (AM&V) layer 212 can be configured to verify that control strategies commanded by integrated control layer 218 are working properly (e.g., using data aggregated by AM&V layer 212, integrated control layer 218, IoT device integration layer 220, FDD layer 216, and/or the like). The calculations made by AM&V layer 212 can be based on IoT device data models and/or equipment models for individual IoT devices or subsystems. For example, AM&V layer 212 may compare a model-predicted output with an actual output from IoT devices 228 to determine an accuracy of the model.

[0085] Fault detection and diagnostics (FDD) layer 216 can be configured to provide on-going fault detection for the IoT devices 228 and subsystem devices (equipment, sensors, and the like), and control algorithms used by integrated control layer 218. FDD layer 216 may receive data inputs from integrated control layer 218, directly from one or more IoT devices or subsystems, or from another data source. FDD layer 216 may automatically diagnose and respond to detected faults. The responses to detected or diagnosed faults can include providing an alert message to a user, a maintenance scheduling system, or a control algorithm configured to attempt to repair the fault or to work-around the fault.

[0086] FDD layer 216 can be configured to output a specific identification of the faulty component or cause of the fault (e.g., faulty IoT device or sensor) using detailed subsystem inputs available at IoT device integration layer 220. In other exemplary embodiments, FDD layer 216 is configured to provide "fault" events to integrated control layer 218 which executes control strategies and policies in response to the received fault events. According to some embodiments, FDD layer 216 (or a policy executed by an integrated control engine or business rules engine) may shut-down IoT systems, devices, and/or or components or direct control activities around faulty IoT systems, devices, and/or components to reduce waste, extend IoT device life, or to assure proper control response.

[0087] FDD layer 216 can be configured to store or access a variety of different system data stores (or data points for live data). FDD layer 216 may use some content of the data stores to identify faults at the IoT device or equipment level and other content to identify faults at component or subsystem levels. For example, the IoT devices 228 may generate temporal (i.e., time-series) data indicating the performance of IoTMS 200 and the various components thereof. The data generated by the IoT devices 228 can include measured or calculated values that exhibit statistical characteristics and provide information about how the corresponding system or IoT application process is performing in terms of error from its setpoint. These processes can be examined by FDD layer 216 to expose when the system begins to degrade in performance and alert a user to repair the fault before it becomes more severe.

IoT Management System with Cloud IoT Platform Services

[0088] Referring now to FIG. 3, a block diagram of another IoT management system (IoTMS) 300 is shown, according to some embodiments. IoTMS 300 can be configured to collect data samples (e.g., raw data) from IoT devices 228 and provide the data samples to Cloud IoT platform services 320 to generate raw timeseries data, derived timeseries data, and/or entity data from the data samples. Cloud IoT platform services 320 can process and transform the raw timeseries data to generate derived timeseries data. Throughout this disclosure, the term "derived timeseries data" is used to describe the result or output of a transformation or other timeseries processing operation performed by Cloud IoT platform services 320 (e.g., data aggregation, data cleansing, virtual point calculation, etc.). The term "entity data" is used to describe the attributes of various smart entities (e.g., IoT systems, devices, components, sensors, and the like) and the relationships between the smart entities. The derived timeseries data can be provided to various applications 330 of IoTMS 300 and/or stored in storage 314 (e.g., as materialized views of the raw timeseries data). In some embodiments, Cloud IoT platform services 320 separates data collection; data storage, retrieval, and analysis; and data visualization into three different layers. This allows Cloud IoT platform services 320 to support a variety of applications 330 that use the derived timeseries data and/or entity data, and allows new applications 330 to reuse the existing infrastructure provided by Cloud IoT platform services 320.

[0089] It should be noted that the components of IoTMS 300 and/or Cloud IoT platform services 320 can be integrated within a single device (e.g., a supervisory controller, a IoT device controller, etc.) or distributed across multiple separate systems or devices. In other embodiments, some or all of the components of IoTMS 300 and or Cloud IoT platform services 320 can be implemented as part of a cloud-based computing system configured to receive and process data from one or more IoT systems, devices, and/or components. In other embodiments, some or all of the components of IoTMS 300 and/or Cloud IoT platform services 320 can be components of a subsystem level controller, a subplant controller, a device controller, a field controller, a computer workstation, a client device, or any other system or device that receives and processes data from IoT devices.

[0090] IoTMS 300 can include many of the same components as IoTMS 200, as described with reference to FIG. 2. For example, IoTMS 300 is shown to include an IoT device interface 302 and a communications interface 304. Interfaces 302-304 can include wired or wireless communications interfaces (e.g., jacks, antennas, transmitters, receivers, transceivers, wire terminals, etc.) for conducting data communications with IoT devices 228 or other external systems or devices. Communications conducted via interfaces 302-304 can be direct (e.g., local wired or wireless communications) or via a communications network 246 (e.g., a WAN, the Internet, a cellular network, etc.).

[0091] Communications interface 304 can facilitate communications between IoTMS 300 and external applications (e.g., remote systems and applications 244) for allowing user control, monitoring, and adjustment to IoTMS 300. Communications interface 304 can also facilitate communications between IoTMS 300 and client devices 248. IoT device interface 302 can facilitate communications between IoTMS 300, Cloud IoT platform services 320, and IoT devices 228. IoTMS 300 can be configured to communicate with IoT devices 228 and/or Cloud IoT platform services 320 using any suitable protocols (e.g., HTTP(s), TCP-IP, HTML, SOAP, REST, LON, BACnet, OPC-UA, ADX, and/or the like). In some embodiments, IoTMS 300 receives data samples from IoT devices 228 and provides control signals to IoT devices 228 via IoT device interface 302.

[0092] IoT devices 228 may include any suitable device having network connectivity, such as, for example, a mobile phone, laptop, tablet, smart speaker, vehicle, appliance, light fixture, thermostat, wearable, medical implant, equipment, sensor, and/or the like. Further, each of the IoT devices 228 can include any number of devices, controllers, and connections for completing its individual functions and control activities. For example, any of the IoT devices 228 can be a system of devices in itself including controllers, equipment, sensors, and/or the like.

[0093] Still referring to FIG. 3, each of IoTMS 300 and Cloud IoT platform services 320 includes a processing circuit including a processor and memory. Each of the processors can be a general purpose or specific purpose processor, an application specific integrated circuit (ASIC), one or more field programmable gate arrays (FPGAs), a group of processing components, or other suitable processing components. Each of the processors is configured to execute computer code or instructions stored in memory or received from other computer readable media (e.g., CDROM, network storage, a remote server, etc.).

[0094] The memory can include one or more devices (e.g., memory units, memory devices, storage devices, etc.) for storing data and/or computer code for completing and/or facilitating the various processes described in the present disclosure. Memory can include random access memory (RAM), read-only memory (ROM), hard drive storage, temporary storage, non-volatile memory, flash memory, optical memory, or any other suitable memory for storing software objects and/or computer instructions. Memory can include database components, object code components, script components, or any other type of information structure for supporting the various activities and information structures described in the present disclosure. Memory can be communicably connected to the processor via the processing circuit and can include computer code for executing (e.g., by the processor) one or more processes described herein.

[0095] Still referring to FIG. 3, Cloud IoT platform services 320 is shown to include a data collector 312. Data collector 312 is shown receiving data samples from the IoT devices 228 via the IoT device interface 302. However, the present disclosure is not limited thereto, and the data collector 312 may receive the data samples directly from the IoT devices 228 (e.g., via network 246 or via any suitable method). In some embodiments, the data samples include data values for various data points. The data values can be measured or calculated values, depending on the type of data point. For example, a data point received from a sensor can include a measured data value indicating a measurement by the sensor. A data point received from a controller can include a calculated data value indicating a calculated efficiency of the controller. Data collector 312 can receive data samples from multiple different devices (e.g., IoT systems, devices, components, sensors, and the like) of the IoT devices 228.

[0096] The data samples can include one or more attributes that describe or characterize the corresponding data points. For example, the data samples can include a name attribute defining a point name or ID (e.g., "B1F4R2.T-Z"), a device attribute indicating a type of device from which the data samples are received, a unit attribute defining a unit of measure associated with the data value (e.g., .degree. F., .degree. C., kPA, etc.), and/or any other attribute that describes the corresponding data point or provides contextual information regarding the data point. The types of attributes included in each data point can depend on the communications protocol used to send the data samples to Cloud IoT platform services 320. For example, data samples received via the ADX protocol or BACnet protocol can include a variety of descriptive attributes along with the data value, whereas data samples received via the Modbus protocol may include a lesser number of attributes (e.g., only the data value without any corresponding attributes).

[0097] In some embodiments, each data sample is received with a timestamp indicating a time at which the corresponding data value was measured or calculated. In other embodiments, data collector 312 adds timestamps to the data samples based on the times at which the data samples are received. Data collector 312 can generate raw timeseries data for each of the data points for which data samples are received. Each timeseries can include a series of data values for the same data point and a timestamp for each of the data values. For example, a timeseries for a data point provided by a temperature sensor can include a series of temperature values measured by the temperature sensor and the corresponding times at which the temperature values were measured. An example of a timeseries which can be generated by data collector 312 is as follows:

[<key, timestamp.sub.1, value.sub.1>, <key, timestamp.sub.2, value.sub.2>, <key, timestamp.sub.3, value.sub.3>]

where key is an identifier of the source of the raw data samples (e.g., timeseries ID, sensor ID, device ID, etc.), timestamp.sub.i identifies the time at which the ith sample was collected, and value.sub.i indicates the value of the ith sample.

[0098] Data collector 312 can add timestamps to the data samples or modify existing timestamps such that each data sample includes a local timestamp. Each local timestamp indicates the local time at which the corresponding data sample was measured or collected and can include an offset relative to universal time. The local timestamp indicates the local time at the location the data point was measured at the time of measurement. The offset indicates the difference between the local time and a universal time (e.g., the time at the international date line). For example, a data sample collected in a time zone that is six hours behind universal time can include a local timestamp (e.g., Timestamp=2016-03-18T14:10:02) and an offset indicating that the local timestamp is six hours behind universal time (e.g., Offset=-6:00). The offset can be adjusted (e.g., +1:00 or -1:00) depending on whether the time zone is in daylight savings time when the data sample is measured or collected.

[0099] The combination of the local timestamp and the offset provides a unique timestamp across daylight saving time boundaries. This allows an application using the timeseries data to display the timeseries data in local time without first converting from universal time. The combination of the local timestamp and the offset also provides enough information to convert the local timestamp to universal time without needing to look up a schedule of when daylight savings time occurs. For example, the offset can be subtracted from the local timestamp to generate a universal time value that corresponds to the local timestamp without referencing an external database and without requiring any other information.

[0100] In some embodiments, data collector 312 organizes the raw timeseries data. Data collector 312 can identify a system or device associated with each of the data points. For example, data collector 312 can associate a data point with an IoT device, system, component, sensor, or any other type of system or device. In some embodiments, a data entity may be created for the data point, in which case, the data collector 312 (e.g., via entity service) can associate the data point with the data entity. In various embodiments, data collector uses the name of the data point, a range of values of the data point, statistical characteristics of the data point, or other attributes of the data point to identify a particular system, device, or data point entity associated with the data point. Data collector 312 can then determine how that system or device relates to the other systems or devices in the IoT environment from entity data. For example, data collector 312 can determine that the identified system or device is part of a larger system or serves a particular function within the larger system from the entity data. In some embodiments, data collector 312 uses or retrieves an entity graph (e.g., via the entity service 326) based on the entity data when organizing the timeseries data.

[0101] Data collector 312 can provide the raw timeseries data to the other Cloud IoT platform services 320 and/or store the raw timeseries data in storage 314. Storage 314 may be internal storage or external storage. For example, storage 314 can be internal storage with relation to Cloud IoT platform service 320 and/or IoTMS 300, and/or may include a remote database, cloud-based data hosting, or other remote data storage. Storage 314 can be configured to store the raw timeseries data obtained by data collector 312, the derived timeseries data generated by Cloud IoT platform services 320, and/or directed acyclic graphs (DAGs) used by Cloud IoT platform services 320 to process the timeseries data.

[0102] Still referring to FIG. 3, Cloud IoT platform services 320 can receive the raw timeseries data from data collector 312 and/or retrieve the raw timeseries data from storage 314. Cloud IoT platform services 320 can include a variety of services configured to analyze, process, and transform the raw timeseries data. For example, Cloud IoT platform services 320 is shown to include a security service 322, an analytics service 324, an entity service 326, and a timeseries service 328. Security service 322 can assign security attributes to the raw timeseries data to ensure that the timeseries data are only accessible to authorized individuals, systems, or applications. Security service 322 may include a messaging layer to exchange secure messages with the entity service 326. In some embodiment, security service 322 may provide permission data to entity service 326 so that entity service 326 can determine the types of entity data that can be accessed by a particular entity or device. Entity service 324 can assign entity information (or entity data) to the timeseries data to associate data points with a particular system, device, or component. Timeseries service 328 and analytics service 324 can apply various transformations, operations, or other functions to the raw timeseries data to generate derived timeseries data.

[0103] In some embodiments, timeseries service 328 aggregates predefined intervals of the raw timeseries data (e.g., quarter-hourly intervals, hourly intervals, daily intervals, monthly intervals, etc.) to generate new derived timeseries of the aggregated values. These derived timeseries can be referred to as "data rollups" since they are condensed versions of the raw timeseries data. The data rollups generated by timeseries service 328 provide an efficient mechanism for IoT applications 330 to query the timeseries data. For example, IoT applications 330 can construct visualizations of the timeseries data (e.g., charts, graphs, etc.) using the pre-aggregated data rollups instead of the raw timeseries data. This allows IoT applications 330 to simply retrieve and present the pre-aggregated data rollups without requiring IoT applications 330 to perform an aggregation in response to the query. Since the data rollups are pre-aggregated, IoT applications 330 can present the data rollups quickly and efficiently without requiring additional processing at query time to generate aggregated timeseries values.

[0104] In some embodiments, timeseries service 328 calculates virtual points based on the raw timeseries data and/or the derived timeseries data. Virtual points can be calculated by applying any of a variety of mathematical operations (e.g., addition, subtraction, multiplication, division, etc.) or functions (e.g., average value, maximum value, minimum value, thermodynamic functions, linear functions, nonlinear functions, etc.) to the actual data points represented by the timeseries data. For example, timeseries service 328 can calculate a virtual data point (pointID.sub.3) by adding two or more actual data points (pointID.sub.1 and pointID.sub.2) (e.g., pointID.sub.3=pointID.sub.1+pointID.sub.2). As another example, timeseries service 328 can calculate an enthalpy data point (pointID.sub.4) based on a measured temperature data point (pointID.sub.5) and a measured pressure data point (pointID.sub.6) (e.g., pointID.sub.4=enthalpy(pointID.sub.5, pointID.sub.6)). The virtual data points can be stored as derived timeseries data.

[0105] IoT applications 330 can access and use the virtual data points in the same manner as the actual data points. IoT applications 330 may not need to know whether a data point is an actual data point or a virtual data point since both types of data points can be stored as derived timeseries data and can be handled in the same manner by IoT applications 330. In some embodiments, the derived timeseries are stored with attributes designating each data point as either a virtual data point or an actual data point. Such attributes allow IoT applications 330 to identify whether a given timeseries represents a virtual data point or an actual data point, even though both types of data points can be handled in the same manner by IoT applications 330. These and other features of timeseries service 328 are described in greater detail with reference to FIG. 6.

[0106] In some embodiments, analytics service 324 analyzes the raw timeseries data and/or the derived timeseries data with the entity data to detect faults. Analytics service 324 can apply a set of fault detection rules based on the entity data to the timeseries data to determine whether a fault is detected at each interval of the timeseries. Fault detections can be stored as derived timeseries data. For example, analytics service 324 can generate a new fault detection timeseries with data values that indicate whether a fault was detected at each interval of the timeseries. The fault detection timeseries can be stored as derived timeseries data along with the raw timeseries data in storage 314.

[0107] Still referring to FIG. 3, IoTMS 300 is shown to include several IoT applications 330 including a resource management application 332, monitoring and reporting applications 334, and enterprise control applications 336. Although only a few IoT applications 330 are shown, it is contemplated that IoT applications 330 can include any of a variety of applications configured to use the raw or derived timeseries generated by Cloud IoT platform services 320. In some embodiments, IoT applications 330 exist as a separate layer of IoTMS 300 (e.g., a part of Cloud IoT platform services 320 and/or data collector 312). In other embodiments, IoT applications 330 can exist as remote applications that run on remote systems or devices (e.g., remote systems and applications 244, client devices 248, and/or the like).

[0108] IoT applications 330 can use the derived timeseries data and entity data to perform a variety data visualization, monitoring, and/or control activities. For example, resource management application 332 and monitoring and reporting application 334 can use the derived timeseries data and entity data to generate user interfaces (e.g., charts, graphs, etc.) that present the derived timeseries data and/or entity data to a user. In some embodiments, the user interfaces present the raw timeseries data and the derived data rollups in a single chart or graph. For example, a dropdown selector can be provided to allow a user to select the raw timeseries data or any of the data rollups for a given data point.

[0109] Enterprise control application 336 can use the derived timeseries data and/or entity data to perform various control activities. For example, enterprise control application 336 can use the derived timeseries data and/or entity data as input to a control algorithm (e.g., a state-based algorithm, an extremum seeking control (ESC) algorithm, a proportional-integral (PI) control algorithm, a proportional-integral-derivative (PID) control algorithm, a model predictive control (MPC) algorithm, a feedback control algorithm, etc.) to generate control signals for IoT devices 228. In some embodiments, IoT devices 228 use the control signals to operate other systems, devices, components, and/or sensors, which can affect the measured or calculated values of the data samples provided to IoTMS 300 and/or Cloud IoT platform services 320. Accordingly, enterprise control application 336 can use the derived timeseries data and/or entity data as feedback to control the systems and devices of the IoT devices 228.

Cloud Entity IoT Platform Service

[0110] Referring now to FIG. 4, a block diagram illustrating entity service 326 in greater detail is shown, according to some embodiments. Entity service 326 registers and manages various devices and entities in the Cloud IoT platform services 320. According to various embodiments, an entity may be any person, place, or physical object, hereafter referred to as an object entity. Further, an entity may be any event, data point, or record structure, hereinafter referred to as data entity. In addition, relationships between entities may be defined by relational objects.

[0111] In some embodiments, an object entity may be defined as having at least three types of attributes. For example, an object entity may have a static attribute, a dynamic attribute, and a behavioral attribute. The static attribute may include any unique identifier of the object entity or characteristic of the object entity that either does not change over time or changes infrequently (e.g., a device ID, a person's name or social security number, a place's address or room number, and the like). The dynamic attribute may include a property of the object entity that changes over time (e.g., location, age, measurement, data point, and the like). In some embodiments, the dynamic attribute of an object entity may be linked to a data entity. In this case, the dynamic attribute of the object entity may simply refer to a location (e.g., data/network address) or static attribute (e.g., identifier) of the linked data entity, which may store the data (e.g., the value or information) of the dynamic attribute. Accordingly, in some such embodiments, when a new data point (e.g., timeseries data) is received for the object entity, only the linked data entity may be updated, while the object entity remains unchanged. Therefore, resources that would have been expended to update the object entity may be reduced.

[0112] However, the present disclosure is not limited thereto. For example, in some embodiments, there may also be some data that is updated (e.g., during predetermined intervals) in the dynamic attribute of the object entity itself. For example, the linked data entity may be configured to be updated each time a new data point is received, whereas the corresponding dynamic attribute of the object entity may be configured to be updated less often (e.g., at predetermined intervals less than the intervals during which the new data points are received). In some implementations, the dynamic attribute of the object entity may include both a link to the data entity and either a portion of the data from the data entity or data derived from the data of the data entity. For example, in an embodiment in which periodic odometer readings are received from a connected car, an object entity corresponding to the car could include the last odometer reading and a link to a data entity that stores a series of the last ten odometer readings received from the car.

[0113] The behavioral attribute may define a function of the object entity, for example, based on inputs, capabilities, and/or permissions. For example, behavioral attributes may define the types of inputs that the object entity is configured to accept, how the object entity is expected to respond under certain conditions, the types of functions that the object entity is capable of performing, and the like. As a non-limiting example, if the object entity represents a person, the behavioral attribute of the person may be his/her job title or job duties, user permissions to access certain systems, expected location or behavior given a time of day, tendencies or preferences based on connected activity data received by entity service 326 (e.g., social media activity), and the like. As another non-limiting example, if the object entity represents a device, the behavioral attributes may include the types of inputs that the device can receive, the types of outputs that the device can generate, the types of controls that the device is capable of, the types of software or versions that the device currently has, known responses of the device to certain types of input (e.g., behavior of the device defined by its programming), and the like.

[0114] In some embodiments, the data entity may be defined as having at least a static attribute and a dynamic attribute. The static attribute of the data entity may include a unique identifier or description of the data entity. For example, if the data entity is linked to a dynamic attribute of an object entity, the static attribute of the data entity may include an identifier that is used to link to the dynamic attribute of the object entity. In some embodiments, the dynamic attribute of the data entity represents the data for the dynamic attribute of the linked object entity. In some embodiments, the dynamic attribute of the data entity may represent some other data that is derived, analyzed, inferred, calculated, or determined based on data from a plurality of data sources.