Mechanism To Provide Visual Feedback Regarding Computing System Command Gestures

MONGIA; RAJIV ; et al.

U.S. patent application number 14/954858 was filed with the patent office on 2019-03-21 for mechanism to provide visual feedback regarding computing system command gestures. This patent application is currently assigned to INTEL CORPORATION. The applicant listed for this patent is INTEL CORPORATION. Invention is credited to ACHINTYA K. BHOWMIK, DANA KRIEGER, ED MAGNUM, RAJIV MONGIA, DIANA POVIENG, MARK H. YAHIRO.

| Application Number | 20190087008 14/954858 |

| Document ID | / |

| Family ID | 48669317 |

| Filed Date | 2019-03-21 |

| United States Patent Application | 20190087008 |

| Kind Code | A9 |

| MONGIA; RAJIV ; et al. | March 21, 2019 |

MECHANISM TO PROVIDE VISUAL FEEDBACK REGARDING COMPUTING SYSTEM COMMAND GESTURES

Abstract

A mechanism to provide visual feedback regarding computing system command gestures. An embodiment of an apparatus includes a sensing element to sense a presence or movement of a user of the apparatus, a processor, wherein operation of the processor includes interpretation of command gestures of a user to provide input to the apparatus; and a display screen, the apparatus to display one or more icons on the display screen, the one or more icons being related to the operation of the apparatus. The apparatus is to display visual feedback for a user of the apparatus, visual feedback including a representation of one or both hands of the user while the one or both hands are within a sensing area for the sensing element.

| Inventors: | MONGIA; RAJIV; (MOUNTAIN VIEW, CA) ; BHOWMIK; ACHINTYA K.; (CUPERTINO, CA) ; YAHIRO; MARK H.; (SANTA CLARA, CA) ; KRIEGER; DANA; (EMERYVILLE, CA) ; MAGNUM; ED; (SAN MATEO, CA) ; POVIENG; DIANA; (SAN FRANCISCO, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | INTEL CORPORATION SANTA CLARA CA |

||||||||||

| Prior Publication: |

|

||||||||||

| Family ID: | 48669317 | ||||||||||

| Appl. No.: | 14/954858 | ||||||||||

| Filed: | November 30, 2015 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13997640 | Jun 24, 2013 | |||

| PCT/US11/67289 | Dec 23, 2011 | |||

| 14954858 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/017 20130101; G06F 3/04895 20130101; G06F 3/0481 20130101; G06F 3/011 20130101; G06F 3/04812 20130101; G06F 3/0487 20130101; G06F 2203/04806 20130101 |

| International Class: | G06F 3/01 20060101 G06F003/01; G06F 3/0487 20060101 G06F003/0487; G06F 3/0481 20060101 G06F003/0481 |

Claims

1. A computing device capable of being used in processing of movement-related data, the computing device comprising: a user interface; one or more sensors; a processor; and storage capable of storing instructions to be executed by the processor, the instructions when executed by the processor being capable of resulting in performance of operations comprising: detection, via at least one of the one or more sensors, of a movement of a body part of a user; determination of user intent relating to the movement of the body part, wherein the body part includes one or more hands of the user; and presentation, via the user interface, of visual feedback of the movement, wherein the visual feedback provides a visual representation of the body part or the movement, wherein the visual representation is presented by lights of various brightness levels.

2. The computing device of claim 1, wherein the visual feedback of the movement is based on the user intent.

3. The computing device of claim 1, wherein the operations comprise generation, via the lights, of an outline of the body part, wherein the various brightness levels represent the movement of the body part.

4. The computing device of claim 3, wherein the lights to highlight one or more portions of the body part, wherein the one or more portions include one or more finger tips of one or more fingers of the one or more hands.

5. The computing device of claim 1, wherein the visual feedback corresponds to a gesture of a plurality of gestures, wherein the user intent is determined based on the gesture or the movement.

6. The computing device of claim 5, wherein the gesture comprises a command gesture capable of being interpreted to provide an input, via a user interface, to the computing device.

7. A method comprising: detecting, via at least one of one or more sensors of a computing device, a movement of a body part of a user; determining user intent relating to the movement of the body part, wherein the body part includes one or more hands of the user; and presenting, via a user interface, visual feedback of the movement, wherein the visual feedback provides a visual representation of the body part or the movement, wherein the visual representation is presented by lights of various brightness levels.

8. The method of claim 7, wherein the visual feedback of the movement is based on the user intent.

9. The method of claim 7, further comprising generating, via the lights, of an outline of the body part, wherein the various brightness levels represent the movement of the body part.

10. The method of claim 9, wherein the lights to highlight one or more portions of the body part, wherein the one or more portions include one or more finger tips of one or more fingers of the one or more hands.

11. The method of claim 7, wherein the visual feedback corresponds to a gesture of a plurality of gestures, wherein the user intent is determined based on the gesture or the movement.

12. The method of claim 11, wherein the gesture comprises a command gesture capable of being interpreted to provide an input, via a user interface, to the computing device.

13. At least one machine-readable storage medium capable of storing instructions which, when executed by a computing device, cause the computing device to: detect, via at least one of one or more sensors, a movement of a body part of a user; determine user intent relating to the movement of the body part, wherein the body part includes one or more hands of the user; and present, via a user interface, visual feedback of the movement, wherein the visual feedback provides a visual representation of the body part or the movement, wherein the visual representation is presented by lights of various brightness levels.

14. The machine-readable storage medium of claim 13, wherein the visual feedback of the movement is based on the user intent.

15. The machine-readable storage medium of claim 13, wherein the computing device to generate, via the lights, of an outline of the body part, wherein the various brightness levels represent the movement of the body part.

16. The machine-readable storage medium of claim 15, wherein the lights to highlight one or more portions of the body part, wherein the one or more portions include one or more finger tips of one or more fingers of the one or more hands.

17. The machine-readable storage medium of claim 13, wherein the visual feedback corresponds to a gesture of a plurality of gestures, wherein the user intent is determined based on the gesture or the movement.

18. The machine-readable storage medium of claim 17, wherein the gesture comprises a command gesture capable of being interpreted to provide an input, via a user interface, to the computing device.

Description

CLAIM OF PRIORITY

[0001] This application is a continuation application of U.S. patent application Ser. No. 13/977,640, Attorney Docket No. 42P40717, entitled, MECHANISM TO PROVIDE VISUAL FEEDBACK REGARDING COMPUTING SYSTEM COMMAND GESTURES, by Rajiv Mongia, et al., filed Jun. 24, 2013, which is a U.S. National Phase application under 35 U.S.C. .sctn.371 of International Application No. PCT/US2011/067289, Attorney Docket No. 42P40717PCT, entitled, MECHANISM TO PROVIDE VISUAL FEEDBACK REGARDING COMPUTING SYSTEM COMMAND GESTURES, by Rajiv Mongia, et al., filed Dec. 23, 2011, the benefit of and priority to which are claimed thereof and the entire contents of which are incorporated herein by reference.

TECHNICAL FIELD

[0002] Embodiments of the invention generally relate to the field of computing systems and, more particularly, to a mechanism to provide visual feedback regarding computing system command gestures.

BACKGROUND

[0003] Computing systems and related systems are being developed to provide a more natural interface for users. In particular, computing systems may include sensing of a user of the computing system, where user sensing may include gesture recognition, where the system attempts to recognize one or more command gestures of a user, and in particular hand gestures of the user.

[0004] For example, the user may perform several gestures to manipulate symbols and other images shown on a display screen of the computer system.

[0005] However, a user is generally required to perform command gestures without being aware how the gestures are being interpreted by the computing system. For this reason the user may not be aware that a gesture will not be interpreted in the manner intended by the user.

BRIEF DESCRIPTION OF THE DRAWINGS

[0006] Embodiments of the invention are illustrated by way of example, and not by way of limitation, in the figures of the accompanying drawings in which like reference numerals refer to similar elements.

[0007] FIG. 1 illustrates an embodiment of a computing system including a mechanism to provide visual feedback to users regarding presentation of command gestures;

[0008] FIG. 2 illustrates as embodiment of a computing system providing visual feedback regarding fingertip presentation;

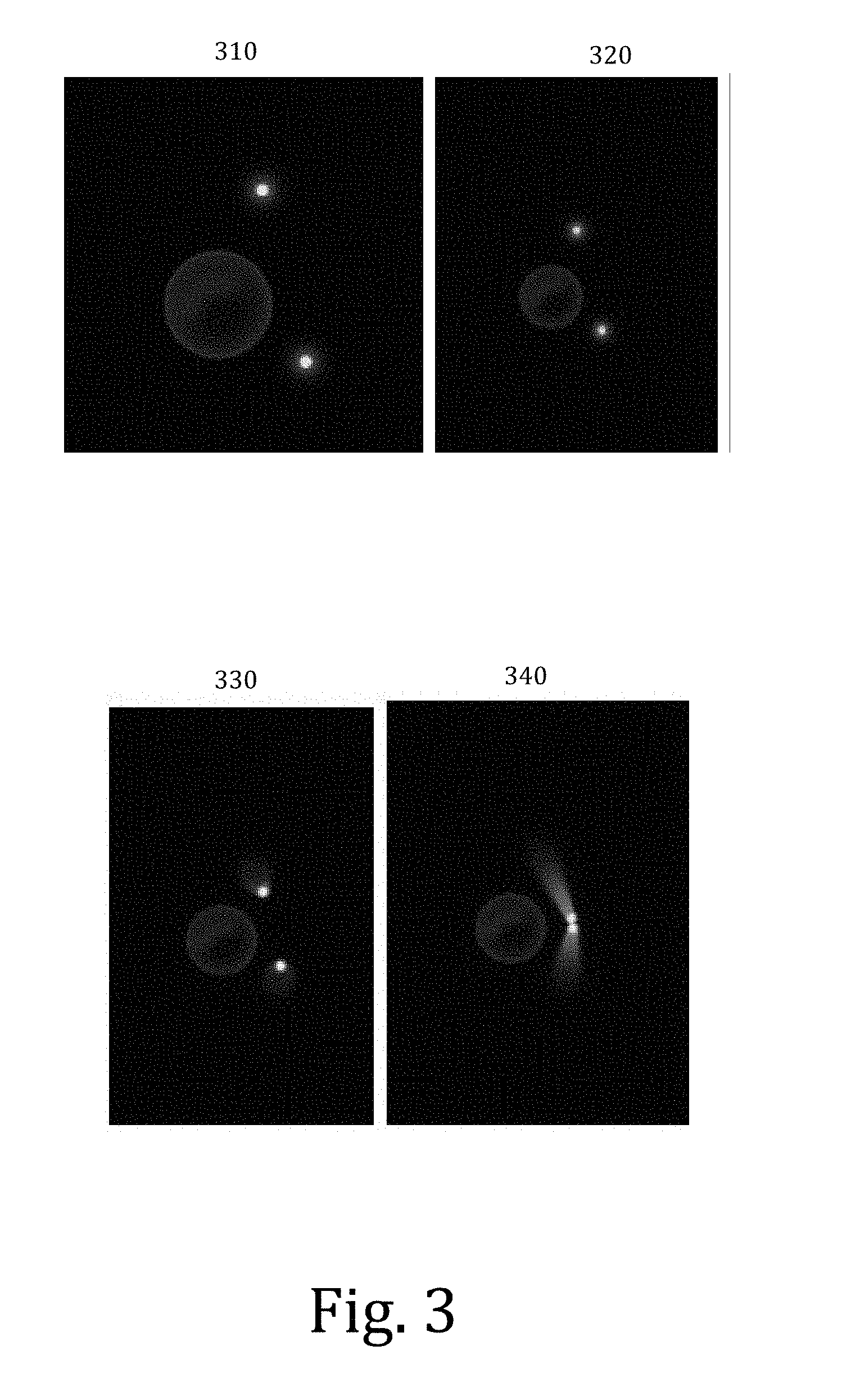

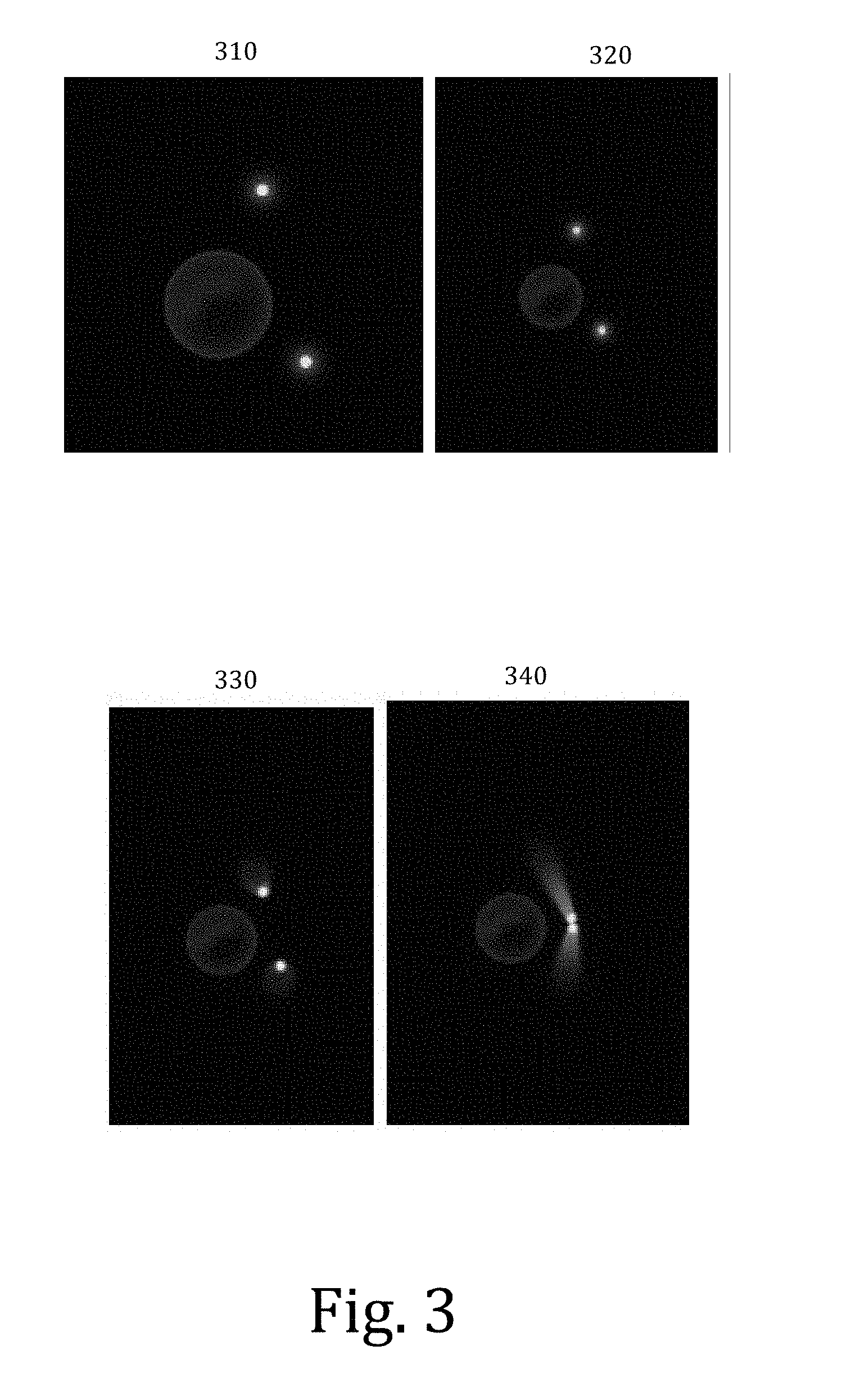

[0009] FIG. 3 illustrates as embodiment of a computing system providing feedback regarding a command gesture to zoom out on an element;

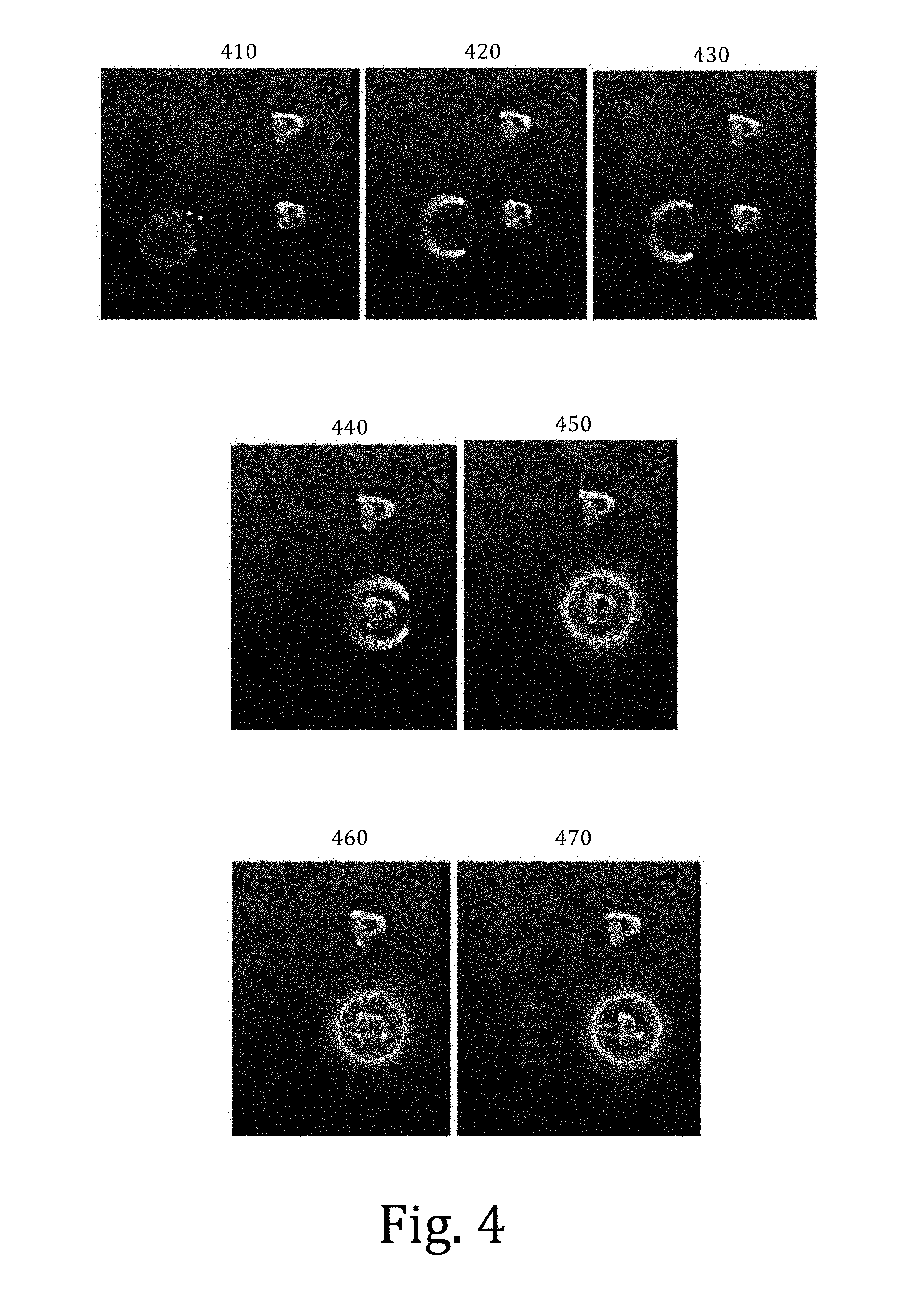

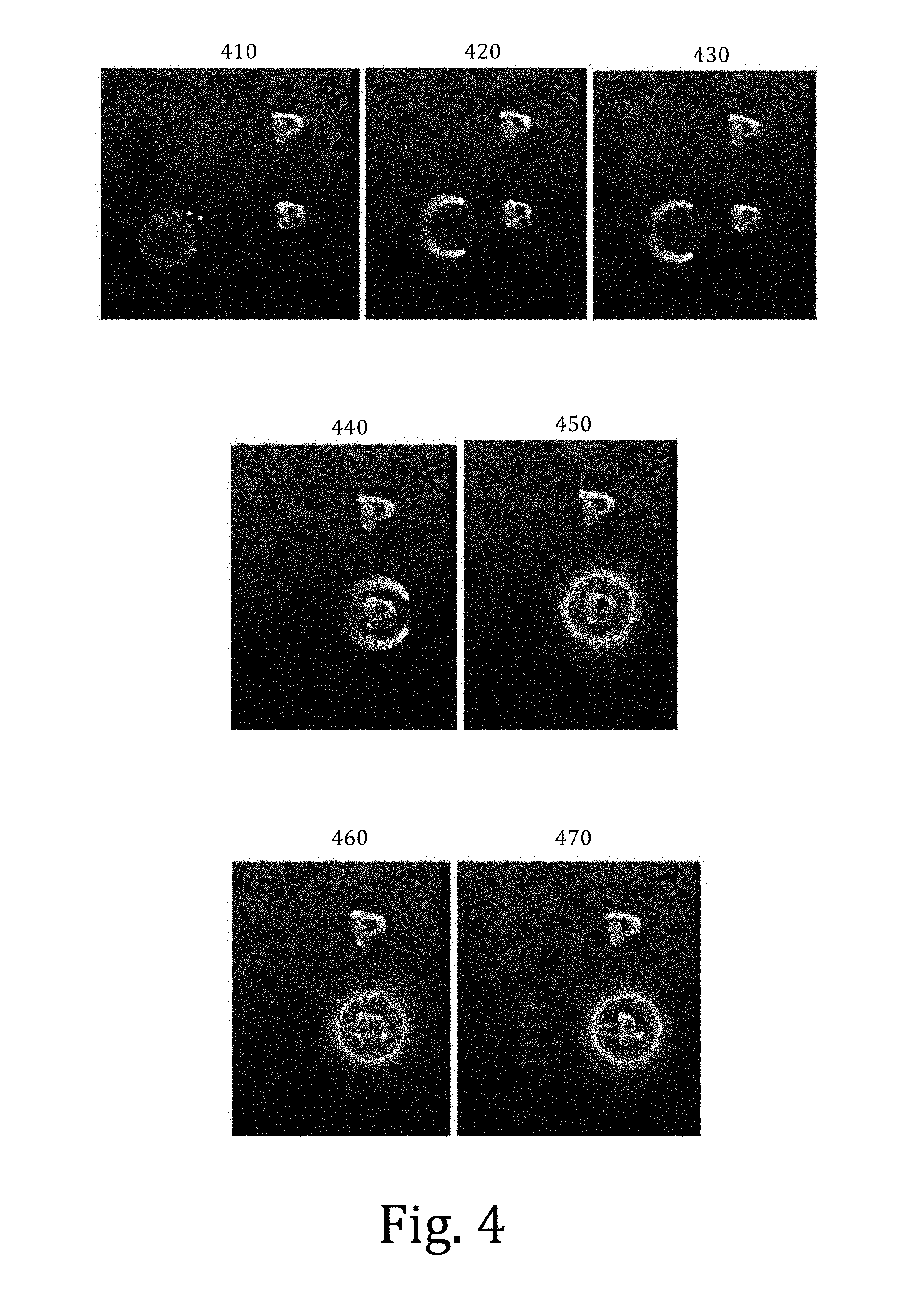

[0010] FIG. 4 illustrates as embodiment of a computing system providing feedback regarding a gesture to select an icon or other symbol;

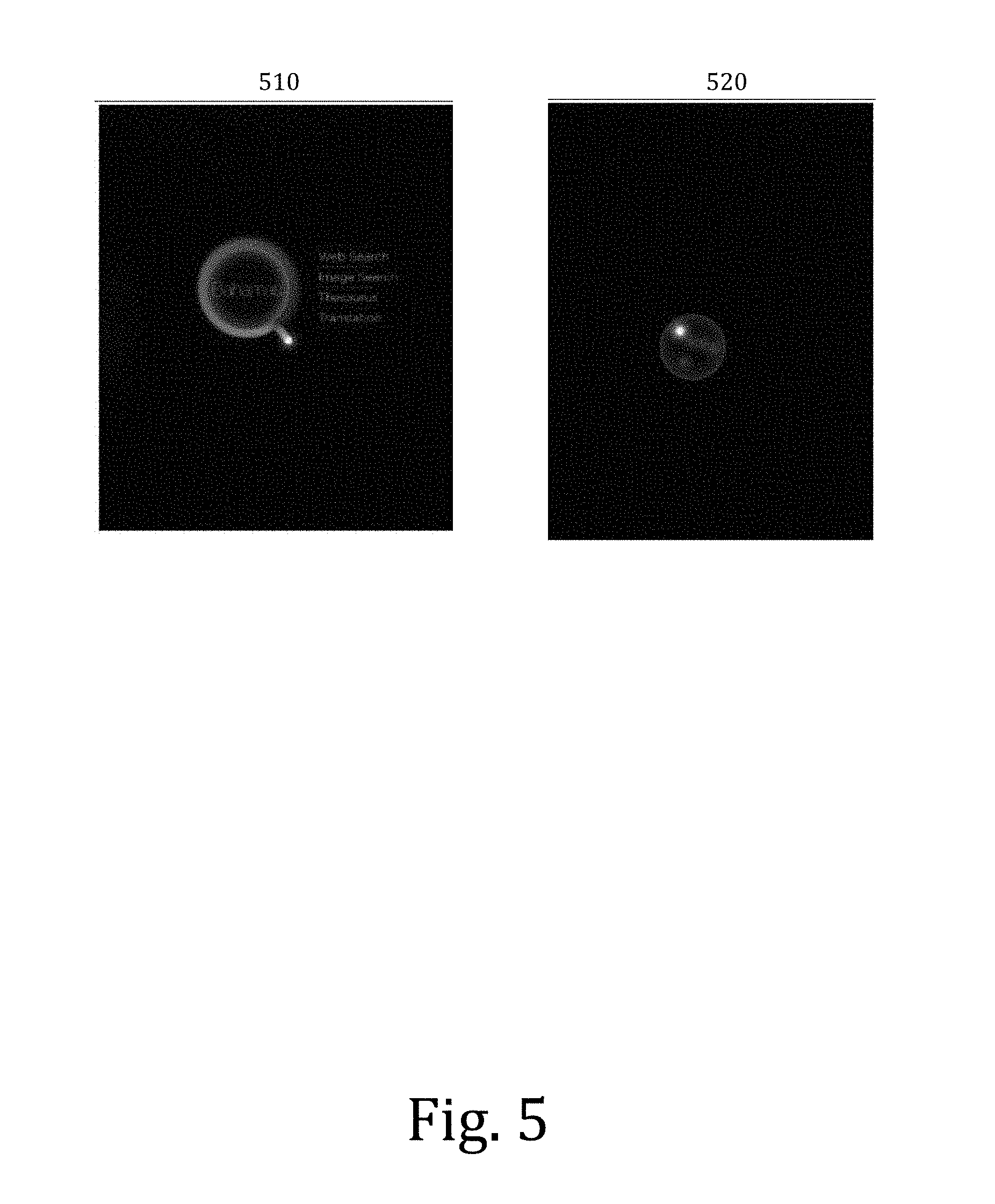

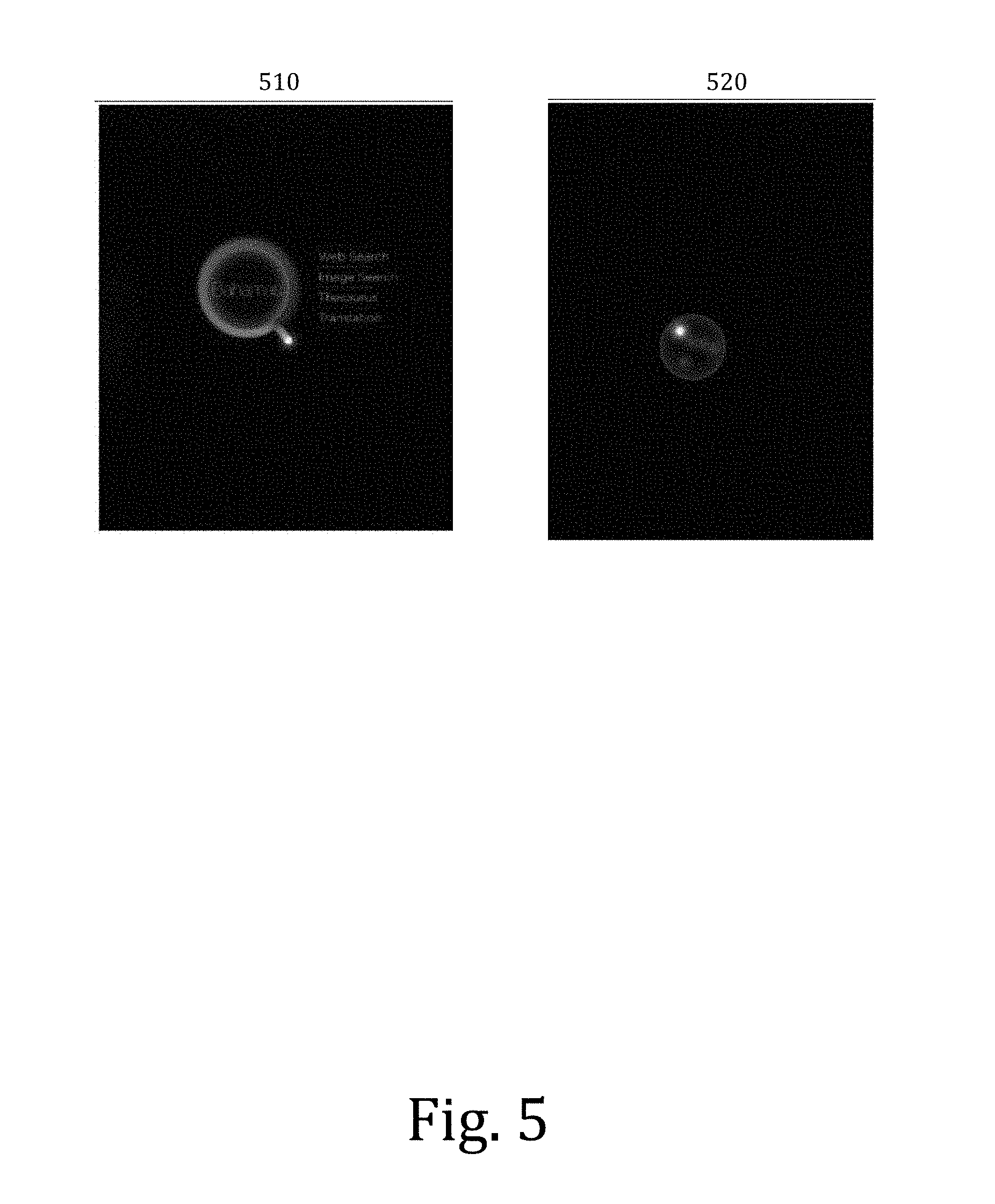

[0011] FIG. 5 illustrates as embodiment of a computing system providing feedback regarding an information function;

[0012] FIG. 6 illustrates as embodiment of a computing system providing feedback regarding user hand orientation;

[0013] FIG. 7 is a flowchart to illustrate an embodiment of a process for providing visual feedback to a user while performing command gestures; and

[0014] FIG. 8 is a block diagram to illustrate an embodiment a computing system including a mechanism to provide feedback to users regarding presentation of command gestures; and

[0015] FIG. 9 illustrates an embodiment of a computing system for perceptual computing.

DETAILED DESCRIPTION

[0016] Embodiments of the invention are generally directed to a mechanism to provide visual feedback regarding computing system command gestures.

[0017] As used herein:

[0018] "User sensing" means a computer operation to sense a user. The sensing of a user may include position and motion detection, such as a computing system detecting and interpreting gestures made by a user of the computing system as inputs to the computing system. User sensing may utilize any technology by which the user may be sensed by the computing, including visual sensing (using one or more cameras or similar devices), audio sensing (including detection of sounds and detection of sound reflection), heat or infrared sensing, sensing and interpretation of projected light patterns (visible or invisible), and other technologies. User sensing may include operations of a perceptual computing system in sensing user operations and intent, where a perceptual computing system is a system allowing for the addition of alternative input modalities, including gesture recognition.

[0019] "Computing system" means any device or system that includes computing capability. A computing system includes both a single unit and a system of multiple elements. A computing system is not limited to a general-purpose computer, but also includes special purpose devices that include computing capability. A "computing system" includes a desktop computer, a game console, a laptop or notebook computer, an all-in-one (AIO) computer, a tablet computer, a handheld computing device including a smart phone, or other apparatus or system having computing and display capability.

[0020] In some embodiments, an intent of the user to interact with a computing system is determined by the motion of the user, such as by a user's hand or hands, toward an element of the computing system. While embodiments are not limited to a user's hands, this may be the most common operation by the user.

[0021] In a computing system utilizing user sensing to detect system inputs, gestures made by a user to communicate with the computing system (which may be referred to generally as command gestures) may be difficult for the user to gauge as such gestures are being made. Thus, a user may be able to discern from results that a particular gesture was or was not effective to provide a desired result, but may not understand why the gesture was effective or ineffective.

[0022] In some cases, the reason that a gesture is ineffective is that the user isn't accurate in performing the gesture, such as circumstances in which the user isn't actually presenting the gesture the user believes the user is presenting, or the user is making the gesture in a manner that creates difficulty in the computing system sensing the user's gesture, such as in an orientation that is more difficult for the computing system to detect. Thus, a user may be forced to either watch the user's own hand while performing the gesture, or perform the gesture in an exaggerated manner to ensure that the computing system registers the intended gesture. In either case, the user is prevented from interfacing naturally with the computing system.

[0023] In some embodiments, a computing system includes a mechanism to provide feedback regarding computing system command gestures. In some embodiments, a computing system includes an on screen visual feedback mechanism that can be used to provide feedback to a user of a perceptual computing (PerComp) system while a command gesture is being performs. In some embodiments, a natural feedback mechanism is provided for an end user so that the user is able to properly use the system tools.

[0024] In some embodiments, a mechanism presents certain visual representations of a user's hand or hands on a display screen to allow the user to understand a relationship between the user's hands and symbols on the display screen,

[0025] In some embodiments, a first framework provides visual feedback including bright lights at the points at which the fingers are located (finger-tip-lights) in a visual representation of a position of a user's hand. In some embodiments, the movement of a user's fingers in space are represented by light that trails the finger-tip-lights' locations on the display screen for a certain period of time. In some embodiments, the visual feedback presents an image that is analogous to an image of a finger moving in a pool of water, where a trail is visible following the movement of the finger, but such trail dissipates are a short period of time. In some embodiments, the finger-tip-lights and the image of trailing lights provides feedback to the end user with respect to position and motion of the user's hand, and further creates the illusion of a full hand without resorting to using a full hand skeleton in visual feedback. In some embodiments, entry into the computing system may happen gradually with the finger-tip-lights becoming brighter as the user enters into the proper zone for sensing of command gestures. In some embodiments, entry into the computing system may happen gradually with the finger-tip-lights becoming more focused as the user enters into the proper zone for sensing of command gestures. In some embodiments, the finger-tip-light color or shape may change when the user is conducting or has completed a command gesture.

[0026] In some embodiments, a different in brightness provides feedback to a user regarding the orientation of the hand of the user in relation to the display screen. In some embodiments, the finger-tip-light are brighter when the fingers are pointed towards the display screen and dimmer when the fingers of the hand of a user are pointed away from the display screen versus. In some embodiments, the brightness of a displayed image may have a different orientation, such as a reverse orientation where the finger-tip-light are brighter when the fingers are pointed away the display screen and dimmer when the fingers of the hand of a user are pointed towards the display screen. In some embodiments, the feedback regarding the brightness of the fingers of the user may motivate the user to orient the hand of the user towards display screen and sensor such that the fingers of the user are not obscured in sensing.

[0027] In some embodiments, a second framework provides feedback for a command gesture to select a element of a display screen, where the gesture involves forming the hand in a shape in which the fingers can pinch or squeeze to select a targeted icon or other symbol (which may be referred to herein as a "claw" gesture). In some embodiments, a computer system may provide feedback for a claw select function by displaying a "C" shape with a partial fill. In some embodiments, user to complete the circle by closing the hand to select the object. If the open claw stays over a particular location for a long period of time, the screen responds by showing what the user can do in order to select the object.

[0028] In some embodiments, upon selecting an object, a light spins, thus encouraging the user to "rotate" the object to preview, or to move the object toward a projector light in order to view the file. In some embodiments, a "Search" looking glass gesture feedback is a magnifying glass on the screen as shown.

[0029] In some embodiments, a "zoom" function may be utilized, where, for example, two finger-tip-lights provide feedback regarding a zoom out gesture formed by a hand of the user.

[0030] In some embodiments, a third framework includes a "magnifying glass" or loop icon to provide visual feedback for a loop gesture performed by a user by touching the tip of the thumb with the tip of at least one other finger of the same hand. In some embodiments, the computing system provides information regarding what is represented by the icon when the loop representation surrounds the icon at least in part.

[0031] In some embodiments, an apparatus includes a sensing element to sense a presence or movement of a user of the apparatus, a processor, wherein operation of the processor includes interpretation of command gestures of a user to provide input to the apparatus; and a display screen, the apparatus to display one or more icons on the display screen, the one or more icons being related to the operation of the apparatus. The apparatus is to display visual feedback for a user of the apparatus, visual feedback including a representation of one or both hands of the user while the one or both hands are within a sensing area for the sensing element.

[0032] In some embodiments, a method includes commencing a session between a user and a computing system; sensing a presence or movement of the user by a sensing element of the apparatus; determining one or more of a position, a movement, and an orientation of the one or both hands of the user; and displaying visual feedback to the user regarding the hand or hands of the user, the visual feedback including a representation of the hand or hands of the user in relation to one or more symbols on a display screen.

[0033] FIG. 1 illustrates an embodiment of a computing system including a mechanism to provide visual feedback to users regarding presentation of command gestures. In some embodiments, a computing system 100 includes a display screen to 110 to provide feedback to a user 150 regarding command gestures being performed by the user. In some embodiments, the computing system 100 including one or more sensing elements 120 to sense position and movement of the user 150. Sensing elements may include multiple different elements working together, working in sequence, or both. For example, sensing elements may include elements that provide initial sensing, such as light or sound projection, following by sensing for gesture detection by, for example, an ultrasonic time of flight camera or a patterned light camera.

[0034] In particular, the sensing elements 120 may detect movement and position of the hand or hands of the user. In some embodiments, the provide visual feedback regarding gestures of the hand or hands of the computing system, where the visual feedback includes the presentation of images 130 that represent position and movement of the hands of the user in attempted to perform command gestures for operation of the computing system.

[0035] FIG. 2 illustrates as embodiment of a computing system providing visual feedback regarding fingertip presentation. In some embodiments, a computing system, such as system 100 illustrated in FIG. 1, provides visual feedback of the position and movement of the hands of the user by generating images representing the hands, where the images illuminate certain portions of the hands or fingers. In some embodiments, a computing systems provide a light point in a display image to represent the tip of each finger of the hand of the user. In some embodiments, movement of the fingers of the hand of the user results in illustrating a trailing image following the finger-tip-lights.

[0036] In some embodiments, a computing system senses the position and movement of the fingers of the user through illustration of the finger-tip-lights shown in 210. In this illustration, the fingers are shown to be close together and do not show any movement, indicating that the user has not recently moved the hands in an attempt to present a command gesture. In some embodiments, the fingers of the user move outward from the first position, with each finger having a trail following the finger to show the relative movement of the fingers 220. In some embodiments, this process continues 230, with the fingers reaching fully extended position 240.

[0037] In some embodiments, the computing system 100 operates such that the light trailing the light representing the fingertips of the fingers of the user will begin to fade 250 after a first period of time, and the training light representing the movement of the fingers of the user will eventually fade completely, thus returning the feedback display to showing the lights representing the finger tips of the user in a stationary representation without showing any trailing light 260.

[0038] FIG. 3 illustrates as embodiment of a computing system providing feedback regarding a command gesture to zoom-out from an element. In some embodiments, a computing system provides two finger-tip-lights to provide feedback regarding a zoom out gesture formed by a hand of the user 310. In some embodiments, the movement of the thumb and other finger or fingers of the hand provide feedback as the thumb and other finger or fingers draw closer, 320 and 330, and show contact when the thumb and other finger or fingers make contact 340.

[0039] FIG. 4 illustrates as embodiment of a computing system providing feedback regarding a gesture to select an icon or other symbol. In some embodiments, the fingertips of a user's hand 410 may change to a "C" symbol to provide feedback regarding a grasping or claw gesture moving towards an icon 420-430. In some embodiments, the computing system provides feedback as the user's hand in space moves into position to grasp or select an icon 440, and provides a complete circle when the thumb and other finger or fingers of the hand of the user close to select the icon 450. In some embodiments, after selecting an icon, feedback in the form of a rotating light may be provided 460. In some embodiments, feedback in the form of a menu to choose an action in relation to the selected icon is provided 470.

[0040] FIG. 5 illustrates as embodiment of a computing system providing feedback regarding an information function. In some embodiments, a loop gesture performed by the thumb of a user in contact with another finger, analogous to a magnifying glass or similar physical object, provide for further information regarding what is represented by an icon 510. In some embodiments, 520 indicates a "pointing gesture", where once the loop gesture creates options, the second hand may be used to perform a pointing gesture in order to select a particular option.

[0041] FIG. 6 illustrates as embodiment of a computing system providing feedback regarding user hand orientation. In some embodiments, a computing system provides feedback regarding the orientation of the hands of the user. In this illustration, the feedback indicates that the fingers of a user's hand are oriented towards the display screen by providing a dim representation of the hand 610, and feedback to indicate that the fingers of a user's hand are oriented away from the display screen by providing a brighter representation of the hand 620. However, embodiments are not limited to any particular orientation of the hands. For example, a computing system may provide the opposite feedback regarding the orientation of the hands of the user, where feedback indicates that the fingers of a user's hand are oriented away from the display screen by providing a dim representation of the hand, and feedback indicates that the fingers of a user's hand are oriented towards the display screen by providing a brighter representation of the hands.

[0042] FIG. 7 is a flowchart to illustrate an embodiment of a process for providing visual feedback to a user while performing command gestures. In some embodiments, a computing system commences a session with a user 700, and the presence of the user is sensed in a sensing area of the computing system 705. In some embodiments, one or both hands of the user are detected by the computing system 710, and the system detects the position, orientation, and motion of the hand or hands of the user 715.

[0043] In some embodiments, the computing system provides visual feedback regarding the hand or hands of the user, where the display provides feedback regarding the relationship between the hand or hands of the user and the symbols on the display screen 720. In some embodiments, the feedback includes one or more of visual feedback of the fingertips of the hand or hands of the user (such as finger-tip-lights) and the motion of the fingertips (such as a light trail following a fingertip) 725; visual feedback of a "C" shape to show a grasping or "claw" gesture to select icons 730; and visual feedback of a magnifying glass or loop to illustrate a loop gesture to obtain information regarding what is represented by an icon 735.

[0044] In some embodiments, if gesture movement of the user is detected 740 the computing system provides a gesture recognition process 745, which may include use of data from a gesture data library that is accessible to the computing system, 750.

[0045] FIG. 8 is a block diagram to illustrate an embodiment a computing system including a mechanism to provide feedback to users regarding presentation of command gestures. Computing system 800 represents any computing device or system utilizing user sensing, including a mobile computing device, such as a laptop computer, computing tablet, a mobile phone or smartphone, a wireless-enabled e-reader, or other wireless mobile device. It will be understood that certain of the components are shown generally, and not all components of such a computing system are shown in computing system 800. The components may be connected by one or more buses or other connections 805.

[0046] Computing system 800 includes processor 810, which performs the primary processing operations of computing system 800. Processor 810 can include one or more physical devices, such as microprocessors, application processors, microcontrollers, programmable logic devices, or other processing means. The processing operations performed by processor 810 include the execution of an operating platform or operating system on which applications, device functions, or both are executed. The processing operations include, for example, operations related to I/O (input/output) with a human user or with other devices, operations related to power management, and operations related to connecting computing system 800 to another system or device. The processing operations may also include operations related to audio I/O, display I/O, or both. Processors 810 may include one or more graphics processing units (GPUs), including a GPU used for general-purpose computing on graphics processing units (GPGPU).

[0047] In some embodiments, computing system 800 includes audio subsystem 820, which represents hardware (such as audio hardware and audio circuits) and software (such as drivers and codecs) components associated with providing audio functions to the computing system. Audio functions can include speaker output, headphone output, or both, as well as microphone input. Devices for such functions can be integrated into computing system 800, or connected to computing system 800. In some embodiments, a user interacts with computing system 800 by providing audio commands that are received and processed by processor 810.

[0048] Display subsystem 830 represents hardware (for example, display devices) and software (for example, drivers) components that provide a visual display, a tactile display, or combination of displays for a user to interact with the computing system 800. Display subsystem 830 includes display interface 832, which includes the particular screen or hardware device used to provide a display to a user. In one embodiment, display interface 832 includes logic separate from processor 810 to perform at least some processing related to the display. In one embodiment, display subsystem 830 includes a touchscreen device that provides both output and input to a user.

[0049] In some embodiments, the display subsystem 830 of the computing system 800 includes user gesture feedback 834 to provide visual feedback to the user regarding the relationship between the gestures performed by the user and the elements of the display. In some embodiments, the feedback includes one or more of visual feedback of the fingertips of the hand or hands of the user (such as finger-tip-lights) and the motion of the fingertips (such as a light trail following a fingertip); visual feedback of a "C" shape to show a grasping or "claw" gesture to select icons; and visual feedback of a magnifying glass or loop to illustrate a loop gesture to obtain information regarding what is represented by an icon.

[0050] I/O controller 840 represents hardware devices and software components related to interaction with a user. I/O controller 840 can operate to manage hardware that is part of audio subsystem 820 and hardware that is part of the display subsystem 830. Additionally, I/O controller 840 illustrates a connection point for additional devices that connect to computing system 800 through which a user might interact with the system. For example, devices that can be attached to computing system 800 might include microphone devices, speaker or stereo systems, video systems or other display device, keyboard or keypad devices, or other I/O devices for use with specific applications such as card readers or other devices.

[0051] As mentioned above, I/O controller 840 can interact with audio subsystem 820, display subsystem 830, or both. For example, input through a microphone or other audio device can provide input or commands for one or more applications or functions of computing system 800. Additionally, audio output can be provided instead of or in addition to display output. In another example, if display subsystem includes a touchscreen, the display device also acts as an input device, which can be at least partially managed by I/O controller 840. There can also be additional buttons or switches on computing system 800 to provide I/O functions managed by I/O controller 840.

[0052] In one embodiment, I/O controller 840 manages devices such as accelerometers, cameras, light sensors or other environmental sensors, or other hardware that can be included in computing system 800. The input can be part of direct user interaction, as well as providing environmental input to the system to influence its operations (such as filtering for noise, adjusting displays for brightness detection, applying a flash for a camera, or other features).

[0053] In one embodiment, computing system 800 includes power management 850 that manages battery power usage, charging of the battery, and features related to power saving operation. Memory subsystem 860 includes memory devices for storing information in computing system 800. Memory can include nonvolatile (state does not change if power to the memory device is interrupted) memory devices and volatile (state is indeterminate if power to the memory device is interrupted) memory devices. Memory 860 can store application data, user data, music, photos, documents, or other data, as well as system data (whether long-term or temporary) related to the execution of the applications and functions of system 800. In particular, memory may include gesture detection data 862 for use in detecting and interpreting gestures by a user of the computing system 800.

[0054] In some embodiments, computing system 800 includes one or more user sensing elements 890 to sense presence and motion, wherein may include one or more cameras or other visual sensing elements, one or more microphones or other audio sensing elements, one or more infrared or other heat sensing elements, or any other element for sensing the presence or movement of a user.

[0055] Connectivity 870 includes hardware devices (such as wireless and wired connectors and communication hardware) and software components (such as drivers and protocol stacks) to enable computing system 800 to communicate with external devices. The computing system could include separate devices, such as other computing devices, wireless access points or base stations, as well as peripherals such as headsets, printers, or other devices.

[0056] Connectivity 870 can include multiple different types of connectivity. To generalize, computing system 800 is illustrated with cellular connectivity 872 and wireless connectivity 874. Cellular connectivity 872 refers generally to cellular network connectivity provided by wireless carriers, such as provided via GSM (global system for mobile communications) or variations or derivatives, CDMA (code division multiple access) or variations or derivatives, TDM (time division multiplexing) or variations or derivatives, or other cellular service standards. Wireless connectivity 874 refers to wireless connectivity that is not cellular, and can include personal area networks (such as Bluetooth), local area networks (such as WiFi), wide area networks (such as WiMax), or other wireless communication. Connectivity 870 may include an omnidirectional or directional antenna for transmission of data, reception of data, or both.

[0057] Peripheral connections 880 include hardware interfaces and connectors, as well as software components (for example, drivers and protocol stacks) to make peripheral connections. It will be understood that computing system 800 could both be a peripheral device ("to" 882) to other computing devices, as well as have peripheral devices ("from" 884) connected to it. Computing system 800 commonly has a "docking" connector to connect to other computing devices for purposes such as managing (such as downloading, uploading, changing, and synchronizing) content on computing system 800. Additionally, a docking connector can allow computing system 800 to connect to certain peripherals that allow computing system 800 to control content output, for example, to audiovisual or other systems.

[0058] In addition to a proprietary docking connector or other proprietary connection hardware, computing system 800 can make peripheral connections 880 via common or standards-based connectors. Common types can include a Universal Serial Bus (USB) connector (which can include any of a number of different hardware interfaces), DisplayPort including MiniDisplayPort (MDP), High Definition Multimedia Interface (HDMI), Firewire, or other type.

[0059] FIG. 9 illustrates an embodiment of a computing system for perceptual computing. The computing system may include a computer, server, game console, or other computing apparatus. In this illustration, certain standard and well-known components that are not germane to the present description are not shown. Under some embodiments, the computing system 900 comprises an interconnect or crossbar 905 or other communication means for transmission of data. The computing system 900 may include a processing means such as one or more processors 910 coupled with the interconnect 905 for processing information. The processors 910 may comprise one or more physical processors and one or more logical processors. The interconnect 905 is illustrated as a single interconnect for simplicity, but may represent multiple different interconnects or buses and the component connections to such interconnects may vary. The interconnect 905 shown in FIG. 9 is an abstraction that represents any one or more separate physical buses, point-to-point connections, or both connected by appropriate bridges, adapters, or controllers.

[0060] Processing by the one or more processors include processing for perceptual computing 911, where such processing includes sensing and interpretation of gestures in relation to a virtual boundary of the computing system.

[0061] In some embodiments, the computing system 900 further comprises a random access memory (RAM) or other dynamic storage device or element as a main memory 912 for storing information and instructions to be executed by the processors 910. RAM memory includes dynamic random access memory (DRAM), which requires refreshing of memory contents, and static random access memory (SRAM), which does not require refreshing contents, but at increased cost. In some embodiments, main memory may include active storage of applications including a browser application for using in network browsing activities by a user of the computing system. DRAM memory may include synchronous dynamic random access memory (SDRAM), which includes a clock signal to control signals, and extended data-out dynamic random access memory (EDO DRAM). In some embodiments, memory of the system may include certain registers or other special purpose memory.

[0062] The computing system 900 also may comprise a read only memory (ROM) 916 or other static storage device for storing static information and instructions for the processors 910. The computing system 900 may include one or more non-volatile memory elements 918 for the storage of certain elements.

[0063] In some embodiments, the computing system 900 includes one or more input devices 930, where the input devices include one or more of a keyboard, mouse, touch pad, voice command recognition, gesture recognition, or other device for providing an input to a computing system.

[0064] The computing system 900 may also be coupled via the interconnect 905 to an output display 940. In some embodiments, the display 940 may include a liquid crystal display (LCD) or any other display technology, for displaying information or content to a user. In some environments, the display 940 may include a touch-screen that is also utilized as at least a part of an input device. In some environments, the display 940 may be or may include an audio device, such as a speaker for providing audio information.

[0065] One or more transmitters or receivers 945 may also be coupled to the interconnect 905. In some embodiments, the computing system 900 may include one or more ports 950 for the reception or transmission of data. The computing system 900 may further include one or more omnidirectional or directional antennas 955 for the reception of data via radio signals.

[0066] The computing system 900 may also comprise a power device or system 960, which may comprise a power supply, a battery, a solar cell, a fuel cell, or other system or device for providing or generating power. The power provided by the power device or system 960 may be distributed as required to elements of the computing system 900.

[0067] In the description above, for the purposes of explanation, numerous specific details are set forth in order to provide a thorough understanding of the present invention. It will be apparent, however, to one skilled in the art that the present invention may be practiced without some of these specific details. In other instances, well-known structures and devices are shown in block diagram form. There may be intermediate structure between illustrated components. The components described or illustrated herein may have additional inputs or outputs which are not illustrated or described.

[0068] Various embodiments may include various processes. These processes may be performed by hardware components or may be embodied in computer program or machine-executable instructions, which may be used to cause a general-purpose or special-purpose processor or logic circuits programmed with the instructions to perform the processes. Alternatively, the processes may be performed by a combination of hardware and software.

[0069] Portions of various embodiments may be provided as a computer program product, which may include a computer-readable medium having stored thereon computer program instructions, which may be used to program a computer (or other electronic devices) for execution by one or more processors to perform a process according to certain embodiments. The computer-readable medium may include, but is not limited to, floppy diskettes, optical disks, compact disk read-only memory (CD-ROM), and magneto-optical disks, read-only memory (ROM), random access memory (RAM), erasable programmable read-only memory (EPROM), electrically-erasable programmable read-only memory (EEPROM), magnet or optical cards, flash memory, or other type of computer-readable medium suitable for storing electronic instructions. Moreover, embodiments may also be downloaded as a computer program product, wherein the program may be transferred from a remote computer to a requesting computer.

[0070] Many of the methods are described in their most basic form, but processes can be added to or deleted from any of the methods and information can be added or subtracted from any of the described messages without departing from the basic scope of the present invention. It will be apparent to those skilled in the art that many further modifications and adaptations can be made. The particular embodiments are not provided to limit the invention but to illustrate it. The scope of the embodiments of the present invention is not to be determined by the specific examples provided above but only by the claims below.

[0071] If it is said that an element "A" is coupled to or with element "B," element A may be directly coupled to element B or be indirectly coupled through, for example, element C. When the specification or claims state that a component, feature, structure, process, or characteristic A "causes" a component, feature, structure, process, or characteristic B, it means that "A" is at least a partial cause of "B" but that there may also be at least one other component, feature, structure, process, or characteristic that assists in causing "B." If the specification indicates that a component, feature, structure, process, or characteristic "may", "might", or "could" be included, that particular component, feature, structure, process, or characteristic is not required to be included. If the specification or claim refers to "a" or "an" element, this does not mean there is only one of the described elements.

[0072] An embodiment is an implementation or example of the present invention. Reference in the specification to "an embodiment," "one embodiment," "some embodiments," or "other embodiments" means that a particular feature, structure, or characteristic described in connection with the embodiments is included in at least some embodiments, but not necessarily all embodiments. The various appearances of "an embodiment," "one embodiment," or "some embodiments" are not necessarily all referring to the same embodiments. It should be appreciated that in the foregoing description of exemplary embodiments of the present invention, various features are sometimes grouped together in a single embodiment, figure, or description thereof for the purpose of streamlining the disclosure and aiding in the understanding of one or more of the various inventive aspects. This method of disclosure, however, is not to be interpreted as reflecting an intention that the claimed invention requires more features than are expressly recited in each claim. Rather, as the following claims reflect, inventive aspects lie in less than all features of a single foregoing disclosed embodiment. Thus, the claims are hereby expressly incorporated into this description, with each claim standing on its own as a separate embodiment of this invention.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.