Rear View Mirror Simulation

Rotzer; Ilka ; et al.

U.S. patent application number 15/602068 was filed with the patent office on 2019-03-14 for rear view mirror simulation. This patent application is currently assigned to SMR Patents S.a.r.l.. The applicant listed for this patent is SMR Patents S.a.r.l.. Invention is credited to Oliver Eder, Andreas Herrmann, Frank Linsenmaier, Firas Mualla, Ilka Rotzer.

| Application Number | 20190077332 15/602068 |

| Document ID | / |

| Family ID | 64270244 |

| Filed Date | 2019-03-14 |

View All Diagrams

| United States Patent Application | 20190077332 |

| Kind Code | A9 |

| Rotzer; Ilka ; et al. | March 14, 2019 |

Rear View Mirror Simulation

Abstract

A method is shown to indicate to a driver of a vehicle the presence of an object moving relative to the vehicle. The method begins by collecting data using a camera or other such image capturing device. The data is analyzed to detect a hazard created by the presence of the object, regardless of whether it is moving or not. A warning associated with the hazard is created. Both the hazard and the warning are displayed using the display device.

| Inventors: | Rotzer; Ilka; (Denkendorf, DE) ; Herrmann; Andreas; (Winnenden, DE) ; Eder; Oliver; (Pinache, DE) ; Linsenmaier; Frank; (Weinstadt, DE) ; Mualla; Firas; (Stuttgart, DE) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | SMR Patents S.a.r.l. Luxembourg LU |

||||||||||

| Prior Publication: |

|

||||||||||

| Family ID: | 64270244 | ||||||||||

| Appl. No.: | 15/602068 | ||||||||||

| Filed: | May 22, 2017 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15287554 | Oct 6, 2016 | |||

| 15602068 | ||||

| 14968132 | Dec 14, 2015 | |||

| 15287554 | ||||

| 13090127 | Apr 19, 2011 | 9238434 | ||

| 14968132 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | B60R 2300/306 20130101; B60R 1/00 20130101; B60R 2300/8046 20130101; G06K 9/00791 20130101; G03B 37/04 20130101; B60R 2300/8026 20130101; B60R 2011/004 20130101; G06K 9/00805 20130101; B60R 2300/8093 20130101; B60R 11/04 20130101; G06T 3/0018 20130101 |

| International Class: | B60R 11/04 20060101 B60R011/04; G06K 9/00 20060101 G06K009/00; G06T 3/00 20060101 G06T003/00; H04N 5/357 20060101 H04N005/357; G03B 37/04 20060101 G03B037/04 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Apr 19, 2010 | EP | 10160325.6 |

Claims

1. A method for indicating to a driver of a vehicle the presence of an object at least temporarily moving relative to the vehicle, the method comprising the steps of: collecting data using at least one camera; analyzing the data to detect a hazard created by the presence of the object; creating a warning associated with the hazard; and displaying the hazard and the warning using at least one display device.

2. A method as set forth in claim 1 wherein the step of displaying includes the step of enhancing the hazard detected by the step of analyzing the data collected by at least one camera to assist the driver in recognizing the hazard.

3. A method as set forth in claim 2 wherein the step of enhancing the hazard includes the step of creating audio signals to alert the driver.

4. A method as set forth in claim 2 wherein the step of enhancing the hazard includes the step of marking the hazard to represent a warning as to the presence of the hazard.

5. A method as set forth in claim 4 wherein the step of marking includes the step of marking with a color.

6. A method as set forth in claim 5 wherein the step of marking the hazard with a color includes changing the color of the hazard as the object and the vehicle move relatively closer to each other.

7. A method as set forth in claim 2 including dividing at least one of the at least one display devices into at least two regions.

8. A method as set forth in claim 7 including the step of rectifying an image shown on one of the at least two regions.

9. A method as set forth in claim 8 including the step of presenting an image as captured by at least one camera on at least one of the other of the at least two regions.

10. A method as set forth in claim 2 wherein the step of enhancing the image includes the step of displaying an alphanumeric and/or graphical warning on at least one display device.

11. A method a set forth in claim 10, wherein the alphanumerical and/or graphical warning is displayed adjacent and/or surrounding and/or in close proximity to a spotter area

12. A method for indicating to a driver of a vehicle the presence of a plurality of objects all at least temporarily moving relative to the vehicle, the method comprising the steps of: collecting data using at least one camera; analyzing the data to detect a plurality of hazards, each of the plurality of hazards associated with each of the plurality of objects; identifying a rating for each of the plurality of hazards; prioritizing each of the plurality of hazards based on the rating thereof; creating a plurality of warnings each associated with each of the plurality of hazards; and displaying the plurality of hazards and warning using at least one display device, wherein only the highest rated hazard and warning of the plurality of hazards and warnings of the highest rated is displayed until the object is no longer present.

13. A method as set forth in claim 12 wherein the step of displaying includes the step of enhancing the hazard detected by the step of analyzing the data collected by at least one camera to assist the driver in recognizing the hazard.

14. A method as set forth in claim 13 wherein the step of enhancing the hazard includes the step of creating audio signals to alert the driver.

15. A method as set forth in claim 13 wherein the step of enhancing the hazard includes the step of marking the hazard with a color designated to represent a warning as to the presence of the hazard.

16. A method as set forth in claim 15 wherein the step of marking the hazard with a color includes changing the color of the hazard as the object and the vehicle move relatively closer to each other.

17. A method as set forth in claim 13 including dividing at least one of the at least one display devices into at least two regions.

18. A method as set forth in claim 17 including the step of rectifying an image shown on one of the at least two regions.

19. A method as set forth in claim 18 including the step of presenting an image as captured by at least one camera on at least one of the other of the at least two regions.

20. A method as set forth in claim 19 wherein the step of enhancing the image includes the step of displaying an alphanumeric warning on at least one display device.

21. A method for indicating to a driver of a vehicle the presence of a plurality of objects or parts of objects all at least temporarily moving relative to the vehicle, the method comprising the steps of: collecting data using at least one camera; analyzing the data to detect a plurality of objects or parts of objects; identifying a distance for each of the plurality of objects or part of objects relative to the vehicle; associating a color with each of the distances; and displaying the plurality of objects or parts of objects with the associated color of the respective distance using at least one display device.

22. A method for indicating to a driver of a vehicle the presence of an object at least temporarily moving relative to the vehicle, the method comprising the steps of: collecting data using at least one camera; analyzing the data to detect a hazard created by the presence of the object; creating a warning associated with the hazard from a group of warnings consisting of haptics, acoustics, odors, chemicals and/or other forms of electromagnetic fields; and displaying the hazard using at least one display device.

Description

[0001] This patent application is a continuation-in-part of U.S. patent application Ser. No. 14/968,132, which is a continuation of U.S. patent application Ser. No. 13/090,127. Furthermore this patent application claims the priority of U.S. patent application Ser. No. 15/287,554, which is hereby incorporated herein by reference. The invention is based on priority patent applications EP 10160325.6 and U.S. Ser. No. 15/287,554 which are hereby incorporated by reference.

BACKGROUND ART

1. Field of the Invention

[0002] A first aspect of the invention relates to an exterior mirror simulation with image data recording and a display of the recorded and improved data for the driver of a vehicle.

[0003] A second aspect of the invention relates to an environment simulation with image data recording and a display of the recorded and improved data for the driver of a vehicle. In particular, an enlarged optical display, displayed on a display unit and arranged inside a vehicle, is provided. The display view changes hereby to a different display view, especially an enlarged view, when detecting possible hazardous situations.

[0004] The display on a display device shows the data in a way favored by the driver or vehicle manufacturer.

2. Description of the Related Art

[0005] Several solutions for recording image data and its display for the driver of a vehicle are known in the prior art. The image recording is done by one or several cameras installed in the vehicle. The different assistance systems process the data from the captured image in very different ways.

[0006] Other well-known solutions relate to image displaying processes for rear-view cameras, suited to display images and, with the help of sensors, distance information regarding an obstacle behind the vehicle. In addition, systems including an image magnification function when changing into reverse gear and activated when the vehicle approaches an obstacle are well known. In US patent application having publication number 2008/0159594, a system is known which records images from the surroundings of the vehicle with a fish-eye lens. Image data is recorded with great distortion through this wide-angle lens. The image data recorded by the camera pixels are rectified block by block. The display of the image is done with the rectified image data, since an image of the surroundings of the vehicle is required.

[0007] A camera for assisting reversing is known in DE 102008031784. The distorted camera image is edited and rectified, which leads to an undistorted image. This is then further processed, in order to optimize the perspective for reversing.

[0008] In U.S. Pat. No. 6,970,184 B2, an image displaying process is known, which determines the distance to an obstacle behind the vehicle with the help of a distance sensor. This process is activated when changing into reverse gear and displayed on the display of a navigation system.

[0009] In DE 102010034140 A1, a process for displaying images on a display device and a driving assistance system is shown with the use of a sensor. The image data from two external cameras, providing each one image from the environment, is used to indicate the present distance to an object and switch from one image to another.

[0010] US 20100259371 A1 discloses a parking assistance system using an ultrasonic sensor. Here, a picture change is suggested and a distance display, reveals the calculated distance to an object.

[0011] From WO 2013101075 A1, an object detection system raises an acoustic warning when an object approaches the vehicle or the vehicle approaches an object, realized with the help of a sensor.

[0012] DE 102012007984 discloses a maneuvering system to automatically move a vehicle with a vehicle-side installed control device which is designed to output control signals to a driving and/or steering device of the motor vehicle and thereby automatically carry out an automatic maneuvering operation of the vehicle.

[0013] An object monitoring system is known from WO 2011153646 A1, whereby images are generated using more than one camera and transmitted to an evaluation unit in order to avoid possible collisions.

[0014] From EP 2481637 A1 a touch display is known, offering the possibility to select an object on the display and to calculate the distance of the respective object. The information can be provided either via an audio signal and/or a visual representation.

[0015] US 20070057816 discloses a parking assistance method using a camera system, which ensures stopping during the vehicle parking process with the aid of a picture taken from the bird's eye view.

[0016] From EP 1725035, an image acquisition system is supplied with images from a plurality of cameras, attached to the body of a vehicle. The driver of the vehicle can then select images via a touch display as required. The driver thus has the possibility to select pictures and get them displayed according to the needs of the present situation.

[0017] From EP 1462342 a parking assist apparatus and method are known, in which a vehicle driver sets a target parking position for the vehicle to be parked in on a display, displaying the image from a back camera. The area can be colored and has to be moved by the driver to a suitable spot, so that the parking assistant can assist in or conduct parking the vehicle.

[0018] From WO200007373, a method and an apparatus are disclosed for displaying images are known which use a synthesized image composed of a plurality of images shot by a plurality of cameras to facilitate the understanding of the overall situation.

[0019] A blind spot indicator is disclosed in U.S. Pat. No. 8,779,911 B2, which is adjacent to a second mirror surface of a rear view device, a so called spotter area, used to observe objects located in a blind spot of the vehicle.

[0020] An assistance system is known from EP 1065642 that records an image via a camera and displays the position of the steering axles in the area of the vehicle in order to reach a possible parking position.

[0021] WO 2016126322 relates to a configuration for an autonomously driven vehicle in which the sensors, providing 360 degrees of sensing, are accommodated within the conventional, existing exterior surface or skin of the vehicle.

[0022] CN 103424112 discloses a laser-based visual navigation method to support a movement carrier autonomous navigation system. To increase the reliability, a plurality of vision sensors are combined and the geometric relationship between the laser light source and the vision sensors is effectively utilized.

[0023] WO2014016293 relates to an ultrasonic sensor arrangement placed within a motor vehicle, which can be used for supplying data to a parking assistant to show the distance of the motor vehicle to obstacles to the driver.

SUMMARY OF THE INVENTION

[0024] In contrast, the object of a first aspect of the invention is to create a display of a camera image, which corresponds to the familiar image in a rear view mirror. The distortions of the image caused by the different mirror glasses are provided for the driver in the usual manner.

[0025] The present invention relates to image rectification for a vehicle, which includes a display device, in order to show modified images and an imaging device for receiving the recorded images, which have been improved by image rectification. Furthermore, the system comprises image rectification in communication with the display device and the imaging device, so that pixels, which are located in the recorded images, are improved by reorientation or repositioning of the pixels from a first position to a second position by means of a transmission or transfer process.

[0026] Furthermore, the invention relates to a rear-view image improvement system for a vehicle, which includes a display device for showing modified images, which have been improved by the image improvement system, and an imaging device for receiving recorded images, which have been improved by the image improvement system. The system also comprises an image improvement module in connection with the display device, and indeed in such a way that pixels, which are located in the recorded images, are grouped and spread out, in order to form at least one region of interest, in which reference is made to the pixels from a base plane in the recorded image, in order to form the modified images.

[0027] Additionally, the object of the second aspect of this invention is to create and display a camera image, which corresponds to the best true to the scale image of a region of interest. The distortion and/or the manipulation of the image assists the driver to perceive the situation displayed in the region of interest.

[0028] The invention relates to a further improvement of the displaying system to relay an accurate or enhanced image from a region of interest, including for example a hazardous situation, to the driver by combining state-of-the-art technology, sensors, image capturing and analysis systems. This is done in such a way, that the driver receives a best possible true to the scale estimation of the region of interest and can perceive the situation comprised within the region of interest, for example with the help of numerical, graphical and/or audio representation variants within the vehicle, particularly displayed on the display unit.

[0029] The invention relates further to a system for improving the perception of the driver by using different graphical representations and color scales.

[0030] Furthermore, the invention relates to a vehicle comprising display devices, processing devices and sensors such as cameras.

[0031] The object of the invention is to also provide an object detection and classification system with image feature descriptors derived from periodic descriptor functions.

[0032] An object detection and classification system analyzes images captured by an image sensor for a hazard detection and information system, such as on a vehicle. Extracting circuitry is configured to extract at least one feature value from one or more keypoints in an image captured by an image sensor of the environment surrounding a vehicle. A new image feature descriptor is derived from a periodic descriptor function, which depends on the distance between at least one of the keypoints and a chosen query point in complex space and depends on a feature value of at least one of the keypoints in the image.

[0033] Query point evaluation circuitry is configured to sample the periodic descriptor function for a chosen query point in the image from the environment surrounding the vehicle to produce a sample value. The sample value for a query point may be evaluated to determine whether the query point is the center of an object or evaluated to determine what type of object the query point is a part of.

[0034] If the evaluated query point satisfies a potential hazard condition, such as if the object is classified as a vulnerable road user or object posing a collision threat, a signal bus is configured to transmit a signal to alert the operator of the vehicle to the object. Additionally, or alternatively, the signal bus may transmit a signal to a control apparatus of the vehicle to alter the vehicle's speed and/or direction to avoid collision with the object.

[0035] The object detection and classification system disclosed herein may be used in the area of transportation for identifying and classifying objects encountered in the environment surrounding a vehicle, such as on the road, rail, water, air, etc., and alerting the operator of the vehicle or autonomously taking control of the vehicle if the system determines the encountered object poses a hazard, such as a risk of collision or danger to the vehicle or to other vehicles or persons in the area.

[0036] Another aspect of this invention is a rearview device and illumination means comprising different functions.

BRIEF DESCRIPTION OF THE DRAWINGS

[0037] Advantages of the invention will be readily appreciated as the same becomes better understood by reference to the following detailed description when considered in connection with the accompanying drawings, wherein:

[0038] FIG. 1 shows an exemplary exterior mirror;

[0039] FIG. 2 shows an examples of a mirror type;

[0040] FIG. 3 shows a camera installation;

[0041] FIG. 4 shows an exemplary vehicle;

[0042] FIG. 5 shows a display in the vehicle;

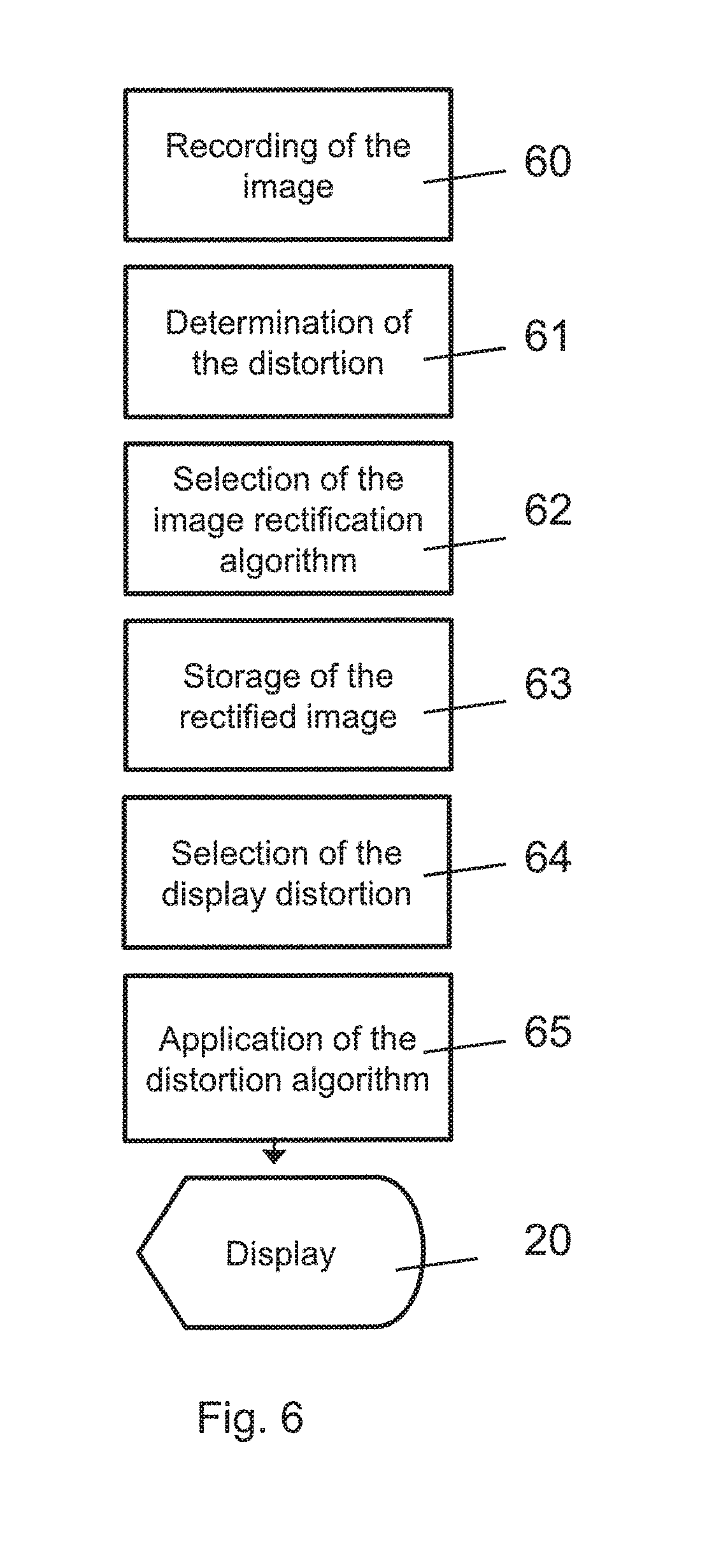

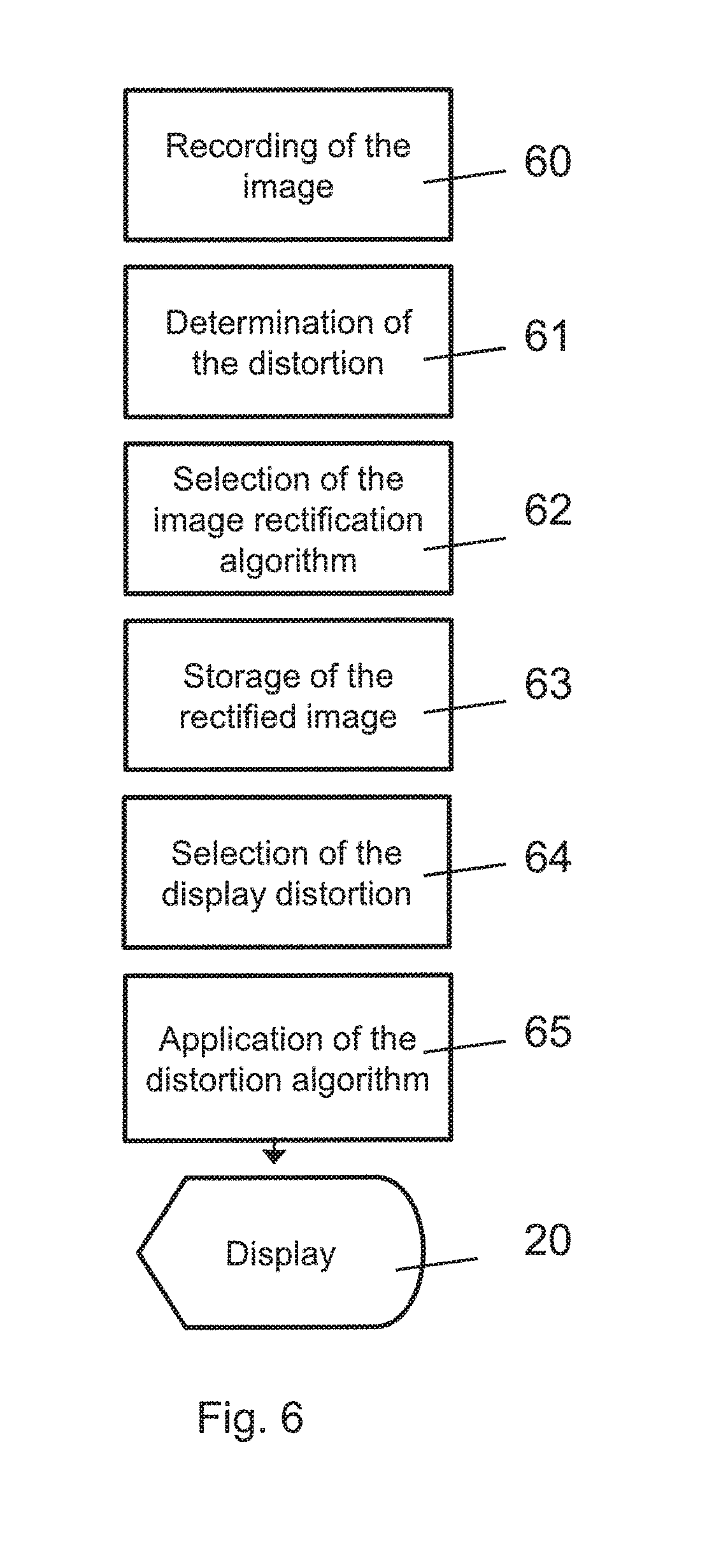

[0043] FIG. 6 shows the process of image capture;

[0044] FIG. 7 shows an alternative process;

[0045] FIG. 8 shows distorted and rectified pixel areas;

[0046] FIG. 9 shows an alternative process from acquiring to displaying the relevant information;

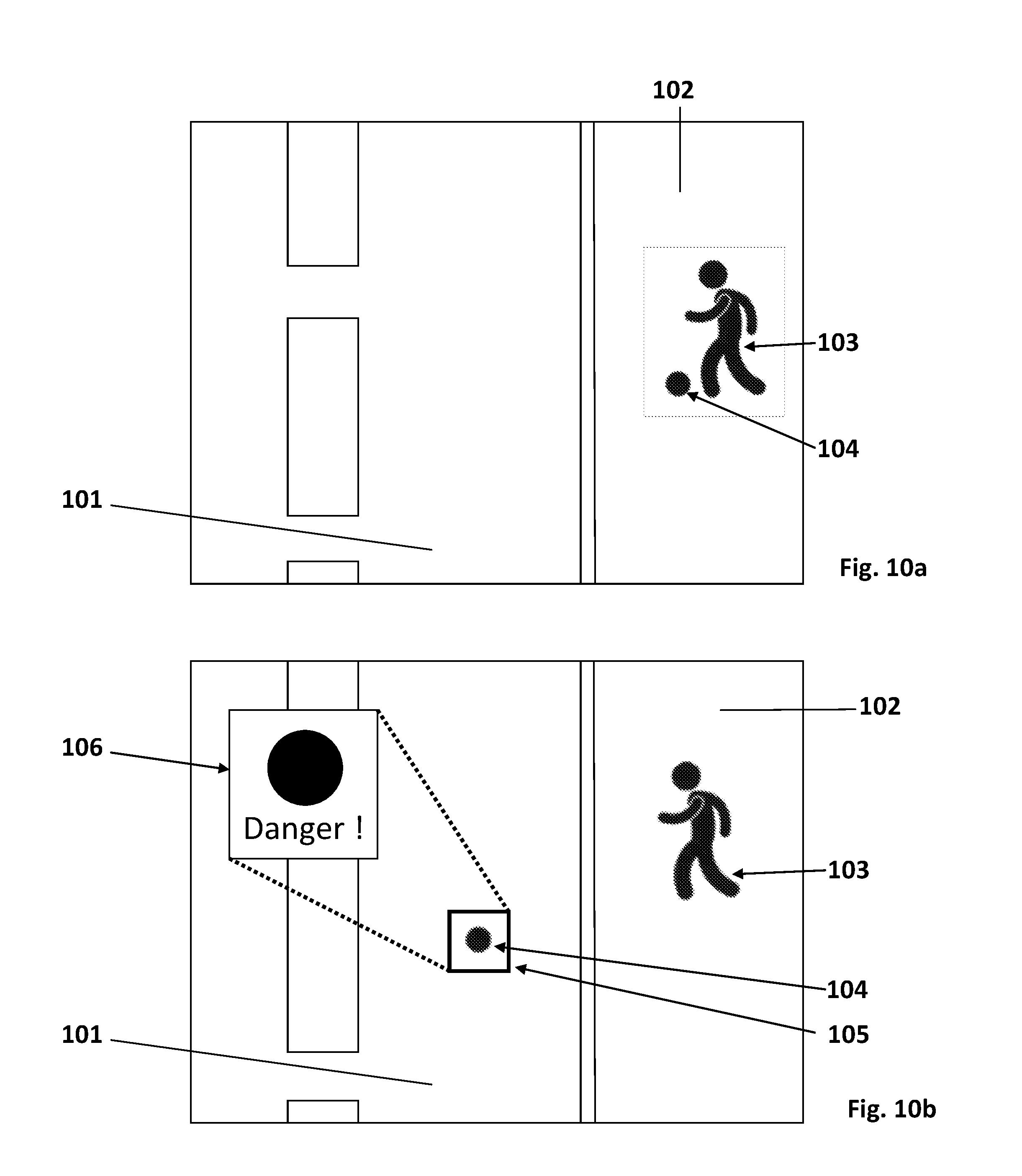

[0047] FIGS. 10a and 10b show an example of hazardous detection during operation;

[0048] FIGS. 11a-11k show exemplarily different forms of color scales;

[0049] FIG. 12 illustrates a rear view of a vehicle with an object detection and classification system;

[0050] FIG. 13 illustrates a schematic of an image capture with a query point and a plurality of keypoints;

[0051] FIG. 14 illustrates a block diagram of a system that may be useful in implementing the implementations disclosed herein;

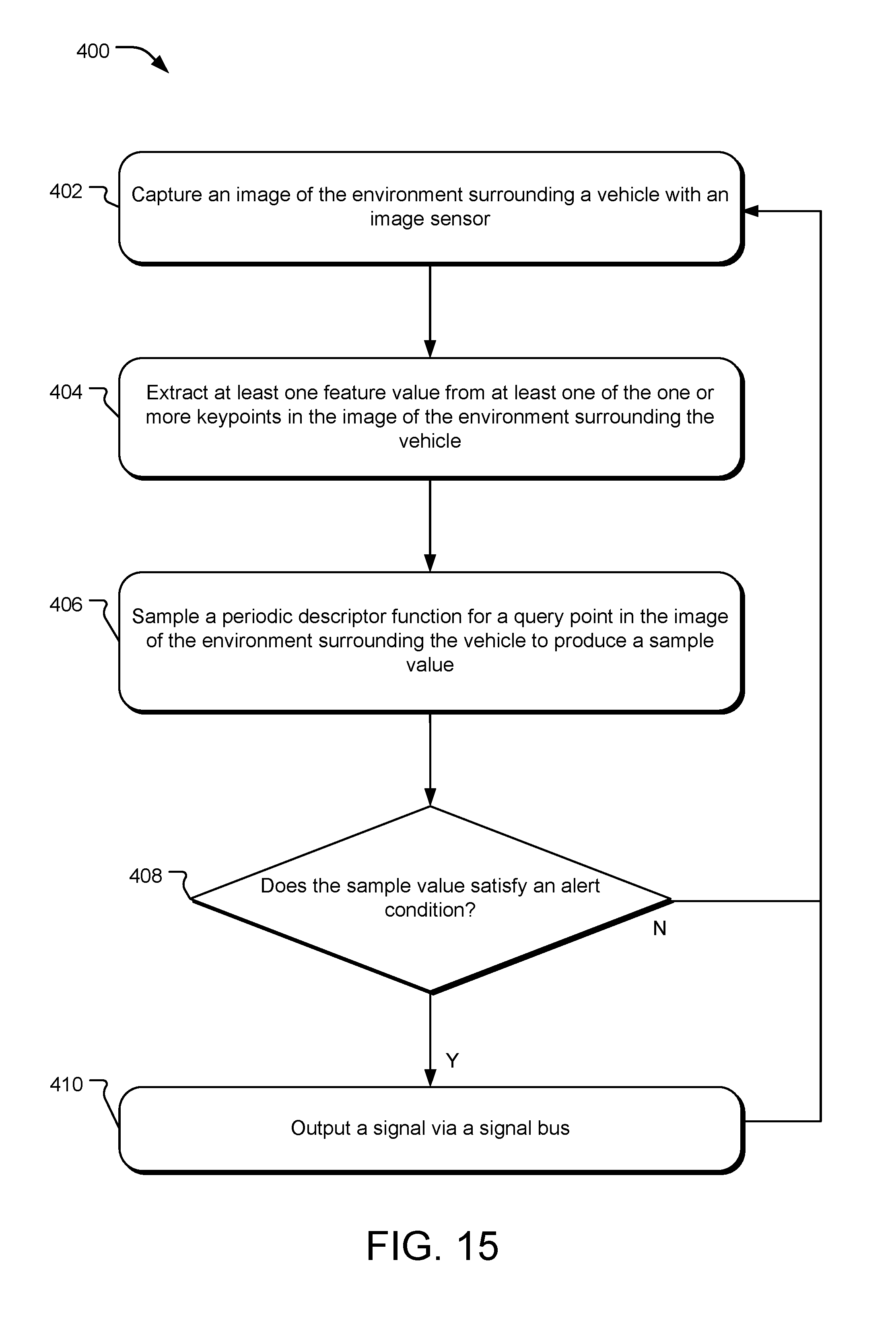

[0052] FIG. 15 illustrates example operations for detecting and classifying an object and transmitting a signal to an alert system and/or to a vehicle control system; and

[0053] FIG. 16 discloses a block diagram of an example processor system suitable for implementing one or more aspects of an object detection and classification system with Fourier fans.

DETAILED DESCRIPTION OF THE DRAWINGS

[0054] FIG. 1 shows an exterior mirror 1, which comprises a mirror head 2, which is connected to a vehicle by a mirror base or bracket 3. The mirror head 2 forms an opening for a mirror glass 4.

[0055] The size of the mirror glass 4 is determined by the mounting on the vehicle, as well as by the different legal regulations about the available field of view. In this process, different glass types for different regions have developed. In the USA, a flat plane glass is used on the driver side.

[0056] A mirror glass 4 with a curvature radius is shown in FIG. 2. The glass in FIG. 2 can be used in mirror assemblies on the passenger side of the vehicle and on the driver side of the vehicle in countries other than the USA. Convex mirror glasses as well as glass with an aspherical part are used in addition to convex glass.

[0057] The driver of a vehicle is used to the display of each type of exterior mirror, and therefore can deduce for himself the warning information which he needs to steer the vehicle through the traffic.

[0058] Exterior mirrors contribute to the overall wind resistance of the vehicle. The aerodynamics of a vehicle are influenced by the exterior mirror. Therefore, it is sensible to replace it with the camera system that provides the same field of view while reducing the adverse effect on aerodynamics, and so to minimize the total CO2 emissions of the vehicle, by reducing the turbulent flows around the vehicle, and creating a predominantly laminar flow.

[0059] FIG. 3 shows a possible installation of a rear view assembly, generally indicated at 10 in a vehicle. The optical sensor 6, of which only the optic lens can be seen in the figure, is enclosed in a housing 7. The housing 7 is tightly mounted to a vehicle 8, best seen in FIG. 4. The housing 7 has a form which is streamlined on the vehicle 8. The optical sensor itself is installed in the housing 7, and has a watertight seal against weather effects, as well as against the influence of washing processes with detergents, solvents and high pressure cleaners.

[0060] The housing 7 includes an opening, through which the camera cabling is led. In this process, the connection of the camera to the electric system of the vehicle 8 is done by any bus system or a separate cable connection. FIG. 4 shows as an example the attachment position of a sensor in the housing 7 on the vehicle 8. The camera position is therefore to be chosen in a way that fulfils the legally required field of view. The position can therefore be on the front mudguard, on the mirror triangle or on the edge of the vehicle roof 8a. Through the application of a wide-angle lens, it is possible that the field of view of the sensor will be larger than through a conventional mirror.

[0061] A display device 20, which can be seen by the driver 9, is mounted into a vehicle 8. The picture from the camera is transmitted to the display device 20. In one embodiment, the display device 20 is mounted to an A-pillar 21 of the motor vehicle 8.

[0062] FIG. 5 shows an exemplary embodiment of the present invention 10 with a display device 20, which is provided in the vehicle cab or vehicle interior for observation or viewing by the driver 9. The rear view assembly 10 delivers real-time wide-angle video images to the driver 9 that are captured and converted to electrical signals via the optical sensor 6. The optical sensor 6 is, for example, a sensor technology with a Charge-Coupled Device (`CCD`) or a Complementary Metal Oxide Semiconductor (`CMOS`), for recording continuous real-time images. In FIG. 5, the display device 20 is attached to the A-pillar 21, so that the familiar look in the rear view mirror is led to a position which is similar to the familiar position of the exterior mirror used up to now.

[0063] In the event of mounting on the A-pillar 21 being difficult due to the airbag safety system, a position on the dashboard 22 near to the mirror triangle or the A pillar is also an option. The display device 20 shows the real-time images of camera 6, as they are recorded in this example by a camera 6 in the exterior mirror.

[0064] The invention is not dependent on whether the exterior mirror is completely replaced, or if, as is shown in FIG. 5, it is still available as additional information. The optical sensor 6 can look through a semitransparent mirror glass, for example a semitransparent plane mirror glass.

[0065] The field of view recorded by an optical sensor 6 is processed and improved in an image rectification module, which is associated with the rear view assembly 10, according to the control process shown in FIG. 6. The image rectification module uses a part of the vehicle 8 as a reference (e.g. a part of the vehicle contour) when it modifies the continuous images, which are transmitted to the display device 20 as video data. The display device 20 can be a monitor, a liquid crystal display device or a TFT display, or LCD, a navigation screen or other known video display devices, which in the present invention permit the driver 9 to see the area near to the vehicle 8. The application of OLED, holographic or laser projection displays, which are adapted to the contour of the dashboard or the A pillar 21 are useful.

[0066] The image rectification occurs onboard the vehicle 8, and comprises processing capacities, which are carried out by a computation unit, such as, for example, a digital signal processor or DSP, a field programmable gate array (`FPGA`), microprocessors or circuits specific to use, or application specific integrated circuits (`ASIC`), or a combination thereof, which show programmability, for example, by a computer-readable medium such as, for example, software or hardware, which is recorded in a microprocessor, including Read Only Memory (`ROM`), or as binary image data, which can be programmed by a user. The image rectification can be formed integrally with the imaging means 20 or the display device 14, or can be positioned away in communication (wired or wireless) with both the imaging means as well as the display device.

[0067] The initiation or starting up of the image rectification occurs when the driver starts the vehicle. At least one display device 20 displays continuous images from the side of the vehicle, and transmits the continuous images to the image rectification device. The image rectification device modifies the continuous images and transmits the improved images by video data to the display device 20, in order to help the driver.

[0068] The individual steps of image rectification as well as image distortion are shown in FIG. 6. In this process, the invention distorts the image of the wide-angle camera and applies post-distortion to this image, in order to give this image the same view as that of the desired mirror glass.

[0069] The first step is the recording of the image. In a second step, the type of distortion, to which the image is subjected, is determined.

[0070] In a further step, the algorithm is selected, which is adapted to the present distortion. An example is explained in DE 102008031784.

[0071] An optical distortion correction is an improving function, which is applied to the continuous images. The optical distortion correction facilitates the removal of a perspective effect and a visual distortion, which is caused by a wide angle lens used in the camera 6. The optical distortion correction uses a mathematical model of the distortion, in order to determine the correct position of the pixels, which are recorded in the continuous images. The mathematical position also corrects the pixel position of the continuous images, as a result of the differences between the width and height of a pixel unit due to the aspect or side ratio, which is created by the wide angle lens.

[0072] For certain lenses, which are used by the camera 6, the distortion coefficient values k1 and k2 can be predetermined, in order to help in eliminating the barrel distortion, which is created by the use of a wide-angle lens. The distortion coefficient values are used for the real-time correction of the continuous images.

[0073] The distortion coefficient values k1 and k2 can be further adjusted or coordinated by using an image, which is recorded in the continuous images, which shows the known straight line, for example, the lane markings on a road. According to this aspect of the present invention, the distortion center is registered by analysis of the recorded continuous images in the search for the straightest horizontal and vertical lines, whereby the center is situated where the two lines intersect. The recorded image can then be corrected with varied or fine-tuned distortion co-efficient values k1 and k2 in a trial and error process. If, for example, the lines on one side of the image are "barrel distorted" ("barreled") and lines on the other side of the image are "pin cushion distorted" ("pin-cushioned"), then the center offset must move in the direction of the pin-cushioned side. If a value is found, which sufficiently corrects the distortion, then the values for the distortion center 42 and the distortion coefficient values k1 and k2 can be used in the mathematical model of optical distortion correction.

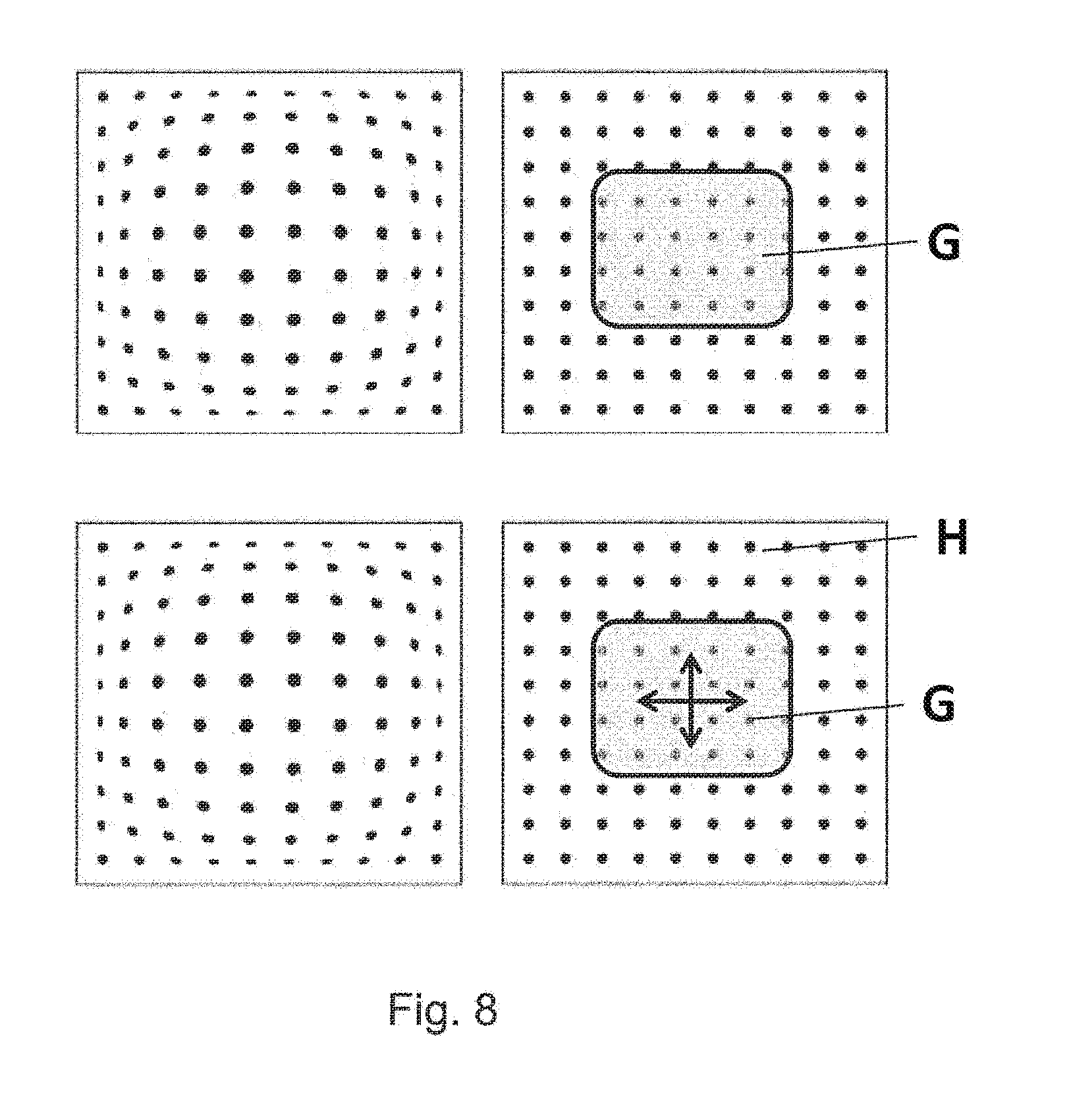

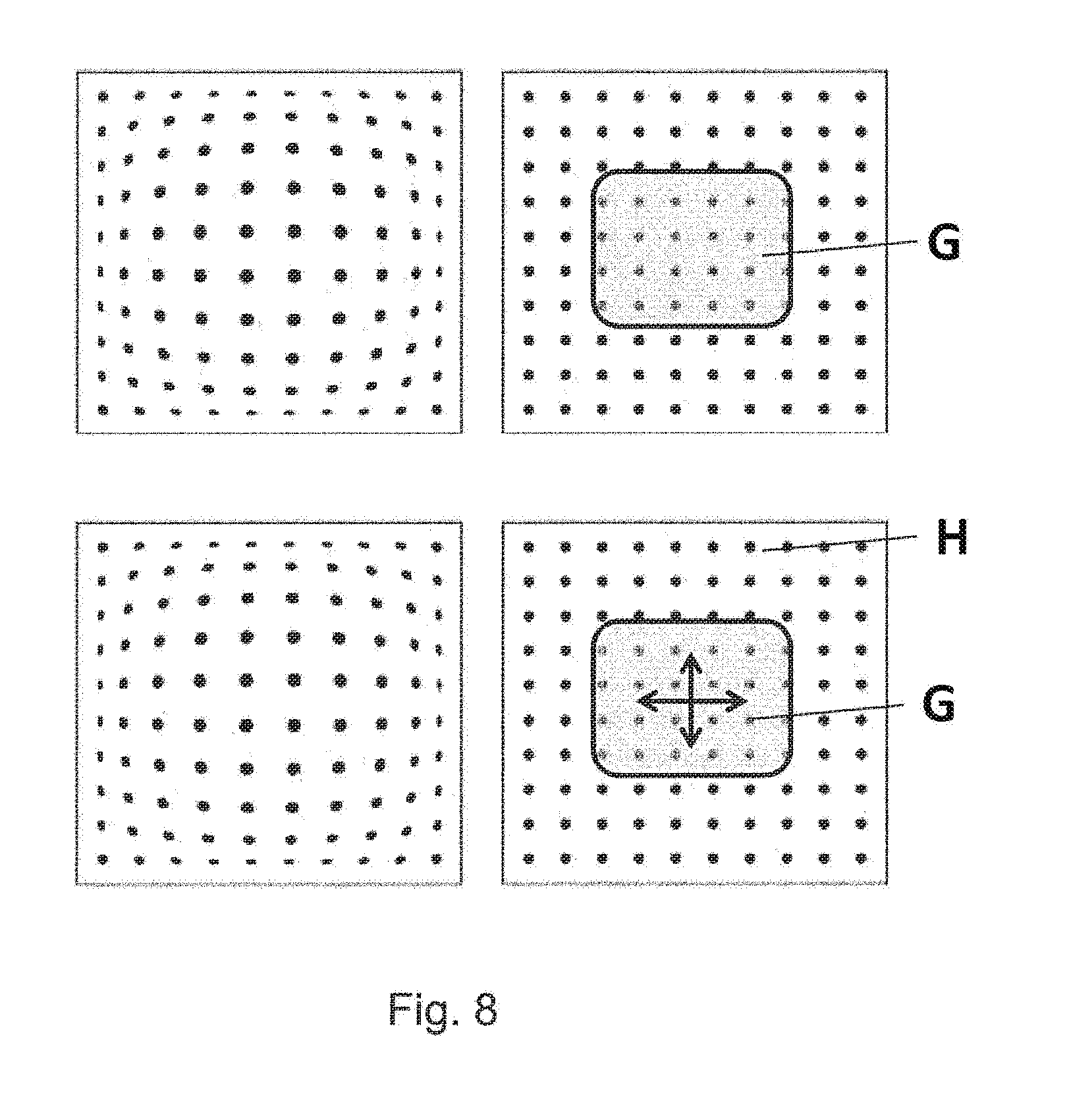

[0074] As a result of the rectification stage at 63, a low-error image is given at 64, which can be shown on the display device 20. The image obtained after rectification corresponds to the image of a plane mirror, whereby the simulated mirror surface would be larger than the usual mirror surface. If such a plane mirror is simulated, the further steps are eliminated and the data is displayed directly on the display according to FIG. 7. The image of a plane mirror is defined by a selection of pixels of the optical sensor. In this way, as shown in FIG. 8, only the pixels in the middle of the optical sensor are chosen. In order to simulate the plane mirror in a larger approximation on the hardware mirror, data must be cut, and the section is limited to a section in the middle of the image.

[0075] The operator which is applied to the pixels in order to achieve the desired image is determined in the next step 64. For example, the algorithm is selected in order to again distort the low-error image as would be shown in mirror glass with an aspheric curve, for example. Therefore, the pixel values must be moved in a certain area in order to obtain the impression of curved mirror glass.

[0076] In the next step 65, the post-distortion of the present image is carried out. For example, a plane mirror with a convex additional mirror is chosen. For this purpose, a defined number of pixels is chosen for the display of the plane mirror surface. In FIG. 8, it is area G which shows plane surfaces in the middle of the optical sensor. For the display of information from the convex mirror, all pixels of the sensor must be used, both area G as well as H, in order to provide data to the wide-angle representation of the image, which is situated in a defined area of the display. This is due to the fact that the additional convex mirror will produce an image of which a portion overlaps the image that is created by the plane mirror.

[0077] The information from all pixels is subject to a transformation, and the image of all pixels is distorted and shown on a small area of the display. In this process, information is collated by suitable operators in order to optimally display the image on a lower number of display pixels.

[0078] All operations described up to now present a defined image while the vehicle is in motion. The image is adjusted depending on the application of the vehicle.

[0079] A further adjustment possibility of the simulated exterior mirror is the function of adapting the field of view to the driver's position. As in a conventional mirror, which is adapted by an electric drive to the perspective of the driver, the `mirror adjustment` of the plane mirror simulation is done by moving section A on the optical sensor, so that other pixels of the optical sensors are visualized. The number of pixels, and therefore the size of the section, is not changed. This adjustment is indicated by the arrows in FIG. 8.

[0080] For a convex mirror, the adjustment to the perspective of the driver is not connected with simply moving a pixel section, but rather with a recalculation of the image.

[0081] The whole control of the exterior mirror simulation is done by control elements, which are used in the conventional way on the vehicle door or on the dashboard.

[0082] Furthermore, the invention also relates to a further improvement of the displaying system to relay an accurate image from a region of interest, that may include for example a hazardous situation, to the driver by combining state-of-the-art technology, sensors, image capturing and analysis systems. This is done in such a way that the driver receives a best possible true-to-scale estimation of the region of interest, and the drive can perceive the situation within the region of interest, for example with the help of numerical, graphical, audio representation variants or any combination thereof within the vehicle, many of which may be displayed on the display device 20.

[0083] The vehicle 8 can detect hazardous situations not only when moving, but also when pausing, parking and during the process of parking. The information is delivered to the vehicle 8 using a different signaling device. The signaling device may include sensors, imaging capturing and data analysis systems, any other possible device to transform information from the environment and from within the vehicle 8 into data usable by the vehicle 8, data links to other objects, for example vehicles or stationary stations, as well as any combinations hereof.

[0084] One embodiment of the process from acquiring the data to displaying the relevant information is shown in FIG. 9. The signaling device 90, by way of example, includes a sensor and a camera 91, collects data and analyzes it to detect possible hazard situations within a hazard detection module 92. When detecting a possible hazard situation, the signaling device 90 transmits information at 93 to the driver assistant software. The method then evaluates the information at 94, rectifies it and transmits it to the display unit to be displayed at 95 to the driver.

[0085] The vehicle 8 recognizes an object moving relative to the vehicle 8, for example a pedestrian walking on the sidewalk, with the help of the signaling device 90. One part of the signaling device 90 detects this moving object and calculates the distance to the vehicle. The same or another part of the signaling device marks this object, for example with a color, and relays the information with the help of a display device to the driver. The display device 20 is configured to pass the information that a potential hazard has been detected at a specific distance, e.g. 30 meters to the vehicle 8. The display device 20, showing the respective region of interest in which the potential hazard has been detected, is now subdivided to show at least two images, for example a normal, and additionally a rectified image of the respective region, whereby the rectified image of the respective region can be an enlarged view of the respective region. Additionally it is possible to subdivide the display device into multiple parts. Then multiple different images, for example rectified or non-rectified images of multiple detected possible hazards, can be shown.

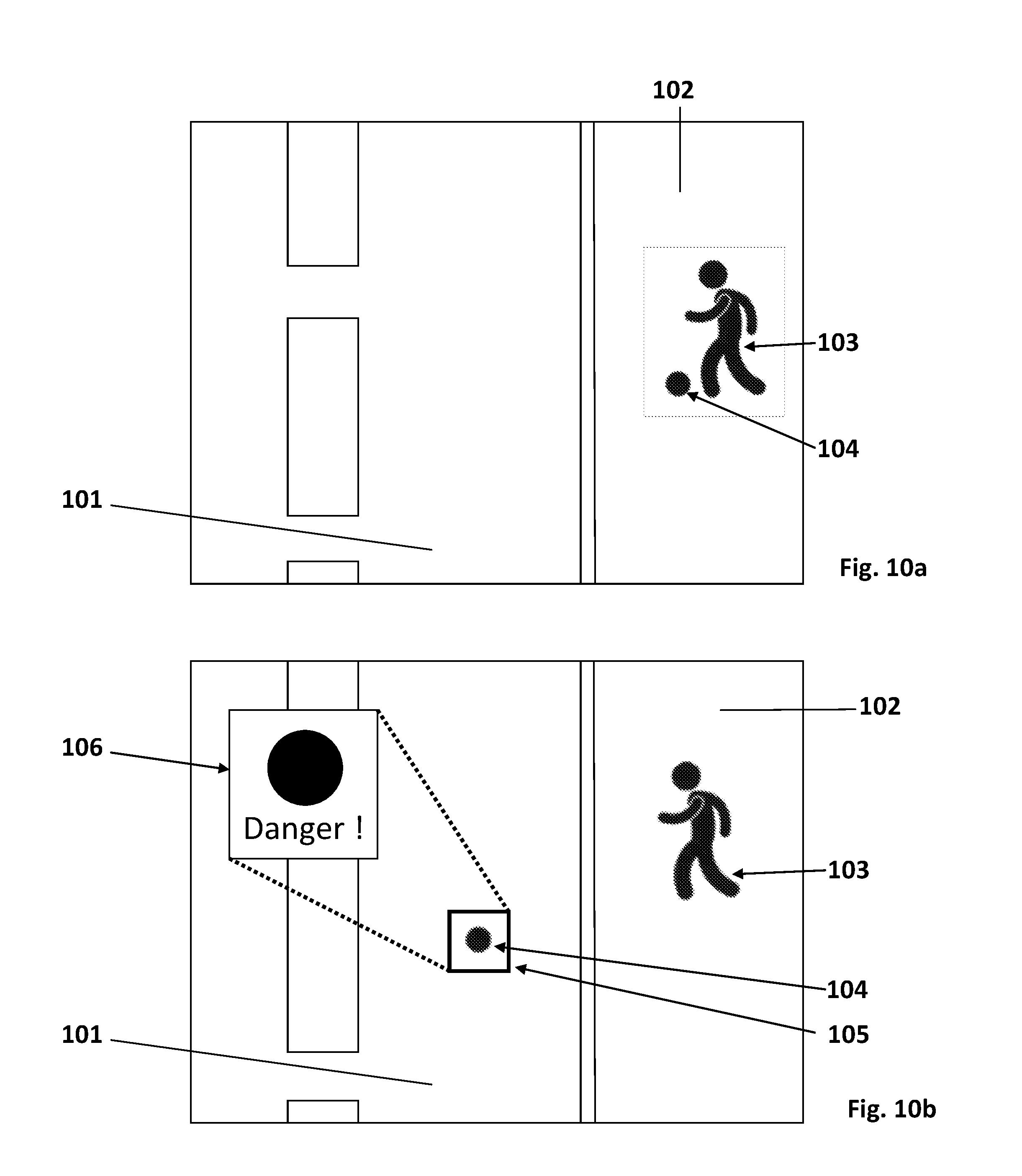

[0086] This is exemplarily shown in FIGS. 10a and 10b, wherein in FIG. 10a the image of the situation in front of a vehicle 8 is depicted, comprising a street 101, a sidewalk 102 and two objects 103 and 104 located on the sidewalk. In FIG. 10b, the object 104, is now present on the street and the signaling device 90 detects this as a possible hazard object within a respective region of interest 105. The display device 20 is subdivided to provide the normal view of the image and additionally an enlarged view 106 of the respective region 105, in which the possible hazard object has been detected. Additionally, another indicator in form of the alphanumeric characters "Danger!" is used here to support the perception of the situation by the driver.

[0087] To react accordingly to the present situation, either the nearest potential hazard object is shown in the rectified image of the respective region and the view is switched to the next nearest potential hazard object after passing the nearest potential hazard object, or the order in which the potential hazard objects are shown in the rectified image of the respective region is arranged according to the level of hazard the potential hazard objects pose, starting from the highest rated potential hazard to the lowest rated potential hazard. The level of hazard can be derived for example form accumulated velocity and position data of the respective objects.

[0088] Additionally, the signaling device 90 can mark the potential hazard objects as rated (described earlier) either based on distance or level of hazard, wherein the characteristic properties of these markings can be comprised of color, brightness, shading, hatching or any other type of possible quality as well as any combinations thereof, constant with respect to time or varying with time, displayed on the display device 20.

[0089] Additionally, the signaling device 90 can mark the objects or parts of objects surrounding the vehicle 8 and determine the distances of the respective objects or part of the objects. Then it can associate a color with each of the respective object or part of the object based on the distance of the respective object or part of the object and display this information on the display device 20. Thereby the distance information is connected with the color information, allowing a better anticipation and understanding of the distance information on the display device 20.

[0090] The display device 20 may also include a navigation device, a head-up display, any other kind of devices suitable for displaying numerical, graphical and/or audio representation variants, or any combination thereof.

[0091] The signaling device 90 can also recognize the situation arising when the driver initializes the process of parking, irrespective of the gear used and the direction in which the parking is performed. When the driver changes for example into reverse gear, the image transmitted to the display device 20 shows a normal image of the region behind and/or adjacent to the sides of the vehicle. As soon as the driver approaches a relevant object in the vicinity of the reversing vehicle 8, for example a curbstone, another vehicle, a fire hydrant or any other type of object, located in a respective region of interest of the display device 20, the situation displayed on the display device 20 changes to a rectified image of the respective region of interest, preferably an extended view of the respective region of interest.

[0092] The situation displayed on the display device 20 during the parking process, the rectified image of the respective region of interest, covers the whole area of the display unit.

[0093] At the same time, the signaling device 90 detects and calculates the distance to the relevant object and relays this information with the help of the display device 20 to the driver by numerical, graphical, audio representation variants or any combination thereof, preferably by using a graphical representation, preferably by using a range of different colors, brightness, shadings, hatchings or any other type of possible quality as well as any combinations thereof, constant with respect to time or varying with time.

[0094] The same holds true for the vehicle 8 attempting to park when using the forward gear so that the display device 20 shows an image of the region in front and/or adjacent to the sides of the vehicle 8.

[0095] Instead of concentrating on a single relevant object, the signaling device 90 can also mark different objects in the vicinity of the vehicle 8 and display the distance information on the display device 20 by numerical, graphical, audio representation variants or any combination thereof, preferably by using a graphical representation, in particular by using a range of different colors, brightness, shadings, hatchings or any other type of possible quality as well as any combinations thereof, constant with respect to time or varying with time, and therefore enhance the perception of the situation by the driver.

[0096] Using color as the characteristic quality and relating the distance of the objects to the displayed color of the objects can especially enhance the perception of the situation by the driver, when the driver is not able to naturally perceive depth or distance information by optical means, due to for example a missing stereoscopic view, a missing ability to read the distance information or other inabilities in one of these directions. The color scheme used to signify the distance of the object can be adapted to the personal needs of the driver, for example a version without ambiguities for persons having the inability to discern between different colors. Instead of the color quality another characteristic quality, for example the brightness, can be used to signify the distance of the respective objects for persons which have the inability to perceive colors, so that also in this cases the perception of the driver can be enhanced. This holds true for all other possible combinations of different qualities and/or the different representation variants.

[0097] When the vehicle 8 is not moving, that is parking or temporarily halting, for example due to a red traffic light, the signaling device 90 is used to identify relevant objects which pose a possible hazard in the near or far vicinity of the vehicle 8. These objects are for example pedestrians, bicycle riders or other vehicles, but also other objects having the possibility to move temporary or to be moved, for example boom barriers or bollards. The image displayed on the display device 20 is chosen as to optimize the perception of the situation and the possible hazards by the driver from one or more of the methods described above, for example showing an enlarged view of the possible hazard objects and/or marking the possible hazard objects with different colors.

[0098] When applicable, also the perception of the person sitting next to the driver or another person sitting, in fact all fellow passengers in the vehicle 8 is enhanced. This is achieved by using the methods described above and, when necessary, splitting the image displayed on the display device 20 to provide two or more different images, one for each of the respective persons, displaying different images on different display device 20 for the respective persons or any combination thereof. This is especially useful in situations, in which the driver and the fellow passenger require different information, for example when opening the doors and/or exiting on different sides and facing therefore different possible hazards. This is also useful in situations in which the signaling unit 90 is not able to display all the different possible hazards on one single display device 20 or the number of possible hazards is so large, that one driver alone is not able to perceive the complete situation.

[0099] A special potential hazard situation is present when an object moves into or is located inside a region not visible for the driver and/or another person sitting in the vehicle, often referred to as a blind spot. This typically comprises for example the area left and right of the vehicle which is not captured by the rear-view devices such as the external rear-view mirrors, but also the area in the surrounding of the vehicle 8 where the view is blocked by parts of the vehicle 8 itself. Objects inside these regions are detected and either marked and displayed as described in the situations above, or a special warning signal is sent to the driver and/or the respective persons sitting in the vehicle to inform them of this special possible hazard. This special warning signal can be comprised of numerical, graphical and/or audio representation variants or any combination thereof, in particular a time varying signal, such as for example a blinking graphical representation, preferably a frame or part of a frame, or a tone.

[0100] The term "driver" and "driver of the vehicle" relates here to the person controlling the main parameters of the vehicle, such as for example direction, speed and/or altitude, e.g. normally the person located in the location specified for the controlling person, for example a seat, but can also relate to any other person or object within or outside of the vehicle for which information can be provided.

[0101] To provide information to the driver, it can be advantageous to reduce and/or specify the amount of information provided to the driver, for example in images which can naturally comprise a huge amount of information and which might be not or not really or only partially important to the driver.

[0102] In one embodiment, a zoom function is applied/used to direct the attention of the driver to at least one point of interest (POI), for example like a specific detail, area, event and/or object, by enlarging the view around this POI and reducing the amount of information besides the POI and not related with it, while still providing contextual information about the details and/or the area close to the POI.

[0103] In another embodiment of this zoom function, additional information is provided to the driver. This additional information can comprise for example a graphical, audio, tactile, taste, smell signal and/or any combination thereof, providing vehicle and/or environment in an advantageous way.

[0104] In this embodiment, this signal comprises a graphical representation of the distance between the vehicle and at least one POI. This graphical representation can for example be a scale in which at least a parameter of the signal, for example the color, brightness, contrast, polarization, size and/or form of the output of the graphical representation, is used with at least one function of at least one parameter of the vehicle and/or environment, for example the distance between the vehicle and a POI, and in which the at least one function can be comprised of for example a linear function, an exponential function, a logarithmic function, a polynomial function, a constant function and/or any combination thereof.

[0105] In one specific embodiment the color of the graphical representation is used to enhance the perception of the distance information provided by the vehicle with respect to at least one POI, in which the colors are chosen according to the purpose, for example signifying an approaching object in the direction of travel.

[0106] When driving and/or reversing and approaching a POI with which contact should not be made, the color of the graphical representation can change from green, signaling a large distance, to red, signaling a small distance. When driving and/or reversing and approaching a POI with which contact is desired, for example a coupling device, the colors of the graphical representation can be used in a reversed meaning, that is using the red color to signify a large distance and the green color to signify a small distance. When driving and/or reversing and approaching a POI with which keeping a specific distance is desired, a two-sided scale can be used signaling large distances away from the desired distance in both directions with one color, for example red, and the optimal distance with another color, for example green. The colors can change according to the at least one specified function in for example a constant, linear, exponential, logarithmic, polynomial and/or any combination thereof way. In the example above it could be a standard color bar ranging from red to orange to yellow to green. But any other colors, color bars and/or color schemes can be used.

[0107] The color scale can take various forms, comprising for example a multitude of elements, for example arranged vertically as shown for stripes in FIG. 11a, arranged in a circle as shown for stripes in FIG. 11b, arranged in a half-circle as shown for stripes in FIG. 11c, arranged in a triangle shape as shown for stripes in FIG. 11d, arranged in a rectangular shape as shown for stripes in FIG. 11e. The shape of the elements can also vary and is not limited to the shown stripes, comprising for example triangles, circles, squares, 2D and/or 3D representations of 3D objects, for example cubes, boxes, pyramids and many more.

[0108] The scale can also comprise just a single element, becoming smaller or larger and/or changing colors. Preferably the single element comprises a continuous changing color scale, of which several possible embodiments are shown in FIGS. 11f-11k.

[0109] At the same time, a number representation of the parameter and/or the parameter range can be displayed next to the scale to increase the perception by the driver. The orientation of the scale can be chosen either horizontal, vertical and/or at any angle in between.

[0110] The size, shape color and volume of the graphical representation can also change with the at least one parameter of the vehicle and/or environment, such that for example a single or multiple elements fade away, disappear and/or appear. The arrows shown in the FIGS. 11a-11k indicates exemplarily the direction of such possible changes.

[0111] The graphical representations, for example those shown in FIGS. 11a-11k, can also be used to be placed adjacent to and/or surrounding a present spotter area of a rear view device, irrespective if an actual mirror or a mirror replacement, such as a display, is used.

[0112] In all embodiments the changes can also be carried out on multiple parts and in multiple directions, sequentially or at the same time.

[0113] Multiple information can be displayed on a single display device, by splitting the display into at least two parts, one part showing the information of the zoom function, whereas at least one of the other parts can show the normal view and/or part of the normal view

[0114] A vehicle comprising display devices, processing devices and sensors such as cameras is also described. In or on the vehicle different display devices, processing devices and cameras can be installed, configured and interconnected.

[0115] The display devices can be mounted inside or outside the vehicle and can be used to transmit optical information to the driver and/or any person or object inside and outside of the vehicle. The display devices can also be configured to transmit information via haptics, acoustics, odors, chemicals and/or other forms of electromagnetic fields. The information is typically first collected from sensors and other signal receiving devices on or in the vehicle and then processed by processing devices. A multitude or only one processing device can be installed in the vehicle to process the pictures and information provided by the cameras and sensors. Optionally the processing devices can be remotely located and the vehicle is wirelessly connected to the remote processing unit. The processed information is then directed to the different display devices to inform the driver and/or any person or object inside and outside of the vehicle. Depending on the location of the display devices and the nature of the receiver, the output of different information with different output means is induced.

[0116] The display devices can also be configured to receive input from the driver and/or any person or object inside and outside of the vehicle. This input can be received via different sensing means, comprising for example photosensitive sensors, acoustic sensors, distance sensors, touch-sensitive surfaces, temperature sensors, pressure sensors, odor detectors, gas detectors and/or sensors for other kind of electromagnetic fields. This input can be used to control or change the status of the output of the display device and/or other components on or in the vehicle. For example the field of view, the contrast, the brightness and/or the colors displayed on the display device, but also the strength of the touch feedback, sound volume and other adjustable parameters can be changed. As further examples the position or focus of a camera, the temperature or lighting inside the vehicle, the status of a mobile device, like a mobile phone, carried by a passenger, the status of a driver assistance system or the stiffness of the suspension can be changed. Generally every adjustable parameter of the vehicle can be changed.

[0117] Preferably the information from the sensing means is first processed by a processing device, but it can also be directly processed by the sensor means or the display device comprising a processing device. Preferably the display device comprises a multi-touch display so that the driver or any other passenger can directly react to optical information delivered by the display device by touching specific areas on the display. Optionally gestures, facial expression, eye movement, voice, sound, evaporations, breathing and/or postural changes of the body can also be detected, for example via a camera, and used to provide contact-free input to also control the display device.

[0118] Information stemming from multiple sources can be simultaneously displayed on a display of the display device. The information coming from different sources can either be displayed in separated parts of the display or the different information can be displayed side by side or overlaid together on the same part of the display.

[0119] Selecting a specific region on the display of the display device by, for example touching it, can trigger different functions depending on the circumstances. For example, a specific function can be activated or deactivated, additional information can be displayed, or a menu can be opened. The menu can offer the choice between different functions, for example the possibility to adjust various parameter.

[0120] The adjustment of different parameters via a menu can be done in many ways, known from prior art and especially from the technology used in mobile phones with touch screen technology. Known are for example scrolling or sliding gestures, swiping, panning, pinching, zooming, rotating, single, double or multi tapping, short or long pressing, with one or more than one finger of one or more hands and/or any combination thereof.

[0121] A display device in combination with one or more cameras can be used to replace a rearview mirror, either an interior or an exterior rearview mirror. There are various advantages offered by this constellation. For example, a display device together with a camera monitoring one side of the vehicle and one camera monitoring the rear of the vehicle can replace an external rearview mirror. By combining the pictures of both cameras, the blind spot zone is eliminated and an improved visibility is offered.

[0122] The display devices can be arranged inside the vehicle eliminating the need for exterior parts. This offers the advantage to smoothen the outer shape of the vehicle, reduces the air friction and therefore offers power and/or fuel savings.

[0123] The processing device can advantageously handle the input of multiple sources. Correlating the input data of the different sources allows for the reduction of possible errors, increases measurement accuracy and allows to extract as much information as possible from the available data.

[0124] When driving, it is especially important to perceive possibly dangerous situations. One part of the processing device analyses the available data and uses different signaling means to enhance the perception of the situation by the driver. For example, an object recognition and classification algorithm can be used to detect different objects surrounding the vehicle, for example based on the pictures acquired by one or more cameras. Comparing the pictures for different points in time or using supplementary sensor data gives information about the relative movement of objects and their velocity. Therefore, objects can be classified into different categories; for example, dangerous, potentially dangerous, noted for continued observance, highly relevant, relevant, and irrelevant.

[0125] From all the information, a level of danger attributed with each object can be derived. Depending on the danger level or other important parameters, the perception of objects for the driver can be enhanced by using different signalling means to display on the display device, for example highlighting the objects with specific colors, increased brightness, flashing messages, warning signs and/or using audio messages. The overall danger level or the highest danger level can also be displayed by special warning signs, like an increased brightness, a colorful border around the whole or specific parts of the display, constant in time or flashing with increasing or decreasing frequency. The information displayed on the display device is highly situational and is re-evaluated according to the updated information from the various sensors and information sources. An emergency vehicle or a station can for example broadcast an emergency message to allow for vehicles and the driver of the vehicles for an improved reaction to possible dangerous situations or to clear the path for emergency operations. A vehicle involved in an accident or dangerous situation can also broadcast a message to call the attention of other vehicles and their drivers to those situations.

[0126] The implementations disclosed herein also relate to an object detection and classification system for use in a variety of contexts. The present disclosure contains a novel feature descriptor that combines information relating to what a feature is with information relating to where the feature is located with respect to a query point. This feature descriptor provides advantages over prior feature descriptors because, by combining the "what" with the "where," it reduces the resources needed to detect and classify an object because a single descriptor can be used instead of multiple feature descriptors. The resulting system therefore is more efficient than prior systems, and can more accurately detect and classify objects in situations where hardware and/or software resources are limited.

[0127] FIG. 12 illustrates a rear view of a vehicle 112 with an object detection and classification system 110 according to the present disclosure. The vehicle 112 includes an image sensor 114 to capture an image 116 of the environment surrounding the vehicle 112. The image may include a range of view through an angle 118, thus the image 116 may depict only a portion of the area surrounding the vehicle 112 as defined by the angle 118. The image 116 may include an object 120. The object 120 may be any physical object in the environment surrounding the vehicle 112, such as a pedestrian, another vehicle, a bicycle, a building, road signage, road debris, etc. The object detection and classification system 110 may assign a classification to the object 120. The classification may include the type of road object, whether the object is animate or inanimate, whether the object is likely to suddenly change direction, etc. The object detection and classification system 110 may further assign a range of characteristics to the object 120 such as a size, distance, a point representing the center of the object, a velocity of the object, an expected acceleration range, etc.

[0128] The image sensor 114 may be various types of optical image sensors, including without limitation a digital camera, a range finding camera, a charge-coupled device (CCD), a complementary metal oxide semiconductor (CMOS) sensor, or any other type of image sensor capable of capturing continuous real-time images. In an implementation, the vehicle 112 has multiple image sensors 114, each image sensor 114 may be positioned so as to provide a view of only a portion of the environment surrounding the vehicle 112. As a group, the multiple image sensors 114 may cover various views from the vehicle 112, including a front view of objects in the path of the vehicle 112, a rear-facing image sensor 114 for capturing images 116 of the environment surrounding the vehicle 112 including objects behind the vehicle 112, and/or side-facing image sensors 114 for capturing images 116 of object next to or approaching the vehicle 112 from the side. In an implementation, image sensors 112 may be located on various parts of the vehicle. For example, without limitation, image sensors 112 may be integrated into an exterior mirror of the vehicle 112, such as on the driver's exterior side mirror 122. Alternatively, or additionally, the image sensor 112 may be located on the back of the vehicle 112, such as in a rear-light unit 124. The image sensor 112 may be forward-facing and located in the interior rear-view mirror, dashboard, or in the front headlight unit of the vehicle 112.

[0129] Upon capture of an image 116 of the environment surrounding the vehicle 112, the object detection and classification system 110 may store the image 116 in a memory and perform analysis on the image 116. One type of analysis performed by the object detection and classification system 110 on the image 116 is the identification of keypoints and associated keypoint data. Keypoints, also known as interest points, are spatial locations or points in the image 116 that define locations that are likely of interest. Keypoint detections methods may be supplied by a third party library, such as the SURF and FAST methods available in the OpenCV (Open Source Computer Vision) library. Other methods of keypoint detection include without limitation SIFT (Scale-Invariant Feature Transform). Keypoint data may include a vector to the center of the keypoint describing the size and orientation of the keypoint, and visual appearance, shape, and/or texture in a neighborhood of the keypoint, and/or other data relating to the keypoint.

[0130] A function may be applied to a keypoint to generate a keypoint value. A function may take a keypoint as a parameter and calculate some characteristic of the keypoint. As one example, a function may measure the image intensity of a particular keypoint. Such a function may be represented as f (z.sub.k), where f is the image intensity function and z.sub.k is the k.sup.th keypoint in an image. Other functions may also be applied, such a visual word in a visual word index.

[0131] FIG. 13 illustrates a schematic diagram 200 of an image capture 204 taken by an image sensor 202 on a vehicle. The image capture 204 includes a query point (x.sub.c, y.sub.c) and a plurality of keypoints z.sub.0-z.sub.4. A query point is a point of interest that may or may not be a keypoint, for which the object detection and classification system may choose for further analysis. In an implementation, the object detection and classification system may attempt to determine whether a query point is the center of an object to assist in classification of the object.

[0132] Points in the image capture 204 may be described with reference to a Cartesian coordinate system; wherein each point is represented by an ordered pair, the first digit of the pair referring to the point's position along the horizontal or x-axis, and the second digit of the pair referring to the point's position along the vertical or y-axis. The orientation of the horizontal and vertical axes with respect to the image 204 is shown by the axis 206. Alternatively, points in the image capture 204 may be referred to with complex numbers where each point is described in the form x+iy wherein i= (-1). In another implementation, a query point may serve as the origin of a coordinate system, and the locations of keypoints relative to the query point may be described as vectors from the query point to each of the keypoints.

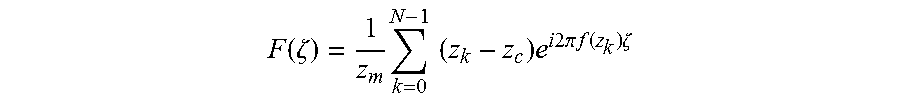

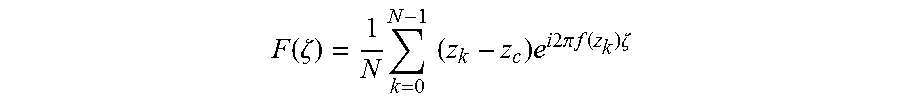

[0133] The image detection and classification system 110 uses a new descriptor function, to produce an evaluation of a query point in an image 204 that combines a representation of what the feature is and where the feature is located in relation to the query point into a single representation. For any image 204 with a set of keypoints z.sub.0-z.sub.4 in the neighborhood of a query point (x.sub.c, y.sub.c), the descriptor for the query point is as follows:

F ( .zeta. ) = 1 N k = 0 N - 1 ( z k - z c ) e i 2 .pi. f ( z k ) .zeta. ##EQU00001##

[0134] where N is the number of keypoints in the image from the environment surrounding the vehicle in the neighborhood of the query point, z.sub.c is the query point represented in complex space, z.sub.k is the k.sup.th keypoint, f(z.sub.k) is the feature value of the k.sup.th keypoint, and .zeta. is the continuous independent variable of the descriptor function F(.zeta.).

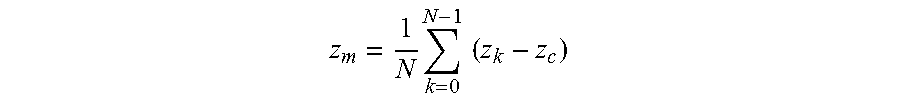

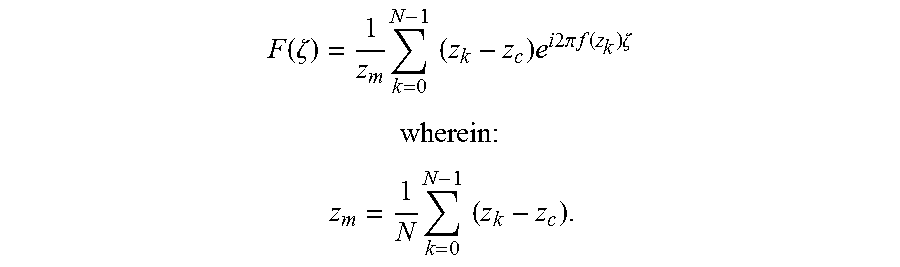

[0135] To obtain a descriptor that is invariant to scale and orientation, Equation (1) may be modified by letting z.sub.m be the mean value of z.sub.k values:

z m = 1 N k = 0 N - 1 ( z k - z c ) ##EQU00002##

[0136] By dividing the right-hand side of Equation (1) by |z.sub.m|, a scale invariant version of the descriptor is obtained. On the other hand, by dividing both sides of Equation (1) by

z m z m ##EQU00003##

a rotation-invariant version the descriptor is obtained. In order to write a descriptor that is invariant in both scale and orientation, dividing by z.sub.m yields the following descriptor:

F ( .zeta. ) = 1 z m k = 0 N - 1 ( z k - z c ) e i 2 .pi. f ( z k ) .zeta. ##EQU00004##

[0137] The division by N is omitted from Equation (3) since the contribution of the keypoint number is already neutralized through the division by z.sub.m. Due to the similarity of Equation (3) to the formula for the Inverse Fourier Series, Equation (3) may be referred to herein as a Fourier Fan.

[0138] Since Equation (3) is a function of a continuous variable it may be sampled for use in the object detection and classification system 100. In an implementation, a sampling frequency greater than 2max(f) may be chosen where max( ) indicates the maximum value of the function f. Another characteristic of Equation (3) is that it is infinite over the domain of the variable .zeta.. Sampling an infinite equation will result in an infinite number of samples, which may not be practical for use in the object detection and classification system 100. If Equation (3) is a periodic function, however, then it would be sufficient to sample only a single period of Equation (3), and to ignore the remaining periods. In an implementation, Equation (3) is made to be periodic by requiring all values of the function f to be integer multiples of a single frequency f.sub.0. As such, for Equation (3) to be able to be sampled, the function f must have a known maximum, and for the Equation (3) to be periodic, the function f must be quantized such that the values of f are integer multiples of f.sub.0.

[0139] In an implementation, the function f may represent more than a simple feature, such as the image intensity. Instead, the function f may be a descriptor function of each of the keypoints, such as those referred to herein (e.g., SIFT and/or SURF descriptors). Such descriptors are usually not simple scalar values, but rather are more likely to be high dimensional feature vectors, which cannot be incorporated directly in Equation (3) in a trivial manner. It is, however, possible to incorporate complex descriptors as feature values by clustering the descriptors in an entire set of training data and to use the index of the corresponding cluster as the value for f. Such cluster centers may be referred to as "visual words" for f. Let f.sub.k be the descriptor for a keypoint k, if f.sub.k takes integer values, e.g., 3, then there is a descriptor at the keypoint located at z.sub.k-z.sub.c, which can be assigned to cluster 3. It should be appreciated that, in this example, f is quantized and the number of clusters is the function's maximum which is known. These characteristics are relevant because they are the characteristics of f needed to make Equation (3) able to be sampled and periodic.

[0140] In an implementation, an order is imposed on the visual word cluster centers, such that the output of f is not a categorical value. In other words, without an order, the distance between cluster 2 and cluster 3 is not necessarily less than the distance between cluster 2 and cluster 10 because the numerical values are merely identifiers for the clusters. An order for the visual words may be imposed using multidimensional scaling (MDS) techniques. Using MDS, one can find a projection into a low dimensional feature space from a high dimensional feature space such that distances in the low dimensional feature space resemble as much as possible distances in the high dimensional feature space. Applied to the visual words using MDS, the cluster centers may be projected into a one dimensional space for use as a parameter for f. In one implementation, a one dimensional feature space is chosen as the low dimensional feature space because one dimensional space is the only space in which full ordering is possible.

[0141] The object detection and classification system may be tuned according to a set of training data during which parameters for the system may be chosen and refined. For example, descriptor values and types may be chosen, the size of the neighborhood around a query point may be set, the method of choosing keypoints, the number of keypoints chosen per image, etc. may also be chosen. Since the tuning of the object detection and classification system is a type of machine learning, it may be susceptible to a problem known as "overfitting." Overfitting manifests itself when machine classifiers over-learn the training data leading to models which do not generalize well on other data, the other data being referred to herein as "test data." In the descriptor of Equation (3), overfitting could occur if, on training data, the object detection and classification system overfits the positions of the keypoints with respect to the query point. Changes in the positions of the keypoints that are not present in training data, which could occur due to noise and intra-class variance, will not always be handled well by the object detection and classification system when acting on test data. To address the issue of overfitting, at each query point (x.sub.c, y.sub.c), instead of extracting a single Fourier Fan Equation (3) on training data, multiple random Fans may be extracted, denoted by the set M.sub.f (e.g., 15.sub.f). Each of the random Fans contains only a subset of the available N keypoints in the neighborhood of the query point (x.sub.c, y.sub.c). Later, when the object detection and classification system is running on test data, the same set M.sub.f of random Fourier Fans is extracted, and the result is confirmed according to majority agreement among the set of random Fourier Fans. Random Fourier Fans also allow the object detection and classification system to learn from a small number of images since several feature vectors are extracted at each object center.

[0142] In the comparison of Equation (3), the "Fourier Fan," to the formula for the inverse Fourier Series, it should be understood that there are some differences between the two. For example, only those frequencies that belong to the neighborhood of a query point are available for each Fourier Fan. As another example, shifting all coefficients z.sub.k by a constant z.sub.a, i.e. a shift of the object center, is not equivalent to adding a Dirac impulse in the domain .zeta. even if it is assumed that the same keypoints are available in the new query point neighborhood. This is true because the addition of z.sub.a is not a constant everywhere, but only to the available frequencies, and zero for the other frequencies.

[0143] FIG. 14 illustrates a block diagram of an object detection and classification system 300 that may be useful for the implementations disclosed herein. The object detection and classification system 300 includes an image sensor 302 directed at the environment surrounding a vehicle. The image sensor 302 may capture images of the environment surrounding the vehicle for further analysis by the object detection and classification system 300. Upon capture, an image from the environment surrounding a vehicle may be stored in the memory 304. The memory 304 may include volatile or non-volatile memory and may store images captured by the image sensor as well as data produced by analysis of the images captured by the image sensor. A processor 306 may carry out operations on the images stored in memory 304. The memory 304 may also store executable program code in the form of program modules that may be executed by the processor 306. Program modules stored on the memory 304 include without limitation, hazard detection program modules, image analysis program modules, lens obstruction program modules, blind spot detection program modules, shadow detection program modules, traffic sign detection program modules, park assistance program modules, collision control and warning program modules, etc.

[0144] The memory 304 may further store parameters and settings for the operation of the object detection and classification system 300. For example, parameters relating to the training data may be stored on the memory 304 including a library of functions f and keypoint settings for computation and calculation of Random Fourier Fans. The memory 304 may further be communicatively coupled to extracting circuitry 308 for extracting keypoints from the images stored on the memory 304. The memory 304 may further be communicatively coupled to query point evaluation circuitry 310 for taking image captures with keypoints and associated keypoint data and evaluating the images with keypoints and keypoint data according to Fourier Fans to produce sampled Fourier Fan values.

[0145] If the sampled Fourier Fan values produced by the query point evaluation circuitry 310 meet a potential hazard condition, then signal bus circuitry 312 may send a signal to an alert system 314 and/or a vehicle control system 316. Sampled Fourier Fan values may first be processed by one or more program modules residing on memory 304 to determine whether the sampled values meet a potential hazard condition. Examples of sampled values that may meet a potential hazard condition are an object determined to be a collision risk to the vehicle, an object that is determined to be a vulnerable road user that is at risk of being struck by the vehicle, a road sign object that indicates the vehicle is traveling in the wrong part of a road or on the wrong road, objects that indicate a stationary object that the vehicle might strike, objects that represent a vehicle located in a blind spot of the operator of the vehicle.

[0146] If the sampled values of a Fourier Fan function satisfy a potential hazard condition, the signal bus circuitry 312 may send one or more signals to the alert system 314. In an implementation, signals sent to the alert system 312 include acoustic warnings to the operator of the vehicle. Examples of acoustic warnings include bells or beep sounds, computerized or recorded human language voice instructions to the operator of the vehicle to suggest a remedial course of action to avoid the cause the of sample value meeting the potential hazard condition. In another implementation, signals sent to the alert system 314 include tactile or haptic feedback to the operator of the vehicle. Examples of tactile or haptic feedback to the operator of the vehicle include without limitation shaking or vibrating the steering wheel or control structure of the vehicle, tactile feedback to the pedals, such as a pedal that, if pushed, may avoid the condition that causes the sample value of the Fourier Fan to meet the potential hazard condition, vibrations or haptic feedback to the seat of the driver, etc. In another implementation, signals sent to the alert system 314 include visual alerts displayed to the operator of the vehicle. Examples of visual alerts displayed to the operator of the vehicle include lights or indications appearing on the dashboard, heads-up display, and/or mirrors visible to the operator of the vehicle. In one implementation, the visual alerts to the operator of the vehicle include indications of remedial action that, if taken by the operator of the vehicle, may avoid the cause of the sample value of the Fourier Fan meeting the potential hazard condition. Examples of remedial action, include an indication of another vehicle in the vehicle's blind spot, an indication that another vehicle is about to overtake the vehicle, an indication that the vehicle will strike an object in reverse that may not be visible to the operator of the vehicle, etc.