Audio Control System And Related Methods

Bailey; Jonathan ; et al.

U.S. patent application number 16/182055 was filed with the patent office on 2019-03-07 for audio control system and related methods. This patent application is currently assigned to iZotope, Inc.. The applicant listed for this patent is iZotope, Inc.. Invention is credited to Jonathan Bailey, Todd Baker, Brett Bunting, Mark Ethier, Matt Fuerch.

| Application Number | 20190074807 16/182055 |

| Document ID | / |

| Family ID | 63040054 |

| Filed Date | 2019-03-07 |

View All Diagrams

| United States Patent Application | 20190074807 |

| Kind Code | A1 |

| Bailey; Jonathan ; et al. | March 7, 2019 |

AUDIO CONTROL SYSTEM AND RELATED METHODS

Abstract

Some embodiments of the invention are directed to an audio production system which is more portable, less expensive, faster to set up, and simpler and easier to use than conventional audio production tools. An audio production system implemented in accordance with some embodiments of the invention may therefore be more accessible to the typical user, and easier and more enjoyable to use, than conventional audio production tools.

| Inventors: | Bailey; Jonathan; (Brooklyn, NY) ; Baker; Todd; (Hampstead, NH) ; Bunting; Brett; (Edinburgh, GB) ; Ethier; Mark; (Somerville, MA) ; Fuerch; Matt; (Minneapolis, MN) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | iZotope, Inc. Cambridge MA |

||||||||||

| Family ID: | 63040054 | ||||||||||

| Appl. No.: | 16/182055 | ||||||||||

| Filed: | November 6, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/US2018/015655 | Jan 29, 2018 | |||

| 16182055 | ||||

| 62454138 | Feb 3, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H03G 3/3005 20130101; H03G 5/005 20130101; H03G 9/005 20130101; G06F 3/04883 20130101; G06F 3/04847 20130101; G10L 19/00 20130101; G11B 27/34 20130101; H03G 7/00 20130101; H03G 9/00 20130101; H03G 2201/103 20130101; G10H 2210/056 20130101; H03G 3/20 20130101; H03G 5/16 20130101; H03G 7/002 20130101; H04Q 3/64 20130101; G06F 3/167 20130101; H03G 3/301 20130101; H03G 5/165 20130101; H03G 3/3089 20130101; G06F 3/0482 20130101; H03F 2200/03 20130101; G06F 3/165 20130101; H04B 1/034 20130101; H03G 7/007 20130101; H03F 3/183 20130101; H04R 3/04 20130101 |

| International Class: | H03G 3/20 20060101 H03G003/20; H04R 3/04 20060101 H04R003/04; G06F 3/16 20060101 G06F003/16 |

Claims

1. A method for use with an audio system in relation to a multi-track recording of audio, the audio system comprising at least one amplifier, the method comprising acts of: (A) receiving, at the audio system, an indication of a new audio input; (B) in response to receiving the indication, automatically causing, without intervention by a user of the audio system, a new track to be created in the multi-track recording; (C) receiving the audio input; (D) causing the received audio input to be mapped to the new track; (E) determining a gain level associated with the audio input received in the act (C); and (F) automatically, without intervention by the user, adjusting a gain level of the at least one amplifier, so that subsequent reproduction of the audio input by the at least one amplifier falls within a dynamic range delimited by a lower gain level and an upper gain level.

2. The method of claim 1, wherein the indication of the new audio input received in the act (A) comprises the user actuating a record button on the audio system.

3. The method of claim 1, wherein the indication of the new audio input received in the act (A) comprises the user plugging a musical instrument or microphone into an audio input port of the audio system.

4. The method of claim 1, wherein the act (A) comprises receiving an indication of a plurality of audio inputs, the act (B) comprises causing a new track to be created in the multi-track recording for each of the plurality of received audio inputs, the act (C) comprises receiving the plurality of audio inputs, and the act (D) comprises causing each of the plurality of received audio inputs to be mapped to a corresponding new track.

5. The method of claim 4, wherein the act (C) comprises receiving the plurality of audio inputs simultaneously.

6. The method of claim 1, wherein the act (C) comprises receiving the audio input via a component comprising at least one amplifier, and wherein the act (F) comprises automatically, without intervention by the user, analyzing spectral content of the received audio input in each of a plurality of frequency sub-bands, and adjusting spectral content of subsequent output of the at least one amplifier in one or more of the frequency sub-bands.

7. The method of claim 1, wherein the act (B) comprises the audio system issuing an instruction to device to create the new track in the multi-track recording.

8. The method of claim 7, wherein the device is a mobile device.

9. The method of claim 1, wherein the act (F) comprises causing a digital representation of the received audio input to be stored to memory allocated to the new track.

10. The method of claim 1, comprising an act, performed after the act (A) is started, of causing a waveform representing the received audio input to be rendered by a device.

11. The method of claim 10, wherein the device is a mobile device.

12. The method of claim 1, wherein the new track is a first track in the multi-track recording.

13. The method of claim 1, comprising acts, performed after the act (D), of: receiving, at a first point in time, an instruction from the user to pause a recording of the audio input; receiving, at a second point in time after the first point in time, a second audio input; automatically causing, without intervention by the user, a second new track to be created in the multi-track recording; and causing the second audio input to be mapped to the second new track.

14. The method of claim 1, comprising acts, performed after the act (D), of: receiving, at a first point in time, an instruction from the user to pause a recording of the audio input; receiving, at a second point in time after the first point in time, a second audio input; automatically causing the second audio input to be mapped to the new track.

15. At least one computer-readable storage medium having instructions recorded thereon which, when executed, cause an audio system to perform a method, the audio system comprising at least one amplifier, the method comprising acts, performed by the audio system, of: (A) receiving an indication of a new audio input; (B) in response to receiving the indication, automatically causing, without intervention by a user of the audio system, a new track to be created in a multi-track recording; (C) receiving the audio input; (D) causing the received audio input to be mapped to the new track; (E) determining a gain level associated with the audio input received in the act (C); and (F) automatically, without intervention by the user, adjusting a gain level of the at least one amplifier, so that subsequent reproduction of the audio input by the at least one amplifier falls within a dynamic range delimited by a lower gain level and an upper gain level.

16. An audio system, comprising: at least one amplifier; at least one computer-readable storage medium having instructions recorded thereon; and at least one computer processor, programmed via the instructions to: receive an indication of a new audio input at the audio system; in response to receiving the indication, automatically cause, without intervention by a user of the audio system, a new track to be created in a multi-track recording; receive the audio input; cause the received audio input to be mapped to the new track; determine a gain level associated with the received audio input; and automatically, without intervention by the user, adjust a gain level of the at least one amplifier, so that subsequent reproduction of the audio input by the at least one amplifier falls within a dynamic range delimited by a lower gain level and an upper gain level.

17. The audio system of claim 16, comprising a microphone and an audio input port, wherein the at least one computer processor is programmed via the instructions to receive a plurality of audio inputs, one of the plurality of audio inputs being received via one of the microphone and the audio input port, and another of the plurality of audio inputs being received via the other of the microphone and the audio input port.

18. The audio system of claim 16, wherein the at least one computer processor is programmed via the instructions to determine an average gain level associated with the received audio input during a time interval of predetermined length.

19. The audio system of claim 16, wherein the at least one computer processor is programmed via the instructions to determine a peak gain level associated with the received audio input.

20. The audio system of claim 19, wherein the at least one computer processor is programmed via the instructions to determine the upper gain level of the dynamic range based at least in part on the peak gain level.

21. The audio system of claim 16, comprising a sound emitting device coupled to the at least one amplifier, wherein the at least one computer processor is programmed via the instructions to adjust a gain level of the at least one amplifier and/or the sound emitting device so that subsequent output of the at least one amplifier and the sound emitting device falls within a dynamic range in which response of the at least one amplifier and of the sound emitting device is substantially linear.

22. The audio system of claim 16, comprising a sound emitting device coupled to the at least one amplifier, and wherein the at least one computer processor is programmed via the instructions to adjust a gain level of the at least one amplifier and/or of the sound emitting device so that subsequent output of the at least one amplifier and the sound emitting device includes less than 10% harmonic distortion.

23. The audio system of claim 22, wherein the at least one computer processor is programmed via the instructions to adjust a gain level of the at least one amplifier and/or of the sound emitting device so that subsequent output of the at least one amplifier and the sound emitting device includes less than 5% harmonic distortion.

24. The audio system of claim 23, wherein the at least one computer processor is programmed via the instructions to adjust a gain level of the at least one amplifier and/or of the sound emitting device so that subsequent output of the at least one amplifier and the sound emitting device includes less than 3% harmonic distortion.

25. The audio system of claim 16, wherein the at least one amplifier comprises a plurality of amplifiers, and the at least one computer processor is programmed via the instructions to adjust a gain level of each of the plurality of amplifiers so that subsequent output produced by the plurality of amplifiers is within the dynamic range.

26. The audio system of claim 16, coupled to a mobile device comprising at least one amplifier of a mobile device, wherein the at least one computer processor is programmed via the instructions to adjust a gain level of the at least one amplifier of the mobile device, so that subsequent reproduction of the audio input by the at least one amplifier of the mobile device falls within the dynamic range delimited by the lower gain level and the upper gain level.

27. The audio system of claim 16, wherein the at least one amplifier comprises a plurality of amplifiers, and the at least one computer processor is programmed via the instructions to set a gain level of each of the plurality of amplifiers to be substantially equal.

28. The audio system of claim 16, wherein the at least one computer processor is programmed via the instructions to: receive the audio input from at least one audio source comprising at least one musical instrument; identify the at least one musical instrument from the received audio input; and adjust the gain level of the at least one amplifier in a manner corresponding to the identified musical instrument.

29. The audio system of claim 16, wherein the at least one computer processor is programmed via the instructions to analyze spectral content of the received audio input in each of a plurality of frequency sub-bands, and to adjust the spectral content of subsequent output of the at least one amplifier in one or more of the frequency sub-bands.

30. The audio system of claim 29, wherein the at least one computer processor is programmed via the instructions to equalize a gain level across one or more of the frequency sub-bands.

31. The audio system of claim 29, wherein the at least one computer processor is programmed via the instructions to attenuate a gain level in one or more of the frequency sub-bands.

32. The audio system of claim 29, wherein the at least one computer processor is programmed via the instructions to increase a gain level in one or more of the frequency sub-bands.

Description

RELATED APPLICATIONS

[0001] This application is a continuation of commonly assigned International Application No. PCT/US2018/015655, filed Jan. 29, 2018, entitled "Audio Control System And Related Methods," which claims priority to commonly assigned U.S. Provisional Application Ser. No. 62/454,138, filed Feb. 3, 2017, entitled "Audio Control System And Related Methods." The entirety of each of the applications listed above is incorporated herein by reference.

BACKGROUND INFORMATION

[0002] Audio production tools exist that enable users to produce high-quality audio. For example, some audio production tools include electronic devices and/or computer software applications, and enable users to record one or more audio sources (e.g., vocals and/or speech captured by a microphone, music played with an instrument, etc.), process the audio (e.g., to master, mix, design, and/or otherwise manipulate the audio), and/or control its playback. Audio production tools may be used to produce audio comprising music, speech, sound effects, and/or other sounds.

[0003] Computer-implemented audio production tools often provide a graphical user interface with which users may complete various production tasks on audio source inputs, such as from a microphone or instrument. For example, some tools may receive audio input and generate one or more digital representations of the input, which a user may manipulate, such as to obtain desired audio output through filtering, equalization and/or other operations.

[0004] Conventional audio production tools often also enable a user to "map" audio source inputs to corresponding tracks. In this respect, a "track" is a component of an audio or video recording that is distinct from other components of the recording. For example, the lead vocals for a song may be mapped to one track, the drums for the song may be mapped to another track, the lead guitar may be mapped to yet another track, etc. In some situations (e.g., in live performances), multiple audio inputs may be recorded at the same time and mapped to multiple corresponding tracks, while in other situations (e.g., in recording studios), the various audio inputs collectively comprising a body of audio may be recorded at different times and mapped to corresponding tracks.

BRIEF DESCRIPTION OF DRAWINGS

[0005] Various aspects and embodiments of the invention are described below with reference to the following figures. It should be appreciated that the figures are not necessarily drawn to scale. Items appearing in multiple figures are indicated by the same reference number in all the figures in which they appear.

[0006] FIG. 1A is a block diagram illustrating an audio controller of an audio recording system, according to some non-limiting embodiments.

[0007] FIG. 1B is a block diagram illustrating a mobile device configured to operate as part of an audio recording system, according to some non-limiting embodiments.

[0008] FIG. 2 is a block diagram illustrating control inputs of the audio controller of FIG. 1A, according to some non-limiting embodiments.

[0009] FIG. 3 is a block diagram illustrating an audio recording system and a communication network, according to some non-limiting embodiments.

[0010] FIG. 4A is a block diagram illustrating how multiple sequences associated with an audio input may be generated, according to some non-limiting embodiments.

[0011] FIG. 4B is a schematic diagram illustrating transmissions of data sequences using the communication network of FIG. 3, according to some non-limiting embodiments.

[0012] FIG. 5A is a flowchart illustrating a method for transmitting an audio input to a mobile device, according to some non-limiting embodiments.

[0013] FIG. 5B is a flowchart illustrating a method for receiving an audio input with a mobile device, according to some non-limiting embodiments.

[0014] FIG. 6A is a schematic diagram illustrating reception of a low resolution (LR) sequence with a mobile device, according to some non-limiting embodiments.

[0015] FIG. 6B is schematic diagram illustrating a mobile device displaying a waveform, according to some non-limiting embodiments.

[0016] FIG. 6C is a schematic diagram illustrating reception of a high resolution (HR) sequence with a mobile device, according to some non-limiting embodiments.

[0017] FIG. 7A is a schematic diagram illustrating an example of a waveform displayed in a record mode, according to some non-limiting embodiments.

[0018] FIG. 7B is a schematic diagram illustrating an example of a waveform displayed in a play mode, according to some non-limiting embodiments.

[0019] FIG. 8A is a schematic diagram illustrating a mobile device configured to receive gain and pan information, according to some non-limiting embodiments.

[0020] FIG. 8B is a table illustrating examples of gain and pan information, according to some non-limiting embodiments.

[0021] FIG. 8C is a block diagram illustrating an example of a stereo sound system, according to some non-limiting embodiments.

[0022] FIG. 8D is a flowchart illustrating a method for adjusting gain and pan, according to some non-limiting embodiments.

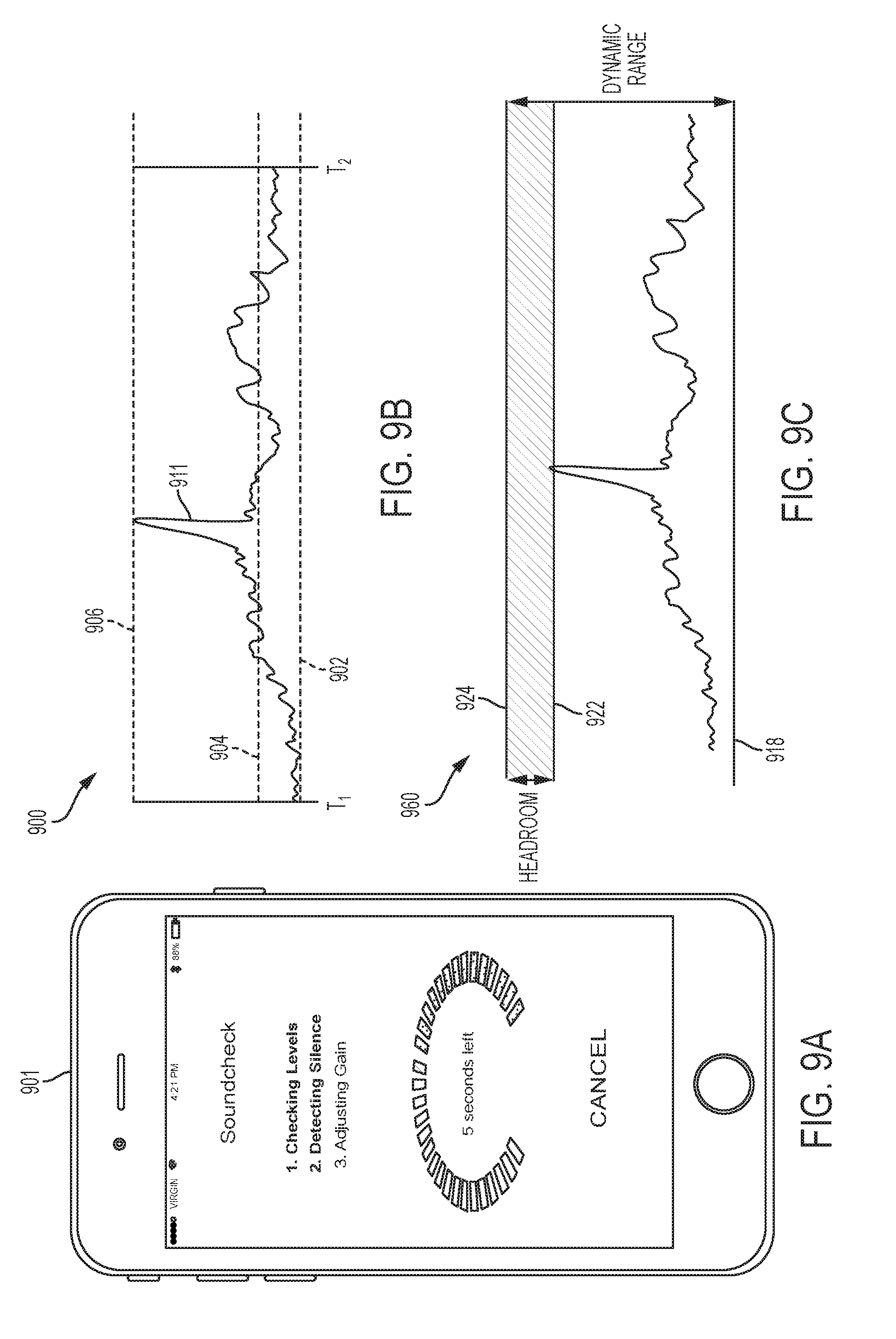

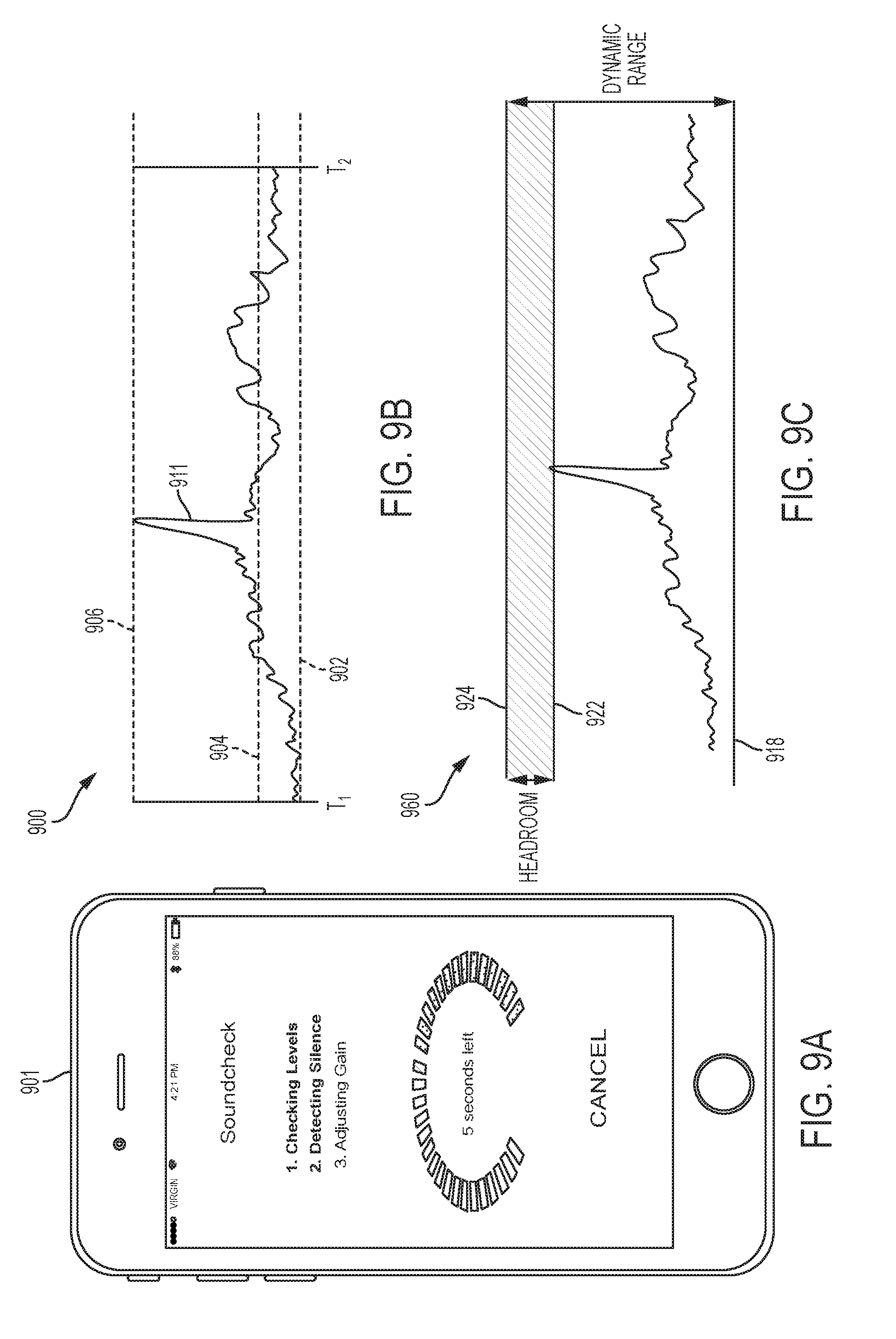

[0023] FIG. 9A is a schematic diagram illustrating a mobile device performing a sound check routine, according to some non-limiting embodiments.

[0024] FIG. 9B is a schematic diagram illustrating an example of an audio input received in a sound check routine, according to some non-limiting embodiments.

[0025] FIG. 9C is a schematic diagram illustrating an example of an audio output obtained from a sound check routine, according to some non-limiting embodiments.

[0026] FIG. 9D is a flowchart illustrating a method for performing a sound check routine, according to some non-limiting embodiments.

[0027] FIG. 10 is a block diagram illustrating an audio recording system having a plurality of amplification stages, according to some non-limiting embodiments.

[0028] FIG. 11A is a block diagram illustrating an audio recording system having a track mapping controller, according to some non-limiting embodiments.

[0029] FIG. 11B is a flowchart illustrating a method for automatically mapping audio inputs to tracks, according to some non-limiting embodiments.

[0030] FIG. 12 is a block diagram illustrating a computer system, according to some non-limiting embodiments.

DETAILED DESCRIPTION

I. Overview

[0031] The Assignee has appreciated that, for a significant number of users, conventional audio production tools suffer from four main deficiencies. First, conventional audio production tools are expensive. In this respect, many audio production tools are designed (and priced) for use by professional audio engineers to produce audio for professional musicians, studios and/or other high-end users. As a result, these tools are financially out of reach of many who may benefit from their features.

[0032] Second, producing audio using conventional audio production tools is time- and labor-intensive, and requires specialized expertise. As one example, mapping an audio recording to a corresponding track typically requires a number of manual steps. For example, a user may be forced to first create the new track using a software program, and then manually pair audio source input to the new track, and then ensure that the audio input is being produced and captured in the manner needed to combine the track with other tracks. This process may then be repeated for each additional track. As a result of the time- and labor-intensive nature of many types of audio production tasks, many potential users are discouraged from using conventional audio production tools.

[0033] Third, conventional audio production equipment is often bulky. Even when the tools used to process audio recordings are computer-implemented, other equipment involved in producing audio (e.g., sound production equipment like amplifiers, sound capture equipment like microphones, sound recording equipment, etc.) is often cumbersome. As a result, many users may find it inconvenient or impractical to use conventional audio production tools in certain (e.g., space-constrained) settings, and/or may be discouraged from producing audio at all if appropriate facilities are unavailable.

[0034] Fourth, functionality provided by conventional audio production tools is often more advanced than the average user needs or wants. As a result, the expense, time and bulkiness associated with sophisticated conventional audio production tools may not be worthwhile for many average users.

[0035] Some embodiments of the invention overcome these and other deficiencies to provide an audio production system that is less expensive, faster to set up, simpler and easier to use, and more portable than conventional audio production tools. An audio production system implemented in accordance with some embodiments of the invention may be more accessible to the typical user, and easier and more enjoyable to use, than conventional audio production tools.

[0036] In accordance with some embodiments of the invention, an audio production system comprises an audio controller that communicates with a mobile device. In some embodiments, the audio controller may have a relatively small form-factor, which makes it portable and easy to use in any of numerous settings. For example, in some embodiments, the audio controller may be small enough to fit in a backpack or a purse. The audio controller may include easy-to-use controls for acquiring, recording and playing back audio. Further, as described in detail below, an audio controller implemented in accordance with some embodiments may enable a user to quickly and easily create multiple tracks, stop and start recordings, discern that audio is being recorded at appropriate levels, and perform other audio production-related tasks.

[0037] In some embodiments, an audio controller and mobile device may communicate wirelessly, enabling various features of the mobile device to be employed to perform audio production tasks seamlessly. For example, the mobile device's graphical user interface may be used to present controls that a user may employ to process audio that is captured by the audio controller. The mobile device's graphical user interface may enable a user to mix, cut, filter, amplify, equalize or play back captured audio. Recorded tracks may, for example, be stored in the memory of the mobile device and be accessed for processing by a user at his/her convenience.

[0038] A graphical user interface of the mobile device may also, or alternatively, be used to provide visual feedback to a user while audio input is being captured by the audio controller, so that the user may gain confidence that audio being captured by the audio controller has any of numerous characteristics. For example, in some embodiments, the graphical user interface of the mobile device may display a waveform and/or other representation which visually represents to the user the gain level of the audio being captured by the audio controller, so that the user may be provided with visual feedback that the audio being captured by the audio controller has a suitable gain level. For example, the visual feedback may indicate that the gain level of the captured audio is within a suitable dynamic range, and/or has any other suitable characteristic(s).

[0039] In this respect, the Assignee has appreciated that the usefulness of such visual feedback is directly correlated to the visual feedback being presented to the user in real time or near real time (and indeed, that visual feedback which is delayed may confuse or frustrate the user). The Assignee has also appreciated, however, that wireless communication is commonly prone to delays and interruptions, particularly when large amounts of data are being transferred. As a result, some embodiments of the invention are directed to techniques for wireless communication between an audio controller and mobile device to enable visual feedback to be presented to the user in real time or near real time.

[0040] Given that a digitized version of captured audio may comprise large amounts of data, which may prove difficult to transmit from the audio controller to the mobile device in real time or near real time, some embodiments of the invention provide techniques for generating information that is useful for rendering a visual representation of captured audio, communicating this information to the mobile device in real time or near real time, and separately producing and later communicating a digitized version of the audio itself. As such, in some embodiments, data that is used to render a visual representation of an audio recording to the user via the mobile device may be decoupled from the digitized version of the audio, and transmitted separately from the digitized version of the audio to the mobile device, so that it may be delivered to the mobile device more quickly than the digitized version of the audio.

[0041] Such a transfer of information may be performed in any of numerous ways. In some embodiments, both the data useful for rendering the visual representation, and the digitized version of the audio recording, may be generated based on the same audio input, as it is captured by the audio controller. For example, in some embodiments, two distinct data sequences may be generated from captured audio. A first sequence, referred to herein as a "low-resolution (LR) sequence", may be generated by sampling a body of audio input at a first sampling rate. The sampling rate may, for example, be chosen so as to provide a sufficient number of data samples so that one or more representations (e.g., waveforms, bar meters, and/or any other suitable representations) may be rendered on the mobile device, but a small enough number of samples so that when each sample is sent to the mobile device, the transmission is unlikely to encounter delays due to latency or lack of bandwidth in the communication network. A second sequence, referred to herein as a "high-resolution (HR) sequence", may, for example, be generated by sampling the body of audio input at a second sampling rate which is greater than the first sampling rate. In some embodiments, the HR sequence may comprise a high-quality digitized version of the captured audio input which is suitable for playback and/or subsequent processing by the user. In some embodiments, the LR and HR sequences may be sent to the mobile device in a manner that enables the LR sequences to be received at the mobile device in real time or near real time, so that the visual representation of the captured audio may be rendered immediately, when the user finds it most useful. By contrast, the HR sequences may be received at the mobile device later, and indeed may not arrive at the mobile device until after the user stops recording audio with the audio controller, when the user is most likely to be ready to take on the task of playing back and/or processing the audio. Sending data useful for rendering the representation separately from the digitized version of captured audio may be accomplished in any of numerous ways, such as by assigning transmissions of LR sequences a higher priority than transmissions of HR sequences, by transmitting HR sequences less frequently than LR sequences, and/or using any other suitable technique(s). As a result, some embodiments of the invention may prevent the transmission of a digitized version of captured audio from interfering with the timely transmission of data useful for rendering a representation on the mobile device.

[0042] Some embodiments of the invention enable users to perform certain audio production tasks more easily than conventional audio production tools allow. As one example, some embodiments may enable a user to automatically map a new audio input to a new track. For example, when a new audio input is received, some embodiments of the invention may automatically create a new track for the input, and map the input to the new track. As such, users may avoid the manual, time-intensive process involved in creating a new track using conventional audio production tools.

[0043] As another example, some embodiments of the invention may provide controls that enable a user to more easily adjust the gain and pan levels of recorded audio than conventional audio production tools allow. (In this respect, the gain level for recorded audio generally characterizes the power level of a signal representing the recorded audio as compared to a predetermined level, and the pan level generally characterizes the amount of a signal representing the recorded audio that is sent to each of multiple (e.g., left and right) channels.) In some embodiments of the invention, the graphical user interface of the mobile device may enable a user to easily specify both the gain level and the pan level for recorded audio using a single control and/or via a single input operation. For example, in some embodiments, the user interface may include a touchscreen configured to receive gain/pan data for each track of a multi-track recording, via a cursor provided for each track. The touchscreen may, in some embodiments, represent the gain and pan levels for each track on an X-Y coordinate system, with the gain level represented on one of the X-axis and the Y-axis, and the pan level represented on the other of the two axes. By enabling a user to move a cursor up/down/left/right within the coordinate system shown on the touchscreen, some embodiments may provide for easy user adjustment of the gain and pan for tracks in a recording.

[0044] As another example, some embodiments of the invention may provide controls which enable a user to more easily perform a "sound check" for recorded audio than conventional audio production tools allow. In this respect, sound checks are conventionally performed in audio recording or live performance settings to make sure that the sound being produced at a sufficiently high gain level to enable it to be heard clearly, but at not such a high gain level that captured sound becomes distorted. Using conventional audio production tools, performing a sound check is a cumbersome process. For example, a user may play a portion of a music piece, check the gain level of the captured audio, adjust the gain level if it is not at the desired level, then play a portion of the music piece again, then re-check whether the gain level is appropriate, and so on until the right level is achieved. This is not only unnecessarily time-consuming, but can also be complicated and error-prone if some portions of the music piece are louder than others. Accordingly, some embodiments of the invention enable a user to perform a sound check routine automatically, such as in response to audio input being initially captured. For example, in some embodiments, when the user first begins creating sound, one or more components of the audio recording system may automatically detect one or more characteristics of the sound, and automatically adjust the gain level of captured audio so that it falls within a predetermined acceptable dynamic range.

[0045] It should be appreciated that the foregoing is a non-limiting overview of only certain aspects of the invention. Some embodiments of the invention are described in further detail in the sections that follow.

II. Audio Production System

[0046] FIGS. 1A-1B depict components of a representative audio recording system, according to some embodiments of the invention. In particular, FIG. 1A illustrates a representative audio controller 102 and FIG. 1B illustrates a representative mobile device 152. In the example shown, representative audio controller 102 comprises a plurality of audio input ports 104.sub.1, 104.sub.2 . . . 104.sub.N, a processor 106, a memory 112, control inputs 108, amplifier 109, audio output port 110, transceiver 114, visual output unit 116, and power unit 118. The audio input ports 104.sub.1, 104.sub.2 . . . 104.sub.N may, for example, be connected to different audio sources, such as different instruments or microphones. Of course, some embodiments of the invention may not provide multiple audio input ports, as any suitable number may be provided. In some embodiments, easy access to one or more of the audio input ports may be provided by placing the audio input ports on the external housing of the audio controller.

[0047] In some embodiments, the audio controller may be equipped with a microphone, which may be connected to an audio input port. The microphone may, for example, reside within the audio controller's housing. Additionally, or alternatively, external microphones may be used. The audio input ports 104.sub.1, 104.sub.2 . . . 104.sub.N may receive audio inputs from one or more audio sources. An audio input may, for example, be time-delimited, such that it consists of sound captured during a particular time period. The time period may be fixed or variable, and be of any suitable length. Of course, an audio input need not be time-delimited, and may be delimited in any suitable fashion. For example, a user may cause the generation of a time-delimited audio input by creating audio during a certain time interval, such as between the time when the user actuates a "record" button and the time when the user actuates a "stop" button. The audio input(s) may be provided to processor 106. Processor 106 may be implemented using a microprocessor, a microcontroller, an application specific integrated circuit (ASIC), a field programmable gate array (FPGA), or any other suitable type of digital and/or analog circuitry. Processor 106 may be used to sample the audio inputs to digitize them. In addition, processor 106 may be configured to process the audio inputs in any suitable manner (e.g., to filter, equalize, amplify, or attenuate). For example, processor 106 may execute instructions to analyze the spectral content of audio input and determine whether such spectral content has a suitable distribution. Such instructions may, for example, produce a filter function aimed at filtering out certain spectral components of the audio input (e.g., providing a high-pass filter response, a low-pass filter response, a band-pass filter response and/or a stop-band filter response). Such instructions may also, or alternatively, balance the spectral content of the audio input by attenuating and/or amplifying certain spectral components with respect to others. For example, such instruction may be used to produce spectral equalization. Such instructions may also, or alternatively, analyze one or more levels of the audio input and determine the suitability of the level(s) for the desired recording/playback environment, and/or amplify or attenuate the level(s) if desired.

[0048] Processor 106 may be coupled to memory 112. Memory 112 may have any suitable size, and may be implemented using any suitable type of memory technology, including random access memory (RAM), read only memory (ROM), Flash memory, electrically erasable programmable read only memory (EEPROM), etc. Memory 112 may be configured to store audio inputs received through the audio input ports, and/or to store modified versions of the audio inputs. In some embodiments, as it will be described further below, a portion of memory 112 may be used to buffer data to be transmitted to mobile device 152.

[0049] Processor 106 may be coupled to audio output port 110. In some embodiments, processor 106 may be coupled to audio output port 110 through amplifier 109. Audio output port 110 may be connected to a sound emitting device, such as a speaker set or a headphone set. The speaker set may be integrated with audio controller 102. For example, the speaker may be mounted in the housing of audio controller 102. Alternatively, or additionally, the speaker may be external. Processor 106 may comprise circuitry for driving the audio emitting device connected to audio output port 110. For example, processor 106 may comprise a digital-to-analog converter. Amplifier 109 may be used to adjust the level of the audio output as desired.

[0050] Processor 106 may be coupled to control inputs 108. Some representative control inputs 108 are shown in FIG. 2. Control inputs 108 may comprise any suitable user interface, including physical buttons, touch screen controls, and/or any other suitable control(s). It should be appreciated that control inputs 108 need not be manually actuated. For example, in some embodiments, control inputs 108 may be actuated via voice recognition.

[0051] In the example shown in FIG. 2, control inputs 108 comprise a "record" button 202. When the record button is actuated by a user, the audio controller 102 may begin to record an audio input. The record button may be configured to record any suitable number of audio inputs. In the example shown, control inputs 108 also comprise "play" button 204. When the play button is actuated by a user, the audio controller 102 may play a recorded audio input using audio output port 110, which may be connected to a speaker set or a headphone set. In the example shown, control inputs 108 may comprise a "stop" button 206. When the stop button is actuated by a user, the audio controller 102 may stop recording if recording is underway or stop playing if playing is underway. In the example shown, control inputs 108 comprises a "create project" button 208. When the create project button is actuated by a user, processor 106 may allocate a portion of memory 112 for a new project. The portion of the memory 112 may be populated with one or more audio inputs that are considered by the user as part of the same project.

[0052] In the example shown, control inputs 108 comprise a "sound check" button 210. When the sound check button is actuated by a user, the audio controller 102 may initiate a sound check routine. Sound check routines will be described further below. In the example shown, control inputs 108 comprise an "audio output level" button 212. The audio output level may be used to increase or decrease the level of the audio produced using audio output port 110. In the example shown, control inputs 108 comprise a "mute track" button 214. When the mute track button is actuated by a user, the audio controller 102 may mute a desired track, while playing of other tracks may continue. In this way, a user may toggle tracks on/off as desired. In the example shown, control inputs 108 comprise a "delete track" button 216. When the delete track button is actuated by a user, the audio controller 102 erase a track from memory 112. In the example shown, control inputs 108 may further comprise other control inputs 218 to provide any desired functionality, and/or may not include all of the control inputs 108 depicted in FIG. 2. Although a specific combination of control inputs is described above with reference to FIG. 2, any suitable combination of control inputs 108 may be provided.

[0053] Referring back to FIG. 1A, audio controller 102 may further comprise visual output unit 116. Visual output unit 116 may be configured to provide visual outputs in any suitable way. For example, visual output unit 116 may comprise an array of light emitting elements, such as light emitting diodes (LEDs), a display, such as a liquid crystal display (LCD), and/or any other suitable visual output component(s). In some embodiments, visual output unit 116 may light up in response to actuation of a button of control inputs 108, and/or in response to any other suitable form(s) of input. For example, visual output unit 116 may light up when a track is being recorded, or when the audio controller detects audio above a certain threshold. In some embodiments, visual output unit 116 may change lighting color in response to certain input being received. For example, a light-emitting element may be associated with a track, and may display one color when the track is being recorded and another color when the recording is paused. The visual output unit 116 may be used to provide any of numerous different types of visual feedback to the user. Of course, visual output unit 116 need not rely upon light or color to convey such information.

[0054] Audio controller 102 may further comprise transceiver (TX/RX) 114. Transceiver 114 may be a wireless transceiver in some embodiments, and may be configured to transmit and/or receive data to/from a mobile device, such as mobile device 152. Transceiver 114 may be configured to transmit/receive data using any suitable wireless communication protocol, whether now known or later developed, including but not limited to Wi-Fi, Bluetooth, ANT UWB, ZigBee, LTE, GPRS, UMTS, EDGE, HSPA+, WIMAX and Wireless USB. Transceiver 114 may comprise one or more antennas, such as a strip antenna or a patch antenna, and circuitry for modulating and demodulating signals. Audio controller 102 may further comprise a power unit 118. The power unit 118 may power some or all the components of audio controller 102, and may comprise one or more batteries.

[0055] FIG. 1B illustrates mobile device 152, according to some non-limiting embodiments. Mobile device 152 may comprise any suitable device(s), whether now known or later developed, including but not limited to a smartphone, a tablet, a smart watch or other wearable device, a laptop, a gaming device, a desktop computer, etc. In the example shown, mobile device 152 comprises transceiver (TX/RX) 164, processor 156, memory 162, audio input port 154, amplifier 159, audio output port 160, control inputs 158 and display 166. However, it should be appreciated that mobile device 152 may include any suitable collection of components, which may or may not include all of the components shown, Transceiver 164 may be configured to support any of the wireless protocols described in connection with transceiver 114, and/or other protocols. In some embodiments, transceiver 164 may receive signals, from transceiver 114, that are representative of audio inputs. The received signals may be processed in any suitable way using processor 156, and may be stored in memory 162. Control inputs 158 may comprise any suitable combinations of the inputs described in connection with FIG. 2. The inputs may be actuated using a touch screen display. Audio input port 154 may be connected to an audio source, such as a microphone or an instrument. The microphone may be integrated with the mobile device, and/or may be external. Audio output port 160 may be connected to a sound emitting device, such a speaker set or a headphone set. The speaker set may be embedded in the mobile device. Audio output port 160 may be used to play audio recordings obtained through audio input ports 104.sub.1, 104.sub.2 . . . 104.sub.N or audio input port 154. In some embodiments, amplifier 159 may be used to set the audio output to a desired level. Display 166 may be used to display waveforms representative of recording audio inputs.

[0056] Audio controller 102 and mobile device 152 may communicate via their respective transceivers. In the preferred embodiment, audio controller 102 and mobile device 152 are configured to communicate wirelessly, but it should be appreciated that a wired connection may be used, in addition to or as an alternative to a wireless connection.

[0057] In the example shown in FIG. 3, audio controller 102 and mobile device 152 communicate via communication network(s) 300. The communication network(s) 300 may employ any suitable communications infrastructure and/or protocol(s). For example, communication network 300 may comprise a Wi-Fi network, Bluetooth network, the Internet, any other suitable network(s), or any suitable combination thereof.

III. Mitigating Communication Delays to Provide a Visual Representation of Recorded Audio

[0058] The Assignee has appreciated that the performance of communication network(s) 300 may be affected by the distance between transceivers 114 and 164, any noise or interference present in the environment in which communication network(s) 300 operates, and/or other factors. For example, in some circumstances, communication network 300 may not provide sufficient bandwidth for all communication needs, imposing an upper limit on the rate at which data can be transferred between audio controller 102 and mobile device 152. Accordingly, one aspect of the present invention relates to the manner in which data is communicated between audio controller 102 and mobile device 152.

[0059] In accordance with some embodiments of the invention, a plurality of data sequences may be communicated between audio controller 102 and mobile device 152. For example, in some embodiments audio controller 102 may transmit, for a body of captured audio input, a low-resolution (LR) sequence and a high-resolution (HR) sequence representing the body of captured audio input to mobile device 152. While both the LR and HR sequence may be representative of the body of audio input captured through audio input ports 104.sub.1, 104.sub.2 . . . 104.sub.N, the way in which the sequences are generated and transmitted to mobile device 152 may differ. FIG. 4A is a block diagram illustrating how the sequences may be generated, according to some non-limiting embodiments of the invention.

[0060] In the example shown in FIG. 4A, the LR sequence is generated by sampling a body of audio input using sample generator 406 at a first sampling rate. Sample generator 406 may be implemented using processor 106 (FIG. 1A). The first sampling rate may be at any suitable frequency (e.g., between 10 Hz and 1 KHz, between 10 Hz and 100 Hz, between 10 Hz and 50 Hz, between 10 Hz and 30 Hz, between 10 Hz and 20 Hz, or between 20 Hz and 30 Hz). The LR sequence may be transmitted to mobile device 152 using transceiver 114. In some embodiments, the LR sequence may include data which is used to render a visual representation of captured audio to a user of the mobile device, and so the sampling rate may be chosen so the visual representation may be rendered in real time or near real time. As used herein, the term "near real time" indicates a delay of 50 ms or less, 30 ms or less, 20 ms or less, 10 ms or less, or 5 ms or less.

[0061] By contrast, an HR sequence may be generated by sampling the body of audio input with sample generator 402, which may also be implemented using processor 106 (FIG. 1A). The sample generator may, for example, sample the body of audio input at a second sampling rate which is greater than the first sampling rate. The second sampling rate may be configurable, and selected to provide a high-quality digitized version of the audio input. For example, the second sampling rate may be between 1 KHz and 100 KHz, between 1 KHz and 50 KHz, between 1 KHz and 20 KHz, between 10 KHz and 50 KHz, between 10 KHz and 30 KHz, between 10 KHz and 20 KHz, or within any suitable range within such ranges.

[0062] The body of audio input that is sampled to produce the LR and the HR sequences may be delimited in any suitable way. For example, it may be time-delimited (e.g., representing an amount of audio captured by the audio controller over an amount of time), data-delimited (e.g., representing captured audio comprising an amount of data), and/or delimited in any other suitable fashion.

[0063] In some embodiments, the HR sequence may be temporarily stored prior to transmission in a buffer 404, which may be implemented using memory 112, a virtual data buffer, and/or any other suitable storage mechanism(s). In this respect, the Assignee has appreciated that due to the rate at which audio input is sampled to produce an HR sequence, the amount of bandwidth needed to transmit the data in the HR sequence may exceed the available bandwidth of communication network(s) 300. By temporarily storing HR sequence data in buffer 404 prior to transmission, some embodiments of the invention may throttle transmissions across communication network(s) 300, so that sufficient bandwidth is available to transmit LR sequence data on a timely basis. That is, through the use of buffer 404, HR sequences may be segmented into bursts for transmission. In some embodiments, first-in first-out (FIFO) schemes may be used, that is, data is output out of the buffer 404 in the order it is received. However, it should be appreciated that other schemes may also, or alternatively, be used.

[0064] In some embodiments, HR sequence data may be held in buffer 404 until it is determined that the buffer stores a predetermined amount of data (e.g., 1 KB, 16 KB, 32 KB, 128 KB, 512 KB, 1 MB, 16 MB, 32 MB, 128 MB, 512 MB or any suitable value between such values), or when the amount of memory allocated to the buffer is completely populated. The amount of memory allocated to buffer 404 may be fixed or variable. If variable, the size may be varied to accomplish any of numerous objectives.

[0065] Of course, the invention is not limited to transmitting HR sequence data when the buffer stores a predetermined amount of data. For example, in some embodiments, HR sequence data may be transmitted when it is determined that communication network(s) 300 have enough bandwidth to support transmission of HR sequence data. In some embodiments, HR sequence data may be transmitted when a "stop recording" command is received at the audio controller. Any suitable technique(s) may be used, as the invention is not limited in this respect.

[0066] FIG. 4B depicts the transmission of LR and the HR sequence data via communication network(s) 300. In particular, exemplary LR sequences 420 and HR sequences 410 are shown. In the example depicted, multiple discrete LR sequences are transmitted serially during the time period depicted, and thus are depicted as a continuous transmission 420 in FIG. 4B for simplicity. Of course, it should be appreciated that transmissions of LR sequence data may not be performed serially, and that transmissions may be separated by any suitable time interval(s). It should also be appreciated that although FIG. 4B depicts LR sequences being transmitted from the time when recording begins (or shortly thereafter) to the time when recording stops (or shortly thereafter), the invention is not limited to being implemented in this manner, as transmission may start and end at any suitable point(s) in time. Transmission of LR sequences may be performed using any suitable technique(s), including but not limited to ZeroMQ.

[0067] In the example shown in FIG. 4B, transmission of HR sequences 410 is performed in multiple bursts, designated 412.sub.1, 412.sub.2 . . . 412.sub.N. Some or all the bursts may, for example, be transmitted after recording of audio by the audio controller ends.

[0068] FIG. 5A depicts a representative method 500 for transmitting audio input to a mobile device, according to some embodiments of the invention. Method 500 begins at 502. In act 504, a body of audio input is received using an audio controller. The audio controller may receive the body of audio input, for example, through one or more audio input ports.

[0069] Method 500 then proceeds to act 506, wherein the audio input is processed, in any suitable way. For example, the audio input may be filtered, equalized, distorted, etc. Then, in act 508, one or more LR sequences are created based on the audio input. Each LR sequence may be generated by sampling the audio input(s) at a first sampling rate. In some embodiments, the LR sequence(s) may be compressed, using any suitable compression technique(s) and compression ratio(s) (e.g., 2:1, 4:1, 16:1, 32:1, or within any range between such values). For example, a free lossless audio codec (FLAC) format for compressing the LR sequence(s) may be used. Each LR sequence is then transmitted to a mobile device in act 510. In some embodiments, such transmission is wireless.

[0070] In act 512, one or more HR sequences are created by sampling the audio input(s) at a second sampling rate which is greater than the first sampling rate. As with the LR sequence(s), each HR sequence may be compressed, using any suitable compression technique (e.g., FLAC) and/or compression ratio(s). In act 514, the HR sequences are loaded into a buffer.

[0071] In act 516, the amount of data in the buffer is determined, and the method then proceeds to act 518, wherein a determination is made whether the amount of data stored in the buffer exceeds a predefined amount of data. If it is determined in the act 518 that the amount of data stored in the buffer does not exceed a predefine amount of data, then method 500 returns to 516 and proceeds as described above.

[0072] However, if it is determined in act 518 that the amount of data stored in the buffer exceeds a predefined amount of data, then method 500 proceeds to act 520, wherein the data comprising one or more HR sequences is transmitted to the mobile device.

[0073] HR sequence data may be transmitted in any suitable fashion. For example, as noted above, in some embodiments HR sequence data may be transmitted in bursts. That is, HR sequence data may be partitioned into multiple segments, and each segment may be transmitted to the mobile device at a different time.

[0074] The partitioning of HR sequence data may be accomplished in any of numerous ways. As one example, a burst may be transmitted when the buffer stores a predefined amount of data. As another example, a burst may be transmitted after an amount of time passes after HR sequence data was first loaded to the buffer (e.g., less than 1 ms, less than 10 ms, less than 100 ms, less than 1 s, or less than 10 s after HR sequence data is first loaded to the buffer).

[0075] The size of HR sequence data bursts may be fixed or variable. If variable, the size may be chosen depending on any suitable factor(s), such as a comparison between the rate at which HR sequence data is loaded to the buffer and the rate at which HR sequence data is transmitted.

[0076] The bursts may, for example, be transmitted one at a time. That is, a first burst may be transmitted during a first time interval, and a second burst may be transmitted during a second time interval different from the first time interval. The first and second time intervals may be separated by any suitable amount of time or may be contiguous. Of course, the invention is not limited to such an implementation, as bursts may be transmitted simultaneously, or during overlapping time intervals.

[0077] In some embodiments, processor 106 may create each burst to include a payload and one or more fields describing the content of the burst. The payload may include data which represents a body of captured audio input. The field(s) may, for example, indicate that the burst includes HR sequence data, the placement of the burst among other HR sequence data, and/or other information. The field(s) may, for example, be appended to the payload (e.g., as a header).

[0078] Act 520 may, in some embodiments, include deleting transmitted data from the buffer.

[0079] Process 500 then proceeds to act 522, wherein a determination is made whether a stop recording command has been received. If it is determined that a stop recording command has not been received, then method 500 returns to act 504, and proceeds to process additional audio input as described above. If, however, it is determined that a stop recording command has been received, then method 500 completes.

[0080] It should be appreciated that although representative process 500 includes generating an LR sequence and an HR sequence from the same body of audio input, the invention is not limited to such an implementation. Each LR sequence and HR sequence may represent any suitable portion(s) of captured audio input.

[0081] It should also be appreciated that any of numerous variations on method 500 may be employed. For example, some variations may not include all of the acts described above, some may include acts not described above, and some may involve acts being performed in a different sequence than that which is described above. It should further be appreciated that in some embodiments, certain of the acts described above may be performed in parallel, rather than serially as described above. For example, acts 508 and 510 may be performed to create and transmit one or more LR sequences from a body of audio input as acts 512-520 are performed to create and transmit one or more HR sequences from the same body of audio input.

[0082] FIG. 5B depicts a representative method 550, which a mobile device may perform to receive audio input(s). Method 550 begins at 552. In act 554, the mobile device receives data transmitted by an audio controller via one or more communication networks. In the act 556, a determination is made whether the received data comprises LR sequence data or HR sequence data. This determination may be made in any of numerous ways. For example, the received data may include a header or field(s) comprising information indicating what type of data it represents.

[0083] If it is determined in the act 556 that the data received comprises LR sequence data, process 550 proceeds to act 558, wherein the LR sequence data is processed by the mobile device, such as to render a representation of captured audio input on display 166. The representation may, for example, provide visual feedback on one or more characteristics of the audio input to the user of the mobile device.

[0084] If it is determined in the act 556 that the data received in the act 554 comprises HR sequence data, then process 550 proceeds to act 560, wherein the HR sequence data is stored in a memory of the mobile device.

[0085] At the completion of either of act 558 or 560, process 550 proceeds to act 562, wherein a determination is made whether recording of audio input by the audio controller has stopped. This determination may be made in any of numerous ways. For example, the audio controller may provide to the mobile device an explicit indication that recording has ceased.

[0086] If it is determined in the act 562 that recording has not stopped, then process 550 returns to act 554, and repeats as described above. If it is determined in the act 562 that recording has stopped, then process 550 completes, as indicated at 564.

[0087] A representation of captured audio input produced by a mobile device based upon LR sequence data may take any of numerous forms. As but one example, a representation may comprise a waveform. Such a waveform may, for example, indicate the gain level, and/or any other suitable characteristic(s), of the captured audio input. By viewing a rendered representation, the user may determine whether captured audio has any of numerous desired characteristics, and thus that audio is captured and recorded in the manner desired and expected.

[0088] FIG. 6A is a schematic diagram of a mobile device during reception of LR sequence data. In the example shown, LR sequence data 420 is received using transceiver 164 and processed using processor 156. In some embodiments, processor 156 may generate a representation of audio input based upon LR sequence data 420 for rendering on display 166. For example, processor 156 may execute one or more instructions for mapping samples included in the LR sequence data to corresponding locations on the display of the mobile device. The locations may, for example, correspond to the gain level reflected in different LR sequences. For example, a gain level for a LR sequence may be reflected as a location along an axis included in a coordinate system shown on the display (e.g., a vertical axis), such that the higher the gain level, the higher the location on the axis. As LR sequences representing consecutive portions of the audio input are processed, the result is a waveform representation of the captured audio input's gain level.

[0089] FIG. 6B illustrates an example of a waveform. In particular, waveform 670 is displayed on display 666 of mobile device 652. (In the example shown, display 666 serves as display 166, and mobile device 652 serves as mobile device 152.) Waveform 670 provides a real time (or near real time) visual representation of the gain level of captured audio input. Of course, it should be appreciated that more than one waveform may be rendered on the display of the mobile device, with each waveform representing a different characteristic of captured audio, each waveform representing different audio inputs (e.g., different tracks of a recording), and/or any other suitable information.

[0090] It should also be appreciated that the invention is not limited to employing one or more waverforms to represent characteristics of captured audio, as any suitable representation(s) may be used. As but one example, a meter may be used to represent the gain level (and/or any other suitable characteristic(s)) of captured audio. The invention is not limited to using any particular type(s) of representation.

[0091] As noted above, HR sequence data comprising a digitized version of captured audio may be received by a mobile device, such as during the same time period as LR sequence data is received. In some embodiments, as HR sequence data is received, it may be stored in a memory of the mobile device, to accommodate subsequent playback and/or processing. FIG. 6C is a schematic diagram of a mobile device during reception of HR sequence data 412.sub.1-412.sub.n. In some embodiments, if HR sequence data is received in a different order than the order in which it was transmitted, processor 164 may re-order the sequences so as to recompose the audio input. For example, processor 164 may execute a sorting routine to sort the received data. This may be performed in any of numerous ways, such as by using a value (e.g., provided in a header) indicating a sequence's placement among other sequences. The recomposed audio input may be stored in memory 162.

[0092] In some embodiments, the mobile device may play back captured audio once it is received from the audio controller in its entirety. For example, mobile device 152 (FIG. 1B) may reproduce received audio using audio output port 160, such as in response to user commands.

[0093] In some embodiments, the received audio may be processed by a user, in any of numerous ways, such as to produce a desired acoustic effect. For example, the user may perform filtering, equalization, amplification, and/or noise compensation on received audio. A software application installed on the mobile device may provide functionality for processing received audio, and a user interface for accessing the functionality. The user interface may, for example, include a waveform panel for displaying waveforms representing captured audio.

[0094] FIG. 7A-7B illustrate schematically a waveform panel 700 in a "record mode" and in a "play mode", respectively. In the record mode, the waveform panel may be configured to display a waveform corresponding to captured audio, as the audio input is being recorded. In the example shown in FIG. 7A, a waveform 704 is displayed. Progress bar 706 indicates the time elapsed since the beginning of the recording. In some embodiments the software application installed on the mobile device may automatically scale the waveform along the horizontal axis, so as to display the entirety of the body of captured audio in the waveform panel

[0095] FIG. 7B illustrates the waveform panel 700 in the play mode. In the illustrated example, waveform 714 represents a previously recorded audio input. As the waveform is displayed, the mobile device may simultaneously play the previously recorded audio input using audio output port 160. Progress bar 716 indicates the time elapsed since the beginning of the audio input. Pause button 722 may be used to pause the play session. Record button 720 may be used to switch to the record mode.

IV. User Controls

[0096] A. Gain and Pan Adjustment

[0097] According to another aspect of the present invention, the software application may enable a user to set the gain and pan for captured audio, such as for each track in a multi-track recording. As noted above, the gain level for captured audio generally characterizes the power level of a signal representing the audio as compared to a predetermined level, and the pan level for captured audio generally characterizes the amount of a signal representing the audio that is sent to each of multiple (e.g., left and right) channels. By adjusting the gain and the pan for each track in a multi-track recording, a user may acoustically create the illusion that the sound captured in each track was produced from a different physical location relative to the listener. For example, a user may create the illusion that a guitar in one track is being played close to the listener and on the listener's right hand side. To do so, the user may increase the gain level (thereby indicating to the listener that the guitar is close by) and set the pan to the listener's right hand side The user may also create the illusion that a bass guitar in another track is being played farther away from the listener and on the listener's left hand side. To do so, the user may decrease the gain (thereby indicating to the user that the bass guitar is further away than the guitar) and set the pan to the listener's left hand side.

[0098] In some embodiments, gain and pan settings for individual tracks may be set using a touch panel on a graphical user interface of a mobile device. In some embodiments, the touch panel may represent gain and pan levels for each track on an X-Y coordinate system, with the gain level represented on one of the X-axis and the Y-axis, and the pan level represented on the other of the X-axis and the Y-axis. By moving a cursor up and down and left and right within the coordinate system shown, the user may adjust the gain and the pan for a track. FIG. 8A illustrates an example. Mobile device 852, which may serve as mobile device 152, may be configured to render touch panel 830. In some embodiments, the touch panel 830 may render an X-Y coordinate system. The touch panel may display one or more gain/pan cursors, which in the non-limiting example shown in FIG. 8A are labeled "1", "2", "3", "4", "5", "6", "7", and "8". In the example shown, the gain/pan cursors are displayed as circles. However, it should be appreciated that any other suitable shape may be used for the gain/pan cursors. Each gain/pan cursor may be associated with a track, and may represent a point within the gain/pan coordinate system. For each recorded track, a user may enter a desired gain/pan combination by moving the corresponding gain/pan cursor within the touch panel. For example, the user may touch, with a finger, an area of the touch panel that includes a gain/pan cursor, and move the gain/pan cursor up/down and/or left/right. In the example sown, moving a gain/pan cursor up/down along the Y-axis causes an increase/decrease in the gain of the corresponding track, while moving a gain/pan cursor left/right along the X-axis causes the pan of the track to be shifted toward the left/right channel. It should be appreciated that gain and pan may be adjusted by moving a gain/pan cursor in other ways.

[0099] While FIG. 8A illustrates a method for entering a gain/pan combination using a touch panel, the application is not limited in this respect and other methods may be used. For example, a gain/pan combination may be selected by entering values in a table, by vocally instructing a software application, or by using a control device (e.g., a mouse or keyboard). A table stored in memory 162 may keep track of the gain/pan cursors selected by the user. FIG. 8B illustrates an example of such a table. For each track 1 . . . 8, the table includes a first value representing the selected gain and a second value representing the selected pan. The values illustrated in the table are expressed in arbitrary unit, but any suitable unit may be used. The selected values may be used to control the manner in which the recorded tracks are played. The gain value may be used to set the gain of an amplifier, and the pan value may be used to set the balance between the audio channels. In some embodiments, a stereo sound system may be used. An example of a stereo sound system is illustrated in FIG. 8C. In the example shown, the stereo sound system 800 comprises a control unit 820, amplifiers 822 and 824, and sound emitting devices 826 and 828 (labeled "L" and "R" for left and right). However, it should be appreciated that different configurations may be used. For example, more than two channels may be used. The control unit 820 may be implemented using processor 106 or processor 156. Amplifiers 822 and 824 may be implemented using amplifier 109 or amplifier 159. The sound emitting devices 826 and 828 may be connected to the amplifier via audio output port 110 or audio output port 160. For each track, based on the selected gain/pan values, control unit 820 may set the gain of the amplifiers 822 and 824. For example, the sum (or the product) of the gains of amplifiers 822 and 824 may be based on the gain value, and the ratio (or the difference) of the gains of amplifiers 822 and 824 may be based on the pan value. However, other methods may be used.

[0100] FIG. 8D is a flowchart illustrating a representative method 801 for adjusting gain and pan for a track, according to some non-limiting embodiments. Method 801 begins at 802. In act 804, a first value indicative of a user's preference with respect to a track's volume is received. In some embodiments, the first value may be entered by a user by moving a single cursor representing both gain and pan "up" and "down" within a coordinate system depicted in a touch panel. At act 806, a second value indicative of a user's preference with respect to the track's pan may be received. In some embodiments, the second value may be entered by a user by moving the cursor "left" and "right" within the coordinate system depicted by the touch panel. At act 808, based on the received gain and pan values, the gain of a first amplifier may be controlled. The first amplifier may be connected to a first sound-emitting device. At act 810, based on the received gain and pan values, the gain of a second amplifier may be controlled. The second amplifier may be connected to a second sound-emitting device. The first and second sound emitting devices may collectively form a stereo sound system. At act 812, it is determined whether additional gain/values are received. If no additional values are received, method 801 may end at act 814. If additional values are received, method 801 may repeat another track.

[0101] In some embodiments, the first value and the second value may be entered by a user using a single input device, such as the user's finger, a stylus or any other suitable implement.

[0102] B. Sound Check

[0103] Sound checks are often performed to make sure that sound is produced clearly and at the right volume. Manual sound checks are performed by sound engineers or musicians by playing a portion of an audio piece and by determining whether the volume of the audio is acceptable. If it is not, the sound engineer or musician may readjust the level of an amplifier and re-play a portion of an audio piece. If the volume is still not acceptable, another iteration may be performed. This method may be cumbersome, as multiple iterations may be needed before the desired condition is reached. The Assignee has appreciated that a sound check may be performed automatically using an audio recording system of the type described herein. In automatic sound checks, gain may be adjusted using electronic circuits as the audio input is being acquired. In this way, the sound check may be performed automatically without having to interrupt the musician's performance. According to one aspect of the present invention, automatic sound checks may be performed using audio controller 102 and/or mobile device 152. In some embodiments, a sound check may be performed during a dedicated audio recording session, which will be referred to herein as a "sound check routine", which typically takes place before an actual recording session. However, the application is not limited in this respect and sound checks may be an actual recording session. In this way, if there are variations in the recording environment (e.g., if a crowd fills the recording venue), or if there are variations in the characteristics of the audio being recorded (e.g., a single music piece includes multiple music genres), the recording parameters (e.g., gain) may be adjusted dynamically.

[0104] FIG. 9A is a schematic view illustrating a mobile device 901 during a sound check routine. Mobile device 901 may serve as mobile device 152. Mobile device 901 may initiate a sound check routine in response to receiving a user request. In some embodiments, such a request may be entered by a user by initiating a sound check button key, such as sound check button 210. In some embodiments, sound check button 210 may be disposed on the top side of the housing of audio controller 102. Positioning sound button 210 on the top side of the housing may provide easy reach to the user.

[0105] In some embodiments, the sound check routine may comprise a "checking levels" phase, in which levels of the audio input are detected; a "detecting silence" phase, in which a silence level is detected; and an "adjusting gain" phase, in which a gain is adjusted. Such routine may take a few seconds (e.g., less than 30 seconds, less than 20 seconds or less than 10 seconds). FIG. 9B illustrates schematically an audio input 900, which may be used during a sound check routine. Audio input 900 may be received through an audio controller or through an audio input port of the mobile device. In the checking levels phase, one or more levels of the audio input may be detected by a processor. For example, the average level 904 of audio input 900 may be detected between a time t.sub.1 and a time t2. The average level may be computed in any suitable way, including an arithmetic mean, a geometric mean, a root mean square, etc. In some embodiments, a moving average may be computed. Accordingly, the average level may be computed during periodic intervals. The durations of the time intervals may be between 1 second and 100 seconds, between 1 second and 50 seconds, between 1 second and 20 seconds, between 1 second and 10 seconds, between 5 second and 10 seconds, between 10 second and 20 seconds, or within any range within such ranges. In this way, if the audio input varies its characteristics over time, the average level may be adjusted accordingly.

[0106] In some embodiments, a peak level 906, corresponding to the intensity of a peak 911, may be detected. In the detecting silence phase, a silence level 902 may be detected. During this phase, the mobile device may prompt the user to stop playing. In this way, the mobile device may detect the level received when no audio is played. The silence level may be limited by the background noise. In the adjusting gain phase, the gain of an amplifier, such as amplifier 159, may be adjusted based on the levels detected in the checking levels and the detecting silence phases. The gain may be adjusted so as to allow the audio output to vary within the dynamic range of the audio output system.

[0107] In some circumstances, the dynamic range of the audio system may be limited by the amplifier, while in other circumstances, it may be limited by the sound emitting device. FIG. 9C illustrates output audio 960 obtained by amplifying audio input 900. The dynamic range of the audio output system may be bound by a lower level 918 and by an upper level 924. In some embodiments, the dynamic range may be defined as the region in which the response of the audio output system, which includes amplifier 109 and a sound-emitting device, is linear. In other embodiments, the dynamic range may be defined as the region in which harmonic distortion is less than 10%, less than 5%, less than 3%, less than 2%, or less than 1%. The gain of the amplifier may be selected such that output audio 960 is within the dynamic range of the audio output system. In some embodiments, the silence level of the audio input 900 may be set to correspond to the lower level 918 of the dynamic range.

[0108] In some embodiments, a "headroom" region may be provided within the dynamic range. The headroom region may provide sufficient room for sound having a peak level to be played without creating distortion. The headroom region may be confined between upper level 924 and a headroom level 922. In some embodiments, headroom level 922 may be set at the level of the maximum of the audio input (e.g., level 906). The headroom may occupy any suitable portion of the dynamic range, such as 30% or less, 25% or less, 20% or less, 15% or less, 10% or less, or 5% or less. Choosing the size of the headroom may be based on trade-off considerations between providing enough room for peaks and limiting noise.

[0109] The examples of FIGS. 9A-9C illustrate sound check routines performed using a mobile device. Alternatively, a sound check routine may be performed using audio controller 102. In this embodiment, processor 106 may detect the levels of an audio input received though an audio input port, and may adjust the gain of amplifier 109. The detection and the gain adjustment may be performed in the manner described in connection with FIGS. 9B-9C.