Self-learning Spatial Recognition System

Idrisov; Renat

U.S. patent application number 16/182299 was filed with the patent office on 2019-03-07 for self-learning spatial recognition system. The applicant listed for this patent is Satori Worldwide, LLC. Invention is credited to Renat Idrisov.

| Application Number | 20190073788 16/182299 |

| Document ID | / |

| Family ID | 64604905 |

| Filed Date | 2019-03-07 |

View All Diagrams

| United States Patent Application | 20190073788 |

| Kind Code | A1 |

| Idrisov; Renat | March 7, 2019 |

SELF-LEARNING SPATIAL RECOGNITION SYSTEM

Abstract

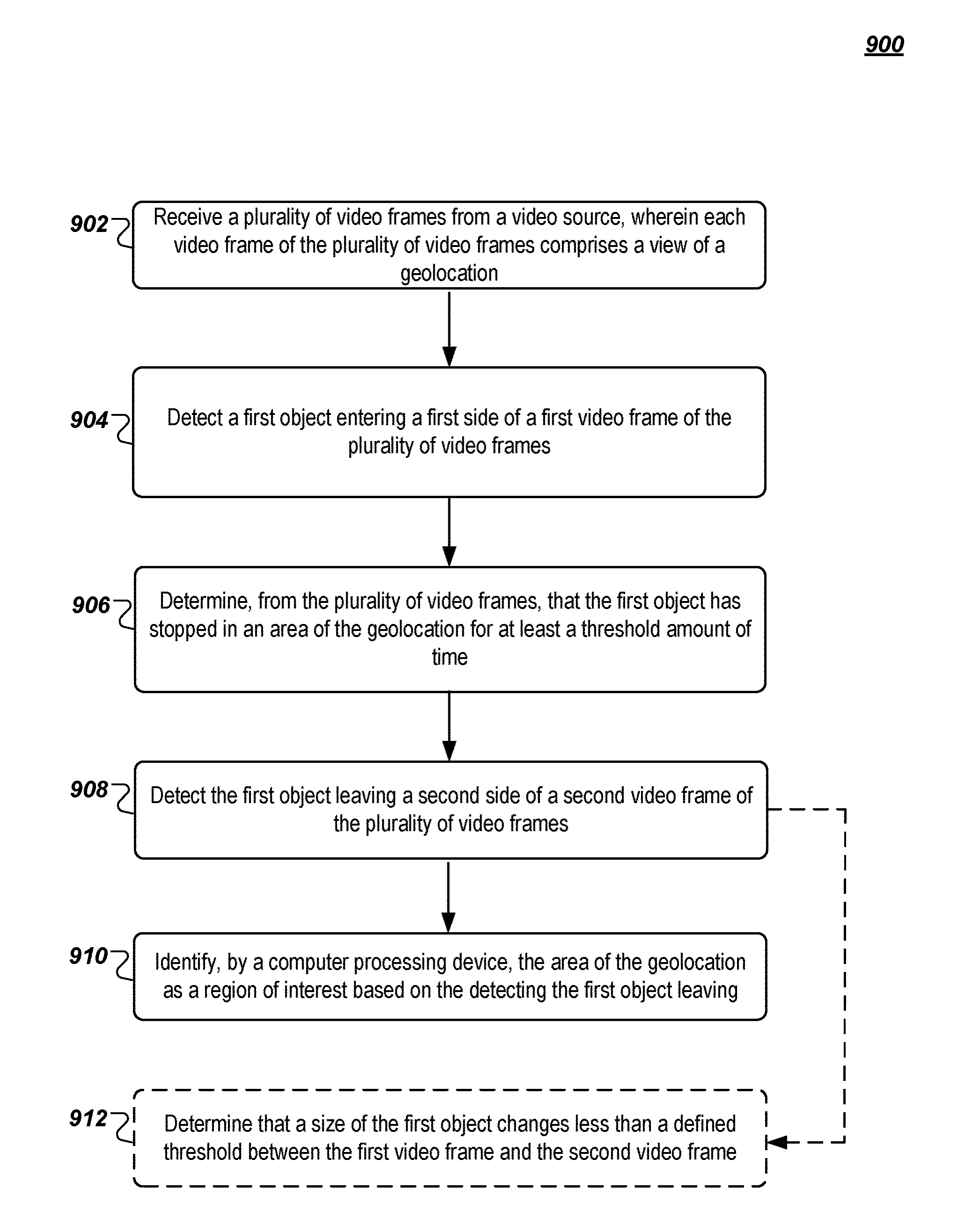

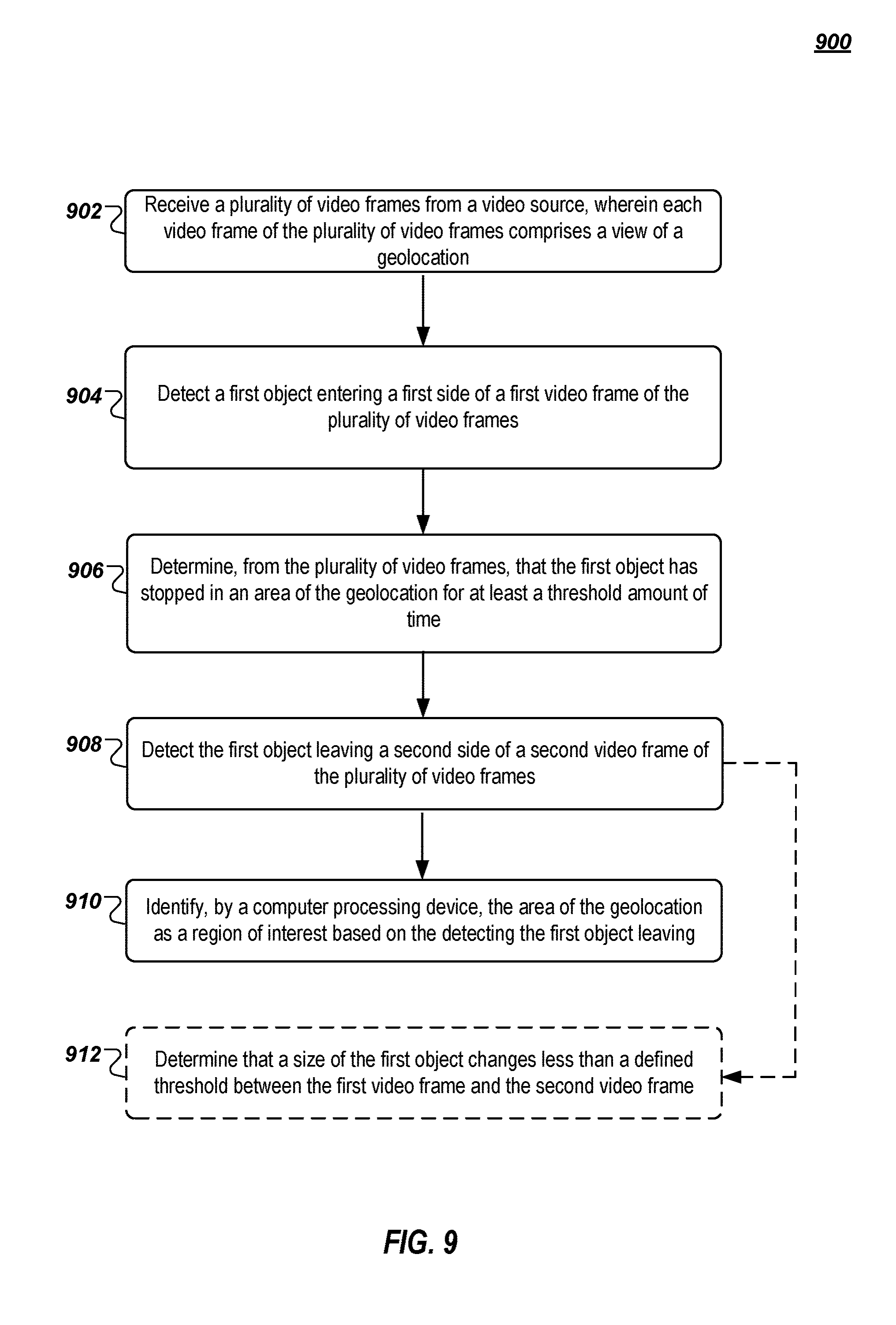

A method includes detecting a first object entering a first video frame of a plurality of video frames of a view of a geolocation and determining, from the plurality of video frames, that the first object has stopped in an area of the geolocation for at least a threshold amount of time. The method also includes detecting the first object leaving a second video frame of the plurality of video frames, and identifying, by a computer processing device, the area of the geolocation as a region of interest based on the detecting the first object leaving.

| Inventors: | Idrisov; Renat; (Berlin, DE) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 64604905 | ||||||||||

| Appl. No.: | 16/182299 | ||||||||||

| Filed: | November 6, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 15982877 | May 17, 2018 | 10157476 | ||

| 16182299 | ||||

| 62520328 | Jun 15, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/00369 20130101; H04L 67/1061 20130101; G06K 9/00778 20130101; G06K 9/00785 20130101; H04L 51/20 20130101; H04L 67/18 20130101; G06T 2207/30232 20130101; G06T 7/70 20170101; G06K 9/00771 20130101; G06T 2207/10016 20130101; G06K 9/3233 20130101; G06T 2207/30204 20130101 |

| International Class: | G06T 7/70 20060101 G06T007/70; G06K 9/00 20060101 G06K009/00; G06K 9/32 20060101 G06K009/32 |

Claims

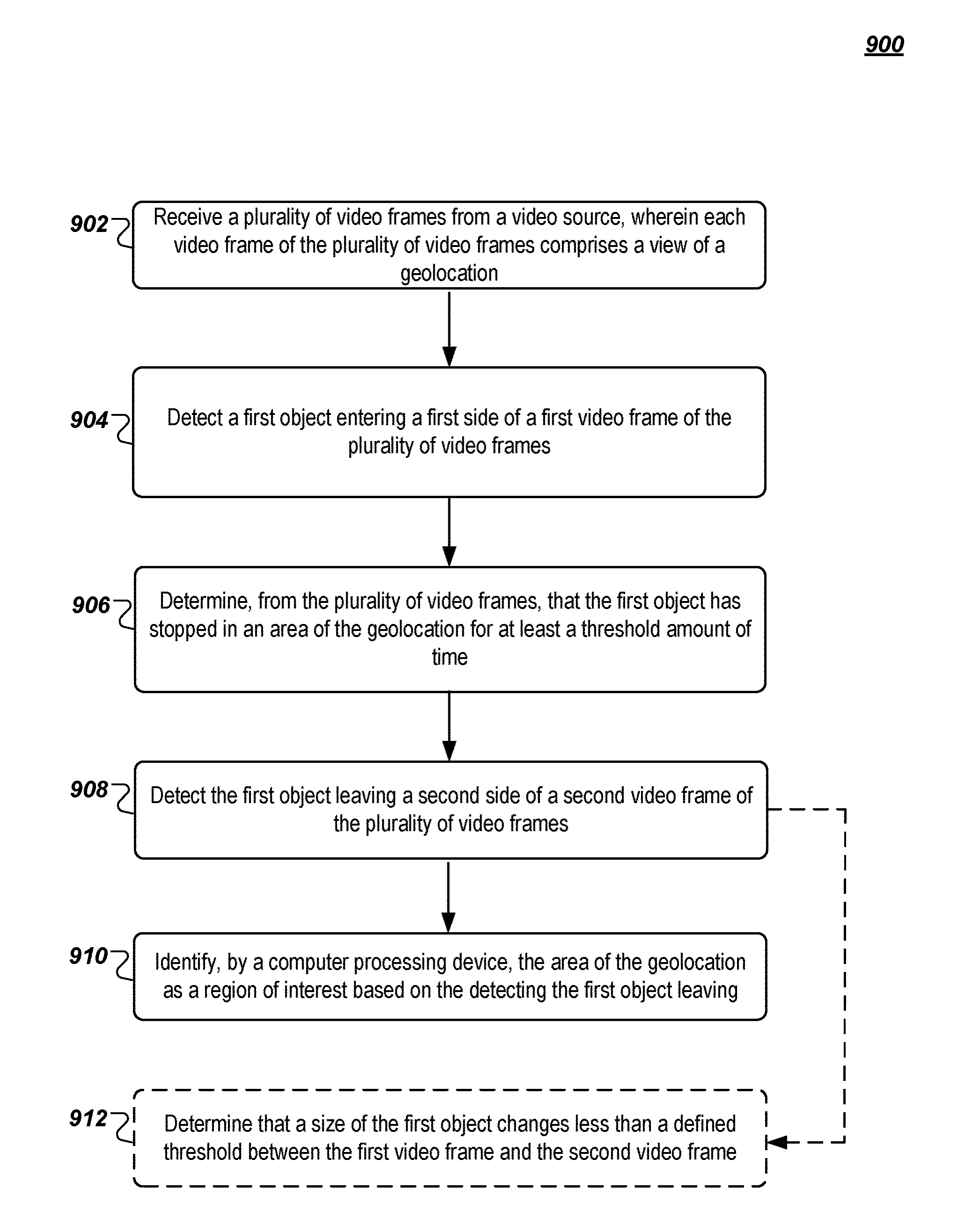

1. A method, comprising: detecting a first object entering a first video frame of a plurality of video frames of a view of a geolocation; detecting the first object leaving a second video frame of the plurality of video frames; and identifying, by a computer processing device, an area of the geolocation as a region of interest based on the detecting the first object leaving.

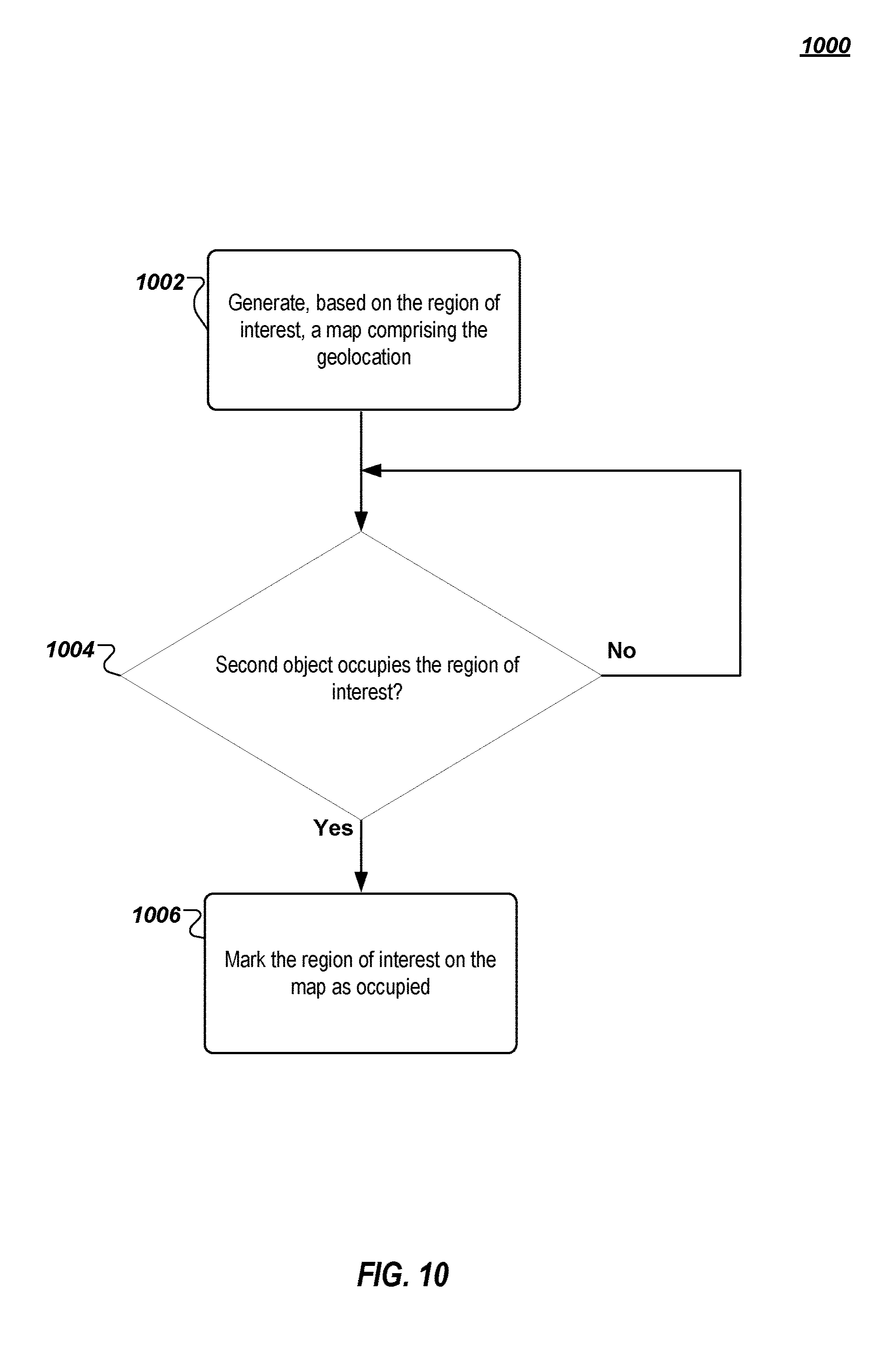

2. The method of claim 1, comprising: generating, based on the region of interest, a map comprising the geolocation.

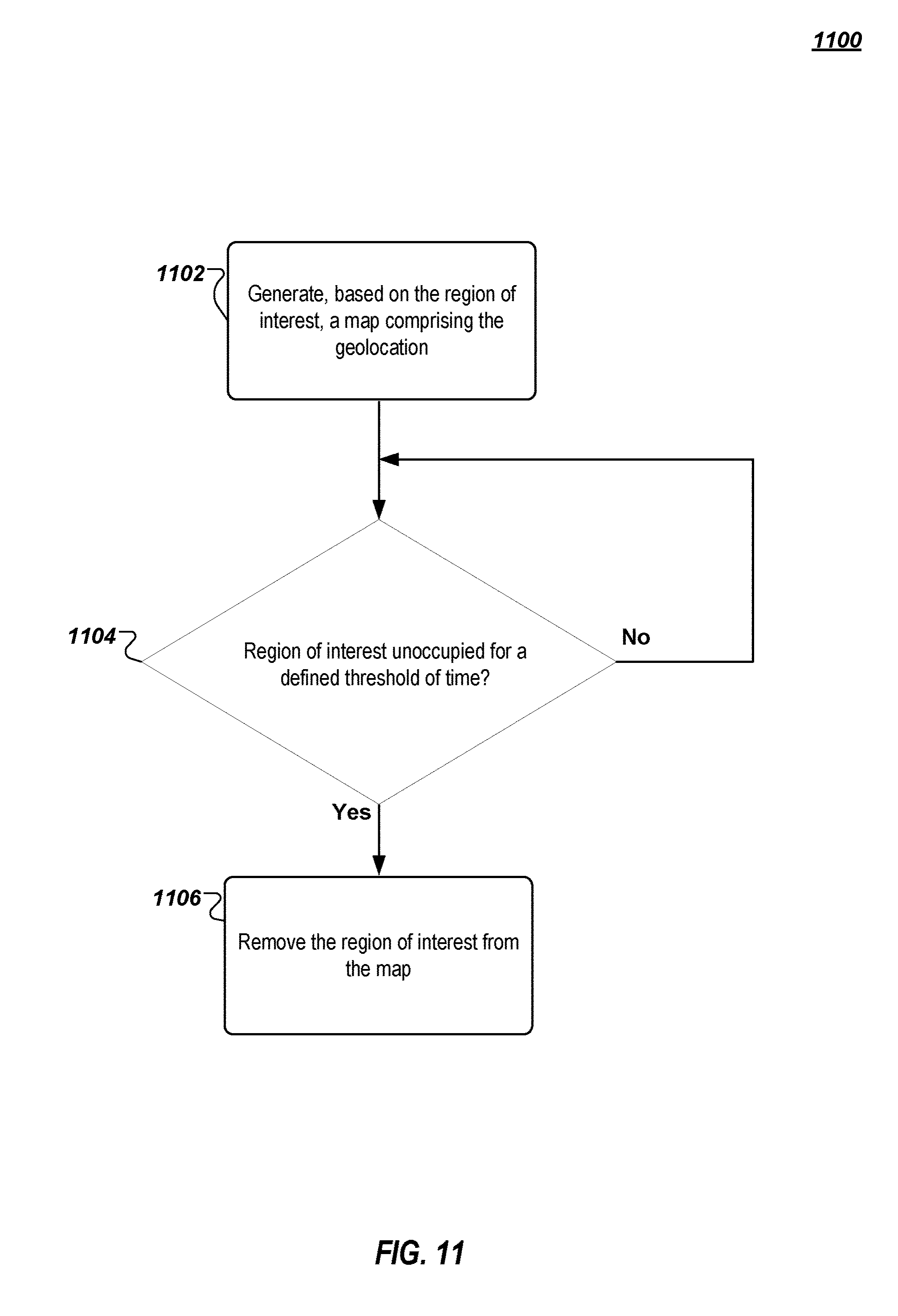

3. The method of claim 2, comprising: determining that a second object occupies the region of interest; and marking the region of interest on the map as occupied.

4. The method of claim 2, comprising: determining that the region of interest has not been occupied for a predetermined amount of time; and removing the region of interest from the map.

5. The method of claim 1, wherein the region of interest comprises a parking spot, and wherein the first object comprises a vehicle and wherein the method further comprises determining, from the plurality of video frames, that the first object has stopped in the area of the geolocation for at least a threshold amount of time.

6. The method of claim 1, comprising: receiving the plurality of video frames from a live video source.

7. The method of claim 6, wherein the plurality of video frames are received from the live video source via a publish-subscribe communication system.

8. The method of claim 1, further comprising determining, from the plurality of video frames, that the first object has stopped in the area of the geolocation for at least a threshold amount of time, wherein the threshold amount of time is selected to differentiate between different objects in the plurality of video frames.

9. The method of claim 1, comprising: detecting boundaries of the region of interest by extracting a difference between an image of an occupied region of interest and an image of a same unoccupied region of interest.

10. The method of claim 1, comprising: determining that a size of the first object changes less than a predetermined threshold between the first video frame and the second video frame.

11. A system, comprising: a computer processing device programmed to perform operations to: detect a first object entering a first video frame of a plurality of video frames of a view of a geolocation; detect the first object leaving a second video frame of the plurality of video frames; and identify an area of the geolocation as a region of interest based on the detecting the first object leaving.

12. The system of claim 11, the computer processing device further to: generate, based on the region of interest, a map comprising the geolocation.

13. The system of claim 12, the computer processing device further to: determine that a second object occupies the region of interest; and mark the region of interest on the map as occupied.

14. The system of claim 12, the computer processing device further to: determine that the region of interest has not been occupied for a predetermined amount of time; and remove the region of interest from the map.

15. The system of claim 11, wherein the computer processing device further to determine, from the plurality of video frames, that the first object has stopped in an area of the geolocation for at least a threshold amount of time, wherein the region of interest comprises a parking spot, and wherein the first object comprises a vehicle.

16. The system of claim 11, the computer processing device further to: receive the plurality of video frames from a live video source.

17. The system of claim 16, wherein the plurality of video frames are received from the live video source via a publish-subscribe communication system.

18. The system of claim 11, wherein the computer processing device further to determine, from the plurality of video frames, that the first object has stopped in an area of the geolocation for at least a threshold amount of time, and wherein the threshold amount of time is selected to differentiate between different objects in the plurality of video frames.

19. The system of claim 11, the computer processing device further to: detect boundaries of the region of interest by extracting a difference between an image of an occupied region of interest and an image of a same unoccupied region of interest.

20. A non-transitory computer-readable medium having instructions stored thereon that, when executed by a computer processing device, cause the computer processing device to: detect a first object entering a first video frame of a plurality of video frames of a view of a geolocation; detect the first object leaving a second video frame of the plurality of video frames; and identify an area of the geolocation as a region of interest based on the detecting the first object leaving.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 15/982,877, filed May 17, 2018, which claims the benefit of U.S. Provisional Patent Application No. 62/520,328, filed Jun. 15, 2017, the entire contents of each of which are hereby incorporated by reference.

BACKGROUND

[0002] This disclosure relates to a self-learning spatial recognition system and, more particularly, to a self-learning spatial recognition system in a publish-subscribe (or "PubSub") system.

[0003] The PubSub pattern is a data communication messaging arrangement implemented by software systems where so-called publishers publish messages to topics and so-called subscribers receive the messages pertaining to particular topics to which they are subscribed. There can be one or more publishers per topic and publishers generally have no knowledge of what subscribers, if any, will receive the published messages. Because publishers may publish large volumes of messages and subscribers may subscribe to many topics (or "channels"), the overall volume of messages directed to a particular channel and/or subscriber may be difficult to manage.

BRIEF DESCRIPTION OF THE DRAWINGS

[0004] FIG. 1A illustrates an example system that supports the PubSub communication pattern.

[0005] FIG. 1B illustrates functional layers of software on an example client device.

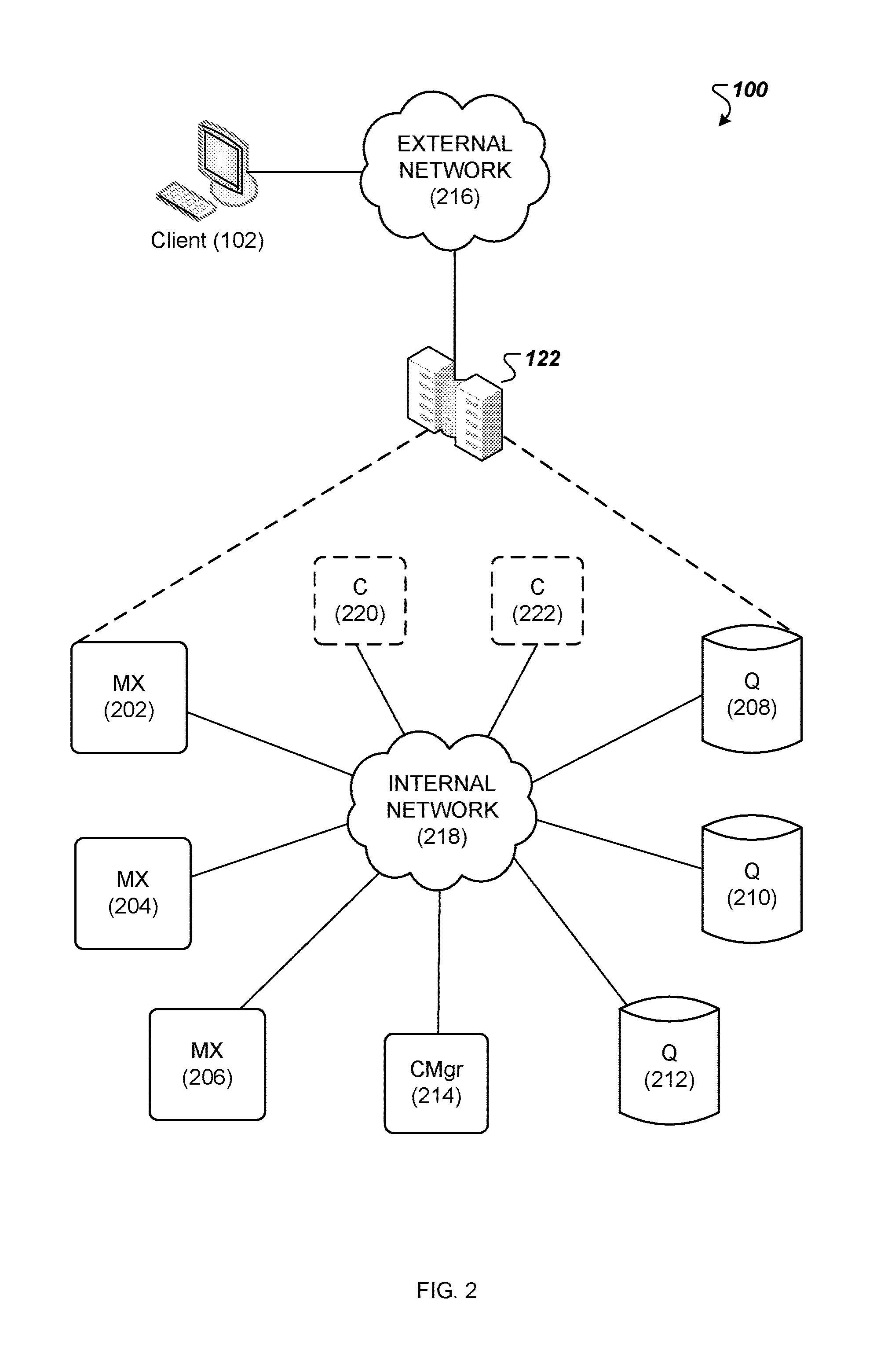

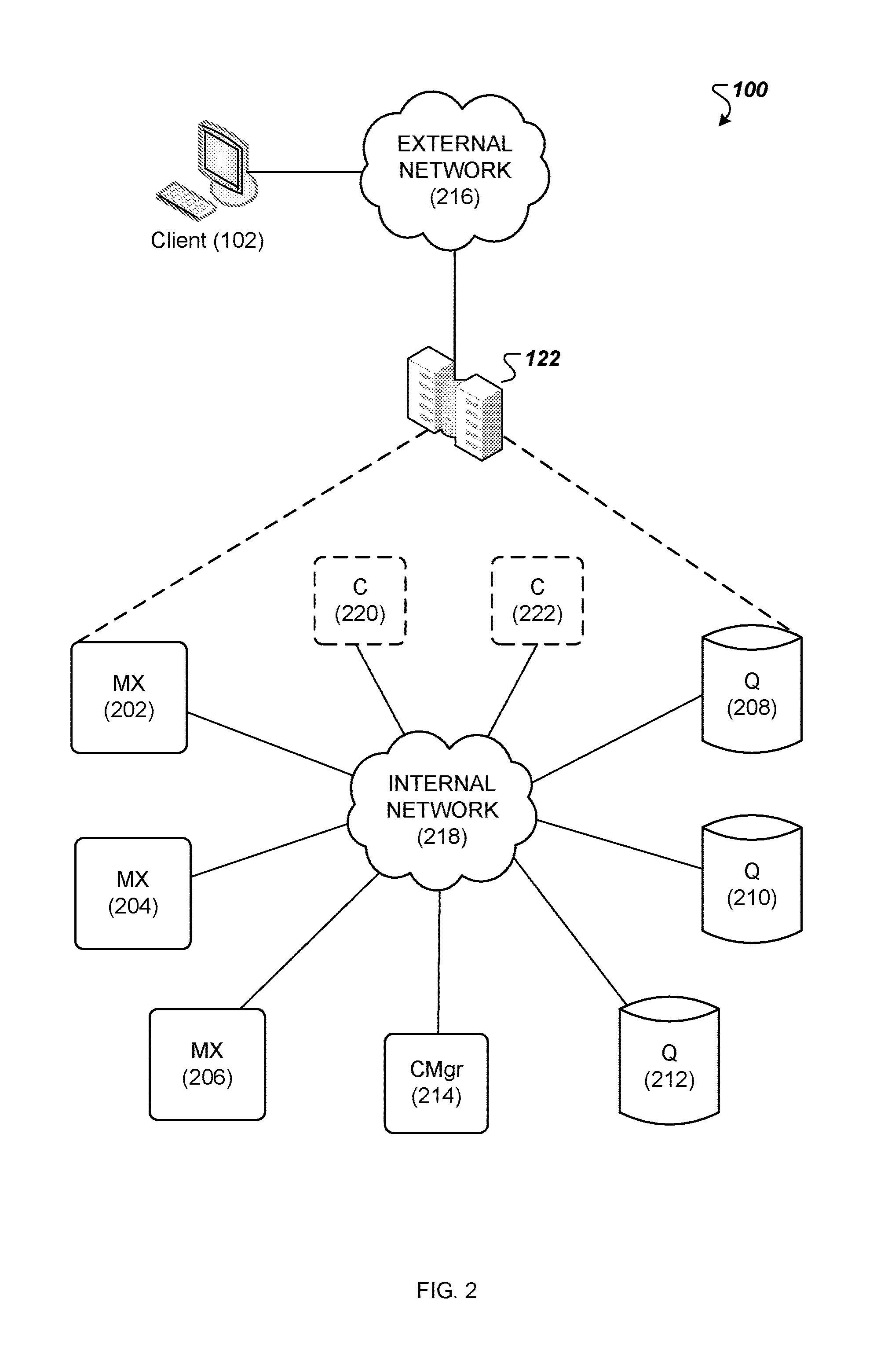

[0006] FIG. 2 is a diagram of an example messaging system.

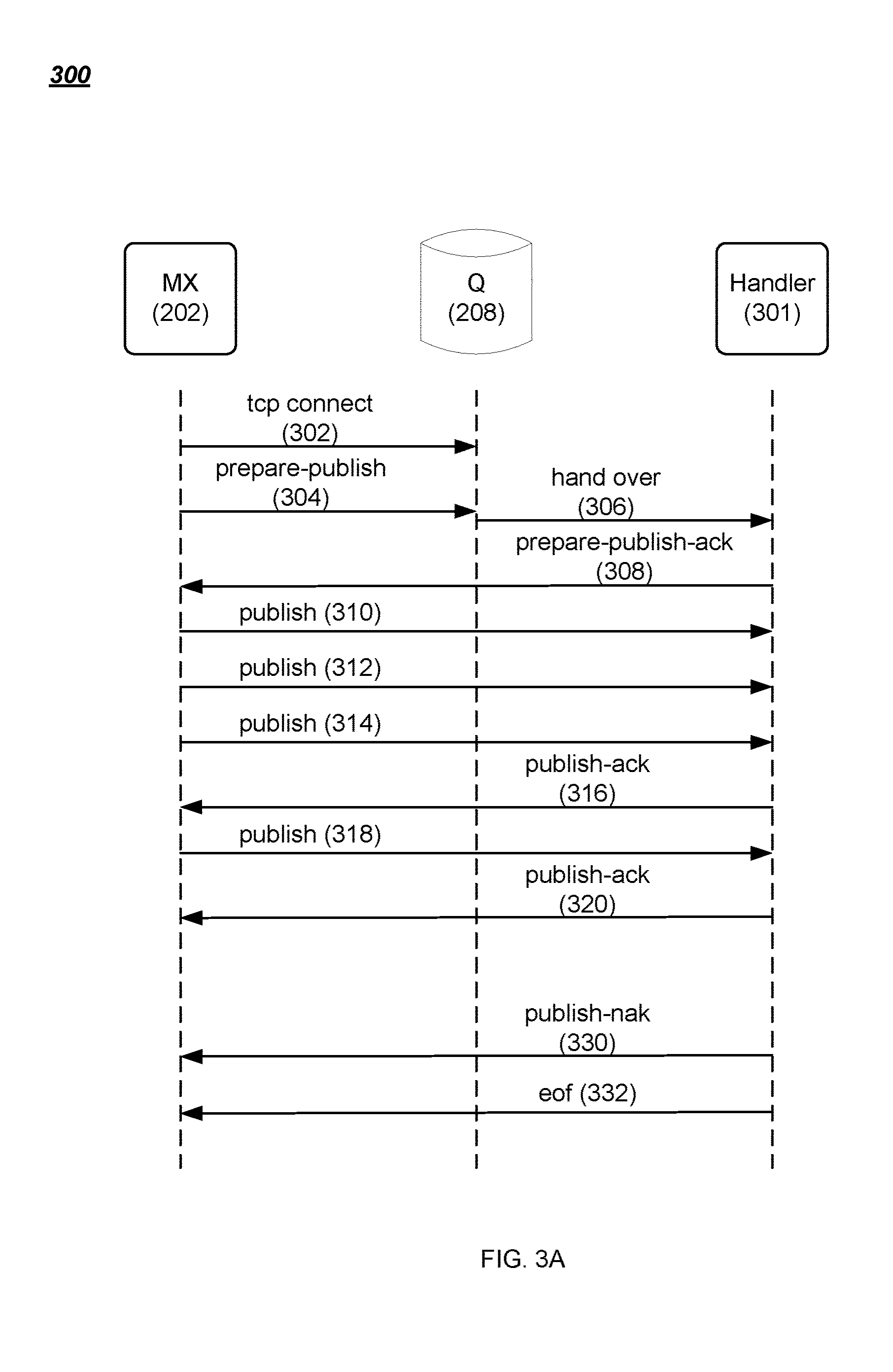

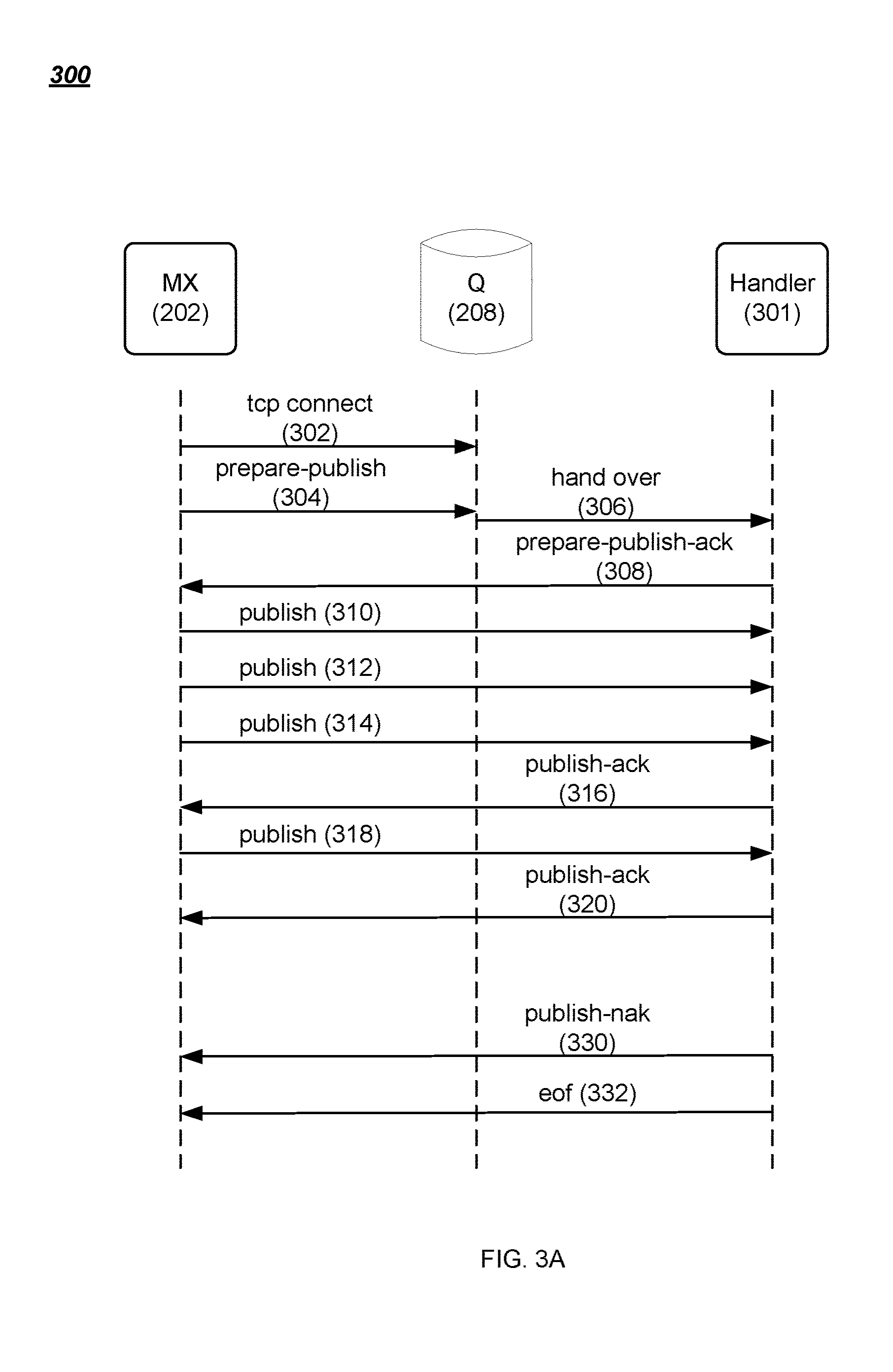

[0007] FIG. 3A is a data flow diagram of an example method for writing data to a streamlet.

[0008] FIG. 3B is a data flow diagram of an example method for reading data from a streamlet.

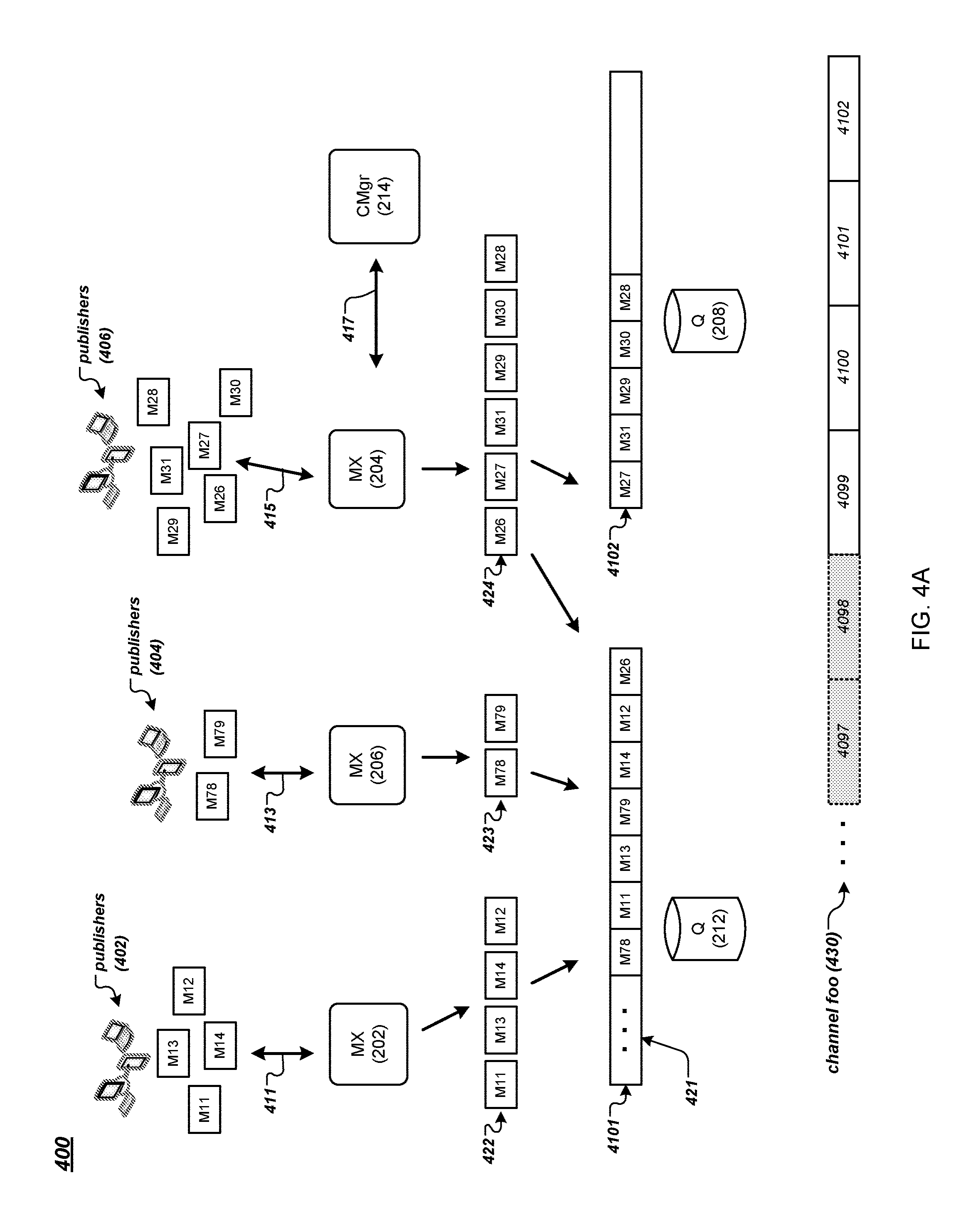

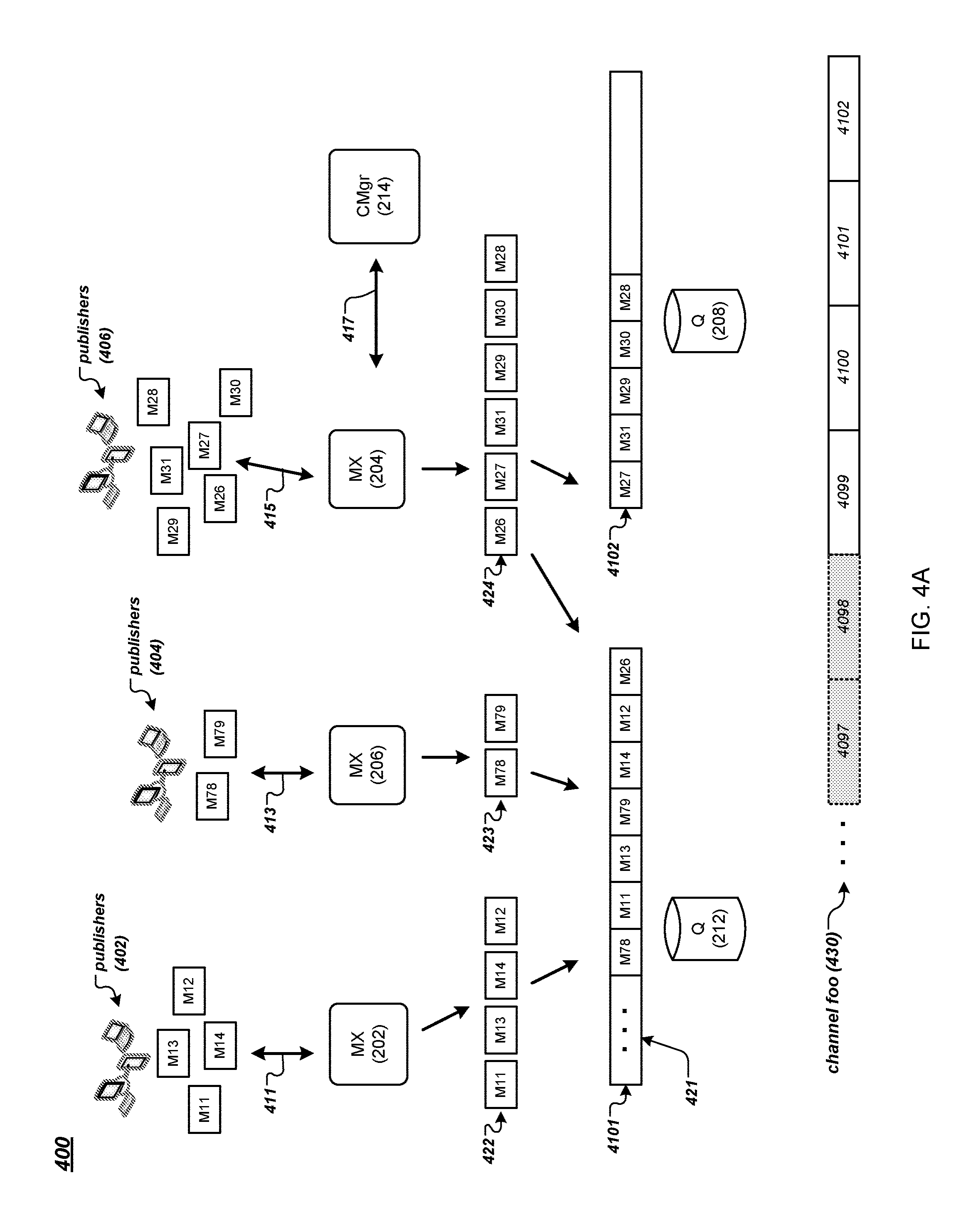

[0009] FIG. 4A is a data flow diagram of an example method for publishing messages to a channel of a messaging system.

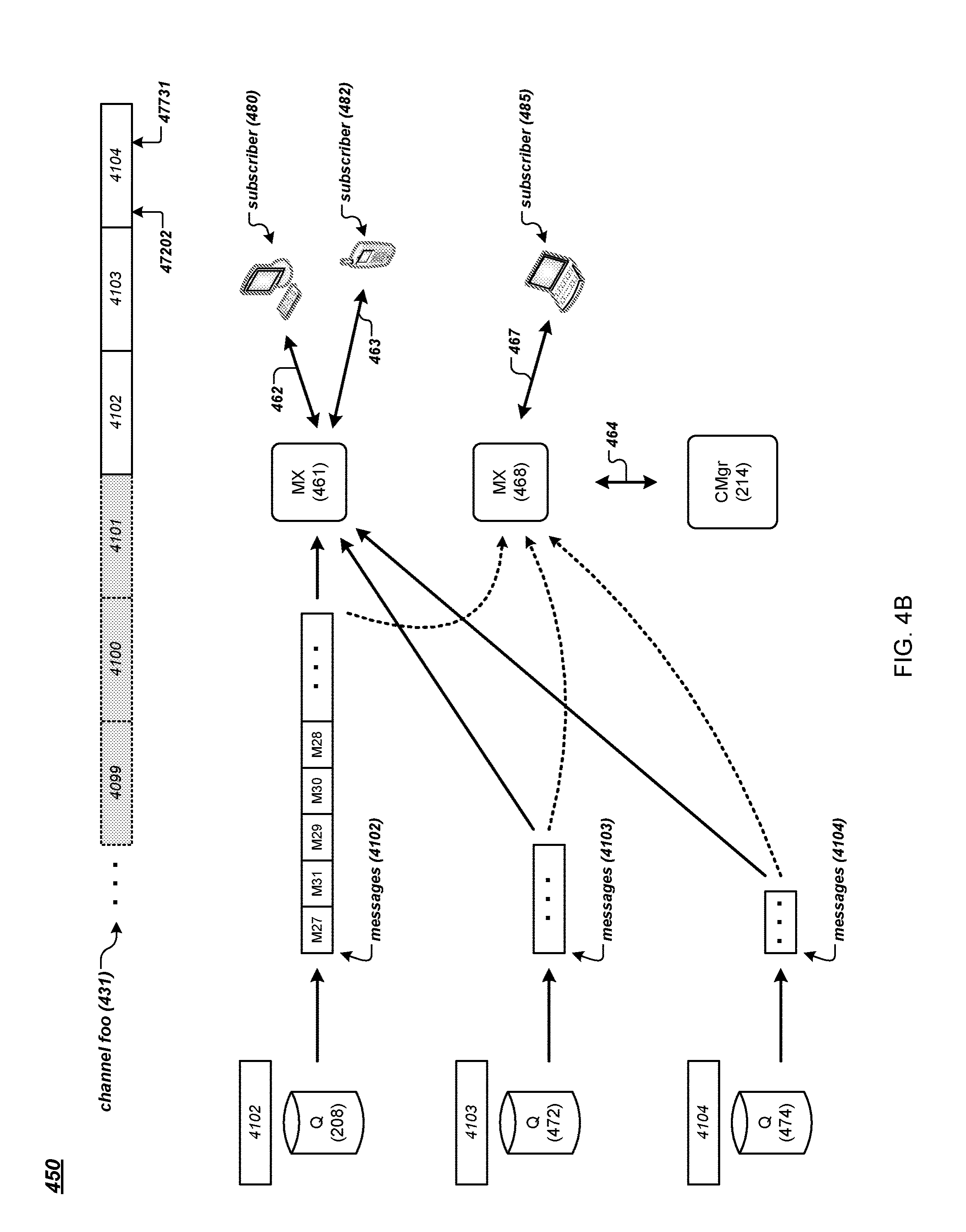

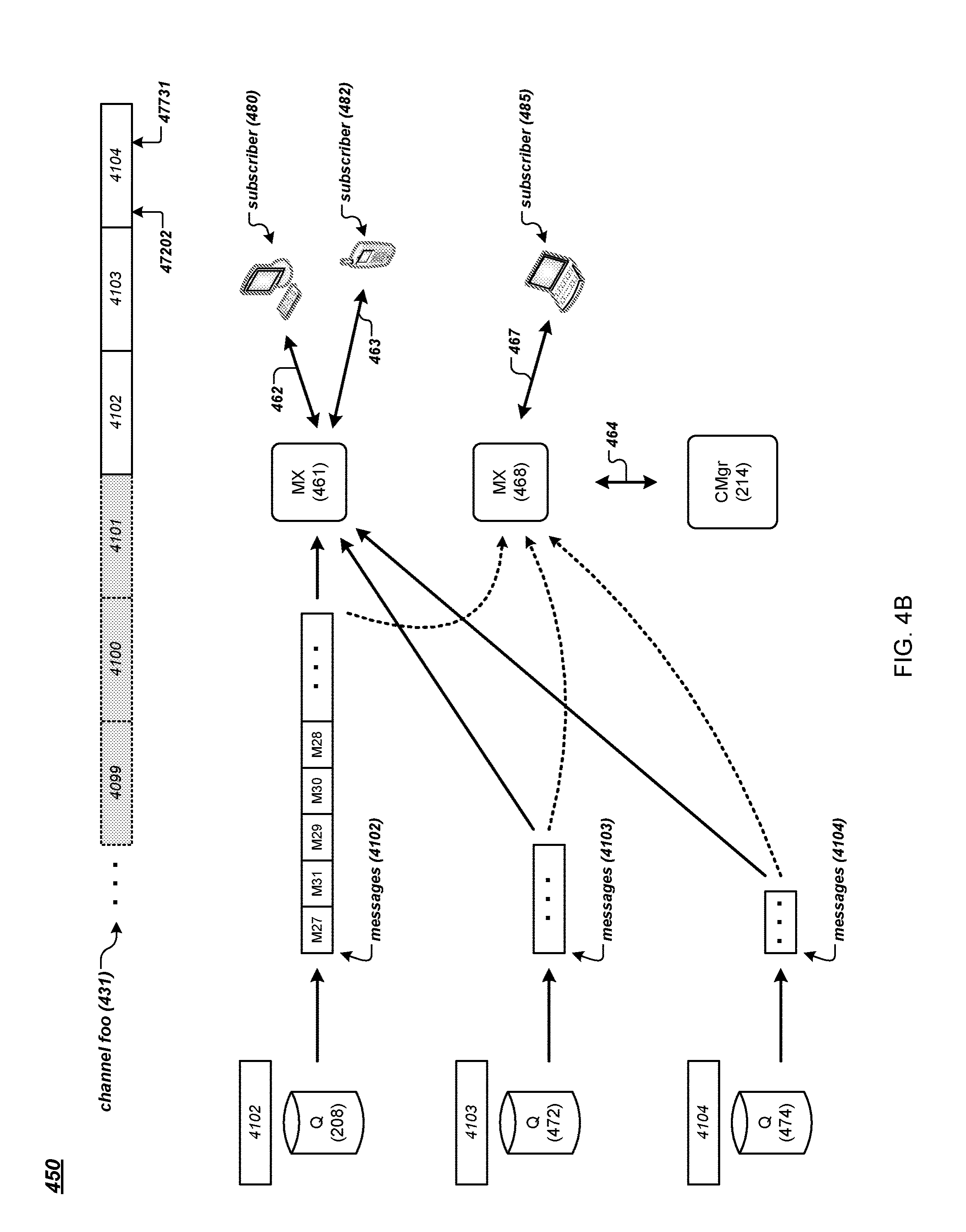

[0010] FIG. 4B is a data flow diagram of an example method for subscribing to a channel of a messaging system.

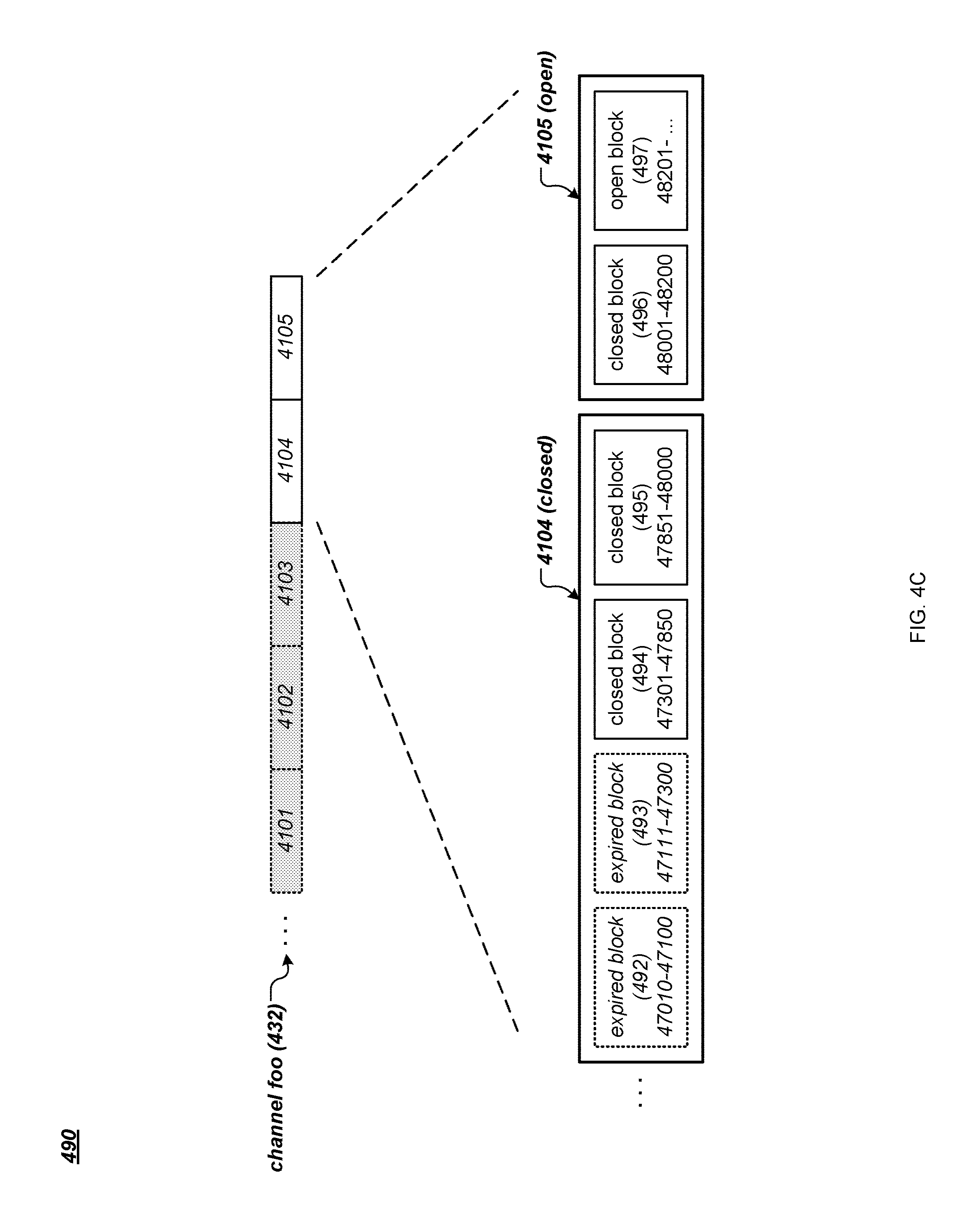

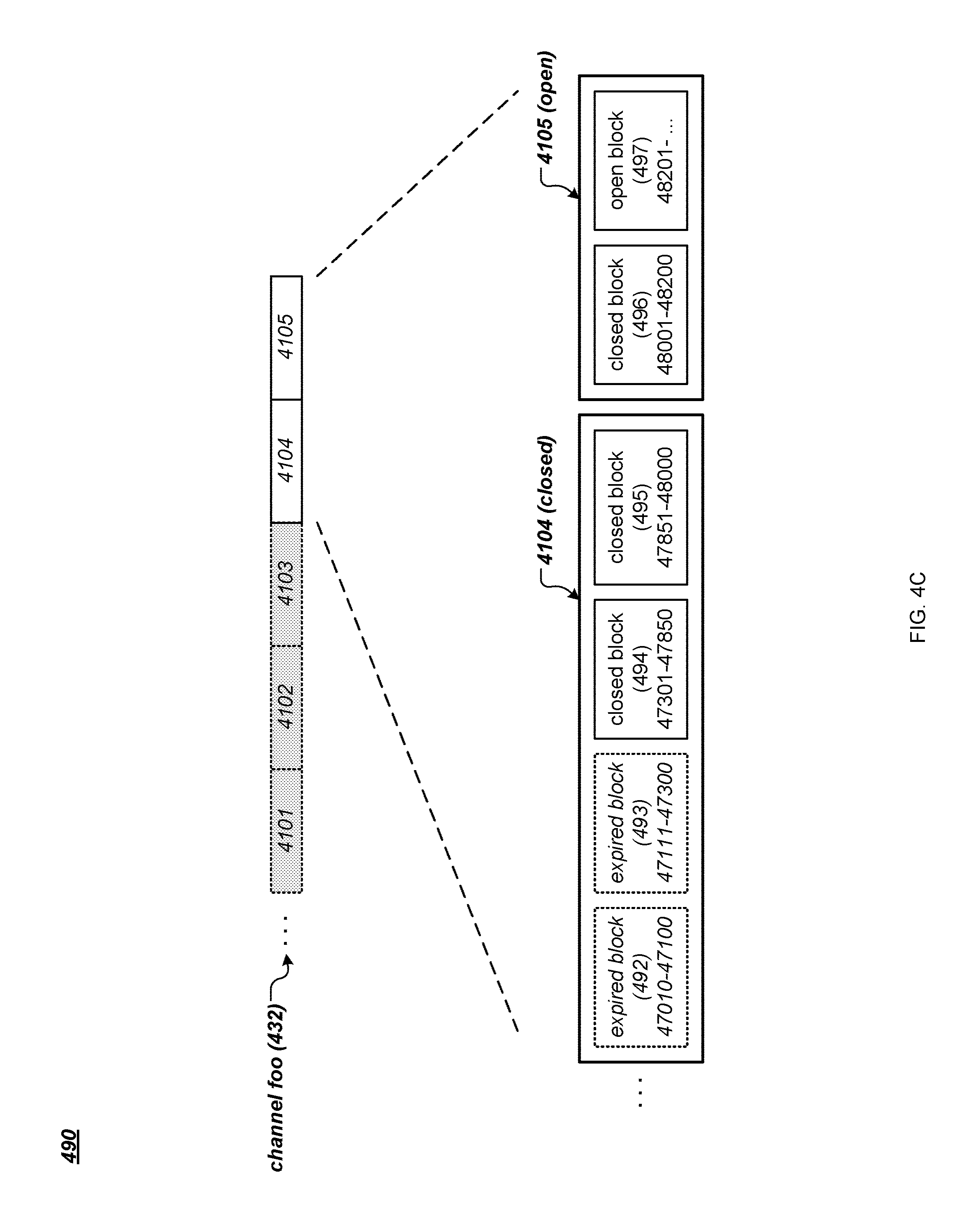

[0011] FIG. 4C is an example data structure for storing messages of a channel of a messaging system.

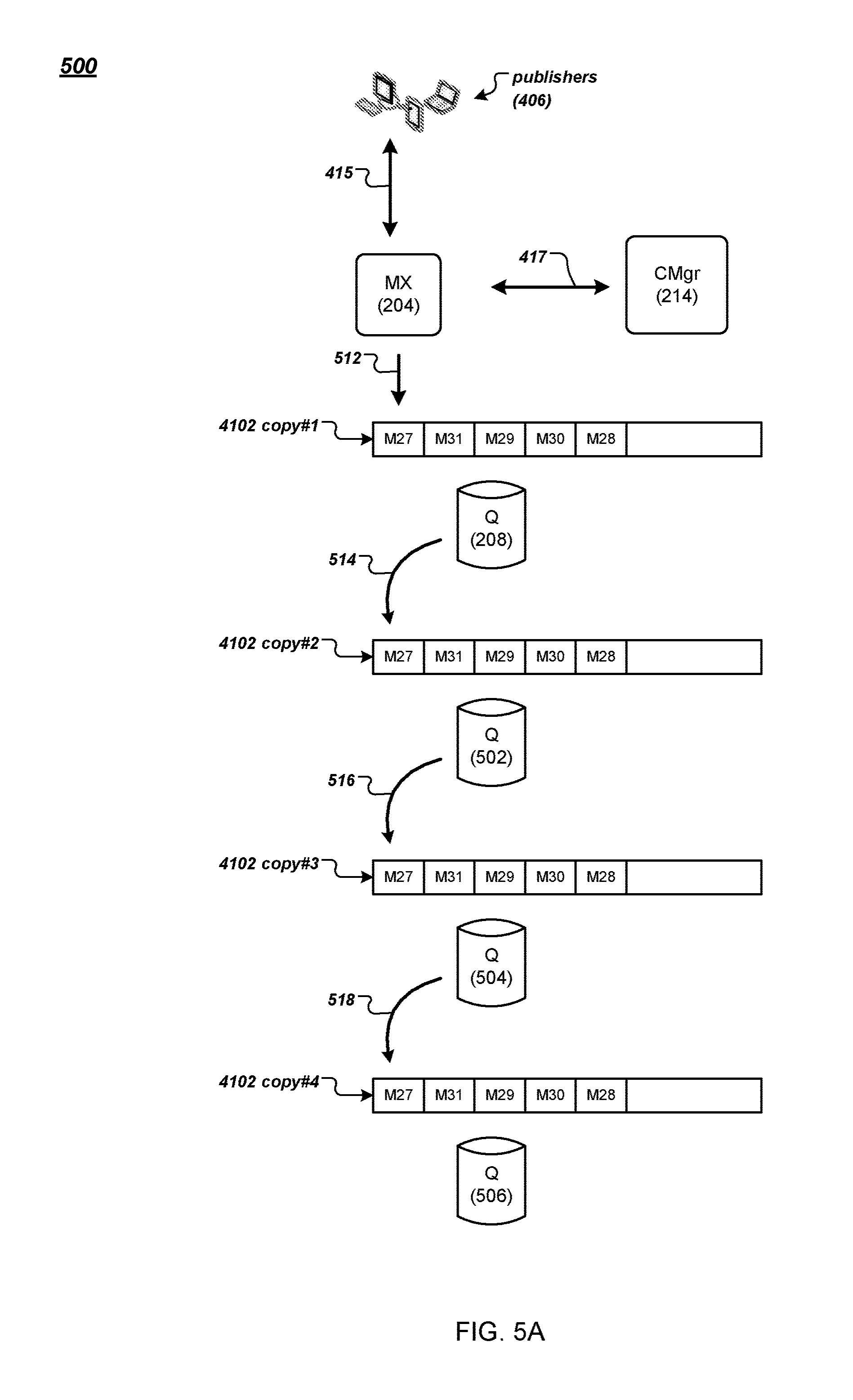

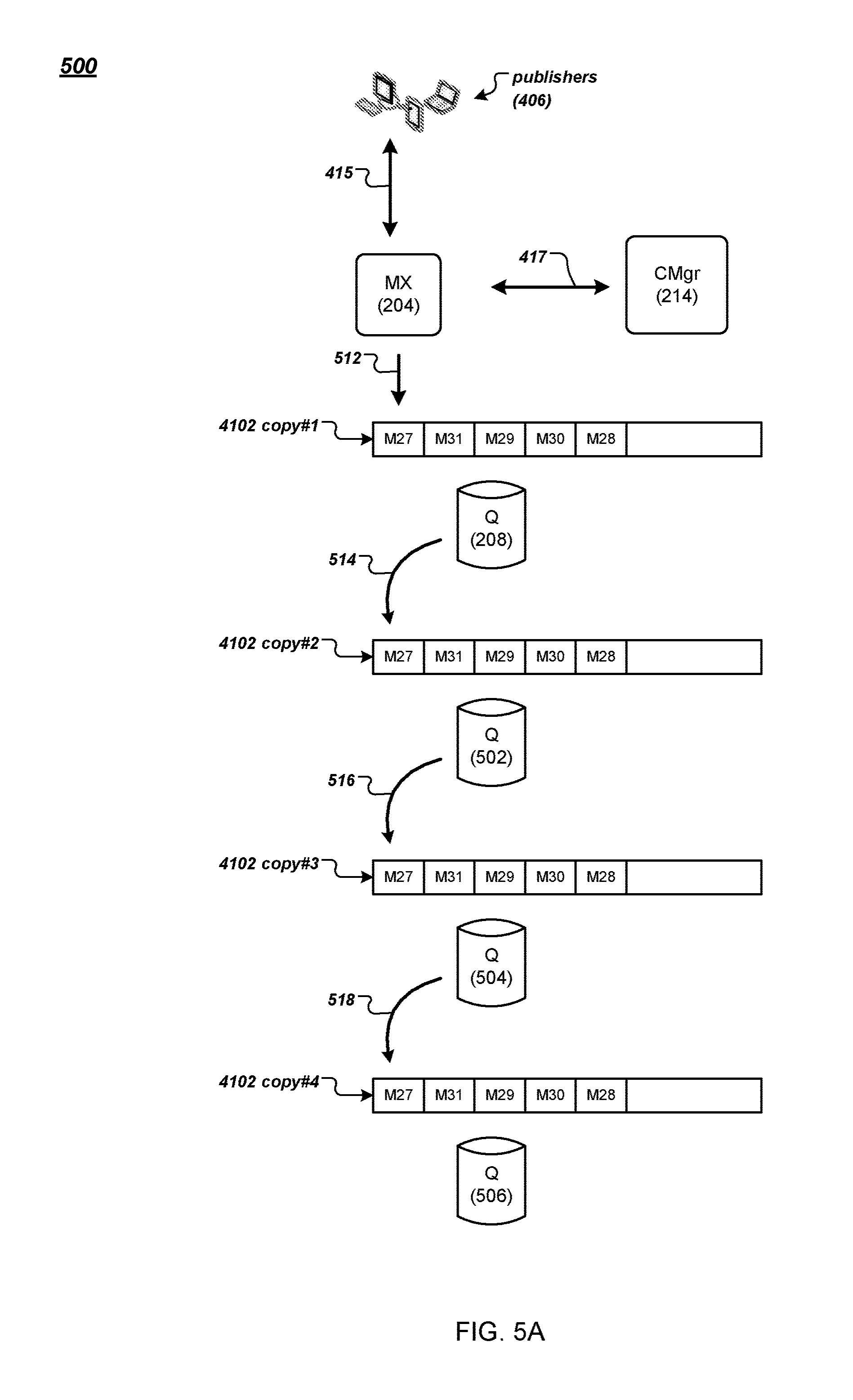

[0012] FIG. 5A is a data flow diagram of an example method for publishing and replicating messages of a messaging system.

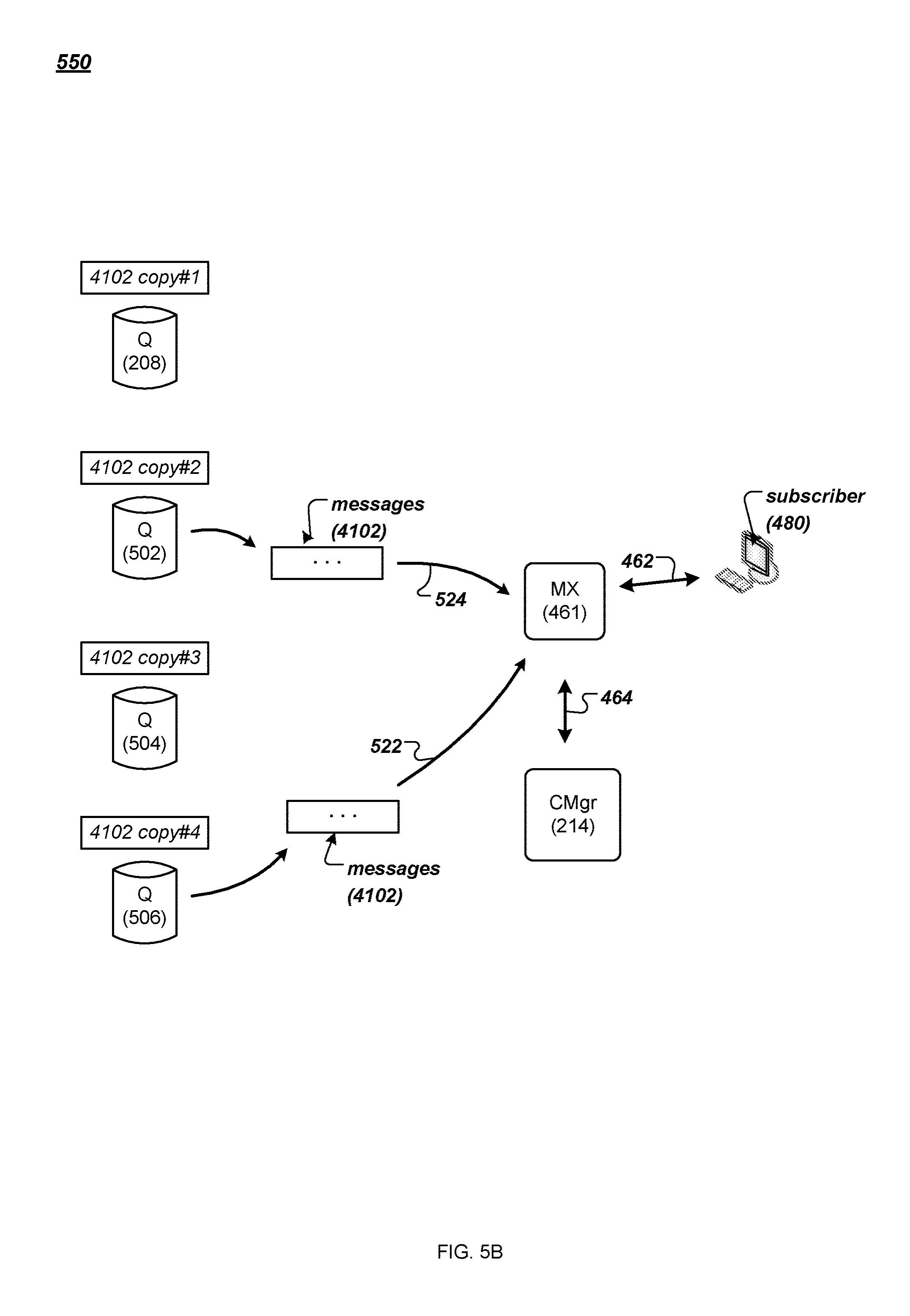

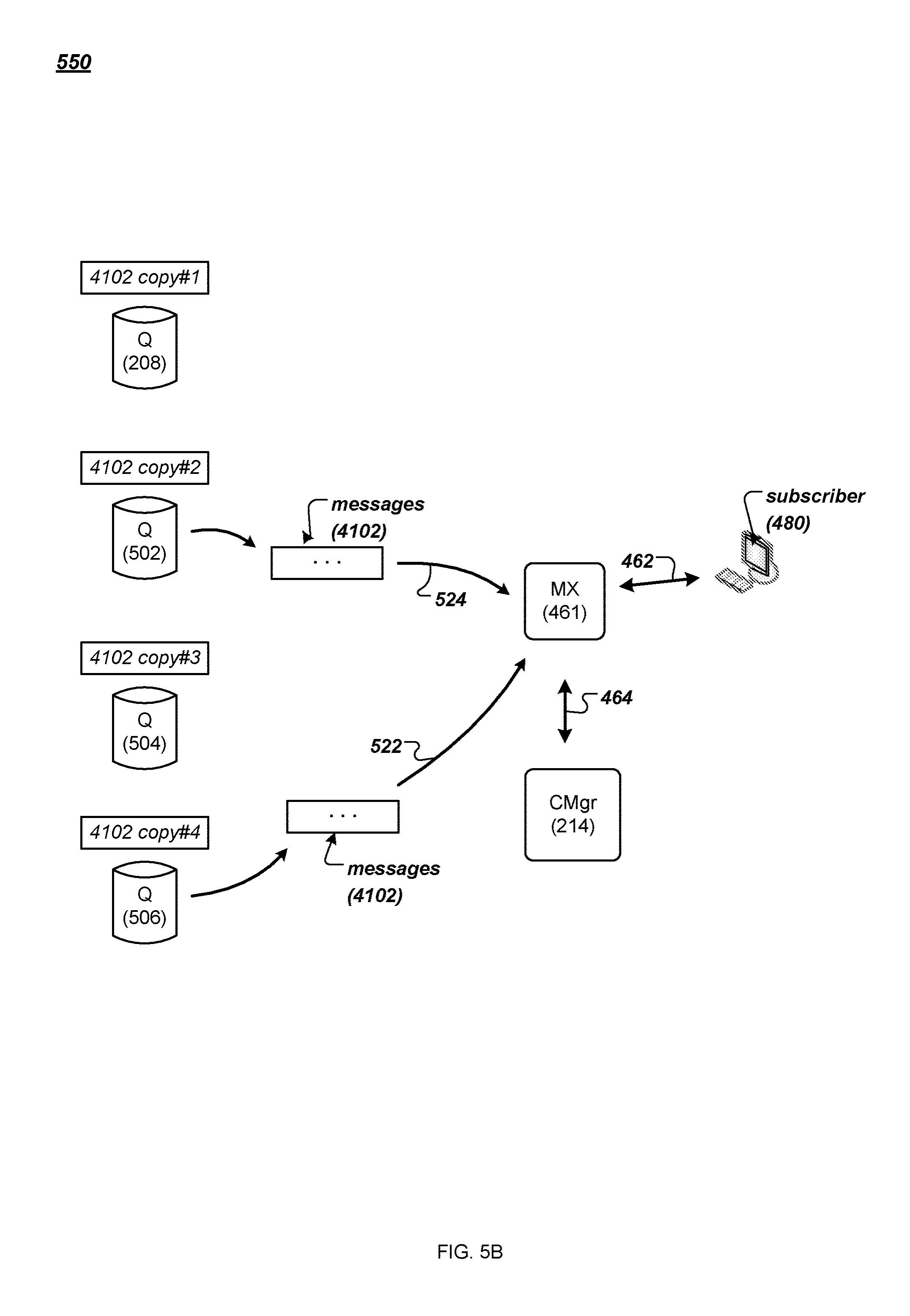

[0013] FIG. 5B is a data flow diagram of an example method for retrieving stored messages in a messaging system.

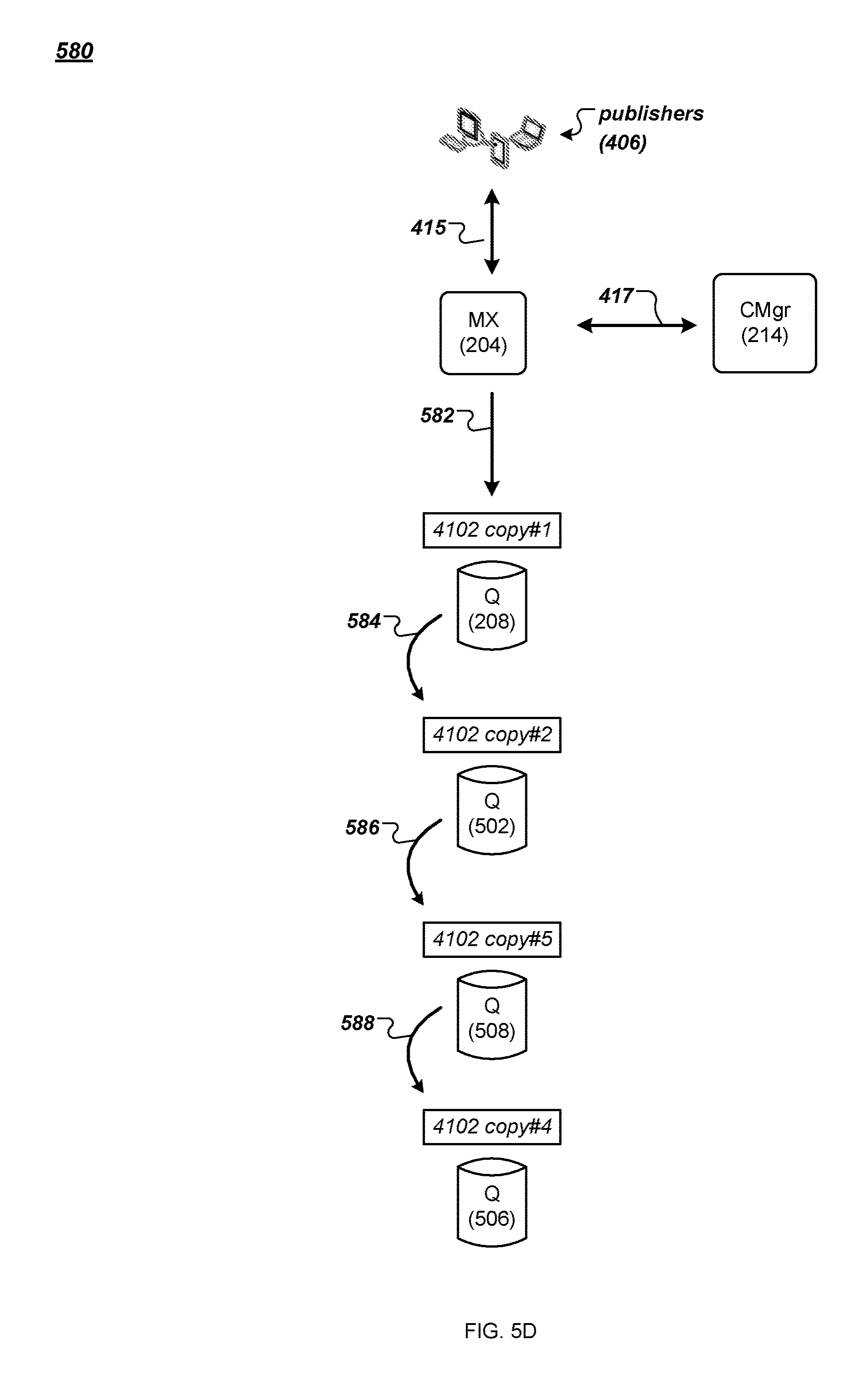

[0014] FIGS. 5C and 5D are data flow diagrams of example methods for repairing a chain of copies of data in a messaging system.

[0015] FIG. 6 is an example data flow diagram for the application of filtering criteria in a messaging system.

[0016] FIGS. 7A-7D are illustrations of how messages may be processed using query instructions that include a period-based parameter.

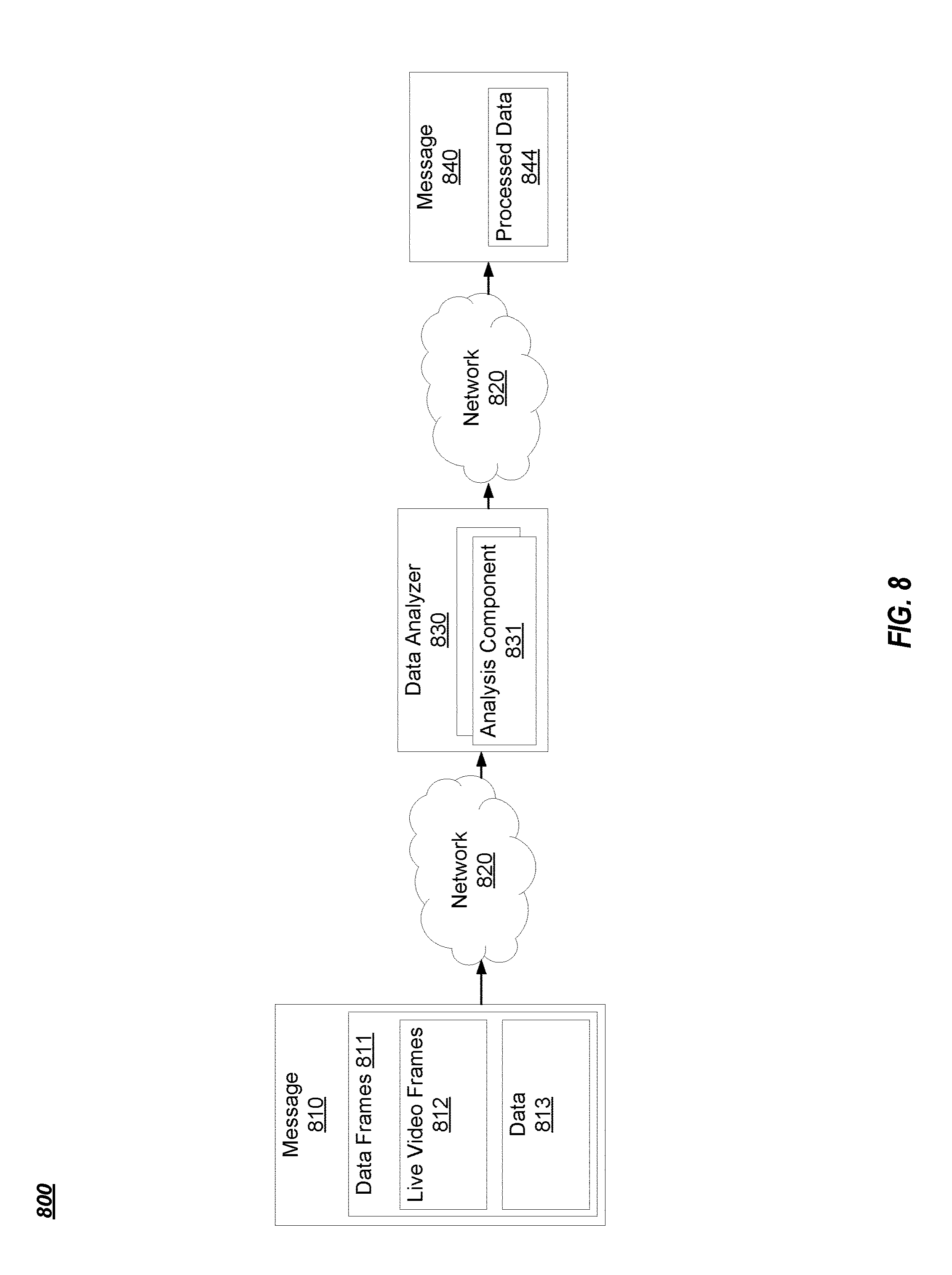

[0017] FIG. 8 is a diagram of an example messaging system for self-learning spatial recognition in a PubSub communication system.

[0018] FIG. 9 is a first flowchart of an example method for self-learning spatial recognition in a PubSub communication system.

[0019] FIG. 10 is a second flowchart of an example method for self-learning spatial recognition in a PubSub communication system.

[0020] FIG. 11 is a third flowchart of an example method for self-learning spatial recognition in a PubSub communication system.

[0021] FIG. 12 is a diagram of an example mapping for self-learning spatial recognition in a PubSub communication system.

[0022] FIG. 13 is a block diagram of an example computing device that may perform one or more of the operations described herein.

DETAILED DESCRIPTION

[0023] Elements of examples or embodiments described with respect to a given aspect of the invention can be used in various embodiments of another aspect of the invention. For example, it is contemplated that features of dependent claims depending from one independent claim can be used in apparatus, systems, and/or methods of any of the other independent claims.

[0024] The details of one or more embodiments of the subject matter described in this specification are set forth in the accompanying drawings and the description below. Other features, aspects, and advantages of the subject matter will become apparent from the description, the drawings, and the claims.

[0025] System architecture for self-learning spatial recognition may include a messaging system. The messaging system may support the PubSub communication pattern and may allow publishers and subscribers to publish and receive live messages. Users may include both publishers and subscribers in the messaging system. For example, publishers may publish messages via the messaging system that indicate useful information to subscribers. The subscribers may view the messages and associated information. It is noted the present embodiments may also be implemented using other suitable (non-PubSub) real-time or near real-time network topologies.

[0026] In certain embodiments, systems may track free or open geographical areas (e.g., parking lot spots) with a webcam or other suitable video or camera source if a map of the geographical area (e.g., parking lot) is available. For example, if a map of a parking lot already exists, defined parking spots may be labeled via either human intervention or computer-based artificial intelligence. However, if the parking lot is large and there are no markers or other identifiers on the parking lot surface (or the marks are not obvious or easily seen), detecting free or open parking spots in the parking lot may be problematic. Even if possible using existing methods, such methods of detecting free or open parking spots may be time-consuming, resource, and processor intensive. Accordingly, it would be desirable to provide systems and methods for self-learning spatial recognition, where current approaches cannot provide such spatial recognition without human intervention, and while minimizing computer resources and processing power.

[0027] Advantageously, the systems and methods of self-learning spatial recognition described herein resolve the above problems, and others, by utilizing a PubSub computing network to identify regions of interest (e.g., parking spots, seats, boat slips, spots in a queue, etc.) in a geographical location (e.g., parking lots, parking structures, streets, harbors, restaurants, sports complexes, theatres, parks, open fields, shopping areas, etc.). The present embodiments may provide techniques for resource and processing power minimizing self-learning spatial recognition, and may thereby increase processing capability and/or capacity and data throughput within the PubSub computing network.

[0028] Worth noting is that while the term "vehicle" is used throughout for convenience and simplicity, the term "vehicle" may refer to one of, for example, a bike, a skateboard, an automotive motor vehicle, a motorcycle, a scooter, a boat, an airplane, a train, a jet, or other suitable conveyance. Furthermore, the term "pedestrian" as used throughout may include both humans and animals.

[0029] FIG. 1A illustrates an example system 100 that supports the PubSub communication pattern. The example system 100 may support video streaming by providing messages from publishers to subscribers that include a video frame, for instance. Components of the example system 100 may also provide support for querying and updating live video streams. Publisher clients (e.g., Publisher 1) can publish messages to named channels (e.g., "Channel 1") by way of the system 100. A message can comprise any type of information including one or more of the following: text, image content, sound content, multimedia content, video content, binary data, and so on. Other types of message data are possible. Subscriber clients (e.g., Subscriber 2) can subscribe to a named channel using the system 100 and start receiving messages which occur after the subscription request or from a given position (e.g., a message number or time offset). A client can be both a publisher and a subscriber.

[0030] Depending on the configuration, a PubSub system can be categorized as follows: [0031] One to One (1:1). In this configuration there is one publisher and one subscriber per channel. A typical use case is private messaging. [0032] One to Many (1:N). In this configuration there is one publisher and multiple subscribers per channel. Typical use cases are broadcasting messages (e.g., stock prices). [0033] Many to Many (M:N). In this configuration there are many publishers publishing to a single channel. The messages are then delivered to multiple subscribers. Typical use cases are map applications.

[0034] There is no separate operation needed to create a named channel. A channel is created implicitly when the channel is subscribed to or when a message is published to the channel. In some implementations, channel names can be qualified by a name space. A name space comprises one or more channel names. Different name spaces can have the same channel names without causing ambiguity. The name space name can be a prefix of a channel name where the name space and channel name are separated by a dot or other suitable separator. In some implementations, name spaces can be used when specifying channel authorization settings. For instance, the messaging system 100 may have app1.foo and app1.system.notifications channels where "app1" is the name of the name space. The system can allow clients to subscribe and publish to the app1.foo channel. However, clients can only subscribe to, but not publish to the app1.system.notifications channel.

[0035] FIG. 1B illustrates functional layers of software on an example client device. A client device (e.g., client 102) is a data processing apparatus such as, for example, a personal computer, a laptop computer, a tablet computer, a smart phone, a smart watch, or a server computer. Other types of client devices are possible. The application layer 104 comprises the end-user application(s) that will integrate with the PubSub system 100. The messaging layer 106 is a programmatic interface for the application layer 104 to utilize services of the system 100 such as channel subscription, message publication, message retrieval, user authentication, and user authorization. In some implementations, the messages passed to and from the messaging layer 106 are encoded as JavaScript Object Notation (JSON) objects. Other message encoding schemes are possible.

[0036] The operating system 108 layer comprises the operating system software on the client 102. In various implementations, messages can be sent and received to/from the system 100 using persistent or non-persistent connections. Persistent connections can be created using, for example, network sockets. A transport protocol such as TCP/IP layer 112 implements the Transport Control Protocol/Internet Protocol communication with the system 100 that can be used by the messaging layer 106 to send messages over connections to the system 100. Other communication protocols are possible including, for example, User Datagram Protocol (UDP). In further implementations, an optional Transport Layer Security (TLS) layer 110 can be employed to ensure the confidentiality of the messages.

[0037] FIG. 2 is a diagram of an example messaging system 100. The system 100 provides functionality for implementing PubSub communication patterns. The system comprises software components and storage that can be deployed at one or more data centers 122 in one or more geographic locations, for example. The system comprises MX nodes (e.g., MX nodes or multiplexer nodes 202, 204 and 206), Q nodes (e.g., Q nodes or queue nodes 208, 210 and 212), one or more configuration manager nodes (e.g., configuration manager 214), and optionally one or more C nodes (e.g., C nodes or cache nodes 220 and 222). Each node can execute in a virtual machine or on a physical machine (e.g., a data processing apparatus). Each MX node can serve as a termination point for one or more publisher and/or subscriber connections through the external network 216. The internal communication among MX nodes, Q nodes, C nodes, and the configuration manager can be conducted over an internal network 218, for example. By way of illustration, MX node 204 can be the terminus of a subscriber connection from client 102. Each Q node buffers channel data for consumption by the MX nodes. An ordered sequence of messages published to a channel is a logical channel stream. For example, if three clients publish messages to a given channel, the combined messages published by the clients comprise a channel stream. Messages can be ordered in a channel stream, for example, by time of publication by the client, by time of receipt by an MX node, or by time of receipt by a Q node. Other ways for ordering messages in a channel stream are possible. In the case where more than one message would be assigned to the same position in the order, one of the messages can be chosen (e.g., randomly) to have a later sequence in the order. Each configuration manager node is responsible for managing Q node load, for example, by assigning channels to Q nodes and/or splitting channel streams into so-called streamlets. Streamlets are discussed further below. The optional C nodes provide caching and load removal from the Q nodes.

[0038] In the example messaging system 100, one or more client devices (publishers and/or subscribers) establish respective persistent connections (e.g., TCP connections) to an MX node (e.g., MX node 204). The MX node serves as a termination point for these connections. For instance, external messages (e.g., between respective client devices and the MX node) carried by these connections can be encoded based on an external protocol (e.g., JSON). The MX node terminates the external protocol and translates the external messages to internal communication, and vice versa. The MX nodes publish and subscribe to streamlets on behalf of clients. In this way, an MX node can multiplex and merge requests of client devices subscribing for or publishing to the same channel, thus representing multiple client devices as one, instead of one by one.

[0039] In the example messaging system 100, a Q node (e.g., Q node 208) can store one or more streamlets of one or more channel streams. A streamlet is a data buffer for a portion of a channel stream. A streamlet will close to writing when its storage is full. A streamlet will close to reading and writing and be de-allocated when its time-to-live (TTL) has expired. By way of illustration, a streamlet can have a maximum size of 1 MB and a TTL of three minutes. Different channels can have streamlets limited by different sizes and/or by different TTLs. For instance, streamlets in one channel can exist for up to three minutes, while streamlets in another channel can exist for up to 10 minutes. In various implementations, a streamlet corresponds to a computing process running on a Q node. The computing process can be terminated after the streamlet's TTL has expired, thus freeing up computing resources (for the streamlet) back to the Q node, for example.

[0040] When receiving a publish request from a client device, an MX node (e.g., MX node 204) makes a request to a configuration manager (e.g., configuration manager 214) to grant access to a streamlet to write the message being published. Note, however, that if the MX node has already been granted write access to a streamlet for the channel (and the channel has not been closed to writing), the MX node can write the message to that streamlet without having to request a grant to access the streamlet. Once a message is written to a streamlet for a channel, the message can be read by MX nodes and provided to subscribers of that channel.

[0041] Similarly, when receiving a channel subscription request from a client device, an MX node makes a request to a configuration manager to grant access to a streamlet for the channel from which messages are read. If the MX node has already been granted read access to a streamlet for the channel (and the channel's TTL has not been closed to reading), the MX node can read messages from the streamlet without having to request a grant to access the streamlet. The read messages can then be forwarded to client devices that have subscribed to the channel. In various implementations, messages read from streamlets are cached by MX nodes so that MX nodes can reduce the number of times needed to read from the streamlets.

[0042] By way of illustration, an MX node can request a grant from the configuration manager that allows the MX node to store a block of data into a streamlet on a particular Q node that stores streamlets of the particular channel. Example streamlet grant request and grant data structures are as follows:

TABLE-US-00001 StreamletGrantRequest = { "channel": string( ) "mode": "read" | "write" "position": 0 } StreamletGrantResponse = { "streamlet-id": "abcdef82734987", "limit-size": 2000000, # 2 megabytes max "limit-msgs": 5000, # 5 thousand messages max "limit-life": 4000, # the grant is valid for 4 seconds "q-node": string( ) "position": 0 }

[0043] The StreamletGrantRequest data structure stores the name of the stream channel and a mode indicating whether the MX node intends on reading from or writing to the streamlet. The MX node sends the StreamletGrantRequest to a configuration manager node. The configuration manager node, in response, sends the MX node a StreamletGrantResponse data structure. The StreamletGrantResponse contains an identifier of the streamlet (streamlet-id), the maximum size of the streamlet (limit-size), the maximum number of messages that the streamlet can store (limit-msgs), the TTL (limit-life), and an identifier of a Q node (q-node) on which the streamlet resides. The StreamletGrantRequest and StreamletGrantResponse can also have a position field that points to a position in a streamlet (or a position in a channel) for reading from the streamlet.

[0044] A grant becomes invalid once the streamlet has closed. For example, a streamlet is closed to reading and writing once the streamlet's TTL has expired and a streamlet is closed to writing when the streamlet's storage is full. When a grant becomes invalid, the MX node can request a new grant from the configuration manager to read from or write to a streamlet. The new grant will reference a different streamlet and will refer to the same or a different Q node depending on where the new streamlet resides.

[0045] FIG. 3A is a data flow diagram of an example method for writing data to a streamlet in various embodiments. In FIG. 3A, when an MX node (e.g., MX node 202) request to write to a streamlet is granted by a configuration manager (e.g., configuration manager 214), as described before, the MX node establishes a Transmission Control Protocol (TCP) connection with the Q node (e.g., Q node 208) identified in the grant response received from the configuration manager (302). A streamlet can be written concurrently by multiple write grants (e.g., for messages published by multiple publisher clients). Other types of connection protocols between the MX node and the Q node are possible.

[0046] The MX node then sends a prepare-publish message with an identifier of a streamlet that the MX node wants to write to the Q node (304). The streamlet identifier and Q node identifier can be provided by the configuration manager in the write grant as described earlier. The Q node hands over the message to a handler process 301 (e.g., a computing process running on the Q node) for the identified streamlet (306). The handler process can send to the MX node an acknowledgement (308). After receiving the acknowledgement, the MX node starts writing (publishing) messages (e.g., 310, 312, 314, and 318) to the handler process, which in turn stores the received data in the identified streamlet. The handler process can also send acknowledgements (316, 320) to the MX node for the received data. In some implementations, acknowledgements can be piggy-backed or cumulative. For instance, the handler process can send to the MX node an acknowledgement for every predetermined amount of data received (e.g., for every 100 messages received) or for every predetermined time period (e.g., for every one millisecond). Other acknowledgement scheduling algorithms, such as Nagle's algorithm, can be used.

[0047] If the streamlet can no longer accept published data (e.g., when the streamlet is full), the handler process sends a Negative-Acknowledgement (NAK) message (330) indicating a problem, following by an EOF (end-of-file) message (332). In this way, the handler process closes the association with the MX node for the publish grant. The MX node can then request a write grant for another streamlet from a configuration manager if the MX node has additional messages to store.

[0048] FIG. 3B is a data flow diagram of an example method for reading data from a streamlet in various embodiments. In FIG. 3B, an MX node (e.g., MX node 204) sends to a configuration manager (e.g., configuration manager 214) a request for reading a particular channel starting from a particular message or time offset in the channel. The configuration manager returns to the MX node a read grant including an identifier of a streamlet containing the particular message, a position in the streamlet corresponding to the particular message, and an identifier of a Q node (e.g., Q node 208) containing the particular streamlet. The MX node then establishes a TCP connection with the Q node (352). Other types of connection protocols between the MX node and the Q node are possible.

[0049] The MX node then sends to the Q node a subscribe message (354) with the identifier of the streamlet (in the Q node) and the position in the streamlet from which the MX node wants to read (356). The Q node hands over the subscribe message to a handler process 351 for the streamlet (356). The handler process can send to the MX node an acknowledgement (358). The handler process then sends messages (360, 364, 366), starting at the position in the streamlet, to the MX node. In some implementations, the handler process can send all of the messages in the streamlet to the MX node. After sending the last message in a particular streamlet, the handler process can send a notification of the last message to the MX node. The MX node can send to the configuration manager another request for another streamlet containing a next message in the particular channel.

[0050] If the particular streamlet is closed (e.g., after its TTL has expired), the handler process can send an unsubscribe message (390), followed by an EOF message (392), to close the association with the MX node for the read grant. The MX node can close the association with the handler process when the MX node moves to another streamlet for messages in the particular channel (e.g., as instructed by the configuration manager). The MX node can also close the association with the handler process if the MX node receives an unsubscribe message from a corresponding client device.

[0051] In various implementations, a streamlet can be written into and read from at the same time instance. For example, there can be a valid read grant and a valid write grant at the same time instance. In various implementations, a streamlet can be read concurrently by multiple read grants (e.g., for channels subscribed to by multiple publisher clients). The handler process of the streamlet can order messages from concurrent write grants based on, for example, time-of-arrival, and store the messages based on the order. In this way, messages published to a channel from multiple publisher clients can be serialized and stored in a streamlet of the channel.

[0052] In the messaging system 100, one or more C nodes (e.g., C node 220) can offload data transfers from one or more Q nodes. For instance, if there are many MX nodes requesting streamlets from Q nodes for a particular channel, the streamlets can be offloaded and cached in one or more C nodes. The MX nodes (e.g., as instructed by read grants from a configuration manager) can read the streamlets from the C nodes instead.

[0053] As described above, messages for a channel in the messaging system 100 are ordered in a channel stream. A configuration manager (e.g., configuration manager 214) splits the channel stream into fixed-sized streamlets that each reside on a respective Q node. In this way, storing a channel stream can be shared among many Q nodes; each Q node stores a portion (one or more streamlets) of the channel stream. More particularly, a streamlet can be stored in, for example, registers and/or dynamic memory elements associated with a computing process on a Q node, thus avoiding the need to access persistent, slower storage devices such as hard disks. This results in faster message access. The configuration manager can also balance load among Q nodes in the messaging system 100 by monitoring respective workloads of the Q nodes and allocating streamlets in a way that avoids overloading any one Q node.

[0054] In various implementations, a configuration manager maintains a list identifying each active streamlet, the respective Q node on which the streamlet resides, an identification of the position of the first message in the streamlet, and whether the streamlet is closed for writing. In some implementations, Q nodes notify the configuration manager and/or any MX nodes that are publishing to a streamlet that the streamlet is closed due to being full or when the streamlet's TTL has expired. When a streamlet is closed, the streamlet remains on the configuration manager's list of active streamlets until the streamlet's TTL has expired so that MX nodes can continue to retrieve messages from the streamlet.

[0055] When an MX node requests a write grant for a given channel and there is not a streamlet for the channel that can be written to, the configuration manager allocates a new streamlet on one of the Q nodes and returns the identity of the streamlet and the Q node in the StreamletGrantResponse. Otherwise, the configuration manager returns the identity of the currently open for writing streamlet and corresponding Q node in the StreamletGrantResponse. MX nodes can publish messages to the streamlet until the streamlet is full or the streamlet's TTL has expired, after which a new streamlet can be allocated by the configuration manager.

[0056] When an MX node requests a read grant for a given channel and there is not a streamlet for the channel that can be read from, the configuration manager allocates a new streamlet on one of the Q nodes and returns the identity of the streamlet and the Q node in the StreamletGrantResponse. Otherwise, the configuration manager returns the identity of the streamlet and Q node that contains the position from which the MX node wishes to read. The Q node can then begin sending messages to the MX node from the streamlet beginning at the specified position until there are no more messages in the streamlet to send. When a new message is published to a streamlet, MX nodes that have subscribed to that streamlet will receive the new message. If a streamlet's TTL has expired, the handler process 351 can send an EOF message (392) to any MX nodes that are subscribed to the streamlet.

[0057] In some implementations, the messaging system 100 can include multiple configuration managers (e.g., configuration manager 214 plus one or more other configuration managers). Multiple configuration managers can provide resiliency and prevent single point of failure. For instance, one configuration manager can replicate lists of streamlets and current grants it maintains to another "slave" configuration manager. As another example, multiple configuration managers can coordinate operations between them using distributed consensus protocols, such as, for example, Paxos or Raft protocols.

[0058] FIG. 4A is a data flow diagram of an example method for publishing messages to a channel of a messaging system. In FIG. 4A, publishers (e.g., publisher clients 402, 404, 406) publish messages to the messaging system 100 described earlier in reference to FIG. 2. For instance, publishers 402 respectively establish connections 411 and send publish requests to the MX node 202. Publishers 404 respectively establish connections 413 and send publish requests to the MX node 206. Publishers 406 respectively establish connections 415 and send publish requests to the MX node 204. Here, the MX nodes can communicate (417) with a configuration manager (e.g., configuration manager 214) and one or more Q nodes (e.g., Q nodes 212 and 208) in the messaging system 100 via the internal network 218.

[0059] By way of illustration, each publish request (e.g., in JSON key/value pairs) from a publisher to an MX node includes a channel name and a message. The MX node (e.g., MX node 202) can assign the message in the publish request to a distinct channel in the messaging system 100 based on the channel name (e.g., "foo") of the publish request. The MX node can confirm the assigned channel with the configuration manager 214. If the channel (specified in the subscribe request) does not yet exist in the messaging system 100, the configuration manager can create and maintain a new channel in the messaging system 100. For instance, the configuration manager can maintain a new channel by maintaining a list identifying each active streamlet of the channel's stream, the respective Q node on which the streamlet resides, and identification of the positions of the first and last messages in the streamlet as described earlier.

[0060] For messages of a particular channel, the MX node can store the messages in one or more buffers or streamlets in the messaging system 100. For instance, the MX node 202 receives from the publishers 402 requests to publish messages M11, M12, M13, and M14 to a channel foo. The MX node 206 receives from the publishers 404 requests to publish messages M78 and M79 to the channel foo. The MX node 204 receives from the publishers 406 requests to publish messages M26, M27, M28, M29, M30, and M31 to the channel foo.

[0061] The MX nodes can identify one or more streamlets for storing messages for the channel foo. As described earlier, each MX node can request a write grant from the configuration manager 214 that allows the MX node to store the messages in a streamlet of the channel foo. For instance, the MX node 202 receives a grant from the configuration manager 214 to write messages M11, M12, M13, and M14 to a streamlet 4101 on the Q node 212. The MX node 206 receives a grant from the configuration manager 214 to write messages M78 and M79 to the streamlet 4101. Here, the streamlet 4101 is the last one (at the moment) of a sequence of streamlets of the channel stream 430 storing messages of the channel foo. The streamlet 4101 has messages (421) of the channel foo that were previously stored in the streamlet 4101, but is still open, i.e., the streamlet 4101 still has space for storing more messages and the streamlet's TTL has not expired.

[0062] The MX node 202 can arrange the messages for the channel foo based on the respective time that each message was received by the MX node 202, e.g., M11, M13, M14, M12 (422), and store the received messages as arranged in the streamlet 4101. That is, the MX node 202 receives M11 first, followed by M13, M14, and M12. Similarly, the MX node 206 can arrange the messages for the channel foo based on their respective time that each message was received by the MX node 206, e.g., M78, M79 (423), and store the received messages as arranged in the streamlet 4101. Other arrangements or ordering of the messages for the channel are possible.

[0063] The MX node 202 (or MX node 206) can store the received messages using the method for writing data to a streamlet described earlier in reference to FIG. 3A, for example. In various implementations, the MX node 202 (or MX node 206) can buffer (e.g., in a local data buffer) the received messages for the channel foo and store the received messages in a streamlet for the channel foo (e.g., streamlet 4101) when the buffered messages reach a predetermined number or size (e.g., 100 messages) or when a predetermined time (e.g., 50 milliseconds) has elapsed. For instance, the MX node 202 can store in the streamlet 100 messages at a time or in every 50 milliseconds. Other appropriate algorithms and techniques, such as Nagle's algorithm, can be used for managing the buffered messages.

[0064] In various implementations, the Q node 212 (e.g., a handler process) stores the messages of the channel foo in the streamlet 4101 in the order as arranged by the MX node 202 and MX node 206. The Q node 212 stores the messages of the channel foo in the streamlet 4101 in the order the Q node 212 receives the messages. For instance, assume that the Q node 212 receives messages M78 (from the MX node 206) first, followed by messages M11 and M13 (from the MX node 202), M79 (from the MX node 206), and M14 and M12 (from the MX node 202). The Q node 212 stores in the streamlet 4101 the messages in the order as received, e.g., M78, M11, M13, M79, M14, and M12, immediately after the messages 421 that are already stored in the streamlet 4101. In this way, messages published to the channel foo from multiple publishers (e.g., 402, 404) can be serialized in a particular order and stored in the streamlet 4101 of the channel foo. Different subscribers that subscribe to the channel foo will receive messages of the channel foo in the same particular order, as will be described in more detail in reference to FIG. 4B.

[0065] In the example of FIG. 4A, at a time instance after the message M12 was stored in the streamlet 4101, the MX node 204 requests a grant from the configuration manager 214 to write to the channel foo. The configuration manager 214 provides the MX node 204 a grant to write messages to the streamlet 4101, as the streamlet 4101 is still open for writing. The MX node 204 arranges the messages for the channel foo based on the respective time that each message was received by the MX node 204, e.g., M26, M27, M31, M29, M30, M28 (424), and stores the messages as arranged for the channel foo.

[0066] By way of illustration, assume that the message M26 is stored to the last available position of the streamlet 4101. As the streamlet 4101 is now full, the Q node 212 sends to the MX node 204 a NAK message, following by an EOF message, to close the association with the MX node 204 for the write grant, as described earlier in reference to FIG. 3A. The MX node 204 then requests another write grant from the configuration manager 214 for additional messages (e.g., M27, M31, and so on) for the channel foo.

[0067] The configuration manager 214 can monitor available Q nodes in the messaging system 100 for their respective workloads (e.g., how many streamlets are residing in each Q node). The configuration manager 214 can allocate a streamlet for the write request from the MX node 204 such that overloading (e.g., too many streamlets or too many read or write grants) can be avoided for any given Q node. For instance, the configuration manager 214 can identify a least loaded Q node in the messaging system 100 and allocate a new streamlet on the least loaded Q node for write requests from the MX node 204. In the example of FIG. 4A, the configuration manager 214 allocates a new streamlet 4102 on the Q node 208 and provides a write grant to the MX node 204 to write messages for the channel foo to the streamlet 4102. As shown in FIG. 4A, the Q node stores in the streamlet 4102 the messages from the MX node 204 in an order as arranged by the MX node 204: M27, M31, M29, M30, and M28 (assuming that there is no other concurrent write grant for the streamlet 4102 at the moment).

[0068] When the configuration manager 214 allocates a new streamlet (e.g., streamlet 4102) for a request for a grant from an MX node (e.g., MX node 204) to write to a channel (e.g., foo), the configuration manager 214 assigns to the streamlet its TTL, which will expire after TTLs of other streamlets that are already in the channel's stream. For instance, the configuration manager 214 can assign to each streamlet of the channel foo's channel stream a TTL of 3 minutes when allocating the streamlet. That is, each streamlet will expire 3 minutes after it is allocated (created) by the configuration manager 214. Since a new streamlet is allocated after a previous streamlet is closed (e.g., filled entirely or expired), in this way, the channel foo's channel stream comprises streamlets that each expires sequentially after its previous streamlet expires. For instance, as shown in an example channel stream 430 of the channel foo in FIG. 4A, streamlet 4098 and streamlets before 4098 have expired (as indicated by the dotted-lined gray-out boxes). Messages stored in these expired streamlets are not available for reading for subscribers of the channel foo. Streamlets 4099, 4100, 4101, and 4102 are still active (not expired). The streamlets 4099, 4100, and 4101 are closed for writing, but still are available for reading. The streamlet 4102 is available for reading and writing, at the moment when the message M28 was stored in the streamlet 4102. At a later time, the streamlet 4099 will expire, following by the streamlets 4100, 4101, and so on.

[0069] FIG. 4B is a data flow diagram of an example method for subscribing to a channel of a messaging system. In FIG. 4B, a subscriber 480 establishes a connection 462 with an MX node 461 of the messaging system 100. Subscriber 482 establishes a connection 463 with the MX node 461. Subscriber 485 establishes a connection 467 with an MX node 468 of the messaging system 100. Here, the MX nodes 461 and 468 can respectively communicate (464) with the configuration manager 214 and one or more Q nodes in the messaging system 100 via the internal network 218.

[0070] A subscriber (e.g., subscriber 480) can subscribe to the channel foo of the messaging system 100 by establishing a connection (e.g., 462) and sending a request for subscribing to messages of the channel foo to an MX node (e.g., MX node 461). The request (e.g., in JSON key/value pairs) can include a channel name, such as, for example, "foo." When receiving the subscribe request, the MX node 461 can send to the configuration manager 214 a request for a read grant for a streamlet in the channel foo's channel stream.

[0071] By way of illustration, assume that at the current moment the channel foo's channel stream 431 includes active streamlets 4102, 4103, and 4104, as shown in FIG. 4B. The streamlets 4102 and 4103 each are full. The streamlet 4104 stores messages of the channel foo, including the last message (at the current moment) stored at a position 47731. Streamlets 4101 and streamlets before 4101 are invalid, as their respective TTLs have expired. Note that the messages M78, M11, M13, M79, M14, M12, and M26 stored in the streamlet 4101, described earlier in reference to FIG. 4A, are no longer available for subscribers of the channel foo, since the streamlet 4101 is no longer valid, as its TTL has expired. As described earlier, each streamlet in the channel foo's channel stream has a TTL of 3 minutes, thus only messages (as stored in streamlets of the channel foo) that are published to the channel foo (i.e., stored into the channel's streamlets) no earlier than 3 minutes from the current time can be available for subscribers of the channel foo.

[0072] The MX node 461 can request a read grant for all available messages in the channel foo, for example, when the subscriber 480 is a new subscriber to the channel foo. Based on the request, the configuration manager 214 provides the MX node 461 a read grant to the streamlet 4102 (on the Q node 208) that is the earliest streamlet in the active streamlets of the channel foo (i.e., the first in the sequence of the active streamlets). The MX node 461 can retrieve messages in the streamlet 4102 from the Q node 208, using the method for reading data from a streamlet described earlier in reference to FIG. 3B, for example. Note that the messages retrieved from the streamlet 4102 maintain the same order as stored in the streamlet 4102. However, other arrangements or ordering of the messages in the streamlet are possible. In various implementations, when providing messages stored in the streamlet 4102 to the MX node 461, the Q node 208 can buffer (e.g., in a local data buffer) the messages and send the messages to the MX node 461 when the buffer messages reach a predetermined number or size (e.g., 200 messages) or a predetermined time (e.g., 50 milliseconds) has elapsed. For instance, the Q node 208 can send the channel foo's messages (from the streamlet 4102) to the MX node 461 200 messages at a time or in every 50 milliseconds. Other appropriate algorithms and techniques, such as Nagle's algorithm, can be used for managing the buffered messages.

[0073] After receiving the last message in the streamlet 4102, the MX node 461 can send an acknowledgement to the Q node 208, and send to the configuration manager 214 another request (e.g., for a read grant) for the next streamlet in the channel stream of the channel foo. Based on the request, the configuration manager 214 provides the MX node 461 a read grant to the streamlet 4103 (on Q node 472) that logically follows the streamlet 4102 in the sequence of active streamlets of the channel foo. The MX node 461 can retrieve messages stored in the streamlet 4103, e.g., using the method for reading data from a streamlet described earlier in reference to FIG. 3B, until it retrieves the last message stored in the streamlet 4103. The MX node 461 can send to the configuration manager 214 yet another request for a read grant for messages in the next streamlet 4104 (on Q node 474). After receiving the read grant, the MX node 461 retrieves messages of the channel foo stored in the streamlet 4104, until the last message at the position 47731. Similarly, the MX node 468 can retrieve messages from the streamlets 4102, 4103, and 4104 (as shown with dotted arrows in FIG. 4B), and provide the messages to the subscriber 485.

[0074] The MX node 461 can send the retrieved messages of the channel foo to the subscriber 480 (via the connection 462) while receiving the messages from the Q nodes 208, 472, or 474. In various implementations, the MX node 461 can store the retrieved messages in a local buffer. In this way, the retrieved messages can be provided to another subscriber (e.g., subscriber 482) when the other subscriber subscribes to the channel foo and requests the channel's messages. The MX node 461 can remove messages stored in the local buffer that each has a time of publication that has exceeded a predetermined time period. For instance, the MX node 461 can remove messages (stored in the local buffer) with respective times of publication exceeding 3 minutes. In some implementations, the predetermined time period for keeping messages in the local buffer on MX node 461 can be the same as or similar to the time-to-live duration of a streamlet in the channel foo's channel stream, since at a given moment, messages retrieved from the channel's stream do not include those in streamlets having respective times-to-live that had already expired.

[0075] The messages retrieved from the channel stream 431 and sent to the subscriber 480 (by the MX node 461) are arranged in the same order as the messages were stored in the channel stream, although other arrangements or ordering of the messages are possible. For instance, messages published to the channel foo are serialized and stored in the streamlet 4102 in a particular order (e.g., M27, M31, M29, M30, and so on), then stored subsequently in the streamlet 4103 and the streamlet 4104. The MX node retrieves messages from the channel stream 431 and provides the retrieved messages to the subscriber 480 in the same order as the messages are stored in the channel stream: M27, M31, M29, M30, and so on, followed by ordered messages in the streamlet 4103, and followed by ordered messages in the streamlet 4104.

[0076] Instead of retrieving all available messages in the channel stream 431, the MX node 461 can request a read grant for messages stored in the channel stream 431 starting from a message at particular position, e.g., position 47202. For instance, the position 47202 can correspond to an earlier time instance (e.g., 10 seconds before the current time) when the subscriber 480 was last subscribing to the channel foo (e.g., via a connection to the MX node 461 or another MX node of the messaging system 100). The MX node 461 can send to the configuration manager 214 a request for a read grant for messages starting at the position 47202. Based on the request, the configuration manager 214 provides the MX node 461 a read grant to the streamlet 4104 (on the Q node 474) and a position on the streamlet 4104 that corresponds to the channel stream position 47202. The MX node 461 can retrieve messages in the streamlet 4104 starting from the provided position, and send the retrieved messages to the subscriber 480.

[0077] As described above in reference to FIGS. 4A and 4B, messages published to the channel foo are serialized and stored in the channel's streamlets in a particular order. The configuration manager 214 maintains the ordered sequence of streamlets as they are created throughout their respective times-to-live. Messages retrieved from the streamlets by an MX node (e.g., MX node 461, or MX node 468) and provided to a subscriber can be, in some implementations, in the same order as the messages are stored in the ordered sequence of streamlets. In this way, messages sent to different subscribers (e.g., subscriber 480, subscriber 482, or subscriber 485) can be in the same order (as the messages are stored in the streamlets), regardless which MX nodes the subscribers are connected to.

[0078] In various implementations, a streamlet stores messages in a set of blocks of messages. Each block stores a number of messages. For instance, a block can store two hundred kilobytes of messages (although other sizes of blocks of messages are possible). Each block has its own time-to-live, which can be shorter than the time-to-live of the streamlet holding the block. Once a block's TTL has expired, the block can be discarded from the streamlet holding the block, as described in more detail below in reference to FIG. 4C.

[0079] FIG. 4C is an example data structure for storing messages of a channel of a messaging system. As described with the channel foo in reference to FIGS. 4A and 4B, assume that at the current moment the channel foo's channel stream 432 includes active streamlets 4104 and 4105, as shown in FIG. 4C. Streamlet 4103 and streamlets before 4103 are invalid, as their respective TTLs have expired. The streamlet 4104 is already full for its capacity (e.g., as determined by a corresponding write grant) and is closed for additional message writes. The streamlet 4104 is still available for message reads. The streamlet 4105 is open and is available for message writes and reads.

[0080] By way of illustration, the streamlet 4104 (e.g., a computing process running on the Q node 474 shown in FIG. 4B) currently holds two blocks of messages. Block 494 holds messages from channel positions 47301 to 47850. Block 495 holds messages from channel positions 47851 to 48000. The streamlet 4105 (e.g., a computing process running on another Q node in the messaging system 100) currently holds two blocks of messages. Block 496 holds messages from channel positions 48001 to 48200. Block 497 holds messages starting from channel position 48201, and still accepts additional messages of the channel foo.

[0081] When the streamlet 4104 was created (e.g., by a write grant), a first block (sub-buffer) 492 was created to store messages, e.g., from channel positions 47010 to 47100. Later on, after the block 492 had reached its capacity, another block 493 was created to store messages, e.g., from channel positions 47111 to 47300. Blocks 494 and 495 were subsequently created to store additional messages. Afterwards, the streamlet 4104 was closed for additional message writes, and the streamlet 4105 was created with additional blocks for storing additional messages of the channel foo.

[0082] In this example, the respective TTL's of blocks 492 and 493 had expired. The messages stored in these two blocks (from channel positions 47010 to 47300) are no longer available for reading by subscribers of the channel foo. The streamlet 4104 can discard these two expired blocks, e.g., by de-allocating the memory space for the blocks 492 and 493. The blocks 494 or 495 could become expired and be discarded by the streamlet 4104, before the streamlet 4104 itself becomes invalid. Alternatively, streamlet 4104 itself could become invalid before the blocks 494 or 495 become expired. In this way, a streamlet can hold one or more blocks of messages, or contain no block of messages, depending on respective TTLs of the streamlet and blocks, for example.

[0083] A streamlet, or a computing process running on a Q node in the messaging system 100, can create a block for storing messages of a channel by allocating a certain size of memory space from the Q node. The streamlet can receive, from an MX node in the messaging system 100, one message at a time and store the received message in the block. Alternatively, the MX node can assemble (i.e., buffer) a group of messages and send the group of messages to the Q node. The streamlet can allocate a block of memory space (from the Q node) and store the group of messages in the block. The MX node can also perform compression on the group of messages, e.g., by removing a common header from each message or performing other suitable compression techniques.

[0084] As described above, a streamlet (a data buffer) residing on a Q node stores messages of a channel in the messaging system 100. To prevent failure of the Q node (a single point failure) that can cause messages being lost, the messaging system 100 can replicate messages on multiple Q nodes, as described in more detail below.

[0085] FIG. 5A is a data flow diagram of an example method 500 for publishing and replicating messages of the messaging system 100. As described earlier in reference to FIG. 4A, the MX node 204 receives messages (of the channel foo) from the publishers 406. The configuration manager 214 can instruct the MX Node 204 (e.g., with a write grant) to store the messages in the streamlet 4102 on the Q node 208. In FIG. 5A, instead of storing the messages on a single node (e.g., Q node 208), the configuration manager 214 allocates multiple Q nodes to store multiple copies of the streamlet 4102 on these Q nodes.

[0086] By way of illustration, the configuration manager 214 allocates Q nodes 208, 502, 504, and 506 in the messaging system 100 to store copies of the streamlet 4102. The configuration manager 214 instructs the MX node 204 to transmit the messages for the channel foo (e.g., messages M27, M31, M29, M30, and M28) to the Q node 208 (512). A computing process running on the Q node 208 stores the messages in the first copy (copy #1) of the streamlet 4102. Instead of sending an acknowledgement message to the MX node 204 after storing the messages, the Q node 208 forwards the messages to the Q node 502 (514). A computing process running on the Q node 502 stores the messages in another copy (copy #2) of the streamlet 4102. Meanwhile, the Q node 502 forwards the messages to the Q node 504 (516). A computing process running on the Q node 504 stores the messages in yet another copy (copy #3) of the streamlet 4102. The Q node 504 also forwards the message to the Q node 506 (518). A computing process running on the Q node 506 stores the messages in yet another copy (copy #4) of the streamlet 4102. The Q node 506 can send an acknowledgement message to the MX node 204, indicating that all the messages (M27, M31, M29, M30, and M28) have been stored successfully in streamlet copies #1, #2, #3 and #4.

[0087] In some implementations, after successfully storing the last copy (copy #4), the Q node 506 can send an acknowledgement to its upstream Q node (504), which in turns sends an acknowledgement to its upstream Q node (502), and so on, until the acknowledgement is sent to the Q node 208 storing the first copy (copy #1). The Q node 208 can send an acknowledgement message to the MX node 204, indicating that all messages have been stored successfully in the streamlet 4102 (i.e., in the copies #1, #2, #3 and #4).

[0088] In this way, four copies of the streamlet 4102 (and each message in the streamlet) are stored in four different Q nodes. Other numbers (e.g., two, three, five, or other suitable number) of copies of a streamlet are also possible. In the present illustration, the four copies form a chain of copies including a head copy in the copy #1 and a tail copy in the copy #4. When a new message is published to the streamlet 4102, the message is first stored in the head copy (copy #1) on the Q node 208. The message is then forwarded downstream to the next adjacent copy, the copy #2 on the Q node 502 for storage, then to the copy #3 on the Q node 504 for storage, until the message is stored in the tail copy the copy #4 on the Q node 506.

[0089] In addition to storing and forwarding by messages, the computing processes running on Q nodes that store copies of a streamlet can also store and forward messages by blocks of messages, as described earlier in reference to FIG. 4C. For instance, the computing process storing the copy #1 of the streamlet 4102 on Q node 208 can allocate memory and store a block of, for example, 200 kilobytes of messages (although other sizes of blocks of messages are possible), and forward the block of messages to the next adjacent copy (copy #2) of the chain for storage, and so on, until the block messages is stored in the tail copy (copy #4) on the Q node 506.

[0090] Messages of the streamlet 4102 can be retrieved and delivered to a subscriber of the channel foo from one of the copies of the streamlet 4102. FIG. 5B is a data flow diagram of an example method 550 for retrieving stored messages in the messaging system 100. For instance, the subscriber 480 can send a request for subscribing to messages of the channel to the MX node 461, as described earlier in reference to FIG. 4B. The configuration manager 214 can provide to the MX node 461 a read grant for one of the copies of the streamlet 4102. The MX node 461 can retrieve messages of the streamlet 4102 from one of the Q nodes storing a copy of the streamlet 4102, and provide the retrieved messages to the subscriber 480. For instance, the MX node 461 can retrieve messages from the copy #4 (the tail copy) stored on the Q node 506 (522). As for another example, the MX node 461 can retrieve messages from the copy #2 stored on the Q node 502 (524). In this way, the multiple copies of a streamlet (e.g., copies #1, #2, #3, and #4 of the streamlet 4102) provide replication and redundancy against failure if only one copy of the streamlet were stored in the messaging system 100. In various implementations, the configuration manager 214 can balance workloads among the Q nodes storing copies of the streamlet 4102 by directing the MX node 461 (e.g., with a read grant) to a particular Q node that has, for example, less current read and write grants as compared to other Q nodes storing copies of the streamlet 4102.

[0091] A Q node storing a particular copy in a chain of copies of a streamlet may fail, e.g., a computing process on the Q node storing the particular copy may freeze. Other failure modes of a Q node are possible. An MX node can detect a failed node (e.g., from non-responsiveness of the failed node) and report the failed node to a configuration manager in the messaging system 100 (e.g., configuration manager 214). A peer Q node can also detect a failed Q node and report the failed node to the configuration manager. For instance, an upstream Q node may detect a failed downstream Q node when the downstream Q node is non-responsive, e.g., fails to acknowledge a message storage request from the upstream Q node as described earlier. It is noted that failure of a Q node storing a copy of a particular streamlet of a particular channel stream does not have to be for publish or subscribe operations of the particular streamlet or of the particular channel stream. Failure stemming from operations on another streamlet or another channel stream can also alert a configuration manager about failure of a Q node in the messaging system 100.

[0092] When a Q node storing a particular copy in a chain of copies of a streamlet fails, a configuration manager in the messaging system 100 can repair the chain by removing the failed node, or by inserting a new node for a new copy into the chain, for example. FIGS. 5C and 5D are data flow diagrams of example methods for repairing a chain of copies of a streamlet in the messaging system 100. In FIG. 5C, for instance, after detecting that the Q node 504 fails, the configuration manager 214 can repair the chain of copies by redirecting messages intended to be stored in the copy #3 of the streamlet 4102 on the Q node 502 to the copy #4 of the streamlet 4102 on the Q node 506. In this example, a message (or a block of messages) is first sent from the MX node 204 to the Q node 208 for storage in the copy #1 of the streamlet 4102 (572). The message then is forwarded to the Q node 502 for storage in the copy #2 of the streamlet 4102 (574). The message is then forwarded to the Q node 506 for storage in the copy #4 of the streamlet 4102 (576). The Q node 506 can send an acknowledgement message to the configuration manager 214 indicating that the message has been stored successfully.

[0093] Here, a failed node can also be the node storing the head copy or the tail copy of the chain of copies. For instance, if the Q node 208 fails, the configuration manager 214 can instruct the MX node 204 first to send the message to the Q node 502 for storage in the copy #2 of the streamlet 4102. The message is then forwarded to the next adjacent copy in the chain for storage, until the message is stored in the tail copy.

[0094] If the Q node 506 fails, the configuration manager 214 can repair the chain of copies of the streamlet 4102 such that the copy #3 on the Q node 504 becomes the tail copy of the chain. A message is first stored in the copy #1 on the Q node 208, then subsequently stored in the copy #2 on the Q node 502, and the copy #3 on the Q node 504. The Q node 504 then can send an acknowledgement message to the configuration manager 214 indicating that the message has been stored successfully.

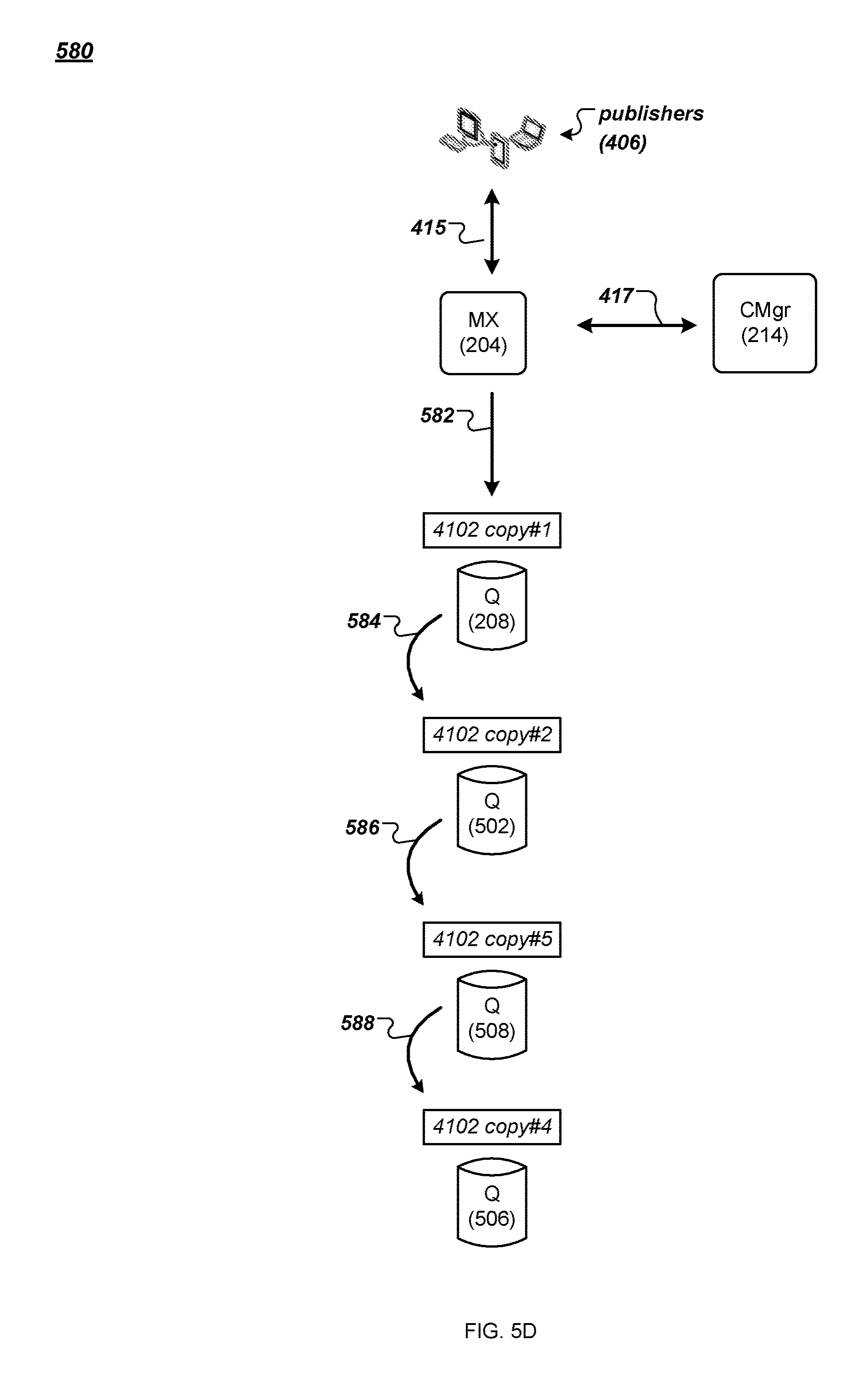

[0095] In FIG. 5D, the configuration manager 214 replaces the failed node Q node 504 by allocating a new Q node 508 to store a copy #5 of the chain of copies of the streamlet 4102. In this example, the configuration manager 214 instructs the MX node 204 to send a message (from the publishers 406) to the Q node 208 for storage in the copy #1 of the streamlet 4102 (582). The message is then forwarded to the Q node 502 for storage in the copy #2 of the streamlet 4102 (584). The message is then forwarded to the Q node 508 for storage in the copy #5 of the streamlet 4012 (586). The message is then forwarded to the Q node 506 for storage in the copy #4 of the streamlet 4102 (588). The Q node 506 can send an acknowledgement message to the configuration manager 214 indicating that the message has been stored successfully.

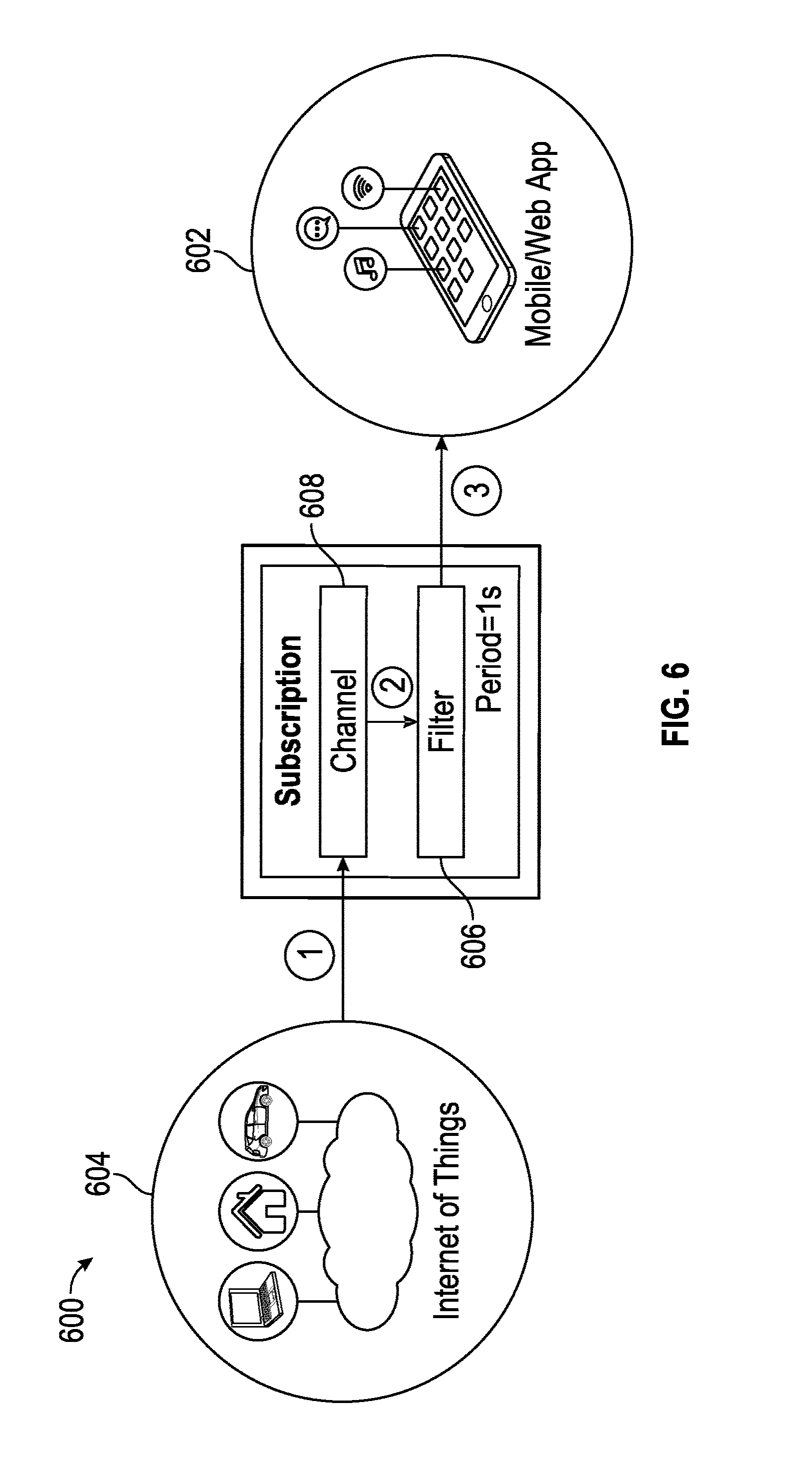

[0096] FIG. 6 is a data flow diagram 600 illustrating the application of selective filtering, searching, transforming, querying, aggregating and transforming of messages in real time to manage the delivery of messages into and through each channel and on to individual subscribers. Users operating applications on client devices, such as, for example, smartphones, tablets, and other internet-connected devices, act as subscribers (e.g., subscriber 480 in FIG. 4B, subscriber 602 in FIG. 6). The applications may be, for example, consumers of the messages to provide real-time information about news, transportation, sports, weather, or other subjects that rely on published messages attributed to one or more subjects and/or channels. Message publishers 604 can be any internet-connected service that provides, for example, status data, transactional data or other information that is made available to the subscribers 602 on a subscription basis. In some versions, the relationship between publishers and channels is 1:1, such that is there is one and only one publisher that provides messages into that particular channel. In other instances, the relationship may be many-to-one (more than one publisher provides messages into a channel), one-to-many (a publisher's messages are sent to more than one channel), or many-to-many (more than one publisher provides messages to more than one channel). Typically, when a subscriber subscribes to a channel, they receive all messages and all message data published to the channel as soon as it is published. The result, however, is that many subscribers can receive more data (or data that requires further processing) than is useful. The additional filtering or application of functions against the data places undue processing requirements on the subscriber application and can delay presentation of the data in its preferred format.

[0097] A filter 606 can be created by providing suitable query instructions at, for example, the time the subscriber 602 subscribes to the channel 608. The filter 606 that is specified can be applied to all messages published to the channel 608 (e.g., one message at a time), and can be evaluated before the subscriber 602 receives the messages (e.g., block 2 in FIG. 6). By allowing subscribers 602 to create query instructions a priori, that is upon subscribing to the channel 608 and before data is received into the channel 608, the burden of filtering and processing messages moves closer to the data source, and can be managed at the channel level. As a result, the messages are pre-filtered and/or pre-processed before they are forwarded to the subscriber 602. Again, the query instructions need not be based on any a priori knowledge of the form or substance of the incoming messages. The query instructions can be used to pre-process data for applications such as, for example, real-time monitoring services (for transportation, healthcare, news, sports, weather, etc.) and dashboards (e.g., industrial monitoring applications, financial markets, etc.) to filter data, summarize data and/or detect anomalies. One or more filters 606 can be applied to each channel 608.

[0098] The query instructions can implement real-time searches and queries, aggregate or summarize data, or transform data for use by a subscriber application. In some embodiments, including those implementing JSON formatted messages, the messages can be generated, parsed and interpreted using the query instructions, and the lack of a pre-defined schema (unlike conventional RDBMS/SQL-based applications) means that the query instructions can adapt to changing business needs without the need for schema or application layer changes. This allows the query instructions to be applied selectively at the message level within a channel, thus filtering and/or aggregating messages within the channel. In some instances, the queries may be applied at the publisher level--meaning channels that receive messages from more than one publisher may apply certain filters against messages from specific publishers. The query instructions may be applied on a going-forward basis, that is on only newly arriving messages, and/or in some cases, the query instructions may be applied to historical messages already residing in the channel queue.

[0099] The query instructions can be applied at either or both of the ingress and egress side of the PubSub service. On the egress side, the query instructions act as a per-connection filter against the message channels, and allow each subscriber to manage their own set of unique filters. On the ingress side, the query instructions operate as a centralized, system-wide filter that is applied to all published messages.

[0100] For purposes of illustration and not limitation, examples of query instructions that may be applied during message ingress include: [0101] A message may be distributed to multiple channels or to a different channel (e.g., based on geo-location in the message, or based on a hash function of some value in the message). [0102] A message may be dropped due to spam filtering or DoS rules (e.g., limiting the number of messages a publisher can send in a given time period). [0103] An alert message may be sent to an admin channel on some event arriving at any channel (e.g., cpu_temp>threshold).

[0104] For purposes of illustration and not limitation, examples of query instructions that may be applied during message egress include: [0105] Channels that contain events from various sensors where the user is only interested in a subset of the data sources. [0106] Simple aggregations, where a system reports real time events, such as cpu usage, sensor temperatures, etc., and we would like to receive some form of aggregation over a short time period, irrespective of the number of devices reporting or the reporting frequency, e.g., average (cpu_load), max(temperature), count(number_of_users), count(number_of_messages) group by country. [0107] Transforms, where a system reports real time events and metadata is added to them from mostly static external tables, e.g., adding a city name based on IP address, converting an advertisement ID to a marketing campaign ID or to a marketing partner ID. [0108] Adding default values to event streams where such values do not exist on certain devices. [0109] Advanced aggregations, where a system reports real time events, and combines some mostly static external table data into the aggregation in real time, e.g., grouping advertisement clicks by partners and counting number of events. [0110] Counting number of user events, grouping by a/b test cell allocation.

[0111] In some embodiments, the query instructions may be used to define an index or other suitable temporary data structure, which may then be applied against the messages as they are received into the channel to allow for the reuse of the data element(s) as searchable elements. In such cases, a query frequency may be maintained to describe the number of times (general, or in a given period) that a particular data element is referred to or how that element is used. If the frequency that the data element is used in a query exceeds some threshold, the index may be stored for subsequent use on incoming messages, whereas in other instances in which the index is used only once (or infrequently) it may be discarded. In some instances, the query instruction may be applied to messages having arrived at the channel prior to the creation of the index. Thus, the messages are not indexed according to the data elements described in the query instructions but processed using the query instructions regardless, whereas messages arriving after the creation of the index may be filtered and processed using the index. For queries or other subscriptions that span the time at which the index may have been created, the results of applying the query instructions to the messages as they are received and processed with the index may be combined with results of applying the query instructions to non-indexed messages received prior to receipt of the query instructions.

[0112] For purposes of illustration and not limitation, one use case for such a filtering application is a mapping application that subscribes to public transportation data feeds, such as the locations of all buses across a city. The published messages may include, for example, geographic data describing the location, status, bus agency, ID number, route number, and route name of the buses. Absent pre-defined query instructions, the client application would receive individual messages for all buses. However, query instructions may be provided that filter out, for example, inactive routes and buses and aggregate, for example, a count of buses by agency. The subscriber application receives the filtered bus data in real time and can create reports, charts and other user-defined presentations of the data. When new data is published to the channel, the reports can be updated in real time based on a period parameter (described in more detail below).

[0113] The query instructions can be provided (e.g., at the time the subscriber subscribes to the channel) in any suitable format or syntax. For example, the following illustrates the structure of several fields of a sample subscription request Protocol Data Unit (PDU) with the PDU keys specific to adding a filter to a subscription request:

TABLE-US-00002 { ''action'': ''subscribe'', "body": { ''channel'': "ChannelName" ''filter'': "QueryInstructions" ''period'': [1-60, OPTIONAL] } }

In the above subscription request PDU, the "channel" field can be a value (e.g., string or other appropriate value or designation) for the name of the channel to which the subscriber wants to subscribe. The "filter" field can provide the query instructions or other suitable filter commands, statements, or syntax that define the type of key/values in the channel message to return to the subscriber. The "period" parameter specifies the time period in, for example, seconds, to retain messages before returning them to the subscriber (e.g., an integer value from 1 to 60, with a default of, for example, 1). The "period" parameter will be discussed in more detail below. It is noted that a subscription request PDU can include any other suitable fields, parameters, or values.

[0114] One example of a query instruction is a "select" filter, which selects the most recent (or "top") value for all (e.g., "select.*") or selected (e.g., "select.name") data elements. In the example below, the Filter column shows the filter value sent in the query instructions as part of a subscription as the filter field. The Message Data column lists the input of the channel message data and the message data sent to the client as output. In this example, the value for the "extra" key does not appear in the output, as the "select" filter can return only the first level of results and does not return any nested key values.

TABLE-US-00003 Filter Message Data SELECT * Input {"name": "art", "eye": "blue"}, {"name": "art", "age": 11}, {"age": 12, "height": 190} Output {"name": "art", "age": 12, "eye": "blue", "height": 190} SELECT top.* Input {"top": {"age": 12, "eyes": "blue"}}, {"top": {"name": "joy", "height": 168}, "extra": 1}, {"top": {"name": "art"}} Output {"name": "art", "age": 12, "eye": "blue", "height": 168}

[0115] For aggregative functions, all messages can be combined that satisfy the query instructions included in the GROUP BY clause. The aggregated values can then be published as a single message to the subscriber(s) at the end of the aggregation period. The number of messages that are aggregated depends on, for example, the number of messages received in the channel in the period value for the filter. For instance, if the period parameter is set to 1, and 100 messages are received in one second, all 100 messages are aggregated into a single message for transmission to the subscsriber(s). As an example, a query instruction as shown below includes a filter to aggregate position data for an object, grouping it by obj_id, with a period of 1: [0116] SELECT*WHERE (<expression with aggregate function>) GROUP BY obj_id In this example, all messages published in the previous second with the same obj_id are grouped and sent as a batch to the subscriber(s).