Methods And Apparatus For Evaluating Developmental Conditions And Providing Control Over Coverage And Reliability

VAUGHAN; Brent ; et al.

U.S. patent application number 16/155794 was filed with the patent office on 2019-02-07 for methods and apparatus for evaluating developmental conditions and providing control over coverage and reliability. The applicant listed for this patent is Cognoa, Inc.. Invention is credited to Abdelhalim ABBAS, Jeffrey Ford GARBERSON, Clara LAJONCHERE, Brent VAUGHAN, Dennis WALL.

| Application Number | 20190043619 16/155794 |

| Document ID | / |

| Family ID | 62110089 |

| Filed Date | 2019-02-07 |

View All Diagrams

| United States Patent Application | 20190043619 |

| Kind Code | A1 |

| VAUGHAN; Brent ; et al. | February 7, 2019 |

METHODS AND APPARATUS FOR EVALUATING DEVELOPMENTAL CONDITIONS AND PROVIDING CONTROL OVER COVERAGE AND RELIABILITY

Abstract

The methods and apparatus disclosed herein can evaluate a subject for a developmental condition or conditions and provide improved sensitivity and specificity for categorical determinations indicating the presence or absence of the developmental condition by isolating hard-to-screen cases as inconclusive. The methods and apparatus disclosed herein can be configured to be tunable to control the tradeoff between coverage and reliability and to adapt to different application settings and can further be specialized to handle different population groups.

| Inventors: | VAUGHAN; Brent; (Portola Valley, CA) ; LAJONCHERE; Clara; (Los Angeles, CA) ; WALL; Dennis; (Palo Alto, CA) ; ABBAS; Abdelhalim; (San Jose, CA) ; GARBERSON; Jeffrey Ford; (Redwood City, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 62110089 | ||||||||||

| Appl. No.: | 16/155794 | ||||||||||

| Filed: | October 9, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| PCT/US2017/061552 | Nov 14, 2017 | |||

| 16155794 | ||||

| 62452908 | Jan 31, 2017 | |||

| 62421958 | Nov 14, 2016 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G16H 20/10 20180101; G16H 50/30 20180101; G16H 50/20 20180101; G06N 20/00 20190101; G16H 20/70 20180101; G16H 50/70 20180101 |

| International Class: | G16H 50/30 20060101 G16H050/30; G16H 20/10 20060101 G16H020/10; G16H 20/70 20060101 G16H020/70; G06F 15/18 20060101 G06F015/18 |

Claims

1. A computer-implemented method for determining a treatment for an individual for a neurological disorder, said method comprising: (a) receiving data of said individual related to said neurological disorder; and (b) evaluating said data using at least one classifier to select at least one therapeutic agent for treating said neurological disorder.

2. The method of claim 1, wherein said at least one therapeutic agent comprises a stimulant or antipsychotic drug for treating said neurological disorder.

3. The method of claim 1, wherein said at least one therapeutic agent comprises amphetamine or an amphetamine-derived drug.

4. The method of claim 1, wherein said at least one therapeutic agent is selected from the group consisting of risperidone, quetiapine, amphetamine, dextroamphetamine, methylphenidate, methamphetamine, dextroamphetamine, dexmethylphenidate, guanfacine, atomoxetine, lisdexamfetamine, clonidine, and aripiprazole, and modafinil.

5. The method of claim 1, wherein said neurological disorder is selected from the group consisting of autism spectrum disorder, attention deficit disorder, attention deficit hyperactivity disorder, and dyslexia.

6. The method of claim 1, wherein said data comprises active data generated from at least one active data source and passive data generated from at least one passive data source.

7. The method of claim 6, wherein said passive data comprises passive data streams from user interactions with at least one of an activity, game, mobile device or application, smart toy, wearable sensor, and activity monitor.

8. The method of claim 1, wherein said data comprises information acquired from at least one of genetic data, floral data, a sleep monitor, and eye tracking of said individual.

9. The method of claim 1, wherein said data comprises at least one of demographic data and answers to a set of diagnostic questions.

10. The method of claim 1, wherein said at least one classifier comprises an assessment model for providing an evaluation result based on said data, wherein said evaluation result is a first categorical determination or a first inconclusive determination with respect to a presence or absence of said neurological disorder.

11. The method of claim 10, wherein said first categorical determination for said presence or absence of said neurological disorder in said individual is based on a specified sensitivity and a specified specificity.

12. The method of claim 10, wherein said at least one classifier comprises a subset of a plurality of tunable machine learning models.

13. The method of claim 12, further comprising: (a) requesting additional data when said evaluation result comprises said first inconclusive determination; and (b) generating a second categorical determination or a second inconclusive determination based on said additional data using at least one additional machine learning model selected from said plurality of tunable machine learning models.

14. The method of claim 12, further comprising: (a) combining scores for each of said subset of said plurality of tunable machine learning models to generate a combined preliminary output score; and (b) mapping said combined preliminary output score to said first categorical determination or to said first inconclusive determination for said presence or absence of said neurological disorder in said individual.

15. The method of claim 1, wherein said at least one classifier comprises a chain of classifiers for providing an evaluation result for said neurological disorder based on said data.

16. The method of claim 15, wherein said chain of classifiers comprises a first classifier that generates a first output based on said data and a second classifier that generates a second output based on said first output.

17. The method of claim 1, wherein said at least one classifier comprises a therapeutic model for selecting said at least one therapeutic agent.

18. The method of claim 17, wherein said at least one classifier generates a personal therapeutic treatment plan for said individual.

19. The method of claim 18, further comprising receiving feedback data based on performance of said personal therapeutic treatment plan and updating said personal therapeutic treatment plan based on said feedback data.

20. The method of claim 18, wherein said personal therapeutic treatment plan comprises a drug therapy and digital therapeutics.

Description

CROSS-REFERENCE

[0001] The present application is a bypass continuation of International Patent Application No. PCT/US2017/061552, filed Nov. 14, 2017, which claims priority to U.S. Provisional Application No. 62/421,958, filed on Nov. 14, 2016, and U.S. Provisional Application No. 62/452,908, filed on Jan. 31, 2017, each of which application are herein incorporated in their entireties for all purposes.

[0002] The subject matter of the present application is related to U.S. application Ser. No. 14/354,032, filed on Apr. 24, 2014, now U.S. Pat. No. 9,443,205, and U.S. application Ser. No. 15/234,814, filed on Aug. 11, 2016, the entire disclosures of which are incorporated herein by reference.

BACKGROUND OF THE INVENTION

[0003] Prior methods and apparatus for diagnosing and treating cognitive function attributes of people such as, for example, people with a developmental condition or disorder can be less than ideal in at least some respects. Unfortunately, a less than ideal amount of time, energy and money can be required to obtain a diagnosis and treatment, and to determine whether a subject is at risk for decreased cognitive function such as, dementia, Alzheimer's or a developmental disorder. Examples of cognitive and developmental disorders less than ideally treated by the prior approaches include autism, autistic spectrum, attention deficit disorder, attention deficit hyperactive disorder and speech and learning disability, for example. Examples of mood and mental illness disorders less than ideally treated by the prior approaches include depression, anxiety, ADHD, obsessive compulsive disorder, and substance disorders such as substance abuse and eating disorders. The prior approaches to diagnosis and treatment of several neurodegenerative diseases can be less than ideal in many instances, and examples of such neurodegenerative diseases include age related cognitive decline, cognitive impairment, Alzheimer's disease, Parkinson's disease, Huntington's disease, and amyotrophic lateral sclerosis ("ALS"), for example. The healthcare system is under increasing pressure to deliver care at lower costs, and prior methods and apparatus for clinically diagnosing or identifying a subject as at risk of a developmental disorder can result in greater expense and burden on the health care system than would be ideal. Further, at least some subjects are not treated as soon as ideally would occur, such that the burden on the healthcare system is increased with the additional care required for these subjects.

[0004] The identification and treatment of cognitive function attributes, including for example, developmental disorders in subjects can present a daunting technical problem in terms of both accuracy and efficiency. Many known methods for identifying such attributes or disorders are often time-consuming and resource-intensive, requiring a subject to answer a large number of questions or undergo extensive observation under the administration of qualified clinicians, who may be limited in number and availability depending on the subject's geographical location. In addition, many known methods for identifying and treating behavioral, neurological, or mental health conditions or disorders have less than ideal accuracy and consistency, as subjects to be evaluated using such methods often present a vast range of variation that can be difficult to capture and classify. A technical solution to such a technical problem would be desirable, wherein the technical solution can improve both the accuracy and efficiency of existing methods. Ideally, such a technical solution would reduce the required time and resources for administering a method for identifying and treating attributes of cognitive function, such as behavioral, neurological or mental health conditions or disorders, and improve the accuracy and consistency of the identification outcomes across subjects.

[0005] Although prior lengthy tests with questions can be administered to caretakers such as parents in order to diagnose or identify a subject as at risk for a developmental condition or disorder, such tests can be quite long and burdensome. For example at least some of these tests have over one hundred questions, and more than one such lengthy test may be administered further increasing the burden on health care providers and caretakers. Additional data may be required such as clinical observation of the subject, and clinical visits may further increase the amount of time and burden on the healthcare system. Consequently, the time between a subject being identified as needing to be evaluated and being clinically identified as at risk or diagnosed with a developmental delay can be several months, and in some instances over a year.

[0006] The delay between identified need for an evaluation and clinical diagnosis can result in less than ideal care in at least some instances. Some developmental disorders can be treated with timely intervention. However, the large gap between a caretaker initially identifying a prospective as needing an evaluation and clinically diagnosing the subject or clinically identifying the subject as at risk can result in less than ideal treatment. In at least some instances, a developmental disorder may have a treatment window, and the treatment window may be missed or the subject treated for only a portion of the treatment window.

[0007] Although prior methods and apparatus have been proposed to decrease the number of questions asked, such prior methods and apparatus can be less than ideal in at least some respects. Although prior methods and apparatus have relied on training and test datasets to train and validate, respectively, the methods and apparatus, the actual clinical results of such methods and apparatus can be less than ideal, as the clinical environment can present more challenging cases than the training and test dataset. The clinical environment can present subjects who may have one or more of several possible developmental disorders, and relying on a subset of questions may result in less than ideal sensitivity and specificity of the tested developmental disorder. Also, the use of only one test to diagnose only one developmental disorder, e.g. autism, may provide less than ideal results for diagnosing the intended developmental disorder and other disorders, as subject behavior from other developmental disorders may present confounding variables that decrease the sensitivity and specificity of the subset of questions targeting the one developmental disorder. Also, reliance on a predetermined subset can result in less than ideal results as more questions than would be ideal may be asked, and the questions asked may not be the ideal subset of questions for a particular subject.

[0008] Further, many subjects may have two or more related disorders or conditions. If each test is designed to diagnose or identify only a single disorder or condition, a subject presenting with multiple disorders may be required to take multiple tests. The evaluation of a subject using multiple diagnostic tests may be lengthy, expensive, inconvenient, and logistically challenging to arrange. It would be desirable to provide a way to test a subject using a single diagnostic test that is capable of identifying or diagnosing multiple related disorders or conditions with sufficient sensitivity and specificity.

[0009] Additionally, it would be helpful if diagnostic methods and treatments could be applied to subjects to advance cognitive function for subjects with advanced, normal and decreased cognitive function. In light of the above, improved methods and systems of diagnosing and identifying subjects at risk for a particular cognitive function attribute such as a developmental disorder and for providing improved digital therapeutics are needed. Ideally such methods and apparatus would require fewer questions, decreased amounts of time, determine a plurality of cognitive function attributes, such as behavioral, neurological or mental health conditions or disorders, and provide clinically acceptable sensitivity and specificity in a clinical or nonclinical environment, which can be used to monitor and adapt treatment efficacy. Moreover, improved digital therapeutics can provide a customized treatment plan for a patient, receive updated diagnostic data in response to the customized treatment plan to determine progress, and update the treatment plan accordingly. Ideally, such methods and apparatus can also be used to determine the developmental progress of a subject, and offer treatment to advance developmental progress.

SUMMARY OF THE INVENTION

[0010] The methods and apparatus disclosed herein can determine a cognitive function attribute such as the developmental progress of a subject in a clinical or nonclinical environment. For example, the described methods and apparatus can identify a subject as developmentally advanced in one or more areas of development, or identify a subject as developmentally delayed or at risk of having one or more developmental disorders. The methods and apparatus disclosed can determine the subject's developmental progress by evaluating a plurality of characteristics or features of the subject based on an assessment model, wherein the assessment model can be generated from large datasets of relevant subject populations using machine-learning approaches. The methods and apparatus disclosed herein comprise improved logical structures and processes to diagnose a subject with a disorder among a plurality of disorders, using a single test.

[0011] The methods and apparatus disclosed herein can diagnose or identify a subject as at risk of having one or more cognitive function attributes such as for example, a subject at risk of having one or more developmental disorders among a plurality of developmental disorders in a clinical or nonclinical setting, with fewer questions, in a decreased amounts of time, and with clinically acceptable sensitivity and specificity in a clinical environment. A processor can be configured with instructions to identify a most predictive next question, such that a person can be diagnosed or identified as at risk with fewer questions. Identifying the most predictive next question in response to a plurality of answers has the advantage of increasing the sensitivity and the specificity with fewer questions. The methods and apparatus disclosed herein can be configured to evaluate a subject for a plurality of related developmental disorders using a single test, and diagnose or determine the subject as at risk of one or more of the plurality of developmental disorders using the single test. Decreasing the number of questions presented can be particularly helpful where a subject presents with a plurality of possible developmental disorders. Evaluating the subject for the plurality of possible disorders using just a single test can greatly reduce the length and cost of the evaluation procedure. The methods and apparatus disclosed herein can diagnose or identify the subject as at risk for having a single developmental disorder among a plurality of possible developmental disorders that may have overlapping symptoms.

[0012] While the most predictive next question can be determined in many ways, in many instances the most predictive next question is determined in response to a plurality of answers to preceding questions that may comprise prior most predictive next questions. The most predictive next question can be determined statistically, and a set of possible most predictive next questions evaluated to determine the most predictive next question. In many instances, answers to each of the possible most predictive next questions are related to the relevance of the question, and the relevance of the question can be determined in response to the combined feature importance of each possible answer to a question.

[0013] The methods and apparatus disclosed herein can categorize a subject into one of three categories: having one or more developmental conditions, being developmentally normal or typical, or inconclusive (i.e. requiring additional evaluation to determine whether the subject has any developmental conditions). A developmental condition can be a developmental disorder or a developmental advancement. Note that the methods and apparatus disclosed herein are not limited to developmental conditions, and may be applied to other cognitive function attributes, such as behavioral, neurological or mental health conditions. The methods and apparatus may initially categorize a subject into one of the three categories, and subsequently continue with the evaluation of a subject initially categorized as "inconclusive" by collecting additional information from the subject. Such continued evaluation of a subject initially categorized as "inconclusive" may be performed continuously with a single screening procedure (e.g., containing various assessment modules). Alternatively or additionally, a subject identified as belonging to the inconclusive group may be evaluated using separate, additional screening procedures and/or referred to a clinician for further evaluation.

[0014] The methods and apparatus disclosed herein can evaluate a subject using a combination of questionnaires and video inputs, wherein the two inputs may be integrated mathematically to optimize the sensitivity and/or specificity of classification or diagnosis of the subject. Optionally, the methods and apparatus can be optimized for different settings (e.g., primary vs secondary care) to account for differences in expected prevalence rates depending on the application setting.

[0015] The methods and apparatus disclosed herein can account for different subject-specific dimensions such as, for example, a subject's age, a geographic location associated with a subject, a subject's gender or any other subject-specific or demographic data associated with a subject. In particular, the methods and apparatus disclosed herein can take different subject-specific dimensions into account in identifying the subject as at risk of having one or more cognitive function attributes such as developmental conditions, in order to increase the sensitivity and specificity of evaluation, diagnosis, or classification of the subject. For example, subjects belonging to different age groups may be evaluated using different machine learning assessment models, each of which can be specifically tuned to identify the one or more developmental conditions in subjects of a particular age group. Each age group-specific assessment model may contain a unique group of assessment items (e.g., questions, video observations), wherein some of the assessment items may overlap with those of other age groups' specific assessment models.

[0016] In addition, the digital personalized medicine systems and methods described herein can provide digital diagnostics and digital therapeutics to patients. The digital personalized medicine system can use digital data to assess or diagnose symptoms of a patient in ways that inform personalized or more appropriate therapeutic interventions and improved diagnoses.

[0017] In one aspect, the digital personalized medicine system can comprise digital devices with processors and associated software that can be configured to: use data to assess and diagnose a patient; capture interaction and feedback data that identify relative levels of efficacy, compliance and response resulting from the therapeutic interventions; and perform data analysis. Such data analysis can include artificial intelligence, including for example machine learning, and/or statistical models to assess user data and user profiles to further personalize, improve or assess efficacy of the therapeutic interventions.

[0018] In some instances, the system can be configured to use digital diagnostics and digital therapeutics. Digital diagnostics and digital therapeutics can comprise a system or methods for digitally collecting information and processing and evaluating the provided data to improve the medical, psychological, or physiological state of an individual. A digital therapeutic system can apply software based learning to evaluate user data, monitor and improve the diagnoses and therapeutic interventions provided by the system.

[0019] Digital diagnostics data in the system can comprise data and meta-data collected from the patient, or a caregiver, or a party that is independent of the assessed individual. In some instances, the collected data can comprise monitoring behaviors, observations, judgments, or assessments made by a party other than the individual. In further instances, the assessment can comprise an adult performing an assessment or provide data for an assessment of a child or juvenile. The data and meta-data can be either actively or passively collected in digital format via one or more digital devices such as mobile phones, video capture, audio capture, activity monitors, or wearable digital monitors.

[0020] The digital diagnostic uses the data collected by the system about the patient, which can include complimentary diagnostic data captured outside the digital diagnostic, with analysis from tools such as machine learning, artificial intelligence, and statistical modeling to assess or diagnose the patient's condition. The digital diagnostic can also provide an assessment of a patient's change in state or performance, directly or indirectly via data and meta-data that can be analyzed and evaluated by tools such as machine learning, artificial intelligence, and statistical modeling to provide feedback into the system to improve or refine the diagnoses and potential therapeutic interventions.

[0021] Data assessment and machine learning from the digital diagnostic and corresponding responses, or lack thereof, from the therapeutic interventions can lead to the identification of novel diagnoses for patients and novel therapeutic regimens for both patents and caregivers.

[0022] Types of data collected and utilized by the system can include patient and caregiver video, audio, responses to questions or activities, and active or passive data streams from user interaction with activities, games or software features of the system, for example. Such data can also include meta-data from patient or caregiver interaction with the system, for example, when performing recommended activities. Specific meta-data examples include data from a user's interaction with the system's device or mobile app that captures aspects of the user's behaviors, profile, activities, interactions with the software system, interactions with games, frequency of use, session time, options or features selected, and content and activity preferences. Data can also include data and meta-data from various third party devices such as activity monitors, games or interactive content.

[0023] Digital therapeutics can comprise instructions, feedback, activities or interactions provided to the patient or caregiver by the system. Examples include suggested behaviors, activities, games or interactive sessions with system software and/or third party devices.

[0024] In further aspects, the digital therapeutics methods and systems disclosed herein can diagnose and treat a subject at risk of having one or more behavioral, neurological or mental health conditions or disorders among a plurality of behavioral, neurological or mental health conditions or disorders in a clinical or nonclinical setting. This diagnosis and treatment can be accomplished using the methods and systems disclosed herein with fewer questions, in a decreased amount of time, and with clinically acceptable sensitivity and specificity in a clinical environment, and can provide treatment recommendations. This can be helpful when a subject initiates treatment based on an incorrect diagnosis, for example. A processor can be configured with instructions to identify a most predictive next question or most instructive next symptom or observation such that a person can be diagnosed or identified as at risk reliably using only the optimal number of questions or observations. Identifying the most predictive next question or most instructive next symptom or observation in response to a plurality of answers has the advantage of providing treatment with fewer questions without degrading the sensitivity or specificity of the diagnostic process. In some instances, an additional processor can be provided to predict or collect information on the next more relevant symptom. The methods and apparatus disclosed herein can be configured to evaluate and treat a subject for a plurality of related disorders using a single adaptive test, and diagnose or determine the subject as at risk of one or more of the plurality of disorders using the single test. Decreasing the number of questions presented or symptoms or measurements used can be particularly helpful where a subject presents with a plurality of possible disorders that can be treated. Evaluating the subject for the plurality of possible disorders using just a single adaptive test can greatly reduce the length and cost of the evaluation procedure and improve treatment. The methods and apparatus disclosed herein can diagnose and treat subject at risk for having a single disorder among a plurality of possible disorders that may have overlapping symptoms.

[0025] The most predictive next question, most instructive next symptom or observation used for the digital therapeutic treatment can be determined in many ways. In many instances, the most predictive next question, symptom, or observation can be determined in response to a plurality of answers to preceding questions or observation that may comprise prior most predictive next question, symptom, or observation to evaluate the treatment and provide a closed-loop assessment of the subject. The most predictive next question, symptom, or observation can be determined statistically, and a set of candidates can be evaluated to determine the most predictive next question, symptom, or observation. In many instances, observations or answers to each of the candidates are related to the relevance of the question or observation, and the relevance of the question or observation can be determined in response to the combined feature importance of each possible answer to a question or observation. Once a treatment has been initiated, the questions, symptoms, or observations can be repeated or different questions, symptoms, or observations can be used to more accurately monitor progress and suggest changes to the digital treatment. The relevance of a next question, symptom or observation can also depend on the variance of the ultimate assessment among different answer choices of the question or potential options for an observation. For example, a question for which the answer choices might have a significant impact on the ultimate assessment down the line can be deemed more relevant than a question for which the answer choices might only help to discern differences in severity for one particular condition, or are otherwise less consequential.

[0026] In one aspect, a method of providing an evaluation of at least one cognitive function attribute of a subject may comprise: on a computer system having a processor and a memory storing a computer program for execution by the processor, the computer program comprising instructions for: receiving data of the subject related to the cognitive function attribute; evaluating the data of the subject using a machine learning model; and providing an evaluation for the subject, the evaluation selected from the group consisting of an inconclusive determination and a categorical determination in response to the data. The machine learning model may comprise a selected subset of a plurality of machine learning assessment models.

[0027] The categorical determination may comprise a presence of the cognitive function attribute and an absence of the cognitive function attribute. Receiving data from the subject may comprise receiving an initial set of data. Evaluating the data from the subject may comprise evaluating the initial set of data using a preliminary subset of tunable machine learning assessment models selected from the plurality of tunable machine learning assessment models to output a numerical score for each of the preliminary subset of tunable machine learning assessment models.

[0028] The method may further comprise providing a categorical determination or an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject based on the analysis of the initial set of data, wherein the ratio of inconclusive to categorical determinations can be adjusted. The method may further comprise: determining whether to apply additional assessment models selected from the plurality of tunable machine learning assessment models if the analysis of the initial set of data yields an inconclusive determination; receiving an additional set of data from the subject based on an outcome of the decision; evaluating the additional set of data from the subject using the additional assessment models to output a numerical score for each of the additional assessment models based on the outcome of the decision; and providing a categorical determination or an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject based on the analysis of the additional set of data from the subject using the additional assessment models, wherein the ratio of inconclusive to categorical determinations can be adjusted.

[0029] The method may further comprise: combining the numerical scores for each of the preliminary subset of assessment models to generate a combined preliminary output score; and mapping the combined preliminary output score to a categorical determination or to an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject, wherein the ratio of inconclusive to categorical determinations can be adjusted.

[0030] The method may further comprise employing rule-based logic or combinatorial techniques for combining the numerical scores for each of the preliminary subset of assessment models and for combining the numerical scores for each of the additional assessment models. The ratio of inconclusive to categorical determinations may be adjusted by specifying an inclusion rate. The categorical determination as to the presence or absence of the developmental condition in the subject may be assessed by providing a sensitivity and specificity metric. The inclusion rate may be no less than 70% and the categorical determination may result in a sensitivity of at least 70 with a corresponding specificity of at least 70. The inclusion rate may be no less than 70% and the categorical determination may result in a sensitivity of at least 80 with a corresponding specificity of at least 80. The inclusion rate may be no less than 70% and the categorical determination may result in a sensitivity of at least 90 with a corresponding specificity of at least 90.

[0031] Data from the subject may comprise at least one of a sample of a diagnostic instrument, wherein the diagnostic instrument comprises a set of diagnostic questions and corresponding selectable answers, and demographic data.

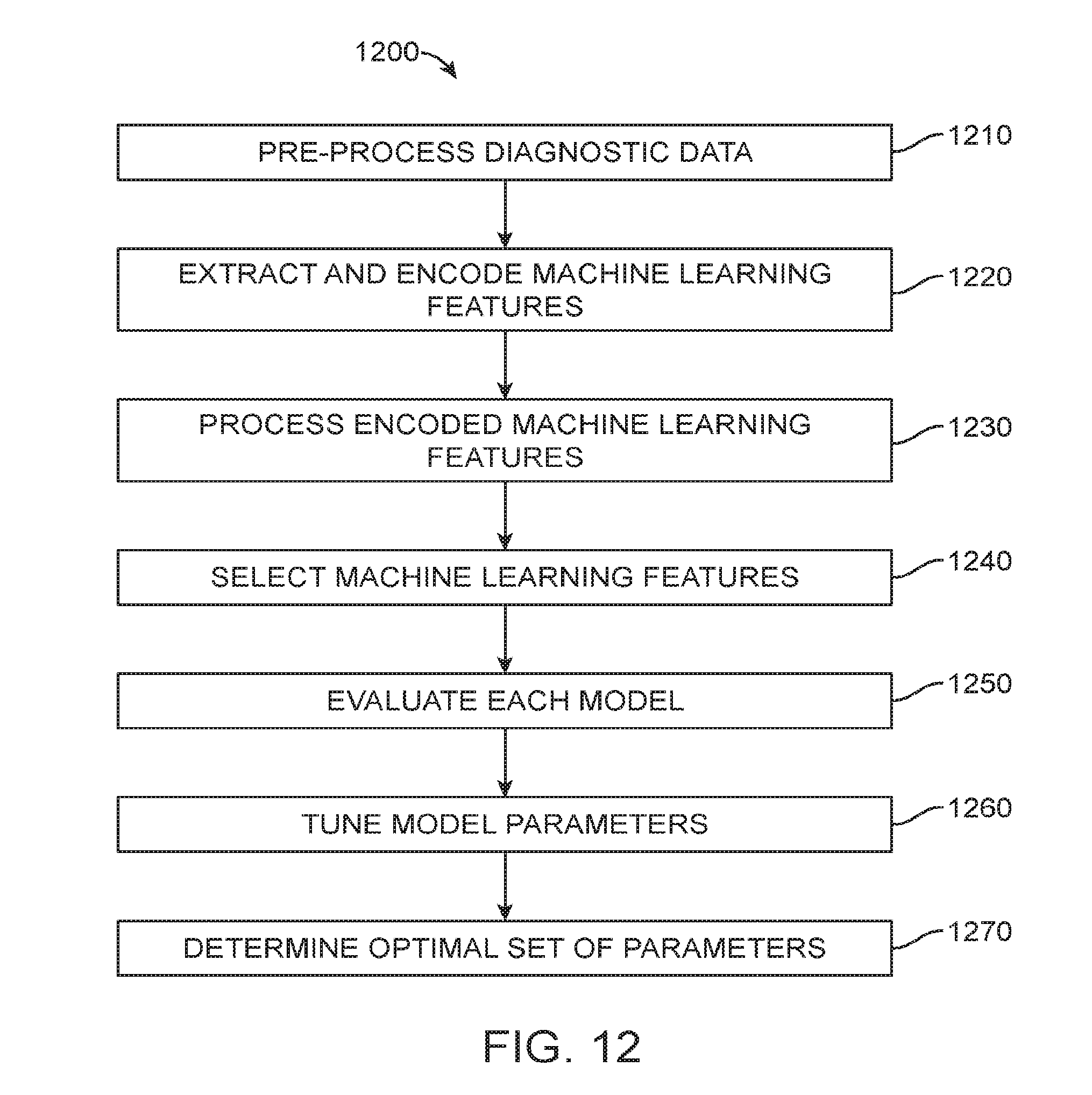

[0032] The method may further comprise: training a plurality of tunable machine learning assessment models using data from a plurality of subjects previously evaluated for the developmental condition, wherein training comprises: pre-processing the data from the plurality of subjects using machine learning techniques; extracting and encoding machine learning features from the pre-processed data; processing the data from the plurality of subjects to mirror an expected prevalence of a cognitive function attribute among subjects in an intended application setting; selecting a subset of the processed machine learning features; evaluating each model in the plurality of tunable machine learning assessment models for performance, wherein each model is evaluated for sensitivity and specificity for a pre-determined inclusion rate; and determining an optimal set of parameters for each model based on determining the benefit of using all models in a selected subset of the plurality of tunable machine learning assessment models. Determining an optimal set of parameters for each model may comprise tuning the parameters of each model under different tuning parameter settings.

[0033] Processing the encoded machine learning features may comprise: computing and assigning sample weights to every sample of data, wherein each sample of data corresponds to a subject in the plurality of subjects, wherein samples are grouped according to subject-specific dimensions, and wherein the sample weights are computed and assigned to balance one group of samples against every other group of samples to mirror the expected distribution of each dimension among subjects in an intended setting. The subject-specific dimensions may comprise a subject's gender, the geographic region where a subject resides, and a subject's age. Extracting and encoding machine learning features from the pre-processed data may comprise using feature encoding techniques such as but not limited to one-hot encoding, severity encoding, and presence-of-behavior encoding. Selecting a subset of the processed machine learning features may comprise using bootstrapping techniques to identify a subset of discriminating features from the processed machine learning features.

[0034] The cognitive function attribute may comprise a behavioral disorder and a developmental advancement. The categorical determination provided for the subject may be selected from the group consisting of an inconclusive determination, a presence of multiple cognitive function attributes, and an absence of multiple cognitive function attributes in response to the data.

[0035] In another aspect, an apparatus to evaluate a cognitive function attribute of a subject may comprise processor configured with instructions that, when executed, cause the processor to perform the method described above.

[0036] In another aspect, a mobile device for providing an evaluation of at least one cognitive function attribute of a subject may comprise: a display; and a processor configured with instructions to: receive and display data of the subject related to the cognitive function attribute; and receive and display an evaluation for the subject, the evaluation selected from the group consisting of an inconclusive determination and a categorical determination; wherein the evaluation for the subject has been determined in response to the data of the subject.

[0037] The categorical determination may be selected from the group consisting of a presence of the cognitive function attribute, and an absence of the cognitive function attribute. The cognitive function attribute may be determined with a sensitivity of at least 80 and a specificity of at least 80, respectively, for the presence or the absence of the cognitive function attribute. The cognitive function attribute may be determined with a sensitivity of at least 90 and a specificity of at least 90, respectively, for the presence or the absence of the cognitive function attribute. The cognitive function attribute may comprise a behavioral disorder and a developmental advancement.

[0038] In another aspect, digital therapeutic system to treat a subject with a personal therapeutic treatment plan may comprise: one or more processors comprising software instructions; a diagnostic module to receive data from the subject and output diagnostic data for the subject, the diagnostic module comprising one or more classifiers built using machine learning or statistical modeling based on a subject population to determine the diagnostic data for the subject, and wherein the diagnostic data comprises an evaluation for the subject, the evaluation selected from the group consisting of an inconclusive determination and a categorical determination in response to data received from the subject; and a therapeutic module to receive the diagnostic data and output the personal therapeutic treatment plan for the subject, the therapeutic module comprising one or more models built using machine learning or statistical modeling based on at least a portion the subject population to determine and output the personal therapeutic treatment plan of the subject, wherein the diagnostic module is configured to receive updated subject data from the subject in response to therapy of the subject and generate updated diagnostic data from the subject and wherein the therapeutic module is configured to receive the updated diagnostic data and output an updated personal treatment plan for the subject in response to the diagnostic data and the updated diagnostic data.

[0039] The diagnostic module may comprise a diagnostic machine learning classifier trained on the subject population and the therapeutic module may comprise a therapeutic machine learning classifier trained on the at least the portion of the subject population and the diagnostic module and the therapeutic module may be arranged for the diagnostic module to provide feedback to the therapeutic module based on performance of the treatment plan. The therapeutic classifier may comprise instructions trained on a data set comprising a population of which the subject is not a member and the subject may comprise a person who is not a member of the population. The diagnostic module may comprise a diagnostic classifier trained on plurality of profiles of a subject population of at least 10,000 people and therapeutic profile trained on the plurality of profiles of the subject population.

[0040] In another aspect, a system to evaluate of at least one cognitive function attribute of a subject may comprise: a processor configured with instructions that when executed cause the processor to: present a plurality of questions from a plurality of chains of classifiers, the plurality of chains of classifiers comprising a first chain comprising a social/behavioral delay classifier and a second chain comprising a speech & language delay classifier. The social/behavioral delay classifier may be operatively coupled to an autism & ADHD classifier. The social/behavioral delay classifier may be configured to output a positive result if the subject has a social/behavioral delay and a negative result if the subject does not have the social/behavioral delay. The social/behavioral delay classifier may be configured to output an inconclusive result if it cannot be determined with a specified sensitivity and specificity whether or not the subject has the social/behavioral delay. The social/behavioral delay classifier output may be coupled to an input of an Autism and ADHD classifier and the Autism and ADHD classifier may be configured to output a positive result if the subject has Autism or ADHD. The output of the Autism and ADHD classifier may be coupled to an input of an Autism v. ADHD classifier, and the Autism v. ADHD classifier may be configured to generate a first output if the subject has autism and a second output if the subject has ADHD. The Autism v. ADHD classifier may be configured to provide an inconclusive output if it cannot be determined with specified sensitivity and specificity whether or not the subject has autism or ADHD. The speech & language delay classifier may be operatively coupled to an intellectual disability classifier. The speech & language delay classifier may be configured to output a positive result if the subject has a speech and language delay and a negative output if the subject does not have the speech and language delay. The speech & language delay classifier may be configured to output an inconclusive result if it cannot be determined with a specified sensitivity and specificity whether or not the subject has the speech and language delay. The speech & language delay classifier output may be coupled to an input of an intellectual disability classifier and the intellectual disability classifier may be configured to generate a first output if the subject has intellectual disability and a second output if the subject has the speech and language delay but no intellectual disability. The intellectual disability classifier may be configured to provide an inconclusive output if it cannot be determined with a specified sensitivity and specificity whether or not the subject has the intellectual disability.

[0041] The processor may be configured with instructions to present questions for each chain in sequence and skip overlapping questions. The first chain may comprise the social/behavioral delay classifier coupled to an autism & ADHD classifier. The second chain may comprise the speech & language delay classifier coupled to an intellectual disability classifier. A user may go through the first chain and the second chain in sequence.

[0042] In another aspect, a method for administering a drug to a subject may comprise: detecting a neurological disorder of the subject with a machine learning classifier; and administering the drug to the subject in response to the detected neurological disorder. The neurological disorder may comprise autism spectrum disorder, and the drug may be selected from the group consisting of risperidone, quetiapine, amphetamine, dextroamphetamine, methylphenidate, methamphetamine, dextroamphetamine, dexmethylphenidate, guanfacine, atomoxetine, lisdexamfetamine, clonidine, and aripiprazolecomprise; or the neurological disorder may comprise attention deficit disorder (ADD), and the drug may be selected from the group consisting of amphetamine, dextroamphetamine, methylphenidate, methamphetamine, dextroamphetamine, dexmethylphenidate, guanfacine, atomoxetine, lisdexamfetamine, clonidine, and modafinil; or the neurological disorder may comprise attention deficit hyperactivity disorder (ADHD), and the drug may be selected from the group consisting of amphetamine, dextroamphetamine, methylphenidate, methamphetamine, dextroamphetamine, dexmethylphenidate, guanfacine, atomoxetine, lisdexamfetamine, clonidine, and modafinil; or the neurological disorder may comprise obsessive-compulsive disorder, and the drug may be selected from the group consisting of buspirone, sertraline, escitalopram, citalopram, fluoxetine, paroxetine, venlafaxine, clomipramine, and fluvoxamine; or the neurological disorder may comprise acute stress disorder, and the drug may be selected from the group consisting of propranolol, citalopram, escitalopram, sertraline, paroxetine, fluoextine, venlafaxine, mirtazapine, nefazodone, carbamazepine, divalproex, lamotrigine, topiramate, prazosin, phenelzine, imipramine, diazepam, clonazepam, lorazepam, and alprazolam; or the neurological disorder may comprise adjustment disorder, and the drug may be selected from the group consisting of busiprone, escitalopram, sertraline, paroxetine, fluoextine, diazepam, clonazepam, lorazepam, and alprazolam; or neurological disorder may comprise agoraphobia, and the drug may be selected from the group consisting of diazepam, clonazepam, lorazepam, alprazolam, citalopram, escitalopram, sertraline, paroxetine, fluoextine, and busiprone; or the neurological disorder may comprise Alzheimer's disease, and the drug may be selected from the group consisting of donepezil, galantamine, memantine, and rivastigmine; or the neurological disorder may comprise anorexia nervosa, and the drug may be selected from the group consisting of olanzapine, citalopram, escitalopram, sertraline, paroxetine, and fluoxetine; or the neurological disorder may comprise anxiety disorders, and the drug may be selected from the group consisting of sertraline, escitalopram, citalopram, fluoxetine, diazepam, buspirone, venlafaxine, duloxetine, imipramine, desipramine, clomipramine, lorazepam, clonazepam, and pregabalin; or the neurological disorder may comprise bereavement, and the drug may be selected from the group consisting of citalopram, duloxetine, and doxepin; or the neurological disorder may comprise binge eating disorder, and the drug may be selected from the group consisting of lisdexamfetamine; or the neurological disorder may comprise bipolar disorder, and the drug may be selected from the group consisting of topiramate, lamotrigine, oxcarbazepine, haloperidol, risperidone, quetiapine, olanzapine, aripiprazole, and fluoxetine; or the neurological disorder may comprise body dysmorphic disorder, and the drug may be selected from the group consisting of sertraline, escitalopram, and citalopram; or the neurological disorder may comprise brief psychotic disorder, and the drug may be selected from the group consisting of clozapine, asenapine, olanzapine, and quetiapine; or the neurological disorder may comprise bulimia nervosa, and the drug may be selected from the group consisting of sertraline and fluoxetine; or the neurological disorder may comprise conduct disorder, and the drug may be selected from the group consisting of lorazepam, diazepam, and clobazam; or the neurological disorder may comprise delusional disorder, and the drug may be selected from the group consisting of clozapine, asenapine, risperidone, venlafaxine, bupropion, and buspirone; the neurological disorder may comprise depersonalization disorder, and the drug may be selected from the group consisting of sertraline, fluoxetine, alprazolam, diazepam, and citalopram; or the neurological disorder may comprise depression, and the drug may be selected from the group consisting of sertraline, fluoxetine, citalopram, bupropion, escitalopram, venlafaxine, aripiprazole, buspirone, vortioxetine, and vilazodone; or the neurological disorder may comprise disruptive mood dysregulation disorder, and the drug may be selected from the group consisting of quetiapine, clozapine, asenapine, and pimavanserin; or the neurological disorder may comprise dissociative amnesia, and the drug may be selected from the group consisting of alprazolam, diazepam, lorazepam, and chlordiazepoxide; or the neurological disorder may comprise dissociative disorder, and the drug may be selected from the group consisting of bupropion, vortioxetine, and vilazodone; or the neurological disorder may comprise dissociative fugue, and the drug may be selected from the group consisting of amobarbital, aprobarbital, butabarbital, and methohexitlal; or the neurological disorder may comprise dysthymic disorder, and the drug may be selected from the group consisting of bupropion, venlafaxine, sertraline, and citalopram; the neurological disorder may comprise eating disorders, and the drug may be selected from the group consisting of olanzapine, citalopram, escitalopram, sertraline, paroxetine, and fluoxetine; or the neurological disorder may comprise gender dysphoria, and the drug may be selected from the group consisting of estrogen, prostogen, and testosterone; or the neurological disorder may comprise generalized anxiety disorder, and the drug may be selected from the group consisting of venlafaxine, duloxetine, buspirone, sertraline, and fluoxetine; or the neurological disorder may comprise hoarding disorder, and the drug may be selected from the group consisting of buspirone, sertraline, escitalopram, citalopram, fluoxetine, paroxetine, venlafaxine, and clomipramine; or the neurological disorder may comprise intermittent explosive disorder, and the drug may be selected from the group consisting of asenapine, clozapine, olanzapine, and pimavanserin; or the neurological disorder may comprise kleptomania, and the drug may be selected from the group consisting of escitalopram, fluvoxamine, fluoxetine, and paroxetine; or the neurological disorder may comprise panic disorder, and the drug may be selected from the group consisting of bupropion, vilazodone, and vortioxetine; or the neurological disorder may comprise Parkinson's disease, and the drug may be selected from the group consisting of rivastigmine, selegiline, rasagiline, bromocriptine, amantadine, cabergoline, and benztropine; or the neurological disorder may comprise pathological gambling, and the drug may be selected from the group consisting of bupropion, vilazodone, and vartioxetine; or the neurological disorder may comprise postpartum depression, and the drug may be selected from the group consisting of sertraline, fluoxetine, citalopram, bupropion, escitalopram, venlafaxine, aripiprazole, buspirone, vortioxetine, and vilazodone; or the neurological disorder may comprise posttraumatic stress disorder, and the drug may be selected from the group consisting of sertraline, fluoxetine, and paroxetine; or the neurological disorder may comprise premenstrual dysphoric disorder, and the drug may be selected from the group consisting of estadiol, drospirenone, sertraline, citalopram, fluoxetine, and busiprone; or the neurological disorder may comprise pseudobulbar affect, and the drug may be selected from the group consisting of dextromethorphan hydrobromide, and quinidine sulfate; or the neurological disorder may comprise pyromania, and the drug may be selected from the group consisting of clozapine, asenapine, olanzapine, paliperidone, and quetiapine; or the neurological disorder may comprise schizoaffective disorder, and the drug may be selected from the group consisting of sertraline, carbamazepine, oxcarbazepine, valproate, haloperidol, olanzapine, and loxapine; or the neurological disorder may comprise schizophrenia, and the drug may be selected from the group consisting of chlopromazine, haloperidol, fluphenazine, risperidone, quetiapine, ziprasidone, olanzapine, perphenazine, aripiprazole, and prochlorperazine; or the neurological disorder may comprise schizophreniform disorder, and the drug may be selected from the group consisting of paliperidone, clozapine, and risperidone; or the neurological disorder may comprise seasonal affective disorder, and the drug may be selected from the group consisting of sertraline, and fluoxetine; or the neurological disorder may comprise shared psychotic disorder, and the drug may be selected from the group consisting of clozapine, pimavanserin, risperidone, and lurasidone; or the neurological disorder may comprise social anxiety phobia, and the drug may be selected from the group consisting of amitriptyline, bupropion, citalopram, fluoxetine, sertraline, and venlafaxine; or the neurological disorder may comprise specific phobia, and the drug may be selected from the group consisting of diazepam, estazolam, quazepam, and alprazolam; or the neurological disorder may comprise stereotypic movement disorder, and the drug may be selected from the group consisting of risperidone, and clozapine; or the neurological disorder may comprise Tourette's disorder, and the drug may be selected from the group consisting of haloperidol, fluphenazine, risperidone, ziprasidone, pimozide, perphenazine, and aripiprazole; or the neurological disorder may comprise transient tic disorder, and the drug may be selected from the group consisting of guanfacine, clonidine, pimozide, risperidone, citalopram, escitalopram, sertraline, paroxetine, and fluoxetine; or the neurological disorder may comprise trichotillomania, and the drug may be selected from the group consisting of sertraline, fluoxetine, paroxetine, desipramine, and clomipramine.

[0043] Amphetamine may be administered with a dosage of 5 mg to 50 mg. Dextroamphetamine may be administered with a dosage that is in a range of 5 mg to 60 mg. Methylphenidate may be administered with a dosage that is in a range of 5 mg to 60 mg. Methamphetamine may be administered with a dosage that is in a range of 5 mg to 25 mg. Dexmethylphenidate may be administered with a dosage that is in a range of 2.5 mg to 40 mg. Guanfacine may be administered with a dosage that is in a range of 1 mg to 10 mg. Atomoxetine may be administered with a dosage that is in a range of 10 mg to 100 mg. Lisdexamfetamine may be administered with a dosage that is in a range of 30 mg to 70 mg. Clonidine may be administered with a dosage that is in a range of 0.1 mg to 0.5 mg. Modafinil may be administered with a dosage that is in a range of 100 mg to 500 mg. Risperidone may be administered with a dosage that is in a range of 0.5 mg to 20 mg. Quetiapine may be administered with a dosage that is in a range of 25 mg to 1000 mg. Buspirone may be administered with a dosage that is in a range of 5 mg to 60 mg. Sertraline may be administered with a dosage of up to 200 mg. Escitalopram may be administered with a dosage of up to 40 mg. Citalopram may be administered with a dosage of up to 40 mg. Fluoxetine may be administered with a dosage that is in a range of 40 mg to 80 mg. Paroxetine may be administered with a dosage that is in a range of 40 mg to 60 mg. Venlafaxine may be administered with a dosage of up to 375 mg. Clomipramine may be administered with a dosage of up to 250 mg. Fluvoxamine may be administered with a dosage of up to 300 mg.

[0044] The machine learning classifier may have an inclusion rate of no less than 70%. The machine learning classifier may be capable of outputting an inconclusive result.

INCORPORATION BY REFERENCE

[0045] All publications, patents, and patent applications mentioned in this specification are herein incorporated by reference to the same extent as if each individual publication, patent, or patent application was specifically and individually indicated to be incorporated by reference.

BRIEF DESCRIPTION OF THE DRAWINGS

[0046] The novel features of the invention are set forth with particularity in the appended claims. A better understanding of the features and advantages of the present invention will be obtained by reference to the following detailed description that sets forth illustrative embodiments, in which the principles of the invention are utilized, and the accompanying drawings of which:

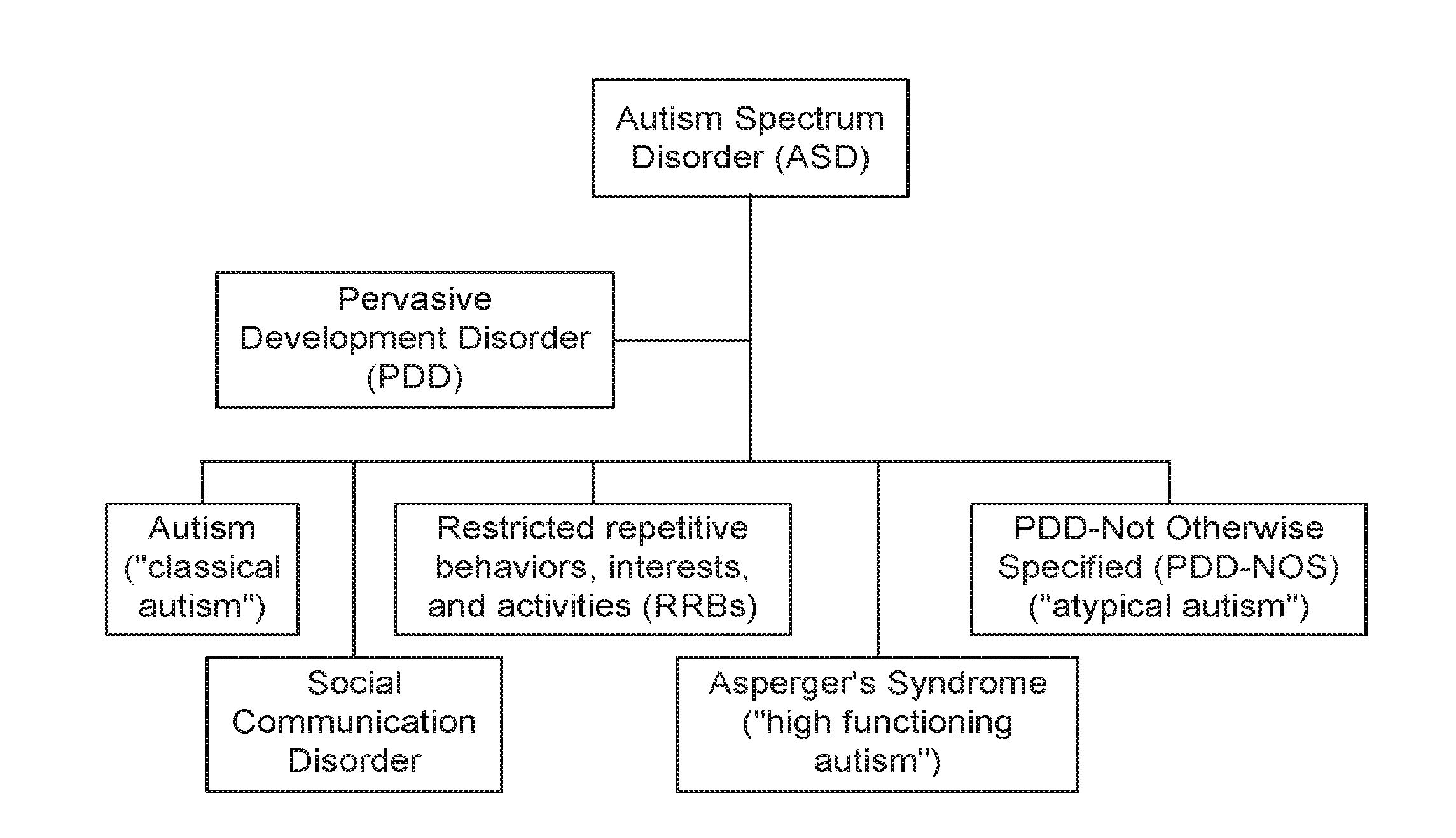

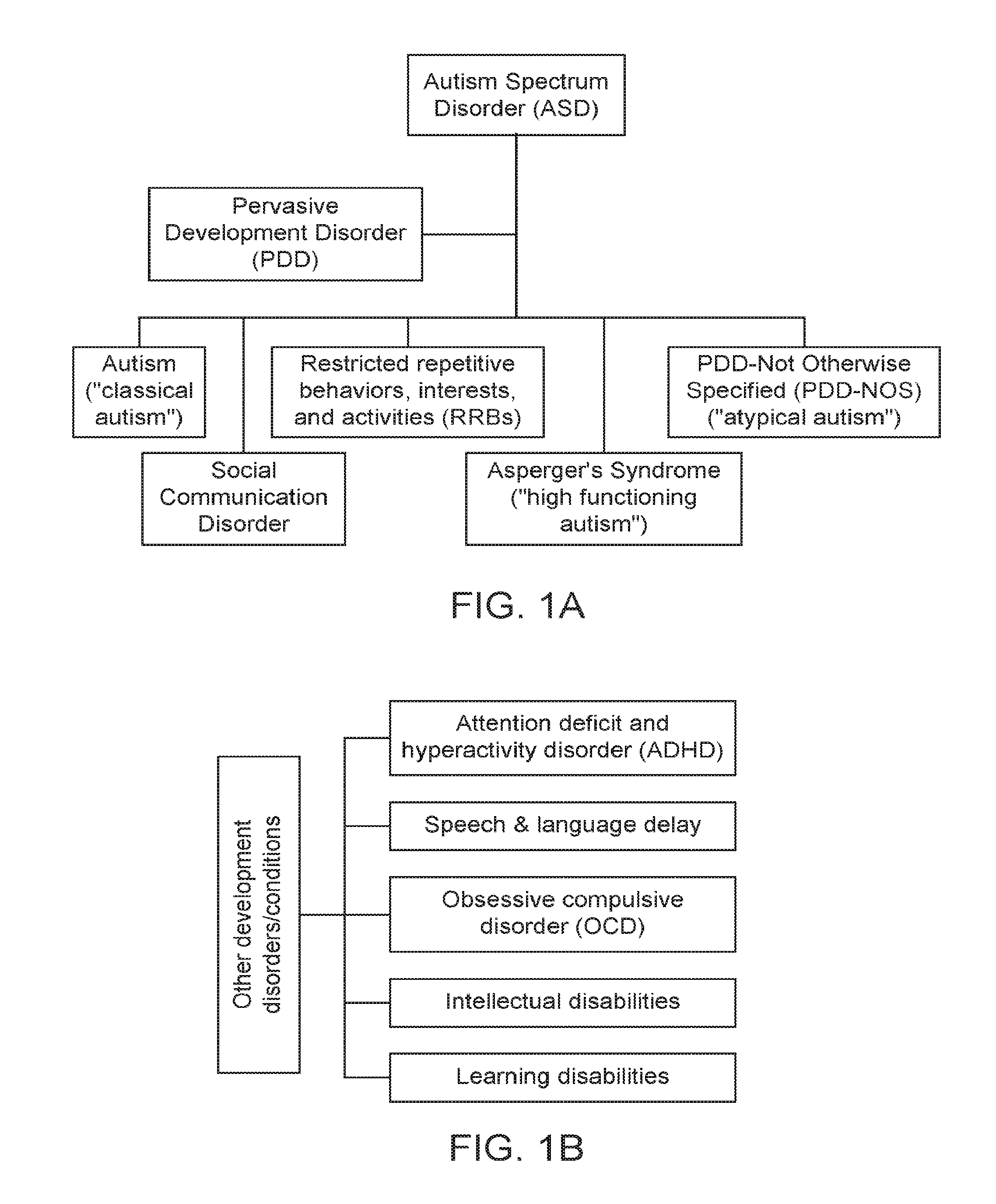

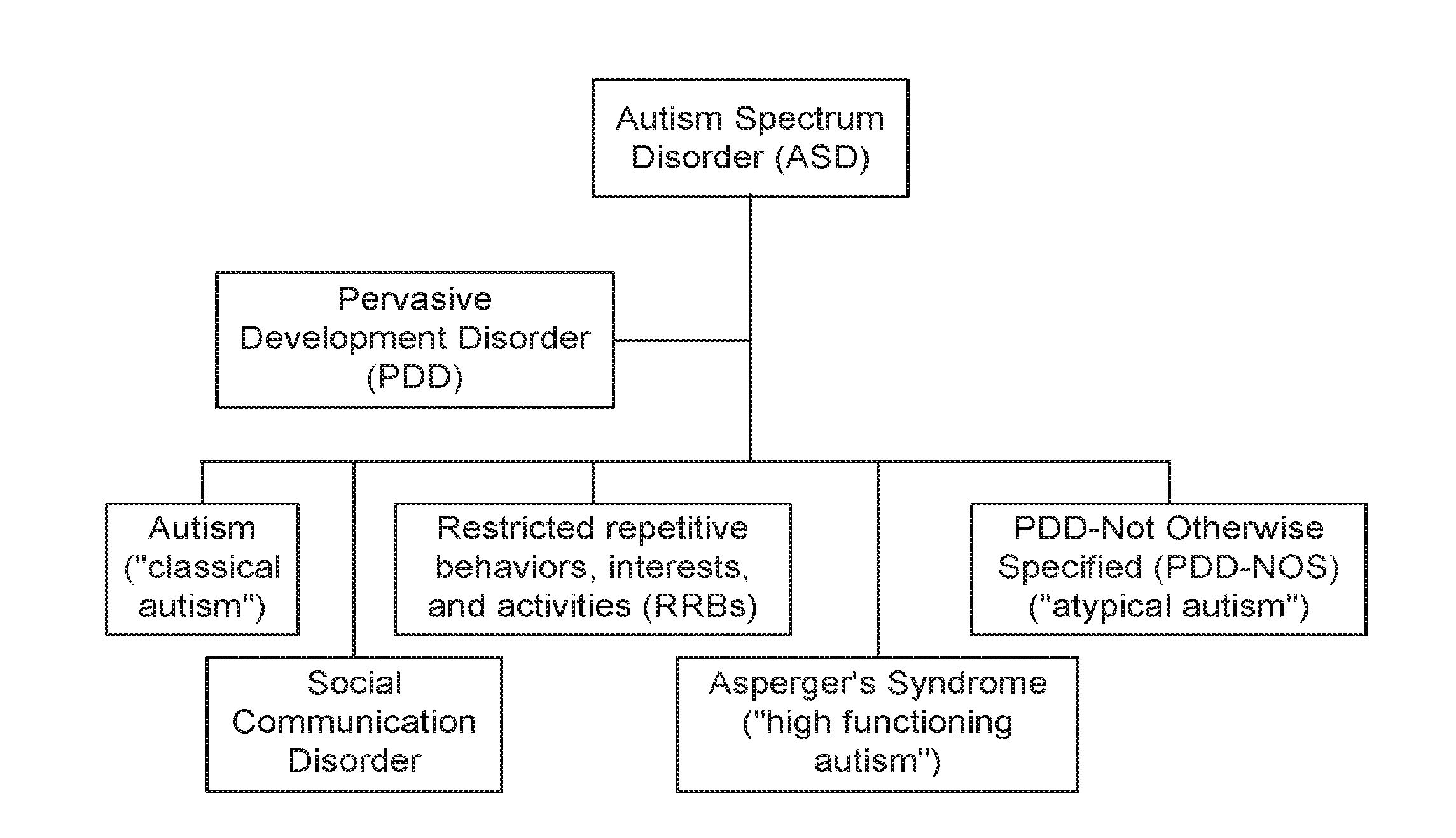

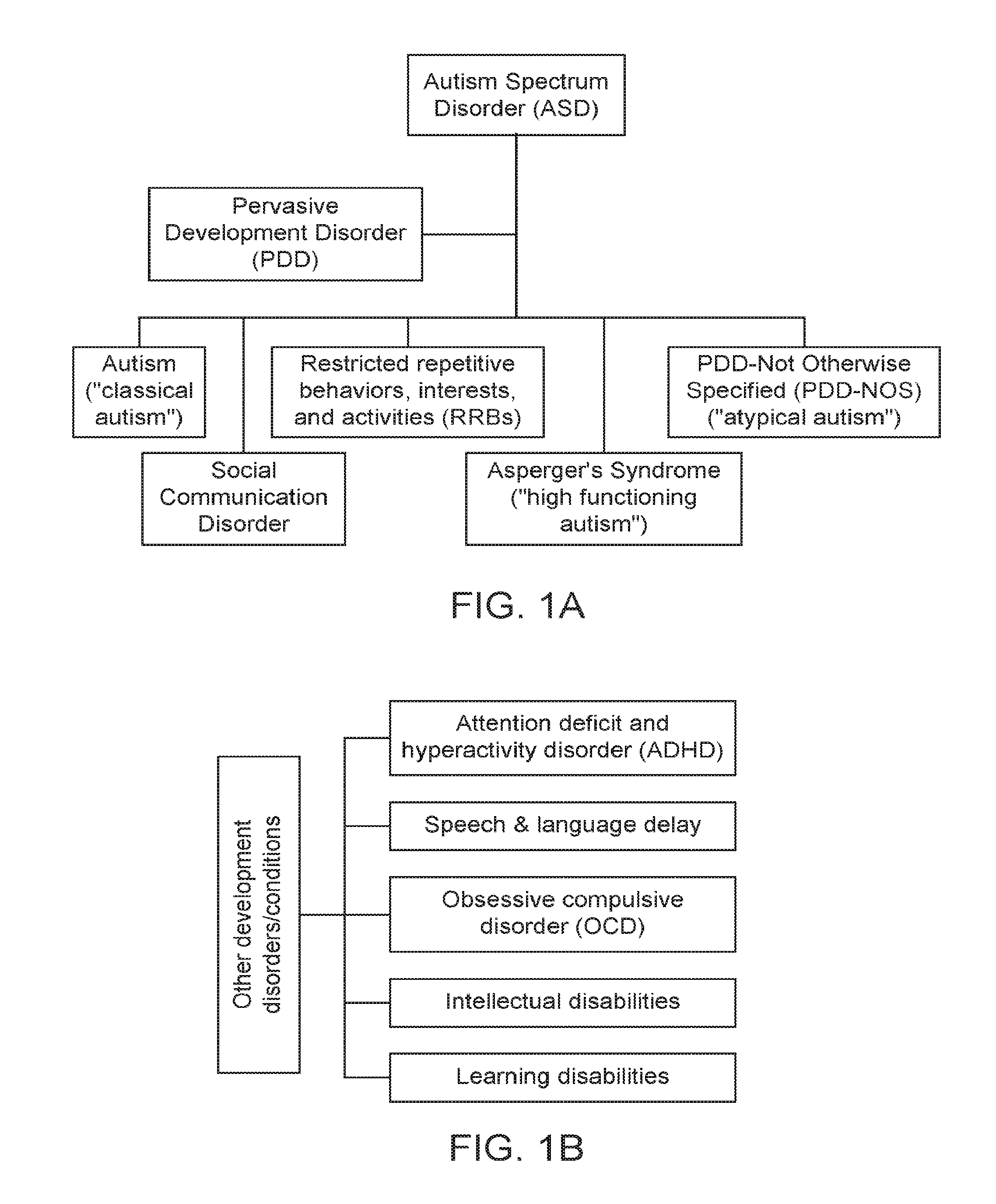

[0047] FIGS. 1A and 1B show some exemplary developmental disorders that may be evaluated using the assessment procedure as described herein.

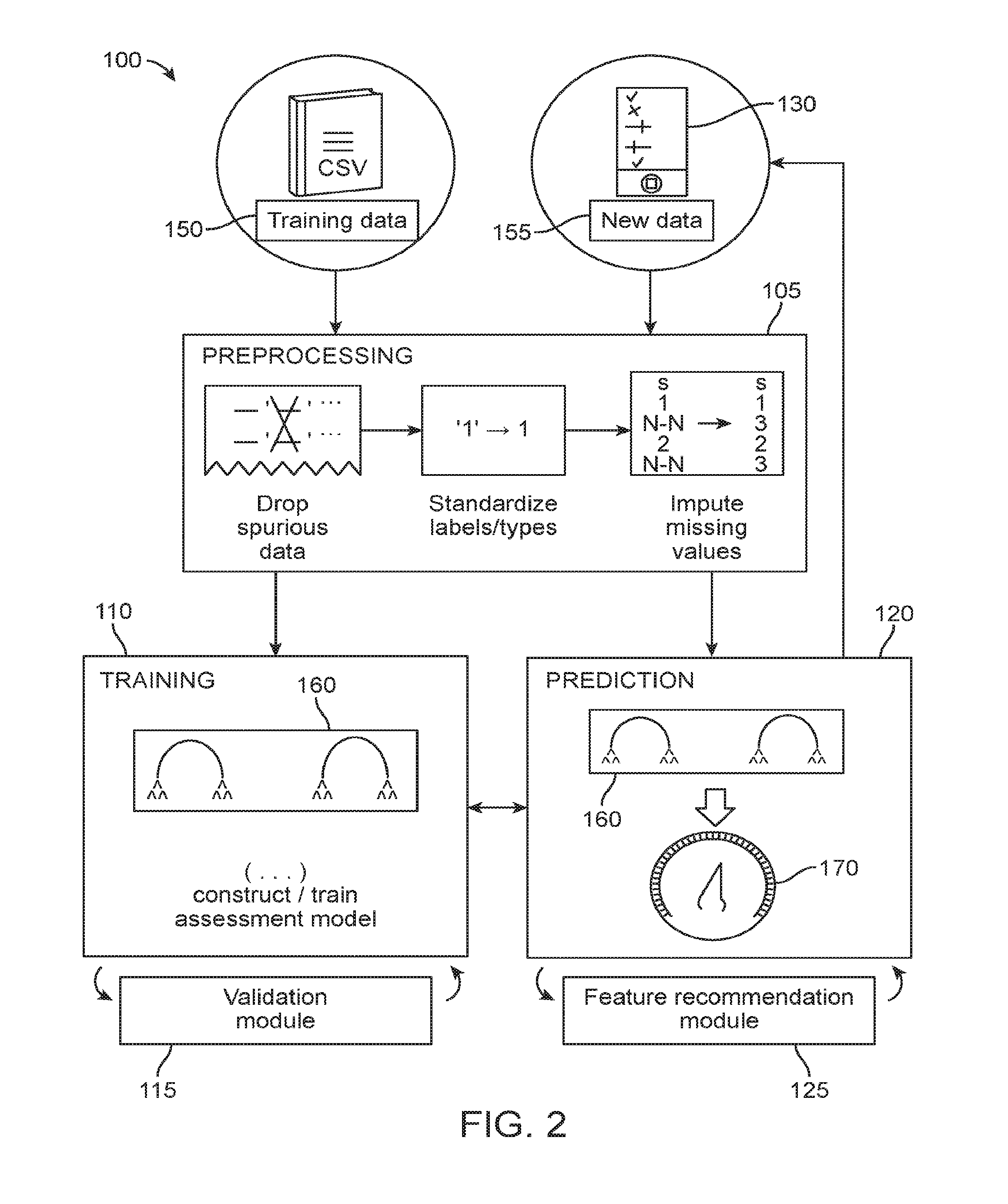

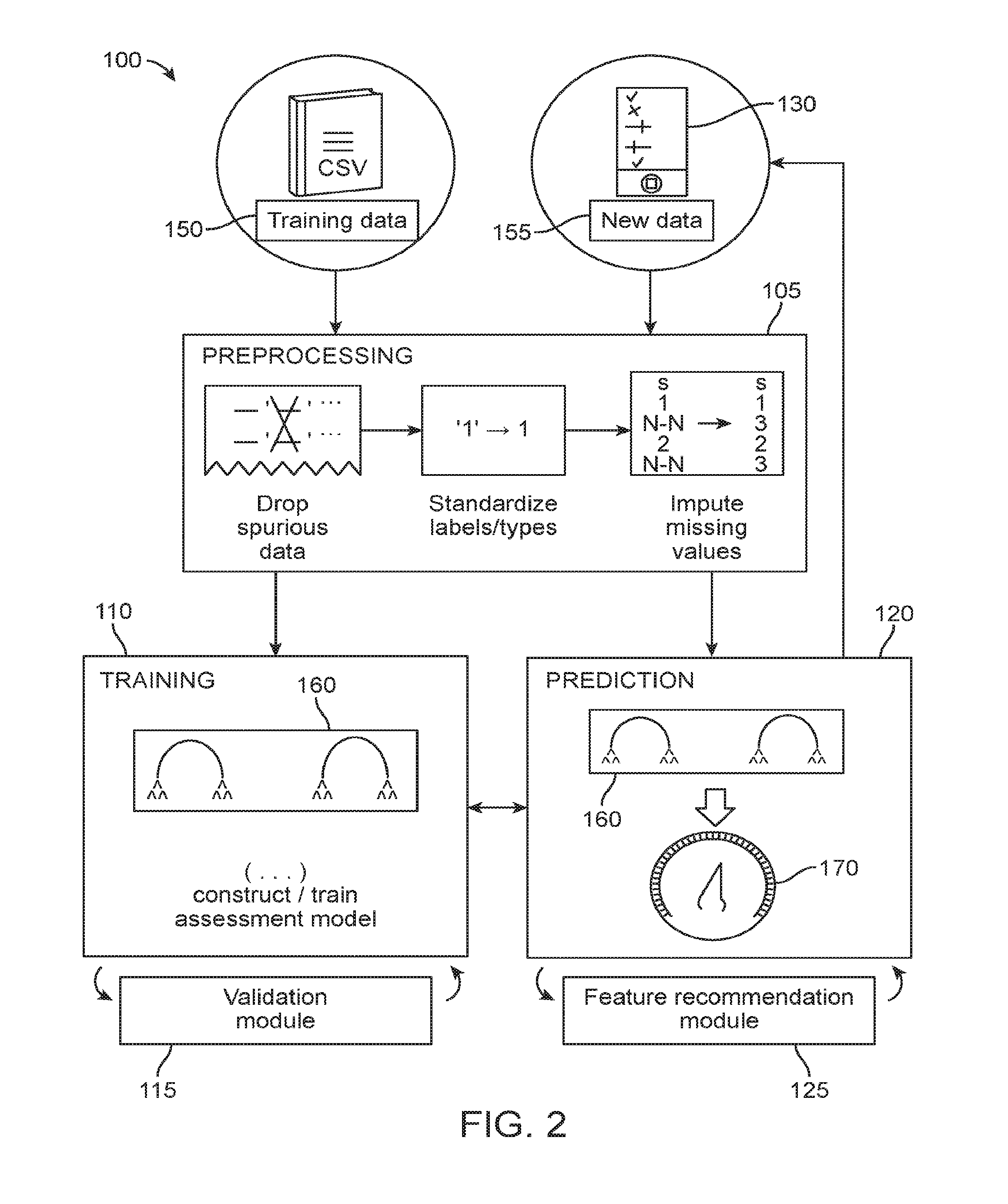

[0048] FIG. 2 is a schematic diagram of an exemplary data processing module for providing the assessment procedure as described herein.

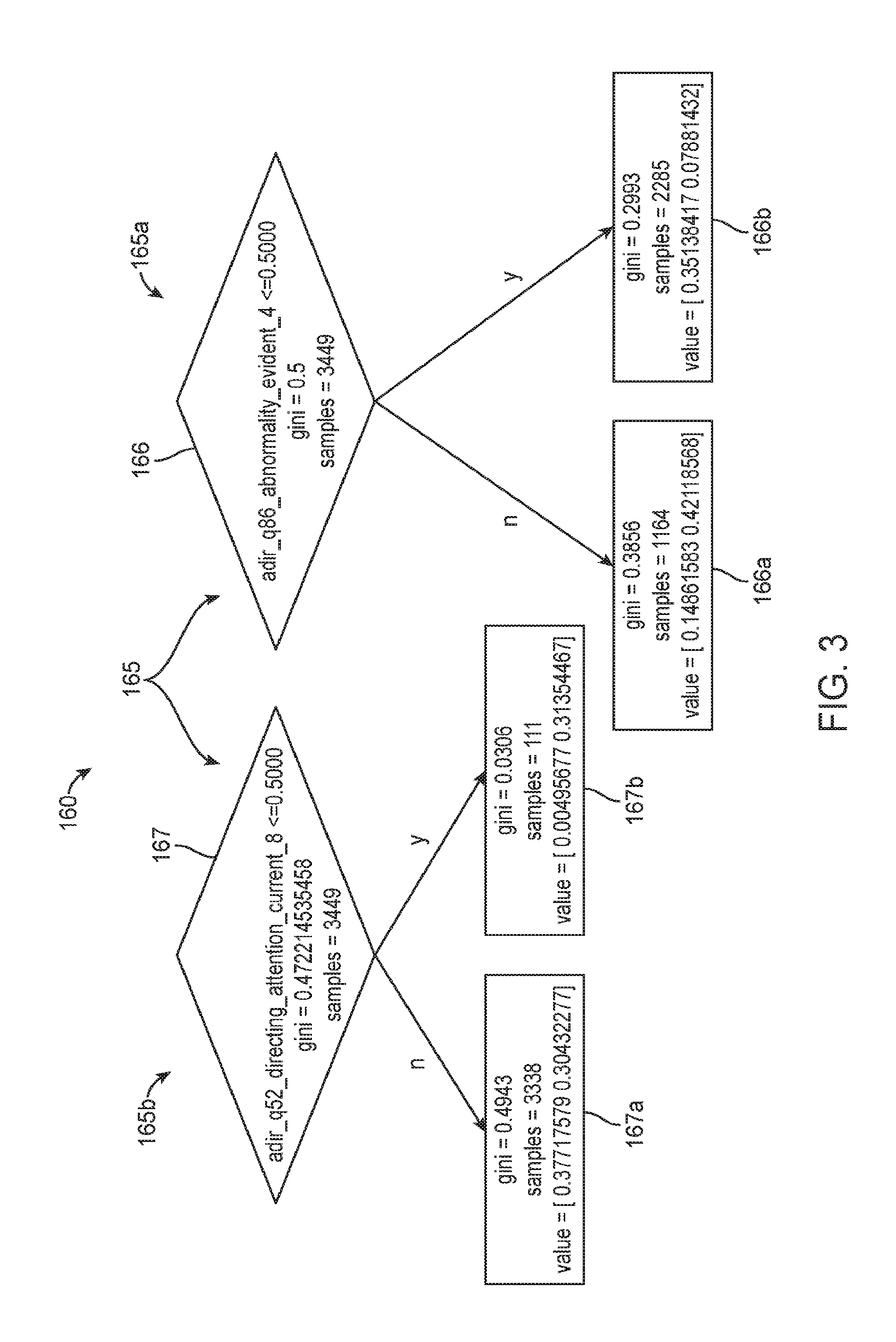

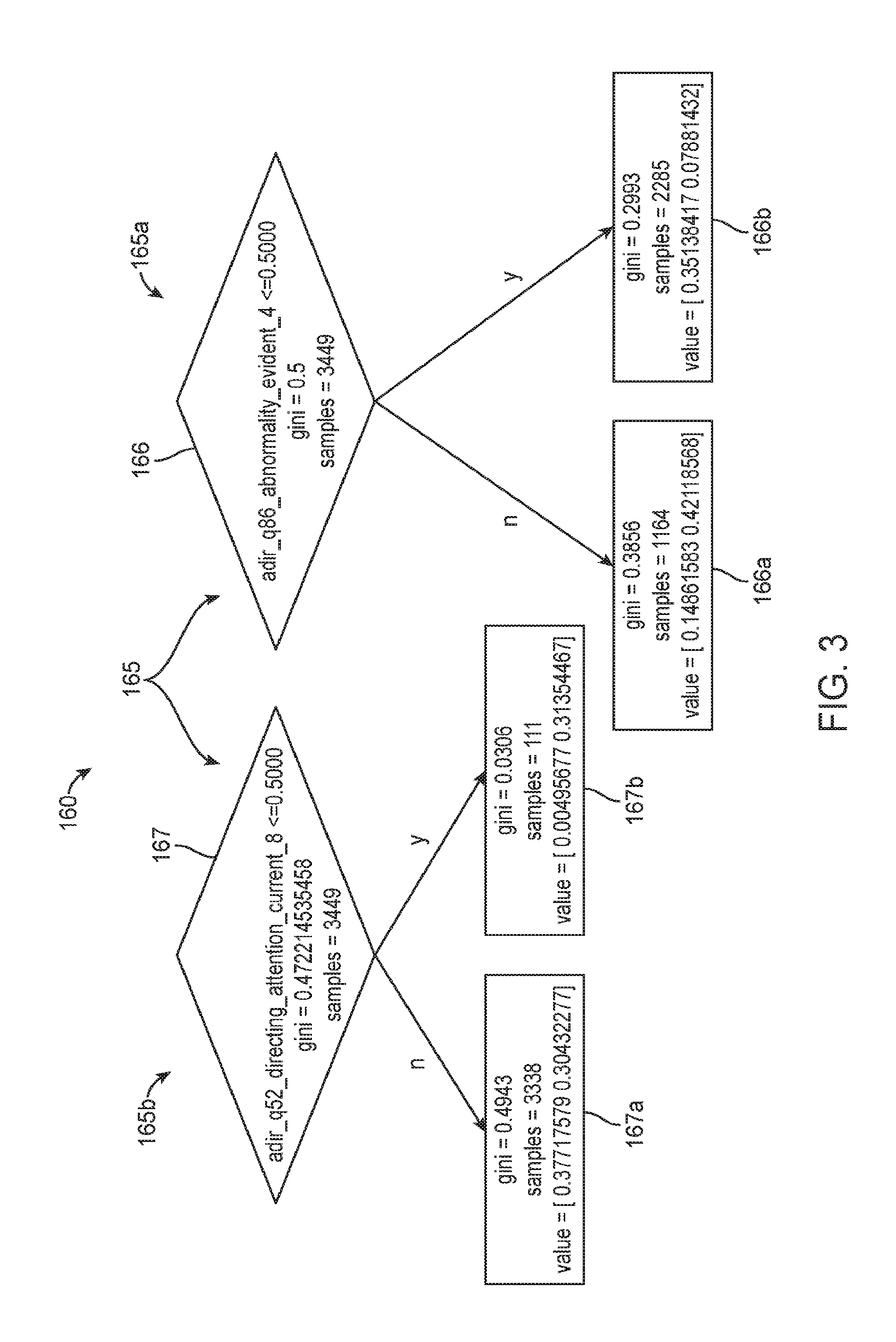

[0049] FIG. 3 is a schematic diagram illustrating a portion of an exemplary assessment model based on a Random Forest classifier.

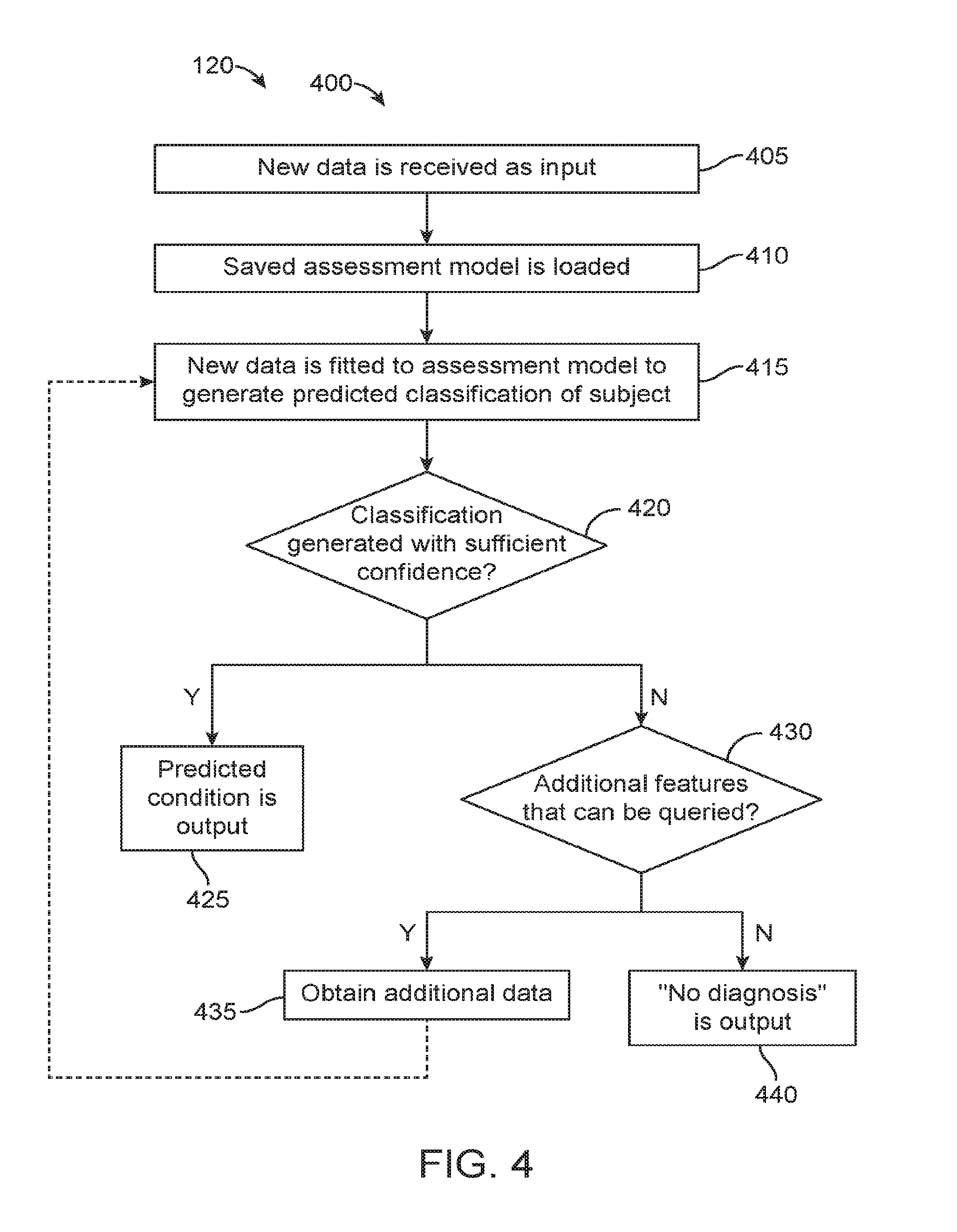

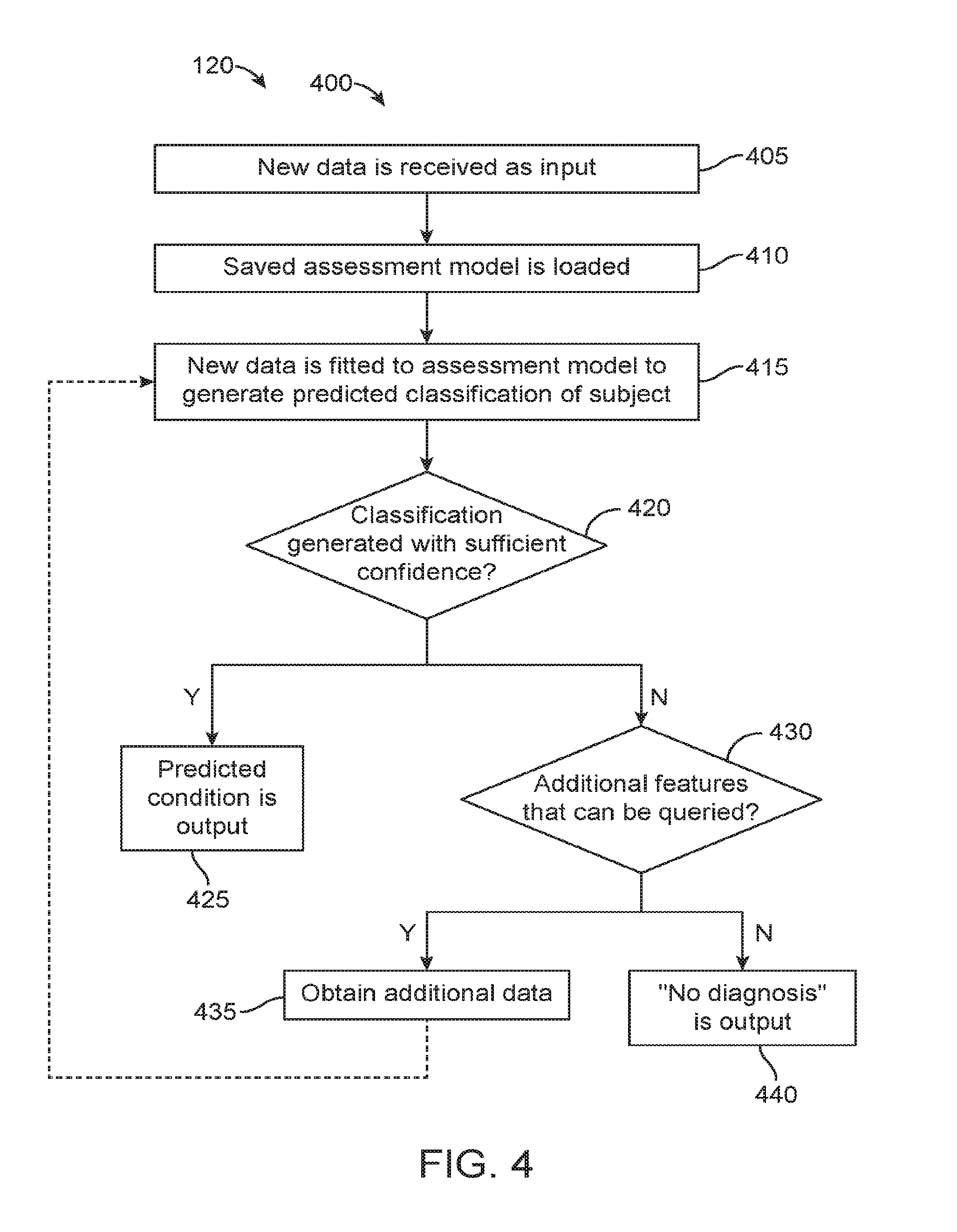

[0050] FIG. 4 is an exemplary operational flow of a prediction module as described herein.

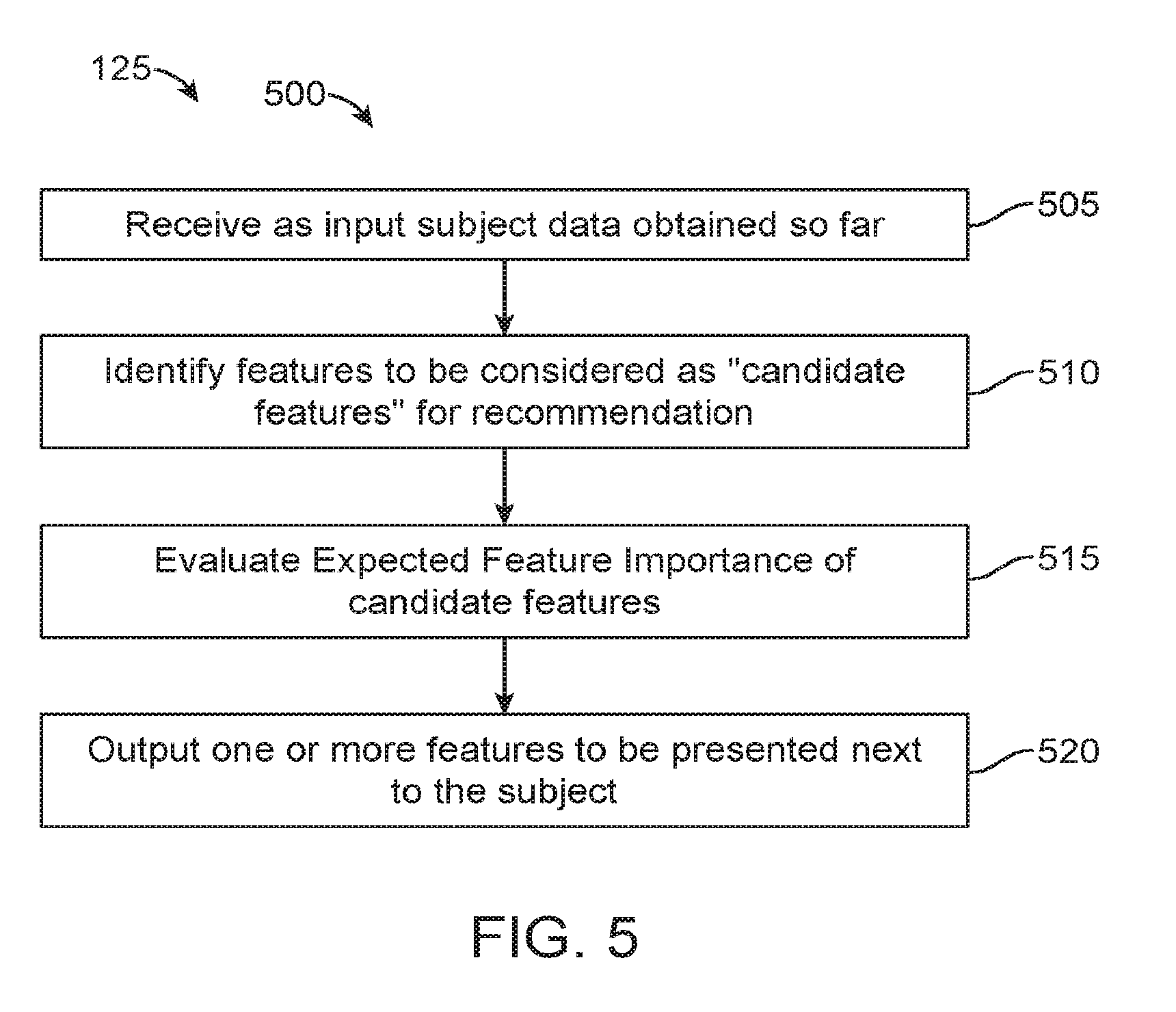

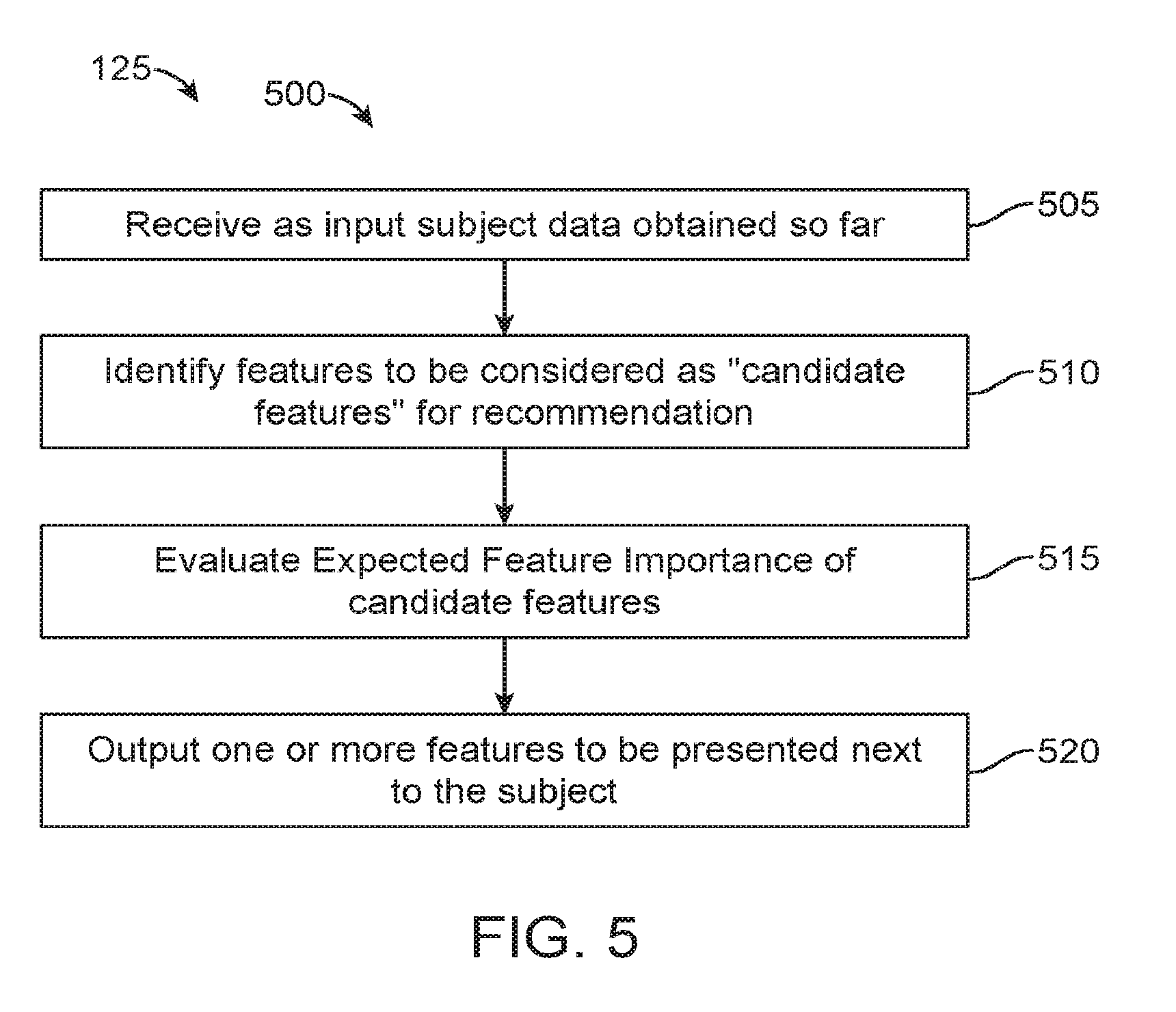

[0051] FIG. 5 is an exemplary operational flow of a feature recommendation module as described herein.

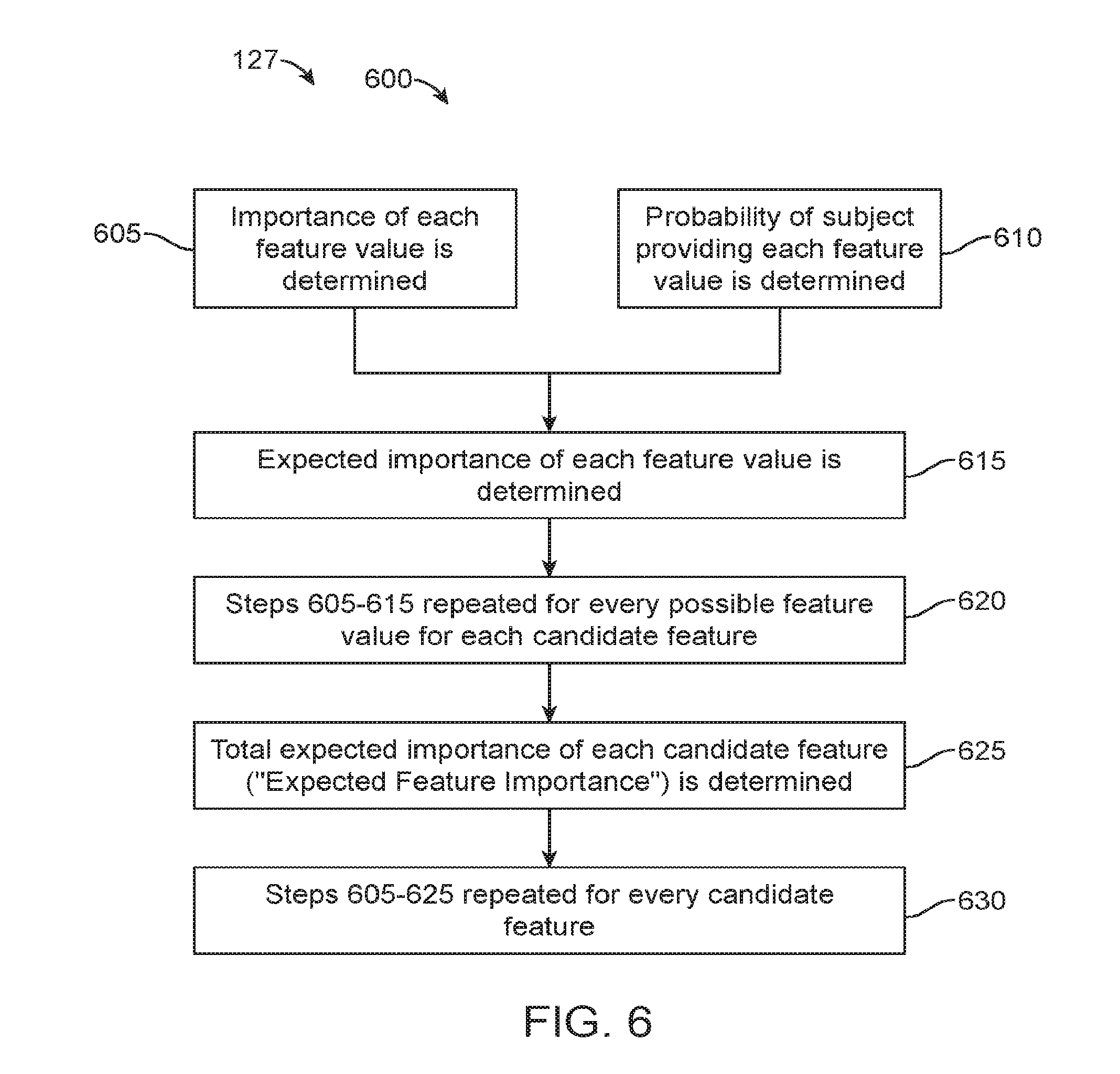

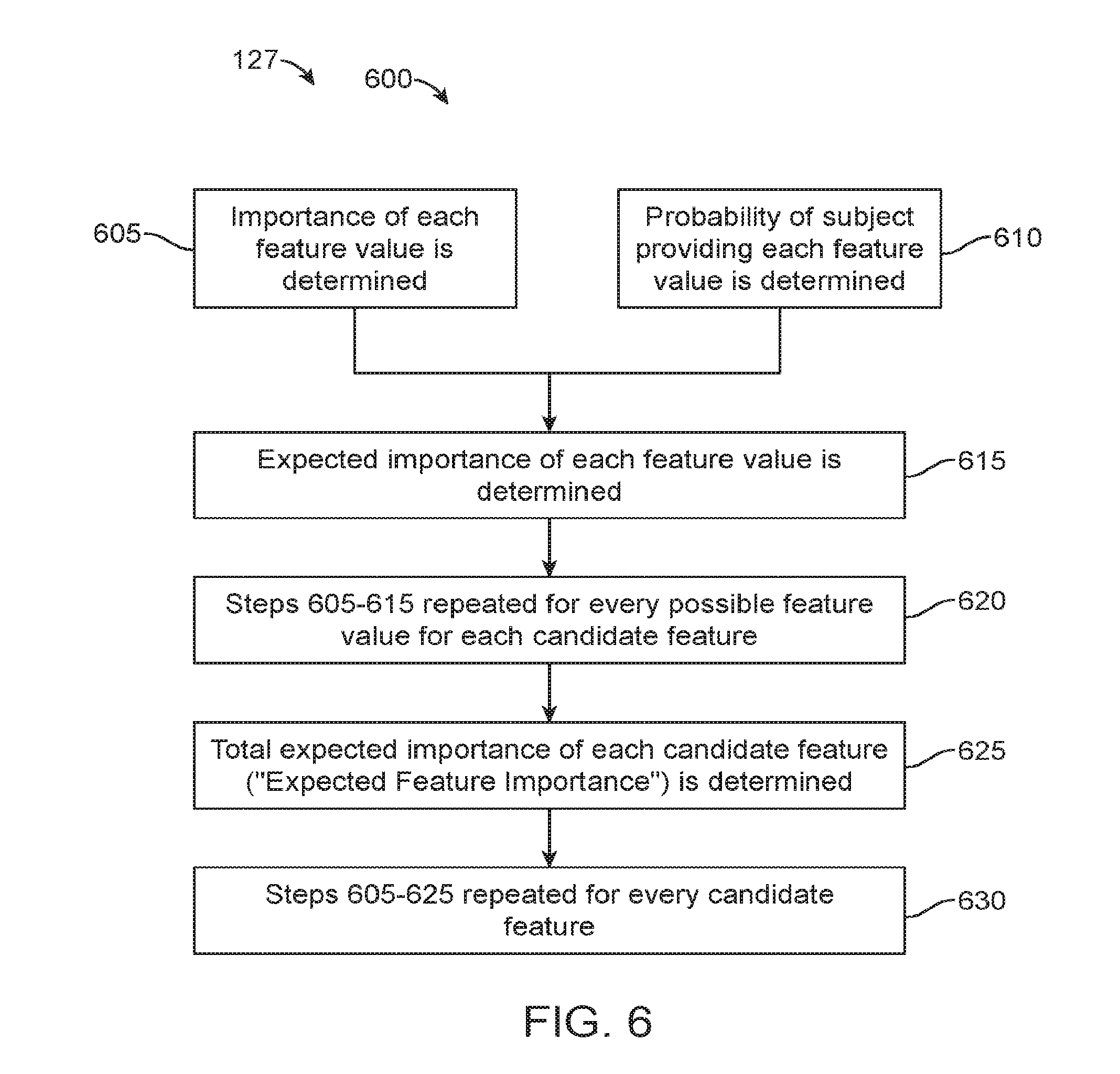

[0052] FIG. 6 is an exemplary operational flow of an expected feature importance determination algorithm as performed by a feature recommendation module described herein.

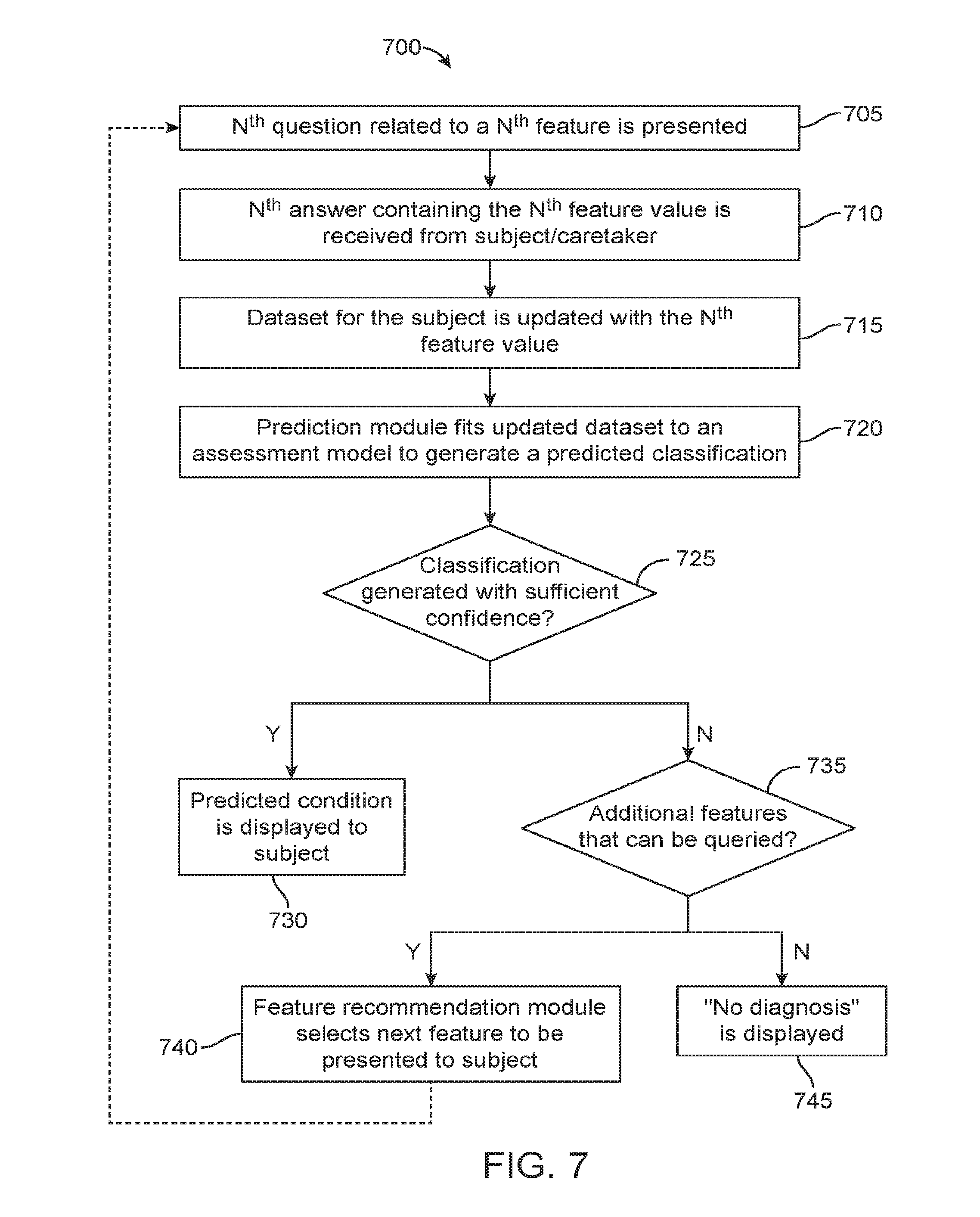

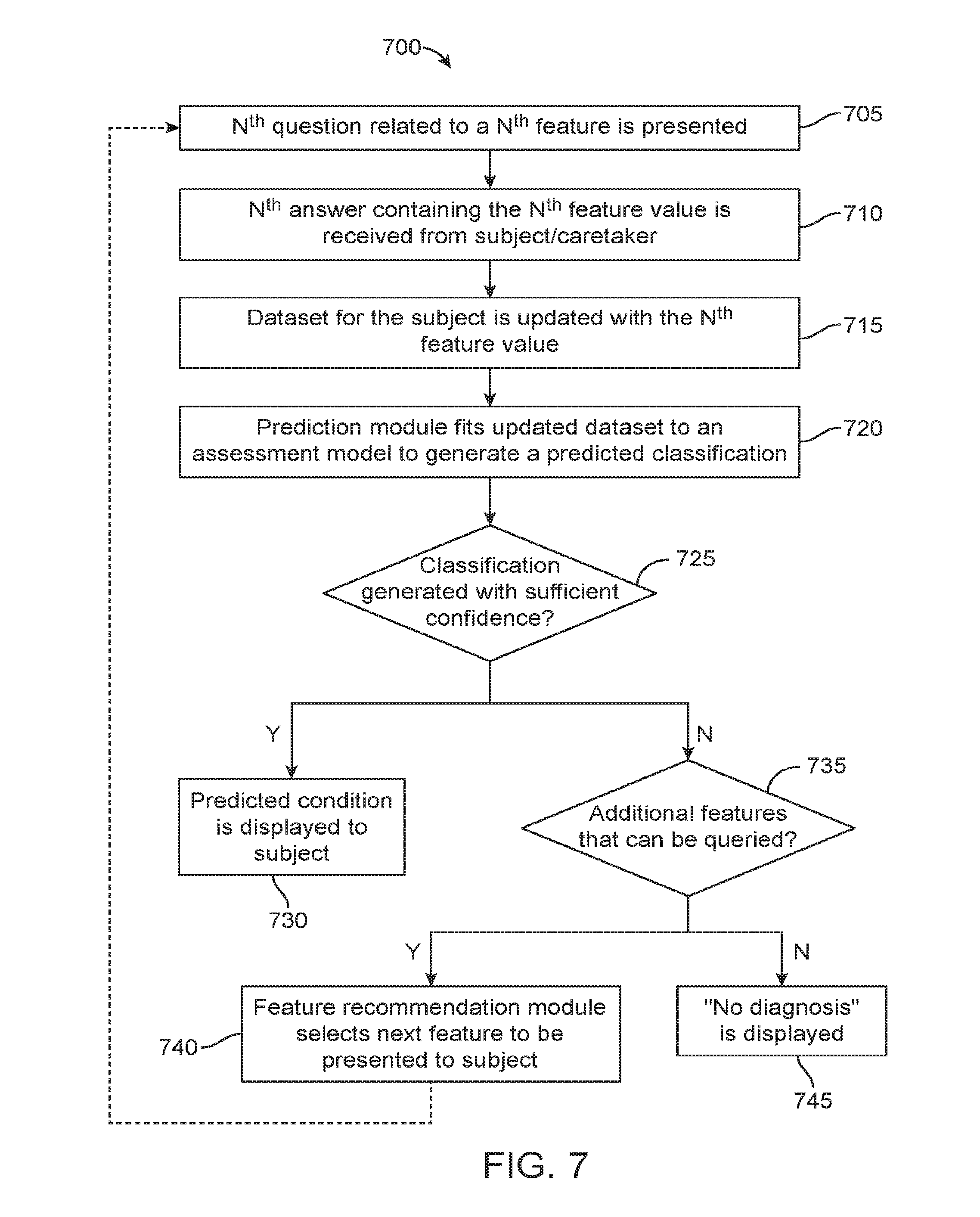

[0053] FIG. 7 illustrates a method of administering an assessment procedure as described herein.

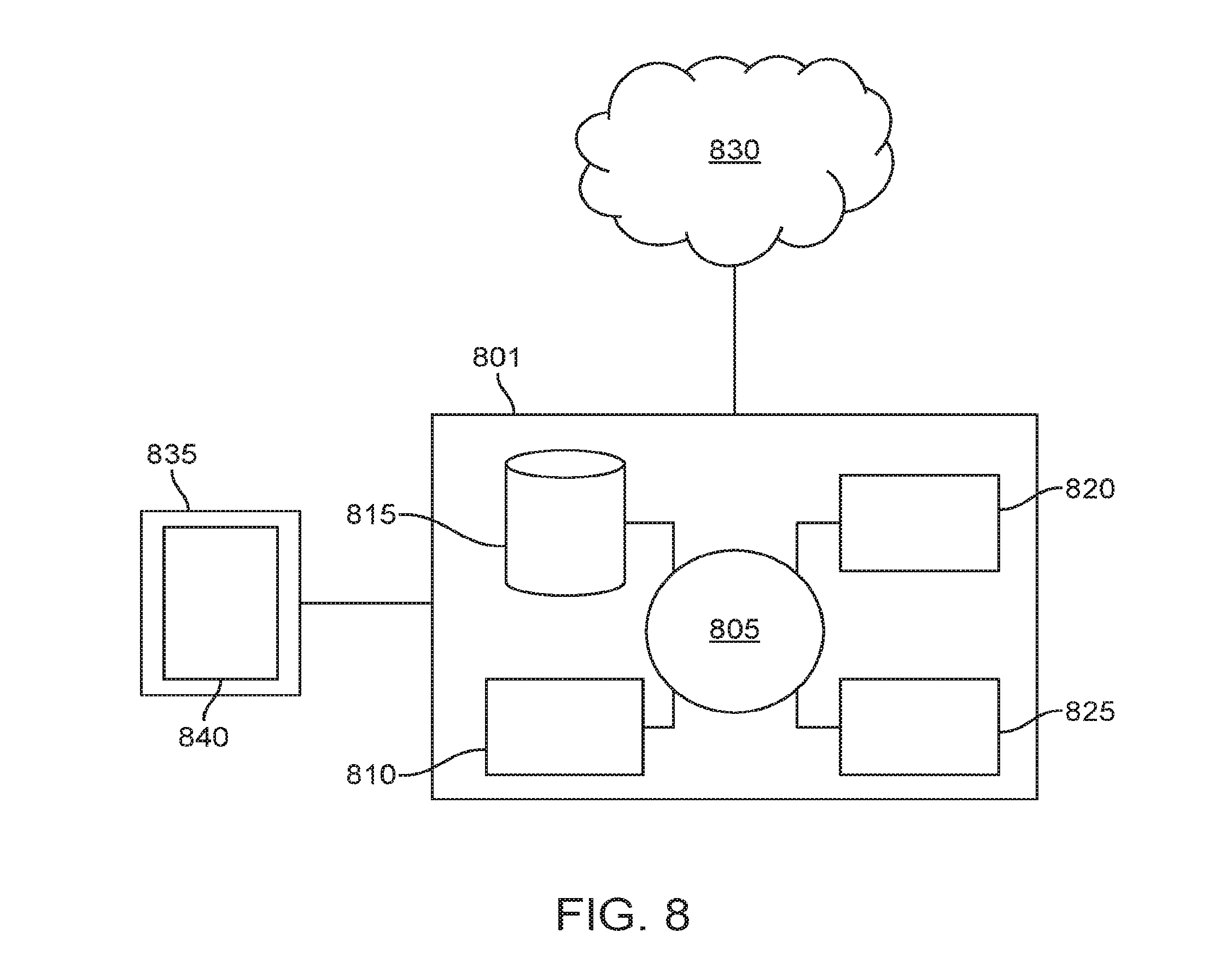

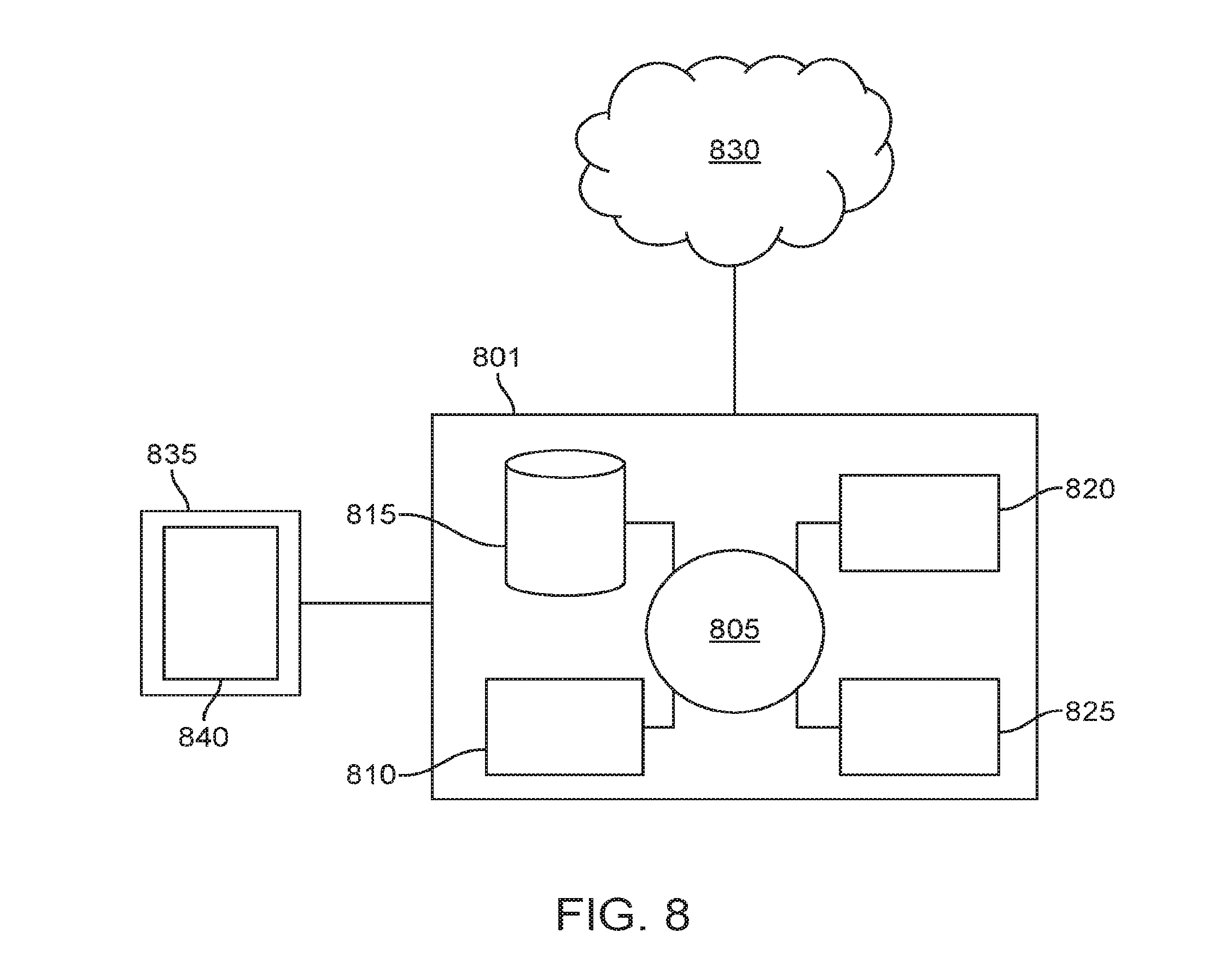

[0054] FIG. 8 shows a computer system suitable for incorporation with the methods and apparatus described herein.

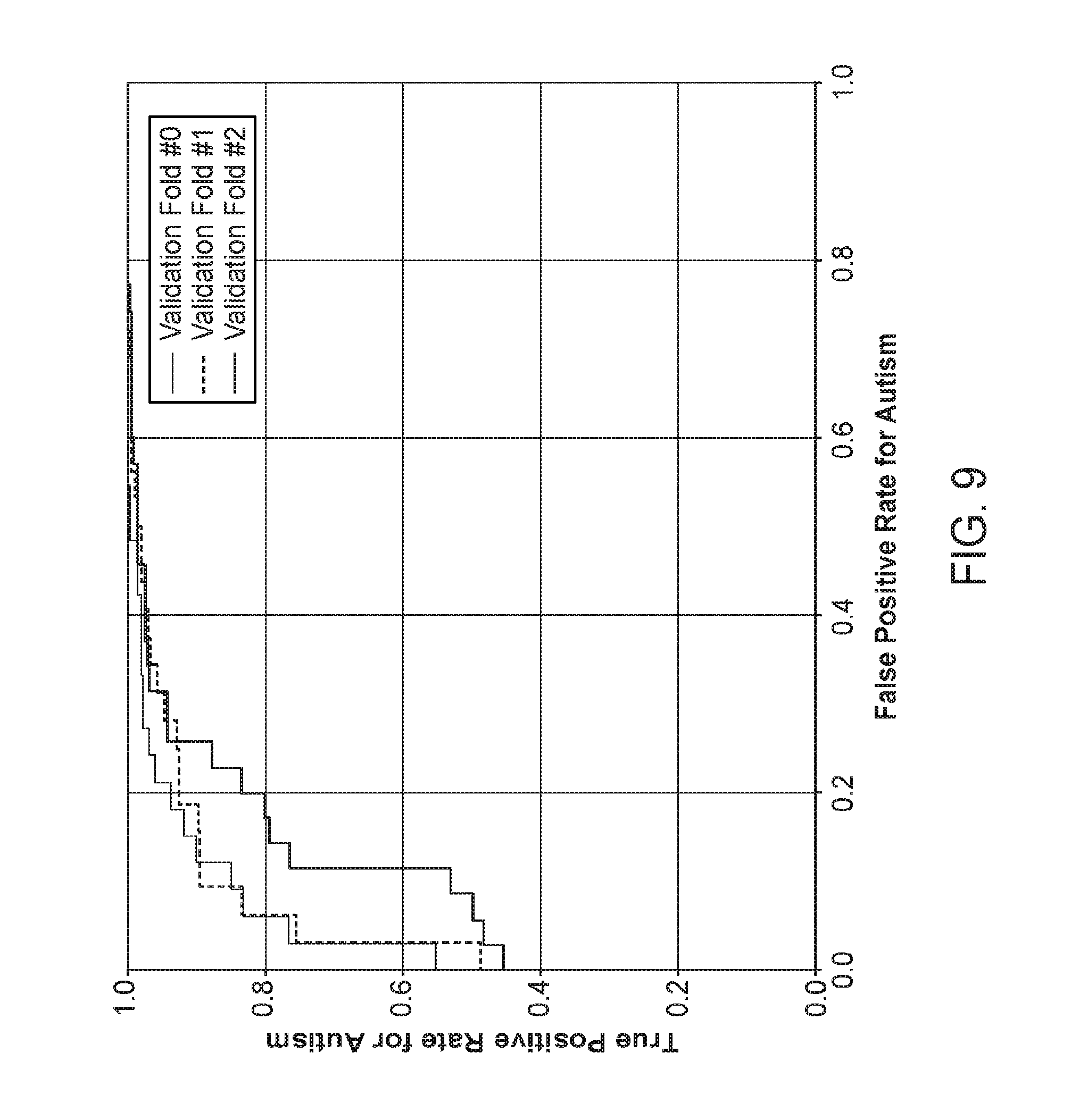

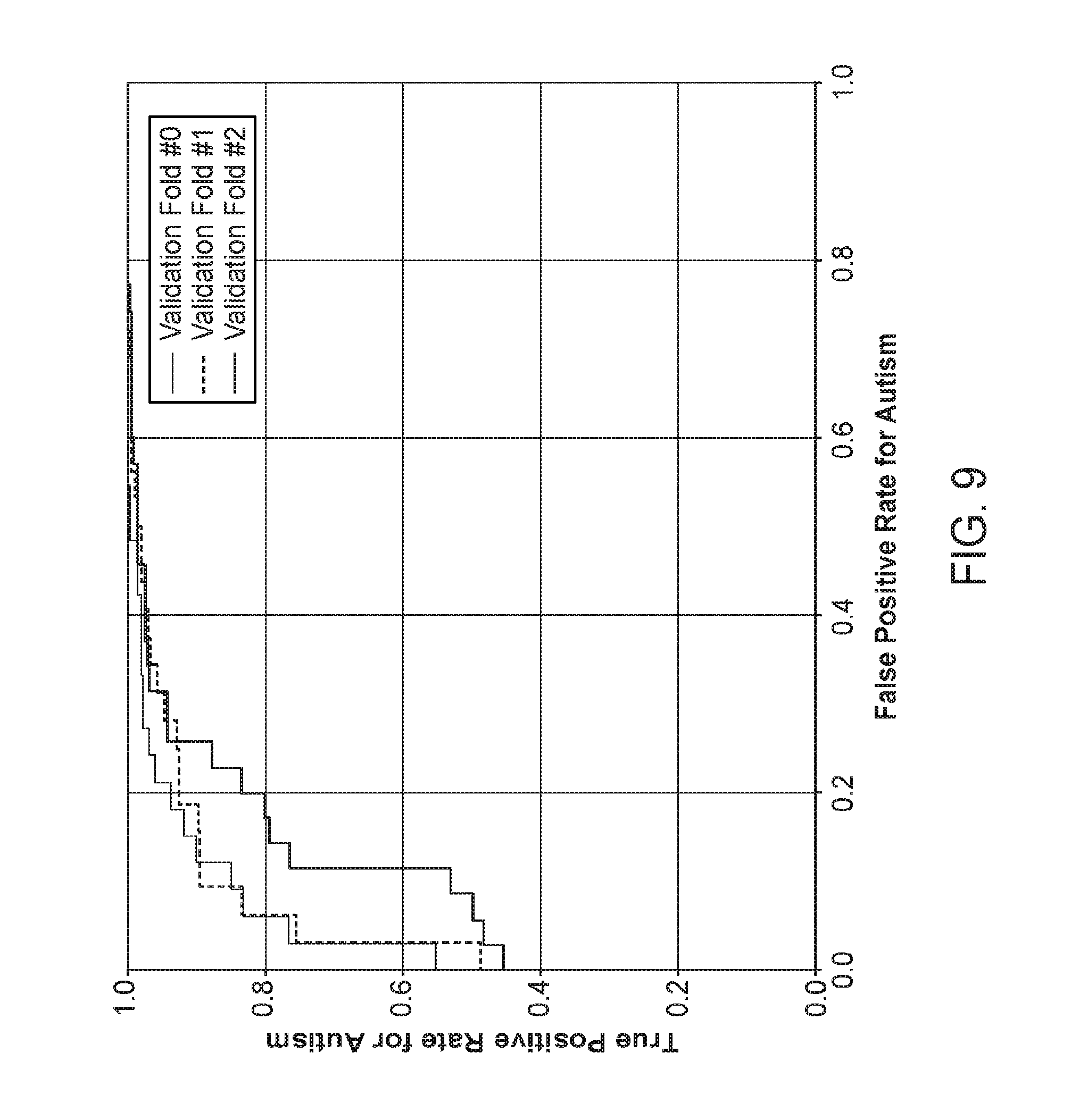

[0055] FIG. 9 shows receiver operating characteristic (ROC) curves mapping sensitivity versus fall-out for an exemplary assessment model as described herein.

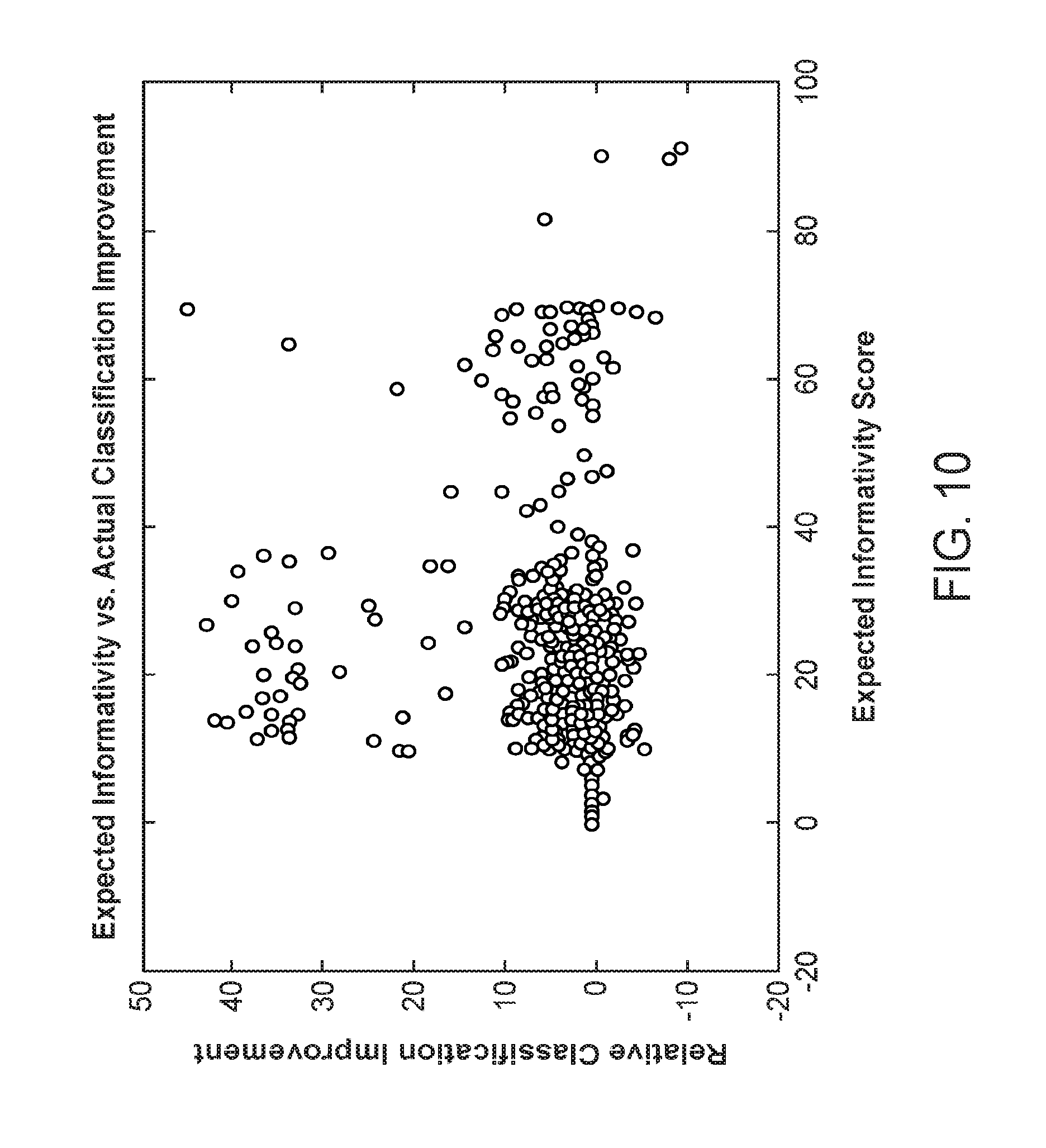

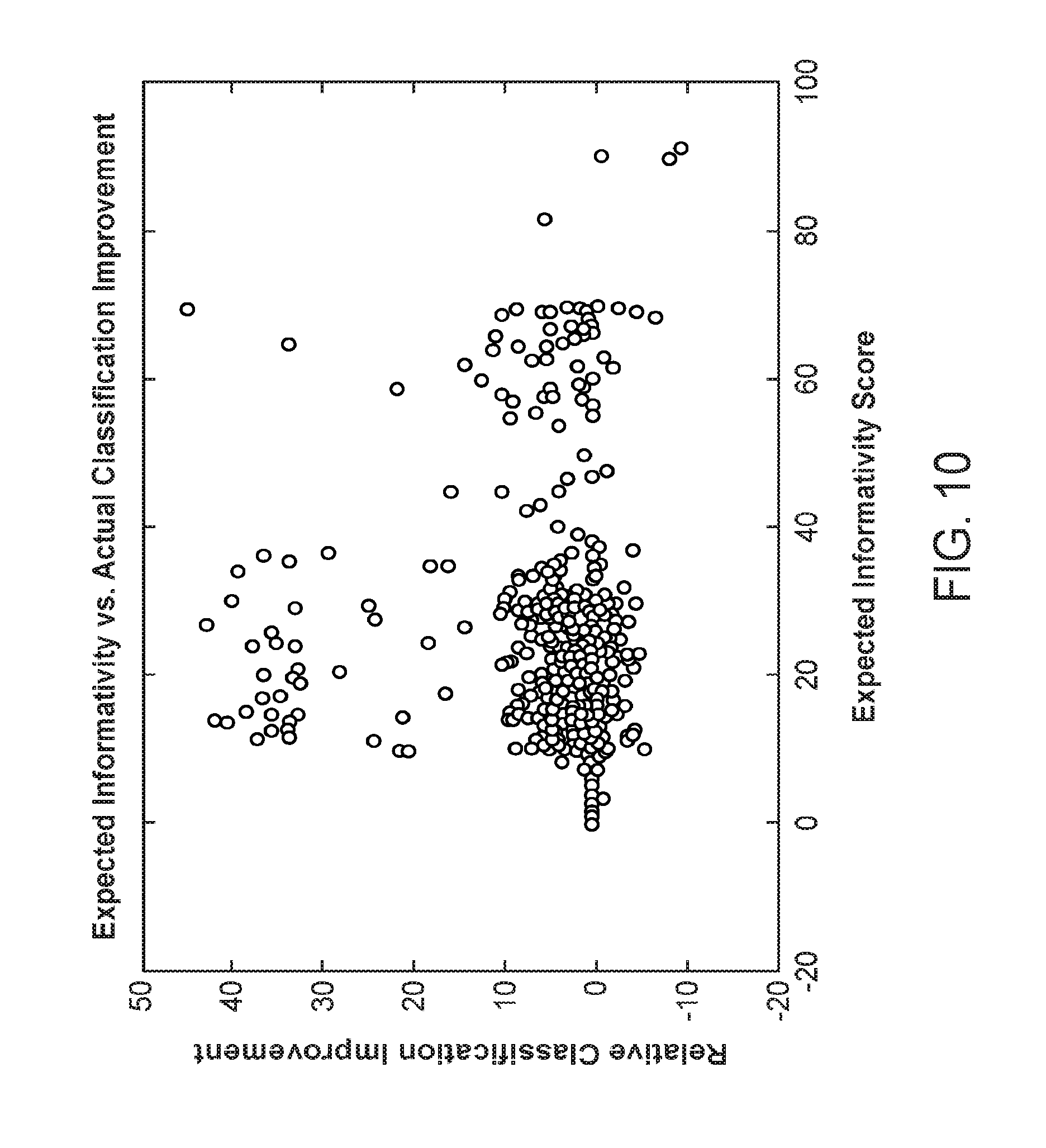

[0056] FIG. 10 is a scatter plot illustrating a performance metric for a feature recommendation module as described herein.

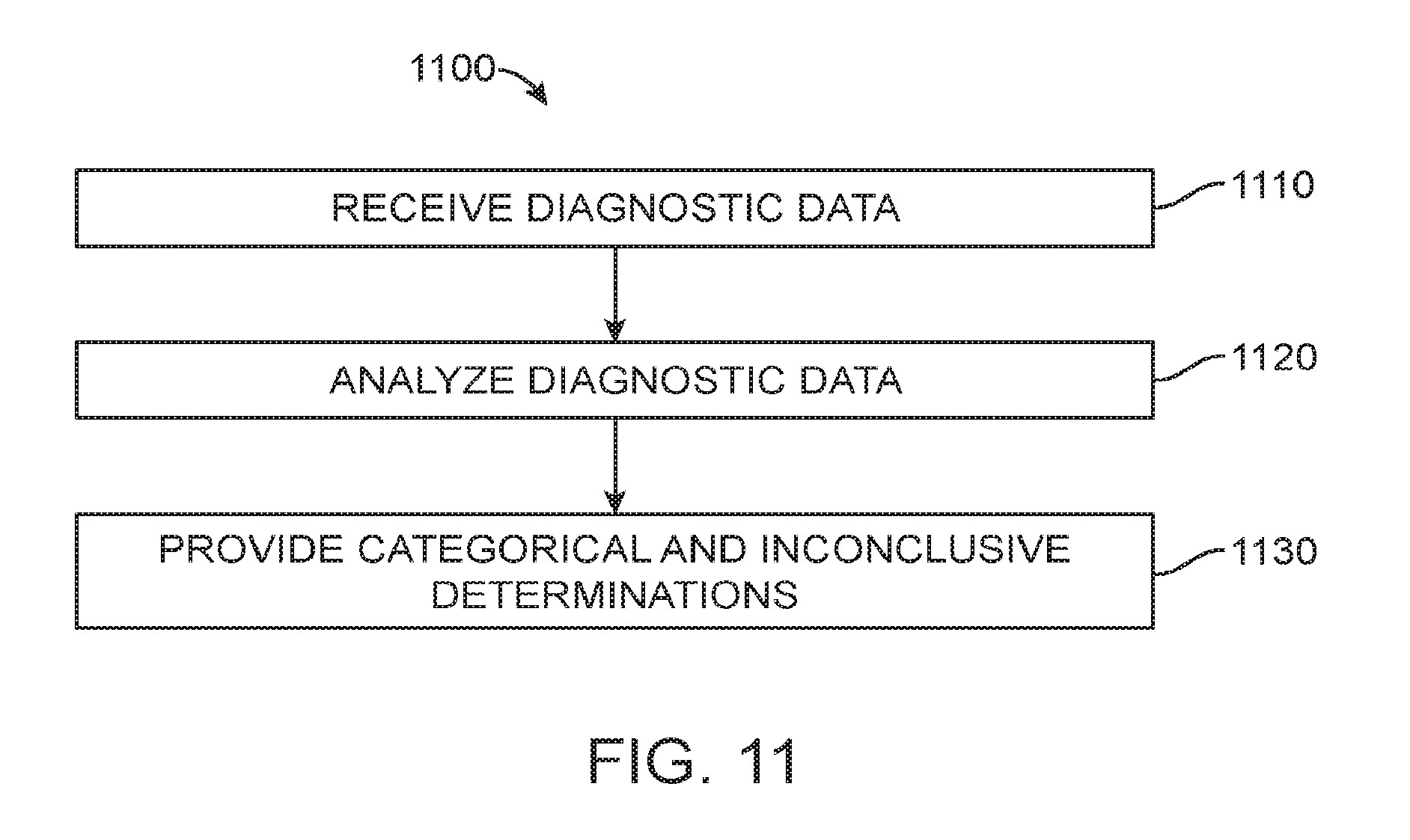

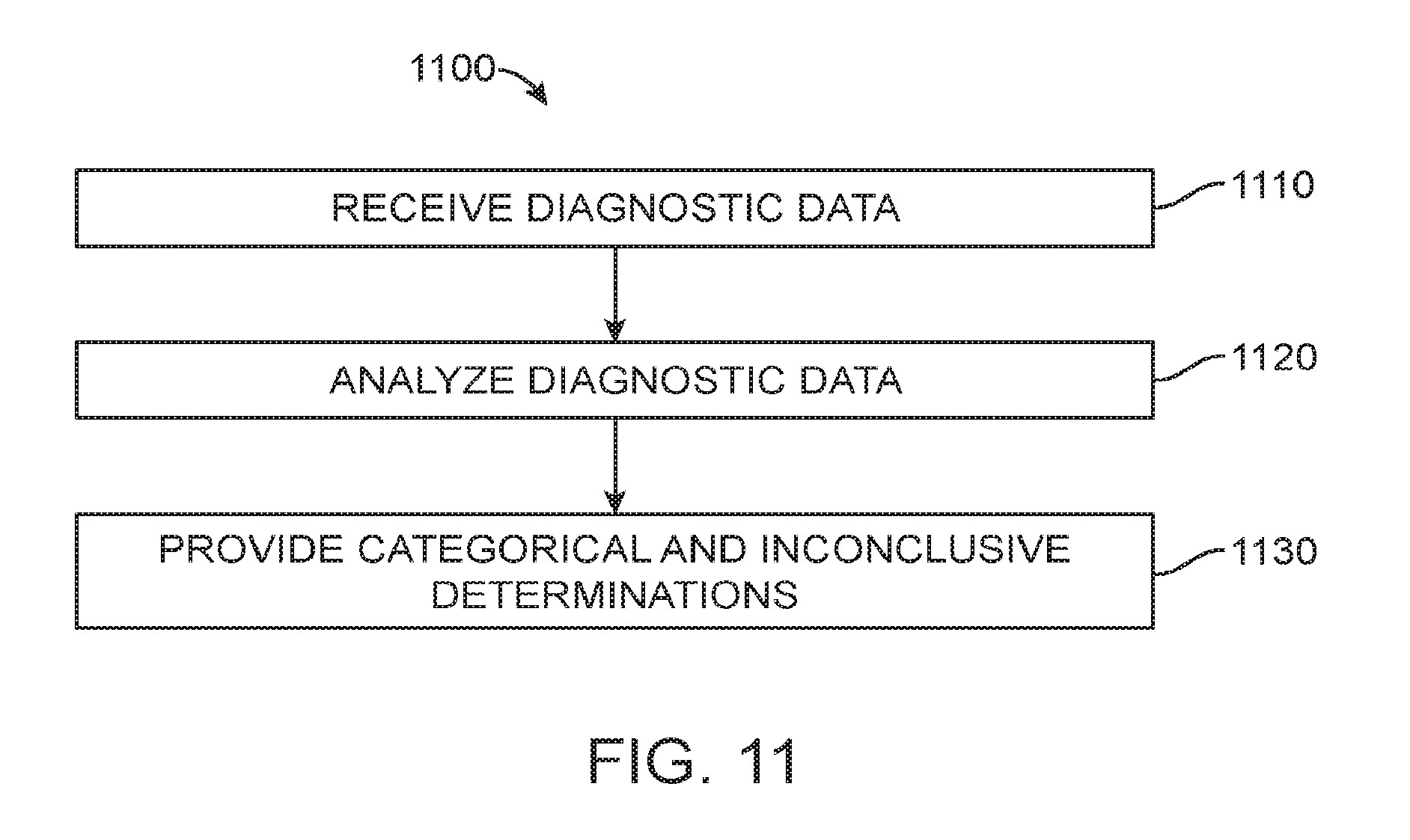

[0057] FIG. 11 is an exemplary operational flow of an evaluation module as described herein.

[0058] FIG. 12 is an exemplary operational flow of a model tuning module as described herein.

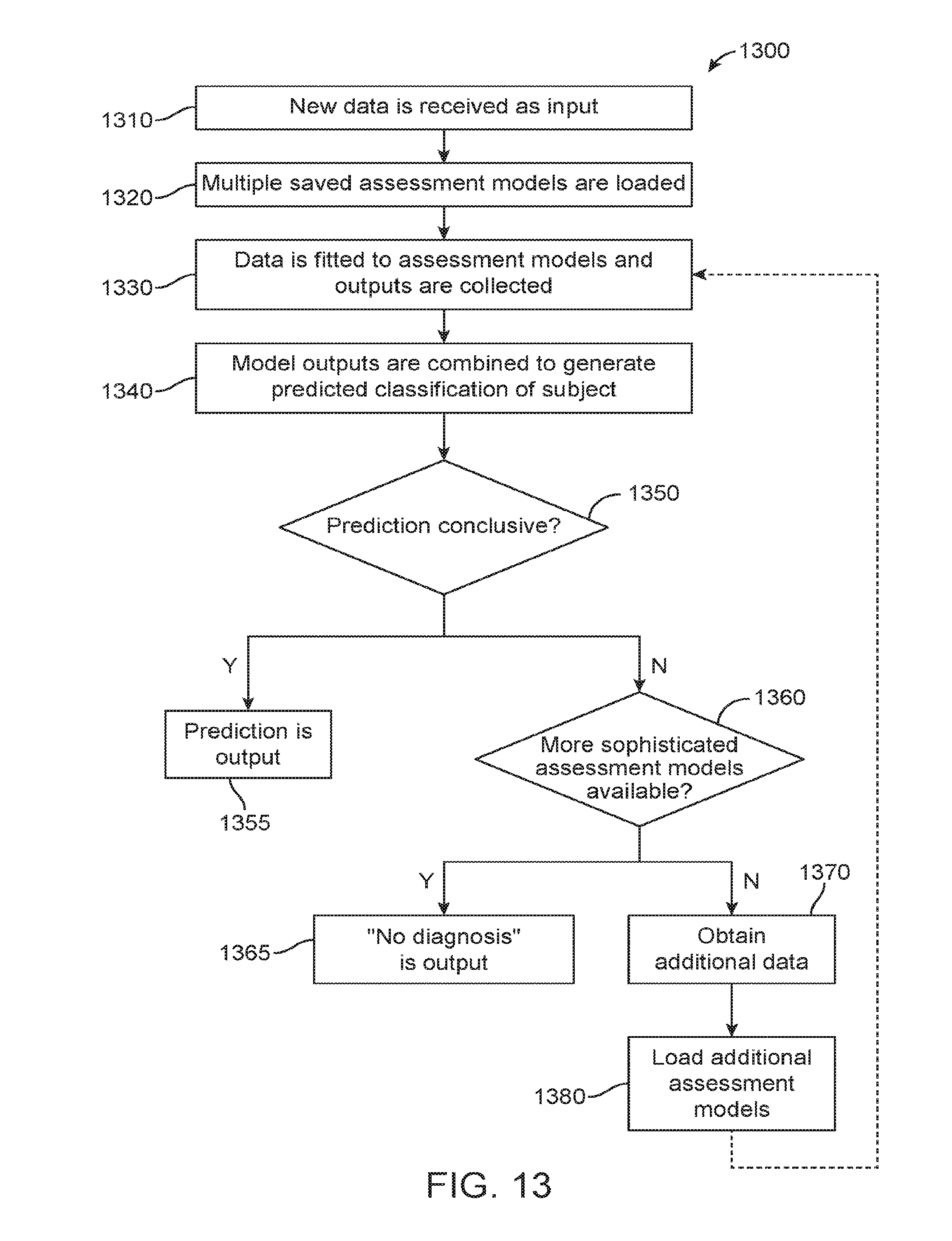

[0059] FIG. 13 is another exemplary operational flow of an evaluation module as described herein.

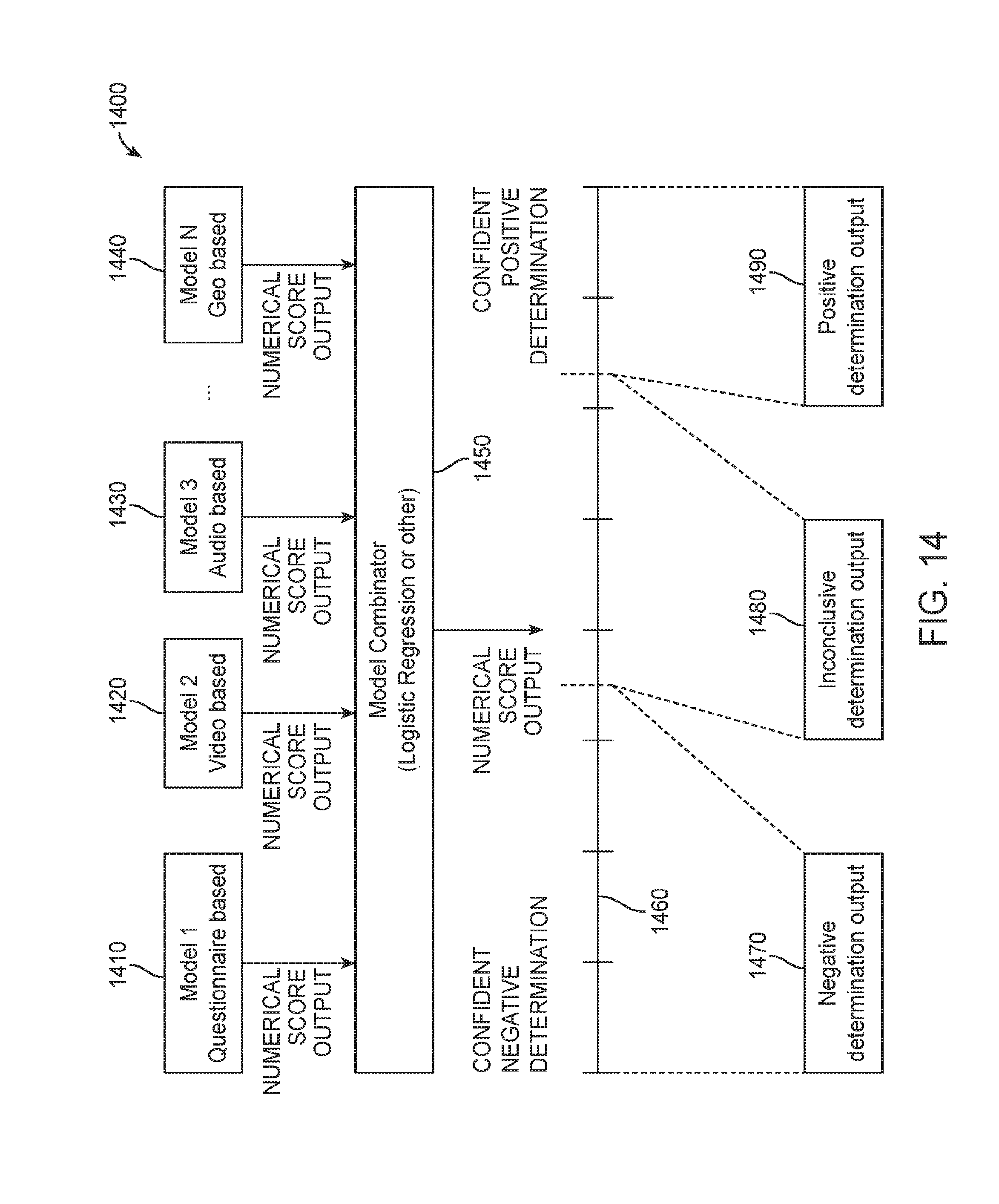

[0060] FIG. 14 is an exemplary operational flow of the model output combining step depicted in FIG. 13.

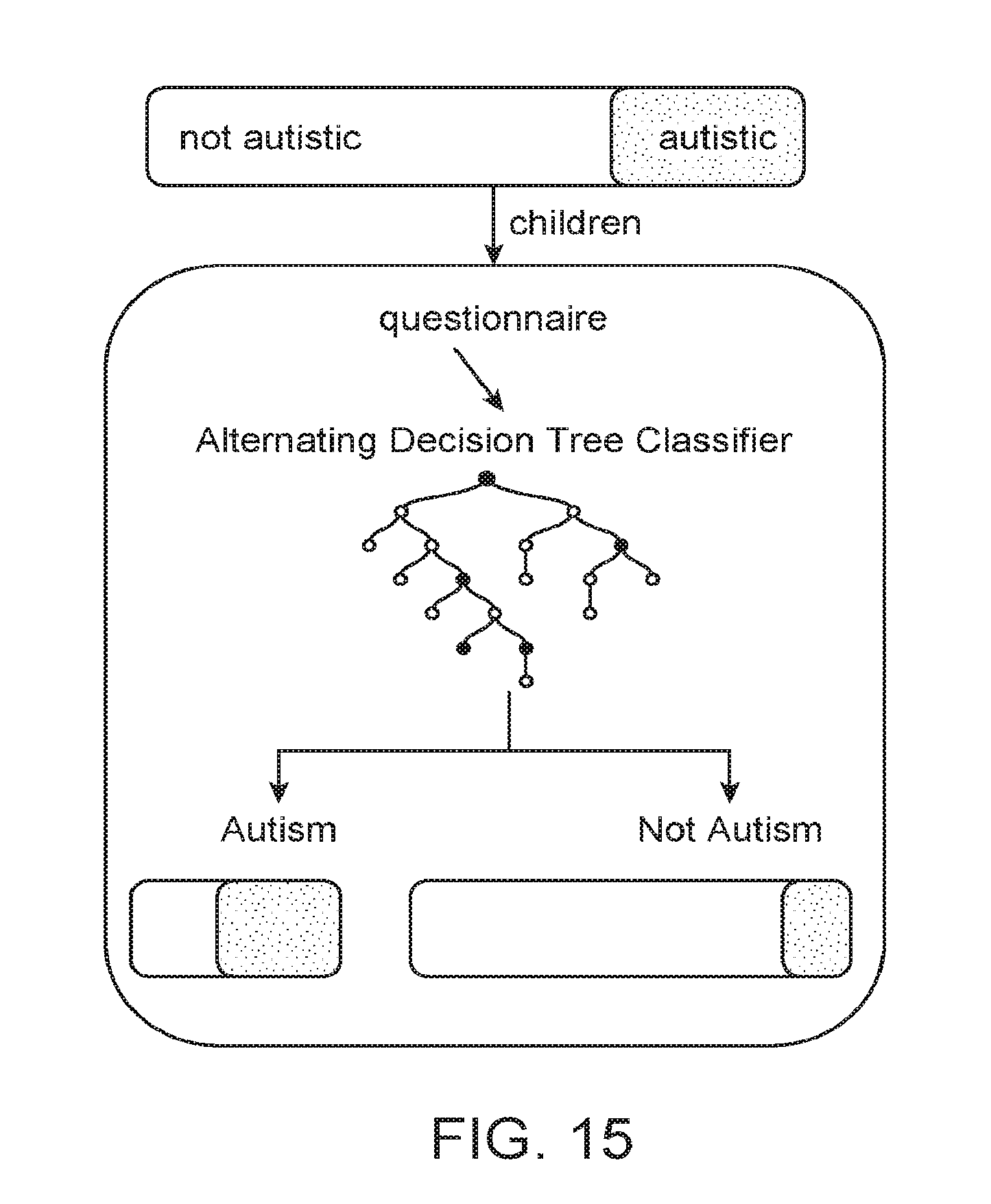

[0061] FIG. 15 shows an exemplary questionnaire screening algorithm configured to provide only categorical determinations as described herein.

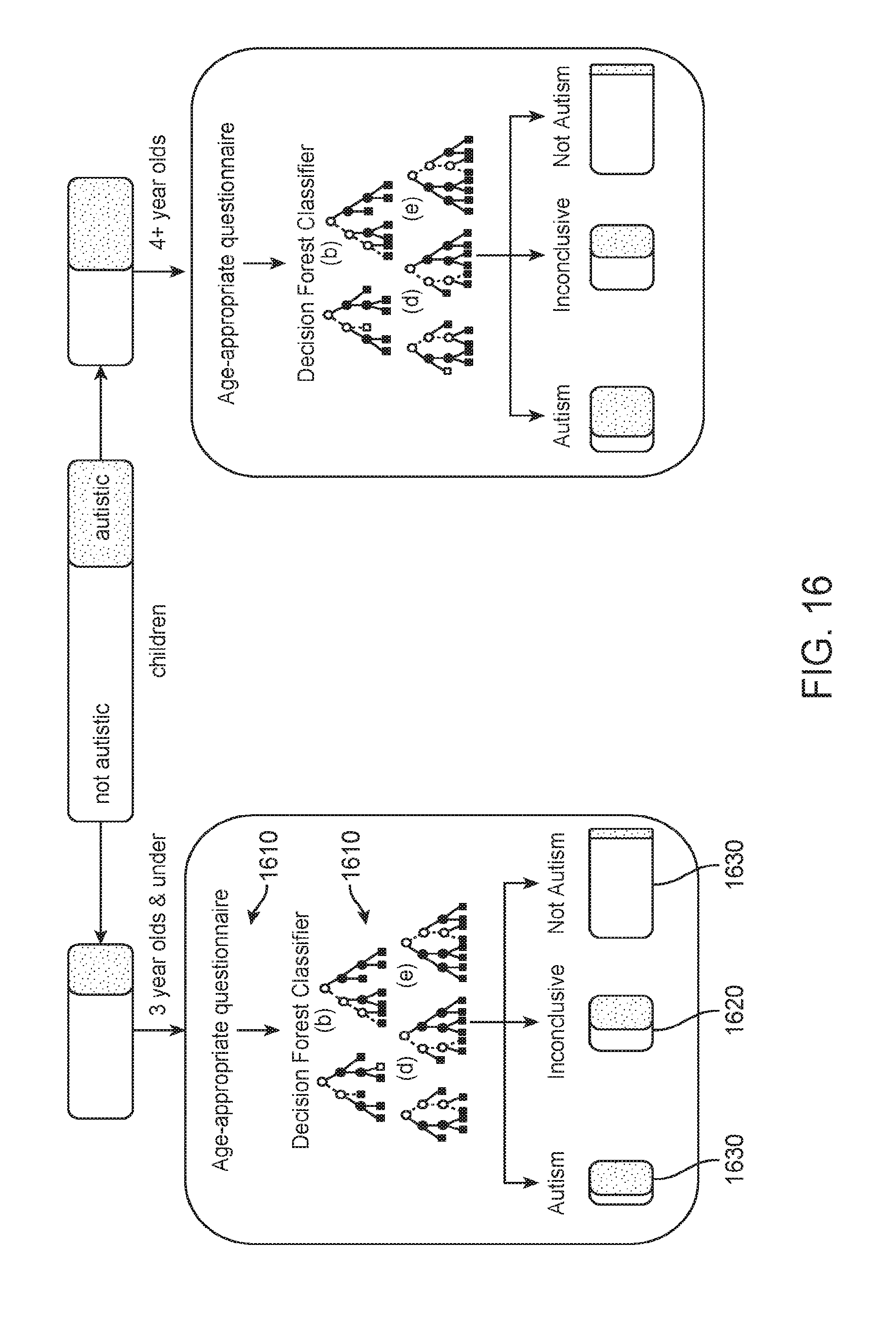

[0062] FIG. 16 shows an exemplary questionnaire screening algorithm configured to provide categorical and inconclusive determinations as described herein.

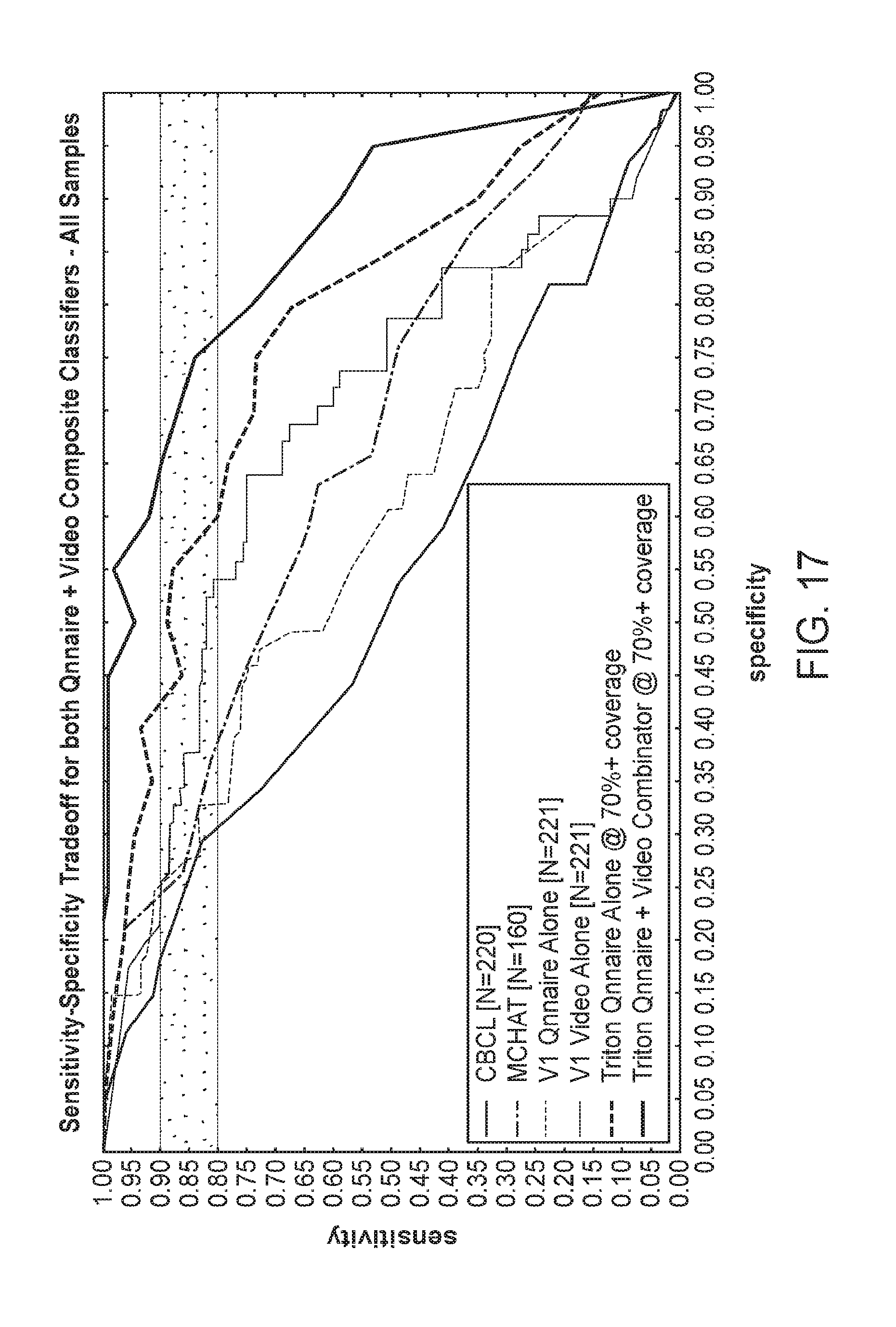

[0063] FIG. 17 shows a comparison of the performance for various algorithms for all samples as described herein.

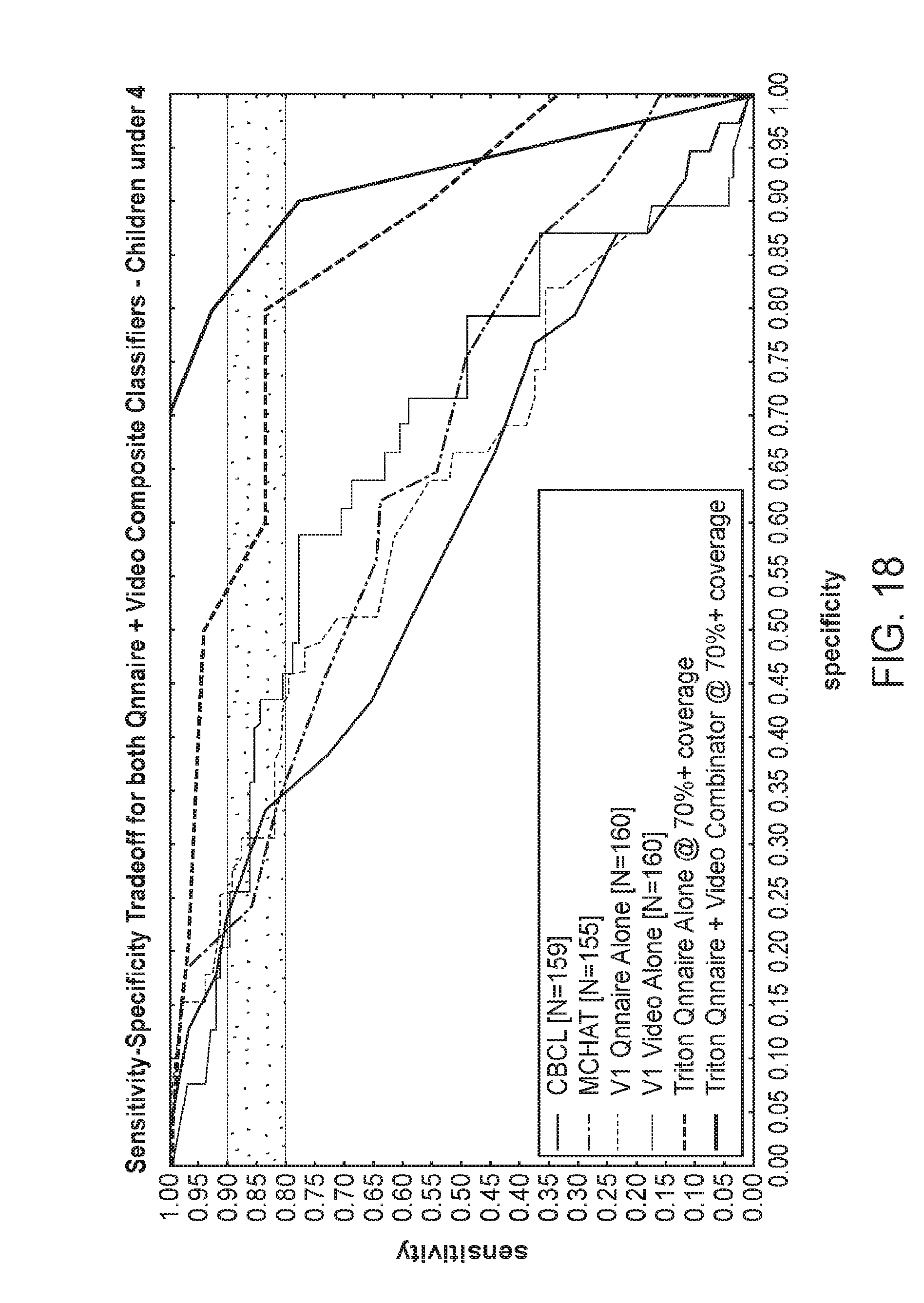

[0064] FIG. 18 shows a comparison of the performance for various algorithms for samples taken from Children Under 4 as described herein.

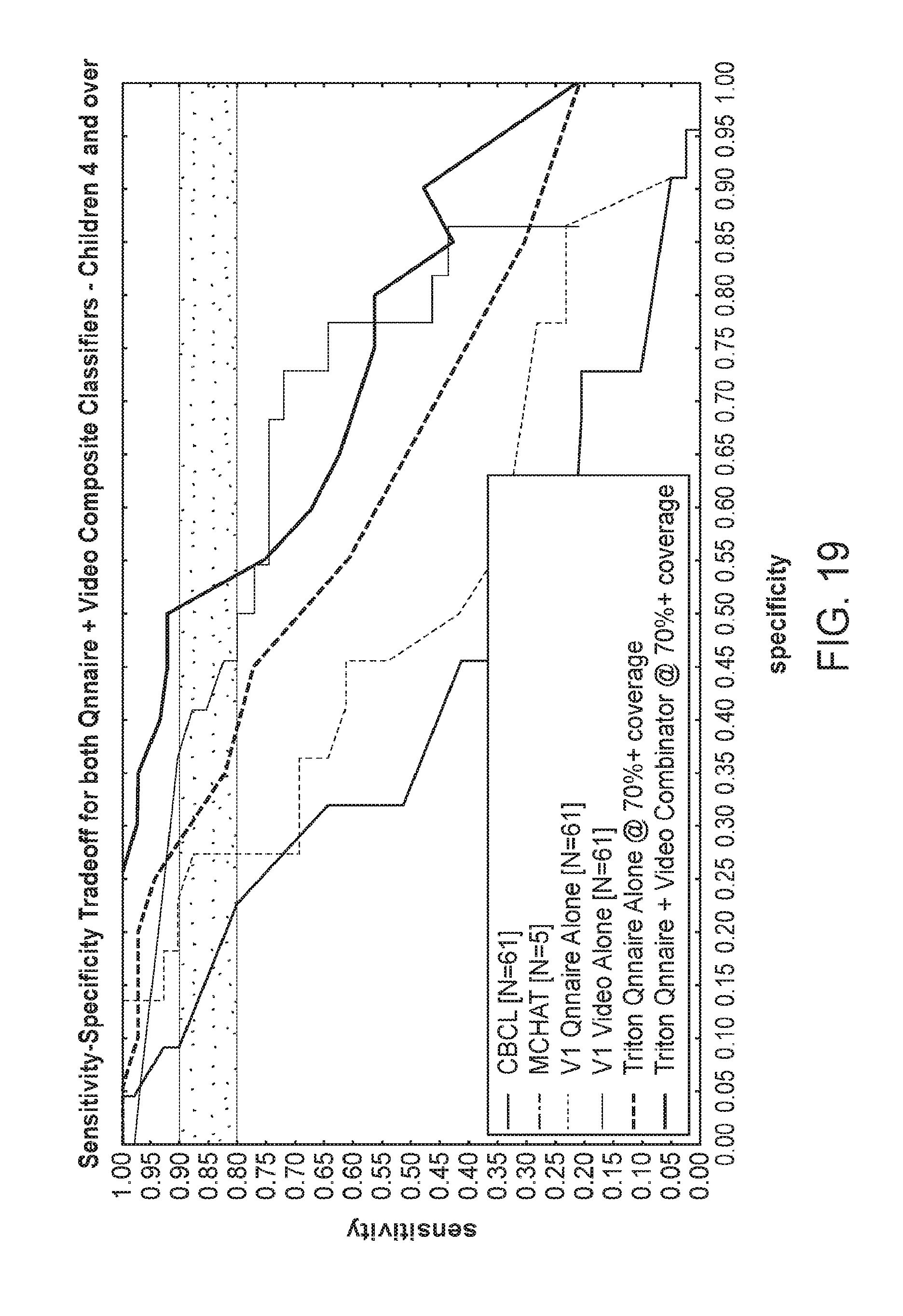

[0065] FIG. 19 shows a comparison of the performance for various algorithms for samples taken from Children 4 and Over described herein.

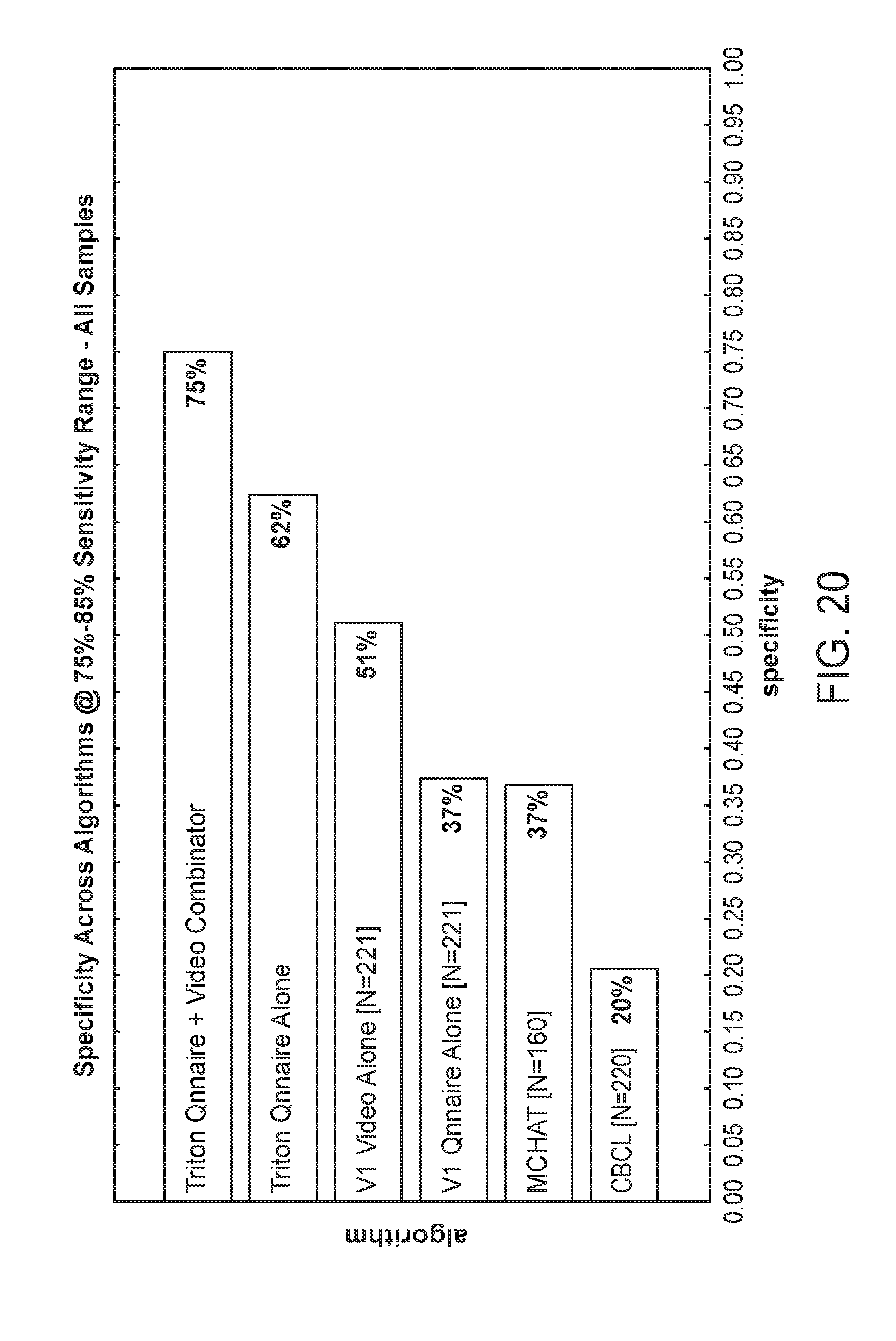

[0066] FIG. 20 shows the specificity across algorithms at 75%-85% sensitivity range for all samples as described herein.

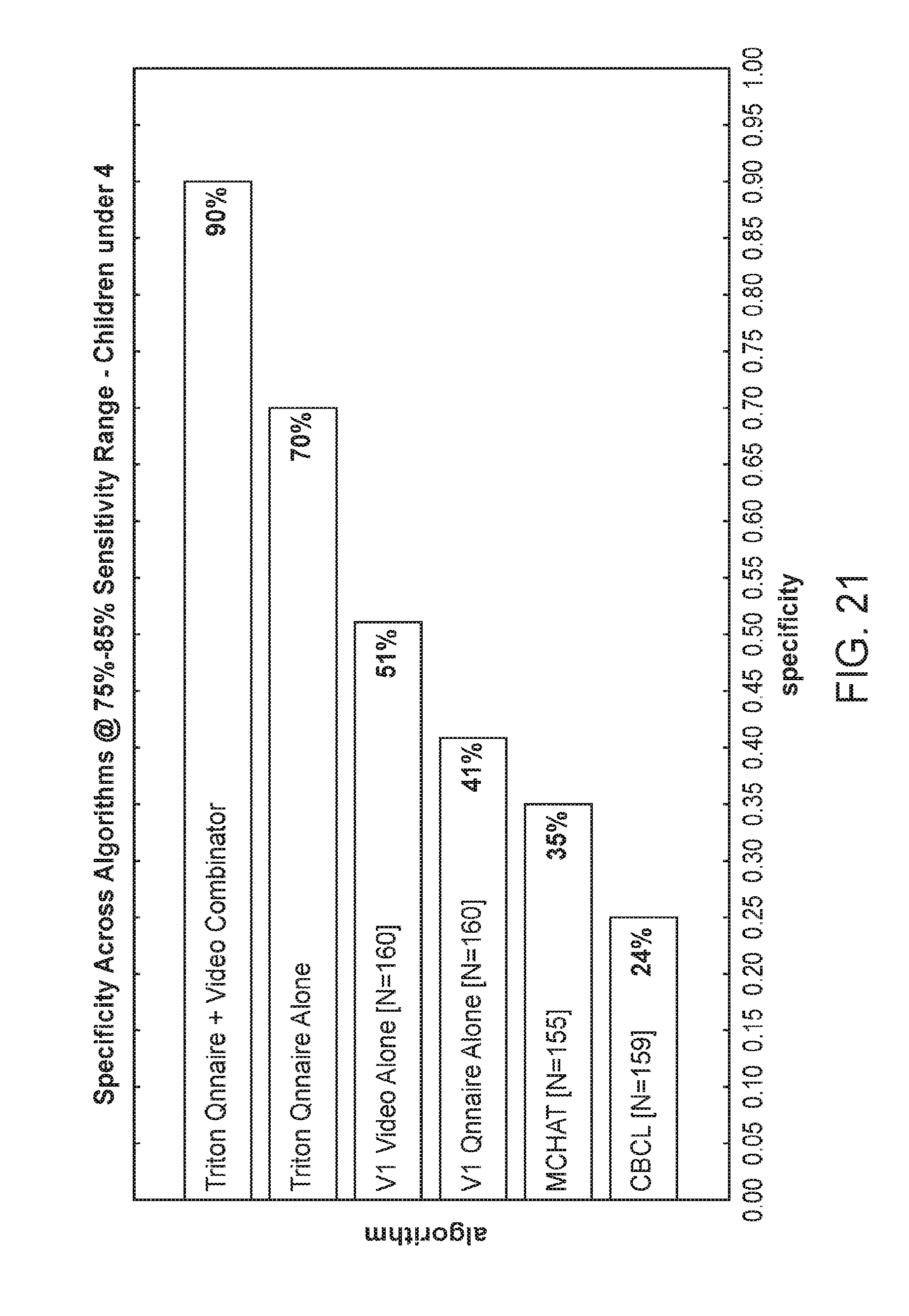

[0067] FIG. 21 shows the specificity across algorithms at 75%-85% sensitivity range for Children Under 4 as described herein.

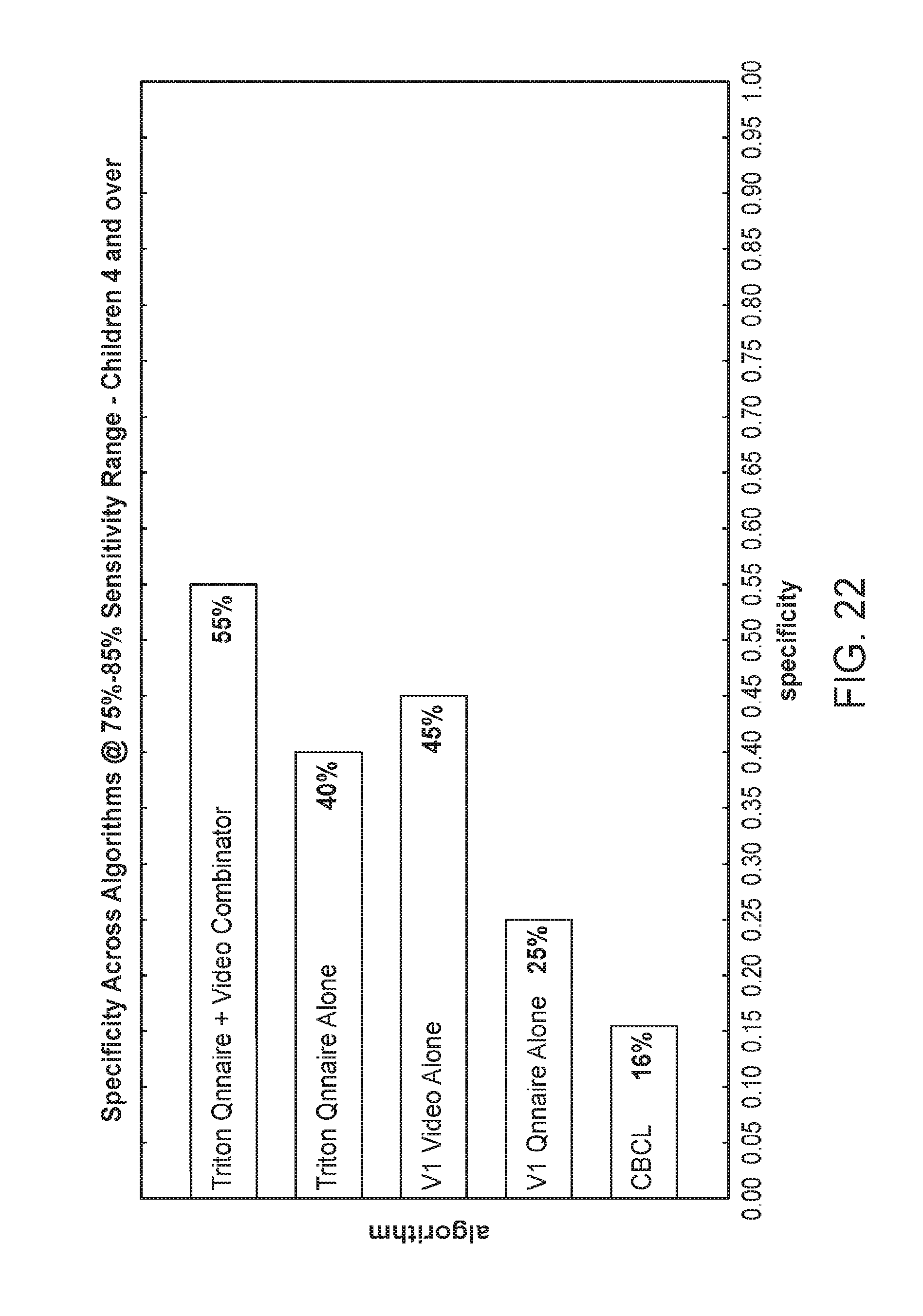

[0068] FIG. 22 shows the specificity across algorithms at 75%-85% sensitivity range for Children 4 and Over as described herein.

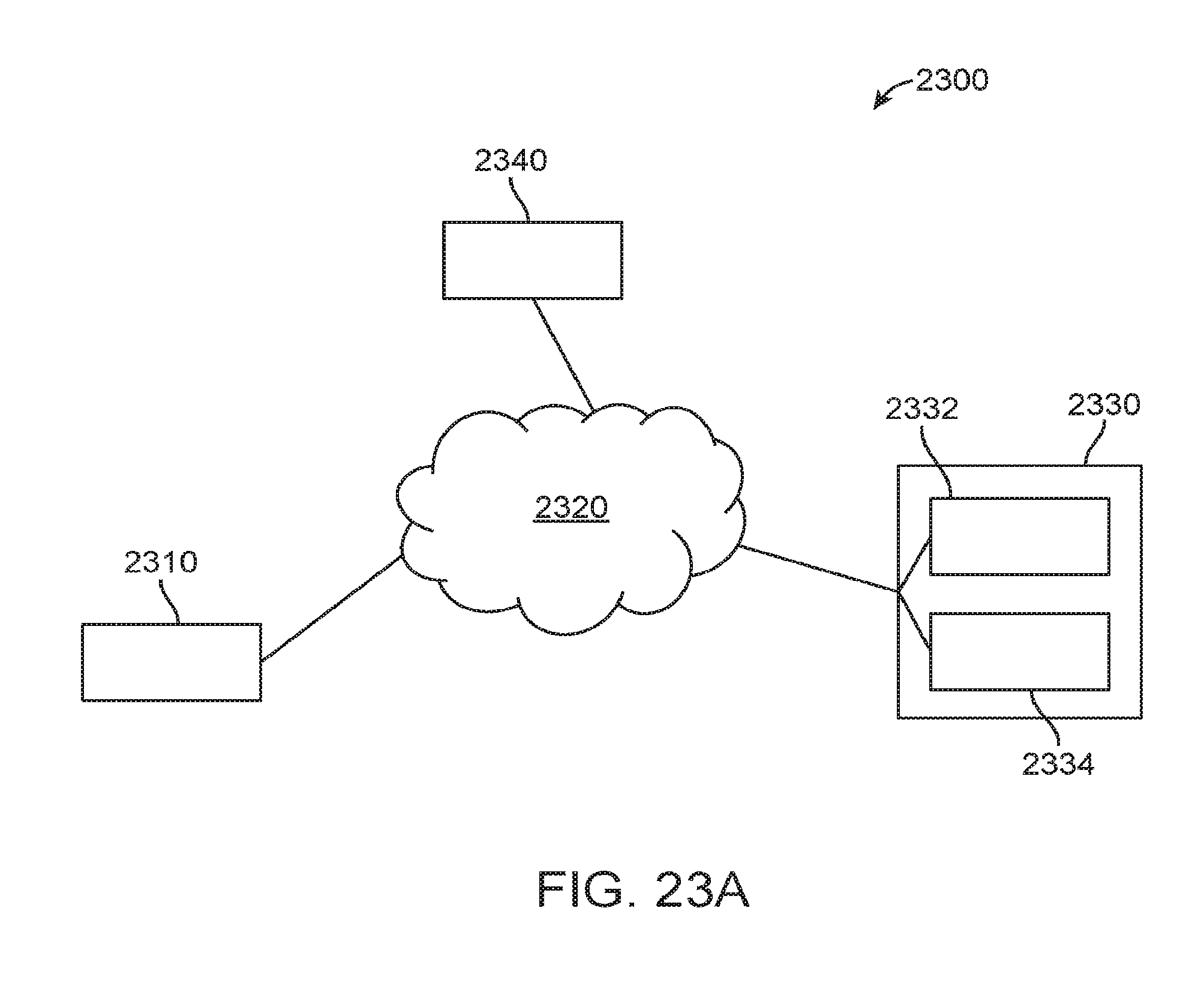

[0069] FIG. 23A illustrates an exemplary system diagram for a digital personalized medicine platform.

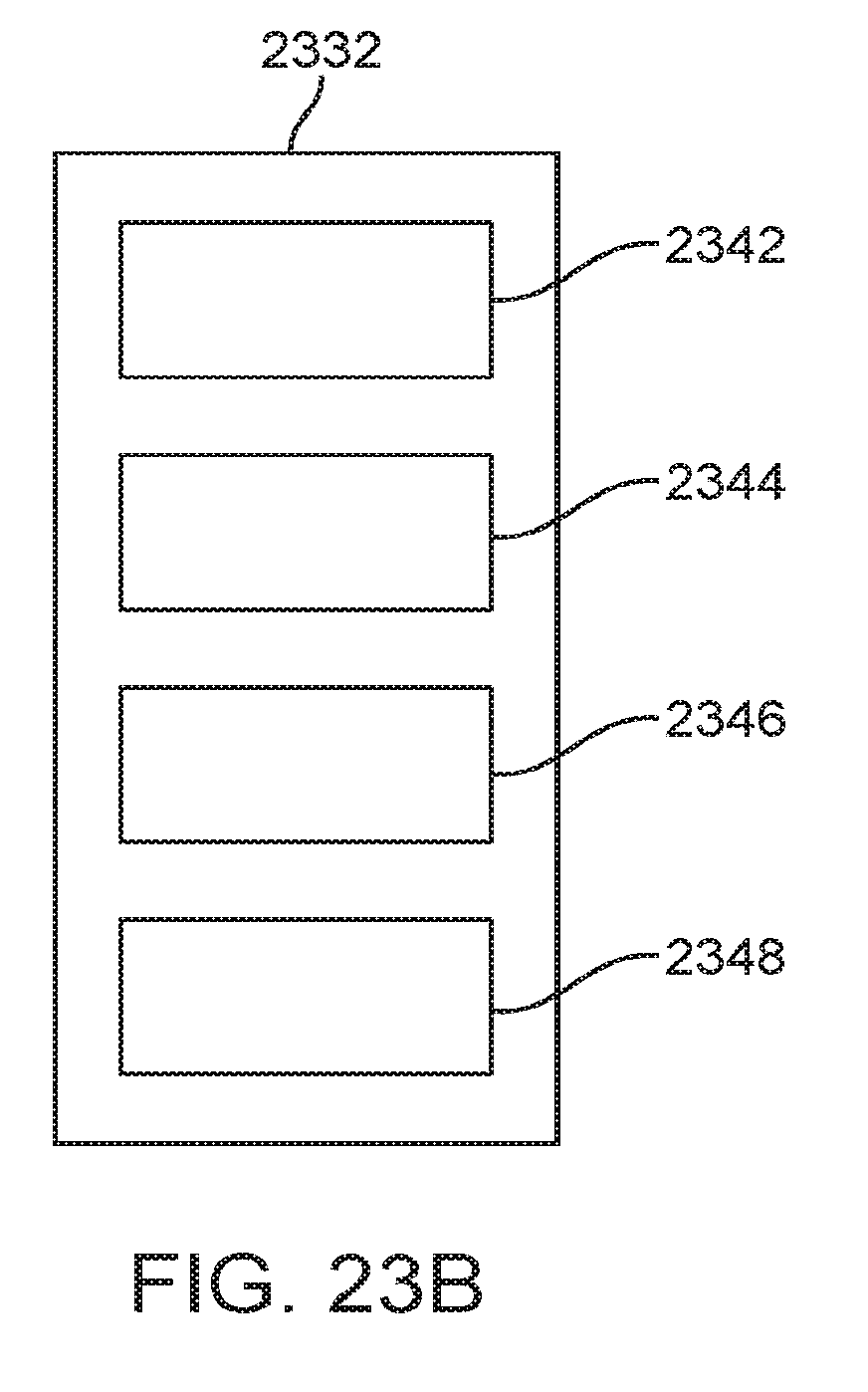

[0070] FIG. 23B illustrates a detailed diagram of an exemplary diagnosis module.

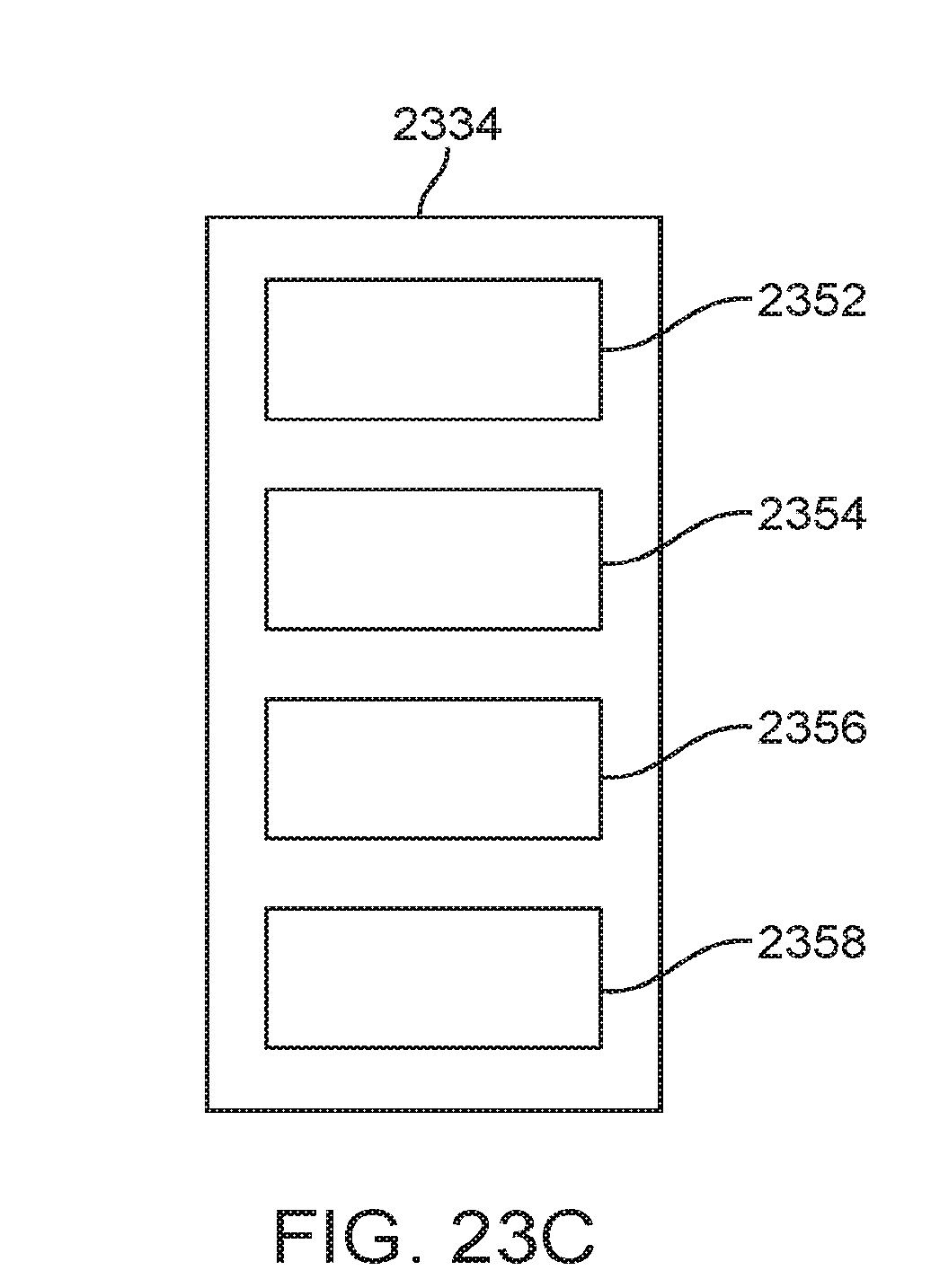

[0071] FIG. 23C illustrates a diagram of an exemplary therapy module.

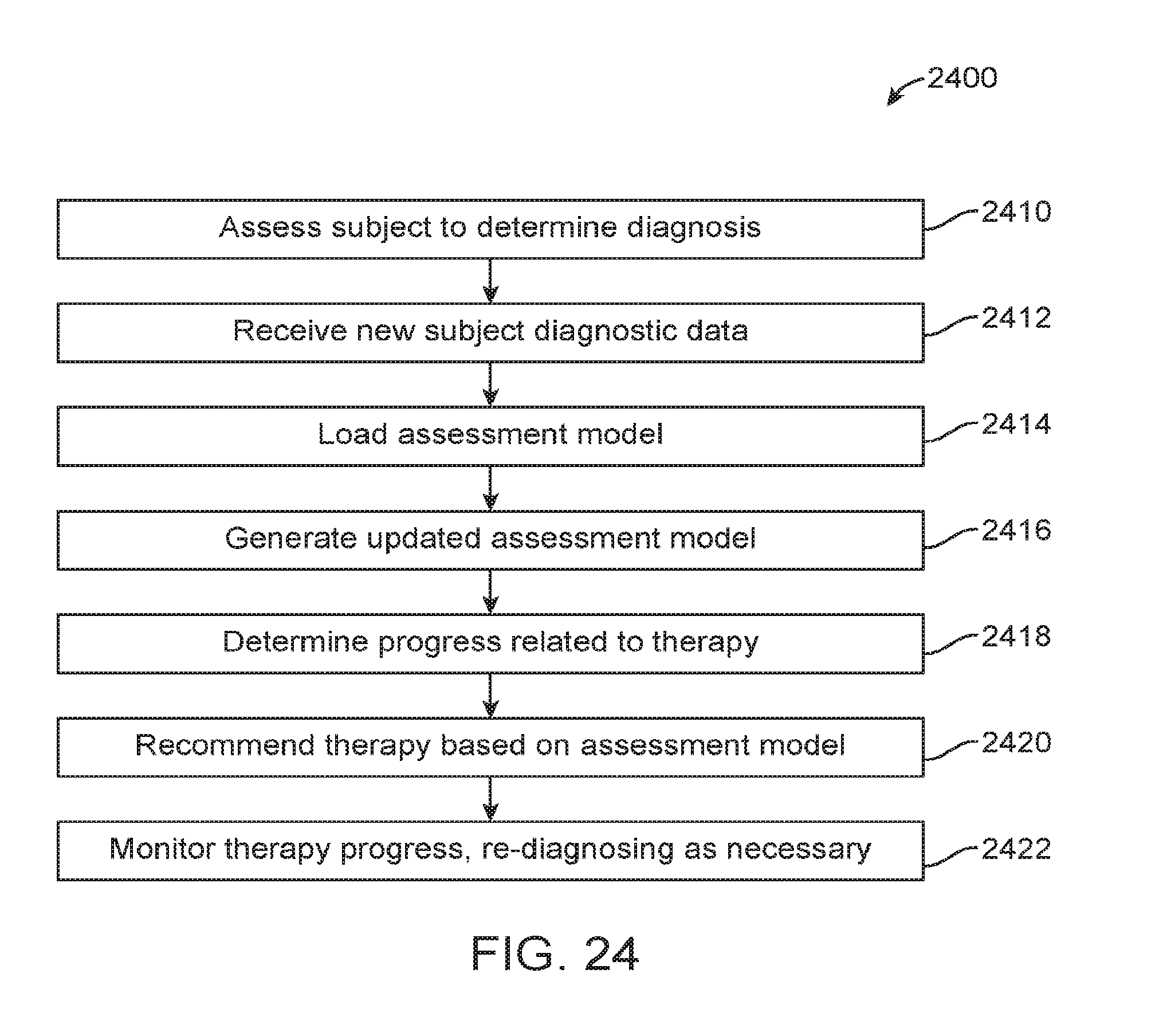

[0072] FIG. 24 illustrates an exemplary method for diagnosis and therapy to be provided in a digital personalized medicine platform.

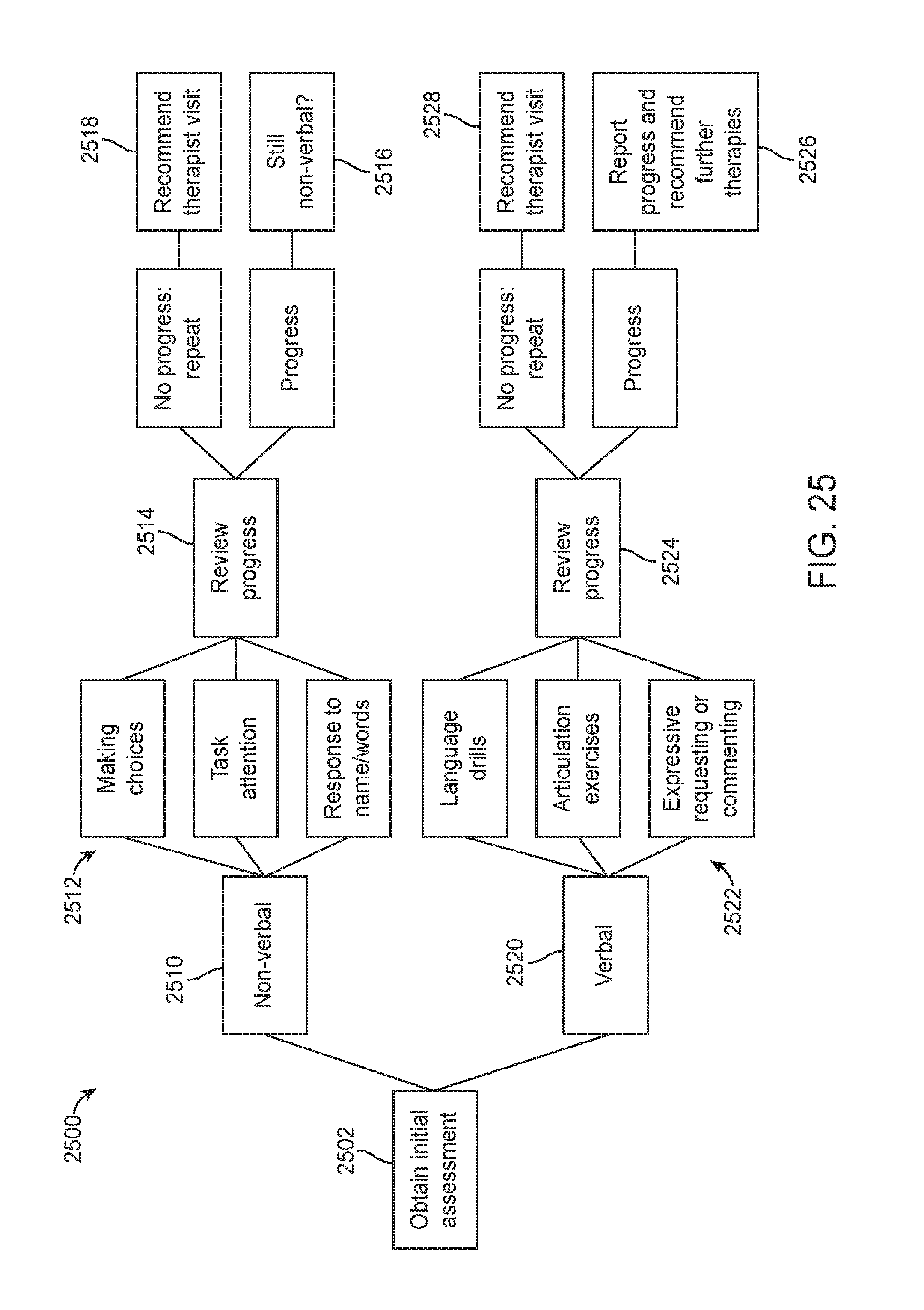

[0073] FIG. 25 illustrates an exemplary flow diagram showing the handling of autism-related developmental delay.

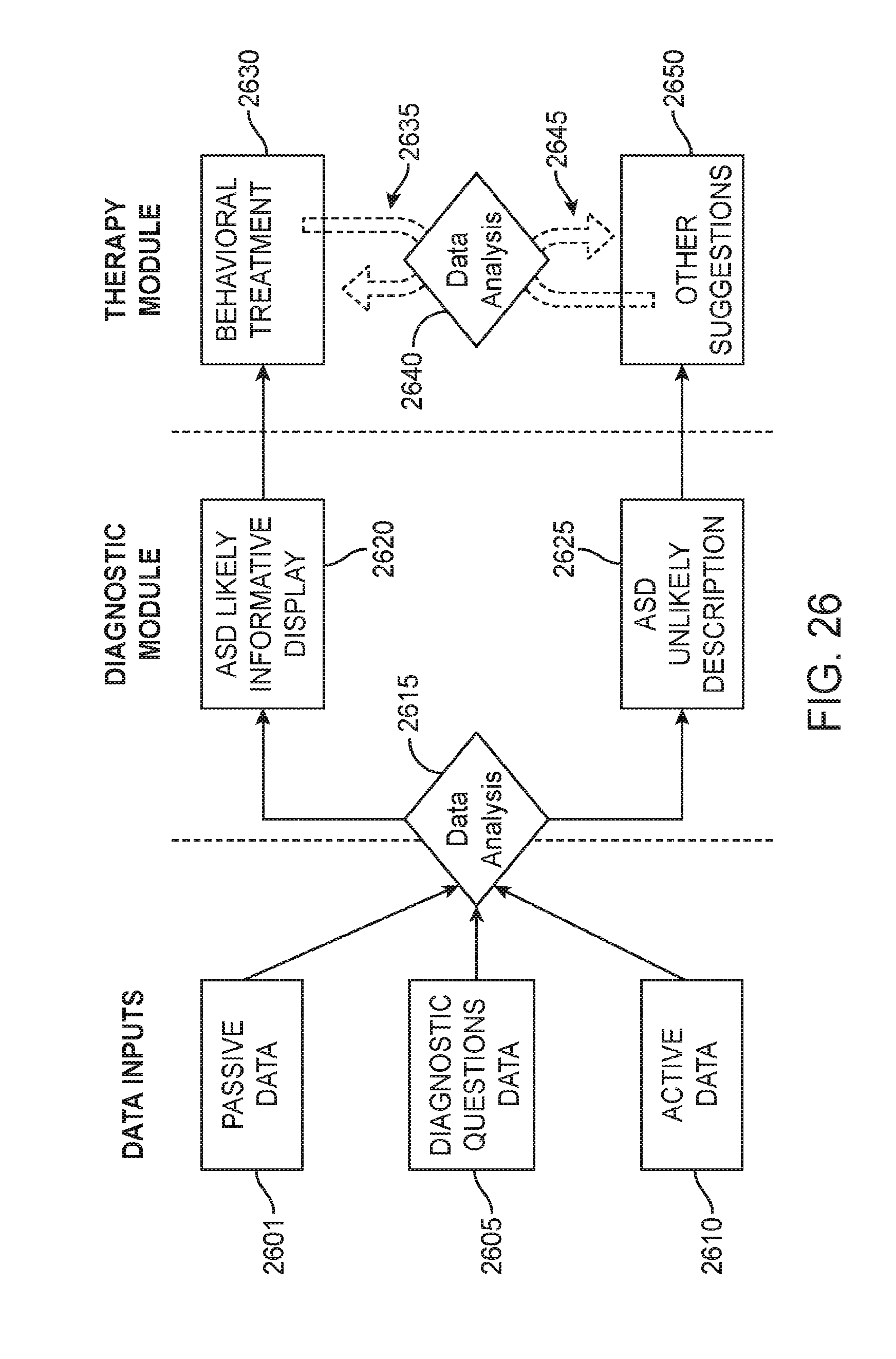

[0074] FIG. 26 illustrates an overall of data processing flows for a digital personalized medical system comprising a diagnostic module and a therapeutic module, configured to integrate information from multiple sources.

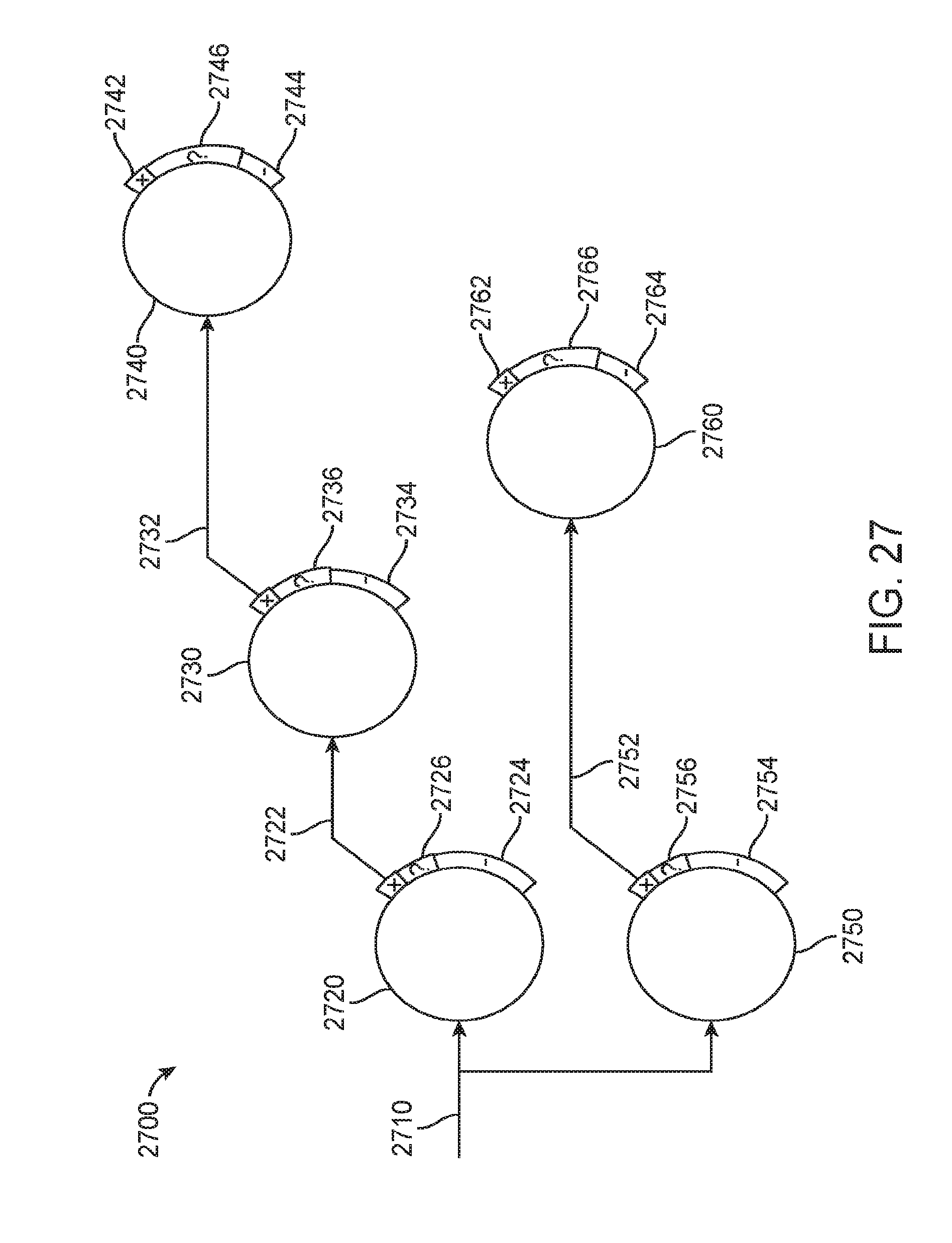

[0075] FIG. 27 shows a system for evaluating a subject for multiple clinical indications.

[0076] FIG. 28 shows a drug that may be administered in response to a diagnosis by the systems and methods described herein.

DETAILED DESCRIPTION OF THE INVENTION

[0077] While various embodiments of the invention have been shown and described herein, it will be obvious to those skilled in the art that such embodiments are provided by way of example only. Numerous variations, changes, and substitutions may occur to those skilled in the art without departing from the invention. It should be understood that various alternatives to the embodiments of the invention described herein may be employed. It shall be understood that different aspects of the invention can be appreciated individually, collectively, or in combination with each other.

[0078] The terms "based on" and "in response to" are used interchangeably with the present disclosure.

[0079] The term "processor" encompasses one or more of a local processor, a remote processor, or a processor system, and combinations thereof.

[0080] The term "feature" is used herein to describe a characteristic or attribute that is relevant to determining the developmental progress of a subject. For example, a "feature" may refer to a clinical characteristic that is relevant to clinical evaluation or diagnosis of a subject for one or more developmental disorders (e.g., age, ability of subject to engage in pretend play, etc.). The term "feature value" is herein used to describe a particular subject's value for the corresponding feature. For example, a "feature value" may refer to a clinical characteristic of a subject that is related to one or more developmental disorders (e.g., if feature is "age", feature value could be 3; if feature is "ability of subject to engage in pretend play", feature value could be "variety of pretend play" or "no pretend play").

[0081] As used herein, the phrases "autism" and "autism spectrum disorder" may be used interchangeably.

[0082] As used herein, the phrases "attention deficit disorder (ADD)" and "attention deficit/hyperactivity disorder (ADHD)" may be used interchangeably.

[0083] Described herein are methods and apparatus for determining the developmental progress of a subject. For example, the described methods and apparatus can identify a subject as developmentally advanced in one or more areas of development or cognitively declining in one or more cognitive functions, or identify a subject as developmentally delayed or at risk of having one or more developmental disorders. The methods and apparatus disclosed can determine the subject's developmental progress by evaluating a plurality of characteristics or features of the subject based on an assessment model, wherein the assessment model can be generated from large datasets of relevant subject populations using machine-learning approaches.

[0084] While methods and apparatus are herein described in the context of identifying one or more developmental disorders of a subject, the methods and apparatus are well-suited for use in determining any developmental progress of a subject. For example, the methods and apparatus can be used to identify a subject as developmentally advanced, by identifying one or more areas of development in which the subject is advanced. To identify one or more areas of advanced development, the methods and apparatus may be configured to assess one or more features or characteristics of the subject that are related to advanced or gifted behaviors, for example. The methods and apparatus as described can also be used to identify a subject as cognitively declining in one or more cognitive functions, by evaluating the one or more cognitive functions of the subject.

[0085] Described herein are methods and apparatus for diagnosing or assessing risk for one or more developmental disorders in a subject. The method may comprise providing a data processing module, which can be utilized to construct and administer an assessment procedure for screening a subject for one or more of a plurality of developmental disorders or conditions. The assessment procedure can evaluate a plurality of features or characteristics of the subject, wherein each feature can be related to the likelihood of the subject having at least one of the plurality of developmental disorders screenable by the procedure. Each feature may be related to the likelihood of the subject having two or more related developmental disorders, wherein the two or more related disorders may have one or more related symptoms. The features can be assessed in many ways. For example, the features may be assessed via a subject's answers to questions, observations of a subject, or results of a structured interaction with a subject, as described in further detail herein.

[0086] To distinguish among a plurality of developmental disorders of the subject within a single screening procedure, the procedure can dynamically select the features to be evaluated in the subject during administration of the procedure, based on the subject's values for previously presented features (e.g., answers to previous questions). The assessment procedure can be administered to a subject or a caretaker of the subject with a user interface provided by a computing device. The computing device comprises a processor having instructions stored thereon to allow the user to interact with the data processing module through a user interface. The assessment procedure may take less than 10 minutes to administer to the subject, for example 5 minutes or less. Thus, apparatus and methods described herein can provide a prediction of a subject's risk of having one or more of a plurality of developmental disorders using a single, relatively short screening procedure.

[0087] The methods and apparatus disclosed herein can be used to determine a most relevant next question related to a feature of a subject, based on previously identified features of the subject. For example, the methods and apparatus can be configured to determine a most relevant next question in response to previously answered questions related to the subject. A most predictive next question can be identified after each prior question is answered, and a sequence of most predictive next questions and a corresponding sequence of answers generated. The sequence of answers may comprise an answer profile of the subject, and the most predictive next question can be generated in response to the answer profile of the subject.

[0088] The methods and apparatus disclosed herein are well suited for combinations with prior questions that can be used to diagnose or identify the subject as at risk in response to fewer questions by identifying the most predictive next question in response to the previous answers, for example.

[0089] In one aspect, a method of providing an evaluation of at least one cognitive function attribute of a subject comprises the operations of: on a computer system having a processor and a memory storing a computer program for execution by the processor. The computer program may comprise instructions for: 1) receiving data of the subject related to the cognitive function attribute; 2) evaluating the data of the subject using a machine learning model; and 3) providing an evaluation for the subject. The evaluation may be selected from the group consisting of an inconclusive determination and a categorical determination in response to the data. The machine learning model may comprise a selected subset of a plurality of machine learning assessment models. The categorical determination may comprise a presence of the cognitive function attribute and an absence of the cognitive function attribute.

[0090] Receiving data from the subject may comprise receiving an initial set of data. Evaluating the data from the subject may comprise evaluating the initial set of data using a preliminary subset of tunable machine learning assessment models selected from the plurality of tunable machine learning assessment models to output a numerical score for each of the preliminary subset of tunable machine learning assessment models. The method may further comprise providing a categorical determination or an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject based on the analysis of the initial set of data, wherein the ratio of inconclusive to categorical determinations can be adjusted.

[0091] The method may further comprise the operations of: 1) determining whether to apply additional assessment models selected from the plurality of tunable machine learning assessment models if the analysis of the initial set of data yields an inconclusive determination; 2) receiving an additional set of data from the subject based on an outcome of the decision; 3) evaluating the additional set of data from the subject using the additional assessment models to output a numerical score for each of the additional assessment models based on the outcome of the decision; and 4) providing a categorical determination or an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject based on the analysis of the additional set of data from the subject using the additional assessment models. The ratio of inconclusive to categorical determinations may be adjusted.

[0092] The method may further comprise the operations: 1) combining the numerical scores for each of the preliminary subset of assessment models to generate a combined preliminary output score; and 2) mapping the combined preliminary output score to a categorical determination or to an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject. The ratio of inconclusive to categorical determinations may be adjusted. The method may further comprise the operations of: 1) combining the numerical scores for each of the additional assessment models to generate a combined additional output score; and 2) mapping the combined additional output score to a categorical determination or to an inconclusive determination as to the presence or absence of the cognitive function attribute in the subject. The ratio of inconclusive to categorical determinations may be adjusted. The method may further comprise employing rule-based logic or combinatorial techniques for combining the numerical scores for each of the preliminary subset of assessment models and for combining the numerical scores for each of the additional assessment models.

[0093] The ratio of inconclusive to categorical determinations may be adjusted by specifying an inclusion rate and wherein the categorical determination as to the presence or absence of the developmental condition in the subject is assessed by providing a sensitivity and specificity metric. The inclusion rate may be no less than 70% with the categorical determination resulting in a sensitivity of at least 70 with a corresponding specificity in of at least 70. The inclusion rate may be no less than 70% with the categorical determination resulting in a sensitivity of at least 80 with a corresponding specificity in of at least 80. The inclusion rate may be no less than 70% with the categorical determination resulting in a sensitivity of at least 90 with a corresponding specificity in of at least 90. The data from the subject may comprise at least one of a sample of a diagnostic instrument, wherein the diagnostic instrument comprises a set of diagnostic questions and corresponding selectable answers, and demographic data.

[0094] The method may further comprise training a plurality of tunable machine learning assessment models using data from a plurality of subjects previously evaluated for the developmental condition. The training may comprise the operations of: 1) pre-processing the data from the plurality of subjects using machine learning techniques; 2) extracting and encoding machine learning features from the pre-processed data; 3) processing the data from the plurality of subjects to mirror an expected prevalence of a cognitive function attribute among subjects in an intended application setting; 4) selecting a subset of the processed machine learning features; 5) evaluating each model in the plurality of tunable machine learning assessment models for performance; and 6) determining an optimal set of parameters for each model based on determining the benefit of using all models in a selected subset of the plurality of tunable machine learning assessment models. Each model may be evaluated for sensitivity and specificity for a pre-determined inclusion rate. Determining an optimal set of parameters for each model may comprise tuning the parameters of each model under different tuning parameter settings. Processing the encoded machine learning features may comprise computing and assigning sample weights to every sample of data. Each sample of data may correspond to a subject in the plurality of subjects. Samples may be grouped according to subject-specific dimensions. Sample weights may be computed and assigned to balance one group of samples against every other group of samples to mirror the expected distribution of each dimension among subjects in an intended setting. The subject-specific dimensions may comprise a subject's gender, the geographic region where a subject resides, and a subject's age. Extracting and encoding machine learning features from the pre-processed data may comprise using feature encoding techniques such as but not limited to one-hot encoding, severity encoding, and presence-of-behavior encoding. Selecting a subset of the processed machine learning features may comprise using bootstrapping techniques to identify a subset of discriminating features from the processed machine learning features.

[0095] The cognitive function attribute may comprise a behavioral disorder and a developmental advancement. The categorical determination provided for the subject may be selected from the group consisting of an inconclusive determination, a presence of multiple cognitive function attributes and an absence of multiple cognitive function attributes in response to the data.

[0096] In another aspect, an apparatus to evaluate a cognitive function attribute of a subject may comprise a processor. The processor may be configured with instructions that, when executed, cause the processor to receive data of the subject related to the cognitive function attribute and applies rules to generate a categorical determination for the subject. The categorical determination may be selected from a group consisting of an inconclusive determination, a presence of the cognitive function attribute, and an absence of the cognitive function attribute in response to the data. The cognitive function attribute may be determined with a sensitivity of at least 70 and a specificity of at least 70, respectively, for the presence or the absence of the cognitive function attribute. The cognitive function attribute may be selected from a group consisting of autism, autistic spectrum, attention deficit disorder, attention deficit hyperactive disorder and speech and learning disability. The cognitive function attribute may be determined with a sensitivity of at least 80 and a specificity of at least 80, respectively, for the presence or the absence of the cognitive function attribute. The cognitive function attribute may be determined with a sensitivity of at least 90 and a specificity of at least 90, respectively, for the presence or the absence of the cognitive function attribute. The cognitive function attribute may comprise a behavioral disorder and a developmental advancement.

[0097] In another aspect, a non-transitory computer-readable storage media encoded with a computer program including instructions executable by a processor to evaluate a cognitive function attribute of a subject comprises a database, recorded on the media. The database may comprise data of a plurality of subjects related to at least one cognitive function attribute and a plurality of tunable machine learning assessment models; an evaluation software module; and a model tuning software module. The evaluation software module may comprise instructions for: 1) receiving data of the subject related to the cognitive function attribute; 2) evaluating the data of the subject using a selected subset of a plurality of machine learning assessment models; and 3) providing a categorical determination for the subject, the categorical determination selected from the group consisting of an inconclusive determination, a presence of the cognitive function attribute and an absence of the cognitive function attribute in response to the data. The model tuning software module may comprise instructions for: 1) pre-processing the data from the plurality of subjects using machine learning techniques; 2) extracting and encoding machine learning features from the pre-processed data; 3) processing the encoded machine learning features to mirror an expected distribution of subjects in an intended application setting; 4) selecting a subset of the processed machine learning features; 5) evaluating each model in the plurality of tunable machine learning assessment models for performance; 6) tuning the parameters of each model under different tuning parameter settings; and 7) determining an optimal set of parameters for each model based on determining the benefit of using all models in a selected subset of the plurality of tunable machine learning assessment models. Each model may be evaluated for sensitivity and specificity for a pre-determined inclusion rate. The cognitive function attribute may comprise a behavioral disorder and a developmental advancement.