Image Processing Apparatus And Robot System

NISHITANI; Masanobu ; et al.

U.S. patent application number 16/157840 was filed with the patent office on 2019-02-07 for image processing apparatus and robot system. The applicant listed for this patent is Seiko Epson Corporation. Invention is credited to Masanobu NISHITANI, Nobuyuki SETSUDA.

| Application Number | 20190043150 16/157840 |

| Document ID | / |

| Family ID | 54249287 |

| Filed Date | 2019-02-07 |

View All Diagrams

| United States Patent Application | 20190043150 |

| Kind Code | A1 |

| NISHITANI; Masanobu ; et al. | February 7, 2019 |

Image Processing Apparatus And Robot System

Abstract

An image processing apparatus includes a first connecting unit connected to an image pickup apparatus, a plurality of second connecting units connected to one control apparatus or one other image processing apparatus, the control apparatus being configured to control a robot; and a processing unit configured to process picked-up images picked up by the image pickup apparatus.

| Inventors: | NISHITANI; Masanobu; (Suwa, JP) ; SETSUDA; Nobuyuki; (Okaya, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 54249287 | ||||||||||

| Appl. No.: | 16/157840 | ||||||||||

| Filed: | October 11, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14854499 | Sep 15, 2015 | 10127622 | ||

| 16157840 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/23206 20130101; H04N 5/23229 20130101; G06T 7/73 20170101; B25J 9/1697 20130101; Y10S 901/09 20130101; G06T 1/0014 20130101 |

| International Class: | G06T 1/00 20060101 G06T001/00; B25J 9/16 20060101 B25J009/16; G06T 7/73 20060101 G06T007/73; H04N 5/232 20060101 H04N005/232 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Sep 16, 2014 | JP | 2014-187732 |

| Sep 16, 2014 | JP | 2014-188035 |

| Sep 16, 2014 | JP | 2014-188036 |

| Sep 16, 2014 | JP | 2014-188037 |

| Sep 16, 2014 | JP | 2014-188038 |

| Sep 16, 2014 | JP | 2014-188127 |

Claims

1. A controller comprising: a processor configured to execute computer-readable instructions stored in a memory so as to: receive status information of an image processing device that processes a captured image; determine a status of the image processing device based on the status information; and generate a transmission signal that is transmitted to an external apparatus based on the determined status of the image processing device.

2. The controller according to claim 1, wherein the determined status includes: a normal status that corresponds to a state in which the image processing device has no failure; a defective status that corresponds to a state in which the image processing device has a failure; and a possible defective status that corresponds to a state in which the image processing device has a high possibility of the failure.

3. The controller according to claim 2, wherein the status information includes a plurality of physical values that correspond to statuses of a plurality of hardware components in the image processing device, and the processor is configured to determine a phenomenon based on each of the plurality of physical values, and the phenomenon corresponds to the status of the image processing device.

4. The controller according to claim 3, wherein the plurality of physical values include an error code, and the error code corresponds to a control of the image processing device, and the processor is configured to determine the phenomenon based on the error code.

5. The controller according to claim 4, wherein the processor is configured to associate the status of the image processing device with an identifier, and the processor is configured to place the identifier into the transmission signal.

6. The controller according to claim 3, wherein the processor is configured to cause the external apparatus to transmit the status information including the plurality of physical values based on an request from the external apparatus.

7. The controller according to claim 5, wherein the processor is configured to cause the image processing device to transmit request information to change an output status of the image processing device based on the determined status of the image processing device.

8. An information processing apparatus comprising: a display that displays a physical value that indicates a status of a plurality of hardware components in an image processing device; and a processor that is configured to receive status information including a plurality of physical values that correspond to statuses of a plurality of hardware components in the image processing device based on a phenomenon that is determined according to the plurality of physical values.

9. A robot system comprising: a robot that is configured to perform a task; a camera that is configured to capture an image of an area where the robot performs the task; an image processing device that is configured to process the image so as to output a result; and a controller that is configured to control the robot based on the result from the image processing device, wherein the controller includes a processer that is configured to execute computer-readable instructions stored in a memory so as to: receive status information of the image processing device; determine a status of the image processing device based on the status information; and generate a transmission signal that is transmitted to an external apparatus based on the determined status of the image processing device.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a divisional of U.S. patent application Ser. No. 14/854,499, filed Sep. 15, 2015, which claims priority to Japanese Patent Application No. 2014-188035, filed Sep. 16, 2014; Japanese Patent Application No. 2014-188127, filed Sep. 16, 2014; Japanese Patent Application No. 2014-187732, filed Sep. 16, 2014; Japanese Patent Application No. 2014-188036, filed Sep. 16, 2014; Japanese Patent Application No. 2014-188037, filed Sep. 16, 2014; and Japanese Patent Application No. 2014-188038, filed Sep. 16, 2014, all of which are hereby expressly incorporated by reference herein in their entireties.

BACKGROUND

1. Technical Field

[0002] The present invention relates to an image processing apparatus and a robot system.

2. Related Art

[0003] There has been proposed a robot system that calculates a position and a posture of a work target using an image picked up by an image pickup apparatus and causes a robot to perform predetermined work on the basis of the calculated position and the calculated posture.

[0004] The robot system includes the image pickup apparatus, an image processing apparatus, a robot controller, and a robot. The image processing apparatus calculates a center position of the work target using the image picked up by the image pickup apparatus. The robot controller recognizes the position and the posture of the work target on the basis of information indicating the center position of the work target calculated by the image processing apparatus. The robot controller generates, on the basis of recognized positions and postures of work targets, a command for positioning a hand mechanism of the robot in an appropriate position and an appropriate posture and controls a gripping action of the robot. In the robot system, the image pickup apparatus is connected to the image processing apparatus, the robot controller is connected to the image processing apparatus, and the robot is connected to the robot controller (see, for example, JP-A-2012-242281 (Patent Literature 1)).

[0005] The image processing apparatus in the past includes one LAN (Local Area Network) port for connection to the robot controller. The image processing apparatus and the robot controller are connected to each other, for example, by a LAN cable.

[0006] In recent years, in a site where a robot is used, for example, there is a demand that a plurality of image processing apparatuses are to one robot controller to control the robot. Alternatively, in a site where a robot is used, there is a demand that a plurality of robot controllers share and use one image processing apparatus.

[0007] In the robot system in the past, the image pickup apparatus is connected to the image processing apparatus via a USB (Universal Serial Bus) or a LAN cable.

[0008] When the image pickup apparatus and the image processing apparatus are connected via the LAN cable, a user performs setting concerning a network for the image pickup apparatus. Alternatively, in the robot system in the past, a DHCP (Dynamic Host Configuration Protocol) server is provided in the network to automatically perform setting concerning a network for the image pickup apparatus.

[0009] There has been researched and developed a method of calculating a position and a posture of a work target on the basis of a picked-up image picked up by an image pickup unit and causing a robot to perform predetermined work on the basis of the calculated position and the calculated posture.

[0010] Concerning the method, there is known an image processing apparatus connected to a control apparatus that controls the robot and separate from a control apparatus that performs image processing such as calculation of the position and the posture of the work target based on the picked-up image (see http://robots.epson.com/admin/uploads/product_catalog/files/EPSON_CV1_Vis- ion%20(RevB).pdf "Vision Guidance for Epson Robots" (Non Patent Literature 1).

[0011] There has been proposed a robot system that controls the operation of a robot using a picked-up image picked up by an image pickup apparatus. For example, JP-A-2009-218933 (Patent Literature 2) describes a robot system in which an image processing apparatus, a control apparatus, and a PC (Personal Computer) for control are connected via a network. The image processing apparatus processes a picked-up image around the robot acquired from the image pickup apparatus. The PC for control functions as a development environment for displaying the picked-up image acquired from the image pickup apparatus and creating a computer program for image processing.

[0012] For the image processing apparatus to apply the image processing to the picked-up image, physical connection of the image pickup apparatus to the image processing apparatus is not enough. Setting for the PC for control is necessary. For example, a user checks beforehand an IP (Internet Protocol) address of the image processing apparatus and performs operation for manually inputting the checked IP address to the PC for control. A plurality of image pickup apparatuses are connectable to the image processing apparatus. There is an image processing apparatus capable of using a plurality of models of image pickup apparatuses. Concerning such an image processing apparatus, a user checks in advance a camera of which model is connected to the image processing apparatus and performs operation for setting, in the PC for control, which of a plurality of cameras is selected. When the PC for control and the image processing apparatus are connected to the same network segment, the PC for control and the image processing apparatus can transmit and receive data immediately after the completion of the setting operation. Therefore, it is possible to use the image pickup apparatus for which the setting is completed. The network segment is a logically divided range of a LAN and is sometimes called sub-network.

[0013] However, the image processing apparatus in the past includes only one LAN port. Therefore, for example, when a plurality of image processing apparatuses are connected to one robot controller to control the robot, it is necessary to connect the robot controller and the plurality of image processing apparatuses via a network switch (a switching hub) and perform communication among the apparatuses.

[0014] In the image processing apparatus in the past, the DHCP server is necessary to automatically performing the setting concerning a network for the image pickup apparatus. When the DHCP server is not used, the user needs to manually perform the setting concerning a network for the image pickup apparatus. In this case, the user needs to grasp setting contents of the image processing apparatus and the robot controller, which are connected to the robot system, concerning a network.

[0015] In the image processing apparatus in the past, when a serious error that cannot be solved by operation by a person not having special knowledge (e.g., the user) occurs, for example, some trouble is caused by executed processing and, thereafter, the image processing apparatus cannot be started, the user has to disassemble the image processing apparatus to remove a storing unit included in the image processing apparatus, send the storing unit to a person having special knowledge (e.g., a technician of a manufacturer), and ask the person to repair the storing unit. Therefore, the user consumes time and labor.

[0016] When the PC for control and the image processing apparatus are connected to different network segments, the PC for control and the image processing apparatus cannot transmit and receive data even if the setting operation is completed. Therefore, the image pickup apparatus for which the setting is completed cannot be used unless the setting of the network segment of one of the PC for control and the image processing apparatus is adjusted to the setting of the network segment of the other. In particular, in a large robot system including a plurality of image processing apparatuses, the user consumes a lot of labor for the setting operation.

[0017] Even in a state in which some abnormality occurs in hardware of the image processing apparatus, the control apparatus in the past cannot detect the state.

[0018] The image processing apparatus in the past does not take into account that a person not having special knowledge (e.g., a user) is caused to update an OS (Operating System). When the user has to update the OS, the user has to disassemble the image processing apparatus, send the image processing apparatus to a person having special knowledge (e.g., a technician of a manufacture), and ask the person to update the OS. Therefore, the user needs to consume time and labor.

SUMMARY

[0019] An advantage of some aspects of the invention is to solve at least a part of the problems described above, and the invention can be implemented as the following aspects or application examples.

[0020] An aspect of the invention is directed to an image processing apparatus including: a first connecting unit connected to an image pickup apparatus; a plurality of second connecting units connected to one or more control apparatuses that control a robot or one or more other image processing apparatuses; and a processing unit configured to process picked-up images picked up by the image pickup apparatuses.

[0021] With this configuration, the image processing apparatus includes the plurality of second connecting units. Consequently, in the image processing apparatus, it is possible to connect external apparatuses respectively to the plurality of second connecting units without using a network switch.

[0022] In the aspect of the invention, each of the plurality of second connecting units may include a different identifier. The processing unit may be capable of setting the same IP address in at least two or more of the plurality of second connecting units.

[0023] With this configuration, the image processing apparatus can set the same IP address in at least two or more second connecting units. Consequently, in the image processing apparatus, a user can easily perform connection to a plurality of external apparatuses without being aware of the IP address.

[0024] In the aspect of the invention, the processing unit may be capable of setting an IP address different from the same IP address in the second connecting units other than the second connecting units in which the same IP address is set.

[0025] With this configuration, the image processing apparatus can set another IP address in the second connecting units other than the second connecting units in which the same IP address is set. Consequently, the image processing apparatus can perform connection to a plurality of external apparatuses using different IP addresses without using a network switch.

[0026] In the aspect of the invention, the same IP address may be information input from an information processing apparatus that outputs a control program for the robot to the control apparatus or information input from a setting screen on which an IP address can be set.

[0027] With the configuration, the same IP address can be input to the image processing apparatus from the control apparatus or the setting screen on which an IP address can be set. Consequently, in the image processing apparatus, the IP address can be set from both of the control apparatus and the setting screen.

[0028] Another aspect of the invention is directed to a robot system including: the image processing apparatus according to the aspect of the invention; a robot main body unit; the image pickup apparatus; and the control apparatus configured to perform driving control of the robot main body unit.

[0029] With this configuration, in the robot system, the image processing apparatus includes the plurality of second connecting units. Consequently, in the robot system, it is possible to connect the image processing apparatus and a plurality of external apparatuses and control the robot system without using a network switch.

[0030] According to the aspects of the invention, the image processing apparatus includes the plurality of second connecting units. Therefore, it is possible to connect an external apparatus to the image processing apparatus without using a network switch. As a result, according to the aspects of the invention, the image processing apparatus can communicate with a plurality of external apparatuses without using a network switch.

[0031] Still another aspect of the invention is directed to an image processing apparatus including: a connecting unit connected to an image pickup apparatus; a determining unit configured to determine, using setting information of the image pickup apparatus and setting information of the connecting unit, whether it is necessary to change the setting information of the image pickup apparatus; and a changing unit configured to change the setting information of the image pickup apparatus when the determining unit determines that it is necessary to change the setting information of the image pickup apparatus.

[0032] With this configuration, the image processing apparatus can change the setting information of the image pickup apparatus when it is determined using the setting information of the image pickup apparatus and the setting information of the connecting unit that it is necessary to change the setting information of the image pickup apparatus. Consequently, in the image processing apparatus, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0033] In the aspect of the invention, the setting information of the image pickup apparatus and the setting information of the connecting unit may be respectively setting information concerning a network and include network segment information indicating a network segment and an IP address including the network segment information. The determining unit may compare the network segment information included in the setting information of the image pickup apparatus and the network segment information included in the setting information of the connecting unit, when these kinds of network segment information coincide with each other, compare the IP address included in the setting information of the image pickup apparatus and the IP address included in the setting information of the connecting unit, and, when the IP addresses coincide with each other, determine that it is necessary to change a fourth segment of the IP address included in the setting information of the image pickup apparatus.

[0034] With this configuration, the image processing apparatus can change the fourth segment of the IP address of the image pickup apparatus when the network segment of the connecting unit and the network segment of the image pickup apparatus coincide with each other and the IP address of the connecting unit and the IP address of the image pickup apparatus coincide with each other. Consequently, in the image processing apparatus, even when the IP addresses of the connecting unit and the image pickup apparatus coincide with each other, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0035] In the aspect of the invention, when the network segment information included in the setting information of the image pickup apparatus and the network segment information included in the setting information of the connecting unit do not coincide with each other, the determining unit may determine that it is necessary to change the network segment information of the IP addresses to coincide and change the fourth segment to be different in the setting information of the image pickup apparatus and the setting information of the connecting unit.

[0036] With this configuration, when the network segment of the connecting unit and the network segment of the image pickup apparatus do not coincide with each other, the image processing apparatus can change the IP address of the image pickup apparatus to coincide with the network segment of the connecting unit and change the fourth segment to be different. Consequently, in the image processing apparatus, even when the network segments of the connecting unit and the image pickup apparatus coincide with each other, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0037] In the aspect of the invention, the image processing apparatus may further include an external-communication connecting unit connected to a control apparatus that controls a robot or other image processing apparatuses. The determining unit may determine, using setting information of the external-communication connecting unit, whether it is necessary to change the setting information of the image pickup apparatus.

[0038] With this configuration, the image processing apparatus can change the setting information of the image pickup apparatus when it is determined using the setting information of the external-communication connecting unit and the setting information of the connecting unit that it is necessary to change the setting information of the image pickup apparatus. Consequently, in the image processing apparatus, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0039] In the aspect of the invention, the setting information of the image pickup apparatus and the setting information of the external-communication connecting unit may be respectively setting information concerning a network and include network segment information indicating a network segment and an IP address including the network segment information. The determining unit may compare the network segment information included in the setting information of the image pickup apparatus and the network segment information included in the setting information of the external-communication connecting unit and, when these kinds of network segment information coincide with each other, determine that it is necessary to change the network segment information of the IP address included in the setting information of the image pickup apparatus.

[0040] With this configuration, the image processing apparatus can change the network segment of the image pickup apparatus when the network segment of the connecting unit and the network segment of the external-communication connecting unit coincide with each other. Consequently, in the image processing apparatus, when the IP address of the external-communication connecting unit is changed, even when the network segments of the external-communication connecting unit and the image pickup apparatus coincide with each other, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0041] Yet another aspect of the invention is directed to a robot system including: the image processing apparatus according to the aspect of the invention; a robot main body unit; and a robot control apparatus configured to perform communication using the setting information changed by the changing unit of the image processing apparatus and perform driving control of the robot main body unit.

[0042] With this configuration, in the robot system, the image processing apparatus can change the setting information of the image pickup apparatus when it is determined using the setting information of the image pickup apparatus and the setting information of the connecting unit that it is necessary to change the setting information of the image pickup apparatus. Consequently, the robot system can control the robot using a result of the image processing apparatus automatically performing setting concerning a network for the image pickup apparatus.

[0043] According to the aspects of the invention, the image processing apparatus determines, using the network setting information of the image pickup apparatus and the network setting information of the connecting unit to which the image pickup apparatus is connected or the external-communication connecting unit to which another apparatus is connected, whether it is necessary to change the network setting information of the image pickup apparatus and, when it is necessary to change the network setting information of the image pickup apparatus, changes the network setting information of the image pickup apparatus. Therefore, it is possible to automatically perform setting concerning a network for the image pickup apparatus.

[0044] Still yet another aspect of the invention is directed to an image processing apparatus including: a processing unit configured to process a picked-up image picked up by an image pickup apparatus; and a storing unit configured to store data concerning the image processing apparatus. When a reset signal is input, the processing unit deletes at least a part of the data stored in the storing unit.

[0045] With this configuration, when the reset signal is input, the image processing apparatus deletes at least a part of the data concerning the image processing apparatus stored in the storing unit. Consequently, it is possible to easily restore the image processing apparatus to a predetermined state.

[0046] In the aspect of the invention, in the image processing apparatus, the data stored in the storing unit may include history information of hardware monitoring of the image processing apparatus, information related to processing performed by the processing unit, and a system log.

[0047] With this configuration, when the reset signal is input, the image processing apparatus deletes at least a part of the history information of the hardware monitoring of the image processing apparatus stored in the storing unit, the information related to the processing performed by the processing unit, and the system log. Consequently, it is possible to restore the image processing apparatus to a predetermined state based on a part or all of the history information of the hardware monitoring of the image processing apparatus, the information related to the processing performed by the processing unit, and the system log.

[0048] In the aspect of the invention, in the image processing apparatus, when a data saving signal is input, the processing unit may store, in an external storage device, at least a part of the data stored in the storing unit.

[0049] With this configuration, when the data saving signal is input, the image processing apparatus stores, in the external storage device, at least a part of the data concerning the image processing apparatus stored in the storing unit. Consequently, the image processing apparatus can cause the user to check a state of the image processing apparatus on the basis of the data concerning the image processing apparatus stored in the external storage device.

[0050] In the aspect of the invention, the image processing apparatus may further include: an input receiving unit including one or more buttons; and an input determining unit configured to input the data saving signal to the processing unit when a predetermined button among the one or more buttons included in the input receiving unit is released before a predetermined time elapses from depression of the predetermined button and input the reset signal to the processing unit when the predetermined button is released after the predetermined time or more elapses from the depression of the predetermined button.

[0051] With this configuration, the image processing apparatus inputs the data saving signal to the processing unit when the predetermined button among the one or more buttons included in the input receiving unit is released before the predetermined time elapses from the depression of the predetermined button and inputs the reset signal to the processing unit when the predetermined button is released after the predetermined time or more elapses from the depression of the predetermined button. Consequently, the image processing apparatus can select, according to time from the depression until the release of the predetermined button, processing performed by the processing unit.

[0052] In the aspect of the invention, the image processing apparatus may further include an output control unit configured to change an output state of the output unit for time determined in advance when the predetermined button continues to be depressed for the predetermined time or more.

[0053] With this configuration, the image processing apparatus changes the output state of the output unit for the time determined in advance when the predetermined button continues to be pressed for the predetermined time or more. Consequently, the image processing apparatus can notify the user whether the predetermined button continues to be depressed for the predetermined time or more.

[0054] In the aspect of the invention, in the image processing apparatus, the output control unit may change the output state of the output unit according to success or failure of the deletion of at least part of the data by the processing unit or the storage in the external storage device of at least part of the data by the processing unit.

[0055] With this configuration, the image processing apparatus changes the output state of the output unit according to success or failure of the deletion of at least part of the data concerning the image processing apparatus by the processing unit or the storage in the external storage device of at least part of the data concerning the image processing apparatus by the processing unit. Consequently, the image processing apparatus can notify the user of success or failure of the deletion of at least part of the data concerning the image processing apparatus by the processing unit or the storage in the external storage device of at least a part of the data concerning the image processing apparatus by the processing unit.

[0056] In the aspect of the invention, in the image processing apparatus, when the external storage device is not connected to the image processing apparatus, the processing unit may not store at least a part of the data in the external storage device even when the data saving signal is input.

[0057] With this configuration, when the external storage device is not connected to the image processing apparatus, the image processing apparatus does not store at least a part of the data in the external storage device even when the data saving signal is input. Consequently, the image processing apparatus can suppress continuation of processing of the processing unit by wrong operation in a state in which preparation for storing at least a part of the data concerning the image processing apparatus in the external storage device is not completed.

[0058] Further another aspect of the invention is directed to a robot system including: a robot configured to perform predetermined work; an image pickup apparatus configured to pick up an image of a range related to the predetermined work; an image processing apparatus configured to process the picked-up image picked up by the image pickup apparatus; and a control apparatus configured to control the robot on the basis of a result of the processing by the image processing apparatus. The image processing apparatus includes: a processing unit configured to process the picked-up image picked up by the image pickup apparatus; and a storing unit configured to store data concerning the image processing apparatus. When a reset signal is input, the processing unit deletes at least a part of the data stored in the storing unit.

[0059] With this configuration, when the reset signal is input, the robot system deletes at least a part of the data stored in the storing unit. Consequently, it is possible to easily restore the robot system to a predetermined state.

[0060] As explained above, when the reset signal is input, the image processing apparatus and the robot system delete at least a part of the data stored in the storing unit. Consequently, it is possible to easily restore the image processing apparatus and the robot system to predetermined states.

[0061] Still further another aspect of the invention is directed to an image processing system including: an image processing apparatus configured to process a picked-up image; and a display apparatus configured to display a screen on which setting of the image processing apparatus is performed. The image processing apparatus searches for image pickup apparatuses communicable with the image processing apparatus. The display apparatus displays information concerning the communicable image pickup apparatuses found by the image processing apparatus.

[0062] With this configuration, the information concerning the communicable image pickup apparatuses found by the image processing apparatus is displayed. Therefore, it is possible to select, from the displayed image pickup apparatuses, an image pickup apparatus that the user desires to use. Therefore, in the setting of the image processing apparatus, it is unnecessary to check in advance information concerning an image pickup apparatus that the user desires to use.

[0063] In the aspect of the invention, in the image processing system, the display apparatus may broadcast, to a network connected to the display apparatus, a search packet including a command for inquiring about information set in an apparatus at a transmission destination. When receiving the search packet from the display apparatus, the image processing apparatus may broadcast, to the network, a search response packet including information concerning the found communicable image pickup apparatuses.

[0064] With this configuration, even when the image processing apparatus to which the image pickup apparatus is connected belongs to a sub-network different from a sub-network to which the display apparatus belongs, the display apparatus can acquire information concerning the image pickup apparatus connected to the image processing apparatus. Therefore, the user can obtain a clue for using, without omission, the image pickup apparatuses found by the image processing apparatus connected to the network.

[0065] In the aspect of the invention, in the image processing system, a network address of the image processing apparatus may be included in the search response packet. The display apparatus may display the network address in association with information concerning the image pickup apparatus.

[0066] With this configuration, a network address of an image processing address related to the image pickup apparatus that the user desires to use is displayed. Therefore, in setting for using the image pickup apparatus, the user can recognize the network address of the image processing apparatus.

[0067] In the aspect of the invention, in the image processing system, the display apparatus may acquire a new network address and broadcast a change packet including the acquired network address to a network connected to the display apparatus. The image processing apparatus may change a network address set in the image processing apparatus to the network address included in the change packet received from the display apparatus.

[0068] With this configuration, even when the image processing apparatus connected to the image pickup apparatus belongs to a sub-network different from a sub-network to which the display apparatus belongs, it is possible to change the network address set in the image processing apparatus to the network address acquired by the display apparatus. For example, by setting, in the display apparatus, an unused network address belonging to a sub-network same as the sub-network to which the display apparatus belongs, it is possible to cause the image processing apparatus to belong to the sub-network same as the sub-network to which the display apparatus belongs.

[0069] In the aspect of the invention, in the image processing system, the display apparatus may belong to the sub-network same as the sub-network to which the display apparatus belongs and decide the unused network address as a network address of the image processing apparatus.

[0070] With this configuration, the user can cause the image processing apparatus to belong to the sub-network same as the sub-network to which the display apparatus belongs without checking a network address in advance and inputting the network address. Consequently, it is possible to perform communication between the display apparatus and the image pickup apparatus connected to the image processing apparatus.

[0071] In the aspect of the invention, in the image processing system, when the image processing apparatus belongs to the sub-network same as the sub-network to which the display apparatus belongs, the image processing apparatus may include the network address of the display apparatus in the search response packet as a network address of a transmission destination.

[0072] With this configuration, by broadcasting the search response packet, it is possible to avoid useless communication in which the search response packet is transmitted to apparatuses other than the display apparatus.

[0073] Yet further another aspect of the invention is directed to a robot system including: a robot; an image processing apparatus configured to process a picked-up image; a display apparatus configured to display a screen for performing setting of the image processing apparatus; and a control apparatus configured to control the operation of the robot on the basis of a processing result of the image processing apparatus. The image processing apparatus searches for image pickup apparatuses communicable with the image processing apparatus. The display apparatus displays information concerning the communicable image pickup apparatuses found by the image processing apparatus.

[0074] With this configuration, the information concerning the communicable image pickup apparatuses found by the image processing apparatus is displayed. Therefore, the user can select, from the displayed image pickup apparatuses, an image pickup apparatus that the user desires to use. Therefore, in setting of the image pickup apparatus, it is unnecessary to check in advance information concerning the image pickup apparatus that the user desires to use.

[0075] According to the aspects of the invention explained above, it is possible to easily perform setting concerning the image pickup apparatus.

[0076] Still yet further another aspect of the invention is directed to a controller including: a receiving unit configured to receive, from an image processing apparatus that processes a picked-up image, state information of the image processing apparatus; a determining unit configured to determine a state of the image processing apparatus on the basis of the state information; and a generating unit configured to generate, on the basis of a determination result by the determining unit, a transmission signal to be transmitted to an external apparatus.

[0077] With this configuration, the controller receives, from the image processing apparatus that processes a picked-up image, state information of the image processing apparatus, determines a state of the image processing apparatus on the basis of the state information, and generates, on the basis of a result of the determination, a transmission signal to be transmitted to the external apparatus. Consequently, it is possible to notify a state of the image processing apparatus.

[0078] In the aspect of the invention, in the controller, the determining unit may determine, as the state of the image processing apparatus, any one of a normal state, a state in which the image processing apparatus is highly likely be out of order, and a state in which the image processing apparatus is highly likely to fail in future.

[0079] With this configuration, the controller determines, as the state of the image processing apparatus, any one of the normal state, the state in which the image processing apparatus is highly likely to be out of order, and the state in which the image processing apparatus is highly likely to fail in future. Consequently, the controller makes it possible to notify, as the state of the image processing apparatus, any one of the normal state, the state in which the image processing apparatus is highly likely to be out of order, and the state in which the image processing apparatus is highly likely to fail in future.

[0080] In the aspect of the invention, in the controller, the state information may include information indicating one or more physical quantities indicating states of hardware included in the image processing apparatus. The controller may further include an event detecting unit configured to detect, on the basis of the one or more physical quantities, an event including abnormality of the image processing apparatus. The determining unit may determine the state of the image processing apparatus on the basis of the event detected by the state detecting unit.

[0081] With this configuration, the controller detects an event indicating abnormality of the image processing apparatus on the basis of the one or more physical quantities indicating the states of hardware included in the image processing apparatus and determine the state of the image processing apparatus on the basis of the detected event. Consequently, the controller can generate a transmission signal based on the state of the image processing apparatus determined on the basis of the event indicating the abnormality of the image processing apparatus.

[0082] In the aspect of the invention, in the controller, the state information may include an error code related to control of the image processing apparatus. The event detecting unit may detect an event indicating abnormality of the image processing apparatus on the basis of an error code related to control of the image processing apparatus.

[0083] With this configuration, the controller detects an event indicating abnormality of the image processing apparatus on the basis of the error code related to the control of the image processing apparatus. Consequently, the controller can generate, as the state of the image processing apparatus, a transmission signal based on the state of the image processing apparatus determined on the basis of the event indicating the abnormality of the image processing apparatus detected on the basis of the error code related to the control of the image processing apparatus.

[0084] In the aspect of the invention, in the controller, the determining unit may associate information for identifying the state of the image processing apparatus with the state of image processing apparatus determined on the basis of the event. The generating unit may include, in the transmission signal, the information for identifying the state of the image processing apparatus associated by the determining unit.

[0085] With this configuration, the controller associates the information for identifying the state of the image processing apparatus with the state of image processing apparatus determined on the basis of the event indicating the abnormality of the image processing apparatus and includes, in the transmission signal, the associated information for identifying the state of the image processing apparatus. Consequently, the controller can generate the transmission signal including the information for identifying the state of the image processing apparatus.

[0086] In the aspect of the invention, the controller may further include a communication control unit configured to cause a communication unit to transmit the transmission signal generated by the generating unit to the external apparatus.

[0087] With this configuration, the controller causes the communication unit to transmit the transmission signal generated on the basis of the determination result of the state of the image processing apparatus to the external apparatus. Consequently, the controller can notify a user of the external apparatus of the state of the image processing apparatus.

[0088] In the aspect of the invention, in the controller, the communication control unit may cause, according to a request from the external apparatus, the communication unit to transmit the information indicating the one or more physical quantities to the external apparatus.

[0089] With this configuration, the controller causes, according to a request from the external apparatus, the communication unit to transmit the information indicating one or more physical quantities indicating the states of the hardware included in the image processing apparatus to the external apparatus. Consequently, the controller can notify the user of the one or more physical quantities indicating the states of the hardware included in the image processing apparatus.

[0090] In the aspect of the invention, in the controller, the communication control unit may cause, according to the determination result by the determining unit, the communication unit to transmit information for requesting a change of the output state of the output unit included in the image processing apparatus to the image processing apparatus.

[0091] With this configuration, the controller causes, according to the determination result of the state of the image processing apparatus, the communication unit to transmit the information for requesting a change of the output state of the output unit included in the image processing apparatus to the image processing apparatus. Consequently, the controller can cause, with the image processing apparatus, the user to check the state of the image processing apparatus.

[0092] A further aspect of the invention is directed to an information processing apparatus that displays a GUI (Graphical User Interface) for displaying the one or more physical quantities acquired from the controller.

[0093] With this configuration, the information processing apparatus displays the GUI for displaying the one or more physical quantities indicating the states of the hardware included in the image processing apparatus acquired from the controller. Consequently, the information processing apparatus can facilitate management of the state of the image processing apparatus by providing the user with the GUI for displaying the one or more physical quantities indicating the states of the hardware included in the image processing apparatus.

[0094] A still further aspect of the invention is directed to a robot system including: a robot configured to perform predetermined work; an image pickup apparatus configured to pick up an image of a range related to the predetermined work; an image processing apparatus configured to process the picked-up image picked up by the image pickup apparatus; and a controller configured to control the robot on the basis of a result of the processing by the image processing apparatus. The controller includes: a receiving unit configured to receive state information of the image processing apparatus from the image processing apparatus; a determining unit configured to determine a state of the image processing apparatus on the basis of the state information; and a generating unit configured to generate, on the basis of a determination result by the determining unit, a transmission signal to be transmitted to the external apparatus.

[0095] With this configuration, the processing system receives the state information of the image processing apparatus from the image processing apparatus, determines the state of the image processing apparatus on the basis of the state information, and generates, on the basis of a result of the determination, the transmission signal to be transmitted to the external apparatus. Consequently, the robot system enables notification of the state of the image processing apparatus.

[0096] As explained above, the controller and the robot system receive the state information of the image processing apparatus from the image processing apparatus, determine the state of the image processing apparatus on the basis of the state information, and generate, on the basis of a result of the determination, the transmission signal to be transmitted to the external apparatus. Consequently, the controller and the robot system enable notification of the state of the image processing apparatus.

[0097] The information processing apparatus displays a GUI for displaying the one or more physical quantities indicating the states of the hardware included in the image processing apparatus acquired from the controller. Consequently, the information processing apparatus can facilitate management of the state of the image processing apparatus by providing a user with the GUI for displaying the one or more physical quantities indicating the states of the hardware included in the image processing apparatus.

[0098] A yet further aspect of the invention is directed to an image processing apparatus including: a storing unit configured to store a first computer program and a second computer program; and a processing unit configured to process a picked-up image picked up by an image pickup apparatus. The first computer program is updated using first data stored in an external storage device. The second computer program is updated using second data stored in an information processing apparatus.

[0099] With this configuration, in the image processing apparatus, the first computer program is updated using the first data stored in the external storage device and the second computer program is updated using the second data stored in the information processing apparatus. Consequently, the image processing apparatus can easily update the computer programs.

[0100] In the aspect of the invention, in the image processing apparatus, the image processing apparatus may include a first updating unit configured to operate according to execution of another program different from the first computer program and the second computer program, read the first data stored in the external storage device, and update the first computer program on the basis of the read first data.

[0101] With this configuration, the image processing apparatus operates according to the execution of another program different from the first computer program and the second computer program, reads the first data stored in the external storage device, and updates the first computer program on the basis of the read first data. Consequently, the image processing apparatus can update the first computer program without executing the first computer program. Therefore, it is possible to suppress the first computer program from being damaged by the update of the first computer program during the execution of the first computer program.

[0102] In the aspect of the invention, in the image processing apparatus, the first updating unit may back up the first computer program before the update in a storage region of the external storage device and thereafter update the first computer program on the basis of the first data.

[0103] With this configuration, the image processing apparatus backs up the first computer program before the update in the storage region of the external storage device and thereafter updates the first computer program on the basis of the first data. Consequently, the image processing apparatus does not need to secure a storage region for backup in a storage region of the image processing apparatus. It is possible to reduce costs related to securing of the storage region of the image processing apparatus.

[0104] In the aspect of the invention, the image processing apparatus may further include: a checking unit configured to determine whether the first data is stored in the external storage device; and a display control unit configured to cause the display unit to display information indicating an error when the checking unit determines that the first data is not stored in the external storage device. When the checking unit determines that the first data is stored in the external storage device, the first updating unit may update the first computer program on the basis of the first data.

[0105] With this configuration, the image processing apparatus determines whether the first data is stored in the external storage device, when it is determined that the first data is not stored in the external storage device, causes the display unit to display the information indicating an error, and, when it is determined that the first data is stored in the external storage device, updates the first computer program on the basis of the first data. Consequently, the image processing apparatus can suppress the update of the first computer program from being continued by mistake in a state in which the first data is not stored in the external storage device.

[0106] In the aspect of the invention, the image processing apparatus may further includes: a communication unit configured to communicate with an external apparatus; and a second updating unit configured to operate according to the execution of the first computer program, acquire the second data from the information processing apparatus via the communication unit, and update the second computer program on the basis of the acquired second data.

[0107] With this configuration, the image processing apparatus operates according to the execution of the first computer program, acquires the second data from the information processing apparatus via the communication unit, and updates the second computer program on the basis of the acquired second data. Consequently, the image processing apparatus can easily update the second computer program with the information processing apparatus.

[0108] A still yet further aspect of the invention is directed to a robot system including: a robot configured to perform predetermined work; an image pickup apparatus configured to pick up an image of a range related to the predetermined work; an image processing apparatus configured to process the picked-up image picked up by the image pickup apparatus; and a control apparatus configured to control the robot on the basis of a result of the processing by the image processing apparatus. The image processing apparatus includes: a storing unit configured to store a first computer program and a second computer program; and a processing unit configured to process a picked-up image picked up by the image pickup apparatus. The first computer program is updated using first data stored in an external storage device. The second computer program is updated using second data stored in an information processing apparatus.

[0109] With this configuration, in the robot system, the first computer program is updated using the first data stored in the external storage device and the second computer program is updated using the second data stored in the information processing apparatus. Consequently, the image processing apparatus can easily update the computer programs.

[0110] As explained above, in the image processing apparatus and the robot system, the first computer program is updated using the first data stored in the external storage device and the second computer program is updated using the second data stored in the information processing apparatus. Consequently, the image processing apparatus and the robot system can easily update the computer programs.

BRIEF DESCRIPTION OF THE DRAWINGS

[0111] The invention will be described with reference to the accompanying drawings, wherein like numbers reference like elements.

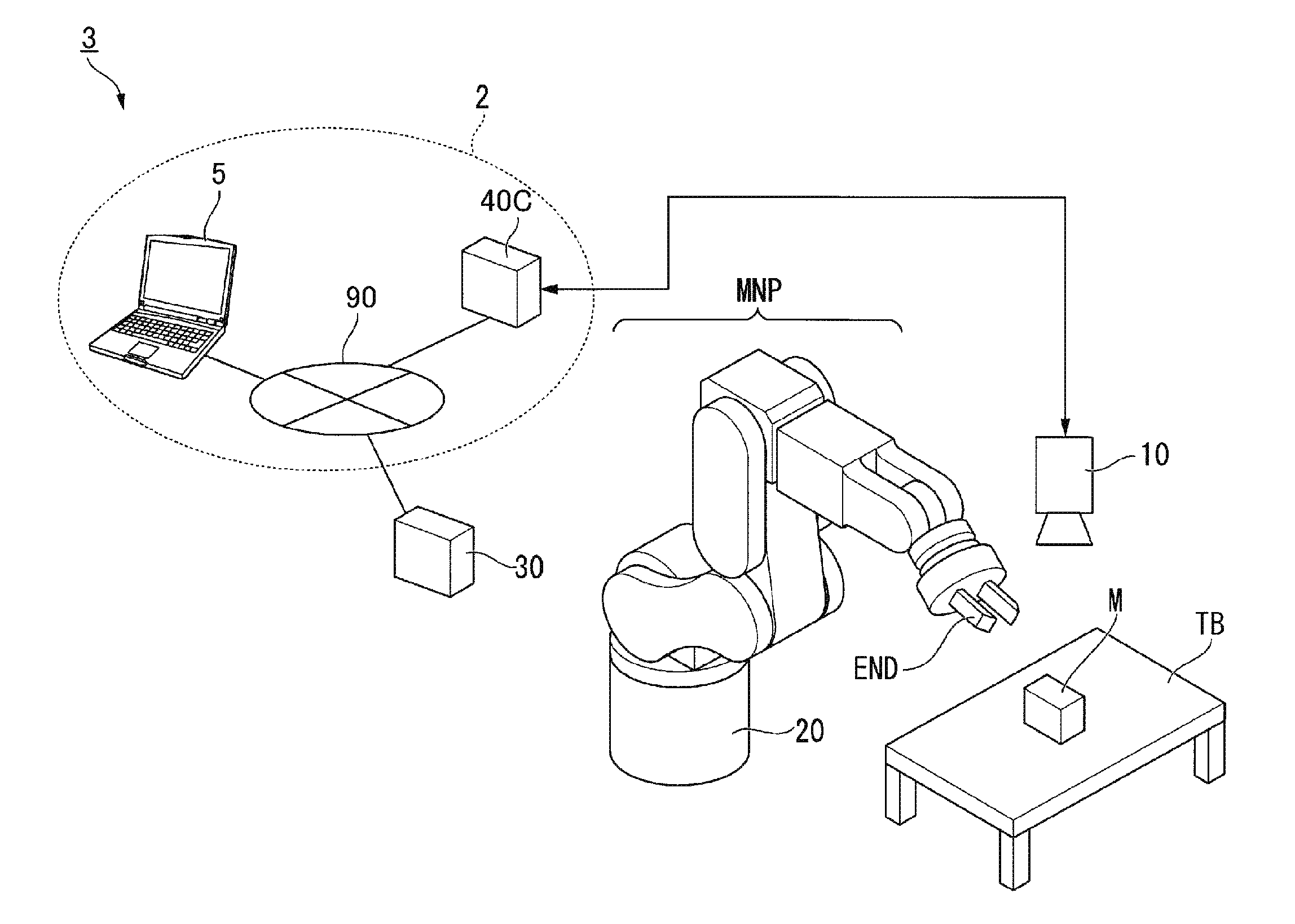

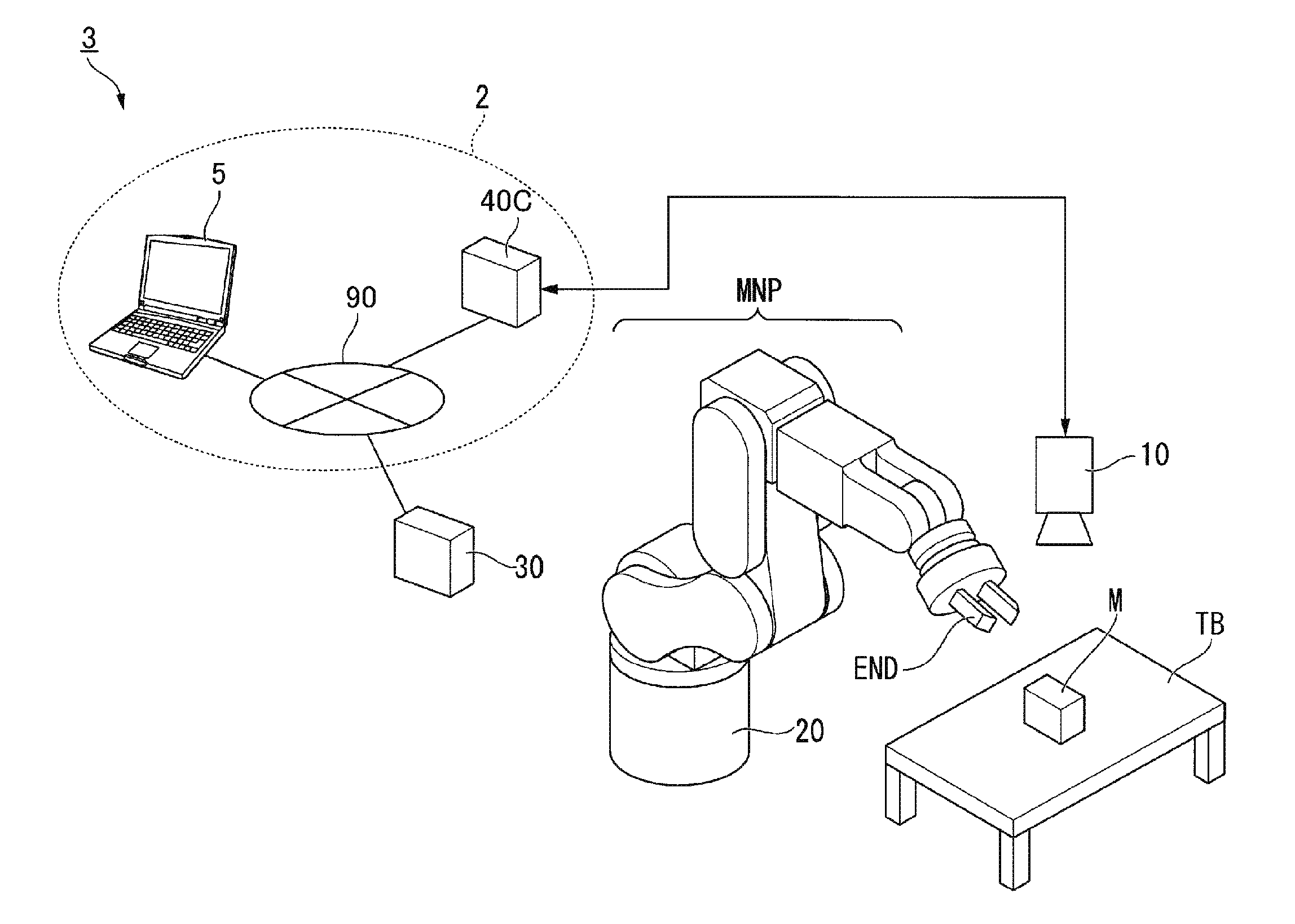

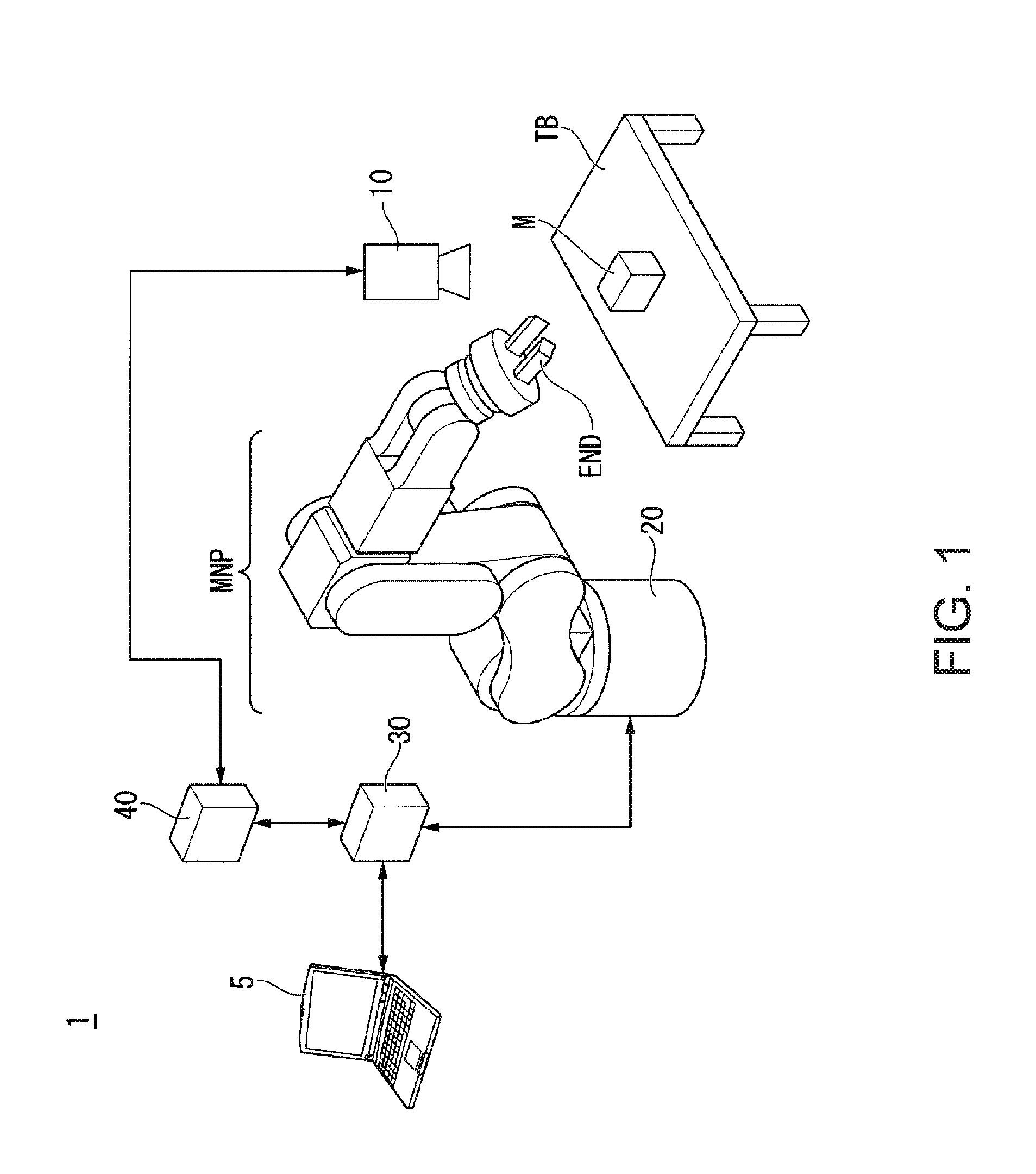

[0112] FIG. 1 is a configuration diagram showing an example of a robot system according to a first embodiment.

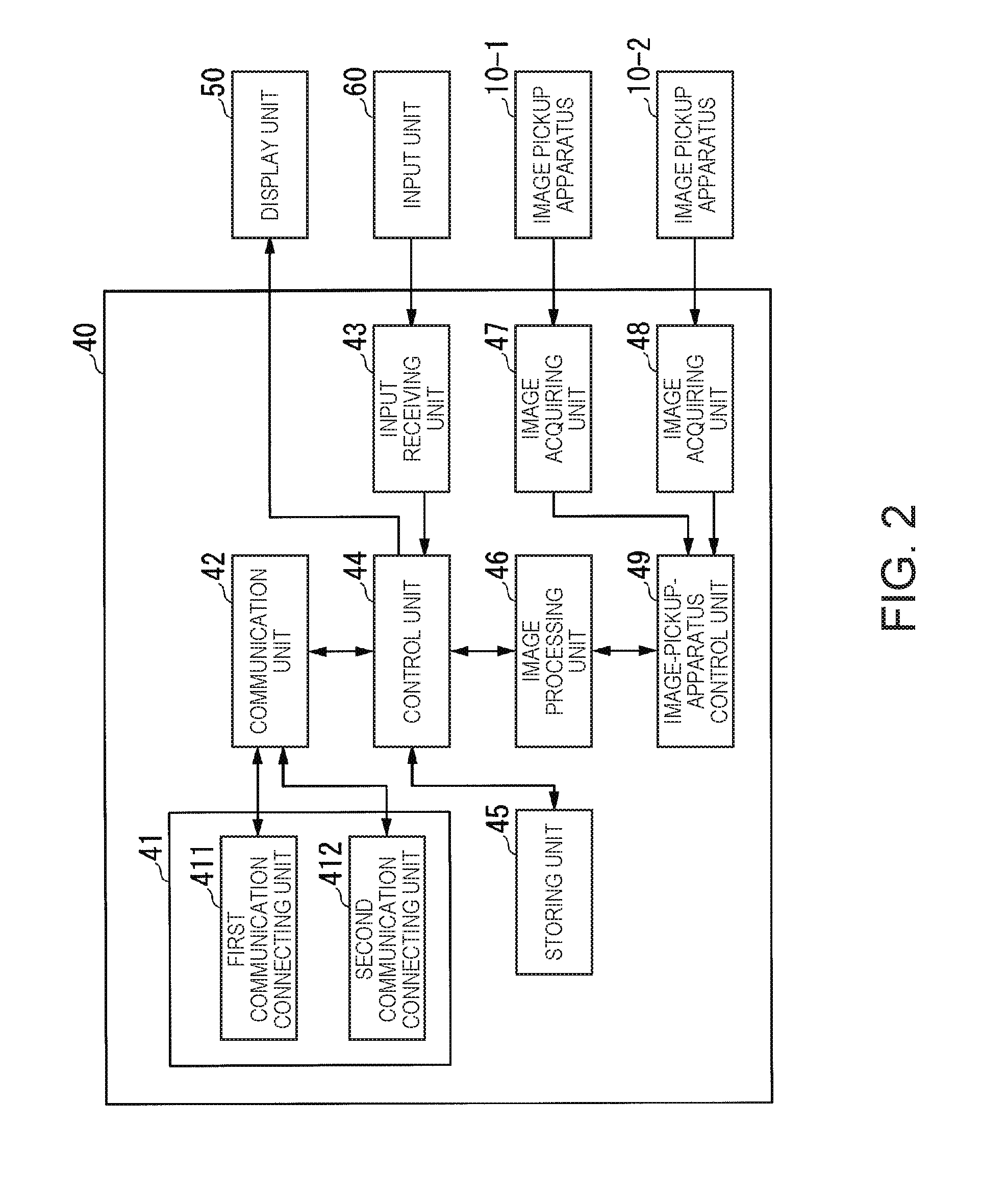

[0113] FIG. 2 is a schematic block diagram showing the configuration of an image processing apparatus according to the first embodiment.

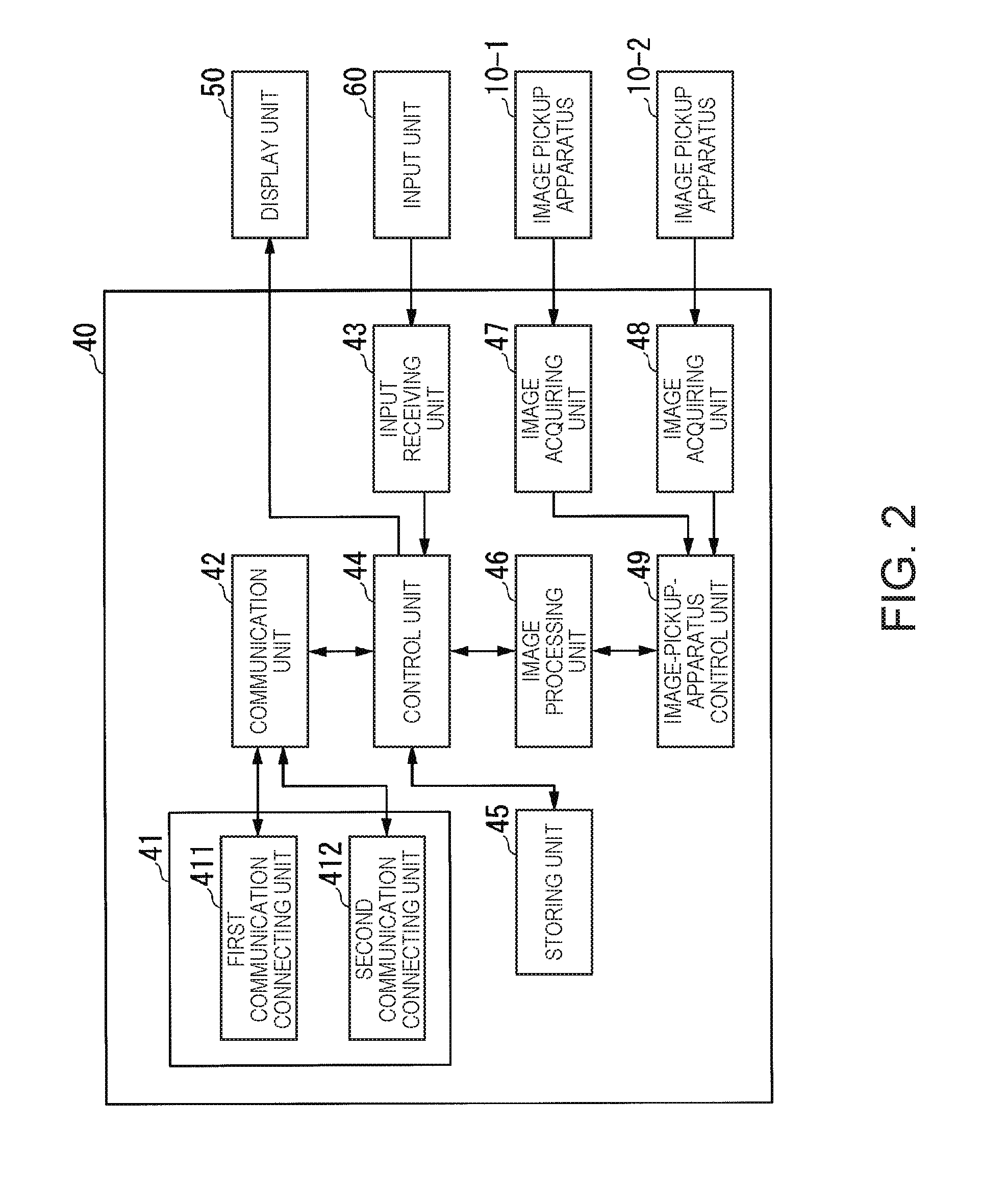

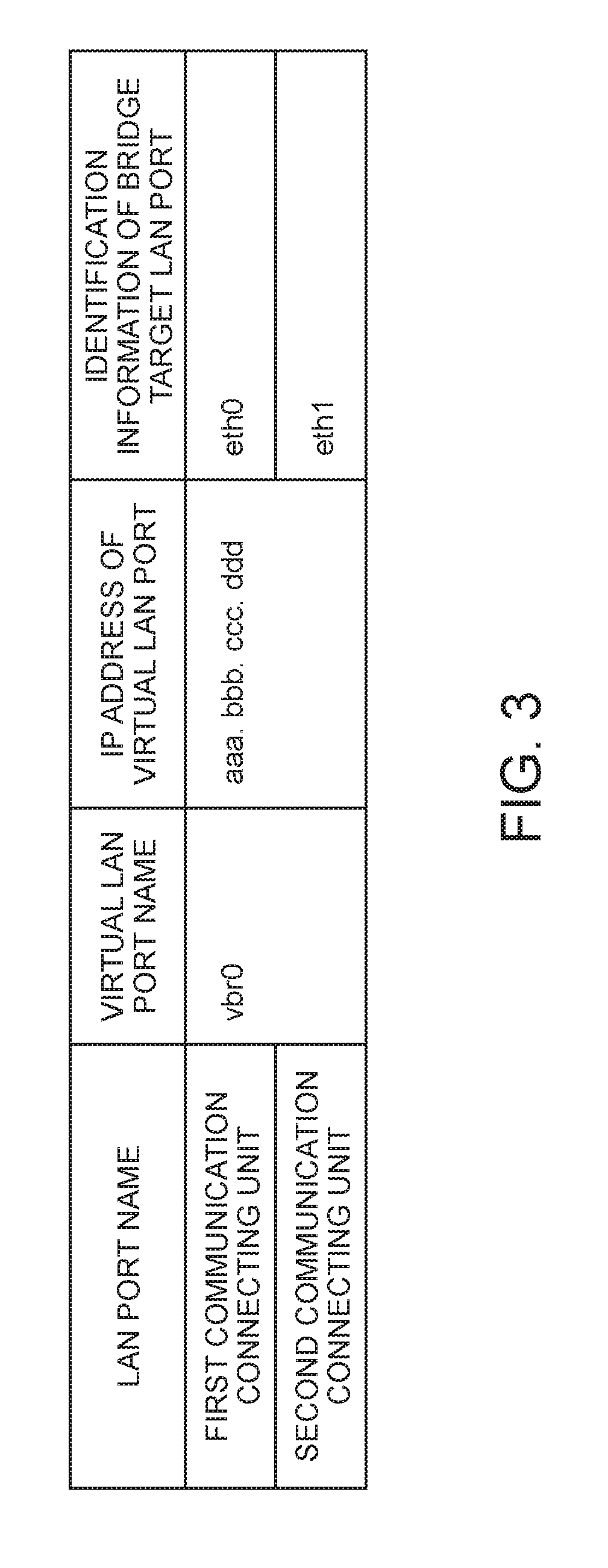

[0114] FIG. 3 is a diagram for explaining an example of LAN port names, a virtual LAN port name, an IP address of the virtual LAN port, and identification information of a bridge target LAN port of a first communication connecting unit and a second communication connecting unit according to the first embodiment.

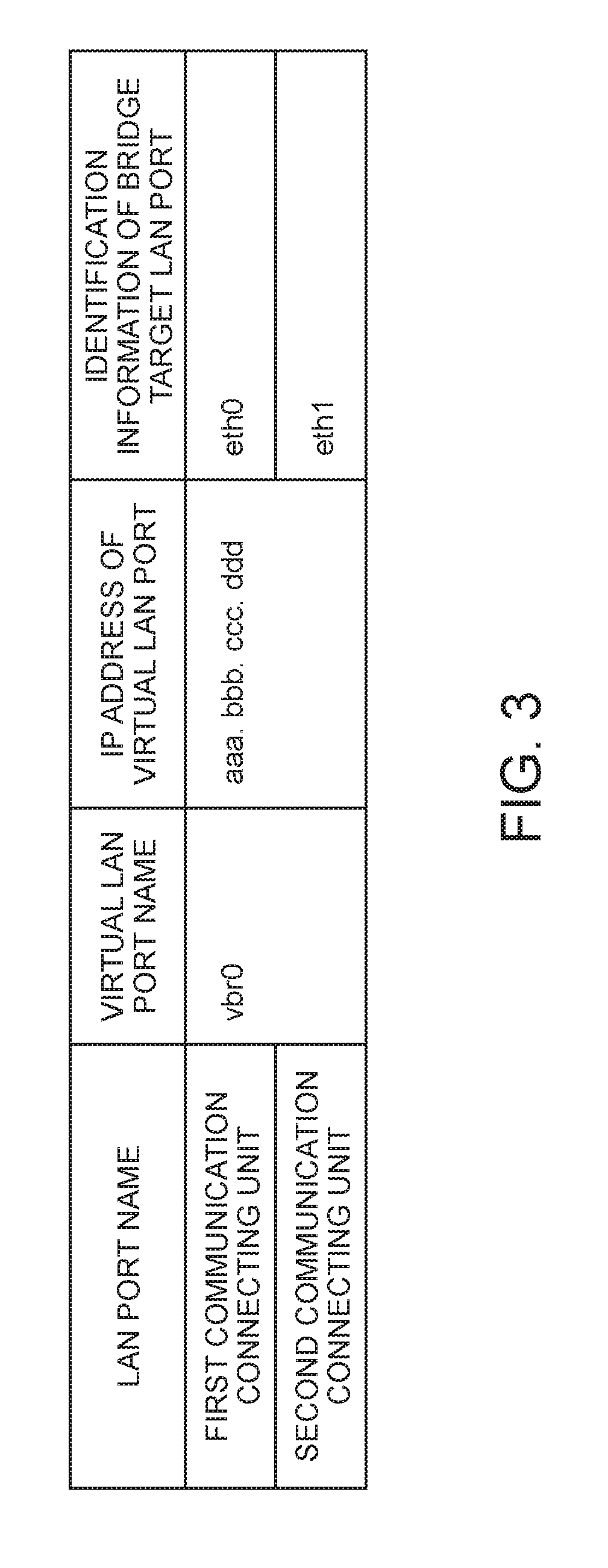

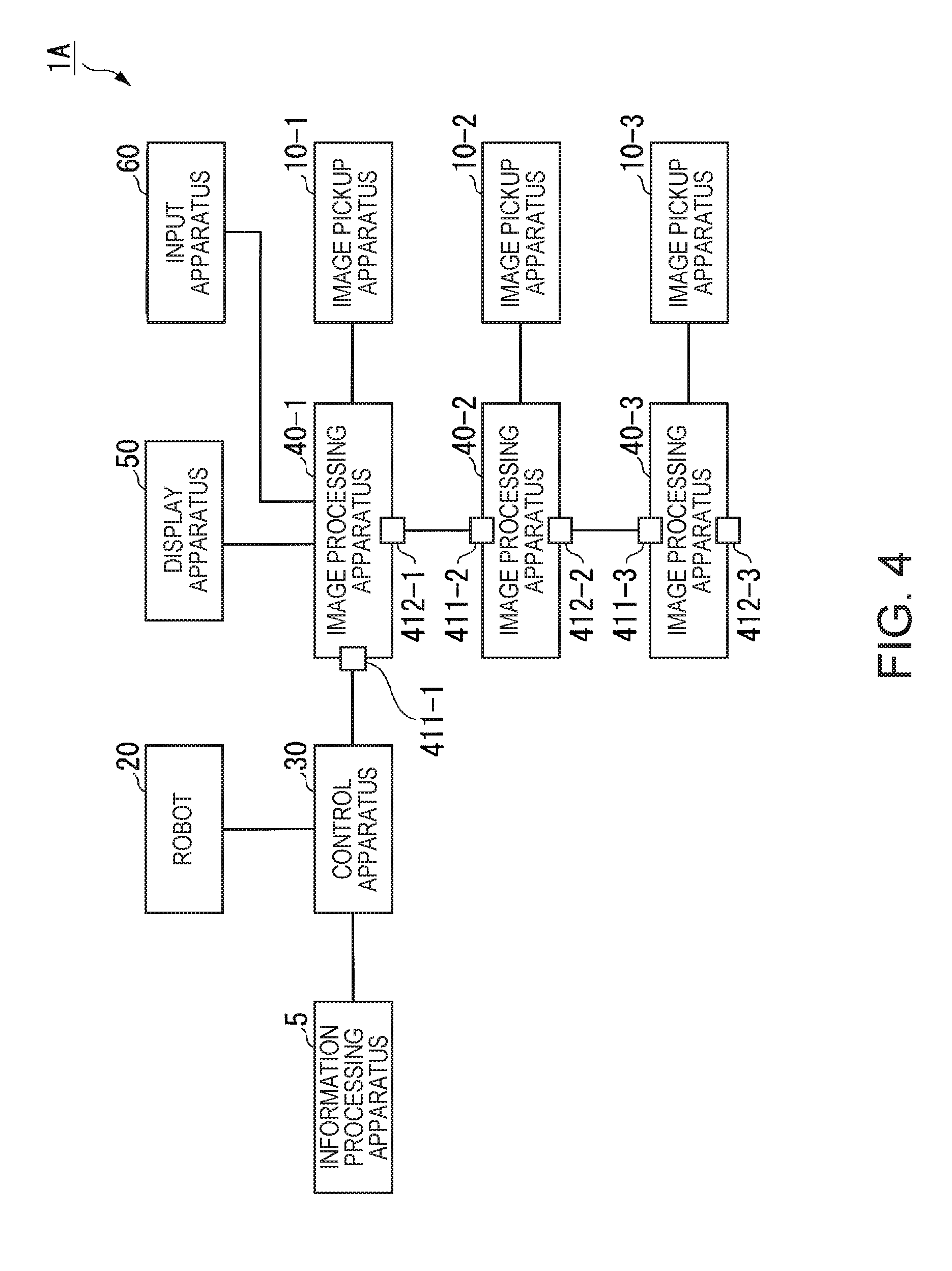

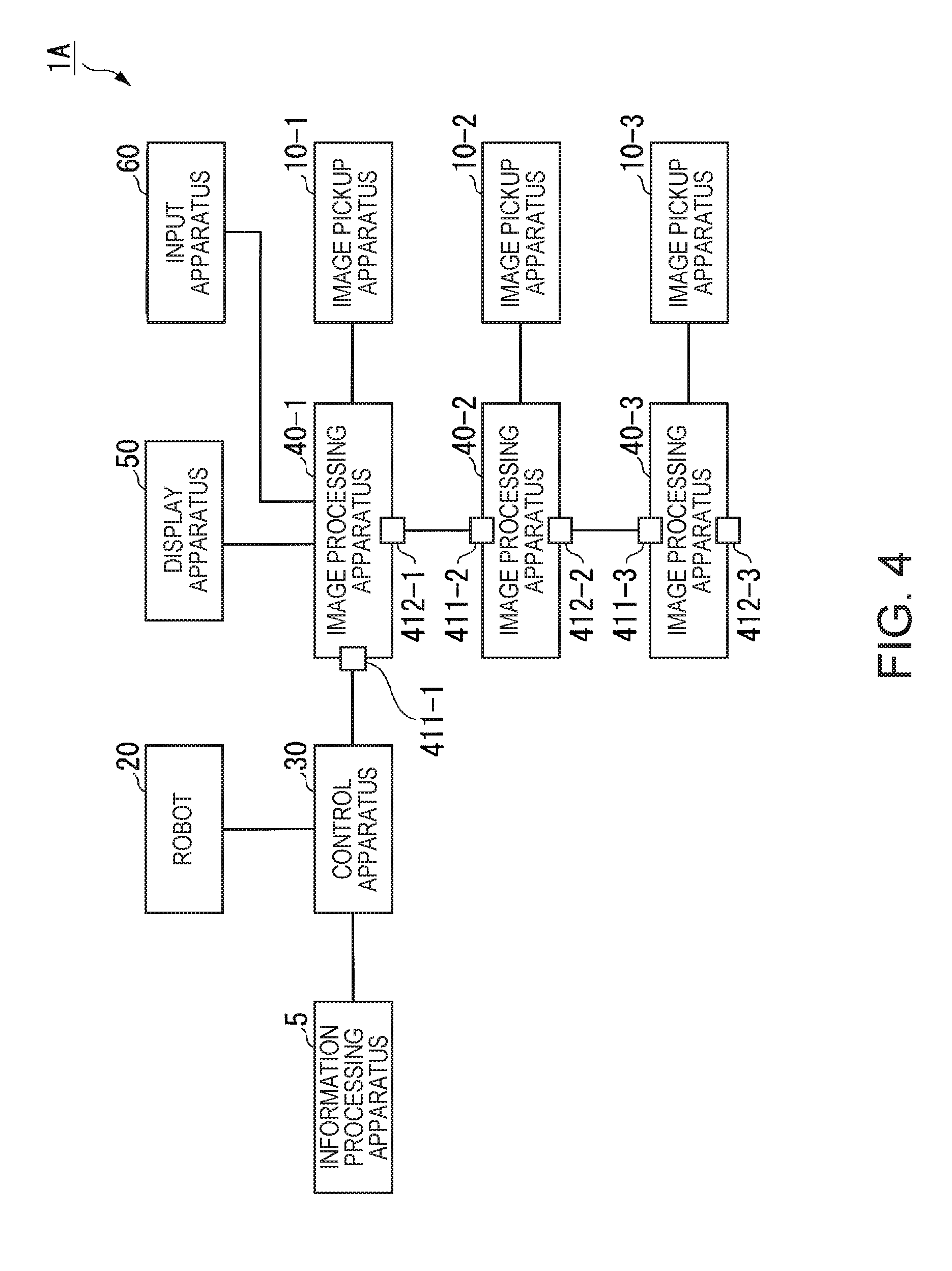

[0115] FIG. 4 is a diagram for explaining an example of the configuration of a robot system in which a plurality of image processing apparatuses are connected according to the first embodiment.

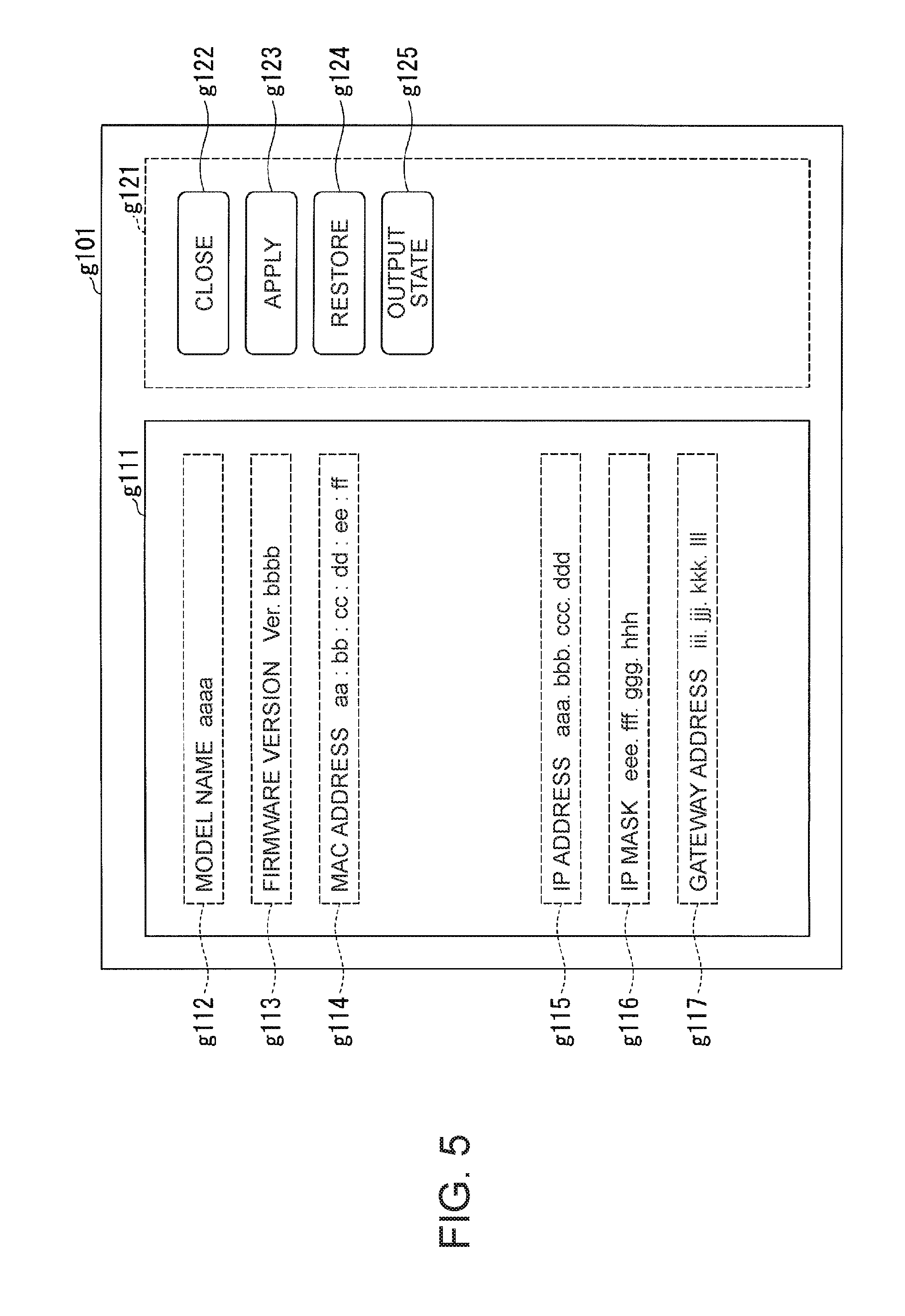

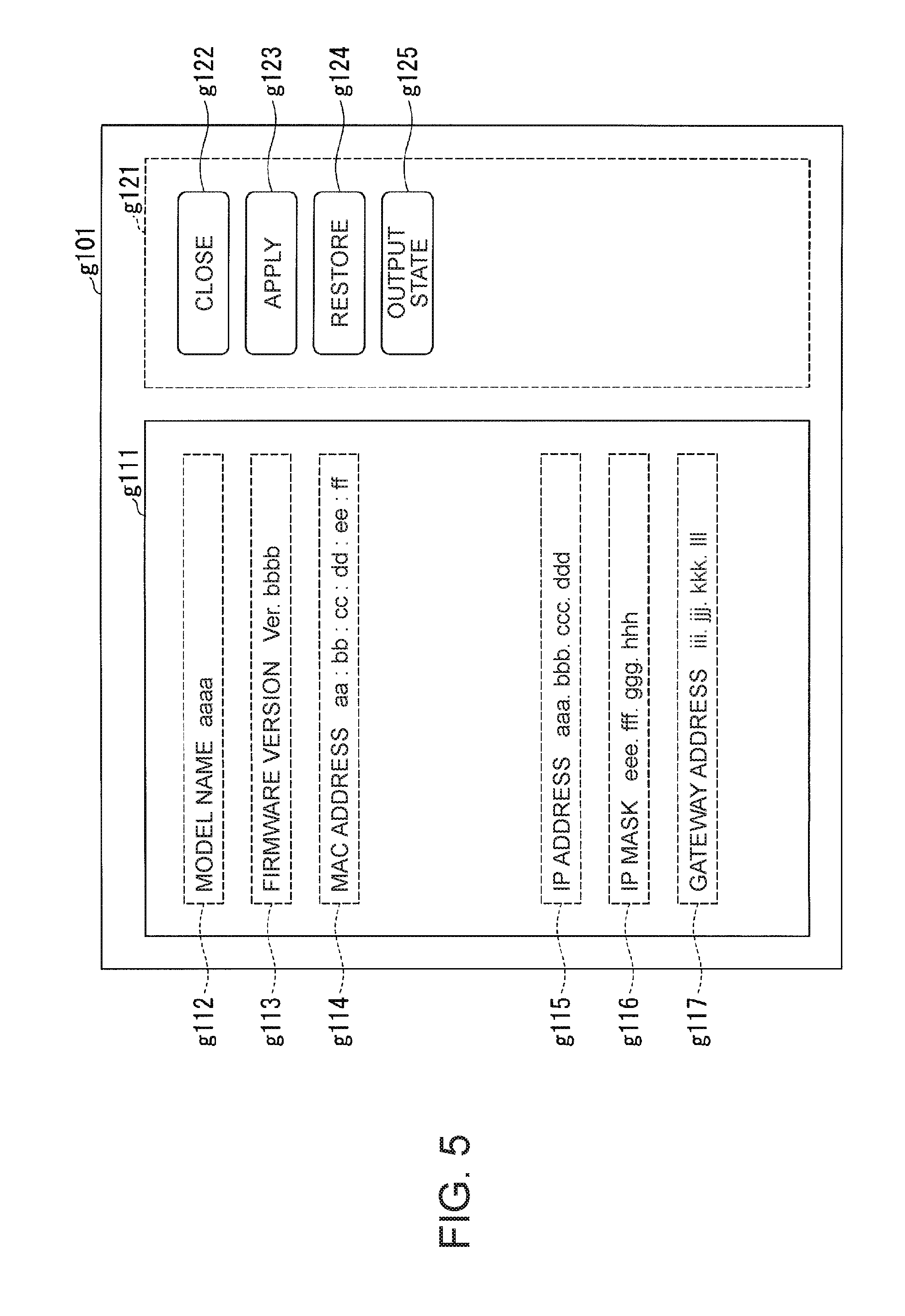

[0116] FIG. 5 is a diagram for explaining an example of a network setting image displayed on a display of an information processing apparatus according to the first embodiment.

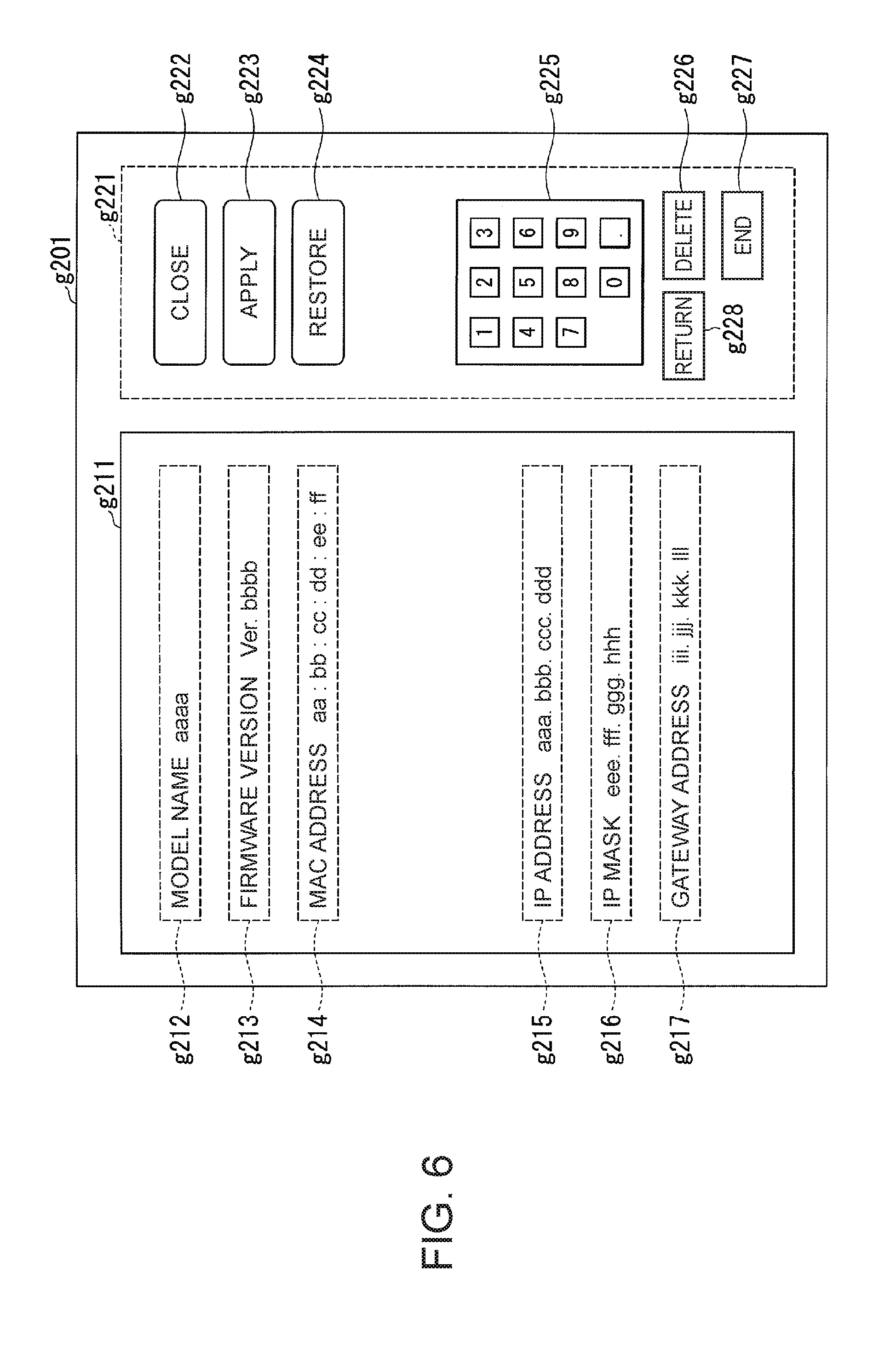

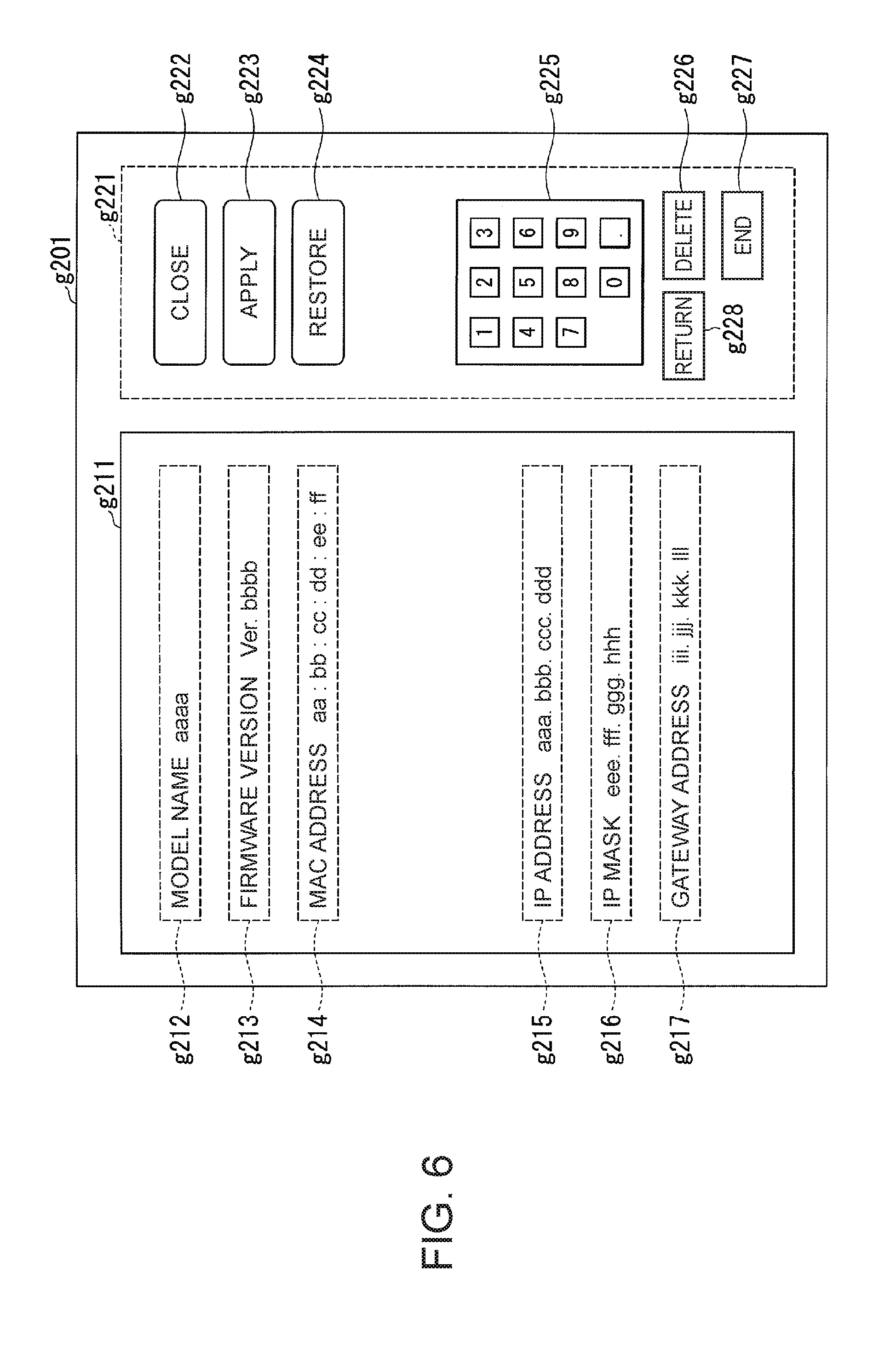

[0117] FIG. 6 is a diagram for explaining an example of a network setting screen displayed on a display unit connected to the image processing apparatus according to the first embodiment.

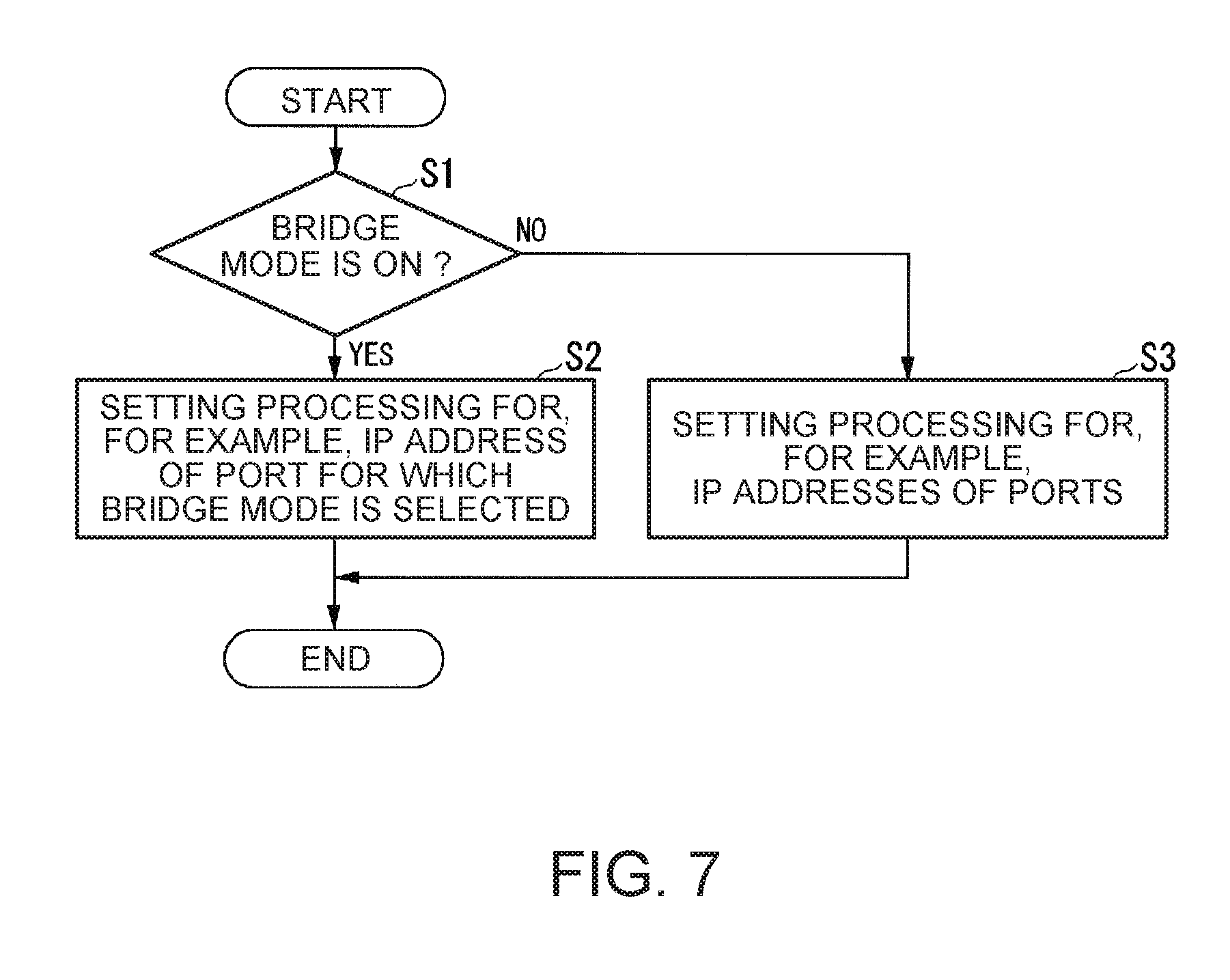

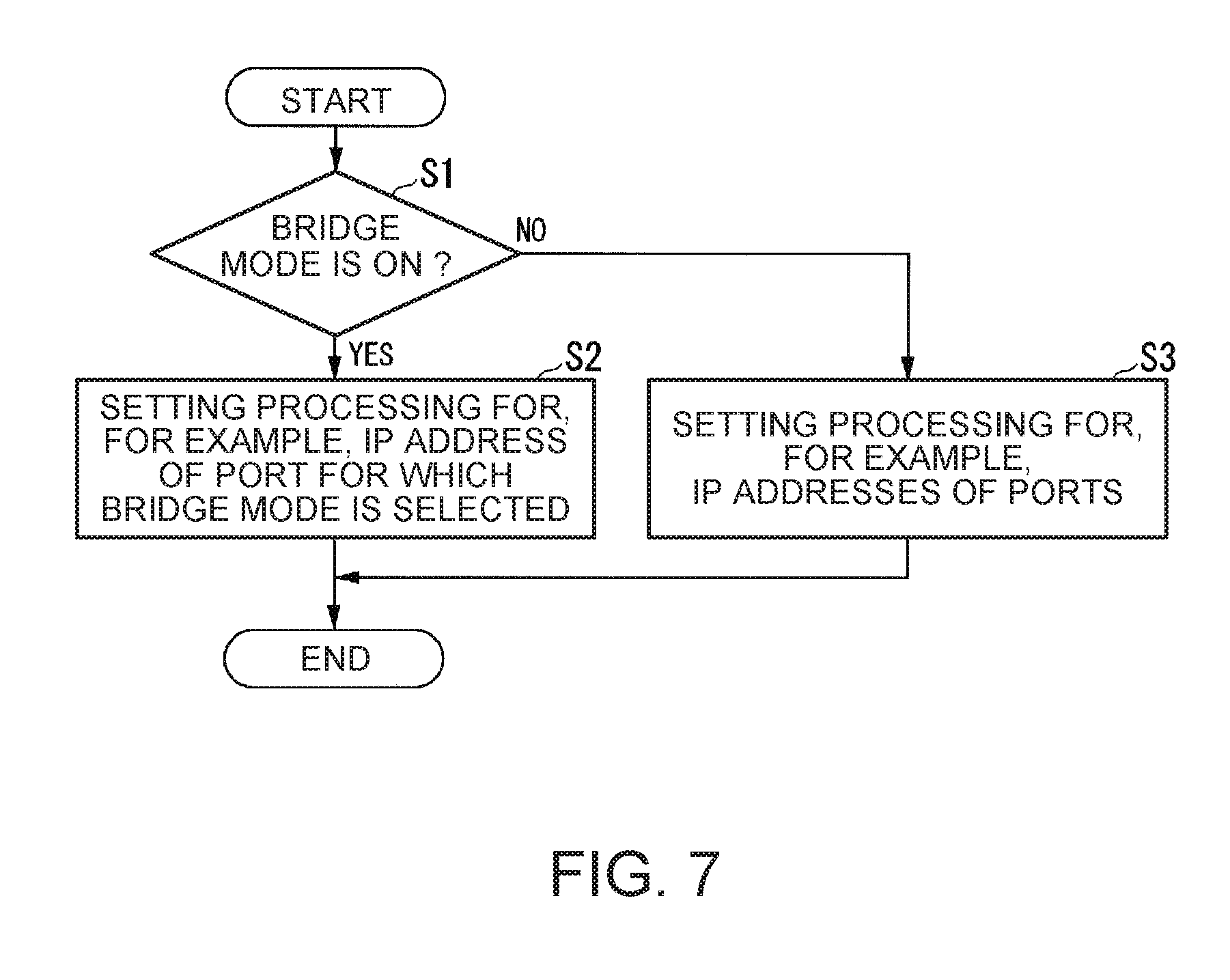

[0118] FIG. 7 is a flowchart of a processing procedure of setting of a network relation performed by the image processing apparatus according to the first embodiment.

[0119] FIG. 8 is a flowchart of a processing procedure for a received request performed by the image processing apparatus according to the first embodiment.

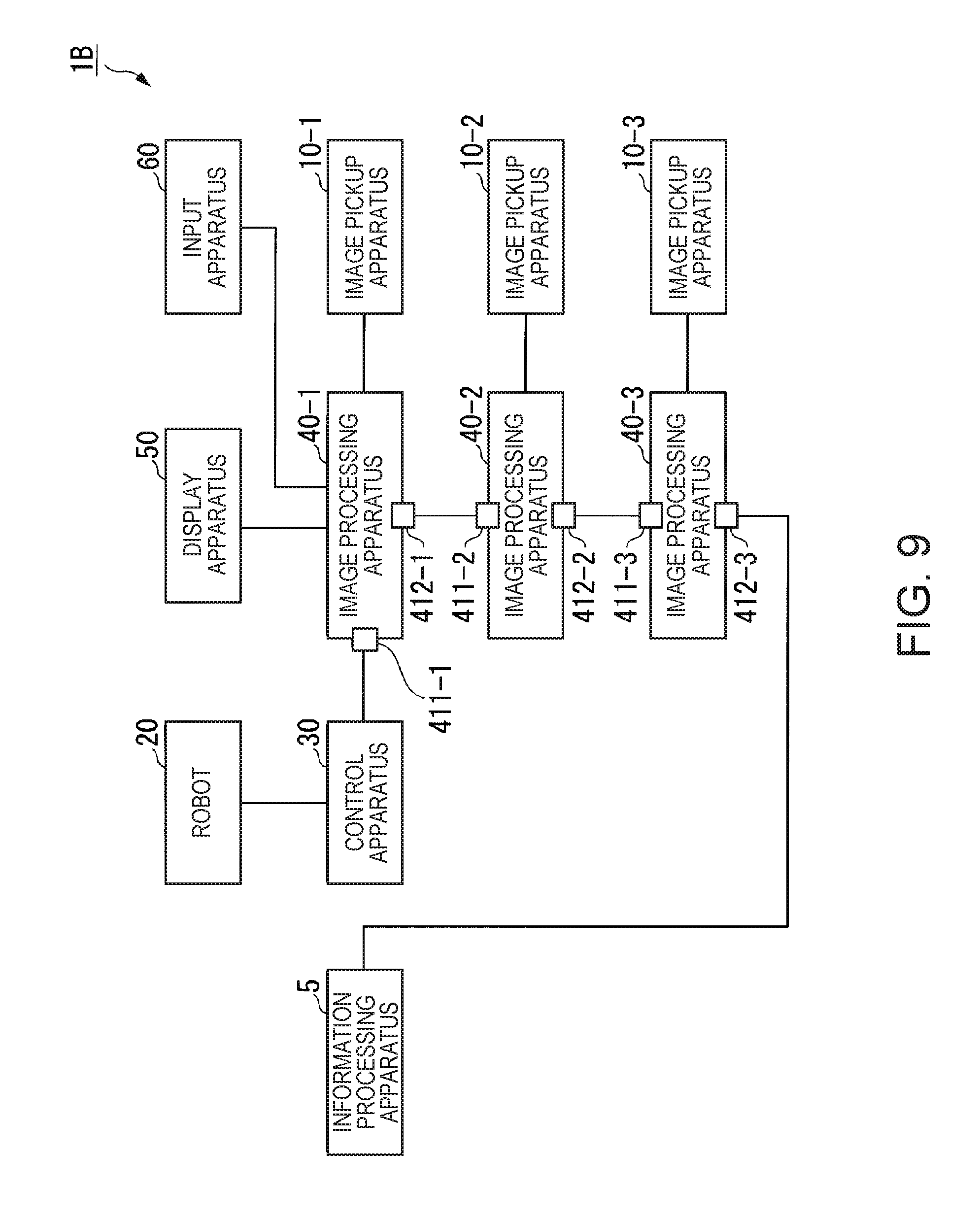

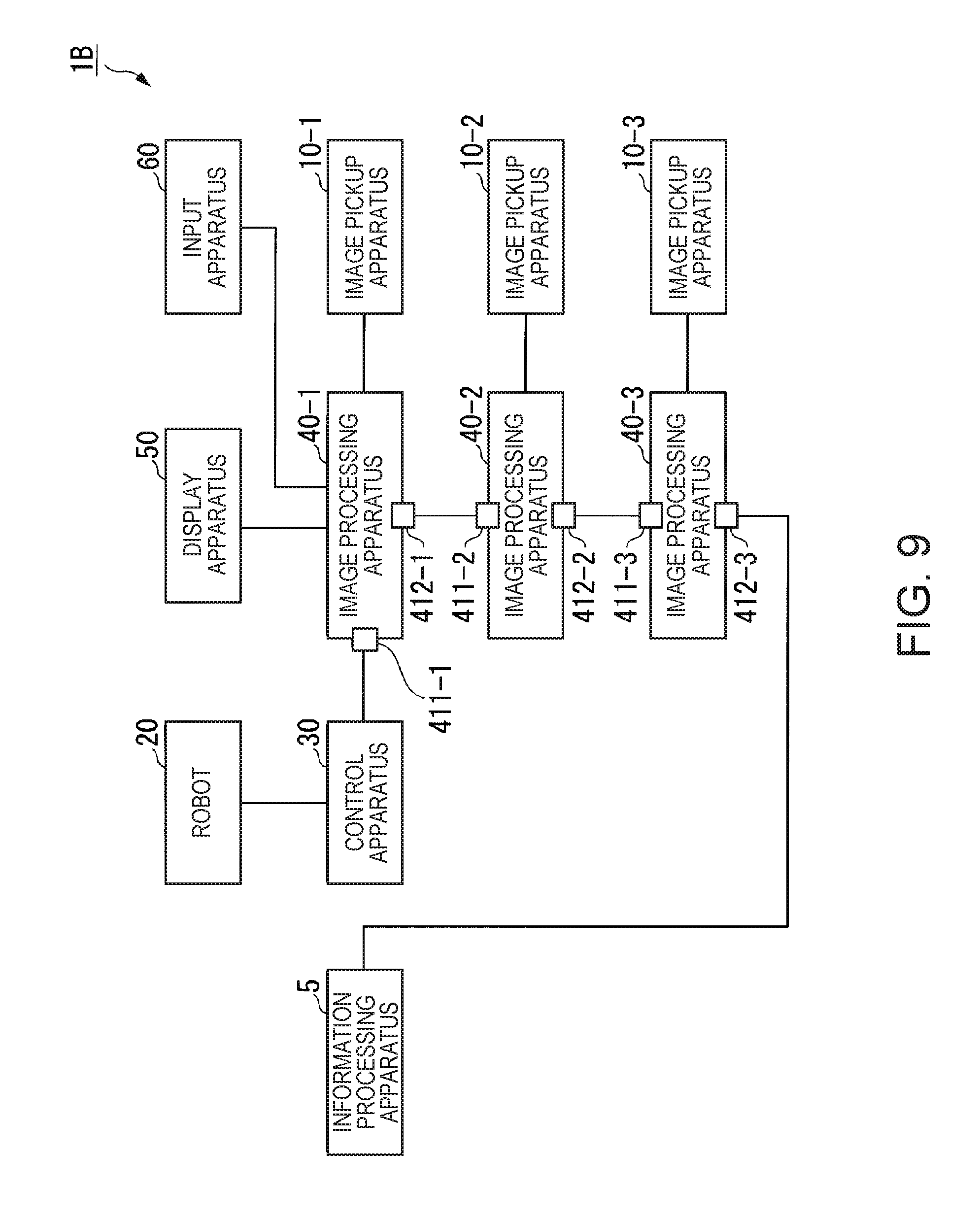

[0120] FIG. 9 is a diagram for explaining an example of the configuration of a robot system in which an information processing apparatus is connected to one of the plurality of image processing apparatuses according to the first embodiment.

[0121] FIG. 10 is a diagram for explaining an example of the configuration of a robot system in which a plurality of control apparatuses share one image processing apparatus according to the first embodiment.

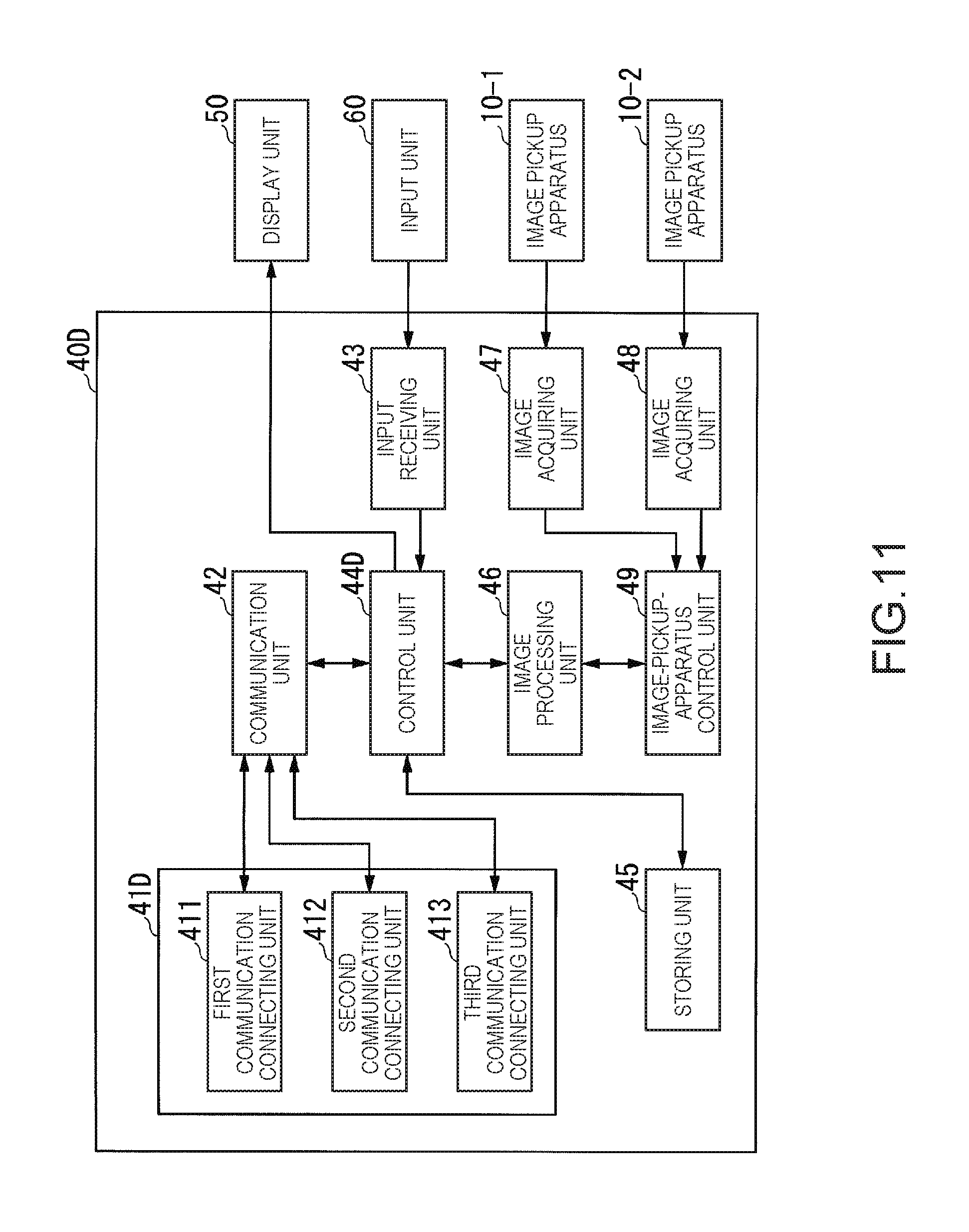

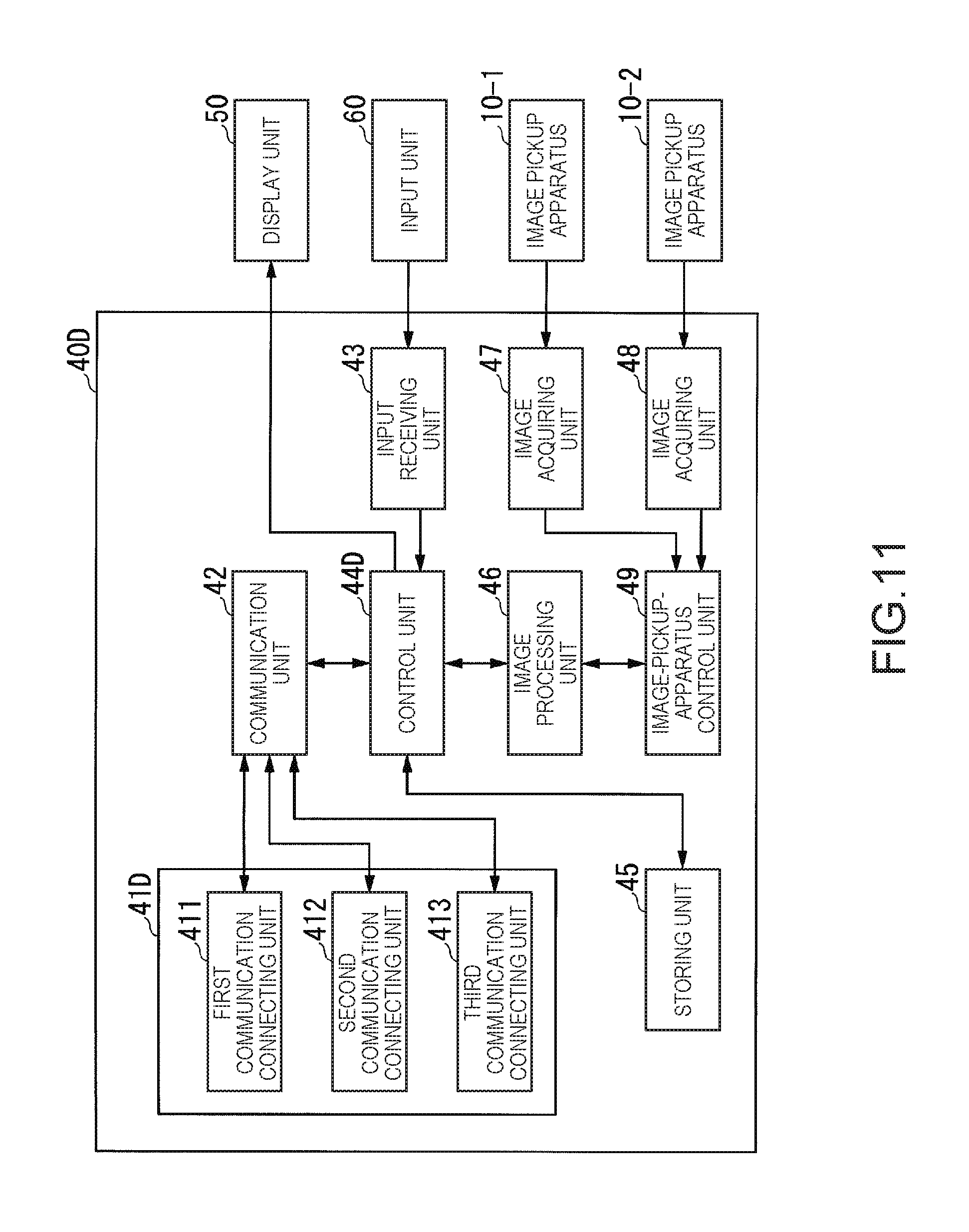

[0122] FIG. 11 is a schematic block diagram showing the configuration of an image processing apparatus according to a second embodiment.

[0123] FIG. 12 is a diagram for explaining an example of LAN port names, a virtual LAN port name, an IP address of the virtual LAN port, and identification information of a bridge target LAN port of a first communication connecting unit to a third communication connecting unit according to the second embodiment.

[0124] FIG. 13 is a diagram for explaining an example of a setting screen in a bridge mode according to the second embodiment.

[0125] FIG. 14 is a flowchart for explaining a processing procedure of setting of a network relation performed by the image processing apparatus according to the second embodiment.

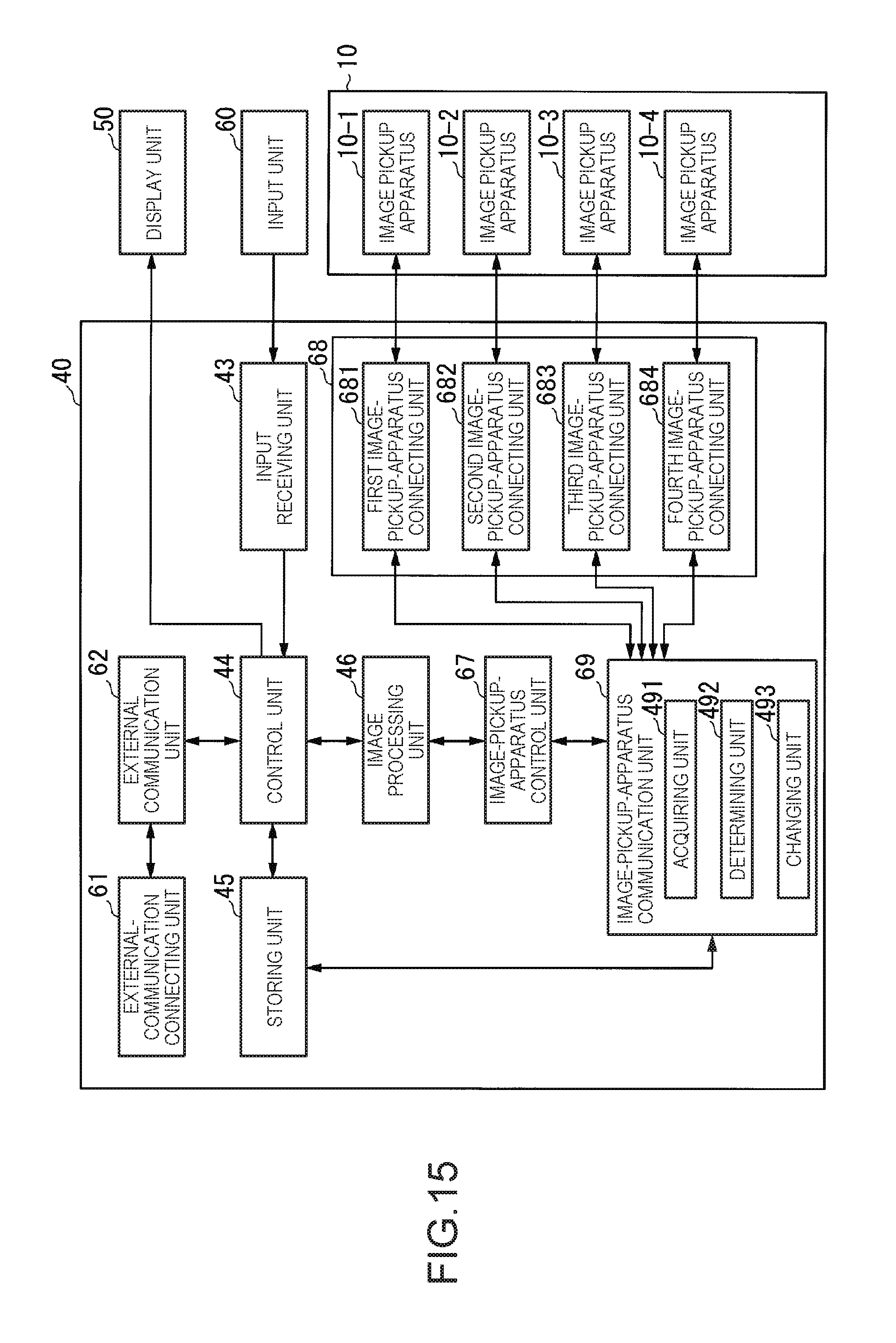

[0126] FIG. 15 is a schematic block diagram showing the configuration of an image processing apparatus according to a third embodiment.

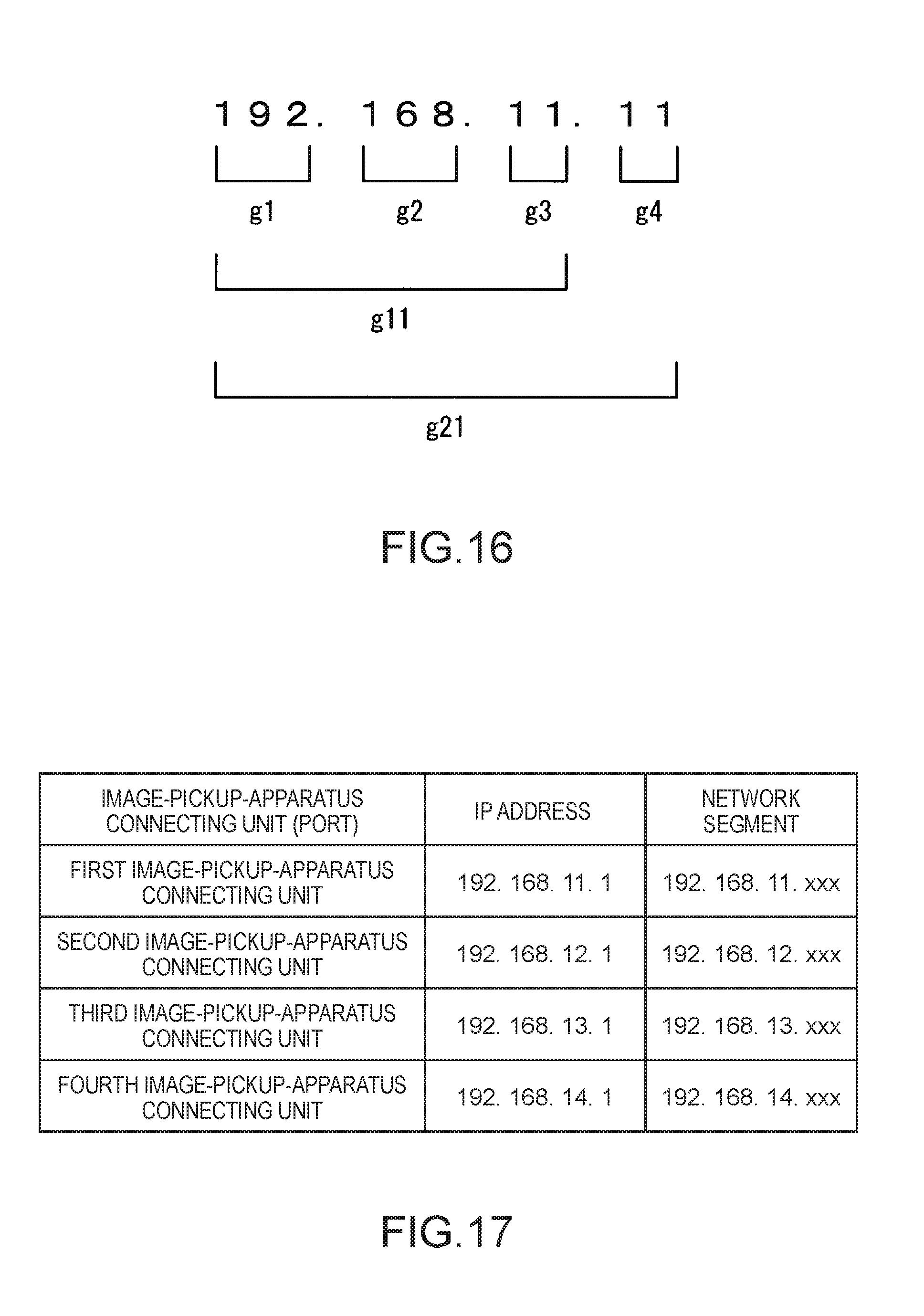

[0127] FIG. 16 is a diagram for explaining a network segment according to the third embodiment.

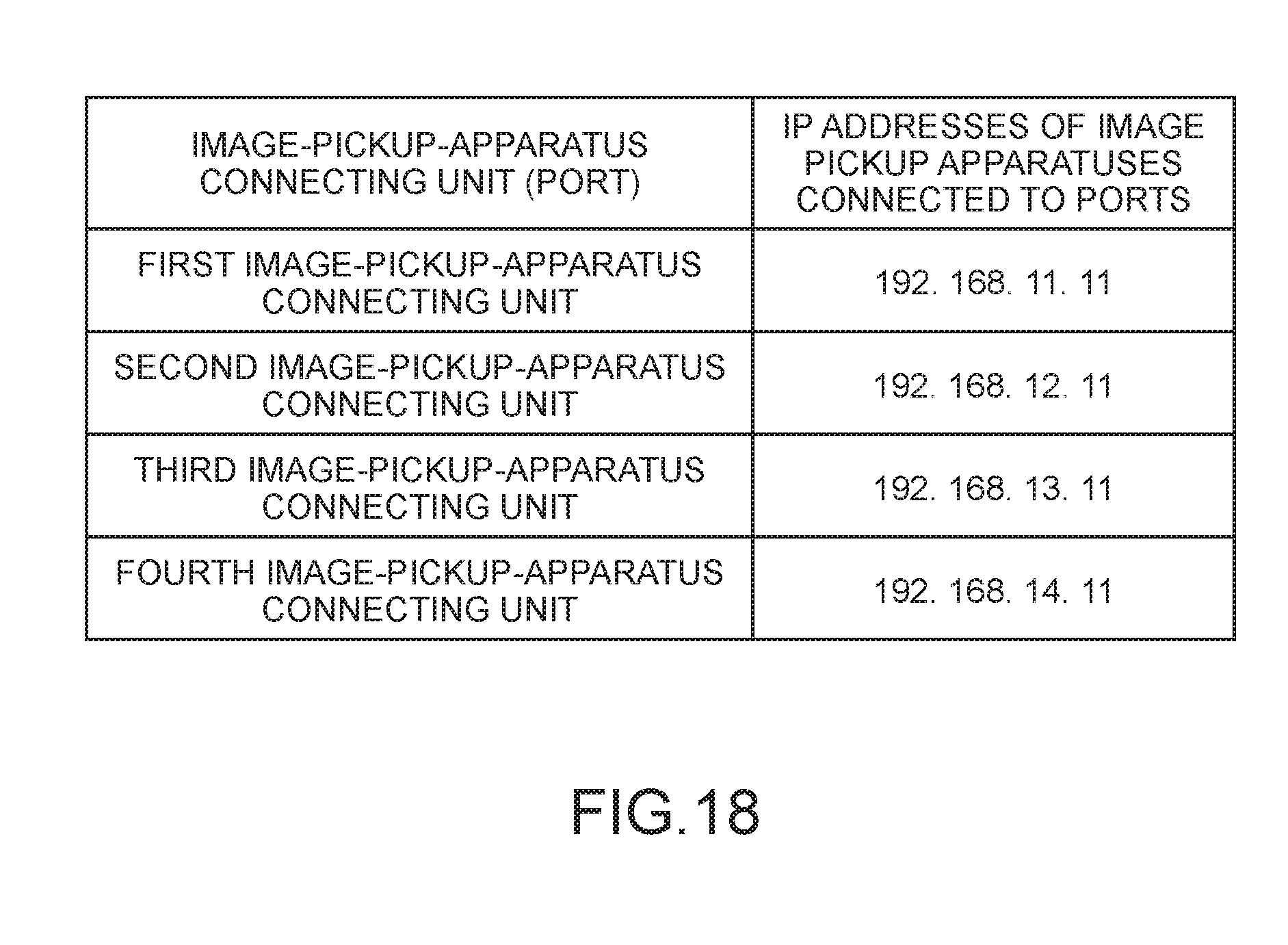

[0128] FIG. 17 is a diagram for explaining an example of a relation between IP addresses and network segments allocated in advance to a first image-pickup-apparatus connecting unit to a fourth image-pickup-apparatus connecting unit stored in a storing unit according to the third embodiment.

[0129] FIG. 18 is a diagram for explaining an example of IP addresses allocated in advance to image pickup apparatuses connected to the first image-pickup-apparatus connecting unit to the fourth image-pickup-apparatus connecting unit and stored in the storing unit according to the third embodiment.

[0130] FIG. 19 is a flowchart for explaining a processing procedure performed by an image-pickup-apparatus communication unit according to the third embodiment.

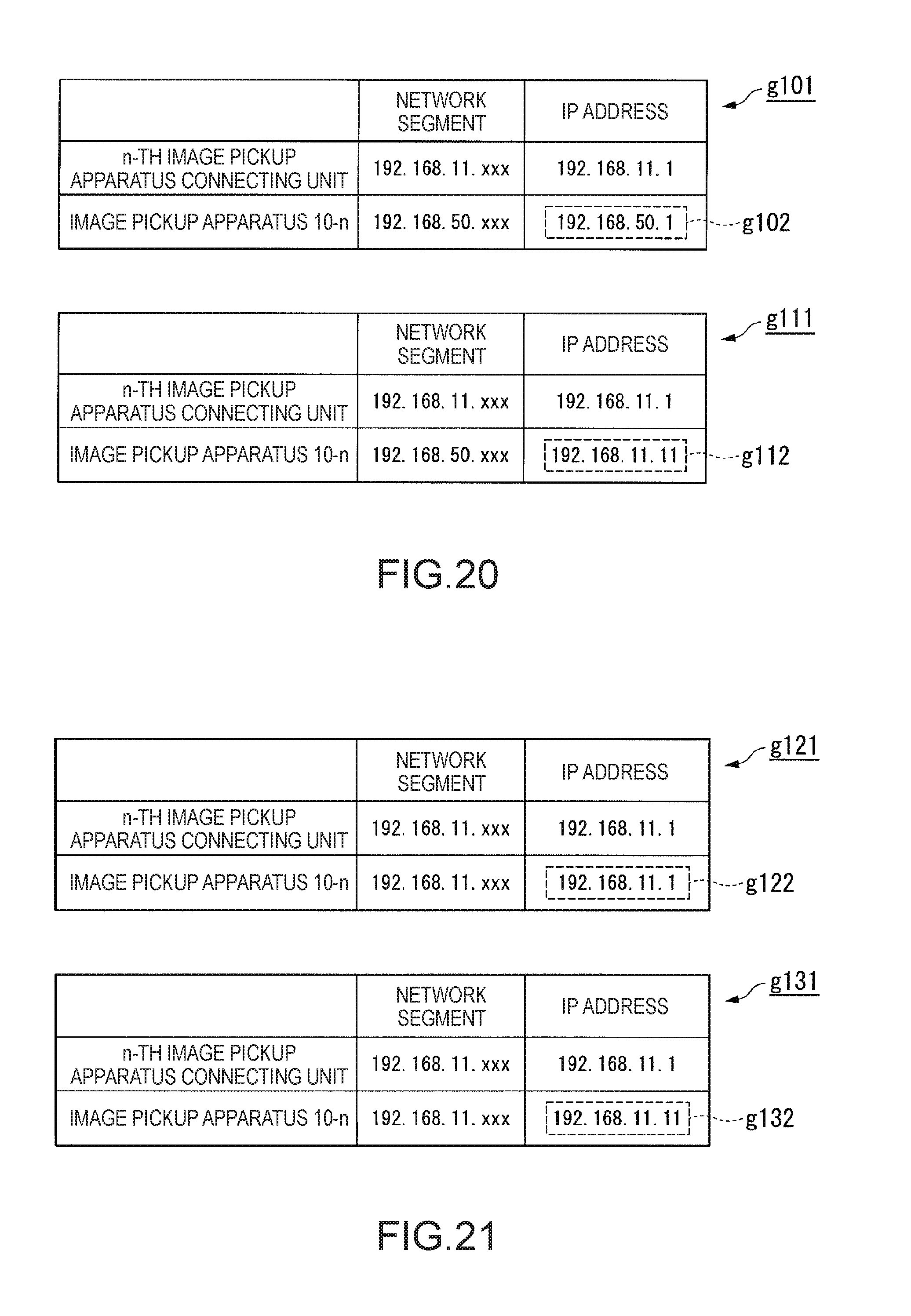

[0131] FIG. 20 is a diagram for explaining an example of network segments and IP addresses in which the network segments are different in an n-th image-pickup-apparatus connecting unit and an image pickup apparatus according to the third embodiment.

[0132] FIG. 21 is a diagram for explaining an example of network segments and IP addresses in which the network segments and the IP addresses are the same in the n-th image-pickup-apparatus connecting unit and the image pickup apparatus according to the third embodiment.

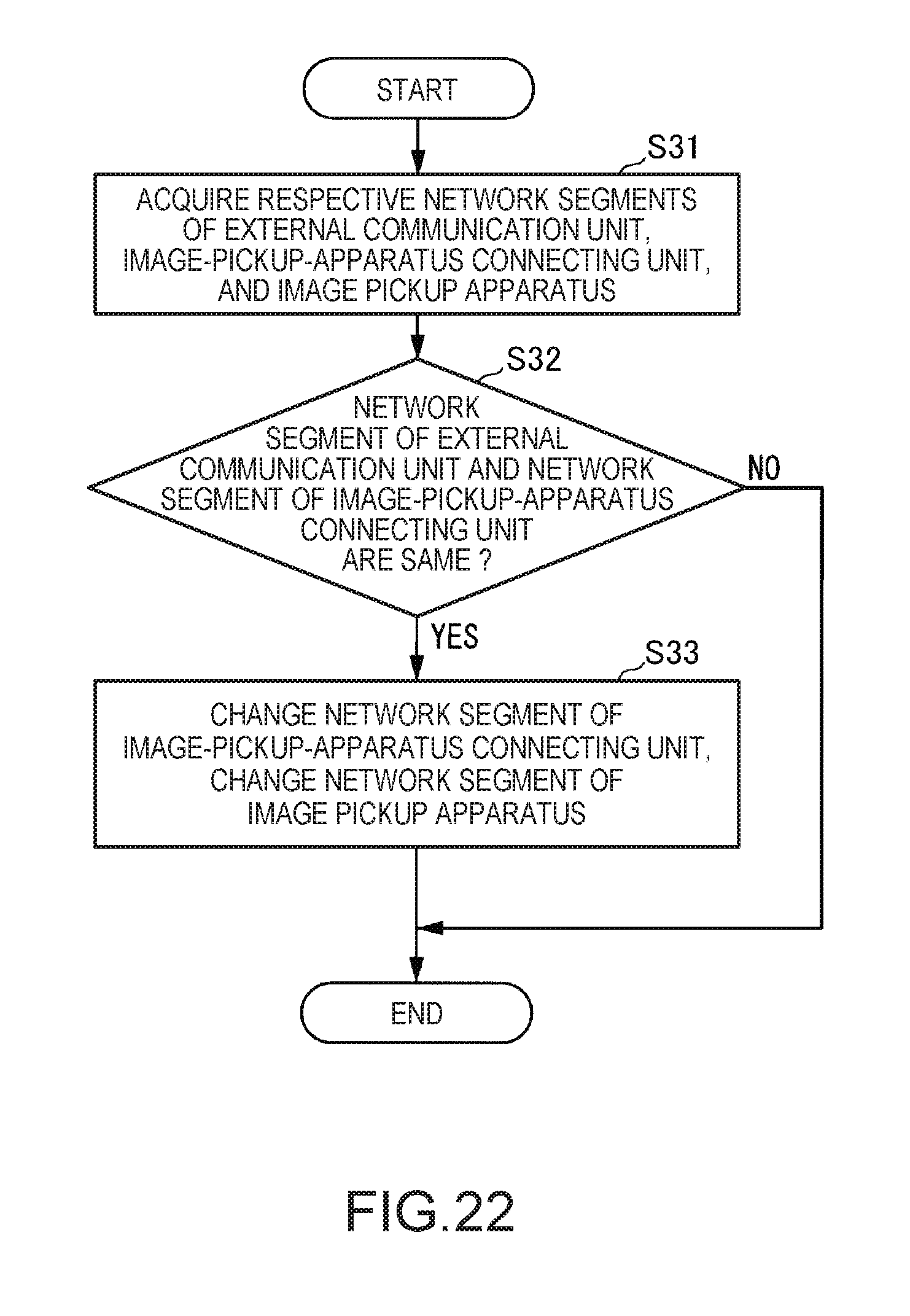

[0133] FIG. 22 is a flowchart of a processing procedure performed by an image-pickup-apparatus communication unit according to a fourth embodiment.

[0134] FIG. 23 is a diagram for explaining an example of network segments and IP addresses in which the network segments are the same in an external-communication connecting unit, an n-th image-pickup-apparatus connecting unit, and an image pickup apparatus according to the fourth embodiment.

[0135] FIG. 24 is a diagram showing an example of the hardware configuration of an image processing apparatus according to a fifth embodiment.

[0136] FIG. 25 is a diagram showing an example of the functional configuration of the image processing apparatus.

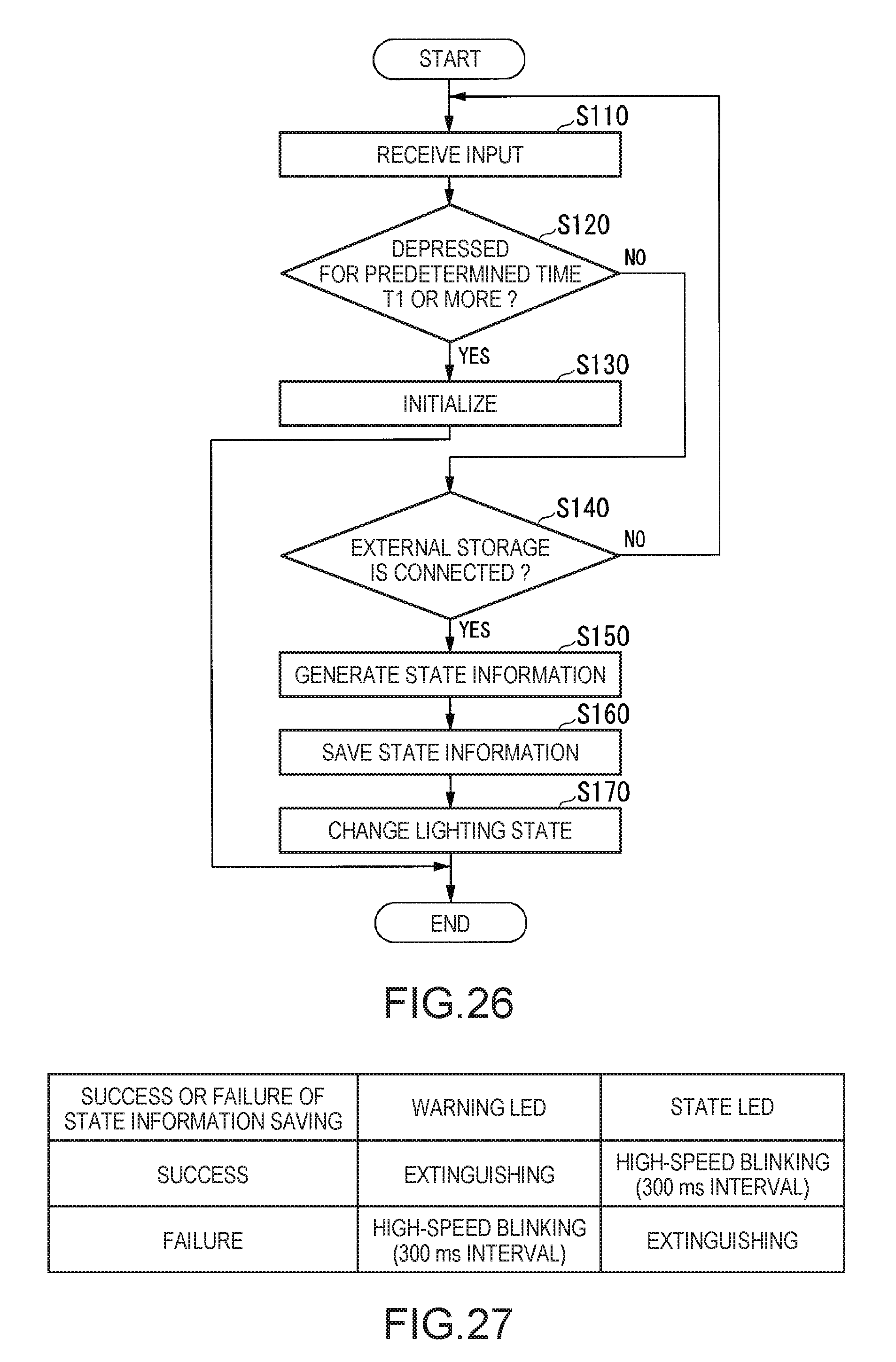

[0137] FIG. 26 is a flowchart for explaining an example of a flow of initialization processing and data saving processing of the image processing apparatus performed by a control unit.

[0138] FIG. 27 is a table showing an example of rules for a change of a lighting state of an output unit by an output control unit.

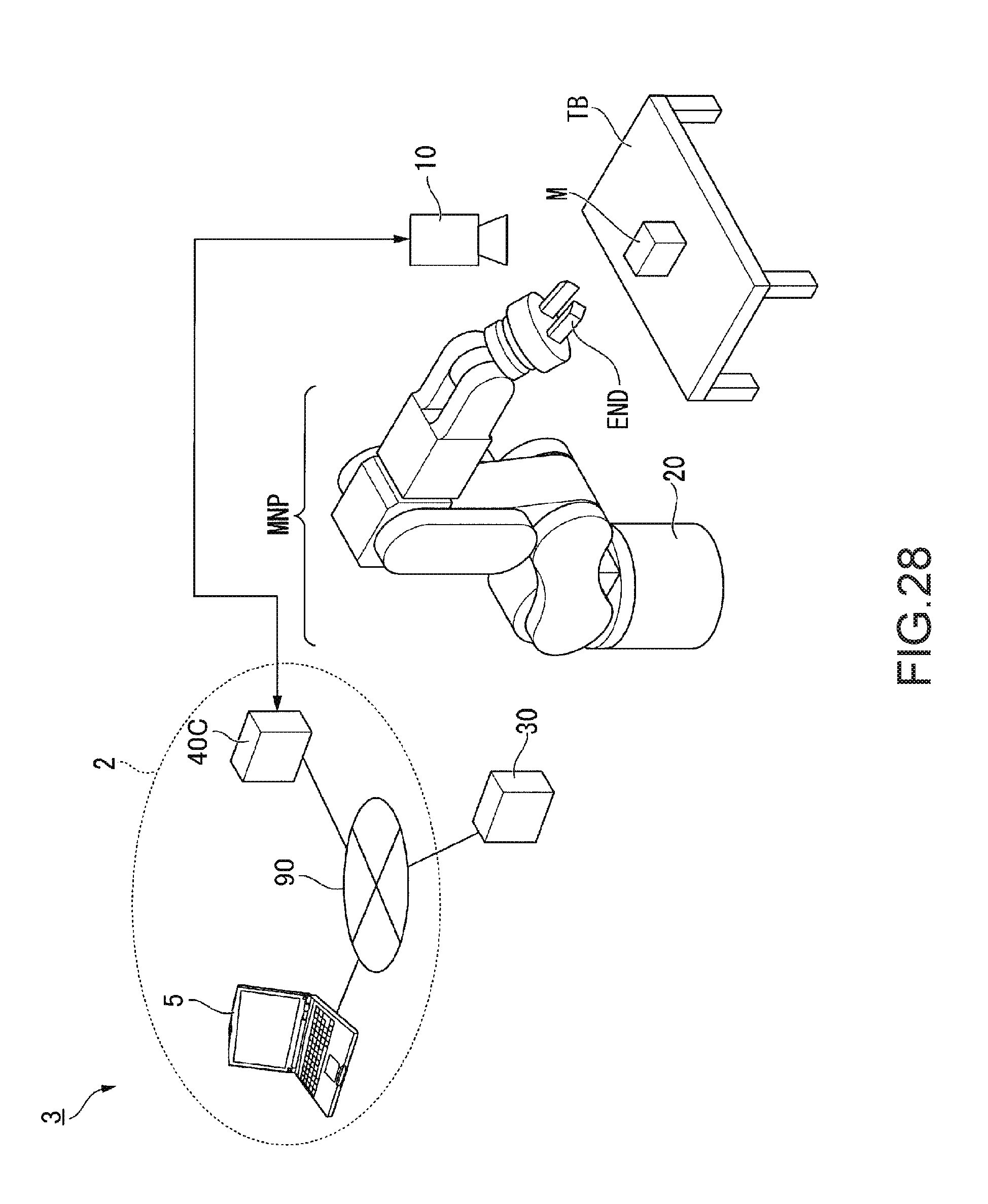

[0139] FIG. 28 is a configuration diagram showing a robot system according to a sixth embodiment.

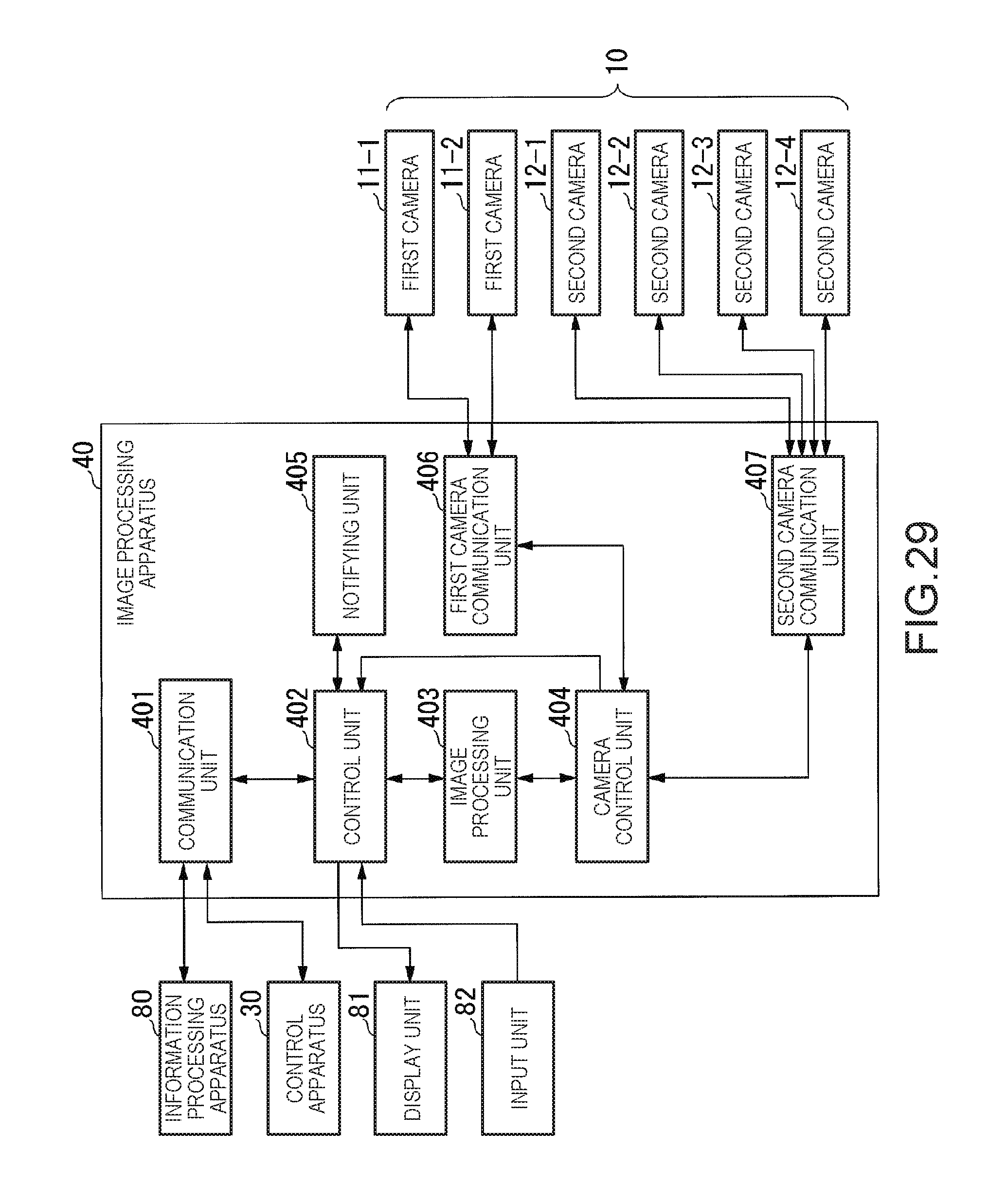

[0140] FIG. 29 is a block diagram showing the configuration of an image processing apparatus according to the sixth embodiment.

[0141] FIG. 30 is a block diagram showing the configuration of an information processing apparatus according to the sixth embodiment.

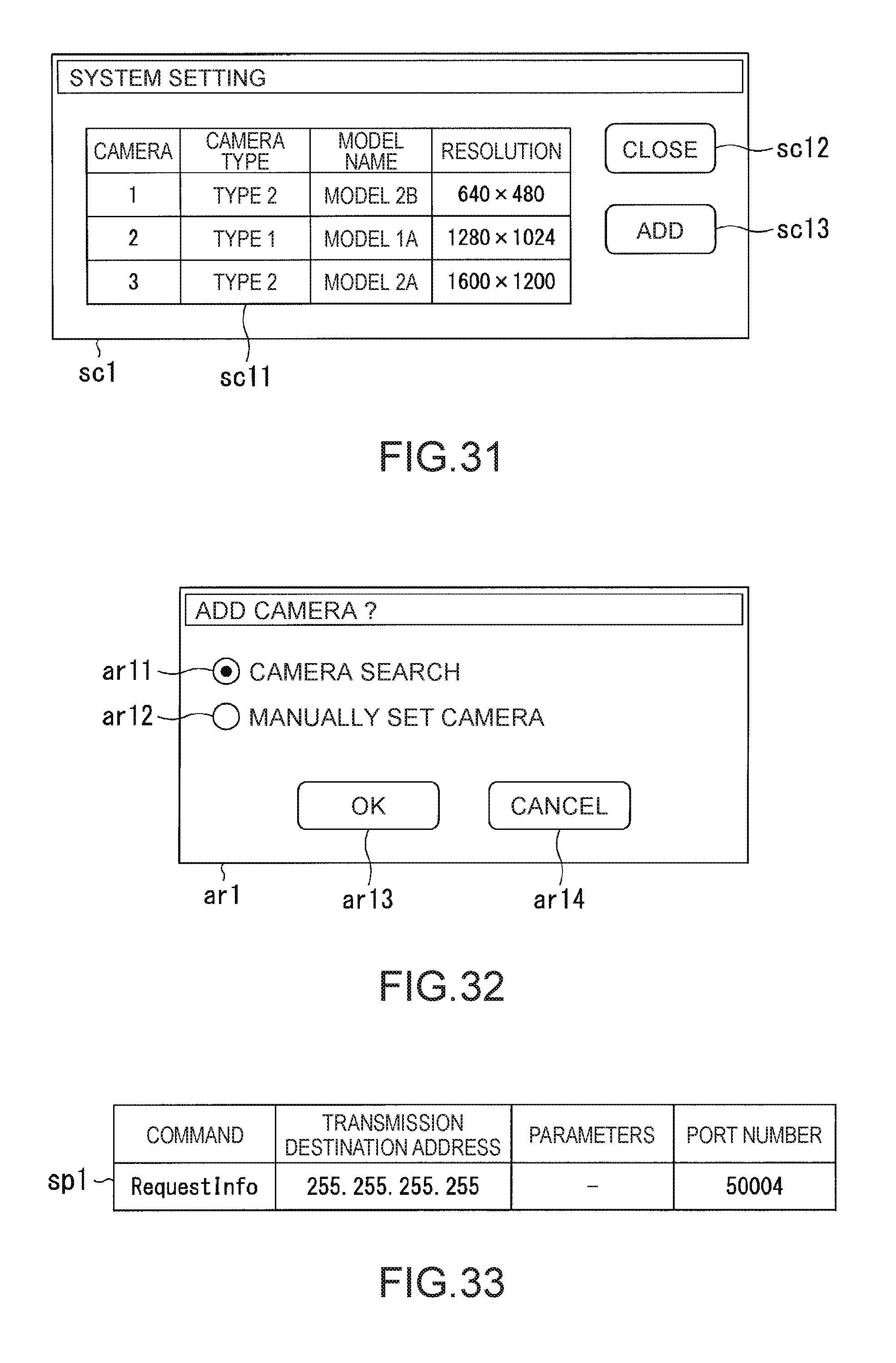

[0142] FIG. 31 is a diagram showing an example of a system display screen.

[0143] FIG. 32 is a diagram showing an example of an addition method inquiry screen.

[0144] FIG. 33 is a diagram showing an example of a search packet.

[0145] FIG. 34 is a diagram showing an example of a camera setting display screen.

[0146] FIG. 35 is a diagram showing an example of a system integration setting display screen.

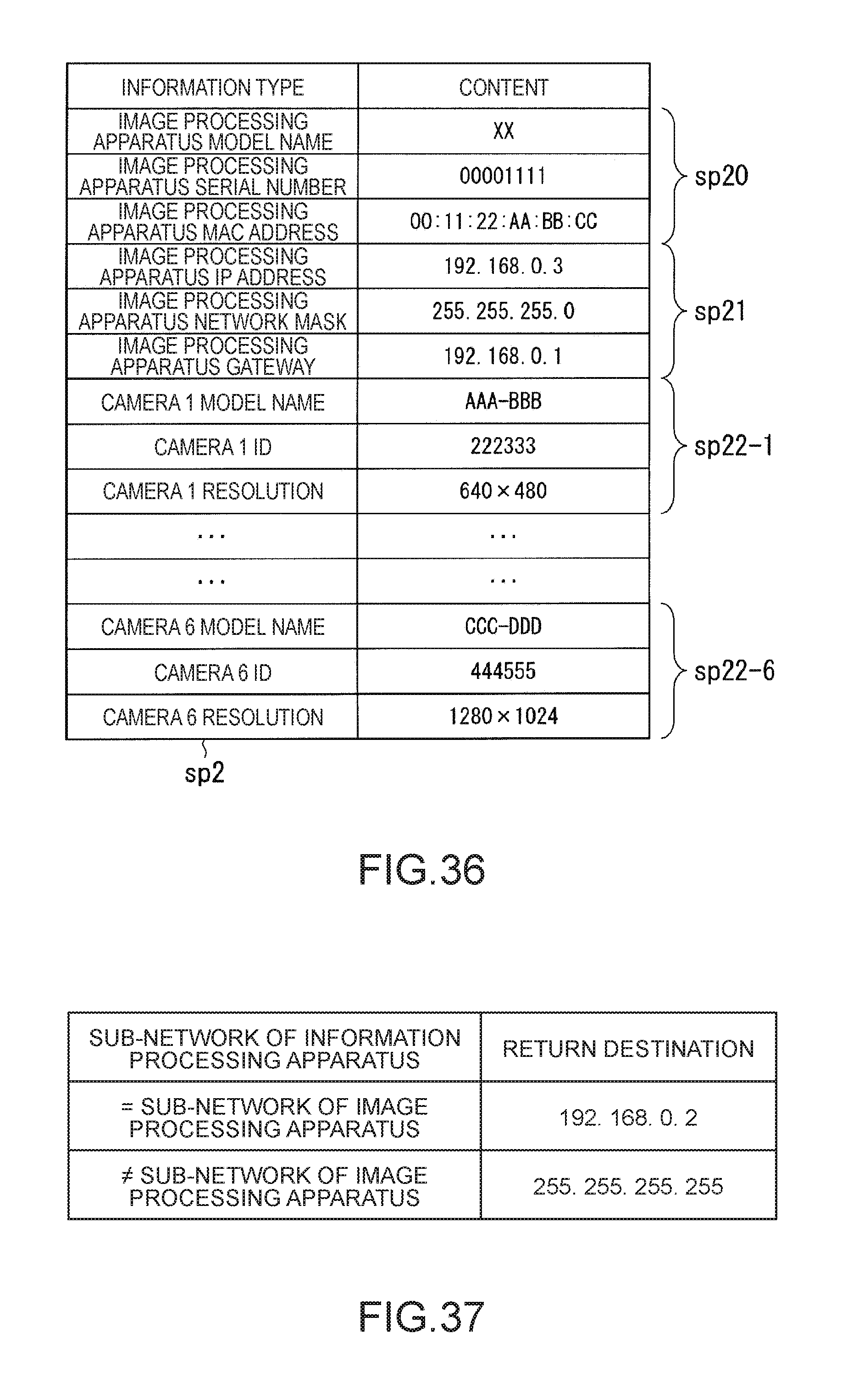

[0147] FIG. 36 is a diagram showing an example of a search response packet.

[0148] FIG. 37 is a diagram for explaining a return destination of the search response packet.

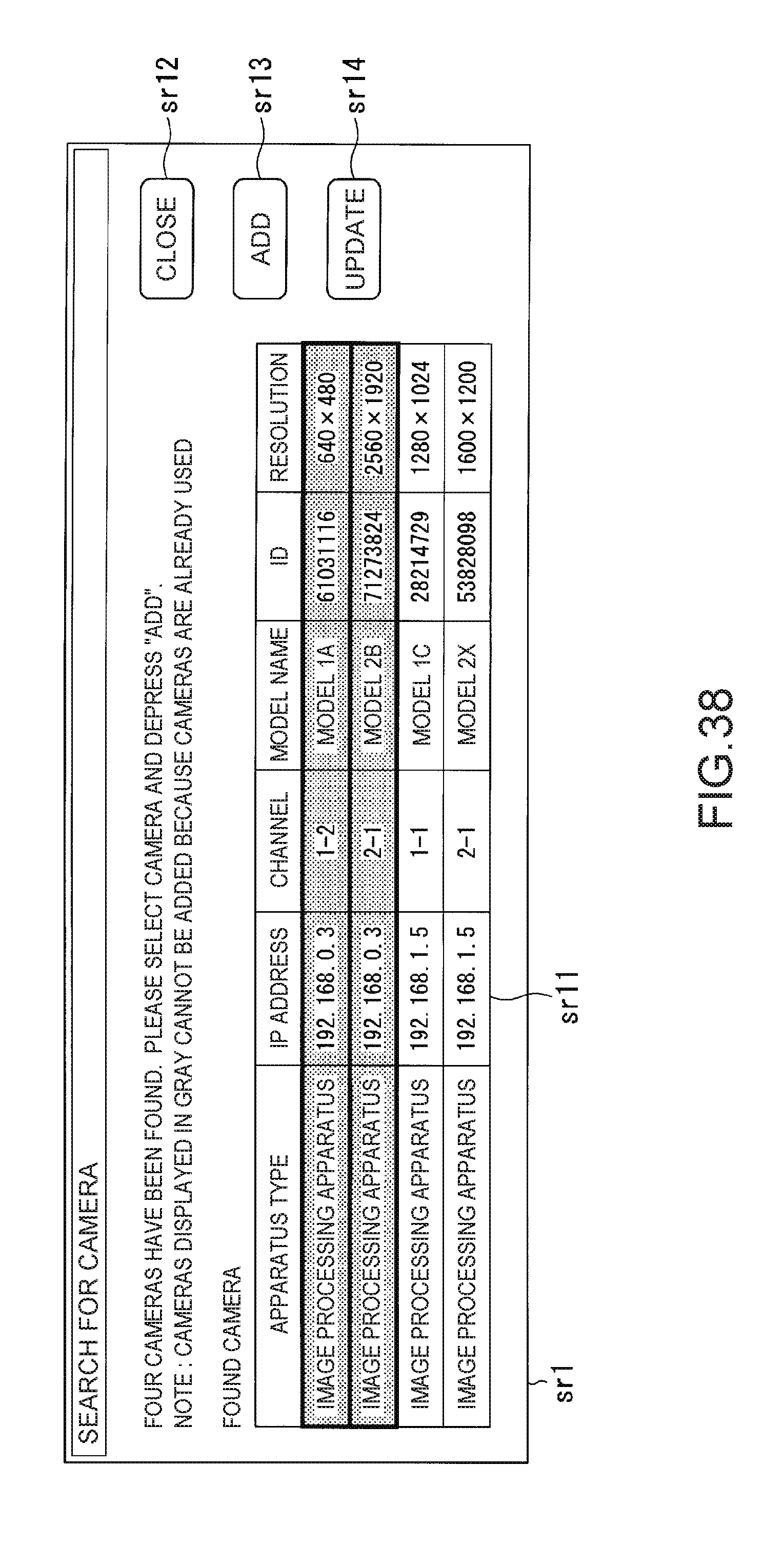

[0149] FIG. 38 is a diagram showing an example of a search result screen.

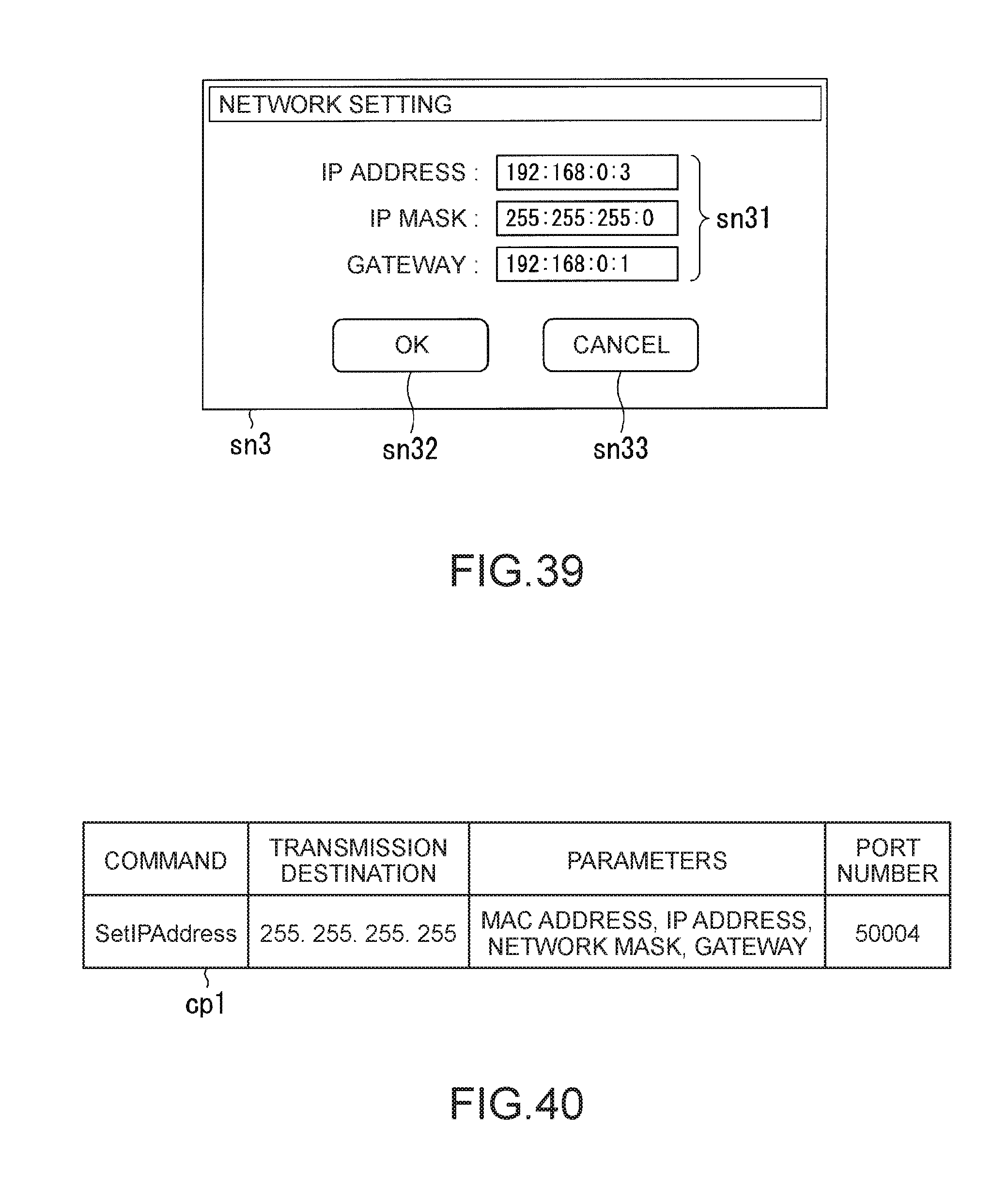

[0150] FIG. 39 is a diagram showing an example of a network setting screen.

[0151] FIG. 40 is a diagram showing an example of a change packet.

[0152] FIG. 41 is a diagram for explaining determination of necessity of a network setting information change.

[0153] FIG. 42 is a diagram showing an example of a change response packet.

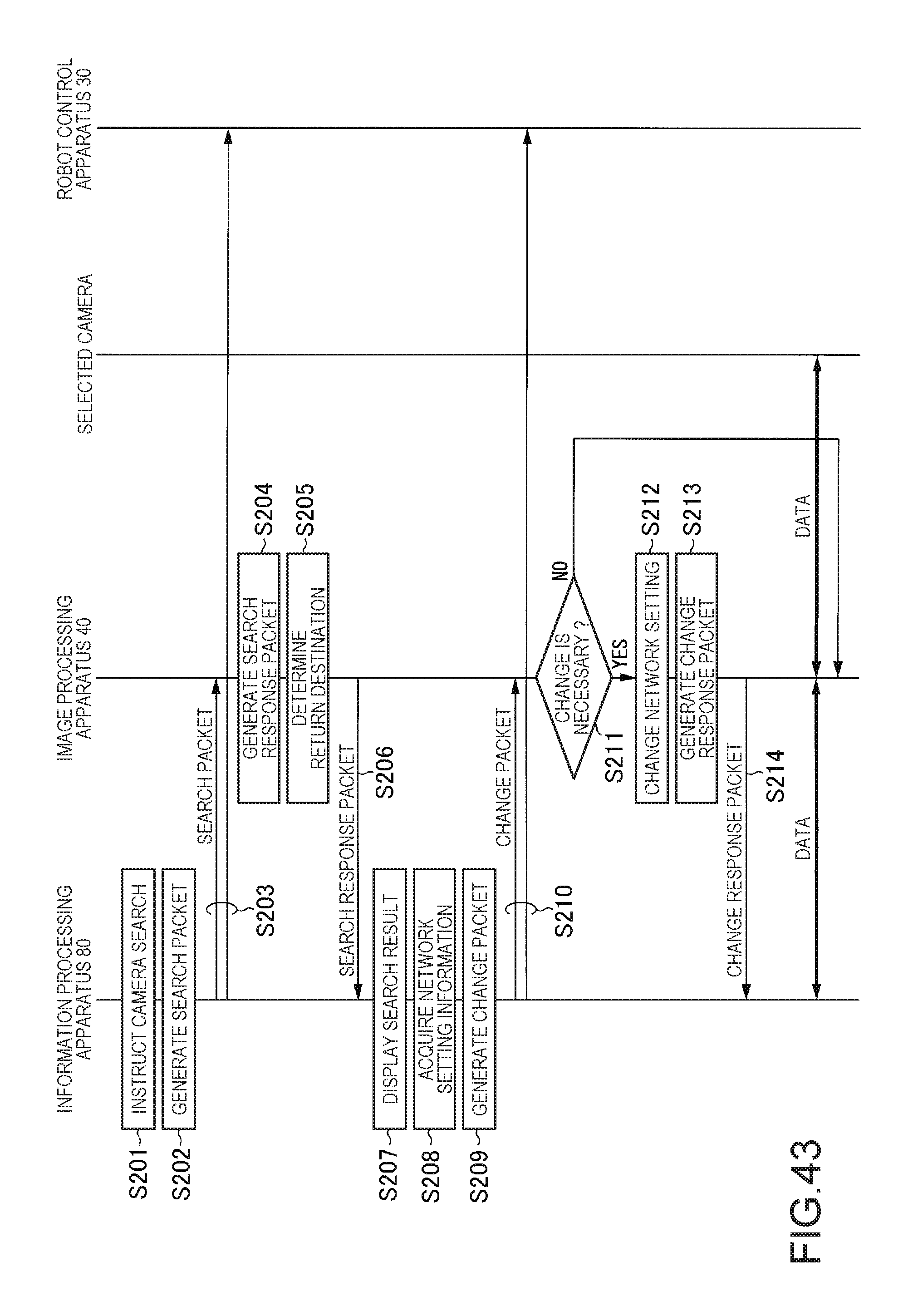

[0154] FIG. 43 is a sequence chart showing network processing according to a sixth embodiment.

[0155] FIG. 44 is a diagram showing an example of the hardware configuration of a control apparatus according to a seventh embodiment.

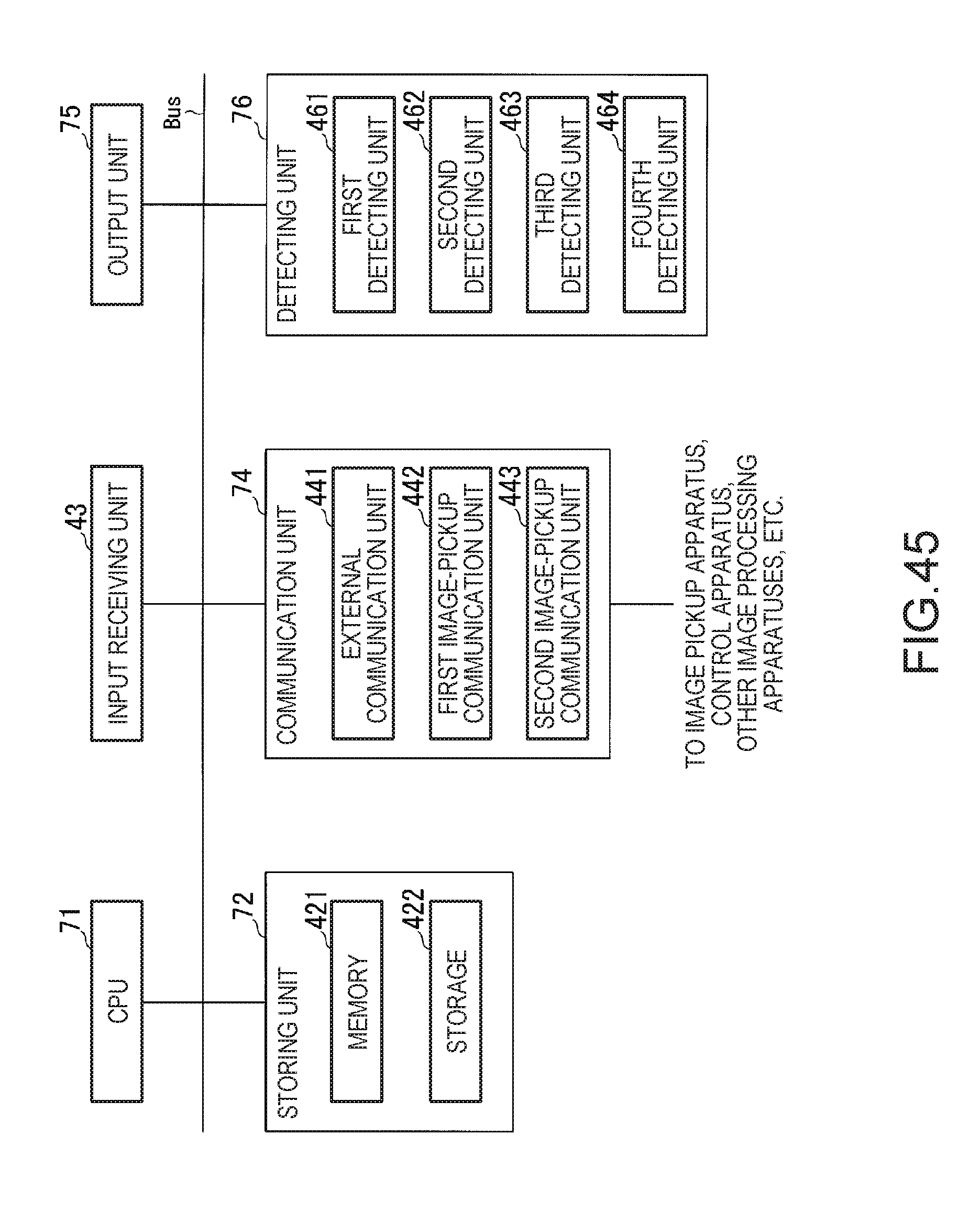

[0156] FIG. 45 is a diagram showing an example of the hardware configuration of an image processing apparatus.

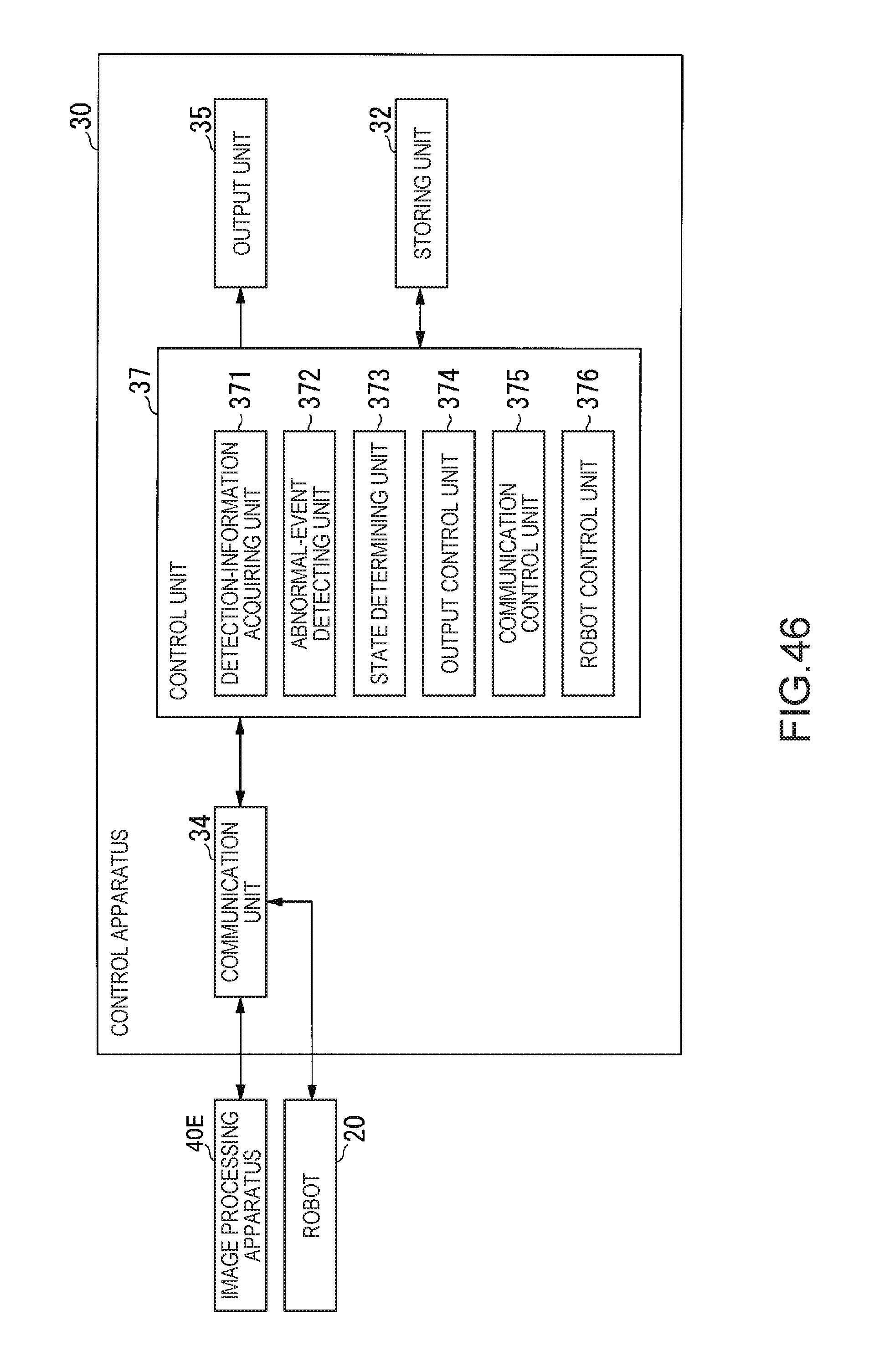

[0157] FIG. 46 is a diagram showing an example of the functional configuration of the control apparatus.

[0158] FIG. 47 is a diagram showing an example of the functional configuration of an image processing apparatus.

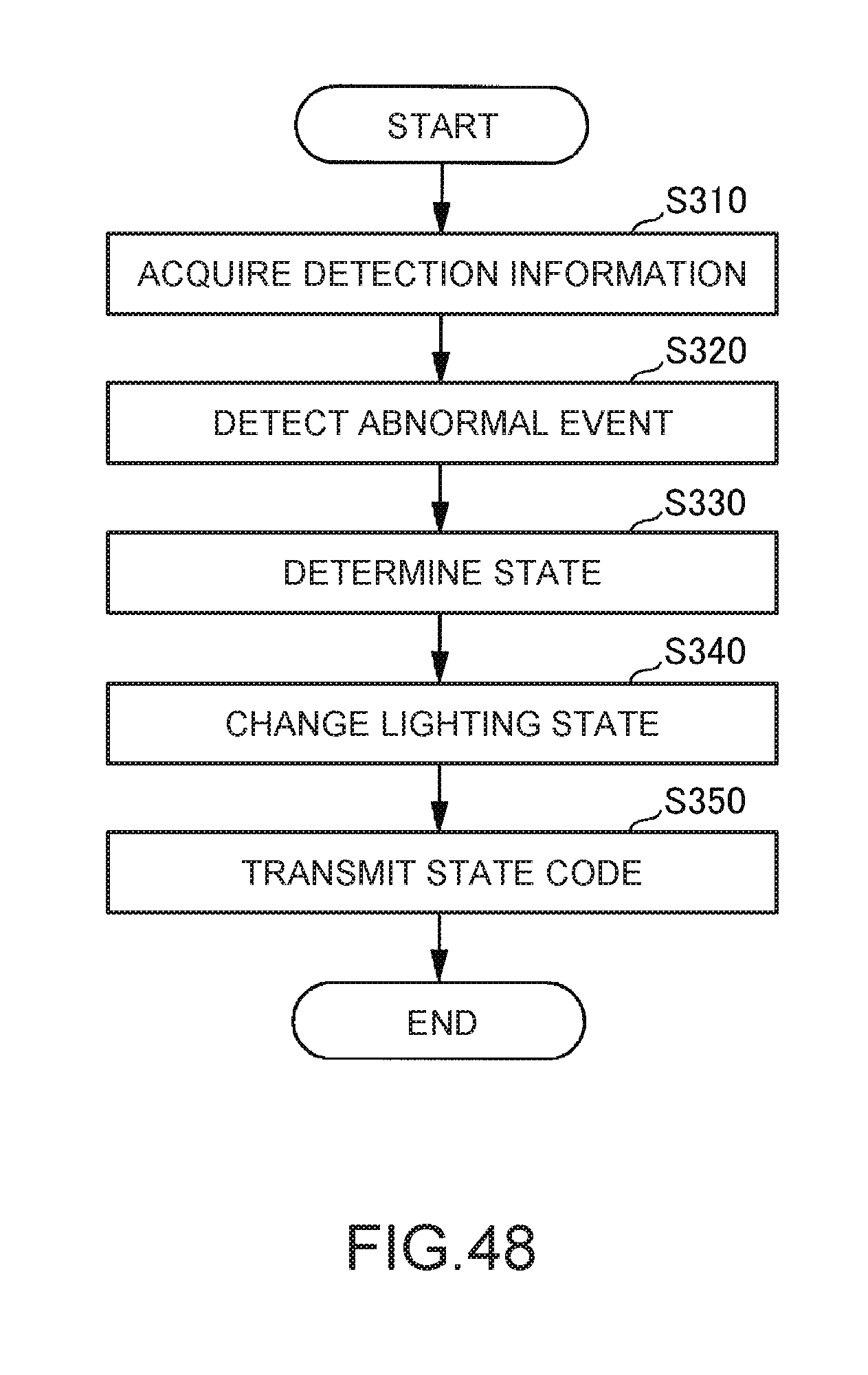

[0159] FIG. 48 is a flowchart showing an example of a flow of processing performed by a control unit of the control apparatus.

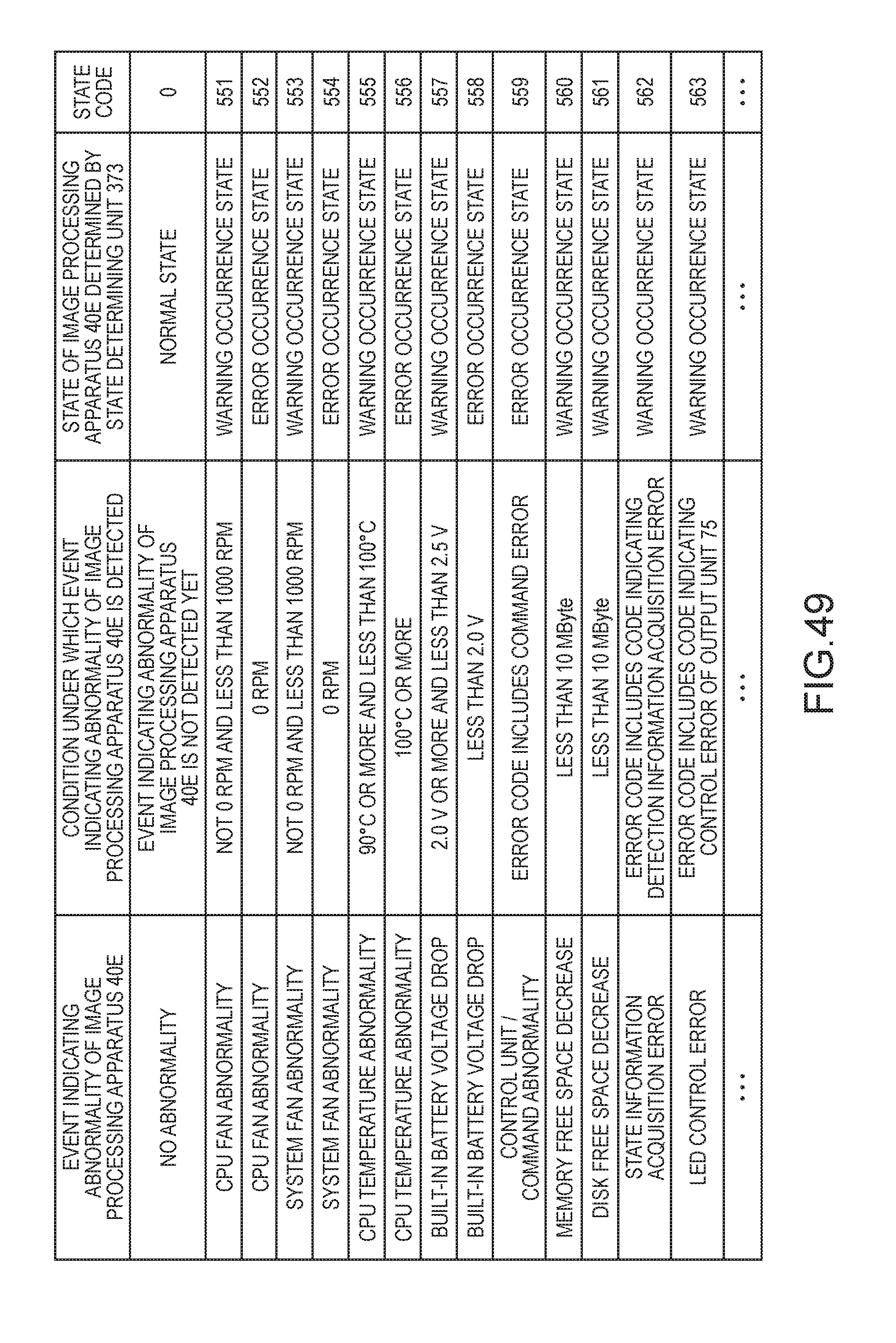

[0160] FIG. 49 is a diagram showing an example of a correspondence relation among an event indicating abnormality of the image processing apparatus, a condition under which the event indicating the abnormality of the image processing apparatus is detected, a state of the image processing apparatus, and a state code for identifying the state of the image processing apparatus.

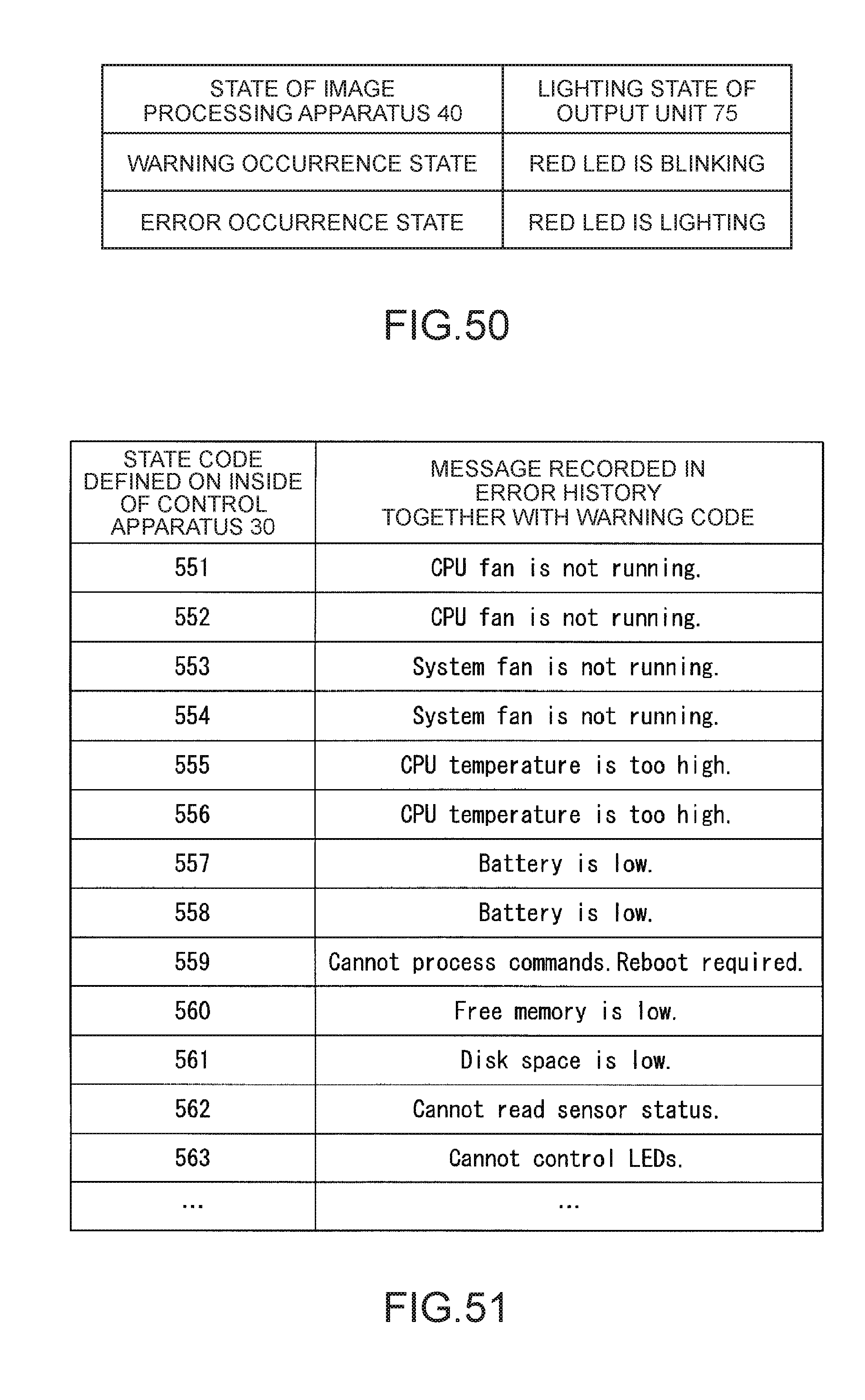

[0161] FIG. 50 is a table showing an example of rules for a change of a lighting state of an output unit by an output control unit.

[0162] FIG. 51 is a table showing an example of a correspondence relation between a state code for identifying a state of the image processing apparatus determined by a state determining unit in step S330 and a message recorded in an error history together with the state code.

[0163] FIG. 52 is a diagram showing an example of a GUI for displaying detection values included in detection information acquired from the image processing apparatus.

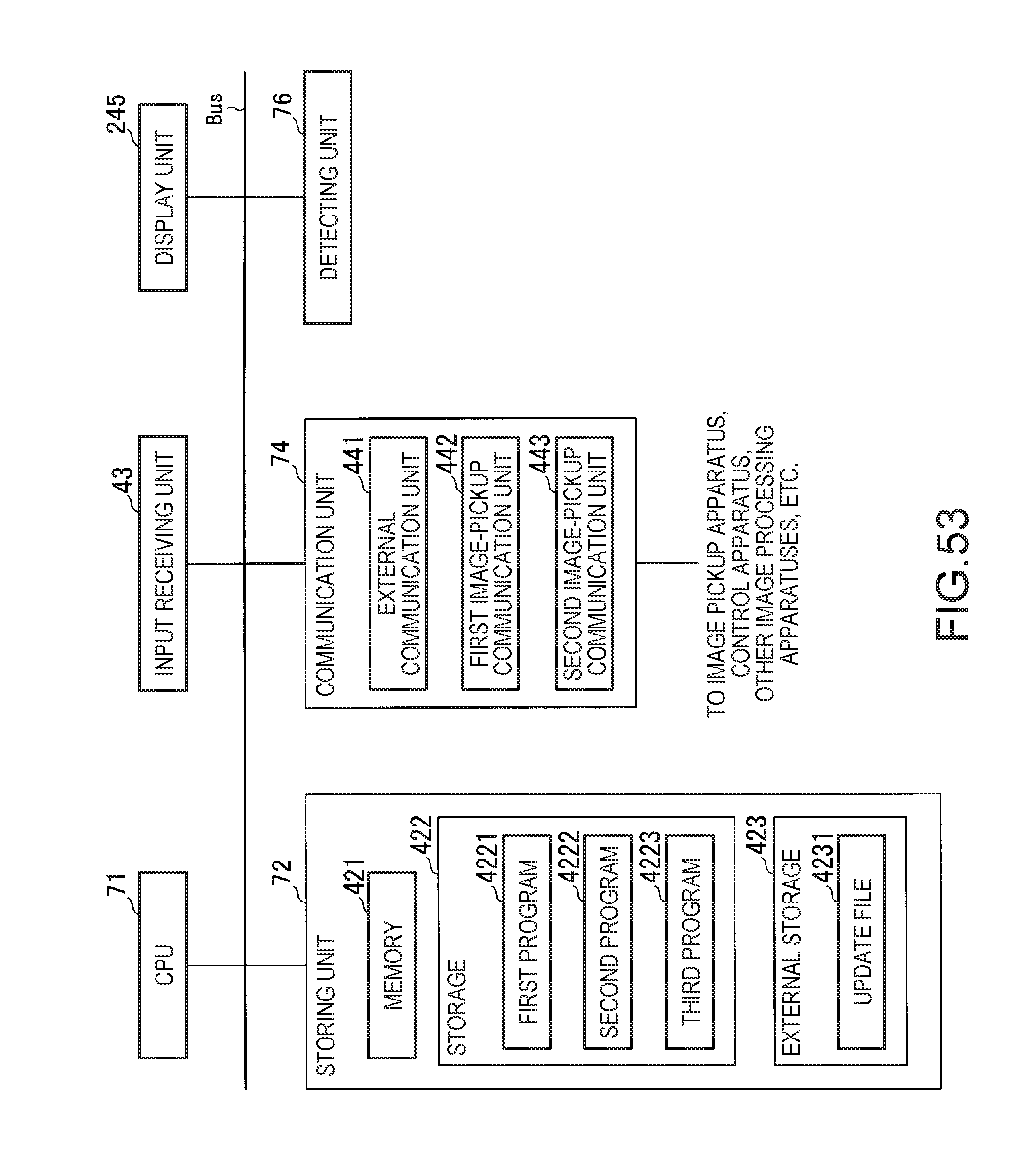

[0164] FIG. 53 is a diagram showing an example of a hardware configuration of an image processing apparatus according to an eighth embodiment.

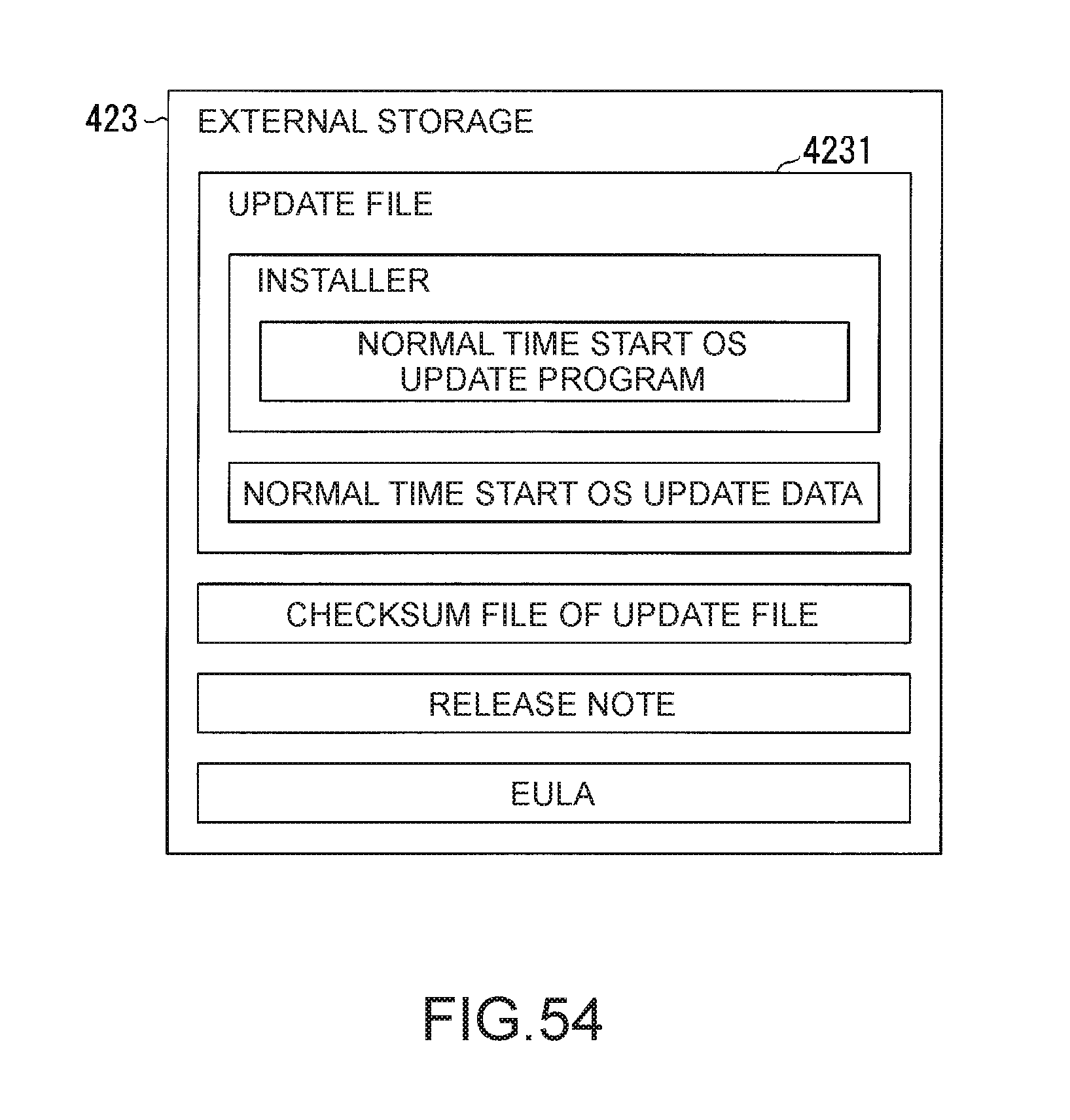

[0165] FIG. 54 is a diagram illustrating a plurality of files stored in an external storage.

[0166] FIG. 55 is a diagram showing an example of the functional configuration of the image processing apparatus during the start of a normal time start OS.

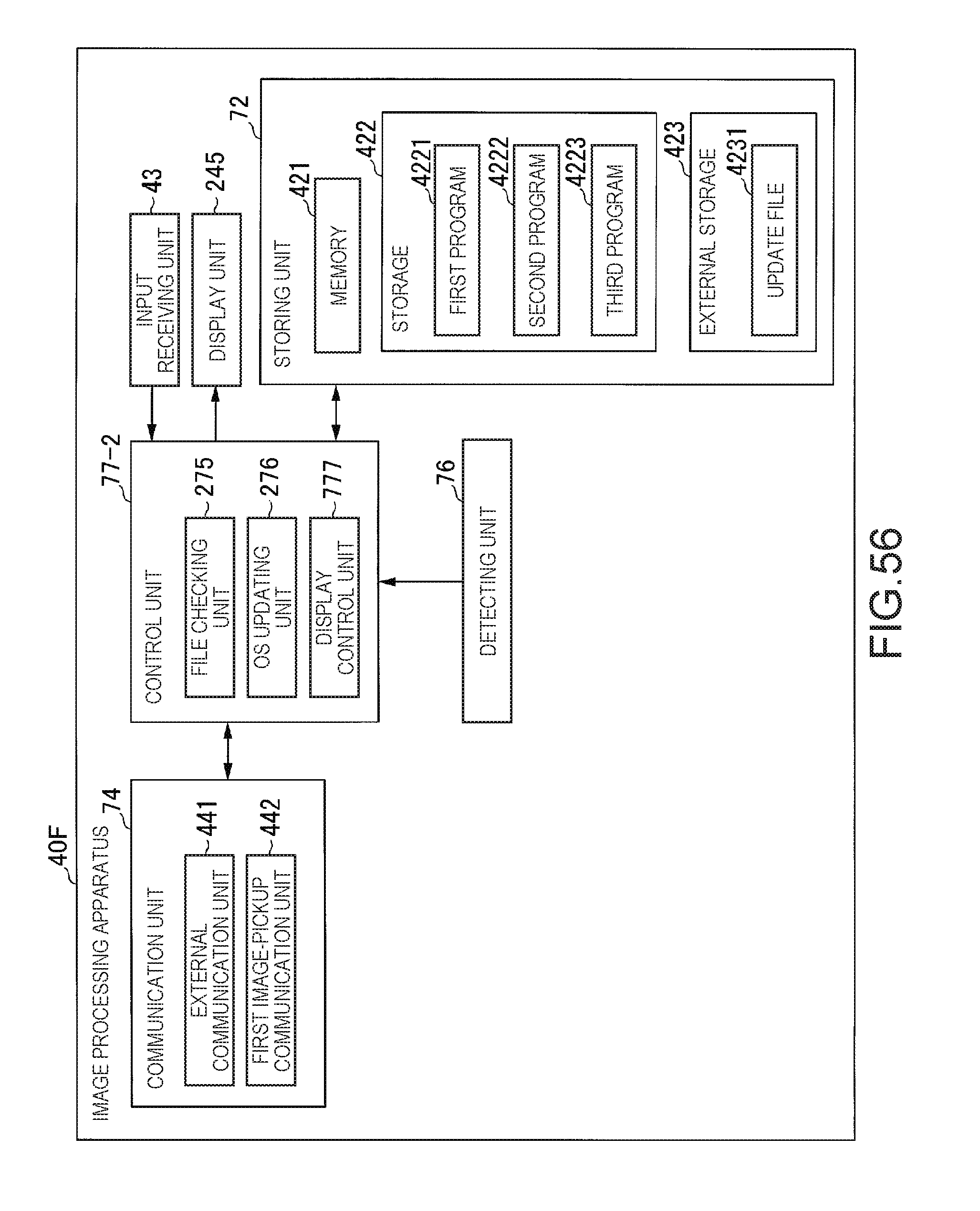

[0167] FIG. 56 is a diagram showing an example of the functional configuration of the image processing apparatus during the start of a failure time start OS.

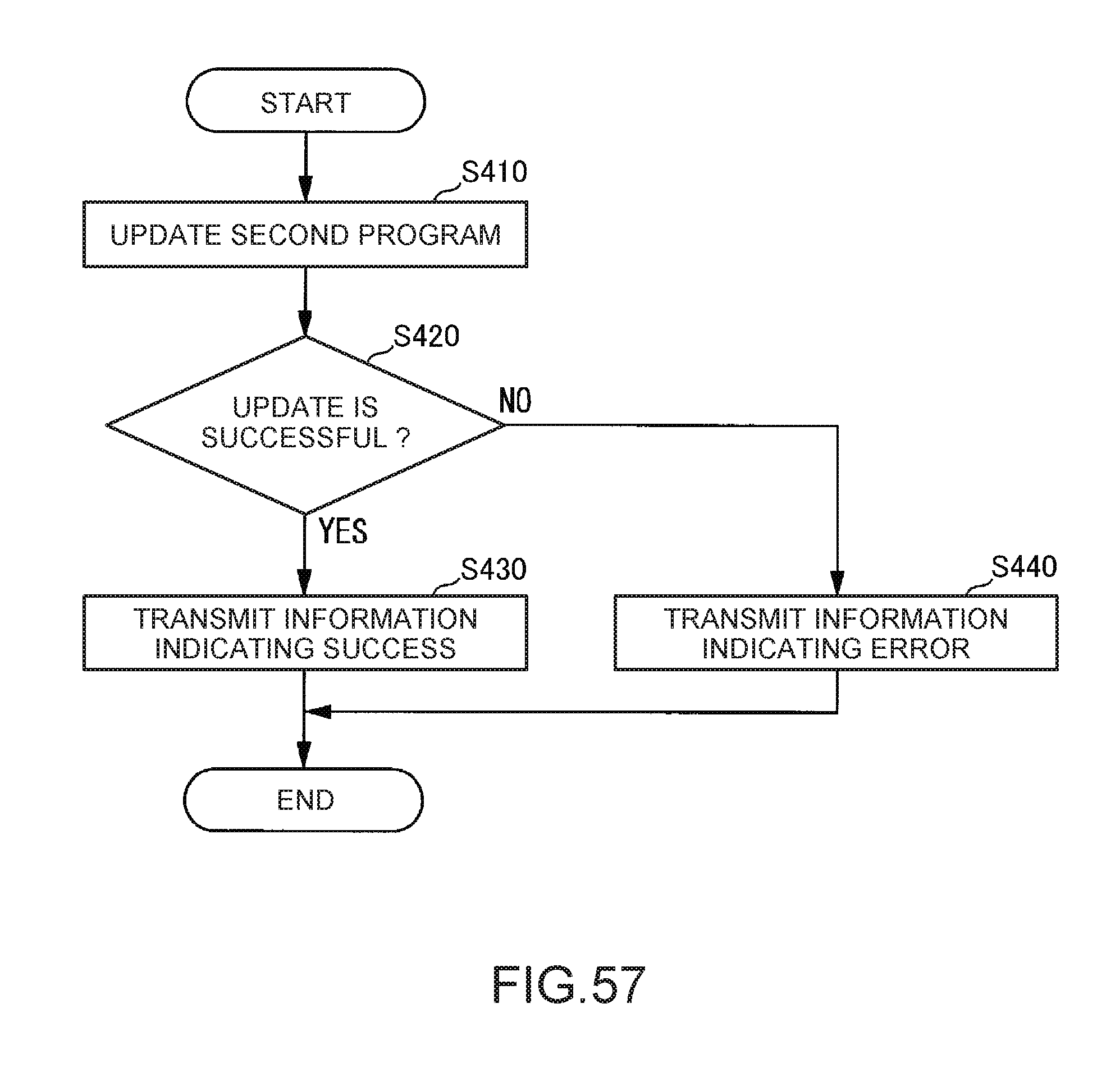

[0168] FIG. 57 is a flowchart showing an example of a flow of application update processing performed by a control unit.

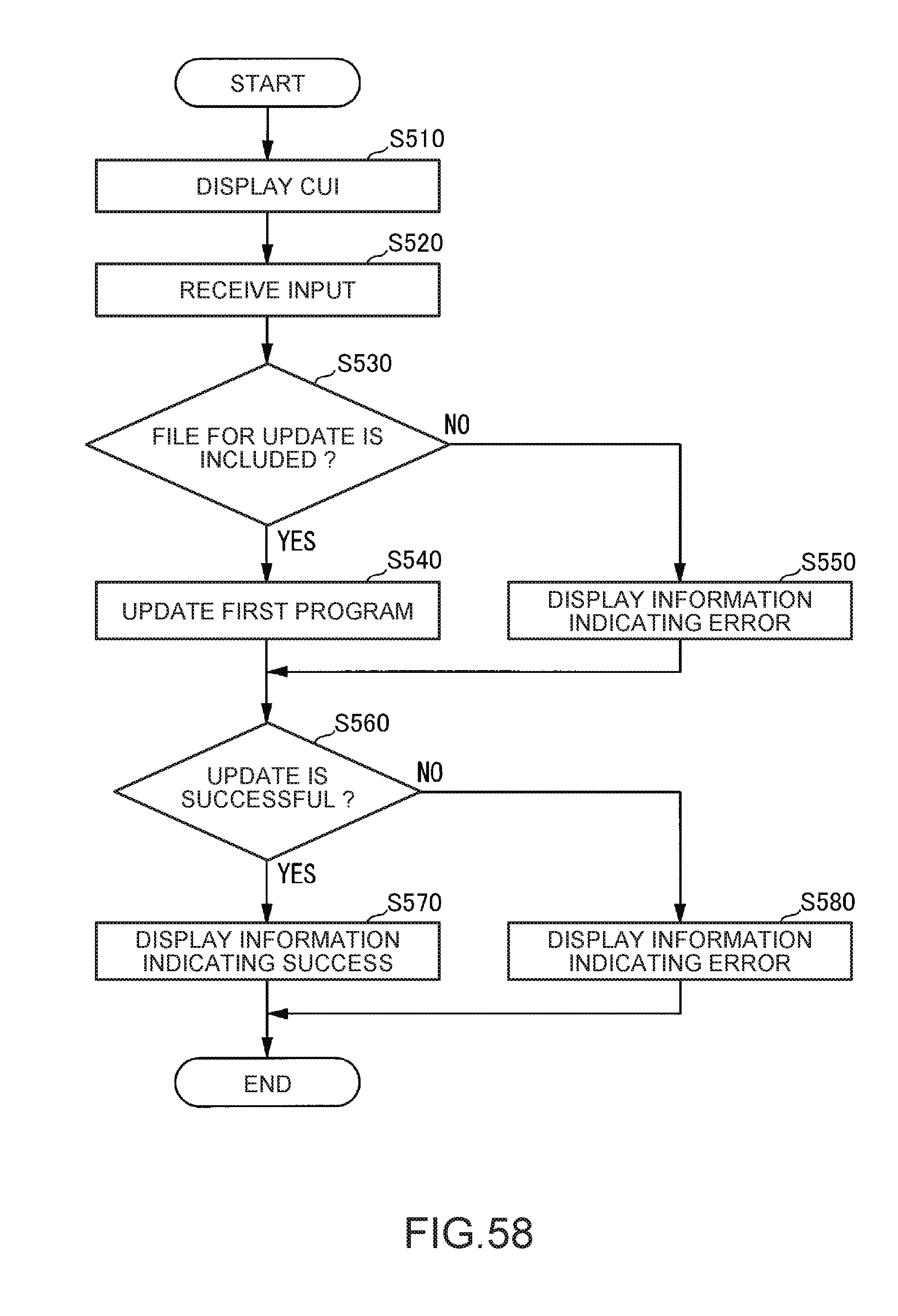

[0169] FIG. 58 is a flowchart showing an example of a flow of OS update processing performed by the control unit.

[0170] FIG. 59 is a diagram showing an example of a CUI for displaying a menu of the failure time start OS.

[0171] FIG. 60 is an example of a CUI for displaying a warning for an event that occurs when update of the normal time start OS is executed and checking whether the update of the normal time start OS is executed.

[0172] FIG. 61 is a diagram showing an example of a CUI for displaying information indicating success of the update of the normal time start OS.

DESCRIPTION OF EXEMPLARY EMBODIMENTS

First Embodiment

[0173] An embodiment of the invention is explained below with reference to the drawings.

[0174] FIG. 1 is a configuration diagram showing an example of a robot system 1 according to this embodiment. The robot system 1 includes an information processing apparatus 5, an image pickup apparatus 10, a robot 20, a control apparatus 30, and an image processing apparatus 40.

[0175] The robot system 1 receives, with the information processing apparatus 5, operation from a user and causes the robot 20 to perform predetermined work on the basis of the received operation. The predetermined work indicates, for example, as shown in FIG. 1, work for rearranging a work target M, which is arranged on the upper surface of a workbench TB, in a predetermined position. However, the predetermined work may be other work. The workbench TB is a stand such as a table. However, instead of the stand, the workbench TB may be a floor surface, a wall surface, or the like as long as it is possible to arrange the work target M thereon.

[0176] The work target M is an object that can be gripped by the robot 20 and is, for example, a component of an industrial product such as a screw or a bolt, and may be another object. In FIG. 1, the work target M is shown as an object having a rectangular parallelepiped shape. The robot system 1 causes the robot 20 to perform the predetermined work on the basis of the position and the posture of the work target M calculated by the image processing apparatus 40.

[0177] The information processing apparatus 5 is, for example, a display equipped apparatus, in which an application program for controlling the robot 20 can be installed, such as a notebook PC, a desktop PC, a tablet PC, or a multi-function cellular phone terminal (a smart phone). The application program causes a display unit of the information processing apparatus 5 to display GUIs having various functions such as a GUI for creating a control program for controlling the robot 20 and a GUI for displaying a state of the robot system 1 (respective states of the control apparatus 30 and the image processing apparatus 40 (e.g., various statuses and presence or absence of a failure)). The GUIs are, for example, a GUI for creating an image processing program for processing an image picked up by the image pickup apparatus 10 and a control program for controlling the robot 20 and a GUI for displaying an operation state of the robot system 1.

[0178] The information processing apparatus 5 receives operation from the user via a GUI displayed on the display unit, creates the control program on the basis of the received operation, and compiles the control program. The control program is converted by the compile into an object code of a format executable by the control apparatus 30. The information processing apparatus 5 receives operation from the user via the GUI displayed on the display unit and outputs the object code to the control apparatus 30 on the basis of the received operation. For example, a plurality of processing procedures are described in the object code. In a processing procedure in which an apparatus that executes the object code performs communication with an external apparatus, for each kind of processing, information indicating a transmission source of a request for the processing (the apparatus that executes the object code) and information indicating a transmission destination (the external apparatus) are described in association with each other. The information indicating the transmission source is an IP address set in the information processing apparatus 5 or the control apparatus 30. The information indicating the transmission destination is an IP address set in the control apparatus 30 or a communication connecting unit 41 (FIG. 2) of the image processing apparatus 40.

[0179] The information processing apparatus 5 receives operation from the user via the GUI displayed on the display unit and performs setting concerning a network for the image processing apparatus 40. The setting concerning a network includes setting of an IP address of the image processing apparatus 40, an IP mask (also referred to as IP address sub-network mask), and an address of a gateway (hereinafter also referred to as gateway address) and setting for changing a bridge mode to an ON state. The bridge mode is a mode for setting the same LAN port name and the same LAN port IP address in a first communication connecting unit 411 (FIG. 2) and a second communication connecting unit 412 (FIG. 2) included in the image processing apparatus 40 and using the LAN port name and the LAN port IP address.

[0180] The information processing apparatus 5 is communicably connected to the control apparatus 30 by a cable. Wired communication via the cable is performed according to a standard such as Ethernet (registered trademark).

[0181] The image pickup apparatus 10 is, for example, a camera including a CCD (Charge Coupled Device), which is an image pickup device that converts condensed light into an electric signal, and a CMOS (Complementary Metal Oxide Semiconductor). The image pickup apparatus 10 is communicably connected to the image processing apparatus 40 by a cable. Wired communication via the cable is performed, for example, according to a standard such as the Ethernet (registered trademark) or the USB. Note that the image pickup apparatus 10 and the image processing apparatus 40 may be connected by radio communication performed according to a communication standard such as Wi-Fi (registered trademark). When the image processing apparatus 40 is connectable to a plurality of image pickup apparatuses 10, the plurality of image pickup apparatuses 10 may be provided.

[0182] The image pickup apparatus 10 is set in a position where an image of a range including the work target M can be picked up as a picked-up image. The image pickup apparatus 10 is configured to pick up a still image as the picked-up image. Instead, the image pickup apparatus 10 may be configured to pick up a moving image as the picked-up image.

[0183] The robot 20 acquires a control signal based on the position and the posture of the work target M from the control apparatus 30 and performs predetermined work on the basis of the acquired control signal. The robot 20 is a single-arm robot including an end effector END including nail sections capable of gripping an object (in this example, the work target M), a manipulator MNP, and a not-shown plurality of actuators. The single-arm robot indicates a robot including one arm configured by the end effector END and the manipulator MNP (or only the manipulator MNP).

[0184] Note that the robot 20 may be a SCARA robot (a horizontal articulated robot), a double-arm robot, or the like instead of the single-arm robot. The SCARA robot is a robot in which a manipulator moves only in the horizontal direction and only a slide shaft at the distal end of the manipulator moves up and down. The double-arm robot is a robot including two arms, each configured by the end effector END and the manipulator MNP (or only the manipulator MNP).

[0185] The arm of the robot 20 is a six-axis vertical articulated type in the example shown in FIG. 1. A support stand, the manipulator MNP, and the end effector END can perform actions of a six-axis degree of freedom according to an associated action by the actuators. Note that the arm of the robot 20 may act at five degrees of freedom (five axes) or less or may act at seven degrees of freedom (seven axes) or more. In the following explanation, the operation of the robot 20 performed by the arm including the end effector END and the manipulator MNP is explained.

[0186] The robot 20 is communicably connected to the control apparatus 30 by, for example, a cable. Wired communication via the cable is performed, for example, according to a standard such as the Ethernet (registered trademark) or the USB. Note that the robot 20 and the control apparatus 30 may be connected by radio communication performed according to a communication standard such as the Wi-Fi (registered trademark). Note that, as shown in FIG. 1, the robot 20 is connected to the control apparatus 30 set on the outside of the robot 20. However, instead, the control apparatus 30 may be incorporated in the robot 20.

[0187] The control apparatus 30 acquires an object code from the information processing apparatus 5 and controls, on the basis of the acquired object code, the robot 20 to perform the predetermined work. More specifically, in this example, the control apparatus 30 outputs, on the basis of the object code, to the image processing apparatus 40, a request for acquiring a picked-up image of the range including the work target M picked up by the image pickup apparatus 10 and performing, on the basis of the acquired picked-up image, image processing for calculating a position and a posture of the work target M. Note that the request output to the image processing apparatus 40 by the control apparatus 30 includes, as information indicating a transmission destination, information indicating setting concerning a network for the communication connecting unit (FIG. 2) of the image processing apparatus 40.

[0188] The control apparatus 30 is communicably connected to the image processing apparatus 40 by a LAN cable. Wired communication via the LAN cable is performed, for example, according to a standard such as the Ethernet (registered trademark). The control apparatus 30 outputs, to the information processing apparatus 5, the information indicating the setting concerning a network for the communication connecting unit 41 (FIG. 2) output from the image processing apparatus 40 during the start of the image processing apparatus 40. The control apparatus 30 shares the information with the information processing apparatus 5.

[0189] After the image processing is finished by the image processing apparatus 40, the control apparatus 30 acquires information indicating the position and the posture of the work target M from the image processing apparatus 40. The control apparatus 30 generates a control signal based on the acquired information concerning the position and the posture of the work target M and outputs the generated control signal to the robot 20 to control the robot 20 to perform the predetermined work.

[0190] The image processing apparatus 40 acquires, according to the request from the control apparatus 30 based on the object code, a picked-up image of the range including the work target M from the image pickup apparatus 10. After acquiring the picked-up image from the image pickup apparatus 10, the image processing apparatus 40 performs, on the basis of the acquired picked-up image, image processing for calculating a position and a posture of the work target M. The image processing apparatus 40 outputs information indicating the position and the posture of the work target M obtained as a result of the image processing to the control apparatus 30 via the LAN cable.

[0191] The image processing apparatus 40 performs the setting concerning a network for the communication connecting unit 41 (FIG. 2) according to operation by the user from the information processing apparatus 5. Alternatively, the user operates an input unit 60 (FIG. 2) on the basis of an image displayed on a display unit 50 (FIG. 2) connected to the image processing apparatus 40, whereby the image processing apparatus 40 performs the setting concerning a network for the communication connecting unit 41 (FIG. 2).

[0192] When the image processing apparatus 40 is started, the image processing apparatus 40 outputs the information indicating the setting concerning a network for the communication connecting unit 41 (FIG. 2) to the control apparatus 30.