Information Processing Apparatus And Information Processing Method

IMOTO; MAKI ; et al.

U.S. patent application number 16/154331 was filed with the patent office on 2019-02-07 for information processing apparatus and information processing method. The applicant listed for this patent is SONY CORPORATION. Invention is credited to MAKI IMOTO, TAKURO NODA, RYOUHEI YASUDA.

| Application Number | 20190041985 16/154331 |

| Document ID | / |

| Family ID | 52665434 |

| Filed Date | 2019-02-07 |

| United States Patent Application | 20190041985 |

| Kind Code | A1 |

| IMOTO; MAKI ; et al. | February 7, 2019 |

INFORMATION PROCESSING APPARATUS AND INFORMATION PROCESSING METHOD

Abstract

There is provided an information processing apparatus including an image acquisition unit configured to acquire a captured image of users, a determination unit configured to determine an operator from among the users included in the acquired captured image, and a processing unit configured to conduct a process based on information about user line of sight corresponding to the determined operator.

| Inventors: | IMOTO; MAKI; (TOKYO, JP) ; NODA; TAKURO; (TOKYO, JP) ; YASUDA; RYOUHEI; (KANAGAWA, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 52665434 | ||||||||||

| Appl. No.: | 16/154331 | ||||||||||

| Filed: | October 8, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14917244 | Mar 7, 2016 | 10120441 | ||

| PCT/JP2014/067433 | Jun 30, 2014 | |||

| 16154331 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06F 3/012 20130101; G06F 3/013 20130101; G06F 3/167 20130101; G06F 3/0304 20130101; G06F 3/017 20130101 |

| International Class: | G06F 3/01 20060101 G06F003/01; G06F 3/03 20060101 G06F003/03 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Sep 13, 2013 | JP | 2013-190715 |

Claims

1. An information processing apparatus, comprising: a display screen; and one or more processors configured to: acquire a captured image that includes a plurality of users; detect a plurality of regions corresponding to physical features of the plurality of users in the captured image; determine an operator from the plurality of users included in the captured image, based on a position of a first input operation of a first user of the plurality of users in a specific region on the display screen and based on a size of each of the plurality of regions; and control a first process based on first information associated with the first input operation corresponding to the determined operator.

2. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to control the display screen to display an object based on a second input operation of a second user of the plurality of users, and the second user is different from the determined operator.

3. The information processing apparatus according to claim 2, wherein the one or more processors are further configured to control the display screen to hide the displayed object, based on one of an elapse of a first determined time or a position of the second input operation that is unchanged for a second determined time.

4. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to determine the operator based on a corresponding distance of each region of the plurality of regions from the display screen.

5. The information processing apparatus according to claim 4, wherein the one or more processors are further configured to determine the first user of the plurality of users as the operator based on a first region of the plurality of regions that has a shortest distance from the display screen among the corresponding distance of each region of the plurality of regions, and the first region is associated with the first user.

6. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to determine the operator based on a specific gesture detected from the captured image.

7. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to determine the operator based on a rank associated with each of the plurality of users.

8. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to: determine the operator as the first user; determine an operation level for the first user; and dynamically change available processes based on the determined operation level.

9. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to control a second process based on second information associated with a second input operation of a second user of the plurality of users and based on a recognition of a determined speech of the second user.

10. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to determine a new operator from the plurality of users based on unavailability of the first information.

11. The information processing apparatus according to claim 1, wherein the one or more processors are further configured to change the operator from the first user to a second user of the plurality of users based on a detection of a determined combination of gestures by the first user and the second user, and the second user is different from the first user.

12. The information processing apparatus according to claim 1, wherein the physical features of the plurality of users corresponds to faces of the plurality of users.

13. The information processing apparatus according to claim 1, wherein the first input operation includes a line-of-sight operation.

14. An information processing method, comprising: in an information processing apparatus: acquiring a captured image that includes a plurality of users; detecting a plurality of regions corresponding to physical features of the plurality of users in the captured image; determining an operator from the plurality of users included in the captured image, based on a position of an input operation of a user of the plurality of users in a specific region on a display screen and based on a size of each of the plurality of regions; and controlling a process based on information associated with the input operation corresponding to the determined operator.

15. A non-transitory computer-readable medium having stored thereon computer-executable instructions that, when executed by a processor, cause the processor to execute operations, the operations comprising: acquiring a captured image that includes a plurality of users; detecting a plurality of regions corresponding to physical features of the plurality of users in the captured image; determining an operator from the plurality of users included in the captured image, based on a position of an input operation of a user of the plurality of users in a specific region on a display screen and based on a size of each of the plurality of regions; and controlling a process based on information associated with the input operation corresponding to the determined operator.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

[0001] The present application is a continuation application of U.S. patent application Ser. No. 14/917,244, filed Mar. 7, 2016, which is a National Stage of PCT/JP2014/067433, filed Jun. 30, 2014, and which claims the priority from Japanese Patent Application JP 2013-190715, filed Sep. 13, 2013, the entire content of which is hereby incorporated by reference.

TECHNICAL FIELD

[0002] The present disclosure relates to an information processing apparatus, an information processing method, and a program.

BACKGROUND ART

[0003] In recent years, user interfaces allowing a user to operate through the line of sight by using line-of-sight detection technology such as an eye tracking technology are emerging. For example, the technology described in PTL 1 below can be cited as a technology concerning the user interface allowing the user to operate through the line of sight.

CITATION LIST

Patent Literature

[0004] Patent Literature 1: JP 2009-64395A

SUMMARY OF INVENTION

Technical Problem

[0005] For example, when an operation is performed through a user's line of sight, an apparatus that conducts a process based on a user's line of sight needs to conduct the process after determining which user's line of sight to use as the basis for conducting the process, or in other words, determining the operator who performs an operation through line of sight (hereinafter simply called the "operator" in some cases). However, a method of determining the operator in an apparatus like the above has not been established.

[0006] The present disclosure proposes a new and improved information processing and information processing method capable of determining an operator who performs an operation through line of sight, and conducting a process based on the line of sight of the determined operator.

Solution to Problem

[0007] According to the present disclosure, there is provided an information processing apparatus including: an image acquisition unit configured to acquire a captured image of users; a determination unit configured to determine an operator from among the users included in the acquired captured image; and a processing unit configured to conduct a process based on information about user line of sight corresponding to the determined operator.

[0008] According to the present disclosure, there is provided an information processing method executed by an information processing apparatus, the information processing method including: a step of acquiring a captured image of users; a step of determining an operator from among the users included in the acquired captured image; and a step of conducting a process based on information about user line of sight corresponding to the determined operator.

Advantageous Effects of Invention

[0009] According to the present disclosure, it is possible to determine an operator who performs an operation through line of sight, and conduct a process based on the line of sight of the determined operator.

The above effect is not necessarily restrictive and together with the above effect or instead of the above effect, one of the effects shown in this specification or another effect grasped from this specification may be achieved.

BRIEF DESCRIPTION OF DRAWINGS

[0010] FIGS. 1A and 1B are explanatory diagrams for describing an example of a process in accordance with an information processing method according to the present embodiment.

[0011] FIGS. 2A and 2B are explanatory diagrams for describing an example of a process in accordance with an information processing method according to the present embodiment.

[0012] FIG. 3 is an explanatory diagram for describing an example of a process in accordance with an information processing method according to the present embodiment.

[0013] FIGS. 4A and 4B are explanatory diagrams for describing a second applied example of a process in accordance with an information processing method according to the present embodiment.

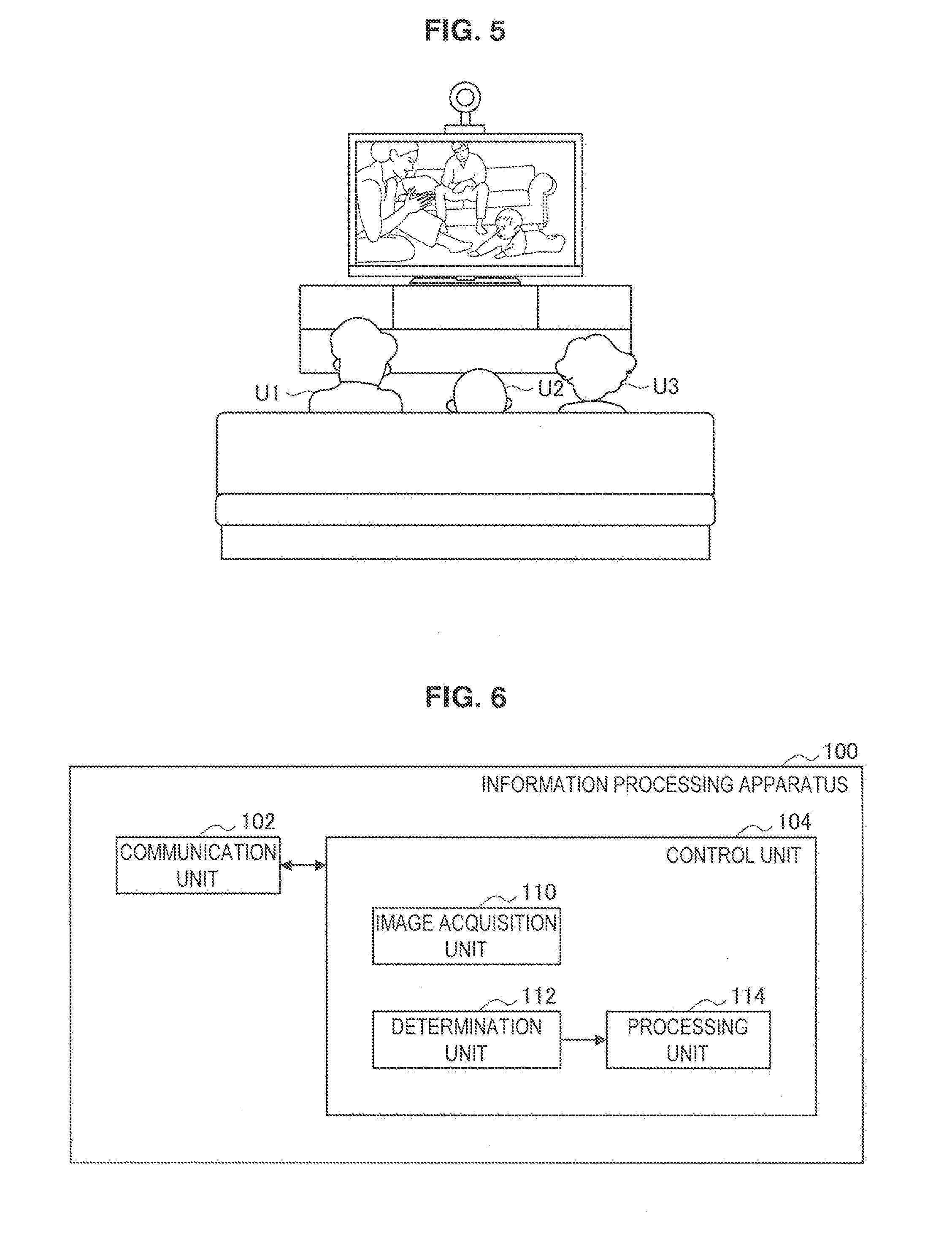

[0014] FIG. 5 is an explanatory diagram for describing a third applied example of a process in accordance with an information processing method according to the present embodiment.

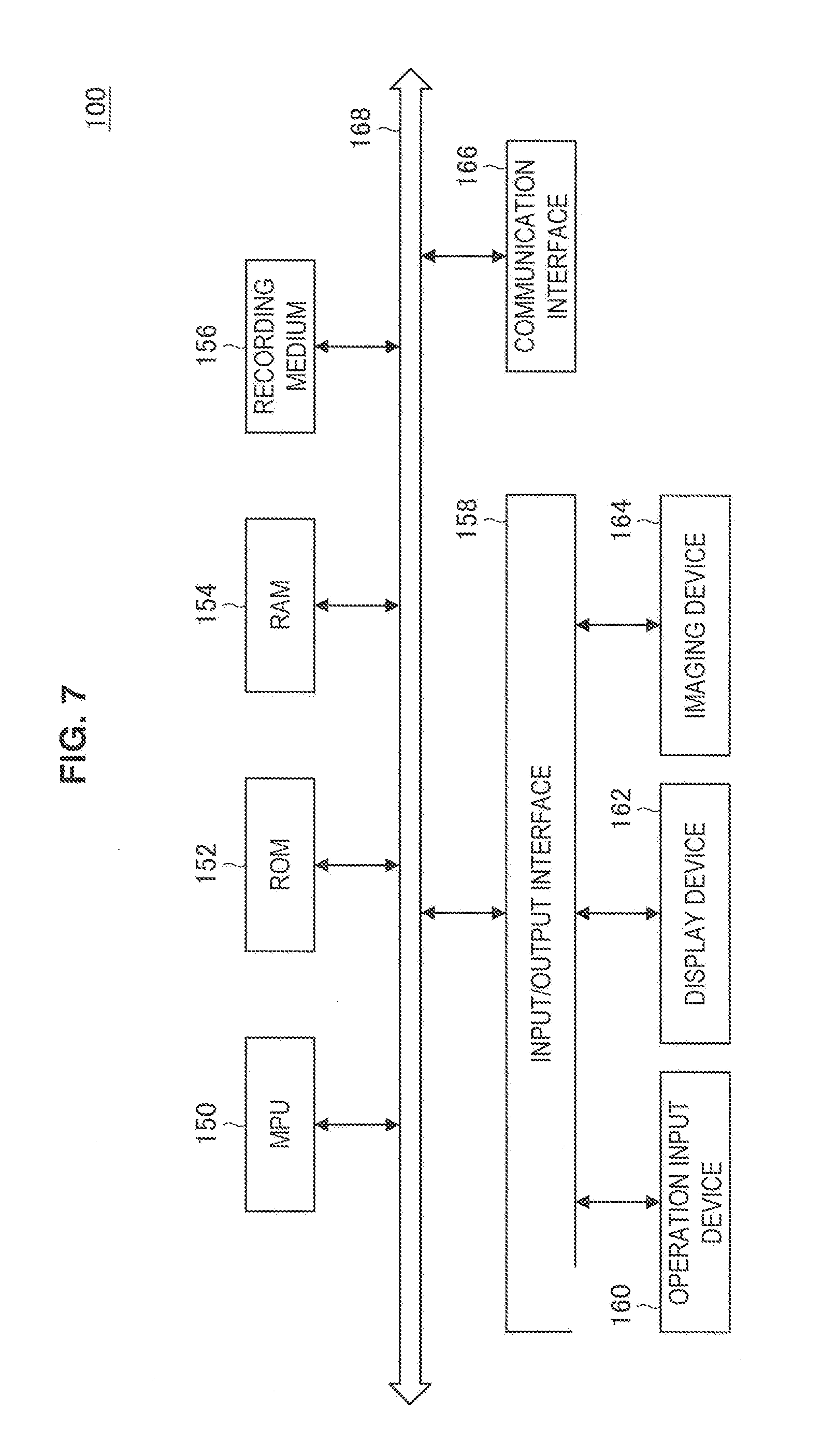

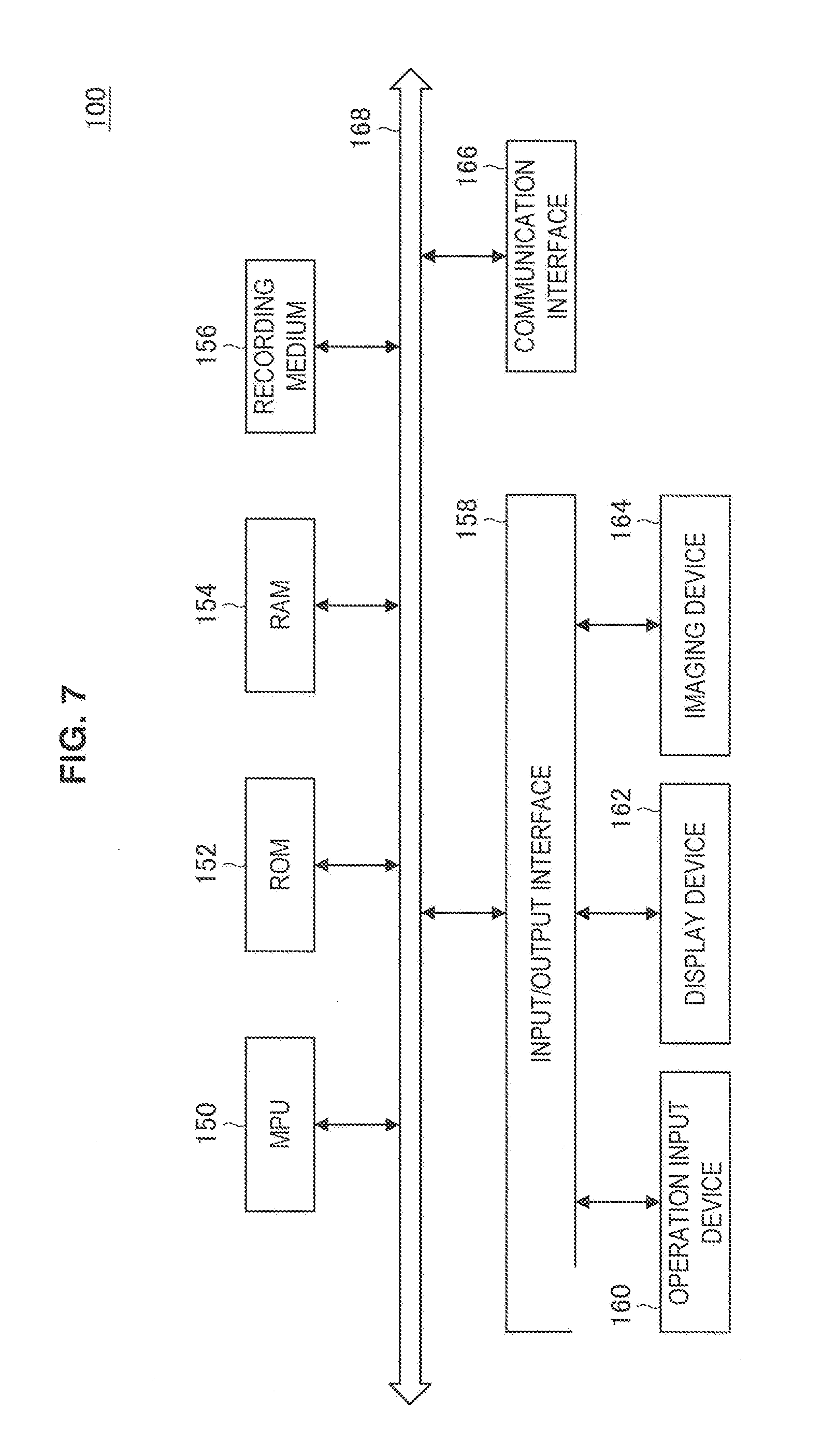

[0015] FIG. 6 is a block diagram illustrating an example of a configuration of an information processing apparatus according to the present embodiment.

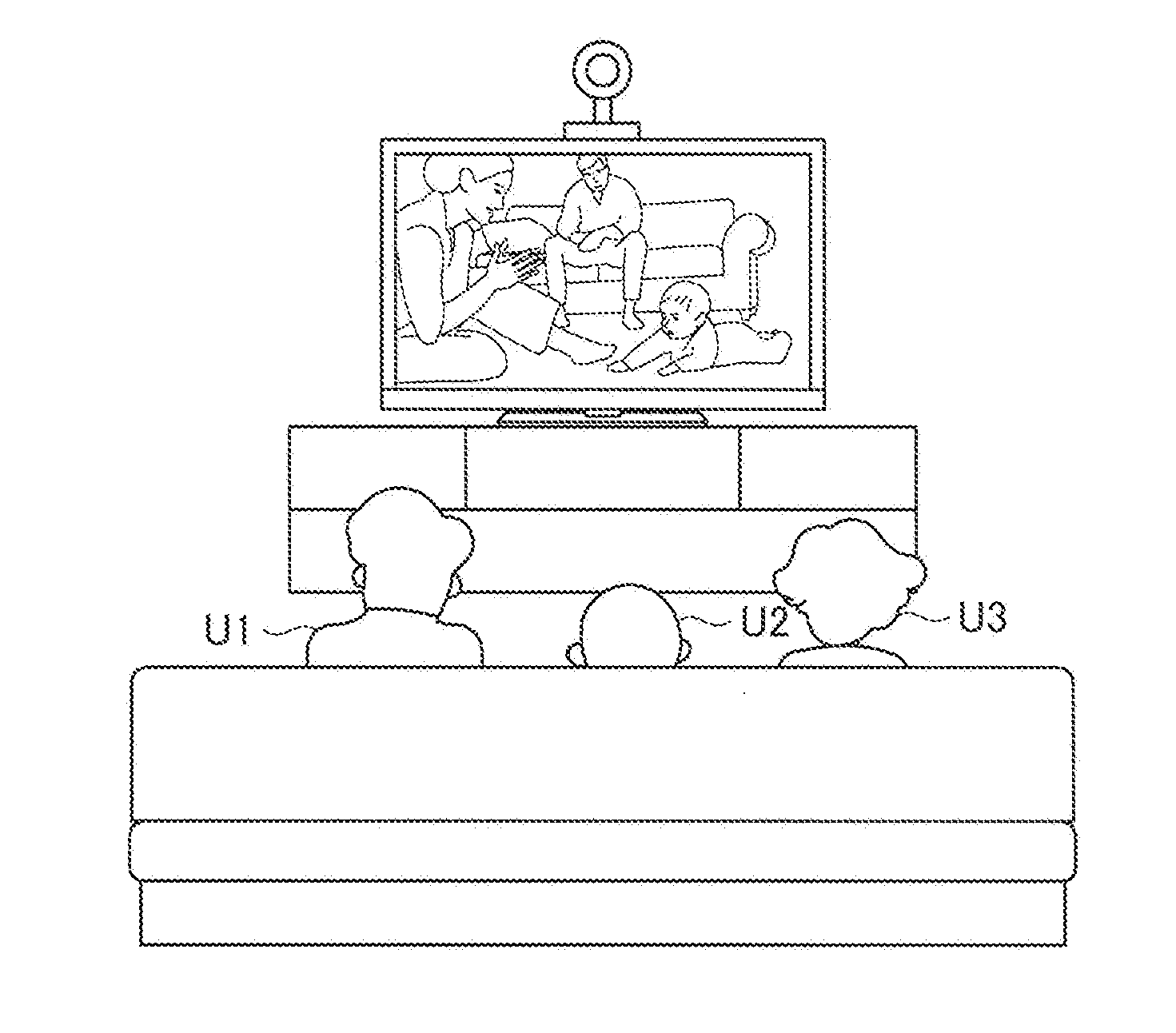

[0016] FIG. 7 is an explanatory diagram illustrating an example of a hardware configuration of an information processing apparatus according to the present embodiment.

DESCRIPTION OF EMBODIMENTS

[0017] A preferred embodiment of the present disclosure will be described in detail below with reference to the appended drawings. Note that in this specification and the drawings, the same reference signs are attached to elements having substantially the same function and configuration, thereby omitting duplicate descriptions.

[0018] The description will be provided in the order shown below:

1. Information Processing Method According to the Present Embodiment

2. Information Processing Apparatus According to the Present Embodiment

3. Program According to the Present Embodiment

Information Processing Method According to the Present Embodiment

[0019] Before describing the configuration of an information processing apparatus according to the present embodiment, an information processing method according to the present embodiment will first be described. The information processing method according to the present embodiment will be described by taking a case in which processing according to the information processing method according to the present embodiment is performed by an information processing apparatus according to the present embodiment as an example.

[1] Overview of Process According to Information Processing Method According to Present Embodiment

[0020] As discussed above, a method of deciding an operator who performs an operation through line of sight in an apparatus that conducts a process based on a user's line of sight has not been established. Herein, an operator according to the present embodiment refers to the user who performs an action that affects the behavior of the apparatus (or an application being executed), for example. Note that, as discussed later, as an example of a process according to an information processing method according to the present embodiment, an information processing apparatus according to the present embodiment is also capable of conducting a process that does not affect the behavior of the apparatus (or an application being executed) on the basis of the line of sight of a user not determined to be the operator.

[0021] Accordingly, an information processing apparatus according to the present embodiment acquires a captured image of users (image acquisition process), and determines the operator from among the users included in the acquired captured image (determination process). Subsequently, an information processing apparatus according to the present embodiment conducts a process on the basis of information about the line of sight of the user corresponding to the determined operator (execution process).

[0022] Herein, a captured image of users according to the present embodiment refers to a captured image that may include users, for example. Herein, a captured image of users according to the present embodiment simply will be called the "captured image". The captured image according to the present embodiment may be a user-captured image viewable on a display screen, for example. In addition, the captured image according to the present embodiment may be generated by image capture in an imaging unit (discussed later) provided in an information processing apparatus according to the present embodiment, or an external imaging device. The captured image according to the present embodiment may be a moving image or a still image, for example.

[0023] The display screen according to the present embodiment is, for example, a display screen on which various images are displayed and toward which the user directs the line of sight. As the display screen according to the present embodiment, for example, the display screen of a display unit (described later) included in the information processing apparatus according to the present embodiment and the display screen of an external display apparatus (or an external display device) connected to the information processing apparatus according to the present embodiment wirelessly or via a cable can be cited.

[0024] In addition, information about user line of sight according to the present embodiment refers to information (data) about a user's eyes, such as the position of a user's line of sight on the display screen and a user's eye movements, for example. The information about user line of sight according to the present embodiment may be information about the position of the line of sight of a user and information about eye movements of a user, for example.

[0025] Here, the information about the position of the line of sight of the user according to the present embodiment is, for example, data showing the position of the line of sight of the user or data that can be used to identify the position of the line of sight of the user (or data that can be used to estimate the position of the line of sight of the user. This also applies below).

[0026] As the data showing the position of the line of sight of the user according to the present embodiment, for example, coordinate data showing the position of the line of sight of the user on the display screen can be cited. The position of the line of sight of the user on the display screen is represented by, for example, coordinates in a coordinate system in which a reference position of the display screen is set as its origin. The data showing the position of the line of sight of the user according to the present embodiment may include data indicating the direction of the line of sight (for example, data showing the angle with the display screen).

[0027] When coordinate data indicating the position of the line of sight of the user on the display screen is used as information about the position of the line of sight of the user according to the present embodiment, the information processing apparatus according to the present embodiment identifies the position of the line of sight of the user on the display screen by using, for example, coordinate data acquired from an external apparatus having identified (estimated) the position of the line of sight of the user by using line-of-sight detection technology and indicating the position of the line of sight of the user on the display screen. When the data indicating the direction of the line of sight is used as information about the position of the line of sight of the user according to the present embodiment, the information processing apparatus according to the present embodiment identifies the direction of the line of sight by using, for example, data indicating the direction of the line of sight acquired from the external apparatus.

[0028] It is possible to identify the position of the line of sight of the user and the direction of the line of sight of the user on the display screen by using the line of sight detected by using line-of-sight detection technology and the position of the user and the orientation of face with respect to the display screen detected from a captured image in which the direction in which images are displayed on the display screen is captured. However, the method of identifying the position of the line of sight of the user and the direction of the line of sight of the user on the display screen according to the present embodiment is not limited to the above method. For example, the information processing apparatus according to the present embodiment and the external apparatus can use any technology capable of identifying the position of the line of sight of the user and the direction of the line of sight of the user on the display screen.

[0029] As the line-of-sight detection technology according to the present embodiment, for example, a method of detecting the line of sight based on the position of a moving point (for example, a point corresponding to a moving portion in an eye such as the iris and the pupil) of an eye with respect to a reference point (for example, a point corresponding to a portion that does not move in the eye such as an eye's inner corner or corneal reflex) of the eye can be cited. However, the line-of-sight detection technology according to the present embodiment is not limited to the above technology and may be, for example, any line-of-sight detection technology capable of detecting the line of sight.

[0030] As the data that can be used to identify the position of the line of sight of the user according to the present embodiment, for example, captured image data in which the direction in which images (moving images or still images) are displayed on the display screen is imaged can be cited. The data that can be used to identify the position of the line of sight of the user according to the present embodiment may further include detection data of any sensor obtaining detection values that can be used to improve estimation accuracy of the position of the line of sight of the user such as detection data of an infrared sensor that detects infrared radiation in the direction in which images are displayed on the display screen.

[0031] When data that can be used to identify the position of the line of sight of the user is used as information about the position of the line of sight of the user according to the present embodiment, the information processing apparatus according to the present embodiment uses, for example, captured image data acquired by an imaging unit (described later) included in the local apparatus (hereinafter, referred to as the information processing apparatus according to the present embodiment) or an external imaging device. In the above case, the information processing apparatus according to the present embodiment may use, for example, detection data (example of data that can be used to identify the position of the line of sight of the user) acquired from a sensor that can be used to improve estimation accuracy of the position of the line of sight of the user included in the local apparatus or an external sensor. The information processing apparatus according to the present embodiment performs processing according to an identification method of the position of the line of sight of the user and the direction of the line of sight of the user on the display screen according to the present embodiment using, for example, data that can be used to identify the position of the line of sight of the user acquired as described above to identify the position of the line of sight of the user and the direction of the line of sight of the user on the display screen.

[0032] The information related to a user's eye movements according to the present embodiment may be, for example, data indicating the user's eye movements, or data that may be used to specify the user's eye movements (or data that may be used to estimate the user's eye movements. This applies similarly hereinafter.)

[0033] The data indicating a user's eye movements according to the present embodiment may be, for example, data indicating a predetermined eye movement, such as a single blink movement, multiple consecutive blink movements, or a wink movement (for example, data indicating a number or the like corresponding to a predetermined movement). In addition, the data that may be used to specify a user's eye movements according to the present embodiment may be, for example, captured image data depicting the direction in which an image (moving image or still image) is displayed on the display screen.

[0034] When data indicating a user's eye movements is used as the information related to a user's eye movements according to the present embodiment, the information processing apparatus according to the present embodiment determines that a predetermined eye movement has been performed by using data indicating the user's eye movements acquired from an external apparatus that specifies (or estimates) the user's eye movements on the basis of a captured image, for example.

[0035] Herein, for example, when a change in eye shape detected from a moving image (or a plurality of still images) depicting the direction in which an image is displayed on the display screen qualifies as a change in eye shape corresponding to a predetermined eye movement, it is possible to determine that the predetermined eye movement was performed. Note that the method of determining a predetermined eye movement according to the present embodiment is not limited to the above. For example, the information processing apparatus according to the present embodiment or an external apparatus is capable of using arbitrary technology enabling a determination that a predetermined eye movement was performed.

[0036] When data that may be used to specify a user's eye movements is used as the information related to a user's eye movements according to the present embodiment, the information processing apparatus according to the present embodiment uses captured image data (an example of data that may be used to specify a user's eye movements) acquired from an imaging unit (discussed later) provided in the local apparatus or an external imaging device, for example. The information processing apparatus according to the present embodiment uses the data that may be used to specify a user's eye movements acquired as above to conduct a process related to a method of determining a predetermined eye movement according to the present embodiment, and determine that the predetermined eye movement was performed, for example.

[0037] Hereinafter, processes according to an information processing method according to the present embodiment will be described more specifically.

[2] Processes According to Information Processing Method According to Present Embodiment

(1) Image Acquisition Process

[0038] The information processing apparatus according to the present embodiment acquires the captured image according to the present embodiment. The information processing apparatus according to the present embodiment acquires the captured image according to the present embodiment by controlling image capture in an imaging unit (discussed later) provided in the information processing apparatus according to the present embodiment or an external imaging device, for example. The information processing apparatus according to the present embodiment controls image capture in the imaging unit (discussed later) or the like by transmitting control commands related image capture to the imaging unit (discussed later), the external imaging device, or the like via a communication unit (discussed later) or a connected external communication device.

[0039] Note that the image acquisition process according to the present embodiment is not limited to the above. For example, the information processing apparatus according to the present embodiment may also passively acquire the captured image according to the present embodiment transmitted from the imaging unit (discussed later) or the external imaging device.

(2) Determination Process

[0040] The information processing apparatus according to the present embodiment determines the operator from among the users included in a captured image acquired by the process of (1) above (image acquisition process). The information processing apparatus according to the present embodiment determines a single user or multiple users from among the users included in the captured image as the operator(s).

(2-1) First Example of Determination Process

[0041] The information processing apparatus according to the present embodiment determines the operator on the basis of the size of a face region detected from the captured image, for example.

[0042] Herein, the face region according to the present embodiment refers to a region including the face portion of a user in the captured image. The information processing apparatus according to the present embodiment detects the face region by detecting features such as the user's eyes, nose, mouth, and bone structure from the captured image, or by detecting a region resembling a luminance distribution and structure pattern of a face from the captured image, for example. Note that the method of detecting the face region according to the present embodiment is not limited to the above, and the information processing apparatus according to the present embodiment may also use arbitrary technology enabling the detection of a face from the captured image.

(2-1-1) Process in the Case of Determining Single User from Among Users Included in Captured Image as the Operator

[0043] The information processing apparatus according to the present embodiment determines the operator to be a single user corresponding to the face region having the largest face region size from among face regions detected from the captured image, for example.

[0044] At this point, regions having the same (or approximately the same) face region size may be included among the face regions detected from the captured image.

[0045] Accordingly, when there exist multiple face regions having the largest face region size among the face regions detected from the captured image, the information processing apparatus according to the present embodiment determines the operator to be the user corresponding to the face region detected earlier, for example.

[0046] By determining as the operator the user corresponding to the face region detected earlier as above, for example, the information processing apparatus according to the present embodiment is able to determine a single user as the operator, even when face regions are the same (or approximately the same) size.

[0047] Note that the method of determining the operator when there exist multiple face regions having the largest face region size among the face regions detected from the captured image is not limited to the above.

[0048] For example, the information processing apparatus according to the present embodiment may also determine the operator to be the user corresponding to the face region detected later, or determine from among the face regions detected from the captured image a single user as the operator by following a configured rule (such as randomly, for example).

[0049] In addition, the information processing apparatus according to the present embodiment may also determine a single user as the operator by combining one or multiple processes from among the determination process according to the second example discussed later to the determination process according to the fifth example discussed later, for example. By determining the operator according to a process combining the determination process according to the first example with a determination process according to another example, it becomes possible to prevent the operator from changing frequently, for example.

[0050] The information processing apparatus according to the present embodiment determines a single user as the operator on the basis of the size of a face region detected from the captured image as above, for example.

[0051] Note that the process in the case of determining a single user as the operator in the determination process according to the first example according to the present embodiment is not limited to the above.

[0052] For example, the information processing apparatus according to the present embodiment may also determine that a user who had been determined to be the operator on the basis of the size of a face region detected from the captured image is not the operator.

[0053] For example, the information processing apparatus according to the present embodiment computes a first difference value indicating the difference in the size of a face region corresponding to the user determined to be the operator from the size of a face region corresponding to a user not determined to be the operator (hereinafter called an "other user") from among the users included in the captured image. Subsequently, when the first difference value is equal to or greater than a configured first threshold value (or when the first difference value is greater than the first threshold value), the information processing apparatus according to the present embodiment determines that the user who had been determined to be the operator is not the operator.

[0054] The first threshold value may be a fixed value configured in advance, or a variable value that may be set appropriately by user operations or the like, for example. The degree to which the user determined to be the operator continues to be the operator changes according to the magnitude of the configured value of the first threshold value. Specifically, in the case in which the value of the first threshold value is 0 (zero), the user who had been determined to be the operator is determined not to be the operator when the size of the face region corresponding to the user determined to be the operator becomes smaller than the size of a face region corresponding to another user (or when the size of the face region corresponding to the user determined to be the operator becomes less than or equal to the size of a face region corresponding to another user). Also, as the value of the first threshold value becomes larger, the value of the first difference value needed for the user who had been determined to be the operator to be determined not to be the operator becomes larger, and thus the user who had been determined to be the operator is less likely to be determined not to be the operator.

(2-1-2) Process in the Case of Determining Multiple Users from Among Users Included in Captured Image as the Operator

[0055] The information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people in order of largest face region size detected from the captured image, for example. More specifically, the information processing apparatus according to the present embodiment determines, as the operator, users up to a configured number of people or a number of users less than the configured number of people, in order of largest face region size detected from the captured image, for example.

[0056] Herein, the configured number of people in the determination process according to the first example may be fixed, or varied by user operations or the like.

[0057] In addition, when the configured number of people is exceeded as a result of operator candidates being selected in order of largest face region size detected from the captured image due to face regions being the same size, the information processing apparatus according to the present embodiment does not determine the operator to be a user corresponding to a face region detected later from among the face regions having the same face region size, for example. Note that the method of determining the operator in the above case obviously is not limited to the above.

[0058] The information processing apparatus according to the present embodiment determines multiple users as the operator on the basis of the sizes of face regions detected from the captured image as above, for example.

(2-2) Second Example of Determination Process

[0059] The information processing apparatus according to the present embodiment determines the operator on the basis of a distance, from the display screen, of a user corresponding to a face region detected from the captured image (hereinafter called the "distance corresponding to a face region"), for example. The information processing apparatus according to the present embodiment determines a single user or multiple users from among the users included in the captured image as the operator(s).

[0060] Herein, the "distance, from the display screen, of a user corresponding to a face region included in the captured image" according to the present embodiment is specified (or estimated) on the basis of a depth map captured by a method such as time of flight (TOF), for example. In addition, the information processing apparatus according to the present embodiment is also capable of specifying (or estimating) the "distance, from the display screen, of a user corresponding to a face region included in the captured image" according to the present embodiment on the basis of a face region detected from the captured image and a detection value from a depth sensor using infrared or the like, for example. In addition, the information processing apparatus according to the present embodiment may also specify the "distance, from the display screen, of a user corresponding to a face region included in the captured image" by specifying (or estimating) the coordinates of a face region using arbitrary technology, and computing the distance to the coordinates of a reference position, for example. Herein, the coordinates of the face region and the coordinates of the reference position are expressed as coordinates in a three-dimensional coordinate system made up of two axes representing a plane corresponding to the display screen and one axis representing the vertical direction with respect to the display screen, for example. Note that the method of specifying (or the method of estimating) the "distance, from the display screen, of a user corresponding to a face region included in the captured image" according to the present embodiment obviously is not limited to the above.

(2-2-1) Process in the Case of Determining Single User from Among Users Included in Captured Image as the Operator

[0061] The information processing apparatus according to the present embodiment determines the operator to be a single user corresponding to the face region having the shortest distance corresponding to a face region, for example.

[0062] At this point, distances corresponding to a face region having the same (or approximately the same) distance may be included among the distances corresponding to a face region according to the present embodiment which correspond to face regions detected from the captured image.

[0063] Accordingly, when there exist multiple distances corresponding to a face region having the same (or approximately the same) distance among the distances corresponding to a face region according to the present embodiment, the information processing apparatus according to the present embodiment determines the operator to be the user corresponding to the face region detected earlier, for example.

[0064] By determining as the operator the user corresponding to the face region detected earlier as above, for example, the information processing apparatus according to the present embodiment is able to determine a single user as the operator, even when there exist multiple distances corresponding to a face region having the same (or approximately the same) distance.

[0065] Note that the method of determining the operator when there exist multiple distances corresponding to a face region having the same (or approximately the same) distance among the distances corresponding to a face region according to the present embodiment which correspond to face regions detected from the captured image is not limited to the above.

[0066] For example, the information processing apparatus according to the present embodiment may also determine the operator to be the user corresponding to the face region detected later, or determine from among the face regions detected from the captured image a single user as the operator by following a configured rule (such as randomly, for example).

[0067] In addition, the information processing apparatus according to the present embodiment may also determine a single user as the operator by combining one or multiple processes from among the determination process according to the first example above and the determination process according to the third example discussed later to the determination process according to the fifth example discussed later, for example. By determining the operator according to a process combining the determination process according to the third example with a determination process according to another example, it becomes possible to prevent the operator from changing frequently, for example.

[0068] The information processing apparatus according to the present embodiment determines a single user as the operator on the basis of the distance corresponding to a face region according to the present embodiment, which corresponds to a face region detected from the captured image as above, for example.

[0069] Note that the process in the case of determining a single user as the operator in the determination process according to the second example according to the present embodiment is not limited to the above. For example, the information processing apparatus according to the present embodiment may also determine that a user who had been determined to be the operator on the basis of the length of the distance corresponding to a face region according to the present embodiment which corresponds to a face region captured from the captured image is not the operator.

[0070] For example, the information processing apparatus according to the present embodiment computes a second difference value indicating the difference in the distance corresponding to a face region corresponding to another user from the distance corresponding to a face region corresponding to the user determined to be the operator. Subsequently, when the second difference value is equal to or greater than a configured second threshold value (or when the second difference value is greater than the second threshold value), the information processing apparatus according to the present embodiment determines that the user who had determined to be the operator is not the operator.

[0071] The second threshold value may be a static value configured in advance, or a variable value that may be set appropriately by user operations or the like, for example. The degree to which the user determined to be the operator continues to be the operator changes according to the magnitude of the configured value of the second threshold value. Specifically, in the case in which the value of the second threshold is 0 (zero), the user who had been determined to be the operator is determined not to be the operator when the distance corresponding to the face region corresponding to the user determined to be the operator becomes shorter than the distance corresponding to a face region corresponding to another user (or when the distance corresponding to the face region corresponding to the user determined to be the operator becomes less than or equal to the distance corresponding to a face region corresponding to another user). Also, as the value of the second threshold value becomes larger, the value of the second difference value needed for the user who had been determined to be the operator to be determined not to be the operator becomes larger, and thus the user who had been determined to be the operator is less likely to be determined not to be the operator.

(2-2-2) Process in the Case of Determining Multiple Users from Among Users Included in Captured Image as the Operator

[0072] The information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people in order of shortest distance corresponding to a face region, for example. More specifically, the information processing apparatus according to the present embodiment determines, as the operator, users up to a configured number of people or a number of users less than the configured number of people, in order of shortest distance corresponding to a face region, for example.

[0073] Herein, the configured number of people in the determination process according to the second example may be fixed, or varied by user operations or the like.

[0074] In addition, when the configured number of people is exceeded as a result of operator candidates being selected in order of shortest distance corresponding to a face region due to distances corresponding to a face region according to the present embodiment which correspond to face regions detected from the captured image being the same, the information processing apparatus according to the present embodiment does not determine the operator to be a user corresponding to a face region detected later from among the face regions having the same face distance corresponding to a face region, for example. Note that the method of determining the operator in the above case obviously is not limited to the above.

[0075] The information processing apparatus according to the present embodiment determines multiple users as the operator on the basis of the distance corresponding to a face region according to the present embodiment which corresponds to a face region detected from the captured image as above, for example.

(2-3) Third Example of Determination Process

[0076] The information processing apparatus according to the present embodiment determines the operator on the basis of a predetermined gesture detected from the captured image, for example.

[0077] Herein, the predetermined gesture according to the present embodiment may be various gestures, such as a gesture of raising a hand, or a gesture of waving a hand, for example.

[0078] For example, in the case of detecting a gesture of raising a hand, the information processing apparatus according to the present embodiment respectively detects the face region and the hand from the captured image. Subsequently, if the detected hand exists within a region corresponding to the face region (a region configured to determine that a hand was raised), the information processing apparatus according to the present embodiment detects the gesture of raising a hand by determining that the user corresponding to the relevant face region raised a hand.

[0079] As another example, in the case of detecting a gesture of waving a hand, the information processing apparatus according to the present embodiment respectively detects the face region and the hand from the captured image. Subsequently, if the detected hand is detected within a region corresponding to the face region (a region configured to determine that a hand was waved), and the frequency of luminance change in the captured image is equal to or greater than a configured predetermined frequency (or the frequency of the luminance change is greater than the predetermined frequency), the information processing apparatus according to the present embodiment detects the gesture of waving a hand by determining that the user corresponding to the relevant face region waved a hand.

[0080] Note that the predetermined gesture according to the present embodiment and the method of detecting the predetermined gesture according to the present embodiment are not limited to the above. The information processing apparatus according to the present embodiment may also detect an arbitrary gesture, such as a gesture of pointing a finger, by using an arbitrary method enabling detection from the captured image, for example.

(2-3-1) Process in the Case of Determining Single User from Among Users Included in Captured Image as the Operator

[0081] The information processing apparatus according to the present embodiment determines, as the operator, a user for which a predetermined gesture was detected earlier from the captured image, for example.

[0082] Note that in the determination process according to the third example, the process of determining a single user as the operator is not limited to the above.

[0083] For example, when there exist multiple users for which a predetermined gesture was detected from the captured image, the information processing apparatus according to the present embodiment may determine the operator to be a user for which a predetermined gesture was detected later, for example. Also, in the above case, the information processing apparatus according to the present embodiment may also determine from among the users for which a predetermined gesture was detected from the captured image a single user as the operator by following a configured rule (such as randomly, for example). Furthermore, in the above case, the information processing apparatus according to the present embodiment may also determine a single user as the operator by combining the determination process according to the first example above, the determination process according to the second example above, the determination process according to the fourth example discussed later, and the determination process according to the fifth example discussed later, for example.

(2-3-2) Process in the Case of Determining Multiple Users from Among Users Included in Captured Image as the Operator

[0084] The information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people in order of a predetermined gesture being detected from the captured image, for example. More specifically, the information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people or a number of users less than the configured number of people, in order of a predetermined gesture being detected from the captured image, for example.

[0085] Herein, the configured number of people in the determination process according to the third example may be fixed, or varied by user operations or the like.

[0086] Note that in the determination process according to the third example, the process of determining multiple users as the operator is not limited to the above.

[0087] For example, the information processing apparatus according to the present embodiment may also determine the operator to be users up to the configured number of people selected from among the users for which a predetermined gesture was detected from the captured image by following a configured rule (such as randomly, for example).

(2-4) Fourth Example of Determination Process

[0088] When the position of a user's line of sight on the display screen is included in a configured region on the display screen, the information processing apparatus according to the present embodiment determines the operator to be the user corresponding to the relevant line of sight, for example.

[0089] Herein, the information processing apparatus according to the present embodiment uses the position of a line of sight of a user on the display screen, which is indicated by the information about the line of sight of a user according to the present embodiment. Also, the configured region on the display screen according to the present embodiment may be a fixed region configured in advance on the display screen, a region in which a predetermined object, such as an icon or character image, is displayed on the display screen, or a region configured by a user operation or the like on the display screen, for example.

(2-4-1) Process in the Case of Determining Single User from Among Users Included in Captured Image as the Operator

[0090] The information processing apparatus according to the present embodiment determines the operator to be a user for which the position of the user's line of sight was detected earlier within a configured region on the display screen, for example.

[0091] Note that in the determination process according to the fourth example, the process of determining a single user as the operator is not limited to the above.

[0092] For example, when there exist multiple users for which a line of sight was detected within the configured region on the display screen, the information processing apparatus according to the present embodiment may determine the operator to be a user for which the position of the user's line of sight was detected later within the configured region on the display screen, for example. Also, in the above case, the information processing apparatus according to the present embodiment may also determine, from among the users for which the position of the user's line of sight is included within the configured region on the display screen, a single user as the operator by following a configured rule (such as randomly, for example). Furthermore, in the above case, the information processing apparatus according to the present embodiment may also determine a single user as the operator by combining the determination process according to the first example above, the determination process according to the second example above, the determination process according to the third example above, and the determination process according to the fifth example discussed later, for example.

(2-4-2) Process in the Case of Determining Multiple Users from Among Users Included in Captured Image as the Operator

[0093] The information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people in order of the position of the user's line of sight being detected within a configured region on the display screen, for example. More specifically, the information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people or a number of users less than the configured number of people, in order of the position of the user's line of sight being detected within the configured region on the display screen, for example.

[0094] Herein, the configured number of people in the determination process according to the fourth example may be fixed, or varied by user operations or the like.

[0095] Note that in the determination process according to the fourth example, the process of determining multiple users as the operator is not limited to the above.

[0096] For example, the information processing apparatus according to the present embodiment may also determine the operator to be users up to the configured number of people selected from among the users for which the position of the user's line of sight is included within the configured region on the display screen by following a configured rule (such as randomly, for example).

(2-5) Fifth Example of Determination Process

[0097] The information processing apparatus according to the present embodiment identifies a user included a captured image on the basis of the captured image, for example. Subsequently, the information processing apparatus according to the present embodiment determines the operator on the basis of a ranking associated with the identified user.

[0098] Herein, a ranking according to the present embodiment refers to a value indicating an index by which the information processing apparatus according to the present embodiment preferentially determines the operator, for example. In the ranking according to the present embodiment, the priority of ranking may be high to the extent that the value is small, or the priority of ranking may be high to the extent that the value is large, for example.

[0099] More specifically, the information processing apparatus according to the present embodiment detects a face region from the captured image, and conducts a face recognition process on the detected face region to extract face information (data) indicating features of a user's face, for example. Subsequently, the information processing apparatus according to the present embodiment uses a table (or database) associating a user ID uniquely indicating a user with face information and the extracted face information to identify the user by specifying the user ID corresponding to the face information, for example.

[0100] Herein, the user ID uniquely indicating a user according to the present embodiment additionally may be associated with the execution state of an application and/or information related to the calibration of a user interface (UI), for example. The information related to UI calibration may be data indicating positions where objects such as icons are arranged on the display screen, for example. By additionally associating the user ID uniquely indicating a user according to the present embodiment with the execution state of an application or the like, the information processing apparatus according to the present embodiment is able to manage identifiable users in greater detail, and also provide to a identifiable user various services corresponding to that user.

[0101] Note that the method of identifying a user based on the captured image according to the present embodiment is not limited to the above.

[0102] For example, the information processing apparatus according to the present embodiment may also use a table (or a database) associating a user ID indicating a user type, such as an ID or the like indicating whether the user is an adult or a child, with face information and extracted face information to specify a user ID corresponding to a face region, and thereby specify a user type.

[0103] After users are identified, the information processing apparatus according to the present embodiment uses a table (or database) associating a user ID with a ranking value and the specified user ID to specify the ranking corresponding to the identified users. Subsequently, the information processing apparatus according to the present embodiment determines the operator on the basis of the specified ranking.

[0104] By determining the operator on the basis of a ranking as above, for example, the information processing apparatus according to the present embodiment is able to realize the following. Obviously, however, an example of a determination process according to the fifth example is not limited to the examples given below. [0105] When the ranking of a father is highest from among the users identifiable by the information processing apparatus according to the present embodiment, the information processing apparatus according to the present embodiment determines the operator to be the father while the father is being identified from the captured image (an example of a case in which the information processing apparatus according to the present embodiment determines the operator of equipment used at home). [0106] When the ranking of an adult is higher than a child from among the user identifiable by the information processing apparatus according to the present embodiment, if a child user and an adult user are included in the captured image, the information processing apparatus according to the present embodiment determines the operator to be the adult while the adult is being identified from the captured image. (2-5-1) Process in the Case of Determining Single User from Among Users Included in Captured Image as the Operator

[0107] The information processing apparatus according to the present embodiment determines the operator to be the user with the highest ranking associated with a user identified on the basis of the captured image, for example.

[0108] Note that in the determination process according to the fifth example, the process of determining a single user as the operator is not limited to the above.

[0109] For example, when there exist multiple users having the highest ranking, the information processing apparatus according to the present embodiment may determine the operator to be the user whose face region was detected earlier from the captured image or the user identified earlier on the basis of the captured image from among the users having the highest ranking, for example. Also, in the above case, the information processing apparatus according to the present embodiment may also determine from among the users having the highest ranking a single user as the operator by following a configured rule (such as randomly, for example).

(2-5-2) Process in the Case of Determining Multiple Users from Among Users Included in Captured Image as the Operator

[0110] The information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people in order of highest ranking, for example. More specifically, the information processing apparatus according to the present embodiment determines the operator to be users up to a configured number of people or a number of users less than the configured number of people, in order of highest ranking, for example.

[0111] Herein, the configured number of people in the determination process according to the fifth example may be fixed, or varied by user operations or the like.

[0112] In addition, when the configured number of people is exceeded as a result of operator candidates being selected in order of highest ranking due to rankings being the same, the information processing apparatus according to the present embodiment does not determine the operator to be a user whose face region was detected later from the captured image or a user identified later on the basis of the captured image from among the users having the same ranking, for example. Note that the method of determining the operator in the above case obviously is not limited to the above.

(2-6) Sixth Example of Determination Process

[0113] The information processing apparatus according to the present embodiment may also determine the operator when speech indicating configured predetermined spoken content is additionally detected for a user determined to be the operator in each of the determination process according to the first example indicated in (2-1) above to the determination process according to the fifth example indicated in (2-5) above.

[0114] The information processing apparatus according to the present embodiment detects the speech indicating predetermined spoken content by performing speech recognition using source separation or source localization, for example. Herein, source separation according to the present embodiment refers to technology that extracts only speech of interest from among various sounds. Also, source localization according to the present embodiment refers to technology that measures the position (angle) of a sound source.

[0115] As a determination process according to the present embodiment, the information processing apparatus according to the present embodiment determines the operator from among users included in the captured image on the basis of the captured image by conducting one of the processes from the determination process according to the first example indicated in (2-1) above to the determination process according to the sixth example indicated in (2-6) above, for example.

[0116] Note that the process of determining the operator from among users included in the captured image on the basis of the captured image in a determination process according to the present embodiment is not limited to being from the determination process according to the first example indicated in (2-1) above to the determination process according to the sixth example indicated in (2-6) above. For example, the information processing apparatus according to the present embodiment may also determine the operator according to a detection order of faces detected from the captured image. Examples of determining the operator according to a face detection order include taking the operator to be the user whose face was detected first, or taking the operator to be users equal to a configured number of people in order of face detection, for example.

[0117] Also, the determination process according to the present embodiment is not limited to being a process of determining the operator from among users included in the captured image. The information processing apparatus according to the present embodiment is also capable of conducting one or more of the processes from the determination process according to the seventh example indicated below to the determination process according to the tenth example indicated below as the determination process according to the present embodiment, for example.

(2-7) Seventh Example of Determination Process

[0118] For example, the information processing apparatus according to the present embodiment configures an operation level for a user determined to be the operator.

[0119] Herein, the operation level according to the present embodiment refers to a value indicating an index related to a range of operations that may be performed using line of sight by the determined operator, for example. The operation level according to the present embodiment is associated with a range of operations that may be performed using line of sight by a table (or a database) associating the operation level according to the present embodiment with information about operations that may be performed using line of sight, for example. The information about operations according to the present embodiment may be, for example, various data for realizing operations, such as data indicating operated-related commands or parameters, or data for executing an operation-related application (such as an address where an application is stored, and parameters, for example).

[0120] When an operation level is configured in the determination process according to the present embodiment, the information processing apparatus according to the present embodiment conducts a process corresponding to the operator's line of sight on the basis of the configured operation level in an execution process according to the present embodiment discussed later. In other words, when an operation level is configured in the determination process according to the present embodiment, the information processing apparatus according to the present embodiment is able to dynamically change the processes that the determined operator may perform on the basis of the configured operation level, for example.

[0121] The information processing apparatus according to the present embodiment configures the configured operation level for a user determined to be the operator, for example.

[0122] In addition, when the user determined to be the operator is identified on the basis of the captured image, the information processing apparatus according to the present embodiment may configure an operation level corresponding to the identified user, for example. The information processing apparatus according to the present embodiment configures an operation level corresponding to the identified user by using a table (or database) associating a user ID with an operation level and the user ID corresponding to the user identified on the basis of the captured image, for example.

(2-8) Eighth Example of Determination Process

[0123] As a determination process according to the present embodiment, the information processing apparatus according to the present embodiment may also determine that a user who had been determined to be the operator is not the operator.

[0124] The information processing apparatus according to the present embodiment determines that a user who had been determined to be the operator is not the operator when information about the line of sight of a user corresponding to the user determined to be the operator cannot be acquired from the captured image, for example. As above, in the case of determining that a user is not the operator on the basis of information about the line of sight of a user corresponding to the user determined to be the operator, the information processing apparatus according to the present embodiment determines that the user who had been determined to be the operator is not the operator when the user determined to be the operator stops directing his or her line of sight towards the display screen, for example.

[0125] Note that the process of determining that a user who had been determined to be the operator is not the operator according to the present embodiment is not limited to the above.

[0126] For example, the cause for being unable to acquire information about the line of sight of a user corresponding to the user determined to be the operator from the captured image may be, for example, that the user determined to be the operator is no longer included in the captured image, or that the user determined to be the operator is included in the captured image, but is not looking at the display screen. Additionally, when information about the line of sight of a user cannot be acquired from the captured image because the user determined to be the operator is included in the captured image, but is not looking at the display screen, and the information processing apparatus according to the present embodiment determines that the user who had been determined to be the operator is not the operator, there is a risk of loss of convenience for the user who had been determined to be the operator.

[0127] Accordingly, even when information about the line of sight of a user corresponding to the user determined to be the operator cannot be acquired from the captured image, if the head of the user determined to be the operator is detected, the information processing apparatus according to the present embodiment does not determine that the user who had been determined to be the operator is not the operator.

[0128] The information processing apparatus according to the present embodiment detects a user's head from the captured image by detecting a shape corresponding to a head (such as a circular shape or an elliptical shape, for example) from the captured image, or by detecting luminance changes or the like in the captured image. The information processing apparatus according to the present embodiment detects the head of the user determined to be the operator by conducting a process related to the detection of a user's head on a partial region of the captured image that includes a region in which a face region corresponding to the user determined to the operator was detected, for example. Note that the process related to the detection of a user's head and the method of detecting the head of the user determined to be the operator according to the present embodiment are not limited to the above. For example, the information processing apparatus according to the present embodiment may also detect the head of the user determined to be the operator by using an arbitrary method and process enabling the detection of the head of the user determined to be the operator, such as a method that uses detection results from various sensors such as an infrared sensor.

[0129] As above, when the head of the user determined to be the operator is detected, by not determining that the user who had been determined to be the operator is not the operator, even if the user who had been determined to be the operator hypothetically looks away from the display screen, that user still remains the operator. Thus, as above, when the head of the user determined to be the operator is detected, by not determining that the user who had been determined to be the operator is not the operator, a reduction in the convenience of the user who had been determined to be the operator may be prevented.

(2-9) Ninth Example of Determination Process

[0130] The determination process according to the eighth example above illustrates a process in which the information processing apparatus according to the present embodiment determines that the user who had been determined to be the operator is not the operator, on the basis of information about the line of sight of a user. When the determination process according to the eighth example above is used, it is possible to change the operator determined by the information processing apparatus according to the present embodiment, such as by having the user who is the operator hide his or her face so that information about the line of sight of a user is not acquired, or by having the user who is the operator move to a position where his or her head is not detected from the captured image, for example.

[0131] However, the method of changing the operator according to the present embodiment is not limited to a method using the determination process according to the eighth example above. For example, the information processing apparatus according to the present embodiment may also actively change the operator from a user who had been determined to be the operator to another user, on the basis of a predetermined combination of gestures by the user determined to be the operator and the other user.

[0132] More specifically, the information processing apparatus according to the present embodiment detects a predetermined combination of gestures by the user determined to be the operator and the other user, for example. The information processing apparatus according to the present embodiment detects the predetermined combination of gestures by using a method related to arbitrary gesture recognition technology, such as a method that uses image processing on the captured image, or a method utilizing detection values from an arbitrary sensor such as a depth sensor, for example.

[0133] Subsequently, when the predetermined combination of gestures by the user determined to be the operator and the other user is detected from the captured image, the information processing apparatus according to the present embodiment changes the operator from the user who had been determined to be the operator to the other user.

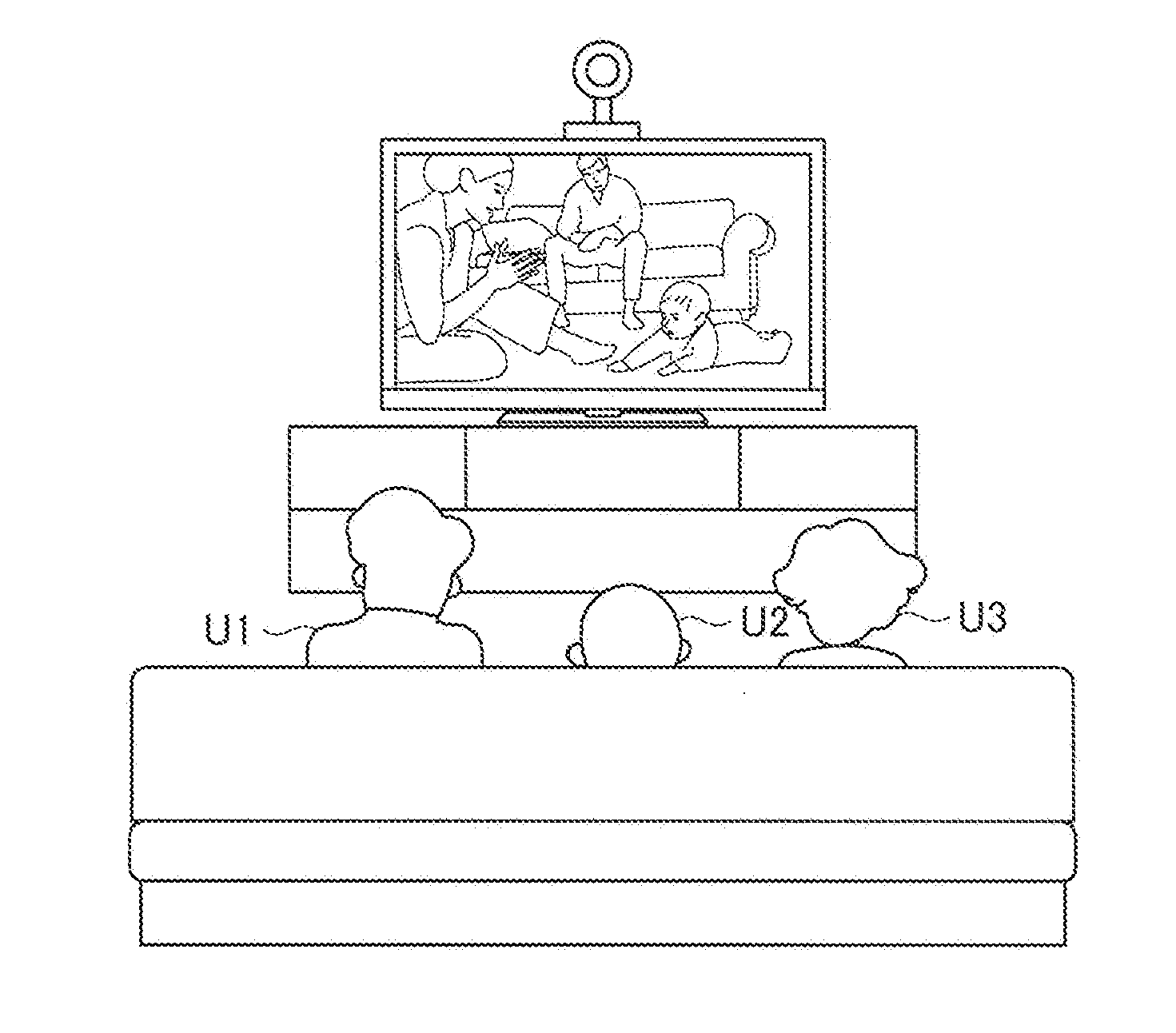

[0134] FIGS. 1A and 1B are explanatory diagrams for describing an example of a process in accordance with an information processing method according to the present embodiment. FIGS. 1A and 1B illustrate respective examples of a predetermined combination of gestures by a user determined to be the operator and another user detected by the information processing apparatus according to the present embodiment. In FIGS. 1A and 1B, the user U1 represents the user determined to be the operator, while the user U2 represents the other user.

[0135] When the predetermined combination of gestures by the user determined to be the operator and the other user, such as the high-five gesture illustrated in FIG. 1A or the hand-raising gesture illustrated in FIG. 1B, is detected from the captured image, the information processing apparatus according to the present embodiment changes the operator from the user U1 to the user U2. Obviously, however, an example of a predetermined combination of gestures by the user determined to be the operator and the other user according to the present embodiment is not limited to the examples illustrated in FIGS. 1A and 1B.

[0136] By having the information processing apparatus according to the present embodiment conduct the determination process according to the ninth example as above, the users are able to change the operator intentionally by performing gestures, even when the number of users determined to be the operator has reached a configured upper limit.

(2-10) Tenth Example of Determination Process

[0137] When the number of users determined to be the operator has not reached a configured upper limit (or alternatively, when an upper limit on the number of users determined to be the operator is not configured; this applies similarly hereinafter), the information processing apparatus according to the present embodiment determines the operator to be a user newly included in the captured image as a result of conducting the process in the case of determining the operator to be multiple users in a process from the determination process according to the first example indicated in (2-1) above to the determination process according to the sixth example indicated in (2-6) above, for example.

[0138] Note that the process related to the determination of the operator in the case in which the number of users determined to be the operator has not reached a configured upper limit is not limited to the above.

[0139] For example, depending on the application executed in the execution process according to the present embodiment discussed later, immediately determining the operator to be a user newly included in the captured image is not desirable in some cases. Accordingly, the information processing apparatus according to the present embodiment may also conduct a process as given below, for example, when a user is newly included in the captured image while the number of users determined to be the operator has not reached a configured upper limit, for example. In addition, the information processing apparatus according to the present embodiment may also conduct a process selected by a user operation or the like from among the above process of determining the operator to be a user newly included in the captured image or the processes given below, for example. [0140] The user newly included in the captured image is not determined to be the operator until a configured time elapses after the application is executed [0141] The user newly included in the captured image is selectively determined to be the operator according to the execution state of the application

(3) Execution Process

[0142] The information processing apparatus according to the present embodiment conducts a process on the basis of the information about the line of sight of the user corresponding to the operator determined in the process of (2) above (determination process).

[0143] Herein, the process based on the information about the line of sight of a user according to the present embodiment may be various processes using the information about the line of sight of a user according to the present embodiment, such as a process of selecting an object existing at the position of the line of sight indicated by the information about the position of the line of sight of a user (an example of information about the line of sight of a user), a process of moving an object depending on the position of the line of sight indicated by the information about the position of the line of sight of a user, a process associated with an eye movement indicated by information about the eye movements of a user (an example of information about the line of sight of a user), and a process of controlling, on the basis of an eye movement indicated by information about the eye movements of a user, the execution state of an application or the like corresponding to the position of the line of sight indicated by the information about the position of the line of sight of a user, for example. In addition, the above object according to the present embodiment may be various objects displayed on the display screen, such as an icon, a cursor, a message box, and a text string or image for notifying the user, for example.