Privacy-preserving Demographics Identification

Kamarol; Siti Khairuni Amalina ; et al.

U.S. patent application number 15/859320 was filed with the patent office on 2019-01-31 for privacy-preserving demographics identification. This patent application is currently assigned to Intel Corporation. The applicant listed for this patent is Intel Corporation. Invention is credited to Siew Wen Chin, Siti Khairuni Amalina Kamarol, Addicam V. Sanjay, Shao-Wen Yang.

| Application Number | 20190034716 15/859320 |

| Document ID | / |

| Family ID | 65038673 |

| Filed Date | 2019-01-31 |

View All Diagrams

| United States Patent Application | 20190034716 |

| Kind Code | A1 |

| Kamarol; Siti Khairuni Amalina ; et al. | January 31, 2019 |

PRIVACY-PRESERVING DEMOGRAPHICS IDENTIFICATION

Abstract

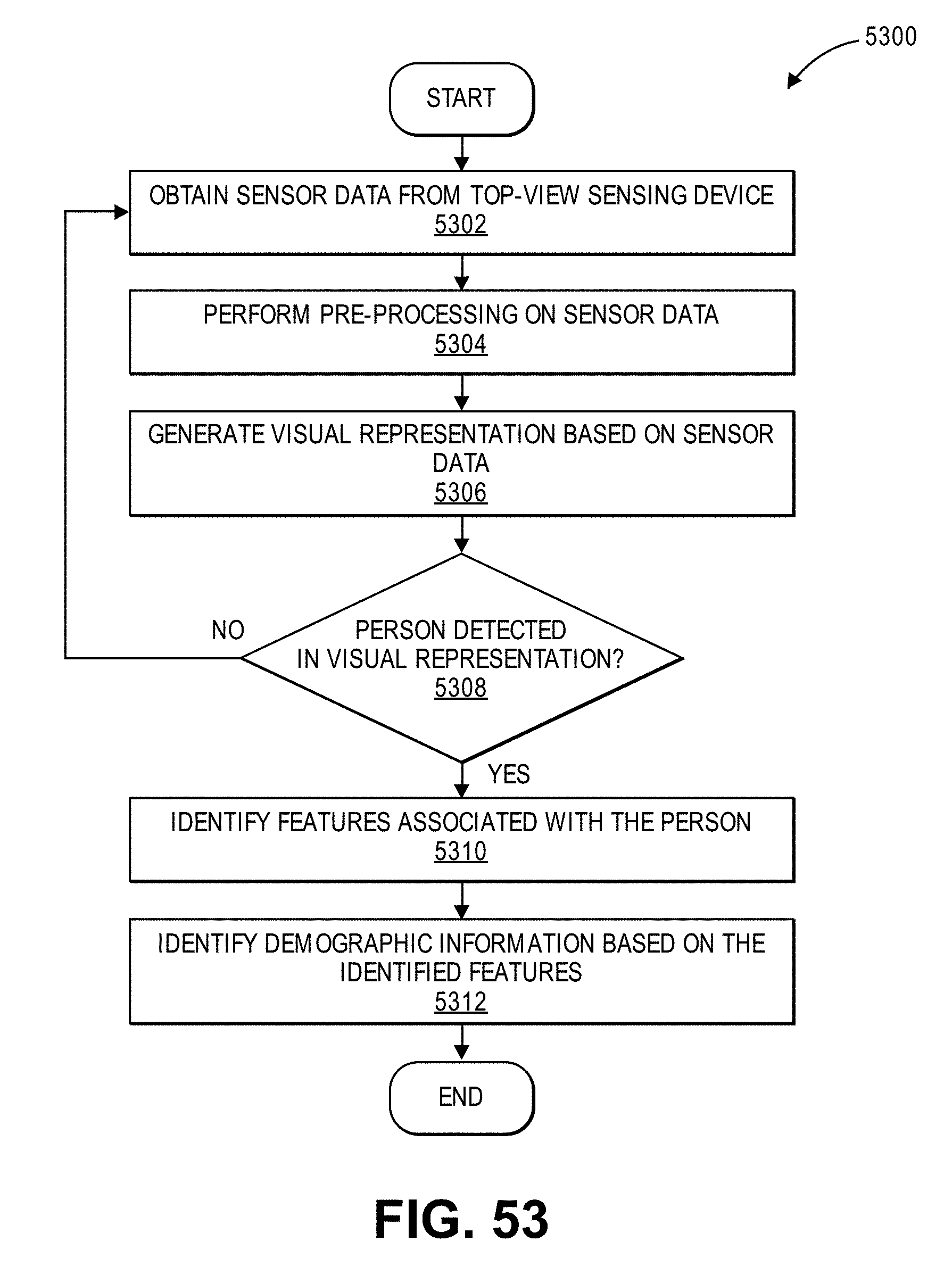

In one embodiment, an apparatus comprises a top-view sensing device to capture sensor data associated with an environment below the top-view sensing device. The apparatus further comprises a processor to: obtain the sensor data captured by the top-view sensing device; generate, based on the sensor data, a visual representation of the environment below the top-view sensing device; determine that the visual representation comprises a representation of a person; identify one or more features associated with the representation of the person; and identify demographic information associated with the person based on the one or more features.

| Inventors: | Kamarol; Siti Khairuni Amalina; (Penang, MY) ; Sanjay; Addicam V.; (Gilbert, AZ) ; Yang; Shao-Wen; (San Jose, CA) ; Chin; Siew Wen; (Penang, MY) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Intel Corporation Santa Clara CA |

||||||||||

| Family ID: | 65038673 | ||||||||||

| Appl. No.: | 15/859320 | ||||||||||

| Filed: | December 29, 2017 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62611536 | Dec 28, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06K 9/00771 20130101; G06K 9/6268 20130101; G06Q 50/26 20130101; G06K 9/00369 20130101; G06F 2209/506 20130101; G06F 21/6245 20130101; G08G 1/087 20130101; G06F 9/4881 20130101; G06F 21/604 20130101; G06K 9/6271 20130101; G11B 27/031 20130101; H04N 7/181 20130101; G06F 9/505 20130101; G08G 1/091 20130101; G08G 1/0116 20130101; G06K 9/4604 20130101 |

| International Class: | G06K 9/00 20060101 G06K009/00; G06K 9/46 20060101 G06K009/46; G06K 9/62 20060101 G06K009/62 |

Claims

1. An apparatus, comprising: a top-view sensing device to capture sensor data associated with an environment below the top-view sensing device; and a processor to: obtain the sensor data captured by the top-view sensing device; generate, based on the sensor data, a visual representation of the environment below the top-view sensing device; determine that the visual representation comprises a representation of a person; identify one or more features associated with the representation of the person; and identify demographic information associated with the person based on the one or more features.

2. The apparatus of claim 1, wherein the top-view sensing device comprises a plurality of sensors.

3. The apparatus of claim 2, wherein the plurality of sensors comprises one or more of: a camera; an infrared sensor; or a laser-based sensor.

4. The apparatus of claim 1, wherein the representation of the person comprises a top-view representation of the person.

5. The apparatus of claim 1, wherein the demographic information comprises one or more of: age; gender; or race.

6. The apparatus of claim 1, wherein the one or more features comprise one or more anthropometric features associated with the person.

7. The apparatus of claim 1, wherein the processor to identify the one or more features associated with the representation of the person is further to perform feature extraction on the representation of the person.

8. The apparatus of claim 7, wherein the processor to perform feature extraction on the representation of the person is further to perform a discrete cosine transform to extract a feature associated with the representation of the person.

9. The apparatus of claim 7, wherein the processor to perform feature extraction on the representation of the person is further to perform a principal component analysis to extract a feature associated with the representation of the person.

10. The apparatus of claim 1, wherein the processor to identify the demographic information associated with the person based on the one or more features is further to classify the one or more features using a machine learning model.

11. The apparatus of claim 1, wherein the processor is further to perform pre-processing on the sensor data.

12. The apparatus of claim 11, wherein the processor to perform pre-processing on the sensor data is further to perform one or more of: a data transformation; or noise reduction.

13. A system, comprising: a top-view sensing device, wherein the top-view sensing device comprises a plurality of sensors to capture sensor data associated with an environment below the top-view sensing device; and an edge processing device to: obtain the sensor data captured by the top-view sensing device; generate, based on the sensor data, a visual representation of the environment below the top-view sensing device; determine that the visual representation comprises a representation of a person; identify one or more anthropometric features associated with the representation of the person; and identify demographic information associated with the person based on the one or more anthropometric features.

14. The system of claim 13, wherein the plurality of sensors comprises one or more of: a camera; an infrared sensor; or a laser-based sensor.

15. The system of claim 13, wherein the edge processing device to identify the demographic information associated with the person based on the one or more anthropometric features is further to classify the one or more anthropometric features using a machine learning model.

16. The system of claim 13, wherein the edge processing device comprises an edge gateway.

17. The system of claim 13: wherein the system further comprises a cloud processing device to perform analytics based on the demographic information; and wherein the edge processing device comprises a communication interface to transmit the demographic information to the cloud processing device.

18. The system of claim 0, wherein the cloud processing device to perform analytics based on the demographic information is further to generate: a heat map; or a people profile.

19. At least one machine accessible storage medium having instructions stored thereon, wherein the instructions, when executed on a machine, cause the machine to: obtain sensor data captured by a top-view sensing device, wherein the sensor data comprises information associated with an environment below the top-view sensing device; generate, based on the sensor data, a visual representation of the environment below the top-view sensing device; determine that the visual representation comprises a representation of a person; identify one or more anthropometric features associated with the representation of the person; and identify demographic information associated with the person based on the one or more anthropometric features.

20. The storage medium of claim 19, wherein the top-view sensing device comprises one or more of: a camera; an infrared sensor; or a laser-based sensor.

21. The storage medium of claim 19, wherein the instructions that cause the machine to identify the one or more anthropometric features associated with the representation of the person further cause the machine to perform feature extraction on the representation of the person.

22. The storage medium of claim 19, wherein the instructions that cause the machine to identify the demographic information associated with the person based on the one or more anthropometric features further cause the machine to classify the one or more anthropometric features using a machine learning model.

23. A method, comprising: obtaining sensor data captured by a top-view sensing device, wherein the sensor data comprises information associated with an environment below the top-view sensing device; generating, based on the sensor data, a visual representation of the environment below the top-view sensing device; determining that the visual representation comprises a representation of a person; identifying one or more anthropometric features associated with the representation of the person; and identifying demographic information associated with the person based on the one or more anthropometric features.

24. The method of claim 23, wherein identifying the demographic information associated with the person based on the one or more anthropometric features comprises classifying the one or more anthropometric features using a machine learning model.

25. A system, comprising: means for obtaining sensor data captured by a top-view sensing device, wherein the sensor data comprises information associated with an environment below the top-view sensing device; means for generating, based on the sensor data, a visual representation of the environment below the top-view sensing device; means for determining that the visual representation comprises a representation of a person; means for identifying one or more anthropometric features associated with the representation of the person; and means for identifying demographic information associated with the person based on the one or more anthropometric features.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This patent application claims the benefit of the filing date of U.S. Provisional Patent Application Ser. No. 62/611,536, filed on Dec. 28, 2017, and entitled "VISUAL FOG," the content of which is hereby expressly incorporated by reference.

FIELD OF THE SPECIFICATION

[0002] This disclosure relates in general to the field of computing systems, and more particularly, though not exclusively, to visual computing.

BACKGROUND

[0003] Advancements in modern computing have led to an increased use of visual computing for a variety of mainstream computing applications. In particular, rapid deployments of cameras have been leveraged for numerous visual computing applications that rely on large-scale video analytics and visual data processing. Existing approaches to large-scale visual computing, however, suffer from numerous limitations. For example, existing visual computing approaches are implemented using rigid designs that utilize resources inefficiently and provide limited functionality, privacy, and security. As a result, existing approaches often suffer from high latency and are inaccurate, unreliable, inflexible, and incapable of scaling efficiently.

BRIEF DESCRIPTION OF THE DRAWINGS

[0004] The present disclosure is best understood from the following detailed description when read with the accompanying figures. It is emphasized that, in accordance with the standard practice in the industry, various features are not necessarily drawn to scale, and are used for illustration purposes only. Where a scale is shown, explicitly or implicitly, it provides only one illustrative example. In other embodiments, the dimensions of the various features may be arbitrarily increased or reduced for clarity of discussion.

[0005] FIG. 1 illustrates an example embodiment of a visual fog system in accordance with certain embodiments.

[0006] FIGS. 2, 3, 4, and 5 illustrate examples of Internet-of-Things (IoT) networks and architectures that can be used in accordance with certain embodiments.

[0007] FIGS. 6 and 7 illustrate example computer architectures that can be used in accordance with certain embodiments.

[0008] FIG. 8 illustrates an example embodiment of an architecture for visual fog nodes.

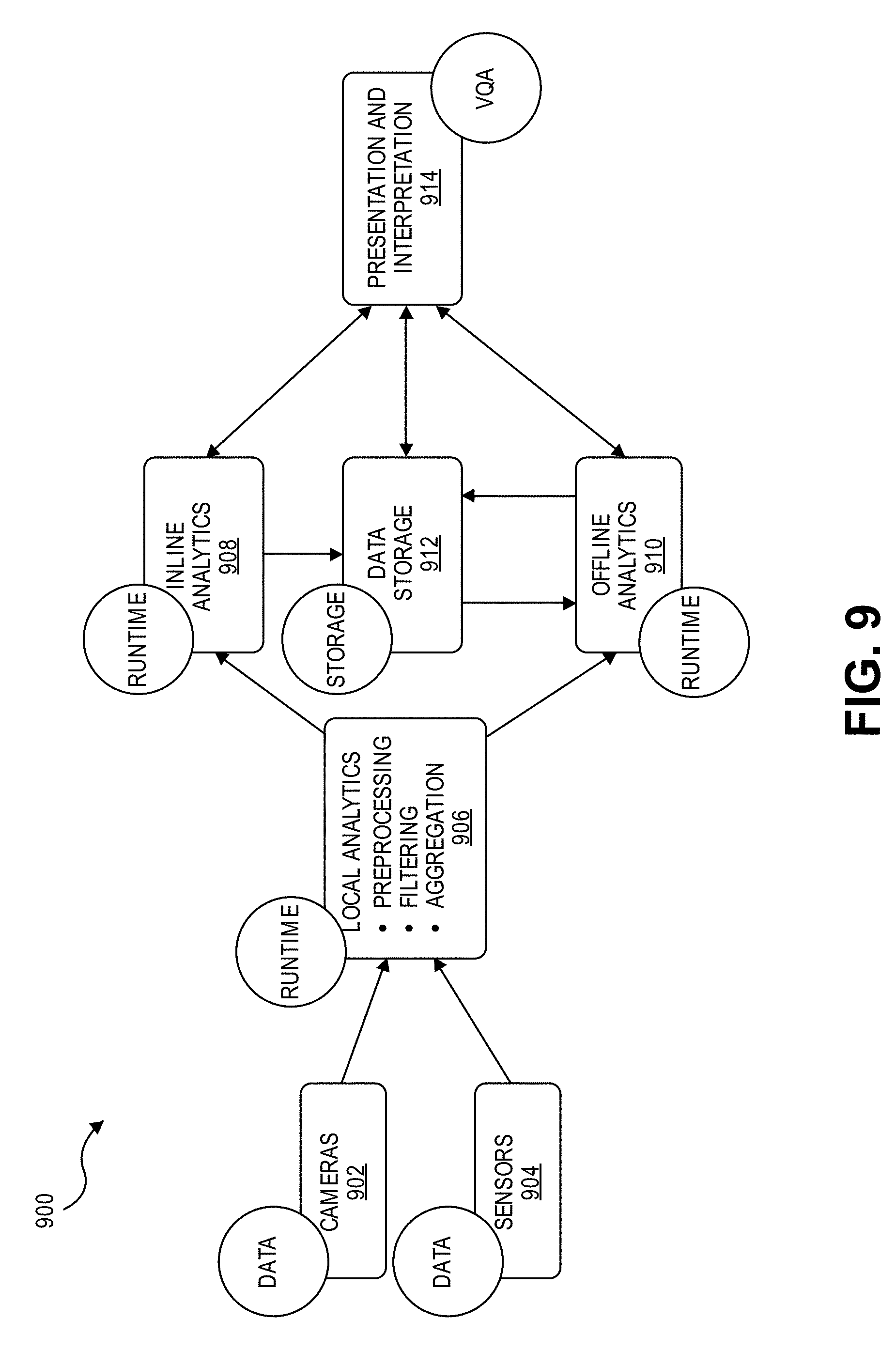

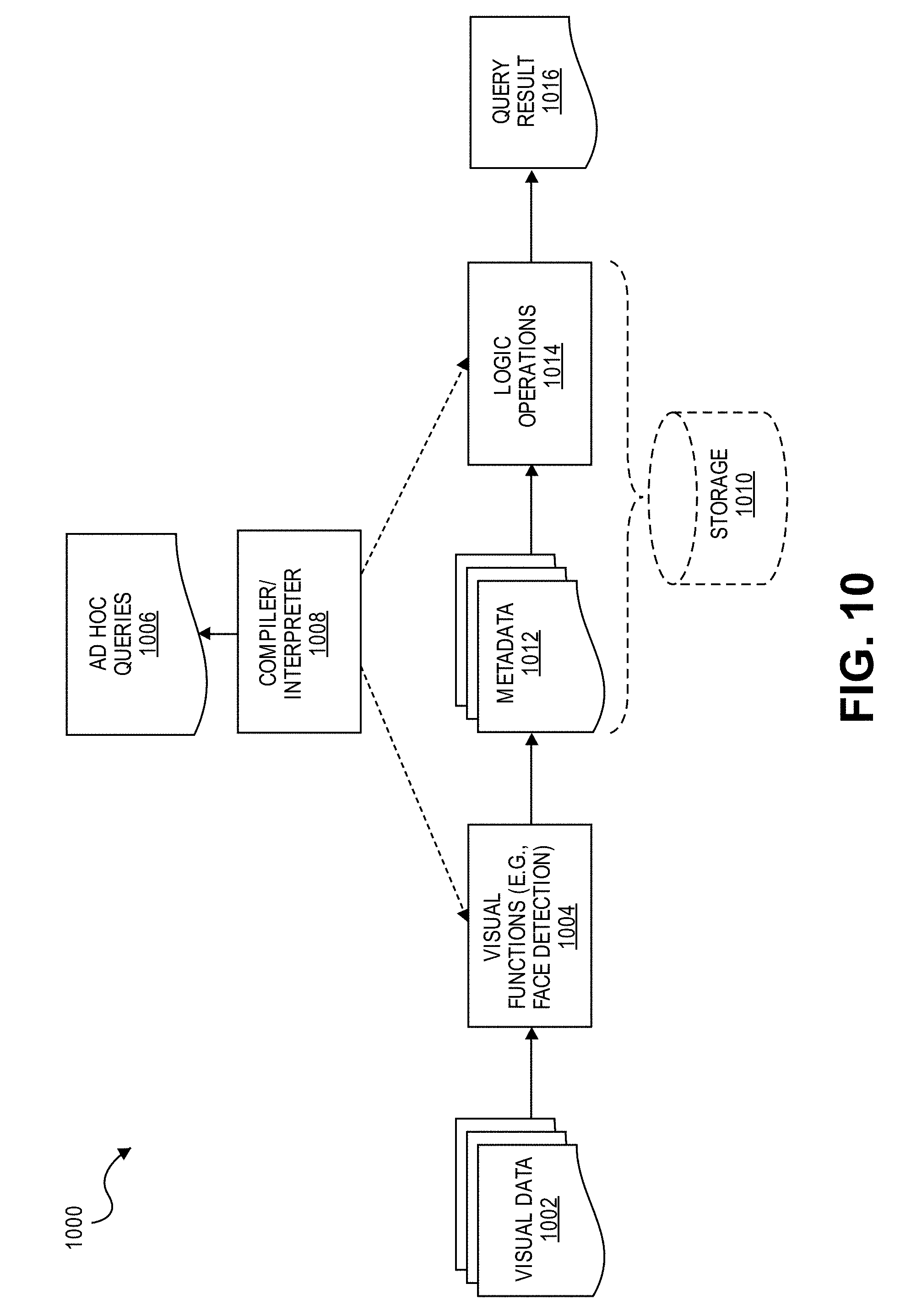

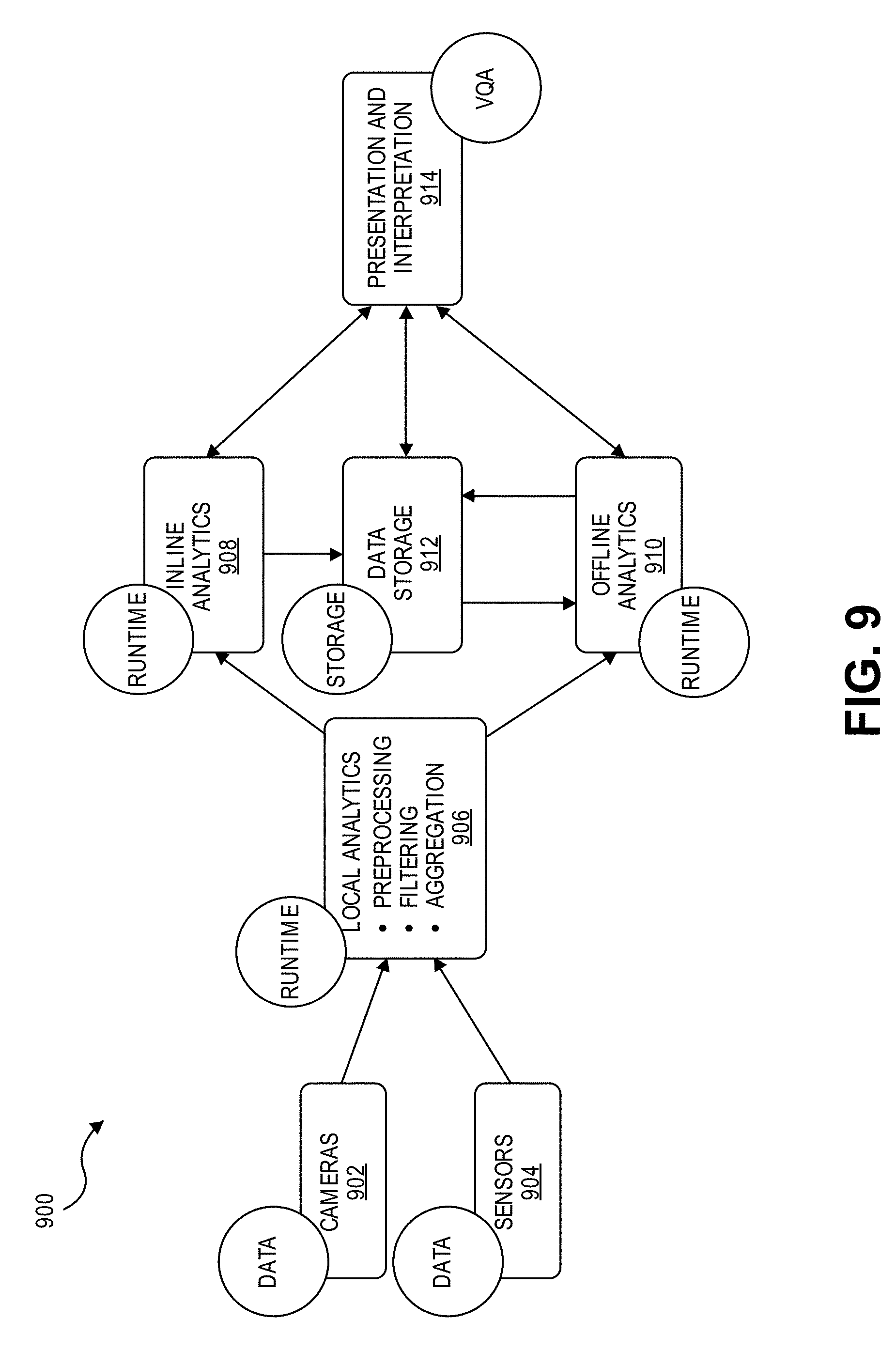

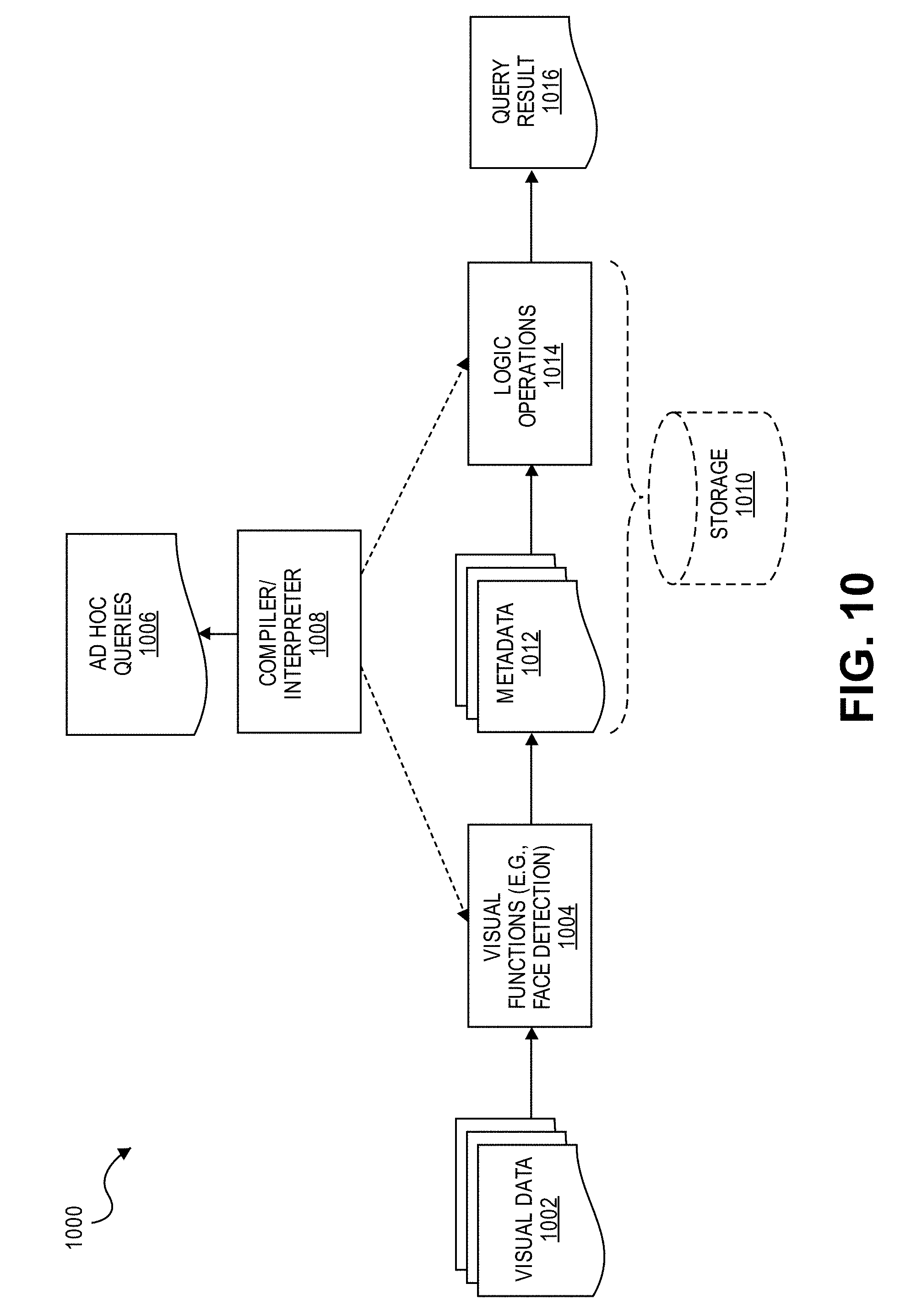

[0009] FIGS. 9, 10, 11, and 12A-B illustrate example embodiments of a visual fog architecture.

[0010] FIGS. 13 and 14 illustrate example embodiments associated with a visual question answering (VQA) framework.

[0011] FIGS. 15 and 16 illustrate example embodiments of device-centric scheduling for visual fog computing.

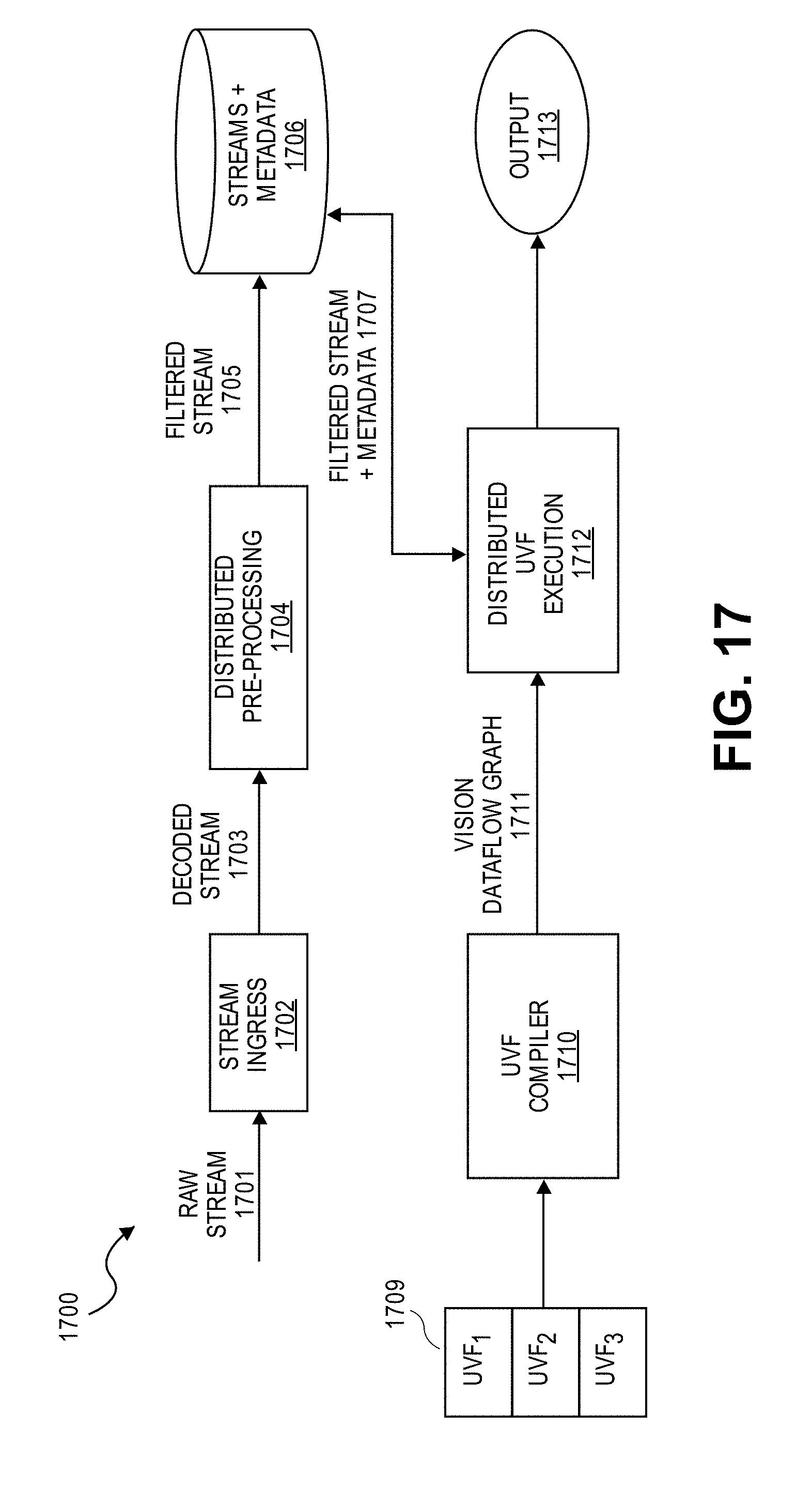

[0012] FIG. 17 illustrates an example embodiment of a runtime processing pipeline for a visual fog architecture.

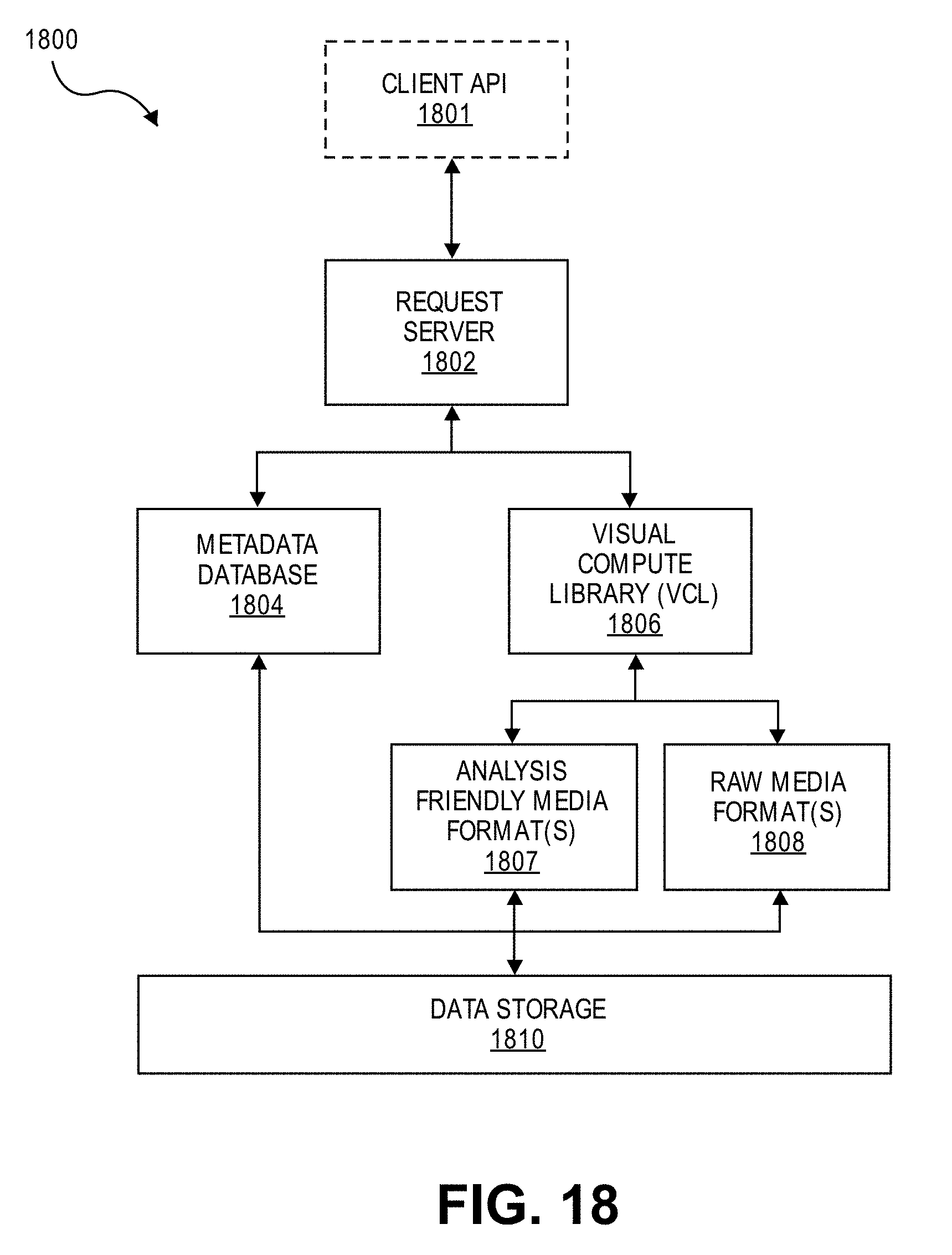

[0013] FIG. 18 illustrates an example embodiment of a visual data storage architecture.

[0014] FIG. 19 illustrates an example of a vision processing pipeline that leverages metadata for searching visual data.

[0015] FIGS. 20 and 21 illustrate examples of representing visual metadata using a property graph.

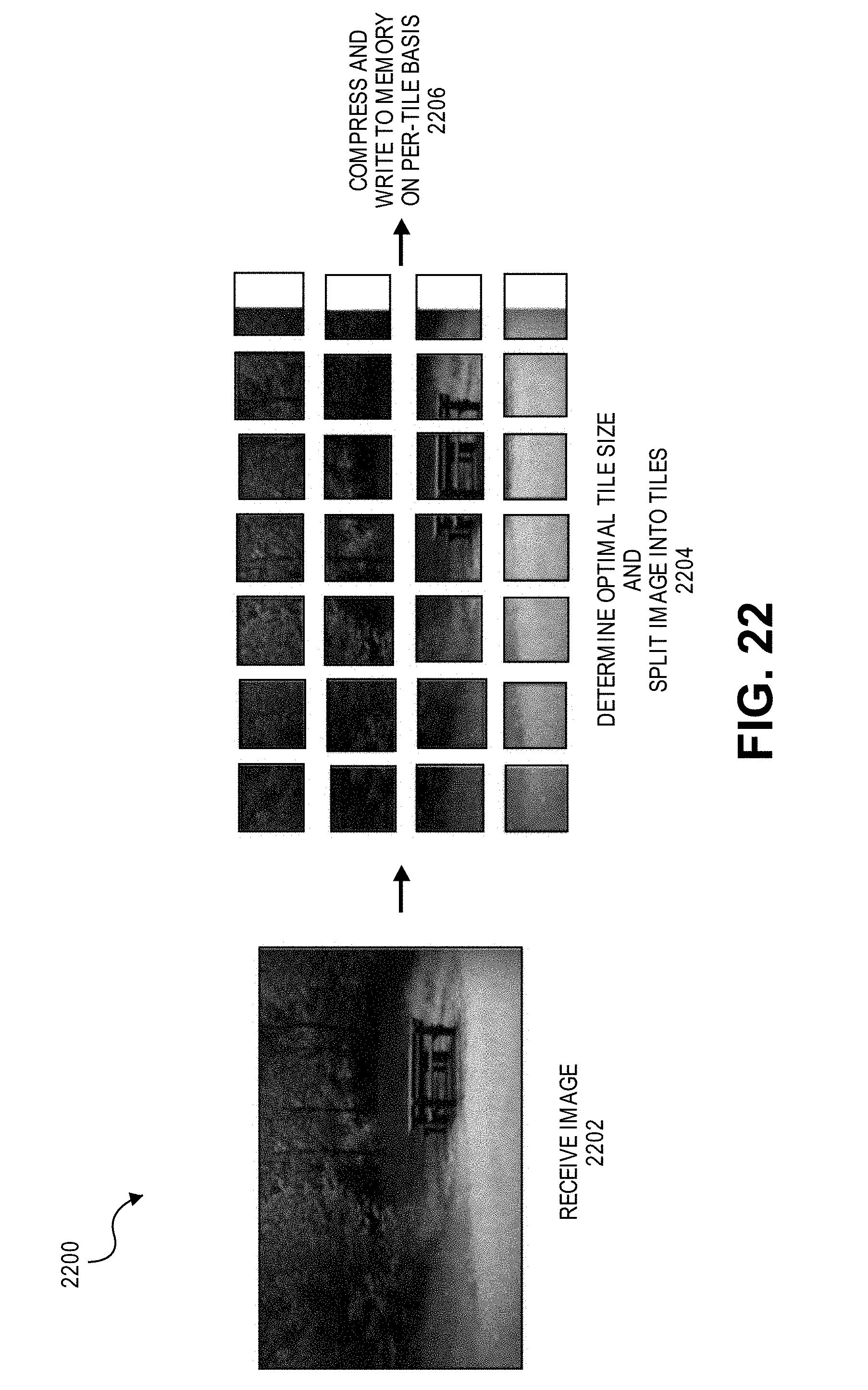

[0016] FIG. 22 illustrates an example embodiment of an analytic image format designed to aid in visual data processing.

[0017] FIG. 23 illustrates a performance graph for various image formats.

[0018] FIGS. 24A, 24B, and 24C illustrate an example embodiment of a multi-domain cascade convolutional neural network (CNN).

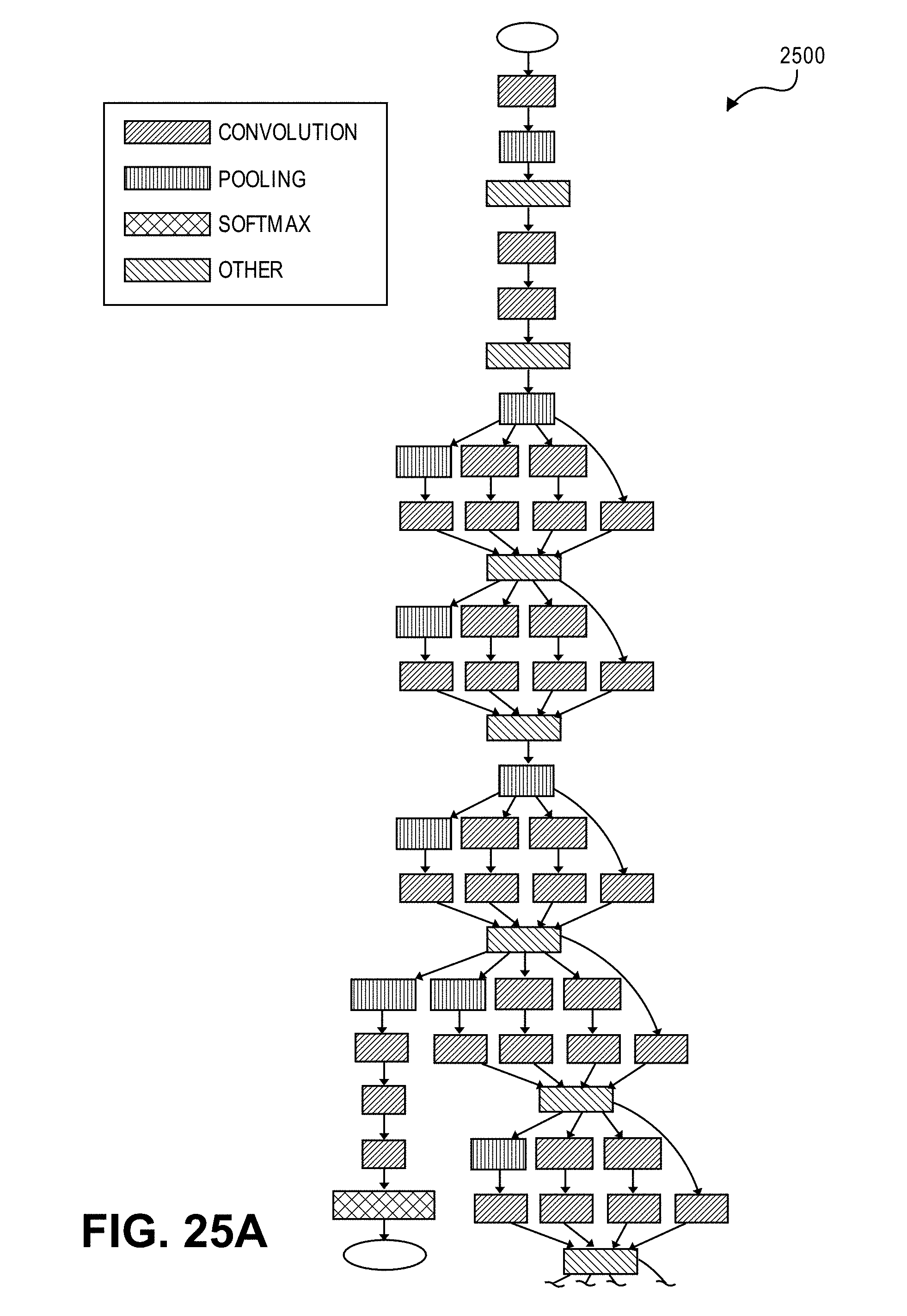

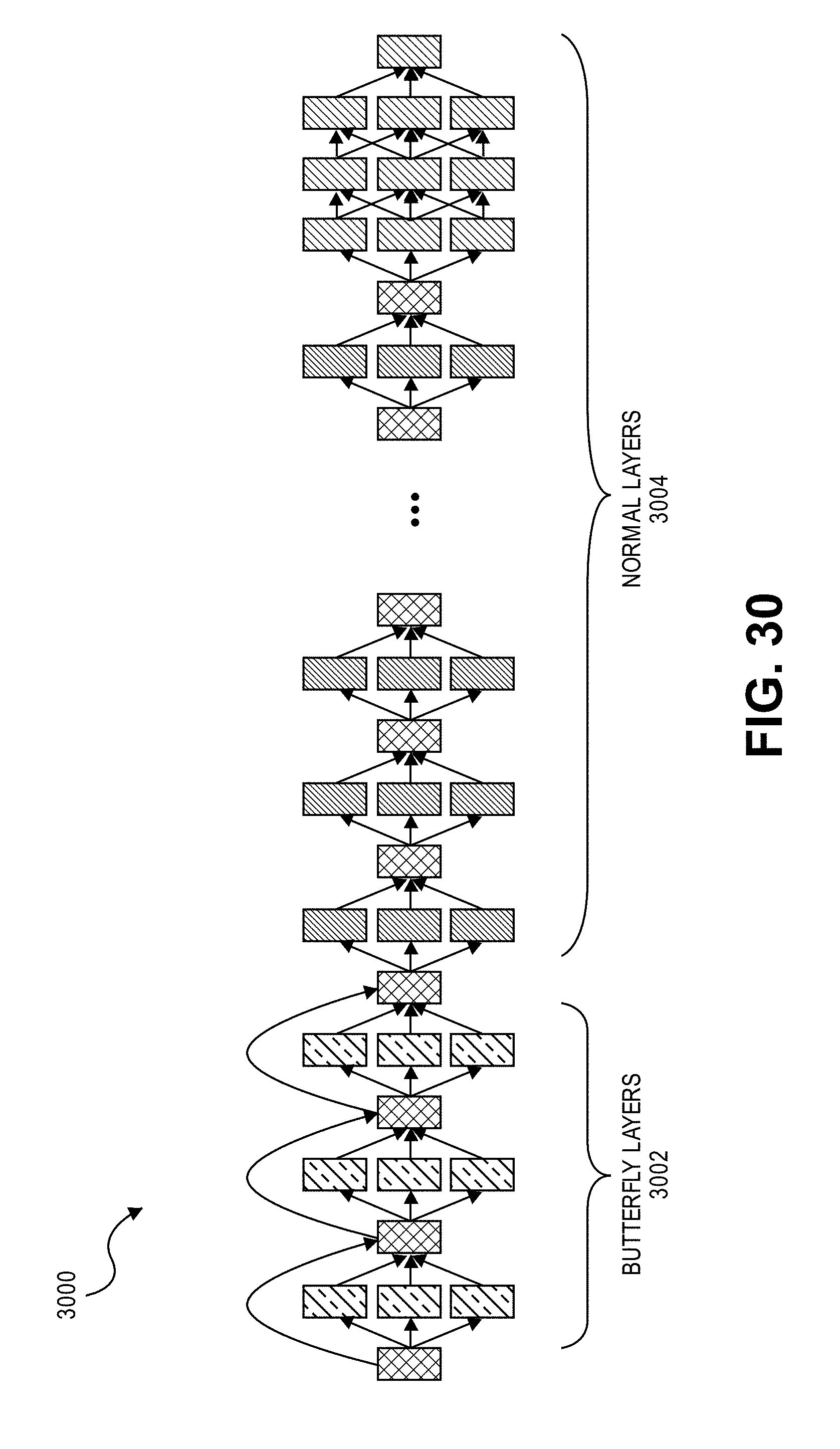

[0019] FIGS. 25A-B, 26, 27, 28, 29, 30, and 31A-B illustrate the use of butterfly operations for a multi-domain convolutional neural network (CNN).

[0020] FIGS. 32 and 33 illustrate an example embodiment of a three-dimensional (3D) CNN for processing compressed visual data.

[0021] FIG. 34 illustrates an example of a pixel-domain CNN.

[0022] FIG. 35 illustrates an example of a pixel-domain visual analytics pipeline.

[0023] FIGS. 36 and 37 illustrate example embodiments of compressed-domain visual analytics pipelines.

[0024] FIG. 38 illustrates a performance graph showing the precision of a CNN trained using compressed visual data.

[0025] FIG. 39 illustrates a flowchart for an example embodiment of context-aware image compression.

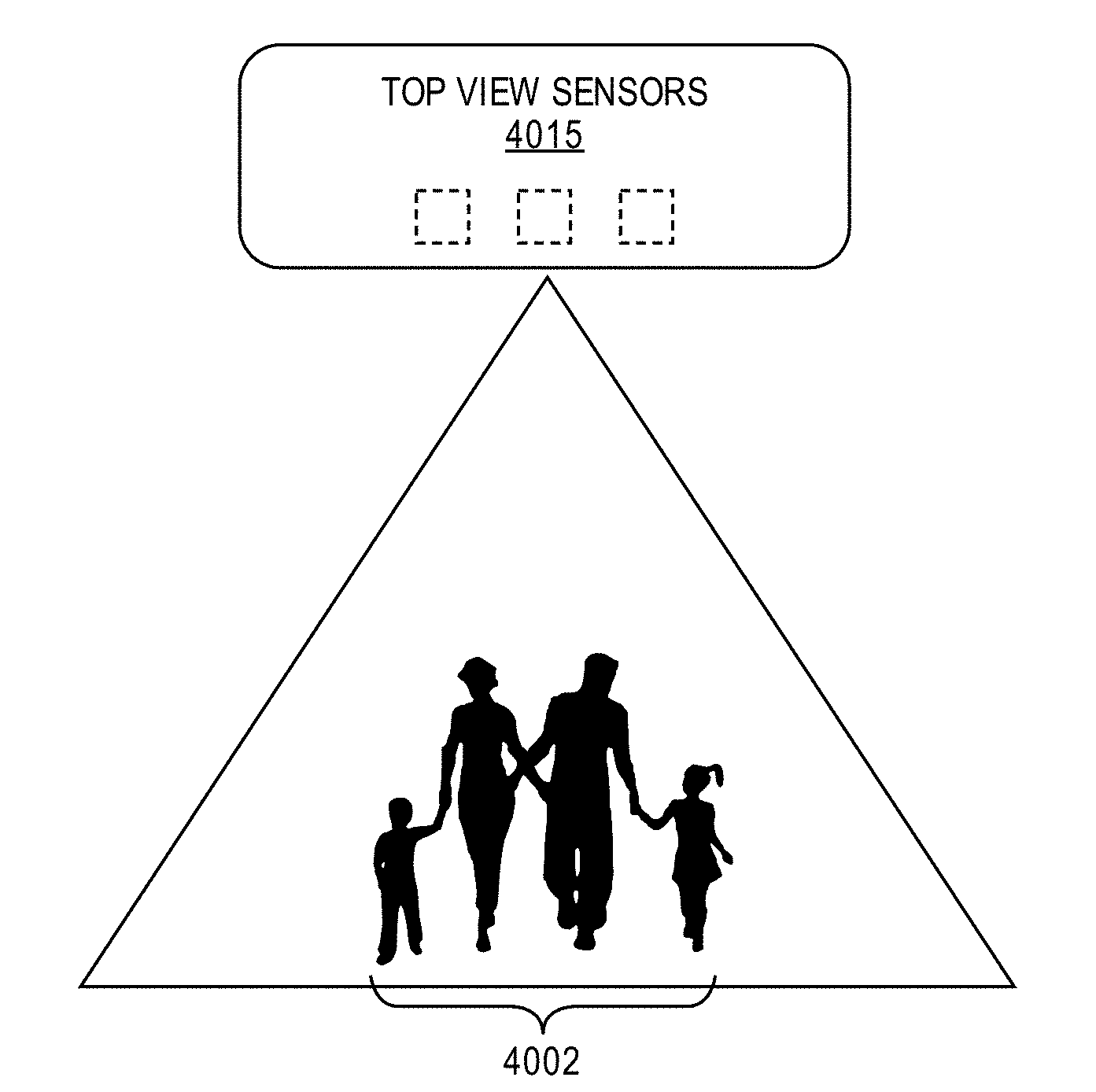

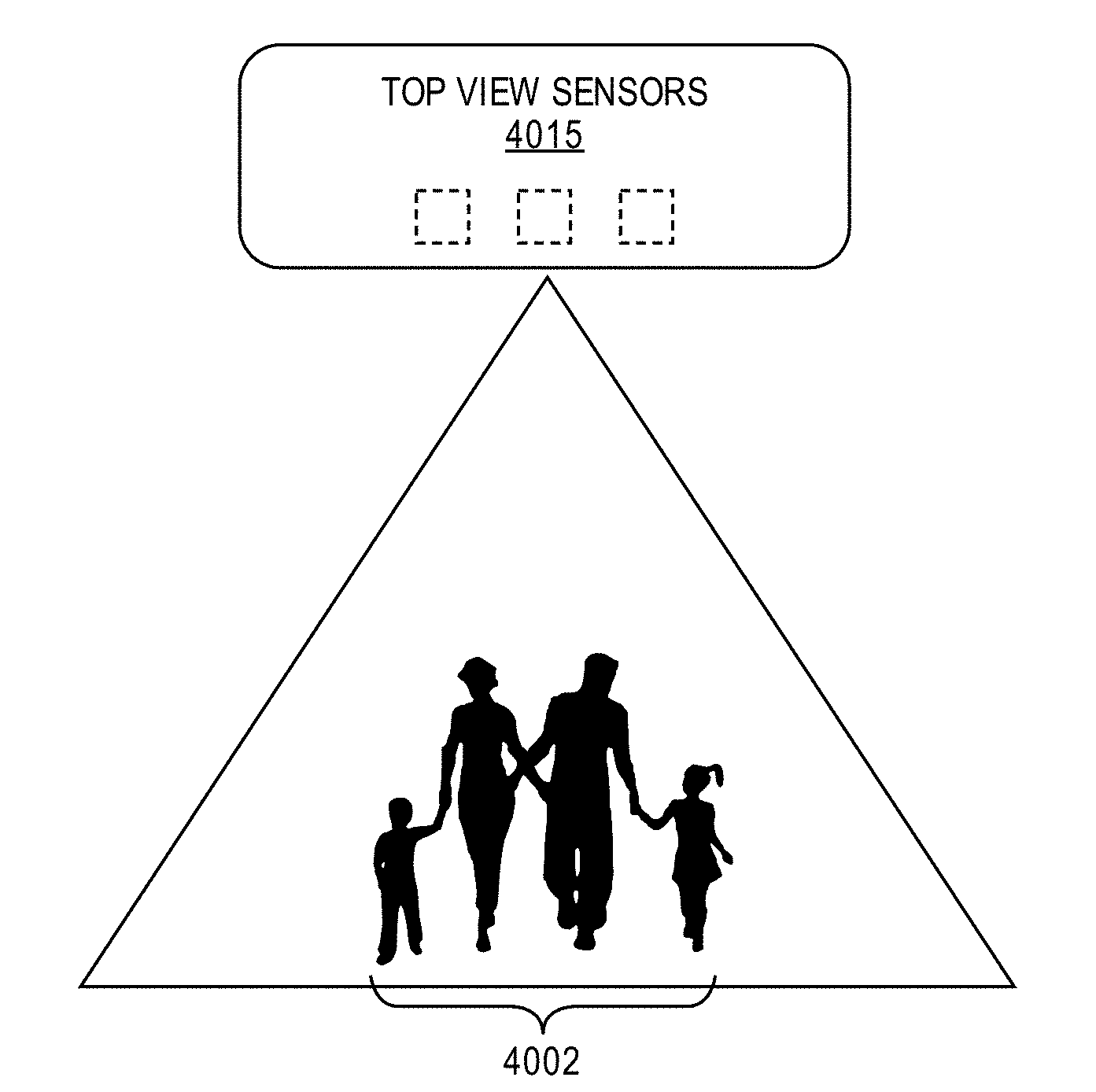

[0026] FIGS. 40A, 40B, and 40C illustrate an example embodiment of a privacy-preserving demographic identification system.

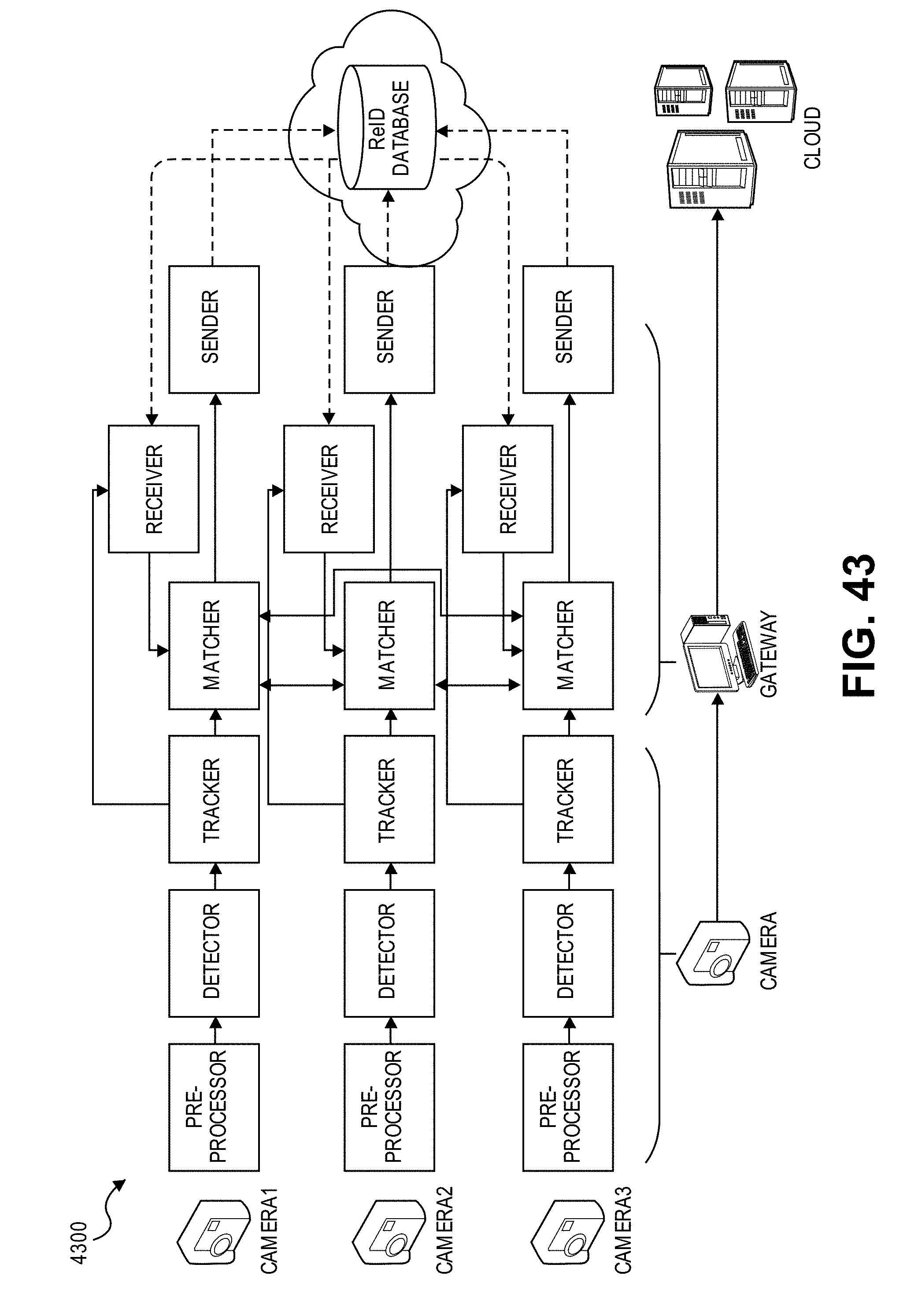

[0027] FIGS. 41, 42, and 43 illustrate an example embodiment of privacy-preserving distributed visual data processing.

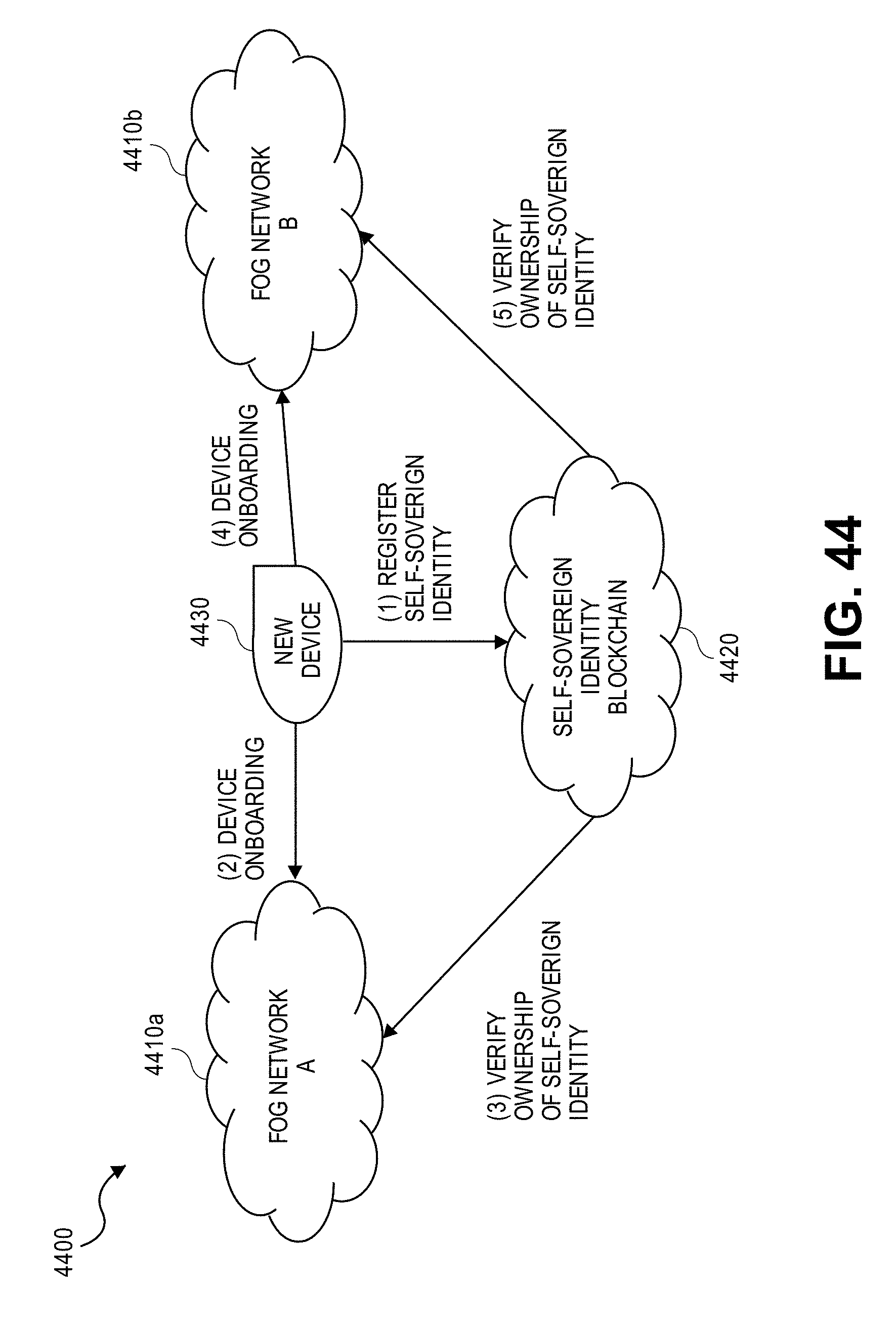

[0028] FIGS. 44, 45, and 46 illustrate example embodiments of self-sovereign device identification for distributed computing networks.

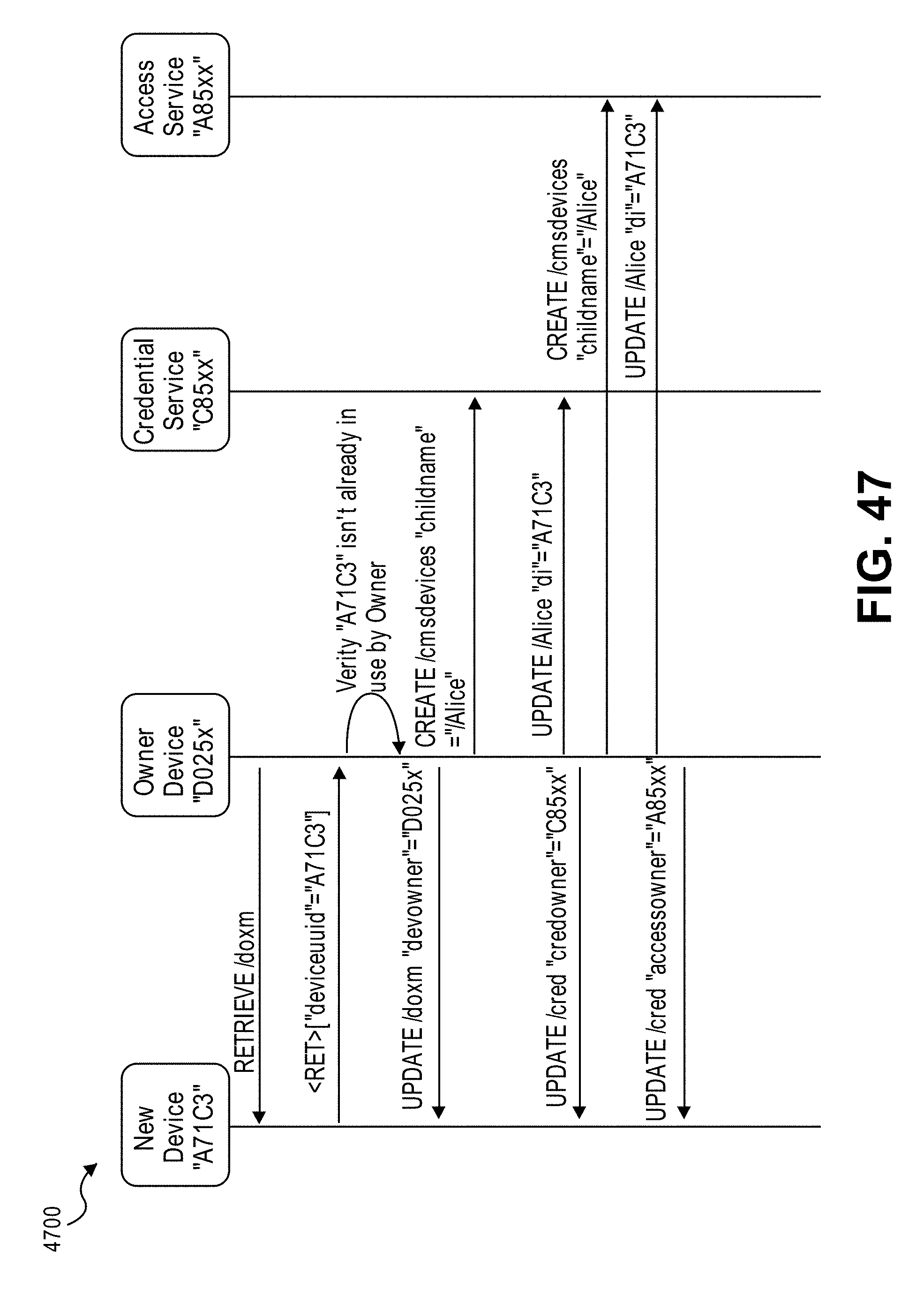

[0029] FIG. 47 illustrates an example of device onboarding/commissioning in a visual fog network without conflict resolution.

[0030] FIGS. 48 and 49 illustrate example embodiments of algorithm identification for distributed computing using a self-sovereign blockchain.

[0031] FIGS. 50, 51, and 52 illustrate example embodiments for processing traditional and analytic image formats.

[0032] FIG. 53 illustrates a flowchart for an example embodiment of privacy-preserving demographics identification.

[0033] FIG. 54 illustrates a flowchart for an example embodiment of privacy-preserving distributed visual processing.

EMBODIMENTS OF THE DISCLOSURE

[0034] This patent application claims the benefit of the filing date of U.S. Provisional Patent Application Ser. No. 62/611,536, filed on Dec. 28, 2017, and entitled "VISUAL FOG," the content of which is hereby expressly incorporated by reference.

[0035] The following disclosure provides many different embodiments, or examples, for implementing different features of the present disclosure. Specific examples of components and arrangements are described below to simplify the present disclosure. These are, of course, merely examples and are not intended to be limiting. Further, the present disclosure may repeat reference numerals and/or letters in the various examples. This repetition is for the purpose of simplicity and clarity and does not in itself dictate a relationship between the various embodiments and/or configurations discussed. Different embodiments may have different advantages, and no particular advantage is necessarily required of any embodiment.

[0036] Example embodiments that may be used to implement the features and functionality of this disclosure will now be described with more particular reference to the attached FIGURES.

[0037] Visual Fog Introduction

[0038] FIG. 1 illustrates an example embodiment of a visual fog system 100 in accordance with certain embodiments. Advancements in modern computing have led to an increased use of computer vision technologies and large-scale visual computing for a variety of mainstream computing applications. In particular, rapid deployments of cameras and other types of computer vision technologies have been leveraged for a variety of visual computing applications that rely on large-scale video analytics and visual data processing. For example, large-scale visual computing can be leveraged for security and surveillance, transportation (e.g., traffic monitoring, navigation, parking, infrastructure planning, security or amber alerts), retail (e.g., customer analytics), enterprise applications, and so forth.

[0039] Existing approaches to large-scale visual computing, however, suffer from numerous limitations. In particular, existing visual computing approaches are implemented using rigid designs that utilize resources inefficiently (e.g., processing, bandwidth, and storage resources) and provide limited functionality. For example, using existing approaches, visual data is typically captured by devices at the edge of a network and simply funneled to the cloud for processing and storage, thus relying heavily on the cloud infrastructure. Due to the large size of visual data, however, this approach typically consumes significant network bandwidth and requires substantial processing and storage resources in the cloud. As a result, existing approaches often suffer from high latency and inefficient resource utilization, and may also be inaccurate, unreliable, inflexible, and incapable of scaling efficiently.

[0040] Accordingly, this disclosure describes various embodiments of a visual fog computing system 100 for performing large-scale visual computing in an efficient and reliable manner. For example, rather than relying exclusively or primarily on cloud resources 130 for visual computing tasks, visual fog system 100 leverages both cloud 130 and edge 110 resources, which may be collectively referred to as the "fog." In this manner, visual fog system 100 can leverage all available "fog" resources to perform visual computing tasks more efficiently, thus improving resource utilization, latency, accuracy, precision, and reliability. Moreover, as described further throughout this disclosure, visual fog system 100 can be implemented using a flexible design that supports ad-hoc queries and is highly scalable, thus rendering it suitable for many visual computing applications and use cases.

[0041] In the illustrated embodiment of FIG. 1, visual fog system 100 includes edge resources 110 and a plurality of associated visual sensors 120, cloud resources 130, and communication networks 150, which are respectively discussed further below. Moreover, in various embodiments, these components of visual fog system 100 may be implemented some or all aspects of the visual computing functionality described throughout this disclosure in connection with the remaining FIGURES.

[0042] Edge resources 110 may include any equipment, devices, and/or components deployed or connected near the "edge" of a communication network. In the illustrated embodiment, for example, edge resources 110 include end-user devices 112a,b (e.g., desktops, laptops, mobile devices), Internet-of-Things (IoT) devices 114, and gateways or routers 116, as described further below. Edge resources 110 may communicate with each other and/or with other remote networks and resources (e.g., cloud resources 130) through one or more communication networks 150, such as local area network 150a and/or wide area network 150b. Moreover, in the illustrated embodiment, edge resources 110 collectively include a plurality of visual sensors 120 (e.g., cameras) for capturing visual representations and data associated with their surroundings. In some embodiments, for example, certain end-user devices 112 and/or IoT devices 114 may include one or more cameras and/or other types of visual sensors 120. Visual sensors 120 may include any type of visual or optical sensors, such as cameras, ultraviolet (UV) sensors, laser rangefinders (e.g., light detection and ranging (LIDAR)), infrared (IR) sensors, electro-optical/infrared (EO/IR) sensors, and so forth.

[0043] End-user devices 112 may include any device that enables or facilitates interaction with a user in visual fog system 100, including, for example, desktop computers, laptops, tablets, mobile phones and other mobile devices, and wearable devices (e.g., smart watches, smart glasses, headsets), among other examples.

[0044] IoT devices 114 may include any device capable of communicating and/or participating in an Internet-of-Things (IoT) system or network. IoT systems may refer to new or improved ad-hoc systems and networks composed of a variety of different devices (e.g., IoT devices 114) interoperating and synergizing for a particular application or use case. Such ad-hoc systems are emerging as more and more products and equipment evolve to become "smart," meaning they are controlled or monitored by computer processors and are capable of communicating with other devices. For example, an IoT device 114 may include a computer processor and/or communication interface to allow interoperation with other components of visual fog system 100, such as with cloud resources 130 and/or other edge resources 110. IoT devices 114 may be "greenfield" devices that are developed with IoT capabilities from the ground-up, or "brownfield" devices that are created by integrating IoT capabilities into existing legacy devices that were initially developed without IoT capabilities. For example, in some cases, IoT devices 114 may be built from sensors and communication modules integrated in or attached to "things," such as equipment, toys, tools, vehicles, living things (e.g., plants, animals, humans), and so forth. Alternatively, or additionally, certain IoT devices 114 may rely on intermediary components, such as edge gateways or routers 116, to communicate with the various components of system 100.

[0045] IoT devices 114 may include various types of sensors for monitoring, detecting, measuring, and generating sensor data and signals associated with characteristics of their environment. In some embodiments, for example, certain IoT devices 114 may include visual sensors 120 (e.g., cameras) for capturing visual representations and data associated with their surroundings. IoT devices 114 may also include other types of sensors configured to detect characteristics such as movement, weight, physical contact, temperature, wind, noise, light, position, humidity, radiation, liquid, specific chemical compounds, battery life, wireless signals, computer communications, and bandwidth, among other examples. Sensors can include physical sensors (e.g., physical monitoring components) and virtual sensors (e.g., software-based monitoring components). IoT devices 114 may also include actuators to perform various actions in their respective environments. For example, an actuator may be used to selectively activate certain functionality, such as toggling the power or operation of a security system (e.g., alarm, camera, locks) or household appliance (e.g., audio system, lighting, HVAC appliances, garage doors), among other examples.

[0046] Indeed, this disclosure contemplates use of a potentially limitless universe of IoT devices 114 and associated sensors/actuators. IoT devices 114 may include, for example, any type of equipment and/or devices associated with any type of system 100 and/or industry, including transportation (e.g., automobile, airlines), industrial manufacturing, energy (e.g., power plants), telecommunications (e.g., Internet, cellular, and television service providers), retail, medical (e.g., healthcare, pharmaceutical), and/or food and beverage, among others. In the transportation industry, for example, IoT devices 114 may include equipment and devices associated with aircrafts, automobiles, or vessels, such as navigation systems, autonomous flight or driving systems, traffic monitoring and/or planning systems, parking systems, and/or any internal mechanical or electrical components that are monitored by sensors (e.g., engines). IoT devices 114 may also include equipment, devices, and/or infrastructure associated with industrial manufacturing and production, shipping (e.g., cargo tracking), communications networks (e.g., gateways, routers, servers, cellular towers), server farms, electrical power plants, wind farms, oil and gas pipelines, water treatment and distribution, wastewater collection and treatment, and weather monitoring (e.g., temperature, wind, and humidity sensors), among other examples. IoT devices 114 may also include, for example, any type of "smart" device or system, such as smart entertainment systems (e.g., televisions, audio systems, videogame systems), smart household or office appliances (e.g., heat-ventilation-air-conditioning (HVAC) appliances, refrigerators, washers and dryers, coffee brewers), power control systems (e.g., automatic electricity, light, and HVAC controls), security systems (e.g., alarms, locks, cameras, motion detectors, fingerprint scanners, facial recognition systems), and other home automation systems, among other examples. IoT devices 114 can be statically located, such as mounted on a building, wall, floor, ground, lamppost, sign, water tower, or any other fixed or static structure. IoT devices 114 can also be mobile, such as devices in vehicles or aircrafts, drones, packages (e.g., for tracking cargo), mobile devices, and wearable devices, among other examples. Moreover, any type of edge resource 110 may also be considered as an IoT device 114, including end-user devices 112 and edge gateways 116, among other examples.

[0047] Edge gateways and/or routers 116 may be used to facilitate communication to and from edge resources 110. For example, gateways 116 may provide communication capabilities to existing legacy devices that were initially developed without any such capabilities (e.g., "brownfield" IoT devices 114). Gateways 116 can also be utilized to extend the geographical reach of edge resources 110 with short-range, proprietary, or otherwise limited communication capabilities, such as IoT devices 114 with Bluetooth or ZigBee communication capabilities. For example, gateways 116 can serve as intermediaries between IoT devices 114 and remote networks or services, by providing a front-haul to the IoT devices 114 using their native communication capabilities (e.g., Bluetooth, ZigBee), and providing a back-haul to other networks 150 and/or cloud resources 130 using another wired or wireless communication medium (e.g., Ethernet, Wi-Fi, cellular). In some embodiments, a gateway 116 may be implemented by a dedicated gateway device, or by a general-purpose device, such as another IoT device 114, end-user device 112, or other type of edge resource 110. In some instances, gateways 116 may also implement certain network management and/or application functionality (e.g., visual computing functionality, IoT application and management functionality), either separately or in conjunction with other components, such as cloud resources 130 and/or other edge resources 110.

[0048] Cloud resources 130 may include any resources or services that are hosted remotely over a network, which may otherwise be referred to as in the "cloud." In some embodiments, for example, cloud resources 130 may be remotely hosted on servers in a datacenter (e.g., application servers, database servers). Cloud resources 130 may include any resources, services, and/or functionality that can be utilized by or for edge resources 110, including but not limited to, visual computing applications and services, IoT application and management services, data storage, computational services (e.g., data analytics, searching, diagnostics and fault management), security services (e.g., surveillance, alarms, user authentication), mapping and navigation, geolocation services, network or infrastructure management, payment processing, audio and video streaming, messaging, social networking, news, and weather, among other examples.

[0049] Communication networks 150a,b may be used to facilitate communication between components of system 100. In the illustrated embodiment, for example, edge resources 110 are connected to local area network (LAN) 150a in order to facilitate communication with each other and/or other remote networks or resources, such as wide area network (WAN) 150b and/or cloud resources 130. In various embodiments, visual fog system 100 may be implemented using any number or type of communication network(s) 150, including local area networks, wide area networks, public networks, the Internet, cellular networks, Wi-Fi networks, short-range networks (e.g., Bluetooth or ZigBee), and/or any other wired or wireless communication networks or mediums.

[0050] In general, edge resources 110 (and in particular IoT devices 114) may generate an extremely large volume and variety of data. As one example, edge resources 110 with visual sensors 120 may generate large volumes of visual data, such as video and/or images. Edge resources 110 typically offload this data to the cloud 130 for processing and/or storage. Cloud resources 130, however, may not necessarily be suited to handle the rapidly growing volume, variety, and velocity of data generated by IoT devices 114 and other edge resources 110. For example, cloud-based processing may not be ideal in certain circumstances, such as processing time-sensitive or highly confidential data, or when faced with network bandwidth constraints, among other examples. Accordingly, in some embodiments, visual fog system 100 may leverage "edge" processing to augment the performance and capabilities of the cloud 130 using edge resources 110. Edge processing is an approach that involves processing certain data at the network edge (e.g., using edge resources 110), near where the data is generated, rather than simply funneling large volumes of data to the cloud for processing and storage. Certain data may still be sent to the cloud, as appropriate, such as for deeper analysis and/or long-term storage. Edge processing may be used to complement the shortcomings of cloud-based processing (e.g., when cloud-based processing is inefficient, ineffective, and/or unsecure), and thus improve the handling of the growing volume, variety, and velocity of data generated by IoT devices 114 and/or other edge resources 110. For example, in some cases, processing data near its source (e.g., in the network edge) rather than in the cloud may improve performance and/or avoid system failures or disasters. Edge processing may also conserve network bandwidth, which may be particularly beneficial when facing bandwidth constraints and/or limited network connectivity.

[0051] In some cases, the collective use of both edge 110 and cloud 130 resources may be referred to as "fog" computing, as functionality of the "cloud" 130 is effectively extended by the edge resources 110, thus forming a "fog" over the network edge. Moreover, in some embodiments, devices 110 in the "fog" may connect and/or communicate with each other using an interconnection standard or protocol, such as the open interconnect consortium (OIC) standard specification 1.0, released by the Open Connectivity Foundation.TM. (OCF) on Dec. 23, 2015, which enables devices to discover and connect with each other; Thread, a networking protocol for Internet-of-Things (IoT) devices used in "smart" home automation and similar deployments, developed by an alliance of organizations named the "Thread Group"; the optimized link state routing (OLSR) protocol; and/or the better approach to mobile ad-hoc networking (B.A.T.M.A.N.), among other examples.

[0052] Moreover, in some embodiments, fog computing may be leveraged by visual fog system 100 for large-scale visual computing applications. For example, in some embodiments, the components of visual fog system 100 (e.g., edge resources 110, cloud resources 130) may be implemented with some or all aspects of the visual computing functionality described throughout this disclosure in connection with the remaining FIGURES.

[0053] Any, all, or some of the computing devices of system 100 may be adapted to execute any operating system, including Linux or other UNIX-based operating systems, Microsoft Windows, Windows Server, MacOS, Apple iOS, Google Android, or any customized and/or proprietary operating system, along with virtual machines adapted to virtualize execution of a particular operating system.

[0054] While FIG. 1 is described as containing or being associated with a plurality of elements, not all elements illustrated within system 100 of FIG. 1 may be utilized in each alternative implementation of the present disclosure. Additionally, one or more of the elements described in connection with the examples of FIG. 1 may be located external to system 100, while in other instances, certain elements may be included within or as a portion of one or more of the other described elements, as well as other elements not described in the illustrated implementation. Further, certain elements illustrated in FIG. 1 may be combined with other components, as well as used for alternative or additional purposes in addition to those purposes described herein.

[0055] Additional embodiments associated with the implementation of a visual fog computing system 100 are described further in connection with the remaining FIGURES. Accordingly, it should be appreciated that visual fog system 100 of FIG. 1 may be implemented with any aspects of the embodiments described throughout this disclosure.

[0056] Example Internet-of-Things (IoT) Implementations

[0057] FIGS. 2-5 illustrate examples of Internet-of-Things (IoT) networks and devices that can be used in accordance with embodiments disclosed herein. For example, the operations and functionality described throughout this disclosure may be embodied by an IoT device or machine in the example form of an electronic processing system, within which a set or sequence of instructions may be executed to cause the electronic processing system to perform any one of the methodologies discussed herein, according to an example embodiment. The machine may be an IoT device or an IoT gateway, including a machine embodied by aspects of a personal computer (PC), a tablet PC, a personal digital assistant (PDA), a mobile telephone or smartphone, or any machine capable of executing instructions (sequential or otherwise) that specify actions to be taken by that machine. Further, while only a single machine may be depicted and referenced in the example above, such machine shall also be taken to include any collection of machines that individually or jointly execute a set (or multiple sets) of instructions to perform any one or more of the methodologies discussed herein. Further, these and like examples to a processor-based system shall be taken to include any set of one or more machines that are controlled by or operated by a processor (e.g., a computer) to individually or jointly execute instructions to perform any one or more of the methodologies discussed herein.

[0058] FIG. 2 illustrates an example domain topology for respective internet-of-things (IoT) networks coupled through links to respective gateways. The internet of things (IoT) is a concept in which a large number of computing devices are interconnected to each other and to the Internet to provide functionality and data acquisition at very low levels. Thus, as used herein, an IoT device may include a semiautonomous device performing a function, such as sensing or control, among others, in communication with other IoT devices and a wider network, such as the Internet.

[0059] Often, IoT devices are limited in memory, size, or functionality, allowing larger numbers to be deployed for a similar cost to smaller numbers of larger devices. However, an IoT device may be a smart phone, laptop, tablet, or PC, or other larger device. Further, an IoT device may be a virtual device, such as an application on a smart phone or other computing device. IoT devices may include IoT gateways, used to couple IoT devices to other IoT devices and to cloud applications, for data storage, process control, and the like.

[0060] Networks of IoT devices may include commercial and home automation devices, such as water distribution systems, electric power distribution systems, pipeline control systems, plant control systems, light switches, thermostats, locks, cameras, alarms, motion sensors, and the like. The IoT devices may be accessible through remote computers, servers, and other systems, for example, to control systems or access data.

[0061] The future growth of the Internet and like networks may involve very large numbers of IoT devices. Accordingly, in the context of the techniques discussed herein, a number of innovations for such future networking will address the need for all these layers to grow unhindered, to discover and make accessible connected resources, and to support the ability to hide and compartmentalize connected resources. Any number of network protocols and communications standards may be used, wherein each protocol and standard is designed to address specific objectives. Further, the protocols are part of the fabric supporting human accessible services that operate regardless of location, time or space. The innovations include service delivery and associated infrastructure, such as hardware and software; security enhancements; and the provision of services based on Quality of Service (QoS) terms specified in service level and service delivery agreements. As will be understood, the use of IoT devices and networks, such as those introduced in FIGS. 2-5, present a number of new challenges in a heterogeneous network of connectivity comprising a combination of wired and wireless technologies.

[0062] FIG. 2 specifically provides a simplified drawing of a domain topology that may be used for a number of internet-of-things (IoT) networks comprising IoT devices 204, with the IoT networks 256, 258, 260, 262, coupled through backbone links 202 to respective gateways 254. For example, a number of IoT devices 204 may communicate with a gateway 254, and with each other through the gateway 254. To simplify the drawing, not every IoT device 204, or communications link (e.g., link 216, 222, 228, or 232) is labeled. The backbone links 202 may include any number of wired or wireless technologies, including optical networks, and may be part of a local area network (LAN), a wide area network (WAN), or the Internet. Additionally, such communication links facilitate optical signal paths among both IoT devices 204 and gateways 254, including the use of MUXing/deMUXing components that facilitate interconnection of the various devices.

[0063] The network topology may include any number of types of IoT networks, such as a mesh network provided with the network 256 using Bluetooth low energy (BLE) links 222. Other types of IoT networks that may be present include a wireless local area network (WLAN) network 258 used to communicate with IoT devices 204 through IEEE 802.11 (Wi-Fi.RTM.) links 228, a cellular network 260 used to communicate with IoT devices 204 through an LTE/LTE-A (4G) or 5G cellular network, and a low-power wide area (LPWA) network 262, for example, a LPWA network compatible with the LoRaWan specification promulgated by the LoRa alliance, or a IPv6 over Low Power Wide-Area Networks (LPWAN) network compatible with a specification promulgated by the Internet Engineering Task Force (IETF). Further, the respective IoT networks may communicate with an outside network provider (e.g., a tier 2 or tier 3 provider) using any number of communications links, such as an LTE cellular link, an LPWA link, or a link based on the IEEE 802.15.4 standard, such as Zigbee.RTM.. The respective IoT networks may also operate with use of a variety of network and internet application protocols such as Constrained Application Protocol (CoAP). The respective IoT networks may also be integrated with coordinator devices that provide a chain of links that forms cluster tree of linked devices and networks.

[0064] Each of these IoT networks may provide opportunities for new technical features, such as those as described herein. The improved technologies and networks may enable the exponential growth of devices and networks, including the use of IoT networks into as fog devices or systems. As the use of such improved technologies grows, the IoT networks may be developed for self-management, functional evolution, and collaboration, without needing direct human intervention. The improved technologies may even enable IoT networks to function without centralized controlled systems. Accordingly, the improved technologies described herein may be used to automate and enhance network management and operation functions far beyond current implementations.

[0065] In an example, communications between IoT devices 204, such as over the backbone links 202, may be protected by a decentralized system for authentication, authorization, and accounting (AAA). In a decentralized AAA system, distributed payment, credit, audit, authorization, and authentication systems may be implemented across interconnected heterogeneous network infrastructure. This allows systems and networks to move towards autonomous operations. In these types of autonomous operations, machines may even contract for human resources and negotiate partnerships with other machine networks. This may allow the achievement of mutual objectives and balanced service delivery against outlined, planned service level agreements as well as achieve solutions that provide metering, measurements, traceability and trackability. The creation of new supply chain structures and methods may enable a multitude of services to be created, mined for value, and collapsed without any human involvement.

[0066] Such IoT networks may be further enhanced by the integration of sensing technologies, such as sound, light, electronic traffic, facial and pattern recognition, smell, vibration, into the autonomous organizations among the IoT devices. The integration of sensory systems may allow systematic and autonomous communication and coordination of service delivery against contractual service objectives, orchestration and quality of service (QoS) based swarming and fusion of resources. Some of the individual examples of network-based resource processing include the following.

[0067] The mesh network 256, for instance, may be enhanced by systems that perform inline data-to-information transforms. For example, self-forming chains of processing resources comprising a multi-link network may distribute the transformation of raw data to information in an efficient manner, and the ability to differentiate between assets and resources and the associated management of each. Furthermore, the proper components of infrastructure and resource based trust and service indices may be inserted to improve the data integrity, quality, assurance and deliver a metric of data confidence.

[0068] The WLAN network 258, for instance, may use systems that perform standards conversion to provide multi-standard connectivity, enabling IoT devices 204 using different protocols to communicate. Further systems may provide seamless interconnectivity across a multi-standard infrastructure comprising visible Internet resources and hidden Internet resources.

[0069] Communications in the cellular network 260, for instance, may be enhanced by systems that offload data, extend communications to more remote devices, or both. The LPWA network 262 may include systems that perform non-Internet protocol (IP) to IP interconnections, addressing, and routing. Further, each of the IoT devices 204 may include the appropriate transceiver for wide area communications with that device. Further, each IoT device 204 may include other transceivers for communications using additional protocols and frequencies.

[0070] Finally, clusters of IoT devices may be equipped to communicate with other IoT devices as well as with a cloud network. This may allow the IoT devices to form an ad-hoc network between the devices, allowing them to function as a single device, which may be termed a fog device. This configuration is discussed further with respect to FIG. 3 below.

[0071] FIG. 3 illustrates a cloud computing network in communication with a mesh network of IoT devices (devices 302) operating as a fog device at the edge of the cloud computing network. The mesh network of IoT devices may be termed a fog 320, operating at the edge of the cloud 300. To simplify the diagram, not every IoT device 302 is labeled.

[0072] The fog 320 may be considered to be a massively interconnected network wherein a number of IoT devices 302 are in communications with each other, for example, by radio links 322. As an example, this interconnected network may be facilitated using an interconnect specification released by the Open Connectivity Foundation.TM. (OCF). This standard allows devices to discover each other and establish communications for interconnects. Other interconnection protocols may also be used, including, for example, the optimized link state routing (OLSR) Protocol, the better approach to mobile ad-hoc networking (B.A.T.M.A.N.) routing protocol, or the OMA Lightweight M2M (LWM2M) protocol, among others.

[0073] Three types of IoT devices 302 are shown in this example, gateways 304, data aggregators 326, and sensors 328, although any combinations of IoT devices 302 and functionality may be used. The gateways 304 may be edge devices that provide communications between the cloud 300 and the fog 320, and may also provide the backend process function for data obtained from sensors 328, such as motion data, flow data, temperature data, and the like. The data aggregators 326 may collect data from any number of the sensors 328, and perform the back-end processing function for the analysis. The results, raw data, or both may be passed along to the cloud 300 through the gateways 304. The sensors 328 may be full IoT devices 302, for example, capable of both collecting data and processing the data. In some cases, the sensors 328 may be more limited in functionality, for example, collecting the data and allowing the data aggregators 326 or gateways 304 to process the data.

[0074] Communications from any IoT device 302 may be passed along a convenient path (e.g., a most convenient path) between any of the IoT devices 302 to reach the gateways 304. In these networks, the number of interconnections provide substantial redundancy, allowing communications to be maintained, even with the loss of a number of IoT devices 302. Further, the use of a mesh network may allow IoT devices 302 that are very low power or located at a distance from infrastructure to be used, as the range to connect to another IoT device 302 may be much less than the range to connect to the gateways 304.

[0075] The fog 320 provided from these IoT devices 302 may be presented to devices in the cloud 300, such as a server 306, as a single device located at the edge of the cloud 300, e.g., a fog device. In this example, the alerts coming from the fog device may be sent without being identified as coming from a specific IoT device 302 within the fog 320. In this fashion, the fog 320 may be considered a distributed platform that provides computing and storage resources to perform processing or data-intensive tasks such as data analytics, data aggregation, and machine-learning, among others.

[0076] In some examples, the IoT devices 302 may be configured using an imperative programming style, e.g., with each IoT device 302 having a specific function and communication partners. However, the IoT devices 302 forming the fog device may be configured in a declarative programming style, allowing the IoT devices 302 to reconfigure their operations and communications, such as to determine needed resources in response to conditions, queries, and device failures. As an example, a query from a user located at a server 306 about the operations of a subset of equipment monitored by the IoT devices 302 may result in the fog 320 device selecting the IoT devices 302, such as particular sensors 328, needed to answer the query. The data from these sensors 328 may then be aggregated and analyzed by any combination of the sensors 328, data aggregators 326, or gateways 304, before being sent on by the fog 320 device to the server 306 to answer the query. In this example, IoT devices 302 in the fog 320 may select the sensors 328 used based on the query, such as adding data from flow sensors or temperature sensors. Further, if some of the IoT devices 302 are not operational, other IoT devices 302 in the fog 320 device may provide analogous data, if available.

[0077] FIG. 4 illustrates a drawing of a cloud computing network, or cloud 400, in communication with a number of Internet of Things (IoT) devices. The cloud 400 may represent the Internet, or may be a local area network (LAN), or a wide area network (WAN), such as a proprietary network for a company. The IoT devices may include any number of different types of devices, grouped in various combinations. For example, a traffic control group 406 may include IoT devices along streets in a city. These IoT devices may include stoplights, traffic flow monitors, cameras, weather sensors, and the like. The traffic control group 406, or other subgroups, may be in communication with the cloud 400 through wired or wireless links 408, such as LPWA links, optical links, and the like. Further, a wired or wireless sub-network 412 may allow the IoT devices to communicate with each other, such as through a local area network, a wireless local area network, and the like. The IoT devices may use another device, such as a gateway 510 or 528 to communicate with remote locations such as the cloud 500; the IoT devices may also use one or more servers 530 to facilitate communication with the cloud 500 or with the gateway 510. For example, the one or more servers 530 may operate as an intermediate network node to support a local edge cloud or fog implementation among a local area network. Further, the gateway 528 that is depicted may operate in a cloud-to-gateway-to-many edge devices configuration, such as with the various IoT devices 514, 520, 524 being constrained or dynamic to an assignment and use of resources in the cloud 500.

[0078] Other example groups of IoT devices may include remote weather stations 414, local information terminals 416, alarm systems 418, automated teller machines 420, alarm panels 422, or moving vehicles, such as emergency vehicles 424 or other vehicles 426, among many others. Each of these IoT devices may be in communication with other IoT devices, with servers 404, with another IoT fog device or system (not shown, but depicted in FIG. 3), or a combination therein. The groups of IoT devices may be deployed in various residential, commercial, and industrial settings (including in both private or public environments).

[0079] As can be seen from FIG. 4, a large number of IoT devices may be communicating through the cloud 400. This may allow different IoT devices to request or provide information to other devices autonomously. For example, a group of IoT devices (e.g., the traffic control group 406) may request a current weather forecast from a group of remote weather stations 414, which may provide the forecast without human intervention. Further, an emergency vehicle 424 may be alerted by an automated teller machine 420 that a burglary is in progress. As the emergency vehicle 424 proceeds towards the automated teller machine 420, it may access the traffic control group 406 to request clearance to the location, for example, by lights turning red to block cross traffic at an intersection in sufficient time for the emergency vehicle 424 to have unimpeded access to the intersection.

[0080] Clusters of IoT devices, such as the remote weather stations 414 or the traffic control group 406, may be equipped to communicate with other IoT devices as well as with the cloud 400. This may allow the IoT devices to form an ad-hoc network between the devices, allowing them to function as a single device, which may be termed a fog device or system (e.g., as described above with reference to FIG. 3).

[0081] FIG. 5 is a block diagram of an example of components that may be present in an IoT device 550 for implementing the techniques described herein. The IoT device 550 may include any combinations of the components shown in the example or referenced in the disclosure above. The components may be implemented as ICs, portions thereof, discrete electronic devices, or other modules, logic, hardware, software, firmware, or a combination thereof adapted in the IoT device 550, or as components otherwise incorporated within a chassis of a larger system. Additionally, the block diagram of FIG. 5 is intended to depict a high-level view of components of the IoT device 550. However, some of the components shown may be omitted, additional components may be present, and different arrangement of the components shown may occur in other implementations.

[0082] The IoT device 550 may include a processor 552, which may be a microprocessor, a multi-core processor, a multithreaded processor, an ultra-low voltage processor, an embedded processor, or other known processing element. The processor 552 may be a part of a system on a chip (SoC) in which the processor 552 and other components are formed into a single integrated circuit, or a single package, such as the Edison.TM. or Galileo.TM. SoC boards from Intel. As an example, the processor 552 may include an Intel.RTM. Architecture Core.TM. based processor, such as a Quark.TM., an Atom.TM., an i3, an i5, an i7, or an MCU-class processor, or another such processor available from Intel.RTM. Corporation, Santa Clara, Calif. However, any number other processors may be used, such as available from Advanced Micro Devices, Inc. (AMD) of Sunnyvale, Calif., a MIPS-based design from MIPS Technologies, Inc. of Sunnyvale, Calif., an ARM-based design licensed from ARM Holdings, Ltd. or customer thereof, or their licensees or adopters. The processors may include units such as an A5-A10 processor from Apple.RTM. Inc., a Snapdragon.TM. processor from Qualcomm.RTM. Technologies, Inc., or an OMAP.TM. processor from Texas Instruments, Inc.

[0083] The processor 552 may communicate with a system memory 554 over an interconnect 556 (e.g., a bus). Any number of memory devices may be used to provide for a given amount of system memory. As examples, the memory may be random access memory (RAM) in accordance with a Joint Electron Devices Engineering Council (JEDEC) design such as the DDR or mobile DDR standards (e.g., LPDDR, LPDDR2, LPDDR3, or LPDDR4). In various implementations, the individual memory devices may be of any number of different package types such as single die package (SDP), dual die package (DDP) or quad die package (Q17P). These devices, in some examples, may be directly soldered onto a motherboard to provide a lower profile solution, while in other examples the devices are configured as one or more memory modules that in turn couple to the motherboard by a given connector. Any number of other memory implementations may be used, such as other types of memory modules, e.g., dual inline memory modules (DIMMs) of different varieties including but not limited to microDlMMs or MiniDIMMs.

[0084] To provide for persistent storage of information such as data, applications, operating systems and so forth, a storage 558 may also couple to the processor 552 via the interconnect 556. In an example, the storage 558 may be implemented via a solid state disk drive (SSDD). Other devices that may be used for the storage 558 include flash memory cards, such as SD cards, microSD cards, xD picture cards, and the like, and USB flash drives. In low power implementations, the storage 558 may be on-die memory or registers associated with the processor 552. However, in some examples, the storage 558 may be implemented using a micro hard disk drive (HDD). Further, any number of new technologies may be used for the storage 558 in addition to, or instead of, the technologies described, such resistance change memories, phase change memories, holographic memories, or chemical memories, among others.

[0085] The components may communicate over the interconnect 556. The interconnect 556 may include any number of technologies, including industry standard architecture (ISA), extended ISA (EISA), peripheral component interconnect (PCI), peripheral component interconnect extended (PCIx), PCI express (PCIe), or any number of other technologies. The interconnect 556 may be a proprietary bus, for example, used in a SoC based system. Other bus systems may be included, such as an I2C interface, an SPI interface, point to point interfaces, and a power bus, among others.

[0086] The interconnect 556 may couple the processor 552 to a mesh transceiver 562, for communications with other mesh devices 564. The mesh transceiver 562 may use any number of frequencies and protocols, such as 2.4 Gigahertz (GHz) transmissions under the IEEE 802.15.4 standard, using the Bluetooth.RTM. low energy (BLE) standard, as defined by the Bluetooth.RTM. Special Interest Group, or the ZigBee.RTM. standard, among others. Any number of radios, configured for a particular wireless communication protocol, may be used for the connections to the mesh devices 564. For example, a WLAN unit may be used to implement Wi-Fi.TM. communications in accordance with the Institute of Electrical and Electronics Engineers (IEEE) 802.11 standard. In addition, wireless wide area communications, e.g., according to a cellular or other wireless wide area protocol, may occur via a WWAN unit.

[0087] The mesh transceiver 562 may communicate using multiple standards or radios for communications at different range. For example, the IoT device 550 may communicate with close devices, e.g., within about 10 meters, using a local transceiver based on BLE, or another low power radio, to save power. More distant mesh devices 564, e.g., within about 50 meters, may be reached over ZigBee or other intermediate power radios. Both communications techniques may take place over a single radio at different power levels, or may take place over separate transceivers, for example, a local transceiver using BLE and a separate mesh transceiver using ZigBee.

[0088] A wireless network transceiver 566 may be included to communicate with devices or services in the cloud 500 via local or wide area network protocols. The wireless network transceiver 566 may be a LPWA transceiver that follows the IEEE 802.15.4, or IEEE 802.15.4g standards, among others. The IoT device 550 may communicate over a wide area using LoRaWAN.TM. (Long Range Wide Area Network) developed by Semtech and the LoRa Alliance. The techniques described herein are not limited to these technologies, but may be used with any number of other cloud transceivers that implement long range, low bandwidth communications, such as Sigfox, and other technologies. Further, other communications techniques, such as time-slotted channel hopping, described in the IEEE 802.15.4e specification may be used.

[0089] Any number of other radio communications and protocols may be used in addition to the systems mentioned for the mesh transceiver 562 and wireless network transceiver 566, as described herein. For example, the radio transceivers 562 and 566 may include an LTE or other cellular transceiver that uses spread spectrum (SPA/SAS) communications for implementing high speed communications. Further, any number of other protocols may be used, such as Wi-Fi.RTM. networks for medium speed communications and provision of network communications.

[0090] The radio transceivers 562 and 566 may include radios that are compatible with any number of 3GPP (Third Generation Partnership Project) specifications, notably Long Term Evolution (LTE), Long Term Evolution-Advanced (LTE-A), and Long Term Evolution-Advanced Pro (LTE-A Pro). It can be noted that radios compatible with any number of other fixed, mobile, or satellite communication technologies and standards may be selected. These may include, for example, any Cellular Wide Area radio communication technology, which may include e.g. a 5th Generation (5G) communication systems, a Global System for Mobile Communications (GSM) radio communication technology, a General Packet Radio Service (GPRS) radio communication technology, or an Enhanced Data Rates for GSM Evolution (EDGE) radio communication technology, a UMTS (Universal Mobile Telecommunications System) communication technology, In addition to the standards listed above, any number of satellite uplink technologies may be used for the wireless network transceiver 566, including, for example, radios compliant with standards issued by the ITU (International Telecommunication Union), or the ETSI (European Telecommunications Standards Institute), among others. The examples provided herein are thus understood as being applicable to various other communication technologies, both existing and not yet formulated.

[0091] A network interface controller (NIC) 568 may be included to provide a wired communication to the cloud 500 or to other devices, such as the mesh devices 564. The wired communication may provide an Ethernet connection, or may be based on other types of networks, such as Controller Area Network (CAN), Local Interconnect Network (LIN), DeviceNet, ControlNet, Data Highway+, PROFIBUS, or PROFINET, among many others. An additional NIC 568 may be included to allow connect to a second network, for example, a NIC 568 providing communications to the cloud over Ethernet, and a second NIC 568 providing communications to other devices over another type of network.

[0092] The interconnect 556 may couple the processor 552 to an external interface 570 that is used to connect external devices or subsystems. The external devices may include sensors 572, such as accelerometers, level sensors, flow sensors, optical light sensors, camera sensors, temperature sensors, a global positioning system (GPS) sensors, pressure sensors, barometric pressure sensors, and the like. The external interface 570 further may be used to connect the IoT device 550 to actuators 574, such as power switches, valve actuators, an audible sound generator, a visual warning device, and the like.

[0093] In some optional examples, various input/output (I/O) devices may be present within, or connected to, the IoT device 550. For example, a display or other output device 584 may be included to show information, such as sensor readings or actuator position. An input device 586, such as a touch screen or keypad may be included to accept input. An output device 584 may include any number of forms of audio or visual display, including simple visual outputs such as binary status indicators (e.g., LEDs) and multi-character visual outputs, or more complex outputs such as display screens (e.g., LCD screens), with the output of characters, graphics, multimedia objects, and the like being generated or produced from the operation of the IoT device 550.

[0094] A battery 576 may power the IoT device 550, although in examples in which the IoT device 550 is mounted in a fixed location, it may have a power supply coupled to an electrical grid. The battery 576 may be a lithium ion battery, or a metal-air battery, such as a zinc-air battery, an aluminum-air battery, a lithium-air battery, and the like.

[0095] A battery monitor/charger 578 may be included in the IoT device 550 to track the state of charge (SoCh) of the battery 576. The battery monitor/charger 578 may be used to monitor other parameters of the battery 576 to provide failure predictions, such as the state of health (SoH) and the state of function (SoF) of the battery 576. The battery monitor/charger 578 may include a battery monitoring integrated circuit, such as an LTC4020 or an LTC2990 from Linear Technologies, an ADT7488A from ON Semiconductor of Phoenix Ariz., or an IC from the UCD90xxx family from Texas Instruments of Dallas, Tex. The battery monitor/charger 578 may communicate the information on the battery 576 to the processor 552 over the interconnect 556. The battery monitor/charger 578 may also include an analog-to-digital (ADC) convertor that allows the processor 552 to directly monitor the voltage of the battery 576 or the current flow from the battery 576. The battery parameters may be used to determine actions that the IoT device 550 may perform, such as transmission frequency, mesh network operation, sensing frequency, and the like.

[0096] A power block 580, or other power supply coupled to a grid, may be coupled with the battery monitor/charger 578 to charge the battery 576. In some examples, the power block 580 may be replaced with a wireless power receiver to obtain the power wirelessly, for example, through a loop antenna in the IoT device 550. A wireless battery charging circuit, such as an LTC4020 chip from Linear Technologies of Milpitas, Calif., among others, may be included in the battery monitor/charger 578. The specific charging circuits chosen depend on the size of the battery 576, and thus, the current required. The charging may be performed using the Airfuel standard promulgated by the Airfuel Alliance, the Qi wireless charging standard promulgated by the Wireless Power Consortium, or the Rezence charging standard, promulgated by the Alliance for Wireless Power, among others.

[0097] The storage 558 may include instructions 582 in the form of software, firmware, or hardware commands to implement the techniques described herein. Although such instructions 582 are shown as code blocks included in the memory 554 and the storage 558, it may be understood that any of the code blocks may be replaced with hardwired circuits, for example, built into an application specific integrated circuit (ASIC).

[0098] In an example, the instructions 582 provided via the memory 554, the storage 558, or the processor 552 may be embodied as a non-transitory, machine readable medium 560 including code to direct the processor 552 to perform electronic operations in the IoT device 550. The processor 552 may access the non-transitory, machine readable medium 560 over the interconnect 556. For instance, the non-transitory, machine readable medium 560 may include storage units such as optical disks, flash drives, or any number of other hardware devices. The non-transitory, machine readable medium 560 may include instructions to direct the processor 552 to perform a specific sequence or flow of actions, for example, as described with respect to the flowchart(s) and diagram(s) of operations and functionality described throughout this disclosure.

[0099] Example Computing Architectures

[0100] FIGS. 6 and 7 illustrate example computer processor architectures that can be used in accordance with embodiments disclosed herein. For example, in various embodiments, the computer architectures of FIGS. 6 and 7 may be used to implement the visual fog functionality described throughout this disclosure. Other embodiments may use other processor and system designs and configurations known in the art, for example, for laptops, desktops, handheld PCs, personal digital assistants, engineering workstations, servers, network devices, network hubs, switches, embedded processors, digital signal processors (DSPs), graphics devices, video game devices, set-top boxes, micro controllers, cell phones, portable media players, hand held devices, and various other electronic devices, are also suitable. In general, a huge variety of systems or electronic devices capable of incorporating a processor and/or other execution logic as disclosed herein are generally suitable.

[0101] FIG. 6 illustrates a block diagram for an example embodiment of a processor 600. Processor 600 is an example of a type of hardware device that can be used in connection with the embodiments described throughout this disclosure. Processor 600 may be any type of processor, such as a microprocessor, an embedded processor, a digital signal processor (DSP), a network processor, a multi-core processor, a single core processor, or other device to execute code. Although only one processor 600 is illustrated in FIG. 6, a processing element may alternatively include more than one of processor 600 illustrated in FIG. 6. Processor 600 may be a single-threaded core or, for at least one embodiment, the processor 600 may be multi-threaded in that it may include more than one hardware thread context (or "logical processor") per core.

[0102] FIG. 6 also illustrates a memory 602 coupled to processor 600 in accordance with an embodiment. Memory 602 may be any of a wide variety of memories (including various layers of memory hierarchy) as are known or otherwise available to those of skill in the art. Such memory elements can include, but are not limited to, random access memory (RAM), read only memory (ROM), logic blocks of a field programmable gate array (FPGA), erasable programmable read only memory (EPROM), and electrically erasable programmable ROM (EEPROM).

[0103] Processor 600 can execute any type of instructions associated with algorithms, processes, or operations detailed herein. Generally, processor 600 can transform an element or an article (e.g., data) from one state or thing to another state or thing.

[0104] Code 604, which may be one or more instructions to be executed by processor 600, may be stored in memory 602, or may be stored in software, hardware, firmware, or any suitable combination thereof, or in any other internal or external component, device, element, or object where appropriate and based on particular needs. In one example, processor 600 can follow a program sequence of instructions indicated by code 604. Each instruction enters a front-end logic 606 and is processed by one or more decoders 608. The decoder may generate, as its output, a micro operation such as a fixed width micro operation in a predefined format, or may generate other instructions, microinstructions, or control signals that reflect the original code instruction. Front-end logic 606 may also include register renaming logic and scheduling logic, which generally allocate resources and queue the operation corresponding to the instruction for execution.

[0105] Processor 600 can also include execution logic 614 having a set of execution units 616a, 616b, 616n, etc. Some embodiments may include a number of execution units dedicated to specific functions or sets of functions. Other embodiments may include only one execution unit or one execution unit that can perform a particular function. Execution logic 614 performs the operations specified by code instructions.

[0106] After completion of execution of the operations specified by the code instructions, back-end logic 618 can retire the instructions of code 604. In one embodiment, processor 600 allows out of order execution but requires in order retirement of instructions. Retirement logic 620 may take a variety of known forms (e.g., re-order buffers or the like). In this manner, processor 600 is transformed during execution of code 604, at least in terms of the output generated by the decoder, hardware registers and tables utilized by register renaming logic 610, and any registers (not shown) modified by execution logic 614.

[0107] Although not shown in FIG. 6, a processing element may include other elements on a chip with processor 600. For example, a processing element may include memory control logic along with processor 600. The processing element may include I/O control logic and/or may include I/O control logic integrated with memory control logic. The processing element may also include one or more caches. In some embodiments, non-volatile memory (such as flash memory or fuses) may also be included on the chip with processor 600.

[0108] FIG. 7 illustrates a block diagram for an example embodiment of a multiprocessor 700. As shown in FIG. 7, multiprocessor system 700 is a point-to-point interconnect system, and includes a first processor 770 and a second processor 780 coupled via a point-to-point interconnect 750. In some embodiments, each of processors 770 and 780 may be some version of processor 600 of FIG. 6.

[0109] Processors 770 and 780 are shown including integrated memory controller (IMC) units 772 and 782, respectively. Processor 770 also includes as part of its bus controller units point-to-point (P-P) interfaces 776 and 778; similarly, second processor 780 includes P-P interfaces 786 and 788. Processors 770, 780 may exchange information via a point-to-point (P-P) interface 750 using P-P interface circuits 778, 788. As shown in FIG. 7, IMCs 772 and 782 couple the processors to respective memories, namely a memory 732 and a memory 734, which may be portions of main memory locally attached to the respective processors.

[0110] Processors 770, 780 may each exchange information with a chipset 790 via individual P-P interfaces 752, 754 using point to point interface circuits 776, 794, 786, 798. Chipset 790 may optionally exchange information with the coprocessor 738 via a high-performance interface 739. In one embodiment, the coprocessor 738 is a special-purpose processor, such as, for example, a high-throughput MIC processor, a network or communication processor, compression engine, graphics processor, GPGPU, embedded processor, matrix processor, or the like.

[0111] A shared cache (not shown) may be included in either processor or outside of both processors, yet connected with the processors via P-P interconnect, such that either or both processors' local cache information may be stored in the shared cache if a processor is placed into a low power mode.

[0112] Chipset 790 may be coupled to a first bus 716 via an interface 796. In one embodiment, first bus 716 may be a Peripheral Component Interconnect (PCI) bus, or a bus such as a PCI Express bus or another third generation I/O interconnect bus, although the scope of this disclosure is not so limited.

[0113] As shown in FIG. 7, various I/O devices 714 may be coupled to first bus 716, along with a bus bridge 718 which couples first bus 716 to a second bus 720. In one embodiment, one or more additional processor(s) 715, such as coprocessors, high-throughput MIC processors, GPGPU's, accelerators (such as, e.g., graphics accelerators or digital signal processing (DSP) units), matrix processors, field programmable gate arrays, or any other processor, are coupled to first bus 716. In one embodiment, second bus 720 may be a low pin count (LPC) bus. Various devices may be coupled to a second bus 720 including, for example, a keyboard and/or mouse 722, communication devices 727 and a storage unit 728 such as a disk drive or other mass storage device which may include instructions/code and data 730, in one embodiment. Further, an audio I/O 724 may be coupled to the second bus 720. Note that other architectures are possible. For example, instead of the point-to-point architecture of FIG. 7, a system may implement a multi-drop bus or other such architecture.

[0114] All or part of any component of FIG. 7 may be implemented as a separate or stand-alone component or chip, or may be integrated with other components or chips, such as a system-on-a-chip (SoC) that integrates various computer components into a single chip.

[0115] Embodiments of the mechanisms disclosed herein may be implemented in hardware, software, firmware, or a combination of such implementation approaches. Certain embodiments may be implemented as computer programs or program code executing on programmable systems comprising at least one processor, a storage system (including volatile and non-volatile memory and/or storage elements), at least one input device, and at least one output device.

[0116] Program code, such as code 730 illustrated in FIG. 7, may be applied to input instructions to perform the functions described herein and generate output information. The output information may be applied to one or more output devices, in known fashion. For purposes of this application, a processing system includes any system that has a processor, such as, for example; a digital signal processor (DSP), a microcontroller, an application specific integrated circuit (ASIC), or a microprocessor.

[0117] The program code may be implemented in a high level procedural or object oriented programming language to communicate with a processing system. The program code may also be implemented in assembly or machine language, if desired. In fact, the mechanisms described herein are not limited in scope to any particular programming language. In any case, the language may be a compiled or interpreted language.

[0118] One or more aspects of at least one embodiment may be implemented by representative instructions stored on a machine-readable medium which represents various logic within the processor, which when read by a machine causes the machine to fabricate logic to perform the techniques described herein. Such representations, known as "IP cores" may be stored on a tangible, machine readable medium and supplied to various customers or manufacturing facilities to load into the fabrication machines that actually make the logic or processor.

[0119] Such machine-readable storage media may include, without limitation, non-transitory, tangible arrangements of articles manufactured or formed by a machine or device, including storage media such as hard disks, any other type of disk including floppy disks, optical disks, compact disk read-only memories (CD-ROMs), compact disk rewritable's (CD-RWs), and magneto-optical disks, semiconductor devices such as read-only memories (ROMs), random access memories (RAMS) such as dynamic random access memories (DRAMs), static random access memories (SRAMs), erasable programmable read-only memories (EPROMs), flash memories, electrically erasable programmable read-only memories (EEPROMs), phase change memory (PCM), magnetic or optical cards, or any other type of media suitable for storing electronic instructions.

[0120] Accordingly, embodiments of this disclosure also include non-transitory, tangible machine-readable media containing instructions or containing design data, such as Hardware Description Language (HDL), which defines structures, circuits, apparatuses, processors and/or system features described herein. Such embodiments may also be referred to as program products.

[0121] Visual Fog Architecture