Image Filter Device

Ikai; Tomohiro

U.S. patent application number 16/126215 was filed with the patent office on 2019-01-17 for image filter device. The applicant listed for this patent is Sharp Kabushiki Kaisha. Invention is credited to Tomohiro Ikai.

| Application Number | 20190020882 16/126215 |

| Document ID | / |

| Family ID | 46244706 |

| Filed Date | 2019-01-17 |

View All Diagrams

| United States Patent Application | 20190020882 |

| Kind Code | A1 |

| Ikai; Tomohiro | January 17, 2019 |

IMAGE FILTER DEVICE

Abstract

A loop filter (16) includes a filter processing unit (162c) configured to generate an output image by acting on an input image made up of a plurality of unit regions, and a reference region setting unit (162b) configured to reduce a reference region to be referenced by the filter processing unit (162c) for calculating a pixel value of each target pixel to a size according to a position of this target pixel in a unit region including this target pixel.

| Inventors: | Ikai; Tomohiro; (Sakai City, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 46244706 | ||||||||||

| Appl. No.: | 16/126215 | ||||||||||

| Filed: | September 10, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13992432 | Jun 7, 2013 | 10116932 | ||

| PCT/JP2011/078882 | Dec 14, 2011 | |||

| 16126215 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 19/167 20141101; H04N 19/593 20141101; H04N 19/82 20141101; H04N 19/176 20141101; H04N 19/117 20141101 |

| International Class: | H04N 19/176 20060101 H04N019/176; H04N 19/167 20060101 H04N019/167 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Dec 14, 2010 | JP | 2010-278499 |

Claims

1. (canceled)

2: An image filter device composing: a processor; and a memory associated with the processor, wherein the processor is configured to perform steps of: performing deblocking on an image; performing adaptive filter processing for a filter processing unit set to have a same size as with a target largest coding unit (LCU) and to have a position different from the target LCU, and to be overlapped with a region where deblocking has been performed regarding the target LCU; setting pixels, deblocking has been performed, included in the filter processing unit to target pixels in raster scan order; setting a reference region of each of the target pixels in a vicinity of the each of the target pixels based on a position of the each of the target pixels within the filter processing unit or a position of the each of the target pixels within the target LCU, wherein the reference region is determined depending on at least one of (1) a number of tap of a filter, (2) width Ddf in a Y direction of a region where deblocking has not been completed in the target LCU, (3) length LLCUy in the Y direction of the target LCU, and (4) an absolute value Ly of deviation in the Y direction between the target LCU and the filter processing unit; calculating a pixel value of the each of the target pixels with reference to a pixel value of a pixel included in the reference region.

3: The image filter device according to the claim 2, wherein the processor restricts an upward reference range Dupper, specifying a distance along a Y axis between the target pixel and a pixel adjacent to an upper edge of the reference region, to a number of pixels equal to or smaller than a value of a Y coordinate of the target pixel within the target LCU in a case that the Y coordinate of the target pixel within the target LCU is less than a predetermined threshold Lupper1.

4: The image filter device according to the claim 2, wherein the processor restricts an downward reference range Dlower, specifying a distance along a Y axis between the target pixel and a pixel adjacent to a lower edge of the reference region, to a number of pixels equal to or smaller than a value of LLCUy-y-1 in a case that in a Y coordinate y of the target pixel within the target LCU is equal to or greater than a predetermined threshold LLCUy-Llower1.

5: The image filter device according to the claim 2, wherein the processor restricts an upward reference range Dupper, specifying a distance along a Y axis between the target pixel and a pixel adjacent to an upper edge of the reference region, to a number of pixels equal to or smaller than a value of Ly+yr-LLCUy in a case that a normalized Y coordinate yr is equal to or greater than a predetermined threshold Lupper2, wherein the normalized Y coordinate yr is a Y coordinate of a relative coordinate of the target pixel from an upper left coordinate (0,0) of the target LCU.

6: The image filter device according to the claim 2, wherein the processor restricts an downward reference range Dlower, specifying a distance along a Y axis between the target pixel and a pixel adjacent to a lower edge of the reference region, to a number of pixels equal to or smaller than a value of LLCUy-Ddf-yr-1 in a case that a normalized Y coordinate yr is less than a predetermined threshold Llower2, wherein the normalized Y coordinate yr is a Y coordinate of a relative coordinate of the target pixel from an upper left coordinate (0,0) of the target LCU.

7: The image filter device according to the claim 2, wherein the processor restricts an downward reference range Dlower, specifying a distance along a Y axis between the target pixel and a pixel adjacent to a lower edge of the reference region, to a number of pixels equal to or smaller than a value of LLCUy-Ly-yr-1 in a case that a normalized Y coordinate yr is less than a predetermined threshold Llower3, wherein the normalized Y coordinate yr is a Y coordinate of a relative coordinate of the target pixel from an upper left coordinate (0,0) of the target LCU.

Description

TECHNICAL FIELD

[0001] The present invention relates to an image filter device configured to perform filtering of an image, also relates to an encoding device and a decoding device which include such an image filter device, and also relates to a data structure of encoded data to be decoded by such a decoding device.

BACKGROUND ART

[0002] In order to effectively transmit or record a moving image, there have been employed a moving image encoding device (encoding device) configured to generate encoded data by encoding the moving image, and a moving image decoding device (decoding device) configured to generate a decoded image by decoding this encoded data. Examples of a specific moving image coding system include H.264/MPEG-4.AVC (NPL 1), a system employed by KTA software which is codec for joint development in VCEG (Video Coding Expert Group), and a system (NPL 2) to be employed by TMuC (Test Model under Consideration) software which is a succeeding codec.

[0003] With such a coding system, an image (picture) making up a moving image is managed by a layered structure made up of slices to be obtained by dividing an image, the maximum coding increment (LCU: Largest Coding Unit) to be obtained by dividing a slice, and coding increment (CU: Coding Unit) to be obtained by dividing a largest coding unit, a block and a partition to be obtained by dividing a coding unit, and in many cases, encoding is performed with a block as the minimum unit.

[0004] Also, with such a coding system, in general, a predicted image is generated based on a local decoded image to be obtained by encoding/decoding an input image, and a difference image (may also referred to as residual image or predicted residual) between this predicted image and an input image is encoded. Also, as a method for generating a predicted image, there has been known a method called inter-frame prediction (inter prediction) and intra-screen prediction (intra prediction).

[0005] With inter prediction, motion compensation using a motion vector is applied to a reference image within a reference frame (decoded image) of which the entire frame has been decoded, and accordingly, a predicted image within a prediction target frame is generated for each prediction unit. On the other hand, with intra prediction, based on a local decoded image within the same frame, a predicted image in this frame is sequentially generated.

[0006] With NPL 2, an adaptive loop filter (ALF: Adaptive Loop Filter) (hereinafter, also simply referred to as "adaptive filter") configured to perform filter processing on a decoded image for each coding unit has been disclosed. This adaptive filter determines a filter coefficient where error between a decoded image subjected to filtering and the original image becomes the minimum, for each slice on a decoded image, and subjects each coding unit included in this slice to filtering based on this filter coefficient. An encoding device and a decoding device which include such an adaptive filter can improve precision of a predicted image to be generated by referencing an image subjected to filtering by improving image quality of an encoded/decoded image using filtering, and accordingly, coding efficiency can be improved.

[0007] Also, the adaptive filter disclosed in NPL 2 can realize increase in speed of filter processing by performing filter processing with reference to a pixel value transferred to local memory which is local memory in which a target coding unit which is a processing target and an image in the vicinity thereof can be stored which can be accessed at high speed from frame memory of which the access speed is low which is frame memory in which pixel values of the entire frame can be stored.

CITATION LIST

Non Patent Literature

[0008] NPL 1: "Recommendation ITU-T H.264", Telecommunication Standardization Sector of ITU, March 2009 (disclosed in March, 2009) [0009] NPL 2: "Test Model under Consideration JCTVC-B205 draft007", Joint Collaborative Team on Video Coding (JCT-VC) of ITU-T SG16 WP3 and ISO/IEC JTC1/SC29WG11, 2nd Meeting: Geneva, CH, July 2010 (disclosed in July, 2010)

SUMMARY OF INVENTION

Technical Problem

[0010] However, an adaptive filter according to the related art disclosed in NPL 2 references a pixel value other than a target coding unit (a pixel value of a pixel in the vicinity of a target coding unit) in addition to a pixel value within a target coding unit at the time of performing filter processing on a target coding unit, and accordingly, this causes a problem wherein the amount of data to be transferred from the frame memory to the local memory increases. Description will specifically be made regarding this point with reference to FIG. 29.

[0011] FIG. 29 illustrates, with an adaptive filter according to the related art, in the event that the size of a target coding unit CU is 8.times.8 pixels, a reference region R with five taps to be set according to a position of a target pixel, and a reference block RA which is a summation group of the reference region R regarding each target pixel included in a target coding unit. The reference block RA is a group of pixels to be referenced by the adaptive filter according to the related art for generating an image subjected to filtering regarding a target coding unit. In the case of the example in FIG. 29, the adaptive filter according to the related art has to reference each pixel value included in the reference block RA with 12.times.12 pixels for generating an image subjected to filtering regarding a target coding unit with 8.times.8 pixels. In this manner, the adaptive filter according to the related art references, at the time of performing filter processing regarding a target coding unit, a pixel value in the vicinity of a target coding unit in addition to a pixel value within a target coding unit, and accordingly, this causes a problem wherein the amount of data to be transferred from the frame memory to the local memory increases.

[0012] On the other hand, in order to reduce the amount of data to be transferred, for example, it can be conceived to decrease the size of a reference region, but in this case, prediction precision of a predicted image to be calculated with an image subjected to filtering decreases, and accordingly, this causes a problem wherein coding efficiency decreases.

[0013] The present invention has been made in the light of the above problems, and its object is to realize an image filter device whereby throughput and processing time can be reduced by reducing the amount of data to be transferred at the time of adaptive filter processing while maintaining high coding efficiency.

Solution to Problem

[0014] In order to solve the above-mentioned problems, an image filter device according to the present invention including: filter means configured to generate an output image by acting on an input image made up of a plurality of unit regions; and reference region modifying means configured to reduce a reference region to be referenced for the filter means calculating a pixel value of each target pixel to a size according to a position of this target pixel in a unit region including this target pixel.

[0015] In the event that the image filter device is employed for a decoding device configured to decode encoded data to generate a decoded image, or an encoding device configured to encode an image to be encoded to generate encoded data, a predicted image with high prediction precision can be generated with reference to an output image of the image filter device, and accordingly, coding efficiency is improved.

[0016] Also, according to the image filter device configured as described above, a reference region to be referenced for the filter means calculating a pixel value of each target pixel is reduced to a size according to a position of this target pixel in a unit region including this target pixel, and accordingly, the number of pixels to be referenced for generating an output image can be reduced while maintaining high coding efficiency. Accordingly, according to the image filter device configured as described above, the amount of data to be transferred at the time of filter processing can be reduced while maintaining high coding efficiency.

[0017] Note that the unit region mentioned here may be, for example, the largest coding unit LCU, or may be a coding unit CU to be obtained by dividing the largest coding unit LCU, or may be another region.

[0018] Also, an image filter device according to the present invention including: filter means configured to generate an output image for each unit region by acting on an input image made up of a plurality of unit regions; and smoothing means configured to smooth a pixel value of a pixel where of the unit regions, distance from at least one of two edges on the upstream side in processing order is equal to or shorter than DB; with the filter means acting on a target unit region after the smoothing means act on two edges on the upstream side of a target unit region before acting on two edges on the upstream side of the next unit region in processing order.

[0019] Also, an image filter device according to the present invention including: smoothing means configured to smooth a pixel value of a pixel positioned near at least one of two edges on the upstream side in processing order of each unit region regarding a decoded image to be obtained by adding a predicted image to be generated for each unit region and a residual image; calculating means including first filter means configured to act on an output image from the smoothing means, second filter means configured to act on the predicted image, and third filter means configured to act on the residual image, configured to add and output output images from the first to third filter means; and reference region setting means configured to set a reference region which the first to third filter means reference for calculating a pixel value of each target pixel, and to set the number of pixels in the vertical direction of a reference region to 1.

[0020] According to the image filter device, an output image is generated with reference to a decoded image, predicted image, and a residual image subjected to smoothing processing by the smoothing means. Accordingly, a decoding device including the image filter device, and an encoding device including the image filter device generate a predicted image with reference to an output image of the image filter device, and accordingly, coding efficiency is improved. Also, the number of pixels in the vertical direction of the reference region is set to 1, and accordingly, the throughput of filter processing and the amount of data to be transferred are reduced.

[0021] Also, an image filter device according to the present invention including: filter means configured to generate an output image by acting on an input image made up of one or a plurality of slices; filter means configured to calculate a pixel value of a target pixel in the output image from each pixel value of the input image in a reference region set in the vicinity of this target pixel; and target pixel restricting means configured to restrict a position of a target pixel to a position where the entire reference region including this target pixel is included in a slice including this target pixel.

[0022] According to the image filter device, an output image in a target slice is generated with reference to a pixel value alone within a target slice. In other words, an output image in a target slice is generated without referencing a pixel outside the target slice. Accordingly, according to the above-mentioned configuration, throughput for generating an output image is reduced. Also, according to the configuration, filter processing can be performed without awaiting until each pixel in the next slice in processing order which is a slice adjacent to a target slice can be referenced, and accordingly, processing time is reduced.

Advantageous Effects of Invention

[0023] As described above, a filter device according to the present invention includes: filter means configured to generate an output image by acting on an input image made up of a plurality of unit regions; and reference region modifying means configured to reduce a reference region to be referenced for the filter means calculating a pixel value of each target pixel to a size according to a position of this target pixel in a unit region including this target pixel.

[0024] According to the image filter device configured as described above, the amount of data to be transferred at the time of filter processing can be reduced while maintaining high coding efficiency.

BRIEF DESCRIPTION OF DRAWINGS

[0025] FIG. 1 is a block diagram illustrating a configuration of an adaptive filter including a moving image decoding device according to a first embodiment of the present invention.

[0026] FIG. 2 is a diagram illustrating a data structure of encoded data to be generated by the moving image encoding device according to the first embodiment of the present invention and to be referenced by the moving image decoding device according to the first embodiment of the present invention. (a) illustrates a configuration of a picture layer of encoded data, (b) illustrates a configuration of a slice layer included in the picture layer, (c) illustrates a configuration of each CU making up an LCU layer included in the slice layer, (d) illustrates a configuration of a leaf CU included in the CU layer, (e) illustrates a configuration of inter prediction information regarding the leaf CU, (f) illustrates a configuration of intra prediction information regarding the leaf CU, and (g) illustrates a configuration of a filter parameter included in a slice header.

[0027] FIG. 3 is a block diagram illustrating a configuration of the moving image decoding device according to the first embodiment of the present invention.

[0028] FIG. 4 is a diagram for describing deblocking processing by a deblocking filter which a loop filter according to the first embodiment of the present invention has, (a) illustrates processing order for multiple LCUs included in a slice, (b) illustrates processing order for multiple CUs included in the LCU, (c) illustrates a border (thick solid line) of deblocking completion at a point-in-time when deblocking regarding an LCU made up of multiple CUs is completed, and a border (thin solid line) of deblocking incompletion.

[0029] FIG. 5 is a diagram for describing processing by a loop filter according to the first embodiment of the present invention, (a) illustrates a first filter processing unit ALFU1 to be set regarding a target LCU in a first processing example, (b) illustrates a flowchart of the first processing example, (c) illustrates a second filter processing unit ALFU2 to be set regarding a target LCU in a second processing example, and (b) illustrates a flowchart of the second processing example.

[0030] FIG. 6 is a diagram for describing processing by an adaptive filter including a loop filter according to the first embodiment of the present invention, (a) illustrates an example of a reference region to be set by the adaptive filter, and (b) illustrates another example of a reference region to be set by the adaptive filter.

[0031] FIG. 7 is a flowchart illustrating a flow of processing for generating a decoded image subjected to adaptive filtering by the adaptive filter according to the first embodiment of the present invention.

[0032] FIG. 8 is a diagram for describing processing for setting a reference region by the adaptive filter according to the first embodiment of the present invention, and (a) to (e) are flowcharts illustrating a flow of each setting example.

[0033] FIG. 9 is a diagram exemplifying a reference region to be set by the adaptive filter according to the first embodiment of the present invention.

[0034] FIG. 10 is a diagram for describing processing by the adaptive filter according to the first embodiment of the present invention, and is a diagram illustrating a position relation between a target LCU and a filter processing unit ALFU corresponding to the target LCU.

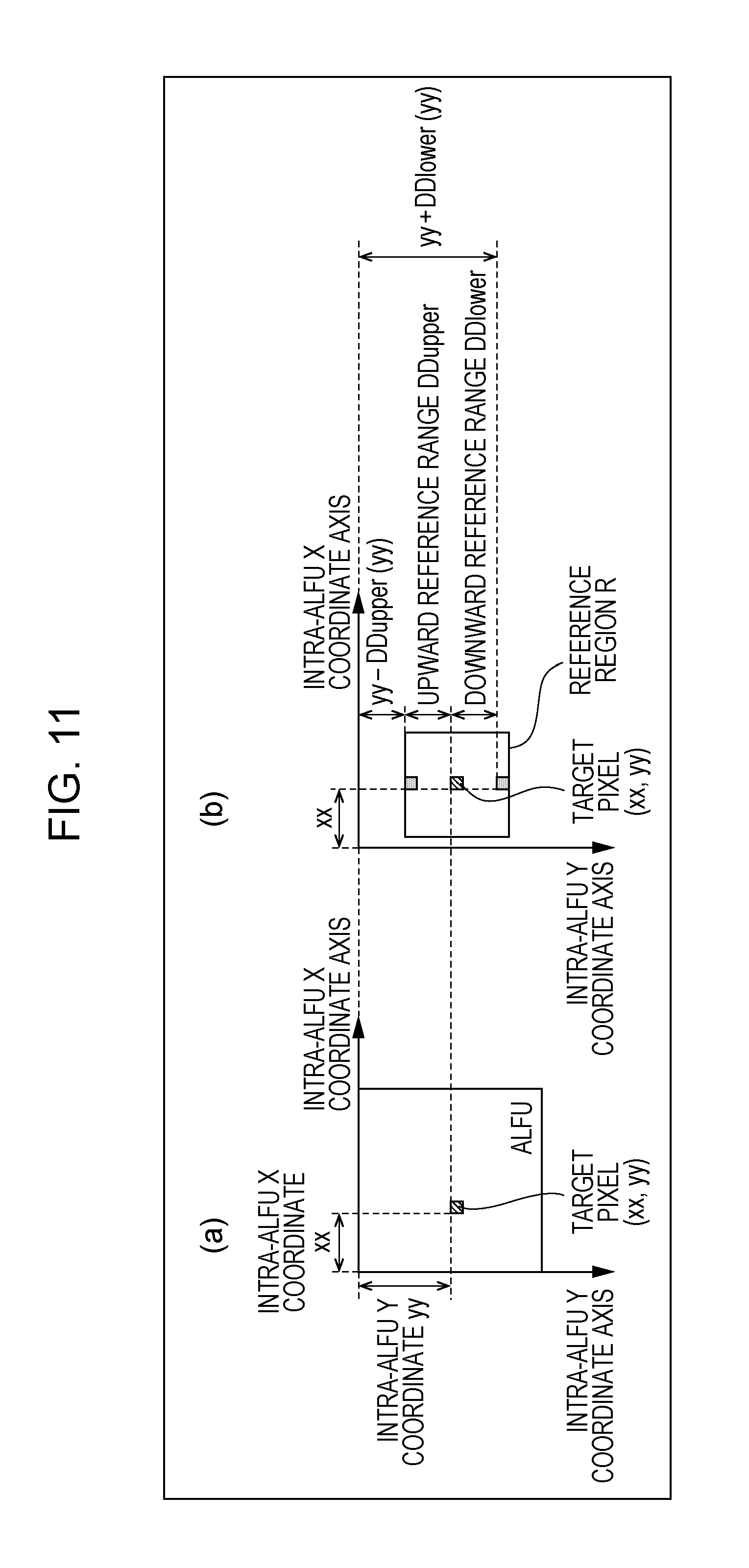

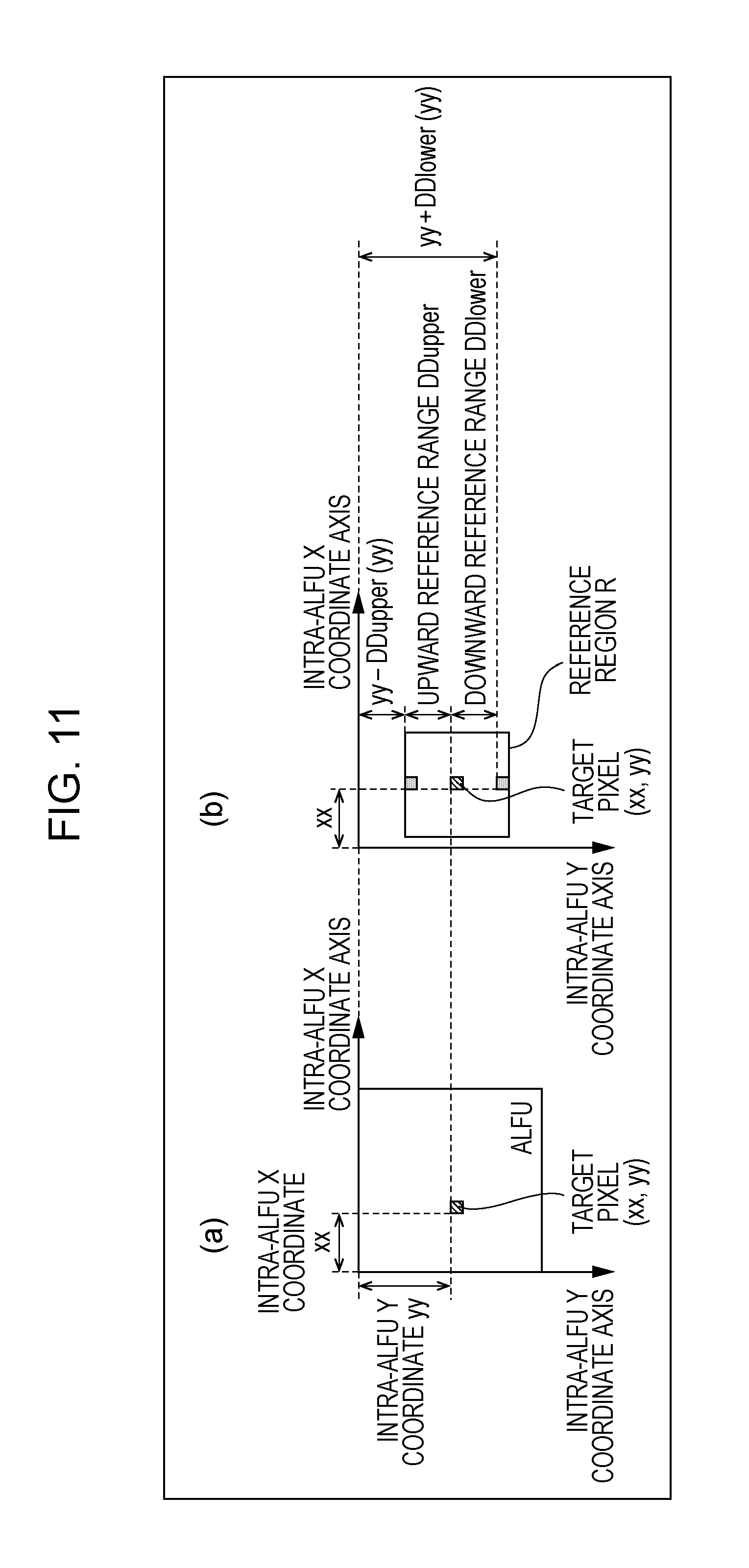

[0035] FIG. 11 is a diagram for describing processing by the adaptive filter according to the first embodiment of the present invention, (a) illustrates a target pixel within a filter processing unit ALFU, and (b) illustrates a reference region to be set in the vicinity of a target pixel.

[0036] FIG. 12 is a diagram for describing a reduction advantage of the amount of data to be transferred by the adaptive filter according to the first embodiment of the present invention, (a) illustrates a region of a pixel to be transferred at adaptive filter processing according to the related art, and (b) to (d) illustrate a region of a pixel to be transferred in a first processing example according to the present invention.

[0037] FIG. 13 is a diagram for describing a reduction advantage of the amount of data to be transferred by the adaptive filter according to the first embodiment of the present invention, (a) illustrates a region of a pixel to be transferred at adaptive filter processing according to the related art, and (b) illustrates a region of a pixel to be transferred in a second processing example according to the present invention.

[0038] FIG. 14 is a diagram for describing processing by the adaptive filter according to the first embodiment of the present invention, and (a) to (d) are diagrams for describing each constraint to be imposed at the time of setting a reference region.

[0039] FIG. 15 is for describing processing by the adaptive filter according to the first embodiment of the present invention, and is a table illustrating a specific example of an intra-ALFU Y coordinate, an intra-LCU Y coordinate, a normalized intra-LCU Y coordinate, an upward referable range, a first downward referable range, and a second downward referable range.

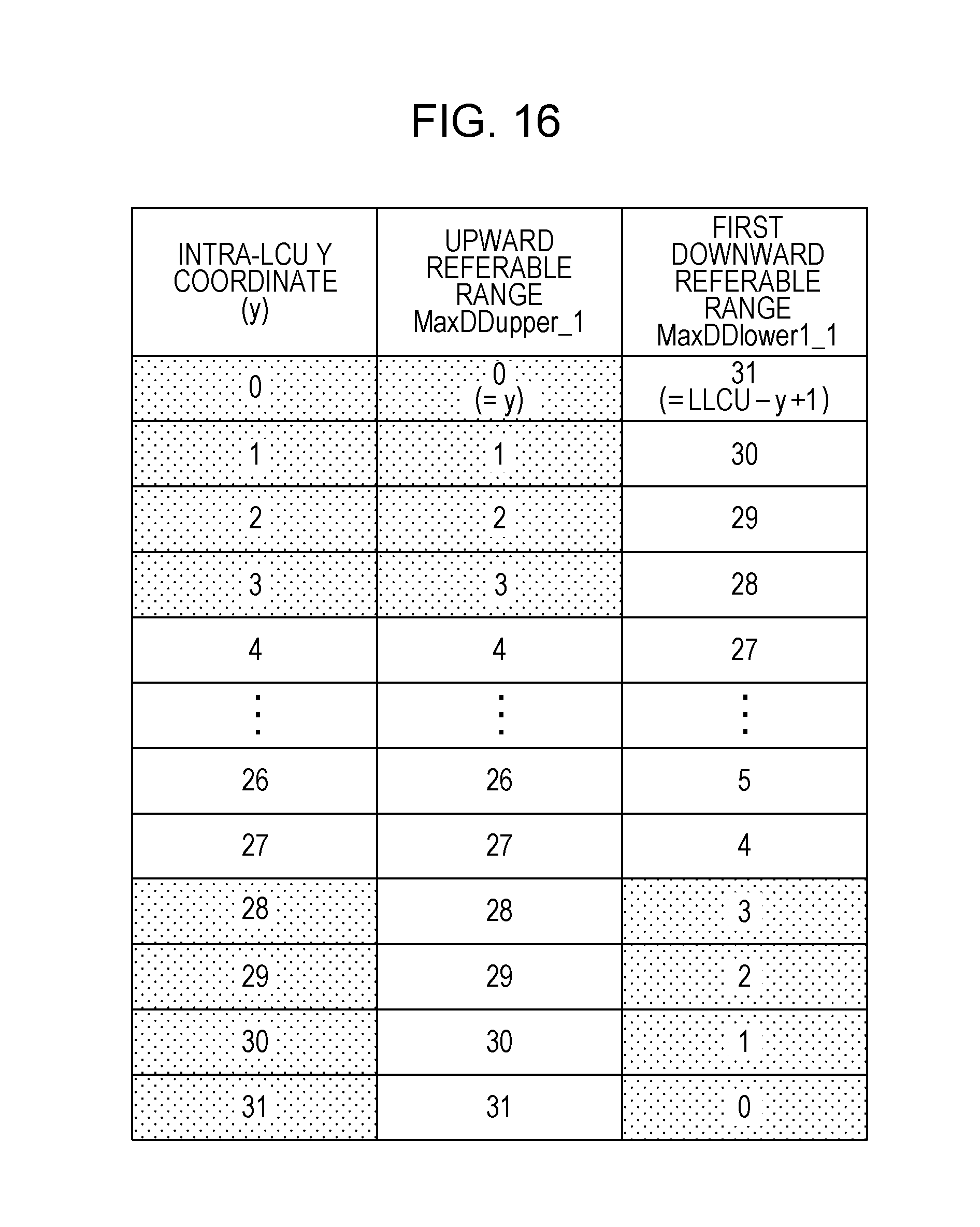

[0040] FIG. 16 is for describing processing by the adaptive filter according to the first embodiment of the present invention, and is a table illustrating a specific example of an intra-LCU Y coordinate, an upward referable range, and a first downward referable range.

[0041] FIG. 17 is for describing processing by the adaptive filter according to the first embodiment of the present invention, and is a table illustrating a specific example of an intra-ALFU Y coordinate, an intra-LCU Y coordinate, a normalized intra-LCU Y coordinate, an upward referable range, a first downward referable range, and a second downward referable range.

[0042] FIG. 18 is for describing processing by the adaptive filter according to the first embodiment of the present invention, and is a table illustrating a specific example of a normalized intra-LCU Y coordinate, an upward referable range, a first downward referable range, and a second downward referable range.

[0043] FIG. 19 is a diagram illustrating an example of a reference region to be set by the adaptive filter according to the first embodiment of the present invention.

[0044] FIG. 20 is a block diagram illustrating a configuration of a moving image encoding device according to the first embodiment of the present invention.

[0045] FIG. 21 is a block diagram illustrating a configuration of a loop filter including the moving image encoding device according to the first embodiment of the present invention.

[0046] FIG. 22 is a block diagram illustrating a configuration of a moving image decoding device according to a second embodiment of the present invention.

[0047] FIG. 23 is a block diagram illustrating a configuration of a loop filter including the moving image decoding device according to the second embodiment of the present invention.

[0048] FIG. 24 is a block diagram illustrating a configuration of a moving image encoding device according to the second embodiment of the present invention.

[0049] FIG. 25 is a block diagram illustrating a configuration of a loop filter including the moving image encoding device according to the second embodiment of the present invention.

[0050] FIG. 26 is a block diagram illustrating a configuration of a loop filter according to a third embodiment of the present invention.

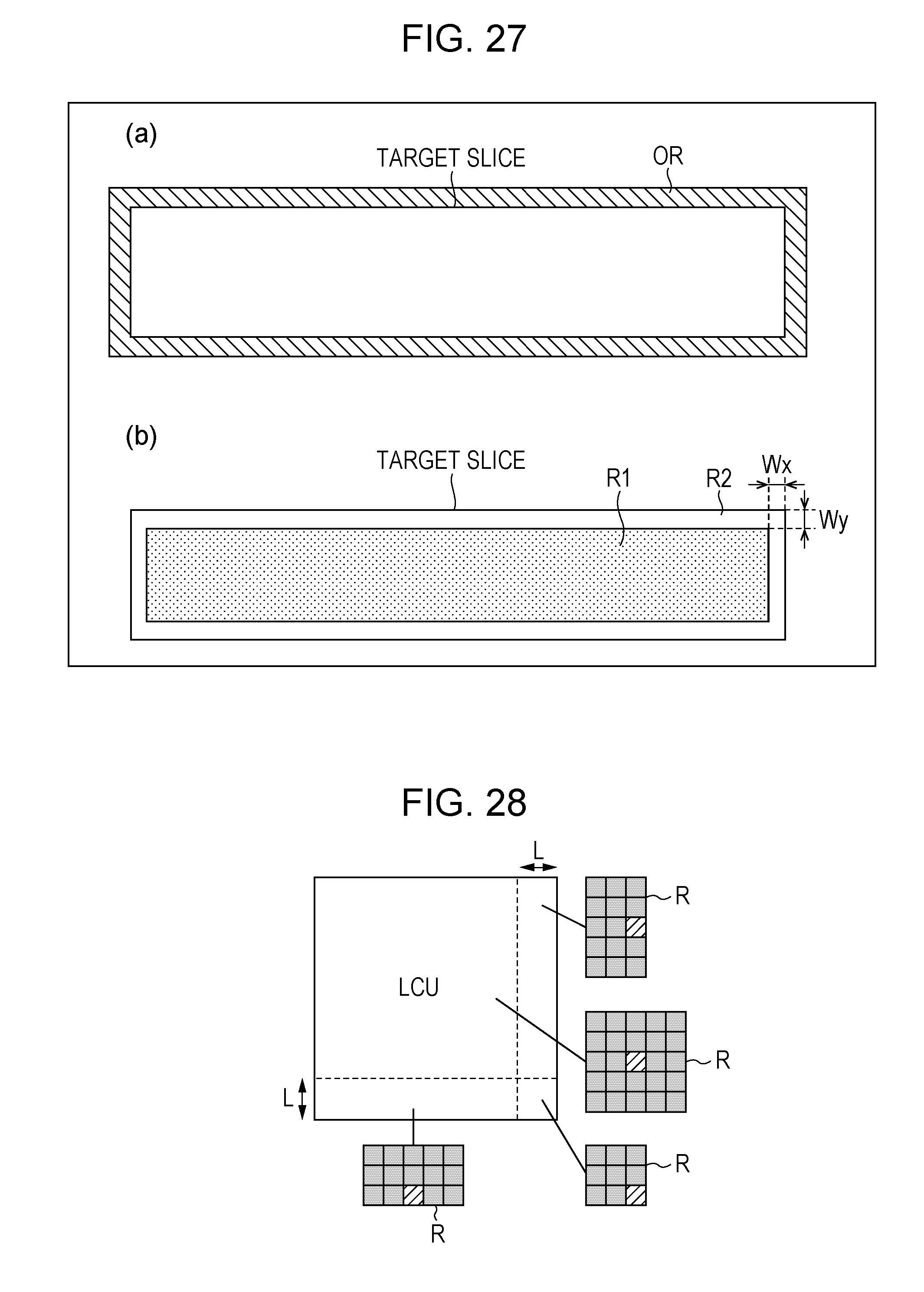

[0051] FIG. 27 is a diagram for describing processing by the loop filter according to the third embodiment of the present invention, (a) illustrates an internal region and an adjacent region to be set on a slice by this loop filter, and (b) illustrates a region to be referenced by a loop filter according to the related art.

[0052] FIG. 28 is a diagram illustrating an example of a reference region to be set by an adaptive filter according to a fourth embodiment of the present invention.

[0053] FIG. 29 is a diagram illustrating a reference region R to be set according to a position of a target pixel, and a reference block RA which is a summation group of the reference region R regarding each target pixel included in a target coding unit, with a conventional adaptive filter.

[0054] FIG. 30 is a diagram for describing that a moving image decoding device and a moving image encoding device according to an embodiment of the present invention can be used for transmission and reception of a moving image, (a) is a block diagram illustrating a configuration of a transmission device on which the moving image encoding device has been mounted, and (b) is a block diagram illustrating a configuration of a reception device on which the moving image decoding device has been mounted.

[0055] FIG. 31 is a diagram for describing that a moving image decoding device and a moving image encoding device according to an embodiment of the present invention can be used for recording and playing of a moving image, (a) is a block diagram illustrating a configuration of a recording device on which the moving image encoding device has been mounted, and (b) is a block diagram illustrating a configuration of a playing device on which the moving image decoding device has been mounted.

DESCRIPTION OF EMBODIMENTS

[0056] Description regarding an embodiment of a decoding device and an encoding device according to the present invention based on drawings is as follows. Note that the decoding device according to the present embodiment is for decoding a moving image from encoded data. Accordingly, hereinafter, this will be referred to as "moving image decoding device". Also, the encoding device according to the present embodiment is for generating encoded data by encoding a moving image. Accordingly, hereinafter, this will be referred to as "moving image encoding device".

[0057] However, the application range of the present invention is not restricted to this. Specifically, as apparent from the following description, features of the present invention are realized without assuming multiple frames. That is to say, the present invention can be applied to decoding devices in general and encoding devices in general regardless of whether to take a moving image as a target or a still image as a target.

(Configuration of Encoded Data #1)

[0058] Prior to description of a moving image decoding device 1 according to the present embodiment, a structure of encoded data #1 to be generated by a moving image encoding device 2 according to the present embodiment and to be decoded by the moving image decoding device 1 will be described with reference to FIG. 2. The encoded data #1 includes a layered structure made up of a sequence layer, a GOP (Group Of Picture) layer, a picture layer, a slice layer, and a largest coding unit (LCU: Largest Coding Unit) layer.

[0059] A structure of layers of the picture layer and thereafter in the encoded data #1 is illustrated in FIG. 2. (a) to (f) in FIG. 2 are diagrams illustrating a structure of a picture layer P, a slice layer S, an LCU layer LCU, a leaf CU (referred to as CUL in (d) in FIG. 2) included in the LCU, inter prediction information PI_Inter which is prediction information PI regarding an inter prediction (inter-frame prediction) partition, and intra prediction information PI_Intra which is prediction information PI regarding an intra prediction (intra-screen prediction) partition, respectively.

(Picture Layer)

[0060] The picture layer P is a group of data to be referenced by the moving image decoding device 1 for decoding a target picture which is a picture to be processed. The picture layer P includes, as illustrated in (a) in FIG. 2, a picture header PH, and slice layers S1 to SNs (Ns is a total number of slice layers included in the picture layer P).

[0061] The picture header PH includes an encoding parameter group to be referenced by the moving image decoding device 1 for determining a decoding method of a target picture. For example, encoding mode information (entropy_coding_mode_flag) which indicates a mode of variable length coding to be used by the moving image encoding device 2 at the time of encoding is an example of encoding parameters included in the picture header PH.

(Slice Layer)

[0062] The slice layers S included in the picture layer P are a group of data to be referenced by the moving image decoding device 1 for decoding a target slice which is a slice to be processed. The slice layer S includes, as illustrated in (b) in FIG. 2, a slice header SH, and LCU layers LCU1 to LCUNc (Nc is a total number of LCUs included in the slice S).

[0063] The slice header SH includes a encoding parameter group to be referenced by the moving image decoding device 1 for determining a decoding method of a target slice. Slice type specifying information (slice_type) for specifying a slice type is an example of the encoding parameters included in the slice header SH.

[0064] Examples of a slice type that can be specified with the slice type specifying information include (1) I slice using intra prediction alone at the time of encoding, (2) single directional prediction at the time of encoding, or P slice using intra prediction, and (3) uni prediction or bi prediction at the time of encoding, or B slice using intra prediction.

[0065] Also, the slice header SH includes filter parameters FP to be referenced by the adaptive filter included in the moving image decoding device 1. Note that a configuration of the filter parameters FP will be described later, and accordingly, description thereof will be omitted here.

(Lcu Layer)

[0066] The LCU layers LCU included in the slice layer S are a group of data to be referenced by the moving image decoding device 1 for decoding a target LCU which is an LCU to be processed.

[0067] The LCU layer LCU is made up of multiple coding units (CU: Coding unit) to be obtained by hierarchically dividing this LCU into quadtree division. In other words, the LCU layer LCU is, of a layered structure recursively including multiple CUs, a coding unit equivalent to the uppermost. The CUs included in the LCU layer LCU has, as illustrated in (c) in FIG. 2, a CU header CUH, and a layered structure recursively including multiple CUs to be obtained by quadtree division of this CU.

[0068] The size of each CU excluding its LCU is a vertically and horizontally half of the size of a CU to which this CU directly belongs (i.e., CU of one hierarchy higher order of this CU), and the size that each CU can take depends on the size and hierarchical depth of the LCU included in a sequence parameter set SPS of the encoded data #1. For example, in the event that the size of the LCU is 128.times.128 pixels, and the maximum hierarchical depth is 5, CUs in hierarchies equal to or lower than this LCU can take five types of sizes, that is, any of 128.times.128 pixels, 64.times.64 pixels, 32.times.32 pixels, 16.times.16 pixels, and 8.times.8 pixels. Also, a CU which is not further divided will be referred to as a leaf CU.

(CU Header)

[0069] The CU header CUH includes an encoding parameter to be referenced by the moving image decoding device 1 for determining a method for decoding a target CU. Specifically, as illustrated in (c) in FIG. 2, there is included a CU division flag SP_CU for specifying whether to further divide the target CU four ways to lower-order. In the event that the CU division flag SP_CU is 0, that is, in the event that the CU is not further divided, this CU is a leaf CU.

(Leaf CU)

[0070] The CU that is not further divided (leaf of CU) is treated as a prediction unit (PU: Prediction Unit) and a transform unit (TU: Transform Unit).

[0071] As illustrated in (d) in FIG. 2, a leaf CU (referred to as CUL in (d) in FIG. 2) includes (1) PU information PUI to be referenced at the time of a predicted image being generated by the moving image decoding device 1, and (2) TU information TUI to be referenced at the time of residual data being decoded by the moving image decoding device 1.

[0072] A skip flag SKIP is a flag that indicates whether or not a skip mode is applied to a target PU, and in the event that the value of the skip flag SKIP is 1, that is, in the event that the skip mode has been applied to the target leaf, the PU information PUI and TU information TUI in the leaf CU thereof are omitted. Note that the skip flag SKIP is omitted in the I slice.

[0073] The PU information PUI includes, as illustrated in (d) in FIG. 2, the skip flag SKIP, prediction type information PT, and prediction information PI. The prediction type information PT is information for specifying whether to use intra prediction or inter prediction as a prediction image generating method regard a target leaf CU (target PU). The prediction information PI is made up of intra prediction information PI_Intra or inter prediction information PI_Inter according to which prediction procedure the prediction type information PT specifies. Hereinafter, a PU to which intra prediction is applied will also be referred to as an intra PU, and a PU to which inter prediction is applied will also be referred to as an inter PU.

[0074] The PU information PUI includes the shape, size, and information specifying a position within a target PU, of each partition included in a target PU. The partition mentioned here is a single or multiple not overlapped regions which make up a target leaf CU, and generation of a predicted image is performed with a partition as a unit.

[0075] The TU information TUI includes, as illustrated in (d) in FIG. 2, a quantization parameter difference .DELTA.qp (tu_qp_delta) for specifying the size of a quantization step, TU division information SP_TU for specifying a division pattern for each block of a target leaf CU (target TU), and quantized predicted residual QD1 to QDNT (NT is a total number of blocks included in the target TU).

[0076] The quantization parameter difference .DELTA.qp is difference qp-qp' between a quantization parameter qp in the target TU, and a quantization parameter qp' in a TU encoded immediately before the TU thereof.

[0077] The TU division information SP_TU is specifically the shape, size, and information specifying a position within the target TU of each block included in the target TU. Each TU can take a size from 64.times.64 pixels to 2.times.2 pixels, for example. The block mentioned here is a single or multiple not overlapped regions which make up the target leaf CU, and encoding/decoding of predicted residual is performed with a block as a unit.

[0078] Each quantized predicted residual QD is encoded data generated by the moving image encoding device 2 subjecting a target block which is a block to be processed to the following processes 1 to 3. Process 1: A predicted residual obtained by subtracting a predicted image from an image to be encoded is subjected to DCT transform (Discrete Cosine Transform). Process 2: A DCT coefficient obtained in Process 1 is subjected to quantization. Process 3: The DCT coefficient subjected to quantization in Process 2 is subjected to variable length encoding. The above-mentioned quantization parameter qp represents the size of a quantization step QP used at the time of the moving image encoding device 2 subjecting the DCT coefficient to quantization (QP=2.sup.qp/6).

(Inter Prediction Information PI_Inter)

[0079] The inter prediction information PI_Inter includes encoding parameters to be referenced at the time of the moving image decoding device 1 generating an inter predicted image by inter prediction. As illustrated in (e) in FIG. 2, the inter prediction information PI_Inter includes inter PU division information SP_Inter for specifying a division pattern for each partition of the target PU, and inter prediction parameters PP_Inter1 to PP_InterNe (Ne is a total number of inter prediction partitions included in the target PU) regarding the partitions.

[0080] The inter PU division information SP_Inter is specifically the shape, size, and information specifying a position within the target PU of each inter prediction partition included in the target PU (inter PU).

[0081] The inter PU can be divided into eight types of partitions in total of four symmetric divisions (symmetric splittings) of 2N.times.2N pixels, 2N.times.N pixels, N.times.2N pixels, and N.times.N pixels, and four asymmetric divisions (asymmetric splittings) of 2N.times.nU pixels, 2N.times.nD pixels, nL.times.2N pixels, and nR.times.2N pixels. A specific value of N is stipulated with the size of a CU to which this PU belongs, and specific values of nU, nD, nL, and nR are determined according to the value of N. For example, the inter PU of 128.times.128 pixels can be divided into inter prediction partitions of 128.times.128 pixels, 128.times.64 pixels, 64.times.128 pixels, 64.times.64 pixels, 128.times.32 pixels, 128.times.96 pixels, 32.times.128 pixels, or 96.times.128 pixels.

(Inter Prediction Parameters PP_Inter)

[0082] The inter prediction parameters PP_Inter include, as illustrated in (e) in FIG. 2, a reference image index RI, an estimated motion vector index PMVI, and a motion vector residual MVD.

[0083] The motion vector residual MVD is encoded data generated by the moving image encoding device 2 executing the following processes 4 to 6. Process 4: An encoded/decoded local decoded image (more accurately, image obtained by subjecting an encoded/decoded local decoded image to deblocking and adaptive filter processing), and a motion vector my for a target partition is derived with reference to the selected encoded/decoded local decoded image (hereinafter, also referred to as "reference image"). Process 5: An estimating method is selected, an estimated value (hereinafter, also referred to as "estimated motion vector") pmv of the motion vector my to be assigned to the target partition is derived using the selected estimating method. Process 6: The motion vector residual MVD obtained by subtracting the estimated motion vector pmv derived in Process 5 from the motion vector my derived in Process 4 is encoded.

[0084] The reference image index RI is for specifying an encoded/decoded local decoded image (reference image) selected in Process 4, and the above-mentioned estimated motion vector index PMVI is for specifying the estimating method selected in Process 5. Examples of the estimating method that can be selected in Process 5 include (1) With a local decoded image being encoded/decoded (more accurately, image to be obtained by subjecting a decoded region of a local decoded image being encoded/decoded to deblocking and adaptive filter processing), a method wherein median of a motion vector assigned to a partition adjacent to the target partition (hereinafter, also referred to as "adjacent partition") is taken as the estimated motion vector pmv, and (2) With an encoded/decoded local decoded image, a method wherein a motion vector assigned to a partition which occupies the same position as with the target partition (often referred to as "colocate partition") is taken as the estimated motion vector pmv.

[0085] Note that prediction parameters PP regarding a partition where uni prediction is performed include, as illustrated in (e) in FIG. 2, each of the reference image index RI, estimated motion vector index PMVI, and motion vector residual MVD one at a time, but prediction parameters PP regarding a partition where bi prediction (weighted prediction) is performed include two reference image indexes RI1 and RI2, two estimated motion vector indexes PMVI1 and PMVI2, and two motion vector residuals MVD1 and MVD2.

(Intra Prediction Information PI_Intra)

[0086] The intra prediction information PI_Intra includes encoding parameters to be referenced at the time of the moving image decoding device 1 generating an intra predicted image by intra prediction. As illustrated in (f) in FIG. 2, the intra prediction information PI_Intra includes intra PU division information SP_Intra for specifying a division pattern for the partitions of the target PU (intra PU), and intra prediction parameters PP_Intra1 to PP_IntraNa (Na is a total number of intra prediction partitions included in the target PU) regarding the partitions.

[0087] The intra PU division information SP_Intra is specifically the shape, size, and information specifying a position within the target PU of each intra prediction partition included in the target PU. The intra PU division information SP_Intra includes an intra division flag (intra_split_flag) for specifying whether to divide the target PU into partitions. In the event that the intra division flag is 1, the target PU is symmetrically divided into four partitions, and in the event that the intra division flag is 0, the target PU is treated as one partition without being divided. Accordingly, if we say that the size of the target PU is 2N.times.2N pixels, the intra prediction partition obtains any size of 2N.times.2N pixels (without division) and N.times.N pixels (four divisions) (here, N=2.sup.n, n is an optional integer equal to or greater than 1). For example, an intra PU of 128.times.128 pixels can be divided into intra prediction partitions of 128.times.128 pixels and 64.times.64 pixels.

(Intra Prediction Parameters PP_Intra)

[0088] The intra prediction parameters PP_Intra include, as illustrated in (f) in FIG. 2, an estimation flag MPM, and a residual prediction mode index RIPM. The intra prediction parameters PP_Intra are parameters for specifying an intra prediction procedure (prediction mode) regarding the partitions.

[0089] The estimation flag MPM is a flag that indicates whether a prediction mode estimated based on a prediction mode assigned to a partition in the vicinity of the target partition which is a processing target agrees with the prediction mode regarding this target partition. Examples of the partition in the vicinity of the target partition include a partition adjacent to the upper edge of the target partition, and a partition adjacent to the left edge of the target partition.

[0090] The residual prediction mode index RIPM is an index to be included in the intra prediction parameters PP_Intra in the event that the estimated prediction mode differs from the prediction mode regarding the target partition, and is an index for specifying a prediction mode to be assigned to this target partition.

(Filter Parameters FP)

[0091] As described above, the slice header SH includes the filter parameters FP to be referenced by the adaptive filter included in the moving image decoding device 1. The filter parameters FP include, as illustrated in (g) in FIG. 2, a filter coefficient group. The filter coefficient group includes (1) tap count specification information for specifying the number of taps of the filter, (2) filter coefficients a.sub.0 to a.sub.NT-1 (NT is a total number of filter coefficients included in the filter coefficient group), and (3) offset o.

(Moving Image Decoding Device 1)

[0092] Hereinafter, the moving image decoding device 1 according to the present embodiment will be described with reference to FIG. 1 to FIG. 19. The moving image decoding device 1 is a decoding device which includes H.264/MPEG-4.AVC, technology employed by KTA software which is a codec for joint development in VCEG (Video Coding Expert Group), and technology employed by TMuC (Test Model under Consideration) software which is a succeeding codec thereof at a portion thereof.

[0093] FIG. 3 is a block diagram illustrating the configuration of the moving image decoding device 1. As illustrated in FIG. 3, the moving image decoding device 1 includes a variable length code decoding unit 11, a predicted image generator 12, an inverse quantization/inverse transformation unit 13, an adder 14, frame memory 15, and a loop filter 16. Also, as illustrated in FIG. 3, the predicted image generator 12 includes a motion vector restoring unit 12a, an inter predicted image generator 12b, an intra predicted image generator 12c, and a prediction procedure determining unit 12d. The moving image decoding device 1 is a device configured to generate a moving image #2 by decoding encoded data #1.

(Variable Length Code Decoding Unit 11)

[0094] The variable length code decoding unit 11 decodes prediction parameters PP regarding the partitions from the encoded data #1 to supply these to the predicted image generator 12. Specifically, the variable length code decoding unit 11 decodes, with regard to an inter prediction partition, the inter prediction parameters PP_Inter including the reference image index RI, estimated motion vector index PMVI, and motion vector residual MVD from the encoded data #1, and supplies these to the motion vector restoring unit 12a. On the other hand, the variable length code decoding unit 11 decodes, with regard to an intra prediction partition, the intra prediction parameters PP_Intra including the estimation flag MPM, residual index RIPM, and additional index AI from the encoded data #1, and supplies these to the intra predicted image generator 12c.

[0095] Also, the variable length code decoding unit 11 decodes the prediction type information PT regarding each of the partitions from the encoded data #1, and supplies this to the prediction procedure determining unit 12d. Further, the variable length code decoding unit 11 decodes the quantization prediction residual QD regarding each of the blocks, and the quantization parameter difference .DELTA.qp regarding the TU including the block thereof from the encoded data #1, and supplies these to the inverse quantization/inverse transformation unit 13. Also, the variable length code decoding unit 11 decodes the filter parameters FP from the encoded data #1, and supplies this to the loop filter 16.

(Predicted Image Generator 12)

[0096] The predicted image generator 12 identifies whether each partition is an inter prediction partition to be subjected to inter prediction or an intra prediction partition to be subjected to intra prediction based on the prediction type information PT regarding each partition. In the case of the former, the predicted image generator 12 generates an inter predicted image Pred_Inter, and also supplies the generated inter predicted image Pred_Inter to the adder 14 as a predicted image Pred, and in the case of the latter, generates an intra predicted image Pred_Intra, and also supplies the generated intra predicted image Pred_Intra to the adder 14. Note that, in the event that the skip mode has been applied to the PU to be processed, the predicted image generator 12 omits decoding of another parameter belonging to this PU.

(Motion Vector Restoring Unit 12a)

[0097] The motion vector restoring unit 12a restores the motion vector my regarding each inter prediction partition from the motion vector residual MVD regarding the partition thereof, and a decoded motion vector my' regarding another partition. Specifically, (1) In accordance with an estimation method specified by the estimated motion vector index PMVI, the estimated motion vector pmv is derived from the decoded motion vector my', and (2) The motion vector my is obtained by adding the derived estimated motion vector pmv and motion vector residual MVD. Note that the decoded motion vector my' regarding another partition can be read out from the frame memory 15. The motion vector restoring unit 12a supplies the restored motion vector my to the inter predicted image generator 12b along with the corresponding reference image index RI.

(Inter Predicted Image Generator 12b)

[0098] The inter predicted image generator 12b generates a motion compensation image mc regarding each inter prediction partition by inter-frame prediction. Specifically, the inter predicted image generator 12b generates a motion compensation image mc from an adaptive-filtered decoded image P_ALF' specified by the reference image index RI supplied from the same motion vector restoring unit 12a using the motion vector my supplied form the motion vector restoring unit 12a. Here, the adaptive-filtered decoded image P_ALF' is an image to be obtained by subjecting a decoded image where the entire frame has already been completed to filter processing by the loop filter 16, and the inter predicted image generator 12b can read out the pixel value of each pixel which makes up the adaptive-filtered decoded image P_ALF' from the frame memory 15. The motion compensation image mc generated by the inter predicted image generator 12b is supplied to the prediction procedure determining unit 12d as the inter predicted image Pred_Inter.

(Intra Predicted Image Generator 12c)

[0099] The intra predicted image generator 12c generates a predicted image Pred_Intra regarding each intra prediction partition. Specifically, first, the intra predicted image generator 12c identifies a prediction mode based on the intra prediction parameters PP_Intra supplied from the variable length code decoding unit 11, and assigns the identified prediction mode to the target partition, for example, in raster scan order.

[0100] Here, identification of a prediction mode based on the intra prediction parameters PP_Intra can be performed as follows. (1) The estimation flag MPM is decoded, and in the event that this estimation flag MPM indicates that the prediction mode regarding the target partition which is a processing target agrees with the prediction mode assigned to a partition in the vicinity of this target partition, the prediction mode assigned to a partition in the vicinity of this target partition is assigned to the target partition. (2) On the other hand, in the event that this estimation flag MPM indicates that the prediction mode regarding the target partition which is a processing target does not agree with the prediction mode assigned to a partition in the vicinity of this target partition, the residual mode index RIPM is decoded, and a prediction mode that this residual prediction mode index RIPM indicates is assigned to the target partition.

[0101] The intra predicted image generator 12c generates a predicted image Pred_Intra from the (local) decoded image P by intra-screen prediction in accordance with a prediction procedure that the prediction mode assigned to the target partition indicates. The intra predicted image Pred_Intra generated by the intra predicted image generator 12c is supplied to the prediction procedure determining unit 12d. Note that an arrangement may be made wherein the intra predicted image generator 12c generates a predicted image Pred_Intra from the adaptive-filtered decoded image P_ALF by intra-screen prediction.

(Prediction Procedure Determining Unit 12d)

[0102] The prediction procedure determining unit 12d determines, based on the prediction type information PT regarding a PU to which each partition belongs, whether each partition is an inter prediction partition to be subjected to inter prediction or an intra prediction partition to be subjected to intra prediction. In the case of the former, the prediction procedure determining unit 12d supplies the inter predicted image Pred_Inter generated at the inter predicted image generator 12b to the adder 14 as a predicted image Pred, and in the case of the latter, the prediction procedure determining unit 12d supplies the intra predicted image Pred_Intra generated at the intra predicted image generator 12c to the adder 14 as a predicted image Pred.

(Inverse Quantization/Inverse Transformation Unit 13)

[0103] The inverse quantization/inverse transformation unit 13 (1) subjects the quantization prediction residual QD to inverse quantization, (2) subjects a DCT coefficient obtained by inverse quantization to inverse DCT (Discrete Cosine Transform) transform, and (3) supplies a prediction residual D obtained by inverse DCT transform to the adder 14. Note that, at the time of subjecting the quantization prediction residual QD to inverse quantization, the inverse quantization/inverse transformation unit 13 derives a quantization step QP from the quantization parameter difference .DELTA.qp supplied from the variable length code decoding unit 11. The quantization parameter qp can be derived by adding the quantization parameter difference .DELTA.qp to the quantization parameter qp' regarding a TU which has been subjected to inverse quantization/inverse DCT transform immediately before, the quantization step QP can be derived from the quantization step qp by QP=2.sup.qp/6, for example. Also, generation of the prediction residual D by the inverse quantization/inverse transformation unit 13 is performed with a TU or a block divided from a TU as a unit.

(Adder 14)

[0104] The adder 14 generates a decoded image P by adding the predicted image Pred supplied from the predicted image generator 12 and the prediction residual D supplied from the inverse quantization/inverse transformation unit 13. The generated decoded image P is stored in the frame memory 15.

(Loop Filter 16)

[0105] The loop filter 16 has (1) a function as a deblocking filter (DF: Deblocking Filter) configured to perform smoothing (deblocking) of an image in the vicinity of a block boundary or a partition boundary in the decoded image P, and (2) a function as an adaptive filter (ALF: Adaptive Loop Filter) configured to perform adaptive filter processing on an image on which the deblocking filter acts, using the filter parameters FP.

[0106] FIG. 1 is a block diagram illustrating the configuration of the loop filter 16. As illustrated in FIG. 1, the loop filter 16 includes a deblocking filter 161, an adaptive filter 162, and internal memory 163.

(Deblocking Filter 161)

[0107] The deblocking filter 161 generates a deblocked decoded image P_DB by performing smoothing of an image in a region in the vicinity of a block boundary or a partition boundary in the decoded image P.

[0108] The deblocking filter 161 performs deblocking on the decoded image P in the target slice in raster scan order for each LCU as illustrated in (a) in FIG. 4. Also, in the event that the target LCU includes multiple CUs, as illustrated in (b) in FIG. 4, the deblocking filter 161 performs deblocking in raster scan order for each CU included in the target LCU. Also, regarding the target CU, the deblocking filter 161 first performs deblocking on a boundary on the left side of the target CU, and then performs deblocking on a boundary on the upper side of the target CU.

[0109] (c) in FIG. 4 is a diagram illustrating a case where the deblocking filter 161 acts on the target LCU including four CUs (CU1 to 4). In (c) in FIG. 4, deblocking by the deblocking filter 161 has been applied to boundaries indicated by a thick solid line, and deblocking has not been applied to boundaries indicated by a thin solid line even at the time of deblocking regarding the target LCU being ended. Note that, of the boundaries indicated by the thin solid lines, deblocking is performed on the boundary of the right edge of the target LCU at the time of performing deblocking on an LCU adjacent to the right side of the target LCU, and deblocking is performed on the boundary of the lower edge of the target LCU at the time of performing deblocking on an LCU adjacent to the lower edge of the target LCU.

[0110] In this manner, even at the time of deblocking being performed on the target LCU, deblocking has not been performed on the boundaries of the right edge and lower edge of the target LCU, which serves a point for understanding at least one aspect of the present invention.

[0111] Hereinafter, prior to specific description regarding configuration of the units of the loop filter 16, processing by the loop filter 16 will be schematically described. The loop filter 16 performs one of the following two processing examples (processing example 1, processing example 2).

Processing Example 1

[0112] With the present processing example, the loop filter 16 performs deblocking in increments of LCUs, and performs adaptive filter processing for each first filter processing unit. Note that the first filter processing unit in the present processing example is set so as to have the same size and same position as with the target LCU as illustrated in (a) in FIG. 5. Also, with the following description, the first filter processing unit will also be referred to as ALFU1.

[0113] (b) in FIG. 5 is a flowchart illustrating a flow of processing in the present processing example. The loop filter 16 performs the following processing in the present processing example.

(Step S101)

[0114] The loop filter 16 initializes the value of a loop variable n to 1, and starts first loop processing wherein with regard to n that satisfying 1.ltoreq.n.ltoreq.N, an increment value of the loop variable n for each loop is taken as 1. Here, N indicates a total number of LCUs included in a slice to be processed. Note that, hereinafter, the n'th LCU will also be referred to as LCU(n).

(Step S102)

[0115] The loop filter 16 obtains, of the pixel values of the decoded image P stored in the frame memory 15, a pixel value to be referenced by the deblocking filter 161 for performing deblocking regarding the LCU(n) from the frame memory 15, and stores this in the internal memory 163.

(Step S103)

[0116] Next, the loop filter 16 generates a deblocked image P_DB regarding the LCU(n) by performing deblocking regarding the LCU(n) in the decoded image P stored in the internal memory 163 using the deblocking filter 161, and stores the generated deblocked image P_DB in the internal memory 163.

(Step S104)

[0117] This step is termination of the first loop processing.

(Step S105)

[0118] Next, the loop filter 16 initializes the value of a loop variable n to 1, and starts second loop processing wherein with regard to n that satisfying 1.ltoreq.n.ltoreq.N, an increment value of the loop variable n for each loop is taken as 1. Here, N indicates a total number of ALFU1s included in a slice to be processed, and is the same as the total number of LCUs. Note that, hereinafter, the n'th first filter processing unit ALFU1 will also be referred to as ALFU1(n).

(Step S106)

[0119] The loop filter 16 obtains, of the pixel values of the deblocked decoded image P_DB stored in the frame memory 15, a pixel value to be referenced by the adaptive filter 162 for performing adaptive filter processing regarding the ALFU1(n) from the frame memory 15, and stores this in the internal memory 163.

(Step S107)

[0120] Next, the loop filter 16 generates a filtered image P_ALF regarding the ALFU1(n) by performing adaptive filter processing regarding the ALFU1(n) in the deblocked decoded image P_DB stored in the internal memory 163 using the adaptive filter 162, and stores the generated filtered image P_ALF in the internal memory 163.

(Step S108)

[0121] This step is termination of the second loop.

[0122] The above is the flow of the processing in the processing example 1. Note that the filtered image P_ALF stored in the internal memory 163 by the adaptive filter 162 is transferred to and stored in the frame memory 15.

[0123] In this manner, with the present processing example, deblocking is performed previously on all of the LCUs included in the target slice, and adaptive filter processing is then performed. Accordingly, with the present processing example, the loop filter 16 performs transfer of data from the frame memory 15 to the internal memory 163 twice regarding each n (step S102 and step S106).

Processing Example 2

[0124] With the present processing example, the loop filter 16 performs deblocking in increments of LCUs, and performs adaptive filter processing for each second filter processing unit. Note that the second filter processing unit in the present processing example is set so as to have the same size as with the target LCU and so as to have a position different from the target LCU as illustrated in (c) in FIG. 5. Also, the second filter processing unit regarding the target LCU is set so as not to be overlapped with a region where deblocking has not been performed regarding the target LCU (shaded portion in (c) in FIG. 5). Also, with the following description, the second filter processing unit will also be referred to as ALFU2.

[0125] (d) in FIG. 5 is a flowchart illustrating a flow of processing in the present processing example. The loop filter 16 performs the following processing in the present processing example.

(Step S201)

[0126] The loop filter 16 initializes the value of a loop variable n to 1, and starts loop processing wherein with regard to n that satisfying 1.ltoreq.n.ltoreq.N, an increment value of the loop variable n for each loop is taken as 1. Here, as described above, N indicates a total number of LCUs included in a slice to be processed. Note that, hereinafter, the n'th second filter processing unit ALFU2 will also be referred to as ALFU2(n).

(Step S202)

[0127] The loop filter 16 obtains, of the pixel values of the decoded image P stored in the frame memory 15, a pixel value to be referenced for performing deblocking regarding the LCU(n) and adaptive filter processing regarding the ALFU2(n) from the frame memory 15, and stores this in the internal memory 163.

(Step S203)

[0128] Next, the loop filter 16 generates a deblocked image P_DB regarding the LCU(n) by performing deblocking regarding the LCU(n) in the decoded image P stored in the internal memory 163 using the deblocking filter 161, and stores the generated deblocked image P_DB in the internal memory 163.

(Step S204)

[0129] Next, the loop filter 16 generates a filtered image P_ALF regarding the ALFU2(n) by performing adaptive filter processing regarding the ALFU2(n) in the deblocked decoded image P_DB stored in the internal memory 163 using the adaptive filter 162, and stores the generated filtered image P_ALF in the internal memory 163.

(Step S205)

[0130] This step is termination of the loop.

[0131] The above is the flow of the processing in the processing example 2. Note that the filtered image P_ALF stored in the internal memory 163 by the adaptive filter 162 is transferred to and stored in the frame memory 15.

[0132] In this manner, with the present processing example, after deblocking is performed on the LCU(n) included in the target slice, and adaptive filter processing in the ALFU2(n) corresponding to the LCU(n) is performed. Accordingly, with the present processing example, it is sufficient that the loop filter 16 performs transfer of data from the frame memory 15 to the internal memory 163 once regarding each n (step S202).

(Adaptive Filter 162)

[0133] Hereinafter, the configuration of the adaptive filter 162 included in the loop filter 16 will be described with reference to FIG. 1. As illustrated in FIG. 1, the adaptive filter 162 includes a target pixel setting unit 162a, a reference region setting unit 162b, and a filter processing unit 162c.

[0134] The target pixel setting unit 162a sets the pixels included in the filter processing unit ALFU to as the target pixel in raster scan order. Target pixel position information that indicates the position of the target pixel set by the target pixel setting unit 162a is supplied to the reference region setting unit 162b. The reference region R is set for each target pixel by the reference region setting unit 162b, and a pixel value is calculated by the filter processing unit 162c for each target pixel.

[0135] The reference region setting unit 162b sets a filter reference region R (also simply referred to as reference region R) in the vicinity of the target pixel based on the position of the target pixel within the filter processing unit ALFU or the position of the target pixel within the target LCU. Here, the filter reference region R is determined depending on at least one of (1) tap information included in the filter parameters FP, (2) width Ddf in the Y direction of a region where deblocking by the deblocking filter 161 has not been completed in the target LCU, (3) length LLCUy in the Y direction of the target LCU, and (4) an absolute value Ly of deviation in the Y direction between the target LCU and target ALFU. Note that the length LLCUy in the Y direction of the target LCU may be expressed as height LcuHeight of the LCU. Note that the LLCUy (LcuHeight) is derived with the following expression using a logarithmic value Log 2MaxCUSize of the LCU size.

LcuHeight=(1<<Log 2MaxCUSize)

[0136] The reference region setting unit 162b sets the reference region R so as to satisfy at least one restraint of restraints A to E listed below.

[0137] Restraint A: In the case that the Y coordinate within the LCU of the target pixel is less than a predetermined threshold Lupper1, an upward reference range Dupper is restricted to the number of pixels equal to or smaller than the Y coordinate within the LCU of the target pixel.

[0138] The upward reference range Dupper mentioned here specifies distance along the Y axis between the target pixel and a pixel within the reference region R which is a pixel adjacent to the upper edge of the reference region R (this will be applied to the following). Also, let us say that the upward reference range Dupper is represented with a pixel as a unit (this will be applied to the following).

[0139] Also, as the predetermined threshold Lupper1, a fixed value such as 4 or 8 or the like may be employed, or a variable value may be employed (this will be applied to the following). Also, the upward reference range Dupper in the event that the Y coordinate within the LCU of the target pixel is less than the predetermined threshold Lupper1 may be set so as to depend on the Y coordinate within the LCU of the target pixel, or may be set to, for example, 0 without depending on the Y coordinate within the LCU of the target pixel.

[0140] In more general, the restraint A may also be expressed as a restraint for restricting, when distance DU between the upstream edge of the LCU including the target pixel, and this target pixel is less than a predetermined threshold, a position of the upstream edge of the reference region R regarding this target pixel so that distance with this target pixel is equal to or shorter than the DU.

[0141] Note that the above-mentioned "upstream edge" specifies any one of the upper edge and left edge when the processing is performed in raster scan order, and specifies any one of the lower edge and right edge when the processing is performed in inverse order of raster scan. Also, the above-mentioned "downstream edge" specifies any one of the lower edge and right edge when the processing is performed in raster scan order, and specifies any one of the upper edge and left edge when the processing is performed in inverse order of raster scan (this will be applied to the following).

[0142] Also, with the following description, "the downstream side of the downstream edge" specifies, when the processing is performed in raster scan order, any one of "the lower side of the lower edge", and "the right side of the right edge", and specifies, when the processing is performed in inverse order of raster scan, any one of "the upper side of the upper edge", and "the left side of the left edge". Also, with the following description, "the upstream side of the downstream edge" specifies, when the processing is performed in raster scan order, any one of "the upper side of the lower edge", and "the left side of the right edge", and specifies, when the processing is performed in inverse order of raster scan, any one of "the lower side of the upper edge", and "the right side of the left edge".

[0143] Restraint B: In the case that the Y coordinate within the LCU of the target pixel is equal to or greater than a predetermined threshold LLCUy-Llower1, a downward reference range Dlower is restricted to the number of pixels equal to or smaller than LLCUy-(Y coordinate within the LCU of the target pixel)-1.

[0144] The downward reference range Dlower mentioned here specifies distance along the Y axis between the target pixel and a pixel within the reference region R which is a pixel adjacent to the lower edge of the reference region R (this will be applied to the following). Also, let us say that the downward reference range Dlower is represented with a pixel as a unit (this will be applied to the following).

[0145] Also, the downward reference range Dlower in the event that the Y coordinate within the LCU of the target pixel is equal to or greater than the predetermined threshold LLCUy-Llower1 may be set so as to depend on the Y coordinate within the LCU of the target pixel, or may be set to, for example, 0 without depending on the Y coordinate within the LCU of the target pixel.

[0146] In more general, the restraint B may also be expressed as a restraint for restricting, when distance DL between the downstream edge of the LCU including the target pixel, and this target pixel is less than a predetermined threshold, a position of the downstream edge of the reference region regarding this target pixel so that distance with this target pixel is equal to or shorter than the DL.

[0147] Restraint C: In the case that the normalized Y coordinate within the LCU of the target pixel is equal to or greater than a predetermined threshold Lupper2, the upward reference range Dupper is restricted to the number of pixels equal to or smaller than Ly+(normalized Y coordinate within the LCU of the target pixel)-LLCUy.

[0148] A boundary of the Lupper2 indicated with the predetermined threshold will be called virtual boundary line (Virtual Boundary Line). The position of the virtual boundary line will be referred to as VBLine.

[0149] The normalized Y coordinate within the LCU mentioned here is defined with (Y coordinate within the LCU) % LLCUy in which a symbol "%" that represents remainder operation is used, and takes a value equal to or greater than 0 (this will be applied to the following). Also, the Y coordinate within the LCU=(Y coordinate of the target pixel) % LLCUy, and consequently, the normalized Y coordinate within the LCU is the same as (Y coordinate % LLCUy) using the Y coordinate of the target pixel. Also, in the event that the position of the target pixel is expressed with the upper left coordinates (xC, yC) of a block including the target pixel and relative coordinates (x, y) from the upper left coordinates of the block, normalized Y coordinate yr within the LCU is represented with (yC+y) % LcuHeight.

[0150] Also, the predetermined threshold Lupper2 has to be a value equal to or smaller than LLCUy-Ly (this will be applied to the following). If we say that Ly=4, the position of the virtual boundary line in the restraint C VBLine (=LcuHeight-Ly) becomes LcuHeight-4. If we say that Ly=3, the VBLine becomes LcuHeight-3. Also, the upward reference range Dupper in the event that the normalized Y coordinate within the LCU of the target pixel is equal to or greater than the predetermined threshold Lupper2 may be set so as to depend on the Y coordinate within the LCU of the target pixel, or may be set to, for example, 0 without depending on the Y coordinate within the LCU of the target pixel.

[0151] In more general, the restraint C may also be expressed as a restraint for restricting, when the target pixel is positioned on the downstream side of the downstream edge of the ALFU corresponding to the CLU including this target pixel (equal to or lower than the virtual boundary line VBLine), the position of the upstream edge of the reference region R regarding the target pixel so that distance with this target pixel becomes equal to or shorter than distance between this target pixel and the upstream edge of the output unit thereof (virtual boundary line VBLine). In this case, the reference region R is restricted so as not to include a pixel on the upper side than the virtual boundary line VBLine. That is to say, a pixel alone of which the Y coordinate is equal to or lower than the virtual boundary line VBLine is referenced.

[0152] Restraint D: In the case that the normalized Y coordinate within the LCU of the target pixel is less than a predetermined threshold Llower2, the downward reference range Dlower is restricted to the number of pixels equal to or smaller than LLCUy-Ddf-(normalized Y coordinate within the LCU of the target pixel)-1.

[0153] Here, the downward reference range Dlower in the event that the normalized Y coordinate within the LCU of the target pixel is less than the predetermined threshold Llower2 may be set so as to depend on the Y coordinate within the LCU of the target pixel, or may be set to, for example, 0 without depending on the Y coordinate within the LCU of the target pixel. Also, the threshold Llower2 has to be a value equal to or greater than LLCU y-Ly.

[0154] In more general, the restraint D may also be expressed as a restraint for restricting, when the target pixel is positioned on the upstream side of the downstream edge of the ALFU corresponding to the CLU including this target pixel, the position of the downstream edge of the reference region R regarding the target pixel so that distance with this target pixel becomes equal to or shorter than distance to be obtained by subtracting Ddf from distance between this target pixel and the downstream edge of this LCU.

[0155] Restraint E: In the case that the normalized Y coordinate within the LCU of the target pixel is less than a predetermined threshold Llower3, the downward reference range Dlower is restricted to the number of pixels equal to or smaller than LLCUy-Ly-(normalized Y coordinate within the LCU of the target pixel)-1. A boundary of Lupper3 indicated with a predetermined threshold will also be called a virtual boundary line (Virtual Boundary Line).

[0156] Here, the threshold Llower3 has to be a value equal to or greater than LLCUy-Ly. Now, if we say that Ly=4, a position of the virtual boundary line VBLine (=LcuHeight-Ly) in the restraint E becomes LcuHeight-4. If we say that Ly=3, the VBLine becomes LcuHeight-3.

[0157] In more general, the restraint E may also be expressed as a restraint for restricting, when the target pixel is positioned on the upstream side of the downstream edge of the ALFU corresponding to the CLU including this target pixel (upper side than the virtual boundary line VBLine), the position of the downstream edge of the reference region R regarding this target pixel so that distance with this target pixel becomes equal to or shorter than distance between this target pixel and the downstream edge of the output unit thereof (virtual boundary line VBLine). In this case, the reference region R is restricted so as not to include a pixel below the virtual boundary line VBLine. That is to say, a pixel alone of which the Y coordinate is above the virtual boundary line VBLine is referenced.

(Filter Processing Unit 162c)

[0158] The filter processing unit 162c calculates the pixel value of the target pixel of the adaptive-filtered decoded image P_ALF (also referred to as "image after filtering") with reference to the pixel value of a pixel included in the reference region R set regarding this target pixel by the reference region setting unit 162b which is a pixel value of the deblocked decoded image P_DB (also referred to as "image before filtering"). The generated adaptive-filtered decoded image P_ALF is stored once in the internal memory 163, and then transferred to the frame memory 15.

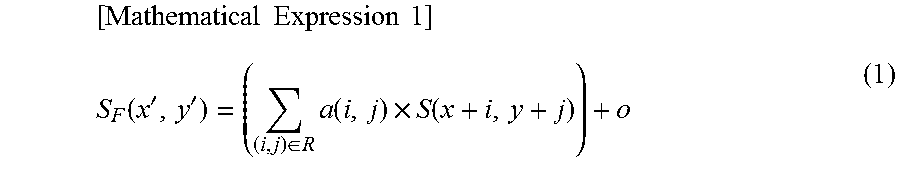

[0159] If we represent the pixel value of the target pixel in the adaptive-filtered decoded image P_ALF as SF(x', y'), and represent a pixel value of the deblocked decoded image P_DB in the filter reference range as S(x, y), the filter processing unit 162c calculates the pixel value SF(x', y') using the following Expression (1).

[ Mathematical Expression 1 ] S F ( x ' , y ' ) = ( ( i , j ) .di-elect cons. R a ( i , j ) .times. S ( x + i , y + j ) ) + o ( 1 ) ##EQU00001##