Dynamic Calibration Systems And Methods For Wearable Heads-up Displays

Aleem; Idris S. ; et al.

U.S. patent application number 16/036558 was filed with the patent office on 2019-01-17 for dynamic calibration systems and methods for wearable heads-up displays. The applicant listed for this patent is Thalmic Labs Inc.. Invention is credited to Idris S. Aleem, Mayank Bhargava, Dylan Jacobs.

| Application Number | 20190018485 16/036558 |

| Document ID | / |

| Family ID | 64998882 |

| Filed Date | 2019-01-17 |

| United States Patent Application | 20190018485 |

| Kind Code | A1 |

| Aleem; Idris S. ; et al. | January 17, 2019 |

DYNAMIC CALIBRATION SYSTEMS AND METHODS FOR WEARABLE HEADS-UP DISPLAYS

Abstract

Systems, methods and articles that provide dynamic calibration of eye tracking systems for wearable heads-up displays (WHUDs). The eye tracking system may determine a user's gaze location on a display of the WHUD utilizing a calibration point model that includes a plurality of calibration points. During regular use of the WHUD by the user, the calibration point model may be dynamically updated based on the user's interaction with user interface (UI) elements presented on the display. The UI elements may be specifically designed (e.g., shaped, positioned, displaced) to provide in-use and on-going dynamic calibration of the eye tracking system, which in at least some implementations may be unnoticeable to the user.

| Inventors: | Aleem; Idris S.; (Kitchener, CA) ; Bhargava; Mayank; (Waterloo, CA) ; Jacobs; Dylan; (Kitchener, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 64998882 | ||||||||||

| Appl. No.: | 16/036558 | ||||||||||

| Filed: | July 16, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 62533463 | Jul 17, 2017 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G02B 2027/014 20130101; G02B 27/017 20130101; G09G 2354/00 20130101; G09G 2370/022 20130101; G02B 2027/0174 20130101; G02B 27/0179 20130101; G09G 2340/0464 20130101; G06F 3/147 20130101; G09G 2320/0693 20130101; G06F 3/013 20130101; G06T 13/80 20130101; G09G 5/37 20130101; G02B 2027/0178 20130101; G02B 2027/0187 20130101; G09G 3/002 20130101; G06K 9/00 20130101; G06K 9/00604 20130101; G02B 27/0172 20130101; G02B 27/0093 20130101 |

| International Class: | G06F 3/01 20060101 G06F003/01; G02B 27/01 20060101 G02B027/01 |

Claims

1. A wearable heads-up display (WHUD), comprising: a support frame; a display carried by the support frame; a glint detection module carried by the support frame that, in operation, determines glint space points in a glint space that correspond to a region in a field of view of an eye of a user at which a gaze of the eye is directed; at least one processor carried by the support frame, the at least one processor communicatively coupled to the display and the glint detection module; and at least one nontransitory processor-readable storage medium carried by the support frame, the at least one nontransitory processor-readable storage medium communicatively coupled to the at least one processor, wherein the at least one nontransitory processor-readable storage medium stores data or processor-executable instructions that, when executed by the at least one processor, cause the at least one processor to: receive a glint space point in a glint space captured by the glint detection module, the glint space point representative of a position of an eye of a user of the WHUD; from time-to-time during regular operation of the WHUD by the user, determine a gaze location in a display space of the display based on the received glint space point and one or more calibration point models, each of the one or more calibration point models comprising a plurality of calibration points, each calibration point comprising: a glint space point; and a display space point in the display space of the display, the display space point representative of a location on the display of a user interface (UI) element displayed on the display; and dynamically generating one or more child calibration point models, wherein to dynamically generate one or more child calibration point models, the at least one processor, for each calibration point model: generates one or more additional calibration points; and adds the one or more additional calibration points to the calibration point model.

2. The WHUD of claim 1 wherein the additional calibration point comprises an additional inferred calibration point.

3. The WHUD of claim 1 wherein the additional calibration point comprises a selected calibration point.

4. The WHUD of claim 1 wherein the at least one processor: evicts a calibration point from the one or more calibration point models.

5. The WHUD of claim 4 wherein the at least one processor evicts an oldest calibration point from the one or more calibration point models.

6. The WHUD of claim 4 wherein the at least one processor evicts a calibration point based on at least one of the locations of calibration points in the one or more calibration point models or the times at which the calibration points in the one or more calibration point models were obtained.

7. The WHUD of claim 1 wherein the at least one processor generates a transform from the glint space to the display space for each of the one or more calibration point models.

8. The WHUD of claim 7 wherein the at least one processor generates an affine transform from the glint space to the display space.

9. The WHUD of claim 7 wherein the at least one processor solves a matrix utilizing at least one of a QR decomposition method or singular value decomposition method.

10. The WHUD of claim 7, wherein the at least one processor: receives at least one auxiliary sensor value from at least one auxiliary sensor during regular operation of the WHUD by the user; and optimizes the transform from the glint space to the display space for each of the one or more calibration point models based at least in part on the received at least one auxiliary sensor value.

11. The WHUD of claim 1, further comprising: at least one auxiliary sensor selected from a group consisting of: a proximity sensor, a gyroscope sensor, and an accelerometer.

12. The WHUD of claim 11 wherein, for each of the one or more calibration point models, the at least one processor trains a machine learning model utilizing the plurality of calibration points, or data derived therefrom, wherein the trained machine learning model receives as inputs at least one current auxiliary sensor value and outputs a set of calibration points.

Description

BACKGROUND

Technical Field

[0001] The present disclosure generally relates to wearable heads-up displays, and more particularly, to calibration systems and methods for eye tracking systems of wearable heads-up displays.

Description of the Related Art

[0002] Wearable Heads-Up Displays

[0003] A head-mounted display is an electronic device that is worn on a user's head and, when so worn, secures at least one electronic display within a viewable field of at least one of the user's eyes, regardless of the position or orientation of the user's head. A wearable heads-up display is a head-mounted display that enables the user to see displayed content but also does not prevent the user from being able to see their external environment. The "display" component of a wearable heads-up display is either transparent or at a periphery of the user's field of view so that it does not completely block the user from being able to see their external environment. Examples of wearable heads-up displays include: the Google Glass.RTM., the Optinvent Ora.RTM., the Epson Moverio.RTM., and the Sony Glasstron.RTM., just to name a few.

[0004] The optical performance of a wearable heads-up display is an important factor in its design. When it comes to face-worn devices, however, users also care a lot about aesthetics. This is clearly highlighted by the immensity of the eyeglass (including sunglass) frame industry. Independent of their performance limitations, many of the aforementioned examples of wearable heads-up displays have struggled to find traction in consumer markets because, at least in part, they lack fashion appeal. Most wearable heads-up displays presented to date employ large display components and, as a result, most wearable heads-up displays presented to date are considerably bulkier and less stylish than conventional eyeglass frames.

[0005] A challenge in the design of wearable heads-up displays is to minimize the bulk of the face-worn apparatus while still providing displayed content with sufficient visual quality. There is a need in the art for wearable heads-up displays of more aesthetically-appealing design that are capable of providing high-quality images to the user without limiting the user's ability to see their external environment.

[0006] Eye Tracking

[0007] Eye tracking is a process by which the position, orientation, and/or motion of the eye may be measured, detected, sensed, determined (collectively, "measured"), and/or monitored. In many applications, this is done with a view towards determining the gaze direction of a user. The position, orientation, and/or motion of the eye may be measured in a variety of different ways, the least invasive of which typically employ one or more optical sensor(s) (e.g., cameras) to optically track the eye. Common techniques involve illuminating or flooding the entire eye, all at once, with infrared light and measuring reflections with at least one optical sensor that is tuned to be sensitive to the infrared light. Information about how the infrared light is reflected from the eye is analyzed to determine the position(s), orientation(s), and/or motion(s) of one or more eye feature(s) such as the cornea, pupil, iris, and/or retinal blood vessels.

[0008] Eye tracking functionality is highly advantageous in applications of wearable heads-up displays. Some examples of the utility of eye tracking in wearable heads-up displays include: influencing where content is displayed in the user's field of view, conserving power by not displaying content that is outside of the user's field of view, influencing what content is displayed to the user, determining where the user is looking or gazing, determining whether the user is looking at displayed content on the display or through the display at their external environment, and providing a means through which the user may control/interact with displayed content.

BRIEF SUMMARY

[0009] A method of operating a wearable heads-up display device (WHUD) comprising a display and a glint detection module may be summarized as including during regular operation of the WHUD by a user, populating, by at least one processor, the display of the WHUD with at least one user interface (UI) element; and detecting, by the at least one processor, a gaze location of a user based at least in part on glint information received from the glint detection module and a known location of at least one of the at least one UI element on the display. Populating the display of the WHUD with at least one UI element may include populating the display with a number of UI elements that is below a threshold determined to enable accurate gaze location detection. Populating the display of the WHUD with at least one UI element may include maximizing the respective distances between a plurality of UI elements displayed on the display. Populating the display of the WHUD with at least one UI element may include minimizing a similarity between an angle and a length of vectors that join pairs of a plurality of UI elements displayed on the display. Populating the display of the WHUD with at least one UI element may include populating the display of the WHUD with at least one animated UI element.

[0010] The method may further include updating, by the at least one processor, an eye tracking calibration based at least in part on the detected gaze location. Updating an eye tracking calibration may include generating at least one calibration point based at least in part on the detected gaze location, the at least one calibration point including a glint space point received from the glint detection module and a display space point that corresponds to a location of a UI element displayed on the display. Generating at least one calibration point may include generating a calibration point for each UI element displayed on the display, each calibration point associated with a UI element including a glint space point received from the glint detection module and a display space point that corresponds to the location of the UI element on the display. Updating an eye tracking calibration may include adding a calibration point to at least one parent calibration point model that includes a plurality of calibration points to generate at least one child calibration point model.

[0011] The method may further include fitting, by the at least one processor, a transform to the calibration points of the at least one child calibration point model.

[0012] A wearable heads-up display device (WHUD) may be summarized as including: a support frame; a display carried by the support frame; a glint detection module carried by the support frame; at least one processor carried by the support frame, the at least one processor communicatively coupled to the display and the glint detection module; and at least one nontransitory processor-readable storage medium carried by the support frame, the at least one nontransitory processor-readable storage medium communicatively coupled to the at least one processor, wherein the at least one nontransitory processor-readable storage medium stores data or processor-executable instructions that, when executed by the at least one processor, cause the at least one processor to: cause the display to display at least one user interface (UI) element; and detect a gaze location of a user based at least in part on glint information received from the glint detection module and a known location of at least one of the at least one UI element on the display.

[0013] The data or processor-executable instructions, when executed by the at least one processor, may cause the at least one processor to cause the display to display a number of UI elements that is below a threshold determined to enable accurate gaze location detection.

[0014] The data or processor-executable instructions, when executed by the at least one processor, may cause the at least one processor to cause the display to maximize the respective distances between a plurality of UI elements displayed on the display.

[0015] The data or processor-executable instructions, when executed by the at least one processor, may cause the at least one processor to cause the display to minimize a similarity between an angle and a length of vectors that join pairs of a plurality of UI elements displayed on the display.

[0016] The data or processor-executable instructions, when executed by the at least one processor, may cause the at least one processor to cause the display to display at least one animated UI element.

[0017] The data or processor-executable instructions, when executed by the at least one processor, may cause the at least one processor to update an eye tracking calibration based at least in part on the detected gaze location. The at least one processor may generate at least one calibration point based at least in part on the detected gaze location, the at least one calibration point comprising a glint space point received from the glint detection module and a display space point that corresponds to a location of a UI element displayed on the display. The at least one processor may generate a calibration point for each UI element displayed on the display, each calibration point associated with a UI element comprising a glint space point received from the glint detection module and a display space point that corresponds to the location of the UI element on the display. The at least one processor may add a calibration point to at least one parent calibration point model that comprises a plurality of calibration points to generate at least one child calibration point model. The at least one processor may fit a transform to the calibration points of the at least one child calibration point model.

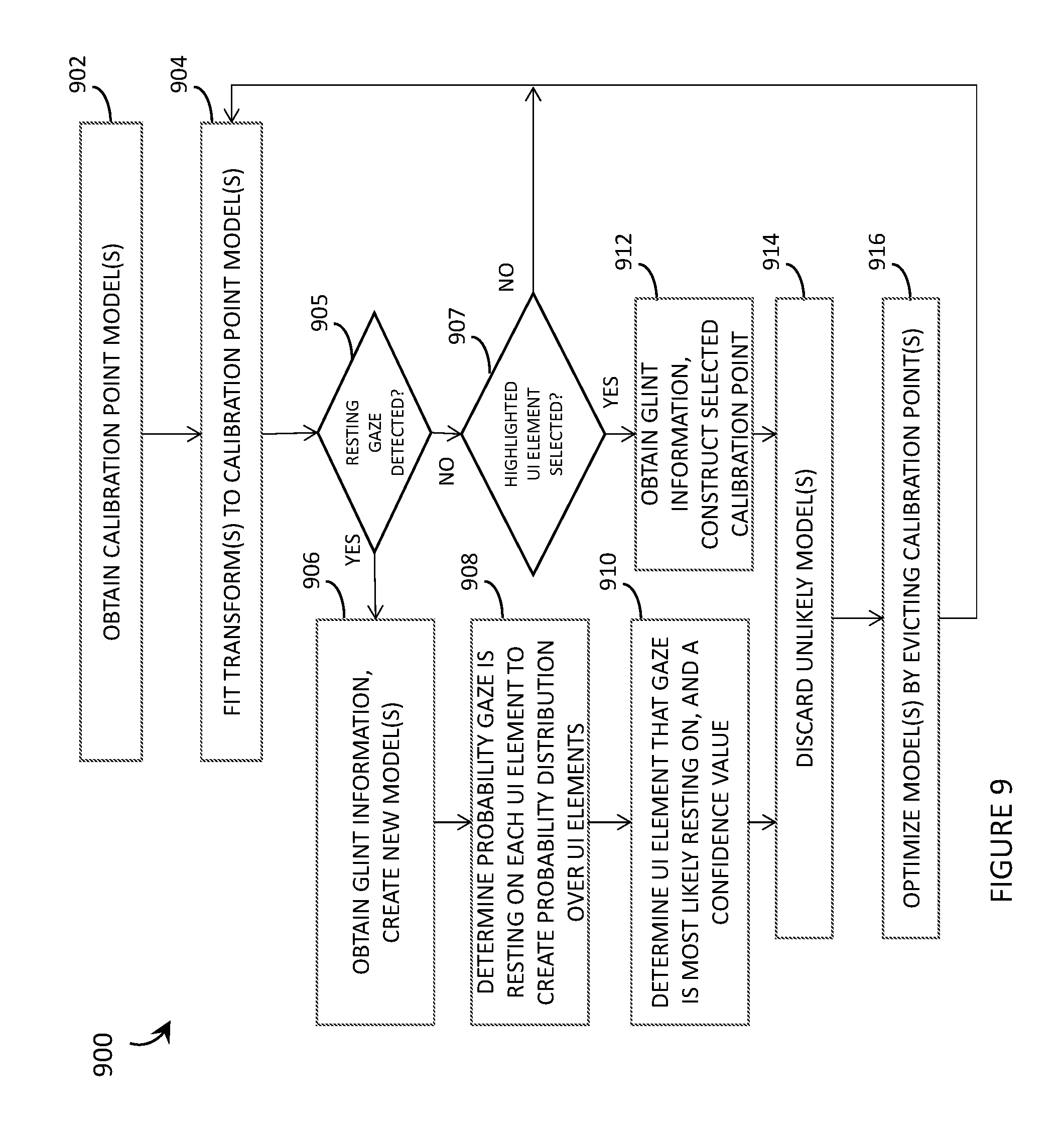

[0018] A method of operating a wearable heads-up display device (WHUD) comprising a display and a glint detection module may be summarized as including obtaining, by at least one processor, one or more calibration point models each comprising a plurality of calibration points, each calibration point comprising: a glint space point in a glint space captured by the glint detection module, the glint space point representative of a position of an eye of a user of the WHUD; and a display space point in a display space of the display, the display space point representative of a location on the display at which a gaze of the user is inferred to be resting when the glint space point is captured by the glint detection module; generating, by the at least one processor, a transform from the glint space to the display space for each of the one or more calibration point models; determining, by the at least one processor, user gaze location in the display space using received glint information and the generated transform; from time-to-time during regular operation of the WHUD by the user, generating, by the at least one processor, at least one additional calibration point; adding, by the at least one processor, the additional calibration point to at least one of the calibration point models to generate one or more child calibration point models; generating, by the at least one processor, a transform for each of the one or more child calibration point models; and determining, by the at least one processor, a user gaze location in the display space using at least one glint space point received from the glint detection module and at least one transform of the one or more child calibration point models.

[0019] Generating at least one additional calibration point may include generating an additional inferred calibration point including a glint space point received from the glint detection module; and a display space point that corresponds to a location in the display space of a UI element determined to be the user gaze location.

[0020] Generating at least one additional calibration point may include generating an additional inferred calibration point for each of a plurality of UI elements displayed on the display, each inferred calibration point including a glint space point received from the glint detection module; and a display space point that corresponds to a location in the display space of one of the plurality of UI elements.

[0021] Generating at least one additional calibration point may include generating at least one additional selected calibration point including a glint space point received from the glint detection module; and a display space point that is a location of a UI element on the display selected by the user during regular operation of the WHUD. Determining a user gaze location in the display space using at least one glint space point received from the glint detection module and at least one transform may include determining a user gaze location in the display space using at least one glint space point received from the glint detection module and the one or more child calibration point models that include the at least one additional selected calibration point. Generating a transform may include generating an affine transform from the glint space to the display space. Generating a transform may include solving a matrix utilizing at least one of a QR decomposition method or singular value decomposition method.

[0022] The method may further include from time-to-time during regular operation of the WHUD by the user, evicting at least one calibration point from a calibration point model. Evicting at least one calibration point from a calibration point model may include evicting an oldest calibration point from the calibration point model. Evicting at least one calibration point from a calibration point model may include evicting a calibration point based on at least one of the locations of calibration points in the calibration point model or the times at which the calibration points in the calibration point model were obtained.

[0023] Obtaining a calibration point model including a plurality of calibration points may include populating, by the at least one processor, the display of the WHUD with a plurality of UI elements; for each of the plurality of UI elements, receiving, by the at least one processor, a selection of the UI element by the user; receiving, by the at least one processor, a glint space point from the glint detection module obtained concurrently with the selection of the UI element by the user; and generating, by the at least one processor, a calibration point that comprises the received glint space point and a display space point representative of the location of the UI element on the display of the WHUD. Populating the display of the WHUD with a plurality of UI elements may include populating the display with the plurality of UI elements one at a time in a sequential order.

[0024] Obtaining a calibration point model including a plurality of calibration points may include causing, by the at least one processor, four UI elements to be sequentially displayed on the display, each of the four UI elements sequentially displayed in a different one of four corners of the display; and obtaining, by the at least one processor, four calibration points that each correspond to a respective one of the UI elements, each calibration point comprising a display point the display space and a glint space point in the glint space.

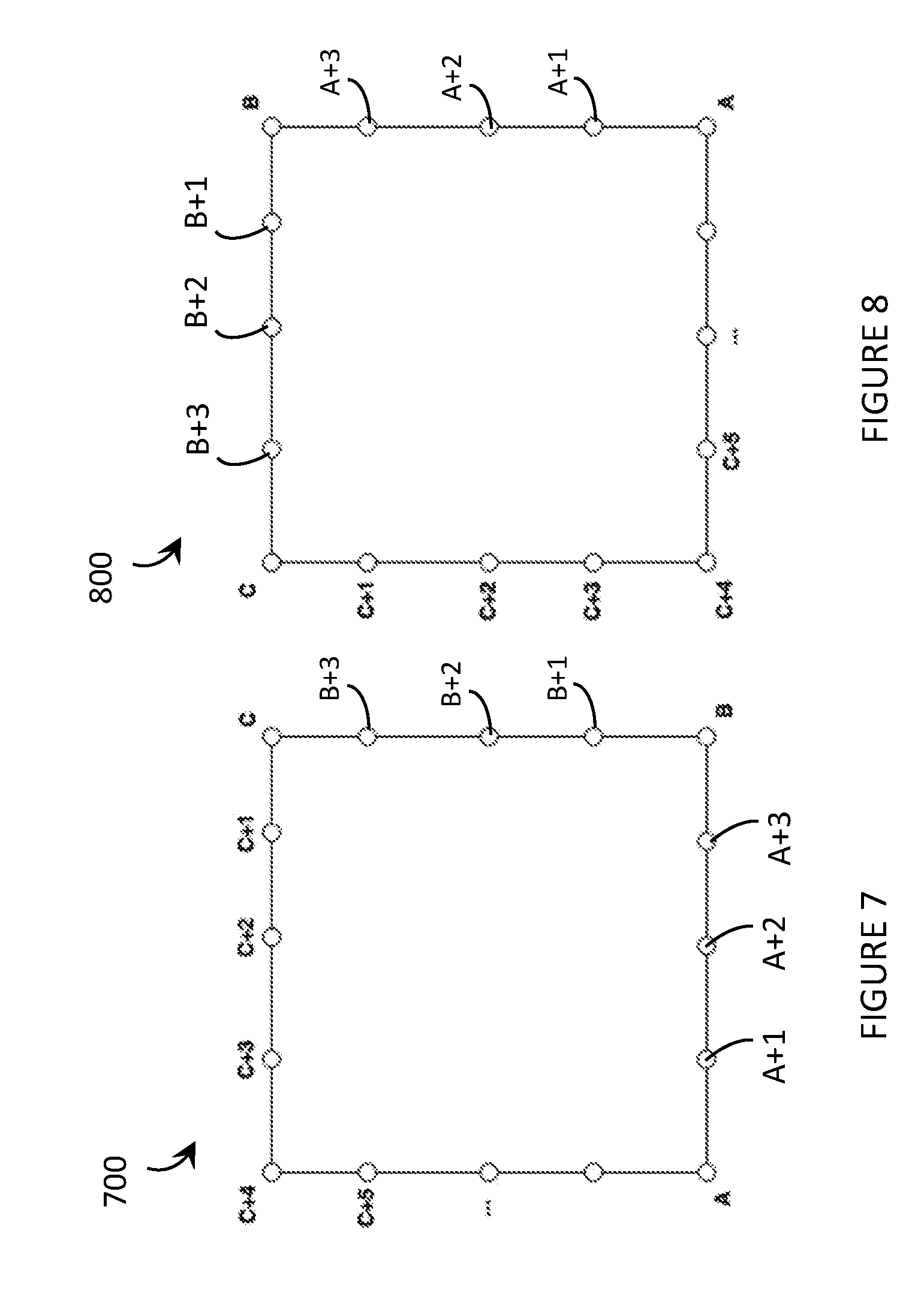

[0025] Obtaining a calibration point model including a plurality of calibration points may include causing, by at least one processor, a UI element to move on the display of the WHUD according to a determined pattern; and generating, by the at least one processor, a plurality of calibration points as the UI element moves on the display, each calibration point includes a glint space point in the glint space captured by the glint detection module; and a display space point in the display space, the display space point representative of a location on the display of the moving UI element when the corresponding glint space point is captured by the glint detection module. Causing a UI element to move on the display of the WHUD according to a determined pattern may include causing a UI element to move on the display of the WHUD according to a rectangular-shaped pattern in a first direction and a second direction, the second direction opposite the first direction.

[0026] The method may further include receiving, by the at least one processor, at least one auxiliary sensor value from at least one auxiliary sensor during regular operation of the WHUD by the user; and optimizing, by the at least one processor, a transform of at least one calibration point model based at least in part on the received at least one auxiliary sensor value. Receiving at least one auxiliary sensor value may include obtaining at least one auxiliary sensor value from at least one of a proximity sensor, a gyroscope sensor or an accelerometer.

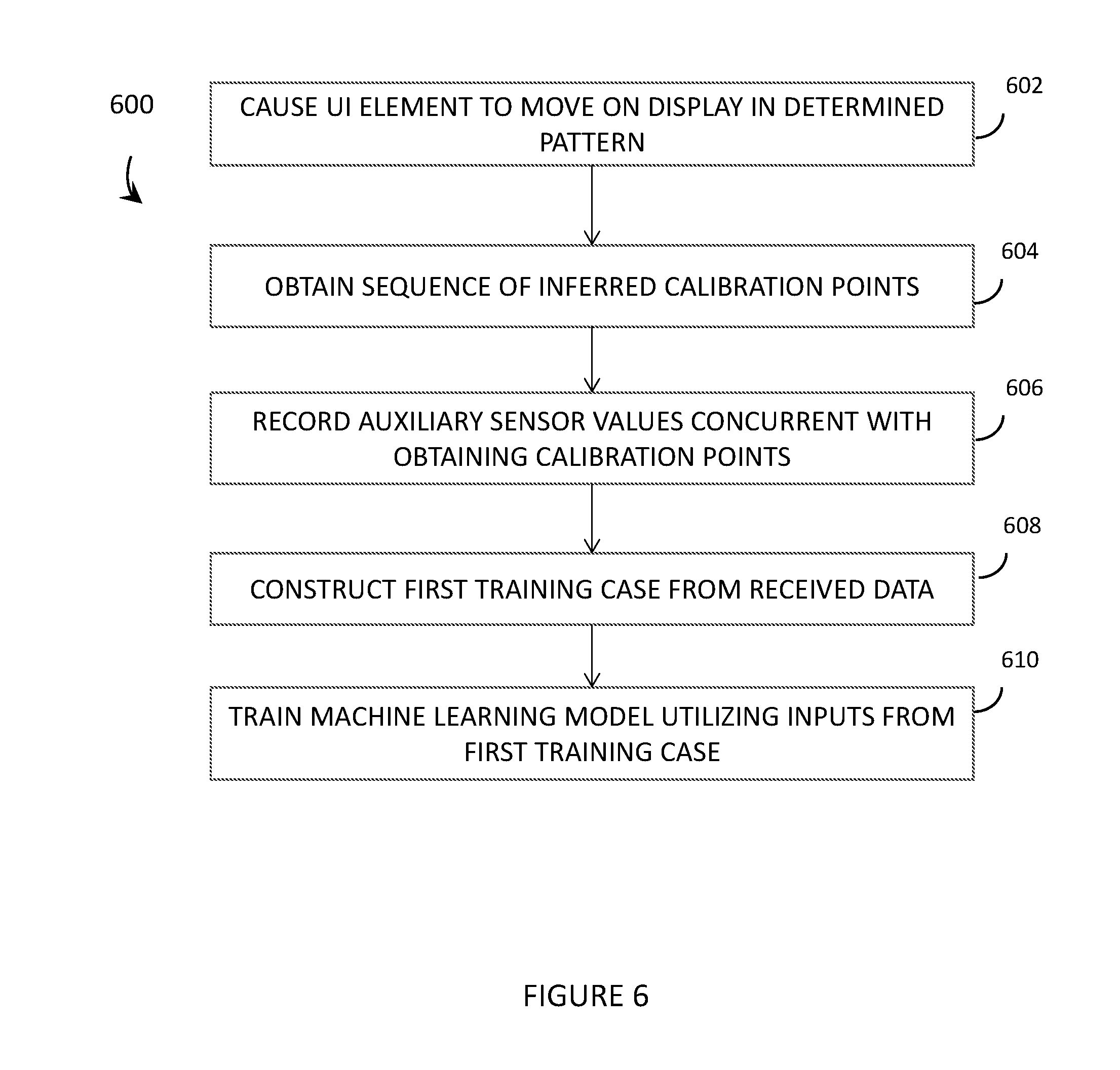

[0027] The method may further include receiving, by the at least one processor, a plurality of calibration points, each calibration point including a glint space point; a display space point; and at least one auxiliary sensor value from at least one auxiliary sensor obtained concurrently with the glint space point and the display space point; and training a machine learning model utilizing the plurality of calibration points, or data derived therefrom, the trained machine learning model receives as inputs at least one current auxiliary sensor value and outputs at least one of a set of calibration points or transform parameters.

[0028] The method may further include optimizing, by the at least one processor, at least one transform utilizing the trained machine learning model. Receiving a plurality of calibration points may include receiving a plurality of calibration points from the WHUD and from a population of WHUDs operated by a population of users.

[0029] A wearable heads-up display (WHUD) may be summarized as including a support frame; a display carried by the support frame; a glint detection module carried by the support frame that, in operation, determines glint space points in a glint space that correspond to a region in a field of view of an eye of a user at which a gaze of the eye is directed; at least one processor carried by the support frame, the at least one processor communicatively coupled to the display and the glint detection module; and at least one nontransitory processor-readable storage medium carried by the support frame, the at least one nontransitory processor-readable storage medium communicatively coupled to the at least one processor, wherein the at least one nontransitory processor-readable storage medium stores data or processor-executable instructions that, when executed by the at least one processor, cause the at least one processor to: obtain one or more calibration point models each comprising a plurality of calibration points, each calibration point comprising: a glint space point in a glint space captured by the glint detection module, the glint space point representative of a position of an eye of a user of the WHUD; and a display space point in a display space of the display, the display space point representative of a location on the display at which a gaze of the user is inferred to be resting when the glint space point is captured by the glint detection module; generate a transform from the glint space to the display space for each of the calibration point models; determine user gaze location in the display space using received glint information and the generated transform; from time-to-time during regular operation of the WHUD by the user, generate at least one additional calibration point; add the additional calibration point to at least one of the calibration point models to generate one or more child calibration point models; generate a transform for each of the one or more child calibration point models; and determine a user gaze location in the display space using at least one glint space point received from the glint detection module and at least one transform of the one or more child calibration point models.

[0030] The at least one processor may generate an additional inferred calibration point including a glint space point received from the glint detection module; and a display space point that corresponds to a location in the display space of a UI element determined to be the user gaze location. The at least one processor may generate an additional inferred calibration point for each of a plurality of UI elements displayed on the display, each inferred calibration point including a glint space point received from the glint detection module; and a display space point that corresponds to a location in the display space of one of a plurality of UI elements. The at least one processor may generate an additional selected calibration point including a glint space point received from the glint detection module; and a display space point that is a location of a UI element on the display selected by the user during regular operation of the WHUD. The at least one processor may determine a user gaze location in the display space using at least one glint space point received from the glint detection module and the one or more child calibration point models that include the additional selected calibration point. The at least one processor may generate an affine transform from the glint space to the display space. The at least one processor may solve a matrix utilizing at least one of a QR decomposition method or singular value decomposition method. The at least one processor may, from time-to-time during regular operation of the WHUD by the user, evict at least one calibration point from a calibration point model. The at least one processor may evict an oldest calibration point from the calibration point model. The at least one processor may evict a calibration point based on at least one of the locations of calibration points in the calibration point model or the times at which the calibration points in the calibration point model were obtained.

[0031] To obtain a calibration point model including a plurality of calibration points, the at least one processor may populate the display of the WHUD with a plurality of UI elements; and for each of the plurality of UI elements, receive a selection of the UI element by the user; receive a glint space point from the glint detection module obtained concurrently with the selection of the UI element by the user; and generate a calibration point that comprises the received glint space point and a display space point representative of the location of the UI element on the display of the WHUD. The at least one processor may populate the display of the WHUD with a plurality of UI elements one at a time in a sequential order. To obtain a calibration point model including a plurality of calibration points, the at least one processor may cause four UI elements to be sequentially displayed on the display, each of the four UI elements sequentially displayed in a different one of four corners of the display; and obtain four calibration points that each correspond to a respective one of the UI elements, each calibration point comprising a display point the display space and a glint space point in the glint space.

[0032] To obtain a calibration point model including a plurality of calibration points, the at least one processor may cause a UI element to move on the display of the WHUD according to a determined pattern; and generate a plurality of calibration points as the UI element moves on the display, each calibration point includes a glint space point in the glint space captured by the glint detection module; and a display space point in the display space, the display space point representative of a location on the display of the moving UI element when the corresponding glint space point is captured by the glint detection module. The determined pattern may include a rectangular-shaped pattern, and the at least one processor may cause the UI element to move in the rectangular-shaped pattern in a first direction; and cause the UI element to move in the rectangular-shaped pattern in a second direction, the second direction opposite the first direction. The at least one processor may receive at least one auxiliary sensor value from at least one auxiliary sensor during regular operation of the WHUD by the user; and optimize a transform of at least one calibration point model based at least in part on the received at least one auxiliary sensor value. The at least one auxiliary sensor may include at least one of a proximity sensor, a gyroscope sensor or an accelerometer.

[0033] The at least one processor may receive a plurality of calibration points, each calibration point including a glint space point; a display space point; and at least one auxiliary sensor value from at least one auxiliary sensor obtained concurrently with the glint space point and the display space point; and train a machine learning model utilizing the plurality of calibration points, or data derived therefrom, the trained machine learning model receives as inputs at least one current auxiliary sensor value and outputs at least one of a set of calibration points or transform parameters. The at least one processor may optimize at least one transform utilizing the trained machine learning model. The at least one processor may receive a plurality of calibration points from the WHUD and from a population of WHUDs operated by a population of users.

[0034] A method of operating a wearable heads-up display device (WHUD) comprising a display and a glint detection module may be summarized as including receiving, by at least one processor, a glint space point in a glint space captured by the glint detection module, the glint space point representative of a position of an eye of a user of the WHUD; from time-to-time during regular operation of the WHUD by the user, determining, by the at least one processor, a gaze location in a display space of the display based on the received glint space point and one or more calibration point models, each of the one or more calibration point models comprising a plurality of calibration points, each calibration point including a glint space point; and a display space point in the display space of the display, the display space point representative of a location on the display of a user interface (UI) element displayed on the display; and dynamically generating one or more child calibration point models by, for each calibration point model: generating, by the at least one processor, one or more additional calibration points; and adding, by the at least one processor, the one or more additional calibration points to the calibration point model. Generating the one or more additional calibration points may include generating an additional inferred calibration point. Generating the one or more additional calibration points may include generating a selected calibration point.

[0035] The method may further include evicting a calibration point from the one or more calibration point models. Evicting a calibration point from the one or more calibration point models may include evicting an oldest calibration point from the one or more calibration point models. Evicting a calibration point from the one or more calibration point models may include evicting a calibration point based on at least one of the locations of calibration points in the one or more calibration point models or the times at which the calibration points in the one or more calibration point models were obtained.

[0036] The method may further include generating, by the at least one processor, a transform from the glint space to the display space for each of the one or more calibration point models. Generating a transform may include generating an affine transform from the glint space to the display space. Generating a transform may include solving a matrix utilizing at least one of a QR decomposition method or singular value decomposition method. The method may further include receiving, by the at least one processor, at least one auxiliary sensor value from at least one auxiliary sensor during regular operation of the WHUD by the user; and optimizing, by the at least one processor, the transform from the glint space to the display space for each of the one or more calibration point models based at least in part on the received at least one auxiliary sensor value. Receiving at least one auxiliary sensor value may include obtaining at least one auxiliary sensor value from at least one of a proximity sensor, a gyroscope sensor or an accelerometer.

[0037] Each calibration point may further include at least one auxiliary sensor value from at least one auxiliary sensor obtained concurrently with the glint space point and the display space point, and the method may further include, for each of the one or more calibration point models, training a machine learning model utilizing the plurality of calibration points, or data derived therefrom, wherein the trained machine learning model receives as inputs at least one current auxiliary sensor value and outputs a set of calibration points.

[0038] A wearable heads-up display (WHUD) may be summarized as including a support frame; a display carried by the support frame; a glint detection module carried by the support frame that, in operation, determines glint space points in a glint space that correspond to a region in a field of view of an eye of a user at which a gaze of the eye is directed; at least one processor carried by the support frame, the at least one processor communicatively coupled to the display and the glint detection module; and at least one nontransitory processor-readable storage medium carried by the support frame, the at least one nontransitory processor-readable storage medium communicatively coupled to the at least one processor, wherein the at least one nontransitory processor-readable storage medium stores data or processor-executable instructions that, when executed by the at least one processor, cause the at least one processor to: receive a glint space point in a glint space captured by the glint detection module, the glint space point representative of a position of an eye of a user of the WHUD; from time-to-time during regular operation of the WHUD by the user, determine a gaze location in a display space of the display based on the received glint space point and one or more calibration point models, each of the one or more calibration point models comprising a plurality of calibration points, each calibration point comprising: a glint space point; and a display space point in the display space of the display, the display space point representative of a location on the display of a user interface (UI) element displayed on the display; and dynamically generating one or more child calibration point models, wherein to dynamically generate one or more child calibration point models, the at least one processor, for each calibration point model: generates one or more additional calibration points; and adds the one or more additional calibration points to the calibration point model. The additional calibration point may include an additional inferred calibration point. The additional calibration point may include a selected calibration point. The at least one processor may evict a calibration point from the one or more calibration point models. For example, the at least one processor may evict an oldest calibration point from the one or more calibration point models, and/or the at least one processor may evict a calibration point based on at least one of the locations of calibration points in the one or more calibration point models or the times at which the calibration points in the one or more calibration point models were obtained.

[0039] The at least one processor may generate a transform from the glint space to the display space for each of the one or more calibration point models. The at least one processor may generate an affine transform from the glint space to the display space. The at least one processor may solve a matrix utilizing at least one of a QR decomposition method or singular value decomposition method. The at least one processor may receive at least one auxiliary sensor value from at least one auxiliary sensor during regular operation of the WHUD by the user, and optimize the transform from the glint space to the display space for each of the one or more calibration point models based at least in part on the received at least one auxiliary sensor value.

[0040] The WHUD may further include at least one auxiliary sensor selected from a group consisting of: a proximity sensor, a gyroscope sensor, and an accelerometer. For each of the one or more calibration point models, the at least one processor may train a machine learning model utilizing the plurality of calibration points, or data derived therefrom, wherein the trained machine learning model receives as inputs at least one current auxiliary sensor value and outputs a set of calibration points.

BRIEF DESCRIPTION OF THE SEVERAL VIEWS OF THE DRAWINGS

[0041] In the drawings, identical reference numbers identify similar elements or acts. The sizes and relative positions of elements in the drawings are not necessarily drawn to scale. For example, the shapes of various elements and angles are not necessarily drawn to scale, and some of these elements may be arbitrarily enlarged and positioned to improve drawing legibility. Further, the particular shapes of the elements as drawn, are not necessarily intended to convey any information regarding the actual shape of the particular elements, and may have been solely selected for ease of recognition in the drawings.

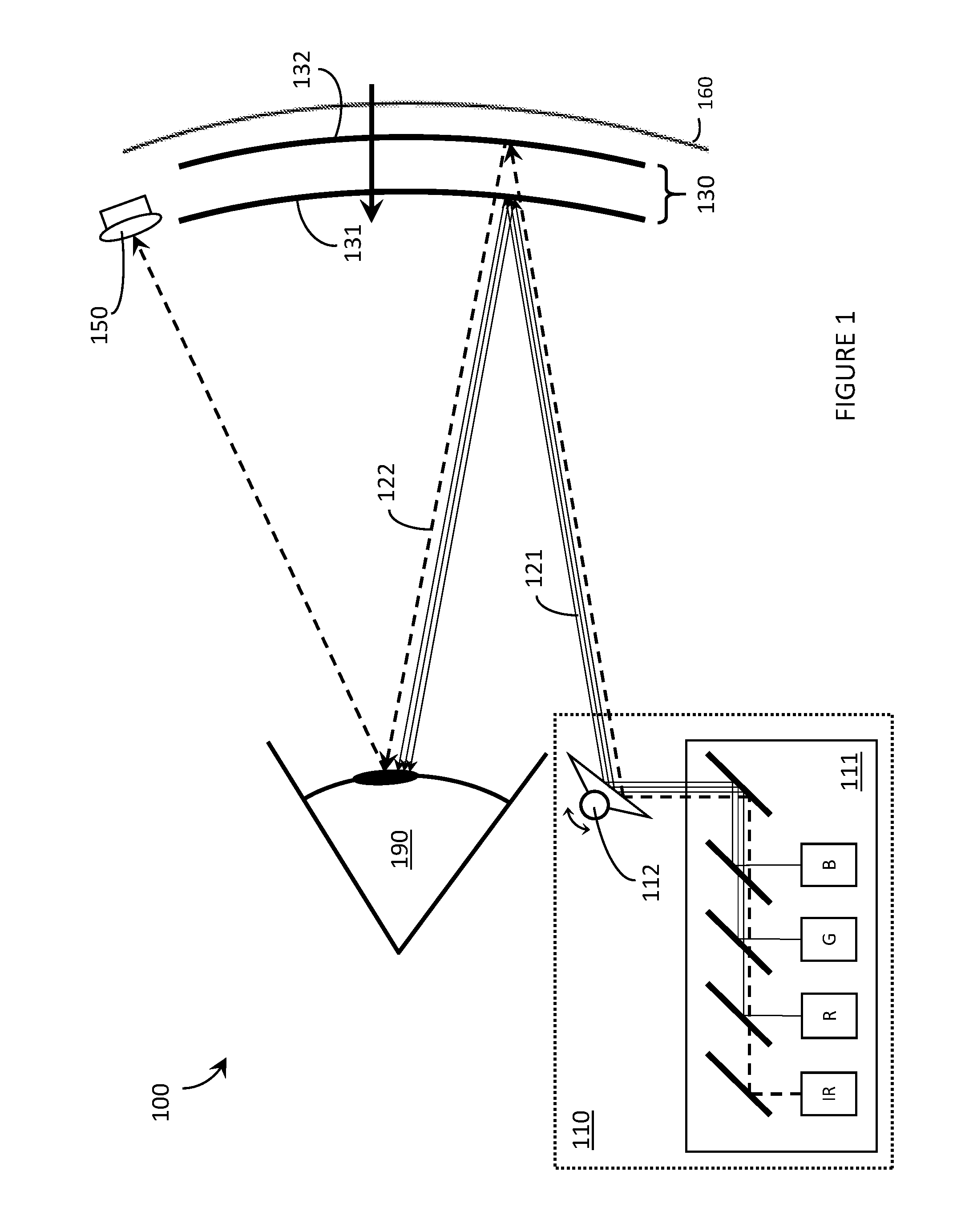

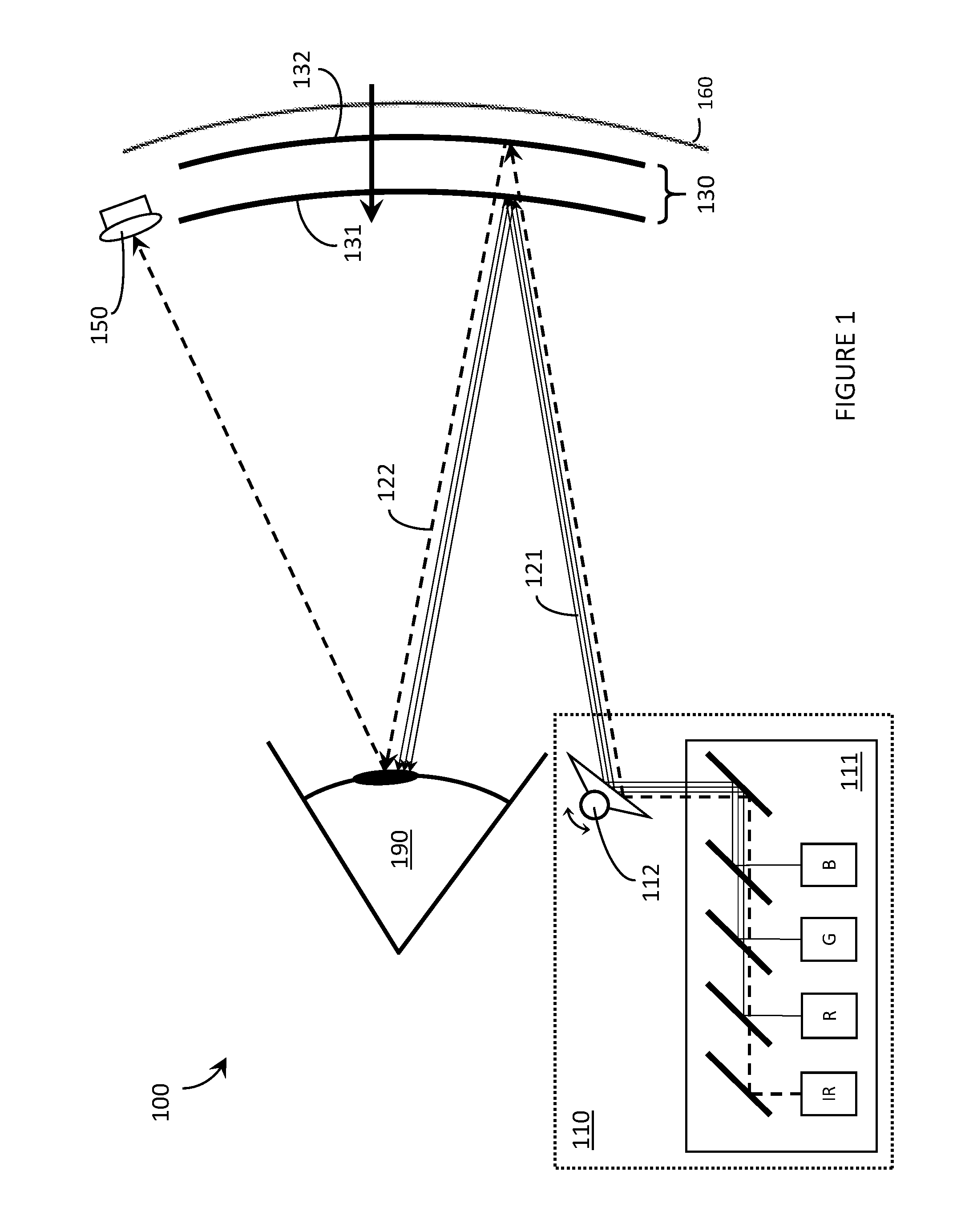

[0042] FIG. 1 is an illustrative diagram showing a side view of a wearable heads-up display, in accordance with the present systems, devices, and methods.

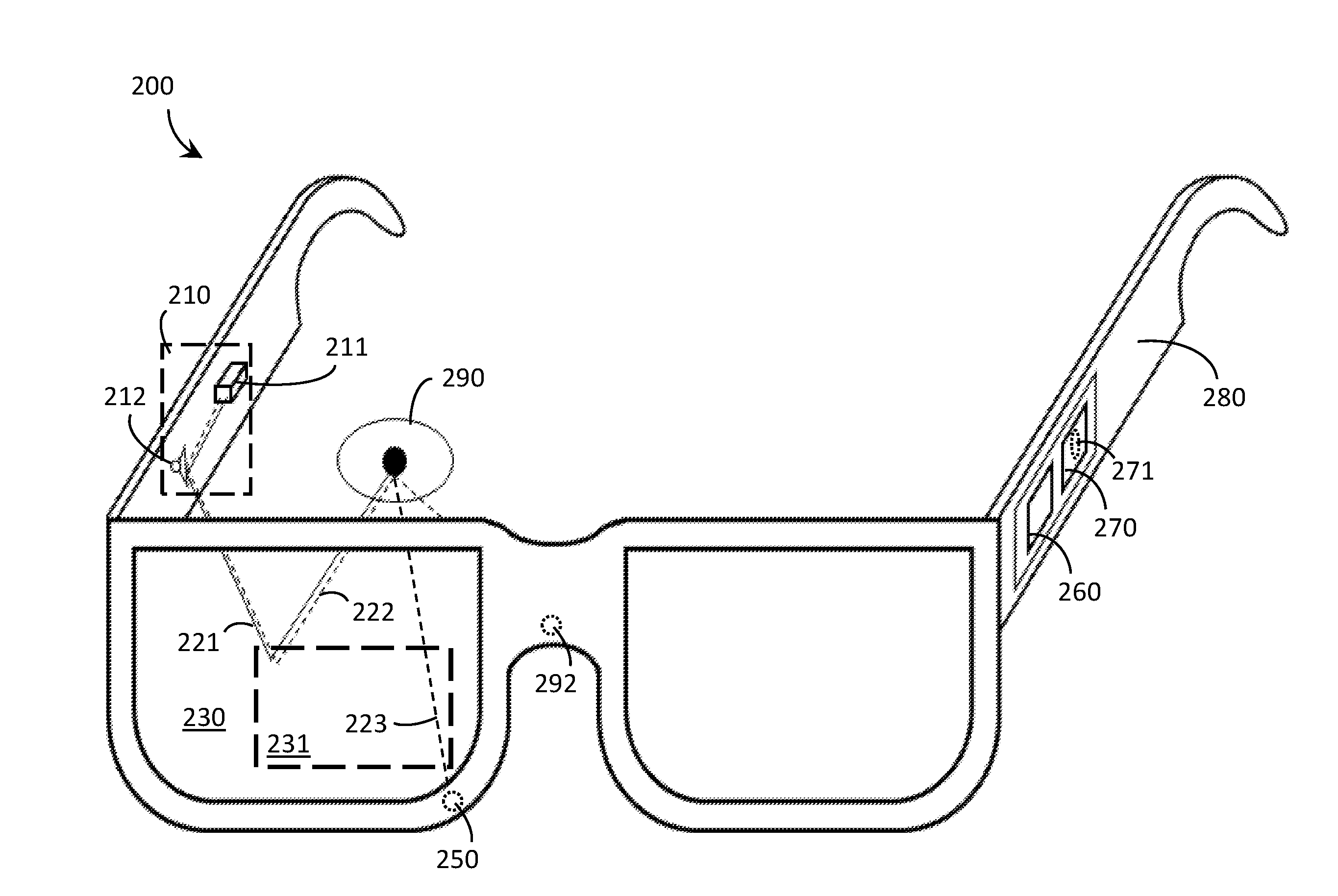

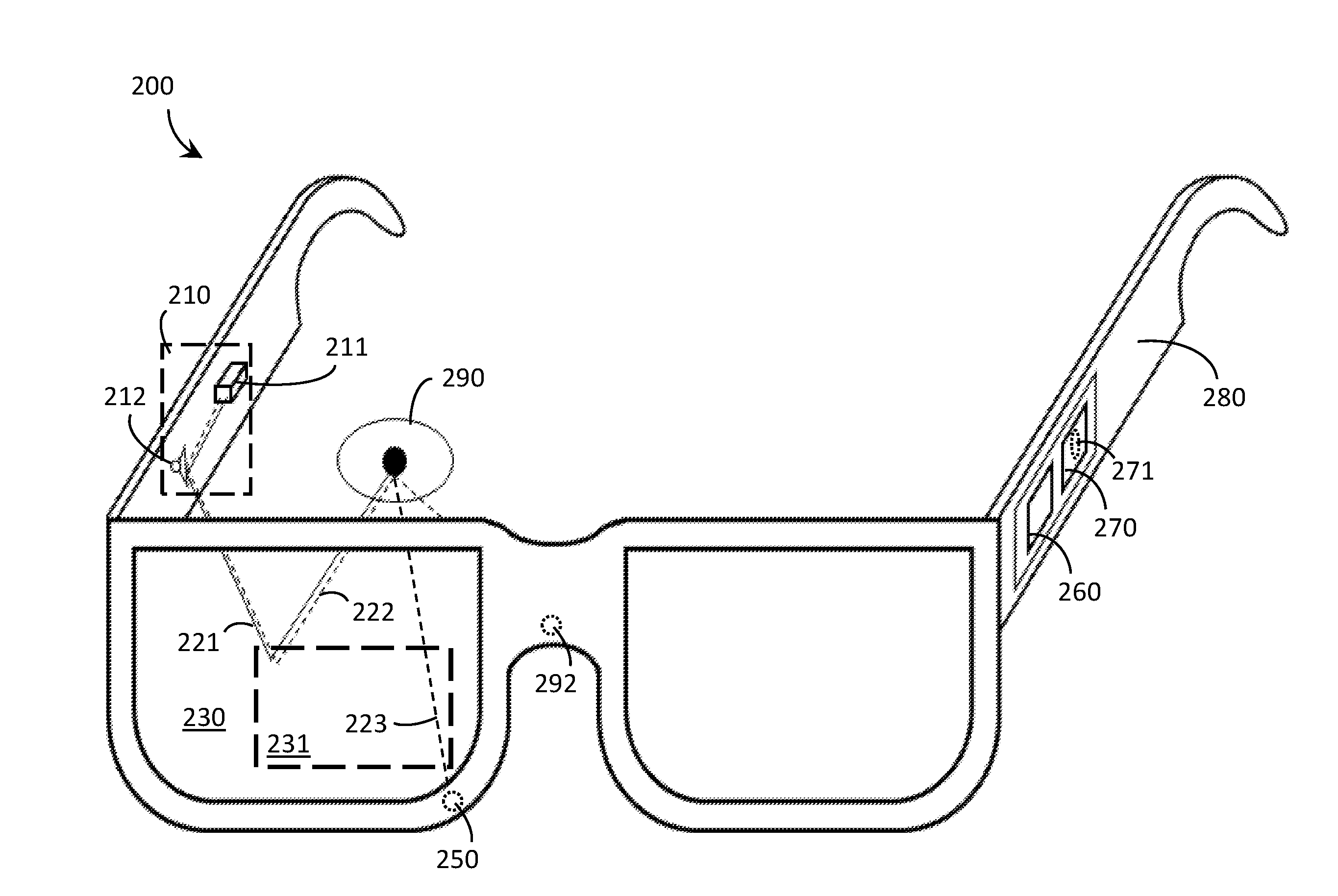

[0043] FIG. 2 is a perspective view of a wearable heads-up display, in accordance with the present systems, devices, and methods.

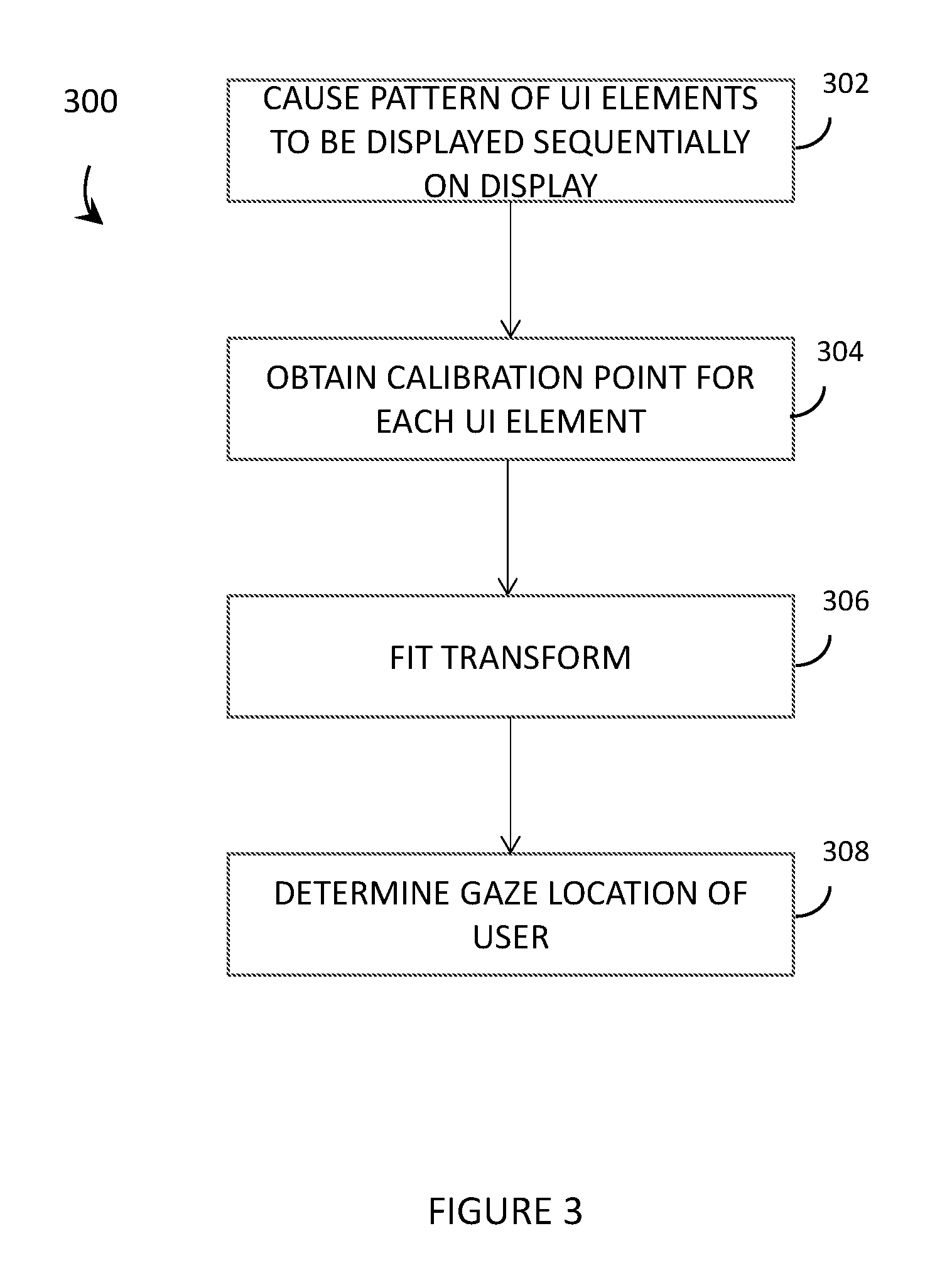

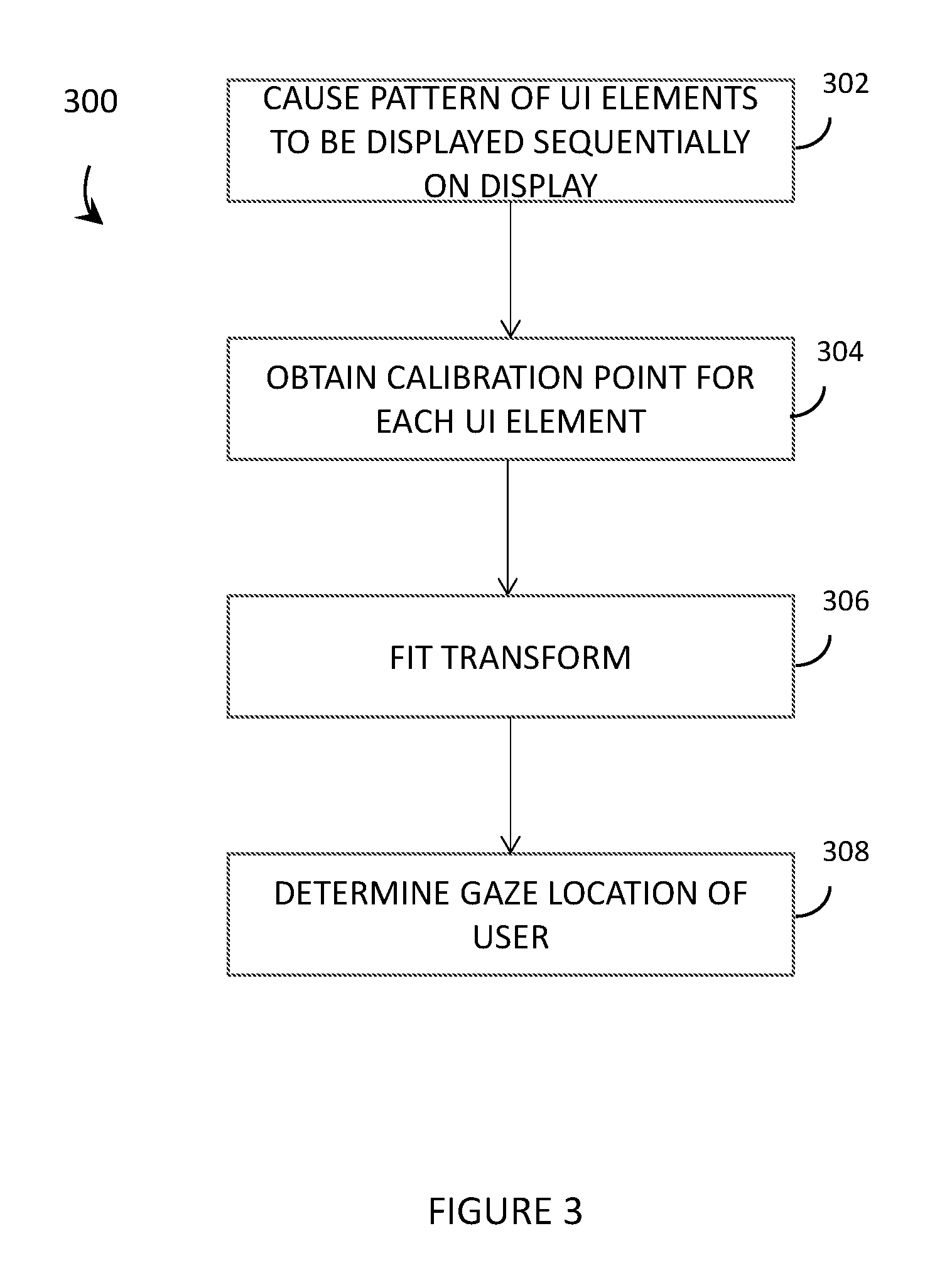

[0044] FIG. 3 is a flowchart for a method of operation of an eye tracking system of a wearable heads-up display to perform an explicit calibration process, in accordance with the present systems, devices, and methods.

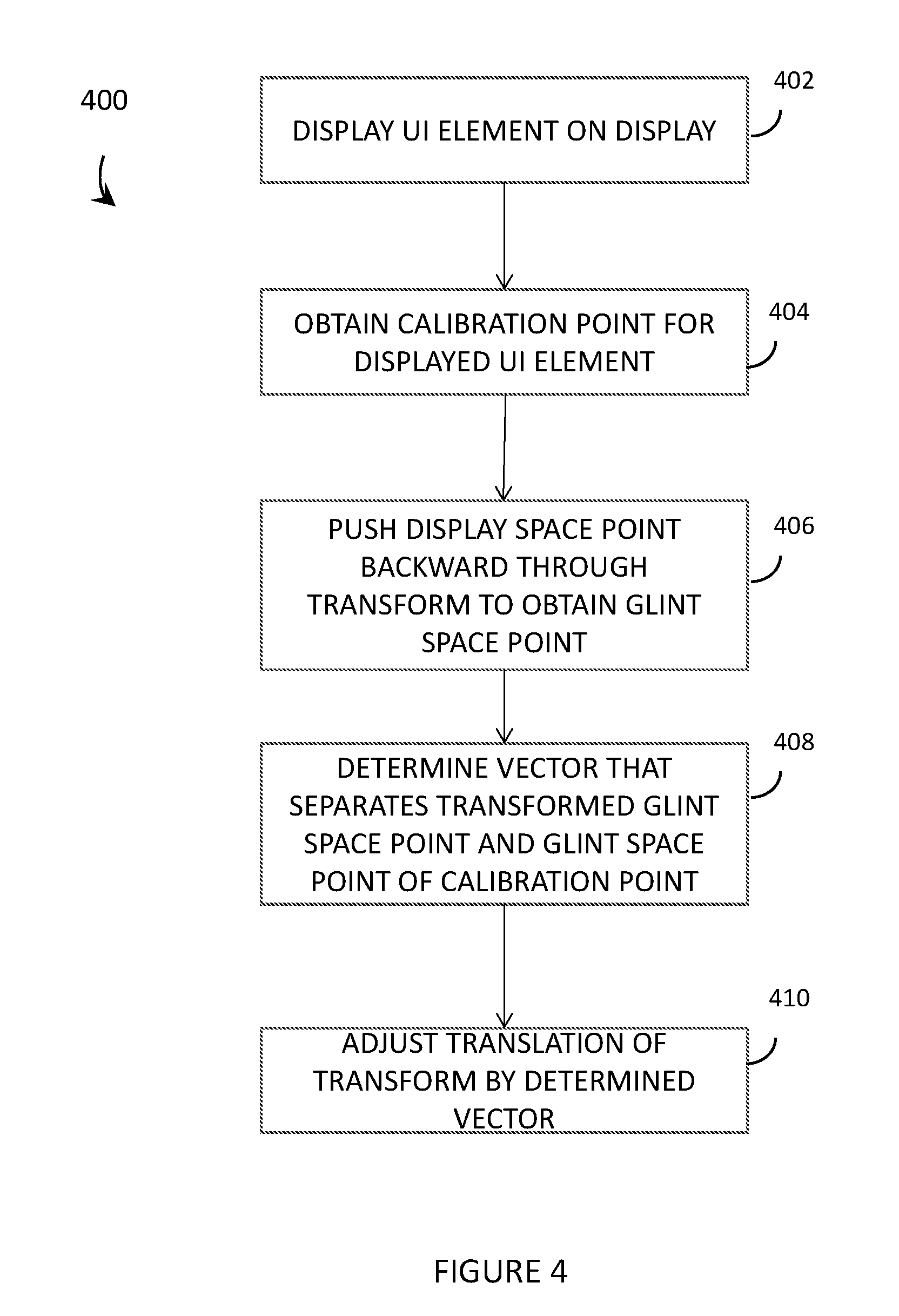

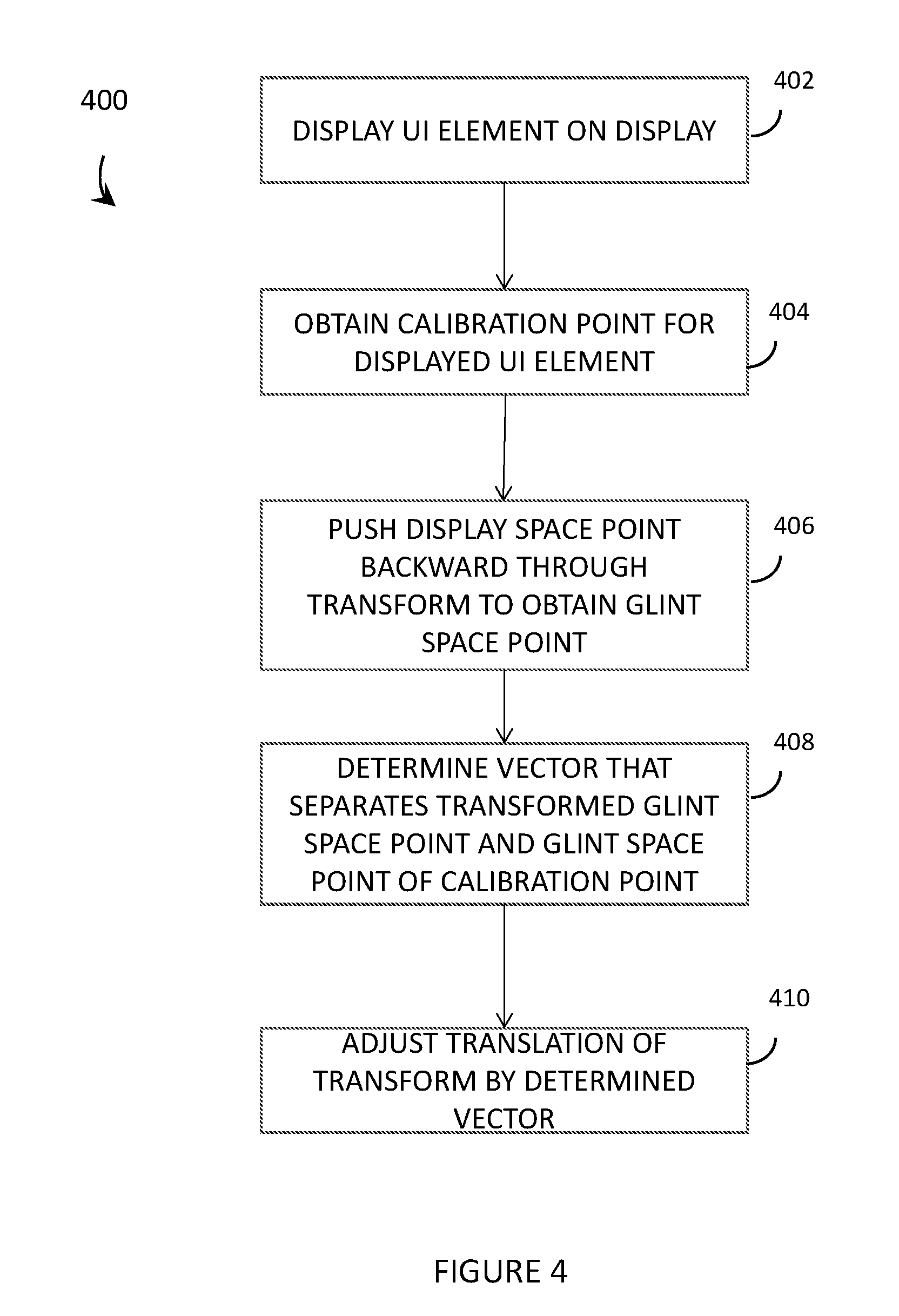

[0045] FIG. 4 is a flowchart for a method of operation of an eye tracking system of a wearable heads-up display to perform an explicit 1-point re-centering of an explicit calibration process, in accordance with the present systems, devices, and methods.

[0046] FIG. 5 is a functional block diagram of an eye tracking system of a wearable heads-up display that utilizes a machine learning model to improve a dynamic calibration scheme, in accordance with the present systems, devices, and methods.

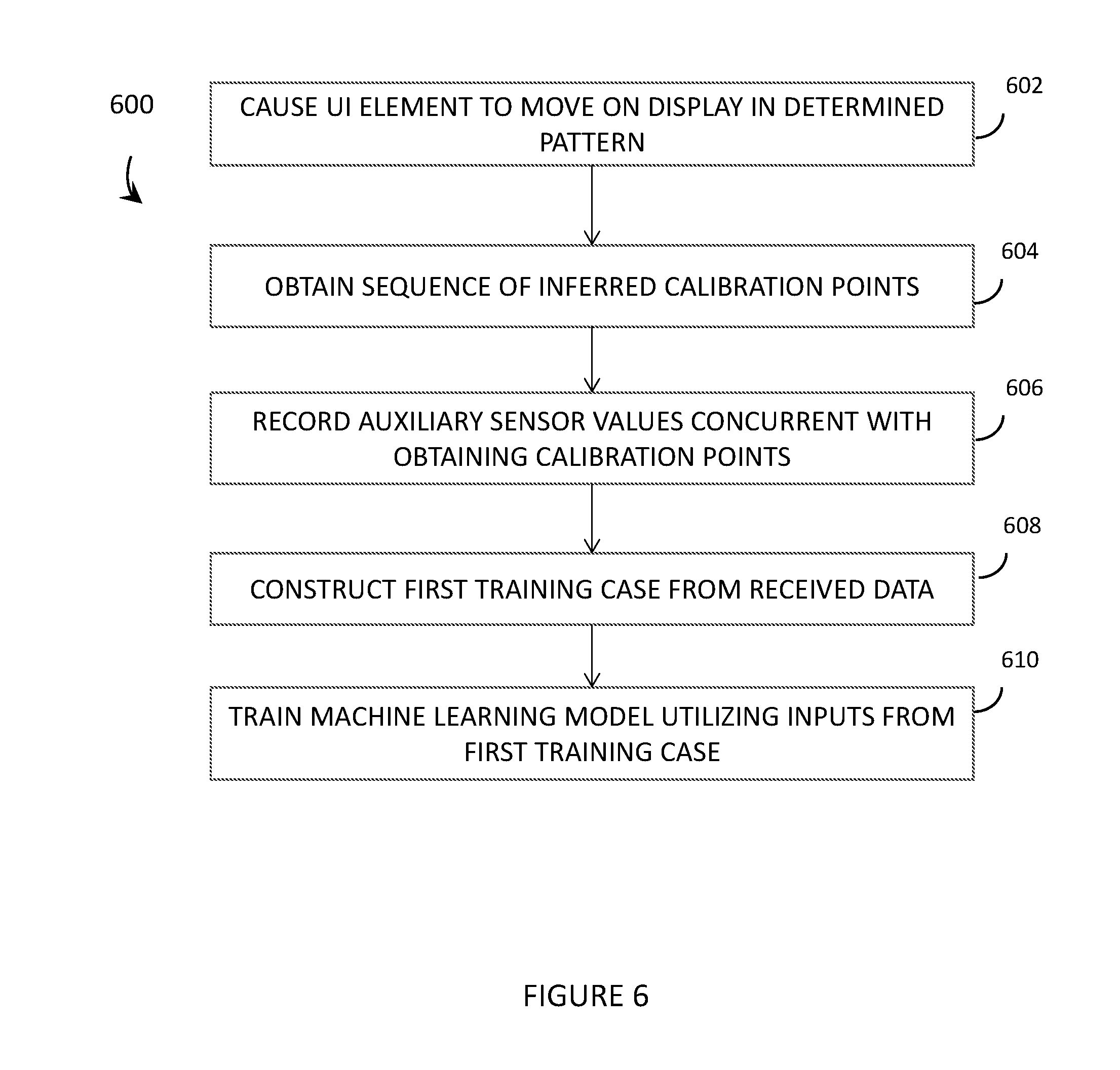

[0047] FIG. 6 is a flowchart for a method of operation of an eye tracking system of a wearable heads-up display to obtain training data and to train one or more machine learning models using the obtained training data, in accordance with the present systems, devices, and methods.

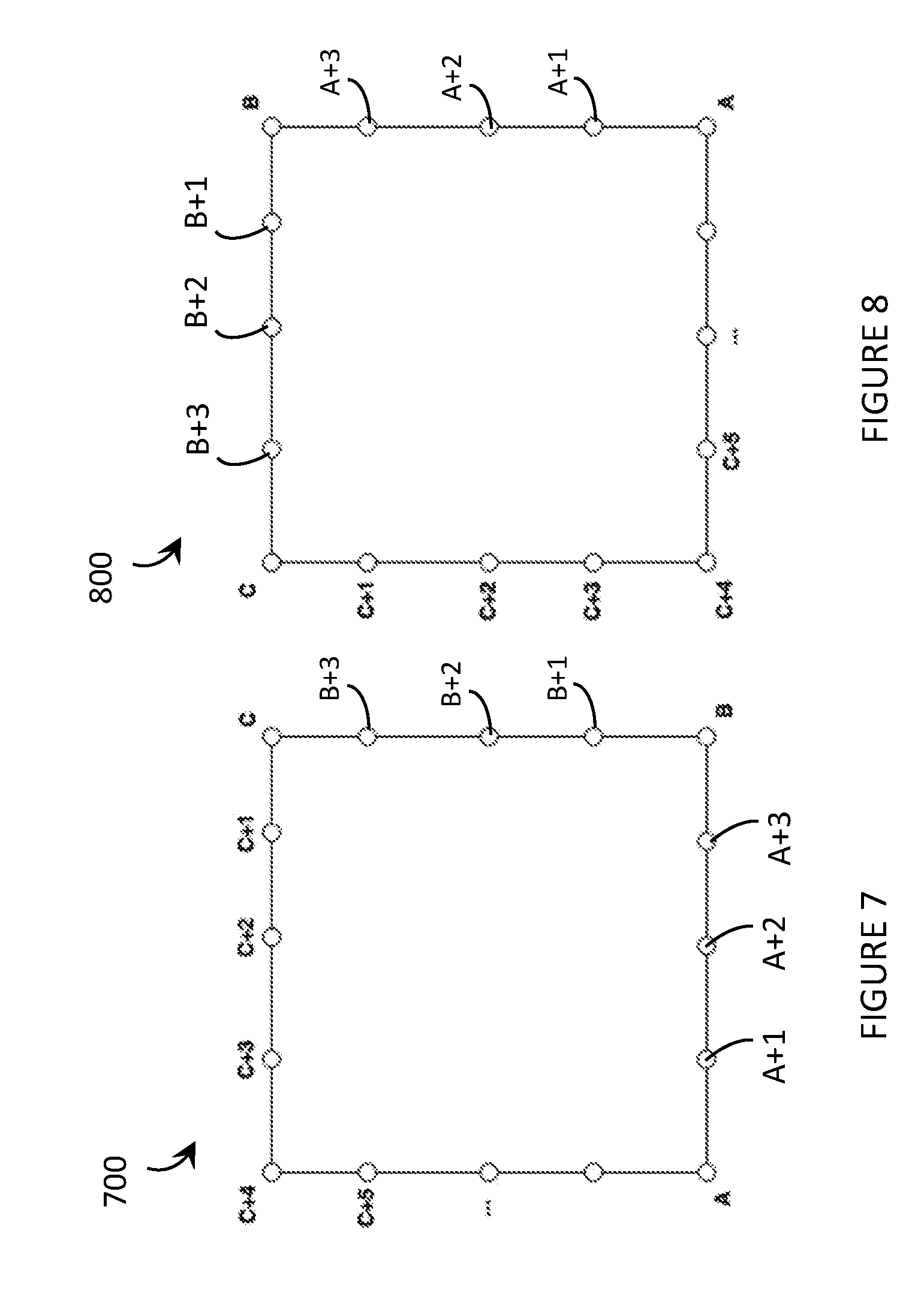

[0048] FIG. 7 depicts a display of a wearable heads-up display, and shows a number of calibration points obtained while a UI element moves in a rectangular pattern around the perimeter of the display, in accordance with the present systems, devices, and methods.

[0049] FIG. 8 depicts a display of a wearable heads-up display, and shows a number of calibration points obtained while a UI element moves in a rectangular pattern around the perimeter of the display, with the calibration points shifted around the perimeter of the display with respect to the calibration points shown in FIG. 7, in accordance with the present systems, devices, and methods.

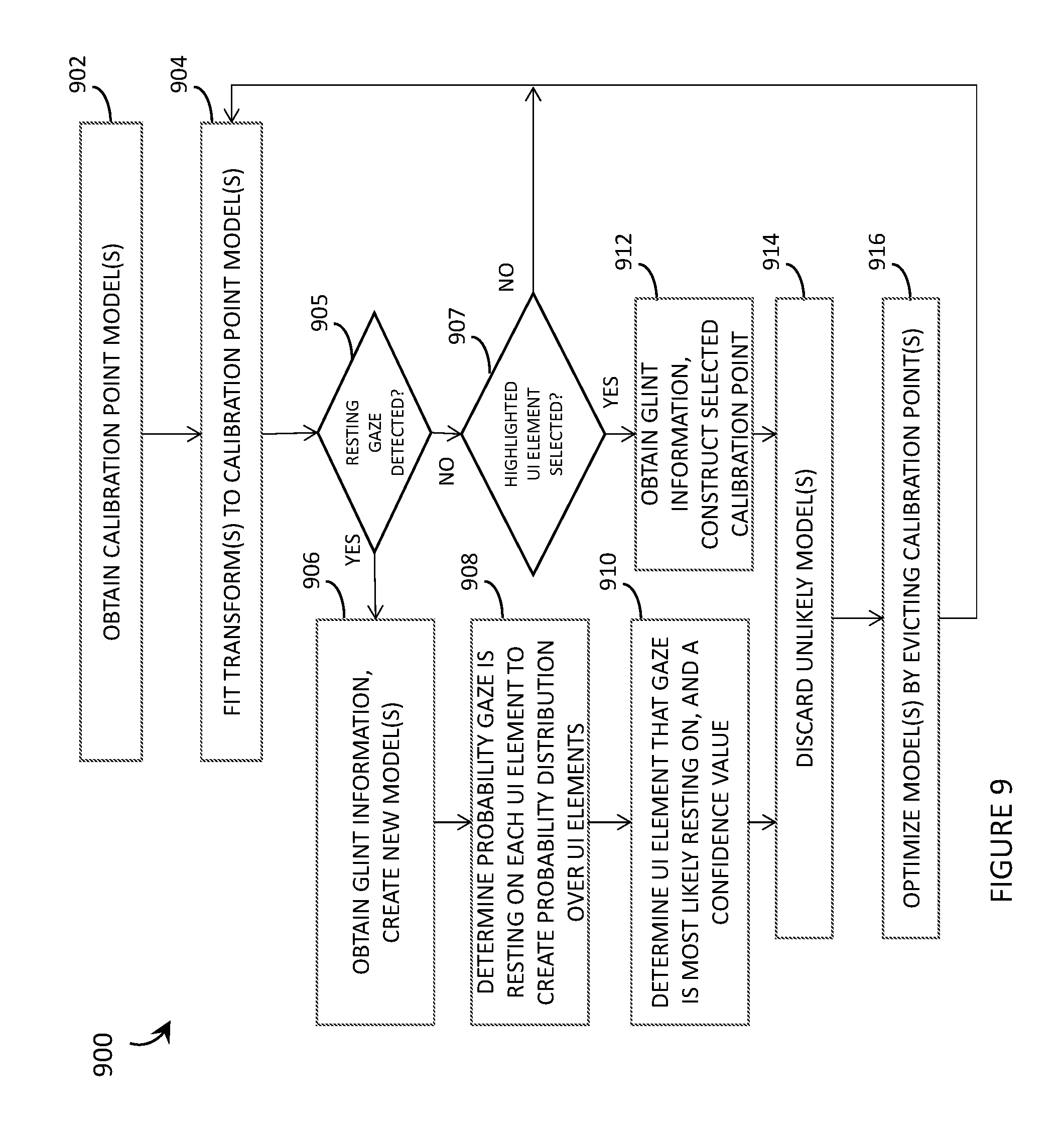

[0050] FIG. 9 is a flowchart for a method of operation of an eye tracking system of a wearable heads-up display that implements a dynamic calibration scheme, in accordance with the present systems, devices, and methods.

DETAILED DESCRIPTION

[0051] In the following description, certain specific details are set forth in order to provide a thorough understanding of various disclosed implementations. However, one skilled in the relevant art will recognize that implementations may be practiced without one or more of these specific details, or with other methods, components, materials, etc. In other instances, well-known structures associated with computer systems, server computers, and/or communications networks have not been shown or described in detail to avoid unnecessarily obscuring descriptions of the implementations.

[0052] Unless the context requires otherwise, throughout the specification and claims that follow, the word "comprising" is synonymous with "including," and is inclusive or open-ended (i.e., does not exclude additional, unrecited elements or method acts).

[0053] Reference throughout this specification to "one implementation" or "an implementation" means that a particular feature, structure or characteristic described in connection with the implementation is included in at least one implementation. Thus, the appearances of the phrases "in one implementation" or "in an implementation" in various places throughout this specification are not necessarily all referring to the same implementation. Furthermore, the particular features, structures, or characteristics may be combined in any suitable manner in one or more implementations.

[0054] As used in this specification and the appended claims, the singular forms "a," "an," and "the" include plural referents unless the context clearly dictates otherwise. It should also be noted that the term "or" is generally employed in its sense including "and/or" unless the context clearly dictates otherwise.

[0055] The headings and Abstract of the Disclosure provided herein are for convenience only and do not interpret the scope or meaning of the implementations.

[0056] The various implementations described herein provide systems, devices, and methods for laser eye tracking in wearable heads-up displays. More specifically, the various implementations described herein provide methods of determining the gaze direction of an eye of a user and are particularly well-suited for use in wearable heads-up displays ("WHUDs") that employ scanning laser projectors ("SLPs"). Examples of WHUD systems, devices, and methods that are particularly well-suited for use in conjunction with the present systems, devices, and methods for laser eye tracking are described in, for example, U.S. Non-Provisional patent application Ser. No. 15/167,458, U.S. Non-Provisional patent application Ser. No. 15/167,472, U.S. Non-Provisional patent application Ser. No. 15/167,484, and U.S. Non-Provisional patent application Ser. No. 15/331,204.

[0057] Initially, an example method of determining a gaze direction of an eye of a user of a WHUD is provided. Next, various example WHUDs are discussed with regard to FIGS. 1 and 2. Then, various static and dynamic calibration systems, methods and articles of the present disclosure are discussed with reference to FIGS. 3-9.

Method of Eye Tracking

[0058] For the purpose of the following discussion, the term "user" refers to a person that is operating and/or wearing the hardware elements described below (e.g., a person that is wearing a wearable heads-up display, as described in more detail below).

[0059] In at least some implementations, an eye tracking system of a WHUD may include a "glint detection module" that includes at least one infrared laser diode, at least one scan mirror, at least one infrared sensor, at least one processor, and at least one nontransitory processor-readable storage medium that stores at least one of instructions or data that, when executed by the at least one processor, cause the WHUD to implement the functionality discussed below. Examples of glint detection modules are shown in FIGS. 1 and 2.

[0060] In at least some implementations, the infrared laser diode may generate infrared laser light. Depending on the specific implementation, the infrared laser diode may activate and remain active in order to continuously generate a continuous beam of infrared laser light, or the infrared laser diode may be modulated to generate a sequence or pattern of infrared laser light. Throughout this specification and the appended claims, the term "infrared" includes "near infrared" and generally refers to a wavelength of light that is larger than the largest wavelength of light that is typically visible to the average human eye. Light that is visible to the average human eye (i.e., "visible light" herein) is generally in the range of 400 nm-700 nm, so as used herein the term "infrared" refers to a wavelength that is greater than 700 nm, up to 1 mm. As used herein and in the claims, visible means that the light includes wavelengths within the human visible portion of the electromagnetic spectrum, typically from approximately 400 nm (violet) to approximately 700 nm (red).

[0061] The at least one scan mirror may scan the infrared laser light over the eye of the user. Depending on the modulation of the infrared laser diode, the at least one scan mirror may scan the infrared laser light over (e.g., completely illuminate) a substantially continuous surface of the eye or the at least one scan mirror may scan the infrared laser light to form an illumination pattern on the surface of the eye (such as a grid pattern, a crosshairs pattern, and so on). Generally, in order for the at least one scan mirror to scan the infrared laser light over the eye of the user, the at least one scan mirror may sweep through a range of orientations and, for a plurality of orientations of the at least one scan mirror (i.e., for each respective orientation of the at least one scan mirror if the infrared laser diode is continuously active in order to completely illuminate the corresponding surface of the eye, or for a subset of orientations of the at least one scan mirror if the infrared laser diode is modulated such that the combination of subsets of orientations of the at least one scan mirror and the modulation pattern of the infrared laser diode produces an illumination pattern on the corresponding surface of the eye), the at least one scan mirror may receive the infrared laser light from the infrared laser diode and reflect the infrared laser light towards a respective region of the eye of the user.

[0062] The at least one scan mirror may include one or multiple (e.g., in a DLP configuration) digital microelectromechanical systems ("MEMS") mirror(s) or one or multiple piezoelectric mirrors.

[0063] In some implementations, the at least one scan mirror may scan infrared laser light directly over at least a portion of the eye of the user. In other implementations (e.g., in applications in which eye tracking is performed by a scanning laser-based WHUD), the at least one scan mirror may indirectly scan infrared laser light over at least a portion of the eye of the user by scanning the infrared laser light over an area of a light-redirection element (such as a holographic optical element ("HOE"), a diffraction grating, a mirror, a partial mirror, or a waveguide structure) positioned in the field of view of the eye of the user and the light-redirection element may redirect the infrared laser light towards the eye of the user. In implementations that employ such indirect scanning, the light-redirection element (e.g., the HOE) may, upon redirection of the infrared laser light towards the eye of the user, converge the infrared laser light to an exit pupil at the eye of the user, where the exit pupil encompasses at least the cornea of the eye of the user (when the user is looking in a specific direction, such as straight ahead or straight towards display content displayed by a WHUD).

[0064] Reflections of the infrared laser light from the eye of the user are detected by the at least one infrared sensor, such as an infrared detector or, more specifically, an infrared photodetector. As will be discussed in more detail below, the at least one infrared sensor may be communicatively coupled to a processor (e.g., a digital processor, or an application-specific integrated circuit) and provide an output signal having a magnitude that depends on an intensity of the infrared laser light detected by the infrared sensor.

[0065] The at least one processor communicatively coupled to the at least one infrared sensor may determine a respective intensity of a plurality of the reflections of the infrared laser light detected by the infrared sensor (i.e., "detected reflections"). The percentage of detected reflections for which the processor determines an intensity may depend on, for example, the sampling rate of the processor. The "intensity" of a detected reflection may be a measure of, for example, the brightness of the detected reflection, the luminance of the detected reflection, and/or the power of the detected reflection.

[0066] The processor may identify at least one detected reflection for which the intensity exceeds a threshold value. Generally, the at least one infrared sensor may be oriented to detect both spectral and diffuse reflections of the infrared laser light from the eye of the user; however, in some implementations the processor may specifically identify a detected reflection for which the intensity exceeds a threshold value only when the infrared sensor detects a spectral reflection of the infrared laser light from the eye of the user. Such spectral reflection may, for example, correspond to the cornea reflection, first Purkinje image, or "glint."

[0067] As previously described, the processor may sample the signal output by the at least one infrared sensor, where the magnitude of the signal (and therefore the magnitude of each sample) depends on the intensity of the infrared laser light detected by the at least one infrared sensor. In this case, the processor may identify at least one detected reflection for which the intensity exceeds a threshold value by identifying a first sample (in a series of samples) for which the magnitude exceeds a threshold magnitude. In other words, identifying, by the processor, at least one detected reflection for which the intensity exceeds a threshold value may be an edge-triggered (e.g., rising edge-triggered) process. If desired, the processor may then continue to identify that subsequent detected reflections each have intensities that do exceed the threshold until the processor identifies a second sample in the series for which the magnitude does not exceed the threshold magnitude (e.g., a falling edge-triggered process).

[0068] The processor may determine the orientation of the at least one scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value. In other words, the processor determines which orientation of the at least one scan mirror caused the infrared laser light to reflect from the eye of the user, as detected, with an intensity that exceeds the determined threshold value.

[0069] The processor may determine a region in a field of view of the eye of the user at which a gaze of the eye is directed based on the orientation of the at least one scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the determined threshold value. Generally, this may include effecting, by the processor, a mapping between the orientation of the at least one scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value and the field of view of the eye of the user.

[0070] As an example, the processor may essentially effect a mapping between "detected reflection space" and "mirror orientation space" which, since only detected reflections that exceed the threshold value are of interest and since detected reflections that exceed the threshold value may generally be "glints," may be interpreted as a mapping between "glint space" and "mirror orientation space." Then, the processor may essentially effect a mapping between "mirror orientation space" and gaze direction of the eye based on established correlations between various mirror orientations and where the corresponding infrared laser light would appear in the user's field of view (e.g., if redirected by a light-redirection element such as an HOE positioned in the user's field of view) if the infrared laser light was visible to the user. In this way, the method may essentially effect a mapping between "glint space" and "gaze direction space."

[0071] Fundamentally, the processor may effect a mapping between the orientation of the at least one scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value (e.g., "glint space") and the field of view of the eye of the user (e.g., "field of view space") or a location on a display of the WHUD (e.g., "display space") by performing at least one transformation between a set of scan mirror orientations and a set of gaze directions of the eye of the user. Non-limiting examples of the at least one transformation include a linear transformation, a geometric transformation, an affine transformation, a neural network-based transformation, etc. In various implementations, such may include any number of intervening transformations, such as a transformation from glint space to a set of display coordinates and a transformation from the set of display coordinates to the set of gaze directions of the eye of the user.

[0072] Depending on the specific implementation, the at least one scan mirror may include a single scan mirror that is controllably orientable about two orthogonal axes or two scan mirrors that are each respectively controllable about a respective axis, with the respective axes about which the two scan mirrors are controllably orientable being orthogonal to one another. For example, a single scan mirror may scan the infrared laser light over two dimensions of the user's eye, or a first scan mirror may scan the infrared laser light across a first dimension of the eye and a second scan mirror may scan the infrared laser light across a second dimension of the eye. The at least one scan mirror may sweep through a range of orientations. In the case of two orthogonal scan mirrors, this may mean that a first scan mirror sweeps through a first range of orientations and, for each respective orientation of the first scan mirror, a second scan mirror sweeps through a second range of orientations. It follows that, for a plurality of orientations of the at least one scan mirror, the at least one scan mirror receives the infrared laser light from the infrared laser diode and reflects the infrared laser light towards a respective region of the eye of the user, with two orthogonal scan mirrors the infrared laser light is reflected towards a respective region of the eye of the user for each respective combination of a first orientation of the first scan mirror and a second orientation of the second scan mirror. Furthermore, with two orthogonal scan mirrors the processor may determine the combination of the first orientation of the first scan mirror and the second orientation of the second scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value and the processor may determine the region in the field of view of the eye of the user at which the gaze of the eye is directed based on the combination of the first orientation of the first scan mirror and the second orientation of the second scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value.

[0073] As previously described, the method may be particularly advantageous when implemented in a WHUD that employs a SLP because in such an implementation the eye tracking (i.e., gaze direction detection) functionality of the method may be achieved with minimal hardware additions (and correspondingly minimal bulk and impact on aesthetic design) to the WHUD. In this case, the method may be extended to include a projection of display content to the user and a determination of where in the display content the user's gaze is directed.

[0074] For example, the infrared laser diode and the at least one scan mirror of the method may be components of a SLP, and the SLP may further include at least one additional laser diode to generate visible laser light. In this case, the method may be extended to include projecting visible display content in the field of view of the eye of the user by the SLP and the processor may determine a region of the visible display content at which the gaze of the eye is directed based on the orientation of the at least one scan mirror that corresponds to the at least one detected reflection for which the intensity exceeds the threshold value. The processor may determine a region of the visible display content at which the gaze of the eye is directed by performing a transformation between a set of scan mirror orientations and a set of regions of the visible display content. In other words, the processor may effect a mapping between "mirror orientation space" (or "glint space," as previously described) and "display space."

Example WHUDs

[0075] FIG. 1 is an illustrative diagram showing a WHUD 100 that includes a SLP 110 with an integrated eye tracking functionality in accordance with the present systems, devices, and methods. In brief, WHUD 100 includes the SLP 110 which is adapted to include an infrared laser diode (labeled as "IR" in FIG. 1) for eye tracking purposes and a transparent combiner comprising a wavelength-multiplexed HOE 130 integrated with (e.g., laminated or otherwise layered upon, or cast within) an eyeglass lens 160. Integration of HOE 130 with lens 160 may include and/or employ the systems, devices, and methods described in U.S. Non-Provisional patent application Ser. No. 15/256,148 and/or U.S. Provisional Patent Application Ser. No. 62/268,892.

[0076] In WHUD 100, scanning laser projection and eye tracking components are both integrated into a single package/module 110. Specifically, SLP 110 comprises a laser module 111 that includes red laser diode (labelled "R" in FIG. 1), a green laser diode (labelled "G" in FIG. 1), and a blue laser diode (labelled "B" in FIG. 1) and a scan mirror 112 (a single mirror illustrated for simplicity, though as previously described at least two orthogonally-orientable mirrors may be used). In addition, laser module 111 also includes an infrared laser diode (labelled "IR" in FIG. 1) for use in eye tracking/gaze detection. Scan mirror 112 simultaneously serves as both the scan mirror for laser projection and a scan mirror for eye tracking, whereby scan mirror 112 scans infrared laser light (represented by dashed lines 122 in FIG. 1) over the area of eye 190 to sequentially illuminate an area of eye 190 (e.g., via a raster scan of IR light). In WHUD 100, infrared laser diode is integrated into laser module 111 of SLP 110 and scan mirror 112 serves to scan both visible (R, G, and/or B) and infrared (IR) laser light over eye 190.

[0077] Scan mirror 112 may advantageously include one or multiple (e.g., in a DLP configuration) digital microelectromechanical systems ("MEMS") mirror(s). In typical operation, scan mirror 112 of SLP 110 repeatedly scans over its entire range of orientations and effectively scans over the entire field of view of the display. Whether or not an image/pixel is projected at each scan orientation depends on controlled modulation of laser module 111 and its synchronization with scan mirror 112. The fact that scan mirror 112 generally scans over its entire range during operation as a laser projector makes scan mirror 112 of SLP 110 compatible with use for eye tracking purposes. SLP 110 is adapted to provide eye tracking functionality without having to compromise or modify its operation as a SLP. In operation, scan mirror 112 repeatedly scans over its entire range of orientations while the RGB laser diodes are modulated to provide the visible light 121 corresponding to pixels of a scanned image or, generally, "display content." At the same time, the infrared laser diode may be activated to illuminate the user's eye 190 (one spot or pixel at a time, each corresponding to a respective scan mirror orientation) with infrared laser light 122 for eye tracking purposes. Depending on the implementation, the infrared laser diode may simply be on at all times to completely illuminate (i.e., scan over the entire area of) eye 190 with infrared laser light 122 or the infrared laser diode may be modulated to provide an illumination pattern (e.g., a grid, a set of parallel lines, a crosshair, or any other shape/pattern) on eye 190. Because infrared laser light 122 is invisible to eye 190 of the user, infrared laser light 122 does not interfere with the scanned image being projected by SLP 110.

[0078] In order to detect the (e.g., portions of) infrared laser light 122 that reflects from eye 190, WHUD 100 includes at least one infrared photodetector 150. While only one photodetector 150 is depicted in FIG. 1, in alternative implementations any number of photodetectors 150 may be used (e.g., an array of photodetectors 150, or a charge-coupled device based camera that is responsive to light in the infrared wavelength range) positioned in any arrangements and at any desired location(s) depending on the implementation.

[0079] As scan mirror 112 scans modulated R, G, and/or B light 121 over eye 190 to produce display content based on modulation of the R, G, and/or B laser diodes, scan mirror 112 also scans infrared laser light 122 over eye 190 based on modulation of the IR laser diode. Photodetector 150 detects an intensity pattern or map of reflected infrared laser light 122 that depends on the position/orientation of eye 190. That is, each distinct orientation of scan mirror 112 may result in a respective intensity of infrared laser light 122 being detected by photodetector 150 that depends on the position/orientation of eye 190 (or the position/orientation of feature(s) of eye 190, such as the cornea, iris, pupil, and so on). The intensity pattern/map detected by photodetector 150 depends on where eye 190 is looking. In this way, the same SLP 110 in WHUD 100 enables both i) image projection, and ii) the gaze direction and movements of eye 190 to be measured and tracked.

[0080] Another adaptation to WHUD 100, for the purpose of integrating eye tracking functionality into SLP 110, is wavelength-multiplexing of HOE 130. WHUD 100 includes a HOE 130 that redirects laser light output from the laser module 111 of SLP 110 towards eye 190; however, in WHUD 100, HOE 130 includes at least two wavelength-multiplexed holograms: at least a first hologram 131 that is responsive to (i.e., redirects at least a portion of, the magnitude of the portion depending on the playback efficiency of the first hologram) the visible light 121 output by laser module 111 and unresponsive to (i.e., transmits) the infrared light 122 output by laser module 111, and a second hologram 132 that is responsive to (i.e., redirects at least a portion of, the magnitude of the portion depending on the playback efficiency of the second hologram) the infrared light 122 output by laser module 111 and unresponsive to (i.e., transmits) the visible light 121 output by laser module 111. While FIG. 1 depicts first hologram 131 as a single hologram, in practice the aspect(s) of HOE 130 that is/are responsive to the visible light 121 output by laser module 111 may include any number of holograms that may be multiplexed in a variety of different ways, including without limitation: wavelength multiplexed (i.e., a "red" hologram that is responsive to only red light from the red laser diode of laser module 111, a "green" hologram that is responsive to only green light from the green laser diode of laser module 111, and a "blue" hologram that is responsive to only blue light from the blue laser diode of laser module 111), angle multiplexed (e.g., for the purpose of eye box expansion/replication), phase multiplexed, spatially multiplexed, temporally multiplexed, and so on. Upon redirection of visible light 121, first hologram 131 may apply a first optical power to visible light 121. Advantageously, the first optical power applied by first hologram 131 (or by the first set of multiplexed holograms if the implementation employs a set of multiplexed holograms for redirecting the visible light 121) may be a positive optical power that focuses or converges the visible light 121 to, for example, an exit pupil having a diameter less than one centimeter (e.g., 6 mm, 5 mm, 4 mm, 3 mm) at the eye 190 of the user for the purpose of providing a clear and focused image with a wide field of view. Upon redirection of infrared light 122, second hologram 132 may apply a second optical power to infrared light 122, where the second optical power applied by second hologram 132 is different from the first optical power applied by first hologram 131. Advantageously, the first optical power may be greater than the second optical power (and therefore, the second optical power may be less than the first optical power) so that second hologram 132 redirects infrared light 122 over an area of eye 190 that is larger than the exit pupil of visible light 121 at eye 190. In other words, the first hologram that is responsive to the visible light may converge the visible light to a first exit pupil at the eye of the user and the second hologram that is responsive to the infrared light may converge the infrared light to a second exit pupil at the eye of the user, where the first exit pupil is completely contained within the second exit pupil at the eye of the user. For example, the second optical power of second hologram 132 may apply a rate of convergence to infrared light 122 that is less than the rate of convergence applied to visible light 121 by the first optical power of first hologram 131, or the second optical power may be zero such that second hologram 132 redirects infrared light 122 towards eye 190 without applying any convergence thereto, or the second optical power may be negative (i.e., less than zero) so that the second optical power of second hologram 132 causes infrared light 122 to diverge (i.e., applies a rate of divergence thereto) to cover, for example, the entire area of eye 190 (and beyond, if desired) for the purpose of illuminating a large area of eye 190 and tracking all eye positions/motions within that illuminated area.

[0081] Depending on the specific implementation, HOE 130 may comprise a single volume of holographic material (e.g., photopolymer or a silver halide compound) that encodes, carries, has embedded therein or thereon, or generally includes both first hologram 131 and second hologram 132, or alternatively HOE 130 may comprise at least two distinct layers of holographic material (e.g., photopolymer and/or a silver halide compound) that are laminated or generally layered together, a first layer of holographic material that includes first hologram 131 and a second layer of holographic material that includes second hologram 132.

[0082] The use of infrared light is advantageous in eye tracking systems because infrared light is invisible to the (average) human eye and so does not disrupt or interfere with other optical content being displayed to the user. Integrating an infrared laser diode into a SLP, in accordance with the present systems, devices, and methods, enables visible laser projection and invisible eye tracking to be simultaneously performed by substantially the same hardware of a WHUD, thereby minimizing overall bulk and processing/power requirements of the system.

[0083] In accordance with the present systems, devices, and methods, an eye tracking system (or an "eye tracker") may include one or more digital processor(s) communicatively coupled to the one or more infrared photodetector(s) and to one or more non-transitory processor-readable storage medium(ia) or memory(ies). The memory(ies) may store processor-executable instructions and/or data that, when executed by the processor, enable the processor to determine the position and/or motion of an eye of the user, or the gaze direction of the eye of the user, based on information (e.g., intensity information, such as an intensity pattern/map) provided by the one or more photodetector(s).

[0084] FIG. 2 is a perspective view of a WHUD 200 that integrates eye tracking and scanning laser projection in accordance with the present systems, devices, and methods. WHUD 200 includes many of the elements depicted in FIG. 1, namely: an SLP 210 comprising laser module 211 with at least one visible laser diode (e.g., a red laser diode, a green laser diode, a blue laser diode, or any combination thereof) to output a visible laser light 221 (e.g., a red laser light, a green laser light, a blue laser light, or any combination thereof) and an infrared laser diode to output infrared laser light 222, at least one scan mirror 212 aligned to receive laser light output from the laser module 211 and controllably orientable to reflect (i.e., scan) the laser light, a wavelength-multiplexed HOE 230 aligned to redirect the laser light 221 and 222 towards an eye 290 of a user, and at least one infrared photodetector 250 responsive to infrared laser light 222. Depending on the implementation, the visible laser light 221 may correspond to any of, either alone or in any combination, a red laser light, a green laser light, and/or a blue laser light. WHUD 200 also includes a support frame 280 that has a general shape and appearance or a pair of eyeglasses. Support frame 280 carries SLP 210, photodetector 250, and wavelength-multiplexed HOE 230 so that HOE 230 is positioned within a field of view of the eye 290 of the user when support frame 280 is worn on a head of the user.

[0085] Support frame 280 of WHUD 200 also carries a digital processor 260 communicatively coupled to SLP 210 and photodetector 250, and a non-transitory processor-readable storage medium or memory 270 communicatively coupled to digital processor 270. Memory 270 stores data and/or processor-executable instructions 271 that, when executed by processor 260, cause WHUD 200 to perform the functionality discussed herein. More specifically, data and/or processor-executable instructions 271, when executed by processor 260, cause WHUD 200 to: generate an infrared laser light 222 by the infrared laser diode of SLP 210; scan the infrared laser light 222 over the eye 290 of the user by the at least one scan mirror 212, wherein scanning the infrared laser light 222 over the eye 290 of the user by the at least one scan mirror 212 includes sweeping the at least one scan mirror 212 through a range of orientations and, for a plurality of orientations of the at least one scan mirror 212, reflecting the infrared laser light 222 towards a respective region of the eye 290 of the user; detect reflections 223 of the infrared laser light 222 from the eye 290 of the user by the at least one infrared photodetector 250; determine a respective intensity of each detected reflection 223 of the infrared laser light 222 by the processor 260; identify, by the processor 260, at least one detected reflection 223 for which the intensity exceeds a threshold value; determine, by the processor 260, the orientation of the at least one scan mirror 212 that corresponds to the at least one detected reflection 223 for which the intensity exceeds the threshold value; and determine, by the processor 260, a region in a field of view of the eye 290 of the user at which a gaze of the eye 290 is directed based on the orientation of the at least one scan mirror 212 that corresponds to the at least one detected reflection 223 for which the intensity exceeds the threshold value. Together, all of these acts enable WHUD 200 to determine a gaze direction of eye 290.

[0086] Since, in addition to eye tracking/gaze direction detection capability, WHUD 200 also has a display capability, memory 270 further stores data and/or processor-executable instructions that, when executed by processor 260, cause WHUD 200 to project visible display content 231 in the field of view of the eye 290 of the user by SLP 210 (in conjunction with HOE 230). In this case, data and/or processor-executable instructions 271, when executed by processor 260, may cause WHUD 200 to determine, by the processor 260, a region in a field of view of the eye 290 of the user at which a gaze of the eye 290 is directed based on the orientation of the at least one scan mirror 212 that corresponds to the at least one detected reflection 223 for which the intensity exceeds the threshold value, by causing WHUD 200 to determine, by the processor 260, a region of the visible display content 231 at which the gaze of the eye 290 is directed based on the orientation of the at least one scan mirror 212 that corresponds to the at least one detected reflection 223 for which the intensity exceeds the threshold value.

[0087] As previously described, infrared photodetector 250 may advantageously be positioned on support frame 280 at a periphery of the field of view of the eye 290 of the user when the eye 290 is gazing straight ahead (e.g., on the rims of frame 280 that surround the eyeglass lens that carries HOE 230). In this case, the data and/or processor-executable instructions that, when executed by the processor 260, cause WHUD 200 to project visible display content 231 in the field of view of the eye 290 of the user by the SLP 210, may advantageously cause the SLP 210 to position the visible display content 231 away-from-center in the field of view of the eye 290 of the user and towards the position of the at least one infrared photodetector 250 at the periphery of the field of view of the eye 290 of the user, as depicted in the exemplary implementation of FIG. 2.

[0088] In at least some implementations, the WHUD 200 may include one more auxiliary sensors 292 carried by the support frame 280 at one or more locations thereof. Non-limiting examples of such auxiliary sensors include proximity sensors, gyroscopes or accelerometers. A proximity sensor may be used to indicate where the support frame 280 is positioned relative to a "home" position on a user's face (e.g., distance from the proximity sensor to a point on the user's face, such as the nose bridge), which provides information about where the support frame 280 is located relative to the eye(s) of the user. A gyroscope sensor may be used to determine the orientation of a user's head during use of the WHUD 200. An accelerometer may be used to indicate patterns of acceleration, including sudden movements, that are occurring as the user wears the WHUD. As discussed further below, in at least some implementations auxiliary sensors may be used to improve calibration methods for the WHUD 200.

[0089] Throughout this specification, Figures, as well as the appended claims, reference is often made to the eye of the user. For example, FIG. 1 depicts eye 190, and FIG. 2 depicts eye 290. In general, the systems, devices, and methods described herein are suitable for use in association with at least one eye of a user (e.g., 190, 290) but do not themselves include the eye of the user. In other words, eye 190 is not a part of WHUD 100 and eye 290 is not a part of WHUD 200.

[0090] The various implementations described herein generally reference and illustrate a single eye of a user (i.e., monocular applications), but a person of skill in the art will readily appreciate that the present systems, devices, and methods may be duplicated in a WHUD in order to provide scanned laser projection and/or scanned laser eye tracking for both eyes of the user (i.e., binocular applications).