Apparatus And Methods For Programming And Training Of Robotic Devices

Szatmary; Botond ; et al.

U.S. patent application number 16/131128 was filed with the patent office on 2019-01-10 for apparatus and methods for programming and training of robotic devices. The applicant listed for this patent is Brain Corporation. Invention is credited to Oyvind Grotmol, Eugene Izhikevich, Oleg Sinyavskiy, Botond Szatmary.

| Application Number | 20190009408 16/131128 |

| Document ID | / |

| Family ID | 55632136 |

| Filed Date | 2019-01-10 |

| United States Patent Application | 20190009408 |

| Kind Code | A1 |

| Szatmary; Botond ; et al. | January 10, 2019 |

APPARATUS AND METHODS FOR PROGRAMMING AND TRAINING OF ROBOTIC DEVICES

Abstract

Apparatus and methods for training and operating of robotic devices. Robotic controller may comprise a plurality of predictor apparatus configured to generate motor control output. One predictor may be operable in accordance with a pre-configured process; another predictor may be operable in accordance with a learning process configured based on a teaching signal. An adaptive combiner component may be configured to determine a combined control output controller block may provide control output that may be combined with the predicted control output. The pre-programmed predictor may be configured to operate a robot to perform a task. Based on detection of a context, the controller may adaptively switch to use control output of the learning process to perform the given or another task. User feedback may be utilized during learning.

| Inventors: | Szatmary; Botond; (San Diego, CA) ; Grotmol; Oyvind; (San Diego, CA) ; Izhikevich; Eugene; (San Diego, CA) ; Sinyavskiy; Oleg; (San Diego, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 55632136 | ||||||||||

| Appl. No.: | 16/131128 | ||||||||||

| Filed: | September 14, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 14613237 | Feb 3, 2015 | 10105841 | ||

| 16131128 | ||||

| 62059039 | Oct 2, 2014 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G06N 3/00 20130101; B25J 9/1607 20130101; G05D 1/0246 20130101; G06N 20/00 20190101; B25J 9/0081 20130101; B25J 9/1666 20130101; G06N 3/008 20130101; B25J 9/163 20130101; Y10S 901/01 20130101; Y10S 901/03 20130101; G05D 2201/02 20130101; B25J 9/1697 20130101; Y10S 901/47 20130101; G06N 3/049 20130101; Y10S 901/09 20130101; G05D 1/0088 20130101; B25J 9/1602 20130101; B25J 9/161 20130101 |

| International Class: | B25J 9/16 20060101 B25J009/16; B25J 9/00 20060101 B25J009/00; G06N 99/00 20060101 G06N099/00 |

Claims

1. An apparatus for controlling a robot, the apparatus comprising: a sensor interface configured to receive a first representation of an object associated with an environment of the robot; a predictor component configured to determine a first control output, the first control output being configured to cause the robot to execute a task in accordance with the first representation of the object; an input interface configured to receive a teaching input associated with a second representation of the object, the teaching input being configured to convey information related to a target trajectory associated with execution of the task by the robot; an evaluation component configured to provide a second control output based on an evaluation of the control signal and the teaching input; a learning predictor component configured to determine a third control output, the third control output being determined based on analysis of the first representation of the object and the second control output; and a combiner configured to combine the first control output and the third control output to produce a fourth control output, the fourth control output being configured to cause the robot to execute the task in accordance with the first representation of the object; wherein: execution of the task based on the fourth control output produces a first trajectory that is closer to the target trajectory compared to a second trajectory associated with execution of the task based on the first control output.

2. The apparatus of claim 1, wherein: the learning predictor component is operable in accordance with a learning process, the learning process being configured to determine an association between the object representation and the second control output; and the learning process is characterized by a learning configuration that is adapted based on an occurrence of the object representation contemporaneously with provisioning of the second control output.

3. The apparatus of claim 2, wherein: the learning process comprises a spiking neuron classifier, the spiking neuron classifier comprising a plurality of spiking neurons interconnected via a plurality of connections, individual ones of the plurality of connections being characterized by connection efficacy; and the learning configuration adaptation comprises modifying efficacy of one or more of the plurality of connections.

4. The apparatus of claim 2, wherein: the learning process comprises a look up table comprising a plurality of entries; and the determination of the third control output by the learning process comprises incrementing an entry of the plurality of entries based on an occurrence of the first representation of the object contemporaneously with provisioning of the third control output.

5. The apparatus of claim 2, wherein: the predictor component is further configured to provide to the learning process confidence information associated with the first control output; the learning process comprises a look up table comprising a plurality of entries; and the determination of the third control output by the learning process comprises incrementing an entry of the plurality of entries based on an occurrence of the first representation of the object contemporaneously with provisioning of the second control output and provided that the confidence information satisfies a given condition.

6. The apparatus of claim 2, wherein the first representation of the object, the first control output, and the second control output comprise a plurality of features of a first type and one or more features of a second type; and the determination of third control output by the learning process comprises: determining a subset of features by randomly selecting a portion of the plurality of features and at least one feature from the second input features; comparing individual features of the subset to corresponding features of a plurality of training feature sets, individual ones of the plurality of training feature sets comprising a number of training features, the number being equal to or greater than the quantity of features within the subset of features; based on the comparison, determining a similarity measure for a given training set of the plurality of training feature sets, the similarity measure characterizing similarity between features of the subset and features of the given training set; responsive to the similarity measure breaching a threshold, selecting one or more training sets from the plurality of training sets; determining one or more potential control outputs, individual ones of the one or more potential control outputs being associated with a corresponding training set of the plurality of training sets; and determining the third control output based on a transformation obtained from the one or more potential control outputs; wherein: individual ones of the plurality of training feature sets comprise features of the first type and at least one feature of the second type; individual ones of the plurality of training feature sets are obtained during training operation of the robot, the training operation being performed responsive to receiving a training signal from the robot; and individual ones of the one or more potential control outputs being determined based on the training signal and the features of the given training set.

7. The apparatus of claim 6, wherein the similarity measure is determined based on a difference between values of the features of the subset and values of the features of the given training set.

8. The apparatus of claim 6, wherein the similarity measure is determined based on a distance between individual features of the subset of features and corresponding features of the given training set.

9. The apparatus of claim 1, wherein execution of the task based solely on the third control output is configured to produce a third trajectory that is farther from the target trajectory compared to the second trajectory.

10. The apparatus of claim 1, wherein: the robot comprises a vehicle, the vehicle comprising a platform configured to move in at least one dimension; the apparatus is disposed on the platform; and the training input is provided by an entity disposed external to the platform.

11. The apparatus of claim 10, further comprising: a wireless communications interface configured to receive remote data transmissions from the entity; wherein: the training input is provided via the remote data transmissions; and the training input is configured based on an evaluation of the second representation of the object, the second representation being distinct from the first representation.

12. The apparatus of claim 1, wherein the evaluation component is configured to produce the second control output based on a discrepancy between the control signal and the teaching input.

13. The apparatus of claim 12, wherein the combiner is operable in accordance with at least one of an addition or a union operation on the first control output and the third control output.

14. The apparatus of claim 1, wherein the combination is configured based on a concatenation of the first control output and the third control output.

15. The apparatus of claim 1, further comprising: another combiner component configured to combine the fourth control output and the training input to produce a motor control output, the combination of the fourth control output and the training input being characterized by a transfer function; wherein: the robot comprises an actuator configured to displace at least a portion of the robot in at least one dimension based on application of the motor control output; and the transfer function is configured to provide an override combination wherein the a motor control output is configured based solely on the teaching provided the teaching signal satisfies a given condition.

16. A non-transitory computer-readable storage medium having instructions embodied thereon, the instructions being executable by a processor to perform a method of determining a combined control output for a task being executed by a robot, the method comprising: for a sensory context, determining a first instance of a control output using a pre-configured and non-learning prediction process and a second instance of the control output using a learning prediction process; combining the first instance of the control output with the second instance of the control output using a combination process to produce the combined control output; and causing the task to be executed responsive to providing the combined control output to the robot; wherein: the learning prediction process is configured to determine the second instance of the control output based on a teaching input indicative of a target trajectory associated with the task execution; and execution of the task by the robot in accordance with the combined control output is configured to produce a trajectory that is closer to the target trajectory compared to task execution based on one or both of the first instance of the control output or the second instance of the control output.

17. The method of claim 16, wherein: the learning process is configured to associate the sensory context with the target trajectory, the association being based on updating a parameter of the learning process during based on a contemporaneous occurrence of the sensory context and the training input.

18. The method of claim 17, wherein: the robot comprises a plurality of actuators characterized by a first operational degree of freedom and a second operational degree of freedom; the first instance of the control output is configured for operating the first operational degree of freedom and the second instance of the control output is configured for operating the second operational degree of freedom; and the combination process comprises a concatenation operation.

19. A method of determining a control signal for a robot, the method being performed by one or more processors executing instructions stored by a non-transitory computer-readable storage medium, the method comprising: determining an occurrence of a first context in sensory input; accessing a learning process configuration, the learning process configuration adapted to convey an association between a given context and a respective action; determining a first action associated with the first context; responsive to the first action corresponding to a pre-programmed action, activating a pre-programmed predictor component to produce the control signal based on analysis of the sensory input; responsive to the first action corresponding to a learned action, activating a learning predictor component to produce the control signal based on analysis of the sensory input and a training input; and updating the learning process configuration in accordance with the activated component.

20. The method of claim 19, wherein: the robot comprises an autonomous vehicle and a collector apparatus; the pre-programmed action comprises an object search task configured based on a random exploration of environment by the robot; and the learned action comprises an object collection task configured based on a provision of the training input.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation of U.S. patent application Ser. No. 14/613,237 filed Feb. 3, 2015 which claims the priority benefit of co-pending and co-owned U.S. Provisional Patent Application Ser. No. 62/059,039 entitled "LEARNING APPARATUS AND METHODS", filed Oct. 2, 2014, which is incorporated herein by reference in its entirety. This application is related to co-pending and co-owned U.S. patent application Ser. No. 14/542,391 entitled "ROBOTIC NAVIGATION APPARATUS AND METHODS", filed Nov. 14, 2014, Ser. No. 14/070,239 "REDUCED DEGREE OF FREEDOM ROBOTIC CONTROLLER APPARATUS AND METHODS", filed Nov. 1, 2013, Ser. No. 14/070,269 "APPARATUS AND METHODS FOR OPERATING ROBOTIC DEVICES USING SELECTIVE STATE SPACE TRAINING", filed Nov. 1, 2013, Ser. No. 14/070,114 entitled "APPARATUS AND METHODS FOR ONLINE TRAINING OF ROBOTS", filed Nov. 1, 2013, U.S. patent application Ser. No. 14/244,890 entitled "LEARNING APPARATUS AND METHODS FOR CONTROL OF ROBOTIC DEVICES", filed Apr. 3, 2014, Ser. No. 13/918,338 entitled "ROBOTIC TRAINING APPARATUS AND METHODS", filed Jun. 14, 2013, U.S. patent application Ser. No. 13/918,298 entitled "HIERARCHICAL ROBOTIC CONTROLLER APPARATUS AND METHODS", filed Jun. 14, 2013, Ser. No. 13/907,734 entitled "ADAPTIVE ROBOTIC INTERFACE APPARATUS AND METHODS", filed May 31, 2013, Ser. No. 13/842,530 entitled "ADAPTIVE PREDICTOR APPARATUS AND METHODS", filed Mar. 15, 2013, Ser. No. 13/842,562 entitled "ADAPTIVE PREDICTOR APPARATUS AND METHODS FOR ROBOTIC CONTROL", filed Mar. 15, 2013, Ser. No. 13/842,616 entitled "ROBOTIC APPARATUS AND METHODS FOR DEVELOPING A HIERARCHY OF MOTOR PRIMITIVES", filed Mar. 15, 2013, Ser. No. 13/842,647 entitled "MULTICHANNEL ROBOTIC CONTROLLER APPARATUS AND METHODS", filed Mar. 15, 2013, and Ser. No. 13/842,583 entitled "APPARATUS AND METHODS FOR TRAINING OF ROBOTIC DEVICES", filed Mar. 15, 2013, each of the foregoing being incorporated herein by reference in its entirety.

COPYRIGHT

[0002] A portion of the disclosure of this patent document contains material that is subject to copyright protection. The copyright owner has no objection to the facsimile reproduction by anyone of the patent document or the patent disclosure, as it appears in the Patent and Trademark Office patent files or records, but otherwise reserves all copyright rights whatsoever.

FIELD OF THE DISCLOSURE

[0003] The present disclosure relates to, inter alia, computerized apparatus and methods for training of robotic devices to perform various tasks.

BACKGROUND

[0004] Robotic devices are used in a variety of industries, such as manufacturing, medical, safety, military, exploration, and/or other. Robotic "autonomy", i.e., the degree of human control, varies significantly according to application. Some existing robotic devices (e.g., manufacturing assembly and/or packaging) may be programmed in order to provide desired functionality without further supervision. Some robotic devices (e.g., surgical robots) may be controlled by humans.

[0005] Robotic devices may comprise hardware components that enable the robot to perform actions in 1-dimension (e.g., a single range of movement), 2-dimensions (e.g., a plane of movement), and/or 3-dimensions (e.g., a space of movement). Typically, movement is characterized according to so-called "degrees of freedom". A degree of freedom is an independent range of movement; a mechanism with a number of possible independent relative movements (N) is said to have N degrees of freedom. Some robotic devices may operate with multiple degrees of freedom (e.g., a turret and/or a crane arm configured to rotate around vertical and/or horizontal axes). Other robotic devices may be configured to follow one or more trajectories characterized by one or more state parameters (e.g., position, velocity, acceleration, orientation, and/or other). It is further appreciated that some robotic devices may simultaneously control multiple actuators (degrees of freedom) resulting in very complex movements.

[0006] Users may utilize robots that provide functionality out of the box (e.g., pre-programmed). Some users may train a robot with supervised learning to perform a task (e.g., navigation, manipulation, and/or other tasks).

SUMMARY

[0007] One aspect of the disclosure relates to an apparatus for controlling a robot. The apparatus may comprise a sensor, a predictor component, an input interface, an evaluation component, a learning predictor component, and a combiner. The sensor interface may be configured to receive a first representation of an object associated with an environment of the robot. The predictor component may be configured to determine a first control output. The first control output may be configured to cause the robot to execute a task in accordance with the first representation of the object. The input interface may be configured to receive a teaching input associated with a second representation of the object. The teaching input may be configured to convey information related to a target trajectory associated with execution of the task by the robot. The evaluation component may be configured to provide a second control output based on an evaluation of the control signal and the teaching input. The learning predictor component may be configured to determine a third control output. The third control output may be determined based on analysis of the first representation of the object and the second control output. The combiner may be configured to combine the first control output and the third control output to produce a fourth control output. The fourth control output may be configured to cause the robot to execute the task in accordance with the first representation of the object. Execution of the task based on the fourth control output may produce a first trajectory that is closer to the target trajectory compared to a second trajectory associated with execution of the task based on the first control output.

[0008] In some implementations, the learning predictor component may be operable in accordance with a learning process. The learning process may be configured to determine an association between the object representation and the second control output. The learning process may be characterized by a learning configuration that is adapted based on an occurrence of the object representation contemporaneously with provisioning of the second control output.

[0009] In some implementations, the learning process may comprise a spiking neuron classifier. The spiking neuron classifier may comprise a plurality of spiking neurons interconnected via a plurality of connections. Individual ones of the plurality of connections may be characterized by connection efficacy. The learning configuration adaptation may comprise modifying efficacy of one or more of the plurality of connections.

[0010] In some implementations, the learning process may comprise a look up table comprising a plurality of entries. The determination of the third control output by the learning process may comprise incrementing an entry of the plurality of entries based on an occurrence of the first representation of the object contemporaneously with provisioning of the third control output.

[0011] In some implementations, the predictor component may be configured to provide to the learning process confidence information associated with the first control output. The learning process may comprise a look up table comprising a plurality of entries. The determination of the third control output by the learning process may comprise incrementing an entry of the plurality of entries based on an occurrence of the first representation of the object contemporaneously with provisioning of the second control output and provided that the confidence information satisfies a given condition.

[0012] In some implementations, the first representation of the object, the first control output, and the second control output may comprise a plurality of features of a first type and one or more features of a second type. The determination of third control output by the learning process may comprise: determining a subset of features by randomly selecting a portion of the plurality of features and at least one feature from the second input features; comparing individual features of the subset to corresponding features of a plurality of training feature sets, individual ones of the plurality of training feature sets comprising a number of training features, the number being equal to or greater than the quantity of features within the subset of features; based on the comparison, determining a similarity measure for a given training set of the plurality of training feature sets, the similarity measure characterizing similarity between features of the subset and features of the given training set; responsive to the similarity measure breaching a threshold, selecting one or more training sets from the plurality of training sets; determining one or more potential control outputs, individual ones of the one or more potential control outputs being associated with a corresponding training set of the plurality of training sets; and determining the third control output based on a transformation obtained from the one or more potential control outputs. Individual ones of the plurality of training feature sets may comprise features of the first type and at least one feature of the second type. Individual ones of the plurality of training feature sets are obtained during training operation of the robot. The training operation may be performed responsive to receiving a training signal from the robot. Individual ones of the one or more potential control outputs may be determined based on the training signal and the features of the given training set.

[0013] In some implementations, the similarity measure may be determined based on a difference between values of the features of the subset and values of the features of the given training set.

[0014] In some implementations, the similarity measure may be determined based on a distance between individual features of the subset of features and corresponding features of the given training set.

[0015] In some implementations, execution of the task based solely on the third control output may be configured to produce a third trajectory that is farther from the target trajectory compared to the second trajectory.

[0016] In some implementations, the robot may comprise a vehicle. The vehicle may comprise a platform configured to move in at least one dimension. The apparatus may be disposed on the platform. The training input may be provided by an entity disposed external to the platform.

[0017] In some implementations, the apparatus may comprise a wireless communications interface configured to receive remote data transmissions from the entity. The training input may be provided via the remote data transmissions. The training input may be configured based on an evaluation of the second representation of the object. The second representation may be distinct from the first representation.

[0018] In some implementations, the evaluation component may be configured to produce the second control output based on a discrepancy between the control signal and the teaching input.

[0019] In some implementations, the combiner may be operable in accordance with at least one of an addition or a union operation on the first control output and the third control output.

[0020] In some implementations, the combination may be configured based on a concatenation of the first control output and the third control output.

[0021] In some implementations, the apparatus may comprise another combiner component configured to combine the fourth control output and the training input to produce a motor control output. The combination of the fourth control output and the training input may be characterized by a transfer function. The robot may comprise an actuator configured to displace at least a portion of the robot in at least one dimension based on application of the motor control output. The transfer function may be configured to provide an override combination wherein the a motor control output is configured based solely on the teaching provided the teaching signal satisfies a given condition.

[0022] Another aspect of the disclosure relates to a non-transitory computer-readable storage medium having instructions embodied thereon. The instructions may be executable by a processor to perform a method of determining a combined control output for a task being executed by a robot. The method may comprise: for a sensory context, determining a first instance of a control output using a pre-configured and non-learning prediction process and a second instance of the control output using a learning prediction process; combining the first instance of the control output with the second instance of the control output using a combination process to produce the combined control output; and causing the task to be executed responsive to providing the combined control output to the robot. The learning prediction process may be configured to determine the second instance of the control output based on a teaching input indicative of a target trajectory associated with the task execution. Execution of the task by the robot in accordance with the combined control output may be configured to produce a trajectory that is closer to the target trajectory compared to task execution based on one or both of the first instance of the control output or the second instance of the control output.

[0023] In some implementations, the learning process may be configured to associate the sensory context with the target trajectory. The association may be based on updating a parameter of the learning process during based on a contemporaneous occurrence of the sensory context and the training input.

[0024] In some implementations, the robot may comprise a plurality of actuators characterized by a first operational degree of freedom and a second operational degree of freedom. The first instance of the control output may be configured for operating the first operational degree of freedom and the second instance of the control output is configured for operating the second operational degree of freedom. The combination process may comprise a concatenation operation.

[0025] Yet another aspect of the disclosure relates to a method of determining a control signal for a robot. The method may be performed by one or more processors executing instructions stored by a non-transitory computer-readable storage medium. The method may comprise: determining an occurrence of a first context in sensory input; accessing a learning process configuration, the learning process configuration adapted to convey an association between a given context and a respective action; determining a first action associated with the first context; responsive to the first action corresponding to a pre-programmed action, activating a pre-programmed predictor component to produce the control signal based on analysis of the sensory input; responsive to the first action corresponding to a learned action, activating a learning predictor component to produce the control signal based on analysis of the sensory input and a training input; and updating the learning process configuration in accordance with the activated component.

[0026] In some implementations, the robot may comprise an autonomous vehicle and a collector apparatus. The pre-programmed action may comprise an object search task configured based on a random exploration of environment by the robot. The learned action may comprise an object collection task configured based on a provision of the training input.

[0027] These and other objects, features, and characteristics of the system and/or method disclosed herein, as well as the methods of operation and functions of the related elements of structure and the combination of parts and economies of manufacture, will become more apparent upon consideration of the following description and the appended claims with reference to the accompanying drawings, all of which form a part of this specification, wherein like reference numerals designate corresponding parts in the various figures. It is to be expressly understood, however, that the drawings are for the purpose of illustration and description only and are not intended as a definition of the limits of the disclosure. As used in the specification and in the claims, the singular form of "a", "an", and "the" include plural referents unless the context clearly dictates otherwise.

BRIEF DESCRIPTION OF THE DRAWINGS

[0028] FIG. 1 is a graphical illustration depicting a robotic apparatus comprising an adaptive controller configured for autonomous navigation, in accordance with one or more implementations.

[0029] FIG. 2 is functional block diagram illustrating a robotic system comprising an adaptive predictor component, according to some implementations.

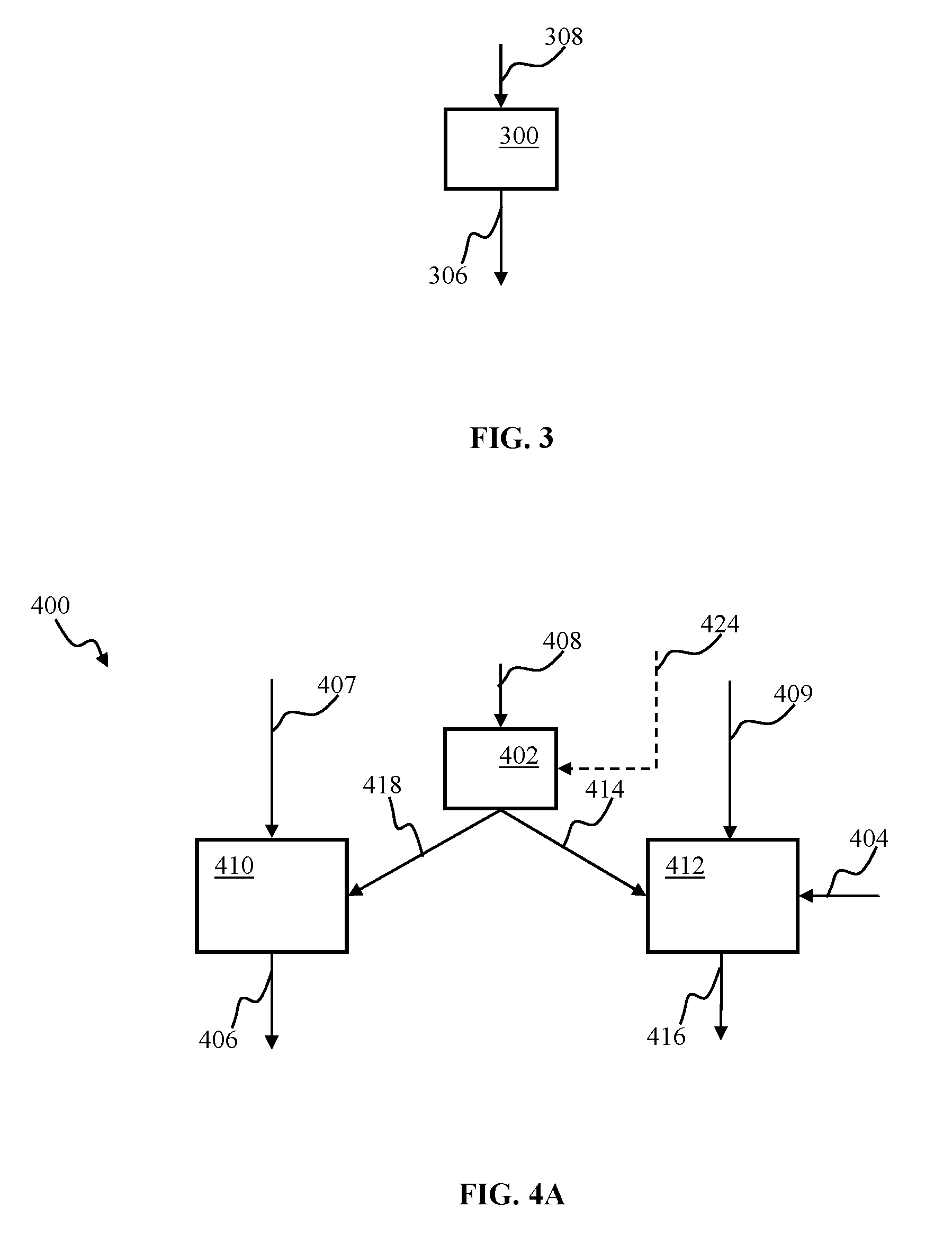

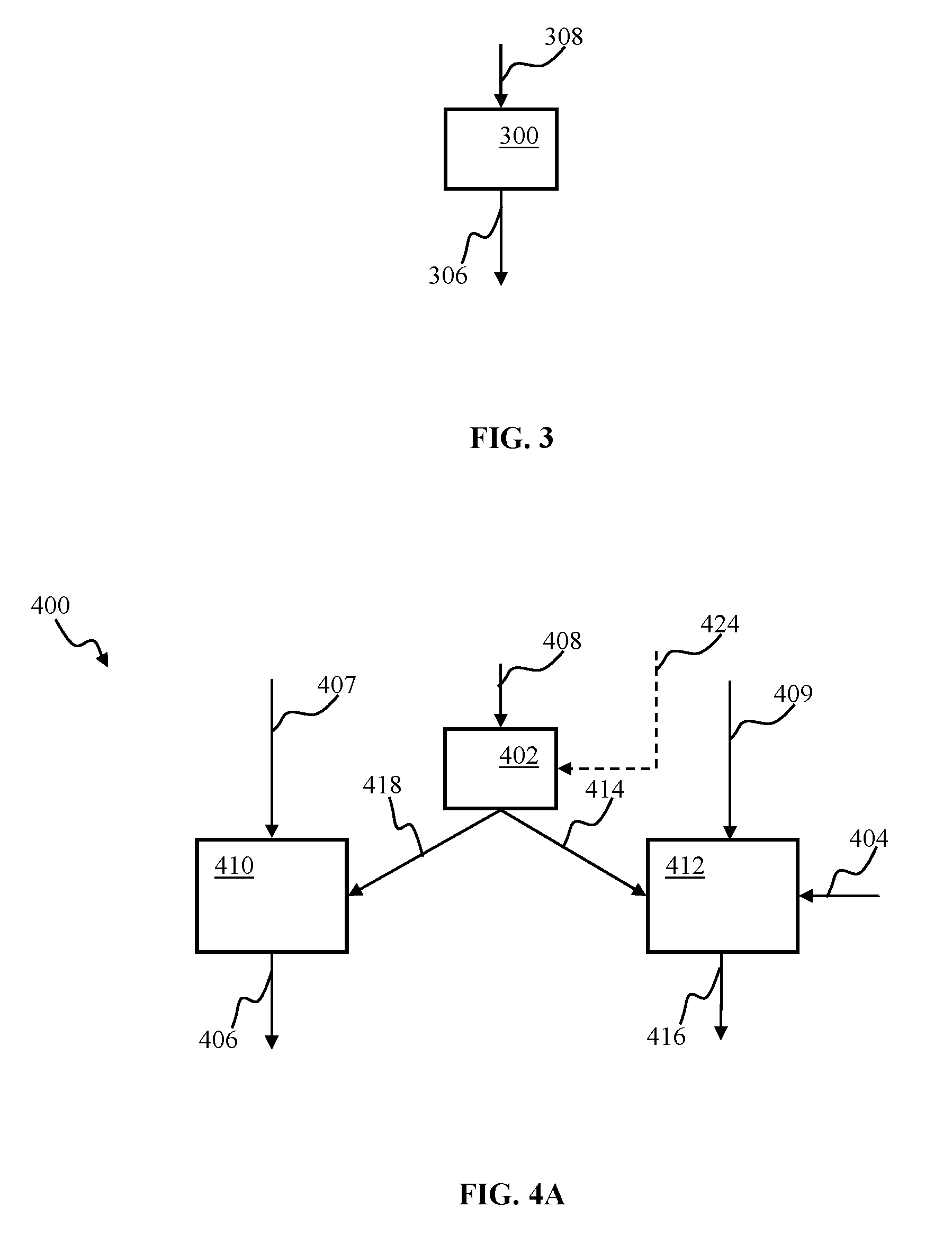

[0030] FIG. 3 is a block diagram illustrating an unattended robotic controller configured to determine a control output based on sensory input, according to one or more implementations.

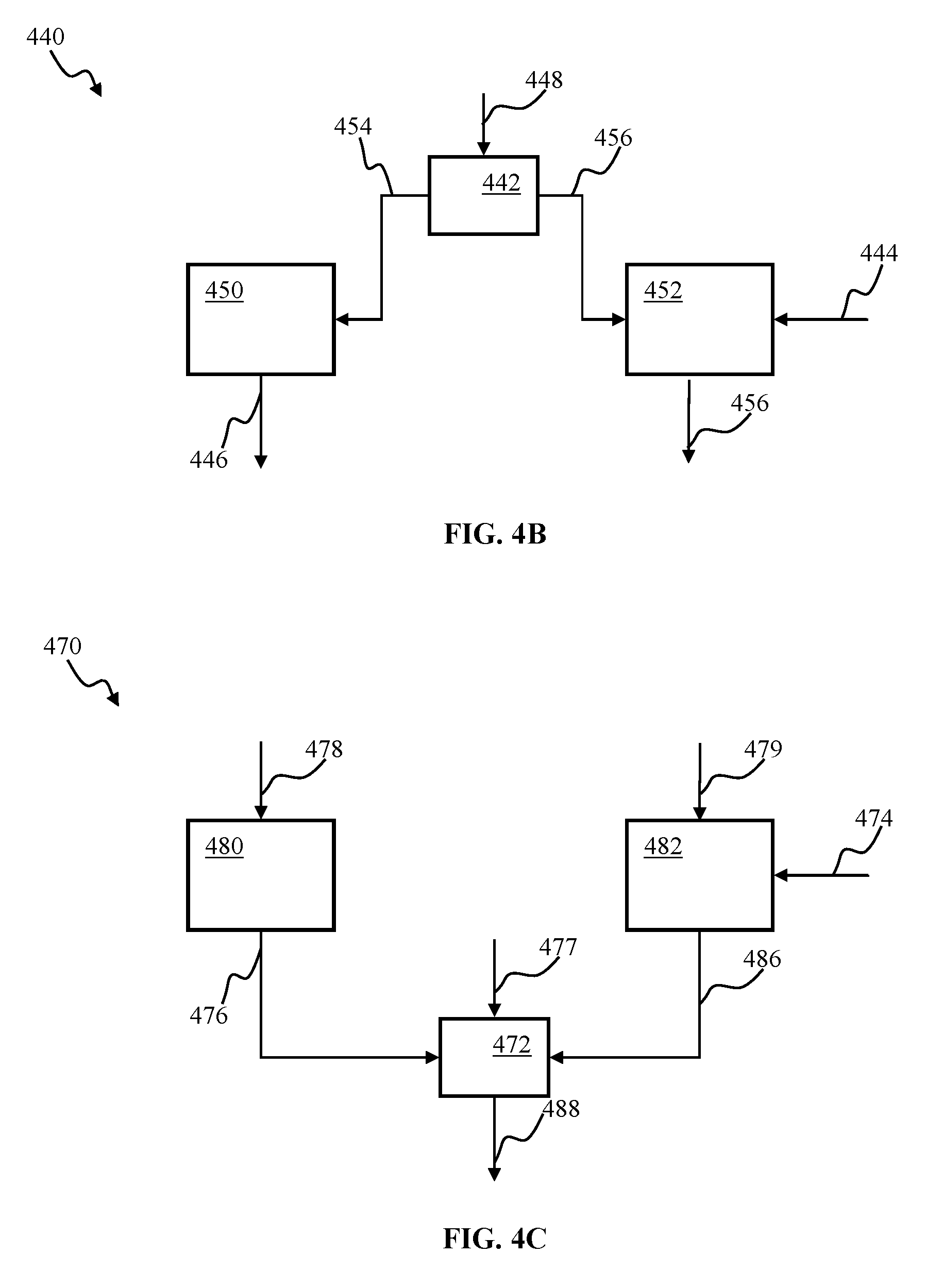

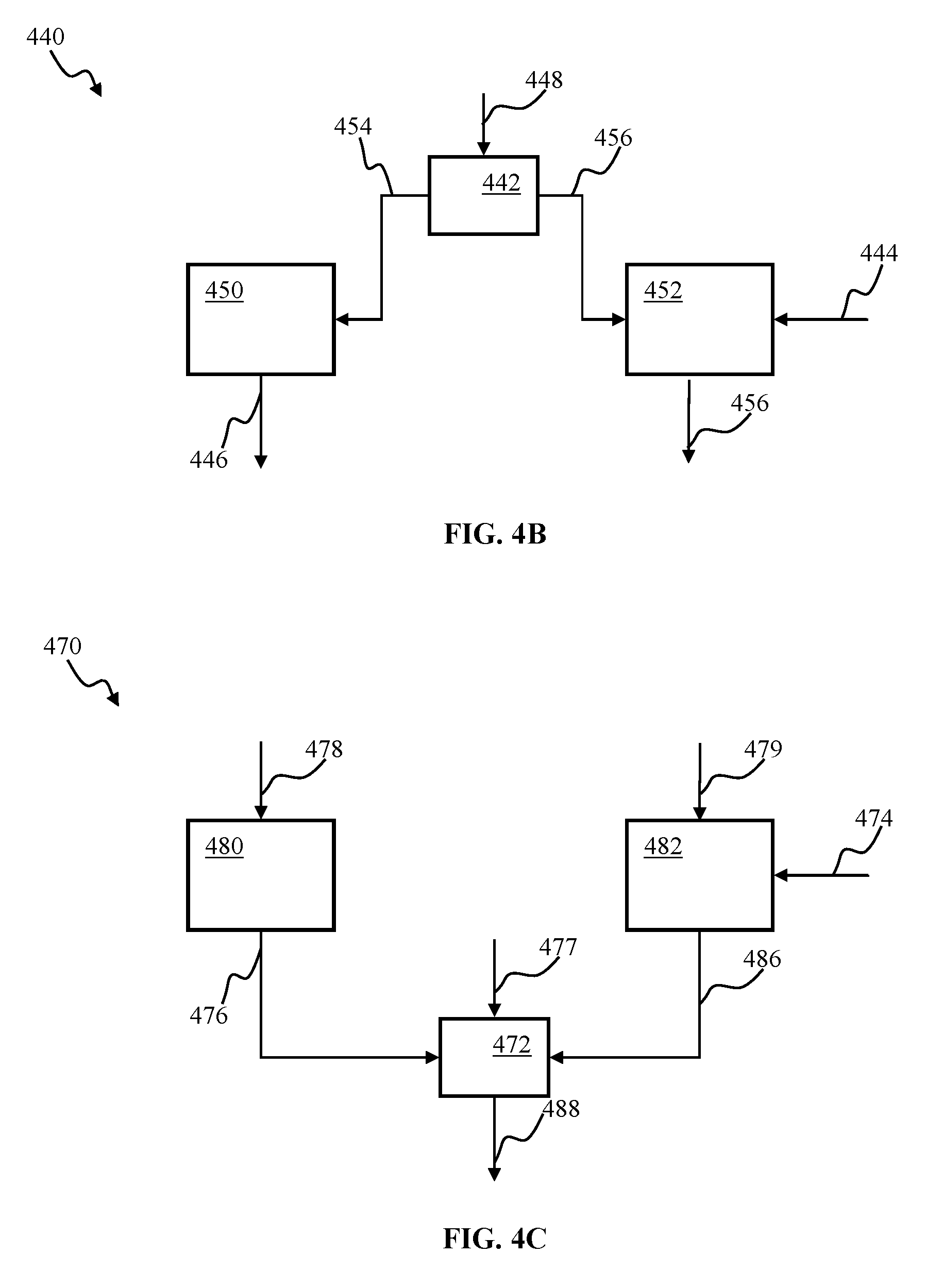

[0031] FIG. 4A is a block diagram illustrating a hybrid controller apparatus comprising a switching component, according to one or more implementations.

[0032] FIG. 4B is a block diagram illustrating a hybrid robotic controller apparatus comprising input switching, according to one or more implementations.

[0033] FIG. 4C is a block diagram illustrating a hybrid robotic controller apparatus comprising output switching, according to one or more implementations.

[0034] FIG. 5A is a block diagram illustrating a control apparatus comprising two cascaded predictors configured to determine a control output based on sensory input, according to one or more implementations.

[0035] FIG. 5B is a block diagram illustrating a control apparatus comprising cascaded predictors configured to determine a control output based on sensory input, according to one or more implementations.

[0036] FIG. 6 is a functional block diagram illustrating components of a robotic controller apparatus for use with the programming and training robotic control methodology, in accordance with one or more implementations.

[0037] FIG. 7 is a functional block diagram depicting a system comprising robotic controller apparatus, according to one or more implementations.

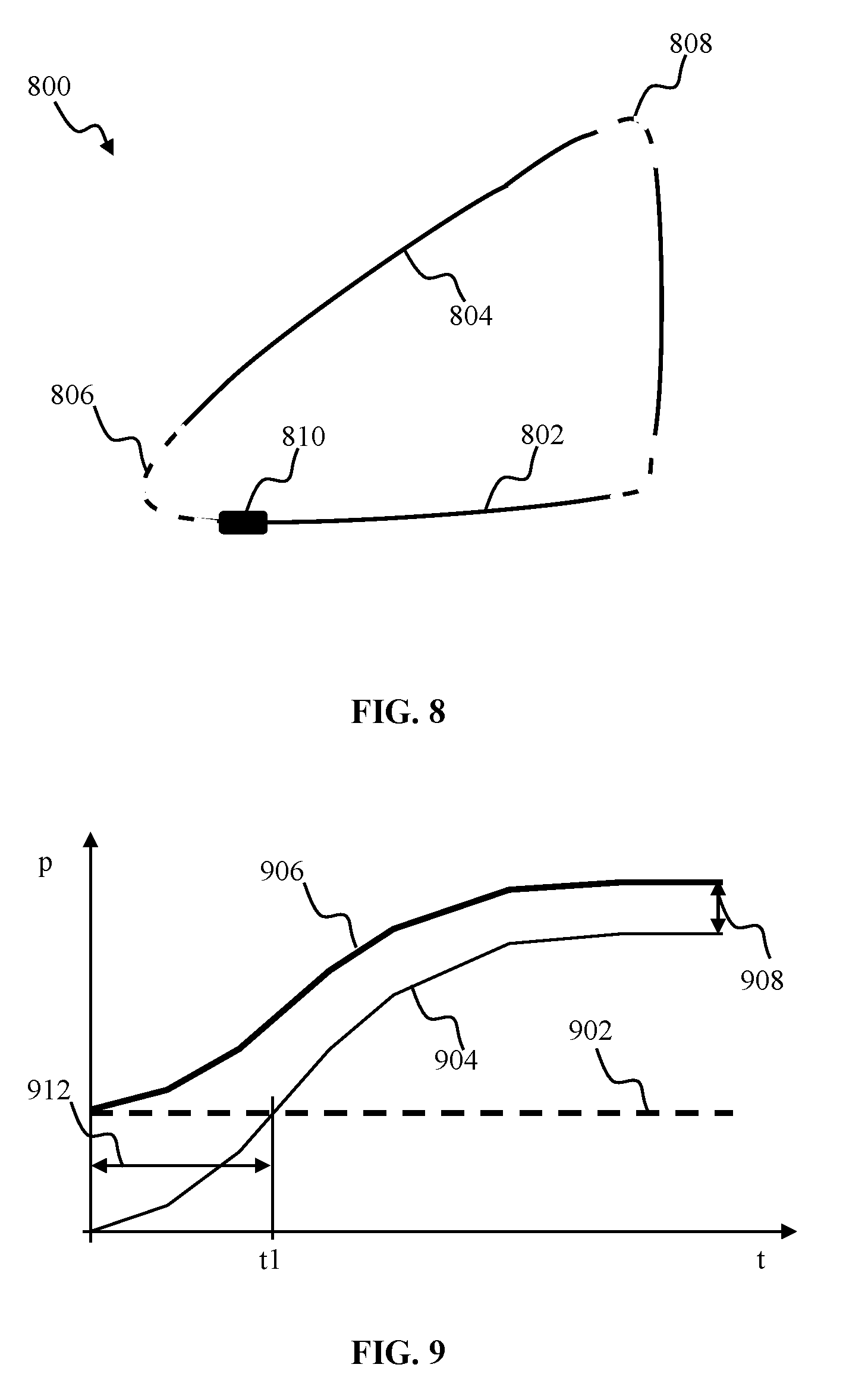

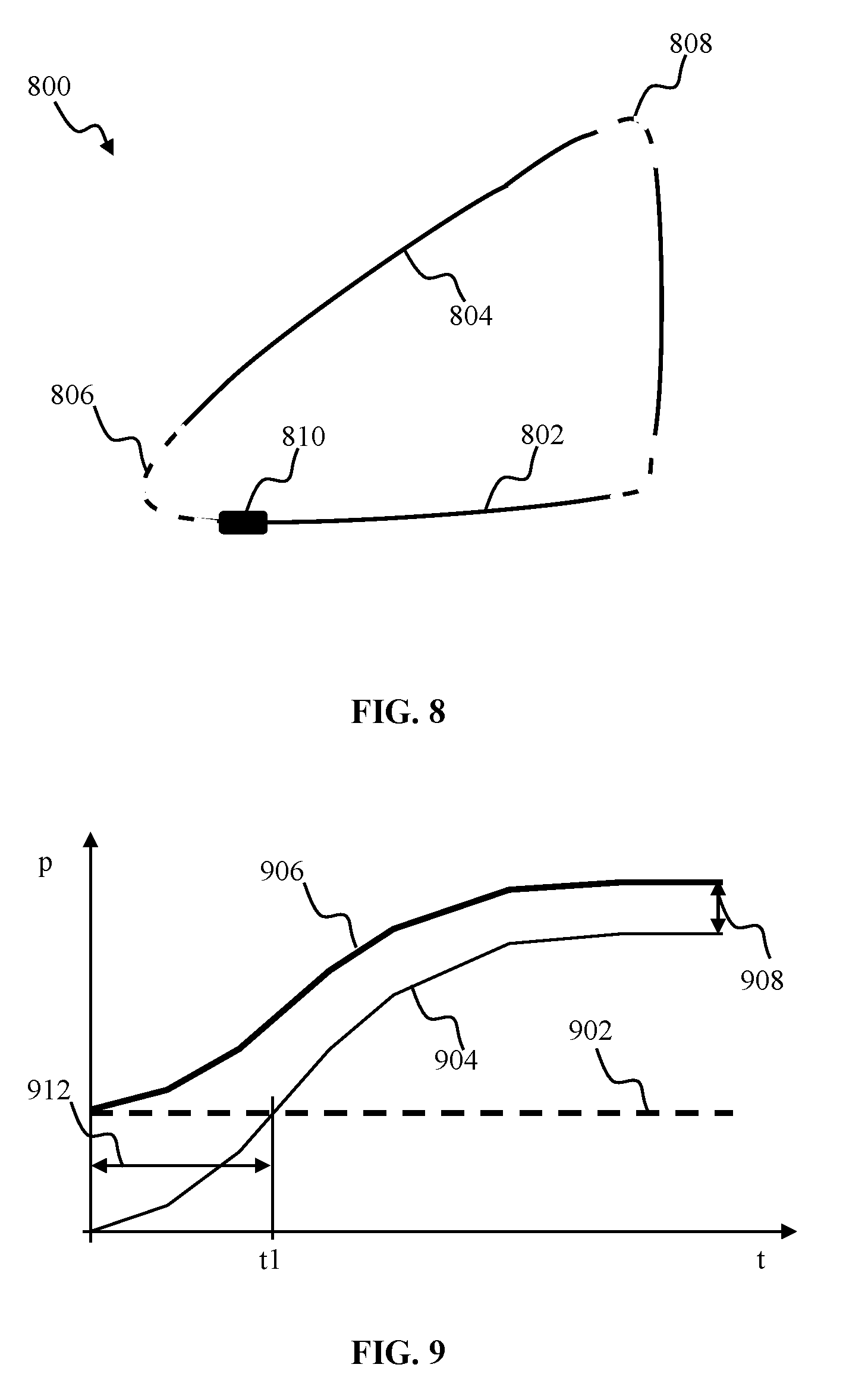

[0038] FIG. 8 is graphical illustration depicting navigation of an exemplary trajectory by a robotic vehicle configured for operation with the programming and training robotic control methodology of the disclosure, in accordance with one or more implementations

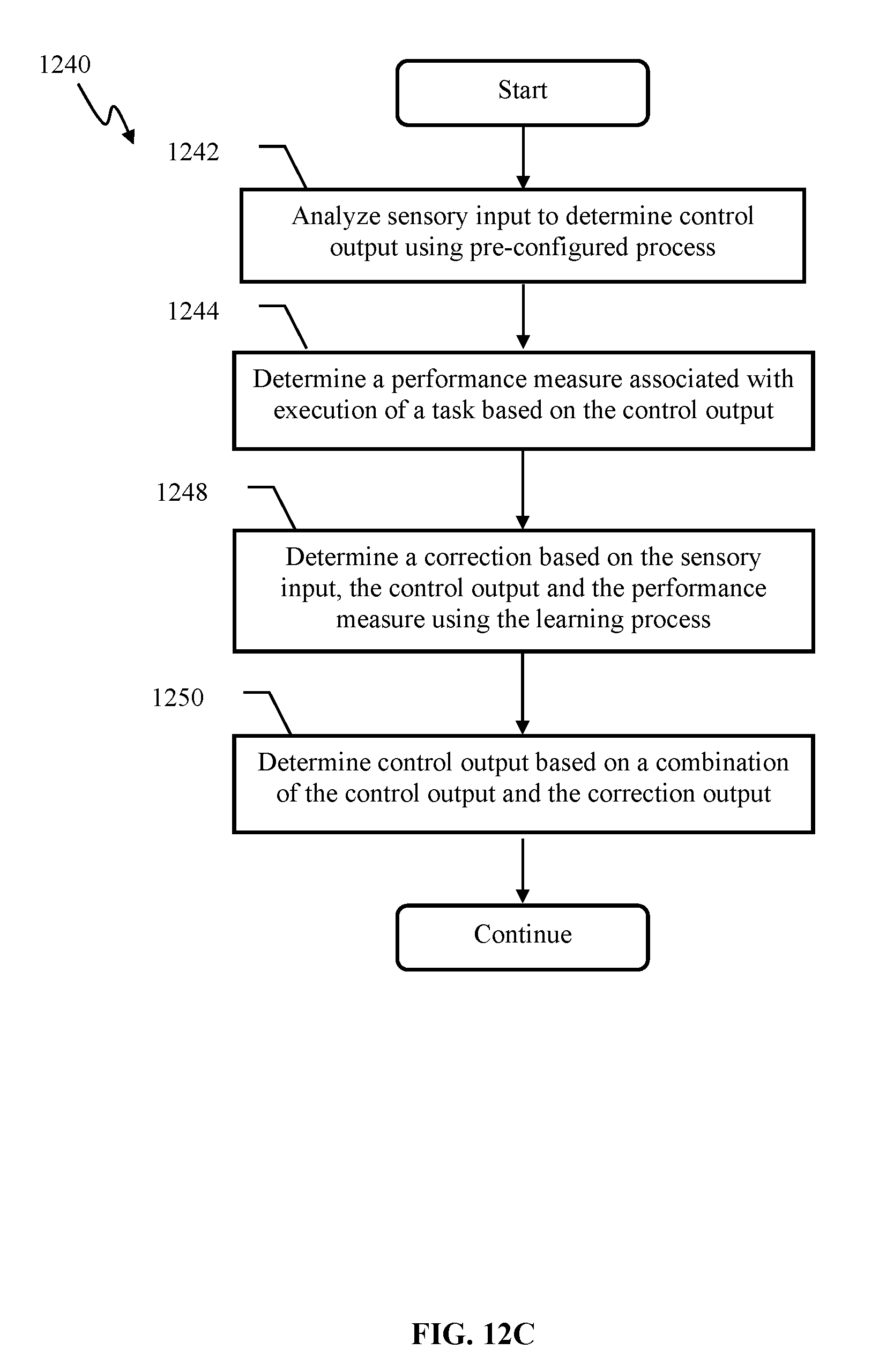

[0039] FIG. 9 is a plot depicting performance of a controller apparatus of, e.g., FIG. 4A-4C, according to one or more implementations.

[0040] FIG. 10 is an isometric view of EyeRover.TM. robotic apparatus comprising articulated camera component and configured for use with the programming and training robotic control methodology of the disclosure, in accordance with one or more implementations.

[0041] FIG. 11 is graphical illustration depicting target following by, e.g., camera of the EyeRover.TM. apparatus of FIG. 10, in accordance with one or more implementations.

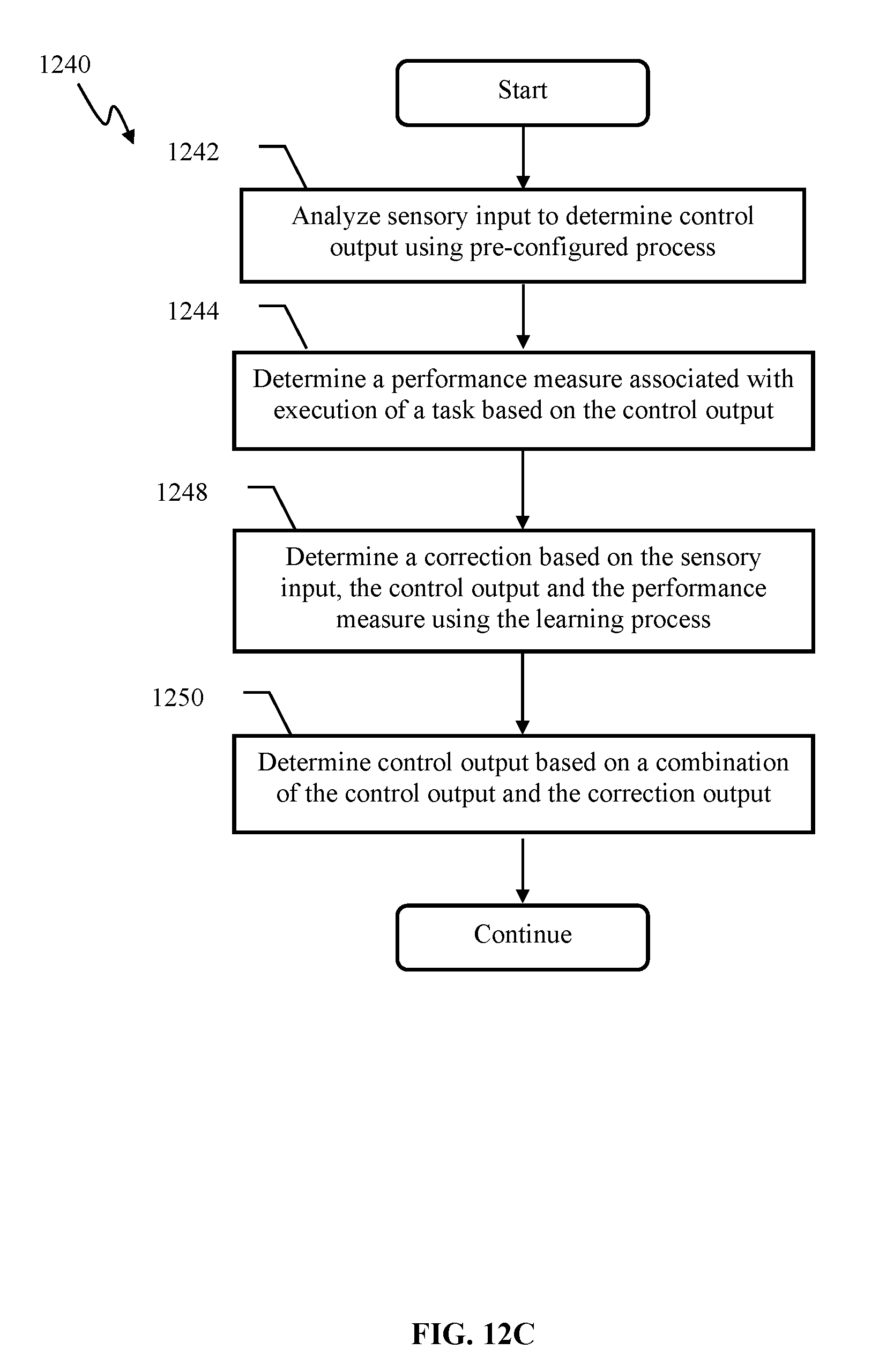

[0042] FIGS. 12A-12C illustrate exemplary methods of operating an adaptive apparatus comprising a pre-configured and a learning prediction components, according to one or more implementations.

[0043] All Figures disclosed herein are .COPYRGT.Copyright 2018 Brain Corporation. All rights reserved.

DETAILED DESCRIPTION

[0044] Implementations of the present disclosure will now be described in detail with reference to the drawings, which are provided as illustrative examples so as to enable those skilled in the art to practice the present technology. Notably, the figures and examples below are not meant to limit the scope of the present disclosure to a single implementation, but other implementations are possible by way of interchange of or combination with some or all of the described or illustrated elements. Wherever convenient, the same reference numbers will be used throughout the drawings to refer to same or like parts.

[0045] Although the system(s) and/or method(s) of this disclosure have been described in detail for the purpose of illustration based on what is currently considered to be the most practical and preferred implementations, it is to be understood that such detail is solely for that purpose and that the disclosure is not limited to the disclosed implementations, but, on the contrary, is intended to cover modifications and equivalent arrangements that are within the spirit and scope of the appended claims. For example, it is to be understood that the present disclosure contemplates that, to the extent possible, one or more features of any implementation may be combined with one or more features of any other implementation

[0046] In the present disclosure, an implementation showing a singular component should not be considered limiting; rather, the disclosure is intended to encompass other implementations including a plurality of the same component, and vice-versa, unless explicitly stated otherwise herein.

[0047] Further, the present disclosure encompasses present and future known equivalents to the components referred to herein by way of illustration.

[0048] As used herein, the term "bus" is meant generally to denote all types of interconnection or communication architecture that is used to access the synaptic and neuron memory. The "bus" could be optical, wireless, infrared or another type of communication medium. The exact topology of the bus could be for example standard "bus", hierarchical bus, network-on-chip, address-event-representation (AER) connection, or other type of communication topology used for accessing, e.g., different memories in pulse-based system.

[0049] As used herein, the terms "computer", "computing device", and "computerized device ", include, but are not limited to, personal computers (PCs) and minicomputers, whether desktop, laptop, or otherwise, mainframe computers, workstations, servers, personal digital assistants (PDAs), handheld computers, embedded computers, programmable logic device, personal communicators, tablet or "phablet" computers, portable navigation aids, J2ME equipped devices, smart TVs, cellular telephones, smart phones, personal integrated communication or entertainment devices, or literally any other device capable of executing a set of instructions and processing an incoming data signal.

[0050] As used herein, the term "computer program" or "software" is meant to include any sequence or human or machine cognizable steps which perform a function. Such program may be rendered in virtually any programming language or environment including, for example, C/C++, C#, Fortran, COBOL, MATLAB.TM., PASCAL, Python, assembly language, markup languages (e.g., HTML, SGML, XML, VoXML), and the like, as well as object-oriented environments such as the Common Object Request Broker Architecture (CORBA), Java.TM. (including J2ME, Java Beans), Binary Runtime Environment (e.g., BREW), and other languages.

[0051] As used herein, the terms "connection", "link", "synaptic channel", "transmission channel", "delay line", are meant generally to denote a causal link between any two or more entities (whether physical or logical/virtual), which enables information exchange between the entities.

[0052] As used herein the term feature may refer to a representation of an object edge, determined by change in color, luminance, brightness, transparency, texture, and/or curvature. The object features may comprise, inter alia, individual edges, intersections of edges (such as corners), orifices, and/or curvature

[0053] As used herein, the term "memory" includes any type of integrated circuit or other storage device adapted for storing digital data including, without limitation, ROM. PROM, EEPROM, DRAM, Mobile DRAM, SDRAM, DDR/2 SDRAM, EDO/FPMS, RLDRAM, SRAM, "flash" memory (e.g., NAND/NOR), memristor memory, and PSRAM.

[0054] As used herein, the terms "processor", "microprocessor" and "digital processor" are meant generally to include all types of digital processing devices including, without limitation, digital signal processors (DSPs), reduced instruction set computers (RISC), general-purpose (CISC) processors, microprocessors, gate arrays (e.g., field programmable gate arrays (FPGAs)), PLDs, reconfigurable computer fabrics (RCFs), array processors, secure microprocessors, and application-specific integrated circuits (ASICs). Such digital processors may be contained on a single unitary IC die, or distributed across multiple components.

[0055] As used herein, the term "network interface" refers to any signal, data, or software interface with a component, network or process including, without limitation, those of the FireWire (e.g., FW400, FW800, and/or other FireWire implementation.), USB (e.g., USB2), Ethernet (e.g., 10/100, 10/100/1000 (Gigabit Ethernet), 10-Gig-E, and/or other technologies), MoCA, Coaxsys (e.g., TVnet.TM.), radio frequency tuner (e.g., in-band or OOB, cable modem, and/or other technologies), Wi-Fi (802.11), WiMAX (802.16), PAN (e.g., 802.15), cellular (e.g., 3G, LTE/LTE-A/TD-LTE, GSM, and/or other cellular interface implementation) or IrDA families.

[0056] As used herein, the terms "pulse", "spike", "burst of spikes", and "pulse train" are meant generally to refer to, without limitation, any type of a pulsed signal, e.g., a rapid change in some characteristic of a signal, e.g., amplitude, intensity, phase or frequency, from a baseline value to a higher or lower value, followed by a rapid return to the baseline value and may refer to any of a single spike, a burst of spikes, an electronic pulse, a pulse in voltage, a pulse in electrical current, a software representation of a pulse and/or burst of pulses, a software message representing a discrete pulsed event, and any other pulse or pulse type associated with a discrete information transmission system or mechanism.

[0057] As used herein, the term "Wi-Fi" refers to, without limitation, any of the variants of IEEE-Std. 802.11 or related standards including 802.11 a/b/g/n/s/v and 802.11-2012.

[0058] As used herein, the term "wireless" means any wireless signal, data, communication, or other interface including without limitation Wi-Fi, Bluetooth, 3G (3GPP/3GPP2), HSDPA/HSUPA, TDMA, CDMA (e.g., IS-95A, WCDMA, and/or other wireless interface implementation.), FHSS, DSSS, GSM, PAN/802.15, WiMAX (802.16), 802.20, narrowband/FDMA, OFDM, PCS/DCS, LTE/LTE-A/TD-LTE, analog cellular, CDPD, RFID or NFC (e.g., EPC Global Gen. 2, ISO 14443, ISO 18000-3), satellite systems, millimeter wave or microwave systems, acoustic, and infrared (e.g., IrDA).

[0059] FIG. 1 depicts a mobile robotic apparatus 100 that may be configured with an adaptive controller in accordance with one or more implementations of e.g., the learning apparatuses illustrated in FIGS. 2-5, infra. The robotic apparatus 100 may comprise a sensor component 166. The sensor component 166 may be characterized by an aperture and/or field of view 168. Generally speaking, a field of view may be described as an extent of the observable world that may be captured by the sensor at a given moment. The sensor component 166 may provide information associated with objects within the field-of-view 168. In one or more implementations, such as object recognition, and/or obstacle avoidance, the output provided by the sensor component 166 may comprise a stream of pixel values associated with one or more digital images. In one or more implementations wherein the sensor 166 may comprise video, radar, sonography, x-ray, magnetic resonance imaging, and/or other types of sensors, the sensor output may be based on electromagnetic waves (e.g., visible light, infrared (IR), ultraviolet (UV), and/or other types of electromagnetic waves) entering an imaging sensor array. In some implementations, the imaging sensor array may comprise one or more of artificial retinal ganglion cells (RGCs), a charge coupled device (CCD), an active-pixel sensor (APS), and/or other sensors. The input signal may comprise a sequence of images and/or image frames. The sequence of images and/or image frame may be received from a CCD camera via a receiver apparatus and/or downloaded from a file. The image may comprise a two-dimensional matrix of red/green/blue (RGB) values refreshed at a 25 Hz frame rate. In some implementations, the RGB image data may be augmented with range (visual scene depth) data to produce RGB-D frame. It will be appreciated by those skilled in the arts that the above image parameters are merely exemplary, and many other image representations (e.g., bitmap, CMYK, HSV, HSL, grayscale, and/or other representations) and/or frame rates may be utilized in various implementations of the present disclosure. Pixels and/or groups of pixels associated with objects and/or features in the input frames may be encoded using, for example, latency encoding described in co-owned U.S. patent application Ser. No. 12/869,583, filed Aug. 26, 2010 and entitled "INVARIANT PULSE LATENCY CODING SYSTEMS AND METHODS"; U.S. Pat. No. 8,315,305, issued Nov. 20, 2012, and entitled "SYSTEMS AND METHODS FOR INVARIANT PULSE LATENCY CODING"; U.S. patent application Ser. No. 13/152,084, filed Jun. 2, 2011, and entitled "APPARATUS AND METHODS FOR PULSE-CODE INVARIANT OBJECT RECOGNITION"; and/or latency encoding comprising a temporal winner take all mechanism described in U.S. patent application Ser. No. 13/757,607, filed Feb. 1, 2013, and entitled "TEMPORAL WINNER TAKES ALL SPIKING NEURON NETWORK SENSORY PROCESSING APPARATUS AND METHODS", each of the foregoing being incorporated herein by reference in its entirety.

[0060] In one or more implementations, object recognition and/or classification may be implemented using a spiking neuron classifier comprising conditionally independent subsets as described in co-owned U.S. patent application Ser. No. 13/756,372 filed Jan. 31, 2013, and entitled "SPIKING NEURON CLASSIFIER APPARATUS AND METHODS" and/or co-owned U.S. patent application Ser. No. 13/756,382 filed Jan. 31, 2013, and entitled "REDUCED LATENCY SPIKING NEURON CLASSIFIER APPARATUS AND METHODS", each of the foregoing being incorporated herein by reference in its entirety.

[0061] In one or more implementations, encoding may comprise adaptive adjustment of neuron parameters, such as neuron excitability which is described in U.S. patent application Ser. No. 13/623,820 entitled "APPARATUS AND METHODS FOR ENCODING OF SENSORY DATA USING ARTIFICIAL SPIKING NEURONS", filed Sep. 20, 2012, the foregoing being incorporated herein by reference in its entirety.

[0062] In some implementations, analog inputs may be converted into spikes using, for example, kernel expansion techniques described in co-owned U.S. patent application Ser. No. 13/623,842 filed Sep. 20, 2012, and entitled "SPIKING NEURON NETWORK ADAPTIVE CONTROL APPARATUS AND METHODS", the foregoing being incorporated herein by reference in its entirety. The term "continuous signal" may be used to describe a non-spiking signal (e.g., analog, n-ary digital signal characterized by n-bits of resolution, n>1). In one or more implementations, analog and/or spiking inputs may be processed by mixed signal spiking neurons, such as co-owned U.S. patent application Ser. No. 13/313,826 entitled "APPARATUS AND METHODS FOR IMPLEMENTING LEARNING FOR ANALOG AND SPIKING SIGNALS IN ARTIFICIAL NEURAL NETWORKS", filed Dec. 7, 2011, and/or co-owned U.S. patent application Ser. No. 13/761,090 entitled "APPARATUS AND METHODS FOR IMPLEMENTING LEARNING FOR ANALOG AND SPIKING SIGNALS IN ARTIFICIAL NEURAL NETWORKS", filed Feb. 6, 2013, each of the foregoing being incorporated herein by reference in its entirety.

[0063] In some implementations of robotic navigation in an arbitrary environment, the sensor component 166 may comprise a camera configured to provide an output comprising a plurality of digital image frames refreshed at, e.g., 25 Hz frame rate. Output of the sensor 166 in FIG. 1 may comprise representations of one or more objects (e.g., target 174, and/or obstacle 176). The sensor output may be processed by a feature detection apparatus, e.g., as described in U.S. patent application Ser. No. 14/542,391 entitled "ROBOTIC NAVIGATION APPARATUS AND METHODS", filed Nov. 14, 2014, incorporated supra. In one or more implementations of visual data processing, the features that may be detected in the sensory output may comprise one or more of representations (e.g., representations of objects, corner, edges, patches of texture, color, brightness, and/or other patterns that may be present in visual output), audio patterns (e.g., speech elements), and/or other persistent signal patterns that may be relevant to a given task. A given pattern and/or data item (e.g., representation of an orange fruit on a tree and/or time of day) may comprise a relevant feature for one task (e.g., harvesting of oranges) and may be ignored by other tasks (e.g., navigation around trees). Various feature detection methodologies may be applied to processing of the sensor output. In some implementations, the feature detection may be configured to implement one or more of a filter operation (e.g., orange mask to detect orange objects), a Radon transform edge detection, corner detection (e.g., using Harris operator), texture detection (e.g., using Laws masks), patterns of motion (e.g., using optical flow), and/or other methodologies.

[0064] Output of the feature detection may be utilized by the robot to perform a task. The tasks of the robot 100 may be configured based on a context. In one or more implementations, the context may comprise one or more of an occurrence of one or more features in the sensor output, one or more robot states, a state of the environment, environmental conditions, previous state information, and/or other information. Examples of a robot state may include one or more of location or motion information (e.g., position, orientation, speed, and/or other information), platform state or configuration (e.g., manipulator size, manipulator position, and/or other information), available power and/or other robot states. Examples of a state of the environment may include one or more of an object size, object location, and/or other states of the environment. Examples of environmental conditions may include information indicating whether there is one or more of wind, rain, and/or other environmental conditions. In some implementations, previous state information may be based on historic states of robot motions. The robotic apparatus 100 may be operated using an adaptive controller, e.g., such as described below with respect to FIGS. 2-5.

[0065] FIG. 2 illustrates an implementation of adaptive control system 200 for use with, e.g., the robotic apparatus 100 of FIG. 1 and/or 810 of FIG. 8. The adaptive control system 200 of FIG. 2 may comprise a corrector 212, an adaptive predictor 222, and a combiner 214 cooperating to control a robotic platform 230. The learning process of the adaptive predictor 222 may comprise one or more of a supervised learning process, a reinforcement learning process, an unsupervised learning process, and/or other processes. The corrector 212, the predictor 222, and the combiner 214 may cooperate to produce a control signal 220 for the robotic platform 230. In one or more implementations, the control signal 220 may comprise one or more motor commands (e.g., pan camera to the right, turn right wheel forward), sensor acquisition commands (e.g., use high resolution camera mode), and/or other commands.

[0066] In some implementations, the predictor 222 and the combiner 214 components may be configured to operate a plurality of robotic platforms. The control signal 220 may be adapted by a decoder component in accordance with a specific implementation of a given robotic platform 230. In one or more implementations of robotic vehicle control, the adaptation by the decoder may comprise translating binary signal representation 220 into one or more formats (e.g., pulse code modulation) that may be utilized by given robotic vehicle. U.S. patent application Ser. No. 14/244,890 entitled "LEARNING APPARATUS AND METHODS FOR CONTROL OF ROBOTIC DEVICES", filed Apr. 3, 2014 describes some implementations of control signal conversion.

[0067] In some implementations of the decoder corresponding to the analog control and/or analog corrector 212 implementations, the decoder may be further configured to rescale the drive and/or steering signals to a range appropriate for the motors and/or actuators of the platform 230.

[0068] In some implementations of the discrete state space control implementation of the corrector 212, the decoder may be configured to convert an integer control index into a corresponding steering/drive command using, e.g. a look-up table approach described in detail in, e.g., U.S. patent application Ser. No. 14/265,113 entitled "TRAINABLE CONVOLUTIONAL NETWORK APPARATUS AND METHODS FOR OPERATING A ROBOTIC VEHICLE", filed Apr. 29, 2014 ("the '113 application"), the foregoing being incorporated herein by reference in its entirety.

[0069] The corrector 212 may receive a control input 228 from a control entity. The control input 228 may be determined based on one or more of (i) sensory input 202 and (ii) feedback from the platform (not shown). In some implementations, the feedback may comprise proprioceptive signals. Examples of a proprioceptive signal may include one or more of feedback from servo motors, feedback from joint position sensors, torque resistance, and/or other proprioceptive signals. In some implementations, the sensory input 202 may correspond to the sensory input, described, e.g., with respect to FIG. 1, supra. In one or more implementations, the control entity providing the input 228 to the corrector may comprise a human trainer, communicating with the robot via a remote controller (wired and/or wireless). In some implementations, the control entity may comprise a computerized agent such as a multifunction adaptive controller operable using reinforcement and/or unsupervised learning and capable of training other robotic devices for one and/or multiple tasks. In one such implementation, the control entity and the corrector 212 may comprise a single computerized apparatus.

[0070] The corrector 212 may be operable to generate control signal 208 using a plurality of approaches. In some implementations of analog control for robotic vehicle navigation, the corrector output 208 may comprise target vehicle velocity and target vehicle steering angle. Such implementations may comprise an "override" functionality configured to cause the robotic platform 230 to execute action in accordance with the user-provided control signal instead of the predicted control signal.

[0071] In one or more implementations of analog correction provision for robotic vehicle navigation, the control signal 208 may comprise a correction to the target trajectory. The signals 208 may comprise a target "correction" to the current velocity and/or steering angle of the platform 230. In one such implementation, when the corrector output 208 comprises a zero signal (or substantially a null value), the platform 230 may continue its operation unaffected.

[0072] In some implementations of state space for vehicle navigation, the actions of the platform 230 may be encoded using, e.g., a 1-of-10 integer signal, where eight (8) states may be used to indicate 8 directions of motion (e.g., forward-left, forward, forward-right, left, right, back-left, back, back-right), one state may indicate "stay-still", and one state may indicate "neutral". The neutral state may comprise a default state. When the corrector outputs a neutral state, the predictor may control the robot directly. It will be appreciated by those skilled in the arts that various other encoding approaches may be utilized in accordance with controlled configuration of the platform (e.g., controllable degrees of freedom).

[0073] In some implementations of control for vehicle navigation, the action space of the platform 230 may be represented as a 9-element state vector, e.g., as described in, e.g., the above referenced the '113 application. Individual elements of the state vector may indicate the probability of the platform being subjected to (i.e., controlled within) a given control state. In one such implementation, output 218 of the predictor 222 may be multiplied with the output 208 of the corrector 212 in order to determine probability of a given control state.

[0074] The adaptive predictor 222 may be configured to generate predicted control signal 218 based on one or more of (i) the sensory input 202 and the platform feedback (not shown). The predictor 222 may be configured to adapt its internal parameters, e.g., according to a supervised learning rule, and/or other machine learning rules.

[0075] Predictor realizations comprising platform feedback, may be employed in applications such as, for example, where: (i) the control action may comprise a sequence of purposefully timed commands (e.g., associated with approaching a stationary target (e.g., a cup) by a robotic manipulator arm), or where (ii) the platform may be characterized by platform state parameters (e.g., arm inertia, and/or motor response time) that change faster than the rate of action updates. Parameters of a subsequent command within the sequence may depend on the control plant state. A "control plant" may refer to the logical combination of the process being controlled and the actuator (often expressed mathematically). For example, control plant feedback might be the exact location and/or position of the arm joints which can be provided to the predictor.

[0076] In some implementations, the predictor 222 may comprise a convolutional network configured to predict the output 220 of the combiner 214 given the input 202. The convolutional network may be combined with other components that learn to predict the corrector signal given other elements of the sensory context. When the corrector 212 output comprises a zero signal (or null value), the combiner output 220 may equal the predictor output 218. When the corrector provides a non-zero signal, a discrepancy may occur between the prediction 218 and the output 220 of the combiner 214. The discrepancy may be utilized by the predictor 222 in order to adjust parameters of the learning process in order to minimize future discrepancies during sub sequent iterations.

[0077] The sensory input and/or the plant feedback may collectively be referred to as sensory context. The sensory context may be utilized by the predictor 222 to produce the predicted output 218. By way of a non-limiting illustration, one exemplary scenario of obstacle avoidance by an autonomous rover uses an image of an obstacle (e.g., wall representation in the sensory input 202) combined with rover motion (e.g., speed and/or direction) to generate Context A. When the Context A is encountered, the control output 220 may comprise one or more commands configured to avoid a collision between the rover and the obstacle. Based on one or more prior encounters of the Context A avoidance control output, the predictor may build an association between these events as described in detail below.

[0078] The combiner 214 may implement a transfer function h(x) where x includes the control signal 208 and the predicted control signal 218. In some implementations, the combiner 214 operation may be expressed, e.g., as described in detail in co-owned U.S. patent application Ser. No. 13/842,530 entitled "ADAPTIVE PREDICTOR APPARATUS AND METHODS", filed Mar. 15, 2013, as follows:

u=h(u,u.sup.P). (Eqn. 1)

[0079] Various realizations of the transfer function of Eqn. 1 may be utilized. In some implementations, the transfer function may comprise one or more of addition, multiplication, union, a logical `AND` operation, a logical `OR` operation, and/or other transfer functions.

[0080] In one or more implementations, the transfer function may comprise a convolution operation, e.g., a dot product. In spiking network realizations of the combiner function, the convolution operation may be supplemented by use of a finite support kernel (i.e., a mapping function for linear space to a non-linear space) such as Gaussian, rectangular, exponential, and/or other process. In some implementations, a finite support kernel may implement a low pass filtering operation of input spike train(s). In some implementations, the transfer function h may be characterized by a commutative property.

[0081] In one or more implementations, the transfer function of the combiner 214 may be configured as follows:

h(0,u.sup.P)=u.sup.P. (Eqn. 2)

[0082] In some implementations, the transfer function h may be configured as:

h(u,0)=u. (Eqn. 3)

[0083] In some implementations, the transfer function h may be configured as a combination of realizations of Eqn. 2-Eqn. 3 as:

h(0,u.sup.P)=u.sup.P, and h(u,0)=u, (Eqn. 4)

[0084] In one exemplary implementation, the transfer function satisfying Eqn. 4 may be expressed as:

h(u,u.sup.P)=(1-u).times.(1-u.sup.P)-1. (Eqn. 5)

[0085] In one such realization, the combiner transfer function is configured according to Eqn. 2-Eqn. 5, to implement additive feedback. In other words, output of the predictor (e.g., 218) may be additively combined with the control signal (408) and the combined signal 220 may be used as the teaching input (404) for the predictor. In some implementations, the combined signal 220 may be utilized as an input (context) into the predictor 222, e.g., as described in co-owned U.S. patent application Ser. No. 13/842,530 entitled "ADAPTIVE PREDICTOR APPARATUS AND METHODS", filed Mar. 15, 2013, incorporated supra.

[0086] In some implementations, the combiner transfer function may be characterized by a delay expressed as:

u(t.sub.i+1)=h(u(t.sub.i), u.sup.P(t.sub.i)), (Eqn. 6)

where u(t.sub.i+1) denotes combined output (e.g., 220 in FIG. 2) at time t+.DELTA.t.

[0087] As used herein, symbol t.sub.i may be used to refer to a time instance associated with individual controller update events (e.g., as expressed by Eqn. 6), for example ti denoting time of the first control output, e.g., a simulation time step and/or a sensory input frame step. In some implementations of training autonomous robotic devices (e.g., rovers, bi-pedaling robots, wheeled vehicles, aerial drones, robotic limbs, and/or other robotic devices), the update periodicity .DELTA.t may be configured to be between 1 ms and 1000 ms.

[0088] In some implementations, the combiner transfer function may be configured to implement override functionality (e.g., override combiner). The "override" combiner may detect a non-zero signal provided by the corrector, and provide a corrector signal as the combined output. When a zero (or no) corrector signal is detected, the predicted signal may be routed by the combiner as the output. In some implementations, the zero corrector signal may be selected as not a value (NaN); the non-zero signal may comprise a signal rather than the NaN.

[0089] In one or more implementations of a multi-channel controller, the corrector may simultaneously provide "no" signal on some channels and one or more signals on other channels, allowing the user to control one degree of freedom (DOF) of the robotic platform while the predictor may control another DOF.

[0090] It will be appreciated by those skilled in the art that various other realizations of the transfer function of the combiner 214 may be applicable. For example, in some implementations, the transfer function may include a Heaviside step function, a sigmoid function (e.g., hyperbolic tangent), Gauss error function, logistic function, a stochastic operation, and/or other transfer functions. Operation of the predictor 222 learning process may be aided by a teaching signal 204. As shown in FIG. 2, the teaching signal 204 may comprise the output 220 of the combiner 214. In some implementations wherein the combiner transfer function may be characterized by a delay (e.g., Eqn. 6), the teaching signal at time t.sub.i may be configured based on values of u, u.sup.P at a prior time t.sub.i-1, for example as:

u.sup.d(t.sub.i)=h(u(t.sub.i-1), u.sup.P(t.sub.i-1)). (Eqn. 7)

[0091] The training signal u.sup.d at time t.sub.i may be utilized by the predictor in order to determine the predicted output u.sup.P at a subsequent time corresponding to the context (e.g., the sensory input x) at time t.sub.i:

u.sup.P(t.sub.i+1)=F[x.sub.i,W(u.sup.d(t.sub.i))]. (Eqn. 8)

[0092] In Eqn. 8, the function W may refer to a learning process implemented by the predictor, e.g., a perceptron, and/or a look-up table.

[0093] In one or more implementations, such as illustrated in FIG. 2, the sensory input 206, the control signal 208, the predicted output 218, the combined output 220 and/or plant feedback may comprise spiking signals, analog signals, and/or a combination thereof. Analog to spiking and/or spiking to analog signal conversion may be effectuated using, mixed signal spiking neuron networks, such as, for example, described in co-owned U.S. patent application Ser. No. 13/313,826 entitled "APPARATUS AND METHODS FOR IMPLEMENTING LEARNING FOR ANALOG AND SPIKING SIGNALS IN ARTIFICIAL NEURAL NETWORKS", filed Dec. 7, 2011, and/or co-owned U.S. patent application Ser. No. 13/761,090 entitled "APPARATUS AND METHODS FOR IMPLEMENTING LEARNING FOR ANALOG AND SPIKING SIGNALS IN ARTIFICIAL NEURAL NETWORKS", filed Feb. 6, 2013, incorporated supra.

[0094] Output 220 of the combiner e.g., 214 in FIG. 2 may be gated. In some implementations, the gating information may be provided to the combiner by the corrector 212 using, e.g., an "override" indication in order to cause the robotic platform 230 to execute actions according to the user-provided control instead of the predicted control signal.

[0095] In one such realization of spiking controller output, the control signal 208 may comprise positive spikes indicative of a control command. The control signal 208 may be configured to be combined with the predicted control signal (e.g., 218). The control signal 208 may comprise negative spikes. The timing of the negative spikes may be configured to communicate the control command. The (negative) amplitude sign may be configured to communicate the combination inhibition information to the combiner 214 to enable the combiner to `ignore` the predicted control signal 218 for constructing the combined output 220.

[0096] In some implementations of spiking signal output, the combiner 214 may comprise a spiking neuron network. The control signal 208 may be communicated via two or more connections. One such connection may be configured to communicate spikes indicative of a control command to the combiner neuron. The other connection may be used to communicate an inhibitory signal to the combiner network. The inhibitory signal may inhibit one or more neurons of the combiner the one or more combiner input neurons of the combiner network thereby effectively removing the predicted control signal from the combined output (e.g., 220 in FIG. 2).

[0097] The gating information may be provided to the combiner by another entity (e.g., a human operator controlling the system with a remote control and/or external controller) and/or from another output from the corrector 212 (e.g., an adaptation block, an optimization controller). In one or more implementations, the gating information may comprise one or more of: a command, a memory address of a register storing a flag, a message, an inhibitory efficacy, a value (e.g., a weight of zero to be applied to the predicted control signal by the combiner), and/or other information capable of conveying gating instructions to the combiner.

[0098] The gating information may be used by the combiner network to inhibit and/or suppress the transfer function operation. The suppression (or `veto`) may cause the combiner output (e.g., 220) to be comprised solely of the control signal portion 218, e.g., configured in accordance with Eqn. 3. In one or more implementations the gating information may be used to suppress (`veto`) provision of the context signal to the predictor without affecting the combiner output 220. In one or more implementations, the gating information may be used to suppress (`veto`) the feedback from the platform.

[0099] In one or more implementations, the gating signal may comprise an inhibitory indication that may be configured to inhibit the output from the combiner. Zero combiner output may, in some realizations, may cause zero teaching signal (e.g., 214 in FIG. 2) to be provided to the predictor so as to signal to the predictor a discrepancy between the target action (e.g., controller output 208) and the predicted control signal (e.g., output 218).

[0100] The gating signal may be used to veto predictor output 218 based on, for example, the predicted control output 218 being away from the target output by more than a given margin. The margin may be configured based on an application and/or state of the trajectory. For example, a smaller margin may be applicable in navigation applications wherein the platform is proximate to a hazard (e.g., a cliff) and/or an obstacle. A larger error may be tolerated when approaching one (of many) targets.

[0101] In one or more implementations, the gating/veto functionality may be implemented on a "per-channel" basis in a multi-channel controller wherein some components of the combined control vector may comprise predicted components, while some components may comprise the corrector components.

[0102] By way of a non-limiting illustration, if the turn is to be completed and/or aborted (e.g., due to a trajectory change and/or sensory input change), and the predictor output still produces turn instructions to the plant, the gating signal may cause the combiner to veto (ignore) the predictor contribution and pass through the controller contribution.

[0103] Predicted control signal 218 and the control input 208 may be of opposite signs. In one or more implementations, a positive predicted control signal (e.g., 218) may exceed the target output that may be appropriate for performance of as task. The control signal 208 may be configured to include negative signaling in order to compensate for over-prediction by the predictor.

[0104] Gating and/or sign reversal of controller outputs may be useful, for example, where the predictor output is incompatible with the sensory input (e.g., navigating towards a wrong target). Rapid changes in the environment (compared to the predictor learning time scale caused by e.g., appearance of a new obstacle, target disappearance), may require an "override" capability for the controller (and/or supervisor) to `override` predictor output. In one or more implementations compensation for over-prediction may be controlled by a graded form of the gating signal.

[0105] FIG. 3 illustrates an unattended robotic controller apparatus configured to determine a control output based on sensory input, according to one or more implementations. The apparatus 300 of FIG. 3 may operate an unsupervised control process configured based on software application (programmed), a learned configuration (e.g., table and/or array of weights determined during training), and/or other control process operable without training input from human and/or computerized training entity.

[0106] The apparatus 300 may be configured to receive context 308 and to produce control output 306. In one or more implementations, the context 308 may comprise sensory input, e.g., 202, described above with respect to FIG. 2. The output 306 may comprise one or more of motor commands (e.g., voltage, force, torque, represented as discrete and/or continuous values), action indications, e.g., as described in Ser. No. 13/842,616 entitled "ROBOTIC APPARATUS AND METHODS FOR DEVELOPING A HIERARCHY OF MOTOR PRIMITIVES", filed Mar. 15, 2013, the foregoing being incorporated herein by reference in its entirety. The term "action indication" may be used to describe an instruction, a memory value, a signal, and/or another indication mechanism configured to convey a higher level control directive. The higher level control directive may not be directly communicated to the motor but rather may serve as an action directive to another component of the control system (e.g., the predictor 222 and/or a combiner 214 in FIG. 2). In one or more implementations, the action indication may comprise, for example, a directive. Examples of a directive may include one or more of "turn", "move ahead", "turn left", "turn right", and/or other directives. In some implementations, the control system may utilize a hierarchy of action indications, ranging from less complex/more specific (e.g., turn, move) to more abstract: approach, avoid, fetch, park, grab, and/or other instructions.

[0107] In some implementations, training a predictor (e.g., 222) to produce a control output may be combined with a predictor operating using an unattended (e.g., programmed, pre-trained) process (e.g., the component 300) in a hybrid control process. The unattended (e.g., programmed, pre-trained) process may also be referred to as a "reflex".

[0108] FIG. 4A illustrates use of a hybrid control methodology with a robotic controller apparatus configured to determine a control output based on sensory input, according to one or more implementations. Apparatus 400 of FIG. 4A may comprise a switching component 402 coupled to predictor components 410, 412. The components 402, 410, 412 may be configured to receive sensory information 407, 408, 409, respectively. In one or more implementations, the sensory input 407, 408, 409 may comprise all or a portion of sensory information available to the apparatus 400. In some implementations, the sensory information may comprise, e.g., the input 202 described above with respect to FIG. 2. By way of an illustration, the sensory input 407, 408, 409 may comprise data from one or more sensors (audio, video, range, acoustic, IR, structured light, LiDAR, radio frequency, positioning, inertial, environmental, and/or other sensors) characterizing robotic apparatus and/or its environment, state (feedback) of the robotic platform (e.g., motor torque, motor position, motor load, battery current draw, battery voltage, position of actuators and/or controllable elements (e.g., rotor blade, rudder, wheel), and/or other parameters). In some implementations of a learning controller for home animation, the sensory information may comprise ambient environment data (e.g., temperature, humidity, pressure, daylight), inputs from appliances such as valves, doors, locks, light switches, light fixture status, entertainment components, and/or other data. In some implementations, the sensory data may comprise pre-processed data e.g., edges, color patches obtained from camera data, platform speed, tilt obtained from inertial measurements, and/or other processed data.

[0109] In one or more implementations, individual ones of the sensory inputs 407, 408, 409 may be configured different from one another, e.g., comprising a subset of the available sensory information. By way of an illustration of target search and manipulation application, a robotic platform may comprise a navigation sensor (e.g., camera, LiDAR, ultrasonic range sensor, and/or other navigation sensor) configured to provide information to a navigation component (e.g., pre-programmed predictor 410) used to operate the platform during path following, target search, and/or target approach task. The platform may further comprise a manipulator supporting another sensor (e.g., gripper mounted camera) useful for providing sensory information to a learning predictor for grasping the target. The information 407 may comprise navigation sensor output. The information 409 may comprise data provided by the gripper camera, position, and/or orientation of the manipulator, and/or other information.

[0110] Predictor components 410, 412 may be configured to determine control output (406, 416, respectively) based on the sensory input (407, 409, respectively). The predictor component 410 may be configured to operate an unattended control process, e.g., comprising a programmed, a pre-trained and/or otherwise configured process that may be operable without input from a trainer, e.g., random exploration based on visual input; obstacle avoidance based on distance sensor and visual input as described above with respect to FIG. 3.

[0111] In some implementations of self-stabilized camera support, the predictor component 410 may be configured to implement a stabilization behavior wherein a camera module mounted on an arm is stabilized (e.g., kept pointing in a given direction at a given elevation). The component 410 may be configured to produce control output for joints of the arm in order to maintain camera orientation.

[0112] The predictor component 412 may be configured to operate a learning control process configured based on a teaching signal 404 from an external (e.g., with respect to the entity 412). In some implementations, the component 412 may comprise the adaptive predictor 222 described above with respect to FIG. 2. The teaching input may comprise an output of a combiner component (e.g., the output 204 in FIG. 2) configured based on a training input (208 in FIG. 2). In one or more implementations of robotic vehicle navigation, the training signal 404 may comprise target vehicle velocity and/or target vehicle steering angle. Such implementations may comprise an "override" functionality configured to cause a robot to execute action in accordance with the trainer-provided control signal instead of the predicted control signal. In some implementations, the training signal 404 may comprise a correction (an increment/decrement) to vehicle velocity and/or direction, and/or other parameters.

[0113] In some implementations, the training signal 404 may comprise desired motor velocity for motors operating a robotic arm while training a controller to lift the arm once an object is in the gripper. In one or more implementations of training a Baxter robot (available from Rethink Robotic.TM.), the training signal may comprise a 7 dimensional vector consisting of target position and/or velocity of the motors for operating joints of the Baxter arm. Velocity information may be used as the training signal when the Baxter arm needs to be rotated relative to the direction of a target object that the arm gripper is about to pick up.

[0114] The controller configuration comprising a trained and an unattended predictor components (e.g., as illustrated in FIGS. 4A-4C) may be referred to as the hybrid controller and/or hybrid configuration.

[0115] FIG. 8 illustrates use of a hybrid control approach for controlling navigation of trajectory by a robotic vehicle. In FIG. 8, the robotic vehicle 810 may be configured to follow the trajectory 800. The trajectory 800 may comprise one or more straightaway portions (802, 804) and one or more turns (806, 808). The vehicle 810 may comprise a hybrid controller, e.g., 400 shown and described with respect to FIG. 4A. The vehicle 810 may comprise one or more sensors configured to communicate information related to vehicle position (e.g., with respect to the track), vehicle motion (e.g., orientation, velocity, acceleration), wheel traction, wheel position, brake status, motor speed, torque, and/or other parameters.

[0116] By way of an illustration, in one or more implementations, the predictor component 410 of apparatus 400 may be configured to provide one or more control signals to navigate the vehicle 810 along a straight portions (e.g., segments 802, 804) of the trajectory 800 in FIG. 8). Output 406 of the predictor 410 may be configured based on sensory input 407 (e.g., vehicle speed, position, and/or other parameters).

[0117] The predictor component 412 may be configured to provide one or more control signals to navigate a robotic vehicle (e.g., 100 in FIG. 1 and/or 810 in FIG. 8) along turns (e.g., segments 806, 808 of the trajectory 800 in FIG. 8). Output 416 of the predictor 412 may be configured based on the sensory input 409 and the training input 404. In one or more implementations, the training input 404 may comprise indication from the trainer to slow down, speed up, wheel orientation, and/or other navigation instructions.