Image Blurring Correction Apparatus, Imaging Apparatus, And Image Blurring Correction Method

TSUCHIYA; Hitoshi

U.S. patent application number 16/010735 was filed with the patent office on 2019-01-03 for image blurring correction apparatus, imaging apparatus, and image blurring correction method. This patent application is currently assigned to OLYMPUS CORPORATION. The applicant listed for this patent is OLYMPUS CORPORATION. Invention is credited to Hitoshi TSUCHIYA.

| Application Number | 20190007616 16/010735 |

| Document ID | / |

| Family ID | 64739283 |

| Filed Date | 2019-01-03 |

View All Diagrams

| United States Patent Application | 20190007616 |

| Kind Code | A1 |

| TSUCHIYA; Hitoshi | January 3, 2019 |

IMAGE BLURRING CORRECTION APPARATUS, IMAGING APPARATUS, AND IMAGE BLURRING CORRECTION METHOD

Abstract

An image blurring correction apparatus includes: an angular-velocity sensor that detects an angular velocity; and a processor. The processor includes: a first-panning detection section that detects first panning on the basis of the angular velocity; a LPF processing section that performs LPF processing on the angular velocity; a second-panning detection section that detects second panning that has a panning velocity that is lower than that of the first panning on the basis of a processing result of the LPF processing section; a HPF processing section that performs HPF processing on the angular velocity; a calculation section that calculates an image-blurring-correction amount on the basis of a detection result of the first-panning detection section or a detection result of the second-panning detection section, and a processing result of the HPF processing section; and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount.

| Inventors: | TSUCHIYA; Hitoshi; (Tokyo, JP) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | OLYMPUS CORPORATION Tokyo JP |

||||||||||

| Family ID: | 64739283 | ||||||||||

| Appl. No.: | 16/010735 | ||||||||||

| Filed: | June 18, 2018 |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/23258 20130101; H04N 5/23287 20130101; G01P 3/00 20130101 |

| International Class: | H04N 5/232 20060101 H04N005/232 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Jun 29, 2017 | JP | 2017-126902 |

Claims

1. An image blurring correction apparatus comprising: an angular-velocity sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes a first-panning detection section that detects first panning on the basis of the angular velocity, a low-pass-filter (LPF) processing section that performs LPF processing on the angular velocity, a second-panning detection section that detects second panning that has a panning velocity that is lower than that of the first panning on the basis of a processing result of the LPF processing section, a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity, a calculation section that calculates an image-blurring-correction amount on the basis of a detection result of the first-panning detection section or a detection result of the second-panning detection section, and a processing result of the HPF processing section, and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, when the first-panning detection section has detected the first panning, or when the second-panning detection section has detected the second panning, the processor changes one of, or both, a characteristic of the HPF processing section and a characteristic of the calculation section in such a manner as to decrease the image-blurring-correction amount.

2. The image blurring correction apparatus of claim 1, wherein when the first-panning detection section has detected the first panning, or when the second-panning detection section has detected the second panning, the processor increases a cutoff frequency specific to the HPF processing section and/or decreases a coefficient that is multiplied when the calculation section calculates the image-blurring-correction amount.

3. The image blurring correction apparatus of claim 1, wherein when the first panning detection section has detected the first panning, the processor invalidates the detection result of the second panning detection section.

4. The image blurring correction apparatus of claim 1, wherein the processor further includes a selection section that selects the detection result of the first panning detection section or the detection result of the second panning detection section, and the calculation section calculates the image-blurring-correction amount on the basis of the processing result of the HPF processing section, and the detection result of the first panning detection section or the detection result of the second panning detection section that has been selected by the selection section.

5. The image blurring correction apparatus of claim 4, wherein the apparatus is an imaging apparatus, and the selection section performs the selecting on the basis of at least one of an image-shooting condition of the imaging apparatus, a movement vector detected from a video image signal of the imaging apparatus, and past-image-shooting-history information of the imaging apparatus.

6. The image blurring correction apparatus of claim 5, wherein when an image-shooting condition suitable for shooting an image of a sport scene or a child is set as the image-shooting condition, the selection section selects the detection result of the first panning detection section, and when an image-shooting condition suitable for shooting an image of landscape or nightscape is set as the image-shooting condition, the selection section selects the detection result of the second panning detection section.

7. The image blurring correction apparatus of claim 5, wherein when a magnitude of the movement vector is greater than a predetermined value, the selection section selects the detection result of the first panning detection section, and when the magnitude of the movement vector is less than the predetermined value, the selection section selects the detection result of the second panning detection section.

8. An imaging apparatus comprising: the image blurring correction apparatus of claim 1.

9. An image blurring correction method for an image blurring correction apparatus, the image blurring correction method comprising: detecting an angular velocity of an apparatus; detecting first panning on the basis of the angular velocity; performing low-pass-filter (LPF) processing on the angular velocity; detecting second panning that has a panning velocity that is lower than that of the first panning on the basis of a processing result of the LPF processing; performing high-pass-filter (HPF) processing on the angular velocity; calculating an image-blurring-correction amount on the basis of a result of the detection of the first panning or a result of the detection of the second panning, and a processing result of the HPF processing; and driving an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the first panning has been detected, or when the second panning has been detected, one of, or both, a characteristic of the HPF processing and a characteristic of the calculating of the image-blurring-correction amount is changed in such a manner as to decrease the image-blurring-correction amount.

10. An image blurring correction apparatus comprising: an angular-velocity sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes a panning detection section that detects panning on the basis of the angular velocity, a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity, a limit section that performs clip processing on a processing result of the HPF processing section on the basis of a detection result of the panning detection section, a calculation section that calculates an image-blurring-correction amount on the basis of a processing result of the limit section, and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the panning detection section has detected the panning, the limit section performs the clip processing under a condition in which a threshold that has a sign that corresponds to an opposite direction from a direction of the panning is defined as a limit value for the processing result of the HPF processing section, and when the panning detection section has detected an end of the panning, the processor initializes the HPF processing section.

11. The image blurring correction apparatus of claim 10, wherein the panning detection section includes a determination section that determines whether an absolute value of the angular velocity has exceeded a panning detection threshold, a clocking section that measures, on the basis of a determination result of the determination section, a time period during which the absolute value of the angular velocity continues to be higher than the panning detection threshold, and a sign detection section that detects a sign of the angular velocity, and when the time period measured by the clocking section is longer than a predetermined time period, the panning detection section detects the panning and detects, as the direction of the panning, the sign detected at that time by the sign detection section.

12. The image blurring correction apparatus of claim 10, wherein an absolute value of the threshold defined as the limit value is variable.

13. The image blurring correction apparatus of claim 12, wherein as a focal length of an optical system of the apparatus becomes greater, the absolute value of the threshold defined as the limit value becomes lower.

14. An imaging apparatus comprising: the image blurring correction apparatus of claim 10.

15. An image blurring correction apparatus comprising: an angular-velocity sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes a panning detection section that detects panning on the basis of the angular velocity, a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity, a decision section that decides an upper limit value and a lower limit value for a processing result of the HPF processing section, a limit section that performs clip processing on a processing result of the HPF processing section on the basis of a detection result of the panning detection section, the upper limit value, and the lower limit value, a calculation section that calculates an image-blurring-correction amount on the basis of a processing result of the limit section, and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the panning detection section has not detected the panning, the limit section performs the clip processing using the upper limit value and the lower limit value.

16. The image blurring correction apparatus of claim 15, wherein the upper limit value and the lower limit value have different signs, and an absolute value of the upper limit value and an absolute value of the lower limit value are equal.

17. The image blurring correction apparatus of claim 16, wherein the decision section decides an amplitude of the angular velocity from the angular velocity specific to a period of time beginning at a predetermined time before a present time and extending up to the present time, and decides the absolute value on the basis of the amplitude.

18. The image blurring correction apparatus of claim 17, wherein the absolute value is obtained by multiplying the amplitude by a coefficient.

19. The image blurring correction apparatus of claim 18, wherein the coefficient is variable, and as a focal length of an optical system of the apparatus becomes greater, the coefficient becomes lower.

20. The image blurring correction apparatus of claim 16, wherein the absolute value is equal to or lower than an absolute value of a panning detection threshold used by the panning detection section for detection of the panning.

21. The image blurring correction apparatus of claim 16, wherein the apparatus is an imaging apparatus, and the decision section decides the absolute value on the basis of one of, or both, an image-shooting condition of the imaging apparatus and a movement vector detected from a video image signal of the imaging apparatus.

22. The image blurring correction apparatus of claim 21, wherein when the image-shooting condition includes a long focal length, or when a magnitude of the movement vector is greater than a predetermined value, the decision section decides the upper limit value and the lower limit value such that the absolute value becomes low.

23. An imaging apparatus comprising: the image blurring correction apparatus of claim 15.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is based upon and claims the benefit of priority of the prior Japanese Patent Application No. 2017-126902, filed on Jun. 29, 2017, the entire contents of which are incorporated herein by reference.

FIELD

[0002] The present invention relates to an image blurring correction apparatus that corrects image blurring, an imaging apparatus provided with the image blurring correction apparatus, and an image blurring correction method for the image blurring correction apparatus.

BACKGROUND

[0003] Common digital cameras (hereinafter simply referred to as cameras) of recent years include one that performs an optical shake correction during recording of a moving image or that applies such a correction to a finder view. The finder view means a video image of a subject captured and displayed (displayed in a finder) in real time during, for example, a standby mode in image shooting, and is also referred to as a live view.

[0004] Making a shake correction of continuous video images in a finder view during video filming prevents the images from being blurred due to camera shakes, and hence the images give no visual feeling of wrongness.

[0005] A photographer's intentional actions include, for example, swinging a camera rightward or leftward (horizontal direction), upward or downward (vertical direction), or diagonally. In a broad sense, all of these types of camera work are referred to as panning; in a more limited sense, an action of swinging the camera rightward or leftward is referred to as panning, and an action of swinging the camera upward or downward is referred to as tilting. Unless otherwise noted, panning herein refers to the panning in the broad sense.

[0006] FIGS. 12 and 13 each depict an exemplary temporal variation in an angular velocity of a camera detected when panning is performed (see the upper side of the figure), and an exemplary temporal variation in the amount of movement of a video image captured by the camera (the quantity of image movement vector) in that situation (see the lower side of the figure). In the upper side of each figure, a solid line indicates a detected angular velocity (the angular velocity of the camera), and a broken line indicates an angular velocity after performing high-pass filter (HPF) processing based on a HPF (a process for removing low frequency components) on the detected angular velocity (an angular velocity after the HPF process). In the lower side of each figure, a solid line indicates the amount of image movement in the absence of a shake correction, and a broken line indicates the amount of image movement in the presence of a shake correction.

[0007] In recent years, high-picture-quality video filming can be easily performed, and even average users have started using their camera work as a means for providing elaborate images.

[0008] For example, an image may be shot by performing panning at a low angular velocity so as to enter/exclude a main subject into/from the view.

[0009] FIG. 14 depicts an exemplary temporal change in an angular velocity of a camera detected when slow panning is performed (see the upper side of the figure), and an example of panning of the camera detected in that situation (see the lower side of the figure). In FIG. 14, Vth indicates a threshold used to detect panning.

[0010] There have already been various proposals regarding the matters described above.

[0011] An imaging apparatus described in patent document 1 (Japanese Laid-open Patent Publication No. 2012-168420) performs, for example, the following processes: an angular velocity sensor detects shake applied to the imaging apparatus; a HPF calculation unit attenuates low frequency components in the output of the angular velocity sensor; an image blurring compensation amount is calculated on the basis of that output, and image blurring compensation is executed by drive control of the compensation optical system; for each sampling period, an intermediate value is retained as a calculation result by a digital high pass filter that configures the HPF calculation unit; when a panning control unit that detects a panning operation performed by the imaging apparatus detects the completion of the panning operation, the intermediate value retained in the digital high pass filter is initialized by the panning control unit, and the output of the HPF calculation unit is placed to approximately a value of zero. The imaging apparatus initializes the intermediate values and places the output of the HPF calculation unit to approximately a value of zero, thereby decreasing a feeling of wrongness that the photographer could have when panning is finished.

[0012] An imaging apparatus described in patent document 2 (Japanese Laid-open Patent Publication No. 2013-78104) has been proposed as an apparatus that makes a shake correction when panning is performed. The imaging apparatus includes a sensor that detects a shake amount, a movable part for shake correction, and a signal processing apparatus. The signal processing apparatus generates a signal representing a target position for the movable member for shake correction on the basis of a detection signal of the sensor, and has a variable cut-off frequency. The signal processing apparatus includes a highpass filter that applies highpass filter processing to the detection signal of the sensor, and a cut-off-frequency control unit. The cut-off-frequency control unit makes the cut-off frequency higher when the level of a value of integral of the detection signal of the sensor exceeds a predetermined threshold, and sets the cut-off frequency back to the original when the level becomes equal to or less than the threshold. Providing such a signal processing apparatus enables panning and tilting to be appropriately accommodated.

[0013] A pan shooting device described in patent document 3 (Japanese Laid-open Patent Publication No. 5-216104) has been proposed as an apparatus for determining whether panning is being performed. The pan shooting device includes: a camera shake detecting means for detecting a camera shake and outputting a camera shake signal; a correction optical system for correcting the camera shake; a correction driving unit for driving the correction optical system on the basis of the camera shake signal from the camera shake detecting means; a pan shooting determination means; and a switching means. The pan shooting determination means determines whether pan shooting is being performed, by eliminating high frequency components from the camera shake signal from the camera shake detecting means. When the pan shooting determination means has determined that pan shooting is being performed, the switching means stops the camera shake signal being transmitted to the correction driving unit. Such a configuration enables it to be automatically determined that pan shooting has been started, so that pan shooting can be performed without making an advance preparation.

SUMMARY

[0014] An aspect of the present invention provides an image blurring correction apparatus that includes: an angular-velocity detection sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes: a first-panning detection section that detects first panning on the basis of the angular velocity; a low-pass-filter (LPF) processing section that performs LPF processing on the angular velocity; a second-panning detection section that detects second panning that has a panning velocity that is lower than that of the first panning on the basis of a processing result of the LPF processing section; a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity; a calculation section that calculates an image-blurring-correction amount on the basis of a detection result of the first-panning detection section or a detection result of the second-panning detection section, and the processing result of the HPF processing section; and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the first-panning detection section has detected the first panning, or when the second-panning detection section has detected the second panning, the processor changes one of, or both, a characteristic of the HPF processing section and a characteristic of the calculation section in such a manner as to decrease the image-blurring-correction amount.

[0015] Still another aspect of the invention provides an imaging apparatus that includes the image blurring correction apparatus in accordance with the aspect described above.

[0016] Yet another aspect of the invention provides an image blurring correction method for an image blurring correction apparatus, the image blurring correction method including: detecting an angular velocity of an apparatus; detecting first panning on the basis of the angular velocity; performing low-pass-filter (LPF) processing on the angular velocity; detecting second panning that has a panning velocity that is lower than that of the first panning on the basis of a processing result of the LPF processing; performing high-pass-filter (HPF) processing on the angular velocity; calculating an image-blurring-correction amount on the basis of a result of the detection of the first panning or a result of the detection of the second panning, and a processing result of the HPF processing; and driving an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the first panning has been detected, or when the second panning has been detected, one of, or both, a characteristic of the HPF processing and a characteristic of the calculating of the image-blurring-correction amount is changed in such a manner as to decrease the image-blurring-correction amount.

[0017] A further aspect of the invention provides an image blurring correction apparatus that includes: an angular-velocity sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes: a panning detection section that detects panning on the basis of the angular velocity; a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity; a limit section that performs clip processing on a processing result of the HPF processing section on the basis of a detection result of the panning detection section; a calculation section that calculates an image-blurring-correction amount on the basis of a processing result of the limit section; and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the panning detection section has detected the panning, the limit section performs the clip processing under a condition in which a threshold that has a sign that corresponds to an opposite direction from the direction of the panning is defined as a limit value for the processing result of the HPF processing section, and when the panning detection section has detected an end of the panning, the processor initializes the HPF processing section.

[0018] Still further aspect of the invention provides an imaging apparatus that includes the image blurring correction apparatus in accordance with the aspect described above.

[0019] Yet further aspect of the invention provides an image blurring correction apparatus that includes: an angular-velocity sensor that detects an angular velocity of an apparatus; and a processor, wherein the processor includes: a panning detection section that detects panning on the basis of the angular velocity; a high-pass-filter (HPF) processing section that performs HPF processing on the angular velocity; a decision section that decides an upper limit value and a lower limit value for a processing result of the HPF processing section; a limit section that performs clip processing on a processing result of the HPF processing section on the basis of a detection result of the panning detection section, the upper limit value, and the lower limit value; a calculation section that calculates an image-blurring-correction amount on the basis of a processing result of the limit section; and a drive circuit that drives an image-blurring-correction mechanism on the basis of the image-blurring-correction amount, wherein when the panning detection section has not detected the panning, the limit section performs the clip processing using the upper limit value and the lower limit value.

[0020] Yet further aspect of the invention provides an imaging apparatus that includes the image blurring correction apparatus in accordance with the aspect described above.

BRIEF DESCRIPTION OF DRAWINGS

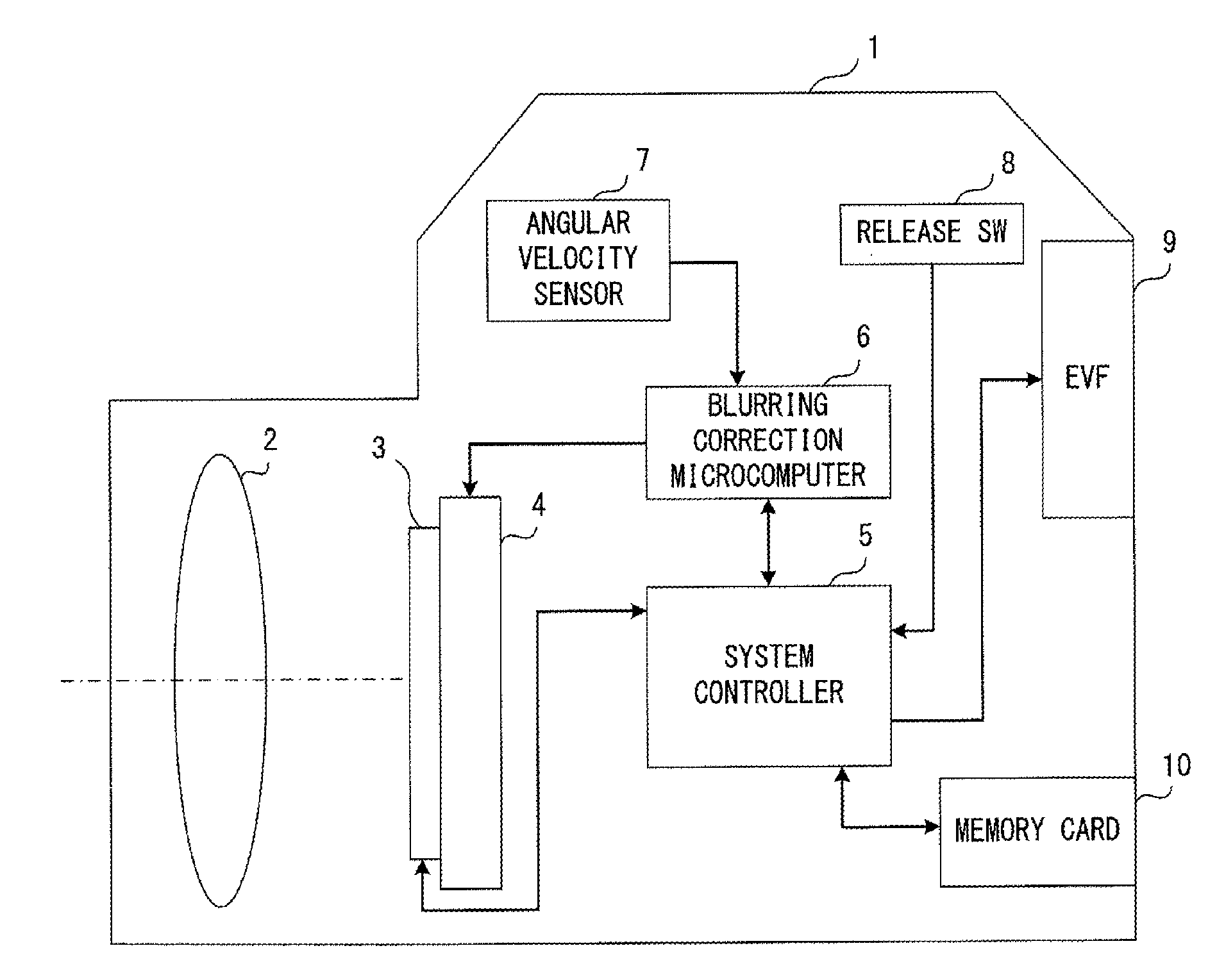

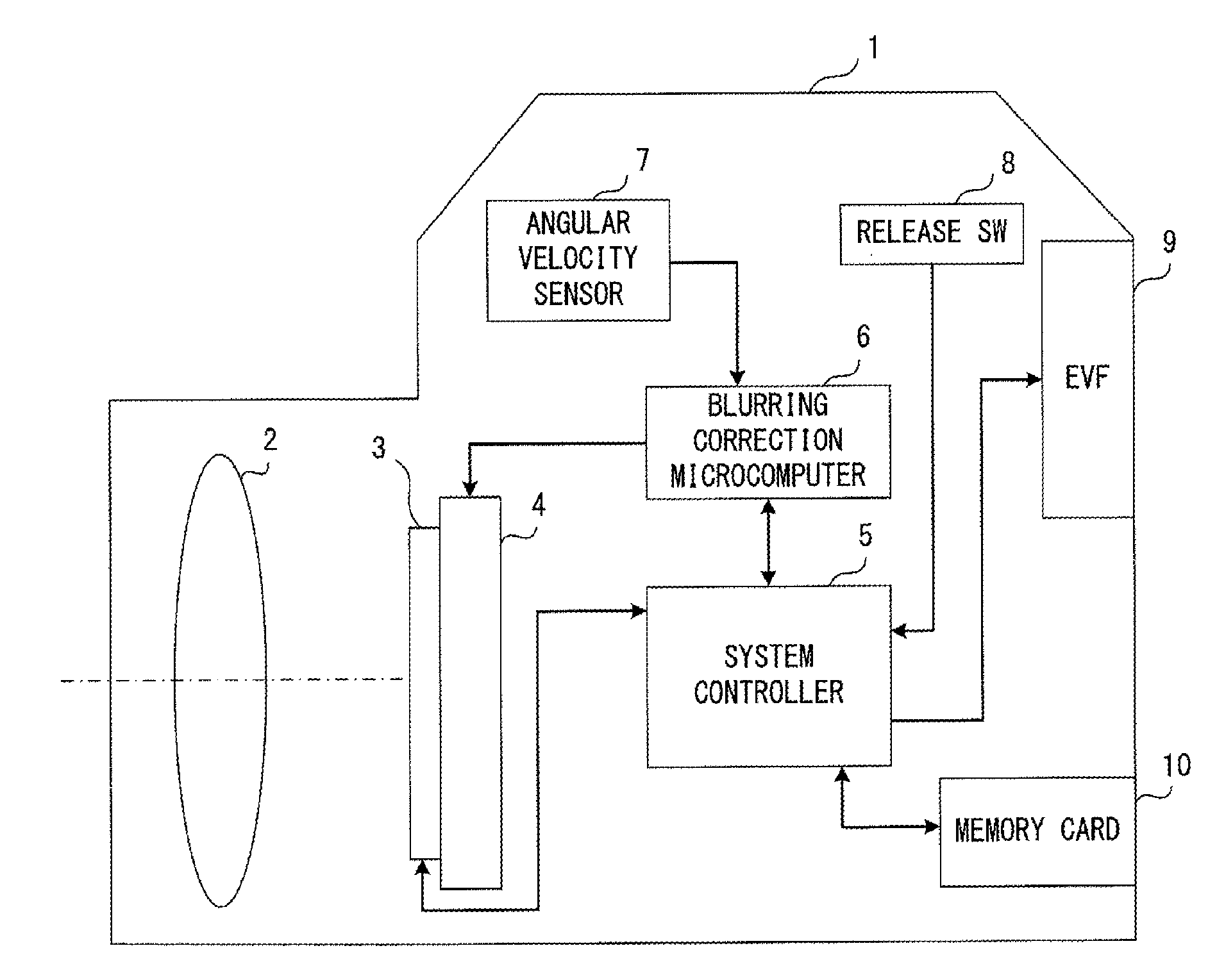

[0021] FIG. 1 illustrates an exemplary configuration of a camera that is an imaging apparatus that includes an image blurring correction apparatus in accordance with a first embodiment;

[0022] FIG. 2 illustrates an exemplary functional configuration of a blurring correction microcomputer in accordance with a first embodiment;

[0023] FIG. 3 illustrates exemplary effects of a blurring correction microcomputer in accordance with a first embodiment that are achieved when panning has been performed;

[0024] FIG. 4 is a flowchart indicating an example of limit processing performed on a predetermined cycle by a limit unit;

[0025] FIG. 5 illustrates an exemplary functional configuration of a panning detection unit in accordance with a second embodiment;

[0026] FIG. 6 illustrates an exemplary functional configuration of a first panning detection unit in accordance with a second embodiment;

[0027] FIG. 7 illustrates an exemplary functional configuration of a second panning detection unit in accordance with a second embodiment;

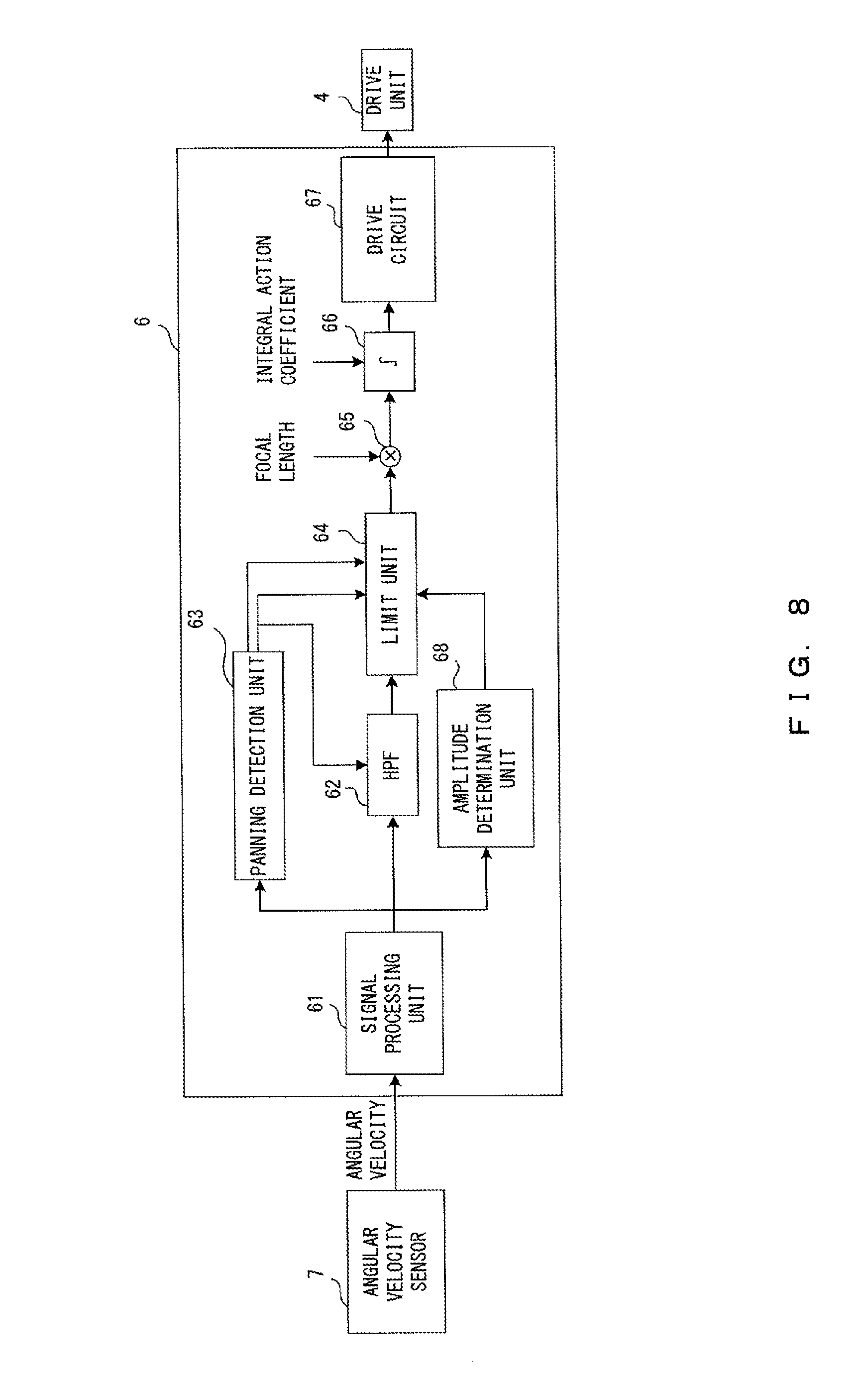

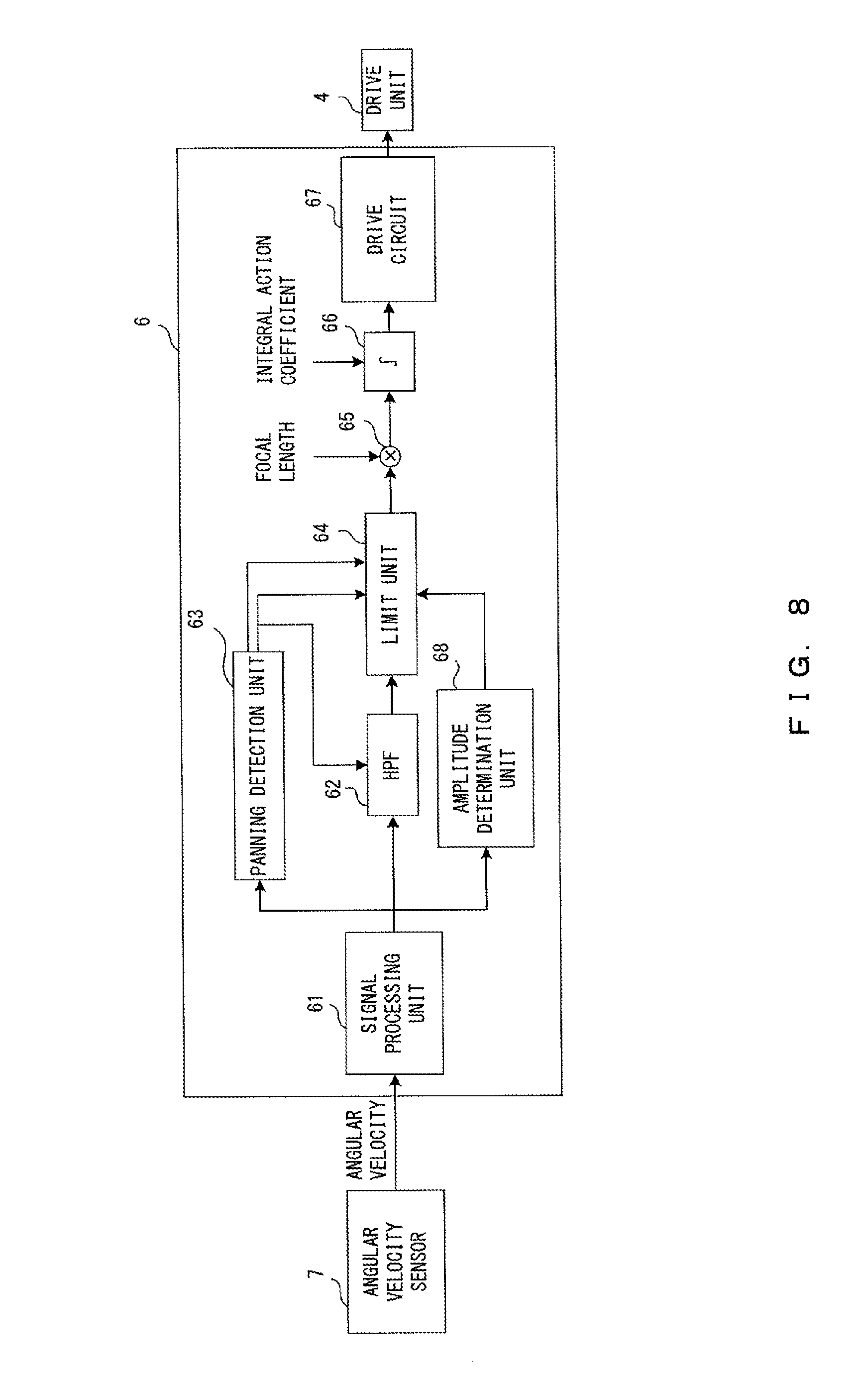

[0028] FIG. 8 illustrates an exemplary functional configuration of a blurring correction microcomputer in accordance with a third embodiment;

[0029] FIG. 9 illustrates an example of a threshold (th_l.sub.1) set by an amplitude determination unit;

[0030] FIG. 10 illustrates an exemplary functional configuration of a panning detection unit in accordance with a fourth embodiment;

[0031] FIG. 11 illustrates an exemplary functional configuration of a blurring correction microcomputer in accordance with a fifth embodiment;

[0032] FIG. 12 depicts an exemplary temporal variation in an angular velocity of a camera detected when panning has been performed, and an exemplary temporal variation in the amount of movement of a video image captured by the camera (the quantity of image movement vector) in that situation (example 1);

[0033] FIG. 13 depicts an exemplary temporal variation in an angular velocity of a camera detected when panning has been performed, and an exemplary temporal variation in the amount of movement of a video image captured by the camera (the quantity of image movement vector) in that situation (example 2); and

[0034] FIG. 14 illustrates an exemplary situation in which a camera erroneously detects an end of panning when a panning determination has been made using a conventional technique.

DESCRIPTION OF EMBODIMENTS

[0035] The following describes embodiments of the present invention by referring to the drawings.

First Embodiment

[0036] FIG. 1 illustrates an exemplary configuration of a camera that is an imaging apparatus that includes an image blurring correction apparatus in accordance with a first embodiment of the invention.

[0037] As depicted in FIG. 1, a camera 1 includes an image shooting optical system 2, an image pickup element 3, a drive unit 4, a system controller 5, a blurring correction microcomputer 6, an angular velocity sensor 7, a release switch (SW) 8, an electric viewfinder (EVF) 9, and a memory card 10.

[0038] The image shooting optical system 2 includes a focus lens and a zoom lens and forms an optical image of a subject on the image pickup element 3.

[0039] The image pickup element 3 converts an image (optical image) of a subject formed by the image shooting optical system 2 into an electrical signal. The image pickup element 3 is an image sensor, e.g., a charge coupled device (CCD), a complementary metal oxide semiconductor (CMOS).

[0040] The system controller 5 controls operations of the entirety of the camera 1. For example, the system controller 5 may read, as a video image signal, an electrical signal obtained via the converting performed by the image pickup element 3, apply predetermined image processing to the signal, and then display the signal as a video image (live view, finder view) on the EVF 9 or record the signal in the memory card 10 as a record image. The memory card 10 is a nonvolatile memory attachable to, and detachable from, the camera 1 and is, for example, SD memory card.RTM..

[0041] The angular velocity sensor 7 detects angular velocities in a yaw direction and a pitch direction such that two axes orthogonal to an optical axis of the image shooting optical system 2 are defined as rotation axes. These two axis are also a vertical axis and a horizontal axis of the camera 1.

[0042] On the basis of the angular velocity detected by the angular velocity sensor 7, the blurring correction microcomputer 6 calculates the amount of image blurring generated on an image plane (imaging plane of the image pickup element 3) and detects a posture state of the camera 1. Details of the blurring correction microcomputer 6 will be described hereinafter by referring to FIG. 2.

[0043] The drive unit 4 is a drive mechanism that holds or moves the image pickup element 3 onto a plane orthogonal to an optical axis of the image shooting optical system 2. The drive unit 4 is provided with a motor such as a voice coil motor (VCM) and moves the image pickup element 3 using a driving force generated by the motor. On the basis of an instruction (driving signal) from the blurring correction microcomputer 6, the drive unit 4 moves the image pickup element 3 in a direction such that the image blurring generated on the image plane is eliminated. This corrects the image blurring generated on the image plane.

[0044] The release SW 8 is a switch for reporting a photographer's operation of image shooting to the system controller 5; when the photographer presses the release SW 8, the system controller 5 starts or ends the recording of a moving image or starts the shooting of a static image. Although not illustrated, the camera 1 includes an operation unit for performing another instruction operation of the camera 1, in addition to the release SW 8.

[0045] The EVF 9 displays a video image of a subject so as to allow the photographer to visually check a framing state, or displays a menu screen so as to display camera settings.

[0046] In the camera 1 which has such a configuration, the system controller 5 and the blurring correction microcomputer 6 each include a processor (e.g., CPU), a memory, and an electronic circuit. The functions of the system controller 5 and the blurring correction microcomputer 6 are each achieved by the processor executing a program stored in the memory. Alternatively, the system controller 5 and the blurring correction microcomputer 6 may each comprise a dedicated circuit, e.g., an application specific integrated circuit (ASIC) or a field-programmable gate array (FPGA).

[0047] FIG. 2 illustrates an exemplary functional configuration of the blurring correction microcomputer 6.

[0048] The blurring correction microcomputer 6 includes a functional configuration for processing an angular velocity detected for a yaw direction by the angular velocity sensor 7, and a functional configuration for processing an angular velocity detected for a pitch direction by the angular velocity sensor 7. However, those configurations are similar to one another, and FIG. 2 depicts only one of them with the other omitted. Descriptions are given herein of the one configuration only, and descriptions of the other are omitted.

[0049] As depicted in FIG. 2, the blurring correction microcomputer 6 includes a signal processing unit 61, a HPF 62, a panning detection unit 63, a limit unit 64, a multiplication unit 65, an integration unit 66, and a drive circuit 67.

[0050] The signal processing unit 61 converts an angular velocity output as an analog signal from the angular velocity sensor 7 into a digital value, and subtracts a reference value from the conversion result. The reference value is obtained by converting into a digital value an angular velocity output as an analog signal from the angular velocity sensor 7 when the camera 1 is in a static state. Through such processing, a signed digital value is obtained. In this example, the sign indicates a direction (rotation direction) of an angular velocity, and the absolute value of a signed digital value indicates the magnitude of an angular velocity.

[0051] When the angular velocity sensor 7 includes a component that outputs a detected angular velocity as a digital signal, the signal processing unit 61 does not perform a process of converting an analog signal into a digital signal. In this case, the reference value is the value of an angular velocity output as a digital signal from the angular velocity sensor 7 when the camera 1 is in a static state.

[0052] The HPF 62 performs a process for removing low frequency components (HPF processing) from an angular velocity that is a processing result of the signal processing unit 61. The HPF is initialized when the panning detection unit 63 has detected an end of panning.

[0053] On the basis of the processing result of the signal processing unit 61, the panning detection unit 63 detects panning and a panning direction. More particularly, the panning detection unit 63 detects a start of panning when (1) the absolute value of an angular velocity that is a processing result of the signal processing unit 61 has exceeded a predetermined threshold and (2) the state of (1) has continued for a predetermined period. (3) After this, the panning detection unit 63 detects an end of the panning when the angular velocity that is the processing result of the signal processing unit 61 has crossed zero (zero cross). The panning detection unit 63 also detects, as a direction of the panning, a sign of the angular velocity, i.e., the processing result of the signal processing unit 61, that is indicated at a time at which the start of the panning is detected. The panning detection unit 63 is achieved by, for example, the functional configuration depicted in FIG. 6 which will be described hereinafter with reference to a second embodiment.

[0054] On the basis of a detection result of the panning detection unit 63, the limit unit 64 performs clip processing on an angular velocity that is a processing result of the HPF 62. Details of the clip processing will be described hereinafter with reference to FIGS. 3 and 4.

[0055] The multiplication unit 65 multiplies an angular velocity that is a processing result of the limit unit 64 by a focal length of the image shooting optical system 2. This converts the angular velocity that is a processing result of the limit unit 64 into the amount of movement of an image on an image plane. Information related to the focal length of the image shooting optical system 2 is reported from, for example, the system controller 5.

[0056] The integration unit 66 integrates multiplication results of the multiplication unit 65 (cumulative addition) using an integral action coefficient and outputs the integration result as an image-blurring-correction amount. In this integration process, on a predetermined cycle, an integration result Y.sub.n is obtained by adding a previous integration result Y.sub.n-1 multiplied by an integral action coefficient K to a multiplication result X.sub.n of the multiplication unit 65, as indicated by the recursion formula (1).

Y.sub.n=X.sub.n+K.times.Y.sub.n-1=X.sub.n+K.times..SIGMA.X.sub.n-1 Formula (1)

[0057] In this formula, integral action coefficient K is equal to or less than 1 (K.ltoreq.1).

[0058] When, for example, integration action coefficient K is 0.99, the integration result is decreased on a predetermined cycle by 1%, and the image-blurring-correction amount gradually approaches 0. Accordingly, the drive unit 4 gradually returns to the initial position. When integration action coefficient K is 1 (when complete integration is performed), the drive unit 4 does not return to the initial position; when integration action coefficient K is 0, the image-blurring-correction function itself is stopped.

[0059] The drive circuit 67 converts an image-blurring-correction amount that is an integration result of the integration unit 66 into a driving signal for the drive unit 4, and outputs the driving signal to the drive unit 4. As a result, the drive unit 4 is driven in accordance with the driving signal, thereby moving the image pickup element 3 in a direction such that the amount of movement of an image on an image plane is balanced out.

[0060] FIG. 3 illustrates exemplary effects of the blurring correction microcomputer 6 that are achieved when panning has been performed.

[0061] The upper side of FIG. 3 depicts, using a solid line, an exemplary temporal change in an angular velocity that is a processing result of the signal processing unit 61, and depicts, using a broken line, a temporal change in an angular velocity that is a processing result of the HPF 62 in the same situation.

[0062] In the example depicted on the upper side of FIG. 3, when panning has been started, the angular velocity that is a processing result of the signal processing unit 61 is deviated in one direction (positive direction). However, the angular velocity that is a processing result of the HPF 62 is attenuated by effects of the HPF 62, thereby approaching 0.

[0063] On the upper side of FIG. 3, "Limit 1 (th_l.sub.1)" indicates a panning detection threshold (a threshold for the positive direction) used when the panning detection unit 63 detects panning. A threshold for the negative direction is not illustrated, and the only difference between the threshold for the negative direction and the threshold for the positive direction is the signs of those values. In this example, the angular velocity that is a processing result of the signal processing unit 61 exceeds "Limit 1 (th_l.sub.1)" at time "t.sub.0", and when this state continues for a period of "P.sub.1" (until time "t.sub.2" in this example), the panning detection unit 63 detects a start of panning. When the angular velocity that is a processing result of the signal processing unit 61 crosses zero (zero cross) at time "t.sub.3", the panning detection unit 63 detects an end of the panning. Accordingly, a period of "P.sub.2", i.e., a period from time "t.sub.2" to time "t.sub.3", is a period in which panning has been detected (panning-detected period).

[0064] While the panning detection unit 63 does not detect panning, "Limit 1 (th_l.sub.1) " also serves as an upper limit value used when the limit unit 64 performs clip processing. A lower limit value is not illustrated, and the only difference between the lower limit value and the upper limit value is the signs of those values. When panning has not been detected, components that do not fall within a range extending from the lower limit value to the upper limit value are removed through clip processing from the angular velocity that is a processing result of the HPF 62, i.e., those components are removed from the components on which image blurring correction is to be applied. Hence, during the period before time "t2", in which panning is not detected, components "A" indicated by oblique lines are chosen as components to which image blurring correction is to be applied, and components "B" indicated as a shaded area are removed from the components to which image blurring correction is to be applied. Time "t.sub.1" is the time at which the angular velocity that is a processing result of the HPF 62 exceeds the upper limit value for the first time after time "t.sub.0".

[0065] On the upper side of FIG. 3, "Limit 2 (th_l.sub.2)" indicates a limit value that is set only for an opposite direction from the panning direction when the limit unit 64 performs clip processing while the panning detection unit 63 has detected panning (during the panning-detected period). In this example, the direction of the panning detected by the panning detection unit 63 is the positive direction, and hence the limit value "Limit 2 (th_l.sub.2) " is set in the negative direction. The panning direction is also a sign of the angular velocity that is a processing result of the signal processing unit 61 during the panning-detected period. As a result, during the panning-detected period, components less than "Limit 2 (th_l.sub.2)" are removed thorough clip processing from the angular velocity that is a processing result of the HPF 62, i.e., those components are removed from the components to which image blurring correction is to be applied. Hence, during a period of "P.sub.2" (panning-detected period), i.e., a period from time "t.sub.2" to time "t.sub.3", components "A" indicated by oblique lines are chosen as components to which image blurring correction is to be applied, and components "B" indicated as a shaded area are removed from the components to which image blurring correction is to be applied. Hence, while the panning velocity is being decreased before the panning is ended, the components less than "Limit 2(th_l.sub.2)" do not undergo image blurring correction.

[0066] The center of FIG. 3 depicts, using a solid line, a temporal change in an image-blurring-correction amount (integration result of the integration unit 66) indicated when the limit unit 64 does not perform limit processing, in comparison with the temporal change in the angular velocity indicated on the upper side of FIG. 3 using a broken line (processing result of the HPF 62). The center of FIG. 3 also depicts, using a broken line, a temporal change in the amount of image blurring correction that is indicated when the limit unit 64 has performed limit processing. In this case, the integral action coefficient K given to the integration unit 66 is a value that is less than 1.

[0067] Due to effects of integration action coefficient K that are caused by operations of the integration unit 66, both of the image-blurring-correction amounts are decreased as time elapses, as depicted at the center of FIG. 3. In comparison with a situation in which limit processing is not performed, the image-blurring-correction amount is decreased when the limit unit 64 performs limit processing. The amount of variation in K.times..SIGMA.X.sub.n-1 is decreased in formula (1), thereby reducing the influence of integral action coefficient K.

[0068] The lower side of FIG. 3 depicts, using a solid line, a temporal change in the amount of movement of a video image (quantity of image movement vector) that is indicated when the limit unit 64 does not perform limit processing, in comparison with the temporal change in the angular velocity indicated using a broken line on the upper side of FIG. 3 (processing result of the HPF 62). The lower side also depicts, using a broken line, a temporal change in the amount of movement of a video image that is indicated when the limit unit 64 has performed limit processing.

[0069] When the limit unit 64 has performed limit processing, the effect of compensating for a decrease in the panning velocity that is caused by slowdown in the velocity of a camera action (effect of image blurring correction) is lessened as depicted in the lower side of FIG. 3. Hence, in comparison with the example depicted in FIG. 13, a rapid change is not seen in the amount of movement of an image at the end of panning.

[0070] FIG. 4 is a flowchart indicating an example of limit processing performed by the limit unit 64 on a predetermined cycle.

[0071] As depicted in FIG. 4, the limit unit 64 determines whether panning is being performed (whether the process is in a panning-detected period) from a detection result of the panning detection unit 63 (S401).

[0072] When the result of the determination in S401 is Yes, the limit unit 64 determines whether a panning direction is a positive (+) direction or whether it is a negative (-) direction from the detection result of the panning detection unit 63 (S402).

[0073] When the result of the determination in S402 is the positive (+) direction, the limit unit 64 performs a limit determination 2 for the negative direction. More particularly, the limit unit determines whether an angular velocity (.omega.) that is a processing result of the HPF 62 is less than a limit value specific to the negative direction (th_l.sub.2.times.-1) (S403).

[0074] When the result of the determination in S403 is Yes, the limit unit 64 sets the angular velocity (.omega.) that is a processing result of the HPF 62 to th_l.sub.2.times.-1 through clip processing and outputs the set velocity (S404), and the limit processing is ended.

[0075] When the result of the determination in S403 is No, the limit unit 64 directly outputs the angular velocity (.omega.) that is a processing result of the HPF 62, and the limit processing is ended.

[0076] When the result of the determination in S402 is the negative (-) direction, the limit unit 64 performs a limit determination 2 for the positive direction. More particularly, the limit unit 64 determines whether the angular velocity (.omega.) that is a processing result of the HPF 62 has exceeded a limit value specific to the positive direction (th_l.sub.2) (S405).

[0077] When the result of the determination in S405 is Yes, the limit unit 64 sets an angular velocity (.omega.) that is an output of the HPF 62 to th_l.sub.2 through clip processing and outputs the set velocity (S406), and the limit processing is ended.

[0078] When the result of the determination in S405 is No, the limit unit 64 directly outputs the angular velocity (.omega.) that is a processing result of the HPF 62, and the limit processing is ended.

[0079] When the result of the determination in S401 is No, the limit unit 64 performs a limit determination 1. More particularly, the limit unit 64 determines whether the absolute value of the angular velocity (.omega.) that is the processing result of the HPF 62 has exceeded th_l.sub.1 (S407).

[0080] When the result of the determination in S407 is Yes, the limit unit 64 performs a sign determination for the angular velocity (.omega.) that is the processing result of the HPF 62. More particularly, the limit unit 64 determines whether the angular velocity (.omega.) that is the processing result of the HPF 62 is greater than 0 (S408).

[0081] When the result of the determination in S408 is Yes, the limit unit 64 sets an angular velocity (.omega.) that is the processing result of the HPF 62 to th_l.sub.1 through clip processing and outputs the set velocity (S409), and the limit processing is ended.

[0082] When the result of the determination in S408 is No, the limit unit 64 sets the angular velocity (.omega.) that is the processing result of the HPF 62 to th_l.sub.1.times.-1 through clip processing and outputs the set velocity (S410), and the limit processing is ended.

[0083] When the result of the determination in S407 is No, the limit unit 64 directly outputs the angular velocity (.omega.) that is a processing result of the HPF 62, and the limit processing is ended.

[0084] Accordingly, the first embodiment may improve the response of a video image to camera work such as panning. The embodiment may decrease a feeling of wrongness that could be given to the photographer by a video image (a video image that was shot during panning) that has undergone image blurring correction.

[0085] The first embodiment may be varied as follows.

[0086] For example, the processes related to the limit determination 1 (S407-S410) may be removed from the limit processing depicted in FIG. 4. In this case, only a visual feeling of wrongness that the photographer could have regarding a video image at the time of ending panning is decreased.

[0087] For example, the th_l.sub.2 used in the limit determination 2 in S403 and 405 may be a value that is variable within the range extending from 0 to a predetermined value. In this case, setting th_l.sub.2 to a lower value decreases the likelihood of a video image at the time of ending panning giving a feeling of wrongness to the photographer. However, when the result of the determination in S403 or S405 is Yes due to slow panning, a th_l.sub.2 that is a low value leads to a small image-blurring-correction amount, and hence the image blurring could possibly partially remain. In view of this fact, th_l.sub.2 may be set to, for example, an angular velocity of about 2 degrees per second (dps). This will decrease a feeling of wrongness effectively when panning is ended, and eliminate the possibility of image blurring remaining frequently when slow panning is performed.

[0088] When th_l.sub.2 is a value that is variable within the range extending from 0 to a predetermined value, a video image looks differently according to the usage state of the camera 1 and the focal length of the image shooting optical system 2. Hence, th_l.sub.2 may be switched according to the usage state of the camera 1 or the focal length of the image shooting optical system 2. When, for example, the focal length of the image shooting optical system 2 is long, th_l.sub.2 may be set to a lower value because the influence on image movement would be increased due to a large image-blurring-correction amount, in comparison with the case of shake of the camera 1 with a short focal length. As a result, the smaller image-blurring-correction amount leads to a decrease in the influence on the image movement, thereby more effectively decreasing a feeling of wrongness that could be given to the photographer when panning is ended.

Second Embodiment

[0089] The following describes a second embodiment of the invention. The second embodiment will be described only regarding differences from the first embodiment. In the second embodiment, like components are given like reference marks to those in the first embodiment, and descriptions of those components are omitted herein.

[0090] FIG. 5 illustrates an exemplary functional configuration of the panning detection unit 63 in accordance with the second embodiment.

[0091] As depicted in FIG. 5, the panning detection unit 63 in accordance with a second embodiment includes a first-panning detection unit 631, a second-panning detection unit 632, and a logic circuit 633.

[0092] The first-panning detection unit 631 detects first panning and a direction of the panning on the basis of an angular velocity that is a processing result of the signal processing unit 61, and outputs a first-panning detection result signal and a first-panning-direction detection result signal. More particularly, the first-panning detection unit 631 outputs a high-level signal as the first-panning detection result signal during the period extending from a moment at which a start of the first panning is detected to a moment at which an end of the first panning is detected (while the first panning has been detected), and outputs a low-level signal during the other periods. The first-panning detection unit 631 also outputs, as the first-panning-direction detection result signal, a high-level signal when a positive panning direction has been detected and a low-level signal when a negative panning direction has been detected. Details of the first-panning detection unit 631 will be described hereinafter with reference to FIG. 6.

[0093] The second-panning detection unit 632 detects second panning, which is slower than the first panning, and a direction of the panning on the basis of the angular velocity that is a processing result of the signal processing unit 61, and outputs a second-panning detection result signal and a second-panning-direction detection result signal. More particularly, the second-panning detection unit 632 outputs a logic signal of a high-level signal as the second-panning detection result signal during the period extending from a moment at which a start of the second panning is detected to a moment at which an end of the second panning is detected (while the second panning has been detected), and outputs a logic signal of a low-level signal during the other periods. However, when the first-panning detection result signal of the first-panning detection unit 631 is a high-level signal, the second-panning detection unit 632 outputs a low-level signal as the second-panning detection result signal. The second-panning detection unit 632 outputs, as the second-panning-direction detection result signal, a high-level signal when a positive panning direction has been detected, and a low-level signal when a negative panning direction has been detected. Details of the second-panning detection unit 632 will be described hereinafter with reference to FIG. 7.

[0094] The logic circuit 633 includes a selection circuit (SW) 6331 and an OR circuit 6332.

[0095] The selection circuit 6331 selects and outputs a panning-direction detection result signal of a panning detection unit that has detected panning from among the first-panning detection unit 631 and the second-panning detection unit 632. When both the first-panning detection unit 631 and the second-panning detection unit 632 have detected panning, higher priority is given to the direction detected by the first-panning detection unit 631. In particular, in accordance with a logical state that corresponds to the first-panning detection result signal output by the first-panning detection unit 631 as a logical state for switching, the selection circuit 6331 selects and outputs a panning-direction detection result signal of a panning detection unit that has detected panning from among the first-panning detection unit 631 and the second-panning detection unit 632. In the present embodiment, when the first-panning detection result signal input to the selection circuit 6331 is a low-level signal, the selection circuit 6331 determines that the second-panning detection unit 632 has detected the second panning, and selects and outputs the second-panning-direction detection result signal from the second-panning detection unit 632.

[0096] The OR circuit 6332 performs an OR operation (logical sum) on the first-panning detection result signal of the first-panning detection unit 631 and the second-panning detection result signal of the second-panning detection unit 632, and outputs the resultant operation result signal as a panning detection result of the panning detection unit 63 in accordance with the second embodiment. As a result, the panning detection unit 63 in accordance with the second embodiment detects panning when the first-panning detection unit 631 has detected the first panning, or when the second-panning detection unit 632 has detected the second panning.

[0097] FIG. 6 illustrates an exemplary functional configuration of the first-panning detection unit 631.

[0098] As depicted in FIG. 6, the first-panning detection unit 631 includes a panning-start determination unit 6311, a zero-cross detection unit 6312, a clocking unit 6313, a panning-detection-flag setting unit 6314, a panning-direction detection unit 6315, and a panning-direction-detection-flag setting unit 6316.

[0099] The panning-start determination unit 6311 determines the absolute value of an angular velocity that is a processing result of the signal processing unit 61, and determines whether this value has exceeded a panning-start-detection threshold (th_s).

[0100] The zero-cross detection unit 6312 detects zero cross for the angular velocity that is a processing result of the signal processing unit 61.

[0101] On the basis of the determination result of the panning-start determination unit 6311, the clocking unit 6313 counts a period in which the absolute value of the angular velocity that is a processing result of the signal processing unit 61 continuously exceeds the panning-start-detection threshold (th_s). When the count value has reached one that corresponds to a predetermined period (e.g., the period "P.sub.1" depicted on the upper side of FIG. 3), it is determined that first panning has been started. However, when the absolute value of the angular velocity that is a processing result of the signal processing unit 61 does not exceed the panning-start-detection threshold (th_s), or when the zero-cross detection unit 6312 has detected zero cross, the clocking unit 6313 sets the count value to an initial value (count value=0).

[0102] The panning-detection-flag setting unit 6314 sets a panning detection flag when the clocking unit 6313 has determined that the first panning has been started, and clears the panning detection flag when the zero-cross detection unit 6312 has detected zero cross. It should be noted that setting the panning detection flag means setting the logical state of the panning detection flag to a high level and that clearing the panning detection flag means setting the logical state of the panning detection flag to a low level. This is also applicable to other types of flags. When the panning detection flag has been set, the panning-detection-flag setting unit 6314 outputs a logic signal of a high-level signal as the first-panning detection result signal. When the panning detection flag has been cleared, the panning-detection-flag setting unit 6314 outputs a logic signal of a low-level signal as the first-panning detection result signal.

[0103] When the clocking unit 6313 has determined that the first panning has been started, the panning-direction detection unit 6315 detects, as a direction of the first panning, a sign of the angular velocity that is a processing result of the signal processing unit 61 as of that moment.

[0104] When the direction detected by the panning-direction detection unit 6315 is positive, the panning-direction-detection-flag setting unit 6316 sets the panning-direction detection flag.

[0105] When the direction detected by the panning-direction detection unit 6315 is negative, the panning-direction-detection-flag setting unit 6316 clears the panning-direction detection flag. When the panning-direction-detection flag has been set, the panning-direction detection unit 6315 outputs a high-level signal as the first-panning-direction detection result signal. When the panning-direction-detection flag has been cleared, the panning-direction detection unit 6315 outputs a low-level signal as the first-panning-direction detection result signal.

[0106] FIG. 7 illustrates an exemplary functional configuration of the second-panning detection unit 632.

[0107] The second-panning detection unit 632 detects panning of a very low angular velocity of, for example, about 1 dps as second panning. Accordingly, the second-panning detection unit 632 includes components that apply low-pass-filter (LPF) processing for removing high frequency components to an angular velocity that is a processing result of the signal processing unit 61 and then determine a start and end of panning.

[0108] As depicted in FIG. 7, the second-panning detection unit 632 includes a LPF 6321, a panning-start determination unit 6322, a panning-end determination unit 6323, a panning-detection-flag setting unit 6324, a panning-direction detection unit 6325, and a panning-direction-detection-flag setting unit 6326.

[0109] The LPF 6321 applies processing of removing high frequency components (LPF processing) to an angular velocity that is a processing result of the signal processing unit 61. The LPF 6321 may be provided outside the second-panning detection unit 632.

[0110] The panning-start determination unit 6322 determines that second panning has been started when the absolute value of an angular velocity that is an output of the LPF 6321 has exceeded a panning-start-detection threshold (th_s).

[0111] When the absolute value of an angular velocity that is an output of the LPF 6321 is less than a panning-end-detection threshold (th_e), or when a first-panning detection result signal of the first-panning detection unit 631 is a high-level signal (when first panning has been detected), the panning-end determination unit 6323 determines that second panning has been ended. In this way, also in the latter situation, it is determined that second panning has been ended, and first panning is detected more preferentially than the second panning. This maintains the performance of the response of a captured video image not only to slow panning (second panning) but also to fast panning (first panning).

[0112] The panning-detection-flag setting unit 6324 sets a panning detection flag when the panning-start determination unit 6322 has determined that second panning has been started. Meanwhile, the panning-detection-flag setting unit 6324 clears the panning detection flag when the panning-end determination unit 6323 has determined that second panning has been ended. When the panning detection flag has been set, the panning-detection-flag setting unit 6324 outputs a high-level signal as a second-panning detection result signal. When the panning-detection flag has been cleared, the panning-detection-flag setting unit 6324 outputs a low-level signal as a second-panning detection result signal.

[0113] When the panning-start determination unit 6322 has determined that second panning has been started, the panning-direction detection unit 6325 detects, as a direction of the second panning, a sign of an angular velocity that is an output of the LPF 6321 as of that moment.

[0114] When the direction detected by the panning-direction detection unit 6325 is positive, the panning-direction-detection-flag setting unit 6326 sets the panning-direction detection flag. When the direction detected by the panning-direction detection unit 6325 is negative, the panning-direction-detection-flag setting unit 6326 clears the panning-direction detection flag. When the panning-direction-detection flag has been set, the panning-direction detection unit 6325 outputs a high-level signal as the second-panning-direction detection result signal. When the panning-direction-detection flag has been cleared, the panning-direction-detection-flag setting unit 6326 outputs a low-level signal as the second-panning-direction detection result signal.

[0115] When the second-panning detection unit 632 which has such a configuration attempts to detect panning of an angular velocity of, for example, 1 dps or greater, it is advantageous for the LPF 6321 to have a cut-off frequency of about 1 Hz as a frequency transfer characteristic. Detection thresholds for the respective times of the start and end of panning desirably have hysteresis; for example, the panning-start-detection threshold (th_s) may be set to 1 dps, and the panning-end-detection threshold (th_e) may be set to 0.5 dps.

[0116] The HPF 62 of the blurring correction microcomputer 6 in accordance with the second embodiment switches a cut-off frequency in accordance with a result of detection of panning performed by the panning detection unit 63 in accordance with the second embodiment. More particularly, when panning has not been detected, the HPF 62 switches the cut-off frequency to f1. When panning has been detected, the HPF 62 switches the cut-off frequency to f2 (f2>f1). Accordingly, the processing characteristics of the HPF 62 are changed according to a result of detection of panning performed by the panning detection unit 63 in accordance with the second embodiment. In particular, when panning has been detected, the change leads to a smaller image-blurring-correction amount than when panning is not detected.

[0117] The blurring correction microcomputer 6 in accordance with the second embodiment switches an integral action coefficient K to be provided to the integration unit 66, according to a result of detection of panning performed by the panning detection unit 63 in accordance with the second embodiment. More particularly, the integral action coefficient K is switched to k1 when panning has not been detected, and is switched to k2 (k2<k1) when panning has been detected. Accordingly, the processing characteristics of the integration unit 66 are changed according to a result of detection of panning performed by the panning detection unit 63 in accordance with the second embodiment; when panning has been detected, the change leads to a smaller image-blurring-correction amount than when panning is not detected.

[0118] Both the cut-off frequency and the integral action coefficient K do not necessarily need to be switched, but only one of them may be switched.

[0119] The second embodiment enables the performance of the response of a video image not only to slow panning (second panning) but also to fast panning (first panning) to be maintained.

Third Embodiment

[0120] The following describes a third embodiment of the invention. The third embodiment will be described only regarding differences from the first embodiment. In the third embodiment, like components are given like reference marks to those in the first embodiment, and descriptions of those components are omitted herein.

[0121] FIG. 8 illustrates an exemplary functional configuration of a blurring correction microcomputer 6 in accordance with a third embodiment.

[0122] As depicted in FIG. 8, in comparison with the blurring correction microcomputer 6 in accordance with the first embodiment depicted in FIG. 2, the blurring correction microcomputer 6 in accordance with the third embodiment further includes an amplitude determination unit 68.

[0123] On the basis of an angular velocity that is a processing result of the signal processing unit 61 for a period of time beginning at a predetermined time before (predetermined number of cycles before) the present time and extending up to the present time, the amplitude determination unit 68 changes a threshold for deciding an upper limit value and a lower limit value to be used when the limit unit 64 performs clip processing while panning has not been detected ("th_l.sub.1" in S409 and S410 in FIG. 4). More particularly, on the basis of one of, or both, the maximum and minimum values of the angular velocity that is the processing result of the signal processing unit 61 which are reached during a period of time beginning at a predetermined time before the present time and extending up to the present time, the amplitude determination unit 68 obtains an amplitude value W of the angular velocity. For example, the absolute value of the maximum or minimum value of the angular velocity may be obtained as the amplitude value W. The amplitude value W multiplied by a predetermined scale factor (coefficient) .alpha. is set as a threshold (th_l.sub.1) for deciding an upper limit value and a lower limit value to be used when the limit unit 64 performs clip processing while panning has not been detected.

[0124] The threshold (th_l.sub.1) that is set as described above is equal to or less than a panning detection threshold (a panning detection threshold as an absolute value) used by the panning detection unit 63 to detect panning. The predetermined scale factor .alpha. is variable. For example, a longer focal length of the image shooting optical system 2 may lead to a smaller scale factor .alpha..

[0125] FIG. 9 illustrates an example of a threshold (th_l.sub.1) set by the amplitude determination unit 68.

[0126] In the example depicted in FIG. 9, the amplitude determination unit 68 obtains, as an amplitude value W, a maximum value of an angular velocity that is a processing result of the signal processing unit 61 which is reached during a period of time beginning at a predetermined time before the present time and extending up to the present time, and sets, as a threshold (th_l.sub.1), the amplitude value W multiplied by a scale factor .alpha., thereby providing an upper limit value of W.times..alpha..

[0127] Descriptions will be given of an exemplary situation in which a maximum value of the angular velocity reached during a period of time beginning 0.5 second before the present time and extending up to the present time is obtained as an amplitude value W, and the amplitude value W multiplied by 1.5 (.alpha.=1.5) is set as a threshold (th_l.sub.1). In this situation, when no postural changes are made to the camera 1, the limit unit 64 does not limit an angular velocity that is a processing result of the HPF 62. However, when a postural change has been made to the camera 1, or when the photographer has switched framing, the limit unit 64 immediately limits the angular velocity that is a processing result of the HPF 62, thereby suppressing image blurring correction.

[0128] Accordingly, the threshold (th_l.sub.1) is set to a low value when the camera 1 is rarely shaken, e.g., when the photographer tightly holds the camera 1. As a result, when panning has been performed, the absolute value of the angular velocity that is an output result of the HPF 62 quickly exceeds the threshold (th_l.sub.1) upon the action of the camera 1, and the effect of suppressing image blurring correction is increased, thereby improving the response of a video image to the panning.

[0129] The threshold (th_l.sub.1) is set to a high value when the camera 1 is shaken hard, e.g., when the photographer is walking or holding the camera 1 with one hand. As result, the absolute value of the angular velocity that is an output result of the HPF 62 exceeds the threshold (th_l.sub.1) less frequently, and this can suppress the effect of limiting image blurring correction that is caused through limit processing performed by the limit unit 64, thereby preventing the performance of image blurring correction from being decreased.

[0130] The upper limit of the threshold (th_l.sub.1) may be a panning detection threshold (a panning detection threshold as an absolute value) used by the panning detection unit 63 for detection of panning. In this case, the threshold (th_l.sub.1) is varied without exceeding the panning detection threshold. This may limit an image-blurring-correction amount calculated by a time at which the panning detection unit 63 detects panning.

[0131] As described above, in the third embodiment, when panning has not been detected, a threshold (th_l.sub.1) for deciding an upper limit value and lower limit value for clip processing performed by the limit unit 64 is changed according to a change in the posture of the camera 1 that is made immediately before the detection of panning. This allows the performance of image blurring correction to be prevented from being decreased due to the limit processing performed by the limit unit 64.

Fourth Embodiment

[0132] The following describes a fourth embodiment of the invention. The fourth embodiment will be described only regarding differences from the second embodiment. In the fourth embodiment, like components are given like reference marks to those in the second embodiment, and descriptions of those components are omitted herein.

[0133] The fourth embodiment is a variation of the second embodiment.

[0134] Regarding the panning detection unit 63 in accordance with the second embodiment (see FIG. 5), higher priority is given to the detection result of the first-panning detection unit 631 than the detection result of the second-panning detection unit 632. However, depending on a photographer or a scene for which image shooting is performed, the only panning that is performed may be slow panning (the second panning) or fast panning (the first panning). Accordingly, the panning detection unit 63 in accordance with the fourth embodiment has a function such that a detection result of one of the first-panning detection unit 631 or the second-panning detection unit 632 is used to make a selection as to whether to reset (initialize) the HPF 62 when panning has been ended.

[0135] FIG. 10 illustrates an exemplary functional configuration of the panning detection unit 63 in accordance with the fourth embodiment.

[0136] As depicted in FIG. 10, the panning detection unit 63 in accordance with the fourth embodiment includes a selector 634 in place of the logic circuit 633 depicted in FIG. 5, and further includes a panning selection unit 635 and a communication unit 636. In the configuration of the panning detection unit 63 in accordance with the fourth embodiment, a first-panning detection result signal of the first-panning detection unit 631 is not input to the second-panning detection unit 632.

[0137] The communication unit 636 communicates with the system controller 5. The communication unit 636 may be provided outside the panning detection unit 63.

[0138] On the basis of information reported from the system controller 5 via the communication unit 636, the panning selection unit 635 decides which of a detection result of the first-panning detection unit 631 or a detection result of the second-panning detection unit 632 is to be selected. The information reported from the system controller 5 is information on a movement vector (motion vector) detected from a video image and image-shooting conditions (e.g., image-shooting scene mode, exposure) that have been set for the camera 1. The movement vector is detected by the system controller 5.

[0139] For example, when slow panning is estimated to have been performed (e.g., when the camera 1 is moved slowly) from a movement vector detected from a video image, the panning selection unit 635 selects a detection result of the second-panning detection unit 632. For example, when the magnitude of a movement vector detected from a video image is less than a predetermined value, a detection result of the second-panning detection unit 632 maybe selected. Meanwhile, when fast panning is estimated to have been performed (when a subject is frequently switched), a detection result of the first-panning detection unit 631 is selected. For example, when a variation (amount of change) in the magnitude of a movement vector detected from a video image is greater than a predetermined value, or when the magnitude of the movement vector is greater than a predetermined value, it may be highly likely that fast panning for chasing a subject has been performed. In this case, the panning selection unit 635 selects a detection result of the first-panning detection unit 631.

[0140] When, for example, the image-shooting scene mode is one suitable for shooting an image of a sport scene or a child, it is highly likely that fast panning for chasing a subject will be performed, and the panning selection unit 635 selects a detection result of the first-panning detection unit 631. Meanwhile, when the image-shooting scene mode is one suitable for shooting an image of landscape or nightscape, it is highly likely that slow panning will be performed. In this case, a detection result of the second-panning detection unit 632 is selected.

[0141] In accordance with the selection decision of the panning selection unit 635, the selector 634 performs switching to output a detection result of the first-panning detection unit 631 to the HPF 62 and the limit unit 64 or to output a detection result of the second-panning detection unit 632 to the HPF 62 and the limit unit 64. In particular, when the panning selection unit 635 has selected a detection result of the first-panning detection unit 631, the selector 634 outputs the detection result of the first-panning detection unit 631 to the HPF 62 and the limit unit 64. When the panning selection unit 635 has selected a detection result of the second-panning detection unit 632, the selector 634 outputs the detection result of the second-panning detection unit 632 to the HPF 62 and the limit unit 64.

[0142] As described above, in the fourth embodiment, on the basis of a panning detection result selected according to an image-shooting scene, the timing of resetting the HPF 62 is changed, and the limit unit 64 performs limit processing. This allows the camera 1 to perform image-blurring-correction control tailored to an image-shooting scene.

[0143] A variation of the forth embodiment is as follows. For example, the system controller 5, instead of the panning selection unit 635, may make a decision as to which of the detection results of the first-panning detection unit 631 and the second-panning detection unit 632 is to be selected. In this case, in accordance with the selection decision of the system controller 5 that is reported via the communication unit 636 and the panning selection unit 635, the selector 634 outputs a detection result of the first-panning detection unit 631 to the HPF 62 and the limit unit 64. Alternatively, the selector 634 may perform switching to output a detection result of the second-panning detection unit 632 to the HPF 62 and the limit unit 64.