Data Buffering

Pope; Steven Leslie ; et al.

U.S. patent application number 16/119053 was filed with the patent office on 2018-12-27 for data buffering. This patent application is currently assigned to Solarflare Communications, Inc.. The applicant listed for this patent is Solarflare Communications, Inc.. Invention is credited to Steven Leslie Pope, David James Riddoch.

| Application Number | 20180375782 16/119053 |

| Document ID | / |

| Family ID | 35911635 |

| Filed Date | 2018-12-27 |

| United States Patent Application | 20180375782 |

| Kind Code | A1 |

| Pope; Steven Leslie ; et al. | December 27, 2018 |

DATA BUFFERING

Abstract

A method is disclosed for bridging between a first data link carrying data units of a first data protocol and a second data link for carrying data units of a second protocol by means of a bridging device. This method may comprise receiving by means of a first entity data units of a first protocol, and storing those data units in the memory. Then, accessing by means of a protocol processing entity the protocol data of data units stored in the memory and thereby performing protocol processing for those data units under the first protocol. The method also accesses by means of a second interface entity the traffic data of data units stored in the memory and thereby transmits that traffic data over the second data link in data units of the second data protocol.

| Inventors: | Pope; Steven Leslie; (Cambridge, GB) ; Riddoch; David James; (Fenstanton, GB) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | Solarflare Communications,

Inc. Irvine CA |

||||||||||

| Family ID: | 35911635 | ||||||||||

| Appl. No.: | 16/119053 | ||||||||||

| Filed: | August 31, 2018 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13644433 | Oct 4, 2012 | 10104005 | ||

| 16119053 | ||||

| 12215437 | Jun 26, 2008 | 8286193 | ||

| 13644433 | ||||

| PCT/GB2006/004946 | Dec 28, 2006 | |||

| 12215437 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 47/27 20130101; G06F 9/544 20130101; H04L 47/62 20130101; G06F 9/45533 20130101; H04L 29/06 20130101; H04L 69/16 20130101; H04L 47/30 20130101 |

| International Class: | H04L 12/863 20060101 H04L012/863; H04L 29/06 20060101 H04L029/06; H04L 12/835 20060101 H04L012/835; H04L 12/807 20060101 H04L012/807; G06F 9/455 20060101 G06F009/455; G06F 9/54 20060101 G06F009/54 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Dec 28, 2005 | GB | 0526519.4 |

| Feb 1, 2006 | GB | 0602033.3 |

| Jan 10, 2016 | GB | 0600417.0 |

Claims

1-27. (canceled)

28. A method comprising: receiving, at a first interface entity, data units in accordance with a first protocol, and storing at least traffic data of those data units in a memory buffer, wherein the data units in accordance with each protocol include protocol data and traffic data; receiving a call from a protocol processing entity, at the first interface entity, and returning in response thereto a message comprising a reference to the memory buffer and at least some protocol data, control of said memory buffer being handed over to said protocol processing entity; performing, in response to said message, by the protocol processing entity, protocol processing for those data units, control of said memory buffer being handed over to a second interface entity; and accessing, by the second interface entity, the traffic data of data units stored in the memory buffer and thereby transmitting that traffic data through an output in data units in accordance with a second protocol.

29. A method as claimed in claim 28, wherein the protocol processing entity is arranged to perform protocol processing for the data units stored in the memory buffer without it accessing the traffic data of those units stored in said memory buffer.

30. A method as claimed in claim 29, wherein the first protocol is such that protocol data of a data unit of the first protocol includes check data that is a function of the traffic data of the data unit, and the method comprises: applying the function by the first interface entity to the content of a data unit of the first protocol received by the first interface entity to calculate first check data; transmitting the first check data to the protocol processing entity; and comparing by the protocol processing entity the first check data calculated for a data unit with the check data included in the protocol data of that data unit.

31. A method as claimed in claim 28, wherein: the memory buffer is at least one memory buffer; the first interface entity, the second interface entity and the protocol processing entity are each arranged to access a first memory buffer of the at least one memory buffer only when they have control of the first memory buffer; and the method comprises: the first interface entity storing a received data unit of the first protocol in a given memory buffer, of the at least one memory buffer, of which it has control and subsequently passing control of the given memory buffer to the protocol processing entity; the protocol processing entity passing control of a given memory buffer to the second interface entity when it has performed protocol processing of the or each data unit stored in the given memory buffer; and the second interface entity passing control of a given memory buffer to the first interface entity when it has transmitted the traffic data contained in the given memory buffer through the output in data units in accordance with the second data protocol.

32. A method as claimed in claim 28, comprising: generating, by the protocol processing entity, protocol data of the second protocol for the data units to be transmitted under the second protocol; communicating that protocol data to the second interface entity; and the second interface entity including that protocol data in the said data units in accordance with the second protocol.

33. A method as claimed in claim 32, wherein the second protocol is such that protocol data of a data unit of the second protocol includes check data that is a function of the traffic data of the data unit, and the method comprises: applying the function by the second interface entity to the content of a data unit of the second protocol to be transmitted by the second interface entity to calculate first check data; combining that check data with protocol data received from the protocol processing entity to form second protocol data; and the second interface entity including the second protocol data in the said data units in accordance with the second protocol.

34. A method as claimed in claim 28, wherein one of the first and second protocols is TCP.

35. A method as claimed in claim 28, wherein one of the first and second protocols is Fibrechannel.

36. A method as claimed in claim 28, wherein the first and second protocols are the same protocol.

37. A method as claimed in claim 28, wherein the first and second interface entities each communicate with the respective data link via a respective hardware interface.

38. A method as claimed in claim 28, wherein the protocol processing comprises terminating a link of the first protocol.

39. A method as claimed in clam 28, wherein the protocol processing comprises: inspecting the traffic data of the first protocol; comparing the traffic data of the first protocol with one or more pre-set rules; and if the traffic data does not satisfy the rules preventing that traffic data from being transmitted by the second interface entity.

40. An apparatus comprising: one or more processors configured to provide a first interface entity for interfacing with an input, a second interface entity for interfacing with an output, and a protocol processing entity, wherein the input carries data units in accordance with a first protocol and the output carries data units in accordance with a second protocol, the first and second protocols being such that data units in accordance with each protocol include protocol data and traffic data; the first interface entity being arranged to receive data units in accordance with the first protocol, store those data units in a memory buffer, and in response to receipt of a call from the protocol processing entity, return a message thereto comprising a reference to the memory buffer and at least some protocol data, control of said memory buffer being handed over to said protocol processing entity; the protocol processing entity being arranged to perform protocol processing for those data units, control of said memory buffer being handed over to said second interface entity; and the second interface entity being arranged to access the traffic data of the data units stored in the memory buffer and thereby transmit that traffic data through the output in data units in accordance with a second data protocol.

41. An apparatus comprising: an interface for interfacing with an input, wherein the input carries data units in accordance with a first protocol, the first protocol being such that data units in accordance with the first protocol include protocol data and traffic data; and one or more processors configured to provide a protocol processing entity, wherein said interface is configured to perform at least some protocol processing for data units received at the input, and respond to at least one message from said protocol processing entity with a reference to a memory buffer in which one or more data units are stored, control of said memory buffer being handed over to said protocol processing entity by removing said memory buffer from an owned buffer list of the interface and adding said memory buffer to an owned buffer list of the protocol processing entity, and the protocol processing entity is arranged to cause said one or more processors to perform protocol processing for said data units stored in said memory buffer without accessing the traffic data of those data units stored in said memory buffer.

42. A method as claimed in claim 28, wherein the first interface entity comprises a first transport library, and the second interface entity comprises a second transport library.

43. A method as claimed in claim 28, further comprising: in response to receiving a message from the protocol processing entity at the second interface entity, processing protocol data to form protocol data of a second protocol.

44. A method as claimed in claim 31, wherein: each memory buffer is identifiable by a virtual reference; the step of the first interface entity passing control of a buffer to the protocol processing entity comprises passing to the protocol processing entity a virtual reference to that buffer; and the step of the protocol processing entity passing control of a buffer to the second interface entity comprises passing to the second interface entity a virtual reference to that buffer.

45. A method as claimed in claim 28, further comprising: the protocol processing entity periodically issuing calls to the first interface entity to initiate protocol processing of data units stored in said memory buffer; the first interface entity, in response to receiving one or more of said calls, returning a response message to the protocol processing entity comprising at least one of: protocol data of data units stored in the memory buffer; and/or an indication of a location in memory of the traffic data of data units stored in the memory buffer.

46. A method as claimed in claim 28, wherein the one or more processors are configured to provide the first interface entity, second interface entity, and protocol processing entity at user level.

Description

PRIORITY CLAIM

[0001] This application is a continuation of and claims priority to U.S. patent application No. 12/215,437 filed Jun. 26, 2008, which claims priority to PCT Application No. PCT/GB2006/004946 filed on Dec. 28, 2006 which claims priority to Great Britain Application No. 0602033.3 filed on Feb. 1, 2006.

FIELD OF THE INVENTION

[0002] This invention relates to the buffering of data, for example in the processing of data units in a device bridging between two data protocols.

BACKGROUND OF THE INVENTION

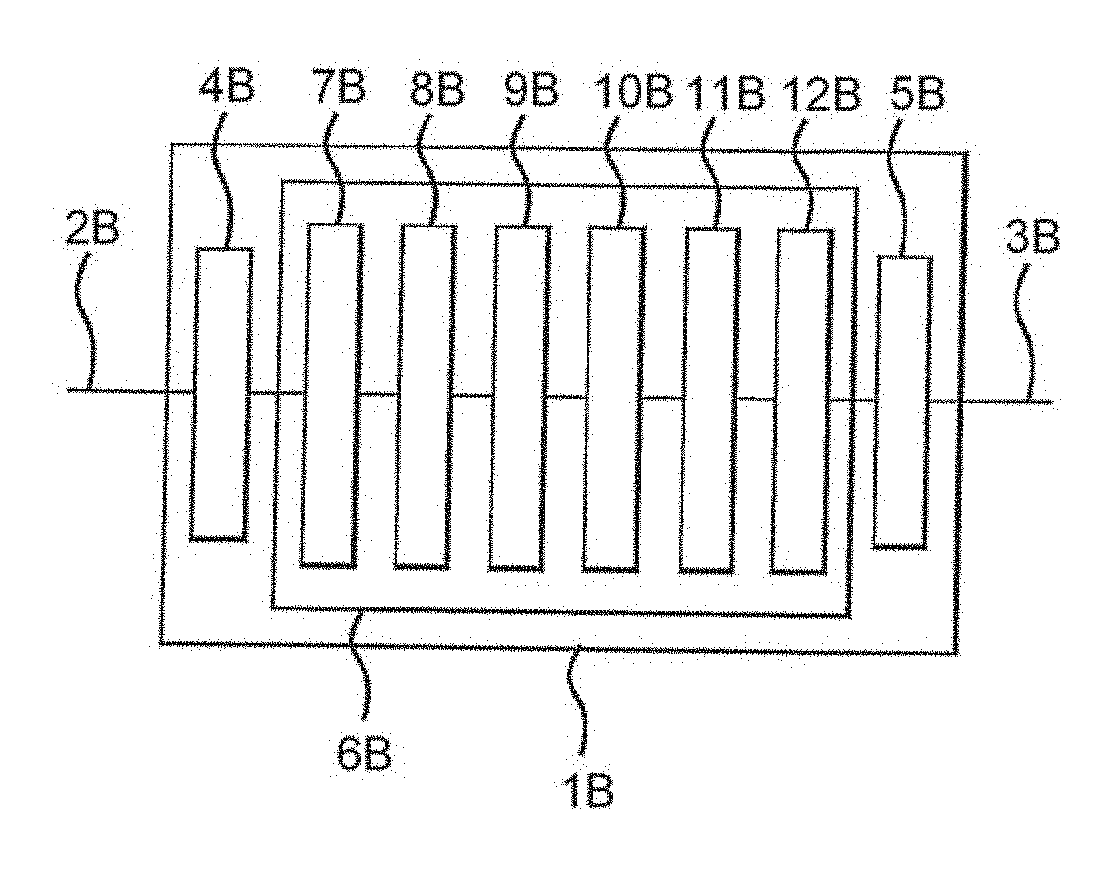

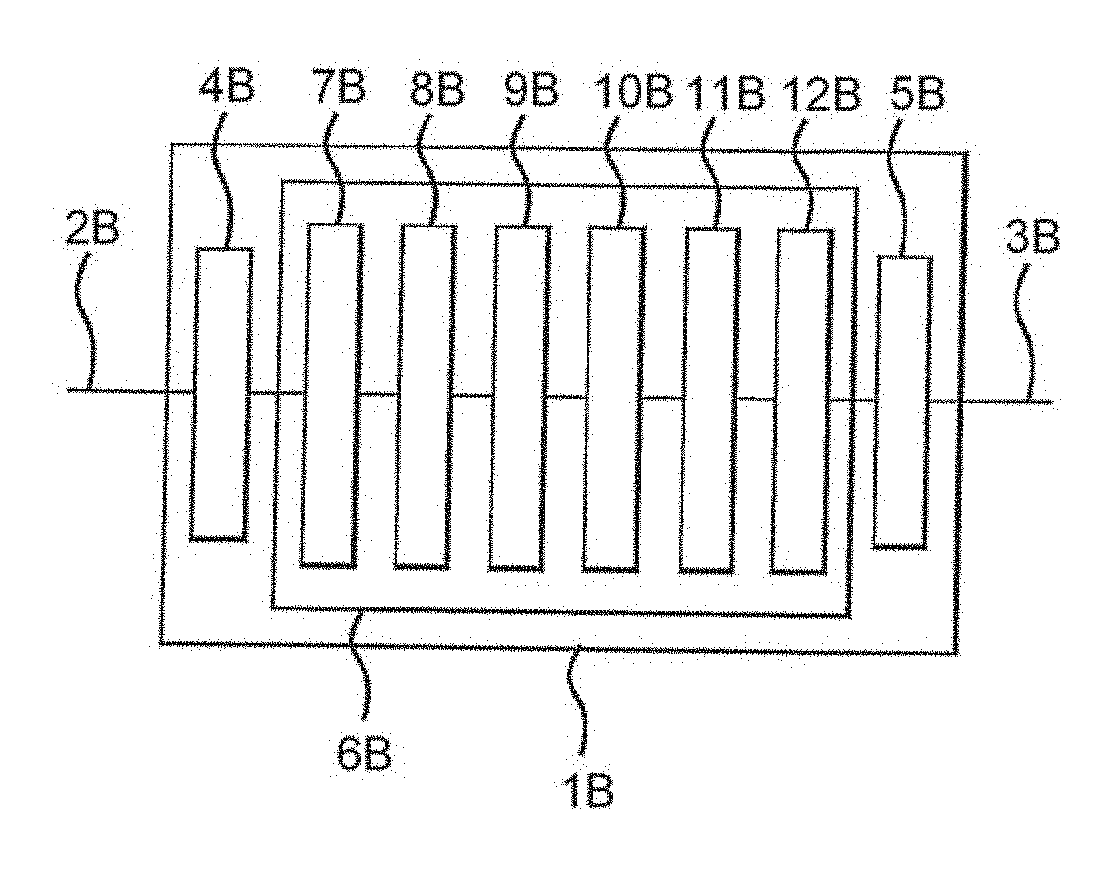

[0003] FIG. 1 shows in outline the logical and physical architecture of a bridge 1 for bridging between data links 2 and 3. In this example link 2 carries data according to the Fibrechannel protocol and link 3 carries data according to the ISCSI (Internet Small Computer Serial Interface) protocol over the Ethernet protocol (known as ISCSI-over-Ethernet). The bridge comprises a Fibrechannel hardware interface 4, an Ethernet hardware interface 5 and a data processing section 6. The interfaces link the data processing section to the respective data links 2 and 3. The data processing section implements a series of logical protocol layers: a Fibrechannel driver 7, a Fibrechannel stack 8, a bridge/buffer cache 9, an ISCSI stack 10, a TCP (transmission control protocol) stack 11 and an Ethernet driver 12. These layers convert packets that have been received in accordance with one of the protocols into packets for transmission according to the other of the protocols, and buffer the packets as necessary to accommodate flow control over the links.

[0004] FIG. 2 shows the physical architecture of the data processing section 6. The data processing section 6 comprises a data bus 13, such as a PCI (personal computer interface) bus. Connected to the data bus 13 are the Ethernet hardware interface 5, the Fibrechannel hardware interface 4 and the memory bus 14. Connected to the memory bus 14 are a memory unit 15, such as a RAM (random access memory) chip, and a CPU (central processing unit) 16 which has an integral cache 17.

[0005] The example of an ISCSI-over-Ethernet packet being received and translated to Fibrechannel will be discussed, in order to explain problems of the prior art. The structure of the Ethernet packet is shown in FIG. 3. The packet 30 comprises an Ethernet header 31, a TCP header 32, an ISCSI header 33 and ISCSI traffic data 34.

[0006] Arrows 20 to 22 in FIG. 2 illustrate the conventional manner of processing an incoming Ethernet packet in this system. The Ethernet packet is received by Ethernet interface 5 and passed over the PCI and memory buses 12, 13 to memory 14 (step 20), where it is stored until it can be processed by the CPU 15. When the CPU is ready to process the Ethernet packet it is passed over the memory bus to the cache 16 of the CPU. (Step 21). The CPU processes the packet to perform protocol processing and re-encapsulate the data for transmission over Fibrechannel. The Fibrechannel packet is then passed over the memory bus and the PCI bus to the Fibrechannel interface 4 (step 22), from which it is transmitted. It will be appreciated that this process involves passing the entire Ethernet packet three times over the memory bus 13. These bus traversals slow down the bridging process.

[0007] It would be possible to pass the Ethernet packet directly from the Ethernet interface 5 to the CPU, without it first being stored in memory. However, this would require the CPU to signal the Ethernet hardware to tell it to pass the packet, or alternatively for the CPU and the Ethernet hardware to be synchronised, which would be inefficient and could also lead to poor cache performance. In any event, this is not readily possible in current server chipsets.

[0008] An alternative process is illustrated in FIG. 4. FIG. 4 is analogous to FIG. 2 but shows different process steps. In step 23 the received Ethernet packet is passed from the Ethernet hardware to the memory 14. When the CPU is ready to process the packet only the header data is passed to the CPU. (Step 24). The CPU process the header data, forms a Fibrechannel header and transmits the Fibrechannel header to the Fibrechannel interface. (Step 25). Then the traffic data 34 is passed to the Fibrechannel hardware (step 26), which mates it with the received header to form a Fibrechannel packet for transmission. This method has the advantage that the traffic data 34 traverses the memory bus only twice. However, this method is not straightforward to implement, since the CPU must be capable of arranging for the traffic data to be passed from the memory 14 to the Fibrechannel hardware in step 26. This is problematic because the CPU would conventionally have received only the headers for that packet, without any indication of where the packet was located in memory, and so it would have no knowledge of where the traffic data is located in the memory. As a result, the CPU would be unable to inform the bridging entity that is to transmit that data onwards of what data is to be transmitted. Furthermore, if that transmitting entity is to be implemented in software then it could be implemented at user level, for example as an application, or as part of the operating system kernel. If it is implemented at user level then it would not conventionally be able to access physical memory addresses, being restricted instead to accessing memory via virtual memory addresses. As a result, it could not access the packet data in memory directly via a physical address. Alternatively, if the transmitting entity is implemented in the kernel then for software abstraction and engineering reasons it would be preferable for it to interface with the network at a high level of abstraction, for instance by way of a sockets API (application programming interface). As a result, it would be preferred that it does not access the packet data in memory directly via a physical address.

[0009] One way of addressing this problem is to permit the Ethernet hardware 5 to access the memory 14 by RDMA (remote direct memory access), and for the Ethernet hardware to be allocated named buffers in the memory. Then the Ethernet hardware can write the traffic data of each packet to a specific named buffer and through the RDMA interface with the bridging application (e.g. uDAPL) indicate to the application the location/identity of the buffer which has received data. The CPU can access the data by means of reading the buffer, for example by means of a post( ) instruction having as its operand the name of the buffer that is to be read. The Fibrechannel hardware can then be passed a reference to the named buffer by the application and so (also by RDMA) read the data from the named buffer. The buffer remains allocated to the Ethernet hardware during the reading step(s).

[0010] One problem with this approach is that it requires the Ethernet hardware to be capable of accessing the memory 14 by RDMA, and to include functionality that can handle the named buffer protocol. if the Ethernet hardware is not compatible with RDMA or with the named buffer protocol, or if the remainder of the system is not configured to communicated with the Ethernet hardware by RDMA then this method cannot be used. Also, RDMA typically involves performance overheads.

[0011] Analogous problems arise when bridging in the opposite direction: from Fibrechannel to ISCSI, and when using other protocols.

[0012] There is therefore a need to improve the processing of data units in bridging situations.

SUMMARY

[0013] According to one aspect of the present invention there is provided a method for bridging between a first data link carrying data units of a first data protocol and a second data link for carrying data units of a second protocol by means of a bridging device, the first and second protocols being such that data units of each protocol include protocol data and traffic data and the bridging device comprising a first interface entity for interfacing with the first data link, a second interface entity for interfacing with the second data link, a protocol processing entity and a memory accessible by the first interface entity, the second interface entity and the protocol processing entity, the method comprising: receiving by means of the first interface entity data units of the first protocol, and storing those data units in the memory; accessing by means of the protocol processing entity the protocol data of data units stored in the memory and thereby performing protocol processing for those data units under the first protocol; and accessing by means of the second interface entity the traffic data of data units stored in the memory and thereby transmitting that traffic data over the second data link in data units of the second data protocol.

[0014] According to a second aspect of the present invention there is provided a bridging device for bridging between a first data link carrying data units of a first data protocol and a second data link for carrying data units of a second protocol, the first and second protocols being such that data units of each protocol include protocol data and traffic data and the bridging device comprising: a first interface entity for interfacing with the first data link, a second interface entity for interfacing with the second data link, a protocol processing entity and a memory accessible by the first interface entity, the second interface entity and the protocol processing entity; the first interface entity being arranged to receive data units of the first protocol, and storing those data units in the memory; the protocol processing entity being arranged to access the protocol data of data units stored in the memory and thereby perform protocol processing for those data units under the first protocol; and the second interface entity being arranged to access the traffic data of data units stored in the memory and thereby transmit that traffic data over the second data link in data units of the second data protocol.

[0015] According to a third aspect of the present invention there is provided a data processing system comprising: a memory comprising a plurality of buffer regions; an operating system for supporting processing entities running on the data processing system and for restricting access to the buffer regions to one or more entities; a first interface entity running on the data processing system whereby a first hardware device may communicate with the buffer regions; and an application entity running on the data processing system; the first interface entity and the application entity being configured to, in respect of a buffer region to which the operating system permits access by both the interface entity and the application entity, communicate ownership data so as to indicate which of the first interface entity and the application entity may access the buffer region and to access the buffer region. only in accordance with the ownership data.

[0016] According to a fourth aspect of the present invention there is provided a method for operating a data processing system comprising: a memory comprising a plurality of buffer regions; an operating system for supporting processing entities running on the data processing system and for restricting access to the buffer regions to one or more entities; a first interface entity running on the data processing system whereby a first hardware device may communicate with the buffer regions; and an application entity running on the data processing system; the method comprising, in respect of a buffer region to which the operating system permits access by both the interface entity and the application entity, communicating ownership data by means of the first interface entity and the application entity so as to indicate which of the first interface entity and the application entity may access the buffer region and to access the buffer region only in accordance with the ownership data.

[0017] According to a fifth aspect of the present invention there is provided a protocol processing entity for operation in a bridging device for bridging between a first data link carrying data units of a first data protocol and a second data link for carrying data units of a second protocol by means of a bridging device, the first and second protocols being such that data units of each protocol include protocol data and traffic data and the protocol processing entity being arranged to cause a processor of the bridging device to perform protocol processing for data units stored in the memory without it accessing the traffic data of those units stored in the memory. The protocol processing entity may be implemented in software. The software may be stored on a data carrier.

[0018] The protocol processing entity may be arranged to perform protocol processing for the data units stored in the memory without it accessing the traffic data of those units stored in the memory.

[0019] The first protocol may be such that protocol data of a data unit of the first protocol includes check data that is a function of the traffic data of the data unit. The method may then comprise: applying the function by means of the first entity to the content of a data unit of the first protocol received by the first interface entity to calculate first check data; transmitting the first check data to the protocol processing entity; and comparing by means of the protocol processing entity the first check data calculated for a data unit with the check data included in the protocol data of that data unit.

[0020] The memory may comprise a plurality of buffer regions. The first interface entity, the second interface entity and the protocol processing entity may each be arranged to access a buffer region only when they have control of it. The method may then comprise: the first interface entity storing a received data unit of the first protocol in a buffer of which it has control and subsequently passing control of that buffer to the protocol processing entity; the protocol processing entity passing control of a buffer to the second interface entity when it has performed protocol processing of the or each data unit stored in that buffer; and the second interface entity passing control of a butler to the first interface entity when it has transmitting the traffic data contained in that buffer over the second data link in data units of the second data protocol.

[0021] The method may comprise: generating by means of the protocol processing entity protocol data of the second protocol for the data units to be transmitted under the second protocol; communicating that protocol data to the second interface entity; and the second interface entity including that protocol data in the said data units of the second protocol.

[0022] The second protocol may be such that protocol data of a data unit of the second protocol includes check data that is a function of the traffic data of the data unit. The method may then comprise: applying the function by means of the second interface entity to the content of a data unit of the second protocol to be transmitted by the second interface entity to calculate first check data; combining that check data with protocol data received from the protocol processing entity to form second protocol data; and the second interface entity including the second protocol data in the said data units of the second protocol.

[0023] One of the first and second protocols may be TCP. One of the first and second protocols may be Fibrechannel. The first and second protocols may be the same.

[0024] The first and second interface entities may each communicate with the respective data link via a respective hardware interface.

[0025] The first and second interface entities may each communicate with the respective data link via the same hardware interface.

[0026] The protocol processing may comprise terminating a link of first protocol.

[0027] The protocol processing may comprise: inspecting the traffic data of the first protocol; comparing the traffic data of the first protocol with one or more pre-set rules; and if the traffic data does not satisfy the rules preventing that traffic data from being transmitted by the second interface entity.

[0028] The data processing system may comprise a second interface entity running on the data processing system whereby a second hardware device may communicate with the buffer regions. The first and second interface entities and the application entity may be configured to, in respect of a buffer region to which the operating system permits access by the first and second interface entities and the application entity, communicate ownership data so as to indicate which of the first and second interface entities and the application entity may access each buffer regions and to access each buffer region only in accordance with the ownership data.

[0029] The first interface entity may be arranged to, on receiving a data unit, store that data unit in a buffer region that it may access in accordance with the ownership data and to subsequently modify the ownership data such that the application entity may access that buffer region in accordance with the ownership data. The application entity may be arranged to perform protocol processing on data unit(s) stored in a buffer region that it may access in accordance with the ownership data and to subsequently modify the ownership data such that the second interface entity may access that buffer region in accordance with the ownership data. The second interface entity may be arranged to transmit at least some of the content of data unit(s) stored in a buffer region that it may access in accordance with the ownership data and to subsequently modify the ownership data such that the application entity may access that buffer region in accordance with the ownership data.

BRIEF DESCRIPTION OF THE DRAWINGS

[0030] The present invention will now be described by way of example with reference to the accompanying drawings. In the drawings:

[0031] FIG. 1 shows in outline the logical and physical architecture of a bridge.

[0032] FIG. 2 shows the architecture of the bridge of FIG. 1 in more detail, illustrating data transfer steps.

[0033] FIG. 3 shows the structure of an ISCSI-over-Ethernet packet.

[0034] FIG. 4 shows the architecture of the bridge of FIG. 1, illustrating alternative data transfer steps.

[0035] FIG. 5 illustrates the physical architecture of a bridging device.

[0036] FIG. 6 illustrates the logical architecture of the bridging device of FIG. 5.

[0037] FIG. 7 shows the processing of data in the bridging device of FIG. 5.

DETAILED DESCRIPTION OF THE INVENTION

[0038] In the bridging device described below, data units of a first protocol are received by interface hardware and written to one or more receive buffers. In the example described below, those data units are TCP packets which encapsulate ISCSI packets. The TCP and ISCSI header data is then passed to the entity that performs protocol processing. The header data is passed to that entity without the traffic data of the packets, but with information that identifies the location of the traffic data within the buffer(s). The protocol processing entity performs TCP and ISCSI protocol processing. If protocol processing is successful then it also passes the data identifying the location of the traffic data in the buffers to an interface that will be used for transmitting the outgoing packets. The interface can then read that data, form one or more headers for transmitting it as data units of a second protocol, and transmit it. In bridging between the data links that carry the packets of the respective protocols, the bridging device receives data units of one protocol and transmits data units of another protocol which include the traffic data contained in the received data units.

[0039] FIG. 5 shows the physical architecture of a device 40 for bridging between an ISCSI-over-Ethernet data link 41 and a Fibrechannel data link 42. The device comprises an Ethernet hardware interface 43, a Fibrechannel hardware interface 44 and a central processing section 45. The hardware interfaces link the respective data links to the central processing section 45 via a bus 46, which could be a PCI bus. The central processing section comprises a CPU 47, which includes a cache 47a and a processing section 47b, and random access memory 48 which are linked by a memory bus 49 to the PCI bus. A non-volatile storage device 50, such as a hard disc, stores program code for execution by the CPU.

[0040] FIG. 6 shows the logical architecture provided by the central processing section 45 of the bridging device 40. The CPU provides four main logical functions: an Ethernet transport library 51, a bridging application 52, a Fibrechannel transport library 53 and an operating system kernel 54. The transport libraries, the bridging application and the operating system are implemented in software which is executed by the CPU. The general principles of operation of such systems are discussed in WO 2004/025477.

[0041] Areas of the memory 48 are allocated for use as buffers 55, 56. These buffers are configured in such a way that the interface that receives the incoming data can write to them, the bridging application can read from them, and the interface that transmits the outgoing data can read from them. This may be achieved in a number of ways. In a system that is configured not to police memory access any buffer may be accessible in this way. In other operating systems they may be set up as anonymous memory: i.e. memory that is not mapped to a specific process; so that they can be freely accessed by both interfaces. Another approach is to implement a further process, or a set of instructions calls, or an API that is able to act as an intermediary to access the buffers on behalf of the interfaces.

[0042] The present example will be described with reference to a system in which the operating system allocates memory resources to specific processes and restricts other processes from accessing those resources. The transport libraries 51, 53 and the bridging application 52 are implemented in a single process, by virtue of them occupying a common instruction space. As a result, a buffer allocated to any of those three entities can be accessible to the other two. (Under a normal operating system (OS), OS-allocated buffers are only accessible if the OS chooses for them to be). The interfaces 43, 44 should be capable of writing to and reading from the buffers. This can be achieved in a number of ways. For example, each transport libraries may implement an API through which the respective interface can access the buffers. Alternatively, the interface could interact directly with the operating system to access the buffers. This may be convenient where, in an alternative embodiment, one of the transport libraries is implemented as part of the operating system and derives its ability to access the buffers through its integration with the operating system rather than its sharing of an instruction space with the bridging application.

[0043] Each buffer is identifiable by a handle that acts as a virtual reference to the buffer. The handle is issued by the operating system when the buffer is allocated. An entity wishing to read from the buffer can issue a read call to the operating system identifying the buffer by the handle, in response to which the operating system will return the content of the buffer or the part of buffer cited in the read call. An entity wishing to write to the buffer can issue a write call to the operating system identifying the buffer by the handle, in response to which the operating system will write data supplied with the call to the buffer or to the part of buffer cited in the write call. As a result, the buffers need not be referenced by a physical address, and can hence be accessed by user-level entities under operating systems that limit the access of user-level entities to physical memory.

[0044] The transport libraries and the bridging application implement a protocol to allow them to cooperatively access the buffers that are allocated to the instruction space that they share. In this protocol each of those entities maintains an "owned buffer" list of the buffers that it has responsibility for. Each entity is arranged to access only those buffers currently included in its owned buffer list. Each entity can pass a "handover" message to one of the other entities. The handover message includes the handle of a buffer. On transmitting the handover message (or alternatively on acknowledgement of the handover message), the entity that transmitted the handover message deletes the buffer mentioned in the message from its owned buffer list. On receipt of a handover message an entity adds the buffer mentioned in the message to its owned buffer list. This process allows the entities to cooperatively assign control of each buffer between each other, independently of the operating system. The entity whose owned buffer list includes a buffer is also responsible for the administration of that buffer: for example for returning the buffer to the operating system when it is no longer required. Buffers that are subject to this protocol will be termed "anonymous buffers" since the operating system does not discriminate between the entities of the common instruction space in policing access to those buffers.

[0045] The operation of the device for bridging packets from the Ethernet interface to the Fibrechannel interface will now be explained. The device operates in an analogous way to bridge packets in the opposite direction.

[0046] At the start of operations the bridging application 52 requests the operating system 54 to allocate blocks of memory for use by the bridging system as buffers 55. The operating system allocates a set of buffers accordingly and passes handles to them to the application. These buffers can then be accessed directly by the bridging application and the transport libraries, and can be accessed by the interfaces by means of the anonymous APIs implemented by the respective transport libraries.

[0047] One or more of the buffers are passed to the incoming transport library 51 by means of one or more handover messages. The transport library adds those buffers to its owned buffer list. The transport library maintains a data structure that permits it to identify which of those buffers contains unprocessed packets. This may be done by queuing the buffers or by storing a flag indicating whether each buffer is in use. On being passed a buffer the incoming transport library notes that buffer as being free. The data structure preferably indicates the order in which the packets were received, in order that that information can be used to help prioritise their subsequent processing. Multiple packets could be stored in each buffer, and a data structure maintained by the Ethernet transport library to indicate the location of each packet.

[0048] Referring to FIG. 7, as Ethernet packets are received Ethernet protocol processing is performed by the Ethernet interface hardware 43, and the Ethernet headers are removed from the Ethernet packets, leaving TCP packets in which ISCSI packets are encapsulated. Each of these packets is written by the Ethernet hardware into one of the buffers 55. (Step 60). This is achieved by the Ethernet hardware issuing a buffer write call to the API of the Ethernet transport library, with the TCP packet as an operand. In response to this call the transport library identifies a buffer that is included in its owned buffer list and that is free to receive a packet. It stores the received packet in that buffer and then modifies its data structure to mark the buffer as being occupied.

[0049] Thus, at least some of the protocol processing that is to be performed on the packet can be performed by the interface (43, in this example) that received the incoming packet data. This is especially efficient if that interface includes dedicated hardware for performing that function. Such hardware can also be used in protocol processing for non-bridged packets: for example packets sent to the bridge and that are to terminate there. One example of such a situation is when an administrator is transmitting data to control the bridging device remotely. The interface that receives the incoming packet data has access to both the header and the traffic data of the packet. As a result, it can readily perform protocol processing operations that require knowledge of the traffic data in addition to the header data. Examples of these operations include verifying checksum data, CRC (cyclic redundancy check) data or bit-count data. In addition to Ethernet protocol processing the hardware could conveniently perform TCP protocol processing of received packets.

[0050] The application 52 runs continually. Periodically it makes a call, which may for example be "recv( )" or "complete( )" to the transport library 51 to initiate the protocol processing of any Ethernet packet that is waiting in one of the buffers 55. (Step 61). The recv( )/complete( ) call does not specify any buffer. In response to the recv( )/complete( ) call the transport library 51 checks its data structure to find whether any of the buffers 55 contain unprocessed packets. Preferably the transport library identifies the buffer that contains the earliest-received packet that is still unprocessed, or if the buffer is capable of prioritising certain traffic then it may bias its identification of a packet based on that prioritisation. If an unprocessed packet has been identified then the transport library responds to the recv( )/complete( ) call by returning a response message to the application (step 62), which includes: [0051] the TCP and ISCSI headers of the identified packet, which may collectively be considered to constitute a header or header data of the packet; [0052] the handle of the buffer in which the identified packet is stored; [0053] the start point within that buffer of the traffic data block of the packet; and [0054] the length of the traffic data block of the packet.

[0055] By means of the headers the application can perform protocol processing on the received packet. The other data collectively identifies the location of the traffic data for the packet. The response message including a buffer handle is treated by the incoming transport library and the bridging application as handing that buffer over to the bridging application. The incoming transport library deletes that buffer handle from its owned buffer list as one of the buffers 55, and the bridging application adds the handle to its owned buffer list.

[0056] It will be noted that by this message the application has received the header of the packet and a handle to the traffic data of the packet. However, the traffic data itself has not been transferred. The application can now perform protocol processing on the header data.

[0057] The protocol processing that is to be performed by the application may involve functions that are to be performed on the traffic data of the packet. For example, ISCSI headers include a CRC field, which needs to be verified over the traffic data. Since the application does not have access to the traffic data it cannot straightforwardly perform this processing. Several options are available. First, the application could assume that that CRC (or other such error-check data) is correct. This may be a useful option if the data is delay-critical and need not anyway be re-transmitted, or if error checking is being performed in a lower-level protocol. Another option is for the interface to calculate the error-check data over the relevant portion of the received packet and to store it in the buffer together with the packet. The error check data can then be passed to the application in the response message detailed above, and the application can simply verify whether that data matches the data. that is included in the header. This requires the interface to be capable of identifying data of the relevant higher-level protocol (e.g. ISCSI) embedded in received packets of a lower-level protocol (e.g. Ethernet or TCP), and to be capable of executing the error-check algorithm appropriate to that higher-level data. Thus, in this approach the execution of the error-check algorithm is performed by a different entity from that which carries out the remainder of the protocol processing, and by a different entity from that which verifies the error--check data.

[0058] Not all of the headers of the packet as received at the hardware interface need be passed in the response message that is sent to the application, or even stored in the buffer. If protocol processing for one or more protocols is performed at the interface then the headers for those protocols can be omitted from the response and not stored in the buffer. However, it may still be useful for the application to receive the headers of one or more protocols for which the application does not perform protocol processing. One reason for this is that it provides a way of allow the application to calculate the outgoing route. The outgoing route could be determined by the Fibrechannel transport library 53 making use of system-wide route tables that could for example, be maintained by the operating system. The Fibrechannel transport library 53 can look up a destination address in the route tables so as to resolve it to the appropriate outgoing FC interface.

[0059] The application is configured in advance to perform protocol processing on one or more protocol levels. The levels that are to be protocol processed by the application will depend on the bridging circumstances. The application is configured to be capable of performing such protocol processing in accordance with the specifications for the protocol(s) in question. In the present example the application performs protocol processing on the ISCSI header. (Step 63).

[0060] Having performed protocol processing on the header as received from the incoming transport library, the application then passes a send( ) command to the Fibrechannel transport library (step 65). The send( ) command includes as an operand the handle of the buffer that includes the packet in question. It may also include data that specifies the location of the traffic data in the buffer, for example the start point and length of the traffic data block of the packet. The send( ) command is interpreted by the buffering application and by the outgoing transport library as handing over that buffer to the outgoing transport library. Accordingly, the bridging application deletes that buffer handle from its owned buffer list, and the outgoing transport library adds the handle to its owned buffer list, as one of the buffers 56.

[0061] The Fibrechannel transport library then reads the header data from that buffer (step 66) and using the header alone (i.e. without receiving the traffic data stored in the buffer) it forms a Fibrechannel header for onward transmission of the corresponding traffic data (step 67).

[0062] The Fiberchannel transport library then provides that header and the traffic data to the Fibrechannel interface, which combines them into a packet for transmission (step 68). The header and the traffic data could be provided to the Fiberchannel interface in a number of ways. For example, the header could be written into the buffer and the start location and length of the header and the traffic data could be passed to the Fiberchannel interface. Conveniently the header could be written to the buffer immediately before the traffic data, so that only one set of start location and length data needs to be transmitted. If the outgoing header or header set is longer than the incoming header or header set this may require the incoming interface to write the data to the buffer in such a way as to leave sufficient free space before the traffic data to accommodate the outgoing header. The Fiberchannel interface could then read the data from the buffer, for example by DMA (direct memory access). Alternatively, the header could be transmitted to the Fiberchannel interface together with the start location and length of the traffic data and the interface could then read the traffic data, by means of an API call to the transport library, and combine the two together. Alternatively, both the header and the traffic data could be transmitted to the Fiberchannel interface. The header and the start/length data could be provided to the Fiberchannel interface by being written to a queue stored in a predefined set of memory locations, which is polled periodically by the interface.

[0063] The outgoing header might have to include calculated data, such as CRCs, that is to be calculated as a function of the traffic data. In this situation the header as formed by the transport library can include space (e.g. as zero bits) for receiving that calculated data. The outgoing hardware interface can then calculate the calculated data and insert it into the appropriate location in the header. This avoids the outgoing transport library having to access the traffic data.

[0064] Once the Fibrechannel packet has been transmitted for a particular incoming packet the buffer in which the incoming packed had been stored can be re-used. The Fibrechannel transport library hands over ownership of the buffer to the Ethernet transport library. Accordingly, the Fiberchannel transport library deletes that buffer handle from its owned buffer list, and the Ethernet transport library adds the handle to its owned buffer list, marking the buffer as free for storage of an incoming packet.

[0065] As indicated above, the buffers in which the packets are stored are implemented as anonymous buffers. When a packet is received the buffer that is to hold that packet is owned by the incoming hardware and/or the incoming transport library. When the packet comes to he processed by the bridging application ownership of the buffer is transferred to the bridging application. Then when the packet comes to be transmitted ownership of the buffer is transferred to the outgoing hardware and/or the outgoing transport library. Once the packet has been transmitted ownership of the buffer can be returned to the incoming hardware and/or the incoming transport library. In this way the buffers can be used efficiently, and without problems of access control. The use of anonymous buffers avoids the need for the various entities to have to support named buffers. This is especially significant in the case of the incoming and outgoing hardware since it may not he possible to modify pre-existing hardware to support named buffers. It may also not be economically viable to use such hardware since it requires significant additional complexity--namely the ability to fully perform complex protocol processing e.g. to support TCP and RDMA (iWARP) protocol processing, This would in practice require a powerful CPU to be embedded in the hardware, which would make the hardware excessively expensive.

[0066] Once each layer of protocol processing is completed for a packet the portion of the packet's header that relates to that protocol is no longer required. As a result, the memory in which that portion of header was stored can be used to store other data structures. This will be described in more detail below.

[0067] When a packet is received the incoming hardware and/or transport library should have one or more buffers in its ownership. It selects one of those buffers for writing the packet to. That buffer may include one or more other received packets, in which case the hardware/library selects suitable free space in the buffer for accommodating the newly received packet. Preferably it attempts to pack the available space efficiently. There are various ways to aim at this: one is to find a space in a buffer that most closely matches the size of the received packet, whilst not being smaller than the received packet. The space in the buffer may be managed by a data structure stored in the buffer itself which provides pointers to the start and end of the packets stored in the buffer. If the buffer includes multiple packets then ownership of the buffer is passed to the application when any of those is to be protocol processed by the application. When the packet has been transmitted the remaining packets in the buffer remain unchanged but the data structure is updated to show the space formerly occupied by the packet as being vacant.

[0068] If the TCP and ISCSI protocol processing is unsuccessful then the traffic data of the packet may be dropped. The data need not be deleted from the buffer: instead the anonymous buffer handle can simply passed back to the Ethernet transport library for reuse.

[0069] This mechanism has the consequence that the traffic data needs to pass only twice over the memory bus: once from the Ethernet hardware to memory and once from memory to the Fibrechannel hardware. It does not need to pass through the CPU; in particular it does not need to pass through the cache of the CPU. The same approach could be used for other protocols; it is not limited to bridging between Ethernet and Fibrechannel.

[0070] The transport libraries and the application can run at user level. This can improve reliability and efficiency over prior approaches in which protocol processing is performed by the operating system. Reliability is improved because the machine can continue in operation even if a user-level process fails.

[0071] The transport libraries and the application are configured programmatically so that if their ownership list does not include the identification of a particular buffer they will not access that buffer.

[0072] If the machine is running other applications in other address spaces then the named buffers for one application are not accessible to the others. This feature provides for isolation between applications and system integrity. This is enforced by the operating system in the normal manner of protecting applications' memory spaces.

[0073] The received data can be delivered directly from the hardware to the ISCSI stack, which is constituted by the Ethernet transport library and the application operating in cooperation with each other. This avoids the need for buffering received data on the hardware, and for transmitting the data via the operating system as in some prior implementations.

[0074] The trigger for the passing of data from the buffers to the CPU is the polling of the transport library at step 61. The polling can be triggered by an event sent by the Ethernet hardware to the application on receipt of data, a timer controlled by the application, by a command from a higher level process or from a user, or in response to a. condition in the bridging device such as the CPU running out of headers to process. This approach means that there is no need for the protocol processing to be triggered by an interrupt when data arrives. This economises on the use of interrupts.

[0075] The bridging device may be implemented on a conventional personal computer or server. The hardware interfaces could be provided as network interface cards (NICs) which could each be peripheral devices or built into the computer. For example, the NICs could be provided as integrated circuits on the computer's motherboard.

[0076] When multiple packets have been received the operations in FIG. 7 can be combined for multiple packets. For example, the response data (at step 62) for multiple packets can be passed to the CPU and stored in the CPU's cache awaiting processing.

[0077] There may be limitations on the size of the outgoing packets that mean that the traffic data of an incoming packet cannot be contained in a single outgoing packet. In that case the traffic data can be contained in two or more outgoing packets, each of whose headers is generated by the transport library of the outgoing protocol.

[0078] Since the packets are written to contiguous blocks of free space in the buffers 54, as packets get removed from the buffers 54 gaps can appear in the stream of data in the buffers. If the received packets are of different lengths then those gaps might not be completely filled by new received data. As a result the buffers can become fragmented, and therefore inefficiently utilised. To mitigate this, as soon as the header of a received packet has been passed to the CPU for processing the space occupied by that header can be freed up immediately. That space can be used to allow a larger packet to be received in a gap in memory preceding that header. Alternatively that space can be used for various data constructs. For example, it can be used to store a linked-list data structure that allows packets to be stored discontiguously in the buffer. Alternatively, it could be used to store the data structure that indicates the location of each packet and the order in which it was received. Fragmentation may also be reduced by performing a defragmentation operation on the content of a buffer, or by moving packets whose headers have not been passed to the CPU for processing from one buffer to another. One preferred fragmentation algorithm is to check from time to time for buffers that contain less data than a pre-set threshold level. The data in such a buffer is moved out to another buffer, and the data structure that indicates which packet is where is updated accordingly.

[0079] In a typical architecture, when the Ethernet packet headers are read to the CPU for processing by the bridging application they will normally be stored in a cache of the CPU. The headers will then be marked as "dirty" data. Therefore, in normal circumstances they would be flushed out of the cache and written back to the buffer so as to preserve the integrity of that memory. However, once the headers have been processed by the bridging application they are not needed any more, and so writing them back to the buffer is wasteful. Therefore, efficiency can be increased by taking measures to prevent the CPU from writing the headers back to memory. One way to achieve this is by using an instruction such as the whiny (write-back invalidate) instruction which is available on some architectures. This instruction can be used in respect of the header data stored in the cache to prevent the bridging application from writing that dirty data back to the memory. The instruction can conveniently be invoked by the bridging application on header data that is stored in the cache when it completes processing of that header data. At the same point, it can arrange for the space in the buffer(s) that was occupied by that header data to be marked as free for use, for instance by updating the data directory that indicates the buffer contents.

[0080] The principles described above can be used for bridging in the opposite direction: from Fibrechannel to ISCSI, and when using other protocols. Thus the references herein to Ethernet and Fibrechannel can be substituted for references to other incoming and outgoing protocols respectively. They could also be used for bridging between links that use two identical protocols. In the apparatus of FIG. 5 the software could be configured to permit concurrent bridging in both directions. If the protocols are capable of being operated over a common data link then the same interface hardware could be used to provide the interface for incoming and for outgoing packets.

[0081] The anonymous buffer mechanism described above could be used in applications other than bridging. In general it can be advantageous wherever multiple devices that have their own processing capabilities are to process data units in a buffer, and where one of those devices is to carry out processing on only a part of each data unit. In such situations the anonymous buffer mechanism allows the devices or their interfaces to the buffer to cooperates so that the entirety of each data unit need not pass excessively through the system. One examples of such an application is a firewall in which a network card is to provide data units to an application that is to inspect the header of each data unit and in dependence on that header either block or pass the data unit. In that situation, the processor would not need to terminate a link of the incoming protocol to perform the required processing: it could simply inspect incoming packets, compare them with pre-stored rules and allow them to pass only if they satisfy the rules. Another example is a tape backup application where data is being received by a computer over a network, written to a buffer and then passed to a tape drive interface for storage. Another example is a billing system for a telecommunications network, in which a network device inspects the headers of packets in order to update billing records for subscribers based on the amount or type of traffic passing to or from them.

[0082] The applicant hereby discloses in isolation each individual feature described herein and any combination of two or more such features, to the extent that such features or combinations are capable of being carried out based on the present specification as a whole in the light of the common general knowledge of a person skilled in the art, irrespective of whether such features or combinations of features solve any problems disclosed herein, and without limitation to the scope of the claims. The applicant indicates that aspects of the present invention may consist of any such individual feature or combination of features. In view of the foregoing description it will be evident to a person skilled in the art that various modifications may be made within the scope of the invention.

* * * * *

D00000

D00001

D00002

D00003

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.