Data Processing Apparatus To Generate An Alarm

Boghossian; Boghos ; et al.

U.S. patent application number 15/642028 was filed with the patent office on 2017-12-28 for data processing apparatus to generate an alarm. The applicant listed for this patent is IPSOTEK LTD. Invention is credited to James Black, Boghos Boghossian.

| Application Number | 20170372575 15/642028 |

| Document ID | / |

| Family ID | 38219446 |

| Filed Date | 2017-12-28 |

View All Diagrams

| United States Patent Application | 20170372575 |

| Kind Code | A1 |

| Boghossian; Boghos ; et al. | December 28, 2017 |

DATA PROCESSING APPARATUS TO GENERATE AN ALARM

Abstract

A method of operating a data processing apparatus is provided. The method includes receiving video data; receiving a virtual test signal representative of a location of a virtual object; setting data representing the horizontal and vertical size of the virtual object dependent upon the location of the virtual object; overlaying the received video data with an image of the virtual object at locations determined from the virtual test signal to generate virtual test video data; and analysing the virtual test video data and generating an alarm signal based on an output signal generated by said analysis.

| Inventors: | Boghossian; Boghos; (Wimbledon, GB) ; Black; James; (Wimbledon, GB) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 38219446 | ||||||||||

| Appl. No.: | 15/642028 | ||||||||||

| Filed: | July 5, 2017 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13595306 | Aug 27, 2012 | |||

| 15642028 | ||||

| 13595275 | Aug 27, 2012 | 8547436 | ||

| 13595306 | ||||

| 12600380 | May 7, 2010 | 8305441 | ||

| PCT/GB2008/001679 | May 15, 2008 | |||

| 13595275 | ||||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | G08B 13/19682 20130101; G06K 9/00771 20130101; H04N 7/188 20130101; G06K 2009/00738 20130101; G08B 13/19697 20130101; G08B 13/1961 20130101 |

| International Class: | G08B 13/196 20060101 G08B013/196; G06K 9/00 20060101 G06K009/00; H04N 7/18 20060101 H04N007/18 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| May 15, 2007 | GB | 0709329.7 |

| May 18, 2007 | GB | 0709620.9 |

Claims

1. A method of testing an operation of a data processing apparatus configured for operation in a normal mode for generating an alarm signal as a result of analysis of video data signals derived from a real scene and in a testing mode, the method comprising operating the apparatus in the testing mode by: simulating one or more conditions in a scene by: receiving video data representing the scene; receiving a virtual test signal representative of one or more locations of a virtual object in said scene; setting data representing a horizontal size and a vertical size of the virtual object dependent upon said one or more locations of the virtual object in said scene; and overlaying the received video data with an image of the virtual object at said one or more locations determined from the virtual test signal to generate virtual test video data; and analysing the virtual test video data and generating an alarm signal based on an output signal generated by said analysis.

2. Data processing apparatus, which is configured for operation in a normal mode for generating an alarm signal as a result of analysis of video data signals derived from a real scene and for operation in a testing mode, comprising: (a) a video data receiver configured to receive video data; (b) a module for operating said apparatus in said testing mode by simulating one or more conditions in a scene, said module comprising: (i) a virtual test signal receiver configured to receive a virtual test signal representative of one or more locations of a virtual object in said scene; and (ii) a video overlay engine configured to overlay the received video data with the virtual object at said one or more locations determined from the virtual test signal to generate virtual test video data, wherein the video overlay engine is configured to set data representative of a horizontal size and a vertical size of the virtual object dependent upon the location of the virtual object; and (c) an alarm engine configured to analyse the virtual test video data and generate an alarm signal based on an output signal generated by said analysis.

3. A non-transitory carrier carrying a computer program comprising non-transitory processor-implementable instructions for configuring a data processing apparatus for operation in a normal mode for generating an alarm as a result of analysis of video data signals derived from a real scene and in a testing mode, said instruction being executable to cause the data processing apparatus to perform, in the testing mode, the steps of: receiving video data representing a scene; receiving a virtual test signal representative of one or more locations of a virtual object in said scene; setting data representing a horizontal size and a vertical size of the virtual object dependent upon said one or more locations of the virtual object in said scene; overlaying the received video data with an image of the virtual object at said one or more locations determined from the virtual test signal to generate virtual test video data; and analysing the virtual test video data and generating an alarm signal based on an output signal generated by said analysis.

Description

CROSS-REFERENCE TO RELATED APPLICATIONS

[0001] This application is a continuation application of U.S. application Ser. No. 13/595,306, filed Aug. 27, 2012 (pending), which, in turn, is a divisional application of U.S. application Ser. No. 13/595,275, filed Aug. 27, 2012 and was issued on Oct. 1, 2013 as U.S. Pat. No. 8,547,436, which, in turn, is a divisional application of U.S. application Ser. No. 12/600,380, filed on Nov. 16, 2009 and was issued on Nov. 6, 2012 as U.S. Pat. No. 8,305,441, which, in turn, claims priority to International Patent Application No. PCT/GB/2008/001679 filed on May 15, 2008, which claims priority to Patent Application Nos. 0709329.7 filed on May 15, 2007 and 0709620.9 filed on May 18, 2007 all of which are herein incorporated by reference.

BACKGROUND

[0002] The present disclosure relates to data processing apparatus, in particular, but not exclusively, to a data processing apparatus that is configured to generate an alarm signal as a result of analysis performed on received audio and video data signals.

[0003] Closed circuit television (CCTV) systems are known to record video data, and display the video data to a human operator in order that the human operator can identify any anomalies in the video data, for example breaches of security, or illegal activities.

[0004] US 2006/0222206 (Garoutte) discloses a methodology of implementing complex behaviour recognition in an intelligent video system.

[0005] U.S. Pat. No. 6,628,835 (Brill et al) discloses a method and system for defining and recognizing complex events in a video sequence.

[0006] WO 97/08896 (Scientific-Atlanta, Inc.) discloses an open area security system that comprises an acoustic sensor array capable of forming elevational and azimuthal beans, and a camera mounted in the vicinity of the array that is automatically directed toward a detected, sound-producing event.

[0007] Aspects and embodiments disclosed herein were devised with the foregoing in mind.

SUMMARY

[0008] According to a first aspect of the invention, there is provided data processing apparatus comprising:

[0009] a video data receiver configured to receive video data; and/or

[0010] an audio data receiver configured to receive audio data; and

[0011] an alarm engine configured to analyse said received video and/or audio data and generate an alarm signal based on an output signal generated by said analysis.

[0012] According to a second aspect of the invention, there is provided a method of operating a data processing apparatus comprising:

[0013] receiving video data; and/or

[0014] receiving audio data; and

[0015] analysing said received video and/or audio data and generating an alarm signal based on an output signal generated by said analysis.

[0016] The analysis may comprise one or more processing operations that perform an analysis of the received data and may be based on computations based on physical modelling to identify incidents from the received data.

[0017] The result of the analysis may be a binary output, for example whether or not one or more criteria are satisfied, or may be an output signal that represents a degree to which one or more criteria are met, or may be a count of how many times a criteria is met. In some embodiments, the analysis may be referred to as analytics; that is a logical analysis such as the application of algorithms and transformations that operate on a digital representation of the received data.

[0018] An example of a count of how many times a criteria is met may include how many times an object, for example a person or a vehicle, passes a predetermined point. That is, a criterion may be a person passing a predetermined point. The predetermined point may be an entry to a building, a field, an arena, or any other area.

[0019] In one embodiment, a video camera may be set up to monitor a doorway to a building, and the results of analysis performed on the video data may be a count of the number of people who have passed through the doorway, and/or the total number people who are currently inside the building. In order to maintain a count of the total number of people inside the building the video analysis may be able to differentiate between people entering and exiting the building.

[0020] In other embodiments, the predetermined area may be a gate to a field/enclosure, an entrance to a stadium, an entrance to a public transport station such as a train station, or any other area through which objects/people pass.

[0021] Generating the alarm signal as a result of analysis performed on both the received audio and video data can enable complex alarm monitoring incidents/scenarios to be considered and/or detection accuracy to be improved and/or the number of false alarms generated to be reduced.

[0022] The analysis may be performed on both the audio and video data, either independently or in combination, or in sequence, and this can enable a greater degree of confidence to be placed on alarms.

[0023] In some embodiments, incidents that can be determined from a result of analysis of audio data complement incidents that can be determined from a result of analysis of video data due to the different types of incident that each type of data are particularly suited to monitoring.

[0024] For example, analysis of video data may provide high detection accuracy and low false alarm potential for determining an "Intruder Detection" incident, whereas analysis of audio data may provide low detection accuracy and high false alarm potential for determining the same "Intruder Detection" incident. Similarly, analysis of video data may provide low detection accuracy and high false alarm potential for determining a "Personal Attack" incident, whereas analysis of audio data may provide high detection accuracy and low false alarm potential for determining the same "Personal Attack" incident. However, using a combination of analysis of both video and audio data enables a high detection accuracy and low false alarm potential to be obtained for both the "Intruder Detection" incident and the "Personal Attack" incident.

[0025] An example where use of analysis of audio and video data together can improve detection accuracy and false alarm potential includes identifying a shooting. Use of audio data analysis alone may wrongly identify a car back-firing as a gunshot, and use of video data analysis alone may wrongly identify a person running for a bus as running away from a shooting. However, use of audio and video data analysis together can ensure that a shooting alarm is only generated when a gunshot sound is identified and it is determined that people are running in the scene.

[0026] It will be appreciated that embodiments of the invention provide a system/method wherein the analysis of audio data can be used to complement any deficiencies in an analysis of video data, and vice versa. This provides an improved system/method for automatically generating alarms that are determined as a result of an improved determination of incidents/scenarios from the received data.

[0027] Furthermore, it may be possible to determine the occurrence of alarms that previously were not determinable. For example, security threats and potentially dangerous situations can be determined early by utilising the more sophisticated incidents/scenarios that can be recognised to enable preventative pro-active measures to be taken where possible. This may be preferable to re-active measures that are taken after a dangerous situation has occurred.

[0028] The alarm engine may be configured to:

[0029] perform incident analysis on said received audio and/or video data, and

[0030] generate an incident analysis output signal,

[0031] wherein said incident analysis output signal comprises a representation of the result of one or more pattern recognition operations performed on said received audio and/or video data.

[0032] The one or more pattern recognition operations may comprise identifying objects from the video data. Examples of objects in the video data may comprise components of the foreground of a video image, for example a person, a bag, or a vehicle. The incident analysis may comprise performing one or more pattern recognition operations on one or more objects identified from the video data. The pattern recognition operations may comprise determining spatial and/or temporal relationships of objects identified in the video data.

[0033] The one or more pattern recognition operations may comprise applying pattern recognition operations to the audio data. For example, the audio pattern recognition operations may comprise one or more of performing ridge estimation, running autocorrelation operations under the ridges to provide a measure of the periodicity of contours, performing instantaneous frequency estimation to determine a local frequency for each moment of the fundamental period contour, performing fundamental period contour estimation to estimate the pitch of the signal.

[0034] Performing one or more comparisons of said received audio and video data with one or more patterns enables situations comprising different types of incidents to be determined. Examples of incidents include identifying a stationary object, loitering, congestion, running, a gun shot, aggressive noise, an emergency vehicle's siren, and a scream. The pattern recognition operations on the received audio and video data may be performed independently, in combination, or in sequence.

[0035] The video incident analysis may be performed on a detection area that comprises a sub-area of said video data.

[0036] Use of the detection area enables more advanced incidents to be determined as the incident analysis is focussed on areas of interest. This level of refinement can enable events which would otherwise have constituted an incident to be ignored if they are not within the detection area. The detection area may comprise one or more sub-areas of images represented by the video data, and the sub-areas may or may not be contiguous. For example, sub-areas may be defined that correspond to areas of an image that display doors and windows when performing security analysis.

[0037] One or more of the sub-areas may be dynamically determined. A dynamically determined sub-area may focus/restrict analysis, or a function defined by said analysis, to a sub-area related to an earlier incident as identified by the incident analysis. The dynamically determined sub-area may be a sub-area of a detection area, which in turn may be a sub-area of an existing sub-area.

[0038] The alarm engine may be configured to:

[0039] perform contemporaneous analysis on said received audio and/or video data, and

[0040] generate a contemporaneous analysis output signal,

[0041] wherein said contemporaneous analysis output signal comprises a representation of the result of one or more contemporaneous analysis functions applied either directly or indirectly to the received audio and/or video data.

[0042] A contemporaneous analysis function is a function of the contemporaneous analysis.

[0043] One or more of said contemporaneous analysis functions may comprise a contemporaneous analysis rule and a delay period. The delay period defines a period of time for which the contemporaneous analysis rule must be satisfied for the contemporaneous analysis function to be satisfied. The contemporaneous analysis rule may comprise an operation that is to be performed on one or more incident analysis output signals, and the operation may be a Boolean operation.

[0044] Using a delay period enables the analysis to generate an alarm when criteria are satisfied for a predetermined time. This provides the advantage that potential false alarms that would be generated where a criteria is satisfied for only a short period of time are reduced. Another advantage is that a user defined delay that is appropriate to a behaviour/incident that is being monitored can be incorporated. Furthermore, additional confidence can be provided by automatically utilising the determination of the concurrence of audio and video incidents/detections.

[0045] The alarm engine may be configured to:

[0046] perform sequential analysis either directly or indirectly on the received audio and/or video data, and

[0047] generate a sequential analysis output signal,

[0048] wherein said sequential analysis comprises the sequential application of one or more sequential analysis functions to the received audio and/or video data,

[0049] wherein said sequential analysis output signal comprises a representation of the result of the last of the one or more sequential analysis functions.

[0050] In embodiments where there is one sequential analysis function, the only sequential analysis function is the last sequential analysis function. That is, the sequence is a sequence of one function.

[0051] In embodiments where the one or more sequential analysis functions comprise a plurality of sequential analysis functions, one or more of the plurality of sequential analysis functions may comprise a sequential analysis rule and a timeout period. The timeout period may define a period of time after the sequential analysis rule has been satisfied during which the output signal for that sequential analysis function is considered true. That is, the timeout period defines a period of time during which the next sequential analysis function must be satisfied for the sequence to be continued. If the next sequential function is not satisfied before the timeout period of the previous sequential function expires, then the alarm engine may be configured to terminate the processing of the current sequence. In the meantime, any further sequences that have been initiated are continued.

[0052] The timeout period for a sequential analysis function may be applied from the time at which the sequential analysis rule of that sequential analysis function is satisfied. In such an embodiment, the timeout period timer is triggered when the sequential analysis rule is satisfied, and therefore the output of the sequential analysis function is true for the period of time defined by the timeout period after the sequential analysis rule becomes satisfied.

[0053] In other embodiments, the timeout period may be applied from the time at which the sequential analysis function is no longer satisfied. That is, the output of the sequential analysis function is true for the period of time during which the sequential analysis rule is satisfied plus the period of time defined by the timeout period after the sequential analysis rule is no longer satisfied.

[0054] One or more of the sequential analysis rules may comprise an operation that is to be performed on the contemporaneous analysis output signal. The operation may be a Boolean function.

[0055] The video data may be data representative of output signals generated by a plurality of cameras. The audio data may be data representative of output signals generated by a plurality of microphones.

[0056] The audio data may be representative of sound emanating from outside of the field of the received video data. This can provide the advantage that alarms can be generated in relation to situations where the cameras do not necessarily capture the situation for which the alarm is generated and/or where incidents outside the field of view for the received video data assist in determining what incidents are occurring in the field of view.

[0057] The data apparatus may further comprise an external data receiver configured to receive external data, wherein the alarm engine is also configured to perform analysis on said received external data. Examples of external data may include data representative of an indication of smoke, fire or an intruder, and the data may be received from a smoke alarm, a temperature sensor or an infra-red motion detector.

[0058] In other embodiments, the external data receiver may be configured to receive data from a radio frequency identification (RFID) system. For example, the external data received from an RFID system may comprise a count of the number of RFID tags/transponders that are identified by a RFID reader.

[0059] The RFID reader may be one wire that runs under the ground across an entrance to an area such as a building, wherein a count is incremented each time a RFID tag passes over the wire.

[0060] In some embodiments, the RFID reader may be two wires that run under the ground across an entrance, wherein the wires are spaced apart such that an incident analysis function can determine in which direction the RFID tag is passing through the entrance. The wires/RFID readers may be spaced 1 metre apart across the entrance. This can be determined by identifying in which order the two RFID readers/wires were activated by a given RFID tag. By monitoring in which direction RFID tags pass by the reader, it may be possible to maintain a count of the total number of RFID tags that are within a building or any other area. For example a count may be incremented when a tag passes through the entrance in one direction, and the count may be decremented when a tag passes through the entrance in the other direction.

[0061] In some embodiments, the alarm engine may be configured to perform analysis on the external data to perform a comparison between the external data and video and/or audio data. A discrepancy between the external data and the video and/or audio data may cause an alarm signal to be generated by the alarm engine.

[0062] An embodiment where external data representative of a count of the number of people who pass through an entrance is compared with video data representative of a count of the number people passing though the same entrance may be considered as a "covert access control system". The external data may be obtained from an RFID system, for example. It will be appreciated that such a "covert access control system" is not limited to monitoring people entering a building, but could be used as a barrier-less entry control system in any situation where crowd flow, or any other object flow such as vehicles, can be monitored.

[0063] Another embodiment where external data representative of a count of the number of people who pass through an entrance is compared with video data representative of a count of the number people passing though the same entrance can be used to detect "tailgating" possibly through ticket barriers or at an access control point at a security door that is opened by the swiping of a security pass.

[0064] An example of tailgating is where two or more people pass through an automated ticket barrier using a ticket that is only valid for a single person. Typically this may mean two people walking very closely together through a ticket barrier that automatically opens when a ticket is presented, for example at a train station. In some embodiments, the ticket barrier may accept paper tickets, and in other embodiments the ticket barrier may be configured as an RFID reader to access data on an RFID tag associated with a ticket.

[0065] Optionally, embodiments described herein may be used with systems that do not have a physical barrier to access. In such an embodiment, the external data may comprise data representative of the covert reading of RFID tags as they pass through an area that is monitored by a RFID reader. The monitored area may be considered as comprising a "virtual trip wire" which can generate an external data output signal when the presence of a RFID tag is determined. The external data output signal may be a count of the number of RFID tags passing through the monitored area in a predetermined time. The alarm engine may be configured to compare a count of the number of RFID tags passing through the monitored area as determined from the external data output signal, with a count of objects passing through the monitored area as determined from a video data signal.

[0066] According to a further embodiment of the invention, there is provided a method of configuring data processing apparatus for automatically determining a real size of an object in an image of a scene displayed on a display screen, the method comprising:

[0067] responding to user input by displaying in an image of a scene displayed on a display screen a virtual object representative of a real size object to establish a first datum position;

[0068] varying the size of the virtual object in the image responsive to user input;

[0069] storing data representative of the horizontal and vertical size of the virtual object relative to the displayed image at the first datum position;

[0070] displaying said virtual object at a second datum position in said image responsive to user input;

[0071] varying the size of the virtual object on the display screen at the second datum position responsive to user input; and

[0072] storing data representative of the vertical and horizontal size of the virtual object at the second datum position relative to the displayed image.

[0073] According to a further embodiment of the invention, there is provided data processing apparatus configured for automatically determining a real size of an object in an image of a scene displayed on a display screen, the apparatus operative to:

[0074] respond to user input by displaying in an image of a scene displayed on a display screen a virtual object representative of a real size object to establish a first datum position;

[0075] vary the size of the virtual object in the image responsive to user input;

[0076] store data representative of the horizontal and vertical size of the virtual object relative to the displayed image at the first datum position;

[0077] display said virtual object at a second datum position in said image responsive to user input;

[0078] vary the size of the virtual object on the display screen at the second datum position responsive to user input; and

[0079] store data representative of the vertical and horizontal size of the virtual object at the second datum position relative to the displayed image.

[0080] The method may further comprise automatically establishing said first datum position by displaying said virtual object such that the foot of the virtual object is at the foot of said displayed image.

[0081] The method may further comprise automatically establishing said second datum position by displaying said virtual object such that the foot of the virtual object is on the horizon of the scene in the displayed image.

[0082] The method may further comprise storing data representative of the real size represented by said virtual object.

[0083] This may provide the advantage that the user can define the real size represented by the virtual object, for example to suit the sizes of the objects in the image.

[0084] According to a further aspect of the invention, they may be provided data processing apparatus comprising:

[0085] a video data receiver configured to receive video data;

[0086] a virtual test signal receiver configured to receive a virtual test signal representative of a location of a virtual object;

[0087] a video overlay engine configured to overlay the received video data with a virtual object at locations determined from the virtual test signal to generate virtual test video data; and

[0088] an alarm engine configured to analyse the virtual test video data and generate an alarm signal based on an output signal generated by said analysis.

[0089] The video overlay engine may be configured to set the size of the virtual object dependent upon the location of the virtual object.

[0090] The received video data may be pre-recorded video data retrieved from computer memory, or may be live video data.

[0091] The virtual object may representative of an object that has been captured from video data, or may be a virtual object that has been loaded from a library of virtual objects.

[0092] According to a further embodiment of the invention, there is provided data processing apparatus comprising:

[0093] a video data receiver configured to receive video data;

[0094] an external data receiver configured to receive external data; and

[0095] an alarm engine configured to analyse said received video and external data and generate an alarm signal based on an output signal generated by said analysis.

[0096] According to a further embodiment of the invention, there is provided a method of operating a data processing apparatus comprising:

[0097] receiving video data;

[0098] receiving external data; and

[0099] analysing said received video and external data and generating an alarm signal based on an output signal generated by said analysis.

[0100] The alarm engine may be configured to perform sequential analysis of said received video and external data to determine a sequence of one or more incidents.

[0101] The external data may be received from, and representative of the data output by, one or more of: an RFID system; a thermal camera; a fingerprint reader; an iris reader; an access card reader; an automatic number plate recognition system; a fire alarm; an access control system; a speed camera; a relay input; a keypad entry system; a thermal sensor; an ear shape detection system; a face recognition system; an object tracker system; a smoke detector; a voice identification (speaker identification) system; a voice recognition system; a gait detection (identification) system; a temperature measuring system; an infrared or radio frequency remote control system/signal; a radar; a proximity detection system; an odour detector; an electrical fence intruder detection system; and/or a sonar system.

DRAWINGS

[0102] Specific embodiments in accordance with the present invention will now be described, by way of example only, with reference to the drawings, in which:

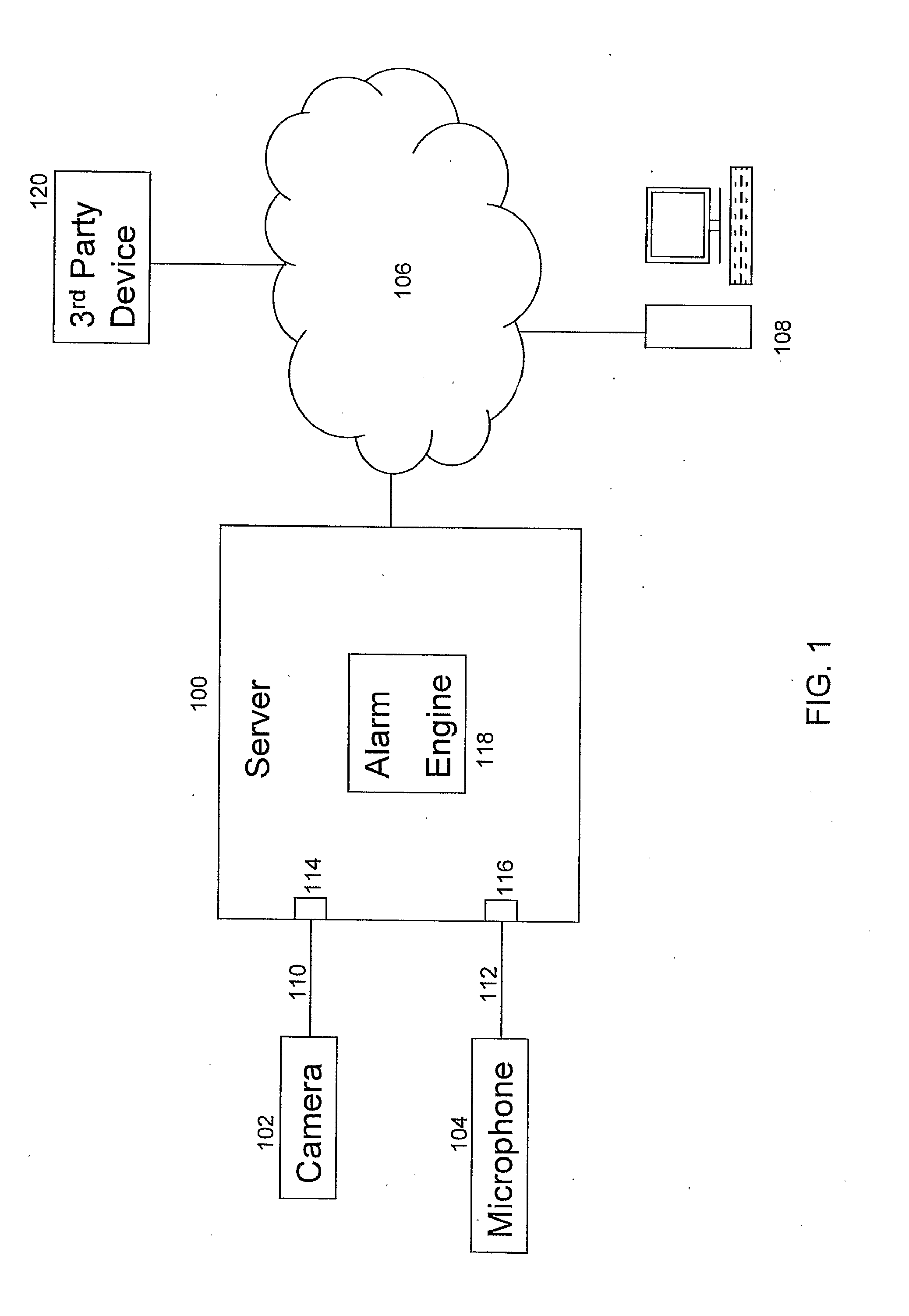

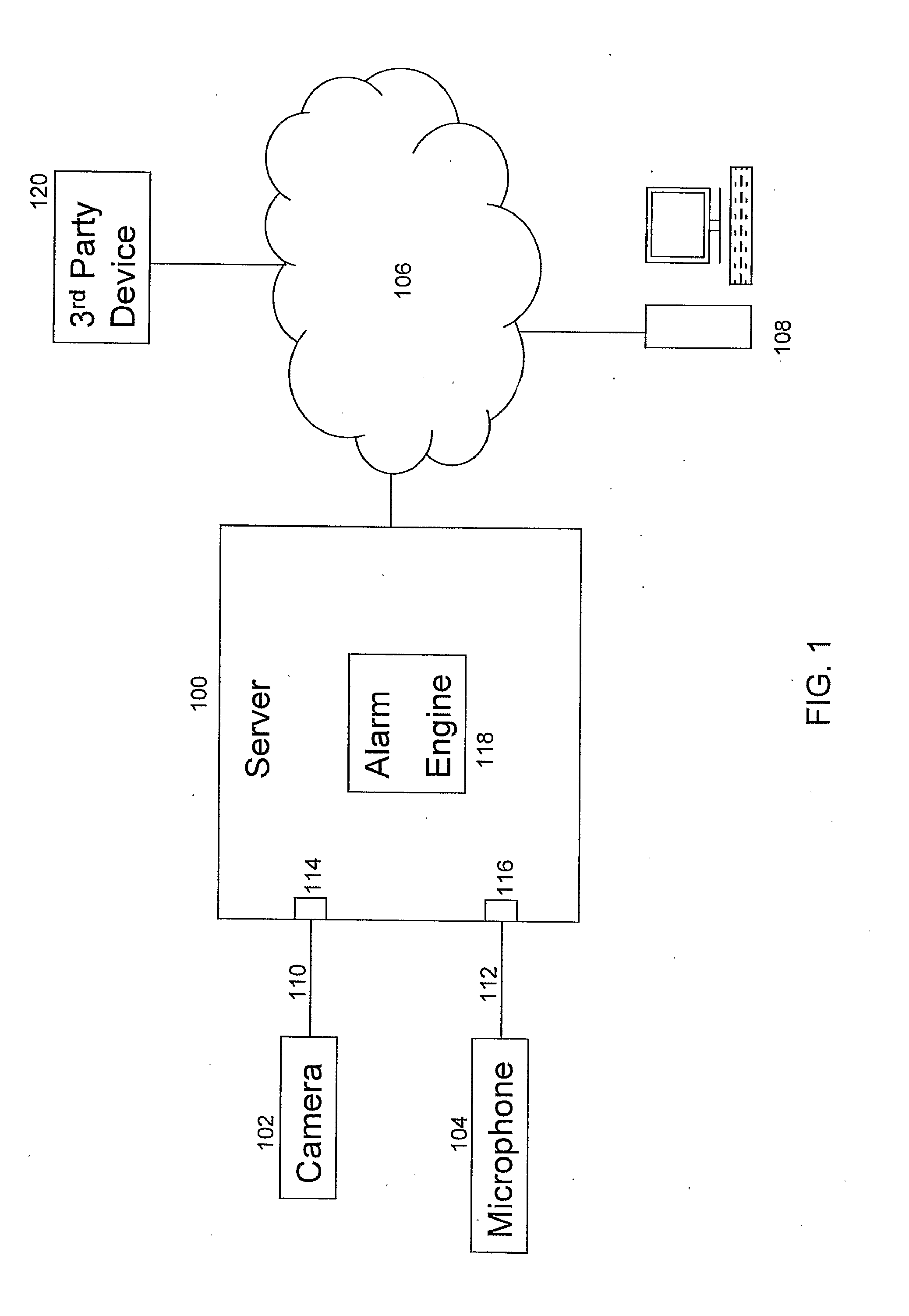

[0103] FIG. 1 shows a system according to an embodiment of the invention;

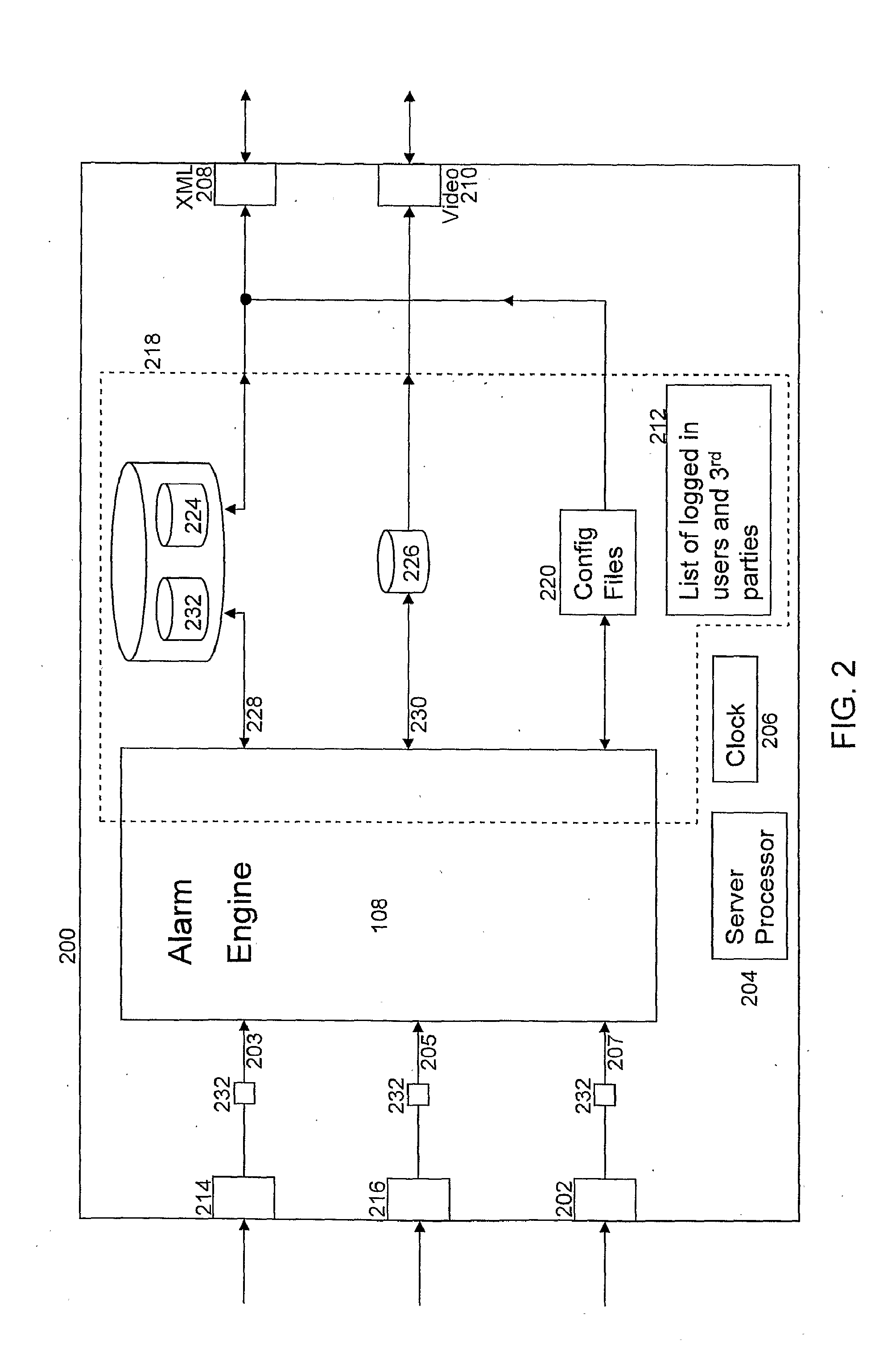

[0104] FIG. 2 shows an embodiment of a data-processing apparatus according to an embodiment of the invention;

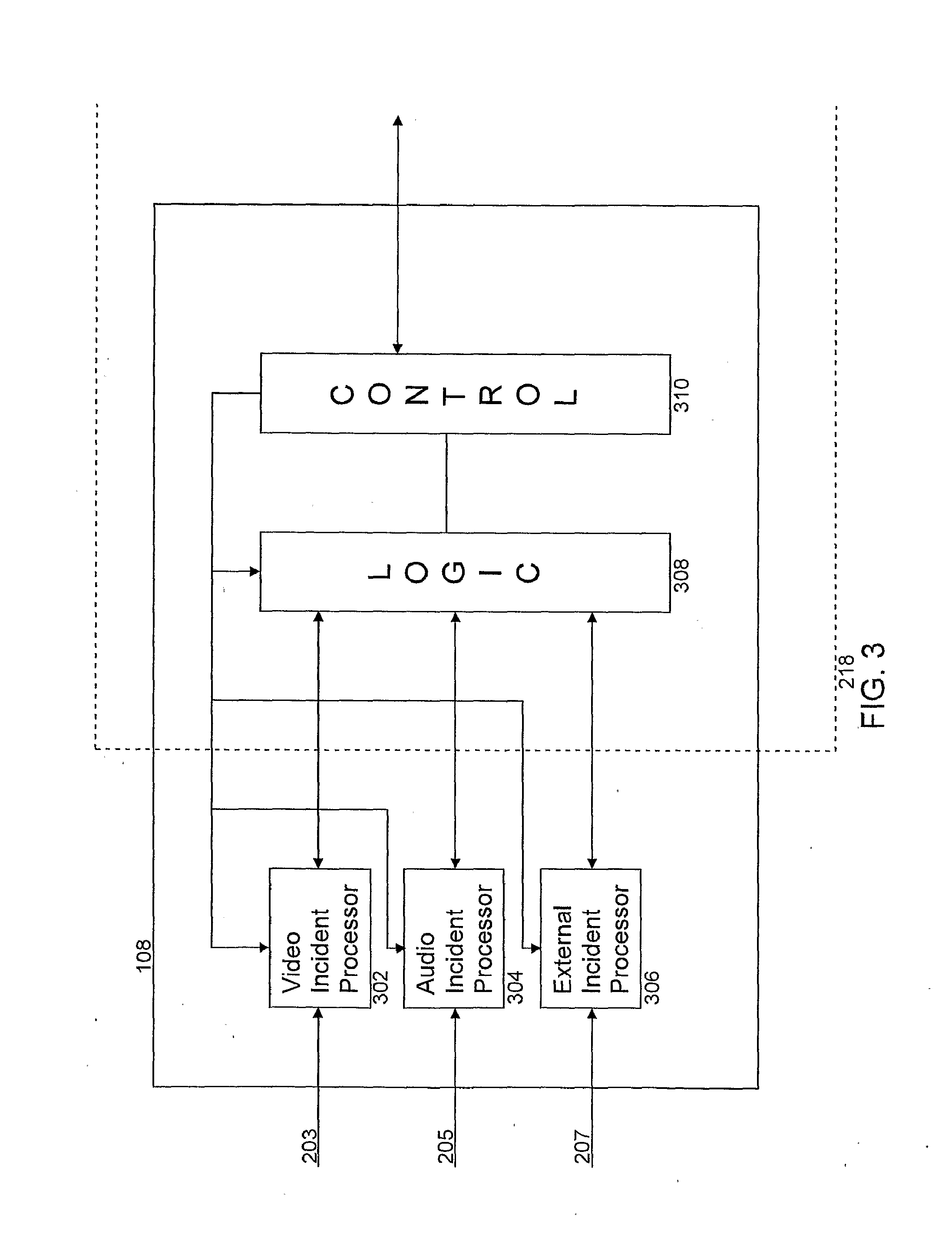

[0105] FIG. 3 shows an embodiment of an alarm engine according to an embodiment of the invention;

[0106] FIG. 4 shows an embodiment of processing performed by an alarm engine according to an embodiment of the invention;

[0107] FIG. 5 shows an embodiment of a contemporaneous analysis function according to an embodiment of the present invention;

[0108] FIG. 6A shows an example of how the timer delay is used by a contemporaneous analysis function, and

[0109] FIG. 6B shows an example of how the timeout is used by a sequential analysis function according to an embodiment of the invention;

[0110] FIG. 7 shows an embodiment of an image file according to an embodiment of the invention;

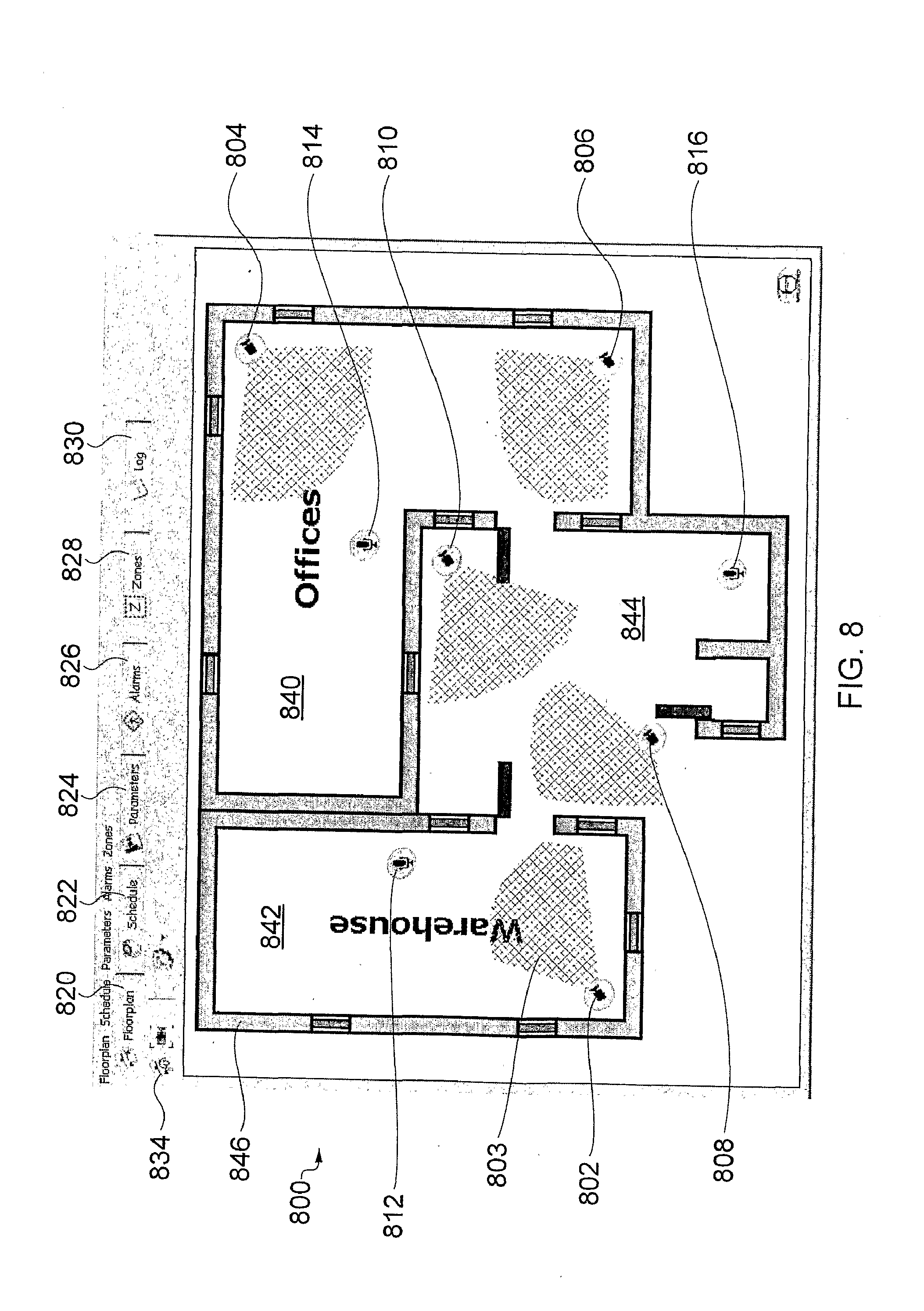

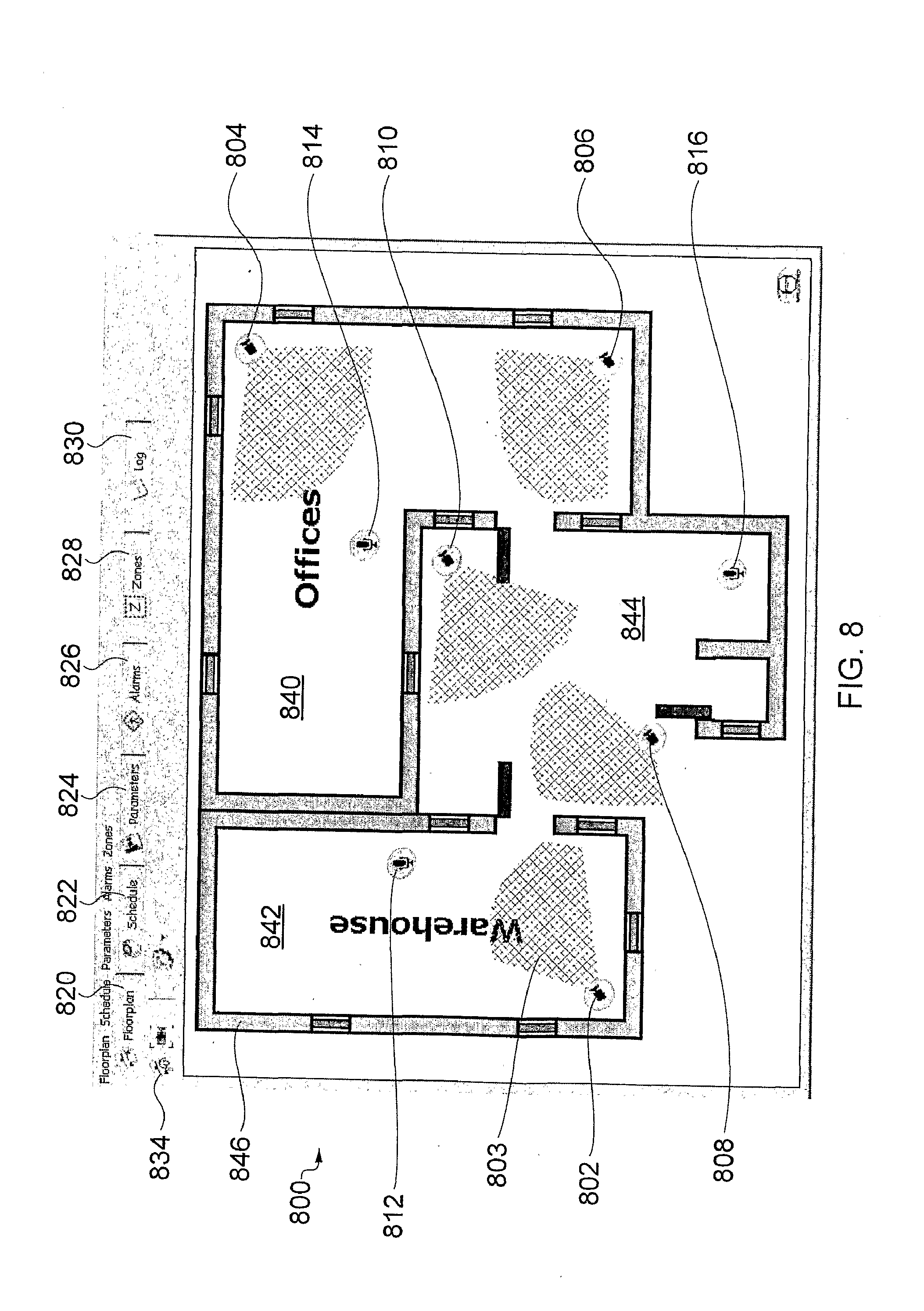

[0111] FIG. 8 shows an example of a floorplan display screen of a CONFIGURE GUI,

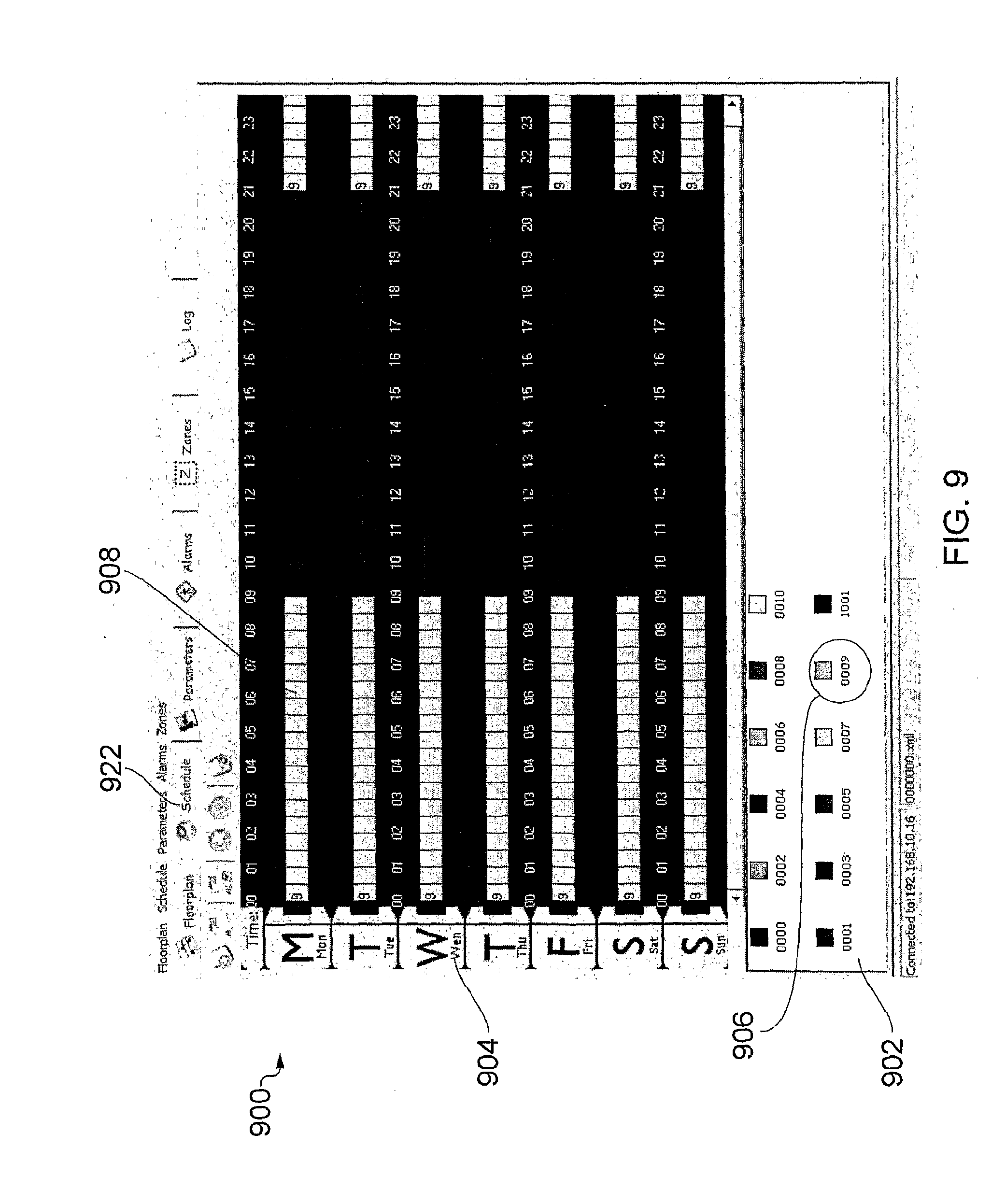

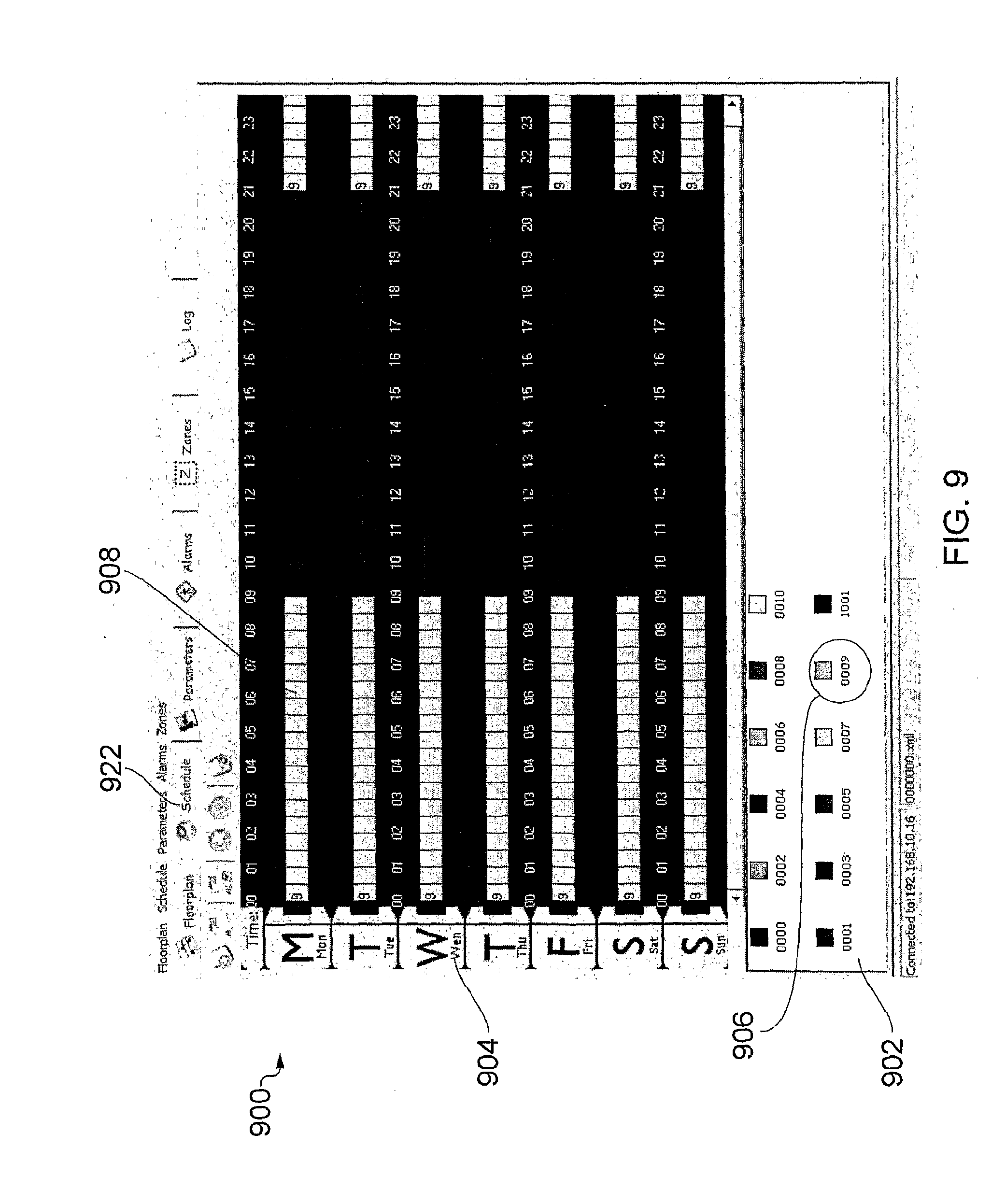

[0112] FIG. 9 shows an embodiment of the schedule display screen of the CONFIGURE GUI,

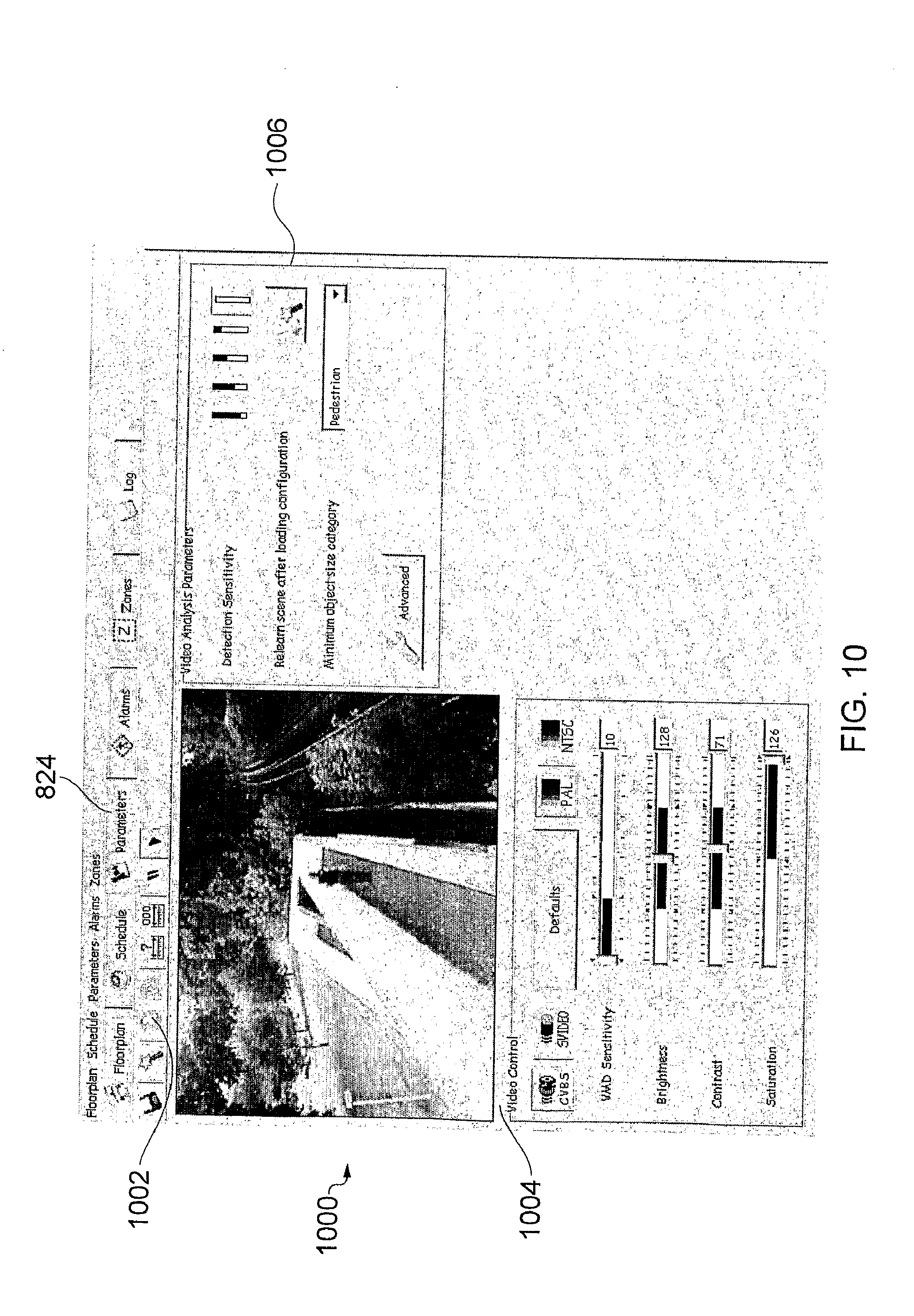

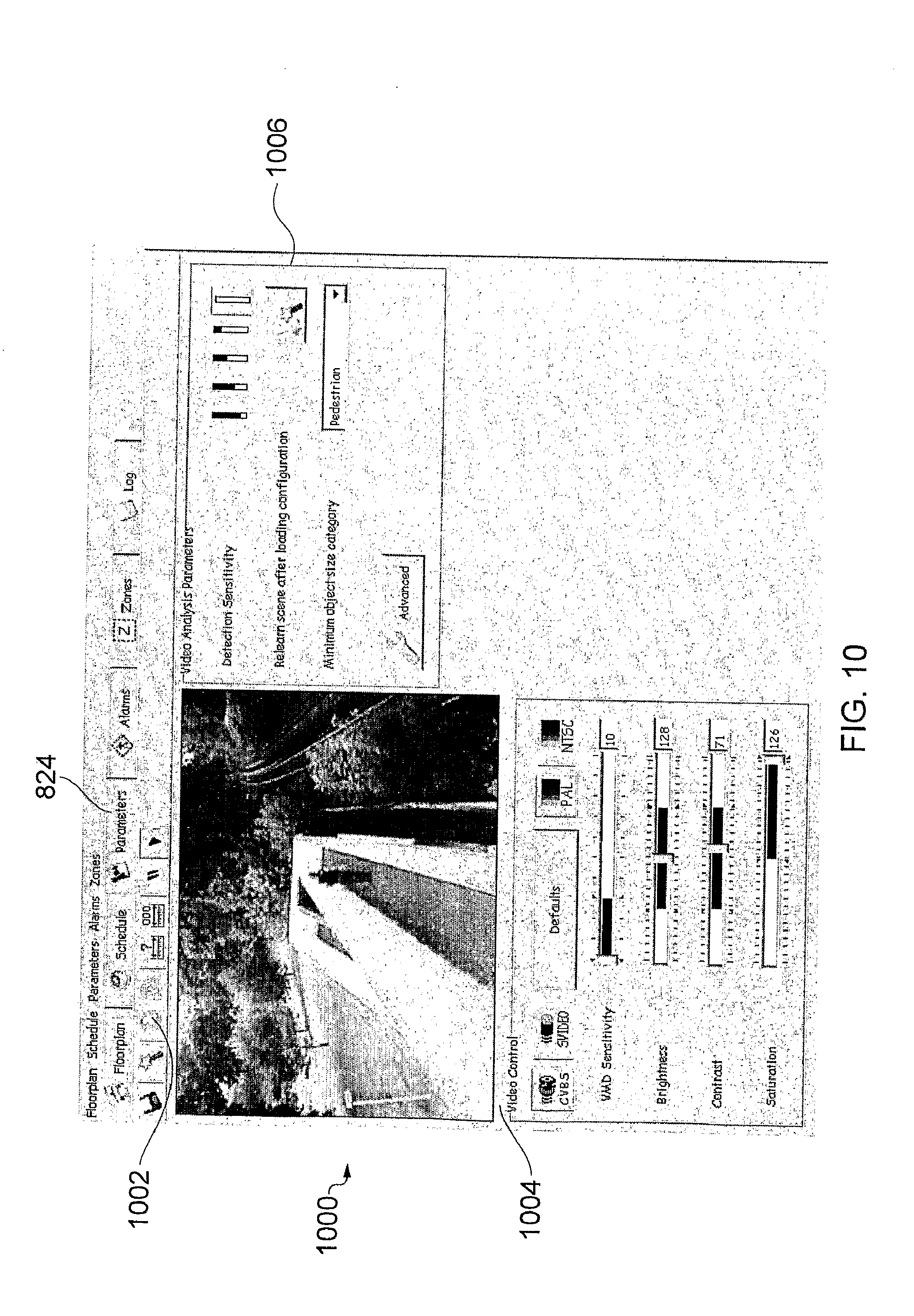

[0113] FIG. 10 shows an embodiment of a video parameters display screen,

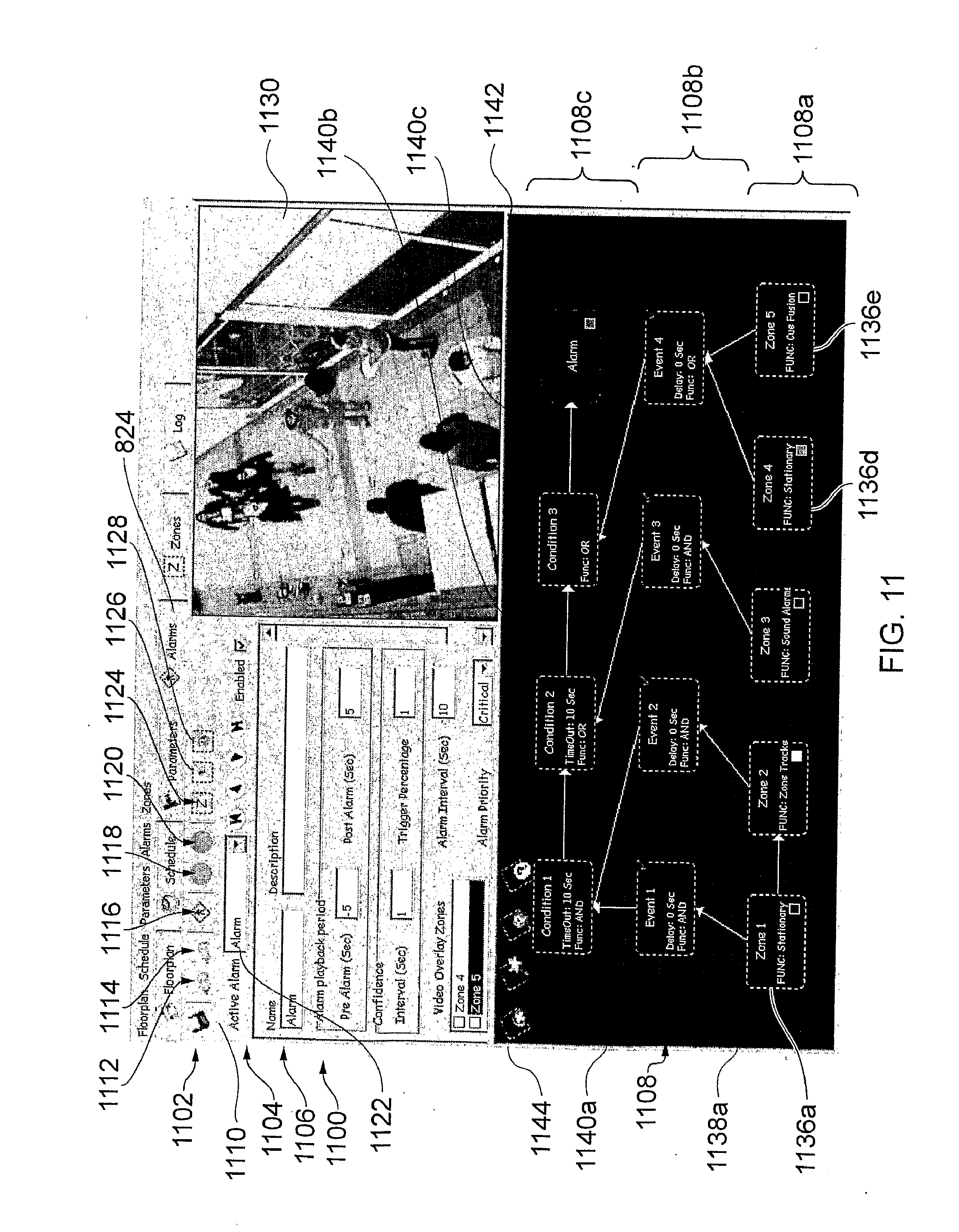

[0114] FIG. 11 shows an embodiment of the alarms display screen,

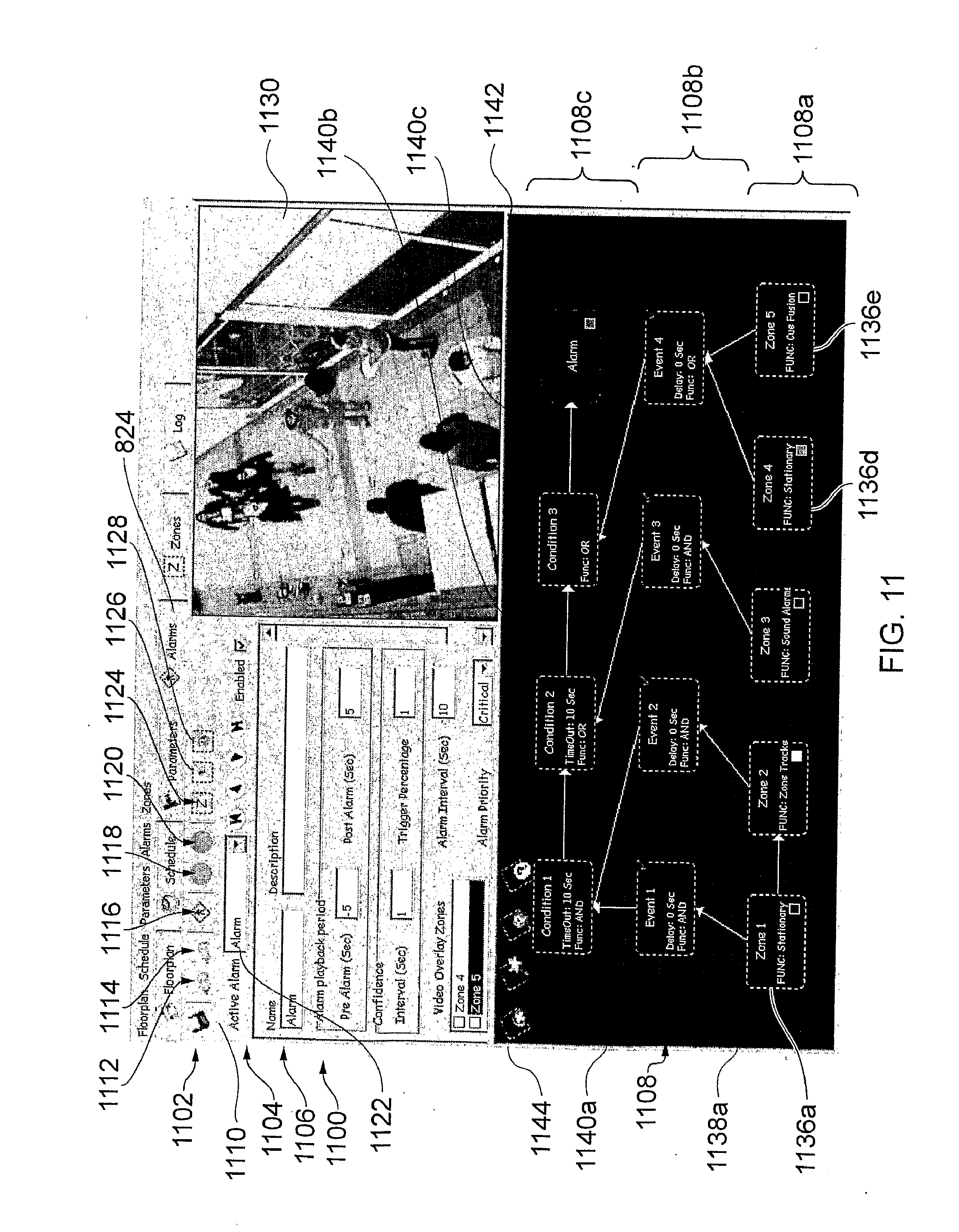

[0115] FIG. 12 illustrates a zone configuration dialogue box,

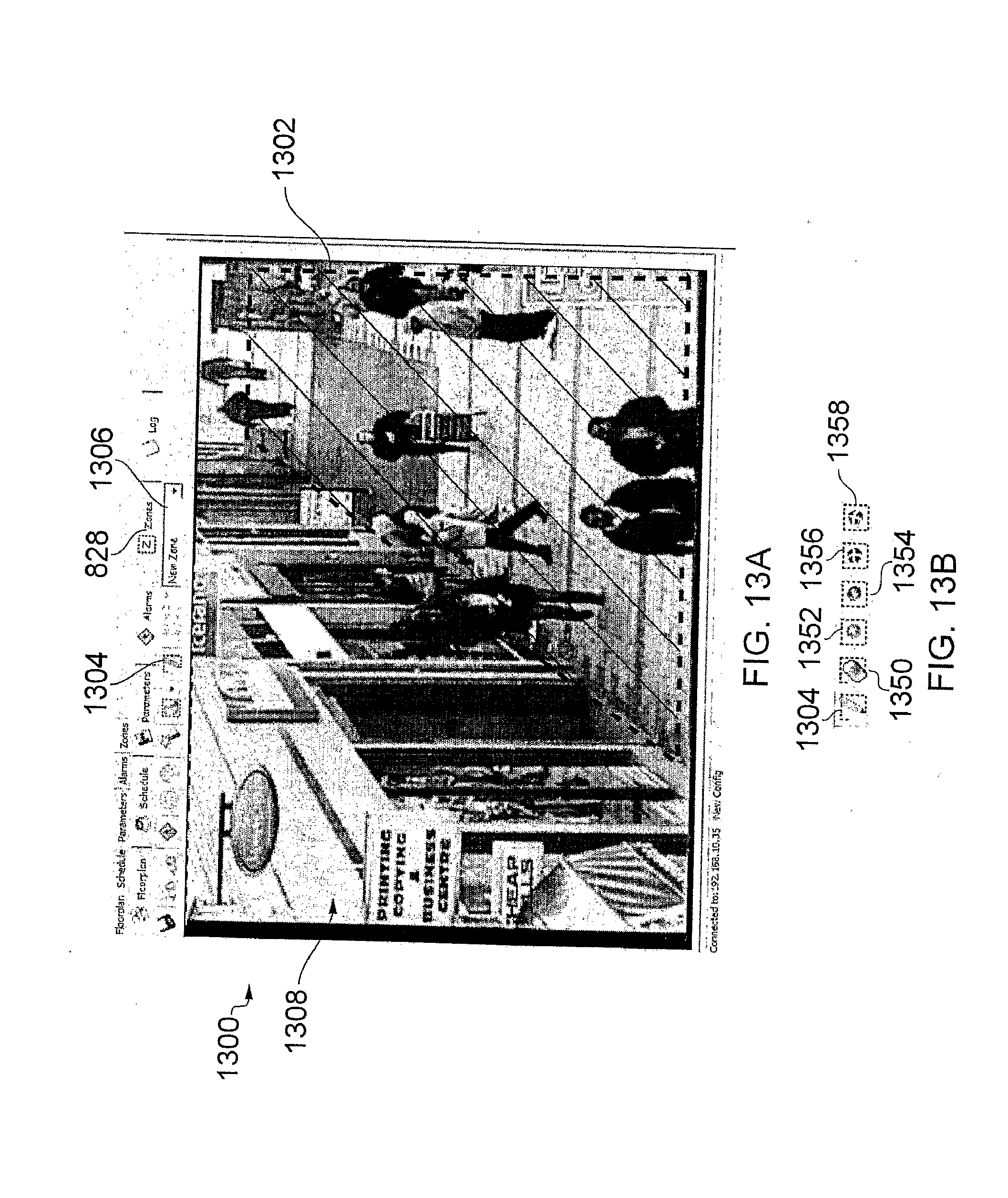

[0116] FIG. 13A shows an embodiment of the zones display screen,

[0117] FIG. 13B shows five additional icons concerning the modification of the detection area, and

[0118] FIG. 14 shows an embodiment of a log display screen of the CONFIGURE GUI according to an embodiment of the invention;

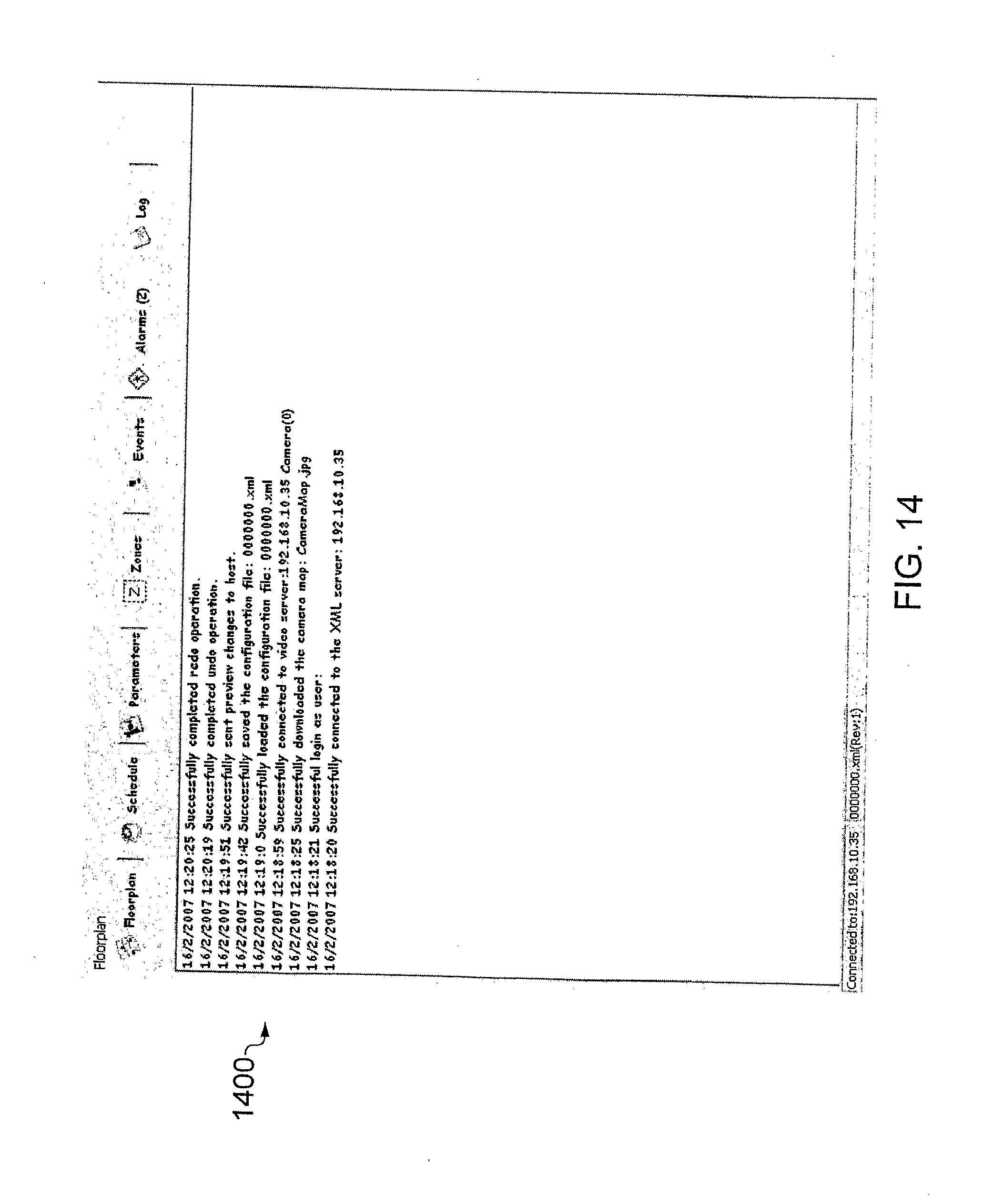

[0119] FIG. 15 shows a MANAGE GUI display screen, and

[0120] FIG. 16 illustrates an alternative display screen of a MANAGE GUI according to an embodiment of the invention;

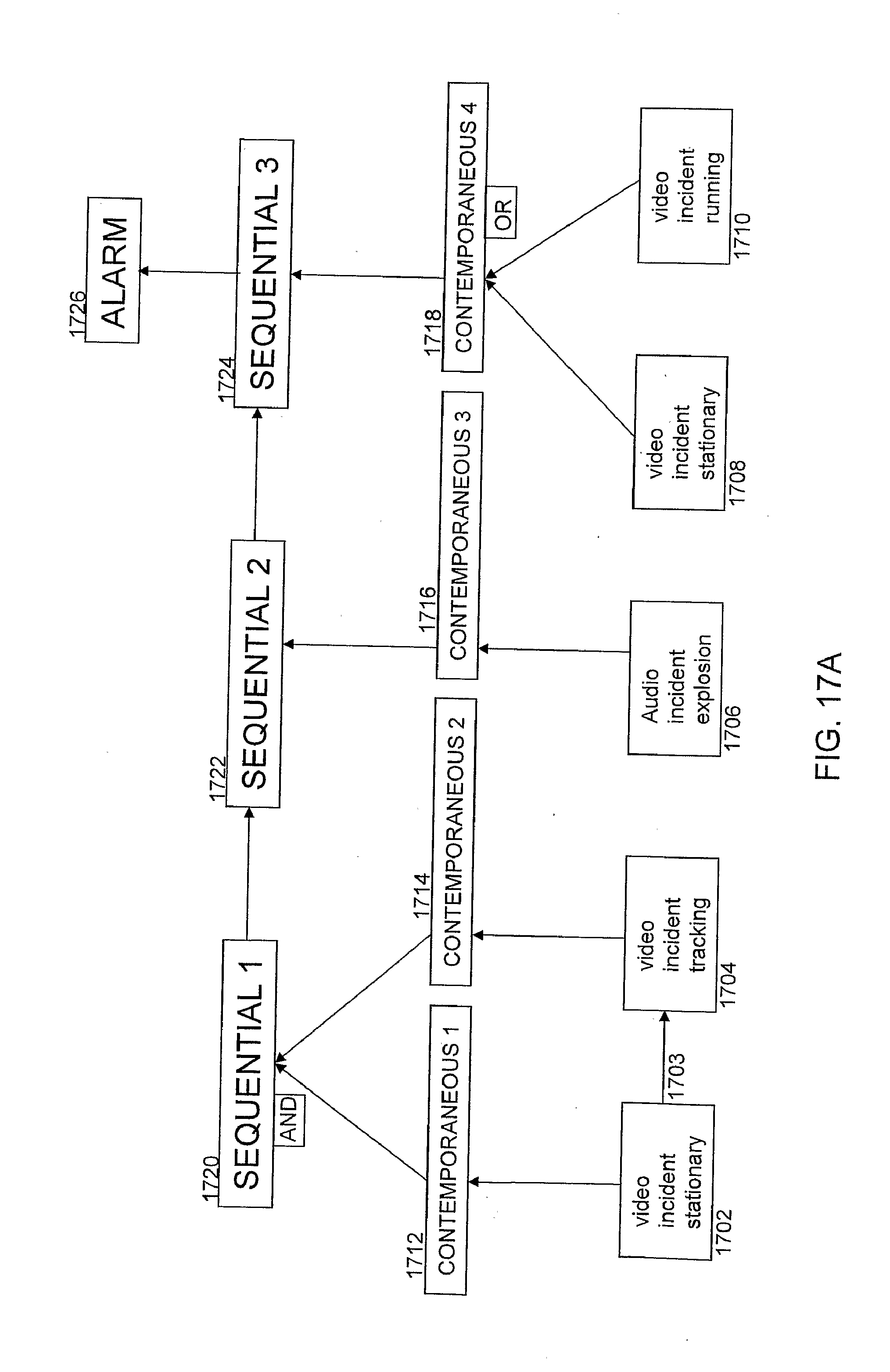

[0121] FIG. 17A illustrates schematically an embodiment of a scenario/sequence of analysis functions, and

[0122] FIG. 17B illustrates an example of an image/scene on which the analysis functions illustrated in FIG. 17A are performed according to an embodiment of the invention;

[0123] FIG. 18 illustrates a process flow according to an embodiment of the invention;

[0124] FIG. 19 illustrates a virtual object according to an embodiment of the invention;

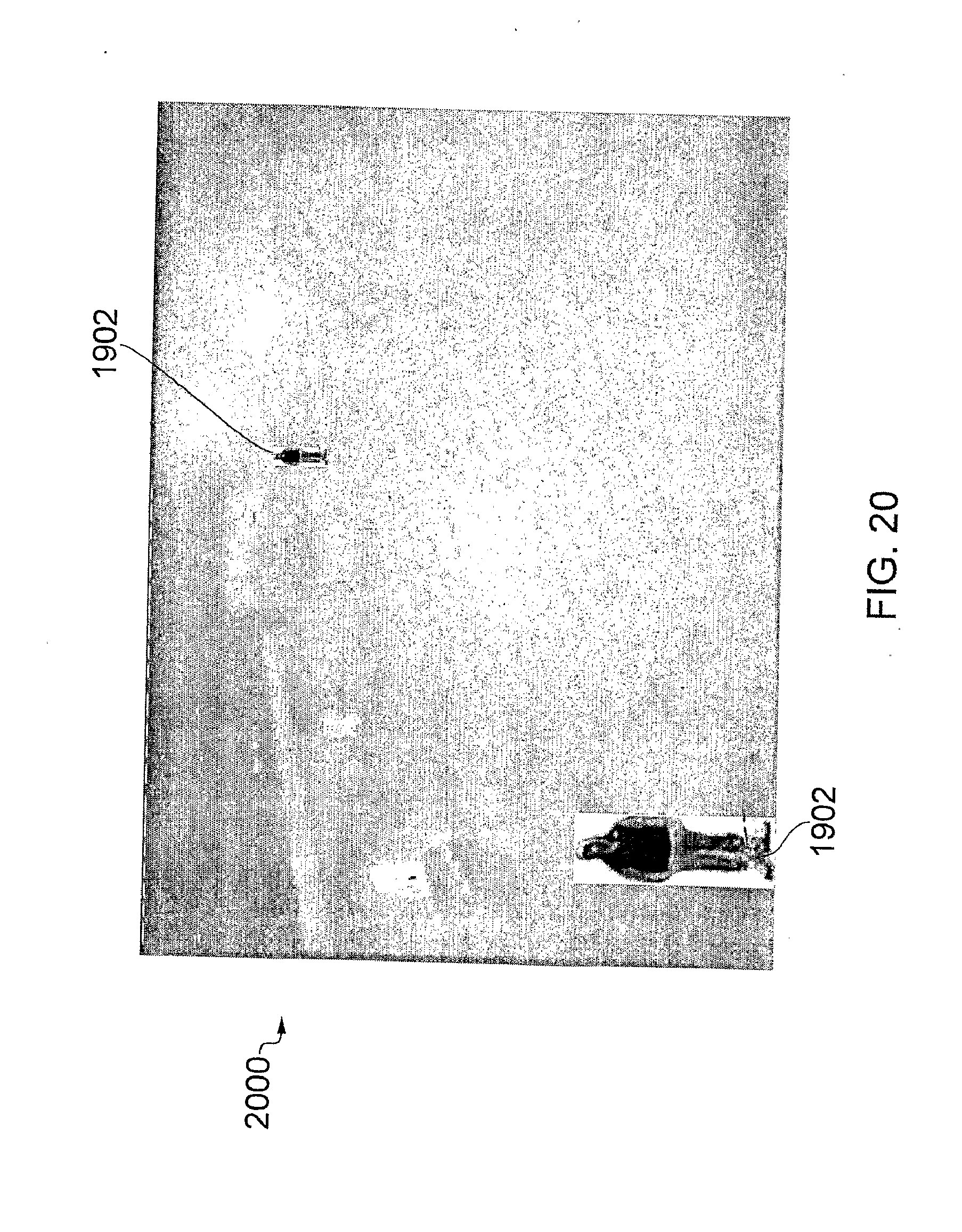

[0125] FIG. 20 illustrates a virtual object in a scene according to an embodiment of the invention;

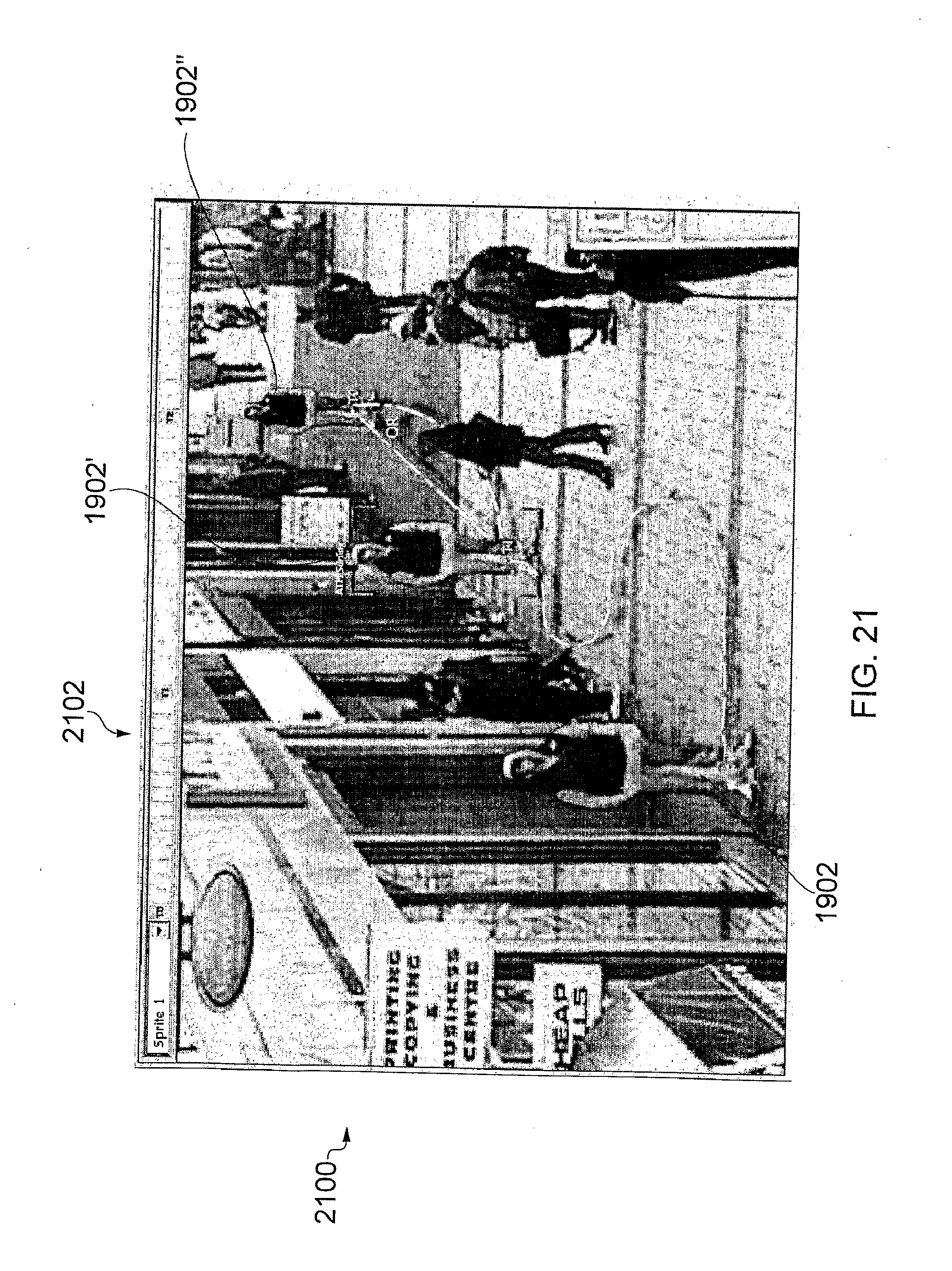

[0126] FIG. 21 illustrates an embodiment of a testing display screen according to an embodiment of the invention; and

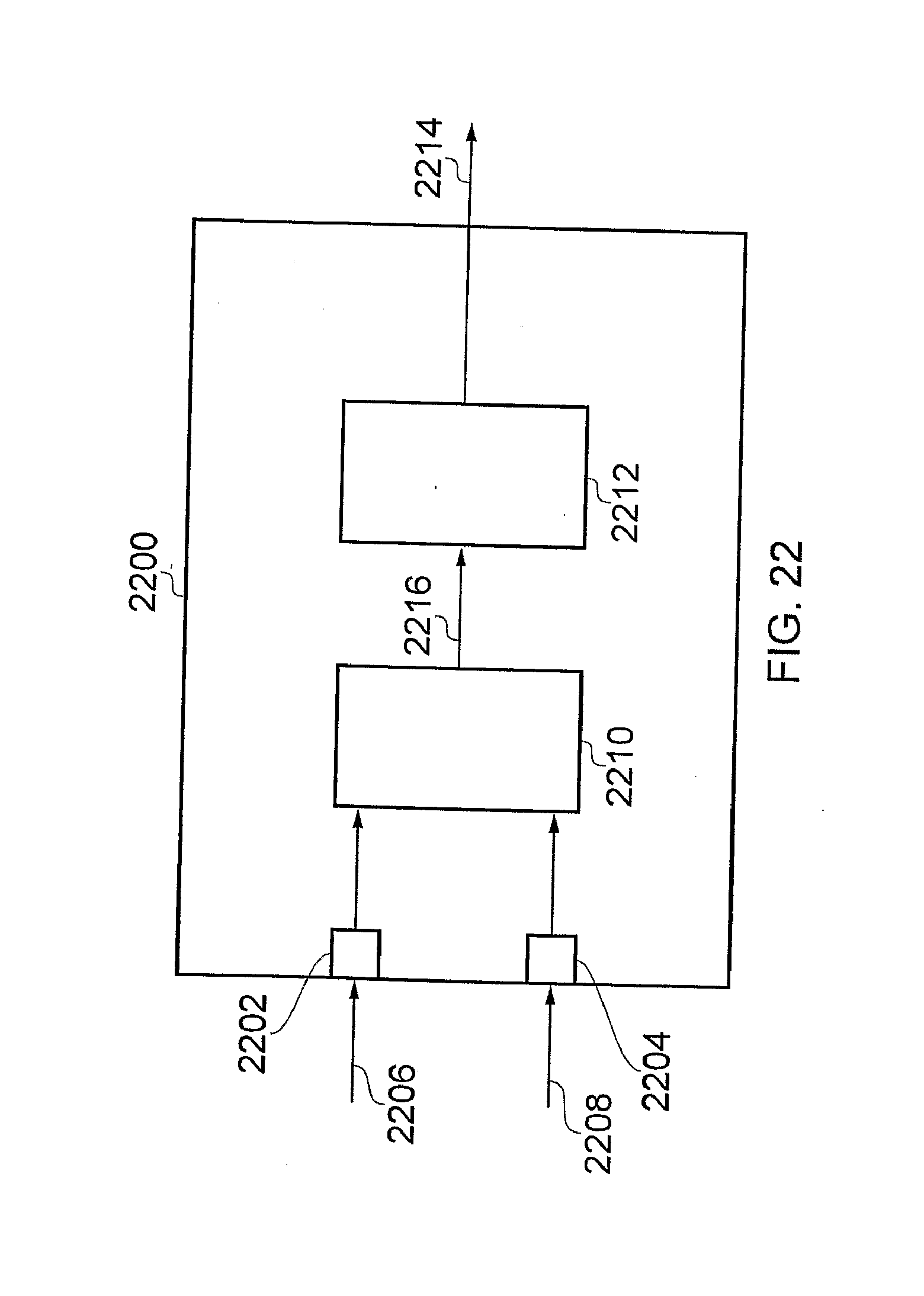

[0127] FIG. 22 illustrates an embodiment of data processing apparatus according to an embodiment of the invention.

DETAILED DESCRIPTION

[0128] FIG. 1 shows a system according to an embodiment of the invention for generating an alarm based on the result of analysis of received audio and video data.

[0129] In this embodiment, the analysis utilises algorithms and transformations applied to the received data in order to detect incidents that are modelled on physical parameters/characteristics. Detecting incidents may include performing pattern recognition operations on the received data, and such an analysis may be referred to as analytics.

[0130] The system comprises data processing apparatus, which in this embodiment is a server 100, a camera 102, a microphone 104, a user terminal 108 and a third party device 120.

[0131] The server 100 is in electronic communication with the camera 102 and the microphone 104 over communications links 110, 112 respectively, and is in communication with the user terminal 108 and third party device 120 over network 106.

[0132] The camera 102 is an analogue camera configured to generate analogue video data and make the analogue video data available to the server 100 over the electronic communications link 110. Similarly, the microphone 104 is configured to generate analogue audio data and make the analogue audio data available to the server 100 over electronic communications link 112.

[0133] In this embodiment, the server 100 converts the analogue audio and video data into digital data for subsequent processing, although it will be appreciated that in other embodiments a digital camera and/or digital microphone may be used to generate digital video and/or audio data and make the digital data available to the server such that analogue to digital conversion is not required at the server 100.

[0134] In this embodiment, the server 100, camera 102, and microphone 104 are all located in the same geographical area, for example in a building that is being monitored, and therefore the communication links 110, 112 are hard-wired.

[0135] The server 100 comprises a video data receiver 114 configured to receive video data from the camera 102 and an audio data receiver 116 configured to receive audio data from the microphone 104. The server 100 also comprises an alarm engine 118 configured to perform analysis on both the received audio and video data, and generate an alarm based on the result of the analysis.

[0136] Typically an alarm is generated to identify a security threat, an illegal action, or to identify a potentially unsafe situation such as detecting congestion on a train platform. The server 100 may automatically take a predetermined action when an alarm is generated. The predetermined action that is taken may depend upon a priority associated with the alarm. The predetermined action may include transmitting a signal to the user terminal 108 indicative of the alarm, thereby reporting the alarm to a human operator of the user terminal 108.

[0137] The server 100 may also be configured to record/write data in relation to the analysis to memory. The recorded data may be associated with the video and/or audio data to which the analysis has been applied. In some embodiments, the data may be written/recorded by appending a header to the received video and/or audio data, wherein the header contains a representation of the results of the analysis.

[0138] Non-limiting examples of analysis that may be performed on video data include detecting a stationary object, tracking an object, detecting motion of an object in a predefined direction, detecting loitering, and any combination thereof. Non-limiting examples of analysis that may be performed on audio data include identifying sounds that relate to aggression, breaking glass, a gunshot, a scream, and any combination thereof. Individual analysis operations performed on the audio and/or video data may be combined such that a plurality of analysis operations can be considered independently, and/or contemporaneously and/or sequentially in order to generate an alarm signal. Further details of examples of analysis are described in more detail below.

[0139] Generating the alarm signal as a result of analysis performed on both the received audio and video data enables sophisticated alarm monitoring scenarios to be considered with a high level of detection accuracy and may also reduce the number of false alarms generated. The results of the analysis on both the audio and video data, either independently or in combination may enable a greater degree of confidence to be placed on alarms that are generated.

[0140] The server 100 is also in communication with the user terminal 108 and the third party device 120 over a network 106. In this embodiment, the network 106 is an IP network such as a Local Area Network (LAN) and a user of the user terminal 108 can use a graphical user interface (GUI) displayed by Web browser software stored on the user terminal 108 to manage and display alarm signals generated by the alarm engine 118 in the server 100.

[0141] In other embodiments, the network may be a Wide Area Network (WAN), a Metropolitan Area Network (MAN) or the Internet.

[0142] In this embodiment, the user must log in to use the GUI. Each user has an associated electronic user profile file that defines the privileges of that user. Depending upon the privileges of the user profile that is logged in, the Web browser software is configured to control the functionality that is available to the user. For example, a manager may have access to a GUI that is arranged to configure and manage alarms, whereas an operator may only have access to a GUI that is arranged to manage alarms.

[0143] The third party device 120 is associated with a third party who has registered to receive alarm information from the server 100. The server 100 is configured to be in client-server communication with the third party device 120 such that the third party device 120 is the server, and the alarm server 100 is the client.

[0144] The third party device 120 is configured to receive alarm signals from the server 100 and in this embodiment the third party device 120 is not arranged to configure how the alarm signals are generated. The configuration of how the alarm files are generated is provided at user terminal 108.

[0145] It will be appreciated that the functionality of the server/data processing apparatus 100 may be provided by a single device in a single location, or alternatively may be distributed over a number of devices which may be in one or more different locations, for example distributed over multiple servers.

[0146] It will be appreciated that in some embodiments there may be provided a plurality of cameras 102 and/or a plurality of microphones 104, and that analysis is performed on video and audio data received from one or more of the cameras 102 and microphones 104.

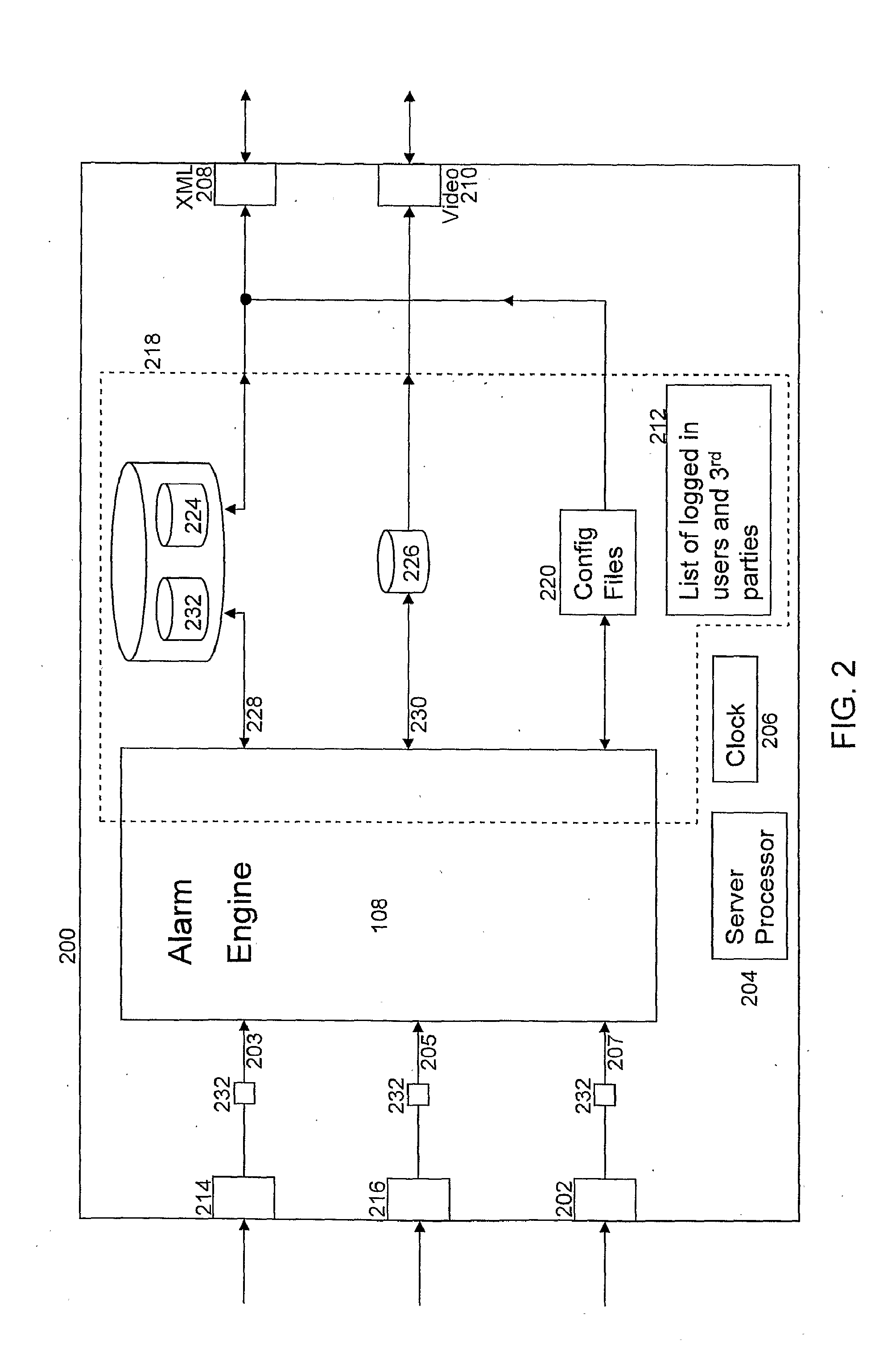

[0147] FIG. 2 shows another embodiment of a data processing apparatus 200 according to the invention.

[0148] The data processing apparatus is a server 200 that comprises a video data receiver 214, an audio data receiver 216, an external data receiver 202, an XML transmitter/receiver 208 and a video transmitter 210. The server comprises a server processor 204, a clock 206 and memory 218. In this embodiment part of the functionality provided by the alarm engine 108 is stored as software modules in memory 218, and part of the functionality of the alarm engine 108 is provided by designated processors as discussed in more detail in relation to FIG. 3. Memory 218 also comprises a video storage data store 226, a plurality of databases 224, a list of logged-in users 212 and one or more configuration files 220.

[0149] In addition to the audio and video data receivers that are present in the data processing apparatus 100 illustrated in FIG. 1, the data processing apparatus 200 illustrated in FIG. 2 is configured to also receive external data at external data receiver 202. External data is data received from an external data source/sensor, and is representative of a physical quantity such as temperature, the presence of smoke, motion detection for example through use of an infra red beam, or the activation of an external relay.

[0150] The server 200 comprises three analogue to digital converters 232, which are configured to convert the analogue video, audio and external data received at data receivers 214, 216, 218 and provide corresponding digital data 203, 205, 207 to the alarm engine 108. In this embodiment, the analogue to digital converters 232 are configured to sample the analogue signals at a sampling frequency of 5 Hz. That is, a digital representation of the analogue data is generated every 0.2 seconds.

[0151] The alarm engine 108 is configured to process/analyse the received audio, video and external data and generate an alarm message when an alarm is generated/triggered as determined from the analysis. Specifically, the alarm engine 108 is configured to apply one or more analysis functions as defined by a user of user terminal 208 to one or more of the received audio, video data, and external data, and to use the result of the one or more analysis functions to determine whether or not an alarm message should be generated.

[0152] A user can use a GUI accessible from the user terminal 108 in communication with the XML transceiver 208 on the server 200 to configure the analysis that is to be performed on the received data by the server 200 to generate an alarm. The GUI is arranged to enable the user to create a configuration file that defines the analysis functions that are to be performed on the received data, and define when that configuration file is to be applied. The user can select the analysis functions that are to be performed from a preset list of analysis functions. In response to the user's input, the GUI generates a configuration signal representative of the user's input and the user terminal 108 sends that signal to the XML receiver 208 of the server 200.

[0153] The configuration signal defines an identifier for the configuration file, identifiers of one or more analysis functions, the parameters associated with those analysis functions, and the times at which the configuration file is to be applied. The parameters include a data source identifier (for example, a camera or microphone name/identifier) to which the analysis function should be applied. In this example, a week is split into 30 minute timeslots and the signal represents which of the 30 minute timeslots in a week the configuration file is to be applied.

[0154] Upon receipt of the configuration signal, the server 200 is configured to store a configuration file in data store 220 and populate the scheduler database 224 to define when the configuration file is to be applied.

[0155] The configuration file comprises the configuration identifier, the identifiers of the one or more analysis functions, and the parameters associated with those analysis functions as determined from the received configuration signal. The configuration file is used by the alarm engine 108 to define which analysis functions are performed on the received data, the values that are to be attributed as the parameters for those analysis functions, and how those functions are logically connected together.

[0156] In addition, the server 200 populates the scheduler database 224 upon receipt of the configuration signal to define at what times the corresponding configuration file is to be applied. The scheduler database 224 comprises a row for each timeslot, and each row comprises two columns: a first that represents a timeslot identifier and a second that represents a configuration file identifier. The server is configured to populate the scheduler database 224 by inserting the configuration file identifier into the rows corresponding to the timeslots to which the user has applied the configuration. In this example the scheduler database 224 comprises 336 rows (48.times.7), one for each 30 minute timeslot in a week.

[0157] In this embodiment, the server 200 only enables a single configuration file to be applied at a given time, although in other embodiments a plurality of configuration files may be applied to the same timeslot, and therefore more than one configuration file identifier may be associated with a single timeslot identifier in the scheduler database 224.

[0158] During operation, the alarm engine 108 is configured to use the time provided by the clock 206 to poll the scheduler database 224 every 30 minutes, and if the alarm engine 108 determines that a different configuration file is to be applied for the new timeslot, the alarm engine 108 loads the appropriate configuration file from configuration files data store 220 into alarm engine 108. The alarm engine is preloaded with the functionality to perform the available analysis functions. The analysis function identifiers in the configuration file provide an identification of which of the preloaded analysis functions to enable.

[0159] The alarm engine 108 is configured to apply the analysis functions as identified by the analysis function identifiers, and apply algorithms that determine whether or not an alarm message should be generated based on the results of the analysis functions.

[0160] When an alarm message is generated, it is sent from the alarm engine 108 to alarms database 232 where an entry is made that corresponds to the generated alarm. In addition, the alarm message is sent to XML transmitter 208, which is configured to send the alarm message to all user terminals 108 that are logged on to the server 200 and one or more third party servers 120. The destinations to which the alarm message are sent are determined from the list of logged on users and a third party servers 212 (if there are any) the addresses of which are stored in memory 218.

[0161] The alarm message includes an identifier of the alarm, one or more of the results of the analysis that lead to the generation of the alarm, and a timestamp. The timestamp may be used to retrieve video and/or audio and/or external data from data store 226 relative to the time at which the alarm was generated, for example for the ten seconds before and ten seconds after an alarm is generated.

[0162] The alarm message may also comprise information relating to the data source that provided the data that caused the alarm to be generated, for example one or more camera identifiers, and/or one or more microphone identifiers and/or one or more external data source identifiers. The results of the analysis may also comprise coordinates of objects within an image that caused the alarm to be generated, for example when an alarm is configured to identify a stationary vehicle, the coordinates of the identified stationary vehicle in the image may be provided.

[0163] In addition to the generation of alarms, the alarm engine 108 generates an image file periodically five times every second that corresponds to a snapshot image of the video data received from a camera. The alarm engine 108 is configured to append supplemental data representative of the analysis performed on the image to each image file. In this embodiment, the data is appended in the form of a header that provides a timestamp as well as the data representative of the analysis performed on the image.

[0164] The data representative of the analysis performed on the image provides information in relation to characteristics of the associated video data, the results of the one or more of the analysis functions that have been performed on the received data, and an alarm indicator if the analysis has caused an alarm to be generated. The data representative of the analysis performed on the image may include characteristics of objects that are identified in the image. An object in the video data may comprise a component of the foreground of a video image, for example a person, a bag, or a vehicle.

[0165] The appended image files are stored in memory 218 in the data store 226.

[0166] In other embodiments, the image files may also be appended with a representation of the corresponding audio and/or external data including the results of one or more analysis functions that have been applied to the audio and/or external data. This can enable video, audio and/or external data to be retrieved from data store 226 along with the information provided by the associated header, for a given time frame.

[0167] The information provided in the header can enable further analysis operations to be performed on the results of the analysis that has already been performed on the received data as recorded in the appended header. For example, where the analysis that has been performed on the received data consists of a number of layers/tiers of analysis functions, the results of the first tier of analysis can be retroactively processed in a different way to the analysis that was performed on the results of the first tier (incident) analysis data when it was originally received.

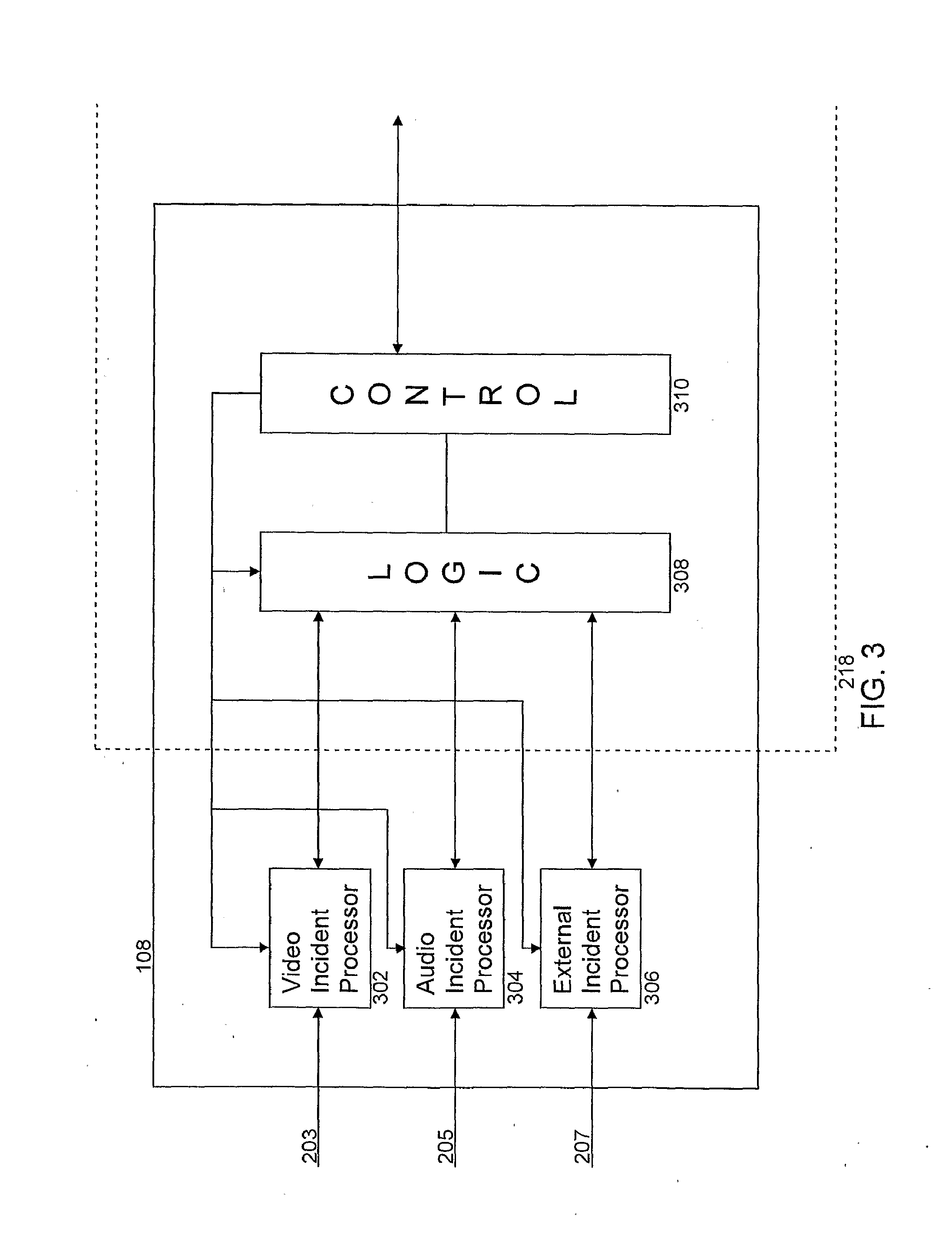

[0168] FIG. 3 shows another embodiment of an alarm engine 108 according to the invention. The alarm engine 108 comprises a video incident processor 302, an audio incident processor 304, an external incident processor 306, a logic unit 308, and a control unit 310. The logic unit 308 and the control unit 310 are software modules stored in memory 218. The video incident processor 302, audio incident processor 304, and external incident processor 306 are designated digital signal processors.

[0169] The video incident processor 302, audio incident processor 304, and external incident processor 306 are configured to perform computationally intensive incident analysis functions on the received data, and therefore it is efficient for designated digital signal processors to perform this computationally intensive analysis. The implementation of the incident analysis functions in software may place an unacceptably high burden on a single processor 204 within the server. However, in other embodiments, a single processing resource may be capable of performing the incident analysis processing without detracting from the overall performance of the alarm engine 108. In one embodiment all of the processing may be performed on a single processor located on the server.

[0170] Each of the incident processors 203, 205, 207 is preloaded with one or more incident analysis functions which can be set as disabled or enabled depending on the user defined configuration that is to be applied. The incident analysis functions that are used by a configuration file are enabled and configured with the required parameters in the incident processors 302, 304, 306 by control unit 310.

[0171] An example of a video incident analysis function is one that determines a stationary object. The function is configured to initially detect the background of an image by applying a mixture of Gaussians to model the scene background. This step may be performed over a period of time to provide a suitable level of confidence that the background has been properly detected, and therefore provide confidence that any differences between a subsequent image and the detected background will identify elements of the foreground. Subsequent images/frames, are then compared with the model background to identify foreground objects. Block matching motion estimation techniques are then applied to the foreground objects identified from the current and previous image/frame to deduce any moving objects. If it is determined that a foreground object has not moved for a predefined number of frames, that foreground object is determined as being stationary. The predefined number of frames may be set by a user to configure how long an object must be stationary for the incident analysis function to be triggered. This video incident analysis function is an example of a pixel-level function, as the analysis is performed on a pixel-level.

[0172] A further example of a video analysis function is a zone tracker function that identifies when an object moves from a first user defined detection area to a second user defined detection area of the frame/image. The function initially detects the background of an image/frame in the same way as described above in relation to identifying a stationary object, and similarly identifies foreground objects. In this function, the foreground objects are segmented and tracked using a Kalman filter to calculate the trajectories of the observed foreground objects from one frame/image to the next. The zone tracker function is satisfied when an observed foreground object is determined as having moved from the first user defined detection area to the second user defined detection area.

[0173] Other individual video analysis functions may be provided, and/or that combine the functionality of one or more of the other video analysis functions.

[0174] The control unit 310 is configured to poll the scheduler database 224 periodically at the start of every timeslot, in this example every 30 minutes, and retrieve from configuration file data store 220 the configuration files that are recorded as being applicable to the next timeslot. If the configuration file for the next time slot is the same as the configuration file for the previous timeslot, the control unit 310 determines that the analysis functions that have been enabled in the incident processors 302, 304, 306 should remain the same and takes no further action in relation to the loading of configuration files until the next timeslot when the control unit 310 polls the scheduler database 224 again.

[0175] If the control unit 310 determines that the next configuration file is different to the configuration file for the previous timeslot, the control unit 310 retrieves the identified configuration file from the configuration file data store 220 and loads each of the incident analysis functions into the corresponding incident processors 302, 304, 306 as determined from the data source identifiers that form part of the configuration file.

[0176] Non-limiting examples of video incident analysis functions include the determination of a stationary object, loitering, congestion, and running. Non-limiting examples of audio incident analysis functions include the determination of a gunshot, aggressive noise, and a scream. Non-limiting examples of external incident analysis functions include the activation of a fire alarm, an emergency alarm, and the triggering of a motion sensor.

[0177] In addition, the control unit 310 configures the logic unit 308 to perform logical operations on the outputs of the incident processors 302, 304, 306 as identified in the configuration file. The logical operations include applying one or more contemporaneous analysis functions to the outputs of the incident processors 302, 304, 306, applying one or more sequential analysis functions to the output of the contemporaneous analysis functions, and applying one or more alarm parameters to the output of the sequential analysis functions.

[0178] The contemporaneous and sequential analysis functions are described in more detail in relation to FIG. 4.

[0179] In this embodiment the video incident processor 302 is configured to receive video data 203 from a single camera, and a separate video incident processor is provided for each camera supported by the alarm engine 108. In other embodiments, a video incident processor may be configured to receive and process data received from a plurality of cameras.

[0180] In this embodiment, the video incident processor 302 is configured to perform up to a maximum of 32 separate video incident analysis functions on the data received from the camera and provide the results of the application of the video incident analysis functions to the logic unit 308.

[0181] Similarly, the audio incident processor 304 is configured to receive audio data from a single microphone and perform up to a maximum of 32 separate audio incident analysis functions on the data received from the microphone. The audio incident processor 304 is configured to provide the results of the audio analysis functions to the logic unit 308. In other embodiments, an audio incident processor may be configured to receive and process data received from a plurality of microphones.

[0182] In this embodiment the external incident processor 306 is configured to receive external data from one or more external sources/sensors. The external incident processor 306 is configured to perform up to a maximum of 32 separate external incident analysis functions on the data received from the external sources. The external incident processor 306 is configured to provide the results of the external analysis functions to the logic unit 308.

[0183] In this embodiment a total of 32 incident analysis functions can be configured for a single configuration file. That is, the combination of the video, audio and external incident analysis functions that are utilised by the configuration file cannot exceed a sum total of 32. This restriction is enforced by a user interface when the user is configuring the configuration file. A single configuration file may define a plurality of alarms that process the results of the 32 incident analysis functions in different ways. In embodiments where a configuration file defines a single alarm, a total of 32 incident analysis functions can be configured for a single alarm.

[0184] Conveniently, the output signal generated by each of the incident analysis processors is a 32 bit signal which is known as a "dword", wherein each of the bits can represent a result of an incident analysis function. A dword is a known computer science term for a unit of data having 32 bits.

[0185] Using a 32 bit dword makes efficient use of computer resources as the signals can be easily manipulated/transformed using logical operations including "AND" and "OR" functionality, as well as "equals to", "greater than" and "less than", examples of which are described in relation to FIG. 4 below.

[0186] The logic unit 308 is configured to combine the video, audio and external incident output signals to generate an incident analysis output signal that provides a representation of the result of the application of the incident analysis functions to the received video, audio and external data such that the results of the different analysis functions can be processed collectively.

[0187] The logic unit 308 is configured to perform up to 32 contemporaneous analysis functions on the incident analysis output signal and generate a contemporaneous analysis output signal representative of the result of the contemporaneous analysis functions. The contemporaneous output signal is a 32 bit dword wherein each bit represents the result of a contemporaneous analysis function.

[0188] The contemporaneous analysis functions are performed on the incident analysis output signal, which is an indirect representation of the received audio, video and external data.

[0189] A contemporaneous analysis function comprises applying one or more rules to the results of the incident analysis functions. The rules may define a Boolean equation that is applied to one or more of the results of the incident analysis functions. In addition, a contemporaneous analysis function includes a variable that defines a timer delay period, which defines a period of time for which the contemporaneous analysis rule must be satisfied in order for the contemporaneous analysis function to be satisfied.

[0190] The logic unit 310 is also configured to sequentially perform one or more sequential analysis functions on the contemporaneous analysis output signal and generate a sequential analysis output signal that is representative of the output of the last sequential analysis function in the sequence. The output of a sequential analysis function is only set as `1` if the output of the previous sequential analysis function is `1` when the current sequential analysis function is triggered. The output of the last sequential analysis function is a single bit that identifies whether or not an alarm has been determined.

[0191] The sequential analysis functions are performed on the contemporaneous analysis output signal, which is itself an indirect representation of the received audio, video and external data.

[0192] A sequential analysis function comprises applying one or more rules to the results of the contemporaneous analysis functions. The rules may define a Boolean equation that is applied to one or more of the results of the contemporaneous analysis functions. In addition, a sequential analysis function includes a variable that defines a timeout period, which defines a time period for which the output of the sequential analysis function remains true after the corresponding rule has been satisfied. The timeout period is used to define a time period during which the next sequential analysis function must become true for the sequence to continue.

[0193] FIG. 4 illustrates an example of how received audio, video and external data are analysed/processed by the video incident processor 302, audio incident processor 304, external incident processor 306, and logic unit 308.

[0194] As described above, the video, audio and external data 203, 205, 207 received by the incident processors 302, 304, 306 have been converted from analogue to digital data at a sampling frequency of 5 Hz. Therefore the subsequently described analysis is performed for each piece of digital data (audio, video and external), and a new piece of digital data is received every 0.2 seconds.

[0195] The received video data 203 is applied to 32 video incident analysis functions 402a to 402n. The video incident analysis functions 402 are pattern recognition algorithms that are configured to recognise certain incidents/behaviours in the video data. The specific video incident analysis functions 402 that are enabled, and their associated function, are set by the control unit 310 as described above in relation to FIG. 3.

[0196] The output of each video incident analysis function 402 is a single bit 404 that indicates whether or not the incident/behaviour has been recognised.

[0197] The video incident analysis processor 302 then concatenates the 32 single bits 404 into a 32 bit "video incident mask" dword 406, and sends the "video incident mask" dword 406 to the logic unit 308.

[0198] Similarly, the audio incident analysis processor 304 applies 32 audio incident analysis functions 408 to the received digital audio data 205 and generates 32 output single bits 410, which are concatenated into a 32 bit "audio incident mask" dword 412 and sent to the logic unit 308.

[0199] In the same way, the external incident analysis processor 306 applies 32 external incident analysis functions 414 to the received digital audio data 207 and generates 32 output single bits 416, which are concatenated into a 32 bit "external incident mask" dword 418 and sent to the logic unit 308.

[0200] In some embodiments, the received audio data signal 205 and/or the received external data signal 207 may already provide a representation of whether or not predefined incident analysis functions are satisfied. In such embodiments, the audio incident processor 304 and/or external incident processor 306 are configured to translate the received signal 205, 207 into the "audio/external incident mask" 412, 418 that represents the specific audio/external incident function outputs for the configuration file that has been loaded into the audio/external incident processors 304, 306.

[0201] Each of the video, audio and external incident analysis functions 402, 408, 414 can be considered as being associated with a channel between 1 and 32 which corresponds to the bit position in the "video/audio/external incident mask" 406, 412, 418. Typically the channel to which the incident analysis function is attributed is set when a user sets up the configuration file.

[0202] In this embodiment, a single incident analysis function 402, 408, 414 is configured to perform an analysis of only one of audio or video or external data 203, 205, 207. The setup of the configuration file ensures that for a given bit position/channel an incident analysis function 402, 408, 414 can be enabled for only one of the video incident analysis processor 302, or the audio incident analysis processor 304, or the external incident analysis processor 306.

[0203] When the incident analysis function 402, 408, 414 is satisfied, a `1` is stored in the corresponding audio, video or external 32 bit "incident mask" 406, 412, 418 at the corresponding bit position. Therefore, the value in any of the "incident masks" 406, 412, 418 for a given bit position can only be `1` in a maximum of one of the "incident masks" 406, 412, 418.

[0204] The logic unit 308 performs a summation or a bitwise "OR" of the 32 bit audio, video and external incident masks 406, 412, 418 to generate an incident analysis output signal 420 which in this embodiment is a 32 bit dword that represents a mask of the output of all incident analysis functions.

[0205] The 32 bit incident analysis output dword 420 is made available for contemporaneous analysis processing by the logic unit 308.

[0206] The contemporaneous analysis processing comprises 32 contemporaneous analysis functions 424a to 424n that are configured to process the incident analysis output signal 420 and generate a single output bit 438 representative of whether or not the contemporaneous analysis function 424 is satisfied. Each contemporaneous analysis function 424 is represented by two 32 bit dword operational masks 426, 430, a delay period parameter 440, and an "and/or identifier" 442, the application of which will be described in more detail with reference to the example illustrated in FIG. 5.

[0207] The example illustrated in FIG. 5 is described in relation to 8 bit signals to aid clarity, and it will be appreciated that the same principles apply to the 32 bit dword signals of the embodiment illustrated in FIG. 4.

[0208] As described above, the values of the bits in the operational masks 426, 430, the value of the delay period 440, and the "and/or identifier" 442 are set automatically by the control unit 310 in accordance with the values stored in configuration file 1514.

[0209] The first operation that is performed on the 32 bit incident analysis output dword 420 as part of the contemporaneous analysis function is to invert any individual incident analysis function output bits using an invert mask 426. This functionality enables a user to define whether the presence or absence of an incident is utilised as part of the contemporaneous analysis function 424.

[0210] The control unit 310 stores a "1" in the invert operational mask 426 where the configuration file defines that the functionality of a "NOT" gate is applied to the output of the corresponding incident analysis function 402, 408, 414. Application of the invert operational mask 426 to the incident analysis output dword 420 is provided by applying a bitwise logical "XOR" function to the incident analysis output dword 420 and the invert mask 426 to generate an inverted incident 32 bit dword 428. The logical functions are applied bitwise: that is, the corresponding bits are operated on, and it is not the 32 bit dwords that are operated on collectively.

[0211] The second operation that is performed as part of the contemporaneous analysis function 424 is to mask out any incident analysis functions that are not enabled for the contemporaneous analysis function 424 in question. This functionality is provided by using a 32 bit operational mask dword 430 that comprises a "1" for bit positions/channels for which corresponding incident analysis functions are to be considered, and a "0" for bit positions/channels for which corresponding incident analysis functions are not to be considered.

[0212] Bitwise logical "AND" functionality is applied to the inverted incident mask dword 428 and the operational mask dword 430 to generate a masked inverted incident dword 432. This ensures that the output of the incident analysis functions 402, 408, 414 (inverted where necessary) is carried through to the masked inverted incident dword 432 for bits that are enabled for the contemporaneous function 424. Any incident analysis functions that not enabled as identified by a "0" in the corresponding bits in the mask operational dword 430, will always be "0" in the masked inverted incident dword 432.

[0213] The "and/or identifier" 442 is used to define whether "AND" or "OR" functionality is applied to the enabled incident analysis functions. The value of the "and/or identifier" 442 is set by the control unit 310 in accordance with the configuration file retrieved from memory.

[0214] If the "and/or identifier" 442 identifies that logical "AND" functionality is to be applied, the logic unit 308 compares the operational mask dword 430 with the masked inverted incident dword 432. If the logic unit 408 determines that the operational mask dword 430 is the same as the masked inverted incident dword 432 at that instant and also for the previous period of time defined by the delay period 440, then the output 438' of the contemporaneous analysis function 424 is set as "1" (step 434). In the example shown in FIG. 5, the operational mask dword 430 is not the same as the masked inverted incident dword 432, and therefore the output 438' of the contemporaneous analysis function 424 is set as "0".

[0215] If the "and/or identifier" 442 identifies that logical "OR" functionality is to be applied, the logic unit 308 performs a bitwise comparison with a 32 bit dword having all bits set as `0` (not shown). If the result of comparison identifies that the dwords are not the same, the logic unit 308 determines that the masked inverted incident dword 432 has at least one bit that is set as `0` at that instant, and therefore the "OR" functionality is satisfied. If the "OR" functionality has also been satisfied for the previous period of time defined by the delay period 440, then the output 438'' of the contemporaneous analysis function 424 is set as "0" (step 436). In the example shown in FIG. 5, the masked inverted incident dword 432 is greater than zero, and as long as the masked inverted incident dword 432 has been greater than zero for at least the delay period, the output 438'' is set as "1".

[0216] In other embodiments, the logic unit 308 can support the application of more complex logical functions to the incident analysis function output signal 420.

[0217] The output of each contemporaneous analysis function 424 is a single bit 438 that indicates whether or not the contemporaneous analysis function 424 is satisfied.

[0218] The logic unit 308 is configured to concatenate the 32 single bits 438 into a 32 bit "contemporaneous incident mask" dword 440, and makes the "contemporaneous incident mask" dword 440 available for sequential analysis processing.

[0219] The sequential analysis processing is performed by up to four sequential analysis functions 442 on the contemporaneous analysis output signal 440. The sequential analysis functions 442 evaluated in sequence, whereby the immediately subsequent sequential analysis function 442 cannot be triggered unless the immediately previous sequential analysis function 442 is satisfied at the same time as the triggering of the subsequent sequential analysis function 442.

[0220] The sequential analysis functions 442 comprise an invert operational mask 446, an operational mask 448 which defines which of the contemporaneous analysis functions 424 are utilised by the sequential analysis function 442, a timeout period parameter 450, and an "and/or identifier" 452.

[0221] The invert operational mask 446, the operational mask 448, and the "and/or identifier" 452 are implemented in a similar way to the corresponding features of the contemporaneous analysis function 424 described above, and therefore are not described again. The timeout period parameter 450 defines a period of time for which the associated sequential analysis function 442 remains true after the sequential analysis function 442 is initially satisfied.

[0222] As shown schematically in FIG. 4, the output of each of the sequential analysis functions 442 is a single bit 454, and the immediately subsequent sequential analysis function output bit 454 can only be set as `1` when the immediately preceding sequential analysis function output bit 454 is also a `1`. For example, the output bit 454b of the second sequential analysis function cannot be set as a `1` unless the output bit 454a was the first sequential analysis function is also `1`.

[0223] The output 454d of the last sequential analysis function 442 is also an alarm identifier. That is, when the last sequential analysis function 442 is satisfied the alarm is generated. It will be appreciated that the configuration file may not necessarily use all of the available sequential analysis functions, and that if only two sequential analysis functions are required, the first two sequential analysis functions 442 are not used, and the sequential analysis processing begins with the generation of the output bit 4504c of the third sequential analysis function 442.

[0224] An example of how the timer delay is used by a contemporaneous analysis function is illustrated in FIG. 6a. FIG. 6a shows the value of a contemporaneous analysis function output 604, and the rule output 902 associated with that contemporaneous analysis function over time. A value of 0 indicates that the rule or contemporaneous analysis function is not satisfied, and an output value of 1 indicates that the rule or contemporaneous analysis function is satisfied.

[0225] At time t1, the inputs to the contemporaneous analysis function satisfy the rule and the value of the rule output 602 changes from 0 to 1 which initiates the timer delay period. However, in this example the inputs to the contemporaneous analysis function change at time t2 such that the rule is no longer satisfied. The time period between t2 and t1 is less than the timer delay, and therefore the contemporaneous analysis function is not triggered.

[0226] At time t3, the inputs to the contemporaneous analysis function again satisfy the rule and the value of the rule output 602 changes from 0 to 1. This time, the rule output 602 remains at 1 when the timer delay expires at time t4 and therefore the contemporaneous analysis function output 604 changes from 0 to 1. The contemporaneous analysis function output 604 remains as 1 until the rule is no longer satisfied at time t5.

[0227] In this embodiment, a maximum number of thirty two contemporaneous analysis functions can be defined for a single alarm.

[0228] An example of how the timeout is used by a sequential analysis function of 640 is illustrated in FIG. 6b. FIG. 6b shows the value of sequential analysis function 1 output 620, sequential analysis function 2 output 622, and the alarm output 624. Sequential analysis function 1, sequential analysis function 2, and the alarm are sequentially configured such that sequential analysis function 1 must precede sequential analysis function 2 before the alarm is generated.

[0229] A value of 0 indicates that the sequential analysis function is not satisfied, and an output value of 1 indicates that the sequential analysis function is satisfied. The generation of an alarm is indicated as an impulse signal.

[0230] At time t1, the inputs to sequential analysis function 2 satisfy the associated rule. However, as sequential analysis function 1 620 has not been satisfied in the preceding pre-requisite timeframe as defined by the timeout value of sequential analysis function 1, the alarm 624 is not generated.

[0231] At time t2, the inputs to sequential analysis function 1 satisfy the associated rule. As sequential analysis function 1 is the first sequential analysis function in the sequence there is no preceding sequential analysis function output value to be considered and the output of sequential analysis function 1 620 changes from 0 to 1. This initiates the timeout period associated with the sequential analysis function 1 as illustrated in FIG. 6b. It does not matter how long the output of sequential analysis function 1 620 remains as a 1, as the timeout period has been initiated.

[0232] At time t3, the inputs to sequential analysis function 2 satisfy the associated rule. The difference in time between t3 and t2 is less than the timeout period as illustrated in the figure, and therefore sequential analysis function 2 is satisfied and the output of sequential analysis function 2 924 changes from 0 to 1.