System And Method For Implementing Personal Emergency Response System Based On Uwb Interferometer

ZACK; Rafi ; et al.

U.S. patent application number 14/753062 was filed with the patent office on 2016-12-29 for system and method for implementing personal emergency response system based on uwb interferometer. The applicant listed for this patent is ECHOCARE TECHNOLOGIES LTD.. Invention is credited to Yossi Kofman, Rafi ZACK.

| Application Number | 20160379474 14/753062 |

| Document ID | / |

| Family ID | 57483982 |

| Filed Date | 2016-12-29 |

| United States Patent Application | 20160379474 |

| Kind Code | A1 |

| ZACK; Rafi ; et al. | December 29, 2016 |

SYSTEM AND METHOD FOR IMPLEMENTING PERSONAL EMERGENCY RESPONSE SYSTEM BASED ON UWB INTERFEROMETER

Abstract

A non-wearable Personal Emergency Response System (PERS) architecture is provided, having a synthetic aperture antenna based RF interferometer followed by two-stage human state classifier and abnormal states pattern recognition. In addition, it contains a communication sub-system to communicate with the remote operator and centralized system for multiple users' data analysis. The system is trained to learn the person's body features as well as the home environment. The decision process is carried out based on the instantaneous human state (Local Decision) followed by abnormal states patterns recognition (Global decision). The system global decision (emergency alert) is communicated to the operator through the communication system and two-ways communication is enabled between the monitored person and the remote operator. In some embodiments, a centralized system (cloud) receives data from distributed PERS systems to perform further analysis and upgrading the systems with updated database (codebooks).

| Inventors: | ZACK; Rafi; (Kiryat-Ono, IL) ; Kofman; Yossi; (Raanana, IL) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Family ID: | 57483982 | ||||||||||

| Appl. No.: | 14/753062 | ||||||||||

| Filed: | June 29, 2015 |

| Current U.S. Class: | 340/539.11 |

| Current CPC Class: | G08B 25/016 20130101 |

| International Class: | G08B 25/01 20060101 G08B025/01 |

Claims

1. A non-wearable monitoring system comprising: a radio frequency (RF) interferometer configured to transmit signals at a specified area and receive echo signals via an antenna array; an environmental clutter cancellation module configured to filter out static non-human related echo signals; a human state feature extractor configured to extract from the filtered echo signals, a quantified representation of position postures, movements, motions and breathing of at least one human located within the specified area; a human state classifier configured to identify a most probable fit of human current state that represents an actual human instantaneous status; and an abnormality situation pattern recognition module configured to apply a pattern recognition based decision function to the identified states patterns and determine whether an abnormal physical event has occurred to the at least one human in the specified area.

2. The system according to claim 1, wherein the extracted features are vector quantized, and wherein the classifier is configured to find the best match to a codebook which represents the state being a set of human instantaneous condition/situation which is based on said quantized features.

3. The system according to claim 1, wherein the RF interferometer is an ultra-wide band (UWB) RF interferometer which includes: a multiple synthetic aperture antennas arrays; UWB pulse generator, UWB transmitter and UWB receiver configured to capture echo signal from every antenna and every array.

4. The system according to claim 1, wherein the environmental clutter cancellation module comprises a static environment detector configured to ensure that no human body at the environment, static clutter estimator and static clutter subtraction.

5. The system according to claim 1, wherein the human state features extractor comprises: a back-projection unit configured to estimate the reflected clutter from a specific voxel to extract the human position and posture features; a Doppler estimator configured to extract the human motions and breathing features; and a features vector generator configured to create a quantized vectors of the extracted features.

6. The system according to claim 1, wherein the human state classifier comprises a training unit configured to quantize the known states features vectors and generate the states code-vectors; a distance function configured to measure the distance between unknown tested features vectors and pre-defined known code-vectors; and a classifier unit configured to find the best fit between unknown tested features vector and pre-determined code-vectors set, classifier output consisting of the most probable state and the relative statistical distance to the tested features vector.

7. The system according to claim 1, wherein the abnormality pattern recognition module comprises: a training unit configured to generate the set of abnormal states patterns as a reference codebook, and set of states transition probabilities, and states patterns matching function to find and alert on a match between a tested states pattern and the pre-defined abnormal pattern of the codebook.

8. The system according to claim 1, further comprising a communication sub-system configured to communicate an alert upon determining of an abnormal physical event.

9. The system according to claim 8, wherein the communication sub-system comprises: a signaling link configured to transmit the system alerts to far end, a two way voice and video communication to interact with the monitored person, and two-way data link to remotely upgrade the system.

10. The system according to claim 8, further comprising a remote centralized data analyzing unit comprising: a data collection unit to receive the monitoring and alerts units' data, data analyzer to find new abnormal patterns based on multiple users situations, abnormal patterns codebook generator to update the codebook with the new abnormal patterns.

11. The system according to claim 1, configured to operate with similar systems in a master-slave configuration in which the RF interferometer and set of antennas are configured to act as a repeater communicating between at least two systems.

12. The system according to claim 1, wherein parameters obtained in a training sequence are used to configure the human state classifier per a specific person.

13. The system according to claim 12, wherein the trained environmental parameters are calculated by monitoring a human in various locations and positions in a predefined order.

14. The system according to claim 7, wherein the abnormal patterns reference codebook is remotely updated with new abnormal patterns received from data analysis by multiple users.

15. The system according to claim 1, wherein the system further includes an interface with at least one of: a wearable medical sensor configured to sense vital signs of the human, and a home safety sensor configured to sense ambient conditions at said specified area, and wherein data from said at least one sensor are used by said decision function for improving the decision whether an abnormal physical event has occurred to the at least one human in said specified area.

Description

FIELD OF THE INVENTION

[0001] The present invention relates to the field of elderly monitoring, and more particularly, to a system architecture for personal emergency response system (PERS).

BACKGROUND OF THE INVENTION

[0002] Elderly people have a higher risk of falling, for example, in residential environments. As most of elder people will need immediate help after such a fall, it is crucial that these falls are monitored and addressed upon in real time. Specifically, one fifth of falling elders are admitted to hospital after staying on floor for over one hour following a fall. The late admission increases the risk of dehydration, pressure ulcers, hypothermia, and pneumonia. Acute falls leads to high psychological effect of fear and high impact on daily life quality.

[0003] Most of the existing personal emergency response systems (PERS), which take the form of fall detectors and alarm buttons, are wearable devices. These wearable devices have several disadvantages. First, they cannot recognize the human body positioning and posture.

[0004] Second, they suffer from limited acceptance and use due to: Elders' perception and image issues, high rate of false alarms and miss-detects, elders neglect re-wearing when getting out of bed or bath, and, and long term usage of wearable might lead to user skin irritations. Third, the wearable PERS are used mainly after experiencing a fall (very limited addressable market).

[0005] Therefore, there is a need for a paradigm shift toward automated and remote monitoring systems.

SUMMARY OF THE INVENTION

[0006] Some embodiments of the present invention provide a unique sensing system and a breakthrough for the supervision of the elderly during their stay in the house, in general, and detect falls, in particular. The system may include: a UWB-RF Interferometer, Vector Quantization based Human states classifier, Cognitive situation analysis, communication unit and processing unit.

[0007] According to some embodiments of the present invention, the system may be installed in the house's ceiling, and covers a typical elder's apartment with a single sensor, using Ultra-Wideband RF technology. It is a machine learning based solution that learns the elder's unique characteristics (e.g., stature, gait and the like) and home primary locations (e.g. bedroom, restroom, bathroom, kitchen, entry, etc.), as well as the home external walls boundaries.

[0008] According to some embodiments of the present invention, the system may automatically detect and alert emergency situation that might be encountered by elders while being at home and identify the emergency situations.

[0009] According to some embodiments of the present invention, the system may detect falls of elderly people, but may also identify other emergencies situations, such as labor briefing, sleep apnea, as well as other abnormal cases, e.g., sedentary situation, repetitive non-acute falls that are not reported by the person. It is considered as a key element for the elderly connected smart home, and, by connecting the system to the network and cloud, it can also make a use of big data analytics to identify new patterns of emergencies and abnormal situations.

[0010] These, additional, and/or other aspects and/or advantages of the present invention are set forth in the detailed description which follows; possibly inferable from the detailed description; and/or learnable by practice of the present invention.

BRIEF DESCRIPTION OF THE DRAWINGS

[0011] The subject matter regarded as the invention is particularly pointed out and distinctly claimed in the concluding portion of the specification. The invention, however, both as to organization and method of operation, together with objects, features, and advantages thereof, may best be understood by reference to the following detailed description when read with the accompanying drawings in which:

[0012] FIG. 1 is a block diagram illustrating a non-limiting exemplary architecture of a system in accordance with embodiments of the present invention

[0013] FIG. 2 is another block diagram illustrating the architecture of a system in further details in accordance with embodiments of the present invention;

[0014] FIG. 3 is a diagram illustrating conceptual 2D Synthetic Aperture Antennas arrays in accordance with some embodiments of the present invention;

[0015] FIG. 4 is a table illustrating an exemplary states definition in accordance with some embodiments of the present invention;

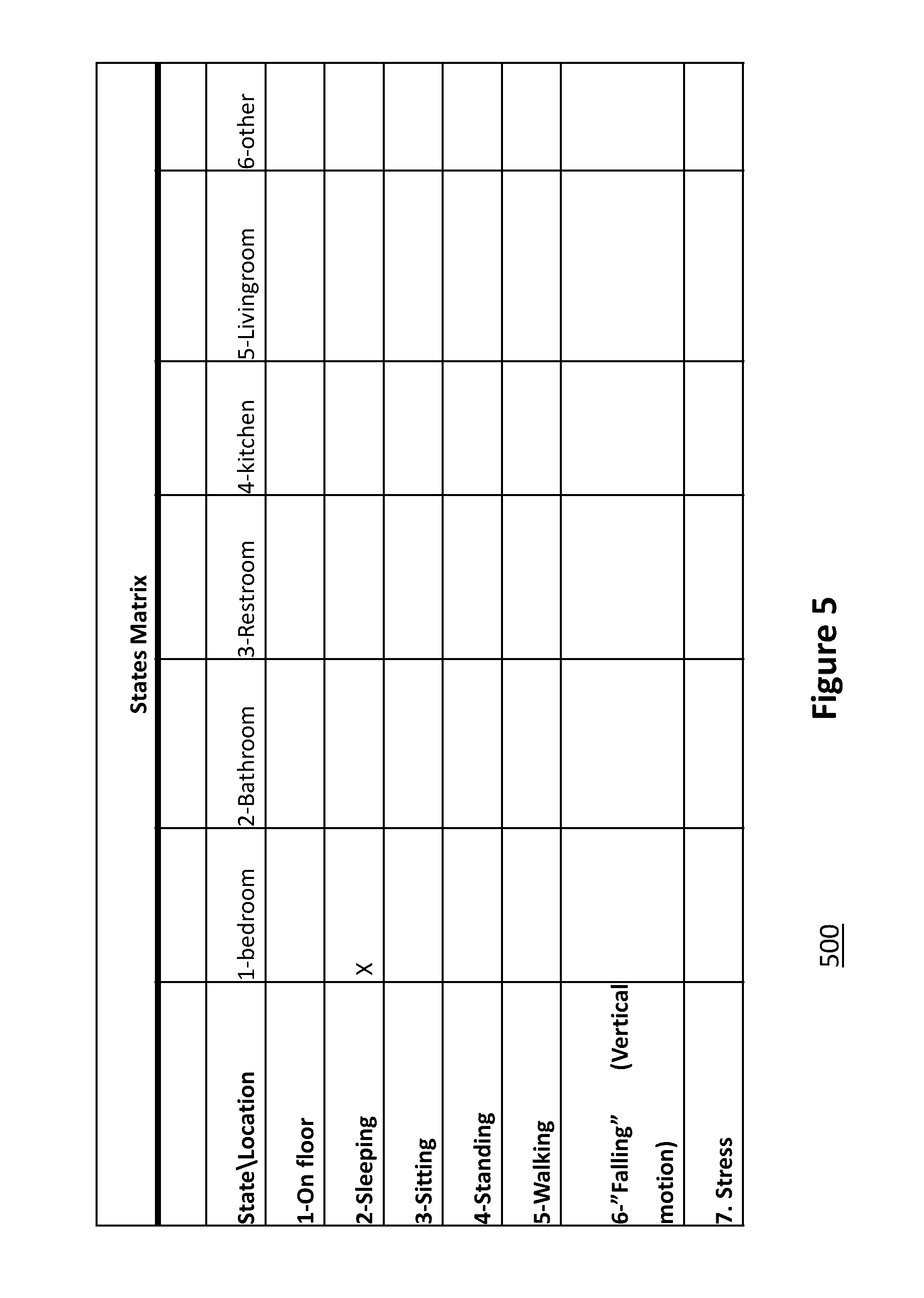

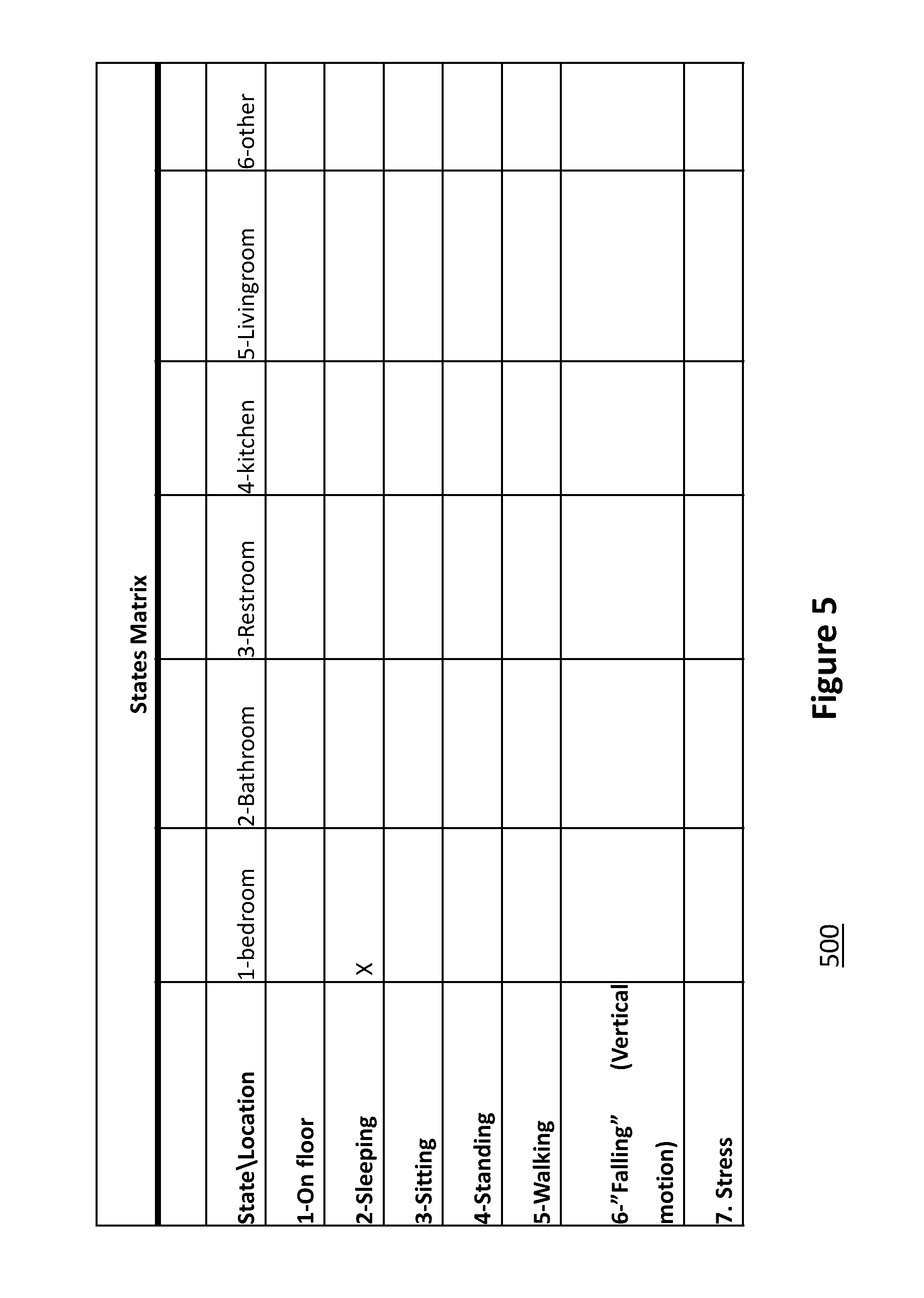

[0016] FIG. 5 is a table illustrating an exemplary states matrix in accordance with some embodiments of the present invention;

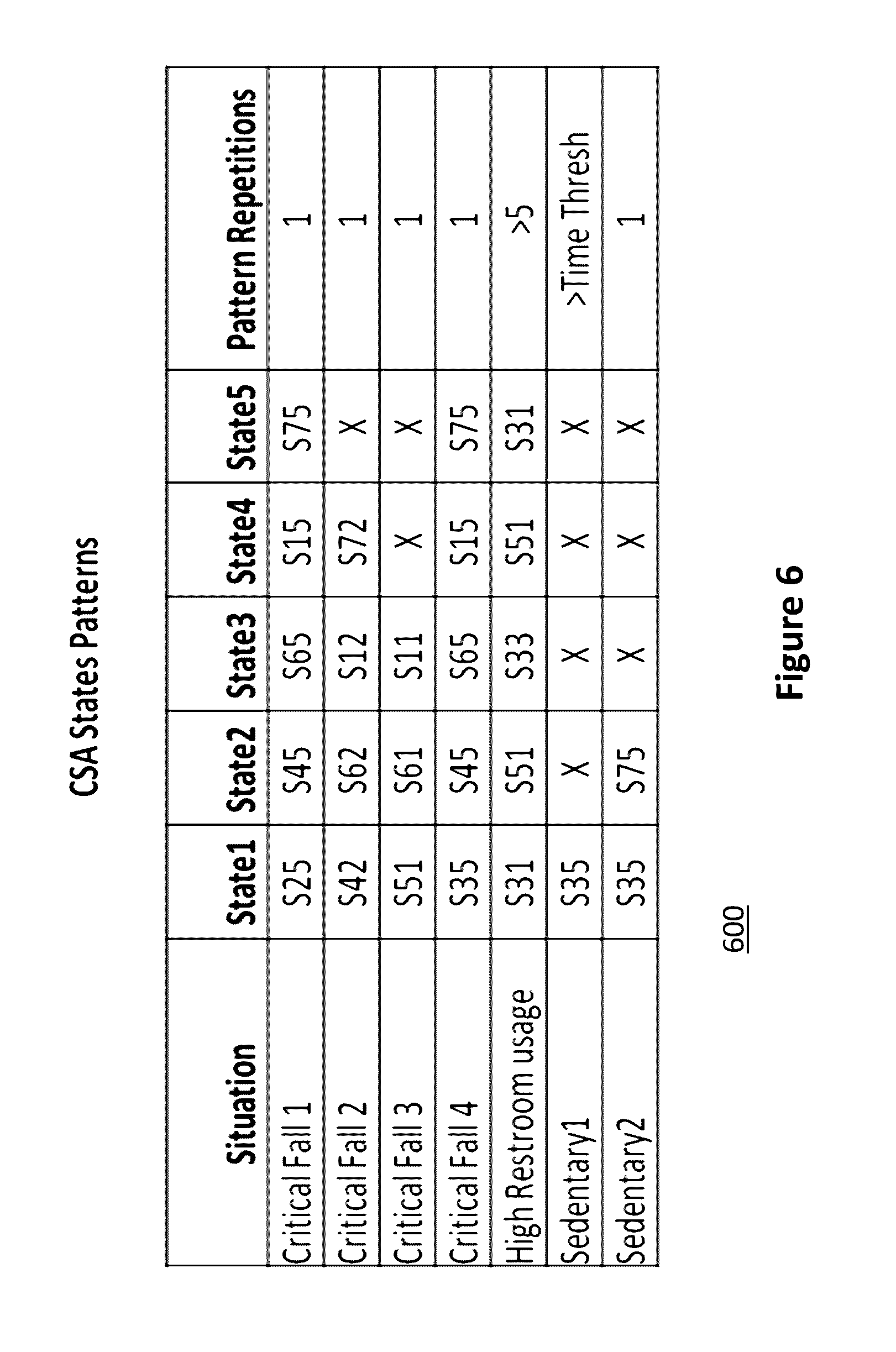

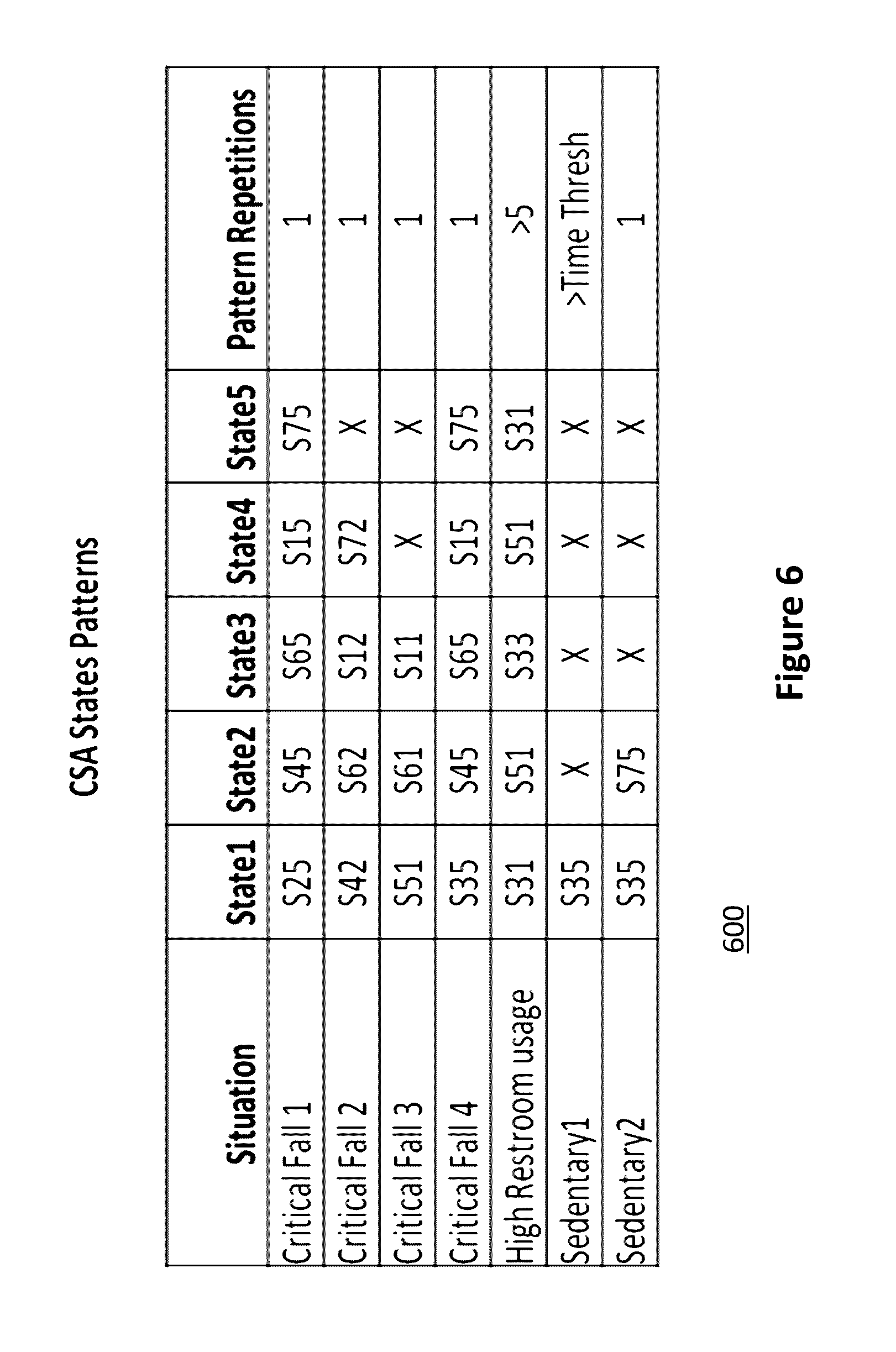

[0017] FIG. 6 is a table illustrating an exemplary abnormal patterns in accordance with some embodiments of the present invention; and

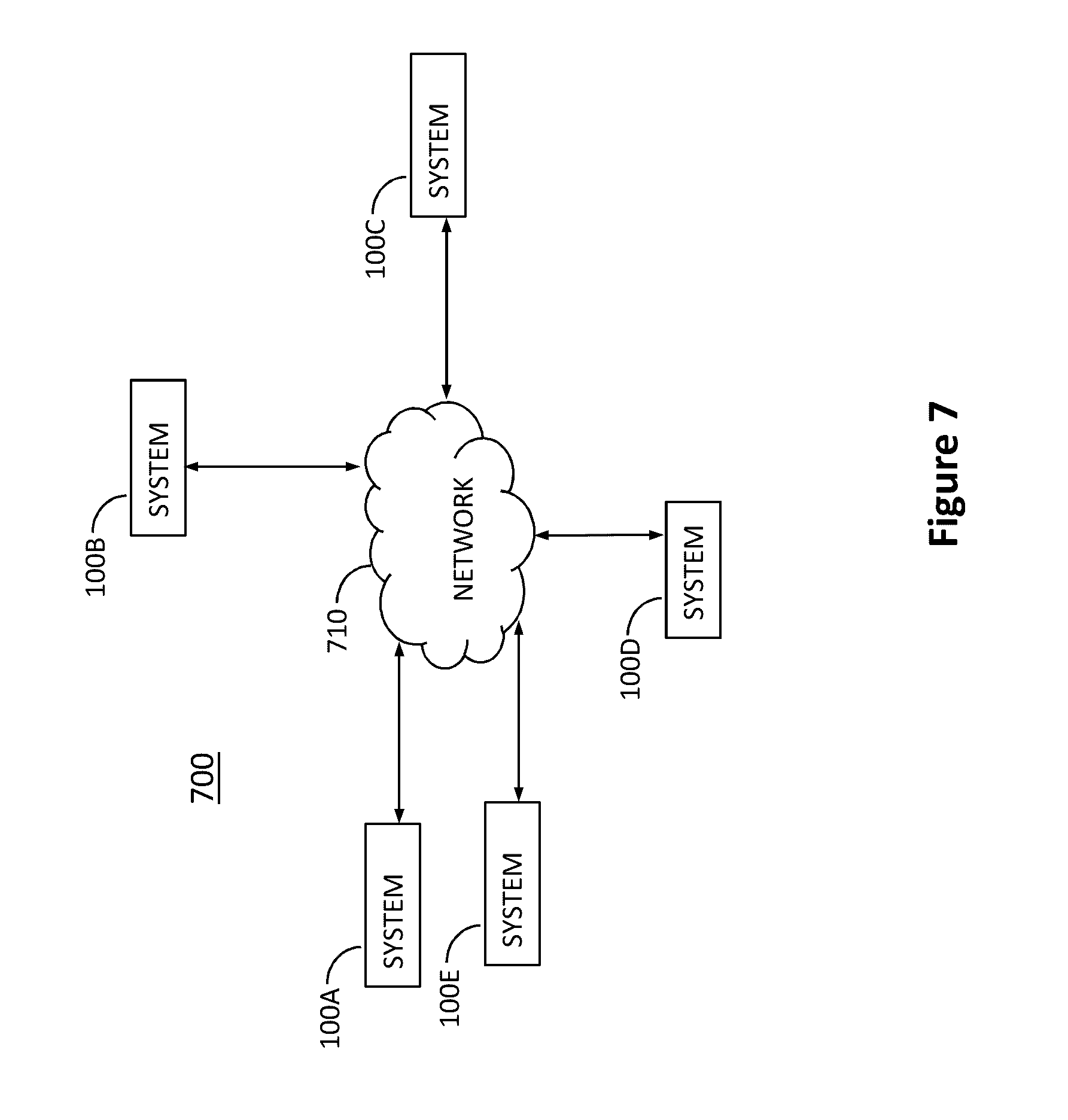

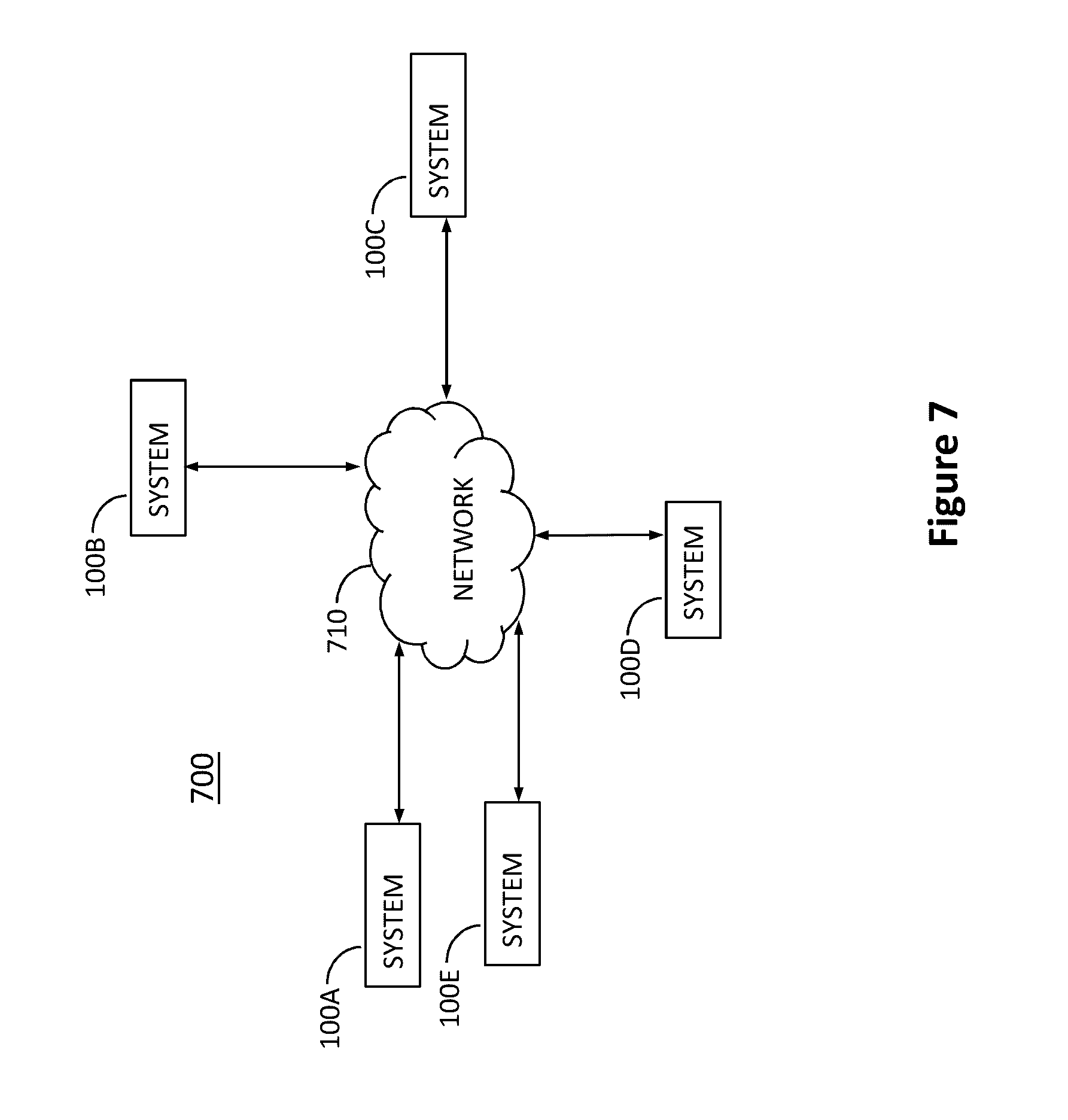

[0018] FIG. 7 is a diagram illustrating a cloud based architecture of the system in accordance with embodiments of the present invention;

[0019] FIG. 8 is a floor plan diagram illustrating initial monitored person training as well as the home environment and primary locations training in accordance with embodiments of the present invention; and

[0020] FIG. 9 is a diagram illustrating yet another aspect in accordance with some embodiments of the present invention.

[0021] It will be appreciated that for simplicity and clarity of illustration, elements shown in the figures have not necessarily been drawn to scale. For example, the dimensions of some of the elements may be exaggerated relative to other elements for clarity. Further, where considered appropriate, reference numerals may be repeated among the figures to indicate corresponding or analogous elements.

DETAILED DESCRIPTION OF THE INVENTION

[0022] With specific reference now to the drawings in detail, it is stressed that the particulars shown are by way of example and for purposes of illustrative discussion of the preferred embodiments of the present invention only, and are presented in the cause of providing what is believed to be the most useful and readily understood description of the principles and conceptual aspects of the invention. In this regard, no attempt is made to show structural details of the invention in more detail than is necessary for a fundamental understanding of the invention, the description taken with the drawings making apparent to those skilled in the art how the several forms of the invention may be embodied in practice.

[0023] Before at least one embodiment of the invention is explained in detail, it is to be understood that the invention is not limited in its application to the details of construction and the arrangement of the components set forth in the following description or illustrated in the drawings. The invention is applicable to other embodiments or of being practiced or carried out in various ways. Also, it is to be understood that the phraseology and terminology employed herein is for the purpose of description and should not be regarded as limiting.

[0024] FIG. 1 is a block diagram illustrating a non-limiting exemplary architecture of a system 100 in accordance with embodiments of the present invention. System 100 may include a radio frequency (RF) interferometer 120 configured to transmit signals via Tx antenna 101 and receive echo signals via array 110-1 to 110-N. It should be noted that transmit antennas and receive antennas may take different form and according to a preferred embodiment, in each antenna array they may be a single transmit antenna and several receive antennas. An environmental clutter cancellation module may or may not be used to filter out static non-human related echo signals. System 100 may include a human state feature extractor 130 configured to extract from the filtered echo signals, a quantified representation of position postures, movements, motions and breathing of at least one human located within the specified area. A human state classifier may be configured to identify a most probable fit of human current state that represents an actual human instantaneous status. System 100 may include a abnormality situation pattern recognition module 140 configured to apply a pattern recognition based decision function to the identified states patterns and determine whether an abnormal physical event has occurred to the at least one human in the specified area. A communication system 150 for communicating with a remote server and end-user equipment for alerting (not shown here). Communication system 150 may further include two-way communication system between the caregiver and the monitored person for real-time assistance.

[0025] FIG. 2 is another block diagram illustrating the architecture of a system in further details in accordance with some embodiments of the present invention as follows:

[0026] UWB-RF interferometer 220--this unit transmits an Ultra wideband signal (e.g., pulse) into the monitored environment and receives back the echo signals from multiple antenna arrays to provide a better spatial resolution by using the Synthetic Antenna Aperture approach. In order to increase the received signal-to-noise (SNR), the transmitter sends multiple UWB pulse and receiver receives and integrates multiple echo signals (processing gain). The multiple received signals (one signal per each Rx Antenna) are sampled and digitally stored for further signal processing.

[0027] Environmental Clutter Cancellation 230. The echo signals are pre-processed to reduce the environmental clutter (the unwanted reflected echo components that are arrived from the home walls, furniture, etc.). The output signal mostly contains only the echo components that reflected back from the monitored human body. Environmental Clutter Cancellation 230 is fed with the trained environmental parameters 232. In addition, the clutter cancellation includes a stationary environment detection (i.e., no human body at zone) to retrain the reference environmental clutter for doors or furniture movement cases.

[0028] Feature extraction 240--The "cleaned" echo signals are then processed to extract the set of features that will be used to classify the instantaneous state of the monitored human person (e.g. posture, location, motion, movement, breathing). The set of the extracted features constructs the feature vector that is the input for the classifier.

[0029] Human state classifier 250--The features vector is entered to a Vector Quantization based classifier that classifies the instantaneous features vector by statistically finding the closest pre-trained state out of a set of N possible states, i.e., finding the closest code vector (centroid) out of all code vectors in a codebook 234. The classifier output is the most probable states with its relative probability (local decision).

[0030] Cognitive Situation Analysis (CSA) 260--This unit recognizes whether the monitored person is in an emergency or abnormal situation. This unit is based on a pattern recognition engine (e.g., Hidden Markov Model--HMM, based). The instantaneous states with their probabilities are streamed in and the CSA search for states patterns that are tagged as emergency or abnormal patterns, such as a fall. These predefined patterns are stored in a patterns codebook 234. If case that CSA recognizes such a pattern, it will send an alarm notification to the healthcare center or family care giver through the communication unit (e.g., Wi-Fi or cellular).

[0031] Two-way voice/video communication unit 150--this unit may be activated by the remote caregiver to communicate with the monitored person when necessary.

[0032] The UWB-RF interferometer unit 220 may include the following blocks:

[0033] Two-dimensional UWB antenna array 110-1-110-N to generate the synthetic aperture through all directions, followed by antenna selector.

[0034] UWB pulse generator and Tx RF chain to transmit the pulse to the monitored environment

[0035] UWB Rx chain to receive the echo signals from the antenna array followed by analog to digital converter (ADC).

[0036] The sampled signals (from each antenna) are stored in the memory, such as SRAM or DRAM.

[0037] In order to increase the received SNR, the RF interferometer repeats the pulse transmission and echo signal reception per each antenna (of the antenna array) and coherently integrates the digital signal to improve the SNR.

Environmental Clutter Cancellation

[0038] The environmental clutter cancellation is required to remove the unwanted echo components that are reflected from the apartment's static items as walls, doors, furniture, etc.

[0039] The clutter cancellation is done by subtracting the unwanted environmental clutter from the received echo signals. The residual clutter represents the reflected echo signals from the monitored human body.

[0040] According to some embodiments of the present invention, the clutter cancellation also includes stationary environment detection to detect if no person at the environment, such as when the person is not at home, or is not at the estimated zone. Therefore, a periodic stationary clutter check is carried out and new reference clutter fingerprint is captured when the environment is identified as stationary.

[0041] The system according to some embodiments of the present invention re-estimates the environmental clutter to overcome the clutter changes due to doors or furniture movements.

Multiple Features Extraction

[0042] The "cleaned" echo signal vectors are used as the raw data for the features extraction unit. This unit extracts the features that mostly describe the instantaneous state of the monitored person. The following are examples for the set of the extracted features and the method it's extracted:

[0043] Position--the position is extracted as the position (in case of 2D--angle/range, in case of 3D--x,y,z coordinates) metrics output of each array baseline. The actual person position at home will be determined as a "finger print" method, i.e., the most proximity to the pre-trained home position matrices (centroids) codebook.

[0044] Posture--the person posture (sitting, standing, and laying) will be extracted by creating the person "image" by using, e.g., a back-projection algorithm.

[0045] Both position and posture are extracted, for example, by operating, e.g., the Back-projection algorithm on received echo signals--as acquired from the multiple antennas array in SAR operational mode.

[0046] The following is the used procedure to find the human position and posture:

[0047] Dividing the surveillance space into voxels (small cubic) in cross range, down range and height

[0048] Estimating the reflected EM signal from a specific voxel by the back projection algorithm

[0049] Estimating the human position by averaging the coordinates of the human reflecting voxels for each baseline (Synthetic Aperture Antenna Array).

[0050] Triangulating all baselines' position to generate the human position in the environment

[0051] Estimating the human posture by mapping the human related high-power voxels into the form-factor vector

[0052] Tracking the human movements in the environment (bedroom, restroom, etc.)

[0053] Human motion--The monitored human body may create vibrations and other motions (as gestures and gait). Therefore, it introduces frequency modulation on the returned echo signal. The modulation due to these motions is referred to as micro-Doppler (m-D) phenomena. The human body's motion feature is extracted by estimating the micro-Doppler frequency shift vector at the target distance from the system (down range).

[0054] Human breathing--During the breathing (respiration) the chest wall moves. The average respiratory rate of a healthy adult is usually 12-20 breaths/min at rest (.about.0.3 Hz) and 35-45 breaths/min (.about.0.75 Hz) during labored breathing. The breathing frequency feature is extracted by estimating the spectrum on the slow-time sampled received echo signal at the target distance (down range) from the system.

[0055] The features vector is prepared by quantizing the extracted features with a final number of bits per field and adding the time stamp for the prepared vector. This vector is used as the entry data for the human state classifier (for both training and classifying stages).

Human State Classifier

[0056] The Human state classifier is a VQ (Vector Quantization) based classifier. This classifier consists of two main phases:

[0057] Training phase--it's done offline (supervised training) and online (unsupervised training), where a stream of features vectors reflecting various states are used as a preliminary database for vector quantization and finding the set of code-vectors (centroids) that sufficiently representing the instantaneous human states. The set of the calculated code-vectors are called codebook. Some embodiments of the training sessions are provided in more details hereinafter.

[0058] Classifying phase--it's executed during the online operation while an unknown features vector is entered into the classifier and the classifier determines what the most probable state that it represents. The classifier output is the determined states and the set of the measured statistical distances (probabilities), i.e., the probability of State-i given the observation-O (the features vector). The aforementioned probability scheme may be formulated by: P (Si|O). The determined instantaneous state is called "Local Decision".

[0059] The VQ states are defined as the set of instantaneous states at various locations at the monitored home environment. Therefore, any state is a 2 dimension results which is mapped on the VQ state matrix.

[0060] The State matrix consists of the state (row) and location (Column) followed by a time stamp. Typical elderly home environment consists of the specific locations (Primary zones) and others non-specified locations (Secondary zones). State is defined as the combination of posture/motion at a specific location (e.g. S.sup.21 will indicate sleeping at Bedroom).

[0061] FIG. 3 is a diagram illustrating conceptual 2D Synthetic Aperture Antennas arrays in accordance with some embodiments of the present invention. Antenna array system 300 may include several arrays of antennas 320, 330, 340, and 350. Each row may have at least one transmit antenna and a plurality of receive antennas. The aforementioned non-limiting exemplary configuration enables to validate a location of a real target 310 by eliminating the possible images 310A and 310B after checking reflections received at corresponding arrays of antennas 330 and 320, respectively. It is well understood that the aforementioned configuration is a non-limiting example and other antennas configurations may be used effectively.

[0062] FIG. 4 is a table 400 illustrating an exemplary states definition in accordance with some embodiments of the present invention.

[0063] FIG. 5 is a table 500 illustrating an exemplary states matrix in accordance with some embodiments of the present invention.

Cognitive Situation Analysis (CSA)

[0064] The CSA's objective is to recognize the abnormal human patterns according to a trained model that contains the possible abnormal cases (e.g., fall). The core of the CSA, in this embodiment, may, in a non-limiting example a Hidden Markov Model (HMM) based pattern recognition.

[0065] The CSA engine searches for states patterns that are tagged as an emergencies or abnormal patterns. These predefined patterns are stored in a patterns codebook.

[0066] The output of the CSA is the Global recognized human situation.

[0067] FIG. 6 is a table 600 illustrating exemplary abnormal patterns in accordance with some embodiments of the present invention. It can be seen that in the first abnormal case (Critical fall), it appears that the person was sleeping in the leaving room (S25), then was standing (S45) and immediately fell down (S65). He stayed on floor (S15) and start being in stress due to high respiration rate (S75).

[0068] The CSA may contain additional codebook (irrelevant codebook) to identify irrelevant patterns that might mislead the system decision.

Communication Unit

[0069] The communication unit creates the channel between the system and the remote caregiver (family member or operator center). It may be based on either wired (Ethernet) connectivity or wireless (e.g., cellular or WiFi communication or any other communication channel).

[0070] The communication unit provides the following functionalities: [0071] 1. This unit transmits any required ongoing situation of the monitored person and emergency alerts. [0072] 2. It enables the two way voice/video communication with the monitored person when necessary. Such a communication is activated either automatically whenever the system recognizes an emergency situation or remotely by the caregiver. [0073] 3. It enables the remote system upgrades for both software and updated codebooks (as will be in further detail below) [0074] 4. It enables the communication to the centralized system (cloud) to share common information and for further big data analytics based on multiple deployments of such innovated system

[0075] FIG. 7 is a diagram illustrating cloud-based architecture 700 of the system in accordance with embodiments of the present invention. Raw data history (e.g., states stream) is passed from each local system 100A-100E to the central unit located on a cloud system 710 and performs various data analysis to find correlation of states patterns among the multiple users' data to identify new abnormal patterns that may be reflected just before the recognized abnormal pattern. New patterns code vectors will be included to the CSA codebook and cloud remotely updates the multiple local systems with the new code-book. The data will be used to analyze daily operation of local system 100A-100E.

[0076] FIG. 8 is a diagram illustrating a floor plan 800 of an exemplary residential environment (e.g., an apartment) on which the process for the initial training is described herein. The home environment is mapped into the primary zones (the major home places that the monitored person attends most of the time as bedroom 810, restroom 820, living room 830 and the like) and secondary zones (the rest of the barely used environments).

[0077] The VQ based human state classifier (described above) is trained to know the various primary places at the home. This is done during the system setup while the installer 10A (being the elderly person or another person) stands or walks at each primary place such as bedroom 810, restroom 820, and living room 830 and let the system learns the "finger print" of the echo signals extracted features that mostly represents that place. These finger prints are stored in the VQ positions codebook.

[0078] In addition, the system learns the home external walls boundaries. This is done during the system setup while the installer stands at various places along the external walls and let the system tunes its power and processing again (integration) towards each direction. For example, in bedroom 810, installer 10A may walk along walls in route 840 so that the borders of bedroom 810 are detected by tracking the changes in the RF signal reflections throughout the process of walking. A similar border identification process can be carried out in restroom 820, and living room 830.

[0079] Finally, the system learns to identify the monitored person 10B. This is done by capturing the finger print of the extracted features on several conditions, such as (1) while the person lays at the default bed 812 (where he or she is supposed to be during nighttime) to learn the overall body volume (2) while the person is standing to learn the stature, and (3) while the person walks to learn the gait.

[0080] All the captured cases are stored in the VQ unit and are used to weight the pre-trained codebooks and to generate the specific home/person codebooks.

[0081] According to some embodiments, one or additional persons such as 20 can also be monitored simultaneously. The additional person can be another elderly person with specific fingerprint or it can be a care giver who needs not be monitored for abnormal postures.

[0082] FIG. 9 is a diagram illustrating yet another aspect in accordance with some embodiments of the present invention. System 900 is similar to the system described above but it is further enhanced by the ability to interface with at least one wearable medical sensor 910A or 910B coupled to the body of human 10 configured to sense vital signs of human 10, and a home safety sensor 920 configured to sense ambient conditions at said specified area, and wherein data from said at least one sensor are used by said decision function for improving the decision whether an abnormal physical event has occurred to the at least one human in said specified area. The vital signs sensor may sense ECG, heart rate, blood pressure, respiratory system parameters and the like. Home safety sensors may include temperature sensors, smoke detector, open door detectors and the like. Date from all or some of these additional sensors may be used in order to improve the decision making process described above.

[0083] In the above description, an embodiment is an example or implementation of the inventions. The various appearances of "one embodiment," "an embodiment" or "some embodiments" do not necessarily all refer to the same embodiments.

[0084] Although various features of the invention may be described in the context of a single embodiment, the features may also be provided separately or in any suitable combination. Conversely, although the invention may be described herein in the context of separate embodiments for clarity, the invention may also be implemented in a single embodiment.

[0085] Reference in the specification to "some embodiments", "an embodiment", "one embodiment" or "other embodiments" means that a particular feature, structure, or characteristic described in connection with the embodiments is included in at least some embodiments, but not necessarily all embodiments, of the inventions.

[0086] It is to be understood that the phraseology and terminology employed herein is not to be construed as limiting and are for descriptive purpose only.

[0087] The principles and uses of the teachings of the present invention may be better understood with reference to the accompanying description, figures and examples.

[0088] It is to be understood that the details set forth herein do not construe a limitation to an application of the invention.

[0089] Furthermore, it is to be understood that the invention can be carried out or practiced in various ways and that the invention can be implemented in embodiments other than the ones outlined in the description above.

[0090] It is to be understood that the terms "including", "comprising", "consisting" and grammatical variants thereof do not preclude the addition of one or more components, features, steps, or integers or groups thereof and that the terms are to be construed as specifying components, features, steps or integers.

[0091] If the specification or claims refer to "an additional" element, that does not preclude there being more than one of the additional element.

[0092] It is to be understood that where the claims or specification refer to "a" or "an" element, such reference is not be construed that there is only one of that element.

[0093] It is to be understood that where the specification states that a component, feature, structure, or characteristic "may", "might", "can" or "could" be included, that particular component, feature, structure, or characteristic is not required to be included.

[0094] Where applicable, although state diagrams, flow diagrams or both may be used to describe embodiments, the invention is not limited to those diagrams or to the corresponding descriptions. For example, flow need not move through each illustrated box or state, or in exactly the same order as illustrated and described.

[0095] Methods of the present invention may be implemented by performing or completing manually, automatically, or a combination thereof, selected steps or tasks.

[0096] The descriptions, examples, methods and materials presented in the claims and the specification are not to be construed as limiting but rather as illustrative only.

[0097] Meanings of technical and scientific terms used herein are to be commonly understood as by one of ordinary skill in the art to which the invention belongs, unless otherwise defined.

[0098] The present invention may be implemented in the testing or practice with methods and materials equivalent or similar to those described herein.

[0099] While the invention has been described with respect to a limited number of embodiments, these should not be construed as limitations on the scope of the invention, but rather as exemplifications of some of the preferred embodiments. Other possible variations, modifications, and applications are also within the scope of the invention. Accordingly, the scope of the invention should not be limited by what has thus far been described, but by the appended claims and their legal equivalents.

* * * * *

D00000

D00001

D00002

D00003

D00004

D00005

D00006

D00007

D00008

D00009

XML

uspto.report is an independent third-party trademark research tool that is not affiliated, endorsed, or sponsored by the United States Patent and Trademark Office (USPTO) or any other governmental organization. The information provided by uspto.report is based on publicly available data at the time of writing and is intended for informational purposes only.

While we strive to provide accurate and up-to-date information, we do not guarantee the accuracy, completeness, reliability, or suitability of the information displayed on this site. The use of this site is at your own risk. Any reliance you place on such information is therefore strictly at your own risk.

All official trademark data, including owner information, should be verified by visiting the official USPTO website at www.uspto.gov. This site is not intended to replace professional legal advice and should not be used as a substitute for consulting with a legal professional who is knowledgeable about trademark law.