Context Dependent Application/event Activation

JUNQUA; Jean-Claude ; et al.

U.S. patent application number 14/758208 was filed with the patent office on 2015-12-31 for context dependent application/event activation. This patent application is currently assigned to PANASONIC CORPORATION OF NORTH AMERICA. The applicant listed for this patent is PANASONIC CORPORATION OF NORTH AMERICA. Invention is credited to Jean-Claude JUNQUA, Philippe MORIN, Gary David SASAKI, Ricardo H. TEIXEIRA.

| Application Number | 20150379477 14/758208 |

| Document ID | / |

| Family ID | 48743520 |

| Filed Date | 2015-12-31 |

View All Diagrams

| United States Patent Application | 20150379477 |

| Kind Code | A1 |

| JUNQUA; Jean-Claude ; et al. | December 31, 2015 |

CONTEXT DEPENDENT APPLICATION/EVENT ACTIVATION

Abstract

The system includes a computer-readable memory having a data structure configured to store information about a time-based event for a patient having reduced cognitive abilities, and optionally also electronic data reflecting the patient's cognitive ability. A networked computer system coupled to the computer-readable memory provides an information communicating interface to the patient. The computer system is programmed to monitor context information relevant to the patient and to dynamically adjust the presentation of the stored information based on the context, and optionally also based upon the patient's cognitive ability.

| Inventors: | JUNQUA; Jean-Claude; (Cupertino, CA) ; SASAKI; Gary David; (Cupertino, CA) ; TEIXEIRA; Ricardo H.; (Cupertino, CA) ; MORIN; Philippe; (Goleta, CA) | ||||||||||

| Applicant: |

|

||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Assignee: | PANASONIC CORPORATION OF NORTH

AMERICA Newark NJ |

||||||||||

| Family ID: | 48743520 | ||||||||||

| Appl. No.: | 14/758208 | ||||||||||

| Filed: | December 20, 2013 | ||||||||||

| PCT Filed: | December 20, 2013 | ||||||||||

| PCT NO: | PCT/US13/76824 | ||||||||||

| 371 Date: | June 26, 2015 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 13730327 | Dec 28, 2012 | 8803690 | ||

| 14758208 | ||||

| 61631500 | Jan 6, 2012 | |||

| Current U.S. Class: | 705/2 |

| Current CPC Class: | G06F 19/3418 20130101; G06Q 10/1093 20130101; G06F 16/211 20190101; G16H 50/30 20180101; G06F 19/3456 20130101; G16H 20/70 20180101; G16H 40/67 20180101; G08B 21/0263 20130101; G06Q 50/22 20130101; G08B 5/22 20130101; G08B 21/0423 20130101 |

| International Class: | G06Q 10/10 20060101 G06Q010/10; G06Q 50/22 20060101 G06Q050/22 |

Claims

1. A computer-implemented system for assisting persons of reduced cognitive ability to manage upcoming events, comprising: a computer-implemented data store configured to store information about a context-based event for a patient having reduced cognitive abilities; a networked computer system coupled to said data store that provides an information communicating interface to the patient; said computer system being programmed to monitor context information relevant to the patient; said computer system being further programmed to dynamically adjust the presentation of said stored information to the patient based on said context information and the cognitive ability of the patient ascertained from the patient during interactive use of the computer-implemented system or from a caregiver associated with the patient.

2. The system of claim 1 wherein the context information includes a time relative to the context-based event.

3. The system of claim 1 wherein the context information includes information relating to at least one of: the patient's medical condition, weight, vital signs, activity level, habitual behaviors and lifestyle.

4. The system of claim 1 further comprising at least one sensor for measuring at least one of: the patient's medical condition, weight, vital signs, activity level, habitual behaviors and lifestyle.

5. The system of claim 1 wherein the context information includes information relating to at least one of: location of patient, mobility of patient and ambient temperature and weather conditions in proximity of patient.

6. The system of claim 1 further comprising at least one sensor for measuring at least one of: location of patient, mobility of patient and ambient temperature and weather conditions in proximity of patient.

7. The system of claim 1 wherein said context information is a patient-related context.

8. The system of claim 1 wherein said context information is a situational context external to the patient.

9. The system of claim 1 wherein said context information is a technology context associated with the networked computer system.

10. The system of claim 1 wherein said computer system being further programmed to dynamically adjust the presentation of said stored information by automatically launching an application running on the computer system.

11. The system of claim 1 wherein said computer system being further programmed to dynamically adjust the presentation of said stored information by automatically launching an application running on a device in proximity to the patient.

12. The system of claim 1 wherein said computer system being further programmed to dynamically adjust the presentation of said stored information by adapting the modality of a multi-modal device.

13. The system of claim 1 wherein said computer system being further programmed to dynamically adjust the complexity of information presented.

14. The system of claim 1 wherein said computer system being further programmed to dynamically adjust the content of information presented.

15. The system of claim 1 wherein said computer system includes a caregiver interface through which a caregiver furnishes information to said data store about time-based events for the patient.

16. The system of claim 1 wherein said computer system is further programmed to automatically transfer the presentation from a first device to a second device based on said context information.

17. The system of claim 1 wherein said computer system is programmed to present said stored information on a first device and a second device and further programmed to separately and dynamically adjust the presentation on said first and second devices based on said context information.

18. A computer-implemented system for assisting persons of reduced cognitive ability to manage upcoming events, comprising: a computer-implemented data store configured to store plural items of information about a context-based event for a patient having reduced cognitive abilities; a networked computer system coupled to said data store that provides: a first information communicating interface to the patient and a second information communicating interface to a caregiver associated with the patient; the data store having a data structure in which to store electronic data indicative of the patient's cognitive ability; the networked computer system being programmed to receive through said second interface plural items of information about a specific context-based event and being programmed to store said received plural items of information as a record in said data store associated with said specific context-based event; the networked computer system being programmed to dynamically render said stored information to the patient through the first interface in a manner such that the rendering changes as the context changes; the networked computer system being further programmed to access said data structure storing said electronic data indicative of the patient's cognitive ability and to control the manner in which the dynamic presentation is delivered to the patient based on the accessed electronic data; and the networked computer system being further programmed to collect information indicative of cognitive ability from the patient through the first interface during interactive use or from a caregiver through the second interface and to store the collected information in the data structure storing electronic data indicative of the patient's cognitive ability.

19. The system of claim 18 wherein the context-based event is a time-based event.

20. The system of claim 18 wherein the networked computer system is further programmed to collect interaction data from the patient through the first interface and to supply the collected interaction data to the caregiver through the second interface.

21. (canceled)

22. The system of claim 18 wherein the networked computer system is further programmed to collect information indicative of cognitive ability and wherein the networked computer system is programmed to deliver a memory exercising game through the first interface and to extract from said game said information indicative of cognitive ability.

23. The system of claim 18 wherein the first interface is a display interface supported by at least one of a portable device and a wearable device used by the patient.

24. The system of claim 18 wherein the first interface includes a display screen and the electronic data indicative of the patient's cognitive ability is used to adjust the amount of information presented concurrently on the display screen.

25. The system of claim 18 wherein the first interface includes an aural interface producing speech messages and the electronic data indicative of the patient's cognitive ability is used to adjust at least one of speaking speed, vocabulary, and grammatical complexity of the speech messages.

26. The system of claim 18 further comprising a sensor measuring at least one of the patient's medical condition, weight, vital signs, activity level, habitual behaviors and lifestyle, location, mobility, ambient temperature and weather conditions in proximity of patient; and wherein the networked computer system is programmed to adjust the dynamic presentation based on said sensor-measured condition.

27. The system of claim 18 wherein the plural items of information each provide successively greater amounts of information about the time-based event.

28. The system of claim 18 wherein said caregiver is a member of the patient's family and the second interface is supported by a browser running on a device operated by the caregiver and communicating with said networked computer system.

29. The system of claim 18 wherein said caregiver is a member of a professional nursing home or healthcare organization and the second interface is supported by a browser running on a device operated by the caregiver and communicating with said networked computer system.

30. The system of claim 18 wherein said caregiver is a member of a professional nursing home or healthcare organization and the second interface is supported by a client application running on a device operated by the caregiver and communicating with said networked computer system.

31. The system of claim 18 wherein the first interface employs a computer device operated by or in proximity to the patient, said computer device running an autonomous program that delivers said dynamic presentation even when communication with the networked computer system is interrupted.

32. The system of claim 18 wherein said first interface includes speech input responsive to speech of the patient and wherein said networked computer system is programmed to receive said speech input and use it to assess the cognitive ability or emotional state of the patient.

33. The system of claim 18 wherein the networked computer system provides a third information communicating interface to another caregiver who inputs information about the condition of the patient.

34. The system of claim 18 wherein the networked computer system provides a third information communicating interface to another caregiver who inputs information about the condition of the patient wherein the information input by the another caregiver is used to update the electronic data indicative of the patient's cognitive ability.

35. The system of claim 18 wherein the networked computer system provides a third information communicating interface to another caregiver who inputs information about the condition of the patient wherein the information input by the another caregiver is used to supply status information about the patient through the second interface.

36. The system of claim 18 wherein the networked computer system provides a software interface adapted to allow other software systems to interact with the data store.

37. The system of claim 18 wherein the networked computer system provides a software interface adapted to allow a sensor measuring at least one of the patient's medical condition, weight, activity level, habitual behaviors, lifestyle, location, mobility, ambient temperature and weather conditions in proximity of patient to interact with the data store.

38. The system of claim 18 wherein the data structure is configured to represent cognitive ability as a model corresponding to a set of dimensions including at least one of anxiety level, vision impairment, vision skills, short-term memory skills, long-term memory skills, recognizing and remembering names/familiar faces, reading comprehension skills, attention skills, time and space sensing, speech skills, hearing and comprehension skills, ability to solve simple logical problems, and inference skill including ability to understand normal implied consequences of actions and facts.

39. The system of claim 18 wherein said networked computer system is programmed to automatically adjust the message as the patient's cognitive ability changes, as reflected by said accessed electronic data.

Description

TECHNICAL FIELD

[0001] This disclosure relates to a computer-implemented system for assisting persons of reduced cognitive ability to manage upcoming events.

BACKGROUND

[0002] This section provides background information related to the present disclosure which is not necessarily prior art.

[0003] People with early to moderate Alzheimer's disease or dementia suffer from both memory loss and the inability to operate complex devices. These people are often anxious about missing events or activities, or forgetting other time-based issues. Consequently, these people often write copious notes to themselves. The accumulation of notes results in another form of confusion because they forget which notes matter and when they matter.

[0004] Notes placed on calendars are not always effective because the current date and/or time are not always known. In fact, keeping track of today's date, which day of the week it is, or even what part of the day it is (e.g. morning vs. evening) can be challenging.

[0005] If the person with Alzheimer's disease does remember that an important event is coming up, but this event is still many days or weeks away, anxiety can set in because they remember the event, but not when it is. Or, they can mistakenly think the event is happening tomorrow, even it is not happening for several weeks away. Consequently, people get told the wrong information by this person and/or repeated phone calls are made by this person to friends or family asking about details of the event.

[0006] If the person with Alzheimer's disease needs to remember a periodic activity, such as taking pills at certain times of the day, the first challenge is to remember to take the pills. The second challenge is to remember that they took the pills after they have already done so. The third challenge is giving a remote friend or family member some indication that the pills were taken so that a reminder phone call could be made if not.

[0007] Friends and family that wish to remind this person about an event may try to add their own notes, if they happen to be visiting. But, again, these notes can add to the pile of other notes that often get ignored or forgotten. Further, if someone takes this person on a short trip, other people may not know about this trip and consequently wonder if this person is OK when the phone is not answered.

[0008] If the person with Alzheimer's disease is in an assisted living home, staff can put a reminder note in an obvious place on the day of an event; but, placing these notes requires labor and the note is still often ignored when it comes time for the event to start. Consequently, staff may have to visit the person again to remind them when it is time.

[0009] Thus in an age of accessibility, the idea of being able to have one's medical records, doctor's contact information and even one's calendar schedule at the click of a button seems commonplace. However, for the over five million people who suffer from mental diseases such as Alzheimer and dementia, such use of these commonplace conveniences is beyond reach. Such persons are incapable of utilizing the new technology and applications due to age or mental diseases such as Alzheimer's disease and dementia. Individuals with impaired cognitive abilities have difficulty focusing and are easily confused, making it a challenge to interact with displays, computers, remote controls and many other daily objects/devices. Further, cognitively impaired individuals require the constant assistance of third parties (e.g. family members, friends, and other such caregivers) to perform simple day to day tasks.

[0010] Individuals with impaired cognitive abilities, herein referred to as patients, are not completely incapable of independent actions. Many are capable of getting dressed and feeding themselves, yet it may be the simple action of remembering to perform such an action that prevents them from living without the assistance of a third party.

[0011] Current market technologies include products such as portable data storage devices for medical records, emergency notification devices, portable medical monitoring system, daily calendar alerts, etc. However, all of these devices require the continuous action of a third party and/or are limited in their usage due to the patient's cognitive ability.

[0012] While third parties can assist people with impaired cognitive abilities to perform simple tasks (such as remembering events), there is a need to develop automatic solutions which assist people with varying levels of impaired cognitive abilities to perform and enjoy a variety of tasks and events in a natural and non-intrusive manner.

SUMMARY

[0013] This section provides a general summary of the disclosure, and is not a comprehensive disclosure of its full scope or all of its features.

[0014] The disclosed computer-implemented system assists persons of reduced cognitive ability. It can dynamically offload mental tasks of the patient to a computer system based on the patient's cognitive ability. In addition, it provides an interface that dynamically customizes the manner of interaction with the patient based on the patient's cognitive ability. In some instances the patient's reduced cognitive ability may be due to a diagnosed medical condition, such as Alzheimer's disease or dementia. In other instances the reduced cognitive ability may be due to other factors such as aging, stress and anxiety or other factors. The disclosed system is capable of assisting patients in all of these situations, and is thus not limited to diagnosed medical conditions such as Alzheimer's disease or dementia.

[0015] In one aspect, the disclosed computer-implemented system employs a memory having a data structure configured to store electronic data indicative of a patient's cognitive ability. The computer system is programmed to dynamically present information based on the patient's cognitive ability, as ascertained by accessing the data structure.

[0016] In another aspect, the computer system is programmed to acquire and store context information relevant to the patient.

[0017] The computer-implemented system uses patient's cognitive ability, and/or context information to customize how interaction with the patient, and also with third parties, such as the patient's caregiver is performed. As used herein, the term "caregiver" is intended to refer to any person who provides assistance to the patient, including family members, doctors, professional nursing home staff, and the like.

[0018] The computer-implemented system, based on the patient's cognitive ability and/or context information, dynamically renders assistive information to the patient, dynamically and automatically launches computer applications to assist the patient without the necessity of the patient's mindful interaction. The system dynamically adapts and customizes the presentation of information to the patient by adapting the content and complexity of messages presented, by adapting the modality of multi-modal devices used by the patient, including providing audible and visual information to the patient based on cognitive ability and/or context. The audible information may include speech, which the system is able to dynamically adapt to suit the abilities and needs of the patient, as by adapting the vocabulary, speaking speed, grammar complexity and length of messages based on cognitive ability and/or context.

[0019] In another aspect the system employs a computer-implemented data store configured to store plural items of information about a time-based event for a patient having reduced cognitive abilities. A networked computer system coupled to said data store provides a first information communicating interface to the patient and a second information communicating interface to a caregiver associated with the patient.

[0020] The data store has a data structure in which to store electronic data indicative of the patient's cognitive ability. The networked computer system is programmed to receive, through the second interface, plural items of information about a specific time-based event and is further programmed to store the received plural items of information as a record in said data store associated with said specific time-based event.

[0021] The networked computer system is further programmed to supply information to the patient through the first interface in a fashion such that the stored plural items of information associated with the even are used to construct a dynamic message communicated to the patient in increasing levels of detail as the time of the event draws nearer.

[0022] In addition, the networked computer system is further programmed to access the data structure storing said electronic data indicative of the patient's cognitive ability so as to control the manner in which the dynamic message is delivered to the patent based on the accessed electronic data.

[0023] Further areas of applicability will become apparent from the description provided herein. The description and specific examples in this summary are intended for purposes of illustration only and are not intended to limit the scope of the present disclosure.

BRIEF DESCRIPTION OF THE DRAWINGS

[0024] The drawings described herein are for illustrative purposes only of selected embodiments and not all possible implementations, and are not intended to limit the scope of the present disclosure.

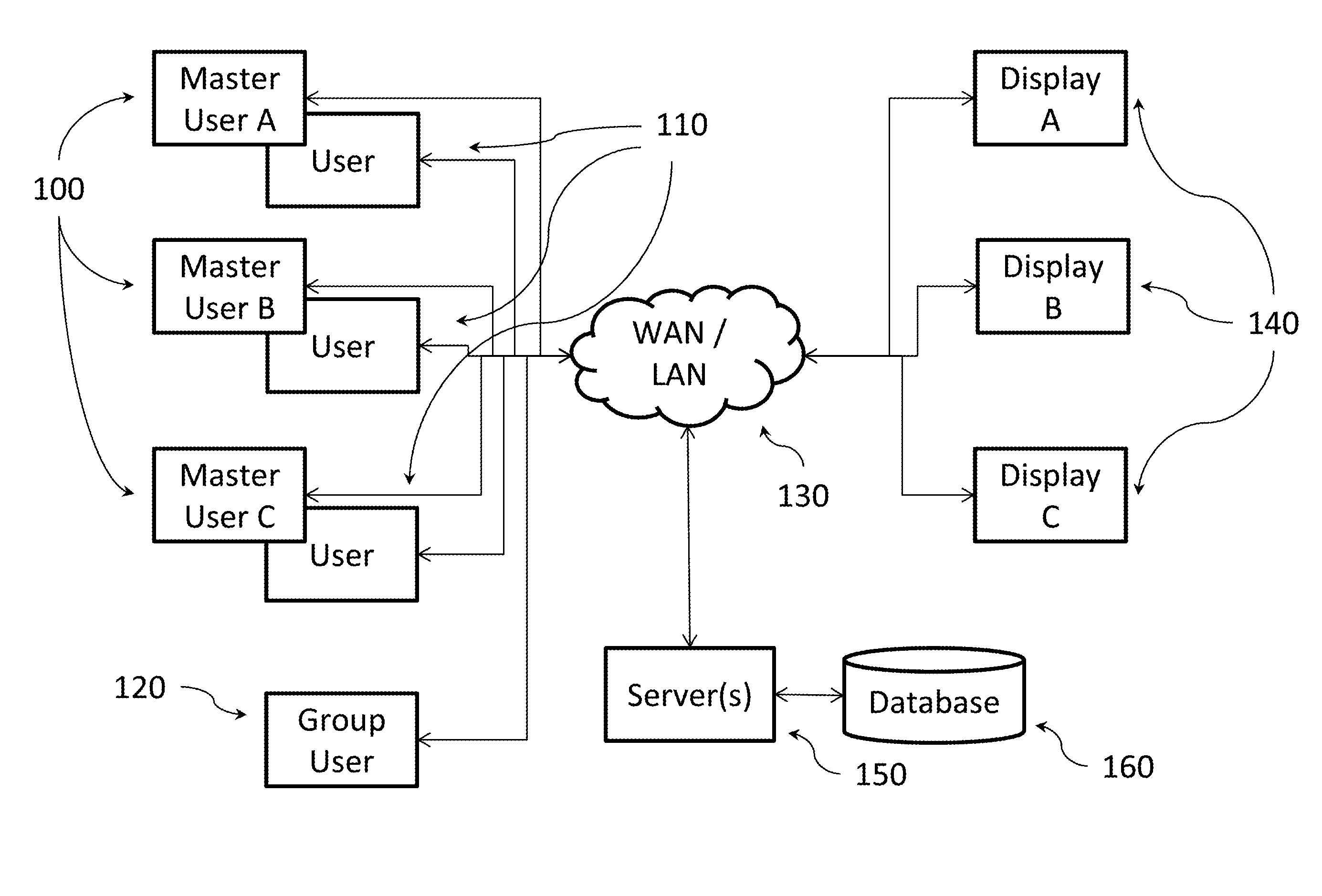

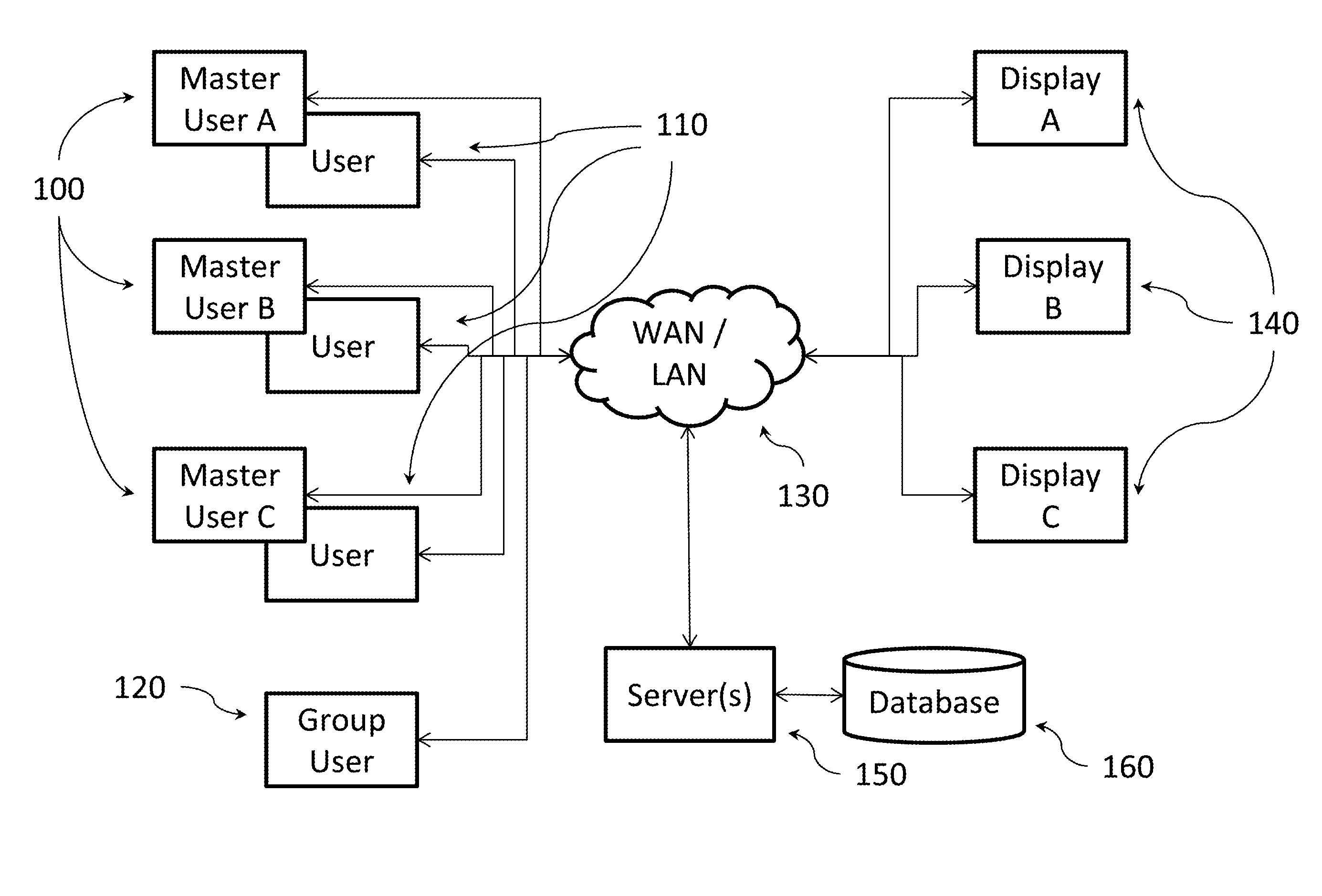

[0025] FIG. 1 shows a general system architecture showing users, display devices, server-based system, and network.

[0026] FIG. 2 shows an example messaging seen on a display device.

[0027] FIG. 3 shows an example messaging seen on display device after a reminder was acknowledged.

[0028] FIG. 4 shows examples of alternative approaches for where to place system logic within the overall system.

[0029] FIG. 5 illustrates exemplary database elements used by the system.

[0030] FIG. 6 provides an example of a simplified flow of logic for the display device.

[0031] FIG. 7 shows the relationship of group messages and different types of users for a particular display device.

[0032] FIG. 8 shows an example process for setting up a relationship between group and master users for a display device.

[0033] FIG. 9 illustrates a subset of system for illustrating reminder acknowledgement.

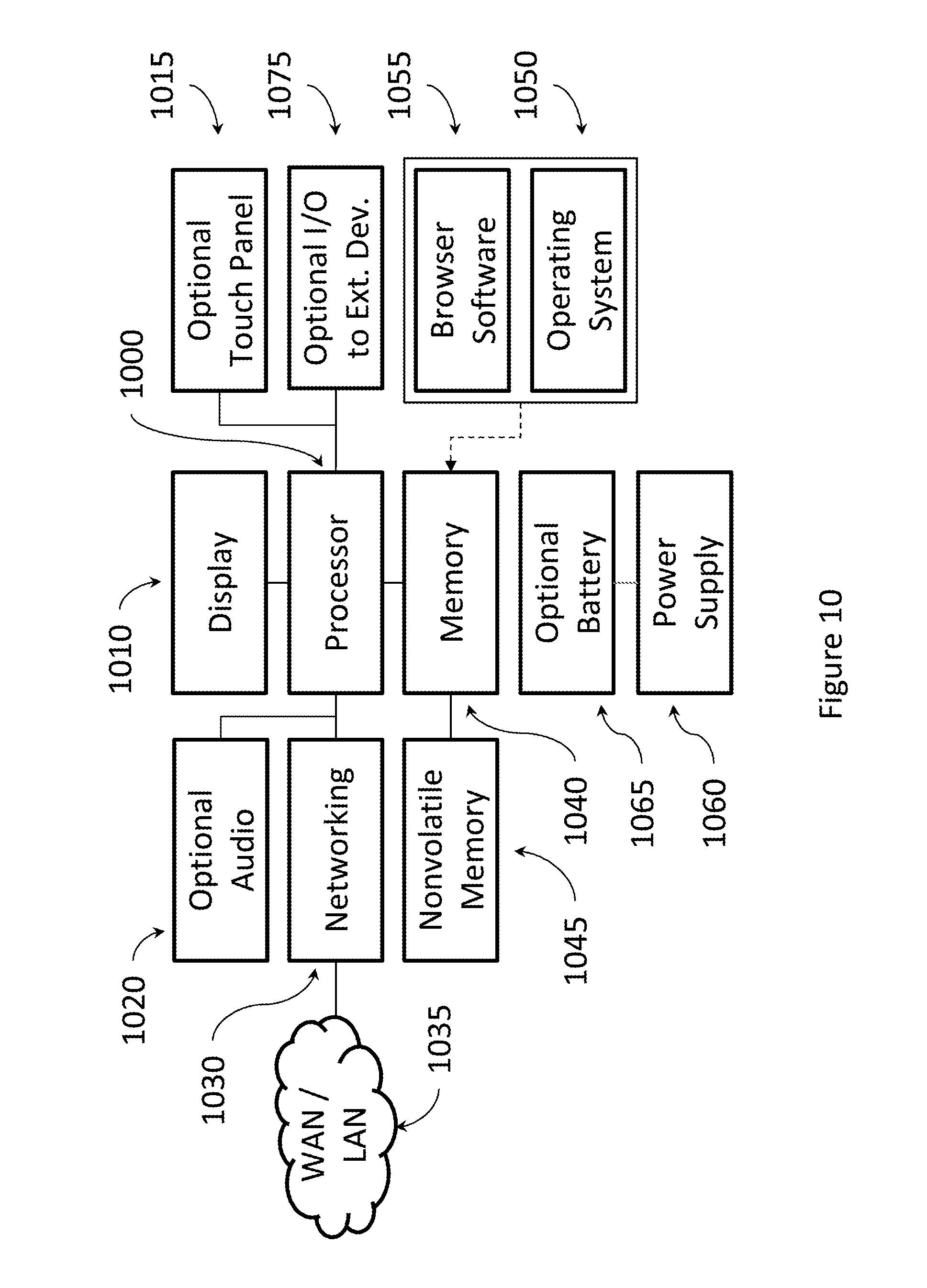

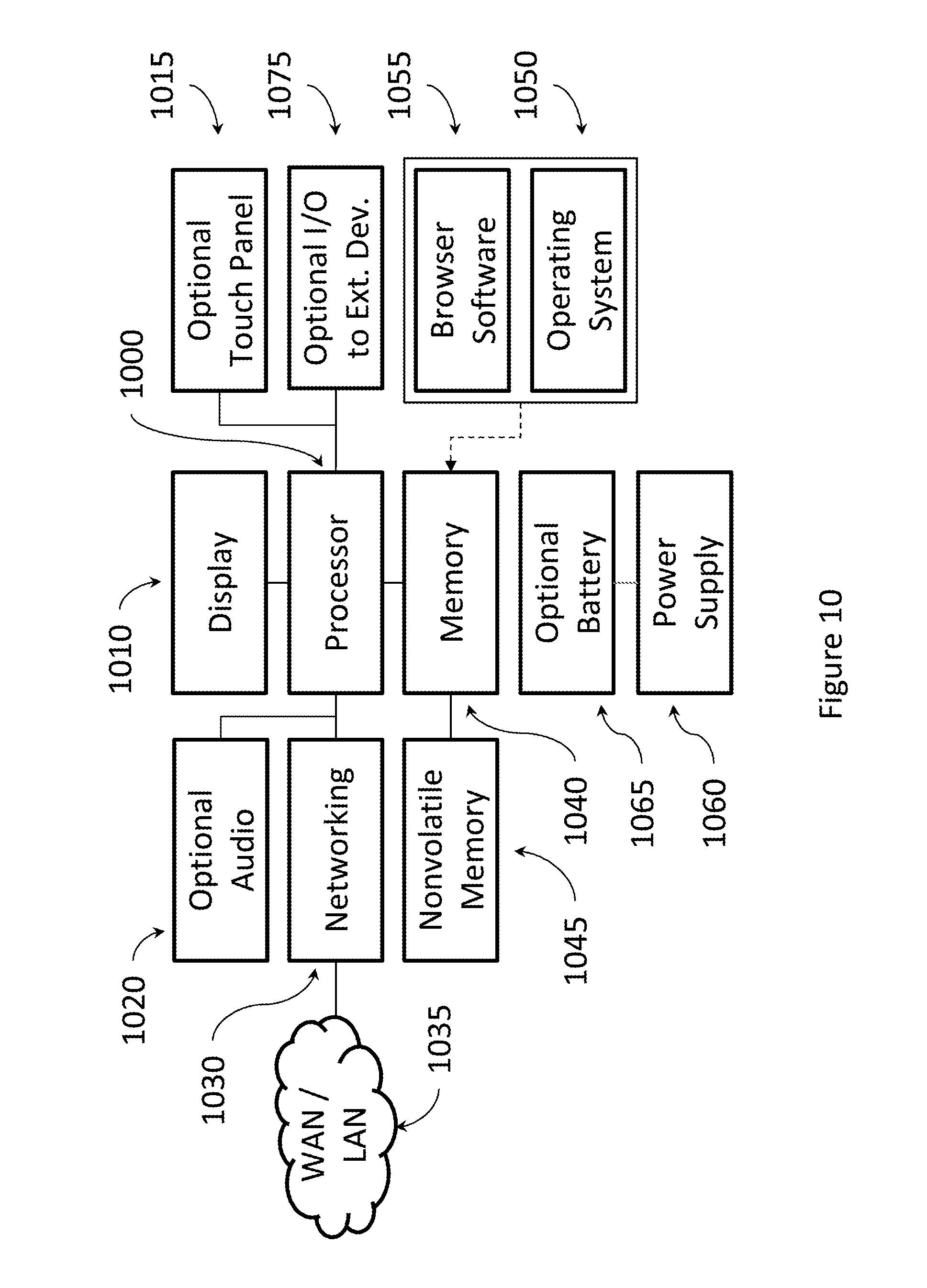

[0034] FIG. 10 shows an exemplary hardware block diagram for a display device.

[0035] FIG. 11 illustrates an example user interface for creating and/or editing reminder messages.

[0036] FIG. 12 illustrates an example user interface as seen by a master user for reviewing all active reminders and messages for a particular display device.

[0037] FIG. 13 illustrates an example user interface as seen by a regular user for reviewing all active reminders and messages for a particular display device.

[0038] FIG. 14 illustrates an example of a similar user interface formatted for smart phones and other mobile devices.

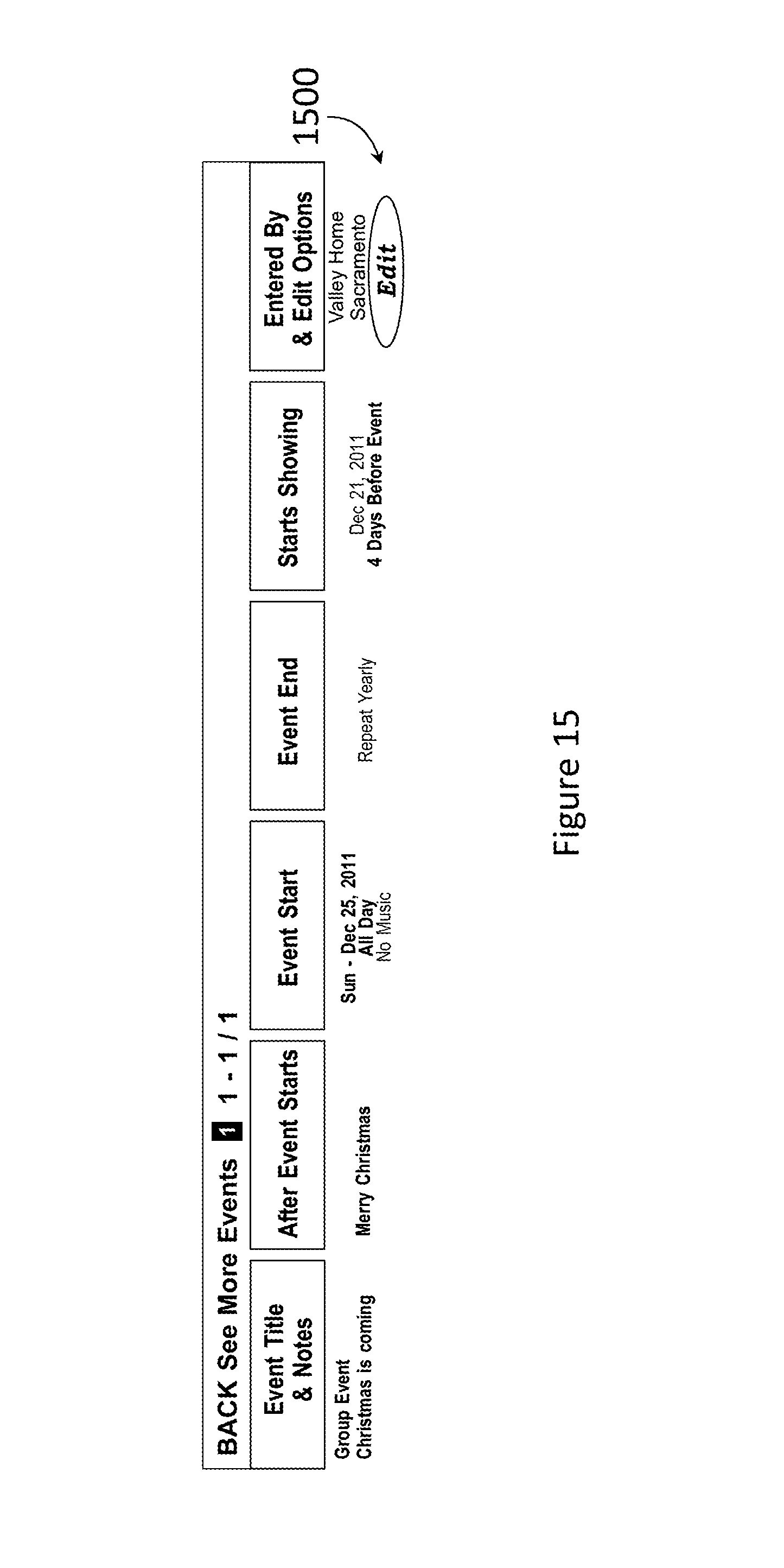

[0039] FIG. 15 illustrates an example user interface as seen by a group user for viewing group reminders and messages.

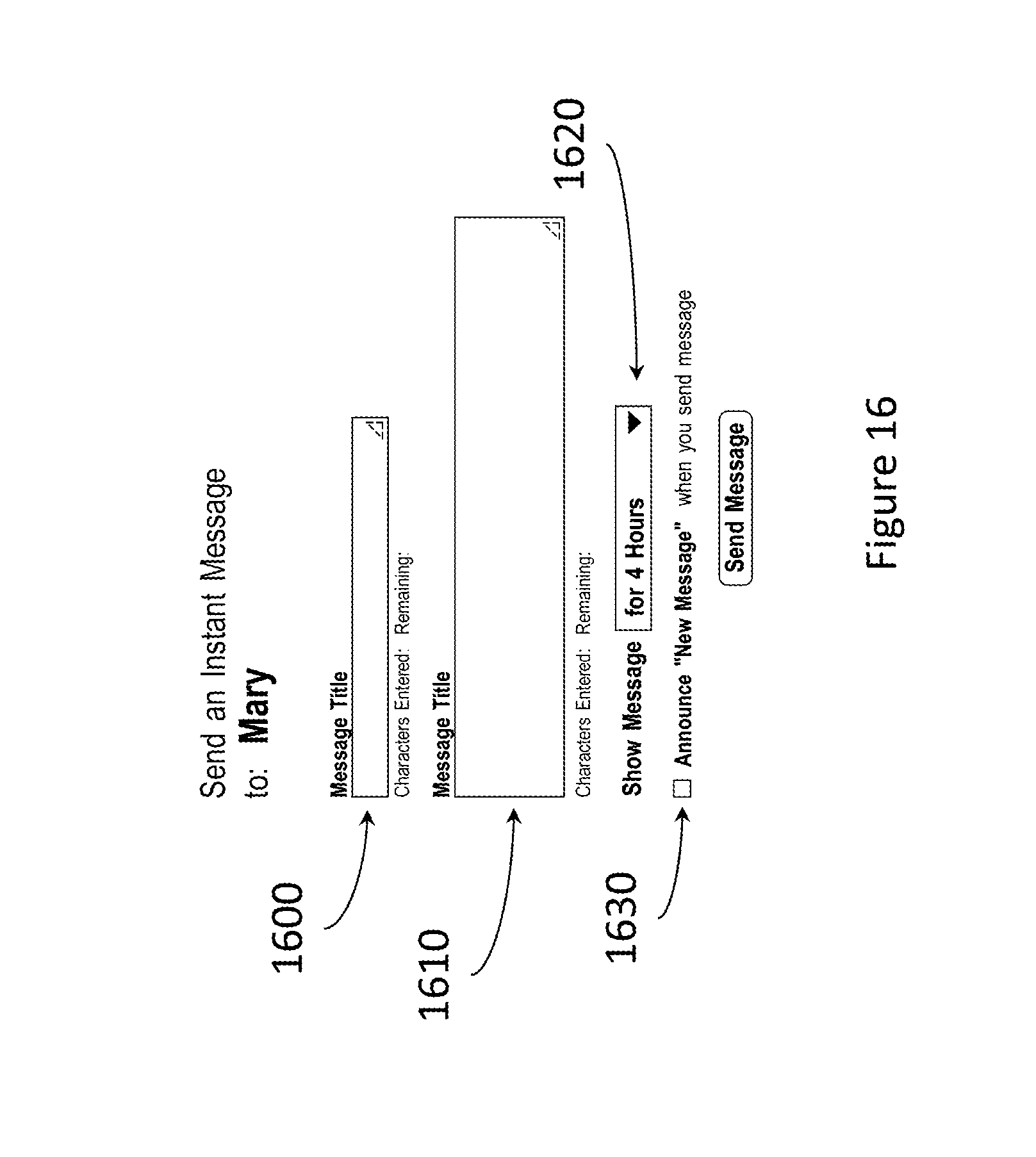

[0040] FIG. 16 illustrates an example user interface for creating or editing an instant message.

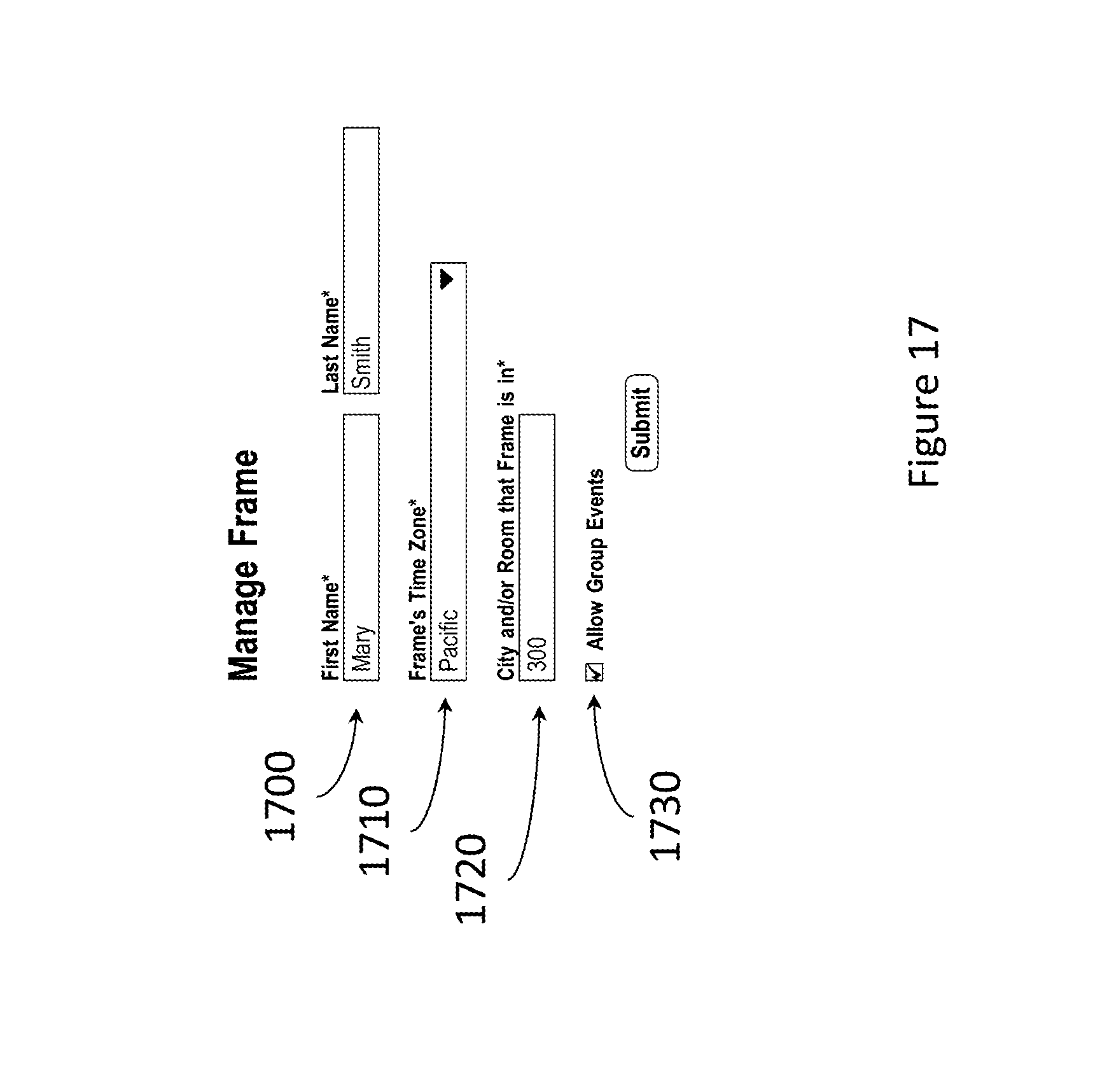

[0041] FIG. 17 illustrates an example user interface for managing parameters for a particular display device.

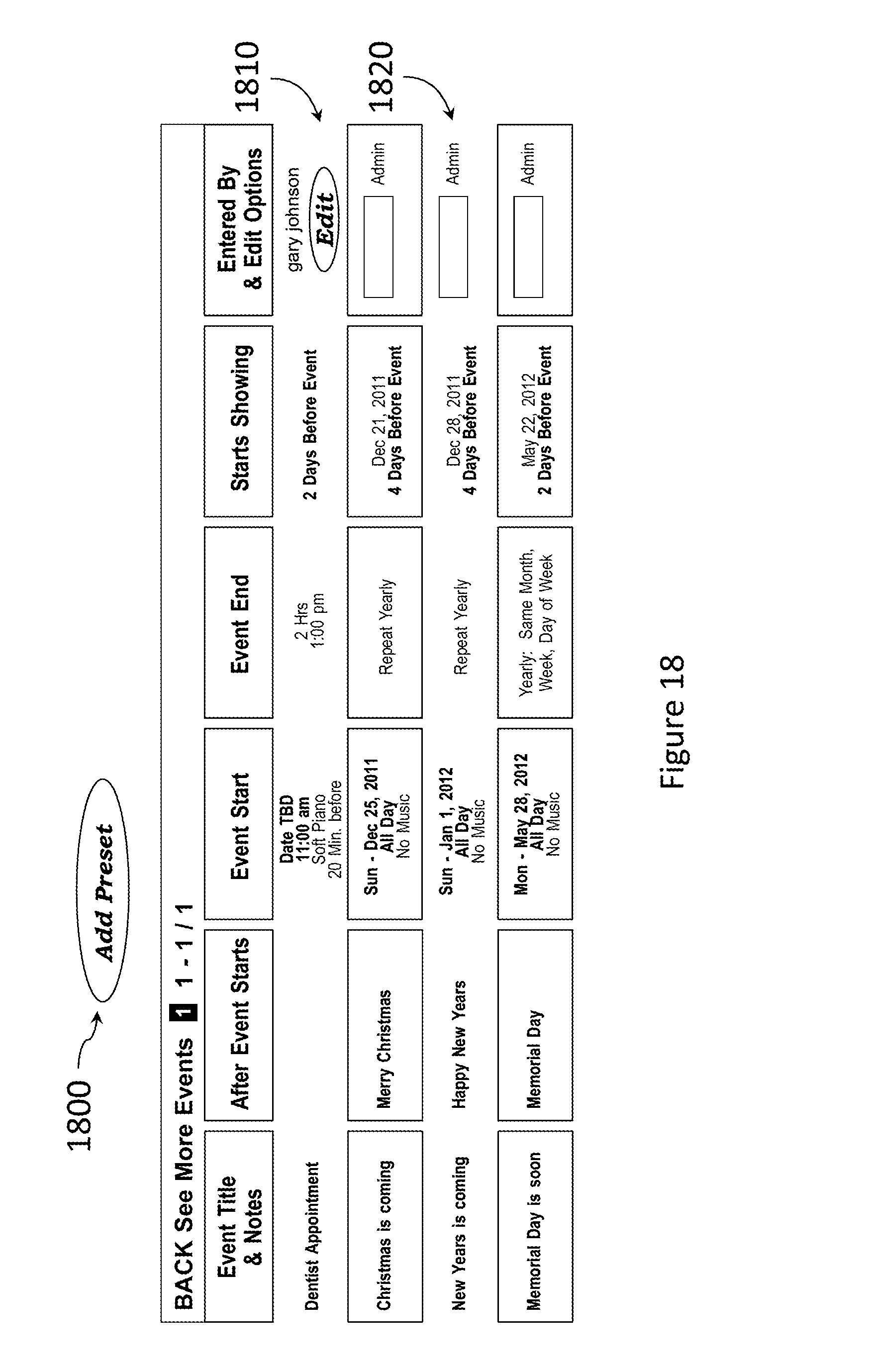

[0042] FIG. 18 illustrates an example user interface showing existing preset reminders.

[0043] FIG. 19 illustrates an example user interface for selecting a preset reminder.

[0044] FIG. 20 is a diagram for showing how a display device's health can be monitored.

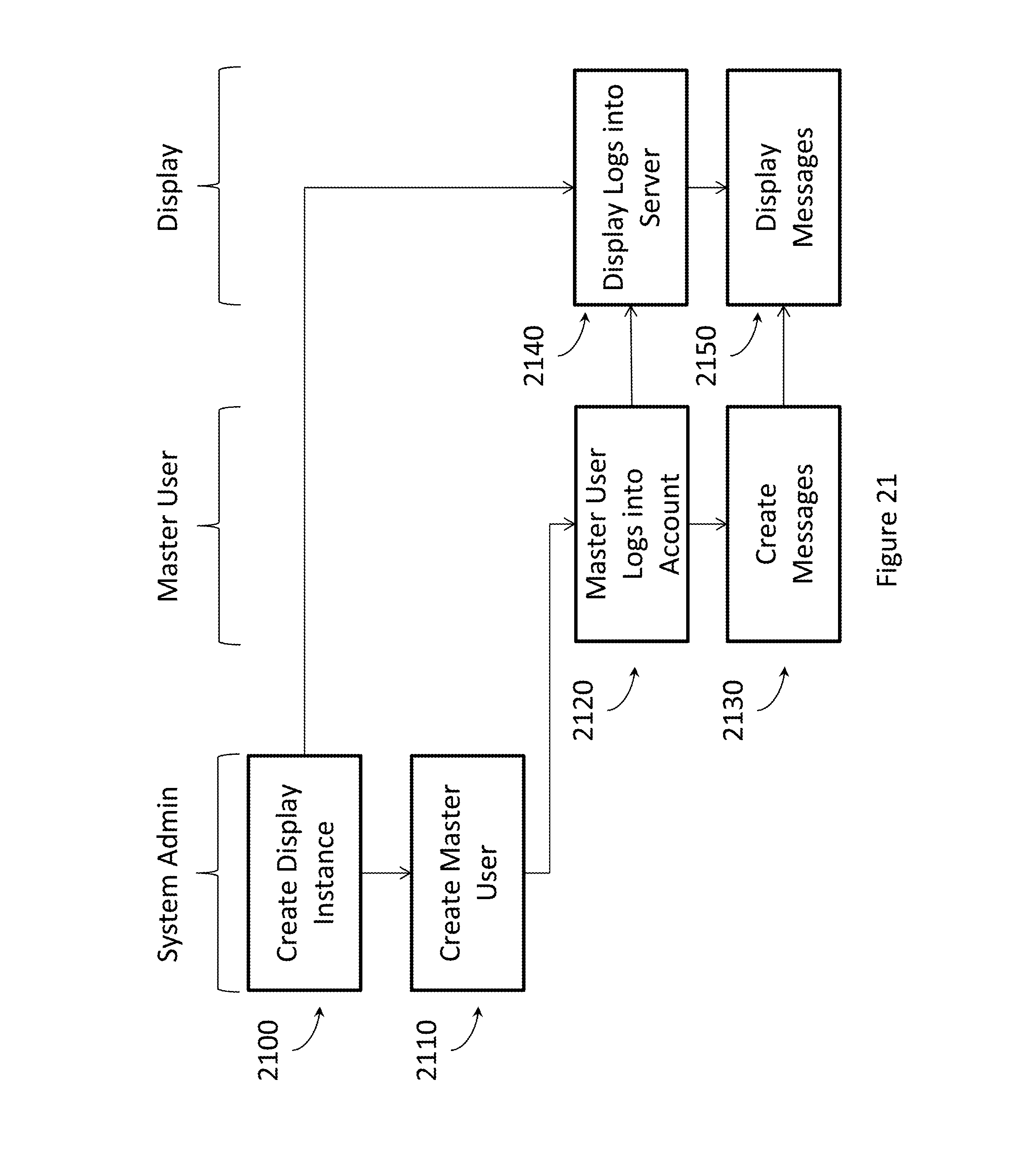

[0045] FIG. 21 depicts the display and master user setup.

[0046] FIG. 22 depicts an example display device showing a few example reminder messages.

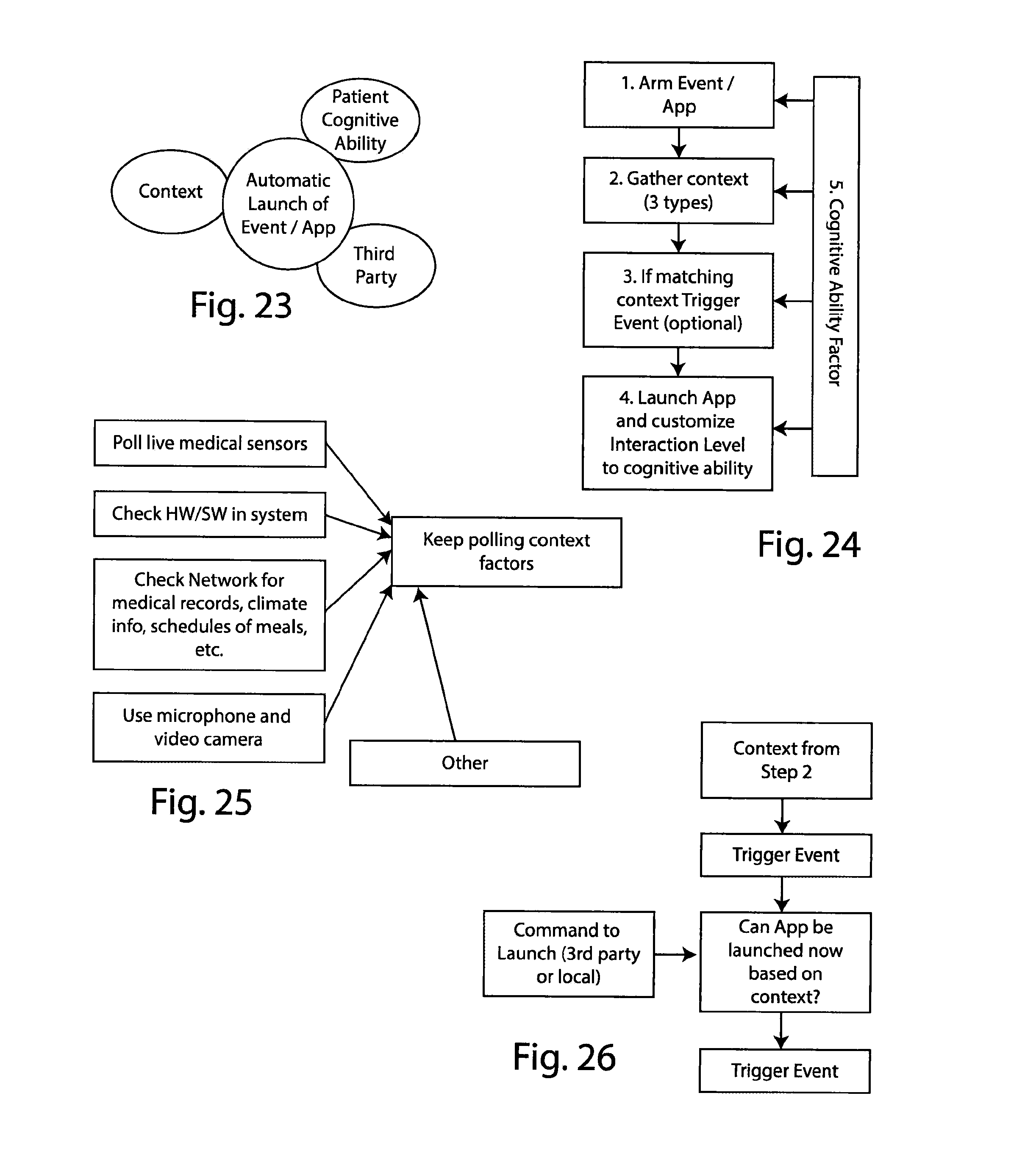

[0047] FIG. 23 is an entity diagram illustrating basic components of how an event or application is launched automatically using the disclosed system.

[0048] FIG. 24 is a high level flowchart diagram illustrating how cognitive ability factors into the launching of an event or application.

[0049] FIG. 25 is a flowchart depicting how context is gathered and used by various components within the system.

[0050] FIG. 26 is a flowchart illustrating the trigger event flow implemented by the system.

[0051] FIG. 27 is a flowchart illustrating the event launch flow implemented by the system.

[0052] FIG. 28 is a use case diagram showing an exemplary use of the system.

[0053] FIG. 29 is an interaction diagram showing how the interaction level of the system is customized based on cognitive ability and based on preferences and technology context information.

[0054] FIG. 30 is a diagram showing how cognitive ability is modeled by the system, as reflected in the cognitive ability data structure maintained in computer memory by the system.

[0055] FIG. 31 is a block diagram showing one tablet-based, web-enabled system embodiment.

[0056] FIG. 32 shows an example screen display with several exemplary applications/events launched.

[0057] FIG. 33 is a block diagram showing the computer-implemented system and its associated database and data structures.

[0058] Corresponding reference numerals indicate corresponding parts throughout the several views of the drawings.

DETAILED DESCRIPTION

Overview

[0059] The disclosed system lets people (e.g. friends, family, administrators) in a remote or local location create reminder messages that will show at the appropriate times and with appropriate messaging on a relatively simple display device. This display device need not have any controls that the viewer interacts with, so a person with Alzheimer's disease does not need to learn how to operate it. The only interaction that this display device needs happens during a one-time initial setup step, and optional reminder acknowledgements that require only the press of one button.

[0060] The system works via a network, such as the Internet and/or local area network (LAN). People (friends, family, administrators) interface to the system via any modern browser. The system, in turn, interacts with the display device via the network.

[0061] The system accommodates multiple display devices and multiple accounts. More than one person can be given the ability to create a reminder message. A master account(s) is also given the ability to edit messages from other accounts, as well as other privileges. For situations, such as an assisted living home, a group administrator account can send messages to groups of display devices, or to just one display device. However, accounts that are associated directly with a particular display device can hide such group messages if needed.

[0062] Account holders associated with a particular display device can see each other's reminders, including group messages, so that friends and family can be informed about the planned or current activities of the person for which the reminders are intended. However, group account holders can only see their own group messages, unless permission is granted otherwise, to preserve privacy.

[0063] Messaging can be set up in advance, and made to appear at the appropriate time relative to the event they refer to. The content and level of detail of the messaging, including audio, changes according to how close it is to the event in question. Once the event starts, messaging continues until the event is finished, and the content of this messaging changes according to when it is relative to the end of the event.

[0064] Reminders can be programmed to automatically repeat at specified intervals, from daily to yearly, to accommodate a variety of situations and events.

[0065] Reminders can optionally require that an acknowledgement by the viewer take place. Multiple acknowledgement requests can be active at one time. If such a reminder is not acknowledged, remote users (friends, family, and administrators) can check the status and/or receive an alert via a short message service or email.

[0066] Preset reminders exist to help save typing. Account holders can use system defined preset messages or create their own for future use. Preset messages can be customized by the account user.

[0067] Messages can also be "instant messages" that are not tied to any particular event. Such instant messages appear relatively quickly and do not require any action by the viewer to see.

[0068] To avoid potential failure situations, such as equipment failure, loss of power or communications, the system can monitor the health each display device and alert the appropriate account holders and/or administrators of such a failure.

[0069] In one aspect the system focuses on providing hybrid care assistance dependent on the cognitive abilities of a patient, ranging from full third party control to shared control to an independently functioning patient, in an automatic and natural manner. Third party control of the system can be local or remote. Further, the system itself will adapt the level of interaction provided to the patient based on further improvement or decline in cognitive ability.

[0070] Thus, the system works to automatically and naturally adapt the triggering of events (e.g. launching applications/events on a display) based on the following core functionalities: [0071] A. Arm/set the event/App by a third party (e.g. family member) [0072] B. Estimation of an event context. The context can take several forms (medical, situational, etc.) depending on the event to be triggered [0073] C. Launch of the event based on matching the context with present situation and the person cognitive ability.

[0074] In addition to the above core functionalities, the system also offers an number of additional advantages, including the following: [0075] An application can be triggered automatically in a non-intrusive way (e.g. audio message when the patient is not sleeping) [0076] The launch and the interaction with the application can be customized to the patient cognitive ability. [0077] The patient can enjoy a number of services (e.g. see family pictures, video conference or reminders) without having to know how to launch the application/event. [0078] Personal preferences of the patient can be taken into account to customize the system's services. [0079] The solution includes implicit interaction (from the patient point of view) with explicit interaction (from the third party who arm the event/application point of view) [0080] Our solution is about when to launch an application/event, and how to launch an application/event.

DETAILED DESCRIPTION

[0081] Example embodiments will now be described more fully with reference to the accompanying drawings.

[0082] FIG. 1 illustrates the general system architecture, showing a set of different types of remote users, a server system and a set of display devices (or simply "display" or "displays" in subsequent descriptions). Each User 100, 110 interacts with the system via the network 130, which can be a combination of wide area (such as the Internet) or local area networks. Each user is associated with a particular display 140. In the illustration, Master User A 100 and a related normal User 110 interact with Display A. In turn, there is a separate set of users associated with display B, etc.

[0083] User accounts center around the display. There is at least one Master User 100 associated with each display. The Master User has ultimate control over how the display looks. The Master User can do the following: [0084] Create new event reminders and messages. [0085] Edit event reminders and messages that they created or those created by any other Master or Normal user that belongs to the managed display. [0086] Create new Master or Normal users for their display. [0087] Controls whether or not Group events are enabled on their display (see Group users). [0088] Hide or Show a specific Group event reminder that was sent to their display. Hiding a Group event reminder might be necessary if this event is in conflict with another event that the Master (or Normal) user has planned. [0089] See event reminders and messages that anyone has created for their display. [0090] Create and edit preset event reminders that others can also use. [0091] Change the display's details, such as names, location and time zone. [0092] Change their own user details, such as names, username, email address and password.

[0093] Normal or regular Users 110 can place messages on this display, but have fewer privileges: [0094] Edit event reminders and messages that they created. [0095] Hide or Show a specific Group event reminder that was sent to the display. [0096] See event reminders and messages that anyone has created for the display. [0097] Create and edit preset event reminders that others can also use. [0098] Change their own user details, such as names, username, email address and password.

[0099] Group users 120 can be associated with more than one display. FIG. 1 only shows one group user to illustrate a situation where there are three displays (A, B, C) at a particular facility that this group user has access to. Master Users can do the following: [0100] Invite a Master user to join this Group by sending them an email invitation. To send such an invitation, the Group user will need to ask for the Master user's `username`. The Master user that receives the email invitation to join the Group must then click on a link to accept the invitation. The Master User still reserves the right to disable any or all Group events from showing on their display. [0101] Create and edit Group event reminders and instant messages that go to all displays enabled to accept group events from this Group user. [0102] Specify that an event reminder or instant message go to just one display (instead of all displays). [0103] Group users cannot see the event reminders and messages that have been created by Master and Normal users for any particular display, unless permission is given to expose a particular item. [0104] Change their own user details, such as names, username, email address and password.

[0105] The Server 150 manages the system, including the access to the system by each of the users and the updating of each of the displays. Again, FIG. 1 only shows a subset of what is possible because one server 150 can manage a number of set of users and displays scattered around the world. Databases 160 store the all of the information on all users, displays and messages. Sensitive information, such as passwords and email addresses are kept encrypted.

[0106] In a typical operation a user interacts via a web browser or dedicated application with the system to create a reminder. This reminder is stored in the database and the server then determines which reminders should go to each particular display at the appropriate times. Users can view the status of all reminders and messages, including making edits and hiding messages as appropriate.

[0107] The displays merely display the messages that they are sent. Optionally, they can do a small amount management of these messages to minimize the amount of communications needed during operation. Optionally, these displays may provide a simple way (e.g. touch the display, verbally, etc.) for a viewer to respond to a message, if requested, and this response is sent back to the server.

[0108] FIG. 2 illustrates a typical type set of messages that might be seen on a display. Because complex messages and even graphics can lead to confusion if a person has Alzheimer's disease, messaging must be kept simple, direct, and appropriate to the situation.

[0109] The top of the display 200 simply shows the current date and time. The part of the day, such as "Morning" or "Evening" is also shown. Time and date are automatically obtained from the network. Since the display can be in one time zone while a user is in another time zone, the display's time zone is determined by a selection 1710 made by the display's master user.

[0110] In the sample display, event or reminder "titles" 210 are shown on the left because the illustration assumes people tend to read from left to right. Of course, different cultures can work differently, and so adjustments to how the display if arranged can be adopted to different countries.

[0111] Message titles are kept deliberately short by limiting their length in the input menu 1100.

[0112] The size and font color used to display the message title (and other parts of the messaging) change according to how close it is to the event in question. The closer it is to the event's start (and end if the event is of any length) the larger the font and more urgent the color.

[0113] Since message titles might be too short from some occasions, a second line 210 is allowed for putting additional messages or instructions. This second line is optional and it can be made to not appear until the time gets closer to the event. This delayed showing of the second line follows the assumption that showing too much information too early would only confuse the reader.

[0114] Additional messaging 240, 250 is added to reminders to give clues on when an event is to take place. The wording of the supplemental timing messages is designed in a simple conversational style. It would be too confusing to the reader to say that an event is supposed to start at "11:30 AM, Apr. 10, 2011" if it something like "In about 2 Hours" can communicate the same thing. The algorithms for how such timing messaging works can be fairly involved and must be tailored to the cultural and language norms of the viewer. As always, messaging must be kept to a minimum; but, it can also be a problem if too little information is given.

[0115] FIG. 22 shows more sample messaging on a working display.

[0116] The sample message "Morning Pills" is asking for a response--in this case the pressing of the "OK button" 220. Instructions on how to respond can be given verbally or by other means. In this illustration, the OK button is simply a graphic on the display, and the system senses the pressing of this button by using a touch sensitive display 1015 system. The status of the response can be monitored, as is explained later.

[0117] FIG. 3 shows a similar sample display to FIG. 1, but with one difference--the OK button has been replaced with a checked box icon 320. This icon, or a similar type indicator, tells the viewer that the message was acted on. Sometimes people will forget that they already acted on something that they regularly do, such as taking pills. The checked box icon serves as another form of reminder.

[0118] FIG. 4 shows a couple of ways to distribute the system's logic.

[0119] The top version places almost all logic in the server side 400, 410 and the display 430 is not much more than a thin client, such as a browser connected to the Internet 420. Such an arrangement means that off-the-shelf products, such as modern tablet computers can be used for the display.

[0120] In this arrangement, the tablet computer is basically used as a browser display. HTML and PHP commands in various web pages determine what to display and when to display it.

[0121] Refreshing of the display just after the top of each minute, or at other selected times, is programmed into the webpage by reading the network time and calculating the time for the next auto-redirect command (header(`Location: page_url.php`)). Upon each refresh the display can update the displayed time of day, retrieve new messages and update the wording and fonts of currently active reminder messages. Audio can be played, if required, via commands found in HTML5, or alternatives.

[0122] The bottom version places some of the messaging logic into the client side 440. Information on future events can be stored locally in the display's local database 460. Algorithms that have been placed into the display's system can then determine what to display at any given time without having to communicate with the server's system. The display will still need to periodically communicate with the server to get message updates, but such communication can be less frequent. Much of the system's logic, particularly for Master, Regular and Group interfacing, account management and general system management, etc. still resides in the server 450.

[0123] Implementation can be done a number of ways. In one version, software code could effectively be downloaded into the display's browser using a language such as AJAX.

[0124] In another version, the display could contain a software application that stays resident in nonvolatile memory, if present. This software can be made to automatically execute when the display is first turned on. This means that power and communication interruptions can be automatically addressed.

[0125] FIG. 5 shows categories of typical database 500 tables used in the system. During operation the algorithms stored in the server access the following database tables to determine how to handle each display, user and situation.

[0126] A table for Displays 505 contains information about each individual display, such as the names associated with the display and time zone. The table for Users 510 contains information on each user, including their names, contact information, passwords, and type of user account. Users found in this table are associated with a display or set of displays (if this is a group user). The Messages table 515 holds all of the messages, including information on how and when each individual message should be displayed, who created the message and type of message. These three tables comprise the core of the database used by the system.

[0127] In addition to the core tables, there are a number of important supplemental tables. The Display Checks table 520 is used to store the health of each display. The Presets table stores predefined messages that can be used to save some typing. These preset messages contain most of the same information as regular messages stored in the Messages table. The Group Requests table is used to store requests that a Group User has made to Master Users to join a group. The Group Hide table is used to store information that determines if a particular Group message should be displayed on a particular display, or not. The OK Buttons table stores the status of responses for each message that requires such a response. The Instructions table is used to store localized (different languages) instructions and wording for the user interface. The Images table is used to store images that can be associated with particular messages. The Audio table is used to store audio files in appropriate formats that can be associated with particular messages or situations.

[0128] FIG. 6 begins to show how the algorithms and database tables work together to manage all of the displays.

[0129] At periodic times the database 600 is looked at to see which messages are currently active 610. A message is active if the entry in the Messages table indicates that a message should be displayed based on the current time zone, date and time 650.

[0130] Next, if a particular message comes from a Group User, the Group Hide table is accessed 615 to determine if this message should be displayed on this particular display.

[0131] Next, the OK Button table is accessed 620 to determine if a response is required at this particular time or not. A message can be displayed without requiring a response until a predetermined time before the event is to start. Thus, for example, a viewer can see that an event is about to come up, but a response from the viewer is not asked for until the event is just about to happen.

[0132] Next, based on parameters stored in the Messages and other tables, the exact wording and choice of fonts is compiled 625. How messaging is tailored to meet each situation is perhaps just as much an art as a science, but the important element of this disclosure is that such tailoring is integral to the system.

[0133] Next, if there is any audio associated with the message or situation, the Audio table is accessed and compiled 630 into the message as appropriate. As with the wording and fonts chosen earlier in the previous step, audio can be tailored, too.

[0134] Similarly, if there are any graphics or images associated with the message, these are also integrated in 635. Again, tailoring to fit the situation can be done.

[0135] Finally, the complete compiled message is rendered on the display 640. This includes any text, audio and/or images that were determined to be part of the message in earlier steps. The display and message is then refreshed as necessary based on the refresh timer.

[0136] FIG. 7 shows a part of the relationship between a Group User 700, Master User B 715 and regular User B 720 when placing messages on a particular display.

[0137] The simplest situation is when a Master User wishes to place a Message B1 730 onto the Display B 760. Since Display B is managed by this user, the message is allowed. Other displays in the network, such as Display A, Display C 770 and Display D 780, ignore Message B1. Similarly, User B can place a Message B2 735 onto the same Display B because this user has been authorized by Master User B to do so.

[0138] Master User B also has the ability to edit or delete Message B2 that was created by regular User B. But, while regular User B can also edit Message B2, this user cannot edit Message B1 created by Master User B.

[0139] Both Master User B and regular User B can see all off the messages that are directed to Display B, whether or not they are currently showing on this display.

[0140] The Group User in this diagram is shown as creating two Group Messages 705, 710. These group messages potentially go to all displays 760, 770 that belong to this group, but not displays 780 that are not part of this group, even if such displays are on the same network.

[0141] When a Group Message is directed at any display, the Master and regular Users associated with that display also see this message. If either the Master or regular User decides that a Group Message conflicts with an event that they are planning, these users have the ability to hide this Group Message. Each individual Group Message can be allowed to show or be hidden, so Group Message 1 705 can be hidden 745 independently from Group Message 2 710 being hidden 750. Decisions by this Master User B and regular User B do not affect what is shown or not on other Displays 770, 780.

[0142] FIG. 8 shows the flow of activities that determine if a particular display is part of a particular group. Control of the display belongs to that display's Master User, so the Group User must first ask for the Master User's username 800. If the Master User agrees 810, the Group User can then send that Master User an invitation via email to join the group 820. This email contains a special link with an encrypted key that, when clicked, takes the Master User to a web page that displays the group the display just joined 830. From this point the Group User's Group Messages will be seen on the display in question 840, unless the Master User decides to remove this display from the group 850 or hide that particular Group Message 860. Normal Users can also hide individual Group Messages (similar to step 860), but cannot remove the display from the Group.

[0143] FIG. 9 shows a variation of FIG. 1, and is used to illustrate how the OK button or acknowledgement system works. First, a reminder message is created by the Master 900, regular 910 or Group User 915 that specifies the need for an acknowledgement by the display's viewer. The message is saved in the database 980 and served up 970 to the display 940 at the appropriate time. Then, at the specified time, the OK button is displayed 950, along with any other verbal or visual prompts. Alternatively, an external device 960 can be activated to ask for some type of action. The requested acknowledgement is then made by the viewer and logged into the database 980. The various users can then see via a web page if the acknowledgement was made. Alternatively, the server can send a short message (SMS), email or even make a phone call.

[0144] FIG. 10 shows a typical hardware block diagram of a display. The display consists of a typical set of elements, including a processor(s) 1000, memory for instruction operation and variables 1040, nonvolatile memory 1045 for BIOS, operating system 1050 and applications 1055, power supply 1060 and optional battery 1065, display 1010 and optional touch panel system 1015, networking (wired and/or wireless) 1030 for connecting to the WAN/LAN 1035.

[0145] This display can be a stand-alone product or be part of another product. For example, this display can be integrated into a television. If so, the touch panel user interface might be replaced with a remote control arrangement. Since most all of the other elements are already part of today's televisions, these elements can be shared and leveraged.

[0146] FIG. 11 shows an example user interface screenshot for entering or editing a reminder message. This can be part of a webpage or be part of an application.

[0147] It begins with a place for entering the message title or headline 1100. There is also a place to enter a second line of description 1105. While there can be even more lines, this illustration limits descriptions to just these two parts to keep the message to the viewer simple.

[0148] Sometimes it helps to change the message once the event starts. For example, a message can read "Your Birthday Soon" on days leading up to the birthday, but read "Happy Birthday" on the day of the birthday. To accommodate this option, a second set of message titles and notes are allowed for 1110.

[0149] Each reminder message is then given a start date and time 1120. Some types of events, such as holidays and birthdays are really about the day itself, so events can be designated as being "All-Day" 1125.

[0150] If the event is not an All-Day event, the next thing to specify is how long the event lasts 1130. If the event lasts less than a day the length of the event can be specified in minutes, hours, etc. If the event takes place over multiple days, the end of the event can be defined by specifying a specific date and time 1140.

[0151] Next, one can specify when to start showing the event on the display 1150. The timing of when to start showing the event is highly dependent on the type of event and preferences of the users and viewers, and is not tied to the length of the event.

[0152] Optionally, audio reminders can be played to draw attention to an event. One can specify when to start playing such audio messaging 1160 independently from when the event starts to show, except that audio messaging should not start until the message shows visually. The type of audio messaging can be chosen separately 1165.

[0153] If an acknowledgement of the reminder message is required, there is a checkbox that the user can check 1170. Further, if the user wishes to be alerted if acknowledgement is not given after a specified period of time (by the end of the event), another checkbox 1175 is provided for doing so.

[0154] If the event repeats in some predictable way, the system lets the user specify how this event should repeat 1180. A number of repeat options, from daily to yearly and several options in between can be provided. Unlike calendar systems used in PC, PDA and phone systems, only one occurrence of a repeating event is shown at a time to avoid confusion by the display's viewer.

[0155] FIG. 12 shows a typical user interface that Master Users see for reviewing and managing all of the messages scheduled to show on the display. This can be found on a webpage or be part of an application.

[0156] The interface shows information about which display it is showing 1200, plus other supplemental information such as the time where the display sits, and if this display is enabled to accept Group Messages 1205.

[0157] There is a button for showing the information in a format that is friendlier for mobile devices 1210 (automatic switching to this mode is also possible). There are buttons for adding a new reminder message 1215 or instant message 1220. There is a button to see what the display itself looks like at the moment 1225. There are other buttons for displaying help and infrequently used administrative functions 1230.

[0158] The main table shows a summary of all of the active events currently lined up for this display. Table columns show titles and notes (1240, 1245), information on when events start and what the display should do at various times 1250, information on when events end and if or how they should repeat 1255, information on when events should start to show, or if they are currently showing on the display 1260. There is also information on if acknowledgements will be requested, or if an acknowledgement has been given or not, and if an alert should be issued if an acknowledgement is missed 1270. A final column shows who created the message 1275, and shows an edit button if the message is one that this user can edit 1280. Since FIG. 12 is for a Master User, this user has edit privileges for any message created by any other Master or regular User. The edit button can be made to look slightly different if the particular message was made by someone else 1280.

[0159] Not illustrated is flag that appears if two or more events overlap or conflict. Since different users can be placing event reminders onto the same display, one user could accidently create an event that conflicts with another, so it is important to give some indication of such a conflict.

[0160] For Group messages, instead of an edit button, there is a button that is used to hide or show that Group message. In this case, the button shows an open eye 1285 if the message is visible, but a closed eye if not. Specific implementations of this feature can be different according to user interface preferences.

[0161] FIG. 13 shows a similar illustration for managing reminder messages, only this one shows what it might look like for a normal User. Since a Normal user can only edit messages that they entered themselves, the edit button only shows on a subset of the listed messages 1300, and not for messages that others have created 1310. Since normal Users can hide and show Group Messages, they still see the button for doing so 1320.

[0162] FIG. 14 shows what these interfaces might look like for a mobile device. Only part of the overall interface is shown--the rest can be seen by scrolling or paging. There is also a way to get to the "full" interface 1400.

[0163] FIG. 15 shows what the interface might look like for a Group User. Since the example being used only had one Group Message in it, only one message 1500 is shown on this table. Unlike Master and normal Users, a Group User can edit a Group Message, so we also see an edit button.

[0164] FIG. 16 shows a typical interface for sending an Instant Message to a display. A place for a message title 1600 and second line of details 1610 is given. A way to specify how long the message should be displayed is then provided 1620.

[0165] Unlike typical instant messages used on phones or PCs, the viewer of the type of display described in this disclosure is a more passive viewer. No action is required by the viewer to get the message onto the display, but at the same time, there is no guarantee that this person will ever notice the message. To draw attention to the message an audio notice can be specified 1630. Alternatively (not illustrated here) a message can be made to ask for an acknowledgement, similar to messages illustrated earlier.

[0166] FIG. 17 shows a part of the system's administration functions--in this case the management of the display (or "Frame" as it is called in the illustration). Each display can be given a name 1700, which is generally the name of the person that will be viewing the display. Next, is a way to specify the display's Time Zone 1710. Alternatively, time zone information can be obtained via the network that the display is connected to.

[0167] Some form of location description, such as city or room number can be specified next 1720. The combination of display names and location help to uniquely identify each display.

[0168] If it is OK for this display to accept Group Messages, a checkbox 1730 is provided for doing so. This checkbox is automatically checked when the Master User clicks on the email invitation 830, but can be subsequently unchecked or rechecked at any time.

[0169] FIG. 18 shows an interface for managing Preset reminders. Some preset reminders are defined by the system and are shown here as coming from Admin 1820. Some presets might have been defined by a Master User, normal User or Group User. If the user has edit privileges (which follow rules similar to regular reminder messages), an edit button will appear 1810.

[0170] FIG. 19 shows a simple way for picking a Preset. Once Presets have been defined, they are available for picking 1900 when creating a new reminder message by clicking on a button for Presets (not illustrated, but it would be found on an interface similar to that shown in FIG. 11). Once a Preset is selected, it can be subsequently modified and customized. Thus, users are not locked into a particular set of parameters, dates, times, etc.

[0171] FIG. 20 shows a low-level operation designed to monitor the health of a given set of displays. Displays can be accidently turned off, lose power or communications, or have a hardware failure. Since the display is usually not near the people that manage it, there needs to be a way to get some indication about its health.

[0172] To do so starts with the display sending out a periodic "keep-alive" signal 2000 to the server via the network 2020. The frequency of this keep-alive signal can be preset 2010 and does not need to be too frequent, depending upon needs.

[0173] The server ("system" in this illustration) accepts the keep-alive signals from all of the displays that it is monitoring 2030. If one or more of the displays fails to send a keep-alive signal 2040, an alert can be sent 2050. Alternatively, a webpage can be updated to show the suspect display name and location.

[0174] Meantime, Users 2060 can view the status of the display and/or receive alerts even though they are nowhere near the display.

[0175] FIG. 21 shows another low-level admin function, display and Master User setup.

[0176] For each display a unique account needs to be created. This account can be created by a system administrator 2100. This administrator can be a service provider or someone at a factory. If the display is a unique device made specifically to work in this system, a unique account code, probably algorithmically generated, can be stored in the display's nonvolatile memory. If a service provider is creating the display's account, any number of means may be used to create unique codes. Once created, these unique account codes are also stored in the system database 160, 505.

[0177] Next, the system administrator creates a new Master User account. This account consists of a unique username and a pointer to a specific display. A password, advisably unique, is also generated. Again, if the display is made specifically with this system in mind, the Master User setup can be done in the display's factory. Alternatively, a service provider can create the Master User account details. Either way, once created, this information is also stored in the same database 160, 510.

[0178] Next, the new Master User is given the new username and password. This Master User then logs into the system 2120. Once logged in, this person can create new reminder messages 2130, create other users, etc., as described earlier.

[0179] Before or after this step the Master User installs the display where it is intended to be used (e.g. the person with Alzheimer's disease). Installation consists of logging the display into the system 2140. Logging into the system involves two steps. The first step is to establish a network connection. This connection can be accomplished in a number of ways depending upon the specific type of network connectivity used. Connectivity can be accomplished via various wired (e.g. LAN via a cable, modem via phone) or wireless (e.g. Wi-Fi, cellular, Bluetooth) means. For example, if there is an existing Internet service available via a Wi-Fi connection, the display would first need to establish a link to this Wi-Fi.

[0180] The second step for logging the display into the system is to make the system aware of the display's unique identification code established earlier 2100. This step can be done manually or automatically by the display.

[0181] If done manually a screen on the display would ask for the display's account log in information, such as a username and password. The user could use any of a variety of input devices (e.g. touchscreen, remote control or keyboard) to enter the required information.

[0182] If done automatically, the display would read its unique identification information from nonvolatile memory and pass this information to the system. Automatic logging in of the display can be done once the display's nonvolatile memory is loaded with the required information, either by the factory or the Master User.

[0183] Password, in particular, would be encrypted before being passed to the server. Encryption is necessary to preserve privacy.

[0184] FIG. 22 shows a prototype display device. It is in a stand so that it can be placed on a tabletop. Alternatively, such a display can be built into a wall or be part of another device, such as a television.

[0185] Notice that similar to FIGS. 2 and 3, messaging is tailored to fit the current time relative to each event. For example, "Dinner with Jerry" is shown as "In 2 Days" 2210, which is a Tuesday at 6 PM 2215. The birthday is "In 3 Days", and since this is an All-Day event, no time of day is given--it just says it is on "Wednesday" 2220.

[0186] A "Visit Alice" event shows a bit more detail 2230. This happens to be a multi-day event, so we see that it starts "In about a Week" on "December 17" and lasts "For 3 Days" 2235.

[0187] Each of these messages will automatically change over time, depending upon how close to the event it is, and if the event has started, or just ended.

[0188] The illustrated sample display has a white background because the photo was taken during the day. To reduce the possibility of disturbing someone's sleeping, during night hours the display's background becomes black and font colors are adjusted accordingly for readability. Timing for when the display goes into night mode can be arbitrary, set by Master User selected options, or automatically adjusted according to where in the world the display is located, as determined by the geo-location of the IP address detected by the display.

[0189] Referring now to FIG. 33, a computer-implemented system for assisting persons of reduced cognitive ability to manage upcoming events will now be discussed. The computer-implemented system is shown generally at 10, and includes a computer system 12, which may be implemented using a single computer or using a networked group of computers to handle the various functions described herein in a distributed fashion. The computer system 12 manages an electronic database 14 and also optionally an analytics system used to analyze data stored in the database 14. The database 14 functions as a data store configured to store plural items of information about time-based events (and other context-based events) for the patient. The analytics system may be programmed, for example, to analyze trends in a particular patient's cognitive abilities, so as to adjust the performance of the system to match those abilities, and also to provide feedback information about the patient to interested parties such as the patient's caregiver.

[0190] If desired several different presentation devices may be used by a single patient. For example, one device may be a tablet computer operated by the patient, while another device may be a wall-mounted television display in the patient's room. The system can dynamically control which device to use to interact with the patient. In some instances both devices may be used simultaneously. The system is able to customize the presentation sent to each device individually. Thus the level of complexity for the television display might be different than that used for the tablet computer, in a given situation. The system is able to use context information and also the patient's cognitive ability to adapt each display as appropriate for the patient's needs.

[0191] The computer system 12 may also be programmed to generate memory games that are supplied to the patient. Thus a memory game generator 16 is shown as coupled to the computer system. It will be understood that the generator may be implemented by programming the computer system 12 to generate and make available the appropriate memory games, based on the patient's cognitive ability. Memory games can be extremely helpful to exercise the patient's memory, possibly slowing the progress of the patient's disease. In addition, feedback information captured automatically as the patient plays the game is used to gather information about the patient's current cognitive ability, which is used by other systems as will be more fully explained below.

[0192] The computer system 12 also preferably includes an application program interface (API) that presents a set of standardized inputs/outputs and accompanying communications protocols to allow third party application developers to build software applications and physical devices that interact with the system 10, perhaps reading or writing data to the database 14.

[0193] The computer system 12 includes a web server 22 by which the caregiver 26 and patient 28 communicate with the computer system 12. In this regard web pages are delivered for viewing and interaction by computer devices such as tablets, laptop computers, desktop computers, smartphones and the like. The computer system 12 may also be connected to a local area network (LAN) 24, which allows other computer devices to communicate with the computer system 12, such as a workstation computer terminal utilized by a nursing home staff member 30, for example.

[0194] The database 14 is configured to store data organized according to predefined data structures that facilitate provision of the services performed by the computer system. The database includes a data structure 32 that stores plural items of information (informational content) that are each associated with a set of relevant context attributes and associated triggers. By way of example, an item of informational content might be a reminder message that the patient has an optometrist appointment. Associated with that message might be a trigger datum indicating when the appointment is scheduled. Also associated with the message might be other context attributes, such as how large the message should be displayed based on what device the message is being viewed upon. See FIG. 32 as an example of a display of this message.

[0195] When the appointment is still distant in time, the informational content stored for that event might include a very general text reminder, stored as one record in the data structure 32. As the time for the event draws near, the system might provide more detailed information about the event (such as a reminder to "bring your old glasses"). This would be stored as a second record in the data structure. The system chooses the appropriate item of information, by selecting the one that matches the current context.

[0196] In this regard, the system also stores in another data structure, the current context for the patient, such as where the patient is located, any relevant medical condition attributes, and the like. These are shown as context data structure 34. Further details of the context attributes are discussed below. The computer system 12 uses the current context attributes in structure 34 in determining which information content to retrieve from structure 32.

[0197] In addition to the patient's current context, the computer system further maintains a cognitive ability data structure 36 which stores data indicative of the patient's cognitive ability. This may be quantified, for example as a relative value suitable for representing as a sliding scale, e.g., a 1-10 scale. The patient's cognitive ability may be assessed by explicit entry by the caregiver or nursing home staff. Alternatively the system can establish the cognitive ability data itself through feedback from the memory game generator 18 or by analyzing how well the patient is able to interact with the system generally.

[0198] In one embodiment the system automatically launches specific applications and events based on set parameters configured by third parties, taking into account specific information, such as patient context, technology context, and situation context. FIG. 23 shows the key considerations that are taken into account during the process of determining which application/event to launch, when to launch it, and how to launch it. If the context information meets the parameter settings, the execution of an application and/or event is triggered. This provides some information or interaction for an individual to see or use on a computer terminal such as a tablet computer. The system also adjusts the level of interactivity based on the cognitive ability of the patient. The goal is to provide a patient or user a non-intrusive, automatic way to get information and services that are relevant and sometimes necessary.

[0199] With reference to FIG. 23, the third party is a person or entity that generally has at least some involvement in care giving. Such third party may have control to put in reminders, start videoconferences, upload pictures, set appointments, and other features of remote care. As used herein, the term "caregiver" refers to such a third party and may include family members, doctors, nursing staff and the like.

[0200] As depicted in FIG. 23, context also provides useful information. The system is able to initiate some of events/applications with knowledge of other factors that the care giver may not be aware of. These include the situation currently at the nursing home or other patient center (current situation detectable by cameras, microphones, nurse/doctor input, medical sensors, and the like), active/available technology information (e.g. don't send the reminder to the person's watch but put it on the TV), and medical information (data from medical sensors, current doctor reports, current status reports by users.

Cognitive Ability

[0201] Patient cognitive ability also forms an important aspect of the system, as shown in FIG. 23. Patient cognitive ability is the current level (on a rating scale) of the patient's ability to interact with the electronics system, tablet, or other device in the system. If the rating is high, the patient likely can interact with the device himself or herself and may not need as much assistance from some context or third party support. If the rating is low, the system and third parties can provide more support. Cognitive ability scale, and how it is determined, is discussed more in relation to FIGS. 29 and 30 below.

[0202] The computer-implemented system captures and stores an electronic data record indicative of the patient's cognitive ability. In one embodiment the electronic data corresponds to a collection of individual measurements or assessments of skill (skill variables), each represented numerically over a suitable range, such as a range from 0 to 10. If desired, an overall cognitive ability rating or aggregate assessment may also be computed and stored, based on the individual measurements or assessments.

[0203] The dynamic rendering system uses these skill variables to render facts in the most appropriate manner based on the patient's skill set. In this embodiment the collection of skill variables, stored in memory of the computer, thus correspond to the overall "cognitive ability" of the patient.

[0204] The skill variables comprise a set that can be static or dynamic. Some variables are measured or assessed by human operators and some are automatically assessed by the system based on historical observations and sensor data. The following is a list of the skill variables utilized by the system. In this regard a system may not require all of these variable, and likewise there are other variables, not listed here, that are within the scope of this disclosure as would be understood by those of skill in the art. [0205] Anxiety level [0206] Vision impairment or Skills [0207] Short term memory skills [0208] Long term memory skills [0209] Recognizing and remembering names/familiar faces [0210] Reading comprehension skills [0211] Attention skills [0212] time and space sensing [0213] Speech skills [0214] Hearing and comprehension skills [0215] Ability to solve simple logical problems [0216] Inference skill (ability understand normal implied consequences of actions and facts)

[0217] If desired, these skill variables may be algorithmically combined by the computer system to derive a single value "cognitive ability" score. A suitable scoring mechanism may be based on the clinically recognized stages of Alzheimer's disease, namely: [0218] Stage 1: No impairment [0219] Stage 2: Very mild decline [0220] Stage 3: Mild decline [0221] Stage 4: Moderate decline [0222] Stage 5: Moderately severe decline [0223] Stage 6: Severe decline [0224] Stage 7: Very severe decline

Context

[0225] In addition to cognitive abiliy, the system also takes contextual information relevant to the patient into account. FIG. 24 shows the high level flow chart for this context-dependent application/event activation for people with various cognitive abilities. (Step 1) A third party (e.g. family, friend, caregiver) will input information and configure the parameters for triggering of events and applications. (Step 2) Once the system has been armed, the system will gather and store contextual information. Such context information, about events and the like, are preferably composed of three sub-contexts: patient related information, situational/external information, and event/application/device information. (Step 3) If contexts meet the armed settings of the system, an event may be triggered. (Step 4) If triggered, the system will launch the application whilst customizing the interaction level for the patient.

[0226] In one embodiment the context of an event (an event being an application, task, etc.) can be composed of 3 sub-contexts: a patient-related context, a situational or external condition context, and a technology context. The state of these contexts are stored in a context data structure within the memory of a computer forming part of the system.

The Patient-Related Context

[0227] The patient related context contains all the information that is available from the patient including (this is not exclusive). This information is stored as data in the context data structure. Examples of patient-related context data include: [0228] Medical context obtained from sensors (e.g. vital signs) [0229] Digital medical record (history) [0230] Patient behavior (e.g. sleeping or not) [0231] Patient location (e.g. in the room, looking at the display) [0232] Patient preference (e.g. audio trigger/notification preferred, preferences of sounds, videos, tv shows, pictures) [0233] Family/Caregiver wishes

The Situational or External Condition Context

[0234] The situational/external context contains all the information that is available from external sources to the patient (this is not exclusive). This information is likewise stored as data in the context data structure. Examples of situational or external condition context data include: [0235] Weather information [0236] Time [0237] Third party(ies) information (e.g. identity) [0238] Watching TV and what's on [0239] Other people are in the patient room

The Technology Context

[0240] The Event Application/Device context contains all the information that is available from the devices that make up the system. This information, collected by communicating with the devices themselves, is likewise stored as data in the context data structure. Examples of technology context data include: [0241] Status of the tablet display device [0242] Amount of bandwidth available [0243] Type of display (e.g. size) [0244] Type of network [0245] Other devices available (smart watches, TV's in the room, other components in the system)