Method And Apparatus For Inputting Information Including Coordinate Data

FUJIOKA; SUSUMU

U.S. patent application number 13/345044 was filed with the patent office on 2012-12-27 for method and apparatus for inputting information including coordinate data. This patent application is currently assigned to SMART TECHNOLOGIES ULC. Invention is credited to SUSUMU FUJIOKA.

| Application Number | 20120327031 13/345044 |

| Document ID | / |

| Family ID | 17992706 |

| Filed Date | 2012-12-27 |

View All Diagrams

| United States Patent Application | 20120327031 |

| Kind Code | A1 |

| FUJIOKA; SUSUMU | December 27, 2012 |

METHOD AND APPARATUS FOR INPUTTING INFORMATION INCLUDING COORDINATE DATA

Abstract

A method, computer readable medium, and apparatus for inputting information, including coordinate data, includes: extracting a predetermined object from an image, including a predetermined object above a plane; detecting motion of the predetermined object while the predetermined object is within a predetermined distance from the plane; and then determining if to input predetermined information.

| Inventors: | FUJIOKA; SUSUMU; (Zama City, JP) |

| Assignee: | SMART TECHNOLOGIES ULC Calgary CA |

| Family ID: | 17992706 |

| Appl. No.: | 13/345044 |

| Filed: | January 6, 2012 |

Related U.S. Patent Documents

| Application Number | Filing Date | Patent Number | ||

|---|---|---|---|---|

| 12722345 | Mar 11, 2010 | RE43084 | ||

| 13345044 | ||||

| 10717456 | Nov 21, 2003 | 7342574 | ||

| 12722345 | ||||

| 09698031 | Oct 30, 2000 | 6674424 | ||

| 10717456 | ||||

| Current U.S. Class: | 345/175 |

| Current CPC Class: | G06F 3/0428 20130101 |

| Class at Publication: | 345/175 |

| International Class: | G06F 3/042 20060101 G06F003/042 |

Foreign Application Data

| Date | Code | Application Number |

|---|---|---|

| Oct 29, 1999 | JP | 11-309412 |

Claims

1. A method for inputting information including coordinate data, comprising: providing at least two cameras at respective corners of a display; extracting, based on outputs from the at least two cameras, a predetermined object from an image including the predetermined object above a plane of the display and a plane of the display; determining whether the predetermined object is within a predetermined distance from the plane of the display; detecting, based on outputs from the at least two cameras, a position of the predetermined object while the predetermined object is determined to be within a predetermined distance from the plane; calculating angles of views of each of the at least two cameras to the detected position; and calculating coordinates of the predetermined object on the display panel utilizing the calculated angles.

2. A method for inputting information including coordinate data according to claim 1, wherein the at least two cameras are in opposite corners of the display.

3. A device for inputting information including coordinate data, comprising: at least two cameras at respective corners of a display; an object extracting device configured to extract a predetermined object from an image including the predetermined object above a plane of the display and a plane of the display, and to determine whether the predetermined object is within a predetermined distance from the plane of the display; a detector device configured to detect a position of the predetermined object while the predetermined object is within a predetermined distance from the plane; and a controller configured to calculate angles of views of each of the at least two cameras to the detected position and to calculate coordinates of the predetermined object on the display panel utilizing the calculated angles.

4. A device for inputting information including coordinate data according to claim 3, wherein the at least two cameras are in opposite corners of the display.

5. A device for inputting information including coordinate data, comprising: at least two imaging means at respective corners of a display; means for extracting, based on outputs from the at least two imaging means, a predetermined object from an image including the predetermined object above a plane of the display and a plane of the display, and for determining whether the predetermined object is within a predetermined distance from the plane of the display; means for detecting, based on outputs from the at least two imaging means, a position of the predetermined object while the predetermined object is within a predetermined distance from the plane; means for calculating angles of view of each of the least two imaging means and for calculating coordinates of the predetermined object on the display panel utilizing the calculated angles.

6. A device for inputting information including coordinate data according to claim 5, wherein the at least two imaging means are in opposite corners of the display.

Description

[0001] This is a continuation of U.S. patent application Ser. No. 12/722,345, filed Mar. 11, 2010, which is a reissue application of U.S. patent application Ser. No. 10/717,456, filed Nov. 21, 2003, now U.S. Pat. No. 7,342,574, issued Mar. 11, 2008, which is a continuation of U.S. patent application Ser. No. 09/698,031, filed Oct. 30, 2000, now U.S. Pat. No. 6,674,424, issued Jan. 6, 2004 which is based on Japanese Patent Appln. No. 11-309412, filed Oct. 29, 1999, the contents or each of which are incorporated herein by reference.

BACKGROUND OF THE INVENTION

[0002] 1. Field of the Invention

[0003] The present invention relates to a method and apparatus for inputting information including coordinate data. More particularly, the present invention relates to a method and apparatus for inputting information including coordinate data of a location of a coordinate input member, such as a pen, a human finger, etc., on an image displayed on a relatively large screen.

[0004] 2. Discussion of the Background

[0005] Lately, presentation systems, electronic copy boards, or electronic blackboard systems provided with a relatively large screen display device, such as a plasma display panel, a rear projection display, etc., are coming into wide use. Certain type of presentation systems also provide a touch input device disposed in front of a screen for inputting information related to the image displayed on the screen. Such a touch input device is also referred as an electronic tablet, an electronic pen, etc.

[0006] As to such a presentation system, for example, when a user of the system touches an icon on a display screen, a touch input device detects and inputs the touching motion and the coordinates of the touched location. Similarly, when the user draws a line, the touch input device repetitively detects and inputs a plurality of coordinates as a locus of the drawn line.

[0007] As an example, Japanese Laid-Open Patent Publication No. 11-85376 describes a touch input apparatus provided with light reflecting devices disposed around a display screen, light beam scanning devices, and light detectors. The light reflecting device has a characteristic to reflect incident light toward a direction close to the incident light. During an operation of the apparatus, scanning light beams emitted by the light beam scanning devices are reflected by the light reflecting devices, and then received by the light detectors. When a coordinate input member, such as a pen, a user's finger, etc., touches the surface of the screen at a location, the coordinate input member interrupts the path of the scanning light beams, and thereby the light detector is able to detect the touched location as a missing of the scanning light beams at the touched location.

[0008] In this apparatus, when a certain location-detecting accuracy in a direction perpendicular to the screen is required, the scanning light beams are desired to be thin and to scan on a plane close enough to the screen. Meanwhile, when the surface of the screen is contorted, the contorted surface may interfere with the transmission of the scanning light beams, and consequently a coordinate input operation might be impaired. As a result, for example, a double-click operation might not be properly detected, free hand drawing lines and characters might be erroneously detected, and so forth.

[0009] As another example, Japanese Laid-Open Patent Publication No. 61-196317 describes a touch input apparatus provided with a plurality of television cameras. In the apparatus, the plurality of television cameras detect three-dimensional coordinates of a moving object, such as a pen, as a coordinate input member. Because the apparatus detects a three-dimensional coordinates, the plurality of television cameras are desirable to capture images of the moving object at a relatively high flame rate.

[0010] As further example, a touch input apparatus provided with an electro magnetic tablet and an electromagnetic stylus is known. In this apparatus, a location of the stylus is detected based on electromagnetic induction between the tablet and the stylus. Therefore, a distance between the tablet and the stylus tends to be limited in a rather short distance, for example, eight millimeters; otherwise a large size stylus or a battery powered stylus is used.

SUMMARY OF THE INVENTION

[0011] The present invention has been made in view of the above-discussed and other problems and to overcome the above-discussed and other problems associated with the background methods and apparatus. Accordingly, an object of the present invention is to provide a novel method and apparatus that can input information including coordinate data even when the surface of a display screen is contorted to a certain extent and without using a light scanning device.

[0012] Another object of the present invention is to provide a novel method and apparatus that can input information including coordinate data using a plurality of coordinate input members, such as a pen, a human finger, a stick, a rod, a chalk, etc.

[0013] Another object of the present invention is to provide a novel method and apparatus that can input information including coordinate data with a plurality of background devices, such as a chalkboard, a whiteboard, etc., in addition to a display device, such as a plasma display panel, a rear projection display.

[0014] To achieve these and other objects, the present invention provides a method, computer readable medium and apparatus for inputting information including coordinate data that include extracting a predetermined object from an image including the predetermined object above a plane, detecting a motion of the predetermined object while the predetermined object is in a predetermined distance from the plane, and determining to input predetermined information.

BRIEF DESCRIPTION OF THE DRAWINGS

[0015] A more complete appreciation of the present invention and many of the attendant advantages thereof will be readily obtained as the same becomes better understood by reference to the following detailed description when considered in connection with the accompanying drawings, wherein:

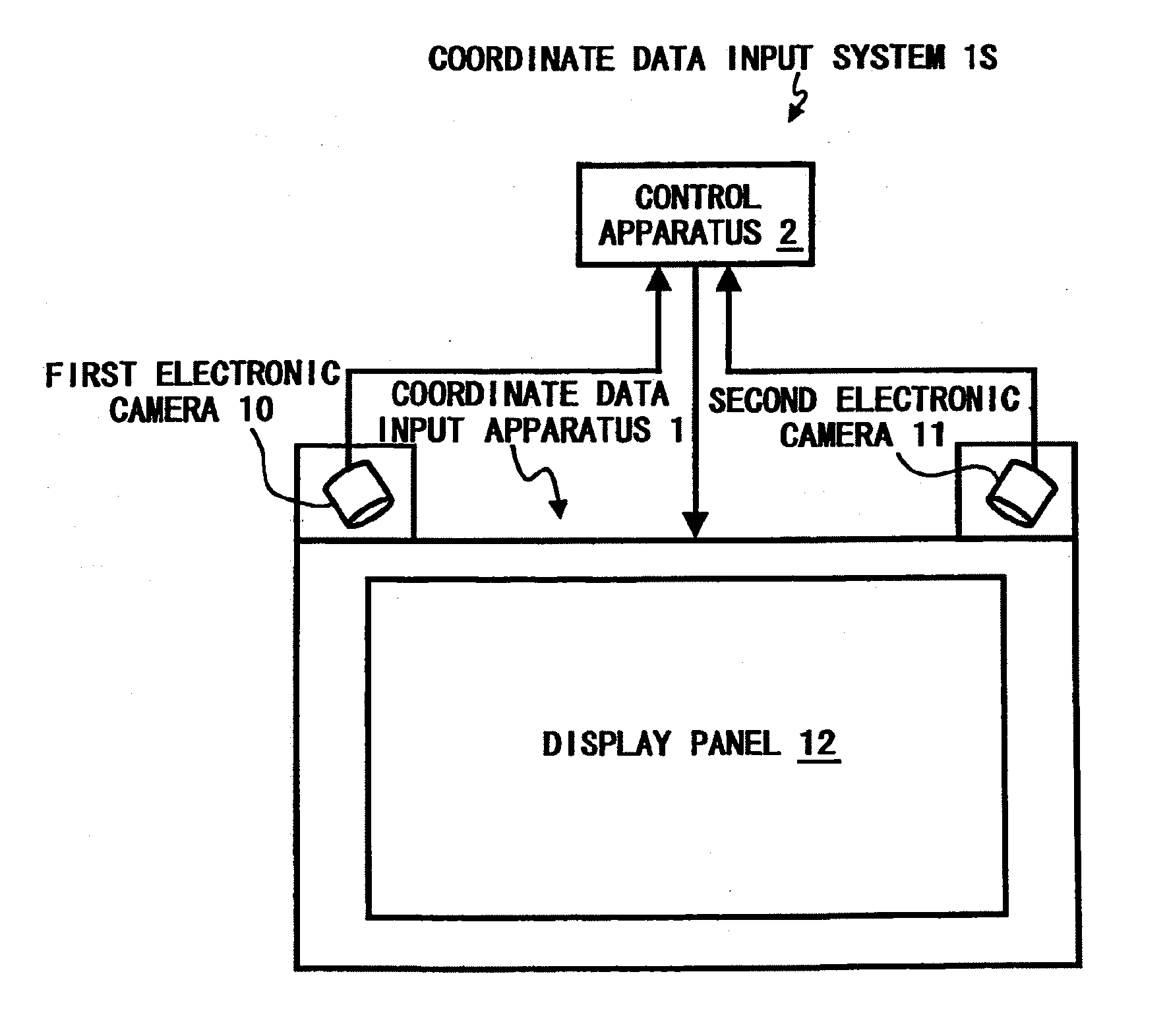

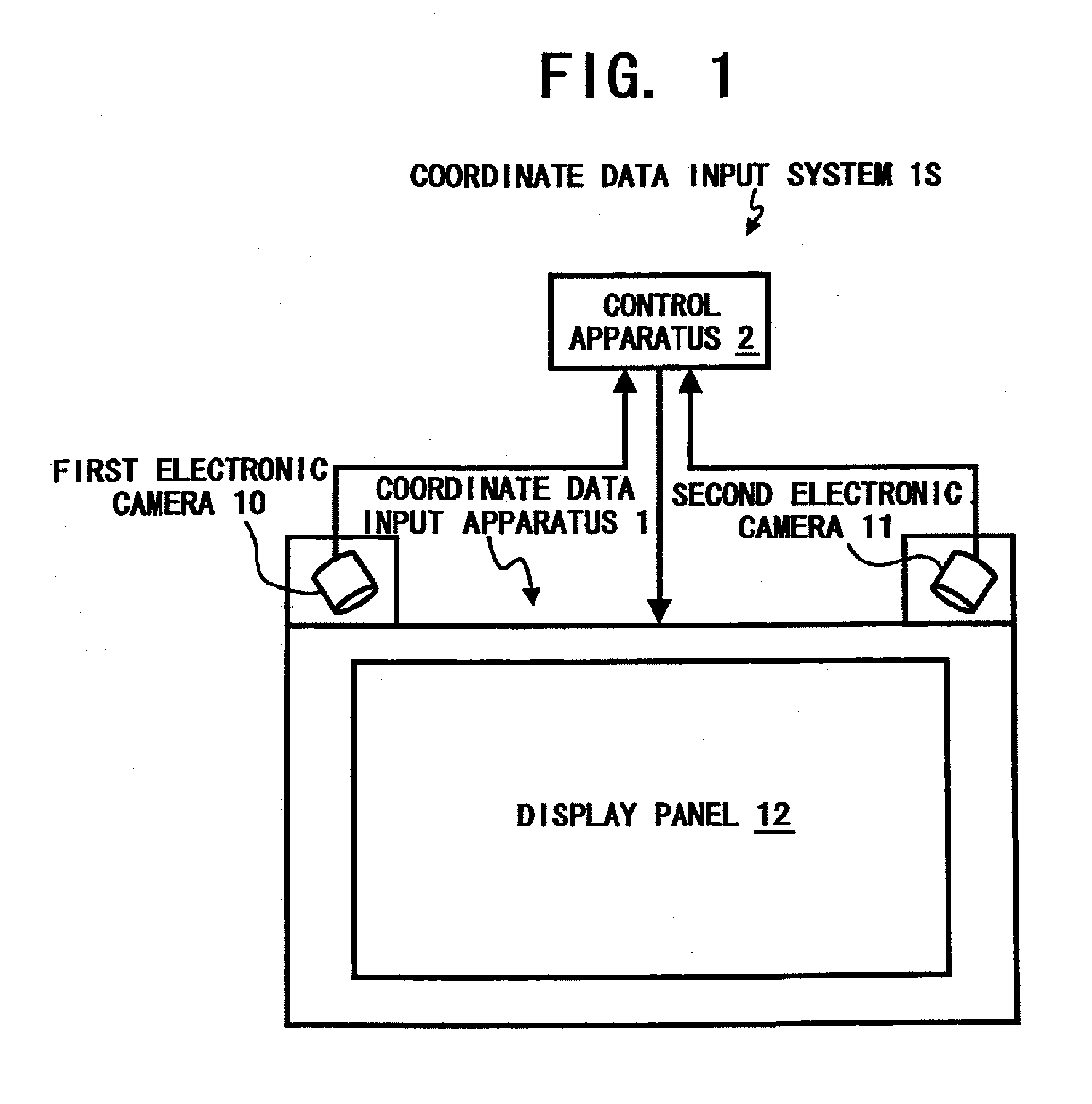

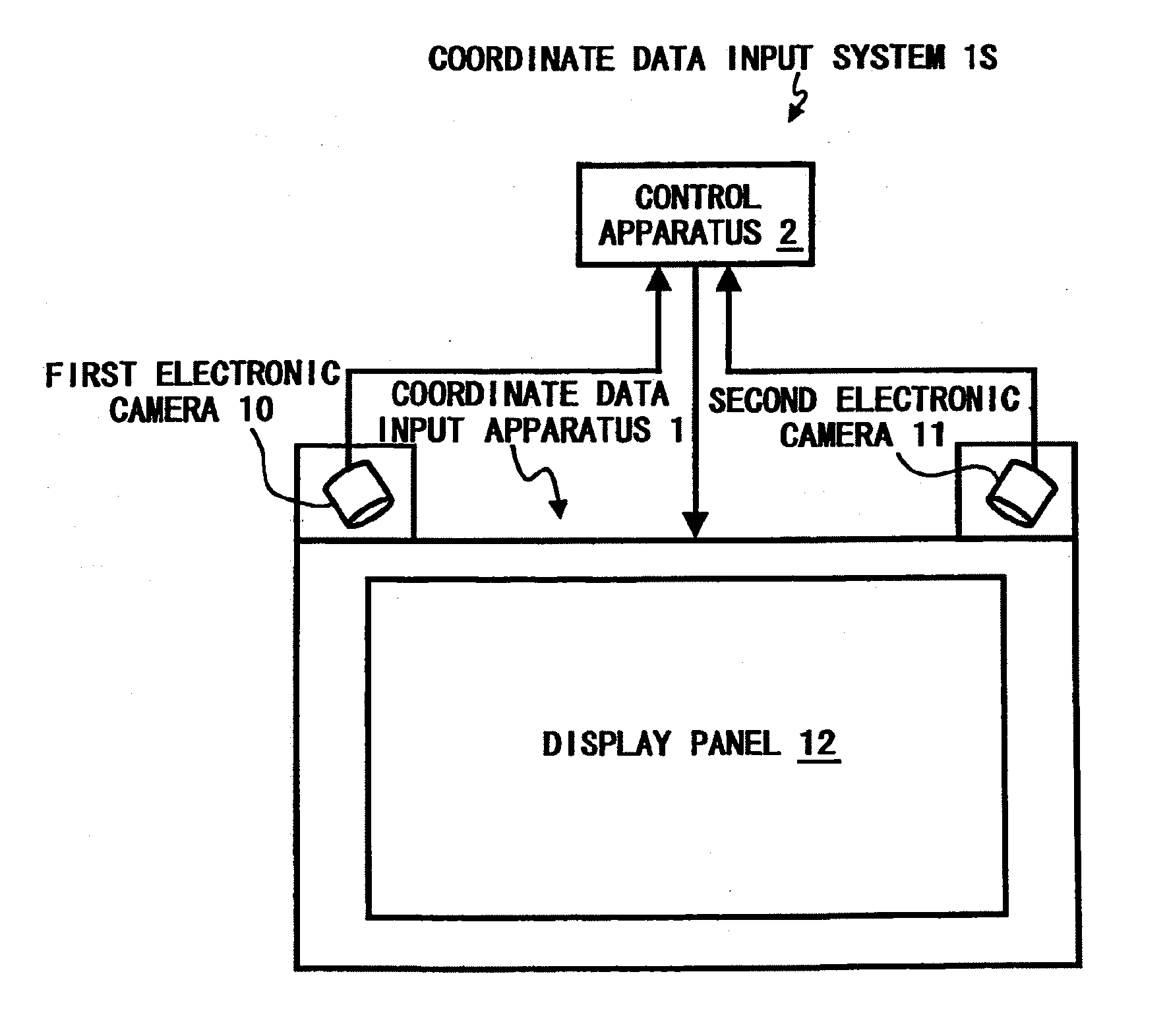

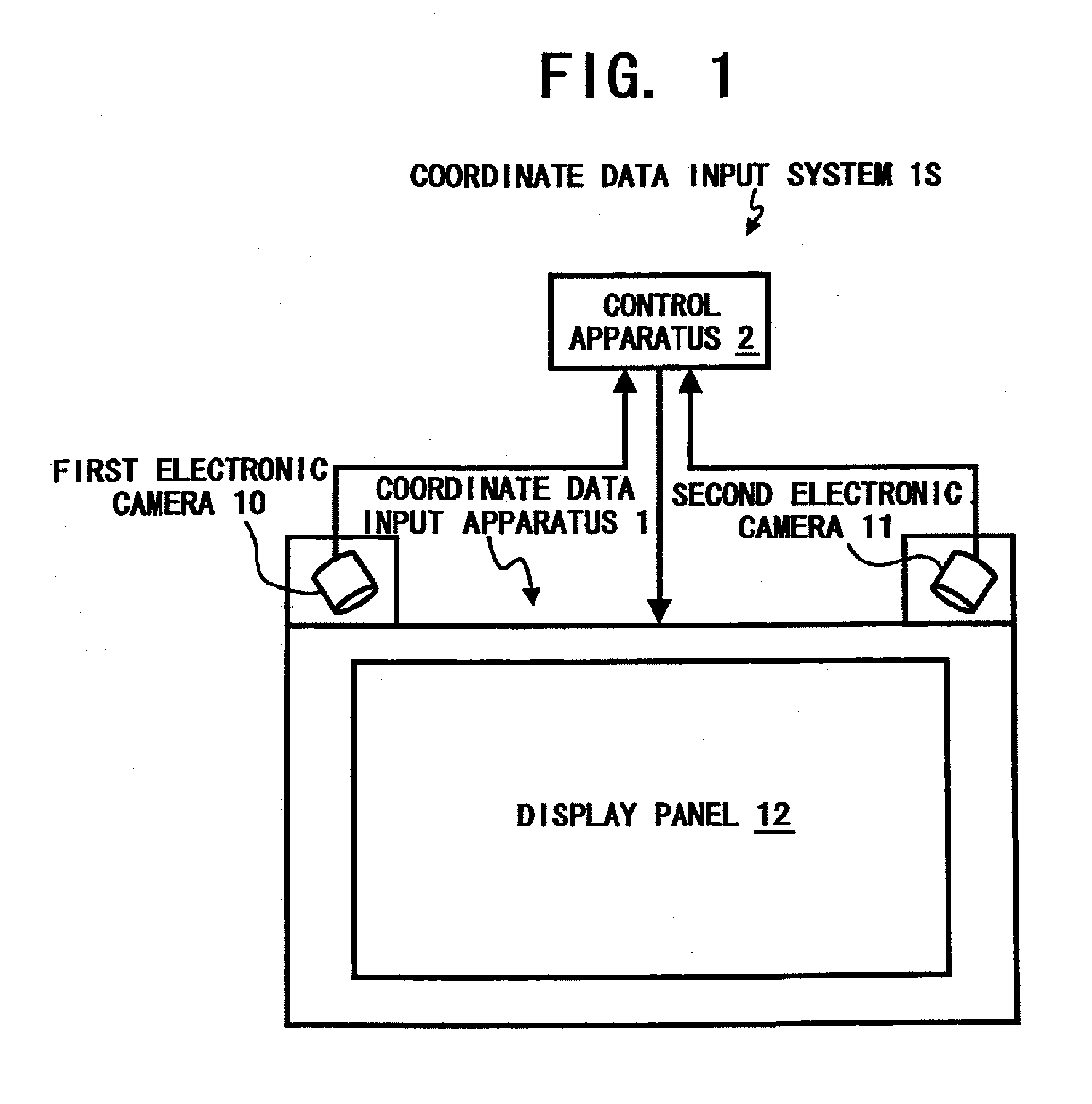

[0016] FIG. 1 is a schematic view illustrating a coordinate data input system as an example configured according to the present invention;

[0017] FIG. 2 is an exemplary block diagram of a control apparatus of the coordinate data input system of FIG. 1;

[0018] FIG. 3 is a diagram illustrating a method for obtaining coordinates where a coordinate input member contacts a display panel;

[0019] FIG. 4 is a magnified view of the wide-angle lens and the CMOS image sensor of FIG. 3;

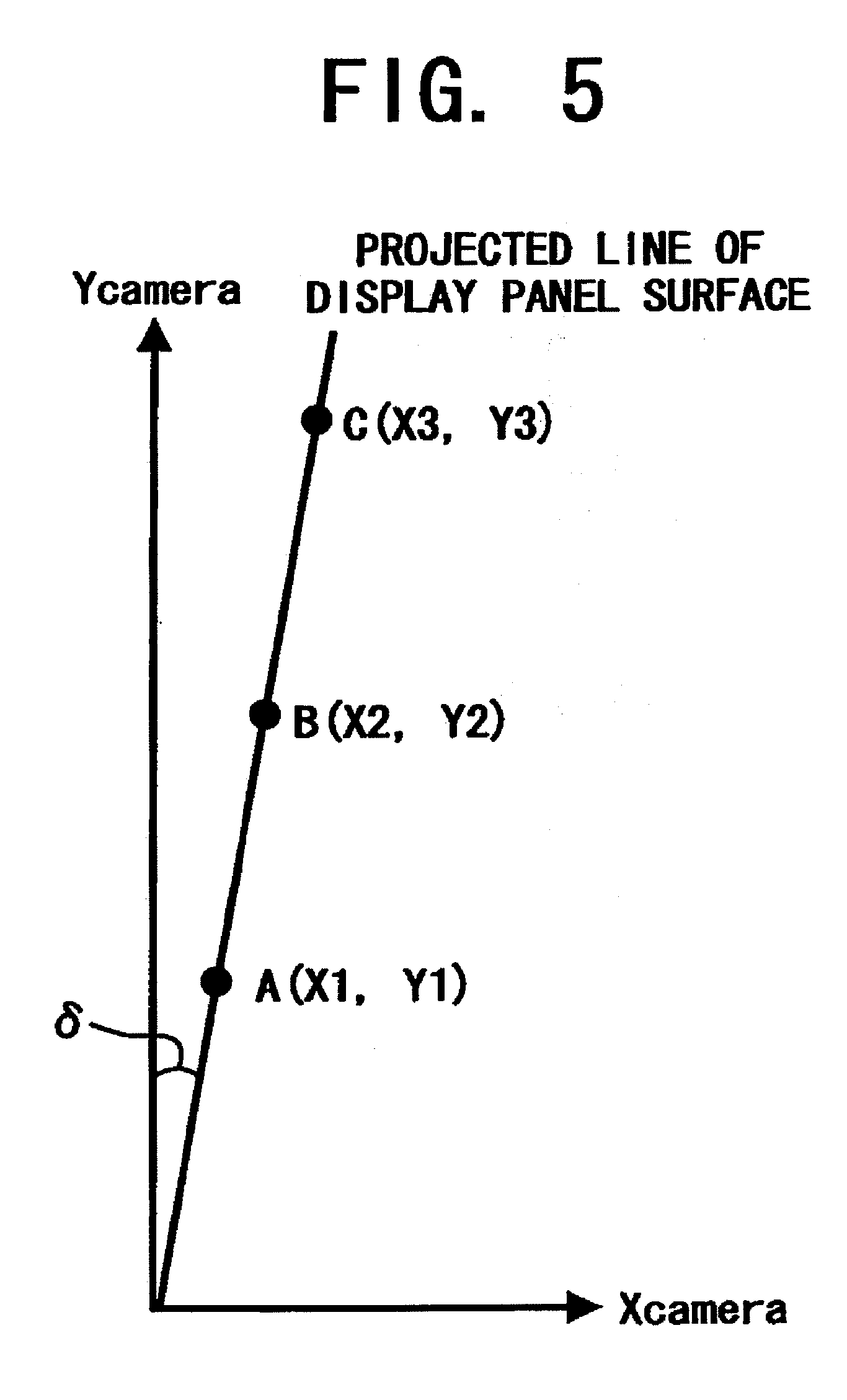

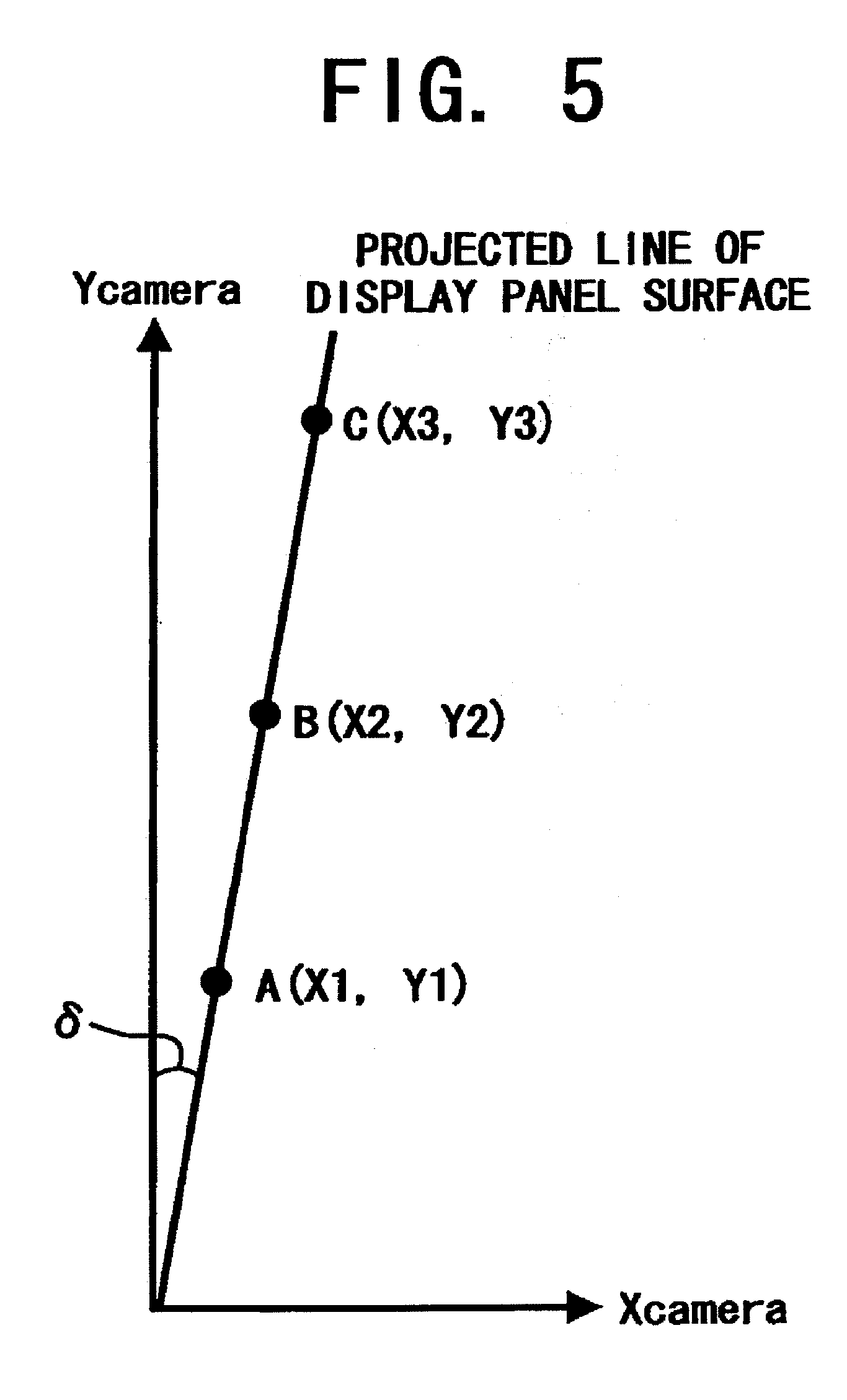

[0020] FIG. 5 is a diagram illustrating a tilt of the surface of the display panel to the CMOS image sensor;

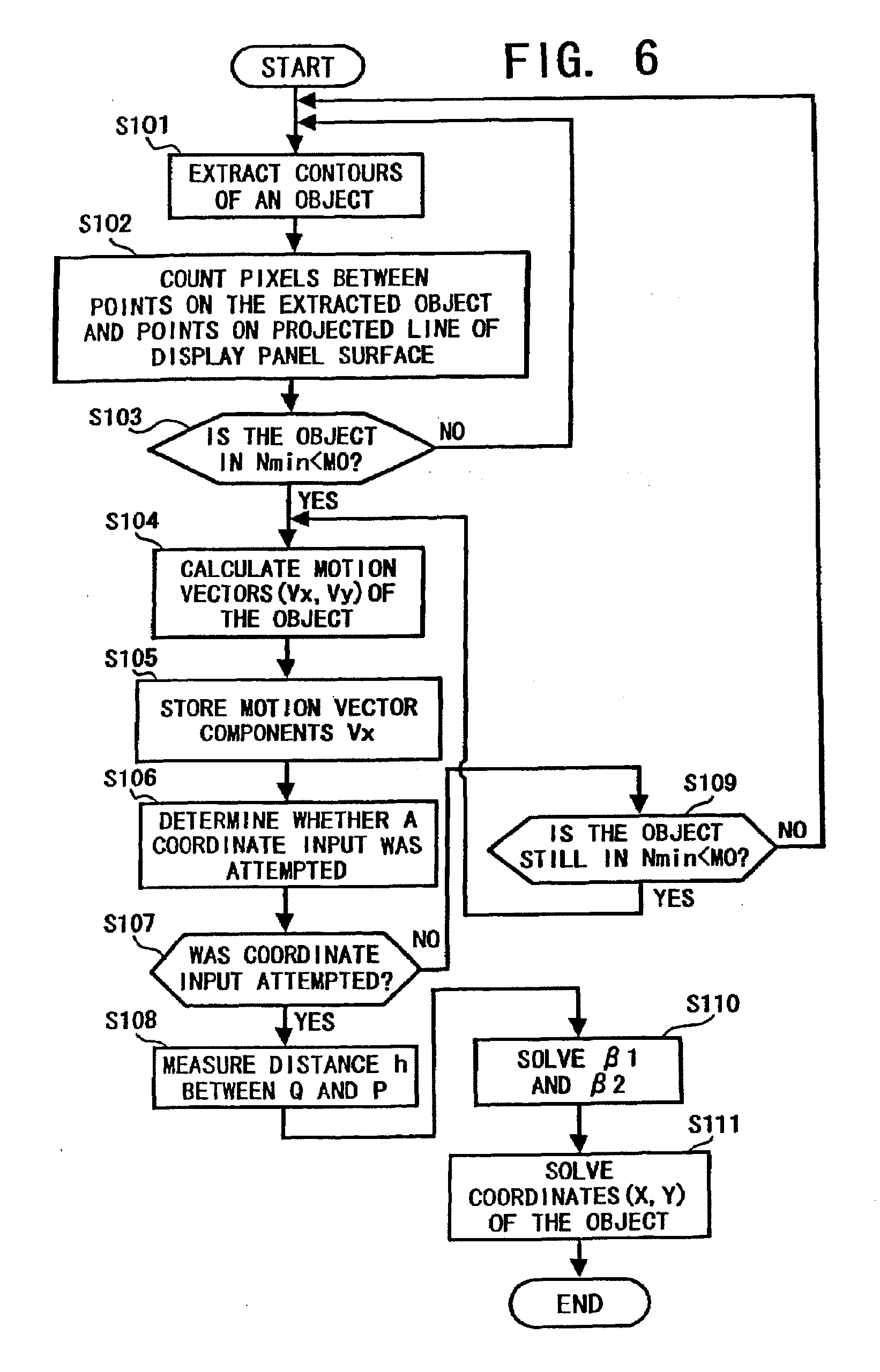

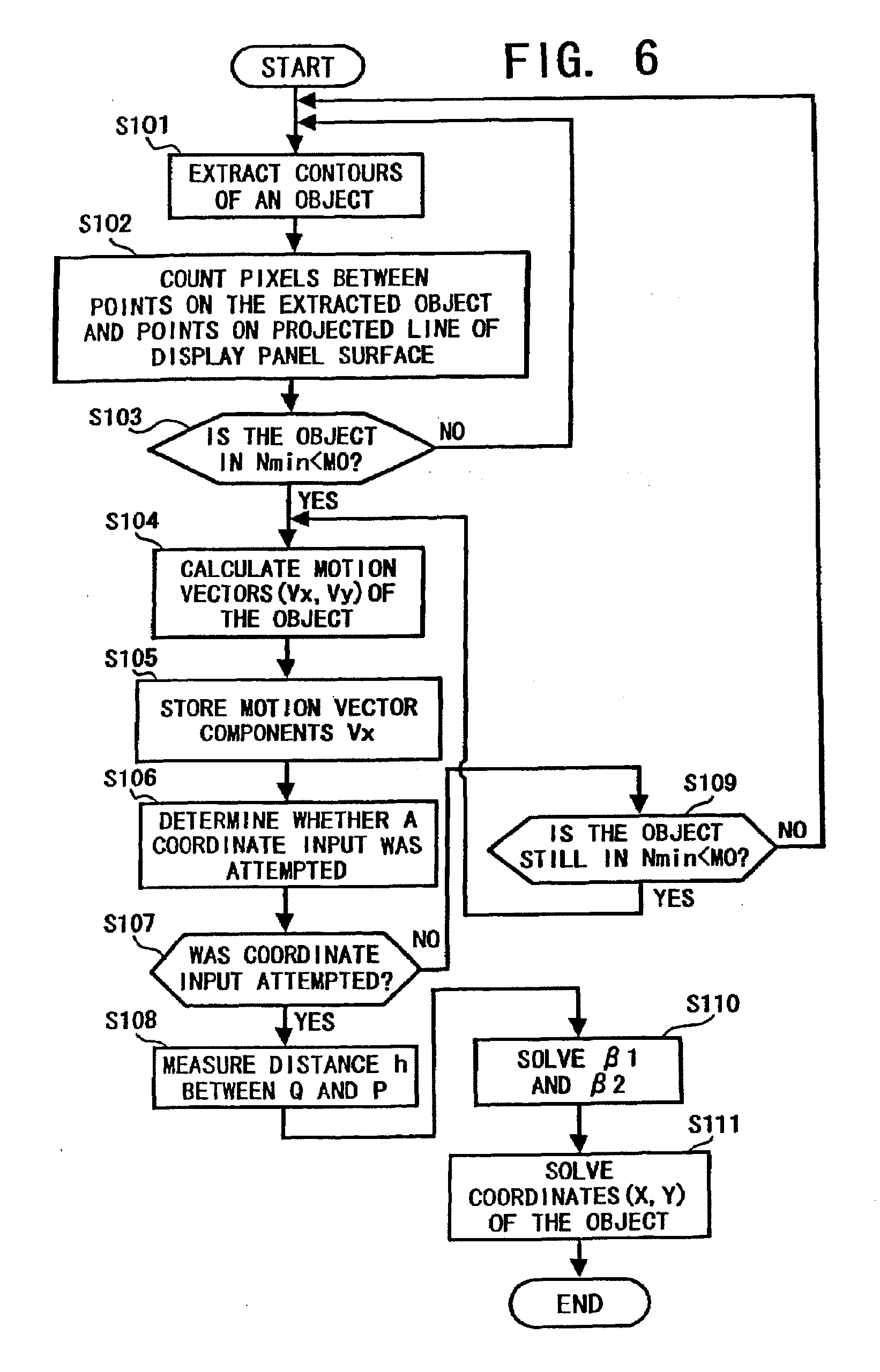

[0021] FIG. 6 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system of FIG. 1 as an example configured according to the present invention;

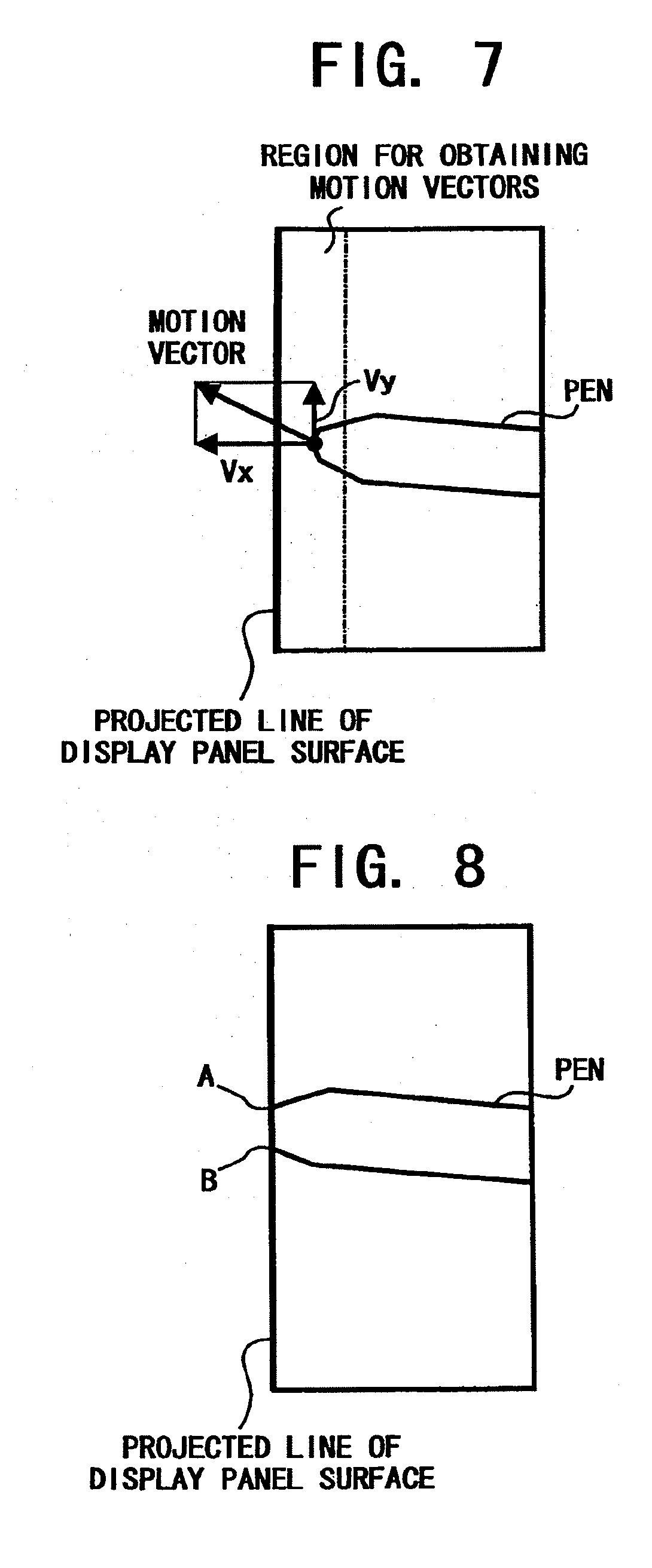

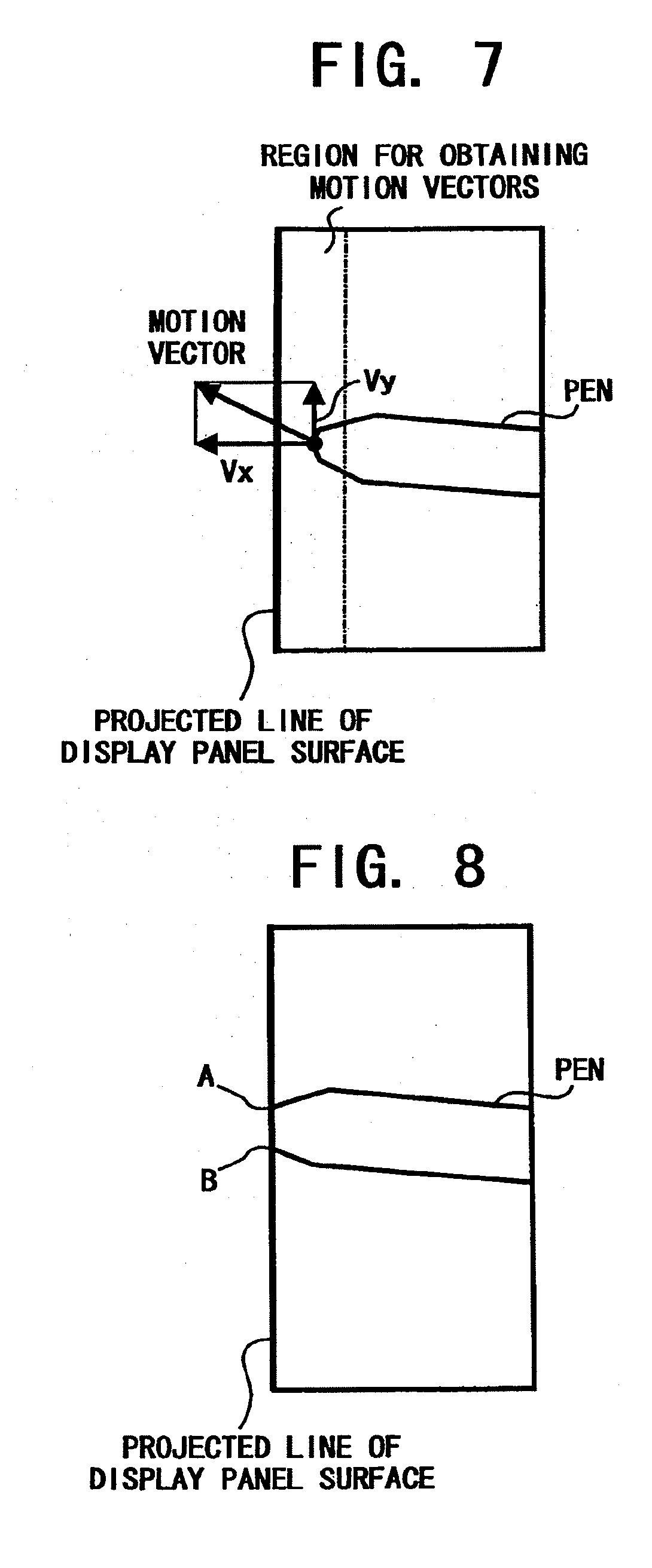

[0022] FIG. 7 is a diagram illustrating an image captured by the first electronic camera of FIG. 1;

[0023] FIG. 8 is a diagram illustrating an image captured by the first electronic camera when an input pen distorts the surface of a display panel;

[0024] FIG. 9 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention;

[0025] FIG. 10 is a diagram illustrating an image captured by the first electronic camera when an input pen tilts to the surface of a display panel;

[0026] FIG. 11 is a diagram illustrating an image having an axial symmetry pen captured by the first electronic camera;

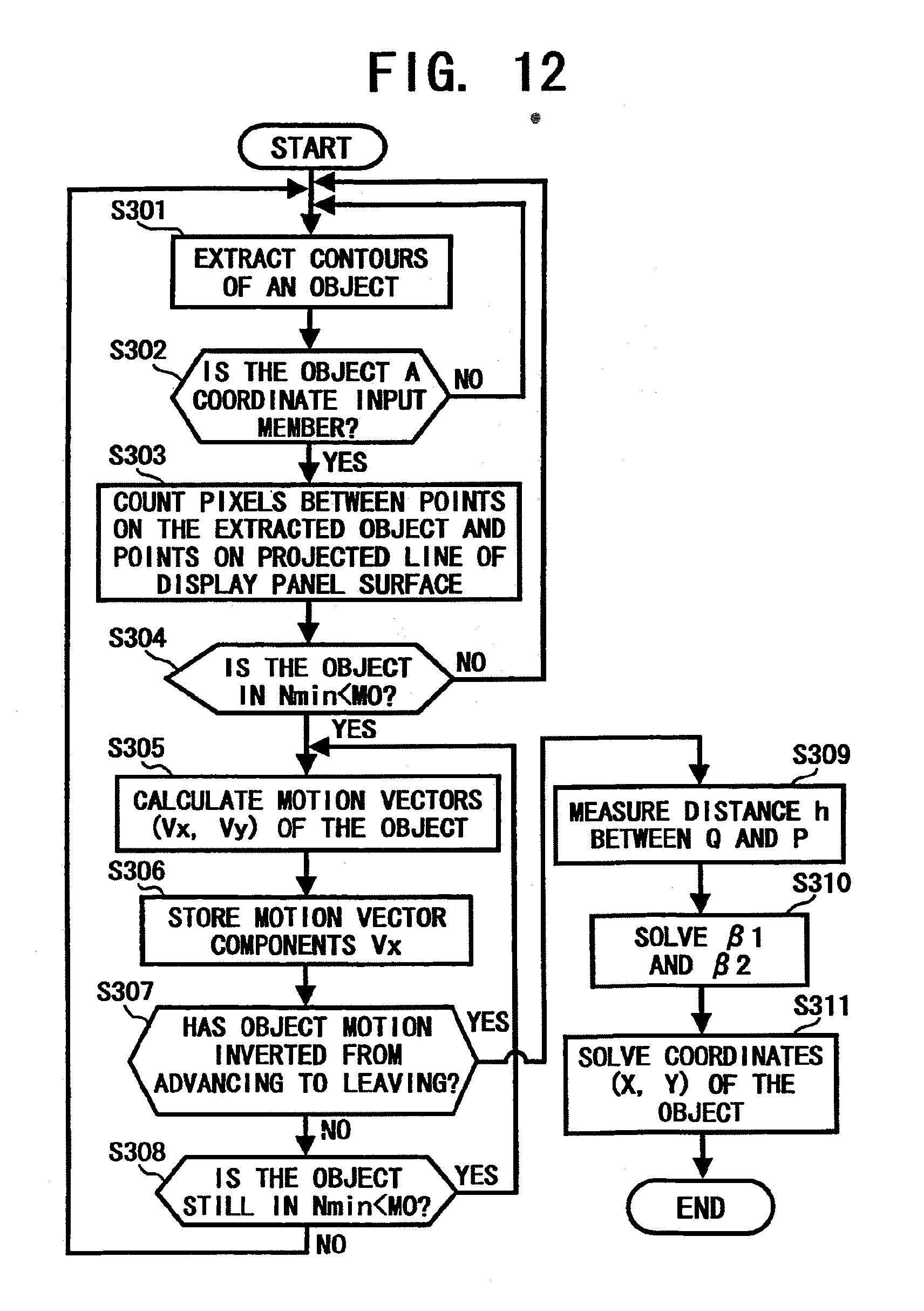

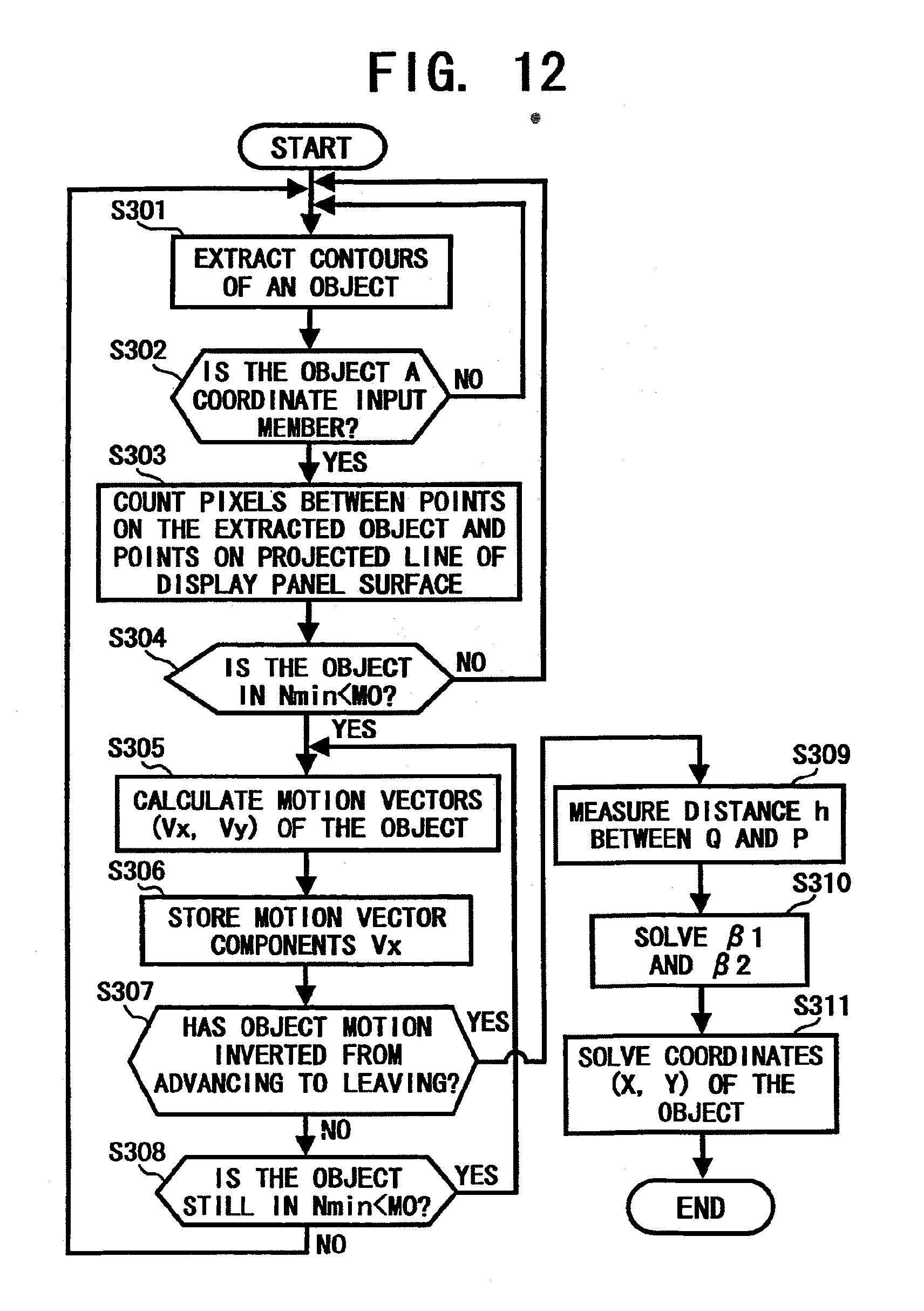

[0027] FIG. 12 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention;

[0028] FIG. 13 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention;

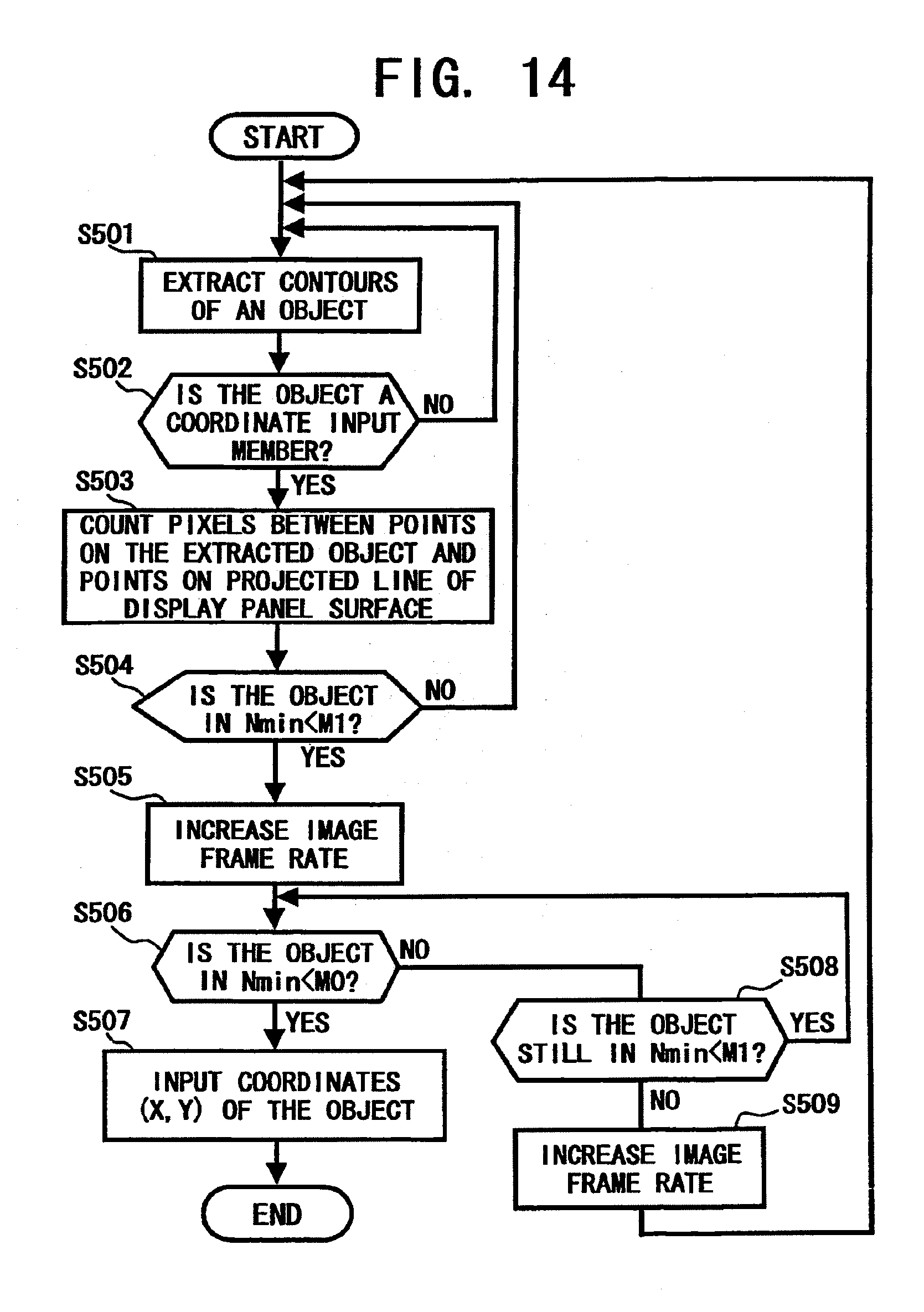

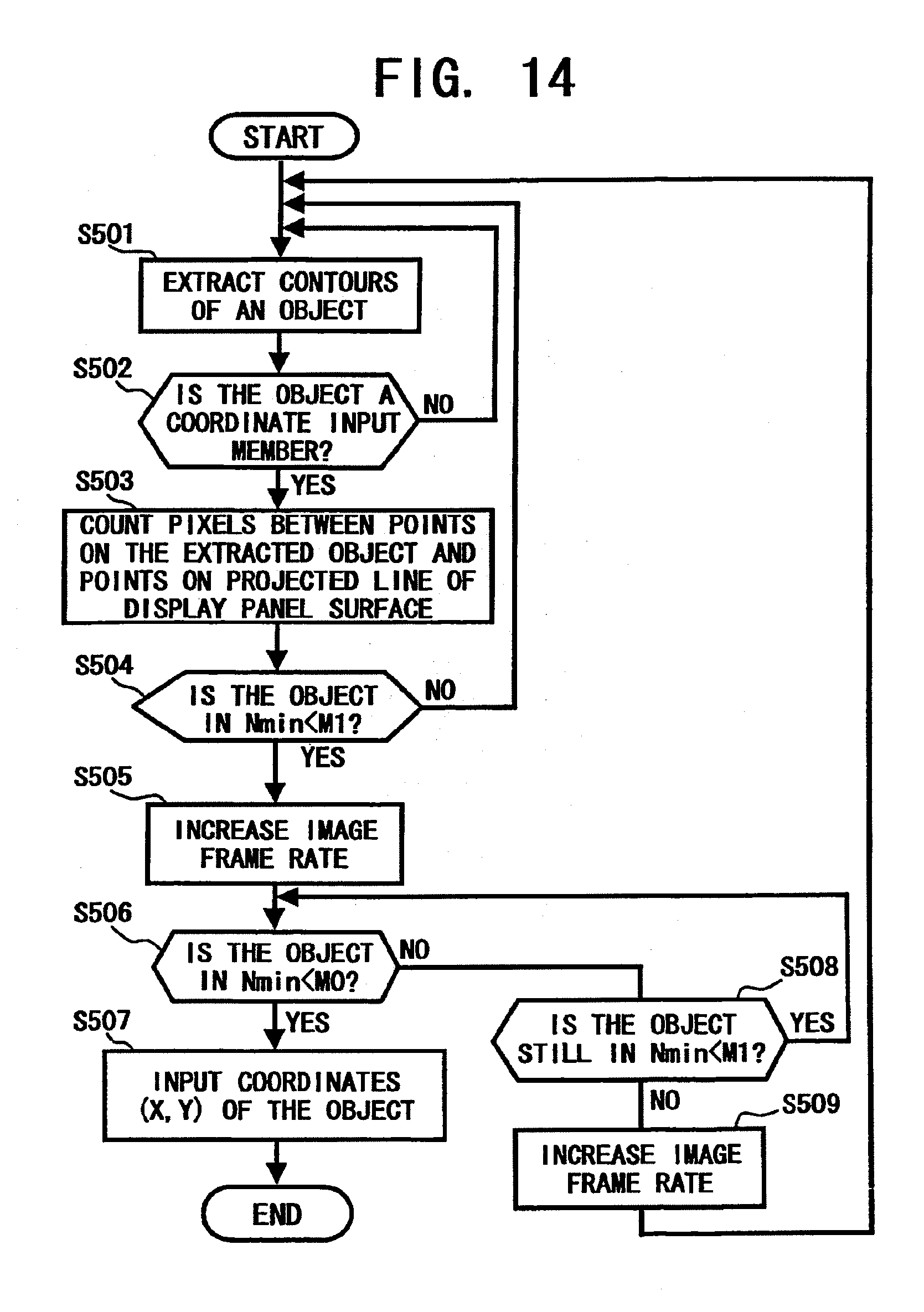

[0029] FIG. 14 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention;

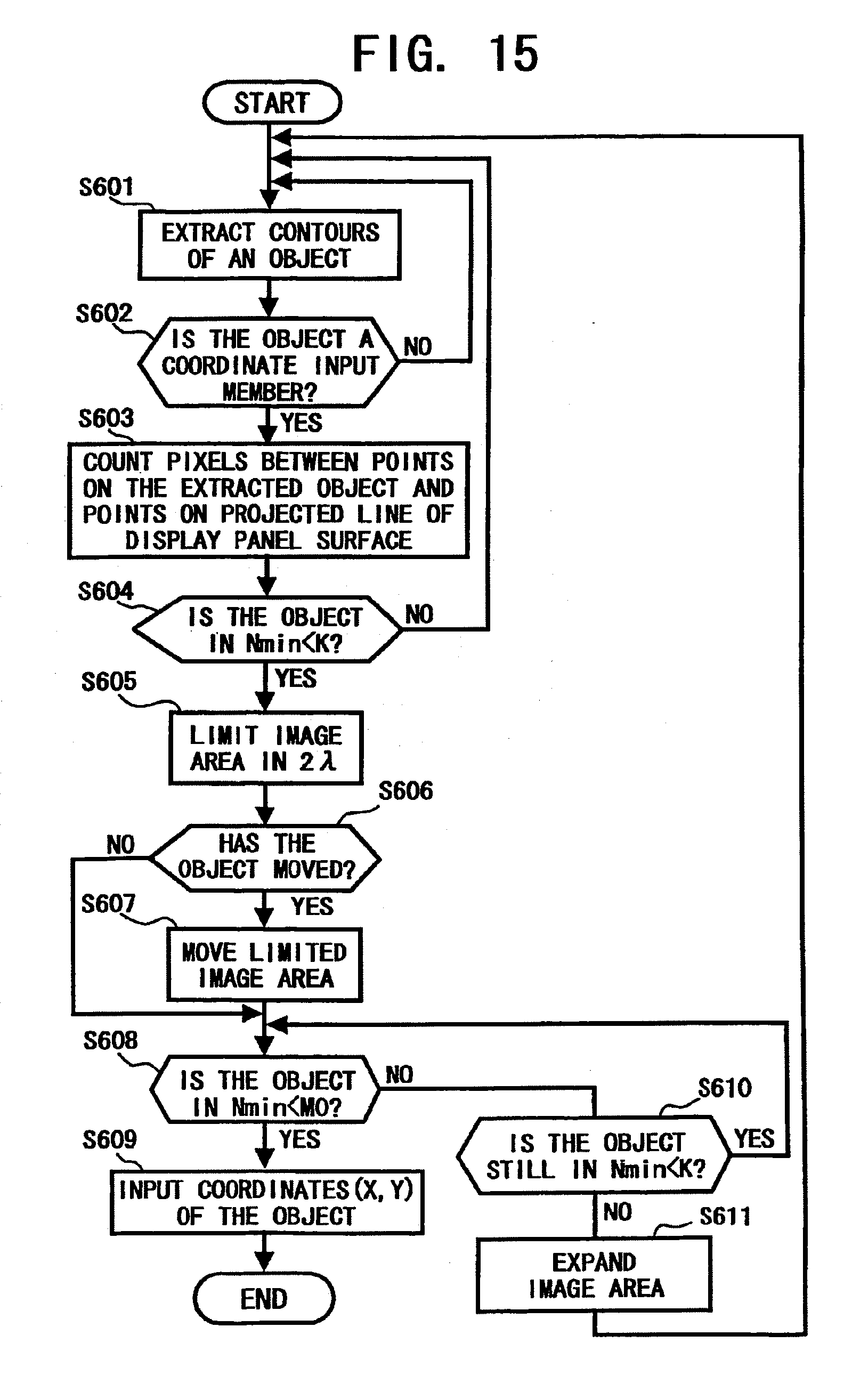

[0030] FIG. 15 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention;

[0031] FIG. 16A is a diagram illustrating an image captured by the first electronic camera and an output limitation of the image;

[0032] FIG. 16B is a diagram illustrating an image captured by the first electronic camera and a displaced output limitation of the image;

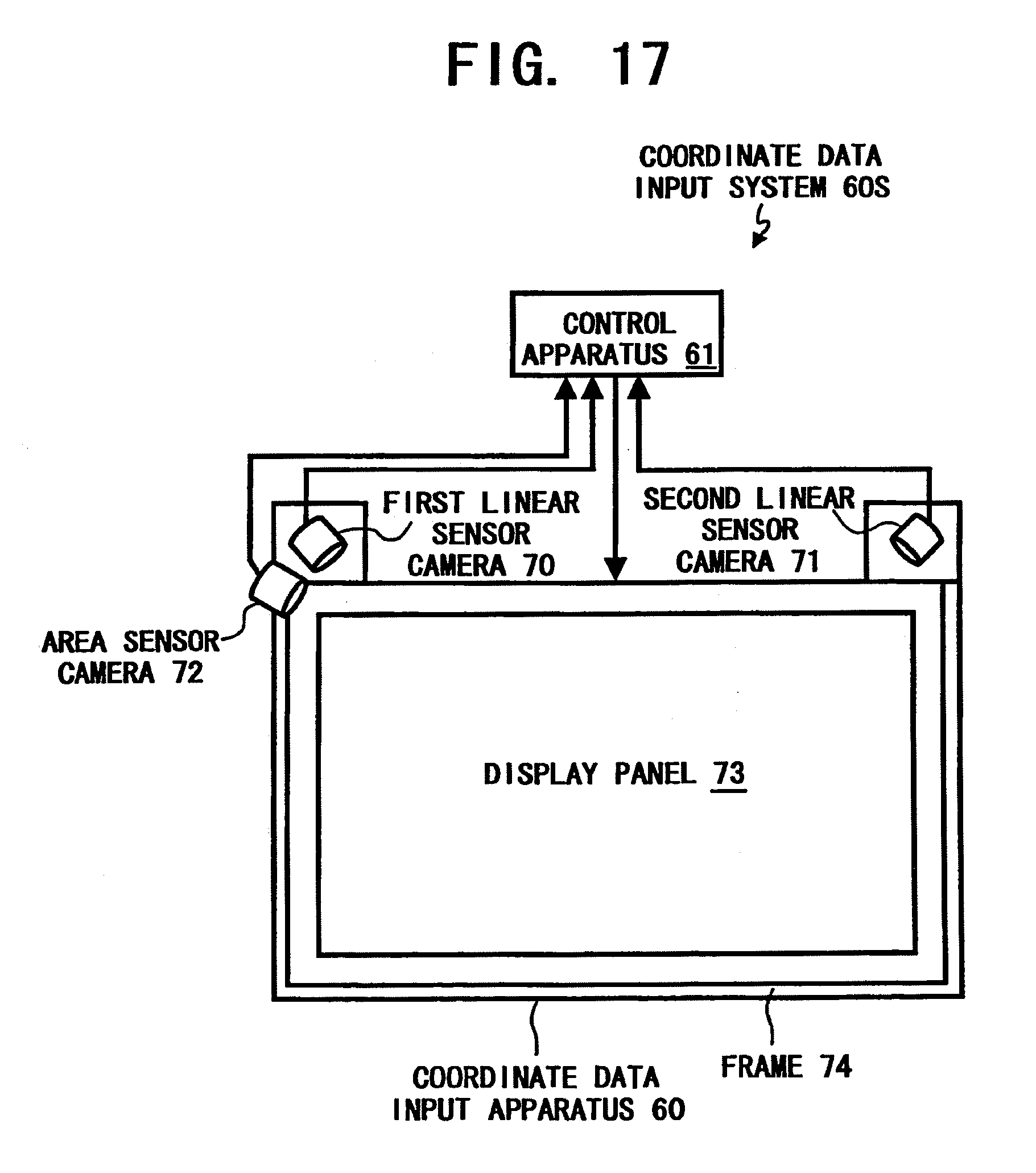

[0033] FIG. 17 is a schematic view illustrating a coordinate data input system as another example configured according to the present invention;

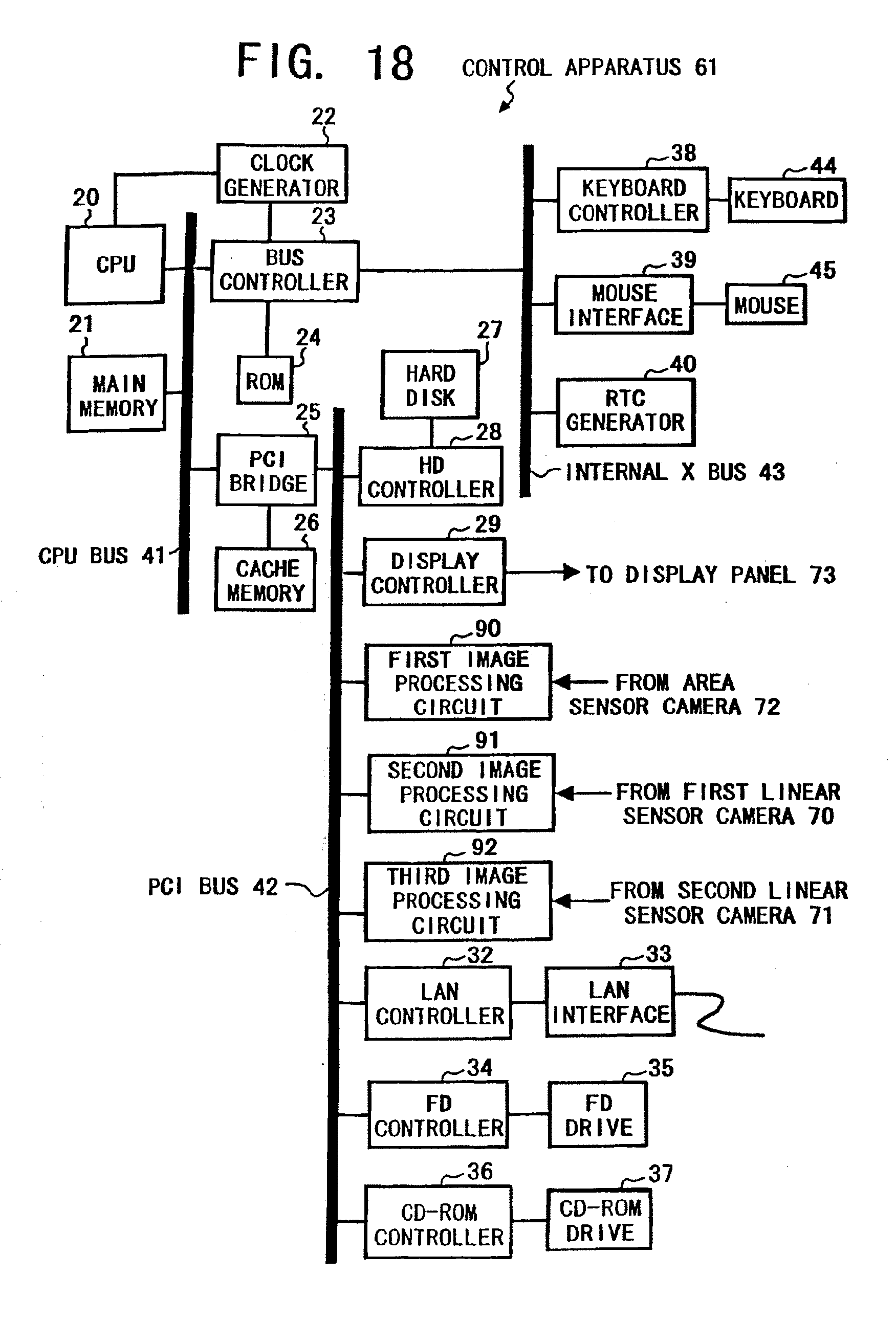

[0034] FIG. 18 is an exemplary block diagram of a control apparatus of the coordinate data input system of FIG. 17 configured according to the present invention;

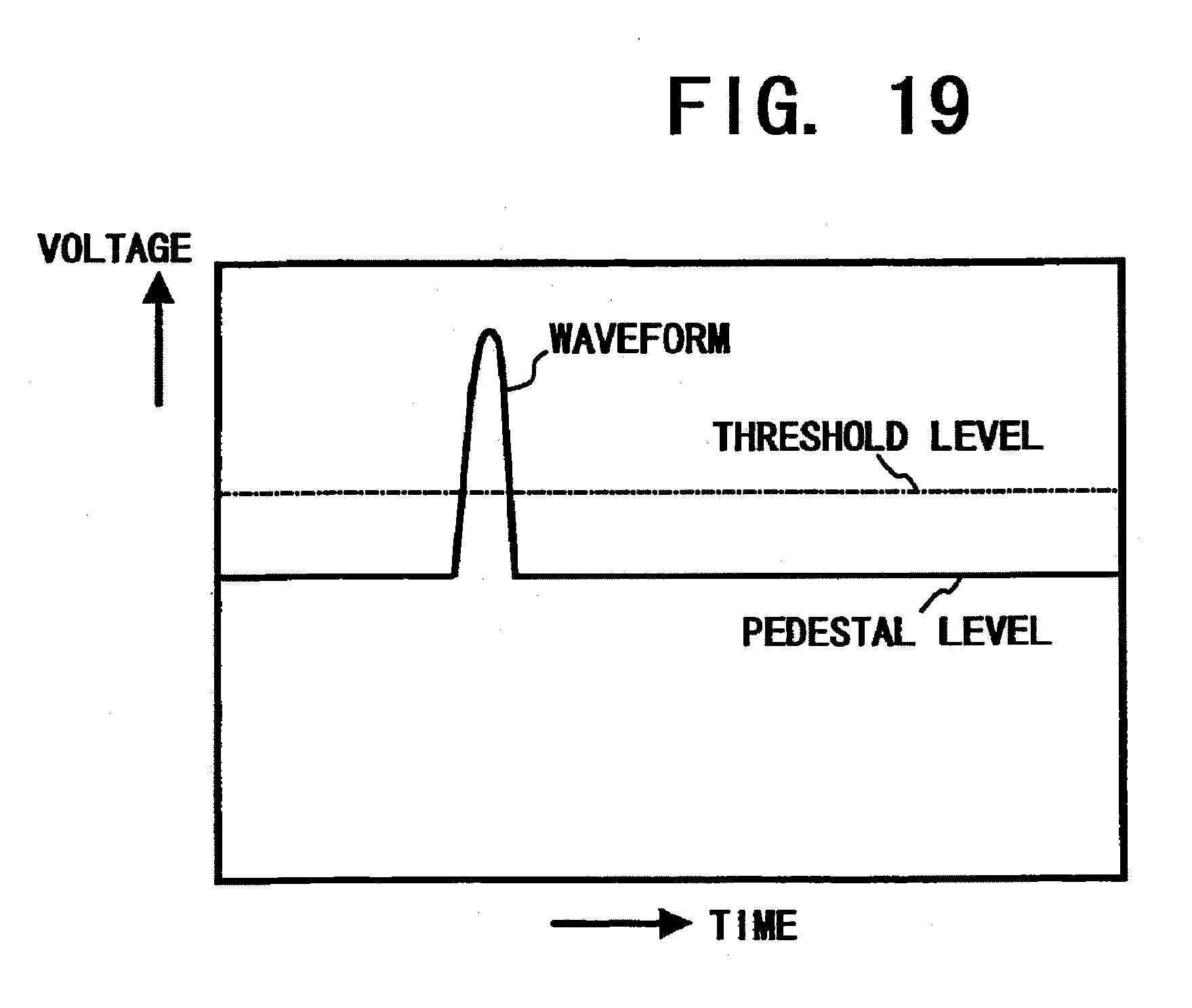

[0035] FIG. 19 is a diagram illustrating an analog signal waveform output from a linear sensor camera;

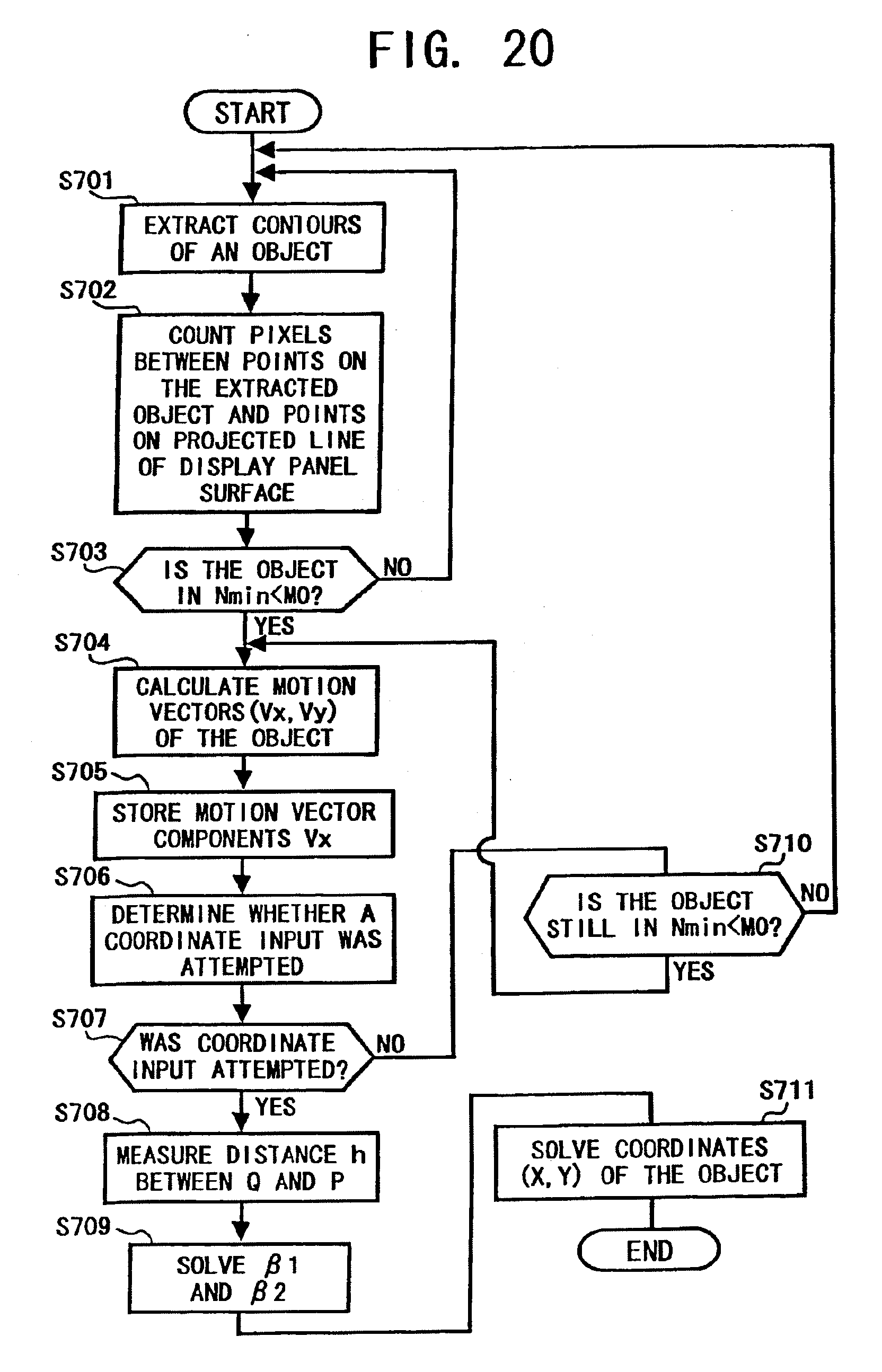

[0036] FIG. 20 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system of FIG. 17 as an example configured according to the present invention;

[0037] FIG. 21 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system of FIG. 17 as another example configured according to the present invention;

[0038] FIG. 22 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system of FIG. 17 as another example configured according to the present invention;

[0039] FIG. 23 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system of FIG. 1 as another example configured according to the present invention;

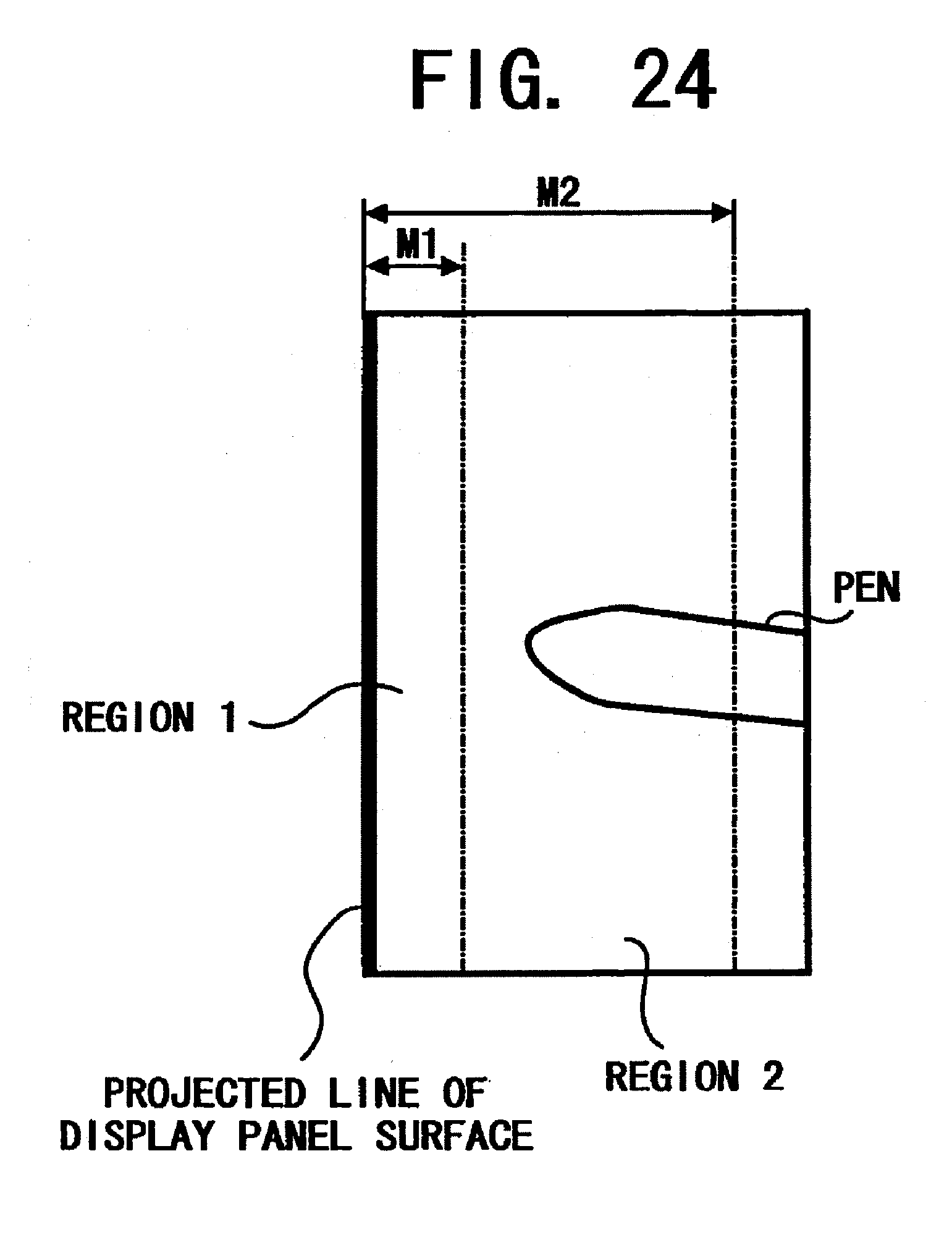

[0040] FIG. 24 is a diagram illustrating an image captured by the first electronic camera in the coordinate data input system of FIG. 1; and

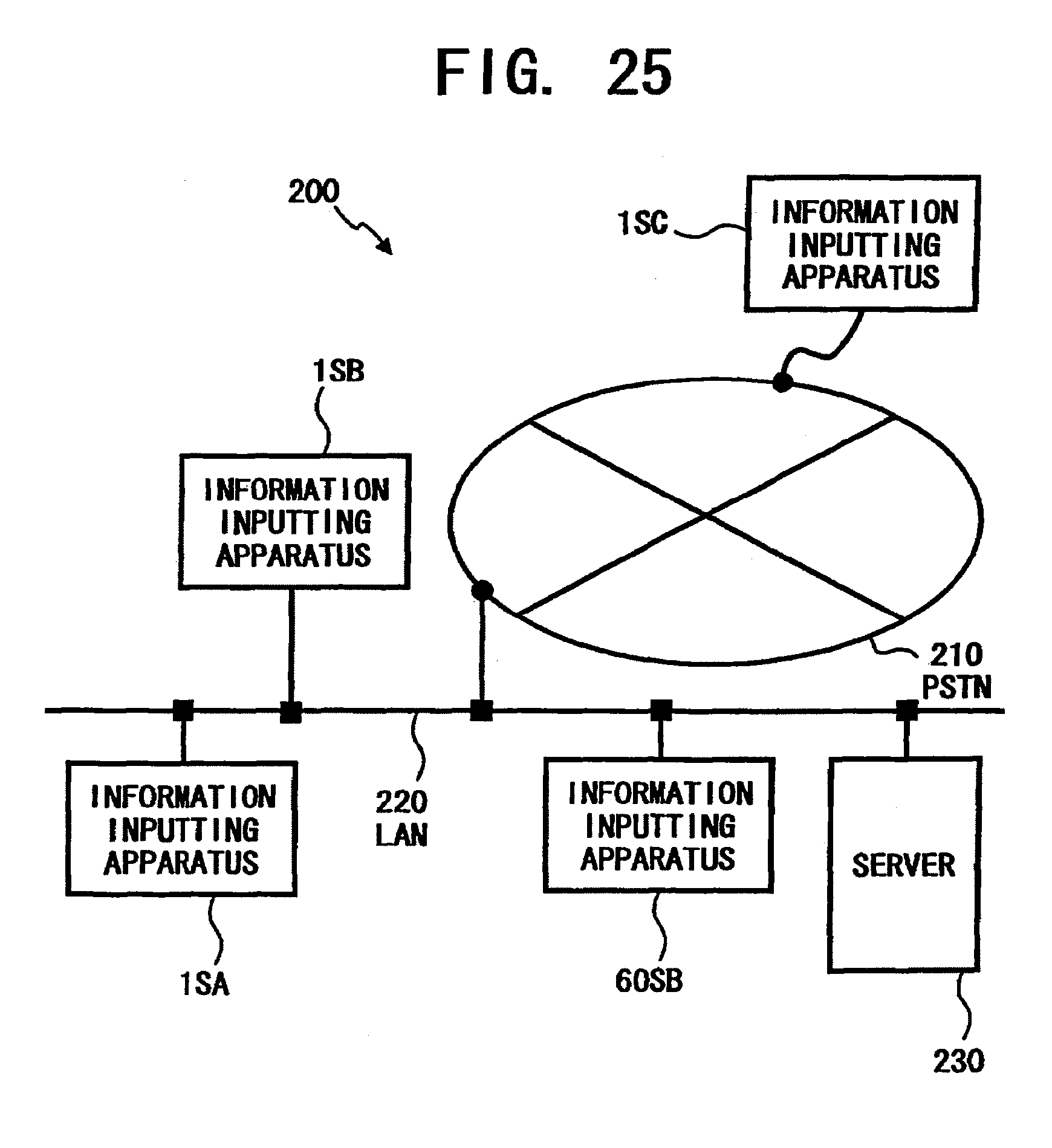

[0041] FIG. 25 is an exemplary network system including the coordinate data input systems of FIG. 1 and FIG. 17.

DESCRIPTION OF THE PREFERRED EMBODIMENTS

[0042] Referring now to the drawings, wherein like reference numerals designate identical or corresponding parts throughout the several views, and more particularly to FIG. 1 thereof, is a schematic view illustrating a coordinate data input system 1S as an example configured according to the present invention. The coordinate data input system 1S includes a coordinate data input apparatus 1 and a control apparatus 2. The coordinate data input apparatus 1 includes a first electronic camera 10, a second electronic camera 11, and a display panel 12.

[0043] The display panel 12 displays an image with, for example, a 48 by 36 inch screen (diagonally 60 inches) and 1024 by 768-pixel resolution, which is referred as an XGA screen. For example, a plasma display panel, a rear projection display, etc., may be used as the display panel 12. Each of the first electronic camera 10 and the second electronic camera 11 implements a two-dimensional imaging device with a resolution that enables such as a selecting operation of an item in a menu window, a drawing operation of free hand lines, letters, etc. A two-dimensional imaging device is also referred as an area sensor.

[0044] The two-dimensional imaging device preferably has variable output frame rate capability. The two-dimensional imaging device also preferably has a random access capability that allows any imaging cell therein randomly accessed to obtain an image signal from the cell. Such a random access capability is sometimes also referred to as random addressability. As an example of such a random access two-dimensional imaging device, a complementary metal oxide semiconductor sensor (CMOS sensor) may be utilized.

[0045] The electronic camera 10 also includes a wide-angle lens 50 which covers around 90 degrees or wider angle and an analog to digital converter. Likewise, the electronic camera 11 also includes a wide-angle lens 52 which covers around 90 degrees or wider angle and an analog to digital converter. The first electronic camera 10 is disposed at a upper corner of the display panel 12 and such that an optical axis of the wide-angle lens 50 forms an angle of approximately 45 degrees with the horizontal edge of the display panel 12. The second electronic camera 11 is disposed at the other upper corner of the display panel 12 and such that the optical axis of the wide-angle lens 52 forms an angle of approximately 45 degrees with the horizontal edge of the display panel 12.

[0046] Further, the optical axis each of the electronic cameras 10 and 11 is disposed approximately parallel to a display screen surface of the display panel 12. Thus, the electronic cameras 10 and 11 can capture whole the display screen surface of the display panel 12, respectively. Each of the captured images is converted into digital data, and the digital image data is then transmitted to the control apparatus 2.

[0047] FIG. 2 is an exemplary block diagram of the control apparatus 2 of the coordinate data input system 1S of FIG. 1. Referring to FIG. 2, the control apparatus 2 includes a central processing unit (CPU) 20, a main memory 21, a clock generator 22, a bus controller 23, a read only memory (ROM) 24, a peripheral component interconnect (PCI) bridge 25, a cash memory 26, a hard disk 27, a hard disk controller (HD controller) 28, a display controller 29, a first image processing circuit 30, and a second image processing circuit 31.

[0048] The control apparatus 2 also includes a local area network controller (LAN controller) 32, a LAN interface 33, a floppy disk controller (FD controller) 34, a FD drive 35, a compact disc read only memory controller (CD-ROM controller) 36, a CD-ROM drive 37, a keyboard controller 38, a mouse interface 39, a real time clock generator (RTC generator) 40, a CPU bus 41, a PCI bus 42, an internal X bus 43, a keyboard 44, and a mouse 45.

[0049] The CPU 20 executes a boot program, a basic input and output control system (BIOS) program stored in the ROM 24, an operating system (OS), application programs, etc. The main memory 21 may be structured by, e.g., a dynamic random access memory (DRAM), and is utilized as a work memory for the CPU 20. The clock generator 22 may be structured by, for example, a crystal oscillator and a frequency divider, and supplies a generated clock signal to the CPU 20, the bus controller 23, etc., to operate those devices at the clock speed.

[0050] The bus controller 23 controls data transmission between the CPU bus 41 and the internal X bus 43. The ROM 24 stores a boot program, which is executed immediate after the coordinate data input system 1S is turned on, device control programs for controlling the devices included in the system 15, etc. The PCI bridge 25 is disposed between the CPU bus 41 and the PCI bus 42 and transmits data between the PCI bus 42 and devices connected to the CPU bus 41, such as the CPU 20 through the use of the cash memory 26. The cash memory 26 may be configured by, for example, a DRAM.

[0051] The hard disk 27 stores system software such as an operating system, a plurality of application programs, various data for multiple users of the coordinate data input system 1S. The hard disk (HD) controller 28 implements a standard interface, such as a integrated device electronics interface (IDE interface), and transmits data between the PCI bus 42 and the hard disk 27 at a relatively high speed data transmission rate.

[0052] The display controller 29 converts digital letter/character data and graphic data into an analog video signal, and controls the display panel 12 of the coordinate data input apparatus 1 so as to display an image of the letters/characters and graphics thereon according to the analog video signal.

[0053] The first image processing circuit 30 receives digital image data output from the first electronic camera 10 through a digital interface, such as an RS-422 interface. The first image processing circuit 30 then executes an object extraction process, an object shape recognition process, a motion vector detection process, etc. Further, the first image processing circuit 30 supplies the first electronic camera 10 with a clock signal and an image transfer pulse via the above-described digital interface.

[0054] Similarly, the second image processing circuit 31 receives digital image data output from the second electronic camera 11 through a digital interface, such as also an RS-422 interface. The second image processing circuit 31 is configured as the substantially same hardware as the first image processing circuit 30, and operates substantially the same as the first image processing circuit 30 operates. That is, the second image processing circuit 31 also executes an object extraction process, an object shape recognition process, a motion vector detection process, and supplies a clock signal and an image transfer pulse to the second electronic camera 11 as well.

[0055] In addition, the clock signal and the image transfer pulse supplied to the first electronic camera 10 and those signals supplied to the second electronic camera 11 are maintained in synchronization.

[0056] The LAN controller 32 controls communications between the control apparatus 2 and external devices connected to a local area network, such as an Ethernet, via the LAN interface 33 according to the protocol of the network. As an example of an interface protocol, the Institute of Electrical and Electronics Engineers (IEEE) 802.3 standard may is used.

[0057] The FD controller 34 transmits data between the PCI bus 42 and the FD drive 35. The FD drive 35 reads and writes a floppy disk therein. The CD-ROM controller 36 transmits data between the PCI bus 42 and the CD-ROM drive 37. The CD-ROM drive 37 reads a CD-ROM disc therein and sends the read data to the CD-ROM controller 36. The CD-ROM controller 36 and the CD-ROM drive 37 may be connected with an IDE interface.

[0058] The keyboard controller 38 converts serial key input signals generated at the keyboard 44 into parallel data. The mouse interface 39 is provided with a mouse port to be connected with the mouse 45 and controlled by mouse driver software or a mouse control program. In this example, the coordinate data input apparatus 1 functions as a data input device, and therefore the keyboard 44 and the mouse 45 may be omitted from the coordinate data input system 1S in normal operations except for a moment during a maintenance operation for the coordinate data input system 1S. The RTC generator 40 generates and supplies calendar data, such as day, hour, and minute, etc., and is battery back-upped.

[0059] Now, a method for determining a location where a coordinate input member has touched on or come close to the image display surface of the display panel 12 is described. FIG. 3 is a diagram illustrating a method for obtaining coordinates where a coordinate input member contacts or comes close to the display panel 12. Referring to FIG. 3, the first electronic camera 10 includes the wide-angle lens 50 and a CMOS image sensor 51, and the second electronic camera 11 also includes the wide-angle lens 52 and a CMOS image sensor 53.

[0060] As stated above, the first and second electronic cameras 10 and 11 are disposed such that the optical axes of the wide-angle lenses 50 and 52, i.e., the optical axes of incident lights to the cameras, are parallel to the display surface of the display panel 12. Further, the first and second electronic cameras 10 and 11 are disposed such that each of the angles of view of the electronic cameras 10 and 11 covers substantially a whole area where the coordinate input member can come close and touch the display panel 12.

[0061] In FIG. 3, the symbol L denotes a distance between the wide-angle lens 50 and the wide-angle lens 52, and the symbol X-Line denotes a line connecting the wide-angle lens 50 and the wide-angle lens 52. The symbol A(x, y) denotes a point A where a coordinate input member comes close to or touches the display panel 12 and the coordinates (x, y) thereof. The point A(x, y) is referred as a contacting point. Further, the symbol .beta.1 denotes an angle which the line X-line forms with a line connecting the wide-angle lens 50 and the contacting point A(x, y), and the symbol .beta.2 denotes an angle that the X-line forms with a line connecting the wide-angle lens 52 and the contacting point A(x, y).

[0062] FIG. 4 is a magnified view of the wide-angle lens 50 and the CMOS image sensor 51 of FIG. 3. Referring to FIG. 4, the symbol f denotes a distance between the wide-angle lens 50 and the CMOS image sensor 51. The symbol Q denotes a point at which the optical axis of the wide-angle lens 50 intersects the CMOS image sensor 51. The point Q is referred as an optical axis crossing point.

[0063] The symbol P denotes a point where an image of the contacting point A(x, y) is formed on the CMOS image sensor 51. The point P is referred as a projected point P of the contacting point A(x, y). The symbol h denotes a distance between the point P and the point Q. The symbol a denotes an angle which the optical axis of the wide-angle lens 50 forms with the X-line, and the symbol .theta. denotes an angle which the optical axis of the wide-angle lens 50 forms with a line connecting the contacting point A(x, y) and the point P.

[0064] Referring to FIG. 3 and FIG. 4, the following equations hold;

.theta.=arctan(h/f) (1)

.beta.1=.alpha.-.theta. (2)

[0065] Where, the angle .alpha. and the distance f are constant values, because these values are determined by a mounted mutual location of the wide-angle lens 50 and the CMOS image sensor 51, and a mounted angle of the wide-angle lens 50 to the line X-line at a manufacturing plant. Therefore, when the distance h is given, the angle .beta.1 is solved. Regarding the second electronic camera 11, similar equations are hold, and thus the angle .beta.2 is solved.

[0066] After the angle .beta.1 and the angle .beta.2 are obtained, the coordinates of the contacting point A(x, y) are calculated by the followings based on a principle of trigonometrical survey;

x=Lx tan .beta.2/(tan .beta.1+tan .beta.2) (3)

y=.times.X tan .beta.1 (4)

[0067] Next, a relation between the CMOS image sensor 51 and an image of the edges of the display panel 12 formed on the CMOS image sensor 51 is described. Each of the CMOS image sensors 51 and 53 has a two-dimensional array or a matrix of imaging picture elements (pixels) or imaging cells. When the number of imaging cells in a direction and the number of imaging cells in the other direction are different each other, the CMOS image sensors 51 and 53 are disposed such that a side having the larger number of imaging cells is parallel to the surface of the display panel 12.

[0068] Regarding the CMOS image sensors 51 and 53, a coordinate axis along the direction having the larger number of imaging cells is represented by Ycamera axis. A coordinate axis along the direction having a smaller number of imaging cells, i.e., the direction perpendicular to the Ycamera axis is represented by Xcamera axis. Thus, images of the edges or margins of the display panel 12 that are formed on the CMOS image sensors 51 and 53 become a line parallel to the Ycamera axis and perpendicular to the Xcamera axis. A projection of the surface of the display panel 12 on the CMOS image sensors 51 and 53 is formed as substantially the same line on the CMOS image sensors 51 and 53. Accordingly, such a line formed on the CMOS image sensors 51 and 53 is hereinafter referred as "a formed line of the surface of the display panel 12," "a projected line of the surface of the display panel 12," or just simply "the surface of the display panel 12."

[0069] FIG. 5 is a diagram illustrating a tilt of the surface of the display panel 12 to the CMOS image sensors 51 and 53. Referring to FIG. 5, when the surface of the display panel 12 is not parallel, i.e., is tilted to the Ycamera axis as illustrated, the tilting angle .delta. to the Ycamera axis is obtained as follows.

[0070] When, points A(x1c, y1c), B(x2c, y2c), C(x3c, y3c) are arbitrary points on the projected line of the surface of the display panel 12. An angle .delta. between a line connecting each point and the origin of the coordinate system and the Ycamera axis is stated as follows;

.delta.1=arctan(x1c/y1c) (5)

.delta.2=arctan(x2c/y2c) (6)

.delta.3=arctan(x3c/y3c) (7)

[0071] After that, the tilted angle .delta. is obtained as an average value of those angles;

.delta.=(.delta.1+.delta.2+.delta.3)/3 (8)

[0072] When the surface of the display panel 12 is tilted to the Ycamera axis, a tilted coordinate system, which tilts angle .delta. to the original coordinate system (Xcamera, Ycamera), may also be conveniently utilized to obtain a location of a coordinate input member and a motion vector thereof. The tilted coordinate system is related to a rotation of the original coordinate system at angle .delta.. When the surface of the display panel 12 tilts clockwise, the tilted coordinate system is obtained by being rotated counterclockwise, and vice versa. Relations between the original coordinate system (Xcamera, Ycamera) and the tilted coordinate system, which is denoted by (X1camera, Y1camera), are the following:

X1camera=XcameraX cos .delta.+YcameraX sin .delta. (9)

Y1camera=YcameraX cos .delta.-cameraX sin .delta. (10)

[0073] When the surface of the display panel 12 does not tilt to the Ycamera axis by, e.g., as a result of adjusting operation on the electronic cameras 10 and 11 at a production factory, or an installing and maintenance operation at a customer office, those coordinate conversions are not always needed.

[0074] FIG. 6 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation in the coordinate data input system 1S of FIG. 1 as an example configured according to the present invention. By the way, the CMOS image sensor 51 may not always have an appropriate image aspect ratio, or a ratio of the number of imaging cells in a direction to that in the other direction. In such a case, the first electronic camera 10 allows outputting the image signal captured by the CMOS image sensor 51 within a predetermined range from the surface of the display panel 12 in a direction perpendicular to the surface. In other words, the first electronic camera 10 outputs digital image data in the predetermined range to the first image processing circuit 30 in the control apparatus 2. Likewise, the second electronic camera 11 outputs digital image data in a predetermined range to the second image processing circuit 31.

[0075] With reference to FIG. 6, in step S101, the first image processing circuit 30 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10. As an example of extraction methods of contours of an object, the first image processing circuit 30 first determines gradients of image density among the pixels by differentiation, and then extracts contours based on a direction and magnitude of the gradients of image density. Further, the method described in Japanese Patent Publication No. 8-16931 may also be applied for extracting an object as a coordinate input member from frame image data.

[0076] In step S102, the first image processing circuit 30 measures plural distances between the object and the projected line of the surface of the display panel 12 on the CMOS image sensor 51. For measuring a distance, the first image processing circuit 30 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 on the CMOS image sensor 51. An image forming reduction ratio on the CMOS image sensor 51 is fixed and a pixel pitch of the CMOS image sensor 51 (i.e., the interval between imaging cells) is known. As a result, the number of pixels between two points determines a distance between the two points.

[0077] For measuring plural distances between the object and the surface of the display panel 12, the first image processing circuit 30 counts pixels as regards plural distances between the contours of the extracted object and the projected line of the surface of the display panel 12.

[0078] In step S103, the first image processing circuit 30 extracts the least number of pixels among the plural numbers of pixels counted for measuring plural distances in step S102. A symbol Nmin denotes the least number of pixels among the plural numbers of pixels. Consequently, the distance being the minimum value Nmin corresponds to a nearest point of the object to the surface of the display panel 12. The first image processing circuit 30 then determines whether the minimum value Nmin is smaller than a predetermined number M0. When the minimum value Nmin is smaller than the predetermined number M0, i.e., YES in step S103, the process proceeds to step S104, and when the minimum value Nmin is not smaller than the predetermined number M0, i.e., NO in step S103, the process returns to step S101.

[0079] In step S104, the first image processing circuit 30 calculates motion vectors regarding predetermined plural points on the extracted contours of the object including the nearest point to the display panel 12. For this calculation, the first image processing circuit 30 uses the identical frame image data used for extracting the contours and the next following frame image data received from the first electronic camera 10.

[0080] In this example, the first image processing circuit 30 obtains optical flows, i.e., velocity vectors, by calculating a rate of temporal change of a pixel image density. The first image processing circuit 30 also obtains a rate of spatial change of image densities of pixels in the vicinity of the pixel used for calculating the rate of temporal change of the pixel image density. The motion vectors are expressed on the coordinate system (Xcamera, Ycamera), which associates with the projected line of the surface of the display panel 12 on the CMOS image sensor 51 (i.e., Ycamera) and the coordinate perpendicular to the surface of the display panel 12 (i.e., Xcamera).

[0081] FIG. 7 is a diagram illustrating an image captured by the first electronic camera 10. With Reference to FIG. 7, a thick line illustrates the projected line of the surface of the display panel 12 on the CMOS image sensors 51. The display panel 12 includes a display area and a frame in circumference of and at approximately same level of the display screen surface. Therefore, the surface of the display panel 12 can also be the surface of the frame. The alternate long and short dash line is drawn at a predetermined distance from the projected line of the surface of the display panel 12. The predetermined distance corresponds to the predetermined number M0 of pixels at the step S103 of FIG. 6, and the region limited by the predetermined distance is denoted by REGION FOR OBTAINING MOTION VECTORS. The linked plural lines illustrate a pen as the extracted contours of the object at the step S101 of FIG. 6.

[0082] In this example, the nearest point of the pen to the display panel 12, which is marked by the black dot at the tip of the pen in FIG. 7, is in the REGION FOR OBTAINING MOTION VECTORS. Accordingly, a calculation of motion vectors, which is executed at the step S104 of FIG. 6, results in such as the motion vector and components thereof. Vx and Vy as illustrated in FIG. 7 regarding the nearest point (black dot) of the pen.

[0083] Referring back to FIG. 6, in step S105, the CPU 20 stores motion vector components along the direction Xcamera of the calculated vectors, such as the component Vx illustrated in FIG. 7, in the main memory 21. The CPU 20 stores those vector components from each obtained frame image data in succession. The successively stored motion vector data is also referred as trace data.

[0084] In step S106, the CPU 20 determines whether the extracted object, such as the pen in FIG. 7, has made an attempt to input coordinates on the display panel 12 based on the trace data of motion vectors. A determining method is further described later. When the extracted object has made an attempt to input coordinates, i.e., YES in step S107, the process proceeds to step S108, and when the object has not made an attempt to input coordinates, i.e., No in step S107, the process branches to step S109.

[0085] In step S108, the CPU 20 measures the distance h between the optical axis crossing point Q and a projected point P of a contacting point A(x, y) of the object. When the extracted object is physically soft, such as a human finger, the extracted object may contact at an area rather than a point. In such case, the contacting point A(x, y) can be replaced with the center of the contacting area. In addition, as stated earlier, the term contacting point A(x, y) is applied for not only a contacting state of the object and the display panel 12, but also a state that the object is adjacent to the display panel 12.

[0086] A range from the optical axis crossing point Q to an end of the CMOS image sensor 51 contains a fixed number (denoted by N1) of pixels, which only depends upon relative locations of the wide-angle lens 50 and the CMOS image sensor 51 being disposed.

[0087] On the other hand, a range from the point P to the end of the CMOS image sensor 51 contains variable pixels (denoted by N2), which varies depending upon the location of the contacting point A(x, y) of the object. Therefore, the range between the point Q and the point P contains |N1-N2| pixels, and the distance between the point Q and point P in the direction Ycamera, i.e., the distance h, is determined as |N1-N2|.times.the pitch of the pixels.

[0088] Referring back again to FIG. 6, in step S110, the CPU 20 solves the angle .beta.1 by using the equations (1) and (2), with known quantities f and .alpha., and the measured distance h. As regards image data received from the second electronic camera 11, the CPU 20 solves the angle .beta.2 in a similar manner.

[0089] In step S111, the CPU 20 solves the coordinates x and y of the object on the display panel 12 by using the equations (3) and (4), with known quantities L, and the solved angles .beta.1 and .beta.2.

[0090] In step S109, the CPU 20 determines whether the object is still within the predetermined region above the display panel 12 using the trace data of motion vector components Vx of the object. When the object is in the predetermined region, i.e., YES in step S109, the process returns to step S104 to obtain motion vectors again, and when the object is out of the predetermined region, i.e., NO in step S109, the process returns to step S101.

[0091] As stated above, for solving .beta.1, .beta.2, x and y by using equations (1), (2), (3) and (4), the calculating operations is executed by the CPU 20. However, angles .beta.1, .beta.2 may also be solved by the first image processing circuit 30 and the second image processing circuit 31, respectively, and then the obtained .beta.1, .beta.2 are transferred to the CPU 20 to solve the coordinates x and y.

[0092] In addition, the CPU 20 may also execute the above-described contour extracting operation in step S101, the distance measuring operation in step S102, the least number extracting and comparing operation in steps S103 and S104 in place of the first image processing circuit 30. When the CPU 20 executes the operation, the hard disk 27 may initially store program codes, and the program codes are loaded to the main memory 21 for execution every time after the system 1S is boot upped.

[0093] When the coordinate data input system 1S is in a writing input mode or a drawing input mode, the CPU 20 generates display data according to the obtained plural sets of coordinates x and y of the object, i.e., the locus data of the object, and sends the generated display data to the display controller 29. Thus, the display controller 29 displays an image corresponding to the locus of the object on the display panel 12 of the coordinate data input apparatus 1.

[0094] A certain type of display panel, such as a rear projection display, has a relatively elastic surface, such as a plastic sheet screen. FIG. 8 is a diagram illustrating an image captured by the first electronic camera 10 when an input pen distorts the surface of a display panel. In the captured image, the tip of the pen is out of the frame due to the distortion or warp in the surface of the display panel caused by the pressure of the pen stroke. Intersections of the surface of the display panel 12 and the contours of the pen are denoted by point A and point B.

[0095] Accordingly, when the method of FIG. 6 is applied for a display panel having such a relatively elastic surface, the middle point of the points A and B may be presumed or substantially equivalent to a nearest point of the pen as well as a literal sense of the nearest point, such as the black dot at the tip of the pen illustrated in FIG. 7.

[0096] FIG. 9 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention. The method is also executed on the coordinate data input system 1S of FIG. 1.

[0097] With reference to FIG. 9, in step S201, the first image processing circuit 30 or the CPU 20 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10.

[0098] In step S202, the first image processing circuit 30 or the CPU 20 first extracts geometrical features of the shape of the extracted contours of the object. For extracting geometrical features, the first image processing circuit 30 or the CPU 20 determines the position of the barycenter of the contours of the object, then measures distances from the barycenter to plural points on the extracted contours for all radial directions like the spokes of a wheel. Then, the CPU 20 extracts geometrical features of the contour shape of the object based on relations between each direction and the respective distance. Japanese Laid-Open Patent Publication No. 8-315152 may also be referred for executing the above-stated character extraction method.

[0099] After that, the CPU 20 compares the extracted geometrical features of the contour shape of the object with features of cataloged shapes of potential coordinate input members one after the other. The shapes of potential coordinate input members may be stored in the ROM 24 or the hard disk 27 in advance.

[0100] When the operator of the coordinate data input system 1S points to an item on a menu or an icon, or draws a line, etc., with a coordinate input member, the axis of the coordinate input member may tilt in any direction with various tilting angles. Therefore, the CPU 20 may rotate the contour shape of the object for predetermined angles to compare with the cataloged shapes.

[0101] FIG. 10 is a diagram illustrating an image captured by the first electronic camera 10 when an input pen as a coordinate input member tilts to the surface of a display panel 12. In this case, the pen tilts to the surface of the display panel 12 at an angle AR as illustrated. Therefore, when the CPU 20 inversely rotates, i.e., rotates counterclockwise, the contour shape of the object at the angle AR, the contour shape easily coincides with one of the cataloged shapes.

[0102] Instead of such a rotating operation of the contour shape, the shapes of potential coordinate input members may be rotated at plural angles in advance, and the rotated shapes stored in the ROM 24 or the hard disk 27. Thus, the real-time rotating operation of the contour shape is not needed; consequently, execution time for the coordinate data inputting operation is further saved.

[0103] FIG. 11 is a diagram illustrating an image having an axially symmetric pen captured by the first electronic camera 10. As in the illustrated example, various sorts of potential coordinate input members, such as a pen, a magic marker, a stick, a rod, etc., have axial symmetry. Therefore, the CPU 20 may analyze whether the captured object has axial symmetry, and when the captured object has axial symmetry, the CPU 20 can simply presume the captured object to be a coordinate input member.

[0104] By this method, not all the cataloged shapes of potential coordinate input members are required to be stored in the ROM 24 or the hard disk 27; therefore storage capacity thereof is saved. As an example, the axial symmetry may be determined based on distances from the barycenter to plural points on the extracted contours.

[0105] Referring back to FIG. 9, in step S203, the CPU 20 determines whether the character extracted contour shape of the object coincides with one of the cataloged shapes of potential coordinate input members by determining methods including the above-stated methods. When the character extracted contour shape coincides with one of the cataloged shapes, i.e., YES in step S203, the process proceeds to step S204, and when the contour shape does not coincide with any of the cataloged shapes, i.e., NO in step S203, the process returns to step S201.

[0106] In step S204, the first image processing circuit 30 or the CPU 20 measures plural distances between points on the contours of the extracted object and points on the projected line of the surface of the display panel 12. For measuring those distances, the first image processing circuit 30 or the CPU 20 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 with respect to each of the plural distances. A distance between two points is obtained as the product of the pixel pitch of the CMOS image sensor 51 and the number of pixels between the points.

[0107] In step S205, the first image processing circuit 30 or the CPU 20 extracts the least number of pixels, which is denoted by Nmin, among the plural numbers of pixels counted in step S204, and determines whether the minimum value Nmin is smaller than a predetermined number M0. When the minimum value Nmin is smaller than the predetermined number M0, i.e., YES in step S205, the process proceeds to step S206, and when the minimum value Nmin is not smaller than the predetermined number M0, i.e., NO in step S205, the process returns to step S201.

[0108] In step S206, the first image processing circuit 30 or the CPU 20 calculates motion vectors (Vx, Vy) regarding predetermined plural points on the extracted contours of the object including the nearest point to the display panel 12. The component Vx is a vector component along the Xcamera axis, i.e., a direction perpendicular to the projected line of the surface of the display panel 12, and the component Vy is a vector component along the Ycamera axis, i.e., a direction along the surface of the display panel 12. For calculating the motion vectors, the first image processing circuit 30 or the CPU 20 uses consecutive two frames and utilizes the optical flow method stated above.

[0109] In step S207, the CPU 20 successively stores motion vector components along the direction of Xcamera (i.e., Vx) of the calculated motion vectors of frames in the main memory 21 as trace data. In step S208, the CPU 20 determines whether the extracted object has made an attempt to input coordinates on the display panel 12 based on the trace data of motion vectors. When the object has made an attempt to input coordinates, i.e., YES in step S209, the process branches to step S211, and when the object has not made an attempt, i.e., No in step S209, the process proceeds to step S210.

[0110] In step S210, the CPU 20 determines whether the object is within a predetermined region above the display panel 12 using the trace data of motion vector components Vx of the object. When the object is in the predetermined region, i.e., YES in step S210, the process returns to step S206 to obtain new motion vectors again, and when the object is out of the predetermined region, i.e., NO in step S210, the process returns to step S201.

[0111] In step S211, the first image processing circuit 30 or the CPU 20 measures a distance h on the CMOS image sensor 51 between the optical axis crossing point Q and a projected point P of a contacting point A(x, y). In step S212, with reference to FIG. 4, the CPU 20 solves the angle .beta.1 by using the equations (1) and (2), with known quantities f and .alpha., and the measured distance h. As regards image data received from the second electronic camera 11, the CPU 20 solves the angle .alpha.2 in a similar manner.

[0112] In step S213, referring to FIG. 3, the CPU 20 solves the coordinates x and y of the object on the display panel 12 by using the equations (3) and (4), with known quantities L, and the solved angles .beta.1 and .beta.2.

[0113] As described, the CPU 20 only inputs coordinates of an object that coincides with one of cataloged shapes of potential coordinate input members. Accordingly, the coordinate data input system 1S can prevent an erroneous or unintentional inputting operation, e.g., inputting coordinates of an operator's arm, head, etc.

[0114] FIG. 12 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention. This example is applied for, e.g., inputting a pointing or clicking operation for an icon, an item in a menu, etc., being displayed on the display panel 12. The method is also executed on the coordinate data input system 1S of FIG. 1.

[0115] With reference to FIG. 12, in step S301, the first image processing circuit 30 or the CPU 20 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10. In step S302, the first image processing circuit 30 or the CPU 20 determines whether the contour shape of the object is regarded as a coordinate input member. When the contour shape of the object is regarded as a coordinate input member, i.e., YES in step S302, the process proceeds to step S303, and when the contour shape of the object is not regarded as a coordinate input member, i.e., NO in step S302, the process returns to step S301.

[0116] In step S303, the first image processing circuit 30 or the CPU 20 measures plural distances between points on the contours of the extracted object and points on the projected line of the surface of the display panel 12. For measuring those distances, the first image processing circuit 30 or the CPU 20 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 regarding each of the distances. A distance between two points is obtained as the product of the pixel pitch of the CMOS image sensor 51 and the number of pixels between the points.

[0117] In step S304, the first image processing circuit 30 or the CPU 20 extracts the least number of pixels Nmin among the plural numbers of pixels counted in step S303, and determines whether the minimum value Nmin is smaller than a predetermined number M0. When the minimum value Nmin is smaller than the predetermined number M0, i.e., YES in step S304, the process proceeds to step S305, and when the minimum value Nmin is not smaller than the predetermined number M0, i.e., NO in step S304, the process returns to step S301.

[0118] In step S305, the first image processing circuit 30 or the CPU 20 calculates motion vectors (Vx, Vy) regarding predetermined plural points on the extracted contours of the object including the nearest point to the display panel 12. The component Vx is a vector component along the Xcamera axis, i.e., a direction perpendicular to the projected line of the surface of the display panel 12, and the component Vy is a vector component along the Ycamera axis, i.e., a direction along the surface of the display panel 12. For calculating the motion vectors, the first image processing circuit 30 or the CPU 20 uses two consecutive frames of image data and utilizes the optical flow method stated above.

[0119] In step S306, the CPU 20 successively stores motion vector components along the direction Xcamera, i.e., component Vx, of plural frames in the main memory 21 as trace data.

[0120] In step S307, the CPU 20 determines whether a moving direction of the extracted object has been reversed from an advancing motion toward the display panel 12 to a leaving motion from the panel 12 based on the trace data of motion vectors. When the moving direction of the extracted object has been reversed, i.e., YES in step S307, the process branches to step S309, and when the moving direction has not reversed, i.e., No in step S307, the process proceeds to step S308.

[0121] In step S308, the first image processing circuit 30 or the CPU 20 determines whether the object is within a predetermined region above the display panel 12 using the trace data of motion vector components Vx of the object. When the object is in the predetermined region, i.e., YES in step S308, the process returns to step S305 to obtain new motion vectors again, and when the object is out of the predetermined region, i.e., NO in step S308, the process returns to step S301.

[0122] In step S309, the first image processing circuit 30 or the CPU 20 measures a distance h on the CMOS image sensor 51 between the optical axis crossing point Q and a projected point P of a contacting point A(x, y) of the object. For projected point P, for example, a starting point of a motion vector being centered among plural motion vectors, whose direction has been reversed, is used.

[0123] In step S310, referring to FIG. 4, the CPU 20 solves the angle .beta.1 by using the equations (1) and (2), with known quantities f and .alpha., and the measured distance h. As regards image data received from the second electronic camera 11, the CPU 20 solves the angle .beta.2 in a similar manner.

[0124] In step S311, referring to FIG. 3, the CPU 20 solves the coordinates x and y of the object on the display panel 12 by using the equations (3) and (4), with known quantities L, and the solved angles .beta.1 and .beta.2.

[0125] FIG. 13 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention. This example is applied to, for example, inputting information while a coordinate input member is staying at the surface of the display panel 12. The method is also executed on the coordinate data input system 1S of FIG. 1.

[0126] Referring to FIG. 13, in step S401, the first image processing circuit 30 or the CPU 20 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10. In step S402, the first image processing circuit 30 or the CPU 20 determines whether the contour shape of the object is regarded as a coordinate input member. When the contour shape of the object is regarded as a coordinate input member, i.e., YES in step S402, the process proceeds to step S403, and when the contour shape of the object is not regarded as a coordinate input member, i.e., NO in step S402, the process returns to step S401.

[0127] In step S403, the first image processing circuit 30 or the CPU 20 measures plural distances between points on the contours of the extracted object and points on the projected line of the surface of the display panel 12. For measuring those distances, the first image processing circuit 30 or the CPU 20 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 for each of the distances. A distance between two points is obtained as the product of the pixel pitch of the CMOS image sensor 51 and the number of pixels between the points.

[0128] In step S404, the first image processing circuit 30 or the CPU 20 extracts the least number of pixels Nmin among the plural numbers of pixels counted in step S403, and determines whether the minimum value Nmin is smaller than a predetermined number M0. When the minimum value Nmin is smaller than the predetermined number M0, i.e., YES in step S404, the process proceeds to step S405, and when the minimum value Nmin is not smaller than the predetermined number M0, i.e., NO in step S404, the process returns to step S401.

[0129] In step S405, the first image processing circuit 30 or the CPU 20 calculates motion vectors (Vx, Vy) regarding predetermined plural points on the extracted contours of the object including the nearest point to the display panel 12. Vx is a vector component along the Xcamera axis, i.e., a direction perpendicular to the projected line of the surface of the display panel 12, and Vy is a vector component along the Ycamera axis, i.e., a direction along the surface of the display panel 12. For calculating the motion vectors, the first image processing circuit 30 or the CPU 20 uses two consecutive frames and utilizes the optical flow method stated above.

[0130] In step S406, the CPU 20 successively stores motion vector components along the direction Xcamera of the calculated vectors, i.e., the component Vx, in the main memory 21 as trace data.

[0131] In step S407, the CPU 20 determines whether the vector component Vx, which is perpendicular to the plane of the display panel 12, has become a value of zero from an advancing motion toward the display panel 12. When the component Vx of the motion vector has become practically zero, i.e., YES in step S407, the process branches to step S409, and when the component Vx has not become zero yet, i.e., No in step S407, the process proceeds to step S408.

[0132] In step S408, the CPU 20 determines whether the object is located within a predetermined region above the display panel 12 using the trace data of motion vectors component Vx of the object. When the object is located in the predetermined region, i.e., YES in step S408, the process returns to step S405 to obtain new motion vectors again, and when the object is out of the predetermined region, i.e., NO in step S408, the process returns to step S401.

[0133] In step S409, the CPU 20 determines that a coordinate inputting operation has been started, and transits the state of the coordinate data input system 1S to a coordinate input state. In step S410, the first image processing circuit 30 or the CPU 20 measures a distance h between the optical axis crossing point Q and the projected point P of a contacting point A(x, y) of the object on the CMOS image sensor 51.

[0134] In step S411, referring to FIG. 4, the CPU 20 solves the angle .beta.1 by using the equations (1) and (2), with known quantities f and .alpha., and the measured distance h. As regards image data received from the second electronic camera 11, the CPU 20 solves the angle .beta.2 in a similar manner. In step S412, referring to FIG. 3, the CPU 20 solves the coordinates x and y of the object on the display panel 12 by using the equations (3) and (4), with known quantities L, and the solved angles .beta.1 and .beta.2.

[0135] In step S413, the CPU 20 determines whether the motion vector component Vy at the point P has changed while the other motion vector component Vx is value of zero. In other words, the CPU 20 determines whether the object has moved in any direction whatever along the surface of the display panel 12. When the motion vector component Vy has changed while the other motion vector component Vx is zero, i.e., YES in step S413, the process returns to step S410 to obtain the coordinates x and y of the object at a moved location. When the motion vector component Vy has not changed, i.e., No in step S413, the process proceeds to step S414.

[0136] Further, the CPU 20 may also determine the motion vector component Vy under a condition that the other component Vx is a positive value, which represents a direction approaching toward the display panel 12 in addition to the above-described condition of the component Vx is zero.

[0137] In step S414, the CPU 20 determines whether the motion vector component Vx regarding the point P has become a negative value, which represents a direction leaving from the display panel 12. When the motion vector component Vx has become a negative value, i.e., YES in step S414, the process proceeds to step S415, and if NO, the process returns to step S410. In step S415, the CPU 20 determines that the coordinate inputting operation has been completed, and terminates the coordinate input state of the coordinate data input system 1S.

[0138] Thus, the CPU 20 can generate display data according to the coordinated data obtained during the above-described coordinate input state, and transmit the generated display data to the display controller 29 to display an image of the input data on the display panel 12.

[0139] FIG. 14 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention. These operational steps are also executed on the coordinate data input system 1S of FIG. 1. In this example, a frame rate output from each of the first and second electronic cameras 10 and 11 varies depending on a distance of a coordinate input member from the display panel 12. The frame rate may be expressed as the number of frames per one second.

[0140] When a coordinate input member is within a predetermined distance, the frame rate output from each of the CMOS image sensors 51 and 53 is increased to obtain the motion of the coordinate input member further in detail. When the coordinate input member is out of the predetermined distance, the output frame rate is decreased to reduce loads of the other devices in the coordinate data input system 1S, such as the first image processing circuit 30, the second image processing circuit 31, the CPU 20, etc.

[0141] The frame rate of each of the first and second electronic cameras 10 and 11, i.e., the frame rate of each of the CMOS image sensors 51 and 53, is capable of being varied as necessary between at least at two frame rates, one referred to as a high frame rate and the other referred to as a low frame rate. A data size per unit time input to the first image processing circuit 30 and the second image processing circuit 31 varies depending on the frame rate of the image data. When the coordinate data input system 15 is powered on, the low frame rate is initially selected as a default frame rate.

[0142] Referring now to FIG. 14, in step S501, the first image processing circuit 30 or the CPU 20 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10. In step S502, the first image processing circuit 30 or the CPU 20 determines whether the contour shape of the object is regarded as a coordinate input member. When the contour shape of the object is regarded as a coordinate input member, i.e., YES in step S502, the process proceeds to step S503, and when the contour shape of the object is not regarded as a coordinate input member, i.e., NO in step S502, the process returns to step S501.

[0143] In step S503, the first image processing circuit 30 or the CPU 20 measures plural distances between points on the contours of the extracted object and points on the projected line of the surface of the display panel 12. For measuring those distances, the first image processing circuit 30 or the CPU 20 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 regarding each of the distances. A distance between two points is obtained as the product of the pixel pitch of the CMOS image sensor 51 and the number of pixels between the points.

[0144] In step S504, the first image processing circuit 30 or the CPU 20 extracts the least number of pixels Nmin among the plural numbers of pixels counted in step S503, and determines whether the minimum value Nmin is smaller than a first predetermined number M1. When the minimum value Nmin is smaller than the first predetermined number M1, i.e., YES in step S504, the process proceeds to step S505, and when the minimum value Nmin is not smaller than the first predetermined number M1, i.e., NO in step S504, the process returns to step S501.

[0145] The first predetermined number M1 in the step S504 is larger than a second predetermined number M0 for starting trace of vector data used in the following steps.

[0146] In step S505, the first image processing circuit 30 sends a command to the first electronic camera 10 to request increasing the output frame rate of the CMOS image sensor 51. Such a command for switching the frame rate, i.e., from the low frame rate to the high frame rate or from the high frame rate to the low frame rate, is transmitted through a cable that also carries image data. When the first electronic camera 10 receives the command, the first electronic camera 10 controls the CMOS image sensor 51 to increase the output frame rate thereof. As an example for increasing the output frame rate of the CMOS image sensor 51, the charge time of each of photoelectric conversion devices, i.e., the imaging cells, in the CMOS image sensor 51 may be decreased.

[0147] In step S506, the CPU 20 determines whether the object is in a second predetermined distance from the display panel 12 to start a tracing operation of motion vectors of the object. In other words, the CPU 20 determines if the minimum value Nmin is smaller than the second predetermined number M0, which corresponds to the second predetermined distance, and if YES, the process proceeds to step S507, and if No, the process branches to step S508.

[0148] In step S507, the CPU 20 traces the motion of the object and generates coordinate data of the object according to the traced motion vectors. As stated earlier, the second predetermined number M0 is smaller than the first predetermined number M1; therefore, the spatial range for tracing motion vectors of the object is smaller than the spatial range for outputting image data with the high frame rate from the CMOS image sensor 51.

[0149] In step S508, the first image processing circuit 30 determines whether the minimum value Nmin is still smaller than the first predetermined number M1, i.e., the object is still in the range of the first predetermined number M1. When the minimum value Nmin is still smaller than the first predetermined number M1, i.e., YES in step S508, the process returns to step S506, and when the minimum value Nmin is no longer smaller than the first predetermined number M1, i.e., NO in step S508, the process proceeds to step S509.

[0150] In step S509, the first image processing circuit 30 sends a command to the first electronic camera 10 to request decreasing the output frame rate of the CMOS image sensor 51, and then the process returns to the step S501. Receiving the command, the first electronic camera 10 controls the CMOS image sensor 51 to decrease again the output frame rate thereof.

[0151] In the above-described operational steps, the second electronic camera 11 and the second image processing circuit 31 operate substantially the same as the first electronic camera 10 and the first image processing circuit 30 operate.

[0152] In this example, while the coordinate input device is a distant place from the display panel 12, the first electronic camera 10 and the second electronic camera 11 operate in a low frame rate, and output a relatively small quantity of image data to the other devices. Consequently, power consumption of the coordinate data input system 1S is decreased.

[0153] FIG. 15 is a flowchart illustrating operational steps for practicing a coordinate data inputting operation as another example configured according to the present invention. These operational steps are also executed on the coordinate data input system 1S of FIG. 1. In this example, an image area output from each of the CMOS image sensors 51 and 53 varies depending upon a distance of a coordinate input member from the display panel 12. In other words, the output image area is limited within a predetermined distance from a coordinate input member depending on a location of the coordinate input member. When the output image area is limited in a small area, an image data size included in a frame is also decreased, and consequently the decreased data size decreases loads of devices, such as the first image processing circuit 30, the second image processing circuit 31, the CPU 20, etc. That is, the power consumption of the coordinate data input system 1S is also decreased.

[0154] The pixels in each of the CMOS image sensors 51 and 53 can be randomly accessed by pixel, i.e., the pixels in the CMOS image sensors 51 and 53 can be randomly addressed to output the image signal thereof. This random accessibility enables the above-stated output image area limitation. When the coordinate data input system 1S is powered on, the output image area is set to cover a region surrounded by a whole horizontal span of and a predetermined altitude range above the display panel 12 as a default image area.

[0155] Referring now to FIG. 15, in step S601, the first image processing circuit 30 or the CPU 20 extracts contours of an object as a coordinate input member from frame image data received from the first electronic camera 10. In step S602, the first image processing circuit 30 or the CPU 20 determines whether the contour shape of the object is regarded as a coordinate input member. When the contour shape of the object is regarded as a coordinate input member, i.e., YES in step S602, the process proceeds to step S603, and when the contour shape of the object is not regarded as a coordinate input member, i.e., NO in step S602, the process returns to step S601.

[0156] In step S603, the first image processing circuit 30 or the CPU 20 measures plural distances between points on the contours of the extracted object and points on the projected line of the surface of the display panel 12. For measuring those distances, the first image processing circuit 30 or the CPU 20 counts pixels included between a point on the contours of the extracted object and a point on the projected line of the surface of the display panel 12 for each of the distances for each measuring distance. A distance between two points is obtained as the product of the pixel pitch of the CMOS image sensor 51 and the number of pixels between the two points.

[0157] In step S604, the first image processing circuit 30 or the CPU 20 extracts the least number of pixels Nmin among the plural numbers of pixels counted in step S603, and determines whether the minimum value Nmin is smaller than a predetermined number K. When the minimum value Nmin is smaller than the predetermined number K, i.e., YES in step S604, the process proceeds to step S605, and when the minimum value Nmin is not smaller than the predetermined number K, i.e., NO in step S604, the process returns to step S601.

[0158] FIG. 16A is a diagram illustrating an image captured by the first electronic camera 10 and an output limitation of the image. In FIG. 16A, the symbol K denotes a predetermined distance, and the symbol ym denotes a coordinate of the illustrated coordinate input member from an end of the CMOS image sensor 51 in the Ycamera axis direction.

[0159] Referring back to FIG. 15, in step S605, the first image processing circuit 30 first calculates the distance ym of the object from an end of the CMOS image sensor 51. After that, the first image processing circuit 30 sends a command to the first electronic camera 10 to limit the output image area of the CMOS image sensor 51 in a relatively small area. Referring back to FIG. 16A, the limited area corresponds to an inside area enclosed by a predetermined distance .lamda. from the coordinate input member for both sides in the Ycamera axis direction.

[0160] Such a command for limiting the output image area is transmitted through a common cable that carries image data. When the first electronic camera 10 receives the command, the first electronic camera 10 controls the CMOS image sensor 51 so as to limit the output image area thereof.

[0161] FIG. 16B is a diagram illustrating an image captured by the first electronic camera 10 and a displaced output limitation of the image. In FIG. 16B, the symbol ym denotes an original location of a coordinate input member and the symbol ym1 denotes a displaced location thereof. The symbol LL denotes a displacement of the coordinate input member from the original location ym to the displaced location ym1. As illustrated, when the coordinate input member moves from the original location ym to the location ym1, the limiting range X of the output image also follows to the new location ym1.

[0162] Referring back to FIG. 15, in step S606, the first image processing circuit 30 determines whether the object has moved in the Ycamera axis direction. When the object has moved, i.e., YES in step S606, the process proceeds to step S607, and if NO in step S606, the process skips the step S607 and jumps to step S608.

[0163] In step S607, the first image processing circuit 30 sends a command to the first electronic camera 10 to limit the output image area of the CMOS image sensor 51 in the distance .lamda. around the moved location ym1 of the object as illustrated in FIG. 16B. Thus, as long as the object stays under the predetermined altitude K above the display panel 12, the first electronic camera 10 carries on sending images limited in an area corresponding to the distance X around the object to the first image processing circuit 30.

[0164] In step S608, the CPU 20 determines whether the object is within a predetermined distance from the display panel 12 to start a tracing operation of motion vectors of the object. In other words, the CPU 20 determines if the minimum value Nmin is smaller than the predetermined number M0, which corresponds to the predetermined distance, and if YES in step S608, the process proceeds to step S609, and if No in step S608, the process branches to step S610.

[0165] In step S609, the CPU 20 traces motion vectors of the object, and inputs coordinate data of the object according to traced motion vectors.

[0166] In step S610, the CPU 20 determines whether the object is still within the predetermined altitude K above the display panel 12 for outputting image data limited in the range 2.lamda.. When the object is within the predetermined altitude K, i.e., YES in step S610, the process returns to step S608, and when the object is no longer within the predetermined altitude K, i.e., NO in step S610, the process proceeds to step S611.

[0167] In step S611, the first image processing circuit 30 sends a command to the first electronic camera 10 to expand the output image area of the CMOS image sensor 51 to cover the whole area of the display panel 12, and then the process returns to the step S601. When the first electronic camera 10 receives the command, the first electronic camera 10 controls the CMOS image sensor 51 to expand the output image that covers the whole area of the display panel 12 so as to be in the same state as when the coordinate data input system 1S is turned on.

[0168] In the above-described operational steps, the second electronic camera 11 and the second image processing circuit 31 operate substantially the same as the first electronic camera 10 and the first image processing circuit 30 operate.

[0169] Present-day large screen display devices in the market, such as a plasma display panel (PDP) or a rear projection display generally have a 40-inch to 70-inch screen with 1024-pixel by 768-pixel resolution, which is known as an XGA screen. For capitalizing on those performance figures to a coordinate data input system, image sensors, such as the CMOS image sensors 51 and 53 are desirable to be provided with about 2000 imaging cells (pixels) in a direction. Against those backdrops, the following examples according to the present invention are configured to further reduce costs of a coordinate data input system.

[0170] FIG. 17 is a schematic view illustrating a coordinate data input system 60S as another example configured according to the present invention. The coordinate data input system 60S includes a coordinate data input apparatus 60 and a control apparatus 61. The coordinate data input apparatus 60 includes a first linear sensor camera 70, a second linear sensor camera 71, an area sensor camera 72, a display panel 73, and a frame 74.

[0171] The linear sensor camera may also be referred as a line sensor camera, a one-dimensional sensor camera, a 1-D camera, etc., and the area sensor camera may also be referred as a video camera, a two-dimensional camera, a two-dimensional electronic camera, a 2-D camera, a digital still camera, etc.