Image processing device, image processing method, and program for generating a focused image

Hayasaka , et al. May 18, 2

U.S. patent number 11,012,605 [Application Number 16/344,217] was granted by the patent office on 2021-05-18 for image processing device, image processing method, and program for generating a focused image. This patent grant is currently assigned to SONY CORPORATION. The grantee listed for this patent is SONY CORPORATION. Invention is credited to Kengo Hayasaka, Katsuhisa Ito.

View All Diagrams

| United States Patent | 11,012,605 |

| Hayasaka , et al. | May 18, 2021 |

Image processing device, image processing method, and program for generating a focused image

Abstract

The present technology relates to an image processing device, an image processing method, and a program that enable refocusing accompanied by desired optical effects. A light collection processing unit performs a light collection process to generate a processing result image focused at a predetermined distance, using images of a plurality of viewpoints. The light collection process is performed with the images of the plurality of viewpoints having pixel values adjusted with adjustment coefficients for the respective viewpoints. The present technology can be applied in a case where a refocused image is obtained from images of a plurality of viewpoints, for example.

| Inventors: | Hayasaka; Kengo (Saitama, JP), Ito; Katsuhisa (Tokyo, JP) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | SONY CORPORATION (Tokyo,

JP) |

||||||||||

| Family ID: | 1000005562678 | ||||||||||

| Appl. No.: | 16/344,217 | ||||||||||

| Filed: | October 25, 2017 | ||||||||||

| PCT Filed: | October 25, 2017 | ||||||||||

| PCT No.: | PCT/JP2017/038469 | ||||||||||

| 371(c)(1),(2),(4) Date: | April 23, 2019 | ||||||||||

| PCT Pub. No.: | WO2018/088211 | ||||||||||

| PCT Pub. Date: | May 17, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20190260925 A1 | Aug 22, 2019 | |

Foreign Application Priority Data

| Nov 8, 2016 [JP] | JP2016-217763 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 5/225 (20130101); H04N 5/23212 (20130101); G06T 1/00 (20130101); H04N 5/232 (20130101); H04N 13/282 (20180501) |

| Current International Class: | H04N 5/232 (20060101); G06T 1/00 (20060101); H04N 13/282 (20180101); H04N 5/225 (20060101) |

References Cited [Referenced By]

U.S. Patent Documents

| 9055218 | June 2015 | Nishiyama |

| 9681042 | June 2017 | Hiasa |

| 2016/0094835 | March 2016 | Takanashi |

| 2017/0193642 | July 2017 | Naruse |

| 2017/0193643 | July 2017 | Naruse |

| 2017/0359565 | December 2017 | Ito |

| 2013-121050 | Jun 2013 | JP | |||

| 2015-201722 | Nov 2015 | JP | |||

| WO 2016/132950 | Aug 2016 | WO | |||

Other References

|

Wilburn et al., High Performance Imaging Using Large Camera Arrays, ACM Transactions on Graphics (TOG), Jul. 2005, pp. 765-776, vol. 24, Issue 3. cited by applicant. |

Primary Examiner: Haliyur; Padma

Attorney, Agent or Firm: Paratus Law Group, PLLC

Claims

The invention claimed is:

1. An image processing device comprising: an acquisition unit configured to acquire images of a plurality of viewpoints; a light collection processing unit configured to perform a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, wherein the light collection processing unit is configured to perform the light collection process using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, wherein the adjustment coefficients are set for the respective viewpoints to correspond to a gain distribution of transmittance, and wherein the gain distribution has a peak at a center of the plurality of viewpoints, decreases smoothly toward ends of the plurality of viewpoints, and becomes 0 between the center and each end of the plurality of viewpoints; and an adjustment unit that adjusts the pixel values of pixels of the images of the viewpoints with the adjustment coefficients corresponding to the viewpoints, wherein the light collection processing unit performs the light collection process by setting a shift amount for shifting the pixels of the images of the plurality of viewpoints, shifting the pixels of the images of the plurality of viewpoints in accordance with the shift amount, and integrating the pixel values, and performs the light collection process using the pixel values of the pixels of the images of the plurality of viewpoints, the pixel values having been adjusted by the adjustment unit.

2. The image processing device according to claim 1, wherein the images of the plurality of viewpoints include a plurality of captured images captured by a plurality of cameras.

3. The image processing device according to claim 2, wherein the images of the plurality of viewpoints include the plurality of captured images and a plurality of interpolation images generated by interpolation using the captured images.

4. The image processing device according to claim 3, further comprising: a parallax information generation unit that generates parallax information about the plurality of captured images; and an interpolation unit that generates the plurality of interpolation images of different viewpoints, using the captured images and the parallax information.

5. An image processing device comprising: an acquisition unit configured to acquire images of a plurality of viewpoints; a light collection processing unit configured to perform a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, wherein the light collection processing unit is configured to perform the light collection process using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, wherein the adjustment coefficients are set for the respective viewpoints to correspond to a gain distribution, and wherein the gain distribution gradually decreases from one end to the other end of the plurality of viewpoints; and an adjustment unit that adjusts the pixel values of pixels of the images of the viewpoints with the adjustment coefficients corresponding to the viewpoints, wherein the light collection processing unit performs the light collection process by setting a shift amount for shifting the pixels of the images of the plurality of viewpoints, shifting the pixels of the images of the plurality of viewpoints in accordance with the shift amount, and integrating the pixel values, and performs the light collection process using the pixel values of the pixels of the images of the plurality of viewpoints, the pixel values having been adjusted by the adjustment unit.

6. The image processing device according to claim 5, wherein the gain distribution corresponds to at least one color component.

7. An image processing method comprising: acquiring images of a plurality of viewpoints; and performing a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, wherein the light collection process is performed using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, wherein the adjustment coefficients are set for the respective viewpoints to correspond to a gain distribution of transmittance, wherein the gain distribution has a peak at a center of the plurality of viewpoints, decreases smoothly toward ends of the plurality of viewpoints, and becomes 0 between the center and each end of the plurality of viewpoints, wherein the pixel values of pixels of the images of the viewpoints are adjusted with the adjustment coefficients corresponding to the viewpoints, wherein the light collection process is performed by setting a shift amount for shifting the pixels of the images of the plurality of viewpoints, shifting the pixels of the images of the plurality of viewpoints in accordance with the shift amount, and integrating the pixel values, and wherein the light collection process is performed using the adjusted pixel values of the pixels of the images of the plurality of viewpoints.

8. A non-transitory computer-readable medium having embodied thereon a program, which when executed by a computer causes the computer to execute a method, the method comprising: acquiring images of a plurality of viewpoints; and performing a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, wherein the light collection processing unit performs the light collection process using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, wherein the adjustment coefficients are set for the respective viewpoints to correspond to a gain distribution of transmittance, wherein the gain distribution has a peak at a center of the plurality of viewpoints, decreases smoothly toward ends of the plurality of viewpoints, and becomes 0 between the center and each end of the plurality of viewpoints, wherein the pixel values of pixels of the images of the viewpoints are adjusted with the adjustment coefficients corresponding to the viewpoints, wherein the light collection process is performed by setting a shift amount for shifting the pixels of the images of the plurality of viewpoints, shifting the pixels of the images of the plurality of viewpoints in accordance with the shift amount, and integrating the pixel values, and wherein the light collection process is performed using the adjusted pixel values of the pixels of the images of the plurality of viewpoints.

9. An image processing device comprising: an acquisition unit configured to acquire images of a plurality of viewpoints; a light collection processing unit configured to perform a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, wherein the light collection processing unit is configured to perform the light collection process using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, wherein the adjustment coefficients are set for the respective viewpoints in accordance with a gain distribution of transmittance, and wherein the gain distribution has at least two peaks at positions different from a center of the plurality of viewpoints, and changes smoothly toward ends of the plurality of viewpoints; and an adjustment unit that adjusts the pixel values of pixels of the images of the viewpoints with the adjustment coefficients corresponding to the viewpoints, wherein the light collection processing unit performs the light collection process by setting a shift amount for shifting the pixels of the images of the plurality of viewpoints, shifting the pixels of the images of the plurality of viewpoints in accordance with the shift amount, and integrating the pixel values, and performs the light collection process using the pixel values of the pixels of the images of the plurality of viewpoints, the pixel values having been adjusted by the adjustment unit.

10. The image processing device according to claim 9, wherein the gain distribution becomes 0 between the center and each end of the plurality of viewpoints.

Description

CROSS REFERENCE TO PRIOR APPLICATION

This application is a National Stage Patent Application of PCT International Patent Application No. PCT/JP2017/038469 (filed on Oct. 25, 2017) under 35 U.S.C. .sctn. 371, which claims priority to Japanese Patent Application No. 2016-217763 (filed on Nov. 8, 2016), which are all hereby incorporated by reference in their entirety.

TECHNICAL FIELD

The present technology relates to an image processing device, an image processing method, and a program, and more particularly, to an image processing device, an image processing method, and a program for enabling refocusing accompanied by desired optical effects, for example.

BACKGROUND ART

A light field technique has been suggested for reconstructing, from images of a plurality of viewpoints, a refocused image, that is, an image captured with an optical system whose focus is changed, or the like, for example (see Non-Patent Document 1, for example).

For example, Non-Patent Document 1 discloses a refocusing method using a camera array formed with 100 cameras.

CITATION LIST

Non-Patent Document

Non-Patent Document 1: Bennett Wilburn et al., "High Performance imaging Using Large Camera Arrays"

SUMMARY OF THE INVENTION

Problems to be Solved by the Invention

As for refocusing, the need for realizing refocusing accompanied by optical effects desired by users and the like is expected to increase in the future.

The present technology has been made in view of such circumstances, and aims to enable refocusing accompanied by desired optical effects.

Solutions to Problems

An image processing device or a program according to the present technology is

an image processing device including: an acquisition unit that acquires images of a plurality of viewpoints; and a light collection processing unit that performs a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, in which the light collection processing unit performs the light collection process using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints, or

a program for causing a computer to function as such an image processing device.

An image processing method according to the present technology is an image processing method including: acquiring images of a plurality of viewpoints; and performing a light collection process to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints, in which the light collection process is performed using the images of the plurality of viewpoints, the images having pixel values adjusted with adjustment coefficients for the respective viewpoints.

In the image processing device, the image processing method, and the program according to the present technology, images of a plurality of viewpoints are acquired, and a light collection process is performed to generate a processing result image focused at a predetermined distance, using the images of the plurality of viewpoints. This light collection process is performed with the images of the plurality of viewpoints, the pixel values of the images having been adjusted with adjustment coefficients for the respective viewpoints.

Note that the image processing device may be an independent device, or may be an internal block in a single device.

Meanwhile, the program to be provided may be transmitted via a transmission medium or may be recorded on a recording medium.

Effects of the Invention

According to the present technology, it is possible to perform refocusing accompanied by desired optical effects.

Note that effects of the present technology are not limited to the effects described herein, and may include any of the effects described in the present disclosure.

BRIEF DESCRIPTION OF DRAWINGS

FIG. 1 is a block diagram showing an example configuration of an embodiment of an image processing system to which the present technology is applied.

FIG. 2 is a rear view of an example configuration of an image capturing device 11.

FIG. 3 is a rear view of another example configuration of the image capturing device 11.

FIG. 4 is a block diagram showing an example configuration of an image processing device 12.

FIG. 5 is a flowchart showing an example process to be performed by the image processing system.

FIG. 6 is a diagram for explaining an example of generation of an interpolation image at an interpolation unit 32.

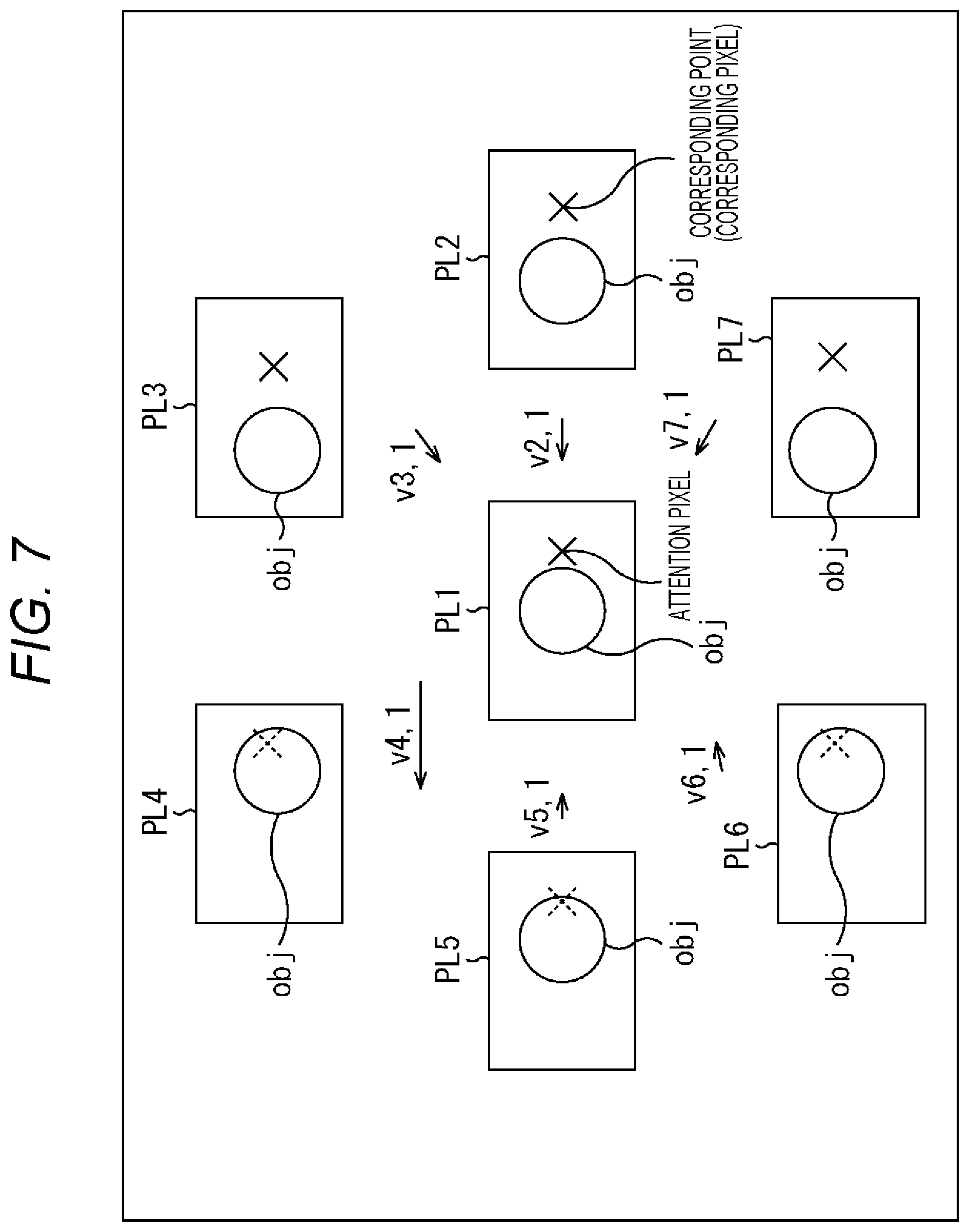

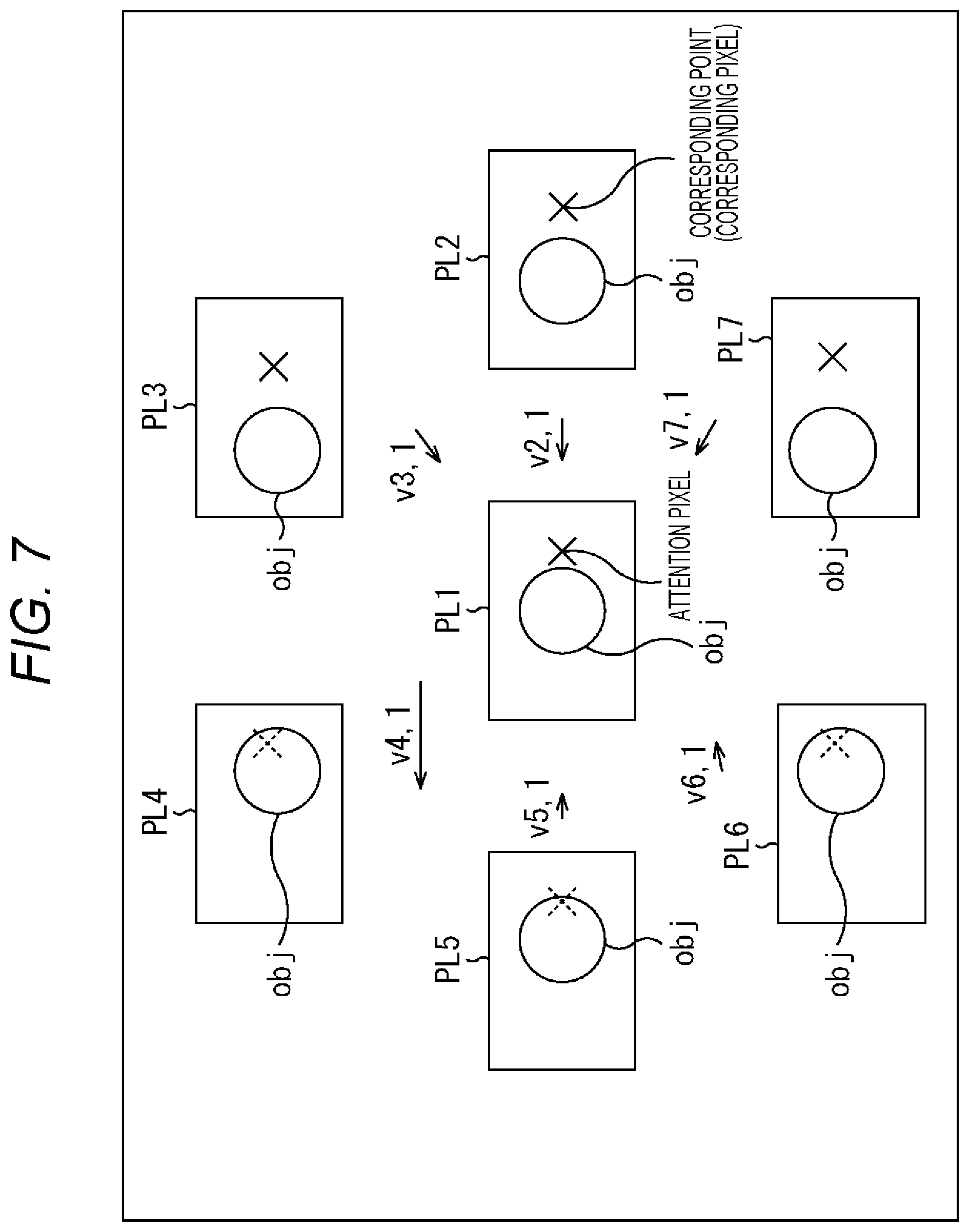

FIG. 7 is a diagram for explaining an example of generation of a disparity map at a parallax information generation unit 31.

FIG. 8 is a diagram for explaining an outline of refocusing through a light collection process to be performed by a light collection processing unit 34.

FIG. 9 is a diagram for explaining an example of disparity conversion.

FIG. 10 is a diagram for explaining an outline of refocusing.

FIG. 11 is a flowchart for explaining an example of a light collection process to be performed by the light collection processing unit 34.

FIG. 12 is a flowchart for explaining an example of an adjustment process to be performed by an adjustment unit 33.

FIG. 13 is a diagram showing a first example of lens aperture parameters.

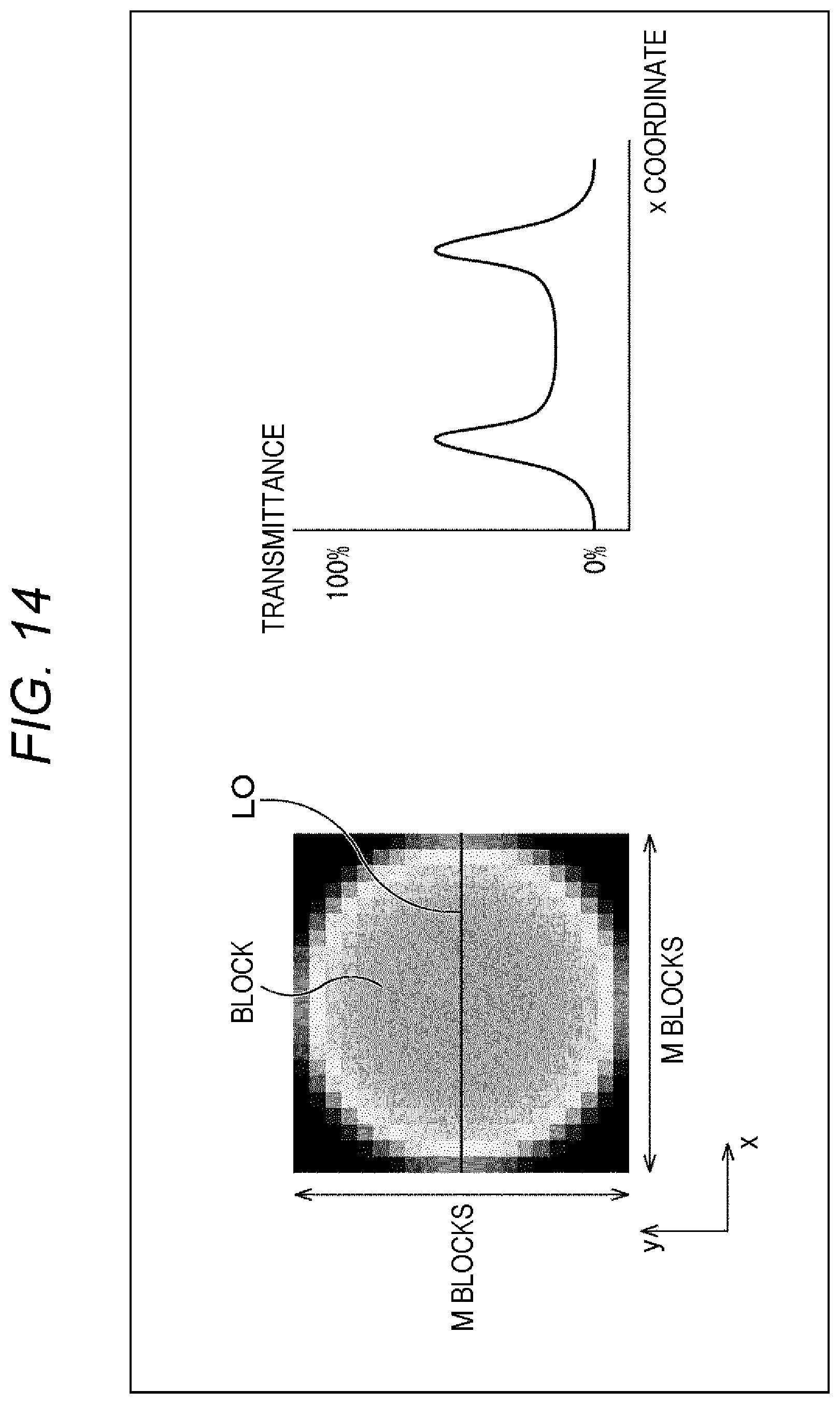

FIG. 14 is a diagram showing a second example of lens aperture parameters.

FIG. 15 is a diagram showing a third example of lens aperture parameters.

FIG. 16 is a diagram showing a fourth example of lens aperture parameters.

FIG. 17 is a diagram showing an example of filter parameters.

FIG. 18 is a block diagram showing another example configuration of the image processing device 12.

FIG. 19 is a flowchart for explaining an example of a light collection process to be performed by a light collection processing unit 51.

FIG. 20 is a block diagram showing an example configuration of an embodiment of a computer to which the present technology is applied.

MODES FOR CARRYING OUT THE INVENTION

<Embodiment of an Image Processing System to which the Present Technology is Applied>

FIG. 1 is a block diagram showing an example configuration of an embodiment of an image processing system to which the present technology is applied.

In FIG. 1, the image processing system includes an image capturing device 11, an image processing device 12, and a display device 13.

The image capturing device 11 captures images of an object from a plurality of viewpoints, and supplies, for example, (almost) pan-focus images obtained as a result of the capturing from the plurality of viewpoints, to the image processing device 12.

The image processing device 12 performs image processing such as refocusing for generating (reconstructing) an image focused on a desired object by using the captured images of the plurality of viewpoints supplied from the image capturing device 11, and supplies a processing result image obtained as a result of the image processing, to the display device 13.

The display device 13 displays the processing result image supplied from the image processing device 12.

Note that, in FIG. 1, the image capturing device 11, the image processing device 12, and the display device 13 constituting the image processing system can be all installed in an independent apparatus such as a digital (still/video) camera, or a portable terminal like a smartphone or the like, for example.

Alternatively, the image capturing device 11, the image processing device 12, and the display device 13 can be installed in apparatuses independent of one another.

Furthermore, any two devices among the image capturing device 11, the image processing device 12, and the display device 13 can be installed in an apparatus independent of the apparatus in which the remaining one apparatus is installed.

For example, the image capturing device 11 and the display device 13 can be installed in a portable terminal owned by a user, and the image processing device 12 can be installed in a server in a cloud.

Alternatively, some of the blocks of the image processing device 12 can be installed in a server in a cloud, and the remaining blocks of the image processing device 12, the image capturing device 11, and the display device 13 can be installed in a portable terminal.

<Example Configuration of the Image Capturing Device 11>

FIG. 2 is a rear view of an example configuration of the image capturing device 11 shown in FIG. 1.

The image capturing device 11 includes a plurality of camera units (hereinafter also referred to as cameras) 21.sub.i that captures images having the values of RGB as pixel values, for example, and the plurality of cameras 21.sub.i captures images from a plurality of viewpoints.

In FIG. 2, the image capturing device 11 includes seven cameras 21.sub.1, 21.sub.2, 21.sub.3, 21.sub.4, 21.sub.5, 21.sub.6, and 21.sub.7 as the plurality of cameras, for example, and these seven cameras 21.sub.1 through 21.sub.7 are arranged in a two-dimensional plane.

Further, in FIG. 2, the seven cameras 21.sub.1 through 21.sub.7 are arranged such that one of the seven cameras 21.sub.4 through 21.sub.7, such as the camera 21.sub.1, for example, is disposed at the center, and the other six cameras 21.sub.2 through 21.sub.7 are disposed around the camera 21.sub.1, to form a regular hexagon.

Therefore, in FIG. 2, the distance between one camera 21.sub.i (i=1, 2, . . . , or 7) out of the seven cameras 21.sub.1 through 21.sub.7 and a camera 21.sub.j (j=1, 2, . . . , or 7) closest to the camera 21.sub.i (the distance between the optical axes) is the same distance of B.

The distance of B between the cameras 21.sub.i and 21.sub.j may be about 20 mm, for example. In this case, the image capturing device 11 can be designed to have almost the same size as the size of a card such as an IC card.

Note that the number of the cameras 21.sub.i constituting the image capturing device 11 is not necessarily seven, and it is possible to adopt a number from two to six, or the number eight or greater.

Also, in the image capturing device 11, the plurality of cameras 21.sub.i may be disposed at any appropriate positions, other than being arranged to form a regular polygon such as a regular hexagon as described above.

Hereinafter, of the cameras 21.sub.1 through 21.sub.7, the camera 21.sub.1 disposed at the center will be also referred to as the reference camera 21.sub.1, and the cameras 21.sub.2 through 21.sub.7 disposed around the reference camera 21.sub.1 will be also referred to as the peripheral cameras 21.sub.2 through 21.sub.7.

FIG. 3 is a rear view of another example configuration of the image capturing device 11 shown in FIG. 1.

In FIG. 3, the image capturing device 11 includes nine cameras 21.sub.11 through 21.sub.19, and the nine cameras 21.sub.11 through 21.sub.17 are arranged in three rows and three columns. Each of the 3.times.3 cameras 21.sub.i (i=11, 12, . . . , and 19) is disposed at the distance of B from an adjacent camera 21.sub.j (j=11, 12, . . . , or 19) above the camera 21.sub.i, below the camera 21.sub.i, or to the left or right of the camera 21.sub.i.

In the description below, the image capturing device 11 includes the seven cameras 21.sub.1 through 21.sub.7 as shown in FIG. 2, for example, unless otherwise specified.

Meanwhile, the viewpoint of the reference camera 21.sub.1 is also referred to as the reference viewpoint, and a captured image PL1 captured by the reference camera 21.sub.1 is also referred to as the reference image PL1. Further, a captured image PL #i captured by a peripheral camera 21.sub.i is also referred to as the peripheral image PL #i.

Note that the image capturing device 11 includes a plurality of cameras 21.sub.i as shown in FIGS. 2 and 3, but may be formed with a microlens array (MLA) as disclosed by Ren Ng and seven others in "Light Field Photography with a Hand-Held Plenoptic Camera", Stanford Tech Report CTSR 2005 February, for example. Even in a case where the image capturing device 11 is formed with an MLA, it is possible to obtain images substantially captured from a plurality of viewpoints.

Further, the method of capturing images from a plurality of viewpoints is not necessarily the above method by which the image capturing device 11 includes a plurality of cameras 21.sub.i or the method by which the image capturing device 11 is formed with an MIA.

<Example Configuration of the image Processing Device 12>

FIG. 4 is a block diagram showing an example configuration of the image processing device 12 shown in FIG. 1.

In FIG. 4, the image processing device 12 includes a parallax information generation unit 31, an interpolation unit 32, an adjustment unit 33, a light collection processing unit 34, and a parameter setting unit 35.

The image processing device 12 is supplied with captured images PL1 through PL7 captured from seven viewpoints by the cameras 21.sub.1 through 21.sub.7, from the image capturing device 11.

In the image processing device 12, the captured images PL #i are supplied to the parallax information generation unit 31 and the interpolation unit 323.

The parallax information generation unit 31 obtains parallax information using the captured images PL #i supplied from the image capturing device 11, and supplies the parallax information to the interpolation unit 32 and the light collection processing unit 34.

Specifically, the parallax information generation unit 31 performs a process of obtaining the parallax information between each of the captured images PL #i supplied from the image capturing device 11 and the other captured images PL #j, as image processing of the captured images PL #i of a plurality of viewpoints, for example. The parallax information generation unit 31 then generates a map in which the parallax information is registered for (the position of) each pixel of the captured images, for example, and supplies the map to the interpolation unit 32 and the light collection processing unit 34.

Here, any appropriate information that can be converted into parallax, such as a disparity representing parallax with the number of pixels or distance in the depth direction corresponding to parallax, can be adopted as the parallax information. In this embodiment, disparities are adopted as the parallax information, for example, and in the parallax information generation unit 31, a disparity map in which the disparity is registered is generated as a map in which the parallax information is registered.

Using the captured images PL1 through PL7 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7 from the image capturing device 11, and the disparity map from the parallax information generation unit 31, the interpolation unit 32 performs interpolation to generate images to be obtained from viewpoints other than the seven viewpoints of the cameras 21.sub.1 through 21.sub.7.

Here, through the later described light collection process performed by the light collection processing unit 34, the image capturing device 11 including the plurality of cameras 21.sub.1 through 21.sub.7 can be made to function as a virtual lens having the cameras 21.sub.1 through 21.sub.7 as a synthetic aperture. In the image capturing device 11 shown in FIG. 2, the synthetic aperture of the virtual lens has a substantially circular shape with a diameter of approximately 2B connecting the optical axes of the peripheral cameras 21.sub.2 through 21.sub.7.

For example, where the viewpoints are a plurality of points equally spaced in a square having the diameter of 2B of the virtual lens (or a square inscribed in the synthetic aperture of the virtual lens), or the viewpoints are 21 points in the horizontal direction and 21 points in the vertical direction, for example, the interpolation unit 32 performs interpolation to generate a plurality of 21.times.21-7 viewpoints that are the 21.times.21 viewpoints minus the seven viewpoints of the cameras 21.sub.1 through 21.sub.7.

The interpolation unit 32 then supplies the captured images PL1 through PL7 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7 and the images of the 21.times.21-7 viewpoints generated by the interpolation using captured images, to the adjustment unit 33.

Here, in the interpolation unit 32, the images generated by the interpolation using captured images is also referred to as interpolation images.

Further, the images of the 21.times.21 viewpoints, which are the total of the captured images PL1 through PL7 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7 and the interpolation images of the 21.times.21-7 viewpoints supplied from the interpolation unit 32 to the adjustment unit 33, are also referred to as viewpoint images.

The interpolation in the interpolation unit 32 can be considered as a process of generating viewpoint images of a larger number of viewpoints (21.times.21 viewpoints in this case) from the captured images PL1 through PL7 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7. The process of generating the viewpoint images of the large number of viewpoints can be regarded as a process of reproducing light beams entering the virtual lens having the cameras 21.sub.1 through 21.sub.7 as synthetic apertures from real-space points in the real space.

The adjustment unit 33 is supplied not only with the viewpoint images of the plurality of viewpoints from the interpolation unit 32, but also with adjustment parameters from the parameter setting unit 35. The adjustment parameters are adjustment coefficients for adjusting pixel values, and are set for the respective viewpoints, for example.

The adjustment unit 33 adjusts the pixel values of the pixels of the viewpoint images of the respective viewpoints supplied from the interpolation unit 32, using the adjustment coefficients for the respective viewpoints as the adjustment parameters supplied from the parameter setting unit 35. The adjustment unit 33 then supplies the viewpoint images of the plurality of viewpoints with the adjusted pixel values to the light collection processing unit 34.

Using the viewpoint images of the plurality of viewpoints supplied from the adjustment unit 33, the light collection processing unit 34 performs a light collection process that is image processing equivalent to forming an image of the object by collecting light beams that have passed through an optical system such as a lens from the object, onto an image sensor or a film in a real camera.

In the light collection process by the light collection processing unit 34, refocusing is performed to generate (reconstruct) an image focused on a desired object. The refocusing is performed using the disparity map supplied from the parallax information generation unit 31 and a light collection parameter supplied from the parameter setting unit 35.

The image obtained through the light collection process by the light collection processing unit 34 is output as a processing result image to (the display device 13).

The parameter setting unit 35 sets a pixel of the captured image PL #i (the reference image PL1, for example) located at a position designated by the user operating an operation unit (not shown), a predetermined application, or the like, as the focus target pixel for focusing (or showing the object), and supplies the focus target pixel as (part of) the light collection parameter to the light collection processing unit 34.

The parameter setting unit 35 further sets adjustment coefficients for adjusting pixel values for each of the plurality of viewpoints in accordance with an operation by the user or an instruction from a predetermined application, and supplies the adjustment coefficients for the respective viewpoints as the adjustment parameters to the adjustment unit 33.

The adjustment parameters are parameters for controlling pixel value adjustment at the adjustment unit 33, and include the adjustment coefficients for each of the viewpoints of the viewpoint images to be used in the light collection process at the light collection processing unit 34, or for each of the viewpoints of the viewpoint images obtained by the interpolation unit 32.

The adjustment parameters may be lens aperture parameters for achieving optical image effects that can be actually or theoretically achieved with an optical system such as an optical lens and a diaphragm, filter parameters for achieving optical image effects that can be actually or theoretically achieved with a lens filter, or the like, for example.

Note that the image processing device 12 may be configured as a server, or may be configured as a client. Further, the image processing device 12 may be configured as a server-client system. In a case where the image processing device 12 is configured as a server-client system, some of the blocks of the image processing device 12 can be configured as a server, and the remaining blocks can be configured as a client.

<Process to Be Performed by the Image Processing System>

FIG. 5 is a flowchart showing an example process to be performed by the image processing system shown in FIG. 1.

In step S11, the image capturing device 11 captures images PL1 through PL7 of seven viewpoints as a plurality of viewpoints. The captured images PL #i are supplied to the parallax information generation unit 31 and the interpolation unit 32 of the image processing device 12 (FIG. 4).

The process then moves from step S11 on to step S12, and the image processing device 12 acquires the captured image PL #i from the image capturing device 11. Further, in the image processing device 12, the parallax information generation unit 31 performs a parallax information generation process, to obtain the parallax information using the captured image PL #i supplied from the image capturing device 11, and generate a disparity map in which the parallax information is registered.

The parallax information generation unit 31 supplies the disparity map obtained through the parallax information generation process to the interpolation unit 32 and the light collection processing unit 31, and the process moves from step S12 on to step S13. Note that, in this example, the image processing device 12 acquires the captured images PL #i from the image capturing device 11, but the image processing device 12 not only can acquire the captured images PL #i directly from the image capturing device 11 but also can acquire, from a cloud, captured images PL #i that have been captured by the image capturing device 11 or some other image capturing device (not shown), for example, and been stored beforehand into the cloud.

In step S13, the interpolation unit 32 performs an interpolation process of generating interpolation images of a plurality of viewpoints other than the seven viewpoints of the cameras 21.sub.1 through 21.sub.7, using the captured images PL1 through PL7 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7 supplied from the image capturing device 11, and the disparity map supplied from the parallax information generation unit 31.

The interpolation unit 32 further supplies the captured images PL1 through P17 of the seven viewpoints of the cameras 21.sub.1 through 21.sub.7 supplied from the image capturing device 11 and the interpolation images of the plurality of viewpoints obtained through the interpolation process, as viewpoint images of a plurality of viewpoints to the adjustment unit 33. The process then moves from step S13 on to step S14.

In step S14, the parameter setting unit 35 sets the light collection parameter and the adjustment parameters.

In other words, the parameter setting unit 35 sets adjustment coefficients for the respective viewpoints of the viewpoint images, in accordance with a user operation or the like.

The parameter setting unit 35 also sets a pixel of the reference image PL1 located at a position designated by a user operation or the like, as the focus target pixel for focusing.

Here, the parameter setting unit 35 causes the display device 13 to also display, for example, a message prompting designation of the object onto which the reference image PL1 is to be focused among the captured images PL1 through PL7 of the seven viewpoints supplied from the image capturing device 11, for example. The parameter setting unit 35 then waits for the user to designate a position (in the object shown) in the reference image PL1 displayed on the display device 13, and then sets the pixel of the reference image PL1 located at the position designated by the user as the focus target pixel.

The focus target pixel can be set not only in accordance with user designation as described above, but also in accordance with designation from an application or in accordance with designation based on predetermined rules or the like, for example.

For example, a pixel showing an object moving at a predetermined speed or higher, or a pixel showing an object moving continuously for a predetermined time or longer can be set as the focus target pixel.

The parameter setting unit 35 supplies the adjustment coefficients for the respective viewpoints of the viewpoint images as the adjustment parameters to the adjustment unit 33, and supplies the focus target pixel as the light collection parameter to the light collection processing unit 34. The process then moves from step S14 on to step S15.

In step S15, the adjustment unit 33 performs an adjustment process to adjust the pixel values of the pixels of the images of the respective viewpoints supplied from the interpolation unit 32, using the adjustment coefficients for the respective viewpoints as the adjustment parameters supplied from the parameter setting unit 35. The adjustment unit 33 supplies the viewpoint images of the plurality of viewpoints subjected to the pixel value adjustment, to the light collection processing unit 34, and the process then moves from step S15 on to step S16.

In step S16, the light collection processing unit. 34 performs a light collection process equivalent to collecting light beams that have passed through the virtual lens having the cameras 21.sub.1 through 21.sub.7 as the synthetic aperture from the object onto a virtual sensor (not shown), using the viewpoint images of the plurality of viewpoints subjected to the pixel value adjustment from the adjustment unit 33, the disparity map from the parallax information generation unit 31, and the focus target pixel as the light collection parameter from the parameter setting unit 35.

The virtual sensor onto which the light beams having passed through the virtual lens are collected is actually a memory (not shown), for example. In the light collection process, the pixel values of the viewpoint images of a plurality of viewpoints are integrated (as the stored value) in the memory as the virtual sensor, the pixel values being regarded as the luminance of the light beams gathered onto the virtual sensor. In this manner, the pixel values of the image obtained as a result of collection of the light beams having passed through the virtual lens are determined.

In the light collection process by the light collection processing unit 34, a reference shift amount BV (described later), which is a pixel shift amount for performing pixel shifting on the pixels of the viewpoint images of the plurality of viewpoints, is set. The pixels of the viewpoint images of the plurality of viewpoints are subjected to pixel shifting in accordance with the reference shift amount BV, and are then integrated. Thus, refocusing, or processing result image generation, is performed to determine the respective pixel values of a processing result image focused on an in-focus point at a predetermined distance.

As described above, the light collection processing unit 34 performs a light collection process (integration of (the pixel values of) pixels) on the viewpoint images of the plurality of viewpoints subjected to the pixel value adjustment. Thus, refocusing accompanied by various kinds of optical effects can be performed with the adjustment coefficients for the respective viewpoints as the adjustment parameters for adjusting the pixel values.

Here, an in-focus point is a real-space point in the real space where focusing is achieved, and, in the light collection process by the light collection processing unit 34, the in-focus plane as the group of in-focus points is set with focus target pixels as light collection parameters supplied from the parameter setting unit 35.

Note that, in the light collection process by the light collection processing unit 34, a reference shift amount By is set for each of the pixels of the processing result image. As a result, other than an image focused on an in-focus point at a distance, an image formed on a plurality of in-focus points at a plurality of distances can be obtained as the processing result image.

The light collection processing unit 34 supplies the processing result image obtained as a result of the light collection process to the display device 13, and the process then moves from step S16 on to step S17.

In step S17, the display device 13 displays the processing result image supplied from the light collection processing unit 34.

Note that, although the adjustment parameters and the light collection parameter are set in step 214 in FIG. 5, the adjustment parameters can be set at any appropriate timing until immediately before the adjustment process in step S15, and the light collection parameter can be set at any appropriate timing during the period from immediately after the capturing of the captured images PL1 through PL7 of the seven viewpoints in step S11 till immediately before the light collection process in step S15.

Further, the image processing device 12 (FIG. 4) can be formed only with the light collection processing unit 34.

For example, in a case where the light collection process at the light collection processing unit 34 is performed with images captured by the image capturing device 11 but without any interpolation image, the image processing device 12 can be configured without the interpolation unit 32. However, in a case where the light collection process is performed not only with captured images but also with interpolation images, ringing can be prevented from appearing in an unfocused object in the processing result image.

Further, in a case where parallax information about captured images of a plurality of viewpoints captured by the image capturing device 11 can be generated by an external device using a distance sensor or the like, and the parallax information can be acquired from the external device, for example, the image processing device 12 can be configured without the parallax information generation unit 31.

Furthermore, in a case where the adjustment parameters can be set at the adjustment unit 33 while the light collection parameters can be set at the light collection processing unit 34 in accordance with predetermined rules or the like, for example, the image processing device 12 can be configured without the parameter setting unit 35.

<Generation of Interpolation Images>

FIG. 6 is a diagram for explaining an example of generation of an interpolation image at the interpolation unit 32 shown in FIG. 4.

In a case where an interpolation image of a certain viewpoint is to be generated, the interpolation unit 32 sequentially selects a pixel of the interpolation image as an interpolation target pixel for interpolation. The interpolation unit 32 further selects pixel value calculation images to be used in calculating the pixel value of the interpolation target pixel. The pixel value calculation images may be all of the captured images PL1 through PL7 of the seven viewpoints, or the captured images PL #i of some viewpoints close to the viewpoint of the interpolation image. Using the disparity map from the parallax information generation unit 31 and the viewpoint of the interpolation image, the interpolation unit 32 determines the pixel (the pixel showing the same spatial point as the spatial point shown on the interpolation target pixel, if image capturing is performed from the viewpoint of the interpolation image) corresponding to the interpolation target pixel from each of the captured images PL #1 of a plurality of viewpoints selected as the pixel value calculation images.

The interpolation unit 32 then weights the pixel value of the corresponding pixel, and determines the resultant weighted value to be the pixel value of the interpolation target pixel.

The weight used for the weighting of the pixel value of the corresponding pixel may be a value that is inversely proportional to the distance between the viewpoint of the captured image PL7 as the pixel value calculation image having the corresponding pixel and the viewpoint of the interpolation image having the interpolation target pixel.

Note that, in a case where intense light with directivity is reflected on the captured images PL #i, it is preferable to select captured images PL #i of some viewpoints such as three or four viewpoints as the pixel value calculation images, rather than selecting all of the captured images PL1 through PL7 of the seven viewpoints as the pixel value calculation images. With captured images PL #i of some of the viewpoints, it is possible to obtain an interpolation image similar to an image that would be obtained if image capturing is actually performed from the viewpoint of the interpolation image.

<Generation of a Disparity Map>

FIG. 7 is a diagram for explaining an example of generation of a disparity map at the parallax information generation unit 31 shown in FIG. 4.

In other words, FIG. 7 shows an example of captured images PL1 through PL7 captured by the cameras 211 through 21.sub.7 of the image capturing device 11.

In FIG. 7, the captured images PL1 through PL7 show a predetermined object obj as the foreground in front of a predetermined background. Since the captured images PL1 through PL7 have different viewpoints from one another, the positions (the positions in the captured images) of the object obj shown in the respective captured images P12 through P17 differ from the position of the object obj shown in the captured image PL1 by the amounts equivalent to the viewpoint differences, for example.

Here, the viewpoint (position) of a camera 21.sub.i, or the viewpoint of a captured image PL #i captured by a camera 21.sub.i, is represented by vp #i.

For example, in a case where a disparity map of the viewpoint vp1 of the captured image PL1 is to be generated, the parallax information generation unit 31 sets the captured image PL1 as the attention image PL1 to which attention is paid. The parallax information generation unit 31 further sequentially selects each pixel of the attention image PL1 as the attention pixel to which attention is paid, and detects the corresponding pixel (corresponding point)) corresponding to the attention pixel from each of the other captured images PL2 through PL7.

The method of detecting the pixel corresponding to the attention pixel of the attention image PL1 from each of the captured images PL2 through PL7 may be a method utilizing the principles of triangulation, such as stereo matching or multi-baseline stereo, for example.

Here, the vector representing the positional shift of the corresponding pixel of a captured image PL #i relative to the attention pixel of the attention image PL1 is set as a disparity vector v #i, 1.

The parallax information generation unit 31 obtains disparity vectors v2, 1 through v7, 1 for the respective captured images PL2 through PL7. The parallax information generation unit 31 then performs a majority decision on the magnitudes of the disparity vectors v2, 1 through v7, 1, for example, and sets the magnitude of the disparity vectors v #i, 1, which are the majority, as the magnitude of the disparity (at the position) of the attention pixel.

Here, in a case where the distance between the reference camera 21.sub.1 for capturing the attention image P11 and each of the peripheral cameras 21.sub.2 through 21.sub.7 for capturing the captured images PL2 through PL7 is the same distance of B in the image capturing device 11 as described above with reference to FIG. 2, when the real-space point shown in the attention pixel of the attention image PL1 is also shown in the captured images P12 through PL7, vectors that differ in orientation but are equal in magnitude are obtained as the disparity vectors v2, 1 through v7, 1.

In other words, the disparity vectors v2, 1 through v7, 1 in this case are vectors that are equal in magnitude and are in the directions opposite to the directions of the viewpoints vp2 through vp7 of the other captured images P12 through PL7 relative to the viewpoint vp1 of the attention image PL1.

However, among the captured images PL2 through PL7, there may be an image with occlusion, or an image in which the real-space point appearing in the attention pixel of the attention image PL1 is hidden behind the foreground.

From a captured image PL #i that does not show the real-space point shown in the attention pixel of the attention image PL1 (this captured image PL #i will be hereinafter also referred to as the occlusion image), it is difficult to correctly detect the pixel corresponding to the attention pixel.

Therefore, regarding the occlusion image PL #i, a disparity vector v #i, 1 having a different magnitude from the disparity vectors v #j, 1 of the captured images PL #j showing the real-space point shown in the attention pixel of the attention image PL1 is obtained.

Among the captured images PL2 through PL7, the number of images with occlusion with respect to the attention pixel is estimated to be smaller than the number of images with no occlusion. In view of this, the parallax information generation unit 31 performs a majority decision on the magnitudes of the disparity vectors v2, 1 through v7, 1, and sets the magnitude of the disparity vectors v #i, 1, which are the majority, as the magnitude of the disparity of the attention pixel, as described above.

In FIG. 7, among the disparity vectors v2, 1 through v7, 1, the three disparity vectors v2, 1, v3, 1, and v7, 1 are vectors of the same magnitude. Meanwhile, there are no disparity vectors of the same magnitude among the disparity vectors v4, 1, v5, 1, and v6, 1.

Therefore, the magnitude of the three disparity vectors v2, 1, v3, 1, and v7, 1 are obtained as the magnitude of the disparity of the attention pixel.

Note that the direction of the disparity between the attention pixel of the attention image PL1 and any captured image PL #i can be recognized from the positional relationship (such as the direction from the viewpoint vp1 toward the viewpoint vp #i) between the viewpoint vp1 of the attention image PL1 (the position of the camera 21.sub.1) and the viewpoint vp #i of the captured image PL #i (the position of the camera 21.sub.i).

The parallax information generation unit 31 sequentially selects each pixel of the attention image P11 as the attention pixel, and determines the magnitude of the disparity. The parallax information generation unit 31 then generates, as disparity map, a map in which the magnitude of the disparity of each pixel of the attention image PL1 is registered with respect to the position (x-y coordinate) of the pixel. Accordingly, the disparity map is a map (table) in which the positions of the pixels are associated with the disparity magnitudes of the pixels.

The disparity maps of the viewpoints vp #i of the other captured images PL #i can also be generated like the disparity map of the viewpoint vp #1.

However, in the generation of the disparity maps of the viewpoints vp #i other than the viewpoint vp #1, the majority decisions are performed on the disparity vectors, after the magnitudes of the disparity vectors are adjusted on the basis of the positional relationship between the viewpoint vp #j a captured image PL #i and the viewpoints vp #1 of the captured images PL #j other than the captured image PL #i (the positional relationship between the cameras 21.sub.i and 21.sub.j) (the distance between the viewpoint vp #i and the viewpoint vp #j).

In other words, in a case where the captured image PL5 is set as the attention image PL5, and disparity maps are generated with respect to the image capturing device 11 shown in FIG. 2, for example, the disparity vector obtained between the attention image PL5 and the captured image PL2 is twice greater than the disparity vector obtained between the attention image PL5 and the captured image PL1.

This is because, while the baseline length that is the distance between the optical axes of the camera 21.sub.3 for capturing the attention image PL5 and the camera 21.sub.1 for capturing the captured image PL1 is the distance of B, the baseline length between the camera 21.sub.5 for capturing the attention image PL5 and the camera 21.sub.2 for capturing the captured image PL2 is the distance of 2B.

In view of this, the distance of B, which is the baseline length between the reference camera 21.sub.i and the other cameras 21.sub.i, for example, is referred to as the reference baseline length, which is the reference in determining a disparity. A majority decision on disparity vectors is performed after the magnitudes of the disparity vectors are adjusted so that the baseline lengths can be converted into the reference baseline length of B.

In other words, since the baseline length of B between the camera 21.sub.5 for capturing the captured image PL5 and the reference camera 21.sub.1 for capturing the captured image PL1 is equal to the reference baseline length of B, for example, the magnitude of the disparity vector to be obtained between the attention image PL5 and the captured image PL1 is adjusted to a magnitude that is one time greater.

Further, since the baseline length of 2B between the camera 21.sub.5 for capturing the attention image PL5 and the camera 21.sub.2 for capturing the captured image PL2 is equal to twice the reference baseline length of B, for example, the magnitude of the disparity vector to be obtained between the attention image PL5 and the captured image PL2 is adjusted to a magnification that is 1/2 greater (a value multiplied by the ratio between the reference baseline length of B and the baseline length of 2B between the camera 21.sub.5 and the camera 21.sub.2).

Likewise, the magnitude of the disparity vector to be obtained between the attention image PL5 and another captured image PL #i is adjusted to a magnitude multiplied by the ratio to the reference baseline length of B.

A disparity vector majority decision is then performed with the use of the disparity vectors subjected to the magnitude adjustment.

Note that, in the parallax information generation unit 31, the disparity of (each of the pixels of) a captured image PL #i can be determined with the precision of the pixels of the captured images captured by the image capturing device 11, for example. Alternatively, the disparity of a captured image PL #i can be determined with a precision equal to or lower than that of pixels having a higher precision than the pixels of the captured image PL #i (for example, the precision of sub pixels such as 1/4 pixels).

In a case where a disparity is to be determined with the pixel precision or lower, the disparity with the pixel precision or lower can be used as it is in a process using disparities, or the disparity with the pixel precision or lower can be used after being rounded down, rounded up, or rounded off to the closest whole number.

Here, the magnitude of a disparity registered in the disparity map is hereinafter also referred to as a registered disparity. For example, in a case where a vector as a disparity in a two-dimensional coordinate system in which the axis extending in a rightward direction is the x-axis while the axis extending in a downward direction is the y-axis, a registered disparity is equal to the x component of the disparity between each pixel of the reference image PL1 and the captured image PL5 of the viewpoint to the left of the reference image PL1 (or the x component of the vector representing the pixel shift from a pixel of the reference image PL1 to the corresponding pixel of the captured image PL5, the corresponding pixel corresponding to the pixel of the reference image PL1).

<Refocusing Through a Light Collection Process>

FIG. 8 is a diagram for explaining an outline of refocusing through a light collection process to be performed by the light collection processing unit 34 shown in FIG. 4.

Note that, for ease of explanation, the three images, which are the reference image PL1, the captured image PL2 of the viewpoint to the right of the reference image PL1, and the captured image PL5 of the viewpoint to the left of the reference image PL1, are used as the viewpoint images of a plurality of viewpoints for the light collection process in FIG. 8.

In FIG. 8, two objects obj1 and obj2 are shown in the captured images PL1, PL2, and PL5. For example, the object obj1 is located on the near side, and the object obj2 is on the far side.

For example, refocusing is performed to focus on (or put the focus on) the object obj1 at this stage, so that an image viewed from the reference viewpoint of the reference image PL1 is obtained as the post-refocusing processing result image.

Here, DP1 represents the disparity of the viewpoint of the processing result image with respect to the pixel showing the object obj1 of the captured image PL1, or the disparity (of the corresponding pixel of the reference image PL1) of the reference viewpoint in this case. Likewise, DP2 represents the disparity of the viewpoint of the processing result image with respect to the pixel showing the object obj1 of the captured image PL2, and DP5 represents the disparity of the viewpoint of the processing result image with respect to the pixel showing the object obj1 of the captured image PL5.

Note that, since the viewpoint of the processing result image is equal to the reference viewpoint of the captured image PL1 in FIG. 8, the disparity DP1 of the viewpoint of the processing result image with respect to the pixel showing the object obj1 of the captured image PL1 is (0, 0).

As for the captured images PL1, PL2, and PL5, pixel shift is performed on the captured images PL1, PL2, and PL5 in accordance with the disparities DP1, DP2, and DP5, respectively, and the captured images PL1 and PL2, and PL5 subjected to the pixel shift are integrated. In this manner, the processing result image focused on the object obj1 can be obtained.

In other words, pixel shift is performed on the captured images PL1, PL2, and PL5 so as to cancel the disparities DP1, DP2, and DP5 (the pixel shift being in the opposite direction from the disparities DP1, DP2, and DP5). As a result, the positions of the pixels showing obj1 match among the captured images PL1, PL2, and PL5 subjected to the pixel shift.

As the captured images PL1, PL2, and PL5 subjected to the pixel shift are integrated in this manner, the processing result image focused on the object obj1 can be obtained.

Rote that, among the captured images PL1, P12, and PL5 subjected to the pixel shift, the positions of the pixels showing the object obj2 located at a different position from the object obj1 in the depth direction are not the same. Therefore, the object obj2 shown in the processing result image is blurry.

Furthermore, since the viewpoint of the processing result image is the reference viewpoint, and the disparity DP1 is (0, 0) as described above, there is no substantial need to perform pixel shift on the captured image PL1.

In the light collection process by the light collection processing unit 34, the pixels of viewpoint images of a plurality of viewpoints are subjected to pixel shift so as to cancel the disparity of the viewpoint (the reference viewpoint in this case) of the processing result image with respect to the focus target pixel showing the focus target, and are then integrated, as described above, for example. Thus, an image subjected to refocusing for the focus target is obtained as the processing result image.

<Disparity Conversion>

FIG. 9 is a diagram for explaining an example of disparity conversion.

As described above with reference to FIG. 7, the registration disparities registered in a disparity map are equivalent to the x components of the disparities of the pixels of the reference image PL1 with respect to the respective pixels of the captured image PL5 of the viewpoint to the left of the reference image PL1.

In refocusing, it is necessary to perform pixel shift on each viewpoint image so as to cancel the disparity of the focus target pixel.

Attention is now drawn to a certain viewpoint as the attention viewpoint. In this case, the disparity of the focus target pixel of the processing result image with respect to the viewpoint image of the attention viewpoint, or the disparity of the focus target pixel of the reference image PL1 of the reference viewpoint in this case, is required in pixel shift of the captured image of the attention viewpoint, for example.

The disparity of the focus target pixel of the reference image PL1 with respect to viewpoint image of the attention viewpoint can be determined from the registered disparity of the focus target pixel of the reference image PL1 (the corresponding pixel of the reference image PL corresponding to the focus target pixel of the processing result image), with the direction from the reference viewpoint (the viewpoint of the processing result image) toward the attention viewpoint being taken into account.

Here, the direction from the reference viewpoint toward the attention viewpoint is indicated by a counterclockwise angle, with the x-axis being 0 [radian].

For example, the camera 21.sub.2 is located at a distance equivalent to the reference baseline length of B in the +x direction, and the direction from the reference viewpoint toward the viewpoint of the camera 21.sub.2 is 0 [radian]. In this case, (the vector as) the disparity DP2 of the focus target pixel of the reference image PL1 with respect to the viewpoint image (the captured image PL2) at the viewpoint of the camera 21.sub.2 can be determined to be (-RD, 0)=(-(B/B).times.RD.times.cos .theta., -(B/B).times.RD.times.sin .theta.) from the registered disparity RD of the focusing target pixel, as 0 [radian] is the direction of the viewpoint of the camera 21.sub.2.

Meanwhile, the camera 21.sub.3 is located at a distance equivalent to the reference baseline length of B in the .pi./3 direction, for example, and the direction from the reference viewpoint toward the viewpoint of the camera 212 is .pi./3 [radian]. In this case, the disparity DP3 of the focus target pixel of the reference image PL1 with respect to the viewpoint image (the captured image PL3) of the viewpoint of the camera 21.sub.3 can be determined to be (-RD.times.cos(.pi./3), -RD.times.sin(.pi./3))=(-(B/B).times.RD.times.cos (.pi./3), -(B/B).times.RD.times.sin (.pi./3)) from the registered disparity RD of the focus target pixel, as the direction of the viewpoint of the camera 21.sub.3 is .pi./3 [radian].

Here, an interpolation image obtained by the interpolation unit 32 can be regarded as an image captured by a virtual camera located at the viewpoint vp of the interpolation image. The viewpoint vp of this virtual camera is assumed to be located at a distance L from the reference viewpoint in the direction of the angle .theta. [radian]. In this case, the disparity DP of the focus target pixel of the reference image PL1 with respect to the viewpoint image of the viewpoint vp (the image captured by the virtual camera) can be determined to be (-(L/B).times.RD.times.cos .theta., -(L/B).times.RD.times.sin .theta.) from the registered disparity RD of the focus target, pixel, as the direction of the viewpoint vp as the angle .theta..

Determining the disparity of a pixel of the reference image PL1 with respect to the viewpoint image of the attention viewpoint from a registered disparity RD and the direction of the attention viewpoint as described above, or converting a registered disparity RD into the disparity of a pixel of the reference image PL1 (the processing result image) with respect to the viewpoint image of the attention viewpoint, is also called disparity conversion.

In refocusing, the disparity of the focus target pixel of the reference image PL1 with respect to the viewpoint image of each viewpoint is determined from the registered disparity RD of the focus target pixel through disparity conversion, and pixel shift is performed on the viewpoint images of the respective viewpoints so as to cancel the disparity of the focus target pixel.

In refocusing, pixel shift is performed on a viewpoint image so as to cancel the disparity of the focus target pixel with respect to the viewpoint image, and the shift amount of this pixel shift is also referred to as the focus shift amount.

Here, in the description below, the viewpoint of the ith viewpoint image among the viewpoint images of a plurality of viewpoints obtained by the interpolation unit 32 is also written as the viewpoint vp #i. The focus shift amount of the viewpoint image of the viewpoint vp #i is also written as the focus shift amount DP #i.

The focus shift amount DP #i of the viewpoint image of the viewpoint vp #i can be uniquely determined from the registered disparity RD of the focus target pixel through disparity conversion taking into account the direction from the reference viewpoint toward the viewpoint vp #i.

Here, in the disparity conversion, (the vector as) a disparity (-(L/B).times.RD.times.cos .theta., -(L/B).times.RD.times.sin .theta.) is calculated from the registered disparity RD, as described above.

Accordingly, the disparity conversion can be regarded as an operation to multiply the registered disparity RD by -(L/B).times.cos .theta. and -(L/B).times.sin .theta., as an operation to multiply the registered disparity RD.times.-1 by (L/B).times.cos .theta. and (L/B).times.sin .theta., or the like, for example.

Here, the disparity conversion can be regarded as an operation to multiply the registered disparity RD.times.-1 by (L/B).times.cos .theta. and (L/B).times.sin .theta., for example.

In this case, the value to be subjected to the disparity conversion, which is the registered disparity RD.times.-1, is the reference value for determining the focus shift amount of the viewpoint image of each viewpoint, and will be hereinafter also referred to as the reference shift amount BV.

The focus shift amount is uniquely determined through disparity conversion of the reference shift amount BV. Accordingly, the pixel shift amount for performing pixel shift on the pixels of the viewpoint image of each viewpoint in refocusing is substantially set depending on the setting of the reference shift amount BV.

Note that, in a case where the registered disparity RD.times.-1 is adopted as the reference shift amount BV as described above, the reference shift amount By at a time when the focus target pixel is focused, or the registered disparity RD of the focus target pixel.times.-1, is equal to the x component of the disparity of the focus target pixel with respect to the captured image PL2.

<Light Collection Process>

FIG. 10 is a diagram for explaining refocusing through a light collection process

Here, a plane formed with a group of in-focus points (in-focus real-space points in the real space) is set as an in-focus plane.

In a light collection process, refocusing is performed by setting an in-focus plane that is a plane in which the distance in the depth direction in the real space is constant (does not vary), for example, and generating a processing result image focused on an object located on the in-focus plane (or in the vicinity of the in-focus plane), using viewpoint images of a plurality of viewpoints.

In FIG. 10, one person is shown in the near side while another person is shown in the middle in each of the viewpoint images of the plurality of viewpoints. Further, a plane that passes through the position of the person in the middle and is at a constant distance in the depth direction is set as the in-focus plane, and a processing result image focused on an object on the in-focus plane, or the person in the middle, for example, is obtained from the viewpoint images of the plurality of viewpoints.

Note that the in-focus plane may be a plane or a curved plane whose distance in the depth direction in the real space varies, for example. Alternatively, the in-focus plane may be formed with a plurality of planes or the like at different distances in the depth direction.

FIG. 11 is a flowchart for explaining an example of a light collection process to be performed by the light collection processing unit 34.

In step S31, the light collection processing unit 34 acquires (information about) the focus target pixel serving as a light collection parameter from the parameter setting unit 35, and the process then moves on to step S32.

Specifically, the reference image PL1 or the like among the captured images PL1 through PL7 captured by the cameras 21.sub.1 through 21.sub.7 is displayed on the display device 13, for example. When the user designates a position in the reference image PL1, the parameter setting unit 35 sets the pixel at the position designated by the user as the focus target pixel, and supplies (information indicating) the focus target pixel as a light collection parameter to the light collection processing unit 34.

In step S31, the light collection processing unit 34 acquires the focus target pixel supplied from the parameter setting unit 35 as described above.

In step S32, the light collection processing unit 34 acquires the registered disparity RD of the focus target pixel registered in a disparity map supplied from the parallax information generation unit 31. The light collection processing unit 34 then sets the reference shift amount By in accordance with the registered disparity RD of the focus target pixel, or sets the registered disparity RD of the focus target pixel.times.-1 as the reference shift amount BV, for example. The process then moves from step S32 on to step 333.

In step 333, the light collection processing unit. 34 sets a processing result image that is an image corresponding to one of viewpoint images of a plurality of viewpoints that have been supplied from the adjustment unit 33 and been subjected to pixel value adjustment, such as an image corresponding to the reference image, or an image that has the same size as the reference image and has 0 as the initial value of the pixel value as viewed from the viewpoint of the reference image, for example. The light collection processing unit 34 further determines the attention pixel that is one of the pixels that are of the processing result image and have not been selected as the attention pixel. The process then moves from step S33 on to step S34.

In step S34, the light collection processing unit 34 determines the attention viewpoint vp #i to be one viewpoint vp #i that has not been determined to be the attention viewpoint (with respect to the attention pixel) among the viewpoints of the viewpoint images supplied from the adjustment unit 33. The process then moves on to step S35.

In step S35, the light collection processing unit 34 determines the focus shift amounts DP #i of the respective pixels of the viewpoint image of the attention viewpoint vp #i, from the reference shift amount By. The focus shift amounts DP #i are necessary for focusing on the focus target pixel (put the focus on the object shown in the focus target pixel).

In other words, the light collection processing unit 34 performs disparity conversion on the reference shift amount BV by taking into account the direction from the reference viewpoint toward the attention viewpoint vp #i, and acquires the values (vectors) obtained through the disparity conversion as the focus shift amounts DP #i of the respective pixels of the viewpoint image of the attention viewpoint vp #i.

After that, the process moves from step S35 on to step S36. The light collection processing unit 34 then performs pixel shift on the respective pixels of the viewpoint image of the attention viewpoint vp #i in accordance with the focus shift amount DP #i, and integrates the pixel value of the pixel at the position of the attention pixel in the viewpoint image subjected to the pixel shift, with the pixel value of the attention pixel.

In other words, the light collection processing unit 34 integrates the pixel value of the pixel at a distance equivalent to the vector (for example, the focus shift amount DP #i.times.-1 in this case) corresponding to the focus shift amount DP #i from the position of the attention pixel among the pixels of the viewpoint image of the attention viewpoint vp #i, with the pixel value of the attention pixel.

The process then moves from step S36 on to step S37, and the light collection processing unit 34 determines whether or not all the viewpoints of the viewpoint images supplied from the adjustment unit 33 have been set as the attention viewpoint.

If it is determined in step S37 that not all the viewpoints of the viewpoint images from the adjustment unit 33 have been set as the attention viewpoint, the process returns to step S34, and thereafter, a process similar to the above is repeated.

If it is determined in step S37 that all the viewpoints of the viewpoint images from the adjustment unit 33 have been set as the attention viewpoint, on the other hand, the process moves on to step S38.

In step S38, the light collection processing unit 34 determines whether or not all of the pixels of the processing result image have been set as the attention pixel.

If it is determined in step S38 that not all of the pixels of the processing result image have been set as the attention pixel, the process returns to step S33, and the light collection processing unit 34 newly determines the attention pixel that is one of the pixels that are of the processing result image and have not been determined to be the attention pixel. After that, a process similar to the above is repeated.

If it is determined in step S38 that all the pixels of the processing result image have been set as the attention pixel, on the other hand, the light collection processing unit 34 outputs the processing result image, and ends the light collection process.

Note that, in the light collection process shown in FIG. 11, the reference shift amount BV is set in accordance with the registered disparity RD of the focus target pixel, and varies neither with the attention pixel nor with the attention viewpoint vp #i. In view of this, the reference shift amount DV is set, regardless of the attention pixel and the attention viewpoint vp #i.

Meanwhile, the focus shift amount DP #i varies with the attention viewpoint vp #i and the reference shift amount BV. In the light collection process shown in FIG. 11, however, the reference shift amount BV varies neither with the attention pixel nor with the attention viewpoint, vp #i, as described above. Accordingly, the focus shift amount DP #i varies with the attention viewpoint vp #i, but does not vary with the attention pixel. In other words, the focus shift amount DP #i has the same value for each pixel of the viewpoint image of one viewpoint, irrespective of the attention pixel.

In FIG. 11, the process in step S35 for obtaining the focus shift amount DP #i forms a loop for repeatedly calculating the focus shift amount. DP #i for the same viewpoint vp #i, regarding different attention pixels (the loop from step S33 to step S38). However, as described above, the focus shift amount DP #i has the same value for each pixel of a viewpoint image of one viewpoint, regardless of the attention pixel.

Therefore, in FIG. 11, the process in step S35 for obtaining the focus shift amount DP #i is performed only once for one viewpoint.

In the light collection process shown in FIG. 11, the plane having a constant distance in the depth direction is set as the in-focus plane, as described above with reference to FIG. 10. Accordingly, the reference shift amount BV of the viewpoint image necessary for focusing on the focus target pixel has such a value as to cancel the disparity of the focus target pixel showing a spatial point on the in-focus plane having the constant distance in the depth direction, or the disparity of the focus target pixel whose disparity is the value corresponding to the distance to the in-focus plane.

Therefore, the reference shift amount BV depends neither on a pixel (attention pixel pixel) of the processing result image nor on the viewpoint (attention viewpoint) of a viewpoint image in which the pixel values are integrated, and accordingly, does not need to be set for each pixel of the processing result image or each viewpoint of the viewpoint images (even if the reference shift amount BV is set for each pixel of the processing result image or each viewpoint of the viewpoint images, the reference shift amount By is set at the same value, and accordingly, is not, actually set for each pixel of the processing result image or each viewpoint of the viewpoint images).

Note that, in FIG. 11, pixel shift and integration of the pixels of the viewpoint images are performed for each pixel of the processing result image. In the light collection process, however, pixel shift and integration of the pixels of the viewpoint images can be performed for each subpixel obtained by finely dividing each pixel of the processing result image, other than for each pixel of the processing result image.

Further, in the light collection process shown in FIG. 11, the attention pixel loop (the loop from step S33 to step S38) is on the outer side, and the attentional viewpoint loop (the loop from step S34 to step S37) is on the inner side. However, the attention viewpoint loop can be the outer-side loop while the attention pixel loop is the inner-side loop.

<Adjustment Process>

FIG. 12 is a flowchart for explaining an example of an adjustment process to be performed by the adjustment unit 33 shown in FIG. 4.

In step S51, the adjustment unit 33 acquires the adjustment coefficients for the respective viewpoints as the adjustment parameters supplied from the parameter setting unit 35, and the process moves on to step S52.