Microscopy method and apparatus for optical tracking of emitter objects

Duocastella , et al. September 15, 2

U.S. patent number 10,775,602 [Application Number 16/479,808] was granted by the patent office on 2020-09-15 for microscopy method and apparatus for optical tracking of emitter objects. This patent grant is currently assigned to FONDAZIONE INSTITUTO ITALIANO DI TECNOLOGIA. The grantee listed for this patent is FONDAZIONE ISTITUTO ITALIANO DI TECNOLOGIA. Invention is credited to Alberto Diaspro, Marti Duocastella, Giuseppe Sancataldo.

View All Diagrams

| United States Patent | 10,775,602 |

| Duocastella , et al. | September 15, 2020 |

Microscopy method and apparatus for optical tracking of emitter objects

Abstract

Microscopy method and apparatus for determining the positions of emitter objects in a three-dimensional space that comprises focusing scattered light or fluorescent light emitted by an emitter object, separating the focused beam in a first and a second optical beam, directing the first and the second optical beam through a varifocal lens having an optical axis such that the first optical beam impinges on the lens along the optical axis and the second beam impinges decentralized with respect to the optical axis of the varifocal lens, simultaneously capturing a first image created by the first optical beam and a second image created by the second optical beam, and determining the relative displacement of the position of the object in the first and in the second image, wherein the relative displacement contains the information of the axial position of the object along a perpendicular direction to the image plane.

| Inventors: | Duocastella; Marti (Arenzano, IT), Sancataldo; Giuseppe (Bagheria, IT), Diaspro; Alberto (Genoa, IT) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | FONDAZIONE INSTITUTO ITALIANO DI

TECNOLOGIA (Genoa, IT) |

||||||||||

| Family ID: | 58995057 | ||||||||||

| Appl. No.: | 16/479,808 | ||||||||||

| Filed: | January 16, 2018 | ||||||||||

| PCT Filed: | January 16, 2018 | ||||||||||

| PCT No.: | PCT/IB2018/050257 | ||||||||||

| 371(c)(1),(2),(4) Date: | July 22, 2019 | ||||||||||

| PCT Pub. No.: | WO2018/134730 | ||||||||||

| PCT Pub. Date: | July 26, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20200142174 A1 | May 7, 2020 | |

Foreign Application Priority Data

| Jan 23, 2017 [IT] | 102017000006925 | |||

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04N 13/239 (20180501); G02B 21/08 (20130101); H04N 5/23212 (20130101); G02B 21/22 (20130101); G02B 21/367 (20130101); H04N 13/128 (20180501); G02B 21/365 (20130101); H04N 13/167 (20180501); G02B 21/18 (20130101); G02B 21/361 (20130101); G06K 9/00134 (20130101); G02B 21/16 (20130101); G02B 21/025 (20130101); H04N 13/254 (20180501); H04N 5/2352 (20130101); G02B 27/0075 (20130101); H04N 2013/0081 (20130101); H04N 2013/0096 (20130101); H04N 2013/0085 (20130101) |

| Current International Class: | G02B 21/36 (20060101); G02B 21/22 (20060101); H04N 5/235 (20060101); H04N 5/232 (20060101); G06K 9/00 (20060101); G02B 21/08 (20060101); G02B 21/16 (20060101); H04N 13/128 (20180101); H04N 13/167 (20180101); H04N 13/254 (20180101); H04N 13/239 (20180101); G02B 21/02 (20060101); H04N 13/00 (20180101) |

References Cited [Referenced By]

U.S. Patent Documents

| 6201899 | March 2001 | Bergen |

| 2014/0008549 | January 2014 | Theriault |

| 2014/0192166 | July 2014 | Cogswell et al. |

| 2017/0078549 | March 2017 | Emtman |

| 2017/0318216 | November 2017 | Gladnick |

| 2019/0162945 | May 2019 | Hua |

Other References

|

Sun et al., "Parallax: High Accuracy Three-Dimensional Single Molecule Tracking Using Split Images" Nano Letters, vol. 9, No. 7, pp. 2676-2682 (Jun. 4, 2009). cited by applicant . Liu et al., "Extended depth-of-field microscopic imaging with a variable focus microscope objective", Optics Express, vol. 19, No. 1, pp. 353-362 (Jan. 3, 2011). cited by applicant . Duocastella et al., "Three-dimensional particle tracking via tunable color-encoded multiplexing", Optics Letters, vol. 41, No. 5, pp. 863-866 (Mar. 1, 2016). cited by applicant . Ram et al., "High Accuracy 3D Quantum Dot Tracking with Multifocal Plane Microscopy for the Study of Fast Intracellular Dynamics in Live Cells", Biophysical Journal, vol. 95, pp. 6025-6043 (Dec. 2008). cited by applicant . Toprak et al., "Three-Dimensional Particle Tracking via Bifocal Imaging", Nano Letters, vol. 7, No. 7, pp. 2043-2045 (Jul. 11, 2007). cited by applicant . Small et al., "Fluorophore localization algorithms for super-resolution microscopy", Nature Methods, vol. 11, No. 3, pp. 267-279 (Mar. 2014). cited by applicant . Patterson et al. "Superresolution Imaging using Single-Molecule Localization", Annual Review of Physical Chemistry, vol. 61, pp. 345-367 (Jan. 4, 2010). cited by applicant. |

Primary Examiner: Cattungal; Rowina J

Attorney, Agent or Firm: Volpe and Koenig, P.C.

Claims

The invention claimed is:

1. Microscopy method for determining the position of an emitter object in a three dimensional (3D) space which comprises: a) illuminating an emitter object so as to cause an emission of scattered light or fluorescent light from the emitter object; b) focusing the emitted light in a primary focused optical beam through an objective lens; c) splitting the primary optical beam in a first secondary beam and in a second secondary beam; d) directing the first and the second secondary beam through a varifocal lens, having an optical axis, along respective optical paths such that the first secondary beam impinges on the varifocal lens along a direction corresponding to said optical axis and the second secondary beam impinges decentralized on the varifocal lens at an offset distance .DELTA.d from the optical axis and along a direction parallel to the same, in which the varifocal lens has an electronically controllable focal length, e) electronically controlling the focal length of the varifocal lens changing the focal length through a range of focal length values so as to move the respective focal positions of the first and second beam along said optical axis through said range of focal length values in a predetermined travel time, f) simultaneously acquiring a first image and a second image of the emitter object in an integration time greater than or equal to the travel time of the focal positions simultaneously detecting the first secondary beam in-axis and the decentralized second secondary beam in respective first detection area and second detection area arranged on an image plane (x, y); g) analyzing the first and the second image for determining a first object position on the first image and a second object position on the second image and determining a relative displacement .DELTA.r in the image plane (x, y) of the position of the object in the two images, and h) determining an axial position z.sub.p of the emitter object along an axis z perpendicular to the image plane on the basis of the relative displacement .DELTA.r.

2. Method according to claim 1, further comprising, after step h): i) associating the coordinates defined by the first position on the image plane (x, y) and by the axial position z.sub.p with the 3D position of the emitter object.

3. Method according to claim 1 wherein, in step h), the axial position z.sub.p is determined on the basis of a linear relationship between z.sub.p and .DELTA.r.

4. Method according to claim 1, which further comprises, subsequently to focusing the light emitted in a primary optical beam and prior to splitting the primary optical beam into a first secondary beam and into a second secondary beam, directing the primary optical beam through a relay optical unit having a magnification ratio, the relay optical unit being arranged on a rear focal plane of the objective lens.

5. Method according to claim 1, wherein the varifocal lens is arranged on a conjugate plane of the rear focal plane of the objective lens.

6. Method according to claim 1, wherein the offset distance .DELTA.d of the second secondary beam from the optical axis of the varifocal lens is such as to cause a lateral displacement .DELTA.r=.DELTA.y between the first and the second position of the emitter object along one of the two of the image plane coordinates (x, y).

7. Method according to claim 6 wherein, in step h), the axial position z.sub.p of the emitter object along axis z is determined according to a linear relationship .DELTA.y=C z.sub.p, wherein C is a conversion factor.

8. Method according to claim 1, wherein the step of simultaneously acquiring a first image and a second image of the emitter object comprises simultaneously acquiring a plurality of respective first and second images at successive instants so as to trace the 3D position of the object over time.

9. Method according to claim 1, wherein simultaneously acquiring is carried out by a two-dimensional image sensor which comprises an array of photosensitive elements which extend in the image plane (x, y) in a detection area which comprises the first detection area and the second detection area.

10. Method according to claim 1, wherein simultaneously acquiring comprises acquiring the first secondary beam through a first two-dimensional image sensor and acquiring the second secondary beam through a second two-dimensional image sensor, wherein the first and the second two-dimensional image sensors are mutually synchronized and each image sensor comprises a respective array of photosensitive elements defining a respective first and second detection area in the image plane (x, y).

11. Method according to claim 1, wherein: splitting the primary optical beam into a first secondary beam and a second secondary beam comprises transmitting the primary beam through a beam splitter configured for power-splitting the beam, and the beam splitter is configured in such a way as to produce a first secondary beam and a second secondary beam which propagate along two distinct directions not parallel to each other and step d) comprises directing at least one between the first and the second secondary beam through a directing system configured such that the first and the second secondary beam, in output from the directing optical system, propagate along two distinct and mutually parallel directions.

12. Microscopy apparatus for determining the position of one or more emitter objects in a three dimensional (3D) space which comprises: an objective lens configured for collecting light emitted by an emitter object and focusing the emitted light in a primary light beam; a beam splitter arranged for receiving the primary optical beam and configured for power-splitting the primary optical beam in a first secondary optical beam and a second secondary optical beam; a varifocal lens with electronically tunable focal length and having an optical axis, the varifocal lens being arranged downstream of the beam splitter; an optical beam directing optical system for directing at least one between the first secondary optical beam and the second secondary optical beam, the directing optical system being arranged between the beam splitter and the varifocal lens and configured such that the first and the second secondary beams in output from the directing optical system, propagate along two distinct and mutually parallel directions; at least one photodetector device arranged so as to receive the first and the second secondary beam in output from the varifocal lens, wherein the varifocal lens and the directing optical system are arranged in such a way that the first secondary optical beam impinges on the varifocal lens along its optical axis and the second secondary optical beam impinges on the varifocal lens decentralized along a direction parallel to the optical axis and at an offset distance .DELTA.d from the same, and the at least one photodetector device is configured for simultaneously detecting the first secondary beam and the second secondary beam on a respective first and second detection area to form at least one two-dimensional image in an image plane (x, y), which comprises respective first image of the emitter object formed on the first detection area by the first beam in axis and the second image of the same emitter object formed on the second detection area by the second decentralized beam.

13. Apparatus according to claim 12, wherein the varifocal lens is configured to be electronically controlled by setting a variation in the focal length through a range of focal length values so as to move the respective focal positions of the first and second beam along the optical axis of the varifocal lens through said range of focal length values in a predetermined travel time and the at least one detector device is configured for forming the at least one two-dimensional image in an integration time greater than or equal to the travel time.

14. Apparatus according to claim 12, which further comprises: a relay optical unit positioned on a rear focal plane of the objective lens (23) and arranged in such a way as to receive the primary optical beam upstream of the beam splitter, the optical relay unit being configured for transferring an image formed by the objective lens to an image plane conjugate with a magnification ratio.

15. Apparatus according to claim 14, wherein the relay optical unit is a telecentric optical system with a magnification ratio of 1:1.

16. Apparatus according to claim 12, wherein the varifocal lens is arranged on a conjugate plane of the rear focal plane of the objective lens.

17. Apparatus according to claim 12, which further comprises a data processing device connected to the at least one photodetector device configured for: receiving the first image and the second image of the emitter object; analyzing the first and the second image for determining a first position of the object on the first image and a second object position on the second image, determining a relative displacement .DELTA.r in the image plane (x, y) of the position of the object in the first and in the second image; determining an axial position z.sub.p of the emitter object along an axis z perpendicular to the image plane on the basis of .DELTA.r, and associating the coordinates defined by the first position on the image plane (x, y) and by the axial position z.sub.p with the position (x, y, z) of the emitter object.

Description

CROSS REFERENCE TO RELATED APPLICATIONS

This application is a 35 USC .sctn. 371 national stage application of PCT/IB2018/050257, which was filed Jan. 16, 2018, and claimed priority to IT 102017000006925, filed Jan. 23, 2017, both of which are incorporated herein by reference as if fully set forth.

FIELD OF THE INVENTION

The present invention relates to a microscopy method and apparatus for determining the 3D position of an emitter, in particular for the three-dimensional optical tracking of nanometric emitters.

BACKGROUND OF THE INVENTION

The development of efficient and rapid technologies for the optical tracking of individual molecules or particles makes it possible to investigate dynamic biological processes or the rheological behaviour of complex fluids, such as polymer networks, often in a non-invasive manner. Interest is generally directed at the ability to create well focused images of an object in a 3D volume.

In most cases, the nanometric object is a fluorescent emitter, whose signal is collected using a "wide-field" detection system, in which the entire field of view of a microscope is illuminated with simultaneous detection of the fluorescence emitted using a camera. Superposing multiple frames detected in sequence and using appropriate interpolation procedures, it is possible to obtain the two-dimensional localisation of the emitter.

The typical resolution of a microscope in visible light causes nanometric objects spread out in a sample appear in the image as luminous diffraction spots. The impulsive response of an optical instrument is commonly defined by the Point Spread Function (PSF), i.e. the amplitude distribution of the electromagnetic field on the image plane when a point source is observed. In the case of a non-point source, for example in the case of particles of at least a few tens of nm, the apparent dimension of the particle substantially corresponds to the dimension of the luminous spot and it is the convolution of the real dimension with the PSF.

Two main questions have been addressed in the development of techniques for the localisation of a single emitter in a volume instead of in a plane, i.e. the 3D localisation.

The first question pertains to the loss of efficiency of photonic collection from objects positioned outside the focal plane. In this case, single emitters do not appear as spots but as diffraction rings. The diffusion of light in rings with the consequent loss of the measured intensity results in decreased precision in the 2D localisation outside the focal plane until reaching an inability to localise the emitter. A second point resides in the fact the axial symmetry along the z-axis of the PSF in common microscopes does not allow discriminating whether an object is positioned at a distance .DELTA.z above or below the focal plane.

In other words, the axial distance traveled by a particle in a plane (x,y) can be determined by measuring the diameter of the first diffraction ring (if the dimension of the particle is known), however, it is not possible to determine, along the z-axis, whether the particle moved above or below the focal plane.

Moreover, the reduction of the signal/noise ratio when the particle moves out of focus limits in fact the axial distance within which the particle is visible.

A system for 3D tracking of a single fluorescent molecule, called Parallax, was presented in "Parallax: High Accuracy Three-Dimensional Single Molecule Tracking Using Split Images" di Y. Sun et al., Nano Letters, vol. 9, pages 2676-2682 (2009). The light beam emitted by an object is collimated by a lens positioned at a focal length to the primary image and separated in two optical paths by mirrors positioned at an additional focal length. The two optical paths form two images on the upper and lower part of the camera separated by a distance .DELTA.y.sub.1. When the object if out of focus, the beam is no longer collimated and the separate images formed on the camera are closer or farther away to or from each other in the y direction, with separation .DELTA.y.sub.2. The separation between .DELTA.y.sub.1 and .DELTA.y.sub.2 provides the signal to measure the displacement of the object along the z axis, while the positions in the plane (x,y) are obtained by the average of the positions in the two images.

The application US 2014/0192166 describes a microscope for generating a 3D image of an object that comprises a first and a second detector, an optical system that includes a waveplate between the 3D object and the detectors, wherein the waveplate is configured in such a way that the optical system simultaneously produces a depth of field extended to the second detector and the depth-encoded image exhibits a PSF that maps the positioning in various points inside the 3D object.

Use of a lens with variable/tunable focal length, often indicated with a varifocal lens, in a microscope, when positioned in a conjugated plane of the rear focal plane of the microscope lens, makes it possible to obtain focused images on the focal planes selectable by a user. If the speed of displacement of the focal spot of a varifocal lens is greater than the exposure time of the detector, the information on multiple plane can be integrated in a single image capture, creating an extended depth of field (EDOF) effect.

Sheng Liu and Hong Hua in "Extended depth-of-field microscopic imaging with a variable focus microscope objective", published in Optics Express, vol. 19, pages 353-362 (2011), have a microscope able to capture EDOF images in a single captured image. The volumetric optical sampling method uses a rapid scan of the focus of a varifocal objective lens through the extended depth of a thick sample during a single exposure of a detector. The captured image is the fusion of infinite sections (slices) of image within the focal interval of the objective lens and an EDOF image is reconstructed by applying the deconvolution technique. In the optical system used, a miniature liquid lens is attached to the rear surface of the objective. The simultaneous imaging of multiple focal planes was applied in "wide-field" microscopes to extend the axial tracking of a nanometric emitter.

M. Duocastella et al. in "Three-dimensional particle tracking via tunable color-encoded multiplexing", published in Optics Letters Vol. 41, Issue 5, pp. 863-866 (2016), describe a method for 3D tracking in light field optical microscopy using multiple, selectable focal planes. A lens with electronically tunable focal length and high speed is synchronised with three different sources of monochromatic light, each with different colour, red, white and blue (RGB). The control electronics makes possible the selection and independent control of the position whereat each colour is focused. In this way, each individual exposure by means of a colour camera simultaneously captures the three colours corresponding to the three different focal planes. The authors observe that measuring the diameter and the position of the centroid of the diffraction rings for each of the three focal planes allows the localisation and tracking of individual objects in significantly larger axial intervals than those obtainable with conventional approaches with single focal plane.

S. Ram et al. "High Accuracy 3D Quantum Dot Tracking with Multifocal Plane Microscopy for the Study of Fast Intracellular Dynamics in Live Cells", published in Biophys J. (2008); vol. 95(12), pages 6025-6043, describe a localisation algorithm for determining the 3D position of a point source in a multifocal plane microscopy image mode, in which the simultaneous imaging of two distinct planes within the sample is generated.

SUMMARY OF THE INVENTION

The Applicant has observed that the Parallax technique described by Y. Sun et al. can generally work in an axial interval in the z axis (i.e. outside the image plane) that is relatively limited, often smaller than 1 .mu.m, because, when an emitter exits the focus, it appears as a diffraction ring, thus preventing an accurate localisation in the plane (x,y).

With the use of a varifocal lens actuated at an axial displacement velocity of the focused optical beam that is greater than the time of exposure of the detection system, the multiplanar information can be integrated in a single image capture, creating an EDOF effect. This makes it possible to concentrate the fluorescence light in a relatively small region, in order to maintain a high signal-noise ratio and to reduce potential superpositions between particles near each other. A single emitter situated inside the EDOF thus appears to be focused in the image and its coordinates (x,y) can be determined with sufficient precision.

The Applicant has observed that the concentration of light in the EDOF region created by the varifocal lens takes place at the expense of a loss of information on the axial position of the particle, outside the image plane. In an image acquired "in-axis", i.e. along the optical axis of the objective lens of the imaging system, the single emitter is represented by a focal spot in the image plane (x,y), which encloses the information on its axial position in a direction z perpendicular to the plane, however this information is not recognisable.

The simultaneous imaging of multiple focal planes of the method described in the aforementioned paper by Duocastella et al. is able to extend the axial distance with respect to other conventional, single plane approaches. However, use of more than two measurement planes leads to a reduction of the signal-to-noise ratio. Moreover, the particle localisation precision depends on the position of the focal planes and in general it is not uniform. The Applicant has then noted that method can be difficult to implement in the case of tracking of fluorescent particles and it is not possible to use more than three focal planes.

The Applicant has understood that if, simultaneously with a first image acquired with a first optical beam in-axis with respect to the optical axis of the varifocal lens, a second image is captured, created by a second optical beam that is off-axis with respect to the optical axis of the varifocal lens, the comparison between the two images contains the axial information of a single emitter, encoded in a lateral shift, .DELTA.y or .DELTA.x, on one of the two axes of the image plane, between the position of the emitter in the first image and the position of the same emitter in the second image. The lateral shift of the emitter on one or on both the axes of the image plane is defined by the decentralizing of the second optical beam with respect to the optical axis of the varifocal lens, in particular by the offset distance between the second optical beam and the first optical beam in-axis.

The Applicant has noted that there is a linear relationship between the lateral displacement of the emitter, due to the decentralizing of the second beam, and the axial position of the emitter. Hereinafter, reference shall be made to an emitter object, preferably with nanometric size, for example a fluorescent molecule, which emits scattered light or fluorescent light when illuminated by an optical beam.

In the present description, the "axial position" of an emitter object means the position outside the plane of a detected image, preferably perpendicular to the plane of the image. The plane (x,y) shall indicate the plane of the image and z shall indicate the axial direction perpendicular to the plane (x,y).

The Applicant has observed that there is a linear relationship between the axial position, z.sub.p, of a single emitter and the focal length, f.sub.TL, of varifocal lens, which varies within a range of values, generally selectable by a user. Therefore, the quantification of .DELTA.y makes it possible to extract z.sub.p with high accuracy within the EDOF region created by the varifocal lens.

Regulating one or more parameters of the imaging system, such as the offset distance of the second beam from the optical axis of the varifocal lens and the range of values of focal length f.sub.TL, it is possible to change the tracking area of the emitter object and/or the accuracy of its axial position.

In accordance with the present disclosure, a microscopy method is provided for determining the position of one or more emitter objects in a three-dimensional (3D) space which comprises: a) illuminating an emitter object so as to cause an emission of scattered light or fluorescent light from the emitter object; b) focusing the emitted light in a primary focused optical beam through an objective lens; c) splitting the primary optical beam in a first secondary beam and in a second secondary beam; d) directing the first and the second secondary beam through a varifocal lens, having an optical axis, along respective optical paths such that the first secondary beam impinges on the varifocal lens along a direction corresponding to said optical axis and the second secondary beam impinges decentralized on the varifocal lens at an offset distance .DELTA.d from the optical axis and along a direction parallel to the same, in which the varifocal lens has an electronically controllable focal length, e) electronically controlling the focal length of the varifocal lens changing the focal length through a range of focal length values so as to move the respective focal positions of the first and second beam along said optical axis through said range of focal length values in a predetermined travel time, f) simultaneously acquiring a first image and a second image of the emitter object in an integration time greater than or equal to the travel time of the focal positions simultaneously detecting the first secondary beam in-axis and the decentralized second secondary beam in respective first detection area and second detection area arranged on an image plane (x, y); g) analyzing the first and the second image for determining a first position of the object on the first image and a second object position on the second image and determining a relative displacement .DELTA.r in the image plane (x,y) of the position of the object in the first and in the second image, and h) determining an axial position z.sub.p of the emitter object along an axis z perpendicular to the image plane on the basis of .DELTA.r.

Preferably, after step h), the method comprises: i) associating the coordinates defined by the first position on the image plane (x,y) and by the axial position z.sub.p with the 3D position of the emitter object.

Preferably, in the step h), the axial position z.sub.p is determined on the basis of a linear relationship between z.sub.p and .DELTA.r.

Preferably, the emitter object has nanometric dimension.

Preferably, the step of simultaneously acquiring a first image and a second image of the emitter object comprises simultaneously acquiring a plurality of respective first and second images at successive instants so as to trace the 3D position of the object over time.

Preferably, the successive instants of synchronous acquisition of first and second images are separated from each other by a longer time interval than the integration time of the at least one photodetector device.

Preferably, the steps from the acquisition of the first and of the second image to the determination of the 3D position of the emitter object are carried out automatically.

In some embodiments, the method is a fluorescence microscopy method and the emitter object is a nanometric fluorescent object.

The focal length of the varifocal length is electronically tunable through an electronic control signal. An electronic control of the focal length of the lens has the advantage of achieving a relatively fast displacement of the focus of the lens, along the optical axis thereof, with controlled displacement speed. To create the effect of an EDOF, the displacement speed is selected so as to travel through a determined interval of focal lengths in a travel time that is lower than or equal to the time of exposure of the detector device for the collection of the light that hits its photosensitive area, i.e., the time during which the sensor actively collects the photons for the acquisition of a snap shot, indicated also as integration time.

Preferably, electronically controlling the focal length of the varifocal length is achieved in such a way as to produce a continuous change of the focal length through said interval of values of focal length.

Preferably, the control signal of the varifocal length is frequency modulated, in which the frequency .nu..sub.TL determines the axial displacement speed of the focal spot. For equal paths of the focal spot, an increase in the frequency .nu..sub.TL implies an increase in the axial displacement speed. To create the EDOF effect, periodic modulation of the focal length of the lens is selected at a higher rate than the integration time. For example, if the detector is a CCD with integration time of 100 ms, the modulation frequency with which the varifocal length operates is selected at a value that is equal to or greater than 10 Hz.

Since the first and the second secondary beam are synchronous to each other, the electronic control of the varifocal lens produces a same change of the focal length in each secondary optical beam, causing an equal EDOF effect in the corresponding image.

The first and the second images acquired simultaneously are associated to a same time instant, in which the same object can occupy two different positions in the image plane (x,y) depending on its axial position.

The first and the second image acquired in the step f) of the method are preferably digital images.

Preferably, subsequently to focusing the light emitted in a primary optical beam and prior to splitting the primary optical beam into a first secondary beam and into a second secondary beam, the method comprises directing the primary optical beam through a relay optical unit having a magnification ratio, the relay optical unit being arranged on a rear focal plane of the objective lens. The relay optical unit is configured for transferring an image formed by the objective lens to an image plane conjugate with an image magnification ratio.

Preferably, the relay optical unit is a telecentric optical system from the image side on the rear focal plane of the objective lens.

Preferably, the magnification ratio is 1:1.

Preferably, the relay optical unit comprises a first converging lens and a second converging lens, the second converging lens being arranged so as to receive the primary optical beam that has passed through the first converging lens.

In the embodiments described hereafter, the first and the second secondary beam are focused in the image plane by means of a tube lens arranged so as to receive the first and the second secondary beam that have passed through the varifocal lens and configured to focus the first and the second secondary beam in an intermediate plane that coincides with the image plane. The intermediate focus plane of the tube lens corresponds to a value of focal length included in said interval of values of focal length of the varifocal lens.

In some embodiments, the first position of the object is defined in the image plane by the coordinates (x.sub.1, y.sub.1), the relative displacement between the first position and the second position z in the image plane is .DELTA.r= {square root over (.DELTA.x.sup.2+.DELTA.y.sup.2)}, and the axial position z.sub.p of the emitter object along the z axis is determined in accordance with a relationship .DELTA.r=C'z.sub.p, in which C' is a conversion factor.

Preferably, the offset distance .DELTA.d of the second secondary beams from the optical axis of the varifocal lens is along one of the two coordinates that define a plane perpendicular to the optical axis so as to produce a lateral displacement .DELTA.r=.DELTA.y between the first and the second position of the object along one of the two coordinates of the image plane (x,y). Preferably, the axial position z.sub.p of the emitter object along the z axis is determined in accordance with a linear relationship .DELTA.y=Cz.sub.p, in which C is a conversion factor. In the preferred embodiments, the conversion factor is a proportionality constant.

The 3D position of the object is defined by (x.sub.1, y.sub.1, z.sub.p).

In accordance with the present invention, with a single snap shot of the at least one photodetector device it is possible to obtain the information on the 3D position of an emitter object contained in a sample.

The interval of the axial displacement of the emitter object, which can be measured along the z axis, can be modified by changing at least one of the ends of the interval of the focal length of the varifocal lens. In some embodiments, the range of trackable axial displacements is between 0 and 20 .mu.m.

The accuracy of the axial position within the EDOF created by the varifocal lens can be controlled by changing the offset distance of the decentralized beam with respect to the beam in axis. In some exemplary embodiments, it is possible to obtain an accuracy .delta.z on the axial position that is lower than 100 nm.

Therefore, the present microscopy technique offers flexibility in selecting some parameters of the optical imaging system, making it possible to prefer a broader interval of axial tracking of a single emitter or a higher accuracy in the axial localization thereof, depending on the application.

The quantification of the lateral displacement .DELTA.y, or more generically the determination of the displacement .DELTA.r, of the emitter object in the image plane relative to the first position because of the decentralization of the optical detection beam, can be carried out using a cross correlation algorithm of the two images or an interpolation function. Preferably, in step g), determining a relative displacement .DELTA.r in the image plane (x,y) is achieved using a cross-correlation algorithm between the first and the second image.

In one embodiment, the lateral displacement .DELTA.y is calculated using an algorithm based on the cross-correlation analysis of a portion of a first image and of a portion of the second image, each image portion containing the emitter object.

Preferably, the value of the conversion factor, C or C'= {square root over (2)}C, for the quantification of the position in the z axis, is determined using a calibration function obtained detecting the lateral displacement of a particle displaced axially along z by one or more known quantities.

In an embodiment, the conversion factor C is determined carrying out the steps from a) to f) of the method, in which the offset distance .DELTA.d of the second secondary beam from the optical axis of the varifocal lens is such as to cause a lateral displacement .DELTA.y between the first and the second position of the object along one of the two coordinates of the image plane (x,y) and the emitter object has a fixed position in the image plane, in which: step f) comprises simultaneously acquiring a plurality of respective first and second images at successive instants moving the emitter object only along the axial direction z in predetermined axial positions between successive acquisitions of first and second images, step g) comprises determining a relative displacement .DELTA.y of the y coordinate of the object in the two images for each acquisition of a first and second image and hence for each predetermined axial position, and step h) is replaced by a step that comprises calculating a linear interpolation function that has as its input values the predetermined axial positions and the respective relative displacements .DELTA.y so as to determine the conversion factor as angular coefficient of the interpolation function.

In some embodiments, simultaneously acquiring the first and the second image is carried out by a two-dimensional image sensor which comprises an array of photosensitive elements which extend in the image plane (x,y) in a detection area which comprises the first detection area and the second detection area.

In other embodiments, simultaneously acquiring the first and the second image comprises acquiring the first secondary beam through a first two-dimensional image sensor and acquiring the second secondary beam through a second two-dimensional image sensor, wherein the first and the second two-dimensional image sensors are mutually synchronized and each image sensor comprises a respective array of photosensitive elements defining a respective first and second detection area in the image plane (x,y).

Preferably, the at least one two-dimensional image sensor is a photocamera or a digital television camera.

Preferably, splitting the primary optical beam into a first secondary beam and a second secondary beam comprises transmitting the primary beam through a beam splitter configured for power-splitting the beam.

Preferably, the beam splitter is configured in such a way as to produce a first secondary beam and a second secondary beam which propagate along two distinct directions not parallel to each other and step d) of the method comprises directing at least one between the first and the second secondary beam through a directing optical system configured such that the first and the second secondary beam, in output from the directing optical system, propagate along two distinct and mutually parallel directions.

In an additional embodiment, the primary optical beam passes through a relay optical unit, the relay optical unit is formed by a first and by a second converging lens arranged along the optical path of the primary beam and the conversion factor C is determined by the relationship C==(f.sub.t.DELTA.d/M.sub.R.sup.2f.sub.o.sup.2), wherein f.sub.t is the focal length of the tube lens, f.sub.o the focal length of the objective lens and M.sub.R=-f.sub.R1/f.sub.R2, wherein f.sub.R1 and f.sub.R2 are the respective focal lengths of the first and of the second converging lens.

In accordance with the present disclosure, a microscopy apparatus is provided for determining the position of one or more emitter objects in a three-dimensional (3D) space which comprises: an objective lens configured for collecting light emitted by an emitter object and focusing the emitted light in a primary light beam; a beam splitter arranged for receiving the primary optical beam and configured for power-splitting the primary optical beam in a first secondary optical beam and a second secondary optical beam; a varifocal lens with electronically tunable focal length and having an optical axis, the varifocal lens being arranged downstream of the beam splitter; an optical beam directing optical system for directing at least one between the first secondary optical beam and the second secondary optical beam, the directing optical system being arranged between the beam splitter and the varifocal lens and configured such that the first and the second secondary beams exiting from the directing optical system, propagate along two distinct and mutually parallel directions; at least one photodetector device arranged so as to receive the first and the second secondary beam in output from the varifocal lens, wherein the varifocal lens and the directing optical system are arranged in such a way that the first secondary optical beam impinges on the varifocal lens along its optical axis and the second secondary optical beam impinges on the varifocal lens decentralized along a direction parallel to the optical axis and at an offset distance .DELTA.d from the same, and the at least one photodetector device is configured for simultaneously detecting the first secondary beam and the second secondary beam on a respective first and second detection area to form at least one two-dimensional image in an image plane (x, y), said two-dimensional image comprising respective first image of the emitter object formed on the first detection area by the first beam in axis and the second image of the same emitter object formed on the second detection area by the second decentralized beam.

Preferably, the varifocal lens is configured to be electronically controlled by setting a variation in the focal length through a range of focal length values so as to move the respective focal positions of the first and second beam along the optical axis of the varifocal lens through said range of focal length values in a predetermined travel time and the at least one detector device is configured for forming the at least one two-dimensional image in an integration time greater than or equal to the travel time.

Preferably, the microscopy apparatus further comprises: a relay optical unit positioned on a rear focal plane of the objective lens and arranged in such a way as to receive the primary optical beam upstream of the beam splitter, the relay optical unit being configured for transferring an image formed by the objective lens to an image plane conjugate with a magnification ratio.

Preferably, the relay optical unit is a telecentric optical system with a magnification ratio of 1:1.

Preferably, the microscopy apparatus further comprises a data processing device connected to the at least one photodetector device configured for: receiving the first image and the second image of the emitter object; analyzing the first and the second image for determining a first position of the object on the first image and a second position of the object on the second image; determining a relative displacement .DELTA.r in the image plane (x,y) of the position of the object in the first and in the second image; determining an axial position z.sub.p of the emitter object along an axis z perpendicular to the image plane on the basis of .DELTA.r, and associating the coordinates defined by the first position on the image plane (x,y) and by the axial position z.sub.p with the position (x,y,z) of the emitter object.

Preferably, the at least one photodetector device is a two-dimensional image sensor which comprises an array of photosensitive elements that extend in the image plane (x,y).

Preferably, the microscopy apparatus further comprises a tube lens arranged so as to receive the first and the second secondary beam that have passed through the varifocal lens and configured for focusing the first and the second secondary beam in an intermediate plane that coincides with the image plane.

BRIEF DESCRIPTION OF THE FIGURES

The present invention will be described in more detail below with reference to the accompanying drawings, in which some embodiments of the invention are shown. The drawings that illustrate the embodiments are schematic representations, not drawn to scale.

FIGS. 1(a) and 1(b) schematically illustrate the operating principle constituting the basis of the method and apparatus in accordance with the present disclosure.

FIG. 2 is an optical diagram of a telecentric microscope that comprises a varifocal lens and a relay optical unit.

FIG. 3 is a schematic representation of a perpendicular plane to the optical axis z of coordinates (x,y) with origin O in the optical axis of the varifocal lens.

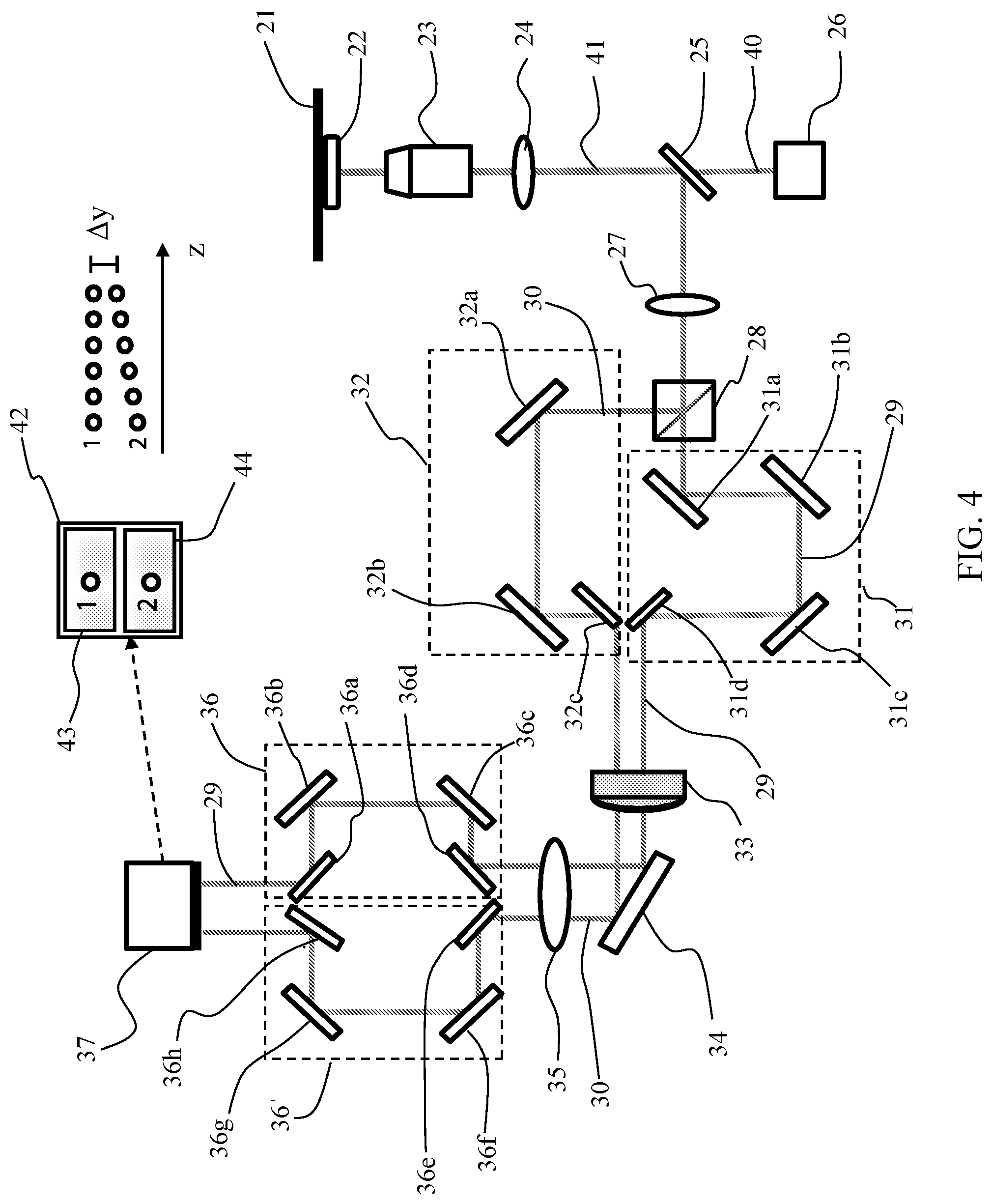

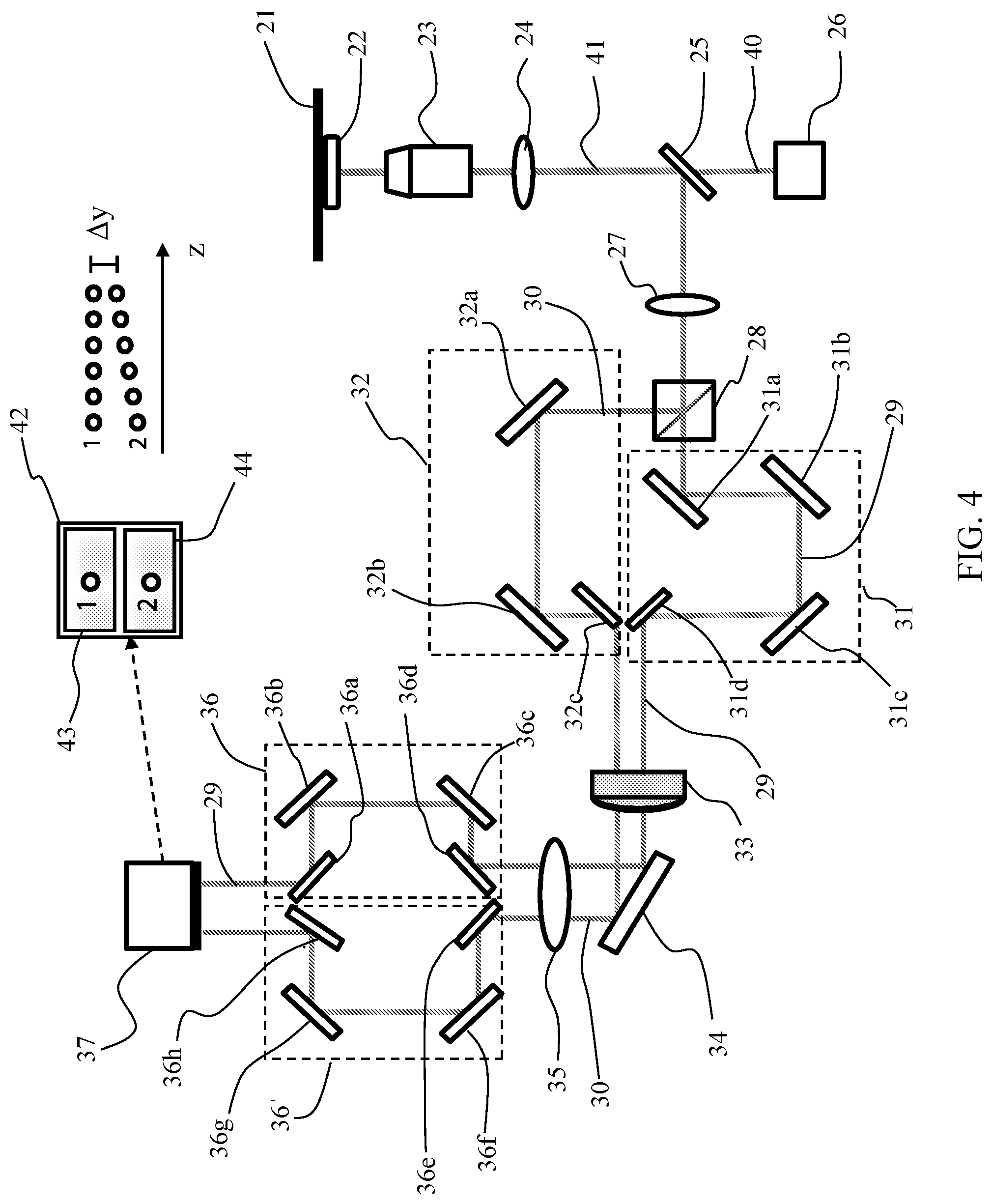

FIG. 4 is a schematic diagram of a microscopy apparatus, in accordance with an embodiment of the present invention.

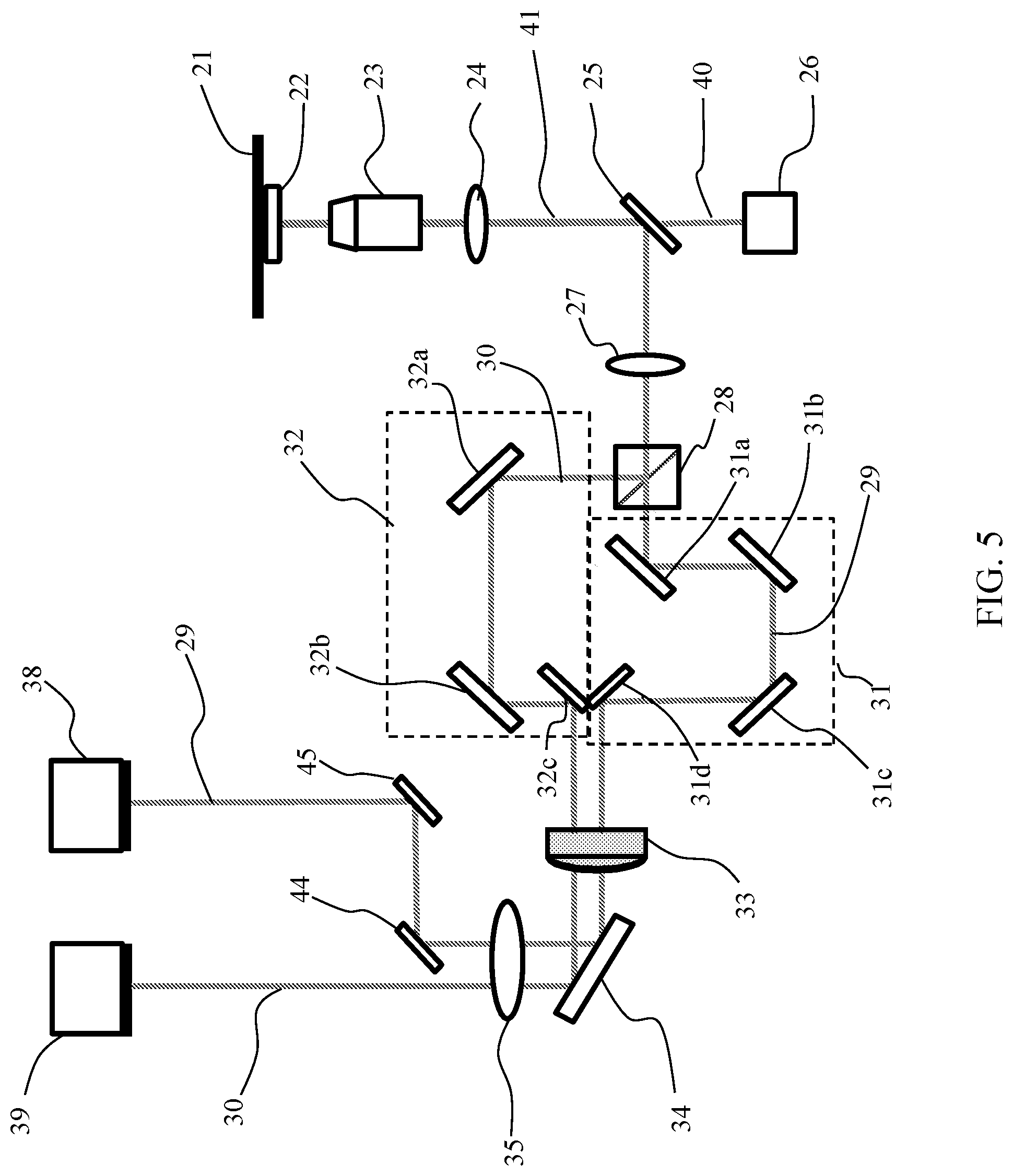

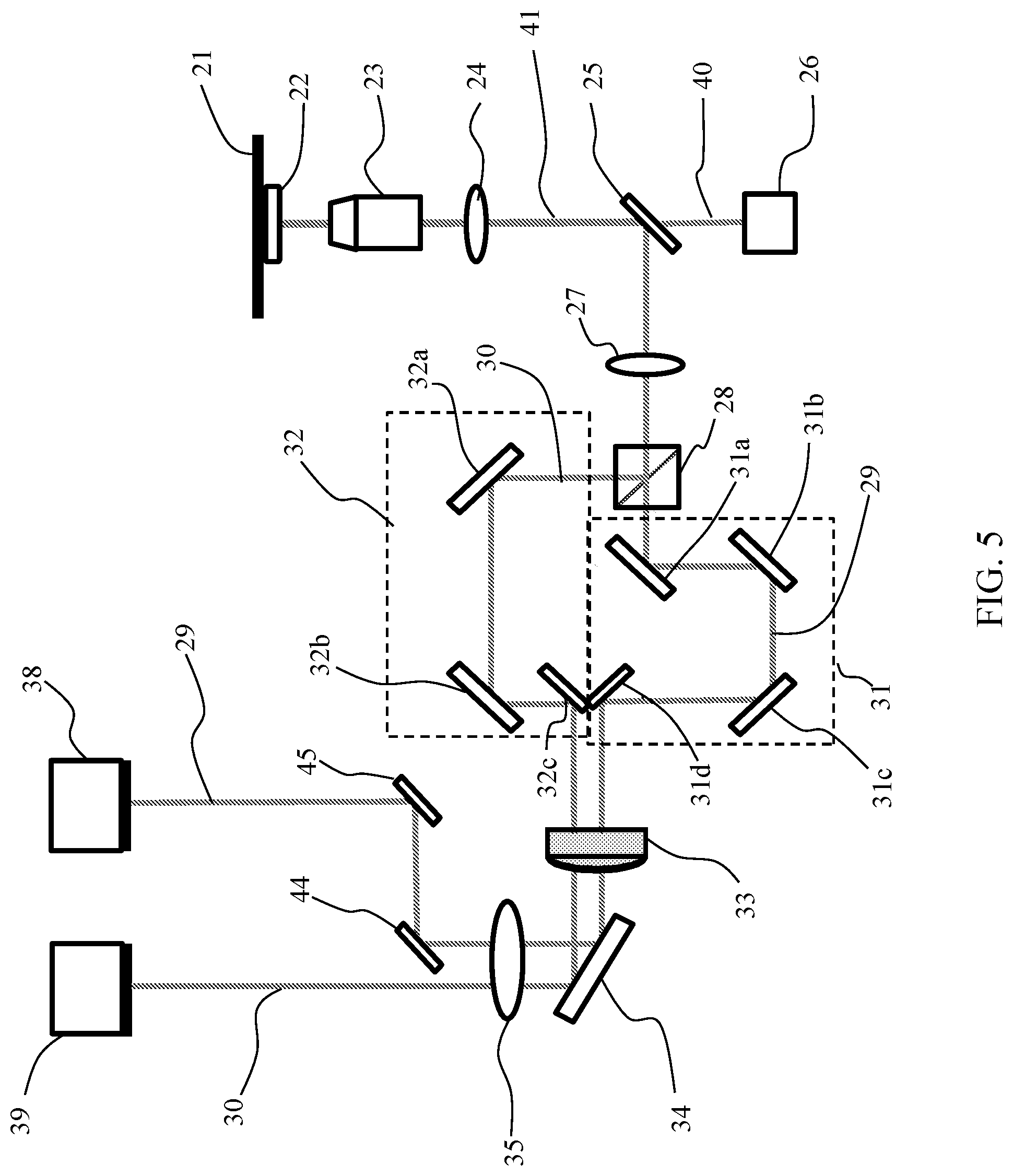

FIG. 5 is a schematic diagram of a microscopy apparatus, in accordance with a further embodiment of the present invention.

FIG. 6 is a schematic diagram of a microscopy apparatus, in accordance with another embodiment of the present invention.

FIGS. 7A and 7B show the experimental PSF, in the plane (y,z), for the positions of a fluorescent microsphere measured with the beam in axis, according to an embodiment of the present invention.

FIGS. 7C and 7D show the experimental PSF for the positions of the microsphere of FIGS. 7A and 7B, with the beam off axis.

FIG. 8 e is a series of portions of images, in which each image shows the position of the microsphere acquired with the beam in axis, position "I", and with the optical beam off axis, "O".

FIGS. 9A and 9B show an example of a first and a second fluorescence microscopy image acquired simultaneously detecting, respectively, the beam along the optical axis of the varifocal lens and the beam off axis.

FIGS. 10A to 10D schematically show the work flow of the localization algorithm used to determine the lateral and axial displacement of a particle, in accordance with an exemplary embodiment of the present invention.

FIG. 11 shows the evolution over time of the 3D position of a single fluorescent particle on the superficial membrane of neurons in vivo, in which the particle was coupled to a neuronal ionotropic receptor GABAA, in accordance with an exemplary embodiment of the invention.

DETAILED DESCRIPTION

FIGS. 1(a) and 1(b) schematically illustrate the operating principle constituting the basis of the method and apparatus in accordance with the present disclosure. With reference to FIG. 1(a), in an optical detection system of a microscope, downstream of the objective lens (not shown), a varifocal lens 12 is optically coupled to an optical detection system of a microscope. The detection system comprises a tube lens 13 arranged along the direction of detection (not shown in detail) that defines an image plane 14. As per se generally known, the tube lens is configured to focus a parallel light beam (i.e. subjected to infinite imaging) exiting the objective lens at an intermediate image plane 14, whereon the photodetector is positioned. The varifocal lens 12 is positioned in a conjugate plane of the rear focal plane of the objective lens of the microscope and is aligned with the optical axis of the objective lens. A first optical beam 10, indicated with a dashed line, for example a beam of fluorescent light emitted by an object, is aligned with the optical axis 15 of the varifocal lens, along the direction of detection, and passes through the tube lens to be then detected by the photodetector device in a position in the (x.sub.f, y.sub.f) image plane in the image plane 14 (x,y), the coordinate y.sub.f whereof is visible in the figure. The position of a point in the image plane is conventionally defined by the coordinate in the image plane of maximum intensity of the point spread function (PSF) that describes the response of an imaging system and its spatial resolution.

A second optical beam 11, synchronous with the first beam and generated by the same emitter object passes through the varifocal lens 12 off axis with respect to the optical axis of the lens, parallel to the optical axis 15 and at an offset distance .DELTA.d therefrom. In the case shown in the figure, the offset of the second beam in a plane perpendicular to the optical axis is along a y direction. As described in more detail below, the first and the second beam originate from the splitting in two beams of the fluorescent/scattered light emitted by the object itself. The decentralization of the optical axis causes a "deflection" of the collimated beam that emerges from the lens 12 at an angle (not indicated in the figure) with respect to the direction of incidence of the collimated beam on the varifocal lens. The angle depends on the focal length of the lens 12, f.sub.TL, and on the distance .DELTA.d of the optical axis of the beam 11 from the optical axis 15 of the beam in axis 10, in accordance with the relationship:

.times..times. .DELTA..times..times. ##EQU00001## Following the deflection of the beam off axis 11, the image of the object formed on the detector will be displaced, along the y axis of the image plane, by a quantity .DELTA.y, indicated with lateral displacement. In the optical configuration of FIG. 1, .DELTA.y=f.sub.ttan , (2)

wherein f.sub.t is the focal length of the tube lens.

The lateral displacement contains the information about the axial position along an axis z perpendicular to the image plane.

In FIG. 1(a), it is assumed that the object is in a position z.sub.1 that corresponds to a lateral displacement .DELTA.y.sub.1, while in FIG. 1(b) it is assumed that the object is in a position z.sub.2 represented by a lateral displacement .DELTA.y.sub.2.

As described in more detail below, the optical parameters of the optical elements downstream of the objective lens are represented by constant quantities for a given optical configuration of the microscope and there is a linear relationship between the lateral displacement .DELTA.y and the axial displacement .DELTA.z, .DELTA.y=C.DELTA.z, (3) where C is a conversion factor, which depends linearly on .DELTA.d, f.sub.t and the focal length of the objective lens. An axial displacement .DELTA.z in the direction of incidence of the light on the photodetector can be calculated with sufficient accuracy from the focal parameters. In a preferred embodiment, the conversion factor is determined in a calibration step.

Taking an arbitrary axial plane as the reference plane z.sub.0=0, it is possible to write the equation (3) as .DELTA.y=Cz.sub.p, (4)

wherein z.sub.p is the axial position relative to the axial reference plane.

It is noted that, if the offset distance .DELTA.d from the optical axis is unchanged, as indicated in FIGS. 1(a) and 1(b), once an optical configuration of the beam in axis and of the offset beam is selected, the position of the object can be tracked both in the plane of the image and along the axis z.

FIG. 2 is an optical diagram of a telecentric microscope for the production of an EDOF image by means of a varifocal lens. The optical system comprises an objective lens LO with focal length f.sub.o, a relay optical unit formed by a first converging lens L1 and a second converging lens L2 with respective focal lengths f.sub.R1 and f.sub.R2. The microscope further comprises a varifocal lens with focal length f.sub.TL and a tube lens with focal length f.sub.t. The focal plane of the object, P, is indicated, as well as the image plane, Pi, whereon is focused the image of the object by means of the tube lens. The lens L1 is positioned at a distance from the rear focal plane of the objective that is equal to f.sub.R1+f.sub.o.

According to a mathematical approach for calculating the focal properties in a microscope in parallax conditions, per se known, that is based on the use of the ABCD matrix for tracking a light beam, the position of a focal point s is given by the equation:

.times. ##EQU00002##

wherein M.sub.R is the magnification ratio, M.sub.R=-f.sub.R1/f.sub.R2, which defines the magnification of the relay optical unit. The corresponding displacement in the axial position, .DELTA.z, with respect to the initial position f.sub.o of the focus, is:

.DELTA..times..times..times. ##EQU00003##

where a positive value of .DELTA.z implies a movement towards the objective lens.

In FIG. 2, an optical path at depth z=0 is shown with a dotted line, while the optical path indicated with the dashed line refers to an axial position displaced by a quantity .DELTA.z. Combining the equations (1), (2) and (6), the conversion factor C is expressed, C=(f.sub.t.DELTA.d/M.sub.R.sup.2f.sub.o.sup.2) (7).

Once the optical parameters of the optical detection system of the microscope are set, the conversion factor is a proportionality constant.

In some preferred embodiments, the conversion factor is determined in a calibration step wherein a non-movable emitter object is detected by the beam in axis and by the decentralized beam in a plurality of axial positions having known values and obtained displacing the object only along the axis z. A respective plurality of lateral displacements is then determined, corresponding to said plurality of axial positions, determining the quantity .DELTA.y in the two images formed by the first and by the second beam. The interpolation function of the pairs of discrete values (.DELTA.y, z) is used as a calibration function .DELTA.y(z) for determining the correspondence between a determined value of lateral displacement .DELTA.y and the axial position z.sub.p of the particle. Preferably, the varifocal lens is arranged on a conjugate plane of the objective lens, in particular it is arranged at or in proximity to the rear conjugate plane of the objective lens in such a way as to maintain substantially constant the magnification of an object on the image plane due to the variation of the focus.

Preferably, M.sub.R is selected to be equal to 1, i.e. the relay unit has 1:1 magnification, thereby making the variation of the focal plane of the varifocal lens possible without introducing a magnification of the object on the image plane.

It is understood that the present invention can use a telecentric optical system with M.sub.R different from 1. If the optical system does not comprise a relay optical unit, it is preferable to offset the magnification effects within the EDOF, for example by modifying the focal length of the tube lens, in order to increase the tracking precision of the particle.

The Applicant has observed that the present approach makes it possible to maintain an approximately constant localization precision over the entire EDOF. The extended field depth can be adjusted electronically by controlling the focal length of the varifocal lens, e.g. selecting the current signal applied to the lens. It is further noted that, varying the constant C, for example varying the offset distance .DELTA.d of the second beam from the optical axis of the lens, it is possible to adjust the precision in the axial localization z.sub.p.

The offset of one of the two secondary beams with respect to the optical axis of the varifocal lens can be along the axis y or along the axis x of a plane perpendicular to the optical axis so as to produce a lateral displacement .DELTA.y or a lateral displacement .DELTA.x, respectively, on the image plane. Also in the case of simultaneous detection of a beam in axis and of a beam decentralized along the axis x, the relationships (3) and (4) apply, in particular .DELTA.x=C.DELTA.z or .DELTA.x=Cz.sub.p. The displacement of the position of the particle on the image plane, .DELTA.r, deriving from an offset distance given by the relationship (6) with x and y different from zero, wherein the position O of coordinates (0,0) corresponds to the optical axis, is not necessarily a lateral displacement on one of the two coordinates of the image plane, but more generally a displacement in the plane, e.g. it can be "diagonal" with respect to the real position of the particle in the image plane. More generally, the relative displacement .DELTA.r on the image plane is given by .DELTA.r= {square root over (.DELTA.x.sup.2+.DELTA.y.sup.2)} (8).

In this case, too, there is a linear relationship between the displacement .DELTA.r of the planar coordinates of the emitter particle and the axial displacement of the particle along the axis z perpendicular to the image plane that is given by .DELTA.r=C'.DELTA.z, with the proportionality constant C'= {square root over (2)}C.

A lateral displacement both in x and in y is determined by an offset distance of the second beam from the optical axis of the varifocal lens both in x and in y.

FIG. 3 is a schematic representation of a plane perpendicular to the optical axis of the varifocal lens of coordinates (x,y) with origin O in the optical axis in the direction z. The optical axis of a lens is generally defined as a straight line that passes through the geometric centre of the lens and joins the respective centres of curvature of the surfaces of the lens through which an optical beam passes. In the figure, three possible offset distances from the optical axis of the decentralized beam are exemplified: a distance .DELTA.d.sub.1 along the axis x, .DELTA.d.sub.2 along the y axis and .DELTA.d.sub.3 that is defined by an offset from the origin both on the x axis and on the y axis. Since the direction of incidence of the optical beams on the detector device is typically perpendicular to the two-dimensional array of pixels on which the light is collected, the plane indicated in FIG. 3 is typically parallel to the image plane.

The point of incidence of the second optical beam on the varifocal lens with respect to the optical axis of the lens can be selected by a user, for example by means of an optical system for directing at least one of the two secondary beams. Without thereby limiting the present invention, in the description that follows reference will be made to a lateral displacement .DELTA.y, wherein y is generally one of the two coordinates of the image plane.

FIG. 4 is a schematic representation of a microscopy apparatus for determining the individual position of a particle that emits light (scattered or fluorescent) in a three-dimensional space, in accordance with an embodiment of the present invention. A light source 26 configured for emitting a laser beam 40 that illuminates a sample, which contains dispersed emitting particles, such as molecules or cells dispersed in a biological sample. For example, the emitting particles can be fluorescent proteins bonded or conjugated to the surface of cells for the imaging of the cells. The emitting objects are preferably nanometric, with typical dimensions that vary from a few nm, for example if the tracked particle is a fluorescent protein, to a few hundreds of nm. The sample is contained in a sample holder 22 arranged on a translation system 21 along the axes (x,y,z) for the lateral positioning of the sample with respect to the incident beam emitted by the laser source 26 and for focusing the particles in the optical microscope, as described below. For example, the translation system is a piezoelectric position transducer.

The apparatus of FIG. 4 is used with the light field or fluorescence imaging technique. In the case of fluorescence microscopy, the sample contains chemically excitable species and the light source 26 is a lamp or a laser source. If the collected light is light scattered by the particles, the light source 26 is a laser source configured to emit a collimated beam at a determined wavelength in the visible spectrum.

In the examples shown in FIGS. 4-6, the microscopy apparatus comprises an inverted microscope in epi-illumination, which makes it possible to display the cells even on non-transparent supports. However, the method in accordance with the present disclosure can also be applied on an upright microscope.

The fluorescent light emitted or the light scattered by the sample is collected by a microscope objective lens 23 configured to focus the light emitted by the sample in a primary optical beam that is directed towards a first converging lens 24.

Preferably, the objective lens 23 has high numerical aperture (NA), inasmuch as a greater numerical aperture generally implies a greater focusing of the beam of fluorescent or scattered light. In some embodiments, the numerical aperture of the objective lens is between 0.90 and 1.49, preferably greater than 1.2.

A first optical deflection element 25 is positioned downstream of the first lens so as to receive the light that passed through the first lens 24. In the case of fluorescent light, preferably, the first optical deflection element 25 is a dichroic mirror that is so configured as to reflect the beam emitted by the sample and transmit the beam coming from the light source, e.g. laser source. The optical features of the dichroic mirror are selected as a function of the wavelength of the laser beam that hits the sample and of the optical spectrum of fluorescence or of emission of the particles. In the case of measurement of light scattered by the particles, the first deflection element can be a beam splitter.

Without thereby limiting the present invention, hereafter for the sake of brevity reference will mainly be made to fluorescence microscopy. The beam of fluorescent light or of light scattered by the sample will be indicated as secondary beam.

The fluorescent light is deflected by the dichroic mirror 25 towards a second converging lens 27 to enter a beam splitter 28 configured for dividing in power the light beam in a first secondary optical beam 29 and a second secondary optical beam 30. For example, the beam splitter is a 50:50 splitter.

The first and the second converging lens 24, 27, arranged between the objective lens 23 and the beam splitter 28, form a relay optical unit. As is generally known, a relay optical unit produces a shadow image of the object in a first intermediate focal plane of the first converging lens 24 and this shadow image is magnified by the second converging lens 27 to produce a magnified image projected on a second intermediate focal plane, i.e. a conjugate image plane. The magnification ratio depends on the focal lengths of the two relay lenses and it is preferably selected to be equal to 1:1. Preferably, the first lens 24 is positioned at distance from the rear focal plane of the objective lens that is equal to the sum of the focal length of the objective lens and of the focal length of the lens itself.

Preferably, the relay optical unit is a telecentric optical system from the image side on the rear focal plane of the objective lens. For example, the telecentric system is an optical system 4f, wherein the first lens 24 and the second lens 27 have a same focal length, f.sub.1=f.sub.R1=f.sub.R2, and are arranged at an optical distance equal to 2f.sub.1 from each other.

Downstream of the beam splitter 28, with respect to the direction of propagation of the secondary beam of fluorescent light, is arranged a varifocal lens 33 with electronically tunable focal length. The varifocal lens has an optical axis. The maintain constant the magnification of an object on the image plane for the different values of focal length, the varifocal lens 33 is arranged on the conjugate plane of the rear focal plane of the objective lens 23 and the magnification factor is defined by the focal lengths of the relay lens unit 24, 27.

The microscopy apparatus is so configured that the first secondary optical beam 29 impinges on the varifocal lens in axis (i.e. along the optical axis) and the second secondary beam 30 impinges thereon along a direction parallel to the optical axis, at an offset distance .DELTA.d from the optical axis of the lens.

Since a beam splitter typically introduces a bifurcation of the incoming optical beam, the two beams emerge from the splitter along optical paths with two different directions. Therefore, the direction of at least one of the two beams generally needs to be modified so as to be parallel to the direction of the other beam when it impinges on the varifocal lens. Moreover, depending on the specific configuration according to which the main optical elements are arranged, it is possible that the optical path of one or of both of the beams has to be modified, for example translated and/or deflected, so as to enter into the varifocal lens in the correct position in axis or off axis.

In the embodiment of FIG. 4, the first optical beam 29 passes through a first beam-directing optical unit 31 configured to direct the first beam 29 towards the varifocal lens 33 along the optical axis of the lens. In the illustrated example, the first directing optical unit 31 consists of a set of mirrors, in particular four mirrors 31a, 31b, 31c and 31d.

The second optical beam 30 passes through a second beam-directing optical unit 32 to deflect the beam and direct it towards the varifocal length 33 along a direction parallel to the optical axis of the lens at an offset distance .DELTA.d from the optical axis. In the illustrated example, the second directing optical unit 32 consists of a set of three mirrors 32a, 32b and 32c.

It is understood that the first and the second directing optical unit 31, 32 can comprise a single directing optical element, e.g. a mirror or a prism, or a plurality of mirrors/prisms in a different number from the illustrated ones.

The first and the second directing optical unit are generically indicated as beam-directing optical system, which is configured to direct at least a secondary optical beam exiting the beam splitter. It is understood that the present invention is not limited to the configuration of the directing optical system able to deflect one or both optical beams in the desired direction, e.g. towards the varifocal lens or towards the at least one photodetector device, or to the presence of a directing optical system for both secondary beams of fluorescent or scattered light. Since the two secondary beams originate from the splitting in two of the fluorescent light or of the light scattered by the same object, it is possible to obtain the synchronization between the two beams with no need for complex synchronization systems.

In ways known in themselves, the focal length of the varifocal lens is controlled by means of adjusting elements operatively connected to the lens. Typically, the focal length is electronically controllable by means of an actuator (electrical, mechanical or electromagnetic) connected to a current or voltage regulator that supplies current/voltage from zero to a maximum value. The control of the focal length is for example achieved by means of an electrical control signal with variable amplitude. In the usual ways, the current or voltage supplied to the actuator can be controlled electronically by a software, for example integrated in an electronic control system of the microscopy apparatus, which can also control other elements, such as the sample translation system, the lighting and shutting off of the light source and the photodetector device. Although it is not shown in the figures, the varifocal lens comprises an actuator that controls its focal length, wherein the actuator is connected to a current or voltage regulator, in turn connected to an electronic control unit (which are also not shown in the figure). In these embodiments, the actuator and the current/voltage regulator constitute the adjusting elements.

For example, the varifocal lens 33 is a TAG Lens.TM. or an electronically tunable lens produced by Optotune AG or by Varioptic.

The microscopy apparatus comprises a tube lens 35 arranged downstream of the varifocal lens 33 with respect to the optical path of the secondary beams 29 and 30 exiting the varifocal lens and configured in such a way as to receive the first beam 29 and the second beam 30, optionally after said beams have been deflected by a deflection element 34, e.g. a mirror.

A photodetector device 37 is arranged along the optical path of the first and second secondary beam 29, 30, downstream with respect to the tube lens 35. The photodetector device is arranged on a detection plane, indicated as the image plane, which coincides with a main focusing plane of the tube lens.

The photodetector device preferably is a two-dimensional image sensor that comprises a two-dimensional array of photosensitive elements (pixels), more preferably a photocamera or CCD or CMOS digital television camera. The image sensor is set to have a determined exposure time or integration time, which is defined to be the time during which the photosensitive elements of the sensor can collect the incoming photons for the acquisition of an image. The image sensor is characterised by a frame rate approximately equal to the reciprocal of the exposure time. To a change in the focal length of lens 33 corresponds a displacement of the position of the focal plane along the optical axis of the varifocal lens. Since the varifocal lens is arranged along the optical path of the beams between the objective lens 23 and the tube lens 35, the change of the focal length introduced by the varifocal lens 33 causes a displacement of the focal plane defined by the tube lens. As noted above, the positioning of the varifocal lens at or in proximity to the rear conjugate plane of the objective lens allows to maintain substantially constant the magnification of an object on the image plane for the values of focal length.

Preferably, the varifocal lens is controlled in such a way that the focal plane defined by the tube lens moves axially in a continuous manner from an initial position, f.sub.i, to a final position, f.sub.t, along the optical axis of the lens. As is generally known, the continuity of variation of signals depends on the control electronics that establish a differential variation (increases or decreases) of amplitude of the control signal of the varifocal lens between an amplitude value and the next one.

An electronic control of the focal length of the lens with tunable focal length has, in many embodiments, the advantage of achieving a relatively fast displacement of the focal plane, with controllable displacement speed.

The initial position f.sub.i and the final position f.sub.f of the displacement of the focal plane along the optical axis of the varifocal lens, hence along a direction perpendicular to the image plane are selected so that there is at least one position included in the range [f.sub.i, f.sub.f] whereat the focal plane of the tube lens corresponds to the image plane on which the detector device is arranged. In this way, if the integration time of the detector device is greater than or equal to the travel time of the focal plane in the interval [f.sub.1, f.sub.f], a single image captured by the detector device is an integration of 2D projections in the image plane of a 3D object in focus or out of focus. The control signal of the varifocal lens is preferably a frequency modulated analogue electrical signal at a .nu..sub.TL that determines the displacement speed of the focal length f.sub.TL and hence an axial displacement of the focal plane formed by the tube lens. In particular, the speed of the displacement of the focal plane, v.sub.fs, is a function of the frequency of the control signal of the tunable lens, .nu..sub.TL, and/or of the distance .DELTA.f.sub.TL=(f.sub.f-f.sub.i) traveled by the beam during a scan: v.sub.fs=2(f.sub.f-f.sub.i).nu..sub.TL. (9).

At constant axial travel distance .DELTA.f.sub.TL, a frequency increase implies an increase in the axial displacement speed. The modulation frequency of the control signal of the varifocal lens is selected in such a way that the scan .DELTA.f.sub.TL takes place in a time that is lower than or equal to the integration time of the photodetector device.

In the embodiment of FIG. 4, the image sensor 37 comprises two detection areas (not shown) arranged on the image plane, separated to have a physical separation between two secondary optical beams that impinge respective thereon. In particular, the sensor comprises a first and a second detection area. The image sensor is configured for the synchronous acquisition of images generated, respectively, by the first and by the second area of the sensor. The image sensor is therefore configured to generate two synchronous digital images of a same object, that occupies a determined position in the image plane (x,y).

Preferably, the sensor image is an Electron Multiplying Charge-Coupled Device with photoactive area divided in two detection regions.

When the varifocal lens 33 is shut off, the first and the second optical beam 29, 30 form an identical image of a 3D object in the first and in the second area of detection of the image sensor, i.e. the 2D projections of the object on the image plane (x,y) are identical. It is understood that with the varifocal lens off, the EDOF effect in the captured image is absent. When the varifocal lens 33 is on, a scan is carried out of the focal length of the focal length of the varifocal length in a time T.sub.TL=1/.nu..sub.TL that is lower than or equal to the integration time of the photodetector device and hence a (synchronous) scan of the focal position of the first and of the second secondary beam through the image plane. For example, assuming a longitudinal scan of the focal length, the change of the focal length over time is given by:

.function..function..times..times..pi..times. ##EQU00004##

wherein f.sub.min is the minimum focal length of the varifocal lens, corresponding to an end of the axial range [f.sub.i, f.sub.f], f.sub.min=f.sub.i.

The EDOF can be expressed with the sum of the original field depth (i.e. with the varifocal lens off), DOF, and of the range of focal positions scanned in the travel time T.sub.TL

.times. ##EQU00005##

The second beam 30, that passes off axis through the varifocal lens, undergoes a deflection and the position of the object in the image formed on the detector is displaced, along the y axis of the image plane, by a quantity .DELTA.y (as exemplified in FIGS. 1(a) and 1(b)). Two images are then detected, wherein the object, in the same instant, occupies two different positions in the image plane (x,y), wherein the position of the object in each image is defined by the coordinates of maximum intensity of the luminous spot that represents the object in the image. In particular, the object in the image formed by the first secondary beam in axis is in a position (x, y.sub.1), while the object in the image formed by the second secondary beam is in a position (x, y.sub.2), wherein (y.sub.1-y.sub.2)=.DELTA.y.

The lateral displacement .DELTA.y can be calculated starting from the localization of the particle in each of the two images.

The evolution of the axial position z.sub.p of the particle over time is calculated on the basis of the time evolution of the lateral displacement of the position of the particle in the second image with respect to its position in the first image, for example using an algorithm based on the analysis of the cross correlation between the images relating to the two channels. Alternatively, a Gaussian sub-pixel interpolation algorithm can be used.

For example, the lateral displacement .DELTA.y can be calculated using a localization algorithm described in A. Small and S. Stahlheber, "Fluorophore localization algorithms for super-resolution microscopy", Nature Methods 11, 267-279 (2014).

Using a calibration function .DELTA.y(z) it is possible to calculate the axial position associated with a lateral displacement .DELTA.y.

Since the detection areas of a single image sensor, albeit spatially separated, are usually physically close, the microscopy apparatus preferably comprises an additional directing optical system configured to direct the first optical beam 29 towards the first detection area and the second optical beam 30 towards the second detection area.

In the embodiment of FIG. 4, the microscopy apparatus comprises a third directing optical unit 36 configured to direct the first optical beam 29 in the first detection area of the sensor 37 and a fourth directing optical unit 36' configured to direct the second optical beam 30 in the second detection area of the sensor 37. In the illustrated example, the third directing optical unit comprises four mirrors 36a, 36b, 36c and 36d, while the fourth directing unit comprises four mirrors 36e, 36f, 36h and 36g.

The third and the fourth directing optical unit 36, 36' constitute the beam directing optical system of the embodiment of FIG. 4, which is configured to direct at least a secondary optical beam (in this case both beams) coming from the tube lens 35. It is understood that the present invention is not limited to the presence or to a particular configuration of the directing optical system adapted to deflect one or both optical beams in the desired direction.

In ways known in themselves, an image acquisition processor (not shown), integrated with the photodetector device or logically connected thereto, is adapted to digitise the output analogue signal of each detection area of the device and to store a respective digitised acquired image collected from each detection area. The acquisition processor transmits the digital images to an electronic image processing unit 42 that comprises a processor apt to process numerically the digital images and a memory. The electronic image processing unit is connected to an image display unit that comprises a first screen 43 for displaying the image captured in the first detection area and a second screen 44 for displaying the image captured in the second detection area.