Traffic management for high-bandwidth switching

Fairhurst , et al. Feb

U.S. patent number 10,567,307 [Application Number 15/965,829] was granted by the patent office on 2020-02-18 for traffic management for high-bandwidth switching. This patent grant is currently assigned to AVAGO TECHNOLOGIES INTERNATIONAL SALES PTE. LIMITED. The grantee listed for this patent is Avago Technologies General IP (Singapore) Pte. Ltd.. Invention is credited to Ari Aravinthan, Yehuda Avidan, Ankit Sajjan Kumar Bansal, Mark Fairhurst, Noam Halevy, Manoj Lakshmygopalakrishnan, Michael H. Lau, Eugene N. Opsasnick.

View All Diagrams

| United States Patent | 10,567,307 |

| Fairhurst , et al. | February 18, 2020 |

Traffic management for high-bandwidth switching

Abstract

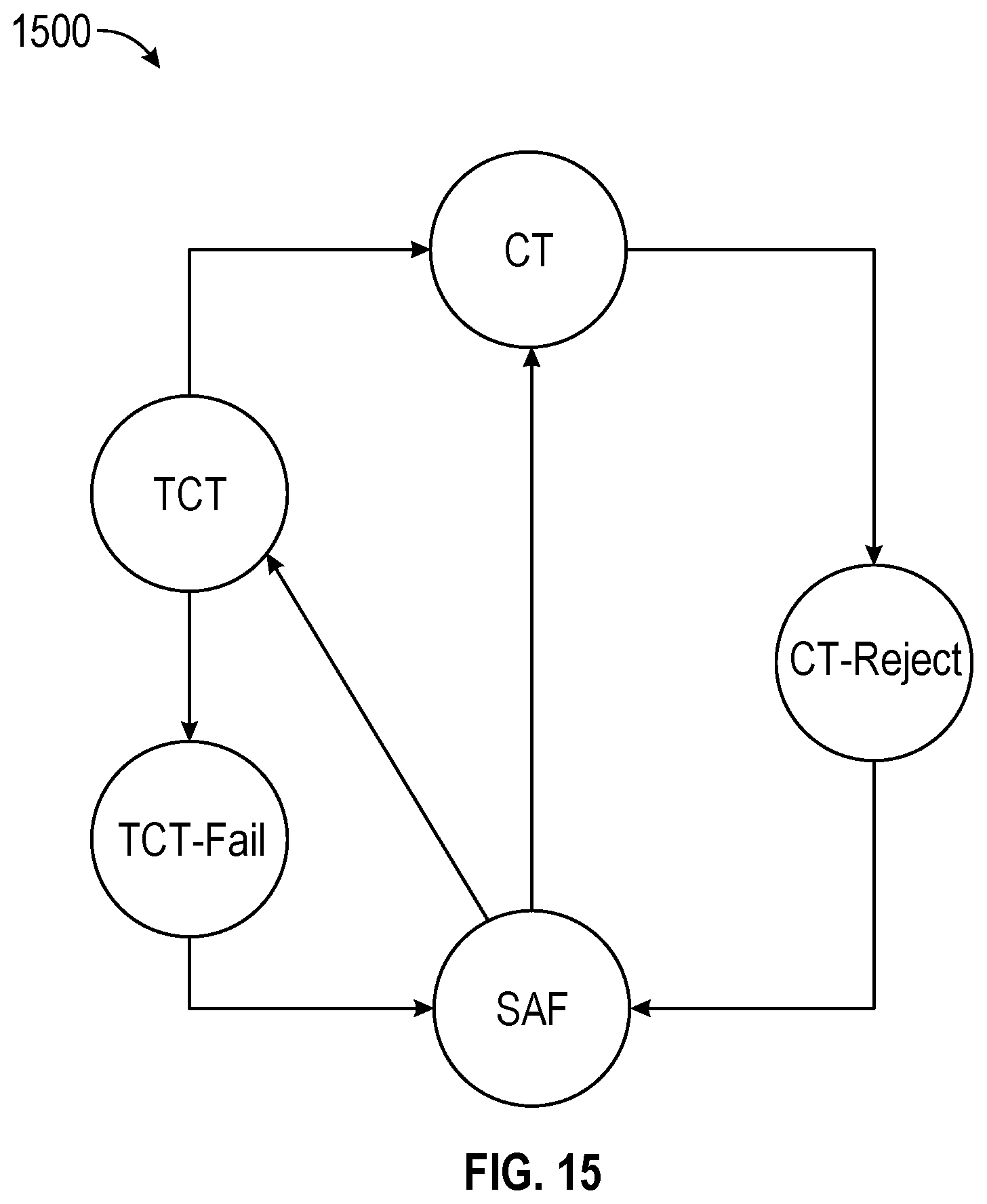

In the subject system for a network switch may determine to transition the output port of the network switch between a store-and-forward (SAF) state and a cut-through (CT) state based on at least one factor. The network switch may determine, based on a condition of the output port, whether to transition the output port to a transition-cut-through (TCT) state or directly to a CT state when transitioning the output port to the CT state. When the output port is transitioned to the TCT state, the network switch may determine, based on the condition of the output port, whether to transition the output port to the CT state or to transition the output port back to the SAF state.

| Inventors: | Fairhurst; Mark (Didsbury, GB), Opsasnick; Eugene N. (San Jose, CA), Lau; Michael H. (San Jose, CA), Aravinthan; Ari (San Jose, CA), Lakshmygopalakrishnan; Manoj (San Jose, CA), Bansal; Ankit Sajjan Kumar (San Jose, CA), Avidan; Yehuda (San Jose, CA), Halevy; Noam (Yakum, IL) | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Applicant: |

|

||||||||||

| Assignee: | AVAGO TECHNOLOGIES INTERNATIONAL

SALES PTE. LIMITED (Singapore, SG) |

||||||||||

| Family ID: | 68291950 | ||||||||||

| Appl. No.: | 15/965,829 | ||||||||||

| Filed: | April 27, 2018 |

Prior Publication Data

| Document Identifier | Publication Date | |

|---|---|---|

| US 20190334837 A1 | Oct 31, 2019 | |

| Current U.S. Class: | 1/1 |

| Current CPC Class: | H04L 49/3027 (20130101); H04L 49/251 (20130101); H04L 47/2433 (20130101); H04L 49/351 (20130101); H04L 49/9005 (20130101); H04L 49/252 (20130101) |

| Current International Class: | H04L 12/947 (20130101); H04L 12/861 (20130101); H04L 12/931 (20130101); H04L 12/851 (20130101) |

References Cited [Referenced By]

U.S. Patent Documents

| 2002/0083173 | June 2002 | Musoll |

| 2008/0120450 | May 2008 | Mott |

| 2012/0023295 | January 2012 | Nemawarkar |

| 2014/0098818 | April 2014 | Matthews |

| 2014/0160935 | June 2014 | Zecharia |

| 2016/0127267 | May 2016 | Kumar |

| 2016/0226797 | August 2016 | Aravinthan |

| 2017/0063609 | March 2017 | Philip |

| WO 2007/003370 | Jan 2007 | WO | |||

Other References

|

Extended European Search Report from European Patent Application No. 19171403.9, dated Jul. 23, 2019, 8 pages. cited by applicant. |

Primary Examiner: Mattis; Jason E

Attorney, Agent or Firm: Morgan, Lewis & Bockius LLP

Claims

What is claimed is:

1. A network switch, comprising: at least one egress controller of an egress buffer component associated with an output port, the at least one egress controller configured to: determine to transition the output port of the network switch between a store-and-forward (SAF) state and a cut-through (CT) state based on at least one factor; transition, based on a condition of the output port, the output port to a transition-cut-through (TCT) state or directly to a CT state when transitioning the output port to the CT state; and when the output port is transitioned to the TCT state, transition, based on the condition of the output port, the output port to the CT state or back to the SAF state.

2. The network switch of claim 1, wherein the at least one egress controller is further configured to: receive a CT request from an ingress tile, the CT request requesting a transition of the output port to the CT state; and issue a CT decision to the ingress tile by the egress buffer component, the CT decision indicating whether to transition the output port to the CT state, wherein a packet arrived at the ingress tile is held from processing until the CT decision is returned.

3. The network switch of claim 1, comprising: at least one ingress controller configured to: when the output port is in the CT state, forward one or more packets to a CT path within the network switch to send the one or more packets directly to a read launcher of the network switch; and when the output port is in the SAF state, forward the one or more packets to an SAF path within the network switch to pass the one or more packets through one or more processes and to the read launcher.

4. The network switch of claim 3, wherein a CT queue is maintained for a packet through the CT path and a SAF queue is maintained for a packet through the SAF path.

5. The network switch of claim 3, wherein the one or more processes include processes by a source context block, an OQS block, and a ToQ block.

6. The network switch of claim 1, wherein the at least one egress controller is configured to: determine that a new packet is not granted CT access, the new packet having newly arrived during the CT state of the output port; transition the output port from the CT state to a CT reject state during which one or more CT packets remaining in an egress buffer are drained, the CT packets having been received at the egress buffer via a CT path within the network switch during the CT state of the output port; and transition the output port from the CT reject state to the SAF state when the one or more remaining CT packets in the egress buffer are drained.

7. The network switch of claim 1, wherein the at least one egress controller is configured to: determine that the output port is empty during the SAF state; and transition the output port directly from the SAF state to the CT state when the output port is empty.

8. The network switch of claim 1, wherein the at least one egress controller is configured to: determine that a fill level of the output port is below a threshold during the SAF state; and transition the output port from the SAF state to the TCT state in response to determining that the fill level is below the threshold.

9. The network switch of claim 1, wherein the at least one egress controller is configured to: determine whether one or more SAF packets remaining in an egress buffer are drained from the egress buffer, the SAF packets having been received the egress buffer via an SAF path within the network switch during the SAF state of the output port; and transition the output port from the TCT state to the CT state when the one or more remaining SAF packets are drained from the egress buffer.

10. The network switch of claim 1, wherein the at least one egress controller is configured to: determine to transition the output port back to the SAF state when one or more packets to be sent to an SAF path within the network switch have been received during the TCT state; transition the output port from the TCT state to a TCT fail state to drain packets received during the TCT state upon determining to transition the output port back to the SAF state; and transition the output port from the TCT fail state to the SAF state when remaining packets that have remained in the egress buffer since before the transition to the TCT fail state have been drained from the egress buffer.

11. The network switch of claim 1, wherein the at least one egress controller is configured to: grant a higher priority to CT packets on a CT path within the network switch than to SAF packets on a SAF path within the network switch; and output the CT packets and the SAF packets based on the priority.

12. The network switch of claim 1, wherein the at least one egress controller is further configured to: determine a burst of cells to an egress buffer block while the output port is in the CT state; and absorb the burst in the egress buffer block without transitioning the output port out of the CT state.

13. The network switch of claim 1, wherein the at least one egress controller is further configured to: determine an order of arrival of packets at the network switch; and transmit the packets out of the network switch in the order of arrival.

14. A method comprising: determining to transition an output port of a network switch between a store-and-forward (SAF) state and a cut-through (CT) state based on at least one factor; transitioning, based on a condition of the output port, the output port to a transition-cut-through (TCT) state or directly to a CT state when transitioning the output port to the CT state; and when the output port is transitioned to the TCT state, determining, based on the condition of the output port, whether to transition the output port to the CT state or to revert back to the SAF based on a condition of the output port.

15. The method of claim 14, further comprising: determining that a new packet is not granted CT access, the new packet having newly arrived during the CT state of the output port; transitioning the output port from the CT state to a CT reject state during which one or more CT packets remaining in an egress buffer are drained, the CT packets having been received at the egress buffer via a CT path within the network switch during the CT state of the output port; and transitioning the output port from the CT reject state to the SAF state when the one or more remaining CT packets in the egress buffer are drained.

16. The method of claim 14, further comprising: determining that the output port is empty during the SAF state; and transitioning the output port directly from the SAF state to the CT state when the output port is empty.

17. The method of claim 14, further comprising: determining that a fill level of the output port is below a threshold during the SAF state; and transitioning the output port from the SAF state to the TCT state in response to determining that the fill level is below the threshold.

18. The method of claim 14, further comprising: determining whether one or more SAF packets remaining in an egress buffer are drained from the egress buffer, the SAF packets having been received the egress buffer via an SAF path within the network switch during the SAF state of the output port; and transitioning the output port from the TCT state to the CT state when the one or more remaining SAF packets are drained from the egress buffer.

19. The method of claim 14, further comprising: determining to transition the output port back to the SAF state when one or more packets to be sent to an SAF path within the network switch have been received during the TCT state; transitioning the output port from the TCT state to a TCT fail state to drain packets received during the TCT state upon determining to transition the output port back to the SAF state; and transitioning the output port from the TCT fail state to the SAF state when remaining packets that have remained in an egress buffer since before the transition to the TCT fail state have been drained from the egress buffer.

20. A system comprising: a plurality of input ports configured to receive one or more packets; an ingress tile connected to the plurality of input ports to receive the one or more packets via the plurality of input ports; and one or more egress buffer components connected to one or more output ports to transmit the one or more packets received from the ingress tile, wherein each of the one or more egress buffer components is configured to: determine to transition a respective output port between a store-and-forward (SAF) state and a cut-through (CT) state based on at least one factor, transition the respective output port to a transition-cut-through (TCT) state or directly to a CT state when transitioning to the CT state, and when the respective output port is transitioned to the TCT state, transition, based on a condition of the output port, the respective output port to the CT state or to revert back to the SAF.

Description

TECHNICAL FIELD

The present description relates generally to a hybrid-shared traffic managing system capable of performing a switching function in a network switch.

BACKGROUND

A network switch may be used to connect devices so that the devices may communicate with each other. The network switch includes a traffic managing system to handle incoming traffic of data received by the network switch and outgoing traffic transmitted by the network switch. The network switch may further include buffers used by the traffic managing system for managing data traffic. The input ports and the output ports of the network switch may be arranged differently for different purposes. For example, an operating clock frequency may be scaled to run faster. Further, various features such as a cut through feature may be implemented to enhance the network switch performance.

BRIEF DESCRIPTION OF THE DRAWINGS

Certain features of the subject technology are set forth in the appended claims. However, for purpose of explanation, several embodiments of the subject technology are set forth in the following figures.

FIG. 1 illustrates an example network environment in which traffic flow management within a network switch may be implemented in accordance with one or more implementations.

FIG. 2 is an example diagram illustrating a shared-buffer architecture for a network switch that processes a single packet per cycle.

FIG. 3 is an example diagram illustrating a scaled-up shared-buffer architecture for a network switch that processes two packets per cycle.

FIG. 4 is an example diagram illustrating an implementation of the input-output-buffered traffic manager for a network switch that is configured to process eight packets per cycle.

FIG. 5 is an example diagram illustrating a hybrid-shared switch architecture for a network switch, in accordance with one or more implementations.

FIG. 6 is an example diagram illustrating banks of a buffer per ITM in a hybrid-shared switch architecture and data paths to egress buffers within a network switch, in accordance with one or more implementations.

FIG. 7 is an example diagram illustrating an orthogonal queue set block in accordance with one or more implementations.

FIG. 8 is an example diagram illustrating a Queuing block partitioned to support Orthogonal Queue Sets in accordance with one or more implementations.

FIG. 9 is an example diagram illustrating a queue structure, in accordance with one or more implementations.

FIG. 10 is an example diagram illustrating rate protected dequeue control/data path limits for a network switch, in accordance with one or more implementations.

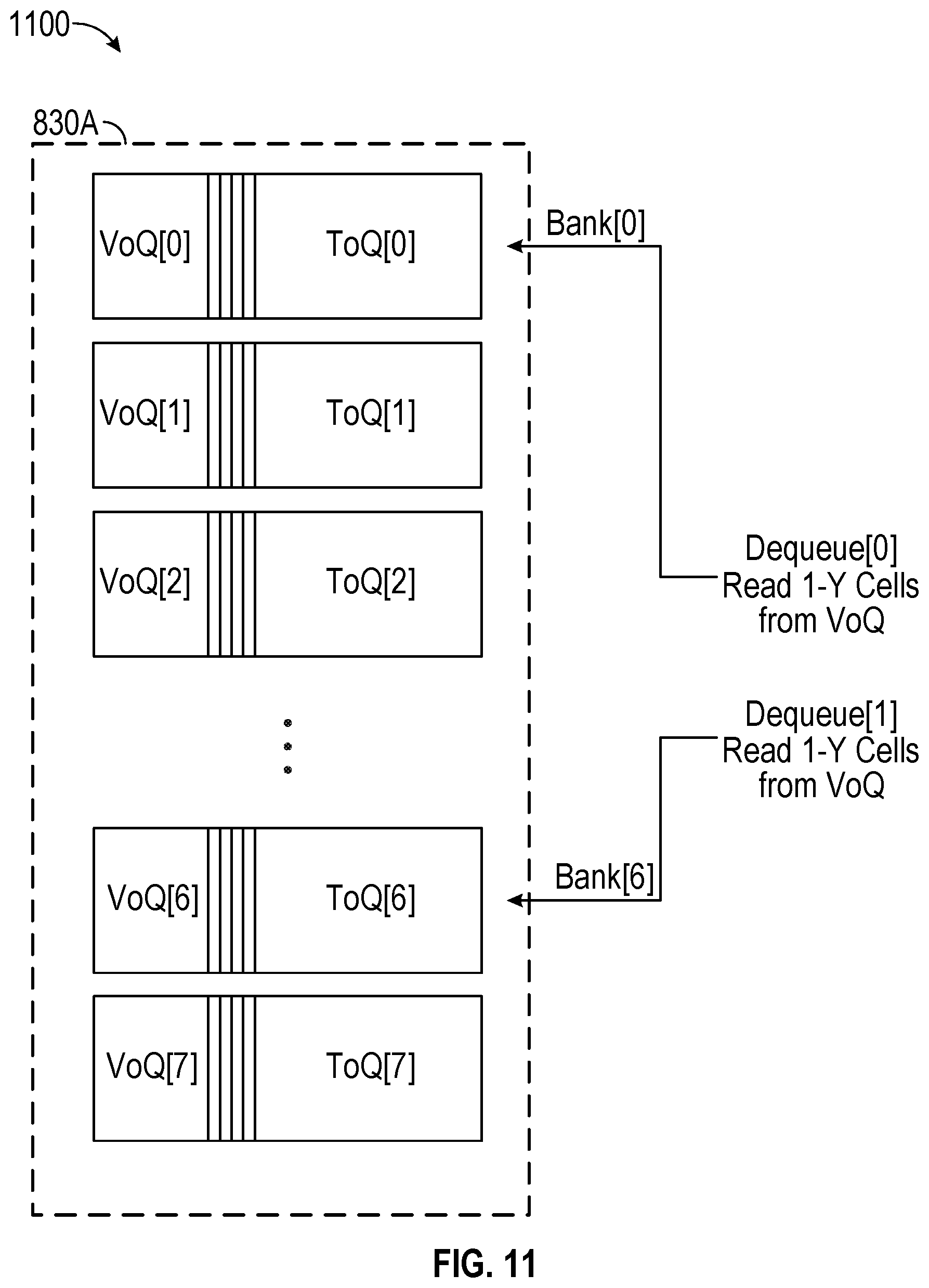

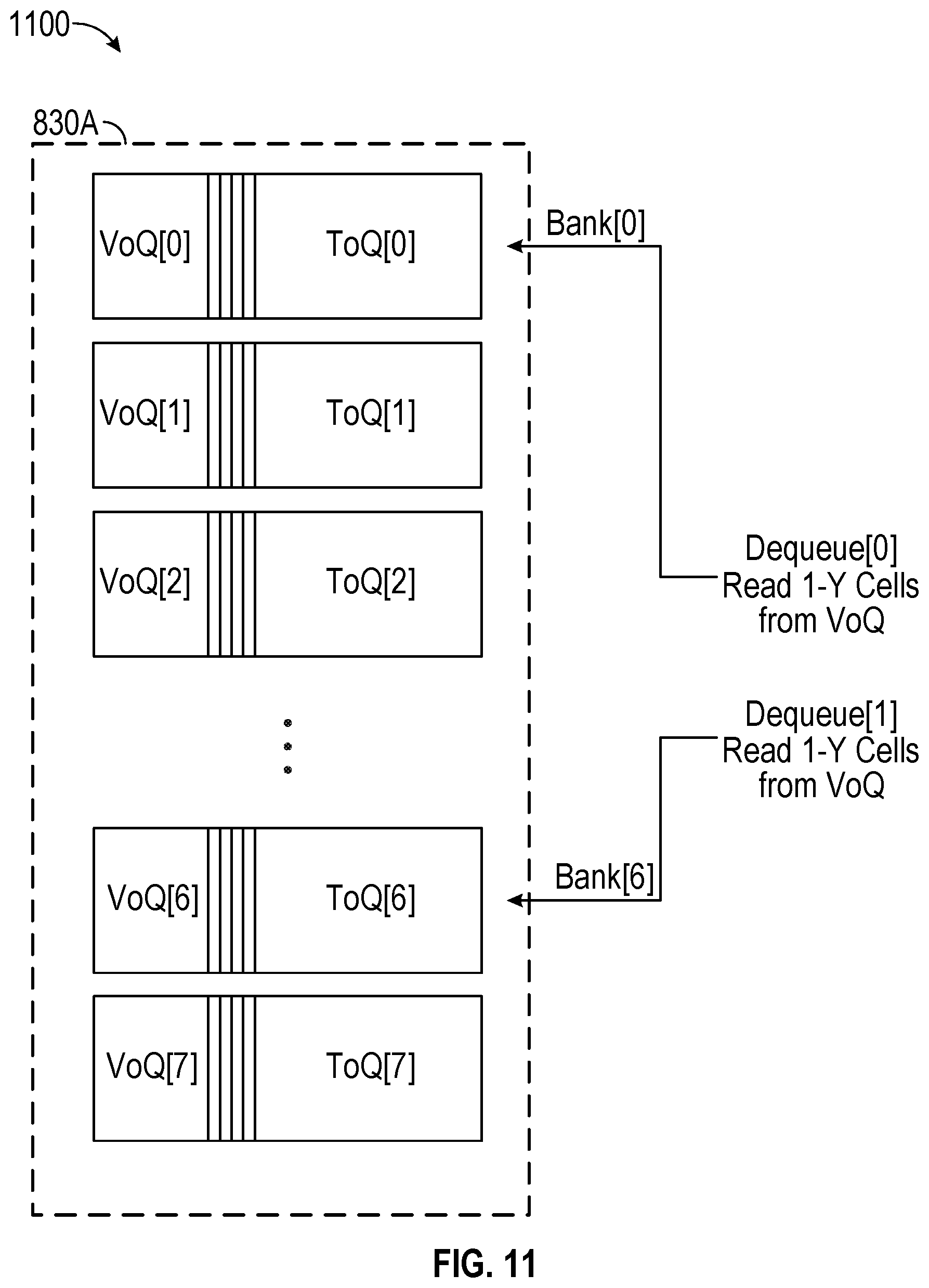

FIG. 11 is an example diagram illustrating a queue dequeue, in accordance with one or more implementations.

FIG. 12 is an example diagram illustrating an egress buffer architecture, in accordance with one or more implementations.

FIG. 13 is an example diagram illustrating a cut-through data path in an memory management unit for a network switch.

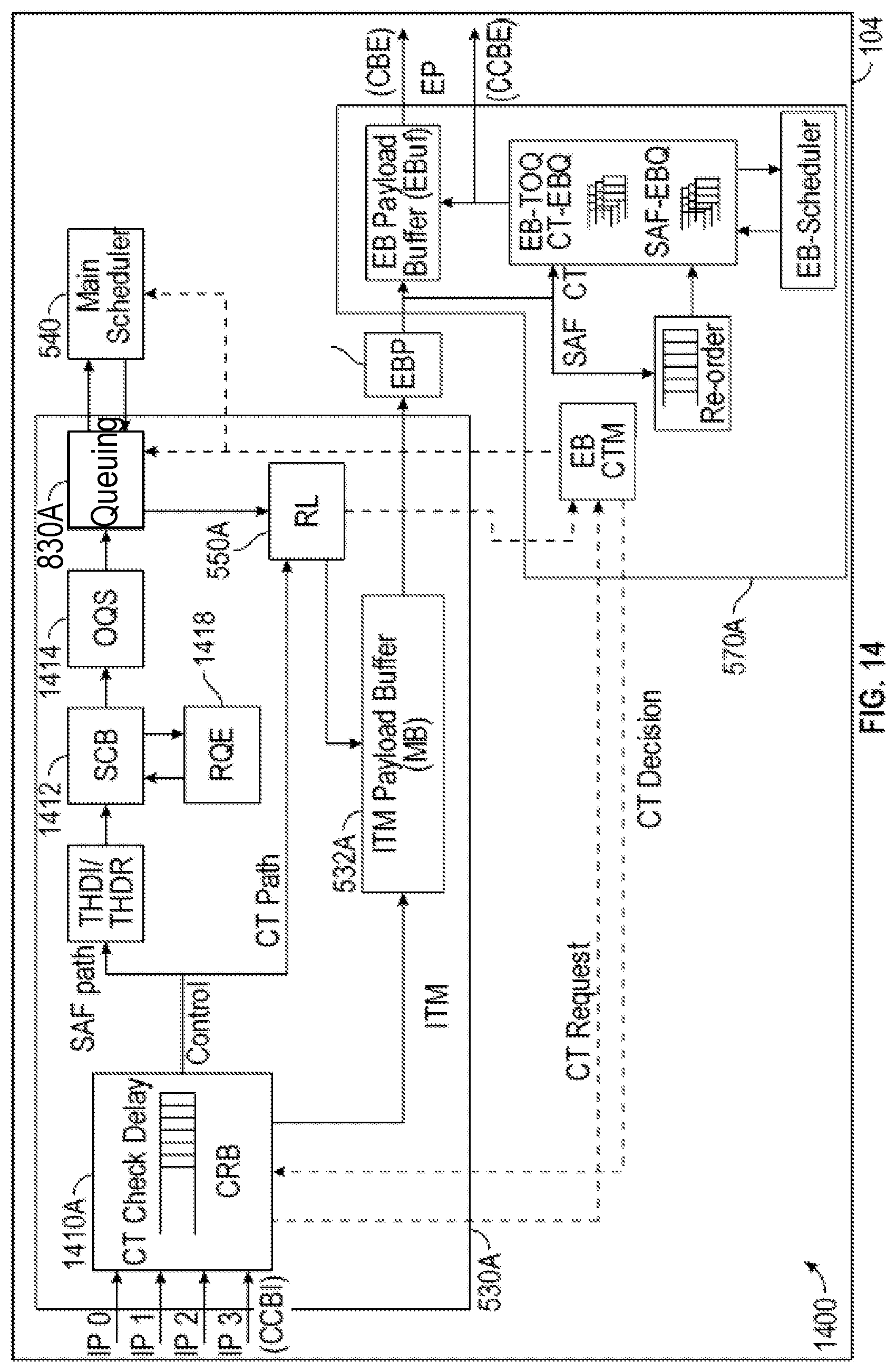

FIG. 14 is an example diagram illustrating a cut-through state machine for a network switch, in accordance with one or more implementations.

FIG. 15 is an example diagram illustrating the store-and-forward path in a traffic manager, in accordance with one or more implementations.

FIG. 16 illustrates a flow diagram of an example process of traffic flow management within a network switch in accordance with one or more implementations.

FIG. 17 illustrates a flow diagram of an example process of traffic flow management within a network switch in accordance with one or more implementations.

FIG. 18 illustrates a flow diagram of an example process of traffic flow management within a network switch in accordance with one or more implementations.

FIG. 19 illustrates a flow diagram of an example process of traffic flow management within a network switch in accordance with one or more implementations, continuing from the example process of FIG. 18.

FIG. 20 illustrates a flow diagram of an example process of traffic flow management within a network switch in accordance with one or more implementations, continuing from the example process of FIG. 19.

FIG. 21 illustrates an example electronic system with which aspects of the subject technology may be implemented in accordance with one or more implementations.

DETAILED DESCRIPTION

The detailed description set forth below is intended as a description of various configurations of the subject technology and is not intended to represent the only configurations in which the subject technology can be practiced. The appended drawings are incorporated herein and constitute a part of the detailed description. The detailed description includes specific details for the purpose of providing a thorough understanding of the subject technology. However, the subject technology is not limited to the specific details set forth herein and can be practiced using one or more implementations. In one or more implementations, structures and components are shown in block diagram form in order to avoid obscuring the concepts of the subject technology.

FIG. 1 illustrates an example network environment 100 in which traffic flow management within a network switch may be implemented in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

The network environment 100 includes one or more electronic devices 102A-C connected via a network switch 104. The electronic devices 102A-C may be connected to the network switch 104, such that the electronic devices 102A-C may be able to communicate with each other via the network switch 104. The electronic devices 102A-C may be connected to the network switch 104 via wire (e.g., Ethernet cable) or wirelessly. The network switch 104, may be, and/or may include all or part of, the network switch discussed below with respect to FIG. 5 and/or the electronic system discussed below with respect to FIG. 21. The electronic devices 102A-C are presented as examples, and in other implementations, other devices may be substituted for one or more of the electronic devices 102A-C.

For example, the electronic devices 102A-C may be computing devices such as laptop computers, desktop computers, servers, peripheral devices (e.g., printers, digital cameras), mobile devices (e.g., mobile phone, tablet), stationary devices (e.g. set-top-boxes), or other appropriate devices capable of communication via a network. In FIG. 1, by way of example, the electronic devices 102A-C are depicted as network servers. The electronic devices 102A-C may also be network devices, such as other network switches, and the like.

The network switch 104 may implement the subject traffic flow management within a network switch. An example network switch 104 implementing the subject system is discussed further below with respect to FIG. 5, and example processes of the network switch 104 implementing the subject system are discussed further below with respect to FIG. 21.

The network switch 104 may implement a hybrid-shared traffic manager architecture in which a traffic manager includes a main packet payload buffer memory. The traffic manager performs a central main switching function that involves moving packet data received from input ports to correct output port(s). Main functions of the traffic manager may include admission control, queuing, and scheduling. The admission control involves determining whether a packet can be admitted into the packet buffer or discarded based on buffer fullness and fair sharing between ports and queues. In queuing, packets that are admitted into the packet buffer are linked together into output queues. For example, each output port may have multiple separate logical queues (e.g., 8 separate logical queues). Packets are enqueued upon arrival into the traffic manager, and are dequeued after being scheduled for departure to its output port. In scheduling, a port with backlogged packet data in multiple queues may select one queue at a time to dequeue a packet, such that backlogged packet data may be transmitted to the output port. This may be done based on a programmable set of Quality of Service (QoS) parameters.

The network switch 104 may include a switching chip that may be generally configured to scale the operating clock frequency to run faster when the network switch 104 includes more ports and/or faster interfaces. Such configurations may be made while using a shared-buffer architecture for the traffic manager, where input ports and output ports have equal access to the entire payload memory, and the control structures of the traffic manager operate on one or two packets (or part of a packet) in each clock cycle.

However, recent switching chips are not able to scale the operating clock frequency to achieve a speed beyond which the transistors operate, and thus chip's clock frequency may not allow faster operation. Other constraints such as total device power may also limit the maximum clock operating frequency. Therefore, if new switching chips are not configured to increase the chip's clock frequency, the chips may need to support more and/or faster ports, which makes it difficult to use the existing shared-buffer architecture for newer and larger generations of switch chips.

An alternative switch architecture may be used to support more and/or faster ports without scaling the operating clock frequency to a very high bandwidth. For example, the alternative switch architecture may be an input-output buffered architecture, where the payload memory is divided into several smaller segments, each of which can handle a fraction of the total switch bandwidth. Each part can then operate at a lower clock frequency than would be required to switch the entire bandwidth. This architecture may be capable of scaling the switch bandwidth to a much higher bandwidth than the shared-buffer architecture. However, each input port or output port has access to a fraction of the total payload memory. For requirements with limited total payload memory, a memory segment may be too small to allow efficient sharing of the memory space.

In the shared-buffer architecture, a limited number of packets (e.g., one or two packets) may be processed at a time.

FIG. 2 is an example diagram 200 illustrating a shared-buffer architecture for a network switch (e.g., the network switch 104) that processes a single packet per cycle. The traffic manager may process a single packet or a single packet segment per cycle and the shared-buffer architecture may include a shared data buffer, an admission control component, a queuing component, and a scheduling component. A packet payload buffer (e.g., the shared data buffer) may be implemented with single-port memories by utilizing multiple physical banks within the total buffer. When a packet is scheduled to be transmitted, the packet can be located anywhere in the buffer, in any bank. While this packet is being read from one bank, a newly received packet can be written into a different bank of the buffer.

FIG. 3 is an example diagram 300 illustrating a scaled-up shared-buffer architecture for a network switch (e.g., the network switch 104) that processes two packets per cycle. Each physical bank of the buffer memory implemented in the shared-buffer architecture of FIG. 3 is capable of supporting two random access reads within a single bank because two scheduled packets for transmission may reside in the same bank at the same time. The received packets in the same cycle can always be directed to be written into memory banks other than the ones being read while avoiding collisions with other writes. This type of memory is more expensive (e.g., in terms of area per bit of memory), but may be simple to implement. However, scaling the memory design to support more than two random access reads at a time may become very expensive and may not be a cost-effective approach.

The packet processing in a switch chip that examines each packet and determines an output port to switch the packet to can be parallelized, such that multiple packet processing processes may be performed in parallel. For example, the chip may support a total of 64 100 Gbps interfaces (ports). To keep up with the packet processing requirements of many interfaces (e.g., 4 pipelines each with 32.times.100 Gbps interfaces), the chip may implement eight separate packet processing elements, where, for example, each of the packet processing elements may support up to 8 100 Gbps by processing 1 packet per clock cycle. The clock frequency of the chip and the number of ports may dictate the number of packet processors that are necessary for the parallel packet processing. As such, the traffic manager in the chip may need to be able to simultaneously handle eight incoming packets or eight cells, where each cell is a portion of a packet. For example, the packets (e.g., 2000 bytes per packet) may be divided into cells (e.g., 128 bytes per cell). The traffic manager may also need to select eight outgoing packets or cells in every cycle where the egress packets are of independent flows.

A single shared-buffer switch may need to be able to write eight separate cells and read eight separate cells every cycle. Handling the write operations in a multi-banked shared memory is easier than handling the multiple read operations. The writing of the cells to the buffer can be handled by directing individual writes to separate banks within the shared memory. However, the eight buffer reads each cycle may collide on common banks because the eight buffer reads are scheduled independently, creating bank conflicts that may not be easily resolved in the shared-buffer architecture.

Another traffic manager architecture is an input-output-buffered traffic manager architecture. The input-output-buffered architecture implements separate ingress buffer elements and egress buffer elements that each support a fraction of the total switch bandwidth. For example, each buffer element may be capable of supporting a single input and output cell. Further, a mesh interconnect may be implemented to provide connections between the ingress buffers and the egress buffers. Typically, each of the ingress buffers and the egress buffers has its own Queuing and scheduling control structures. As a result, the total packet payload memory is divided into several smaller pieces across the ingress buffers and the egress buffers, and thus each input port has access to a fraction of the total buffer. The independent control and limited bandwidth of each element also means that input blocking can occur.

FIG. 4 is an example diagram 400 illustrating an implementation of the input-output-buffered traffic manager for a network switch (e.g., the network switch 104) that is configured to process eight packets per cycle. The input-output-buffered traffic manager of FIG. 4 includes 8 ingress traffic managers (ITM) and 8 egress traffic managers (ETM). Each ingress traffic manager includes an ingress buffer, an admission control element, a queuing element, and a scheduling element. Each egress traffic manager includes an egress data buffer, a queuing component, and a scheduling component. Each ingress buffer is configured to support a single input cell and a single output cell. Each egress buffer is configured to support a single input cell and a single output cell. The cross-connect component provides a mesh interconnect between the 8 ingress buffers and 8 egress buffers.

The input-output-buffered architecture may suffer from several shortcomings. For example, each input port or each output port may have access to only a fraction of the total payload memory because the buffer is divided into several smaller portions, where each portion handles a single packet per cycle. As such, the buffering bandwidth-delay product (e.g., the amount of buffering available to any one output port) can be severely limited. Dividing the buffer into smaller portions also means that the control logic that performs admission control and queuing should be replicated at each ingress and egress buffer, which may have a significant impact on the size and the cost of this architecture in a single chip ASIC design and/or a multi-chip ASIC design. The thresholding may be compromised or become more complicated as it is difficult to control the buffer space allocated to a logical queue that has several physical queues (VoQs) each using up space. The scheduling function increases in complexity compared to a shared-buffer architecture as the scheduler has to select from and provide fairness for multiple sources (VoQs) within each logical queue.

The input-output-buffered architecture may also suffer from input blocking due to source congestion that can occur when several output ports associated with different egress buffers all need to transmit packets from a single ingress buffer. The single ingress buffer may not be able to provide enough bandwidth to support all of the output ports at the same time, which may lead to loss in performance due to reduced bandwidth to all affected output ports. Although the input blocking problem may be mitigated by introducing internal speed-up between the ingress buffers and egress buffers, such internal speed-up may have a significant impact on the size and complexity of the Ingress stages, interconnect and output stages, and may affect the clock frequency (power) and area of the chip that may question the feasibility of the chip design.

The subject technology includes a switch traffic manager architecture called a hybrid-shared switch architecture. The hybrid-shared switch architecture combines elements of both the shared-buffer switch architecture and the input-output-buffered switch architectures to be able to scale the total switch bandwidth in a switch to very high levels, while retaining the advantages of a shared buffer switch where all inputs and outputs have access to a high percentage of the switch's payload buffer.

The hybrid-shared switch architecture may utilize a small number of large Ingress Data Buffers to achieve a very high level of buffer sharing among groups of input ports. Each Ingress Data Buffer element (e.g., referred to as an ingress tile or an ITM) may service multiple input ports. For example, if the hybrid-shared switch architecture has two ITMs, each ITM may be configured to service a half of the total switch ports. In some aspects, the hybrid-shared switch architecture may include a single central scheduler that is configured to schedule traffic across all ingress buffers to simultaneously maximize the bandwidth of each input tile and to keep all output ports satisfied. The packets scheduled by the scheduler may be forwarded to multiple egress buffers (EBs). The EBs may be associated with a set of output ports. The packets, once scheduled by the scheduler, do not need to be scheduled again even though there are several small EBs. The scheduled packets may be forwarded through the EBs based on a time of arrival (e.g., on a first-come first-served basis). In one or more implementations, the switch may include a distributed scheduler where destination based schedulers simultaneously pull from ITMs.

FIG. 5 is an example diagram 500 illustrating a hybrid-shared switch architecture for a network switch, in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

As shown in FIG. 5, the network switch 104 may include a hybrid-shared traffic manager 510, input ports (ingress ports (IPs)) 520A-520H, and output ports (egress ports (EPs)) 580A-580H. The input ports 520A-520H may be coupled to respective ingress pipelines, and the output ports 580A-580H may be coupled to respective egress pipelines. In some aspects, the distinction between the input ports 520A-520H and the output ports 580A-580H may be a logical distinction, and same physical ports may be used for one or more of the input ports 520A-520H and one or more of the output ports 580A-580H. As discussed above, the packets received via the input ports 520A-520H may be divided into cells via packet processing, such that the cells may be stored and queued at the hybrid-shared traffic manager 510.

The example hybrid-shared traffic manager 510 is connected to the input ports 520A-520H and the output ports 580A-580H and includes two ITMs (also referred to as ingress tiles) 530A-B including a first ITM 530A and a second ITM 530B, where each of the ITMs 530A-B is connected to four input ports. Each of the ITMs 530A-B includes an ingress buffer, a queuing component, and an admission control component. Thus, the first ITM 530A includes the ingress buffer 532A and the queuing component 534A, and the second ITM 530B includes the ingress buffer 532B and the queuing component 534B. The ingress buffer 532A and the queuing component 534A of the first ITM 530A are connected to a read launcher 550A. The ingress buffer 532B and the queuing component 534B of the second ITM 530B are connected to the read launcher 550B. Although the read launchers 550A-B in FIG. 5 are illustrated as separate components from the ITMs 530A-B, the read launchers 550A-B may reside in the ITMs 530A-B respectively. The queuing component 534A of the first ITM 530A and the queuing component 534B of the second ITM 530B are connected to a centralized main scheduler 540.

Each of the ITMs 530A and 530B may be controlled by its own controller and/or processor. For example, the first ITM 530A may be controlled by the first ingress controller 538A and the second ITM 530B may be controlled by the second ingress controller 538B. Each of the ITMs 530A and 530B may be implemented in hardware (e.g., an Application Specific Integrated Circuit (ASIC), a Field Programmable Gate Array (FPGA), a Programmable Logic Device (PLD), a controller, a state machine, gated logic, discrete hardware components, or any other suitable devices).

The packets from the ingress buffer 532A of the first ITM 530A and the ingress buffer 532B of the second ITM 530B are forwarded to a cross-connect component 560, which provides connectivity between the ingress buffers 532A and 532B of the two ITMs 530A and 530B and the egress buffer (EB) components 570A-570H having respective egress buffers. The EB components 570A-570H are connected to the output ports 580A-580H, respectively.

Each of the EB components 570A-570H may be controlled by its own controller and/or processor. For example, the EB components 570A-570H may be controlled by respective egress controllers 572A-572H. In one or more implementations, the EB components 570A-570H may be first-in first-out buffers that store data (e.g. cells, packets, etc.) received from the ITMs 530A-B. In one or more implementations, one or more EB components 570A-570H may be connected to two or more packet processors. The data may then be read out for transmission by one or more egress packet processors. Each of the EB components 570A-570H may be implemented in hardware (e.g., an ASIC, an FPGA, a PLD, a controller, a state machine, gated logic, discrete hardware components, or any other suitable devices), software, and/or a combination of both.

Each of the ingress buffers 532A-B may be partitioned into multiple memory banks. For example, in some aspects, the bandwidth of the memory banks in each of the ITMs 530A-B may be used to support higher total read and write bandwidth than that provided by a single memory. Write operations may be forwarded to memory banks in such a way to avoid read operations and/or other write operations.

The single centralized main scheduler 540 in the hybrid-shared traffic manager 510 may schedule packets based on quality of service (QoS) requirements and/or bandwidth availability across the ingress buffers 532A-B. Thus, the main scheduler 540 may issue read requests to read cells from the ingress buffers 532A-B of the ITMs 530A-B based on QoS requirements and/or bandwidth availability across the ingress buffers 532A-B of the ITMs 530A-B. For example, if eight cells are set to be transferred to the egress pipelines per clock cycle, an average of eight cells may be scheduled and read from the ingress buffers every cycle to maintain bandwidth to the output ports. The main scheduler 540 may be controlled by its own scheduling controller 542. The main scheduler 540 may be implemented in software (e.g., subroutines and code), hardware (e.g., an ASIC, an FPGA, a PLD, a controller, a state machine, gated logic, discrete hardware components, or any other suitable devices), software, and/or a combination of both.

If the main scheduler 540 schedules reading multiple cells at the same time from the same memory location, colliding read requests to the ingress buffers may result. The collision may occur when two or more read requests read cells from the same memory bank of the ingress buffer (e.g., ingress buffer 532A or 532B) in a same cycle. For example, the collision may occur because the scheduling is based on QoS parameters and may require any queued packet at any time to be sent to a proper output port. This results in an uncontrolled selection of read address banks (memory read requests) to the buffers. Therefore, it may not be possible to guarantee that the main scheduler 540 will schedule non-colliding reads. Such collisions should be avoided to prevent stalling output scheduling and dequeue operations.

In some aspects, to compensate for these scheduling conflicts, the architecture may allow for memory read requests to be delayed and thus to occur out-of-order. In one or more implementations, the cell read requests from the main scheduler 540 may be forwarded to a corresponding read launcher (e.g., read launcher 550A or 550B), which may be a logical block and/or a dedicated hardware component. The read launcher 550A or 550B resolves the memory bank conflicts and can issue a maximum number of non-colliding reads per clock cycle to each ITM's data buffer. One or more read requests of the read requests that collide with each other are held by a corresponding read launcher (e.g., the read launcher 550A or 550B) until later cycles when the one or more read requests can be issued with no collisions. In one example, if a first and second read requests collide with each other, the first read request may be issued during a next cycle and the second read request may be issued during a subsequent cycle after the next cycle. In another example, if a first and second read requests collide with each other, the first read request may be issued during a current cycle and the second read request may be issued during a next cycle. In one or more implementations, the read requests with collisions may be held in temporary request FIFOs (e.g., associated with the read launcher 550A or 550B), allowing the read requests with collisions to be delayed in the Read Launcher without blocking read requests to non-colliding banks. This allows the main scheduler 540 to continue scheduling cells as needed keep up with the output port bandwidth demands without stalling. Hence, using the read launcher 550A or 550B, the cell read requests may be reordered to avoid collisions. For example, while reordering, older read requests may be prioritized over newer read requests.

The read launcher 550A or 550B allows some newer read requests to be issued before older delayed read requests, thus creating possible "out-of-order" data reads from the buffer. After being read from the ingress buffer, the packets and cells are then put back in order and before being sent out to the final destination by the egress buffers. Further, the architecture may provide read speed-up. For example, an ITM with 4 writes per cycle may support 4+overhead reads per cycle. This read speed-up over the write bandwidth allows for the system to catch-up after any collisions that may occur. The combination of the out-of-order reads and the read speed-up may allow the architecture to maintain full bandwidth to the all output ports of the switch chip.

In one or more implementations, each egress packet processor and the output ports it serves may be supported by a single EB component and/or a EB component may support multiple egress packet processors. For example, the output port 580A and the packet processor serving the output port 580A are supported by the EB component 570A. There are eight EB components 570A-570H in the hybrid-shared switch architecture 500 illustrated in FIG. 5. The EB component may contain a relatively small buffer that re-orders the data from the ingress buffers and feeds each egress packet processor. Because the EB component supports only a single egress packet processor, the EB component may be implemented as a simple buffer structure in one or more implementations.

The ITM's shared buffer may be capable of supporting X incoming cells and X+overspeed outgoing cells per cycle, where the ITM may include the standard admission control and Queuing of a shared-buffer traffic manager. A centralized main scheduler in the hybrid-shared switch architecture may have visibility into all ITMs and can schedule queues based on QoS parameters and ITM availability (e.g., ingress buffer availability). A read launcher is implemented to resolve buffer bank conflicts of multiple scheduled cells (e.g., read collisions) and allows out-of-order buffer reads prevent stalling the main scheduler. Each EB component in the hybrid-shared architecture may be capable of re-ordering data (e.g., to the order of arriving) before forwarding to the egress packet processor (and to output ports).

The hybrid-shared switch architecture is capable of scaling bandwidth higher without the limitations of the shared-buffer and input-output-buffered architectures. The hybrid-shared architecture has close to the same performance of the shared-memory architecture in terms of buffering capacity for each port, but can scale to a much larger total bandwidth. Further, compared to the shared-buffer architecture, the hybrid-shared architecture has a smaller bandwidth requirement on its ingress buffers of the ITMs. This makes the ingress buffer easier to implement with simple single-port memories. The hybrid-shared switch can scale up more in capacity by adding more ingress tiles with the same bandwidth requirement on each element.

The hybrid-shared switch architecture also has the following advantages over the input-output-buffered architecture. For example, a larger buffer space available to all ports to store data in the event of temporary over-subscription of an output port. Since there are fewer ingress buffers, each one has a significantly larger percentage of the overall payload buffer. Further, less overhead may be needed for queuing control structures. The input-output-buffered architecture requires a full set of virtual output queues at each ingress buffer block. While the hybrid-shared switch architecture also requires a full set of virtual output queues at each ingress buffer, the hybrid-shared has fewer buffers so the number of redundant VoQs is greatly reduced. In the hybrid-shared switch architecture, temporary collisions of the cell requests are non-blocking. The reordering of read requests of cells and internal speed-up makes the hybrid-shared architecture non-blocking while the input-output-buffered architecture may suffer input blocking from source congestion under various traffic patterns. A single centralized main scheduler may be aware of all VoQs and thus allows for optimal scheduling across both input tile buffers. The main scheduler can take into account source tile availability as well as the QoS requirements.

In each of the ITMs 530A-B, a set of output port queues (e.g., virtual output queues (VoQs)) maybe enqueued. The main scheduler 540 may be configured to select an ITM of the ITMs 530A-B in the hybrid-shared traffic manager 510 and to select VoQs in the selected ITM. Each of the ITMs 530A-B may be able to handle a maximum number of dequeues per cycle, and thus the main scheduler 540 may schedule up to this maximum per cycle per ITM. In one or more implementations, the input tile read bandwidth may provide overspeed compared to the input tile write bandwidth. For example, each of the ITMs 530A-B may be capable of writing X cells per clock while being capable of reading X+overspeed cells per clock. Each of the EB components 570A-H may implement a shallow destination buffer for burst absorption plus flow control isolation.

The payload memory of each ingress buffer of the Ingress Data Buffers 532A-B may be partitioned into segments referred to as memory banks, where the total read and write bandwidth is higher than the bandwidth of each memory bank. Each memory bank may be accessed in parallel to other memory banks, and thus a read operation and/or a write operation may be performed at each memory bank. In some cases, the payload memory may include a large number of memory banks, but only a fraction of available bandwidth may be used.

In addition, the control paths may be configured for multi-cell enqueue and multi-cell dequeue. Traditionally, a traffic manager control structure may be capable of enqueuing and dequeuing 1 or 2 packets or cells per cycle. For example, traditionally, each packet received may trigger generating an enqueue, and each packet transmitted may trigger a dequeue, which may set the enqueue/dequeue rate to be greater than or equal to a maximum packet per second for a switch. However, the traditional approach may limit the ITM capacity, where, for example, a 4 input/output ITM may require 16 port memories (4 enqueues and 4 dequeues, each requiring read and write) for VoQ state.

In the hybrid-shared switch architecture 500, to provide a high capacity ITM while providing capability to receive 1 packet or cell per clock from each input pipeline and to transmit 1 packet or cell per clock to each egress pipeline, a control path in each ITM (e.g., ITM 530A or 530B) may be configured to handle multi-packet enqueues and multi-packet dequeues. By creating multi-packet events with multi-packet enqueues and dequeues, the frequency of events being handled by the control path decreases, which may allow the hybrid-shared traffic manager 510 to operate at lower frequencies and/or use more efficient lower port count memories. For example, each ITM may support 6 input pipelines and thus an average of 6 packets per cycle may be enqueued. For larger packets, multiple cells of a large packet may be enqueued at a time. Smaller packets may be accumulated together to allow multi-packet enqueue and multi-packet dequeue. For example, a single scheduling event may naturally dequeue 6 or more cells (e.g., from single large packet or multiple small packets). Multiple small packets may also be accumulated for single multi-packet enqueue events to output queue.

Each ITM may also include a shared buffer that maybe a high bandwidth buffer, where the buffer is partitioned per ITM. For example, each ITM may support 8 writes and 8+overspeed reads per cycle. There is no limitation on sharing within each ingress buffer (e.g., ingress buffer 532A or 532B).

In some examples, the shared buffer may be implemented using efficient memories. For example, the total payload buffer size may be 64 MB, with 32 MB of a shared buffer (e.g., ingress buffer 532A or 532B) per ITM, implemented as N banks of (32 MB/N) MB per bank. A memory bank may perform 1 read or 1 write per clock cycle. The write bandwidth may be deterministic (e.g., with flexible addressing). The read bandwidth may be non-deterministic (e.g., with fixed addressing), where reads cannot be blocked by writes and reads can be blocked by other reads.

FIG. 6 is an example diagram 600 illustrating banks of a buffer per tile in a hybrid-shared switch architecture and data paths to egress buffers within a network switch, in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

As shown in FIG. 6, the network switch 104 may include the ingress buffer 532A configured to receive data via IPs 520A-520D and EB components 570A-570D. In an example data path for the ITM 530A of the hybrid-shared switch architecture, the ingress buffer 532A may be divided into N payload memory banks. 4 writes per cycle may be performed into the ingress buffer 532A and 5 reads per cycle may be performed from ingress buffer 532A. The 5 reads from the ITM buffer memory may be meshed with 5 reads from another ingress buffer (e.g., ingress buffer 532B) from another ITM (e.g., ITM 530B), and then the results may be sent to 4 EB components 570A-570D, to be output through 4 EPs 580A-580D. No more than a maximum number of cells per cycle may be sent to a single EB component of the EB components 570A-570D from the ingress buffer 532A. In one or more implementations, a small EB staging buffer may be implemented per EP to reorder read data and to absorb short bursts per EP.

In one or more implementations, a control plane of the hybrid-shared switch architecture may not need to scale to the highest packet-per-second (pps) rate. VoQ enqueue/dequeue events may be reduced, and the enqueue rate and the dequeue rate may be less than smallest packet pps rate. Multi-packet enqueue/dequeue events may require VoQ enqueue and dequeue cell or packet accumulation, and/or may require multi-packet enqueue/dequeue control structures. Controlled distribution of accesses are distributed across physical memories may reduce individual memory bandwidth requirement.

In the subject technology, an increased number of input port interfaces are available per control plane, and thus fewer control planes may be needed. Fewer control planes may require fewer VoQ structures, which may reduce the area taken by VoQ structures and/or may reduce partitioning of output queue guarantees/fairness. Further, the increased number of input port interfaces per control plane improves sharing of resources among sources within a tile and may also reduce source congestion. For example, a single pipeline may burst to egress at higher than a pipeline bandwidth (within an input tile limit). In addition to sharing within the input tile, the input tile may provide the overspeed, as discussed above (e.g., 5 cell reads for 4 input pipelines). The control plane may be implemented using low port count memories due to low rate multi-packet enqueue and dequeue events.

For larger packets, multi-cell enqueues may be created by having a reassembly FIFO per input port accumulate the packet which may then be enqueued as a single event to its target VoQ. For small packets that are each targeting different VoQs, reassembled packet state is not sufficient. Thus, according to an aspect of the disclosure, the total VoQ database may be segmented into N VoQ banks, where each VoQ bank has (total VoQs/N) VoQ entries and there is no duplication of VoQ state. Output accumulation FIFOs are implemented prior to the VoQ enqueue stage where cells within each FIFO cannot address more than M VoQs in any VoQ bank, where M is the maximum number of VoQ enqueues that a VoQ bank can receive per clock cycle. Multiple cells may then be read from a FIFO addressing up to N VoQs knowing that no more than M VoQs in any bank is accessed by the event. In an example implementation, N=8 and M=1.

The following description explains the traffic manager control plane with regard to the ITM 530A, as an example. Another ITM (e.g., ITM 530B) may include a similar traffic manager control plane to the traffic manager control plane of the ITM 530A. The traffic manager control plane of the ITM 530A may include an orthogonal queue set (OQS) block 1414 as part of the Queuing block 830A. The traffic manager control plane may reside in the ITM 530A. In one or more implementations, the traffic manager control plane may reside in the queuing component 534A of the ITM 530A. In one or more implementations, the Queuing component 534A may utilize the ingress buffer 532A to store data and/or queues. The Queuing block 830A is connected to a main scheduler 540 of the traffic manager, where the main scheduler 540 is capable of communicating with a read launcher (RL) 550A. The RL 550A communicates with the ingress buffer 532A to read data packets to be forwarded to EPs via an EB block. The ingress buffer 532A may also communicate with the ingress buffer 532B of the ITM 530B. Additional details regarding the OQS block 1414 and its parent Queuing block 830A and the RL 550A are provided infra.

At the OQS block 1414, the output accumulation FIFO of the OQS block 1414 may accumulate cells/packets for the same VoQ(s) to create multi cell and/or multi-packet enqueues. This allows the control path enqueue rate to be less than the maximum packet per second rate. The OQS block 1414 may compress multiple enqueues into OQS queues. At the OQS block 1414, each packet received from the input pipelines may be switched to an output accumulation FIFO in the OQS block 1414. For example, the OQS block 1414 may receive up to 4 cells per cycle from the input pipelines.

Further, at the OQS block 1414, the output accumulation FIFO may also accumulate cells/packets within each output accumulation FIFO for a set of VoQs. The set of VoQs within an output accumulation FIFO may be called an OQS, where each VoQ within the same OQS is put in a separate VoQ bank in the Queuing block. Thus, draining the output accumulation FIFO in the OQS block 1414 may generate one or more VoQ enqueues (e.g., up to the number of VoQ banks in Queuing block 830A) that are distributed across VoQ banks in the Queuing block 830A, each VoQ enqueue to a different VoQ bank. Each VoQ enqueue may be a multi-cell and/or multi-packet enqueue, i.e. add from 1 to maximum number of cells per clock cycle to the VoQ. This may achieve multiple VoQ enqueues per clock cycle using VoQ banks in the Queuing block 830A, where each VoQ bank supports 1 VoQ enqueue per clock. In one or more implementations, a VoQ block may contain multiple enqueues per clock cycle in which case the OQS set of queues within an Output Accumulation FIFO may contain multiple VoQs in each VoQ bank.

Up to X cells from the input pipelines can be written to between 1 and X output accumulation FIFOs per clock cycle. For example, all packets/cells may be written to one output accumulation FIFO or may be written to one or more different output accumulation FIFOs. In some aspects, the output accumulation FIFO throughput may be large enough to ensure no continuous accumulating build up and to avoid FIFO buffer management/drops. Thus, for example, the output accumulation FIFO throughput may be greater than or equal to total input pipe bandwidth plus any required multicast enqueue bandwidth within the ITM. This also allows the FIFOs to be shallow and fixed in size which minimizes the control state required to manage each FIFO. Although the Output Accumulation FIFOs have a high enqueue plus dequeue rate, there are fewer Output Accumulation FIFOs than VoQs and the control state per Output Accumulation FIFO is considerably smaller than the VoQ enqueue state which the architecture allows to be implemented in area and power efficient memories supporting as low as 1 enqueue per clock.

The output accumulation FIFO state has a high access count, e.g., a read plus write for each FIFO enqueue and dequeue event. In one implementation, there may be one output accumulation FIFO per output port and the number of VoQ banks may be equal to the number of queues within a port. In another implementation, there may be one output accumulation FIFO per a pair of output ports and the number of VoQ banks may be twice the number of queues within a port.

The architecture according to the disclosure may ensure that a dequeue from an output accumulation FIFO cannot overload a VoQ bank enqueue rate. Multiple cells may be read from an output accumulation FIFO which can contain one or more packets. For example, one large packet may be read from an Output Accumulation FIFO and enqueued to a single VoQ or multiple small packets may be read to the same or different VoQs. The VoQ bank implementation (and underlying VoQ structure) is configured to support multiple cells/packets being added to a VoQ in a single enqueue update.

FIG. 7 is an example diagram 700 illustrating the OQS block in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

The output accumulation FIFO provides (output) enqueue accumulation. In one or more implementations, the output port accumulation FIFO for the OQS block 1414 may be a part of the ingress buffer 532A or may reside in the queuing component 534A. The OQS block 1414 receives a serial stream of packets from the Input Pipelines. Up to the number of cells received per clock from the Input Pipelines may be written and the cells to be written per clock cycle may be distributed to one or more of the output accumulation FIFOs. For example, 8 cells in a clock cycle may be distributed to one or more of 8 output accumulation FIFOs. In the example diagram, in one clock cycle, the OQS block 1414 receives 8 cells (A0-A7). Out of the 8 cells in one clock cycle, one cell (A2) is written to one output accumulation FIFO, three cells (A7, A1, and A0) are written to another output accumulation FIFO, and four cells (A3, A4, A5, A6) are written to another output accumulation FIFO. The OQS block 1414 may serve as a control switching point from a source to a destination.

The output accumulation FIFOs may be sized to typically avoid creating back pressure when a large packet is written in to an output accumulation FIFO. The OQS arbiter 720 may dequeue more cells per clock cycle from an Output Accumulation FIFO than are written in a clock cycle. This prevents the output accumulation FIFO reaching its maximum fill level and provides additional dequeue bandwidth to read Output Accumulation FIFOs with shallow fill levels. In one or more implementations, each output port or set of output ports may be mapped to an output accumulation FIFO.

The OQS arbiter 720 can make up to N FIFO selections per clock cycle to attempt to read Y cells per clock where Y may contain overspeed compared to the number of cells received by the ITM from its Ingress Pipelines in one clock cycle. Different implementations may have different values of N and Y to meet the switch requirements. In an example, N may be 1 and Y may be 6, in another example N may be 2 and Y may be 8. The OQS arbiter 720 may generate a serial stream of packets. For example, the OQS arbiter 720 may completely drain one output accumulation FIFO to end of packet before switching to a different output accumulation FIFO. The output accumulation FIFOs with the deepest fill level (quantized) may have the highest priority followed by FIFOs that have been in a non-empty state the longest.

The OQS arbiter 720 may use a FIFO ager scheme. The FIFO ager scheme is used to raise the priority of aged FIFOs with shallow fill levels above other non-aged FIFOs also with shallow fill levels. FIFO(s) with high fill levels (aged or not) have highest priority as these have efficient dequeues that provide over speed when selected and free up dequeue bandwidth for less efficient shallow dequeues. The output FIFO ager scheme of the OQS arbiter 720 may further be able to set the ager timer based upon the output port speed and queue high/low priority configuration. Hence, for example, an output FIFO ager scheme may be used to minimize the delay through the output stage for packets requiring low latency.

FIG. 8 is an example diagram 800 illustrating a Queuing block 830A in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

The Queuing block 830A implements a VoQ banking stage containing VoQ accumulation for multi cell dequeue events (single dequeue can be multiple cells). Cells from the same output accumulation FIFO 820A cannot overload any one VoQ bank. Other control structures such as Output Admission Control may have the same banking structure as the VoQ banking in Queuing block 830A. Controlled distribution of multi cell enqueues across physical memory banks is performed. In one or more implementations, the VoQ banks of the Queuing block 830A may be a part of the ingress buffer 532A or may reside in the queuing component 534A.

This structure performs multi cell enqueues to one or more VoQs within an OQS block each clock cycle while implementing the VoQ banks with databases that only support as low as one enqueue per clock cycle. Each VoQ enqueue can add multiple cells/packets to the VoQ.

As previously discussed, each read from an output accumulation FIFO may generate enqueue requests for VoQ(s) that do not overload any one VoQ bank. The output accumulation FIFO stage can provide up to Y cells from N output accumulation FIFOs in each clock cycle. This may generate N VoQ enqueues per clock cycle where each OQS is assigned one VoQ per VoQ bank or a multiple of N VoQ enqueues per clock cycle where each OQS is assigned a multiple of VoQs per VoQ bank. An implementation may support reading from N Output Accumulation FIFOs per clock such that the maximum number of VoQ enqueues generated to a VoQ bank exceeds the number the VoQ bank can support in a clock cycle. If this occurs the implementation should hold back the latest packets that overload the VoQ bank to be enqueued first in the next clock cycle. The VoQ enqueues held back until the next cycle can be combined with new enqueue requests received in the next cycle.

FIG. 9 is an example diagram 900 illustrating a Hybrid-shard queue structure, in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

Each cell (or packet) within a VoQ is assigned a slot entry in a Queue Block to hold the cell/packet's control state. The VoQ structure for the VoQ in FIG. 9 is constructed of a linked list of Queue Blocks, where the Queue Block Link database is used to create the links. This VoQ structure has the benefit of allowing multiple cells or packets within a single Queue Block to be read in one access while still supporting the flexible and dynamic allocation of Queue depth to active VoQs. Thus, backlogged queue is constructed of dynamically allocated Queue Blocks. For example, this VoQ structure in FIG. 9 includes a Queue Block implementation containing 8 cells or packets per Queue Block. The cell control holds cell payload memory address. Up to 8 cells can be written to a VoQ Queue Block per clock cycle.

In one or more implementations, the number of cell control slots within a Queue Block may change depending upon the size (number of cells) and frequency of the device's multi-cell enqueues and dequeues.

Configurable mapping of {EP number, MMU Port number, MMU Queue number} to VoQ banks may be provided. The mapping may avoid the same MMU Queue number for all ports being mapped to the same VoQ bank. For implementations where N OQS FIFOs are read in a clock cycle that may overload the maximum enqueue rate of a VoQ bank(s), the mapping can attempt to distribute the enqueue load across the VoQ banks to reduce the probability of any one bank being overloaded.

According to one or more implementations, the subject disclosure has a payload memory per ITM. For example, an ITM payload memory supports NUMIPITM cell writes plus (NUMIPITM+X) cell reads per clock cycle, where NUMIPITM is the number of IP interfaces connected to the ITM and X is the required read overhead to minimize input blocking and egress buffering to maintain port throughput. The subject disclosure's data path allows a payload memory supporting multiple writes and reads per clock to be implemented using efficient single port memories. To achieve such features, the total ITM payload depth is segmented into a number of shallower payload memory banks. Thus, an ITM payload memory may be segmented into multiple payload memory banks. Each payload memory bank may be partitioned in to several payload memory instances. An example payload memory may support each payload memory bank supports one write or one read per clock, which can be implemented using one or more single port memory instances.

With regard to the dequeue feature of the subject disclosure, the dequeue architecture utilizes multi-cell VoQ dequeues to support dequeue rates lower than the required packet per second rate. In one or more implementations, the number of dequeues per clock and the maximum number of cells per dequeue may be set so that the maximum total dequeue cell rate is higher than the sum of the required output port bandwidth to allow for shallow VoQ dequeues. Under maximum VoQ enqueue loads, shallow dequeues may cause other VoQs to back up which can then be drained at (up to) the maximum rate to achieve the overall required throughput. The number of cells per dequeue and the number of dequeues per clock cycle may be device specific.

Each of the RLs 550A-B may buffer bursts of read requests. For example, the RLs 550A-B may each generate a maximum of 8 cell read requests to payload memory per clock. The goal may be to issue the 8 oldest non-conflicting payload reads. The RLs 550A-B may each reorder read requests to avoid payload memory bank collisions. Each of the RLs 550A-B may back pressure the main scheduler 540 if a generated read request rate cannot keep up with the dequeue rate. The RLs 550A-B may exchange state to minimize the cell read burst length for an EB.

EB buffering may behave almost as a single port FIFO. The EB components 570A-H may not interfere with priority/fairness decisions of the main scheduler 540. In one or more implementations, each of the EB components 570A-H may contain multiple queues to allow for fast response to Priority-based flow control.

In one or more implementations, the main scheduler 540 may be configured to select a VoQ from which a number of cells will be dequeued. The selection may attempt to maintain output port bandwidth while selecting VoQs within the port to adhere to the port's QoS configuration.

In this architecture each dequeue selection can read multiple cells from a VoQ. For simplicity, in one or more implementations, it is expected (though not required) that each dequeue will read a maximum of the number of cell slots within a Queue Block, e.g. 8 using the VoQ structure shown in FIG. 9. The main scheduler 540 may adjust back to back port selection spacing based upon the port speed but also the number of cells within each dequeue. An ITM may not be able to provide sufficient dequeue bandwidth for all output ports. The main scheduler 540 may consider loading and availability of VoQs in both ITMs and optimize throughput by issuing dequeues to each ITM when possible without compromising a port's QoS requirements.

The main scheduler (e.g., main scheduler 540) transmits packets from the ITM payload buffers (e.g., ingress buffers 532A-B) to an egress buffer of an EB component per EP interface or set of EP interfaces. As the main scheduler can transmit with overspeed to each port, the EB component can contain several packets per port. In addition to the main scheduler, each EB component may or may not contain its own scheduler to transmit from the EB component to its EP interface(s). This EB scheduler matches the main scheduler's strict priority policies (to minimize strict priority packet latencies through the EB component) and port bandwidth allocations.

As the main scheduler can schedule multiple cells per dequeue, it can generate significant overspeed when scheduling full dequeues. The dequeue control and data path may have restriction on the number of total cells, cells per EB component and cells per port that the main scheduler should observe. This may be implemented using credits/flow control and scheduler pacing (awareness of maximum and average burst cell rates for total, EBs and Ports). EB rates are constant across EB components independent of the port bandwidth active within an EB component. Thus, the main scheduler does not attempt to control EB fairness. Port rates are different for the different port speeds supported by the device, and may have configured values.

Minimum port to port spacing may be enforced based upon number of cells within each dequeue. One or more implementations may also consider the number of bytes to be transmitted from each cell. Dequeues with higher number of cells or bytes may observe longer port to port spacing to allow other ports to obtain more dequeue bandwidth, even with higher spacing the port is still allocated overspeed compared to the required rate to the EP.

FIG. 10 is an example diagram 1000 illustrating credit protected dequeue control/data path limits for a network switch (e.g., network switch 104), in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

As illustrated in FIG. 10, the main scheduler 540 may observe the output port credit within the EB component 570A, and may determine whether to select an output port based on the observed output port credit. Scheduler picks by the main scheduler 540 are passed to an ITM's Queuing block which retrieves the cell control including payload read address before issuing cell read requests to the RL.

Each dequeue accesses a different VoQ state within the Queuing block. As described within the enqueue flow, the Queuing block contains VoQ banks and the OQS FIFOs control the number of enqueues addressing each bank. In certain applications, the scheduling decision may ensure that the dequeue rate to any VoQ bank does not exceed the bank's guaranteed dequeue bandwidth. For an example implementation in FIG. 11 that supports 2 dequeues per VoQ bank, the main scheduler 540 may be unaware of VoQ banking in which case both dequeues could access the same VoQ bank. In other applications, the number of dequeues per bank may be more or less than 2 dequeues per clock. The scheduler may actively select VoQs to avoid overloading a VoQ bank's dequeue rate without impacting the port's QoS requirements. In other applications, the scheduler VoQ selections may overload a VoQ bank's dequeue rate in which case later VoQ selections may be held back to subsequent clocks.

FIG. 11 is an example diagram 1100 illustrating a set of dequeues in one clock cycle, in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

The queue database (e.g., in the Queuing block 830A) may support up to Z dequeues per clock cycle. Each dequeue is independent. The dequeues may be for the same or different VoQ banks within the Queuing structure. In FIG. 11 with Z=2, each bank supports 2 simultaneous dequeue operations. Each dequeue results in 1-Y cell addresses out of Queuing block 830A (e.g., up to ZY cell addresses total with 2 dequeues).

One task performed by the EB component may be to reorder cells per ITM to original scheduler order. The Read Launcher 550A can reorder cell read requests issued to the ITM to optimize payload memory bandwidth while avoiding payload bank read collisions. The EB component contains a reorder FIFO that reorders the cell read data to the original scheduler order. Once reordered, the cells are forwarded to the EB queues.

Another task performed by the EB component is to observe flow control. Pause or PFC flow control to each port may be supported. If pause xoff is received, the EB will stop transmitting the port to the EP and the state may be mapped back to the main scheduler to stop it scheduling to this port. The implementation may allow the EB port FIFO to fill, which will cause the main scheduler to run out of EB port credits and stop scheduling to that port. In one or more implementations, no packet loss due to pause is allowed.

For PFC flow control, each port can receive, for example, up to 8 PFC Class xon/xoff flow control status. This state may be mapped back to MMU Queues within the main scheduler so that the main scheduler will stop transmitting from VoQ(s) that are mapped to a PFC class in an xoff state. Further, the EB implementation may support multiple PFC class queues per port that can also be flow controlled by PFC Class(es) to enable faster PFC response times. PFC class(es) mapped to EB PFC class queues may also be mapped to MMU Queues that are mapped to that EB PFC class queue. The EB PFC class queue will stop draining from the EB component and the main scheduler should stop transmitting to the EB PFC class queue.

In one or more implementations, there should be no packet loss within the dequeue flow due to PFC flow control. In this regard, the EB scheduler may not transmit packets from EB PFC class queues in an xoff state to the EP while allowing packets to transmit to the port as long as they are mapped to an EB PFC class queue in an xon state. EB PFC class queues may require EB buffering to absorb packets that were in flight when xoff was received.

Another task performed by the EB component may be EB scheduling to the EP interface. Each EB component may contain an EB scheduler to allocate bandwidth to each port within the EB component. Each port should be allocated a fair portion of the EP bandwidth in line with that allocated by the main scheduler.

The EB component may contain a set of PFC class queues per port. To minimize latency for strict priority packets, an EB PFC class queue can be configured for strict priority selection against other EB PFC class queues within the same port. In addition to observing PFC flow control, this allows strict priority packets to bypass lower priority packets already stored within the EB component. The EB component 570A contains minimum buffering needed to maintain full line rate

FIG. 12 is an example diagram 1200 illustrating an egress buffer component architecture, in accordance with one or more implementations. Not all of the depicted components may be used in all implementations, however, and one or more implementations may include additional or different components than those shown in the figure. Variations in the arrangement and type of the components may be made without departing from the spirit or scope of the claims as set forth herein. Additional components, different components, or fewer components may be provided.

In the example diagram 1200, there is one EB component per EP. The EB component 570A contains minimum buffering needed to maintain full line rate. The EB component 570A implements a second SAF point due to non-deterministic delay through RL and ingress buffer. As shown in FIG. 12, the EB component 570A receives 2 cells per cycle from main tile buffers and writes 2 cells per cycle into the EB component 570A (e.g., cell0 and cell1). The EB component 570A reads 1 cell per cycle to send to the output port 580A.

A network switch generally supports two methods of passing packets from input ports to output ports, which are store-and-forward (SAF) and cut-through (CT). Thus, the hybrid-shared traffic manager architecture may also support the SAF switching and the CT switching. The SAF switching accumulates entire packets in the ITM's data buffer before scheduling and transmitting it to the output port. The SAF switching is utilized when an output port is congested. When two or more input ports attempt to send data to the same output port, the output port becomes congested and thus enters an SAF state. In the SAF state, all packets should be completely received before the first byte of the packet is allowed to exit the switch output port, which may cause a longer latency than the CT state.